repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

koxudaxi/fastapi-code-generator | fastapi | 84 | ValueError: 'template/main.jinja2' is not in the subpath | I'm trying to make my project compatible with poetry so I have to add a start method where I invoke uvicorn so that I can have the following in the poetry run script:

```

[tool.poetry.scripts]

run = "skeleton_python_api.main:start"

```

I have the following structure of a project:

```

├── README.md

├── openapi.yaml

├── poetry.lock

├── pyproject.toml

├── skeleton_python_api

│ ├── __init__.py

│ ├── main.py

│ └── models.py

├── template

│ └── main.jinja2

└── tests

├── __init__.py

└── test_skeleton_python_api.py

```

While running the following command:

```

(skeleton-python-api-PB31_aPS-py3.9) ➜ skeleton-python-api git:(master) ✗ fastapi-codegen --input openapi.yaml --output skeleton_python_api -t template

```

I'm getting an error:

```

Traceback (most recent call last):

File "/Users/bartosz.nadworny/Library/Caches/pypoetry/virtualenvs/skeleton-python-api-PB31_aPS-py3.9/bin/fastapi-codegen", line 8, in <module>

sys.exit(app())

File "/Users/bartosz.nadworny/Library/Caches/pypoetry/virtualenvs/skeleton-python-api-PB31_aPS-py3.9/lib/python3.9/site-packages/typer/main.py", line 214, in __call__

return get_command(self)(*args, **kwargs)

File "/Users/bartosz.nadworny/Library/Caches/pypoetry/virtualenvs/skeleton-python-api-PB31_aPS-py3.9/lib/python3.9/site-packages/click/core.py", line 829, in __call__

return self.main(*args, **kwargs)

File "/Users/bartosz.nadworny/Library/Caches/pypoetry/virtualenvs/skeleton-python-api-PB31_aPS-py3.9/lib/python3.9/site-packages/click/core.py", line 782, in main

rv = self.invoke(ctx)

File "/Users/bartosz.nadworny/Library/Caches/pypoetry/virtualenvs/skeleton-python-api-PB31_aPS-py3.9/lib/python3.9/site-packages/click/core.py", line 1066, in invoke

return ctx.invoke(self.callback, **ctx.params)

File "/Users/bartosz.nadworny/Library/Caches/pypoetry/virtualenvs/skeleton-python-api-PB31_aPS-py3.9/lib/python3.9/site-packages/click/core.py", line 610, in invoke

return callback(*args, **kwargs)

File "/Users/bartosz.nadworny/Library/Caches/pypoetry/virtualenvs/skeleton-python-api-PB31_aPS-py3.9/lib/python3.9/site-packages/typer/main.py", line 497, in wrapper

return callback(**use_params) # type: ignore

File "/Users/bartosz.nadworny/Library/Caches/pypoetry/virtualenvs/skeleton-python-api-PB31_aPS-py3.9/lib/python3.9/site-packages/fastapi_code_generator/__main__.py", line 28, in main

return generate_code(input_name, input_text, output_dir, template_dir)

File "/Users/bartosz.nadworny/Library/Caches/pypoetry/virtualenvs/skeleton-python-api-PB31_aPS-py3.9/lib/python3.9/site-packages/fastapi_code_generator/__main__.py", line 50, in generate_code

relative_path = target.relative_to(template_dir.absolute())

File "/usr/local/Cellar/python@3.9/3.9.0_2/Frameworks/Python.framework/Versions/3.9/lib/python3.9/pathlib.py", line 928, in relative_to

raise ValueError("{!r} is not in the subpath of {!r}"

ValueError: 'template/main.jinja2' is not in the subpath of '/Users/bartosz.nadworny/workspace/space/skeleton-python-api/template' OR one path is relative and the other is absolute.

```

Template:

```

from __future__ import annotations

import uvicorn

from fastapi import FastAPI

{{imports}}

app = FastAPI(

{% if info %}

{% for key,value in info.items() %}

{{ key }} = "{{ value }}",

{% endfor %}

{% endif %}

)

{% for operation in operations %}

@app.{{operation.type}}('{{operation.snake_case_path}}', response_model={{operation.response}})

def {{operation.function_name}}({{operation.snake_case_arguments}}) -> {{operation.response}}:

{%- if operation.summary %}

"""

{{ operation.summary }}

"""

{%- endif %}

pass

{% endfor %}

def start():

uvicorn.run(app, host="0.0.0.0", port=8000)

```

# Env

macos

python 3.9.0

fastapi-code-generator 0.1.0 | closed | 2021-01-07T12:13:38Z | 2021-01-13T09:29:49Z | https://github.com/koxudaxi/fastapi-code-generator/issues/84 | [] | nadworny | 1 |

miguelgrinberg/python-socketio | asyncio | 338 | Python Server & Node Client , On Authentication Fail client receives 1 fix error in any scenario. | Hello,

I have a scenario where HTTPS Server is of Python and Client is from the nodeJs,

For a client, I need to show 2 different messages for the below scenario

-If a client tries to connect with invalid URL like 'abc.com', I need to show the message "server not found"

-If the user enters valid URL and passes an invalid token, I need to show the message "Invalid token"

On Authentication fail if i raise any type of errors on server,on client i receive only 1 type of FIX error that is "**websocket error**".I can not receive same error object with text on client sidewhich is sent by server.

For Node, I have just 1 default function where I can receive any type of error on connection error...

```

socket.on("connect_error", (data) => {

console.log((data));

})

```

So here if from server i return _ConnectionRefusedError('authentication failed')_ (refer server code),so in node js i can compare datatype and text message of function parameter,and on its basis i can decide which message i need to show to user.

> My Python server HTTPS server +SocketIO code looks like:

```

import socketio

from aiohttp import web

import asyncio

import eventlet

import ssl

import jwt

from urllib.parse import urlparse, parse_qs

sio = socketio.AsyncServer(cors_allowed_origins="*")

app = web.Application()

sio.attach(app)

@sio.event

async def connect(sid, environ):

print('>>>> connect <<<<< ')

print(sid)

raise ConnectionRefusedError('authentication failed')

@sio.event

def disconnect(sid):

print('>>>> disconnect <<<<< ')

@sio.event

def error(sid, data):

print('>>>> error <<<<< ')

@sio.event

async def message(sid, data):

print('>>>> message <<<<< ')

print(data)

return 'Acknowledgement From Server'

ctx = ssl.create_default_context(ssl.Purpose.CLIENT_AUTH)

ctx.load_cert_chain('server.cert', 'server.key')

web.run_app(app, host='localhost', port=2499, ssl_context=ctx)

```

#################################################################

> My client code is:

```

const io = require('socket.io-client');

var jwt = require('jsonwebtoken');

let token = jwt.sign({ username: 'git' }, '2YyZ?&qLkKus`pGV', { expiresIn: 60 * 60 });

const socket = io.connect('wss://localhost:2499', {

forceNew: true,

autoConnect: true,

rejectUnauthorized: false,

reconnection: false,

secure: true,

transports: ['websocket'],

hostname: 'localhost',

port: 2499,

upgrade: false,

query: {

token

},

})

socket.on("connect", (data, aaaaa) => {

console.log("connect====>");

console.log(data);

})

socket.on("connect_error", (data) => {

console.log("connect_error====>");

console.log((data));

})

socket.on("message", (data) => {

console.log("message===>", data);

})

socket.on("error", (data) => {

console.log("Error===>", data);

})

socket.on("disconnect", (data) => {

console.log("disconnect===>", data);

});

```

What's Wrong here.Why i am not getting same error on client side?

How can i achieve desired output?

Thanks in advance | closed | 2019-08-21T08:38:00Z | 2019-08-29T05:46:39Z | https://github.com/miguelgrinberg/python-socketio/issues/338 | [

"question"

] | harshkoralwala | 6 |

bmoscon/cryptofeed | asyncio | 332 | OHLCV Aggregation Coinbase fails with `unexpected keyword argument 'order_type'` | I use the script `examples/demo_ohlcv.py`

```

from cryptofeed import FeedHandler

from cryptofeed.backends.aggregate import OHLCV

from cryptofeed.callback import Callback

from cryptofeed.defines import TRADES

from cryptofeed.exchanges import Coinbase

from cryptofeed.exchanges import Binance

async def ohlcv(data=None):

print(data)

def main():

f = FeedHandler()

f.add_feed(Coinbase(pairs=['BTC-USD', 'ETH-USD', 'BCH-USD'], channels=[TRADES], callbacks={TRADES: OHLCV(Callback(ohlcv), window=30)}))

#f.add_feed(Binance(pairs=['BTC-USDT'], channels=[TRADES], callbacks={TRADES: OHLCV(Callback(ohlcv), window=30)}))

f.run()

if __name__ == '__main__':

main()

```

Binance or FTX works, however, for Coinbase I get:

```

TypeError: __call__() got an unexpected keyword argument 'order_type'

```

I use the current dev version 1.6.2.

Do I use it wrong?

Thansk for a quick reply

| closed | 2020-11-20T18:43:57Z | 2020-11-21T01:35:42Z | https://github.com/bmoscon/cryptofeed/issues/332 | [

"bug"

] | degloff | 1 |

coqui-ai/TTS | pytorch | 3,799 | [Bug] Demo Inference Produces Distorted Audio Output | ### Describe the bug

I followed the demo code provided by Coqui to create a simple dataset and fine-tune a model using Gradio. However, when I load the model and perform inference, the output audio is heavily distorted, resembling the sound of a hair shaving machine.

You can listen to the output at the following link: [Distorted Audio Output](https://voca.ro/12zTyyaafKBF).

Steps to Reproduce:

Create Dataset:

Followed the instructions to create a simple dataset using the demo code.

Fine-Tune Model:

Used the Gradio interface as provided in the demo to fine-tune the model.

Load Model and Inference:

Loaded the fine-tuned model.

Create a simple dataset, fine-tune and performed inference using the Gradio interface with the following setup:

`py TTS/TTS/demos/xtts_ft_demo/xtts_demo.py`

The model should produce a clear and intelligible speech output corresponding to the input text.

Actual Result:

The output audio is distorted and unintelligible. You can hear the output here: [Distorted Audio Output](https://voca.ro/12zTyyaafKBF).

Additional Information:

I verified that CUDA and the NVIDIA drivers are correctly installed and operational.

The nvidia-smi command confirms that the GPU is recognized and utilized by the system.

Other models and libraries utilizing CUDA work as expected.

Logs and Error Messages:

No explicit error messages were encountered during the execution. The process completes without any exceptions.

Request:

Could you please provide guidance on how to resolve this issue or if there are any specific configurations required to avoid such distortion in the output?

Thank you for your assistance.

### To Reproduce

`py TTS/TTS/demos/xtts_ft_demo/xtts_demo.py`

### Expected behavior

_No response_

### Logs

_No response_

### Environment

```shell

- Operating System: Window 11

- Python Version: 3.10.4

- CUDA Version: 11.5

- PyTorch Version: 1.11.0+cu115

- coqui-ai Version: Last Update on github

```

### Additional context

_No response_ | closed | 2024-06-25T18:29:54Z | 2025-01-03T08:49:11Z | https://github.com/coqui-ai/TTS/issues/3799 | [

"bug",

"wontfix"

] | Heshamtr | 1 |

aio-libs/aiomysql | sqlalchemy | 195 | unable to perform operation on <TCPTransport closed=True reading=False 0x1e41248>; the handler is closed` | hi,i use

python3.5.3

aiohttp 2.0.7

aiomysql 0.0.9

sqlalchemy1.1.10

When i open the application for a long time.Throw the following error

`2017-07-27 10:28:13 rtgroom.py[line:141] ERROR Traceback (most recent call last):

File "/home/wwwroot/ykrealtime/rtgame/models/mysql/rtgroom.py", line 137, in get_invite_me_count

RTG_room.select().where(RTG_room.c.create_by == create_by).where(RTG_room.c.status == 1))

File "/home/wwwroot/ykrealtime/venv/lib/python3.5/site-packages/aiomysql/utils.py", line 66, in __await__

resp = yield from self._coro

File "/home/wwwroot/ykrealtime/venv/lib/python3.5/site-packages/aiomysql/sa/connection.py", line 107, in _execute

yield from cursor.execute(str(compiled), post_processed_params[0])

File "/home/wwwroot/ykrealtime/venv/lib/python3.5/site-packages/aiomysql/cursors.py", line 239, in execute

yield from self._query(query)

File "/home/wwwroot/ykrealtime/venv/lib/python3.5/site-packages/aiomysql/cursors.py", line 460, in _query

yield from conn.query(q)

File "/home/wwwroot/ykrealtime/venv/lib/python3.5/site-packages/aiomysql/connection.py", line 397, in query

yield from self._execute_command(COMMAND.COM_QUERY, sql)

File "/home/wwwroot/ykrealtime/venv/lib/python3.5/site-packages/aiomysql/connection.py", line 627, in _execute_command

self._write_bytes(prelude + sql[:chunk_size - 1])

File "/home/wwwroot/ykrealtime/venv/lib/python3.5/site-packages/aiomysql/connection.py", line 568, in _write_bytes

return self._writer.write(data)

File "/usr/local/lib/python3.5/asyncio/streams.py", line 294, in write

self._transport.write(data)

File "uvloop/handles/stream.pyx", line 632, in uvloop.loop.UVStream.write (uvloop/loop.c:74612)

File "uvloop/handles/handle.pyx", line 150, in uvloop.loop.UVHandle._ensure_alive (uvloop/loop.c:54917)

RuntimeError: unable to perform operation on <TCPTransport closed=True reading=False 0x1e41248>; the handler is closed` | closed | 2017-07-27T03:44:36Z | 2018-12-06T03:38:03Z | https://github.com/aio-libs/aiomysql/issues/195 | [] | larryclean | 4 |

taverntesting/tavern | pytest | 564 | Support using custom function in request.auth | As stated in the [requests document](https://requests.readthedocs.io/en/master/user/advanced/#custom-authentication), user may pass a sub class of AuthBase as the auth parameter. This is very useful when the authentication is a little bit more complicated than passing the session token or basic auth.

I guess this can be worked around by allowing user to pass a custom function which return AuthBase subclass? Something similar to the below.

```

request:

auth:

$ext:

function: security:prepare_auth

extra_kwargs:

username: "{username_variable}"

```

In the `security.py`, the caller can do the below

```

from requests.auth import AuthBase

class PizzaAuth(AuthBase):

"""Attaches HTTP Pizza Authentication to the given Request object."""

def __init__(self, username):

# setup any auth-related data here

self.username = username

def __call__(self, r):

# modify and return the request

r.headers['X-Pizza'] = self.username

return r

def prepare_auth(**kwargs):

username = kwargs['username']

return PizzaAuth(username)

```

I hope this makes sense ?

I do have a fix which can be submitted shortly. Let me know what you think. | open | 2020-06-28T13:08:51Z | 2021-01-13T13:36:33Z | https://github.com/taverntesting/tavern/issues/564 | [] | sohoffice | 2 |

allenai/allennlp | data-science | 5,105 | Build Fairness Library | **Motivation:** As models and datasets become increasingly large and complex, it is critical to evaluate the fairness of models according to multiple definitions of fairness and mitigate bias in learned representations. This library aims to make fairness metrics, fairness training tools, and bias mitigation algorithms extremely easy to use and accessible to researchers and practitioners of all levels.

**Success Criteria:**

* Create a fairness library, and apply it to the Textual Entailment model, publishing an analysis for where the present models fall short and where they should improve.

* Write a blog post and guide chapter and add a model and demo for the implementations of the fairness metrics and bias mitigation algorithms, and explain the broader impact.

**Milestones**

Implement the following:

Fairness Metrics

- [x] Independence, Separation, Sufficiency

- [x] Sparse Annotations for Ground-Truth

- [ ] Dataset Bias Amplification, Model Bias Amplification

Training-Time Fairness Algorithms (with and without Demographics):

- [x] Through Adversarial Learning (with Demographics)

- [ ] Minimax (without Demographics)

- [ ] Repeated Loss Minimization (without Demographics)

Bias Mitigation Algorithms:

- [x] Linear projection, Hard debiasing, OSCaR, Iterative Null Space Projection

- [x] Bias direction methods: Classification Normal, Two Means, Paired PCA, PCA

- [x] Contextualized word embeddings

Bias Metrics:

- [x] WEAT, Embedding Coherence Test, NLI

Communication:

- [x] blog post

- [x] guide chapter

- [x] demo

- [x] contribute binary gender bias-mitigated model for SNLI to allennlp-models

- [x] contribute binary gender bias-mitigated model for SNLI to demos

| open | 2021-04-08T21:25:54Z | 2022-12-15T16:09:49Z | https://github.com/allenai/allennlp/issues/5105 | [] | ArjunSubramonian | 38 |

jacobgil/pytorch-grad-cam | computer-vision | 491 | the example in README need to update | this link :https://jacobgil.github.io/pytorch-gradcam-book/Class%20Activation%20Maps%20for%20Semantic%20Segmentation.html

i found now the code can automatic use the same device of model:

```

class BaseCAM:

def __init__(self,

model: torch.nn.Module,

target_layers: List[torch.nn.Module],

reshape_transform: Callable = None,

compute_input_gradient: bool = False,

uses_gradients: bool = True,

tta_transforms: Optional[tta.Compose] = None) -> None:

self.model = model.eval()

self.target_layers = target_layers

# Use the same device as the model.

self.device = next(self.model.parameters()).device

xxx

``` | open | 2024-03-14T07:33:48Z | 2024-03-14T07:37:37Z | https://github.com/jacobgil/pytorch-grad-cam/issues/491 | [] | 578223592 | 1 |

pywinauto/pywinauto | automation | 1,171 | {AttributeError}'EditWrapper' object has no attribute 'is_editable' | ## Expected Behavior

I get a edit control from a window. I want to check whether the control is editable.

[https://pywinauto.readthedocs.io/en/latest/code/pywinauto.controls.uia_controls.html?highlight=is_editable#pywinauto.controls.uia_controls.EditWrapper.is_editable](url)

According to the document, method "is_editable" can be used to pywinauto.controls.uia_controls.EditWrapper.

## Actual Behavior

when I use is_editable, it throw the error "{AttributeError}'EditWrapper' object has no attribute 'is_editable'"

## Steps to Reproduce the Problem

1. open software 7zFM

2. get descendants whose control type is "Edit"

3. choose edit control "uia_controls.EditWrapper - 'C:', Edit"

4. call method is_editable()

## Short Example of Code to Demonstrate the Problem

## Specifications

- Pywinauto version:0.6.8

- Python version and bitness:python 3.8

- Platform and OS:windows 10

| open | 2022-01-25T01:09:29Z | 2022-01-25T01:09:29Z | https://github.com/pywinauto/pywinauto/issues/1171 | [] | jiliguluss | 0 |

jschneier/django-storages | django | 1,243 | S3Boto3Storage.exists() always returns False | Hey guys, need a small help again

I'm having an issue with S3Boto3Storage.exists()

It always returns false even though I have that directory present in the bucket.

I need to know what is going wrong as I want to make sure that if a user uploads new file with same content but, with different file name I would want only the newly uploaded to be visible.

I'm attaching AWS configs, storages_backends.py, views.py

settings.py

```

# AWS Config

AWS_ACCESS_KEY_ID = 'AWS_ACCESS_KEY_ID '

AWS_SECRET_ACCESS_KEY = 'AWS_SECRET_ACCESS_KEY'

AWS_STORAGE_BUCKET_NAME = 'AWS_STORAGE_BUCKET_NAME'

AWS_S3_SIGNATURE_NAME = 's3v4',

AWS_S3_REGION_NAME = 'ap-south-1'

AWS_S3_FILE_OVERWRITE = True

AWS_DEFAULT_ACL = None

AWS_S3_VERITY = True

DEFAULT_FILE_STORAGE = 'storages.backends.s3boto3.S3Boto3Storage'

```

storages_backends.py

```

from storages.backends.s3boto3 import S3Boto3Storage

class MediaStorage(S3Boto3Storage):

bucket_name = 'bucket-name'

```

views.py (where the logic is going wrong

```

if request.method == 'POST':

print('INSIDE POST DOC UPLOAD')

doc_storage = MediaStorage()

team = Team.objects.filter(teamID=user.username).get()

media_storage = MediaStorage()

file_path_bucket = 'documents/{0}'.format(request.user.username)

print('Path is: ', file_path_bucket)

Below line always returns False

print('Check Dir', media_storage.exists(file_path_bucket))

```

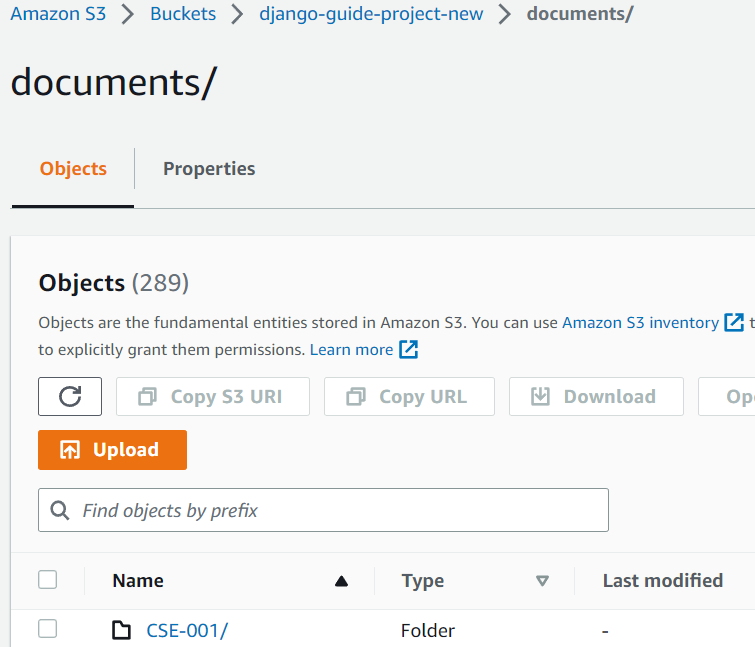

For example if I upload a file to documents/CSE-001 the directory present. So, when I pass this directory to exists() it should return True instead of False because, that directory exists inside the bucket. I'm attaching an screenshot of the directory which is created when a user uploads a file.

Please help me with that...

I'm not sure where I have gone wrong

Thank you | closed | 2023-04-24T16:08:38Z | 2023-05-20T18:06:14Z | https://github.com/jschneier/django-storages/issues/1243 | [] | bphariharan1301 | 1 |

holoviz/panel | jupyter | 7,150 | global loading spinner static asset not available | #### ALL software version info

panel 1.3.8

Docker version 26.1.3, build b72abbb

conda 24.1.2

the app is running locally within a docker container

#### Description of expected behavior and the observed behavior

I would expect the loading spinner to be loaded successfully.

#### Complete, minimal, self-contained example code that reproduces the issue

```

panel serve /home/jovy/work/notebooks/A.ipynb --port 5006 --address 0.0.0.0 --allow-websocket-origin=0.0.0.0:5006 --log-level debug --autoreload --reuse-sessions --global-loading-spinner

```

2024-08-15 16:02:06,114 Uncaught exception GET [/static/extensions/panel//arc_spinner.svg](http://localhost:8888/static/extensions/panel//arc_spinner.svg) (172.17.0.1)

HTTPServerRequest(protocol='http', host='0.0.0.0:5006', method='GET', uri='[/static/extensions/panel//arc_spinner.svg](http://localhost:8888/static/extensions/panel//arc_spinner.svg)', version='HTTP[/1.1](http://localhost:8888/1.1)', remote_ip='172.17.0.1')

Traceback (most recent call last):

File "[/opt/conda/envs/myenv/lib/python3.11/site-packages/tornado/web.py", line 1792](http://localhost:8888/opt/conda/envs/myenv/lib/python3.11/site-packages/tornado/web.py#line=1791), in _execute

self.finish()

File "[/opt/conda/envs/myenv/lib/python3.11/site-packages/tornado/web.py", line 1218](http://localhost:8888/opt/conda/envs/myenv/lib/python3.11/site-packages/tornado/web.py#line=1217), in finish

self.set_etag_header()

File "[/opt/conda/envs/myenv/lib/python3.11/site-packages/tornado/web.py", line 1702](http://localhost:8888/opt/conda/envs/myenv/lib/python3.11/site-packages/tornado/web.py#line=1701), in set_etag_header

etag = self.compute_etag()

^^^^^^^^^^^^^^^^^^^

File "[/opt/conda/envs/myenv/lib/python3.11/site-packages/tornado/web.py", line 2775](http://localhost:8888/opt/conda/envs/myenv/lib/python3.11/site-packages/tornado/web.py#line=2774), in compute_etag

assert self.absolute_path is not None

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

AssertionError

2024-08-15 16:02:06,115 500 GET [/static/extensions/panel//arc_spinner.svg](http://localhost:8888/static/extensions/panel//arc_spinner.svg) (172.17.0.1) 1.75ms

2024-08-15 16:02:06,120 Subprotocol header received

2024-08-15 16:02:06,121 WebSocket connection opened | closed | 2024-08-15T16:09:39Z | 2024-08-24T12:15:02Z | https://github.com/holoviz/panel/issues/7150 | [] | updiversity | 0 |

LAION-AI/Open-Assistant | machine-learning | 3,747 | Not able to get to the dashboard | There is no way for me to access the dashboard tools. I will see the dashboard for a split second, and then it just goes back to the main page, naming off contributors and affiliates. | closed | 2024-01-31T01:26:35Z | 2024-01-31T05:14:23Z | https://github.com/LAION-AI/Open-Assistant/issues/3747 | [] | RayneDrip | 1 |

labmlai/annotated_deep_learning_paper_implementations | deep-learning | 71 | Question about the framework | Thanks for your excellent wor for so many implementations, i was wondering that would you accept some algorithms that are implemented using tensorflow, mxnet or paddlepaddle, rather than pytorch? | closed | 2021-07-27T05:07:38Z | 2021-08-07T02:17:25Z | https://github.com/labmlai/annotated_deep_learning_paper_implementations/issues/71 | [

"question"

] | littletomatodonkey | 2 |

nl8590687/ASRT_SpeechRecognition | tensorflow | 142 | 提取发音的内容 | 有一份训练数据,每份语音内容包括空白内容跟人的发声内容。想请问下有什么方法可以把人的发声内容单独提取出来保存成wav? | closed | 2019-09-18T06:46:32Z | 2021-11-22T14:06:12Z | https://github.com/nl8590687/ASRT_SpeechRecognition/issues/142 | [] | zraul | 5 |

ultralytics/yolov5 | machine-learning | 12,527 | speed estimate using yolo5 - put coridantes of cars in xml file or csv | ### Search before asking

- [x] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

hi i am using yolo5 py porchand i can detect cars and everything ok but i have a code for speed detecting but needs cars cordinates . can i do someting else using only yolo for speed at my video?

thank you for help

### Additional

_No response_ | closed | 2023-12-19T22:06:32Z | 2024-10-20T19:34:52Z | https://github.com/ultralytics/yolov5/issues/12527 | [

"question"

] | gchinta1 | 6 |

bmoscon/cryptofeed | asyncio | 217 | [Feature request] support to record "Market price" or "index" in bitmex | seems they are terribly out of line when things get funny | open | 2020-03-13T03:55:55Z | 2020-08-01T00:49:43Z | https://github.com/bmoscon/cryptofeed/issues/217 | [

"Feature Request"

] | xiandong79 | 7 |

jmcnamara/XlsxWriter | pandas | 193 | Problem with one formula | Hello.

I sorry, I don't speak English very well (I am French)

I have a small problem with a formula.

I reduced my program easier to explain my problem.

``` python

import xlsxwriter

workbook = xlsxwriter.Workbook('test.xlsx')

worksheet = workbook.add_worksheet()

worksheet.write('A1', 'SUCCEED')

worksheet.write('A2', 'FAILED')

worksheet.write('A3', 'SUCCEED')

#worksheet.write_formula('A5', '=NB.SI(A1:A3;"SUCCEED")') #French

worksheet.write_formula('B5', '=COUNTIF(A1:A3;"SUCCEED")') #English

workbook.close()

```

I want to count the number of times that there is "succed" from my results.

But I am unable to open excel when the .xlsx is generated.

The error (in french):

"Désolé... Nous avons trouvé un problème dans le contenu de "test.xlsx". mais nous pouvons essayer de récupérer le maximum de contenu. Si la source de ce classeur est fiable cliquer sur oui"

I thinks the english error is:

"We're sorry. We can't open test.xlsx because we found a problem with its contents."

The repport:

``` xml

<?xml version="1.0" encoding="UTF-8" standalone="yes"?>

<recoveryLog xmlns="http://schemas.openxmlformats.org/spreadsheetml/2006/main"><logFileName>error087360_01.xml</logFileName><summary>Des erreurs ont été détectées dans le fichier « C:\Users\ArnaudF\Desktop\test.xlsx »</summary><removedRecords summary="Liste des enregistrements supprimés ci-dessous :"><removedRecord>Enregistrements supprimés: Formule dans la partie /xl/worksheets/sheet1.xml</removedRecord></removedRecords></recoveryLog>

```

And LibreOffice wrote "Err :508" instead of result

Yet I don't see error in the python code ...

An idea of the problem?

Thank you and good day/evening

| closed | 2014-12-12T14:58:03Z | 2019-10-17T14:14:32Z | https://github.com/jmcnamara/XlsxWriter/issues/193 | [] | Keevar | 4 |

strawberry-graphql/strawberry | asyncio | 3,617 | Unable to import strawberry.django since v0.236.0 | I receive an error when trying to build the project since [`v0.236.0`](https://github.com/strawberry-graphql/strawberry/releases/tag/0.236.0)

## Describe the Bug

```

File "/Users/boesch/.pyenv/versions/project/lib/python3.10/site-packages/strawberry/django/__init__.py", line 16, in __getattr__

raise AttributeError(

AttributeError: Attempted import of strawberry.django.type failed. Make sure to install the'strawberry-graphql-django' package to use the Strawberry Django extension API.

```

## System Information

```

# requirements.in

strawberry-graphql[asgi]==0.236.0

strawberry-graphql-django==0.37.0

```

Note that I'm keeping strawberry django at `v0.37.0` even though their latest release is [`v0.47.2`](https://github.com/strawberry-graphql/strawberry-django/releases/tag/v0.47.2) because, from what I can tell without digging into it too much yet, [`v0.37.1`](https://github.com/strawberry-graphql/strawberry-django/releases/tag/v0.37.1) forces asgi 3.8+ when django 4.2 wants 3.7

Not the problem to resolve here, but fyi, I don't _think_ I can upgrade strawberry django yet. Will probably make an issue over there

## Additional Context

Tbh I'm not seeing anything at first glance in https://github.com/strawberry-graphql/strawberry/pull/3546/files that would cause this (though it is a big PR 😅). Nothing significant changed within the `strawberry/django` package at least 🤷

Running `strawberry upgrade update-imports` just updates some unset types for me, still have the issue, fwiw. | closed | 2024-09-04T14:48:25Z | 2025-03-20T15:56:51Z | https://github.com/strawberry-graphql/strawberry/issues/3617 | [

"bug"

] | bradleyoesch | 3 |

gradio-app/gradio | python | 10,658 | Events injecting function instead of called function value for gr.State | ### Describe the bug

I've noticed that the render function is injecting the value of gr.State before the state value is called AND after the state value is called. It should only inject the called value not the callable itself if I understand correctly

### Have you searched existing issues? 🔎

- [x] I have searched and found no existing issues

### Reproduction

```python

import gradio as gr

with gr.Blocks() as demo:

input_text = gr.Textbox(label="input")

input_state = gr.State(

lambda: bool()

)

@gr.render(inputs=[input_text, input_state])

def show_split(text, state):

print(state)

if len(text) == 0:

gr.Markdown("## No Input Provided")

else:

for letter in text:

gr.Textbox(letter)

demo.launch()

```

### Logs

Here is the output of the above code from the print statement inside the decorated render function:

```shell

<function <lambda> at 0x000001F66D8CCE00>

False

```

### System Info

```shell

Gradio Environment Information:

------------------------------

Operating System: Windows

gradio version: 5.17.1

gradio_client version: 1.7.1

------------------------------------------------

gradio dependencies in your environment:

aiofiles: 23.2.1

anyio: 4.8.0

audioop-lts is not installed.

fastapi: 0.115.8

ffmpy: 0.5.0

gradio-client==1.7.1 is not installed.

httpx: 0.28.1

huggingface-hub: 0.29.0

jinja2: 3.1.5

markupsafe: 2.1.5

numpy: 2.2.3

orjson: 3.10.15

packaging: 24.2

pandas: 2.2.3

pillow: 11.1.0

pydantic: 2.10.6

pydub: 0.25.1

python-multipart: 0.0.20

pyyaml: 6.0.2

ruff: 0.9.6

safehttpx: 0.1.6

semantic-version: 2.10.0

starlette: 0.45.3

tomlkit: 0.13.2

typer: 0.15.1

typing-extensions: 4.12.2

urllib3: 2.3.0

uvicorn: 0.34.0

authlib; extra == 'oauth' is not installed.

itsdangerous; extra == 'oauth' is not installed.

gradio_client dependencies in your environment:

fsspec: 2025.2.0

httpx: 0.28.1

huggingface-hub: 0.29.0

packaging: 24.2

typing-extensions: 4.12.2

websockets: 14.2

```

### Severity

I can't work around it | open | 2025-02-23T00:57:41Z | 2025-03-03T07:31:16Z | https://github.com/gradio-app/gradio/issues/10658 | [

"bug"

] | brycepg | 1 |

robinhood/faust | asyncio | 535 | Consumer thread not yet started when enable_kafka = False | I'd like to run a Faust worker without doing anything with Kafka, for example to run timers.

## Steps to reproduce

```

import faust

from faust.app.base import BootStrategy

class App(faust.App):

class BootStrategy(BootStrategy):

enable_kafka = False

app = App('test')

```

## Expected behavior

App starts.

## Actual behavior

App crashes initializing the TableManager:

```

[^Worker]: Error: ConsumerNotStarted('Consumer thread not yet started')

Traceback (most recent call last):

File "/usr/local/lib/python3.8/site-packages/mode/worker.py", line 273, in execute_from_commandline

self.loop.run_until_complete(self._starting_fut)

File "/usr/local/lib/python3.8/asyncio/base_events.py", line 612, in run_until_complete

return future.result()

File "/usr/local/lib/python3.8/site-packages/mode/services.py", line 736, in start

await self._default_start()

File "/usr/local/lib/python3.8/site-packages/mode/services.py", line 743, in _default_start

await self._actually_start()

File "/usr/local/lib/python3.8/site-packages/mode/services.py", line 767, in _actually_start

await child.maybe_start()

File "/usr/local/lib/python3.8/site-packages/mode/services.py", line 795, in maybe_start

await self.start()

File "/usr/local/lib/python3.8/site-packages/mode/services.py", line 736, in start

await self._default_start()

File "/usr/local/lib/python3.8/site-packages/mode/services.py", line 743, in _default_start

await self._actually_start()

File "/usr/local/lib/python3.8/site-packages/mode/services.py", line 767, in _actually_start

await child.maybe_start()

File "/usr/local/lib/python3.8/site-packages/mode/services.py", line 795, in maybe_start

await self.start()

File "/usr/local/lib/python3.8/site-packages/mode/services.py", line 736, in start

await self._default_start()

File "/usr/local/lib/python3.8/site-packages/mode/services.py", line 743, in _default_start

await self._actually_start()

File "/usr/local/lib/python3.8/site-packages/mode/services.py", line 760, in _actually_start

await self.on_start()

File "/usr/local/lib/python3.8/site-packages/faust/tables/manager.py", line 143, in on_start

await self._update_channels()

File "/usr/local/lib/python3.8/site-packages/faust/tables/manager.py", line 162, in _update_channels

tp for tp in self.app.consumer.assignment()

File "/usr/local/lib/python3.8/site-packages/faust/transport/consumer.py", line 1292, in assignment

return self._thread.assignment()

File "/usr/local/lib/python3.8/site-packages/faust/transport/drivers/aiokafka.py", line 754, in assignment

return ensure_TPset(self._ensure_consumer().assignment())

File "/usr/local/lib/python3.8/site-packages/faust/transport/drivers/aiokafka.py", line 792, in _ensure_consumer

raise ConsumerNotStarted('Consumer thread not yet started')

faust.exceptions.ConsumerNotStarted: Consumer thread not yet started

```

# Versions

* Python version: 3.8.1

* Faust version: 1.10.3

* Operating system: Debian Buster | closed | 2020-02-24T13:41:29Z | 2020-02-26T23:28:57Z | https://github.com/robinhood/faust/issues/535 | [] | joekohlsdorf | 1 |

aleju/imgaug | machine-learning | 669 | cval not behaving correctly when given float value | According to [the docs](https://imgaug.readthedocs.io/en/latest/source/api_augmenters_geometric.html) `cval` should accept float values and create new pixels according to the given value:

> **cval** (number ... ) – The constant value to use when filling in newly created pixels. ... _It may be a float value._

However in practice (with imgaug.augmenters.Affine at least) this does not work. It appears that the actual value being returned is `int(cval)`. My particular use case is with `float32` images ranging from `[0.0, 1.0]`.

The issue can be reproduced this way:

```

import numpy as np

import matplotlib.pyplot as plt

import imgaug as ia

import imgaug.augmenters as iaa

im = np.array(ia.quokka(size=(256,256)),dtype=np.float32)

im = im/(2**8-1)

print("First pixel = " + str(im[0,0,:]))

aug = iaa.Affine(scale=0.8,cval=0.4)

aug_im = aug(image=im)

print("First pixel = " + str(aug_im[0,0,:]))

plt.imsave('./regular.png',im)

plt.imsave('./scaled.png',aug_im)

```

Output:

```

First pixel = [0.19215687 0.30588236 0.32156864]

First pixel = [0. 0. 0.]

```

Where this should now be `[0.4 0.4 0.4]`.

Resulting images:

If this can't be fixed please update the documentation, as currently this is not the expected behavior.

| closed | 2020-05-20T15:18:42Z | 2020-05-25T19:47:49Z | https://github.com/aleju/imgaug/issues/669 | [

"bug"

] | cdjameson | 1 |

deepinsight/insightface | pytorch | 1,855 | RAM | ```

# our RAM is 256G

mount -t tmpfs -o size=140G tmpfs /train_tmp

```

How to find my computer size

htop ? Mem? | open | 2021-12-11T09:31:01Z | 2021-12-13T02:57:32Z | https://github.com/deepinsight/insightface/issues/1855 | [] | alicera | 2 |

wkentaro/labelme | deep-learning | 987 | [Question] Why Labelme GUI not add open flags.txt | closed | 2022-02-15T06:00:50Z | 2022-02-25T21:09:07Z | https://github.com/wkentaro/labelme/issues/987 | [] | YuaXan | 1 | |

pyjanitor-devs/pyjanitor | pandas | 570 | [ENH] Series toset() functionality | # Brief Description

<!-- Please provide a brief description of what you'd like to propose. -->

I would like to propose toset() functionality similar to [tolist()](https://pandas.pydata.org/pandas-docs/stable/reference/api/pandas.Series.tolist.html).

Basically it will be a function call to tolist and then conversion to set.

Note: if the collection has no hashable member it will raise an exception.

# Example API

<!-- One of the selling points of pyjanitor is the API. Hence, we guard the API very carefully, and want to

make sure that it is accessible and understandable to many people. Please provide a few examples of what the API

of the new function you're proposing will look like. We have provided an example that you should modify. -->

Please modify the example API below to illustrate your proposed API, and then delete this sentence.

```python

# convert series to a set

df['col1'].toset()

| closed | 2019-09-15T07:26:08Z | 2019-09-24T13:28:33Z | https://github.com/pyjanitor-devs/pyjanitor/issues/570 | [

"enhancement",

"good first issue",

"good intermediate issue",

"being worked on"

] | eyaltrabelsi | 5 |

hzwer/ECCV2022-RIFE | computer-vision | 332 | Question about tracking a point | Thank you for this library: I tried it with the `Dockerfile` - without `GPU` - and I was able to generate a new file right away.

Let's say I have an input video with two contiguous frames: `frame_1` and `frame_2`.

On the input video, on `frame_1`, I have a point with known coordinates.

On the output video, is there a way to know the coordinates of the point on:

1) the generated frames between `frame_1` and `frame_2`

2) on `frame_2`

?

Thank you very much again. | open | 2023-08-01T10:37:46Z | 2023-08-03T12:33:49Z | https://github.com/hzwer/ECCV2022-RIFE/issues/332 | [] | carlok | 2 |

comfyanonymous/ComfyUI | pytorch | 6,652 | Image generation on 3090 is sometimes broken and worse than on 2060, and can't reproduce it | ### Expected Behavior

I have a workflow that produces extremely different results on 2060 GPU on a different PC, and my 3090. This image looks correct, and was generated on 2060.

### Actual Behavior

This is what gets generated on my 3090, no matter what I do: updating pytorch, drivers, changing VAE, changing attention options, changing fp32/bf16 settings. This results in slight changes in the image but it remains broken. Btw, generating on CPU is completely broken.

### Steps to Reproduce

[ComfyUI_01254_.json](https://github.com/user-attachments/files/18609546/ComfyUI_01254_.json)

The model used is obsessionIllustrious_v31.safetensors, https://civitai.com/models/820208?modelVersionId=1136462

No custom nodes required

### Debug Logs

```powershell

@:~/github/ComfyUI$ python main.py --use-sage-attention --highvram --disable-all-custom-nodes

...

Checkpoint files will always be loaded safely.

Total VRAM 24135 MB, total RAM 64001 MB

pytorch version: 2.6.0+cu126

Set vram state to: HIGH_VRAM

Device: cuda:0 NVIDIA GeForce RTX 3090 : cudaMallocAsync

Using sage attention

ComfyUI version: 0.3.13

...

Skipping loading of custom nodes

Starting server

To see the GUI go to: http://127.0.0.1:8188

got prompt

model weight dtype torch.float16, manual cast: None

model_type EPS

Using pytorch attention in VAE

Using pytorch attention in VAE

VAE load device: cuda:0, offload device: cpu, dtype: torch.bfloat16

CLIP/text encoder model load device: cuda:0, offload device: cpu, current: cpu, dtype: torch.float16

loaded diffusion model directly to GPU

Requested to load SDXL

loaded completely 9.5367431640625e+25 4897.0483474731445 True

Requested to load SDXLClipModel

loaded completely 9.5367431640625e+25 1560.802734375 True

100%|█████████████████████████████████████████████████████████████████████████████| 25/25 [00:07<00:00, 3.40it/s]

Requested to load AutoencoderKL

loaded completely 9.5367431640625e+25 159.55708122253418 True

Prompt executed in 9.84 seconds

```

### Other

_No response_ | open | 2025-01-30T22:52:53Z | 2025-02-04T15:24:05Z | https://github.com/comfyanonymous/ComfyUI/issues/6652 | [

"Potential Bug"

] | Nekotekina | 4 |

jumpserver/jumpserver | django | 14,718 | [Question] JumpServer server requirement | ### Product Version

Latest

### Product Edition

- [X] Community Edition

- [ ] Enterprise Edition

- [ ] Enterprise Trial Edition

### Installation Method

- [X] Online Installation (One-click command installation)

- [ ] Offline Package Installation

- [ ] All-in-One

- [ ] 1Panel

- [ ] Kubernetes

- [ ] Source Code

### Environment Information

Linux Server

### 🤔 Question Description

What are the specifications or server requirements for jumpserver?

example = OS version, Cpu cores, Ram, etc

minimum, recommended, and best practice.

### Expected Behavior

_No response_

### Additional Information

_No response_ | closed | 2024-12-24T02:09:35Z | 2024-12-24T09:33:30Z | https://github.com/jumpserver/jumpserver/issues/14718 | [

"🤔 Question"

] | aryasenawiryady | 2 |

jumpserver/jumpserver | django | 14,383 | [Bug] 本地Golang代码连接PgSQL失败 | ### 产品版本

v3.10.13

### 版本类型

- [ ] 社区版

- [X] 企业版

- [ ] 企业试用版

### 安装方式

- [ ] 在线安装 (一键命令安装)

- [x] 离线包安装

- [ ] All-in-One

- [ ] 1Panel

- [ ] Kubernetes

- [ ] 源码安装

### 环境信息

Jumpserver版本:JumpServer Enterprise Edition Version: v3.10.13

部署架构: jumpserver server -> 公网网域网关 -> pgsql

### 🐛 缺陷描述

通过Jumpserver工作台里面获得的 Database connect info 信息,本地Golang代码无法连接

### 复现步骤

登入Jumpserver ,跳转到工作台,选择要连接的PgSQL资产,

连接信息选择 Native -> DB Guide , 本地Golang代码通过获取的DB Connect信息去调用,无法连接

### 期望结果

可以使用本地代码,通过 DB Guide 获得的信息去连接数据库

### 补充信息

_No response_

### 尝试过的解决方案

1,已经尝试过Tcp抓包处理,无法建立TCP连接;

2,已经尝试过网络防火墙,确认不是因为防火墙原因导致无法建立TCP握手; | closed | 2024-10-30T09:44:40Z | 2024-11-28T08:41:59Z | https://github.com/jumpserver/jumpserver/issues/14383 | [

"🐛 Bug",

"🔘 Inactive"

] | ChenTitan49 | 3 |

thunlp/OpenPrompt | nlp | 309 | import break when using latest transformers | file: pipeline_base.py

line: 4

code: `from transformers.generation_utils import GenerationMixin`

this is broken, should be replaced with `from transformers import GenerationMixin` | open | 2024-05-02T19:24:14Z | 2024-05-02T19:24:14Z | https://github.com/thunlp/OpenPrompt/issues/309 | [] | xiyang-aads-lilly | 0 |

Lightning-AI/pytorch-lightning | pytorch | 20,530 | Batch size finder code example in dark mode is light instead of dark | ### 📚 Documentation

On the [Batch size finder advanced tricks](https://lightning.ai/docs/pytorch/stable/advanced/training_tricks.html#batch-size-finder) page, in dark mode the example code is in light mode makes it hard to read:

<img width="891" alt="Hard to read light mode code example" src="https://github.com/user-attachments/assets/84f54224-1281-4faf-8228-580d2f8db566" />

The code example should look instead look like this in dark mode:

<img width="974" alt="Screenshot 2025-01-06 at 12 59 06 PM" src="https://github.com/user-attachments/assets/0455b527-faae-4125-b2a7-e63db02519f0" />

cc @lantiga @borda | open | 2025-01-06T18:03:51Z | 2025-01-06T18:05:21Z | https://github.com/Lightning-AI/pytorch-lightning/issues/20530 | [

"docs",

"needs triage"

] | nicolasperez19 | 0 |

Yorko/mlcourse.ai | seaborn | 169 | Missing image on Lesson 3 notebook | Hey,

Image _credit_scoring_toy_tree_english.png_ is missing on the topic3_decision_trees_kNN notebook. | closed | 2018-02-19T11:17:20Z | 2018-08-04T16:08:25Z | https://github.com/Yorko/mlcourse.ai/issues/169 | [

"minor_fix"

] | henriqueribeiro | 3 |

alteryx/featuretools | data-science | 2,399 | Refactor computation of primitive lists in `DeepFeatureSynthesis` `__init__` | When building the following lists, there is a lot of code duplication:

- `self.groupby_trans_primitives`

- `self.agg_primitives`

- `self.where_primitives`

- `self.trans_primitives`

Furthermore, refactoring this logic outside of the `__init__` would help make the code more expressive and testable. | open | 2022-12-13T05:03:45Z | 2023-03-15T22:48:59Z | https://github.com/alteryx/featuretools/issues/2399 | [

"enhancement",

"refactor",

"tech debt"

] | sbadithe | 0 |

oegedijk/explainerdashboard | dash | 220 | Feature value input to get_contrib_df | Hello,

I understand that the get_contrib_df function can be used to get the contributions of various features to the final predictions for a particular data index from the table. However, is it possible to get the contribution calculation/table by passing a list/array of data points to this function? I am guess this is possible since the Input Feature table and contributions plot work in the explainer dashboard, just not sure how to call this function as there are no input arguments that accept a list/ an array.

Thank you,

Andy | open | 2022-05-25T05:24:51Z | 2022-05-25T05:24:51Z | https://github.com/oegedijk/explainerdashboard/issues/220 | [] | andypatrac | 0 |

aeon-toolkit/aeon | scikit-learn | 2,043 | [ajb/remove_metric] is STALE | @TonyBagnall,

ajb/remove_metric has had no activity for 143 days.

This branch will be automatically deleted in 32 days. | closed | 2024-09-09T01:28:04Z | 2024-09-12T07:26:31Z | https://github.com/aeon-toolkit/aeon/issues/2043 | [

"stale branch"

] | aeon-actions-bot[bot] | 1 |

LAION-AI/Open-Assistant | machine-learning | 2,760 | 没有删除历史会话的功能 | Delete history session prompted by the assistant, not executable | closed | 2023-04-19T15:44:02Z | 2023-04-23T20:02:49Z | https://github.com/LAION-AI/Open-Assistant/issues/2760 | [] | taskmgr0 | 1 |

tflearn/tflearn | tensorflow | 882 | About loss in Tensorboard | Hello everyone,

I run the example of Multi-layer perceptron, and visualize the loss in Tensorboard.

Does "Loss" refer to the training loss on each batch? And "Loss/Validation" refers to the loss on validation set? What does "Loss_var_loss" refer to?

| open | 2017-08-22T14:57:32Z | 2017-08-26T07:15:47Z | https://github.com/tflearn/tflearn/issues/882 | [] | zhao62 | 3 |

pytest-dev/pytest-xdist | pytest | 1,063 | Enable configuring numprocesses's default `tx` command | Over the years I've used and introduced xdist whenever possibly to speed up pytest runs.

Usually just using the `-n X` notation was sufficient. But in our current application, we have to use the `--tx` notation to ensure we're using eventlet.

```

--tx '4*popen//execmodel=eventlet'

```

This is a lot to type if you 'just' want to speed up tests. And combining it with `-n` reverts it to just using `popen`.

Ideally I'd configuring it to default to using `popen//execmodel=eventlet` and then up the processes using `-n X` notation.

So my feature request would be:

Enable configuring what 'executing method' `-n` actually uses. With `popen` being the default.

So that in your pytest.ini you can do something like this:

```ini

[pytest]

addopts = --default-tx popen//execmodel=eventlet

```

And then can add more worker as desired with the `-n` notation.

| open | 2024-04-12T14:19:07Z | 2024-04-16T09:52:35Z | https://github.com/pytest-dev/pytest-xdist/issues/1063 | [] | puittenbroek | 5 |

vaexio/vaex | data-science | 2,183 | [BUG-REPORT]"Unknown variables or column: ' while using jit | **Description**

Hi, I got this "Unknown variables or column: ' error while using jit_numba() / jit_cuda()

I am just trying a simplify version of your jit turtorial guide, and the code as below:

```

df = vaex.example()

def arc_distance(theta_1, phi_1, theta_2, phi_2):

"""

Calculates the pairwise arc distance

between all points in vector a and b.

"""

temp = (np.sin((theta_2-2-theta_1)/2)**2

+ np.cos(theta_1)*np.cos(theta_2) * np.sin((phi_2-phi_1)/2)**2)

distance_matrix = 2 * np.arctan2(np.sqrt(temp), np.sqrt(1-temp))

return distance_matrix

#without jit

df['arc_distance'] = arc_distance(df.x * np.pi/180,

df.y * np.pi/180,

df.z * np.pi/180,

df.vx * np.pi/180)

df.mean(df.arc_distance) # works fine here

df['arc_distance_cuda'] = df.arc_distance.jit_numba() # **Errorr here**

df.mean(df.arc_distance_cuda)

```

**Software information**

- Numpy: 1.22.0 / Numba: 0.56.0 / python: 3.9.13

- Vaex version {'vaex': '4.11.1', 'vaex-core': '4.11.1', 'vaex-viz': '0.5.2', 'vaex-hdf5': '0.12.3', 'vaex-server': '0.8.1', 'vaex-astro': '0.9.1', 'vaex-jupyter': '0.8.0', 'vaex-ml': '0.18.0'}

- Vaex was installed via: pip

- OS: Win10

I didn't encounter this problem in my another env (in python 3.8), and I think the package version aren't too much different to current env. Though @jit acceleration isn't a must-need function for me(at least for now), I still want to know how to avoid these mistake. | closed | 2022-08-24T06:03:54Z | 2022-08-26T09:18:59Z | https://github.com/vaexio/vaex/issues/2183 | [] | GMfatcat | 5 |

docarray/docarray | pydantic | 1,601 | Handle `max_elements` from HNSWLibIndexer | By default, `max_elements` is set to 1024. I believe this max_elements should be recomputed and indexes resized dynamically | closed | 2023-05-31T13:08:32Z | 2023-06-01T08:00:59Z | https://github.com/docarray/docarray/issues/1601 | [] | JoanFM | 0 |

supabase/supabase-py | fastapi | 717 | Test failures on Python 3.12 | # Bug report

## Describe the bug

Tests are broken against Python 3.12.

```AttributeError: module 'pkgutil' has no attribute 'ImpImporter'. Did you mean: 'zipimporter'?```

## To Reproduce

Run test script in a python 3.12 environment.

## Expected behavior

Tests should not fail.

## Logs

```bash

ERROR: invocation failed (exit code 1), logfile: /Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/log/py312-3.log

========================================================================== log start ===========================================================================

ERROR: Exception:

Traceback (most recent call last):

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/cli/base_command.py", line 167, in exc_logging_wrapper

status = run_func(*args)

^^^^^^^^^^^^^^^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/cli/req_command.py", line 247, in wrapper

return func(self, options, args)

^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/commands/install.py", line 315, in run

session = self.get_default_session(options)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/cli/req_command.py", line 98, in get_default_session

self._session = self.enter_context(self._build_session(options))

^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/cli/req_command.py", line 125, in _build_session

session = PipSession(

^^^^^^^^^^^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/network/session.py", line 343, in __init__

self.headers["User-Agent"] = user_agent()

^^^^^^^^^^^^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/network/session.py", line 175, in user_agent

setuptools_dist = get_default_environment().get_distribution("setuptools")

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/metadata/importlib/_envs.py", line 180, in get_distribution

return next(matches, None)

^^^^^^^^^^^^^^^^^^^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/metadata/importlib/_envs.py", line 175, in <genexpr>

matches = (

^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/metadata/base.py", line 594, in iter_all_distributions

for dist in self._iter_distributions():

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/metadata/importlib/_envs.py", line 168, in _iter_distributions

for dist in finder.find_eggs(location):

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/metadata/importlib/_envs.py", line 136, in find_eggs

yield from self._find_eggs_in_dir(location)

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/metadata/importlib/_envs.py", line 103, in _find_eggs_in_dir

from pip._vendor.pkg_resources import find_distributions

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_vendor/pkg_resources/__init__.py", line 2164, in <module>

register_finder(pkgutil.ImpImporter, find_on_path)

^^^^^^^^^^^^^^^^^^^

AttributeError: module 'pkgutil' has no attribute 'ImpImporter'. Did you mean: 'zipimporter'?

Traceback (most recent call last):

File "<frozen runpy>", line 198, in _run_module_as_main

File "<frozen runpy>", line 88, in _run_code

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/__main__.py", line 31, in <module>

sys.exit(_main())

^^^^^^^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/cli/main.py", line 70, in main

return command.main(cmd_args)

^^^^^^^^^^^^^^^^^^^^^^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/cli/base_command.py", line 101, in main

return self._main(args)

^^^^^^^^^^^^^^^^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/cli/base_command.py", line 223, in _main

self.handle_pip_version_check(options)

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/cli/req_command.py", line 179, in handle_pip_version_check

session = self._build_session(

^^^^^^^^^^^^^^^^^^^^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/cli/req_command.py", line 125, in _build_session

session = PipSession(

^^^^^^^^^^^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/network/session.py", line 343, in __init__

self.headers["User-Agent"] = user_agent()

^^^^^^^^^^^^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/network/session.py", line 175, in user_agent

setuptools_dist = get_default_environment().get_distribution("setuptools")

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/metadata/importlib/_envs.py", line 180, in get_distribution

return next(matches, None)

^^^^^^^^^^^^^^^^^^^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/metadata/importlib/_envs.py", line 175, in <genexpr>

matches = (

^

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/metadata/base.py", line 594, in iter_all_distributions

for dist in self._iter_distributions():

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/metadata/importlib/_envs.py", line 168, in _iter_distributions

for dist in finder.find_eggs(location):

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/metadata/importlib/_envs.py", line 136, in find_eggs

yield from self._find_eggs_in_dir(location)

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_internal/metadata/importlib/_envs.py", line 103, in _find_eggs_in_dir

from pip._vendor.pkg_resources import find_distributions

File "/Users/harish/Workspaces/oss/supabase/supabase-py/.tox/py312/lib/python3.12/site-packages/pip/_vendor/pkg_resources/__init__.py", line 2164, in <module>

register_finder(pkgutil.ImpImporter, find_on_path)

^^^^^^^^^^^^^^^^^^^

AttributeError: module 'pkgutil' has no attribute 'ImpImporter'. Did you mean: 'zipimporter'?

```

## System information

- OS: macOS

## Additional context

Launched tests via a `tox` script. (see https://github.com/supabase-community/supabase-py/issues/696)

| closed | 2024-03-03T05:12:43Z | 2024-03-23T13:24:45Z | https://github.com/supabase/supabase-py/issues/717 | [

"bug"

] | tinvaan | 2 |

QingdaoU/OnlineJudge | django | 476 | 提交代码的时候显示system error |

在提交issue之前请

- 认真阅读文档 http://docs.onlinejudge.me/#/

- 搜索和查看历史issues

- 安全类问题请不要在 GitHub 上公布,请发送邮件到 `admin@qduoj.com`,根据漏洞危害程度发送红包感谢。

然后提交issue请写清楚下列事项

- 进行什么操作的时候遇到了什么问题,最好能有复现步骤

- 错误提示是什么,如果看不到错误提示,请去data文件夹查看相应log文件。大段的错误提示请包在代码块标记里面。

- 你尝试修复问题的操作

- 页面问题请写清浏览器版本,尽量有截图

| open | 2024-09-15T12:54:37Z | 2024-09-15T12:54:37Z | https://github.com/QingdaoU/OnlineJudge/issues/476 | [] | leeway-z | 0 |

hankcs/HanLP | nlp | 1,059 | 使用繁體分詞後,髮變發 | <!--

注意事项和版本号必填,否则不回复。若希望尽快得到回复,请按模板认真填写,谢谢合作。

-->

## 注意事项

请确认下列注意事项:

* 我已仔细阅读下列文档,都没有找到答案:

- [首页文档](https://github.com/hankcs/HanLP)

- [wiki](https://github.com/hankcs/HanLP/wiki)

- [常见问题](https://github.com/hankcs/HanLP/wiki/FAQ)

* 我已经通过[Google](https://www.google.com/#newwindow=1&q=HanLP)和[issue区检索功能](https://github.com/hankcs/HanLP/issues)搜索了我的问题,也没有找到答案。

* 我明白开源社区是出于兴趣爱好聚集起来的自由社区,不承担任何责任或义务。我会礼貌发言,向每一个帮助我的人表示感谢。

* [x] 我在此括号内输入x打钩,代表上述事项确认完毕。

## 版本号

<!-- 发行版请注明jar文件名去掉拓展名的部分;GitHub仓库版请注明master还是portable分支 -->

当前最新版本号是:1.7.1

我使用的版本是:1.6.8

<!--以上属于必填项,以下可自由发挥-->

## 我的问题

使用 TraditionalChineseTokenizer.segment 來分詞 "飛利浦整髮造型吹風梳"

### 期望输出

```

[飛利浦/ntc, 整髮/v, 造型/n, 吹風/vn, 梳/v]

```

### 实际输出

```

[飛利浦/ntc, 整發/v, 造型/n, 吹風/vn, 梳/v]

```

### 其他信息

用NLPTokenizer.analyze來分詞則是期望的輸出

| closed | 2018-12-25T06:25:13Z | 2018-12-25T19:54:56Z | https://github.com/hankcs/HanLP/issues/1059 | [

"improvement"

] | gunblues | 1 |

nteract/papermill | jupyter | 405 | Using papermill to test notebooks | Hi,

I am using papermill to check that some notebooks run without problems. I don't need to output any notebook. Is there a way to run a notebook without output? | open | 2019-07-26T06:54:23Z | 2021-03-11T22:54:23Z | https://github.com/nteract/papermill/issues/405 | [

"question"

] | argenisleon | 3 |

AUTOMATIC1111/stable-diffusion-webui | pytorch | 16,376 | [Feature Request]: add support for stablediffusion.cpp inference. | ### Is there an existing issue for this?

- [X] I have searched the existing issues and checked the recent builds/commits

### What would your feature do ?

stablediffusion.cpp works fast on cpu, use less memory than pytorch and support of quantized models which take much less space.

### Proposed workflow

1. Go to settings

2. set inference method to stablediffusion.cpp

### Additional information

_No response_ | open | 2024-08-13T03:48:05Z | 2024-08-13T03:48:05Z | https://github.com/AUTOMATIC1111/stable-diffusion-webui/issues/16376 | [

"enhancement"

] | sss123next | 0 |

gradio-app/gradio | data-visualization | 10,667 | gr.load_chat has no documentation on gradio.app | ### Describe the bug

The [How to Create a Chatbot with Gradio](https://www.gradio.app/guides/creating-a-chatbot-fast) guide references a URL that does not exist, this is the documentation for the `gr.load_chat` function.

https://www.gradio.app/docs/gradio/load_chat

https://github.com/gradio-app/gradio/blob/f0a920c4934880645fbad783077ae9c7519856ce/guides/05_chatbots/01_creating-a-chatbot-fast.md?plain=1#L27

### Have you searched existing issues? 🔎

- [x] I have searched and found no existing issues

### Reproduction

https://www.gradio.app/docs/gradio/load_chat does not exist and will 404

### Screenshot

_No response_

### Logs

```shell

```

### System Info

```shell

n/a

```

### Severity

I can work around it | closed | 2025-02-24T17:46:19Z | 2025-02-25T00:49:49Z | https://github.com/gradio-app/gradio/issues/10667 | [

"bug",

"docs/website"

] | alexandercarruthers | 1 |

sgl-project/sglang | pytorch | 4,436 | [Feature] enable SGLang custom all reduce by default | ### Checklist

- [ ] 1. If the issue you raised is not a feature but a question, please raise a discussion at https://github.com/sgl-project/sglang/discussions/new/choose Otherwise, it will be closed.

- [ ] 2. Please use English, otherwise it will be closed.

### Motivation

We need community users to help test these cases. After confirming that there are no issues, we will default to using the custom all reduce implemented in SGLang. You can reply with your test results below this issue. Thanks!

**GPU Hardware Options**:

- H100/H200/H20/H800/A100

**Model Configurations with Tensor Parallelism (TP) Settings**:

- Llama 8B with TP 1/2/4/8

- Llama 70B with TP 4/8

- Qwen 7B with TP 1/2/4/8

- Qwen 32B with TP 4/8

- DeepSeek V3 with TP 8/16

**Environment Variables**:

```

export USE_VLLM_CUSTOM_ALLREDUCE=0

export USE_VLLM_CUSTOM_ALLREDUCE=1

```

**Benchmarking Commands**:

```bash

python3 -m sglang.bench_one_batch --model-path model --batch-size --input 128 --output 8

python3 -m sglang.bench_serving --backend sglang

```

### Related resources

_No response_ | open | 2025-03-14T19:46:52Z | 2025-03-18T08:29:14Z | https://github.com/sgl-project/sglang/issues/4436 | [

"good first issue",

"help wanted",

"high priority",

"performance"

] | zhyncs | 5 |

autokey/autokey | automation | 659 | Add return codes to mouse.wait_for_click and keyboard.wait_for_keypress | ### Has this issue already been reported?

- [X] I have searched through the existing issues.

### Is this a question rather than an issue?

- [X] This is not a question.

### What type of issue is this?

Enhancement

### Which Linux distribution did you use?

N/A

### Which AutoKey GUI did you use?

_No response_

### Which AutoKey version did you use?

N/A

### How did you install AutoKey?

N/A

### Can you briefly describe the issue?

I want to know how a wait for click or keypress completed so I can use that information for flow control in scripts.

E.g. a loop continues indefinitely until the mouse is clicked. This has to distinguish between a timeout and a click.

The same thing with a loop terminated by a keypress.

### Can the issue be reproduced?

N/A

### What are the steps to reproduce the issue?

N/A

### What should have happened?

These API calls should return 0 for success and one or more defined non-zero values to cover any alternatives. So far, 1 for timeout/failure is all that comes to mind.

### What actually happened?

AFAIK, they do not return any status code - which is equivalent to returning 0 no matter what happened.

### Do you have screenshots?

_No response_

### Can you provide the output of the AutoKey command?

_No response_

### Anything else?

_No response_ | open | 2022-02-08T20:34:56Z | 2023-06-18T16:59:58Z | https://github.com/autokey/autokey/issues/659 | [

"enhancement",

"scripting",

"good first issue"

] | josephj11 | 21 |

sqlalchemy/sqlalchemy | sqlalchemy | 10,792 | Перестаньте делать из нормального языка франкенштейна какого-то | ### Describe the bug

Перестаньте делать из нормального языка франкенштейна какого-то

### Optional link from https://docs.sqlalchemy.org which documents the behavior that is expected

_No response_

### SQLAlchemy Version in Use

2.0.2

### DBAPI (i.e. the database driver)

psycopg2

### Database Vendor and Major Version

PostgreSQL 15

### Python Version

3.11

### Operating system

OSX

### To Reproduce

```python

.

```

### Error

```

# Copy the complete stack trace and error message here, including SQL log output if applicable.

```

### Additional context

_No response_ | closed | 2023-12-26T11:54:45Z | 2023-12-26T12:00:04Z | https://github.com/sqlalchemy/sqlalchemy/issues/10792 | [] | undergroundenemy616 | 0 |

horovod/horovod | machine-learning | 3,091 | horovod installation: tensorflow not detected when using intel-tensorflow-avx512. | **Environment:**

1. Framework: TensorFlow

2. Framework version: intel-tensorflow-avx512==2.5.0

3. Horovod version: 0.22.1

4. MPI version: openmpi 4.0.3

5. CUDA version: N/A, cpu only

6. NCCL version: N/A, cpu only

7. Python version: 3.8

10. OS and version: Ubuntu focal

11. GCC version: 9.3.0

12. CMake version: 3.16.3

**Bug report:**

I'm trying to install horovod after installing intel-tensorflow-avx512. horovod fails to detect that version of tensorflow.

singularity buildfile is here:

https://github.com/kaufman-lab/build_containers/blob/8145f3c58d237e0c3953d45ff58cf750397bc781/geospatial_plus_ml_horovod4.1.0.def

in particular:

```

HOROVOD_WITH_TENSORFLOW=1 HOROVOD_WITH_MPI=1 HOROVOD_WITHOUT_GLOO=1 HOROVOD_WITHOUT_MXNET=1 HOROVOD_CPU_OPERATIONS=MPI HOROVOD_WITHOUT_PYTORCH=1 pip install --no-cache-dir horovod[tensorflow]==0.22.1 --no-dependencies --force-reinstall

```

build log is here:

https://github.com/kaufman-lab/build_containers/runs/3268356819?check_suite_focus=true

in particular, note the successful installation of tensorflow (specifically the intel-tensorflow-avx512 variant)

```

+ python3 -m pip freeze

absl-py==0.13.0

astunparse==1.6.3

cachetools==4.2.2

certifi==2021.5.30

cffi==1.14.6

charset-normalizer==2.0.4

cloudpickle==1.6.0

flatbuffers==1.12

future==0.18.2

gast==0.4.0

GDAL==3.0.4

google-auth==1.34.0

google-auth-oauthlib==0.4.5

google-pasta==0.2.0

grpcio==1.34.1

h5py==3.1.0

idna==3.2

intel-tensorflow-avx512==2.5.0

keras-nightly==2.5.0.dev2021032900

Keras-Preprocessing==1.1.2

Markdown==3.3.4

numpy==1.19.5

oauthlib==3.1.1

opt-einsum==3.3.0

packaging==21.0

Pillow==8.3.1

protobuf==3.17.3

psutil==5.8.0

pyasn1==0.4.8

pyasn1-modules==0.2.8

pycparser==2.20

pyparsing==2.4.7

PyYAML==5.4.1

requests==2.26.0

requests-oauthlib==1.3.0

rsa==4.7.2

scipy==1.7.1

six==1.15.0

tensorboard==2.5.0

tensorboard-data-server==0.6.1

tensorboard-plugin-wit==1.8.0

tensorflow-estimator==2.5.0

termcolor==1.1.0

typing==3.7.4.3

typing-extensions==3.7.4.3

urllib3==1.26.6

Werkzeug==2.0.1

wrapt==1.12.1

```

and the message saying that tensorflow couldn't be found:

```

CMake Error at /usr/share/cmake-3.16/Modules/FindPackageHandleStandardArgs.cmake:146 (message):

Could NOT find Tensorflow (missing: Tensorflow_LIBRARIES) (Required is at

least version "1.15.0")

``` | open | 2021-08-07T07:31:46Z | 2021-08-09T15:37:07Z | https://github.com/horovod/horovod/issues/3091 | [

"bug"

] | myoung3 | 4 |

Significant-Gravitas/AutoGPT | python | 8,740 | Add `integer` to `NodeHandle` type list | The JSON schema type `integer` is not defined in the type list in `<NodeHandle>`, causing it to show up as `(any)` rather than `(integer)` on block inputs/outputs with that type.

[https://github.com/Significant-Gravitas/AutoGPT/blob/86535b5811f8d1cc0bdde2232693919c4b1115e3/autogpt_platform/frontend/src/components/NodeHandle.tsx#L22-L29](https://github.com/Significant-Gravitas/AutoGPT/blob/86535b5811f8d1cc0bdde2232693919c4b1115e3/autogpt_platform/frontend/src/components/NodeHandle.tsx#L22-L29)

<img src="https://uploads.linear.app/a47946b5-12cd-4b3d-8822-df04c855879f/3d4e9efe-7804-441e-83ef-53dab7c32832/d668d7b5-430e-4d76-bfc8-4fbc9bd0668d?signature=eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9.eyJwYXRoIjoiL2E0Nzk0NmI1LTEyY2QtNGIzZC04ODIyLWRmMDRjODU1ODc5Zi8zZDRlOWVmZS03ODA0LTQ0MWUtODNlZi01M2RhYjdjMzI4MzIvZDY2OGQ3YjUtNDMwZS00ZDc2LWJmYzgtNGZiYzliZDA2NjhkIiwiaWF0IjoxNzMyMjEwNDc4LCJleHAiOjMzMzAyNzcwNDc4fQ.a8Bl1Ua7JVzcIWUSQI0DD9XOKYhydUZorNSpK6TLKXg " alt="image.png" width="348" height="231" /> | closed | 2024-11-21T17:34:39Z | 2024-12-10T17:46:17Z | https://github.com/Significant-Gravitas/AutoGPT/issues/8740 | [

"platform/frontend"

] | Pwuts | 0 |

howie6879/owllook | asyncio | 70 | 能不能更新下docker hub? | 对python有点不熟悉,一直没弄好。

docker hub那边的版本有点老了 | closed | 2019-09-01T12:11:34Z | 2019-09-02T02:56:40Z | https://github.com/howie6879/owllook/issues/70 | [] | henri001 | 2 |

gradio-app/gradio | data-science | 10,046 | HTML component issue |

There is only one small problem, and I believe this problem occurs in the front-end code. When both the container and show_label attributes are set to True at the same time, there will be an obvious conflict between the two.

I think the problem is that the labels used in the two are different. The label of the html component uses ```<span>```, while other components use ```<label>```

_Originally posted by @nuclearrockstone in https://github.com/gradio-app/gradio/issues/10014#issuecomment-2492948301_

| closed | 2024-11-27T06:06:52Z | 2024-11-28T19:13:57Z | https://github.com/gradio-app/gradio/issues/10046 | [

"bug"

] | nuclearrockstone | 0 |

airtai/faststream | asyncio | 1,414 | Bug: The Kafka consumer remains blocked indefinitely after commit failures, unable to recover | **Describe the bug**

In version 0.5.x, when using aiokafka with auto_commit=false, if Kafka rebalances causing consumer commit failures, the consumer remains indefinitely blocked, unable to resume normal consumption.

However, when I set auto_commit=true, or revert to version 0.4.7, the issue does not occur, and the consumer is able to quickly recover consumption after commit failures.

**How to reproduce**

My code be like:

```python

from fastapi import FastAPI

from faststream.kafka.fastapi import KafkaRouter, KafkaMessage

router = KafkaRouter(bootstrap_servers='10.0.3.61:9092')

subscriber = router.subscriber('in_topic', group_id='test', auto_commit=False)

publisher = router.publisher('out_topic')

@subscriber

async def handle(msg:dict, kafka_msg:KafkaMessage):

# do something...

await publisher.publish(msg)

app = FastAPI(lifespan=router.lifespan_context)

app.include_router(router)

```

And/Or steps to reproduce the behavior:

1. version 0.5.x

2. set auto_commit=false

3. When Kafka rebalances leading to consumer commit failures

**Expected behavior**

The consumer should be able to quickly recover consumption.

**Observed behavior**

the consumer remains indefinitely blocked, unable to resume normal consumption.

**Screenshots**

When Kafka rebalances leading to consumer commit failures

The consumer remains blocked thereafter until it goes offline.

**Environment**

faststream==0.5.x

**Additional context**

Provide any other relevant context or information about the problem here.

| closed | 2024-05-02T07:34:01Z | 2024-05-04T16:51:41Z | https://github.com/airtai/faststream/issues/1414 | [

"bug"

] | JohannT9527 | 0 |

InstaPy/InstaPy | automation | 6,000 | Setting timeout on join_pods function | Hi & Happy new year!

Can you please let me know if there is a way to stop `join_pods` interaction after some specified time?

The point is that currently it infinitely engages in interaction with the pods which results in Instagram blocking my activity.

I would therefore to set a time limit on that function.

Is this somehow possible currently?

Many thanks. | open | 2021-01-01T20:15:06Z | 2021-07-21T03:19:20Z | https://github.com/InstaPy/InstaPy/issues/6000 | [

"wontfix"

] | alinakhay | 1 |

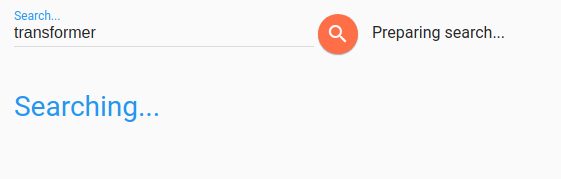

d2l-ai/d2l-en | computer-vision | 1,737 | Search doesn't appear to work | Currently, the search page shows no results and just "Preparing search"...

http://d2l.ai/search.html?q=transformer

Possibly related to this error in the console:

| closed | 2021-04-26T22:16:35Z | 2021-05-17T03:12:17Z | https://github.com/d2l-ai/d2l-en/issues/1737 | [

"bug"

] | indigoviolet | 3 |

nolar/kopf | asyncio | 1,018 | Problem in walkthrough diff example | ### Long story short

In the [diff]() example, the example only works if the `labels` field already exists. As things are, `labels` has not been created at this point (and would likely be pruned if it was created and empty).

### Kopf version

1.36.0

### Kubernetes version

1.24.8

### Python version

3.10

### Code

```python

@kopf.on.field('ephemeralvolumeclaims', field='metadata.labels')

def relabel(diff, status, namespace, **kwargs):

labels_patch = {field[0]: new for op, field, old, new in diff}

pvc_name = status['create_fn']['pvc-name']

pvc_patch = {'metadata': {'labels': labels_patch}}

api = kubernetes.client.CoreV1Api()

obj = api.patch_namespaced_persistent_volume_claim(

namespace=namespace,

name=pvc_name,

body=pvc_patch,

)

```

### Logs

```none

/home/jsolbrig/anaconda3/envs/kopf/lib/python3.10/site-packages/kopf/_core/reactor/running.py:176: FutureWarning: Absence of either namespaces or cluster-wide flag will become an error soon. For now, switching to the cluster-wide mode for backward compatibility.

warnings.warn("Absence of either namespaces or cluster-wide flag will become an error soon."