repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

pyjanitor-devs/pyjanitor | pandas | 544 | Welcome Zijie to the team! | On the basis of his contribution to extend pyjanitor to be compatible with pyspark, I have added @zjpoh to the team. Welcome on board!

Zijie and @anzelpwj have the most experience amongst us with pyspark, and I'm looking forward to seeing their contributions!

cc: @zbarry @szuckerman @hectormz @shandou @sallyhong @anzelpwj @jk3587 | closed | 2019-08-23T21:57:33Z | 2019-09-06T23:16:42Z | https://github.com/pyjanitor-devs/pyjanitor/issues/544 | [] | ericmjl | 2 |

Anjok07/ultimatevocalremovergui | pytorch | 724 | Performance decreases with high batch size | If I set the batch size to a value like 10, I get higher ram usage, around 100GB/128GB of ram usage, but the performance actually seems to get worse. Is this expected? | open | 2023-08-07T18:51:38Z | 2023-08-08T00:07:00Z | https://github.com/Anjok07/ultimatevocalremovergui/issues/724 | [] | GilbertoRodrigues | 0 |

flasgger/flasgger | rest-api | 623 | Can syntax highlighting be supported? | In future planning, can syntax highlighting be used in description? | open | 2024-08-13T04:21:10Z | 2024-08-13T04:23:01Z | https://github.com/flasgger/flasgger/issues/623 | [] | rice0524168 | 0 |

OpenInterpreter/open-interpreter | python | 1,109 | Is the %% [commands] implemented not yet? Or is it a bug? | ### Describe the bug

I found the following message when I looked at %help.

<img width="401" alt="image" src="https://github.com/OpenInterpreter/open-interpreter/assets/2724312/ae75d188-8ffa-413d-a5c7-3f61dcc9be44">

According to this Help, `%% [commands]` seems to allow you to execute shell commands in the Interpreter console.

I found this to be a very nice feature.

However, when I typed the following command on Open Interpreter, nothing was executed.

Could this possibly not yet be implemented?

Or is it a bug?

If anyone knows anything about the behavior of this command, please let me know.

### Reproduce

1. Open Interpreter

2. Execute command `%% ls`

### Expected behavior

1. A list of files in the current current directory is displayed

### Screenshots

_No response_

### Open Interpreter version

0.2.3

### Python version

3.10.12

### Operating System name and version

Windows 11 WSL/Ubuntu 22.04.4 LTS

### Additional context

_No response_ | open | 2024-03-22T10:30:08Z | 2024-03-31T02:12:42Z | https://github.com/OpenInterpreter/open-interpreter/issues/1109 | [

"Bug",

"Help Required",

"Triaged"

] | ynott | 3 |

junyanz/pytorch-CycleGAN-and-pix2pix | deep-learning | 1,201 | No gradient penalty in WGAN-GP | When computing WGAN-GP loss, the cal_gradient_penalty function is not called, and gradient penalty is not applied. | open | 2020-11-29T14:58:05Z | 2020-11-29T14:58:05Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/1201 | [] | GwangPyo | 0 |

JaidedAI/EasyOCR | deep-learning | 320 | Can it be used in php? | Can it be used in php? | closed | 2020-12-02T06:33:20Z | 2022-03-02T09:24:09Z | https://github.com/JaidedAI/EasyOCR/issues/320 | [] | netwons | 0 |

hankcs/HanLP | nlp | 1,904 | pip install hanlp[full]时,会安装Collecting tensorflow>=2.8.0 Using cached tensorflow-2.17.0-cp39-cp39-win_amd64.whl (2.0 kB) | <!--

感谢找出bug,请认真填写下表:

-->

**Describe the bug**

A clear and concise description of what the bug is.

**Code to reproduce the issue**

Provide a reproducible test case that is the bare minimum necessary to generate the problem.

```python

# 在Windows 11 23H2系统上运行以下命令

pip install hanlp[full]

```

**Describe the current behavior**

在Windows 11 23H2系统上使用Python 3.9.13安装HanLP[full]时,会自动安装tensorflow==2.17.0,这与项目中setup.py所要求的版本不一致,导致程序无法正常运行。此外,尝试降级tensorflow版本时,会遇到多个依赖项之间的冲突。

**Expected behavior**

期望安装HanLP[full]时,能够自动匹配适合的tensorflow版本,或者提供解决依赖冲突的建议。

**System information**

-OS Platform and Distribution: Windows 11 23H2

-Python version: 3.9.13

-HanLP version: 2.1.0b59

**Other info / logs**

D:\>cd d:\Code-Compile\Language\Python39\Scripts

d:\Code-Compile\Language\Python39\Scripts>pip -V

pip 22.0.4 from D:\Code-Compile\Language\Python39\lib\site-packages\pip (python 3.9)

d:\Code-Compile\Language\Python39\Scripts>pip install hanlp[full]

Collecting hanlp[full]

Downloading hanlp-2.1.0b59-py3-none-any.whl (651 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 651.6/651.6 KB 42.8 MB/s eta 0:00:00

Collecting pynvml

Using cached pynvml-11.5.3-py3-none-any.whl (53 kB)

Collecting sentencepiece>=0.1.91

Using cached sentencepiece-0.2.0-cp39-cp39-win_amd64.whl (991 kB)

Collecting torch>=1.6.0

Using cached torch-2.4.0-cp39-cp39-win_amd64.whl (198.0 MB)

Collecting hanlp-downloader

Using cached hanlp_downloader-0.0.25-py3-none-any.whl

Collecting hanlp-trie>=0.0.4

Using cached hanlp_trie-0.0.5-py3-none-any.whl

Collecting termcolor

Using cached termcolor-2.4.0-py3-none-any.whl (7.7 kB)

Collecting hanlp-common>=0.0.20

Using cached hanlp_common-0.0.20-py3-none-any.whl

Collecting toposort==1.5

Using cached toposort-1.5-py2.py3-none-any.whl (7.6 kB)

Collecting transformers>=4.1.1

Downloading transformers-4.44.1-py3-none-any.whl (9.5 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 9.5/9.5 MB 28.7 MB/s eta 0:00:00

Collecting tensorflow>=2.8.0

Using cached tensorflow-2.17.0-cp39-cp39-win_amd64.whl (2.0 kB)

Collecting perin-parser>=0.0.12

Using cached perin_parser-0.0.14-py3-none-any.whl

Collecting penman==1.2.1

Using cached Penman-1.2.1-py3-none-any.whl (43 kB)

Collecting fasttext-wheel==0.9.2

Using cached fasttext_wheel-0.9.2-cp39-cp39-win_amd64.whl (225 kB)

Collecting networkx>=2.5.1

Using cached networkx-3.2.1-py3-none-any.whl (1.6 MB)

Collecting pybind11>=2.2

Using cached pybind11-2.13.4-py3-none-any.whl (240 kB)

Requirement already satisfied: setuptools>=0.7.0 in d:\code-compile\language\python39\lib\site-packages (from fasttext-wheel==0.9.2->hanlp[full]) (58.1.0)

Collecting numpy

Using cached numpy-2.0.1-cp39-cp39-win_amd64.whl (16.6 MB)

Collecting phrasetree>=0.0.9

Using cached phrasetree-0.0.9-py3-none-any.whl

Collecting scipy

Using cached scipy-1.13.1-cp39-cp39-win_amd64.whl (46.2 MB)

Collecting tensorflow-intel==2.17.0

Using cached tensorflow_intel-2.17.0-cp39-cp39-win_amd64.whl (385.0 MB)

Collecting libclang>=13.0.0

Using cached libclang-18.1.1-py2.py3-none-win_amd64.whl (26.4 MB)

Collecting google-pasta>=0.1.1

Using cached google_pasta-0.2.0-py3-none-any.whl (57 kB)

Collecting grpcio<2.0,>=1.24.3

Downloading grpcio-1.65.5-cp39-cp39-win_amd64.whl (4.1 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 4.1/4.1 MB 53.0 MB/s eta 0:00:00

Collecting astunparse>=1.6.0

Using cached astunparse-1.6.3-py2.py3-none-any.whl (12 kB)

Collecting absl-py>=1.0.0

Using cached absl_py-2.1.0-py3-none-any.whl (133 kB)

Collecting protobuf!=4.21.0,!=4.21.1,!=4.21.2,!=4.21.3,!=4.21.4,!=4.21.5,<5.0.0dev,>=3.20.3

Using cached protobuf-4.25.4-cp39-cp39-win_amd64.whl (413 kB)

Collecting wrapt>=1.11.0

Using cached wrapt-1.16.0-cp39-cp39-win_amd64.whl (37 kB)

Collecting h5py>=3.10.0

Using cached h5py-3.11.0-cp39-cp39-win_amd64.whl (3.0 MB)

Collecting ml-dtypes<0.5.0,>=0.3.1

Using cached ml_dtypes-0.4.0-cp39-cp39-win_amd64.whl (126 kB)

Collecting numpy

Using cached numpy-1.26.4-cp39-cp39-win_amd64.whl (15.8 MB)

Collecting keras>=3.2.0

Using cached keras-3.5.0-py3-none-any.whl (1.1 MB)

Collecting tensorboard<2.18,>=2.17

Using cached tensorboard-2.17.1-py3-none-any.whl (5.5 MB)

Collecting gast!=0.5.0,!=0.5.1,!=0.5.2,>=0.2.1

Using cached gast-0.6.0-py3-none-any.whl (21 kB)

Collecting opt-einsum>=2.3.2

Using cached opt_einsum-3.3.0-py3-none-any.whl (65 kB)

Collecting typing-extensions>=3.6.6

Using cached typing_extensions-4.12.2-py3-none-any.whl (37 kB)

Collecting flatbuffers>=24.3.25

Using cached flatbuffers-24.3.25-py2.py3-none-any.whl (26 kB)

Collecting requests<3,>=2.21.0

Using cached requests-2.32.3-py3-none-any.whl (64 kB)

Collecting packaging

Using cached packaging-24.1-py3-none-any.whl (53 kB)

Collecting tensorflow-io-gcs-filesystem>=0.23.1

Using cached tensorflow_io_gcs_filesystem-0.31.0-cp39-cp39-win_amd64.whl (1.5 MB)

Collecting six>=1.12.0

Using cached six-1.16.0-py2.py3-none-any.whl (11 kB)

Collecting fsspec

Using cached fsspec-2024.6.1-py3-none-any.whl (177 kB)

Collecting sympy

Using cached sympy-1.13.2-py3-none-any.whl (6.2 MB)

Collecting filelock

Using cached filelock-3.15.4-py3-none-any.whl (16 kB)

Collecting jinja2

Using cached jinja2-3.1.4-py3-none-any.whl (133 kB)

Collecting pyyaml>=5.1

Using cached PyYAML-6.0.2-cp39-cp39-win_amd64.whl (162 kB)

Collecting safetensors>=0.4.1

Using cached safetensors-0.4.4-cp39-none-win_amd64.whl (286 kB)

Collecting tqdm>=4.27

Using cached tqdm-4.66.5-py3-none-any.whl (78 kB)

Collecting regex!=2019.12.17

Using cached regex-2024.7.24-cp39-cp39-win_amd64.whl (269 kB)

Collecting huggingface-hub<1.0,>=0.23.2

Downloading huggingface_hub-0.24.6-py3-none-any.whl (417 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 417.5/417.5 KB 25.5 MB/s eta 0:00:00

Collecting tokenizers<0.20,>=0.19

Using cached tokenizers-0.19.1-cp39-none-win_amd64.whl (2.2 MB)

Collecting idna<4,>=2.5

Using cached idna-3.7-py3-none-any.whl (66 kB)

Collecting certifi>=2017.4.17

Using cached certifi-2024.7.4-py3-none-any.whl (162 kB)

Collecting urllib3<3,>=1.21.1

Using cached urllib3-2.2.2-py3-none-any.whl (121 kB)

Collecting charset-normalizer<4,>=2

Using cached charset_normalizer-3.3.2-cp39-cp39-win_amd64.whl (100 kB)

Collecting colorama

Using cached colorama-0.4.6-py2.py3-none-any.whl (25 kB)

Collecting MarkupSafe>=2.0

Using cached MarkupSafe-2.1.5-cp39-cp39-win_amd64.whl (17 kB)

Collecting mpmath<1.4,>=1.1.0

Using cached mpmath-1.3.0-py3-none-any.whl (536 kB)

Collecting wheel<1.0,>=0.23.0

Using cached wheel-0.44.0-py3-none-any.whl (67 kB)

Collecting rich

Using cached rich-13.7.1-py3-none-any.whl (240 kB)

Collecting namex

Using cached namex-0.0.8-py3-none-any.whl (5.8 kB)

Collecting optree

Using cached optree-0.12.1-cp39-cp39-win_amd64.whl (263 kB)

Collecting tensorboard-data-server<0.8.0,>=0.7.0

Using cached tensorboard_data_server-0.7.2-py3-none-any.whl (2.4 kB)

Collecting markdown>=2.6.8

Downloading Markdown-3.7-py3-none-any.whl (106 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 106.3/106.3 KB 6.0 MB/s eta 0:00:00

Collecting werkzeug>=1.0.1

Using cached werkzeug-3.0.3-py3-none-any.whl (227 kB)

Collecting importlib-metadata>=4.4

Downloading importlib_metadata-8.4.0-py3-none-any.whl (26 kB)

Collecting pygments<3.0.0,>=2.13.0

Using cached pygments-2.18.0-py3-none-any.whl (1.2 MB)

Collecting markdown-it-py>=2.2.0

Using cached markdown_it_py-3.0.0-py3-none-any.whl (87 kB)

Collecting zipp>=0.5

Using cached zipp-3.20.0-py3-none-any.whl (9.4 kB)

Collecting mdurl~=0.1

Using cached mdurl-0.1.2-py3-none-any.whl (10.0 kB)

Installing collected packages: toposort, sentencepiece, phrasetree, penman, namex, mpmath, libclang, flatbuffers, zipp, wrapt, wheel, urllib3, typing-extensions, termcolor, tensorflow-io-gcs-filesystem, tensorboard-data-server, sympy, six, safetensors, regex, pyyaml, pynvml, pygments, pybind11, protobuf, packaging, numpy, networkx, mdurl, MarkupSafe, idna, hanlp-common, grpcio, gast, fsspec, filelock, colorama, charset-normalizer, certifi, absl-py, werkzeug, tqdm, scipy, requests, optree, opt-einsum, ml-dtypes, markdown-it-py, jinja2, importlib-metadata, hanlp-trie, h5py, google-pasta, fasttext-wheel, astunparse, torch, rich, perin-parser, markdown, huggingface-hub, hanlp-downloader, tokenizers, tensorboard, keras, transformers, tensorflow-intel, tensorflow, hanlp

WARNING: The script penman.exe is installed in 'D:\Code-Compile\Language\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

WARNING: The script wheel.exe is installed in 'D:\Code-Compile\Language\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

WARNING: The script isympy.exe is installed in 'D:\Code-Compile\Language\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

WARNING: The script pygmentize.exe is installed in 'D:\Code-Compile\Language\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

WARNING: The script pybind11-config.exe is installed in 'D:\Code-Compile\Language\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

WARNING: The script f2py.exe is installed in 'D:\Code-Compile\Language\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

WARNING: The script normalizer.exe is installed in 'D:\Code-Compile\Language\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

WARNING: The script tqdm.exe is installed in 'D:\Code-Compile\Language\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

WARNING: The script markdown-it.exe is installed in 'D:\Code-Compile\Language\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

WARNING: The scripts convert-caffe2-to-onnx.exe, convert-onnx-to-caffe2.exe and torchrun.exe are installed in 'D:\Code-Compile\Language\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

WARNING: The script markdown_py.exe is installed in 'D:\Code-Compile\Language\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

WARNING: The script huggingface-cli.exe is installed in 'D:\Code-Compile\Language\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

WARNING: The script tensorboard.exe is installed in 'D:\Code-Compile\Language\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

WARNING: The script transformers-cli.exe is installed in 'D:\Code-Compile\Language\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

WARNING: The scripts import_pb_to_tensorboard.exe, saved_model_cli.exe, tensorboard.exe, tf_upgrade_v2.exe, tflite_convert.exe, toco.exe and toco_from_protos.exe are installed in 'D:\Code-Compile\Language\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

Successfully installed MarkupSafe-2.1.5 absl-py-2.1.0 astunparse-1.6.3 certifi-2024.7.4 charset-normalizer-3.3.2 colorama-0.4.6 fasttext-wheel-0.9.2 filelock-3.15.4 flatbuffers-24.3.25 fsspec-2024.6.1 gast-0.6.0 google-pasta-0.2.0 grpcio-1.65.5 h5py-3.11.0 hanlp-2.1.0b59 hanlp-common-0.0.20 hanlp-downloader-0.0.25 hanlp-trie-0.0.5 huggingface-hub-0.24.6 idna-3.7 importlib-metadata-8.4.0 jinja2-3.1.4 keras-3.5.0 libclang-18.1.1 markdown-3.7 markdown-it-py-3.0.0 mdurl-0.1.2 ml-dtypes-0.4.0 mpmath-1.3.0 namex-0.0.8 networkx-3.2.1 numpy-1.26.4 opt-einsum-3.3.0 optree-0.12.1 packaging-24.1 penman-1.2.1 perin-parser-0.0.14 phrasetree-0.0.9 protobuf-4.25.4 pybind11-2.13.4 pygments-2.18.0 pynvml-11.5.3 pyyaml-6.0.2 regex-2024.7.24 requests-2.32.3 rich-13.7.1 safetensors-0.4.4 scipy-1.13.1 sentencepiece-0.2.0 six-1.16.0 sympy-1.13.2 tensorboard-2.17.1 tensorboard-data-server-0.7.2 tensorflow-2.17.0 tensorflow-intel-2.17.0 tensorflow-io-gcs-filesystem-0.31.0 termcolor-2.4.0 tokenizers-0.19.1 toposort-1.5 torch-2.4.0 tqdm-4.66.5 transformers-4.44.1 typing-extensions-4.12.2 urllib3-2.2.2 werkzeug-3.0.3 wheel-0.44.0 wrapt-1.16.0 zipp-3.20.0

WARNING: You are using pip version 22.0.4; however, version 24.2 is available.

You should consider upgrading via the 'D:\Code-Compile\Language\Python39\python.exe -m pip install --upgrade pip' command.

* [x] I've completed this form and searched the web for solutions.

<!-- ⬆️此处务必勾选,否则你的issue会被机器人自动删除! -->

<!-- ⬆️此处务必勾选,否则你的issue会被机器人自动删除! -->

<!-- ⬆️此处务必勾选,否则你的issue会被机器人自动删除! --> | closed | 2024-08-21T12:57:55Z | 2024-08-22T01:16:28Z | https://github.com/hankcs/HanLP/issues/1904 | [

"bug"

] | qingting04 | 1 |

mirumee/ariadne-codegen | graphql | 308 | include_all_inputs = false leads to IndexError in isort | `ariadne-codegen --config ariadne-codegen.toml` runs fine unless I add `include_all_inputs = false` in my `ariadne-codegen.toml` file. With include_all_inputs = false command fails with error:

```

File "/Users/user/my-project/venv/bin/ariadne-codegen", line 8, in <module>

sys.exit(main())

^^^^^^

File "/Users/user/my-project/venv/lib/python3.12/site-packages/click/core.py", line 1157, in __call__

return self.main(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/user/my-project/venv/lib/python3.12/site-packages/click/core.py", line 1078, in main

rv = self.invoke(ctx)

^^^^^^^^^^^^^^^^

File "/Users/user/my-project/venv/lib/python3.12/site-packages/click/core.py", line 1434, in invoke

return ctx.invoke(self.callback, **ctx.params)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/user/my-project/venv/lib/python3.12/site-packages/click/core.py", line 783, in invoke

return __callback(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/user/my-project/venv/lib/python3.12/site-packages/ariadne_codegen/main.py", line 37, in main

client(config_dict)

File "/Users/user/my-project/venv/lib/python3.12/site-packages/ariadne_codegen/main.py", line 81, in client

generated_files = package_generator.generate()

^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/user/my-project/venv/lib/python3.12/site-packages/ariadne_codegen/client_generators/package.py", line 152, in generate

self._generate_input_types()

File "/Users/user/my-project/venv/lib/python3.12/site-packages/ariadne_codegen/client_generators/package.py", line 307, in _generate_input_types

code = self._add_comments_to_code(ast_to_str(module), self.schema_source)

^^^^^^^^^^^^^^^^^^

File "/Users/user/my-project/venv/lib/python3.12/site-packages/ariadne_codegen/utils.py", line 33, in ast_to_str

return format_str(isort.code(code), mode=Mode())

^^^^^^^^^^^^^^^^

File "/Users/user/my-project/venv/lib/python3.12/site-packages/isort/api.py", line 92, in sort_code_string

sort_stream(

File "/Users/user/my-project/venv/lib/python3.12/site-packages/isort/api.py", line 210, in sort_stream

changed = core.process(

^^^^^^^^^^^^^

File "/Users/user/my-project/venv/lib/python3.12/site-packages/isort/core.py", line 422, in process

parsed_content = parse.file_contents(import_section, config=config)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/user/my-project/venv/lib/python3.12/site-packages/isort/parse.py", line 522, in file_contents

if "," in import_string.split(just_imports[-1])[-1]:

~~~~~~~~~~~~^^^^

IndexError: list index out of range

```

BTW, nothing is wrong with `include_all_enums = false`, it doesn't cause any issues.

UPD:

```

$ ariadne-codegen --version

ariadne-codegen, version 0.14.0

$ isort --version

_ _

(_) ___ ___ _ __| |_

| |/ _/ / _ \/ '__ _/

| |\__ \/\_\/| | | |_

|_|\___/\___/\_/ \_/

isort your imports, so you don't have to.

VERSION 5.12.0

``` | open | 2024-08-13T17:07:40Z | 2025-01-08T18:54:46Z | https://github.com/mirumee/ariadne-codegen/issues/308 | [] | weblab-misha | 7 |

MaartenGr/BERTopic | nlp | 2,304 | Lightweight installation: use safetensors without torch | ### Feature request

Remove dependency on `torch` when loading the topic model saved with safetensors.

### Motivation

I was happy to find [the guide](https://maartengr.github.io/BERTopic/getting_started/tips_and_tricks/tips_and_tricks.html#lightweight-installation) to lightweight bertopic installation (without `torch`), however, BERTopic.load() seems to depend on `torch` through safetensors.

Removing torch from requirements gives 2x speedup to the build of docker container for me, since I am hosting the embedding model on another service, so I would really like this to be avoided if possible.

Specifically, the problem is [here](https://github.com/MaartenGr/BERTopic/blob/master/bertopic/_save_utils.py#L514) in _save_utils.py:

``` python

def load_safetensors(path):

"""Load safetensors and check whether it is installed."""

try:

import safetensors.torch # <----

import safetensors

return safetensors.torch.load_file(path, device="cpu")

except ImportError:

raise ValueError("`pip install safetensors` to load .safetensors")

```

### Your contribution

I suggest using the function `safetensors.safe_open()` with `framework='numpy'` instead. This way the load() does not have the unnecessary requirement for torch to be installed. | closed | 2025-03-13T12:05:01Z | 2025-03-17T07:40:01Z | https://github.com/MaartenGr/BERTopic/issues/2304 | [] | hedgeho | 1 |

PokeAPI/pokeapi | api | 495 | Wurmple evolution chain error. |

In https://pokeapi.co/api/v2/evolution-chain/135/, Cascoon is set as an evolution of Silcoon instead of Wurmple. | closed | 2020-05-25T10:18:14Z | 2020-06-03T18:23:11Z | https://github.com/PokeAPI/pokeapi/issues/495 | [

"duplicate"

] | ESSutherland | 6 |

netbox-community/netbox | django | 18,585 | Filtering circuits by location not working | ### Deployment Type

NetBox Cloud

### NetBox Version

v4.2.3

### Python Version

3.11

### Steps to Reproduce

1. Attach a location to a circuit as a termination point.

2. Go to the location and under "Related objects" click on circuits (or circuits terminations).

3. `https://netbox.local/circuits/circuits/?location_id=<id>`

### Expected Behavior

Only the attached circuit(s) show(s) up.

### Observed Behavior

Filter not working, all circuits are being displayed. | closed | 2025-02-06T10:39:02Z | 2025-02-18T18:33:08Z | https://github.com/netbox-community/netbox/issues/18585 | [

"type: bug",

"status: accepted",

"severity: low"

] | Azmodeszer | 0 |

DistrictDataLabs/yellowbrick | matplotlib | 949 | Some plot directive visualizers not rendering in Read the Docs | Currently on Read the Docs (develop branch), a few of our visualizers that use the plot directive (#687) are not rendering the plots:

- [Classification Report](http://www.scikit-yb.org/en/develop/api/classifier/classification_report.html)

- [Silhouette Scores](http://www.scikit-yb.org/en/develop/api/cluster/silhouette.html)

- [ScatterPlot](http://www.scikit-yb.org/en/develop/api/contrib/scatter.html)

- [JointPlot](http://www.scikit-yb.org/en/develop/api/features/jointplot.html)

| closed | 2019-08-15T20:58:39Z | 2019-08-29T00:03:24Z | https://github.com/DistrictDataLabs/yellowbrick/issues/949 | [

"type: bug",

"type: documentation"

] | rebeccabilbro | 1 |

ets-labs/python-dependency-injector | asyncio | 31 | Make Objects compatible with Python 3.3 | Acceptance criterias:

- Tests on Python 3.3 passed.

- Badge with supported version added to README.md

| closed | 2015-03-17T13:02:30Z | 2015-03-26T08:03:49Z | https://github.com/ets-labs/python-dependency-injector/issues/31 | [

"enhancement"

] | rmk135 | 0 |

qwj/python-proxy | asyncio | 126 | reconnect ssh proxy session | Hi,

Please add ability to reconnect ssh session after disconnect. Current behavior after a failed or disconnected ssh session is just a session state notification in the log:

```

May 03 22:21:48 debian jumphost.py[1520]: ERROR:asyncio: Task exception was never retrieved

May 03 22:21:48 debian jumphost.py[1520]: future: <Task finished coro=<ProxySSH.patch_stream.<locals>.channel() done, defined at /usr/local/lib/python3.7/dist-packages/pproxy/server.py:610> exception=ConnectionLost('Connection lost')>

May 03 22:21:48 debian jumphost.py[1520]: Traceback (most recent call last):

May 03 22:21:48 debian jumphost.py[1520]: File "/usr/local/lib/python3.7/dist-packages/pproxy/server.py", line 612, in channel

May 03 22:21:48 debian jumphost.py[1520]: buf = await ssh_reader.read(65536)

May 03 22:21:48 debian jumphost.py[1520]: File "/usr/local/lib/python3.7/dist-packages/asyncssh/stream.py", line 131, in read

May 03 22:21:48 debian jumphost.py[1520]: return await self._session.read(n, self._datatype, exact=False)

May 03 22:21:48 debian jumphost.py[1520]: File "/usr/local/lib/python3.7/dist-packages/asyncssh/stream.py", line 495, in read

May 03 22:21:48 debian jumphost.py[1520]: raise exc

May 03 22:21:48 debian jumphost.py[1520]: asyncssh.misc.ConnectionLost: Connection lost

May 03 22:23:34 debian jumphost.py[1520]: DEBUG:jumphost: socks5 z.z.z.z:65418 -> sshtunnel x.x.x.x:22 -> y.y.y.y:22

May 03 22:23:34 debian jumphost.py[1520]: Traceback (most recent call last):

May 03 22:23:34 debian jumphost.py[1520]: File "/usr/local/lib/python3.7/dist-packages/pproxy/server.py", line 87, in stream_handler

May 03 22:23:34 debian jumphost.py[1520]: reader_remote, writer_remote = await roption.open_connection(host_name, port, local_addr, lbind)

May 03 22:23:34 debian jumphost.py[1520]: File "/usr/local/lib/python3.7/dist-packages/pproxy/server.py", line 227, in open_connection

May 03 22:23:34 debian jumphost.py[1520]: reader, writer = await asyncio.wait_for(wait, timeout=timeout)

May 03 22:23:34 debian jumphost.py[1520]: File "/usr/lib/python3.7/asyncio/tasks.py", line 416, in wait_for

May 03 22:23:34 debian jumphost.py[1520]: return fut.result()

May 03 22:23:34 debian jumphost.py[1520]: File "/usr/local/lib/python3.7/dist-packages/pproxy/server.py", line 649, in wait_open_connection

May 03 22:23:34 debian jumphost.py[1520]: reader, writer = await conn.open_connection(host, port)

May 03 22:23:34 debian jumphost.py[1520]: File "/usr/local/lib/python3.7/dist-packages/asyncssh/connection.py", line 3537, in open_connection

May 03 22:23:34 debian jumphost.py[1520]: *args, **kwargs)

May 03 22:23:34 debian jumphost.py[1520]: File "/usr/local/lib/python3.7/dist-packages/asyncssh/connection.py", line 3508, in create_connection

May 03 22:23:34 debian jumphost.py[1520]: chan = self.create_tcp_channel(encoding, errors, window, max_pktsize)

May 03 22:23:34 debian jumphost.py[1520]: File "/usr/local/lib/python3.7/dist-packages/asyncssh/connection.py", line 2277, in create_tcp_channel

May 03 22:23:34 debian jumphost.py[1520]: errors, window, max_pktsize)

May 03 22:23:34 debian jumphost.py[1520]: File "/usr/local/lib/python3.7/dist-packages/asyncssh/channel.py", line 110, in __init__

May 03 22:23:34 debian jumphost.py[1520]: self._recv_chan = conn.add_channel(self)

May 03 22:23:34 debian jumphost.py[1520]: File "/usr/local/lib/python3.7/dist-packages/asyncssh/connection.py", line 939, in add_channel

May 03 22:23:34 debian jumphost.py[1520]: 'SSH connection closed')

May 03 22:23:34 debian jumphost.py[1520]: asyncssh.misc.ChannelOpenError: SSH connection closed

May 03 22:23:34 debian jumphost.py[1520]: DEBUG:jumphost: SSH connection closed from z.z.z.z

```

My monkeypatch as WA:

```python

class ProxySSH(pproxy.server.ProxySSH):

async def wait_open_connection(self, host, port, local_addr, family, tunnel=None):

if self.sshconn is not None and self.sshconn.cancelled():

self.sshconn = None

try:

await self.wait_ssh_connection(local_addr, family, tunnel)

conn = self.sshconn.result()

if isinstance(self.jump, pproxy.server.ProxySSH):

reader, writer = await self.jump.wait_open_connection(host, port, None, None, conn)

else:

host, port = self.jump.destination(host, port)

if self.jump.unix:

reader, writer = await conn.open_unix_connection(self.jump.bind)

else:

reader, writer = await conn.open_connection(host, port)

reader, writer = self.patch_stream(reader, writer, host, port)

return reader, writer

except Exception as ex:

if not self.sshconn.done():

self.sshconn.set_exception(ex)

self.sshconn = None

raise

pproxy.server.ProxySSH = ProxySSH

``` | closed | 2021-05-03T19:32:33Z | 2021-05-11T23:04:18Z | https://github.com/qwj/python-proxy/issues/126 | [] | keenser | 2 |

biolab/orange3 | pandas | 6,129 | Orange installed from conda/pip does not have an icon (on Mac) | ### Discussed in https://github.com/biolab/orange3/discussions/6122

<div type='discussions-op-text'>

<sup>Originally posted by **DylanZDD** September 4, 2022</sup>

<img width="144" alt="Screen Shot 2022-09-04 at 12 26 04" src="https://user-images.githubusercontent.com/44270787/188297386-c463907c-9e7f-45ea-b46f-b0ad9b6f8f23.png">

<img width="1431" alt="Screen Shot 2022-09-04 at 12 26 13" src="https://user-images.githubusercontent.com/44270787/188297398-7584db2e-be45-4b6b-839a-f20de5185e50.png">

</div>

A quick search led me here:

https://stackoverflow.com/questions/33134594/set-tkinter-python-application-icon-in-mac-os-x

I think other platforms have similar problems. | closed | 2022-09-05T11:49:28Z | 2023-01-20T08:39:42Z | https://github.com/biolab/orange3/issues/6129 | [

"bug",

"snack"

] | markotoplak | 0 |

glumpy/glumpy | numpy | 6 | ffmpeg dependency | Every gloo-*.py example seems to depend on ffmpeg. The problem is that python executes the package `__init__.py` file (`glumpy/ext/__init__.py`) even if one wants to import only from a sub-package (`from glumpy.ext.inputhook import inputhook_manager, stdin_ready`). Seems to me this "always import ffmpeg" feature is not intentional.

```

fdkz@woueao:~/fdkz/extlibsrc/glumpy/examples$ python gloo-terminal.py

Traceback (most recent call last):

File "gloo-terminal.py", line 7, in <module>

import glumpy

File "/Library/Python/2.7/site-packages/glumpy/__init__.py", line 8, in <module>

from . app import run

File "/Library/Python/2.7/site-packages/glumpy/app/__init__.py", line 17, in <module>

from glumpy.ext.inputhook import inputhook_manager, stdin_ready

File "/Library/Python/2.7/site-packages/glumpy/ext/__init__.py", line 12, in <module>

from . import ffmpeg_reader

File "/Library/Python/2.7/site-packages/glumpy/ext/ffmpeg_reader.py", line 37, in <module>

from . ffmpeg_conf import FFMPEG_BINARY # ffmpeg, ffmpeg.exe, etc...

File "/Library/Python/2.7/site-packages/glumpy/ext/ffmpeg_conf.py", line 86, in <module>

raise IOError("FFMPEG binary not found. Try installing MoviePy"

IOError: FFMPEG binary not found. Try installing MoviePy manually and specify the path to the binary in the file conf.py

```

| closed | 2014-11-18T18:45:38Z | 2014-11-27T08:45:38Z | https://github.com/glumpy/glumpy/issues/6 | [] | fdkz | 1 |

davidsandberg/facenet | computer-vision | 821 | AttributeError: 'dict' object has no attribute 'iteritems' | Epoch: [1][993/1000] Time 0.462 Loss nan Xent nan RegLoss nan Accuracy 0.456 Lr 0.00005 Cl nan

Epoch: [1][994/1000] Time 0.447 Loss nan Xent nan RegLoss nan Accuracy 0.556 Lr 0.00005 Cl nan

Epoch: [1][995/1000] Time 0.471 Loss nan Xent nan RegLoss nan Accuracy 0.489 Lr 0.00005 Cl nan

Epoch: [1][996/1000] Time 0.469 Loss nan Xent nan RegLoss nan Accuracy 0.556 Lr 0.00005 Cl nan

Epoch: [1][997/1000] Time 0.457 Loss nan Xent nan RegLoss nan Accuracy 0.589 Lr 0.00005 Cl nan

Epoch: [1][998/1000] Time 0.469 Loss nan Xent nan RegLoss nan Accuracy 0.456 Lr 0.00005 Cl nan

Epoch: [1][999/1000] Time 0.474 Loss nan Xent nan RegLoss nan Accuracy 0.500 Lr 0.00005 Cl nan

Epoch: [1][1000/1000] Time 0.460 Loss nan Xent nan RegLoss nan Accuracy 0.444 Lr 0.00005 Cl nan

Saving variables

Variables saved in 0.74 seconds

Saving metagraph

Metagraph saved in 3.01 seconds

Saving statistics

Traceback (most recent call last):

File "src/train_softmax.py", line 580, in <module>

main(parse_arguments(sys.argv[1:]))

File "src/train_softmax.py", line 260, in main

for key, value in stat.iteritems():

AttributeError: 'dict' object has no attribute 'iteritems'

| closed | 2018-07-27T02:32:16Z | 2021-12-20T15:04:53Z | https://github.com/davidsandberg/facenet/issues/821 | [] | alanMachineLeraning | 3 |

tflearn/tflearn | data-science | 741 | how to build ResNet 152-layer model and extract the penultimate hidden layer's image feature | Now I got a pre-trained ResNet 152-layer model [](http://download.tensorflow.org/models/resnet_v1_152_2016_08_28.tar.gz)

I just want to use this 152-layer model to extract the image feature, now i want to extract the penultimate hidden layer's image feature

(just as show in the code).

1. The main question is how to build 152-layer ResNet model ? (i just see that set n = 18 then resnet

is 110 layers.). Or how to build 50-layer ResNet model?

2. my extract the penultimate hidden layer's image feature code is right?

```

from __future__ import division, print_function, absolute_import

import tflearn

from PIL import Image

import numpy as np

# Residual blocks

# 32 layers: n=5, 56 layers: n=9, 110 layers: n=18

n = 18

# Data loading

from tflearn.datasets import cifar10

(X, Y), (testX, testY) = cifar10.load_data()

Y = tflearn.data_utils.to_categorical(Y, 10)

testY = tflearn.data_utils.to_categorical(testY, 10)

# Real-time data preprocessing

img_prep = tflearn.ImagePreprocessing()

img_prep.add_featurewise_zero_center(per_channel=True)

# Real-time data augmentation

img_aug = tflearn.ImageAugmentation()

img_aug.add_random_flip_leftright()

img_aug.add_random_crop([32, 32], padding=4)

# Building Residual Network

net = tflearn.input_data(shape=[None, 32, 32, 3],

data_preprocessing=img_prep,

data_augmentation=img_aug)

net = tflearn.conv_2d(net, 16, 3, regularizer='L2', weight_decay=0.0001)

net = tflearn.residual_block(net, n, 16)

net = tflearn.residual_block(net, 1, 32, downsample=True)

net = tflearn.residual_block(net, n-1, 32)

net = tflearn.residual_block(net, 1, 64, downsample=True)

net = tflearn.residual_block(net, n-1, 64)

net = tflearn.residual_block(net, 1, 64, downsample=True)

net = tflearn.residual_block(net, n-1, 64)

net = tflearn.batch_normalization(net)

net = tflearn.activation(net, 'relu')

output_layer = tflearn.global_avg_pool(net)

# Regression

net = tflearn.fully_connected(output_layer, 10, activation='softmax')

mom = tflearn.Momentum(0.1, lr_decay=0.1, decay_step=32000, staircase=True)

net = tflearn.regression(net, optimizer=mom,

loss='categorical_crossentropy')

# Training

model = tflearn.DNN(net, checkpoint_path='resnet_v1_152.ckpt',

max_checkpoints=10, tensorboard_verbose=0,

clip_gradients=0.)

model.fit(X, Y, n_epoch=200, validation_set=(testX, testY),

snapshot_epoch=False, snapshot_step=500,

show_metric=True, batch_size=128, shuffle=True,

run_id='resnet_cifar10')

model.save('./resnet_v1_152.ckpt')

#---------------

# now extract the penultimate hidden layer's image feature

img = Image.open(file_path)

img = img.resize((32, 32), Image.ANTIALIAS)

img = np.asarray(img, dtype="float32")

imgs = np.asarray([img])

model_test = tflearn.DNN(output_layer, session = model.session)

model_test.load('resnet_v1_152.ckpt', weights_only = True)

predict_y = model_test.predict(imgs)

print('layer\'s feature'.format(predict_y))

``` | open | 2017-05-05T12:05:01Z | 2017-07-26T08:49:35Z | https://github.com/tflearn/tflearn/issues/741 | [] | willduan | 3 |

jupyterlab/jupyter-ai | jupyter | 485 | Empty string config fields cause confusing errors | ## Description

If a user types into a config field, then deletes what was written, then clicks save, sometimes the field is saved as an empty string `""`. This can cause confusing behavior because this will then be passed as a keyword argument to the underlying LangChain provider.

Next steps are to

1. Set up test case coverage, preferably E2E if possible

2. Implement changes in the frontend and backend to address this | open | 2023-11-21T18:22:40Z | 2023-11-21T18:23:09Z | https://github.com/jupyterlab/jupyter-ai/issues/485 | [

"bug"

] | dlqqq | 0 |

vastsa/FileCodeBox | fastapi | 74 | 可以做对S3对象存储协议兼容吗? | 希望能对接minio 兼容s3接口,应该是和oss类似,是否可以使用oss对接minio?希望能做一点S3兼容就可以更多存储对接了 | closed | 2023-07-06T09:19:48Z | 2023-08-15T09:26:20Z | https://github.com/vastsa/FileCodeBox/issues/74 | [] | Oldming1 | 2 |

gradio-app/gradio | data-visualization | 10,399 | TabbedInterface does not work with Chatbot defined in ChatInterface | ### Describe the bug

When defining a `Chatbot` in `ChatInterface`, the `TabbedInterface` does not render it properly.

### Have you searched existing issues? 🔎

- [x] I have searched and found no existing issues

### Reproduction

```python

import gradio as gr

def chat():

return "Hello"

chat_ui = gr.ChatInterface(

fn=chat,

type="messages",

chatbot=gr.Chatbot(type="messages"),

)

demo = gr.TabbedInterface([chat_ui], ["Tab 1"])

demo.launch()

```

### Screenshot

### Logs

```shell

```

### System Info

```shell

Gradio Environment Information:

------------------------------

Operating System: Darwin

gradio version: 5.12.0

gradio_client version: 1.5.4

------------------------------------------------

gradio dependencies in your environment:

aiofiles: 23.2.1

anyio: 4.8.0

audioop-lts is not installed.

fastapi: 0.115.6

ffmpy: 0.5.0

gradio-client==1.5.4 is not installed.

httpx: 0.27.2

huggingface-hub: 0.27.1

jinja2: 3.1.5

markupsafe: 2.1.5

numpy: 2.2.2

orjson: 3.10.15

packaging: 24.2

pandas: 2.2.3

pillow: 11.1.0

pydantic: 2.10.5

pydub: 0.25.1

python-multipart: 0.0.20

pyyaml: 6.0.2

ruff: 0.4.10

safehttpx: 0.1.6

semantic-version: 2.10.0

starlette: 0.41.3

tomlkit: 0.13.2

typer: 0.15.1

typing-extensions: 4.12.2

urllib3: 2.3.0

uvicorn: 0.34.0

authlib; extra == 'oauth' is not installed.

itsdangerous; extra == 'oauth' is not installed.

gradio_client dependencies in your environment:

fsspec: 2024.12.0

httpx: 0.27.2

huggingface-hub: 0.27.1

packaging: 24.2

typing-extensions: 4.12.2

websockets: 14.2

```

### Severity

Blocking usage of gradio | open | 2025-01-21T17:11:08Z | 2025-01-22T17:19:36Z | https://github.com/gradio-app/gradio/issues/10399 | [

"bug"

] | arnaldog12 | 2 |

babysor/MockingBird | deep-learning | 381 | 两种预置声码器各有优缺点,该在什么方向上改进? | 预置的两种声码器g_hifigan和pretained,

用g_hifigan的生成出来的音频,音色特别准,但是会带有电音

用pretained的生成出来的音频,音色没那么准,音量也会变小,但是就不会带电音

这种问题应该往哪个方向去改进?使得结果两种优点都具有

是声码器训练问题?还是源音频的问题?还是合成器?

| open | 2022-02-10T10:36:47Z | 2022-02-10T12:51:42Z | https://github.com/babysor/MockingBird/issues/381 | [] | funboomen | 1 |

ivy-llc/ivy | pytorch | 28,344 | Fix Frontend Failing Test: tensorflow - math.tensorflow.math.argmax | To-do List: https://github.com/unifyai/ivy/issues/27499 | closed | 2024-02-20T09:49:40Z | 2024-02-20T15:36:42Z | https://github.com/ivy-llc/ivy/issues/28344 | [

"Sub Task"

] | Sai-Suraj-27 | 0 |

gradio-app/gradio | data-visualization | 10,160 | gr.BrowserState first variable entry is not value, its default_value | ### Describe the bug

One cannot replace gr.State with gr.BrowserState because assigning "value" causes an error, and the first entry is default_value. This causes multiple headaches in replacing one with the other. Having "default_value" be the first input also breaks the standard for of all other components.

### Have you searched existing issues? 🔎

- [X] I have searched and found no existing issues

### Reproduction

This doesn't work.

```python

import gradio as gr

local_storage=gr.BrowserState(value="my Thing")

```

### Screenshot

not relevant.

### Logs

```shell

running gradio 5.8.0,

```

### System Info

```shell

running on Mac M2,

Gradio Environment Information:

------------------------------

Operating System: Darwin

gradio version: 5.8.0

gradio_client version: 1.5.1

------------------------------------------------

gradio dependencies in your environment:

aiofiles: 22.1.0

anyio: 3.7.1

audioop-lts is not installed.

fastapi: 0.115.6

ffmpy: 0.3.2

gradio-client==1.5.1 is not installed.

httpx: 0.27.0

huggingface-hub: 0.25.2

jinja2: 3.1.2

markupsafe: 2.0.1

numpy: 1.26.4

orjson: 3.10.5

packaging: 23.2

pandas: 2.2.3

pillow: 10.4.0

pydantic: 2.7.4

pydub: 0.25.1

python-multipart: 0.0.19

pyyaml: 6.0

ruff: 0.5.0

safehttpx: 0.1.6

semantic-version: 2.10.0

starlette: 0.41.3

tomlkit: 0.12.0

typer: 0.12.5

typing-extensions: 4.12.2

urllib3: 2.2.2

uvicorn: 0.30.5

authlib; extra == 'oauth' is not installed.

itsdangerous; extra == 'oauth' is not installed.

gradio_client dependencies in your environment:

fsspec: 2023.10.0

httpx: 0.27.0

huggingface-hub: 0.25.2

packaging: 23.2

typing-extensions: 4.12.2

websockets: 11.0.3

```

### Severity

I can work around it | closed | 2024-12-09T17:43:14Z | 2024-12-12T15:34:17Z | https://github.com/gradio-app/gradio/issues/10160 | [

"bug",

"pending clarification"

] | robwsinnott | 2 |

koaning/scikit-lego | scikit-learn | 221 | add --pre-commit features | our ci jobs are taking a longer time now and since 25% of the issues are flake related it may be a good idea to add black to this project and force it with a pre commit hook

@MBrouns objections? | closed | 2019-10-18T14:09:08Z | 2020-01-24T21:41:41Z | https://github.com/koaning/scikit-lego/issues/221 | [

"enhancement"

] | koaning | 2 |

roboflow/supervision | deep-learning | 1,038 | Opencv channel swap in ImageSinks | ### Search before asking

- [X] I have searched the Supervision [issues](https://github.com/roboflow/supervision/issues) and found no similar feature requests.

### Description

Hello I realize opencv used BGR instead of RGB, and therefore, the following code will cause channel swap:

```python

with sv.ImageSink(target_dir_path=output_dir, overwrite=True) as sink:

annotated_img = box_annotator.annotate(

scene=np.array(Image.open(img_dir).convert("RGB")),

detections=results,

labels=labels,

)

sink.save_image(

image=annotated_img, image_name="test.jpg"

)

```

Unless I use `cv2.cvtColor(annotated_img, cv2.COLOR_RGB2BGR)` . This also happens with video sinks and other places using `Opencv` for image writing.

I wonder if it is possible to add this conversion by default or at least mention this in the docs? Thanks a lot!

### Use case

For savings with `ImageSink()`

### Additional

_No response_

### Are you willing to submit a PR?

- [X] Yes I'd like to help by submitting a PR! | closed | 2024-03-24T21:48:00Z | 2024-03-26T01:46:31Z | https://github.com/roboflow/supervision/issues/1038 | [

"enhancement"

] | zhmiao | 3 |

dot-agent/nextpy | pydantic | 157 | Why hasn't this project been updated for a while | This is a good project, but it hasn't been updated for a long time. Why is that | open | 2024-09-23T09:25:54Z | 2025-01-13T12:36:11Z | https://github.com/dot-agent/nextpy/issues/157 | [] | redpintings | 2 |

scikit-learn/scikit-learn | machine-learning | 30,594 | DOC: Example of `train_test_split` with `pandas` DataFrames | ### Describe the issue linked to the documentation

Currently, the example [here](https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.train_test_split.html) only illustrates the use case of `train_test_split` for `numpy` arrays. I think an additional example featuring a `pandas` DataFrame would make this page more beginner-friendly. Would you guys be interested?

### Suggest a potential alternative/fix

The modification in [`model_selection/_split`](https://github.com/scikit-learn/scikit-learn/blob/d666202a9349893c1bd106cc9ee0ff0a807c7cf3/sklearn/model_selection/_split.py) would be the following:

```

"""

Example: Data are a `numpy` array

--------

>>> Current example

Example: Data are a `pandas` DataFrame

--------

>>> from sklearn import datasets

>>> from sklearn.model_selection import train_test_split

>>> iris = datasets.load_iris(as_frame=True)

>>> X, y = iris['data'], iris['target']

>>> X.head()

sepal length (cm) sepal width (cm) petal length (cm) petal width (cm)

0 5.1 3.5 1.4 0.2

1 4.9 3.0 1.4 0.2

2 4.7 3.2 1.3 0.2

3 4.6 3.1 1.5 0.2

4 5.0 3.6 1.4 0.2

>>> y.head()

0 0

1 0

2 0

3 0

4 0

>>> X_train, X_test, y_train, y_test = train_test_split(

... X, y, test_size=0.33, random_state=42) # rows will be shuffled

>>> X_train.head()

sepal length (cm) sepal width (cm) petal length (cm) petal width (cm)

96 5.7 2.9 4.2 1.3

105 7.6 3.0 6.6 2.1

66 5.6 3.0 4.5 1.5

0 5.1 3.5 1.4 0.2

122 7.7 2.8 6.7 2.0

>>> y_train

96 1

105 2

66 1

0 0

122 2

>>> X_test.head()

sepal length (cm) sepal width (cm) petal length (cm) petal width (cm)

73 6.1 2.8 4.7 1.2

18 5.7 3.8 1.7 0.3

118 7.7 2.6 6.9 2.3

78 6.0 2.9 4.5 1.5

76 6.8 2.8 4.8 1.4

>>> y_test.head()

73 1

18 0

118 2

78 1

76 1

"""

``` | closed | 2025-01-06T11:53:30Z | 2025-02-06T10:44:52Z | https://github.com/scikit-learn/scikit-learn/issues/30594 | [

"Documentation"

] | victoris93 | 2 |

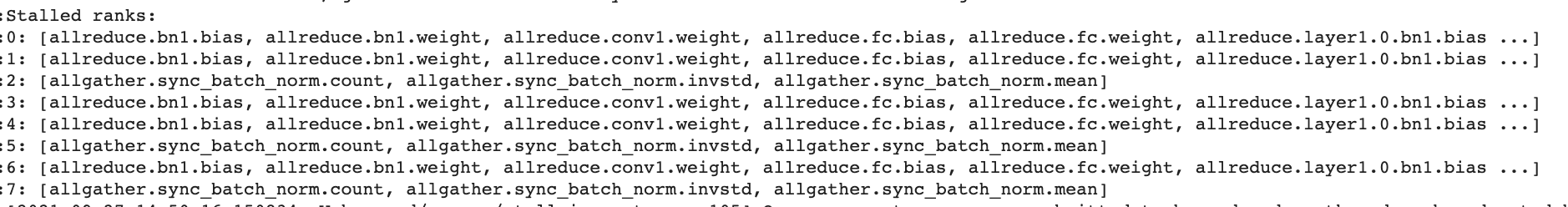

horovod/horovod | tensorflow | 3,181 | Horovod stalled ranks when using hvd.SyncBatchNorm in pytorch amp mode | Excuse me! Recently I use hvd.SyncBatchNorm to train pytorch resnet50, followed [this pr](https://github.com/horovod/horovod/pull/3018/files) and find the phenomenon that the horovod stalled rank when using pytorch amp mode. When disabling amp, it workes normly. All above experiments using `single machine 8gpus`.

### Environment

horovod: 0.19.2

pytorch: 1.7.0

nccl: 2.7.8

### The relevant code

```python

from torchvision import models

model = models.resnet50(norm_layer=hvd.SyncBatchNorm)

optimizer = torch.optim.SGD(model.parameters(), lr=args.learning_rate, momentum=args.momentum, weight_decay=args.weight_decay)

optimizer = hvd.DistributedOptimizer(optimizer, named_parameters=model.named_parameters())

# amp code

scaler = amp.grad_scaler.GradScaler()

...

with amp.autocast_mode.autocast():

output = model(data)

loss = loss_fn(output)

scaler.scale(loss).backward()

scaler.step(optimizer)

scaler.update()

```

The reason is the incompatibility between pytoch amp and horovod?

| closed | 2021-09-27T07:33:08Z | 2021-12-18T14:28:20Z | https://github.com/horovod/horovod/issues/3181 | [

"wontfix"

] | wuyujiji | 6 |

scikit-image/scikit-image | computer-vision | 7,331 | The function skimage.util.compare_images fails silently if called with integers matrices | ### Description:

I was trying to call the `skimage.util.compare_images` with integers matrices (the documentation does not prevent this).

The function does return a value, but not the expected one.

I found out that the function `skimage.util.img_as_float32` called in `skimage.util.compare_images` does not convert the int matrix to a float matrix as expected, resulting into a wrong return value for compare_images.

### Way to reproduce:

```python

import skimage.util as skiu

mat_a = skiu.img_as_float32([[1.0, 1.0], [1.0, 1.0]])

mat_b = skiu.img_as_float32([[1, 1], [1, 1]])

mat_c = skiu.compare_images(mat_a, mat_b, method='diff')

assert mat_a[0][0] == 1.0 # OK

assert mat_b[0][0] == 1.0 # Will fail

assert mat_c[0][0] == 0 #Will fail too

```

### Version information:

```Shell

3.11.2 (main, Mar 13 2023, 12:18:29) [GCC 12.2.0]

Linux-4.4.0-22621-Microsoft-x86_64-with-glibc2.36

scikit-image version: 0.22

```

| open | 2024-02-29T12:46:04Z | 2024-09-08T02:37:35Z | https://github.com/scikit-image/scikit-image/issues/7331 | [

":sleeping: Dormant",

":bug: Bug"

] | Hish15 | 2 |

sigmavirus24/github3.py | rest-api | 936 | Allow preventing file contents retrieval to enable updating large files | Please allow creating a `file_contents` object without retrieving the current contents. This is because [GitHub's contents API only supports retrieving files up to 1mb](https://developer.github.com/v3/repos/contents/#get-contents), and I need to update a file that is larger than 1mb but not necessarily read it. I would suggest the following syntax: `repo.file_contents('folder/myfile.txt', retrieve=False)`. Attempting to access file contents on an object without it's contents retrieved yet could either attempt to retrieve and return the contents, return `None`, or throw an exception. Manual retreival could be attempted with `.retrieve()`. #741 is a slightly related issue. Thanks! | open | 2019-04-16T01:00:07Z | 2019-04-16T01:00:07Z | https://github.com/sigmavirus24/github3.py/issues/936 | [] | stevennyman | 0 |

nolar/kopf | asyncio | 353 | [PR] Force annotations to end strictly with alphanumeric characters | > <a href="https://github.com/nolar"><img align="left" height="50" src="https://avatars0.githubusercontent.com/u/544296?v=4"></a> A pull request by [nolar](https://github.com/nolar) at _2020-04-28 11:05:15+00:00_

> Original URL: https://github.com/zalando-incubator/kopf/pull/353

> Merged by [nolar](https://github.com/nolar) at _2020-04-28 12:29:33+00:00_

## What do these changes do?

Force annotation names to end strictly with alphanumeric characters, not only to fit into 63 chars.

_"Learning Kubernetes the hard way."_

## Description

The annotation names are not only limited to 63 characters, and not only to a specific alphabet, but also to the beginning/ending characters. Otherwise, it fails to patch:

```

$ kubectl patch … --type=merge -p '{"metadata": {"annotations": {"kopf.zalando.org/long.handler.id.here-WumEzA--": "{}"}}}'

The … is invalid: metadata.annotations: Invalid value: "kopf.zalando.org/long.handler.id.here-WumEzA--": name part must consist of alphanumeric characters, '-', '_' or '.', and must start and end with an alphanumeric character (e.g. 'MyName', or 'my.name', or '123-abc', regex used for validation is '([A-Za-z0-9][-A-Za-z0-9_.]*)?[A-Za-z0-9]')

```

Since base64 of a digest was used to make the shortened annotations names unique in #346, it can end with `=` characters, for example.

In this case, we do not need the actual base64'ed value, we just need a persistent and unique suffix. So, cutting those special non-alphanumeric characters is fine.

The change is backward compatible (despite the hashing function change since 0.27rc4): first, it was never released beyond RC; second, it was not working for non-alphanumeric annotations anyway. Proper alphanumeric annotations will remain the same as in 0.27rc4.

## Issues/PRs

> Issues: #331

> Related: #346

## Type of changes

- Bug fix (non-breaking change which fixes an issue)

## Checklist

- [x] The code addresses only the mentioned problem, and this problem only

- [x] I think the code is well written

- [x] Unit tests for the changes exist

- [x] Documentation reflects the changes

- [x] If you provide code modification, please add yourself to `CONTRIBUTORS.txt`

<!-- Are there any questions or uncertainties left?

Any tasks that have to be done to complete the PR? -->

| closed | 2020-08-18T20:04:28Z | 2020-08-23T20:57:43Z | https://github.com/nolar/kopf/issues/353 | [

"bug",

"archive"

] | kopf-archiver[bot] | 0 |

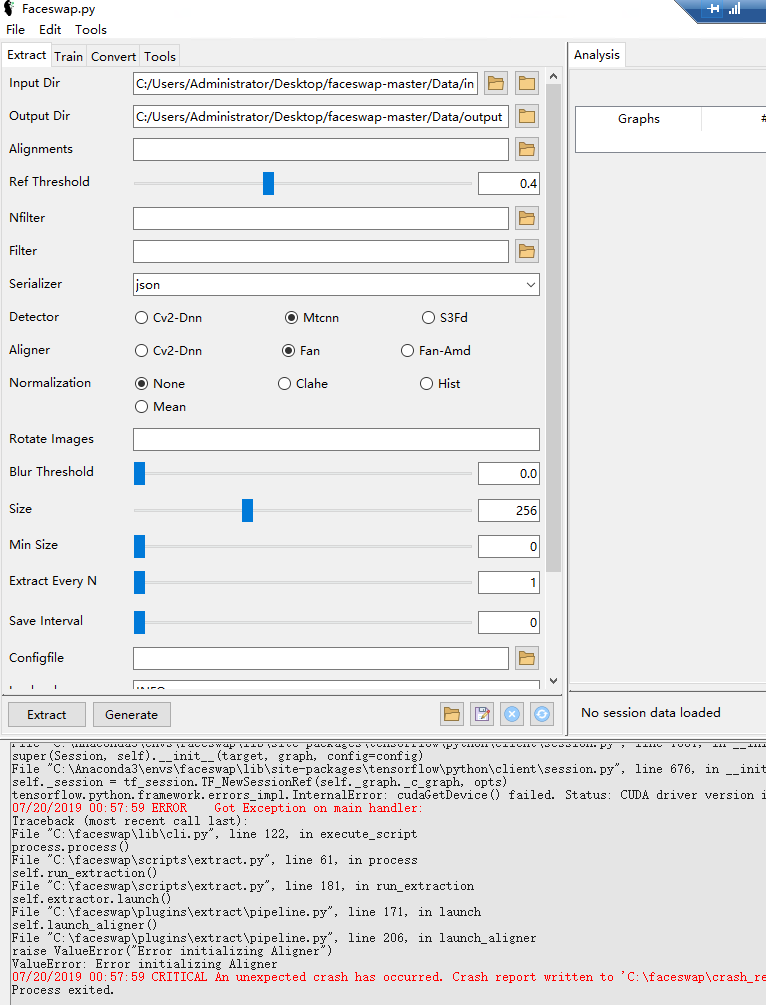

deepfakes/faceswap | deep-learning | 800 | ValueError: Error initializing Aligner | **Describe the bug**

Extract Exception

**To Reproduce**

Steps to reproduce the behavior:

1. download Releases from [https://github.com/deepfakes/faceswap/releases/download/v1.0.0/faceswap_setup_x64.exe](url)

2. install it

3. open FaceSwap and select Extract page ,input 'input dir' & 'output dir'

4. click Extract button

5. See error

**Expected behavior**

The output folder should have many files

**Screenshots**

**Desktop (please complete the following information):**

- OS: [Windows-10-10.0.17763-SP0]

- Browser [IE]

- Version [v11]

**Additional context**

`

07/20/2019 00:57:46 MainProcess MainThread multithreading start DEBUG Started all threads 'save_faces': 1

07/20/2019 00:57:46 MainProcess MainThread extract process_item_count DEBUG Items already processed: 0

07/20/2019 00:57:47 MainProcess MainThread extract process_item_count DEBUG Items to be Processed: 5985

07/20/2019 00:57:47 MainProcess MainThread pipeline launch DEBUG Launching aligner and detector

07/20/2019 00:57:47 MainProcess MainThread pipeline launch_aligner DEBUG Launching Aligner

07/20/2019 00:57:47 MainProcess MainThread multithreading __init__ DEBUG Initializing SpawnProcess: (target: 'Aligner.run', args: (), kwargs: {})

07/20/2019 00:57:47 MainProcess MainThread multithreading __init__ DEBUG Initialized SpawnProcess: 'Aligner.run'

07/20/2019 00:57:47 MainProcess MainThread multithreading start DEBUG Spawning Process: (name: 'Aligner.run', args: (), kwargs: {'event': <multiprocessing.synchronize.Event object at 0x000001D2082895C0>, 'error': <multiprocessing.synchronize.Event object at 0x000001D2082B4748>, 'log_init': <function set_root_logger at 0x000001D27F2F2C80>, 'log_queue': <AutoProxy[Queue] object, typeid 'Queue' at 0x1d27f34c828>, 'log_level': 10, 'in_queue': <AutoProxy[Queue] object, typeid 'Queue' at 0x1d208289908>, 'out_queue': <AutoProxy[Queue] object, typeid 'Queue' at 0x1d2082896d8>}, daemon: True)

07/20/2019 00:57:47 MainProcess MainThread multithreading start DEBUG Spawned Process: (name: 'Aligner.run', PID: 9360)

07/20/2019 00:57:49 Aligner.run MainThread _base initialize DEBUG _base initialize Align: (PID: 9360, args: (), kwargs: {'event': <multiprocessing.synchronize.Event object at 0x000002745378FA20>, 'error': <multiprocessing.synchronize.Event object at 0x000002745618D438>, 'log_init': <function set_root_logger at 0x0000027453A6AB70>, 'log_queue': <AutoProxy[Queue] object, typeid 'Queue' at 0x2745c822f28>, 'log_level': 10, 'in_queue': <AutoProxy[Queue] object, typeid 'Queue' at 0x2745c824080>, 'out_queue': <AutoProxy[Queue] object, typeid 'Queue' at 0x2745c8240f0>})

07/20/2019 00:57:49 Aligner.run MainThread fan initialize INFO Initializing Face Alignment Network...

07/20/2019 00:57:49 Aligner.run MainThread fan initialize DEBUG fan initialize: (args: () kwargs: {'event': <multiprocessing.synchronize.Event object at 0x000002745378FA20>, 'error': <multiprocessing.synchronize.Event object at 0x000002745618D438>, 'log_init': <function set_root_logger at 0x0000027453A6AB70>, 'log_queue': <AutoProxy[Queue] object, typeid 'Queue' at 0x2745c822f28>, 'log_level': 10, 'in_queue': <AutoProxy[Queue] object, typeid 'Queue' at 0x2745c824080>, 'out_queue': <AutoProxy[Queue] object, typeid 'Queue' at 0x2745c8240f0>})

07/20/2019 00:57:49 Aligner.run MainThread gpu_stats __init__ DEBUG Initializing GPUStats

07/20/2019 00:57:49 Aligner.run MainThread gpu_stats initialize DEBUG OS is not macOS. Using pynvml

07/20/2019 00:57:49 Aligner.run MainThread gpu_stats get_device_count DEBUG GPU Device count: 1

07/20/2019 00:57:49 Aligner.run MainThread gpu_stats get_active_devices DEBUG Active GPU Devices: [0]

07/20/2019 00:57:49 Aligner.run MainThread gpu_stats get_handles DEBUG GPU Handles found: 1

07/20/2019 00:57:49 Aligner.run MainThread gpu_stats get_driver DEBUG GPU Driver: 385.54

07/20/2019 00:57:49 Aligner.run MainThread gpu_stats get_devices DEBUG GPU Devices: ['GeForce GTX 1060 6GB']

07/20/2019 00:57:49 Aligner.run MainThread gpu_stats get_vram DEBUG GPU VRAM: [6144.0]

07/20/2019 00:57:49 Aligner.run MainThread gpu_stats __init__ DEBUG Initialized GPUStats

07/20/2019 00:57:49 Aligner.run MainThread gpu_stats initialize DEBUG OS is not macOS. Using pynvml

07/20/2019 00:57:49 Aligner.run MainThread gpu_stats get_device_count DEBUG GPU Device count: 1

07/20/2019 00:57:49 Aligner.run MainThread gpu_stats get_active_devices DEBUG Active GPU Devices: [0]

07/20/2019 00:57:49 Aligner.run MainThread gpu_stats get_handles DEBUG GPU Handles found: 1

07/20/2019 00:57:49 Aligner.run MainThread gpu_stats get_free DEBUG GPU VRAM free: [5860.96484375]

07/20/2019 00:57:49 Aligner.run MainThread gpu_stats get_card_most_free DEBUG Active GPU Card with most free VRAM: {'card_id': 0, 'device': 'GeForce GTX 1060 6GB', 'free': 5860.96484375, 'total': 6144.0}

07/20/2019 00:57:49 Aligner.run MainThread _base get_vram_free VERBOSE Using device GeForce GTX 1060 6GB with 5860MB free of 6144MB

07/20/2019 00:57:49 Aligner.run MainThread fan initialize VERBOSE Reserving 2240MB for face alignments

07/20/2019 00:57:49 Aligner.run MainThread fan load_graph VERBOSE Initializing Face Alignment Network model...

07/20/2019 00:57:53 Aligner.run MainThread _base run ERROR Caught exception in child process: 9360

07/20/2019 00:57:53 Aligner.run MainThread _base run ERROR Traceback:

Traceback (most recent call last):

File "C:\faceswap\plugins\extract\align\_base.py", line 112, in run

self.align(*args, **kwargs)

File "C:\faceswap\plugins\extract\align\_base.py", line 127, in align

self.initialize(*args, **kwargs)

File "C:\faceswap\plugins\extract\align\fan.py", line 47, in initialize

raise err

File "C:\faceswap\plugins\extract\align\fan.py", line 41, in initialize

self.model = FAN(self.model_path, ratio=tf_ratio)

File "C:\faceswap\plugins\extract\align\fan.py", line 199, in __init__

self.session = self.set_session(ratio)

File "C:\faceswap\plugins\extract\align\fan.py", line 221, in set_session

session = self.tf.Session(config=config)

File "C:\Anaconda3\envs\faceswap\lib\site-packages\tensorflow\python\client\session.py", line 1551, in __init__

super(Session, self).__init__(target, graph, config=config)

File "C:\Anaconda3\envs\faceswap\lib\site-packages\tensorflow\python\client\session.py", line 676, in __init__

self._session = tf_session.TF_NewSessionRef(self._graph._c_graph, opts)

tensorflow.python.framework.errors_impl.InternalError: cudaGetDevice() failed. Status: CUDA driver version is insufficient for CUDA runtime version

Traceback (most recent call last):

File "C:\faceswap\lib\cli.py", line 122, in execute_script

process.process()

File "C:\faceswap\scripts\extract.py", line 61, in process

self.run_extraction()

File "C:\faceswap\scripts\extract.py", line 181, in run_extraction

self.extractor.launch()

File "C:\faceswap\plugins\extract\pipeline.py", line 171, in launch

self.launch_aligner()

File "C:\faceswap\plugins\extract\pipeline.py", line 206, in launch_aligner

raise ValueError("Error initializing Aligner")

ValueError: Error initializing Aligner

============ System Information ============

encoding: cp936

git_branch: master

git_commits: 5da91b7 Add .ico back for legacy windows installs

gpu_cuda: 9.0

gpu_cudnn: 7.0.5

gpu_devices: GPU_0: GeForce GTX 1060 6GB

gpu_devices_active: GPU_0

gpu_driver: 385.54

gpu_vram: GPU_0: 6144MB

os_machine: AMD64

os_platform: Windows-10-10.0.17763-SP0

os_release: 10

py_command: C:\faceswap\faceswap.py extract -i C:/Users/Administrator/Desktop/faceswap-master/Data/input/6026016444322A7E967FAD9273213C77.mp4 -o C:/Users/Administrator/Desktop/faceswap-master/Data/output -l 0.4 --serializer json -D mtcnn -A fan -nm none -bt 0.0 -sz 256 -min 0 -een 1 -si 0 -L INFO -gui

py_conda_version: conda 4.7.5

py_implementation: CPython

py_version: 3.6.8

py_virtual_env: True

sys_cores: 4

sys_processor: Intel64 Family 6 Model 94 Stepping 3, GenuineIntel

sys_ram: Total: 8128MB, Available: 5726MB, Used: 2402MB, Free: 5726MB

=============== Pip Packages ===============

absl-py==0.7.1

astor==0.7.1

certifi==2019.6.16

cloudpickle==1.2.1

cycler==0.10.0

cytoolz==0.10.0

dask==2.1.0

decorator==4.4.0

fastcluster==1.1.25

ffmpy==0.2.2

gast==0.2.2

grpcio==1.16.1

h5py==2.9.0

imageio==2.5.0

imageio-ffmpeg==0.3.0

joblib==0.13.2

Keras==2.2.4

Keras-Applications==1.0.8

Keras-Preprocessing==1.1.0

kiwisolver==1.1.0

Markdown==3.1.1

matplotlib==2.2.2

mkl-fft==1.0.12

mkl-random==1.0.2

mkl-service==2.0.2

mock==3.0.5

networkx==2.3

numpy==1.16.2

nvidia-ml-py3==7.352.0

olefile==0.46

pathlib==1.0.1

Pillow==6.1.0

protobuf==3.8.0

psutil==5.6.3

pyparsing==2.4.0

pyreadline==2.1

python-dateutil==2.8.0

pytz==2019.1

PyWavelets==1.0.3

pywin32==223

PyYAML==5.1.1

scikit-image==0.15.0

scikit-learn==0.21.2

scipy==1.2.1

six==1.12.0

tensorboard==1.13.1

tensorflow==1.13.1

tensorflow-estimator==1.13.0

termcolor==1.1.0

toolz==0.10.0

toposort==1.5

tornado==6.0.3

tqdm==4.32.1

Werkzeug==0.15.4

wincertstore==0.2

============== Conda Packages ==============

# packages in environment at C:\Anaconda3\envs\faceswap:

#

# Name Version Build Channel

_tflow_select 2.1.0 gpu

absl-py 0.7.1 py36_0

astor 0.7.1 py36_0

blas 1.0 mkl

ca-certificates 2019.5.15 0

certifi 2019.6.16 py36_0

cloudpickle 1.2.1 py_0

cudatoolkit 10.0.130 0

cudnn 7.6.0 cuda10.0_0

cycler 0.10.0 py36h009560c_0

cytoolz 0.10.0 py36he774522_0

dask-core 2.1.0 py_0

decorator 4.4.0 py36_1

fastcluster 1.1.25 py36h830ac7b_1000 conda-forge

ffmpeg 4.1.3 h6538335_0 conda-forge

ffmpy 0.2.2 pypi_0 pypi

freetype 2.9.1 ha9979f8_1

gast 0.2.2 py36_0

grpcio 1.16.1 py36h351948d_1

h5py 2.9.0 py36h5e291fa_0

hdf5 1.10.4 h7ebc959_0

icc_rt 2019.0.0 h0cc432a_1

icu 58.1 vc14_0 conda-forge

imageio 2.5.0 py36_0

imageio-ffmpeg 0.3.0 py_0 conda-forge

intel-openmp 2019.4 245

joblib 0.13.2 py36_0

jpeg 9c hfa6e2cd_1001 conda-forge

keras 2.2.4 0

keras-applications 1.0.8 py_0

keras-base 2.2.4 py36_0

keras-preprocessing 1.1.0 py_1

kiwisolver 1.1.0 py36ha925a31_0

libblas 3.8.0 8_mkl conda-forge

libcblas 3.8.0 8_mkl conda-forge

liblapack 3.8.0 8_mkl conda-forge

liblapacke 3.8.0 8_mkl conda-forge

libmklml 2019.0.3 0

libpng 1.6.37 h7602738_0 conda-forge

libprotobuf 3.8.0 h7bd577a_0

libtiff 4.0.10 h6512ee2_1003 conda-forge

libwebp 1.0.2 hfa6e2cd_2 conda-forge

lz4-c 1.8.3 he025d50_1001 conda-forge

markdown 3.1.1 py36_0

matplotlib 2.2.2 py36had4c4a9_2

mkl 2019.4 245

mkl-service 2.0.2 py36he774522_0

mkl_fft 1.0.12 py36h14836fe_0

mkl_random 1.0.2 py36h343c172_0

mock 3.0.5 py36_0

networkx 2.3 py_0

numpy 1.16.2 py36h19fb1c0_0

numpy-base 1.16.2 py36hc3f5095_0

nvidia-ml-py3 7.352.0 pypi_0 pypi

olefile 0.46 py36_0

opencv 4.1.0 py36hb4945ee_5 conda-forge

openssl 1.1.1c he774522_1

pathlib 1.0.1 py36_1

pillow 6.1.0 py36hdc69c19_0

pip 19.1.1 py36_0

protobuf 3.8.0 py36h33f27b4_0

psutil 5.6.3 py36he774522_0

pyparsing 2.4.0 py_0

pyqt 5.9.2 py36h6538335_2

pyreadline 2.1 py36_1

python 3.6.8 h9f7ef89_7

python-dateutil 2.8.0 py36_0

pytz 2019.1 py_0

pywavelets 1.0.3 py36h8c2d366_1

pywin32 223 py36hfa6e2cd_1

pyyaml 5.1.1 py36he774522_0

qt 5.9.7 hc6833c9_1 conda-forge

scikit-image 0.15.0 py36ha925a31_0

scikit-learn 0.21.2 py36h6288b17_0

scipy 1.2.1 py36h29ff71c_0

setuptools 41.0.1 py36_0

sip 4.19.8 py36h6538335_0

six 1.12.0 py36_0

sqlite 3.29.0 he774522_0

tensorboard 1.13.1 py36h33f27b4_0

tensorflow 1.13.1 gpu_py36h9006a92_0

tensorflow-base 1.13.1 gpu_py36h871c8ca_0

tensorflow-estimator 1.13.0 py_0

tensorflow-gpu 1.13.1 h0d30ee6_0

termcolor 1.1.0 py36_1

tk 8.6.8 hfa6e2cd_0

toolz 0.10.0 py_0

toposort 1.5 py_3 conda-forge

tornado 6.0.3 py36he774522_0

tqdm 4.32.1 py_0

vc 14.1 h0510ff6_4

vs2015_runtime 14.15.26706 h3a45250_4

werkzeug 0.15.4 py_0

wheel 0.33.4 py36_0

wincertstore 0.2 py36h7fe50ca_0

xz 5.2.4 h2fa13f4_1001 conda-forge

yaml 0.1.7 hc54c509_2

zlib 1.2.11 h2fa13f4_1005 conda-forge

zstd 1.4.0 hd8a0e53_0 conda-forge

`

| closed | 2019-07-20T01:22:15Z | 2019-07-20T09:59:27Z | https://github.com/deepfakes/faceswap/issues/800 | [] | 463728946 | 1 |

sigmavirus24/github3.py | rest-api | 523 | "422 Invalid request" when creating a commit without author and/or committer | [The document](http://github3py.readthedocs.org/en/latest/repos.html) says `author` and `committer` parameter of `create_commit` method is optional but it seems not true.

```

>>> commit = repo.create_commit('test commit', t.sha, [])

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/usr/lib/python3.5/site-packages/github3/decorators.py", line 38, in auth_wrapper

return func(self, *args, **kwargs)

File "/usr/lib/python3.5/site-packages/github3/repos/repo.py", line 508, in create_commit

json = self._json(self._post(url, data=data), 201)

File "/usr/lib/python3.5/site-packages/github3/models.py", line 100, in _json

if self._boolean(response, status_code, 404) and response.content:

File "/usr/lib/python3.5/site-packages/github3/models.py", line 121, in _boolean

raise GitHubError(response)

github3.models.GitHubError: 422 Invalid request.

"email", "name" weren't supplied.

"email", "name" weren't supplied.

>>> commit = repo.create_commit('test commit', t.sha, [], {'name':'Yi EungJun', 'email':'test@mail.com''})

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/usr/lib/python3.5/site-packages/github3/decorators.py", line 38, in auth_wrapper

return func(self, *args, **kwargs)

File "/usr/lib/python3.5/site-packages/github3/repos/repo.py", line 508, in create_commit

json = self._json(self._post(url, data=data), 201)

File "/usr/lib/python3.5/site-packages/github3/models.py", line 100, in _json

if self._boolean(response, status_code, 404) and response.content:

File "/usr/lib/python3.5/site-packages/github3/models.py", line 121, in _boolean

raise GitHubError(response)

github3.models.GitHubError: 422 Invalid request.

"email", "name" weren't supplied.

```

I am using 0.9.4.

##

<bountysource-plugin>

---

Want to back this issue? **[Post a bounty on it!](https://www.bountysource.com/issues/29406457-422-invalid-request-when-creating-a-commit-without-author-and-or-committer?utm_campaign=plugin&utm_content=tracker%2F183477&utm_medium=issues&utm_source=github)** We accept bounties via [Bountysource](https://www.bountysource.com/?utm_campaign=plugin&utm_content=tracker%2F183477&utm_medium=issues&utm_source=github).

</bountysource-plugin> | closed | 2015-12-26T12:21:47Z | 2018-03-22T02:23:45Z | https://github.com/sigmavirus24/github3.py/issues/523 | [] | eungjun-yi | 2 |

thtrieu/darkflow | tensorflow | 461 | restore cnn in native tensorflow | thanks for project. it works well on my custom dataset. i want to restore only cnn (from input to last convolution layer) in native tensorflow (or tf slim), how to do it? thanks! | open | 2017-12-05T21:03:10Z | 2018-01-08T03:55:05Z | https://github.com/thtrieu/darkflow/issues/461 | [] | ghost | 0 |

nolar/kopf | asyncio | 400 | [archival placeholder] | This is a placeholder for later issues/prs archival.

It is needed now to reserve the initial issue numbers before going with actual development (PRs), so that later these placeholders could be populated with actual archived issues & prs with proper intra-repo cross-linking preserved. | closed | 2020-08-18T20:05:38Z | 2020-08-18T20:05:39Z | https://github.com/nolar/kopf/issues/400 | [

"archive"

] | kopf-archiver[bot] | 0 |

sammchardy/python-binance | api | 721 | Instance of 'Client' has no 'futures_coin_account_trades' | Hi,

I have been using this lib since December till now.

Thank you for the contributor for this python-binance!

Recently I had updated to version 0.7.9, however, the futures_coin_XXX() functions are all not able to be called.

Here is the sample code I am using:

```

# Get environment variables

api_key = os.environ.get('API_KEY')

api_secret = os.environ.get('API_SECRET')

client = Client(api_key, api_secret)

ping = client.futures_coin_ping()

print(ping)

```

Error Return:

```

Traceback (most recent call last):

File "test.py", line 15, in <module>

ping = client.futures_coin_ping()

AttributeError: 'Client' object has no attribute 'futures_coin_ping'

```

However, this works well if I replace `client.futures_coin_ping()` to `client.futures_ping()`.

Seems like only the futures_coin functions has some issue.

| open | 2021-03-06T06:54:12Z | 2021-03-12T22:33:40Z | https://github.com/sammchardy/python-binance/issues/721 | [] | zyairelai | 1 |

twelvedata/twelvedata-python | matplotlib | 1 | [Bug] get_stock_exchanges_list not working | **Describe the bug**

it is not possible to call the function td.get_stock_exchanges_list()

**To Reproduce**

When using td.get_stock_exchanges_list() python returns:

```

/usr/local/lib/python3.6/dist-packages/twelvedata/client.py in get_stock_exchanges_list(self)

44 :rtype: StockExchangesListRequestBuilder

45 """

---> 46 return StockExchangesListRequestBuilder(ctx=self.ctx)

47

48 def get_forex_pairs_list(self):

NameError: name 'StockExchangesListRequestBuilder' is not defined

```

**Cause of the error**

The reason for the error is probably because the endpoints are named differently

```

from .endpoints import (

StocksListEndpoint,

StockExchangesListEndpoint,

ForexPairsListEndpoint,

CryptocurrenciesListEndpoint,

CryptocurrencyExchangesListEndpoint,

)

```

**Further notes**

The same thing seems to apply for some other endpoints. | closed | 2020-01-08T13:14:33Z | 2020-01-13T00:11:29Z | https://github.com/twelvedata/twelvedata-python/issues/1 | [] | bandor151 | 1 |

KevinMusgrave/pytorch-metric-learning | computer-vision | 421 | Cannot allocate memory : Getting RAM full issue while using 110 GB RAM and 1024 memory queue size. | Hi Kevin,

I have around 15k images and around 6k labels for them. While training for the first epoch itself, I see that my 110GB RAM space is occupied and hence the trainnig stops with error:

`RuntimeError: [enforce fail at CPUAllocator.cpp:68] . DefaultCPUAllocator: can't allocate memory: you tried to allocate 51380224 bytes. Error code 12 (Cannot allocate memory)`

I have below settings:

batch_size = 8

out_embedding_size = 2048 # final embedding size

memory_size = 1024 #XBM queue size

Attaching the RAM usage snapshot.

<img width="1131" alt="Ram full" src="https://user-images.githubusercontent.com/7069488/153206740-52ff1c7a-606c-4a0a-bb91-3c5f288ab49c.png">

| closed | 2022-02-09T13:04:07Z | 2022-02-10T07:29:42Z | https://github.com/KevinMusgrave/pytorch-metric-learning/issues/421 | [

"question"

] | abhinav3 | 6 |

explosion/spaCy | machine-learning | 12,140 | ro_core_news_lg missing | <!-- NOTE: For questions or install related issues, please open a Discussion instead. -->

## How to reproduce the behaviour

<!-- Include a code example or the steps that led to the problem. Please try to be as specific as possible. -->

Click on

https://spacy.io/models/ro#ro_core_news_lg

or try

python -m spacy [download](https://spacy.io/api/cli#download) ro_core_news_lg

## Your Environment

<!-- Include details of your environment. You can also type `python -m spacy info --markdown` and copy-paste the result here.-->

* Operating System:

* Python Version Used:

* spaCy Version Used:

* Environment Information:

| closed | 2023-01-22T07:58:52Z | 2023-03-03T01:43:41Z | https://github.com/explosion/spaCy/issues/12140 | [

"lang / ro"

] | TigranI | 4 |

uriyyo/fastapi-pagination | fastapi | 842 | Pagination is not working with tortoise-orm version 0.20.0 | Hi!

I'm trying to use pagination with the latest version of `tortoise-orm (0.20.0)` according to the [official integration tortoise-orm example](https://github.com/uriyyo/fastapi-pagination/blob/main/examples/pagination_tortoise.py).

But it's not working and getting pydantic model serialization error.

Later tried the following and it's working with the default `paginate` method like below.

```python

from typing import Any

from fastapi import Depends

from fastapi_pagination import Params, paginate, Page

from apps.contacts.models import Country

from apps.utils.cbv.routers import InferringRouter

from apps.utils.cbv.views import cbv

router = InferringRouter()

@cbv(router)

class CountryViews:

@router.get("/countries", response_model=Page[Any], tags=["address"])

async def country_list(self, params: Params = Depends()) -> Any:

return paginate(await Country.all().values(), params=params)

```

I'm using [cbv](https://fastapi-utils.davidmontague.xyz/user-guide/class-based-views/) and [InferringRouter](https://fastapi-utils.davidmontague.xyz/user-guide/inferring-router/) from [FastAPI utils](https://fastapi-utils.davidmontague.xyz/user-guide/class-based-views/).

As they don't have support for Pydantic version 2.0 yet, so I've copied two modules in my local utility packages so that I can use them with Pydantic version 2.0.

Can you please tell me what I can do to use the tortoise-orm integration version of `fastapi-pagination` instead of default `paginate`?

@uriyyo | closed | 2023-09-22T17:42:26Z | 2023-09-26T15:04:42Z | https://github.com/uriyyo/fastapi-pagination/issues/842 | [

"bug"

] | xalien10 | 4 |

jonaswinkler/paperless-ng | django | 1,332 | [BUG] How to debug classifier that doesn't auto classify? | The model seems generated according to the admin/log, yet after consumption nothing else happens to any new documents.

Before a certain(?) amount of documents the log contained `classify..no model existing yet..`. Now `consumption finished` remains the last output for each document.

I've tried:

- Creating an inbox tag and and adding/removing it to existing documents

- Adding type, correspondent and tag to 50 of ca. 70 documents

- `document-retagger`[^1]

I can't see any suspicious log entry to start investigating.

Where should I start debugging?

Note: Tags of type `Any` are working