repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

tflearn/tflearn | data-science | 943 | cross_product term | Anyone know how to use cross_product in tflearn?

Or how to transform tensorflow indicator to tensor for tflearn ?

```

tf.feature_column.indicator_column(tf.feature_column.categorical_column_with_vocabulary_list(cc,cc_size[1]))

``` | open | 2017-10-26T16:52:06Z | 2017-10-26T16:52:06Z | https://github.com/tflearn/tflearn/issues/943 | [] | whizzalan | 0 |

whitphx/streamlit-webrtc | streamlit | 1,046 | ReferenceError: weakly-referenced object no longer exists | Updated to 0.43.3 but I still get the same error on Mac M1 Pro | closed | 2022-09-01T12:31:35Z | 2022-09-05T12:03:18Z | https://github.com/whitphx/streamlit-webrtc/issues/1046 | [] | creeksflowing | 12 |

home-assistant/core | asyncio | 140,995 | HomeKit Integration: Missing and Non-Functional Entities After Update (Smartmi Air Purifier P2) | ### The problem

Hello! First of all, thank you for your hard work on the HomeKit integration. I need your help with an issue that appeared after updating Home Assistant.

After the update, several entities in the HomeKit integration disappeared, and new ones appeared, but they are not working. Here are the details:

**Device:**

Name: Smartmi Air Purifier P2

Model: ZMKQJHQP21

Manufacturer: Beijing Smartmi Electronic Technology Co., Ltd.

**Previously Working Entities:**

sensor.smartmi_air_purifier_p2_air_purifier_status

fan.smartmi_air_purifier_p2

sensor.smartmi_air_purifier_p2_pm2_5_density

sensor.smartmi_air_purifier_p2_pm10_density

Additionally, there were entities for operation modes and filter status.

**Current Situation:**

After the update, the following entities are present but non-functional:

fan.smartmi_air_purifier_p2 - Unavailable

sensor.smartmi_air_purifier_p2_air_purifier_status - Unavailable

sensor.smartmi_air_purifier_p2_air_quality - Shows values from 1 to 5, but other entities are missing or not working.

### What version of Home Assistant Core has the issue?

2025.3.3

### What was the last working version of Home Assistant Core?

_No response_

### What type of installation are you running?

Home Assistant Supervised

### Integration causing the issue

homekit_controller

### Link to integration documentation on our website

_No response_

### Diagnostics information

{

"data": {

"config-entry": {

"title": "Smartmi Air Purifier P2",

"version": 1,

"data": {

"AccessoryIP": "**REDACTED**",

"AccessoryIPs": [

"192.168.33.61"

],

"AccessoryLTPK": "xxxxxxxxx713709b0d957456bf18b61cbd418b027fb66de6",

"AccessoryPairingID": "xx:2x:xx:5x:3x:xx",

"AccessoryPort": 80,

"Connection": "IP",

"iOSDeviceLTPK": "xxxxxxe0f48ea6b6a4e3de32073a7d",

"iOSDeviceLTSK": "**REDACTED**",

"iOSPairingId": "xxxxxxx48e6-ba29-5f4be17810bd"

}

},

"entity-map": [

{

"aid": 1,

"services": [

{

"iid": 1,

"type": "0000xxx-xxxx-xxxx-xxxxx-xxxxxxxxx",

"characteristics": [

{

"type": "0000xxx-xxxx-xxxx-xxxxx-xxxxxxxxx",

"iid": 2,

"perms": [

"pw"

],

"format": "bool",

"description": "Identify"

},

{

"type": "0000xxx-xxxx-xxxx-xxxxx-xxxxxxxxx",

"iid": 3,

"perms": [

"pr"

],

"format": "string",

"value": "Beijing Smartmi Electronic Technology Co., Ltd.",

"description": "Manufacturer",

"maxLen": 64

},

{

"type": "0000xxx-xxxx-xxxx-xxxxx-xxxxxxxxx",

"iid": 4,

"perms": [

"pr"

],

"format": "string",

"value": "ZMKQJHQP21",

"description": "Model",

"maxLen": 64

},

{

"type": "0000xxx-xxxx-xxxx-xxxxx-xxxxxxxxx",

"iid": 5,

"perms": [

"pr"

],

"format": "string",

"value": "Smartmi Air Purifier P2",

"description": "Name",

"maxLen": 64

},

{

"type": "0000xxx-xxxx-xxxx-xxxxx-xxxxxxxxx",

"iid": 6,

"perms": [

"pr"

],

"format": "string",

"value": "**REDACTED**",

"description": "Serial Number",

"maxLen": 64

},

{

"type": "0000xxx-xxxx-xxxx-xxxxx-xxxxxxxxx",

"iid": 7,

"perms": [

"pr"

],

"format": "string",

"value": "3.0.2",

"description": "Firmware Revision",

"maxLen": 64

}

]

},

{

"iid": 15,

"type": "0000xxx-xxxx-xxxx-xxxxx-xxxxxxxxx",

"characteristics": [

{

"type": "0000xxx-xxxx-xxxx-xxxxx-xxxxxxxxx",

"iid": 16,

"perms": [

"pr",

"ev"

],

"format": "uint8",

"value": 1,

"description": "Air Quality",

"minValue": 0,

"maxValue": 5,

"minStep": 1

},

{

"type": "0000xxx-xxxx-xxxx-xxxxx-xxxxxxxxx",

"iid": 17,

"perms": [

"pr"

],

"format": "string",

"value": "My air quality sensor",

"description": "Name",

"maxLen": 64

}

]

}

]

}

],

"device": {

"name": "Smartmi Air Purifier P2",

"model": "ZMKQJHQP21",

"manfacturer": "Beijing Smartmi Electronic Technology Co., Ltd.",

"sw_version": "3.0.2",

"entities": [

{

"original_name": "Smartmi Air Purifier P2 Air Purifier Status",

"original_device_class": "enum",

"entity_category": "diagnostic",

"state": {

"entity_id": "sensor.smartmi_air_purifier_p2_air_purifier_status",

"state": "unavailable",

"attributes": {

"restored": true,

"options": [

"inactive",

"idle",

"purifying"

],

"device_class": "enum",

"friendly_name": "Smartmi Air Purifier P2 Air Purifier Status",

"supported_features": 0

}

}

},

{

"original_name": "Smartmi Air Purifier P2 Air Quality",

"original_device_class": "aqi",

"state": {

"entity_id": "sensor.smartmi_air_purifier_p2_air_quality",

"state": "1",

"attributes": {

"state_class": "measurement",

"device_class": "aqi",

"friendly_name": "Smartmi Air Purifier P2 Air Quality"

}

}

},

{

"original_name": "Smartmi Air Purifier P2 Identify",

"original_device_class": "identify",

"entity_category": "diagnostic",

"state": {

"entity_id": "button.smartmi_air_purifier_p2_identify",

"state": "2025-03-17T07:35:22.947612+00:00",

"attributes": {

"device_class": "identify",

"friendly_name": "Smartmi Air Purifier P2 Identify"

}

}

},

{

"original_name": "Smartmi Air Purifier P2 My air purifier",

"original_device_class": null,

"state": {

"entity_id": "fan.smartmi_air_purifier_p2",

"state": "unavailable",

"attributes": {

"restored": true,

"friendly_name": "purifier",

"supported_features": 48

}

}

}

]

}

}

}

### Example YAML snippet

```yaml

```

### Anything in the logs that might be useful for us?

```txt

```

### Additional information

_No response_ | open | 2025-03-20T14:01:02Z | 2025-03-20T17:27:04Z | https://github.com/home-assistant/core/issues/140995 | [

"integration: homekit"

] | FleshZLO | 1 |

tqdm/tqdm | jupyter | 1,438 | bar_format typeerror for {rate:.3f} format | ## my code

```py

from tqdm.auto import tqdm , trange

# from tqdm.notebook import tqdm

from time import sleep

with tqdm(total=10,

desc="desc", bar_format="[{desc}: {percentage:3.0f}%] |{bar}| [{n_fmt}/{total_fmt}] [{elapsed}<<{remaining}] [{rate:.3f} {unit}/s] "

) as t:

for i in range(10):

sleep(0.1)

t.update()

```

## env

environment, where applicable:

```python

import tqdm, sys

print(tqdm.__version__, sys.version, sys.platform)

```

4.64.0 3.9.9 (tags/v3.9.9:ccb0e6a, Nov 15 2021, 18:08:50) [MSC v.1929 64 bit (AMD64)] win32

## traceback

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "D:\programs\python\python39\lib\site-packages\tqdm\std.py", line 1109, in __init__

self.refresh(lock_args=self.lock_args)

File "D:\programs\python\python39\lib\site-packages\tqdm\std.py", line 1361, in refresh

self.display()

File "D:\programs\python\python39\lib\site-packages\tqdm\std.py", line 1509, in display

self.sp(self.__str__() if msg is None else msg)

File "D:\programs\python\python39\lib\site-packages\tqdm\std.py", line 1165, in __str__

return self.format_meter(**self.format_dict)

File "D:\programs\python\python39\lib\site-packages\tqdm\std.py", line 524, in format_meter

nobar = bar_format.format(bar=full_bar, **format_dict)

TypeError: unsupported format string passed to NoneType.__format__

## other

just use rate is ok

- [*] I have marked all applicable categories:

+ [*] exception-raising bug

+ [ ] visual output bug

- [*] I have visited the [source website], and in particular

read the [known issues]

- [*] I have searched through the [issue tracker] for duplicates

- [*] I have mentioned version numbers, operating system and

[source website]: https://github.com/tqdm/tqdm/

[known issues]: https://github.com/tqdm/tqdm/#faq-and-known-issues

[issue tracker]: https://github.com/tqdm/tqdm/issues?q=

| open | 2023-03-02T13:51:56Z | 2025-02-09T21:54:41Z | https://github.com/tqdm/tqdm/issues/1438 | [] | ZX1209 | 1 |

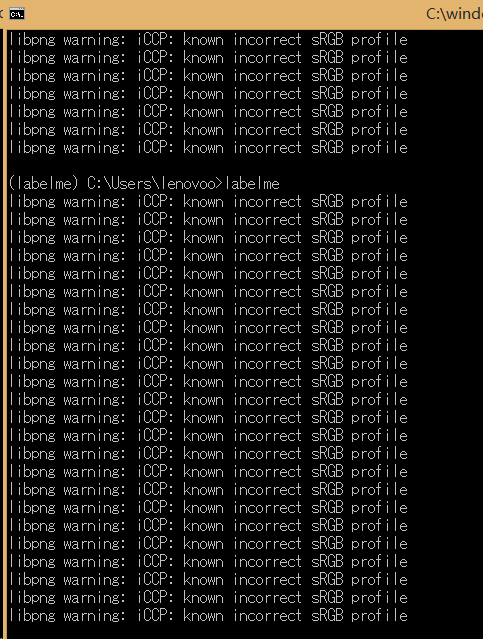

wkentaro/labelme | computer-vision | 318 | libpng warning: iCCP: known incorrect sRGB profile | Hi, what does this warning mean? Although it doesn't affect my use.

| closed | 2019-02-14T07:43:52Z | 2019-04-27T01:59:00Z | https://github.com/wkentaro/labelme/issues/318 | [] | lck1201 | 0 |

darrenburns/posting | rest-api | 160 | Scripting get_variable method not implemented | The docs [Scripting](https://posting.sh/guide/scripting/) contains a reference to the `posting.get_variable()` method, but it doesn't look like this has been implemented (yet).

As a workaround, looks like we could use `vars = posting.variables` and process the dict returned.

e.g.

```python

from posting import Posting

import json

def setup(posting: Posting) -> None:

auth_token="auth_token"

# Capture the variables currently set

vars = posting.variables

# Check to see if auth token is set

if not auth_token in vars:

print(f"{auth_token} not found")

posting.set_variable(auth_token, "1234567890")

# Debug - dump the updated variables

vars = posting.variables

print(f"Vars: {json.dumps(vars, sort_keys=True, indent=4)}")

# Debug to see the var is set.

print(f"Auth: {vars[auth_token]}")

```

| closed | 2024-12-31T13:58:53Z | 2025-03-02T18:07:40Z | https://github.com/darrenburns/posting/issues/160 | [

"bug"

] | zDavidB | 2 |

axnsan12/drf-yasg | django | 691 | No utf-8 symbols support for generated JSON and YAML | When trying to generate files with ^swagger(?P<format>\.json|\.yaml)$ it gives no support for utf-8 symbols, though defined description my content such symbols | open | 2021-01-14T12:25:29Z | 2025-03-07T12:13:27Z | https://github.com/axnsan12/drf-yasg/issues/691 | [

"triage"

] | ProstoMaxim | 1 |

voila-dashboards/voila | jupyter | 1,341 | Persistent loop of Matplotlib figure animation results in flickering output when run in a thread | ## Description

The [Mesa](https://github.com/projectmesa/mesa) agent-based modeling library is looking to replace its self-hosted Tornado-based visualization server with Voilà. I have made a prototype in https://github.com/rht/mesa-examples/tree/voila. However, I encountered the flickering issue reported in #431. To summarize, it is like running the Game of Life simulation with play and stop buttons. The play and stop work, except that the display flickers.

The long-running loop: https://github.com/rht/mesa-examples/blob/99a68386226f3fe5be3953d25a84bd92f8b7065c/examples/boltzmann_wealth_model/run_voila.py#L161-L170. If I run the loop without threading or multiprocessing, it runs just fine. Each loop lasts for about 300 ms. My hypothesis is that the solutions in #431 does not apply because there is only 1 plot being constantly re-rendered, whereas in my case, I have 3 objects being constantly rerendered:

- the time series plot of the simulation

- the imshow heatmap view of the agents

- the elapsed of each step, displayed in a `widgets.Output`

https://github.com/rht/mesa-examples/blob/99a68386226f3fe5be3953d25a84bd92f8b7065c/examples/boltzmann_wealth_model/run_voila.py#L154-L159

I have tried the `plt.draw()` as recommended in https://github.com/voila-dashboards/voila/issues/431#issuecomment-542390982, but it didn't work out. I have also tried adding `clear_output(wait=True)`, and it didn't work out.

I haven't tried on JupyterLab yet, and have been focusing to make it work with `voila --no-browser --debug`. I apologize in advance if this issue is not concise nor self-contained.

## Context

<!--Complete the following for context, and add any other relevant context-->

- voila version 0.4.0

- Operating System and version: NixOS 23.05

- Browser and version: Brave v1.52.126 | open | 2023-06-26T10:06:20Z | 2024-02-12T23:25:17Z | https://github.com/voila-dashboards/voila/issues/1341 | [

"bug"

] | rht | 6 |

microsoft/nlp-recipes | nlp | 625 | [ASK] transformers.abstractive_summarization_bertsum.py not importing transformers | ### Description

I run in Google Colab the following code

```

!pip install --upgrade

!pip install -q git+https://github.com/microsoft/nlp-recipes.git

!pip install jsonlines

!pip install pyrouge

!pip install scrapbook

import os

import shutil

import sys

from tempfile import TemporaryDirectory

import torch

import nltk

from nltk import tokenize

import pandas as pd

import pprint

import scrapbook as sb

nlp_path = os.path.abspath("../../")

if nlp_path not in sys.path:

sys.path.insert(0, nlp_path)

from utils_nlp import models

from utils_nlp.models import transformers

from utils_nlp.models.transformers.abstractive_summarization_bertsum \

import BertSumAbs, BertSumAbsProcessor

```

It breaks on the last line and I get the following error

```

/usr/local/lib/python3.7/dist-packages/utils_nlp/models/transformers/abstractive_summarization_bertsum.py in <module>()

15 from torch.utils.data.distributed import DistributedSampler

16 from tqdm import tqdm

---> 17 from transformers import AutoTokenizer, BertModel

18

19 from utils_nlp.common.pytorch_utils import (

ModuleNotFoundError: No module named 'transformers'

```

In summary, the code in abstractive_summarization_bertsum.py doesn't resolve transformers where it is located in the transformer folder. Is it something to be fixed on your side? | open | 2022-01-11T10:21:31Z | 2022-02-17T23:23:40Z | https://github.com/microsoft/nlp-recipes/issues/625 | [] | neqkir | 1 |

Gerapy/Gerapy | django | 77 | 上传的项目py文件编辑不了 | 在项目的py文件中不能包含中文,我把全部中文换成英文后,可以编辑文件了,希望修复下。 | open | 2018-08-01T07:14:42Z | 2020-07-24T08:19:53Z | https://github.com/Gerapy/Gerapy/issues/77 | [] | ghost | 1 |

jina-ai/serve | deep-learning | 5,402 | Bind to `host` instead of `default_host` | **Describe the bug**

Flow accepts `host` parameter because it inherits from client and gateway but is confusing as shown in #5401 | closed | 2022-11-17T08:59:04Z | 2022-11-21T15:43:42Z | https://github.com/jina-ai/serve/issues/5402 | [

"area/community"

] | JoanFM | 5 |

psf/requests | python | 6,080 | How to uinstall requests library using setup.py? | We are in a factory environment where we cannot use pip.

We installed request library using python install setup.py.

Is it possible to uninstall requests library using setup.py. Please share the command. | closed | 2022-03-07T10:57:39Z | 2023-03-08T00:03:28Z | https://github.com/psf/requests/issues/6080 | [] | ashokchandran | 1 |

Tanuki/tanuki.py | pydantic | 35 | Align statements do not support lists or tuples | The following align statements error out with inputs Lists or tuples

```

@Monkey.patch

def classify_sentiment(input: List[str]) -> Literal['Good', 'Bad', 'Neutral']: # Multi-class classification

"""

Determine if the input is positive, negative or neutral sentiment

"""

@Monkey.align

def align():

assert classify_sentiment(["I thought the ending was awesome"]) == 'Good'

assert classify_sentiment(["The acting was horrendous"]) == 'Bad'

assert classify_sentiment(["It was a dark and stormy night"]) == 'Neutral'

```

```

@Monkey.patch

def classify_sentiment(input: tuple) -> Literal['Good', 'Bad', 'Neutral']: # Multi-class classification

"""

Determine if the input is positive, negative or neutral sentiment

"""

@Monkey.align

def align():

assert classify_sentiment(("I thought the ending was awesome", "It was really good")) == 'Good'

``` | closed | 2023-11-03T18:32:28Z | 2023-11-08T14:56:49Z | https://github.com/Tanuki/tanuki.py/issues/35 | [] | MartBakler | 0 |

tflearn/tflearn | data-science | 1,074 | tflearn does't work when using higher TensorFlow | I found that tflearn does't work when using higher TensorFlow.

Is it a bug?

My Tensorflow version: 1.8.0

TfLearn version: 0.3.2

OS: Win10 x64

Python version: 3.6.6 | open | 2018-07-16T06:55:25Z | 2018-07-16T06:55:25Z | https://github.com/tflearn/tflearn/issues/1074 | [] | polar99 | 0 |

koxudaxi/fastapi-code-generator | fastapi | 328 | [] in query parameter name generates code that cannot be formatted | When you have `[]` as prarameter name it fails to generate correct code. See this part of OpenAPI schema:

```yaml

parameters:

- in: query

name: color[]

schema:

type: array

items:

type: string

```

Possible solutions:

1. Correct schema to not use `[]`

2. Patch template to strip `[]` but handle possible name collisions like `color` and `color[]`

3. Add some `--no-code-format` flag to allow generate invalid code to fix it later manually

What do you think about it?

| open | 2023-03-01T23:23:32Z | 2023-03-01T23:23:32Z | https://github.com/koxudaxi/fastapi-code-generator/issues/328 | [] | Skyross | 0 |

chainer/chainer | numpy | 8,195 | ChainerX take allows OOB access | I think we should not allow this?

```

>>> x = chx.array([1,2])

>>> y = x.take(chx.array([3]), axis=0)

>>> y

array([2], shape=(1,), dtype=int64, device='native:0')

```

numpy raises an exception for this. | closed | 2019-09-29T04:36:42Z | 2019-10-10T03:52:57Z | https://github.com/chainer/chainer/issues/8195 | [

"pr-ongoing",

"ChainerX"

] | shinh | 1 |

twelvedata/twelvedata-python | matplotlib | 7 | [Bug] Technical Indicator Plotly | Hello,

I ran the 'static' (chart) example code from git without error using python 3.5.

The chart appeared in my browser.

However, the Technical Indicators are not appearing on the chart. (Compared to the image of the char on the git and pypi pages)

It seems that either there is a bug or,

Do I need to write additional code (presumably using plotly modules) in order to configure the chart to properly display the technical indicators?

I'm on Ubuntu 16.04. Using Pycharm.

I had to use ts.show_plotly() to display the chart, otherwise the chart doesn't show.

I also tried on Python3.6 and 3.7 and neither seemed to make a difference.

Thanks for your help.

Matt | closed | 2020-04-26T05:54:30Z | 2020-04-26T16:28:56Z | https://github.com/twelvedata/twelvedata-python/issues/7 | [] | majikbyte | 2 |

jina-ai/clip-as-service | pytorch | 430 | Adding Bi-LSTM layer to word-level embeddings | Has anyone got any examples of them adding a classification layer (as per the Bert paper) for NER? | open | 2019-08-02T18:16:42Z | 2019-08-02T18:16:42Z | https://github.com/jina-ai/clip-as-service/issues/430 | [] | samjtozer | 0 |

kennethreitz/responder | flask | 45 | Probable bug in GraphQL JSON request handling | There seems to be a bug in parsing of JSON GraphQL requests.

Test case (disregard the fact that it would also fail if parsed successfully):

```python

def test_graphql_schema_json_query(api, schema):

api.add_route("/", schema)

r = api.session().post("http://;/", headers={"Accept": "json", "Content-type": "json"}, data={'query': '{ hello }'})

assert r.ok

```

Result on the latest `master`:

```

tests/test_responder.py:123:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

.env/lib/python3.7/site-packages/requests/sessions.py:559: in post

return self.request('POST', url, data=data, json=json, **kwargs)

.env/lib/python3.7/site-packages/starlette/testclient.py:312: in request

json=json,

.env/lib/python3.7/site-packages/requests/sessions.py:512: in request

resp = self.send(prep, **send_kwargs)

.env/lib/python3.7/site-packages/requests/sessions.py:622: in send

r = adapter.send(request, **kwargs)

.env/lib/python3.7/site-packages/starlette/testclient.py:159: in send

raise exc from None

.env/lib/python3.7/site-packages/starlette/testclient.py:156: in send

loop.run_until_complete(connection(receive, send))

/usr/local/Cellar/python/3.7.0/Frameworks/Python.framework/Versions/3.7/lib/python3.7/asyncio/base_events.py:568: in run_until_complete

return future.result()

responder/api.py:71: in asgi

resp = await self._dispatch_request(req)

responder/api.py:112: in _dispatch_request

self.graphql_response(req, resp, schema=view)

responder/api.py:200: in graphql_response

query = self._resolve_graphql_query(req)

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

req = <responder.models.Request object at 0x105f20c18>

@staticmethod

def _resolve_graphql_query(req):

if "json" in req.mimetype:

> return req.json()["query"]

E AttributeError: 'Request' object has no attribute 'json'

```

I tried fixing it myself, but got tangled in the web of `async/await`-s and ended up breaking other stuff 😅

I will try again later unless someone else wants to pick it up. | closed | 2018-10-14T19:14:04Z | 2018-10-15T07:32:21Z | https://github.com/kennethreitz/responder/issues/45 | [] | artemgordinskiy | 2 |

twopirllc/pandas-ta | pandas | 381 | VWAP that matched values of TradingView VWAP indicator (anchor) | Running

python: 3.8.5

pandas_ta version: 0.3.14b0

Description:

Trying to get VWAP that matched values of TradingView indicator at this link.

https://www.tradingview.com/support/solutions/43000502018-volume-weighted-average-price-vwap/

I think the key to matching this TradingView indicator is its "Anchor Setting", default is "session".

I want pandas_ta VWAP that matches TradingView indicator 30 minute chart.

The "Timeseries Offset Aliases" documentation shows: T,min = minutely frequency

I am not sure what combination of pandas_ta "anchor" and "offset" settting can be used to match TradingView VWAP on 30 minute chart.

Also, I am not sure if pandas_ta can match this TradingView indicator.

Code I have tried:

```python

df.set_index(pd.DatetimeIndex(df["date"]), inplace=True)

vwap = df.ta.vwap(anchor="T", offset=30, append=True)

```

I can provide screenshots if necessary.

| closed | 2021-08-31T05:37:20Z | 2021-09-07T01:07:04Z | https://github.com/twopirllc/pandas-ta/issues/381 | [

"info"

] | slhawk98 | 12 |

dbfixtures/pytest-postgresql | pytest | 574 | Maintain v3.x line with psycopg2 support | ### What action do you want to perform

Since `psycopg` 3 isn't slated to for GA with [SQLAlchemy until their 2.0 release](https://github.com/sqlalchemy/sqlalchemy/issues/6842), most users of SQLAlchemy are still using v1.4 with `psycopg2`. For those of us on SQLAlchemy v1.4, it doesn't really make sense to write tests with this fixture package that requires `psycopg` 3. Would you be open to maintaining the v3.x line please so that we can continue to get feature upgrades and fixes without the `psycopg2` requirement. I'd be happy to help maintain that branch if so.

### What are the results

### What are the expected results | open | 2022-03-07T14:10:22Z | 2022-03-08T12:54:43Z | https://github.com/dbfixtures/pytest-postgresql/issues/574 | [

"question"

] | winglian | 3 |

anselal/antminer-monitor | dash | 31 | Reboot when detected chips (Os) =/= 180 | I have a couple of weird D3s that sometimes say 175-179 chips are Os and the rest are Xs and are fixed with a simple reboot on their static ip page, it'd be great to have a built in option to automatically reboot the miner if any Xs are detected. | closed | 2017-11-27T08:36:39Z | 2017-11-27T20:32:49Z | https://github.com/anselal/antminer-monitor/issues/31 | [

":dancing_men: duplicate"

] | ckl33 | 4 |

Nemo2011/bilibili-api | api | 614 | [提问] 出现风控校验失败信息 | **Python 版本:** 3.12.1

**模块版本:** 16.1.1

**运行环境:** Windows

<!-- 务必提供模块版本并确保为最新版 -->

---

```

user_info = await bilibili_api.user.User(uid=uid, credential=credential).get_user_info()

bilibili_api.exceptions.ResponseCodeException.ResponseCodeException: 接口返回错误代码:-352,信息:风控校验失败。

{'code': -352, 'message': '风控校验失败', 'ttl': 1, 'data': {'v_voucher': 'voucher_121bba47-4c20-4250-998c-454ef9a0a8cc'}}

```

在获取b站用户个人信息的时候出现了风控提示,代码中已传入了credential

重启程序后貌似正常了,不确定什么时候再触发此风控 | closed | 2023-12-28T05:18:21Z | 2024-01-09T11:51:17Z | https://github.com/Nemo2011/bilibili-api/issues/614 | [

"need debug info",

"anti-spider"

] | iconFehu | 0 |

facebookresearch/fairseq | pytorch | 5,090 | NLLB License | ## ❓ Questions and Help

Here is the NLLB model's license, https://github.com/facebookresearch/fairseq/blob/nllb/LICENSE.model.md

Can we use NLLB model output (translation from language X to language Y) to train a model and release that model under a Commercially permitted license (i.e., Apache 2.0)? I understand the model license is for `Attribution-NonCommercial 4.0 International` but to generate the model output, we are actually paying for the compute hours. I understand that we cannot use model weights for commercial purposes. But what about the output generated by the model?

| open | 2023-04-24T18:34:04Z | 2023-05-19T08:45:26Z | https://github.com/facebookresearch/fairseq/issues/5090 | [

"question",

"needs triage"

] | sbmaruf | 2 |

Farama-Foundation/Gymnasium | api | 742 | [Bug Report] gymnasium.error.NamespaceNotFound: Namespace gym_examples not found. | ### Describe the bug

I've followed https://gymnasium.farama.org/tutorials/gymnasium_basics/environment_creation/#creating-a-package.

I have successfully registered the environment, but when I try to use that environment, I receive the error mentioned in the title. I have seen a similar issue here (https://github.com/Farama-Foundation/Gymnasium/issues/400), but all of my code is using Gymnasium (you can see it on my GitHub). Did I do something wrong? If so, please help me.

My github code: https://github.com/NghiaPhamttk27/GridWorld/tree/main

### Code example

_No response_

### System info

_No response_

### Additional context

_No response_

### Checklist

- [X] I have checked that there is no similar [issue](https://github.com/Farama-Foundation/Gymnasium/issues) in the repo

| closed | 2023-10-16T15:47:38Z | 2024-04-06T13:15:36Z | https://github.com/Farama-Foundation/Gymnasium/issues/742 | [

"bug"

] | NghiaPhamttk27 | 2 |

ultralytics/yolov5 | deep-learning | 13,402 | Feature map channel not same as what I defined | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

Recently I'm working on some head detection works, and to deploy the model on devices with weak computation ability, I used a yoloface-500k model and tried to train it in yolov5 framework. The model yaml is defined as followed:

```yaml

nc: 1 # number of classes

depth_multiple: 1.0 # model depth multiple

width_multiple: 1.0 # layer channel multiple

anchors:

- [4, 6, 7, 10, 11, 15]

- [16, 24, 33, 25, 26, 41]

- [47, 60, 83, 97, 141, 149]

backbone:

# [from, number, module, args]

# args: out_channels, size, stride

[

[-1, 1, Conv, [8, 3, 2]], # 0 [batch, 8, size/2, size/2]

[-1, 1, DWConv, [8, 3, 1]], # 1 [320]

[-1, 1, Conv, [4, 1, 1 ]], # 2 [320]

[-1, 1, Conv, [24, 1, 1]], # 3 [-1, 1, DWConv, [24, 3, 2]] # 4

[-1, 1, Conv, [6, 1, 1]], # 4

[-1, 1, Bottleneck3, [6]], # 5

[-1, 1, Conv, [36, 1, 1]], # 6

[-1, 1, DWConv, [36, 3, 2]], # 7 [160]

[-1, 1, Conv, [8, 1, 1]], # 8

[-1, 2, Bottleneck3, [8]], # 9

[-1, 1, Conv, [48, 1, 1]], # 10

[-1, 1, DWConv, [48, 3, 2]], # 11 [80]

[-1, 1, Conv, [16, 1, 1]], # 12

[-1, 3, Bottleneck3, [16]], # 13

[-1, 1, Conv, [96, 1, 1]], # 14

[-1, 1, DWConv, [96, 3, 1]], # 15

[-1, 1, Conv, [24, 1, 1]], # 16

[-1, 2, Bottleneck3, [24]], # 17

[-1, 1, Conv, [144, 1, 1]], # 18 [80]

[-1, 1, DWConv, [144, 3, 2]], # 19 [80] -> [40]

[-1, 1, Conv, [40, 1, 1]], # 20

[-1, 2, Bottleneck3, [40]], # 21 [batch, 40, size/16, size/16]

]

head: [

[-1, 1, Conv, [80, 1, 1]], # 22 [40]

[[-1, -4], 1, Concat, [1]], # 23 [batch, 224, size/16, size/16] [40]

[-1, 1, Conv, [48, 1, 1]], # 24

[-1, 1, DWConv, [48, 3, 1]], # 25

[-1, 1, Conv, [36, 1, 1]], # 26

[-1, 1, Conv, [18, 1, 1]], # 27 [batch, 18, size/8, size/8] -> [40]

[-5, 1, nn.Upsample, [None, 2, "nearest"]], # 28 [80]

[[-1, 11], 1, Concat, [1]], # 29 [80] ch = 272

[-1, 1, Conv, [24, 1, 1]], # 30

[-1, 1, DWConv, [24, 3, 1]], # 31

[-1, 1, Conv, [24, 1, 1]], # 32

[-1, 1, Conv, [18, 1, 1]], # 33 [batch, 18, 160, 160] -> [80]

[-5, 1, nn.Upsample, [None, 2, "nearest"]], # 34 [1, 272, 320, 320] -> [160]

[[-1, 7], 1, Concat, [1]], # 35

[-1, 1, Conv, [18, 1, 1]], # 36

[-1, 1, DWConv, [18, 3, 1]], # 37

[-1, 1, Conv, [24, 1, 1]], # 38

[-1, 1, Conv, [18, 1, 1]], # 39 [batch, 18, 320, 320] -> [160]

[[39, 33, 27], 1, Detect, [nc, anchors]],

]

```

The arrows in the file just denote the change on size I have made in this layer from a previous version, which is not important in this issue.

My problem is, As I defined in layer 27, 33 and 39, these three layers should output a 18 channel feature map, respectively. However, in my experiment, where I run `detect.py` with the .pt weight file I get after training, it turns out that the output of these layers are all 24 channels:

```txt

Layer 0: torch.Size([1, 8, 320, 320])

Layer 1: torch.Size([1, 8, 320, 320])

Layer 2: torch.Size([1, 8, 320, 320])

Layer 3: torch.Size([1, 24, 320, 320])

Layer 4: torch.Size([1, 8, 320, 320])

Layer 5: torch.Size([1, 8, 320, 320])

Layer 6: torch.Size([1, 40, 320, 320])

Layer 7: torch.Size([1, 40, 160, 160])

Layer 8: torch.Size([1, 8, 160, 160])

Layer 9: torch.Size([1, 8, 160, 160])

Layer 10: torch.Size([1, 48, 160, 160])

Layer 11: torch.Size([1, 48, 80, 80])

Layer 12: torch.Size([1, 16, 80, 80])

Layer 13: torch.Size([1, 16, 80, 80])

Layer 14: torch.Size([1, 96, 80, 80])

Layer 15: torch.Size([1, 96, 80, 80])

Layer 16: torch.Size([1, 24, 80, 80])

Layer 17: torch.Size([1, 24, 80, 80])

Layer 18: torch.Size([1, 144, 80, 80])

Layer 19: torch.Size([1, 144, 40, 40])

Layer 20: torch.Size([1, 40, 40, 40])

Layer 21: torch.Size([1, 40, 40, 40])

Layer 22: torch.Size([1, 80, 40, 40])

Layer 23: torch.Size([1, 224, 40, 40])

Layer 24: torch.Size([1, 48, 40, 40])

Layer 25: torch.Size([1, 48, 40, 40])

Layer 26: torch.Size([1, 40, 40, 40])

Layer 27: torch.Size([1, 24, 40, 40])

Layer 28: torch.Size([1, 224, 80, 80])

Layer 29: torch.Size([1, 272, 80, 80])

Layer 30: torch.Size([1, 24, 80, 80])

Layer 31: torch.Size([1, 24, 80, 80])

Layer 32: torch.Size([1, 24, 80, 80])

Layer 33: torch.Size([1, 24, 80, 80])

Layer 34: torch.Size([1, 272, 160, 160])

Layer 35: torch.Size([1, 312, 160, 160])

Layer 36: torch.Size([1, 24, 160, 160])

Layer 37: torch.Size([1, 24, 160, 160])

Layer 38: torch.Size([1, 24, 160, 160])

Layer 39: torch.Size([1, 24, 160, 160])

```

What makes it more weird is that, in my previous version, just as I mentioned above,

```yaml

nc: 1 # number of classes

depth_multiple: 1.0 # model depth multiple

width_multiple: 1.0 # layer channel multiple

anchors:

- [4, 6, 7, 10, 11, 15]

- [16, 24, 33, 25, 26, 41]

- [47, 60, 83, 97, 141, 149]

backbone:

# [from, number, module, args]

# args: out_channels, size, stride

[

[-1, 1, Conv, [8, 3, 2]], # 0 [batch, 8, size/2, size/2]

[-1, 1, DWConv, [8, 3, 1]], # 1 [320]

[-1, 1, Conv, [4, 1, 1 ]], # 2 [320]

[-1, 1, Conv, [24, 1, 1]], # 3 [-1, 1, DWConv, [24, 3, 2]] # 4

[-1, 1, Conv, [6, 1, 1]], # 4

[-1, 1, Bottleneck3, [6]], # 5

[-1, 1, Conv, [36, 1, 1]], # 6

[-1, 1, DWConv, [36, 3, 2]], # 7 [160]

[-1, 1, Conv, [8, 1, 1]], # 8

[-1, 2, Bottleneck3, [8]], # 9

[-1, 1, Conv, [48, 1, 1]], # 10

[-1, 1, DWConv, [48, 3, 2]], # 11 [80]

[-1, 1, Conv, [16, 1, 1]], # 12

[-1, 3, Bottleneck3, [16]], # 13

[-1, 1, Conv, [96, 1, 1]], # 14

[-1, 1, DWConv, [96, 3, 1]], # 15

[-1, 1, Conv, [24, 1, 1]], # 16

[-1, 2, Bottleneck3, [24]], # 17

[-1, 1, Conv, [144, 1, 1]], # 18 [80]

[-1, 1, DWConv, [144, 3, 2]], # 19 [40]

[-1, 1, Conv, [40, 1, 1]], # 20

[-1, 2, Bottleneck3, [40]], # 21 [batch, 40, size/16, size/16]

]

head: [

[-1, 1, Conv, [80, 1, 1]], # 22

[-1, 1, nn.Upsample, [None, 2, "nearest"]], # 23 [1, 80, 80, 80]

[[-1, -6], 1, Concat, [1]], # 24 [batch, 224, size/8, size/8]

[-1, 1, Conv, [48, 1, 1]], # 25

[-1, 1, DWConv, [48, 3, 1]], # 26

[-1, 1, Conv, [36, 1, 1]], # 27

[-1, 1, Conv, [18, 1, 1]], # 28 [batch, 18, size/8, size/8]

[-5, 1, nn.Upsample, [None, 2, "nearest"]], # 29

[[-1, 10], 1, Concat, [1]], # 30

[-1, 1, Conv, [24, 1, 1]], # 31

[-1, 1, DWConv, [24, 3, 1]], # 32

[-1, 1, Conv, [24, 1, 1]], # 33

[-1, 1, Conv, [18, 1, 1]], # 34 [batch, 18, 160, 160]

[-5, 1, nn.Upsample, [None, 2, "nearest"]], # 35 [1, 272, 320, 320]

[[-1, 6], 1, Concat, [1]], # 36

[-1, 1, Conv, [18, 1, 1]], # 37

[-1, 1, DWConv, [18, 3, 1]], # 38

[-1, 1, Conv, [24, 1, 1]], # 39

[-1, 1, Conv, [18, 1, 1]], # 40 [batch, 18, 320, 320]

[[40, 34, 28], 1, Detect, [nc, anchors]],

]

```

Which is a similar one except that the stride of some of the convolution layers are different with that in the latter version. By the way, I made these changes only to reduce the size of feature maps from 80, 160, 320 to 40, 80, 160 in order to have a better performance on the edge device. In this version, on the contrary, it outputs three feature maps with channel 18 each.

```txt

Layer 0: torch.Size([1, 8, 320, 320])

Layer 1: torch.Size([1, 8, 320, 320])

Layer 2: torch.Size([1, 8, 320, 320])

Layer 3: torch.Size([1, 24, 320, 320])

Layer 4: torch.Size([1, 8, 320, 320])

Layer 5: torch.Size([1, 8, 320, 320])

Layer 6: torch.Size([1, 40, 320, 320])

Layer 7: torch.Size([1, 40, 160, 160])

Layer 8: torch.Size([1, 8, 160, 160])

Layer 9: torch.Size([1, 8, 160, 160])

Layer 10: torch.Size([1, 48, 160, 160])

Layer 11: torch.Size([1, 48, 80, 80])

Layer 12: torch.Size([1, 16, 80, 80])

Layer 13: torch.Size([1, 16, 80, 80])

Layer 14: torch.Size([1, 96, 80, 80])

Layer 15: torch.Size([1, 96, 80, 80])

Layer 16: torch.Size([1, 24, 80, 80])

Layer 17: torch.Size([1, 24, 80, 80])

Layer 18: torch.Size([1, 144, 80, 80])

Layer 19: torch.Size([1, 144, 40, 40])

Layer 20: torch.Size([1, 40, 40, 40])

Layer 21: torch.Size([1, 40, 40, 40])

Layer 22: torch.Size([1, 80, 40, 40])

Layer 23: torch.Size([1, 80, 80, 80])

Layer 24: torch.Size([1, 224, 80, 80])

Layer 25: torch.Size([1, 48, 80, 80])

Layer 26: torch.Size([1, 48, 80, 80])

Layer 27: torch.Size([1, 40, 80, 80])

Layer 28: torch.Size([1, 18, 80, 80])

Layer 29: torch.Size([1, 224, 160, 160])

Layer 30: torch.Size([1, 272, 160, 160])

Layer 31: torch.Size([1, 24, 160, 160])

Layer 32: torch.Size([1, 24, 160, 160])

Layer 33: torch.Size([1, 24, 160, 160])

Layer 34: torch.Size([1, 18, 160, 160])

Layer 35: torch.Size([1, 272, 320, 320])

Layer 36: torch.Size([1, 312, 320, 320])

Layer 37: torch.Size([1, 18, 320, 320])

Layer 38: torch.Size([1, 18, 320, 320])

Layer 39: torch.Size([1, 24, 320, 320])

Layer 40: torch.Size([1, 18, 320, 320])

```

I wonder what makes the output channel different? It seems that the yolov3 framework has modified the last several layers of the new model automatically... Since the output channel of last four layers, in my design is 18, 18, 24, 24. However in the first txt file I showed above, it's 24, 24, 24, 24. Why does this change take place?

### Additional

_No response_ | closed | 2024-11-07T06:27:23Z | 2024-11-08T20:26:33Z | https://github.com/ultralytics/yolov5/issues/13402 | [

"question",

"detect"

] | tobymuller233 | 3 |

syrupy-project/syrupy | pytest | 116 | Syrupy assertion diff does not show missing carriage return | **Describe the bug**

Syrupy assertion diff does not show missing carriage return.

**To Reproduce**

Add this test.

```python

def test_example(snapshot):

assert snapshot == "line 1\r\nline 2"

```

Run `pytest --snapshot-update`.

Remove the `\r` from the string in the test case so you get:

```python

def test_example(snapshot):

assert snapshot == "line 1\nline 2"

```

Run `pytest`. The test will fail with a useless diff of "...".

**Expected behavior**

Syrupy should output each line with the missing carriage return, with some indication of what's missing.

**Additional context**

Syrupy v0.3.1

#113 fixed the bug where carriage returns were not being serialized. This issue addresses the missing functionality in the snapshot assertion diff reporter.

| closed | 2020-01-15T14:40:21Z | 2020-03-08T04:23:00Z | https://github.com/syrupy-project/syrupy/issues/116 | [

"bug",

"released"

] | noahnu | 3 |

apify/crawlee-python | automation | 311 | Document tiered proxies | closed | 2024-07-16T07:12:48Z | 2024-07-18T15:48:15Z | https://github.com/apify/crawlee-python/issues/311 | [

"documentation",

"t-tooling"

] | vdusek | 0 | |

fastapi/sqlmodel | fastapi | 208 | Pylance / VSCode cannot find sqlmodel typings correctly | ### First Check

- [X] I added a very descriptive title to this issue.

- [X] I used the GitHub search to find a similar issue and didn't find it.

- [X] I searched the SQLModel documentation, with the integrated search.

- [X] I already searched in Google "How to X in SQLModel" and didn't find any information.

- [X] I already read and followed all the tutorial in the docs and didn't find an answer.

- [X] I already checked if it is not related to SQLModel but to [Pydantic](https://github.com/samuelcolvin/pydantic).

- [X] I already checked if it is not related to SQLModel but to [SQLAlchemy](https://github.com/sqlalchemy/sqlalchemy).

### Commit to Help

- [X] I commit to help with one of those options 👆

### Example Code

```python

from fastapi import FastAPI

from model import Hero, User

from sqlmodel import create_engine, SQLModel, Session, select

app = FastAPI()

engine = create_engine("sqlite:///database.db")

SQLModel.metadata.create_all(engine)

@app.get("/")

async def read_root():

hero_1 = Hero(name="Deadpond", secret_name="Dive Wilson")

with Session(engine) as session:

session.add(hero_1)

session.commit()

session.refresh(hero_1)

return hero_1

```

### Description

<img width="1155" alt="Screen Shot 2021-12-29 at 2 58 04 PM" src="https://user-images.githubusercontent.com/260667/147709474-1613074e-c983-4736-ab5c-9b0d3fd8fad9.png">

For some reason, all of sqlmodel objects are not analyzed correctly. I have tried the same file in PyCharm and it works.

This _might_ be an issue with Pylance and not a bug on sqlmodel. I am hoping others might know of a solution.

### Operating System

macOS

### Operating System Details

_No response_

### SQLModel Version

0.0.6

### Python Version

3.10.1

### Additional Context

❯ pdm list

Package Version Location

----------------- -------- --------

anyio 3.4.0

asgiref 3.4.1

asttokens 2.0.5

black 21.12b0

click 8.0.3

devtools 0.8.0

executing 0.8.2

fastapi 0.70.1

greenlet 1.1.2

h11 0.12.0

httptools 0.3.0

idna 3.3

mypy 0.930

mypy-extensions 0.4.3

pathspec 0.9.0

platformdirs 2.4.1

pydantic 1.9.0a2

python-dotenv 0.19.2

pyyaml 6.0

six 1.16.0

sniffio 1.2.0

sqlalchemy 1.4.29

sqlalchemy2-stubs 0.0.2a19

sqlmodel 0.0.6

starlette 0.16.0

tomli 1.2.3

typing-extensions 4.0.1

uvicorn 0.16.0

uvloop 0.16.0

watchgod 0.7

websockets 10.1 | open | 2021-12-29T23:05:18Z | 2021-12-30T01:04:05Z | https://github.com/fastapi/sqlmodel/issues/208 | [

"question"

] | amir20 | 2 |

chezou/tabula-py | pandas | 125 | read_pdf returns None on my Linux only | # Summary of your issue

I have moved from Mac to Linux mint. I tried to run the read_pdf and every attempt results in a dataframe containing "None". I have not seen similar issue online.

# Environment

- [x] Paste the output of `import tabula; tabula.environment_info()` on Python REPL: ?

```

If not possible toPython version:

2.7.15 |Anaconda, Inc.| (default, May 1 2018, 23:32:55)

[GCC 7.2.0]

Java version:

openjdk version "1.8.0_03-Ubuntu"

OpenJDK Runtime Environment (build 1.8.0_03-Ubuntu-8u77-b03-3ubuntu3-b03)

OpenJDK 64-Bit Server VM (build 25.03-b03, mixed mode)

tabula-py version: 1.3.1

platform: Linux-4.4.0-21-generic-x86_64-with-debian-stretch-sid

uname:

('Linux', 'nawaf', '4.4.0-21-generic', '#37-Ubuntu SMP Mon Apr 18 18:33:37 UTC 2016', 'x86_64', 'x86_64')

linux_distribution: (u'Ubuntu', u'16.04', u'Xenial Xerus')

mac_ver: ('', ('', '', ''), '') execute `tabula.environment_info()`, please answer following questions manually.

```

- [x] Paste the output of `python --version` command on your terminal: ?

`Python 2.7.15 :: Anaconda, Inc.`

- [x] Paste the output of `java -version` command on your terminal: ?

```

openjdk version "1.8.0_03-Ubuntu"

OpenJDK Runtime Environment (build 1.8.0_03-Ubuntu-8u77-b03-3ubuntu3-b03)

OpenJDK 64-Bit Server VM (build 25.03-b03, mixed mode)

```

- [x] Does `java -h` command work well?; Ensure your java command is included in `PATH`

```

Usage: java [-options] class [args...]

(to execute a class)

or java [-options] -jar jarfile [args...]

(to execute a jar file)

where options include:

-d32 use a 32-bit data model if available

-d64 use a 64-bit data model if available

-server to select the "server" VM

-zero to select the "zero" VM

-jamvm to select the "jamvm" VM

-dcevm to select the "dcevm" VM

The default VM is server,

because you are running on a server-class machine.

$PATH

bash: /usr/lib/jvm/java-1.8.0-openjdk-amd64/bin:/usr/lib/jvm/java-1.8.0-openjdk-amd64/bin:/home/nalsabhan/anaconda2/bin:/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games: No such file or directory

```

- [x] Write your OS and it's version: ?

`Linux version 4.4.0-21-generic (buildd@lgw01-21) (gcc version 5.3.1 20160413 (Ubuntu 5.3.1-14ubuntu2) ) #37-Ubuntu SMP Mon Apr 18 18:33:37 UTC 2016`

- [x] (Optional, but really helpful) Your PDF URL: ?

https://www.tsu.ge/data/file_db/faculty_social_political/B2-nimushi.pdf

# What did you do when you faced the problem?

Tested multiple pdf files with text in them. Tried to update JAVA and made sure it is in the path

## Example code:

```

import tabula as tb

df = tb.read_pdf("pdfs/test.pdf", pages = [3])

print df

```

## Output:

```

None

```

## What did you intend it to be?

I expected a table with text scattered in it.

| closed | 2018-12-24T22:10:18Z | 2018-12-30T11:00:57Z | https://github.com/chezou/tabula-py/issues/125 | [] | nalsabhan | 5 |

waditu/tushare | pandas | 792 | stock_basic函数获得的结果不全 | ts_code

0 000001.SZ

1 000002.SZ

2 000004.SZ

3 000005.SZ

4 000006.SZ

5 000007.SZ

6 000008.SZ

7 000009.SZ

8 000010.SZ

9 000011.SZ

10 000012.SZ

11 000014.SZ

12 000016.SZ

13 000017.SZ

14 000018.SZ

15 000019.SZ

16 000020.SZ

17 000021.SZ

18 000022.SZ

19 000023.SZ

20 000025.SZ

21 000026.SZ

22 000027.SZ

23 000028.SZ

24 000029.SZ

25 000030.SZ

26 000031.SZ

27 000032.SZ

28 000034.SZ

29 000035.SZ

... ...

3526 603936.SH

3527 603937.SH

3528 603938.SH

3529 603939.SH

3530 603955.SH

3531 603958.SH

3532 603959.SH

3533 603960.SH

3534 603963.SH

3535 603966.SH

3536 603968.SH

3537 603969.SH

3538 603970.SH

3539 603976.SH

3540 603977.SH

3541 603978.SH

3542 603979.SH

3543 603980.SH

3544 603985.SH

3545 603986.SH

3546 603987.SH

3547 603988.SH

3548 603989.SH

3549 603990.SH

3550 603991.SH

3551 603993.SH

3552 603996.SH

3553 603997.SH

3554 603998.SH

3555 603999.SH

[3556 rows x 1 columns]

| closed | 2018-10-30T13:09:42Z | 2018-12-19T05:25:34Z | https://github.com/waditu/tushare/issues/792 | [] | zhanguoce | 5 |

saulpw/visidata | pandas | 2,379 | Can't disable mouse | **Small description**

Mouse-disable commands do nothing. In-session disable states invalid command. I'm running in a headless environment over SSH. Since I don't have access to a system clipboard, I need to be able to select values.

**Expected result**

I should be free to select terminal text with my mouse.

**Actual result with screenshot**

If you get an unexpected error, please include the full stack trace that you get with `Ctrl-E`.

Not disabled. Not including a screenshot in order to avoid confusing the issue.

**Steps to reproduce with sample data and a .vd**

First try reproducing without any user configuration by using the flag `-N`.

e.g. `echo "abc" | vd -f txt -N`

Tried adding to ~/.visidatarc:

```

options.mouse_interval = 0 # disables the mouse-click

options.scroll_incr = 0 # disables the scroll wheel

```

Tried disabling directly:

```

[SPACE] mouse-disable

```

Shows:

```

[...]| no binding for mouse-disable

```

Please attach the commandlog (saved with `Ctrl-D`) to show the steps that led to the issue.

See [here](http://visidata.org/docs/save-restore/) for more details.

Not necessary given that specific commands just aren't doing anything with no prior history.

**Additional context**

Please include the version of VisiData and Python.

VisiData: 1.5.2-1

Python: 3.8.10

VisiData was installed via Apt on Ubuntu 20.04.1 .

The bodge for my particular issue (needing to copy values out the remote terminal) is just to enter command mode, which makes everything in the terminal selectable.

| closed | 2024-04-12T05:17:25Z | 2024-04-12T19:52:02Z | https://github.com/saulpw/visidata/issues/2379 | [

"bug",

"fixed"

] | dsoprea | 2 |

ned2/slapdash | dash | 12 | Enable callback validation | Out of the box, a dynamic multi-page app like Slapdash requires callback validation to be turned off as callbacks will need to be defined that don't yet exist in the layout. the [Dash docs](https://dash.plot.ly/urls) have an example in the section titled "Dynamically Create a Layout for Multi-Page App Validation" that show how you can not suppress callback validation. | closed | 2018-12-29T03:39:06Z | 2022-10-19T12:35:35Z | https://github.com/ned2/slapdash/issues/12 | [] | ned2 | 2 |

InstaPy/InstaPy | automation | 6,450 | acc.txt | Yountrust | closed | 2022-01-03T00:44:32Z | 2022-01-08T19:28:15Z | https://github.com/InstaPy/InstaPy/issues/6450 | [] | liyahworks | 1 |

cvat-ai/cvat | computer-vision | 8,263 | I want to work as a data annotator | I want to work for cvat.ai as a data annotator can you help me how to start? | closed | 2024-08-06T13:47:09Z | 2024-08-06T16:19:27Z | https://github.com/cvat-ai/cvat/issues/8263 | [

"invalid"

] | fatmard947 | 0 |

dask/dask | scikit-learn | 10,881 | applying tuple with pyarrow | <!-- Please include a self-contained copy-pastable example that generates the issue if possible.

Please be concise with code posted. See guidelines below on how to provide a good bug report:

- Craft Minimal Bug Reports http://matthewrocklin.com/blog/work/2018/02/28/minimal-bug-reports

- Minimal Complete Verifiable Examples https://stackoverflow.com/help/mcve

Bug reports that follow these guidelines are easier to diagnose, and so are often handled much more quickly.

-->

When applying tuple to a dask dataframe without pyarrow installed, it gives a column with tuples as expected. If instead we apply it with pyarrow installed, we get string dtypes instead.

The problem can be reproduced by the following commands in the console:

```bash

$ pyenv deactivate

$ pyenv virtualenv --clear 3.10.12 tuple10 # create a clear environment

$ pyenv activate tuple10

$ pip install dask[dataframe]==2024.1.1

$ python tuple_test.py # we expect a tuple to be the result

d

0 <class 'tuple'>

$ pip install pyarrow

$ python tuple_test.py # but with pyarrow we get a string instead

d

0 <class 'str'>

```

with tuple_test.py

```python

import dask.dataframe as dd

import pandas as pd

def apply_tuple_on_two_cols(

counts_df: dd.DataFrame,

):

counts_df["d"] = counts_df[["b", "c"]].apply(

tuple, axis=1, meta=pd.Series(dtype=object)

)

counts_df["d"] = counts_df["d"].apply(

type,

meta=pd.Series(dtype=object),

)

return counts_df[["d"]]

def test_tuple_application():

counts = dd.from_pandas(

pd.DataFrame({"a": ["1"], "b": ["2"], "c": [3]}), npartitions=1

)

result = apply_tuple_on_two_cols(counts)

print(result.compute())

if __name__ == "__main__":

test_tuple_application()

```

**Environment**:

- Dask version:2024.1.1

- Pyarrow version: 15.0.0

- Python version:3.10.12

- Operating System:Ubuntu 22.04

- Install method (conda, pip, source):pip

| open | 2024-02-01T15:20:45Z | 2024-02-02T08:38:50Z | https://github.com/dask/dask/issues/10881 | [

"convert-string"

] | SurkynRik | 2 |

gunthercox/ChatterBot | machine-learning | 1,555 | AttributeError: 'ChatBot' object has no attribute 'set_trainer' | Hi,

Just after installing ChatterBot ( version is 1.0.0a3.) , I tried to execute the following code snippet from quick start guide:

```

from chatterbot import ChatBot

chatbot = ChatBot("Ron Obvious")

from chatterbot.trainers import ListTrainer

conversation = [

"Hello",

"Hi there!",

"How are you doing?",

"I'm doing great.",

"That is good to hear",

"Thank you.",

"You're welcome."

]

chatbot.set_trainer(ListTrainer)

chatbot.train(conversation)

```

It failed to execute with the error, " AttributeError: 'ChatBot' object has no attribute 'set_trainer' ". I couldn't find any other post related to this attribute either.

I skimmed through the code of chatterbot.py and found ChatBot indeed has neither 'set_trainer' nor 'train' function.

Am I missing something here? I would really appreciate if anybody could help me here.

Thanks, | closed | 2019-01-09T09:55:18Z | 2020-12-27T06:32:24Z | https://github.com/gunthercox/ChatterBot/issues/1555 | [

"answered"

] | achingacham | 18 |

ultralytics/ultralytics | pytorch | 19,566 | Cleanest way to customize the model.val() method for custom validation. | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/orgs/ultralytics/discussions) and found no similar questions.

### Question

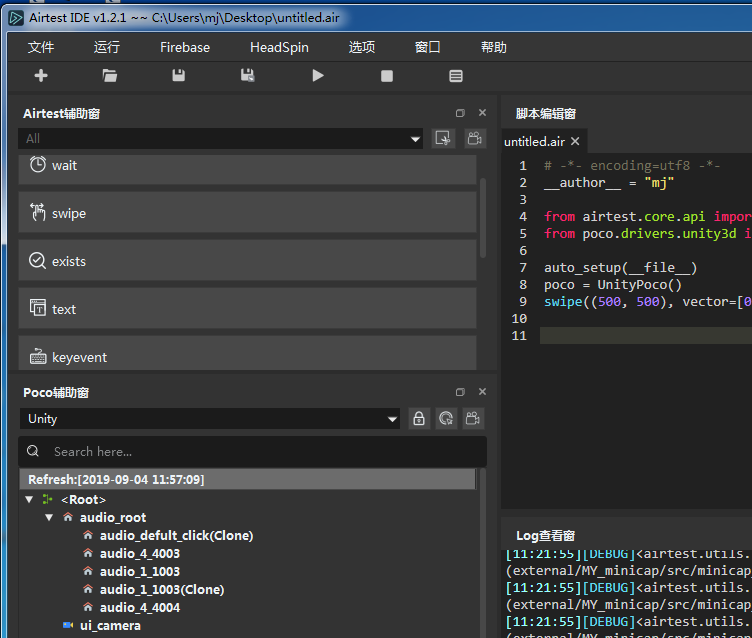

Hello, I plan to customise the standard validation (see photo) to my needs (use of def ap50, def ap70 with difficulty levels). How do I have to do this to extend the validation cleanly? By default, the OBBValidator (based on DetectionValidator) is used for this.

**Current validation code:**

```python

from ultralytics import YOLO

model = YOLO('/home/heizung1/ultralytics_yolov8-obb_ob_kitti/ultralytics/kitti_bev_yolo/run10_Adam_89.2_87.9/weights/best.pt', task='obb', verbose=False)

metrics = model.val_kitti(data='/home/heizung1/ultralytics_yolov8-obb_ob_kitti/ultralytics/cfg/datasets/kitti_bev.yaml', imgsz=640,

batch=16, save_json=False, conf=0.001, iou=0.5, max_det=300, half=False,

device='0', dnn=False, plots=False, rect=False, split='val', project=None, name=None)

```

**Current validation output:**

**Desired validation output:**

`Class` , `Images`, `Instances`, `Box(P R AP50 AP70): 100% etc.`

`AP50` and `AP70` categorized in difficulties columns: Easy, Moderate, Hard

The information for the difficulties are in my validation labels: `standard OBB format (1 0.223547 0.113517 0.223496 0.049611 0.258965 0.049583 0.259016 0.113489) difficulty infos (22.58 0.00 0)`.

For the training I modified: https://github.com/ultralytics/ultralytics/blob/23a90142dc66fbb180fe1bb513a2adc44322c978/ultralytics/data/utils.py#L97 to handle only the required label information.

I think it would be good to start in small steps, so my first consideration would be how to load the labels from a newly created .cache file that contains all the label information and then split it into two variables. One will be used to execute the standard processes (prediction, calculation IoU, metrics) and the other will be processed to develop 1 difficulty level from the 3 values.

Thank you for any useful input and guidance during this customization!

### Additional

_No response_ | open | 2025-03-07T09:44:22Z | 2025-03-15T03:28:25Z | https://github.com/ultralytics/ultralytics/issues/19566 | [

"question",

"OBB"

] | Petros626 | 13 |

idealo/image-super-resolution | computer-vision | 17 | Weights | Really simple issue, but the weights for Large RDN model were updated in the wget command, but not in the execution of ISR_Prediction_Tutorial.ipynb (it's downloading PSNR-driven/rdn-C6-D20-G64-G064-x2_PSNR_epoch086.hdf5, but calling weights/rdn-C6-D20-G64-G064-x2_div2k-e086.hdf5) | closed | 2019-04-09T11:28:39Z | 2019-04-11T16:29:05Z | https://github.com/idealo/image-super-resolution/issues/17 | [] | victorca25 | 1 |

plotly/dash-table | plotly | 742 | Feature request: Clear cell selection | Hi!

It's a common question at the [community](https://community.plotly.com/t/deselect-cell-in-data-table/25447).

I know we can use `Output("table", "selected_cells")` to set an empty cell selection, but it still leaves some selection box like this:

Is there a way to remove this as well?

It'd be nice to use `esc` as a hotkey to clear the cells selections. Or maybe click twice at a single selected cell to clear the selection. | open | 2020-04-13T23:50:30Z | 2020-04-13T23:50:30Z | https://github.com/plotly/dash-table/issues/742 | [] | victor-ab | 0 |

apache/airflow | automation | 48,009 | Restore support of starting mapped tasks from triggerer | ### Body

#48006 disabled starting mapped tasks from triggerer because it was crashing the scheduler (https://github.com/apache/airflow/issues/47735). It was discovered late in the airlfow 3 beta process so, disabling it was a reasonable choice.

When time permits, one could look into restoring this capability, perhaps with limitations.

### Committer

- [x] I acknowledge that I am a maintainer/committer of the Apache Airflow project. | open | 2025-03-20T13:39:31Z | 2025-03-24T16:54:20Z | https://github.com/apache/airflow/issues/48009 | [

"kind:feature",

"kind:meta",

"area:dynamic-task-mapping",

"area:Triggerer"

] | dstandish | 0 |

tensorpack/tensorpack | tensorflow | 1,276 | Error when try to register my own dataset | Hi

I think I got the same error than #1215 https://github.com/tensorpack/tensorpack/issues/1215.

This is the structure of my own dataset

COCO/DIR/

_______|__annotations/

_________________|__instances_train2017.json

_________________|__instances_val2017.json

_______|__train2017

_______|__val2017

As it was proposed on #1215 I used this code in coco.py:

```

def register_coco(basedir):

DatasetRegistry.register("train2017", lambda: COCODetection(basedir, "train2017"))

DatasetRegistry.register("val2017", lambda: COCODetection(basedir, "val2017"))

```

but I get this error:

```

Traceback (most recent call last):

File "train.py", line 74, in <module>

train_dataflow = get_train_dataflow()

File "/home/federicolondon2019/tensorpack/examples/FasterRCNN/data.py", line 391, in get_train_dataflow

roidbs = list(itertools.chain.from_iterable(DatasetRegistry.get(x).training_roidbs() for x in cfg.DATA.TRAIN))

File "/home/federicolondon2019/tensorpack/examples/FasterRCNN/data.py", line 391, in <genexpr>

roidbs = list(itertools.chain.from_iterable(DatasetRegistry.get(x).training_roidbs() for x in cfg.DATA.TRAIN))

File "/home/federicolondon2019/tensorpack/examples/FasterRCNN/dataset/dataset.py", line 90, in get

assert name in DatasetRegistry._registry, "Dataset {} was not registered!".format(name)

AssertionError: Dataset t was not registered!

```

I changed coco.py and config.py to adapt my own dataset to the code.

Below coco.py, config.py and the log.

Hopefully you can help me.

Thanks!

If you're asking about an unexpected problem which you do not know the root cause,

use this template. __PLEASE DO NOT DELETE THIS TEMPLATE, FILL IT__:

If you already know the root cause to your problem,

feel free to delete everything in this template.

### 1. What you did:

(1) **If you're using examples, what's the command you run:**

train.py --config MODE_MASK=True MODE_FPN=True DATA.BASEDIR=/home/federicolondon2019/tensorpack/COCO/DIR BACKBONE.WEIGHTS=/home/federicolondon2019/tensorpack/models/ImageNet-R50-AlignPadding.npz

(2) **If you're using examples, have you made any changes to the examples? Paste `git status; git diff` here:**

COCO.PY (changed version)

```

import json

import numpy as np

import os

import tqdm

from tensorpack.utils import logger

from tensorpack.utils.timer import timed_operation

from config import config as cfg

from dataset import DatasetRegistry, DatasetSplit

__all__ = ['register_coco']

class COCODetection(DatasetSplit):

# handle the weird (but standard) split of train and val

_INSTANCE_TO_BASEDIR = {

'valminusminival2014': 'val2017',

'minival2014': 'val2017',

}

"""

Mapping from the incontinuous COCO category id to an id in [1, #category]

For your own coco-format, dataset, change this to an **empty dict**.

"""

COCO_id_to_category_id = {1: 1, 2: 2, 3: 3, 4: 4, 5: 5, 6: 6, 7: 7, 8: 8, 9: 9, 10: 10, 11: 11, 12: 12, 13: 13, 14: 14, 15: 15, 16: 16, 17: 17, 18: 18, 19: 19, 20: 20, 21: 21, 22: 22, 23: 23, 24: 24, 25: 25, 26: 26, 27: 27, 28: 28, 29: 29, 30: 30, 31: 31, 32: 32, 33: 33, 34: 34, 35: 35, 36: 36, 37: 37} # noqa

"""

80 names for COCO

For your own coco-format dataset, change this.

"""

class_names = [

'Bird', 'Ground_Animal', 'Crosswalk_Plain', 'Person', 'Bicyclist', 'Motorcyclist', 'Other_Rider', 'Lane_Marking_-_Crosswalk', 'Banner', 'Bench', 'Bike_Rack', 'Billboard', 'Catch_Basin', 'CCTV_Camera', 'Fire_Hydrant', 'Junction_Box', 'Mailbox', 'Manhole', 'Phone_Booth', 'Street_Light', 'Pole', 'Traffic_Sign_Frame', 'Utility_Pole', 'Traffic_Light', 'Traffic_Sign_(Back)', 'Traffic_Sign_(Front)', 'Trash_Can', 'Bicycle', 'Boat', 'Bus', 'Car', 'Caravan', 'Motorcycle', 'Other_Vehicle', 'Trailer', 'Truck', 'Wheeled_Slow'] # noqa

cfg.DATA.CLASS_NAMES = class_names

def __init__(self, basedir, split):

"""

Args:

basedir (str): root of the dataset which contains the subdirectories for each split and annotations

split (str): the name of the split, e.g. "train2017".

The split has to match an annotation file in "annotations/" and a directory of images.

Examples:

For a directory of this structure:

DIR/

annotations/

instances_XX.json

instances_YY.json

XX/

YY/

use `COCODetection(DIR, 'XX')` and `COCODetection(DIR, 'YY')`

"""

basedir = os.path.expanduser(basedir)

self._imgdir = os.path.realpath(os.path.join(

basedir, self._INSTANCE_TO_BASEDIR.get(split, split)))

assert os.path.isdir(self._imgdir), "{} is not a directory!".format(self._imgdir)

annotation_file = os.path.join(

basedir, 'annotations/instances_{}.json'.format(split))

assert os.path.isfile(annotation_file), annotation_file

from pycocotools.coco import COCO

self.coco = COCO(annotation_file)

self.annotation_file = annotation_file

logger.info("Instances loaded from {}.".format(annotation_file))

# https://github.com/cocodataset/cocoapi/blob/master/PythonAPI/pycocoEvalDemo.ipynb

def print_coco_metrics(self, json_file):

"""

Args:

json_file (str): path to the results json file in coco format

Returns:

dict: the evaluation metrics

"""

from pycocotools.cocoeval import COCOeval

ret = {}

cocoDt = self.coco.loadRes(json_file)

cocoEval = COCOeval(self.coco, cocoDt, 'bbox')

cocoEval.evaluate()

cocoEval.accumulate()

cocoEval.summarize()

fields = ['IoU=0.5:0.95', 'IoU=0.5', 'IoU=0.75', 'small', 'medium', 'large']

for k in range(6):

ret['mAP(bbox)/' + fields[k]] = cocoEval.stats[k]

json_obj = json.load(open(json_file))

if len(json_obj) > 0 and 'segmentation' in json_obj[0]:

cocoEval = COCOeval(self.coco, cocoDt, 'segm')

cocoEval.evaluate()

cocoEval.accumulate()

cocoEval.summarize()

for k in range(6):

ret['mAP(segm)/' + fields[k]] = cocoEval.stats[k]

return ret

def load(self, add_gt=True, add_mask=False):

"""

Args:

add_gt: whether to add ground truth bounding box annotations to the dicts

add_mask: whether to also add ground truth mask

Returns:

a list of dict, each has keys including:

'image_id', 'file_name',

and (if add_gt is True) 'boxes', 'class', 'is_crowd', and optionally

'segmentation'.

"""

with timed_operation('Load annotations for {}'.format(

os.path.basename(self.annotation_file))):

img_ids = self.coco.getImgIds()

img_ids.sort()

# list of dict, each has keys: height,width,id,file_name

imgs = self.coco.loadImgs(img_ids)

for idx, img in enumerate(tqdm.tqdm(imgs)):

img['image_id'] = img.pop('id')

img['file_name'] = os.path.join(self._imgdir, img['file_name'])

if idx == 0:

# make sure the directories are correctly set

assert os.path.isfile(img["file_name"]), img["file_name"]

if add_gt:

self._add_detection_gt(img, add_mask)

return imgs

def _add_detection_gt(self, img, add_mask):

"""

Add 'boxes', 'class', 'is_crowd' of this image to the dict, used by detection.

If add_mask is True, also add 'segmentation' in coco poly format.

"""

# ann_ids = self.coco.getAnnIds(imgIds=img['image_id'])

# objs = self.coco.loadAnns(ann_ids)

objs = self.coco.imgToAnns[img['image_id']] # equivalent but faster than the above two lines

if 'minival' not in self.annotation_file:

# TODO better to check across the entire json, rather than per-image

ann_ids = [ann["id"] for ann in objs]

assert len(set(ann_ids)) == len(ann_ids), \

"Annotation ids in '{}' are not unique!".format(self.annotation_file)

# clean-up boxes

width = img.pop('width')

height = img.pop('height')

all_boxes = []

all_segm = []

all_cls = []

all_iscrowd = []

for objid, obj in enumerate(objs):

if obj.get('ignore', 0) == 1:

continue

x1, y1, w, h = list(map(float, obj['bbox']))

# bbox is originally in float

# x1/y1 means upper-left corner and w/h means true w/h. This can be verified by segmentation pixels.

# But we do make an assumption here that (0.0, 0.0) is upper-left corner of the first pixel

x2, y2 = x1 + w, y1 + h

# np.clip would be quite slow here

x1 = min(max(x1, 0), width)

x2 = min(max(x2, 0), width)

y1 = min(max(y1, 0), height)

y2 = min(max(y2, 0), height)

w, h = x2 - x1, y2 - y1

# Require non-zero seg area and more than 1x1 box size

if obj['area'] > 1 and w > 0 and h > 0 and w * h >= 4:

all_boxes.append([x1, y1, x2, y2])

all_cls.append(self.COCO_id_to_category_id.get(obj['category_id'], obj['category_id']))

iscrowd = obj.get("iscrowd", 0)

all_iscrowd.append(iscrowd)

if add_mask:

segs = obj['segmentation']

if not isinstance(segs, list):

assert iscrowd == 1

all_segm.append(None)

else:

valid_segs = [np.asarray(p).reshape(-1, 2).astype('float32') for p in segs if len(p) >= 6]

if len(valid_segs) == 0:

logger.error("Object {} in image {} has no valid polygons!".format(objid, img['file_name']))

elif len(valid_segs) < len(segs):

logger.warn("Object {} in image {} has invalid polygons!".format(objid, img['file_name']))

all_segm.append(valid_segs)

# all geometrically-valid boxes are returned

if len(all_boxes):

img['boxes'] = np.asarray(all_boxes, dtype='float32') # (n, 4)

else:

img['boxes'] = np.zeros((0, 4), dtype='float32')

cls = np.asarray(all_cls, dtype='int32') # (n,)

if len(cls):

assert cls.min() > 0, "Category id in COCO format must > 0!"

img['class'] = cls # n, always >0

img['is_crowd'] = np.asarray(all_iscrowd, dtype='int8') # n,

if add_mask:

# also required to be float32

img['segmentation'] = all_segm

def training_roidbs(self):

return self.load(add_gt=True, add_mask=cfg.MODE_MASK)

def inference_roidbs(self):

return self.load(add_gt=False)

def eval_inference_results(self, results, output):

continuous_id_to_COCO_id = {v: k for k, v in self.COCO_id_to_category_id.items()}

for res in results:

# convert to COCO's incontinuous category id

if res['category_id'] in continuous_id_to_COCO_id:

res['category_id'] = continuous_id_to_COCO_id[res['category_id']]

# COCO expects results in xywh format

box = res['bbox']

box[2] -= box[0]

box[3] -= box[1]

res['bbox'] = [round(float(x), 3) for x in box]

assert output is not None, "COCO evaluation requires an output file!"

with open(output, 'w') as f:

json.dump(results, f)

if len(results):

# sometimes may crash if the results are empty?

return self.print_coco_metrics(output)

else:

return {}

def register_coco(basedir):

DatasetRegistry.register("train2017", lambda: COCODetection(basedir, "train2017"))

DatasetRegistry.register("val2017", lambda: COCODetection(basedir, "val2017"))

if __name__ == '__main__':

basedir = '~/data/coco'

c = COCODetection(basedir, 'train2014')

roidb = c.load(add_gt=True, add_mask=True)

print("#Images:", len(roidb))

```

CONFIG.PY (cahnged version)

```

import numpy as np

import os

import pprint

import six

from tensorpack.utils import logger

from tensorpack.utils.gpu import get_num_gpu

__all__ = ['config', 'finalize_configs']

class AttrDict():

_freezed = False

""" Avoid accidental creation of new hierarchies. """

def __getattr__(self, name):

if self._freezed:

raise AttributeError(name)

if name.startswith('_'):

# Do not mess with internals. Otherwise copy/pickle will fail

raise AttributeError(name)

ret = AttrDict()

setattr(self, name, ret)

return ret

def __setattr__(self, name, value):

if self._freezed and name not in self.__dict__:

raise AttributeError(

"Config was freezed! Unknown config: {}".format(name))

super().__setattr__(name, value)

def __str__(self):

return pprint.pformat(self.to_dict(), indent=1, width=100, compact=True)

__repr__ = __str__

def to_dict(self):

"""Convert to a nested dict. """

return {k: v.to_dict() if isinstance(v, AttrDict) else v

for k, v in self.__dict__.items() if not k.startswith('_')}

def update_args(self, args):

"""Update from command line args. """

for cfg in args:

keys, v = cfg.split('=', maxsplit=1)

keylist = keys.split('.')

dic = self

for i, k in enumerate(keylist[:-1]):

assert k in dir(dic), "Unknown config key: {}".format(keys)

dic = getattr(dic, k)

key = keylist[-1]

oldv = getattr(dic, key)

if not isinstance(oldv, str):

v = eval(v)

setattr(dic, key, v)

def freeze(self, freezed=True):

self._freezed = freezed

for v in self.__dict__.values():

if isinstance(v, AttrDict):

v.freeze(freezed)

# avoid silent bugs

def __eq__(self, _):

raise NotImplementedError()

def __ne__(self, _):

raise NotImplementedError()

config = AttrDict()

_C = config # short alias to avoid coding

# mode flags ---------------------

_C.TRAINER = 'horovod' # options: 'horovod', 'replicated'

_C.MODE_MASK = True # FasterRCNN or MaskRCNN

_C.MODE_FPN = False

# dataset -----------------------

_C.DATA.BASEDIR = '/home/federicolondon2019/tensorpack/COCO/DIR'

# All TRAIN dataset will be concatenated for training.

_C.DATA.TRAIN = ('train2017') # i.e. trainval35k, AKA train2017

# Each VAL dataset will be evaluated separately (instead of concatenated)

_C.DATA.VAL = ('val2017', ) # AKA val2017

# This two config will be populated later by the dataset loader:

_C.DATA.NUM_CATEGORY = 37 # without the background class (e.g., 80 for COCO)

_C.DATA.CLASS_NAMES = [] # NUM_CLASS (NUM_CATEGORY+1) strings, the first is "BG".

# whether the coordinates in the annotations are absolute pixel values, or a relative value in [0, 1]

_C.DATA.ABSOLUTE_COORD = True

# Number of data loading workers.

# In case of horovod training, this is the number of workers per-GPU (so you may want to use a smaller number).

# Set to 0 to disable parallel data loading

_C.DATA.NUM_WORKERS = 10

# backbone ----------------------

_C.BACKBONE.WEIGHTS = '' # /path/to/weights.npz

_C.BACKBONE.RESNET_NUM_BLOCKS = [3, 4, 6, 3] # for resnet50

# RESNET_NUM_BLOCKS = [3, 4, 23, 3] # for resnet101

_C.BACKBONE.FREEZE_AFFINE = False # do not train affine parameters inside norm layers

_C.BACKBONE.NORM = 'FreezeBN' # options: FreezeBN, SyncBN, GN, None

_C.BACKBONE.FREEZE_AT = 2 # options: 0, 1, 2

# Use a base model with TF-preferred padding mode,

# which may pad more pixels on right/bottom than top/left.

# See https://github.com/tensorflow/tensorflow/issues/18213

# In tensorpack model zoo, ResNet models with TF_PAD_MODE=False are marked with "-AlignPadding".

# All other models under `ResNet/` in the model zoo are using TF_PAD_MODE=True.

# Using either one should probably give the same performance.

# We use the "AlignPadding" one just to be consistent with caffe2.

_C.BACKBONE.TF_PAD_MODE = False

_C.BACKBONE.STRIDE_1X1 = False # True for MSRA models

# schedule -----------------------

_C.TRAIN.NUM_GPUS = None # by default, will be set from code

_C.TRAIN.WEIGHT_DECAY = 1e-4

_C.TRAIN.BASE_LR = 1e-2 # defined for total batch size=8. Otherwise it will be adjusted automatically

_C.TRAIN.WARMUP = 1000 # in terms of iterations. This is not affected by #GPUs

_C.TRAIN.WARMUP_INIT_LR = 1e-2 * 0.33 # defined for total batch size=8. Otherwise it will be adjusted automatically

_C.TRAIN.STEPS_PER_EPOCH = 500

_C.TRAIN.STARTING_EPOCH = 1 # the first epoch to start with, useful to continue a training

# LR_SCHEDULE means equivalent steps when the total batch size is 8.

# When the total bs!=8, the actual iterations to decrease learning rate, and

# the base learning rate are computed from BASE_LR and LR_SCHEDULE.

# Therefore, there is *no need* to modify the config if you only change the number of GPUs.

_C.TRAIN.LR_SCHEDULE = [120000, 160000, 180000] # "1x" schedule in detectron

# _C.TRAIN.LR_SCHEDULE = [240000, 320000, 360000] # "2x" schedule in detectron

# Longer schedules for from-scratch training (https://arxiv.org/abs/1811.08883):

# _C.TRAIN.LR_SCHEDULE = [960000, 1040000, 1080000] # "6x" schedule in detectron

# _C.TRAIN.LR_SCHEDULE = [1500000, 1580000, 1620000] # "9x" schedule in detectron

_C.TRAIN.EVAL_PERIOD = 25 # period (epochs) to run evaluation

# preprocessing --------------------

# Alternative old (worse & faster) setting: 600

_C.PREPROC.TRAIN_SHORT_EDGE_SIZE = [800, 800] # [min, max] to sample from

_C.PREPROC.TEST_SHORT_EDGE_SIZE = 800

_C.PREPROC.MAX_SIZE = 1333

# mean and std in RGB order.

# Un-scaled version: [0.485, 0.456, 0.406], [0.229, 0.224, 0.225]

_C.PREPROC.PIXEL_MEAN = [123.675, 116.28, 103.53]

_C.PREPROC.PIXEL_STD = [58.395, 57.12, 57.375]

# anchors -------------------------

_C.RPN.ANCHOR_STRIDE = 16

_C.RPN.ANCHOR_SIZES = (32, 64, 128, 256, 512) # sqrtarea of the anchor box

_C.RPN.ANCHOR_RATIOS = (0.5, 1., 2.)

_C.RPN.POSITIVE_ANCHOR_THRESH = 0.7

_C.RPN.NEGATIVE_ANCHOR_THRESH = 0.3

# rpn training -------------------------

_C.RPN.FG_RATIO = 0.5 # fg ratio among selected RPN anchors

_C.RPN.BATCH_PER_IM = 256 # total (across FPN levels) number of anchors that are marked valid

_C.RPN.MIN_SIZE = 0

_C.RPN.PROPOSAL_NMS_THRESH = 0.7

# Anchors which overlap with a crowd box (IOA larger than threshold) will be ignored.

# Setting this to a value larger than 1.0 will disable the feature.

# It is disabled by default because Detectron does not do this.

_C.RPN.CROWD_OVERLAP_THRESH = 9.99

_C.RPN.HEAD_DIM = 1024 # used in C4 only

# RPN proposal selection -------------------------------

# for C4

_C.RPN.TRAIN_PRE_NMS_TOPK = 12000

_C.RPN.TRAIN_POST_NMS_TOPK = 2000

_C.RPN.TEST_PRE_NMS_TOPK = 6000

_C.RPN.TEST_POST_NMS_TOPK = 1000 # if you encounter OOM in inference, set this to a smaller number

# for FPN, #proposals per-level and #proposals after merging are (for now) the same

# if FPN.PROPOSAL_MODE = 'Joint', these options have no effect

_C.RPN.TRAIN_PER_LEVEL_NMS_TOPK = 2000

_C.RPN.TEST_PER_LEVEL_NMS_TOPK = 1000

# fastrcnn training ---------------------

_C.FRCNN.BATCH_PER_IM = 512

_C.FRCNN.BBOX_REG_WEIGHTS = [10., 10., 5., 5.] # Slightly better setting: 20, 20, 10, 10

_C.FRCNN.FG_THRESH = 0.5

_C.FRCNN.FG_RATIO = 0.25 # fg ratio in a ROI batch