repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

mwaskom/seaborn | data-science | 2,788 | [Feature] Multiple rugplots on same plot | Hi there,

Is there a way to add multiple rugplots to the same figure? This would be useful when having unique values on one axis and multiple grouping variables to color by. I understand this is achievable with shapes/colors for groups of low cardinality but I believe can quickly get confusing. Currently, when calling `rugplot` twice the second `rugplot` draws over the first one.

Example:

```{python}

import string

import random

import numpy as np

import pandas as pd

import seaborn as sns

N = 20

groups_a = random.choices(string.ascii_letters[0:5], k=N)

groups_b = random.choices(string.ascii_uppercase[0:5], k=N)

Y = np.random.random(N)

X = range(N)

df = pd.DataFrame.from_dict({"X":X, "Y":Y, "A":groups_a,"B":groups_b})

sns.scatterplot(data=df, x="X", y="Y")

sns.rugplot(data=df, x="X", hue="A", linewidth=12)

sns.rugplot(data=df, x="X", hue="B", linewidth=12)

```

This yields:

Expected output would be something like:

(of course with more modifications of cmaps etc. to make the plot more clear)

| closed | 2022-05-02T10:20:48Z | 2022-05-02T13:18:32Z | https://github.com/mwaskom/seaborn/issues/2788 | [] | jeskowagner | 8 |

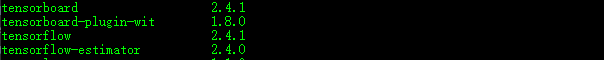

vllm-project/vllm | pytorch | 14,789 | [Bug]: Clarification on LoRA Support for Gemma3ForConditionalGeneration | ### Your current environment

<img width="886" alt="Image" src="https://github.com/user-attachments/assets/056cca27-67e9-4399-9411-cffa94760c04" />

### 🐛 Describe the bug

Hey vLLM Team,

I’d like to clarify the LoRA support for `Gemma3ForConditionalGeneration`. The [supported models documentation](https://docs.vllm.ai/en/latest/models/supported_models.html) states that this model supports LoRA, but after reviewing the code, it doesn’t seem to have LoRA support implemented.

Could you confirm if LoRA is indeed supported for this model, or if the documentation needs an update?

Thanks!

<img width="597" alt="Image" src="https://github.com/user-attachments/assets/eeb1d626-d84d-4f23-ae0b-44587ff77544" />

### Before submitting a new issue...

- [x] Make sure you already searched for relevant issues, and asked the chatbot living at the bottom right corner of the [documentation page](https://docs.vllm.ai/en/latest/), which can answer lots of frequently asked questions. | closed | 2025-03-14T01:37:07Z | 2025-03-15T01:21:18Z | https://github.com/vllm-project/vllm/issues/14789 | [

"bug"

] | angkywilliam | 2 |

holoviz/panel | plotly | 6,822 | Panel ChatInterface examples should not use TextAreaInput | I think the pn.chat.ChatAreaInput should be mentioned here https://panel.holoviz.org/reference/chat/ChatInterface.html | closed | 2024-05-10T17:22:00Z | 2024-05-13T19:24:15Z | https://github.com/holoviz/panel/issues/6822 | [

"type: docs"

] | ahuang11 | 0 |

plotly/dash | dash | 2,221 | [BUG] Background callbacks with different outputs not working | **Describe your context**

Please provide us your environment, so we can easily reproduce the issue.

- replace the result of `pip list | grep dash` below

```

dash 2.6.1

dash-bootstrap-components 1.2.0

dash-core-components 2.0.0

dash-html-components 2.0.0

dash-table 5.0.0

```

**Describe the bug**

Background callbacks don't work if they are generated with a function/loop. For example, I've created a function `gen_callback` that creates a new callback given a css id.

```python

def gen_callback(css_id, x):

@app.callback(

Output(css_id, 'children'),

Input('my-dropdown', 'value'),

background=True,

)

def callback_name(value):

print(f"Inside callback_name {self._css_id} for {org_id}")

return int(value) + x

```

The background callback manager uses a hash function to know the key to get. This hash function just takes into account the code of the function, but not the variables used to generate that function.

https://github.com/plotly/dash/blob/c897b2b094543e930a862fe51d48abfb78057df7/dash/long_callback/managers/__init__.py#L101-L105

I think the hash should also take into account the `Output` list of the callback.

**Expected behavior**

Using a variable as the id of one of the outputs creates multiple different, valid callbacks, that should work fine with background=True.

**Possible temporary solution**

Generate a function with `exec()`, replacing parts of a template code, and decorate the compiled function.

| closed | 2022-09-08T08:05:57Z | 2023-06-25T22:28:46Z | https://github.com/plotly/dash/issues/2221 | [] | daviddavo | 5 |

koxudaxi/datamodel-code-generator | pydantic | 1,541 | Issue with generating model from JSON Compound Schema | **Describe the bug**

Trying to generate a pydantic model from a compound JSON schema fails and returns following error (just the end of the otherwise very long message) :

`yaml.scanner.ScannerError: mapping values are not allowed in this context

in "<unicode string>", line 11, column 25`

**To Reproduce**

Taken from [json-schema doc](https://json-schema.org/understanding-json-schema/structuring.html)

Example schema:

```json

{

"$id": "https://example.com/schemas/customer",

"$schema": "https://json-schema.org/draft/2020-12/schema",

"type": "object",

"properties": {

"first_name": { "type": "string" },

"last_name": { "type": "string" },

"shipping_address": { "$ref": "/schemas/address" },

"billing_address": { "$ref": "/schemas/address" }

},

"required": ["first_name", "last_name", "shipping_address", "billing_address"],

"$defs": {

"address": {

"$id": "/schemas/address",

"$schema": "http://json-schema.org/draft-07/schema#",

"type": "object",

"properties": {

"street_address": { "type": "string" },

"city": { "type": "string" },

"state": { "$ref": "#/definitions/state" }

},

"required": ["street_address", "city", "state"],

"definitions": {

"state": { "enum": ["CA", "NY", "... etc ..."] }

}

}

}

}

```

Used commandline:

```

$ datamodel-codegen --input .\json_schema\test.json --input-file-type jsonschema --output test.py

```

**Expected behavior**

Expected the generation of a pydantic schema

**Version:**

- OS: windows 11 22H2

- Python version: 3.11.4

- datamodel-code-generator version: 0.21.4

**Additional context**

Similar issue was described in jsonschema $ref parsed as yaml? #564, however I tried to replace the base URI with "blank" and now I got FileNotFound error when refering to the sub $id

| closed | 2023-09-11T01:16:23Z | 2023-11-19T17:05:54Z | https://github.com/koxudaxi/datamodel-code-generator/issues/1541 | [

"enhancement"

] | clementboutaric2 | 2 |

erdewit/ib_insync | asyncio | 250 | How to get the pre-market-price? | I would like to get the pre-market-price -> the price between 8 -9.30.

By the way, I don't know how to get the price.

Would you please inform me?

I really appreciate your help

I will wait for your favorable reply.

Thanks. | closed | 2020-05-04T15:21:24Z | 2020-05-07T10:28:40Z | https://github.com/erdewit/ib_insync/issues/250 | [] | lovetrading10 | 1 |

onnx/onnx | pytorch | 6,579 | Undefined Symbol when importing onnx | # Bug Report

### Is the issue related to model conversion?

No.

### Describe the bug

I am trying to build onnx on ppc64le arch with external protobuf and with shared libraries. When I install the onnx wheel, and try importing it, I see issues of undefined symbol.

```

>>> import onnx

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/home/builder/dev1/lib64/python3.12/site-packages/onnx/__init__.py", line 77, in <module>

from onnx.onnx_cpp2py_export import ONNX_ML

ImportError: /home/builder/new_scripts/onnx/onnx/.setuptools-cmake-build/libonnx.so: undefined symbol: _ZN4onnx25TensorProto_DataType_NameB5cxx11ENS_20TensorProto_DataTypeE

```

### System information

<!--

- OS Platform and Distribution (*e.g. Linux Ubuntu 20.04*): ppc64le

- ONNX version (*e.g. 1.13*): v1.17.0

- Python version: 3.12

- GCC/Compiler version (if compiling from source): GCC 13

- CMake version: 3.31.1

- Protobuf version: 4.25.3

- Visual Studio version (if applicable):--> NA

### Reproduction instructions

<!--

- Describe the code to reproduce the behavior.

```

import onnx

model = onnx.load('model.onnx')

...

```

- Attach the ONNX model to the issue (where applicable)-->

### Expected behavior

import onnx.__version__ should show version.

### Notes

Listing my cmake args:

```

export CMAKE_ARGS="${CMAKE_ARGS} -DCMAKE_INSTALL_PREFIX=$ONNX_PREFIX"

export CMAKE_ARGS="${CMAKE_ARGS} -DCMAKE_AR=${AR}"

export CMAKE_ARGS="${CMAKE_ARGS} -DCMAKE_LINKER=${LD}"

export CMAKE_ARGS="${CMAKE_ARGS} -DCMAKE_NM=${NM}"

export CMAKE_ARGS="${CMAKE_ARGS} -DCMAKE_OBJCOPY=${OBJCOPY}"

export CMAKE_ARGS="${CMAKE_ARGS} -DCMAKE_OBJDUMP=${OBJDUMP}"

export CMAKE_ARGS="${CMAKE_ARGS} -DCMAKE_RANLIB=${RANLIB}"

export CMAKE_ARGS="${CMAKE_ARGS} -DCMAKE_STRIP=${STRIP}"

export CMAKE_ARGS="${CMAKE_ARGS} -DBUILD_SHARED_LIBS=ON"

export CMAKE_ARGS="${CMAKE_ARGS} -DONNX_BUILD_SHARED_LIBS=ON"

export CMAKE_ARGS="${CMAKE_ARGS} -DCMAKE_CXX_STANDARD=17"

export CMAKE_ARGS="${CMAKE_ARGS} -DONNX_USE_PROTOBUF_SHARED_LIBS=ON"

export CMAKE_ARGS="${CMAKE_ARGS} -DONNX_USE_LITE_PROTO=ON"

export CMAKE_ARGS="${CMAKE_ARGS} -DProtobuf_PROTOC_EXECUTABLE=$ENV_PREFIX/bin/protoc -DProtobuf_LIBRARY=$ENV_PREFIX/lib/libprotobuf.so"

export CMAKE_ARGS="${CMAKE_ARGS} -DCMAKE_PREFIX_PATH=$CMAKE_PREFIX_PATH"

```

creating whl using `python -m pip wheel -w dist -vv --no-build-isolation --no-deps .`

| open | 2024-12-09T13:01:23Z | 2024-12-09T13:01:23Z | https://github.com/onnx/onnx/issues/6579 | [

"bug"

] | Aman-Surkar | 0 |

miguelgrinberg/microblog | flask | 68 | SearchableMixin limited to Post objects | Hi,

At this moment SearchableMixin is limited only to Post class. Could it be possible to change it to be more universal with the following change?

```

db.case(when, value=cls.id)), total

```

And then reuse the same mixin with User class

```

class User(SearchableMixin, UserMixin, db.Model):

__searchable__ = ['username']

...

db.event.listen(db.session, 'before_commit', User.before_commit)

db.event.listen(db.session, 'after_commit', User.after_commit)

```

| closed | 2018-01-11T13:24:29Z | 2018-03-06T01:32:58Z | https://github.com/miguelgrinberg/microblog/issues/68 | [

"bug"

] | avoidik | 1 |

healthchecks/healthchecks | django | 1,132 | Additional blank checks are created through API? | Hi, I'm having this odd issue and I could use a bit of a sanity check.

I create checks like this:

```bash

curl --silent "$HC_CHECK_URL/api/v3/checks/" \

--header "X-Api-Key: $HC_API_KEY" \

--data @- | jq <<-EOF

{

"name": "${image_local}",

"slug": "${image_slug}",

"timeout": 5184000,

"grace": 3600,

"tz": "Redacted",

"channels": "Redacted",

"unique": ["slug"]

}

EOF

```

And this seems to trigger the correct check slug. I see a new event/ping there, but it also creates these empty checks.

To note, the check name (image_local variable) changes on every build, but the slug (image_slug) doesn't change. I've noticed that after the initial check creation, new calls to the same check don't update the name. Is this by design?

| closed | 2025-03-05T23:15:04Z | 2025-03-05T23:54:03Z | https://github.com/healthchecks/healthchecks/issues/1132 | [] | rwjack | 2 |

danimtb/dasshio | dash | 109 | support for 'dash wand'? | hello there,

i've been using the dash buttons in my brand new HA setup for a few weeks now.

then i've learned about the dash wand, bought one on ebay but had no success with the provided config URL, youve posted here:

`http://192.168.0.1/?amzn_ssid=<SSID>&amzn_pw=<PASSWORD>`

google also has no clue.

could anyony lead my in the right direction?

is this a future feature maybe? | closed | 2022-03-12T01:24:39Z | 2023-06-12T07:20:02Z | https://github.com/danimtb/dasshio/issues/109 | [] | sebaschn | 1 |

kubeflow/katib | scikit-learn | 2,132 | After updating to version 0.15.0, the name argument in KatibClient().get_success_trial_details() has disappeared. | /kind bug

**What steps did you take and what happened:**

The code below, which worked well in version 0.14.0, fails because of the name parameter in version 0.15.0.

```

trial_details_log = kclient.get_success_trial_details(

name=experiment, namespace=namespace

)

```

**What did you expect to happen:**

I wonder if this was done intentionally in version 0.15.0 or if a bug fix is needed. Is it something that will be changed in the future update version?

- Katib version (check the Katib controller image version): 0.15.0

- Kubernetes version: (`kubectl version`): v1.21.11

- OS (`uname -a`): `Linux control-plane.minikube.internal 5.4.0-144-generic #161~18.04.1-Ubuntu SMP Fri Feb 10 15:55:22 UTC 2023 x86_64 x86_64 x86_64 GNU/Linux`

---

<!-- Don't delete this message to encourage users to support your issue! -->

Impacted by this bug? Give it a 👍 We prioritize the issues with the most 👍

| closed | 2023-03-24T00:50:49Z | 2023-03-27T00:51:45Z | https://github.com/kubeflow/katib/issues/2132 | [

"kind/bug"

] | moey920 | 3 |

pydantic/pydantic-ai | pydantic | 1,028 | Create llms.txt and llms-full.txt | Right now we have only `llms.txt`, but that shows the full docs. We should follow the spec properly, and add them to the hub: https://llmstxthub.com/ | open | 2025-03-02T10:06:59Z | 2025-03-20T12:22:43Z | https://github.com/pydantic/pydantic-ai/issues/1028 | [] | Kludex | 10 |

desec-io/desec-stack | rest-api | 528 | GUI: allow declaring API tokens read-only during creation | It would be useful to have a capability for read-only API tokens.

The use case I have is that we'd like to check if all values are up to date via `terraform plan -detailed-exitcode || fail` as part of a CI job. At the moment that could only be done by giving that CI job a token with read/write access.

This is a "least authority" concern similar to #347. | open | 2021-04-14T13:18:03Z | 2024-10-07T17:11:35Z | https://github.com/desec-io/desec-stack/issues/528 | [

"enhancement",

"help wanted",

"gui"

] | Valodim | 6 |

ipython/ipython | data-science | 14,113 | error with jupyter_notebook_config.json file | **Hi, I face this error with my jupyter_notebook_config.json file, when I use "jupyter notebook"**

Exception while loading config file /data/Wu_Feizhen/wfz05/.jupyter/jupyter_notebook_config.json

Traceback (most recent call last):

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/traitlets/config/application.py", line 858, in _load_config_files

config = loader.load_config()

^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/traitlets/config/loader.py", line 576, in load_config

dct = self._read_file_as_dict()

^^^^^^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/traitlets/config/loader.py", line 582, in _read_file_as_dict

return json.load(f)

^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/json/__init__.py", line 293, in load

return loads(fp.read(),

^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/json/__init__.py", line 346, in loads

return _default_decoder.decode(s)

^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/json/decoder.py", line 337, in decode

obj, end = self.raw_decode(s, idx=_w(s, 0).end())

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/json/decoder.py", line 355, in raw_decode

raise JSONDecodeError("Expecting value", s, err.value) from None

json.decoder.JSONDecodeError: Expecting value: line 3 column 13 (char 33)

Traceback (most recent call last):

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/bin/jupyter-notebook", line 11, in <module>

sys.exit(main())

^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/jupyter_core/application.py", line 277, in launch_instance

return super().launch_instance(argv=argv, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/traitlets/config/application.py", line 991, in launch_instance

app.initialize(argv)

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/traitlets/config/application.py", line 113, in inner

return method(app, *args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/notebook/notebookapp.py", line 2169, in initialize

self.init_server_extension_config()

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/notebook/notebookapp.py", line 2026, in init_server_extension_config

section = manager.get(self.config_file_name)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/notebook/services/config/manager.py", line 25, in get

recursive_update(config, cm.get(section_name))

^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/notebook/config_manager.py", line 100, in get

recursive_update(data, json.load(f))

^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/json/__init__.py", line 293, in load

return loads(fp.read(),

^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/json/__init__.py", line 346, in loads

return _default_decoder.decode(s)

^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/json/decoder.py", line 337, in decode

obj, end = self.raw_decode(s, idx=_w(s, 0).end())

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/json/decoder.py", line 355, in raw_decode

raise JSONDecodeError("Expecting value", s, err.value) from None

json.decoder.JSONDecodeError: Expecting value: line 3 column 13 (char 33)

**then I run "jupyter notebook --debug", I got this,**

Searching ['/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/etc/jupyter', '/data/Wu_Feizhen/wfz05/.jupyter', '/data/Wu_Feizhen/wfz05/.local/etc/jupyter', '/usr/local/etc/jupyter', '/etc/jupyter'] for config files

[D 21:29:21.651 NotebookApp] Looking for jupyter_config in /etc/jupyter

[D 21:29:21.651 NotebookApp] Looking for jupyter_config in /usr/local/etc/jupyter

[D 21:29:21.651 NotebookApp] Looking for jupyter_config in /data/Wu_Feizhen/wfz05/.local/etc/jupyter

[D 21:29:21.651 NotebookApp] Looking for jupyter_config in /data/Wu_Feizhen/wfz05/.jupyter

[D 21:29:21.651 NotebookApp] Looking for jupyter_config in /data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/etc/jupyter

[D 21:29:21.651 NotebookApp] Looking for jupyter_notebook_config in /etc/jupyter

[D 21:29:21.651 NotebookApp] Looking for jupyter_notebook_config in /usr/local/etc/jupyter

[D 21:29:21.651 NotebookApp] Looking for jupyter_notebook_config in /data/Wu_Feizhen/wfz05/.local/etc/jupyter

[D 21:29:21.651 NotebookApp] Looking for jupyter_notebook_config in /data/Wu_Feizhen/wfz05/.jupyter

[D 21:29:21.652 NotebookApp] Loaded config file: /data/Wu_Feizhen/wfz05/.jupyter/jupyter_notebook_config.py

[E 21:29:21.652 NotebookApp] Exception while loading config file /data/Wu_Feizhen/wfz05/.jupyter/jupyter_notebook_config.json

Traceback (most recent call last):

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/traitlets/config/application.py", line 858, in _load_config_files

config = loader.load_config()

^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/traitlets/config/loader.py", line 576, in load_config

dct = self._read_file_as_dict()

^^^^^^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/traitlets/config/loader.py", line 582, in _read_file_as_dict

return json.load(f)

^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/json/__init__.py", line 293, in load

return loads(fp.read(),

^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/json/__init__.py", line 346, in loads

return _default_decoder.decode(s)

^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/json/decoder.py", line 337, in decode

obj, end = self.raw_decode(s, idx=_w(s, 0).end())

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/json/decoder.py", line 355, in raw_decode

raise JSONDecodeError("Expecting value", s, err.value) from None

json.decoder.JSONDecodeError: Expecting value: line 3 column 13 (char 33)

[D 21:29:21.653 NotebookApp] Looking for jupyter_notebook_config in /data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/etc/jupyter

[D 21:29:21.653 NotebookApp] Raising open file limit: soft 1024->4096; hard 4096->4096

[D 21:29:21.673 NotebookApp] Paths used for configuration of jupyter_notebook_config:

/etc/jupyter/jupyter_notebook_config.json

[D 21:29:21.673 NotebookApp] Paths used for configuration of jupyter_notebook_config:

/usr/local/etc/jupyter/jupyter_notebook_config.json

[D 21:29:21.673 NotebookApp] Paths used for configuration of jupyter_notebook_config:

/data/Wu_Feizhen/wfz05/.local/etc/jupyter/jupyter_notebook_config.json

[D 21:29:21.673 NotebookApp] Paths used for configuration of jupyter_notebook_config:

/data/Wu_Feizhen/wfz05/.jupyter/jupyter_notebook_config.json

Traceback (most recent call last):

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/bin/jupyter-notebook", line 11, in <module>

sys.exit(main())

^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/jupyter_core/application.py", line 277, in launch_instance

return super().launch_instance(argv=argv, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/traitlets/config/application.py", line 991, in launch_instance

app.initialize(argv)

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/traitlets/config/application.py", line 113, in inner

return method(app, *args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/notebook/notebookapp.py", line 2169, in initialize

self.init_server_extension_config()

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/notebook/notebookapp.py", line 2026, in init_server_extension_config

section = manager.get(self.config_file_name)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/notebook/services/config/manager.py", line 25, in get

recursive_update(config, cm.get(section_name))

^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/site-packages/notebook/config_manager.py", line 100, in get

recursive_update(data, json.load(f))

^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/json/__init__.py", line 293, in load

return loads(fp.read(),

^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/json/__init__.py", line 346, in loads

return _default_decoder.decode(s)

^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/json/decoder.py", line 337, in decode

obj, end = self.raw_decode(s, idx=_w(s, 0).end())

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/data/Wu_Feizhen/wfz05/anaconda3/envs/jupyter_R/lib/python3.11/json/decoder.py", line 355, in raw_decode

raise JSONDecodeError("Expecting value", s, err.value) from None

json.decoder.JSONDecodeError: Expecting value: line 3 column 13 (char 33)

**please anyone can help me with this issue, many thanks!**

| closed | 2023-07-11T13:44:47Z | 2023-07-13T12:06:43Z | https://github.com/ipython/ipython/issues/14113 | [] | BioVictory | 1 |

AntonOsika/gpt-engineer | python | 113 | Add deployment instructions to output | Let me preface this by saying I am not a developer, but I have a decent level of technical understanding and understand how code works.

In my instructions for the code I was generating, I said that I wanted step by step instructions for deploying the application on a server. In my case, this is a Telegram bot. It added that request to the specification, but did not provide the instructions in the output.

It might be good to have an instruction available to the user that does not generate code, but allows them to request instructions for installation or some other component of the app delivery. | closed | 2023-06-17T16:51:48Z | 2023-06-18T19:20:20Z | https://github.com/AntonOsika/gpt-engineer/issues/113 | [

"enhancement",

"help wanted",

"good first issue"

] | clickbrain | 3 |

CorentinJ/Real-Time-Voice-Cloning | python | 468 | SyntaxError: invalid syntax when running python demo_cli.py | ```

$: python demo_cli.py

Traceback (most recent call last):

File "demo_cli.py", line 2, in <module>

from utils.argutils import print_args

File "Real-Time-Voice-Cloning-master/utils/argutils.py", line 22

def print_args(args: argparse.Namespace, parser=None):

^

SyntaxError: invalid syntax

```

| closed | 2020-08-04T15:48:21Z | 2020-08-04T15:56:43Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/468 | [] | biplab1 | 3 |

clovaai/donut | nlp | 220 | Training one common model on two different type of images | I have been trying to train a common model that can extract data from two different type of images. I am getting all other details but it is not extracting the ID numbers of these two type of images. Can anyone suggest something to improve the results. | open | 2023-07-01T04:16:28Z | 2023-07-01T04:56:23Z | https://github.com/clovaai/donut/issues/220 | [] | NavneetSingh20 | 0 |

flairNLP/flair | pytorch | 2,699 | NER on custom data fails to start training and gets stuck before 1st epoch | I'm trying to train a custom NER with falir using my data (BIO format).

from flair.data import Corpus

from flair.datasets import ColumnCorpus

columns = {0 : 'text', 1 : 'ner'}

data_folder = 'Data_french/'

corpus: Corpus = ColumnCorpus(data_folder, columns,

train_file = 'train.txt',

test_file = 'test.txt',

dev_file = 'eval.txt')

2022-04-01 13:59:25,399 Reading data from Data_french

2022-04-01 13:59:25,401 Train: Data_french/train.txt

2022-04-01 13:59:25,401 Dev: Data_french/eval.txt

2022-04-01 13:59:25,401 Test: Data_french/test.txt

CPU times: user 49min 20s, sys: 5min 54s, total: 55min 15s

Wall time: 55min 18s

print(len(corpus.train))

print(corpus.train[0].to_tagged_string('ner'))

4948186

Myélogramme en sortie d'aplasie le 17.04.2013 `<B-DATE>` : rémission cytologique .

tag_type = 'ner'

tag_dictionary = corpus.make_label_dictionary(label_type=tag_type)

2022-04-01 15:37:39,082 Computing label dictionary. Progress:

100%|██████████| 4948186/4948186 [05:20<00:00, 15435.43it/s]

2022-04-01 15:42:59,660 Corpus contains the labels: ner (#179449386)

2022-04-01 15:42:59,660 Created (for label 'ner') Dictionary with 17 tags: <unk>, O, B-DATE, B-PATIENT, B-VILLE, I-DATE, B-DOCTOR, B-ZIP, I-PATIENT, I-DOCTOR, B-STR, I-STR, B-PHONE, I-PHONE, B-EMAIL, I-EMAIL, I-VILLE

tag_dictionary.get_items()

[' `<unk>` ',

'O',

'B-DATE',

'B-PATIENT',

'B-VILLE',

'I-DATE',

'B-DOCTOR',

'B-ZIP',

'I-PATIENT',

'I-DOCTOR',

'B-STR',

'I-STR',

'B-PHONE',

'I-PHONE',

'B-EMAIL',

'I-EMAIL',

'I-VILLE']

embedding_types = [

# GloVe embeddings

WordEmbeddings("fr"),

# contextual string embeddings, forward

FlairEmbeddings('fr-forward'),

# contextual string embeddings, backward

FlairEmbeddings('fr-backward'),

]

embeddings : StackedEmbeddings = StackedEmbeddings(embeddings=embedding_types)

from flair.models import SequenceTagger

tagger : SequenceTagger = SequenceTagger(hidden_size=256,

embeddings=embeddings,

tag_dictionary=tag_dictionary,

tag_type=tag_type,

use_crf=True)`

from flair.trainers import ModelTrainer

trainer : ModelTrainer = ModelTrainer(tagger, corpus)

trainer.train('flair_all_data_model',

#train_with_dev=True,

learning_rate=0.1,

mini_batch_size=64,

max_epochs=150,

embeddings_storage_mode='none')

Now everything works find and started the training but it doesn't even reach the epoch one and stuck before the epochs in this stage:

2022-04-01 15:53:22,942 ----------------------------------------------------------------------------------------------------

2022-04-01 15:53:22,942 Model: "SequenceTagger(

(embeddings): StackedEmbeddings(

(list_embedding_0): WordEmbeddings(

'fr'

(embedding): Embedding(1000000, 300)

)

(list_embedding_1): FlairEmbeddings(

(lm): LanguageModel(

(drop): Dropout(p=0.5, inplace=False)

(encoder): Embedding(275, 100)

(rnn): LSTM(100, 1024)

(decoder): Linear(in_features=1024, out_features=275, bias=True)

)

)

(list_embedding_2): FlairEmbeddings(

(lm): LanguageModel(

(drop): Dropout(p=0.5, inplace=False)

(encoder): Embedding(275, 100)

(rnn): LSTM(100, 1024)

(decoder): Linear(in_features=1024, out_features=275, bias=True)

)

)

)

(word_dropout): WordDropout(p=0.05)

(locked_dropout): LockedDropout(p=0.5)

(embedding2nn): Linear(in_features=2348, out_features=2348, bias=True)

(rnn): LSTM(2348, 256, batch_first=True, bidirectional=True)

(linear): Linear(in_features=512, out_features=19, bias=True)

(beta): 1.0

(weights): None

(weight_tensor) None

)"

2022-04-01 15:53:22,943 ----------------------------------------------------------------------------------------------------

2022-04-01 15:53:22,943 Corpus: "Corpus: 4948186 train + 608305 dev + 620581 test sentences"

2022-04-01 15:53:22,943 ----------------------------------------------------------------------------------------------------

2022-04-01 15:53:22,944 Parameters:

2022-04-01 15:53:22,944 - learning_rate: "0.1"

2022-04-01 15:53:22,944 - mini_batch_size: "64"

2022-04-01 15:53:22,944 - patience: "3"

2022-04-01 15:53:22,945 - anneal_factor: "0.5"

2022-04-01 15:53:22,945 - max_epochs: "150"

2022-04-01 15:53:22,945 - shuffle: "True"

2022-04-01 15:53:22,945 - train_with_dev: "False"

2022-04-01 15:53:22,946 - batch_growth_annealing: "False"

2022-04-01 15:53:22,946 ----------------------------------------------------------------------------------------------------

2022-04-01 15:53:22,946 Model training base path: "flair_all_data_model"

2022-04-01 15:53:22,946 ----------------------------------------------------------------------------------------------------

2022-04-01 15:53:22,947 Device: cuda:0

2022-04-01 15:53:22,947 ----------------------------------------------------------------------------------------------------

2022-04-01 15:53:22,947 Embeddings storage mode: none

2022-04-01 15:53:22,949 ----------------------------------------------------------------------------------------------------`

| closed | 2022-04-01T13:56:19Z | 2022-06-22T12:40:37Z | https://github.com/flairNLP/flair/issues/2699 | [] | elazzouzi1080 | 1 |

huggingface/transformers | tensorflow | 36,541 | Wrong dependency: `"tensorflow-text<2.16"` | ### System Info

- `transformers` version: 4.50.0.dev0

- Platform: Windows-10-10.0.26100-SP0

- Python version: 3.10.11

- Huggingface_hub version: 0.29.1

- Safetensors version: 0.5.3

- Accelerate version: not installed

- Accelerate config: not found

- DeepSpeed version: not installed

- PyTorch version (GPU?): not installed (NA)

- Tensorflow version (GPU?): not installed (NA)

- Flax version (CPU?/GPU?/TPU?): not installed (NA)

- Jax version: not installed

- JaxLib version: not installed

- Using distributed or parallel set-up in script?: no

### Who can help?

@stevhliu @Rocketknight1

### Information

- [ ] The official example scripts

- [ ] My own modified scripts

### Tasks

- [ ] An officially supported task in the `examples` folder (such as GLUE/SQuAD, ...)

- [ ] My own task or dataset (give details below)

### Reproduction

I am trying to install the packages needed for creating a PR to test my changes. Running `pip install -e ".[dev]"` from this [documentation](https://huggingface.co/docs/transformers/contributing#create-a-pull-request) results in the following error:

```markdown

ERROR: Cannot install transformers and transformers[dev]==4.50.0.dev0 because these package versions have conflicting dependencies.

The conflict is caused by:

transformers[dev] 4.50.0.dev0 depends on tensorflow<2.16 and >2.9; extra == "dev"

tensorflow-text 2.8.2 depends on tensorflow<2.9 and >=2.8.0; platform_machine != "arm64" or platform_system != "Darwin"

transformers[dev] 4.50.0.dev0 depends on tensorflow<2.16 and >2.9; extra == "dev"

tensorflow-text 2.8.1 depends on tensorflow<2.9 and >=2.8.0

To fix this you could try to:

1. loosen the range of package versions you've specified

2. remove package versions to allow pip to attempt to solve the dependency conflict

ERROR: ResolutionImpossible: for help visit https://pip.pypa.io/en/latest/topics/dependency-resolution/#dealing-with-dependency-conflicts

```

This happens because of the specification of `tensorflow-text<2.16` here:

https://github.com/huggingface/transformers/blob/c0c5acff077ac7c8fe68a0fdbad24306dbd9d4e3/setup.py#L179

### Expected behavior

`transformers[dev]` requires `tensorflow` above version 2.9, while `tensorflow-text` explicitly restricts TensorFlow to versions below 2.9.

Also, there is no 2.16 version, neither of `tensorflow` or `tensorflow-text`:

https://pypi.org/project/tensorflow/#history

https://pypi.org/project/tensorflow-text/#history

```markdown

INFO: pip is looking at multiple versions of transformers[dev] to determine which version is compatible with other requirements. This could take a while.

ERROR: Could not find a version that satisfies the requirement tensorflow<2.16,>2.9; extra == "dev" (from transformers[dev]) (from versions: 2.16.0rc0, 2.16.1, 2.16.2, 2.17.0rc0, 2.17.0rc1, 2.17.0, 2.17.1, 2.18.0rc0, 2.18.0rc1, 2.18.0rc2, 2.18.0, 2.19.0rc0)

ERROR: No matching distribution found for tensorflow<2.16,>2.9; extra == "dev"

```

What is the correct `tensorflow-text` version? | open | 2025-03-04T15:23:19Z | 2025-03-07T00:14:03Z | https://github.com/huggingface/transformers/issues/36541 | [

"bug"

] | d-kleine | 6 |

strawberry-graphql/strawberry | fastapi | 3,501 | `UnallowedReturnTypeForUnion` Error thrown when having a response of Union Type and using Generics since 0.229.0 release | ## Describe the Bug

`UnallowedReturnTypeForUnion` Error thrown when having a response of Union Type and using Generics(with interface) since 0.229.0 release.

Sharing the playground [link](https://play.strawberry.rocks/?gist=a3c87c1b7816a2a3251cdf442b4569e1) showing the error for ease of replication.

This was a feature which was working but seems like it broke with the 0.229.0 release

## System Information

- Operating system:

- Strawberry version (if applicable): 0.229.0

## Additional Context

Playground Link : https://play.strawberry.rocks/?gist=a3c87c1b7816a2a3251cdf442b4569e1 | closed | 2024-05-15T13:19:10Z | 2025-03-20T15:56:43Z | https://github.com/strawberry-graphql/strawberry/issues/3501 | [

"bug"

] | jibujacobamboss | 2 |

wkentaro/labelme | computer-vision | 658 | Backward compatibility Issues | I have old json files created with an older version of labelme just a few months old.

But a new version packed with Ubuntu Focal Fossa 20.04 failed to open the json file, and saying that lineColor is missing.

```json

{

"shapes": [

{

"shape_type": "polygon",

"points": [

[

95.10204081632652,

159.1020408163265

],

[

117.55102040816325,

147.26530612244898

],

[

145.30612244897958,

147.6734693877551

],

[

153.87755102040816,

162.77551020408163

],

[

156.734693877551,

185.22448979591834

],

[

153.0612244897959,

186.85714285714283

],

[

152.6530612244898,

195.42857142857142

],

[

150.6122448979592,

197.0612244897959

],

[

138.77551020408163,

206.44897959183672

],

[

97.14285714285714,

208.89795918367346

],

[

96.3265306122449,

202.77551020408163

],

[

89.38775510204081,

186.0408163265306

],

[

88.16326530612244,

177.0612244897959

]

],

"flags": {},

"group_id": null,

"label": "Minibus"

},

{

"shape_type": "polygon",

"points": [

[

0.0,

184.81632653061223

],

[

26.530612244897956,

185.22448979591834

],

[

40.0,

198.6938775510204

],

[

37.14285714285714,

226.85714285714283

],

[

21.224489795918366,

233.38775510204079

],

[

16.3265306122449,

235.0204081632653

],

[

12.244897959183673,

230.53061224489795

],

[

0.0,

232.16326530612244

]

],

"flags": {},

"group_id": null,

"label": "Car"

},

{

"shape_type": "polygon",

"points": [

[

215.91836734693877,

166.0408163265306

],

[

211.83673469387753,

170.93877551020407

],

[

207.34693877551018,

177.0612244897959

],

[

204.48979591836732,

185.22448979591834

],

[

200.40816326530611,

191.75510204081633

],

[

207.34693877551018,

194.20408163265304

],

[

202.85714285714283,

202.77551020408163

],

[

197.55102040816325,

209.30612244897958

],

[

202.44897959183672,

209.7142857142857

],

[

211.42857142857142,

196.6530612244898

],

[

217.55102040816325,

199.91836734693877

],

[

223.6734693877551,

208.89795918367346

],

[

226.1224489795918,

206.44897959183672

],

[

220.40816326530611,

194.61224489795916

],

[

215.1020408163265,

188.89795918367346

],

[

215.51020408163265,

173.3877551020408

],

[

219.99999999999997,

172.57142857142856

]

],

"flags": {},

"group_id": null,

"label": "Pedestrian"

},

{

"shape_type": "polygon",

"points": [

[

233.46938775510202,

131.75510204081633

],

[

229.79591836734693,

135.0204081632653

],

[

232.6530612244898,

138.28571428571428

],

[

228.97959183673467,

139.91836734693877

],

[

231.0204081632653,

141.95918367346937

],

[

233.87755102040813,

147.6734693877551

],

[

234.28571428571428,

158.6938775510204

],

[

235.1020408163265,

158.6938775510204

],

[

239.59183673469386,

158.28571428571428

],

[

237.55102040816325,

147.6734693877551

],

[

237.95918367346937,

144.0

],

[

239.99999999999997,

141.14285714285714

],

[

238.36734693877548,

137.46938775510202

],

[

235.51020408163262,

137.87755102040816

]

],

"flags": {},

"group_id": null,

"label": "Pedestrian"

},

{

"shape_type": "polygon",

"points": [

[

33.87755102040816,

139.91836734693877

],

[

31.83673469387755,

143.59183673469386

],

[

28.97959183673469,

149.30612244897958

],

[

31.020408163265305,

155.0204081632653

],

[

32.244897959183675,

159.51020408163265

],

[

33.46938775510204,

168.89795918367346

],

[

36.326530612244895,

168.48979591836732

],

[

36.73469387755102,

157.0612244897959

],

[

35.51020408163265,

151.34693877551018

],

[

38.367346938775505,

150.53061224489795

]

],

"flags": {},

"group_id": null,

"label": "Pedestrian"

},

{

"shape_type": "polygon",

"points": [

[

126.53061224489795,

147.26530612244898

],

[

131.83673469387753,

139.91836734693877

],

[

135.91836734693877,

139.91836734693877

],

[

140.0,

133.79591836734693

],

[

159.18367346938774,

133.3877551020408

],

[

166.12244897959184,

139.91836734693877

],

[

165.7142857142857,

151.75510204081633

],

[

165.7142857142857,

156.6530612244898

],

[

162.44897959183672,

155.0204081632653

],

[

159.18367346938774,

158.6938775510204

],

[

153.0612244897959,

159.51020408163265

],

[

146.53061224489795,

146.85714285714283

]

],

"flags": {},

"group_id": null,

"label": "Car"

},

{

"shape_type": "polygon",

"points": [

[

144.6629213483146,

133.14606741573033

],

[

148.03370786516854,

123.87640449438202

],

[

149.7191011235955,

121.06741573033707

],

[

151.68539325842696,

119.9438202247191

],

[

156.46067415730337,

116.29213483146067

],

[

170.22471910112358,

116.29213483146067

],

[

175.0,

117.97752808988764

],

[

178.93258426966293,

127.24719101123596

],

[

179.4943820224719,

131.46067415730337

],

[

177.52808988764045,

146.34831460674158

],

[

168.82022471910113,

150.28089887640448

],

[

166.01123595505618,

148.87640449438203

],

[

166.29213483146066,

139.8876404494382

],

[

159.2696629213483,

133.14606741573033

]

],

"flags": {},

"group_id": null,

"label": "Minibus"

},

{

"shape_type": "polygon",

"points": [

[

140.4494382022472,

132.58426966292134

],

[

137.07865168539325,

128.37078651685394

],

[

137.07865168539325,

120.78651685393258

],

[

140.1685393258427,

115.73033707865169

],

[

144.1011235955056,

120.78651685393258

],

[

144.9438202247191,

125.56179775280899

]

],

"flags": {},

"group_id": null,

"label": "Motorcycle"

},

{

"shape_type": "polygon",

"points": [

[

196.91011235955057,

128.93258426966293

],

[

192.97752808988764,

122.75280898876404

],

[

193.53932584269663,

116.57303370786516

],

[

196.62921348314606,

112.07865168539325

],

[

200.8426966292135,

116.29213483146067

],

[

201.40449438202248,

119.9438202247191

],

[

202.52808988764045,

125.84269662921348

]

],

"flags": {},

"group_id": null,

"label": "Motorcycle"

},

{

"shape_type": "polygon",

"points": [

[

214.8876404494382,

124.71910112359551

],

[

215.1685393258427,

117.41573033707866

],

[

212.92134831460675,

117.69662921348315

],

[

216.29213483146066,

110.11235955056179

],

[

220.22471910112358,

115.4494382022472

],

[

221.06741573033707,

118.53932584269663

],

[

220.5056179775281,

124.71910112359551

],

[

218.82022471910113,

128.37078651685394

]

],

"flags": {},

"group_id": null,

"label": "Motorcycle"

},

{

"shape_type": "polygon",

"points": [

[

205.8988764044944,

125.56179775280899

],

[

204.2134831460674,

122.75280898876404

],

[

203.65168539325842,

116.85393258426966

],

[

203.65168539325842,

115.4494382022472

],

[

205.3370786516854,

107.86516853932584

],

[

217.13483146067415,

105.33707865168539

],

[

219.9438202247191,

106.46067415730337

],

[

219.9438202247191,

111.51685393258427

],

[

223.03370786516854,

109.26966292134831

],

[

225.28089887640448,

113.48314606741573

],

[

225.28089887640448,

114.8876404494382

],

[

224.7191011235955,

119.10112359550561

],

[

224.1573033707865,

123.59550561797752

],

[

220.78651685393257,

125.56179775280899

]

],

"flags": {},

"group_id": null,

"label": "Car"

},

{

"shape_type": "polygon",

"points": [

[

237.64044943820224,

123.87640449438202

],

[

236.23595505617976,

120.50561797752809

],

[

235.3932584269663,

112.07865168539325

],

[

237.92134831460675,

103.93258426966293

],

[

248.87640449438203,

103.08988764044943

],

[

259.2696629213483,

104.7752808988764

],

[

263.4831460674157,

115.1685393258427

],

[

264.0449438202247,

119.3820224719101

],

[

260.39325842696627,

125.84269662921348

],

[

257.5842696629214,

125.84269662921348

],

[

257.02247191011236,

122.47191011235955

],

[

255.3370786516854,

122.47191011235955

],

[

255.0561797752809,

124.71910112359551

],

[

252.80898876404493,

124.71910112359551

],

[

252.24719101123594,

123.87640449438202

],

[

244.9438202247191,

122.75280898876404

],

[

244.9438202247191,

125.84269662921348

],

[

243.25842696629212,

126.12359550561797

],

[

241.01123595505618,

125.84269662921348

]

],

"flags": {},

"group_id": null,

"label": "Minibus"

},

{

"shape_type": "polygon",

"points": [

[

260.00884955752207,

151.20353982300884

],

[

260.00884955752207,

141.91150442477874

],

[

257.3539823008849,

138.81415929203538

],

[

257.3539823008849,

134.83185840707964

],

[

259.1238938053097,

133.50442477876103

],

[

259.5663716814159,

129.5221238938053

],

[

261.33628318584067,

129.5221238938053

],

[

262.2212389380531,

134.38938053097343

],

[

265.3185840707964,

135.27433628318582

],

[

264.87610619469024,

152.5309734513274

]

],

"flags": {},

"group_id": null,

"label": "Pedestrian"

},

{

"shape_type": "polygon",

"points": [

[

213.99115044247785,

101.64601769911502

],

[

214.43362831858406,

94.12389380530972

],

[

229.92035398230087,

92.79646017699113

],

[

235.2300884955752,

93.23893805309733

],

[

235.6725663716814,

96.7787610619469

],

[

233.90265486725662,

98.99115044247786

],

[

233.4601769911504,

102.53097345132741

],

[

231.69026548672565,

100.31858407079645

],

[

224.16814159292034,

99.87610619469025

],

[

224.16814159292034,

108.72566371681415

]

],

"flags": {},

"group_id": null,

"label": "Car"

}

],

"imagePath": "../AnnoImages/936.jpeg",

"flags": {},

"version": "4.2.9",

"imageData": "/9j/4AAQSkZJRgABAQAAAQABAAD/2wBDAAgGBgcGBQgHBwcJCQgKDBQNDAsLDBkSEw8UHRofHh0aHBwgJC4nICIsIxwcKDcpLDAxNDQ0Hyc5PTgyPC4zNDL/2wBDAQkJCQwLDBgNDRgyIRwhMjIyMjIyMjIyMjIyMjIyMjIyMjIyMjIyMjIyMjIyMjIyMjIyMjIyMjIyMjIyMjIyMjL/wAARCADwAUADASIAAhEBAxEB/8QAHwAAAQUBAQEBAQEAAAAAAAAAAAECAwQFBgcICQoL/8QAtRAAAgEDAwIEAwUFBAQAAAF9AQIDAAQRBRIhMUEGE1FhByJxFDKBkaEII0KxwRVS0fAkM2JyggkKFhcYGRolJicoKSo0NTY3ODk6Q0RFRkdISUpTVFVWV1hZWmNkZWZnaGlqc3R1dnd4eXqDhIWGh4iJipKTlJWWl5iZmqKjpKWmp6ipqrKztLW2t7i5usLDxMXGx8jJytLT1NXW19jZ2uHi4+Tl5ufo6erx8vP09fb3+Pn6/8QAHwEAAwEBAQEBAQEBAQAAAAAAAAECAwQFBgcICQoL/8QAtREAAgECBAQDBAcFBAQAAQJ3AAECAxEEBSExBhJBUQdhcRMiMoEIFEKRobHBCSMzUvAVYnLRChYkNOEl8RcYGRomJygpKjU2Nzg5OkNERUZHSElKU1RVVldYWVpjZGVmZ2hpanN0dXZ3eHl6goOEhYaHiImKkpOUlZaXmJmaoqOkpaanqKmqsrO0tba3uLm6wsPExcbHyMnK0tPU1dbX2Nna4uPk5ebn6Onq8vP09fb3+Pn6/9oADAMBAAIRAxEAPwD3A3KI21pVU+hanebhgN/J7ZrhPtUu4sfmY925qRL+ZbqO4K5KDABYkYq+Uzudv5p7FjTHuVRd0kqoo7lq4691u5nl3xuYBjG1D1rOe7leLy3ZnXdnk5pcoXPQhcx+V5gkBTuwbj86qQa1a3EwhjmbcfbiuFS5cIU3sEbqueKfBcPbyrIrcg0+ULs9BLsJwQSRjmsPW9St4pzumzugZMLyc5z+HWuZn1G5c7jOzMf9vFZE8r+fHvGcEjOfakkguejReIrK6tghmaN3jHLrxkj1q+dTtVwWuUGenzV5nbmQ28eCowMbR09KsCRioPPTtRYLs7wa7YHpc4+bowNMvtXT7Owt72NJR0J5zXC+Z70u/wBKfKFzol1u+jQvNcb42OMDr+FMtPEdxFLmZmdPTcawN/qaQutFkB3c2v2MIH78uSBwnNQQeJLaSYq5ZUP3WP8AWuKzQZKVgOun8Sol9GELNACQxB6+4q3c6/bWzICXfem4FOf61wZlwMkkUCfPGTkUWFc7O58UwRtiFZJD6k4FUh4puCGJCjB+4M8/jXMlzR5mFppILl2S/lkld8sCxLEAml/tC4H3ZpFGegc1SBBOTxTi4f8AwFAGla61e2aBI52CjJweeaaNY1DzWc3c2W64aswn3/A0gJOOfyNAzTutTubwqZJCdowMGqv2iUN/rHx/vGo4LpxCmDgY5zQTlsk9aQrCy3M5glQyP8yMOGPoa4PUZJX0m4BdvmhPG72rvdkgk2EHnj1rg5vntnU90K4P0xUsuJ52HbaPmNG5v7zfnTQMilpFDSWz94/nQCf7x+tLikxTA1DBbKPLkvW3ZPIORiqp+yhZ/wB65Yf6s/3qq4oxSEXknsY1P7uV2Pqab9vRXbbADGcYVvYVSxRQBaF/Mjl0wpLbhjovGMUf2ld7k/enCDGPX/GqtFO4Ekt1cSyF5JnYnoc1GWY87jz60YoxSuB9IjANDkDkDimoeOCDj1qKSdQ3JI9q2MiKWRRnI5qqZWGewqSVw+QOM1WkIHU0WGSC4+Y55FEk5OCOB3zVUsO1KDlTmkMeHzxweepqvO/72Ijsx4/CnbqhmPzRk92osBaglIVgDyG6VOkoC4J6dqoxkiUqe461Nn1pAWdwIbJ696RXIwCcj1qEE7hjn2p+4njFFwJN/wAvFJk9uaYenGOelAVjgAnP0ouFiQudwzTTIOuaYN23H6VG/POcH0xRcCR5F8s5PbpTUk+Y4HGBUbkKvDbjimxFnXKqzfLklRmk2Fi1vFPJULnrUBR/IMuCFHQmoRIeOenamncdi8JV3cjOO1M8/DnAAU9KiyHxnCnHenGEIuBIGOe1AWFMpLcUzzW5FPitWlXcpwPcVFIcOVBzjvRcLEkUmEAOMZP86siUMp29c96p24BWQMM+lSRIzyBQCAe/SkIueasagFvrxXCzkCSZRziRxn/gRrsidrnI4HrXGXzH+0rsAH/XNgD35/rUsqJ5442SuPRj/Okrc/4R65mmkk8xEVmJAIOetTp4XP8AFdDr2Sp5rFHOYJpcV1SeGrYffmkb1xgVYj0HTk6xMx9S5pcwWOMxShSTxg/Su7TTrCL7tpCPquf51OEhT7sca49FApczGcEllcPwsMjZ9FNTpo1+/S2f8eP5125lVR94D8arPf2sRIaZB7Ci7GcunhzUXPMaL/vPVuLwpck/vJ4l+gLVrNrFqGwu9mP92M0h1Un7ltMwPcjFK7EVF8KQj/WXUhPoqgVOnhzTk6iVj7t/hSnUrkkbLUY9TJVe51W8iI+SNcn0osFz18xlh0De56/nVediqYP5N/jXNX/jFYbkJbvHJHsOckgdPpWNbeLblItkkjSEn5dw6V086MbM655go4JH8qiZ2cZxkeoql9vtpWj8s5d8ZGdvatNLOIxkpcrgEgsD0/KjnQWHwQCQjGMjtSXFu0TggDBqOzkJuzbfNkISGAz0962JYEwSzqQr43PKMdR6c0rjsYZVlwSMVFLn5O/zCtO6ltvNs1Uw5MgEhUlhgqev6Ul1bWkLowu4s7+ignH50XApw28srqY1ymcZNWxYS92Ue2KsvqFoIgqh2MfKhRjJq2dQsptkn2ZWDLnYzHNRcqxRjhSLhsM2ee1KyoWI2r39fSrH223DlvsrIo+6iYA/E96je/tVz/oqf8Denp1Ya9hoBHOVH0X2xUqIQM7hgAcZxVNr+GW5TbHAP4QinrW2mn+bbpMpTLKCQRUSlYcU3uY5tUd8mUgHpgZoFgjy48w4HU4o1a9bSvK+RXLEggN0rDfX7pHYCSFhuOMDt2o1aHZI6A2Fsig5b6moYLhYGAkkYxDPG7jr6VNpUsOqwiWQhApwSxGc8U0x6aHHm3Cn94eN+OKm7vqPQzCTtJzkE5xn8qcYgrxCXMYb7zMOBz1rY+06BD96eFgOgGW/pWJrOqJdyCKzCx28Z+9twWx/IVak9iGicRxoxDXcAX0XJ/pU8UFtL/y/AZ7CM1lC1uyqlcK/qGxQ1hdCE+ZPwB93Jp3RNmbDCytZUDXMzlh1XgYof7D9q8tEd2xkZbrWSoLxR5BDBQMVIkc/mr5Zwc/ez0obSKSZrWzRFnCQE4x0NLIh28xlQexeobd7hHYi3c56ttPNJdpe3e0C3ZQM8kgUuddwsyLMRYDzAB6muc1Gxnk1e5mgt2MDsCr9jwAe9b/9k3w5Plxj/af/AOtUZt5o/wByZlIZTkqufypcyYzItNHu7rOBGmD/ABP/AIVDd2E9rMY2kjyOpANapniikwZiG9B1qvcGDLOwdz6kY7UWVx3ZXtNLF31uWBHUBRTNQ0+C08grcO4kYgjNTIWj2PgrWTrcrfY1cHlZhg/XNDsEU2zOuHlGovGoYBMAKG+8p6n61disNmxJm4BGXY8tx09qzJb0wJBORmXdgHviqcuqTzxMxY57c9KC3BpnTmWEWpMZG1crxWHbhJdQn7jaD/OltLkDS4twIWTdkg9DmqtvKltfOZGIBiOCB3zQXyWgbAhRewpcIOiisU6ldPGpUqGL7eFrVR3g08PIvnXL9ABkKPcCpdjElyO1Z2qRrLGOcMOg9fapUjuJ7eQSIyluBzjb706O3MTKxCLjjLtk/pWc6rWyLUerKDbhhiQMf3uv0xUSoFkTBJxzwM0biy7zyx6E1PE8QZTJkbem3/PNaXIsRy3Egc5kZhjit/QLlfLaNrkIWx+7yRnmse5SOSVTEdoOMZHrzWha2kVtd+c0gcgKyYb2B5x+NNp9Bq19S9q8Rs5oRHNLtbcGy59a2kZLZADImzAYLu9qwtZnNwschBBDHnB6Y96m81p2iMqt5e0AlFAbHtniizsUrJmkskZMLeYC29cjdnqcVLcTQFQodSu4EkN+dWJtK0OWw+1abruySEbzbXkW1mxgkAjiuYcOJ5I8k7WPSiKTBs2pZWW9M8MkYB42bu1NMyq7t57KxPaTHHYVjxht2SMgDoasDErFGHGPvDiqIuXRLE+cyyEDuzGlWNXfKK5I7Gn6dBA6TxuAVCdTUkCodRkADFTCjjDfWocmUIQ8DpIwVB656UXWoXQdoEvZGhX7qrIcfpUNykJ1FwwBBjU8n3qkY23EgHbk8iiLuSxJdxcE8+uTT+PSmCMhTgZxUmOnygcVdxEgI2tgqoweWpS4GCrAY69KfHbK1qzk5IJ4xxVORAk0MmBg5GSPbP8ASldMbL4iTyQ0lyI+zBj/ACA61Gbu2imwczIOpB27v8Kpz3JlYtuJ571TLsWPHWjViOvh1nTCqk2c59S0xPFapv8ATVjWSO03qehAz/WvPIpTsQZxgdQK0LS9nglXZIAhODuHFS4JjudomsKCfJsFGM8t3/SkbW75siO2iUgck/8A66oQsAgw+4HnJNK8mwk4JGB796FBICwdQv3zmSNOOCBUJuLxjl7psDsKqxXaT/NG4YA8sBxUhkULkyYB6HijlQEkV9HC5MkjtuGFBJOTnnrVK4ubm4lRkhaJC2zcBkniopJYoyJZFZ1WXgE9iM0l7rSGJGjLfK33CuKbuthq3UYdOmjXzWK7COpPNU5jlWJmJUDqfpUouJ7lgJHUKOdpbA65q8beyn/drPjJxkKMH1460rtbidjljqjvcQxAMo81dpPcdKdrbyNaEBWEasC42+9WW062xDK6lJVwwznJPbFU9VcvZzqcn5DyT+NG6Ki7M527lJ2NnGG7VV8zCMOeTT5keVMqMhRk0iWlzIMxxswNUi5yuzViO3T4UbOxkGDj+LvVWCfyL6JmBYcjAGc8U6EzPYNETxAd2M/gaV7Vk05bvB3FwFA9wR/WjS5q3eBafWUH7tYkDA/daVcj64FM/tLUHGIrRDkcHzM/1FDOlrBHvJTMY2gDr75FQNfktmNIWUesmOfWnYwsrbjZr2+EJbzI1bjhFyMHvk1HYXN0+oQiWYvG2cYYY6VsWOlzXaNdXPlkNGFCr0I9azbmCKy1y0jhQopwcE556U7aGd1c1bPSnMKq4EbJncSDk+1NvNLkRkMUO5WPRT0qZLyGFj5b7SPQ1ZF7MRuRw3HIZazRRg37sWEZhAMe5NyseTmt/TDBDpw+02iSSMhKs08iH06KwFVpYjJDJI6jJfdgD2/+tU5LeTCQVPysMEe+asRFfvEyv5Z25YFVViVA/E09Jw8MahRwBziormMmEscEKo5q1p8UUlqhMe5wTk7sZpN6CFgDyxyIqjIBOP8AP0q025pGIwM4I4x1ANSRgIzCONSueRjNPRwYIwyjmMc/QY/pUOQXIjlckqGI7YpyxBM7gQSegqxlmUYGaYOCPkwM96nmEpDIro2t0ijDRyZVgPpSbovtducgo0bLlie3So7tFRoWAKgSDOPrioHKpqCRuFCLIQPxFHmVqy1KIUvo+U2tEwHT2NWkcbQyMHTH3TVK+jiVrcKUzkjarD+6cVMk32Rk8xMyFASyvkAYyBRy6AXwYPKyzKuR69KrvLZbGEbBnCnBweKoyorzSMXYIe2f/rVJFbD5SGO0fez3p6Idy5BBI0RG4A4yQKy7mdDNbRlSFMo+9+XStNAViGZAT6n+VZeqxCJI5AScSDrRFq4OxMdLuXkOFVVDYBY4z71VltlhfEhcBgdoIx371oGdpW3RswDehpkkBaWIyiZwTjpVc1hWILKySS3EkjYXeVFPihSN13HLCRMZHH3gO/Wpba2uPOmggtp32tuCqhJxgGkuba6tFLTwNEwIZfNHXkdKfNcR0ErrbRk+XtUDgYxmqx1EDHGADzk1Qub55YlSZ4WZSQiRrz9SakeJnHAJAY+lUgNHw1Y6ZcpcW+oarBbrvBUMOWzUd5LZSM0VoCIllIRnPLKOAcdBmsaGxM99LChVTjcS7YFad2gt0WIFHIQfMnNRLRlrValOZ8o4wdowxIqhc5dmjbA3HoBVt5AMBSRn7xz1qG5k837o4HehXZLsRHhclulS21yYnUrgEHqaqKvPfNWEQOwzkewFDQiy8pkiEec8dz0qhcwA6dclgNxicc/Q4q4EmDgLGzc8HbnNSSwsYZg6n7rDBGOxqBq7Zx62cQSN8bt2Dz7r/jTN0llfKIQSSqgr13djTrW7RbSEP1UAYrRsb+B5UXBEh4+7Ti5X8jZD4rCDTrp5DIzLIuNm3IA9/Wma7Gz6YzR4URkSEfSrVwheXJaUqRwqdqSdPO0yeM5UsjD5j7daq+oNs5IyefELeV9jA5jJ6DPUe1Jpdg9/qkdoQR82ZMdgOtWNYltpfsxgAEkce2XHcirFkTbXk12C4YtwF7Z5NbpXOds7ry1RAoAVR0AFcV4ikVNftgP4VXP51tpr2xVEgD5HJU4P41xt/e/b9ZNyBgFxtBPYYxSkmgjqacqNt3Rx4UjnB6f4VrWastvhmLfKeD2rbmsUcsAqkE8HGCB7+tVntFHJmRCByE5rG6RaRFKM2hx3VSfzqJEL2kGM53n+VWpJYDEwAZpNvOe1SQFzZsI4sbWGCDz6UcwjNdHCuNrn5SDgVPpSPJbnapYBwMj3rQczvbeWTkdxv56dazdJMqbovLZgxH8WMc9eKlyuhaGn5JgYhwoyBj5gTUqRxeVGTIgAZlIxk9c1XmZC5YvGdvcIT+pNRvexIQCdw37uBjsKx5tA0LqmEsqtI+COg78U9ZIjcbEjLAHABPWs59WAP7uNTjrntUdpqsbzkuwPPAx/hQr9hpmhq06y2sxjh8sKnGeuQQf6VS1VluJUnWMM7EEgHt71allmuWmhWMbTGc7Vyeh/LtVeSKabSLaQAgFQMjA7Y+tXEG3YpO7r5e8ouJBwPvda13gZCfLKuQeMk1gXEDIjMSMqeADW7JcWLomZ3OMMdiH698VTu9iVdlaSecfKYVXHaiK5lbuFPvT3uoWfIa4kPoEQf4mr9hPLAHcwPASn3o15b25HH4VVkikisHmfgSM2f7oqveW7zWE8kccjiJfMdgCdozjP612uhRT6rqEELXk8ZdSRvl68ZxgVz18YPsk9s0rRuUYFEBwxxkbiDRbUrQ0dA8K6rdwW91HZyJBIgZZEZMnjqMtVPVmaKZA13dswlXln/DgjjvUOmXEQ0i2OLgyBSG2zZVeT27fhTJ9RilidBPuCbfKUcYIIJ4NOwXELmK9lKieTci8hjn0zUt7bSvp85EaBNm594ySRgkgHoeOtRi/WXUWeMhlCleT2yCK09SNtP4WEiXYF0JGVoCfvJtyDStLqN2SMXS4pJdUEJ2cq2AzADpVuMgxIfkJKj+EntWZAdk0THgnqfwq4FbYpEmBgcBzVxM+hNaxg6nkjblDwB1q5d2sUrR5L5P4VlCc211Gy/eC9/wCKtMyusCs5Vgo3Ahupx0pPVjMp4kVnUdj0NVZCN2MEYHYVd86KVm8wSBmb7qD/ABqwdIQ4MlzDbjptlnTd+hp2sIysZUYHJHQCtDToyqknGT3q1HBY2YYG5V3BKscEgH06U9Ykdg9oWkAGGwmOfapkUIRlT39MU6OKAo/mTFGIwECZNRylomIdSGPRTUiQFwdm1pSCAx9agpHJ/wDCLS+aQhxEScEtk1o6V4Yg+1mMzsrhckrg/wBa1X0a5kYlIZnA6lVyM4yaTSjFFelI3DHYcsv06VfTQG2ZUFg03hS51NnbzoZgmA2B94Dp+NYNyxNrckM26NTg10NvqhtfDmt6auCtw3BJ6cg5H5CubSJi94SG2SqcfiKasiW2znUIVxJJzjnHrXVwaVJbWMchmUEqGkVuBk88Vg6ba/atQQSYWJWBbP8AKuuvb1FRVjKsxbBxzgVspWIkmzn71IrayknjUqW+VCOnvXOHg59Oa6DU4Jp1WJHQRKc/M2Mmsp7IoVXzEJPdTwPrUOabGoNI9d0i2/tiAhrU3CqB5iwTLHz6ZwePpzV6HwdA0snnfaYGDDbH9lYgAn+9kk49cCsGO9mdmIuZFDHPyEDp06CteC9tQoNzdsXOSQ0rc8+mahajbaKuo6Fb2OR56NjcAsAZm/HIAH51FbaZAlq5k1SxhAAyHzvB69MEdqs3FxZSxnywjL/uf/WrPMoMK7QWP+7jNDiguZupyNBbyxytMCRhGBGCe3ArKtNRlhyFdkbHJBxu/Kr+rzyxbBtKtnoy1hiUpK4JCkHqB1NQ4hoTS3c7q68gA9T35ppumnYxhAcEfNmppIpUtWPmRFWbPPWqcUWFDg7xgHaKOVCLiSoyGOMLHnqTzinpbI+6RXBJbJCrgA1WgaEksRgjoKcb1ycKoUDsKhp9AL0UjFsFmDMDkgmo4GZQMcbeAadbNFKxAJZyBzmniIJG5LHcGJ2+1WnpZj5WxzoXhZ2J3MM802A7QjHDDAODU0+PKJHAAAxWhp2j3F7ps08UMTRQfeZ5lQn2GTn9KIslKxjG9lBIX5VPZeKktNTnilXEjYJ6GnG0WT+NUOeQ3akGnIjEtMxxgkqKLJopJs34NctY2HmSXCSbuqBcYxgjJFJbTWmF8sDGBknvx3rnJUPnnJ+QHjJ/pV6GFowpWQbQM7h2qGuUTuTWkskVm0Mf3s/eJzikEUao/wC6xtPJJ6CpYIwkj/vVJbsKldlDPwGG3kfjWkZ2QFWynjVXOCcnjA9qtvcxMu0BzuBHUccVGsC3P7zJT1VRgmmiARbTI27/AGSOop86Y7Exiti2yMMzKgOcfxY5pIpnDCI7lIHOOPeoSDKwmhCRjPCjgcUGEu7HncSScccUXsOxYn+TyZpclSONrBjn39KJHV2O5gAMFVznFTwWjSRxxmFpEGSfmwCfrTzoyC4M1yTFa/3Yzuf6DPFHOgsUIclo5N3Bb5s9hV1ksWjbyy+/aWJU53fpxXQaeNAgAb7PKw2ksZ7kDAHsBWBq0mnPf40UyR28iYPJO/n36CrDQeLm0CmR4g2fmZWPf16Vs6VZaVq7XEVyt1CbeXCCJQu5T0zmuQH3sCSQ49Fqzp93KmpCYvISGMjFueg4zSdrE6mjqtpAtwRpUkzRRs0KvKf4lOGGfY102g6FNb+ULmawKzRhlaSX51OB/CR2+tV9N8R38nmQ20ccgWTotupJyNxPT1pZ9cviCLmO2Y9f3lpGf6Vna42+xo6ki2NhezXNqHtIU+aS2vRuPQcrt4/M1x2jC0aWeaOB4c42IzZO3696lvbmIwzTGaCCSU7PKSIKrAjGcDisfRb2R7sJIxZmjxkt3pySS0KptvcrSIkGp6hbwKApKlQ3piqkqsUeQ8jPOBxmrGp74deycfOik459RUlzt+yMBwd2a55Ts0U1qc5DbIjE/MAD2q0tuZWLqXbcOBsFRXl2ba3PkkghhuyuBVeyv5Z7+OGSTEbHmulVXuiOVmh9mMSqPJByNpBGfxp4srcNmSG3CjsOtWWaGOaNFjt5CzAEgnj9a02ge1sWu2S2QRsUKA84xw3481PtdR8rtYrWzW8EKjLKCOmM4P41aN1EW2Kc47kYrmjfs9sVYBVXgEU+K/3Splm2+nWpbl0Iub4v0jYg569cUovd7YSGacj+6KynupYkYZyp9OtLHcXOd6TmPruA4+tHtHbUDQvb2K/iaNrRVZj8gyuR6YwK546dNKJpY8SLFkyEnBHPXFTSxym6HlOzcZGKhkgaM45Hy84p86fUNSm7sRjGAOtMVmdhGD1PSpCJJMxoCcDgDmp47R4wrnOD/EBVXQFdMbSrAZHekAU5H8jSyApMwq5DpssyeYAQB1Y8CplOMFqNJsZbzeQwBXOe5qe4uMMQO46intaSJuQqSOw6Z+lVZEUS7SdoA+UmpjJS1RopuKJhK5Tg8Hsa0LC/eFiAQ2PWsqBS6NjJJxwK0UtGWESAoc+vHenO1g5kiecyzsnmEFiTzx0rQtfmcqwUbVBHv7ms0Ww8wOblAVPQ05lVwxEjAY5JfP8A+uiDS0BEL27XF6wjBZWJ2noOp71JcRPaoI3wrleAGzx68VZjglEBKKwC9Dj2qiXEiCR53EhX7oH5Vd00Q4u4olZ1JjG3aOma37LTFdFkvNT0+zYnkPNvb8lz+XWnaPEtiRdvbQtKeAHUOBwMnB4rYHiG/Q5TyI/dLaMf+y0cikDbQ6LSNHchj4ktN2P4bZyPzyKbq/hiWzhjuBNFPA4GGI8on6AnNWLTxTewSMZJndGVhtXCjd2PSue1S5n1lpHmmd2JYfN/Cw5GPyo9mkClqXoNJKLjCRjHbk/4UlxZLHfWhTecMdzMc8elaEUzy2sLyKVkKjcCMHOOaa5AGQB+NZ21NEzCvr+QakJI4z5dscH5sA962xd6ZGBNfW80hkXKBH7e9c1exGK6mjwWMhyCP5VbktHbTYQI2aVc8Ee9aaCabNPV7nTHsj9httkvdy/QY571lW14llC1v5scsaqHBXg5x938M0sdpMYcNFMHGcbcAfzqumlX16wTGxVOCzn+lJyQKLNGLSpTB+9jkLHJP77A9fSnQ2y6ZM5cKonb5FDZ6DnJrZG4L24A71m6vbz3cCi3CrKjhlYnGKy9pruV7Mr3XiP+y5BHAkbg8tvz1GRjg+9Z7eMpXck2tvyMYA9sVDdaXqDzSSfZUkXPBZ1JqmmmXzFM2DY3cghentWiqon2bLd5r/8AauyIWsNvIWwrxk/limWmnzG5tJUBYK/znbjjOD+laOjaY1rdSzzWgQ9IicZx781oX935EJ2AF/4R61jKrd2RtCktzB1qKE6zaBAdrRnv3zxVCWYvuAVtvTOKmuX33VncMcDzOWPaor145ZgSd5HAA7UtOpM7IydUjf8As92KHYcc/jWXYkHULclSwLrlR1bkcVvTxtNatCfusMdawZFNveHBOY36j61omjG50sfkpNJ9uEtusRJVbZBkt2GWqB9VhuriQzwzyIUEYVJAGPvypA/KrTxI+0Md2Wzg037PGDhY0z1yBWXOkx85n+QhVgkZB9Tzn8KhggxLlgVx2FbxiidMBFHuOKRLaMMHTcCOhLVXtmyErspRTtDNkDaoPU9xUU99mQuB8zHOTWnJFFO5L7iR1NK8VkilHAOR3bFRKsl0G07mXNeHYNpAY9cUWj79wJ4Jy2KuabEjpJJHChw5VD7dqn+zIUkaNBvbufWn7Rdh7FKRI0ikSBsBgQwx37GpLC0lulUjCoVAJZuCPSoWsrlNx2kjHUUiPcCARhWyF6DpTldr3WCauakekLFdCaSTcMcptyPSpppzEygRp5Y7Ien4VkG9u2QBo2EgPXp+dTf6RdsrsQp7Ac1zOjNu82P0Llxswnlqp3fxMe/oayb2CSRgBGpI7xjitmDTpS+8xsD6mrX2QIB52361tSXJsUqcpHOQQSiEgKMkdTV+C2aeJQUk3DrW3BaBz+5tw59QMCtSPSXZ/mkKJ/dQc+/NaObZoqKW5jR6dbLFm4UgY5ZiP6VNBYRyjbbWjMPULgfma6WDSLaLDeSCw/ic5NaAjUAAD8KEmXeMdEc7Hoc8ke2SQRqeoUZNULnwtJEsP2YmZSQHHTHHWupub6C1nFtISJmG5V2nn8aIIVkUSybST3PStacDGpMrw6FaJHCn23bhfmBAJHOakl0y0twrPqZKc7gYk6dsd60oo7XuqfpVDxPawyaBdtHKiTRwtJER13AcD8a22Rg9SgieFUbM2ozvhvujgf8AoNQi/sCuyxkVyhJZgmCxyccn8K4u6icPuMjA7sSBx0NT2V1HZIzO5Iwev6VLasNHWwSPLCZHIJZjyDkU8Rs+MCk0a3J0m2LY5XPpWkkQjyTz6CsGzeKM9tLSZlMgJ2nIGe9WktkTt+dWMZGelBK9qlstIYIlAyAKRiOwodz0GKaBjms2ylEgu5zbWc04iaRo1LCNerewqraajFe2UFySsSz/AHFY8k9xUmqmY6bMLbmcj5V/veorhrG4klvtOtI4ZG8iX92jdF5yeO2KErmmiVzvNhc9OO1O8vFPyBUM84jUnvUjMfV72+sr63FssUkRicvGxweOhz2qnc3BnkydoA9DkCqHiF3nuN4n4xtCHgfTNRRyrFCsfC4XoOapbGU5OImpyRGOMR8YbJpo2gZVNue5qpcSGV8gjFJGGlfAz06U+xyyk2xzu65WMbh6E1QFiTL5kwOS2TV24me0xMFV1DYdT0IqXz7S9mVYJHOVztcdPWhtoOV2uIkmzGOWHr2qWKUvhioYHvQ8IjYnyhgDqTTHLggEFgeiDjArNyuQTvcoCSgdiedoWp0EsxKoAPYnGKcZUGJHmKr1KjGaqS3EUVx5izHBGSB/KlzSfwo1joSyrFERHI3JHJU5FUdXZY7QNGh/u793bFST3drKMxIxkxwT61G6Ne2hjcjB6GnTjLm5mKWpU0Kd42kQgmM9wfumt+GRvu5BB7gYNYem23k3U0RySpx9a2UhY85VPcmtKkVzBGLZY3HPOPfIzQzrxx19Kmg0+aUDy0kf3PArSttClIHmsqD+6n+NQomiovqc/LbvKvC7PdqsWNlqCMRDG0gPcrgfXJrsbbRoIiCseW9WNXltgBz+QFbJNmvLCJzdvpF46g3EyqPSMc/nWpb6REhDFSx9X5rVConYU7cDxjkdqapidQhjtkUYxx7VYigLsI40LE9hyavWsekwlW1TUIkkP/LBW+YfXHNayeJdAs02wyEKP7kLf4VfIZOoZ8Wh3UsjAQlVBxvc43fQdalk8MXjIRHPDGT3wSasHxzow6NO30j/APr04+MrALu8mbbt3fw5/nVezZnzkFv4Umjb99dRyLycGPnP1zUr+HI4Vz50CKOpZP8A69Mn8b6emnXNyqSh4Ymk2MvUCvNtQ8ZXms3Mf74i3mh8xMH8xjtinyvuF7mjrWrxWGsG1hEcsWCPMQ7QtZXivVWe2sraNFVXjEj4m3GT5iBwDxXJ6rEElWQEsTkMSfxqCB5TJGEiLEEcqmT1qr6Ba5uXdpNdW0SW6FpGO5lHU0+w8NXUocXC+WjY4P3q0tE067i1SS/kUrG6bVjY5IrpQTjpWEpGsYENrEYIUhUkqi4Gam5z1yacMngDApdhA4/Gs7miQwkjjrTCQM08jrzULOAeealstIb3zkCo5JdvAJJpjyFyQBj1NIFAqCrDgCSDkk571w9sfK8ZFcfMJ2H0yM13AwmCOMVzd3ZxN4mjuxvGJELMAdp+U/1ArSLtciUWzo5JAi5yOBWJqV+QSqHLEflT9Qv1jXPBz90A1iIxlnBkYgEjLf1qEjR6Gdq6NKsBHPzn+VOcCRy5BCjoKu3pSJmRDvQdwOtVQmxA8/Ck8AU+fQ46krsjEW8FvLCKO5P9KkTb5SgYyV6Ch0ku2KghI8dOnapltkTarOWKgYAqJT01IsircQb7WTeSPM6cd6wdOmeC8GASR2Arq50jnVQVIA+6AaYltbwszrAqzYOCDyKUatkO9lYVMTqduGA4zjqacYCc5LDHanCSURKFwu0AZxSPJggmQElh26e1Z31JIMAMcHBYYINEdsskoMdvgjuOlacFjI2PKtic9C1asGiu+POkC89Err2OpUu5hDS7YHMgVWxkhepq3Z6VGSfJt3HoxBxXSQabChG2Mt05bmr6WilcEcegp6sp8iRw2naY03iC9gkkMZCKSF78V1NpodtEwYRF2Hdq1UhijGVjVSe4H9ak9u3Wq5W2T7RIiS2VeuB7Cpgqr0ApC2R2pPrV8qRm5NjywFJuJpvAoyaCRTgjk4oyEV5GO1UUsTTSR2rl7K11eGbUjfO7W8uNpL7hjOeg6VS1B6ElzcsSpA4K9cY5qoJZNxOTg9atmS0JXcWdiPlCozZ/IUiy/LI0ei3rrH99xbttH1JrZGJW3s/U/jSAnPXr1rVFpLdWyyR6LfIWGd3kHGKRdA1d0WSPTJQjDhpGVP0JzVIVy3rcENtYwymUrp9xZuhaTHUrxwOcZ9q85sEJ0iEZ897afyleLPKsOevvXW6jpeo2zRxXsdutvcHyvlnWRlJ78ZAplrp9rpGmmxhH2hpG3Tyt0J9APSpkNGKdPn1ARlUwu4MWb7vBrv4oIgqskaLnnhcVz08jQskMcZlcj5VXhVFdDbuWtkLEZxziueozeCJgMCjAApByPb3pCwxxzWDZskLnHrTHlAHNRvIBnnPtVaWZQuTx7VDZRK856nhaqvMXJC8Z71CZGlbJ+6OgpRvJAWM5J4pxjdlXsPLrGuXICjqxNSLIrqGDAqehBzWL4xsidGRBcrJcMQfIQcr9fSqGji6stKEUxZG3Z2selXKnyoiM7s6Z5gvfA96x7vUV2kj7oOMf3vasy7ugd24kqPvZasU3rSTZP3OgHpSVO+pbmjReR5XLMcsalntprJFeQbd43KD3pbCPzpAeqjkmq2pXbX184Gdo4Az1qWzOo7RK8t35KAhcuzcFqakjCQNM43nrTxEGCtJGp4wCe34UfYluJskHaOpFRdI5vUntpIyjSZLMCMLVmR0kkLQgqx6ITn9adaWaQICwQqv3lLY3flVmL7OVIiwrjhjJ2/OsZTDQoJKCp3ELg8A0B02liQT1AxViK7to1G6FFQYYnOC3t61JJOJWVowvlgc4NJK4JIzbmbDuuSu0fMrNlvyqHdLKC2QqkcAHG78O1Xp0t08wgBmBOWIzzUCvDIpyNzHsoOeK1VhK56DFp0u0GSUY9EGKvW9miuAFyffmu4TQtORQCpb/AHnqZNK05T8tvHn65r0VRLdY4eRApAAA4FRkAHrWp4i1PTbC48iOyVnx9/nP4VhwX0F221BtJPQnNPlsLnuTEntR160pGDSfWo1GLwKTNB9qQketIYE0hYD3NITnim8L7mgqw7PBOOlLFf2BsJI5r8xLIeQq/e9uaYAxbAGAe5rnNZudP02WNLiRhIVbbmLI5pwepMlodGNb0qyhJj1S6YqOigAe30pyeO7KKGRJJzMjdQ8w/XC15zeX2n3du0dvcFGOA8jqQPyrLQWCNzfggdwv/wBeunnZhyI9UfxzpPksH8wE4wIX+b/x4YFQnxfonCi0nkfgYkkySa80e6sC4L3TMB02qBSprFtbXgkkgmlIO4IZwi/ouf1pXCx6Jc+JrWJSf7HRMcgsOh9cZrLsnN+Jbg8KWOB2x+FcvN4pUxeYthD88nR3ZxntxnBrRs9WfbpzkqFmDmREXAJ7dKzm2XBG3qFyLGzE20OpkRAM+pAz+Fa9jKstruQHbvYL74OM1x+u3LXElrbEjcX3FAeQP6V18SrBCIoxhVHFc8zoiiwXABz+VQs5bpx7UwuCeTUMk4HCj8awNUiSeQIhOQW9KqBHc7mJPoKcAWPNSgYXFI0toNVQF6UGWKEM0hAIQkDPWngAEda4291VP+EjvY5SFCxtFGWOBnr9K2o25jGq9Cle6iZbq3IYESTjP5119y6oxRcZHQ4ry23ljg1GBpctGr7mAPv2rup9RW4iMkLArIOtViF7wsPsVNQcXAMQxgHr71heWyTFCMEHmtVBhgB0q7Fp8crGWQ7cDqO9Qp8qLnG5ShunsrTywBlvUc1BEpG6RgAO2akkTfKW52g/KDVgLnkjauAeTWUpdjllJt2Gx2zXcMhAK4xhv51Ik3kW8cUYDFcZO3nrmq8t28cbRRjAJ4C1XdzArESbicg4+vWs+VsmTNCW4jmhCSyFCO5XJb8qpS3XlqFJY7TxuJqlnew8xicGn7/mOOnStI00jO4T3czoWSLAYenJojvZEiBUqc8c9qZ5hLdTmmOibeRweuKvlQ7ltL5wyscNg88daR7ne2SMAHcoHbnNVdg2jjp0FMyx6/pTshH0DBcqFwNpbtiuj0ra8JfbznqawotFv0PyW4OP70m3+ldFpsE8FvtnVFOeitmvQuTY5HxxpxUJLaQPJPJxtjXJ9c1yGmaTfvrEEAtrhZGPzllxj3r2O7M4gP2faH9W7Vj29/DarIRIjzMcuVGKllGVrWmJYhfLJORyTWL061sanqMNzKIDMPPbsT0FZGOfWs2axEJJ6Ckx6/pS4zTguazLIypPQU5Yx35NS4IHB/CkLc8mpuVYAo6c1ynjXThcW8dx9jM+wYIV9pA/KupLc1T1AK9jMDjJQ4zQnqDPJrq0cw+VFaNCzEEK024n/Cq0WjTOT5zLGMcEOCTTRPNLfIl1IzKrfNlu1XLm7hHywBuOhLV0XMbEZ0RBHnz23egxU6ZRgkclooAALTxhm/DNZBnlH3WIB7CmEyMehJoA2Xs3vsma/gXy/uqFCr9cLVmymSKZJN5e2tDtLrwxB9K58JKw+6xx6VuaFpd3c71aFkhfGXNTOVikrjLV5p9eaaCOSRXckBucLnoa9D+1sQOMcVStLCGziCRqAPXuasMQBxj6Cuac7m8IkhnJ68Ui5Y5I/CmIhJDHirCLg81kbpWHDI680GVQO/vxQQSTjjiqksMpPAQj/aoQMle7XHByR3BrjvF8ttLYlzHEJyw2sD8x9a37yMpbs8hhjCjJbb0rz6UvreoAKGW3U8kjhR61rTWtzKe1ivbaYZ7F7jftkJ/dr61b0O7dLz7NKThvXtWjKIlURxDESjCg1kzxPFdLcA4IPUVrJ3REFys6uKBp5QgHJ7irOokJFHbo/wAw+9gdsVFpF+htjKykkDrj9ao3cheWR9wJB65rke9i6k0loJ5rOygYJP8AepLuWWFWRwfMI4xzVcNKIycgAnjP61HLPgeYSAdhALGmo6nK3YjFztRvnDsT27U0Pls4x9ahG3CgDcT3xV6C0MqneSo6gjvVOSRk9Srlm5znHHAqzHZzSYJBXHNaUFokTrHtUj1FWXjPmkwuwBHOB+YNZurqKxlfZIhhTIS3BJK9KX+zm5UsQynnIrTCKjGSQryeRjken1prNnoyrubB/wAannbL5TJNpuKjdz7+lO+wMOOmeh3VphSV4VTg9SKdvd5RJIgV89OlNVHcfKfSdRmWMPsLruPbPNcHLe6lKG8zXbSNVGWAl3H8SKTR7rT21AEao92+ckwoQo57k16lydzqtX1a3tf9F85RPIOFB5rjFVkZmkJUMenc1yWq6n5PjWSQuSp7lsnhjT9Y8SOttLJbrgDOGJ5Y0mUkRtObjx1sB+VVxgH3rrApBwa878JM0+um5lYFmGWI616EJAe5/Os5msUSAAdePagkAccVGXz0GaUAnrWZdhckjikwBTt2OBTOT1pDsMkJI+XAPqazLuxmulIM5A9AK1TtHpimkg8DvSuOxyEnhC2diWkbJPUCkXwZZE8s5+hrsBEMdfwprBQOtHOw5EcqPCGnIeVZqlXwzp4I/cg/WuhK7sYyFpHCqpAwPc0nJlciMhNEsIcYhQn3FWAiRLgAADtT3YAnGfqaiIL9PzrNybKURrsDwASaFixgnBJqZECr65607HFI0GhcdqdzilAOKUg0h2GH2qNvrTyccVWuZhFHg9T0pDKupItzayW0n3JBhsf41xoiTT7loIpGMD9d3rW7e3bH5QeT3rKlg89eOG6g1pFtIzmkyrPEUORnBqW0t0mYeYoK9xUtuDcKVYc4wQatm0e1iywCkntzVTnpYxmrakV3OsaJHFGAgPUVVULLIWYcHsRTiN4Zc5PXBPFSSqqRksB04OcDNYoxlJleYqVwGLYHABrHIczFJM7lbpWqIpjvAGwnGSR2qSK0TarOV3Y5x9arnUSL3GadaFD50m31UY5+taJkPqNrdjSOn7zIwg6DBz2pcEqQdo2ngCsZybJY52VTwd2OnsanXciGNsqoBG7tkiojAVYkAHd1U/WnLDIJT5jhioBYD+L2qdhoiLsLj5o8ZHcdOKa+CykHGKkL2xYiRiCc7VHHSo5SgAdSQvAZWXvjpmj3mUrjhL8qxqAoI6/56Uvm+YjyEHecZ5/DpUAnR1JCMCTxUjsAseVYqVyGHFFncLM3rTVNPgtAl3d25OclIxnH4mnL4y0m0YCGOWVv9nCivOUs52IxG5z6A1oWuhX88gEdu4B7sMV7F0FmXNUvZL+/a7UbSegqWy0q71FQGZhHnqa29M8LOjK90QxHO0dK6aCzVFxgAegFRKZcYGXpGjQacMxglyOWNbyJgcnj3pQgQU/OfpWTNbAMDoaUMTTAueelOyBUhYWkL+nNNJJ9qUL3NIYcnrRkCgkLUbOT0pDQpbNMwWPP4UAc5pjygA46UhiuzDgDj1qB5Cc5OcCmyy/LjP4ZqMZPJ4X0qGy4xuJy59vWnomKPQDpTs+lIrYUEAUDnNMHNLkCgaJOKjkcLwKCx25qu75yTSZQSSYUnv8AWsS+u9rE5y3YGrFzcCNiScKBzWJI7SuWPWnFCbEAy3zEkHqTUiJs69c8UxPQ9Ku2ls055GAOpqpMkZFZnzftJOxB3HeqlzOVc7WJjz25/StDUXSIeXCqgDO5jWRcBYhvBzkd+MGs7ts561S2hEkhD4Hyk5+9VryjPGquucjoTnJ9az7aN7mUtJ8sQzgA45rUaXymOQR6D19KJabHJqKkoRRGVJAPQ03MKOQSoiJ6mqcupFGIIJO0DI42mucubueWeSSSZjyeOnWnCi5FqDerOnn1G3g2jh1kJ2OvPQc8VUfUgo3BgDjhT19q5eCQiUybsYz3qU3JbG5juJzmt1h0i4xRtvfymIx+Xlj8xdjkZp8ty5QRicHaMYx3+nes6J1Kud+/CniqonCSFjkuOhJ4p+zTexpZG2u2CVCTIsoB+ZiDz+dXI4ZZX8tZA5Ub8bgST3/H6VzyX4lypwWPVxxn8KvXFw0aLKAFckEKf5cUSgPSxqLFMFJAYKTwAufx9qtyL8y7mKBRyDz3rDtLiSaQWy7iwA+6SM8DgmtSKBIXbzmCkHGXfG36Vi4NMytdH//Z",

"imageWidth": 320,

"imageHeight": 240

}

```

I've added the json file but it won't read it.

Any help is appreciated cause this is due for review soon and i have more two hundred images to annotate. | closed | 2020-05-17T21:30:07Z | 2020-06-23T18:20:04Z | https://github.com/wkentaro/labelme/issues/658 | [] | juniorkibirige | 7 |

microsoft/MMdnn | tensorflow | 891 | AttributeError: 'NoneType' object has no attribute 'name' | tensorflow convert caffe:

from ._conv import register_converters as _register_converters

Parse file [model.ckpt-61236.meta] with binary format successfully.

Tensorflow model file [model.ckpt-61236.meta] loaded successfully.

Tensorflow checkpoint file [model.ckpt-61236] loaded successfully. [435] variables loaded.

WARNING:tensorflow:From /home/lsp/.local/lib/python3.6/site-packages/mmdnn/conversion/tensorflow/tensorflow_parser.py:269: extract_sub_graph (from tensorflow.python.framework.graph_util_impl) is deprecated and will be removed in a future version.

Instructions for updating:

Use tf.compat.v1.graph_util.extract_sub_graph

TensorflowEmitter has not supported operator [IteratorV2] with name [IteratorV2].

TensorflowEmitter has not supported operator [IteratorGetNext] with name [IteratorGetNext].

Traceback (most recent call last):

File "/home/lsp/.local/bin/mmconvert", line 8, in <module>

sys.exit(_main())

File "/home/lsp/.local/lib/python3.6/site-packages/mmdnn/conversion/_script/convert.py", line 102, in _main

ret = convertToIR._convert(ir_args)

File "/home/lsp/.local/lib/python3.6/site-packages/mmdnn/conversion/_script/convertToIR.py", line 120, in _convert

parser.run(args.dstPath)

File "/home/lsp/.local/lib/python3.6/site-packages/mmdnn/conversion/common/DataStructure/parser.py", line 22, in run

self.gen_IR()

File "/home/lsp/.local/lib/python3.6/site-packages/mmdnn/conversion/tensorflow/tensorflow_parser.py", line 421, in gen_IR

func(current_node)

File "/home/lsp/.local/lib/python3.6/site-packages/mmdnn/conversion/tensorflow/tensorflow_parser.py", line 815, in rename_FusedBatchNorm

self.set_weight(source_node.name, 'mean', self.ckpt_data[mean.name])

AttributeError: 'NoneType' object has no attribute 'name'

so,what can i do? | open | 2020-08-21T02:09:29Z | 2020-08-21T02:10:58Z | https://github.com/microsoft/MMdnn/issues/891 | [] | lsplsplsp1111 | 1 |

amdegroot/ssd.pytorch | computer-vision | 292 | this line is wrong, tensor used as index while the dim do not same | ayers/modules/multibox_loss.py

# Hard Negative Mining

loss_c[pos] = 0 # filter out pos boxes for now

loss_c = loss_c.view(num, -1)

should be as

loss_c = loss_c.view(num, -1)

loss_c[pos] = 0 # filter out pos boxes for now | open | 2019-02-21T03:44:28Z | 2019-03-17T05:34:44Z | https://github.com/amdegroot/ssd.pytorch/issues/292 | [] | caijch | 3 |

replicate/cog | tensorflow | 1,244 | Rethink/redesign validation of prediction responses | We recently ran into an issue in the Replicate production environment where a model with an output type of `cog.File` returned a string rather than a file-like object. This highlighted multiple issues relating to output handling and validation of responses from the model, namely:

1. Cog's synchronous prediction API validates the entire prediction response before returning it to the user, but the asynchronous prediction API doesn't do this anywhere.

2. [`upload_files`](https://github.com/replicate/cog/blob/2b4515b3635c4d1a8ca5d8f94c2e390e383b96d4/python/cog/json.py#L46) quietly ignores things that don't look like file handles. This results in the value returned by the model being passed back to Replicate, even though it was a 10MB base64-encoded blob and not a URL.

It's not immediately obvious how and where to fix this in the code. There are at least two things that seem a bit wrong here:

- While returning an invalid type is clearly a model error, it seems to me that`POST /predictions` should probably return a prediction with a `failed` status and an appropriate error message rather than a 500.

- The async update path (both polling and webhooks) should probably refuse to propagate invalid payloads. | open | 2023-08-02T11:08:13Z | 2024-01-31T11:45:02Z | https://github.com/replicate/cog/issues/1244 | [] | nickstenning | 4 |

adamerose/PandasGUI | pandas | 48 | PandasGUI (Not Responding) | Hi,

Trying to use PandasGUI on Windows 10.

Installed via pip in Python 3.8.6

Tried in both Jupyter Notebook and VS Code in .ipynb file.

Added `from pandasgui import show`

As soon as I use `show(df)` it opens and hangs on the below.

| closed | 2020-10-22T06:49:22Z | 2020-11-05T18:00:45Z | https://github.com/adamerose/PandasGUI/issues/48 | [] | eddylit | 5 |

lepture/authlib | django | 5 | Cannot log in with Facebook: "Missing client_id parameter." | From authorize_access_token call I receive:

'{"error":{"message":"Missing client_id parameter.","type":"OAuthException","code":101,"fbtrace_id":"AkqIKeKkCiT"}}'

During debug I found in oauth.py fetch_access_token(...), when body object is formatted it has one of the query parameters "client=....", which in fact should be "client_id=..." for Facebook.

Unfortunately, there is no compliance_hook here for it.

I would suggest to make a complience_hook for it. In fact I want to contribute to authlib by myself. | closed | 2017-12-05T17:29:49Z | 2017-12-07T03:22:44Z | https://github.com/lepture/authlib/issues/5 | [

"bug"

] | anikolaienko | 5 |

deezer/spleeter | tensorflow | 601 | [Bug] ModuleNotFoundError: No module named 'tensorflow.contrib' |

How to fix it up? | closed | 2021-03-30T17:29:54Z | 2021-04-02T13:34:10Z | https://github.com/deezer/spleeter/issues/601 | [

"bug",

"invalid"

] | cfuncode | 1 |

dpgaspar/Flask-AppBuilder | rest-api | 1,949 | Select2 3.5.2 XSS vulnerability | Selec2 3.5.2 is affected XSS vulnerability.

https://security.snyk.io/package/npm/select2/3.5.2-browserify

https://security.snyk.io/package/npm/select2

I try to update select2.js and select2.css to last stable release (4.0.13) in Superset application and it works. | closed | 2022-11-07T09:07:38Z | 2022-12-22T08:24:09Z | https://github.com/dpgaspar/Flask-AppBuilder/issues/1949 | [] | n1k9 | 1 |

holoviz/panel | matplotlib | 7,416 | Opening notebook from url with panelite, fromURL parameter? | #### Is your feature request related to a problem? Please describe.

A way to open notebooks from urls with panelite

such as https://github.com/YoraiLevi/interactive_matplotlib/blob/b09c7720069ad41337d8acf2540cda223c50a9dc/examples/draggable_line_matplotlib_widgets.ipynb#L7

#### Describe the solution you'd like

the following link should open the notebook

`https://panelite.holoviz.org/lab/index.html?fromURL=https://raw.githubusercontent.com/YoraiLevi/interactive_matplotlib/refs/heads/master/examples/draggable_line_matplotlib_widgets.ipynb`

#### Describe alternatives you've considered

jupyterlite does open the following notebook with this url using `fromURL` parameter

`https://jupyter.org/try-jupyter/lab/index.html?fromURL=https://raw.githubusercontent.com/YoraiLevi/interactive_matplotlib/refs/heads/master/examples/draggable_line_matplotlib_widgets.ipynb`

#### Additional context

https://discourse.holoviz.org/t/opening-notebook-from-url-with-panelite/8346

| open | 2024-10-17T20:57:28Z | 2024-10-17T20:57:28Z | https://github.com/holoviz/panel/issues/7416 | [] | YoraiLevi | 0 |

bmoscon/cryptofeed | asyncio | 1,014 | BITMEX: Failed to parse symbol information: 'expiry' | **Describe the bug**

2024-03-01 14:27:05,508 : ERROR : BITMEX: Failed to parse symbol information: 'expiry'

Traceback (most recent call last):

File "/home/ec2-user/.cache/pypoetry/virtualenvs/feed-CdS8cHT0-py3.9/lib64/python3.9/site-packages/cryptofeed/exchange.py", line 105, in symbol_mapping

syms, info = cls._parse_symbol_data(data if len(data) > 1 else data[0])

File "/home/ec2-user/.cache/pypoetry/virtualenvs/feed-CdS8cHT0-py3.9/lib64/python3.9/site-packages/cryptofeed/exchanges/bitmex.py", line 61, in _parse_symbol_data

s = Symbol(base, quote, type=stype, expiry_date=entry['expiry'])

KeyError: 'expiry'

2024-03-01 14:27:05,510:ERROR:An error occurred: 'expiry'