repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

mirumee/ariadne | graphql | 1,051 | Support passing Python `Enum` directly to `make_executable_schema` | `make_executable_schema` could have two lines of magic that would repack `Enum`s to `ariadne.EnumType(enum.__name__, enum).bind_to_schema(schema)` | closed | 2023-03-17T17:02:54Z | 2023-03-20T12:27:06Z | https://github.com/mirumee/ariadne/issues/1051 | [

"enhancement",

"docs"

] | rafalp | 0 |

aminalaee/sqladmin | sqlalchemy | 181 | Show the form fields in the order of `only` | ### Checklist

- [X] There are no similar issues or pull requests for this yet.

### Is your feature related to a problem? Please describe.

`get_model_form()` receives `only` parameter in `Sequence` but ignores its order to build form fields.

### Describe the solution you would like.

`attributes` should align to the order of `only` if specified like:

```python

attributes = []

names = only or mapper.attrs.keys()

for name in names:

if exclude and name in exclude:

continue

attributes.append((name, mapper.attrs[name]))

```

### Describe alternatives you considered

_No response_

### Additional context

_No response_ | closed | 2022-06-16T10:56:08Z | 2022-06-19T08:59:44Z | https://github.com/aminalaee/sqladmin/issues/181 | [] | okapies | 0 |

sebastianruder/NLP-progress | machine-learning | 209 | Semantic parsing / natural language query | It would be really interesting to see a state-of-the-art summary for semantic parsing tasks, like natural-language query into databases. Is that task category too broad to really accommodate comparable metrics? I see [a bunch of papers at stateoftheart.ai](https://www.stateoftheart.ai/?area=Natural%20Language%20Processing&task=Semantic%20Parsing) but the metrics columns there are quite sparse. | closed | 2019-01-16T02:11:23Z | 2019-01-16T16:27:58Z | https://github.com/sebastianruder/NLP-progress/issues/209 | [] | gthb | 2 |

fastapi/sqlmodel | sqlalchemy | 249 | Create Relationships with Unique Fields (UniqueViolationError) | ### First Check

- [X] I added a very descriptive title to this issue.

- [X] I used the GitHub search to find a similar issue and didn't find it.

- [X] I searched the SQLModel documentation, with the integrated search.

- [X] I already searched in Google "How to X in SQLModel" and didn't find any information.

- [X] I already read and followed all the tutorial in the docs and didn't find an answer.

- [X] I already checked if it is not related to SQLModel but to [Pydantic](https://github.com/samuelcolvin/pydantic).

- [X] I already checked if it is not related to SQLModel but to [SQLAlchemy](https://github.com/sqlalchemy/sqlalchemy).

### Commit to Help

- [X] I commit to help with one of those options 👆

### Example Code

```python

# From the SQLModel Tutorial (https://sqlmodel.tiangolo.com/tutorial/relationship-attributes/create-and-update-relationships/)

from typing import List, Optional

from sqlmodel import Field, Relationship, Session, SQLModel, create_engine

class Team(SQLModel, table=True):

# id: Optional[int] = Field(default=None, primary_key=True)

id: int = Field(default=None, primary_key=True) # NEW

name: str = Field(index=True)

heroes: List["Hero"] = Relationship(back_populates="team")

class Hero(SQLModel, table=True):

# id: Optional[int] = Field(default=None, primary_key=True)

id: int = Field(default=None, primary_key=True) # NEW

name: str = Field(index=True)

# team_id: Optional[int] = Field(default=None, foreign_key="team.id")

# team: Optional[Team] = Relationship(back_populates="heroes")

team_id: int = Field(default=None, foreign_key="team.id") # NEW

team: Team = Relationship(back_populates="heroes") # NEW

from sqlalchemy.ext.asyncio import AsyncSession # ADDED: 2022_02_24

# def create_heroes():

async def create_heroes(session: AsyncSession, request: Hero): # NEW, EDITED: 2022_02_24

# with Session(engine) as session: # EDITED

# team_preventers = Team(name="Preventers", headquarters="Sharp Tower") # REMOVE

# team_z_force = Team(name="Z-Force", headquarters="Sister Margaret’s Bar") # REMOVE

assigned_team = Team(name=request.team_to_assign)

new_hero = Hero(

name=request.hero_name,

team=assigned_team

)

session.add(new_hero)

await session.commit() # EDITED: 2022_02_24

await session.refresh(new_hero) # EDITED: 2022_02_24

return new_hero # ADDED: 2022_02_24

# Code below omitted 👇

```

### Description

I'm following the SQLModel tutorial as I implement my own version. I have a model very similar to the above example (derived from the Hero/Team example given in the tutorial on how to implement One-To-Many relationships with SQLModel.

When I use this approach, it does write the required Team and Hero objects to my database. However, it does not check the Team table to ensure that the "team_to_assign" from the request object does not already exist. So, if I use the "create_heroes" function (in two separate commits) to create two Heroes who are on the same team, I get two entries for the same team in the Team table. This is not desirable. If the team already exists, the Hero being created should use the id that already exists for that team.

When I implement "sa_column_kwargs={"unique": True}" within the "name" Field of the Team table, I can no longer create a new Hero if they are to be connected to a Team that already exists. I get the error:

> `sqlalchemy.exc.IntegrityError: (sqlalchemy.dialects.postgresql.asyncpg.IntegrityError) <class 'asyncpg.exceptions.UniqueViolationError'>: duplicate key value violates unique constraint "ix_team_name"

> DETAIL: Key (name)=(team_name) already exists.

> [SQL: INSERT INTO "team" (name) VALUES (%s) RETURNING "team".id]`

I was hoping that would somehow tell SQLModel to skip the insertion of a Team that already exists and get the appropriate Team id instead. Clearly it just stops it from happening. SQLModel doesn't appear to check that a Team already exists before inserting it into the Team table.

Am I missing something about how to handle this with SQLModel, or am I meant to employ my own logic to check the Team table prior to generating the Hero object to insert?

Thanks for your time!

### Operating System

Linux

### Operating System Details

_No response_

### SQLModel Version

0.0.6

### Python Version

3.10

### Additional Context

Using async libraries:

SQLAlchemy = {extras = ["asyncio"], version = "^1.4.31"}

asyncpg = "^0.25.0"

| closed | 2022-02-23T17:02:05Z | 2022-03-02T14:08:42Z | https://github.com/fastapi/sqlmodel/issues/249 | [

"question"

] | njdowdy | 11 |

microsoft/MMdnn | tensorflow | 694 | Transfer from keras to caffe 【error forSeparableConv 】 | Platform (like ubuntu 16.04/win10):ubuntu 16.04

Python version:python2.7.12

Source framework with version (like Tensorflow 1.4.1 with GPU):keras 2.2.4

Destination framework with version (like CNTK 2.3 with GPU):caffe

Pre-trained model path (webpath or webdisk path):

Running scripts:

mmconvert -sf keras -iw age.h5 --dstNodeName age -df caffe -om mycaffemodel

```

Using TensorFlow backend.

2019-07-12 17:28:20.255156: I tensorflow/core/platform/cpu_feature_guard.cc:140] Your CPU supports instructions that this TensorFlow binary was not compiled to use: AVX2 AVX512F FMA

2019-07-12 17:28:20.361369: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1212] Found device 0 with properties:

name: Quadro P2000 major: 6 minor: 1 memoryClockRate(GHz): 1.4805

pciBusID: 0000:65:00.0

totalMemory: 4.94GiB freeMemory: 4.28GiB

2019-07-12 17:28:20.361401: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1312] Adding visible gpu devices: 0

2019-07-12 17:28:20.573748: I tensorflow/core/common_runtime/gpu/gpu_device.cc:993] Creating TensorFlow device (/job:localhost/replica:0/task:0/device:GPU:0 with 4032 MB memory) -> physical GPU (device: 0, name: Quadro P2000, pci bus id: 0000:65:00.0, compute capability: 6.1)

IR network structure is saved as [cf3c22ff6bc040c4b67fefb242b96e02.json].

IR network structure is saved as [cf3c22ff6bc040c4b67fefb242b96e02.pb].

IR weights are saved as [cf3c22ff6bc040c4b67fefb242b96e02.npy].

Parse file [cf3c22ff6bc040c4b67fefb242b96e02.pb] with binary format successfully.

CaffeEmitter has not supported operator [SeparableConv].

separable_conv2d_1

CaffeEmitter has not supported operator [SeparableConv].

separable_conv2d_2

CaffeEmitter has not supported operator [SeparableConv].

separable_conv2d_3

CaffeEmitter has not supported operator [SeparableConv].

separable_conv2d_4

CaffeEmitter has not supported operator [SeparableConv].

separable_conv2d_5

CaffeEmitter has not supported operator [SeparableConv].

separable_conv2d_6

CaffeEmitter has not supported operator [SeparableConv].

separable_conv2d_7

CaffeEmitter has not supported operator [SeparableConv].

separable_conv2d_8

Target network code snippet is saved as [cf3c22ff6bc040c4b67fefb242b96e02.py].

Target weights are saved as [cf3c22ff6bc040c4b67fefb242b96e02.npy].

Traceback (most recent call last):

File "/home/bill/.local/bin/mmconvert", line 10, in <module>

sys.exit(_main())

File "/home/bill/.local/lib/python2.7/site-packages/mmdnn/conversion/_script/convert.py", line 112, in _main

dump_code(args.dstFramework, network_filename + '.py', temp_filename + '.npy', args.outputModel, args.dump_tag)

File "/home/bill/.local/lib/python2.7/site-packages/mmdnn/conversion/_script/dump_code.py", line 32, in dump_code

save_model(MainModel, network_filepath, weight_filepath, dump_filepath)

File "/home/bill/.local/lib/python2.7/site-packages/mmdnn/conversion/caffe/saver.py", line 9, in save_model

MainModel.make_net(dump_net)

File "cf3c22ff6bc040c4b67fefb242b96e02.py", line 100, in make_net

n = KitModel()

File "cf3c22ff6bc040c4b67fefb242b96e02.py", line 38, in KitModel

n.batch_normalization_4 = L.BatchNorm(n.separable_conv2d_1, eps=0.0010000000475, use_global_stats=True, ntop=1)

File "/home/bill/E/dl_base/caffe_program/caffe_mobilenet/python/caffe/net_spec.py", line 180, in __getattr__

return self.tops[name]

KeyError: 'separable_conv2d_1'

```

Before I can successfully convert mxnet's depthwise conv to caffe's groupconv, how to deal with the keras's SeparableConv and transfer to caffe's groupconv. Thanks!

| open | 2019-07-12T09:50:49Z | 2020-06-24T01:09:25Z | https://github.com/microsoft/MMdnn/issues/694 | [] | zys1994 | 1 |

InstaPy/InstaPy | automation | 6,630 | No way to create posts? | <!-- Did you know that we have a Discord channel ? Join us: https://discord.gg/FDETsht -->

<!-- Is this a Feature Request ? Please, check out our Wiki first https://github.com/timgrossmann/InstaPy/wiki -->

## Expected Behavior

I would think that an Instagram automation library as comprehensive as this one would have a way for one to create posts.

## Current Behavior

However, I am unable to find one.

## Possible Solution (optional)

Perhaps I am mistaken. If not, however, this seems like something that this library should be able to do.

## InstaPy configuration

| closed | 2022-07-07T02:27:52Z | 2022-08-16T21:50:37Z | https://github.com/InstaPy/InstaPy/issues/6630 | [] | kaijif | 1 |

zappa/Zappa | django | 440 | [Migrated] Using Extra Cloundfront Distro to Restrict TLS Protocol and Ciphers? | Originally from: https://github.com/Miserlou/Zappa/issues/1154 by [Erstwild](https://github.com/Erstwild)

I have been working on trying create a "regulated industry" deployment of Zappa. I have one issue open on putting the DynamoDB instance in a VPC. The other I have been working on with AWS is trying to find the best currently available way to restrict TLS protocol and ciphers. The idea I had that they also recommended to try is adding an additional cloudfront distribution with the following settings: https://aws.amazon.com/blogs/aws/cloudfront-update-https-tls-v1-1v1-2-to-the-origin-addmodify-headers/

So essentially this would change the standard:

Client -> Route53 -> CloudFront (Created by API GW) -> API Gateway -> Lambda backend

to this:

Client -> Route53 -> CloudFront (custom distro with TLS settings) -> CloudFront (Created by API GW) -> API Gateway -> Lambda backend

I know this will add some latency, but I am hoping it wont be a showstopper if I can get it working. My question is if there are other issues that will likely arise with this approach/other changes I will need to make? | closed | 2021-02-20T08:34:52Z | 2024-04-13T16:17:58Z | https://github.com/zappa/Zappa/issues/440 | [

"no-activity",

"auto-closed"

] | jneves | 2 |

plotly/dash-bio | dash | 711 | Browser scrolls and zooms simultaniously NglMoleculeViewer | I am talking about the dash_bio.NglMolecule.Viewer here:

The browser is scrolling down when the mouse is in the window of the viewer, therefore the browser scrolls simultaniously when zooming into or out of the viewer.

I was told by the original developer that this could be an easy fix by overwriting the `stage.mouseControls`

of the core ngl viewer.

Can you guys help me with this ?

Python version is: 3.10.4

These are the used dependencies:

aiohttp 3.8.3

aiosignal 1.2.0

ansi2html 1.8.0

appnope 0.1.3

argon2-cffi 21.3.0

argon2-cffi-bindings 21.2.0

asttokens 2.0.5

async-timeout 4.0.2

attrs 21.4.0

backcall 0.2.0

beautifulsoup4 4.11.1

biopython 1.79

black 22.8.0

bleach 5.0.0

bokeh 2.4.3

Brotli 1.0.9

certifi 2022.5.18.1

cffi 1.15.0

charset-normalizer 2.0.12

click 8.1.3

cloudpickle 2.1.0

colorcet 3.0.0

colour 0.1.5

cycler 0.11.0

dash 2.6.1

dash-bio 1.0.2

dash-bootstrap-components 1.2.1

dash-core-components 2.0.0

dash-html-components 2.0.0

dash-table 5.0.0

dask 2022.6.0

datashader 0.14.0

datashape 0.5.2

debugpy 1.6.0

decorator 5.1.1

defusedxml 0.7.1

distributed 2022.6.0

docker-pycreds 0.4.0

entrypoints 0.4

executing 0.8.3

fastjsonschema 2.15.3

Flask 2.2.2

Flask-Compress 1.12

fonttools 4.33.3

frozenlist 1.3.1

fsspec 2022.5.0

GEOparse 2.0.3

gitdb 4.0.9

GitPython 3.1.27

h5py 3.7.0

HeapDict 1.0.1

holoviews 1.14.9

idna 3.3

imageio 2.19.3

ipykernel 6.15.0

ipython 8.4.0

ipython-genutils 0.2.0

ipywidgets 7.7.0

itsdangerous 2.1.2

jedi 0.18.1

Jinja2 3.1.2

joblib 1.1.0

jsonschema 4.6.0

jupyter 1.0.0

jupyter-client 7.3.4

jupyter-console 6.4.3

jupyter-core 4.10.0

jupyter-dash 0.4.2

jupyterlab-pygments 0.2.2

jupyterlab-widgets 1.1.0

kaleido 0.2.1

kiwisolver 1.4.3

llvmlite 0.38.1

locket 1.0.0

Markdown 3.3.7

MarkupSafe 2.1.1

matplotlib 3.5.2

matplotlib-inline 0.1.3

mistune 0.8.4

msgpack 1.0.4

multidict 6.0.2

multipledispatch 0.6.0

mypy-extensions 0.4.3

nbclient 0.6.4

nbconvert 6.5.0

nbformat 5.4.0

nest-asyncio 1.5.5

networkx 2.8.4

notebook 6.4.12

numba 0.55.2

numpy 1.21.6

packaging 21.3

pandas 1.4.3

pandocfilters 1.5.0

panel 0.13.1

param 1.12.1

ParmEd 3.4.3

parso 0.8.3

partd 1.2.0

pathspec 0.10.1

pathtools 0.1.2

periodictable 1.6.1

pexpect 4.8.0

pickleshare 0.7.5

Pillow 9.1.1

pip 22.3

platformdirs 2.5.2

plotly 5.8.2

prometheus-client 0.14.1

promise 2.3

prompt-toolkit 3.0.29

protobuf 3.20.1

psutil 5.9.1

ptyprocess 0.7.0

pure-eval 0.2.2

pycparser 2.21

pyct 0.4.8

pyfaidx 0.7.1

Pygments 2.12.0

pynndescent 0.5.7

pyparsing 3.0.9

pyrsistent 0.18.1

python-dateutil 2.8.2

pytz 2022.1

pyviz-comms 2.2.0

PyWavelets 1.3.0

PyYAML 6.0

pyzmq 23.1.0

qtconsole 5.3.1

QtPy 2.1.0

requests 2.28.0

retrying 1.3.3

scikit-image 0.19.3

scikit-learn 1.1.1

scipy 1.8.1

seaborn 0.11.2

Send2Trash 1.8.0

sentry-sdk 1.6.0

setproctitle 1.2.3

setuptools 60.2.0

shortuuid 1.0.9

six 1.16.0

sklearn 0.0

smmap 5.0.0

sortedcontainers 2.4.0

soupsieve 2.3.2.post1

stack-data 0.3.0

tblib 1.7.0

tenacity 8.0.1

terminado 0.15.0

threadpoolctl 3.1.0

tifffile 2022.5.4

tinycss2 1.1.1

tomli 2.0.1

toolz 0.11.2

torch 1.11.0

torchsummary 1.5.1

tornado 6.1

tqdm 4.64.0

traitlets 5.3.0

typing_extensions 4.2.0

umap-learn 0.5.3

urllib3 1.26.9

wandb 0.12.20

wcwidth 0.2.5

webencodings 0.5.1

Werkzeug 2.2.2

wheel 0.37.1

widgetsnbextension 3.6.0

xarray 2022.3.0

yarl 1.8.1

zict 2.2.0

| open | 2022-11-16T08:36:05Z | 2022-11-16T10:20:35Z | https://github.com/plotly/dash-bio/issues/711 | [] | Ento0n | 1 |

nerfstudio-project/nerfstudio | computer-vision | 2,862 | TypeError: __init__() got an unexpected keyword argument 'dataparser' | **Describe the bug**

After installing and setting up the environment, I run the example command `ns-train nerfacto --data data/nerfstudio/poster` and then got the following error.

It worked without error two weeks ago, so I am not sure whether it is due to some inconsistency between versions.

**To Reproduce**

Steps to reproduce the behavior:

1. Download and install the `nerfstudio` repo

2. Run the sample command `ns-train nerfacto --data data/nerfstudio/poster`

4. See error

```

(ns) xxx@xxx:/data/xxx/projects/nerfstudio$ ns-train nerfacto --data data/nerfstudio/poster

Traceback (most recent call last):

File "/home/ruihan/anaconda3/envs/ns/bin/ns-train", line 8, in <module>

sys.exit(entrypoint())

File "/data/ruihan/projects/nerfstudio/nerfstudio/scripts/train.py", line 263, in entrypoint

tyro.cli(

File "/home/ruihan/.local/lib/python3.8/site-packages/tyro/_cli.py", line 187, in cli

output = _cli_impl(

File "/home/ruihan/.local/lib/python3.8/site-packages/tyro/_cli.py", line 454, in _cli_impl

out, consumed_keywords = _calling.call_from_args(

File "/home/ruihan/.local/lib/python3.8/site-packages/tyro/_calling.py", line 157, in call_from_args

value, consumed_keywords_child = call_from_args(

File "/home/ruihan/.local/lib/python3.8/site-packages/tyro/_calling.py", line 122, in call_from_args

value, consumed_keywords_child = call_from_args(

File "/home/ruihan/.local/lib/python3.8/site-packages/tyro/_calling.py", line 122, in call_from_args

value, consumed_keywords_child = call_from_args(

File "/home/ruihan/.local/lib/python3.8/site-packages/tyro/_calling.py", line 247, in call_from_args

return unwrapped_f(*positional_args, **kwargs), consumed_keywords # type: ignore

TypeError: __init__() got an unexpected keyword argument 'dataparser'

```

**Expected behavior**

Expected it to run the nerfacto training.

| closed | 2024-02-01T15:58:27Z | 2024-02-01T21:45:19Z | https://github.com/nerfstudio-project/nerfstudio/issues/2862 | [] | RuihanGao | 6 |

python-restx/flask-restx | flask | 193 | Why was the changelog removed from the repository and the documentation? | **Ask a question**

Why was the changelog removed from the repository and the documentation?

**Additional context**

Hi, I was looking at trying to move a project from flask_restplus==0.10.1 to restx but had a hard time figuring out what had changed. The docs say that is it mostly compatible with restplus but that is specific to a version, I think relabeling tags was a bad idea and can be reverted. Also having the changelog in the docs is not only very common but also a great way to figure out the risks when trying to move from restplus to restx.

| open | 2020-08-07T16:17:54Z | 2020-09-02T18:48:40Z | https://github.com/python-restx/flask-restx/issues/193 | [

"question"

] | avilaton | 3 |

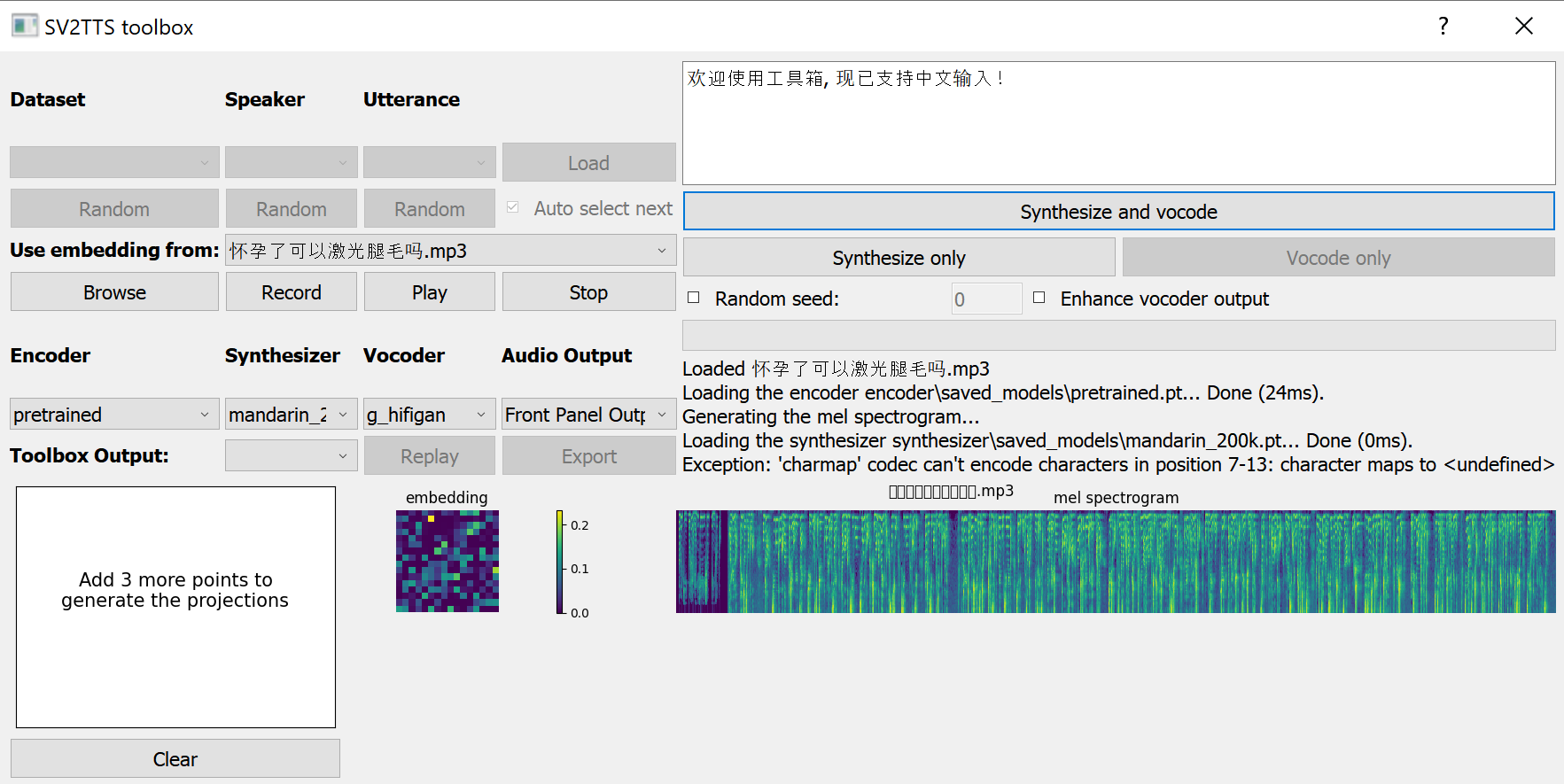

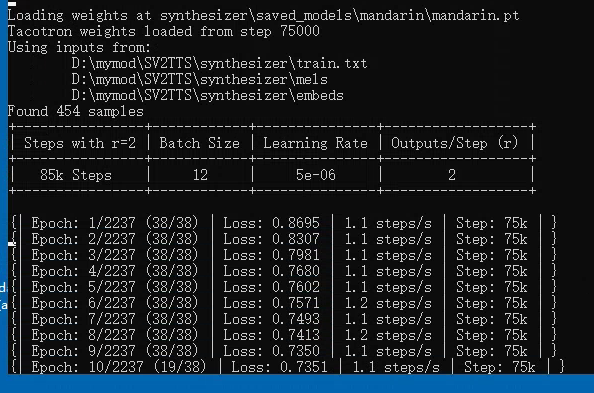

babysor/MockingBird | deep-learning | 204 | 使用新語料 toolbox inference錯誤 | 最近使用了一個新的語料庫去訓練synthesizer(沒有使用作者提供的語料),訓練格式都沒有改。但是將模型拿去toolbox做inference的時候遇到下列的錯誤。

`Feel free to add your own. You can still use the toolbox by recording samples yourself.

Loaded encoder "pretrained.pt" trained to step 1564501

Synthesizer using device: cpu

Trainable Parameters: 30.875M

Traceback (most recent call last):

File "C:\Users\VC\MockingBird\toolbox\__init__.py", line 123, in <lambda>

func = lambda: self.synthesize() or self.vocode()

File "C:\Users\VC\MockingBird\toolbox\__init__.py", line 237, in synthesize

specs = self.synthesizer.synthesize_spectrograms(texts, embeds)

File "C:\Users\VC\MockingBird\synthesizer\inference.py", line 87, in synthesize_spectrograms

self.load()

File "C:\Users\VC\MockingBird\synthesizer\inference.py", line 65, in load

self._model.load(self.model_fpath)

File "C:\Users\VC\MockingBird\synthesizer\models\tacotron.py", line 497, in load

self.load_state_dict(checkpoint["model_state"])

File "C:\Users\anaconda3\envs\VC-test\lib\site-packages\torch\nn\modules\module.py", line 1407, in load_state_dict

self.__class__.__name__, "\n\t".join(error_msgs)))

RuntimeError: Error(s) in loading state_dict for Tacotron:

Unexpected key(s) in state_dict: "gst.encoder.convs.0.weight", "gst.encoder.convs.0.bias", "gst.encoder.convs.1.weight", "gst.encoder.convs.1.bias", "gst.encoder.convs.2.weight", "gst.encoder.convs.2.bias", "gst.encoder.convs.3.weight", "gst.encoder.convs.3.bias", "gst.encoder.convs.4.weight", "gst.encoder.convs.4.bias", "gst.encoder.convs.5.weight", "gst.encoder.convs.5.bias", "gst.encoder.bns.0.weight", "gst.encoder.bns.0.bias", "gst.encoder.bns.0.running_mean", "gst.encoder.bns.0.running_var", "gst.encoder.bns.0.num_batches_tracked", "gst.encoder.bns.1.weight", "gst.encoder.bns.1.bias", "gst.encoder.bns.1.running_mean", "gst.encoder.bns.1.running_var", "gst.encoder.bns.1.num_batches_tracked", "gst.encoder.bns.2.weight", "gst.encoder.bns.2.bias", "gst.encoder.bns.2.running_mean", "gst.encoder.bns.2.running_var", "gst.encoder.bns.2.num_batches_tracked", "gst.encoder.bns.3.weight", "gst.encoder.bns.3.bias", "gst.encoder.bns.3.running_mean", "gst.encoder.bns.3.running_var", "gst.encoder.bns.3.num_batches_tracked", "gst.encoder.bns.4.weight", "gst.encoder.bns.4.bias", "gst.encoder.bns.4.running_mean", "gst.encoder.bns.4.running_var", "gst.encoder.bns.4.num_batches_tracked", "gst.encoder.bns.5.weight", "gst.encoder.bns.5.bias", "gst.encoder.bns.5.running_mean", "gst.encoder.bns.5.running_var", "gst.encoder.bns.5.num_batches_tracked", "gst.encoder.gru.weight_ih_l0", "gst.encoder.gru.weight_hh_l0", "gst.encoder.gru.bias_ih_l0", "gst.encoder.gru.bias_hh_l0", "gst.stl.embed", "gst.stl.attention.W_query.weight", "gst.stl.attention.W_key.weight", "gst.stl.attention.W_value.weight".` | open | 2021-11-09T07:27:15Z | 2021-11-09T13:10:05Z | https://github.com/babysor/MockingBird/issues/204 | [] | gaga820402 | 1 |

pydata/xarray | pandas | 9,815 | Test failure on RISC-V platform | ### What happened?

I am a Fedora packager

When I run pytest on the RISC-V platform, I got four failures

### What did you expect to happen?

_No response_

### Minimal Complete Verifiable Example

```Python

pytest xarray/tests/test_backends.py::TestNetCDF4ViaDaskData::test_roundtrip_mask_and_scale

pytest xarray/tests/test_backends.py::TestNetCDF4Data::test_roundtrip_mask_and_scale

```

### MVCE confirmation

- [x] Minimal example — the example is as focused as reasonably possible to demonstrate the underlying issue in xarray.

- [ ] Complete example — the example is self-contained, including all data and the text of any traceback.

- [x] Verifiable example — the example copy & pastes into an IPython prompt or [Binder notebook](https://mybinder.org/v2/gh/pydata/xarray/main?urlpath=lab/tree/doc/examples/blank_template.ipynb), returning the result.

- [ ] New issue — a search of GitHub Issues suggests this is not a duplicate.

- [X] Recent environment — the issue occurs with the latest version of xarray and its dependencies.

### Relevant log output

```Python

XFAIL tests/test_units.py::TestDataArray::test_searchsorted[int64-function_searchsorted-identical_unit] - xarray does not implement __array_function__

XFAIL tests/test_units.py::TestDataArray::test_missing_value_filling[int64-method_bfill] - ffill and bfill lose units in data

XFAIL tests/test_units.py::TestPlots::test_units_in_slice_line_plot_labels_isel[coord_unit1-coord_attrs1] - pint.errors.UnitStrippedWarning

XFAIL tests/test_units.py::TestPlots::test_units_in_line_plot_labels[coord_unit1-coord_attrs1] - indexes don't support units

XFAIL tests/test_variable.py::TestVariableWithDask::test_pad[xr_arg2-np_arg2-median] - median is not implemented by Dask

XFAIL tests/test_units.py::TestDataset::test_missing_value_filling[int64-method_bfill] - ffill and bfill lose the unit

XFAIL tests/test_variable.py::TestVariableWithDask::test_pad[xr_arg0-np_arg0-median] - median is not implemented by Dask

XFAIL tests/test_units.py::TestDataArray::test_interp_reindex_like[int64-method_interp_like-data] - uses scipy

XFAIL tests/test_variable.py::TestVariableWithDask::test_pad[xr_arg2-np_arg2-reflect] - dask.array.pad bug

XFAIL tests/test_units.py::TestDataset::test_interp_reindex_like[float64-method_interp_like-coords] - uses scipy

XFAIL tests/test_variable.py::TestVariableWithDask::test_pad[xr_arg3-np_arg3-median] - median is not implemented by Dask

XFAIL tests/test_variable.py::TestVariableWithDask::test_pad[xr_arg4-np_arg4-median] - median is not implemented by Dask

XFAIL tests/test_units.py::TestDataArray::test_numpy_methods_with_args[float64-no_unit-function_clip] - xarray does not implement __array_function__

XFAIL tests/test_units.py::TestDataset::test_interp_reindex[int64-method_interp-data] - uses scipy

XFAIL tests/test_variable.py::TestVariableWithDask::test_pad[xr_arg3-np_arg3-reflect] - dask.array.pad bug

XFAIL tests/test_units.py::TestDataArray::test_interp_reindex_like[int64-method_interp_like-coords] - uses scipy

XFAIL tests/test_units.py::TestDataset::test_interp_reindex_like[int64-method_interp_like-data] - uses scipy

XFAIL tests/test_units.py::TestDataset::test_interp_reindex[int64-method_interp-coords] - uses scipy

XFAIL tests/test_units.py::TestDataset::test_interp_reindex_like[int64-method_interp_like-coords] - uses scipy

XPASS tests/test_computation.py::test_cross[a6-b6-ae6-be6-cartesian--1-False]

XPASS tests/test_dataarray.py::TestDataArray::test_copy_coords[True-expected_orig0]

XPASS tests/test_dataarray.py::TestDataArray::test_to_dask_dataframe - dask-expr is broken

XPASS tests/test_dataset.py::TestDataset::test_copy_coords[True-expected_orig0]

XPASS tests/test_computation.py::test_cross[a5-b5-ae5-be5-cartesian--1-False]

XPASS tests/test_plot.py::TestImshow::test_dates_are_concise - Failing inside matplotlib. Should probably be fixed upstream because other plot functions can handle it. Remove this test when it works, already in Common2dMixin

XPASS tests/test_units.py::TestVariable::test_computation[int64-method_rolling_window-numbagg] - converts to ndarray

XPASS tests/test_units.py::TestVariable::test_computation[int64-method_rolling_window-None] - converts to ndarray

XPASS tests/test_units.py::TestVariable::test_computation[float64-method_rolling_window-numbagg] - converts to ndarray

XPASS tests/test_units.py::TestVariable::test_computation[float64-method_rolling_window-None] - converts to ndarray

XPASS tests/test_units.py::TestDataset::test_computation_objects[float64-coords-method_rolling] - strips units

XPASS tests/test_units.py::TestDataset::test_computation_objects[int64-coords-method_rolling] - strips units

XPASS tests/test_variable.py::TestVariableWithDask::test_pad[xr_arg0-np_arg0-reflect] - dask.array.pad bug

XPASS tests/test_variable.py::TestVariableWithDask::test_pad[xr_arg1-np_arg1-reflect] - dask.array.pad bug

FAILED tests/test_backends.py::TestNetCDF4ViaDaskData::test_roundtrip_mask_and_scale[dtype0-create_unsigned_false_masked_scaled_data-create_encoded_unsigned_false_masked_scaled_data]

FAILED tests/test_backends.py::TestNetCDF4Data::test_roundtrip_mask_and_scale[dtype0-create_unsigned_false_masked_scaled_data-create_encoded_unsigned_false_masked_scaled_data]

FAILED tests/test_backends.py::TestNetCDF4ViaDaskData::test_roundtrip_mask_and_scale[dtype1-create_unsigned_false_masked_scaled_data-create_encoded_unsigned_false_masked_scaled_data]

FAILED tests/test_backends.py::TestNetCDF4Data::test_roundtrip_mask_and_scale[dtype1-create_unsigned_false_masked_scaled_data-create_encoded_unsigned_false_masked_scaled_data]

```

### Anything else we need to know?

TestNetCDF4ViaDaskData::test_roundtrip_mask_and_scale

TestNetCDF4Data::test_roundtrip_mask_and_scale

### Environment

<details>

commit: 1bb867d573390509dbc0379f0fd318a6985dab45

python: 3.13.0 (main, Oct 8 2024, 00:00:00) [GCC 14.2.1 20240912 (Red Hat 14.2.1-3)]

python-bits: 64

OS: Linux

OS-release: 6.1.55

machine: riscv64

processor:

byteorder: little

LC_ALL: None

LANG: C.UTF-8

LOCALE: ('C', 'UTF-8')

libhdf5: 1.12.1

libnetcdf: 4.9.2

xarray: 2024.11.0

pandas: 2.2.1

numpy: 1.26.4

scipy: 1.11.3

netCDF4: 1.7.1

pydap: None

h5netcdf: None

h5py: None

zarr: 2.18.3

cftime: 1.6.4

nc_time_axis: None

iris: None

bottleneck: 1.3.7

dask: 2024.9.1

distributed: None

matplotlib: 3.9.1

cartopy: None

seaborn: 0.13.2

numbagg: None

fsspec: 2024.3.1

cupy: None

pint: 0.24.4

sparse: None

flox: None

numpy_groupies: None

setuptools: 69.2.0

pip: 24.2

conda: None

pytest: 8.3.3

mypy: None

IPython: None

sphinx: 7.3.7

</details>

| open | 2024-11-24T10:21:51Z | 2025-02-16T18:37:58Z | https://github.com/pydata/xarray/issues/9815 | [

"bug",

"needs triage"

] | U2FsdGVkX1 | 14 |

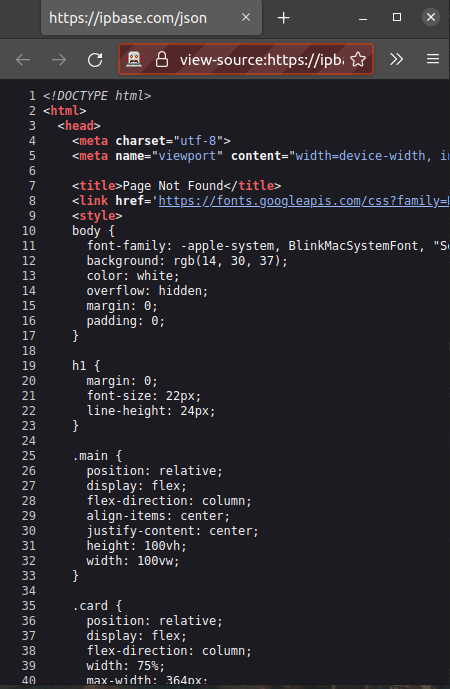

InstaPy/InstaPy | automation | 6,628 | Not working view-source:https://freegeoip.app/json | <!-- Did you know that we have a Discord channel ? Join us: https://discord.gg/FDETsht -->

<!-- Is this a Feature Request ? Please, check out our Wiki first https://github.com/timgrossmann/InstaPy/wiki -->

## Expected Behavior

Log in

## Current Behavior

Im tryng to login but, the request to get the ip it doesn't work anymore. The request to de ipbase endpoint return a 404

## Possible Solution (optional)

Change for another free ip location api

## InstaPy configuration

```

session = InstaPy(

username=insta_username,

password=insta_password,

headless_browser=False,

)

```

| open | 2022-07-02T19:19:10Z | 2022-11-23T05:38:17Z | https://github.com/InstaPy/InstaPy/issues/6628 | [] | Arwiim | 2 |

flairNLP/flair | pytorch | 2,887 | issue loading MultiTagger (unpickling error) | I am using Hunflair and having below error while running it: Can anyone suggest me the next step?

Traceback (most recent call last):

File "hunflair.py", line 35, in <module>

hunflair = MultiTagger.load("hunflair")

File "/home/sgu260/.local/lib/python3.8/site-packages/flair/models/sequence_tagger_model.py", line 1079, in load

model = SequenceTagger.load(model_name)

File "/home/sgu260/.local/lib/python3.8/site-packages/flair/nn/model.py", line 142, in load

state = torch.load(f, map_location="cpu")

File "/home/sgu260/.local/lib/python3.8/site-packages/torch/serialization.py", line 713, in load

return _legacy_load(opened_file, map_location, pickle_module, **pickle_load_args)

File "/home/sgu260/.local/lib/python3.8/site-packages/torch/serialization.py", line 930, in _legacy_load

result = unpickler.load()

_pickle.UnpicklingError: pickle data was truncated

| closed | 2022-08-05T18:49:56Z | 2023-01-07T13:48:14Z | https://github.com/flairNLP/flair/issues/2887 | [

"question",

"wontfix"

] | shashank140195 | 1 |

huggingface/datasets | computer-vision | 7,357 | Python process aborded with GIL issue when using image dataset | ### Describe the bug

The issue is visible only with the latest `datasets==3.2.0`.

When using image dataset the Python process gets aborted right before the exit with the following error:

```

Fatal Python error: PyGILState_Release: thread state 0x7fa1f409ade0 must be current when releasing

Python runtime state: finalizing (tstate=0x0000000000ad2958)

Thread 0x00007fa33d157740 (most recent call first):

<no Python frame>

Extension modules: numpy.core._multiarray_umath, numpy.core._multiarray_tests, numpy.linalg._umath_linalg, numpy.fft._pocketfft_internal, numpy.random._common, numpy.random.bit_generator, numpy.random._boun

ded_integers, numpy.random._mt19937, numpy.random.mtrand, numpy.random._philox, numpy.random._pcg64, numpy.random._sfc64, numpy.random._generator, pyarrow.lib, pandas._libs.tslibs.ccalendar, pandas._libs.ts

libs.np_datetime, pandas._libs.tslibs.dtypes, pandas._libs.tslibs.base, pandas._libs.tslibs.nattype, pandas._libs.tslibs.timezones, pandas._libs.tslibs.fields, pandas._libs.tslibs.timedeltas, pandas._libs.t

slibs.tzconversion, pandas._libs.tslibs.timestamps, pandas._libs.properties, pandas._libs.tslibs.offsets, pandas._libs.tslibs.strptime, pandas._libs.tslibs.parsing, pandas._libs.tslibs.conversion, pandas._l

ibs.tslibs.period, pandas._libs.tslibs.vectorized, pandas._libs.ops_dispatch, pandas._libs.missing, pandas._libs.hashtable, pandas._libs.algos, pandas._libs.interval, pandas._libs.lib, pyarrow._compute, pan

das._libs.ops, pandas._libs.hashing, pandas._libs.arrays, pandas._libs.tslib, pandas._libs.sparse, pandas._libs.internals, pandas._libs.indexing, pandas._libs.index, pandas._libs.writers, pandas._libs.join,

pandas._libs.window.aggregations, pandas._libs.window.indexers, pandas._libs.reshape, pandas._libs.groupby, pandas._libs.json, pandas._libs.parsers, pandas._libs.testing, charset_normalizer.md, requests.pa

ckages.charset_normalizer.md, requests.packages.chardet.md, yaml._yaml, markupsafe._speedups, PIL._imaging, torch._C, torch._C._dynamo.autograd_compiler, torch._C._dynamo.eval_frame, torch._C._dynamo.guards

, torch._C._dynamo.utils, torch._C._fft, torch._C._linalg, torch._C._nested, torch._C._nn, torch._C._sparse, torch._C._special, sentencepiece._sentencepiece, sklearn.__check_build._check_build, psutil._psut

il_linux, psutil._psutil_posix, scipy._lib._ccallback_c, scipy.sparse._sparsetools, _csparsetools, scipy.sparse._csparsetools, scipy.linalg._fblas, scipy.linalg._flapack, scipy.linalg.cython_lapack, scipy.l

inalg._cythonized_array_utils, scipy.linalg._solve_toeplitz, scipy.linalg._decomp_lu_cython, scipy.linalg._matfuncs_sqrtm_triu, scipy.linalg.cython_blas, scipy.linalg._matfuncs_expm, scipy.linalg._decomp_up

date, scipy.sparse.linalg._dsolve._superlu, scipy.sparse.linalg._eigen.arpack._arpack, scipy.sparse.linalg._propack._spropack, scipy.sparse.linalg._propack._dpropack, scipy.sparse.linalg._propack._cpropack,

scipy.sparse.linalg._propack._zpropack, scipy.sparse.csgraph._tools, scipy.sparse.csgraph._shortest_path, scipy.sparse.csgraph._traversal, scipy.sparse.csgraph._min_spanning_tree, scipy.sparse.csgraph._flo

w, scipy.sparse.csgraph._matching, scipy.sparse.csgraph._reordering, scipy.special._ufuncs_cxx, scipy.special._ufuncs, scipy.special._specfun, scipy.special._comb, scipy.special._ellip_harm_2, scipy.spatial

._ckdtree, scipy._lib.messagestream, scipy.spatial._qhull, scipy.spatial._voronoi, scipy.spatial._distance_wrap, scipy.spatial._hausdorff, scipy.spatial.transform._rotation, scipy.optimize._group_columns, s

cipy.optimize._trlib._trlib, scipy.optimize._lbfgsb, _moduleTNC, scipy.optimize._moduleTNC, scipy.optimize._cobyla, scipy.optimize._slsqp, scipy.optimize._minpack, scipy.optimize._lsq.givens_elimination, sc

ipy.optimize._zeros, scipy.optimize._highs.cython.src._highs_wrapper, scipy.optimize._highs._highs_wrapper, scipy.optimize._highs.cython.src._highs_constants, scipy.optimize._highs._highs_constants, scipy.l

inalg._interpolative, scipy.optimize._bglu_dense, scipy.optimize._lsap, scipy.optimize._direct, scipy.integrate._odepack, scipy.integrate._quadpack, scipy.integrate._vode, scipy.integrate._dop, scipy.integr

ate._lsoda, scipy.interpolate._fitpack, scipy.interpolate._dfitpack, scipy.interpolate._bspl, scipy.interpolate._ppoly, scipy.interpolate.interpnd, scipy.interpolate._rbfinterp_pythran, scipy.interpolate._r

gi_cython, scipy.special.cython_special, scipy.stats._stats, scipy.stats._biasedurn, scipy.stats._levy_stable.levyst, scipy.stats._stats_pythran, scipy._lib._uarray._uarray, scipy.stats._ansari_swilk_statis

tics, scipy.stats._sobol, scipy.stats._qmc_cy, scipy.stats._mvn, scipy.stats._rcont.rcont, scipy.stats._unuran.unuran_wrapper, scipy.ndimage._nd_image, _ni_label, scipy.ndimage._ni_label, sklearn.utils._isf

inite, sklearn.utils.sparsefuncs_fast, sklearn.utils.murmurhash, sklearn.utils._openmp_helpers, sklearn.metrics.cluster._expected_mutual_info_fast, sklearn.preprocessing._csr_polynomial_expansion, sklearn.p

reprocessing._target_encoder_fast, sklearn.metrics._dist_metrics, sklearn.metrics._pairwise_distances_reduction._datasets_pair, sklearn.utils._cython_blas, sklearn.metrics._pairwise_distances_reduction._bas

e, sklearn.metrics._pairwise_distances_reduction._middle_term_computer, sklearn.utils._heap, sklearn.utils._sorting, sklearn.metrics._pairwise_distances_reduction._argkmin, sklearn.metrics._pairwise_distanc

es_reduction._argkmin_classmode, sklearn.utils._vector_sentinel, sklearn.metrics._pairwise_distances_reduction._radius_neighbors, sklearn.metrics._pairwise_distances_reduction._radius_neighbors_classmode, s

klearn.metrics._pairwise_fast, PIL._imagingft, google._upb._message, h5py._errors, h5py.defs, h5py._objects, h5py.h5, h5py.utils, h5py.h5t, h5py.h5s, h5py.h5ac, h5py.h5p, h5py.h5r, h5py._proxy, h5py._conv,

h5py.h5z, h5py.h5a, h5py.h5d, h5py.h5ds, h5py.h5g, h5py.h5i, h5py.h5o, h5py.h5f, h5py.h5fd, h5py.h5pl, h5py.h5l, h5py._selector, _cffi_backend, pyarrow._parquet, pyarrow._fs, pyarrow._azurefs, pyarrow._hdfs

, pyarrow._gcsfs, pyarrow._s3fs, multidict._multidict, propcache._helpers_c, yarl._quoting_c, aiohttp._helpers, aiohttp._http_writer, aiohttp._http_parser, aiohttp._websocket, frozenlist._frozenlist, xxhash

._xxhash, pyarrow._json, pyarrow._acero, pyarrow._csv, pyarrow._dataset, pyarrow._dataset_orc, pyarrow._parquet_encryption, pyarrow._dataset_parquet_encryption, pyarrow._dataset_parquet, regex._regex, scipy

.io.matlab._mio_utils, scipy.io.matlab._streams, scipy.io.matlab._mio5_utils, PIL._imagingmath, PIL._webp (total: 236)

Aborted (core dumped)

```an

### Steps to reproduce the bug

Install `datasets==3.2.0`

Run the following script:

```python

import datasets

DATASET_NAME = "phiyodr/InpaintCOCO"

NUM_SAMPLES = 10

def preprocess_fn(example):

return {

"prompts": example["inpaint_caption"],

"images": example["coco_image"],

"masks": example["mask"],

}

default_dataset = datasets.load_dataset(

DATASET_NAME, split="test", streaming=True

).filter(lambda example: example["inpaint_caption"] != "").take(NUM_SAMPLES)

test_data = default_dataset.map(

lambda x: preprocess_fn(x), remove_columns=default_dataset.column_names

)

for data in test_data:

print(data["prompts"])

``

### Expected behavior

The script should not hang or crash.

### Environment info

- `datasets` version: 3.2.0

- Platform: Linux-5.15.0-50-generic-x86_64-with-glibc2.31

- Python version: 3.11.0

- `huggingface_hub` version: 0.25.1

- PyArrow version: 17.0.0

- Pandas version: 2.2.3

- `fsspec` version: 2024.2.0 | open | 2025-01-06T11:29:30Z | 2025-03-08T15:59:36Z | https://github.com/huggingface/datasets/issues/7357 | [] | AlexKoff88 | 1 |

mlfoundations/open_clip | computer-vision | 2 | Avenue for exploration - augmenting training set with colour palettes / texture names /more meta data | so part of the fun with clip is using it in conjunction with VQGAN.

This allows the prompts to generate images.

There's something lost in this translation. though .

They say a picture is worth a 1000 words - but what if some extra data was injected into the training ?

could be say textures / maybe even geometric descptions / meta data

| closed | 2021-07-30T11:03:50Z | 2021-07-30T19:52:20Z | https://github.com/mlfoundations/open_clip/issues/2 | [] | johndpope | 1 |

SciTools/cartopy | matplotlib | 2,088 | cartopy crashes with 0.21.0 | Hi,

The simplest cartopy script crashes when cartopy is installed at 0.21.0 release

with a pip install cartopy==0.21.0

```

import cartopy.crs as ccrs

import matplotlib.pyplot as plt

ax = plt.axes(projection=ccrs.PlateCarree())

ax.coastlines()

plt.show()

```

produces a core.

Concerned installed modules are:

```

python 3.9.13

matplotlib 3.6.0

Cartopy 0.21.0

``` | closed | 2022-09-26T17:57:56Z | 2022-09-27T07:35:54Z | https://github.com/SciTools/cartopy/issues/2088 | [] | PBrockmann | 2 |

Lightning-AI/pytorch-lightning | pytorch | 20,276 | Lightning place model inputs and model to different devices | ### Bug description

In the following code snippet, `lmm` is a class inherited from `nn.Module` which is a wrapper class huggingface model and processor.

```

class ICVModel(pl.LightningModule):

def __init__(self, lmm, icv_encoder: torch.nn.Module) -> None:

super().__init__()

self.lmm = lmm

self.lmm.requires_grad_(False)

self.icv_encoder = icv_encoder

self.eos_token = self.lmm.processor.tokenizer.eos_token

def forward(self, ice_texts, query_texts, answers, images):

query_answer = [

query + answer + self.eos_token

for query, answer in zip(query_texts, answers)

]

query_images = [img[-setting.num_image_in_query :] for img in images]

query_inputs = self.lmm.process_input(query_answer, query_images)

query_outputs = self.lmm.model(

**query_inputs,

labels=query_inputs["input_ids"],

)

```

However, a device mismatch error raised at

```python

query_outputs = self.lmm.model(

**query_inputs,

labels=query_inputs["input_ids"],

)

```

I printed device of `inputs.pixel_values.device`, `self.device`, `self.lmm.device` outside of `lmm.model.forward`, then I got

```

rank[0]: cpu cuda:0 cuda:0

rank[1]: cpu cuda:1 cuda:1

```

In Idefics (`self.lmm.model`) forward process, when I printed `inputs.pixel_values.device` and `self.device`, I got

```

rank[0]: cuda:0 cuda:0

rank[1]: cuda:0 cuda:1

```

### What version are you seeing the problem on?

v2.4

### How to reproduce the bug

_No response_

### Error messages and logs

Full trace stack:

```

[rank1]: File "/home/jyc/ICLTestbed/dev/train.py", line 103, in <module>

[rank1]: main()

[rank1]: File "/home/jyc/ICLTestbed/dev/train.py", line 72, in main

[rank1]: trainer.fit(

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/trainer/trainer.py", line 538, in fit

[rank1]: call._call_and_handle_interrupt(

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/trainer/call.py", line 46, in _call_and_handle_interrupt

[rank1]: return trainer.strategy.launcher.launch(trainer_fn, *args, trainer=trainer, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/strategies/launchers/subprocess_script.py", line 105, in launch

[rank1]: return function(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/trainer/trainer.py", line 574, in _fit_impl

[rank1]: self._run(model, ckpt_path=ckpt_path)

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/trainer/trainer.py", line 981, in _run

[rank1]: results = self._run_stage()

[rank1]: ^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/trainer/trainer.py", line 1025, in _run_stage

[rank1]: self.fit_loop.run()

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/loops/fit_loop.py", line 205, in run

[rank1]: self.advance()

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/loops/fit_loop.py", line 363, in advance

[rank1]: self.epoch_loop.run(self._data_fetcher)

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/loops/training_epoch_loop.py", line 140, in run

[rank1]: self.advance(data_fetcher)

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/loops/training_epoch_loop.py", line 250, in advance

[rank1]: batch_output = self.automatic_optimization.run(trainer.optimizers[0], batch_idx, kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/loops/optimization/automatic.py", line 190, in run

[rank1]: self._optimizer_step(batch_idx, closure)

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/loops/optimization/automatic.py", line 268, in _optimizer_step

[rank1]: call._call_lightning_module_hook(

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/trainer/call.py", line 167, in _call_lightning_module_hook

[rank1]: output = fn(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/core/module.py", line 1306, in optimizer_step

[rank1]: optimizer.step(closure=optimizer_closure)

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/core/optimizer.py", line 153, in step

[rank1]: step_output = self._strategy.optimizer_step(self._optimizer, closure, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/strategies/ddp.py", line 270, in optimizer_step

[rank1]: optimizer_output = super().optimizer_step(optimizer, closure, model, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/strategies/strategy.py", line 238, in optimizer_step

[rank1]: return self.precision_plugin.optimizer_step(optimizer, model=model, closure=closure, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/plugins/precision/deepspeed.py", line 129, in optimizer_step

[rank1]: closure_result = closure()

[rank1]: ^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/loops/optimization/automatic.py", line 144, in __call__

[rank1]: self._result = self.closure(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/utils/_contextlib.py", line 115, in decorate_context

[rank1]: return func(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/loops/optimization/automatic.py", line 129, in closure

[rank1]: step_output = self._step_fn()

[rank1]: ^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/loops/optimization/automatic.py", line 317, in _training_step

[rank1]: training_step_output = call._call_strategy_hook(trainer, "training_step", *kwargs.values())

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/trainer/call.py", line 319, in _call_strategy_hook

[rank1]: output = fn(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/strategies/strategy.py", line 389, in training_step

[rank1]: return self._forward_redirection(self.model, self.lightning_module, "training_step", *args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/strategies/strategy.py", line 640, in __call__

[rank1]: wrapper_output = wrapper_module(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/module.py", line 1532, in _wrapped_call_impl

[rank1]: return self._call_impl(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/module.py", line 1541, in _call_impl

[rank1]: return forward_call(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/deepspeed/utils/nvtx.py", line 18, in wrapped_fn

[rank1]: ret_val = func(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/deepspeed/runtime/engine.py", line 1899, in forward

[rank1]: loss = self.module(*inputs, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/module.py", line 1532, in _wrapped_call_impl

[rank1]: return self._call_impl(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/module.py", line 1541, in _call_impl

[rank1]: return forward_call(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/pytorch_lightning/strategies/strategy.py", line 633, in wrapped_forward

[rank1]: out = method(*_args, **_kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/ICLTestbed/dev/icv_model.py", line 89, in training_step

[rank1]: loss_dict = self(**batch)

[rank1]: ^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/module.py", line 1532, in _wrapped_call_impl

[rank1]: return self._call_impl(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/module.py", line 1541, in _call_impl

[rank1]: return forward_call(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/ICLTestbed/dev/icv_model.py", line 42, in forward

[rank1]: query_outputs = self.lmm.model(

[rank1]: ^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/module.py", line 1532, in _wrapped_call_impl

[rank1]: return self._call_impl(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/module.py", line 1541, in _call_impl

[rank1]: return forward_call(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/accelerate/hooks.py", line 170, in new_forward

[rank1]: output = module._old_forward(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/transformers/models/idefics/modeling_idefics.py", line 1493, in forward

[rank1]: outputs = self.model(

[rank1]: ^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/module.py", line 1532, in _wrapped_call_impl

[rank1]: return self._call_impl(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/module.py", line 1541, in _call_impl

[rank1]: return forward_call(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/accelerate/hooks.py", line 170, in new_forward

[rank1]: output = module._old_forward(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/transformers/models/idefics/modeling_idefics.py", line 1181, in forward

[rank1]: image_hidden_states = self.vision_model(

[rank1]: ^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/module.py", line 1532, in _wrapped_call_impl

[rank1]: return self._call_impl(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/module.py", line 1541, in _call_impl

[rank1]: return forward_call(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/accelerate/hooks.py", line 170, in new_forward

[rank1]: output = module._old_forward(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/transformers/models/idefics/vision.py", line 467, in forward

[rank1]: hidden_states = self.embeddings(pixel_values, interpolate_pos_encoding=interpolate_pos_encoding)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/module.py", line 1532, in _wrapped_call_impl

[rank1]: return self._call_impl(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/module.py", line 1541, in _call_impl

[rank1]: return forward_call(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/accelerate/hooks.py", line 170, in new_forward

[rank1]: output = module._old_forward(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/transformers/models/idefics/vision.py", line 147, in forward

[rank1]: patch_embeds = self.patch_embedding(pixel_values.to(dtype=target_dtype)) # shape = [*, width, grid, grid]

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/module.py", line 1532, in _wrapped_call_impl

[rank1]: return self._call_impl(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/module.py", line 1541, in _call_impl

[rank1]: return forward_call(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/accelerate/hooks.py", line 170, in new_forward

[rank1]: output = module._old_forward(*args, **kwargs)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/conv.py", line 460, in forward

[rank1]: return self._conv_forward(input, self.weight, self.bias)

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: File "/home/jyc/miniconda3/envs/icl/lib/python3.12/site-packages/torch/nn/modules/conv.py", line 456, in _conv_forward

[rank1]: return F.conv2d(input, weight, bias, self.stride,

[rank1]: ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[rank1]: RuntimeError: Expected all tensors to be on the same device, but found at least two devices, cuda:0 and cuda:1! (when checking argument for argument weight in method wrapper_CUDA__cudnn_convolution)

```

### Environment

_No response_

### More info

_No response_ | closed | 2024-09-12T13:27:39Z | 2025-02-13T06:46:52Z | https://github.com/Lightning-AI/pytorch-lightning/issues/20276 | [

"bug",

"needs triage",

"ver: 2.4.x"

] | Kamichanw | 6 |

pallets/flask | flask | 4,553 | asserts with `pytest.raises` should be outside the `with` block | I've been using Flask's test suite to evaluate our [Slipcover](https://github.com/plasma-umass/slipcover) coverage tool and noticed likely bugs in the Flask tests. For example, in `tests/test_basic.py`, you have

```python

def test_response_type_errors():

[...]

with pytest.raises(TypeError) as e:

c.get("/none")

assert "returned None" in str(e.value)

assert "from_none" in str(e.value)

with pytest.raises(TypeError) as e:

c.get("/small_tuple")

assert "tuple must have the form" in str(e.value)

[...]

with pytest.raises(TypeError) as e:

c.get("/bad_type")

assert "it was a bool" in str(e.value)

```

You probably don't want the `assert` statements checking `e` indented, as they won't execute when the exception is thrown. | closed | 2022-04-27T15:30:57Z | 2022-05-14T00:07:22Z | https://github.com/pallets/flask/issues/4553 | [

"testing"

] | jaltmayerpizzorno | 2 |

microsoft/unilm | nlp | 1,224 | Doubts about the MARIO-LAION dataset | **Describe**

Model I am using TextDiffuser:

I found that there are some index numbers starting with "50001" in the MARIO-LAION dataset, but I did not find the corresponding subfolder in the meta information (40G) file.

| open | 2023-07-26T06:55:16Z | 2024-02-03T16:02:57Z | https://github.com/microsoft/unilm/issues/1224 | [] | scutyuanzhi | 3 |

ultralytics/yolov5 | machine-learning | 13,144 | How to increase FPS camera capture inside the Raspberry Pi 4B 8GB with best.onnx model | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and found no similar bug report.

### YOLOv5 Component

Detection

### Bug

Hi, i am currently trying to make traffic sign detection and recognition by using the YOLOv5 Pytorch with Yolov5s model. I am using detect.py file to run the model and the FPS i get is only 1 FPS. The dataset contain around 2K images with 200 epochs. I run the code with:

python detect.py --weights best.onnx --img 640 --conf 0.7 --source 0

Is there any modify to the code so that i can get more than 4FPS?

### Environment

-Raspberry Pi 4B with 8GB Ram

-Webcam

-Model best.onnx

-Train using Yolov5 Pytorch

### Minimal Reproducible Example

_No response_

### Additional

_No response_

### Are you willing to submit a PR?

- [X] Yes I'd like to help by submitting a PR! | open | 2024-06-27T21:16:08Z | 2024-10-20T19:49:02Z | https://github.com/ultralytics/yolov5/issues/13144 | [

"bug",

"Stale"

] | Killuagg | 13 |

ageitgey/face_recognition | python | 1,312 | Details of face_encodings() function | * face_recognition version:1.3.0

* Python version:3.x

* Operating System:linux

### Description

Actually this is not an issue, rather an effort to know the details of 'face_encondings()' function.

### What I Did

so in the examples in Markdown file, you always take very first list of returned multiple lists. What does this function actually return and why do you always take the first list for comparison?

| closed | 2021-05-12T17:47:29Z | 2021-05-22T19:25:09Z | https://github.com/ageitgey/face_recognition/issues/1312 | [] | AI-07 | 1 |

allenai/allennlp | pytorch | 4,971 | Failed to import WordTokenizer | Hello, I'm using Allennlp 1.4.0 and Allen-model 1.4.0 on my proiect. I run the command as `allennlp serve --archive-path <path> --predictor <predictor> --include-package <pacakge_name>`. And then I got an error of `Failed to import WordTokenizer from allennlp.data.tokenizers.word_tokenizer`.

I've looked at the WordTokenizer from version 0.9.0 to the latest one. Only version 0.9.0 contains WordTokenizer. Is there a specific reason for removing WordTokenizer? Or is it moved/changed to another method?

I also tried to run v0.9.0 but when I run `python -m allennlp.service.server_simple`. It pops another error: `/usr/bin/python: No module named allennlp.service`

It would be appreciated if someone can give an advice.

| closed | 2021-02-11T13:30:16Z | 2021-02-26T20:17:15Z | https://github.com/allenai/allennlp/issues/4971 | [

"question"

] | yanchao-yu | 3 |

piccolo-orm/piccolo | fastapi | 221 | Naming of columns | ### Discussed in https://github.com/piccolo-orm/piccolo/discussions/206

Allow ``Table`` columns to map to database columns with different names.

For example:

```python

class MyTable(Table):

name = Varchar(name="person_name")

```

In the example above, when doing queries, you will use `MyTable.name`, but the actual column in the database is called `person_name`.

This is useful when using Piccolo on legacy databases with non-ideal column names.

| closed | 2021-09-08T09:31:14Z | 2021-10-05T20:10:21Z | https://github.com/piccolo-orm/piccolo/issues/221 | [

"enhancement"

] | dantownsend | 7 |

matterport/Mask_RCNN | tensorflow | 2,906 | Training balloon.py ValueError: Expected a symbolic Tensors or a callable for the loss value. Please wrap your loss computation in a zero argument `lambda`. | python==3.7.15

tensorflow==2.10.0

keras==2.10.0

".\Mask_RCNN\mrcnn\model.py"

```

# Add L2 Regularization

# Skip gamma and beta weights of batch normalization layers.

reg_losses = [keras.regularizers.l2(self.config.WEIGHT_DECAY)(w) / tf.cast(tf.size(w), tf.float32)

for w in self.keras_model.trainable_weights

if 'gamma' not in w.name and 'beta' not in w.name]

print(tf.add_n(reg_losses))

self.keras_model.add_loss(tf.add_n(reg_losses))

```

Error occurred as below

```

File ".\Mask_RCNN\mrcnn\model.py", line 2107, in co

mpile

self.keras_model.add_loss(tf.add_n(reg_losses))

File ".\anaconda3\envs\MaskRCNN\lib\site-packages\keras\engine\base_layer.py

", line 1488, in add_loss

"Expected a symbolic Tensors or a callable for the loss value. "

ValueError: Expected a symbolic Tensors or a callable for the loss value. Please wrap your

loss computation in a zero argument `lambda`.

```

Please anyone helps me | open | 2022-11-14T23:42:10Z | 2023-02-14T04:09:32Z | https://github.com/matterport/Mask_RCNN/issues/2906 | [] | sanso62 | 2 |

AUTOMATIC1111/stable-diffusion-webui | pytorch | 16,130 | [Bug]: weird>launch.py: error: unrecognized arguments: --lora-dir | ### Checklist

- [ ] The issue exists after disabling all extensions

- [X] The issue exists on a clean installation of webui

- [ ] The issue is caused by an extension, but I believe it is caused by a bug in the webui

- [X] The issue exists in the current version of the webui

- [x] The issue has not been reported before recently

- [ ] The issue has been reported before but has not been fixed yet

### What happened?

when runing webui.bat it throws>

launch.py: error: unrecognized arguments: --lora-dir

### Steps to reproduce the problem

1.install fresh webui.w git

2.run,webui-user,wait...

2.run webui.bat?

### What should have happened?

not throw>launch.py: error: unrecognized arguments: --lora-dir

### What browsers do you use to access the UI ?

Mozilla Firefox

### Sysinfo

win11,py3.11

### Console logs

```Shell

no module 'xformers'. Processing without...

usage: launch.py [-h] [--update-all-extensions] [--skip-python-version-check] [--skip-torch-cuda-test] [--reinstall-xformers] [--reinstall-torch] [--update-check] [--test-server]

[--log-startup] [--skip-prepare-environment] [--skip-install] [--dump-sysinfo] [--loglevel LOGLEVEL] [--do-not-download-clip] [--data-dir DATA_DIR] [--config CONFIG]

[--ckpt CKPT] [--ckpt-dir CKPT_DIR] [--vae-dir VAE_DIR] [--gfpgan-dir GFPGAN_DIR] [--gfpgan-model GFPGAN_MODEL] [--no-half] [--no-half-vae] [--no-progressbar-hiding]

[--max-batch-count MAX_BATCH_COUNT] [--embeddings-dir EMBEDDINGS_DIR] [--textual-inversion-templates-dir TEXTUAL_INVERSION_TEMPLATES_DIR]

[--hypernetwork-dir HYPERNETWORK_DIR] [--localizations-dir LOCALIZATIONS_DIR] [--allow-code] [--medvram] [--medvram-sdxl] [--lowvram] [--lowram]

[--always-batch-cond-uncond] [--unload-gfpgan] [--precision {full,autocast}] [--upcast-sampling] [--share] [--ngrok NGROK] [--ngrok-region NGROK_REGION]

[--ngrok-options NGROK_OPTIONS] [--enable-insecure-extension-access] [--codeformer-models-path CODEFORMER_MODELS_PATH] [--gfpgan-models-path GFPGAN_MODELS_PATH]

[--esrgan-models-path ESRGAN_MODELS_PATH] [--bsrgan-models-path BSRGAN_MODELS_PATH] [--realesrgan-models-path REALESRGAN_MODELS_PATH] [--dat-models-path DAT_MODELS_PATH]

[--clip-models-path CLIP_MODELS_PATH] [--xformers] [--force-enable-xformers] [--xformers-flash-attention] [--deepdanbooru] [--opt-split-attention]

[--opt-sub-quad-attention] [--sub-quad-q-chunk-size SUB_QUAD_Q_CHUNK_SIZE] [--sub-quad-kv-chunk-size SUB_QUAD_KV_CHUNK_SIZE]

[--sub-quad-chunk-threshold SUB_QUAD_CHUNK_THRESHOLD] [--opt-split-attention-invokeai] [--opt-split-attention-v1] [--opt-sdp-attention] [--opt-sdp-no-mem-attention]

[--disable-opt-split-attention] [--disable-nan-check] [--use-cpu USE_CPU [USE_CPU ...]] [--use-ipex] [--disable-model-loading-ram-optimization] [--listen] [--port PORT]

[--show-negative-prompt] [--ui-config-file UI_CONFIG_FILE] [--hide-ui-dir-config] [--freeze-settings] [--freeze-settings-in-sections FREEZE_SETTINGS_IN_SECTIONS]

[--freeze-specific-settings FREEZE_SPECIFIC_SETTINGS] [--ui-settings-file UI_SETTINGS_FILE] [--gradio-debug] [--gradio-auth GRADIO_AUTH]

[--gradio-auth-path GRADIO_AUTH_PATH] [--gradio-img2img-tool GRADIO_IMG2IMG_TOOL] [--gradio-inpaint-tool GRADIO_INPAINT_TOOL] [--gradio-allowed-path GRADIO_ALLOWED_PATH]

[--opt-channelslast] [--styles-file STYLES_FILE] [--autolaunch] [--theme THEME] [--use-textbox-seed] [--disable-console-progressbars] [--enable-console-prompts]

[--vae-path VAE_PATH] [--disable-safe-unpickle] [--api] [--api-auth API_AUTH] [--api-log] [--nowebui] [--ui-debug-mode] [--device-id DEVICE_ID] [--administrator]

[--cors-allow-origins CORS_ALLOW_ORIGINS] [--cors-allow-origins-regex CORS_ALLOW_ORIGINS_REGEX] [--tls-keyfile TLS_KEYFILE] [--tls-certfile TLS_CERTFILE]

[--disable-tls-verify] [--server-name SERVER_NAME] [--gradio-queue] [--no-gradio-queue] [--skip-version-check] [--no-hashing] [--no-download-sd-model] [--subpath SUBPATH]

[--add-stop-route] [--api-server-stop] [--timeout-keep-alive TIMEOUT_KEEP_ALIVE] [--disable-all-extensions] [--disable-extra-extensions] [--skip-load-model-at-start]

[--unix-filenames-sanitization] [--filenames-max-length FILENAMES_MAX_LENGTH] [--no-prompt-history] [--ldsr-models-path LDSR_MODELS_PATH] [--lora-dir LORA_DIR]

[--lyco-dir-backcompat LYCO_DIR_BACKCOMPAT] [--scunet-models-path SCUNET_MODELS_PATH] [--swinir-models-path SWINIR_MODELS_PATH]

launch.py: error: unrecognized arguments: --lora-dir D

```

### Additional information

not sure but I downloaded from src and uses the git way. | closed | 2024-07-02T15:44:24Z | 2024-07-06T13:49:17Z | https://github.com/AUTOMATIC1111/stable-diffusion-webui/issues/16130 | [

"bug-report"

] | hgftrdw45ud67is8o89 | 2 |

Nike-Inc/koheesio | pydantic | 166 | [BUG] Snowflake sync task for insert Change Data Feed is not working in case of existing row in snowflake already | ## Describe the bug

The issue is in the line https://github.com/Nike-Inc/koheesio/blob/720da7a12d4b8b49de4367bf051007175c2af5cb/src/koheesio/integrations/spark/snowflake.py#L973, which leads to the case of skipping updates if the `change_type` is 'insert' and the row is present in Snowflake (in case of delete and insert operation on the delta side).

## Steps to Reproduce

1. Perform a delete operation on a row in the delta table.

2. Perform an insert operation on the same row in the delta table.

3. Run the synchronization process to merge the delta table with the Snowflake table.

4. Observe that the insert operation is skipped if the row already exists in Snowflake.

## Expected behavior

The insert operation should be performed even if the row already exists in Snowflake, ensuring that the latest data is correctly synchronized.

## Additional context

The issue occurs because the merge query only updates rows when `temp._change_type` is 'update_postimage'. This condition does not account for rows that have been deleted and then inserted again in the delta table. | open | 2025-02-06T23:20:12Z | 2025-02-26T13:34:57Z | https://github.com/Nike-Inc/koheesio/issues/166 | [

"bug"

] | mikita-sakalouski | 0 |

streamlit/streamlit | machine-learning | 10,648 | adding the help parameter to a (Button?) widget pads it weirdly instead of staying to the left. | ### Checklist

- [x] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar issues.

- [x] I added a very descriptive title to this issue.

- [x] I have provided sufficient information below to help reproduce this issue.

### Summary

```python

st.page_link('sub_pages/resources/lesson_plans.py', label='Lesson Plans', icon=':material/docs:', help='View and download lesson plans')

```

## Unexpected Outcome

---

## Expected

### Reproducible Code Example

```Python

import streamlit as st

st.page_link('sub_pages/resources/lesson_plans.py', label='Lesson Plans', icon=':material/docs:', help='View and download lesson plans')

```

### Steps To Reproduce

1. Add help to a button widget

### Expected Behavior

Button keeps help text and is on the left

### Current Behavior

Button keeps help text but is on the right

### Is this a regression?

- [x] Yes, this used to work in a previous version.

### Debug info

- Streamlit version: 1.43.0

- Python version: 3.12.2

- Operating System: Windows 11

- Browser: Chrome

### Additional Information

> Yes, this used to work in a previous version.

1.42.0 works | closed | 2025-03-05T09:56:06Z | 2025-03-07T21:21:05Z | https://github.com/streamlit/streamlit/issues/10648 | [

"type:bug",

"status:confirmed",

"priority:P1",

"feature:st.download_button",

"feature:st.button",

"feature:st.link_button",

"feature:st.page_link"

] | thehamish555 | 2 |

PaddlePaddle/models | nlp | 4,803 | DCGAN的自定义数据集应该做成什么样的格式 | open | 2020-08-16T11:52:38Z | 2024-02-26T05:10:28Z | https://github.com/PaddlePaddle/models/issues/4803 | [] | xiaolifeimianbao | 3 | |

ultralytics/ultralytics | pytorch | 19,553 | Build a dataloader without training | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/orgs/ultralytics/discussions) and found no similar questions.

### Question

Hi. I would like to examine data in a train dataloader. How can I build one without starting training? I plan to train a detection model. Here is my attempt:

``` python

from ultralytics.models.yolo.detect import DetectionTrainer

import os

# Define paths

DATA_YAML = f"{os.environ['DATASETS']}/drone_tiny/data.yaml" # Path to dataset YAML file

WEIGHTS_PATH = f"{os.environ['WEIGHTS']}/yolo11n.pt" # Path to local weights file

SAVE_IMAGES_DIR = f"{os.environ['PROJECT_ROOT']}/saved_images"

# Ensure save directory exists

os.makedirs(SAVE_IMAGES_DIR, exist_ok=True)

# Load the model

trainer = DetectionTrainer(

overrides = dict(

data = DATA_YAML

)

)

train_data, test_data = trainer.get_dataset()

dataloader = trainer.get_dataloader(train_data)

```

But this fails with the following error:

```bash

Ultralytics 8.3.77 🚀 Python-3.10.12 torch-2.3.1+cu121 CUDA:0 (NVIDIA GeForce GTX 1080 Ti, 11169MiB)

engine/trainer: task=detect, mode=train, model=None, data=/home/daniel/drone_detection/datasets/drone_tiny/data.yaml, epochs=100, time=None, patience=100, batch=16, imgsz=640, save=True, save_period=-1, cache=False, device=None, workers=8, project=None, name=train9, exist_ok=False, pretrained=True, optimizer=auto, verbose=True, seed=0, deterministic=True, single_cls=False, rect=False, cos_lr=False, close_mosaic=10, resume=False, amp=True, fraction=1.0, profile=False, freeze=None, multi_scale=False, overlap_mask=True, mask_ratio=4, dropout=0.0, val=True, split=val, save_json=False, save_hybrid=False, conf=None, iou=0.7, max_det=300, half=False, dnn=False, plots=True, source=None, vid_stride=1, stream_buffer=False, visualize=False, augment=False, agnostic_nms=False, classes=None, retina_masks=False, embed=None, show=False, save_frames=False, save_txt=False, save_conf=False, save_crop=False, show_labels=True, show_conf=True, show_boxes=True, line_width=None, format=torchscript, keras=False, optimize=False, int8=False, dynamic=False, simplify=True, opset=None, workspace=None, nms=False, lr0=0.01, lrf=0.01, momentum=0.937, weight_decay=0.0005, warmup_epochs=3.0, warmup_momentum=0.8, warmup_bias_lr=0.1, box=7.5, cls=0.5, dfl=1.5, pose=12.0, kobj=1.0, nbs=64, hsv_h=0.015, hsv_s=0.7, hsv_v=0.4, degrees=0.0, translate=0.1, scale=0.5, shear=0.0, perspective=0.0, flipud=0.0, fliplr=0.5, bgr=0.0, mosaic=1.0, mixup=0.0, copy_paste=0.0, copy_paste_mode=flip, auto_augment=randaugment, erasing=0.4, crop_fraction=1.0, cfg=None, tracker=botsort.yaml, save_dir=/home/daniel/drone_detection/runs/detect/train9

train: Scanning /home/daniel/drone_detection/datasets/drone_tiny/train/labels.cache... 14180 images, 5 backgrounds, 304 corrupt: 100%|██████████| 14180/14180 [00:00<?, ?it/s]

train: WARNING ⚠️ /home/daniel/drone_detection/datasets/drone_tiny/train/images/V_BIRD_008_0080.png: ignoring corrupt image/label: non-normalized or out of bounds coordinates [ 1.0372]

train: WARNING ⚠️ /home/daniel/drone_detection/datasets/drone_tiny/train/images/V_BIRD_008_0085.png: ignoring corrupt image/label: non-normalized or out of bounds coordinates [ 1.0828 1.0406]

train: WARNING ⚠️ /home/daniel/drone_detection/datasets/drone_tiny/train/images/V_BIRD_008_0090.png: ignoring corrupt image/label: non-normalized or out of bounds coordinates [ 1.1289 1.1016]

train: WARNING ⚠️ /home/daniel/drone_detection/datasets/drone_tiny/train/images/V_BIRD_008_0160.png: ignoring corrupt image/label: non-normalized or out of bounds coordinates [ 1.0086]

train: WARNING ⚠️ /home/daniel/drone_detection/datasets/drone_tiny/train/images/V_BIRD_008_0165.png: ignoring corrupt image/label: non-normalized or out of bounds coordinates [ 1.0617]

train: WARNING ⚠️ /home/daniel/drone_detection/datasets/drone_tiny/train/images/V_BIRD_008_0170.png: ignoring corrupt image/label: non-normalized or out of bounds coordinates [ 1.1147]

train: WARNING ⚠️ /home/daniel/drone_detection/datasets/drone_tiny/train/images/V_BIRD_008_0175.png: ignoring corrupt image/label: non-normalized or out of bounds coordinates [ 1.1697]

train: WARNING ⚠️ /home/daniel/drone_detection/datasets/drone_tiny/train/images/V_BIRD_008_0180.png: ignoring corrupt image/label: non-normalized or out of bounds coordinates [ 1.2278]

train: WARNING ⚠️ /home/daniel/drone_detection/datasets/drone_tiny/train/images/V_BIRD_008_0185.png: ignoring corrupt image/label: non-normalized or out of bounds coordinates [ 1.2805]