repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

Gurobi/gurobi-logtools | plotly | 17 | Cut name pattern too restrictive | When extracting statistics about generated cuts, the pattern for matching cut names is too restrictive. It does not match `Relax-and-lift` which is generated for `912-glass4-0.log`. I believe the hyphen is not included in the `[\w ]` pattern for the cut name. | closed | 2022-01-11T08:03:21Z | 2022-01-12T12:48:46Z | https://github.com/Gurobi/gurobi-logtools/issues/17 | [] | ronaldvdv | 1 |

openapi-generators/openapi-python-client | fastapi | 681 | field required (type=value_error.missing) | Hi,

I'm sure this is related to my project, not to the openapi-python-client, but needed some help.

When running the app, I get the following errors:

```

Error(s) encountered while generating, client was not created

Failed to parse OpenAPI document

50 validation errors for OpenAPI

paths -> /auth/login -> post -> requestBody -> content -> application/x-www-form-urlencoded -> schema -> $ref

field required (type=value_error.missing)

paths -> /auth/login -> post -> requestBody -> content -> application/x-www-form-urlencoded -> schema -> properties -> user -> $ref

field required (type=value_error.missing)

paths -> /auth/login -> post -> requestBody -> content -> application/x-www-form-urlencoded -> schema -> properties -> user -> required

value is not a valid list (type=type_error.list)

paths -> /auth/login -> post -> requestBody -> content -> application/x-www-form-urlencoded -> schema -> properties -> password -> $ref

field required (type=value_error.missing)

paths -> /auth/login -> post -> requestBody -> content -> application/x-www-form-urlencoded -> schema -> properties -> password -> required

....

```

And here's some of api.yaml file that I'm using:

```

paths:

/auth/login:

post:

tags:

- Auth

summary: Authenticate with API

description: Authenticate with API getting bearer token

requestBody:

description: Request values

required: true

content:

application/x-www-form-urlencoded:

schema:

type: object

properties:

user:

description: API user name

type: string

required: true

password:

description: API password

type: string

required: true

required:

- user

- password

```

Any thoughts on what's wrong? | closed | 2022-10-04T22:03:24Z | 2024-09-04T00:36:49Z | https://github.com/openapi-generators/openapi-python-client/issues/681 | [

"🐞bug"

] | cristiang777 | 7 |

suitenumerique/docs | django | 403 | ⚗️AI return with complex data | ## Improvement

### Problem:

When we request the AI, we transform the editor data in **markdown**, when the data have a simple structure it works fine, but when we start to have complex structure like "Table" by example, the data back from the AI will start to be very "lossy".

### Tests:

- Try to see if we can send the json structure instead and see if the AI is smart enough to do the actions without impacting negatively the json structure.

- Other solutions, probably better, send only the content text of the blocknote json to the AI, bind each content text with an ID (it is maybe already bind with an ID), then replace the content text of the json thanks to this ID. By doing so, we keep the complex structure on the frontside and replace only the text.

## Code to improve

https://github.com/numerique-gouv/impress/blob/50891afd055b5dada1d34e57ab447638865410af/src/frontend/apps/impress/src/features/docs/doc-editor/components/AIButton.tsx#L284-L305

| closed | 2024-11-06T06:15:29Z | 2025-03-13T15:38:35Z | https://github.com/suitenumerique/docs/issues/403 | [

"bug",

"enhancement",

"frontend",

"backend",

"editor"

] | AntoLC | 0 |

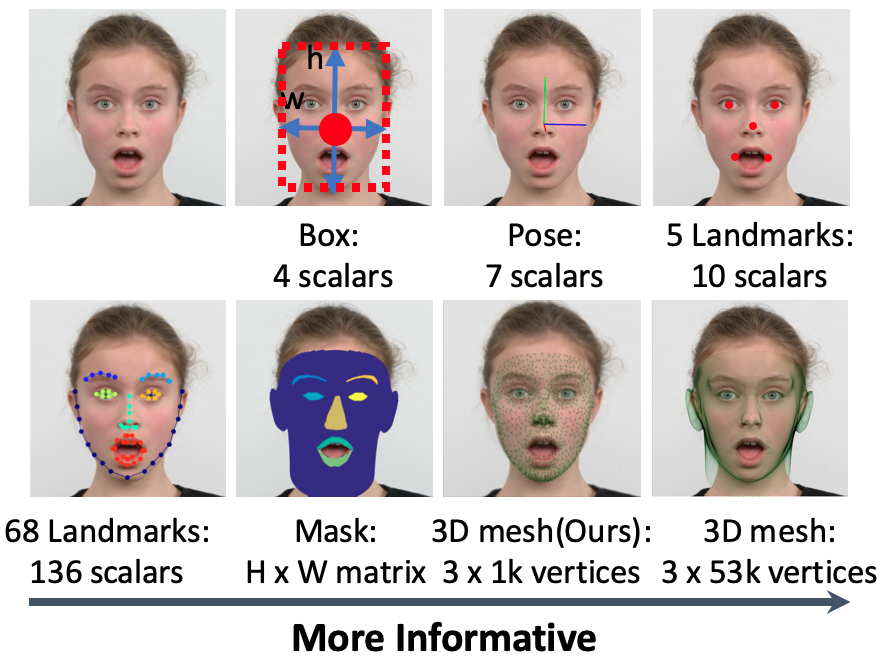

deepinsight/insightface | pytorch | 1,793 | How to 3D visualize face alignments? | **Hi**

Is there any example to have 3D or even 2D visualize of face alignments like 3D Mesh and 2D 68 Landmarks in this photo?

Is face.normed_embedding from FaceAnalysis, 3D alignments?

please mention how to get alignments data and how to save it as image

Thanks in advance

[https://insightface.ai/assets/img/custom/thumb_retinaface.png](https://insightface.ai/assets/img/custom/thumb_retinaface.png)

| open | 2021-10-19T09:08:36Z | 2021-10-21T11:28:15Z | https://github.com/deepinsight/insightface/issues/1793 | [] | zerodwide | 3 |

jonaswinkler/paperless-ng | django | 495 | [BUG] Doesn't remember PDF-viewer setting | Hey, thanks for introducing the setting to switch back to the built-in embed pdf-viewer.

However, the system does not remember the setting. If I close the session, it is set back to default.

I am using two containers with cookie-prefix, if that is an information you may need.

Thank you :) | closed | 2021-02-02T15:23:49Z | 2021-02-02T16:05:23Z | https://github.com/jonaswinkler/paperless-ng/issues/495 | [] | praul | 3 |

tableau/server-client-python | rest-api | 1,377 | Schedules REST API is encountering an issue with responses: interval details for 'Hourly' and 'Daily' schedules are missing. This information, vital for accurate task execution, is currently unavailable. Are you aware of this issue? | Missing interval details specifically days of the week to execute schedules in API responses for 'Daily' and 'Hourly' schedules, crucial for task configuration and execution. 'Monthly' and 'Weekly' schedules retrieving necessary details.

Requesting assistance in schedule API responses to include the missing interval details, particularly the days of the week for 'Daily' and 'Hourly' schedules. Your insights and suggestions on how best to integrate this information into our API responses would be greatly appreciated.

Attached to this message is a PDF containing the current API responses for reference.

[Schedule Frequencies.pdf](https://github.com/tableau/server-client-python/files/15398196/Schedule.Frequencies.pdf)

| closed | 2024-05-22T05:05:22Z | 2024-08-21T23:37:15Z | https://github.com/tableau/server-client-python/issues/1377 | [

"help wanted",

"fixed"

] | Hiraltailor1 | 3 |

CorentinJ/Real-Time-Voice-Cloning | pytorch | 351 | Clone | closed | 2020-05-26T09:17:56Z | 2020-06-25T07:42:55Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/351 | [] | syrakusmrdrte | 0 | |

Tinche/aiofiles | asyncio | 40 | memory leak? | Hi Tinche

First of all thanks for the great work! Asyncronous file support for asyncio is a great thing to have!

While testing a small project, I noticed a large amount of threads and big memory consumption in the python process. I deciced to write a small testscript which just writes to a file in a loop and tracks the memory:

```python

#!/usr/bin/python3

import asyncio

import aiofiles

import os

import psutil

async def printMemory():

for iteration in range(0, 20):

# grab the memory statistics

p = psutil.Process(os.getpid())

vms =p.memory_info().vms / (1024.0*1024.0)

threads = p.num_threads()

print(f'Iteration {iteration:>2d} - Memory usage (VMS): {vms:>6.1f} Mb; # threads: {threads:>2d}')

# simple write to a test file

async with aiofiles.open('test.txt',mode='w') as f:

await f.write('hello\n')

# a wait, just for the sake of it

await asyncio.sleep(1)

loop = asyncio.get_event_loop()

try:

loop.run_until_complete(printMemory())

finally:

loop.close()

```

The output shows some worrisome numbers (run with Python 3.6.5 on Debian 8.10 (jessy) ):

```

Iteration 0 - Memory usage (VMS): 92.5 Mb; # threads: 1

Iteration 1 - Memory usage (VMS): 308.5 Mb; # threads: 4

Iteration 2 - Memory usage (VMS): 524.6 Mb; # threads: 7

Iteration 3 - Memory usage (VMS): 740.6 Mb; # threads: 10

Iteration 4 - Memory usage (VMS): 956.6 Mb; # threads: 13

Iteration 5 - Memory usage (VMS): 1172.6 Mb; # threads: 16

Iteration 6 - Memory usage (VMS): 1388.7 Mb; # threads: 19

Iteration 7 - Memory usage (VMS): 1604.7 Mb; # threads: 22

Iteration 8 - Memory usage (VMS): 1820.8 Mb; # threads: 25

Iteration 9 - Memory usage (VMS): 2036.8 Mb; # threads: 28

Iteration 10 - Memory usage (VMS): 2252.8 Mb; # threads: 31

Iteration 11 - Memory usage (VMS): 2468.8 Mb; # threads: 34

Iteration 12 - Memory usage (VMS): 2684.8 Mb; # threads: 37

Iteration 13 - Memory usage (VMS): 2900.8 Mb; # threads: 40

Iteration 14 - Memory usage (VMS): 2972.8 Mb; # threads: 41

Iteration 15 - Memory usage (VMS): 2972.8 Mb; # threads: 41

Iteration 16 - Memory usage (VMS): 2972.8 Mb; # threads: 41

Iteration 17 - Memory usage (VMS): 2972.8 Mb; # threads: 41

Iteration 18 - Memory usage (VMS): 2972.8 Mb; # threads: 41

Iteration 19 - Memory usage (VMS): 2972.8 Mb; # threads: 41

```

Any idea where this could come from?

| open | 2018-05-02T05:24:53Z | 2018-05-02T21:41:36Z | https://github.com/Tinche/aiofiles/issues/40 | [] | alexlocher | 1 |

junyanz/pytorch-CycleGAN-and-pix2pix | deep-learning | 1,027 | ConnectionRefusedError: [WinError 10061] 由于目标计算机积极拒绝,无法连接。 | open | 2020-05-14T07:45:35Z | 2020-06-10T07:14:03Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/1027 | [] | bu-fan-jun | 1 | |

plotly/dash-table | dash | 189 | data types - decimal formatting options for numerical columns | closed | 2018-11-01T11:06:25Z | 2019-02-28T15:18:34Z | https://github.com/plotly/dash-table/issues/189 | [] | chriddyp | 7 | |

pyjanitor-devs/pyjanitor | pandas | 784 | Add slice feature to `select_columns` | # Brief Description

I love the [select_columns]() method. It is powerful, especially the glob style name selection (beautiful!). I wonder if it is possible to add slice option, or if it is just unnecessary.

# Example API

Currently, you pass the selection to a list:

```python

# current implementation

df = pd.DataFrame(...).select_columns(['a', 'b', 'c', 'col_*'], invert=True)

```

I think it would be nice if we could do this:

```python

# proposed addition

df = pd.DataFrame(...).select_columns(['a': 'c', 'col_*'], invert=True)

```

Probably similar to what you do with [np.r_](https://numpy.org/doc/stable/reference/generated/numpy.r_.html) (which is only for indices)

| closed | 2020-12-16T07:19:29Z | 2021-01-28T17:02:39Z | https://github.com/pyjanitor-devs/pyjanitor/issues/784 | [] | samukweku | 2 |

mars-project/mars | scikit-learn | 2,896 | [BUG] Deref stage may raises AssertionError: chunk key xxx will have negative ref count | <!--

Thank you for your contribution!

Please review https://github.com/mars-project/mars/blob/master/CONTRIBUTING.rst before opening an issue.

-->

**Describe the bug**

A clear and concise description of what the bug is.

Try to run the `pytest -s -v --log-cli-level=debug mars/dataframe/groupby/tests/test_groupby_execution.py::test_groupby_sample` in the latest master.

The test case will be ***PASSED***, but there is an unretrieved task exception:

```python

ERROR asyncio:isolation.py:36 Task exception was never retrieved

future: <Task finished coro=<<coroutine without __name__>()> exception=AssertionError('chunk key fba521b5c44e2f43199042f58b5fa971_0 will have negative ref count')>

Traceback (most recent call last):

File "mars/oscar/core.pyx", line 251, in __pyx_actor_method_wrapper

return await result_handler(result)

File "mars/oscar/core.pyx", line 385, in _handle_actor_result

task_result = await coros[0]

File "mars/oscar/core.pyx", line 428, in mars.oscar.core._BaseActor._run_actor_async_generator

async with self._lock:

File "mars/oscar/core.pyx", line 429, in mars.oscar.core._BaseActor._run_actor_async_generator

with debug_async_timeout('actor_lock_timeout',

File "mars/oscar/core.pyx", line 434, in mars.oscar.core._BaseActor._run_actor_async_generator

res = await gen.athrow(*res)

File "/home/admin/mars-ant/mars/services/task/supervisor/processor.py", line 655, in start

yield processor.decref_stage.batch(*decrefs)

File "mars/oscar/core.pyx", line 439, in mars.oscar.core._BaseActor._run_actor_async_generator

res = await self._handle_actor_result(res)

File "mars/oscar/core.pyx", line 359, in _handle_actor_result

result = await result

File "/home/admin/mars-ant/mars/oscar/batch.py", line 148, in _async_batch

return [await self._async_call(*d.args, **d.kwargs)]

File "/home/admin/mars-ant/mars/oscar/batch.py", line 95, in _async_call

return await self.func(*args, **kwargs)

File "/home/admin/mars-ant/mars/services/task/supervisor/processor.py", line 67, in inner

return await func(processor, *args, **kwargs)

File "/home/admin/mars-ant/mars/services/task/supervisor/processor.py", line 288, in decref_stage

await self._lifecycle_api.decref_chunks(decref_chunk_keys)

File "/home/admin/mars-ant/mars/services/lifecycle/api/oscar.py", line 134, in decref_chunks

return await self._lifecycle_tracker_ref.decref_chunks(chunk_keys)

File "mars/oscar/core.pyx", line 251, in __pyx_actor_method_wrapper

return await result_handler(result)

File "mars/oscar/core.pyx", line 385, in _handle_actor_result

task_result = await coros[0]

File "mars/oscar/core.pyx", line 428, in mars.oscar.core._BaseActor._run_actor_async_generator

async with self._lock:

File "mars/oscar/core.pyx", line 429, in mars.oscar.core._BaseActor._run_actor_async_generator

with debug_async_timeout('actor_lock_timeout',

File "mars/oscar/core.pyx", line 432, in mars.oscar.core._BaseActor._run_actor_async_generator

res = await gen.asend(res)

File "/home/admin/mars-ant/mars/services/lifecycle/supervisor/tracker.py", line 94, in decref_chunks

to_remove_chunk_keys = self._get_remove_chunk_keys(chunk_keys)

File "/home/admin/mars-ant/mars/services/lifecycle/supervisor/tracker.py", line 82, in _get_remove_chunk_keys

assert ref_count >= 0, f"chunk key {chunk_key} will have negative ref count"

AssertionError: chunk key fba521b5c44e2f43199042f58b5fa971_0 will have negative ref count

```

The exception may be caused by the operand execution error:

```python

ERROR mars.services.task.supervisor.stage:stage.py:172 Subtask gTsCHkuFyK77u7ahMDUJzxD0 errored

Traceback (most recent call last):

File "/home/admin/mars-ant/mars/services/scheduling/worker/execution.py", line 356, in internal_run_subtask

subtask, band_name, subtask_api, batch_quota_req

File "/home/admin/mars-ant/mars/services/scheduling/worker/execution.py", line 456, in _retry_run_subtask

return await _retry_run(subtask, subtask_info, _run_subtask_once)

File "/home/admin/mars-ant/mars/services/scheduling/worker/execution.py", line 107, in _retry_run

raise ex

File "/home/admin/mars-ant/mars/services/scheduling/worker/execution.py", line 67, in _retry_run

return await target_async_func(*args)

File "/home/admin/mars-ant/mars/services/scheduling/worker/execution.py", line 398, in _run_subtask_once

return await asyncio.shield(aiotask)

File "/home/admin/mars-ant/mars/services/subtask/api.py", line 69, in run_subtask_in_slot

subtask

File "/home/admin/mars-ant/mars/oscar/backends/context.py", line 190, in send

return self._process_result_message(result)

File "/home/admin/mars-ant/mars/oscar/backends/context.py", line 70, in _process_result_message

raise message.as_instanceof_cause()

File "/home/admin/mars-ant/mars/oscar/backends/pool.py", line 541, in send

result = await self._run_coro(message.message_id, coro)

File "/home/admin/mars-ant/mars/oscar/backends/pool.py", line 333, in _run_coro

return await coro

File "/home/admin/mars-ant/mars/oscar/api.py", line 120, in __on_receive__

return await super().__on_receive__(message)

File "mars/oscar/core.pyx", line 507, in __on_receive__

raise ex

File "mars/oscar/core.pyx", line 500, in mars.oscar.core._BaseActor.__on_receive__

return await self._handle_actor_result(result)

File "mars/oscar/core.pyx", line 385, in _handle_actor_result

task_result = await coros[0]

File "mars/oscar/core.pyx", line 428, in mars.oscar.core._BaseActor._run_actor_async_generator

async with self._lock:

File "mars/oscar/core.pyx", line 429, in mars.oscar.core._BaseActor._run_actor_async_generator

with debug_async_timeout('actor_lock_timeout',

File "mars/oscar/core.pyx", line 434, in mars.oscar.core._BaseActor._run_actor_async_generator

res = await gen.athrow(*res)

File "/home/admin/mars-ant/mars/services/subtask/worker/runner.py", line 125, in run_subtask

result = yield self._running_processor.run(subtask)

File "mars/oscar/core.pyx", line 439, in mars.oscar.core._BaseActor._run_actor_async_generator

res = await self._handle_actor_result(res)

File "mars/oscar/core.pyx", line 359, in _handle_actor_result

result = await result

File "/home/admin/mars-ant/mars/oscar/backends/context.py", line 190, in send

return self._process_result_message(result)

File "/home/admin/mars-ant/mars/oscar/backends/context.py", line 70, in _process_result_message

raise message.as_instanceof_cause()

File "/home/admin/mars-ant/mars/oscar/backends/pool.py", line 541, in send

result = await self._run_coro(message.message_id, coro)

File "/home/admin/mars-ant/mars/oscar/backends/pool.py", line 333, in _run_coro

return await coro

File "/home/admin/mars-ant/mars/oscar/api.py", line 120, in __on_receive__

return await super().__on_receive__(message)

File "mars/oscar/core.pyx", line 507, in __on_receive__

raise ex

File "mars/oscar/core.pyx", line 500, in mars.oscar.core._BaseActor.__on_receive__

return await self._handle_actor_result(result)

File "mars/oscar/core.pyx", line 385, in _handle_actor_result

task_result = await coros[0]

File "mars/oscar/core.pyx", line 428, in mars.oscar.core._BaseActor._run_actor_async_generator

async with self._lock:

File "mars/oscar/core.pyx", line 429, in mars.oscar.core._BaseActor._run_actor_async_generator

with debug_async_timeout('actor_lock_timeout',

File "mars/oscar/core.pyx", line 434, in mars.oscar.core._BaseActor._run_actor_async_generator

res = await gen.athrow(*res)

File "/home/admin/mars-ant/mars/services/subtask/worker/processor.py", line 616, in run

result = yield self._running_aio_task

File "mars/oscar/core.pyx", line 439, in mars.oscar.core._BaseActor._run_actor_async_generator

res = await self._handle_actor_result(res)

File "mars/oscar/core.pyx", line 359, in _handle_actor_result

result = await result

File "/home/admin/mars-ant/mars/services/subtask/worker/processor.py", line 463, in run

await self._execute_graph(chunk_graph)

File "/home/admin/mars-ant/mars/services/subtask/worker/processor.py", line 223, in _execute_graph

await to_wait

File "/home/admin/mars-ant/mars/lib/aio/_threads.py", line 36, in to_thread

return await loop.run_in_executor(None, func_call)

File "/home/admin/.pyenv/versions/3.7.7/lib/python3.7/concurrent/futures/thread.py", line 57, in run

result = self.fn(*self.args, **self.kwargs)

File "/home/admin/mars-ant/mars/services/subtask/worker/tests/subtask_processor.py", line 70, in _execute_operand

super()._execute_operand(ctx, op)

File "/home/admin/mars-ant/mars/core/mode.py", line 77, in _inner

return func(*args, **kwargs)

File "/home/admin/mars-ant/mars/services/subtask/worker/processor.py", line 191, in _execute_operand

return execute(ctx, op)

File "/home/admin/mars-ant/mars/core/operand/core.py", line 485, in execute

result = executor(results, op)

File "/home/admin/mars-ant/mars/dataframe/groupby/sample.py", line 323, in execute

errors=op.errors,

File "/home/admin/mars-ant/mars/dataframe/groupby/sample.py", line 314, in <listcomp>

sample_pd[iloc_col].to_numpy()

File "/home/admin/mars-ant/mars/dataframe/groupby/sample.py", line 69, in _sample_groupby_iter

n=n, frac=frac, replace=replace, weights=w, random_state=random_state

File "/home/admin/.pyenv/versions/3.7.7/lib/python3.7/site-packages/pandas/core/generic.py", line 5365, in sample

locs = rs.choice(axis_length, size=n, replace=replace, p=weights)

File "mtrand.pyx", line 965, in numpy.random.mtrand.RandomState.choice

ValueError: [address=127.0.0.1:42482, pid=84916] Cannot take a larger sample than population when 'replace=False'

```

**To Reproduce**

To help us reproducing this bug, please provide information below:

1. Your Python version `3.7.7`

2. The version of Mars you use `Latest master`

3. Versions of crucial packages, such as numpy, scipy and pandas `numpy==1.21.5 pandas==1.3.5`

4. Full stack of the error.

5. Minimized code to reproduce the error.

**Expected behavior**

A clear and concise description of what you expected to happen.

**Additional context**

I added some logs and found that the decref stage does not match the incref stage:

The incref stage only increfs the `MapReduceOperand` with `stage == reduce`:

```python

for chunk in subtask.chunk_graph:

if (

isinstance(chunk.op, MapReduceOperand)

and chunk.op.stage == OperandStage.reduce

):

# reducer

data_keys = chunk.op.get_dependent_data_keys()

incref_chunk_keys.extend(data_keys)

# main key incref as well, to ensure existence of meta

incref_chunk_keys.extend([key[0] for key in data_keys])

```

But, the decref stage will derefs all the matched `MapReduceOperand`:

``` python

# if subtask not executed, rollback incref of predecessors

for inp_subtask in subtask_graph.predecessors(subtask):

for result_chunk in inp_subtask.chunk_graph.results:

# for reducer chunk, decref mapper chunks

if isinstance(result_chunk.op, ShuffleProxy):

for chunk in subtask.chunk_graph:

if isinstance(chunk.op, MapReduceOperand):

data_keys = chunk.op.get_dependent_data_keys()

print(">>>>>>>>>", chunk.op, chunk.op.stage, data_keys)

decref_chunk_keys.extend(data_keys)

decref_chunk_keys.extend(

[key[0] for key in data_keys]

)

```

The error data key in above traceback belongs to the a `DataFrameGroupByOperand` with `None` stage:

``` python

>>>>>>>>> DataFrameGroupByOperand <key=7bbacc1ca962ead3202f2ee0d4774909> None ['fba521b5c44e2f43199042f58b5fa971_0']

```

| closed | 2022-04-02T03:35:26Z | 2022-04-09T12:01:38Z | https://github.com/mars-project/mars/issues/2896 | [

"type: bug",

"mod: lifecycle service"

] | fyrestone | 1 |

python-restx/flask-restx | flask | 632 | How do you set the array of "Server Objects" per the spec | **Ask a question**

The openAPI spec includes a [Server Object ](https://swagger.io/specification/#server-object). I have used this for localhost, staging, production URLs for generated clients. Is it possible to set these in restx so that the swagger document includes this object? | closed | 2025-01-14T05:53:12Z | 2025-01-14T16:29:48Z | https://github.com/python-restx/flask-restx/issues/632 | [

"question"

] | rodericj | 2 |

benbusby/whoogle-search | flask | 308 | [BUG] <brief bug description> | **Describe the bug**

A clear and concise description of what the bug is.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to '...'

2. Click on '....'

3. Scroll down to '....'

4. See error

**Deployment Method**

- [ ] Heroku (one-click deploy)

- [x] Docker

- [ ] `run` executable

- [ ] pip/pipx

- [ ] Other: [describe setup]

**Version of Whoogle Search**

- [ ] Latest build from [source] (i.e. GitHub, Docker Hub, pip, etc)

- [x] Version [0.4.0 - 0.4.1]

- [ ] Not sure

**Additional context**

So I have been messing with this for a couple of hours trying to get the latest version or the 0.4.0 version to work but Duckduckgo bangs don't work in either (I type then and click enter or search and nothing happens). Also the 0.4.1 version seems to have a weird error when i do a search it removes the last letter from my search. So right now I am using the 0.3.2 version and would like to have the css that the 0.4.0 and 0.4.1 has but can't because of these errors. Thanks for this great project. And making it easy to use and setup. | closed | 2021-05-10T05:39:13Z | 2021-05-10T16:13:52Z | https://github.com/benbusby/whoogle-search/issues/308 | [

"bug"

] | czadikem | 1 |

jackmpcollins/magentic | pydantic | 410 | Does magentic support o1 and o1-mini models? | Hi,

I was trying to use these models today while generating structured output with `OpenaiChatModel`. But, I ran into issues as these models do not support the same range of parameters as older models like gpt-4. Thus magentic breaks quite heavily.

Notably, the o1 models don't:

* support to set `max_tokens` (aside from setting it to 1)

* allow the `parallel_tool_calls parameter`

* support `tools`

| open | 2025-01-28T13:35:08Z | 2025-02-03T12:36:36Z | https://github.com/jackmpcollins/magentic/issues/410 | [] | Lawouach | 2 |

blacklanternsecurity/bbot | automation | 1,422 | Optimize scan status message | Recently I've noticed that in large scans, it takes a long time (10+ seconds) to calculate the third and final status message. This is a problem, since it significantly increases the duration of the scan. | closed | 2024-06-01T20:29:39Z | 2024-06-01T21:11:47Z | https://github.com/blacklanternsecurity/bbot/issues/1422 | [

"enhancement"

] | TheTechromancer | 1 |

aio-libs/aiopg | sqlalchemy | 473 | cannot insert in PostgreSQL 8.1 | Hi,

Recap:

I'm using postgreSQL version 8.1.23 and I got this error:

```

psycopg2.ProgrammingError: syntax error at or near «RETURNING»

LINE 1: INSERT INTO tbl (val) VALUES (E'abc') RETURNING tbl.id

```

This is the code that runs the insert from the demo code in README:

```

metadata = sa.MetaData()

tbl = sa.Table('tbl', metadata,

sa.Column('id', sa.Integer, primary_key=True),

sa.Column('val', sa.String(255)))

await conn.execute(tbl.insert().values(val='abc'))

async for row in conn.execute(tbl.select()):

print(row.id, row.val)

```

I've created the engine with **enable_hstore** set to False in order to work with this Postgres version. I am already acquiring connections from this engine and querying _selects_ and they're all working fine.

I've also created tables with **implicit_returning=False**, which I found in source code to prevent adding RETURNING clause, cause I've seen that is not a valid clause in this PostgreSQL version.

But now, I've seen that if no default is defined in column or is None, appears after awaiting query:

`AttributeError: 'NoneType' object has no attribute 'is_callable'`

I don't see clearly how can I set specific dialect or to insert items... :(

Thank you in advance! | closed | 2018-05-11T13:41:43Z | 2020-12-21T05:53:26Z | https://github.com/aio-libs/aiopg/issues/473 | [] | Marruixu | 1 |

TencentARC/GFPGAN | deep-learning | 95 | Possible to use Real-ESRGAN on mac os AMD, and 16-bit images? | Faces work excellent with GFPGAN, it's pretty amazing, but without CUDA support, the backgrounds are left pretty nasty. Still amazed at how fast it runs on CPU (only a few seconds per image, even with scale of 8), granted it's using the non-color version, but I have colorizing software and do the rest manually. I've been unsuccessful trying to enable color using the Paper model and forcing CPU.

More importantly than color, as I use other software for colorization, is the background. I need to get the backgrounds restored and not just faces.

I've tried setting `--bg_upsampler realesrgan` flag, and it does not throw an error, but this seems to have no effect on the output image. I do get the warning though that Real-ESRGAN is slow and not used for CPU. Is it possible to enable Real-ESRGAN on macOS so that it uses AMD GPU and restores the background (I have a desktop with Pro Vega 64)? I saw the other Real-ESRGAN compiled for mac/AMD, maybe the two can be linked somehow?

If it can't use the AMD GPU, can it be forced to use the CPU? I don't care if it's slow, I just need it to work. :) I do a lot of rendering that is slow, because sometimes it's the only way. Main thing is getting it to work.

Also, is it possible to enable the use of 16-bit PNG, TIFF, or cinema DNG? Would be really cool if it could support 32-bit float TIFF or EXR.

Thank you | open | 2021-11-11T14:41:12Z | 2021-11-12T20:06:38Z | https://github.com/TencentARC/GFPGAN/issues/95 | [] | KeygenLLC | 1 |

roboflow/supervision | computer-vision | 1,567 | Bug in git-committers-plugin-2, v2.4.0 | At the moment, an error is observed when running the `mkdocs build` action from develop.

```

File "/opt/hostedtoolcache/Python/3.10.15/x64/lib/python3.10/site-packages/mkdocs_git_committers_plugin_2/plugin.py", line 121, in get_contributors_to_file

'avatar': commit['author']['avatar_url'] if user['avatar_url'] is not None else ''

UnboundLocalError: local variable 'user' referenced before assignment

```

This is due to: https://github.com/ojacques/mkdocs-git-committers-plugin-2/issues/72

| closed | 2024-10-03T21:29:35Z | 2024-10-04T23:41:07Z | https://github.com/roboflow/supervision/issues/1567 | [

"bug",

"documentation",

"github_actions"

] | LinasKo | 4 |

Evil0ctal/Douyin_TikTok_Download_API | fastapi | 244 | tiktok正常,抖音出现错误,这种情况是服务器问题吗?我用的日本服务器,用cloudflare做了泛解析! | ***Platform where the error occurred?***

Such as: Douyin/TikTok

***The endpoint where the error occurred?***

Such as: API-V1/API-V2/Web APP

***Submitted input value?***

Such as: video link

***Have you tried again?***

Such as: Yes, the error still exists after X time after the error occurred.

***Have you checked the readme or interface documentation for this project?***

Such as: Yes, and it is very sure that the problem is caused by the program.

| closed | 2023-08-18T08:59:49Z | 2023-08-28T07:53:55Z | https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues/244 | [

"BUG",

"enhancement"

] | tiermove | 7 |

huggingface/transformers | pytorch | 36,532 | After tokenizers upgrade, the length of the token does not correspond to the length of the model | ### System Info

transformers:4.48.1

tokenizers:0.2.1

python:3.9

### Who can help?

@ArthurZucker @itazap

### Information

- [ ] The official example scripts

- [x] My own modified scripts

### Tasks

- [ ] An officially supported task in the `examples` folder (such as GLUE/SQuAD, ...)

- [x] My own task or dataset (give details below)

### Reproduction

code snippet:

```

tokenizer = PegasusTokenizer.from_pretrained('IDEA-CCNL/Randeng-Pegasus-238M-Summary-Chinese')

model = AutoModelForSeq2SeqLM.from_pretrained(

'IDEA-CCNL/Randeng-Pegasus-238M-Summary-Chinese',

config=config

)

training_args = Seq2SeqTrainingArguments(

output_dir=config['model_name'],

evaluation_strategy="epoch",

# report_to="none",

save_strategy="epoch",

per_device_train_batch_size=4,

per_device_eval_batch_size=4,

num_train_epochs=4,

predict_with_generate=True,

logging_steps=0.1

)

trainer = Seq2SeqTrainer(

model=model,

args=training_args,

train_dataset=train_dataset,

eval_dataset=eval_dataset,

tokenizer=tokenizer,

data_collator=data_collator,

compute_metrics=compute_metrics

)

```

错误信息:

Trial process:

My original Trasnformers: 4.29.1 tokenizers: 0.13.3. The model is capable of reasoning and training normally.

After upgrading, the above error occurred and normal training was not possible. Therefore, I adjusted the length of the model to 'model. resice_tokec_embeddings' (len (tokenizer)). Original model length: 50000, tokenizer loading length: 50103. So the model I trained resulted in abnormal inference results.

Try again, keep tokenizers at 0.13.3, upgrade trasnformers at 4.33.3 (1. I need to upgrade because NPU only supports version 4.3.20. 2. This version is the highest compatible with tokenizers). After switching to this version, training and reasoning are normal.As long as tokenizers is greater than 0.13.3, length changes

### Expected behavior

I expect tokenizer to be compatible with the original code | closed | 2025-03-04T09:58:59Z | 2025-03-05T09:49:44Z | https://github.com/huggingface/transformers/issues/36532 | [

"bug"

] | CurtainRight | 3 |

xorbitsai/xorbits | numpy | 63 | Register all members in top-level init file | closed | 2022-12-09T04:50:16Z | 2022-12-13T04:46:51Z | https://github.com/xorbitsai/xorbits/issues/63 | [] | aresnow1 | 0 | |

skypilot-org/skypilot | data-science | 4,625 | [k8s] Unexpected error when relaunching an INIT cluster on k8s which failed due to capacity error | To reproduce:

1. Launch a managed job with the controller on k8s with the following `~/.sky/config.yaml`

```yaml

jobs:

controller:

resources:

cpus: 2

cloud: kubernetes

```

```console

$ sky jobs launch test.yaml --cloud aws --cpus 2 -n test-mount-bucket

Task from YAML spec: test.yaml

Managed job 'test-mount-bucket' will be launched on (estimated):

Considered resources (1 node):

----------------------------------------------------------------------------------------

CLOUD INSTANCE vCPUs Mem(GB) ACCELERATORS REGION/ZONE COST ($) CHOSEN

----------------------------------------------------------------------------------------

AWS m6i.large 2 8 - us-east-1 0.10 ✔

----------------------------------------------------------------------------------------

Launching a managed job 'test-mount-bucket'. Proceed? [Y/n]:

⚙︎ Translating workdir and file_mounts with local source paths to SkyPilot Storage...

Workdir: 'examples' -> storage: 'skypilot-filemounts-vscode-904d206c'.

Folder : 'examples' -> storage: 'skypilot-filemounts-vscode-904d206c'.

Created S3 bucket 'skypilot-filemounts-vscode-904d206c' in us-east-1

Excluded files to sync to cluster based on .gitignore.

✓ Storage synced: examples -> s3://skypilot-filemounts-vscode-904d206c/ View logs at: ~/sky_logs/sky-2025-01-30-23-19-02-003572/storage_sync.log

Excluded files to sync to cluster based on .gitignore.

✓ Storage synced: examples -> s3://skypilot-filemounts-vscode-904d206c/ View logs at: ~/sky_logs/sky-2025-01-30-23-19-09-895566/storage_sync.log

✓ Uploaded local files/folders.

Launching managed job 'test-mount-bucket' from jobs controller...

Warning: Credentials used for [GCP, AWS] may expire. Clusters may be leaked if the credentials expire while jobs are running. It is recommended to use credentials that never expire or a service account.

⚙︎ Launching managed jobs controller on Kubernetes.

W 01-30 23:19:33 instance.py:863] run_instances: Error occurred when creating pods: sky.provision.kubernetes.config.KubernetesError: Insufficient memory capacity on the cluster. Required resources (cpu=4, memory=34359738368) were not found in a single node. Other SkyPilot tasks or pods may be using resources. Check resource usage by running `kubectl describe nodes`. Full error: 0/1 nodes are available: 1 Insufficient memory. preemption: 0/1 nodes are available: 1 No preemption victims found for incoming pod.

sky.provision.kubernetes.config.KubernetesError: Insufficient memory capacity on the cluster. Required resources (cpu=4, memory=34359738368) were not found in a single node. Other SkyPilot tasks or pods may be using resources. Check resource usage by running `kubectl describe nodes`.

Full error: 0/1 nodes are available: 1 Insufficient memory. preemption: 0/1 nodes are available: 1 No preemption victims found for incoming pod.

During handling of the above exception, another exception occurred:

NotImplementedError

The above exception was the direct cause of the following exception:

sky.provision.common.StopFailoverError: During provisioner's failover, stopping 'sky-jobs-controller-11d9a692' failed. We cannot stop the resources launched, as it is not supported by Kubernetes. Please try launching the cluster again, or terminate it with: sky down sky-jobs-controller-11d9a692

```

2. Launch again:

```console

$ sky jobs launch test.yaml --cloud aws --cpus 2 -n test-mount-bucket

Task from YAML spec: test.yaml

Managed job 'test-mount-bucket' will be launched on (estimated):

Considered resources (1 node):

----------------------------------------------------------------------------------------

CLOUD INSTANCE vCPUs Mem(GB) ACCELERATORS REGION/ZONE COST ($) CHOSEN

----------------------------------------------------------------------------------------

AWS m6i.large 2 8 - us-east-1 0.10 ✔

----------------------------------------------------------------------------------------

Launching a managed job 'test-mount-bucket'. Proceed? [Y/n]:

⚙︎ Translating workdir and file_mounts with local source paths to SkyPilot Storage...

Workdir: 'examples' -> storage: 'skypilot-filemounts-vscode-b7ba6a41'.

Folder : 'examples' -> storage: 'skypilot-filemounts-vscode-b7ba6a41'.

Created S3 bucket 'skypilot-filemounts-vscode-b7ba6a41' in us-east-1

Excluded files to sync to cluster based on .gitignore.

✓ Storage synced: examples -> s3://skypilot-filemounts-vscode-b7ba6a41/ View logs at: ~/sky_logs/sky-2025-01-30-23-20-51-067815/storage_sync.log

Excluded files to sync to cluster based on .gitignore.

✓ Storage synced: examples -> s3://skypilot-filemounts-vscode-b7ba6a41/ View logs at: ~/sky_logs/sky-2025-01-30-23-20-58-164407/storage_sync.log

✓ Uploaded local files/folders.

Launching managed job 'test-mount-bucket' from jobs controller...

Warning: Credentials used for [AWS, GCP] may expire. Clusters may be leaked if the credentials expire while jobs are running. It is recommended to use credentials that never expire or a service account.

Cluster 'sky-jobs-controller-11d9a692' (status: INIT) was previously in Kubernetes (gke_sky-dev-465_us-central1-c_skypilotalpha). Restarting.

⚙︎ Launching managed jobs controller on Kubernetes.

⨯ Failed to set up SkyPilot runtime on cluster. View logs at: ~/sky_logs/sky-2025-01-30-23-21-05-243052/provision.log

AssertionError: cpu_request should not be None

``` | open | 2025-01-30T23:24:59Z | 2025-01-31T02:32:32Z | https://github.com/skypilot-org/skypilot/issues/4625 | [

"good first issue",

"good starter issues"

] | Michaelvll | 0 |

coqui-ai/TTS | pytorch | 3,371 | Windows installation error | ### Describe the bug

when i installing TTS, it couldn't build wheels.

### To Reproduce

pip install TTS

### Expected behavior

_No response_

### Logs

_No response_

### Environment

```shell

{

"CUDA": {

"GPU": [],

"available": false,

"version": null

},

"Packages": {

"PyTorch_debug": false,

"PyTorch_version": "2.1.1+cpu",

"TTS": "0.21.3",

"numpy": "1.26.0"

},

"System": {

"OS": "Windows",

"architecture": [

"64bit",

"WindowsPE"

],

"python": "3.11.5",

"version": "10.0.22621"

}

}

```

### Additional context

_No response_ | closed | 2023-12-06T02:47:52Z | 2024-01-14T09:35:19Z | https://github.com/coqui-ai/TTS/issues/3371 | [

"bug"

] | yyi016100 | 7 |

tableau/server-client-python | rest-api | 729 | Add Documentation for Prep Flow classes and methods | Hi!

Update: per comment below, verified FlowItem class and methods already exist. Updating this request for documentation of these items.

Original request:

Currently there is no FlowItem class and methods in TSC. Can we get that on the backlog (similar to [DatasourceItem](https://tableau.github.io/server-client-python/docs/api-ref#datasourceitem-class) and [WorkbookItem](https://tableau.github.io/server-client-python/docs/api-ref#workbookitem-class))? For now we have done a workaround using metadata api integration, but the code implementation was lengthy.

Cheers!

Chadd | open | 2020-11-13T16:49:21Z | 2022-07-22T19:14:34Z | https://github.com/tableau/server-client-python/issues/729 | [

"docs"

] | thechadd | 3 |

tqdm/tqdm | jupyter | 646 | File stream helpers | - [✓] I have visited the [source website], and in particular

read the [known issues]

- [✓] I have searched through the [issue tracker] for duplicates

- [✓] I have mentioned version numbers, operating system and

environment, where applicable:

```

>>> print(tqdm.__version__, sys.version, sys.platform)

4.26.0 3.6.6 |Anaconda custom (64-bit)| (default, Jun 28 2018, 11:07:29)

[GCC 4.2.1 Compatible Clang 4.0.1 (tags/RELEASE_401/final)] darwin

```

Would a helper around file streams for `tqdm` be useful? It seems like inputs to `tqdm` are often files.

Here is a simple example:

```python3

pbar = tqdm(total=os.path.getsize(filename), unit='B', unit_scale=True, unit_divisor=1024)

with ProgressReader(open(filename, 'rb'), pbar) as f:

pass

class ProgressReader():

def __init__(self, reader, pbar):

self._pbar = pbar

self._reader = reader

# Can also override other read methods

def read(self, *args, **kwargs):

prev = self.tell()

result = self._reader.read(*args, **kwargs)

self._pbar.update(self.tell() - prev)

return result

# Delegate everything else

def __getattr__(self, attr):

return getattr(self._reader, attr)

# Support while blocks

def __enter__(self, *args, **kwargs):

self._reader.__enter__(*args, **kwargs)

return self

def __exit__(self, *args, **kwargs):

self._reader.__exit__(type, *args, **kwargs)

```

Would welcome comments on the best approach here, e.g.: either wrap `tqdm` instance (as above) or directly extending `tqdm()` to support file streams by switching on the input type | open | 2018-12-01T23:05:19Z | 2018-12-01T23:06:53Z | https://github.com/tqdm/tqdm/issues/646 | [] | smallnamespace | 0 |

httpie/cli | api | 727 | JavaScript is disabled | I use httpie to access hackerone.com, prompting that javascript needs to be turned on

> [root@localhost ~]# http https://hackerone.com/monero/hacktivity?sort_type=latest_disclosable_activity_at\&filter=type%3Aall%20to%3Amonero&page=1

> It looks like your JavaScript is disabled. To use HackerOne, enable JavaScript in your browser and refresh this page.

How to fix it? | closed | 2018-11-06T05:50:09Z | 2018-11-06T05:57:17Z | https://github.com/httpie/cli/issues/727 | [] | linkwik | 1 |

DistrictDataLabs/yellowbrick | scikit-learn | 337 | Create a localization for Turkish language docs | I would like to translate some of the yellowbrick documentation into Turkish. Can you please create a localization for Turkish-language docs. | closed | 2018-03-15T01:15:44Z | 2018-03-16T02:23:25Z | https://github.com/DistrictDataLabs/yellowbrick/issues/337 | [

"level: expert",

"type: documentation"

] | Zeynepelabiad | 2 |

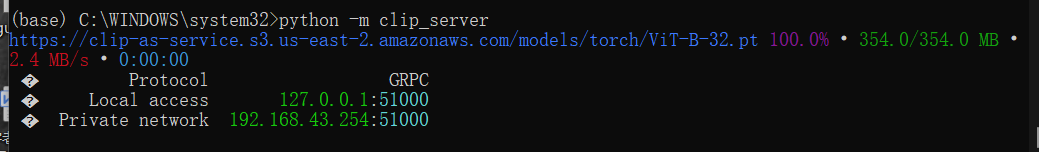

jina-ai/clip-as-service | pytorch | 691 | when excuting python -m clip_server, the Public address doesnt appear… |

it has been remained like this for a long time, is that normal TAT? | closed | 2022-04-23T10:20:23Z | 2022-04-25T05:29:43Z | https://github.com/jina-ai/clip-as-service/issues/691 | [] | LioHaunt | 3 |

PaddlePaddle/models | nlp | 4,863 | 从字符串,直接进行推理问题 | 官方使用run_ernie_sequence_labeling.py 文件进行推理,发现推理过程是从硬盘读取数据,并封装成数据迭代器实现的,

请问有没有 可以直接从内存上把字符串接过来,直接进行推理呢?

我在内存中的字符串是列表格式的 ['字符串1' ,'字符串2', '字符串3'], 想直接进行推理,分词并返回词性标注,

谢谢!!

| closed | 2020-09-21T04:14:42Z | 2020-09-22T07:03:31Z | https://github.com/PaddlePaddle/models/issues/4863 | [] | LeeYongchao | 1 |

iperov/DeepFaceLab | deep-learning | 5,417 | Deepfacelab Vram recongnition problem. | My computer specifications are as follows.

cpu : ryzen 4800H

gpu : Rtx3060 (notebook) 6GB

memory: 16GB

The job manager confirmed that the video RAM was normally caught at 6GB.

However, deepfacelab recognizes vram as 3.4GB, resulting in frequent memory errors. Find someone who knows the solution. | open | 2021-10-22T00:28:27Z | 2023-06-08T22:48:35Z | https://github.com/iperov/DeepFaceLab/issues/5417 | [] | Parksehoon1505 | 5 |

qubvel-org/segmentation_models.pytorch | computer-vision | 657 | save model for inference | i would thank you in your talented repos such as this repo

i want to save my custom model to load it and not causing the error segmentaiton_models.pytorch not found

and not installaing the pakage what would i have to do save the model class with the model_state_dict or what

i was able to do this with the tensorflow repo compiling the model with its loss and meterices and load the model as any tensorflow model doesn't need to install any thing just tensorflow framework

how i can do the same with this pytorch model | closed | 2022-09-21T15:07:10Z | 2022-11-29T09:42:25Z | https://github.com/qubvel-org/segmentation_models.pytorch/issues/657 | [

"Stale"

] | Mahmuod1 | 3 |

Gozargah/Marzban | api | 851 | Wireguard | با عرض درود و خسته نباشید

درخواست دارم تا در صورت امکان قابلیت پیکربندی وایرگارد هسته ایکس ری رو به هاست ستینگ مرزبان اضافه کنید

و همچنین قابلیت اکانتینگ رو اضافه کنید به وایرگارد تا بشه برای هر یوزر مانند دیگر پروتکل ها اکانت حجم / زمان مشخص تعین کرد

باتشکر | closed | 2024-03-05T20:46:58Z | 2024-03-06T08:36:42Z | https://github.com/Gozargah/Marzban/issues/851 | [

"Duplicate"

] | w0l4i | 1 |

paperless-ngx/paperless-ngx | django | 8,910 | [BUG] Custom fields endpoint does not respect API version | ### Description

Hey folks, thank you a ton for all the effort you put into Paperless, it's easily one of the best maintained self-hosted projects out there!

A fairly recent update (I believe #8299) changed the API response for the custom fields endpoint from

```json

"id": 1,

"name": "TestSelect",

"data_type": "select",

"extra_data": {

"select_options": [

"A",

"B"

],

"default_currency": null

},

"document_count": 0

```

to

```json

"id": 27,

"name": "TestSelect",

"data_type": "select",

"extra_data": {

"select_options": [

{

"id": "5t7Ix9oT5zdhPVrV",

"label": "A"

},

...

]

},

"document_count": 1

```

but the [API version](https://docs.paperless-ngx.com/api/#api-versioning) wasn't incremented and calls using any of the older versions still receive responses using this new format.

### Steps to reproduce

```bash

curl --request GET \

--url 'http://{{server}}/api/custom_fields/' \

--header 'accept: application/json; version=6' \

--header 'content-type: application/json'

```

### Webserver logs

```bash

Not applicable

```

### Browser logs

```bash

```

### Paperless-ngx version

2.14.5

### Host OS

Ubuntu 22.04

### Installation method

Docker - official image

### System status

```json

```

### Browser

_No response_

### Configuration changes

_No response_

### Please confirm the following

- [x] I believe this issue is a bug that affects all users of Paperless-ngx, not something specific to my installation.

- [x] This issue is not about the OCR or archive creation of a specific file(s). Otherwise, please see above regarding OCR tools.

- [x] I have already searched for relevant existing issues and discussions before opening this report.

- [x] I have updated the title field above with a concise description. | closed | 2025-01-25T23:17:09Z | 2025-02-27T03:10:20Z | https://github.com/paperless-ngx/paperless-ngx/issues/8910 | [

"bug",

"backend"

] | LeoKlaus | 4 |

tensorflow/tensor2tensor | deep-learning | 1,306 | Transformer model crashing due to memory issues trying to translate garbled text | I am translating a Chinese text file into English using the transformer model. My text file has a lot of garbled text and it causes memory usage to spike.

Here is the text:

https://gist.github.com/echan00/acde1d7e460cd9e467dfd612ea14ab66

Besides avoiding sending garbled text to the model, how else could I mitigate the problem described? | open | 2018-12-16T03:54:45Z | 2018-12-21T08:46:48Z | https://github.com/tensorflow/tensor2tensor/issues/1306 | [] | echan00 | 0 |

StructuredLabs/preswald | data-visualization | 520 | [FEATURE] Introduce tab() Component for Multi-Section UI Navigation | **Goal**

Add a `tab()` component that enables developers to organize UI content into labeled tabs within their Preswald apps—ideal for sectioning long dashboards, multiple views, or split data insights.

---

### 📌 Motivation

Many dashboards and tools created with Preswald contain multiple logical sections (e.g., charts, tables, filters). Scrolling through all at once creates cognitive overload. A `tab()` component provides:

- Better user experience through organized navigation

- A way to switch between related views in-place

- Clean separation of logic (e.g., Overview vs. Details)

This aligns with the existing layout vision (e.g., `size`, `collapsible()`), and will significantly improve interface design for real-world dashboards.

---

### ✅ Acceptance Criteria

- [ ] Add `tab()` component to `preswald/interfaces/components.py`

- [ ] Frontend implementation using ShadCN’s `Tabs` from `@/components/ui/tabs.tsx`

- [ ] Create `TabWidget.jsx` to render tabs and dynamic child content

- [ ] Register component in `DynamicComponents.jsx`

- [ ] Tab component should accept:

- `label: str` – Label for the tab container

- `tabs: list[dict]` – Each item with `title: str`, `components: list`

- `size: float = 1.0` – For layout sizing

- [ ] Ensure tabs support rendering other registered components (e.g., text, plotly, table) inside them

- [ ] Fully documented in SDK

---

### 🛠 Implementation Plan

#### 1. **Backend – Component Definition**

In `preswald/interfaces/components.py`:

```python

def tab(

label: str,

tabs: list[dict],

size: float = 1.0,

) -> None:

service = PreswaldService.get_instance()

component_id = f"tab-{hashlib.md5(label.encode()).hexdigest()[:8]}"

component = {

"type": "tab",

"id": component_id,

"label": label,

"size": size,

"tabs": tabs,

}

service.append_component(component)

```

Register it in `__init__.py`:

```python

from .components import tab

```

---

#### 2. **Frontend – UI Component**

Create: `frontend/src/components/widgets/TabWidget.jsx`

```jsx

import React from 'react';

import { Tabs, TabsList, TabsTrigger, TabsContent } from '@/components/ui/tabs';

import { Card } from '@/components/ui/card';

const TabWidget = ({ _label, tabs = [] }) => {

const [activeTab, setActiveTab] = React.useState(tabs?.[0]?.title || '');

return (

<Card className="mb-4 p-4 rounded-2xl shadow-md">

<h2 className="font-semibold text-lg mb-2">{_label}</h2>

<Tabs value={activeTab} onValueChange={setActiveTab}>

<TabsList className="mb-2 flex space-x-2">

{tabs.map((tab) => (

<TabsTrigger key={tab.title} value={tab.title}>

{tab.title}

</TabsTrigger>

))}

</TabsList>

{tabs.map((tab) => (

<TabsContent key={tab.title} value={tab.title}>

{/* Render nested components */}

{tab.components?.map((child, i) => (

<div key={i}>{window.renderDynamicComponent(child)}</div>

))}

</TabsContent>

))}

</Tabs>

</Card>

);

};

export default TabWidget;

```

---

#### 3. **DynamicComponents.jsx – Register `tab`**

```jsx

import TabWidget from '@/components/widgets/TabWidget';

case 'tab':

return (

<TabWidget

{...commonProps}

_label={component.label}

tabs={component.tabs}

/>

);

```

Ensure `renderDynamicComponent()` is accessible globally or passed through props to render children.

---

### 🧪 Testing Plan

- Add a test in `examples/iris/hello.py`:

```python

from preswald import tab, text, table, get_df, connect

connect()

df = get_df("sample_csv")

tab(

label="Data Views",

tabs=[

{"title": "Intro", "components": [text("Welcome to the Iris app.")]},

{"title": "Table", "components": [table(df)]},

]

)

```

- Run with `preswald run` and confirm:

- Tab titles appear

- Content switches correctly

- Styling matches Tailwind theme

---

### 🧾 SDK Usage Example

```python

tab(

label="Navigation",

tabs=[

{"title": "Overview", "components": [text("Summary goes here")]},

{"title": "Data", "components": [table(df)]},

{"title": "Chart", "components": [plotly(fig)]}

]

)

```

---

### 📚 Docs To Update

- [ ] `/docs/sdk/tab.mdx` – Full parameters and example

- [ ] `/docs/layout/guide.mdx` – Add layout pattern for `tab()`

---

### 🧩 Files Involved

- `preswald/interfaces/components.py`

- `frontend/src/components/widgets/TabWidget.jsx`

- `frontend/src/components/ui/tabs.tsx`

- `DynamicComponents.jsx`

---

### 💡 Future Ideas

- Allow dynamic content loading (lazy tabs)

- Optional `icon` support per tab

- Persist selected tab state across sessions | open | 2025-03-24T06:15:12Z | 2025-03-24T06:15:12Z | https://github.com/StructuredLabs/preswald/issues/520 | [

"enhancement"

] | amrutha97 | 0 |

jpadilla/django-rest-framework-jwt | django | 485 | Documentation not found | Hi there, the documentation has gone, could anyone find it? THANKS!! | closed | 2019-07-09T15:32:47Z | 2019-07-09T23:48:24Z | https://github.com/jpadilla/django-rest-framework-jwt/issues/485 | [] | YipCyun | 2 |

dpgaspar/Flask-AppBuilder | rest-api | 2,090 | How to enable ldap authentication |

### Environment

Flask-Appbuilder version: 4.3.4

### Describe the expected results

I am setting the following in `config.py` but it still doesn't seem to be using ldap in any way. Anything I can do to check?

```python

AUTH_TYPE = AUTH_LDAP

AUTH_LDAP_SERVER = "ldaps://my-ldap-server.com

AUTH_LDAP_USE_TLS = False

```

### Describe the actual results

Nothing seems to happen, and checking the underlying database the user table is not populated (which is something I would have expected)

### Steps to reproduce

| open | 2023-07-21T19:02:18Z | 2023-09-25T05:19:55Z | https://github.com/dpgaspar/Flask-AppBuilder/issues/2090 | [

"question"

] | jcauserjt | 2 |

proplot-dev/proplot | data-visualization | 356 | Plotting stuff example gives `ValueError' | I'm learning proplot according to the documents. When trying [Plotting stuff](https://proplot.readthedocs.io/en/latest/basics.html#Plotting-stuff) example, it gives a `ValueError: DiscreteNorm is not invertible.`

My maplotlib and proplot version are as follows:

```python

In [1]: matplotlib.__version__

Out[1]: '3.5.1'

In [2]: pplt.__version__

Out[2]: '0.9.5.post301'

```

I'm not sure whether this is related to the incompatibility with matplotlib of version 3.5.1.

| closed | 2022-04-23T14:10:54Z | 2023-03-29T05:45:02Z | https://github.com/proplot-dev/proplot/issues/356 | [

"bug"

] | chenyulue | 1 |

Lightning-AI/pytorch-lightning | pytorch | 19,730 | ValueError: dictionary update sequence element #0 has length 1; 2 is required | ### Bug description

I am trying to train a Lightning model that inherits from pl.LightningModule and implements a simple feed-forward network. The issue is that when I run it, it spits out the below error trace coming from trainer.fit(). I found this [very similar issue](https://github.com/Lightning-AI/pytorch-lightning/issues/9318), where downgrading to `torchmetrics<=0.5.0` fixed the issue, but that is not possible in my case as v2.2.0 of pytorch-lightning is not compatible with such an old version of torchmetrics. I tried downgrading to 0.7., the oldest compatible version, but it led to a different error also in the trainer.fit method.

Thanks for your attention and I would appreciate any help with this.

### What version are you seeing the problem on?

v2.2

### How to reproduce the bug

```python

Below is the model class definition

import pytorch_lightning as pl

import torch

import numpy as np

from torch.nn import MSELoss, L1Loss

from torchmetrics import R2Score

torch.random.manual_seed(123)

class LightningModelSimple(pl.LightningModule):

def __init__(

self,

latent_model,

readout_model=None,

losses={},

metrics=[],

gpu=True,

learning_rate=0.001,

weight_decay=0.0,

):

super().__init__()

self.save_hyperparameters()

self.latent_model = latent_model

if readout_model is None:

self.readout_model = torch.nn.Identity()

else:

self.readout_model = readout_model

# losses

if "target" in losses:

self.loss_target = losses["target"]

else:

self.loss_target = None

if "latent_target" in losses:

self.loss_latent_target = losses["latent_target"]

self.weight_loss_latent_target = losses["weight_loss_latent_target"]

else:

self.loss_latent_target = None

self.gpu = gpu

self.metrics = metrics

self.learning_rate = learning_rate

self.weight_decay = weight_decay

def forward(self, x):

x_latent = self.latent_model(x)

y = self.readout_model(x_latent)

return y

def step(self, partition, batch, batch_idx):

spectra, target_glucose = batch

# get latent predictions

self.pred_latent = self.latent_model(spectra.float())

# get glucose predictions

self.pred_glucose = self.readout_model(self.pred_latent)

# compute losses

loss = 0

if self.loss_target is not None:

loss += self.loss_target(self.pred_glucose, target_glucose)

self.log(partition + "_loss_target", loss, on_epoch=True)

if self.loss_latent_target is not None:

loss_latent_target = (

self.weight_loss_latent_target

* self.loss_latent_target(self.pred_latent, target_glucose.unsqueeze(1))

)

self.log(

partition + "_loss_latent_target", loss_latent_target, on_epoch=True

)

loss += loss_latent_target

self.log(partition + "_loss_total", loss, on_epoch=True)

for metric_name, metric in self.metrics:

self.log(

partition + "_" + metric_name,

metric(self.pred_glucose, target_glucose),

on_epoch=True,

)

return loss

def training_step(self, batch, batch_idx):

return self.step("train", batch, batch_idx)

def validation_step(self, batch, batch_idx):

return self.step("val", batch, batch_idx)

def test_step(self, batch, batch_idx):

return self.step("test", batch, batch_idx)

def configure_optimizers(self):

return torch.optim.Adam(

self.parameters(),

lr=self.hparams.learning_rate,

weight_decay=self.hparams.weight_decay,

)

This should go in a different file called helpers.py

def log_parameter(params, parser, param_name=""):

if isinstance(params, dict):

for key in params.keys():

if key == "class_path":

parser = log_parameter(params[key], parser, param_name)

else:

parser = log_parameter(params[key], parser, key)

else:

parser.add_argument("--" + param_name, type=type(params), default=params)

return parser

def update(config_data, params):

for k, v in params.items():

if isinstance(v, collections.abc.Mapping):

config_data[k] = update(config_data.get(k, {}), v)

else:

config_data[k] = v

return config_data

def train_model(config_file, **kwargs):

loader = yaml.SafeLoader

with open(config_file, "r") as stream:

config_data = yaml.load(stream, Loader=loader)

if "params" in kwargs:

config_data = update(config_data, kwargs["params"])

if "latent_model" in kwargs:

config_data["lightning_model"]["init_args"]["latent_model"] = kwargs[

"latent_model"

]

# experiment_name = config_data["experiment_name"]

n_epochs = config_data["n_epochs"]

pl.seed_everything(1234)

# add arguments to parser

parser = ArgumentParser(conflict_handler="resolve")

parser.add_argument(

"--auto-select-gpus", default=True, help="run automatically on GPU if available"

)

parser.add_argument("--max-epochs", default=n_epochs, type=int)

parser.add_argument("gpus", type=int, default=1)

parser = log_parameter(config_data, parser)

# parse arguments to trainer

args = parser.parse_args()

if args.gpus == 1:

device = "cuda"

elif args.gpus == 0:

device = "cpu"

# create mlflow experiment if it doesn't yet exist

try:

current_experiment = dict(mlflow.get_experiment_by_name(args.experiment_name))

experiment_id = current_experiment["experiment_id"]

except:

print("creating new experiment")

experiment_id = mlflow.create_experiment(args.experiment_name)

# # start experiment

with mlflow.start_run(experiment_id=experiment_id) as run:

with open("log.txt", "a") as log_file:

log_file.write("'" + str(run.info.run_id) + "'" + ", ")

path_mlflow_results = (

"mlruns/" + str(experiment_id) + "/" + str(run.info.run_id)

)

path_checkpoints = path_mlflow_results + "/checkpoints"

# copy yaml file to mlfow results

# TODO: this is a hack for now, this should automatically be logged

# with open(path_mlflow_results + "/" + config_file, "w") as f:

with open(path_mlflow_results + "/config.yaml", "w") as f:

yaml.dump(config_data, f)

# initialize dataloader

config_data = initialize_datamodule(config_data)

datamodule = config_data["datamodule"]

# extract key for model selection

loss_key = config_data["metric_model_selection"]

if (

config_data["datamodule"].split_label_val == "Barcode"

and "val_" in loss_key[0]

):

raise ValueError(

"split_label_val=Barcode with metric_model_selection=",

loss_key,

" introduces data leakage",

)

# initialize lightning model

if (

config_data["lightning_model"]["class_path"]

== "models.lightning_model.LightningModel"

):

use_val_test_data_in_train = True

elif (

config_data["lightning_model"]["class_path"]

== "models.lightning_model.LightningModelSimple"

):

use_val_test_data_in_train = False

config_data = initialize_modules(config_data)

lightning_model = config_data["lightning_model"]

print(type(lightning_model))

print(type(datamodule))

# monitor different metrics depending on loss variable

checkpoints = []

monitored_metrics = config_data["monitored_metrics"]

for i, (me, mo) in enumerate(monitored_metrics):

ckpt = pl.callbacks.ModelCheckpoint(

monitor=me,

mode=mo,

dirpath=path_checkpoints,

filename="{epoch:02d}-{" + me + ":.4f}",

save_top_k=1,

)

checkpoints.append(ckpt)

# checkpoints.append(

# pl.callbacks.ModelCheckpoint(

# dirpath=path_checkpoints,

# filename="every_n_{epoch:02d}",

# every_n_epochs=10,

# save_top_k=-1, # <--- this is important!

# )

# )

# log all parameter

mlflow.pytorch.autolog()

for arg in vars(args):

mlflow.log_param(arg, getattr(args, arg))

# train model

trainer = pl.Trainer(max_epochs=n_epochs, logger=True, callbacks=checkpoints)

# TODO: this is very hackey and should be revisited

# we create a combined dataloader which is the same for train/validation/test

# batching is applied to the train dataloader, thus there will be multiple batches with the batch size defined in config.yaml

# the validation and test datloaders only have one batch which has the size of the entire validation/test set

# insight the lightning module we read out the validation and test batch at step 0 and save it as a class

# attribute such that all validation and test data can be used in all training steps

if use_val_test_data_in_train:

datamodule.setup(stage="")

iterables_train = {

"train": datamodule.train_dataloader(),

"val": datamodule.val_dataloader(),

"test": datamodule.test_dataloader(),

}

iterables_val = {

"train": datamodule.train_dataloader(),

"val": datamodule.val_dataloader(),

"test": datamodule.test_dataloader(),

}

iterables_test = {

"train": datamodule.train_dataloader(),

"val": datamodule.val_dataloader(),

"test": datamodule.test_dataloader(),

}

combined_loader_train = CombinedLoader(iterables_train, mode="max_size")

combined_loader_val = CombinedLoader(iterables_val, mode="max_size")

combined_loader_test = CombinedLoader(iterables_test, mode="max_size")

trainer.fit(lightning_model, combined_loader_train, combined_loader_val)

else:

trainer.fit(lightning_model, datamodule=datamodule)

# evaluate tests for all monitored metrics

ckpts = glob.glob(path_checkpoints + "/*")

for ckpt in ckpts:

if loss_key[0] in ckpt:

if use_val_test_data_in_train:

result = trainer.test(

dataloaders=combined_loader_test, ckpt_path=ckpt

)

else:

result = trainer.test(datamodule=datamodule, ckpt_path=ckpt)

print(result)

Finally the main file

import torch

import utils.helpers as helpers

torch.random.manual_seed(123)

if __name__ == "__main__":

# profil data

# train_model("config_profil_latent.yaml")

# train_model("config_profil_readout.yaml")

# train_model("config_profil.yaml")

# train_model("config_profil_simple.yaml")

for weight_decay in [1.0]:

for val_subject in range(0, 14):

params = {

"datamodule": {

"init_args": {

"val_index": [val_subject],

"test_index": [],

}

},

"lightning_model": {

"init_args": {

"weight_decay": weight_decay,

}

},

}

helpers.train_model("config_profil_simple.yaml", params=params)

```

### Error messages and logs

```

Traceback (most recent call last):

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/pytorch_lightning/trainer/call.py", line 44, in _call_and_handle_interrupt

return trainer_fn(*args, **kwargs)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/pytorch_lightning/trainer/trainer.py", line 579, in _fit_impl

self._run(model, ckpt_path=ckpt_path)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/pytorch_lightning/trainer/trainer.py", line 969, in _run

_log_hyperparams(self)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/pytorch_lightning/loggers/utilities.py", line 95, in _log_hyperparams

logger.save()

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/lightning_utilities/core/rank_zero.py", line 42, in wrapped_fn

return fn(*args, **kwargs)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/lightning_fabric/loggers/csv_logs.py", line 157, in save

self.experiment.save()

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/pytorch_lightning/loggers/csv_logs.py", line 67, in save

save_hparams_to_yaml(hparams_file, self.hparams)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/pytorch_lightning/core/saving.py", line 354, in save_hparams_to_yaml

yaml.dump(v)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/__init__.py", line 253, in dump

return dump_all([data], stream, Dumper=Dumper, **kwds)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/__init__.py", line 241, in dump_all

dumper.represent(data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 27, in represent

node = self.represent_data(data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 48, in represent_data

node = self.yaml_representers[data_types[0]](self, data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 199, in represent_list

return self.represent_sequence('tag:yaml.org,2002:seq', data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 92, in represent_sequence

node_item = self.represent_data(item)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 48, in represent_data

node = self.yaml_representers[data_types[0]](self, data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 199, in represent_list

return self.represent_sequence('tag:yaml.org,2002:seq', data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 92, in represent_sequence

node_item = self.represent_data(item)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 52, in represent_data

node = self.yaml_multi_representers[data_type](self, data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 356, in represent_object

return self.represent_mapping(tag+function_name, value)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 118, in represent_mapping

node_value = self.represent_data(item_value)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 48, in represent_data

node = self.yaml_representers[data_types[0]](self, data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 207, in represent_dict

return self.represent_mapping('tag:yaml.org,2002:map', data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 118, in represent_mapping

node_value = self.represent_data(item_value)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 52, in represent_data

node = self.yaml_multi_representers[data_type](self, data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 330, in represent_object

dictitems = dict(dictitems)

ValueError: dictionary update sequence element #0 has length 1; 2 is required

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/home/pap_spiden_com/spiden_ds/experiments/artemis/main.py", line 28, in <module>

helpers.train_model("config_profil_simple.yaml", params=params)

File "/home/pap_spiden_com/spiden_ds/experiments/artemis/utils/helpers.py", line 191, in train_model

trainer.fit(lightning_model, datamodule=datamodule)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/mlflow/utils/autologging_utils/safety.py", line 573, in safe_patch_function

patch_function(call_original, *args, **kwargs)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/mlflow/utils/autologging_utils/safety.py", line 252, in patch_with_managed_run

result = patch_function(original, *args, **kwargs)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/mlflow/pytorch/_lightning_autolog.py", line 386, in patched_fit

result = original(self, *args, **kwargs)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/mlflow/utils/autologging_utils/safety.py", line 554, in call_original

return call_original_fn_with_event_logging(_original_fn, og_args, og_kwargs)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/mlflow/utils/autologging_utils/safety.py", line 489, in call_original_fn_with_event_logging

original_fn_result = original_fn(*og_args, **og_kwargs)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/mlflow/utils/autologging_utils/safety.py", line 551, in _original_fn

original_result = original(*_og_args, **_og_kwargs)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/pytorch_lightning/trainer/trainer.py", line 543, in fit

call._call_and_handle_interrupt(

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/pytorch_lightning/trainer/call.py", line 67, in _call_and_handle_interrupt

logger.finalize("failed")

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/lightning_utilities/core/rank_zero.py", line 42, in wrapped_fn

return fn(*args, **kwargs)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/lightning_fabric/loggers/csv_logs.py", line 166, in finalize

self.save()

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/lightning_utilities/core/rank_zero.py", line 42, in wrapped_fn

return fn(*args, **kwargs)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/lightning_fabric/loggers/csv_logs.py", line 157, in save

self.experiment.save()

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/pytorch_lightning/loggers/csv_logs.py", line 67, in save

save_hparams_to_yaml(hparams_file, self.hparams)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/pytorch_lightning/core/saving.py", line 354, in save_hparams_to_yaml

yaml.dump(v)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/__init__.py", line 253, in dump

return dump_all([data], stream, Dumper=Dumper, **kwds)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/__init__.py", line 241, in dump_all

dumper.represent(data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 27, in represent

node = self.represent_data(data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 48, in represent_data

node = self.yaml_representers[data_types[0]](self, data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 199, in represent_list

return self.represent_sequence('tag:yaml.org,2002:seq', data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 92, in represent_sequence

node_item = self.represent_data(item)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 48, in represent_data

node = self.yaml_representers[data_types[0]](self, data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 199, in represent_list

return self.represent_sequence('tag:yaml.org,2002:seq', data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 92, in represent_sequence

node_item = self.represent_data(item)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 52, in represent_data

node = self.yaml_multi_representers[data_type](self, data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 356, in represent_object

return self.represent_mapping(tag+function_name, value)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 118, in represent_mapping

node_value = self.represent_data(item_value)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 48, in represent_data

node = self.yaml_representers[data_types[0]](self, data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 207, in represent_dict

return self.represent_mapping('tag:yaml.org,2002:map', data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 118, in represent_mapping

node_value = self.represent_data(item_value)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 52, in represent_data

node = self.yaml_multi_representers[data_type](self, data)

File "/opt/conda/envs/artemis/lib/python3.10/site-packages/yaml/representer.py", line 330, in represent_object

dictitems = dict(dictitems)

ValueError: dictionary update sequence element #0 has length 1; 2 is required

```

### Environment

<details>

<summary>Current environment</summary>

```

#- Lightning Component (e.g. Trainer, LightningModule, LightningApp, LightningWork, LightningFlow):