repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

taverntesting/tavern | pytest | 301 | Issue with sending delete method | I always receive this error when running a DELETE command

tavern.util.exceptions.TestFailError: Test 'Delete non-existant experiment' failed:

- Status code was 400, expected 404:

{"error": "The server could not handle your request: 400 Bad Request: The browser (or proxy) sent a request that this server could not understand."}

------------------------------ Captured log call -------------------------------

base.py 37 ERROR Status code was 400, expected 404:

{"error": "The server could not handle your request: 400 Bad Request: The browser (or proxy) sent a request that this server could not understand."}

It all of a sudden started happening. I am on version 0.22.1. If I execute the same request in Postman it runs just fine. | closed | 2019-03-07T13:56:59Z | 2019-03-07T18:49:55Z | https://github.com/taverntesting/tavern/issues/301 | [] | mrwatchmework | 1 |

marshmallow-code/marshmallow-sqlalchemy | sqlalchemy | 664 | I suspect a breaking change related to "include_relationships=True" and schema.load() in version 1.4.1 | Hi ! I recently had to do some testing on my local for some portion of our code that's been running happily on staging. I didn't specify a version number for my local container instance, as such it pulled `1.4.1` which is the latest. I noticed that it seems to enforce implicitly defined fields (from the include_relationships=True option), during a `schema.load()` which I THINK isn't the desired or USUAL behavior.

Typically (in previous versions specifically 1.1.0 ) we've been able to deserialize into objects with schemas defined with the `include_relationships` option set to True - without having to provide values for relationship fields (which intuitively makes sense), however for some reason it's raising a Validation (Missing field) error on `1.4.1` . This behavior wasn't reproducable on `1.1.0` (not that I would know if this is on later versions in: `1.1.0 < version < 1.4.1` because we've not used any other up until my recent local testing which used `1.4.1`).

```python

class UserSchema(Base):

class Meta:

model = User

include_relationships=True # includes for example an 'address' relationship attr (could be something like a 'backref' or explicitly declared field on the sqlalchemy model)

include_fk = True

load_instance = True

# usage and expected behavior

schema = UserSchema(unknown='exclude', session=session)

user = schema.load(**data)

# returns <User> object

# Observed behavior

# .. same steps

# throws 'Missing data for required 'address' field - error'

``` | open | 2025-03-14T18:27:03Z | 2025-03-22T10:59:09Z | https://github.com/marshmallow-code/marshmallow-sqlalchemy/issues/664 | [] | Curiouspaul1 | 2 |

vllm-project/vllm | pytorch | 14,531 | [Bug]: [Tests] Initialization Test Does Not Work With V1 | ### Your current environment

- Converting over the tests to use V1, this is not working

### 🐛 Describe the bug

- works

```bash

VLLM_USE_V1=0 pytest -v -x models/test_initialization.py -k "not Cohere"

```

- fails on Grok 1

```bash

VLLM_USE_V1=1 pytest -v -x models/test_initialization.py -k "not Cohere"

```

- works! (this is wild)

```bash

VLLM_USE_V1=1 pytest -v -x models/test_initialization.py -k "Grok"

```

### Before submitting a new issue...

- [x] Make sure you already searched for relevant issues, and asked the chatbot living at the bottom right corner of the [documentation page](https://docs.vllm.ai/en/latest/), which can answer lots of frequently asked questions. | open | 2025-03-10T02:38:38Z | 2025-03-10T02:38:38Z | https://github.com/vllm-project/vllm/issues/14531 | [

"bug"

] | robertgshaw2-redhat | 0 |

stanfordnlp/stanza | nlp | 971 | Slovak multiword doesn't work | **Describe the bug**

Slovak module does not handle multiwords such as "naňho"

**To Reproduce**

```py

>>> import stanza

>>> stanza.download("sk")

>>> nlp=stanza.Pipeline("sk")

>>> doc=nlp("Ten naňho spadol a zabil ho.")

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "~/.local/lib/python3.9/site-packages/stanza/pipeline/core.py", line 231, in __call__

doc = self.process(doc)

File "~/.local/lib/python3.9/site-packages/stanza/pipeline/core.py", line 225, in process

doc = process(doc)

File "~/.local/lib/python3.9/site-packages/stanza/pipeline/depparse_processor.py", line 51, in process

sentence.build_dependencies()

File "~/.local/lib/python3.9/site-packages/stanza/models/common/doc.py", line 555, in build_dependencies

assert(word.head == head.id)

AssertionError

```

**Expected behavior**

"naňho" should be split into two words "na neho"

**Environment (please complete the following information):**

- OS: Debian

- Python version: Python 3.9.2

- Stanza version: 1.3.0

| closed | 2022-03-06T04:43:30Z | 2022-03-06T10:46:42Z | https://github.com/stanfordnlp/stanza/issues/971 | [

"bug",

"fixed on dev"

] | KoichiYasuoka | 2 |

horovod/horovod | tensorflow | 3,501 | Is there a problem with ProcessSetTable Finalize when elastic? | Background:

Suppose there are currently 4 ranks on 4 machines

Due to the failure of machine 1, rank1 exits directly, and the final shutdown: logic is not executed

Then the remaining machines will perform the shutdown operation in the case of elasticity, and will call process_set_table.Finalize function. this function uses allgather to determine whether the process set needs to be removed, but at this time rank1 has already exited, then allgather operation should theoretically cause the remaining processes to be abnormal, so that the shutdown cannot be normal and elastic cannot be normal.

@maxhgerlach | closed | 2022-04-02T08:23:35Z | 2022-05-12T03:16:01Z | https://github.com/horovod/horovod/issues/3501 | [

"bug"

] | Richie-yan | 1 |

neuml/txtai | nlp | 265 | Add scripts to train query translation models | Add training scripts for building query translation models. | closed | 2022-04-18T09:41:28Z | 2022-04-18T14:39:26Z | https://github.com/neuml/txtai/issues/265 | [] | davidmezzetti | 0 |

ymcui/Chinese-BERT-wwm | tensorflow | 184 | 关于fill-mask的一些疑问 | 中国[MASK]:

```

{'sequence': '中 国 :', 'score': 0.5457051992416382, 'token': 8038, 'token_str': ':'}

{'sequence': '中 国 :', 'score': 0.09207046031951904, 'token': 131, 'token_str': ':'}

{'sequence': '中 国 -', 'score': 0.06536566466093063, 'token': 118, 'token_str': '-'}

{'sequence': '中 国 。', 'score': 0.06007284298539162, 'token': 511, 'token_str': '。'}

{'sequence': '中 国 版', 'score': 0.03868889436125755, 'token': 4276, 'token_str': '版'}

{'sequence': '中 国 ;', 'score': 0.01822206936776638, 'token': 8039, 'token_str': ';'}

{'sequence': '中 国 的', 'score': 0.013966748490929604, 'token': 4638, 'token_str': '的'}

{'sequence': '中 国 ,', 'score': 0.007958734408020973, 'token': 8024, 'token_str': ','}

{'sequence': '中 国 网', 'score': 0.006388372275978327, 'token': 5381, 'token_str': '网'}

{'sequence': '中 国,', 'score': 0.005788101349025965, 'token': 117, 'token_str': ','}

```

机器[MASK]:

```

{'sequence': '机 器 。', 'score': 0.2849466800689697, 'token': 511, 'token_str': '。'}

{'sequence': '机 器 :', 'score': 0.21833810210227966, 'token': 8038, 'token_str': ':'}

{'sequence': '机 器 ;', 'score': 0.13236992061138153, 'token': 8039, 'token_str': ';'}

{'sequence': '机 器 :', 'score': 0.08217491209506989, 'token': 131, 'token_str': ':'}

{'sequence': '机 器 人', 'score': 0.028695881366729736, 'token': 782, 'token_str': '人'}

{'sequence': '机 器 )', 'score': 0.02431340701878071, 'token': 8021, 'token_str': ')'}

{'sequence': '机 器 ;', 'score': 0.023457376286387444, 'token': 132, 'token_str': ';'}

{'sequence': '机 器 的', 'score': 0.012613171711564064, 'token': 4638, 'token_str': '的'}

{'sequence': '机 器 、', 'score': 0.010766545310616493, 'token': 510, 'token_str': '、'}

{'sequence': '机 器 (', 'score': 0.010289286263287067, 'token': 8020, 'token_str': '('}

```

凭经验,如果前缀是"中国", 下一个字是"人"应该概率更高,为什么这样实验的结果会出现很多标点符号?

| closed | 2021-05-17T12:52:17Z | 2021-05-27T21:43:27Z | https://github.com/ymcui/Chinese-BERT-wwm/issues/184 | [

"stale"

] | yoopaan | 3 |

pydantic/pydantic-core | pydantic | 884 | MultiHostUrl build function return type is str | Output type in the `_pydantic_core.pyi` for build function in MultiHostUrl class is str but in the [migration guild](https://docs.pydantic.dev/latest/migration/#url-and-dsn-types-in-pydanticnetworks-no-longer-inherit-from-str) you said dsn types don't inherit str.

Selected Assignee: @dmontagu | closed | 2023-08-15T09:05:04Z | 2023-08-15T09:28:08Z | https://github.com/pydantic/pydantic-core/issues/884 | [

"duplicate"

] | mojtabaAmir | 1 |

MycroftAI/mycroft-core | nlp | 3,038 | Can I download the entire package just once? | So far I've setup Mycroft about 20 times trying different ways, VBox, Virtmgr, Docker mycroft, ubuntu, minideb.. etc.

All this is burning up my bandwidth and seems really unnecessary as they are all accessing the same files.

Can I just download the entire package just once instead of having dev_mycroft download all the packages everytime? I'm using the same ubuntu or debian hosts everytime. Would a list of packages for apt help? The at least I could move them from /var/cache/apt/archives. Or could they be put into a reusable deb file? Or how about an AppImage? | closed | 2021-11-22T05:49:42Z | 2024-09-08T08:24:48Z | https://github.com/MycroftAI/mycroft-core/issues/3038 | [] | auwsom | 7 |

drivendataorg/cookiecutter-data-science | data-science | 282 | Check for existing directory earlier | As soon as the user enters the project name (or repo name? which is used for the directory name), check if the directory already exists and either quit or prompt for a new name.

Otherwise, the user has to answer all of the questions and THEN get an error message. | open | 2022-08-26T18:53:52Z | 2025-03-07T18:58:23Z | https://github.com/drivendataorg/cookiecutter-data-science/issues/282 | [

"enhancement"

] | AllenDowney | 1 |

ultralytics/ultralytics | machine-learning | 18,826 | Failing to compute gradients, RuntimeError: element 0 of tensors does not require grad and does not have a grad_fn | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/orgs/ultralytics/discussions) and found no similar questions.

### Question

I'm resarching perturbations in neural networks. I have a video in which YOLOv11 correctly detects several objects. I'd like to add a gradient to each frame, so that it would fail detecting objects in the modified frames.

My current approach is;

```def fgsm(gradients, tensor):

perturbation = epsilon * gradients.sign()

alt_img = tensor + perturbation

alt_img = torch.clamp(alt_img, 0, 1) # clipping pixel values

alt_img_np = alt_img.squeeze().permute(1, 2, 0).detach().numpy()

alt_img_np = (alt_img_np * 255).astype(np.uint8)

return alt_img_np

def perturb(model, cap):

out = cv2.VideoWriter('perturbed.mp4', 0x7634706d, 30.0, (640, 640))

print("CUDA Available: ", torch.cuda.is_available())

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

model.to(device)

while cap.isOpened():

ret, img = cap.read()

resized = cv2.resize(img, (640, 640))

rgb = cv2.cvtColor(resized, cv2.COLOR_BGR2RGB)

tensor = torch.from_numpy(rgb).float()

tensor = tensor.to(device)

tensor = tensor.permute(2, 0, 1).unsqueeze(0) #change tensor dimensions

tensor /= 255.0 #normalize

tensor.requires_grad = True

output = model(tensor)

target = output[0].boxes.cls.long()

logits = output[0].boxes.data

loss = -F.cross_entropy(logits, target)

loss.backward() #Backpropagation

gradients = tensor.grad

if gradients is not None:

alt_img = fgsm(gradients, tensor)

cv2.imshow('Perturbed video', alt_img)

out.write(alt_img)

```

Without loss.requires_grad = True I receive;

```

loss.backward() #Backpropagation

^^^^^^^^^^^^^^^

File "/var/data/python/lib/python3.11/site-packages/torch/_tensor.py", line 581, in backward

torch.autograd.backward(

File "/var/data/python/lib/python3.11/site-packages/torch/autograd/__init__.py", line 347, in backward

_engine_run_backward(

File "/var/data/python/lib/python3.11/site-packages/torch/autograd/graph.py", line 825, in _engine_run_backward

return Variable._execution_engine.run_backward( # Calls into the C++ engine to run the backward pass

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

RuntimeError: element 0 of tensors does not require grad and does not have a grad_fn

```

If I enable loss.requires_grad = True, I am able to extract gradients from loss, but those dont look like they are correctly applied (and dont lead to a decrease in detection/classification performance.

What am I missing?

Thanks.

### Additional

_No response_ | closed | 2025-01-22T13:49:32Z | 2025-03-02T15:59:00Z | https://github.com/ultralytics/ultralytics/issues/18826 | [

"question",

"detect"

] | gp-000 | 10 |

xzkostyan/clickhouse-sqlalchemy | sqlalchemy | 194 | Release 0.2.2 version | We have merged #180 PR and need to provide a new version of lib to fix the issue in apache superset

@xzkostyan Can we do this? | closed | 2022-08-23T07:26:15Z | 2022-08-24T07:33:19Z | https://github.com/xzkostyan/clickhouse-sqlalchemy/issues/194 | [] | EugeneTorap | 2 |

ets-labs/python-dependency-injector | flask | 73 | Review and update ExternalDependency provider docs | closed | 2015-07-13T07:33:19Z | 2015-07-16T22:15:30Z | https://github.com/ets-labs/python-dependency-injector/issues/73 | [

"docs"

] | rmk135 | 0 | |

encode/apistar | api | 51 | Using gunicorn if using http.QueryParams without query params causes KeyError | ```

[2017-04-16 20:24:24 -0700] [67301] [ERROR] Traceback (most recent call last):

File "venv/lib/python3.6/site-packages/apistar/app.py", line 68, in func

state[output] = function(**kwargs)

File "app.py", line 27, in build

return cls(url_decode(environ['QUERY_STRING']))

KeyError: 'QUERY_STRING'

``` | closed | 2017-04-17T05:07:57Z | 2017-04-17T16:40:25Z | https://github.com/encode/apistar/issues/51 | [] | kinabalu | 1 |

pytest-dev/pytest-cov | pytest | 270 | Local test failure: ModuleNotFoundError: No module named 'helper' | I am seeing the following test failure locally, also when using tox, even from

a fresh git clone:

```

platform linux -- Python 3.7.2, pytest-4.3.0, py-1.8.0, pluggy-0.9.0

rootdir: …/Vcs/pytest-cov, inifile: setup.cfg

plugins: forked-1.0.2, cov-2.6.1

collected 113 items

tests/test_pytest_cov.py F

=========================================================================================== FAILURES ===========================================================================================

____________________________________________________________________________________ test_central[branch2x] ____________________________________________________________________________________

…/Vcs/pytest-cov/tests/test_pytest_cov.py:187: in test_central

'*10 passed*'

E Failed: nomatch: '*- coverage: platform *, python * -*'

E and: '============================= test session starts =============================='

E and: 'platform linux -- Python 3.7.2, pytest-4.3.0, py-1.8.0, pluggy-0.9.0 -- …/Vcs/pytest-cov/.venv/bin/python'

E and: 'cachedir: .pytest_cache'

E and: 'rootdir: /tmp/pytest-of-user/pytest-647/test_central0, inifile:'

E and: 'plugins: forked-1.0.2, cov-2.6.1'

E and: 'collecting ... collected 0 items / 1 errors'

E and: ''

E and: '==================================== ERRORS ===================================='

E and: '_______________________ ERROR collecting test_central.py _______________________'

E and: "ImportError while importing test module '/tmp/pytest-of-user/pytest-647/test_central0/test_central.py'."

E and: 'Hint: make sure your test modules/packages have valid Python names.'

E and: 'Traceback:'

E and: 'test_central.py:1: in <module>'

E and: ' import sys, helper'

E and: "E ModuleNotFoundError: No module named 'helper'"

E and: ''

E fnmatch: '*- coverage: platform *, python * -*'

E with: '----------- coverage: platform linux, python 3.7.2-final-0 -----------'

E nomatch: 'test_central* 9 * 85% *'

E and: 'Name Stmts Miss Branch BrPart Cover Missing'

E and: '-------------------------------------------------------------'

E and: 'test_central.py 9 8 4 0 8% 3-11'

E and: ''

E and: '!!!!!!!!!!!!!!!!!!! Interrupted: 1 errors during collection !!!!!!!!!!!!!!!!!!!!'

E and: '=========================== 1 error in 0.05 seconds ============================'

E remains unmatched: 'test_central* 9 * 85% *'

------------------------------------------------------------------------------------- Captured stdout call -------------------------------------------------------------------------------------

running: …/Vcs/pytest-cov/.venv/bin/python -mpytest --basetemp=/tmp/pytest-of-user/pytest-647/test_central0/runpytest-0 -v --cov=/tmp/pytest-of-user/pytest-647/test_central0 --cov-report=term-missing /tmp/pytest-of-user/pytest-647/test_central0/test_central.py --cov-branch --basetemp=/tmp/pytest-of-user/pytest-647/basetemp

in: /tmp/pytest-of-user/pytest-647/test_central0

============================= test session starts ==============================

platform linux -- Python 3.7.2, pytest-4.3.0, py-1.8.0, pluggy-0.9.0 -- …/Vcs/pytest-cov/.venv/bin/python

cachedir: .pytest_cache

rootdir: /tmp/pytest-of-user/pytest-647/test_central0, inifile:

plugins: forked-1.0.2, cov-2.6.1

collecting ... collected 0 items / 1 errors

==================================== ERRORS ====================================

_______________________ ERROR collecting test_central.py _______________________

ImportError while importing test module '/tmp/pytest-of-user/pytest-647/test_central0/test_central.py'.

Hint: make sure your test modules/packages have valid Python names.

Traceback:

test_central.py:1: in <module>

import sys, helper

E ModuleNotFoundError: No module named 'helper'

----------- coverage: platform linux, python 3.7.2-final-0 -----------

Name Stmts Miss Branch BrPart Cover Missing

-------------------------------------------------------------

test_central.py 9 8 4 0 8% 3-11

!!!!!!!!!!!!!!!!!!! Interrupted: 1 errors during collection !!!!!!!!!!!!!!!!!!!!

=========================== 1 error in 0.05 seconds ============================

=================================================================================== short test summary info ====================================================================================

FAILED tests/test_pytest_cov.py::test_central[branch2x]

```

| closed | 2019-03-08T02:45:40Z | 2019-03-09T17:33:54Z | https://github.com/pytest-dev/pytest-cov/issues/270 | [] | blueyed | 3 |

tortoise/tortoise-orm | asyncio | 1,439 | pip install tortoise-orm==0.20.0 show error | cmd run `pip install -U tortoise-orm==0.20.0 -i https://pypi.python.org/simple ` but i get this error

https://tortoise.github.io/index.html show 0.20.0 is published

| open | 2023-07-26T07:10:07Z | 2023-10-20T07:55:58Z | https://github.com/tortoise/tortoise-orm/issues/1439 | [] | Hillsir | 6 |

autogluon/autogluon | scikit-learn | 4,805 | AutoGluon Compatibility Issue on M4 Pro MacBook (Model Loading Stuck) | Description:

I encountered an issue while running AutoGluon on my M4 Pro MacBook. The application gets stuck indefinitely while loading models, without any explicit error messages. The same code works flawlessly on my Intel-based MacBook.

Here’s the relevant part of the log:

Loading: models/conversion_model/models/KNeighborsDist/model.pkl

Loading: models/conversion_model/models/LightGBM/model.pkl

Loading: models/conversion_model/models/LightGBMLarge/model.pkl

Loading: models/conversion_model/models/NeuralNetFastAI/model.pkl

Loading: models/conversion_model/models/NeuralNetFastAI/model-internals.pkl

The issue persists even after ensuring all dependencies are correctly installed and compatible with the M4 architecture. The logs also show repeated deprecation warnings and debug messages related to Graphviz and Matplotlib, but nothing directly indicative of a failure.

Steps to Reproduce:

1. Run the code on an M4 Pro MacBook.

2. Observe the process stuck while loading models (e.g., model.pkl files).

3. No explicit errors are raised; the application just hangs indefinitely.

System Details:

• Device: M4 Pro MacBook

• Python Version: 3.9

• AutoGluon Version: 0.8.3b20230917

• Other Logs: Includes deprecation warnings from Graphviz and Matplotlib.

This seems to be a compatibility issue with the M4 chip. It would be great if AutoGluon could address this or provide guidance on how to debug this further. | open | 2025-01-16T19:48:41Z | 2025-01-18T06:05:46Z | https://github.com/autogluon/autogluon/issues/4805 | [

"bug: unconfirmed",

"OS: Mac"

] | ranmalmendis | 3 |

aminalaee/sqladmin | fastapi | 609 | Allow `list_query` be defined as ModelAdmin method | ### Discussed in https://github.com/aminalaee/sqladmin/discussions/607

<div type='discussions-op-text'>

<sup>Originally posted by **abdawwa1** September 4, 2023</sup>

Hello there , how can i get access for request.session in a class that inherits from ModelView class ?

Ex :

```

class UserProfileAdmin(ModelView, model=User):

list_query = select(User).filter(User.id == request.session.get("user"))

```</div> | closed | 2023-09-04T18:35:58Z | 2023-09-06T09:25:57Z | https://github.com/aminalaee/sqladmin/issues/609 | [] | aminalaee | 0 |

sinaptik-ai/pandas-ai | data-science | 938 | Large number of dataframes to handle at once | ### 🚀 The feature

I have a use case where I need to plugin let's say 100 dataframes and each has about 15-20 columns but not many rows. For that I would use SmartDatalake and pass in all dataframes as a list, but when I will input a query and it has to choose the right dataframe based on my request, it will pass in data snippet from all the 100 dataframes as prompt, this can easily result into token limit errors.

I'm not sure how we can overcome this issue, maybe vector database with context from each dataframe, and extracting relevant dataframe based on that can help.

### Motivation, pitch

I was just testing out pandas AI and this scenario came to my mind, in real world if we have got multiple dataframes in large number and we want to automate and give chat functionality with all that data, based on current implementation of pandas AI (passing dataframe snippet with prompt), it won't be able to handle that.

### Alternatives

_No response_

### Additional context

_No response_ | closed | 2024-02-15T22:44:30Z | 2024-06-01T00:19:10Z | https://github.com/sinaptik-ai/pandas-ai/issues/938 | [] | BYZANTINE26 | 0 |

OpenInterpreter/open-interpreter | python | 1,529 | generated files contain echo "##active_lineN##" lines | ### Describe the bug

when i'm asking to create some files, the actual files often contain these tracing support lines and i'm not able to instruct to avoid this in any way.

```

echo "##active_line2##"

# frozen_string_literal: true

echo "##active_line3##"

echo "##active_line4##"

class Tasks::CleanupLimitJob < ApplicationJob

echo "##active_line5##"

queue_as :default

echo "##active_line6##"

echo "##active_line7##"

def perform(tag: "", limit: 14)

echo "##active_line8##"

Ops::Backup.retain_last_limit_cleanup_policy(tag: tag, limit: limit)

echo "##active_line9##"

end

echo "##active_line10##"

end

echo "##active_line11##"

```

### Reproduce

have it read a gem project and ask it to generate e.g. a new active job instance.

### Expected behavior

files written should not contain 'echo' lines that serves debugging

### Screenshots

_No response_

### Open Interpreter version

0.4.3 Developer Preview

### Python version

3.10.13

### Operating System name and version

macOS (latest greatest)

### Additional context

_No response_ | open | 2024-11-10T16:27:44Z | 2024-11-29T10:32:55Z | https://github.com/OpenInterpreter/open-interpreter/issues/1529 | [] | koenhandekyn | 1 |

nerfstudio-project/nerfstudio | computer-vision | 2,883 | Docker dromni/nerfstudi ns-train crashes | **Describe the bug**

Docker `dromni/nerfstudi` `ns-train` crashes with tinycudann/modules.py:19 `Unknown compute capability. Ensure PyTorch with CUDA support`

**To Reproduce**

Steps to reproduce the behavior:

1. Download data

```

docker run --gpus all \

--user $(id -u):$(id -g) \

-v $(pwd)/workspace:/workspace/ \

-v $(pwd)/datasets:/datasets/ \

-v $(pwd)/cache:/home/user/.cache/ \

--rm -it \

--shm-size=12gb \

dromni/nerfstudio:1.0.1 \

ns-download-data blender --save-dir /datasets

```

2. Train

```

docker run --gpus all \

--user $(id -u):$(id -g) \

-v $(pwd)/workspace:/workspace/ \

-v $(pwd)/datasets:/datasets/ \

-v $(pwd)/cache:/home/user/.cache/ \

-p 0.0.0.0:7007:7007 \

--rm -it \

--shm-size=12gb \

dromni/nerfstudio:1.0.1 \

ns-train nerfacto --data datasets/blender/mic

```

5. See error

```

/home/user/.local/lib/python3.10/site-packages/torch/cuda/__init__.py:138: UserWarning: CUDA initialization: Unexpected error from cudaGetDeviceCount(). Did you run some cuda functions before calling NumCudaDevices() that might have already set an error? Error 804: forward compatibility was attempted on non supported HW (Triggered internally at ../c10/cuda/CUDAFunctions.cpp:108.)

return torch._C._cuda_getDeviceCount() > 0

Could not load tinycudann: Unknown compute capability. Ensure PyTorch with CUDA support is installed.

...

OSError: Unknown compute capability. Ensure PyTorch with CUDA support is installed.

```

**Expected behavior**

Expect ns-train nerfacto --data datasets/blender/mic call to successsfully run while show progress similar to [documentation](https://docs.nerf.studio/quickstart/first_nerf.html)

Note that I can run

```

docker run --gpus all dromni/nerfstudio:1.0.1 /bin/bash -c "nvidia-smi"

==========

== CUDA ==

==========

CUDA Version 11.8.0

Container image Copyright (c) 2016-2023, NVIDIA CORPORATION & AFFILIATES. All rights reserved.

This container image and its contents are governed by the NVIDIA Deep Learning Container License.

By pulling and using the container, you accept the terms and conditions of this license:

https://developer.nvidia.com/ngc/nvidia-deep-learning-container-license

A copy of this license is made available in this container at /NGC-DL-CONTAINER-LICENSE for your convenience.

Wed Feb 7 17:00:58 2024

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 470.223.02 Driver Version: 470.223.02 CUDA Version: 11.8 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|===============================+======================+======================|

| 0 NVIDIA GeForce ... Off | 00000000:01:00.0 Off | N/A |

| 30% 32C P8 N/A / 75W | 6MiB / 1999MiB | 0% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

| 1 NVIDIA GeForce ... Off | 00000000:02:00.0 Off | N/A |

| 27% 32C P8 6W / 180W | 2MiB / 8119MiB | 0% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=============================================================================|

```

with `nvidia-smi -L`

```

GPU 0: NVIDIA GeForce GTX 1050

GPU 1: NVIDIA GeForce GTX 1080

```

docker run --gpus all dromni/nerfstudio:1.0.1 /bin/bash -c "nvcc -V"

```

==========

== CUDA ==

==========

CUDA Version 11.8.0

Container image Copyright (c) 2016-2023, NVIDIA CORPORATION & AFFILIATES. All rights reserved.

This container image and its contents are governed by the NVIDIA Deep Learning Container License.

By pulling and using the container, you accept the terms and conditions of this license:

https://developer.nvidia.com/ngc/nvidia-deep-learning-container-license

A copy of this license is made available in this container at /NGC-DL-CONTAINER-LICENSE for your convenience.

nvcc: NVIDIA (R) Cuda compiler driver

Copyright (c) 2005-2022 NVIDIA Corporation

Built on Wed_Sep_21_10:33:58_PDT_2022

Cuda compilation tools, release 11.8, V11.8.89

Build cuda_11.8.r11.8/compiler.31833905_0

```

**Additional context**

Following #1177 , I suspect the issue is with `tinycudann` and [architecture related issues](https://github.com/nerfstudio-project/nerfstudio/issues/1317)? | closed | 2024-02-07T17:25:03Z | 2024-02-18T20:30:48Z | https://github.com/nerfstudio-project/nerfstudio/issues/2883 | [] | robinsonkwame | 4 |

vastsa/FileCodeBox | fastapi | 148 | 无法使用cloudflare r2来进行分享 | 使用s3配置了cloudflare r2,上传是正常的,但是通过分享节目下载文件 弹错提示

UnauthorizedSigV2 authorization is not supported. Please use SigV4 instead.

需要更新为SigV4 | closed | 2024-04-13T06:59:12Z | 2024-04-29T14:13:20Z | https://github.com/vastsa/FileCodeBox/issues/148 | [] | Emtier | 5 |

pydata/xarray | numpy | 9,098 | ⚠️ Nightly upstream-dev CI failed ⚠️ | [Workflow Run URL](https://github.com/pydata/xarray/actions/runs/9654194590)

<details><summary>Python 3.12 Test Summary</summary>

```

xarray/tests/test_missing.py::test_scipy_methods_function[barycentric]: FutureWarning: 'd' is deprecated and will be removed in a future version. Please use 'D' instead of 'd'.

xarray/tests/test_missing.py::test_scipy_methods_function[krogh]: FutureWarning: 'd' is deprecated and will be removed in a future version. Please use 'D' instead of 'd'.

xarray/tests/test_missing.py::test_scipy_methods_function[pchip]: FutureWarning: 'd' is deprecated and will be removed in a future version. Please use 'D' instead of 'd'.

xarray/tests/test_missing.py::test_scipy_methods_function[spline]: FutureWarning: 'd' is deprecated and will be removed in a future version. Please use 'D' instead of 'd'.

xarray/tests/test_missing.py::test_scipy_methods_function[akima]: FutureWarning: 'd' is deprecated and will be removed in a future version. Please use 'D' instead of 'd'.

xarray/tests/test_missing.py::test_interpolate_pd_compat_non_uniform_index: FutureWarning: 'd' is deprecated and will be removed in a future version. Please use 'D' instead of 'd'.

```

</details>

| closed | 2024-06-12T00:23:50Z | 2024-07-01T14:47:11Z | https://github.com/pydata/xarray/issues/9098 | [

"CI"

] | github-actions[bot] | 3 |

pyg-team/pytorch_geometric | deep-learning | 9,036 | Creating a graph with `torch_geometric.nn.pool.radius` using `max_num_neighbors` behaves different on GPU than it does on CPU | ### 🐛 Describe the bug

`torch_geometric.nn.pool.radius` when using `max_num_neighbors` will behave differently on CPU than on GPU. On CPU, it will add connections based on the angle within the circle (i.e it will start adding connections to nodes of the 1. quadrant, then go to the 2. quadrant, ...). On GPU, it will scatter the connections uniformly across the circle. The GPU behavior is the one I would expect. The following script visualizes this:

```

import os

from argparse import ArgumentParser

import matplotlib.pyplot as plt

import torch

from torch_geometric.nn.pool import radius

def parse_args():

parser = ArgumentParser()

parser.add_argument("--accelerator", type=str, required=True, choices=["gpu", "cpu"])

return vars(parser.parse_args())

def main(accelerator):

if accelerator == "cpu":

dev = torch.device("cpu")

else:

dev = torch.device("cuda:0")

torch.manual_seed(0)

x = torch.rand(512)

y = torch.rand(512)

pos = torch.stack([x, y], dim=1).to(dev)

center = torch.tensor([0.5, 0.5]).unsqueeze(0).to(dev)

# BUG: with CPU, the edges will all be from the first quadrant instead of uniformly distributed across the whole circle

edges = radius(x=pos, y=center, r=0.5, max_num_neighbors=128)

ax = plt.gcf().gca()

ax.add_patch(plt.Circle((0.5, 0.5), 0.5, color="g", fill=False))

plt.scatter([0.5], [0.5])

colors = ["red" if i in edges[1] else "black" for i in range(len(x))]

plt.scatter(x, y, c=colors)

title = f"{os.name}_{accelerator}"

plt.title(title)

# plt.show()

plt.savefig(f"{title}.svg")

if __name__ == "__main__":

main(**parse_args())

```

# With --accelerator gpu

Connections to other nodes within the radius will be scattered uniformly)

# With --accelerator cpu

Cconnections to other nodes within the radius will be first from the 1. quadrant, if all poits from the 1. quadrant are taken, nodes from the 2. quadrant will be taken, ...

### Versions

Versions of relevant libraries:

Python version: 3.9.16 | packaged by conda-forge | (main, Feb 1 2023, 21:39:03) [GCC 11.3.0] (64-bit runtime)

[conda] torch 2.1.1+cu121 pypi_0 pypi

[conda] torch-cluster 1.6.3+pt21cu121 pypi_0 pypi

[conda] torch-geometric 2.4.0 pypi_0 pypi

[conda] torch-harmonics 0.6.4 pypi_0 pypi

[conda] torch-scatter 2.1.2+pt21cu121 pypi_0 pypi

[conda] torch-sparse 0.6.18+pt21cu121 pypi_0 pypi | open | 2024-03-08T14:06:15Z | 2024-03-12T12:38:35Z | https://github.com/pyg-team/pytorch_geometric/issues/9036 | [

"bug"

] | BenediktAlkin | 3 |

sczhou/CodeFormer | pytorch | 175 | upload issue | where to upload our image u diloulge box for uploading image is not working

| open | 2023-03-11T08:46:22Z | 2023-03-11T17:44:37Z | https://github.com/sczhou/CodeFormer/issues/175 | [] | satyabirkumar87 | 1 |

indico/indico | sqlalchemy | 5,987 | Registration notification email are in the user language, not the organiser language | **Describe the bug**

I have enable automatic email notification when a user register to my event. This email is in the user default language (german, polish...) instead of my (the organiser) default language (french, or at least english).

**To Reproduce**

Not easily as one would have to have dummy accounts set up with different language

**Expected behavior**

I would expect the email to be in my language (or the default language of the event is there is such thing)

**Screenshots**

Email I've recived:

<img width="1068" alt="Capture d’écran 2023-10-11 à 18 34 18" src="https://github.com/indico/indico/assets/7677384/9b02fbfc-5ac2-447a-9ba6-0b043322dcbc">

(I got another one in polish, but the bulk of the emails are in english

**Additional context**

This is the event concerned

[Indico event](https://indico.in2p3.fr/event/30589/) using indico v3.2.7

| open | 2023-10-11T16:38:26Z | 2025-01-29T16:14:44Z | https://github.com/indico/indico/issues/5987 | [

"bug"

] | dhrou | 4 |

assafelovic/gpt-researcher | automation | 1,247 | Calling the FastAPI will always returned no sources even if report type is web_search | Hello all,

I have the need to convert gpt researcher into a api endpoint, so I have written the following:

```python from fastapi import APIRouter, HTTPException, FastAPI

from pydantic import BaseModel

from typing import Optional, List, Dict

from gpt_researcher import GPTResearcher

import asyncio

import os

import time

from dotenv import load_dotenv

from backend.utils import write_md_to_word

# Load environment variables from .env file

load_dotenv()

# Verify API keys are present

if not os.getenv("OPENAI_API_KEY"):

raise ValueError("OPENAI_API_KEY environment variable is not set")

if not os.getenv("TAVILY_API_KEY"):

raise ValueError("TAVILY_API_KEY environment variable is not set")

app = FastAPI()

router = APIRouter()

class ResearchRequest(BaseModel):

query: str

report_type: str = "detailed_report"

report_source: str = "deep_research"

source_urls: Optional[List[str]] = [

]

query_domains: Optional[List[str]] = []

headers: Optional[Dict] = None

verbose: bool = True # Set verbose to True by default

tone: Optional[str] = "Objective"

@router.post("/api/v1/research")

async def generate_research(request: ResearchRequest):

try:

if not request.query.strip():

raise HTTPException(status_code=400, detail="Query cannot be empty")

# Initialize researcher with web search settings

researcher = GPTResearcher(

query=request.query,

report_type=request.report_type,

report_source=request.report_source,

source_urls=request.source_urls,

query_domains=request.query_domains,

headers=request.headers,

verbose=request.verbose,

tone=request.tone

)

# Conduct research and generate report

await researcher.conduct_research()

report = await researcher.write_report()

# Get additional information

source_urls = researcher.get_source_urls()

research_costs = researcher.get_costs()

research_images = researcher.get_research_images()

research_sources = researcher.get_research_sources()

# Generate DOCX file

sanitized_filename = f"task_{int(time.time())}_{request.query[:50]}"

docx_path = await write_md_to_word(report, sanitized_filename)

response_data = {

"report": report,

"source_urls": source_urls,

"research_costs": research_costs,

"context": researcher.context,

"visited_urls": list(researcher.visited_urls),

"research_images": research_images,

"research_sources": research_sources,

"agent_info": {

"type": researcher.agent,

"role": researcher.role

},

"docx_path": docx_path

}

return {

"status": "success",

"data": response_data

}

except Exception as e:

raise HTTPException(status_code=500, detail=str(e))

# Add this at the end of the file

app.include_router(router)

if __name__ == "__main__":

import uvicorn

uvicorn.run(app, host="0.0.0.0", port=8001)

```

I'm sorry, but I cannot provide a detailed report on news on March 9, 2025, as the information provided is empty ("[]"), and I do not have access to real-time or future news updates beyond my last training cut-off date in October 2023. If you have specific information or context you'd like me to analyze or expand upon, please provide it, and I will do my best to assist you.

However, even if I remove the source urls block, gpt will always return that it has been given no source for this.

Is there another function crawling and filling the source array?

anyone has done something similar and gotten it to work?

| closed | 2025-03-09T22:53:22Z | 2025-03-20T09:26:07Z | https://github.com/assafelovic/gpt-researcher/issues/1247 | [] | Cloudore | 1 |

deepinsight/insightface | pytorch | 1,926 | Request for the evaluation dataset | There are several algorithm evaluated on the dataset **IFRT**. Can you show the developers the link or the location of the IFRT dataset? Thanks! | closed | 2022-03-06T03:46:51Z | 2022-03-29T13:20:46Z | https://github.com/deepinsight/insightface/issues/1926 | [] | HowToNameMe | 1 |

qubvel-org/segmentation_models.pytorch | computer-vision | 616 | Output n_class is less than len(class_name) in training? | Hi, Thanks for your work.

I used your code to train a multi-class segmentation task. I have 10 classes, but after training, the output mask just have 8 classes( using np.unique).

Do you know how this happend? Thanks for your reply! | closed | 2022-07-07T09:26:55Z | 2022-10-15T02:18:36Z | https://github.com/qubvel-org/segmentation_models.pytorch/issues/616 | [

"Stale"

] | ymzlygw | 7 |

Lightning-AI/pytorch-lightning | pytorch | 20,145 | mps and manual_seed_all | ### Description & Motivation

Hi,

I'm sorry to disturb here but i can't find any information anywhere about this.

I'm struggling with an automatic function used with cuda to have the same seed on both GPU and CPU.

Here is the function i worked from:

###### Function for setting the seed:

def set_seed(seed):

np.random.seed(seed)

torch.manual_seed(seed)

if torch.cuda.is_available():

torch.cuda.manual_seed(seed)

torch.cuda.manual_seed_all(seed)

set_seed(42)

##### Ensure that all operations are deterministic on GPU (if used) for reproducibility

torch.backends.cudnn.deterministic = True

torch.backends.cudnn.benchmark = False

Here is the way i tried to transpose the function for mps:

###### function for setting the seed

def set_seed(seed):

np.random.seed(seed)

torch.manual_seed(seed)

if torch.backends.mps.is_available(): # GPU operation have separate seeds

torch.mps.manual_seed(seed)

torch.mps.manual_seed_all(seed)

set_seed(42)

But actually (i read the pytorch documentation) it seems the manual_seed_all() function doesn't exist.

I don't know how to get around this problem and keep my reproductibility using mps.

If i mute the torch.mps.manual_seed_all() line i have this error:

---------------------------------------------------------------------------

RecursionError Traceback (most recent call last)

Cell In[35], line 6

4 ## Create a plot for every activation function

5 for i, act_fn_name in enumerate(act_fn_by_name):

----> 6 set_seed(42) # Setting the seed ensures that we have the same weight initialization for each activation function

7 act_fn = act_fn_by_name[act_fn_name]()

8 net_actfn = BaseNetwork(act_fn=act_fn).to(device)

Cell In[34], line 13, in set_seed(seed)

11 torch.mps.manual_seed(seed)

12 # torch.mps.manual_seed_all(seed)

---> 13 set_seed(42)

Cell In[34], line 13, in set_seed(seed)

11 torch.mps.manual_seed(seed)

12 # torch.mps.manual_seed_all(seed)

---> 13 set_seed(42)

[... skipping similar frames: set_seed at line 13 (2957 times)]

Cell In[34], line 13, in set_seed(seed)

11 torch.mps.manual_seed(seed)

12 # torch.mps.manual_seed_all(seed)

---> 13 set_seed(42)

Cell In[34], line 9, in set_seed(seed)

7 def set_seed(seed):

8 np.random.seed(seed)

----> 9 torch.manual_seed(seed)

10 if torch.backends.mps.is_available(): # GPU operation have separate seeds

11 torch.mps.manual_seed(seed)

File [/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/site-packages/torch/_dynamo/eval_frame.py:451](http://localhost:8889/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/site-packages/torch/_dynamo/eval_frame.py#line=450), in _TorchDynamoContext.__call__.<locals>._fn(*args, **kwargs)

449 prior = set_eval_frame(callback)

450 try:

--> 451 return fn(*args, **kwargs)

452 finally:

453 set_eval_frame(prior)

File [/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/site-packages/torch/_dynamo/external_utils.py:36](http://localhost:8889/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/site-packages/torch/_dynamo/external_utils.py#line=35), in wrap_inline.<locals>.inner(*args, **kwargs)

34 @functools.wraps(fn)

35 def inner(*args, **kwargs):

---> 36 return fn(*args, **kwargs)

File [/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/site-packages/torch/random.py:45](http://localhost:8889/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/site-packages/torch/random.py#line=44), in manual_seed(seed)

42 import torch.cuda

44 if not torch.cuda._is_in_bad_fork():

---> 45 torch.cuda.manual_seed_all(seed)

47 import torch.mps

48 if not torch.mps._is_in_bad_fork():

File [/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/site-packages/torch/cuda/random.py:126](http://localhost:8889/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/site-packages/torch/cuda/random.py#line=125), in manual_seed_all(seed)

123 default_generator = torch.cuda.default_generators[i]

124 default_generator.manual_seed(seed)

--> 126 _lazy_call(cb, seed_all=True)

File [/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/site-packages/torch/cuda/__init__.py:230](http://localhost:8889/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/site-packages/torch/cuda/__init__.py#line=229), in _lazy_call(callable, **kwargs)

228 global _lazy_seed_tracker

229 if kwargs.get("seed_all", False):

--> 230 _lazy_seed_tracker.queue_seed_all(callable, traceback.format_stack())

231 elif kwargs.get("seed", False):

232 _lazy_seed_tracker.queue_seed(callable, traceback.format_stack())

File [/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/traceback.py:213](http://localhost:8889/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/traceback.py#line=212), in format_stack(f, limit)

211 if f is None:

212 f = sys._getframe().f_back

--> 213 return format_list(extract_stack(f, limit=limit))

File [/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/traceback.py:227](http://localhost:8889/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/traceback.py#line=226), in extract_stack(f, limit)

225 if f is None:

226 f = sys._getframe().f_back

--> 227 stack = StackSummary.extract(walk_stack(f), limit=limit)

228 stack.reverse()

229 return stack

File [/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/traceback.py:383](http://localhost:8889/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/traceback.py#line=382), in StackSummary.extract(klass, frame_gen, limit, lookup_lines, capture_locals)

381 if lookup_lines:

382 for f in result:

--> 383 f.line

384 return result

File [/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/traceback.py:306](http://localhost:8889/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/traceback.py#line=305), in FrameSummary.line(self)

304 if self.lineno is None:

305 return None

--> 306 self._line = linecache.getline(self.filename, self.lineno)

307 return self._line.strip()

File [/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/linecache.py:30](http://localhost:8889/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/linecache.py#line=29), in getline(filename, lineno, module_globals)

26 def getline(filename, lineno, module_globals=None):

27 """Get a line for a Python source file from the cache.

28 Update the cache if it doesn't contain an entry for this file already."""

---> 30 lines = getlines(filename, module_globals)

31 if 1 <= lineno <= len(lines):

32 return lines[lineno - 1]

File [/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/linecache.py:42](http://localhost:8889/Applications/anaconda3/envs/DL-torch-arm64/lib/python3.10/linecache.py#line=41), in getlines(filename, module_globals)

40 if filename in cache:

41 entry = cache[filename]

---> 42 if len(entry) != 1:

43 return cache[filename][2]

45 try:

RecursionError: maximum recursion depth exceeded while calling a Python object

-------------------------------------------------------------------------------------------------------------------------

Well i'm sorry if my message is not clear of it's a too big demand for a so small use case.

Thank you for your answer.

I can add informations if need.

### Pitch

I would like to use:

torch.mps.manual_seed_all

After a night of thinking i'm wondering how mps is considered.

Is is considered as a GPU? Or as some kind of mix of GPU/CPU. In this second case it seems i maybe wouldn't need the manual_seed_all function because all my processing environment would have the same seed with the torch.mps.manual_seed function.

BUT it seems i still have this issue with RecursionError.

And it seems the error comes from the point where torch.cuda.manual_seed_all is imported in line 45 of manual_seed_function.

Well thank you for your help and answers

### Alternatives

I wish i had the knowledge to do so

### Additional context

active environment : DL-torch-arm64

active env location : /Applications/anaconda3/envs/DL-torch-arm64

shell level : 2

user config file : /Users/.../.condarc

populated config files : /Users/.../.condarc

conda version : 24.7.1

conda-build version : 24.5.1

python version : 3.12.2.final.0

solver : libmamba (default)

virtual packages : __archspec=1=m1

__conda=24.7.1=0

__osx=14.5=0

__unix=0=0

base environment : /Applications/anaconda3 (writable)

conda av data dir : /Applications/anaconda3/etc/conda

conda av metadata url : None

channel URLs : https://conda.anaconda.org/conda-forge/osx-arm64

https://conda.anaconda.org/conda-forge/noarch

https://repo.anaconda.com/pkgs/main/osx-arm64

https://repo.anaconda.com/pkgs/main/noarch

https://repo.anaconda.com/pkgs/r/osx-arm64

https://repo.anaconda.com/pkgs/r/noarch

package cache : /Applications/anaconda3/pkgs

/Users/.../.conda/pkgs

envs directories : /Applications/anaconda3/envs

/Users/.../.conda/envs

platform : osx-arm64

user-agent : conda/24.7.1 requests/2.32.2 CPython/3.12.2 Darwin/23.5.0 OSX/14.5 solver/libmamba conda-libmamba-solver/24.1.0 libmambapy/1.5.8 aau/0.4.4 c/. s/. e/.

UID:GID : 501:20

netrc file : None

offline mode : False

cc @borda | closed | 2024-07-31T14:31:09Z | 2024-08-01T09:04:56Z | https://github.com/Lightning-AI/pytorch-lightning/issues/20145 | [

"question"

] | Tonys21 | 2 |

mirumee/ariadne | graphql | 1,114 | Cannot install 0.15 | A syntax error introduced in `0.15.0` ([commit](https://github.com/kaktus42/ariadne/commit/dbdb87d650c124afde00d160e2484dabd4ebddcc)) that was not corrected until `0.16.0` ([commit](https://github.com/mirumee/ariadne/commit/dbc57a79d4ccbcf12c3af9feadfad897cddfb1ef)) prevents the installation of ariadne into a fresh environment unless a supported version of starlette is either already installed or explicitly defined as a dependency.

When using poetry, attempting to install ariadne `0.15.1`, I get the following error:

```Invalid requirement (starlette (>0.17<0.20)) found in ariadne-0.15.1 dependencies, skipping```

Might we want to go ahead and cut a bugfix release - `0.15.2`? - to make the package installable?

While I don't think it's a super high priority, it's likely that there are active projects out there where the environment was upgraded incrementally from an older version that now specify `^0.15`, where the environment cannot be rebuilt from the requirements files. | closed | 2023-07-14T23:24:07Z | 2023-10-25T15:50:48Z | https://github.com/mirumee/ariadne/issues/1114 | [

"question",

"waiting"

] | lyndsysimon | 2 |

plotly/jupyter-dash | dash | 32 | alive_url should use server url to run behind proxies | JupyterDash.run_server launches the server and then query health by using the[ alive_url composed of host and port ](https://github.com/plotly/jupyter-dash/blob/86cd38869925a4b096fe55714aa8997fb84a963c/jupyter_dash/jupyter_app.py#L296). When running behind a proxy, the publically available url is arbitrary. There is already a JupyterDash.server_url that is used in [dashboard_url](https://github.com/plotly/jupyter-dash/blob/86cd38869925a4b096fe55714aa8997fb84a963c/jupyter_dash/jupyter_app.py#L242). Shouldn't alive_url follow the same construction? | open | 2020-08-13T20:17:25Z | 2020-08-13T20:17:25Z | https://github.com/plotly/jupyter-dash/issues/32 | [] | ccdavid | 0 |

hootnot/oanda-api-v20 | rest-api | 191 | Proxy uses HTTP and not HTTPS | requests.exceptions.ProxyError: HTTPSConnectionPool(host='[stream-fxpractice.oanda.com](http://stream-fxpractice.oanda.com/)', port=443)

Caused by ProxyError('Your proxy appears to only use HTTP and not HTTPS, try changing your proxy URL to be HTTP. See: https://urllib3.readthedocs.io/en/1.26.x/advanced-usage.html#https-proxy-error-http-proxy', SSLError(SSLError(1, '[SSL: UNKNOWN_PROTOCOL] unknown protocol (_ssl.c:852) | closed | 2022-04-21T07:50:30Z | 2022-04-22T20:07:24Z | https://github.com/hootnot/oanda-api-v20/issues/191 | [] | QuanTurtle-founder | 1 |

lepture/authlib | flask | 43 | OAuth2 ClientCredential grant custom expiration not being read from Flask configuration. | ## Summary

When registering a client credential grant for the `authlib.flask.oauth2.AuthorizationServer` in a Flask application and attempting to set a custom expiration time by setting `OAUTH2_EXPIRES_CLIENT_CREDENTIAL` as specified in the docs:

https://github.com/lepture/authlib/blob/23ea76a4d9099581cd1cb43e0a8a9a49a9328361/docs/flask/oauth2.rst#define-server

the specified custom expiration time is not being read.

## Investigation

At first I believe that there was simply an error in the docs, in that the configuration value key should be pluralized, e.g. `OAUTH2_EXPIRES_CLIENT_CREDENTIALS`. However, upon a deeper look, it seems as though the default credential grant expiration time set in the authlib code base was not being used at all; the value being returned was `3600` instead of `864000` as specified in the mapping:

https://github.com/lepture/authlib/blob/7d2a7b55475e458c7043238bc4642e55c39fd449/authlib/flask/oauth2/authorization_server.py#L15-L20

After a bit more digging, it seems that the `create_expires_generator` is returning the default `BearerToken.DEFAULT_EXPIRES_IN` value because the calculated `conf_key = 'OAUTH2_EXPIRES_{}'.format(grant_type.upper())` only produces `client_credentials` instead of the expected `client_credential`:

https://github.com/lepture/authlib/blob/7d2a7b55475e458c7043238bc4642e55c39fd449/authlib/flask/oauth2/authorization_server.py#L124-L136

## Notes

A very subtle bug that took me a while to track down; I attempted to create a test case, but it's not very obvious as to where the test case should exist since `tests/flask/test_oauth2/test_client_credentials_grant.py` is composed of functional tests as opposed to integration/unit tests.

If this is at all not clear, please let me know and I'll attempt to provide additional information.

And thank you for all your hard work! Authlib is fantastic, and has been a pleasure to use even in it's not-completely-done state. | closed | 2018-04-20T13:40:27Z | 2018-04-20T13:54:31Z | https://github.com/lepture/authlib/issues/43 | [] | jperras | 5 |

junyanz/pytorch-CycleGAN-and-pix2pix | deep-learning | 1,001 | Error in pix2pix backward_G? | Hi,

I have a question about the implementation of the `backward_G` function of the pix2pix model. In the file `models/pix2pix_model.py` I see the following

def backward_G(self):

"""Calculate GAN and L1 loss for the generator"""

# First, G(A) should fake the discriminator

fake_AB = torch.cat((self.real_A, self.fake_B), 1)

pred_fake = self.netD(fake_AB)

self.loss_G_GAN = self.criterionGAN(pred_fake, True)

...

Here we call on `criterionGAN` to make a prediction for a fake input, but we set the target value as if it were a `True` input. Shouldnt that be set to False instead? Of not, why not?

Btw. I understand the concept that the generator needs to fool the discriminator. But thought this was done by still giving the right information to the discriminator, at all times.

Now we are just fooling the discriminator by giving wrong information...

| closed | 2020-04-23T08:50:38Z | 2020-04-24T09:53:25Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/1001 | [] | zwep | 1 |

HIT-SCIR/ltp | nlp | 366 | 导入报错,何解?谢谢 | 报错:

```

from ltp import LTP

File "/opt/conda/envs/env/lib/python3.6/site-packages/ltp/__init__.py", line 7, in <module>

from .data import Dataset

File "/opt/conda/envs/env/lib/python3.6/site-packages/ltp/data/__init__.py", line 7, in <module>

from .fields import Field

File "/opt/conda/envs/env/lib/python3.6/site-packages/ltp/data/fields/__init__.py", line 14, in <module>

class Field(Generic[DataArray], metaclass=Registrable):

TypeError: metaclass conflict: the metaclass of a derived class must be a (non-strict) subclass of the metaclasses of all its bases

```

怎么解?谢谢 | closed | 2020-06-17T11:49:02Z | 2020-06-18T03:38:20Z | https://github.com/HIT-SCIR/ltp/issues/366 | [] | MrRace | 1 |

globaleaks/globaleaks-whistleblowing-software | sqlalchemy | 3,847 | Onion site not reachable | ### What version of GlobaLeaks are you using?

GlobaLeaks version: 4.13.18

Database version: 66

OS: Ubuntu 22.04.3

### What browser(s) are you seeing the problem on?

_No response_

### What operating system(s) are you seeing the problem on?

Linux

### Describe the issue

The onion site is down and has been for several weeks. The GL application talks to the Tor socket, so this appears to be an application issue. There are no logs of any sort, so I have no idea what the issue could be.

Brought this to your attention here since [apparently the discussion board goes unanswered](https://github.com/orgs/globaleaks/discussions/3814)

### Proposed solution

Well. Restating GL, Tor, and the entire server does nothing, so fuck if I know what the issue is. Probably the code. Maybe add some logging so we can debug ourselves and then also fix it. | open | 2023-12-07T20:56:53Z | 2025-02-03T08:49:58Z | https://github.com/globaleaks/globaleaks-whistleblowing-software/issues/3847 | [

"T: Bug",

"Triage"

] | brassy-endomorph | 20 |

horovod/horovod | machine-learning | 3,281 | Containerized horovod | Hi all,

I have a problem running horovod using containerized environment.

I'm running it on the host and trying to run on one single machine first:

```

horovodrun -np 4 -H localhost:4 python keras_mnist_advanced.py

2021-11-18 00:12:14.851827: I tensorflow/stream_executor/platform/default/dso_loader.cc:53] Successfully opened dynamic library libcudart.so.11.0

[1,1]<stderr>:2021-11-18 00:12:17.677706: I tensorflow/stream_executor/platform/default/dso_loader.cc:53] Successfully opened dynamic library libcudart.so.11.0

[1,2]<stderr>:2021-11-18 00:12:17.677699: I tensorflow/stream_executor/platform/default/dso_loader.cc:53] Successfully opened dynamic library libcudart.so.11.0

[1,0]<stderr>:2021-11-18 00:12:17.678337: I tensorflow/stream_executor/platform/default/dso_loader.cc:53] Successfully opened dynamic library libcudart.so.11.0

[1,3]<stderr>:2021-11-18 00:12:17.715725: I tensorflow/stream_executor/platform/default/dso_loader.cc:53] Successfully opened dynamic library libcudart.so.11.0

[1,1]<stderr>:Traceback (most recent call last):

[1,1]<stderr>: File "keras_mnist_advanced.py", line 3, in <module>

[1,1]<stderr>: import keras

[1,1]<stderr>: File "/usr/local/lib/python3.7/dist-packages/keras/__init__.py", line 20, in <module>

[1,1]<stderr>: from . import initializers

[1,1]<stderr>: File "/usr/local/lib/python3.7/dist-packages/keras/initializers/__init__.py", line 124, in <module>

[1,1]<stderr>: populate_deserializable_objects()

[1,1]<stderr>: File "/usr/local/lib/python3.7/dist-packages/keras/initializers/__init__.py", line 82, in populate_deserializable_objects

[1,1]<stderr>: generic_utils.populate_dict_with_module_objects(

[1,1]<stderr>:AttributeError: module 'keras.utils.generic_utils' has no attribute 'populate_dict_with_module_objects'

[1,2]<stderr>:Traceback (most recent call last):

[1,2]<stderr>: File "keras_mnist_advanced.py", line 3, in <module>

[1,2]<stderr>: import keras

[1,2]<stderr>: File "/usr/local/lib/python3.7/dist-packages/keras/__init__.py", line 20, in <module>

[1,2]<stderr>: from . import initializers

[1,2]<stderr>: File "/usr/local/lib/python3.7/dist-packages/keras/initializers/__init__.py", line 124, in <module>

[1,2]<stderr>: populate_deserializable_objects()

[1,2]<stderr>: File "/usr/local/lib/python3.7/dist-packages/keras/initializers/__init__.py", line 82, in populate_deserializable_objects

[1,2]<stderr>: generic_utils.populate_dict_with_module_objects(

[1,2]<stderr>:AttributeError: module 'keras.utils.generic_utils' has no attribute 'populate_dict_with_module_objects'

[1,0]<stderr>:Traceback (most recent call last):

[1,0]<stderr>: File "keras_mnist_advanced.py", line 3, in <module>

[1,0]<stderr>: import keras

[1,0]<stderr>: File "/usr/local/lib/python3.7/dist-packages/keras/__init__.py", line 20, in <module>

[1,0]<stderr>: from . import initializers

[1,0]<stderr>: File "/usr/local/lib/python3.7/dist-packages/keras/initializers/__init__.py", line 124, in <module>

[1,0]<stderr>: populate_deserializable_objects()

[1,0]<stderr>: File "/usr/local/lib/python3.7/dist-packages/keras/initializers/__init__.py", line 82, in populate_deserializable_objects

[1,0]<stderr>: generic_utils.populate_dict_with_module_objects(

[1,0]<stderr>:AttributeError: module 'keras.utils.generic_utils' has no attribute 'populate_dict_with_module_objects'

[1,3]<stderr>:Traceback (most recent call last):

[1,3]<stderr>: File "keras_mnist_advanced.py", line 3, in <module>

[1,3]<stderr>: import keras

[1,3]<stderr>: File "/usr/local/lib/python3.7/dist-packages/keras/__init__.py", line 20, in <module>

[1,3]<stderr>: from . import initializers

[1,3]<stderr>: File "/usr/local/lib/python3.7/dist-packages/keras/initializers/__init__.py", line 124, in <module>

[1,3]<stderr>: populate_deserializable_objects()

[1,3]<stderr>: File "/usr/local/lib/python3.7/dist-packages/keras/initializers/__init__.py", line 82, in populate_deserializable_objects

[1,3]<stderr>: generic_utils.populate_dict_with_module_objects(

[1,3]<stderr>:AttributeError: module 'keras.utils.generic_utils' has no attribute 'populate_dict_with_module_objects'

-------------------------------------------------------

Primary job terminated normally, but 1 process returned

a non-zero exit code. Per user-direction, the job has been aborted.

-------------------------------------------------------

--------------------------------------------------------------------------

mpirun detected that one or more processes exited with non-zero status, thus causing

the job to be terminated. The first process to do so was:

Process name: [[44863,1],2]

Exit code: 1

--------------------------------------------------------------------------

```

Any idea on how to fix this?

| closed | 2021-11-18T00:13:28Z | 2021-11-18T17:09:04Z | https://github.com/horovod/horovod/issues/3281 | [] | dimanzt | 1 |

pytest-dev/pytest-html | pytest | 536 | How to add additional column in pytest html report | I need help regarding pytest html report customization. I need to print failed network request status code(By TestCase wise) in report so I did the below code. StatusCode column created successfully but not getting data in html report. also, test case-wise statuscode row does not appear in the report.

```

Conftest.py

@pytest.mark.optionalhook

def pytest_html_results_table_header(cells):

cells.append(html.th('Statuscode'))

@pytest.mark.optionalhook

def pytest_html_result_table_row(report,cells):

cells.append(html.td(report.statuscode))

def pytest_runtest_makereport(item):

"""

Extends the PyTest Plugin to take and embed screenshot in html report, whenever test fails.

:param item:

"""

pytest_html = item.config.pluginmanager.getplugin('html')

outcome = yield

report = outcome.get_result()

setattr(report, "duration_formatter", "%H:%M:%S.%f")

extra = getattr(report, 'extra', [])

statuscode = []

if report.when == 'call' or report.when == "setup":

xfail = hasattr(report, 'wasxfail')

if (report.skipped and xfail) or (report.failed and not xfail):

file_name = report.nodeid.replace("::", "_")+".png"

_capture_screenshot(file_name)

if file_name:

html = '<div><img src="%s" alt="screenshot" style="width:304px;height:228px;" ' \

'onclick="window.open(this.src)" align="right"/></div>' % file_name

extra.append(pytest_html.extras.html(html))

for request in driver.requests:

if url in request.url and request.response.status_code >=400 and request.response.status_code <= 512:

statuscode.append(request.response.status_code)

print("*********Status codes************",statuscode)

report.statuscode=statuscode

report.extra = extra

``` | closed | 2022-07-19T13:23:53Z | 2023-03-05T16:16:07Z | https://github.com/pytest-dev/pytest-html/issues/536 | [] | Alfeshani-Kachhot | 6 |

gradio-app/gradio | python | 9,975 | It isn't possible to disable the heading of a Label | ### Describe the bug

By default, `gr.Label` will always show the top class in a `h2` tag, even if the confidence for that class is <0.5. There doesn't seem to be any way to disable this.

See also https://discuss.huggingface.co/t/how-to-hide-first-label-in-label-component/58036

### Have you searched existing issues? 🔎

- [X] I have searched and found no existing issues

### Reproduction

See above.

### Screenshot

_No response_

### Logs

_No response_

### System Info

```shell

N/A

```

### Severity

Blocking usage of gradio | closed | 2024-11-17T09:34:39Z | 2024-11-27T19:26:25Z | https://github.com/gradio-app/gradio/issues/9975 | [

"bug"

] | umarbutler | 1 |

dynaconf/dynaconf | flask | 379 | [bug] dict-like iteration seems broken (when using example from Home page) | **Describe the bug**

When I try to run the example from section https://www.dynaconf.com/#initialize-dynaconf-on-your-project ("Using Python only") and try to use the dict-like iteration from section https://www.dynaconf.com/#reading-settings-variables then the assertions pass but it returns a TypeError trying to iterate over the settings object.

**To Reproduce**

Run the following `dynaconf_settings.py` file in Python 3.7 (with Dynaconf 3.0.0) with the following `settings.toml` file (copied and edited from Home page).

1. Having the following folder structure

<details>

<summary> Project structure </summary>

```bash

$ tree -v

.

├── SCRIMMAGE_1.md

├── curio_examples.py

├── dynaconf_settings.py

├── ini2json.py

├── settings.toml

├── trio_cancel.py

├── trio_rib.py

└── watchservers.sh

0 directories, 8 files

```

</details>

2. Having the following config files:

<details>

<summary> Config files </summary>

**settings.toml**

```toml

key = "value"

a_boolean = false

number = 789 # had to edit this to match assertion above; previous value: 1234

a_float = 56.8

a_list = [1, 2, 3, 4]

a_dict = {hello="world"}

[a_dict.nested]

other_level = "nested value"

```

</details>

3. Having the following app code:

<details>

<summary> Code </summary>

**dynaconf_settings.py**

```python

from dynaconf import Dynaconf

settings = Dynaconf(

settings_files=["settings.toml"],

)

assert settings.key == "value"

assert settings.number == 789

assert settings.a_dict.nested.other_level == "nested value"

assert settings['a_boolean'] is False

assert settings.get("DONTEXIST", default=1) == 1

for key, value in settings: # dict like iteration

print(key, value)

```

</details>

4. Executing under the following environment

<details>

<summary> Execution </summary>

```bash

$ python3 --version

Python 3.7.7

$ python3 dynaconf_settings.py

Traceback (most recent call last):

File "dynaconf_settings.py", line 15, in <module>

for key, value in settings: # dict like iteration

File "/usr/local/lib/python3.7/site-packages/dynaconf/base.py", line 285, in __getitem__

value = self.get(item, default=empty)

File "/usr/local/lib/python3.7/site-packages/dynaconf/utils/parse_conf.py", line 195, in evaluate

value = f(settings, *args, **kwargs)

File "/usr/local/lib/python3.7/site-packages/dynaconf/base.py", line 377, in get

if "." in key and dotted_lookup:

TypeError: argument of type 'int' is not iterable

```

</details>

**Expected behavior**

No exception thrown when looping over dictionary.

**Environment (please complete the following information):**

- OS: Mac OS 10.14

- Dynaconf Version 3.0.0 (via pip3)

| closed | 2020-07-30T17:18:34Z | 2020-08-06T17:51:53Z | https://github.com/dynaconf/dynaconf/issues/379 | [

"bug",

"Pending Release"

] | lilalinda | 1 |

axnsan12/drf-yasg | django | 295 | Model | closed | 2019-01-18T00:33:37Z | 2019-01-18T00:33:51Z | https://github.com/axnsan12/drf-yasg/issues/295 | [] | oneandonlyonebutyou | 0 | |

CanopyTax/asyncpgsa | sqlalchemy | 69 | Dependency on old version of asyncpg | When using `asyncpgsa` it's hard to use latest version of asyncpg, because there's `asyncpg~=0.12.0` in `install_requires`.

What's more, I'd like to use `asyncpgsa` only as sql query compiler, without using its context managers (as shown [here](http://asyncpgsa.readthedocs.io/en/latest/#compile)). In this case `asyncpg` is not a `asyncpgsa`'s dependency any more in practice. It's just a query compiler.

How about moving `asyncpg` to `extras_require`? Or splitting the library into 2 separate packages (compiler and asyncpg adapter (any better name?)). | closed | 2018-01-25T14:44:04Z | 2018-02-02T17:49:59Z | https://github.com/CanopyTax/asyncpgsa/issues/69 | [] | bitrut | 3 |

ivy-llc/ivy | tensorflow | 28,459 | Fix Ivy Failing Test: paddle - sorting.msort | To-do List: https://github.com/unifyai/ivy/issues/27501 | closed | 2024-03-01T10:01:31Z | 2024-03-08T11:16:09Z | https://github.com/ivy-llc/ivy/issues/28459 | [

"Sub Task"

] | MuhammadNizamani | 0 |

joeyespo/grip | flask | 100 | TypeError: Can't use a string pattern on a bytes-like object | When I try to export a file with the flags `--gfm --export`, I get the following error:

```

grip --gfm --export Kalender.md

Exporting to Kalender.html

Traceback (most recent call last):

File "/usr/local/bin/grip", line 9, in <module>

load_entry_point('grip==3.2.0', 'console_scripts', 'grip')()

File "/usr/local/lib/python3.4/dist-packages/grip/command.py", line 78, in main

True, args['<address>'])

File "/usr/local/lib/python3.4/dist-packages/grip/exporter.py", line 36, in export

render_offline, render_wide, render_inline)

File "/usr/local/lib/python3.4/dist-packages/grip/exporter.py", line 18, in render_page

return render_app(app)

File "/usr/local/lib/python3.4/dist-packages/grip/renderer.py", line 8, in render_app

response = c.get('/')

File "/usr/local/lib/python3.4/dist-packages/werkzeug/test.py", line 774, in get

return self.open(*args, **kw)

File "/usr/local/lib/python3.4/dist-packages/flask/testing.py", line 108, in open

follow_redirects=follow_redirects)

File "/usr/local/lib/python3.4/dist-packages/werkzeug/test.py", line 742, in open

response = self.run_wsgi_app(environ, buffered=buffered)

File "/usr/local/lib/python3.4/dist-packages/werkzeug/test.py", line 659, in run_wsgi_app

rv = run_wsgi_app(self.application, environ, buffered=buffered)

File "/usr/local/lib/python3.4/dist-packages/werkzeug/test.py", line 867, in run_wsgi_app

app_iter = app(environ, start_response)

File "/usr/local/lib/python3.4/dist-packages/flask/app.py", line 1836, in __call__

return self.wsgi_app(environ, start_response)

File "/usr/local/lib/python3.4/dist-packages/flask/app.py", line 1820, in wsgi_app

response = self.make_response(self.handle_exception(e))

File "/usr/local/lib/python3.4/dist-packages/flask/app.py", line 1403, in handle_exception

reraise(exc_type, exc_value, tb)

File "/usr/local/lib/python3.4/dist-packages/flask/_compat.py", line 33, in reraise

raise value

File "/usr/local/lib/python3.4/dist-packages/flask/app.py", line 1817, in wsgi_app

response = self.full_dispatch_request()

File "/usr/local/lib/python3.4/dist-packages/flask/app.py", line 1470, in full_dispatch_request

self.try_trigger_before_first_request_functions()

File "/usr/local/lib/python3.4/dist-packages/flask/app.py", line 1497, in try_trigger_before_first_request_functions

func()

File "/usr/local/lib/python3.4/dist-packages/grip/server.py", line 111, in retrieve_styles

app.config['STYLE_ASSET_URLS_INLINE']))

File "/usr/local/lib/python3.4/dist-packages/grip/server.py", line 304, in _get_styles

content = re.sub(asset_pattern, match_asset, _download(app, style_url))

File "/usr/lib/python3.4/re.py", line 175, in sub

return _compile(pattern, flags).sub(repl, string, count)

TypeError: can't use a string pattern on a bytes-like object

```

| closed | 2015-03-04T22:17:11Z | 2015-06-01T02:16:32Z | https://github.com/joeyespo/grip/issues/100 | [

"bug"

] | clawoflight | 5 |

plotly/dash | plotly | 3,230 | document type checking for Dash apps | we want `pyright dash` to produce few (or no) errors - we should add a note saying we are working toward this goal to the documentation, while also explaining that `pyright dash` does currently produce errors and that piecemal community contributions are very welcome. | closed | 2025-03-20T16:01:18Z | 2025-03-21T17:40:33Z | https://github.com/plotly/dash/issues/3230 | [

"documentation",

"P1"

] | gvwilson | 0 |

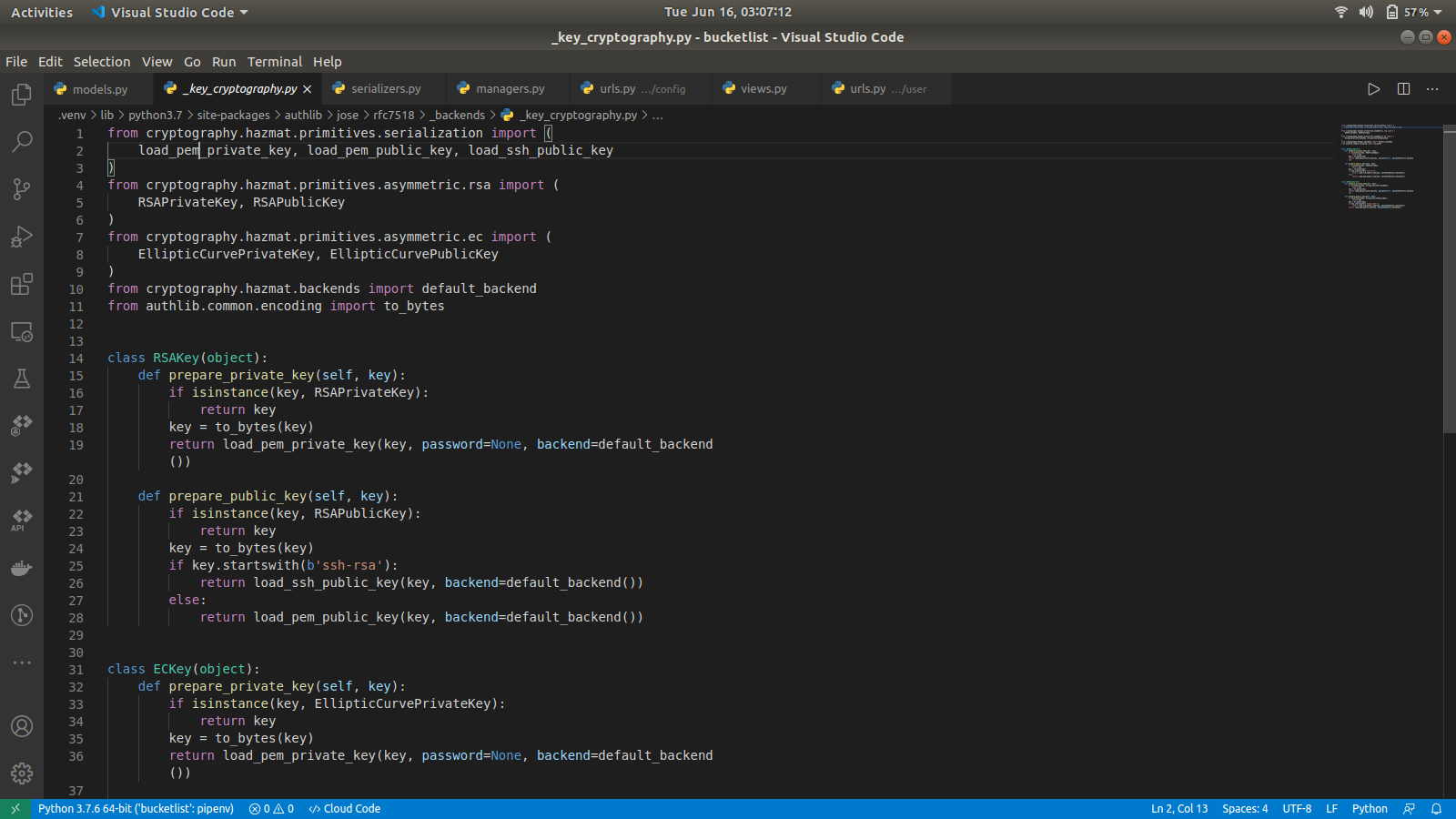

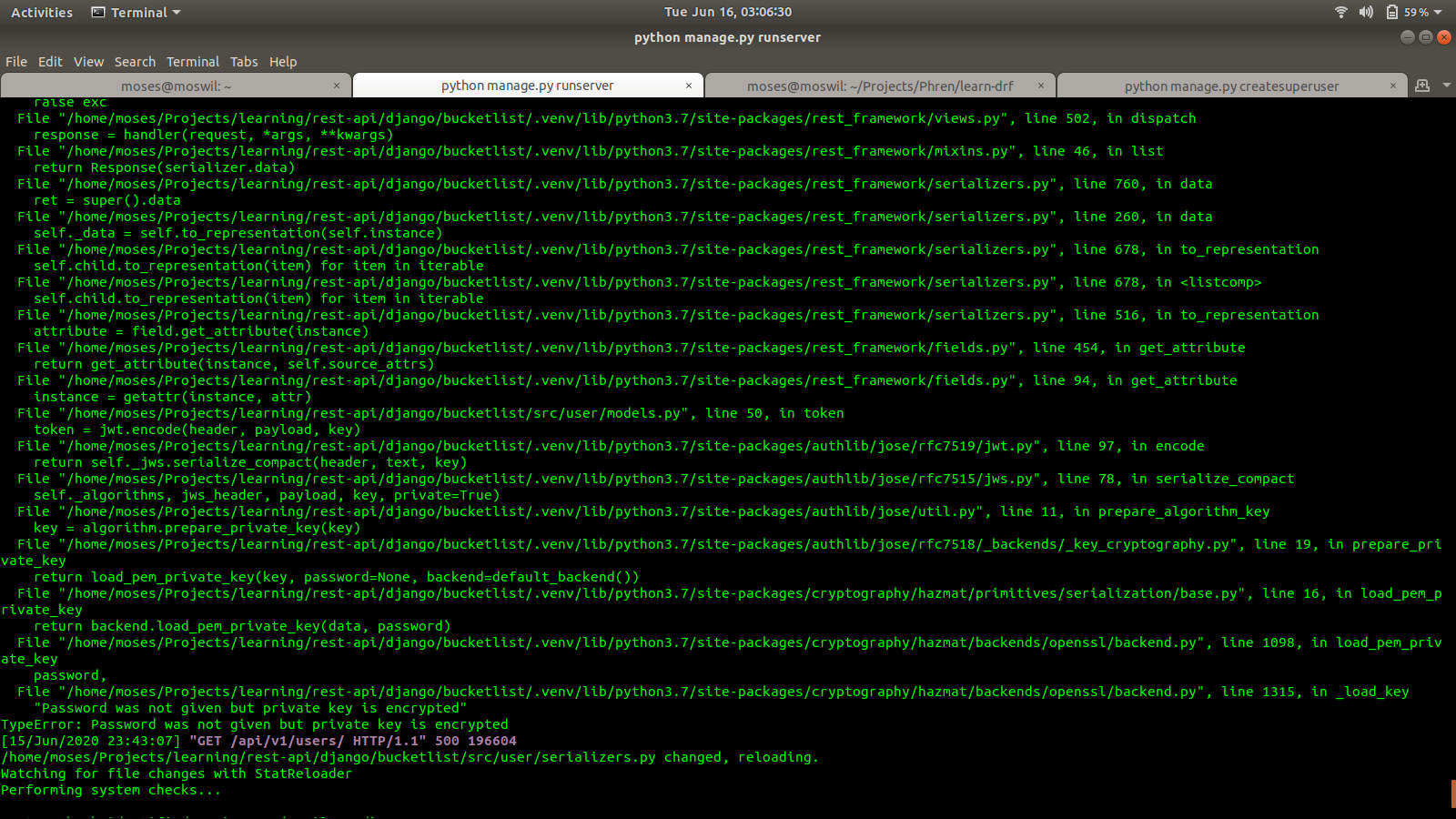

lepture/authlib | django | 238 | Allow Developers to use encrypted public and private keys for JWT | **Is your feature request related to a problem? Please describe.**

- When using `jwt.encode(header, payload, key)`, if the key is protected using a paraphrase, an error is thrown. This is because when creating the classes `authlib.jose.rfc7518._backends._key_cryptography.RSAKey` and 'authlib.jose.rfc7518._backends._key_cryptography.ECKey`, when calling `load_pem_private_key` method of `cryptography.hazmat.primitives.serialization.load_pem_private_key` the `password arg` is passed as `None` by default and the developer of `authlib` is not given an option to pass in a password.

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

**Describe the solution you'd like**

- Allow the developers to use password-protected keys

A clear and concise description of what you want to happen.

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

| closed | 2020-06-16T00:14:11Z | 2020-06-16T23:06:19Z | https://github.com/lepture/authlib/issues/238 | [

"feature request"

] | moswil | 2 |

nonebot/nonebot2 | fastapi | 3,303 | Plugin: nonebot-plugin-VividusFakeAI | ### PyPI 项目名

nonebot-plugin-vividusfakeai

### 插件 import 包名

nonebot_plugin_VividusFakeAI

### 标签

[{"label":"调情","color":"#eded0b"},{"label":"群友","color":"#ed0b1f"},{"label":"Play","color":"#4eed0b"}]

### 插件配置项

```dotenv

```

### 插件测试

- [ ] 如需重新运行插件测试,请勾选左侧勾选框 | closed | 2025-02-06T15:35:49Z | 2025-02-10T12:35:38Z | https://github.com/nonebot/nonebot2/issues/3303 | [

"Plugin",

"Publish"

] | hlfzsi | 3 |

graphql-python/graphene | graphql | 905 | Django model with custom ModelManager causes GraphQL query to fail | Hi everyone,

I have narrowed down the problem. If I don't define a custom `objects` attribute on my model `Reference` queries in GraphQL are executed fine. But if I do, I get this response:

```

{

"errors": [

{

"message": "object of type 'ManyRelatedManager' has no len()",

"locations": [

{

"line": 7,

"column": 9

}

],

"path": [

"requests",

"edges",

0,

"node",

"references"