repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

dynaconf/dynaconf | flask | 790 | Add custom converters to the docs | The PR https://github.com/dynaconf/dynaconf/pull/784

allowed

```py

# app.py

from pathlib import Path

from dynaconf.utils import parse_conf

parse_conf.converters["@path"] = (

lambda value: value.set_casting(Path)

if isinstance(value, parse_conf.Lazy)

else Path(value)

)

```

```toml

# setti... | closed | 2022-08-16T17:52:27Z | 2022-08-18T10:19:20Z | https://github.com/dynaconf/dynaconf/issues/790 | [

"Docs"

] | rochacbruno | 3 |

jackmpcollins/magentic | pydantic | 388 | AWS Bedrock support | Hi Jack 👋 ,

Probably a long-shot (given the large API differences), but are there any plans to support [AWS Bedrock](https://docs.aws.amazon.com/code-library/latest/ug/python_3_bedrock-runtime_code_examples.html#anthropic_claude)?

Given Amazon's investment into Anthropic, we've seen an uptick in interest in usi... | open | 2024-12-18T08:16:59Z | 2024-12-18T17:00:40Z | https://github.com/jackmpcollins/magentic/issues/388 | [] | mnicstruwig | 1 |

stanfordnlp/stanza | nlp | 1,324 | [QUESTION] GUD model? | In your webpage you report results on Greek **gud** model : https://stanfordnlp.github.io/stanza/performance.html

But when I'm trying to download available models only this model for Greek is available, namely Greek **gdt** and in the huggingface platform as well--

is the Greek GUD model available somewhere online ... | closed | 2023-12-20T15:31:45Z | 2024-02-25T00:14:59Z | https://github.com/stanfordnlp/stanza/issues/1324 | [

"question"

] | vistamou | 2 |

JaidedAI/EasyOCR | deep-learning | 1,386 | CRAFT training on non ocr images | Hi,

This is regarding training the CRAT model (the detection segment of EasyOCR). Apart from images containing text as part of the dataset, I also have images with no text, and I want the model to be trained on both types. While label files are provided for images containing text, I am unsure how to create labels for ... | open | 2025-03-12T07:06:33Z | 2025-03-12T07:06:33Z | https://github.com/JaidedAI/EasyOCR/issues/1386 | [] | gupta9ankit5 | 0 |

Miksus/rocketry | pydantic | 170 | BUG crashed in pickling | **Describe the bug**

On the second run of my task, the following log line triggers continuously in a loop forever:

```

CRITICAL:rocketry.task:Task 'daily_notification' crashed in pickling. Cannot pickle: {'__dict__': {'session': <rocketry.session.Session object at 0x2f6d4f9a0>, 'permanent': False, 'fmt_log_message... | open | 2022-12-11T11:21:49Z | 2023-12-15T09:18:42Z | https://github.com/Miksus/rocketry/issues/170 | [

"bug"

] | tekumara | 3 |

slackapi/bolt-python | fastapi | 1,007 | Lazy Listener for Bolt Python not doing what it is supposed to | I am trying to create a slack app that involves querying data from another service through their API and trying to host it on AWS lambda. Since querying data from another API is going to take quite a long time and slack has the 3 second time-out I've been trying to use the lazy listener, but even just copy pasting from... | closed | 2024-01-03T14:56:14Z | 2024-01-12T22:51:43Z | https://github.com/slackapi/bolt-python/issues/1007 | [

"question"

] | sinjachen | 6 |

onnx/onnx | scikit-learn | 6,710 | type-coverage-of-popular-python-packages-and-github-badge | Hello,

maybe that's of interest for us:

https://discuss.python.org/t/type-coverage-of-popular-python-packages-and-github-badge/63401

https://html-preview.github.io/?url=https://github.com/lolpack/type_coverage_py/blob/main/index.html

def list_weapons(request, pass:str):

return pass

it's be a problem because pass can't use as variable in python. how to handle it?

| open | 2024-01-25T17:00:16Z | 2024-01-26T12:31:20Z | https://github.com/vitalik/django-ninja/issues/1063 | [] | lutfyneutron | 1 |

AUTOMATIC1111/stable-diffusion-webui | deep-learning | 15,765 | [Bug]: Prompt S/R uses newlines as a delimeter as well as commas, but only commas are mentioned in the tooltip/hint | ### Checklist

- [ ] The issue exists after disabling all extensions

- [X] The issue exists on a clean installation of webui

- [ ] The issue is caused by an extension, but I believe it is caused by a bug in the webui

- [X] The issue exists in the current version of the webui

- [ ] The issue has not been reported ... | open | 2024-05-12T19:13:03Z | 2024-05-13T02:10:24Z | https://github.com/AUTOMATIC1111/stable-diffusion-webui/issues/15765 | [

"bug-report"

] | redactedaccount | 0 |

keras-rl/keras-rl | tensorflow | 88 | Parameterization of P for CDQN | I was getting NaNs in my cdqn like https://github.com/matthiasplappert/keras-rl/issues/28 . I suspect the below exponential and I'm trying out an alternative parameterization that seems to be working. Squaring might have slightly less chance of exploding than exponential but means less control over small values. Any th... | closed | 2017-03-15T00:12:33Z | 2019-01-12T15:40:45Z | https://github.com/keras-rl/keras-rl/issues/88 | [

"wontfix"

] | bstriner | 1 |

miguelgrinberg/flasky | flask | 401 | clarification | closed | 2018-12-08T18:09:35Z | 2018-12-08T18:11:14Z | https://github.com/miguelgrinberg/flasky/issues/401 | [] | roygb1v | 0 | |

encode/httpx | asyncio | 3,232 | How to implement failed retry | The starting point for issues should usually be a discussion...

https://github.com/encode/httpx/discussions

Possible bugs may be raised as a "Potential Issue" discussion, feature requests may be raised as an "Ideas" discussion. We can then determine if the discussion needs to be escalated into an "Issue" or not.

... | closed | 2024-06-28T00:40:18Z | 2024-06-28T16:51:36Z | https://github.com/encode/httpx/issues/3232 | [] | yuanjie-ai | 0 |

Gozargah/Marzban | api | 1,568 | Error when use change user plan to next | **Describe the bug**

This bug is not critical, because it performs its functions. But from my side, as an API developer, it is rather strange to see a 404 error when everything works successfully. It accrues the next plan to the user, but apparently the check comes after the accrual and the system already considers th... | closed | 2025-01-05T14:13:29Z | 2025-01-14T22:08:57Z | https://github.com/Gozargah/Marzban/issues/1568 | [

"Bug",

"Backend",

"P1",

"API",

"DB"

] | sm1ky | 3 |

falconry/falcon | api | 1,534 | Middleware process_response function is missing parameter | The `process_response` function used in the middleware in the [readme file](https://github.com/falconry/falcon/blob/master/README.rst) and on the [quickstart page](https://falcon.readthedocs.io/en/stable/user/quickstart.html) is missing the `req_succeeded` parameter, which was introduced in Falcon 2.0.0.

It's curren... | closed | 2019-05-07T16:20:18Z | 2019-05-20T05:04:27Z | https://github.com/falconry/falcon/issues/1534 | [

"bug",

"documentation"

] | pbjr23 | 0 |

modoboa/modoboa | django | 3,329 | Modoboa upgrade resulted in broken pop3 support | # Impacted versions

* OS Type: Debian

* OS Version: 12.4

* Database Type: PostgreSQL

* Modoboa: 2.3.2

* installer used: Yes

* Webserver: Nginx

# Current behavior

After the upgrade, the dovecot-pop3d service did not install correctly and is now not working. I have tried to manually install the dovecot-pop3... | closed | 2024-10-28T21:38:44Z | 2024-10-29T02:06:42Z | https://github.com/modoboa/modoboa/issues/3329 | [] | pappastech | 2 |

graphql-python/graphene-sqlalchemy | graphql | 148 | MySQL Enum reflection convertion issue | When using reflection to build the models (instead of declarative) the Enum conversion fails because the ENUM column is treated as string so doing ```type.name``` returns None.

```sql

CREATE TABLE `foos` (

`id` int(11) unsigned NOT NULL AUTO_INCREMENT,

`status` ENUM('open', 'closed') DEFAULT 'open',

PRIMAR... | open | 2018-07-24T13:14:49Z | 2018-07-24T13:14:49Z | https://github.com/graphql-python/graphene-sqlalchemy/issues/148 | [] | sebastiandev | 0 |

wkentaro/labelme | deep-learning | 430 | Video annotation example | Hi,

Sorry for make you angry.

But I am new to this field. so please help me on this concepts.

Unable to understand the concept of video annotation.

I am using video_to_image.py to convert video frames to image file. Then I run labelme to label the images.

Then I have no idea to convert that images to video.

Final... | closed | 2019-06-28T11:33:54Z | 2021-02-27T04:45:17Z | https://github.com/wkentaro/labelme/issues/430 | [] | suravijayjilla | 6 |

vanna-ai/vanna | data-visualization | 594 | ORA-24550: signal received: Unhandled exception: Code=c0000005 Flags=0 | **Describe the bug**

When I used flask with openai+chroma+Microsoft SQL Server database for initial training, when I generated about 2000 plan data, I carried out vn.train(plan=plan) operation. However, training 100 pieces of data will kill my flask process and output an error :”ORA-24550: signal received: Unhandled e... | closed | 2024-08-09T07:19:22Z | 2024-08-09T07:30:28Z | https://github.com/vanna-ai/vanna/issues/594 | [

"bug"

] | DawsonPeres | 2 |

scikit-tda/kepler-mapper | data-visualization | 252 | Issue with generating visuals in mapper | **Describe the bug**

During execution of the mapper.visualize() function, it errors out in Visuals.py (line 573 - np.asscalar(object) "Numpy does not support attribute asscalar".

**To Reproduce**

Steps to reproduce the behavior:

1. brew install numpy (1.26.3)

2. Open Visual Studio Code

3. Follow the instructi... | closed | 2024-01-29T13:08:41Z | 2024-07-06T11:38:50Z | https://github.com/scikit-tda/kepler-mapper/issues/252 | [

"bug"

] | wingenium-nagesh | 7 |

pallets-eco/flask-wtf | flask | 12 | FieldList(FileField) does not follow request.files | When form data is passed to a FieldList, each key in the form data

dict whose prefix corresponds to the encapsulate field's name results

in the creation of an entry. This allows for the addition of new field

entries on the basis of submitted form data and thus dynamic

field creation. See:

http://groups.google.com/gro... | closed | 2012-02-29T16:46:08Z | 2021-05-30T01:24:51Z | https://github.com/pallets-eco/flask-wtf/issues/12 | [

"bug",

"import"

] | rduplain | 5 |

koaning/scikit-lego | scikit-learn | 535 | [FEATURE] Time Series Target Encoding Transformer | Hi all,

I am a data scientist who is working mainly on the time series problems. Usually, the best features are lags, rolling means and target encoders. I have already a transformer for Spark DataFrames that I use in my daily work for creating these features.

I want to contribute to creating this time series targ... | open | 2022-09-21T14:35:37Z | 2022-09-23T08:49:41Z | https://github.com/koaning/scikit-lego/issues/535 | [

"enhancement"

] | canerturkseven | 4 |

yt-dlp/yt-dlp | python | 11,831 | 'Unable to connect to proxy' 'No connection could be made because the target machine actively refused it' | ### DO NOT REMOVE OR SKIP THE ISSUE TEMPLATE

- [X] I understand that I will be **blocked** if I *intentionally* remove or skip any mandatory\* field

### Checklist

- [X] I'm reporting a bug unrelated to a specific site

- [X] I've verified that I have **updated yt-dlp to nightly or master** ([update instructions](http... | closed | 2024-12-16T02:18:35Z | 2025-02-10T18:24:06Z | https://github.com/yt-dlp/yt-dlp/issues/11831 | [

"question"

] | pmpnmf | 4 |

PaddlePaddle/models | computer-vision | 4,894 | run_ernie.sh infer 进行预测,数据格式问题 | 训练了模型之后,`bash run_ernie.sh infer` 进行预测,预测的数据格式不应该是未标注的数据吗?你这里为什么只能用标注过的数据做预测

```bash

function run_infer() {

echo "infering"

python run_ernie_sequence_labeling.py \

--mode infer \

--ernie_config_path "${ERNIE_PRETRAINED_MODEL_PATH}/ernie_config.json" \

# --init_checkpoint "${ERNIE_... | open | 2020-09-30T03:30:08Z | 2020-12-16T06:34:02Z | https://github.com/PaddlePaddle/models/issues/4894 | [

"user"

] | Adrian-Yan16 | 4 |

howie6879/owllook | asyncio | 100 | 爬起点的书名报错 | 请问下大佬,开起点爬虫时候报错

AttributeError: 'Response' object has no attribute 'html'

这个是什么情况呢 | closed | 2021-01-17T04:18:21Z | 2021-01-17T05:12:21Z | https://github.com/howie6879/owllook/issues/100 | [] | HuanYuanHe | 3 |

PaddlePaddle/PaddleHub | nlp | 2,337 | 使用ernie_vilg,提供了AK,SK,依然不能连接 | 欢迎您反馈PaddleHub使用问题,非常感谢您对PaddleHub的贡献!

在留下您的问题时,辛苦您同步提供如下信息:

- 版本、环境信息

1)PaddleHub和PaddlePaddle版本:请提供您的PaddleHub和PaddlePaddle版本号,例如PaddleHub1.4.1,PaddlePaddle1.6.2

2)系统环境:请您描述系统类型,例如Linux/Windows/MacOS/,python版本

- 复现信息:如为报错,请给出复现环境、复现步骤

在aistudio里运行

# os.environ["WENXIN_AK"] = "" # 替换为你的 API Key

# os.envir... | open | 2024-11-27T08:43:33Z | 2024-11-27T08:43:37Z | https://github.com/PaddlePaddle/PaddleHub/issues/2337 | [] | BarryYin | 0 |

holoviz/colorcet | plotly | 69 | Some categorical colormaps are given as list of numerical RGB instead of list of hex strings | colorcet 2.0.6

The colorcet user guide specifically mentions that it provides 'Bokeh-style' palettes as lists of hex strings, which is handy when working with Bokeh.

However, I realised this was not the case for some of the categorical palettes, including `cc.glasbey_bw` and `cc.glasbey_hv`. These return lists of... | closed | 2021-09-08T14:01:54Z | 2021-11-27T02:29:42Z | https://github.com/holoviz/colorcet/issues/69 | [] | TheoMathurin | 2 |

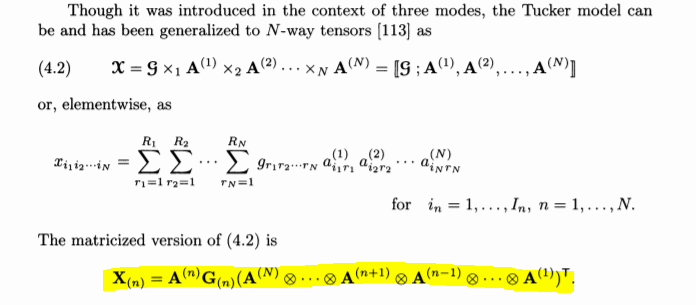

tensorly/tensorly | numpy | 163 | An equation doesn't hold. | #### Describe the bug

The highlighted equation doesn't hold for the tucker tensor. For more information, please refer to page 475 of Tensor Decompositions and Applications (2009).

#### Steps or Code t... | closed | 2020-03-19T09:21:01Z | 2020-03-20T00:29:44Z | https://github.com/tensorly/tensorly/issues/163 | [] | Haobo1108 | 2 |

neuml/txtai | nlp | 87 | Add Embeddings Cluster component | Add Embeddings Cluster component

- This component will manage a number of embeddings shards

- The shards will be API references

- The cluster component will mirror embeddings operations

- The API will seamlessly determine if an Embeddings or Embeddings Cluster should be created based on settings | closed | 2021-05-11T20:20:44Z | 2021-05-19T00:14:06Z | https://github.com/neuml/txtai/issues/87 | [] | davidmezzetti | 0 |

jina-ai/clip-as-service | pytorch | 298 | Cannot start Bert service. | Hi, sorry for reopening this issue but:

I used pip install -U bert-serving-server bert-serving-client

, I ran it again to be sure .

(Bert) C:\Users\fad>pip install -U bert-serving-server bert-serving-client

Requirement already up-to-date: bert-serving-server in c:\programdata\anaconda3\envs\bert\lib\site-pack... | closed | 2019-03-29T03:15:20Z | 2019-08-07T16:57:04Z | https://github.com/jina-ai/clip-as-service/issues/298 | [] | faddyai | 1 |

OFA-Sys/Chinese-CLIP | nlp | 100 | 关于数据集的构造 | 您好!我目前有一个疑问,能不能自己造一些图片

样本,比如.png的格式,能否直接改成您这边的数据集要求格式,当做训练集重新训练模型? | closed | 2023-05-08T09:28:56Z | 2023-05-15T02:50:37Z | https://github.com/OFA-Sys/Chinese-CLIP/issues/100 | [] | Sally6651 | 5 |

schemathesis/schemathesis | pytest | 1,809 | Support Python 3.12 | Blocked on `aiohttp` [update](https://github.com/aio-libs/aiohttp/issues/7675)

Other todos:

- [ ] Update builds to include Python 3.12

- [ ] Update classifiers & docs | closed | 2023-10-09T08:13:27Z | 2023-11-03T15:33:16Z | https://github.com/schemathesis/schemathesis/issues/1809 | [

"Priority: Medium",

"Status: Blocked",

"Type: Compatibility"

] | Stranger6667 | 0 |

cvat-ai/cvat | computer-vision | 9,225 | Return to the first page after visiting invalid page | ### Actions before raising this issue

- [x] I searched the existing issues and did not find anything similar.

- [x] I read/searched [the docs](https://docs.cvat.ai/docs/)

### Is your feature request related to a problem? Please describe.

When visiting a non-existent page for some resource in UI, you're shown a error... | open | 2025-03-18T17:13:28Z | 2025-03-18T17:13:45Z | https://github.com/cvat-ai/cvat/issues/9225 | [

"enhancement",

"ui/ux",

"good first issue"

] | zhiltsov-max | 0 |

huggingface/datasets | pytorch | 6,457 | `TypeError`: huggingface_hub.hf_file_system.HfFileSystem.find() got multiple values for keyword argument 'maxdepth' | ### Describe the bug

Please see https://github.com/huggingface/huggingface_hub/issues/1872

### Steps to reproduce the bug

Please see https://github.com/huggingface/huggingface_hub/issues/1872

### Expected behavior

Please see https://github.com/huggingface/huggingface_hub/issues/1872

### Environment info

Please s... | closed | 2023-11-29T01:57:36Z | 2023-11-29T15:39:03Z | https://github.com/huggingface/datasets/issues/6457 | [] | wasertech | 5 |

jupyter/nbgrader | jupyter | 1,041 | Use Nbgrader without a DB in simplest way possible |

I am trying to use nbgrader for a course I am supervising. However, due to logistics of the course I only need a very small subset of the available features.

Specifically, I just want to generate an assignment version of my notebooks, without any added features. Meaning, I just want to strip the solution blocks an... | closed | 2018-11-01T15:19:17Z | 2019-01-13T19:01:06Z | https://github.com/jupyter/nbgrader/issues/1041 | [

"question"

] | AKuederle | 3 |

jonaswinkler/paperless-ng | django | 446 | Consuming stops, when Mail-Task starts | Still migrate lots of Documents via Consumer to paperless-ng (Docker, Version 1.0.0, using inotify for consumer). When i put Documents (10-50) into the consumer-folder the consumer starts consuming. But when the task paperless_mail.tasks.process_mail_accounts starts while consuming, consuming stops and all waiting docu... | closed | 2021-01-26T09:46:57Z | 2021-01-26T21:11:01Z | https://github.com/jonaswinkler/paperless-ng/issues/446 | [

"bug"

] | andbez | 4 |

alirezamika/autoscraper | web-scraping | 42 | HTML Parameter | I read a previous post that mentioned capability for the HTML parameter, in which I could render a JS application using another tool (BS or Selenium) and pass in the HTML data for AutoScraper to parse. Does anyone have steps or documentation on how to use this parameter? | closed | 2020-12-08T00:28:31Z | 2020-12-15T13:43:39Z | https://github.com/alirezamika/autoscraper/issues/42 | [] | j3vr0n | 2 |

custom-components/pyscript | jupyter | 337 | Cannot delete persistent variables | I tried to set the variable to None still the entity doesn't get removed. I want to remove this variable from the entities list as I don't want the token to display. I had no idea that it converts persistent variables into entities. I think it was not mentioned in the reference documents.

but I can't query it on DjangoListObjectField, only on DjangoObjectField. Is there any way I can do that?

| closed | 2018-02-21T09:41:52Z | 2018-03-26T15:47:59Z | https://github.com/eamigo86/graphene-django-extras/issues/25 | [] | giovannicimolin | 11 |

gradio-app/gradio | python | 10,085 | gradio-client: websockets is out of date | ### Describe the bug

The latest websockets is 14.1. gradio-client requires <13.0 >=10.0

https://github.com/gradio-app/gradio/blob/main/client/python/requirements.txt#L6

I can work around it by creating a fork.

### Have you searched existing issues? 🔎

- [X] I have searched and found no existing issues

### ... | closed | 2024-11-30T00:10:26Z | 2024-12-02T20:02:53Z | https://github.com/gradio-app/gradio/issues/10085 | [

"bug"

] | btakita | 0 |

koaning/scikit-lego | scikit-learn | 534 | [BUG] Future stability of meta-modelling | Scikit-lego is a great asset and the future of this library is important. The meta-modelling option employs the `from sklearn.utils.metaestimators import if_delegate_has_method` method. However, this method is depreciated in the most recent scikit-learn release and will be removed in version 1.3, which will be an issue... | closed | 2022-09-18T17:44:05Z | 2023-06-10T18:24:11Z | https://github.com/koaning/scikit-lego/issues/534 | [

"bug"

] | KulikDM | 4 |

ScrapeGraphAI/Scrapegraph-ai | machine-learning | 400 | BedRock Malformed input request: #/texts/0: expected maxLength: 2048, actual: 19882, please reformat your input and try agai | **Describe the bug**

I followed the example of bedrock https://github.com/VinciGit00/Scrapegraph-ai/blob/main/examples/bedrock/smart_scraper_bedrock.py

It was working in the first place. Then after I replace the url from source="https://perinim.github.io/projects/", to source="https://www.seek.com.au/jobs?page=... | closed | 2024-06-20T11:56:31Z | 2024-12-05T15:39:01Z | https://github.com/ScrapeGraphAI/Scrapegraph-ai/issues/400 | [] | TomZhaoJobadder | 9 |

InstaPy/InstaPy | automation | 6,059 | How to add an LOOP/While statement to Instapy quickstart | Hi,

I'd like to know how I can add a loop statement.

My quickstart file seems to work only when the hourly limit, and the 'amount' to follow = 10

So as soon as it reaches 10, it's jumping to unfollow people, but i'd like it to follow 7500, 10 people per hour, and 200 people a day max. But still to follow until... | open | 2021-01-29T00:56:35Z | 2021-07-21T02:19:03Z | https://github.com/InstaPy/InstaPy/issues/6059 | [

"wontfix"

] | molasunfish | 1 |

vastsa/FileCodeBox | fastapi | 96 | 上传文件报错500 | 开发者你好

我在设置了文件上传大小后,上传文件报错500,错误信息如下

```

ERROR: Exception in ASGI application

Traceback (most recent call last):

File "/usr/local/lib/python3.9/site-packages/uvicorn/protocols/http/h11_impl.py", line 408, in run_asgi

result = await app( # type: ignore[func-returns-value]

File "/usr/local/lib/python3... | closed | 2023-09-23T06:55:19Z | 2024-06-17T09:52:08Z | https://github.com/vastsa/FileCodeBox/issues/96 | [] | zhchy1996 | 3 |

aminalaee/sqladmin | asyncio | 701 | Add file downloading | ### Checklist

- [X] There are no similar issues or pull requests for this yet.

### Is your feature related to a problem? Please describe.

I want to have the possibility to download files from the admin panel

### Describe the solution you would like.

If string is filepath than you can download it

![ima... | open | 2024-01-23T15:48:54Z | 2024-01-23T17:13:09Z | https://github.com/aminalaee/sqladmin/issues/701 | [] | EnotShow | 0 |

miguelgrinberg/microblog | flask | 95 | pages require login redirection failed after login | first and most important, thanks buddy! you " mega project with flask " is amazing , i have learned a lot from this article. i am new to python and flask and you really help me take the first step.

however, excelent in all,there still some small problem exist, in chapter 4 "user login", authentication is required... | closed | 2018-03-30T04:01:14Z | 2018-04-03T04:22:33Z | https://github.com/miguelgrinberg/microblog/issues/95 | [] | rhodesiaxlo | 2 |

vitalik/django-ninja | rest-api | 898 | Expose OpenAPI `path_prefix` in NinjaAPI contstructor | **Is your feature request related to a problem? Please describe.**

`get_openapi_schema` takes the `path_prefix` parameter, but the only way I can see to change it is to subclass `NinjaAPI`. Our use case is that we want to set the prefix to an empty string, and customize the path in the docs with the `servers` paramete... | closed | 2023-11-01T18:38:14Z | 2023-11-02T16:43:12Z | https://github.com/vitalik/django-ninja/issues/898 | [] | scott-8 | 2 |

encode/databases | sqlalchemy | 178 | Implement return rows affected by operation if lastrowid is not set for asyncpg and adopt | It's the continuation of the PR https://github.com/encode/databases/pull/150 (as this PR only implements for mysql and sqlite) for the ticket https://github.com/encode/databases/issues/61 to cover other back-ends. | open | 2020-03-16T15:29:52Z | 2020-09-28T00:02:25Z | https://github.com/encode/databases/issues/178 | [] | gvbgduh | 2 |

Gerapy/Gerapy | django | 43 | 调度爬虫的时候可否增加 添加参数的功能? | 平常调度爬虫的时候会 curl ..... -a arg1=value1 -a arg2=value2 -a arg3=value3 传参数,在使用 Gerapy的时候,调度爬虫,点run后就直接提交运行了。

这个虽然可以直接修改文件里面的参数来做这样的操作,但是还是要修改文件代码,总觉得有点麻烦。

要是能直接在调度的时候添加的话就挺好的。 | open | 2018-02-23T10:17:49Z | 2022-08-30T06:14:30Z | https://github.com/Gerapy/Gerapy/issues/43 | [] | lvsoso | 3 |

mithi/hexapod-robot-simulator | plotly | 117 | pip install problem (on windows) | requirements.txt requests markupsafe 1.1.1 but werkzeug 2.2.3 requires MarkupSafe 2.1.1 or above | open | 2023-08-01T14:23:21Z | 2023-12-04T11:06:51Z | https://github.com/mithi/hexapod-robot-simulator/issues/117 | [] | bestbinaryboi | 2 |

huggingface/transformers | pytorch | 36,210 | Token healing throws error with "Qwen/Qwen2.5-Coder-7B-Instruct" | ### System Info

OS type: Sequoia 15.2 Apple M2 Pro.

I tried to reproduce using 🤗 [Inference Endpoints](https://endpoints.huggingface.co/AI-MO/endpoints/dedicated) when deploying https://huggingface.co/desaxce/Qwen2.5-Coder-7B-Instruct. It's a fork of `Qwen/Qwen2.5-Coder-7B-Instruct` with `token_healing=True` and a `... | open | 2025-02-15T09:01:46Z | 2025-03-18T08:04:00Z | https://github.com/huggingface/transformers/issues/36210 | [

"bug"

] | desaxce | 1 |

Urinx/WeixinBot | api | 61 | 请问发送文件后不停synccheck到window.synccheck={retcode:"0",selector:"2"}? | 请问通过https://wx.qq.com/cgi-bin/mmwebwx-bin/webwxsendappmsg?fun=async&f=json和https://wx.qq.com/cgi-bin/mmwebwx-bin/webwxsendappmsg?fun=async&f=json上传一个文件后,https://webpush.wx.qq.com/cgi-bin/mmwebwx-bin/synccheck不停得到window.synccheck={retcode:"0",selector:"2"},但https://wx.qq.com/cgi-bin/mmwebwx-bin/webwxsync又没有消息,该如何办呢?

| open | 2016-06-12T09:25:19Z | 2016-06-12T12:11:46Z | https://github.com/Urinx/WeixinBot/issues/61 | [] | dereky7 | 1 |

OpenInterpreter/open-interpreter | python | 669 | MacOS & conda env did not run interpreter. | ### Describe the bug

I tried following environment

- Mac OS v14.0 Sonoma

- conda environment : python=3.11 (created by 'conda create python=3.11')

```bash

$ pip install open-interpreter

```

could be done.

When I tried 'interpreter' command on terminal, following error occured.

```bash

...

File ... | closed | 2023-10-21T04:36:34Z | 2023-10-27T13:49:03Z | https://github.com/OpenInterpreter/open-interpreter/issues/669 | [

"Bug"

] | yoyoyo-yo | 1 |

kymatio/kymatio | numpy | 1,062 | Sample to use Scattering 2D with ImageDataGenerator.flowfromdirectory | testDataGen = ImageDataGenerator(rescale = 1.0 / 255)

trainGenerator = trainDataGen.flow_from_directory(os.path.join(dspth,'SplitDataset', 'Train'),

target_size = (32, 32),

batch_size = 32,

... | open | 2025-02-20T07:27:22Z | 2025-02-20T07:27:22Z | https://github.com/kymatio/kymatio/issues/1062 | [] | malathip72 | 0 |

inducer/pudb | pytest | 544 | isinstance(torch.tensor(0), Sized) is True, but it len() will throw error | **Describe the bug**

`isinstance(torch.tensor(0), Sized)` is True, but it `len()` will throw error

```

[08/23/2022 05:51:06 PM] ERROR stringifier failed var_view.py:607

... | closed | 2022-08-23T10:10:49Z | 2022-08-24T02:31:35Z | https://github.com/inducer/pudb/issues/544 | [

"Bug"

] | Freed-Wu | 2 |

ultrafunkamsterdam/undetected-chromedriver | automation | 1,842 | Detected on Twitch | Nodriver and undetected driver are detected on twitch.tv | closed | 2024-04-24T12:28:36Z | 2024-04-24T15:16:14Z | https://github.com/ultrafunkamsterdam/undetected-chromedriver/issues/1842 | [] | fontrendererobj | 0 |

plotly/dash-cytoscape | plotly | 143 | [BUG] expected an incorrect layout name ("close-bilkent") in 0.3.0 | <!--

Thanks for your interest in Plotly's Dash Cytoscape Component!

Note that GitHub issues in this repo are reserved for bug reports and feature

requests. Implementation questions should be discussed in our

[Dash Community Forum](https://community.plotly.com/c/dash).

Before opening a new issue, please search ... | closed | 2021-06-11T13:59:23Z | 2021-09-06T19:28:06Z | https://github.com/plotly/dash-cytoscape/issues/143 | [] | manmustbecool | 0 |

ray-project/ray | tensorflow | 51,351 | Release test aggregate_groups.few_groups (sort_shuffle_pull_based) failed | Release test **aggregate_groups.few_groups (sort_shuffle_pull_based)** failed. See https://buildkite.com/ray-project/release/builds/35758#0195916e-bf24-40ef-8732-80656689b01b for more details.

Managed by OSS Test Policy | closed | 2025-03-13T22:07:28Z | 2025-03-17T17:37:07Z | https://github.com/ray-project/ray/issues/51351 | [

"bug",

"P0",

"triage",

"data",

"release-test",

"jailed-test",

"ray-test-bot",

"weekly-release-blocker",

"stability"

] | can-anyscale | 2 |

ultralytics/yolov5 | machine-learning | 12,643 | Training on non-square images | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

I have images of size 1280x720. I want to use these non-square images for training, my co... | closed | 2024-01-17T15:30:10Z | 2024-10-20T19:37:39Z | https://github.com/ultralytics/yolov5/issues/12643 | [

"question",

"Stale"

] | bharathsanthanam94 | 9 |

Esri/arcgis-python-api | jupyter | 1,846 | User' object has no attribute 'availableCredits' | **Describe the bug**

User object is not providing "AvailableCredits" or "AssignedCredits", for **some** users in AGOL, though the user clearly has credits in AGOL assigned and available to them.

**To Reproduce**

Steps to reproduce the behavior:

```python

from arcgis.gis import GIS

gis = GIS("home")

userid = ... | closed | 2024-06-17T15:55:12Z | 2024-06-18T06:31:48Z | https://github.com/Esri/arcgis-python-api/issues/1846 | [

"cannot reproduce"

] | MSLTrebesch | 2 |

nvbn/thefuck | python | 1,227 | Python exception when executing rule git_hook_bypass: NoneType is not iterable | <!-- If you have any issue with The Fuck, sorry about that, but we will do what we

can to fix that. Actually, maybe we already have, so first thing to do is to

update The Fuck and see if the bug is still there. -->

<!-- If it is (sorry again), check if the problem has not already been reported and

if not, just op... | closed | 2021-08-10T21:07:52Z | 2021-08-17T13:41:54Z | https://github.com/nvbn/thefuck/issues/1227 | [] | bbukaty | 3 |

onnx/onnx | tensorflow | 6,017 | Optimizing Node Ordering in ONNX Graphs: Ensuring Correct Sequence for Model Generation | I am working on generating an ONNX converter for some framework, and previously, I was iterating through the nodes of the ONNX model to generate a feed-forward network corresponding to each node of the ONNX model. However, after analyzing several ONNX models and the order of their nodes, I realized that I can't simply ... | open | 2024-03-12T08:35:51Z | 2024-03-12T16:00:30Z | https://github.com/onnx/onnx/issues/6017 | [

"question"

] | kumar-utkarsh0317 | 1 |

huggingface/datasets | nlp | 6,451 | Unable to read "marsyas/gtzan" data | Hi, this is my code and the error:

```

from datasets import load_dataset

gtzan = load_dataset("marsyas/gtzan", "all")

```

[error_trace.txt](https://github.com/huggingface/datasets/files/13464397/error_trace.txt)

[audio_yml.txt](https://github.com/huggingface/datasets/files/13464410/audio_yml.txt)

Python 3.11.5

... | closed | 2023-11-25T15:13:17Z | 2023-12-01T12:53:46Z | https://github.com/huggingface/datasets/issues/6451 | [] | gerald-wrona | 3 |

huggingface/datasets | deep-learning | 6,484 | [Feature Request] Dataset versioning | **Is your feature request related to a problem? Please describe.**

I am working on a project, where I would like to test different preprocessing methods for my ML-data. Thus, I would like to work a lot with revisions and compare them. Currently, I was not able to make it work with the revision keyword because it was n... | open | 2023-12-08T16:01:35Z | 2023-12-11T19:13:46Z | https://github.com/huggingface/datasets/issues/6484 | [] | kenfus | 2 |

Anjok07/ultimatevocalremovergui | pytorch | 1,000 | What parameter to choose for importing a model from vocal remover by tsurumeso? | I trained a model with the beta version 6 (vocal remover by tsurumeso). I imported it into UVR 5 but the parameter doesn't fit anymore.

It used to be:

1band_sr44100_hl_1024

Which parameter is it now? | open | 2023-12-01T14:53:19Z | 2023-12-01T14:53:19Z | https://github.com/Anjok07/ultimatevocalremovergui/issues/1000 | [] | KatieBelli | 0 |

microsoft/MMdnn | tensorflow | 671 | UnboundLocalError: local variable 'x' referenced before assignment | Platform (like ubuntu 16.04/win10):ubuntu 18.04

Python version:3.6.7

Source framework with version (like Tensorflow 1.4.1 with GPU):Tensorflow 1.13.1

Destination framework with version (like CNTK 2.3 with GPU):

Pre-trained model path (webpath or webdisk path):

Running scripts:

WARNING:tensorflow:From /h... | open | 2019-06-05T06:17:08Z | 2019-06-25T06:22:48Z | https://github.com/microsoft/MMdnn/issues/671 | [] | moonjiaoer | 15 |

robotframework/robotframework | automation | 4,827 | What about adding support of !import to yaml variables | By default yaml does not have any include statement and RF YamlImporter class loader does not support it neither, however adding import can be simply added:

https://gist.github.com/joshbode/569627ced3076931b02f | closed | 2023-07-19T12:19:52Z | 2023-07-23T21:34:04Z | https://github.com/robotframework/robotframework/issues/4827 | [] | sebastianciupinski | 1 |

lexiforest/curl_cffi | web-scraping | 444 | Observing BoringSSL Exception intermittently for specific user-agents | **The question**

_BoringSSL Exception:_

Observing BoringSSL Exception intermittently while using user-agents such as `Mozilla/5.0 (iPhone; CPU iPhone OS 13_4_1 like Mac OS X) AppleWebKit/605.1.15 (KHTML, like Gecko) Mobile/15E148 [FBAN/FBIOS;FBDV/iPhone12,3;FBMD/iPhone;FBSN/iOS;FBSV/13.4.1;FBSS/3;FBID/phone;FBLC/... | closed | 2024-11-26T23:11:00Z | 2024-12-03T09:17:36Z | https://github.com/lexiforest/curl_cffi/issues/444 | [

"question"

] | charliedelta02 | 1 |

deezer/spleeter | tensorflow | 21 | Example script doesn't seem to be working. | I'm just following the instructions found in the README, and I was able to successfully run the scripts, and I get two output files, vocals.wav and accompaniment.wav, but both the files sound exactly the same as the original. I am not hearing any difference between the three.

This is exactly what I ran:

```

git ... | closed | 2019-11-04T16:59:59Z | 2019-11-05T12:41:07Z | https://github.com/deezer/spleeter/issues/21 | [

"bug",

"enhancement",

"MacOS",

"conda"

] | crobertsbmw | 3 |

cvat-ai/cvat | pytorch | 8,795 | cvat version 2.0 reported an error when installing nuctl autoannotation function | ### Actions before raising this issue

- [X] I searched the existing issues and did not find anything similar.

- [X] I read/searched [the docs](https://docs.cvat.ai/docs/)

### Steps to Reproduce

[root@bogon cvat-2.0.0]# nuctl deploy --project-name cvat --path serverless/pytorch/ultralytics/15Strip_surface_inspectio... | closed | 2024-12-09T07:48:27Z | 2024-12-09T07:54:41Z | https://github.com/cvat-ai/cvat/issues/8795 | [

"bug"

] | chang50961471 | 2 |

TracecatHQ/tracecat | pydantic | 71 | Change toast to sonner | # Motivation

Current toast is annoying - doesn't have expiry so you have to close it manually.

A sonner https://ui.shadcn.com/docs/components/sonner (so I can see past notifications) can make this UX better | closed | 2024-04-20T15:28:48Z | 2024-06-16T19:25:53Z | https://github.com/TracecatHQ/tracecat/issues/71 | [

"frontend"

] | daryllimyt | 1 |

streamlit/streamlit | machine-learning | 10,229 | Page Refresh detection and data persistance between refresh page | ### Checklist

- [x] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar feature requests.

- [x] I added a descriptive title and summary to this issue.

### Summary

Currently,

- There is no way to detect page refresh

- There is no way to persists data between page refresh... | open | 2025-01-22T16:13:29Z | 2025-01-22T16:13:42Z | https://github.com/streamlit/streamlit/issues/10229 | [

"type:enhancement"

] | learncodingforweb | 1 |

plotly/dash-component-boilerplate | dash | 173 | Unable to create project | Hi all, I'm trying to initialize the cookiecutter project by following the main page's instructions, but I receive the error shown below.

- Node.JS version: v23.5.0

- npm version: 10.9.2

- Python version: 3.11

I'm not sure if it's the same issue raised in #148 in 2022, but the proposed solution of downgrading to node... | open | 2024-12-25T18:36:33Z | 2025-02-18T09:02:26Z | https://github.com/plotly/dash-component-boilerplate/issues/173 | [] | nulinspiratie | 1 |

lukasmasuch/streamlit-pydantic | pydantic | 54 | Requirements.txt | Thank you so much for the fantastic Streamlit plugin!

I'm currently facing some challenges in getting the examples to run smoothly. Could someone please share a requirements.txt file that is compatible with the provided examples?

Many thanks in advance! | open | 2024-01-26T22:40:44Z | 2024-01-26T22:40:44Z | https://github.com/lukasmasuch/streamlit-pydantic/issues/54 | [] | dmmsop | 0 |

open-mmlab/mmdetection | pytorch | 12,303 | RuntimeError: indices should be on the same device as the indexed tensor in MMRotate tutorial | **Description:**

I encountered a `RuntimeError` when following the [MMRotate tutorial](https://github.com/open-mmlab/mmrotate/blob/main/demo/MMRotate_Tutorial.ipynb). The error occurs when running `inference_detector(model, img)`. My model is on `cuda:0`, but it seems like some indices are on the CPU, causing a misma... | open | 2025-02-07T12:59:56Z | 2025-02-07T13:00:14Z | https://github.com/open-mmlab/mmdetection/issues/12303 | [] | ramondalmau | 0 |

graphql-python/graphene-sqlalchemy | graphql | 407 | Allow a custom filter class with the purpose of using all the base filters, and adding sqlalchemy-filter esk filters | So a pretty important use case at my company is the ability to add custom filters that aren't field specific

Here is an example use case using the below hack discussed

```

class UserNode(SQLAlchemyObjectType):

class Meta:

model = User

interfaces = (LevNode,)

filter = UserFilter

cla... | closed | 2024-03-18T15:32:36Z | 2024-09-15T00:55:04Z | https://github.com/graphql-python/graphene-sqlalchemy/issues/407 | [] | adiberk | 1 |

jupyter/docker-stacks | jupyter | 1,909 | CI improvements and speedups | There are a few things that might be improved / changed and everyone is welcome to implement these ideas:

1. Get rid of upload/download artifact and use a registry server. This would mean much less uploads (most layers will be already uploaded). It might be a good idea to use [service containers](https://docs.github... | closed | 2023-05-28T15:06:10Z | 2024-01-13T14:57:18Z | https://github.com/jupyter/docker-stacks/issues/1909 | [

"type:Enhancement",

"good first issue"

] | mathbunnyru | 2 |

autogluon/autogluon | data-science | 4,679 | OSError: /usr/local/lib/python3.10/dist-packages/torchaudio/lib/libtorchaudio.so: undefined symbol: _ZNK3c105Error4whatEv | Hi,

unfortunately, the Google Colab: https://colab.research.google.com/github/autogluon/autogluon/blob/stable/docs/tutorials/multimodal/text_prediction/beginner_text.ipynb#scrollTo=d2535bb3

throws the following exception:

Can you please point me to references which would allow me to host this on gitlab pages?

The instructions [here](https://jupyterbook.org/en/stable/start/publish.html) work for github b... | open | 2023-03-07T14:06:00Z | 2024-10-28T18:17:39Z | https://github.com/jupyter-book/jupyter-book/issues/1962 | [

"bug"

] | zohimchandani | 2 |

neuml/txtai | nlp | 853 | PGVector not enable | Hi,

Trying to persist index via psql, but I get the error

`_

raise ImportError('PGVector is not available - install "ann" extra to enable')

ImportError: PGVector is not available - install "ann" extra to enable`

I've installed the ANN backend via `pip installl txtai[ann]`, yet same issue.

Sample code... | closed | 2025-01-12T16:17:37Z | 2025-01-20T18:26:39Z | https://github.com/neuml/txtai/issues/853 | [] | thelaycon | 5 |

tqdm/tqdm | pandas | 1,434 | Multiple pbars with leave=None cause leftover stuff on console and wrong position of cursor | - [x] I have marked all applicable categories:

+ [ ] exception-raising bug

+ [x] visual output bug

- [x] I have visited the [source website], and in particular

read the [known issues]

- [x] I have searched through the [issue tracker] for duplicates

- [x] I have mentioned version numbers, operating syste... | open | 2023-02-22T10:06:10Z | 2024-10-16T03:10:12Z | https://github.com/tqdm/tqdm/issues/1434 | [] | fireattack | 4 |

facebookresearch/fairseq | pytorch | 5,224 | ValueError: cannot register model architecture (w2l_conv_glu_enc) | ## 🐛 Bug

I have installed fairseq following the instructions on your site

git clone https://github.com/pytorch/fairseq

cd fairseq

pip install --editable ./

Then I follow the instructions at https://github.com/facebookresearch/fairseq/tree/main/examples/speech_recognition to train a Tranformers speech recogni... | open | 2023-06-28T23:09:42Z | 2023-07-20T16:21:08Z | https://github.com/facebookresearch/fairseq/issues/5224 | [

"bug",

"needs triage"

] | vivektyagiibm | 4 |

bmoscon/cryptofeed | asyncio | 342 | HUOBI_SWAP error | **Describe the bug**

I get following error message from HUOBI_SWAP.

```bash

2020-12-02 21:46:59,329 : ERROR : HUOBI_SWAP: encountered an exception, reconnecting

Traceback (most recent call last):

File "/usr/local/lib/python3.8/dist-packages/cryptofeed-1.6.2-py3.8.egg/cryptofeed/feedhandler.py", line 258, in _con... | closed | 2020-12-02T20:55:55Z | 2020-12-03T06:00:01Z | https://github.com/bmoscon/cryptofeed/issues/342 | [

"bug"

] | yohplala | 4 |

pywinauto/pywinauto | automation | 627 | How to check if edit control type is editable or not (to check whether it is disable)? | closed | 2018-12-10T08:46:00Z | 2019-05-18T14:16:04Z | https://github.com/pywinauto/pywinauto/issues/627 | [

"enhancement",

"refactoring_critical"

] | NeJaiswal | 4 | |

pyqtgraph/pyqtgraph | numpy | 2,772 | pyqtgraph.GraphItem horizontal lines appear when nodes share the exact same coordinates | ### Short description

Horizontal lines appearing in plotted Graph layouts using GraphItem

### Code to reproduce

Code to reproduce the horizontal lines using the test_data variable as the coordinates and the test_edges variable as the edgelist

```python

import pyqtgraph as pg

import numpy as np

from matplotl... | closed | 2023-07-11T12:58:22Z | 2024-02-16T02:58:09Z | https://github.com/pyqtgraph/pyqtgraph/issues/2772 | [] | simonvw95 | 6 |

huggingface/transformers | machine-learning | 36,125 | Transformers | ### Model description

Transformer Practice

### Open source status

- [x] The model implementation is available

- [x] The model weights are available

### Provide useful links for the implementation

_No response_ | closed | 2025-02-10T22:18:45Z | 2025-02-11T13:52:00Z | https://github.com/huggingface/transformers/issues/36125 | [

"New model"

] | HemanthVasireddy | 1 |

tfranzel/drf-spectacular | rest-api | 397 | Allow json/yaml schema response selection via query parameter | When using ReDoc or SwaggerUI it could be helpful if users could view the json and yaml schema in the browser. Currently the `/schema` url selects the response format bases on content negotiation. I am not sure how browsers handle this and what the defaults are, for me chrome seems to default to yaml. I prefer json and... | closed | 2021-05-21T08:01:03Z | 2021-05-27T18:15:00Z | https://github.com/tfranzel/drf-spectacular/issues/397 | [] | valentijnscholten | 2 |

FujiwaraChoki/MoneyPrinter | automation | 157 | [Feature request] Adding custom script | **Describe the solution you'd like**

Frontend option to add custom script for video

| closed | 2024-02-10T16:19:45Z | 2024-02-11T09:46:42Z | https://github.com/FujiwaraChoki/MoneyPrinter/issues/157 | [] | Tungstenfur | 1 |

rthalley/dnspython | asyncio | 1,064 | License specification is missing | I see some time earlier someone already [raised that question](https://github.com/rthalley/dnspython/pull/1038)

I just pulled the latest package from Pypi and it still doesn't have License information:

<img width="516" alt="Screenshot 2024-03-05 at 1 26 49 PM" src="https://github.com/rthalley/dnspython/assets/31595... | closed | 2024-03-05T06:33:28Z | 2024-03-05T13:59:23Z | https://github.com/rthalley/dnspython/issues/1064 | [

"Bug"

] | freakaton | 2 |

encode/databases | sqlalchemy | 73 | Accessing Record values by dot notation | If I am not missing anything, is there a way to get PG Record values using dot notation instead of dict-like syntax?

For now we have to use something like `user['hashed_password']` which breaks `Readability counts.` of zen :)

Would be great to use just `user.hashed_password`. | closed | 2019-03-26T10:26:15Z | 2019-03-26T10:34:35Z | https://github.com/encode/databases/issues/73 | [] | Spacehug | 1 |

keras-team/keras | tensorflow | 20,189 | Keras different versions have numerical deviations when using pretrain model | The following code will have output deviations between Keras 3.3.3 and Keras 3.5.0.

```python

#download model

from modelscope import snapshot_download

base_path = 'q935499957/Qwen2-0.5B-Keras'

import os

dir = 'models'

try:

os.mkdir(dir)

except:

pass

model_dir = snapshot_download(base_path,local_dir=d... | closed | 2024-08-30T11:21:08Z | 2024-08-31T07:38:23Z | https://github.com/keras-team/keras/issues/20189 | [] | pass-lin | 2 |

huggingface/transformers | pytorch | 36,472 | Dtensor support requires torch>=2.5.1 | ### System Info

torch==2.4.1

transformers@main

### Who can help?

#36335 introduced an import on Dtensor https://github.com/huggingface/transformers/blob/main/src/transformers/modeling_utils.py#L44

but this doesn't exist on torch==2.4.1, but their is no guard around this import and setup.py lists torch>=2.0.

@Arthu... | closed | 2025-02-28T05:02:22Z | 2025-03-05T10:27:02Z | https://github.com/huggingface/transformers/issues/36472 | [

"bug"

] | winglian | 6 |

scikit-tda/kepler-mapper | data-visualization | 159 | Target space of lens function something other than R? | The mapper algorithm starts with a real valued function f:X->R, where X is the data set and R is 1d Euclidean space.

According to the original paper, the target space of the lenses (called filters in the paper) may be Euclidean space, but it might also be a circle, a torus, a tree or another metric space.

My qu... | closed | 2019-03-19T13:02:09Z | 2019-04-10T08:37:40Z | https://github.com/scikit-tda/kepler-mapper/issues/159 | [] | karinsasaki | 3 |

dmlc/gluon-nlp | numpy | 1,473 | [Benchmark] Improve NLP Backbone Benchmark | ## Description

In GluonNLP, we introduced the benchmarking script in https://github.com/dmlc/gluon-nlp/tree/master/scripts/benchmarks.

The goal is to track the training + inference latency of common NLP backbones so that we can choose the appropriate ones for our task. This will help users train + deploy models wit... | open | 2021-01-09T23:00:58Z | 2021-01-13T17:30:16Z | https://github.com/dmlc/gluon-nlp/issues/1473 | [

"enhancement",

"help wanted",

"performance"

] | sxjscience | 0 |

sebastianruder/NLP-progress | machine-learning | 82 | Where do the ELMo WSD results come from? | The only result in the paper is the averaged/overall result. | closed | 2018-08-24T10:08:10Z | 2018-08-29T13:50:44Z | https://github.com/sebastianruder/NLP-progress/issues/82 | [] | frankier | 3 |

marshmallow-code/marshmallow-sqlalchemy | sqlalchemy | 362 | Syntax Error when deploying Flask App to sever | Ubuntu: 20.04

Python: 3.8.5

Flask 1.1.2

marshmallow: 3.10.0

SQLAlchemy: 1.3.22

marshmallow-sqlalchemy: 0.24.1

Following [this tutorial](https://www.digitalocean.com/community/tutorials/how-to-deploy-a-flask-application-on-an-ubuntu-vps) on setting a Flask app up on a server. Seemingly is all working however I... | closed | 2021-01-19T13:07:43Z | 2021-01-21T11:59:11Z | https://github.com/marshmallow-code/marshmallow-sqlalchemy/issues/362 | [] | klongbeard | 1 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.