repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

saulpw/visidata | pandas | 1,549 | Does there exist a Slack community? | Hi - thanks for creating this awesome tool. I was wondering if you'd consider starting a Slack community where VisiData users could share tips and learn together.

Thanks,

Roman

| closed | 2022-10-03T06:15:30Z | 2022-10-03T15:52:00Z | https://github.com/saulpw/visidata/issues/1549 | [

"question"

] | rbratslaver | 1 |

CorentinJ/Real-Time-Voice-Cloning | deep-learning | 1,046 | proposing accessibility changes for the gui | hi, please use wxpython instead of qt, as wxpython has the highest degree of accessibility to screen readers. Thanks. I would pull request it if I knew enough python, but I'm still in the basics. | open | 2022-03-31T10:35:40Z | 2022-04-10T17:48:15Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/1046 | [] | king-dahmanus | 8 |

RobertCraigie/prisma-client-py | asyncio | 799 | Support for most recent prisma version | <!--

Thanks for helping us improve Prisma Client Python! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by enabling additional logging output.

See https://prisma-client-py.readthedocs.io/en/stable/reference/logging/ for how to enable additional log... | closed | 2023-07-28T07:50:39Z | 2023-10-22T23:01:43Z | https://github.com/RobertCraigie/prisma-client-py/issues/799 | [] | tobiasdiez | 1 |

ultralytics/ultralytics | machine-learning | 19,696 | May I ask how to mark the key corners of the box? There is semantic ambiguity | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/orgs/ultralytics/discussions) and found no similar questions.

### Question

May I ask how to mark the key corners of the box? There is semantic ambiguit... | open | 2025-03-14T09:45:40Z | 2025-03-16T04:33:07Z | https://github.com/ultralytics/ultralytics/issues/19696 | [

"question"

] | missTL | 4 |

ultralytics/ultralytics | python | 18,705 | yolov8obb realtime detect and save data | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/ultralytics/ultralytics/discussions) and found no similar questions.

### Question

I am using yolov8obb to detect angles. There are 33 small angles on a... | open | 2025-01-16T03:39:20Z | 2025-01-20T20:39:16Z | https://github.com/ultralytics/ultralytics/issues/18705 | [

"question",

"OBB",

"detect"

] | zhang990327 | 9 |

dynaconf/dynaconf | fastapi | 251 | [bug] Exception when getting non existing dotted keys with default value | **Describe the bug**

Using settings.get(key, default) with a non-existing key - depending on the default value - produces an exception.

Am I missing something obvious?

**To Reproduce**

Steps to reproduce the behavior:

1. Having the following folder structure

- app.py

- config.py

2. Having the following ... | closed | 2019-10-25T19:30:31Z | 2019-11-13T20:54:03Z | https://github.com/dynaconf/dynaconf/issues/251 | [

"bug",

"in progress",

"Pending Release"

] | sebbegg | 2 |

man-group/arctic | pandas | 817 | Support for mongo transactions | Hi,

Is there any plan of introducing the ability to modify the mongo db atomically(i.e making use of mongo transactions)?

I see into the code the `ArcticTransaction` which is context based, not mongo based and it works for the VersionStore only, .

Thanks! | closed | 2019-09-21T11:15:29Z | 2019-10-09T06:10:51Z | https://github.com/man-group/arctic/issues/817 | [] | scoriiu | 0 |

tensorpack/tensorpack | tensorflow | 614 | imgaug.Saturation | 1. What I did: Use `fbresnet_augmentor` in `tensorpack/examples/Inception/imagenet_utils.py`.

2. What I observed: when using the `fbresnet_augmentor` in the code, some augmented images contain overly brightly colored artifacts:

when calling the ListTopics operation (reached max retries: 2): exception while calling sn... | closed | 2024-11-19T01:09:42Z | 2024-12-10T01:30:24Z | https://github.com/localstack/localstack/issues/11870 | [

"type: bug",

"status: response required",

"aws:sns",

"status: resolved/stale"

] | stoutlinden | 3 |

cupy/cupy | numpy | 9,028 | `cupy.add` behaves differntly with `numpy.add` on `uint8` | ### Description

`cupy.add` behaves differntly with `numpy.add` on `uint8`

### To Reproduce

```py

import numpy as np

import cupy as cp

print(np.add(np.uint8(255),1))

print(cp.add(cp.uint8(255),1))

```

```bash

0

256

```

### Installation

Wheel (`pip install cupy-***`)

### Environment

```

OS ... | closed | 2025-03-12T15:00:57Z | 2025-03-21T05:59:35Z | https://github.com/cupy/cupy/issues/9028 | [

"issue-checked"

] | apiqwe | 2 |

MilesCranmer/PySR | scikit-learn | 293 | [Feature]Can i clone SymbolicRegression.jl manually while running pysr.install()? | I always met difficulties about cloning git repos while running pysr.install(). But i can clone those repos manually. So can i put these repos like SymbolicRegression.jl somewhere so that i can skip the step of cloning while runing install()? | closed | 2023-04-18T08:07:09Z | 2023-04-18T11:28:42Z | https://github.com/MilesCranmer/PySR/issues/293 | [

"enhancement"

] | WhiteGL | 1 |

jschneier/django-storages | django | 1,439 | File not found error on Azure | After updating django-storages to 1.14, when trying to upload an image through the admin panel I'm getting a file not found error with the following trace:

```

Internal Server Error: /admin/images/multiple/add/

Traceback (most recent call last):

File "/usr/local/lib/python3.12/site-packages/django/core/handlers/e... | closed | 2024-07-25T17:02:15Z | 2024-08-31T22:15:13Z | https://github.com/jschneier/django-storages/issues/1439 | [] | Morefra | 4 |

NVIDIA/pix2pixHD | computer-vision | 183 | Does the program preprocess the input image? | When I input an image with very low contrast, the output results increase the contrast a lot.

What is the reason for this? | open | 2020-03-06T03:27:14Z | 2020-04-22T11:36:02Z | https://github.com/NVIDIA/pix2pixHD/issues/183 | [] | Kodeyx | 1 |

viewflow/viewflow | django | 266 | [Question] How to automatically assign a user to a task? | If I were creating a workflow that has a step to selects a team member to be assigned to a task base on certain conditions, how do I do that? | closed | 2020-03-26T20:44:18Z | 2020-05-12T03:08:28Z | https://github.com/viewflow/viewflow/issues/266 | [

"request/question",

"dev/flow"

] | variable | 1 |

PokemonGoF/PokemonGo-Bot | automation | 5,491 | catch_pokemon: catch filter does not work | I set to catch above 400 cp or above 0.85iv

but seems bot is catching all

### Your FULL config.json (remove your username, password, gmapkey and any other private info)

<!-- Provide your FULL config file, feel free to use services such as pastebin.com to reduce clutter -->

```

"catch": {

"any": {"catch_above_cp": 4... | closed | 2016-09-16T20:17:54Z | 2016-09-17T03:10:27Z | https://github.com/PokemonGoF/PokemonGo-Bot/issues/5491 | [] | liejuntao001 | 2 |

comfyanonymous/ComfyUI | pytorch | 6,302 | 'ASGD is already registered in optimizer at torch.optim.asgd' is:issue | ### Your question

an error: 'ASGD is already registered in optimizer at torch.optim.asgd' is:issue

### Logs

```powershell

File "E:\ComfyUI-aki\ComfyUI-aki-v1.5\python\lib\site-packages\mmengine\optim\optimizer\builder.py", line 28, in register_torch_optimizers

OPTIMIZERS.register_module(module=_optim)

File ... | closed | 2025-01-01T04:03:33Z | 2025-01-02T00:38:01Z | https://github.com/comfyanonymous/ComfyUI/issues/6302 | [

"User Support",

"Custom Nodes Bug"

] | alexlooks | 1 |

PrefectHQ/prefect | automation | 17,251 | Consider committing the uv lockfile | ### Describe the current behavior

Hi, thank you for making prefect!

I'm considering packaging this for nixos (a linux distribution).

Having the lockfile committed to the project would make this much easier.

I've seen the todo in the dockerfile saying to consider committing it.

Just saying here that if you have time, ... | closed | 2025-02-23T09:42:21Z | 2025-03-07T22:48:20Z | https://github.com/PrefectHQ/prefect/issues/17251 | [

"enhancement"

] | happysalada | 3 |

microsoft/hummingbird | scikit-learn | 172 | Support for LGBMRanker? | Hello there,

I am wondering if the current implementation supports convertion from LightGBMRanker which is part of the LightGBM package? Is there anything special to the LightGBMRanker and will the converter for LGBMRegressor works in this case? Here is the error I got when I try to convert the LGBMRanker

```

M... | closed | 2020-06-26T21:18:37Z | 2020-07-21T00:17:16Z | https://github.com/microsoft/hummingbird/issues/172 | [] | go2ready | 5 |

explosion/spaCy | nlp | 13,769 | Bug in Span.sents | <!-- NOTE: For questions or install related issues, please open a Discussion instead. -->

When a `Doc`'s entity is in the second to the last sentence, and the last sentence consists only of one token, `entity.sents` includes that last 1-token sentence (even though the entity is fully contained by the previous sentence.... | open | 2025-03-12T18:03:13Z | 2025-03-12T18:03:13Z | https://github.com/explosion/spaCy/issues/13769 | [] | nrodnova | 0 |

exaloop/codon | numpy | 308 | Importing codon code from python | Is it possible to build a codon script to a .dylib or .so file to be imported into python for execution? I didn't see it in the documentation. I did see how to build object files but you cannot import those into python. | closed | 2023-03-30T05:17:48Z | 2025-02-27T18:07:13Z | https://github.com/exaloop/codon/issues/308 | [] | NickDatLe | 7 |

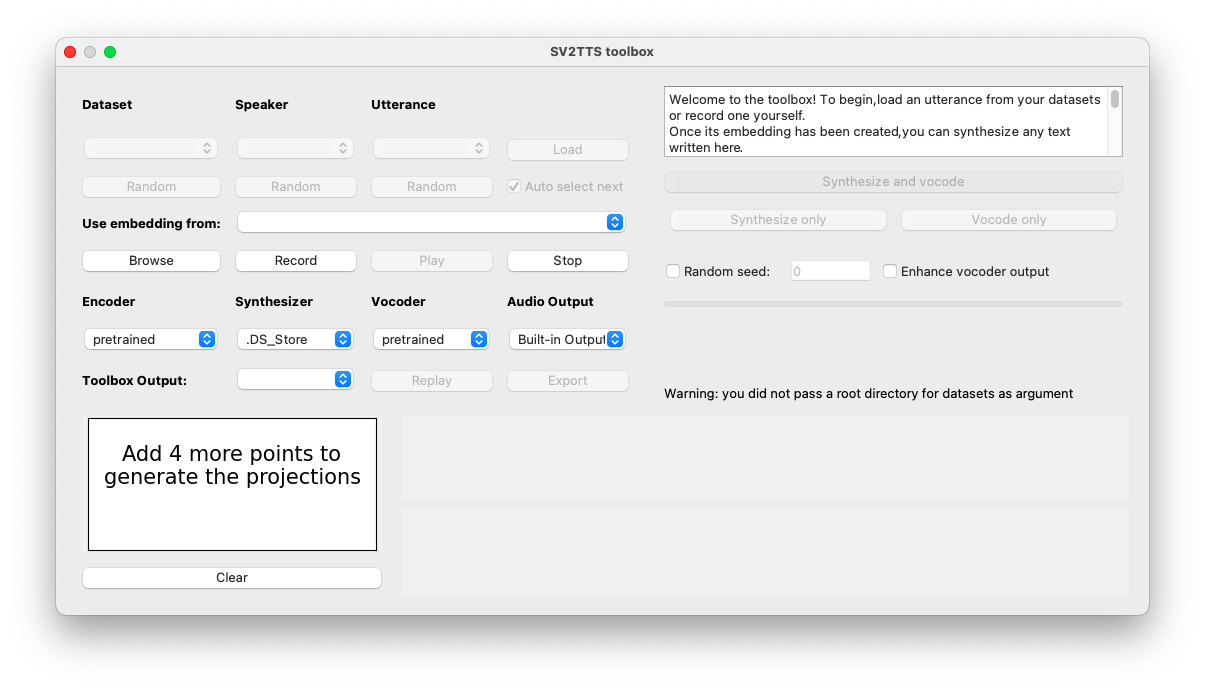

CorentinJ/Real-Time-Voice-Cloning | pytorch | 630 | Can't load dataset |

datasets_root: None

Warning: you did not pass a root directory for datasets as argument.

The recognized datasets are:

LibriSpeech/dev-clean

LibriSpeech/dev-ot... | closed | 2021-01-18T13:40:04Z | 2021-01-18T19:13:48Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/630 | [] | notluke27 | 2 |

christabor/flask_jsondash | plotly | 104 | Make chart specific documentation show up in UI | What likely needs to happen:

1. Docs moved inside of package (OR linked via setuptools)

2. Docs read and imported via python

3. Docs then parsed and available on a per-widget basis.

The ultimate goal of the above is so that there is never any disconnect between docs and UI. It should always stay in sync.

| open | 2017-05-10T22:26:23Z | 2017-05-14T20:31:45Z | https://github.com/christabor/flask_jsondash/issues/104 | [

"enhancement",

"docs"

] | christabor | 0 |

scrapy/scrapy | web-scraping | 6,451 | SSL error - dh key too small | > https://data.airbreizh.asso.fr/

Fails in Scrapy (default settings) but works fine in a web-browser (new or old).

```

[scrapy.downloadermiddlewares.retry] DEBUG: Retrying <GET https://data.airbreizh.asso.fr> (failed 1 times): [<twisted.python.failure.Failure OpenSSL.SSL.Error: [('SSL routines', '', 'dh key too ... | closed | 2024-08-01T13:02:38Z | 2024-08-01T14:27:30Z | https://github.com/scrapy/scrapy/issues/6451 | [] | mohmad-null | 1 |

pydata/xarray | numpy | 9,839 | Advanced interpolation returning unexpected shape | ### What happened?

Hi !

Iam using Xarray since quite some time and recently I started using fresh NC files from https://cds.climate.copernicus.eu/ (up to now, using NC4 files that I have from quite some time now). I have an issue regarding Xarray' interpolation with advanced indexing: the returned shape by interp... | closed | 2024-11-29T15:52:43Z | 2024-12-14T08:33:12Z | https://github.com/pydata/xarray/issues/9839 | [

"topic-interpolation"

] | vianneylan | 7 |

robotframework/robotframework | automation | 4,787 | How can i add a column to the robot test report? | closed | 2023-06-08T07:53:56Z | 2023-06-09T23:06:09Z | https://github.com/robotframework/robotframework/issues/4787 | [] | liulangdexiaoxin | 1 | |

trevismd/statannotations | seaborn | 54 | Add support for LSD& Tukey multiple comparisons | In ecology study, we often apply LSD or Tukey multiple comparisons, which are vital when labeling a batch of significance of experimental result. Most of multiple comparisons methods have been integrated in statmodels.stats.multicomp. You may get more information of multiple comparisons methods from https://zhuanlan.zh... | open | 2022-04-18T15:06:15Z | 2022-06-04T23:19:53Z | https://github.com/trevismd/statannotations/issues/54 | [] | lxw748 | 2 |

FactoryBoy/factory_boy | django | 421 | Use django test database when running test | I have my database defined as followed in my django settings:

```python

DATABASES = {

'default': {

'ENGINE': 'django.db.backends.postgresql',

'USER': 'mydatabaseuser',

'NAME': 'mydatabase',

'TEST': {

'NAME': 'mytestdatabase',

},

},

}

```

When ... | closed | 2017-09-29T13:02:18Z | 2017-10-02T15:06:14Z | https://github.com/FactoryBoy/factory_boy/issues/421 | [] | BrunoGodefroy | 1 |

healthchecks/healthchecks | django | 152 | Twitter DM integration | Twitter DM integration will be awesome to have. Send alerts to user as DM from a standard healthchecks account. | closed | 2018-02-01T06:50:29Z | 2018-08-20T15:23:40Z | https://github.com/healthchecks/healthchecks/issues/152 | [] | thejeshgn | 2 |

pallets-eco/flask-sqlalchemy | sqlalchemy | 398 | provide support for sqlalchemy.ext.automap | SQLAlchemy's automapper provides reflection of an existing database and it's relations.

| closed | 2016-05-28T08:18:58Z | 2020-12-05T20:55:43Z | https://github.com/pallets-eco/flask-sqlalchemy/issues/398 | [] | nexero | 6 |

Evil0ctal/Douyin_TikTok_Download_API | fastapi | 115 | [BUG] API返回中的official_api中的值需要进行修改 | ***发生错误的平台?***

如:抖音/TikTok

***发生错误的端点?***

如:API-V1/API-V2/Web APP

***提交的输入值?***

如:短视频链接

***是否有再次尝试?***

如:是,发生错误后X时间后错误依旧存在。

***你有查看本项目的自述文件或接口文档吗?***

如:有,并且很确定该问题是程序导致的。

| closed | 2022-12-02T11:25:21Z | 2022-12-02T23:01:45Z | https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues/115 | [

"BUG",

"Fixed"

] | Evil0ctal | 1 |

miguelgrinberg/python-socketio | asyncio | 691 | AsyncAioPikaManager connect | https://github.com/miguelgrinberg/python-socketio/blob/2f0d8bbd8c4de43fe26e0f2edcd05aef3c8c71f9/socketio/asyncio_aiopika_manager.py#L68-L76

A connection is built every time it is publish can this place be improved? | closed | 2021-05-25T08:19:24Z | 2021-06-27T19:45:29Z | https://github.com/miguelgrinberg/python-socketio/issues/691 | [

"question"

] | wangjianweiwei | 2 |

AirtestProject/Airtest | automation | 986 | Cannot install pocoui by using poetry on macOS |

**Describe the bug**

Cannot install pocoui by using poetry

```

➜ poetry-demo poetry add pocoui

Creating virtualenv poetry-demo-ZKg_bqkG-py3.8 in /Users/trevorwang/Library/Caches/pypoetry/virtualenvs

Using version ^1.0.84 for pocoui

Updating dependencies

Resolving dependencies... (13.5s)

Writing lock ... | open | 2021-11-22T02:23:05Z | 2021-11-22T02:23:05Z | https://github.com/AirtestProject/Airtest/issues/986 | [] | trevorwang | 0 |

psf/requests | python | 6,707 | Requests 2.32.0 Not supported URL scheme http+docker | <!-- Summary. -->

Newest version of requests 2.32.0 has an incompatibility with python lib `docker`

```

INTERNALERROR> Traceback (most recent call last):

INTERNALERROR> File "/var/lib/jenkins/workspace/Development_sm_master/gravity/.nox/lib/python3.10/site-packages/requests/adapters.py", line 532, in send

... | closed | 2024-05-20T19:36:07Z | 2024-06-04T16:21:25Z | https://github.com/psf/requests/issues/6707 | [] | joshzcold | 16 |

mars-project/mars | numpy | 2,463 | [BUG] Failed to execute query when there are multiple arguments | **Describe the bug**

When executing query with more than three joint arguments, the query failed with SyntaxError.

**To Reproduce**

```python

import numpy as np

import mars.dataframe as md

df = md.DataFrame({'a': np.random.rand(100),

'b': np.random.rand(100),

'c c': np.ra... | closed | 2021-09-17T07:14:18Z | 2021-09-29T16:00:55Z | https://github.com/mars-project/mars/issues/2463 | [

"type: bug",

"good first issue",

"mod: dataframe"

] | wjsi | 1 |

junyanz/pytorch-CycleGAN-and-pix2pix | computer-vision | 788 | Pre-trained model cannot be downloaded | Can anyone share | open | 2019-10-11T09:00:31Z | 2022-10-23T13:23:08Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/788 | [] | jiaying96 | 4 |

CorentinJ/Real-Time-Voice-Cloning | python | 1,309 | Hid21.1 | open | 2024-07-29T20:24:21Z | 2024-07-29T20:24:21Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/1309 | [] | Hid21 | 0 | |

capitalone/DataProfiler | pandas | 414 | Meaning of quantile names | When profiling a numerical column, I get these quantiles:

```json

"quantiles": {

"0": 657.04,

"1": 999.18,

"2": 1335.71

},

```

Based on the data, I guess these are the 25, 50, and 75 percentiles. I feel like 0, 1, and 2 is not meaningful to the human reader, and als... | open | 2021-09-14T08:26:18Z | 2021-09-14T15:20:18Z | https://github.com/capitalone/DataProfiler/issues/414 | [] | ian-contiamo | 1 |

ansible/awx | django | 15,611 | Support for AAP 2.5 and greater? | ### Please confirm the following

- [X] I agree to follow this project's [code of conduct](https://docs.ansible.com/ansible/latest/community/code_of_conduct.html).

- [X] I have checked the [current issues](https://github.com/ansible/awx/issues) for duplicates.

- [X] I understand that AWX is open source software pro... | closed | 2024-11-04T18:00:21Z | 2025-02-12T15:42:56Z | https://github.com/ansible/awx/issues/15611 | [

"type:enhancement",

"community"

] | ryancbutler | 4 |

encode/databases | asyncio | 333 | unable to iterate over multiple rows from database using python in celery | I am using the `databases` python package (https://pypi.org/project/databases/) to manage connection to my postgresql database

from the documentation (https://www.encode.io/databases/database_queries/#queries)

it says i can either use

```

# Fetch multiple rows without loading them all into memory at once

quer... | closed | 2021-05-12T18:45:49Z | 2021-05-13T12:43:58Z | https://github.com/encode/databases/issues/333 | [] | encryptblockr | 0 |

koxudaxi/fastapi-code-generator | pydantic | 350 | Allow generation of subdirs when using '--template-dir' | Hi.

I'd love to be able to generate sub directories when using the `--template-dir` option. Currently this is not possible as in [fastapi_code_generator/__main__](https://github.com/koxudaxi/fastapi-code-generator/blob/master/fastapi_code_generator/__main__.py#L179) `for target in template_dir.rglob("*"):` is used i... | open | 2023-05-17T11:17:55Z | 2023-05-17T11:23:59Z | https://github.com/koxudaxi/fastapi-code-generator/issues/350 | [] | ThisIsANiceName | 0 |

jmcnamara/XlsxWriter | pandas | 545 | Feature request: Chart legend border and legend styling. | Hi,

There are few options for `chart.set_legend()`.

I think it should be great if there are styling options like border.

Thank you. | closed | 2018-08-03T07:27:20Z | 2018-08-23T23:18:31Z | https://github.com/jmcnamara/XlsxWriter/issues/545 | [

"feature request",

"short term"

] | Pusnow | 3 |

biolab/orange3 | scikit-learn | 6,323 | add RangeSlider gui component | <!--

Thanks for taking the time to submit a feature request!

For the best chance at our team considering your request, please answer the following questions to the best of your ability.

-->

**What's your use case?**

<!-- In other words, what's your pain point? -->

Orange3 does not currently support RangeSlid... | closed | 2023-01-31T11:01:26Z | 2023-02-17T07:51:32Z | https://github.com/biolab/orange3/issues/6323 | [] | kodymoodley | 3 |

widgetti/solara | flask | 14 | Support for route in solara.ListItem() | Often you will need to point a listitem to solara route. We can have a `path_or_route` param to the `ListItem()`. to support vue route (similar to `solara.Link()`). | open | 2022-09-01T10:41:58Z | 2022-09-01T10:42:21Z | https://github.com/widgetti/solara/issues/14 | [] | prionkor | 0 |

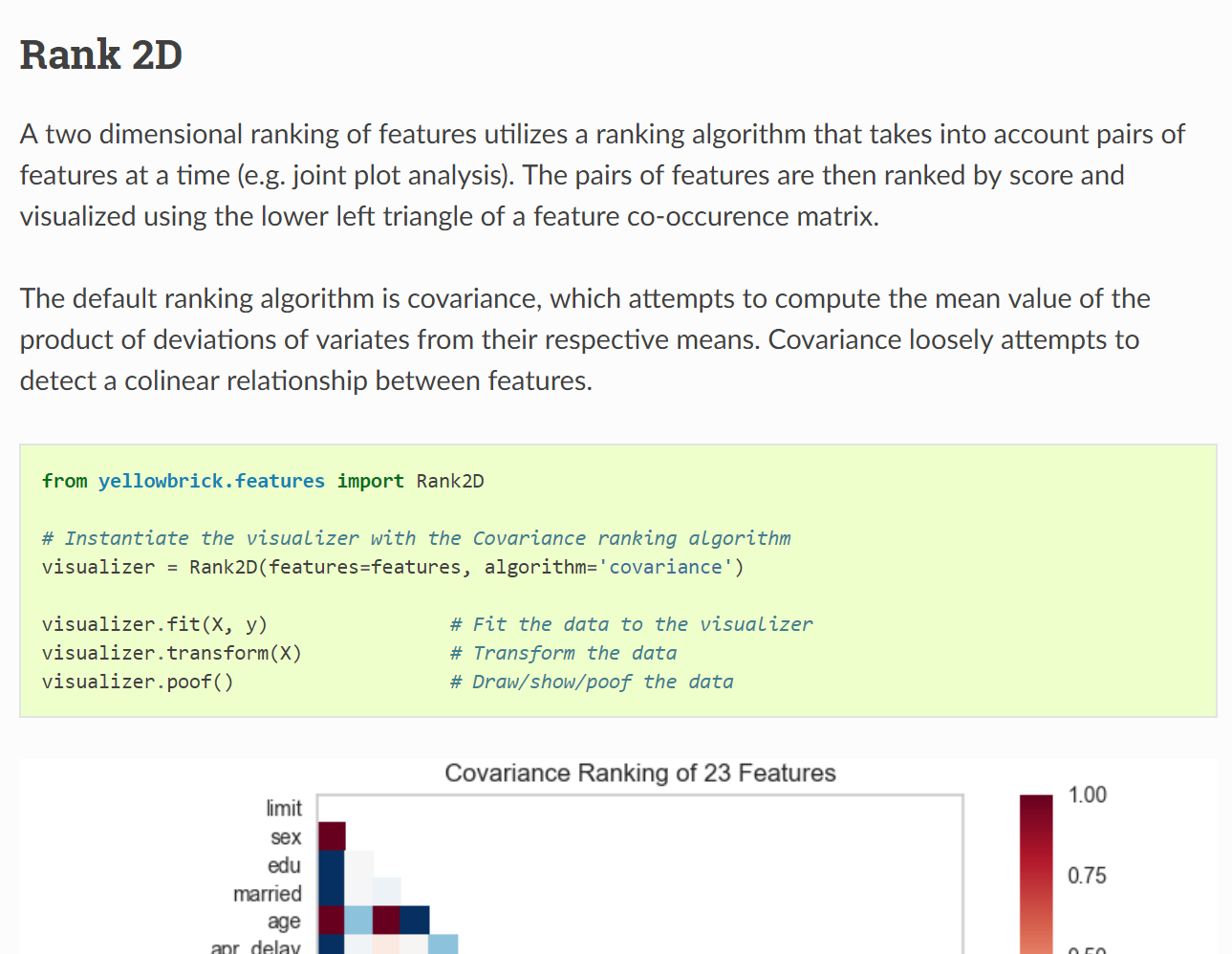

DistrictDataLabs/yellowbrick | scikit-learn | 660 | Update default ranking algorithm in Rank2D documentation | In the documentation for the `Rank2D` visualizer, it says `covariance` is the default ranking algorithm when it is actually `pearson`.

@DistrictDataLabs/team-oz-maintainers

| closed | 2018-11-30T15:08:15Z | 2018-12-03T16:38:53Z | https://github.com/DistrictDataLabs/yellowbrick/issues/660 | [

"type: documentation"

] | Kautumn06 | 0 |

modin-project/modin | data-science | 6,766 | read_pickle: AttributeError: 'Series' object has no attribute 'columns' | Modin version: b8323b5f46e25d257c08f55745c018197f7530f5

Reproducer:

```python

import pandas

import modin.pandas as pd

pandas.Series([1,2,3,4]).to_pickle("test_series.pkl")

pd.read_pickle("test_series.pkl") # <- failed

```

Output:

```python

UserWarning: Ray execution environment not yet initialized.... | open | 2023-11-23T15:33:54Z | 2023-11-23T15:33:54Z | https://github.com/modin-project/modin/issues/6766 | [

"bug 🦗",

"pandas.io",

"P1"

] | anmyachev | 0 |

pytorch/vision | machine-learning | 8,118 | missing labels in FER2013 test data | ### 🐛 Describe the bug

The file **test.csv** has no label column, so the labels in the test split all have value None:

```

from torchvision.datasets import FER2013

dat = FER2013(root='./', split='test')

print(dat[0][1])

```

Adding labels to the file raises a RuntimeError, presumably because of a resulting diffe... | closed | 2023-11-15T09:01:24Z | 2024-06-04T10:21:51Z | https://github.com/pytorch/vision/issues/8118 | [

"enhancement",

"help wanted",

"module: datasets"

] | dtafler | 8 |

aimhubio/aim | tensorflow | 2,347 | Bring all history w/o sampling by cli convert wandb | ## Proposed refactoring or deprecation

(Not sure if it's okay with this category: Feature suggestion instead..?)

In `aim/cli/convert/processors/wandb.py`, https://github.com/aimhubio/aim/blob/8441a58ac1e39b8cd5a5b802794c9c944effdfd4/aim/cli/convert/processors/wandb.py#L45

we've been trying bringing **SAMPLED** his... | closed | 2022-11-15T06:40:13Z | 2022-12-06T19:47:06Z | https://github.com/aimhubio/aim/issues/2347 | [

"area / integrations",

"type / code-health",

"phase / shipped"

] | hjoonjang | 3 |

home-assistant/core | asyncio | 140,888 | 100% CPU usage after updating | ### The problem

After updating my HA instance, I noticed the fans were cranked up to max. ssh-ed, looked at top and saw the following:

9735 root 20 0 3929.6m 225.3m 200.0 1.4 0:11.90 S /usr/bin/java -Xmx1g -jar /app/api/app.jar

9765 root 20 0 3915.5m 172.9m 194.7 1.1 0:04.72 S /usr/bin/ja... | closed | 2025-03-18T18:04:07Z | 2025-03-18T19:41:56Z | https://github.com/home-assistant/core/issues/140888 | [] | fearlesschicken | 1 |

keras-team/keras | tensorflow | 20,748 | Inconsistent validation data handling in Keras 3 for Language Model fine-tuning | ## Issue Description

When fine-tuning language models in Keras 3, there are inconsistencies in how validation data should be provided. The documentation suggests validation_data should be in (x, y) format, but the actual requirements are unclear and the behavior differs between training and validation phases.

## ... | closed | 2025-01-10T14:52:19Z | 2025-02-14T02:01:32Z | https://github.com/keras-team/keras/issues/20748 | [

"type:support",

"stat:awaiting response from contributor",

"stale",

"Gemma"

] | che-shr-cat | 4 |

seleniumbase/SeleniumBase | web-scraping | 2,790 | Time Zone Based on IP ,Time Zone,Time From IP,Time From Javascript | IP based time zone, local time zone, IP based time, local time, WebGL, WebGL Report, WebGPU Report,

=================================================================================

some issues were found. Unable to set these parameters on your own. Some parameters, such as WebGL, WebGL Report, and WebGPU Report, ar... | closed | 2024-05-20T10:08:12Z | 2024-05-21T14:41:24Z | https://github.com/seleniumbase/SeleniumBase/issues/2790 | [

"duplicate",

"external",

"UC Mode / CDP Mode"

] | xipeng5 | 6 |

HIT-SCIR/ltp | nlp | 706 | PyTorch和LTP安装问题 | **

> pip install -i https://pypi.tuna.tsinghua.edu.cn/simple torch transformers

**

Looking in indexes: https://pypi.tuna.tsinghua.edu.cn/simple

Requirement already satisfied: torch in e:\anaconda\lib\site-packages (2.3.1+cu118)

Requirement already satisfied: transformers in e:\anaconda\lib\site-packages (4.42.... | closed | 2024-07-06T11:32:46Z | 2024-07-08T06:52:06Z | https://github.com/HIT-SCIR/ltp/issues/706 | [] | gbl7212 | 0 |

zihangdai/xlnet | nlp | 36 | Default parameters for base model | Hi @kimiyoung and @zihangdai (and all others from the xlnet team),

thanks for sharing the implementation and pre-trained model(s) :heart:

I've some questions regarding to pre-training XLNet:

* Could you provide the default parameters for the `train.py` and `train_gpu.py` script when training a **base** XLNet ... | open | 2019-06-23T22:28:30Z | 2019-06-23T22:37:52Z | https://github.com/zihangdai/xlnet/issues/36 | [] | stefan-it | 0 |

tqdm/tqdm | pandas | 1,457 | Appearance in VSCode using a dark theme. | Hi,

I'm really not sure why this bothers me but nevertheless it does.

This is a fresh Conda environment. I tried to follow the workaround from [this](https://stackoverflow.com/questions/71534901/m... | open | 2023-03-27T11:57:23Z | 2024-09-05T05:22:02Z | https://github.com/tqdm/tqdm/issues/1457 | [] | Chris888-CMD | 2 |

joeyespo/grip | flask | 271 | Internal server error | I issue a command 'grip README.md' and I get a response

```

* Running on http://localhost:6419

```

When I use Chrome to go to that URL I get a error 500. Internal server error.

I look at the terminal window where I invoked the 'grip' command and I see a lot of output but most significantly I see:

```

UnicodeD... | open | 2018-05-24T00:54:57Z | 2018-06-02T00:40:32Z | https://github.com/joeyespo/grip/issues/271 | [

"bug"

] | KevinBurton | 0 |

itamarst/eliot | numpy | 162 | types documentation gives broken Field examples | https://github.com/ClusterHQ/eliot/blob/master/docs/source/types.rst#message-types says `USERNAME = Field.for_types("username", [str])` (and gives several other examples of `Field.for_types` with two arguments). The method requires a third argument, the field description, so the examples don't work as-is.

| open | 2015-05-08T14:25:39Z | 2018-09-22T20:59:17Z | https://github.com/itamarst/eliot/issues/162 | [

"documentation"

] | exarkun | 0 |

matplotlib/matplotlib | data-science | 29,234 | TODO spotted: Separating xy2 and slope | I was reading through the `_axes.pyi` file when I spotted a [TODO comment](https://github.com/matplotlib/matplotlib/blame/84fbae8eea3bb791ae9175dbe77bf5dee3368275/lib/matplotlib/axes/_axes.pyi#L142)

The aim was to separate the `xy2` and `slope` declarations.

Thought I should point it out | open | 2024-12-05T07:14:55Z | 2024-12-05T07:58:31Z | https://github.com/matplotlib/matplotlib/issues/29234 | [] | adityaraute | 3 |

QingdaoU/OnlineJudge | django | 19 | 测试部署说明中命令有拼写错误 | http://qingdaou.github.io/OnlineJudge/easy_install.html

cp OnlineJudge/oj/custom_setting.example.py OnlineJudge/oj/custom_setting.py

这条命令中,文件名的setting都应该是settings

| closed | 2016-03-01T06:25:55Z | 2016-03-01T06:29:35Z | https://github.com/QingdaoU/OnlineJudge/issues/19 | [] | zzh1996 | 1 |

apify/crawlee-python | web-scraping | 908 | Request handler should be able to timeout blocking sync code in user defined handler | Current purely async implementation of request handler is not capable of triggering timeout for blocking sync code. Such code is created by users and we have no control over it. So we can't expect only async blocking code.

Following simple user defined handler can't currently trigger timeout even if it should.

```

@cr... | open | 2025-01-15T11:00:09Z | 2025-01-20T10:22:00Z | https://github.com/apify/crawlee-python/issues/908 | [

"bug",

"t-tooling"

] | Pijukatel | 1 |

yzhao062/pyod | data-science | 278 | No verbose mode for MO_GAAL and SO_GAAL | MO_GAAL and SO_GAAL print a lot during their work: which epoch number is being trained, which one is being tested and so on. While it's useful for some developers, for people who simply consume the functionality the only effect is exploding of their logs.

I would like to request to add "verbose" parameter, similarl... | open | 2021-02-02T15:37:03Z | 2021-04-12T15:34:25Z | https://github.com/yzhao062/pyod/issues/278 | [] | GBR-613 | 1 |

fastapi-users/fastapi-users | fastapi | 302 | Weird response from /auth/register | When making a request to /auth/register through curl or python requests I have no problem registering a user. When I run it through Jquery Ajax I get back the error message:

> Expecting value: line 1 column 1 (char 0)

I looked through some of the code to find out why I would get this back without results.

Here... | closed | 2020-08-17T15:37:52Z | 2020-09-09T13:11:01Z | https://github.com/fastapi-users/fastapi-users/issues/302 | [

"question",

"stale"

] | Houjio | 4 |

clovaai/donut | nlp | 250 | dataset script missing error | Hi,

I'm using this project with my own custom dataset. I created a sample data in the dataset folder as specified in the README.md with a folder for (test/validation/train) with the metadata

Then i ran this command:

python train.py --config config/train_cord.yaml --pretrained_model_name_or_path "naver-clova-ix... | open | 2023-09-14T12:06:50Z | 2023-11-04T17:52:35Z | https://github.com/clovaai/donut/issues/250 | [] | segaranp | 1 |

ets-labs/python-dependency-injector | asyncio | 166 | Update Cython to 0.27 | There was a new release of Cython (0.27), so we need to re-compile current code on it, re-run testing on all currently supported Python versions. | closed | 2017-10-03T21:52:07Z | 2017-10-10T22:13:35Z | https://github.com/ets-labs/python-dependency-injector/issues/166 | [

"enhancement"

] | rmk135 | 0 |

vitalik/django-ninja | django | 1,177 | Some way to deal with auth | - | closed | 2024-05-28T17:01:14Z | 2024-05-28T17:49:28Z | https://github.com/vitalik/django-ninja/issues/1177 | [] | Rey092 | 0 |

PaddlePaddle/models | nlp | 4,955 | DCGAN中infer.py问题:: | 在DCGAN中运行infer.py,

data中只有mnist,怎么还是加载的celeba?

` have some kind of initial setup for "role", "content" (basic rules of communication)?

Just like for Cha... | closed | 2023-10-30T12:53:55Z | 2023-10-30T17:25:37Z | https://github.com/OpenInterpreter/open-interpreter/issues/717 | [] | SuperMaximus1984 | 2 |

saulpw/visidata | pandas | 1,911 | (Unnecessary) hidden dependency on setuptools; debian package broken | **Small description**

When installing visidata from the package-manager without having python3-setuptools installed, the following error appears when starting visidata:

```

Traceback (most recent call last):

File "/usr/bin/vd", line 3, in <module>

import visidata.main

File "/usr/lib/python3/dist-packa... | closed | 2023-06-05T13:29:50Z | 2023-07-31T03:59:47Z | https://github.com/saulpw/visidata/issues/1911 | [

"bug",

"fixed"

] | LaPingvino | 1 |

explosion/spaCy | machine-learning | 13,595 | Document good practices for caching spaCy models in CI setup | I use spaCy in a Jupyter book which currently downloads multiple spaCy models on every CI run, which wastes time and bandwidth.

The best solution would be to download and cache the models once, and get them restored on subsequent CI runs.

Are there any bits of documentation covering this concern somewhere? I coul... | open | 2024-08-13T15:19:09Z | 2024-08-13T15:19:09Z | https://github.com/explosion/spaCy/issues/13595 | [] | ghisvail | 0 |

wsvincent/awesome-django | django | 239 | Awesome-django | 장고 어썸 | closed | 2023-11-29T05:41:46Z | 2023-11-29T13:40:32Z | https://github.com/wsvincent/awesome-django/issues/239 | [] | Jaewook-github | 0 |

dfm/corner.py | data-visualization | 192 | module 'corner' has no attribute 'corner' | I am having trouble getting corner to run.

I've instaled it using:

`python -m pip install corner`

I am trying to run the code from the getting started section of the docs page.

I've tried importing as:

`from corner import corner` or

`import corner as cor`

I keep getting the error:

`AttributeErro... | closed | 2022-02-10T16:16:36Z | 2022-02-11T15:19:56Z | https://github.com/dfm/corner.py/issues/192 | [] | FluidTop3 | 3 |

chatanywhere/GPT_API_free | api | 308 | 免费版的4omini如果对话内容稍微长一点就会报key无效,重新开个对话就又好了 | 一开始我以为挂了,后来发现是这个问题,只要对话长一点就会报错,3.5turbo倒是正常,是什么原因呢? | closed | 2024-10-23T01:50:57Z | 2024-10-27T18:37:13Z | https://github.com/chatanywhere/GPT_API_free/issues/308 | [] | sofs2005 | 1 |

deepfakes/faceswap | machine-learning | 542 | Possible AI contributor here with ethical concerns | closed | 2018-12-07T21:49:21Z | 2018-12-08T00:21:49Z | https://github.com/deepfakes/faceswap/issues/542 | [] | AndrasEros | 0 | |

charlesq34/pointnet | tensorflow | 231 | Error when training sem_seg model with my own data | I have created hdf5 files and when I ran train.py, it displayed this:

/home/junzheshen/pointnet/JS PointNet/PointNet 1000 ptsm2 without error/provider.py:91: H5pyDeprecationWarning: The default file mode will change to 'r' (read-only) in h5py 3.0. To suppress this warning, pass the mode you need to h5py.File(), or s... | open | 2020-02-28T16:31:21Z | 2020-06-14T03:32:59Z | https://github.com/charlesq34/pointnet/issues/231 | [] | shinguncher | 3 |

strawberry-graphql/strawberry | asyncio | 3,171 | Mypy doesn't see attributes on @strawberry.type | <!-- Provide a general summary of the bug in the title above. -->

Mypy throws error: 'Unexpected keyword argument...' on instance creation for @strawberry.type.

<!--- This template is entirely optional and can be removed, but is here to help both you and us. -->

<!--- Anything on lines wrapped in comments like these w... | closed | 2023-10-25T12:54:40Z | 2025-03-20T15:56:26Z | https://github.com/strawberry-graphql/strawberry/issues/3171 | [

"bug"

] | ziehlke | 4 |

matplotlib/matplotlib | data-science | 29,760 | [Bug]: Changing the array returned by get_data affects future calls to get_data but does not change the plot on canvas.draw. | ### Bug summary

I use get_ydata for simplicity. I call it twice, with orig=True and False, respectively, storing the returned arrays as yT and yF, which are then changed. The drawn position is the original one, seems to be a third entity, maybe cached.

Using set_ydata moves the marker, but does not touch the arrays y... | closed | 2025-03-15T19:18:59Z | 2025-03-19T18:00:05Z | https://github.com/matplotlib/matplotlib/issues/29760 | [] | Rainald62 | 3 |

ageitgey/face_recognition | python | 1,352 | If I send Image as File Object from React Application, it is not able to find the locations | * face_recognition version: 1.3.0

* Python version: 3.9

* Operating System: Windows 10

### Description

If I send Image as File Object from React Application, it is not able to find the locations. But if I save that image again by just opening and saving using paint, it works fine.

| open | 2021-08-06T12:56:37Z | 2021-08-06T12:56:37Z | https://github.com/ageitgey/face_recognition/issues/1352 | [] | mihir2510 | 0 |

nonebot/nonebot2 | fastapi | 3,002 | Plugin: ZXPM插件管理 | ### PyPI 项目名

nonebot-plugin-zxpm

### 插件 import 包名

nonebot_plugin_zxpm

### 标签

[{"label":"小真寻","color":"#fbe4e4"},{"label":"多平台适配","color":"#ea5252"},{"label":"插件管理","color":"#456df1"}]

### 插件配置项

```dotenv

zxpm_db_url="sqlite:data/zxpm/db/zxpm.db"

zxpm_notice_info_cd=300

zxpm_ban_reply="才不会给你发消息."

zxpm_ban_leve... | closed | 2024-10-05T23:27:23Z | 2024-10-08T02:24:42Z | https://github.com/nonebot/nonebot2/issues/3002 | [

"Plugin"

] | HibiKier | 6 |

CorentinJ/Real-Time-Voice-Cloning | pytorch | 637 | Toolbox launches but doesn't work | I execute the demo_toolbox.py and the toolbox launches. I click Browse and pull in a wav file. The toolbox shows the wav file loaded and a mel spectrogram displays. The program then says "Loading the encoder \encoder\saved_models\pretrained.pt

but that is where the program just goes to sleep until I exit out. I v... | closed | 2021-01-22T21:56:38Z | 2021-01-23T00:36:12Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/637 | [] | bigdog3604 | 4 |

onnx/onnx | scikit-learn | 6,004 | There is an error in converting LLaVA model. It should not be adapted to this model. How should this model be converted to onnx | # Ask a Question

### Question

<!-- Explain your question here. -->

### Further information

```

`The argument `from_transformers` is deprecated, and will be removed in optimum 2.0. Use `export` instead

Framework not specified. Using pt to export the model.

Traceback (most recent call last):

File "/root/... | closed | 2024-03-08T02:26:34Z | 2024-03-09T00:41:18Z | https://github.com/onnx/onnx/issues/6004 | [

"question",

"topic: converters"

] | woaichixihong | 1 |

widgetti/solara | fastapi | 84 | Reported occational crash of solara.dev | Reported at https://www.reddit.com/r/Python/comments/13fegbp/comment/jjva0xp/?utm_source=reddit&utm_medium=web2x&context=3

```

11vue.runtime.esm.js:1897 Error: Cannot sendat l.send (solara-widget-manager8.min.js:39... | open | 2023-05-12T13:19:13Z | 2023-05-12T13:19:13Z | https://github.com/widgetti/solara/issues/84 | [] | maartenbreddels | 0 |

donnemartin/system-design-primer | python | 244 | Article suggestion: Web architecture 101 | I thought this was an exceptionally well written high-level overview of modern web architecture, and short too (10 min)

https://engineering.videoblocks.com/web-architecture-101-a3224e126947

Perhaps worth putting next to the CS075 course notes? | open | 2018-12-29T18:39:27Z | 2019-01-20T20:02:09Z | https://github.com/donnemartin/system-design-primer/issues/244 | [

"needs-review"

] | stevenqzhang | 0 |

napari/napari | numpy | 7,105 | file menu error on closing/opening napari from jupyter | ### 🐛 Bug Report

When running napari from a notebook, after closing the viewer and reopening it, just opening the ```File``` menu triggers an error and makes it then impossible to open a new viewer. The bug appeared in napari 5.0.

### 💡 Steps to Reproduce

1. Open a viewer:

```

import napari

viewer =... | closed | 2024-07-18T23:09:38Z | 2024-07-22T08:45:55Z | https://github.com/napari/napari/issues/7105 | [

"bug",

"priority:high"

] | guiwitz | 6 |

matplotlib/matplotlib | data-visualization | 29,259 | [Bug]: No module named pyplot | ### Bug summary

No module named pyplot

### Code for reproduction

```Python

import matplotlib.pyplot as plt

import numpy as np

from scipy.integrate import solve_ivp

# Parámetros

miumax = 0.65

ks = 12.8

a = 1.12

b = 1

Sm = 98.3

Pm = 65.2

Yxs = 0.067

m = 0.230

alfa = 7.3

beta = 0 # 0.15

si = 54.45

x... | closed | 2024-12-08T21:01:23Z | 2024-12-09T16:15:33Z | https://github.com/matplotlib/matplotlib/issues/29259 | [

"Community support"

] | abelardogit | 3 |

miguelgrinberg/Flask-SocketIO | flask | 1,451 | SocketIO Chat Web app showing wierd errors | Hi All,

I Am Getting some wierd error when i am running a flask-socketio chat web app on a development server. Refer Below.

`127.0.0.1 - - [04/Jan/2021 19:23:18] code 400, message Bad request version ('úú\x13\x01\x13\x02\x13\x03À+À/À,À0̨̩À\x13À\x14\x00\x9c\x00\x9d\x00/\x005\x01\x00\x01\x93\x8a\x8a\x00\x00\x00\x... | closed | 2021-01-04T13:56:34Z | 2021-04-06T13:16:39Z | https://github.com/miguelgrinberg/Flask-SocketIO/issues/1451 | [

"question"

] | AAYU6H | 4 |

deepset-ai/haystack | nlp | 8,741 | DocumentSplitter updates Document's meta data after initializing the Document | **Describe the bug**

`_create_docs_from_splits` of the `DocumentSplitter` initializes a new document and then changes its meta data afterward. This means that the document's ID is created without taking into account the additional meta data. Documents that have the same content and only differ in page number will recei... | closed | 2025-01-17T09:43:10Z | 2025-01-20T08:51:49Z | https://github.com/deepset-ai/haystack/issues/8741 | [

"P2"

] | julian-risch | 0 |

dunossauro/fastapi-do-zero | pydantic | 299 | Quiz aula 6 | ## Bug encontrado:

Era pra ser 'get_current_user' | closed | 2025-02-07T07:34:34Z | 2025-02-09T06:17:34Z | https://github.com/dunossauro/fastapi-do-zero/issues/299 | [] | matheussricardoo | 0 |

ageitgey/face_recognition | python | 1,371 | facerec_from_webcam.py crashing | * face_recognition version: 1.3.0

* Python version: 3.x

* Operating System: Mac OS BS

### Description

Testing this https://github.com/ageitgey/face_recognition/blob/master/examples/facerec_from_webcam.py

### What I Did

Same code as above link

Camera becomes active, that is visible (green light on), but t... | open | 2021-09-19T14:52:29Z | 2021-09-19T14:52:29Z | https://github.com/ageitgey/face_recognition/issues/1371 | [] | smileBeda | 0 |

dpgaspar/Flask-AppBuilder | flask | 1,628 | KeyError using SQLAlchemy Automap with HStore, JSON Fields | Hii folks,

I'm using Sqlalchemy Automap with Flask-AppBuilder to create an internal dashboard - The database table has fields like HSTORE, JSON which are probably throwing a KeyError. I'm getting this Error log: `:ERROR:flask_appbuilder.forms:Column attachments Type not supported` Here's the KeyError [traceback](http... | closed | 2021-05-01T15:34:55Z | 2022-04-17T16:24:29Z | https://github.com/dpgaspar/Flask-AppBuilder/issues/1628 | [

"stale"

] | sameerkumar18 | 2 |

holoviz/panel | plotly | 7,611 | Bokeh Categorical Heatmap not getting updated when used in a panel application | <!--

Thanks for contacting us! Please read and follow these instructions carefully, then you can delete this introductory text. Note that the issue tracker is NOT the place for usage questions and technical assistance; post those at [Discourse](https://discourse.holoviz.org) instead. Issues without the required informa... | closed | 2025-01-10T10:05:57Z | 2025-01-16T17:25:23Z | https://github.com/holoviz/panel/issues/7611 | [

"bokeh"

] | Davide-sd | 2 |

paperless-ngx/paperless-ngx | machine-learning | 8,118 | [BUG] Creating a superuser password in docker compose drops all characters after "$" | ### Description

Starting up a new paperless-ngx instance with a set admin/superuser password in your environment variables does not respect your password in quotes, all characters after a dollar sign ($) in your password will be disregarded, including the dollar sign. Trying to log in will tell you the password is wro... | closed | 2024-10-30T18:04:53Z | 2024-11-30T03:13:44Z | https://github.com/paperless-ngx/paperless-ngx/issues/8118 | [

"not a bug"

] | DM2602 | 2 |

jupyter/docker-stacks | jupyter | 1,959 | [ENH] - Document correct way to persist conda packages | ### What docker image(s) is this feature applicable to?

base-notebook

### What change(s) are you proposing?

Documenting a "blessed" way of persisting installed python packages, such that they are not removed after the container is recreated.

Ideally this should be a way which doesn't require persisting the ... | closed | 2023-08-04T11:40:00Z | 2023-08-20T16:19:54Z | https://github.com/jupyter/docker-stacks/issues/1959 | [

"type:Enhancement",

"tag:Documentation"

] | laundmo | 6 |

MaartenGr/BERTopic | nlp | 1,816 | semi-supervised topic modelling with multiple labels per document | Hi there,

I have a dataset with 2000 participants who reported their most negative daily event for 90 days. They completed three questions related to their most negative event:

(1) an open-ended written question "what was you most negative event today?

(2) which categories does this event belong to (select all t... | open | 2024-02-17T01:40:00Z | 2024-02-19T16:55:01Z | https://github.com/MaartenGr/BERTopic/issues/1816 | [] | justin-boldsen | 2 |

deepfakes/faceswap | machine-learning | 1,386 | Error with tensorflow. | It gives me this message when i try to run it.

I reinstalled everything from drivers, to cuda, and tensorflowm but still doesnt work.

H:\faceswap\faceswap>"C:\ProgramData\Miniconda3\scripts\activate.bat" && conda activate "faceswap" && python "H:\faceswap\faceswap/faceswap.py" gui

Setting Faceswap backend to N... | closed | 2024-05-01T19:41:12Z | 2024-05-10T11:45:48Z | https://github.com/deepfakes/faceswap/issues/1386 | [] | donleo78 | 1 |

erdewit/ib_insync | asyncio | 286 | Trailing Stop Limit Percentage order | Is it possible to create Trailing Stop Limit Percentage orders? Could you point me to part of the code to create this kind of order? Thanks. | closed | 2020-08-04T19:21:26Z | 2020-09-20T13:19:26Z | https://github.com/erdewit/ib_insync/issues/286 | [] | minhnhat93 | 1 |

keras-team/keras | python | 20,723 | Add `keras.ops.rot90` for `tf.image.rot90` | tf api: https://www.tensorflow.org/api_docs/python/tf/image/rot90

torch api: https://pytorch.org/docs/stable/generated/torch.rot90.html

jax api: https://jax.readthedocs.io/en/latest/_autosummary/jax.numpy.rot90.html

In keras, it provides [RandomRotation](https://keras.io/api/layers/preprocessing_layers/image_augme... | closed | 2025-01-04T17:42:20Z | 2025-01-13T02:16:35Z | https://github.com/keras-team/keras/issues/20723 | [

"type:feature"

] | innat | 4 |

ydataai/ydata-profiling | pandas | 1,479 | Add a report on outliers | ### Missing functionality

I'm missing an easy report to see outliers.

### Proposed feature

An outlier to me is some value more than 3 std dev away from the mean.

I calculate this as:

```python

mean = X.mean()

std = X.std()

lower, upper = mean - 3*std, mean + 3*std

outliers = X[(X < lower) | (X > upper)]

... | open | 2023-10-15T08:25:24Z | 2023-10-16T20:56:52Z | https://github.com/ydataai/ydata-profiling/issues/1479 | [

"feature request 💬"

] | svaningelgem | 1 |

stanfordnlp/stanza | nlp | 1,362 | Stanza 1.8.1 failing to split sentence apart | **Describe the bug**

We've encountered a sentence pattern where Stanza fails to split apart two sentences. It appears when certain names are used (e.g. Max, Anna) but not with others (e.g. Ann).

**To Reproduce**

Steps to reproduce the behavior:

1. Go to http://stanza.run/ or input into stanza either sentence:

... | open | 2024-03-06T21:07:11Z | 2024-03-11T22:31:26Z | https://github.com/stanfordnlp/stanza/issues/1362 | [

"bug"

] | khannan-livefront | 2 |

amdegroot/ssd.pytorch | computer-vision | 563 | Questions about `RandSampleCrop` in `augmentation.py` | Hi, In lines 259 and 260 of `augmentation.py`, the code is

```

left = random.uniform(width - w)

top = random.uniform(height - h)

```

I don't understand why the lower limit is set for 'left' and 'top', not the upper limit like

```

left = random.uniform(high=width - w)

top = random.uniform(high=height - h)

``` | closed | 2021-11-11T08:58:00Z | 2021-11-11T10:20:05Z | https://github.com/amdegroot/ssd.pytorch/issues/563 | [] | zhiyiYo | 1 |

deeppavlov/DeepPavlov | nlp | 1,367 | Review analytics and prepare list of all models that we want to support or deprecate | Moved to internal Trello | closed | 2021-01-12T11:13:22Z | 2021-11-30T10:09:06Z | https://github.com/deeppavlov/DeepPavlov/issues/1367 | [] | danielkornev | 4 |

lexiforest/curl_cffi | web-scraping | 351 | Import problem(for real) |

I accidently deleted the "Session" file's containing codes, but when I put it back and run the code again, it still couldn't work.

| closed | 2024-07-16T13:36:10Z | 2024-07-22T11:33:48Z | https://github.com/lexiforest/curl_cffi/issues/351 | [

"bug"

] | Jeremyyen0978 | 3 |

huggingface/datasets | pandas | 7,001 | Datasetbuilder Local Download FileNotFoundError | ### Describe the bug

So I was trying to download a dataset and save it as parquet and I follow the [tutorial](https://huggingface.co/docs/datasets/filesystems#download-and-prepare-a-dataset-into-a-cloud-storage) of Huggingface. However, during the excution I face a FileNotFoundError.

I debug the code and it seems... | open | 2024-06-25T15:02:34Z | 2024-06-25T15:21:19Z | https://github.com/huggingface/datasets/issues/7001 | [] | purefall | 1 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.