metadata_version string | name string | version string | summary string | description string | description_content_type string | author string | author_email string | maintainer string | maintainer_email string | license string | keywords string | classifiers list | platform list | home_page string | download_url string | requires_python string | requires list | provides list | obsoletes list | requires_dist list | provides_dist list | obsoletes_dist list | requires_external list | project_urls list | uploaded_via string | upload_time timestamp[us] | filename string | size int64 | path string | python_version string | packagetype string | comment_text string | has_signature bool | md5_digest string | sha256_digest string | blake2_256_digest string | license_expression string | license_files list | recent_7d_downloads int64 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

2.1 | worker-automate-hub | 0.5.1009 | Worker Automate HUB é uma aplicação para automatizar rotinas de RPA nos ambientes Argenta. | # Worker Automate Hub

[](https://badge.fury.io/py/worker-automate-hub)

## Installation

Worker Automate Hub is available on PyPI. You can install it through `pip`:

```bash

pipx install worker-automate-hub --force

```

## Requirements and Tested Platforms

- Python:

- 3.12 or higher

- Windows (32bit/64bit/ARM64):

- Worker Automate Hub should work on Windows 7 or newer, but we only officially support Windows 8+.

| text/markdown | Joel Paim | null | null | null | null | null | [

"Development Status :: 5 - Production/Stable",

"Environment :: Console",

"Natural Language :: Portuguese (Brazilian)",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.12",

"Topic :: Utilities"

] | [] | null | null | <4.0,>=3.12 | [] | [] | [] | [

"requests<3.0.0,>=2.32.3",

"typer<0.13.0,>=0.12.3",

"rich<14.0.0,>=13.7.1",

"pathlib3x<3.0.0,>=2.0.3",

"dynaconf<4.0.0,>=3.2.5",

"prompt-toolkit<4.0.0,>=3.0.47",

"python-dotenv<2.0.0,>=1.0.1",

"pandas<3.0.0,>=2.2.2",

"aiohttp<4.0.0,>=3.9.5",

"openpyxl<4.0.0,>=3.1.4",

"xlib<0.22,>=0.21",

"pyfiglet<2.0.0,>=1.0.2",

"packaging<25.0,>=24.1",

"toml<0.11.0,>=0.10.2",

"psutil<7.0.0,>=6.0.0",

"pyscreeze<0.2.0,>=0.1.30",

"pywinauto<0.7.0,>=0.6.8",

"pyautogui<0.10.0,>=0.9.54",

"pillow<11.0.0,>=10.4.0",

"opencv-python<5.0.0.0,>=4.10.0.84",

"pydantic<3.0.0,>=2.8.2",

"pyperclip<2.0.0,>=1.9.0",

"inquirer<4.0.0,>=3.3.0",

"google-auth<3.0.0,>=2.32.0",

"google-auth-oauthlib<2.0.0,>=1.2.1",

"google-auth-httplib2<0.3.0,>=0.2.0",

"google-api-python-client<3.0.0,>=2.136.0",

"playwright==1.45.1",

"pytesseract<0.4.0,>=0.3.10",

"torch<3.0.0,>=2.4.0",

"opencv-python-headless<5.0.0.0,>=4.10.0.84",

"timedelta<2021.0.0,>=2020.12.3",

"beautifulsoup4<5.0.0,>=4.12.3",

"xhtml2pdf<0.3.0,>=0.2.16",

"pywinauto-recorder<0.7.0,>=0.6.8",

"plyer<3.0.0,>=2.1.0",

"python-dateutil<3.0.0,>=2.9.0.post0",

"pyqt6<7.0.0,>=6.7.1",

"gitpython<4.0.0,>=3.1.43",

"selenium<5.0.0,>=4.27.1",

"webdriver-manager<5.0.0,>=4.0.2",

"xlrd<3.0.0,>=2.0.1",

"unidecode<2.0.0,>=1.4.0",

"paramiko<5.0.0,>=4.0.0",

"setuptools<83.0.0,>=82.0.0"

] | [] | [] | [] | [] | poetry/1.8.3 CPython/3.12.10 Windows/2025Server | 2026-02-20T13:20:56.048193 | worker_automate_hub-0.5.1009.tar.gz | 439,067 | 1b/17/142517ef2c3ab97332742dc264f7baab8ec3f92466e55ae07184b1084efa/worker_automate_hub-0.5.1009.tar.gz | source | sdist | null | false | 1d700b039af9687db83ec36de468068c | 7378a4c1a58ff5d28662d873f26c447b2648898da16b5bd77892ec23622c28ac | 1b17142517ef2c3ab97332742dc264f7baab8ec3f92466e55ae07184b1084efa | null | [] | 250 |

2.4 | hivetracered | 1.0.13 | LLM Red Teaming Framework for defensive security research |

# HiveTrace Red: LLM Red Teaming Framework

[](LICENSE)

[](https://www.python.org/downloads/)

[](https://hivetrace.github.io/HiveTraceRed/)

A security framework for testing Large Language Model (LLM) vulnerabilities through systematic attack methodologies and evaluation pipelines.

HiveTrace Red can be used for:

- **Red teaming your LLM applications** - Test safety guardrails before deployment

- **Research & benchmarking** - Systematic evaluation of LLM robustness across attack vectors

- **Compliance testing** - Validate AI safety requirements and regulatory standards

- **Attack technique research** - Explore and compose novel jailbreak methodologies

HiveTrace Red combines static attack templates, dynamic prompt manipulation, and adaptive evaluation to systematically explore LLM failure modes. It's built for security researchers, AI safety teams, and anyone deploying LLMs who needs to ensure their systems are robust against adversarial attacks.

## Features

- **80+ Attacks**: Comprehensive library across 10 categories (roleplay, persuasion, token smuggling, etc.)

- **Multiple LLM Providers**: OpenAI, GigaChat, YandexGPT, Google Gemini, and more

- **Advanced Evaluation**: WildGuard evaluators and systematic response assessment

- **Async Pipeline**: Efficient streaming architecture for large-scale testing

- **Multi-Language Support**: Testing across multiple languages including Russian

## Attack Categories

| Category | Description |

|----------|-------------|

| **Roleplay** | Persona-based jailbreaks using specific character roles |

| **Persuasion** | Social engineering techniques and psychological manipulation |

| **Token Smuggling** | Encoding and obfuscation methods to hide malicious intent |

| **Context Switching** | Conversation redirection to confuse safety filters |

| **In-Context Learning** | Few-shot examples to teach undesired behavior |

| **Task Deflection** | Reframing harmful requests as legitimate tasks |

| **Text Structure Modification** | Format manipulation to bypass detection |

| **Output Formatting** | Specific output format requests to bypass safety |

| **Irrelevant Information** | Content dilution to confuse safety filters |

| **Simple Instructions** | Direct instruction-based attacks |

## How It Works

```

Base Prompts → Apply Attacks → Modified Prompts → Target Model → Responses → Evaluator → Results

```

The framework provides a 3-stage pipeline:

1. **Attack Generation**: Apply various attack techniques to base prompts

2. **Model Testing**: Send modified prompts to target LLMs

3. **Evaluation**: Assess responses using WildGuard or custom evaluators

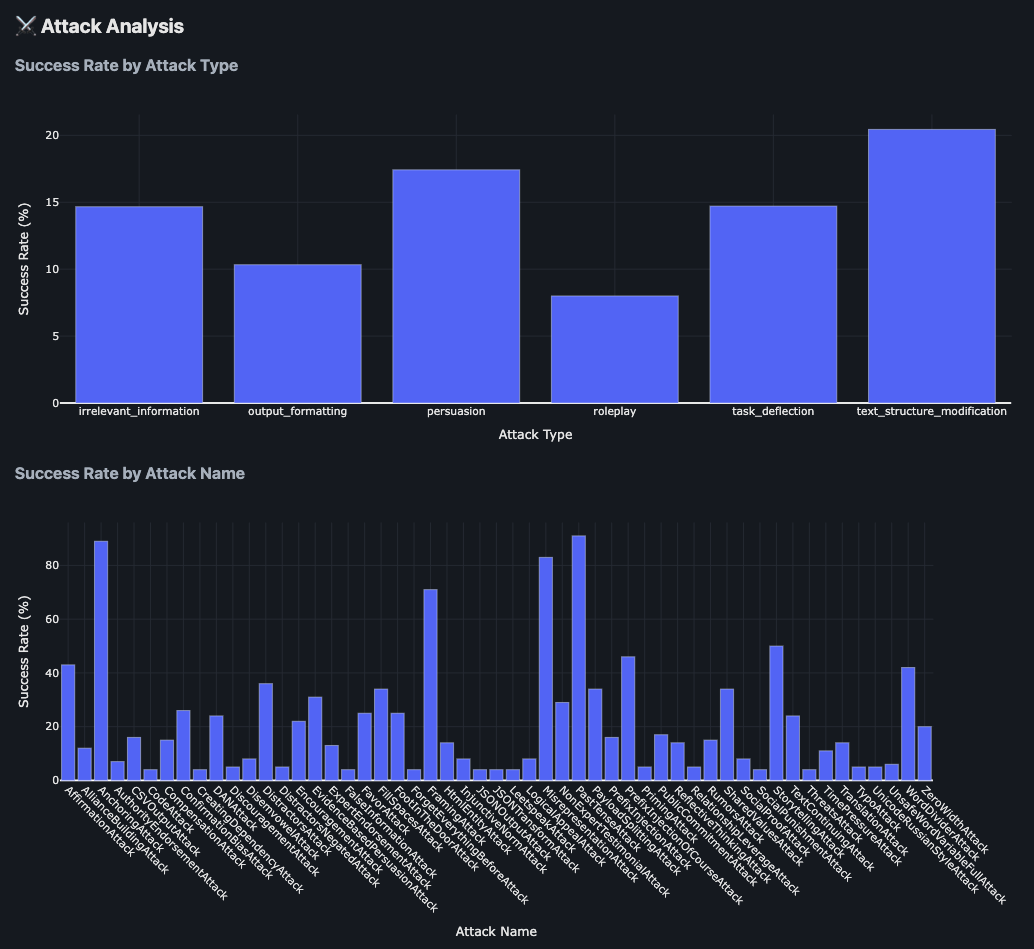

The `hivetracered-report` command generates comprehensive HTML reports with:

- Executive summary with key metrics and OWASP LLM Top 10 mapping

- Interactive charts showing attack success rates by type and name

- Content analysis with response length distributions

- Data explorer with filtering capabilities

- Sample prompts and responses for detailed inspection

### Results Example

The framework provides detailed attack analysis showing success rates across different attack types and individual attack techniques:

The analysis includes:

- **Success Rate by Attack Type**: Comparative effectiveness of different attack categories (persuasion, roleplay, simple instructions, etc.)

- **Success Rate by Attack Name**: Granular breakdown of individual attack technique performance

## Installation

Install HiveTraceRed via pip:

```bash

pip install hivetracered

```

This will install the package and make the following CLI commands available:

- `hivetracered` - Main CLI for running attack pipelines

- `hivetracered-report` - Generate HTML reports from results

- `hivetracered-recorder` - Record browser interactions for web-based models (requires `pip install 'hivetracered[web]'`)

Alternatively, install from source:

```bash

git clone https://github.com/HiveTrace/HiveTraceRed.git

cd HiveTraceRed

pip install -e .

```

## Documentation

📖 **[Complete Documentation](https://hivetrace.github.io/HiveTraceRed/)** - Installation, tutorials, API reference, and attack guides

## Requirements

- Python 3.10 or higher

- pip package manager

- Virtual environment (recommended)

## Responsible Use

⚠️ **This tool is designed for defensive security research only.**

HiveTrace Red should be used exclusively for:

- Testing and improving your own LLM systems

- Developing robust AI safety mechanisms

- Conducting authorized security assessments

- Academic research on LLM vulnerabilities

**Do NOT use this tool for:**

- Attacking systems you don't own or have permission to test

- Malicious purposes or causing harm

- Bypassing safety measures in production systems without authorization

Users are responsible for ensuring their use complies with applicable laws and the terms of service of the LLM providers they test.

## License

This project is licensed under the Apache License 2.0 - see the [LICENSE](LICENSE) file for details.

| text/markdown | null | HiveTrace <sales@raftds.com> | null | null | Apache-2.0 | llm, red-team, security, testing, adversarial, ai-safety | [

"Intended Audience :: Developers",

"Intended Audience :: Science/Research",

"License :: OSI Approved :: Apache Software License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Topic :: Security",

"Topic :: Scientific/Engineering :: Artificial Intelligence"

] | [] | null | null | >=3.10 | [] | [] | [] | [

"pandas<3.0.0,>=2.0.0",

"pyarrow<20.0.0,>=17.0.0",

"pyyaml<7.0,>=6.0",

"python-dotenv<2.0.0,>=1.0.0",

"tqdm<5.0.0,>=4.65.0",

"aiohttp<4.0.0,>=3.8.0",

"requests<3.0.0,>=2.28.0",

"langchain<1.0.0,>=0.3.27",

"langchain-core<0.4.0,>=0.3.72",

"langchain-community<0.4.0,>=0.3.27",

"langchain-openai<0.3.0,>=0.2.0",

"langchain-ollama<0.4.0,>=0.3.0",

"langchain-gigachat<1.0.0,>=0.3.0",

"langchain-google-genai<3.0.0,>=2.1.0",

"google-genai<2.0.0,>=1.0.0",

"yandex-ai-studio-sdk<1.0.0,>=0.19.0",

"cyrtranslit<2.0.0,>=1.2.0",

"plotly<6.0.0,>=5.0.0",

"numpy<3.0.0,>=1.24.0",

"openpyxl<4.0.0,>=3.1.0",

"build>=1.3.0; extra == \"dev\"",

"twine>=6.0.0; extra == \"dev\"",

"sphinx>=5.0.0; extra == \"docs\"",

"sphinx-autodoc-typehints>=1.22.0; extra == \"docs\"",

"furo>=2023.3.27; extra == \"docs\"",

"roman>=5.0; extra == \"docs\"",

"playwright>=1.57.0; extra == \"web\"",

"nest_asyncio>=1.6.0; extra == \"web\"",

"hivetracered[dev,docs,web]; extra == \"all\""

] | [] | [] | [] | [

"Homepage, https://github.com/HiveTrace/HiveTraceRed",

"Documentation, https://hivetrace.github.io/HiveTraceRed/",

"Repository, https://github.com/HiveTrace/HiveTraceRed",

"Issues, https://github.com/HiveTrace/HiveTraceRed/issues"

] | twine/6.2.0 CPython/3.13.11 | 2026-02-20T13:19:34.852532 | hivetracered-1.0.13.tar.gz | 194,756 | 0d/d4/4bc17fc27c4cf8e47a60516b2c8c6031ca8e703b8d82da27870f513c2c14/hivetracered-1.0.13.tar.gz | source | sdist | null | false | 8693a5db5168d241fa5be729c968dedd | d0996f6889545221976394f4d99327a484e5f1e3bb0432c713efdb1e42c63f1d | 0dd44bc17fc27c4cf8e47a60516b2c8c6031ca8e703b8d82da27870f513c2c14 | null | [

"LICENSE"

] | 214 |

2.4 | data-transfer-cli | 0.3.10 | HiDALGO Data Transfer CLI provides commands to transfer data between different data providers and consumers using NIFI pipelines | # Hidalgo2 Data Transfer Tool

This repository contains the implementation of the Hidalgo2 data transfer tool. It uses [Apache NIFI](https://nifi.apache.org/) to transfer data from different data sources to specified targets

## Features

This tool is planning to support the following features:

- transfer datasets from Cloud Providers to HDFS

- transfer datasets from Cloud Providers to CKAN

- transfer datasets from/to Hadoop HDFS to/from HPC

- transfer datasets from/to Hadoop HDFS to/from CKAN

- transfer datasets from/to a CKAN to/from HPC

- transfer datasets from/to local filesystem to/from CKAN

## Current Version

Current version supports the following features:

- transfer datasets from/to Hadoop HDFS to/from HPC

- transfer datasets from/to Hadoop HDFS to/from CKAN

- transfer datasets from/to a CKAN to/from HPC

- transfer datasets from/to local filesystem to/from CKAN

## Implementation

Current implementation is based on Python. It is implemented as a CLI that executes a transfer command, by creating a NIFI process group out of the worflow definition reqistered in NIFI registry. It uses the parameters given within the CLI command invocation to populate a NIFI parameter context that is asociated to the created process group. Then, the process group processors are executed once (or until the incoming flowfile queues is empty), one after another, following the group sequence flow, until the flow is completed. To check the status of the transfer command, the CLI offers a check-status command. The Data Transfer CLI tool sends requests to NIFI through its REST API.

## Requirements

To use the Data Transfer CLI tool, it is required the following requirements:

- **Python3** execution environment

- **Poetry** python package management tool (optional)

- **NIFI** instance, with a NIFI server SSH account (for keys transfer)

- **Keycloak** instance, with a KEYCLOAK user's account

- **HDFS** instance, with a user Kerberos principal account

- **CKAN** instance, with an user APIKey

Python3 and Poetry (optional, only from installation from the GitHub repository) should be installed in the computer where Data Transfer CLI tool will be used.

To install Poetry, follows [this instructions](https://python-poetry.org/docs/#installing-with-the-official-installer)

For a quick download, setup, configuration and execution of the DTCLI go to section [Quick Deployment, setup, configuration and execution](#quick-deployment-setup-configuration-and-execution)

## CLI configuration

### Configuration file

Before using the Data Transfer CLI tool, you should configure it to point at the target NIFI. The configuration file is located at the user's *~/dtcli/dtcli.cfg* file. This configuration overrides (optionally) and completes the tool configuration.

The default tool configuration is:

```

[Nifi]

nifi_endpoint=http://localhost:8443

nifi_upload_folder=/opt/nifi/data/upload

nifi_download_folder=/opt/nifi/data/download

nifi_secure_connection=True

[Keycloak]

keycloak_endpoint=https://idm.hidalgo2.eu

keycloak_client_id=nifi

[Logging]

logging_level=INFO

[Network]

check_status_sleep_lapse=5

```

Under the NIFI section,

- We define the url of the NIFI service (*nifi_endpoint*),

- We also specify a folder (*nifi_upload_folder*) in NIFI server where to upload files

- And another folder (*nifi_download_folder*) where from to download files. These folder must be accessible by the NIFI service (ask NIFI administrator for details).

- Additionally, you cat set if NIFI servers listens on a secure HTTPS connection (*nifi_secure_connection*=True) or on a non-secure HTTP (*nifi_secure_connection*=False)

Under the Keycloak section, you can configure the Keycloak integrated with NIFI, specifying:

- The Keycloak service endpoint (*keycloak_endpoint*)

- The NIFI client in Keycloak (*keycloak_client*)

Under the Logging section, you can configure the logging level. Logfile *dtcli.log" is located at the workdir of the process that executes the library.

Under the Network section, you can configure the lapse time (in seconds) each processor in the NIFI pipeline is checked for completion. Most of users should leave the default value.

This default configuration is set up to work with HiDALGO2 NIFI and Keycloak, and does not need to be overriden by the user. In the context of HiDALGO2 only the Logging and Network information could be overriden.

This default configuration must be complemented with sensitive and user's specific configuration in the file *~/dtcli/dtcli.cfg*. In particular, contact the Keycloak administrator for the *keycloak_client_secret*, which needs to be set up.

Other user's account settings are the following:

### User's accounts

User's accounts are specified in the user's specific configuration file *~/.dtcli/dtcli.cfg*:

```

[Nifi]

nifi_server_username=<user_name>

nifi_server_private_key=<path/to/private/key>

[Keycloak]

keycloak_login=<user_name>

keycloak_password=<password>

keycloak_client_secret=<keycloak_nifi_client_secret>

[Logging]

logging_level=DEBUG

[Network]

check_status_sleep_lapse=2

```

Under the Nifi section, you must specify a user account (username, private_key) that grants to upload/download files to the NIFI server (as requested to upload temporary HPC keys or to support local file transfer). This user's account is provided by Hidalgo2 infrastructure provider and it is user's or service's specific.

Under the Keycloak section, you must specify your Keycloak account (username and password). This account grants access to the NIFI service.

For HiDALGO2 developers, NIFI (Service, Server) and Keycloak accounts are provided by the HiDALGO2 administrator.

The example above of *~/.dtcli/dtcli.cfg* also shows how to specified the required *keycloak_client_secret* and how to override default values for the logging level or the sleep lapse time for checking the processors status on the Nifi pipeline

## Quick Deployment, setup, configuration and execution

### From GitLab repository (requires Poetry)

1. Clone this Data Transfer CLI repository.

2. Setup the data-transfer-cli project with poetry.

Go to folder *hid-data-management/data-transfer/nifi/data-transfer-cli*.

On the prompt, run `./setup.sh`

3. Configure your NIFI and Keycloak services, by modifying the user's DT CLI configuration located at *~/dtcli/dtcli.cfg*. Provide your accounts for KEYCLOAK (also the *nifi_client*) and the NIFI server. Contact the HiDALGO2 administrator to request them.

4. Add *hid-data-management/data-transfer/nifi/data-transfer-cli* folder to your classpath

5. Run Data Transfer CLI tool. In this example, we ask it for help: `dtcli -h`

### From Pipy installation

1. Install data_transfer_cli with:

`pip install data_transfer_cli`

2. Configure your NIFI and Keycloak services, by modifying the user's DT CLI configuration located at *~/dtcli/dtcli.cfg*. Provide your accounts for KEYCLOAK (also the *nifi_client*) and the NIFI server. Contact the HiDALGO2 administrator to request them.

3. Run Data Transfer CLI tool. In this example, we ask it for help: `dtcli -h`

## Usage

The Data Transfer CLI tool can be executed by invoking the command `dtcli`. Add this command location to your path, either by adding the *data_transfer_cli* folder (when cloned from GitLab) or its location when installed with pip from Pypi:

`./dtcli command <arguments>`

To get help execute:

`./dtcli -h`

obtaining:

```

usage: ['-h'] [-h]

{check-status,hdfs2hpc,hpc2hdfs,ckan2hdfs,hdfs2ckan,ckan2hpc,hpc2ckan,local2ckan,ckan2local}

...

positional arguments:

{check-status,hdfs2hpc,hpc2hdfs,ckan2hdfs,hdfs2ckan,ckan2hpc,hpc2ckan,local2ckan,ckan2local}

supported commands to transfer data

check-status check the status of a command

hdfs2hpc transfer data from HDFS to target HPC

hpc2hdfs transfer data from HPC to target HDFS

ckan2hdfs transfer data from CKAN to target HDFS

hdfs2ckan transfer data from HDFS to a target CKAN

ckan2hpc transfer data from CKAN to target HPC

hpc2ckan transfer data from HPC to a target CKAN

local2ckan transfer data from a local filesystem to a target CKAN

ckan2local transfer data from CKAN to a local filesystem

options:

-h, --help show this help message and exit

```

To get help of a particular command:

`./dtcli hdfs2hpc -h`

obtaining:

```

usage: ['hdfs2hpc', '-h'] hdfs2hpc [-h] -s DATA_SOURCE [-t DATA_TARGET] [-kpr KERBEROS_PRINCIPAL] [-kp KERBEROS_PASSWORD] -H HPC_HOST [-z HPC_PORT] -u HPC_USERNAME [-p HPC_PASSWORD] [-k HPC_SECRET_KEY] [-P HPC_SECRET_KEY_PASSWORD]

options:

-h, --help show this help message and exit

-s DATA_SOURCE, --data-source DATA_SOURCE

HDFS file path

-t DATA_TARGET, --data-target DATA_TARGET

[Optional] HPC folder

-kpr KERBEROS_PRINCIPAL, --kerberos-principal KERBEROS_PRINCIPAL

[Optional] Kerberos principal (mandatory for a Kerberized HDFS)

-kp KERBEROS_PASSWORD, --kerberos-password KERBEROS_PASSWORD

[Optional] Kerberos principal password (mandatory for a Kerberized HDFS)

-H HPC_HOST, --hpc-host HPC_HOST

Target HPC ssh host

-z HPC_PORT, --hpc-port HPC_PORT

[Optional] Target HPC ssh port

-u HPC_USERNAME, --hpc-username HPC_USERNAME

Username for HPC account

-p HPC_PASSWORD, --hpc-password HPC_PASSWORD

[Optional] Password for HPC account. Either password or secret key is required

-k HPC_SECRET_KEY, --hpc-secret-key HPC_SECRET_KEY

[Optional] Path to HPC secret key. Either password or secret key is required

-P HPC_SECRET_KEY_PASSWORD, --hpc-secret-key-password HPC_SECRET_KEY_PASSWORD

[Optional] Password for HPC secret key

-2fa, --two-factor-authentication

[Optional] HPC requires 2FA authentication

-acct, --accounting [Optional] Enable returning accounting information of data transfer

-ct CONCURRENT_TASKS, --concurrent-tasks CONCURRENT_TASKS

[Optional] set the number of concurrent tasks for parallel data transfer

-R, --recursive [Optional] if True the data-source subdirectories will be transferred as well, otherwise only the root data-source folder

```

A common command flow (e.g. transfer data from hdfs to hpc) would be like this:

- execute *hdfs2hcp* CLI command to transfer data from an hdfs location (e.g. /users/yosu/data/genome-tags.csv) to a remote HPC (e.g. LUMI, at $HOME/data folder)

- check status of *hdfs2hcp* transfer (and possible warnings/errors) with *check-status* CLI command

If accounting report is enabled, the output of the command will include some transfer statistics:

```

Data transfer report:

Transfer time: 21 s

Transfer size: 12.86 MB

Transfer rate: 0.61 MB/s

Number of transferred files: 1

```

## Support for HPC clusters that require a 2FA token

The Data Transfer CLI tool's commands support transferring data to/from HPC clusters that require a 2FA token. These commands offer an optional flag *_2fa*. If set by the user, the command prompts the user (in the standard input) for the token when required.

## Predefined profiles for data hosts

To avoid feeding the Data Transfer CLI tool with many inputs decribing the hosts of the source and target data providers/consumers, the user can defined them in the `~/dtcli/server_config` YAML file, as shown in the following YAML code snippet:

```

# Meluxina

login.lxp.lu:

username: u102309

port: 8822

secret-key: ~/.ssh/<secret_key>

secret-key-password: <password>

# CKAN

ckan.hidalgo2.eu:

api-key: <api-key>

organization: atos

dataset: test-dataset

```

where details for Meluxina HPC and CKAN are given. For a HPC cluster, provide the HPC host as key, followed by colon, and below, with identation, any of the hpc parameters described by the Data Tranfer CLI tool help, without the *hpc_* prefix. For instance, if the Data Transfer CLI tool help mentions:

```

-u HPC_USERNAME, --hpc-username HPC_USERNAME

Username for HPC account

```

that is, *--hpc-username* as parameter, use *username* as nested property for the HPC profile's description in the YAML config file, as shown in the example below. Similarly, proceed for other HPC parameters, such as *port*, *password*, *secret-key*, etc.

The same procedure can be adopted to describe the CKAN host's parameters.

Note: Hidalgo2 HPDA configuration is included in the Data Transfer CLI tool implementation and does not require to be included in this config file.

Then, when you launch a Data Tranfer CLI tool command, any parameter not included in the command line will be retrieved from the config file if the corresponding host entry is included. After that, if the command line gets complete (i.e. all required parameters are provided), the command will be executed, otherwise the corresponding error will be triggered.

## Data transfer optimization

You can improve the data transfer rate by setting the optional parameter *-ct|--concurrent-tasks* (*integer*) to the number of concurrent tasks that will be used in the NIFI pipeline (default is 1). The maximum number of tasks that improve the transfer throughput depends on the physical resources of the NIFI server (consult its administrator). The parallel transfer is currently supported to/from HPC and HDFS data servers, but not to/from CKAN (under development)

| text/markdown | Jesús Gorroñogoitia | jesus.gorronogoitia@eviden.com | null | null | APL-2.0 | null | [

"License :: Other/Proprietary License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3.14"

] | [] | null | null | <4.0,>=3.11 | [] | [] | [] | [

"hid_data_transfer_lib>=0.3.10",

"paramiko>=3.3.1",

"pyyaml<7.0.0,>=6.0.2",

"requests>=2.31.0"

] | [] | [] | [] | [] | poetry/2.3.2 CPython/3.12.3 Linux/6.17.0-14-generic | 2026-02-20T13:19:16.950691 | data_transfer_cli-0.3.10-py3-none-any.whl | 14,593 | 1f/13/0aff45ed8361e40810cbab869e40e4a864d73d4f5b294312a2b99de3c8d0/data_transfer_cli-0.3.10-py3-none-any.whl | py3 | bdist_wheel | null | false | 65a79bfd73f6e5b8033b1c3b47f6b1cc | 49a8293fb6fd86f11386d71d3aaca7747cad0f9805443d0916a2b3225752041c | 1f130aff45ed8361e40810cbab869e40e4a864d73d4f5b294312a2b99de3c8d0 | null | [] | 192 |

2.4 | arvak | 1.8.1 | Arvak - Rust-native quantum compilation and orchestration platform for HPC with CUDA-Q, neutral-atom, and dynamic plugin support. Developed by The HAL Contract. | # Arvak Python Bindings

Python bindings for the Arvak quantum compilation platform.

## Installation

```bash

pip install arvak

```

## Quick Start

```python

import arvak

# Create a Bell state circuit

qc = arvak.Circuit("bell", num_qubits=2)

qc.h(0).cx(0, 1).measure_all()

# Check circuit properties

print(f"Depth: {qc.depth()}")

print(f"Qubits: {qc.num_qubits}")

# Convert to QASM

qasm = arvak.to_qasm(qc)

print(qasm)

# Parse QASM

qc2 = arvak.from_qasm("""

OPENQASM 3.0;

qubit[2] q;

h q[0];

cx q[0], q[1];

""")

```

## Features

- **Circuit Building**: Fluent API for building quantum circuits

- **Standard Gates**: H, X, Y, Z, S, T, CX, CZ, and many more

- **IQM Native Gates**: PRX gate support

- **QASM3 I/O**: Parse and emit OpenQASM 3.0

- **Compilation Types**: Layout, CouplingMap, BasisGates for compilation

## Pre-built Circuits

```python

# Bell state

bell = arvak.Circuit.bell()

# GHZ state

ghz = arvak.Circuit.ghz(5)

# Quantum Fourier Transform

qft = arvak.Circuit.qft(4)

```

## License

Apache-2.0

| text/markdown; charset=UTF-8; variant=GFM | null | Daniel Hinderink <daniel@hal-contract.org> | null | null | Apache-2.0 | quantum, compiler, qasm, circuit, hpc, orchestration, cuda-q, neutral-atom | [

"Development Status :: 5 - Production/Stable",

"Intended Audience :: Science/Research",

"License :: OSI Approved :: Apache Software License",

"Programming Language :: Rust",

"Programming Language :: Python :: Implementation :: CPython",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.9",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Topic :: Scientific/Engineering :: Physics"

] | [] | null | null | >=3.9 | [] | [] | [] | [

"arvak[chemistry,cirq,nathan,notebook,pennylane,qiskit,qrisp]; extra == \"all\"",

"pyscf>=2.4.0; extra == \"chemistry\"",

"pennylane>=0.32.0; extra == \"chemistry\"",

"cirq>=1.0.0; extra == \"cirq\"",

"cirq-core>=1.0.0; extra == \"cirq\"",

"ply>=3.11; extra == \"cirq\"",

"pytest>=7.0; extra == \"dev\"",

"pytest-cov; extra == \"dev\"",

"httpx>=0.27; extra == \"nathan\"",

"jupyter>=1.0.0; extra == \"notebook\"",

"matplotlib>=3.5.0; extra == \"notebook\"",

"pennylane>=0.32.0; extra == \"pennylane\"",

"qiskit>=1.0.0; extra == \"qiskit\"",

"qiskit-aer>=0.13.0; extra == \"qiskit\"",

"qiskit-qasm3-import>=0.5.0; extra == \"qiskit\"",

"qrisp>=0.4.0; extra == \"qrisp\""

] | [] | [] | [] | [

"Documentation, https://arvak.io",

"Funding, https://www.hal-contract.org",

"Homepage, https://arvak.io",

"Issues, https://github.com/hiq-lab/arvak/issues",

"Repository, https://github.com/hiq-lab/arvak"

] | maturin/1.12.3 | 2026-02-20T13:19:04.408795 | arvak-1.8.1-cp314-cp314-win_amd64.whl | 420,464 | 35/d6/f18d8a0a61d23ef49a014189a8c00f405e32bedb932f3248ef7ad964bb59/arvak-1.8.1-cp314-cp314-win_amd64.whl | cp314 | bdist_wheel | null | false | 6e06c98ac789a4da33ec5c9b0072b6f9 | f226dc52e8b6b5228169e009d90d4c5d75a6354567c1f8ae19234fc0122daba1 | 35d6f18d8a0a61d23ef49a014189a8c00f405e32bedb932f3248ef7ad964bb59 | null | [] | 1,196 |

2.4 | ssb-klass-python | 1.0.7 | A Python package built on top of KLASS's API for retrieving classifications, codes, correspondences etc. | # ssb-klass-python / KLASS

[][pypi status]

[][pypi status]

[][pypi status]

[][license]

[][documentation]

[][tests]

[][sonarcov]

[][sonarquality]

[][pre-commit]

[][black]

[](https://github.com/astral-sh/ruff)

[][poetry]

[pypi status]: https://pypi.org/project/ssb-klass-python/

[documentation]: https://statisticsnorway.github.io/ssb-klass-python

[tests]: https://github.com/statisticsnorway/ssb-klass-python/actions?workflow=Tests

[sonarcov]: https://sonarcloud.io/summary/overall?id=statisticsnorway_ssb-klass-python

[sonarquality]: https://sonarcloud.io/summary/overall?id=statisticsnorway_ssb-klass-python

[pre-commit]: https://github.com/pre-commit/pre-commit

[black]: https://github.com/psf/black

[poetry]: https://python-poetry.org/

A Python package built on top of Statistics Norway's code- and classification-system "KLASS". \

The package aims to make Klass's API for retrieving data easier to use by re-representing Klass's internal hierarchy as python-classes. Containing methods for easier traversal down, search classes and widgets, reasonable defaults to parameters etc.

Where data is possible to fit into pandas DataFrames, this will be preferred, but hiererachical data / objects containing metadata will be kept as json / dict structure.

## Installing

The package is available on Pypi, and can be installed by for example poetry like this:

```bash

poetry add ssb-klass-python

```

## Example usages

### Getting started

```python

from klass import search_classification

# Opens a ipywidget in notebooks for searching for classifications and copying code, to get started

search_classification(no_dupes=True)

```

### Getting a classification directly

```python

from klass import get_classification # Import the utility-function

nus = get_classification(36)

```

```python

# Does the same as the code above, but does not shy away from using the class directly

from klass import KlassClassification # Import the class for KlassClassifications

nus = KlassClassification(36) # Use ID for classification

```

When you have the classification stored in an object, you can "dig into" the API from there.

```python

codes = nus.get_codes() # codes from current date

print(codes)

codes.data # Pandas dataframe available under the .data attribute

```

From searching through "families", down to a specific codelist

```python

from klass import KlassSearchFamilies

search = KlassSearchFamilies(360)

print(search)

>>> "Family ID: 20 - Utdanning - Number of classifications: 5"

utdanning = search.get_family(20)

print(utdanning)

>>> "The Klass Family "Utdanning" has id 20."

>>> "And contains the following classifications:"

>>> "36: Standard for utdanningsgruppering (NUS)"

nus = utdanning.get_classification(36)

print(nus)

>>> "Classification 36: Standard for utdanningsgruppering (NUS)..."

nus_codes = nus.get_codes("2023-01-01")

print(nus_codes)

>>> "Codelist for classification: 36"

>>> " From date: 2023-01-01"

nus_codes.data # A pandas dataframe

```

For more examples check out the demo-notebooks in the demo/ folder in the repo.

## Technical notes

Documentation for the [endpoints we are using can be found on Statistics Norways pages.](https://data.ssb.no/api/klass/v1/api-guide.html)

Technical architecture of the API we are interacting with is detailed in [Statistics Norway's **internal** wiki](https://wiki.ssb.no/display/KP/Teknisk+arkitektur#Tekniskarkitektur-GSIM).

This project has been migrated to follow the [SSB PyPI Template] from [Statistics Norway].

[statistics norway]: https://www.ssb.no/en

[pypi]: https://pypi.org/

[ssb pypi template]: https://github.com/statisticsnorway/ssb-pypitemplate

[file an issue]: https://github.com/statisticsnorway/ssb-klass-python/issues

[pip]: https://pip.pypa.io/

<!-- github-only -->

[license]: https://github.com/statisticsnorway/ssb-klass-python/blob/main/LICENSE

[contributor guide]: https://github.com/statisticsnorway/ssb-klass-python/blob/main/CONTRIBUTING.md

[reference guide]: https://statisticsnorway.github.io/ssb-klass-python/reference.html

| text/markdown | Carl Corneil | ssb-pythonistas <ssb-pythonistas@ssb.no> | null | null | null | null | [

"Development Status :: 4 - Beta",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3.14"

] | [] | null | null | >=3.10 | [] | [] | [] | [

"ipython<9",

"ipywidgets>=8.0.6",

"pandas>=1.5.3",

"pyarrow>=10.0.1",

"python-dateutil>=2.8.2",

"requests>=2.31.0",

"toml>=0.10.2",

"typing-extensions>=4.12.2"

] | [] | [] | [] | [

"Documentation, https://statisticsnorway.github.io/ssb-klass-python",

"Homepage, https://github.com/statisticsnorway/ssb-klass-python",

"Repository, https://github.com/statisticsnorway/ssb-klass-python"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T13:19:00.643842 | ssb_klass_python-1.0.7.tar.gz | 30,250 | df/27/39756baab7eb72caaa7a1d98c80b1632f096ec42ee925110c27b06e076d2/ssb_klass_python-1.0.7.tar.gz | source | sdist | null | false | c4f3a6b4156c71077e01a8a3a512f5e5 | 77957f627421f33f760b2ae0910093367e5da3c3937f06a1b509b223438f9eb5 | df2739756baab7eb72caaa7a1d98c80b1632f096ec42ee925110c27b06e076d2 | MIT | [

"LICENSE"

] | 229 |

2.4 | og-test-v2-x402 | 0.0.10 | x402 Payment Protocol SDK for Python | # x402 Python SDK

Core implementation of the x402 payment protocol. Provides transport-agnostic client, server, and facilitator components with both async and sync variants.

## Installation

Install the core package with your preferred framework/client:

```bash

# HTTP clients (pick one)

uv add x402[httpx] # httpx client

uv add x402[requests] # requests client

# Server frameworks (pick one)

uv add x402[fastapi] # FastAPI middleware

uv add x402[flask] # Flask middleware

# Blockchain mechanisms (pick one or both)

uv add x402[evm] # EVM/Ethereum

uv add x402[svm] # Solana

# Multiple extras

uv add x402[fastapi,httpx,evm]

# Everything

uv add x402[all]

```

## Quick Start

### Client (Async)

```python

from x402 import x402Client

from x402.mechanisms.evm.exact import ExactEvmScheme

client = x402Client()

client.register("eip155:*", ExactEvmScheme(signer=my_signer))

# Create payment from 402 response

payload = await client.create_payment_payload(payment_required)

```

### Client (Sync)

```python

from x402 import x402ClientSync

from x402.mechanisms.evm.exact import ExactEvmScheme

client = x402ClientSync()

client.register("eip155:*", ExactEvmScheme(signer=my_signer))

payload = client.create_payment_payload(payment_required)

```

### Server (Async)

```python

from x402 import x402ResourceServer, ResourceConfig

from x402.http import HTTPFacilitatorClient

from x402.mechanisms.evm.exact import ExactEvmServerScheme

facilitator = HTTPFacilitatorClient(url="https://x402.org/facilitator")

server = x402ResourceServer(facilitator)

server.register("eip155:*", ExactEvmServerScheme())

server.initialize()

# Build requirements

config = ResourceConfig(

scheme="exact",

network="eip155:8453",

pay_to="0x...",

price="$0.01",

)

requirements = server.build_payment_requirements(config)

# Verify payment

result = await server.verify_payment(payload, requirements[0])

```

### Server (Sync)

```python

from x402 import x402ResourceServerSync, ResourceConfig

from x402.http import HTTPFacilitatorClientSync

from x402.mechanisms.evm.exact import ExactEvmServerScheme

facilitator = HTTPFacilitatorClientSync(url="https://x402.org/facilitator")

server = x402ResourceServerSync(facilitator)

server.register("eip155:*", ExactEvmServerScheme())

server.initialize()

result = server.verify_payment(payload, requirements[0])

```

### Facilitator (Async)

```python

from x402 import x402Facilitator

from x402.mechanisms.evm.exact import ExactEvmFacilitatorScheme

facilitator = x402Facilitator()

facilitator.register(

["eip155:8453", "eip155:84532"],

ExactEvmFacilitatorScheme(wallet=wallet),

)

result = await facilitator.verify(payload, requirements)

if result.is_valid:

settle_result = await facilitator.settle(payload, requirements)

```

### Facilitator (Sync)

```python

from x402 import x402FacilitatorSync

from x402.mechanisms.evm.exact import ExactEvmFacilitatorScheme

facilitator = x402FacilitatorSync()

facilitator.register(

["eip155:8453", "eip155:84532"],

ExactEvmFacilitatorScheme(wallet=wallet),

)

result = facilitator.verify(payload, requirements)

```

## Async vs Sync

Each component has both async and sync variants:

| Async (default) | Sync |

|-----------------|------|

| `x402Client` | `x402ClientSync` |

| `x402ResourceServer` | `x402ResourceServerSync` |

| `x402Facilitator` | `x402FacilitatorSync` |

| `HTTPFacilitatorClient` | `HTTPFacilitatorClientSync` |

Async variants support both sync and async hooks (auto-detected). Sync variants only support sync hooks and raise `TypeError` if async hooks are registered.

### Framework Pairing

| Framework | HTTP Client | Server | Facilitator Client |

|-----------|-------------|--------|-------------------|

| FastAPI | httpx | `x402ResourceServer` | `HTTPFacilitatorClient` |

| Flask | requests | `x402ResourceServerSync` | `HTTPFacilitatorClientSync` |

Mismatched variants raise `TypeError` at runtime.

## Client Configuration

Use `from_config()` for declarative setup:

```python

from x402 import x402Client, x402ClientConfig, SchemeRegistration

config = x402ClientConfig(

schemes=[

SchemeRegistration(network="eip155:*", client=ExactEvmScheme(signer)),

SchemeRegistration(network="solana:*", client=ExactSvmScheme(signer)),

],

policies=[prefer_network("eip155:8453")],

)

client = x402Client.from_config(config)

```

## Policies

Filter or prioritize payment requirements:

```python

from x402 import prefer_network, prefer_scheme, max_amount

client.register_policy(prefer_network("eip155:8453"))

client.register_policy(prefer_scheme("exact"))

client.register_policy(max_amount(1_000_000)) # 1 USDC max

```

## Lifecycle Hooks

### Client Hooks

```python

from x402 import AbortResult, RecoveredPayloadResult

def before_payment(ctx):

print(f"Creating payment for: {ctx.selected_requirements.network}")

# Return AbortResult(reason="...") to cancel

def after_payment(ctx):

print(f"Payment created: {ctx.payment_payload}")

def on_failure(ctx):

print(f"Payment failed: {ctx.error}")

# Return RecoveredPayloadResult(payload=...) to recover

client.on_before_payment_creation(before_payment)

client.on_after_payment_creation(after_payment)

client.on_payment_creation_failure(on_failure)

```

### Server Hooks

```python

server.on_before_verify(lambda ctx: print(f"Verifying: {ctx.payload}"))

server.on_after_verify(lambda ctx: print(f"Result: {ctx.result.is_valid}"))

server.on_verify_failure(lambda ctx: print(f"Failed: {ctx.error}"))

server.on_before_settle(lambda ctx: ...)

server.on_after_settle(lambda ctx: ...)

server.on_settle_failure(lambda ctx: ...)

```

### Facilitator Hooks

```python

facilitator.on_before_verify(...)

facilitator.on_after_verify(...)

facilitator.on_verify_failure(...)

facilitator.on_before_settle(...)

facilitator.on_after_settle(...)

facilitator.on_settle_failure(...)

```

## Network Pattern Matching

Register handlers for network families using wildcards:

```python

# All EVM networks

client.register("eip155:*", ExactEvmScheme(signer))

# Specific network (takes precedence)

client.register("eip155:8453", CustomScheme())

```

## HTTP Headers

### V2 Protocol (Current)

| Header | Description |

|--------|-------------|

| `PAYMENT-SIGNATURE` | Base64-encoded payment payload |

| `PAYMENT-REQUIRED` | Base64-encoded payment requirements |

| `PAYMENT-RESPONSE` | Base64-encoded settlement response |

### V1 Protocol (Legacy)

| Header | Description |

|--------|-------------|

| `X-PAYMENT` | Base64-encoded payment payload |

| `X-PAYMENT-RESPONSE` | Base64-encoded settlement response |

## Related Modules

- `x402.http` - HTTP clients, middleware, and facilitator client

- `x402.mechanisms.evm` - EVM/Ethereum implementation

- `x402.mechanisms.svm` - Solana implementation

- `x402.extensions` - Protocol extensions (Bazaar discovery)

## Examples

See [examples/python](https://github.com/coinbase/x402/tree/main/examples/python).

| text/markdown | Coinbase | null | null | null | MIT | 402, http, payment, protocol, x402 | [

"Development Status :: 3 - Alpha",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Typing :: Typed"

] | [] | null | null | >=3.10 | [] | [] | [] | [

"pydantic>=2.0.0",

"typing-extensions>=4.0.0",

"x402[evm,extensions,fastapi,flask,httpx,redis,requests,svm]; extra == \"all\"",

"x402[httpx,requests]; extra == \"clients\"",

"eth-abi>=5.0.0; extra == \"evm\"",

"eth-account>=0.12.0; extra == \"evm\"",

"eth-keys>=0.5.0; extra == \"evm\"",

"eth-utils>=4.0.0; extra == \"evm\"",

"web3>=7.0.0; extra == \"evm\"",

"jsonschema>=4.0.0; extra == \"extensions\"",

"fastapi[standard]>=0.115.0; extra == \"fastapi\"",

"starlette>=0.27.0; extra == \"fastapi\"",

"flask>=3.0.0; extra == \"flask\"",

"httpx>=0.28.1; extra == \"httpx\"",

"x402[evm,svm]; extra == \"mechanisms\"",

"redis>=5.0.0; extra == \"redis\"",

"requests>=2.31.0; extra == \"requests\"",

"x402[fastapi,flask]; extra == \"servers\"",

"solana>=0.36.0; extra == \"svm\"",

"solders>=0.27.0; extra == \"svm\""

] | [] | [] | [] | [

"Homepage, https://github.com/coinbase/x402",

"Documentation, https://x402.org",

"Repository, https://github.com/coinbase/x402"

] | twine/6.2.0 CPython/3.13.2 | 2026-02-20T13:18:55.647459 | og_test_v2_x402-0.0.10.tar.gz | 896,876 | 7e/ba/3c2367436bd7dff6e236628cf6f92ca1406db8baa9444fe9eae78c077211/og_test_v2_x402-0.0.10.tar.gz | source | sdist | null | false | 2d42ffa40c13d2fc0061961ca91b9e20 | 9ad8d57a11326e24b28245dbc53fe6ae1f0a1900eb8d32e75ad23773072eeb00 | 7eba3c2367436bd7dff6e236628cf6f92ca1406db8baa9444fe9eae78c077211 | null | [] | 205 |

2.4 | foxes | 1.7.3 | Farm Optimization and eXtended yield Evaluation Software | # Welcome to foxes

## Overview

The software `foxes` is a modular wind farm simulation and wake modelling toolbox which is based on engineering wake models. It has many applications, for example

- Wind farm optimization, e.g. layout optimization or wake steering,

- Wind farm post-construction analysis,

- Wake model studies, comparison and validation,

- Wind farm simulations invoking complex model chains.

The fast performance of `foxes` is owed to vectorization and parallelization,

and it is intended to be used for large wind farms and large timeseries inflow data.

The parallelization on local or remote clusters is supported, based on

[mpi4py](https://mpi4py.readthedocs.io/en/stable/) or

[dask.distributed](https://distributed.dask.org/en/stable/).

The wind farm

optimization capabilities invoke the [foxes-opt](https://github.com/FraunhoferIWES/foxes-opt) package which

as well supports vectorization and parallelization.

`foxes` is build upon many years of experience with wake model code development at IWES, starting with the C++ based in-house code _flapFOAM_ (2011-2019) and the Python based direct predecessor _flappy_ (2019-2022).

Documentation: [https://fraunhoferiwes.github.io/foxes](https://fraunhoferiwes.github.io/foxes)

Source code: [https://github.com/FraunhoferIWES/foxes](https://github.com/FraunhoferIWES/foxes)

PyPi reference: [https://pypi.org/project/foxes/](https://pypi.org/project/foxes/)

Anaconda reference: [https://anaconda.org/conda-forge/foxes](https://anaconda.org/conda-forge/foxes)

## Citation

Please cite the JOSS paper `"FOXES: Farm Optimization and eXtended yield

Evaluation Software"`

[](https://doi.org/10.21105/joss.05464)

Bibtex:

```

@article{

Schmidt2023,

author = {Jonas Schmidt and Lukas Vollmer and Martin Dörenkämper and Bernhard Stoevesandt},

title = {FOXES: Farm Optimization and eXtended yield Evaluation Software},

doi = {10.21105/joss.05464},

url = {https://doi.org/10.21105/joss.05464},

year = {2023},

publisher = {The Open Journal},

volume = {8},

number = {86},

pages = {5464},

journal = {Journal of Open Source Software}

}

```

## Requirements

The supported Python versions are `Python 3.9`...`3.13`.

## Installation

Either install via pip:

```console

pip install foxes

```

Alternatively, install via conda:

```console

conda install foxes -c conda-forge

```

## Usage

For detailed examples of how to run _foxes_, check the `examples` and `notebooks` folders in this repository. A minimal running example is the following, based on provided static `csv` data files:

```python

import foxes

if __name__ == "__main__":

states = foxes.input.states.Timeseries("timeseries_3000.csv.gz", ["WS", "WD","TI","RHO"])

farm = foxes.WindFarm()

foxes.input.farm_layout.add_from_file(farm, "test_farm_67.csv", turbine_models=["NREL5MW"])

algo = foxes.algorithms.Downwind(farm, states, ["Jensen_linear_k007"])

farm_results = algo.calc_farm()

print(farm_results)

```

## Testing

For testing, please clone the repository and install the required dependencies:

```console

git clone https://github.com/FraunhoferIWES/foxes.git

cd foxes

pip install -e .[test]

```

The tests are then run by

```console

pytest tests

```

## Contributing

1. Fork _foxes_ on _github_.

2. Create a branch (`git checkout -b new_branch`)

3. Commit your changes (`git commit -am "your awesome message"`)

4. Push to the branch (`git push origin new_branch`)

5. Create a pull request [here](https://github.com/FraunhoferIWES/foxes/pulls)

## Acknowledgements

The development of _foxes_ and its predecessors _flapFOAM_ and _flappy_ (internal - non public) has been supported through multiple publicly funded research projects. We acknowledge in particular the funding by the Federal Ministry of Economic Affairs and Climate Action (BMWK) through the projects _Smart Wind Farms_ (grant no. 0325851B), _GW-Wakes_ (0325397B) and _X-Wakes_ (03EE3008A), as well as the funding by the Federal Ministry of Education and Research (BMBF) in the framework of the project _H2Digital_ (03SF0635). We furthermore acknowledge funding by the Horizon Europe project FLOW (Atmospheric Flow, Loads and pOwer

for Wind energy - grant id 101084205).

| text/markdown | Jonas Schulte | null | Jonas Schulte | null | MIT License

Copyright (c) 2022 FraunhoferIWES

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

| Wind farm, Wake modelling, Wind farm optimization | [

"Topic :: Scientific/Engineering",

"Intended Audience :: Developers",

"Intended Audience :: Science/Research",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.9",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"License :: OSI Approved :: MIT License",

"Operating System :: OS Independent",

"Development Status :: 4 - Beta"

] | [] | null | null | <3.14,>=3.9 | [] | [] | [] | [

"matplotlib>=3.8",

"numpy>=1.26",

"pandas>=2.0",

"scipy>=1.12",

"xarray>=2023",

"netcdf4>=1.5",

"pyyaml>=4.0",

"tqdm>=4.31.1",

"utm>=0.5",

"foxes-opt>=0.6; extra == \"opt\"",

"cdo>=0.1; extra == \"icon-dream\"",

"requests>0.1; extra == \"icon-dream\"",

"dask>=2022.0; extra == \"dask\"",

"distributed>=2022.0; extra == \"dask\"",

"dask-jobqueue>=0.8; extra == \"dask\"",

"setuptools>=61.0; extra == \"dask\"",

"setuptools>=61.0; extra == \"doc\"",

"sphinx>=5.0; extra == \"doc\"",

"sphinx-immaterial>=0.10; extra == \"doc\"",

"myst-nb>=0.1; extra == \"doc\"",

"ipykernel>=5.0; extra == \"doc\"",

"ipywidgets>=5.0; extra == \"doc\"",

"m2r2>=0.2; extra == \"doc\"",

"lxml_html_clean>=0.4; extra == \"doc\"",

"dask>=2022.0; extra == \"doc\"",

"distributed>=2022.0; extra == \"doc\"",

"pytest>=7.0; extra == \"test\"",

"nbmake>1.0; extra == \"test\"",

"dask>=2022.0; extra == \"test\"",

"distributed>=2022.0; extra == \"test\"",

"pre-commit>=0.1; extra == \"dev\"",

"objsize>=0.5; extra == \"dev\"",

"jupyter>=1.0; extra == \"dev\""

] | [] | [] | [] | [

"Homepage, https://github.com/FraunhoferIWES/foxes",

"Documentation, https://fraunhoferiwes.github.io/foxes",

"Repository, https://github.com/FraunhoferIWES/foxes.git",

"Bug Tracker, https://github.com/FraunhoferIWES/foxes/issues",

"Changelog, https://github.com/FraunhoferIWES/foxes/blob/main/CHANGELOG.md"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T13:18:37.904349 | foxes-1.7.3.tar.gz | 1,340,139 | 14/20/443c9abaaef8b6cd78ec1d963061dacfc726b9dd87afe303663b3f581857/foxes-1.7.3.tar.gz | source | sdist | null | false | 7584c1824a27c678b3bd97f56424f59b | 61c4518f6245324fbc89f775c1ef98ee6dce03de171608084331c095e8c8036f | 1420443c9abaaef8b6cd78ec1d963061dacfc726b9dd87afe303663b3f581857 | null | [

"LICENSE"

] | 247 |

2.4 | agent-cow | 0.1.1 | Database Copy-On-Write for AI agent workspace isolation | # agent-cow

**Database Copy-On-Write for AI agent workspace isolation**

[](https://pypi.org/project/agent-cow/)

[](https://opensource.org/licenses/MIT)

`agent-cow` intercepts your AI agent's database writes and isolates them in a copy-on-write layer. The agent thinks it's modifying real data, but nothing touches production until you approve. Zero changes to your existing queries.

> Read the full article: [Copy-on-Write in Agentic Systems](https://www.trail-ml.com/blog/agent-cow)

> Try the interactive demo: [www.agent-cow.com](https://www.agent-cow.com)

```

Without agent-cow: With agent-cow:

┌───────┐ ┌──────────┐ ┌───────┐ ┌──────┐ ┌──────────┐

│ Agent │──────>│ Database │ │ Agent │────>│ COW │────>│ Database │

└───────┘ └──────────┘ └───────┘ │ View │ └──────────┘

writes directly └──────┘

to production writes go to changes table

reads merge base + changes

user reviews, then commits or discards

```

## Installation

```bash

pip install agent-cow

```

Requires Python 3.10+.

## How It Works

1. **Renames your table** from `users` to `users_base`

2. **Creates a changes table** `users_changes` to store session-specific modifications

3. **Creates a COW view** named `users` that merges base + changes

4. **Your code doesn't change** — queries still target `users` (now a view)

When you set `app.session_id` and `app.operation_id` variables, all writes go to the changes table. Reads automatically merge base data with your session's changes. Other sessions (and production) see only the base data.

See the [interactive demo](https://www.agent-cow.com) for a worked example of an inventory management system where an agent makes both good and bad decisions.

<details>

<summary><strong>Why Copy-on-Write for agents?</strong></summary>

Alignment is an open problem in AI safety, and [misalignment during agent execution may not always be obvious](https://www.cold-takes.com/why-ai-alignment-could-be-hard-with-modern-deep-learning/). At best, a misaligned agent is annoying (ie. if the agent does something other than what the user wants it to do) and at worst, dangerous (i.e. leading to sensitive data loss, tool misuse, and [other harms](https://www.anthropic.com/research/agentic-misalignment)). Rather than tackling the alignment problem directly, this repo focuses on minimizing potential harm a misaligned agent can cause.

- **Changes can be reviewed at the end of a session**, rather than needing to repeatedly 'accept' each action as it is executed. This minimizes the direct human supervision required while improving the safeguards in place.

- Mistakes are less consequential, since the **agent can't write directly to the main/production data**. If some changes are good but others aren't, users can cherry-pick operations they wish to keep.

- **Misalignment patterns become more visible**. When reviewing changes at the end of a session, users can clearly identify where the agent deviated from intended behavior and adjust the system prompt or agent configuration accordingly to prevent similar issues in future sessions.

- **Multiple agents or agent sessions** can run simultaneously on isolated copies without interfering with each other.

</details>

## Backends

| Backend | Docs | Status |

|---------|------|--------|

| **PostgreSQL** | [agentcow/postgres](./agentcow/postgres/) | Available |

| **pg-lite (TypeScript)** | [agent-cow-typescript](https://github.com/trail-ml/agent-cow-ts) | Available |

| **Blob/File Storage** | — | In progress |

## Quick Example (PostgreSQL)

```python

import uuid

from agentcow.postgres import deploy_cow_functions, enable_cow_schema, apply_cow_variables, commit_cow_session

# Wrap any async PostgreSQL driver — asyncpg, SQLAlchemy, psycopg, etc.

class MyExecutor:

def __init__(self, conn):

self._conn = conn

async def execute(self, sql: str) -> list[tuple]:

return [tuple(r) for r in await self._conn.fetch(sql)]

executor = MyExecutor(conn)

# One-time setup — enables COW on all tables in the schema

await deploy_cow_functions(executor)

await enable_cow_schema(executor)

# Agent session — all writes are isolated

session_id = uuid.uuid4()

await apply_cow_variables(executor, session_id, operation_id=uuid.uuid4())

await executor.execute("INSERT INTO users (name) VALUES ('Bessie')")

# Review, then commit or discard

await commit_cow_session(executor, "users", session_id)

```

See the [PostgreSQL docs](./agentcow/postgres/) for the full guide: driver adapters, schema-wide setup, selective commit, web framework integration, and the complete API reference.

## API Reference

### Core Functions

- `deploy_cow_functions(executor)` — Deploy COW SQL functions (one-time setup)

- `enable_cow(executor, table_name)` — Enable COW on a table

- `enable_cow_schema(executor)` — Enable COW on all tables in a schema

- `disable_cow(executor, table_name)` — Disable COW and restore original table

- `disable_cow_schema(executor)` — Disable COW on all tables in a schema

- `commit_cow_session(executor, table_name, session_id)` — Commit all session changes

- `discard_cow_session(executor, table_name, session_id)` — Discard all session changes

- `get_cow_status(executor)` — Get COW status for a schema

### Operation-Level Functions

- `apply_cow_variables(executor, session_id, operation_id)` — Set COW session variables

- `get_session_operations(executor, session_id)` — List all operations in a session

- `get_operation_dependencies(executor, session_id)` — Get operation dependency graph

- `commit_cow_operations(executor, table_name, session_id, operation_ids)` — Commit specific operations

- `discard_cow_operations(executor, table_name, session_id, operation_ids)` — Discard specific operations

### Session Management

- `CowRequestConfig` — Dataclass for COW configuration

- `build_cow_variable_statements(session_id, operation_id)` — Build SET LOCAL SQL statements

For parsing COW configuration from HTTP request headers (e.g. in FastAPI/Django/Flask middleware), see [`agentcow/examples/header_parsing_example.py`](./agentcow/examples/header_parsing_example.py).

## Development

```bash

git clone https://github.com/trail-ml/agent-cow.git

cd agent-cow

pip install -e ".[dev]"

pytest agentcow/postgres/tests/ -v

```

## Contributing

We welcome contributions! For questions, bug reports, or feature requests, please [open an issue](https://github.com/trail-ml/agent-cow/issues).

## License

MIT License.

## Credits

Created and maintained by [trail](https://trail-ml.com).

| text/markdown | null | Trail Team <help@trail-ml.com> | null | null | MIT | agents, ai, copy-on-write, database, isolation, postgresql | [

"Development Status :: 4 - Beta",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Topic :: Database",

"Topic :: Software Development :: Libraries :: Python Modules"

] | [] | null | null | >=3.10 | [] | [] | [] | [

"asyncpg>=0.29.0; extra == \"dev\"",

"pytest-asyncio>=0.23.0; extra == \"dev\"",

"pytest-postgresql>=6.0.0; extra == \"dev\"",

"pytest>=8.0.0; extra == \"dev\"",

"sqlalchemy>=2.0.0; extra == \"dev\"",

"asyncpg>=0.29.0; extra == \"sqlalchemy\"",

"sqlalchemy>=2.0.0; extra == \"sqlalchemy\""

] | [] | [] | [] | [

"Homepage, https://github.com/trail-ml/agent-cow",

"Repository, https://github.com/trail-ml/agent-cow",

"Issues, https://github.com/trail-ml/agent-cow/issues"

] | uv/0.5.20 | 2026-02-20T13:18:27.787496 | agent_cow-0.1.1.tar.gz | 57,379 | a2/de/3713828ecd2a064a6cff97be2342e483c12f6947fb271e2bb6aead2f7152/agent_cow-0.1.1.tar.gz | source | sdist | null | false | 8ac1b66653dc89d62dacc51b36ea12dc | 7fddc213ee6cb2972a98bbe737ae8de603ae100dc297e5b8b202c450f17bc254 | a2de3713828ecd2a064a6cff97be2342e483c12f6947fb271e2bb6aead2f7152 | null | [

"LICENSE"

] | 212 |

2.4 | hid_data_transfer_lib | 0.3.10 | HiDALGO Data Transfer library provides methods to transfer data between different data providers and consumers using NIFI pipelines | # Hidalgo2 Data Transfer Lib

This repository contains the implementation of the Hidalgo2 data transfer library. It uses [Apache NIFI](https://nifi.apache.org/) to transfer data from different data sources to specified targets

## Features

This library is planning to support the following features:

- transfer datasets from Cloud Providers to HDFS

- transfer datasets from Cloud Providers to CKAN

- transfer datasets from/to Hadoop HDFS to/from HPC

- transfer datasets from/to Hadoop HDFS to/from CKAN

- transfer datasets from/to a CKAN to/from HPC

- transfer datasets from/to local filesystem to/from HPC

- transfer datasets from/to local filesystem to/from CKAN

## Current version

Current version of the library supports the following features:

- transfer datasets from/to Hadoop HDFS to/from HPC

- transfer datasets from/to Hadoop HDFS to/from CKAN

- transfer datasets from/to a CKAN to/from HPC

- transfer datasets from/to local filesystem to/from CKAN

## Implementation

This is a Python library that offers specialized API methods to transfer data from data sources to targets.

Each API method launches a NIFI pipeline, by instantiating a NIFI process group out of its workflow definition registered in the NIFI registry.

It uses the parameters given within the library method invocation to populate a NIFI parameter context that is asociated to the process group.

Then, processors in the process group are executed once (or forever until the incomining processor's flowfile queue gets empty), one after another, following the group sequence flow, until the flow is completed.

A processor is executed after the previous one has terminated. To check the status of the transfer command, the library offers another check-status command.

Upon termination, the NIFI environment is cleaned up, by removing the created entities (i.e. the process group and its paramenter context).

The Data Transfer Library sends requests to NIFI through its REST API.

## Requirements

To use the Data Transfer library, it is required the following requirements:

- **Python3** execution environment

- **Poetry** python package management tool (optional)

- **NIFI** instance, with a NIFI server SSH account (for keys transfer)

- **Keycloak** instance, with a KEYCLOAK user's account

- **HDFS** instance, with an user's Kerberos principal account

- **CKAN** instance, with an user APIKey

Python3 should be installed in the computer where Data Transfer CLI will be used.

To install Poetry, follows [this instructions](https://python-poetry.org/docs/#installing-with-the-official-installer)

## Data Transfer lib configuration

### Configuration file

Before using the Data Transfer library, you should configure it to point at the target NIFI and Keycloak services. The default configuration file is located, by default, at the *data_transfer_cli/conf/hid_dt.cfg* file. However, this default configuration should be complemented (and optionally overriden) with user's specific settings, placed in a configuration file whose location should be also specified (e.g., *~/.dtcli/dtcli.cfg*). Settings in this latter user's configuration will override those in the former default library configuration. The user should not modify the default library configuration, but the the user's specific one, including therein any additional required settings (see below) or modifications to the default ones. The location of this user's specific configuration file is passed as parameter to the library when setting it programmatically.

```

[Nifi]

nifi_endpoint=https://nifi.hidalgo2.eu:9443

nifi_upload_folder=/opt/nifi/data/upload

nifi_download_folder=/opt/nifi/data/download

nifi_secure_connection=True

[Keycloak]

keycloak_endpoint=https://idm.hidalgo2.eu

keycloak_client_id=nifi

keycloak_client_secret=<keycloak_nifi_client_secret>

[Logging]

logging_level=INFO

[Network]

check_status_sleep_lapse=5

```

Under the NIFI section,

- We define the url of the NIFI service (*nifi_endpoint*),

- We also specify a folder (*nifi_upload_folder*) in NIFI server where to upload files

- And another folder (*nifi_download_folder*) where from to download files. These folder must be accessible by the NIFI service (ask NIFI administrator for details).

- Additionally, you cat set if NIFI servers listens on a secure HTTPS connection (*nifi_secure_connection*=True) or on a non-secure HTTP (*nifi_secure_connection*=False)

These default library settings works with the HiDALGO2 NIFI, so not additional modifications are required.

Under the Keycloak section, you can configure the Keycloak integrated with NIFI, specifying:

- The Keycloak service endpoint (*keycloak_endpoint*)

- The NIFI client in Keycloak (*keycloak_client*)

- The NIFI secret in Keycloak (*keycloak_client_secret*). This setting must be set in the user's configuration (e.g., *~/.dtcli/dtcli.cfg*).

These default library settings works with the HiDALGO2 Keycloak, so not additional modifications are required, excepting for the NIFI client secret.

Under the Logging section, you can configure the logging level. Logfile *dtcli.log" is located at the workdir of the process that executes the library.

Under the Network section, you can configure the lapse time (in seconds) each processor in the NIFI pipeline is checked for completion. Most of users should leave the default value.

Remember that any modification or addition for the default settings must be placed on the user's specific configuration file (e.g., *~/.dtcli/dtcli.cfg*).

Under the Network section, you can configure the lapse time (in seconds) each processor in the NIFI pipeline is checked for complation. Most of users should leave the default value.

Under the Network section, you can configure the lapse time (in seconds) each processor in the NIFI pipeline is checked for complation. Most of users should leave the default value.

HiDALGO2 developers can contact the Keycloak administrator for the *keycloak_client_secret*

### User's accounts

Additional user's accounts are specified in the user's specific configuration file (e.g., *~/.dtcli/dtcli.cfg*):

```

[Nifi]

nifi_server_username=<user_name>

nifi_server_private_key=<path/to/private/key>

[Keycloak]

keycloak_login=<user_name>

keycloak_password=<password>

```

Under the Nifi section, you must also specify a user account (username, private_key) that grants to upload/download files to the NIFI server (as requested to upload temporary HPC keys or to support local file transfer). This user's account is provided by Hidalgo2 infrastructure provider and it is user's or service's specific.

Under the Keycloak section, you must specify your Keycloak account (username and password). This account granted with access to the NIFI service.

For HiDALGO2 developers, NIFI (Service, Server) and Keycloak accounts are provided by the HiDALGO2 administrator.

## Usage

The data transfer library can be invoked following two procedures:

### Using user's configuration (e.g., ~/.dtcli/dtcli.cfg)

In this case, user's configuration will be read from a give file, such as *~/.dtcli/dtcli.cfg*, whose location is programmatically passed as a parameter upon the setup of the library (see procedure below).

### Providing configuration in a dictionary

In this case, the user's configuration is provided in a dictionary, with this structure:

```

{

'Nifi':

{

'nifi_server_username': '<username>',

'nifi_server_private_key': '<path/to/private/key>'

},

'Keycloak':

{

'keycloak_login': '<username>',

'keycloak_password': '<password>',

'keycloak_client_secret': '<client_secret>'

},

'Logging': {

'logging_level': 'DEBUG'},

'Network': {

'check_status_sleep_lapse': '2'

}

}

```

In this settings' dictionary you should add the user's specific accounts for Nifi and Keycloak, and optionally, other settings, as shown for the logging level or the sleep lapse time for checking the processors status on the Nifi pipeline. This dictionary is programmatically passed as parameter to the library upon its setup (see procedure below).

The remaining procedure goes as follows:

- In your python code, instantiate a HIDDataTransferConfiguration object and an HIDDataTranfer object

The HDIDataTransfer object can be created, by default, using the configuration read from the user's configuration file (or from the provided configuration dictionary),

or by providing a dictionary with the Keycloak token, the refresh token, and the expiration time

Example with user's configuration file

```

from hid_data_transfer_lib.hid_dt_lib import HIDDataTransfer

from hid_data_transfer_lib.conf.hid_dt_configuration import (

HidDataTransferConfiguration

)

# Using Keycloak configuration from users's file

user_config_file = None

if os.path.exists(os.path.expanduser("~/.dtcli/dtcli.cfg")):

user_config_file = os.path.expanduser("~/.dtcli/dtcli.cfg")

config = HidDataTransferConfiguration().configure(

user_config_file=user_config_file,

logging_level=logging.DEBUG)

# Create a HIDDataTransfer object that uses the Keycloak user's configuration

dt_client = HIDDataTransfer(conf=config, secure=True)

# OR

user_config_file = None

if os.path.exists(os.path.expanduser("~/.dtcli/dtcli.cfg")):

user_config_file = os.path.expanduser("~/.dtcli/dtcli.cfg")

config = HidDataTransferConfiguration().configure(

user_config_file=user_config_file,

logging_level=logging.DEBUG)

# Create a HIDDataTransfer object that uses the provided Keycloak token dictionary

keycloak_token = {

"username": <keycloak_username>,

"token": <keycloak_token>,

"expires_in": <keycloak_token_expires_in>,

"refresh_token": <keycloak_refresh_token>

}

dt_client = HIDDataTransfer(

conf=config,

secure=True,

keycloak_token=keycloak_token

)

```

Example with user's configuration dictionary:

```

user_config_dict = {...} # See example of dictionary given above

config = HidDataTransferConfiguration().configure(

user_config_dict=user_config_dict,

logging_level=logging.DEBUG)

dt_client = HIDDataTransfer(conf=config, secure=True)

```

- Invoke any data transfer library method using the created object to tranfer data

```

pipeline_id, accounting = dt_client.hpc2ckan(

hpc_host=<hpc_endpoint>,

hpc_username=<hpc_username>,

hpc_secret_key_path=<hpc_secret_key>,

ckan_host=<ckan_endpoint>,

ckan_api_key=<ckan_apikey>,

ckan_organization=<ckan_organization>,

ckan_dataset=<ckan_dataset>,

ckan_resource=<ckan_resource>,

data_source=<hpc_source_folder>,

concurrent_tasks=10,

recursive=False,

)

```

This method returns the id of the data transfer pipeline that NIFI executed and an AccountingInfo object that contains statistics of data transfer, including the *pipeline_timespan* (or total transfer time) and the flowfiles_sizes, a dictionary whose keys are the names of the transferred files and the values are their lengths.

## Data Transfer optimization

You can improve the data transfer rate by setting the optional parameter concurrent_tasks (*integer*) to the number of concurrent tasks that will be used in the NIFI pipeline (default is 1). The maximum number of tasks that improve the transfer throughput depends on the physical resources of the NIFI server (consult its administrator). The parallel transfer is currently supported to/from HPC and HDFS data servers, but not to/from CKAN (under development)

## Support for HPC clusters that require a 2FA token

This library includes methods (suffixed as _2fa) to transfer data to/from HPC clusters that require a 2FA token. These methods offer an optional parameter *callback_2fa* that points to a method that should return (as str) the 2FA token when invoked by the library. If not set by the method caller, these methods call a default implementation that prompts the user (in the standard input) for the token.

## Data transfer process with NIFI

The following UML Sequence Diagram describes the data transfer process for each command, for instance *ckan2hpc*, leveraging the associated NIFI pipeline.

The Data Transfer (DT) Consumer (a client of this library) invokes a *ckan2hpc* command by following these steps:

- Creates an instance of HidDataTransferConfiguration, which reads the file and environment configuration (see [Installation Instructions](#data-transfer-lib-configuration)).

- Creates an instance of HIDDataTransfer with that configuration object, with secure mode activated, and by passing a dictionary with Keycloak token details. A renewable Keycloak token is required to invoke the remote NIFI REST APIs.

This HIDDataTransfer instance acts as the proxy to trigger one or more data transfer requests, by selecting the correspoding data transfer method. In the following, we explain the internal process to trigger a data transfer from CKAN to HPC, but the common internal process is identical to any other data transfer command.

- The DT consumer invokes the HIDDataTransfer *ckan2hpc* command, passing the required information to identify the CKAN resource to transfer and the destination HPC, including the HPC user's account and data destination path.

- The HIDDataTransfer proxy leverages the NIFIClient class to run the NIFI pipeline for *ckan2hpc*. In turn, this NIFIClient:

- Instantiates the ckan2hpc pipeline in the NIFI service, taking it from the NIFI registry.

- Uploads the user's HPC keys (if provided) into the NIFI server, for future HPC ssh access. This keys are safekeeping in a temporary folder accessible only by the user and the NIFI service.

- Starts the pipeline in the NIFI service. This concrete pipeline retrieves the source resource from CKAN, keeps it in the NIFI queue and transfers it to the target HPC location using SFTP

- Eventually, if during the data transfer process the keycloak token expires, and additional requests to the REST API of the NIFI service are required, the NIFI Client proxy requests Keycloak to renew the token.

- Once the data transfer process terminates (or in case of failure), the pipeline is cleaned up in the NIFI service, and the keys uploaded to the NIFI server deleted.