metadata_version string | name string | version string | summary string | description string | description_content_type string | author string | author_email string | maintainer string | maintainer_email string | license string | keywords string | classifiers list | platform list | home_page string | download_url string | requires_python string | requires list | provides list | obsoletes list | requires_dist list | provides_dist list | obsoletes_dist list | requires_external list | project_urls list | uploaded_via string | upload_time timestamp[us] | filename string | size int64 | path string | python_version string | packagetype string | comment_text string | has_signature bool | md5_digest string | sha256_digest string | blake2_256_digest string | license_expression string | license_files list | recent_7d_downloads int64 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

2.4 | smello-server | 0.1.2 | A local web dashboard for inspecting outgoing HTTP requests from your code | # Smello Server

A local web dashboard for inspecting outgoing HTTP requests captured by the [smello](https://pypi.org/project/smello/) client SDK — including gRPC calls made by Google Cloud libraries.

## Setup

```bash

pip install smello-server

smello-server run

```

The dashboard opens at `http://localhost:5110`.

Or with Docker:

```bash

docker run -p 5110:5110 ghcr.io/smelloscope/smello

```

Then add the client SDK to your Python code:

```bash

pip install smello

```

```python

import smello

smello.init()

# All outgoing requests are now captured (HTTP and gRPC)

```

## API

Smello Server provides a JSON API for exploring captured requests from the command line.

```bash

# List all captured requests

curl -s http://localhost:5110/api/requests | python -m json.tool

# Filter by method, host, status, or URL substring

curl -s 'http://localhost:5110/api/requests?method=POST&host=api.stripe.com'

# Get full request/response details

curl -s http://localhost:5110/api/requests/{id} | python -m json.tool

# Clear all requests

curl -X DELETE http://localhost:5110/api/requests

```

## CLI Options

```bash

smello-server run --host 0.0.0.0 --port 5110 --db-path /tmp/smello.db

```

## Requires

- Python >= 3.14

## Links

- [Documentation & Source](https://github.com/smelloscope/smello)

- [smello client SDK on PyPI](https://pypi.org/project/smello/)

| text/markdown | null | Roman Imankulov <roman.imankulov@gmail.com> | null | null | null | null | [

"Development Status :: 3 - Alpha",

"Framework :: FastAPI",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.14",

"Topic :: Software Development :: Testing",

"Topic :: System :: Networking :: Monitoring"

] | [] | null | null | >=3.14 | [] | [] | [] | [

"aiosqlite>=0.20.0",

"fastapi>=0.115.0",

"jinja2>=3.1.0",

"tortoise-orm>=0.22.0",

"uvicorn[standard]>=0.34.0"

] | [] | [] | [] | [

"Homepage, https://github.com/smelloscope/smello",

"Repository, https://github.com/smelloscope/smello",

"Issues, https://github.com/smelloscope/smello/issues"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T13:55:57.680207 | smello_server-0.1.2.tar.gz | 11,334 | 4a/14/1031fc7b268b0e09a384bf8525e7e0c128dc2bf4924752df60a9d629a198/smello_server-0.1.2.tar.gz | source | sdist | null | false | 7ab30aedde874562949015cda7087cbb | 4610b5dd32b338287ddd1e29b14d0eb242a08c5b6f7136b1b5b2e397700f0a22 | 4a141031fc7b268b0e09a384bf8525e7e0c128dc2bf4924752df60a9d629a198 | MIT | [] | 199 |

2.4 | navani | 0.1.14 | Package for processing and plotting echem data from cyclers | # navani

Navani is a Python module for processing and plotting electrochemical data from battery cyclers, combining other open source libraries to create pandas dataframes with a normalized schema across multiple cycler brands. It is intended to be easy to use for those unfamiliar with programming.

Contains functions to compute dQ/dV and dV/dQ.

Full documentation can be found [here](https://be-smith.github.io/navani/).

Currently supports:

- BioLogic MPR (`.mpr`)

- Arbin res files (`.res`)

- Simple `.txt` and Excel `.xls`/`.xlsx` formats produced by e.g., Arbin, Ivium and Lanhe/Lande

- Neware NDA and NDAX (`.nda`, `.ndax`)

The main dependencies are:

- pandas

- [galvani](https://github.com/echemdata/galvani) (BioLogic MPR)

- [mdbtools](https://github.com/mdbtools/mdbtools) (for reading Arbin's .res files with galvani).

- [NewareNDA](https://github.com/Solid-Energy-Systems/NewareNDA) (for reading Neware's NDA and NDAx formats).

Navani is released under the terms of the MIT license.

> [!WARNING]

> The [galvani](https://github.com/echemdata/galvani) dependency is available under the terms of [GPLv3 License](https://github.com/echemdata/galvani/blob/master/LICENSE). We believe this usage to be valid following the GPLv3 interpretation of the [copyright holder for galvani](https://github.com/echemdata/galvani/issues/51#issuecomment-701500053). The galvani library is not distributed with Navani, but installing Navani from PyPI will also install GPL-licensed dependencies. Users are responsible for GPL compliance of any downstream projects in this regard.

## Installation

You will need Python 3.10 or higher to use Navani.

Navani can now be installed using pip:

```shell

pip install navani

```

However it is still advised to install navani using [uv](https://docs.astral.sh/uv/), to manage dependencies.

To install Navani and its dependencies, clone this repository and use uv to setup a virtual environment with the dependencies:

```shell

git clone git@github.com/be-smith/navani

cd navani

uv venv

uv sync

```

You should now have an environment you can activate with all the required dependencies (except mdbtools, which is covered later).

To activate this environment simply run from the navani folder:

```shell

source .venv/bin/activate

```

If you would like to contribute to navani it is recommended to install the dev dependencies, this can be done simply by:

```shell

uv sync --all-extras --dev

```

If don't want to use uv it is still strongly recommended to use a fresh Python environment to install navani, using e.g., `conda create` or `python -m venv <chosen directory`.

To install navani, either clone this repository and install from your local copy:

```shell

git clone git@github.com/be-smith/navani

cd navani

pip install .

```

The additional non-Python mdbtools dependency to `galvani` that is required to read Arbin's `.res` format can be installed on Ubuntu via `sudo apt install mdbtools`, with similar instructions available for other Linux distributions and macOS [here](https://github.com/mdbtools/mdbtools).

## Usage

The main entry point to navani is the `navani.echem.echem_file_loader` function, which will do file type detection and return a pandas dataframe.

Many different plot types are then available, as shown below:

```python

import pandas as pd

import navani.echem as ec

df = ec.echem_file_loader(filepath)

fig, ax = ec.charge_discharge_plot(df, 1)

```

<img src="https://github.com/be-smith/navani/raw/main/docs/Example_figures/Graphite_charge_discharge_plot.png" alt="Graphite charge discharge plot example" width="50%" height="50%">

Also included are functions for extracting dQ/dV from the data:

```python

for cycle in [1, 2]:

mask = df['half cycle'] == cycle

voltage, dqdv, capacity = ec.dqdv_single_cycle(df['Capacity'][mask], df['Voltage'][mask],

window_size_1=51,

polyorder_1=5,

s_spline=0.0,

window_size_2=51,

polyorder_2=5,

final_smooth=True)

plt.plot(voltage, dqdv)

plt.xlim(0, 0.5)

plt.xlabel('Voltage / V')

plt.ylabel('dQ/dV / mAhV$^{-1}$')

```

<img src="https://github.com/be-smith/navani/raw/main/docs/Example_figures/Graphite_dqdv.png" alt="Graphite dQ/dV plot example" width="50%" height="50%">

And easily plotting multiple cycles:

```python

fig, ax = ec.multi_dqdv_plot(df, cycles=cycles,

colormap='plasma',

window_size_1=51,

polyorder_1=5,

s_spline=1e-7,

window_size_2=251,

polyorder_2=5,

final_smooth=True)

```

<img src="https://github.com/be-smith/navani/raw/main/docs/Example_figures/Si_dQdV.png" alt="Si dQ/dV plot example" width="50%" height="50%">

Simple jupyter notebooks and Colab notebooks can be found [here](https://github.com/be-smith/navani/blob/main/Simple%20example%20jupyter.ipynb) for Jupyter and [here](https://github.com/be-smith/navani/blob/main/Simple_examples_colab.ipynb) for Colab.

Whilst a more detailed Colab notebook can be found [here](https://github.com/be-smith/navani/blob/main/Detailed_colab_tutorial.ipynb).

| text/markdown | null | Ben Smith <ben.ed.smith2@gmail.com> | null | null | MIT | null | [] | [] | null | null | >=3.10 | [] | [] | [] | [

"numpy<2,~=1.26",

"pandas<3,~=2.2",

"scipy<2,~=1.15",

"datalab-org-galvani>=0.5.0",

"matplotlib<4,~=3.10",

"openpyxl<4,~=3.1",

"NewareNDA>=2024.8.1",

"requests<3,~=2.32",

"pyarrow>=10.0; extra == \"parquet\""

] | [] | [] | [] | [

"Homepage, https://github.com/be-smith/navani",

"Issues, https://github.com/be-smith/navani/issues",

"Documentation, https://be-smith.github.io/navani/"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T13:55:51.032522 | navani-0.1.14.tar.gz | 7,042,877 | a4/22/55d737361e30334107f68dc98adce7ba3fe0782f1c9cb9fa238ff17724e3/navani-0.1.14.tar.gz | source | sdist | null | false | 2996c538d4968e8024d475f961e7c2cc | dfe8e81a80ee4c3ce0bb70209684148fcb54132dcaa46e314522ce2f5cc9fe1c | a42255d737361e30334107f68dc98adce7ba3fe0782f1c9cb9fa238ff17724e3 | null | [

"LICENSE"

] | 254 |

2.4 | geospacelab | 0.13.0 | Collect, manage, and visualize geospace data. | <p align="center">

<img width="500" src="https://github.com/JouleCai/geospacelab/blob/master/docs/images/logo_v1_landscape_accent_colors.png">

</p>

# GeospaceLAB (geospacelab)

[](https://opensource.org/licenses/BSD-3-Clause)

[](https://www.python.org/)

[](https://zenodo.org/badge/latestdoi/347315860)

[](https://pepy.tech/project/geospacelab)

GeospaceLAB provides a framework of data access, analysis, and visualization for the researchers in space physics and space weather. The documentation can be found

on [readthedocs.io](https://geospacelab.readthedocs.io/en/latest/).

## Features

- Class-based data manager, including

- __DataHub__: the core module (top-level class) to manage data from multiple sources,

- __Dataset__: the middle-level class to download, load, and process data from a data source,

- __Variable__: the base-level class to store the data array of a variable with various attributes, including its

error, name, label, unit, group, and dependencies.

- Extendable

- Provide a standard procedure from downloading, loading, and post-processing the data.

- Easy to extend for a data source which has not been supported in the package.

- Flexible to add functions for post-processing.

- Visualization

- Time series plots with

- automatically adjustable time ticks and tick labels.

- dynamical panels (flexible to add or remove panels).

- automatically detect the time gaps.

- useful marking tools (vertical line crossing panels, shadings, top bars, etc, see Example 2 in

[Usage](https://github.com/JouleCai/geospacelab#usage))

- Map projection

- Polar views with

- coastlines in either GEO or AACGM (APEX) coordinate system.

- mapping in either fixed lon/mlon mode or in fixed LST/MLT mode.

- Support 1-D or 2-D plots with

- satellite tracks (time ticks and labels)

- nadir colored 1-D plots

- gridded surface plots

- Space coordinate system transformation

- Unified interface for cs transformations.

- Toolboxes for data analysis

- Basic toolboxes for numpy array, datetime, logging, python dict, list, and class.

## Built-in data sources:

| Data Source | Variables | File Format | Downloadable | Express | Status |

|------------------------------|------------------------------------|-----------------------|---------------|-------------------------------|--------|

| CDAWeb/OMNI | Solar wind and IMF |*cdf* | *True* | __OMNIDashboard__ | stable |

| CDAWeb/DMSP/SSUSI/EDR_AUR | DMSP SSUSI EDR_AUR emission lines | *netcdf* | *True* | __DMSPSSUSIDashboard__ | stable |

| Madrigal/EISCAT | Ionospheric Ne, Te, Ti, ... | *EISCAT-hdf5*, *Madrigal-hdf5* | *True* | __EISCATDashboard__ | stable |

| Madrigal/GNSS/TECMAP | Ionospheric GPS TEC map | *hdf5* | *True* | - | beta |

| Madrigal/DMSP/s1 | DMSP SSM, SSIES, etc | *hdf5* | *True* | __DMSPTSDashboard__ | stable |

| Madrigal/DMSP/s4 | DMSP SSIES | *hdf5* | *True* | __DMSPTSDashboard__ | stable |

| Madrigal/DMSP/e | DMSP SSJ | *hdf5* | *True* | __DMSPTSDashboard__ | stable |

| Madrigal/Millstone Hill ISR+ | Millstone Hill ISR | *hdf5* | *True* | __MillstoneHillISRDashboard__ | stable |

| Madrigal/Poker Flat ISR | Poker Flat ISR | *hdf5* | *True* | __-_ | stable |

| JHUAPL/AMPERE/fitted | AMPERE FAC | *netcdf* | *False* | __AMPEREDashboard__ | stable |

| SuperDARN/POTMAP | SuperDARN potential map | *ascii* | *False* | - | stable |

| WDC/Dst | Dst index | *IAGA2002-ASCII* | *True* | - | stable |

| WDC/ASYSYM | ASY/SYM indices | *IAGA2002-ASCII* | *True* | __OMNIDashboard__ | stable |

| WDC/AE | AE indices | *IAGA2002-ASCII* | *True* | __OMNIDashboard__ | stable |

| GFZ/Kp | Kp/Ap indices | *ASCII* | *True* | - | stable |

| GFZ/Hpo | Hp30 or Hp60 indices | *ASCII* | *True* | - | stable |

| GFZ/SNF107 | SN, F107 | *ASCII* | *True* | - | stable |

| ESA/SWARM/EFI_LP_HM | SWARM Ne, Te, etc. | *netcdf* | *True* | - | stable |

| ESA/SWARM/EFI_TCT02 | SWARM cross track vi | *netcdf* | *True* | - | stable |

| ESA/SWARM/AOB_FAC_2F | SWARM FAC, auroral oval boundary | *netcdf* | *True* | - | beta |

| TUDelft/SWARM/DNS_POD | Swarm $\rho_n$ (GPS derived) | *ASCII* | *True* | - | stable |

| TUDelft/SWARM/DNS_ACC | Swarm $\rho_n$ (GPS+Accelerometer) | *ASCII* | *True* | - | stable |

| TUDelft/GOCE/WIND_ACC | GOCE neutral wind | *ASCII* | *True* | - | stable |

| TUDelft/GRACE/WIND_ACC | GRACE neutral wind | *ASCII* | *True* | - | stable |

| TUDelft/GRACE/DNS_ACC | Grace $\rho_n$ | *ASCII* | *True* | - | stable |

| TUDelft/CHAMP/DNS_ACC | CHAMP $\rho_n$ | *ASCII* | *True* | - | stable |

| UTA/GITM/2DALL | GITM 2D output | *binary*, *IDL-sav* | *False* | - | beta |

| UTA/GITM/3DALL | GITM 3D output | *binary*, *IDL-sav* | *False* | - | beta |

## Installation

### 1. The python distribution "*__Anaconda__*" is recommended:

The package was tested with the anaconda distribution and with **PYTHON>=3.7** under **Ubuntu 20.04** and **MacOS Big Sur**.

With Anaconda, it may be easier to install some required dependencies listed below, e.g., cartopy, using the _conda_ command.

It's also recommended installing the package and dependencies in a virtual environment with anaconda.

After [installing the anaconda distribution](https://docs.anaconda.com/anaconda/install/index.html), a virtual environment can be created by the code below in the terminal:

```shell

conda create --name [YOUR_ENV_NAME] -c conda-forge python cython cartopy

```

The package "spyder" is a widely-used python IDE. Other IDEs, like "VS Code" or "Pycharm" also work.

> **_Note:_** The recommended IDE is Spyder. Sometimes, a *RuntimeError* can be raised

> when the __aacgmv2__ package is called in **PyCharm** or **VS Code**.

> If you meet this issue, try to compile the codes in **Spyder** several times.

After creating the virtual environement, you need to activate the virtual environment:

```shell

conda activate [YOUR_ENV_NAME]

```

and then to install the package as shown below or to start the IDE **Spyder**.

More detailed information to set the anaconda environment can be found [here](https://conda.io/projects/conda/en/latest/user-guide/tasks/manage-environments.html#),

### 2. Installation

#### Quick install from the pre-built release (recommended):

```shell

pip install geospacelab

```

#### Install from [Github](https://github.com/JouleCai/geospacelab) (not recommended):

```shell

pip install git+https://github.com/JouleCai/geospacelab@master

```

### 2. Dependencies

The package dependencies need to be installed before or after the installation of the package.

Several dependencies will be installed automatically with the package installation,

including __toml__, __requests__, __bueatifulsoup4__, __numpy__, __scipy__, __matplotlib__, __h5py__, __netcdf4__,

__cdflib__, __madrigalweb__, __sscws__, and __aacgmv2__.

Other dependencies will be needed if you see a *__ImportError__* or *__ModuleNotFoundError__*

displayed in the python console. Some frequently used modules and their installation methods are listed below:

- [__cartopy__](https://scitools.org.uk/cartopy/docs/latest/installing.html): Map projection for geospatial data.

- ```conda install -c conda-forge cartopy ```

- [__apexpy__ \*](https://apexpy.readthedocs.io/en/latest/reference/Apex.html): Apex and Quasi-Dipole geomagnetic

coordinate system.

- ```pip install apexpy ```

- [__geopack__](https://github.com/tsssss/geopack): The geopack and Tsyganenko models in Python.

- ```pip install geopack ```

> ([\*]()): The **_gcc_** or **_gfortran_** compilers are required before installing the package.

> - gcc: ```conda install -c conda-forge gcc```

> - gfortran: ```conda install -c conda-forge gfortran ```

Please install the packages above, if needed.

Note: The package is currently pre-released. The installation methods may be changed in the future.

### 4. First-time startup and basic configuration

Some basic configurations will be made with the first-time import of the package. Following the messages prompted in the python console, the first configuration is to set the root directory for storing the data.

When the modules to access the online Madrigal database is imported, it will ask for the inputs of user's full name, email, and affiliation.

The user's configuration can be found from the *__toml__* file below:

```

[your_home_directory]/.geospacelab/config.toml

```

The user can set or change the preferences in the configuration file. For example, to change the root directory for storing the data, modify or add the lines in "config.toml":

```toml

[datahub]

data_root_dir = "YOUR_ROOT_DIR"

```

To set the Madrigal cookies, change the lines:

```toml

[datahub.madrigal]

user_fullname = "YOUR_NAME"

user_email = "YOU_EMAIL"

user_affiliation = "YOUR_AFFILIATION"

```

### 5. Upgrade

If the package is installed from the pre-built release. Update the package via:

```shell

pip install geospacelab --upgrade

```

### 6. Uninstallation

Uninstall the package via:

```shell

pip uninstall geospacelab

```

If you don't need the user's configuration, delete the file at **_[your_home_directory]/.geospacelab/config.toml_**

## Usage

### Example 1: Dock a sourced dataset and get variables:

The core of the data manager is the class Datahub. A Datahub instance will be used for docking a buit-in sourced dataset, or adding a temporary or user-defined dataset.

The "dataset" is a Dataset instance, which is used for loading and downloading

the data.

Below is an example to load the EISCAT data from the online service. The module will download EISCAT data automatically from

[the EISCAT schedule page](https://portal.eiscat.se/schedule/) with the presetttings of loading mode "AUTO" and file type "eiscat-hdf5".

Example 1:

```python

import datetime

from geospacelab.datahub import DataHub

# settings

dt_fr = datetime.datetime.strptime('20210309' + '0000', '%Y%m%d%H%M') # datetime from

dt_to = datetime.datetime.strptime('20210309' + '2359', '%Y%m%d%H%M') # datetime to

database_name = 'madrigal' # built-in sourced database name

facility_name = 'eiscat' # facility name

site = 'UHF' # facility attributes required, check from the eiscat schedule page

antenna = 'UHF'

modulation = 'ant'

# create a datahub instance

dh = DataHub(dt_fr, dt_to)

# dock the first dataset (dataset index starts from 0)

ds_isr = dh.dock(datasource_contents=[database_name, 'isr', facility_name],

site=site, antenna=antenna, modulation=modulation, data_file_type='madrigal-hdf5')

# load data

ds_isr.load_data()

# assign a variable from its own dataset to the datahub

n_e = dh.assign_variable('n_e')

T_i = dh.assign_variable('T_i')

# get the variables which have been assigned in the datahub

n_e = dh.get_variable('n_e')

T_i = dh.get_variable('T_i')

# if the variable is not assigned in the datahub, but exists in the its own dataset:

comp_O_p = dh.get_variable('comp_O_p', dataset=ds_isr) # O+ ratio

# above line is equivalent to

comp_O_p = dh.datasets[0]['comp_O_p']

# The variables, e.g., n_e and T_i, are the class Variable's instances,

# which stores the variable values, errors, and many other attributes, e.g., name, label, unit, depends, ....

# To get the value of the variable, use variable_isntance.value, e.g.,

print(n_e.value) # return the variable's value, type: numpy.ndarray, axis 0 is always along the time, check n_e.depends.items{}

print(n_e.error)

```

### Example 2: EISCAT quicklook plot

The EISCAT quicklook plot shows the GUISDAP analysed results in the same format as the online EISCAT quicklook plot.

The figure layout and quality are improved. In addition, several marking tools like vertical lines, shadings, top bars can be

added in the plot. See the example script and figure below:

In "example2.py"

```python

import datetime

import geospacelab.express.eiscat_dashboard as eiscat

dt_fr = datetime.datetime.strptime('20201209' + '1800', '%Y%m%d%H%M')

dt_to = datetime.datetime.strptime('20201210' + '0600', '%Y%m%d%H%M')

site = 'UHF'

antenna = 'UHF'

modulation = '60'

load_mode = 'AUTO'

dashboard = eiscat.EISCATDashboard(

dt_fr, dt_to, site=site, antenna=antenna, modulation=modulation, load_mode='AUTO',

data_file_type="madrigal-hdf5"

)

dashboard.quicklook()

# dashboard.save_figure() # comment this if you need to run the following codes

# dashboard.show() # comment this if you need to run the following codes.

"""

As the dashboard class (EISCATDashboard) is a inheritance of the classes Datahub and TSDashboard.

The variables can be retrieved in the same ways as shown in Example 1.

"""

n_e = dashboard.assign_variable('n_e')

print(n_e.value)

print(n_e.error)

"""

Several marking tools (vertical lines, shadings, and top bars) can be added as the overlays

on the top of the quicklook plot.

"""

# add vertical line

dt_fr_2 = datetime.datetime.strptime('20201209' + '2030', "%Y%m%d%H%M")

dt_to_2 = datetime.datetime.strptime('20201210' + '0130', "%Y%m%d%H%M")

dashboard.add_vertical_line(dt_fr_2, bottom_extend=0, top_extend=0.02, label='Line 1', label_position='top')

# add shading

dashboard.add_shading(dt_fr_2, dt_to_2, bottom_extend=0, top_extend=0.02, label='Shading 1', label_position='top')

# add top bar

dt_fr_3 = datetime.datetime.strptime('20201210' + '0130', "%Y%m%d%H%M")

dt_to_3 = datetime.datetime.strptime('20201210' + '0430', "%Y%m%d%H%M")

dashboard.add_top_bar(dt_fr_3, dt_to_3, bottom=0., top=0.02, label='Top bar 1')

# save figure

dashboard.save_figure()

# show on screen

dashboard.show()

```

Output:

>

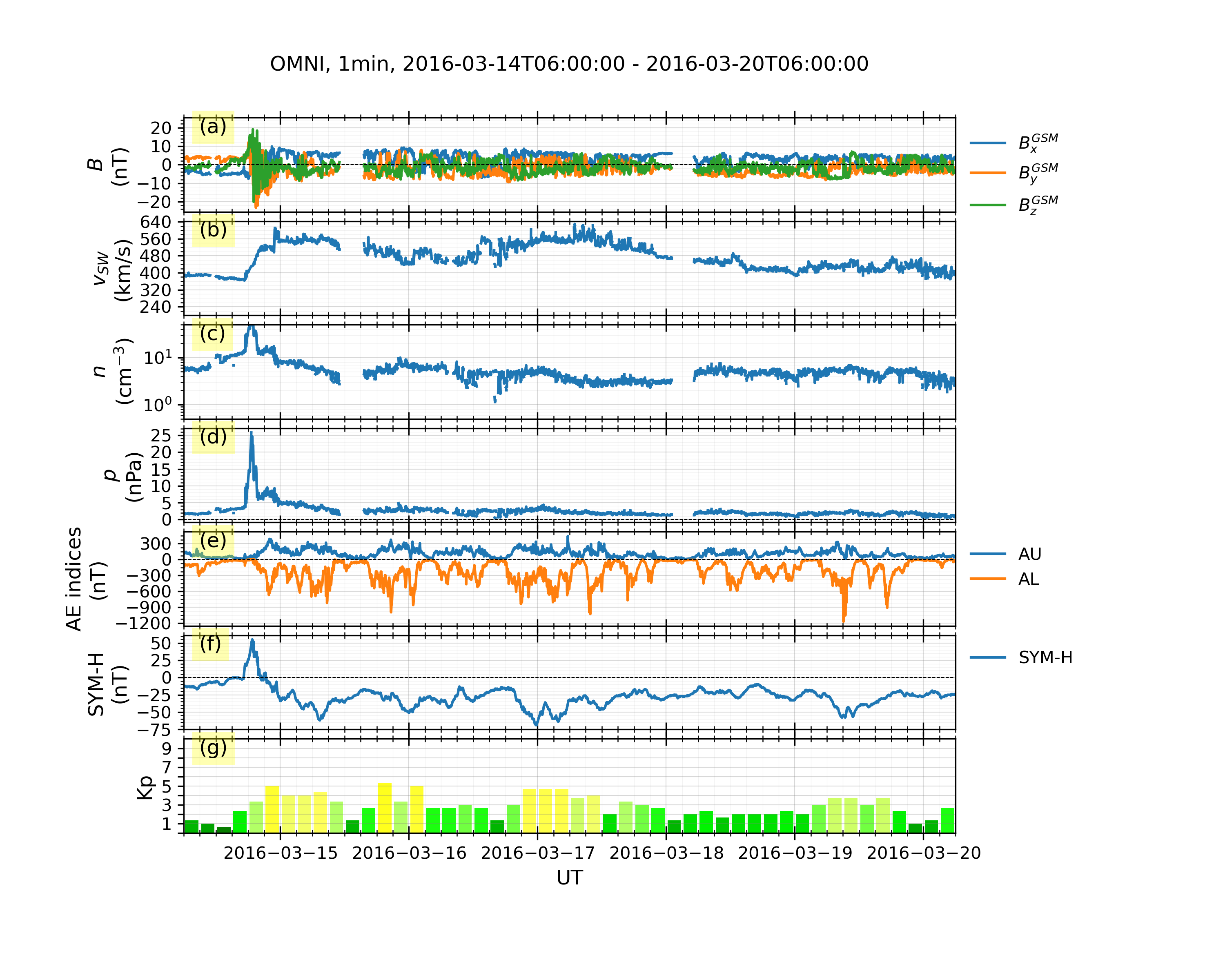

### Example 3: OMNI data and geomagnetic indices (WDC + GFZ):

In "example3.py"

```python

import datetime

import geospacelab.express.omni_dashboard as omni

dt_fr = datetime.datetime.strptime('20160314' + '0600', '%Y%m%d%H%M')

dt_to = datetime.datetime.strptime('20160320' + '0600', '%Y%m%d%H%M')

omni_type = 'OMNI2'

omni_res = '1min'

load_mode = 'AUTO'

dashboard = omni.OMNIDashboard(

dt_fr, dt_to, omni_type=omni_type, omni_res=omni_res, load_mode=load_mode

)

dashboard.quicklook()

# data can be retrieved in the same way as in Example 1:

dashboard.list_assigned_variables()

B_x_gsm = dashboard.get_variable('B_x_GSM', dataset_index=0)

# save figure

dashboard.save_figure()

# show on screen

dashboard.show()

```

Output:

>

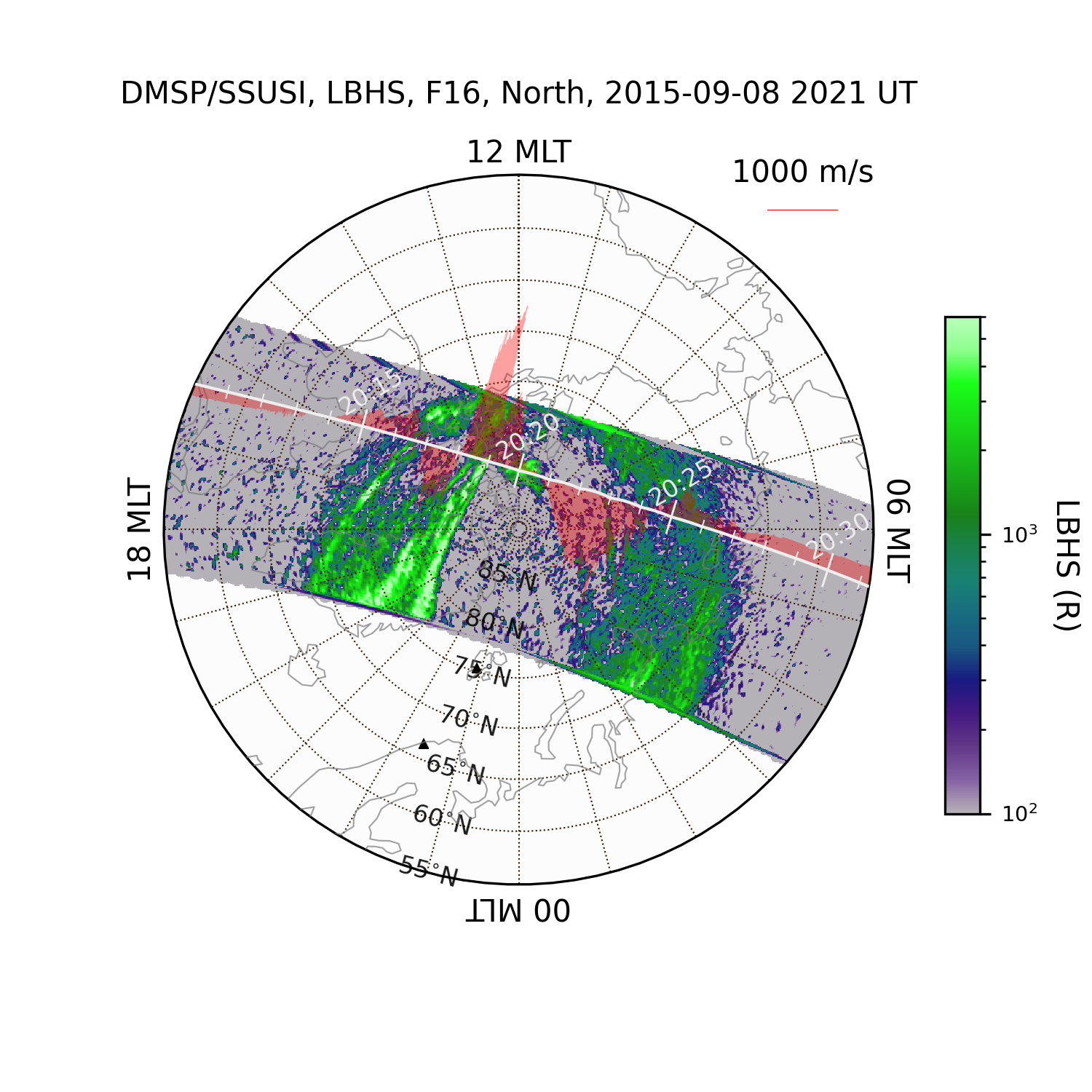

### Example 4: Mapping geospatial data in the polar map.

> **_NOTE_**: JHUAPL stopped supporting DMSP SSUSI on 14 Feb 2025.

From then on, the data source has been switched from JHUAPL to CDAWeb.

```python

import datetime

import matplotlib.pyplot as plt

import geospacelab.visualization.mpl.geomap.geodashboards as geomap

dt_fr = datetime.datetime(2015, 9, 8, 8)

dt_to = datetime.datetime(2015, 9, 8, 23, 59)

time_c = datetime.datetime(2015, 9, 8, 20, 21)

pole = 'N'

sat_id = 'f16'

band = 'LBHS'

# Create a geodashboard object

dashboard = geomap.GeoDashboard(dt_fr=dt_fr, dt_to=dt_to, figure_config={'figsize': (5, 5)})

# If the orbit_id is specified, only one file will be downloaded. This option saves the downloading time.

# dashboard.dock(datasource_contents=['jhuapl', 'dmsp', 'ssusi', 'edraur'], pole='N', sat_id='f17', orbit_id='46863')

# If not specified, the data during the whole day will be downloaded.

ds_ssusi = dashboard.dock(datasource_contents=['cdaweb', 'dmsp', 'ssusi', 'edr_aur'], pole=pole, sat_id=sat_id, orbit_id=None)

ds_s1 = dashboard.dock(

datasource_contents=['madrigal', 'satellites', 'dmsp', 's1'],

dt_fr=time_c - datetime.timedelta(minutes=45),

dt_to=time_c + datetime.timedelta(minutes=45),

sat_id=sat_id, replace_orbit=True)

dashboard.set_layout(1, 1)

# Get the variables: LBHS emission intensiy, corresponding times and locations

lbhs = ds_ssusi['GRID_AUR_' + band]

dts = ds_ssusi['DATETIME'].flatten()

mlat = ds_ssusi['GRID_MLAT']

mlon = ds_ssusi['GRID_MLON']

mlt = ds_ssusi['GRID_MLT']

# Search the index for the time to plot, used as an input to the following polar map

ind_t = dashboard.datasets[0].get_time_ind(ut=time_c)

if (dts[ind_t] - time_c).total_seconds()/60 > 60: # in minutes

raise ValueError("The time does not match any SSUSI data!")

lbhs_ = lbhs.value[ind_t]

mlat_ = mlat.value[ind_t]

mlon_ = mlon.value[ind_t]

mlt_ = mlt.value[ind_t]

# Add a polar map panel to the dashboard. Currently the style is the fixed MLT at mlt_c=0. See the keywords below:

panel = dashboard.add_polar_map(

row_ind=0, col_ind=0, style='mlt-fixed', cs='AACGM',

mlt_c=0., pole=pole, ut=time_c, boundary_lat=55., mirror_south=True

)

# Some settings for plotting.

pcolormesh_config = lbhs.visual.plot_config.pcolormesh

# Overlay the SSUSI image in the map.

ipc = panel.overlay_pcolormesh(

data=lbhs_, coords={'lat': mlat_, 'lon': mlon_, 'mlt': mlt_}, cs='AACGM',

regridding=False, **pcolormesh_config)

# Add a color bar

panel.add_colorbar(ipc, c_label=band + " (R)", c_scale=pcolormesh_config['c_scale'], left=1.1, bottom=0.1,

width=0.05, height=0.7)

# Overlay the gridlines

panel.overlay_gridlines(lat_res=5, lon_label_separator=5)

# Overlay the coastlines in the AACGM coordinate

panel.overlay_coastlines()

# Overlay cross-track velocity along satellite trajectory

sc_dt = ds_s1['SC_DATETIME'].value.flatten()

sc_lat = ds_s1['SC_GEO_LAT'].value.flatten()

sc_lon = ds_s1['SC_GEO_LON'].value.flatten()

sc_alt = ds_s1['SC_GEO_ALT'].value.flatten()

sc_coords = {'lat': sc_lat, 'lon': sc_lon, 'height': sc_alt}

v_H = ds_s1['v_i_H'].value.flatten()

panel.overlay_cross_track_vector(

vector=v_H, unit_vector=1000, vector_unit='m/s', alpha=0.3, color='red',

sc_coords=sc_coords, sc_ut=sc_dt, cs='GEO',

)

# Overlay the satellite trajectory with ticks

panel.overlay_sc_trajectory(sc_ut=sc_dt, sc_coords=sc_coords, cs='GEO')

# Overlay sites

panel.overlay_sites(

site_ids=['TRO', 'ESR'], coords={'lat': [69.58, 78.15], 'lon': [19.23, 16.02], 'height': 0.},

cs='GEO', marker='^', markersize=2)

# Add the title and save the figure

polestr = 'North' if pole == 'N' else 'South'

panel.add_title(title='DMSP/SSUSI, ' + band + ', ' + sat_id.upper() + ', ' + polestr + ', ' + time_c.strftime('%Y-%m-%d %H%M UT'))

plt.savefig('DMSP_SSUSI_' + time_c.strftime('%Y%m%d-%H%M') + '_' + band + '_' + sat_id.upper() + '_' + pole, dpi=300)

# show the figure

plt.show()

```

Output:

>

This is an example showing the HiLDA aurora in the dayside polar cap region

(see also [DMSP observations of the HiLDA aurora (Cai et al., JGR, 2021)](https://agupubs.onlinelibrary.wiley.com/doi/10.1029/2020JA028808)).

Other examples for the time-series plots and map projections can be found [here](https://github.com/JouleCai/geospacelab/tree/master/examples)

## Acknowledgements and Citation

### Acknowledgements

We acknowledge all the dependencies listed above for their contributions to implement a lot of functionality in GeospaceLAB.

### Citation

If GeospaceLAB is used for your scientific work, please mention it in the publication and cite the package:

> Cai L, Aikio A, Kullen A, Deng Y, Zhang Y, Zhang S-R, Virtanen I and Vanhamäki H (2022), GeospaceLAB: Python package

for managing and visualizing data in space physics. Front. Astron. Space Sci. 9:1023163. doi: [10.3389/fspas.2022.1023163](https://www.frontiersin.org/articles/10.3389/fspas.2022.1023163/full)

In addition, please add the following text in the "Methods" or "Acknowledgements" section:

> This research has made use of GeospaceLAB v?.?.?, an open-source Python package to manage and visualize data in space physics.

Please include the project logo (see the top) to acknowledge GeospaceLAB in posters or talks.

### Co-authorship

GeospaceLAB aims to help users to manage and visualize multiple kinds of data in space physics in a convenient way. We welcome collaboration to support your research work. If the functionality of GeospaceLAB plays a critical role in a research paper, the co-authorship is expected to be offered to one or more developers.

## Notes

- The current version is a pre-released version. Many features will be added soon.

| text/markdown | Lei Cai | lei.cai@oulu.fi | null | null | BSD 3-Clause License | Geospace, EISCAT, DMSP, Space weather, Ionosphere, Space, Magnetosphere | [

"Development Status :: 4 - Beta",

"Intended Audience :: Science/Research",

"Topic :: Scientific/Engineering :: Physics",

"Topic :: Scientific/Engineering :: Visualization",

"Topic :: Scientific/Engineering :: Astronomy",

"Topic :: Software Development :: Build Tools",

"License :: OSI Approved :: BSD License",

"Programming Language :: Python :: 3.9",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12"

] | [] | https://github.com/JouleCai/geospacelab | null | <3.13,>=3.9 | [] | [] | [] | [

"cython",

"requests>=2.26.0",

"beautifulsoup4>=4.9.3",

"natsort>=7.1.1",

"numpy<2.4.0",

"scipy>=1.6.0",

"netcdf4>=1.5.7",

"h5py>=3.2.1",

"matplotlib>=3.5",

"madrigalweb>=3.3",

"aacgmv2>=2.6.2",

"cdflib>=1.2.3",

"geopack>=1.0.10",

"palettable",

"tqdm",

"toml",

"sscws",

"pandas>=1.5.3",

"keyring"

] | [] | [] | [] | [] | twine/6.2.0 CPython/3.12.12 | 2026-02-20T13:55:18.290061 | geospacelab-0.13.0.tar.gz | 461,449 | 3c/2f/152aa83aa17781fa0c53cb8862b9dfdcf2518936e1b48cd959731fe2050a/geospacelab-0.13.0.tar.gz | source | sdist | null | false | a5a5762d6a7a47b8997ac2d032cca040 | f1050efd1a31deaafc0cb4e37d6f7176b74d0cb81faaf8b42c791453472c43d4 | 3c2f152aa83aa17781fa0c53cb8862b9dfdcf2518936e1b48cd959731fe2050a | null | [

"LICENSE"

] | 224 |

2.3 | oreum_core | 0.11.13 | Core tools for use on projects by Oreum Industries | # Oreum Core Tools `oreum_core`

[](https://www.python.org)

[](https://choosealicense.com/licenses/apache-2.0/)

[](https://github.com/oreum-industries/oreum_core/releases)

[](https://pypi.org/project/oreum_core)

[](https://github.com/oreum-industries/oreum_core/actions/workflows/lint.yml)

[](https://github.com/oreum-industries/oreum_core/actions/workflows/publish.yml)

[](https://github.com/astral-sh/ruff)

[](https://pypi.org/project/interrogate/)

[](https://github.com/PyCQA/bandit)

---

## 1. Description and Scope

`oreum_core` is an ever-evolving package of core tools for use on client

projects by Oreum Industries.

+ Provides an essential workflow for data curation, EDA, basic ML using the core

scientific Python stack incl. `numpy`, `scipy`, `matplotlib`, `seaborn`,

`pandas`, `scikit-learn`, `umap-learn`

+ Optionally provides an advanced Bayesian modeling workflow in R&D and

Production using a leading probabilistic programming stack incl. `pymc`,

`pytensor`, `arviz`

(do `pip install oreum_core[pymc]`)

+ Optionally enables a generalist black-box ML workflow in R&D using a leading

Gradient Boosted Trees stack incl. `catboost`, `xgboost`, `optuna`, `shap`

(do `pip install oreum_core[tree]`)

+ Also includes several utilities for text cleaning, sql scripting, file handling

This package **is**:

+ A work in progress (v0.y.z) and liable to breaking changes and inconvenience

to the user

+ Solely designed for ease of use and rapid development by employees of

Oreum Industries, and selected clients with guidance

This package **is not**:

+ Intended for public usage and will not be supported for public usage

+ Intended for contributions by anyone not an employee of Oreum Industries,

and unsolicited contributions will not be accepted.

### Notes

+ Project began on 2021-01-01

+ The `README.md` is MacOS and POSIX oriented

+ See `LICENCE.md` for licensing and copyright details

+ See `pyproject.toml` for various package details

+ This uses a logger named `'oreum_core'`, feel free to incorporate or ignore

see `__init__.py` for details

+ Hosting:

+ Source code repo on [GitHub](https://github.com/oreum-industries/oreum_core)

+ Source code release on [GitHub](https://github.com/oreum-industries/oreum_core/releases)

+ Package release on [PyPi](https://pypi.org/project/oreum_core)

+ Implementation:

+ This project is enabled by a modern, open-source, advanced software stack

for data curation, statistical analysis and predictive modelling

+ Specifically we use an open-source Python-based suite of software packages,

the core of which is often known as the Scientific Python stack, supported

by [NumFOCUS](https://numfocus.org)

+ Once installed (see section 2), see `LICENSES_3P.md` for full

details of all package licences

+ Environments: this project was originally developed on a Macbook Air M2

(Apple Silicon ARM64) running MacOS 15 (Sequoia) using `osx-arm64` Accelerate

## 2. Instructions to Create Dev Environment

For local development on MacOS

### 2.0 Pre-requisite installs via `homebrew`

1. Install Homebrew, see instructions at [https://brew.sh](https://brew.sh)

2. Install system-level tools incl. `direnv`, `gcc`, `git`, `graphviz`, `uv`:

```zsh

$> make brew

```

### 2.1 Git clone the repo

Assumes system-level tools installed as above:

```zsh

$> git clone https://github.com/oreum-industries/oreum_core

$> cd oreum_core

```

Then allow `direnv` on MacOS to autorun file `.envrc` upon directory open

### 2.2 Create virtual environment and install dev packages

Notes:

+ We use local `.venv/` virtual env via [`uv`](https://github.com/astral-sh/uv)

+ Packages are technically articulated in `pyproject.toml` and might not be the

latest - to aid stability for `pymc` (usually in a state of development flux)

#### 2.2.1 Create the dev environment

From the dir above `oreum_core/` project dir:

```zsh

$> make -C oreum_core/ dev

```

This will also create some files to help confirm / diagnose successful installation:

+ `dev/install_log/blas_info.txt` for the `BLAS MKL` installation for `numpy`

+ `LICENSES_3P.md` details the license for each third-party package used

#### 2.2.2 (Optional best practice) Test successful installation of dev env

From the dir above `oreum_core/` project dir:

```zsh

$> make -C oreum_core/ dev-test

```

This will also add files `dev/install_log/tests_[numpy|scipy].txt` which detail

successful installation (or not) for `numpy`, `scipy`

#### 2.2.3 (Useful during env install experimentation): To remove the dev env

From the dir above `oreum_core/` project dir:

```zsh

$> make -C oreum_core/ dev-uninstall

```

### 2.3 Code Linting & Repo Control

#### 2.3.1 Pre-commit

We use [pre-commit](https://pre-commit.com) to run a suite of automated tests

for code linting & quality control and repo control prior to commit on local

development machines.

+ Precommit is already installed by the `make dev` command (which itself calls

`pip install -e .[dev]`)

+ The pre-commit script will then run on your system upon `git commit`

+ See this project's `.pre-commit-config.yaml` for details

#### 2.3.2 Github Actions

We use [Github Actions](https://docs.github.com/en/actions/using-workflows) aka

Github Workflows to run:

1. A suite of automated tests for commits received at the origin (i.e. GitHub)

2. Publishing to PyPi upon creating a GH Release

+ See `Makefile` for the CLI commands that are issued

+ See `.github/workflows/*` for workflow details

#### 2.3.3 Git LFS

We use [Git LFS](https://git-lfs.github.com) to store any large files alongside

the repo. This can be useful to replicate exact environments during development

and/or for automated tests

+ This requires a local machine install

(see [Getting Started](https://git-lfs.github.com))

+ See `.gitattributes` for details

### 2.4 Configs for Local Development

Some notes to help configure local development environment

#### 2.4.1 Git config `~/.gitconfig`

```yaml

[user]

name = <YOUR NAME>

email = <YOUR EMAIL ADDRESS>

```

### 2.5 Install VSCode IDE

We strongly recommend using [VSCode](https://code.visualstudio.com) for all

development on local machines, and this is a hard pre-requisite to use

the `.devcontainer` environment (see section 3)

This repo includes relevant lightweight project control and config in:

```zsh

oreum_core.code-workspace

.vscode/extensions.json

.vscode/settings.json

```

### 2.6 Publishing to PyPi

A note for maintainers (Oreum Industries only), publishing to pypi, ensure

local dev machine presence of the following in a config file `~/.pypirc`

```yaml

[distutils]

index-servers =

pypi

testpypi

[pypi]

repository = https://upload.pypi.org/legacy/

username = __token__

[testpypi]

repository = https://test.pypi.org/legacy/

username = __token__

```

---

## 3. Code Standards

Even when writing R&D code, we strive to meet and exceed (even define) best

practices for code quality, documentation and reproducibility for modern

data science projects.

### 3.1 Code Linting & Repo Control

We use a suite of automated tools to check and enforce code quality. We indicate

the relevant shields at the top of this README. See section 1.4 above for how

this is enforced at precommit on developer machines and upon PR at the origin as

part of our CI process, prior to master branch merge.

These include:

+ [`ruff`](https://docs.astral.sh/ruff/) - extremely fast standardised linting

and formatting, which replaces `black`, `flake8`, `isort`

+ [`interrogate`](https://pypi.org/project/interrogate/) - ensure complete Python

docstrings

+ [`bandit`](https://github.com/PyCQA/bandit) - test for common Python security

issues

We also run a suite of general tests pre-packaged in

[`precommit`](https://pre-commit.com).

---

Copyright 2025 Oreum FZCO t/a Oreum Industries. All rights reserved.

Oreum FZCO, IFZA, Dubai Silicon Oasis, Dubai, UAE, reg. 25515

[oreum.io](https://oreum.io)

---

Oreum Industries © 2025

| text/markdown | null | Oreum Industries <info@oreum.io> | null | null | null | null | [

"Development Status :: 4 - Beta",

"Intended Audience :: Science/Research",

"Intended Audience :: Financial and Insurance Industry",

"License :: OSI Approved :: Apache Software License",

"Natural Language :: English",

"Operating System :: MacOS",

"Operating System :: Unix",

"Programming Language :: Python",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.13",

"Topic :: Scientific/Engineering",

"Topic :: Scientific/Engineering :: Information Analysis",

"Topic :: Scientific/Engineering :: Visualization"

] | [] | null | null | ==3.13.* | [] | [

"oreum_core"

] | [] | [

"csv2md",

"dask",

"fastparquet",

"ftfy",

"matplotlib",

"matplotlib-inline",

"pandas[excel,parquet,plot]==2.3.*",

"patsy",

"scikit-learn",

"scipy",

"seaborn<0.14",

"statsmodels",

"bandit; extra == \"dev\"",

"hypothesis; extra == \"dev\"",

"interrogate; extra == \"dev\"",

"ipython; extra == \"dev\"",

"meson; extra == \"dev\"",

"ninja; extra == \"dev\"",

"pipdeptree; extra == \"dev\"",

"pip-licenses; extra == \"dev\"",

"pooch; extra == \"dev\"",

"pre-commit; extra == \"dev\"",

"pytest; extra == \"dev\"",

"ruff; extra == \"dev\"",

"graphviz; extra == \"pymc\"",

"pymc; extra == \"pymc\"",

"catboost; extra == \"tree\"",

"category_encoders; extra == \"tree\"",

"graphviz; extra == \"tree\"",

"optuna; extra == \"tree\"",

"optuna-integration; extra == \"tree\"",

"nbformat; extra == \"tree\"",

"shap; extra == \"tree\"",

"xgboost; extra == \"tree\""

] | [] | [] | [] | [

"Homepage, https://github.com/oreum-industries/oreum_core"

] | python-requests/2.32.5 | 2026-02-20T13:54:54.840428 | oreum_core-0.11.13.tar.gz | 158,302 | 7c/4d/51945bf26bb4a5bce0f1e0567236b4f2ed5a2c6a9df4570bd90915e51ecc/oreum_core-0.11.13.tar.gz | source | sdist | null | false | 3d6d6409803ab9e0394f98cfefbdc03a | fc5fc18274eb319faa718de4905099a242e38079ce49bd35a04cbe819d771b76 | 7c4d51945bf26bb4a5bce0f1e0567236b4f2ed5a2c6a9df4570bd90915e51ecc | null | [] | 0 |

2.4 | llmbo-bedrock | 0.2.3 | Large Language Model Batch Operations | # LLMbo - Large Language model batch operations

AWS Bedrock offers powerful capabilities for running batch inference jobs with large language models.

However, orchestrating these jobs, managing inputs and outputs, and ensuring consistent result structures can be arduous.

LLMbo aims to solve these problems by providing an intuitive, Pythonic interface for Bedrock batch operations.

Additionally, it provides a method of using batch inference for structured responses,

taking inspiration from the likes of [instructor](https://pypi.org/project/instructor/),

[mirascope](https://pypi.org/project/mirascope/) and [pydanticai](https://pypi.org/project/pydantic-ai/).

You provide a model output as a pydantic model and llmbo creates takes care of the rest.

See the AWS documentation for [models that support batch inference.](https://docs.aws.amazon.com/bedrock/latest/userguide/batch-inference-supported.html)

Currently the library has full support (including StructuredBatchInference) for Anthropic and Mistral models.

Other models may be supported through the default adapter, or you can write and register your own.

## Prerequisites

- A `.env` file with an entry for `AWS_PROFILE=`. This profile should have sufficient

permissions to create and schedule a batch inference job. See the [AWS instructions](https://docs.aws.amazon.com/bedrock/latest/userguide/batch-inference-permissions.html)

- [A service role with the required permissions to execute the job.](https://docs.aws.amazon.com/bedrock/latest/userguide/batch-inference-permissions.html#batch-inference-permissions-service),

- A s3 bucket to store the input and outputs for the job. S3 buckets must exists in the same region as you execute the job. This is a limitation of AWS batch inference rather that the

package.

- Inputs will be written to `f{s3_bucket}/input/{job_name}.jsonl`

- Outputs will be written to `f{s3_bucket}/output/{job_id}/{job_name}.jsonl.out` and

`f{s3_bucket}/output/{job_id}/manifest.json.out`

## Install

```bash

pip install llmbo-bedrock

```

## Getting started

Here's a quick example of how to use LLMbo:

### BatchInferer

```python

from llmbo import BatchInferer, ModelInput

bi = BatchInferer(

model_name="anthropic.claude-v2",

bucket_name="my-inference-bucket",

region="us-east-1",

job_name="example-batch-job",

role_arn="arn:aws:iam::123456789012:role/BedrockBatchRole"

)

# Prepare your inputs using the ModelInput class, you also need to include an id

# input Dict[job_id: ModelInput ]

inputs = {

f"{i:03}": ModelInput(

messages=[{"role": "user", "content": f"Question {i}"}]

) for i in range(100)

}

# Run the batch job, this prepares, uploads, creates the job, monitors the progress

# and downloads the results

results = bi.auto(inputs)

```

### StructuredInferer

For structured inference, simply define a Pydantic model and use StructuredBatchInferer:

```python

from pydantic import BaseModel

from llmbo import StructuredBatchInferer

class ResponseSchema(BaseModel):

answer: str

confidence: float

sbi = StructuredBatchInferer(

output_model=ResponseSchema,

model_name="anthropic.claude-v2",

# ... other parameters

)

# The results will be validated against ResponseSchema

structured_results = sbi.auto(inputs)

```

For more detailed examples see the followingin llmbo.py:

- `examples/batch_inference_example.py`: for an example of free text response

- `examples/structured_batch_inference_example.py`: for an example of structured response ala instructor | text/markdown | null | David Gillespie <david.gillespie@digital.cabinet-office.gov.uk> | null | null | null | null | [] | [] | null | null | >=3.10 | [] | [] | [] | [

"boto3<2.0,>=1.3",

"pydantic>=2.10",

"python-dotenv>=1.0.1"

] | [] | [] | [] | [

"Homepage, https://co-cddo.github.io/gds-idea-llmbo/",

"Documentation, https://co-cddo.github.io/gds-idea-llmbo/api/",

"Repository, https://github.com/co-cddo/gds-idea-llmbo.git",

"Issues, https://github.com/co-cddo/gds-idea-llmbo/issues"

] | uv/0.10.4 {"installer":{"name":"uv","version":"0.10.4","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":true} | 2026-02-20T13:53:11.088555 | llmbo_bedrock-0.2.3-py3-none-any.whl | 23,901 | cc/0b/3e7b015315b27eb6d93ad9d97dbcfedc773080dfcee4c58c5e905e4f23c1/llmbo_bedrock-0.2.3-py3-none-any.whl | py3 | bdist_wheel | null | false | eefaa9b8af66f87c1962a4385bdead56 | c736c39c4fc4e268590045aa4efdf66f9d303d9b2cda3af1f1d191e58851c19a | cc0b3e7b015315b27eb6d93ad9d97dbcfedc773080dfcee4c58c5e905e4f23c1 | null | [

"LICENSE"

] | 208 |

2.4 | robotframework-robocop | 8.1.1 | Static code analysis tool (linter) and code formatter for Robot Framework | # Robocop

[](https://github.com/MarketSquare/robotframework-robocop/actions/workflows/unit-tests.yml "GitHub Workflow Unit Tests Status")

[](https://pepy.tech/project/robotframework-robocop)

---

<img style="float:right" src="https://raw.githubusercontent.com/MarketSquare/robotframework-robocop/main/docs/source/images/robocop_logo_small.png">

- [Introduction](#introduction)

- [Documentation](#documentation)

- [Requirements](#requirements)

- [Installation](#installation)

- [Usage](#usage)

- [Example Output](#example-output)

- [MCP Server](#mcp-server)

- [Values](#values)

- [FAQ](#faq)

---

## Introduction

Robocop is a tool that performs static code analysis and formatting of [Robot Framework](https://github.com/robotframework/robotframework) code.

It uses official [Robot Framework parsing API](https://robot-framework.readthedocs.io/en/stable/) to parse files and

runs a number of checks, looking for potential errors or violations to code quality standards (commonly referred to as

*linting issues*).

> Hosted on [GitHub](https://github.com/MarketSquare/robotframework-robocop).

## Documentation

Full documentation is available at [https://robocop.dev](https://robocop.dev). :open_book:

The most common questions with answers can be found at the bottom ⬇ of this README file.

## Requirements

Python 3.9+ :snake: and Robot Framework 4.0+ :robot:.

## Installation

You can install the latest version of Robocop simply by running:

```

pip install -U robotframework-robocop

```

## Usage

Robocop runs by default from the current directory, and it discovers supported files recursively.

To lint the files, you can run:

```

robocop check

```

To format the files, you can run:

```commandline

robocop format

```

All command line options can be displayed in a help message by executing:

```

robocop -h

```

## Example Output

Executing command:

```

robocop check --reports rules_by_error_type test.robot

```

Will result in the following output:

```text

test.robot:17:1 SPC22 Not enough whitespace after 'Test Teardown' setting

|

15 |

16 | Test Setup Keyword

17 | Test Teardown Keyword2

| ^ SPC22

18 | Testtimeout 1min

19 | Documentation this is doc

|

test.robot:28:1 SPC14 Variable in Variables section is not left aligned

|

1 | *** Variables ***

2 | ${VAR} 1

3 | ${VAR} 1

| ^ SPC14

4 | ${VAR} 1

5 | VALUE 1

Found 2 issues: 2 ERRORs, 0 WARNINGs, 0 INFO.

```

## MCP Server

Robocop provides an [MCP (Model Context Protocol)](https://modelcontextprotocol.io/) server that allows AI assistants like Claude and GitHub Copilot to lint and format Robot Framework code directly.

Install with MCP support:

```bash

pip install robotframework-robocop[mcp]

```

Run the server:

```bash

robocop-mcp

```

Full documentation: [MCP Server](https://robocop.dev/stable/integrations/ai/)

## Values

Original *RoboCop* - a fictional cybernetic police officer - was the following three prime directives

which also drive the progress of Robocop linter:

First Directive: **Serve the public trust**

Which lies behind the creation of the project - to **serve** developers and testers as a tool to build applications they can **trust**.

Second Directive: **Protect the innocent**

**The innocent** testers and developers have no intention of producing ugly code, but sometimes, you know, it just happens,

so Robocop is there to **protect** them.

Third Directive: **Uphold the law**

Following the coding guidelines established in the project are something crucial to keep the code clean,

readable and understandable by others, and Robocop can help to **uphold the law**.

## FAQ

<details>

<summary>Can I integrate Robocop with my code editor (IDE)?</summary>

**Yes**, Robocop integrates nicely with popular IDEs like PyCharm or VSCode

thanks to [the RobotCode](https://robotcode.io/) plugin.

Read a simple manual (README) in that project to figure out how to install and use it.

</details>

<details>

<summary>Can I load configuration from a file?</summary>

**Yes**, you can use toml-based configuration files:

**`pyproject.toml` file**

**`robocop.toml` file**

**`robot.toml` file**

Example configuration file:

```toml

[tool.robocop]

exclude = ["deprecated.robot"]

[tool.robocop.lint]

select = [

"rulename",

"ruleid"

]

configure = [

"line-too-long.line_length=110"

]

[tool.robocop.format]

select = ["NormalizeNewLines"]

configure = [

"NormalizeNewLines.flatten_lines=True"

]

```

Multiple configuration files are supported. However, global-like options such as ``--verbose`` or ``--reports`` are

only loaded from a top configuration file. Read more in

[configuration](https://robocop.dev/stable/configuration/).

</details>

<details>

<summary>I use different coding standards. Can I configure rules so that they fit my needs?</summary>

**Yes**, some rules and formatters are configurable. You can find the configuration details in the documentation or

by running:

```commandline

robocop docs rule_name_or_id

robocop docs formatter_name

```

Configuring is done by using `-c / --configure` command line option followed by pattern

`<name>.<param_name>=<value>` where:

- `<name>` can either be rule name or its id, or formatter name

- `<param_name>` is a public name of the parameter

- `<value>` is a desired value of the parameter

For example:

```

robocop check --configure line-too-long.line_length=140

```

---

Each rule's severity can also be overwritten. Possible values are

`e/error`, `w/warning` or `i/info` and are case-insensitive. Example:

```

robocop check -c too-long-test-case.severity=e

```

---

If there are special cases in your code that violate the rules,

you can also exclude them in the source code.

Example:

```

Keyword with lowercased name # robocop: off

```

More about it in

[our documentation](https://robocop.dev/stable/configuration/configuration_reference/#selecting-rules).

</details>

<details>

<summary>Can I define custom rules?</summary>

**Yes**, you can define and include custom rules using `--custom-rules` command line option

by providing a path to a file containing your rule(s):

```

robocop --custom-rules my/own/rule.py --custom-rules external_rules.py

```

If you feel that your rule is very helpful and should be included in Robocop permanently,

you can always share your solution by

[submitting a pull request](https://github.com/MarketSquare/robotframework-robocop/pulls).

You can also share your idea by

[creating an issue](https://github.com/MarketSquare/robotframework-robocop/issues/new/choose).

More about custom rules with code examples in

[our documentation](https://robocop.dev/stable/linter/custom_rules/).

</details>

<details>

<summary>Can I use Robocop in continuous integration (CI) tools?</summary>

**Yes**, Robocop is able to produce different kinds of reports that are supported by most popular platforms such as

GitHub, Gitlab, Sonar Qube, etc. Read more in [integrations](https://robocop.dev/stable/integrations/precommit/).

</details>

---

> Excuse me, I have to go. Somewhere there is a crime happening. - Robocop

| text/markdown | null | Bartlomiej Hirsz <bartek.hirsz@gmail.com>, Mateusz Nojek <matnojek@gmail.com> | null | Bartlomiej Hirsz <bartek.hirsz@gmail.com> | null | automation, formatter, formatting, linter, qa, robotframework, testautomation, testing | [

"Development Status :: 5 - Production/Stable",

"Framework :: Robot Framework",

"Framework :: Robot Framework :: Tool",

"Intended Audience :: Developers",

"License :: OSI Approved :: Apache Software License",

"Operating System :: OS Independent",

"Programming Language :: Python",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3.14",

"Topic :: Software Development :: Quality Assurance",

"Topic :: Software Development :: Testing",

"Topic :: Utilities"

] | [] | null | null | >=3.10 | [] | [] | [] | [

"jinja2>=3.1.4",

"msgpack>=1.0.0",

"pathspec>=0.12",

"platformdirs>=4.3",

"pytz>=2022.7",

"rich>=10.11.0",

"robotframework<7.5,>=5.0",

"tomli-w>=1.0",

"tomli==2.2.1; python_version < \"3.11\"",

"typer>=0.12.5",

"typing-extensions>=4.15.0",

"fastmcp>=2.13.0; extra == \"mcp\""

] | [] | [] | [] | [

"Bug tracker, https://github.com/MarketSquare/robotframework-robocop/issues",

"Source code, https://github.com/MarketSquare/robotframework-robocop",

"Documentation, https://robocop.dev/",

"Homepage, https://robocop.dev/"

] | uv/0.10.4 {"installer":{"name":"uv","version":"0.10.4","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":true} | 2026-02-20T13:53:08.293302 | robotframework_robocop-8.1.1.tar.gz | 974,174 | f4/da/abfb9ee808c814cc553a94cf3f25aac0b866683b8bef5ae78544619947f5/robotframework_robocop-8.1.1.tar.gz | source | sdist | null | false | c00c02b0f3907d683fd90fd58382b14c | 47fcca15042d2d9adbc086542a58c1c6cf058c9a0e29bf38edf0ebc60e63e845 | f4daabfb9ee808c814cc553a94cf3f25aac0b866683b8bef5ae78544619947f5 | null | [

"LICENSE"

] | 6,525 |

2.1 | epyt | 2.3.5.0 | EPyT: An EPANET-Python Toolkit for Smart Water Network Simulations. The EPyT is inspired by the EPANET-Matlab Toolkit. | <a href="http://www.kios.ucy.ac.cy"><img src="https://www.kios.ucy.ac.cy/wp-content/uploads/2021/07/Logotype-KIOS.svg" width="200" height="100"/><a>

[](https://github.com/KIOS-Research/EPyT/blob/main/LICENSE.md)

[](https://pypi.org/project/epyt/)

[](https://pepy.tech/project/epyt)

[](https://pepy.tech/project/epyt)

[](https://github.com/OpenWaterAnalytics/EPyT/actions/workflows/build_tests.yml)

[](https://epanet-python-toolkit-epyt.readthedocs.io/en/latest/?badge=latest)

[](https://doi.org/10.21105/joss.05947)

# EPANET Python Toolkit (EPyT)

The `EPANET-Python Toolkit` is an open-source software, originally developed by the [KIOS Research and Innovation Center of Excellence, University of Cyprus](http://www.kios.ucy.ac.cy/) which operates within the Python environment, for providing a programming interface for the latest version of [EPANET](https://github.com/OpenWaterAnalytics/epanet), a hydraulic and quality modeling software created by the US EPA, with Python, a high-level technical computing software. The goal of the EPANET Python Toolkit is to serve as a common programming framework for research and development in the growing field of smart water networks.

The `EPANET-Python Toolkit` features easy to use commands/wrappers for viewing, modifying, simulating and plotting results produced by the EPANET libraries.

For support, please use the OWA community forum: https://github.com/orgs/OpenWaterAnalytics/discussions

## Table of Contents

- [EPANET Python Toolkit (EPyT)](#epanet-python-toolkit-epyt)

- [How to cite](#how-to-cite)

- [Requirements](#requirements)

- [How to install](#how-to-install)

- [How to use the Toolkit](#how-to-use-the-toolkit)

- [How to fix/report bugs](#how-to-fixreport-bugs)

- [Licenses](#licenses)

- [Contributors](#contributors)

- [Contributing](#contributing)

- [Recommendation](#recommendation)

- [List of EPyT Functions](#list-of-epyt-functions)

- [List of EPANET 2.2 Functions](#list-of-epanet-2012-functions-supported)

- [List of EPANET 2.3 Functions Supported](#list-of-epanet-23-functions-supported)

- [List of EPANET MSX Functions API](#list-of-epanet-msx-functions-api)

## How to cite

Kyriakou, M. S., Demetriades, M., Vrachimis, S. G., Eliades, D. G., & Polycarpou, M. M. (2023). EPyT: An EPANET-Python Toolkit for Smart Water Network Simulations. Journal of Open Source Software, 8(92), 5947. https://doi.org/10.21105/joss.05947

```

@article{Kyriakou2023,

author = {Kyriakou, Marios S. and Demetriades, Marios and Vrachimis, Stelios G. and Eliades, Demetrios G. and Polycarpou, Marios M.},

doi = {10.21105/joss.05947},

journal = {Journal of Open Source Software},

month = dec,

number = {92},

pages = {5947},

title = {{EPyT: An EPANET-Python Toolkit for Smart Water Network Simulations}},

url = {https://joss.theoj.org/papers/10.21105/joss.05947},

volume = {8},

year = {2023}

}

```

↑ [Back to top](#table-of-contents)

## Requirements

* Python >=3.9

* Windows, OSX or Linux

* [EPANET 2.2](https://github.com/OpenWaterAnalytics/epanet)

Linux: `sudo cp libepanet2.so /lib/x86_64-linux-gnu/libepanet.so`

↑ [Back to top](#table-of-contents)

## How to install

**Environments -> base (root) -> open terminal -> pip install epyt**

* PyPI: <b>pip install epyt</b>

↑ [Back to top](#table-of-contents)

## How to use the Toolkit

**Minimum Example:**

```python

>>> from epyt import epanet

>>>

>>> d = epanet('Net1.inp')

>>> d.getNodeCount()

>>> d.getNodeElevations()

```

**Minumun Example using custom Library:**

```python

>>> from epyt import epanet

>>>

>>>epanetlib=os.path.join(os.getcwd(), 'epyt','libraries','win','epanet2.dll')

>>>msxlib=os.path.join(os.getcwd(), 'epyt','libraries','win','epanetmsx.dll')

>>>d = epanet(inpname, customlib=epanetlib)

>>>d.loadMSXFile(msxname, customMSXlib=msxlib)

```

**More examples:**

[https://github.com/KIOS-Research/EPYT/tree/main/epyt/examples](https://github.com/KIOS-Research/EPYT/tree/main/epyt/examples#readme)

↑ [Back to top](#table-of-contents)

## How to fix/report bugs

To fix a bug `Fork` the `EPyT`, `Edit` the code and make the appropriate change, and then `Pull` it so that we evaluate it.

Keep in mind that some bugs may exist in the `EPANET` libraries, in case you are not receiving the expected results.

↑ [Back to top](#table-of-contents)

## Licenses

* `EPANET`: Public Domain

* `EPANET-Python Toolkit (EPyT)`: EUPL

↑ [Back to top](#table-of-contents)

## Contributors

* Marios Kyriakou, [KIOS Research and Innovation Center of Excellence, University of Cyprus](http://www.kios.ucy.ac.cy/)

* Marios Demetriades, [KIOS Research and Innovation Center of Excellence, University of Cyprus](http://www.kios.ucy.ac.cy/)

* Stelios Vrachimis, [KIOS Research and Innovation Center of Excellence, University of Cyprus](http://www.kios.ucy.ac.cy/)

* Demetrios Eliades, [KIOS Research and Innovation Center of Excellence, University of Cyprus](http://www.kios.ucy.ac.cy/)

The `EPyT` is based/inspired on the [EPANET-Matlab Toolkit](https://github.com/OpenWaterAnalytics/EPANET-Matlab-Toolkit).

## Contributing

If you want to contribute, please check out our [Code of Conduct](https://github.com/KIOS-Research/EPyT/blob/dev/CODE_OF_CONDUCT.md). Everyone is welcome to contribute whether reporting a new [issue](https://github.com/KIOS-Research/EPyT/issues), suggesting a new feature, or writing code. If you want to contribute code, you can create a new fork in the repo to your own account. Make your commits on your dev branch (based on dev) and when you are finished then you can create a [pull request](https://github.com/KIOS-Research/EPyT/pulls) to test the code and discuss your changes.

## Recommendation

* Install Anaconda<br>

* Run `EPyT` with [Spyder IDE](https://www.spyder-ide.org/)

* Run `EPyT` with [PyCharm](https://www.jetbrains.com/pycharm/)

*Settings on Spyder IDE*

* Tools -> Preferrences

* View -> Window layouts -> Matlab layout

* Enable interactive plot on matlibplot

: Tools -> Preferences -> IPython console -> Graphics -> Graphics backend -> Backend: Automatic

↑ [Back to top](#table-of-contents)

## List of EPyT Functions

| Function |Description|

|-------------------------------------|---------------------------|

| addControls | Adds a new simple control |

| addCurve | Adds a new curve appended to the end of the existing curves |

| addLinkPipe | Adds a new pipe |

| addLinkPipeCV | Adds a new control valve pipe |

| addLinkPump | Adds a new pump |

| addLinkValveFCV | Adds a new FCV valve |

| addLinkValveGPV | Adds a new GPV valve |

| addLinkValvePBV | Adds a new PBV valve |

| addLinkValvePCV | Adds a new PCV valve and returns the index of the new PCV valve |

| addLinkValvePRV | Adds a new PRV valve |

| addLinkValvePSV | Adds a new PSV valve |

| addLinkValveTCV | Adds a new TCV valve |

| addNodeJunction | Adds a new junction |

| addNodeJunctionDemand | Adds a new demand to a junction given the junction index, base demand, demand time pattern and demand category name |

| addNodeReservoir | Adds a new reservoir |

| addNodeTank | Adds a new tank |

| addPattern | Adds a new time pattern to the network |

| addRules | Adds a new rule-based control to a project |

| appRotateNetwork | Rotates the network by theta degrees counter-clockwise |

| appShiftNetwork | Shifts the network |

| clearReport | Clears the contents of a project's report file |

| closeHydraulicAnalysis | Closes the hydraulic analysis system, freeing all allocated memory |

| closeNetwork | Closes down the Toolkit system |

| closeQualityAnalysis | Closes the water quality analysis system, freeing all allocated memory |

| copyReport | Copies the current contents of a project's report file to another file |

| createProject | Creates a new epanet projec |

| deleteAllTemps | Delete all temporary files (.inp, .bin) created in networks folder |

| deleteControls | Deletes an existing simple control |

| deleteCurve | Deletes a data curve from a project |

| deleteLink | Deletes a link |

| deleteNode | Deletes nodes |

| deleteNodeJunctionDemand | Deletes a demand from a junction given the junction index and demand index.

| deletePattern | Deletes a time pattern from a project |

| deletePatternsAll | Deletes all time patterns from a project |

| deleteProject | Deletes the epanet project |

| deleteRules | Deletes an existing rule-based control given it's index |

| getAdjacencyMatrix | Compute the adjacency matrix (connectivity graph) considering the flows, using mean flow |

| getAllAttributes | Get all attributes of a given Python object |

| getCMDCODE | Retrieves the CMC code |

| getComputedHydraulicTimeSeries | Computes hydraulic simulation and retrieves all time-series |

| getComputedQualityTimeSeries | Computes Quality simulation and retrieves all or some time-series |

| getComputedTimeSeries | Run analysis with binary fil |

| getConnectivityMatrix | Retrieve the Connectivity Matrix of the networ |

| getConsumerDemandDelivered | Retrieves the delivered consumer demand for a specific node |

| getConsumerDemandRequested | Retrieves the requested consumer demand for a specific node |

| getControlCount | Retrieves the number of controls => will replace getControlRulesCount |

| getControlRulesCount | Retrieves the number of controls |

| getControlState | Retrieves the enabled state of a specified control in the EPANET model |

| getControls | Retrieves the parameters of all control statements |

| getCounts | Retrieves the number of network components |

| getCurveComment | Retrieves the comment string of a curve |

| getCurveCount | Retrieves the number of curves |

| getCurveIndex | Retrieves the index of a curve with specific ID |

| getCurveLengths | Retrieves number of points in a curve |

| getCurveNameID | Retrieves the IDs of curves |

| getCurveType | Retrieves the curve-type for all curves |

| getCurveTypeIndex | Retrieves the curve-type index for all curves |

| getCurveValue | Retrieves the X, Y values of points of curves |

| getCurvesInfo | Retrieves all the info of curves |

| getDemandModel | Retrieves the type of demand model in use and its parameters |

| getENfunctionsImpemented | Retrieves the epanet functions that have been developed |

| getError | Retrieves the text of the message associated with a particular error or warning code |

| getFlowUnits | Retrieves flow units used to express all flow rates |

| getLibFunctions | Retrieves the functions of DLL |

| getLinkActualQuality | Retrieves the current computed link quality (read only) |

| getLinkBulkReactionCoeff | Retrieves the value of all link bulk chemical reaction coefficient |

| getLinkComment | Retrieves the comment string assigned to the link object |

| getLinkCount | Retrieves the number of links |

| getLinkDiameter | Retrieves the value of link diameters |

| getLinkEnergy | Retrieves the current computed pump energy usage (read only) |

| getLinkExpansionProperties | Retrieves the expansion properties for a specified link (pipe) |

| getLinkFlows | Retrieves the current computed flow rate (read only) |

| getLinkHeadloss | Retrieves the current computed head loss (read only) |

| getLinkInControl | Function to determine wether a link apperas in any simple or rule based control |

| getLinkIndex | Retrieves the indices of all links, or the indices of an ID set of links |

| getLinkInitialSetting | Retrieves the value of all link roughness for pipes or initial speed for pumps or initial setting for valves |

| getLinkInitialStatus | Retrieves the value of all link initial status |

| getLinkLeakArea | Function to retrieve the leak area for a specified link (pipe) |

| getLinkLeakageRate | Retrieves the leakage rate of a specific pipe (link) at a given point in time |

| getLinkLength | Retrieves the value of link lengths |

| getLinkMinorLossCoeff | Retrieves the value of link minor loss coefficients |

| getLinkNameID | Retrieves the ID label(s) of all links, or the IDs of an index set of links |

| getLinkNodesIndex | Retrieves the indexes of the from/to nodes of all links |

| getLinkPipeCount | Retrieves the number of pipes |

| getLinkPipeIndex | Retrieves the pipe indices |

| getLinkPipeNameID | Retrieves the pipe ID |

| getLinkPumpCount | Retrieves the number of pumps |

| getLinkPumpECost | Retrieves the pump average energy price |

| getLinkPumpECurve | Retrieves the pump efficiency v |

| getLinkPumpEPat | Retrieves the pump energy price time pattern index |

| getLinkPumpEfficiency | Retrieves the current computed pump efficiency (read only) |

| getLinkPumpHCurve | Retrieves the pump head v |

| getLinkPumpHeadCurveIndex | Retrieves the index of a head curve for all pumps |

| getLinkPumpIndex | Retrieves the pump indices |

| getLinkPumpNameID | Retrieves the pump ID |

| getLinkPumpPatternIndex | Retrieves the pump speed time pattern index |

| getLinkPumpPatternNameID | Retrieves pump pattern name ID |

| getLinkPumpPower | Retrieves the pump constant power rating (read only) |

| getLinkPumpState | Retrieves the current computed pump state (read only) (see @ref EN_PumpStateType) |

| getLinkPumpSwitches | Retrieves the number of pump switches |

| getLinkPumpType | Retrieves the type of a pump |

| getLinkPumpTypeCode | Retrieves the code of type of a pump |

| getLinkQuality | Retrieves the value of link quality |

| getLinkResultIndex | Retrieves the order in which a link's results were saved to an output file |

| getLinkRoughnessCoeff | Retrieves the value of link roughness coefficient |

| getLinkSettings | Retrieves the current computed value of all link roughness for pipes or actual speed for pumps or actual setting for valves |

| getLinkStatus | Retrieves the current link status (see @ref EN_LinkStatusType) (0 = closed, 1 = open) |

| getLinkType | Retrieves the link-type code for all links |

| getLinkTypeIndex | Retrieves the link-type code for all links |

| getLinkValues | Retrieves property values for all links within the EPANET model during a hydraulic analysis |

| getLinkValveCount | Retrieves the number of valves |

| getLinkValveCurveGPV | Retrieves the valve curve for a specified general purpose valve (GPV) |

| getLinkValveCurvePCV | Retrieves the valve curve for a specified pressure control valve (PCV) |

| getLinkValveIndex | Retrieves the valve indices |

| getLinkValveNameID | Retrieves the valve ID |

| getLinkVelocity | Retrieves the current computed flow velocity (read only) |

| getLinkVertices | Retrieves the coordinate's of a vertex point assigned to a link |

| getLinkVerticesCount | Retrieves the number of internal vertex points assigned to a link |

| getLinkWallReactionCoeff | Retrieves the value of all pipe wall chemical reaction coefficient |

| getLinksInfo | Retrieves all link info |

| getNetworksDatabase | Retrieves all EPANET Input Files from EPyT database |

| getNodeActualDemand | Retrieves the computed value of all node actual demands |

| getNodeActualDemandSensingNodes | Retrieves the computed demand values at some sensing nodes |

| getNodeActualQuality | Retrieves the computed values of the actual quality for all nodes |

| getNodeActualQualitySensingNodes | Retrieves the computed quality values at some sensing node |

| getNodeBaseDemands | Retrieves the value of all node base demands |

| getNodeComment | Retrieves the comment string assigned to the node object |

| getNodeCount | Retrieves the number of nodes |

| getNodeDemandCategoriesNumber | Retrieves the value of all node base demands categorie number |

| getNodeDemandDeficit | Retrieves the amount that full demand is reduced under PDA |

| getNodeDemandPatternIndex | Retrieves the value of all node base demands pattern index |

| getNodeDemandPatternNameID | Retrieves the value of all node base demands pattern name ID |

| getNodeElevations | Retrieves the value of all node elevations |

| getNodeEmitterCoeff | Retrieves the value of all node emmitter coefficients |

| getNodeEmitterFlow | Retrieves node emmiter flow |

| getNodeHydraulicHead | Retrieves the computed values of all node hydraulic heads |

| getNodeInControl | Function to determine wether a node apperas in any simple or rule based control |

| getNodeIndex | Retrieves the indices of all nodes or some nodes with a specified ID |

| getNodeInitialQuality | Retrieves the value of all node initial quality |

| getNodeJunctionCount | Retrieves the number of junction nodes |

| getNodeJunctionDemandIndex | Retrieves the demand index of the junctions |

| getNodeJunctionDemandName | Gets the name of a node's demand category |

| getNodeJunctionIndex | Retrieves the indices of junctions |

| getNodeJunctionNameID | Retrieves the junction ID label |

| getNodeLeakageFlow | Retrieves the leakage flow for a specific node |

| getNodeMassFlowRate | Retrieves the computed mass flow rates per minute of chemical sources for all nodes |

| getNodeNameID | Retrieves the ID label of all nodes or some nodes with a specified index |

| getNodePatternIndex | Retrieves the value of all node demand pattern indices |

| getNodePressure | Retrieves the computed values of all node pressures |