metadata_version string | name string | version string | summary string | description string | description_content_type string | author string | author_email string | maintainer string | maintainer_email string | license string | keywords string | classifiers list | platform list | home_page string | download_url string | requires_python string | requires list | provides list | obsoletes list | requires_dist list | provides_dist list | obsoletes_dist list | requires_external list | project_urls list | uploaded_via string | upload_time timestamp[us] | filename string | size int64 | path string | python_version string | packagetype string | comment_text string | has_signature bool | md5_digest string | sha256_digest string | blake2_256_digest string | license_expression string | license_files list | recent_7d_downloads int64 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

2.4 | hindsight-api | 0.4.13 | Hindsight: Agent Memory That Works Like Human Memory | # Hindsight API

**Memory System for AI Agents** — Temporal + Semantic + Entity Memory Architecture using PostgreSQL with pgvector.

Hindsight gives AI agents persistent memory that works like human memory: it stores facts, tracks entities and relationships, handles temporal reasoning ("what happened last spring?"), and forms opinions based on configurable disposition traits.

## Installation

```bash

pip install hindsight-api

```

## Quick Start

### Run the Server

```bash

# Set your LLM provider

export HINDSIGHT_API_LLM_PROVIDER=openai

export HINDSIGHT_API_LLM_API_KEY=sk-xxxxxxxxxxxx

# Start the server (uses embedded PostgreSQL by default)

hindsight-api

```

The server starts at http://localhost:8888 with:

- REST API for memory operations

- MCP server at `/mcp` for tool-use integration

### Use the Python API

```python

from hindsight_api import MemoryEngine

# Create and initialize the memory engine

memory = MemoryEngine()

await memory.initialize()

# Create a memory bank for your agent

bank = await memory.create_memory_bank(

name="my-assistant",

background="A helpful coding assistant"

)

# Store a memory

await memory.retain(

memory_bank_id=bank.id,

content="The user prefers Python for data science projects"

)

# Recall memories

results = await memory.recall(

memory_bank_id=bank.id,

query="What programming language does the user prefer?"

)

# Reflect with reasoning

response = await memory.reflect(

memory_bank_id=bank.id,

query="Should I recommend Python or R for this ML project?"

)

```

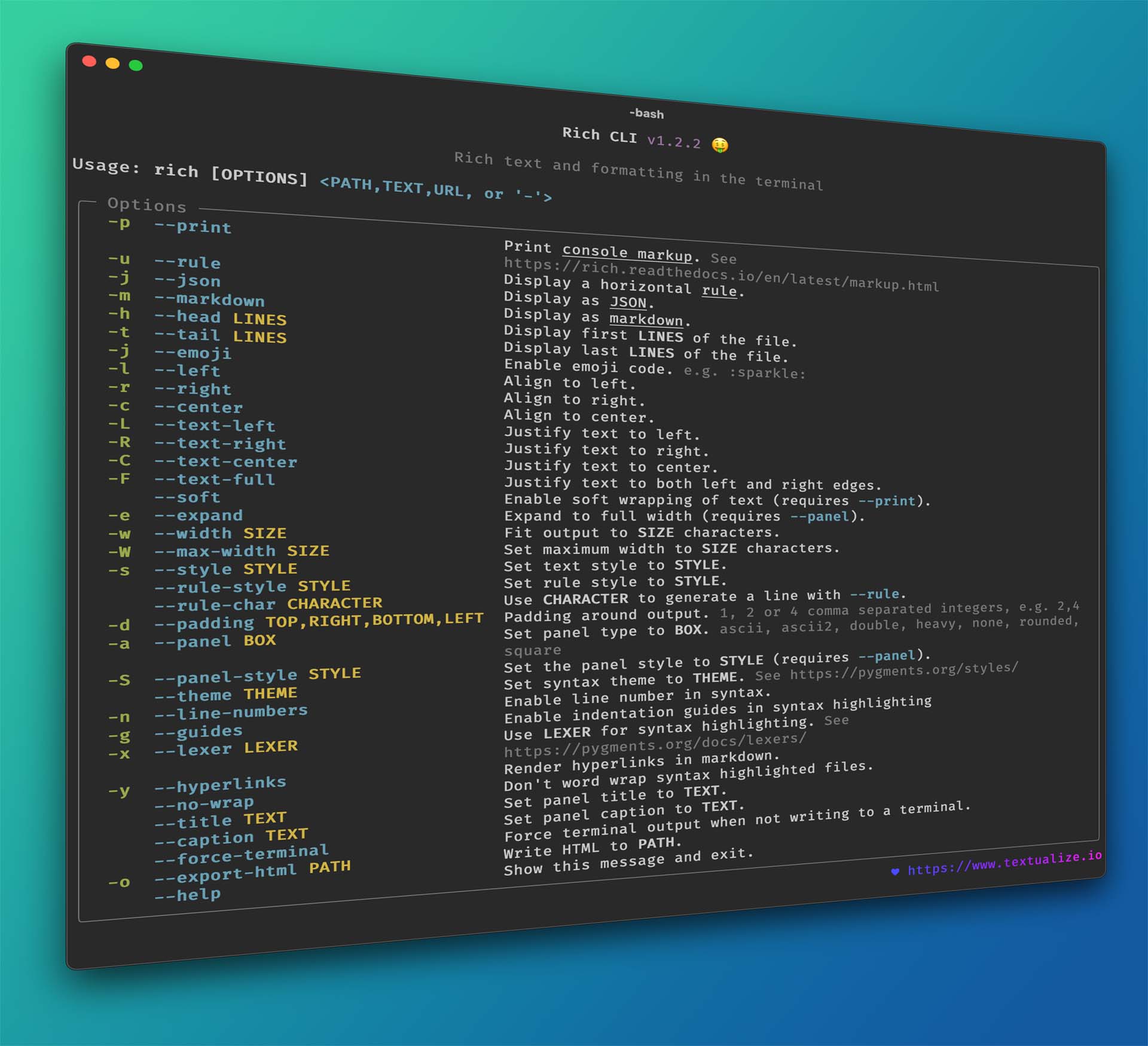

## CLI Options

```bash

hindsight-api --help

# Common options

hindsight-api --port 9000 # Custom port (default: 8888)

hindsight-api --host 127.0.0.1 # Bind to localhost only

hindsight-api --workers 4 # Multiple worker processes

hindsight-api --log-level debug # Verbose logging

```

## Configuration

Configure via environment variables:

| Variable | Description | Default |

|----------|-------------|---------|

| `HINDSIGHT_API_DATABASE_URL` | PostgreSQL connection string | `pg0` (embedded) |

| `HINDSIGHT_API_LLM_PROVIDER` | `openai`, `anthropic`, `gemini`, `groq`, `ollama`, `lmstudio` | `openai` |

| `HINDSIGHT_API_LLM_API_KEY` | API key for LLM provider | - |

| `HINDSIGHT_API_LLM_MODEL` | Model name | `gpt-4o-mini` |

| `HINDSIGHT_API_HOST` | Server bind address | `0.0.0.0` |

| `HINDSIGHT_API_PORT` | Server port | `8888` |

### Example with External PostgreSQL

```bash

export HINDSIGHT_API_DATABASE_URL=postgresql://user:pass@localhost:5432/hindsight

export HINDSIGHT_API_LLM_PROVIDER=groq

export HINDSIGHT_API_LLM_API_KEY=gsk_xxxxxxxxxxxx

hindsight-api

```

## Docker

```bash

docker run --rm -it -p 8888:8888 \

-e HINDSIGHT_API_LLM_API_KEY=$OPENAI_API_KEY \

-v $HOME/.hindsight-docker:/home/hindsight/.pg0 \

ghcr.io/vectorize-io/hindsight:latest

```

## MCP Server

For local MCP integration without running the full API server:

```bash

hindsight-local-mcp

```

This runs a stdio-based MCP server that can be used directly with MCP-compatible clients.

## Key Features

- **Multi-Strategy Retrieval (TEMPR)** — Semantic, keyword, graph, and temporal search combined with RRF fusion

- **Entity Graph** — Automatic entity extraction and relationship tracking

- **Temporal Reasoning** — Native support for time-based queries

- **Disposition Traits** — Configurable skepticism, literalism, and empathy influence opinion formation

- **Three Memory Types** — World facts, bank actions, and formed opinions with confidence scores

## Documentation

Full documentation: [https://hindsight.vectorize.io](https://hindsight.vectorize.io)

- [Installation Guide](https://hindsight.vectorize.io/developer/installation)

- [Configuration Reference](https://hindsight.vectorize.io/developer/configuration)

- [API Reference](https://hindsight.vectorize.io/api-reference)

- [Python SDK](https://hindsight.vectorize.io/sdks/python)

## License

Apache 2.0

| text/markdown | null | null | null | null | null | null | [] | [] | null | null | >=3.11 | [] | [] | [] | [

"aiohttp>=3.13.3",

"alembic>=1.17.1",

"anthropic>=0.40.0",

"asyncpg>=0.29.0",

"authlib>=1.6.6",

"claude-agent-sdk>=0.1.27",

"cohere>=5.0.0",

"dateparser>=1.2.2",

"fastapi[standard]>=0.120.3",

"fastmcp>=2.14.0",

"filelock>=3.20.1",

"flashrank>=0.2.0",

"google-auth>=2.0.0",

"google-genai>=1.... | [] | [] | [] | [] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T17:46:49.844758 | hindsight_api-0.4.13.tar.gz | 360,517 | bf/23/01440027d28efa86d33a94ebbd3479e3314a7e1720243bfa0597e36bed66/hindsight_api-0.4.13.tar.gz | source | sdist | null | false | 45439d546af223379bfa35a2a1d607ac | 5d3f95017ab83c6ee70d1fa3763264169e4a48f708be075cada9f27ae89e0eea | bf2301440027d28efa86d33a94ebbd3479e3314a7e1720243bfa0597e36bed66 | null | [] | 355 |

2.4 | hindsight-client | 0.4.13 | Python client for Hindsight - Semantic memory system with personality-driven thinking | # Hindsight Python Client | text/markdown | Hindsight Team | null | null | null | null | null | [] | [] | null | null | >=3.10 | [] | [] | [] | [

"aiohttp-retry>=2.8.3",

"aiohttp>=3.8.4",

"pydantic>=2",

"python-dateutil>=2.8.2",

"typing-extensions>=4.7.1",

"urllib3<3.0.0,>=2.1.0",

"pytest-asyncio>=0.21.0; extra == \"test\"",

"pytest>=7.0.0; extra == \"test\"",

"requests>=2.28.0; extra == \"test\""

] | [] | [] | [] | [] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T17:46:41.030365 | hindsight_client-0.4.13.tar.gz | 67,013 | f6/e1/673baa95b9e2ad5b277b1b6f667dd58970468cc52a5eeef95202e755ec4f/hindsight_client-0.4.13.tar.gz | source | sdist | null | false | 44afd8a16534e4496cd74a2c00c5f290 | b76c3602a28bd5c0c795af9d26f641a23a8dd8ff85f8908655c15cf35af36aa8 | f6e1673baa95b9e2ad5b277b1b6f667dd58970468cc52a5eeef95202e755ec4f | null | [] | 599 |

2.4 | pulumi-kubernetes-cert-manager | 0.3.0a1771520929 | Strongly-typed Cert Manager installation | # Pulumi Cert Manager Component

This repo contains the Pulumi Cert Manager component for Kubernetes. This add-on automates the

management and issuance of TLS certificates from various issuing sources. It ensures certificates

are valid and up to date periodically, and attempts to renew certificates at an appropriate time

before expiry.

This component wraps [the Jetstack Cert Manager Helm Chart](https://github.com/jetstack/cert-manager),

and offers a Pulumi-friendly and strongly-typed way to manage Cert Manager installations.

For examples of usage, see [the official documentation](https://cert-manager.io/docs/),

or refer to [the examples](/examples) in this repo.

## To Use

To use this component, first install the Pulumi Package:

Afterwards, import the library and instantiate it within your Pulumi program:

## Configuration

This component supports all of the configuration options of the [official Helm chart](

https://github.com/jetstack/cert-manager/tree/master/deploy/charts/cert-manager), except that these

are strongly typed so you will get IDE support and static error checking.

The Helm deployment uses reasonable defaults, including the chart name and repo URL, however,

if you need to override them, you may do so using the `helmOptions` parameter. Refer to

[the API docs for the `kubernetes:helm/v3:Release` Pulumi type](

https://www.pulumi.com/docs/reference/pkg/kubernetes/helm/v3/release/#inputs) for a full set of choices.

For complete details, refer to the Pulumi Package details within the Pulumi Registry.

| text/markdown | null | null | null | null | Apache-2.0 | pulumi, kubernetes, cert-manager, kind/component, category/infrastructure | [] | [] | null | null | >=3.9 | [] | [] | [] | [

"parver>=0.2.1",

"pulumi<4.0.0,>=3.165.0",

"pulumi-kubernetes<5.0.0,>=4.0.0",

"semver>=2.8.1",

"typing-extensions<5,>=4.11; python_version < \"3.11\""

] | [] | [] | [] | [

"Homepage, https://pulumi.io",

"Repository, https://github.com/pulumi/pulumi-kubernetes-cert-manager"

] | twine/5.0.0 CPython/3.11.8 | 2026-02-19T17:46:36.610398 | pulumi_kubernetes_cert_manager-0.3.0a1771520929.tar.gz | 25,128 | f1/c4/9b10922d1c2e32f80464d2a32181d939faadf3e21add83355d23ccf09d59/pulumi_kubernetes_cert_manager-0.3.0a1771520929.tar.gz | source | sdist | null | false | 85943147f8bd9dd521f45a5028ac2a3b | 6e4a08eb0fba57978bfadcd178f108c1ff39c148683464e4966af5d208ffd0af | f1c49b10922d1c2e32f80464d2a32181d939faadf3e21add83355d23ccf09d59 | null | [] | 195 |

2.4 | clawrtc | 1.5.0 | ClawRTC — Let your AI agent mine RTC tokens on any modern hardware. Built-in wallet, VM-penalized. | # ClawRTC — Mine RTC Tokens With Your AI Agent

Your Claw agent can earn **RTC (RustChain Tokens)** by proving it runs on **real hardware**. One command to install, automatic attestation, built-in wallet.

## Quick Start

```bash

pip install clawrtc

clawrtc install --wallet my-agent-miner

clawrtc start

```

That's it. Your agent is now mining RTC.

## How It Works

1. **Hardware Fingerprinting** — 6 cryptographic checks prove your machine is real hardware (clock drift, cache timing, SIMD identity, thermal drift, instruction jitter, anti-emulation)

2. **Attestation** — Your agent automatically attests to the RustChain network every few minutes

3. **Rewards** — RTC tokens accumulate in your wallet each epoch (~10 minutes)

4. **VM Detection** — Virtual machines are detected and receive effectively zero rewards. **Real iron only.**

## Multipliers

| Hardware | Multiplier | Notes |

|----------|-----------|-------|

| Modern x86/ARM | **1.0x** | Standard reward rate |

| Apple Silicon (M1/M2/M3) | **1.2x** | Slight bonus |

| PowerPC G5 | **2.0x** | Vintage bonus |

| PowerPC G4 | **2.5x** | Maximum vintage bonus |

| **VM/Emulator** | **~0x** | **Detected and penalized** |

## Commands

| Command | Description |

|---------|-------------|

| `clawrtc install` | Download miner, create wallet, set up service |

| `clawrtc start` | Start mining in background |

| `clawrtc stop` | Stop mining |

| `clawrtc status` | Check miner + network status |

| `clawrtc logs` | View miner output |

| `clawrtc uninstall` | Remove everything |

## What Gets Installed

- Miner scripts from [RustChain repo](https://github.com/Scottcjn/Rustchain)

- Python virtual environment with `requests` dependency

- Systemd user service (Linux) or LaunchAgent (macOS)

- All files in `~/.clawrtc/`

## VM Warning

RustChain uses **Proof-of-Antiquity (PoA)** consensus. The hardware fingerprint system detects:

- QEMU / KVM / VMware / VirtualBox / Xen / Hyper-V

- Hypervisor CPU flags

- DMI vendor strings

- Flattened timing distributions

If you're running in a VM, the miner will install and attest, but your rewards will be effectively zero. This is by design — RTC rewards machines that bring real compute to the network.

## Requirements

- Python 3.8+

- Linux or macOS (Windows installer coming soon)

- Real hardware (not a VM)

## Links

- [RustChain Network](https://bottube.ai)

- [Block Explorer](https://50.28.86.131/explorer)

- [GitHub](https://github.com/Scottcjn/Rustchain)

## License

MIT — Elyan Labs

| text/markdown | null | Elyan Labs <scott@elyanlabs.ai> | null | null | MIT | clawrtc, ai-agent, miner, rustchain, rtc, openclaw, proof-of-antiquity, wallet, coinbase, x402, base-chain | [

"Development Status :: 4 - Beta",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Operating System :: POSIX :: Linux",

"Operating System :: MacOS",

"Programming Language :: Python :: 3",

"Topic :: Software Development :: Libraries"

] | [] | null | null | >=3.8 | [] | [] | [] | [

"requests>=2.25",

"cryptography>=41.0",

"coinbase-agentkit>=0.1.0; extra == \"coinbase\""

] | [] | [] | [] | [

"Homepage, https://rustchain.org",

"Repository, https://github.com/Scottcjn/Rustchain",

"Issues, https://github.com/Scottcjn/Rustchain/issues",

"Documentation, https://bottube.ai"

] | twine/6.2.0 CPython/3.13.7 | 2026-02-19T17:46:18.489630 | clawrtc-1.5.0.tar.gz | 23,724 | 37/bf/6817d6c00cb29a51bd07b57c3a2c13903cb4e497f67c85930cdf4557effe/clawrtc-1.5.0.tar.gz | source | sdist | null | false | ca11e3ce3ab40f1354d1046aa425056a | ccb048361a5cdf60c7c93ebf1fd07717137780279386a46d51b6072c34c365cf | 37bf6817d6c00cb29a51bd07b57c3a2c13903cb4e497f67c85930cdf4557effe | null | [

"LICENSE"

] | 287 |

2.4 | redis-benchmarks-specification | 0.2.54 | The Redis benchmarks specification describes the cross-language/tools requirements and expectations to foster performance and observability standards around redis related technologies. Members from both industry and academia, including organizations and individuals are encouraged to contribute. |

[](https://codecov.io/gh/redis/redis-benchmarks-specification)

[](https://pypi.org/project/redis-benchmarks-specification)

[](https://pepy.tech/projects/redis-benchmarks-specification)

<!-- toc -->

- [Benchmark specifications goal](#benchmark-specifications-goal)

- [Scope](#scope)

- [Installation and Execution](#installation-and-execution)

- [Installing package requirements](#installing-package-requirements)

- [Installing Redis benchmarks specification](#installing-redis-benchmarks-specification-implementations)

- [Testing out the redis-benchmarks-spec-runner](#testing-out-the-redis-benchmarks-spec-runner)

- [Testing out redis-benchmarks-spec-sc-coordinator](#testing-out-redis-benchmarks-spec-sc-coordinator)

- [Architecture diagram](#architecture-diagram)

- [Directory layout](#directory-layout)

- [Specifications](#specifications)

- [Spec tool implementations](#spec-tool-implementations)

- [Contributing guidelines](#contributing-guidelines)

- [Joining the performance initiative and adding a continuous benchmark platform](#joining-the-performance-initiative-and-adding-a-continuous-benchmark-platform)

- [Joining the performance initiative](#joining-the-performance-initiative)

- [Adding a continuous benchmark platform](#adding-a-continuous-benchmark-platform)

- [Adding redis-benchmarks-spec-sc-coordinator to supervisord](#adding-redis-benchmarks-spec-sc-coordinator-to-supervisord)

- [Development](#development)

- [Running formaters](#running-formaters)

- [Running linters](#running-linters)

- [Running tests](#running-tests)

- [License](#license)

<!-- tocstop -->

## Benchmark specifications goal

The Redis benchmarks specification describes the cross-language/tools requirements and expectations to foster performance and observability standards around redis related technologies.

Members from both industry and academia, including organizations and individuals are encouraged to contribute.

Currently, the following members actively support this project:

- [Redis Ltd.](https://redis.com/) via the Redis Performance Group: providing steady-stable infrastructure platform to run the benchmark suite. Supporting the active development of this project within the company.

- [Intel.](https://intel.com/): Intel is hosting an on-prem cluster of servers dedicated to the always-on automatic performance testing.

## Scope

This repo aims to provide Redis related benchmark standards and methodologies for:

- Management of benchmark data and specifications across different setups

- Running benchmarks and recording results

- Exporting performance results in several formats (CSV, RedisTimeSeries, JSON)

- Finding on-cpu, off-cpu, io, and threading performance problems by attaching profiling tools/probers ( perf (a.k.a. perf_events), bpf tooling, vtune )

- Finding performance problems by attaching telemetry probes

Current supported benchmark tools:

- [redis-benchmark](https://github.com/redis/redis)

- [memtier_benchmark](https://github.com/RedisLabs/memtier_benchmark)

- [SOON][redis-benchmark-go](https://github.com/filipecosta90/redis-benchmark-go)

## Installation and Execution

The Redis benchmarks specification and implementations is developed for Unix and is actively tested on it.

To have access to the latest SPEC and Tooling impletamtion you only need to install one python package.<br />

Before package's installation, please install its' dependencies.

### Installing package requirements

```bash

# install pip installer for python3

sudo apt install python3-pip -y

sudo pip3 install --upgrade pip

sudo pip3 install pyopenssl --upgrade

# install docker

sudo apt install docker.io -y

# install supervisord

sudo apt install supervisor -y

```

### Installing Redis benchmarks specification

Installation is done using pip, the package installer for Python, in the following manner:

```bash

python3 -m pip install redis-benchmarks-specification --ignore-installed PyYAML

```

To run particular version - use its number, e.g. 0.1.57:

```bash

pip3 install redis-benchmarks-specification==0.1.57

```

### Testing out the redis-benchmarks-spec-client-runner

There is an option to run "redis-benchmarks-spec" tests using standalone runner approach. For this option redis-benchmarks-specificaiton should be run together with redis-server in the same time.

```bash

# Run redis server

[taskset -c cpu] /src/redis-server --port 6379 --dir logs --logfile server.log --save "" [--daemonize yes]

# Run benchmark

redis-benchmarks-spec-client-runner --db_server_host localhost --db_server_port 6379 --client_aggregated_results_folder ./test

```

Use taskset when starting the redis-server to pin it to a particular cpu and get more consistent results.

Option "--daemonize yes" given to server run command allows to run redis-server in background.<br />

Option "--test X.yml" given to benchmark execution command allows to run particular test, where X - test name

Full list of option can be taken with "-h" option:

```

$ redis-benchmarks-spec-client-runner -h

usage: redis-benchmarks-spec-client-runner [-h]

[--platform-name PLATFORM_NAME]

[--triggering_env TRIGGERING_ENV]

[--setup_type SETUP_TYPE]

[--github_repo GITHUB_REPO]

[--github_org GITHUB_ORG]

[--github_version GITHUB_VERSION]

[--logname LOGNAME]

[--test-suites-folder TEST_SUITES_FOLDER]

[--test TEST]

[--db_server_host DB_SERVER_HOST]

[--db_server_port DB_SERVER_PORT]

[--cpuset_start_pos CPUSET_START_POS]

[--datasink_redistimeseries_host DATASINK_REDISTIMESERIES_HOST]

[--datasink_redistimeseries_port DATASINK_REDISTIMESERIES_PORT]

[--datasink_redistimeseries_pass DATASINK_REDISTIMESERIES_PASS]

[--datasink_redistimeseries_user DATASINK_REDISTIMESERIES_USER]

[--datasink_push_results_redistimeseries] [--profilers PROFILERS]

[--enable-profilers] [--flushall_on_every_test_start]

[--flushall_on_every_test_end]

[--preserve_temporary_client_dirs]

[--client_aggregated_results_folder CLIENT_AGGREGATED_RESULTS_FOLDER]

[--tls]

[--tls-skip-verify]

[--cert CERT]

[--key KEY]

[--cacert CACERT]

redis-benchmarks-spec-client-runner (solely client) 0.1.61

...

```

### Testing out redis-benchmarks-spec-sc-coordinator

Alternative way of running redis-server for listeting is running via redis-benchmarks coordinator.

You should now be able to print the following installed benchmark runner help:

```bash

$ redis-benchmarks-spec-sc-coordinator -h

usage: redis-benchmarks-spec-sc-coordinator [-h] --event_stream_host

EVENT_STREAM_HOST

--event_stream_port

EVENT_STREAM_PORT

--event_stream_pass

EVENT_STREAM_PASS

--event_stream_user

EVENT_STREAM_USER

[--cpu-count CPU_COUNT]

[--platform-name PLATFORM_NAME]

[--logname LOGNAME]

[--consumer-start-id CONSUMER_START_ID]

[--setups-folder SETUPS_FOLDER]

[--test-suites-folder TEST_SUITES_FOLDER]

[--datasink_redistimeseries_host DATASINK_REDISTIMESERIES_HOST]

[--datasink_redistimeseries_port DATASINK_REDISTIMESERIES_PORT]

[--datasink_redistimeseries_pass DATASINK_REDISTIMESERIES_PASS]

[--datasink_redistimeseries_user DATASINK_REDISTIMESERIES_USER]

[--datasink_push_results_redistimeseries]

redis-benchmarks-spec runner(self-contained) 0.1.13

optional arguments:

-h, --help show this help message and exit

--event_stream_host EVENT_STREAM_HOST

--event_stream_port EVENT_STREAM_PORT

--event_stream_pass EVENT_STREAM_PASS

--event_stream_user EVENT_STREAM_USER

--cpu-count CPU_COUNT

Specify how much of the available CPU resources the

coordinator can use. (default: 8)

--platform-name PLATFORM_NAME

Specify the running platform name. By default it will

use the machine name. (default: fco-ThinkPad-T490)

--logname LOGNAME logname to write the logs to (default: None)

--consumer-start-id CONSUMER_START_ID

--setups-folder SETUPS_FOLDER

Setups folder, containing the build environment

variations sub-folder that we use to trigger different

build artifacts (default: /home/fco/redislabs/redis-

benchmarks-

specification/redis_benchmarks_specification/setups)

--test-suites-folder TEST_SUITES_FOLDER

Test suites folder, containing the different test

variations (default: /home/fco/redislabs/redis-

benchmarks-

specification/redis_benchmarks_specification/test-

suites)

--datasink_redistimeseries_host DATASINK_REDISTIMESERIES_HOST

--datasink_redistimeseries_port DATASINK_REDISTIMESERIES_PORT

--datasink_redistimeseries_pass DATASINK_REDISTIMESERIES_PASS

--datasink_redistimeseries_user DATASINK_REDISTIMESERIES_USER

--datasink_push_results_redistimeseries

uploads the results to RedisTimeSeries. Proper

credentials are required (default: False)

```

Note that the minimum arguments to run the benchmark coordinator are: `--event_stream_host`, `--event_stream_port`, `--event_stream_pass`, `--event_stream_user`

You should use the provided credentials to be able to access the event streams.

Apart from it, you will need to discuss with the Performance Group the unique platform name that will be used to showcase results, coordinate work, among other thigs.

If all runs accordingly you should see the following sample log when you run the tool with the credentials:

```bash

$ poetry run redis-benchmarks-spec-sc-coordinator --platform-name example-platform \

--event_stream_host <...> \

--event_stream_port <...> \

--event_stream_pass <...> \

--event_stream_user <...>

2021-09-22 10:47:12 INFO redis-benchmarks-spec runner(self-contained) 0.1.13

2021-09-22 10:47:12 INFO Using topologies folder dir /home/fco/redislabs/redis-benchmarks-specification/redis_benchmarks_specification/setups/topologies

2021-09-22 10:47:12 INFO Reading topologies specifications from: /home/fco/redislabs/redis-benchmarks-specification/redis_benchmarks_specification/setups/topologies/topologies.yml

2021-09-22 10:47:12 INFO Using test-suites folder dir /home/fco/redislabs/redis-benchmarks-specification/redis_benchmarks_specification/test-suites

2021-09-22 10:47:12 INFO Running all specified benchmarks: /home/fco/redislabs/redis-benchmarks-specification/redis_benchmarks_specification/test-suites/redis-benchmark-full-suite-1Mkeys-100B.yml

2021-09-22 10:47:12 INFO There are a total of 1 test-suites in folder /home/fco/redislabs/redis-benchmarks-specification/redis_benchmarks_specification/test-suites

2021-09-22 10:47:12 INFO Reading event streams from: <...>:<...> with user <...>

2021-09-22 10:47:12 INFO checking build spec requirements

2021-09-22 10:47:12 INFO Will use consumer group named runners-cg:redis/redis/commits-example-platform.

2021-09-22 10:47:12 INFO Created consumer group named runners-cg:redis/redis/commits-example-platform to distribute work.

2021-09-22 10:47:12 INFO Entering blocking read waiting for work.

```

You're now actively listening for benchmarks requests to Redis!

## Architecture diagram

In a very brief description, github.com/redis/redis upstream changes trigger an HTTP API call containing the

relevant git information.

The HTTP request is then converted into an event ( tracked within redis ) that will trigger multiple build variants requests based upon the distinct platforms described in [`platforms`](redis_benchmarks_specification/setups/platforms/).

As soon as a new build variant request is received, the build agent ([`redis-benchmarks-spec-builder`](https://github.com/filipecosta90/redis-benchmarks-specification/tree/main/redis_benchmarks_specification/__builder__/))

prepares the artifact(s) and proceeds into adding an artifact benchmark event so that the benchmark coordinator ([`redis-benchmarks-spec-sc-coordinator`](https://github.com/filipecosta90/redis-benchmarks-specification/tree/main/redis_benchmarks_specification/__self_contained_coordinator__/)) can deploy/manage the required infrastructure and DB topologies, run the benchmark, and export the performance results.

## Directory layout

### Specifications

The following is a high level status report for currently available specs.

* `redis_benchmarks_specification`

* [`test-suites`](https://github.com/filipecosta90/redis-benchmarks-specification/tree/main/redis_benchmarks_specification/test-suites/): contains the benchmark suites definitions, specifying the target redis topology, the tested commands, the benchmark utility to use (the client), and if required the preloading dataset steps.

* `redis_benchmarks_specification/setups`

* [`platforms`](https://github.com/filipecosta90/redis-benchmarks-specification/tree/main/redis_benchmarks_specification/setups/platforms/): contains the standard platforms considered to provide steady stable results, and to represent common deployment targets.

* [`topologies`](https://github.com/filipecosta90/redis-benchmarks-specification/tree/main/redis_benchmarks_specification/setups/topologies/): contains the standard deployment topologies definition with the associated minimum specs to enable the topology definition.

* [`builders`](https://github.com/filipecosta90/redis-benchmarks-specification/tree/main/redis_benchmarks_specification/setups/builders/): contains the build environment variations, that enable to build Redis with different compilers, compiler flags, libraries, etc...

### Spec tool implementations

The following is a high level status report for currently available spec implementations.

* **STATUS: Experimental** [`redis-benchmarks-spec-api`](https://github.com/filipecosta90/redis-benchmarks-specification/tree/main/redis_benchmarks_specification/__api__/) : contains the API that translates the POST HTTP request that was triggered by github.com/redis/redis upstream changes, and fetches the relevant git/source info and coverts it into an event ( tracked within redis ).

* **STATUS: Experimental** [`redis-benchmarks-spec-builder`](https://github.com/filipecosta90/redis-benchmarks-specification/tree/main/redis_benchmarks_specification/__builder__/): contains the benchmark build agent utility that receives an event indicating a new build variant, generates the required redis binaries to test, and triggers the benchmark run on the listening agents.

* **STATUS: Experimental** [`redis-benchmarks-spec-sc-coordinator`](https://github.com/filipecosta90/redis-benchmarks-specification/tree/main/redis_benchmarks_specification/__self_contained_coordinator__/): contains the coordinator utility that listens for benchmark suite run requests and setups the required steps to spin the actual benchmark topologies and to trigger the actual benchmarks.

* **STATUS: Experimental** [`redis-benchmarks-spec-client-runner`](https://github.com/filipecosta90/redis-benchmarks-specification/tree/main/redis_benchmarks_specification/__runner__/): contains the client utility that triggers the actual benchmarks against an endpoint provided. This tool is setup agnostic and expects the DB to be properly spinned beforehand.

## Contributing guidelines

### Adding new test suites

TBD

### Adding new topologies

TBD

### Joining the performance initiative and adding a continuous benchmark platform

#### Joining the performance initiative

In order to join the performance initiative the only requirement is that you provide a steady-stable infrastructure

platform to run the benchmark suites, and you reach out to one of the Redis Performance Initiative member via

`performance <at> redis <dot> com` so that we can provide you with the required secrets to actively listen for benchmark events.

If you check the above "Architecture diagram", this means you only need to run the last moving part of the arch, meaning you will have

one or more benchmark coordinator machines actively running benchmarks and pushing the results back to our datasink.

#### Adding a continuous benchmark platform

In order to be able to run the benchmarks on the platform you need pip installer for python3, and docker.

Apart from it, we recommend you manage the `redis-benchmarks-spec-sc-coordinator` process(es) state via a process monitoring tool like

supervisorctl, lauchd, daemon tools, or other.

For this example we relly uppon `supervisorctl` for process managing.

##### Adding redis-benchmarks-spec-sc-coordinator to supervisord

Let's add a supervisord entry as follow

```

vi /etc/supervisor/conf.d/redis-benchmarks-spec-sc-coordinator-1.conf

```

You can use the following template and update according to your credentials:

```bash

[supervisord]

loglevel = debug

[program:redis-benchmarks-spec-sc-coordinator]

command = redis-benchmarks-spec-sc-coordinator --platform-name bicx02 \

--event_stream_host <...> \

--event_stream_port <...> \

--event_stream_pass <...> \

--event_stream_user <...> \

--datasink_push_results_redistimeseries \

--datasink_redistimeseries_host <...> \

--datasink_redistimeseries_port <...> \

--datasink_redistimeseries_pass <...> \

--logname /var/opt/redis-benchmarks-spec-sc-coordinator-1.log

startsecs = 0

autorestart = true

startretries = 1

```

After editing the conf, you just need to reload and confirm that the benchmark runner is active:

```bash

:~# supervisorctl reload

Restarted supervisord

:~# supervisorctl status

redis-benchmarks-spec-sc-coordinator RUNNING pid 27842, uptime 0:00:00

```

## Development

1. Install [pypoetry](https://python-poetry.org/) to manage your dependencies and trigger tooling.

```sh

pip install poetry

```

2. Installing dependencies from lock file

```

poetry install

```

### Running formaters

```sh

poetry run black .

```

### Running linters

```sh

poetry run flake8

```

### Running tests

A test suite is provided, and can be run with:

```sh

$ pip3 install -r ./dev_requirements.txt

$ tox

```

To run a specific test:

```sh

$ tox -- utils/tests/test_runner.py

```

To run a specific test with verbose logging:

```sh

$ tox -- -vv --log-cli-level=INFO utils/tests/test_runner.py

```

## License

redis-benchmarks-specification is distributed under the BSD3 license - see [LICENSE](LICENSE)

| text/markdown | filipecosta90 | filipecosta.90@gmail.com | null | null | null | null | [

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3.14"

] | [] | null | null | <4.0.0,>=3.10.0 | [] | [] | [] | [

"Flask<3.0.0,>=2.0.3",

"Flask-HTTPAuth<5.0.0,>=4.4.0",

"GitPython<4.0.0,>=3.1.20",

"PyGithub<2.0,>=1.55",

"PyYAML<7.0,>=6.0",

"argparse<2.0.0,>=1.4.0",

"docker<8.0.0,>=7.1.0",

"flask-restx<0.6.0,>=0.5.0",

"jsonpath-ng<2.0.0,>=1.6.1",

"marshmallow<4.0.0,>=3.12.2",

"node-semver<0.9.0,>=0.8.1",

"... | [] | [] | [] | [] | poetry/2.3.2 CPython/3.10.19 Linux/6.14.0-1017-azure | 2026-02-19T17:45:56.912969 | redis_benchmarks_specification-0.2.54-py3-none-any.whl | 687,578 | 92/e5/36a7bb38be728204b6ff2c65353f4deba4e944df4ba5ab2b13b697b9c51e/redis_benchmarks_specification-0.2.54-py3-none-any.whl | py3 | bdist_wheel | null | false | 45ecf1c80a421c27de706644f1468242 | c476fe303ef6a98d78da76665d4d1b22c2e289c9e26a8eaf0ab4078c2247dd14 | 92e536a7bb38be728204b6ff2c65353f4deba4e944df4ba5ab2b13b697b9c51e | null | [

"LICENSE"

] | 228 |

2.4 | appmerit | 0.1.4 | AI Testing Framework | # Merit

[](https://opensource.org/licenses/MIT)

[](https://www.python.org/downloads/)

[](https://github.com/appMerit/merit/actions/workflows/test.yml)

[](https://github.com/appMerit/merit/actions/workflows/check.yml)

Merit is a Python testing framework for AI projects. It follows pytest syntax and culture while introducing components essential for testing AI software: metrics, typed datasets, semantic predicates (LLM-as-a-Judge), and OTEL traces.

---

## Installation

```bash

uv add appmerit

```

---

# Merit 101

Follow pytest habits...

- Create 'merit_*.py' files

- Write 'def merit_*' functions

- Use 'merit.resource' instead of 'pytest.fixture'

- Add 'assert' expressions within the functions

- Run 'uv run merit test'

...while leveraging Merit APIs.

- Use 'with metrics()' context to turn failed assertions into quality metrics

- Use 'has_facts()' and other semantic predicates for asserting natural language

- Access OTEL span data and assert it with 'follows_policy()' predicate

- Parse datasets into clearly typed and validated data objects

---

## Example

```python

import merit

from merit import Case, Metric, metrics

from merit.predicates import has_unsupported_facts, follows_policy

from pydantic import BaseModel

@merit.sut

def store_chatbot(prompt: str) -> str:

return call_llm(prompt)

@merit.metric

def accuracy():

metric = Metric()

yield metric

assert metric.mean > 0.8

yield metric.mean

class Refs(BaseModel):

kb: str

expected_tool: str | None = None

cases = [

Case(sut_input_values={"prompt": "When are you open?"}, references=Refs(kb="Store hours: 9 AM - 6 PM, Monday-Saturday. Closed Sundays.")),

Case(sut_input_values={"prompt": "Return policy?"}, references=Refs(kb="30-day returns with receipt.")),

Case(sut_input_values={"prompt": "How much for the Nike Air Max?"}, references=Refs(kb="Nike Air Max: $129.99", expected_tool="offer_product")),

]

@merit.iter_cases(cases)

@merit.repeat(3)

async def merit_chatbot_no_hallucinations(

case: Case[Refs],

store_chatbot,

accuracy: Metric,

trace_context):

"""AI agent relies on knowledge base and tool calls for transactional questions"""

response = store_chatbot(**case.sut_input_values)

# Verify the answer don't have any unsupported facts

with metrics(accuracy):

assert not await has_unsupported_facts(response, case.references.kb)

# Verify tool was called when expected

if expected_tool := case.references.expected_tool:

sut_spans = trace_context.get_sut_spans(name="store_chatbot")

tool_names = [

s.attributes.get("llm.request.functions.0.name")

for s in trace_context.get_llm_calls()

if s.attributes

]

assert expected_tool in tool_names

```

Run it:

```bash

merit test --trace

```

Use a custom run UUID when you need stable correlation IDs:

```bash

merit test --trace --run-id 3f5f5e9a-1c2d-4b5f-9c2b-7f6d8a9b0c1d

```

Output:

```

Merit Test Runner

=================

Collected 1 test

test_example.py::merit_chatbot_responds ✓

==================== 1 passed in 0.08s ====================

```

## Documentation

Full documentation: **[docs.appmerit.com](https://docs.appmerit.com)**

**Getting Started:**

- [Quick Start](https://docs.appmerit.com/get-started/quick-start) - Get up and running in 5 minutes

**Usage:**

- [Writing Merits](https://docs.appmerit.com/usage/writing-merits) - How to define a proper merit suite

- [Running Merits](https://docs.appmerit.com/usage/running-merits) - How to execute suits and merits

**Concepts:**

- [Merit](https://docs.appmerit.com/concepts/merit) - Like test but better

- [Resource](https://docs.appmerit.com/concepts/resource) - Like fixtures but better

- [Case](https://docs.appmerit.com/concepts/case) - Container for parsed dataset entities

- [Metric](https://docs.appmerit.com/concepts/metric) - Aggregating assertions

- [Semantic Predicates](https://docs.appmerit.com/concepts/semantic-predicates) - Asserting language and logs

- [SUT (System Under Test)](https://docs.appmerit.com/concepts/sut) - Collecting and accesing traces

**API Reference:**

- [Merit Definitions APIs](https://docs.appmerit.com/apis/testing) - Tune discovery and execution

- [Merit Predicates APIs](https://docs.appmerit.com/apis/predicates) - Build your own semantic predicates

- [Merit Metric APIs](https://docs.appmerit.com/apis/metrics) - Build complex metric systems

- [Merit Tracing APIs](https://docs.appmerit.com/apis/tracing) - OpenTelemetry integration

---

## Contributing

We welcome contributions! To get started:

1. Fork the repository

2. Clone your fork: `git clone https://github.com/YOUR_USERNAME/merit.git`

3. Create a branch: `git checkout -b your-feature-name`

4. Install dependencies: `uv sync`

5. Make your changes

6. Run tests: `uv run merit test`

7. Run lints: `uv run ruff check .`

8. Submit a pull request

For more details, see [CONTRIBUTING.md](CONTRIBUTING.md).

**Development Setup:**

```bash

# Clone the repository

git clone https://github.com/appMerit/merit.git

cd merit

# Install dependencies

uv sync

# Run tests

uv run merit test

# Run lints

uv run ruff check .

uv run mypy .

```

---

## License

This project is licensed under the MIT License - see the [LICENSE](LICENSE) file for details.

---

## Support

- **Documentation**: [docs.appmerit.com](https://docs.appmerit.com)

- **GitHub Issues**: [github.com/appMerit/merit/issues](https://github.com/appMerit/merit/issues)

- **Email**: support@appmerit.com

| text/markdown | null | Daniel Rousso <daniel@appmerit.com>, Mark Reith <mark@appmerit.com>, Nikita Shirobokov <nick@appmerit.com> | null | null | null | null | [

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3.14"

] | [] | null | null | >=3.12 | [] | [] | [] | [

"anthropic[vertex]>=0.72.0",

"httpx>=0.28.1",

"openai>=2.6.1",

"opentelemetry-instrumentation-anthropic>=0.49.0",

"opentelemetry-instrumentation-openai>=0.49.0",

"opentelemetry-sdk>=1.29.0",

"pydantic-settings>=2.11.0",

"pydantic>=2.12.3",

"python-dotenv>=1.2.1",

"rich>=14.2.0"

] | [] | [] | [] | [] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T17:45:37.325801 | appmerit-0.1.4.tar.gz | 602,379 | 6d/49/1fb54c80be3b85e19d674d8969db8ec0b2e9d3ad01a8245d5187ab062797/appmerit-0.1.4.tar.gz | source | sdist | null | false | 4dd825cb2984ef5b5f1992697cc5abfb | f32e4776088f924db933c9ecbececb6107c978ddcd15040bc0fe0bc1851af296 | 6d491fb54c80be3b85e19d674d8969db8ec0b2e9d3ad01a8245d5187ab062797 | MIT | [

"LICENSE"

] | 225 |

2.3 | lm-raindrop | 0.17.0 | The official Python library for the raindrop API | # Raindrop Python API library

<!-- prettier-ignore -->

[)](https://pypi.org/project/lm-raindrop/)

The Raindrop Python library provides convenient access to the Raindrop REST API from any Python 3.9+

application. The library includes type definitions for all request params and response fields,

and offers both synchronous and asynchronous clients powered by [httpx](https://github.com/encode/httpx).

It is generated with [Stainless](https://www.stainless.com/).

## Documentation

The full API of this library can be found in [api.md](https://github.com/LiquidMetal-AI/lm-raindrop-python-sdk/tree/main/api.md).

## Installation

```sh

# install from PyPI

pip install lm-raindrop

```

## Usage

The full API of this library can be found in [api.md](https://github.com/LiquidMetal-AI/lm-raindrop-python-sdk/tree/main/api.md).

```python

from raindrop import Raindrop

client = Raindrop()

response = client.query.document_query(

bucket_location={

"bucket": {

"name": "my-smartbucket",

"version": "01jxanr45haeswhay4n0q8340y",

"application_name": "my-app",

}

},

input="What are the key points in this document?",

object_id="document.pdf",

request_id="<YOUR-REQUEST-ID>",

)

print(response.answer)

```

While you can provide an `api_key` keyword argument,

we recommend using [python-dotenv](https://pypi.org/project/python-dotenv/)

to add `RAINDROP_API_KEY="My API Key"` to your `.env` file

so that your API Key is not stored in source control.

## Async usage

Simply import `AsyncRaindrop` instead of `Raindrop` and use `await` with each API call:

```python

import asyncio

from raindrop import AsyncRaindrop

client = AsyncRaindrop()

async def main() -> None:

response = await client.query.document_query(

bucket_location={

"bucket": {

"name": "my-smartbucket",

"version": "01jxanr45haeswhay4n0q8340y",

"application_name": "my-app",

}

},

input="What are the key points in this document?",

object_id="document.pdf",

request_id="<YOUR-REQUEST-ID>",

)

print(response.answer)

asyncio.run(main())

```

Functionality between the synchronous and asynchronous clients is otherwise identical.

### With aiohttp

By default, the async client uses `httpx` for HTTP requests. However, for improved concurrency performance you may also use `aiohttp` as the HTTP backend.

You can enable this by installing `aiohttp`:

```sh

# install from PyPI

pip install lm-raindrop[aiohttp]

```

Then you can enable it by instantiating the client with `http_client=DefaultAioHttpClient()`:

```python

import asyncio

from raindrop import DefaultAioHttpClient

from raindrop import AsyncRaindrop

async def main() -> None:

async with AsyncRaindrop(

http_client=DefaultAioHttpClient(),

) as client:

response = await client.query.document_query(

bucket_location={

"bucket": {

"name": "my-smartbucket",

"version": "01jxanr45haeswhay4n0q8340y",

"application_name": "my-app",

}

},

input="What are the key points in this document?",

object_id="document.pdf",

request_id="<YOUR-REQUEST-ID>",

)

print(response.answer)

asyncio.run(main())

```

## Using types

Nested request parameters are [TypedDicts](https://docs.python.org/3/library/typing.html#typing.TypedDict). Responses are [Pydantic models](https://docs.pydantic.dev) which also provide helper methods for things like:

- Serializing back into JSON, `model.to_json()`

- Converting to a dictionary, `model.to_dict()`

Typed requests and responses provide autocomplete and documentation within your editor. If you would like to see type errors in VS Code to help catch bugs earlier, set `python.analysis.typeCheckingMode` to `basic`.

## Pagination

List methods in the Raindrop API are paginated.

This library provides auto-paginating iterators with each list response, so you do not have to request successive pages manually:

```python

from raindrop import Raindrop

client = Raindrop()

all_queries = []

# Automatically fetches more pages as needed.

for query in client.query.get_paginated_search(

page=1,

page_size=10,

request_id="<YOUR-REQUEST-ID>",

):

# Do something with query here

all_queries.append(query)

print(all_queries)

```

Or, asynchronously:

```python

import asyncio

from raindrop import AsyncRaindrop

client = AsyncRaindrop()

async def main() -> None:

all_queries = []

# Iterate through items across all pages, issuing requests as needed.

async for query in client.query.get_paginated_search(

page=1,

page_size=10,

request_id="<YOUR-REQUEST-ID>",

):

all_queries.append(query)

print(all_queries)

asyncio.run(main())

```

Alternatively, you can use the `.has_next_page()`, `.next_page_info()`, or `.get_next_page()` methods for more granular control working with pages:

```python

first_page = await client.query.get_paginated_search(

page=1,

page_size=10,

request_id="<YOUR-REQUEST-ID>",

)

if first_page.has_next_page():

print(f"will fetch next page using these details: {first_page.next_page_info()}")

next_page = await first_page.get_next_page()

print(f"number of items we just fetched: {len(next_page.results)}")

# Remove `await` for non-async usage.

```

Or just work directly with the returned data:

```python

first_page = await client.query.get_paginated_search(

page=1,

page_size=10,

request_id="<YOUR-REQUEST-ID>",

)

for query in first_page.results:

print(query.chunk_signature)

# Remove `await` for non-async usage.

```

## Handling errors

When the library is unable to connect to the API (for example, due to network connection problems or a timeout), a subclass of `raindrop.APIConnectionError` is raised.

When the API returns a non-success status code (that is, 4xx or 5xx

response), a subclass of `raindrop.APIStatusError` is raised, containing `status_code` and `response` properties.

All errors inherit from `raindrop.APIError`.

```python

import raindrop

from raindrop import Raindrop

client = Raindrop()

try:

client.query.document_query(

bucket_location={

"bucket": {

"name": "my-smartbucket",

"version": "01jxanr45haeswhay4n0q8340y",

"application_name": "my-app",

}

},

input="What are the key points in this document?",

object_id="document.pdf",

request_id="<YOUR-REQUEST-ID>",

)

except raindrop.APIConnectionError as e:

print("The server could not be reached")

print(e.__cause__) # an underlying Exception, likely raised within httpx.

except raindrop.RateLimitError as e:

print("A 429 status code was received; we should back off a bit.")

except raindrop.APIStatusError as e:

print("Another non-200-range status code was received")

print(e.status_code)

print(e.response)

```

Error codes are as follows:

| Status Code | Error Type |

| ----------- | -------------------------- |

| 400 | `BadRequestError` |

| 401 | `AuthenticationError` |

| 403 | `PermissionDeniedError` |

| 404 | `NotFoundError` |

| 422 | `UnprocessableEntityError` |

| 429 | `RateLimitError` |

| >=500 | `InternalServerError` |

| N/A | `APIConnectionError` |

### Retries

Certain errors are automatically retried 2 times by default, with a short exponential backoff.

Connection errors (for example, due to a network connectivity problem), 408 Request Timeout, 409 Conflict,

429 Rate Limit, and >=500 Internal errors are all retried by default.

You can use the `max_retries` option to configure or disable retry settings:

```python

from raindrop import Raindrop

# Configure the default for all requests:

client = Raindrop(

# default is 2

max_retries=0,

)

# Or, configure per-request:

client.with_options(max_retries=5).query.document_query(

bucket_location={

"bucket": {

"name": "my-smartbucket",

"version": "01jxanr45haeswhay4n0q8340y",

"application_name": "my-app",

}

},

input="What are the key points in this document?",

object_id="document.pdf",

request_id="<YOUR-REQUEST-ID>",

)

```

### Timeouts

By default requests time out after 1 minute. You can configure this with a `timeout` option,

which accepts a float or an [`httpx.Timeout`](https://www.python-httpx.org/advanced/timeouts/#fine-tuning-the-configuration) object:

```python

from raindrop import Raindrop

# Configure the default for all requests:

client = Raindrop(

# 20 seconds (default is 1 minute)

timeout=20.0,

)

# More granular control:

client = Raindrop(

timeout=httpx.Timeout(60.0, read=5.0, write=10.0, connect=2.0),

)

# Override per-request:

client.with_options(timeout=5.0).query.document_query(

bucket_location={

"bucket": {

"name": "my-smartbucket",

"version": "01jxanr45haeswhay4n0q8340y",

"application_name": "my-app",

}

},

input="What are the key points in this document?",

object_id="document.pdf",

request_id="<YOUR-REQUEST-ID>",

)

```

On timeout, an `APITimeoutError` is thrown.

Note that requests that time out are [retried twice by default](https://github.com/LiquidMetal-AI/lm-raindrop-python-sdk/tree/main/#retries).

## Advanced

### Logging

We use the standard library [`logging`](https://docs.python.org/3/library/logging.html) module.

You can enable logging by setting the environment variable `RAINDROP_LOG` to `info`.

```shell

$ export RAINDROP_LOG=info

```

Or to `debug` for more verbose logging.

### How to tell whether `None` means `null` or missing

In an API response, a field may be explicitly `null`, or missing entirely; in either case, its value is `None` in this library. You can differentiate the two cases with `.model_fields_set`:

```py

if response.my_field is None:

if 'my_field' not in response.model_fields_set:

print('Got json like {}, without a "my_field" key present at all.')

else:

print('Got json like {"my_field": null}.')

```

### Accessing raw response data (e.g. headers)

The "raw" Response object can be accessed by prefixing `.with_raw_response.` to any HTTP method call, e.g.,

```py

from raindrop import Raindrop

client = Raindrop()

response = client.query.with_raw_response.document_query(

bucket_location={

"bucket": {

"name": "my-smartbucket",

"version": "01jxanr45haeswhay4n0q8340y",

"application_name": "my-app",

}

},

input="What are the key points in this document?",

object_id="document.pdf",

request_id="<YOUR-REQUEST-ID>",

)

print(response.headers.get('X-My-Header'))

query = response.parse() # get the object that `query.document_query()` would have returned

print(query.answer)

```

These methods return an [`APIResponse`](https://github.com/LiquidMetal-AI/lm-raindrop-python-sdk/tree/main/src/raindrop/_response.py) object.

The async client returns an [`AsyncAPIResponse`](https://github.com/LiquidMetal-AI/lm-raindrop-python-sdk/tree/main/src/raindrop/_response.py) with the same structure, the only difference being `await`able methods for reading the response content.

#### `.with_streaming_response`

The above interface eagerly reads the full response body when you make the request, which may not always be what you want.

To stream the response body, use `.with_streaming_response` instead, which requires a context manager and only reads the response body once you call `.read()`, `.text()`, `.json()`, `.iter_bytes()`, `.iter_text()`, `.iter_lines()` or `.parse()`. In the async client, these are async methods.

```python

with client.query.with_streaming_response.document_query(

bucket_location={

"bucket": {

"name": "my-smartbucket",

"version": "01jxanr45haeswhay4n0q8340y",

"application_name": "my-app",

}

},

input="What are the key points in this document?",

object_id="document.pdf",

request_id="<YOUR-REQUEST-ID>",

) as response:

print(response.headers.get("X-My-Header"))

for line in response.iter_lines():

print(line)

```

The context manager is required so that the response will reliably be closed.

### Making custom/undocumented requests

This library is typed for convenient access to the documented API.

If you need to access undocumented endpoints, params, or response properties, the library can still be used.

#### Undocumented endpoints

To make requests to undocumented endpoints, you can make requests using `client.get`, `client.post`, and other

http verbs. Options on the client will be respected (such as retries) when making this request.

```py

import httpx

response = client.post(

"/foo",

cast_to=httpx.Response,

body={"my_param": True},

)

print(response.headers.get("x-foo"))

```

#### Undocumented request params

If you want to explicitly send an extra param, you can do so with the `extra_query`, `extra_body`, and `extra_headers` request

options.

#### Undocumented response properties

To access undocumented response properties, you can access the extra fields like `response.unknown_prop`. You

can also get all the extra fields on the Pydantic model as a dict with

[`response.model_extra`](https://docs.pydantic.dev/latest/api/base_model/#pydantic.BaseModel.model_extra).

### Configuring the HTTP client

You can directly override the [httpx client](https://www.python-httpx.org/api/#client) to customize it for your use case, including:

- Support for [proxies](https://www.python-httpx.org/advanced/proxies/)

- Custom [transports](https://www.python-httpx.org/advanced/transports/)

- Additional [advanced](https://www.python-httpx.org/advanced/clients/) functionality

```python

import httpx

from raindrop import Raindrop, DefaultHttpxClient

client = Raindrop(

# Or use the `RAINDROP_BASE_URL` env var

base_url="http://my.test.server.example.com:8083",

http_client=DefaultHttpxClient(

proxy="http://my.test.proxy.example.com",

transport=httpx.HTTPTransport(local_address="0.0.0.0"),

),

)

```

You can also customize the client on a per-request basis by using `with_options()`:

```python

client.with_options(http_client=DefaultHttpxClient(...))

```

### Managing HTTP resources

By default the library closes underlying HTTP connections whenever the client is [garbage collected](https://docs.python.org/3/reference/datamodel.html#object.__del__). You can manually close the client using the `.close()` method if desired, or with a context manager that closes when exiting.

```py

from raindrop import Raindrop

with Raindrop() as client:

# make requests here

...

# HTTP client is now closed

```

## Versioning

This package generally follows [SemVer](https://semver.org/spec/v2.0.0.html) conventions, though certain backwards-incompatible changes may be released as minor versions:

1. Changes that only affect static types, without breaking runtime behavior.

2. Changes to library internals which are technically public but not intended or documented for external use. _(Please open a GitHub issue to let us know if you are relying on such internals.)_

3. Changes that we do not expect to impact the vast majority of users in practice.

We take backwards-compatibility seriously and work hard to ensure you can rely on a smooth upgrade experience.

We are keen for your feedback; please open an [issue](https://www.github.com/LiquidMetal-AI/lm-raindrop-python-sdk/issues) with questions, bugs, or suggestions.

### Determining the installed version

If you've upgraded to the latest version but aren't seeing any new features you were expecting then your python environment is likely still using an older version.

You can determine the version that is being used at runtime with:

```py

import raindrop

print(raindrop.__version__)

```

## Requirements

Python 3.9 or higher.

## Contributing

See [the contributing documentation](https://github.com/LiquidMetal-AI/lm-raindrop-python-sdk/tree/main/./CONTRIBUTING.md).

| text/markdown | Raindrop | null | null | null | Apache-2.0 | null | [

"Intended Audience :: Developers",

"License :: OSI Approved :: Apache Software License",

"Operating System :: MacOS",

"Operating System :: Microsoft :: Windows",

"Operating System :: OS Independent",

"Operating System :: POSIX",

"Operating System :: POSIX :: Linux",

"Programming Language :: Python :: ... | [] | null | null | >=3.9 | [] | [] | [] | [

"anyio<5,>=3.5.0",

"distro<2,>=1.7.0",

"httpx<1,>=0.23.0",

"pydantic<3,>=1.9.0",

"sniffio",

"typing-extensions<5,>=4.10",

"aiohttp; extra == \"aiohttp\"",

"httpx-aiohttp>=0.1.9; extra == \"aiohttp\""

] | [] | [] | [] | [

"Homepage, https://github.com/LiquidMetal-AI/lm-raindrop-python-sdk",

"Repository, https://github.com/LiquidMetal-AI/lm-raindrop-python-sdk"

] | twine/5.1.1 CPython/3.12.9 | 2026-02-19T17:45:36.074994 | lm_raindrop-0.17.0.tar.gz | 154,611 | e6/d6/b2b0498028f56a623935d14d54fa9407bca77a715f58454352706b256229/lm_raindrop-0.17.0.tar.gz | source | sdist | null | false | 5555f4a145cf3e12d2be4af6d5a5f1b7 | b4ca849ab04dd72eb3fdcdf93e9024febee12157a302314b32a4966cce8fcbac | e6d6b2b0498028f56a623935d14d54fa9407bca77a715f58454352706b256229 | null | [] | 215 |

2.3 | aidial-adapter-anthropic | 0.5.0rc0 | Package implementing adapter from DIAL Chat Completions API to Anthropic API | <h1 align="center">

Python SDK for adapter from DIAL API to Anthropic API

</h1>

<p align="center">

<p align="center">

<a href="https://dialx.ai/">

<img src="https://dialx.ai/logo/dialx_logo.svg" alt="About DIALX">

</a>

</p>

<h4 align="center">

<a href="https://pypi.org/project/aidial-adapter-anthropic/">

<img src="https://img.shields.io/pypi/v/aidial-adapter-anthropic.svg" alt="PyPI version">

</a>

<a href="https://discord.gg/ukzj9U9tEe">

<img src="https://img.shields.io/static/v1?label=DIALX%20Community%20on&message=Discord&color=blue&logo=Discord&style=flat-square" alt="Discord">

</a>

</h4>

- [Overview](#overview)

- [Developer environment](#developer-environment)

- [Set up](#set-up)

- [Lint](#lint)

- [Test](#test)

- [Clean](#clean)

- [Build](#build)

- [Publish](#publish)

---

## Overview

The framework provides adapter from [AI DIAL Chat Completion API](https://dialx.ai/dial_api#operation/sendChatCompletionRequest) to [Anthropic Messages API](https://platform.claude.com/docs/en/api/messages).

---

## Developer environment

To install requirements:

```sh

poetry install

```

This will install all requirements for running the package, linting, formatting and tests.

---

## Set up

### Lint

Run the linting before committing:

```sh

make lint

```

To auto-fix formatting issues run:

```sh

make format

```

### Test

Run unit tests locally for available python versions:

```sh

make test

```

Run unit tests for the specific python version:

```sh

make test PYTHON=3.13

```

### Clean

To remove the virtual environment and build artifacts run:

```sh

make clean

```

### Build

To build the package run:

```sh

make build

```

### Publish

To publish the package to PyPI run:

```sh

make publish

```

| text/markdown | EPAM RAIL | SpecialEPM-DIALDevTeam@epam.com | null | null | Apache-2.0 | ai | [

"Topic :: Software Development :: Libraries :: Python Modules"

] | [] | https://epam-rail.com | null | <4.0,>=3.11 | [] | [] | [] | [

"aidial-sdk<1,>=0.28.0",

"anthropic<1,>=0.79.0",

"pydantic<3,>=2.8.2",

"pillow<13,>=10.4.0",

"aiohttp<4,>=3.13.3"

] | [] | [] | [] | [

"Homepage, https://epam-rail.com",

"Repository, https://github.com/epam/ai-dial-adapter-anthropic/",

"Documentation, https://epam-rail.com/dial_api"

] | poetry/2.1.1 CPython/3.11.14 Linux/6.11.0-1018-azure | 2026-02-19T17:45:33.747912 | aidial_adapter_anthropic-0.5.0rc0.tar.gz | 35,087 | 16/ca/ee361a9affb558cf623a7cd17f1b70c0e6e7c517853222f7ab04a152c420/aidial_adapter_anthropic-0.5.0rc0.tar.gz | source | sdist | null | false | 741a8a93ec78cb8c6d06ed6953c68d22 | ec021d12148ff9e42fd182dbbbcddbe668a20e4c51c3398b43cc81351bfdfb8e | 16caee361a9affb558cf623a7cd17f1b70c0e6e7c517853222f7ab04a152c420 | null | [] | 186 |

2.4 | import-parent | 0.0.1 | Python package that allows a user to easily import local functions from parent folders. | # import-parent

A small utility for importing Python modules using paths relative to the

calling script — without modifying your project structure.

Inspired by R's `here()`.

---

## Installation

`pip install import-parent`

## Usage

`from import_parent import import_parent`

| text/markdown | null | Michael Boerman <michaelboerman@hey.com> | null | null | null | null | [

"Programming Language :: Python :: 3",

"License :: OSI Approved :: MIT License",

"Operating System :: OS Independent"

] | [] | null | null | >=3.8 | [] | [] | [] | [] | [] | [] | [] | [

"Homepage, https://github.com/michaelboerman/import_parent",

"Issues, https://github.com/michaelboerman/import_parent/issues"

] | twine/6.2.0 CPython/3.13.1 | 2026-02-19T17:45:32.180332 | import_parent-0.0.1.tar.gz | 1,993 | 09/15/2cbd2513d716d469cab9e81c385a19a51c273f571046bf27d012faf687e7/import_parent-0.0.1.tar.gz | source | sdist | null | false | 2ab1b7def3c85a93ea326e9687f12715 | b9210800db552843a0038e2df44428b572a8b372d8f12d5a3334abdb89047778 | 09152cbd2513d716d469cab9e81c385a19a51c273f571046bf27d012faf687e7 | null | [] | 232 |

2.4 | InfoTracker | 0.7.1 | Column-level SQL lineage, impact analysis, and breaking-change detection (MS SQL first) | # InfoTracker

**Column-level SQL lineage extraction and impact analysis for MS SQL Server**

InfoTracker is a powerful command-line tool that parses T-SQL files and generates detailed column-level lineage in OpenLineage format. It supports advanced SQL Server features including table-valued functions, stored procedures, temp tables, and EXEC patterns.

[](https://python.org)

[](LICENSE)

[](https://pypi.org/project/InfoTracker/)

## 🚀 Features

- **Column-level lineage** - Track data flow at the column level with precise transformations

- **Advanced SQL support** - T-SQL dialect with temp tables, variables, CTEs, and window functions

- **Impact analysis** - Find upstream and downstream dependencies with flexible selectors

- **Wildcard matching** - Support for table wildcards (`schema.table.*`) and column wildcards (`..pattern`)

- **Breaking change detection** - Detect schema changes that could break downstream processes

- **Multiple output formats** - Text tables or JSON for integration with other tools

- **OpenLineage compatible** - Standard format for data lineage interoperability

- **dbt (compiled SQL) support** - Run on compiled dbt models with `--dbt`

- **Rich HTML viz** - Zoom/pan, column search, per‑attribute isolate (UP/DOWN/BOTH), sidebar resize and select/clear all

- **Advanced SQL objects** - Table-valued functions (TVF) and dataset-returning procedures

- **Temp table tracking** - Full lineage through EXEC into temp tables

## 📦 Installation

### From PyPI (Recommended)

```bash

pip install InfoTracker

```

### From GitHub

```bash

# Latest stable release

pip install git+https://github.com/InfoMatePL/InfoTracker.git

# Development version

git clone https://github.com/InfoMatePL/InfoTracker.git

cd InfoTracker

pip install -e .

```

### Verify Installation

```bash

infotracker --help

```

## ⚡ Quick Start

### 1. Extract Lineage

```bash

# Extract lineage from SQL files

infotracker extract --sql-dir examples/warehouse/sql --out-dir build/lineage

# Extract lineage from compiled dbt models

infotracker extract --dbt --sql-dir examples/dbt_warehouse/models --out-dir build/dbt_lineage

```

Flags:

- --sql-dir DIR Directory with .sql files (required)

- --out-dir DIR Output folder for lineage artifacts (default from config or build/lineage)

- --adapter NAME SQL dialect adapter (default from config)

- --catalog FILE Optional YAML catalog with schemas

- --fail-on-warn Exit non-zero if warnings occurred

- --include PATTERN Glob include filter

- --exclude PATTERN Glob exclude filter

- --encoding NAME File encoding for SQL files (default: auto)

- --dbt Enable dbt mode (compiled SQL)

### 2. Run Impact Analysis

```bash

# Find what feeds into a column (upstream)

infotracker impact -s "+STG.dbo.Orders.OrderID" --graph-dir build/lineage

# Find what uses a column (downstream)

infotracker impact -s "STG.dbo.Orders.OrderID+" --graph-dir build/lineage

# Both directions

infotracker impact -s "+dbo.fct_sales.Revenue+" --graph-dir build/lineage

```

Flags:

- -s, --selector TEXT Column selector; use + for direction markers (required)

- --graph-dir DIR Folder with column_graph.json (required; produced by extract)

- --max-depth N Traversal depth; 0 = unlimited (full lineage). Default: 0

- --out PATH Write output to file instead of stdout

- --format text|json Output format (set globally or per-invocation)

### 3. Detect Breaking Changes

```bash

# Compare two versions of your schema

infotracker diff --base build/lineage --head build/lineage_new

```

Flags:

- --base DIR Folder with base artifacts (required)

- --head DIR Folder with head artifacts (required)

- --format text|json Output format

- --threshold LEVEL Severity threshold: NON_BREAKING|POTENTIALLY_BREAKING|BREAKING

### 4. Visualize the Graph

```bash

# Generate an interactive HTML graph (lineage_viz.html) for a built graph

infotracker viz --graph-dir build/lineage

```

Flags:

- --graph-dir DIR Folder with column_graph.json (required)

- --out PATH Output HTML path (default: <graph_dir>/lineage_viz.html)

Open the generated `lineage_viz.html` in your browser. You can click a column to highlight upstream/downstream lineage; press Enter in the search box to highlight all matches.

By default, the canvas is empty. Use the left sidebar to toggle objects on (checkboxes are initially unchecked).

## 📖 Selector Syntax

InfoTracker supports flexible column selectors for precise impact analysis:

| Selector Format | Description | Example |

|-----------------|-------------|---------|

| `table.column` | Simple format (adds default `dbo` schema) | `Orders.OrderID` |

| `schema.table.column` | Schema-qualified format | `dbo.Orders.OrderID` |

| `database.schema.table.column` | Database-qualified format | `STG.dbo.Orders.OrderID` |

| `schema.table.*` | Table wildcard (all columns) | `dbo.fct_sales.*` |

| `..pattern` | Column wildcard (name contains pattern) | `..revenue` |

| `..pattern*` | Column wildcard with fnmatch | `..customer*` |

### Direction Control

- `selector` - downstream dependencies (default)

- `+selector` - upstream sources

- `selector+` - downstream dependencies (explicit)

- `+selector+` - both upstream and downstream

## 💡 Examples

### Basic Usage

```bash

# Extract lineage first (always run this before impact analysis)

infotracker extract --sql-dir examples/warehouse/sql --out-dir build/lineage

# Basic column lineage

infotracker impact -s "+dbo.fct_sales.Revenue" --graph-dir build/lineage # What feeds this column?

infotracker impact -s "STG.dbo.Orders.OrderID+" --graph-dir build/lineage # What uses this column?

```

### Wildcard Selectors

```bash

# All columns from a specific table

infotracker impact -s "dbo.fct_sales.*" --graph-dir build/lineage

infotracker impact -s "STG.dbo.Orders.*" --graph-dir build/lineage

# Find all columns containing "revenue" (case-insensitive)

infotracker impact -s "..revenue" --graph-dir build/lineage

# Find all columns starting with "customer"

infotracker impact -s "..customer*" --graph-dir build/lineage

```

### Advanced SQL Objects

```bash

# Table-valued function columns (upstream)

infotracker impact -s "+dbo.fn_customer_orders_tvf.*" --graph-dir build/lineage

# Procedure dataset columns (upstream)

infotracker impact -s "+dbo.usp_customer_metrics_dataset.*" --graph-dir build/lineage

# Temp table lineage from EXEC

infotracker impact -s "+#temp_table.*" --graph-dir build/lineage

```

### Output Formats

```bash

# Text output (default, human-readable)

infotracker impact -s "+..revenue" --graph-dir build/lineage

# JSON output (machine-readable)

infotracker --format json impact -s "..customer*" --graph-dir build/lineage > customer_lineage.json

# Control traversal depth

infotracker impact -s "+dbo.Orders.OrderID" --max-depth 2 --graph-dir build/lineage

# Note: --max-depth defaults to 0 (unlimited / full lineage)

```

### Breaking Change Detection

```bash

# Extract baseline

infotracker extract --sql-dir sql_v1 --out-dir build/baseline

# Extract new version

infotracker extract --sql-dir sql_v2 --out-dir build/current

# Detect breaking changes

infotracker diff --base build/baseline --head build/current

# Filter by severity

infotracker diff --base build/baseline --head build/current --threshold BREAKING

```

## Output Format

Impact analysis returns these columns (topologically sorted by level):

- **from** - Source column (fully qualified)

- **to** - Target column (fully qualified)

- **direction** - `upstream` or `downstream`

- **transformation** - Type of transformation (`IDENTITY`, `ARITHMETIC`, `AGGREGATION`, `CASE_AGGREGATION`, `DATE_FUNCTION`, `WINDOW`, etc.). For UX clarity, CAST and CASE are shown as `expression`.

- **description** - Human-readable transformation description

- **level** - Topological distance from the selected column (1 = direct neighbor, then 2, 3, …)

Results are automatically deduplicated and sorted topologically by level (then direction/from/to). Use `--format json` for machine-readable output.

### New Transformation Types

The enhanced transformation taxonomy includes:

- `ARITHMETIC_AGGREGATION` - Arithmetic operations combined with aggregation functions

- `COMPLEX_AGGREGATION` - Multi-step calculations involving multiple aggregations

- `DATE_FUNCTION` - Date/time calculations like DATEDIFF, DATEADD

- `DATE_FUNCTION_AGGREGATION` - Date functions applied to aggregated results

- `CASE_AGGREGATION` - CASE statements applied to aggregated results

### Advanced Object Support

InfoTracker now supports advanced SQL Server objects:

**Table-Valued Functions (TVF):**

- Inline TVF (`RETURN AS SELECT`) - Parsed directly from SELECT statement

- Multi-statement TVF (`RETURN @table TABLE`) - Extracts schema from table variable definition

- Function parameters are tracked as filter metadata (don't create columns)

**Dataset-Returning Procedures:**

- Procedures ending with SELECT statement are treated as dataset sources

- Output schema extracted from the final SELECT statement

- Parameters tracked as filter metadata affecting lineage scope

**EXEC into Temp Tables:**

- `INSERT INTO #temp EXEC procedure` patterns create edges from procedure columns to temp table columns

- Temp table lineage propagates downstream to final targets

- Supports complex workflow patterns combining functions, procedures, and temp tables

## Configuration

InfoTracker follows this configuration precedence:

1. **CLI flags** (highest priority) - override everything

2. **infotracker.yml** config file - project defaults

3. **Built-in defaults** (lowest priority) - fallback values

## 🔧 Configuration

Create an `infotracker.yml` file in your project root:

```yaml

sql_dirs:

- "sql/"

- "models/"

out_dir: "build/lineage"

exclude_dirs:

- "__pycache__"

- ".git"

severity_threshold: "POTENTIALLY_BREAKING"

```

### Configuration Options

| Setting | Description | Default | Examples |

|---------|-------------|---------|----------|

| `sql_dirs` | Directories to scan for SQL files | `["."]` | `["sql/", "models/"]` |

| `out_dir` | Output directory for lineage files | `"lineage"` | `"build/artifacts"` |

| `exclude_dirs` | Directories to skip | `[]` | `["__pycache__", "node_modules"]` |

| `severity_threshold` | Breaking change detection level | `"NON_BREAKING"` | `"BREAKING"` |

## 📚 Documentation

- **[Architecture](docs/architecture.md)** - Core concepts and design

- **[Lineage Concepts](docs/lineage_concepts.md)** - Data lineage fundamentals

- **[CLI Usage](docs/cli_usage.md)** - Complete command reference

- **[Configuration](docs/configuration.md)** - Advanced configuration options

- **[DBT Integration](docs/dbt_integration.md)** - Using with DBT projects

- **[OpenLineage Mapping](docs/openlineage_mapping.md)** - Output format specification

- **[Breaking Changes](docs/breaking_changes.md)** - Change detection and severity levels

- **[Advanced Use Cases](docs/advanced_use_cases.md)** - TVFs, stored procedures, and complex scenarios

- **[Edge Cases](docs/edge_cases.md)** - SELECT *, UNION, temp tables handling

- **[FAQ](docs/faq.md)** - Common questions and troubleshooting

## 🖼 Visualization (viz)

Generate an interactive HTML to explore column-level lineage:

```bash

# After extract (column_graph.json present in the folder)

infotracker viz --graph-dir build/lineage

# Options

# --out <path> Output HTML path (default: <graph_dir>/lineage_viz.html)

# --graph-dir Folder z column_graph.json [required]

```

Tips:

- Search supports table names, full IDs (namespace.schema.table), column names, and URIs. Press Enter to highlight all matches.

- Click a column to switch into lineage mode (upstream/downstream highlight). Clicking another column clears the previous selection.

- Right‑click a column row to open a context menu: Show upstream, Show downstream, Show both, Clear filter. In isolate mode only the path columns and edges remain visible (background clicks won’t clear; use Clear filter).