metadata_version string | name string | version string | summary string | description string | description_content_type string | author string | author_email string | maintainer string | maintainer_email string | license string | keywords string | classifiers list | platform list | home_page string | download_url string | requires_python string | requires list | provides list | obsoletes list | requires_dist list | provides_dist list | obsoletes_dist list | requires_external list | project_urls list | uploaded_via string | upload_time timestamp[us] | filename string | size int64 | path string | python_version string | packagetype string | comment_text string | has_signature bool | md5_digest string | sha256_digest string | blake2_256_digest string | license_expression string | license_files list | recent_7d_downloads int64 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

2.4 | ctrlcode | 0.1.1 | Adaptive coding harness with differential fuzzing - transforms AI slop into production-ready code | # ctrl+code

Adaptive coding harness with differential fuzzing - transforms AI slop into production-ready code.

## Configuration

ctrl+code follows platform conventions for config and data storage:

| Platform | Config | Data | Cache |

|----------|--------|------|-------|

| **Linux** | `~/.config/ctrlcode/` | `~/.local/share/ctrlcode/` | `~/.cache/ctrlcode/` |

| **macOS** | `~/Library/Application Support/ctrlcode/` | `~/Library/Application Support/ctrlcode/` | `~/Library/Caches/ctrlcode/` |

| **Windows** | `%APPDATA%\ctrlcode\` | `%LOCALAPPDATA%\ctrlcode\` | `%LOCALAPPDATA%\ctrlcode\Cache\` |

### Environment Variables

Override default directories:

- `CTRLCODE_CONFIG_DIR`: Config file location

- `CTRLCODE_DATA_DIR`: Session logs and persistent data

- `CTRLCODE_CACHE_DIR`: Conversation storage and temp files

### Configuration File

Copy `config.example.toml` to your config directory as `config.toml` and fill in your API keys.

### Agent Instructions (AGENT.md)

Customize agent behavior with `AGENT.md` files, loaded hierarchically:

1. **Global** (`~/.config/ctrlcode/AGENT.md`) - Your personal defaults across all projects

2. **Project** (`<workspace>/AGENT.md`) - Project-specific instructions

Example global `AGENT.md`:

```markdown

# Global Agent Defaults

- Always use semantic commit messages

- Show tool results explicitly

- Prefer built-in tools over scripts

```

Example project `AGENT.md`:

```markdown

# MyProject Instructions

## Architecture

- Frontend: React + TypeScript

- Backend: FastAPI + PostgreSQL

## Style

- Use async/await for all I/O

- Prefer functional components

```

Instructions are injected into the system prompt, giving the agent context about your preferences and project structure.

## Installation

```bash

uv pip install ctrlcode

```

## Usage

Start the TUI (auto-launches server):

```bash

ctrlcode

```

Or start server separately:

```bash

ctrlcode-server

```

| text/markdown | null | null | null | null | null | null | [] | [] | null | null | >=3.12 | [] | [] | [] | [

"aiohttp>=3.10.0",

"anthropic>=0.40.0",

"apscheduler>=3.11.2",

"faiss-cpu>=1.13.2",

"harness-utils>=0.3.1",

"httpx>=0.28.1",

"mcp>=1.0.0",

"networkx>=3.6.1",

"openai>=1.54.0",

"platformdirs>=4.5.1",

"playwright>=1.58.0",

"pyperclip>=1.11.0",

"sentence-transformers>=5.2.2",

"textual>=7.5.0"... | [] | [] | [] | [] | uv/0.7.17 | 2026-02-19T17:05:46.902641 | ctrlcode-0.1.1.tar.gz | 322,687 | f8/55/80c6733276dbbe710016f63996e30efc25665efe74971c5f574402a0451a/ctrlcode-0.1.1.tar.gz | source | sdist | null | false | 92c284a546a84e74026fbebe5b8570da | 8466759135a25eaf448da7c29d8e9a808663030e175a221a452abbf71da639fa | f85580c6733276dbbe710016f63996e30efc25665efe74971c5f574402a0451a | null | [] | 219 |

2.4 | fairical | 2.0.2 | Fairical is a Python library to assess adjustable demographically fair Machine Learning (ML) systems | <!--

SPDX-FileCopyrightText: Copyright © 2025 Idiap Research Institute <contact@idiap.ch>

SPDX-License-Identifier: GPL-3.0-or-later

-->

[](https://fairical.readthedocs.io/en/v2.0.2/)

[](https://gitlab.idiap.ch/medai/software/fairical/commits/v2.0.2)

[](https://www.idiap.ch/software/medai/docs/medai/software/fairical/v2.0.2/coverage/index.html)

[](https://gitlab.idiap.ch/medai/software/fairical)

# Fairical

Fairical is a Python library for rigorously evaluating and comparing demographically

fair machine-learning systems through the lens of multi-objective optimization. Rather

than treating fairness as a single constraint, Fairical recognizes that real-world

deployments must balance multiple, often conflicting fairness metrics (e.g., demographic

parity, equalized odds across race, gender, age) alongside traditional utility measures

like accuracy. It implements a model-agnostic evaluation framework that approximates

Pareto fronts of utility-fairness trade-offs, then distills each system's performance

into a compact measurement table and radar chart. By calculating convergence (how close

models get to optimal trade-offs), diversity (uniform distribution and spread of

solutions), capacity (number of non-dominated points), and a unified

convergence-diversity score via hypervolume, Fairical delivers both quantitative rigor

and qualitative clarity.

For installation and usage instructions, check-out our documentation.

If you use this library in published material, we kindly ask you to cite this work:

```bibtex

@article{ozbulak_multi-objective_2025,

title={A Multi-Objective Evaluation Framework for Analyzing Utility-Fairness Trade-Offs in Machine Learning Systems},

author={Özbulak, Gökhan and Jimenez-del-Toro, Oscar and Fatoretto, Maíra and Berton, Lilian and Anjos, André},

journal={Machine Learning for Biomedical Imaging},

volume={3},

number={Special issue on FAIMI},

pages={938--957},

doi={10.59275/j.melba.2025-ab9a},

year={2025}

}

```

| text/markdown | null | Gokhan Ozbulak <gokhan.ozbulak@idiap.ch> | null | null | null | null | [

"Development Status :: 4 - Beta",

"Intended Audience :: Developers",

"License :: OSI Approved :: GNU General Public License v3 (GPLv3)",

"Natural Language :: English",

"Programming Language :: Python :: 3",

"Topic :: Software Development :: Libraries :: Python Modules"

] | [] | null | null | >=3.11 | [] | [] | [] | [

"click",

"compact-json",

"fairlearn",

"matplotlib",

"numpy",

"pydantic>=2",

"pymoo",

"scikit-learn",

"tabulate",

"auto-intersphinx; extra == \"doc\"",

"furo; extra == \"doc\"",

"sphinx; extra == \"doc\"",

"sphinx-autodoc-typehints; extra == \"doc\"",

"sphinx-click; extra == \"doc\"",

"sp... | [] | [] | [] | [

"documentation, https://fairical.readthedocs.io/en/v2.0.2/",

"homepage, https://pypi.org/project/fairical",

"repository, https://gitlab.idiap.ch/medai/software/fairical",

"changelog, https://gitlab.idiap.ch/medai/software/fairical/-/releases"

] | twine/6.2.0 CPython/3.13.9 | 2026-02-19T17:05:26.093832 | fairical-2.0.2.tar.gz | 59,554 | 99/6e/ae214a2768a74f0ca6574751e5916d9c07857f430c025bb01a89cf2a1b48/fairical-2.0.2.tar.gz | source | sdist | null | false | 70bcbb292958a7f7a657e530653bf74c | 078a2d310da1b8861e74c07bae68270d113f65af8ddbb6681337fd79dbc34794 | 996eae214a2768a74f0ca6574751e5916d9c07857f430c025bb01a89cf2a1b48 | GPL-3.0-or-later | [] | 223 |

2.3 | reskinner | 4.2.2 | Instantaneous theme changing for PySimpleGUI and FreeSimpleGUI windows. | # Reskinner: Dynamic Theme Switching for PySimpleGUI and FreeSimpleGUI

[](https://pypi.org/project/reskinner/)

[](https://pypi.org/project/reskinner/)

[](https://opensource.org/licenses/MIT)

[](https://github.com/astral-sh/uv)

[](https://github.com/astral-sh/ruff)

[](https://pepy.tech/project/reskinner)

[](https://github.com/definite-d/psg_reskinner/issues)

[](https://github.com/definite-d/psg_reskinner/stargazers)

Reskinner is a Python 3 library for [PySimpleGUI](https://github.com/pysimplegui/pysimplegui) and [FreeSimpleGUI](https://github.com/spyoungtech/FreeSimpleGUI) that enables changing the theme of a GUI window at runtime **without** needing to recreate or re-instantiate the window.

It provides a smooth, dynamic way to update your application's appearance on the fly, with optional animations and support for multiple color interpolation and easing modes. Reskinner is lightweight, easy to integrate, and works with both major PySimpleGUI-compatible frameworks.

To learn more, visit [the GitHub repository](https://github.com/definite-d/reskinner), and consider starring the project if you find it useful.

| text/markdown | Divine U. Afam-Ifediogor | null | null | null | MIT License Copyright (c) 2025 Divine Afam-Ifediogor Permission is hereby granted, free of charge, to any person obtaining a copy of this software and associated documentation files (the "Software"), to deal in the Software without restriction, including without limitation the rights to use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies of the Software, and to permit persons to whom the Software is furnished to do so, subject to the following conditions: The above copyright notice and this permission notice shall be included in all copies or substantial portions of the Software. THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE. | PySimpleGUI, FreeSimpleGUI, reskin, realtime, theme, color, gui | [

"Development Status :: 6 - Mature",

"Framework :: PySimpleGUI",

"Framework :: PySimpleGUI :: 4",

"Framework :: PySimpleGUI :: 5",

"License :: OSI Approved :: MIT License",

"Natural Language :: English",

"Programming Language :: Python",

"Programming Language :: Python :: 3",

"Programming Language ::... | [] | null | null | >=3.7 | [] | [] | [] | [

"colour>=0.1.5",

"strenum; python_full_version < \"3.11\"",

"importlib-metadata; python_full_version < \"3.8\"",

"typing-extensions; python_full_version < \"3.8\"",

"freesimplegui>=5.0.0; extra == \"fsg\"",

"pysimplegui>=4.60.3.96; extra == \"psg\""

] | [] | [] | [] | [

"Homepage, https://github.com/definite-d/reskinner/",

"Bug Tracker, https://github.com/definite-d/reskinner/issues/"

] | uv/0.10.4 {"installer":{"name":"uv","version":"0.10.4","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":true} | 2026-02-19T17:05:24.130925 | reskinner-4.2.2-py3-none-any.whl | 19,611 | b8/b5/b14f0972f4d85854af6a24d89c38d2c3ef06ae9616abee96e48bf6195688/reskinner-4.2.2-py3-none-any.whl | py3 | bdist_wheel | null | false | 3da1d64fd6bf1488cf38dc6fdb533ed7 | 33a32ecc08abf9b4d13b12695b6753df54db6158726683a366fd7582c38e2f16 | b8b5b14f0972f4d85854af6a24d89c38d2c3ef06ae9616abee96e48bf6195688 | null | [] | 226 |

2.4 | reme-ai | 0.3.0.0b3 | Remember Me, Refine Me. | <p align="center">

<img src="docs/_static/figure/reme_logo.png" alt="ReMe Logo" width="50%">

</p>

<p align="center">

<a href="https://pypi.org/project/reme-ai/"><img src="https://img.shields.io/badge/python-3.10+-blue" alt="Python Version"></a>

<a href="https://pypi.org/project/reme-ai/"><img src="https://img.shields.io/pypi/v/reme-ai.svg?logo=pypi" alt="PyPI Version"></a>

<a href="https://pepy.tech/project/reme-ai/"><img src="https://img.shields.io/pypi/dm/reme-ai" alt="PyPI Downloads"></a>

<a href="https://github.com/agentscope-ai/ReMe"><img src="https://img.shields.io/github/commit-activity/m/agentscope-ai/ReMe?style=flat-square" alt="GitHub commit activity"></a>

</p>

<p align="center">

<a href="./LICENSE"><img src="https://img.shields.io/badge/license-Apache--2.0-black" alt="License"></a>

<a href="./README.md"><img src="https://img.shields.io/badge/English-Click-yellow" alt="English"></a>

<a href="./README_ZH.md"><img src="https://img.shields.io/badge/简体中文-点击查看-orange" alt="简体中文"></a>

<a href="https://github.com/agentscope-ai/ReMe"><img src="https://img.shields.io/github/stars/agentscope-ai/ReMe?style=social" alt="GitHub Stars"></a>

</p>

<p align="center">

<strong>Memory Management Kit for Agents, Remember Me, Refine Me.</strong><br>

<em><sub>If you find it useful, please give us a ⭐ Star.</sub></em>

</p>

---

ReMe is a **modular memory management kit** that provides AI agents with unified memory capabilities—enabling the ability to extract, reuse, and share memories across users, tasks, and agents.

Agent memory can be viewed as:

```text

Agent Memory = Long-Term Memory + Short-Term Memory

= (Personal + Task + Tool) Memory + (Working Memory)

```

- **Personal Memory**: Understand user preferences and adapt to context

- **Task Memory**: Learn from experience and perform better on similar tasks

- **Tool Memory**: Optimize tool selection and parameter usage based on historical performance

- **Working Memory**: Manage short-term context for long-running agents without context overflow

---

## 📰 Latest Updates

- **[2026-02]** 💻 ReMeCli: A terminal-based AI chat assistant with built-in memory management. Automatically compacts long conversations into summaries to free up context space, and persists important information as Markdown files for retrieval in future sessions. Memory design inspired by [OpenClaw](https://github.com/openclaw/openclaw).

- [Quick Start](docs/cli/quick_start_en.md)

- Type `/horse` to trigger the Year of the Horse Easter egg -- fireworks, a galloping horse animation, and a random blessing.

<table border="0" cellspacing="0" cellpadding="0" style="border: none;">

<tr style="border: none;">

<td width="10%" style="border: none; vertical-align: middle; text-align: center;">

<strong>马<br>上<br>有<br>钱</strong>

</td>

<td width="80%" style="border: none;">

<video src="https://github.com/user-attachments/assets/d731ae5c-80eb-498b-a22c-8ab2b9169f87" autoplay muted loop controls></video>

</td>

<td width="10%" style="border: none; vertical-align: middle; text-align: center;">

<strong>马<br>到<br>成<br>功</strong>

</td>

</tr>

</table>

- **[2025-12]** 📄 Our procedural (task) memory paper has been released on [arXiv](https://arxiv.org/abs/2512.10696)

- **[2025-11]** 🧠 React-agent with working-memory demo ([Intro](docs/work_memory/message_offload.md)) with ([Quick Start](docs/cookbook/working/quick_start.md)) and ([Code](cookbook/working_memory/work_memory_demo.py))

- **[2025-10]** 🚀 Direct Python import support: use `from reme_ai import ReMeApp` without HTTP/MCP service

- **[2025-10]** 🔧 Tool Memory: data-driven tool selection and parameter optimization ([Guide](docs/tool_memory/tool_memory.md))

- **[2025-09]** 🎉 Async operations support, integrated into agentscope-runtime

- **[2025-09]** 🎉 Task memory and personal memory integration

- **[2025-09]** 🧪 Validated effectiveness in appworld, bfcl(v3), and frozenlake ([Experiments](docs/cookbook))

- **[2025-08]** 🚀 MCP protocol support ([Quick Start](docs/mcp_quick_start.md))

- **[2025-06]** 🚀 Multiple backend vector storage (Elasticsearch & ChromaDB) ([Guide](docs/vector_store_api_guide.md))

- **[2024-09]** 🧠 Personalized and time-aware memory storage

---

## ✨ Architecture Design

<p align="center">

<img src="docs/_static/figure/reme_structure.jpg" alt="ReMe Architecture" width="80%">

</p>

ReMe provides a **modular memory management kit** with pluggable components that can be integrated into any agent framework. The system consists of:

#### 🧠 **Task Memory/Experience**

Procedural knowledge reused across agents

- **Success Pattern Recognition**: Identify effective strategies and understand their underlying principles

- **Failure Analysis Learning**: Learn from mistakes and avoid repeating the same issues

- **Comparative Patterns**: Different sampling trajectories provide more valuable memories through comparison

- **Validation Patterns**: Confirm the effectiveness of extracted memories through validation modules

Learn more about how to use task memory from [task memory](docs/task_memory/task_memory.md)

#### 👤 **Personal Memory**

Contextualized memory for specific users

- **Individual Preferences**: User habits, preferences, and interaction styles

- **Contextual Adaptation**: Intelligent memory management based on time and context

- **Progressive Learning**: Gradually build deep understanding through long-term interaction

- **Time Awareness**: Time sensitivity in both retrieval and integration

Learn more about how to use personal memory from [personal memory](docs/personal_memory/personal_memory.md)

#### 🔧 **Tool Memory**

Data-driven tool selection and usage optimization

- **Historical Performance Tracking**: Success rates, execution times, and token costs from real usage

- **LLM-as-Judge Evaluation**: Qualitative insights on why tools succeed or fail

- **Parameter Optimization**: Learn optimal parameter configurations from successful calls

- **Dynamic Guidelines**: Transform static tool descriptions into living, learned manuals

Learn more about how to use tool memory from [tool memory](docs/tool_memory/tool_memory.md)

#### 🧠 Working Memory

Short‑term contextual memory for long‑running agents via **message offload & reload**:

- **Message Offload**: Compact large tool outputs to external files or LLM summaries

- **Message Reload**: Search (`grep_working_memory`) and read (`read_working_memory`) offloaded content on demand

📖 **Concept & API**:

- Message offload overview: [Message Offload](docs/work_memory/message_offload.md)

- Offload / reload operators: [Message Offload Ops](docs/work_memory/message_offload_ops.md), [Message Reload Ops](docs/work_memory/message_reload_ops.md)

💻 **End‑to‑End Demo**:

- Working memory quick start: [Working Memory Quick Start](docs/cookbook/working/quick_start.md)

- ReAct agent with working memory: [react_agent_with_working_memory.py](cookbook/working_memory/react_agent_with_working_memory.py)

- Runnable demo: [work_memory_demo.py](cookbook/working_memory/work_memory_demo.py)

---

## 🛠️ Installation

### Install from PyPI (Recommended)

```bash

pip install reme-ai

```

### Install from Source

```bash

git clone https://github.com/agentscope-ai/ReMe.git

cd ReMe

pip install .

```

### Environment Configuration

ReMe requires LLM and embedding model configurations. Copy `example.env` to `.env` and configure:

```bash

FLOW_LLM_API_KEY=sk-xxxx

FLOW_LLM_BASE_URL=https://xxxx/v1

FLOW_EMBEDDING_API_KEY=sk-xxxx

FLOW_EMBEDDING_BASE_URL=https://xxxx/v1

```

---

## 🚀 Quick Start

### HTTP Service Startup

```bash

reme \

backend=http \

http.port=8002 \

llm.default.model_name=qwen3-30b-a3b-thinking-2507 \

embedding_model.default.model_name=text-embedding-v4 \

vector_store.default.backend=local

```

### MCP Server Support

```bash

reme \

backend=mcp \

mcp.transport=stdio \

llm.default.model_name=qwen3-30b-a3b-thinking-2507 \

embedding_model.default.model_name=text-embedding-v4 \

vector_store.default.backend=local

```

### Core API Usage

#### Task Memory Management

```python

import requests

# Experience Summarizer: Learn from execution trajectories

response = requests.post("http://localhost:8002/summary_task_memory", json={

"workspace_id": "task_workspace",

"trajectories": [

{"messages": [{"role": "user", "content": "Help me create a project plan"}], "score": 1.0}

]

})

# Retriever: Get relevant memories

response = requests.post("http://localhost:8002/retrieve_task_memory", json={

"workspace_id": "task_workspace",

"query": "How to efficiently manage project progress?",

"top_k": 1

})

```

<details>

<summary>Python import version</summary>

```python

import asyncio

from reme_ai import ReMeApp

async def main():

async with ReMeApp(

"llm.default.model_name=qwen3-30b-a3b-thinking-2507",

"embedding_model.default.model_name=text-embedding-v4",

"vector_store.default.backend=memory"

) as app:

# Experience Summarizer: Learn from execution trajectories

result = await app.async_execute(

name="summary_task_memory",

workspace_id="task_workspace",

trajectories=[

{

"messages": [

{"role": "user", "content": "Help me create a project plan"}

],

"score": 1.0

}

]

)

print(result)

# Retriever: Get relevant memories

result = await app.async_execute(

name="retrieve_task_memory",

workspace_id="task_workspace",

query="How to efficiently manage project progress?",

top_k=1

)

print(result)

if __name__ == "__main__":

asyncio.run(main())

```

</details>

<details>

<summary>curl version</summary>

```bash

# Experience Summarizer: Learn from execution trajectories

curl -X POST http://localhost:8002/summary_task_memory \

-H "Content-Type: application/json" \

-d '{

"workspace_id": "task_workspace",

"trajectories": [

{"messages": [{"role": "user", "content": "Help me create a project plan"}], "score": 1.0}

]

}'

# Retriever: Get relevant memories

curl -X POST http://localhost:8002/retrieve_task_memory \

-H "Content-Type: application/json" \

-d '{

"workspace_id": "task_workspace",

"query": "How to efficiently manage project progress?",

"top_k": 1

}'

```

</details>

#### Personal Memory Management

```python

# Memory Integration: Learn from user interactions

response = requests.post("http://localhost:8002/summary_personal_memory", json={

"workspace_id": "task_workspace",

"trajectories": [

{"messages":

[

{"role": "user", "content": "I like to drink coffee while working in the morning"},

{"role": "assistant",

"content": "I understand, you prefer to start your workday with coffee to stay energized"}

]

}

]

})

# Memory Retrieval: Get personal memory fragments

response = requests.post("http://localhost:8002/retrieve_personal_memory", json={

"workspace_id": "task_workspace",

"query": "What are the user's work habits?",

"top_k": 5

})

```

<details>

<summary>Python import version</summary>

```python

import asyncio

from reme_ai import ReMeApp

async def main():

async with ReMeApp(

"llm.default.model_name=qwen3-30b-a3b-thinking-2507",

"embedding_model.default.model_name=text-embedding-v4",

"vector_store.default.backend=memory"

) as app:

# Memory Integration: Learn from user interactions

result = await app.async_execute(

name="summary_personal_memory",

workspace_id="task_workspace",

trajectories=[

{

"messages": [

{"role": "user", "content": "I like to drink coffee while working in the morning"},

{"role": "assistant",

"content": "I understand, you prefer to start your workday with coffee to stay energized"}

]

}

]

)

print(result)

# Memory Retrieval: Get personal memory fragments

result = await app.async_execute(

name="retrieve_personal_memory",

workspace_id="task_workspace",

query="What are the user's work habits?",

top_k=5

)

print(result)

if __name__ == "__main__":

asyncio.run(main())

```

</details>

<details>

<summary>curl version</summary>

```bash

# Memory Integration: Learn from user interactions

curl -X POST http://localhost:8002/summary_personal_memory \

-H "Content-Type: application/json" \

-d '{

"workspace_id": "task_workspace",

"trajectories": [

{"messages": [

{"role": "user", "content": "I like to drink coffee while working in the morning"},

{"role": "assistant", "content": "I understand, you prefer to start your workday with coffee to stay energized"}

]}

]

}'

# Memory Retrieval: Get personal memory fragments

curl -X POST http://localhost:8002/retrieve_personal_memory \

-H "Content-Type: application/json" \

-d '{

"workspace_id": "task_workspace",

"query": "What are the user'\''s work habits?",

"top_k": 5

}'

```

</details>

#### Tool Memory Management

```python

import requests

# Record tool execution results

response = requests.post("http://localhost:8002/add_tool_call_result", json={

"workspace_id": "tool_workspace",

"tool_call_results": [

{

"create_time": "2025-10-21 10:30:00",

"tool_name": "web_search",

"input": {"query": "Python asyncio tutorial", "max_results": 10},

"output": "Found 10 relevant results...",

"token_cost": 150,

"success": True,

"time_cost": 2.3

}

]

})

# Generate usage guidelines from history

response = requests.post("http://localhost:8002/summary_tool_memory", json={

"workspace_id": "tool_workspace",

"tool_names": "web_search"

})

# Retrieve tool guidelines before use

response = requests.post("http://localhost:8002/retrieve_tool_memory", json={

"workspace_id": "tool_workspace",

"tool_names": "web_search"

})

```

<details>

<summary>Python import version</summary>

```python

import asyncio

from reme_ai import ReMeApp

async def main():

async with ReMeApp(

"llm.default.model_name=qwen3-30b-a3b-thinking-2507",

"embedding_model.default.model_name=text-embedding-v4",

"vector_store.default.backend=memory"

) as app:

# Record tool execution results

result = await app.async_execute(

name="add_tool_call_result",

workspace_id="tool_workspace",

tool_call_results=[

{

"create_time": "2025-10-21 10:30:00",

"tool_name": "web_search",

"input": {"query": "Python asyncio tutorial", "max_results": 10},

"output": "Found 10 relevant results...",

"token_cost": 150,

"success": True,

"time_cost": 2.3

}

]

)

print(result)

# Generate usage guidelines from history

result = await app.async_execute(

name="summary_tool_memory",

workspace_id="tool_workspace",

tool_names="web_search"

)

print(result)

# Retrieve tool guidelines before use

result = await app.async_execute(

name="retrieve_tool_memory",

workspace_id="tool_workspace",

tool_names="web_search"

)

print(result)

if __name__ == "__main__":

asyncio.run(main())

```

</details>

<details>

<summary>curl version</summary>

```bash

# Record tool execution results

curl -X POST http://localhost:8002/add_tool_call_result \

-H "Content-Type: application/json" \

-d '{

"workspace_id": "tool_workspace",

"tool_call_results": [

{

"create_time": "2025-10-21 10:30:00",

"tool_name": "web_search",

"input": {"query": "Python asyncio tutorial", "max_results": 10},

"output": "Found 10 relevant results...",

"token_cost": 150,

"success": true,

"time_cost": 2.3

}

]

}'

# Generate usage guidelines from history

curl -X POST http://localhost:8002/summary_tool_memory \

-H "Content-Type: application/json" \

-d '{

"workspace_id": "tool_workspace",

"tool_names": "web_search"

}'

# Retrieve tool guidelines before use

curl -X POST http://localhost:8002/retrieve_tool_memory \

-H "Content-Type: application/json" \

-d '{

"workspace_id": "tool_workspace",

"tool_names": "web_search"

}'

```

</details>

#### Working Memory Management

```python

import requests

# Summarize and compact working memory for a long-running conversation

response = requests.post("http://localhost:8002/summary_working_memory", json={

"messages": [

{

"role": "system",

"content": "You are a helpful assistant. First use `Grep` to find the line numbers that match the keywords or regular expressions, and then use `ReadFile` to read the code around those locations. If no matches are found, never give up; try different parameters, such as searching with only part of the keywords. After `Grep`, use the `ReadFile` command to view content starting from a specified `offset` and `limit`, and do not exceed 100 lines. If the current content is insufficient, you can continue trying different `offset` and `limit` values with the `ReadFile` command."

},

{

"role": "user",

"content": "搜索下reme项目的的README内容"

},

{

"role": "assistant",

"content": "",

"tool_calls": [

{

"index": 0,

"id": "call_6596dafa2a6a46f7a217da",

"function": {

"arguments": "{\"query\": \"readme\"}",

"name": "web_search"

},

"type": "function"

}

]

},

{

"role": "tool",

"content": "ultra large context , over 50000 tokens......"

},

{

"role": "user",

"content": "根据readme回答task memory在appworld的效果是多少,需要具体的数值"

}

],

"working_summary_mode": "auto",

"compact_ratio_threshold": 0.75,

"max_total_tokens": 20000,

"max_tool_message_tokens": 2000,

"group_token_threshold": 4000,

"keep_recent_count": 2,

"store_dir": "test_working_memory",

"chat_id": "demo_chat_id"

})

```

<details>

<summary>Python import version</summary>

```python

import asyncio

from reme_ai import ReMeApp

async def main():

async with ReMeApp(

"llm.default.model_name=qwen3-30b-a3b-thinking-2507",

"embedding_model.default.model_name=text-embedding-v4",

"vector_store.default.backend=memory"

) as app:

# Summarize and compact working memory for a long-running conversation

result = await app.async_execute(

name="summary_working_memory",

messages=[

{

"role": "system",

"content": "You are a helpful assistant. First use `Grep` to find the line numbers that match the keywords or regular expressions, and then use `ReadFile` to read the code around those locations. If no matches are found, never give up; try different parameters, such as searching with only part of the keywords. After `Grep`, use the `ReadFile` command to view content starting from a specified `offset` and `limit`, and do not exceed 100 lines. If the current content is insufficient, you can continue trying different `offset` and `limit` values with the `ReadFile` command."

},

{

"role": "user",

"content": "搜索下reme项目的的README内容"

},

{

"role": "assistant",

"content": "",

"tool_calls": [

{

"index": 0,

"id": "call_6596dafa2a6a46f7a217da",

"function": {

"arguments": "{\"query\": \"readme\"}",

"name": "web_search"

},

"type": "function"

}

]

},

{

"role": "tool",

"content": "ultra large context , over 50000 tokens......"

},

{

"role": "user",

"content": "根据readme回答task memory在appworld的效果是多少,需要具体的数值"

}

],

working_summary_mode="auto",

compact_ratio_threshold=0.75,

max_total_tokens=20000,

max_tool_message_tokens=2000,

group_token_threshold=4000,

keep_recent_count=2,

store_dir="test_working_memory",

chat_id="demo_chat_id",

)

print(result)

if __name__ == "__main__":

asyncio.run(main())

```

</details>

<details>

<summary>curl version</summary>

```bash

curl -X POST http://localhost:8002/summary_working_memory \

-H "Content-Type: application/json" \

-d '{

"messages": [

{

"role": "system",

"content": "You are a helpful assistant. First use `Grep` to find the line numbers that match the keywords or regular expressions, and then use `ReadFile` to read the code around those locations. If no matches are found, never give up; try different parameters, such as searching with only part of the keywords. After `Grep`, use the `ReadFile` command to view content starting from a specified `offset` and `limit`, and do not exceed 100 lines. If the current content is insufficient, you can continue trying different `offset` and `limit` values with the `ReadFile` command."

},

{

"role": "user",

"content": "搜索下reme项目的的README内容"

},

{

"role": "assistant",

"content": "",

"tool_calls": [

{

"index": 0,

"id": "call_6596dafa2a6a46f7a217da",

"function": {

"arguments": "{\"query\": \"readme\"}",

"name": "web_search"

},

"type": "function"

}

]

},

{

"role": "tool",

"content": "ultra large context , over 50000 tokens......"

},

{

"role": "user",

"content": "根据readme回答task memory在appworld的效果是多少,需要具体的数值"

}

],

"working_summary_mode": "auto",

"compact_ratio_threshold": 0.75,

"max_total_tokens": 20000,

"max_tool_message_tokens": 2000,

"group_token_threshold": 4000,

"keep_recent_count": 2,

"store_dir": "test_working_memory",

"chat_id": "demo_chat_id"

}'

```

</details>

---

## 📦 Pre-built Memory Library

ReMe provides a **memory library** with pre-extracted, production-ready memories that agents can load and use immediately:

### Available Memory Packs

| Memory Pack | Domain | Size | Description |

|----------------------|----------------|---------------|-------------------------------------------------------------------------------------|

| **`appworld.jsonl`** | Task Execution | ~100 memories | Complex task planning patterns, multi-step workflows, and error recovery strategies |

| **`bfcl_v3.jsonl`** | Tool Usage | ~150 memories | Function calling patterns, parameter optimization, and tool selection strategies |

### Loading Pre-built Memories

```python

# Load pre-built memories

response = requests.post("http://localhost:8002/vector_store", json={

"workspace_id": "appworld",

"action": "load",

"path": "./docs/library/"

})

# Query relevant memories

response = requests.post("http://localhost:8002/retrieve_task_memory", json={

"workspace_id": "appworld",

"query": "How to navigate to settings and update user profile?",

"top_k": 1

})

```

<details>

<summary>Python import version</summary>

```python

import asyncio

from reme_ai import ReMeApp

async def main():

async with ReMeApp(

"llm.default.model_name=qwen3-30b-a3b-thinking-2507",

"embedding_model.default.model_name=text-embedding-v4",

"vector_store.default.backend=memory"

) as app:

# Load pre-built memories

result = await app.async_execute(

name="vector_store",

workspace_id="appworld",

action="load",

path="./docs/library/"

)

print(result)

# Query relevant memories

result = await app.async_execute(

name="retrieve_task_memory",

workspace_id="appworld",

query="How to navigate to settings and update user profile?",

top_k=1

)

print(result)

if __name__ == "__main__":

asyncio.run(main())

```

</details>

## 🧪 Experiments

### 🌍 [Appworld Experiment](docs/cookbook/appworld/quickstart.md)

We tested ReMe on Appworld using Qwen3-8B (non-thinking mode):

| Method | Avg@4 | Pass@4 |

|--------------|---------------------|---------------------|

| without ReMe | 0.1497 | 0.3285 |

| with ReMe | 0.1706 **(+2.09%)** | 0.3631 **(+3.46%)** |

Pass@K measures the probability that at least one of the K generated samples successfully completes the task (

score=1).

The current experiment uses an internal AppWorld environment, which may have slight differences.

You can find more details on reproducing the experiment in [quickstart.md](docs/cookbook/appworld/quickstart.md).

### 🔧 [BFCL-V3 Experiment](docs/cookbook/bfcl/quickstart.md)

We tested ReMe on BFCL-V3 multi-turn-base (randomly split 50train/150val) using Qwen3-8B (thinking mode):

| Method | Avg@4 | Pass@4 |

|--------------|---------------------|---------------------|

| without ReMe | 0.4033 | 0.5955 |

| with ReMe | 0.4450 **(+4.17%)** | 0.6577 **(+6.22%)** |

### 🧊 [Frozenlake Experiment](docs/cookbook/frozenlake/quickstart.md)

| without ReMe | with ReMe |

|:----------------------------------------------------------------------------------------------------:|:----------------------------------------------------------------------------------------------------:|

| <p align="center"><img src="docs/_static/figure/frozenlake_failure.gif" alt="GIF 1" width="30%"></p> | <p align="center"><img src="docs/_static/figure/frozenlake_success.gif" alt="GIF 2" width="30%"></p> |

We tested on 100 random frozenlake maps using qwen3-8b:

| Method | pass rate |

|--------------|------------------|

| without ReMe | 0.66 |

| with ReMe | 0.72 **(+6.0%)** |

You can find more details on reproducing the experiment in [quickstart.md](docs/cookbook/frozenlake/quickstart.md).

### 🛠️ [Tool Memory Benchmark](docs/tool_memory/tool_bench.md)

We evaluated Tool Memory effectiveness using a controlled benchmark with three mock search tools using Qwen3-30B-Instruct:

| Scenario | Avg Score | Improvement |

|------------------------|-----------|-------------|

| Train (No Memory) | 0.650 | - |

| Test (No Memory) | 0.672 | Baseline |

| **Test (With Memory)** | **0.772** | **+14.88%** |

**Key Findings:**

- Tool Memory enables data-driven tool selection based on historical performance

- Success rates improved by ~15% with learned parameter configurations

You can find more details in [tool_bench.md](docs/tool_memory/tool_bench.md) and the implementation at [run_reme_tool_bench.py](cookbook/tool_memory/run_reme_tool_bench.py).

## 📚 Resources

### Getting Started

- **[Quick Start](./cookbook/simple_demo)**: Practical examples for immediate use

- [Tool Memory Demo](cookbook/simple_demo/use_tool_memory_demo.py): Complete lifecycle demonstration of tool memory

- [Tool Memory Benchmark](cookbook/tool_memory/run_reme_tool_bench.py): Evaluate tool memory effectiveness

### Integration Guides

- **[Direct Python Import](docs/cookbook/working/quick_start.md)**: Embed ReMe directly into your agent code

- **[HTTP Service API](docs/vector_store_api_guide.md)**: RESTful API for multi-agent systems

- **[MCP Protocol](docs/mcp_quick_start.md)**: Integration with Claude Desktop and MCP-compatible clients

### Memory System Configuration

- **[Personal Memory](docs/personal_memory)**: User preference learning and contextual adaptation

- **[Task Memory](docs/task_memory)**: Procedural knowledge extraction and reuse

- **[Tool Memory](docs/tool_memory)**: Data-driven tool selection and optimization

- **[Working Memory](docs/work_memory/message_offload.md)**: Short-term context management for long-running agents

### Advanced Topics

- **[Operator Pipelines](reme_ai/config/default.yaml)**: Customize memory processing workflows by modifying operator chains

- **[Vector Store Backends](docs/vector_store_api_guide.md)**: Configure local, Elasticsearch, Qdrant, or ChromaDB storage

- **[Example Collection](./cookbook)**: Real-world use cases and best practices

---

## ⭐ Support & Community

- **Star & Watch**: Stars surface ReMe to more agent builders; watching keeps you updated on new releases.

- **Share your wins**: Open an issue or discussion with what ReMe unlocked for your agents—we love showcasing community builds.

- **Need a feature?** File a request and we’ll help shape it together.

---

## 🤝 Contribution

We believe the best memory systems come from collective wisdom. Contributions welcome 👉[Guide](docs/contribution.md):

### Code Contributions

- **New Operators**: Develop custom memory processing operators (retrieval, summarization, etc.)

- **Backend Implementations**: Add support for new vector stores or LLM providers

- **Memory Services**: Extend with new memory types or capabilities

- **API Enhancements**: Improve existing endpoints or add new ones

### Documentation Improvements

- **Integration Examples**: Show how to integrate ReMe with different agent frameworks

- **Operator Tutorials**: Document custom operator development

- **Best Practice Guides**: Share effective memory management patterns

- **Use Case Studies**: Demonstrate ReMe in real-world applications

---

## 📄 Citation

```bibtex

@software{AgentscopeReMe2025,

title = {AgentscopeReMe: Memory Management Kit for Agents},

author = {Li Yu and

Jiaji Deng and

Zouying Cao and

Weikang Zhou and

Tiancheng Qin and

Qingxu Fu and

Sen Huang and

Xianzhe Xu and

Zhaoyang Liu and

Boyin Liu},

url = {https://reme.agentscope.io},

year = {2025}

}

@misc{AgentscopeReMe2025Paper,

title={Remember Me, Refine Me: A Dynamic Procedural Memory Framework for Experience-Driven Agent Evolution},

author={Zouying Cao and

Jiaji Deng and

Li Yu and

Weikang Zhou and

Zhaoyang Liu and

Bolin Ding and

Hai Zhao},

year={2025},

eprint={2512.10696},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://arxiv.org/abs/2512.10696},

}

```

---

## ⚖️ License

This project is licensed under the Apache License 2.0 - see the [LICENSE](./LICENSE) file for details.

---

## Star History

[](https://www.star-history.com/#agentscope-ai/ReMe&Date)

| text/markdown | null | "jinli.yl" <jinli.yl@alibaba-inc.com>, "dengjiaji.djj" <dengjiaji.djj@alibaba-inc.com>, "caozouying.czy" <caozouying.czy@alibaba-inc.com>, "weikangzhou.zwk" <weikangzhou.zwk@alibaba-inc.com> | null | null | Apache License

Version 2.0, January 2004

http://www.apache.org/licenses/

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

1. Definitions.

"License" shall mean the terms and conditions for use, reproduction,

and distribution as defined by Sections 1 through 9 of this document.

"Licensor" shall mean the copyright owner or entity authorized by

the copyright owner that is granting the License.

"Legal Entity" shall mean the union of the acting entity and all

other entities that control, are controlled by, or are under common

control with that entity. For the purposes of this definition,

"control" means (i) the power, direct or indirect, to cause the

direction or management of such entity, whether by contract or

otherwise, or (ii) ownership of fifty percent (50%) or more of the

outstanding shares, or (iii) beneficial ownership of such entity.

"You" (or "Your") shall mean an individual or Legal Entity

exercising permissions granted by this License.

"Source" form shall mean the preferred form for making modifications,

including but not limited to software source code, documentation

source, and configuration files.

"Object" form shall mean any form resulting from mechanical

transformation or translation of a Source form, including but

not limited to compiled object code, generated documentation,

and conversions to other media types.

"Work" shall mean the work of authorship, whether in Source or

Object form, made available under the License, as indicated by a

copyright notice that is included in or attached to the work

(an example is provided in the Appendix below).

"Derivative Works" shall mean any work, whether in Source or Object

form, that is based on (or derived from) the Work and for which the

editorial revisions, annotations, elaborations, or other modifications

represent, as a whole, an original work of authorship. For the purposes

of this License, Derivative Works shall not include works that remain

separable from, or merely link (or bind by name) to the interfaces of,

the Work and Derivative Works thereof.

"Contribution" shall mean any work of authorship, including

the original version of the Work and any modifications or additions

to that Work or Derivative Works thereof, that is intentionally

submitted to Licensor for inclusion in the Work by the copyright owner

or by an individual or Legal Entity authorized to submit on behalf of

the copyright owner. For the purposes of this definition, "submitted"

means any form of electronic, verbal, or written communication sent

to the Licensor or its representatives, including but not limited to

communication on electronic mailing lists, source code control systems,

and issue tracking systems that are managed by, or on behalf of, the

Licensor for the purpose of discussing and improving the Work, but

excluding communication that is conspicuously marked or otherwise

designated in writing by the copyright owner as "Not a Contribution."

"Contributor" shall mean Licensor and any individual or Legal Entity

on behalf of whom a Contribution has been received by Licensor and

subsequently incorporated within the Work.

2. Grant of Copyright License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

copyright license to reproduce, prepare Derivative Works of,

publicly display, publicly perform, sublicense, and distribute the

Work and such Derivative Works in Source or Object form.

3. Grant of Patent License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

(except as stated in this section) patent license to make, have made,

use, offer to sell, sell, import, and otherwise transfer the Work,

where such license applies only to those patent claims licensable

by such Contributor that are necessarily infringed by their

Contribution(s) alone or by combination of their Contribution(s)

with the Work to which such Contribution(s) was submitted. If You

institute patent litigation against any entity (including a

cross-claim or counterclaim in a lawsuit) alleging that the Work

or a Contribution incorporated within the Work constitutes direct

or contributory patent infringement, then any patent licenses

granted to You under this License for that Work shall terminate

as of the date such litigation is filed.

4. Redistribution. You may reproduce and distribute copies of the

Work or Derivative Works thereof in any medium, with or without

modifications, and in Source or Object form, provided that You

meet the following conditions:

(a) You must give any other recipients of the Work or

Derivative Works a copy of this License; and

(b) You must cause any modified files to carry prominent notices

stating that You changed the files; and

(c) You must retain, in the Source form of any Derivative Works

that You distribute, all copyright, patent, trademark, and

attribution notices from the Source form of the Work,

excluding those notices that do not pertain to any part of

the Derivative Works; and

(d) If the Work includes a "NOTICE" text file as part of its

distribution, then any Derivative Works that You distribute must

include a readable copy of the attribution notices contained

within such NOTICE file, excluding those notices that do not

pertain to any part of the Derivative Works, in at least one

of the following places: within a NOTICE text file distributed

as part of the Derivative Works; within the Source form or

documentation, if provided along with the Derivative Works; or,

within a display generated by the Derivative Works, if and

wherever such third-party notices normally appear. The contents

of the NOTICE file are for informational purposes only and

do not modify the License. You may add Your own attribution

notices within Derivative Works that You distribute, alongside

or as an addendum to the NOTICE text from the Work, provided

that such additional attribution notices cannot be construed

as modifying the License.

You may add Your own copyright statement to Your modifications and

may provide additional or different license terms and conditions

for use, reproduction, or distribution of Your modifications, or

for any such Derivative Works as a whole, provided Your use,

reproduction, and distribution of the Work otherwise complies with

the conditions stated in this License.

5. Submission of Contributions. Unless You explicitly state otherwise,

any Contribution intentionally submitted for inclusion in the Work

by You to the Licensor shall be under the terms and conditions of

this License, without any additional terms or conditions.

Notwithstanding the above, nothing herein shall supersede or modify

the terms of any separate license agreement you may have executed

with Licensor regarding such Contributions.

6. Trademarks. This License does not grant permission to use the trade

names, trademarks, service marks, or product names of the Licensor,

except as required for reasonable and customary use in describing the

origin of the Work and reproducing the content of the NOTICE file.

7. Disclaimer of Warranty. Unless required by applicable law or

agreed to in writing, Licensor provides the Work (and each

Contributor provides its Contributions) on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

implied, including, without limitation, any warranties or conditions

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

PARTICULAR PURPOSE. You are solely responsible for determining the

appropriateness of using or redistributing the Work and assume any

risks associated with Your exercise of permissions under this License.

8. Limitation of Liability. In no event and under no legal theory,

whether in tort (including negligence), contract, or otherwise,

unless required by applicable law (such as deliberate and grossly

negligent acts) or agreed to in writing, shall any Contributor be

liable to You for damages, including any direct, indirect, special,

incidental, or consequential damages of any character arising as a

result of this License or out of the use or inability to use the

Work (including but not limited to damages for loss of goodwill,

work stoppage, computer failure or malfunction, or any and all

other commercial damages or losses), even if such Contributor

has been advised of the possibility of such damages.

9. Accepting Warranty or Additional Liability. While redistributing

the Work or Derivative Works thereof, You may choose to offer,

and charge a fee for, acceptance of support, warranty, indemnity,

or other liability obligations and/or rights consistent with this

License. However, in accepting such obligations, You may act only

on Your own behalf and on Your sole responsibility, not on behalf

of any other Contributor, and only if You agree to indemnify,

defend, and hold each Contributor harmless for any liability

incurred by, or claims asserted against, such Contributor by reason

of your accepting any such warranty or additional liability.

END OF TERMS AND CONDITIONS

APPENDIX: How to apply the Apache License to your work.

To apply the Apache License to your work, attach the following

boilerplate notice, with the fields enclosed by brackets "[]"

replaced with your own identifying information. (Don't include

the brackets!) The text should be enclosed in the appropriate

comment syntax for the file format. We also recommend that a

file or class name and description of purpose be included on the

same "printed page" as the copyright notice for easier

identification within third-party archives.

Copyright 2025 Alibaba Group

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

| llm, memory, experience, memoryscope, ai, mcp, http, reme, personal | [

"Development Status :: 4 - Beta",

"Intended Audience :: Developers",

"Intended Audience :: Science/Research",

"License :: OSI Approved :: Apache Software License",

"Operating System :: OS Independent",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Topic :: Scientifi... | [] | null | null | >=3.10 | [] | [] | [] | [

"flowllm[reme]>=0.2.0.10",

"sqlite-vec>=0.1.6",

"prompt_toolkit>=3.0.52",

"rich>=14.2.0",

"asyncpg>=0.31.0",

"chromadb>=1.3.5",

"dashscope>=1.25.1",

"elasticsearch>=9.2.0",

"fastapi>=0.121.3",

"fastmcp>=2.14.1",

"httpx>=0.28.1",

"litellm>=1.80.0",

"loguru>=0.7.3",

"mcp>=1.25.0",

"numpy>=... | [] | [] | [] | [

"Homepage, https://github.com/agentscope-ai/ReMe",

"Documentation, https://reme.agentscope.io/",

"Repository, https://github.com/agentscope-ai/ReMe"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T17:05:10.873101 | reme_ai-0.3.0.0b3.tar.gz | 443,849 | 68/cf/d519d62663a58906c43ccab6270ec0c9308c26e7677a0125ee58b84d5258/reme_ai-0.3.0.0b3.tar.gz | source | sdist | null | false | bbe1a43bd2e99dce97c63f3d15f441c1 | 237a3b6380395f08367992da1e7546d0cb421b9243b2961ff19114588af87c11 | 68cfd519d62663a58906c43ccab6270ec0c9308c26e7677a0125ee58b84d5258 | null | [

"LICENSE"

] | 197 |

2.1 | krippendorff-graph | 0.2.0 | A Python package for computing krippendorffs alpha for graph (modified from https://github.com/grrrr/krippendorff-alpha/blob/master/krippendorff_alpha.py) | # Krippendorff-alpha-for-graph

Compute Krippendorff's alpha for graph, modified from https://github.com/grrrr/krippendorff-alpha/

Package URL: https://pypi.org/project/krippendorff-graph/

### Changes

1. Used Networkx to instantiate graph

2. Added custom node/edge and graph metrics (see below)

3. Forced a pre-computation of distance matrix to boost efficiency for computing, and store it as .npy

- within-units disagreement (Do)

- within- and between-units expected total disagreement (De)

4. Not properly tested, but as long as you have a pandas dataframe that satisfies the following shape, it works.

- the df has a feature column storing annotated graphs (list of tuples, such as [("subject_1", "predicate_1", "object_1"), ("subject_2", "predicate_2", "object_2")])

- feature column can also be nodes or edges (tuple of strings)

- a column indicating annotator id

- annotation id is ordered the same way for all annotator

5. Note that, distance metric interacts with the networkx graph type when calling instantiate_networkx_graph(). There are the following graph types,

- nx.Graph

- nx.DiGraph

- nx.MultiGraph

- nx.MultiDiGraph

6. Two categories of distance metric are implemented.

- Lenient metric: node/edge or graph overlap

- Strict metric: nominal metric, graph edit distance

7. Depending on your how many graphs you have, computation of graph distance matrix can take a long time.

### Python installation

Open your terminal, activate your preferred environment, then type in

```

pip install krippendorff_graph

```

### Node/edge Metrics

#### Lenient metric

1. Node/Edge Overlap Metric: if two sets of nodes or edges overlap, the distance between these two sets is 0; else 1.

#### Moderate metric

1. Node/Edge Jaccard Distance metric: it captures partial similarity by measuring the proportion of shared nodes or edges between two sets.

#### Strict metric

1. Nominal Metric: exact match of two sets of ndoes or edges.

### Graph Metrics

#### Lenient metric

1. Graph Overlap Metric: if two graphs overlap, the distance between these two sets is 0; else 1.

#### Moderate metric

1. Normalized Graph Edit Distance

- normalized by computing distance between g1 and g0 and between g2 and g0

- g0 is an empty graph

#### Strict metric

1. Nominal Metric: exact match of two sets of triples.

### Example Usage

###### Compute distance matrix of graphs

```

import pandas as pd

from krippendorff_graph import compute_alpha, compute_distance_matrix, graph_edit_distance, graph_overlap_metric, nominal_metric

df = pd.DataFrame.from_dict({"annotator": [1,1,1,1,2,2,2,2,3,3,3,3,4,4,4,4],

"narrative": [

["bla, ela, pla, mla."],["bla, ela, pla, mla."],["bla, ela, pla, mla."],["bla, ela, pla, mla."],

["bla, ela, pla, mla."],["bla, ela, pla, mla."],["bla, ela, pla, mla."],["bla, ela, pla, mla."],

["bla, ela, pla, mla."],["bla, ela, pla, mla."],["bla, ela, pla, mla."],["bla, ela, pla, mla."],

["bla, ela, pla, mla."],["bla, ela, pla, mla."],["bla, ela, pla, mla."],["bla, ela, pla, mla."]

],

"graph_feature": [

{("sub", "pre", "obj")},{("sub1", "pre1", "obj1"), ("sub2", "pre2", "obj2")},{("sub", "pre", "obj")},{("sub", "pre", "obj")},

*,{("sub", "pre", "obj")},{("sub", "pre", "obj")},{("sub", "pre", "obj")},

{("sub", "pre", "obj")},{("sub1", "pre1", "obj1"), ("sub2", "pre2", "obj2")},{("sub", "pre", "obj")},{("sub1", "pre1", "obj1"), ("sub2", "pre2", "obj2")},

*,{("sub", "pre", "obj")},{("sub", "pre", "obj")},{("sub1", "pre1", "obj1"), ("sub2", "pre2", "obj2")}

]

})

data = [

df[df["annotator"]==1].graph_feature.to_list(),

df[df["annotator"]==2].graph_feature.to_list(),

df[df["annotator"]==3].graph_feature.to_list(),

df[df["annotator"]==4].graph_feature.to_list()

]

empty_graph_indicator = "*" # indicator for missing values

save_path = "./lenient_distance_matrix.npy"

feature_column="graph_feature"

graph_distance_metric= node_overlap_metric

forced = True

if not Path(save_path).exists() or forced:

distance_matrix = compute_distance_matrix(df=df,

feature_column=feature_column, graph_distance_metric=graph_distance_metric,

empty_graph_indicator=empty_graph_indicator, save_path=save_path, graph_type=nx.Graph)

else:

distance_matrix = np.load(save_path)

print("Lenient node metric: %.3f" % compute_alpha(data, distance_matrix=distance_matrix, missing_items=empty_graph_indicator))

```

(Please help contributing by making a PR - it will be faster than reporting an issue since the maintainer might be slower than you.)

| text/markdown | Junbo Huang | junbo.huang@uni-hamburg.de | null | null | Apache 2 License | null | [] | [] | https://github.com/junbohuang/Krippendorff-alpha-for-graph | null | null | [] | [] | [] | [

"pandas",

"scikit-learn",

"requests",

"networkx",

"tqdm",

"numpy"

] | [] | [] | [] | [] | twine/6.2.0 CPython/3.9.21 | 2026-02-19T17:03:54.986981 | krippendorff_graph-0.2.0-py3-none-any.whl | 9,315 | 26/2f/610b1a0f42cc4971b919cd916194f99312b09c03bc828b48520e82fd04c0/krippendorff_graph-0.2.0-py3-none-any.whl | py3 | bdist_wheel | null | false | fd12f29522d176d06098a755be317bcd | 72732bc9b202e2fb7a5b53bb13bcb434f4d050210bd3df11d570a188e3f7a911 | 262f610b1a0f42cc4971b919cd916194f99312b09c03bc828b48520e82fd04c0 | null | [] | 102 |

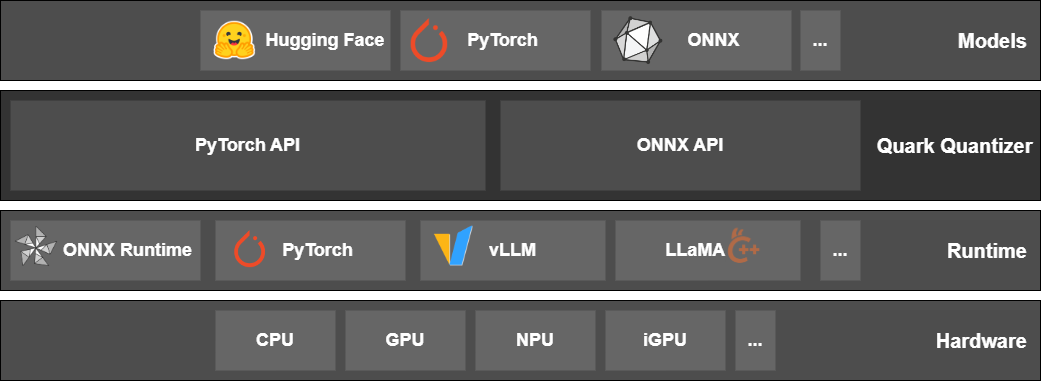

2.4 | amd-quark | 0.11.1 | AMD Quark is a comprehensive cross-platform toolkit designed to simplify and enhance the quantization of deep learning models. Supporting both PyTorch and ONNX models, AMD Quark empowers developers to optimize their models for deployment on a wide range of hardware backends, achieving significant performance gains without compromising accuracy. | <div align="center">

# AMD Quark Model Optimizer

[](https://quark.docs.amd.com/latest/)

[](https://pypi.org/project/amd-quark/)

[](./LICENSE)

[](https://www.python.org/)

[PyTorch Examples](https://quark.docs.amd.com/latest/pytorch/pytorch_examples.html) |

[ONNX Examples](https://quark.docs.amd.com/latest/onnx/onnx_examples.html) |

[Documentation](https://quark.docs.amd.com/) |

[Release Notes](https://quark.docs.amd.com/latest/release_note.html)

</div>

**AMD Quark** is a comprehensive cross-platform toolkit designed to simplify and enhance the quantization of deep learning models. Supporting both PyTorch and ONNX models, AMD Quark empowers developers to optimize their models for deployment on a wide range of hardware backends, achieving significant performance gains without compromising accuracy.

## Features

| Feature Set | PyTorch backend | ONNX backend |

| ---------------------- | ----------------------------------------------------------------------------------------------------------------------------------- | ----------------------------------------------------------------------------------------- |

| Data Types | int4, uint4, int8, uint8, float16, bfloat16, OCP FP8 E4M3/E5M2, OCP MX INT8, OCP MX FP4, OCP MX FP6 E3M2/E2M3, OCP MX FP8 E4M3/E5M2 | int4, uint4, int8, uint8, int16, uint16, int32, uint32, float16, bfloat16, BFP16, MX4/MX6/MX9, OCP MX INT8, OCP MX FP4, OCP MX FP6 E3M2/E2M3, OCP MX FP8 E4M3/E5M2 |

| Quant Mode | eager mode, FX graph mode | ONNX graph mode |

| Quant Strategy | static quant, dynamic quant, weight-only | static quant, dynamic quant, weight-only |

| Quant Scheme | per-tensor, per-channel, per-group | per-tensor, per-channel |

| Symmetric | symmetric, asymmetric | symmetric, asymmetric |

| Calibration Method | MinMax, Percentile, MSE | MinMax, Percentile, MinMSE, Entropy, NonOverflow |

| Scale Type | float16, float32 | float16, float32 |

| KV-Cache Quant | FP8 KV-Cache Quant | N/A |

| Supported Ops. | `nn.Linear`, `nn.Conv2d`, `nn.ConvTranspose2d`, `nn.Embedding`, `nn.EmbeddingBag`, | Almost all ONNX ops, |

| | `nn.BatchNorm2d`, `nn.BatchNorm3d`, `nn.LeakyReLU`, `nn.AvgPool2d`, `nn.AdaptiveAvgPool2d` | see [Full List](https://quark.docs.amd.com/latest/onnx/user_guide_supported_optype_datatype.html) |

| Pre-Quant Optimization | SmoothQuant, QuaRot | QuaRot, SmoothQuant, CLE |

| Quantization Algorithm | AWQ, GPTQ, Qronos | AdaQuant, AdaRound, GPTQ, Bias Correction |

| Export Format | ONNX, JSON-Safetensors, GGUF(Q4_1) | N/A |

| Operating Systems | Linux {ROCm, CUDA, CPU}, Windows {CPU} | Linux {ROCm, CUDA, CPU}, Windows {CUDA, CPU} |

## Model Support Table

| Quantization Technique | Supported Models |

| ------------------------------------- | ------------------------------------------------------------------------------------------------- |

| LLM Pruning | [Model Support](examples/torch/language_modeling/llm_pruning/example_quark_torch_llm_pruning.rst) |

| LLM Post Training Quantization (PTQ) | [Model Support](examples/torch/language_modeling/llm_ptq/example_quark_torch_llm_ptq.rst) |

| LLM Quantization Aware Training (QAT) | [Model Support](examples/torch/language_modeling/llm_qat/example_quark_torch_llm_qat.rst) |

| Vision Model Quantization | [Model Support](examples/torch/vision/model_support.md) |

| Quark for ONNX | [Model Support](examples/onnx/model_support.md) |

## Installation

Official releases of AMD Quark are available on PyPI https://pypi.org/project/amd-quark/, and can be installed with pip:

```shell

pip install amd-quark

```

> [!NOTE]\

> For full instructions to install AMD Quark from Python wheels or ZIP files, refer to our [🛠️Installation Guide](https://quark.docs.amd.com/latest/install.html). The Installation Guide also contains verification steps that apply to building from source.

### Installing from Source

1. Clone or download this repository.

2. Follow the steps from the [PyTorch](https://pytorch.org/get-started/locally/) website to install the appropriate PyTorch package for your system.

3. You can then build and install AMD Quark, and its dependencies, which are detailed in [requirements.txt](requirements.txt), by running:

```shell

git clone --recursive https://github.com/AMD/Quark

cd Quark

# [Optional] run git submodule if you are updating an existing Quark repository

git submodule sync

git submodule update --init --recursive

pip install .

```

## Resources

AMD Quark's documentation site contains [Getting Started](https://quark.docs.amd.com/latest/basic_usage.html), _API documentation_ for both [PyTorch](https://quark.docs.amd.com/latest/autoapi/pytorch_apis.html) and [ONNX](https://quark.docs.amd.com/latest/autoapi/onnx_apis.html) backends, and other detailed information.

The Installation Guide includes our [Recommended First Time User Installation](https://quark.docs.amd.com/latest/install.html#recommended-first-time-user-installation) guide, to get set up with Quark quickly.

Check out our _Frequently Asked Questions_ for both [PyTorch](https://quark.docs.amd.com/latest/pytorch/pytorch_faq.html) and [ONNX](https://quark.docs.amd.com/latest/onnx/onnx_faq.html) for more details.

* [📖Documentation](https://quark.docs.amd.com/)

* [📄FAQ (PyTorch)](https://quark.docs.amd.com/latest/pytorch/pytorch_faq.html)

* [📄FAQ (ONNX)](https://quark.docs.amd.com/latest/onnx/onnx_faq.html)

AMD Quark provides examples of Language Model and Image Classification model quantization, which can be found under [examples/torch/](examples/torch/) and [examples/onnx/](examples/onnx/).

These examples are documented here:

* [💡PyTorch Examples](https://quark.docs.amd.com/latest/pytorch/pytorch_examples.html)

* [💡ONNX Examples](https://quark.docs.amd.com/latest/onnx/onnx_examples.html)

The examples folder also contain integrations of other quantizers under [examples/torch/extensions/](examples/torch/extensions/). You can read about those here:

* [Brevitas Integration](examples/torch/extensions/brevitas/example_quark_torch_brevitas.rst)

* [Integration with AMD Pytorch-light (APL)](examples/torch/extensions/pytorch_light/example_quark_torch_pytorch_light.rst).

## Contributing

AMD Quark is not set up to accept community contributions (bug reports, feature requests, or Pull Requests) just yet.

Please watch this space!

## License and Copyright

Copyright (C) 2025, Advanced Micro Devices, Inc. All rights reserved. SPDX-License-Identifier: MIT.

See [LICENSE](LICENSE) file for detail.

| text/markdown | AMD | help@amd.com | null | AMD Quark Maintainers <quark.maintainers@amd.com> | MIT License

Copyright (c) 2023 Advanced Micro Devices, Inc.

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

| quantization, pytorch, onnx | [

"Development Status :: 5 - Production/Stable",

"Intended Audience :: Developers",

"Intended Audience :: Education",

"Intended Audience :: Science/Research",

"Operating System :: OS Independent",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :... | [] | null | null | <3.13,>=3.9.0 | [] | [] | [] | [

"evaluate",

"joblib",

"ninja",

"numpy<=2.1.3",

"onnx<=1.19.0,>=1.16.0",

"onnxscript",

"onnxslim>=0.1.84",

"pandas",

"plotly",

"protobuf",

"pydantic",

"rich",

"scipy",

"sentencepiece",

"tqdm",

"zstandard",

"mypy==1.18.2; extra == \"lint\"",

"opencv-python; extra == \"lint\"",

"pre... | [] | [] | [] | [

"documentation, https://quark.docs.amd.com",

"homepage, https://github.com/amd/quark",

"issues, https://github.com/amd/quark/issues",

"repository, https://github.com/amd/quark.git"

] | twine/6.2.0 CPython/3.11.13 | 2026-02-19T17:03:38.272699 | amd_quark-0.11.1-py3-none-any.whl | 1,857,393 | 5c/28/ab71c6b10e017e6b2877dff74197d8270e486621dcd08dee1c0308424b0a/amd_quark-0.11.1-py3-none-any.whl | py3 | bdist_wheel | null | false | a16294a49a4a88b08aa1b000465761b6 | dabc284fb1532f96efb53a590bbc6c2f73c2ebac603d92fb4a3f2e4a822f1cc1 | 5c28ab71c6b10e017e6b2877dff74197d8270e486621dcd08dee1c0308424b0a | null | [

"LICENSE"

] | 175 |

2.4 | bagit-create | 1.4.2 | Create BagIt packages harvesting data from upstream sources | # bagit-create

[](https://pypi.org/project/bagit-create/) [](https://github.com/psf/black) [](https://www.python.org/downloads/release/python-3100/)

"BagIt Create" is a tool to export digital repository records in packages with a consistent format, according to the [CERN Submission Information Package specification](https://gitlab.cern.ch/digitalmemory/sip-spec).

Digital Repositories powered by Invenio v1, Invenio v3, Invenio RDM, CERN Open Data and Indico are supported, as well as GitLab repositories and locally found folders.

Quick start:

```

# Install

pip install bagit-create

# Create bag for CDS record 2728246

bic --recid 2728246 --source cds

```

#### Table of contents

- [Install](#install)

- [LXPLUS](#lxplus)

- [Development](#development)

- [Usage](#usage)

- [Examples](#examples)

- [Options](#options)

- [Features](#features)

- [Supported sources](#supported-sources)

- [URL parsing](#url-parsing)

- [Light bags](#light-bags)

- [Configuration](#configuration)

- [Indico](#indico)

- [Invenio v1.x](#invenio-v1x)

- [CERN SSO](#cern-sso)

- [Local](#local)

- [CodiMD](#codimd)

- [Advanced usage](#advanced-usage)

- [Module](#module)

- [Accessing CERN firewalled websites](#accessing-cern-firewalled-websites)

- [bibdocfile](#bibdocfile)

---

# Install

Pre-requisites:

```bash

# On CentOS

yum install gcc krb5-devel python3-devel

```

If you just need to run BagIt Create from the command line:

```bash

# Install from PyPi

pip install bagit-create

# Check installed version

bic --version

# Create bag for CDS record 2728246

bic --recid 2728246 --source cds

```

## LXPLUS

BagIt-Create can be easily installed and used on LXPLUS (e.g. if you need access to mounted EOS folders):

```bash

pip3 install bagit-create --user

```

Check if `.local/bin` (where pip puts the executables) is in the path. If not `export PATH=$PATH:~/.local/bin`.

## Development

Clone this repository and then install the package with the `-e` flag:

```bash

# Clone the repository

git clone https://gitlab.cern.ch/digitalmemory/bagit-create

cd bagit-create

# Create a virtual environment and activate it

python -m venv env

source env/bin/activate

# Install the project in editable mode

pip install -e .

# Check installed version

bic --version

# You're done! Create a SIP for a CDS resource from its URL:

bic --url http://cds.cern.ch/record/2798105 -v

# Install additional packages for testing

pip install pytest oais_utils

# Run tests

# Set an Indico API key, or expect some test to fail

export INDICO_KEY=<YOUR_INDICO_KEY>

export GITLAB_KEY=<YOUR_GITLAB_KEY>

python -m pytest

```

Code is formatted using **black** and linted with **flake8**. A VSCode settings file is provided for convenience.

# Usage

You usually just need to specify the location of the record you are trying to create a package for.

You can do it by specifying the "Source" (see [supported sources](#supported-sources)) and the Record ID:

```bash

bic --recid 2728246 --source cds

```

or passing an URL (currently only works with CDS, Zenodo and CERN Open Data links):

```

bic --url http://cds.cern.ch/record/2665537

```

## Examples

GitLab:

```

bic --source gitlab --token <YOUR_TOKEN> --recid 104913 -vv

```

CDS:

```bash

# (Expect error) Removed resource

bic --recid 1 --source cds

# (Expect error) Public resource but metadata requires authorisation

bic --recid 1000 --source cds

# Resource with a lot of large videos, light bag

bic --recid 1000571 --source cds --dry-run

# Resource with just a PDF

bic --recid 2728246 --source cds

```

ilcdoc:

```bash

# ilcdoc #

bic --source ilcdoc --recid 62959 --verbose

bic --source ilcdoc --recid 34794 --verbose

```

Zenodo

```bash

bic --recid 3911261 --source zenodo --verbose

bic --recid 3974864 --source zenodo --verbose

```

Indico

```bash

bic --recid 1024767 --source indico

```

CERN Open Data

```bash

bic --recid 1 --source cod --dry-run --verbose

bic --recid 8884 --source cod --dry-run --verbose --alternate-uri

bic --recid 8884 --source cod --dry-run --verbose

bic --recid 5200 --source cod --dry-run --verbose

bic --recid 8888 --source cod --dry-run --verbose

bic --recid 10101 --source cod --dry-run --verbose

bic --recid 10102 --source cod --dry-run --verbose

bic --recid 10103 --source cod --dry-run --verbose

bic --recid 10104 --source cod --dry-run --verbose

bic --recid 10105 --source cod --dry-run --verbose

bic --recid 10101 --source cod --verbose

bic --recid 10102 --source cod --verbose

bic --recid 10103 --source cod --verbose

bic --recid 10104 --source cod --verbose

bic --recid 10105 --source cod --verbose

```

Some more advanced recipes can be found in the `examples/` folder.

## Options

```sh

--version Show the version and exit.

--recid TEXT Record ID of the resource the upstream

digital repository. Required by every

pipeline but local.

-s, --source [cds|ilcdoc|cod|zenodo|inveniordm|indico|local|ilcagenda]

Select source pipeline from the supported

ones.

-u, --url TEXT Provide an URL for the Record

[Works with CDS, Open Data and Zenodo links]

-d, --dry-run Skip downloads and create a `light` bag,

without any payload.

-a, --alternate-uri Use alternative uri instead of https for

fetch.txt (e.g. root endpoints for CERN

Open Data instead of http).

-v, --verbose Enable basic logging (verbose, 'info'

level).

-vv, --very-verbose Enable verbose logging (very verbose,

'debug' level).