metadata_version string | name string | version string | summary string | description string | description_content_type string | author string | author_email string | maintainer string | maintainer_email string | license string | keywords string | classifiers list | platform list | home_page string | download_url string | requires_python string | requires list | provides list | obsoletes list | requires_dist list | provides_dist list | obsoletes_dist list | requires_external list | project_urls list | uploaded_via string | upload_time timestamp[us] | filename string | size int64 | path string | python_version string | packagetype string | comment_text string | has_signature bool | md5_digest string | sha256_digest string | blake2_256_digest string | license_expression string | license_files list | recent_7d_downloads int64 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

2.4 | reaktiv | 0.21.2 | Signals for Python - inspired by Angular Signals / SolidJS. Reactive Declarative State Management Library for Python - automatic dependency tracking and reactive updates for your application state. | # reaktiv

<div align="center">

[](https://pypi.org/project/reaktiv/) [](https://pepy.tech/projects/reaktiv)   [](https://microsoft.github.io/pyright/)

[](https://ko-fi.com/H2H71OBINS)

**Reactive Declarative State Management Library for Python** - automatic dependency tracking and reactive updates for your application state.

[Website](https://reaktiv.bui.app/) | [Live Playground](https://reaktiv.bui.app/#playground) | [Documentation](https://reaktiv.bui.app/docs) | [Deep Dive Article](https://bui.app/the-missing-manual-for-signals-state-management-for-python-developers/)

</div>

## Installation

```bash

pip install reaktiv

# or with uv

uv pip install reaktiv

```

`reaktiv` is a **reactive declarative state management library** that lets you **declare relationships between your data** instead of manually managing updates. When data changes, everything that depends on it updates automatically - eliminating a whole class of bugs where you forget to update dependent state.

**Think of it like Excel spreadsheets for your Python code**: when you change a cell value, all formulas that depend on it automatically recalculate. That's exactly how `reaktiv` works with your application state.

**Key benefits:**

- 🐛 **Fewer bugs**: No more forgotten state updates or inconsistent data

- 📋 **Clearer code**: State relationships are explicit and centralized

- ⚡ **Better performance**: Only recalculates what actually changed (fine-grained reactivity)

- 🔄 **Automatic updates**: Dependencies are tracked and updated automatically

- 🎯 **Python-native**: Built for Python's patterns with full async support

- 🔒 **Type safe**: Full type hint support with automatic inference

- 🚀 **Lazy evaluation**: Computed values are only calculated when needed

- 💾 **Smart memoization**: Results are cached and only recalculated when dependencies change

## Documentation

Full documentation is available at [https://reaktiv.bui.app/docs/](https://reaktiv.bui.app/docs/).

For a comprehensive guide, check out [The Missing Manual for Signals: State Management for Python Developers](https://bui.app/the-missing-manual-for-signals-state-management-for-python-developers/).

## Quick Start

```python

from reaktiv import Signal, Computed, Effect

# Your reactive data sources

name = Signal("Alice")

age = Signal(30)

# Reactive derived data - automatically stays in sync

@Computed

def greeting():

return f"Hello, {name()}! You are {age()} years old."

# Reactive side effects - automatically run when data changes

# IMPORTANT: Must assign to variable to prevent garbage collection

greeting_effect = Effect(lambda: print(f"Updated: {greeting()}"))

# Just change your base data - everything reacts automatically

name.set("Bob") # Prints: "Updated: Hello, Bob! You are 30 years old."

age.set(31) # Prints: "Updated: Hello, Bob! You are 31 years old."

```

## Core Concepts

`reaktiv` provides three simple building blocks for reactive programming - just like Excel has cells and formulas:

1. **Signal**: Holds a reactive value that can change (like an Excel cell with a value)

2. **Computed**: Automatically derives a reactive value from other signals/computed values (like an Excel formula)

3. **Effect**: Runs reactive side effects when signals/computed values change (like Excel charts that update when data changes)

```python

# Signal: wraps a reactive value (like Excel cell A1 = 5)

counter = Signal(0)

# Computed: derives from other reactive values (like Excel cell B1 = A1 * 2)

@Computed

def doubled():

return counter() * 2

# Effect: reactive side effects (like Excel chart that updates when cells change)

def print_values():

print(f"Counter: {counter()}, Doubled: {doubled()}")

counter_effect = Effect(print_values)

counter.set(5) # Reactive update: prints "Counter: 5, Doubled: 10"

```

### Excel Spreadsheet Analogy

If you've ever used Excel, you already understand reactive programming:

| Cell | Value/Formula | reaktiv Equivalent |

|------|---------------|-------------------|

| A1 | `5` | `Signal(5)` |

| B1 | `=A1 * 2` | `Computed(lambda: a1() * 2)` |

| C1 | `=A1 + B1` | `Computed(lambda: a1() + b1())` |

When you change A1 in Excel, B1 and C1 automatically recalculate. That's exactly what happens with reaktiv:

```python

# Excel-style reactive programming in Python

a1 = Signal(5) # A1 = 5

@Computed # B1 = A1 * 2

def b1() -> int:

return a1() * 2

@Computed # C1 = A1 + B1

def c1() -> int:

return a1() + b1()

# Display effect (like Excel showing the values)

display_effect = Effect(lambda: print(f"A1={a1()}, B1={b1()}, C1={c1()}"))

a1.set(10) # Change A1 - everything recalculates automatically!

# Prints: A1=10, B1=20, C1=30

```

Just like in Excel, you don't need to manually update B1 and C1 when A1 changes - the dependency tracking handles it automatically.

```mermaid

graph TD

%% Define node subgraphs for better organization

subgraph "Data Sources"

S1[Signal A]

S2[Signal B]

S3[Signal C]

end

subgraph "Derived Values"

C1[Computed X]

C2[Computed Y]

end

subgraph "Side Effects"

E1[Effect 1]

E2[Effect 2]

end

subgraph "External Systems"

EXT1[UI Update]

EXT2[API Call]

EXT3[Database Write]

end

%% Define relationships between nodes

S1 -->|"get()"| C1

S2 -->|"get()"| C1

S2 -->|"get()"| C2

S3 -->|"get()"| C2

C1 -->|"get()"| E1

C2 -->|"get()"| E1

S3 -->|"get()"| E2

C2 -->|"get()"| E2

E1 --> EXT1

E1 --> EXT2

E2 --> EXT3

%% Change propagation path

S1 -.-> |"1\. set()"| C1

C1 -.->|"2\. recompute"| E1

E1 -.->|"3\. execute"| EXT1

%% Style nodes by type

classDef signal fill:#4CAF50,color:white,stroke:#388E3C,stroke-width:1px

classDef computed fill:#2196F3,color:white,stroke:#1976D2,stroke-width:1px

classDef effect fill:#FF9800,color:white,stroke:#F57C00,stroke-width:1px

%% Apply styles to nodes

class S1,S2,S3 signal

class C1,C2 computed

class E1,E2 effect

%% Legend node

LEGEND[" Legend:

• Signal: Stores a value, notifies dependents

• Computed: Derives value from dependencies

• Effect: Runs side effects when dependencies change

• → Data flow / Dependency (read)

• ⟿ Change propagation (update)

"]

classDef legend fill:none,stroke:none,text-align:left

class LEGEND legend

```

### Additional Features That reaktiv Provides

**Lazy Evaluation** - Computations only happen when results are actually needed:

```python

# This expensive computation isn't calculated until you access it

@Computed

def expensive_calc():

return sum(range(1000000)) # Not calculated yet!

print(expensive_calc()) # NOW it calculates when you need the result

print(expensive_calc()) # Instant! (cached result)

```

**Memoization** - Results are cached until dependencies change:

```python

# Results are automatically cached for efficiency

a1 = Signal(5)

@Computed

def b1():

return a1() * 2 # Define the computation

result1 = b1() # Calculates: 5 * 2 = 10

result2 = b1() # Cached! No recalculation needed

a1.set(6) # Dependency changed - cache invalidated

result3 = b1() # Recalculates: 6 * 2 = 12

```

**Fine-Grained Reactivity** - Only affected computations recalculate:

```python

# Independent data sources don't affect each other

a1 = Signal(5) # Independent signal

d2 = Signal(100) # Another independent signal

@Computed # Depends only on a1

def b1():

return a1() * 2

@Computed # Depends on a1 and b1

def c1():

return a1() + b1()

@Computed # Depends only on d2

def e2():

return d2() / 10

a1.set(10) # Only b1 and c1 recalculate, e2 stays cached

d2.set(200) # Only e2 recalculates, b1 and c1 stay cached

```

This intelligent updating means your application only recalculates what actually needs to be updated, making it highly efficient.

## The Problem This Solves

Consider a simple order calculation:

### Without reaktiv (Manual Updates)

```python

class Order:

def __init__(self):

self.price = 100.0

self.quantity = 2

self.tax_rate = 0.1

self._update_totals() # Must remember to call this

def set_price(self, price):

self.price = price

self._update_totals() # Must remember to call this

def set_quantity(self, quantity):

self.quantity = quantity

self._update_totals() # Must remember to call this

def _update_totals(self):

# Must update in the correct order

self.subtotal = self.price * self.quantity

self.tax = self.subtotal * self.tax_rate

self.total = self.subtotal + self.tax

# Oops, forgot to update the display!

```

### With reaktiv (Excel-style Automatic Updates)

This is like Excel - change a cell and everything recalculates automatically:

```python

from reaktiv import Signal, Computed, Effect

# Base values (like Excel input cells)

price = Signal(100.0) # A1

quantity = Signal(2) # A2

tax_rate = Signal(0.1) # A3

# Formulas (like Excel computed cells)

@Computed # B1 = A1 * A2

def subtotal():

return price() * quantity()

@Computed # B2 = B1 * A3

def tax():

return subtotal() * tax_rate()

@Computed # B3 = B1 + B2

def total():

return subtotal() + tax()

# Auto-display (like Excel chart that updates automatically)

total_effect = Effect(lambda: print(f"Order total: ${total():.2f}"))

# Just change the input - everything recalculates like Excel!

price.set(120.0) # Change A1 - B1, B2, B3 all update automatically

quantity.set(3) # Same thing

```

Benefits:

- ✅ Cannot forget to update dependent data

- ✅ Updates always happen in the correct order

- ✅ State relationships are explicit and centralized

- ✅ Side effects are guaranteed to run

## Type Safety & Decorator Benefits

`reaktiv` provides full type hint support, making it compatible with static type checkers like ruff, mypy and pyright. This enables better IDE autocompletion, early error detection, and improved code maintainability.

```python

from reaktiv import Signal, Computed, Effect

# Explicit type annotations

name: Signal[str] = Signal("Alice")

age: Signal[int] = Signal(30)

active: Signal[bool] = Signal(True)

# Type inference works automatically

score = Signal(100.0) # Inferred as Signal[float]

items = Signal([1, 2, 3]) # Inferred as Signal[list[int]]

# Computed values preserve and infer types

@Computed

def name_length(): # Type is automatically ComputeSignal[int]

return len(name())

@Computed

def greeting(): # Type is automatically ComputeSignal[str]

return f"Hello, {name()}!"

@Computed

def total_score(): # Type is automatically ComputeSignal[float]

return score() * 1.5

# Type-safe update functions

def increment_age(current: int) -> int:

return current + 1

age.update(increment_age) # Type checked!

```

## Why This Pattern?

```mermaid

graph TD

subgraph "Traditional Approach"

T1[Manual Updates]

T2[Scattered Logic]

T3[Easy to Forget]

T4[Hard to Debug]

T1 --> T2

T2 --> T3

T3 --> T4

end

subgraph "Reactive Approach"

R1[Declare Relationships]

R2[Automatic Updates]

R3[Centralized Logic]

R4[Guaranteed Consistency]

R1 --> R2

R2 --> R3

R3 --> R4

end

classDef traditional fill:#f44336,color:white

classDef reactive fill:#4CAF50,color:white

class T1,T2,T3,T4 traditional

class R1,R2,R3,R4 reactive

```

This reactive approach comes from frontend frameworks like **Angular** and **SolidJS**, where fine-grained reactivity revolutionized UI development. While those frameworks use this reactive pattern to efficiently update user interfaces, the core insight applies everywhere: **declaring reactive relationships between data leads to fewer bugs** than manually managing updates.

The reactive pattern is particularly valuable in Python applications for:

- Configuration management with cascading overrides

- Caching with automatic invalidation

- Real-time data processing pipelines

- Request/response processing with derived context

- Monitoring and alerting systems

## Practical Examples

### Reactive Configuration Management

```python

from reaktiv import Signal, Computed

# Multiple reactive config sources

defaults = Signal({"timeout": 30, "retries": 3})

user_prefs = Signal({"timeout": 60})

feature_flags = Signal({"new_retry_logic": True})

# Automatically reactive merged config

@Computed

def config():

return {

**defaults(),

**user_prefs(),

**feature_flags()

}

print(config()) # {'timeout': 60, 'retries': 3, 'new_retry_logic': True}

# Change any source - merged config reacts automatically

defaults.update(lambda d: {**d, "max_connections": 100})

print(config()) # Now includes max_connections

```

### Reactive Data Processing Pipeline

```python

import time

from reaktiv import Signal, Computed, Effect

# Reactive raw data stream

raw_data = Signal([])

# Reactive processing pipeline

@Computed

def filtered_data():

return [x for x in raw_data() if x > 0]

@Computed

def processed_data():

return [x * 2 for x in filtered_data()]

@Computed

def summary():

data = processed_data()

return {

"count": len(data),

"sum": sum(data),

"avg": sum(data) / len(data) if data else 0

}

# Reactive monitoring - MUST assign to variable!

summary_effect = Effect(lambda: print(f"Summary: {summary()}"))

# Add data - entire reactive pipeline recalculates automatically

raw_data.set([1, -2, 3, 4]) # Prints summary

raw_data.update(lambda d: d + [5, 6]) # Updates summary

```

#### Reactive Pipeline Visualization

```mermaid

graph LR

subgraph "Reactive Data Processing Pipeline"

RD[raw_data<br/>Signal<list>]

FD[filtered_data<br/>Computed<list>]

PD[processed_data<br/>Computed<list>]

SUM[summary<br/>Computed<dict>]

RD -->|reactive filter x > 0| FD

FD -->|reactive map x * 2| PD

PD -->|reactive aggregate| SUM

SUM --> EFF[Effect: print summary]

end

NEW[New Data] -.->|"raw_data.set()"| RD

RD -.->|reactive update| FD

FD -.->|reactive update| PD

PD -.->|reactive update| SUM

SUM -.->|reactive trigger| EFF

classDef signal fill:#4CAF50,color:white

classDef computed fill:#2196F3,color:white

classDef effect fill:#FF9800,color:white

classDef input fill:#9C27B0,color:white

class RD signal

class FD,PD,SUM computed

class EFF effect

class NEW input

```

### Reactive System Monitoring

```python

from reaktiv import Signal, Computed, Effect

# Reactive system metrics

cpu_usage = Signal(20)

memory_usage = Signal(60)

disk_usage = Signal(80)

# Reactive health calculation

system_health = Computed(lambda:

"critical" if any(x > 90 for x in [cpu_usage(), memory_usage(), disk_usage()]) else

"warning" if any(x > 75 for x in [cpu_usage(), memory_usage(), disk_usage()]) else

"healthy"

)

# Reactive automatic alerting - MUST assign to variable!

alert_effect = Effect(lambda: print(f"System status: {system_health()}")

if system_health() != "healthy" else None)

cpu_usage.set(95) # Reactive system automatically prints: "System status: critical"

```

## Advanced Features

### LinkedSignal (Writable derived state)

`LinkedSignal` is a writable computed signal that can be manually set by users but will automatically reset when its source context changes. Use it for “user overrides with sane defaults” that should survive some changes but reset on others.

Common use cases:

- Pagination: selection resets when page changes

- Wizard flows: step-specific state resets when the step changes

- Filters & search: user-picked value persists across pagination, resets when query changes

- Forms: default values computed from context but user can override temporarily

**Using the @Linked decorator:**

```python

from reaktiv import Signal, Linked

page = Signal(1)

# Writable derived state that resets whenever page changes

@Linked

def selection() -> str:

return f"default-for-page-{page()}"

selection.set("custom-choice") # user override

print(selection()) # "custom-choice"

page.set(2) # context changes → resets

print(selection()) # "default-for-page-2"

```

**Alternative: factory function style:**

```python

# Still supported

selection = LinkedSignal(lambda: f"default-for-page-{page()}")

```

Advanced pattern (explicit source and previous-state aware computation):

```python

from reaktiv import Signal, LinkedSignal, PreviousState

# Source contains (query, page). We want selection to persist across page changes

# but reset when the query string changes.

query = Signal("shoes")

page = Signal(1)

def compute_selection(src: tuple[str, int], prev: PreviousState[str] | None) -> str:

current_query, _ = src

# If only the page changed, keep previous selection

if prev is not None and isinstance(prev.source, tuple) and prev.source[0] == current_query:

return prev.value

# Otherwise, provide a new default for the new query

return f"default-for-{current_query}"

selection = LinkedSignal(source=lambda: (query(), page()), computation=compute_selection)

print(selection()) # "default-for-shoes"

selection.set("red-sneakers")

page.set(2) # page changed, same query → keep user override

print(selection()) # "red-sneakers"

query.set("boots") # query changed → reset to new default

print(selection()) # "default-for-boots"

```

Notes:

- It’s writable: call `selection.set(...)` or `selection.update(...)` to override.

- It auto-resets based on the dependencies you read (simple pattern) or your custom `source` logic (advanced pattern).

### Resource - Async Data Loading

`Resource` brings async operations into your reactive application with automatic dependency tracking, request cancellation, and comprehensive status management. Perfect for API calls and data fetching.

```python

import asyncio

from reaktiv import Resource, Signal, ResourceStatus

# Reactive parameter

user_id = Signal(1)

# Async data loader

async def fetch_user(params):

# Check for cancellation

if params.cancellation.is_set():

return None

await asyncio.sleep(0.5) # Simulate API call

return {"id": params.params["user_id"], "name": f"User {params.params['user_id']}"}

async def main():

# Create resource

user_resource = Resource(

params=lambda: {"user_id": user_id()}, # When user_id changes, auto-reload

loader=fetch_user

)

# Wait for initial load

await asyncio.sleep(0.6)

# Access data safely

if user_resource.has_value():

print(user_resource.value()) # {"id": 1, "name": "User 1"}

# Changing param automatically triggers reload

user_id.set(2)

await asyncio.sleep(0.6)

print(user_resource.value()) # {"id": 2, "name": "User 2"}

asyncio.run(main())

```

**Key features:**

- **6 status states**: IDLE, LOADING, RELOADING, RESOLVED, ERROR, LOCAL

- **Automatic request cancellation** when parameters change (prevents race conditions)

- **Seamless integration** with Computed and Effect

- **Manual control** via `reload()`, `set()`, and `update()` methods

- **Atomic snapshots** for safe state access

- **Automatic cleanup** when garbage collected

For complete documentation, examples, and design patterns, see the [Resource User Guide](https://reaktiv.bui.app/docs/resource-guide.html).

### Custom Equality

```python

# For objects where you want value-based comparison

items = Signal([1, 2, 3], equal=lambda a, b: a == b)

items.set([1, 2, 3]) # Won't trigger updates (same values)

```

### Update Functions

```python

counter = Signal(0)

counter.update(lambda x: x + 1) # Increment based on current value

```

### Async Effects

**Recommendation: Use synchronous effects** - they provide better control and predictable behavior:

```python

import asyncio

my_signal = Signal("initial")

# ✅ RECOMMENDED: Synchronous effect with async task spawning

def sync_effect():

# Signal values captured at this moment - guaranteed consistency

current_value = my_signal()

# Spawn async task if needed

async def background_work():

await asyncio.sleep(0.1)

print(f"Processing: {current_value}")

asyncio.create_task(background_work())

# MUST assign to variable!

my_effect = Effect(sync_effect)

```

**Experimental: Direct async effects**

Async effects are experimental and should be used with caution:

```python

import asyncio

async def async_effect():

await asyncio.sleep(0.1)

print(f"Async processing: {my_signal()}")

# MUST assign to variable!

my_async_effect = Effect(async_effect)

```

**Key differences:**

- **Synchronous effects**: Block the signal update until complete, ensuring signal values don't change during effect execution

- **Async effects** (experimental): Allow signal updates to complete immediately, but signal values may change while the async effect is running

**Note:** Most applications should use synchronous effects for predictable behavior.

### Untracked Reads

Use `untracked()` to read signals without creating dependencies:

```python

from reaktiv import Signal, Computed, Effect

user_id = Signal(1)

debug_mode = Signal(False)

# This computed only depends on user_id, not debug_mode

def get_user_data():

uid = user_id() # Creates dependency

if untracked(debug_mode): # No dependency created

print(f"Loading user {uid}")

return f"User data for {uid}"

user_data = Computed(get_user_data)

debug_mode.set(True) # Won't trigger recomputation

user_id.set(2) # Will trigger recomputation

```

**Context Manager Usage**

You can also use `untracked` as a context manager to read multiple signals without creating dependencies. This is useful for logging or conditional logic inside an effect without adding extra dependencies.

```python

from reaktiv import Signal, Computed, Effect, untracked

name = Signal("Alice")

is_logging_enabled = Signal(False)

log_level = Signal("INFO")

greeting = Computed(lambda: f"Hello, {name()}!")

# An effect that depends on `greeting`, but reads other signals untracked

def display_greeting():

# Create a dependency on `greeting`

current_greeting = greeting()

# Read multiple signals without creating dependencies

with untracked():

logging_active = is_logging_enabled()

current_log_level = log_level()

if logging_active:

print(f"LOG [{current_log_level}]: Greeting updated to '{current_greeting}'")

print(current_greeting)

# MUST assign to variable!

greeting_effect = Effect(display_greeting)

# Initial run prints: "Hello, Alice"

name.set("Bob")

# Prints: "Hello, Bob"

is_logging_enabled.set(True)

log_level.set("DEBUG")

# Prints nothing, because these are not dependencies of the effect.

name.set("Charlie")

# Prints:

# LOG [DEBUG]: Greeting updated to 'Hello, Charlie'

# Hello, Charlie

```

The context manager approach is particularly useful when you need to read multiple signals for logging, debugging, or conditional logic without creating reactive dependencies.

### Batch Updates

Use `batch()` to group multiple updates and trigger effects only once:

```python

from reaktiv import Signal, Effect, batch

name = Signal("Alice")

age = Signal(30)

city = Signal("New York")

def print_info():

print(f"{name()}, {age()}, {city()}")

info_effect = Effect(print_info)

# Effect prints one time on init

# Without batch - prints 3 times

name.set("Bob")

age.set(25)

city.set("Boston")

# With batch - prints only once at the end

with batch():

name.set("Charlie")

age.set(35)

city.set("Chicago")

# Only prints once: "Charlie, 35, Chicago"

```

### Error Handling

Proper error handling is crucial to prevent cascading failures:

```python

from reaktiv import Signal, Computed, Effect

# Example: Division computation that can fail

numerator = Signal(10)

denominator = Signal(2)

# Unsafe computation - can throw ZeroDivisionError

unsafe_division = Computed(lambda: numerator() / denominator())

# Safe computation with error handling

def safe_divide():

try:

return numerator() / denominator()

except ZeroDivisionError:

return float('inf') # or return 0, or handle as needed

safe_division = Computed(safe_divide)

# Error handling in effects

def safe_print():

try:

unsafe_result = unsafe_division()

print(f"Unsafe result: {unsafe_result}")

except ZeroDivisionError:

print("Error: Division by zero!")

safe_result = safe_division()

print(f"Safe result: {safe_result}")

effect = Effect(safe_print)

# Test error scenarios

denominator.set(0) # Triggers ZeroDivisionError in unsafe computation

# Prints: "Error: Division by zero!" and "Safe result: inf"

```

## Important Notes

### ⚠️ Effect Retention (Critical!)

**Effects must be assigned to a variable to prevent garbage collection.** This is the most common mistake when using reaktiv:

```python

# ❌ WRONG - effect gets garbage collected immediately and won't work

Effect(lambda: print("This will never print"))

# ✅ CORRECT - effect stays active

my_effect = Effect(lambda: print("This works!"))

# ✅ Also correct - store in a list or class attribute

effects = []

effects.append(Effect(lambda: print("This also works!")))

# ✅ In classes, assign to self

class MyClass:

def __init__(self):

self.counter = Signal(0)

# Keep effect alive by assigning to instance

self.effect = Effect(lambda: print(f"Counter: {self.counter()}"))

```

**Why this design?** This explicit retention requirement prevents accidental memory leaks. Unlike some reactive systems that automatically keep effects alive indefinitely, `reaktiv` requires you to explicitly manage effect lifetimes. When you no longer need an effect, simply let the variable go out of scope or delete it - the effect will be automatically cleaned up. This gives you control over when reactive behavior starts and stops, preventing long-lived applications from accumulating abandoned effects.

**Manual cleanup:** You can also explicitly dispose of effects when you're done with them:

```python

my_effect = Effect(lambda: print("This will run"))

# ... some time later ...

my_effect.dispose() # Manually clean up the effect

# Effect will no longer run when dependencies change

```

### Mutable Objects

By default, reaktiv uses identity comparison. For mutable objects:

```python

data = Signal([1, 2, 3])

# This triggers update (new list object)

data.set([1, 2, 3])

# This doesn't trigger update (same object, modified in place)

current = data()

current.append(4) # reaktiv doesn't see this change

```

### Working with Lists and Dictionaries

When working with mutable objects like lists and dictionaries, you need to create new objects to trigger updates:

#### Lists

```python

items = Signal([1, 2, 3])

# ❌ WRONG - modifies in place, no update triggered

current = items()

current.append(4) # reaktiv doesn't detect this

# ✅ CORRECT - create new list

items.set([*items(), 4]) # or items.set(items() + [4])

# ✅ CORRECT - using update() method

items.update(lambda current: current + [4])

items.update(lambda current: [*current, 4])

# Other list operations

items.update(lambda lst: [x for x in lst if x > 2]) # Filter

items.update(lambda lst: [x * 2 for x in lst]) # Map

items.update(lambda lst: lst[:-1]) # Remove last

items.update(lambda lst: [0] + lst) # Prepend

```

#### Dictionaries

```python

config = Signal({"timeout": 30, "retries": 3})

# ❌ WRONG - modifies in place, no update triggered

current = config()

current["new_key"] = "value" # reaktiv doesn't detect this

# ✅ CORRECT - create new dictionary

config.set({**config(), "new_key": "value"})

# ✅ CORRECT - using update() method

config.update(lambda current: {**current, "new_key": "value"})

# Other dictionary operations

config.update(lambda d: {**d, "timeout": 60}) # Update value

config.update(lambda d: {k: v for k, v in d.items() if k != "retries"}) # Remove key

config.update(lambda d: {**d, **{"max_conn": 100, "pool_size": 5}}) # Merge multiple

```

#### Alternative: Value-Based Equality

If you prefer to modify objects in place, provide a custom equality function:

```python

# For lists - compares actual values

def list_equal(a, b):

return len(a) == len(b) and all(x == y for x, y in zip(a, b))

items = Signal([1, 2, 3], equal=list_equal)

# Now you can modify in place and trigger updates manually

current = items()

current.append(4)

items.set(current) # Triggers update because values changed

# For dictionaries - compares actual content

def dict_equal(a, b):

return a == b

config = Signal({"timeout": 30}, equal=dict_equal)

current = config()

current["retries"] = 3

config.set(current) # Triggers update

```

## More Examples

You can find more example scripts in the [examples](./examples) folder to help you get started with using this project.

Including integration examples with:

- [FastAPI - Websocket](./examples/fastapi_websocket.py)

- [NiceGUI - Todo-App](./examples/nicegui_todo_app.py)

- [Reactive Data Pipeline with NumPy and Pandas](./examples/data_pipeline_numpy_pandas.py)

- [Jupyter Notebook - Reactive IPyWidgets](./examples/reactive_jupyter_notebook.ipynb)

- [NumPy Matplotlib - Reactive Plotting](./examples/numpy_plotting.py)

- [IoT Sensor Agent Thread - Reactive Hardware](./examples/iot_sensor_agent_thread.py)

## Star History

[](https://star-history.com/#buiapp/reaktiv&Date)

---

**Inspired by** Angular Signals and SolidJS reactivity • **Built for** Python developers who want fewer state management bugs • **Made in** Hamburg

| text/markdown | null | Tuan Anh Bui <mail@bui.app> | null | null | MIT | null | [

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.9",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12"

] | [] | null | null | >=3.9 | [] | [] | [] | [] | [] | [] | [] | [

"Homepage, https://github.com/buiapp/reaktiv"

] | uv/0.10.4 {"installer":{"name":"uv","version":"0.10.4","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":true} | 2026-02-19T10:44:37.722870 | reaktiv-0.21.2.tar.gz | 85,019 | c0/2b/3162c689f5ed78b0a29d9cf2911af3279667110335c04cd270a7b82eb5f8/reaktiv-0.21.2.tar.gz | source | sdist | null | false | fba4c597881608e25cbfa489771a495f | 69a96c993457947af0ce6f29a8d4b8e607168d2f6a963f3a398894813677a9ce | c02b3162c689f5ed78b0a29d9cf2911af3279667110335c04cd270a7b82eb5f8 | null | [] | 401 |

2.4 | alt-pytest-asyncio | 0.9.5 | Alternative pytest plugin to pytest-asyncio | Alternative Pytest Asyncio

==========================

This plugin allows you to have async pytest fixtures and tests.

This plugin only supports python 3.11 and above.

The code here is influenced by pytest-asyncio but with some differences:

* Error tracebacks from are from your tests, rather than asyncio internals

* There is only one loop for all of the tests

* You can manage the lifecycle of the loop yourself outside of pytest by using

this plugin with your own loop

* No need to explicitly mark your tests as async. (pytest-asyncio requires you

mark your async tests because it also supports other event loops like curio

and trio)

Like pytest-asyncio it supports async tests, coroutine fixtures and async

generator fixtures.

Full documentation can be found at https://alt-pytest-asyncio.readthedocs.io

| text/x-rst | null | Stephen Moore <delfick755@gmail.com> | null | null | MIT | null | [

"Framework :: Pytest",

"Topic :: Software Development :: Testing"

] | [] | null | null | >=3.11 | [] | [] | [] | [

"pytest>=8.0.0",

"alt-pytest-asyncio-test-driver; extra == \"dev\"",

"tools; extra == \"dev\"",

"tools; extra == \"tools\""

] | [] | [] | [] | [

"Homepage, https://github.com/delfick/alt-pytest-asyncio"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T10:44:17.279349 | alt_pytest_asyncio-0.9.5.tar.gz | 9,030 | 38/52/920ab736636580ea40ce47d1ba98a027e273a32e4be144eed2f928d9ac41/alt_pytest_asyncio-0.9.5.tar.gz | source | sdist | null | false | 80506ce5d3c561353500fbc84a4ed450 | 8171fbf00fbb4d763d7ded77e343dc443bd111901b1f5654ad271cf94a74c248 | 3852920ab736636580ea40ce47d1ba98a027e273a32e4be144eed2f928d9ac41 | null | [

"LICENSE"

] | 490 |

2.4 | charmlibs-interfaces-tls-certificates | 1.7.0 | The charmlibs.interfaces.tls_certificates package. | # charmlibs.interfaces.tls_certificates

The `tls-certificates` interface library.

To install, add `charmlibs-interfaces-tls-certificates` to your Python dependencies. Then in your Python code, import as:

```py

from charmlibs.interfaces import tls_certificates

```

See the [reference documentation](https://documentation.ubuntu.com/charmlibs/reference/charmlibs/interfaces/tls-certificates).

Also see the [usage documentation](https://charmhub.io/tls-certificates-interface) on Charmhub. This documentation was written when the library was hosted on Charmhub, so some parts might not be directly applicable.

| text/markdown | The TLS team at Canonical | null | null | null | null | null | [

"Development Status :: 5 - Production/Stable",

"Intended Audience :: Developers",

"License :: OSI Approved :: Apache Software License",

"Operating System :: POSIX :: Linux",

"Programming Language :: Python :: 3"

] | [] | null | null | >=3.10 | [] | [] | [] | [

"cryptography>=43.0.0",

"ops",

"pydantic"

] | [] | [] | [] | [

"Repository, https://github.com/canonical/charmlibs",

"Issues, https://github.com/canonical/charmlibs/issues"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T10:43:57.758544 | charmlibs_interfaces_tls_certificates-1.7.0.tar.gz | 145,552 | da/fa/9c20a264c42adcad31293e4588e421c53ae24c105199e4e1c9d7374a4e64/charmlibs_interfaces_tls_certificates-1.7.0.tar.gz | source | sdist | null | false | bb0e49e1bed67f6c5fbceb7686a98a56 | 7fe79c78fab51a864c96d8d731049479610a014152a75dd585568ad268ecaafa | dafa9c20a264c42adcad31293e4588e421c53ae24c105199e4e1c9d7374a4e64 | null | [] | 475 |

2.4 | lumora | 0.3.7 | A composable image generation and document rendering library for Python, powered by Pillow. | <p align="center">

<img src="assets/icon.svg" alt="Lumora" width="128" height="128">

</p>

<h1 align="center">Lumora</h1>

<p align="center">

A composable image generation and document rendering library for Python, powered by Pillow.

</p>

<p align="center">

<a href="https://pypi.org/project/lumora/"><img src="https://img.shields.io/pypi/v/lumora.svg" alt="PyPI"></a>

<a href="https://www.python.org/downloads/"><img src="https://img.shields.io/badge/python-3.12+-blue.svg" alt="Python 3.12+"></a>

<a href="https://github.com/krypton-byte/lumora/blob/main/LICENSE"><img src="https://img.shields.io/badge/license-MIT-green.svg" alt="MIT License"></a>

<a href="https://krypton-byte.github.io/lumora/playground/"><img src="https://img.shields.io/badge/try-playground-7c6ef0.svg" alt="Playground"></a>

<a href="https://krypton-byte.github.io/lumora/docs/"><img src="https://img.shields.io/badge/docs-mkdocs-blue.svg" alt="Documentation"></a>

</p>

---

## Overview

**Lumora** lets you build complex, pixel-perfect images, multi-page PDF documents, and PPTX presentations entirely in Python — no browser, no template engine, no external rendering service.

Inspired by modern declarative UI toolkits (Jetpack Compose, Flutter), Lumora provides a composable widget tree where every element — containers, columns, rows, text, images — is sized, laid out, clipped, and rendered automatically.

### Key features

- **Composable layouts** — `Container`, `Column`, `Row`, `Stack`, `Expanded`, `Spacer`, `SizedBox`, `Padding`

- **Rich text** — `TextWidget`, `RichTextWidget` (inline HTML-like markup), `JustifiedTextWidget`, `HighlightTextWidget`

- **Advanced styling** — gradients (linear & radial), rounded corners, circles, strokes, shadows, text transforms

- **Visual effects** — blur, stroke outlines, glassmorphism corners, alpha gradients, custom effects

- **GPU acceleration** — optional Taichi-powered GPU backend (`lumora[gpu]`) with automatic CPU/NumPy fallback

- **Smart render caching** — content-addressable LRU cache with structural hashing; unchanged widgets return instantly on re-render (~17× speedup)

- **Design-resolution scaling** — define once at a virtual size, render to any resolution with LANCZOS resampling

- **PDF export** — multi-page documents with optional metadata via pikepdf

- **PPTX export** — PowerPoint presentations with auto-fitted slide dimensions via python-pptx

- **`@preview` decorator** — mark specific `Page` subclasses for selective rendering in the playground and VS Code extension

- **Pure Python** — depends on Pillow, NumPy, and python-pptx; pikepdf is an optional extra

---

## Monorepo structure

```

lumora/

├── packages/

│ ├── lumora/ # Core Python library (published to PyPI)

│ └── lumora-vscode/ # VS Code extension for live preview

├── docs/

│ ├── content/ # MkDocs documentation source

│ ├── playground/ # Browser-based playground (Vite + React)

│ └── landing/ # Landing page

├── examples/ # Example scripts

└── pyproject.toml # Python project configuration

```

---

## Try it online

The **[Lumora Playground](https://krypton-byte.github.io/lumora/playground/)** lets you write and render Lumora pages directly in the browser — no installation required. Features include:

- Live code editor with Python syntax highlighting

- Instant rendering with render time display

- Zoom, pan, and fit controls for the preview

- Export to PDF (client-side via jsPDF) and PPTX

- Page reordering before export

- File save/open and shareable URLs

- Auto-saves your code between sessions

- Built-in examples to get started

---

## Installation

```bash

pip install lumora

```

For PDF metadata support (title, author, etc.):

```bash

pip install lumora[pikepdf]

```

For GPU-accelerated rendering via [Taichi](https://www.taichi-lang.org/):

```bash

pip install lumora[gpu]

```

Or with [uv](https://docs.astral.sh/uv/):

```bash

uv add lumora # core (includes python-pptx)

uv add lumora[pikepdf] # with PDF metadata

uv add lumora[gpu] # with GPU acceleration

```

### Requirements

- Python 3.12+

- Pillow >= 12.1

- NumPy >= 2.4

- python-pptx >= 0.6 *(included — for PPTX export)*

- pikepdf >= 10.3 *(optional — for PDF metadata)*

- Taichi >= 1.7 *(optional — for GPU acceleration)*

---

## Quick start

```python

from lumora.page import Page, preview

from lumora.layout import Container, Column, TextWidget

from lumora.config import RGBColor, Alignment, Margin

from lumora.font import TextStyle, FontWeight

title_style = TextStyle(

font_size=72,

font_family="Inter",

font_weight=FontWeight.BOLD,

paint=RGBColor(255, 255, 255),

)

body_style = TextStyle(

font_size=32,

font_family="Inter",

paint=RGBColor(200, 200, 200),

)

@preview

class HelloPage(Page):

design_width = 1920

design_height = 1080

def build(self):

return Container(

width=self.design_width,

height=self.design_height,

background=RGBColor(25, 25, 35),

alignment=Alignment.CENTER,

padding=Margin(60, 60, 60, 60),

child=Column(

spacing=20,

children=[

TextWidget("Hello, Lumora!", style=title_style),

TextWidget(

"Composable image generation in pure Python.",

style=body_style,

),

],

),

)

page = HelloPage()

page.save("hello.png", width=1920)

```

### The `@preview` decorator

Mark any `Page` subclass with `@preview` to flag it for selective rendering in the [Playground](https://krypton-byte.github.io/lumora/playground/) and the [VS Code extension](#vs-code-extension). When `@preview` is present on any class, only decorated classes are rendered — otherwise all `Page` subclasses are rendered:

```python

from lumora.page import Page, preview

@preview

class DesignDraft(Page):

"""Only this page renders in the playground/VS Code."""

...

class ProductionPage(Page):

"""This page is skipped in preview tools."""

...

```

---

## Widget catalog

| Widget | Description |

|-|-|

| `Container` | Box with background, padding, alignment, clipping, effects |

| `Column` | Vertical layout with spacing and axis alignment |

| `Row` | Horizontal layout with spacing and axis alignment |

| `Stack` | Overlay children on top of each other |

| `Expanded` | Fill remaining space in a `Column` or `Row` |

| `Spacer` | Fixed-size empty space |

| `SizedBox` | Force explicit width/height on a child |

| `Padding` | Add insets around a child |

| `TextWidget` | Single-style text block |

| `RichTextWidget` | Inline markup: `<b>`, `<i>`, `<color>`, `<size>`, etc. |

| `JustifiedTextWidget` | Fully justified paragraph text |

| `HighlightTextWidget` | Text with highlighted background spans |

| `ImageWidget` | Render an image from file or PIL Image |

| `LineWidget` | Horizontal line / divider |

| `GlassContainer` | Frosted-glass effect container |

---

## Multi-page PDF

```python

from lumora.page import Document, DocumentMetadata, Format

doc = Document()

doc.add_page(CoverPage())

doc.add_page(ChartPage())

doc.add_page(SummaryPage())

# Without metadata — no pikepdf needed

doc.save("report.pdf", width=1920)

# With metadata — requires: pip install lumora[pikepdf]

doc.save(

"report.pdf",

Format.PDF,

DocumentMetadata(title="Q4 Report", author="Analytics"),

width=1920,

)

```

---

## PPTX (PowerPoint) export

Slide dimensions automatically match the image aspect ratio — no stretching.

```python

doc.save("slides.pptx", Format.PPTX)

# With metadata

doc.save(

"slides.pptx",

Format.PPTX,

DocumentMetadata(title="Q4 Report", author="Analytics"),

width=1920,

)

# At half resolution

doc.save("slides.pptx", Format.PPTX, scale=0.5)

```

---

## GPU acceleration

Lumora includes a dual compute backend. When `lumora[gpu]` is installed,

heavy pixel operations (gradients, blending, vignette, noise, etc.) are

offloaded to the GPU via [Taichi](https://www.taichi-lang.org/). Without

Taichi, the same operations run on the CPU using optimised NumPy.

```bash

pip install lumora[gpu]

```

Check the active backend at runtime:

```python

from lumora.backend import get_backend

print(get_backend()) # 'taichi' or 'numpy'

```

Taichi tries CUDA → Vulkan → Metal → OpenGL and finally falls back to

CPU if no GPU driver is available. Even in CPU mode, Taichi kernels

benefit from multi-threading and JIT compilation.

---

## Render caching

Lumora includes a **content-addressable render cache** that makes

re-rendering fast — especially during live-preview workflows where only

a few widgets change between refreshes.

Every widget computes a *structural hash* from its parameters and the

hashes of its children. When `render()` is called, Lumora checks the

cache first and returns the stored image immediately on a hit. Only

widgets whose content actually changed are re-rendered.

**Highlights:**

- **Automatic** — enabled by default, zero configuration needed.

- **Content-based** — even if widget objects are recreated (e.g. on each VS Code preview refresh), cache hits occur as long as the parameters match.

- **LRU + memory budget** — defaults to 256 MB; evicts least-recently-used entries when the budget is exceeded.

- **~17× speedup** on hot re-renders with partial changes (benchmarked on a 25-widget tree).

### Configuration

```python

from lumora.cache import RenderCache

# Raise memory budget to 512 MB

RenderCache.configure(max_mb=512)

# Disable caching entirely

RenderCache.configure(enabled=False)

# Inspect hit/miss statistics

print(RenderCache.instance().stats)

# {'hits': 19, 'misses': 1, 'hit_rate': 0.95, 'entries': 20,

# 'memory_mb': 42.0, 'max_memory_mb': 256.0}

# Clear all cached entries

RenderCache.instance().clear()

```

---

## Tooling

### Playground

The **[Lumora Playground](https://krypton-byte.github.io/lumora/playground/)** runs entirely in the browser via PyScript/Pyodide. Write, render, zoom/pan, and export — with auto-save and shareable URLs.

<p align="center">

<img src="https://cdn.jsdelivr.net/gh/bgstanly/stream@157861b/output_playground.webp" alt="Lumora Playground Demo" width="720" />

</p>

<p align="center"><em>Lumora Playground — write and render Lumora pages directly in the browser</em></p>

### VS Code Extension

The **Lumora Preview** extension (`packages/lumora-vscode/`) provides live preview directly in VS Code with hot reload.

<p align="center">

<img src="https://cdn.jsdelivr.net/gh/bgstanly/stream@157861b/output_vscode.webp" alt="Lumora VS Code Extension Demo" width="720" />

</p>

<p align="center"><em>Lumora VS Code Extension — live preview with hot reload</em></p>

Files must start with `# @lumora-file-preview` on the first line, and pages must be decorated with `@preview`:

```python

# @lumora-file-preview

from lumora.page import Page, preview

from lumora.layout import Container, TextWidget

from lumora.config import RGBColor, Alignment

from lumora.font import TextStyle, FontWeight

@preview

class MyPage(Page):

design_width = 1920

design_height = 1080

def build(self):

return Container(

width=self.design_width,

height=self.design_height,

background=RGBColor(25, 25, 40),

alignment=Alignment.CENTER,

child=TextWidget(

"Hello!",

style=TextStyle(

font_size=80,

font_weight=FontWeight.BOLD,

paint=RGBColor(255, 255, 255),

),

),

)

```

Install from the [Releases](https://github.com/krypton-byte/lumora/releases) page — download the `.vsix` file and install via `Ctrl+Shift+P` → "Extensions: Install from VSIX...".

---

## Development

```bash

# Clone the monorepo

git clone https://github.com/krypton-byte/lumora.git

cd lumora

# Install Python dependencies

uv sync --group docs --group dev

# Run examples

uv run python examples/01_hello_world.py

# Serve docs locally

uv run mkdocs serve -f docs/mkdocs.yml

# Build the playground

cd docs/playground && bun install && bun run dev

# Build the VS Code extension

cd packages/lumora-vscode && bun install && bun run compile

```

---

## Documentation

Full API documentation is available at **[krypton-byte.github.io/lumora/docs/](https://krypton-byte.github.io/lumora/docs/)**.

---

## License

MIT — see [LICENSE](LICENSE) for details.

| text/markdown | null | null | null | null | MIT | composable, generation, image, layout, pdf, pillow, report, widget | [

"Development Status :: 4 - Beta",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.12",

"Topic :: Multimedia :: Graphics",

"Topic :: Software Development :: Libraries :: Python Modules"

] | [] | null | null | >=3.12 | [] | [] | [] | [

"numpy>=2.4.2",

"pillow>=12.1.1",

"python-pptx>=0.6.21",

"pikepdf>=10.3.0; extra == \"all\"",

"taichi>=1.7.0; extra == \"all\"",

"taichi>=1.7.0; extra == \"gpu\"",

"pikepdf>=10.3.0; extra == \"pikepdf\""

] | [] | [] | [] | [

"Documentation, https://krypton-byte.github.io/lumora",

"Repository, https://github.com/krypton-byte/lumora",

"Issues, https://github.com/krypton-byte/lumora/issues"

] | uv/0.10.4 {"installer":{"name":"uv","version":"0.10.4","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":true} | 2026-02-19T10:43:20.538219 | lumora-0.3.7-py3-none-any.whl | 4,827,679 | 75/7b/5827fbf524c550a9650197cea6785f82bd1a3b4d555bdbc36a2a2e02a29b/lumora-0.3.7-py3-none-any.whl | py3 | bdist_wheel | null | false | ec2d2818d0930049b6d7457c800167c8 | f95ce3c07e7a415b7efb08bf75d5ef9d9f5ea2c3438e5ca1821e17e33dba7f85 | 757b5827fbf524c550a9650197cea6785f82bd1a3b4d555bdbc36a2a2e02a29b | null | [] | 233 |

2.4 | manila | 19.1.1 | Shared Storage for OpenStack | ========================

Team and repository tags

========================

.. image:: https://governance.openstack.org/tc/badges/manila.svg

:target: https://governance.openstack.org/tc/reference/tags/index.html

.. Change things from this point on

======

MANILA

======

You have come across an OpenStack shared file system service. It has

identified itself as "Manila." It was abstracted from the Cinder

project.

* Wiki: https://wiki.openstack.org/wiki/Manila

* Developer docs: https://docs.openstack.org/manila/latest/

Getting Started

---------------

If you'd like to run from the master branch, you can clone the git repo:

git clone https://opendev.org/openstack/manila

For developer information please see

`HACKING.rst <https://opendev.org/openstack/manila/src/branch/master/HACKING.rst>`_

You can raise bugs here https://bugs.launchpad.net/manila

Python client

-------------

https://opendev.org/openstack/python-manilaclient

* Documentation for the project can be found at:

https://docs.openstack.org/manila/latest/

* Release notes for the project can be found at:

https://docs.openstack.org/releasenotes/manila/

* Source for the project:

https://opendev.org/openstack/manila

* Bugs:

https://bugs.launchpad.net/manila

* Blueprints:

https://blueprints.launchpad.net/manila

* Design specifications are tracked at:

https://specs.openstack.org/openstack/manila-specs/

| null | OpenStack | openstack-discuss@lists.openstack.org | null | null | null | null | [

"Environment :: OpenStack",

"Intended Audience :: Information Technology",

"Intended Audience :: System Administrators",

"License :: OSI Approved :: Apache Software License",

"Operating System :: POSIX :: Linux",

"Programming Language :: Python",

"Programming Language :: Python :: 3 :: Only",

"Program... | [] | https://docs.openstack.org/manila/latest/ | null | >=3.8 | [] | [] | [] | [

"pbr>=5.5.0",

"alembic>=1.4.2",

"defusedxml>=0.7.1",

"eventlet>=0.26.1",

"greenlet>=0.4.16",

"lxml>=4.5.2",

"netaddr>=0.8.0",

"oslo.config>=8.3.2",

"oslo.context>=3.1.1",

"oslo.db>=8.4.0",

"oslo.i18n>=5.0.1",

"oslo.log>=4.4.0",

"oslo.messaging>=14.1.0",

"oslo.middleware>=4.1.1",

"oslo.po... | [] | [] | [] | [] | twine/6.2.0 CPython/3.11.14 | 2026-02-19T10:41:38.215853 | manila-19.1.1.tar.gz | 3,513,967 | e8/4c/c7d0ca024a9d0afa66f3ca414835ae4ce22f4d8db9f3b4d381eaf3418958/manila-19.1.1.tar.gz | source | sdist | null | false | a6a0a55b0af68d9231cb41e581d43964 | 2abf92408cf3ae69eabc799e587ed4cf1f1c3325ab0f3944479d7d34241d6a0e | e84cc7d0ca024a9d0afa66f3ca414835ae4ce22f4d8db9f3b4d381eaf3418958 | null | [

"LICENSE"

] | 230 |

2.4 | cycode | 3.10.1 | Boost security in your dev lifecycle via SAST, SCA, Secrets & IaC scanning. | # Cycode CLI User Guide

The Cycode Command Line Interface (CLI) is an application you can install locally to scan your repositories for secrets, infrastructure as code misconfigurations, software composition analysis vulnerabilities, and static application security testing issues.

This guide walks you through both installation and usage.

# Table of Contents

1. [Prerequisites](#prerequisites)

2. [Installation](#installation)

1. [Install Cycode CLI](#install-cycode-cli)

1. [Using the Auth Command](#using-the-auth-command)

2. [Using the Configure Command](#using-the-configure-command)

3. [Add to Environment Variables](#add-to-environment-variables)

1. [On Unix/Linux](#on-unixlinux)

2. [On Windows](#on-windows)

2. [Install Pre-Commit Hook](#install-pre-commit-hook)

3. [Cycode CLI Commands](#cycode-cli-commands)

4. [MCP Command](#mcp-command-experiment)

1. [Starting the MCP Server](#starting-the-mcp-server)

2. [Available Options](#available-options)

3. [MCP Tools](#mcp-tools)

4. [Usage Examples](#usage-examples)

5. [Scan Command](#scan-command)

1. [Running a Scan](#running-a-scan)

1. [Options](#options)

1. [Severity Threshold](#severity-option)

2. [Monitor](#monitor-option)

3. [Cycode Report](#cycode-report-option)

4. [Package Vulnerabilities](#package-vulnerabilities-option)

5. [License Compliance](#license-compliance-option)

6. [Lock Restore](#lock-restore-option)

2. [Repository Scan](#repository-scan)

1. [Branch Option](#branch-option)

3. [Path Scan](#path-scan)

1. [Terraform Plan Scan](#terraform-plan-scan)

4. [Commit History Scan](#commit-history-scan)

1. [Commit Range Option (Diff Scanning)](#commit-range-option-diff-scanning)

5. [Pre-Commit Scan](#pre-commit-scan)

6. [Pre-Push Scan](#pre-push-scan)

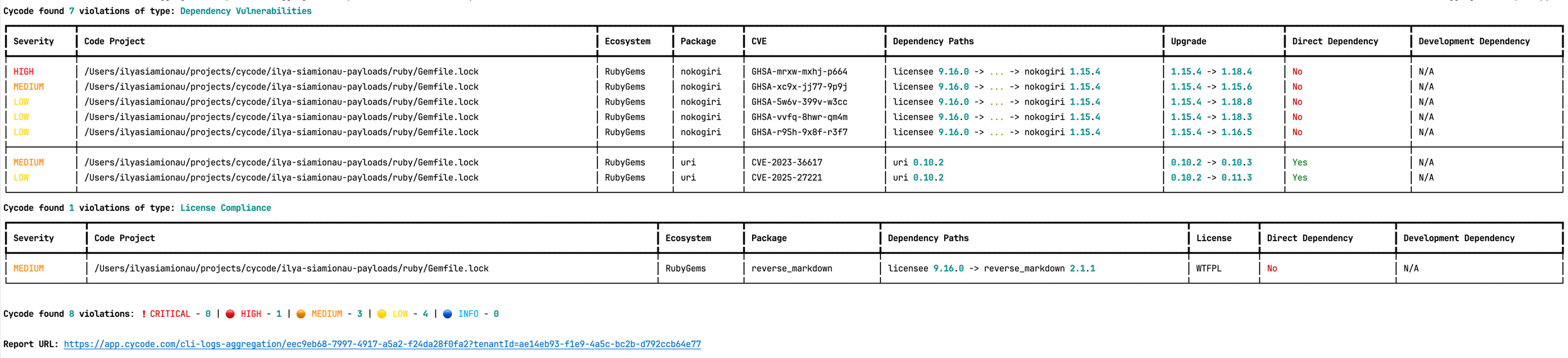

2. [Scan Results](#scan-results)

1. [Show/Hide Secrets](#showhide-secrets)

2. [Soft Fail](#soft-fail)

3. [Example Scan Results](#example-scan-results)

1. [Secrets Result Example](#secrets-result-example)

2. [IaC Result Example](#iac-result-example)

3. [SCA Result Example](#sca-result-example)

4. [SAST Result Example](#sast-result-example)

4. [Company Custom Remediation Guidelines](#company-custom-remediation-guidelines)

3. [Ignoring Scan Results](#ignoring-scan-results)

1. [Ignoring a Secret Value](#ignoring-a-secret-value)

2. [Ignoring a Secret SHA Value](#ignoring-a-secret-sha-value)

3. [Ignoring a Path](#ignoring-a-path)

4. [Ignoring a Secret, IaC, or SCA Rule](#ignoring-a-secret-iac-sca-or-sast-rule)

5. [Ignoring a Package](#ignoring-a-package)

6. [Ignoring via a config file](#ignoring-via-a-config-file)

6. [Report command](#report-command)

1. [Generating SBOM Report](#generating-sbom-report)

7. [Import command](#import-command)

8. [Scan logs](#scan-logs)

9. [Syntax Help](#syntax-help)

# Prerequisites

- The Cycode CLI application requires Python version 3.9 or later. The MCP command is available only for Python 3.10 and above. If you're using an earlier Python version, this command will not be available.

- Use the [`cycode auth` command](#using-the-auth-command) to authenticate to Cycode with the CLI

- Alternatively, you can get a Cycode Client ID and Client Secret Key by following the steps detailed in the [Service Account Token](https://docs.cycode.com/docs/en/service-accounts) and [Personal Access Token](https://docs.cycode.com/v1/docs/managing-personal-access-tokens) pages, which contain details on getting these values.

# Installation

The following installation steps are applicable to both Windows and UNIX / Linux operating systems.

> [!NOTE]

> The following steps assume the use of `python3` and `pip3` for Python-related commands; however, some systems may instead use the `python` and `pip` commands, depending on your Python environment’s configuration.

## Install Cycode CLI

To install the Cycode CLI application on your local machine, perform the following steps:

1. Open your command line or terminal application.

2. Execute one of the following commands:

- To install from [PyPI](https://pypi.org/project/cycode/):

```bash

pip3 install cycode

```

- To install from [Homebrew](https://formulae.brew.sh/formula/cycode):

```bash

brew install cycode

```

- To install from [GitHub Releases](https://github.com/cycodehq/cycode-cli/releases) navigate and download executable for your operating system and architecture, then run the following command:

```bash

cd /path/to/downloaded/cycode-cli

chmod +x cycode

./cycode

```

3. Finally authenticate the CLI. There are three methods to set the Cycode client ID and credentials (client secret or OIDC ID token):

- [cycode auth](#using-the-auth-command) (**Recommended**)

- [cycode configure](#using-the-configure-command)

- Add them to your [environment variables](#add-to-environment-variables)

### Using the Auth Command

> [!NOTE]

> This is the **recommended** method for setting up your local machine to authenticate with Cycode CLI.

1. Type the following command into your terminal/command line window:

`cycode auth`

2. A browser window will appear, asking you to log into Cycode (as seen below):

<img alt="Cycode login" height="300" src="https://raw.githubusercontent.com/cycodehq/cycode-cli/main/images/cycode_login.png"/>

3. Enter your login credentials on this page and log in.

4. You will eventually be taken to the page below, where you'll be asked to choose the business group you want to authorize Cycode with (if applicable):

<img alt="authorize CLI" height="450" src="https://raw.githubusercontent.com/cycodehq/cycode-cli/main/images/authorize_cli.png"/>

> [!NOTE]

> This will be the default method for authenticating with the Cycode CLI.

5. Click the **Allow** button to authorize the Cycode CLI on the selected business group.

<img alt="allow CLI" height="450" src="https://raw.githubusercontent.com/cycodehq/cycode-cli/main/images/allow_cli.png"/>

6. Once completed, you'll see the following screen if it was selected successfully:

<img alt="successfully auth" height="450" src="https://raw.githubusercontent.com/cycodehq/cycode-cli/main/images/successfully_auth.png"/>

7. In the terminal/command line screen, you will see the following when exiting the browser window:

`Successfully logged into cycode`

### Using the Configure Command

> [!NOTE]

> If you already set up your Cycode Client ID and Client Secret through the Linux or Windows environment variables, those credentials will take precedent over this method.

1. Type the following command into your terminal/command line window:

```bash

cycode configure

```

2. Enter your Cycode API URL value (you can leave blank to use default value).

`Cycode API URL [https://api.cycode.com]: https://api.onpremise.com`

3. Enter your Cycode APP URL value (you can leave blank to use default value).

`Cycode APP URL [https://app.cycode.com]: https://app.onpremise.com`

4. Enter your Cycode Client ID value.

`Cycode Client ID []: 7fe5346b-xxxx-xxxx-xxxx-55157625c72d`

5. Enter your Cycode Client Secret value (skip if you plan to use an OIDC ID token).

`Cycode Client Secret []: c1e24929-xxxx-xxxx-xxxx-8b08c1839a2e`

6. Enter your Cycode OIDC ID Token value (optional).

`Cycode ID Token []: eyJhbGciOiJSUzI1NiIsInR5cCI6IkpXVCJ9...`

7. If the values were entered successfully, you'll see the following message:

`Successfully configured CLI credentials!`

or/and

`Successfully configured Cycode URLs!`

If you go into the `.cycode` folder under your user folder, you'll find these credentials were created and placed in the `credentials.yaml` file in that folder.

The URLs were placed in the `config.yaml` file in that folder.

### Add to Environment Variables

#### On Unix/Linux:

```bash

export CYCODE_CLIENT_ID={your Cycode ID}

```

and

```bash

export CYCODE_CLIENT_SECRET={your Cycode Secret Key}

```

If your organization uses OIDC authentication, you can provide the ID token instead (or in addition):

```bash

export CYCODE_ID_TOKEN={your Cycode OIDC ID token}

```

#### On Windows

1. From the Control Panel, navigate to the System menu:

<img height="30" src="https://raw.githubusercontent.com/cycodehq/cycode-cli/main/images/image1.png" alt="system menu"/>

2. Next, click Advanced system settings:

<img height="30" src="https://raw.githubusercontent.com/cycodehq/cycode-cli/main/images/image2.png" alt="advanced system setting"/>

3. In the System Properties window that opens, click the Environment Variables button:

<img height="30" src="https://raw.githubusercontent.com/cycodehq/cycode-cli/main/images/image3.png" alt="environments variables button"/>

4. Create `CYCODE_CLIENT_ID` and `CYCODE_CLIENT_SECRET` variables with values matching your ID and Secret Key, respectively. If you authenticate via OIDC, add `CYCODE_ID_TOKEN` with your OIDC ID token value as well:

<img height="100" src="https://raw.githubusercontent.com/cycodehq/cycode-cli/main/images/image4.png" alt="environment variables window"/>

5. Insert the `cycode.exe` into the path to complete the installation.

## Install Pre-Commit Hook

Cycode's pre-commit and pre-push hooks can be set up within your local repository so that the Cycode CLI application will identify any issues with your code automatically before you commit or push it to your codebase.

> [!NOTE]

> pre-commit and pre-push hooks are not available for IaC scans.

Perform the following steps to install the pre-commit hook:

### Installing Pre-Commit Hook

1. Install the pre-commit framework (Python 3.9 or higher must be installed):

```bash

pip3 install pre-commit

```

2. Navigate to the top directory of the local Git repository you wish to configure.

3. Create a new YAML file named `.pre-commit-config.yaml` (include the beginning `.`) in the repository’s top directory that contains the following:

```yaml

repos:

- repo: https://github.com/cycodehq/cycode-cli

rev: v3.5.0

hooks:

- id: cycode

stages: [pre-commit]

```

4. Modify the created file for your specific needs. Use hook ID `cycode` to enable scan for Secrets. Use hook ID `cycode-sca` to enable SCA scan. Use hook ID `cycode-sast` to enable SAST scan. If you want to enable all scanning types, use this configuration:

```yaml

repos:

- repo: https://github.com/cycodehq/cycode-cli

rev: v3.5.0

hooks:

- id: cycode

stages: [pre-commit]

- id: cycode-sca

stages: [pre-commit]

- id: cycode-sast

stages: [pre-commit]

```

5. Install Cycode’s hook:

```bash

pre-commit install

```

A successful hook installation will result in the message: `Pre-commit installed at .git/hooks/pre-commit`.

6. Keep the pre-commit hook up to date:

```bash

pre-commit autoupdate

```

It will automatically bump `rev` in `.pre-commit-config.yaml` to the latest available version of Cycode CLI.

> [!NOTE]

> Trigger happens on `git commit` command.

> Hook triggers only on the files that are staged for commit.

### Installing Pre-Push Hook

To install the pre-push hook in addition to or instead of the pre-commit hook:

1. Add the pre-push hooks to your `.pre-commit-config.yaml` file:

```yaml

repos:

- repo: https://github.com/cycodehq/cycode-cli

rev: v3.5.0

hooks:

- id: cycode-pre-push

stages: [pre-push]

```

2. Install the pre-push hook:

```bash

pre-commit install --hook-type pre-push

```

3. For both pre-commit and pre-push hooks, use:

```bash

pre-commit install

pre-commit install --hook-type pre-push

```

> [!NOTE]

> Pre-push hooks trigger on `git push` command and scan only the commits about to be pushed.

# Cycode CLI Commands

The following are the options and commands available with the Cycode CLI application:

| Option | Description |

|-------------------------------------------------------------------|------------------------------------------------------------------------------------|

| `-v`, `--verbose` | Show detailed logs. |

| `--no-progress-meter` | Do not show the progress meter. |

| `--no-update-notifier` | Do not check CLI for updates. |

| `-o`, `--output [rich\|text\|json\|table]` | Specify the output type. The default is `rich`. |

| `--client-id TEXT` | Specify a Cycode client ID for this specific scan execution. |

| `--client-secret TEXT` | Specify a Cycode client secret for this specific scan execution. |

| `--id-token TEXT` | Specify a Cycode OIDC ID token for this specific scan execution. |

| `--install-completion` | Install completion for the current shell.. |

| `--show-completion [bash\|zsh\|fish\|powershell\|pwsh]` | Show completion for the specified shell, to copy it or customize the installation. |

| `-h`, `--help` | Show options for given command. |

| Command | Description |

|-------------------------------------------|----------------------------------------------------------------------------------------------------------------------------------------------|

| [auth](#using-the-auth-command) | Authenticate your machine to associate the CLI with your Cycode account. |

| [configure](#using-the-configure-command) | Initial command to configure your CLI client authentication. |

| [ignore](#ignoring-scan-results) | Ignore a specific value, path or rule ID. |

| [mcp](#mcp-command-experiment) | Start the Model Context Protocol (MCP) server to enable AI integration with Cycode scanning capabilities. |

| [scan](#running-a-scan) | Scan the content for Secrets/IaC/SCA/SAST violations. You`ll need to specify which scan type to perform: commit-history/path/repository/etc. |

| [report](#report-command) | Generate report. You will need to specify which report type to perform as SBOM. |

| status | Show the CLI status and exit. |

# MCP Command \[EXPERIMENT\]

> [!WARNING]

> The MCP command is available only for Python 3.10 and above. If you're using an earlier Python version, this command will not be available.

The Model Context Protocol (MCP) command allows you to start an MCP server that exposes Cycode's scanning capabilities to AI systems and applications. This enables AI models to interact with Cycode CLI tools via a standardized protocol.

> [!TIP]

> For the best experience, install Cycode CLI globally on your system using `pip install cycode` or `brew install cycode`, then authenticate once with `cycode auth`. After global installation and authentication, you won't need to configure `CYCODE_CLIENT_ID` and `CYCODE_CLIENT_SECRET` environment variables in your MCP configuration files.

[](https://cursor.com/en/install-mcp?name=cycode&config=eyJjb21tYW5kIjoidXZ4IGN5Y29kZSBtY3AiLCJlbnYiOnsiQ1lDT0RFX0NMSUVOVF9JRCI6InlvdXItY3ljb2RlLWlkIiwiQ1lDT0RFX0NMSUVOVF9TRUNSRVQiOiJ5b3VyLWN5Y29kZS1zZWNyZXQta2V5IiwiQ1lDT0RFX0FQSV9VUkwiOiJodHRwczovL2FwaS5jeWNvZGUuY29tIiwiQ1lDT0RFX0FQUF9VUkwiOiJodHRwczovL2FwcC5jeWNvZGUuY29tIn19)

## Starting the MCP Server

To start the MCP server, use the following command:

```bash

cycode mcp

```

By default, this starts the server using the `stdio` transport, which is suitable for local integrations and AI applications that can spawn subprocesses.

### Available Options

| Option | Description |

|-------------------|--------------------------------------------------------------------------------------------|

| `-t, --transport` | Transport type for the MCP server: `stdio`, `sse`, or `streamable-http` (default: `stdio`) |

| `-H, --host` | Host address to bind the server (used only for non stdio transport) (default: `127.0.0.1`) |

| `-p, --port` | Port number to bind the server (used only for non stdio transport) (default: `8000`) |

| `--help` | Show help message and available options |

### MCP Tools

The MCP server provides the following tools that AI systems can use:

| Tool Name | Description |

|----------------------|---------------------------------------------------------------------------------------------|

| `cycode_secret_scan` | Scan files for hardcoded secrets |

| `cycode_sca_scan` | Scan files for Software Composition Analysis (SCA) - vulnerabilities and license issues |

| `cycode_iac_scan` | Scan files for Infrastructure as Code (IaC) misconfigurations |

| `cycode_sast_scan` | Scan files for Static Application Security Testing (SAST) - code quality and security flaws |

| `cycode_status` | Get Cycode CLI version, authentication status, and configuration information |

### Usage Examples

#### Basic Command Examples

Start the MCP server with default settings (stdio transport):

```bash

cycode mcp

```

Start the MCP server with explicit stdio transport:

```bash

cycode mcp -t stdio

```

Start the MCP server with Server-Sent Events (SSE) transport:

```bash

cycode mcp -t sse -p 8080

```

Start the MCP server with streamable HTTP transport on custom host and port:

```bash

cycode mcp -t streamable-http -H 0.0.0.0 -p 9000

```

Learn more about MCP Transport types in the [MCP Protocol Specification – Transports](https://modelcontextprotocol.io/specification/2025-03-26/basic/transports).

#### Configuration Examples

##### Using MCP with Cursor/VS Code/Claude Desktop/etc (mcp.json)

> [!NOTE]

> For EU Cycode environments, make sure to set the appropriate `CYCODE_API_URL` and `CYCODE_APP_URL` values in the environment variables (e.g., `https://api.eu.cycode.com` and `https://app.eu.cycode.com`).

Follow [this guide](https://code.visualstudio.com/docs/copilot/chat/mcp-servers) to configure the MCP server in your **VS Code/GitHub Copilot**. Keep in mind that in `settings.json`, there is an `mcp` object containing a nested `servers` sub-object, rather than a standalone `mcpServers` object.

For **stdio transport** (direct execution):

```json

{

"mcpServers": {

"cycode": {

"command": "cycode",

"args": ["mcp"],

"env": {

"CYCODE_CLIENT_ID": "your-cycode-id",

"CYCODE_CLIENT_SECRET": "your-cycode-secret-key",

"CYCODE_API_URL": "https://api.cycode.com",

"CYCODE_APP_URL": "https://app.cycode.com"

}

}

}

}

```

For **stdio transport** with `pipx` installation:

```json

{

"mcpServers": {

"cycode": {

"command": "pipx",

"args": ["run", "cycode", "mcp"],

"env": {

"CYCODE_CLIENT_ID": "your-cycode-id",

"CYCODE_CLIENT_SECRET": "your-cycode-secret-key",

"CYCODE_API_URL": "https://api.cycode.com",

"CYCODE_APP_URL": "https://app.cycode.com"

}

}

}

}

```

For **stdio transport** with `uvx` installation:

```json

{

"mcpServers": {

"cycode": {

"command": "uvx",

"args": ["cycode", "mcp"],

"env": {

"CYCODE_CLIENT_ID": "your-cycode-id",

"CYCODE_CLIENT_SECRET": "your-cycode-secret-key",

"CYCODE_API_URL": "https://api.cycode.com",

"CYCODE_APP_URL": "https://app.cycode.com"

}

}

}

}

```

For **SSE transport** (Server-Sent Events):

```json

{

"mcpServers": {

"cycode": {

"url": "http://127.0.0.1:8000/sse"

}

}

}

```

For **SSE transport** on custom port:

```json

{

"mcpServers": {

"cycode": {

"url": "http://127.0.0.1:8080/sse"

}

}

}

```

For **streamable HTTP transport**:

```json

{

"mcpServers": {

"cycode": {

"url": "http://127.0.0.1:8000/mcp"

}

}

}

```

##### Running MCP Server in Background

For **SSE transport** (start server first, then configure client):

```bash

# Start the MCP server in the background

cycode mcp -t sse -p 8000 &

# Configure in mcp.json

{

"mcpServers": {

"cycode": {

"url": "http://127.0.0.1:8000/sse"

}

}

}

```

For **streamable HTTP transport**:

```bash

# Start the MCP server in the background

cycode mcp -t streamable-http -H 127.0.0.2 -p 9000 &

# Configure in mcp.json

{

"mcpServers": {

"cycode": {

"url": "http://127.0.0.2:9000/mcp"

}

}

}

```

> [!NOTE]

> The MCP server requires proper Cycode CLI authentication to function. Make sure you have authenticated using `cycode auth` or configured your credentials before starting the MCP server.

### Troubleshooting MCP

If you encounter issues with the MCP server, you can enable debug logging to get more detailed information about what's happening. There are two ways to enable debug logging:

1. Using the `-v` or `--verbose` flag:

```bash

cycode -v mcp

```

2. Using the `CYCODE_CLI_VERBOSE` environment variable:

```bash

CYCODE_CLI_VERBOSE=1 cycode mcp

```

The debug logs will show detailed information about:

- Server startup and configuration

- Connection attempts and status

- Tool execution and results

- Any errors or warnings that occur

This information can be helpful when:

- Diagnosing connection issues

- Understanding why certain tools aren't working