id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,894,549 | Top productivity Hacks for Busy business owners | Conquering the Chaos: top productivity Hacks for Busy business owners The life of an enterprise... | 0 | 2024-06-20T09:34:27 | https://dev.to/spanking_solutions_0af849/top-productivity-hacks-for-busy-business-owners-2d8o | Conquering the Chaos: top productivity Hacks for Busy business owners

The life of an enterprise owner is a whirlwind. among strategizing, handling teams, and maintaining operations jogging easily, it’s easy to get overwhelmed and struggle to find enough hours inside the day. fortunately, there are some productivity ha... | spanking_solutions_0af849 | |

1,894,548 | Developing Content for Every Stage of the Customer Journey | The business world is plagued with many challenges. These challenges require specific strategies to... | 0 | 2024-06-20T09:34:13 | https://dev.to/martinbaun/developing-content-for-every-stage-of-the-customer-journey-2emn | career, learning, design, architecture |

The business world is plagued with many challenges. These challenges require specific strategies to help you navigate them.

I’ll explain these challenges and the strategies to overcome and succeed.

## Customer acquisition

Companies invest endlessly to acquire customers. They do so to convince them that their brand ... | martinbaun |

1,894,547 | Let’s Talk About The Real Reason For All These Tech Layoffs | We all know that those corporate statements that announce mass tech layoffs and claim that the job... | 0 | 2024-06-20T09:33:51 | https://dev.to/manojgohel/lets-talk-about-the-real-reason-for-all-these-tech-layoffs-451a | layoffs | We all know that those corporate statements that announce mass tech layoffs and claim that the job cuts are actually a plan to fuel growth — we all know that’s bullshit.

Right?

I mean, let’s get this out of the way. The gall on that reasoning actually offends me. Like, on a deep spiritual level. Because if there’s a te... | manojgohel |

1,894,546 | Saving time and money through task Automation | Automating Your way to success: Saving time and money through task Automation In today’s fast-paced... | 0 | 2024-06-20T09:33:28 | https://dev.to/spanking_solutions_0af849/saving-time-and-money-through-task-automation-13eh | Automating Your way to success: Saving time and money through task Automation

In today’s fast-paced business environment, performance is paramount. every minute counts and every dollar stored may be reinvested in growth. Automation, the gadget of the use of generations to carry out responsibilities historically achieve... | spanking_solutions_0af849 | |

1,894,437 | TESTT | TESTTTT | 0 | 2024-06-20T07:22:42 | https://dev.to/testthacked/testt-5aga | tets | TESTTTT | john00900 |

1,894,545 | Xintuo New Energy Co., Ltd.: Leading the Charge in Renewable Solutions | The time which try next: Xintuo New Energy Co. Ltd. : Innovating Renewable Energy Solutions to get... | 0 | 2024-06-20T09:32:17 | https://dev.to/shown_hems_4c10a550372b38/xintuo-new-energy-co-ltd-leading-the-charge-in-renewable-solutions-mj2 | design |

The time which try next: Xintuo New Energy Co. Ltd. : Innovating Renewable Energy Solutions to get the best

It certainly are a component which are definite is important of sustainability means. Traditional power are finite harmful to the surroundings that are environmental. Because more people discover this, It op... | shown_hems_4c10a550372b38 |

1,894,544 | 🧵 Web Workers and Multithreading in JavaScript | JavaScript is a single-threaded language, meaning it can only execute one task at a time. However,... | 0 | 2024-06-20T09:31:17 | https://dev.to/dipakahirav/web-workers-and-multithreading-in-javascript-573l | javascript, webdev, programming, learning | JavaScript is a single-threaded language, meaning it can only execute one task at a time. However, with Web Workers, we can perform background tasks without blocking the main thread. Let's dive into how Web Workers enable multithreading in JavaScript! 🧙♂️

please subscribe to my [YouTube channel](https://www.youtube.... | dipakahirav |

1,894,543 | OpenSign v1.5.0 introduces new features including custom email templates, revoke document functionality | We are excited to announce the release of OpenSign v1.5.0. This update brings a host of new features... | 0 | 2024-06-20T09:31:03 | https://dev.to/opensign001/opensign-v150-introduces-new-features-including-custom-email-templates-revoke-document-functionality-1b81 | We are excited to announce the release of OpenSign [v1.5.0.](https://github.com/OpenSignLabs/OpenSign) This update brings a host of new features and improvements to enhance your experience. The new features in this version are broken down as follows:

What’s New

Major features

1. The digital signature on completion c... | opensign001 | |

1,894,403 | Reviewing the Replika Free Trial | Key Highlights Replika offers a free trial that allows users to experience the benefits... | 0 | 2024-06-20T09:30:00 | https://dev.to/novita_ai/reviewing-the-replika-free-trial-4j56 | ## Key Highlights

- Replika offers a free trial that allows users to experience the benefits of having an AI friend.

- During the free trial, users have access to limited features, but can upgrade to Replika Pro for additional benefits.

- Getting started with Replika easy, and users can sign up without providing any c... | novita_ai | |

1,894,518 | Character AI Roleplay Tips: Unlocking Success with GPU Pods | Key Highlights Character AI is a powerful tool that allows users to have engaging... | 0 | 2024-06-20T09:30:00 | https://dev.to/novita_ai/character-ai-roleplay-tips-unlocking-success-with-gpu-pods-m9f | ## Key Highlights

- Character AI is a powerful tool that allows users to have engaging conversations with bots that act like real humans.

- By following these tips, you master character AI role and have immersive and enjoyable.

- Tips include your character's backstory motivations, establishing a clear voice personali... | novita_ai | |

1,894,524 | How to Train Compute-Optimal Large Language Models? | Introduction Recently, an LLM with only 70B parameters outperforms GPT 3. This LLM, called... | 0 | 2024-06-20T09:29:42 | https://dev.to/novita_ai/how-to-train-compute-optimal-large-language-models-48cb | llm | ## Introduction

Recently, an LLM with only 70B parameters outperforms GPT 3. This LLM, called Chinchilla, was developed by Hoffmann and his colleagues. In their work, they state that [**current LLMs**](https://blogs.novita.ai/llm-leaderboard-2024-predictions-revealed/) are not compute-optimal. Why is this? How do they ... | novita_ai |

1,894,542 | SOLVED Unable to get page count Is poppler installed and in PATH | A post by Free Python Code | 0 | 2024-06-20T09:28:14 | https://dev.to/freepythoncode/solved-unable-to-get-page-count-is-poppler-installed-and-in-path-566l | python, solved, pdf, tutorial | {% embed https://www.youtube.com/watch?v=PyF1Vh9040Y %}

| freepythoncode |

1,894,541 | How to create or generate a WORD file using Python | Hi 🙂🖐 In this post, I will show you how to create or generate a word file using Python and... | 0 | 2024-06-20T09:26:04 | https://dev.to/freepythoncode/how-to-create-or-generate-a-word-file-using-python-3llg | python, coding, tutorial, beginners | ## Hi 🙂🖐

In this post, I will show you how to create or generate a `word` file

using Python and a library called python_docx

## Install python_docx

```

pip install python_docx

```

```python

from docx import Document

word_doc = Document()

```

## Add heading

```python

word_doc.add_heading('This is word file genera... | freepythoncode |

1,894,539 | How to Create E2E Tests for React Using Playwright | Setting up end-to-end (E2E) tests for your project has never been easier. With Playwright, you can... | 0 | 2024-06-20T09:22:22 | https://dev.to/dutchskull/how-to-create-e2e-tests-for-react-using-playwright-4fgp | react, webdev, testing, playwright | Setting up end-to-end (E2E) tests for your project has never been easier. With Playwright, you can create robust tests that ensure your application works as expected across different browsers.

## Dependencies

To get started, you'll need:

- [Playwright](https://playwright.dev/docs/intro)

- [Playwright VS Code extensi... | dutchskull |

1,894,538 | FanClub: Where Creators and Fans Connect and Collaborate! | FanClub is a cutting-edge social platform revolutionizing the way creators and fans connect and... | 0 | 2024-06-20T09:21:16 | https://dev.to/fanclub/fanclub-where-creators-and-fans-connect-and-collaborate-bnj | testing, go, softwaredevelopment, socialmedia | [FanClub](https://fanclub.app/) is a cutting-edge social platform revolutionizing the way creators and fans connect and collaborate. Our platform offers creators a suite of powerful tools to engage directly with their audience through live streams, Q&A sessions, and exclusive content releases. This fosters deeper conne... | fanclub |

1,894,537 | Dark Fiber Market Growth Driver: Rise in Data Center Interconnectivity | Dark Fiber Market size was valued at $ 6.5 Bn in 2022 and is expected to grow to $ 16.55 Bn by 2030... | 0 | 2024-06-20T09:20:02 | https://dev.to/vaishnavi_farkade_/dark-fiber-market-growth-driver-rise-in-data-center-interconnectivity-5g2i | **Dark Fiber Market size was valued at $ 6.5 Bn in 2022 and is expected to grow to $ 16.55 Bn by 2030 and grow at a CAGR of 12.4% by 2023-2030.**

**Market Scope & Overview:**

The global Dark Fiber Market Growth Driver research report provides an in-depth analysis of the existing and future state of the industry. The ... | vaishnavi_farkade_ | |

1,894,536 | [DAY 57-59] I learned React & Redux | Hi everyone! Welcome back to another blog where I document the things I learned in web development. I... | 27,380 | 2024-06-20T09:17:34 | https://dev.to/thomascansino/day-57-59-i-learned-react-redux-157h | learning, react, redux, webdev | Hi everyone! Welcome back to another blog where I document the things I learned in web development. I do this because it helps retain the information and concepts as it is some sort of an active recall.

On days 57-59, after acquiring the DSA certificate from freeCodeCamp, I continued on to the next course which is the... | thomascansino |

1,894,535 | What Are Web Beacons? Should I do something about them? | Should you do something about them? Well, let’s look at a 101 so we get more context! Web... | 0 | 2024-06-20T09:14:47 | https://dev.to/zoltan_fehervari_52b16d1d/what-are-web-beacons-should-i-do-something-about-them-4nai | webbeacons, webdev, website, pixels | Should you do something about them? Well, let’s look at a 101 so we get more context!

## Web Tracking 101: What Are Web Beacons?

Web beacons, also known as pixel tags or clear GIFs, are tiny, transparent images usually embedded in websites or emails. These beacons, often just 1x1 pixel in size, communicate with a ser... | zoltan_fehervari_52b16d1d |

1,894,534 | 3 HAL TENTANG MANAGE UANG | Gimana menurut kalian? ada yg kurang gak hehe😁 Yuk belajar bareng serta diskusi dikolom komentar,... | 0 | 2024-06-20T09:14:08 | https://dev.to/appardana/3-hal-tentang-manage-uang-5c9e | uang, keuangan, management, finance |

Gimana menurut kalian? ada yg kurang gak hehe😁

Yuk belajar bareng serta diskusi dikolom komentar, serta di save biar ga lupa💬😝

📬DM for Business

🌱Follow : @appardana🎍

💭Stay Young, Be Innovative and Keep Lear... | appardana |

1,894,533 | SOLID explained with iOS examples | The SOLID principles are a set of guidelines for designing software that is easy to maintain and... | 0 | 2024-06-20T09:14:05 | https://dev.to/ishouldhaveknown/solid-explained-with-ios-examples-28ni | solidprinciples, ios, swift | The SOLID principles are a set of guidelines for designing software that is easy to maintain and extend.

## 1. Single Responsibility Principle (SRP)

> A class should have only one reason to change, meaning it should only have one job or responsibility.

```swift

// WRONG: A class that handles both user authentication... | ishouldhaveknown |

341,034 | Monica 2.1 | Monica development will be ended with this project. I don't know if I will develop Monica anymore in... | 6,841 | 2020-05-21T16:00:42 | https://realicejoanne.gitbook.io/blog/2019/12/monica-2.1 | android, api, socialmedia, college | Monica development will be ended with this project. I don't know if I will develop Monica anymore in the future but let's hope this idea won't be stolen by anyone...please...readers...

Okay so basically this semester I teamed up again with Rifqy and Raihan because we are the team of Monica 2.0 in the previous semester... | trianne24 |

1,894,532 | CREATIVE MEDIA PRODUCTIONS | Our services • Ad Film • Corporate Film • Industrial Film • Documentry • TV Commercial •... | 0 | 2024-06-20T09:13:51 | https://dev.to/mslive_technologies_6025c/creative-media-productions-jik |

Our services

• Ad Film

• Corporate Film

• Industrial Film

• Documentry

• TV Commercial

• Expedition Film

• Commercial Video

No.1, 1st Floor, Lakshmi Paradise,

Valliammal Street, New Avadi Road,

Kilpauk, Chennai -... | mslive_technologies_6025c | |

1,894,529 | Why Choose Custom Software Development? | In the modern era, with the pace of the digital world that is fast running, companies always are... | 0 | 2024-06-20T09:10:55 | https://dev.to/jiten/why-choose-custom-software-development-39d2 | softwaredevelopment, software, development |

In the modern era, with the pace of the digital world that is fast running, companies always are looking for new marketing ideas to stay ahead of the competition. One of the best strategies that could highly help a ... | jiten |

1,894,530 | Types of Transformer-Based Foundation Models | Transformer-based foundation models have revolutionized natural language processing (NLP) and are... | 0 | 2024-06-20T09:09:30 | https://victorleungtw.com/2024/06/20/transformer/ | nlp, transformers, autoencoders, autoregressive | Transformer-based foundation models have revolutionized natural language processing (NLP) and are categorized into three primary types: encoder-only, decoder-only, and encoder-decoder models. Each type is trained using a specific objective function and is suited for different types of generative tasks. Let’s dive deepe... | victorleungtw |

1,894,528 | Simplify Your DIY Projects with a Cordless Screwdriver | screenshot-1714492004336.png Simplify Your DIY Projects with a Cordless Screwdriver Introduction Do... | 0 | 2024-06-20T09:08:21 | https://dev.to/thea_askinshboy_f72b54b7/simplify-your-diy-projects-with-a-cordless-screwdriver-4afi | design |

screenshot-1714492004336.png

Simplify Your DIY Projects with a Cordless Screwdriver

Introduction

Do you like to fix or make things with your hands? Do you want to make your DIY projects easier and safer? Then you need a cordless screwdriver! It is an innovative tool that can help you with your projects. , we wil... | thea_askinshboy_f72b54b7 |

1,894,527 | Why Coding Plagiarism Checkers are Essential For Developers | Ever written programs for hours and then wondered if your code is really original? Sometimes, it is... | 0 | 2024-06-20T09:07:16 | https://dev.to/codequiry/why-coding-plagiarism-checkers-are-essential-for-developers-1oco | webdev, codingplagiarismchecker, codeplagiarismchecker, codequiry | Ever written programs for hours and then wondered if your code is really original? Sometimes, it is easy to copy someone else's work in this fast-paced world of development. That's where the Coding Plagiarism Checker steps in. These intelligent tools scan your code against a database of millions of codes already availa... | codequiry |

1,894,512 | Most Useful C# .NET 🚀 Snippets | When diving into C# .NET development, efficiency and productivity are key. Whether you’re a seasoned... | 0 | 2024-06-20T09:05:52 | https://dev.to/shahed1bd/most-useful-c-net-snippets-1o16 | When diving into C# .NET development, efficiency and productivity are key. Whether you’re a seasoned developer or just starting, having a collection of useful snippets at your fingertips can significantly enhance your workflow. These snippets not only save time but also help in writing clean, efficient, and bug-free co... | shahed1bd | |

1,894,526 | Why Choose WordPress in June 2024: Top 8 Benefits | Opt-in for a hosting company that offers WordPress hosting can further streamline your site... | 0 | 2024-06-20T09:05:07 | https://taiwoadefowope.hashnode.dev/why-choose-wordpress-in-june-2024-top-8-benefits | > [Opt-in for a hosting company](https://partners.hostgator.com/ZdJrgQ) that offers WordPress hosting can further streamline your site management.

## What is WordPress?

WordPress powers almost one-third of all websites worldwide, from simple personal blogs to intricate corporate websites for companies like Sony, T... | taiwo17 | |

1,891,413 | A refresher on GitHub Pages | I moved my blog from WordPress to GitLab Pages in... 2016. I'm happy with the solution. However, I... | 0 | 2024-06-20T09:02:00 | https://blog.frankel.ch/refresher-github-pages/ | github, githubactions, githubpages | I moved my [blog](https://blog.frankel.ch/) from WordPress to [GitLab Pages](https://docs.gitlab.com/ee/user/project/pages/) in... 2016. I'm happy with the solution. However, I used [GitHub Pages](https://pages.github.com/) when I was teaching for both the courses and the exercises, _e.g._, [Java EE](https://formations... | nfrankel |

1,894,523 | Why Choose a JS Gantt Library? Advantages and Use Cases | Project management is a constantly evolving field that requires effective tools to track tasks,... | 0 | 2024-06-20T08:59:49 | https://dev.to/lenormor/why-choose-a-js-gantt-library-advantages-and-use-cases-58n3 | webdev, javascript, programming, learning | Project management is a constantly evolving field that requires effective tools to track tasks, deadlines, and resources. Among the various tools available, Gantt charts stand out for their ability to provide a clear and structured overview of project progress. With the advent of web technologies, JavaScript (JS) Gantt... | lenormor |

1,894,520 | Wuxi Alsman Compressor Co., Ltd.: Pioneers in Compressor Technology | Wuxi Alsman Compressor Co Ltd Making Life Easier and Compressor Technology Perhaps you have heard... | 0 | 2024-06-20T08:57:33 | https://dev.to/thea_askinshboy_f72b54b7/wuxi-alsman-compressor-co-ltd-pioneers-in-compressor-technology-36j | design | Wuxi Alsman Compressor Co Ltd Making Life Easier and Compressor Technology

Perhaps you have heard about Wuxi Alsman Compressor Co Ltd They've been the ongoing providers that produces machines that will help you are doing activities most effortlessly and quickly, particularly if you need to use air to do so W... | thea_askinshboy_f72b54b7 |

1,894,519 | EOL and EOS Dates | Hii all I wanted to know the End of life and end of support dates for puppet 5 (linux) | 0 | 2024-06-20T08:56:48 | https://dev.to/kiruthika/eol-and-eos-dates-43ia | help | Hii all

I wanted to know the End of life and end of support dates for puppet 5 (linux) | kiruthika |

1,894,517 | How to make human-readable file size in Python | Hi 🙂🖐 In this post, I will show you how to make human-readable file size like: MB, KB, GB I will... | 0 | 2024-06-20T08:55:18 | https://dev.to/freepythoncode/how-to-make-human-readable-file-size-in-python-2bg2 | python, coding, beginners, tutorial | Hi 🙂🖐

In this post, I will show you how to make human-readable file size

like: **MB, KB, GB**

I will use a Python library called `human-readable`

## install

```

pip install human-readable

```

create any file to test in this code I created a text file to text

```python

from human_readable.files import file_size

... | freepythoncode |

1,894,516 | Building Information Modeling Market Forecast: Regional Growth Analysis | The Building Information Modeling Market Size was valued at $ 7.8 Bn in 2023 and is expected to reach... | 0 | 2024-06-20T08:55:15 | https://dev.to/vaishnavi_farkade_/building-information-modeling-market-forecast-regional-growth-analysis-2f4k | **The Building Information Modeling Market Size was valued at $ 7.8 Bn in 2023 and is expected to reach $ 22.03 Bn by 2031 and grow at a CAGR of 13.85% by 2024-2031.**

**Market Scope & Overview:**

A competitive quadrant is included in the study, which is a patented method for analyzing and evaluating a company's posi... | vaishnavi_farkade_ | |

1,894,515 | How to configure the style of the legend separately in VChart and change the shape of the graphic | Question title How to configure the style of legends separately in VChart and change the... | 0 | 2024-06-20T08:54:49 | https://dev.to/xuefei1313/how-to-configure-the-style-of-the-legend-separately-in-vchart-and-change-the-shape-of-the-graphic-5clj |

### Question title

How to configure the style of legends separately in VChart and change the shape of graphics

### Problem description

How to change the legend item graphic of a column chart series to a circle

, in... | 0 | 2024-06-20T08:52:28 | https://dev.to/happyer/the-dawn-of-the-swift-6-era-2ghn | ios, swift, macos, development | ## 1. Introduction

At the recently concluded Apple Worldwide Developers Conference (WWDC), in addition to the highly anticipated announcement of Apple Intelligence, Apple officially released Swift 6.0.

## 2. A Decade of Swift's Development

Since its debut in 2014, Swift has traversed a decade of remarkable progress. ... | happyer |

1,894,510 | Time Off Tracking Software Market: Growth Opportunities | Global Time Off Tracking Software Market Size Was Valued at USD 2.71 Billion in 2022, and is... | 0 | 2024-06-20T08:51:59 | https://dev.to/sakshi_patil_4a2376732717/time-off-tracking-software-market-growth-opportunities-1f9e |

Global Time Off Tracking Software Market Size Was Valued at USD 2.71 Billion in 2022, and is Projected to Reach USD 8.59 Billion by 2030, Growing at a CAGR of 15.52% From 2023-2030

Time Off Tracking Software effectively oversees and manages employees' leave requests, absences, and vacation schedules. It automates the ... | sakshi_patil_4a2376732717 | |

1,894,508 | Svg World Map | Inspired by mashape.com | 0 | 2024-06-20T08:48:42 | https://dev.to/hamed_1051/svg-world-map-1c56 | codepen | Inspired by mashape.com

{% codepen https://codepen.io/DonSinDRom/pen/RwzgBX %} | hamed_1051 |

1,894,507 | Elevating Kitchen Appliance Production: Techniques for Enhanced Efficiency | Elevating Kitchen area Home device Manufacturing: Methods for Improved Effectiveness Lots of people... | 0 | 2024-06-20T08:47:00 | https://dev.to/thea_askinshboy_f72b54b7/elevating-kitchen-appliance-production-techniques-for-enhanced-efficiency-5ge8 | design |

Elevating Kitchen area Home device Manufacturing: Methods for Improved Effectiveness

Lots of people like towards prepare, as well as possessing dependable kitchen area home devices can easily create the job simpler as well as much a lot pleasurable that is extra. We will talk about the benefits of elevating kit... | thea_askinshboy_f72b54b7 |

1,894,506 | Essential Debugging Techniques for Network and Service Connectivity | In the fast-paced world of software development, effective debugging is crucial for ensuring smooth... | 0 | 2024-06-20T08:45:41 | https://dev.to/saniyathossain/essential-debugging-techniques-for-network-and-service-connectivity-55ei | In the fast-paced world of software development, effective debugging is crucial for ensuring smooth operations and quick resolutions to issues. This article provides practical examples and tips for using essential tools like `curl`, `telnet`, and `tcpdump`, along with connectivity checks for services such as Redis, MyS... | saniyathossain | |

1,894,505 | Semaphore - The Traffic Signals of Concurrency | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-20T08:43:12 | https://dev.to/shoyebwritescode/semaphore-the-traffic-signals-of-concurrency-5dke | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

Semaphores are signaling mechanisms that manage access to **shared resources**, ensuring **orderly execution** and mitigating simultaneous **access conflicts**. Semaphores safeguard **d... | shoyebwritescode |

1,894,504 | Let’s go for the best PHP Frameworks in 2024 | I talked to a few PHP developers and asked their opinion. After buying them beer and wine they opened... | 0 | 2024-06-20T08:41:22 | https://dev.to/zoltan_fehervari_52b16d1d/lets-go-for-the-best-php-frameworks-in-2024-2hpp | php, phpframeworks, phpdevelopment, phpprogramming | I talked to a few PHP developers and asked their opinion.

After buying them beer and wine they opened up.

This is what they told me…

Selecting the right PHP framework is crucial for the success of your project. I’m gonna provide overview of the most popular PHP frameworks, their pros and cons, and their suitability f... | zoltan_fehervari_52b16d1d |

1,894,502 | Know about the differences – Selenium vs. Scriptless Testing | With the emergence of Agile, it’s no secret that the way engineers build and test software has... | 0 | 2024-06-20T08:38:50 | https://dev.to/jamescantor38/know-about-the-differences-selenium-vs-scriptless-testing-27he | selenium, scriptlesstesting, testgrid | With the emergence of Agile, it’s no secret that the way engineers build and test software has evolved considerably in recent years. Traditional methods of testing will no longer suffice as software becomes more advanced. Test Automation was created with the advancement of technology to speed up the testing process by ... | jamescantor38 |

1,894,501 | How to make the axis label with graphics in VChart? | How to make the axis label with graphics in VChart? Question title How to make... | 0 | 2024-06-20T08:36:46 | https://dev.to/xuefei1313/how-to-make-the-axis-label-with-graphics-in-vchart-4079 | # How to make the axis label with graphics in VChart?

### Question title

How to make axis labels with graphics in VChart?

### Problem description

Want to mark the special value label of the x-axis with a graph

to help developers deliver live updates in their applications by automatically keeping their database and frontend clients in sync.

[LiveSync](https://hubs.la/Q02CzvRD0) is made of two components, the [Models SDK](https://hubs.la/Q02CzvS_0)... | zknill |

1,894,498 | Demystifying Recursion: A Brief Explanation | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-20T08:33:47 | https://dev.to/vidyarathna/demystifying-recursion-a-brief-explanation-3l1k | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

**Concept: Recursion**

Recursion is a programming technique where a function calls itself to solve smaller instances of the same problem. It’s essential for tasks like tree traversal ... | vidyarathna |

1,894,497 | Biosensors Market Report: Wearable Devices Analysis | The Biosensors Market size was valued at $ 29.2 Bn in 2023 and is expected to grow at a CAGR of 7.9%... | 0 | 2024-06-20T08:30:35 | https://dev.to/vaishnavi_farkade_/biosensors-market-report-wearable-devices-analysis-1n45 | **The Biosensors Market size was valued at $ 29.2 Bn in 2023 and is expected to grow at a CAGR of 7.9% by 2024 to 2031 and it will reach $ 53.74 Bn in 2031.**

**Market Scope & Overview:**

The research report has dedicated several volumes of analysis industry research and Biosensors Market Report share analysis of hig... | vaishnavi_farkade_ | |

1,894,496 | Create virtual host on nginx server(Ubuntu) | The deployment of application on server is a very tedious task. From installation of nginx to... | 0 | 2024-06-20T08:30:34 | https://dev.to/palchandu_dev/create-virtual-host-on-nginx-serverubuntu-5gj4 | The deployment of application on server is a very tedious task. From installation of nginx to creation of virtual host and linking of virtual host from /etc/nginx/sites-available to /etc/nginx/sites-ebabled. Without the enable of virtual host will not work.

Following are steps to create virtual host and make it enable... | palchandu_dev | |

1,894,495 | Choosing the Right Web Development Platform | In the digital age, a website serves as the cornerstone of an organization’s online presence, making... | 0 | 2024-06-20T08:29:39 | https://dev.to/webstudio/choosing-the-right-web-development-platform-2la8 | webdev, websitedevelopment, webdesignn, career | In the digital age, a website serves as the cornerstone of an organization’s online presence, making the choice of web development platform a critical decision. From aligning with business objectives and enhancing user experience to ensuring security and scalability, the platform on which a website is built plays a piv... | webstudionepal |

1,894,494 | Making Memories Last: Gift Box Manufacturer Ensuring Durability | Keep a Durable Gift Box – to your Memories Alive Innovation from Our Manufacturer Do you wish to... | 0 | 2024-06-20T08:29:39 | https://dev.to/barret_riendeaumdhj_eea4/making-memories-last-gift-box-manufacturer-ensuring-durability-2dbd | design | Keep a Durable Gift Box – to your Memories Alive Innovation from Our Manufacturer

Do you wish to keep your memories which are precious in your head Would you need a memory that is great share with all your family members Then you will need to have them locked in if yes What’s the way in which is best to ... | barret_riendeaumdhj_eea4 |

1,894,493 | The Phishing Revolution: AI-Powered Deception Makes Us All Vulnerable | Think you can spot a phishing email a mile away? Think again. Artificial intelligence is making these... | 0 | 2024-06-20T08:28:12 | https://dev.to/otunkay/the-phishing-revolution-ai-powered-deception-makes-us-all-vulnerable-3d5g | Think you can spot a phishing email a mile away? Think again. Artificial intelligence is making these attacks more sophisticated and undetectable than ever before.

AI is being used to personalise phishing emails, crafting messages that appear to come from trusted sources and mimicking writing styles with uncanny accura... | otunkay | |

1,894,492 | Top 11 DevOps Tools on Github | Ehy Everybody 👋 It’s Antonio, CEO & Founder at Litlyx. I come back to you with a... | 0 | 2024-06-20T08:27:59 | https://dev.to/litlyx/top-11-devops-tools-on-github-2cn5 | opensource, webdev, beginners, programming | ## Ehy Everybody 👋

It’s **Antonio**, CEO & Founder at **[Litlyx](https://litlyx.com).**

I come back to you with a curated **Awesome List of resources** that you can find interesting.

Today Subject is...

```bash

Top 11 DevOps Tools

```

---

### Leave a **star** on our open-source [repo](https://github.com/Litlyx/li... | litlyx |

1,894,491 | Cross HTM/CSS compiler | Hey all! I've recently taken on a task at my company to build an email template. Naively, you might... | 0 | 2024-06-20T08:27:32 | https://dev.to/malo_legoff_29fbc05816bf/cross-htmcss-compiler-458j | webdev, email, html, css | Hey all!

I've recently taken on a task at my company to build an email template. Naively, you might think it would be simple. However, I quickly realized that HTML/CSS support in email clients is:

- Outdated

- Inconsistent from one client to another

I'm considering the idea of a cross-HTML/CSS compiler that transfor... | malo_legoff_29fbc05816bf |

1,894,490 | PerfDog Evo v10.3: Unleashing New Possibilities in Performance Testing | We are thrilled to introduce the latest version of PerfDog Evo, v10.3, which comes with a host of new... | 0 | 2024-06-20T08:26:36 | https://dev.to/wetest/try-it-out-perfdog-evo-v103-unleashing-new-possibilities-in-performance-testing-4m8p | performance, gametesting, qa, apptesting | We are thrilled to introduce the latest version of PerfDog Evo, v10.3, which comes with a host of new features and improvements aimed at enhancing your performance testing experience. Stay ahead of the curve and make the most of PerfDog's state-of-the-art performance testing solutions with this latest update.

# What's... | wetest |

1,894,489 | Best Seo Services | Rank on TOP of Google Through the best search engine optimization service At MSLive Technologies,... | 0 | 2024-06-20T08:26:02 | https://dev.to/mslive_technologies_6025c/best-seo-services-5dja |

Rank on TOP of Google Through the best search engine optimization service

At MSLive Technologies, we specialize in strategies to significantly increase your website traffic, driving more potential customers to you... | mslive_technologies_6025c | |

1,894,487 | How to avoid the outline being blocked when hovering the pie chart sector? | Question title How to avoid the outline being blocked when hovering the pie chart sector... | 0 | 2024-06-20T08:24:34 | https://dev.to/xuefei1313/how-to-avoid-the-outline-being-blocked-when-hovering-the-pie-chart-sector-34fe |

### Question title

How to avoid the outline being blocked when hovering the pie chart sector in VChart?

### Problem description

The hover stroke of the pie chart sector is configured, but it will be obscured by other sectors

Imagine this: you wake up to a digital nightmare. Your entire company network is locked down, but there's no ransom note demanding Bitcoin. Instead, the attackers have unleashed your most sensitive data – customer re... | otunkay | |

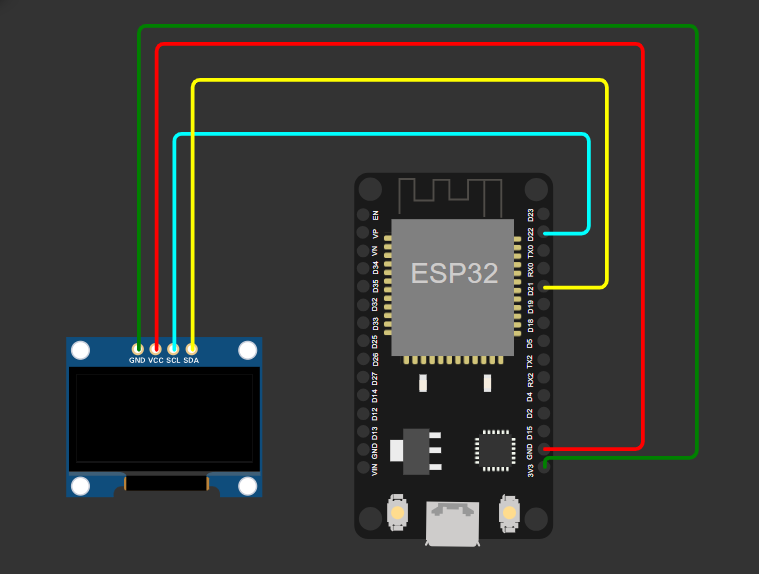

1,894,485 | Using an OLED Display with MicroPython on ESP32 | Introduction In this tutorial, we will learn how to interface an OLED display with an... | 27,763 | 2024-06-20T08:20:44 | https://dev.to/shemanto_sharkar/using-an-oled-display-with-micropython-on-esp32-d9h | micropython, arduino, esp32, iot |

#### Introduction

In this tutorial, we will learn how to interface an OLED display with an ESP32 microcontroller using MicroPython. OLED displays are great for displaying text and si... | shemanto_sharkar |

1,894,483 | All-American Charm: The Wooden Crate Box with Americana Flair | All-American Charms: The Wooden Crate Boxes with Americana Flair Introduction Buying a geniune and... | 0 | 2024-06-20T08:20:20 | https://dev.to/barret_riendeaumdhj_eea4/all-american-charm-the-wooden-crate-box-with-americana-flair-38gc | design | All-American Charms: The Wooden Crate Boxes with Americana Flair

Introduction

Buying a geniune and charming plan for treatment for bundle and show your merchandise or services or solutions or solutions or solutions? Look absolutely no further compared to All-American Charms lumber crate package. This revolutionary pac... | barret_riendeaumdhj_eea4 |

1,894,482 | The Data Science Lifecycle: From Raw Data to Real-World Results | Data science is a powerful field with the potential to revolutionize how we understand and interact... | 0 | 2024-06-20T08:16:58 | https://dev.to/fizza_c3e734ee2a307cf35e5/the-data-science-lifecycle-from-raw-data-to-real-world-results-2kcf | datascience, data, lifecycle | Data science is a powerful field with the potential to revolutionize how we understand and interact with the world. But for aspiring data scientists, the process can seem daunting. Where do you even begin?

The answer lies in the data science lifecycle, a structured approach that transforms raw data into actionable insi... | fizza_c3e734ee2a307cf35e5 |

1,894,481 | How to achieve only selecting the current item in VChart when clicking on the legend? | Question title How to implement VChart to only select the current item when clicking on a... | 0 | 2024-06-20T08:16:39 | https://dev.to/xuefei1313/how-to-achieve-only-selecting-the-current-item-in-vchart-when-clicking-on-the-legend-2gf0 |

### Question title

How to implement VChart to only select the current item when clicking on a legend?

### Problem description

When clicking on the legend, can you change to select the current item and not select other items?

### Solution

VChart supports configuring legend selection mode, including single... | xuefei1313 | |

1,894,480 | How to configure animations in VChart portfolio diagrams? | Question title How to configure animations for VChart's combo chart? Problem... | 0 | 2024-06-20T08:14:55 | https://dev.to/xuefei1313/how-to-configure-animations-in-vchart-portfolio-diagrams-4577 |

### Question title

How to configure animations for VChart's combo chart?

### Problem description

In a biaxial diagram, how to make the left and right axes execute the animation in order, and after the left axis column is executed, the right axis line will play the animation again?

#### Introduction

In this tutorial, we will learn how to interface the HC-SR04 ultrasonic sensor with an ESP32 microcontroller using MicroPython. The HC-SR04 sensor... | shemanto_sharkar |

1,894,474 | Wettquoten-scraper für UEFA Euro 2024 | UEFA 2024 fängt jetzt an! In der Welt der Sportwetten ist die Echtzeit-Überwachung der Quoten für... | 0 | 2024-06-20T08:07:40 | https://dev.to/emilia/wettquoten-scraper-fur-uefa-euro-2024-1ogo | wettquoten, uefa, python, opensource |

UEFA 2024 fängt jetzt an! In der Welt der Sportwetten ist die Echtzeit-Überwachung der Quoten für Wettende von entscheidender Bedeutung. Schwankungen bei den Quoten signalisieren oft potenzielle Gewinnchancen. In di... | emilia |

1,894,476 | Regression Testing in Software Testing: Ensuring Reliability and Stability | Regression testing is an essential practice in the field of software testing that ensures recent... | 0 | 2024-06-20T08:07:09 | https://dev.to/keploy/regression-testing-in-software-testing-ensuring-reliability-and-stability-2d13 | webdev, javascript, beginners, programming |

Regression testing is an essential practice in the field of software testing that ensures recent code changes do not negatively impact existing functionality. This process is crucial for maintaining the reliability ... | keploy |

1,894,475 | Choosing the Right Hardware Tools for Your Toolbox | Picking the Right Tools for the Toolbox Obtaining the technologies being most useful their toolbox... | 0 | 2024-06-20T08:06:26 | https://dev.to/madeline_jonesb_f8139fc95/choosing-the-right-hardware-tools-for-your-toolbox-4d4o |

Picking the Right Tools for the Toolbox

Obtaining the technologies being most useful their toolbox is essential for almost any task. Either this is a big since perform that was tiny using the unit which is most beneficial create a difference that is huge. Consider points to think about when choosing the gear that... | madeline_jonesb_f8139fc95 | |

1,890,051 | 10 Microservice Best Practices for System Design Interview | Microservices best practices for System design interview which yyou can also follow to build scalable and highly resilient applications | 0 | 2024-06-20T08:03:41 | https://dev.to/somadevtoo/10-microservice-best-practices-for-building-scalable-and-resilient-apps-1p0j | microservices, systemdesign, softwaredevelopment, development | ---

title: 10 Microservice Best Practices for System Design Interview

published: true

description: Microservices best practices for System design interview which yyou can also follow to build scalable and highly resilient applications

tags: microservices, systemdesign, softwaredevelopment, development

# cover_image: ht... | somadevtoo |

1,894,472 | EPTFE Membrane Technology Explained | EPTFE Membrane Technology Explained Are you interested in learning about the technology latest in... | 0 | 2024-06-20T08:00:56 | https://dev.to/madeline_jonesb_f8139fc95/eptfe-membrane-technology-explained-3039 | EPTFE Membrane Technology Explained

Are you interested in learning about the technology latest in breathable and membranes that are waterproof? Look no further than EPTFE membrane technology! This technology innovative advantages that are numerous applications, and we're here to explain it all in easy-to-understand la... | madeline_jonesb_f8139fc95 | |

1,894,471 | Unit Testing: Why It Matters and How to Do It Effectively in Python | Introduction Unit testing is a critical aspect of software development that ensures... | 0 | 2024-06-20T08:00:40 | https://dev.to/manavcodaty/unit-testing-why-it-matters-and-how-to-do-it-effectively-in-python-g65 | bug, programming, python | # Introduction

Unit testing is a critical aspect of software development that ensures individual components of a program work as intended. It helps identify bugs early, facilitates maintenance, and improves code quality. This blog post will delve into why unit testing is important and how to implement it effectively i... | manavcodaty |

1,891,673 | Pros & Cons of Git Worktrees | One might wonder why you would use multiple Git worktrees if you can switch between branches and... | 0 | 2024-06-20T08:00:00 | https://devot.team/blog/git-worktrees | One might wonder why you would use multiple Git worktrees if you can switch between branches and stash it whenever you change something?

Let me guide you through the enchanting world of Git worktrees within a Git repository. We'll unravel the mysteries of how they differ from conventional branching strategies and shed... | ana_klari_e98cbb26da5af3 | |

1,854,003 | Forget your database exists! Leave it to Metis | As developers, we all strive to keep our systems in shape. We maintain them, we review metrics and... | 0 | 2024-06-20T08:00:00 | https://www.metisdata.io/blog/forget-your-databases-exist-leave-it-to-metis | sql, database, monitoring | As developers, we all strive to keep our systems in shape. We maintain them, we review metrics and logs, and we react to alerts. We do whatever it takes to make sure that our systems do not break, especially databases that are crucial to our applications. Wouldn’t it be great if there was no need to do the maintenance ... | adammetis |

1,894,470 | This is what you need to know about Machine Code | Just as in the core of our hearts… At the core of every application, from simple calculators to... | 0 | 2024-06-20T07:58:32 | https://dev.to/zoltan_fehervari_52b16d1d/this-is-what-you-need-to-know-about-machine-code-20nd | machinecode, lowlevelcode, programming | Just as in the core of our hearts…

At the core of every application, from simple calculators to complex operating systems, lies a language of zeros and ones known as machine code. This lowest-level programming language converts human-readable instructions into a format that the CPU can directly execute.

So?

So under... | zoltan_fehervari_52b16d1d |

1,894,469 | Conch | Your Undetectable AI Writing Assistant. Use Conch to write, study, and work faster. Conch helps you... | 0 | 2024-06-20T07:58:04 | https://dev.to/malik_hamid_311d4b4c65819/conch-4cob | ai, writing, study | [](https://www.getconch.ai/)

Your Undetectable AI Writing Assistant. Use Conch to write, study, and work faster.

Conch helps you with our four core features:

- Stealth: Record your lectures, meetings, presentations, or interviews to automatically create notes, and flashcards

- Write: Highlight text to edit and improve... | malik_hamid_311d4b4c65819 |

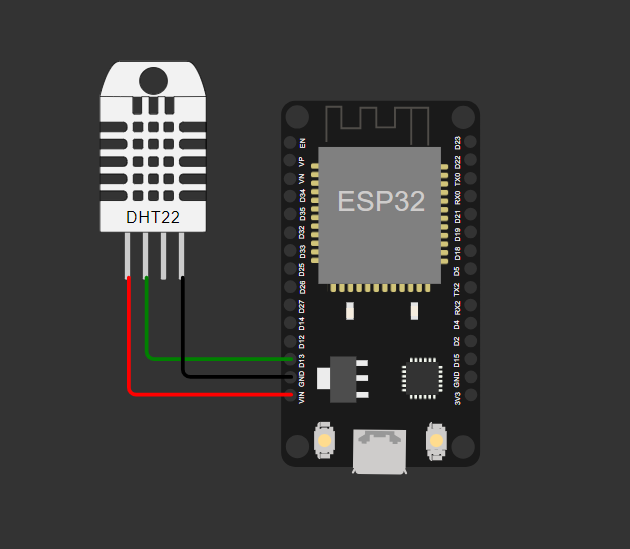

1,894,467 | DHT22 with MicroPython on ESP32 | Introduction In this tutorial, we will learn how to interface a DHT22 temperature and... | 27,763 | 2024-06-20T07:56:29 | https://dev.to/shemanto_sharkar/dht22-with-micropython-on-esp32-16j6 | micropython, esp32, arduino, robotics |

#### Introduction

In this tutorial, we will learn how to interface a DHT22 temperature and humidity sensor with an ESP32 microcontroller using MicroPython. The DHT22 is a reliable sensor for measuri... | shemanto_sharkar |

1,894,466 | How to Kill a Process Binding to a Specific Port in PowerShell | If you frequently run into issues where a process is binding to a specific port and you need to free... | 0 | 2024-06-20T07:56:06 | https://dev.to/dutchskull/how-to-kill-a-process-binding-to-a-specific-port-in-powershell-lh | terminal, powershell, process, port | If you frequently run into issues where a process is binding to a specific port and you need to free that port, PowerShell provides a convenient way to do this. In this blog post, we’ll walk you through how to create a custom PowerShell function that kills the process binding to a specific port.

## The Problem

Often,... | dutchskull |

1,894,465 | Deploy helm charts with go lang | What is Helm ? Helm is designed to simplify the deployment and management of complex application... | 0 | 2024-06-20T07:55:37 | https://medium.com/@varunrathod0045/deploy-helm-charts-with-go-lang-fa3b967af124 | go, helm, kubernetes | > **What is Helm ?**

Helm is designed to simplify the deployment and management of complex application workloads in Kubernetes. It functions similarly to a package manager for Kubernetes, where the packages are known as Helm Charts. A Helm Chart combines a template file, which outlines the Kubernetes resources to be d... | rathod0045 |

1,894,464 | Jiangyin Metallurgy Hydraulic Machinery Factory: Innovators in Metal Processing Equipment | You might like to always check down Jiangyin Metallurgy Hydraulic Machinery Factory if you want to... | 0 | 2024-06-20T07:53:43 | https://dev.to/madeline_jonesb_f8139fc95/jiangyin-metallurgy-hydraulic-machinery-factory-innovators-in-metal-processing-equipment-2jhj | You might like to always check down Jiangyin Metallurgy Hydraulic Machinery Factory if you want to undertaking steel This provider produces machines that will shape plus form steel into all kinds of of good use things. Here are five explanations why Jiangyin Metallurgy try an option that is great steel processing produ... | madeline_jonesb_f8139fc95 | |

1,894,321 | Understanding IQueryable<T> in C# | Hi There! 👋🏻 You've probably used IEnumerable<T>, and most certainly used... | 0 | 2024-06-20T07:53:35 | https://dev.to/rasheedmozaffar/understanding-iqueryable-in-c-4n37 | csharp, dotnet, learning, database | ## Hi There! 👋🏻

You've probably used `IEnumerable<T>`, and most certainly used `List<T>` if you've coded in C# before. These two are very popular and are often presented early to you when you're learning the language. But have you heard of `IQueryable<T>`? This one is a little more advanced, but it's got so much powe... | rasheedmozaffar |

1,894,463 | Firebase Crashlytics : Integration in React Native App | Crashlytics is one of the powerful tool from the Firebase that helps us to track and analyze the... | 0 | 2024-06-20T07:50:47 | https://dev.to/deepbb/firebase-crashlytics-integration-in-react-native-app-2p1b | reactnative, firebase, javascript, debug | Crashlytics is one of the powerful tool from the Firebase that helps us to track and analyze the crashes in real-time, by enabling the Crashlytics in your App you can determine the root cause of the crash and you can understand the impact on your users hence you can keep your app stable authentic.

In this article you c... | deepbb |

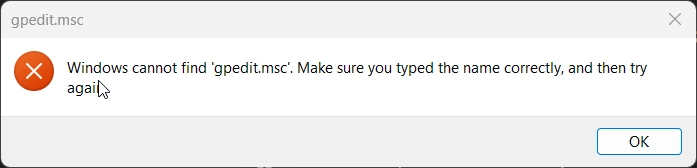

1,894,462 | Fix 'Windows Cannot Find gpedit.msc' in Windows 11! | Cause: The Group Policy Editor is disabled on Home editions and only available for Pro, Enterprise,... | 0 | 2024-06-20T07:49:39 | https://winsides.com/fix-windows-cannot-find-gpedit-msc-in-windows-11/ | windows11, gpedit, msc, batchfile | **Cause**: The Group Policy Editor is disabled on Home editions and only available for Pro, Enterprise, and Education editions.

**Solution**: Enable gpedit.msc using a batch file method.

Create a batch... | vigneshwaran_vijayakumar |

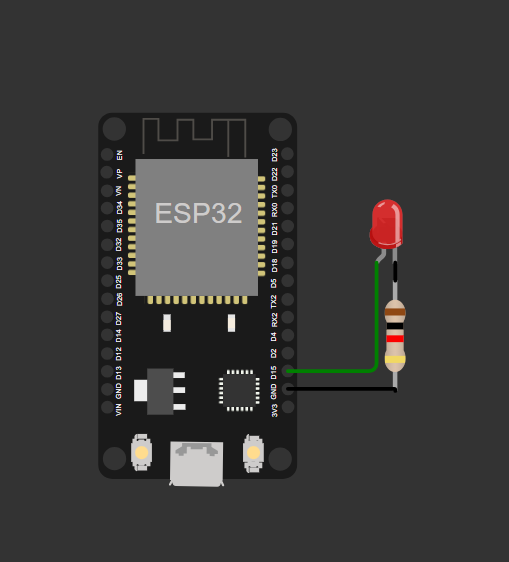

1,894,461 | MicroPython ESP32: Blink LED | Certainly! Here's a description for your blog along with a diagram and comments explaining the... | 27,763 | 2024-06-20T07:49:06 | https://dev.to/shemanto_sharkar/micropython-esp32-blink-led-210d | micropython, esp32, arduino, robotics |

Certainly! Here's a description for your blog along with a diagram and comments explaining the code:

### Blog Description

Welcome to another exciting tutorial on MicroPython programming! Today, we'll be... | shemanto_sharkar |

1,894,460 | UV Lamps Market Trends, Size, Share, Growth Forecast 2023-2033: Latest Developments | The UV lamps market is poised for substantial growth from 2023 to 2033, driven by increasing demand... | 0 | 2024-06-20T07:48:25 | https://dev.to/swara_353df25d291824ff9ee/uv-lamps-market-trends-size-share-growth-forecast-2023-2033-latest-developments-2dk | The [UV lamps market](https://www.persistencemarketresearch.com/market-research/uv-lamps-market.asp) is poised for substantial growth from 2023 to 2033, driven by increasing demand across various applications such as water treatment, air purification, and surface sterilization in healthcare and food processing industri... | swara_353df25d291824ff9ee | |

1,894,459 | Sai Suvidha Packers And Mover | Established in 2012. We started “Sai Suvidha Packers And Movers” in 2012 but we have over 10 years of... | 0 | 2024-06-20T07:45:06 | https://dev.to/indrapal456/sai-suvidha-packers-and-mover-5bh4 | packer, movers, cartransportations, localshifting | Established in 2012. We started “Sai Suvidha Packers And Movers” in 2012 but we have over 10 years of experience in the Moving industry. This helps us to provide our customers with exceptional and leading service in the industry

Working as a packers and mover for many years, we have done a variety of Interstate moves,... | indrapal456 |

1,894,458 | How Blockchain Certification Can Boost Your Professional Credentials? | Blockchain technology has proved its potential as a formidable trend for the future. It offers the... | 0 | 2024-06-20T07:44:15 | https://dev.to/101blockchians_/how-blockchain-certification-can-boost-your-professional-credentials-29gg | blockchain, certification, learnblockchain, career | Blockchain technology has proved its potential as a formidable trend for the future. It offers the advantage of decentralization alongside enhanced flexibility and security through cryptography. Most important of all, the applications of blockchain have not been limited to cryptocurrencies anymore. You can find many pr... | 101blockchians_ |

1,894,457 | Elevate Your Beauty Experience at the Best Salon in Science City, Ahmedabad | Nestled in the dynamic area of Science City, Ahmedabad, our salon offers a haven for those seeking... | 0 | 2024-06-20T07:44:06 | https://dev.to/abitamim_patel_7a906eb289/elevate-your-beauty-experience-at-the-best-salon-in-science-city-ahmedabad-5a2c | Nestled in the dynamic area of **[Science City, Ahmedabad](https://trakky.in/ahmedabad/nearby/?area=Science%20city)**, our salon offers a haven for those seeking top-notch hair and beauty services. Our dedicated team is committed to providing luxurious and professional care, ensuring you leave feeling refreshed and bea... | abitamim_patel_7a906eb289 | |

1,894,456 | Player Agency: Why so serious? | Again, touching on my previous topics, I’m going to lay out something relatable here…I know, this is... | 0 | 2024-06-20T07:42:30 | https://dev.to/zoltan_fehervari_52b16d1d/player-agency-why-so-serious-mnm | playeragency, videogames, gamedev, gameprogramming | Again, touching on my previous topics, I’m going to lay out something relatable here…I know, this is about gaming, again…

**Stay with me though!**

Player agency is a pivotal(vital, important, etc.) element in many video games, granting players the power to shape their in-game experiences. This feature allows players ... | zoltan_fehervari_52b16d1d |

1,894,455 | Trader vs. Investor What's the Difference | Understanding the distinction between traders and investors is crucial for anyone looking to enter... | 0 | 2024-06-20T07:40:07 | https://dev.to/georgewilliam4425/trader-vs-investor-whats-the-difference-227m | Understanding the distinction between traders and investors is crucial for anyone looking to enter the financial markets. Both roles play vital parts in the [forex](https://bit.ly/forex-trading-1), trading, and broader financial markets, including CFDs. This article highlights the key differences between traders and in... | georgewilliam4425 | |

1,894,454 | Understanding SOLID Principles and Their Implementation in React | The SOLID principles, introduced by Robert C. Martin, provide a framework for developing software... | 0 | 2024-06-20T07:37:34 | https://dev.to/rahulvijayvergiya/understanding-solid-principles-and-their-implementation-in-react-fm5 | react, webdev, solidprinciples, designpatterns | The SOLID principles, introduced by Robert C. Martin, provide a framework for developing software that is maintainable, extensible, and adaptable as projects evolve. By adopting these practices, developers can write cleaner and more efficient code. These principles guide the creation of software that remains easy to un... | rahulvijayvergiya |

1,894,453 | Answer: Flutter geolocator package not retrieving location | answer re: Flutter geolocator package not... | 0 | 2024-06-20T07:37:02 | https://dev.to/anuragdhunna/answer-flutter-geolocator-package-not-retrieving-location-4gkf | flutter, ios, location, dart | {% stackoverflow 78371298 %} | anuragdhunna |

1,894,452 | Shutterepair | Looking for professional Shutterepair services in London? Our team of skilled technicians is here to... | 0 | 2024-06-20T07:36:49 | https://dev.to/shutterepair/shutterepair-121l | Looking for professional **[Shutterepair](https://shutterepair.co.uk/)** services in London? Our team of skilled technicians is here to provide you with fast and reliable service. We specialise in all types of shutter repairs, from simple adjustments to major damage. Using only the best quality materials and equipment,... | shutterepair | |

1,894,451 | 【Ethical Hacker】Nice to meet you~ | Thank you for your support of the TECNO Security Response Center. We hope to have more cooperation... | 0 | 2024-06-20T07:35:51 | https://dev.to/tecno-security/ethical-hacker-nice-to-meet-you-4co2 | security, mobile, cybersecurity |

Thank you for your support of the TECNO Security Response Center. We hope to have more cooperation and communication with everyone, so we have gathered everyone in the Slack workspace. We will share various informa... | tecno-security |

1,894,450 | [Flutter] Web Github pages | already create your repository "your_id.gihub.io" "your_id.github.io" 로 repository가 생성되었다는... | 0 | 2024-06-20T07:34:39 | https://dev.to/sidcodeme/flutter-web-github-pages-141e | flutter, web, github, pages | * already create your repository "your_id.gihub.io"

* "your_id.github.io" 로 repository가 생성되었다는 기준임

* already create your flutter web project

* flutter web 프로젝트를 생성했다는 기준임

1. if you have to domain, go to the flutter project directory

만약 당신이 도메인을 소유하고있다면, flutter 프로젝트 생성 한 디렉토리로 이동

```shell

$ cd my_flutter_director... | sidcodeme |

1,894,449 | MobX vs. Redux: Why MobX Might Be the Better Choice for Your Next Project | State management can be a scary concept, especially for those new to coding. But fear not, because... | 0 | 2024-06-20T07:32:51 | https://dev.to/mananpoojara/mobx-vs-redux-why-mobx-might-be-the-better-choice-for-your-next-project-29k6 | javascript, tutorial, learning, webdev | State management can be a scary concept, especially for those new to coding. But fear not, because MobX is here to make things a whole lot easier! With its straightforward approach and effortless optimal rendering, MobX simplifies state management in ways you never thought possible.

**What is MobX?**

MobX is a state ... | mananpoojara |

1,894,448 | Booklet Label Market: Latest Developments, Opportunities, Growth Forecast 2024-2031 | The global booklet label market is set to expand significantly, with a projected CAGR of 4.1% from... | 0 | 2024-06-20T07:32:03 | https://dev.to/swara_353df25d291824ff9ee/booklet-label-market-latest-developments-opportunities-growth-forecast-2024-2031-2233 | The global booklet label market is set to expand significantly, with a projected CAGR of 4.1% from 2024 to 2031, increasing its value from US$ 0.42469 billion to US$ 0.6465 billion. Booklet labels, also known as extended content labels (ECLs), play a crucial role in sectors like pharmaceuticals, food and beverage, and ... | swara_353df25d291824ff9ee | |

1,894,447 | Machine Learning's Basic Definitions | What is Machine Learning? Machine learning is a branch of artificial intelligence (AI)... | 27,754 | 2024-06-20T07:30:08 | https://dev.to/shemanto_sharkar/machine-learnings-basic-definitions-3fd3 | machinelearning, ai, datascience, python | ## What is Machine Learning?

Machine learning is a branch of artificial intelligence (AI) that allows computers and machines to learn from existing information (data) and apply that learning to perform other similar tasks without explicit programming.

## What are features and target variables?

Features, also known as ... | shemanto_sharkar |

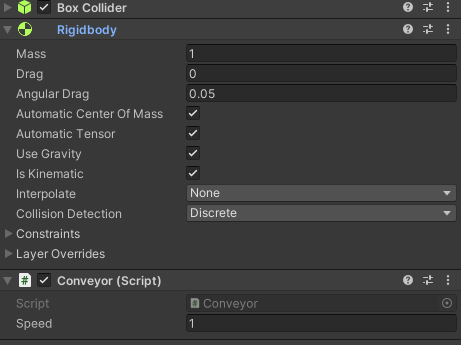

1,894,446 | [Unity] How to create a conveyor? | public class Conveyor : MonoBehaviour { public float speed = 1f; Rigidbody rb; void... | 0 | 2024-06-20T07:29:30 | https://dev.to/piler-tam/unity-how-to-create-a-conveyor-2h8a |

```

public class Conveyor : MonoBehaviour

{

public float speed = 1f;

Rigidbody rb;

void Start()

{

rb = GetComponent<Rigidbody>();

}

private void FixedUpdate()

{

Vector3... | piler-tam | |

1,894,445 | Dynamic rendering: Zoom-level | Renderers can adapt programmatically to the application state and result in different ways of drawing... | 0 | 2024-06-20T07:28:42 | https://dev.to/lenormor/dynamic-rendering-zoom-level-mn7 | javascript, webdev, devops, productivity | Renderers can adapt programmatically to the application state and result in different ways of drawing your activities. This article will cover the AssignmentActivityRenderer implemented in the ScheduleJS Viewer and explain its mechanics.

## What are [ScheduleJS](https://schedulejs.com) renderers?

The concept of an `A... | lenormor |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.