id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,892,818 | Warlock.js From Nodejs Course Into a fine grained framework | Introduction It all started in the beginnings of 2023, where I started a new course here... | 0 | 2024-06-20T14:35:44 | https://dev.to/hassanzohdy/warlockjs-from-nodejs-course-into-a-fine-grained-framework-36kp | typescript, node, framework, mongodb | ## Introduction

It all started in the beginnings of 2023, where I started a new course here on dev Titled with: [Nodejs Course 2023](https://dev.to/hassanzohdy/nodejs-course-2023-introduction-to-nodejs-3n4e) that we start building the entire project from scratch.

Every single file there were written during the articl... | hassanzohdy |

1,893,828 | CREATING A FOLDER USING GIT BASH AND PUSHING IT TO GITHUB | Git is a distribution version control system that multiple developers use to work on a... | 0 | 2024-06-20T14:34:52 | https://dev.to/dorablog2024/creating-a-folder-using-git-bash-and-pushing-it-to-github-41p4 | devops, git, cloud, github | Git is a distribution version control system that multiple developers use to work on a project.

GitHub is a cloud platform that uses Git as its core technology.

To create a code and push it to GitHub using Git bash. Here is a step-by-step process.

1. Open your git bash terminal and enter your details using

the fo... | dorablog2024 |

1,894,869 | Coinmarketrate.com is a rating agency and a free instrument for the crypto | Coinmarketrate.com is a rating agency and a free instrument for the crypto community aimed to provide... | 0 | 2024-06-20T14:32:43 | https://dev.to/coinmarketrate/coinmarketratecom-is-a-rating-agency-and-a-free-instrument-for-the-crypto-2ibi | agency, community, startup, web | Coinmarketrate.com is a rating agency and a free instrument for the crypto community aimed to provide the unique data and the adaptive and captivating interaction between users. This helps to increase the quality of information content regarding cryptocurrencies due to reducing the amount of time spent on the analysis,... | coinmarketrate |

1,894,834 | Harnessing the Power of Predictive Analytics: Techniques and Applications | In today’s data-driven world, predictive analytics has become an essential tool for businesses aiming... | 0 | 2024-06-20T13:29:52 | https://dev.to/linda0609/harnessing-the-power-of-predictive-analytics-techniques-and-applications-om7 | In today’s data-driven world, predictive analytics has become an essential tool for businesses aiming to forecast outcomes and make informed decisions. By leveraging a wide array of approaches such as deep learning, neural networks, machine learning, text analysis, and artificial intelligence, predictive analytics tran... | linda0609 | |

1,894,868 | Orchestrating the API Symphony: Managing Traffic and Routing with Gateways and Service Meshes | In the age of microservices architectures, APIs (Application Programming Interfaces) act as the... | 0 | 2024-06-20T14:32:13 | https://dev.to/syncloop_dev/orchestrating-the-api-symphony-managing-traffic-and-routing-with-gateways-and-service-meshes-4bbd | webdev, javascript, ai, api | In the age of microservices architectures, APIs (Application Programming Interfaces) act as the critical communication channels between loosely coupled services. Effective management of API traffic and routing is crucial for ensuring smooth operation, scalability, and security. This blog explores two essential tools, ... | syncloop_dev |

1,894,867 | The secret to rapid app development | We use applications to make our day-to-day tasks easier. But have you ever thought about building... | 0 | 2024-06-20T14:31:55 | https://dev.to/aaikansh_22/the-secret-to-rapid-app-development-13c3 | developers, engineer, lowcode, development | We use applications to make our day-to-day tasks easier. But have you ever thought about building applications in the easiest and most efficient way? Here I am talking about the [low-code]() way to build applications ... | aaikansh_22 |

1,894,866 | React Native download remote file | import RNFetchBlob from 'rn-fetch-blob' // code for component const toast = useToast(); ... | 0 | 2024-06-20T14:31:44 | https://dev.to/cozeniths/react-native-download-remote-file-6a4 | reat, android, reactnative, webdev |

```

import RNFetchBlob from 'rn-fetch-blob'

// code for component

const toast = useToast();

const downloadCSV = async () => {

if (loading) return;

setLoading(true)

const { fs } = RNFetchBlob;

let downloadsDir = fs.dirs?.DownloadDir; // Directory where downloaded files are ... | cozeniths |

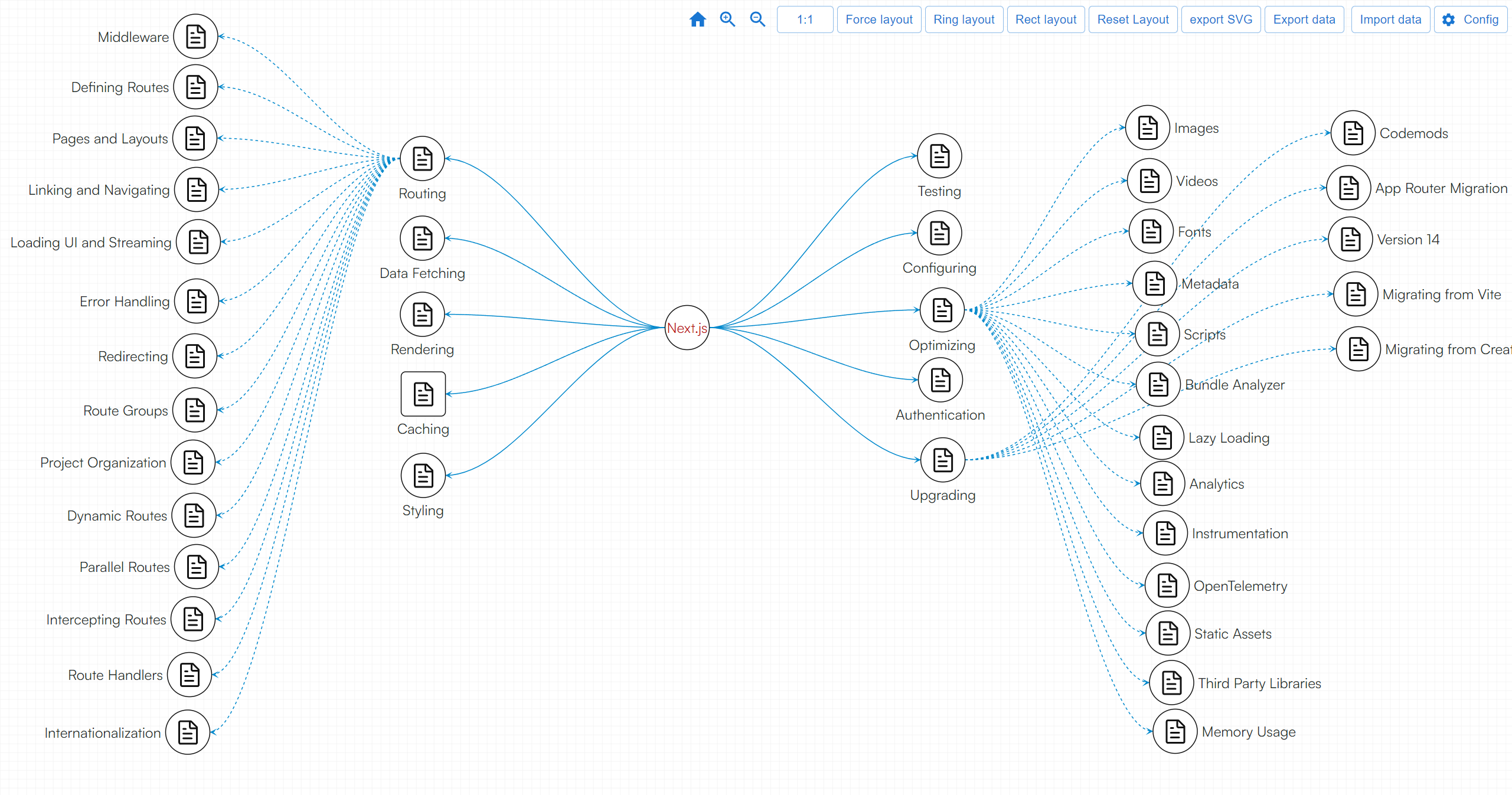

1,894,865 | Nextjs latest version of knowledge graph | Since I’m learning Next.js recently, I used AddGraph to create a simple knowledge graph about... | 0 | 2024-06-20T14:31:16 | https://dev.to/fridaymeng/nextjs-latest-version-of-knowledge-graph-m2e | datavis, nextjs, tooling | Since I’m learning Next.js recently, I used AddGraph to create a simple knowledge graph about Next.js.

[Demo](https://addgraph.com/nextjs) | fridaymeng |

1,894,828 | Difference between `||` and `??` in JS | Basically, both operators. The most fundamental difference lies in how they interpret the result of... | 0 | 2024-06-20T13:21:47 | https://dev.to/syueying/difference-between-and-in-js-1h74 | javascript, beginners, programming | Basically, both operators. The most fundamental difference lies in how they interpret the result of their left expression.

`||` treats its left as a boolean. Regardless of what the left expression is, it ultimately evaluates to either `true` or `false`. This is why, when the left operand is `0`, `''`, `NaN`, `false`, ... | syueying |

1,894,863 | How to Rank Your Business in New York? | In the dynamic and competitive landscape of New York City, standing out as a business is both an... | 0 | 2024-06-20T14:26:58 | https://dev.to/shahrukh_khanofficial_4f/how-to-rank-your-business-in-new-york-1887 | localseo, seo, localseonyc, localseonewyork | In the dynamic and competitive landscape of New York City, standing out as a business is both an opportunity and a challenge. The city is a melting pot of cultures, industries, and potential customers, making it a prime location for business growth. However, to leverage this potential, businesses must adopt effective s... | shahrukh_khanofficial_4f |

1,894,861 | Who am I | Hello Dev Community! 👋 My name is Vidhey Bhogadi, and I am thrilled to be part of this amazing... | 0 | 2024-06-20T14:25:58 | https://dev.to/vidhey071/who-am-i-3dln | Hello Dev Community! 👋

My name is Vidhey Bhogadi, and I am thrilled to be part of this amazing community. Let me take a moment to introduce myself.

My Background 🌐

I am a full-stack developer and a self-taught tech enthusiast. Over the years, I have immersed myself in the world of technology, constantly learning an... | vidhey071 | |

1,894,903 | Keeping It Clean: EKS and `kubectl` Configuration | Previously, I was worried about, "how do I make it so that kubectl can talk to my EKS clusters". ... | 0 | 2024-06-21T19:16:33 | https://thjones2.blogspot.com/2024/06/keeping-it-clean-eks-and-kubectl.html | aws, cli, eks, kubernetes | ---

title: Keeping It Clean: EKS and `kubectl` Configuration

published: true

date: 2024-06-20 14:25:00 UTC

tags: AWS,cli,EKS,Kubernetes

canonical_url: https://thjones2.blogspot.com/2024/06/keeping-it-clean-eks-and-kubectl.html

---

[Previously](https://dev.to/ferricoxide/crib-notes-accessing-eks-cluster-with-kubectl-2h... | ferricoxide |

1,894,859 | A importância da Comunicação para uma pessoa desenvolvedora | Para ser um profissional do mercado de tecnologia é necessário desenvolver hard skills, que são... | 0 | 2024-06-20T14:16:06 | https://dev.to/kecbm/a-importancia-da-comunicacao-para-uma-pessoa-desenvolvedora-4349 | braziliandevs, career, discuss, beginners |

Para ser um profissional do mercado de tecnologia é necessário desenvolver **hard skills**, que são **ferramentas técnicas** como linguagem de programação, e **soft skills**, que são **habilidades comportamentais**... | kecbm |

1,894,858 | Need a JSON object comparison that's better than line-by-line | Even though JSON objects are structured most free and online tools for comparing JSON ignore the... | 0 | 2024-06-20T14:14:16 | https://dev.to/deltajson/need-a-json-object-comparison-thats-better-than-line-by-line-435l | json, react, npm, dynamodb | Even though JSON objects are structured most free and online tools for comparing JSON ignore the structure and process objects line by line. Those basic comparison tools return poor results with many false positives and most completely fail as they use a line-by-line approach, and none offer a solution to the highly co... | deltajson |

1,894,842 | Tips for Building Salesforce Lightning Web Components | Building applications on Salesforce has become more streamlined and powerful with the introduction of... | 0 | 2024-06-20T14:07:58 | https://dev.to/zoyazenniefer/tips-for-building-salesforce-lightning-web-components-28fn | salesforce, webdev, programming, development | Building applications on Salesforce has become more streamlined and powerful with the introduction of Salesforce Lightning Web Components (LWC). Whether you're a seasoned developer or a newcomer to Salesforce, mastering LWCs can significantly enhance your ability to create dynamic, responsive, and efficient application... | zoyazenniefer |

1,894,855 | 먹튀로얄 | 먹투로얄토토사이트: 안전하고 신뢰할 수 있는 카지노, 바카라, 슬롯사이트 토토 커뮤니티 먹투로얄 먹투로얄토토사이트는 다양한 게임 옵션과 신뢰성을 바탕으로 많은 사용자들에게 사랑받고... | 0 | 2024-06-20T14:07:48 | https://dev.to/alemtroy08/meogtwiroyal-fif | 먹투로얄토토사이트: 안전하고 신뢰할 수 있는 카지노, 바카라, 슬롯사이트

토토 커뮤니티 먹투로얄

먹투로얄토토사이트는 다양한 게임 옵션과 신뢰성을 바탕으로 많은 사용자들에게 사랑받고 있는 플랫폼입니다. 이 사이트는 카지노, 바카라, 슬롯 게임을 제공하며, 안전하고 공정한 게임 환경을 유지하는 데 중점을 두고 있습니다. 먹투로얄은 토토 커뮤니티에서 신뢰할 수 있는 사이트로 평가받고 있으며, 사용자의 만족도를 높이기 위해 지속적으로 노력하고 있습니다.

안전한 카지노사이트

먹투로얄토토사이트는 안전한 카지노사이트로 잘 알려져 있습니다. 이 사이트는 최신 보안 기술을 적용하여... | alemtroy08 | |

1,894,854 | 먹튀로얄 | 먹투로얄토토사이트: 카지노, 바카라, 슬롯의 안전한 선택 온라인 게임과 베팅의 인기가 높아짐에 따라, 안전하고 신뢰할 수 있는 플랫폼의 중요성이 강조되고 있습니다. 이러한 요구에... | 0 | 2024-06-20T14:07:08 | https://dev.to/alemtroy08/meogtwiroyal-4d25 | 먹투로얄토토사이트: 카지노, 바카라, 슬롯의 안전한 선택

온라인 게임과 베팅의 인기가 높아짐에 따라, 안전하고 신뢰할 수 있는 플랫폼의 중요성이 강조되고 있습니다. 이러한 요구에 부응하여 등장한 먹투로얄토토사이트는 카지노, 바카라, 슬롯 게임을 제공하며, 사용자들이 안심하고 즐길 수 있는 환경을 제공합니다.

카지노사이트

먹투로얄토토사이트의 카지노섹션은 다양한 게임 옵션을 제공합니다. 클래식한 테이블 게임부터 최신 트렌드를 반영한 새로운 게임들까지, 모든 취향을 만족시킬 수 있는 다양한 선택지를 제공합니다. 먹투로얄토토사이트는 공정한 게임 운영과 투명한 시스템을 통해... | alemtroy08 | |

1,894,851 | What is Simulcasting? | Simulcasting, is a short form for the simultaneous broadcasting. This is a process of broadcasting... | 0 | 2024-06-20T14:00:00 | https://www.metered.ca/blog/simulcast-what-is-simulcasting/ | webdev, javascript, devops, webrtc | Simulcasting, is a short form for the simultaneous broadcasting. This is a process of broadcasting the same media content through multiple distribution channels simultaneously

The concept includes streaming the same content across various digital platforms including social media websites, homepage, apps and media site... | alakkadshaw |

1,894,841 | Wishes From AI | This is a submission for the Twilio Challenge What I Built I built Wishes from AI, an... | 0 | 2024-06-20T13:59:34 | https://dev.to/vibrazy/wishes-from-ai-2jl2 | devchallenge, twiliochallenge, ai, twilio | *This is a submission for the [Twilio Challenge ](https://dev.to/challenges/twilio)*

## What I Built

I built Wishes from AI, an innovative app designed to generate personalized celebration messages using AI and Twilio’s powerful communication APIs. This app allows users to create heartfelt messages for various occasio... | vibrazy |

1,894,105 | Estruturas de declaração de pagina em CSS | O que é o CSS CSS é uma linguagem de estilização de paginas em estilo cascata, serve para... | 0 | 2024-06-20T13:58:34 | https://dev.to/marimnz/estruturas-de-declaracao-de-pagina-em-css-3c1d | css, beginners | ##O que é o CSS

CSS é uma linguagem de estilização de paginas em estilo cascata, serve para adicionar layouts, animações, formas geométricas, filtros, contadores, entre outras configurações.

##Formas de declarar o CSS

**CSS inline** : Adiciona o CSS utilizando o atributo style dentro das tags HTML;

**CSS interno** : ... | marimnz |

1,894,853 | Design Pattern #3 - Observer Pattern | Continuing our quest into trending design patterns for front-end developers, we follow up our first... | 27,620 | 2024-06-20T13:57:55 | https://www.superviz.com/design-pattern-3-observer-pattern-for-frontend-developers | javascript, architecture, learning, webdev | Continuing our quest into trending design patterns for front-end developers, we follow up our first article [on the Singleton pattern](https://dev.to/superviz/design-pattern-1-singleton-for-frontend-developers-14p9) with a look at [the Facade pattern](https://dev.to/superviz/design-pattern-2-facade-pattern-1dhl) in thi... | vtnorton |

1,894,845 | Discover the potential of AI development services | Have you ever thought about how technology that feels like as if it is lifted from the pages of a... | 0 | 2024-06-20T13:53:11 | https://dev.to/ericoliver/discover-the-potential-of-ai-development-services-27c1 | ai, webdev, development, devops | Have you ever thought about how technology that feels like as if it is lifted from the pages of a sci-fi novel is changing our real-world experiences? Artificial Intelligence (AI), once a distant dream, is now a vital part of our daily lives. From smartphones that recognize our faces to virtual assistants who know just... | ericoliver |

1,894,850 | Computer Science Challenge: Discrete Math | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-20T13:50:12 | https://dev.to/alexwedsday/computer-science-challenge-discrete-math-37nm | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

<!-- Explain a computer science concept in 256 characters or less. -->

Provides us with techniques for solving problems in Computer Science and allows us to study finite and enumerated... | alexwedsday |

1,894,849 | LeetCode Day 13 Binary Tree Part 3 | LeetCode No. 104. Maximum Depth of Binary Tree Given the root of a binary tree, return its... | 0 | 2024-06-20T13:49:48 | https://dev.to/flame_chan_llll/leetcode-day-13-binary-tree-part-3-m6i | leetcode, java, algorithms, datastructures | # LeetCode No. 104. Maximum Depth of Binary Tree

Given the root of a binary tree, return its maximum depth.

A binary tree's maximum depth is the number of nodes along the longest path from the root node down to the farthest leaf node.

# LeetCode No. 257. Binary Tree Paths

Given the root of a binary tree, return all... | flame_chan_llll |

1,894,848 | The Data Professions | With the development of Machine Learning, new professions have emerged. There are eight main roles,... | 0 | 2024-06-20T13:49:39 | https://dev.to/moubarakmohame4/the-data-professions-30e | data, datascience, dataengineering, datastructures | With the development of Machine Learning, new professions have emerged. There are eight main roles, in addition to more traditional positions like project manager or developer. These roles help form a team capable of managing a project from start to finish. Creating models is certainly important, but it is not enough. ... | moubarakmohame4 |

1,894,847 | Inrate's Comprehensive ESG Methodology: Pioneering Sustainable Investment Solutions | In an era where sustainable investing is gaining momentum, Inrate stands out with its rigorous... | 0 | 2024-06-20T13:49:12 | https://dev.to/inrate_esg_037e7b133fe497/inrates-comprehensive-esg-methodology-pioneering-sustainable-investment-solutions-4mgl |

In an era where sustainable investing is gaining momentum, Inrate stands out with its rigorous Environmental, Social, and Governance (ESG) methodology. Our approach is designed to offer transparent, precise, and actionable insights, empowering investors to make informed decisions that drive positive environmental and ... | inrate_esg_037e7b133fe497 | |

1,894,846 | Understanding SOLID | Quality of software is the fundamental basis for developing any system. After all, the higher the... | 0 | 2024-06-20T13:46:24 | https://dev.to/darlangui/understanding-solid-c2g | solidprinciples, learning, agile, rust | Quality of software is the fundamental basis for developing any system. After all, the higher the software quality, the fewer errors there are, and it facilitates maintenance and the addition of new functionalities in the future.

Simply put, software quality can be said to be inversely proportional to the incidence of... | darlangui |

1,894,844 | Introduction to Business Dispute Resolution | In the dynamic world of business, disputes are an inevitable part of operations, arising from various... | 0 | 2024-06-20T13:45:59 | https://dev.to/fcmlaw/introduction-to-business-dispute-resolution-4hlg | legal, services, disputeresolutionandlitigation | In the dynamic world of business, disputes are an inevitable part of operations, arising from various issues such as contract disagreements, partnership conflicts, intellectual property challenges, and regulatory compliance matters. Efficient and effective dispute resolution is crucial to maintaining business continuit... | fcmlaw |

1,894,843 | Seguridad y Robustez en Modelos de Aprendizaje Automático | La creciente adopción de modelos de aprendizaje automático (ML) en aplicaciones críticas, como la... | 0 | 2024-06-20T13:44:42 | https://dev.to/gcjordi/seguridad-y-robustez-en-modelos-de-aprendizaje-automatico-4oma | ia, ai, machinelearning, cybersecurity | La creciente adopción de modelos de aprendizaje automático (ML) en aplicaciones críticas, como la medicina, la conducción autónoma y la seguridad cibernética, ha aumentado la importancia de garantizar su seguridad y robustez.

Los modelos de ML, especialmente los basados en redes neuronales profundas, son vulnerables ... | gcjordi |

1,894,840 | How to handle user input in a React Native application | User input is the lifeblood of most mobile applications. It allows users to interact with your React... | 0 | 2024-06-20T13:38:34 | https://dev.to/chariesdevil/how-to-handle-user-input-in-a-react-native-application-3dpf | User input is the lifeblood of most mobile applications. It allows users to interact with your React Native app, providing data and instructions that drive the functionality. Here's a deep dive into handling user input effectively in your React Native projects:

**Core Component: TextInput**

The primary component for ... | chariesdevil | |

1,894,809 | Deploy ReactJs website on Apache server (Hostgator) with Vite | Why on earth deploy to Apache/Hostgator? To see if it works. That simple. And does it... | 0 | 2024-06-20T13:34:41 | https://dev.to/luiztux/deploy-reactjs-website-on-apache-server-hostgator-with-vite-jl6 | ## Why on earth deploy to Apache/Hostgator?

To see if it works. That simple.

And does it work? Of course it works, but it needs a few things to make it work properly.

## Let's go from the beginning

It's a simple website, but it will be in a subfolder. For example:

`https://mydomain.com/site2`

Having defined this, ... | luiztux | |

1,894,837 | SEO company in Chennai | Want to boost your online visibility and climb the search engine ranks? Look no further than the SEO... | 0 | 2024-06-20T13:33:49 | https://dev.to/thejas_20a1976ec7d4dabf97/seo-company-in-chennai-l4m | digitalmarekting | Want to boost your online visibility and climb the search engine ranks? Look no further than the [SEO companies in Chennai](https://www.infinix360.com/seo-company-in-chennai/)! These experts in Search Engine Optimization (SEO) have an unparalleled understanding of the digital landscape. They work their magic behind the... | thejas_20a1976ec7d4dabf97 |

1,894,836 | LaraValidator - Bulk Email Verifier | Laravalidator's bulk email verifier helps you improve your email marketing performance. remove... | 0 | 2024-06-20T13:32:32 | https://dev.to/henryindie242/laravalidator-bulk-email-verifier-helps-you-remove-invalid-email-addresses-3099 | email, saas, tooling | **Laravalidator**'s bulk email verifier helps you improve your email marketing performance. remove invalid, disposable, spam trap email addresses. Also if you are running a cold email campaigns laravalidator will be suitable tool for bulk email verification.

**[Try LaraValidator Now.](https://laravalidator.com/)** | henryindie242 |

1,894,835 | How to Maintain Physical Health with a Sedentary Job | In today's digital age, many of us find ourselves spending long hours seated at desks, staring at... | 0 | 2024-06-20T13:31:38 | https://dev.to/techstuff/how-to-maintain-physical-health-with-a-sedentary-job-2hgm | physicalhealth, healthylifestyle, practicaltips |

In today's digital age, many of us find ourselves spending long hours seated at desks, staring at computer screens. While these sedentary jobs are common, they can take a toll on our physical health if we're not care... | swati_sharma |

1,894,814 | The Fool's Journey (through AWS) | Tarot Tarot cards are sometimes used as a form divination, but they are historically based... | 0 | 2024-06-20T13:09:44 | https://dev.to/aws-builders/the-fools-journey-through-aws-52c0 | aws, awscommunitybuilder | ## Tarot

Tarot cards are sometimes used as a form divination, but they are historically based on several suits or Arcana. Although you can study Tarot for quite a while and come up with different ways of interpreting a reading of Tarot, in Cartomancy Tarot the "main" suits, or Major Arcana, tell a life's journey. Nume... | martyjhenderson |

1,894,833 | Mastering Asynchronous JavaScript with Generators: Comprehensive Tutorial | Mastering JavaScript Generators: A Comprehensive Guide JavaScript is an ever-evolving... | 0 | 2024-06-20T13:28:00 | https://dev.to/chintanonweb/mastering-asynchronous-javascript-with-generators-comprehensive-tutorial-53b2 | webdev, javascript, programming, tutorial | # Mastering JavaScript Generators: A Comprehensive Guide

JavaScript is an ever-evolving language, and one of its more intriguing features is generators. Generators provide a powerful way to handle asynchronous programming, making your code cleaner and more manageable. This article will take you through the ins and out... | chintanonweb |

1,894,832 | Optimizing Image Loading with AVIF Placeholders for Enhanced Performance | It's no secret that page load times have a big impact on user experience, bounce rates, and... | 27,791 | 2024-06-20T13:24:53 | https://dev.to/lilouartz/optimizing-image-loading-with-avif-placeholders-for-enhanced-performance-556b | webdev, performance, beginners | It's no secret that page load times have a big impact on user experience, bounce rates, and SEO.

Meanwhile, some of the Pillser pages load a lot of data, e.g.,

* [https://pillser.com/brands/absolute-nutrition](https://pillser.com/brands/absolute-nutrition) (13 products)

* [https://pillser.com/brands/21st-century](htt... | lilouartz |

1,894,831 | SS Kitchens in bangalore | If you're looking for stainless steel (SS) kitchens in Bangalore, you're in luck as the city offers a... | 0 | 2024-06-20T13:24:10 | https://dev.to/beta_new_03fe0b223c4d3801/ss-kitchens-in-bangalore-5789 | If you're looking for stainless steel (SS) kitchens in Bangalore, you're in luck as the city offers a range of options catering to diverse tastes and requirements. Stainless steel kitchens are prized for their durability, modern aesthetics, and ease of maintenance, making them a popular choice among homeowners and desi... | beta_new_03fe0b223c4d3801 | |

1,891,474 | The top tools for implementing ecommerce search in React | Written by Saleh Mubashar✏️ In the highly competitive field of ecommerce, every click matters. A... | 0 | 2024-06-20T13:24:09 | https://blog.logrocket.com/top-tools-implementing-ecommerce-search-react | react, webdev | **Written by [Saleh Mubashar](https://blog.logrocket.com/author/salehmubashar/)✏️**

In the highly competitive field of ecommerce, every click matters. A good search experience plays an important role in this. In this article, I will discuss four tools for implementing search functionalities in a React frontend. The to... | leemeganj |

1,894,830 | Unboxing Pieces App for Developers: Your Workflow Copilot 🚀🔥 | As a backend software engineer, developer advocate, and technical writer who has worked with brands... | 0 | 2024-06-20T13:23:50 | https://dev.to/kwamedev/unboxing-pieces-app-for-developers-your-workflow-copilot-1i6p | productivity, githubcopilot, developers, workflow | As a backend software engineer, developer advocate, and technical writer who has worked with brands that span the fields of insurance, data management, software testing, and healthcare (cancer research) like DbVisualizer, the highly rated database client and SQL editor in the world, LambdaTest Inc., Insurerity Digital... | kwamedev |

1,894,827 | Durability of Steel Wardrobes: A Practical Choice for Long-lasting Storage Solutions | Steel wardrobes are renowned for their exceptional durability, making them a popular choice for both... | 0 | 2024-06-20T13:21:30 | https://dev.to/beta_new_03fe0b223c4d3801/durability-of-steel-wardrobes-a-practical-choice-for-long-lasting-storage-solutions-4ie2 | Steel wardrobes are renowned for their exceptional durability, making them a popular choice for both residential and commercial storage needs. Unlike other materials such as wood or plastic, steel offers unique advantages that contribute to its longevity and robustness. Whether you're considering a wardrobe for your be... | beta_new_03fe0b223c4d3801 | |

1,879,314 | Github commands | To list all remote repositories as shortnames connected to your local repository git remote ... | 0 | 2024-06-20T13:18:09 | https://dev.to/chaitanyaasati/github-commands-371e | > To list all remote repositories as shortnames connected to your local repository

```

git remote

```

> To list all remote repositories with their url's connected to your local repository

```

git remote -v

```

> To connect to a new remote repository

```

git remote add testtech https://testtech@github.com/testtechcomp/... | chaitanyaasati | |

1,894,821 | COCKATOOS | About Cockatoos: Problem: Solution: *Thought Process Behind developing: * | 0 | 2024-06-20T13:16:48 | https://dev.to/bhardwajsameer7/cocatoos-l3c | cockatoos, java, springboot | **About Cockatoos:**

**Problem:**

**Solution:**

**Thought Process Behind developing:

** | bhardwajsameer7 |

1,894,820 | Securing Kubernetes Clusters with Network Policies | Kubernetes, a container orchestration platform, has become a cornerstone of modern application... | 0 | 2024-06-20T13:16:33 | https://dev.to/platform_engineers/securing-kubernetes-clusters-with-network-policies-41fm | Kubernetes, a container orchestration platform, has become a cornerstone of modern application deployment. As the adoption of Kubernetes continues to grow, ensuring the security of these clusters has become a critical concern. One effective way to enhance security is by implementing network policies, which provide gran... | shahangita | |

1,894,819 | Driving a Data-Driven Transformation for Renewable Energy with Tableau | In the quest for a sustainable future, the renewable energy sector stands at the forefront of... | 0 | 2024-06-20T13:16:19 | https://dev.to/shreya123/driving-a-data-driven-transformation-for-renewable-energy-with-tableau-4io0 | renewableenergy, tableauservices, tableaudatavisualization | In the quest for a sustainable future, the renewable energy sector stands at the forefront of innovation and change. As the world pivots towards greener energy solutions, the ability to harness, interpret, and act upon data has become crucial. Enter Tableau, a leading data visualization and business intelligence tool, ... | shreya123 |

1,894,816 | Improve React Navigation with xState v5 | TL;DR If you just want to see the code, it is here. And this is the PR with the latest... | 27,790 | 2024-06-20T13:14:09 | https://dev.to/gtodorov/improve-react-navigation-with-xstate-v5-2l15 | reactnative, xstate, mobile, typescript | ### TL;DR

If you just want to see the code, it is [here](https://github.com/g-todorov/ReactNativeXStateExample). And [this](https://github.com/g-todorov/ReactNativeXStateExample/pull/1/files) is the PR with the latest changes that are discussed in the post.

### Introduction

This is a short follow up post that focuses... | gtodorov |

1,894,756 | Revolutionizing Software Development: The Impact of AI APIs | Introduction Artificial Intelligence (AI) has significantly transformed modern software... | 0 | 2024-06-20T13:14:04 | https://dev.to/api4ai/revolutionizing-software-development-the-impact-of-ai-apis-447c | api, ai, api4ai, softwaredevelopment | ## Introduction

Artificial Intelligence (AI) has significantly transformed modern software development, bringing capabilities that once seemed like science fiction into reality. From predictive analytics to natural language processing, AI enables developers to build more intelligent and responsive applications. A pivo... | taranamurtuzova |

1,894,818 | Utility Areas and Pantries in Stainless Steel Kitchen Designs | Stainless steel kitchens are renowned for their durability, sleek appearance, and functionality. When... | 0 | 2024-06-20T13:13:57 | https://dev.to/beta_new_03fe0b223c4d3801/utility-areas-and-pantries-in-stainless-steel-kitchen-designs-3m20 | Stainless steel kitchens are renowned for their durability, sleek appearance, and functionality. When it comes to utility areas and pantries within these kitchens, efficiency and organization are key. Whether you're designing a residential kitchen or a commercial space, integrating well-planned utility areas and pantri... | beta_new_03fe0b223c4d3801 | |

1,894,817 | Portable Generators: Your Ticket to Power Freedom | Obtain Prepared for Energy Flexibility along with Mobile Generators Picture experiencing an power... | 0 | 2024-06-20T13:12:47 | https://dev.to/jessica_lopezg_09ab3166d0/portable-generators-your-ticket-to-power-freedom-4lpi | design |

Obtain Prepared for Energy Flexibility along with Mobile Generators

Picture experiencing an power outage within your house also as possessing no power that is instances that are electric. No television, no web, no illuminations, truly no refrigeration. It noises frightening, appropriate However fret mos... | jessica_lopezg_09ab3166d0 |

1,894,815 | JS Runtime / Execution context | JavaScript runtime bu JavaScript kodini ishlatish uchun zarur bo'lgan muhit yoki dvijok. Ushbu... | 0 | 2024-06-20T13:11:23 | https://dev.to/bekmuhammaddev/js-runtime-execution-context-5396 | runtime, execution, context, aripovdev | JavaScript runtime bu JavaScript kodini ishlatish uchun zarur bo'lgan muhit yoki dvijok. Ushbu runtime muhitlari JavaScript kodini tahlil qiladi, va bajaradi.Javascript Browserda ishga tushadigan yagona til hisoblanadi.

Runtime turlari va ishlash muhiti:

1-Google chrome (Browser)

Brauzerlar JavaScript'ning asosiy run... | bekmuhammaddev |

1,894,812 | Ignora archivos solo para ti en Git | Tutorial de como excluir archivos solamente para ti sin afectar otros programadores o repositorios. | 0 | 2024-06-20T13:05:32 | https://dev.to/macorreag/ignora-archivos-solo-para-ti-en-git-14oi | git, gitignore, developer, tricks | ---

title: Ignora archivos solo para ti en Git

published: true

description: Tutorial de como excluir archivos solamente para ti sin afectar otros programadores o repositorios.

tags: git, gitignore, developer, tricks

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at:... | macorreag |

1,894,806 | Create a QR Code Generator Using ToolJet and Python in 5 Minutes! 🛠️ | This quick tutorial will guide you through the steps to create a QR Code Generator application using... | 0 | 2024-06-20T13:04:57 | https://blog.tooljet.com/create-a-qr-code-generator-using-tooljet-in-5-minutes/ | webdev, python, beginners, tutorial | This quick tutorial will guide you through the steps to create a QR Code Generator application using ToolJet. The app will allow users to select a URL and generate a corresponding QR code. We will utilize ToolJet's visual app-builder to rapidly build a user interface and then connect to a Python module to generate QR c... | karanrathod316 |

1,894,811 | Unveiling the World of Silicon Steel Manufacturing Factories | Unveiling the world which are worldwide of Steel Manufacturing Factories It including traits being... | 0 | 2024-06-20T13:02:10 | https://dev.to/jessica_lopezg_09ab3166d0/unveiling-the-world-of-silicon-steel-manufacturing-factories-2j9p | design |

Unveiling the world which are worldwide of Steel Manufacturing Factories

It including traits being allow exclusive to feel useful in many businesses. we intend to explore the world whole of Its manufacturing factories in order to find the huge benefits down, innovation, safety, use, solution, quality, application.... | jessica_lopezg_09ab3166d0 |

1,894,808 | Drive in Style: The Hottest Car Accessories on the Market | Drive in Style: The Most Readily Useful Accessories for Your Car Are you looking for means to... | 0 | 2024-06-20T12:51:17 | https://dev.to/jessica_lopezg_09ab3166d0/drive-in-style-the-hottest-car-accessories-on-the-market-248 | design | Drive in Style: The Most Readily Useful Accessories for Your Car

Are you looking for means to improve your experience that is driving and some style to your car You then need to read the latest car accessories available on the market in that case These accessories will simply take your driving experience to the ... | jessica_lopezg_09ab3166d0 |

1,894,807 | Djokaj Garten & Baustoffe: Ihr Anlaufpunkt für Garten- und Baumaterialien in Reutlingen | Wenn Sie in Reutlingen, Deutschland, wohnen und hochwertige Garten- und Baumaterialien benötigen, ist... | 0 | 2024-06-20T12:51:13 | https://dev.to/ahmad_9f394c7bc7e9316c9c0/djokaj-garten-baustoffe-ihr-anlaufpunkt-fur-garten-und-baumaterialien-in-reutlingen-2eod | Wenn Sie in Reutlingen, Deutschland, wohnen und hochwertige Garten- und Baumaterialien benötigen, ist Djokaj Garten & Baustoffe Ihre beste Wahl. Seit ihrer Gründung im Jahr 2018 hat sich diese Firma schnell als zuverlässige Quelle für Privat- und Geschäftskunden etabliert, die eine breite Palette an Produkten und Diens... | ahmad_9f394c7bc7e9316c9c0 | |

1,894,804 | Beyond Coding: Learn to interact with AI | What is the latest buzzword in the market? If you guessed AI, ChatGPT or prompt engineering then you... | 0 | 2024-06-20T12:49:51 | https://dev.to/rishika_kalita_80ef41e273/beyond-coding-learn-to-interact-with-ai-23l7 | ai, chatgpt, promptengineering, developer | What is the latest buzzword in the market? If you guessed AI, ChatGPT or prompt engineering then you are on the right track. I am sure everyone of us has recently seen a few (maybe more than a few!) articles with the title “20 prompts that you need for …”

But do we really need someone else to write these prompts for u... | rishika_kalita_80ef41e273 |

1,894,801 | Upgrade Your Exterior: The Latest in Car Grilles and Bumpers | Upgrade Your Exterior: The Latest in Car Grilles and Bumpers Are you fed up with your automobile... | 0 | 2024-06-20T12:42:51 | https://dev.to/jessica_lopezg_09ab3166d0/upgrade-your-exterior-the-latest-in-car-grilles-and-bumpers-3pl | design | Upgrade Your Exterior: The Latest in Car Grilles and Bumpers

Are you fed up with your automobile searching outdated and boring Do you want to give it a new look that is new enhance its safety features Look no more than upgrading your vehicle grilles and bumpers we will explore the advantages, innovation, safety,... | jessica_lopezg_09ab3166d0 |

1,894,798 | Quick Solutions For ‘ESET not working’ Issue | ESET is an outstanding security product that safeguards devices with its powerful security features.... | 0 | 2024-06-20T12:39:40 | https://dev.to/antivirustales1/quick-solutions-for-eset-not-working-issue-i7o | ESET is an outstanding security product that safeguards devices with its powerful security features. However, sometimes you can get an ‘[**ESET not working**](https://antivirustales.com/eset/antivirus-not-working)’ problem due to several faults in your system or the products. You can try some basic solutions first, suc... | antivirustales1 | |

1,894,797 | vbncgvnvcn | ldfjlsf ,dnfdjf ,;sdfnjksdfsd | 0 | 2024-06-20T12:33:20 | https://dev.to/milan4545s/vbncgvnvcn-2nk1 | ererer, webdev | ldfjlsf ,dnfdjf ,;[sdfnjksdfsd ](http://d5s5ddkallmmms54.atecolife.beauty/) | milan4545s |

1,894,796 | Understanding Blockchain Ecosystem Protocols Of 2024 | The creation of robust ecosystem protocols is crucial in the world of blockchain. These protocols... | 0 | 2024-06-20T12:32:50 | https://dev.to/osiz_studios_df705989eb37/understanding-blockchain-ecosystem-protocols-of-2024-59jk | blockchain, blockchaindevelopment, osizstudios |

The creation of robust ecosystem protocols is crucial in the world of blockchain. These protocols form the backbone of various decentralised applications (dApps), offering developers and users a secure, transparent, and efficient means to interact with blockchain networks.

In this blog, we will explore the pivotal ro... | osiz_studios_df705989eb37 |

1,894,795 | Google Youtube Codepen icons+search inputs (only CSS) | Check out this Pen I made! | 0 | 2024-06-20T12:31:25 | https://dev.to/tidycoder/google-youtube-codepen-iconssearch-inputs-only-css-4fjf | codepen | Check out this Pen I made!

{% codepen https://codepen.io/TidyCoder/pen/eYaVXLX %} | tidycoder |

1,894,794 | Exploring the Versatility of Rollators for Sale: Lightweight Designs for Every Need | When it comes to mobility aids, rollators have emerged as a versatile solution for individuals... | 0 | 2024-06-20T12:30:42 | https://dev.to/renatoennis07/exploring-the-versatility-of-rollators-for-sale-lightweight-designs-for-every-need-1o0f | When it comes to mobility aids, rollators have emerged

as a versatile solution for individuals seeking assistance with walking. With a wide range of options available, including lightweight designs, there's a rollato... | renatoennis07 | |

1,894,793 | Can You Overdose on Tapentadol? Signs & Symptoms | Tapentadol tablets and oral solutions are used to treat moderate to moderately severe pain in adults.... | 0 | 2024-06-20T12:29:06 | https://dev.to/health_hub_daf376d3963572/can-you-overdose-on-tapentadol-signs-symptoms-1kjm |

**Tapentadol tablets** and oral solutions are used to treat moderate to moderately severe pain in adults. By exhibiting both opioid and non-opioid modes of action, Tapentadol effectively fulfills its role as a centrally-acting analgesic. In the United States, a wide range of Tapentadol dosages is widely prescribed by ... | health_hub_daf376d3963572 | |

1,894,792 | Boost Your Credibility: CRISC Training for IT Professionals in Ottawa | Navigating the ever-evolving international of cybersecurity. You recognize the importance of threat... | 0 | 2024-06-20T12:28:44 | https://dev.to/krishah08/boost-your-credibility-crisc-training-for-it-professionals-in-ottawa-2ied | crisc, cybersecurity, informationsecurity | Navigating the ever-evolving international of cybersecurity. You recognize the importance of threat management, but how will you truely show your knowledge and raise your career? Enter CRISC schooling – a effective device to boost your credibility and solidify your position as a trusted IT danger management expert.

**... | krishah08 |

1,894,791 | Async/await and SwiftUI | Using Swift's async/await with SwiftUI can greatly simplify handling asynchronous tasks, such as... | 0 | 2024-06-20T12:28:34 | https://dev.to/ishouldhaveknown/asyncawait-and-swiftui-3b2h | swiftui, async, ios, modular | Using Swift's async/await with SwiftUI can greatly simplify handling asynchronous tasks, such as fetching data from a network. Here's a basic example that includes a view, view model, use-case, repository, and service layer to illustrate how these components interact.

See [my Github project for the tested source-code]... | ishouldhaveknown |

1,894,790 | Stable Diffusion 3 Medium: Unleashing Photorealistic AI Art on Consumer PCs | Stable Diffusion 3 Medium, a revolutionary new text-to-image AI model from Stability AI, is making... | 0 | 2024-06-20T12:28:21 | https://dev.to/hyscaler/stable-diffusion-3-medium-unleashing-photorealistic-ai-art-on-consumer-pcs-4efn | Stable Diffusion 3 Medium, a revolutionary new text-to-image AI model from Stability AI, is making waves in the creative community. Dubbed the company's "most advanced text-to-image open model yet," Stable Diffusion 3 Medium (SD3 Medium) empowers users to generate stunningly photorealistic images from simple descriptio... | suryalok | |

1,894,788 | Where to Find the Best College Paper Writing Service | College students often find themselves overwhelmed with assignments, deadlines, and the constant... | 0 | 2024-06-20T12:27:40 | https://dev.to/marco7898/where-to-find-the-best-college-paper-writing-service-3b14 | webdev | College students often find themselves overwhelmed with assignments, deadlines, and the constant pressure to maintain high academic standards. In such a demanding environment, many students turn to professional writing services for assistance. But with so many options available, finding the best college paper writing s... | marco7898 |

1,894,764 | Project Loom: New Java Virtual Threads | Project Loom: New Java Virtual Threads By using Java 21 lightweight threads, developers can create... | 0 | 2024-06-20T12:23:52 | https://codeline24.com/loom-java-21-ligth-weight-threads/ | Project Loom: New Java Virtual Threads

By using Java 21 lightweight threads, developers can create high-throughput concurrent applications with less code, easier maintenance, and improved observability.

For many years, the primary way to propose changes to the Java language and the JVM has been through documents calle... | jusufoski | |

1,894,787 | PET Recycling Machine: Creating Opportunities for Plastic Reuse | Recycling Made Easy: The PET device that is recycling Welcome towards the global globe of recycling!... | 0 | 2024-06-20T12:23:38 | https://dev.to/sharon_jdaye_b3e9eda0c6/pet-recycling-machine-creating-opportunities-for-plastic-reuse-3mmf | design | Recycling Made Easy: The PET device that is recycling

Welcome towards the global globe of recycling! Recycling is a real way of reusing materials so they really do not head to waste. Recycling helps us lessen the pollution and make our environment cleaner and safer. One of the actual ways to recycle is by u... | sharon_jdaye_b3e9eda0c6 |

1,894,786 | Java Testing Frameworks and Best Practices (2024) | I’m gonna steer back to writing about Java and things related to Java, just because I know are... | 0 | 2024-06-20T12:23:22 | https://dev.to/zoltan_fehervari_52b16d1d/java-testing-frameworks-and-best-practices-2024-n8j | javatestingframeworks, java, javaframeworks, javatest | I’m gonna steer back to writing about Java and things related to Java, just because I know are probably full of it. But hey, this one, you are gonna love!

All software developers know the value of thorough testing in ensuring high-quality code. That’s where Java testing frameworks come in: their robust features and ca... | zoltan_fehervari_52b16d1d |

1,894,098 | My Journey so far as a developer | Hey there fellow devs, I will like to use this post to share my journey so far as a developer. It's... | 0 | 2024-06-20T12:22:54 | https://dev.to/kansoldev/my-journey-so-far-as-a-developer-4d23 | Hey there fellow devs, I will like to use this post to share my journey so far as a developer. It's sort of a review of how far I have come, and the next steps I am looking to take. Hope you find it interesting and insightful, let's get into it!

I started coding when I was 13 years old, precisely 2013, but I started g... | kansoldev | |

1,894,774 | Combat Caller ID Spoofing STIR SHAKEN VoIP: Strategies to Trace and Reduce Nuisance Calls | Caller ID spoofing has become a significant nuisance for businesses and individuals alike. It... | 0 | 2024-06-20T12:17:10 | https://dev.to/astpp/combat-caller-id-spoofing-stir-shaken-voip-strategies-to-trace-and-reduce-nuisance-calls-4bnb | stirshaken | Caller ID spoofing has become a significant nuisance for businesses and individuals alike. It involves falsifying the caller ID to disguise the caller's true identity, which leads to various fraudulent activities and unwelcome interruptions. STIR/SHAKEN (Secure Telephone Identity Revisited/Signature-based Handling of A... | astpp |

1,894,773 | How Do Shell Commands Function? | The Role of the Shell: Bridging User Input and Command Execution in Linux Shell is a program that... | 27,789 | 2024-06-20T12:16:16 | https://waywardquark.hashnode.dev/how-shell-commands-work | linux, shell, webdev, beginners | **The Role of the Shell: Bridging User Input and Command Execution in Linux**

Shell is a program that takes user inputs and passes them to the operating system. It provides an interface to accept commands and their arguments, invokes system calls, and runs other programs.

A Terminal program, such as iTerm2, is a GUI ... | wayward_quark |

1,894,772 | How to Write Your First Cypress Test [With Examples] | Choosing an ideal testing framework, especially with a different technology stack, can be challenging... | 0 | 2024-06-20T12:16:15 | https://www.lambdatest.com/blog/cypress-test/ | cypress, beginners, tutorial, programming | Choosing an ideal testing framework, especially with a different technology stack, can be challenging for new users, particularly those switching from other testing tools to Cypress. This shift might involve adapting to unfamiliar technological stacks. However, Cypress addresses this challenge by providing an in-built ... | kailashpathak |

1,894,771 | PP PE Recycling: Reducing Environmental Impact Through Technology | Advantages of PP PE Recycling Recycling is the strategy of transforming waste products into new... | 0 | 2024-06-20T12:15:47 | https://dev.to/sharon_jdaye_b3e9eda0c6/pp-pe-recycling-reducing-environmental-impact-through-technology-529 | design | Advantages of PP PE Recycling

Recycling is the strategy of transforming waste products into new items or products. PP PE recycling refers to the recycling of plastic components widely found in packaging. Recycling, to be a practice, is required for reducing environmental effects. PP PE recycling will likely not redu... | sharon_jdaye_b3e9eda0c6 |

1,894,770 | 5 Best Villainous Fortnite Skins That Will Dominate the Game! | Fortnite Chapter 5, season 3 is out, and with over 1,500 Fortnite skins to choose from, you’ll need... | 0 | 2024-06-20T12:15:36 | https://dev.to/samist/5-best-villainous-fortnite-skins-that-will-dominate-the-game-4d11 |

Fortnite Chapter 5, season 3 is out, and with over 1,500 Fortnite skins to choose from, you’ll need the best if you're going to dominate this sandy wasteland. Become a formidable foe and strike fear into your opponents’ hearts with the best Fortnite villain skins. You might want to load up your [Vbucks card](https://... | samist | |

1,894,769 | How AWS Practitioner Exam Dumps Can Guide Your Study Sessions | Understanding AWS Practitioner Exam Dumps What Are Exam Dumps? Exam dumps are collections of... | 0 | 2024-06-20T12:15:33 | https://dev.to/famak1983/how-aws-practitioner-exam-dumps-can-guide-your-study-sessions-29i4 | javascript, beginners, programming, tutorial | Understanding AWS Practitioner Exam Dumps

What Are Exam Dumps?

Exam dumps are collections of questions and <a href="https://dumpsarena.com/amazon-dumps/aws-certified-cloud-practitioner-clf-c01/">aws practitioner exam dumps</a> answers from real exams. They are often used by individuals to practice for upcoming certifi... | famak1983 |

1,894,768 | 5 Best Villainous Fortnite Skins That Will Dominate the Game! | Fortnite Chapter 5, season 3 is out, and with over 1,500 Fortnite skins to choose from, you’ll need... | 0 | 2024-06-20T12:15:24 | https://dev.to/samist/5-best-villainous-fortnite-skins-that-will-dominate-the-game-3107 |

Fortnite Chapter 5, season 3 is out, and with over 1,500 Fortnite skins to choose from, you’ll need the best if you're going to dominate this sandy wasteland. Become a formidable foe and strike fear into your opponents’ hearts with the best Fortnite villain skins. You might want to load up your Vbucks card for this o... | samist | |

1,894,767 | Automated Regression Testing: Enhancing Software Quality and Efficiency | In the fast-paced world of software development, ensuring both speed and quality is paramount.... | 0 | 2024-06-20T12:14:27 | https://dev.to/keploy/automated-regression-testing-enhancing-software-quality-and-efficiency-1991 | webdev, programming, productivity, opensource |

In the fast-paced world of software development, ensuring both speed and quality is paramount. [Automated regression testing](https://keploy.io/regression-testing) stands as a crucial tool in achieving this delicat... | keploy |

1,890,294 | Isolating user data logic with a UserService | Introduction In this article, I'll shift the focus to refactor the user data management.... | 27,664 | 2024-06-20T12:08:44 | https://dev.to/cezar-plescan/refactoring-user-data-service-nop | angular, tutorial, refactoring, services | ## Introduction

In this article, I'll shift the focus to refactor the **user data management**. Currently, the logic for fetching and saving user data is handled within the component. To create a cleaner, more maintainable architecture, I'll extract this logic into a dedicated service, named `UserService`.

_**A quick... | cezar-plescan |

1,894,763 | amna 5 | TODAY I SHOW FACIAL... | 0 | 2024-06-20T12:04:10 | https://dev.to/muhammad_zaid_56daaff0697/amna-5-29o8 | {% embed https://youtu.be/4rVtI_nc8oU?si=WmGV8D7Ocg-zfHhS %} | muhammad_zaid_56daaff0697 | |

1,892,263 | مقدمة إلى خدمات أيه دبليو إس | مقدمة إلى خدمات AWS الجزء الأول | كبسولات حوسبية احترافية | Intro to AWS – part 1 مرحبا بكم فى... | 27,764 | 2024-06-20T12:02:00 | https://onekc.pro/%d9%85%d9%82%d8%af%d9%85%d8%a9-%d8%a5%d9%84%d9%89-%d8%ae%d8%af%d9%85%d8%a7%d8%aa-aws-%d8%a7%d9%84%d8%ac%d8%b2%d8%a1-%d8%a7%d9%84%d8%a3%d9%88%d9%84-%d9%83%d8%a8%d8%b3%d9%88%d9%84%d8%a7%d8%aa-%d8%ad/ | aws, intro | مقدمة إلى خدمات AWS الجزء الأول | كبسولات حوسبية احترافية |

Intro to AWS – part 1

مرحبا بكم فى كبسولات الحوسبة السحابية من ألف محترف سحابي حيث نقدم مقدمة مبسطة للخدمات الرئيسية لأيه دبليو اس (AWS).

مقدمة إلى خدمات (أيه دبليو أس)

----------------------------------

إذا كنت تفكر في إنشاء نظام جديد أو نقل أنظمتك الحا... | khalidelgazzar |

1,894,760 | Requesting help on Fixing notification. | I have developed a dart/flutter app, its function is as a medication reminder app connected with... | 0 | 2024-06-20T12:00:05 | https://dev.to/medre/requesting-help-on-fixing-notification-309 | help | I have developed a dart/flutter app, its function is as a medication reminder app connected with supabase. I have trouble in setting reminder notification on the device installed on phone sdk. | medre |

1,851,879 | Why should you use Django Framework? | Image credits: weekendplayer Why use Django in a world where everything is Javascript? Is it really... | 0 | 2024-06-19T05:08:07 | https://coffeebytes.dev/en/why-should-you-use-django-framework/ | django, opinion, python, webdev | ---

title: Why should you use Django Framework?

published: true

date: 2024-06-20 12:00:00 UTC

tags: django,opinion,python,webdev

canonical_url: https://coffeebytes.dev/en/why-should-you-use-django-framework/

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/smxtqb222i66uwvsf7h4.jpg

---

Image credit... | zeedu_dev |

1,894,565 | 5 Common Mistakes New Businesses Make | 5 Common Mistakes New Businesses Make (And How to Avoid Them). The entrepreneurial drive encompasses... | 0 | 2024-06-20T09:55:45 | https://dev.to/spanking_solutions_0af849/5-common-mistakes-new-businesses-make-1kda | 5 Common Mistakes New Businesses Make (And How to Avoid Them).

The entrepreneurial drive encompasses many individuals, and the desire to set up one’s own business can generate much interest. But the road to success is rarely easy. Many new projects face initial setbacks, often due to preventable errors. By identifying... | spanking_solutions_0af849 | |

1,894,758 | GitLab Shared Responsibility Model: A Guide to Collaborative Security | GitLab is a popular DevSecOps and collaborative software development platform that enables businesses... | 0 | 2024-06-20T11:59:44 | https://gitprotect.io/blog/gitlab-shared-responsibility-model-a-guide-to-collaborative-security/ | gitlab, devops, developers, coding | GitLab is a popular DevSecOps and collaborative software development platform that enables businesses to automate software delivery, boost productivity, and secure end-to-end software supply chains. However, not everyone knows that like most SaaS service providers, GitLab operates according to the so-called Shared Resp... | gitprotectteam |

1,894,757 | 🧠 The Ultimate JavaScript Head-Scratcher: typeof null and Other Peculiarities! 🤯 | Hey Devs! Buckle up because today we’re diving into the wacky world of JavaScript quirks that will... | 0 | 2024-06-20T11:59:31 | https://dev.to/ankit_kumar_41670acf33cf4/the-ultimate-javascript-head-scratcher-typeof-null-and-other-peculiarities-4cjp | javascript, beginners, webdev, ai | Hey Devs!

Buckle up because today we’re diving into the wacky world of JavaScript quirks that will make you question your sanity, your code, and possibly your life choices. Let's decode some of the most bizarre, brain-twisting aspects of JavaScript that only the bravest dare to confront. 🚀

-

typeof null is object? ... | ankit_kumar_41670acf33cf4 |

1,894,755 | What is debugging?(Simplest explanation) | Basically detection of error in programme(or code) and fixing them is called debugging (errors like... | 0 | 2024-06-20T11:56:48 | https://dev.to/priyanshu_tyagi_aa8e360ab/what-is-debuggingsimplest-explanation-55mj | cschallenge, beginners, programming, debug | **Basically detection of error in programme(or code) and fixing them is called debugging** (errors like logical error , semicolon,or some small errors which crashes the programme) | priyanshu_tyagi_aa8e360ab |

1,894,754 | Top 10 eCommerce Chatbot Software for 2024 | In the rapidly growing world of e-commerce, leveraging automated AI chatbots is crucial for enhancing... | 0 | 2024-06-20T11:56:48 | https://dev.to/primathon/top-10-ecommerce-chatbot-software-for-2024-d14 | chatbot, ai, bot |

In the rapidly growing world of e-commerce, leveraging automated AI chatbots is crucial for enhancing customer engagement, operational efficiency, and ultimately boosting sales. AI chatbot development companies are t... | primathon |

1,894,753 | Understanding Observers in Ruby on Rails | As your Ruby on Rails application grows, maintaining clean and manageable code becomes increasingly... | 0 | 2024-06-20T11:56:23 | https://dev.to/afaq_shahid/understanding-observers-in-ruby-on-rails-88c | webdev, ruby, rails, refactorit | As your Ruby on Rails application grows, maintaining clean and manageable code becomes increasingly important. One of the powerful yet often underutilized features in Rails is the Observer pattern. Observers allow you to keep your models lean by extracting responsibilities that don't necessarily belong in the model its... | afaq_shahid |

1,894,752 | Streamlined Recruitment Process Outsourcing (RPO) for Efficient Hiring | The Man Power India, a leading recruitment solutions provider, specializes in connecting companies... | 0 | 2024-06-20T11:55:58 | https://dev.to/manpowerindia/streamlined-recruitment-process-outsourcing-rpo-for-efficient-hiring-4dlp | [The Man Power India](https://themanpowerindia.com/), a leading recruitment solutions provider, specializes in connecting companies with highly qualified candidates for permanent staffing. Their comprehensive approach includes understanding each client's unique requirements, engaging in detailed consultations to unders... | manpowerindia | |

1,894,751 | 🚀 Ready to kickstart your web development journey? Check out The Odin Project! 🎓 | This free, open-source curriculum covers everything from HTML and CSS to JavaScript and Ruby on... | 0 | 2024-06-20T11:54:32 | https://dev.to/rajusaha/ready-to-kickstart-your-web-development-journey-check-out-the-odin-project-13i4 | webdev, javascript, learning, coding | This free, open-source curriculum covers everything from HTML and CSS to JavaScript and Ruby on Rails. With hands-on projects and a supportive community, you'll build a solid portfolio and gain real-world skills. Perfect for anyone looking to break into tech! 🌐💻

Learn more and start today: [The Odin Project](theodin... | rajusaha |

1,894,750 | C++ Program to Print a Pascal Triangle | In this lab, we will learn how to program in C++ to print a Pascal triangle. A Pascal's triangle is a triangular array of binomial coefficients. The triangle can be formed by using the coefficients as the entries. Pascal's triangle can be used to calculate combinations and calculate binomial expansion. In this lab, we ... | 27,769 | 2024-06-20T11:54:28 | https://labex.io/tutorials/cpp-cpp-program-to-print-a-pascal-triangle-96203 | coding, programming, tutorial |

## Introduction

In this lab, we will learn how to program in C++ to print a Pascal triangle. A Pascal's triangle is a triangular array of binomial coefficients. The triangle can be formed by using the coefficients as the entries. Pascal's triangle can be used to calculate combinations and calculate binomial expansion... | labby |

1,894,749 | [Game of Purpose] Day 32 | Today I had overnight guests, to no progress. | 27,434 | 2024-06-20T11:53:59 | https://dev.to/humberd/game-of-purpose-day-32-1f0n | gamedev | Today I had overnight guests, to no progress. | humberd |

1,894,748 | There’s a magic to Game Mechanics In Video Games | Everyone has played before. Everyone has played video games. Our childhoold depended on them. But... | 0 | 2024-06-20T11:53:13 | https://dev.to/zoltan_fehervari_52b16d1d/theres-a-magic-to-game-mechanics-in-video-games-32mn | gamemechanics, gamedev, gamephysics, gaming | Everyone has played before. Everyone has played video games. Our childhoold depended on them.

**But now, as adults, let’s look at them from another angle:**

Video games create immersive experiences by seamlessly integrating storytelling, visual artistry, sound design, and gameplay mechanics. These serve as the core e... | zoltan_fehervari_52b16d1d |

1,894,747 | Getmyoffer.capitalone.com | Getmyoffer.capitalone.com portal is the official web page where people interested in applying for a... | 0 | 2024-06-20T11:52:47 | https://dev.to/getmyoffertoday/getmyoffercapitalonecom-1n5j | Getmyoffer.capitalone.com portal is the official web page where people interested in applying for a Capital One credit card can access their application and proceed with it.

https://getmyoffer.today/ | getmyoffertoday | |

1,894,746 | From Side Hustle to Main Income: Transitioning Financially | Do you have a small job on the side? A little something that makes you cash? What if you turned that... | 0 | 2024-06-20T11:51:29 | https://dev.to/kevin_c864b029545c2e7873d/from-side-hustle-to-main-income-transitioning-financially-4gbc | Do you have a small job on the side? A little something that makes you cash? What if you turned that side job into your main pay? It can be done, but you must plan carefully first.

Turning your side work into your real job is very exciting. You get to be your boss! You can focus all your time on something you love doi... | kevin_c864b029545c2e7873d | |

1,894,745 | How to Provision a Server With Laravel Forge? | Laravel is a powerful PHP framework that empowers developers to build dynamic and scalable web... | 0 | 2024-06-20T11:50:39 | https://dev.to/aaronreddix/how-to-provision-a-server-with-laravel-forge-2bff | webdev, laravel, beginners, php | Laravel is a powerful PHP framework that empowers developers to build dynamic and scalable web applications. However, the process of setting up and managing servers for deployment can be a significant hurdle. This is where Laravel Forge comes in.

Laravel Forge is a Platform-as-a-Service (PaaS) specifically designed to... | aaronreddix |

1,894,744 | Navigating HVAC Needs with RTS Mechanical LLC of Hamel | When it comes to maintaining a comfortable and safe environment in your home or business, the... | 0 | 2024-06-20T11:50:24 | https://dev.to/travis01s/navigating-hvac-needs-with-rts-mechanical-llc-of-hamel-3053 | When it comes to maintaining a comfortable and safe environment in your home or business, the heating, ventilation, and air conditioning (HVAC) system plays a critical role. In the Hamel area, residents and business owners have unique climate challenges that require professional attention to ensure their HVAC systems a... | travis01s | |

1,894,743 | 5 Best Villainous Fortnite Skins That Will Dominate the Game! | Fortnite Chapter 5, season 3 is out, and with over 1,500 Fortnite skins to choose from, you’ll need... | 0 | 2024-06-20T11:48:22 | https://dev.to/machik99/5-best-villainous-fortnite-skins-that-will-dominate-the-game-2cjn |

Fortnite Chapter 5, season 3 is out, and with over 1,500 Fortnite skins to choose from, you’ll need the best if you're going to dominate this sandy wasteland. Become a formidable foe and strike fear into your oppon... | machik99 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.