id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,894,271 | Renaming Bugs as "UnWritten Test Cases" | The Code Crusaders Story There was a team of superheroes called the "Code Crusaders". Their job was... | 0 | 2024-06-20T04:48:53 | https://dev.to/codejourney/renaming-bugs-as-unwritten-test-cases-3h0b | bugsarenewtestcases, notabug, xp |

**The Code Crusaders Story**

There was a team of superheroes called the "Code Crusaders". Their job was to protect the digital world from bad things like glitches and bugs.

One day, they got a call that a virus wa... | codejourney |

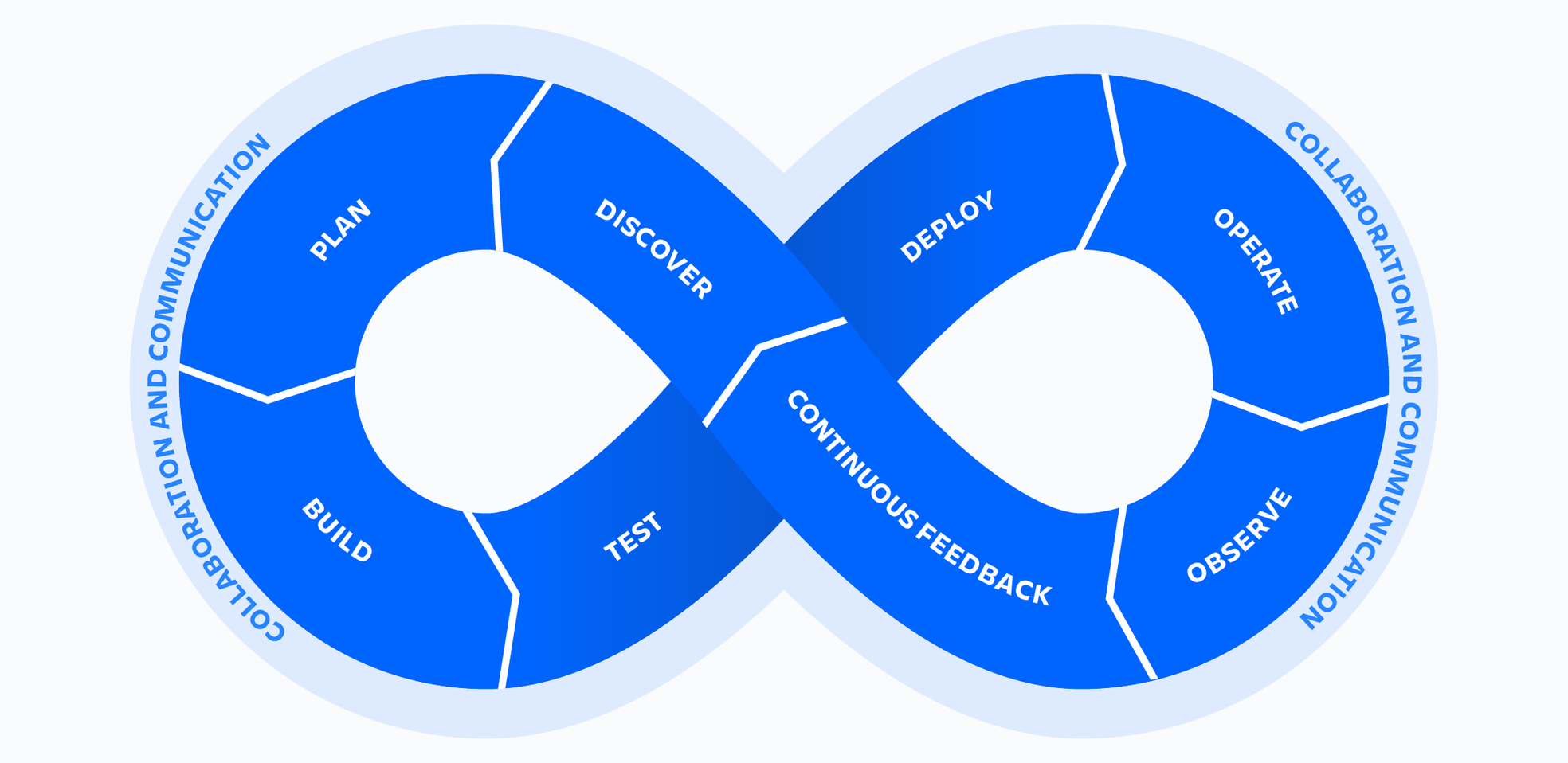

1,894,270 | My 100-Day DevOps Journey: Day 1 Recap | Today marks the beginning of my 100-day adventure into the world of DevOps. I'm diving into the... | 0 | 2024-06-20T04:43:09 | https://dev.to/dilshavijay/my-100-day-devops-journey-day-1-recap-1h2o | devops, 100daysofdevops, challenge, beginners |

Today marks the beginning of my 100-day adventure into the world of DevOps. I'm diving into the practices, tools, and culture that define DevOps, aiming to not just learn technical skills but also foster a collabo... | dilshavijay |

1,894,267 | Ultimate Guide to Effective Project Progress Communication with Top Management | Effective communication of project progress to top management is pivotal for the success of any... | 0 | 2024-06-20T04:37:33 | https://dev.to/futuristicgeeks/ultimate-guide-to-effective-project-progress-communication-with-top-management-10id | webdev, leadership, teamlead, projectmanager | Effective communication of project progress to top management is pivotal for the success of any project. Beyond sharing updates, it involves presenting information in a way that is strategic, insightful, and aligned with organizational goals. This guide outlines advanced strategies and tips for project managers to conv... | futuristicgeeks |

1,894,266 | Boost Your Coding Efficiency: 10 Productivity Tips for Developers | Hey there, code warrior! Ready to up your productivity game and make your coding sessions not just... | 0 | 2024-06-20T04:32:25 | https://dev.to/delia_code/boost-your-coding-efficiency-10-productivity-tips-for-developers-2b45 | productivity, career, codenewbie |

Hey there, code warrior! Ready to up your productivity game and make your coding sessions not just more efficient but also a lot more enjoyable? As developers, we often juggle numerous tasks, deadlines, and challenges that can affect our productivity and mental health. Enhancing productivity isn't just about working h... | delia_code |

1,894,265 | AMAN 4 | BEAUTY FACE MATE POWDER... | 0 | 2024-06-20T04:27:03 | https://dev.to/muhammad_zaid_56daaff0697/aman-4-3gej | {% embed https://youtu.be/5pp9xXQBtwQ?si=4iCKoBd73ASClHDT %} | muhammad_zaid_56daaff0697 | |

1,894,264 | What are the current trends in web development? | After being a web developer, it's clear that knowing how to code is not enough! But you have to... | 0 | 2024-06-20T04:26:58 | https://dev.to/joyanderson1702/what-are-the-current-trends-in-web-development-32b1 | webdev, learning, programming, tooling | After being a web developer, it's clear that knowing how to code is not enough! But you have to update yourself with the latest trends in web development. Knowing current trends not only helps you develop web applications but is also revolutionizing the way websites are developed.

According to an Adobe study, 59% of ... | joyanderson1702 |

1,894,259 | Salah reminder Apple Shortcuts in Bahasa Indonesia | Shortcut link : https://www.icloud.com/shortcuts/faad766c51f448c4888239b07401188d Daftar... | 0 | 2024-06-20T04:10:20 | https://dev.to/azet/salah-reminder-in-bahasa-indonesia-1036 | ios, appleshortcuts |

**Shortcut link :** [https://www.icloud.com/shortcuts/faad766c51f448c4888239b07401188d](https://www.icloud.com/shortcuts/faad766c51f448c4888239b07401188d)

## Daftar isi :

- [Cara kerja](https://gist.github.com/al... | azet |

1,894,263 | AMNA 3 | LADIES EYE SHADE KIT FOR... | 0 | 2024-06-20T04:26:16 | https://dev.to/muhammad_zaid_56daaff0697/amna-3-5b7f | {% embed https://youtu.be/nrGCdVIgrDs?si=BzkGHz3Laf8OPhsI %} | muhammad_zaid_56daaff0697 | |

1,894,262 | AMNA 2 | TODAY SHOW MY SEVEN HERBAL... | 0 | 2024-06-20T04:21:56 | https://dev.to/muhammad_zaid_56daaff0697/amna-2-36n5 | {% embed https://youtu.be/JixNFC29eWs?si=LkXlahqoe9WCicgA %} | muhammad_zaid_56daaff0697 | |

1,894,261 | AMNA 1 | TODAY I GIVE REVIEW ON... | 0 | 2024-06-20T04:21:04 | https://dev.to/muhammad_zaid_56daaff0697/amna-1-1ed2 | {% embed https://youtu.be/dRaAirc9sYM?si=uNGYVACmIwAOFGcL %} | muhammad_zaid_56daaff0697 | |

1,894,260 | Exploring ZNTECH New Energy Technology's Innovations | Exploring ZNTECH New Energy Technology's Innovations Can you need to find out about the charged... | 0 | 2024-06-20T04:11:58 | https://dev.to/laura_hills_aeae9d9e7a982/exploring-zntech-new-energy-technologys-innovations-1nf1 | design |

Exploring ZNTECH New Energy Technology's Innovations

Can you need to find out about the charged energy that has been latest developed by ZNTECH. You are in the area specific is correct, we will explore the innovations of ZNTECH how they could gain ones.

Advantages of ZNTECH's New Energy Technology

ZNTECH's ... | laura_hills_aeae9d9e7a982 |

1,894,258 | Setting up NVIDIA stuff on Pop!_OS | I had a lot of trouble with Ubuntu+NVIDIA which I solved by just installing PopOS. On Ubuntu I was... | 0 | 2024-06-20T04:03:41 | https://dev.to/tallesl/setting-up-nvidia-stuff-on-popos-3o17 | ubuntu, nvidia, linux | I had a lot of trouble with Ubuntu+NVIDIA which I solved by just installing PopOS. On Ubuntu I was getting small 'hiccups' all the time (my machine was freezing for a few seconds) and sometimes crashed under heavy video usage.

Technically PopOS is just Ubuntu under the hood, so it shouldn't be better when compared to ... | tallesl |

1,894,257 | สัญญาณเตือน ที่ธุรกิจต้องจ้างบริษัทรับทําเว็บไซต์มาช่วยแก้วิกฤต | เว็บร้างไร้คนเข้าเยี่ยมชม ประเดิมกันที่ข้อแรกที่ดูเหมือนจะเป็นสัญญาณเตือนที่ชัดที่สุด... | 0 | 2024-06-20T04:00:01 | https://dev.to/c3cugjc7666/sayyaanetuuen-thiithurkicchtngcchaangbrisathrabthmaaewbaichtmaachwyaekwikrt-3p76 | 1. เว็บร้างไร้คนเข้าเยี่ยมชม

ประเดิมกันที่ข้อแรกที่ดูเหมือนจะเป็นสัญญาณเตือนที่ชัดที่สุด ทำเว็บไซต์มาแต่คนเข้าเยี่ยมชมเว็บไซต์ไม่เป็นไปตามเป้า และเริ่มน้อย ๆ ลงเรื่อย จนท้ายที่สุดกลายเป็นเว็บร้างที่ถูกลืม สืบค้นก็หาไม่เจอ จำเป็นอย่างยิ่งที่ต้องให้ผู้เชี่ยวชาญระดับมืออาชีพเข้ามาช่วยกู้ชีพ ปรับปรุง แก้ไข และพัฒนาเว็บไซต์... | c3cugjc7666 | |

1,894,256 | Exploring Rupee Games: Fun, Rewards, and More | Welcome to the world of Rupee Games, where fun and excitement await! Rupee Games is an online gaming... | 0 | 2024-06-20T03:52:20 | https://dev.to/fghnmj/exploring-rupee-games-fun-rewards-and-more-4dmp | Welcome to the world of Rupee Games, where fun and excitement await! Rupee Games is an online gaming platform that offers a wide range of games and opportunities to win real rewards. In this article, we will explore what makes Rupee Games so special, including its diverse game selection, competitive tournaments, attrac... | fghnmj | |

1,894,255 | 10 Reasons Your Side Projects Aren't Making Money (And How to Fix Them!) | Starting a side project with the hope of turning it into a profitable venture is exciting, but many... | 0 | 2024-06-20T03:52:04 | https://dev.to/delia_code/10-reasons-your-side-projects-arent-making-money-and-how-to-fix-them-hh | webdev, career, beginners, softwaredevelopment | Starting a side project with the hope of turning it into a profitable venture is exciting, but many people encounter roadblocks along the way. Let’s explore why many side projects fail to make money and how you can steer clear of these pitfalls to find success.

## 1. Lack of Market Research

### Why It’s a Problem

Man... | delia_code |

1,894,254 | Sass I | Sass es un preprocesador CSS que nos permite dotar de superpoderes a css por medio de la anidación de... | 0 | 2024-06-20T03:50:03 | https://dev.to/fernandomoyano/lo-basico-de-sass-2287 | webdev, beginners, css |

**Sass** es un preprocesador CSS que nos permite dotar de superpoderes a css por medio de la anidación de selectores, la creación de archivos parciales, y funciones avanzadas, Todo este proceso será compilado por el compilador de sass para entregarnos finalmente un único archivo css ya listo y optimizado para producc... | fernandomoyano |

1,547,240 | A simple task, a senior's mind | As all software developers, especially seniors, I was faced today with the task of making a simple,... | 0 | 2023-07-24T12:24:03 | https://dev.to/antosdev/a-simple-task-a-seniors-mind-gka | programming, designsystem, api, softwareengineering | As all software developers, especially seniors, I was faced today with the task of making a simple, brain dead, design decision that I should be able to make in my sleep, but with every passing minute I came up with new designs and solutions to reach the same result so after spending 15 minutes thinking about it I got ... | antosdev |

1,894,253 | Volunteer Backend Developer | Join Generals as a volunteer Backend Developer and help build a platform that fosters unity in the... | 0 | 2024-06-20T03:48:24 | https://dev.to/rejoicedo/volunteer-backend-developer-1mg | backend, backenddevelopment, node, nextjs | Join **Generals** as a volunteer Backend Developer and help build a platform that fosters unity in the body of Christ!

**Responsibilities:

**- Design and develop the server-side logic and database integration

- Create RESTful APIs and ensure data security and scalability

- Collaborate with our team to integrate fronte... | rejoicedo |

1,894,252 | Volunteer Frontend Developer | Join Generals as a volunteer Frontend Developer and help build a platform that promotes unity in the... | 0 | 2024-06-20T03:44:34 | https://dev.to/rejoicedo/volunteer-frontend-developer-589c | javascript, ai, career, frontend | Join Generals as a volunteer Frontend Developer and help build a platform that promotes unity in the body of Christ!

**Responsibilities:**

- Design and develop a user-friendly interface for our web application

- Create responsive, interactive, and visually appealing components

- Collaborate with our team to integrate ... | rejoicedo |

1,894,251 | First Post | This is my first post. I want to improve my skills, so I will start sharing my study notes and... | 0 | 2024-06-20T03:25:19 | https://dev.to/xdoggox/first-post-2h20 | firstpost, development | This is my first post. I want to improve my skills, so I will start sharing my study notes and exercises. I won't do tutorials or anything like that, I'll just share what I learn. Your opinion is welcome as long as it is kind and generates learning 😁😁

So, let's do this :) | xdoggox |

1,894,248 | Dive into 3D: Building Environments in Unity with Pre-Made Assets | Unity reigns supreme as a powerful game engine for crafting compelling 3D experiences. But what if... | 0 | 2024-06-20T03:18:26 | https://dev.to/epakconsultant/dive-into-3d-building-environments-in-unity-with-pre-made-assets-100i | unity3d | Unity reigns supreme as a powerful game engine for crafting compelling 3D experiences. But what if you're new to 3D development or lack the artistic expertise to create intricate environments? Fear not! Unity embraces the use of pre-existing assets, allowing you to construct captivating worlds without needing to model ... | epakconsultant |

1,894,247 | tentu! Berikut contoh kode HTML sederhana untuk membuat halaman web dasar: | road.co.id tentu! Berikut contoh kode HTML sederhana untuk membuat halaman web... | 0 | 2024-06-20T03:14:39 | https://dev.to/jasa_cs_96/tentu-berikut-contoh-kode-html-sederhana-untuk-membuat-halaman-web-dasar-5d9j | webdev, beginners, programming, tutorial | [road.co.id](https://road.co.id/) tentu! Berikut contoh kode HTML sederhana untuk membuat halaman web dasar:

HTML

<!DOCTYPE html>

<html>

<head>

<title>Halaman Web Sederhana</title>

</head>

<body>

<h1>Selamat datang di halaman web saya!</h1>

<p>Ini adalah paragraf berisi konten halaman web.</p>

</body>

</html>

Gu... | jasa_cs_96 |

1,894,246 | tentu! Berikut contoh kode HTML sederhana untuk membuat halaman web dasar: | road.co.id tentu! Berikut contoh kode HTML sederhana untuk membuat halaman web... | 0 | 2024-06-20T03:14:38 | https://dev.to/jasa_cs_96/tentu-berikut-contoh-kode-html-sederhana-untuk-membuat-halaman-web-dasar-4350 | webdev, beginners, programming, tutorial | [road.co.id](https://road.co.id/) tentu! Berikut contoh kode HTML sederhana untuk membuat halaman web dasar:

HTML

<!DOCTYPE html>

<html>

<head>

<title>Halaman Web Sederhana</title>

</head>

<body>

<h1>Selamat datang di halaman web saya!</h1>

<p>Ini adalah paragraf berisi konten halaman web.</p>

</body>

</html>

Gu... | jasa_cs_96 |

1,894,245 | tentu! Berikut contoh kode HTML sederhana untuk membuat halaman web dasar: | road.co.id tentu! Berikut contoh kode HTML sederhana untuk membuat halaman web... | 0 | 2024-06-20T03:14:38 | https://dev.to/jasa_cs_96/tentu-berikut-contoh-kode-html-sederhana-untuk-membuat-halaman-web-dasar-2amn | webdev, beginners, programming, tutorial | [road.co.id](https://road.co.id/) tentu! Berikut contoh kode HTML sederhana untuk membuat halaman web dasar:

HTML

<!DOCTYPE html>

<html>

<head>

<title>Halaman Web Sederhana</title>

</head>

<body>

<h1>Selamat datang di halaman web saya!</h1>

<p>Ini adalah paragraf berisi konten halaman web.</p>

</body>

</html>

Gu... | jasa_cs_96 |

1,894,244 | 郭农场就是一场金融骗局 | ... | 0 | 2024-06-20T03:13:13 | https://dev.to/cryptosire24509/guo-nong-chang-jiu-shi-chang-jin-rong-pian-ju-5gp6 |

郭文贵向他的几十万名网络追随者撒谎,承诺如果他们向GTV传媒集团、喜马拉雅农场联盟、G|俱乐部和喜马拉雅交易所进行投资,将获得巨额的利润。至少从2018年3月起,郭文贵就已经作为主谋开始策划这起复杂的金融骗局,他和自己的金融师余建明(Kin Ming Je)一起诈骗了数千人,获得超过十亿美金的收益。郭文贵的成功有一种“邪教”的味道。用“反共”名义召唤信仰者,打造一个信息封闭的社群,同时用霸凌或威胁手段惩罚信仰不坚定者。把自己... | cryptosire24509 | |

1,894,243 | I'm Moth, and this is my first post! | Hi, I'm Moth. A husband and father of two, I enjoy fishing, hiking, DIY projects, playing Fortnite... | 0 | 2024-06-20T03:12:15 | https://dev.to/moth668/im-moth-and-this-is-my-first-post-3mn | Hi, I'm Moth. A husband and father of two, I enjoy fishing, hiking, DIY projects, playing Fortnite with family, OSRS, restoring a 1991 Nissan 300zx. I'm an overly optimistic jack of all trades.

I'm just beginning my journey. One of the tasks in this course is to participate in the community. This is how I found DEV, a... | moth668 | |

1,894,242 | Test Creation using Record and Playback Testing | The world of software development is progressing each day, where applications are constantly evolving... | 0 | 2024-06-20T03:11:46 | https://dev.to/elle_richard_232/test-creation-using-record-and-playback-testing-56nf | software, cypress, testing | The world of software development is progressing each day, where applications are constantly evolving and new features are continuously being added, ensuring software quality is of utmost importance. In such a scenario, manual testing can be time-consuming and error-prone. And that is where automation testing comes to ... | elle_richard_232 |

1,894,240 | Boosting AI Accuracy: Unveiling Retrieval-Augmented Generation (RAG) | Large language models (LLMs) have revolutionized how we interact with machines. From composing... | 0 | 2024-06-20T03:09:14 | https://dev.to/epakconsultant/boosting-ai-accuracy-unveiling-retrieval-augmented-generation-rag-39mb | rag | Large language models (LLMs) have revolutionized how we interact with machines. From composing realistic dialogue to generating creative text formats, their capabilities are impressive. However, LLMs sometimes struggle with factual accuracy, relying solely on the patterns they learn from vast amounts of training data. ... | epakconsultant |

1,894,239 | Captcha Chaos? Conquering Challenges with Techniques and Strategies | CAPTCHAs (Completely Automated Public Turing test to tell Computers and Humans Apart) act as... | 0 | 2024-06-20T03:04:30 | https://dev.to/epakconsultant/captcha-chaos-conquering-challenges-with-techniques-and-strategies-2ba2 | captcha | CAPTCHAs (Completely Automated Public Turing test to tell Computers and Humans Apart) act as gatekeepers, protecting websites from automated bots. While they serve a purpose, encountering CAPTCHAs can be frustrating, especially when you're a legitimate user. This article explores techniques and strategies to navigate t... | epakconsultant |

1,894,238 | Effortless Container Deployment with AWS App Runner 🏃♂️🚀 | Effortless Container Deployment with AWS App Runner 🏃♂️🚀 Introduction to AWS... | 0 | 2024-06-20T03:02:23 | https://dev.to/virajlakshitha/effortless-container-deployment-with-aws-app-runner-3n3j |

# Effortless Container Deployment with AWS App Runner 🏃♂️🚀

### Introduction to AWS App Runner

In today's fast-paced world of software development, speed and agility are paramount. Enter AWS App Runner, a fully managed container a... | virajlakshitha | |

1,894,237 | Transforming App Development with Stable Diffusion Model | 1. Introduction The integration of AI models like Stable Diffusion into app development... | 27,673 | 2024-06-20T02:58:23 | https://dev.to/rapidinnovation/transforming-app-development-with-stable-diffusion-model-2mgl | ## 1\. Introduction

The integration of AI models like Stable Diffusion into app development marks

a transformative era in how applications are designed, developed, and

deployed. Stable Diffusion specializes in generating high-quality images from

textual descriptions, enhancing user engagement and creativity.

## 2\. W... | rapidinnovation | |

1,894,236 | Setting Up Your Own PostgreSQL Playground: A Beginner's Guide | PostgreSQL, a powerful and open-source object-relational database management system (ORDBMS), is a... | 0 | 2024-06-20T02:56:23 | https://dev.to/epakconsultant/setting-up-your-own-postgresql-playground-a-beginners-guide-22kg | postgres | PostgreSQL, a powerful and open-source object-relational database management system (ORDBMS), is a popular choice for businesses and developers alike. Its robust features and reliability make it a great platform to store and manage your data. This guide will walk you through installing and configuring a basic PostgreSQ... | epakconsultant |

1,894,235 | Analysis of the small-pitch LED market in the second half of 2024 | High-end exhibitions, smart cities and program broadcasting: the dominant force In the second half... | 0 | 2024-06-20T02:55:36 | https://dev.to/sostrondylan/analysis-of-the-small-pitch-led-market-in-the-second-half-of-2024-40gj | led, display, applications | High-end exhibitions, smart cities and program broadcasting: the dominant force

In the second half of 2024, high-end exhibitions, smart cities and program broadcasting will continue to be the dominant forces in the growth of the [small-pitch LED](https://sostron.com/products/) application market. These three applicati... | sostrondylan |

1,894,228 | Using Miniconda with Conda-Forge to Avoid Anaconda Licensing Issues | In the world of data science and Python development, Anaconda has long been a popular choice due to... | 0 | 2024-06-20T02:46:29 | https://dev.to/kaamisan/using-miniconda-with-conda-forge-to-avoid-anaconda-licensing-issues-5hkj | conda, python, anaconda, license | In the world of data science and Python development, Anaconda has long been a popular choice due to its comprehensive package management and environment management capabilities. However, [recent changes](https://www.anaconda.com/blog/is-conda-free) in Anaconda's licensing policies have made some organizations with more... | kaamisan |

1,891,089 | Android Performance Optimization: Best Practices and Tools | Introduction In early 2015, Google launched a series of 16 brief videos on Android... | 0 | 2024-06-20T02:46:25 | https://dev.to/wetest/android-performance-optimization-best-practices-and-tools-262g | # Introduction

In early 2015, Google launched a series of 16 brief videos on Android performance optimization best practices. These 3-5 minute videos aimed to help developers build faster and more efficient Android apps. The course not only explained the fundamental principles of performance issues in the Android syste... | wetest | |

1,894,227 | Nuxt3 CSR Background Image Lazy loading | I currently have background images being displayed for my pages from within the CSS.... | 0 | 2024-06-20T02:43:38 | https://dev.to/kontact00/nuxt3-csr-background-image-lazy-loading-28i7 | nuxt3, webdev, nuxt, javascript |

I currently have background images being displayed for my pages from within the CSS. i.e.

```

.Inner-Page-Banner {

width: 100%;

height: auto;

min-height: 280px;

background: url(../img/trailer-owners.webp) no-repeat center top;

margin-top: 85px;

text-align: center;

}

```

I'm going through the process of perform... | kontact00 |

1,894,226 | https://youtu.be/cLOT0APQzDs?si=-BkWdqi4Tc16aeQ2 | A post by Elahe Adz | 0 | 2024-06-20T02:31:50 | https://dev.to/elahe_adz_464473549b9e89f/httpsyoutubeclot0apqzdssi-bkwdqi4tc16aeq2-2eom | elahe_adz_464473549b9e89f | ||

1,894,225 | Exploring AI-Assisted UI Development: Lessons from Creating a Hacker News Clone | Recently, I've become interested in exploring the use of AI as a collaborator in the coding process.... | 0 | 2024-06-20T02:30:25 | https://dev.to/spencertower/exploring-ai-assisted-ui-development-lessons-from-creating-a-hacker-news-clone-1nne | Recently, I've become interested in exploring the use of AI as a collaborator in the coding process. How can it expedite development, and what are the potential benefits and drawbacks of this approach?

After watching the following video: [Building Hacker News with AI, describe a UI and AI builds it for us](https://www... | spencertower | |

1,656,495 | EC2 Snapshot Management: How to get AWS EC2 Snapshot Information with Python | Introduction Amazon Elastic Compute Cloud (EC2) snapshots are integral to data backup and... | 0 | 2024-06-20T02:23:14 | https://dev.to/aws-builders/ec2-snapshot-management-how-to-get-aws-ec2-snapshot-information-with-python-753 | aws, python | ## Introduction

Amazon Elastic Compute Cloud (EC2) snapshots are integral to data backup and disaster recovery strategies within AWS. They provide point-in-time copies of your EC2 instance volumes, allowing you to restore data quickly and reliably in the event of failures, data loss, or system corruption. As organizat... | cloudsege |

1,894,224 | Essential HTTP Status Codes Every Web Developer Should Know | Understanding HTTP status codes is crucial for web developers. These codes provide insight into the... | 0 | 2024-06-20T02:21:39 | https://dev.to/vyan/essential-http-status-codes-every-web-developer-should-know-2gnd | webdev, beginners, http, react | Understanding HTTP status codes is crucial for web developers. These codes provide insight into the status of a request made to a server, helping you diagnose and troubleshoot issues effectively. Here’s a comprehensive guide to 18 essential HTTP status codes that you need to know.

## 1. 200 OK

**Request Succeeded**

T... | vyan |

1,894,223 | iceriveroutlet | We are a trusted partner of IceRiver. We offer customers a wide range of IceRiver miners to purchase.... | 0 | 2024-06-20T02:21:27 | https://dev.to/kaspa_miningsitessitio/iceriveroutlet-4m46 | We are a trusted partner of IceRiver. We offer customers a wide range of IceRiver miners to purchase. Order your KASPA ASIC MINING today!

view edit pin delete change to draft

IBELINK BM-KS Max Miner – 10.5 Th/s

The iBeLink BM-KS Max is an advanced cryptocurrency miner specifically designed to operate with the KHeavyH... | kaspa_miningsitessitio | |

1,894,221 | How To Handle Large Data Volumes-single table with 10 million records | Handling large single tables with 10 million records requires choosing the right SQL editor and tools... | 0 | 2024-06-20T02:19:19 | https://dev.to/concerate/how-to-handle-large-data-volumes-single-table-with-10-million-records-1ohh | Handling large single tables with 10 million records requires choosing the right SQL editor and tools that can efficiently manage and query large datasets without causing performance issues or memory overload. Here are recommended SQL editors and tools, along with performance optimization suggestions.

Recommended SQL E... | concerate | |

1,894,220 | 🕒 Understanding the Event Loop and Concurrency Model in JavaScript | JavaScript is single-threaded, but it manages to handle asynchronous operations seamlessly. The magic... | 0 | 2024-06-20T02:18:25 | https://dev.to/dipakahirav/understanding-the-event-loop-and-concurrency-model-in-javascript-1ml2 | javascript, eventloop, webdev, programming | JavaScript is single-threaded, but it manages to handle asynchronous operations seamlessly. The magic behind this is the Event Loop. In this blog, we'll dive deep into how the Event Loop works and how JavaScript handles concurrency. 🧙♂️

please subscribe to my [YouTube channel](https://www.youtube.com/@DevDivewithDip... | dipakahirav |

1,894,219 | Understanding Zod: A Comprehensive Guide to Schema Validation in JavaScript/Typescript | In the rapidly evolving world of JavaScript development, ensuring the integrity of data is paramount.... | 0 | 2024-06-20T02:13:32 | https://dev.to/abhilaksharora/understanding-zod-a-comprehensive-guide-to-schema-validation-in-javascripttypescript-171k | webdev, javascript, typescript, programming | In the rapidly evolving world of JavaScript development, ensuring the integrity of data is paramount. Whether you're developing a full-stack application, working on the frontend, or managing a complex backend system, the need to validate data consistently and reliably is crucial. Enter Zod, a powerful TypeScript-first ... | abhilaksharora |

1,894,218 | Elixir Tricks & Tips for develop & debugging | Intro I will continue add tips & tricks for develop/debugging with Elixir in this... | 0 | 2024-06-20T02:11:31 | https://dev.to/manhvanvu/elixir-tricks-tips-for-develop-debugging-1p0p | elixir, tips, tricks | ## Intro

I will continue add tips & tricks for develop/debugging with Elixir in this topic for sharing my experiences about Elixir.

## Add .iex.exs file for easy develop & debug

Add .iex.exs file to root folder of Elixir app then:

1. Add alias to avoid type much

example add

```Elixir

alias MyApp.Background.Worker... | manhvanvu |

1,894,216 | VigRXPlus: Unveiling My Authentic Journey to Enhanced Performance | ⭐ Exploring the Science Behind VigRXPlus Understanding the Ingredients When... | 0 | 2024-06-20T02:07:10 | https://dev.to/superdeals/vigrxplus-unveiling-my-authentic-journey-to-enhanced-performance-54ec | ## ⭐ Exploring the Science Behind VigRXPlus

### Understanding the Ingredients

When it comes to **male enhancement supplements**, one key factor that sets VigRXPlus apart is its powerful blend of natural ingredients. From Korean Red Ginseng to S... | superdeals | |

1,894,215 | Lock / Mutex to a post graduate CS student (Difficulty 4) | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-20T02:00:31 | https://dev.to/sauravshah31/lock-mutex-to-a-post-graduate-cs-student-difficulty-4-m52 | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

A mutex uses architecture-specific atomic instructions (uninterruptible) until successful, allowing thread access to a critical section. For non-atomic architectures, complex logic invo... | sauravshah31 |

1,894,214 | How the Bathroom app offers a real-life solution to a real-world problem | We've all seen the hype around the next big app. But sometimes it's the simple, practical solutions... | 0 | 2024-06-20T01:59:40 | https://dev.to/kevinraposo/how-the-bathroom-app-offers-a-real-life-solution-to-a-real-world-problem-599 | webdev, bootstrap, opinion, opensource | We've all seen the hype around the next big app. But sometimes it's the simple, practical solutions that really shine.

Take the [Bathroom App](https://bathroom.app) – it tackles a universal problem with elegance and a focus on user needs. The concept is straightforward: find a bathroom near you, fast.

But where it ... | kevinraposo |

1,894,213 | 變數的有效範圍 (scope) 與生命週期 (life time) | 變數的有效範圍與生命週期聽起來似乎很像, 不過實質的意義不同: 有效範圍是指該變數在程式碼的哪些地方有效, 你也可以把它當成哪些地方寫出該變數的名稱, 編譯器是認得的,... | 0 | 2024-06-20T01:58:12 | https://dev.to/codemee/bian-shu-de-you-xiao-fan-wei-scope-yu-sheng-ming-zhou-qi-life-time-2h3l | python, cpp | 變數的有效範圍與生命週期聽起來似乎很像, 不過實質的意義不同:

- 有效範圍是指該變數在程式碼的哪些地方有效, 你也可以把它當成哪些地方寫出該變數的名稱, 編譯器是認得的, 表示在那些地方這個名稱是有效的, 可以存取變數的內容。

- 生命週期指的則是變數在執行時期的那一個時間點才會配置記憶體空間, 又持續到哪一個時間點才會把配置的記憶體空間歸還?

顯而易見, 只要執行到有效範圍內的程式碼, 一定就位於生命週期內, 否則就無法存取該變數。但是反過來說, 就有可能在執行時發生變數仍在其生命週期內, 但因為執行的位置不在該變數的有效範圍內, 而無法存取該變數的狀況。例如, 以下是一個 Python 的例子:

```python

>>... | codemee |

1,894,212 | 考古--operator 為什麼翻譯為『運算子』 | 大部分的程式設計教材都會將 operator 翻譯為『運算子』, 我自己對於『子』一直覺得很疑惑, 倒底是什麼意思?有些人會說這個『子』就跟『帽子』、『鞋子』等等中的『子』一樣是個語尾綴詞,... | 0 | 2024-06-20T01:48:14 | https://dev.to/codemee/kao-gu-operator-wei-shi-mo-fan-yi-wei-yun-suan-zi--2d9m | 詞辨, 翻譯 | 大部分的程式設計教材都會將 operator 翻譯為『運算子』, 我自己對於『子』一直覺得很疑惑, 倒底是什麼意思?有些人會說這個『子』就跟『帽子』、『鞋子』等等中的『子』一樣是個語尾綴詞, 沒有什麼意思, 不過運算子的『子』讀音『ㄗˇ』, 而不是語尾綴詞的『ㄗ˙』, 並不是同一個意思。

因為不得其解的緣故, 所以過去我個人偏好將 operator 翻譯為『運算器』, 取 er、or 字尾為做什麼事情的『人』或『器具』的意思, 但因為這樣的譯法和一般通用的『運算子』不同, 所以也有不少人表達無法接受的意見, 但卻也說不出翻譯為『運算子』的理由。

最近查閱《遠流中文活用大辭典》, 發現[在『子』字的解釋](https://lib.... | codemee |

1,894,211 | Stay Updated with Python/FastAPI/Django: Weekly News Summary (10/06/2024–16/06/2024) | Dive into the latest tech buzz with this weekly news summary, focusing on Python, FastAPI, and Django... | 0 | 2024-06-20T01:42:13 | https://poovarasu.dev/python-fastapi-django-weekly-news-summary-10-06-2024-to-16-06-2024/ | python, django, flask, fastapi | Dive into the latest tech buzz with this weekly news summary, focusing on Python, FastAPI, and Django updates from June 10th to June 16th, 2024. Stay ahead in the tech game with insights curated just for you!

This summary offers a concise overview of recent advancements in the Python/FastAPI/Django framework, providin... | poovarasu |

1,894,209 | Stay Updated with PHP/Laravel: Weekly News Summary (10/06/2024–16/06/2024) | Dive into the latest tech buzz with this weekly news summary, focusing on PHP and Laravel updates... | 0 | 2024-06-20T01:40:22 | https://poovarasu.dev/php-laravel-weekly-news-summary-10-06-2024-to-16-06-2024/ | php, laravel | Dive into the latest tech buzz with this weekly news summary, focusing on PHP and Laravel updates from June 10th to June 16th, 2024. Stay ahead in the tech game with insights curated just for you!

This summary offers a concise overview of recent advancements in the PHP/Laravel framework, providing valuable insights fo... | poovarasu |

1,894,205 | Item 36: Use a classe EnumSet em vez dos campos de bits | 1. Uso Tradicional de Campos de Bits: Enumeração com Potências de 2: Cada constante enum é... | 0 | 2024-06-20T01:37:10 | https://dev.to/giselecoder/item-36-use-a-classe-enumset-em-vez-dos-campos-de-bits-1gh6 | java, effectivava, development, enums | **1. Uso Tradicional de Campos de Bits:**

- Enumeração com Potências de 2:

Cada constante enum é atribuída a uma potência de 2.

Combinação de Constantes: Utiliza-se a operação bitwise OR para combinar constantes.

- Operações de Conjunto:

União e interseção são feitas eficientemente com aritmética bitwise.

**2. Des... | giselecoder |

1,890,795 | Exploring the full potential of the Logto Management API | In this article, we will reemphasize the definition of the Logto Management API, explain how it... | 0 | 2024-06-20T01:34:43 | https://blog.logto.io/explore-management-api/ | webdev, programming, identity, opensource | In this article, we will reemphasize the definition of the Logto Management API, explain how it works, and showcase typical scenarios to boost your productivity and unlock more use cases.

---

As an infrastructure service, we strive to make using our Logto Management API easier and faster. We've recently updated our gu... | palomino |

1,894,204 | SDLC without DevOps | The Software Development Life Cycle (SDLC) is a structured process that guides the creation of... | 0 | 2024-06-20T01:31:34 | https://dev.to/abir101/sdlc-without-devops-4jj3 | webdev, devops, deployment, productivity | The Software Development Life Cycle (SDLC) is a structured process that guides the creation of high-quality software. It encompasses all stages, from initial planning and requirements gathering, through coding and testing, to deployment and maintenance. This article explores the SDLC in the context of a Java applicatio... | abir101 |

1,894,190 | Item 35: Use os campos de instância em vez dos valores ordinais | 1. Problemas com o Uso de Ordinais: Manutenção Difícil: Reordenar as constantes de enum quebra a... | 0 | 2024-06-20T01:29:02 | https://dev.to/giselecoder/item-35-use-os-campos-de-instancia-em-vez-dos-valores-ordinais-59l1 | java, efectivejava, enums, development | **1. Problemas com o Uso de Ordinais:**

- Manutenção Difícil:

Reordenar as constantes de enum quebra a lógica dependente do método ordinal().

- Limitações de Valor Int:

Não é possível associar múltiplas constantes ao mesmo valor int.

- Constantes Falsas Necessárias:

Para valores int intermediários sem denominação... | giselecoder |

1,894,203 | project ideas topic | develop a chat AI into our existing Angular application integrate payment gateway angular | 0 | 2024-06-20T01:23:58 | https://dev.to/shivam_sahu_704d021337aec/project-ideas-topic-31f0 | develop a chat AI into our existing Angular application

integrate payment gateway angular

| shivam_sahu_704d021337aec | |

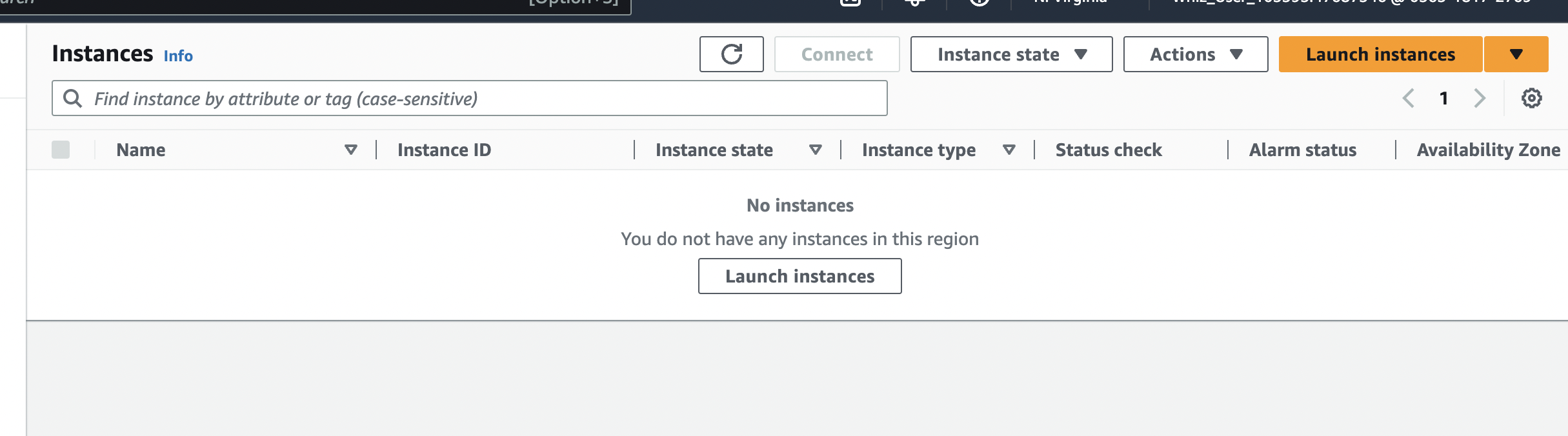

1,422,740 | Install Nginx in Ubuntu 20.04 | 1. Click on Launch Instances 2. Add Server Details Enter Server name Select OS Image here we... | 0 | 2023-04-01T15:13:52 | https://dev.to/anand_h_parmar/install-nginx-in-ubuntu-2004-5ff7 | **1. Click on Launch Instances**

**2. Add Server Details**

- Enter Server name

- Select OS Image here we select Ubuntu 22.04

- Create key pair file this will be use for SSH login

| In today’s post we are going to talk about the leet code study plan SQL 50 and my journey to... | 0 | 2024-06-20T01:20:37 | https://dev.to/caresle/experience-completing-leetcode-sql-50-1-e2a | sql, leetcode | In today’s post we are going to talk about the leet code study plan `SQL 50` and my journey to completing this challenge.

The reason I’m taking this is because I want to improve my skills with sql, to be complete honest with you, It’s somethings that with the time as become one of my weaknesses, one of the reasons i... | caresle |

1,894,192 | Some UX Design Principles Everyone Should Know ✨ | Hitting the ground running with a new app idea is tough. There are a million things to do and no time... | 0 | 2024-06-20T01:17:27 | https://dev.to/firas_lajmi_/some-ux-design-principles-everyone-should-know-oe4 | ux, webdev, design | Hitting the ground running with a new app idea is tough. There are a million things to do and no time to do them. You’ve gone through the “justification” phase, explaining to everyone and their mom why the world needs your application. You’ve documented every single forecast and business plan. Now it’s time to start th... | firas_lajmi_ |

1,894,191 | Load Balancer: Ensuring High Availability and Scalability | In today's digital landscape, where downtime can lead to significant revenue loss and a tarnished... | 0 | 2024-06-20T01:14:52 | https://dev.to/iaadidev/load-balancer-ensuring-high-availability-and-scalability-npg | loadbalancer, webdev, network, devops |

In today's digital landscape, where downtime can lead to significant revenue loss and a tarnished reputation, ensuring high availability and scalability of your applications is crucial. A load balancer is an essential component in achieving this goal. This blog will delve into the concept of load balancing, its impor... | iaadidev |

1,894,189 | Como iniciar no mundo Salesforce? | Fala galera, tudo bem? Espero que sim, esse será meu primeiro artigo aqui, então espero de coração... | 0 | 2024-06-20T01:11:19 | https://dev.to/jhownfs/como-iniciar-no-mundo-salesforce-g8i | braziliandevs, beginners, salesforce, apex | Fala galera, tudo bem? Espero que sim, esse será meu primeiro artigo aqui, então espero de coração que gostem do conteúdo, pretendo escrever mais sobre o ecossistema Salesforce e alimentá-los de muita informação.

Bom, como já percebeu iremos falar aqui de como você pode começar no mundo Salesforce tanto como desenvolv... | jhownfs |

1,894,188 | Windows, fnm으로 node.js 설치 | winget install Schniz.fnm Enter fullscreen mode Exit fullscreen mode ... | 0 | 2024-06-20T01:04:33 | https://dev.to/sunj/windows-fnmeuro-nodejs-seolci-4hii | windows, fnm, node | ```

winget install Schniz.fnm

```

PowerShell에서

```

fnm env --use-on-cd | Out-String | Invoke-Expression

```

```

fnm install 설치버전

```

```

fnm use 설치버전

```

```

node -v

```

_참조 : https://blog.logrocket.com/fnm-rust-built-node-js-version-manager/_ | sunj |

1,894,187 | Effortless VM Data Security with NAKIVO and Proxmox Backup | In the realm of virtualized environments, securing data is paramount. Two powerful tools that have... | 0 | 2024-06-20T00:53:52 | https://dev.to/jeffreyboyle0033/effortless-vm-data-security-with-nakivo-and-proxmox-backup-11gn | vmbackup, dataprotection, nakivo, proxmox | In the realm of virtualized environments, securing data is paramount. Two powerful tools that have gained prominence for [safeguarding virtual machine (VM) data](https://www.wicz.com/story/50890505/simplify-vm-data-protection-with-proxmox-backup-by-nakivo) are NAKIVO Backup & Replication and Proxmox Backup Server. This... | jeffreyboyle0033 |

1,894,186 | Understanding Keywords in SEO: A Comprehensive Guide | Introduction In the vast world of Search Engine Optimization (SEO), keywords play a... | 0 | 2024-06-20T00:47:47 | https://dev.to/gohil1401/understanding-keywords-in-seo-a-comprehensive-guide-lj1 | webdev, beginners, tutorial, seo | ## Introduction

In the vast world of Search Engine Optimization (SEO), keywords play a critical role in helping search engines understand and rank your content. The strategic use of keywords can significantly enhance your website’s visibility and drive targeted traffic. This article delves into the various types of key... | gohil1401 |

1,894,185 | Gummy Bottles: The Sweet and Chewy Beverage You'll Love | screenshot-1712084318540.png Gummy Bottles: The Tasty Drink You'll Adore Gummy bottles are the... | 0 | 2024-06-20T00:47:36 | https://dev.to/susan_bakerf_65f47393fdfb/gummy-bottles-the-sweet-and-chewy-beverage-youll-love-jp9 |

screenshot-1712084318540.png

Gummy Bottles: The Tasty Drink You'll Adore

Gummy bottles are the newest innovation in the world of sweet and chewy drinks, and they're taking the market by storm. Not only are they delicious and fun to eat, but they also come with numerous advantages that make them stand out from the c... | susan_bakerf_65f47393fdfb | |

1,894,184 | Cloud Computing: Principais benefícios e desafios enfrentados na adoção da computação em nuvem. | A computação em nuvem tem se tornado cada vez mais popular nas empresas de todos os tamanhos. Ela... | 0 | 2024-06-20T00:41:54 | https://dev.to/evertoncezartech/cloud-computing-principais-beneficios-e-desafios-enfrentados-na-adocao-da-computacao-em-nuvem-1184 | A computação em nuvem tem se tornado cada vez mais popular nas empresas de todos os tamanhos. Ela oferece uma série de benefícios que podem impulsionar a eficiência operacional, a escalabilidade e a inovação.

Neste artigo, discutiremos os principais benefícios da adoção da computação em nuvem e os desafios enfrentados... | evertoncezartech | |

1,894,123 | HTMX: por que eu uso? | Nos últimos dias surgiram diversos tweets com opiniões, indicações, ou pedindo textos de referência... | 0 | 2024-06-19T23:29:44 | https://dev.to/eduardojm/htmx-por-que-eu-uso-akl | webdev, javascript, braziliandevs | Nos últimos dias surgiram diversos tweets com opiniões, indicações, ou pedindo textos de referência sobre o uso do HTMX. Optei pelo uso do HTMX em alguns projetos, inclusive em produção, e pela falta de conteúdos mostrando usos reais da biblioteca, decidi começar a escrever. Esse texto, em específico, nasceu de alguns ... | eduardojm |

1,894,183 | Developing a Custom Content Management System with Laravel | Introduction Content Management Systems (CMS) play a vital role in the management of... | 0 | 2024-06-20T00:32:05 | https://dev.to/kartikmehta8/developing-a-custom-content-management-system-with-laravel-5ff7 | javascript, beginners, programming, webdev | ## Introduction

Content Management Systems (CMS) play a vital role in the management of digital content for websites. While there are many popular CMS options available, developing a custom CMS with Laravel has gained significant attention in recent years. Laravel is a powerful and elegant PHP framework that provides ... | kartikmehta8 |

1,894,182 | Understanding HTML5: Key Elements and Attributes | HTML5, the latest version of the Hypertext Markup Language, brings a host of new features and... | 0 | 2024-06-20T00:30:32 | https://dev.to/vidyarathna/understanding-html5-key-elements-and-attributes-5h17 | html, webdev | HTML5, the latest version of the Hypertext Markup Language, brings a host of new features and improvements designed to enhance the web development experience. Whether you're just starting out or looking to brush up on your skills, understanding these core elements and attributes is essential. Here's a guide to some of ... | vidyarathna |

1,894,175 | Saleheen Muhammad Mustak: A Visionary Creative Graphic Designer | Saleheen Muhammad Mustak: A Visionary Creative Graphic Designer Introduction Saleheen Muhammad... | 0 | 2024-06-20T00:23:17 | https://dev.to/saleheenmuhammad/saleheen-muhammad-mustak-a-visionary-creative-graphic-designer-4m4f | webdev, javascript | Saleheen Muhammad Mustak: A Visionary Creative Graphic Designer

Introduction

Saleheen Muhammad Mustak, a 25-year-old visual artist and graphic designer, has carved a unique niche for himself in the dynamic world of design. Hailing from Bhadeswar, Gulapgonj, Sylhet, Bangladesh. Saleheen’s journey is one of creativity,... | saleheenmuhammad |

1,895,243 | How to integrate Tabler Icons into your Phoenix project | Table of Contents 1. Introduction 2. Tracking the Tabler Icons source repository 3.... | 0 | 2024-06-25T16:47:45 | https://farens.me/blog/how-to-integrate-tabler-icons-into-your-phoenix-project | phoenix, tailwindcss, tablericons | ---

title: How to integrate Tabler Icons into your Phoenix project

published: true

date: 2024-06-20 00:00:00 UTC

tags: Phoenix,TailwindCSS,TablerIcons

canonical_url: https://farens.me/blog/how-to-integrate-tabler-icons-into-your-phoenix-project

---

## Table of Contents

1. [Introduction](#introduction)

2. [... | farens |

1,893,009 | Domain Driven Design (DDD) with stakeholders: using content production contracting as an example | In my previous article, I focused on the development part of DDD, but this time I would like to delve... | 0 | 2024-06-19T23:50:47 | https://dev.to/ma2mori/domain-driven-design-ddd-with-stakeholders-using-content-production-contracting-as-an-example-526a | ddd, architecture | In [my previous article](https://dev.to/ma2mori/domain-driven-design-ddd-practice-live-streaming-app-example-3dih), I focused on the development part of DDD, but this time I would like to delve into the preliminary part. So, using the business challenges of a fictitious company as an example, we will consider how to un... | ma2mori |

1,894,125 | 👨💻neofetch is archived: what's next for terminal lovers? | I've been using neofetch since I don't know when but I always like of being extremely... | 0 | 2024-06-19T23:29:11 | https://dev.to/girordo/neofetch-is-archived-whats-next-for-terminal-lovers-342j | terminal, cli, tools, devops | I've been using neofetch since I don't know when but I always like of being extremely customizable.

This reminded me of when `null-ls` for Neovim was archived. It's a bittersweet reminder that things, even software, don't last forever.

I don't like to think of software as "dead." Instead, it's a chance to find new to... | girordo |

1,894,128 | Understanding Flash Loans in DeFi | In the realm of decentralized finance (DeFi), opportunities often arise that demand swift action to... | 0 | 2024-06-19T23:28:06 | https://dev.to/passandscore/understanding-flash-loans-in-defi-33n6 |

In the realm of decentralized finance (DeFi), opportunities often arise that demand swift action to capitalize on them. One such opportunity is arbitrage: when a token on Dex A can be bought at a lower price than it's being sold for on Dex B, there's potential for significant profit, which scales with the capital at ... | passandscore | |

1,894,126 | The First: D&A for beginners | ‘First post here !’ "Do you like data and analytics? Or do you want to learn the essentials to apply... | 0 | 2024-06-19T23:25:19 | https://dev.to/camila_arruda_ec496aeea5c/the-first-da-for-begginers-135i | data, analytics, analyticsbydesign, scrum | ‘First post here !’

"Do you like data and analytics? Or do you want to learn the essentials to apply in your daily work at your company? Favorite my website!"

[](https://daguiadebolso-iwurln7.gamma.site/)

https://daguiadebolso-iwurln7.gamma.site/

| mckinney_cothran_d91b8663 | |

1,894,113 | Laravel 11 Changes | Here are the changes that has happened in Laravel... | 0 | 2024-06-19T22:58:15 | https://dev.to/msnmongare/laravel-11-changes-1149 | laravel, webdev, beginners, programming | Here are the changes that has happened in Laravel 11

[

https://rezakhademix.medium.com/laravel-11-no-http-kernel-no-casts-no-console-kernel-721c62adb6ef](url) | msnmongare |

1,893,883 | React: Prevent UI Blocking from Busy Logic Using Web Workers API | I recently received a task from a company I applied to that I had to implement the socket server... | 0 | 2024-06-19T22:57:54 | https://dev.to/lico/react-prevent-ui-blocking-from-busy-logic-using-web-workers-api-59eo | react, webdev, javascript, tutorial | I recently received a task from a company I applied to that I had to implement the socket server using node.js. There is some logic that has to be executed when it receives data from the client. To avoid unnecessary delays in network interaction caused by the logic, I used worker threads. After that, I was wondering if... | lico |

1,894,111 | HTTP Caching 101 | Table of Contents First of all: What is Caching? What is HTTP Caching? And when should we... | 0 | 2024-06-19T22:50:30 | https://dev.to/godinhojoao/-http-caching-101-4ib0 | webdev, http, cache, basic | ## Table of Contents

- [First of all: What is Caching?](#first-of-all-what-is-caching)

- [What is HTTP Caching? And when should we use it?](#what-is-http-caching-and-when-should-we-use-it)

- [Heuristic caching](#heuristic-caching)

- [Cache-Control HTTP header: Used to define caching policy on HTTP services.](#cache-co... | godinhojoao |

1,894,109 | Explorando Linux Server | Linux Server sudo: comando para usar um comando com permissão de administrador apt:... | 0 | 2024-06-19T22:50:24 | https://dev.to/ebagabe/explorando-linux-server-30k0 | linux, ubuntu, devops | ## Linux Server

- `sudo`: comando para usar um comando com permissão de administrador

- `apt`: ferramente que faz gerenciamento de pacotes

- `update`: verifica quais pacotes estão disponiveis.

```bash

sudo apt update

```

Esse comando verifica quais pacotes estão disponiveis para instalação.

- Ao utilizar o coma... | ebagabe |

1,893,802 | Self-host - Part 1 - Securing your remote server | This blog will be first in the three-part series (maybe more, we will see) of self hosting. In first... | 27,778 | 2024-06-19T22:41:40 | https://dev.to/davorj94/self-host-part-1-securing-your-remote-server-3l94 |

This blog will be first in the three-part series (maybe more, we will see) of self hosting. In first part, we'll be explaining how to start and secure your self hosted server. Second part will be addressing zero-downtime deployment using Docker Swarm. In third part we will be talking about backing up your databases.

... | davorj94 | |

1,894,108 | How to Register Middleware in Laravel 11 | Middleware is an essential component in Laravel applications, allowing you to filter HTTP requests... | 0 | 2024-06-19T22:41:09 | https://dev.to/msnmongare/how-to-register-middleware-in-laravel-11-3mkd | laravel, webdev, beginners, php | Middleware is an essential component in Laravel applications, allowing you to filter HTTP requests entering your application. Whether you need to authenticate users, log requests, or enforce specific conditions, middleware provides a flexible way to handle these tasks. In this guide, we will walk through the process of... | msnmongare |

1,894,107 | I want Some one to Coach me in the tech Industry. | Help me to become a company owner.I have the passion and have start some project currently | 0 | 2024-06-19T22:38:23 | https://dev.to/harber_mina_ce3122c1926e4/i-want-some-one-to-coach-me-in-the-tech-industry-2leb | Help me to become a company owner.I have the passion and have start some project currently | harber_mina_ce3122c1926e4 | |

1,894,104 | amna 1 | A post by Mckinney Cothran | 0 | 2024-06-19T22:18:58 | https://dev.to/mckinney_cothran_d91b8663/amna-cosmetic-1-l2b |

<iframe width="560" height="315" src="https://www.youtube.com/embed/dRaAirc9sYM?si=fJfztC78tvcgv2r1" title="YouTube video player" frameborder="0" allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture; web-share" referrerpolicy="strict-origin-when-cross-origin" allowfullscreen>... | mckinney_cothran_d91b8663 | |

1,894,103 | Reactive Streams in Java: Using Project Reactor | Introduction In the world of modern application development, the ability to handle... | 0 | 2024-06-19T22:17:43 | https://dev.to/tutorialq/reactive-streams-in-java-using-project-reactor-d0h | reactivestreams, projectreactor, javareactive, eventdriven | ### Introduction

In the world of modern application development, the ability to handle asynchronous data streams efficiently is critical. Reactive Streams provide a powerful approach to managing this, and Project Reactor, a library for building non-blocking applications on the JVM, is at the forefront of this paradigm... | tutorialq |

1,889,566 | Building Core Banking: A Blueprint for Modern Financial Systems - Introduction | The central nervous system of a bank’s operations is its core banking system. This vital component... | 27,783 | 2024-06-19T22:15:21 | https://dev.to/claretnnamocha/building-core-banking-a-blueprint-for-modern-financial-systems-introduction-4d13 | microservices, corebanking, security, architecture | The central nervous system of a bank’s operations is its core banking system. This vital component oversees crucial daily tasks, including monitoring cash flows, administering customer accounts, and maintaining smooth operations. It’s the foundation that enables efficient banking services for customers.

Core banking r... | claretnnamocha |

1,894,101 | OdinBot.io - The Best SOL Copytrader Bot on the Market | The Best Copytrading Bots for SOL in 2024: A Comprehensive Comparison In the ever-evolving landscape... | 0 | 2024-06-19T22:06:27 | https://dev.to/odinbot/odinbotio-the-best-sol-copytrader-bot-on-the-market-41o0 | solana, copytrading | The [Best Copytrading Bots for SOL](https://odinbot.io

) in 2024: A Comprehensive Comparison

In the ever-evolving landscape of cryptocurrency, copytrading bots have become indispensable tools for both novice and seasoned traders. These bots enable users to replicate the trades of successful investors, known as "whales,... | odinbot |

1,894,100 | key metrics to measure web perfomance; an short introduction | Monitoring web performance is crucial for ensuring optimal user experience and business success. Here... | 0 | 2024-06-19T22:01:26 | https://dev.to/nosylasairaf/key-metrics-to-measure-web-perfomance-an-short-introduction-2dmp | Monitoring web performance is crucial for ensuring optimal user experience and business success. Here some key metrics to consider:

## 1. **Page Load Time**

Page load time measures how long it takes for a web page to fully load in a user's browser. Faster load times improve user satisfaction and engagement.

| developercode | |

1,893,797 | How to create and connect to a Linux VM using a Public Key. | A Linux virtual machine (VM) can be created using the Azure portal. This guide will show you how to... | 0 | 2024-06-19T21:52:58 | https://dev.to/laoluafolami/how-to-create-and-connect-to-a-linux-vm-using-a-public-key-2jhb | devops, cloud, azure | A Linux virtual machine (VM) can be created using the Azure portal. This guide will show you how to use the Azure portal to deploy a Linux virtual machine using Ubuntu Server 22.04 LTS as the operating system (OS) image. A connection will be established to the VM using Secure Shell (SSH) with a public key and the NGINX... | laoluafolami |

1,892,983 | Simplified Virtual Development Environment on the Browser: GitHub Codespace | Starting my cloud journey, one of the biggest challenges I faced was finding a place to practice... | 0 | 2024-06-19T21:35:39 | https://paschalogu.notion.site/Simplified-Virtual-Development-Environment-on-the-Browser-GitHub-Codespace-07d5fe369e2840a2b7e0caae1e4f9ca9 | virtualmachine, github, development, codespace | Starting my cloud journey, one of the biggest challenges I faced was finding a place to practice Linux commands. GitHub Codespace has completely solved this problem.

As a seasoned professional, imagine being able to take your entire local development environment with you, minus the hassle of carrying your computer. Gi... | paschalogu |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.