id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,885,151 | MySQL vs Cassandra: Everything You Need to Know | When it comes to choosing a database for your project, two popular options often come to mind: MySQL... | 0 | 2024-06-12T05:05:31 | https://five.co/blog/mysql-vs-cassandra/ | mysql, cassandra, database, learning | <!-- wp:paragraph -->

<p>When it comes to choosing a database for your project, two popular options often come to mind: <a href="https://www.mysql.com/">MySQL</a> and <a href="https://cassandra.apache.org/_/index.html">Cassandra</a>. Both databases have significant traction in the developer community, but they cater to... | domfive |

1,885,148 | Chain - a Goofy, Functional, Tree-backed List | What? Java has array-based Lists for efficient random access; there are LinkedList for... | 0 | 2024-06-12T05:04:14 | https://dev.to/fluentfuture/chain-a-goofy-functional-tree-backed-list-34dm | java, functional, tree, immutable | ## What?

Java has array-based `List`s for efficient random access; there are `LinkedList` for efficient appending. Who needs a tree for List?

Well, hear me out, just for the fun alright?

## Once upon a time

Agent Aragorn (Son of Arathorn), Jonny English and Smith collaborated on a bunch of missions.

The `Mission` ... | fluentfuture |

1,885,150 | Buy Natural Gemstone Rings Rudraksha Online Bhagya G | Discover a world of natural beauty and spiritual wellness at Bhagya G. We specialize in offering a... | 0 | 2024-06-12T05:00:09 | https://dev.to/bhagyag1/buy-natural-gemstone-rings-rudraksha-online-bhagya-g-1467 | gemstone, rudraksha, buy, online | Discover a world of natural beauty and spiritual wellness at [Bhagya G](https://bhagyag.com/). We specialize in offering a wide range of high-quality natural gemstone rings and authentic Rudraksha beads, carefully selected to enhance your well-being and infuse you

I wrote this article to share a bit of what I've learned in the [PICK](https://www.linuxtips.io/pick) from [LinuxTips](https://www.linuxtips.io/). So, grab your drink and join me.

It all started when, sometimes, security tools... | batistagabriel |

1,868,151 | TIL: How to declutter sites with uBlock Origin filters | I originally posted this post on my blog a long time ago in a galaxy far, far away. I didn't know I... | 0 | 2024-06-12T05:00:00 | https://canro91.github.io/2023/11/13/DeclutteringUBlockOrigin/ | todayilearned, productivity, design, browser | _I originally posted this post on [my blog](https://canro91.github.io/2023/11/13/DeclutteringUBlockOrigin/) a long time ago in a galaxy far, far away._

I didn't know I could restyle elements on a page with uBlock Origin, a "free, open-source ad content blocker."

The other day, while reading HackerNews, I found [this ... | canro91 |

1,838,296 | From Keyframes to Keycaps: A Journey Through Animation Design | The world of animation brings characters and stories to life with movement and magic. But before the... | 27,353 | 2024-06-12T05:00:00 | https://dev.to/shieldstring/from-keyframes-to-keycaps-a-journey-through-animation-design-419c | design, animation, career | The world of animation brings characters and stories to life with movement and magic. But before the final product graces our screens, there's a meticulous process behind the scenes. This article takes you on a journey from the foundational concept – keyframes – to the final, interactive element – the keycap on a keybo... | shieldstring |

1,885,149 | Get Geeky with Python: Build a System Monitor with Flair | Introduction Hey there, fellow coders! Today, I'm super excited to share an awesome... | 0 | 2024-06-12T04:58:40 | https://dev.to/pranjol-dev/get-geeky-with-python-build-a-system-monitor-with-flair-gh2 | python, opensource, sysadmin, beginners | ### Introduction

Hey there, fellow coders! Today, I'm super excited to share an awesome project I recently worked on—a Python System Monitor. This isn't just any ordinary system monitor; it comes with a splash of color and a whole lot of flair! Whether you're a beginner looking to level up your Python skills or an exp... | pranjol-dev |

1,885,147 | Dog Boarding Melbourne | THE BEST FOR YOUR PET Dog Boarding Melbourne We know you don't want to leave them but when you need... | 0 | 2024-06-12T04:54:26 | https://dev.to/adogsdomain/dog-boarding-melbourne-5h8 | melbournedogboarding, dogboardinginmelbourne, dogboardingmelbourne | THE BEST FOR YOUR PET

[Dog Boarding Melbourne](https://www.adogsdomain.com.au/)

We know you don't want to leave them but when you need to, let us ease your mind that we will make sure your pet feels comfortable and relaxed. Whether its their first boarding experience, or they’re seasoned regulars, their wellbeing is ce... | adogsdomain |

1,885,146 | How to resolve Docker Compose Warning WARN[0000] Found orphan containers | Recently I got the docker compose error: Warning WARN[0000] Found orphan containers... | 0 | 2024-06-12T04:48:20 | https://dev.to/almatins/how-to-resolve-docker-compose-warning-warn0000-found-orphan-containers-4dfi | docker, dockercompose, linux, containers | Recently I got the docker compose error:

```Warning WARN[0000] Found orphan containers ([container-name]) for this project. If you removed or renamed this service in your compose file, you can run this command with the — remove-orphans flag to clean it up.```

when I was trying to deploy more than 1 Postgresql containe... | almatins |

1,885,145 | What is Branding? Understanding its Importance in 2024 | In today's fast-paced and highly competitive business landscape, branding plays a pivotal role in... | 0 | 2024-06-12T04:48:09 | https://dev.to/jkbranding/what-is-branding-understanding-its-importance-in-2024-42gc | In today's fast-paced and highly competitive business landscape, branding plays a pivotal role in shaping the perception of companies, products & services in the minds of consumers. Understanding the essence of branding and its significance is crucial for businesses aiming to thrive and succeed in 2024 and beyond.

**D... | jkbranding | |

1,885,143 | How to resolve Docker Compose Warning WARN[0000] Found orphan containers | Recently I got the docker compose error: Warning WARN[0000] Found orphan containers... | 0 | 2024-06-12T04:45:50 | https://dev.to/sisproid/how-to-resolve-docker-compose-warning-warn0000-found-orphan-containers-2a11 | docker, linux, dockercompose | Recently I got the docker compose error:

```Warning WARN[0000] Found orphan containers ([container-name]) for this project. If you removed or renamed this service in your compose file, you can run this command with the — remove-orphans flag to clean it up.```

when I was trying to deploy more than 1 Postgresql containe... | sisproid |

1,847,053 | Kubernetes Dashboard Part 3: Helm Release Management | TL;DR: In this blog, the authors talk about the helm dashboard by Devtron and how it can solve... | 27,311 | 2024-06-12T04:43:16 | https://devtron.ai/blog/kubernetes-dashboard-for-helm-release-management/ | helm, kubernetes, devtron, devops | TL;DR: In this blog, the authors talk about the helm dashboard by Devtron and how it can solve various issues related to helm CLI and help you manage everything around helm through the intuitive dashboard.

This blog will discuss how the HELM dashboard is used to view the installed Helm charts, see their revision histor... | devtron_inc |

1,885,141 | How Should You Handle Negative Reviews? | Buy Yelp Reviews You may have an awesome business, providing an awesome service or product, but... | 0 | 2024-06-12T04:42:08 | https://dev.to/alfred_ben/how-should-you-handle-negative-reviews-5cmd | webdev, javascript, beginners, programming | [Buy Yelp Reviews](https://mangocityit.com/service/buy-yelp-reviews/

)

You may have an awesome business, providing an awesome service or product, but without proper exposure, you cannot grow. However, when you have good reviews on a site like Yelp with 178 million visitors a month, the game changes.

You can grow your ... | alfred_ben |

1,885,140 | Salesforce Data Cloud Implementation Strategies 2024 | In the ever-evolving digital landscape, businesses are constantly seeking ways to optimize their... | 0 | 2024-06-12T04:40:12 | https://dev.to/shruti_sood_543de8c196a4a/salesforce-data-cloud-implementation-strategies-2024-g06 | In the ever-evolving digital landscape, businesses are constantly seeking ways to optimize their operations and deliver superior customer experiences. Salesforce Data Cloud has emerged as a transformative tool, providing businesses with the capability to harness their data effectively. This post will explore the key st... | shruti_sood_543de8c196a4a | |

1,885,139 | Issues with Modern Games, or, How to Engage a Game's Community | This is an important topic because I think that we're starting to stray away from everlasting games.... | 0 | 2024-06-12T04:28:46 | https://dev.to/chigbeef_77/issues-with-modern-games-or-how-to-engage-a-games-community-1ic5 | gamedev | This is an important topic because I think that we're starting to stray away from everlasting games. Before I go on, great games are still made, and I do get excited when I hear about all the cool games my friends are playing and all the features they have, sometimes it's just amazing what is being developed.

We're go... | chigbeef_77 |

1,885,137 | Access Modifiers in TypeScript: The Gatekeepers | Access modifiers in TypeScript are essential tools for managing the accessibility of class members... | 27,696 | 2024-06-12T04:24:37 | https://dev.to/nahidulislam/access-modifiers-in-typescript-the-gatekeepers-50i | typescript, webdev, programming, learning | Access modifiers in TypeScript are essential tools for managing the accessibility of class members (properties and methods) within our code. By controlling who can access and modify these members, access modifiers help us implement encapsulation, a core principle of object-oriented programming. We can use class members... | nahidulislam |

1,885,135 | Apple=1 | A post by Ledgardo Diqcuiatco Lacson Jr aka Xhino or Ardy (Roboardy) | 0 | 2024-06-12T04:17:48 | https://dev.to/roboardy/apple1-1lj0 | roboardy | ||

1,885,134 | Asset Management and Trading Innovation: Gametop Brings Higher Liquidity to Game NFTs | NFTs are reshaping the future of the gaming industry. Gametop is committed to providing a unique and... | 0 | 2024-06-12T04:17:34 | https://dev.to/gametopofficial/asset-management-and-trading-innovation-gametop-brings-higher-liquidity-to-game-nfts-53dj | NFTs are reshaping the future of the gaming industry. Gametop is committed to providing a unique and secure integrated gaming environment for players and developers through its innovative NFT asset management and trading platform, ensuring users have the best gaming experience. The emergence of NFTs not only guarantees... | gametopofficial | |

1,247,502 | Stop Hardcoding Maps Platform API Keys! | One of the really cool things about the new suite of APIs that Google Maps Platform has been... | 27,695 | 2024-06-12T04:15:41 | https://dev.to/bamnet/stop-hardcoding-maps-platform-api-keys-5c2n | googlemaps, jwt, firebase, googlecloud | One of the really cool things about the new suite of APIs that Google Maps Platform has been releasing lately ([Routes API](https://developers.google.com/maps/documentation/routes), [Places API](https://developers.google.com/maps/documentation/places/web-service/op-overview), etc) is that they look and feel a lot like ... | bamnet |

1,885,133 | Asset Management and Trading Innovation: Gametop Brings Higher Liquidity to Game NFTs | A post by 游戏顶部 | 0 | 2024-06-12T04:15:04 | https://dev.to/gametopofficial/asset-management-and-trading-innovation-gametop-brings-higher-liquidity-to-game-nfts-3gb0 | gametopofficial | ||

1,885,132 | Navigating ADHD and Substance Use with Dr. Hanid Audish: Insights into Dangers and Preventive Measures | Attention Deficit Hyperactivity Disorder (ADHD) is a neurodevelopmental disorder characterized by... | 0 | 2024-06-12T04:13:00 | https://dev.to/drhanidaudish/navigating-adhd-and-substance-use-with-dr-hanid-audish-insights-into-dangers-and-preventive-measures-2e3b | Attention Deficit Hyperactivity Disorder (ADHD) is a neurodevelopmental disorder characterized by symptoms of inattention, impulsivity, and hyperactivity. While attention deficit hyperactivity disorder primarily affects children and adolescents, it can persist into adulthood, presenting unique challenges and vulnerabil... | drhanidaudish | |

1,885,131 | Exploring ADHD in Sports with Dr. Hanid Audish: Advantages, Hurdles, and Strategies for Triumph | Attention Deficit Hyperactivity Disorder (ADHD) is a neurodevelopmental disorder characterized by... | 0 | 2024-06-12T04:11:39 | https://dev.to/drhanidaudish/exploring-adhd-in-sports-with-dr-hanid-audish-advantages-hurdles-and-strategies-for-triumph-4j62 | Attention Deficit Hyperactivity Disorder (ADHD) is a neurodevelopmental disorder characterized by symptoms of inattention, hyperactivity, and impulsivity. While ADHD poses unique challenges in various aspects of life, including academic performance and social interactions, its impact on participation in sports and phys... | drhanidaudish | |

1,885,130 | Exploring TypeScript Functions: A Comprehensive Guide | TypeScript, a statically typed superset of JavaScript, offers a myriad of features that enhance the... | 0 | 2024-06-12T04:11:02 | https://dev.to/hasancse/exploring-typescript-functions-a-comprehensive-guide-3hii | webdev, typescript, programming, javascript | TypeScript, a statically typed superset of JavaScript, offers a myriad of features that enhance the development experience and help catch errors early in the development process. One of the core components of TypeScript is its robust support for functions. In this blog post, we’ll dive into the various aspects of TypeS... | hasancse |

1,885,129 | Celebrating ADHD Neurodiversity: Fostering Acceptance and Inclusive Environments with Dr. Hanid Audish | ADHD, or Attention Deficit Hyperactivity Disorder, is a neurodevelopmental condition that affects... | 0 | 2024-06-12T04:09:40 | https://dev.to/drhanidaudish/celebrating-adhd-neurodiversity-fostering-acceptance-and-inclusive-environments-with-dr-hanid-audish-j33 | ADHD, or Attention Deficit Hyperactivity Disorder, is a neurodevelopmental condition that affects millions of children and adolescents worldwide. While individuals with ADHD may face challenges in areas such as attention, impulse control, and hyperactivity, they also possess unique strengths and talents that contribute... | drhanidaudish | |

1,885,127 | Tips of Releasing the Magic of "Read My Essay to Me" for Developers 2024 | Discover how developers can harness the "Read My Essay to Me" text-to-speech tool to boost... | 0 | 2024-06-12T04:08:24 | https://dev.to/novita_ai/tips-of-releasing-the-magic-of-read-my-essay-to-me-for-developers-2024-2pn | readmyessaytome, texttospeech, tts, ai |

Discover how developers can harness the "Read My Essay to Me" text-to-speech tool to boost accessibility, enhance user experience, and streamline coding processes. Learn more about its benefits and integration tips.

## Key Highlights

- "Read My Essay to Me" is a state-of-the-art text-to-speech tool that seamlessly t... | novita_ai |

1,885,128 | The High Ranking Social Media Company Transforming Dubai’s Digital Landscape | In the heart of the bustling metropolis of Dubai, where innovation meets tradition, one company... | 0 | 2024-06-12T04:07:42 | https://dev.to/hive_mind_1ff41438cfa282f/the-high-ranking-social-media-company-transforming-dubais-digital-landscape-5471 | dubai, webdev, beginners | In the heart of the bustling metropolis of Dubai, where innovation meets tradition, one company stands out as a beacon of excellence in the social media sphere: Hive Mind. With a reputation as a [high ranking social media company in Dubai](https://wearehivemind.com/social-media-agency-dubai/), Hive Mind has transformed... | hive_mind_1ff41438cfa282f |

1,883,775 | On Writing a Sane API | Over my years on the ComputerCraft Discord server, I've had the opportunity to witness the creation... | 0 | 2024-06-12T04:00:00 | https://gist.github.com/MCJack123/39ac0847579b3676cc098aca5860c758 | api, design | Over my years on the [ComputerCraft Discord server](https://discord.computercraft.cc), I've had the opportunity to witness the creation of numerous APIs/libraries of all sorts. I've gotten to examine these APIs in depth, as well as answer questions involving the APIs that the creators or users have. As an API designer ... | jackmacwindows |

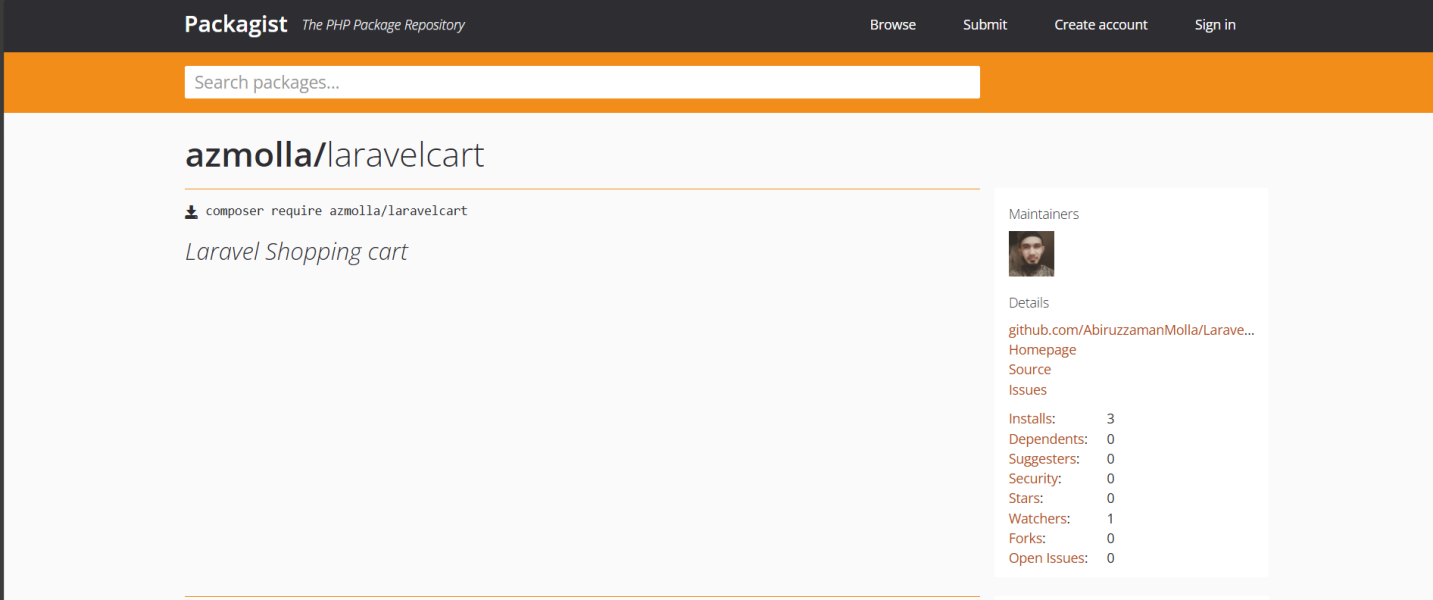

1,885,126 | Introducing LaravelCart: A Streamlined Shopping Cart Solution for Laravel Developers | Do you find managing shopping carts in your Laravel applications cumbersome? Look no further than... | 0 | 2024-06-12T03:54:48 | https://dev.to/abiruzzamanmolla/introducing-laravelcart-a-streamlined-shopping-cart-solution-for-laravel-developers-287a | laravel, laravelcart, laravelshoppingcart, laravel11 | ---

title: Introducing LaravelCart: A Streamlined Shopping Cart Solution for Laravel Developers

published: true

description:

tags: laravel, laravelcart, laravelshoppingcart, laravel11

# cover_image:

# Use a ratio of... | abiruzzamanmolla |

1,885,124 | API Architecture Styles: Sockets | API architecture is fundamental to modern application development, enabling efficient communication... | 0 | 2024-06-12T03:49:30 | https://dev.to/team3/api-architecture-styles-sockets-4pip | API architecture is fundamental to modern application development, enabling efficient communication between different systems. One architecture style particularly useful for real-time communication is the use of sockets. This article provides a comprehensive introduction to sockets, how they work, their practical appli... | team3 | |

1,885,123 | 北川源太郎(KITAGAWA GENTAROU):金融界のレジェンドアナリスト | 北川 源太郎 KITAGAWA GENTAROU 出生地: 東京都 学歴:: 学生時代から数字と金融に興味があり、東京大学で金融学を学び卒業しました。有名な投資銀行に入社し、金融アナリストとして... | 0 | 2024-06-12T03:48:45 | https://dev.to/kitagawag/bei-chuan-yuan-tai-lang-kitagawa-gentaroujin-rong-jie-noreziendoanarisuto-494o | 北川 源太郎 KITAGAWA GENTAROU

出生地: 東京都

学歴::

学生時代から数字と金融に興味があり、東京大学で金融学を学び卒業しました。有名な投資銀行に入社し、金融アナリストとして 20 年以上のキャリアをスタートさせました。

簡単な経歴::

シニア金融アナリストとして、豊富な投資経験と専門知識を持ち、さまざまな財務分析手法に精通しており、多くの金融商品と市場動向をよく理解しています。

2008年、リーマンショックが私の家族とキャリアに深刻な影響を与えました。私は親戚や友人の紹介で山口秀久さんと出会いました。

彼は私の人生の方向性を再定義し、卓越した個人的な洞察力と予測能力、そして豊富な実務経験によって再び高い... | kitagawag | |

1,883,906 | Building a Fort Knox DevSecOps: Comprehensive Security Practices | _Welcome Aboard Week 2 of DevSecOps in 5: Your Ticket to Secure Development Superpowers! Hey there,... | 27,560 | 2024-06-12T03:48:00 | https://dev.to/gauri1504/building-a-fort-knox-devsecops-comprehensive-security-practices-3h7m | devsecops, devops, cloud, security | _Welcome Aboard Week 2 of DevSecOps in 5: Your Ticket to Secure Development Superpowers!

Hey there, security champions and coding warriors!

Are you itching to level up your DevSecOps game and become an architect of rock-solid software? Well, you've landed in the right place! This 5-week blog series is your fast track ... | gauri1504 |

1,863,266 | The radical concept of NixOS and why I love it! | NixOS is one of the most exciting developments in the Linux community in recent years. It is an... | 0 | 2024-06-12T03:47:35 | https://dev.to/prismlabsdev/the-radical-concept-of-nixos-and-why-i-love-it-cfk | linux, learning, nixos | NixOS is one of the most exciting developments in the Linux community in recent years. It is an independently developed Linux distribution based on the Nix package manger. It expands the concept of declarative and reproducible builds found in the Nix package manager to the entire system! Bringing unmatched stability to... | jwoodrow99 |

1,885,122 | Pregnancy Termination Clinic California | We understand unwanted pregnancy can put a lot of stress on the physical and mental health of a... | 0 | 2024-06-12T03:47:18 | https://dev.to/hsc78/pregnancy-termination-clinic-california-15k3 | We understand unwanted pregnancy can put a lot of stress on the physical and mental health of a woman. In such situations, Termination of Pregnancy (TOP) or abortion can be a blessing for her. The termination can be safely performed until the end of the second trimester as per state law. There are two types of procedur... | hsc78 | |

1,885,114 | regexp lazy match | case demo string is /sbin/dhclient /xx -4 -d -nw -cf /run/dhclient/dhclient_eth1.conf -pf... | 0 | 2024-06-12T03:33:33 | https://dev.to/eiguleo/regexp-lazy-match-4bjf | # case

demo string is

/sbin/dhclient /xx -4 -d -nw -cf /run/dhclient/dhclient_eth1.conf -pf /run/dhclient/dhclient_eth1.pid

# target

/run/dhclient/ is prefix path, it would change as os version changes, so get the /run/dhclient/

# code

`echo "/sbin/dhclient /xx -4 -d -nw -cf /run/dhclient/dhclient_eth1.conf -pf /run/... | eiguleo | |

1,885,117 | 北川 源太郎(KITAGAWA GENTAROU):東洋経済と融合した金融戦略家 | 北川 源太郎 KITAGAWA GENTAROU 出生地: 東京都 学歴:: 学生時代から数字と金融に興味があり、東京大学で金融学を学び卒業しました。有名な投資銀行に入社し、金融アナリストとして 20... | 0 | 2024-06-12T03:33:11 | https://dev.to/kitagawagentarou/bei-chuan-yuan-tai-lang-kitagawa-gentaroudong-yang-jing-ji-torong-he-sitajin-rong-zhan-lue-jia-4kbj | 北川源太郎, 北川源太郎kitagawagentarou | 北川 源太郎 KITAGAWA GENTAROU

出生地: 東京都

学歴::

学生時代から数字と金融に興味があり、東京大学で金融学を学び卒業しました。有名な投資銀行に入社し、金融アナリストとして 20 年以上のキャリアをスタートさせました。

簡単な経歴:

シニア金融アナリストとして、豊富な投資経験と専門知識を持ち、さまざまな財務分析手法に精通しており、多くの金融商品と市場動向をよく理解しています。

2008年、リーマンショックが私の家族とキャリアに深刻な影響を与えました。私は親戚や友人の紹介で山口秀久さんと出会いました。

Material Design 3. Material Design 3 is a design system created by Google. In this post, we will learn about the list expansions. The list expansions are lists with items and subit... | leonardorafael |

1,885,072 | The Era of Digital Nomads | SQLynx: The Best Choice for Individual Developers | In the age of digital nomads, where flexibility and remote work have become the norm, choosing the... | 0 | 2024-06-12T03:16:46 | https://dev.to/concerate/the-era-of-digital-nomads-sqlynx-the-best-choice-for-individual-developers-np | In the age of digital nomads, where flexibility and remote work have become the norm, choosing the right tools is crucial for individual developers.

SQLynx stands out as the best choice for personal developers due to its user-friendly interface, powerful features, and efficiency in handling SQL queries and database ma... | concerate | |

1,885,070 | Demystifying the SIP Server: The Heart of Your VoIP Communication | In today's communication landscape, Voice over IP (VoIP) has become a ubiquitous technology. But have... | 0 | 2024-06-12T03:12:36 | https://dev.to/epakconsultant/demystifying-the-sip-server-the-heart-of-your-voip-communication-5hg2 | voip | In today's communication landscape, Voice over IP (VoIP) has become a ubiquitous technology. But have you ever wondered what orchestrates those seamless phone calls over the internet? Enter the SIP server, the unsung hero behind smooth VoIP communication. This article delves into the world of SIP servers, exploring the... | epakconsultant |

1,885,069 | MIMI: Reshaping the DeFi Ecosystem to Build a Secure, Transparent, and Efficient Multi-Chain Investment Platform | In the DeFi field, MIMI is dedicated to providing users with efficient, secure, and transparent... | 0 | 2024-06-12T03:11:16 | https://dev.to/mimi_official/mimi-reshaping-the-defi-ecosystem-to-build-a-secure-transparent-and-efficient-multi-chain-investment-platform-4o9d | In the DeFi field, MIMI is dedicated to providing users with efficient, secure, and transparent financial services. With the rapid development of digital finance, more and more users hope to achieve wealth growth and management by participating in the DeFi ecosystem. Our aim is to ensure that every user, regardless of ... | mimi_official | |

1,885,068 | Lighting the Stage: Mastering Light and Shadow for Stunning 3D Scenes | The magic of 3D graphics lies not just in meticulously crafted models but also in the art of bringing... | 0 | 2024-06-12T03:06:11 | https://dev.to/epakconsultant/lighting-the-stage-mastering-light-and-shadow-for-stunning-3d-scenes-478k | The magic of 3D graphics lies not just in meticulously crafted models but also in the art of bringing them to life with convincing lighting and shading. Just like a well-lit stage elevates a performance, effective lighting techniques can elevate your 3D scenes from sterile to captivating. Let's delve into some key conc... | epakconsultant | |

1,885,067 | Effortless User Management with AWS Cognito | Effortless User Management with AWS Cognito In today's digital landscape, applications... | 0 | 2024-06-12T03:02:26 | https://dev.to/virajlakshitha/effortless-user-management-with-aws-cognito-377j |

# Effortless User Management with AWS Cognito

In today's digital landscape, applications are increasingly reliant on robust and secure user management systems. From handling user authentication and authorization to managing user pr... | virajlakshitha | |

1,885,066 | Unveiling the Magic: A Dive into 3D Graphics Concepts and Techniques | The world of 3D graphics has become ubiquitous, from captivating video games and blockbuster movies... | 0 | 2024-06-12T03:01:27 | https://dev.to/epakconsultant/unveiling-the-magic-a-dive-into-3d-graphics-concepts-and-techniques-1lho | 3d, graphics, webdev | The world of 3D graphics has become ubiquitous, from captivating video games and blockbuster movies to stunning architectural visualizations and even medical simulations. But what brings these three-dimensional worlds to life? Let's delve into some fundamental concepts and techniques that underpin the magic of 3D graph... | epakconsultant |

1,885,065 | The Orchestra of Industry: PLCs, HMIs, and SCADA Systems in Manufacturing | Manufacturing today is a symphony of automation, where machines and computer systems work in concert... | 0 | 2024-06-12T02:53:07 | https://dev.to/epakconsultant/the-orchestra-of-industry-plcs-hmis-and-scada-systems-in-manufacturing-2pd2 | manufacturing | Manufacturing today is a symphony of automation, where machines and computer systems work in concert to produce goods efficiently and precisely. Three key technologies play a vital role in conducting this industrial orchestra: Programmable Logic Controllers (PLCs), Human-Machine Interfaces (HMIs), and Supervisory Contr... | epakconsultant |

1,885,064 | JewelryOnLight | []( ) | 0 | 2024-06-12T02:52:05 | https://dev.to/lan_wang_1f9538b9feb11979/jewelryonlight-45d0 | earrings, bracelets |

[](

)

| lan_wang_1f9538b9feb11979 |

1,885,063 | JewelryOnLight | A post by lan wang | 0 | 2024-06-12T02:50:37 | https://dev.to/lan_wang_1f9538b9feb11979/jewelryonlight-2o8m | earrings, bracelets |

| lan_wang_1f9538b9feb11979 |

1,885,061 | The current Lakehouse is like a false proposition | From all-in-one machine, hyper-convergence, cloud computing to HTAP, we constantly try to combine... | 0 | 2024-06-12T02:50:06 | https://dev.to/esproc_spl/the-current-lakehouse-is-like-a-false-proposition-2le4 | lackhouse, bigdata, development, programming | From all-in-one machine, hyper-convergence, cloud computing to HTAP, we constantly try to combine multiple application scenarios together and attempt to solve this type of problem through one technology so as to achieve the goal of simple and efficient use. Lakehouse, which is very hot nowadays, is exactly such a techn... | esproc_spl |

1,885,060 | Demystifying Game Development: A Look at Frameworks Like Pygame | Crafting a captivating game requires juggling various elements – graphics, sound, physics, user... | 0 | 2024-06-12T02:47:15 | https://dev.to/epakconsultant/demystifying-game-development-a-look-at-frameworks-like-pygame-2hfg | pygame | Crafting a captivating game requires juggling various elements – graphics, sound, physics, user input, and more. Game development frameworks come to the rescue, providing a foundation upon which you can build your interactive masterpiece. This article delves into the core concepts of frameworks like Pygame, equipping y... | epakconsultant |

1,885,059 | Automating Excel with Power: Building Your Own Plugin using VBA | Microsoft Excel is a powerhouse for data analysis and manipulation. But wouldn't it be great to... | 0 | 2024-06-12T02:41:11 | https://dev.to/epakconsultant/automating-excel-with-power-building-your-own-plugin-using-vba-23fk | vba | Microsoft Excel is a powerhouse for data analysis and manipulation. But wouldn't it be great to extend its capabilities with custom tools tailored to your specific needs? Enter VBA, or Visual Basic for Applications. VBA allows you to write macros and plugins that automate repetitive tasks and enhance Excel's functional... | epakconsultant |

1,885,058 | Hello World | A post by NISHANTH CS | 0 | 2024-06-12T02:37:04 | https://dev.to/nishanth_cs_0c45025324f97/hello-world-28a9 | hello |

| nishanth_cs_0c45025324f97 |

1,885,057 | CAS No.: 26530-20-1: Safety and Usage Guidelines | CAS No. : 26530-20-1, the Safe plus Innovative Component for Your specs Selecting the component and... | 0 | 2024-06-12T02:34:41 | https://dev.to/johnnie_heltonke_fbec2631/cas-no-26530-20-1-safety-and-usage-guidelines-22di | design |

CAS No. : 26530-20-1, the Safe plus Innovative Component for Your specs

Selecting the component and protected which try innovative your needs? CAS No. : 26530-20-1 will be the solution in your case! This chemical ingredient is generally found in a lot of companies which are various like edibles, cosmetics, plus p... | johnnie_heltonke_fbec2631 |

1,885,056 | Hierarchical filter on Select tags & Select.Option of Ant Design | Hierarchical filter on Select tags &... | 0 | 2024-06-12T02:34:38 | https://dev.to/trn_thanhhiu_f59ffe159/hierarchical-filter-on-select-tags-selectoption-of-ant-design-2c9i | webdev, react, antd, beginners | {% stackoverflow 78606128 %}

Could someone help me with this, pleaseeee | trn_thanhhiu_f59ffe159 |

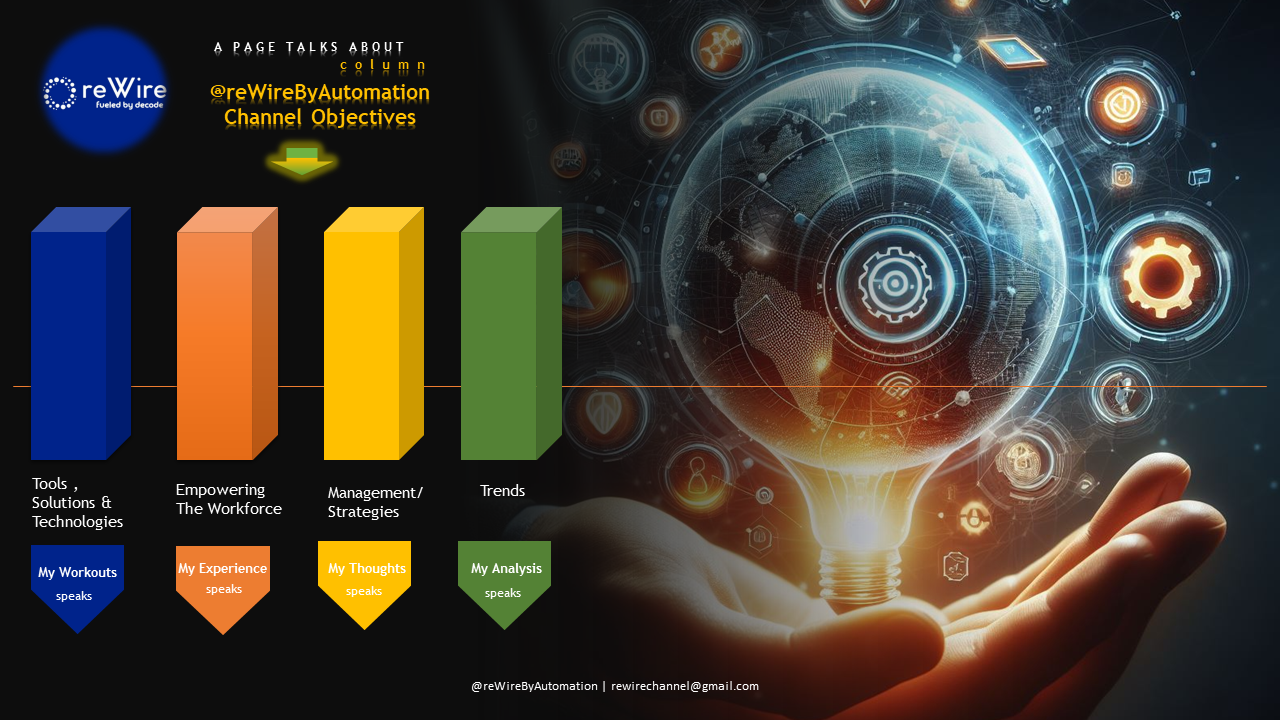

1,885,023 | A PAGE TALKS ABOUT (The 2-Minute Guide: Accessibility Evaluation Approach, Methods, and Tools) | MY WORKOUTS: PICTURE THIS The Accessibility Landscape encompasses Design, Development,... | 0 | 2024-06-12T02:33:00 | https://dev.to/rewirebyautomation/a-page-talks-about-the-2-minute-guide-accessibility-evaluation-approach-methods-and-tools-2km | a11y, testing, automation, qa | **_MY WORKOUTS: PICTURE THIS_**

The **Accessibility Landscape** encompasses **Design, Devel... | rewirebyautomation |

1,885,051 | Research on Binance Futures Multi-currency Hedging Strategy Part 1 | Click the research button on the Dashboard page, and then click the arrow to enter. Open the uploaded... | 0 | 2024-06-12T02:23:41 | https://dev.to/fmzquant/research-on-binance-futures-multi-currency-hedging-strategy-part-1-32a2 | strategy, fmzquant, binance, hedging | Click the research button on the Dashboard page, and then click the arrow to enter. Open the uploaded .pynb suffix file and press shift + enter to run line by line. There are basic tutorials in the usage help of the research environment.

Twinkly is a brand created by Italian tech company Ledworks, a market leader in [smart lights](https://twinkly.com/en-us). Just years after its 2016 launch, Twinkly has already become a global brand, revolutionizing the world ... | twinklyusa | |

1,885,021 | Dynamic CSS Shadows Creation | In this lab, we will explore how to create dynamic shadows using CSS. You will learn how to use the ::after pseudo-element and various CSS properties such as background, filter, opacity, and z-index to create an effect that mimics a box-shadow, but is based on the colors of the element itself. By the end of this lab, y... | 27,689 | 2024-06-12T02:09:19 | https://labex.io/tutorials/css-dynamic-css-shadows-creation-35194 | css, coding, programming, tutorial |

# ① Dynamic Shadow

`index.html` and `style.css` have already been provided in the VM.

To create a shadow that is based on the colors of an element, follow these steps:

1. Use the `::after` pseudo-element with `position: absolute` and `width` and `height` set to `100%` to fill the available space in the parent eleme... | labby |

1,885,002 | Access Google Cloud Storage from AWS Lambda using Workload Identity Federation | In this post we will look at how to access Google cloud storage from AWS Lambda functions using... | 0 | 2024-06-12T01:54:48 | https://dev.to/specky_shooter/access-google-cloud-storage-from-aws-lambda-using-workload-identity-federation-3laj | In this post we will look at how to access Google cloud storage from AWS Lambda functions using Google's Workforce Identity Federation.

Typically, when you access the resources belonging to one cloud from another cloud or from any other environment, you would use a service account credentials file, but this has a coup... | specky_shooter | |

1,885,014 | How to Make Money with IPTV: A Step-by-Step Guide | Are you interested in making money by selling IPTV services? With the right tools and knowledge, you... | 0 | 2024-06-12T01:48:31 | https://dev.to/4k_ott_iptv_supplier/how-to-make-money-with-iptv-a-step-by-step-guide-3eg6 | iptv, makemoney, iptvreseller, tutorial | Are you interested in making money by selling IPTV services? With the right tools and knowledge, you can start your own IPTV reseller business and earn a steady income. In this tutorial, we will guide you through the process of setting up your business and provide you with the necessary information to get started.

The... | 4k_ott_iptv_supplier |

1,885,013 | Why I Developed a Salesforce Chrome Extension? | In the daily work of Salesforce administrators and developers, efficiency is key. One major challenge... | 0 | 2024-06-12T01:46:25 | https://dev.to/dyn/why-i-developed-a-salesforce-chrome-extension-361a | productivity, typescript, salesforce, chrome |

In the daily work of Salesforce administrators and developers, efficiency is key. One major challenge is quickly locating and accessing Salesforce configurations and metadata. To address this issue, I developed the Salesforce Spotlight Chrome Extension. Here are the reasons behind this development and the benefits it ... | dyn |

1,885,011 | flower with heart | Check out this Pen I made! | 0 | 2024-06-12T01:34:45 | https://dev.to/uzumaki156/flower-with-heart-2c0 | codepen | Check out this Pen I made!

{% codepen https://codepen.io/ahmed-abdo-the-bashful/pen/dyEVqEB %} | uzumaki156 |

1,885,010 | SQL IDEs/Editors for making MySQL usage Easier and more Efficient | As a developer, especially for those who work with MySQL databases, using the right SQL tools is... | 0 | 2024-06-12T01:33:09 | https://dev.to/concerate/sql-ideseditors-for-making-mysql-usage-easier-and-more-efficient-1fgd | As a developer, especially for those who work with MySQL databases, using the right SQL tools is crucial as it can simplify daily tasks.

Here are the top 3 recommended user-friendly SQL IDEs or SQL Editors:

**1. SQLynx**

SQLynx supports both web-based and client-side operations. It can handle various types of databases... | concerate | |

1,885,009 | CAS No.: 52-51-7: Potential Applications and Benefits | CAS No.: 52-51-7: Potential Applications and Benefits Are you looking for a compound chemical has... | 0 | 2024-06-12T01:29:43 | https://dev.to/walter_davisker_b9f5919a3/cas-no-52-51-7-potential-applications-and-benefits-265n | CAS No.: 52-51-7: Potential Applications and Benefits

Are you looking for a compound chemical has many prospective uses? One compound such known as CAS No.: 52-51-7. We'll explore the various advantages, innovations, safety measures, and applications with this chemical versatile.

Advantages and Innovations

CAS No.: 5... | walter_davisker_b9f5919a3 | |

1,885,008 | Behind the Code: Variables And Functions | Hoisting is a fundamental concept in JavaScript that often confounds newcomers and even seasoned... | 0 | 2024-06-12T01:28:40 | https://dev.to/whevaltech/behind-the-code-variables-and-functions-41ih | webdev, javascript, programming, tutorial | Hoisting is a fundamental concept in JavaScript that often confounds newcomers and even seasoned developers. This article aims to demystify hoisting by explaining what it is, how it works, and how it affects the way you write and debug JavaScript code.

## What is Hoisting?

Hoisting is JavaScript's default behavior of m... | whevaltech |

1,391,195 | Boas praticas com Git | Nesse post trago algumas reflexões sobre práticas e ferramentas git que tem ajudado o meu dia a dia,... | 0 | 2023-03-16T12:17:03 | https://dev.to/bernardo/boas-praticas-com-git-jdm | git, productivity, devops, braziliandevs | Nesse post trago algumas reflexões sobre práticas e ferramentas git que tem ajudado o meu dia a dia, espero que te ajudem também.

## Simplifique os históricos de commits com git rebase

Quando você tem duas ramificações em um projeto (por exemplo, uma ramificação de desenvolvimento e uma ramificação principal), ambas ... | bernardo |

1,885,001 | Usando Imagens Base Seguras | Escrevi este artigo para compartilhar um pouco do que já aprendi no PICK da LinuxTips. Então, pegue... | 0 | 2024-06-12T01:25:28 | https://dev.to/batistagabriel/usando-imagens-base-seguras-2dc7 | docker, containers |

Escrevi este artigo para compartilhar um pouco do que já aprendi no [PICK](https://www.linuxtips.io/pick) da [LinuxTips](https://www.linuxtips.io/). Então, pegue sua bebida e me acompanhe.

Tudo começou quando, por vezes, as fe... | batistagabriel |

1,885,006 | The Role of CAS No.: 26530-20-1 in Modern Chemistry | Introduction Chemistry has plenty of complicated compounds that need recognition of Chemical... | 0 | 2024-06-12T01:24:27 | https://dev.to/walter_davisker_b9f5919a3/the-role-of-cas-no-26530-20-1-in-modern-chemistry-3840 | Introduction

Chemistry has plenty of complicated compounds that need recognition of Chemical Abstracts Solution (CAS) varieties to avoid complication in between the compounds that are actually various. CAS No.: 26530-20-1 is actually a compound distinct in contemporary chemistry along with varied requests. It is actua... | walter_davisker_b9f5919a3 | |

1,885,005 | Crocodile line trading system Python version | Summary People who have done financial trading will probably have an experience. Sometimes... | 0 | 2024-06-12T01:24:24 | https://dev.to/fmzquant/crocodile-line-trading-system-python-version-3pkb | python, trading, cryptocurrency, fmzquant | ## Summary

People who have done financial trading will probably have an experience. Sometimes the price fluctuations are regular, but more often it shows an unstable state of random walk. It is this instability that is where market risks and opportunities lie. Instability also means unpredictable, so how to make return... | fmzquant |

1,884,999 | simple ways to sum an array of numbers in golang | Step 1: Loop through the Array Loop through each element of the array to access its values. Step 2:... | 0 | 2024-06-12T01:23:53 | https://dev.to/toluwasethomas/simple-ways-to-sum-an-array-of-numbers-in-golang-1chb | webdev, go, beginners, programming | Step 1: Loop through the Array

Loop through each element of the array to access its values.

Step 2: Declare the result Variable

Declare a variable result to store the cumulative sum. The type of this variable should match the type of the elements in the array (e.g., int, float64, etc.).

Step 3: Accumulate the Sum

Use... | toluwasethomas |

1,885,004 | CAS No.: 225708-80-6: Safe Handling Practices | Keep Safe While Using CAS No.: 225708-80-6 What is CAS No.: 225708-80-6? CAS No.: 225708-80-6 is a... | 0 | 2024-06-12T01:20:25 | https://dev.to/walter_davisker_b9f5919a3/cas-no-225708-80-6-safe-handling-practices-3e6m | Keep Safe While Using CAS No.: 225708-80-6

What is CAS No.: 225708-80-6?

CAS No.: 225708-80-6 is a element chemical for a variety of purposes. It is used in industries such as agriculture, pharmaceuticals, and electronics. This compound is also known as Cyclopropane.

Advantages of CAS No.: 225708-80-6

One of this a... | walter_davisker_b9f5919a3 | |

1,884,996 | 3 Ways to Use the @Lazy Annotation in Spring | Does your Spring application take too long to start? Maybe this annotation could help you. This... | 27,602 | 2024-06-12T00:51:57 | https://springmasteryhub.com/2024/06/11/3-ways-to-use-the-lazy-annotation-in-spring/ | java, spring, springboot, programming |

Does your Spring application take too long to start? Maybe this annotation could help you.

This annotation indicates to Spring that a bean needs to be lazily initiated.

Spring will not create this bean at the start of the application. Instead, it will create it only when the bean is requested for the first time.

... | tiuwill |

1,884,995 | The Future of CAS No.: 26530-20-1 in Industry | The Future of CAS No.: 26530-20-1 in Pharmaceutical Industry Introduction Would you know what CAS... | 0 | 2024-06-12T00:50:16 | https://dev.to/walter_davisker_b9f5919a3/the-future-of-cas-no-26530-20-1-in-industry-lpo | The Future of CAS No.: 26530-20-1 in Pharmaceutical Industry

Introduction

Would you know what CAS No.: 26530-20-1 is? It is a chemical that can be used in lots of markets that are various. CAS No.: 26530-20-1 has a total great deal of benefits, which means it is truly helpful for companies that use it. People are cons... | walter_davisker_b9f5919a3 | |

1,884,992 | Deconstructing Search Input Box on Fluent UI's Demo Website… | The search box component that we see on the demo website is actually implemented using... | 0 | 2024-06-12T00:40:43 | https://dev.to/zawhtut/deconstructing-search-input-box-on-fluent-uis-demo-website-4nho | blazor, fluentui, searchbox, autocomplete | The search box component that we see on the demo website is actually implemented using `FluentAutocomplete`. This component combines a text box and a drop-down list box to provide autocomplete functionality.

I want to share my insights on how the `FluentAutocomplete` is implemented on FluentUI's demo website. This wil... | zawhtut |

1,884,991 | Exploring the Use of AI in Web Development | Introduction Artificial Intelligence (AI) has been a buzzword in the technological world... | 0 | 2024-06-12T00:32:39 | https://dev.to/kartikmehta8/exploring-the-use-of-ai-in-web-development-3f6h | webdev, javascript, beginners, tutorial | ## Introduction

Artificial Intelligence (AI) has been a buzzword in the technological world for quite some time now. Its potential to transform various industries is being explored, one of which is web development. With the constantly evolving needs of the online world, the use of AI in web development is gaining popu... | kartikmehta8 |

1,884,990 | IT IS NEVER TOO LATE TO RECOVER LOST BITCOIN. CONTACT AN EXPERT / LEE ULTIMATE HACKER | LEEULTIMATEHACKER@ AOL. COM Support @ leeultimatehacker .com telegram:LEEULTIMATE wh@tsapp +1 (715)... | 0 | 2024-06-12T00:30:50 | https://dev.to/jenny_lann_c731d9b51f36c4/it-is-never-too-late-to-recover-lost-bitcoin-contact-an-expert-lee-ultimate-hacker-1k68 | LEEULTIMATEHACKER@ AOL. COM

Support @ leeultimatehacker .com

telegram:LEEULTIMATE

wh@tsapp +1 (715) 314 - 9248

https://leeultimatehacker.com

Numerous transactions are often conducted online, and the risk of falling victim to scams and fraudsters is ever-present. Despite our best efforts to stay vigilant, there... | jenny_lann_c731d9b51f36c4 | |

1,881,290 | Running Advanced MongoDB Queries In TypeORM | TypeORM is a Javascript/Typescript ORM that works well with SQL databases and MongoDB, which is built... | 0 | 2024-06-12T00:22:17 | https://dev.to/kalashin1/running-advanced-mongodb-queries-in-typeorm-327j | node, database, mongodb, typescript | TypeORM is a Javascript/Typescript ORM that works well with SQL databases and MongoDB, which is built to run in the browser, on the server aka Node.JS, Expo, and every single execution context that utilizes Javascript. TypeORM has excellent support for Typescript while allowing newbies to use it with Javascript.

TypeO... | kalashin1 |

1,884,985 | Button Animation | A button design with a hover effect, perfect for calls-to-action or navigation elements. The button... | 0 | 2024-06-12T00:20:00 | https://dev.to/sabeerjuniad/button-animation-2n3f | codepen, animation, css | A button design with a hover effect, perfect for calls-to-action or navigation elements. The button features a smooth animation and a subtle gradient effect, making it a great addition to any web project.

{% codepen https://codepen.io/Sabeer-Junaid/pen/OJYxowy %} | sabeerjuniad |

1,884,983 | Business Loans NZ - Cash Now = Growth Tomorrow 💰🚀⭐ | Starting or expanding a business in New Zealand may require significant financial resources and... | 0 | 2024-06-12T00:18:42 | https://dev.to/businessloansnz/business-loans-nz-cash-now-growth-tomorrow-13jo | business, finance, loans, nz | Starting or expanding a business in New Zealand may require significant financial resources and support. This is where **NZ Working Capital** can step in to provide the essential helping hand with our business loans NZ services designed specifically for New Zealand businesses like yours.

Click to **[Apply Now >>](htt... | businessloansnz |

1,884,980 | Valentine's Card Flip | Check out this Pen I made! | 0 | 2024-06-12T00:10:22 | https://dev.to/enrique_portillo_3af96727/valentines-card-flip-2gc7 | codepen | Check out this Pen I made!

{% codepen https://codepen.io/hluebbering/pen/eYQgdJN %} | enrique_portillo_3af96727 |

1,884,978 | Understanding API Architecture Styles Using SOAP | What is SOAP? SOAP stands for Simple Object Access Protocol. It is a protocol used for exchanging... | 0 | 2024-06-12T00:06:58 | https://dev.to/fabiola_estefanipomamac/understanding-api-architecture-styles-using-soap-324l | **What is SOAP?**

SOAP stands for Simple Object Access Protocol. It is a protocol used for exchanging information in the implementation of web services. SOAP relies on XML (Extensible Markup Language) to format messages and usually relies on other application layer protocols, most notably HTTP and SMTP, for message neg... | fabiola_estefanipomamac | |

1,884,977 | LOOKING FOR AN EFFICIENT CRYPTO HACKER? CONTACT WEB BAILIFF CONTRACTORS | Losing a significant amount of money, especially through fraudulent means, can be devastating... | 0 | 2024-06-12T00:05:54 | https://dev.to/joyce_caroline_114bcf567a/looking-for-an-efficient-crypto-hacker-contact-web-bailiff-contractors-4854 | beginners | Losing a significant amount of money, especially through fraudulent means, can be devastating emotionally, financially, and psychologically. It's a story that unfortunately many people can relate to, as the allure of quick profits and the promise of financial security can blind even the most cautious investors. For my ... | joyce_caroline_114bcf567a |

1,884,783 | Padrão 7-1 do SASS | Arquitetura SASS para elevar o nível do seu projeto. Imagine esta situação: você precisa... | 0 | 2024-06-12T00:00:55 | https://dev.to/yagopeixinho/padrao-7-1-do-sass-1jl7 | sass, patternsass, webdev, scss | > Arquitetura SASS para elevar o nível do seu projeto.

---

Imagine esta situação: você precisa urgentemente alterar a cor de um botão que está destoando completamente do design padrão da plataforma. Você abre seu editor de código, localiza o arquivo HTML, procura pelo botão… e, para sua surpresa, percebe que ele não ... | yagopeixinho |

1,886,192 | GenAI Predictions and The Future of LLMs as local-first offline Small Language Models (SLMs) | We’ve been increasingly accustomed to subscription-based economic model, which did not skip the GenAI... | 0 | 2024-07-03T18:01:18 | https://lirantal.com/blog/genai-predictions-the-future-llms-local-first-offline-small-language-models-slm/ | ---

title: GenAI Predictions and The Future of LLMs as local-first offline Small Language Models (SLMs)

published: true

date: 2024-06-12 00:00:00 UTC

tags:

canonical_url: https://lirantal.com/blog/genai-predictions-the-future-llms-local-first-offline-small-language-models-slm/

---

We’ve been increasingly accustomed t... | lirantal | |

1,812,678 | Welcome Thread - v280 | Leave a comment below to introduce yourself! You can talk about what brought you here,... | 0 | 2024-06-12T00:00:00 | https://dev.to/devteam/welcome-thread-v280-1mmp | welcome | ---

published_at : 2024-06-12 00:00 +0000

---

---

1. Leave a comment below to introduce yourself! You can talk about what brought you here, what you're learning, or just a fun fact about yourself.

2. Reply to ... | sloan |

1,851,860 | Understand inheritance types in Django models | Image credits: elifskies Sometimes, when we create Models in Django we want to give certain... | 0 | 2024-06-12T16:46:25 | https://coffeebytes.dev/en/understand-inheritance-types-in-django-models/ | django, python, database | ---

title: Understand inheritance types in Django models

published: true

date: 2024-06-12 00:00:00 UTC

tags: django,python,database

canonical_url: https://coffeebytes.dev/en/understand-inheritance-types-in-django-models/

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/4vdx0zl88zsxsg17oh9g.jpg

---

... | zeedu_dev |

1,851,922 | Debounce and Throttle design patterns explained in Javascript | Image credits to i7 from Pixiv: https://www.pixiv.net/en/users/54726558 Debounce and throttle are... | 0 | 2024-06-09T21:56:56 | https://coffeebytes.dev/en/debounce-and-throttle-in-javascript/ | javascript, designpatterns, algorithms, tutorial | ---

title: Debounce and Throttle design patterns explained in Javascript

published: true

date: 2024-06-12 00:00:00 UTC

tags: javascript,designpatterns,algorithms,tutorial

canonical_url: https://coffeebytes.dev/en/debounce-and-throttle-in-javascript/

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/... | zeedu_dev |

1,885,782 | A .NET Developer Guide to XUnit Test Instrumentation with OpenTelemetry and Aspire Dashboard | TL;DR In this guide, we will explored how to leverage XUnit and OpenTelemetry to... | 0 | 2024-06-14T10:57:27 | https://nikiforovall.github.io/dotnet/opentelemetry/2024/06/12/developer-guide-to-xunit-otel.html | csharp, opentelemetry, dotnet, xunit | ---

title: A .NET Developer Guide to XUnit Test Instrumentation with OpenTelemetry and Aspire Dashboard

published: true

date: 2024-06-12 00:00:00 UTC

tags: csharp, opentelemetry, dotnet, xunit

canonical_url: https://nikiforovall.github.io/dotnet/opentelemetry/2024/06/12/developer-guide-to-xunit-otel.html

---

## TL;DR

... | nikiforovall |

1,884,800 | Guia de instalação do Proxmox VE | Neste guia, você vai aprender a instalar o Proxmox VE de forma simples! Para acompanhar minha... | 0 | 2024-06-11T23:45:59 | https://dev.to/hei-lima/guia-de-instalacao-do-proxmox-ve-3d0l | ledscommunity, devops, beginners, proxmox | Neste guia, você vai aprender a instalar o Proxmox VE de forma simples!

Para acompanhar minha experiência escolhendo e instalando um gerenciador de VMs em um servidor, leia este artigo: https://dev.to/hei-lima/a-experiencia-2hf6

---

## Índice

1. [O Proxmox](#proxmox)

2... | hei-lima |

1,884,970 | I just made React/Next Design Pattern Repo | This template is including file structure, edit pattern and abstraction to edit React Component more... | 0 | 2024-06-11T23:36:16 | https://dev.to/lif31up/i-just-made-reactnext-design-pattern-1mlb | This template is including file structure, edit pattern and abstraction to edit React Component more easily. I'm still researching on it.

link to the repo

https://github.com/lif31up/next-template | lif31up | |

1,884,958 | Burn To Earn: What is The Secret Formula? | You probably think I’m going to tell you to burn fat or get moving if you want to earn more, but no,... | 0 | 2024-06-11T23:06:35 | https://dev.to/wulirocks/burn-to-earn-what-is-the-secret-formula-2g00 | web3, blockchain, webdev, nft | You probably think I’m going to tell you to burn fat or get moving if you want to earn more, but no, not today.

The burning I’m talking about here doesn’t require any physical activity.

It’s all about clicking the right button at the right moment.

Burning an NFT means deleting it forever, and I’ll show you how this a... | wulirocks |

1,884,969 | Hosting websites with ipv6 on Hetzner servers | I had to set up a website on hetzner cloud with debian, while having to use an ipv6 address. I... | 0 | 2024-06-11T23:33:51 | https://dev.to/georg4313/hosting-websites-with-ipv6-on-hetzner-servers-57e0 | hosting, ipv6, hetzner | I had to set up a website on hetzner cloud with debian, while having to use an ipv6 address.

I struggled for some time but then stumbled on a [blog post](https://www.blunix.com/blog/ipv6-on-hetzner-cloud-server-for-hosting-a-website.html) that covered the same topic.

The amount of time it saved me with not having to... | georg4313 |

1,884,965 | [Game of Purpose] Day 24 | Today I made my drone animate propeller rotation when it's flying. Well, for now it's rotating only 1... | 27,434 | 2024-06-11T23:19:57 | https://dev.to/humberd/game-of-purpose-day-24-27ik | gamedev | Today I made my drone animate propeller rotation when it's flying. Well, for now it's rotating only 1 of 4, because I didn't figure out how to efficiently trigger events for all of Child Actors of specific type.

{% embed https://youtu.be/tXP4WcLtmh0 %}

Below you can see my pain point. Too many nodes just to trigger ... | humberd |

1,884,960 | How to Set Up a CI/CD Pipeline with GitLab: A Beginner's Guide | Introduction to CI/CD and GitLab In modern software development, Continuous Integration... | 0 | 2024-06-11T23:18:39 | https://dev.to/arbythecoder/how-to-set-up-a-cicd-pipeline-with-gitlab-a-beginners-guide-46b9 | gitlab, devops, beginners, webdev | #### Introduction to CI/CD and GitLab

In modern software development, Continuous Integration (CI) and Continuous Deployment (CD) are essential practices. CI involves automatically integrating code changes into a shared repository multiple times a day, while CD focuses on deploying the integrated code to production aut... | arbythecoder |

1,884,961 | AWS Amplify Gen2 Authentication | Some times the guides does not help much and something is missing to make thinks work. In this case,... | 0 | 2024-06-11T23:15:01 | https://dev.to/ldbravo/aws-amplify-gen2-authentication-cp6 | aws, amplify, react, authentication | Some times the guides does not help much and something is missing to make thinks work. In this case, this guide will help setting up a new project in AWS Amplify with Cognito Authentication.

There we go with the required steps:

1. Create a GitHub or CodeCommit repo.

2. Create a React App, in my case I'm using Vite.

... | ldbravo |

1,884,959 | What is Node.js? | Node.js is a server-side scripting environment that uses JavaScript for backend... | 0 | 2024-06-11T23:09:40 | https://dev.to/satyapriyaambati/what-is-nodejs-2dl0 | Node.js is a server-side scripting environment that uses JavaScript for backend programming.

[https://youtu.be/H9M02of22z4?si=QxsIqqoH_MI-cTjT] | satyapriyaambati | |

1,884,953 | Extracting the Sender from a Transaction with Go-Ethereum | When working with Ethereum transactions in Go, extracting the sender (the address that initiated the... | 0 | 2024-06-11T22:51:11 | https://dev.to/burgossrodrigo/extracting-the-sender-from-a-transaction-with-go-ethereum-1cn3 |

When working with Ethereum transactions in Go, extracting the sender (the address that initiated the transaction) is not straightforward. The go-ethereum library provides the necessary tools, but you need to follow a specific process to get the sender's address. This post will guide you through the steps required to... | burgossrodrigo | |

1,884,952 | Understanding DML, DDL, DCL,TCL SQL Commands in MySQL | MySQL is a popular relational database management system used by developers worldwide. It uses... | 0 | 2024-06-11T22:39:21 | https://dev.to/ayas_tech_2b0560ee159e661/understanding-dml-ddl-dcltcl-sql-commands-in-mysql-o1f | MySQL is a popular relational database management system used by developers worldwide. It uses Structured Query Language (SQL) to interact with databases. SQL commands can be broadly categorized into Data Manipulation Language (DML), Data Definition Language (DDL), and several other types. In this blog post, we'll expl... | ayas_tech_2b0560ee159e661 | |

1,884,896 | Understanding the Difference Between JavaScript and TypeScript | As a developer, you’ve likely heard about both JavaScript and TypeScript. While JavaScript is one of... | 0 | 2024-06-11T22:19:51 | https://dev.to/ayas_tech_2b0560ee159e661/understanding-the-difference-between-javascript-and-typescript-jm1 | As a developer, you’ve likely heard about both JavaScript and TypeScript. While JavaScript is one of the most popular programming languages for web development, TypeScript has been gaining traction due to its added features and benefits. In this blog post, I'll explore the key differences between JavaScript and TypeScr... | ayas_tech_2b0560ee159e661 | |

1,884,884 | Creating mocked data for EF Core using Bogus and more | Introduction The main objective is to demonstrate creating data for Microsoft EF Core that... | 22,612 | 2024-06-11T22:17:12 | https://dev.to/karenpayneoregon/creating-mocked-data-for-ef-core-using-bogus-and-more-2l0i | dotnetcore, database, csharp | ## Introduction

The main objective is to demonstrate creating data for Microsoft EF Core that is the same every time the application runs and/or unit test run.

The secondary objective is to show how to use [IOptions](https://learn.microsoft.com/en-us/dotnet/core/extensions/options) and [AddTransient](https://learn.mi... | karenpayneoregon |

1,884,895 | Unlocking the Power of Geolocation with IPStack's API | In today's hyper-connected world, understanding the geographical location of your users is paramount... | 0 | 2024-06-11T22:16:37 | https://dev.to/ipstackapi/unlocking-the-power-of-geolocation-with-ipstacks-api-30jb | geolocation, location | In today's hyper-connected world, understanding the geographical location of your users is paramount for personalized user experiences, targeted marketing campaigns, and enhanced security measures. Harnessing the power of geolocation data has become a necessity for businesses across various industries. This is where IP... | ipstackapi |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.