id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,882,892 | Elixir: map casting to structs (with checks at compile time!) | Intro In this article you will learn: how to leverage compile-time validation of maps to... | 0 | 2024-06-10T08:04:28 | https://dev.to/utopos/elixir-validate-map-and-structs-keys-for-merging-at-compile-time-4dli | ---

title: Elixir: map casting to structs (with checks at compile time!)

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/wka79oe5i0zwsfjvkmt3.png

published: true

---

## Intro

In this article you will learn:

- how to leverage compile-time validation of maps to be merged later with %struct{}, that ... | utopos | |

1,882,891 | OskiStealer - Traffic Analysis - Spoonwatch | let's start: Downloading the Capture File and Understanding the... | 0 | 2024-06-10T08:03:35 | https://dev.to/mihika/oskistealer-traffic-analysis-spoonwatch-21a0 | wireshark, pcap, networkminer | ## let's start:

## Downloading the Capture File and Understanding the Assignment

1. Download the .pcap file from [pcap.](https://www.malware-traffic-analysis.net/2022/01/07/index.html)

2. Familiarize yourself with the assignment instructions.

## LAN segment data:

LAN segment range: 192.168.1[.]0/24 (192.168.1[.]0 th... | mihika |

1,882,879 | How to observe your Blazor WebAssembly application with OpenTelemetry and real user monitoring | To read this full article, click here. Observing WebAssembly applications presents unique... | 0 | 2024-06-10T08:01:46 | https://newrelic.com/blog/how-to-relic/how-to-observe-your-blazor-webassembly-application-with-opentelemetry-and-real-user-monitoring | observability, opentelemetry, opensource | To read this full article, [click here](https://newrelic.com/blog/how-to-relic/how-to-observe-your-blazor-webassembly-application-with-opentelemetry-and-real-user-monitoring?utm_source=devto&utm_medium=community&utm_campaign=global-fy25-q1-devtoupdates).

---

Observing WebAssembly applications presents unique challeng... | harrykimpel |

1,882,890 | Starter’s Guide for Amazon ElastiCache | Amazon ElastiCache is a fully managed in-memory data store and cache service provided by AWS. It is... | 0 | 2024-06-10T08:00:07 | https://dev.to/saumya27/starters-guide-for-amazon-elasticache-2aih | elasticcache, webdev | Amazon ElastiCache is a fully managed in-memory data store and cache service provided by AWS. It is designed to accelerate the performance of web applications by enabling fast retrieval of information from managed in-memory caches. ElastiCache supports two popular open-source in-memory caching engines: Redis and Memcac... | saumya27 |

1,882,889 | How Laser Hallmarking Works | Angel India for in the intricate world of jewelry and precious metals, authenticity and purity are... | 0 | 2024-06-10T07:59:19 | https://dev.to/webdesigninghouse72/how-laser-hallmarking-works-1k7j | **Angel India** for in the intricate world of jewelry and precious metals, authenticity and purity are paramount. Hallmarking is a crucial process that assures buyers of the quality of their purchase. Traditionally, this has been done manually, but technological advancements have brought about a revolution: [laser hall... | webdesigninghouse72 | |

1,882,888 | Vardhman Ambrosia | Shree Vardhman Ambrosia Gurgaon | Shree Vardhman Ambrosia Sector 70 Gurgaon | Welcome to Shree Vardhman Ambrosia, a distinguished residential community offering luxurious 4 BHK... | 0 | 2024-06-10T07:56:14 | https://dev.to/narendra_kumar_5138507a03/vardhman-ambrosia-shree-vardhman-ambrosia-gurgaon-shree-vardhman-ambrosia-sector-70-gurgaon-1meo | realestate, realestateinvestment, realestateagent, shreevardhmanambrosia | Welcome to Shree Vardhman Ambrosia, a distinguished residential community offering [**luxurious 4 BHK apartments**](https://shreevardhmanambrosia.tech) that set a new standard in modern living. This exquisite development seamlessly blends comfort, style, and convenience, providing an unparalleled living experience.

is a robust solution designed to support the comprehensive management of p... | mylearnnest |

1,882,872 | 5 Developer Techniques to Enhance LLMs Performance! | Large Language Models (LLMs) have revolutionized natural language processing, enabling applications... | 0 | 2024-06-10T07:44:56 | https://dev.to/pavanbelagatti/5-developer-techniques-to-enhance-llms-performance-3bbn | ai, developer, development, machinelearning | Large Language Models (LLMs) have revolutionized natural language processing, enabling applications that range from automated customer service to content generation. However, optimizing their performance remains a challenge due to issues like hallucinations - where the model generates plausible but incorrect informatio... | pavanbelagatti |

1,882,874 | How Cloud Hosting Streamlines Tax Prep for Busy Professionals | According to Gartner's projection, by 2028, approximately 70% of the workload will be operated within... | 0 | 2024-06-10T07:44:35 | https://dev.to/him_tyagi/how-cloud-hosting-streamlines-tax-prep-for-busy-professionals-hob | webdev, beginners, python, programming | According to [Gartner's projection](https://www.gartner.com/en/newsroom/press-releases/2023-11-20-gartner-it-infrastructure-operations-and-cloud-strategies-conference-2023-london-day-1-highlights), by 2028, approximately 70% of the workload will be operated within a cloud setting. It marks a significant increase from t... | him_tyagi |

1,882,859 | Misunderstanding Go Timer Resets | My teammate shared a snippet of code with me the other day which led to a fun rabbit hole. ... | 0 | 2024-06-10T07:40:51 | https://dev.to/milktea02/misunderstanding-go-timers-and-channels-1jal | go, programming, coding, learning | ---

title: Misunderstanding Go Timer Resets

published: true

description:

tags: golang, programming, coding, learning

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-06-10 03:36 +0000

---

My teammate shared a snippet of code with me the other day which led ... | milktea02 |

1,882,871 | Embark on Your Sublimation Journey: Best Printers for Beginners" | Are you ready to dive into the exciting world of sublimation printing? Whether you're a beginner... | 0 | 2024-06-10T07:39:23 | https://dev.to/myla_jack_/embark-on-your-sublimation-journey-best-printers-for-beginners-13n9 | Are you ready to dive into the exciting world of [sublimation printing](https://theprinterinsider.com/)? Whether you're a beginner looking to explore this creative craft or a seasoned enthusiast seeking the perfect starter printer, we've got you covered. Here's a curated list of the best sublimation printers tailored s... | myla_jack_ | |

1,882,422 | Component Generation with Figma API: Bridging the Gap Between Development and Design | Introduction In today's fast-paced software development landscape, efficient workflows and... | 0 | 2024-06-10T07:34:58 | https://dev.to/krjakbrjak/component-generation-with-figma-api-bridging-the-gap-between-development-and-design-1nho | figma, qml, codegeneration, go | ##Introduction

In today's fast-paced software development landscape, efficient workflows and clear responsibilities between development and design teams are crucial. One effective way to streamline these workflows is by automating component generation from design tools like [Figma](https://www.figma.com/) to code usin... | krjakbrjak |

1,882,870 | ブロックチェーン技術の将来性:スマートコントラクトの革新 | ブロックチェーン技術は、金融業界だけでなく、多くの分野で革新をもたらしています。その中でも、スマートコントラクトは特に注目されています。スマートコントラクトは、契約の自動執行を可能にし、透明性と信頼性を... | 0 | 2024-06-10T07:33:25 | https://dev.to/sotatekjapan/burotukutienji-shu-nojiang-lai-xing-sumatokontorakutonoge-xin-4g2l | ブロックチェーン技術は、金融業界だけでなく、多くの分野で革新をもたらしています。その中でも、スマートコントラクトは特に注目されています。スマートコントラクトは、契約の自動執行を可能にし、透明性と信頼性を高める画期的な技術です。本記事では、スマートコントラクトの基本概念、利点、および具体的な応用例について詳しく説明します。

## 1. スマートコントラクトとは?

スマートコントラクトは、ブロックチェーン上で動作する自己実行型の契約です。従来の契約と異なり、スマートコントラクトは特定の条件が満たされた場合に自動的に実行されるため、仲介者を必要としません。このため、取引の迅速化、コストの削減、および透明性の向上が期待されます。

... | sotatekjapan | |

1,882,864 | Building a Commute Navigator using Lyzr SDK | Navigating through the hustle and bustle of city life can be daunting, especially when it comes to... | 0 | 2024-06-10T07:32:06 | https://dev.to/akshay007/building-a-commute-navigator-using-lyzr-sdk-1c60 | ai, programming, python, streamlit | Navigating through the hustle and bustle of city life can be daunting, especially when it comes to finding the quickest and most affordable commuting options. Introducing **Commute Navigator**, your ultimate AI-powered assistant designed to simplify your daily travel. Powered by the advanced capabilities of **Lyzr Auto... | akshay007 |

1,882,863 | Banff Hotel Rooms & Suites | Royal Canadian Lodge | Stay at the Royal Canadian Lodge during your Banff getaway. Enjoy our comfortable and well-equipped... | 0 | 2024-06-10T07:30:16 | https://dev.to/royal_canadianlodge_7cc2/banff-hotel-rooms-suites-royal-canadian-lodge-34dm | Stay at the [Royal Canadian Lodge](https://www.royalcanadianlodge.com/lodging) during your Banff getaway. Enjoy our comfortable and well-equipped hotel rooms & suites near downtown Banff. Book Now!

| royal_canadianlodge_7cc2 | |

1,882,860 | Sam Higginbotham's Strategies for Reducing Financial Stress | In today's fast-paced world, financial stress is a common issue that affects many individuals and... | 0 | 2024-06-10T07:28:38 | https://dev.to/samhigginbotham/sam-higginbothams-strategies-for-reducing-financial-stress-1cje | samhigginbotham, financialgoals, financialplanning, financetips | In today's fast-paced world, financial stress is a common issue that affects many individuals and families. It's easy to feel overwhelmed when juggling bills, savings, and unexpected expenses. Fortunately, there are practical strategies you can employ to reduce financial stress and regain control over your finances. [S... | samhigginbotham |

1,882,725 | Women in Tech: Challenges and Opportunities | The Landscape of Women in Tech The tech industry was first used to be said as male dominated... | 0 | 2024-06-10T07:23:21 | https://dev.to/techstuff/women-in-tech-challenges-and-opportunities-551k | **The Landscape of Women in Tech**

The tech industry was first used to be said as male dominated industry, but over a decade it has seen a transition, a no. of female employees has been increased than male employees. Women are more keen to work and are proving themselves in every aspect of the industry. In Spite of th... | aishna | |

1,882,857 | Satta Matka Game Development Company | PM IT Solution is a premier satta matka game development company that excels in creating captivating... | 0 | 2024-06-10T07:21:36 | https://dev.to/pankaj_seo_dd3432b20e3f9e/satta-matka-game-development-company-2o02 | PM IT Solution is a premier [satta matka game development company](https://www.thepmitsolution.com/satta-matka-game-development.php) that excels in creating captivating and engaging gaming experiences. With a team of skilled developers and designers, we specialize in crafting innovative and immersive satta matka games ... | pankaj_seo_dd3432b20e3f9e | |

1,882,856 | How to Optimize Your YouTube Thumbnail Sizes for Maximum Engagement | Creating attractive YouTube thumbnails is important to attract viewers and increase the click-through... | 0 | 2024-06-10T07:21:24 | https://dev.to/emilie_brown/how-to-optimize-your-youtube-thumbnail-sizes-for-maximum-engagement-4p11 | optimize, imageoptimization, tutorial | Creating attractive YouTube thumbnails is important to attract viewers and increase the click-through rate of your videos.

In this blog post, we will discuss the recommended YouTube thumbnail sizes and introduce you to a powerful YouTube thumbnail downloader tool that can help you streamline your workflow.

**YouTube ... | emilie_brown |

1,882,636 | Why and How to Migrate Your React App from CRA to Vite | At the time(10/06/2024) of writing this article, CRA has been effectively in a semi-dead state for... | 0 | 2024-06-10T07:19:59 | https://dev.to/idrisdev/why-and-how-to-migrate-your-react-app-from-cra-to-vite-2ni6 | react, vite, webdev, refactorit | At the time(10/06/2024) of writing this article, CRA has been effectively in a semi-dead state for the past two years. It has not received any commits since last year nor has it received any important commits for the past two years in [CRA commit history](https://github.com/facebook/create-react-app/commits/main/). Iss... | idrisdev |

1,882,730 | Why is it important to look for the proper institute for your SAT exam preparation? | The competitive examinations like SAT, AP, ACT and LNAT are the important milestone that decides your... | 0 | 2024-06-10T07:19:40 | https://dev.to/optioneducation31/why-is-it-important-to-look-for-the-proper-institute-for-your-sat-exam-preparation-3o43 | The competitive examinations like SAT, AP, ACT and LNAT are the important milestone that decides your future study purposes thereby reaching your dream career goals. These entrance examinations are used by many of the colleges and universities which makes the admission decisions. So basically these competitive exams li... | optioneducation31 | |

1,882,729 | The CNC Laser Cutting: How It Works and Its Key Applications | ** ANDEL INDIA** for CNC laser cutting is revolutionizing the manufacturing industry with its... | 0 | 2024-06-10T07:18:38 | https://dev.to/webdesigninghouse72/the-cnc-laser-cutting-how-it-works-and-its-key-applications-4po6 | ** ANDEL INDIA** for CNC laser cutting is revolutionizing the manufacturing industry with its precision and efficiency. Whether you’re a hobbyist or a major industrial player, understanding how CNC laser cutting works and its various applications can open up new possibilities for your projects. If you’re looking for a ... | webdesigninghouse72 | |

1,882,728 | Sealing Machines: Innovations for the Modern Age | Sealing Products: Innovations for the Modern Age Sealing equipment are a definite innovation which... | 0 | 2024-06-10T07:18:02 | https://dev.to/carrie_richardsoe_870d97c/sealing-machines-innovations-for-the-modern-age-1o6b |

Sealing Products: Innovations for the Modern Age

Sealing equipment are a definite innovation which are definite is contemporary has revolutionized exactly how we package products for transportation, space for storage, plus blood supply. They're efficient, time-saving, plus equipment that are safer try trustworthy ... | carrie_richardsoe_870d97c | |

1,882,727 | Elevating Your Real Estate Experience in Bangalore | When it comes to securing the best Indian real estate services, Address Advisors emerges as the... | 0 | 2024-06-10T07:15:11 | https://dev.to/adressadvisors/elevating-your-real-estate-experience-in-bangalore-53jb | When it comes to securing the [best Indian real estate services](https://addressadvisors.com/), Address Advisors emerges as the premier choice. Our commitment to a client-centric approach ensures that every aspect of our service is designed to meet and exceed the diverse needs of our clients. In today’s world, an addre... | adressadvisors | |

1,882,726 | Adaptive Artificial Intelligence in Business How Can You Implement it | Unlocking the Future: Adaptive AI and Its Transformative Power Welcome to the world of Adaptive... | 27,619 | 2024-06-10T07:14:58 | https://dev.to/aishik_chatterjee_0060e71/adaptive-artificial-intelligence-in-business-how-can-you-implement-it-fam | **Unlocking the Future: Adaptive AI and Its Transformative Power**

Welcome to the world of Adaptive Artificial Intelligence (AI), where technology transcends traditional boundaries to learn, evolve, and adapt autonomously. Unlike conventional AI, which follows rigid programming, Adaptive AI dynamically adjusts its al... | aishik_chatterjee_0060e71 | |

1,520,795 | Stop Git from tracking file changes | In this short post I'll explain how and why I like to (sometimes) stop Git from tracking file... | 0 | 2023-07-07T10:56:42 | https://dev.to/codenamegrant/stop-git-from-tracking-file-changes-1ggg | git, beginners, development | In this short post I'll explain how and why I like to (sometimes) stop Git from tracking file changes.

## Why would I want such a thing?

A simple but real world use case would be when your LOCAL project config differs from the config required in PROD.

Take a look at this `.npmrc` file. Its configured to make use of u... | codenamegrant |

1,882,724 | An arranged marriage | I’ve always been exceedingly fond of puzzles, rubik’s cubes and other “solving” diversions since my... | 0 | 2024-06-10T07:12:12 | https://dev.to/nirmal_harikumar/an-arranged-marriage-3434 | career, learning, life, developer | I’ve always been exceedingly fond of puzzles, rubik’s cubes and other “solving” diversions since my boyhood. Seeing my troubleshooting skills and my hunger for problem solving, my parents brought me more “problems”, this time math ones, to feed my brain and thats how, errm no.. no that’s not me. That’s someone who I ne... | nirmal_harikumar |

1,882,723 | What is GitHub? (And Its Advantages) | According to Semrush, GitHub receives 916.6 million visits. But what exactly is GitHub? Well, it’s a... | 0 | 2024-06-10T07:11:36 | https://medium.com/@shariq.ahmed525/what-is-github-and-its-advantages-b7643fb680a3 | github, githubcopilot, githubactions | According to Semrush, GitHub receives 916.6 million visits. But what exactly is GitHub? Well, it’s a platform where developers can share their code and collaborate on various projects.

It doesn’t matter whether you are a CEO or a developer. Both can work on a project simultaneously. GitHub can be used to store, track,... | shariqahmed525 |

1,882,722 | How to Watch Live Sports on Your Irish TV Channel App | Watching live sports has become an integral part of our lives, providing entertainment, excitement,... | 0 | 2024-06-10T07:11:00 | https://dev.to/charlotte_wesker_2b851e4f/how-to-watch-live-sports-on-your-irish-tv-channel-app-4a1 | Watching live sports has become an integral part of our lives, providing entertainment, excitement, and a sense of community. With the advent of technology, it has become increasingly convenient to catch live sports action from the comfort of our homes. For those living in Ireland, there are several options to watch li... | charlotte_wesker_2b851e4f | |

1,882,721 | Mastering JavaScript Event Delegation | Introduction Event handling is a crucial aspect of building interactive web applications. As web... | 0 | 2024-06-10T07:10:09 | https://dev.to/dipakahirav/mastering-javascript-event-delegation-o1c | javascript, webdev, beginners, programming | **Introduction**

Event handling is a crucial aspect of building interactive web applications. As web applications grow in complexity, managing events efficiently becomes challenging. This is where event delegation comes in handy. In this blog post, we will delve into the concept of event delegation, understand its adv... | dipakahirav |

1,882,719 | 4 devs and a meetup | Good morning everyone and happy MonDev! In fact, once again, and with pleasure, I say good... | 0 | 2024-06-10T07:08:35 | https://dev.to/giuliano1993/4-devs-and-a-meetup-2k3o | Good morning everyone and happy MonDev!

In fact, once again, and with pleasure, I say good evening.

It's Saturday night and I'm writing to you live from the train that is taking me back to Rome after the event that I had the pleasure and luck to organize together with Giuseppe Funicello (aka Giuppi), Gianluca Lomarco ... | giuliano1993 | |

1,882,717 | Why Tech Companies and Tech People Should Not Promot and Stand with LGBTQ+ Movement | In today’s rapidly evolving business landscape, there’s increasing pressure on tech companies to take... | 0 | 2024-06-10T07:05:51 | https://dev.to/md_enayeturrahman_2560e3/why-tech-companies-and-tech-people-should-not-promot-and-stand-with-lgbtq-movement-7k5 | lgbtq, techtalks, node, medium | In today’s rapidly evolving business landscape, there’s increasing pressure on tech companies to take a stand on various social and political issues. However, it’s crucial to recognize the importance of maintaining neutrality, especially in the highly diverse and global tech industry. Here are some reasons why companie... | md_enayeturrahman_2560e3 |

1,882,716 | ChatGPT Reigns Supreme Over Google's Gemini | As Jaiden Benzz, the owner of a real estate company and marketing firm in Boca Raton, Florida, I have... | 0 | 2024-06-10T07:05:35 | https://dev.to/jaiden_benzz_88147cb30b3f/chatgpt-reigns-supreme-over-googles-gemini-1a8b |

As Jaiden Benzz, the owner of a real estate company and marketing firm in Boca Raton, Florida, I have found ChatGPT to be far superior to Google's Gemini for my business needs. ChatGPT provides better information, more detailed responses, and better scaling capabilities, making it an invaluable asset for my real estat... | jaiden_benzz_88147cb30b3f | |

1,882,707 | Import Emails from soon to be Deactivated Outlook Account to Zoho Mail | Estimated reading time: 5 minutes Are you transitioning from an Outlook account that is about to be... | 0 | 2024-06-10T07:02:07 | https://dev.to/mini96/import-emails-from-soon-to-be-deactivated-outlook-account-to-zoho-mail-49cj | webdev, tutorial | **Estimated reading time: 5 minutes**

Are you transitioning from an Outlook account that is about to be deactivated to Zoho Mail? This comprehensive walkthrough is designed to guide you through the process of exporting your emails from Outlook and importing them into Zoho Mail seamlessly. Whether you're using the Outl... | mini96 |

1,881,585 | Sorcerer’s Code: Spellbinding Regex match in Power Automate’s C# Plugin | Intro: Power Automate is a tool from Microsoft that helps people do their work... | 26,301 | 2024-06-10T07:01:46 | https://dev.to/balagmadhu/sorcerers-code-spellbinding-regex-match-in-power-automates-c-plugin-3b3o | powerautomate, powerfuldevs, regex, hacks | ## Intro:

Power Automate is a tool from Microsoft that helps people do their work automatically, like magic. It can do a lot of things without needing extra help. But when it comes to checking data to make sure it’s right, Power Automate doesn’t have a built-in way to do that using something called regex, which is like... | balagmadhu |

1,880,382 | How To Implement The WebAuthn Autcomplete Token | Introduction WebAuthn offers a cutting-edge solution with its autocomplete attribute,... | 0 | 2024-06-10T07:00:00 | https://www.corbado.com/blog/webauthn-autocomplete | ## Introduction

WebAuthn offers a cutting-edge solution with its autocomplete attribute, which facilitates passkey and password autofill. This blog post dives into the characteristics of WebAuthn autocomplete, exploring its benefits, implementation, and browser support, to help developers and product managers enhance u... | vdelitz | |

1,863,787 | InnoDB's ibdata1: Essential Management Tips for MySQL | MySQL's InnoDB storage engine relies on the ibdata1 file. This guide covers its importance and... | 21,681 | 2024-06-10T07:00:00 | https://dev.to/dbvismarketing/innodbs-ibdata1-essential-management-tips-for-mysql-4ccd | ibdata, innodb, mysql | MySQL's InnoDB storage engine relies on the ibdata1 file. This guide covers its importance and management tips.

## Examples of what ibdata1 contains

- **Data and Indexes,** unless `innodb_file_per_table=1`.

- **Doublewrite and Insert Buffers,** for transaction support.

- **Rollback Segments,** enables rollbacks.

- **... | dbvismarketing |

1,882,713 | python selenium | 1)Selenium Architecture Enter fullscreen mode Exit fullscreen... | 0 | 2024-06-10T06:59:42 | https://dev.to/kavithagovindaraj/python-selenium-35ml | 1)Selenium Architecture

The architecture of Selenium is composed of :-

1) Selenium IDE:

a) IDE = Integrated Development Environment.

b) It is nothing but a simple web-browser extension.

c) You just need to download and install the extension for that

particular we... | kavithagovindaraj | |

1,882,711 | 6 Legit Apps To Make Truly Passive Income By Having Your Computer Turned On. | Imagine earning money while you sleep, with your trusty computer quietly ticking away dollars for... | 0 | 2024-06-10T06:59:08 | https://dev.to/hammad_ashraf_b33a1c4cf8e/6-legit-apps-to-make-truly-passive-income-by-having-your-computer-turned-on-52j2 | makemoneyonline, makemoney, onlineearning, adswatching | Imagine earning money while you sleep, with your trusty computer quietly ticking away dollars for you. It sounds too good to be true, but in today’s world, passive income streams are a reality for many.

Maybe you’re tired of the daily grind and are looking for ways to supplement your income without taking on a second ... | hammad_ashraf_b33a1c4cf8e |

1,882,710 | Softening Fine Lines and Wrinkles with Cheyanne Mallas (PA): Youthful Skin for Moms with Bioregenerative Aesthetics | As a mom, balancing the demands of family life while maintaining a youthful and radiant complexion... | 0 | 2024-06-10T06:58:56 | https://dev.to/cheyannemallas_34/softening-fine-lines-and-wrinkles-with-cheyanne-mallas-pa-youthful-skin-for-moms-with-bioregenerative-aesthetics-nh3 | As a mom, balancing the demands of family life while maintaining a youthful and radiant complexion can be challenging. The visible signs of aging, such as fine lines and wrinkles, can affect your confidence and self-esteem, leaving you longing for smoother, more youthful-looking skin. Fortunately, advancements in biore... | cheyannemallas_34 | |

1,882,709 | How Decentralized Science(DeSci) Cures the Common Ails of Academic Research | The current state of academic research is riddled with numerous challenges, including funding... | 0 | 2024-06-10T06:57:36 | https://www.zeeve.io/blog/how-decentralized-sciencedesci-cures-the-common-ails-of-academic-research/ | decentralizedscience, blockchaintechnology | <p>The current state of academic research is riddled with numerous challenges, including funding constraints, publication biases, data accessibility issues, and barriers to collaboration. According to a <a href="https://www.pewresearch.org/politics/2009/07/09/section-3-funding-scientific-research/">PEW Research report<... | zeeve |

1,882,708 | Repairing Sun Damage with Cheyanne Mallas (California): Restoring Mom's Skin Health with Bioregenerative Aesthetics | Motherhood is a rewarding journey, but it can also take a toll on a woman's skin, especially with... | 0 | 2024-06-10T06:56:29 | https://dev.to/cheyannemallas_34/repairing-sun-damage-with-cheyanne-mallas-california-restoring-moms-skin-health-with-bioregenerative-aesthetics-52hf | Motherhood is a rewarding journey, but it can also take a toll on a woman's skin, especially with prolonged exposure to the sun's harmful rays. Sun damage can lead to premature aging, hyperpigmentation, and an increased risk of skin cancer. Fortunately, advancements in bioregenerative aesthetics offer innovative soluti... | cheyannemallas_34 | |

1,882,706 | How do I make a profit with NFT Marketplace Development? | Non-Fungible Tokens (NFTs) have changed the digital world, opening up new opportunities for creators,... | 0 | 2024-06-10T06:51:59 | https://dev.to/jacksam0101/how-do-i-make-a-profit-with-nft-marketplace-development-1719 | Non-Fungible Tokens (NFTs) have changed the digital world, opening up new opportunities for creators, collectors, and investors. As more people want unique digital items, businesses are looking for ways to benefit from this trend. Developing an NFT marketplace is a great opportunity. By building an NFT marketplace, you... | jacksam0101 | |

1,882,689 | Using shallowRef in Vue to improve performance | Handling large data structures in frontend applications is not easy. However, I recently learned... | 24,580 | 2024-06-10T06:48:26 | https://dev.to/jacobandrewsky/using-shallowref-in-vue-to-improve-performance-559f | vue, performance, javascript, typescript | Handling large data structures in frontend applications is not easy. However, I recently learned about a great feature of Vue called `shallowRef` which is a great utility that could help improve performance of your website if you are dealing with huge data structures like nested objects or arrays.

So amazing that the ... | jacobandrewsky |

1,882,702 | Need DEVOPS+AWS learning people. | I have currently completed Linux, shell scripting and working on Git/Github. any one wants to join me... | 0 | 2024-06-10T06:46:53 | https://dev.to/noaman_ali_157680b621a0ee/need-devopsaws-learning-people-2j42 | I have currently completed Linux, shell scripting and working on Git/Github. any one wants to join me in this learning process??????

| noaman_ali_157680b621a0ee | |

1,882,701 | AWS Pricing | Amazon EC2 Pricing Options On-Demand Instances Description: Pay for compute capacity by... | 0 | 2024-06-10T06:46:13 | https://dev.to/devops_den/aws-pricing-1m23 | ## Amazon EC2 Pricing Options

On-Demand Instances

Description: Pay for compute capacity by the hour or second with no long-term commitments.

Pricing Example: t2.micro (Linux/Unix): $0.0116 per hour.

Reserved Instances

Description: Up to 75% discount compared to On-Demand prices with one-year or three-year commitment... | devops_den | |

1,882,699 | Creative Construction Parallax Slider | Theme 1 | This CodePen showcases a visually engaging parallax slider for web development projects. The slider... | 0 | 2024-06-10T06:46:00 | https://dev.to/creative_salahu/creative-construction-parallax-slider-theme-1-1ba7 | codepen | This CodePen showcases a visually engaging parallax slider for web development projects. The slider features three dynamic slides, each highlighting a different theme related to innovative and industrial solutions, building design, and dream constructions. The design is fully responsive and includes smooth animations a... | creative_salahu |

1,882,695 | Everything You Should Know About Oracle Redwood | Any application’s usability and efficacy are greatly influenced by its user interface, and Oracle... | 0 | 2024-06-10T06:40:15 | https://healhow.com/everything-you-should-know-about-oracle-redwood/ | oracle, redwood |

Any application’s usability and efficacy are greatly influenced by its user interface, and Oracle Redwood is no exception. Let’s examine the blog in more detail to see how it has revolutionized user interface design ... | rohitbhandari102 |

1,882,694 | Docker DevTools Day 2.0: Dive Deep into the Docker Developer Ecosystem | Calling all Docker enthusiasts! Join us for the second edition of Docker DevTools Day Bengaluru on... | 0 | 2024-06-10T06:39:32 | https://dev.to/ajeetraina/docker-devtools-day-20-dive-deep-into-the-docker-developer-ecosystem-75m | docker, meetup, developer, genai | Calling all Docker enthusiasts! Join us for the second edition of [Docker DevTools Day Bengaluru](https://www.meetup.com/collabnix/events/301110702/) on June 22nd. This community event is your chance to dive deep into the world of developer tooling that revolves around Docker.

## What to Expect

- **Engaging Talks:** ... | ajeetraina |

1,882,693 | The Long Journey Ahead | Hey, Dev Community I'm Paul, and I'm fairly new to programming. I want to document my learning... | 0 | 2024-06-10T06:39:22 | https://dev.to/pauljd1/the-long-journey-ahead-25eb | webdev, javascript, beginners, programming | Hey, Dev Community

I'm Paul, and I'm fairly new to programming. I want to document my learning journey here. I've been coding and learning for about a month and a half now, every day.

I've been using Zero to Mastery and Scrimba for learning, and now I'm starting on Frontend Mentor for more hands-on projects.

![Image... | pauljd1 |

1,882,687 | Recruitment Databases Explained: Benefits and Best Practices | In today's competitive job market, recruitment databases have become an essential tool for HR... | 0 | 2024-06-10T06:25:44 | https://dev.to/jchristopher0033/recruitment-databases-explained-benefits-and-best-practices-104b | recruitmentdatabase, candidatemanagement, datadrivenrecruitment | In today's competitive job market, recruitment databases have become an essential tool for HR professionals and recruiters. These databases streamline the hiring process, enhance candidate management, and improve the overall efficiency of recruitment efforts. In this article, we'll explore the [benefits of using recrui... | jchristopher0033 |

1,882,692 | Konnect Packers and Movers | [12:47 pm, 07/06/2024] Om Panchal: Address: Shop No. 25, Modi Chawl, SV Road, Bajaj Wadi, Santacruz... | 0 | 2024-06-10T06:37:01 | https://dev.to/dp_1001/konnect-packers-and-movers-218m | [12:47 pm, 07/06/2024] Om Panchal: Address: Shop No. 25, Modi Chawl, SV Road, Bajaj Wadi, Santacruz West, Mumbai, Maharashtra 400054

Mobile No: 8433704467

Email Id: konnectpackerssantacruz@gmail.com

Website: www.konnectpackers.com

At Konnect Packers and Movers, the satisfaction of our customer is not a dream, but a r... | dp_1001 | |

1,882,691 | Courtside Chronicles: A Deep Dive into Basketball Culture in the USA with Robert Geiger (Teacher) | Basketball isn't just a sport in the United States; it's a cultural phenomenon deeply ingrained in... | 0 | 2024-06-10T06:36:45 | https://dev.to/robertgeiger/courtside-chronicles-a-deep-dive-into-basketball-culture-in-the-usa-with-robert-geiger-teacher-3l0d | Basketball isn't just a sport in the United States; it's a cultural phenomenon deeply ingrained in the fabric of society. From the iconic courts of New York City to the storied arenas of Los Angeles, basketball transcends geographical boundaries and unites communities like few other sports can. In this exploration of b... | robertgeiger | |

1,882,690 | Cross Country Running Essentials List by Robert Geiger: What to Pack for Success | Embarking on a cross country running journey requires more than just stamina and determination. The... | 0 | 2024-06-10T06:35:35 | https://dev.to/robertgeiger/cross-country-running-essentials-list-by-robert-geiger-what-to-pack-for-success-25ha | Embarking on a cross country running journey requires more than just stamina and determination. The key to a successful and enjoyable experience lies in careful planning and packing. Whether you're a seasoned runner or a novice, having the right essentials can make a significant difference in your performance and overa... | robertgeiger | |

1,882,688 | #CSS #tailwind | Where can I learn tailwind Css. | 0 | 2024-06-10T06:30:52 | https://dev.to/mateli19/css-tailwind-1gb6 | Where can I learn tailwind Css. | mateli19 | |

1,882,686 | Overcoming Common Challenges in Building a Node.js Development Team with Jurysoft’s Resource-as-a-Service Model | Introduction: Node.js has swiftly become a linchpin technology for numerous companies, prized for... | 0 | 2024-06-10T06:23:12 | https://dev.to/ajmal_kp/overcoming-common-challenges-in-building-a-nodejs-development-team-with-jurysofts-resource-as-a-service-model-3c61 | <p style="text-align: justify;"> <img alt="" class="bg kn ls c" height="394" loading="eager" role="presentation" src="https://miro.medium.com/v2/resize:fit:1400/1*QetPZvNxwu3d0daKRpd_rA.png" style="background-color: white; box-sizing: inherit; color: rgba(0, 0, 0, 0.8); font-family: medium-content-sans-serif-font,... | ajmal_kp | |

1,882,684 | VVIP Namah | VVIP Namah Ghaziabad | VVIP namah nh 24 Ghaziabad | Introducing VVIP Namah an exclusive residential community designed for discerning buyers. These... | 0 | 2024-06-10T06:21:30 | https://dev.to/narendra_kumar_5138507a03/vvip-namah-vvip-namah-ghaziabad-vvip-namah-nh-24-ghaziabad-3dih | realestate, realestateinvestment, realestatagent, vvipnamah | Introducing VVIP Namah an exclusive residential community designed for discerning buyers. These spacious apartments, with their high-end finishes and breathtaking views, perfectly blend comfort and sophistication.

[... | narendra_kumar_5138507a03 |

1,882,653 | Dynamic Datasets and Customizable Parameters for Data Analysis | In our previous blog, we unveiled the magic of Flowtrail AI's Text-to-SQL functionality, which... | 0 | 2024-06-10T06:20:01 | https://dev.to/flowtrail-ai/dynamic-datasets-and-customizable-parameters-for-data-analysis-dl2 | analytics, sql, nlp, ai |

In our [previous blog](https://dev.to/flowtrail-ai/text-to-sql-simple-way-to-generate-the-sql-in-flowtrail-ai-1717), we unveiled the magic of Flowtrail AI's Text-to-SQL functionality, which empowers users to craft complex queries using natural language. This innovation eliminates the need for deep SQL expertise, makin... | flowtrail-admin |

1,882,652 | Master the Art of App Development with Flutter: A Step-by-Step Guide | Introduction to app development with Flutter In the rapidly evolving world of mobile app development,... | 0 | 2024-06-10T06:18:47 | https://dev.to/apptagsolution/master-the-art-of-app-development-with-flutter-a-step-by-step-guide-3d26 | flutter, development, app | Introduction to app development with Flutter

In the rapidly evolving world of [**mobile app development**](https://apptagsolution.com/mobile-app-development/), a powerful and efficient framework like Flutter has emerged as a game-changer. As a developer, I have witnessed firsthand the tremendous impact Flutter has had ... | apptagsolution |

1,882,650 | Hiring Laravel Developers: How to Leverage Laravel Developers for Your Business Growth | Introduction In today's digital age, having a robust online presence is crucial for businesses to... | 0 | 2024-06-10T06:10:22 | https://dev.to/hirelaraveldevelopers/hiring-laravel-developers-how-to-leverage-laravel-developers-for-your-business-growth-k3e | webdev, programming, css, javascript | <h2>Introduction</h2>

<p>In today's digital age, having a robust online presence is crucial for businesses to thrive. One of the key components of a successful online strategy is having a well-built website or application. Laravel, a PHP framework, has emerged as a popular choice for web development due to its elegance... | hirelaraveldevelopers |

1,882,649 | Challenge Submission: Glam Up My Markup | This is a submission for [Frontend Challenge... | 0 | 2024-06-10T06:08:19 | https://dev.to/agagag/challenge-submission-glam-up-my-markup-4954 | devchallenge, frontendchallenge, css, javascript | _This is a submission for [Frontend Challenge v24.04.17]((https://dev.to/challenges/frontend-2024-05-29), Glam Up My Markup: Beaches_

## What I Built

<!-- Tell us what you built and what you were looking to achieve. -->

For the "Glam Up My Markup: Beaches" challenge, I developed a interactive and visually appealing g... | agagag |

1,882,643 | Jack-of-All-Trades or Master of One? My Journey Through Web Development | Hey Dev Community, I wanted to share a bit about my journey in web development. I'm currently... | 0 | 2024-06-10T06:07:50 | https://dev.to/ftharsh/jack-of-all-trades-or-master-of-one-my-journey-through-web-development-3k7g | webdev, codenewbie, coding, programming | Hey Dev Community,

I wanted to share a bit about my journey in web development. I'm currently rocking medium proficiency in JavaScript, React, HTML, and CSS. These tools have been my bread and butter, and they've allowed me to build some pretty cool projects. But, like any good developer, I'm always looking to expand ... | ftharsh |

1,882,386 | The C Family Tree | Image by Tomasz Steifer, Gdansk from Wikimedia Family Roll Call! Someday, I'd love to... | 0 | 2024-06-10T06:07:45 | https://dev.to/tremartin/the-c-family-tree-510e | > [Image by Tomasz Steifer, Gdansk from Wikimedia](https://commons.wikimedia.org/wiki/File:Family_tree_test1.jpg)

## **Family Roll Call!**

Someday, I'd love to make simple video games as a hobby. When this came up in conversation, I was informed that quite a lot of games are coded in C. I'd heard of multiple C langu... | tremartin | |

1,882,647 | Why Should You Invest in a Coin Development Company? | Investing in a MemeCoin development company may seem unusual at first glance, but there are many... | 0 | 2024-06-10T06:05:20 | https://dev.to/vasu99/why-should-you-invest-in-a-coin-development-company-2lpb | Investing in a MemeCoin development company may seem unusual at first glance, but there are many compelling reasons why it can be a profitable and innovative venture. Although meme Coins are often considered a humorous cryptocurrency, they have significant potential in today's digital economy. Here are the top ten reas... | vasu99 | |

1,882,646 | Reliable and Durable Industrial Pasta Making Equipment | These products might include factors like letterhead, contacting memory card, as well as... | 0 | 2024-06-10T06:05:16 | https://dev.to/carrie_richardsoe_870d97c/reliable-and-durable-industrial-pasta-making-equipment-3ck8 | These products might include factors like letterhead, contacting memory card, as well as clothing

Noting is actually really a treatment where a distinct gadget is actually really used in the direction of create a design and even photo on a product

This treatment is actually really used throughout markets in addition to... | carrie_richardsoe_870d97c | |

1,882,645 | 3 things I'd do differently if I learned to code today | This blog was originally published on Substack. Subscribe to ‘Letters to New Coders’ to receive free... | 0 | 2024-06-10T06:03:53 | https://dev.to/fahimulhaq/3-things-id-do-differently-if-i-learned-to-code-today-3glk | learntocode, beginners, career | This blog was originally published on Substack. Subscribe to ‘Letters to New Coders’ to receive free weekly posts.

Building great software. Having a fulfilling career. Solving challenging problems.

These are the aspirations that inspired me to learn programming in the early ’00s. They have likely also influenced you ... | fahimulhaq |

1,882,644 | Lua: The Modular Language You Already Know | What is Lua? Lua is a programming language that was invented in Brazil in 1993. It is a... | 0 | 2024-06-10T06:02:01 | https://dev.to/hackman78/lua-the-modular-language-you-already-know-4gop | ## What is Lua?

Lua is a programming language that was invented in Brazil in 1993. It is a scripting language known for it's speed and efficiency. It was created as an in-house solution for a data-entry application. It was needed to prepare input data files for simulations regularly throughout the day.

Lua was origin... | hackman78 | |

1,882,642 | How to create a simple remix app- A beginner guide | Remix is a framework that allows you to build modern web applications with ease. In this guide, we... | 0 | 2024-06-10T06:01:23 | https://dev.to/md_enayeturrahman_2560e3/how-to-create-a-simple-remix-app-a-beginner-guide-4b5j | remix, react, webdev, javascript | Remix is a framework that allows you to build modern web applications with ease. In this guide, we will walk you through the process of setting up a new Remix project, installing necessary dependencies, and creating a basic application with a simple form.

**Introduction**

Remix offers a powerful and flexible way to b... | md_enayeturrahman_2560e3 |

1,882,678 | Dify: Free Open-source LLM AI Chatbots Builder Platform | In the rapidly evolving field of Generative AI, Dify emerges as a powerful open-source platform for... | 0 | 2024-06-10T08:15:41 | https://blog.elest.io/dify-free-open-source-llm-ai-chatbots-builder-platform/ | opensourcesoftwares, elestio, dify, llm | ---

title: Dify: Free Open-source LLM AI Chatbots Builder Platform

published: true

date: 2024-06-10 06:00:01 UTC

tags: Opensourcesoftwares, Elestio, Dify, LLM

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/gcw0apd7sf1qgmttrm1y.png

canonical_url: https://blog.elest.io/dify-free-open-source-llm-ai-... | kaiwalyakoparkar |

1,882,641 | Perl Weekly #672 - It's time ... | Originally published at Perl Weekly 672 Hi there, ... to celebrate the release of Perl v5.40. To... | 20,640 | 2024-06-10T05:59:56 | https://perlweekly.com/archive/672.html | perl, news, programming | ---

title: Perl Weekly #672 - It's time ...

published: true

description:

tags: perl, news, programming

canonical_url: https://perlweekly.com/archive/672.html

series: perl-weekly

---

Originally published at [Perl Weekly 672](https://perlweekly.com/archive/672.html)

Hi there,

<strong> ... to celebrate the release of... | szabgab |

1,882,640 | Contract Manufacturing: Empowering Businesses with Expertise and Efficiency | In today's competitive market, companies are constantly seeking ways to streamline operations, reduce... | 0 | 2024-06-10T05:58:10 | https://dev.to/pcba1ros/contract-manufacturing-empowering-businesses-with-expertise-and-efficiency-444l | In today's competitive market, companies are constantly seeking ways to streamline operations, reduce costs, and accelerate time-to-market. Contract manufacturing has emerged as a strategic solution that enables businesses to achieve these goals by leveraging specialized expertise and resources. This article explores t... | pcba1ros | |

1,882,639 | Are you looking to find services for assignment help in USA? | For students in USA, starting an assignment can be satisfying and difficult. The assignment is the... | 0 | 2024-06-10T05:57:05 | https://dev.to/jackjose/are-you-looking-to-find-services-for-assignment-help-in-usa-2e9k | webdev, assignment, help | For students in USA, starting an assignment can be satisfying and difficult. The assignment is the final of an academic career and requires careful study, critical thinking, and strong writing. Assignment Help services exist to support students in this critical stage, offering important assistance in navigating the cha... | jackjose |

1,882,638 | Async Nx Monorepo: Enhancing Productivity and Scalability | Managing a large codebase can be a daunting task. As teams grow and projects become more complex, it... | 0 | 2024-06-10T05:56:44 | https://dev.to/hasancse/async-nx-monorepo-enhancing-productivity-and-scalability-2iim | webdev, tutorial, devops, productivity | Managing a large codebase can be a daunting task. As teams grow and projects become more complex, it becomes increasingly important to find efficient ways to handle code, dependencies, and workflows. Enter Nx, a powerful toolkit for building monorepos. But what if you could take your productivity a step further by leve... | hasancse |

1,882,635 | How to choose a cleaning service | Title: How to Choose the Right House Cleaning Service for Your Home Keeping your home clean and tidy... | 0 | 2024-06-10T05:55:30 | https://dev.to/psc2001/how-to-choose-a-cleaning-service-11gc | Title: How to Choose the Right House Cleaning Service for Your Home

Keeping your home clean and tidy can be a daunting task, especially if you have a busy lifestyle. Hiring a house cleaning service can save you time and energy, but with so many options available, it can be challenging to choose the right one. Here are... | psc2001 | |

1,882,634 | Nuxt Your Way to Native: Building Mobile Apps with Capacitor | Nuxt.js is a fantastic framework for building lightning-fast and feature-rich web applications. But... | 0 | 2024-06-10T05:53:02 | https://dev.to/sarveshk76/nuxt-your-way-to-native-building-mobile-apps-with-capacitor-2lbf | nuxt, capacitor, android, ios | Nuxt.js is a fantastic framework for building lightning-fast and feature-rich web applications. But what if you want to take your Nuxt.js creation and turn it into a native mobile app? With Capacitor, you can bridge the gap and deliver your Nuxt.js app as a native Android or iOS experience!

## Why Capacitor for Nuxt.j... | sarveshk76 |

1,882,633 | Comfort and Chic: The Rise of Loungewear in Pakistani Fashion | For centuries, Pakistani fashion has been synonymous with vibrant colors, intricate embroidery, and... | 0 | 2024-06-10T05:52:53 | https://dev.to/eastavenuenj/comfort-and-chic-the-rise-of-loungewear-in-pakistani-fashion-5c99 | clothing | For centuries, Pakistani fashion has been synonymous with vibrant colors, intricate embroidery, and flowing silhouettes. From the elegance of the shalwar kameez to the festive flair of the gharara, traditional **[Pakistani Clothes Online](https://www.eastavenuenj.com/)** has captured hearts around the world. However, i... | eastavenuenj |

1,873,006 | Let's Talk About Dataverse Low-Code Plugins | If you haven't heard about Dataverse Low-Code plugins let me catch you up. For starters plugins are... | 27,573 | 2024-06-10T05:51:01 | https://dev.to/wyattdave/lets-talk-about-dataverse-low-code-plugins-4im2 | powerplatform, dataverse, api, powerautomate | If you haven't heard about Dataverse Low-Code plugins let me catch you up.

For starters plugins are basically functional API's. They are built in C# and allow complex functionality in a single API call. A good example is a solution export, that is a plugin built but Microsoft that does the following:

- Gets solution ... | wyattdave |

1,882,631 | Vikapri Training | Vikapri Training is the best institute for SAP training, Digital Marketing, Selenium & Manual... | 0 | 2024-06-10T05:48:25 | https://dev.to/gayu_vicapri_61bbf9b4e628/vikapri-training-3c1j | Vikapri Training is the best institute for SAP training, Digital Marketing, Selenium & Manual Testing, Python, Java, AI, Machine learning with 100% Job Placement. | gayu_vicapri_61bbf9b4e628 | |

1,882,630 | GPT3 WebApp in Reactjs | Exploring the Capabilities of OpenAI's GPT-3 with a ReactJS Web Application Hey... | 0 | 2024-06-10T05:48:16 | https://dev.to/sudhanshuambastha/gpt3-webapp-in-reactjs-14an | react, webapp, trial, beginnerlearningpurpose | ## Exploring the Capabilities of OpenAI's GPT-3 with a ReactJS Web Application

Hey developers! 👋 Today, I am excited to share with you a project I recently created to delve into the amazing capabilities of OpenAI's GPT-3 model using a ReactJS web application. This project was inspired by a YouTube tutorial and aimed ... | sudhanshuambastha |

1,882,628 | admission | educounsel is best for students as they guide students for their career and also select the college | 0 | 2024-06-10T05:45:11 | https://dev.to/raju_patil_77a7f1fd2b957c/admission-38k5 | [educounsel](https://www.educounselpune.com/) is best for students as they guide students for their career and also select the college | raju_patil_77a7f1fd2b957c | |

1,882,627 | Mastering JavaScript Closures: A Comprehensive Guide | JavaScript closures are a powerful yet sometimes misunderstood concept in JavaScript programming.... | 0 | 2024-06-10T05:43:05 | https://dev.to/jahid6597/mastering-javascript-closures-a-comprehensive-guide-1gd7 | webdev, javascript, beginners, discuss | JavaScript closures are a powerful yet sometimes misunderstood concept in JavaScript programming. Despite being a bit tricky to understand at first, they are powerful and essential for writing clean, modular, and efficient code. In this comprehensive guide, we will explore closures from the ground up, covering everythi... | jahid6597 |

1,882,626 | FeedForward and FeedBack Control | What the F**K is that? Well kiddo chill out! I'll teach you something cool! ... | 0 | 2024-06-10T05:37:06 | https://dev.to/maiommhoon/feedforward-and-feedback-control-433i | robotics, learning, design, algorithms | ## What the F**K is that?

Well kiddo chill out! I'll teach you something cool!

## Intro

These are the concept of Control theory which are used in robot or making autonomous cars/anything.

I learned this while making robots for my team to compete in the world's biggest Robotics competition called Vex Robotics.

Let's ... | maiommhoon |

1,882,625 | Best product to cure acid reflux | Introduction Acid reflux, also known as gastroesophageal reflux disease (GERD), affects millions of... | 0 | 2024-06-10T05:36:55 | https://dev.to/sabir_ali_0ea4b6d31d7e4ad/best-product-to-cure-acid-reflux-4mp1 | reflux | **Introduction**

Acid [reflux](https://zemaflux.com/), also known as gastroesophageal reflux disease (GERD), affects millions of people worldwide, especially in the USA. It’s not just a minor discomfort; it can significantly impact your quality of life. In this article, we’ll explore the best product to cure acid reflu... | sabir_ali_0ea4b6d31d7e4ad |

1,882,624 | Top Email Reseller Program to Boost Your Revenue | Join Digitalaka.com's Email Reseller Program to boost your revenue with top-notch email services. | 0 | 2024-06-10T05:36:54 | https://dev.to/devid_richh_c2ad243b7ac42/top-email-reseller-program-to-boost-your-revenue-2407 | Join Digitalaka.com's **[Email Reseller Program](https://medium.com/@vikaspanache0/top-email-reseller-program-to-boost-your-revenue-0fb95ebadfaf)** to boost your revenue with top-notch email services.

| devid_richh_c2ad243b7ac42 | |

1,882,623 | What are Stablecoins? | Stablecoins are digital or crypto assets that have a value that is stable compared to a conventional... | 0 | 2024-06-10T05:30:36 | https://dev.to/lillywilson/what-are-stablecoins-i40 | bitcoin, cryptocurrency, asic | **[Stablecoins ](https://asicmarketplace.com/blog/what-are-stablecoins/)**are digital or crypto assets that have a value that is stable compared to a conventional monetary asset. Stablecoins are backed by assets with low risk, such as gold or fiat currencies. This reduces the volatility of their price. The backing asse... | lillywilson |

1,882,622 | Glam Up My Markup: Beaches - My Submission | This is a submission for Frontend Challenge v24.04.17, CSS Art: June. Inspiration... | 0 | 2024-06-10T05:29:26 | https://dev.to/harshitads44217/glam-up-my-markup-beaches-my-submission-1l24 | frontendchallenge, devchallenge, css, javascript | _This is a submission for [Frontend Challenge v24.04.17](https://dev.to/challenges/frontend-2024-05-29), CSS Art: June._

## Inspiration 🌊🏄♀️🏄♂️

<!-- What are you highlighting today? -->

I was inspired by the idea of creating a serene beach scene using just HTML and CSS. I wanted to challenge myself to create a... | harshitads44217 |

1,882,621 | Use OpenAI in your JavaScript project the easy way with Ragged | Introduction Welcome to this simple tutorial on how to use OpenAI’s API in JavaScript. In... | 0 | 2024-06-10T05:28:27 | https://dev.to/monarchwadia/use-openai-in-your-javascript-project-the-easy-way-with-ragged-5g79 | ## Introduction

Welcome to this simple tutorial on how to use OpenAI’s API in JavaScript. In this tutorial, we will be taking the help of Ragged, which is a library that makes working with OpenAI very easy and uncomplicated.

In this tutorial, we’ll walk you through the steps to set up Ragged and create a basic applic... | monarchwadia | |

1,882,620 | The Comprehensive Guide to DevOps | Introduction Definition of DevOps DevOps is a set of practices that combines software... | 0 | 2024-06-10T05:26:34 | https://dev.to/sakshi_pach/the-comprehensive-guide-to-devops-4ghp | devops, beginners | ## **Introduction**

**Definition of DevOps**

DevOps is a set of practices that combines software development (Dev) and IT operations (Ops) to shorten the development lifecycle and deliver high-quality software continuously.

**Importance and relevance in modern software development**

In today’s fast-paced tech environm... | sakshi_pach |

1,882,600 | Stay ahead in web development: latest news, tools, and insights #36 | weeklyfoo #36 is here: your weekly digest of all webdev news you need to know! This time you'll find 34 valuable links in 5 categories! Enjoy! | 0 | 2024-06-10T05:18:24 | https://weeklyfoo.com/foos/foo-036/ | webdev, weeklyfoo, javascript, node |

weeklyfoo #36 is here: your weekly digest of all webdev news you need to know! This time you'll find 34 valuable links in 5 categories! Enjoy!

## 🚀 Read it!

- <a href="https://stackoverflow.blog/2024/05/22/you-should-keep-a-developer-s-journal/?utm_source=weeklyfoo&utm_medium=web&utm_campaign=weeklyfoo-36&ref=weekly... | urbanisierung |

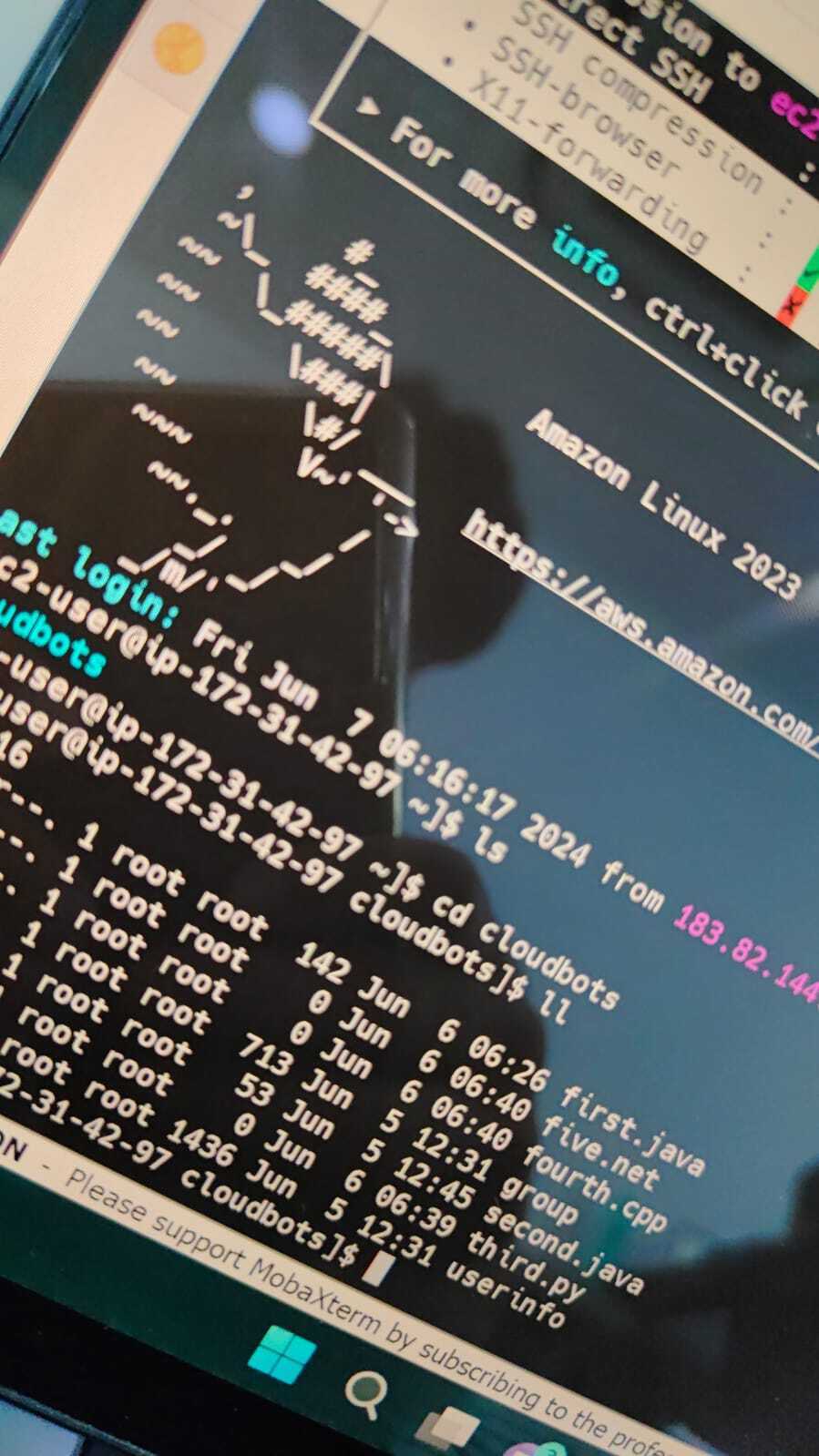

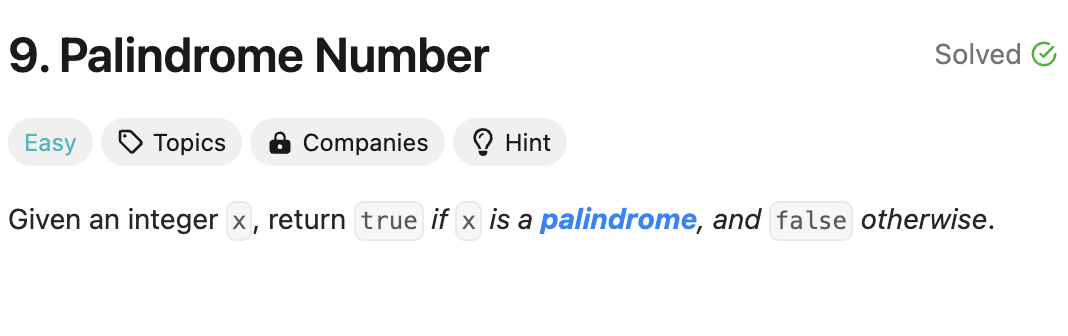

1,882,396 | My idea and submission for problem 9 on Leetcode(very detailed) | The photo of the problem 1. the analysis of the problem: First we can easily know if... | 0 | 2024-06-10T05:18:01 | https://dev.to/hallowaw/my-idea-and-submission-for-problem-9-on-leetcodevery-detailed-55d0 | cpp, beginners, programming | The photo of the problem

## 1. the analysis of the problem:

First we can easily know if the number smaller than 0,it can not be the palindrome number.

We can not compare with the first digit with the last digit,so ... | hallowaw |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.