id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,882,599 | Tétouan est une ville du nord du Maroc | Beauté naturelle Tétouan regorge de beautés naturelles, des montagnes du Rif aux plages immaculées de... | 0 | 2024-06-10T05:15:20 | https://dev.to/cskeisari665/tetouan-est-une-ville-du-nord-du-maroc-4eje | Beauté naturelle

Tétouan regorge de beautés naturelles, des montagnes du Rif aux plages immaculées de la côte méditerranéenne. Le parc national Talassemtane, situé à proximité, propose des sentiers de randonnée à travers des forêts luxuriantes, des cascades et des vues époustouflantes. Le parc abrite une flore et une f... | cskeisari665 | |

1,882,598 | Commonly Used Databases with Node.js and Express | In the world of web development, Node.js and Express have become go-to technologies for building... | 0 | 2024-06-10T05:13:29 | https://dev.to/vyan/the-most-commonly-used-databases-with-nodejs-and-express-1koe | webdev, javascript, node, database | In the world of web development, Node.js and Express have become go-to technologies for building fast, scalable, and efficient server-side applications. One crucial aspect of these applications is the database. Choosing the right database is essential for the performance and reliability of your app. In this blog post, ... | vyan |

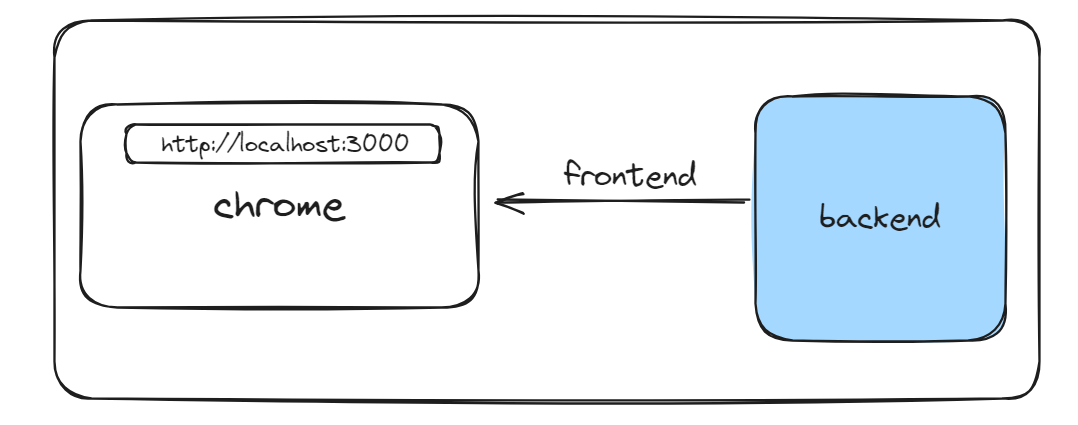

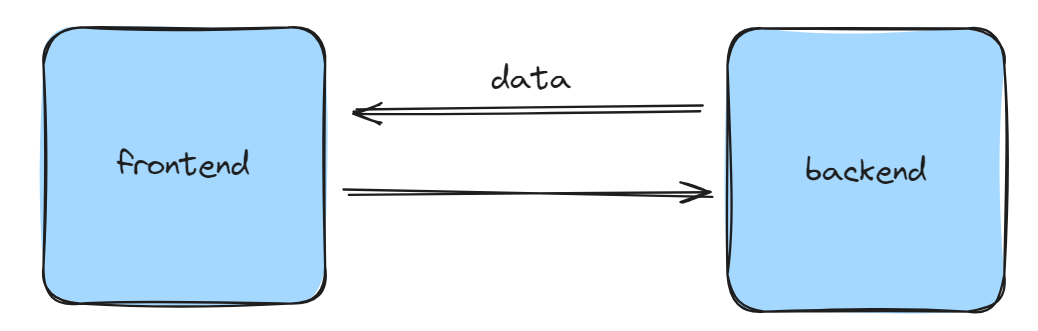

1,882,597 | REST API, Stateless, and Stateful Backend, Postman | Old architecture New architecture An API (Application Programming... | 0 | 2024-06-10T05:12:51 | https://dev.to/swarnendu0123/rest-api-stateless-and-stateful-backend-cjg | # Old architecture

# New architecture

An **API (Application Programming Interface)** is a set of rules and too... | swarnendu0123 | |

1,882,595 | Stepping into a Wearable World: A Beginner's Guide to watchOS Development | The Apple Watch has revolutionized the way we interact with technology on the go. But beyond being a... | 0 | 2024-06-10T05:09:08 | https://dev.to/epakconsultant/stepping-into-a-wearable-world-a-beginners-guide-to-watchos-development-104g | The Apple Watch has revolutionized the way we interact with technology on the go. But beyond being a mere notification hub, the Apple Watch offers a unique platform for app development. If you're intrigued by the idea of creating software for your wrist, then watchOS development might be the perfect path for you. This ... | epakconsultant | |

1,882,593 | Demystifying the Maze: A Beginner's Guide to Amazon's APIs | Amazon, the e-commerce behemoth, isn't just a platform for buying and selling goods. It also offers a... | 0 | 2024-06-10T05:05:50 | https://dev.to/epakconsultant/demystifying-the-maze-a-beginners-guide-to-amazons-apis-4lgo | amazon | Amazon, the e-commerce behemoth, isn't just a platform for buying and selling goods. It also offers a powerful suite of Application Programming Interfaces (APIs) that unlock a world of possibilities for developers. But what exactly are APIs, and how can Amazon's offerings benefit you? Let's delve into the fundamental c... | epakconsultant |

1,882,592 | The Benefits of Listing Your Home with a Real Estate Agent | Are you thinking about selling your home? The process can be overwhelming, from setting the right... | 0 | 2024-06-10T05:03:32 | https://dev.to/machik99/the-benefits-of-listing-your-home-with-a-real-estate-agent-281a | Are you thinking about selling your home? The process can be overwhelming, from setting the right price to navigating the paperwork. That's where a real estate agent comes in. Listing your home with a professional can make all the difference in achieving a smooth and profitable sale. Let's explore the key benefits of w... | machik99 | |

1,882,587 | What are you building this week ? | A post by Méschac Irung | 0 | 2024-06-10T04:46:36 | https://dev.to/meschacirung/what-are-you-building-this-week--51m | webdev, discuss | meschacirung | |

1,882,591 | Scoring Steals on Amazon: Uncovering Deep Discounts and Potential Pricing Errors | For savvy shoppers, Amazon can be a treasure trove of deals. But beyond the occasional lightning deal... | 0 | 2024-06-10T05:02:02 | https://dev.to/epakconsultant/scoring-steals-on-amazon-uncovering-deep-discounts-and-potential-pricing-errors-4pi0 | amazon | For savvy shoppers, Amazon can be a treasure trove of deals. But beyond the occasional lightning deal or coupon code, there are hidden strategies to unearth heavily discounted items (50%-90% off) and even stumble upon potential pricing errors. Here's your guide to becoming an Amazon deal-hunting pro:

Discount Hunting ... | epakconsultant |

1,873,265 | Don’t Be a Hero. It's a Trap | If you want to stand out at work, don't be a hero. It's a trap. I've been there and done that. Yes,... | 27,567 | 2024-06-10T05:00:00 | https://dev.to/canro91/dont-be-a-hero-5gko | career, careerdevelopment, beginners | If you want to stand out at work, don't be a hero.

It's a trap. I've been there and done that.

Yes, I know. It feels sooo good at the end of the day when you're the one saving the day. But if you're the only one who can do something or knows how to do it, that makes you irreplaceable.

And being irreplaceable means b... | canro91 |

1,881,244 | Elasticsearch APM Server Kurulumu ve Uygulama İzleme | İçindekiler Elasticsearch Nedir? Kibana Nedir? APM Nedir? Elasticsearch Kurulumu Kibana... | 0 | 2024-06-10T04:59:23 | https://dev.to/aciklab/elasticsearch-apm-server-kurulumu-ve-uygulama-izleme-17m0 | elasticsearch, kibana, apm, monitoring | # İçindekiler

- [Elasticsearch Nedir?](#elasticsearch-nedir)

- [Kibana Nedir?](#kibana-nedir)

- [APM Nedir?](#apm-nedir)

- [Elasticsearch Kurulumu](#elastic-search-kurulum)

- [Kibana Kurulumu ve Doğrulama Kodu Alma](#kibana-kurulumu-ve-doğrulama-kodu-alma)

- [APM Server Kurulumu](#apm-server-kurulumu)

- [Java APM Agen... | erenalpteksen |

1,882,590 | Unveiling the Voice: A Look at the Fundamentals of OpenAI TTS API | OpenAI's Text-To-Speech (TTS) API is a revolutionary tool that empowers developers to transform... | 0 | 2024-06-10T04:57:33 | https://dev.to/epakconsultant/unveiling-the-voice-a-look-at-the-fundamentals-of-openai-tts-api-gje | openai | OpenAI's Text-To-Speech (TTS) API is a revolutionary tool that empowers developers to transform written text into natural-sounding spoken language. This technology holds immense potential for various applications, from enhancing accessibility features to creating lifelike voiceovers. But how exactly does this API work,... | epakconsultant |

1,882,588 | The Power of Visual Studio: A Match Made in Developer Heaven | Visual Studio acts as the primary development environment for .NET. This intuitive IDE offers... | 0 | 2024-06-10T04:49:40 | https://dev.to/akaksha/the-power-of-visual-studio-a-match-made-in-developer-heaven-1cjm | development, developers, netdevelopers | Visual Studio acts as the primary development environment for .NET. This intuitive IDE offers excellent code editing, debugging, and testing tools, making the development process smoother and more efficient.

The [.NET Framework's](https://www.clariontech.com/blog/top-5-things-you-should-know-about-.net-framework) rich... | akaksha |

1,882,586 | TypeScript Interfaces: Crafting Code with Creative Precision | Hello everyone, السلام عليكم و رحمة الله و بركاته In TypeScript, interfaces play a crucial role in... | 0 | 2024-06-10T04:45:44 | https://dev.to/bilelsalemdev/typescript-interfaces-crafting-code-with-creative-precision-65m | typescript, solid, oop, programming | Hello everyone, السلام عليكم و رحمة الله و بركاته

In TypeScript, interfaces play a crucial role in defining the shape of objects and providing a contract for their behavior. They allow us to enforce consistency and ensure that our code adheres to a specific structure. Let's explore deeply what interfaces are.

## Tabl... | bilelsalemdev |

1,882,585 | How I Built My Portfolio Using NextJS and MDX | Last year, I was inspired by amazing developers like Josh W Comeau and Kent C Dodds. I decided to... | 0 | 2024-06-10T04:42:10 | https://dev.to/beginarjun/how-i-built-my-portfolio-using-nextjs-and-mdx-168a | webdev, nextjs, howto, tutorial | Last year, I was inspired by amazing developers like [Josh W Comeau](https://joshwcomeau.com/) and [Kent C Dodds](https://kentcdodds.com/). I decided to make my own portfolio website with a blog to learn in the open and share my journey with the community. You can check it out [here](https://beginarjun.vercel.app)

## ... | beginarjun |

1,882,583 | A single API for all your conversational generative AI applications | Use the Converse API in Amazon Bedrock to create generative AI applications using single API across... | 0 | 2024-06-10T04:38:52 | https://community.aws/content/2hP2HKfF83IHf0N5VIdfX1G7Ekl/a-single-api-for-all-your-conversational-generative-ai-applications | go, machinelearning, programming, cloud | *Use the Converse API in Amazon Bedrock to create generative AI applications using single API across multiple foundation models*

You can now use the [**Converse API**](https://docs.aws.amazon.com/bedrock/latest/userguide/conversation-inference.html) in Amazon Bedrock to create conversational applications like chatbots... | abhirockzz |

1,882,582 | packageoidc the dartflutter authentication package you didnt know you needed in Flutter News 2024 #23 ʚїɞ | Hey Flutter enthusiasts! Ever worry about missing key Flutter updates? Well, worry no... | 26,008 | 2024-06-10T04:36:33 | https://dev.to/lucianojung/packageoidc-the-dartflutter-authentication-package-you-didnt-know-you-needed-in-flutter-news-2024-23-eyie-54k | flutter, news, dart, discuss | ## Hey Flutter enthusiasts!

Ever worry about missing key Flutter updates? Well, worry no more!

Starting 2024, I'm here to keep you informed with a weekly Monday report. Let's stay ahead in the world of Flutter!

## Table of Contents

1. {% cta #mayor-flutter-updates %} Mayor Flutter updates {% endcta %}

2. {% cta #n... | lucianojung |

1,882,364 | How to Install (and Uninstall) MySQL on a Mac | Introduction In this tutorial, we will walk you through the actions necessary to perform... | 0 | 2024-06-10T00:15:51 | https://dev.to/olsido/how-to-install-and-uninstall-mysql-on-a-mac-16l8 | mysql, macos, beginners, homebrew | # Introduction

In this tutorial, we will walk you through the actions necessary to perform on a Mac to install MySQL.

There are two methods that can be used:

* download the installation package from Oracle and launch the installer file, or

* install using `homebrew`.

Since the first method is pretty straight-forward,... | olsido |

1,882,581 | Bob Geiger: Running Towards Greatness - An Ex-Athlete's Coaching Odyssey in Track and Field | From Athlete to Coach: Geiger's Transformation and Contributions to Track and Field In the dynamic... | 0 | 2024-06-10T04:33:30 | https://dev.to/robertgeiger/bob-geiger-running-towards-greatness-an-ex-athletes-coaching-odyssey-in-track-and-field-1ac0 | From Athlete to Coach: Geiger's Transformation and Contributions to Track and Field

In the dynamic realm of track and field, the pursuit of greatness is an ever-present motivator. Athletes like Bob Geiger train relentlessly, pushing their bodies to their utmost limits in the hopes of securing that coveted gold medal o... | robertgeiger | |

1,882,579 | Making Meetings Matter | In today's fast-paced work environment, productive meetings are essential to keep everyone aligned... | 0 | 2024-06-10T04:29:00 | https://dev.to/apetryla/making-meetings-matter-2ook | beginners, productivity, career, discuss | In today's fast-paced work environment, productive meetings are essential to keep everyone aligned and moving forward. Yet, too often, meetings can become unproductive, leaving participants feeling unheard and unclear about the next steps. Here are some tips to ensure your meetings are efficient and effective:

1. **Se... | apetryla |

1,882,578 | Island Peak Climbing | Island Peak Climbing is one of the most beautiful trekking and peak climbing centers in the... | 0 | 2024-06-10T04:28:07 | https://dev.to/bikram_bipin_a4810dfb455f/island-peak-climbing-fg3 | webdev, javascript, beginners, programming | **_[Island Peak Climbing](https://www.nepalsocialtreks.com/trip/island-peak-climbing/)_** is one of the most beautiful trekking and peak climbing centers in the Himalayas. Located in the Everest/Khumbu region of Eastern Nepal, Island Peak Climbing is perfect for you if you are an adventure lover and enthusiast of energ... | bikram_bipin_a4810dfb455f |

1,882,577 | Cookie consent in NextJs | This article discusses how to add cookie consent to a NextJs app using cookies-next package. ... | 0 | 2024-06-10T04:27:39 | https://dev.to/afzalimdad9/cookie-consent-in-nextjs-4gnn | javascript, react, nextjs, programming |

This article discusses how to add cookie consent to a NextJs app using `cookies-next` package.

## **Install the required packages:**

To install the package, type one of the following commands based on your packag... | afzalimdad9 |

1,882,614 | How to Build an AI Investment Analyst Agent? | Introduction Investing in stocks and other assets is an interesting affair but it can be... | 0 | 2024-06-11T14:29:57 | https://blog.composio.dev/untitled/ | ---

title: How to Build an AI Investment Analyst Agent?

published: true

date: 2024-06-10 04:18:41 UTC

tags:

canonical_url: https://blog.composio.dev/untitled/

---

## Introduction

Investing in stocks... | sohamganatra | |

1,882,569 | 𝐌𝐚𝐬𝐭𝐞𝐫𝐢𝐧𝐠 𝐃𝐞𝐬𝐢𝐠𝐧 𝐏𝐚𝐭𝐭𝐞𝐫𝐧𝐬 𝐢𝐧 𝐉𝐚𝐯𝐚𝐒𝐜𝐫𝐢𝐩𝐭: 𝟏/𝟔 - 𝐓𝐡𝐞 𝐌𝐨𝐝𝐮𝐥𝐞 𝐏𝐚𝐭𝐭𝐞𝐫𝐧 | 🎯 𝐌𝐚𝐬𝐭𝐞𝐫𝐢𝐧𝐠 𝐃𝐞𝐬𝐢𝐠𝐧 𝐏𝐚𝐭𝐭𝐞𝐫𝐧𝐬 𝐢𝐧 𝐉𝐚𝐯𝐚𝐒𝐜𝐫𝐢𝐩𝐭: 𝟏/𝟔 - 𝐓𝐡𝐞 𝐌𝐨𝐝𝐮𝐥𝐞 𝐏𝐚𝐭𝐭𝐞𝐫𝐧 𝖨𝗇 𝗈𝗎𝗋 𝖿𝖺𝗌𝗍-𝗉𝖺𝖼𝖾𝖽 𝗍𝖾𝖼𝗁 𝗐𝗈𝗋𝗅𝖽,... | 0 | 2024-06-10T04:17:23 | https://dev.to/kiransm/--3ga7 | 🎯 𝐌𝐚𝐬𝐭𝐞𝐫𝐢𝐧𝐠 𝐃𝐞𝐬𝐢𝐠𝐧 𝐏𝐚𝐭𝐭𝐞𝐫𝐧𝐬 𝐢𝐧 𝐉𝐚𝐯𝐚𝐒𝐜𝐫𝐢𝐩𝐭: 𝟏/𝟔 - 𝐓𝐡𝐞 𝐌𝐨𝐝𝐮𝐥𝐞 𝐏𝐚𝐭𝐭𝐞𝐫𝐧

𝖨𝗇 𝗈𝗎𝗋 𝖿𝖺𝗌𝗍-𝗉𝖺𝖼𝖾𝖽 𝗍𝖾𝖼𝗁 𝗐𝗈𝗋𝗅𝖽, 𝗐𝗋𝗂𝗍𝗂𝗇𝗀 𝖼𝗅𝖾𝖺𝗇 𝖺𝗇𝖽 𝗆𝖺𝗂𝗇𝗍𝖺𝗂𝗇𝖺𝖻𝗅𝖾 𝖼𝗈𝖽𝖾 𝗂𝗌 𝖾𝗌𝗌𝖾𝗇𝗍𝗂𝖺𝗅. 𝖳𝗁𝖾 𝐌𝐨𝐝𝐮𝐥𝐞 𝐏𝐚𝐭𝐭𝐞𝐫𝐧 𝗂𝗇 𝖩𝖺𝗏𝖺𝖲𝖼... | kiransm | |

1,882,567 | Sample Datasets and Resources for Practicing Pandas | Essential Sample Datasets and Resources for Practicing Pandas Pandas is a powerful Python... | 0 | 2024-06-10T04:13:27 | https://dev.to/sh20raj/sample-datasets-and-resources-for-practicing-pandas-56h | pandas, python | # Essential Sample Datasets and Resources for Practicing Pandas

Pandas is a powerful Python library for data manipulation and analysis. To master Pandas, it's important to work with real-world datasets and resources. In this article, we'll explore some valuable CSV datasets and resources to help you practice and enhan... | sh20raj |

1,882,566 | KERBEROS SETUP in AMBARI | Hi, everyone, can help me, regarding to setup Kerberos in Ambari 2.7.8 with ODP kerberos | 0 | 2024-06-10T04:12:51 | https://dev.to/rizal_trenggono_d2428c20b/kerberos-setup-in-ambari-3pab | help | Hi, everyone, can help me, regarding to setup Kerberos in Ambari 2.7.8 with ODP

#kerberos | rizal_trenggono_d2428c20b |

1,882,564 | The Booming Field with Bite (and a Few Bytes of Caution) | The tech industry is hot right now, let's face it. However, those aren't the only benefits—free... | 0 | 2024-06-10T04:09:35 | https://dev.to/s0330b/the-booming-field-with-bite-and-a-few-bytes-of-caution-3l9o | discuss, web3, webdev |

The tech industry is hot right now, let's face it. However, those aren't the only benefits—free kombucha on tap is also a pretty great bonus. It's difficult to find the special combination of challenge, advancement... | s0330b |

1,882,560 | Understanding End-to-End Encryption in Javascript | Introduction Privacy and security have become increasingly important when using the Internet. Rising... | 0 | 2024-06-10T04:08:31 | https://dev.to/jrob112/understanding-end-to-end-encryption-in-javascript-4f2m | **_Introduction_**

Privacy and security have become increasingly important when using the Internet. Rising concerns over data breaches and unwanted snooping have made End-to-End encryption(E2EE) a critical technological safety technique for Internet communication. E2EE can be intimidating for some web developers, depen... | jrob112 | |

1,882,561 | Automatically Update the Local Branch with the Remote Version When Switching Branches in Git | When you switch to your main branch during development, do you ever forget to perform a git... | 0 | 2024-06-10T04:07:54 | https://dev.to/untilyou58/automatically-update-the-local-branch-with-the-remote-version-when-switching-branches-in-git-3114 | git, learning, beginners, productivity | When you switch to your main branch during development, do you ever forget to perform a `git pull`?

When you `checkout` to a particular branch, you can utilize a feature called "Git Hooks" to have a `git pull` executed automatically.

This article explains how to configure it.

# Setup procedure

1. Go to the `.git/ho... | untilyou58 |

1,882,563 | Exploring Web Development: Ruby on Rails | What is Ruby? Ruby is a general purpose programming language that features simple and... | 0 | 2024-06-10T04:07:21 | https://dev.to/alexphebert2000/exploring-web-development-ruby-on-rails-519f | ## What is Ruby?

Ruby is a general purpose programming language that features simple and beginner-friendly syntax while being a powerful tool for experienced developers. Ruby is an interpreted language and is dynamically typed similarly to JavaScript and Python making my transition to Ruby fairly simple.

There are a... | alexphebert2000 | |

1,882,562 | Everything you need to know about monitoring CoreDNS for DNS performance | 📚 Introduction: Running DNS-intensive workloads can sometimes lead to intermittent CoreDNS failures... | 0 | 2024-06-10T04:07:11 | https://dev.to/aws-builders/everything-you-need-to-know-about-monitoring-coredns-for-dns-performance-5hi9 | dns, eks, troubleshooting, monitoring | 📚 Introduction:

Running DNS-intensive workloads can sometimes lead to intermittent [CoreDNS](https://kubernetes.io/docs/tasks/administer-cluster/dns-custom-nameservers/#coredns) failures caused by DNS throttling. These issues can have a significant impact on your applications.

Such disruptions can hinder the reliab... | seifrajhi |

1,882,744 | Optimize Blog Management with Angular Gantt Chart | TL;DR: Syncfusion Angular Gantt Chart is a project planning and management tool. The blog outlines... | 0 | 2024-06-19T02:38:19 | https://www.syncfusion.com/blogs/post/blog-management-angular-gantt-chart | angular, development, gantt, web | ---

title: Optimize Blog Management with Angular Gantt Chart

published: true

date: 2024-06-10 04:01:07 UTC

tags: angular, development, gantt, web

canonical_url: https://www.syncfusion.com/blogs/post/blog-management-angular-gantt-chart

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/ygio0z3pmh183xx... | gayathrigithub7 |

1,882,558 | The Ultimate Guide to Social Media Marketing for Businesses | Introduction Welcome to the ultimate guide to social media marketing for businesses! In today's... | 0 | 2024-06-10T03:51:46 | https://dev.to/cornelius_zieme_1c02fa34d/the-ultimate-guide-to-social-media-marketing-for-businesses-g5g | webdev, business, socialmedia |

Introduction

Welcome to the ultimate guide to social media marketing for businesses! In today's digital age, social media isn't just for sharing selfies and funny cat videos—it's a powerful tool that businesses can ... | cornelius_zieme_1c02fa34d |

1,880,237 | Building a Bulletproof CI/CD Pipeline: A Comprehensive Guide | Welcome Aboard Week 2 of DevSecOps in 5: Your Ticket to Secure Development Superpowers! _Hey there,... | 27,560 | 2024-06-10T03:49:00 | https://dev.to/gauri1504/building-a-bulletproof-cicd-pipeline-a-comprehensive-guide-3jg3 | devsecop, devops, cloud, security | Welcome Aboard Week 2 of DevSecOps in 5: Your Ticket to Secure Development Superpowers!

_Hey there, security champions and coding warriors!

Are you itching to level up your DevSecOps game and become an architect of rock-solid software? Well, you've landed in the right place! This 5-week blog series is your fast track ... | gauri1504 |

1,882,557 | Glam Up my Markup: Beaches | This is a submission for [Frontend Challenge... | 0 | 2024-06-10T03:46:17 | https://dev.to/2023_anshika_gupta_/glam-up-my-markup-beaches-ekh | devchallenge, frontendchallenge, css, javascript | _This is a submission for [Frontend Challenge v24.04.17]((https://dev.to/challenges/frontend-2024-05-29), Glam Up My Markup: Beaches_

## What I Built

Welcome to the "Take Me to the Beach" project! This website showcases some of the most beautiful and popular beaches globally. You can explore detailed information abou... | 2023_anshika_gupta_ |

1,882,556 | 11 Best SQL IDEs or SQL Editors for 2024 | Choosing the best SQL IDE depends on your specific needs, preferences, and the type of database you... | 0 | 2024-06-10T03:45:18 | https://dev.to/concerate/11-best-sql-ides-or-sql-editors-for-2024-447p | Choosing the best SQL IDE depends on your specific needs, preferences, and the type of database you are working with. However, here are some of the most popular and highly regarded SQL IDEs available as of 2024:

**1.SQLynx:** SQLynx is a strong contender among SQL IDEs, particularly for users who value a modern, intui... | concerate | |

1,882,554 | Javascript Promise Methords | Summary Promise.all :- Resolves when all promises resolve, or rejects if any promise... | 0 | 2024-06-10T03:43:13 | https://dev.to/mino/javascript-promisemethords-11np | **Summary**

**Promise.all** :- Resolves when all promises resolve, or rejects if any promise rejects.

**Promise.race** :- Resolves or rejects as soon as one of the promises resolves or rejects.

**Promise.allSettled** :- Resolves when all promises have settled, with an array of outcomes.

**Promise.any** :- Resolves as ... | mino | |

1,882,553 | SUPABASE | Hello! Do you know about SUPABASE and powers? Read this to have a basic knowledge of it. This has the... | 0 | 2024-06-10T03:43:10 | https://dev.to/hussain101/supabase-4jbo | Hello!

Do you know about SUPABASE and powers?

Read this to have a basic knowledge of it.

This has the following sections:

1. Introduction

2. Use in IOT

3. SUPABASE vs FIREBASE

### **INTRODUCTION**:

Supabase is an open-source platform that provides developers with a suite of tools to build and scale their applicatio... | hussain101 | |

1,882,552 | From Megacities to Micromobility: How Uber can Adapt its System Design for Different Urban Transportation Needs | Traffic-clogged streets and limited parking are the daily struggles of commuters in megacities. But... | 0 | 2024-06-10T03:34:04 | https://dev.to/marufhossain/from-megacities-to-micromobility-how-uber-can-adapt-its-system-design-for-different-urban-transportation-needs-5753 | Traffic-clogged streets and limited parking are the daily struggles of commuters in megacities. But across the globe, a new wave of urban transportation is taking hold: micromobility. Bikes, e-scooters, and e-bikes are zipping through mid-sized cities, offering a convenient and eco-friendly way to get around. The chall... | marufhossain | |

1,882,550 | Websocket | Introduction to WebSockets WebSockets represent a significant advancement in web technology,... | 0 | 2024-06-10T03:30:39 | https://dev.to/dariusc16/websocket-4chm |

**Introduction to WebSockets**

WebSockets represent a significant advancement in web technology, enabling real-time, bidirectional communication between clients and servers. Unlike traditional HTTP requests, which... | dariusc16 | |

1,881,301 | Understanding Python Decorators | Without using decorators I think mostly we can’t build a decent application. They are everywhere.... | 0 | 2024-06-10T03:30:00 | https://dev.to/tankala/understanding-python-decorators-3n9f | webdev, beginners, programming, python | Without using decorators I think mostly we can’t build a decent application. They are everywhere. Let's learn what are they and how we can build our own.

{% embed https://newsletter.piptrends.com/p/understanding-python-decorators %} | tankala |

1,882,549 | Elixir, Erlang, and the BEAM | It’s exciting to learn new languages, especially one’s you take an interest in, but a great rule of... | 0 | 2024-06-10T03:29:22 | https://dev.to/cody-daigle/elixir-erlang-and-the-beam-5g6j | It’s exciting to learn new languages, especially one’s you take an interest in, but a great rule of thumb when taking in that learning process is to understand where they originate from. So, before taking a deep dive into Elixir I’d say it’s a pretty solid Idea to look into Erlang, Elixir’s parent language, in order to... | cody-daigle | |

1,882,548 | Iterative Development && Abstraction - Part 2 | In part 1, I wrote about taking blocks of static note cards and abstracting them into a carousel... | 0 | 2024-06-10T03:23:35 | https://dev.to/snackcode/iterative-development-abstraction-part-2-2dfp | webdev, javascript, beginners, learning | In [part 1](https://dev.to/snackcode/iterative-development-abstraction-part-1-2l9p), I wrote about taking blocks of static note cards and abstracting them into a carousel using a `@for` loop. These cards were utilized on a `learn more` page. The content for the cards were moved from the html template to the an JSON arr... | snackcode |

1,882,540 | Finding the Perfect Talent Agent - A Comprehensive Guide with Micah Pittard | When you're longing to carve a niche for yourself in the entertainment universe, whether it's acting,... | 0 | 2024-06-10T03:15:47 | https://dev.to/micahpittard/finding-the-perfect-talent-agent-a-comprehensive-guide-with-micah-pittard-d3 | When you're longing to carve a niche for yourself in the entertainment universe, whether it's acting, modeling, music, or any other artistic domain, partnering with the apt talent agency, like New Standard Branding (NSB) led by Micah Pittard, can dramatically shape your career trajectory. A talent agent, particularly o... | micahpittard | |

1,882,538 | Unlocking the Power of AWS RDS: A Deep Dive into Managed Relational Databases | Unlocking the Power of AWS RDS: A Deep Dive into Managed Relational Databases ... | 0 | 2024-06-10T03:02:30 | https://dev.to/virajlakshitha/unlocking-the-power-of-aws-rds-a-deep-dive-into-managed-relational-databases-4oob |

# Unlocking the Power of AWS RDS: A Deep Dive into Managed Relational Databases

### Introduction

In today's data-driven world, businesses constantly seek ways to optimize their data management strategies. Amazon Relational Database Se... | virajlakshitha | |

1,882,537 | Intro to Embedded Systems | Introduction No matter where you are right now, chances are is that an embedded system is... | 0 | 2024-06-10T02:59:34 | https://dev.to/ccwell11/intro-to-embedded-systems-5c7p | ### Introduction

No matter where you are right now, chances are is that an embedded system is within arms reach. These systems are so common people never take the time to acknowledge the tech that may be incorporated in their day-to-day lives. Embedded systems are present within products like television remotes, microw... | ccwell11 | |

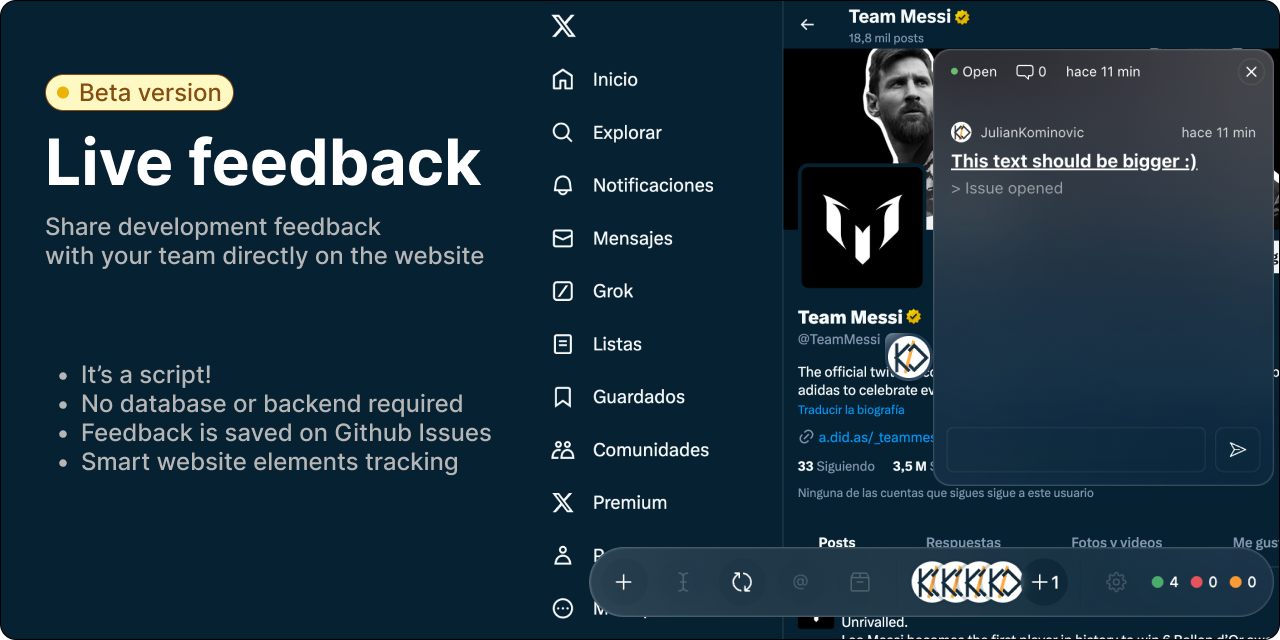

1,882,536 | Update: Live Feedback script | Hi! A few weeks ago I wrote a blog post Introducing Live Feedback as a Google Chrome... | 0 | 2024-06-10T02:58:35 | https://dev.to/juliankominovic/update-live-feedback-script-35oi | webdev, javascript, react, productivity |

Hi! A few weeks ago I wrote a blog post [Introducing Live Feedback](https://dev.to/juliankominovic/introducing-live-feedback-2cg8) as a Google Chrome Extension.

Live Feedback is a script that allows you to start... | juliankominovic |

1,882,529 | How to study React to become a pro. Introduction to React. | React has by far the largest market share among JavaScript frameworks! However, it is a framework... | 0 | 2024-06-10T02:56:34 | https://dev.to/yukionishi1129/how-to-study-react-to-become-a-pro-introduction-to-react-p3l | react, beginners, javascript, frontend | React has by far the largest market share among JavaScript frameworks!

However, it is a framework with a high failure rate, so I would like to introduce a roadmap for learning React from my knowledge as an active front-end engineer!

# Understanding React overview

First of all, what can React do? And what features do... | yukionishi1129 |

1,882,535 | Interface Segregation Principle | The Interface Segregation Principle (ISP) suggests that a class should not be forced to implement... | 0 | 2024-06-10T02:50:07 | https://dev.to/palanivel_sundararajangu/interface-segregation-principle-2edj | The Interface Segregation Principle (ISP) suggests that a class should not be forced to implement methods it doesn’t need.

### Bad Practice - Principle not followed

```java

+------------------+

| <<interface>> |

| IVehicle |

+------------------+

... | palanivel_sundararajangu | |

1,880,385 | How To Get Out Of Tutorial Hell | Once you finish a web development Course, I would build a project that you are interested in. Like... | 0 | 2024-06-10T02:45:00 | https://dev.to/thekarlesi/how-to-get-out-of-tutorial-hell-3mpf | webdev, beginners, programming, html | Once you finish a web development Course, I would build a project that you are interested in.

Like ask yourself, what are your interests, what are your passions. What do you like?

Don't build a Netflix clone. Everybody does that.

Don't build a Meta clone. Everybody does that.

Like, you should be original.

Do somet... | thekarlesi |

1,847,033 | Kubernetes Dashboard Part 2: Cluster Management | TL;DR: In this blog, the author talks about the burning issues in cluster management and how... | 27,311 | 2024-06-10T02:42:33 | https://devtron.ai/blog/kubernetes-dashboard-for-cluster-management/ | kubernetes, devtron, devops, opensource | TL;DR: In this blog, the author talks about the burning issues in cluster management and how Devtron's Kubernetes dashboard helps you manage the on-prem/ cloud Kubernetes clusters efficiently through an intuitive dashboard.

We will discuss how the Kubernetes dashboard by Devtron can be leveraged for cluster management... | devtron_inc |

1,882,534 | Test | #include <studio.h> | 0 | 2024-06-10T02:38:44 | https://dev.to/pkp/test-1k3d | `#include <studio.h>` | pkp | |

1,882,532 | Unity of developers is the power to advance the future | Nowadays humanity is not united as one. What if astronauts attack Earth? What will humanity do... | 0 | 2024-06-10T02:30:27 | https://dev.to/white_snow_b070f35998e724/unity-of-developers-is-the-power-to-advance-the-future-jjm | Nowadays humanity is not united as one.

What if astronauts attack Earth?

What will humanity do then?

Will the Earth still fight among itself then?

What if humanity becomes one only then...?

Is such a sacrifice really necessary for the unity of humanity?

I want to ask everyone in the world

Is it necessary for someone to... | white_snow_b070f35998e724 | |

1,881,898 | Vídeo de apresentação e artigo do projeto de identificação facial com AWS Rekognition | Hoje vou apresentar um projeto que envolve a implementação de pontos de identificação facial em 30... | 0 | 2024-06-10T02:17:41 | https://dev.to/aws-builders/video-de-apresentacao-e-artigo-do-projeto-de-identificacao-facial-com-aws-rekognition-4efa | aws, cloud, communitybuilder, architecture | Hoje vou apresentar um projeto que envolve a implementação de pontos de identificação facial em 30 locais de monitoramento. Esse projeto será realizado utilizando diversos serviços da AWS para garantir segurança, eficiência e escalabilidade. Vamos começar com uma visão geral do projeto e, em seguida, detalhar cada comp... | carlosfilho |

1,882,518 | Vertical or Horizontal Scale? | System Design Horizontal Scaling or Vertical Scaling? Hi, this is my first... | 0 | 2024-06-10T02:10:06 | https://dev.to/fidsouza/vertical-or-horizontal-scale-1dje | systemdesign, designsystem | ## System Design

### Horizontal Scaling or Vertical Scaling?

Hi, this is my first article in English, so I apologize for any mistakes. I'm trying to improve my English each day. In this article, I will explain some basic principles of system design for beginners.

### Scenario

Imagine that you have built software an... | fidsouza |

1,882,516 | Expertoapk - Encuentra tus juegos y aplicaciones APK favoritos | ExpertoAPK, es uno de los mejores sitios web para descargar aplicaciones y juegos para dispositivos... | 0 | 2024-06-10T02:04:47 | https://dev.to/expertoapk/expertoapk-encuentra-tus-juegos-y-aplicaciones-apk-favoritos-105g | webdev | ExpertoAPK, es uno de los mejores sitios web para descargar aplicaciones y juegos para dispositivos Android e iOS. Compartimos los últimos archivos APK de aplicaciones/juegos y MOD APK para disfrutar de las funciones premium pagas de tus aplicaciones y jue

Website: [https://expertoapk.com/](https://expertoapk.com/)

| expertoapk |

1,882,514 | [DAY 48-53] I Built A Pokemon Search App | Hi everyone! Welcome back to another blog where I document the things I learned in web development. I... | 27,380 | 2024-06-10T02:02:45 | https://dev.to/thomascansino/day-48-53-i-built-a-pokemon-search-app-pmk | beginners, learning, javascript, css | Hi everyone! Welcome back to another blog where I document the things I learned in web development. I do this because it helps retain the information and concepts as it is some sort of an active recall.

On days 48-53, I built a pokemon search app to complete the data structures & algorithms certification project in fr... | thomascansino |

1,882,512 | Flexibags: Cost-Effective Solutions for Liquid Logistics Challenges | screenshot-1717600442438.png Flexi-bags: Affordable and Safe Solution for Moving... | 0 | 2024-06-10T01:54:02 | https://dev.to/gloria_ericksonkf_4485f0b/flexibags-cost-effective-solutions-for-liquid-logistics-challenges-312b |

screenshot-1717600442438.png

Flexi-bags: Affordable and Safe Solution for Moving Liquids

Introduction

Have you ever wondered how liquids, such as wine, oil, and juice are transported around the world in a cost-effective and safe way? You may have heard of Flexi-bags, which are innovative and economical solutions... | gloria_ericksonkf_4485f0b | |

1,882,511 | Trainer’s win By Press Gazette Graham Atkins Solicitor | Racehorse trainer Paul D’Arcy has accepted substantial damages and a formal apology from the... | 0 | 2024-06-10T01:51:29 | https://dev.to/grahamatkins/trainers-win-by-press-gazette-graham-atkins-solicitor-41e5 | Racehorse trainer Paul D’Arcy has accepted substantial damages and a formal apology from the publishers of the Evening Star , Ipswich, after allegations that he behaved “unprofessionally, unlawfully and disgracefully” during the sale of colt Indian Haven.

D’Arcy sued publishers Archant over the 4 June 2003 article, in... | grahamatkins | |

1,882,510 | Graham Atkins Solicitor | Graham is widely-acknowledged as a leading libel, privacy and reputation management lawyer,... | 0 | 2024-06-10T01:46:00 | https://dev.to/grahamatkins/graham-atkins-solicitor-4mi9 | Graham is widely-acknowledged as a leading libel, privacy and reputation management lawyer, recognised in the media, the Legal 500, Chambers and Spears for many years. He has 30 years’ experience in his field, qualifying at Dentons, then working at media and commercial firms before founding predecessor firms Atkins Sol... | grahamatkins | |

1,882,509 | Aquaculture Tanks: Providing Controlled Environments for Fish Health | screenshot-1717600421944.png Aquaculture Tanks: Giving Fish a Safe and Healthy Habitat Aquaculture... | 0 | 2024-06-10T01:45:29 | https://dev.to/gloria_ericksonkf_4485f0b/aquaculture-tanks-providing-controlled-environments-for-fish-health-4gc4 | screenshot-1717600421944.png

Aquaculture Tanks: Giving Fish a Safe and Healthy Habitat

Aquaculture tanks are special tanks used for breeding and rearing fish in captivity. They provide a controlled environment for fish to thrive and grow in. By creating an environment that is tailored to specific species, farmers c... | gloria_ericksonkf_4485f0b | |

1,882,508 | Lawyer of the week: Graham Atkins Solicitor | Graham Atkins Solicitor , a partner at Atkins Thomson, acted for President Poroshenko of Ukraine in a... | 0 | 2024-06-10T01:44:01 | https://dev.to/grahamatkins/lawyer-of-the-week-graham-atkins-solicitor-50fh | Graham Atkins Solicitor , a partner at Atkins Thomson, acted for President Poroshenko of Ukraine in a libel action against the BBC over allegations that £300,000 was paid to extend talks between him and President Trump The BBC accepted the claim was completely untrue, apologised and paid £50,000 damages plus. [Read mor... | grahamatkins | |

1,882,507 | Cherie Blair starts hacking legal case — Graham Atkins Solicitor | Cherie Blair is a barrister and campaigner for prison reform. Cherie Blair has started legal... | 0 | 2024-06-10T01:37:20 | https://dev.to/grahamatkins/cherie-blair-starts-hacking-legal-case-graham-atkins-solicitor-23oc | Cherie Blair is a barrister and campaigner for prison reform. Cherie Blair has started legal proceedings over phone hacking, her solicitor has confirmed.

The wife of former Prime Minister Tony Blair, herself a prominent barrister, launched a claim on Tuesday.

Mrs Blair’s [Graham Atkins Solicitor](https://atkinsdellow... | grahamatkins | |

1,882,506 | Sử dụng MongoDB hiệu quả trong Nodejs | MongoDB là một database hướng tài liệu (document), một dạng NoSQL database. Vì thế, MongoDB sẽ tránh... | 0 | 2024-06-10T01:35:46 | https://dev.to/duongphan/su-dung-mongodb-hieu-qua-trong-nodejs-2kh9 | mongodb, webdev, javascript, node | **MongoDB** là một database hướng tài liệu (document), một dạng NoSQL database. Vì thế, MongoDB sẽ tránh cấu trúc table-based của relational database để thích ứng với các tài liệu như JSON có một schema rất linh hoạt gọi là BSON. MongoDB sử dụng lưu trữ dữ liệu dưới dạng Document JSON nên mỗi một collection sẽ các các ... | duongphan |

1,882,505 | Lawyer of the week: Graham Atkins, solicitor who acted for Poroshenko in BBC libel case | Graham Atkins, a partner at Atkins Thomson, acted for President Poroshenko of Ukraine in a libel... | 0 | 2024-06-10T01:33:43 | https://dev.to/grahamatkins/lawyer-of-the-week-graham-atkins-solicitor-who-acted-for-poroshenko-in-bbc-libel-case-3m2i | Graham Atkins, a partner at Atkins Thomson, acted for President Poroshenko of Ukraine in a libel action against the BBC over allegations that £300,000 was paid to extend talks between him and President Trump. The BBC accepted the claim was completely untrue, apologised and paid £50,000 damages plus legal costs.

[Grah... | grahamatkins | |

1,882,504 | How I Built My Own Personalized Google: A Step-by-Step Guide to AI Mastery | Meet My Google: Your Own Simple, Personalized AI Search, Tailor-Made Full Article What is This... | 0 | 2024-06-10T01:31:51 | https://dev.to/exploredataaiml/how-i-built-my-own-personalized-google-a-step-by-step-guide-to-ai-mastery-48ne | machinelearning, rag, ai | Meet My Google: Your Own Simple, Personalized AI Search, Tailor-Made

[Full Article] (https://medium.com/ai-in-plain-english/how-i-built-my-own-personalized-google-a-step-by-step-guide-to-ai-mastery-af0eccc11883)

**What is This Article About?**

○ This article provides a step-by-step guide on building a personalized Go... | exploredataaiml |

1,882,503 | Fish Farming Tanks: Supporting Sustainable Aquaculture Practices | Fish Farming Tanks: supporting Aquaculture which are sustainable procedures You might've currently... | 0 | 2024-06-10T01:31:10 | https://dev.to/gloria_ericksonkf_4485f0b/fish-farming-tanks-supporting-sustainable-aquaculture-practices-5g95 |

Fish Farming Tanks: supporting Aquaculture which are sustainable procedures

You might've currently seafood which was receive being farming their produced by exercising possible for farmers to improve seafood in managed surroundings like tanks if you should be fishing that is considering. These tanks decide to try ... | gloria_ericksonkf_4485f0b | |

1,882,420 | CSS Art: June (The peaceful days) | This is a submission for Frontend Challenge v24.04.17, CSS Art: June. Inspiration Today I... | 0 | 2024-06-10T01:22:13 | https://dev.to/soorajsnblaze333/css-art-june-4hme | frontendchallenge, devchallenge, css | _This is a submission for [Frontend Challenge v24.04.17](https://dev.to/challenges/frontend-2024-05-29), CSS Art: June._

## Inspiration

Today I am posting a figment of my imagination in the form of the June CSS Challenge. Where I live, June means it's sunny, cloudy and raining at the same time. And where I live is gre... | soorajsnblaze333 |

1,882,502 | Flexibags: Safe and Secure Transport for Liquids of All Types | Flexibags: Safe plus Secure Transport for Liquids on most types Because global trade goes on, the... | 0 | 2024-06-10T01:20:48 | https://dev.to/gloria_ericksonkf_4485f0b/flexibags-safe-and-secure-transport-for-liquids-of-all-types-2e59 |

Flexibags: Safe plus Secure Transport for Liquids on most types

Because global trade goes on, the attention in safer plus transportation that are efficient of enhances. Flexibags is an revolutionary plus solution that are affordable transport of liquids on most sorts. Flexibags is actually larger bags produced ... | gloria_ericksonkf_4485f0b | |

1,882,471 | Các Thư Viện React.js Nên Sử Dụng | Giới thiệu React.js đã và đang trở thành một trong những thư viện JavaScript phổ biến nhất... | 0 | 2024-06-10T01:19:12 | https://dev.to/duongphan/cac-thu-vien-reactjs-nen-su-dung-4cea | react, javascript, webdev, programming | ## Giới thiệu

React.js đã và đang trở thành một trong những thư viện JavaScript phổ biến nhất cho phát triển giao diện người dùng. Nhờ khả năng linh hoạt, hiệu quả và cộng đồng hỗ trợ lớn mạnh, React.js được sử dụng để xây dựng mọi thứ, từ các trang web đơn giản đến các ứng dụng web phức tạp.

Tuy nhiên, với vô số thư... | duongphan |

1,882,470 | Top Methods to Find GitHub Users | Project:- 7/500 GitHub User Finder project. Description The GitHub User Finder... | 27,575 | 2024-06-10T01:14:10 | https://dev.to/raajaryan/best-ways-to-search-for-github-users-53no | javascript, beginners, opensource, github | ### Project:- 7/500 GitHub User Finder project.

## Description

The GitHub User Finder is a web application designed to help users quickly and easily find GitHub profiles and view their details. By simply entering a GitHub username, users can retrieve profile information such as repositories, followers, following, and... | raajaryan |

1,877,504 | [Hono] Simple Messaging App Using Bun and WebSocket | We often see implementations of WebSocket using the Express framework and Socket.io. However, there... | 0 | 2024-06-10T01:11:38 | https://dev.to/yutakusuno/hono-simple-messaging-app-using-bun-and-websocket-mnk | hono, bunjs, typescript, react | We often see implementations of WebSocket using the Express framework and Socket.io. However, there seem to be fewer examples of WebSocket implementations using Hono, a framework that is similar to Express but faster and lighter. In this article, I will introduce the implementation of a simple messaging app using Hono ... | yutakusuno |

1,882,468 | SLOT ALLO BANK 💎 SENSASIBET77 AGEN SLOT POPULER MUDAH JACKPOT TANPA BATAS | 💗 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ (KLIK DISINI) 💗 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ (KLIK DISINI) Sensasibet77 - Slot Allo Bank... | 0 | 2024-06-10T01:08:00 | https://dev.to/listi_aminah_553fea0fd533/slot-allo-bank-sensasibet77-agen-slot-populer-mudah-jackpot-tanpa-batas-5d18 | slotallobank, agenslotallobank, sensasibet77, slotallobank5000 | 💗 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ ([KLIK DISINI](https://heylink.me/sensasibet77.com/))

💗 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ ([KLIK DISINI](https://heylink.me/sensasibet77.com/))

Sensasibet77 - Slot Allo Bank merupakan bandar jud... | listi_aminah_553fea0fd533 |

1,882,465 | Mastering Technical SEO: Key Techniques for Improved Website Performance | Introduction Technical SEO is all about optimizing your website's technical aspects to improve its... | 0 | 2024-06-10T01:05:19 | https://dev.to/gohil1401/mastering-technical-seo-key-techniques-for-improved-website-performance-5b6j | webdev, beginners, tutorial, career | **Introduction**

Technical SEO is all about optimizing your website's technical aspects to improve its visibility and ranking in search engine results. It's like tuning up a car to ensure it runs smoothly and efficiently. Ready to learn how to make your website a lean, mean, SEO machine?

**1. Page Speed Optimization*... | gohil1401 |

1,882,464 | How to Build a Cloud Application | Hi and welcome to our step-by-step guide on building your first cloud web application with... | 0 | 2024-06-10T01:05:12 | https://five.co/blog/how-to-build-a-cloud-application/ | tutorial, learning, cloud, mysql | <!-- wp:paragraph -->

<p>Hi and welcome to our step-by-step guide on building your first cloud web application with Five.</p>

<!-- /wp:paragraph -->

<!-- wp:heading -->

<h2 class="wp-block-heading">Goals and Objectives: </h2>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>In this step-by-step tutorial, we'll build a c... | domfive |

1,882,463 | Lập trình web phải phân biệt được Session và Cookie | 1. Giới thiệu về Session và Cookie Trong thế giới web hiện đại, việc quản lý thông tin... | 0 | 2024-06-10T00:59:02 | https://dev.to/duongphan/lap-trinh-web-phai-phan-biet-duoc-session-va-cookie-2mi0 | session, cookie, security, webdev | ## 1. Giới thiệu về Session và Cookie

Trong thế giới web hiện đại, việc quản lý thông tin người dùng giữa các lần truy cập là một yếu tố quan trọng để tạo ra trải nghiệm người dùng mượt mà và cá nhân hóa. Hai công cụ chính để thực hiện điều này là session (phiên làm việc) và cookie (bánh quy). Trong bài viết này, chún... | duongphan |

1,882,462 | Reading Laravel | Hi there. I've been working with Laravel for about 6 years now, but never read the framework code... | 0 | 2024-06-10T00:58:40 | https://dev.to/bruno_beghelli_210b82ca3b/reading-laravel-2j7p | Hi there. I've been working with Laravel for about 6 years now, but never read the framework code (which is expected, I think :P). Got curious about how it's written and started doing that for a few days now and it is both a fun and educational process . It requires some basic programming knowledge, but it feels really... | bruno_beghelli_210b82ca3b | |

1,881,473 | Budgtr Downtime incident report. | We would like to apologize to all our users for the downtime experienced last week. We understand the... | 0 | 2024-06-09T21:50:36 | https://dev.to/thobeats/budgtr-downtime-incident-report-2lph | We would like to apologize to all our users for the downtime experienced last week. We understand the discomfort this would have caused, and we have done the necessary checks and fixes to ensure that this does not happen again.

We have provided an incident report of the downtime that occurred on the 5th of June, 2024.... | thobeats | |

1,882,451 | Effective Methods to Secure Your Online Store Against Cyber Threats | In the digital age, the security of online stores is paramount. With cyber threats evolving and... | 0 | 2024-06-10T00:36:18 | https://dev.to/jchristopher0033/effective-methods-to-secure-your-online-store-against-cyber-threats-457e | dataencryption, onlinesafety, cyberprotection, ecommercesecurity | In the digital age, the security of online stores is paramount. With cyber threats evolving and becoming more sophisticated, online retailers must implement robust security measures to protect their businesses and customers. Here are some effective [methods to secure your online store](https://www.opencart.com/blog/7-w... | jchristopher0033 |

1,882,449 | Developing IoT Applications with Raspberry Pi | Introduction In the current era of technology, the Internet of Things (IoT) has brought... | 0 | 2024-06-10T00:34:05 | https://dev.to/kartikmehta8/developing-iot-applications-with-raspberry-pi-53el | webdev, javascript, beginners, programming | ## Introduction

In the current era of technology, the Internet of Things (IoT) has brought about a major revolution with its ability to connect various devices and enable seamless communication between them. One of the most popular devices used for developing IoT applications is the Raspberry Pi. It is a low-cost, cre... | kartikmehta8 |

1,541,634 | How to Easily Dockerize a Next.js Application | Hi there! In this little post I'm going to show you how to use Docker to containerize your Next.js... | 0 | 2024-06-10T00:31:18 | https://dev.to/emanuelnav/how-to-easily-dockerize-a-nextjs-application-p3f | docker, nextjs, javascript, tutorial | Hi there! In this little post I'm going to show you how to use Docker to containerize your Next.js application.

## What is Docker?

Docker is an open-source platform that enables developers to automate the deployment, scaling, and management of applications inside lightweight, portable containers. Containers are a way ... | emanuelnav |

1,882,430 | DEV Challenge: Beaches 🏖️ | This is a submission for [Frontend Challenge... | 0 | 2024-06-10T00:30:35 | https://dev.to/oliviapandora/dev-challenge-beaches-pjl | devchallenge, frontendchallenge, css, javascript | _This is a submission for [Frontend Challenge v24.04.17]((https://dev.to/challenges/frontend-2024-05-29), Glam Up My Markup: Beaches_

This is my submission to the Frontend Challenges: Beaches. I've seen the other challenges, thought about entering and then felt like I didn't have the time/skills. But I want to keep p... | oliviapandora |

1,882,432 | Capturing Beach Memories: A Polaroid Showcase of the World's Top Beaches | This is a submission for [Frontend Challenge... | 0 | 2024-06-10T00:18:09 | https://dev.to/jennavisions/capturing-beach-memories-a-polaroid-showcase-of-the-worlds-top-beaches-4gjf | devchallenge, frontendchallenge, css, javascript | _This is a submission for [Frontend Challenge v24.04.17]((https://dev.to/challenges/frontend-2024-05-29), Glam Up My Markup: Beaches_

## What I Built

Hello DEV,

This is my first entry here.

I incorporated a Polaroid photo effect to evoke a sense of nostalgia and capture the essence of cherished beach memories. Usin... | jennavisions |

1,882,431 | Glammed Up The Beaches With Blurry Animations. | Submission for Frontend Challenge v24.04.17, Glam Up My Markup: Beaches 🏖️ What I... | 0 | 2024-06-10T00:13:38 | https://dev.to/_zaihl/glammed-up-with-blurry-animations-401o | devchallenge, frontendchallenge, css, javascript | ## Submission for [Frontend Challenge v24.04.17](https://dev.to/challenges/frontend-2024-05-29), Glam Up My Markup: Beaches 🏖️

## What I Built

For this challenge, I created a dynamic, animated web page featuring a day/night mode toggle 🌞🌜, an infinite carousel 🔄, and several other interactive elements. My goal wa... | _zaihl |

1,883,893 | Slow productivity framework | Sharing my journey from toxic productivity to embracing the Slow Productivity framework | 0 | 2024-06-11T14:55:47 | https://jonathanyeong.com/slow-productivity-framework/ | productivity | ---

title: Slow productivity framework

description: Sharing my journey from toxic productivity to embracing the Slow Productivity framework

published: true

date: 2024-06-10 00:00:00 UTC

tags: productivity

canonical_url: https://jonathanyeong.com/slow-productivity-framework/

cover_image: https://dev-to-uploads.s3.amazon... | jonoyeong |

1,883,384 | How I’m learning Clojure in 2024 | I’ve recently been learning a bit of Clojure and it’s been a lot of fun! I thought I would note down... | 0 | 2024-06-11T04:14:48 | https://anthonybruno.dev/2024/06/10/How-Im-learning-Clojure-in-2024/ | clojure, programming, beginners | ---

title: How I’m learning Clojure in 2024

published: true

date: 2024-06-10 00:00:00 UTC

tags: clojure,programming,beginners

canonical_url: https://anthonybruno.dev/2024/06/10/How-Im-learning-Clojure-in-2024/

---

I’ve recently been learning a bit of Clojure and it’s been a lot of fun! I thought I would note down what... | aussieguy |

1,851,932 | Dynamic sitemap with django | A sitemap is an xml file that functions as a map to navigate your site. Hence the name; Site (site)... | 0 | 2024-06-09T18:50:24 | https://coffeebytes.dev/en/dynamic-sitemap-with-django/ | django, python, seo | ---

title: Dynamic sitemap with django

published: true

date: 2024-06-10 00:00:00 UTC

tags: django,python,seo

canonical_url: https://coffeebytes.dev/en/dynamic-sitemap-with-django/

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/uv0ub6ry6844jemd8j0f.jpg

---

A sitemap is an xml file that functions ... | zeedu_dev |

1,882,423 | [Game of Purpose] Day 22 | Today I came back from travelling, so no progress. | 27,434 | 2024-06-09T23:51:37 | https://dev.to/humberd/game-of-purpose-day-22-pl4 | gamedev | Today I came back from travelling, so no progress. | humberd |

1,882,419 | helloWorld("print") | A post by Momo241 | 0 | 2024-06-09T23:28:33 | https://dev.to/mored241/helloworldprint-541e | mored241 | ||

1,882,415 | SENSASIBET77 >> AGEN SITUS SLOT DEPOSIT BANK JENIUS TANPA POTONGAN GAMPANG MENANG | ✅ 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ (KLIK DISINI) ✅ 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ (KLIK DISINI) Sensasibet77 - Slot Bank Jenius... | 0 | 2024-06-09T23:17:55 | https://dev.to/sri_astuti_f02b83bb0c9172/sensasibet77-agen-situs-slot-deposit-bank-jenius-tanpa-potongan-gampang-menang-175h | slotgacor, sensasibet77, slotbankjenius, slotjenius | ✅ 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ ([KLIK DISINI](https://heylink.me/sensasibet77.com/))

✅ 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ ([KLIK DISINI](https://heylink.me/sensasibet77.com/))

Sensasibet77 - Slot Bank Jenius merupakan bandar ju... | sri_astuti_f02b83bb0c9172 |

1,882,414 | Top 5 Chrome Extensions for UI/UX Designers | Chrome web store offers a lot of extensions that can be helpful in various domains, in this article I... | 0 | 2024-06-09T23:16:39 | https://dev.to/douiri/top-5-chrome-extensions-for-uiux-designers-1m9l | extensions, webdev, productivity | Chrome web store offers a lot of extensions that can be helpful in various domains, in this article I want to share the 10 extensions that I found useful and can help designers or frontend developers.

## 1. [WhatFont](https://chromewebstore.google.com/detail/whatfont/jabopobgcpjmedljpbcaablpmlmfcogm?hl=en-US)

this ext... | douiri |

1,882,413 | 𝗙𝗿𝗲𝘀𝗵𝗣𝗶𝗰𝗸 Web application | 🚀 𝗙𝗿𝗲𝘀𝗵𝗣𝗶𝗰𝗸: 𝗦𝗶𝗺𝗽𝗹𝗶𝗳𝘆𝗶𝗻𝗴 𝗚𝗿𝗼𝗰𝗲𝗿𝘆 𝗣𝗶𝗰𝗸𝘂𝗽𝘀 🚀 I'm thrilled to share FreshPick, a project I recently... | 0 | 2024-06-09T22:58:34 | https://dev.to/clear008/web-application-2e5d | 🚀 𝗙𝗿𝗲𝘀𝗵𝗣𝗶𝗰𝗸: 𝗦𝗶𝗺𝗽𝗹𝗶𝗳𝘆𝗶𝗻𝗴 𝗚𝗿𝗼𝗰𝗲𝗿𝘆 𝗣𝗶𝗰𝗸𝘂𝗽𝘀 🚀

I'm thrilled to share FreshPick, a project I recently completed as part of the #ALXSE Program. FreshPick is a web application designed to streamline the process of ordering fresh groceries for pickup at your local store, supporting local fa... | clear008 | |

1,882,412 | SLOT BANK OCBC💥LINK GACOR SLOT DEPOSIT BANK OCBC TERBAIK DAN TERAMANAH | *💠 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ (𝐃𝐀𝐅𝐓𝐀𝐑 𝐊𝐋𝐈𝐊 𝐃𝐈𝐒𝐈𝐍𝐈) * *💠 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ (𝐃𝐀𝐅𝐓𝐀𝐑 𝐊𝐋𝐈𝐊 𝐃𝐈𝐒𝐈𝐍𝐈) * Agar... | 0 | 2024-06-09T22:52:14 | https://dev.to/elis_manda_b4ab45c30bfbcb/slot-bank-ocbclink-gacor-slot-deposit-bank-ocbc-terbaik-dan-teramanah-17ef | **💠 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ ([𝐃𝐀𝐅𝐓𝐀𝐑 𝐊𝐋𝐈𝐊 𝐃𝐈𝐒𝐈𝐍𝐈](https://heylink.me/sensasibet7777/))

**

**💠 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ ([𝐃𝐀𝐅𝐓𝐀𝐑 𝐊𝐋𝐈𝐊 𝐃𝐈𝐒𝐈𝐍𝐈](https://heylink.me/sensasibet7777/))

**

💠 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ (𝐃𝐀𝐅𝐓𝐀𝐑 𝐊𝐋𝐈𝐊 𝐃𝐈𝐒𝐈𝐍𝐈) slot online... | 0 | 2024-06-09T22:47:39 | https://dev.to/elis_manda_b4ab45c30bfbcb/slot-bank-sumut-link-dadtar-slot-deposit-bank-sumut-mudah-menang-modal-5-ribu-4in2 | **💠 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ ([𝐃𝐀𝐅𝐓𝐀𝐑 𝐊𝐋𝐈𝐊 𝐃𝐈𝐒𝐈𝐍𝐈](https://heylink.me/sensasibet7777/))**

**💠 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ ([𝐃𝐀𝐅𝐓𝐀𝐑 𝐊𝐋𝐈𝐊 𝐃𝐈𝐒𝐈𝐍𝐈](https://heylink.me/sensasibet7777/))**

--- My name is also Jacob R. Thompson so i will be calling this the

Thompson Audio Mapping Format ,

or the Thompson - " your name here " Audio format ,

I dont care , and

as I am l... | vampeyer |

1,882,409 | Need Help With Z-Index/Positiong | I created a short video explaining my issue. If anyone can help. Please let me... | 0 | 2024-06-09T22:44:01 | https://dev.to/torenrob/need-help-with-z-indexpositiong-pf3 | react, help, styling, newbie | I created a short video explaining my issue. If anyone can help. Please let me know.

https://www.youtube.com/watch?v=ySAeUFbw5fo

You can reach me on twitter @codingDr3ams or Instagram @holyhippiedad. I'll also be watching this post.

| torenrob |

1,882,406 | Polling in React | In today's tech space of web development, real-time data updates are important for creating engaging... | 0 | 2024-06-09T22:37:18 | https://dev.to/tangoindiamango/polling-in-react-3h8a | javascript, react, webdev, programming | In today's tech space of web development, real-time data updates are important for creating engaging and responsive user experiences. Whether you're building a dashboard, a chat application, a server interface, or a stock trading platform, you often need to check the status of a server or a process at regular intervals... | tangoindiamango |

1,882,404 | SENSASIBET77 AGEN SITUS SLOT DEPOSIT BANK JATENG TANPA POTONGAN MUDAH MAXWIN | ✅ 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ (KLIK DISINI) ✅ 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ (KLIK DISINI) Sensasibet77 - Slot Bank Jateng... | 0 | 2024-06-09T22:32:05 | https://dev.to/listi_aminah_553fea0fd533/sensasibet77-agen-situs-slot-deposit-bank-jateng-tanpa-potongan-mudah-maxwin-391h | ✅ 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ ([KLIK DISINI](https://heylink.me/sensasibet77.com/))

✅ 𝐋𝐈𝐍𝐊 𝐃𝐀𝐅𝐓𝐀𝐑 ❱❱ ([KLIK DISINI](https://heylink.me/sensasibet77.com/))

Sensasibet77 - Slot Bank Jateng merupakan bandar ju... | listi_aminah_553fea0fd533 | |

1,882,402 | Hello to all Developers | A post by MD.MAHFUZUR RAHMAN SIAM | 0 | 2024-06-09T22:26:31 | https://dev.to/siam_khan/hello-to-all-developers-5gh5 | siam_khan | ||

1,882,559 | Handling duplicate events from Stripe in your webhook endpoint | In my recent post, detailing how I handle order fulfillment for my Stripe integration, I missed an... | 0 | 2024-06-10T04:00:13 | https://www.duncanmackenzie.net/blog/handling-duplicate-stripe-events/ | webdev, coding, stripe, azure | ---

title: Handling duplicate events from Stripe in your webhook endpoint

published: true

date: 2024-06-09 22:13:27 UTC

tags: WebDevelopment,Coding,Stripe,Azure

canonical_url: https://www.duncanmackenzie.net/blog/handling-duplicate-stripe-events/

---

In my recent post, [detailing how I handle order fulfillment for my ... | duncanma |

1,877,248 | How to authenticate a Spotify User in next.js 14 using NextAuth | In this article, we'll walk you through authenticating a user with their Spotify account in Next.js... | 0 | 2024-06-09T22:08:00 | https://dev.to/matdweb/how-to-authenticate-a-spotify-user-in-nextjs-14-using-nextauth-5f6i | spotifyapi, nextjs, nextauth, typescript | In this article, we'll walk you through authenticating a user with their Spotify account in Next.js 14. This method is compatible with Next.js 13 as well, so you're covered! 🎉

We'll be using the Spotify Provider along with NextAuth, a powerful authentication library for Next.js applications. No worries if you're new ... | matdweb |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.