id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,880,602 | Day 11 of my progress as a vue dev | About today Let's start from past few days. I made a lot of new changes in my approach of work and my... | 0 | 2024-06-07T16:24:55 | https://dev.to/zain725342/day-11-of-my-progress-as-a-vue-dev-2h26 | webdev, vue, typescript, tailwindcss | **About today**

Let's start from past few days. I made a lot of new changes in my approach of work and my daily schedule and have been experimenting with some stuff. I missed to post here for past 2 days and do regret about breaking my streak. However I feel like doing something doesn't make a lot of sense if you're no... | zain725342 |

1,880,601 | How to Speak to a Human When You're Used to Talking to a Machine | For many developers, daily interactions often involve more time spent with code and virtual... | 27,390 | 2024-06-07T16:24:51 | https://dev.to/buildwebcrumbs/how-to-speak-to-a-human-when-youre-used-to-talking-to-a-machine-4p79 | For many developers, daily interactions often involve more time spent with code and virtual interfaces than with other humans.

Whether it’s issuing commands in a terminal or negotiating logic with an AI, switching back to human conversations can feel a bit... well, less straightforward.

Let’s explore some tongue-in... | pachicodes | |

1,880,576 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash... | 0 | 2024-06-07T16:15:39 | https://dev.to/abnernewman99/buy-verified-cash-app-account-49fo | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-cash-app-account/\n\n\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoin enablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number. Buy verified cash app account.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, ACash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n \n\nHow to verify Cash App accounts\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account.\n\nAs part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly. Buy verified cash app account.\n\n \n\nHow cash used for international transaction?\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom.\n\nNo matter if you’re a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain. Buy verified cash app account.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today’s digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial.\n\nAs we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available. Buy verified cash app account.\n\nOffers and advantage to buy cash app accounts cheap?\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform.\n\nWe deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Buy verified cash app account.\n\nTrustbizs.com stands by the Cash App’s superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management.\n\nExplore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs. Buy verified cash app account.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\nIn today’s digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions.\n\nBy acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com\n\n" | abnernewman99 |

1,880,573 | Azure components of Azure | Even if you've been using Azure for a while, you might not be fully aware of what the essential... | 0 | 2024-06-07T16:13:17 | https://dev.to/arizetbest/azure-components-of-azure-2hpd | Even if you've been using Azure for a while, you might not be fully aware of what the essential elements of this massive cloud provider are.

We'll examine what I think are the three essential elements of Azure's internal architecture in this article:

1. Regions

2. Availability Zones

3. Data warehouses or centers

Le... | arizetbest | |

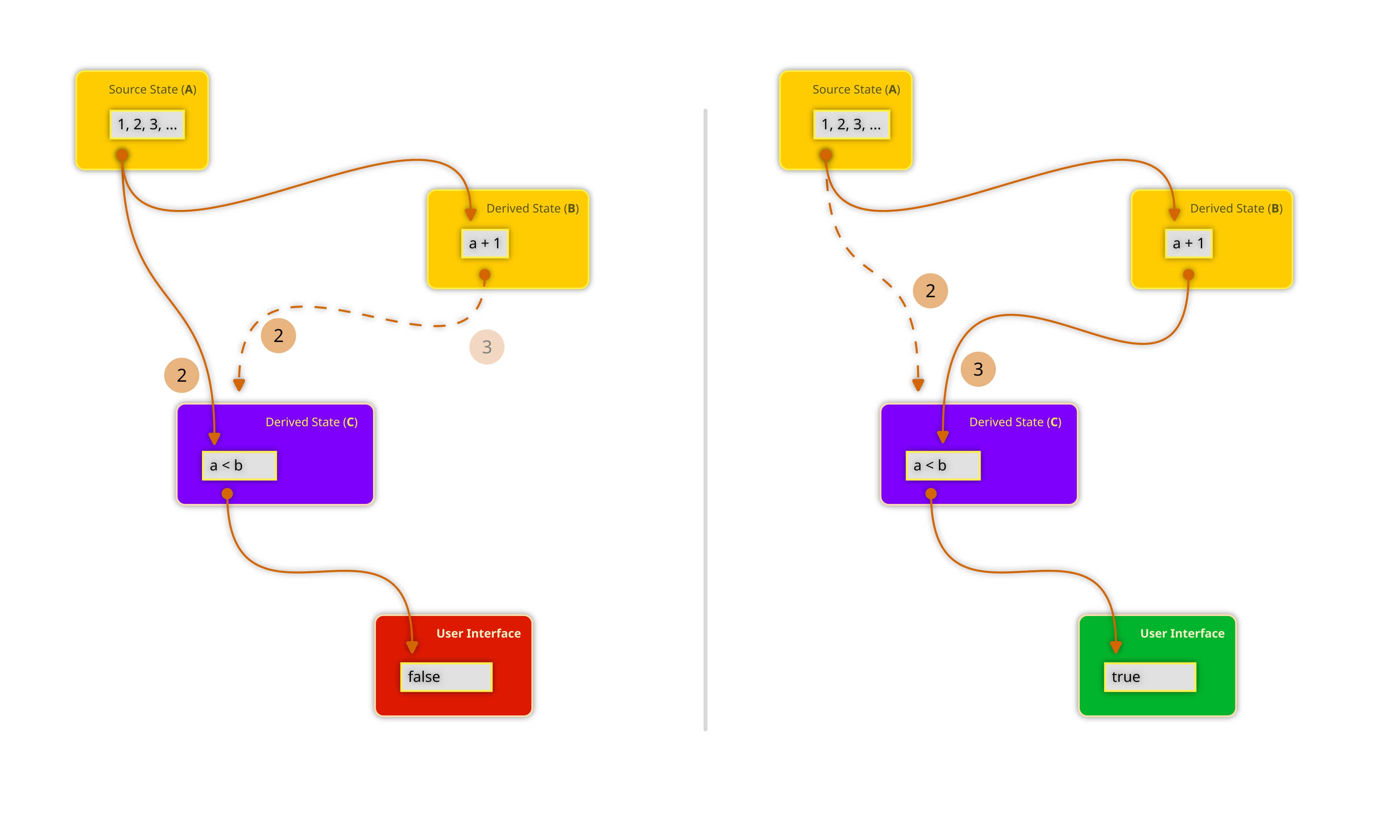

1,865,541 | Rendering "glitches" in reactive programming | Rendering glitches are a bit like the reactive world's version of the race condition where some... | 0 | 2024-06-07T16:10:53 | https://dev.to/dariomannu/rendering-glitches-in-reactive-programming-5a3k | webdev, javascript, functionalreactiveprogramming | Rendering glitches are a bit like the reactive world's version of the race condition where some derived state causes the rendering of invalid data before all its dependencies have finished updating.

In this sc... | dariomannu |

1,880,594 | Aprenda Programação Do Zero Gratuitamente Com A Rocketseat | Inicie na programação e abra as portas para uma carreira repleta de oportunidades em um mercado de... | 0 | 2024-06-23T13:51:03 | https://guiadeti.com.br/aprenda-programacao-do-zero-gratuito-rocketseat/ | eventos, carreira, carreiradeti, cursosgratuitos | ---

title: Aprenda Programação Do Zero Gratuitamente Com A Rocketseat

published: true

date: 2024-06-07 16:08:17 UTC

tags: Eventos,carreira,carreiradeti,cursosgratuitos

canonical_url: https://guiadeti.com.br/aprenda-programacao-do-zero-gratuito-rocketseat/

---

Inicie na programação e abra as portas para uma carreira re... | guiadeti |

1,880,567 | Buy Verified Payoneer Account | Buy Verified Payoneer Account Buy Verified Payoneer Accoun tHaving a reliable platform and procedure... | 0 | 2024-06-07T16:07:47 | https://dev.to/works_usa_6ee34a6e505ce13/buy-verified-payoneer-account-1428 | payoneer, payoneeraccount, verifiedpayoneer | Buy Verified Payoneer Account

**[Buy Verified Payoneer Accoun](https://usa5stareviews.com/product/buy-verified-payoneer-account/)** tHaving a reliable platform and procedure for international transactions is very important. In that case, Payoneer accounts are a simple and reliable medium. Citizens of more than 200 coun... | works_usa_6ee34a6e505ce13 |

1,880,566 | Need help with GPO | I have developed a lab with one domain controller and a domain joined machine. Now I have implemented... | 0 | 2024-06-07T15:57:00 | https://dev.to/kalyan333/need-help-with-gpo-3ch2 | computerscience | I have developed a lab with one domain controller and a domain joined machine. Now I have implemented a group policy for folder redirection which redirects the desktop, downloads and documents folder to a SMB share on the DC. The GPO works on the desktop folder but not on the downloads and documents folder. I have done... | kalyan333 |

1,880,565 | What are Gas Fees on Blockchain? | In the blockchain technology, "gas fees" are a fundamental element. They function similarly to fuel... | 0 | 2024-06-07T15:56:47 | https://dev.to/metla/what-are-gas-fees-on-blockchain-444o | cryptocurrency, blockchain, ethereum, web3 | In the blockchain technology, "gas fees" are a fundamental element. They function similarly to fuel for a vehicle, necessary for processing transactions and executing smart contracts on platforms like Ethereum.

### Understanding Gas Fees

**[Gas fees](https://metla.com/blog/what-are-gas-fees)** are payments users make... | ipratikmali |

1,879,561 | API Security: Key Protocols | API security is a crucial component of the development process, due to the sensitive nature of the... | 0 | 2024-06-07T15:49:14 | https://dev.to/apidna/api-security-key-protocols-19fl | api, security, webdev, programming | API security is a crucial component of the development process, due to the sensitive nature of the information transferred between applications.

Personal information such as contact details, home address, date of birth, passwords, and bank details are all at risk without sufficient API security protocols in place.

In... | itsrorymurphy |

1,880,562 | How to get started with Shopify theme development | In this guide, we’ll walk you through how to get started with Shopify theme development. ... | 0 | 2024-06-07T15:48:42 | https://gadget.dev/blog/setting-up-a-local-dev-environment-for-building-shopify-themes | shopify, webdev, beginners, tutorial | In this guide, we’ll walk you through how to get started with Shopify theme development.

## Requirements

Before you get started, you need the following:

- The URL of a store you want to work on. We recommend signing up for a Shopify Partner account and creating a new development store when you first get started.

-... | gadget |

1,880,560 | Connection Pooling in Spring Boot | connection pooling is a method specifically designed to manage and reuse database connections,... | 0 | 2024-06-07T15:46:59 | https://dev.to/lakmal_asela_8be4eb30d9db/connection-pooling-in-spring-boot-4k21 | springboot, database, connectionpooling | connection pooling is a method specifically designed to manage and reuse database connections, enhancing the performance of applications that interact with databases.

A database connection acts as a bridge between a Java application and a database server. Establishing and terminating these connections should be making ... | lakmal_asela_8be4eb30d9db |

1,880,556 | Unlocking Psychology with Large Language Models: Receptiviti Augmented Generation | As an increasing number of Receptiviti's customers are working with Large Language Models (LLMs),... | 0 | 2024-06-07T15:44:27 | https://dev.to/receptiviti/unlocking-psychology-with-large-language-models-receptiviti-augmented-generation-6lo | llm, rag, psychology, nlp | As an increasing number of Receptiviti's customers are working with Large Language Models (LLMs), we've seeing rising interest in integrating our technology to enhance LLMs' understanding of their users' psychology. Recognizing that an understanding of human psychology can improve Large Language Model (LLM) responses, ... | receptiviti |

1,880,553 | FastAPI Beyond CRUD Part 8 - User Account Creation (Email & Password Auth) | In this video, we employ the user authentication model developed in the previous segment to enable... | 0 | 2024-06-07T15:35:09 | https://dev.to/jod35/fastapi-beyond-crud-part-8-user-account-creation-email-password-auth-47p5 | fastapi, python, api, programming | In this video, we employ the user authentication model developed in the previous segment to enable users to create accounts within our application. Our process starts with establishing the API endpoints and incorporating the required database fields. Subsequently, we implement the logic in a service file and integrate ... | jod35 |

1,880,552 | Game for PYTHON | import random import time class Player: def __init__(self, name): self.name = name ... | 0 | 2024-06-07T15:34:16 | https://dev.to/praket_singh_1c341a50266b/game-for-python-png | ```

import random

import time

class Player:

def __init__(self, name):

self.name = name

self.health = 100

self.gold = 5

self.berries = 0

self.attack = 10

self.defense = 5

self.potions = 3

self.weak_spell = 1

self.special_ability = None

... | praket_singh_1c341a50266b | |

1,880,469 | Leetcode Day3 Linked List | Leetcode No.203 Remove Linked List Elements Given the head of a linked list and an integer... | 0 | 2024-06-07T15:33:20 | https://dev.to/flame_chan_llll/leetcode-day3-linked-list-2n6e | ##Leetcode No.203 Remove Linked List Elements

Given the head of a linked list and an integer val, remove all the nodes of the linked list that has Node.val == val, and return the new head.

```

/**

* Definition for singly-linked list.

* public class ListNode {

* int val;

* ListNode next;

* ListNode() {... | flame_chan_llll | |

1,862,880 | Storage-first pattern using Serverless | Sometimes we have sensitive requests which we need to prepeare our systems to avoid losing it.... | 0 | 2024-06-07T15:30:48 | https://dev.to/skaznowiecki/storage-first-pattern-using-serverless-m6 | serverless, aws, lambda, s3 | Sometimes we have **sensitive requests** which we need to prepeare our systems to avoid losing it. Usually losing these requests mean that the company/product **loses money** or introduce issues in the product/application

## Sensitive requests

- Payment requests

- Delivery requests

- Webhooks with external informati... | skaznowiecki |

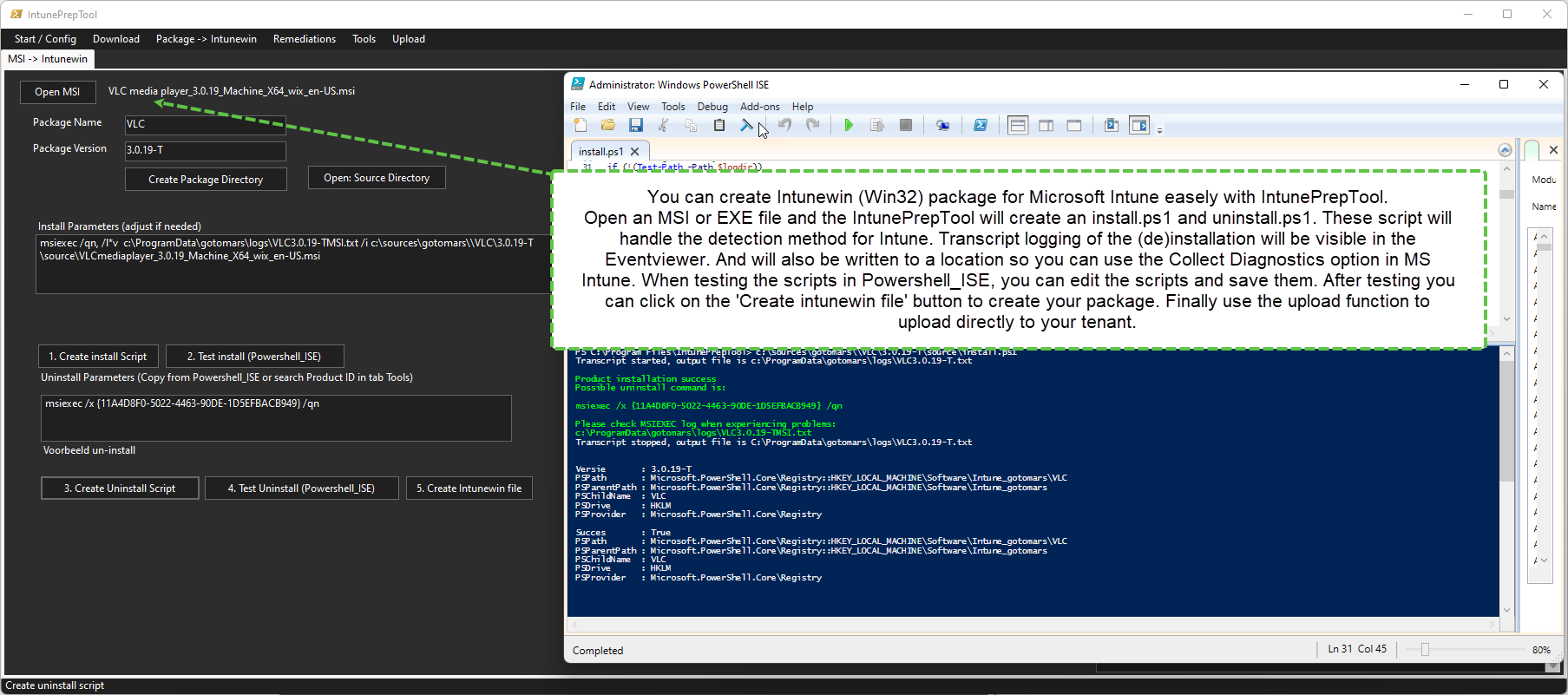

1,880,550 | Creating Win32 Intunewin packages | Hi, I would like to share a GUI based Powershell script that will help create Intunewin files for... | 0 | 2024-06-07T15:29:18 | https://dev.to/rinkturksma/creating-win32-intunewin-packages-gki | Hi,

I would like to share a GUI based Powershell script that will help create Intunewin files for Microsoft Intune.

https://github.com/rink-turksma/IntunePrepTool

#Intune

#powershell

Greetings

| rinkturksma | |

1,880,549 | How can we secure the Spring Boot Actuator endpoints? | Securing Spring Boot Actuator endpoints is crucial to protect sensitive information and operations... | 27,974 | 2024-06-07T15:27:41 | https://dev.to/codegreen/how-can-we-secure-the-spring-boot-actuator-endpoints-1hm7 | java, springboot, microservices | Securing Spring Boot Actuator endpoints is crucial to protect sensitive information and operations exposed by these endpoints from unauthorized access. Here's how we can achieve that:

1. **Using Spring Security:** Spring Security is a powerful authentication and authorization framework that can be integrated with Spr... | manishthakurani |

1,880,548 | JetThoughts Is On GoodFirms Radar for Delivering Effortless Ruby on Rails Development Services to Business of Various Niches | **JetThoughts LLC** is an excellent firm offering various services that can give the business an... | 0 | 2024-06-07T15:27:34 | https://jetthoughts.com/blog/jetthoughts-on-goodfirms-radar-for-delivering-effortless-ruby-rails-development-services-business-of-various-niches/ | ruby, rails, service |

[**JetThoughts LLC](https://www.goodfirms.co/company/jetthoughts-llc)** is an excellent ... | jetthoughts_61 |

1,880,547 | Discover a Treasure Trove of Free Stock PNG Images at PNGsHub.com | In today’s digital age, visuals play a crucial role in capturing attention and conveying messages... | 0 | 2024-06-07T15:26:59 | https://dev.to/luis_jaky_435b02d407064ec/discover-a-treasure-trove-of-free-stock-png-images-at-pngshubcom-5ph | In today’s digital age, visuals play a crucial role in capturing attention and conveying messages effectively. Whether you’re a graphic designer, content creator, or simply someone looking to enhance your projects, having access to a diverse collection of high-quality images is essential. That’s where [PNGsHub.com](htt... | luis_jaky_435b02d407064ec | |

1,878,235 | Checking object existence in large AWS S3 buckets using Python and PySpark (plus some grep comparison) | Introduction In my recent project, I encountered a need to check if data from 3rd party... | 0 | 2024-06-07T15:26:32 | https://dev.to/bgorski/checking-object-existence-in-large-aws-s3-buckets-using-python-and-pyspark-plus-some-grep-comparison-1j0o | aws, python, pyspark, programming | ## Introduction

In my recent project, I encountered a need to check if data from 3rd party database corresponds with the documents in a S3 bucket. While this might seem like a straightforward task, the approach, the dataset was massive - up to 10 million objects in a single bucket. Traditional iteration over objects l... | bgorski |

1,880,495 | Effects in Ember | Originally from How to make an effect in Ember? @trusktr asks: What’s the equivalent of Solid.js... | 0 | 2024-06-07T15:17:00 | https://dev.to/nullvoxpopuli/effects-in-ember-15n9 | ember, effect, javascript | ---

title: Effects in Ember

published: true

description:

tags:

- ember

- effect

- javascript

# cover_image: https://images.unsplash.com/photo-1531574595918-cb77c99fe5e2?q=80&w=1959&auto=format&fit=crop&ixlib=rb-4.0.3&ixid=M3wxMjA3fDB8MHxwaG90by1wYWdlfHx8fGVufDB8fHx8fA%3D%3D

# Use a ratio of 100:42 for best results.

p... | nullvoxpopuli |

1,880,841 | Top 5 Best Backend Bootcamps and Why (2024) | Breaking into backend engineering is a career journey of no return. It is one of the most rewarding... | 0 | 2024-06-10T10:27:04 | https://medium.com/backenders-club/top-5-best-backend-bootcamps-and-why-2024-6dd1b491b8ff | tutorial, waterloo, beginners, bootcamp | ---

title: Top 5 Best Backend Bootcamps and Why (2024)

published: true

date: 2024-06-07 15:26:22 UTC

tags: tutorial,Waterloo,beginners,bootcamp

canonical_url: https://medium.com/backenders-club/top-5-best-backend-bootcamps-and-why-2024-6dd1b491b8ff

---

Along with its free and paid tools for Web3 developers, from explorers to blockchain nodes, GetBlock launches several EVM testnet faucets to satisfy the growing need for developers to test their applications.

## G... | getblockapi |

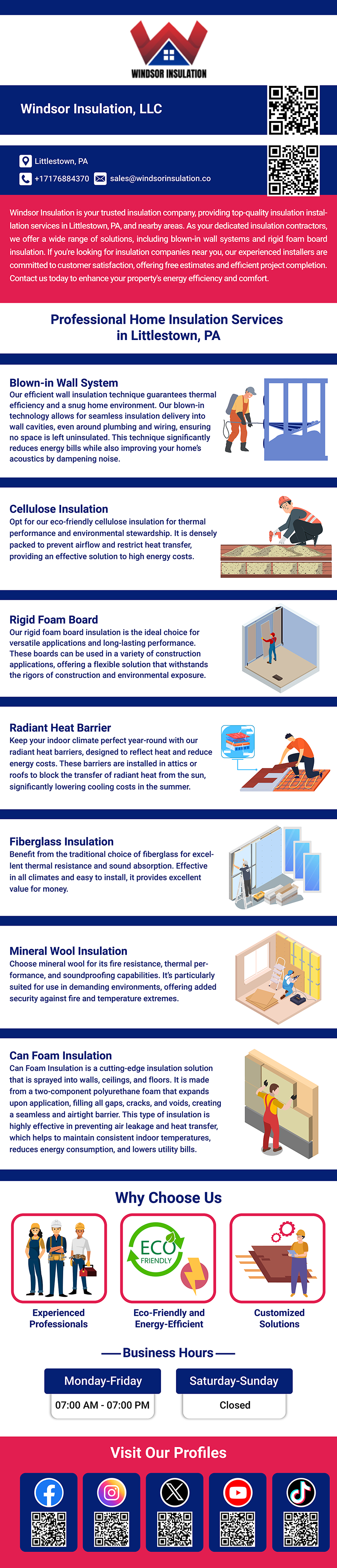

1,880,544 | Windsor Insulation | At Windsor Insulation, LLC, we are dedicated to providing top-quality insulation services to homes... | 0 | 2024-06-07T15:23:08 | https://dev.to/windsorinsulationllc/windsor-insulation-llc-1m4k |

At Windsor Insulation, LLC, we are dedicated to providing top-quality insulation services to homes and businesses in Littlestown, PA, and the surrounding areas. Our team of experienced [attic insulation contractors... | windsorinsulationllc | |

1,880,543 | What are Spring Boot Actuator endpoints? | Spring Boot Actuator endpoints provide a set of built-in HTTP endpoints that expose useful... | 0 | 2024-06-07T15:20:55 | https://dev.to/codegreen/what-are-spring-boot-actuator-endpoints-3ff5 | java, microservices, backend, springboot | Spring Boot Actuator endpoints provide a set of built-in HTTP endpoints that expose useful information and operations about the running Spring Boot application. These endpoints are invaluable for monitoring, managing, and troubleshooting the application in production.

Here are some commonly used Spring Boot Actuator e... | manishthakurani |

1,880,536 | This Week In React #188 : React 19 RC0, Data Fetching, Framer Motion, Compiler, Astro, Zod, Remix, Docusaurus, React-Query... | Hey, This is Benedikt this week, filling in for Sébastien who’s taking a newsletter break. React 19... | 18,494 | 2024-06-07T15:17:14 | https://thisweekinreact.com/newsletter/188 | react, reactnative | ---

series: This Week In React

canonical_url: https://thisweekinreact.com/newsletter/188

---

Hey,

This is [Benedikt](https://twitter.com/bndkt) this week, filling in for Sébastien who’s taking a newsletter break.

React 19 is nearing its final release, with the first RC published this week. Apart from this, there’s n... | sebastienlorber |

1,880,534 | How does Spring Boot Application achieve auto configuration internally, explain the use of @EnableAutoConfiguration? | Spring Boot's auto-configuration feature is one of its key strengths, enabling developers to rapidly... | 0 | 2024-06-07T15:11:54 | https://dev.to/codegreen/how-does-spring-boot-application-achieve-auto-configuration-internally-explain-the-use-of-enableautoconfiguration-1p4 | java, springboot | Spring Boot's auto-configuration feature is one of its key strengths, enabling developers to rapidly build and deploy applications with minimal configuration. Let me explain how it achieves this and the role of `@EnableAutoConfiguration`:

Internally, Spring Boot auto-configuration works by scanning the classpath for s... | manishthakurani |

1,880,533 | Unlock Savings with Protyre Discount Code | If you’re looking to maintain your vehicle without breaking the bank, Protyre is the place to go.... | 0 | 2024-06-07T15:11:11 | https://dev.to/harrybrook22/unlock-savings-with-protyre-discount-code-ni6 | react | - If you’re looking to maintain your vehicle without breaking the bank, Protyre is the place to go. Known for its exceptional service and extensive range of tyres, Protyre is a go-to for many motorists in the UK. To make your experience even better, you can take advantage of a [Protyre discount code](https://www.vouche... | harrybrook22 |

1,880,532 | Intermediate Python Projects | Intermediate Python Projects Python is an incredibly versatile programming language that... | 0 | 2024-06-07T15:10:03 | https://dev.to/romulogatto/intermediate-python-projects-26ng | # Intermediate Python Projects

Python is an incredibly versatile programming language that can be used for a wide range of projects. If you're feeling confident in your Python skills and looking to take on new challenges, here are some intermediate Python projects to consider. These projects will not only help you fur... | romulogatto | |

1,880,531 | what are conditional annotations in Spring Boot Application? | Conditional annotations in Spring Boot allow us to conditionally apply certain configurations or... | 0 | 2024-06-07T15:08:42 | https://dev.to/codegreen/what-are-conditional-annotations-in-spring-boot-application-1m1k | java, springboot, microservices, backend | Conditional annotations in Spring Boot allow us to conditionally apply certain configurations or beans based on the evaluation of specified conditions at runtime. These annotations help in creating flexible and customizable application setups.

Here's how conditional annotations work and their use in a Spring Boot appl... | manishthakurani |

1,880,530 | What are the best practices for versioning Spring Boot REST APIs? | Versioning Spring Boot REST APIs is crucial for maintaining compatibility, managing changes, and... | 27,974 | 2024-06-07T15:04:37 | https://dev.to/codegreen/what-are-the-best-practices-for-versioning-spring-boot-rest-apis-3a05 | java, springboot, backend, microservices | Versioning Spring Boot REST APIs is crucial for maintaining compatibility, managing changes, and ensuring smooth communication between clients and servers. Here are some best practices:

1. **URI Versioning:** Include the version number in the URI path. This approach provides clear visibility and allows clients to spe... | manishthakurani |

1,877,255 | Dynamically pairing tokio spawns | Hi everyone! While learning Rust I stumbled upon problem not covered by popular online tutorials.... | 0 | 2024-06-07T15:01:56 | https://dev.to/bbkr/dynamically-pairing-tokio-spawns-3nd4 | rust, tokio | Hi everyone!

While learning Rust I stumbled upon problem not covered by popular online tutorials. When they talk about `tokio` and `MPSC` (multi-producer single-consumer) channels they usually connect spawned threads in some fixed way. However in my project I have to match dynamically asynchronous producers and consum... | bbkr |

1,880,528 | what are the steps you would take to identify and address the performance issues in Spring Boot? | When encountering performance issues in a Spring Boot application under high load, it's essential to... | 0 | 2024-06-07T15:00:53 | https://dev.to/codegreen/what-are-the-steps-you-would-take-to-identify-and-address-the-performance-issues-in-spring-boot-47af | java, springboot, microservices, backend | When encountering performance issues in a Spring Boot application under high load, it's essential to diagnose and address the root causes effectively. Here's a structured approach to tackle the problem:

1. **Performance Monitoring:** Utilize tools like Spring Boot Actuator and monitoring solutions such as Prometheus ... | manishthakurani |

1,880,527 | Classic To Lightning Migration: 5 Crucial Considerations For A Smooth Transition | A standard change many companies are making is the switch from Salesforce Classic to Lightning, a... | 0 | 2024-06-07T14:59:52 | https://www.opkey.com/blog/salesforce-classic-to-lightning-migration | classic, lightning, migration | A standard change many companies are making is the switch from Salesforce Classic to Lightning, a more contemporary and user-friendly interface. Although there are many advantages to this shift, there are drawbacks as well that need cautious preparation and implementation. This post discusses five essential things to c... | rohitbhandari102 |

1,880,526 | Cashapp $750 Gift Card Now USA | You've received a $750 Cashapp Rewards 2024, 𝐂𝐥𝐢𝐜𝐤 𝐓𝐡𝐞 𝐋𝐢𝐧𝐤 𝐁𝐞𝐥𝐨𝐰 𝐀𝐧𝐝 𝐂𝐥𝐚𝐢𝐦 𝐘𝐨𝐮𝐫 Cashapp Rewards... | 0 | 2024-06-07T14:58:12 | https://dev.to/cashapp6548145/cashapp-750-gift-card-now-usa-djm | cashapp, makemoneyonline, usanews, usajobs | You've received a $750 Cashapp Rewards 2024,

𝐂𝐥𝐢𝐜𝐤 𝐓𝐡𝐞 𝐋𝐢𝐧𝐤 𝐁𝐞𝐥𝐨𝐰 𝐀𝐧𝐝 𝐂𝐥𝐚𝐢𝐦 𝐘𝐨𝐮𝐫 Cashapp Rewards 𝐐𝐮𝐢𝐜𝐤𝐥𝐲🎁

lect your Cashapp Gift $750 Voucher per person🍔

🎉If you interested click here🍟

👇🍔👇Link>>>> https://tinyurl.com/fwys22px

that [Sourcegraph's](https://sourcegraph.com/) AI coding assistant [Cody](https://sourcegraph.com/cody) can be used offline by connecting it to a local running [Ollama](https://www.ollama.com/) server.

Now, unfortunately my l... | thorwebdev |

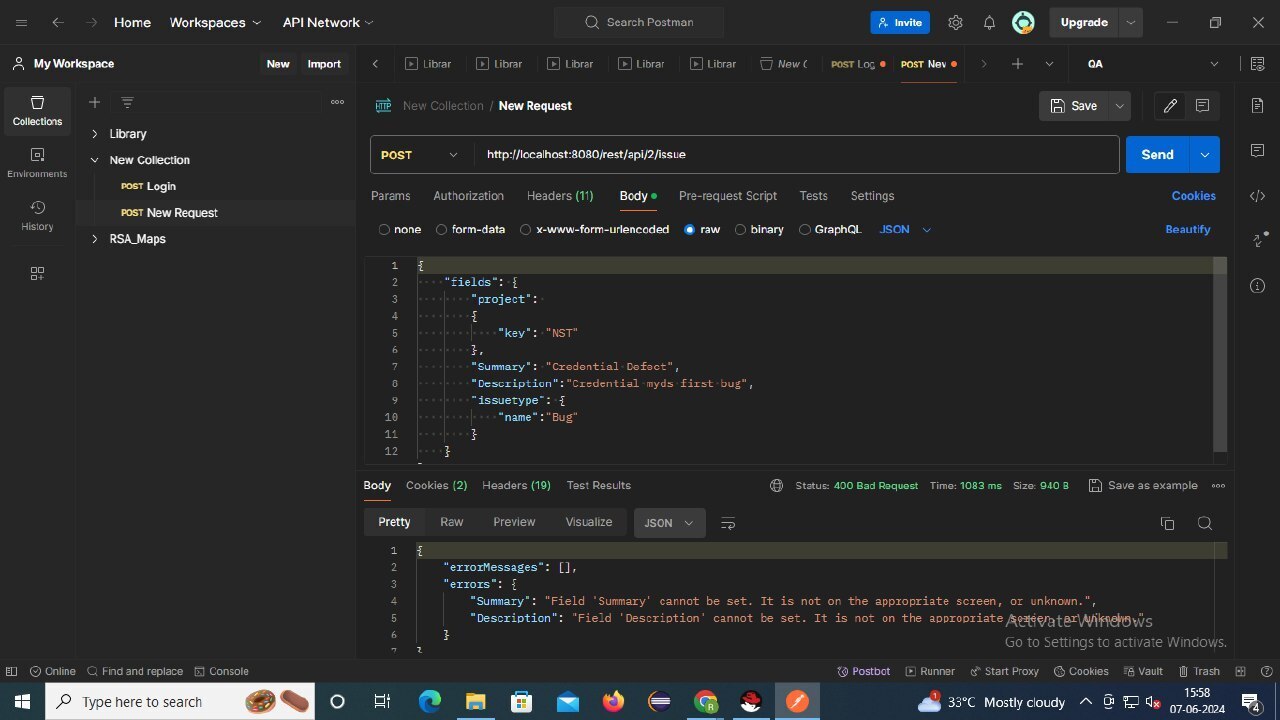

1,880,523 | Unable to create jira issue in postman | I'm facing with issue..can anyone help me to solve the issue. | 0 | 2024-06-07T14:51:07 | https://dev.to/rana_durgi_9e46da09122304/unable-to-create-jira-issue-in-postman-4ked | I'm facing with issue..can anyone help me to solve the issue.

| rana_durgi_9e46da09122304 | |

1,880,522 | zerofeesprocessingny | Zero Fees Processing is tailored for businesses aiming to optimize financial efficiency while meeting... | 0 | 2024-06-07T14:50:52 | https://dev.to/zerofeesprocessingny/zerofeesprocessingny-20n9 | financial | Zero Fees Processing is tailored for businesses aiming to optimize financial efficiency while meeting customer expectations for straightforward and adaptable payment options. Our service spans various industries, eliminating processing fees and enhancing overall transaction clarity. For businesses looking to streamline... | zerofeesprocessingny |

1,880,521 | Life | A post by Marc-André Méthot -Meloche | 0 | 2024-06-07T14:50:47 | https://dev.to/meloche92/life-2cih | meloche92 | ||

1,880,520 | We just migrate from Google analysis to PostHog | In our project's we are using google analysis to track user details What is google analysis... | 0 | 2024-06-07T14:50:46 | https://dev.to/shu12388y/we-just-migrate-from-google-analysis-to-posthog-a6d | webdev, javascript, google, posthog | In our project's we are using google analysis to track user details

What is google analysis ?

Google Analytics is a powerful web analytics service offered by Google that tracks and reports website traffic. ... | shu12388y |

1,880,516 | Elevate Your Web Projects: Mastering JavaScript Accessibility🚀🚀 | Creating an accessible web experience ensures that your website is usable by as many people as... | 0 | 2024-06-07T14:48:11 | https://dev.to/dharamgfx/elevate-your-web-projects-mastering-javascript-accessibility-40oo | webdev, javascript, beginners, programming |

Creating an accessible web experience ensures that your website is usable by as many people as possible, including those with disabilities. This guide dives deep into various aspects of accessibility, providing practical examples to enhance your understanding and implementation.

## What is Accessibility?

**Definition... | dharamgfx |

1,880,515 | G | A post by EDILSON DOS SANTOS | 0 | 2024-06-07T14:46:48 | https://dev.to/edilson_rastafare_3b757b/g-2i5e | edilson_rastafare_3b757b | ||

1,880,514 | Unveiling the Future: A Look at Top Tech Trends on the Rise | The pace of technological innovation is relentless, constantly reshaping our world. From the way we... | 0 | 2024-06-07T14:45:40 | https://dev.to/dishan_maduranga_0adcb598/unveiling-the-future-a-look-at-top-tech-trends-on-the-rise-1k3o | blogging, futuretech, technology, ai | The pace of technological innovation is relentless, constantly reshaping our world. From the way we work to how we interact and entertain ourselves, new advancements are emerging that hold the potential to be truly transformative. Today, we'll delve into some of the most exciting upcoming technologies that are poised t... | dishan_maduranga_0adcb598 |

1,870,050 | Navigating the gRPC Galaxy: A Different view into Efficient 'api to api' Communication | Overview: RPC means Remote Procedure Call. It's a client-server communication mechanism,... | 0 | 2024-06-07T14:44:33 | https://dev.to/nirmalkumar/navigating-the-grpc-galaxy-a-different-view-into-efficient-api-to-api-communication-3nb1 | microservices, grpc, domaindrivendesign, webdev | ###### Overview:

* RPC means Remote Procedure Call. It's a client-server communication mechanism, the client calls locally a procedure which is actually in remote server.

* gRPC is Google's version of RPC open source simple service definition framework.

* When we work with micro services architecture, in few scenar... | nirmalkumar |

1,880,513 | Save Time Building Headless / Jamstack Sites | Ever get tired of rebuilding your content models over and over again? I know I do. Every time I built... | 0 | 2024-06-07T14:41:17 | https://dev.to/alangleeson/save-time-building-headless-jamstack-sites-1f5a | webdev, productivity, development, developer | Ever get tired of rebuilding your content models over and over again? I know I do. Every time I built a new website I had to rebuild a button, a hero, a navigation link etc...because they're pretty much all the same and it made setting up a new headless Jamstack website a bit tedious!

But not anymore 🎉

Introducing t... | alangleeson |

1,880,512 | Elevate Your Web Forms: Mastering JavaScript Form Submission🚀 | Why Use JavaScript to Submit Form Data? Advantages of JavaScript Form... | 0 | 2024-06-07T14:41:03 | https://dev.to/dharamgfx/elevate-your-web-forms-mastering-javascript-form-submission-5d0c | webdev, javascript, beginners, api | ## Why Use JavaScript to Submit Form Data?

### Advantages of JavaScript Form Submission

1. **Real-Time Validation**: JavaScript allows you to validate form data on the client-side before sending it to the server. This enhances user experience by providing immediate feedback.

2. **Asynchronous Requests**: Using JavaSc... | dharamgfx |

1,880,511 | Can you explain the caching mechanism available in Spring Boot? | Sure thing! In Spring Boot, we have a powerful caching mechanism that helps optimize application... | 0 | 2024-06-07T14:39:42 | https://dev.to/codegreen/can-you-explain-the-caching-mechanism-available-in-spring-boot-4ea9 | java, backend, microservices, springboot | Sure thing! In Spring Boot, we have a powerful caching mechanism that helps optimize application performance by storing frequently accessed data in memory. Let me break it down for you.

Firstly, to enable caching in our Spring Boot application, we need to include the necessary dependency in our `pom.xml` or `build.gra... | manishthakurani |

1,880,387 | Create your own content management system with Remix and Xata | In this post, you'll create a content CMS using Xata, Remix, Novel, LiteLLM, and Vercel. You'll learn... | 0 | 2024-06-07T14:37:44 | https://xata.io/blog/content-management-system-remix-xata | ai, database, tutorial | In this post, you'll create a content CMS using Xata, Remix, Novel, LiteLLM, and Vercel. You'll learn how to:

- Set up Xata

- Create a schema with different column types

- Handle forms in Remix using Form Actions

- Implement Client Side Image Uploads

- Use an AI-powered WYSIWYG Editor

- Implement content-wide search

-... | cezz |

1,880,510 | What is TypeScript and what are it's advantages and disadvantages? | TypeScript is an extension of JavaScript, offering static typing to write straightforward JavaScript... | 0 | 2024-06-07T14:33:11 | https://dev.to/afnan_ahmed/what-is-typescript-and-what-are-its-advantages-and-disadvantages-3m7h | webdev, javascript, react, typescript | TypeScript is an extension of JavaScript, offering static typing to write straightforward JavaScript code. It provides optional static typing, classes, and interfaces, along with improved code organization and object-oriented programming techniques. TypeScript also offers better development tool support and extends the... | afnan_ahmed |

1,880,508 | How would you handle inter service communication in a micro-service architecture using Spring Boot | In a microservice architecture using Spring Boot, inter-service communication is typically achieved... | 0 | 2024-06-07T14:28:57 | https://dev.to/codegreen/how-would-you-handle-inter-service-communication-in-a-micro-service-architecture-using-spring-boot-kfi | java, backend, springboot, microservices | In a microservice architecture using Spring Boot, inter-service communication is typically achieved through RESTful APIs. Here's how:

Let's illustrate with two microservices: **UserService** and **OrderService**.

1. **Define APIs:** Each microservice exposes APIs to perform various operations.

2. **Call APIs from o... | manishthakurani |

1,880,507 | Day 6 Task: File Permissions and Access Control Lists🚀 | Introduction📚 Welcome to Day 6 of the #90daysofdevops challenge. In this Blog, we will explore File... | 0 | 2024-06-07T14:27:52 | https://dev.to/oncloud7/day-6-task-file-permissions-and-access-control-lists-5h1 | linux, cloudcomputing, 90daysoddevops | **Introduction📚**

Welcome to Day 6 of the #90daysofdevops challenge. In this Blog, we will explore File permission and ownership in files and directories. also will learn how to apply ACL on files and directories. so let's explore the permissions in Linux.📝

**File Permissions Overview📃**

File permissions are core t... | oncloud7 |

1,880,506 | How to Build Customizable Cancel Flows | This Blog was Originally Posted to Churnfree Blog This blog explains all about cancel flow, what it... | 0 | 2024-06-07T14:27:42 | https://churnfree.com/blog/cancel-flow/ | cancelflow, cancelservises, tutorial, retention | This Blog was Originally Posted to [Churnfree Blog](https://churnfree.com/blog/cancel-flow/?utm_source=Dev.to&utm_medium=referral&utm_campaign=Content_Distribution)

This blog explains all about cancel flow, what it is, why it is used, and step-by-step instructions on how to set up a cancel flow for free! If you’ve bee... | churnfree |

1,879,879 | Git para Principiantes, comandos básicos... | Si estás comenzando en el mundo de la programación o acabas de empezar en tu primer trabajo como... | 0 | 2024-06-07T14:21:18 | https://dev.to/andresordazrs/git-para-principiantes-comandos-basicos-5b1l | git, developers, beginners | Si estás comenzando en el mundo de la programación o acabas de empezar en tu primer trabajo como desarrollador, probablemente ya has escuchado hablar de Git. Esta poderosa herramienta de control de versiones es esencial para gestionar tu código y colaborar con otros desarrolladores. Pero si aún no entiendes completamen... | andresordazrs |

1,880,503 | LeetCode Meditations — Chapter 11: Graphs | Table of contents Introduction Representing graphs Edge List Adjacency... | 26,418 | 2024-06-07T14:20:55 | https://rivea0.github.io/blog/leetcode-meditations-chapter-11-graphs | computerscience, algorithms, typescript, javascript | ## Table of contents

- [Introduction](#intro)

- [Representing graphs](#representing-graphs)

- [Edge List](#edge-list)

- [Adjacency Matrix](#adjacency-matrix)

- [Adjacency List](#adjacency-list)

- [Traversals](#traversals)

- [Breadth-First Search (BFS)](#bfs)

- [Depth-First Search (DFS)](#dfs)

- [Res... | rivea0 |

1,860,741 | HOC Pattern - React | No segundo artigo desta série de como fazer uso de design patterns em projetos, especificamente... | 23,447 | 2024-06-07T14:17:36 | https://dev.to/deesouza/hoc-pattern-react-fmf | javascript, designpatterns, react, webdev | No segundo artigo desta série de como fazer uso de _design patterns_ em projetos, especificamente usando React, vamos considerar - um dos meus preferidos - o _pattern_ **H**igh **O**rder **C**omponent **(HOC)**.

Em poucas palavras, o **HOC** é uma técnica em que uma função recebe um componente em seu argumento e esta ... | deesouza |

1,880,496 | Why Accountants in Oxford Are the Secret Weapon for Your Business Success | In the bustling city of Oxford, renowned for its prestigious university and rich cultural heritage,... | 0 | 2024-06-07T14:17:29 | https://dev.to/hemanshu_tandlekar_017696/why-accountants-in-oxford-are-the-secret-weapon-for-your-business-success-49m3 | accountantsinoxford, accountantsoxford, bookkeeping, accountants | In the bustling city of Oxford, renowned for its prestigious university and rich cultural heritage, lies a hidden gem that many businesses In the bustling city of Oxford, renowned for its pres

tigious university and r... | hemanshu_tandlekar_017696 |

1,880,494 | Migrating from Push Oracles to Pull Oracles: The Future of DeFi Protocols | Introduction Decentralized Finance (DeFi) relies heavily on accurate and timely data to function... | 0 | 2024-06-07T14:09:53 | https://dev.to/gauciv3005/migrating-from-push-oracles-to-pull-oracles-the-future-of-defi-protocols-da1 | **Introduction**

Decentralized Finance (DeFi) relies heavily on accurate and timely data to function efficiently. Oracles serve as the critical link between on-chain smart contracts and off-chain data sources, providing essential information such as asset prices, interest rates, and real-world events. Traditionally, ma... | gauciv3005 | |

1,880,493 | How can I check the payment date for my SASSA R350 grant? | SASSA check r350 date 1. Visit the SASSA Website: To begin, go to the official SASSA... | 0 | 2024-06-07T14:05:36 | https://dev.to/richerdjames/how-can-i-check-the-payment-date-for-my-sassa-r350-grant-38bc | ## **[SASSA check r350 date](https://sassastats.co.za/sassa-payment-dates/)**

**1. Visit the SASSA Website:**

To begin, go to the official SASSA website using your internet browser.

**2. Navigate to Payment Information:**

Look for the section related to grant payments or payment information on the homepage.

**3. Sel... | richerdjames | |

1,880,491 | Pyth V2: Transforming Solana DeFi with Enhanced Oracle Solutions | Introduction The decentralized finance (DeFi) landscape on Solana has been rapidly expanding, driven... | 0 | 2024-06-07T14:02:49 | https://dev.to/gauciv3005/pyth-v2-transforming-solana-defi-with-enhanced-oracle-solutions-2cc | **Introduction**

The decentralized finance (DeFi) landscape on Solana has been rapidly expanding, driven by its high throughput, low latency, and low-cost transactions. Central to the functionality of DeFi platforms are reliable and accurate price feeds, provided by oracles. The Pyth Network has been a cornerstone in d... | gauciv3005 | |

1,880,490 | Migrating TypeScript Projects from Webpack to Vite | So, you're a seasoned developer, juggling TypeScript like a boss, and you've been using Webpack for... | 0 | 2024-06-07T14:00:51 | https://dev.to/josunlp/migrating-typescript-projects-from-webpack-to-vite-15nn | webdev, typescript, webpack, vite | So, you're a seasoned developer, juggling TypeScript like a boss, and you've been using Webpack for ages. Webpack is your trusty steed, your old reliable, but let's face it—sometimes it feels like you're configuring a spaceship just to build a to-do app. Enter Vite, the new kid on the block that's fast, lean, and incre... | josunlp |

1,880,489 | What was your win this week? 🙌 | Hey everyone :) We're wrapping another week -- I hope it was a good one! Looking back on it, what... | 0 | 2024-06-07T14:00:51 | https://dev.to/devteam/what-was-your-win-this-week-5ep8 | weeklyretro | Hey everyone :)

We're wrapping another week -- I hope it was a good one!

Looking back on it, what was something you were proud of accomplishing?

All wins count — big or small 🎉

Examples of 'wins' include:

- Starting a new project

- Fixing a tricky bug

- Teaming up with a teammate 🤝

| halexalexe | |

1,880,486 | What is a Container Registry? – Types and Benefits | In the rapidly changing world of modern software development, containerization has revolutionized... | 0 | 2024-06-07T13:50:54 | https://www.clouddefense.ai/what-is-a-container-registry/ |

In the rapidly changing world of modern software development, containerization has revolutionized the way we build, package, and deploy applications. Tools like Docker enable develo... | clouddefenseai | |

1,880,480 | Build a GenAI Text-to-Speech App in TypeScript | Try your hand at AI app development with this Encore for TypeScript example app, implementing an AI... | 0 | 2024-06-07T13:45:58 | https://dev.to/encore/create-your-own-genai-text-to-speech-app-in-typescript-11h7 | typescript, javascript, ai, programming | Try your hand at AI app development with this [Encore for TypeScript](https://encore.dev) example app, implementing an AI powered text-to-speech generator.

This fun app project lets you create your own text-to-speech app, powered by [ElevenLabs](https://elevenlabs.io/)' cutting-edge API for generative voice AI.

It's ... | marcuskohlberg |

1,880,485 | Dedicated Software Development Teams | Dedicated Software Development Teams We’ve compiled everything you need to know about... | 0 | 2024-06-07T13:49:52 | https://jetthoughts.com/blog/dedicated-software-development-teams-team-recruitment/ | team, recruitment, hr |

## Dedicated Software Development Teams

*We’ve compiled everything you need to know about dedicated software development teams into an extensive guide you’ll definitely need to read before hiring a dedicated team for your project. Let’s take a look!*

and Web3 applications,... | 0 | 2024-06-07T13:42:43 | https://dev.to/gauciv3005/entropy-revolutionizing-on-chain-randomness-with-the-pyth-network-1b14 | entropy | **Introduction**

In the rapidly evolving landscape of decentralized finance (DeFi) and Web3 applications, the demand for secure, reliable, and verifiable randomness is ever-growing. From fair lotteries and gaming to unpredictable algorithmic trading and unique NFT minting processes, random number generation plays a cru... | gauciv3005 |

1,880,475 | Commonly Used Git Commands | In today's tech-savvy world, mastering Git is almost a rite of passage for developers. Git is a... | 0 | 2024-06-07T13:41:39 | https://dev.to/ak_23/commonly-used-git-commands-2g0j | git, beginners, learning, programming |

In today's tech-savvy world, mastering Git is almost a rite of passage for developers. Git is a distributed version control system that helps track changes in source code during software development. Whether you're a newbie or a seasoned developer, knowing the most commonly used Git commands can save you a lot of head... | ak_23 |

1,880,474 | Reduce concurrent requests by Redux-Saga Effects | Are you familiar with the situation when the user clicks on a Load Data button 2 consecutive times at... | 0 | 2024-06-07T13:41:38 | https://jetthoughts.com/blog/reduce-concurrent-requests-by-redux-saga-effects-react/ | redux, react, javascript, frontend | Are you familiar with the situation when the user clicks on a Load Data button 2 consecutive times at a rapid rate, the 2nd click will dispatch a request while the fetch fired on the first one hasn’t yet terminated? Then this blog post is for you.

website on [Jekyll](https://jekyllrb.com/) to [Forestry.io](https://forestry.io/). I’d like to share my process with you, as well as a few tips about building a Jekyll site with GitHub Pages and [Forestry.io](https://forestry.io/).

website on [Jekyll](https://jekyllrb.com/) to [Forestry.io](https://forestry.io/). I’d like to share my process with you, as well as a few tips about building a Jekyll site with GitHub Pages and [Forestry.io](https://forestry.io/).

\n\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoin enablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number. Buy verified cash app account.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, ACash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n \n\nHow to verify Cash App accounts\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account.\n\nAs part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly. Buy verified cash app account.\n\n \n\nHow cash used for international transaction?\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom.\n\nNo matter if you’re a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain. Buy verified cash app account.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today’s digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial.\n\nAs we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available. Buy verified cash app account.\n\nOffers and advantage to buy cash app accounts cheap?\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform.\n\nWe deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Buy verified cash app account.\n\nTrustbizs.com stands by the Cash App’s superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management.\n\nExplore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs. Buy verified cash app account.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\nIn today’s digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions.\n\nBy acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com" | xojiwat855 |

1,878,978 | Comparing SQL engines by CPU instructions for simple DML | When comparing databases, people often focus on the response time, but it's also essential to... | 0 | 2024-06-07T13:33:51 | https://dev.to/yugabyte/comparing-sql-engines-by-cpu-instructions-for-dml-48a | linux, cpu, distributed, sql | When comparing databases, people often focus on the response time, but it's also essential to consider the global CPU usage. Running a database in a Docker container automatically assigns it to a Linux control group (cgroup), making it easy to obtain execution statistics using `perf stat -G`. This method offers the ben... | franckpachot |

1,880,713 | Tutorial: Build a Digital Clock in Rust 🦀⏰ | Hello, amazing people and welcome back to my blog! Today we're going to build a digital clock in... | 0 | 2024-06-08T08:58:32 | https://eleftheriabatsou.hashnode.dev/tutorial-build-a-digital-clock-in-rust | rust, rustprogramming, rusttutorial | ---

title: Tutorial: Build a Digital Clock in Rust 🦀⏰

published: true

date: 2024-06-07 13:33:40 UTC

tags: Rust,Rustprogramming,Rusttutorial

canonical_url: https://eleftheriabatsou.hashnode.dev/tutorial-build-a-digital-clock-in-rust

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/0gjniokbxw0guyvbm... | eleftheriabatsou |