id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,877,565 | WHAT IS THE BEST FRAMEWORK ? | Guys I decided to create something iconic like MEME MONDAYS. What is something that will make us... | 0 | 2024-06-05T06:01:03 | https://dev.to/mince/wednesday-poll-day-4p9i | webdev, javascript, beginners, programming | Guys I decided to create something iconic like **MEME MONDAYS**. What is something that will make us anticipate and feel patriotic.

> **_It's voting 🤨_**

So today onwards we will vote for something cool ?

## [What is the best javascript language ? Click me to vote 😎](https://strawpoll.com/xVg71RAGeyr)

_Wednesday... | mince |

1,877,564 | What Web3 games did right that turn 2024 narrative in their favor? | The web3 gaming industry is constantly climbing to new heights. As of March 2024, daily active... | 0 | 2024-06-05T06:00:42 | https://www.zeeve.io/blog/what-web3-games-did-right-that-turn-2024-narrative-in-their-favor/ | web3gaming, web3 | <p>The web3 gaming industry is constantly climbing to new heights. As of March 2024, daily active addresses for on-chain games spotted at 6M+ and daily transactions volume around $100 million. It is not just the playerbase; web3 games also lead in terms of investment. April 2024 saw the highest investment in blockchain... | zeeve |

1,877,563 | Identity and Access Management: Why it is an Absolute Necessity Today | The digital age has ushered in an era of unparalleled connectivity and innovation. But alongside the... | 0 | 2024-06-05T05:56:59 | https://dev.to/sennovate/identity-and-access-management-why-it-is-an-absolute-necessity-today-5hdi | identityaccessmanagemnt, identity, security, cybersecurity | The digital age has ushered in an era of unparalleled connectivity and innovation. But alongside the benefits, a multitude of security challenges have emerged. Data breaches are commonplace, cyberattacks are growing in sophistication, and regulatory landscapes are constantly evolving. In this ever-changing environment,... | sennovate |

1,877,562 | Glass Sliding Door Bunnings | Builtec Aluminium | Explore the premium range of glass sliding doors from Builtec Aluminium, available at Bunnings. Our... | 0 | 2024-06-05T05:56:16 | https://dev.to/builtec_aluminium_f190c92/glass-sliding-door-bunnings-builtec-aluminium-1nj6 | Explore the premium range of [glass sliding doors](http://builtecaluminium.com/shop/aluminium-glass-sliding-doors-bunnings/) from Builtec Aluminium, available at Bunnings. Our high-quality doors combine sleek, modern design with exceptional durability, making them perfect for any construction project. Builtec Aluminiu... | builtec_aluminium_f190c92 | |

1,877,551 | Certified Translation of Marriage Certificates: What You Need to Know | In our increasingly globalized world, the need for translating official documents is more common than... | 0 | 2024-06-05T05:49:40 | https://dev.to/sylaba_torres_d1c0898f383/certified-translation-of-marriage-certificates-what-you-need-to-know-1i77 | translation | In our increasingly globalized world, the need for translating official documents is more common than ever. One such important document is the marriage certificate. Whether you're moving abroad, applying for a visa, or dealing with legal matters in a foreign country, a certified translation of your marriage certificate... | sylaba_torres_d1c0898f383 |

1,877,561 | How To Memoize False and Nil Values | TL;DR: if method can return false or nil, and you want to memoize it, use defined?(@_result)... | 0 | 2024-06-05T05:55:49 | https://jetthoughts.com/blog/how-memoize-false-nil-values-ruby-rails/ | ruby, rails, tutorial, development |

](https://raw.githubusercontent.com/jetthoughts/jetthoughts.github.io/master/static/assets/img/blog/how-memoize-false-nil-values-ruby-rails/file_0.jpeg)

**TL;DR: if method can return false or nil, and you want to memoize it, use defined?(@_result) i... | jetthoughts_61 |

1,877,560 | When is it safe to sleep in a newly painted room? | Looking at your room's dreary walls, you realize how important it is to paint them.But is it safe to... | 0 | 2024-06-05T05:55:40 | https://dev.to/prime_muzan_b1a29ba9a3967/when-is-it-safe-to-sleep-in-a-newly-painted-room-21a6 | home, renovation, paintingservices, wallpainting |

Looking at your room's dreary walls, you realize how important it is to paint them.But is it safe to sleep in a freshly painted living room answer to this perplexing question is straightforward, and we've walked you through it in this expert painting blog. og. Many people are unaware that paint fumes include dangerous... | prime_muzan_b1a29ba9a3967 |

1,877,559 | The Art and Science of Interior and Exterior Designing in Construction Sites | The Art and Science of Interior and Exterior Designing in Construction Sites Construction site... | 0 | 2024-06-05T05:55:00 | https://dev.to/stuward_paul_66da82c068f2/the-art-and-science-of-interior-and-exterior-designing-in-construction-sites-3kmk | The Art and Science of Interior and Exterior Designing in Construction Sites

[Construction site](https://ckbuilding.co.uk/) interior and exterior designing is a multifaceted field that marries aesthetics with functionality, ensuring that buildings are not only visually appealing but also practical and sustainable. Thi... | stuward_paul_66da82c068f2 | |

1,877,558 | Test Driven Thinking for Solving Common Ruby Pitfalls | Comrade! Our Great Leader requests a web-service for his Despotic Duties! He has chosen you for... | 0 | 2024-06-05T05:53:53 | https://jetthoughts.com/blog/test-driven-thinking-for-solving-common-ruby-pitfalls-rails-tdd/ | rails, tdd, testing |

](https://raw.githubusercontent.com/jetthoughts/jetthoughts.github.io/master/static/assets/img/blog/test-driven-thinking-for-solving-common-ruby-pitfalls-rails-tdd/file_0.jpeg)

*Comrade! Our Great Leader requests a web-service for his Despotic Duties! He h... | jetthoughts_61 |

1,877,557 | BMI & Body Fat Percentage Calculator | The BMI Calculator is a user-friendly Java program crafted to swiftly compute Body Mass Index (BMI),... | 0 | 2024-06-05T05:53:12 | https://dev.to/sudhanshuambastha/bmi-body-fat-percentage-calculator-cbb | The BMI Calculator is a user-friendly Java program crafted to swiftly compute Body Mass Index (BMI), body fat percentage, and provide pertinent BMI and body fat categories based on user-provided data for height, weight, age, and gender.

**Technologies Used**

[](https://... | sudhanshuambastha | |

1,877,556 | Mock Everything Is a Good Way to Sink | Have you found a lot of code with mocks and stubs? But how do you feel about it? When I see... | 0 | 2024-06-05T05:52:47 | https://jetthoughts.com/blog/mock-everything-good-way-sink-tdd-testing/ | tdd, testing, development | ***Have you found a lot of code with mocks and stubs? But how do you feel about it? When I see mocks/stubs, I am always looking for the way to remove them.***

##... | jetthoughts_61 |

1,877,555 | How JetThoughts implements Joel’s test? | For those of you who don’t know who Joel Spolsky is here are some facts: Worked at... | 0 | 2024-06-05T05:51:31 | https://jetthoughts.com/blog/how-jetthoughts-implements-joels-test-deveopment-management/ | deveopment, management, project, startup | ](https://raw.githubusercontent.com/jetthoughts/jetthoughts.github.io/master/static/assets/img/blog/how-jetthoughts-implements-joels-test-deveopment-management/file_0.jpeg)

For those of you who don’t know who Joel Spolsky is ... | jetthoughts_61 |

1,877,554 | Why Testing is Crucial in Web Mobile App Development | In the fast-paced world of web mobile app development, testing is often viewed as an optional step.... | 0 | 2024-06-05T05:50:27 | https://dev.to/danielpeter/why-testing-is-crucial-in-web-mobile-app-development-5b1 | mobileappdevelopment, alphabravodevelopment, apptesting, softwaretesting |

In the fast-paced world of [web mobile app development](https://www.alphabravodevelopment.com), testing is often viewed as an optional step. However, skipping or rushing through testing can lead to disastrous conseq... | danielpeter |

1,877,553 | From Simple to Animated: Transforming Text with Doodle | Have you ever wished you could instantly visualize your ideas? Whether it's a writer describing a... | 0 | 2024-06-05T05:50:05 | https://dev.to/gptconsole/from-simple-to-animated-transforming-text-with-doodle-2cl1 |

Have you ever wished you could instantly visualize your ideas? Whether it's a writer describing a fantastical scene or a designer brainstorming product concepts, the struggle to bridge the gap between words and visuals is real. But fear not! Enter Doodle, your AI agent from GTP Console, ready to transform your text pr... | vincivinni | |

1,877,552 | Esseindia Immigration Consultant Services | Best Visa Consultant - ESSE INDIA IMMIGRATION & VISA SERVICES provides results and fulfills... | 0 | 2024-06-05T05:49:53 | https://dev.to/esseindia/esseindia-immigration-consultant-services-pon | webdev, javascript, beginners, programming | [](https://esseindia.com/)

Best Visa Consultant - ESSE INDIA IMMIGRATION & VISA SERVICES provides results and fulfills all conditions related to immigration, overseas education, investor programs and all visa servi... | esseindia |

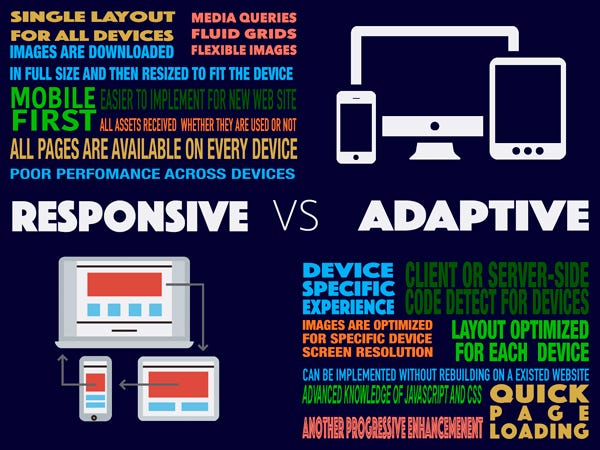

1,877,550 | Responsive or Adaptive Design? Find out which one is better for you | JetThoughts has launched a new project recently. Whole front-end team was set and started... | 0 | 2024-06-05T05:49:06 | https://jetthoughts.com/blog/responsive-or-adaptive-design-find-out-which-one-better-for-you-webdesign/ | design, webdesign, layouts |

JetThoughts has launched a new project recently. Whole front-end team was set and started developing the UI part. They designed se... | jetthoughts_61 |

1,877,549 | Hallmark Treasor | Hallmark Treasor Gandipet | Hallmark Treasor Gandipet Hyderabad | Hallmark Treasor located in the prestigious Gandipet area of Hyderabad, offers a peaceful retreat... | 0 | 2024-06-05T05:49:00 | https://dev.to/narendra_kumar_5138507a03/hallmark-treasor-hallmark-treasor-gandipet-hallmark-treasor-gandipet-hyderabad-2nf | realestate, realestateinvestment, realestateagent, hallmarktreasor | Hallmark Treasor located in the prestigious Gandipet area of Hyderabad, offers a peaceful retreat from the urban hustle while ensuring easy access to city amenities.

These meticulously designed [3 BHK homes](https:/... | narendra_kumar_5138507a03 |

1,877,548 | A Comprehensive Guide to Device Charging Carts: Essential for Modern Workspaces | In an era where technology is integral to daily operations in classrooms, offices, and healthcare... | 0 | 2024-06-05T05:48:58 | https://dev.to/addsofttech/a-comprehensive-guide-to-device-charging-carts-essential-for-modern-workspaces-3030 | blog, business, technology, software | In an era where technology is integral to daily operations in classrooms, offices, and healthcare facilities, managing and charging multiple devices efficiently becomes paramount. A device charging cart is a versatile solution designed to store, secure, and charge several electronic devices simultaneously. This guide w... | addsofttech |

1,877,546 | How to Setup a Project That Can Host Up to 1000 Users for Free | Basic Heroku Setup or Staging Configuration Hosting service: Heroku Database:... | 0 | 2024-06-05T05:47:43 | https://jetthoughts.com/blog/how-setup-project-that-can-host-up-1000-users-for-free-heroku-startup/ | heroku, startup, aws, tutorial | ](https://raw.githubusercontent.com/jetthoughts/jetthoughts.github.io/master/static/assets/img/blog/how-setup-project-that-can-host-up-1000-users-for-free-heroku-startup/file_0.jpeg)

## Basic Heroku Setup or Staging Configuration

* Hosting service: [He... | jetthoughts_61 |

1,876,893 | Glam Up My Markup: Beaches | This is a submission for [Frontend Challenge... | 0 | 2024-06-04T15:50:08 | https://dev.to/byuku/glam-up-my-markup-beaches-4cl8 | devchallenge, frontendchallenge, css, javascript | _This is a submission for [Frontend Challenge v24.04.17]((https://dev.to/challenges/frontend-2024-05-29), Glam Up My Markup: Beaches_

## What I Built

<!-- Tell us what you built and what you were looking to achieve. -->

Here's my first JS / CSS challenge. In an era where everything is built using a framework or desi... | byuku |

1,877,545 | Esseindia Immigration Consultant Services | Legal expertise Immigration companies are kept with lawyers and legal professionals who are... | 0 | 2024-06-05T05:45:56 | https://dev.to/esseindia/esseindia-immigration-consultant-services-572n | canada, visa, consultant, services | [](https://esseindia.com/)

1. Legal expertise

Immigration companies are kept with lawyers and legal professionals who are experts of immigration law. They have deep knowledge of legal structures and rules that contro... | esseindia |

1,877,544 | How We Temporarily Transformed Our Usual Workflow for a Tight Deadline | Time makes rules Every time when we start working on a new project, short iteration or... | 0 | 2024-06-05T05:45:05 | https://jetthoughts.com/blog/how-we-temporarily-transformed-our-usual-workflow-for-tight-deadline-agile/ | workflow, agile |

](https://raw.githubusercontent.com/jetthoughts/jetthoughts.github.io/master/static/assets/img/blog/how-we-temporarily-transformed-our-usual-workflow-for-tight-deadline-agile/file_0.jpeg)

## Time makes rules

Every time when we start working on a new project, ... | jetthoughts_61 |

1,877,543 | 5 Steps to Add Remote Modals to Your Rails App | Sometimes you don’t want to write big JavaScript application just to have working remote modals in... | 0 | 2024-06-05T05:41:44 | https://jetthoughts.com/blog/5-steps-add-remote-modals-your-rails-app-javascript-ruby/ | javascript, ruby, rails, tutorial | Sometimes you don’t want to write big JavaScript application just to have working remote modals in your rails application. The whole JSON-response parsing thing looks big and scary. Why can’t we simply render our views on a server and just display them as modals to users? Let’s take a look at how we can implement this ... | jetthoughts_61 |

1,877,541 | Positive Reinforcement Dog Training Techniques | Training your dog can be a challenging yet rewarding experience. Positive reinforcement is a popular... | 0 | 2024-06-05T05:41:31 | https://dev.to/larsonsteve48/positive-reinforcement-dog-training-techniques-3n16 | dog | Training your dog can be a challenging yet rewarding experience. Positive reinforcement is a popular and effective method that emphasizes rewarding good behavior rather than punishing bad behavior. This approach not only strengthens the bond between you and your pet but also promotes a happy and well-behaved dog. In th... | larsonsteve48 |

1,877,508 | Why you should use Winston for Logging in JS | Logging with Winston in JS Winston JS is a popular open-sourced Javascript logging library... | 0 | 2024-06-05T05:17:26 | https://dev.to/alimalim77/why-you-should-use-winston-for-logging-in-js-d76 | webdev, javascript, programming, tutorial | # Logging with Winston in JS

Winston JS is a popular open-sourced Javascript logging library used to write logs in code with support extending upto multiple transport. A transport helps the log to be present at multiple levels, be it storage at database level but logs on the console.

The transports can be either cons... | alimalim77 |

1,877,506 | How to make Openbox look good with ease? | I started using Linux a few years back now. In my learning path, I encountered myself with hundreds... | 0 | 2024-06-05T05:40:20 | https://dev.to/jr20xx/how-to-make-openbox-look-good-with-ease-554o | linux, openbox, customization | I started using Linux a few years back now. In my learning path, I encountered myself with hundreds of possibilities to do whatever I wanted to do at several aspects, including the chance to get a system that looks how I want it to look. I am the kind of user who like to give a personal touch to what I use and, in the ... | jr20xx |

1,877,540 | Cleaning Up Your Rails Views With View Objects | Why logic in views is a bad idea? The main reason not to put the complex logic into your... | 0 | 2024-06-05T05:39:16 | https://jetthoughts.com/blog/cleaning-up-your-rails-views-with-view-objects-development/ | rails, development, webdev, programming |

## Why logic in views is a bad idea?

The main reason not to put the complex logic into your views is, of course, testing. I don’t want to say th... | jetthoughts_61 |

1,877,539 | All About Power Point | PowerPoint is a versatile presentation software developed by Microsoft. Here's an overview of what it... | 0 | 2024-06-05T05:38:50 | https://dev.to/angelika_jolly_4aa3821499/all-about-power-point-37jn | powerplatform, powerpoint, tutorial, webdev | PowerPoint is a versatile presentation software developed by Microsoft. Here's an overview of what it offers:

1. Slides: Presentations in PowerPoint are organized into slides. You can add text, images, charts, graphs, videos, and more to each slide.

2. Templates: PowerPoint offers a variety of templates to give your ... | angelika_jolly_4aa3821499 |

1,877,538 | Quality software services for a thriving business | Nowadays, businesses have found their place in the online environment. They may want to increase... | 0 | 2024-06-05T05:37:20 | https://dev.to/growwwise/quality-software-services-for-a-thriving-business-c33 | Nowadays, businesses have found their place in the online environment. They may want to increase their visibility and make themselves known or sell products and services directly from their website. But in a large pool of industries, entrepreneurs use applications, programs, or software through which they carry out the... | growwwise | |

1,877,537 | What is Dogecoin Mining? | Dogecoin is mined using the Proof of Work method. To mine cryptocurrency, miners compete to solve... | 0 | 2024-06-05T05:34:41 | https://dev.to/lillywilson/what-is-dogecoin-mining-2g96 | dogecoin, bitcoin, cryptocurrency, asic | **[Dogecoin ](https://asicmarketplace.com/blog/top-dogecoin-miners/)**is mined using the Proof of Work method. To mine cryptocurrency, miners compete to solve difficult mathematical puzzles. They will receive new cryptocurrency in return for validating each block.

Dogecoin was created by a hard fork between Luckycoin ... | lillywilson |

1,877,535 | How To Name Variables And Methods In Ruby | How To Name Variables And Methods In Ruby What’s in a name? that which we call... | 0 | 2024-06-05T05:33:18 | https://jetthoughts.com/blog/how-name-variables-methods-in-ruby-programming/ | programming, ruby, bestpractices, rails |

## How To Name Variables And Methods In Ruby

> # What’s in a name? that which we call a rose

> #By any other name would smell as sweet.

> # *― William Shake... | jetthoughts_61 |

1,877,534 | 4 Steps to Bring Life into a Struggling Project | 4 Steps to Bring Life into a Struggling Project At the initial stages of a new business... | 0 | 2024-06-05T05:31:06 | https://jetthoughts.com/blog/4-steps-bring-life-into-struggling-project-startup-management/ | startup, management, agile, kanban |

## 4 Steps to Bring Life into a Struggling Project

At the initial stages of a new business or a startup, when the future is unknown, people ... | jetthoughts_61 |

1,877,533 | Why Prestige Stainless Steel Cookware is a Must-Have for Every Home | Introduction Choosing the right cookware is crucial for any home kitchen utensils, as it directly... | 0 | 2024-06-05T05:28:38 | https://dev.to/prestigeshop/why-prestige-stainless-steel-cookware-is-a-must-have-for-every-home-5c9p | **Introduction**

Choosing the right cookware is crucial for any home kitchen utensils, as it directly impacts the quality of your meals and the ease of your cooking experience. Here is the Prestige, the most trusted brand in the world of cookware, which provide high-quality, innovative cooking experience.

In this arti... | prestigeshop | |

1,877,532 | Create an Astro blog from scratch | Astro is a web framework for creating content-driven websites. It allows you to build extremely fast... | 0 | 2024-06-05T05:24:12 | https://dev.to/ramkarthik/create-an-astro-blog-from-scratch-5870 | astro, javascript, tutorial, beginners | Astro is a web framework for creating content-driven websites. It allows you to build extremely fast websites. It is perfect for building blogs and we are going to do exactly that. We are going to build an Astro blog from scratch.

You can find the complete code here: [https://github.com/Ramkarthik/astro-blog-tutorial]... | ramkarthik |

1,877,523 | How to implement strategies in Python language | Summary In the previous article, we learned about the introduction to the Python language,... | 0 | 2024-06-05T05:22:07 | https://dev.to/fmzquant/how-to-implement-strategies-in-python-language-9kd | python, strategy, cryptocurrency, fmzquant | ## Summary

In the previous article, we learned about the introduction to the Python language, the basic syntax, the strategy framework and more. Although the content was boring, but it's a must-required skill in your trading strategy development. In this article, let's continue the path of Python with a simple strategy... | fmzquant |

1,877,507 | Finest Leather Jackets | You can now shop for the finest quality leather jackets at 7thangle because we're offering a diverse... | 0 | 2024-06-05T05:15:24 | https://dev.to/7thangle/finest-leather-jackets-2a8i | You can now shop for the finest quality [leather jackets](https://www.7thangle.com) at 7thangle because we're offering a diverse range of premium jackets, each made with care and precision. Visit our website and get your stylish and durable jacket at minimal prices. | 7thangle | |

1,877,505 | From Discomfort to Relief: Understanding Minimally Invasive Pain Treatments | When managing pain, it’s all about finding the right type of relief that seamlessly fits into your... | 0 | 2024-06-05T05:10:07 | https://dev.to/tpspecialist/from-discomfort-to-relief-understanding-minimally-invasive-pain-treatments-1m38 | healthydebate, pain | When managing pain, it’s all about finding the right type of relief that seamlessly fits into your life. With the rise of minimally invasive pain treatments, those enduring chronic pain now have new hope.

These advanced methods not only reduce discomfort but also speed up recovery, transforming the way we approach pa... | tpspecialist |

1,877,503 | Linksys RE6500 Setup | With the Linksys Extender RE6500, welcome to the world of improved connectivity and wider Wi-Fi... | 0 | 2024-06-05T04:58:17 | https://dev.to/linksysextenderlogin/linksys-re6500-setup-23f9 | linksysre6400setup, extenderlinksyssetu, 19216811, linksysextendersetup | With the Linksys Extender RE6500, welcome to the world of improved connectivity and wider Wi-Fi coverage. We'll walk you through the quick and easy [Linksys RE6500 setup](https://www.linksys-extendersetup.net/linksys-re6500-setup/) process in this tutorial, so you can easily increase the reach of your network and take ... | linksysextenderlogin |

1,877,502 | Chainsight Indices now available | Recently, I have been wondering which coins I should invest in as an indivisual investor. And, as a... | 0 | 2024-06-05T04:57:54 | https://dev.to/hide_yoshi/chainsight-indices-now-available-1i5d |

Recently, I have been wondering which coins I should invest in as an indivisual investor. And, as a DeFi developer, which product should be developed and which chain should I develop on. To resolve these questions, I have developed a family of indices called Chainsight Indices. The indices are designed to measure the... | hide_yoshi | |

1,877,501 | Creating Smooth Hover Effects for Menu Icons | In this blog post, I explore how to enhance your website's menu by adding animations to icons using CSS transitions. I provide a step-by-step guide, starting with a simple CSS solution and moving to a more refined approach using the transition and transform properties. By following this tutorial, you'll learn how to cr... | 0 | 2024-06-05T04:55:51 | https://dev.to/yordiverkroost/creating-smooth-hover-effects-for-menu-icons-4ond | frontend, webdev, css | ---

title: Creating Smooth Hover Effects for Menu Icons

published: true

description: In this blog post, I explore how to enhance your website's menu by adding animations to icons using CSS transitions. I provide a step-by-step guide, starting with a simple CSS solution and moving to a more refined approach using the tr... | yordiverkroost |

1,877,500 | The Surprising Power of Nofollow Backlinks | See Our Tactics Get Traffic From Nofollow Site. While nofollow links may not directly impact... | 0 | 2024-06-05T04:53:42 | https://dev.to/divdev/the-surprising-power-of-nofollow-backlinks-4fpg | webdev, blog, seo, backlinks |

> See Our Tactics Get Traffic From Nofollow Site. While nofollow links may not directly impact rankings, they still provide important indexing benefits

Nofollow backlinks, often misunderstood in the SEO community, offer ... | divdev |

1,877,499 | The Future of Web Design: Expert Insights from Leading Kochi Web Design Companies | In the rapidly evolving digital landscape, staying ahead of the curve in web design is crucial for... | 0 | 2024-06-05T04:51:40 | https://dev.to/witsow_branding/the-future-of-web-design-expert-insights-from-leading-kochi-web-design-companies-5fog | In the rapidly evolving digital landscape, staying ahead of the curve in web design is crucial for businesses aiming to maintain a strong online presence. Kochi, known for its burgeoning tech scene, is home to several leading web design companies that are at the forefront of innovation. These companies are setting tren... | witsow_branding | |

1,877,498 | Enhancing Your Yoga Practice with Premium Mats from Wuhan FDM Eco Fitness Product Co., Ltd. | Enhancing Your Yoga Practice with Premium Mats from Wuhan FDM Eco Fitness Product Co. Ltd. Are you... | 0 | 2024-06-05T04:51:21 | https://dev.to/patricia_carrh_36f7f0b63b/enhancing-your-yoga-practice-with-premium-mats-from-wuhan-fdm-eco-fitness-product-co-ltd-3mkh | design, product |

Enhancing Your Yoga Practice with Premium Mats from Wuhan FDM Eco Fitness Product Co. Ltd.

Are you the yoga enthusiast wanting to {bring their classes simply|simply bring their classes} to the quantity that has been next? Search no further! Wuhan FDM Eco Fitness Product Co. Ltd. has mats premium which will be en... | patricia_carrh_36f7f0b63b |

1,877,497 | Data Democratization: Big Data and Analytics Drive Real Estate Decisions | Big data and analytics are playing an increasingly important role in real estate. By analyzing large... | 0 | 2024-06-05T04:46:34 | https://dev.to/akaksha/data-democratization-big-data-and-analytics-drive-real-estate-decisions-5a9c | realestate, developers, data, dataanalytics | Big data and analytics are playing an increasingly important role in [real estate](https://www.clariontech.com/blog/tech-trends-transforming-real-estate-opportunities-and-challenges). By analyzing large datasets of property information, market trends, and demographics, investors, [developers](https://www.clariontech.co... | akaksha |

1,877,495 | How Laboratory Analyzers are Shaping the Future of Diagnostics | Laboratory Analyzers: The Ongoing Future Of Diagnostics Because technology continues to advance... | 0 | 2024-06-05T04:40:46 | https://dev.to/patricia_carrh_36f7f0b63b/how-laboratory-analyzers-are-shaping-the-future-of-diagnostics-2351 | design, product |

Laboratory Analyzers: The Ongoing Future Of Diagnostics

Because technology continues to advance during the rate that are fast it isn't astonishing that advancements in medical gear is likewise being made. one area where progress which can be become which are significant is the development of Shaping the Future of ... | patricia_carrh_36f7f0b63b |

1,877,490 | Why does not postgres use my index? | This is a quick note 1. Query Conditions Not Matching the Index The index is not on the... | 0 | 2024-06-05T04:40:20 | https://dev.to/jacktt/why-does-not-postgres-use-my-index-5apf | postgres, database | _This is a quick note_

## 1. Query Conditions Not Matching the Index

- The index is not on the columns being queried.

- The query does not use the leading columns of a composite index.

- The query is not written in a way that takes advantage of the index's order.

- The query uses functions or operations that prevent t... | jacktt |

1,877,494 | How to Import Excel to MySQL [4-Step Tutorial] | Importing Excel Data into MySQL: A Beginner's Guide Are you looking to convert your Excel... | 0 | 2024-06-05T04:39:48 | https://five.co/blog/how-to-import-excel-to-mysql/ | excel, mysql, tutorial, data | <!-- wp:heading -->

<h2 class="wp-block-heading">Importing Excel Data into MySQL: A Beginner's Guide</h2>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Are you looking to convert your Excel spreadsheet into a MySQL database? If so, you're in the right place! In this beginner-friendly tutorial, we'll walk you through t... | domfive |

1,877,492 | Hoisting for dummies (aka me) | TL/DR: Hoisting is a useful JavaScript feature that allows you to use variables and functions before... | 0 | 2024-06-05T04:35:16 | https://dev.to/bibschan/hoisting-for-dummies-aka-me-2i6k | javascript, webdev, beginners, career |

_**TL/DR:**

Hoisting is a useful JavaScript feature that allows you to use variables and functions before they are declared. However, it's crucial to remember that only declarations are hoisted, not assignments. The keywords `let` and `const` have different hoisting behaviours, and strict mode can be used to avoid pot... | bibschan |

1,877,488 | Vector search in Manticore | Manticore Search since 6.3.0 supports Vector Search! Let's learn more about it – what it is, what... | 0 | 2024-06-05T04:30:58 | https://dev.to/sanikolaev/vector-search-in-manticore-f1a | Manticore Search since 6.3.0 supports **Vector Search**! Let's learn more about it – what it is, what benefits it brings, and how to use it on the example of integrating it into the [GitHub issue search demo](https://github.manticoresearch.com/).

### Full-text search and Vector search

[Full-text search](https://en.wi... | sanikolaev | |

1,877,485 | Mastering Migration: Shopware 5 to 6 Expert Advice | Are you eager to expand your online business? You’ll find plenty of possibilities to improve your... | 0 | 2024-06-05T04:16:32 | https://dev.to/ellasebastian/mastering-migration-shopware-5-to-6-expert-advice-4g64 | shopware, migration, shopware5to6 | Are you eager to expand your online business? You’ll find plenty of possibilities to improve your online shopping experience when you switch from Shopware 5 to Shopware 6. But at first, it might seem difficult to think about migrating your entire store. Calm down! The following guide can help you by easily upgrading fr... | ellasebastian |

1,877,484 | The Best Alternatives to Postman for API Testing | Postman is a popular tool for API development and testing, known for its user-friendly interface and... | 0 | 2024-06-05T04:13:26 | https://dev.to/vyan/the-best-alternatives-to-postman-for-api-testing-2bno | webdev, beginners, react, programming | Postman is a popular tool for API development and testing, known for its user-friendly interface and powerful features. However, there are several alternatives to Postman that offer unique capabilities and may better suit your specific needs. In this blog, we will explore some of the best alternatives to Postman for AP... | vyan |

1,877,294 | React: Design Patterns | Controlled & Uncontrolled Components | React is a powerful library for building user interfaces, and one of its core strengths lies in its... | 0 | 2024-06-05T04:00:00 | https://dev.to/andresz74/react-design-patterns-controlled-uncontrolled-components-e2c | React is a powerful library for building user interfaces, and one of its core strengths lies in its flexibility. Among the many design patterns React offers, controlled and uncontrolled components are fundamental. Understanding these patterns can significantly impact how you manage state and handle user input in your a... | andresz74 | |

1,877,480 | What is Kitchen Cabinet Refacing | Cost-Effective: Cabinet refacing is often much cheaper than a full cabinet replacement, making it a... | 0 | 2024-06-05T03:55:32 | https://dev.to/kitchens00988/what-is-kitchen-cabinet-refacing-2p5j | Cost-Effective: Cabinet refacing is often much cheaper than a full cabinet replacement, making it a budget-friendly option for homeowners.

Time-Saving: Since cabinet refacing doesn't require the removal of the existing cabinets, the process is typically quicker and less disruptive than a full renovation. This means yo... | kitchens00988 | |

1,877,479 | How to setup a Svelte project | How to setup a Svelte project You first have to have Node.JS and npm installed on your... | 0 | 2024-06-05T03:55:15 | https://dev.to/dumorando/how-to-setup-a-svelte-project-4kho | webdev, javascript, beginners, tutorial | # How to setup a Svelte project

You first have to have Node.JS and npm installed on your computer.

First of all run ``bun create vite .`` or ``npm create vite .`` for NPM users.

Use the down arrow key to scroll to Svelte, then hit enter.

Press JavaScript, or Typescript, your choice. Sveltekit is an advanced topic and i... | dumorando |

1,877,478 | SPA vs MPA: Which is better? | Hey there, fellow web developers and tech enthusiasts! Today, we're diving into the age-old debate:... | 0 | 2024-06-05T03:48:41 | https://dev.to/twinkle123/spa-vs-mpa-which-is-better-4mb7 | website, webdev, devops, opensource | Hey there, fellow web developers and tech enthusiasts! Today, we're diving into the age-old debate: SPA vs MPA. If you're scratching your head, wondering what these acronyms even mean, don't worry. We've got you covered in this complete guide.

First things first, let's break down the jargon. SPA stands for Single Page... | twinkle123 |

1,876,682 | Is it Normal ? | Why | 0 | 2024-06-04T13:15:57 | https://dev.to/ishaan_singhal_f3b6b687f3/is-it-normal--4hdc | Why | ishaan_singhal_f3b6b687f3 | |

1,873,474 | Cloud-Native Security: A Guide to Microservices and Serverless Protection | Welcome Aboard Week 1 of DevSecOps in 5: Your Ticket to Secure Development Superpowers! _Hey there,... | 27,560 | 2024-06-05T03:48:00 | https://dev.to/gauri1504/cloud-native-security-a-guide-to-microservices-and-serverless-protection-12d8 | devsecops, devops, cloud, security | Welcome Aboard Week 1 of DevSecOps in 5: Your Ticket to Secure Development Superpowers!

_Hey there, security champions and coding warriors!

Are you itching to level up your DevSecOps game and become an architect of rock-solid software? Well, you've landed in the right place! This 5-week blog series is your fast track ... | gauri1504 |

1,877,477 | AVIF Studio - Web page screen capture Chrome extension Made with Svelte and WebAssembly. | Installation... | 0 | 2024-06-05T03:46:37 | https://dev.to/vshareej/avif-studio-web-page-screen-capture-chrome-extension-made-with-svelte-and-webassembly-4lda | svelte, webassembly, screenshot | Installation Link

https://chromewebstore.google.com/detail/avif-studio/bcnhebdciabcnffgcgdpkkniplccpfap?hl=en

{% youtube tB6EnN3DId8 %}

AVIF Studio - Web page screen capture Chrome extension Made with Svelte and WebAssembly.

Extension for webpage screen capture

Support all major image formats - AVIF/PNG/JPEG/WEBP/PD... | vshareej |

1,877,476 | Biophilic Interior Trends That You Will Find at Your Nearest Home Furnishing Store Dallas | The artistic mimicry of nature’ – this is perhaps the best way to explain the meaning behind the... | 0 | 2024-06-05T03:46:03 | https://dev.to/dhierro/biophilic-interior-trends-that-you-will-find-at-your-nearest-home-furnishing-store-dallas-57l8 |

[](https://www.dhierro.com)

The artistic mimicry of nature’ – this is perhaps the best way to explain the meaning behind the recent interior trend of Biophilic Furniture. Drawing inspiration from ancient European, A... | dhierro | |

1,877,474 | Unlock Business Growth with Australian Email Database from Ready Mailing Team | Empower Your Marketing with Comprehensive Australian Email Database In today's competitive business... | 0 | 2024-06-05T03:45:54 | https://dev.to/australianemaildatab/unlock-business-growth-with-australian-email-database-from-ready-mailing-team-2pap | business, markating, service | Empower Your Marketing with Comprehensive Australian Email Database

In today's competitive business environment, having access to accurate and targeted contact information is crucial for effective marketing and business growth. Ready Mailing Team presents the ultimate solution for your marketing needs – the **[Australi... | australianemaildatab |

1,877,473 | Getting started with the Python language | Preliminary fluff So, you want to learn the Python programming language but can’t find a... | 0 | 2024-06-05T03:35:09 | https://dev.to/fmzquant/getting-started-with-the-python-language-4pkg | python, trading, cryptocurrency, fmzquant | ## Preliminary fluff

So, you want to learn the Python programming language but can’t find a concise and yet full-featured tutorial. This tutorial will attempt to teach you Python in 10 minutes. It’s probably not so much a tutorial as it is a cross between a tutorial and a cheatsheet, so it will just show you some basic... | fmzquant |

1,877,472 | Im s mahibalan, i am doctor | This is a submission for [Frontend Challenge... | 0 | 2024-06-05T03:31:21 | https://dev.to/mahi_balan_a58abd9d3f32d1/im-s-mahibalan-i-am-doctor-43gb | devchallenge, frontendchallenge, css, javascript | _This is a submission for [Frontend Challenge v24.04.17]((https://dev.to/challenges/frontend-2024-05-29), Glam Up My Markup: Beaches_

## What I Built

<!-- Tell us what you built and what you were looking to achieve. -->

## Demo

<!-- Show us your project! You can directly embed an editor into this post (see the FAQ s... | mahi_balan_a58abd9d3f32d1 |

1,877,471 | Enhance Your ReactJS Code Quality with StrictMode A Comprehensive Guide | In ReactJS, StrictMode is a tool used to highlight potential problems in an application. It activates additional checks and warnings for its descendants, making it easier to identify unsafe lifecycles, legacy API usage, and other potential issues | 0 | 2024-06-05T03:30:57 | https://dev.to/mdharoon/enhance-your-reactjs-code-quality-with-strictmode-a-comprehensive-guide-jn | react, javascript | ---

title: Enhance Your ReactJS Code Quality with StrictMode A Comprehensive Guide

published: true

description:In ReactJS, StrictMode is a tool used to highlight potential problems in an application. It activates additional checks and warnings for its descendants, making it easier to identify unsafe lifecycles, legacy ... | mdharoon |

1,877,470 | The Evolution of Medical X-Ray Machines | 37bf6fce0b28a7d445a1556097de1e22d1b3fb21c8597dda51aa5ec6eee8e748.jpg Title: How Medical X-Ray... | 0 | 2024-06-05T03:28:48 | https://dev.to/patricia_carrh_36f7f0b63b/the-evolution-of-medical-x-ray-machines-4nmd | design, product | 37bf6fce0b28a7d445a1556097de1e22d1b3fb21c8597dda51aa5ec6eee8e748.jpg

Title: How Medical X-Ray Machines Have Changed Over Time

Introduction:

Medical X-ray machines is a device that's essential in identifying and dealing with various medical problems. In the previous, x-ray machines were bulky and didn't provide top qu... | patricia_carrh_36f7f0b63b |

1,877,469 | س | A post by shaker fadel | 0 | 2024-06-05T03:27:21 | https://dev.to/shakermohmmad/s-1187 | shakermohmmad | ||

1,877,468 | س | A post by shaker fadel | 0 | 2024-06-05T03:27:19 | https://dev.to/shakermohmmad/s-30o | shakermohmmad | ||

1,876,679 | PyInstaller failed. | 2024/6/4 I run PyInstaller and failed by following error: > pyinstaller -F... | 0 | 2024-06-04T13:11:20 | https://dev.to/kazto/pyinstaller-failed-1ph0 | 2024/6/4

I run PyInstaller and failed by following error:

```

> pyinstaller -F .\main.py

Traceback (most recent call last):

File "<frozen runpy>", line 198, in _run_module_as_main

File "<frozen runpy>", line 88, in _run_code

File "C:\Users\kazto\work\MyProject\.venv\Scripts\pyinstaller.exe\__main__.py", line 8,... | kazto | |

1,877,467 | The Rise of Large Language Models A basic understanding of Chatbot AI | Not even 5 years ago, the general predictions of AI were to change the labor market. Amazon robotics... | 0 | 2024-06-05T03:25:42 | https://dev.to/pinkhathacker/the-rise-of-large-language-modelsa-basic-understanding-of-chatbot-ai-4j5p | beginners, ai, news, machinelearning | Not even 5 years ago, the general predictions of AI were to change the labor market. Amazon robotics scared millions of warehouse workers. However, it’s becoming apparent the arts and entertainment market is the first industry to feel the full effects of AI.If you're curious about the future of AI and its potential imp... | pinkhathacker |

1,877,466 | The Perfect Fit and Excellent Design Essentials Tracksuit: Why Well-Fitted Clothes Matter | The Perfect Fit and Excellent Design Essentials Tracksuit: Why Well-Fitted Clothes Matter Enhances... | 0 | 2024-06-05T03:24:09 | https://dev.to/larrypage/the-perfect-fit-and-excellent-design-essentials-tracksuit-why-well-fitted-clothes-matter-23id | The Perfect Fit and Excellent Design Essentials Tracksuit: Why Well-Fitted Clothes Matter

Enhances Comfort and Confidence

Well-fitted clothes essentials Tracksuit are more than just a fashion statement; they are a key factor in feeling comfortable and confident. When clothes fit correctly, they move with your body, all... | larrypage | |

1,877,465 | Video Production Agency in Italy | In today's digital age, video content is king. From captivating commercials to informative... | 0 | 2024-06-05T03:22:29 | https://dev.to/orbispro/video-production-agency-in-italy-1b7 |

In today's digital age, video content is king. From captivating commercials to informative corporate videos, the power of visual storytelling cannot be underestimated. But why should you consider Italy for your vid... | orbispro | |

1,877,464 | Day 4 | on the fourth day I learned about the form used to input information from the user's side which is... | 0 | 2024-06-05T03:22:17 | https://dev.to/han_han/day-4-3cn | webdev, html, css, 100daysofcode | on the fourth day I learned about the form used to input information from the user's side which is usually placed at the bottom, here I began to understand the use of `<form>` tags which are commonly used on a website | han_han |

1,877,463 | Corteiz Tracksuit: Unique and Stylish Apparel for Every Season | Corteiz Tracksuit: Unique and Stylish Apparel for Every Season Corteiz tracksuit is celebrated for... | 0 | 2024-06-05T03:21:14 | https://dev.to/larrypage/corteiz-tracksuit-unique-and-stylish-apparel-for-every-season-2l00 | Corteiz Tracksuit: Unique and Stylish Apparel for Every Season

Corteiz tracksuit is celebrated for its unique design that stands out in the fashion industry. Each piece is meticulously crafted with attention to detail, making it not just an outfit but a statement. From bold patterns to subtle textures, Corteiz Clothing... | larrypage | |

1,877,091 | How to quickly add a rich text editor to your Next.js project using TipTap | Tiptap is an open source headless wrapper around ProseMirror. ProseMirror is a toolkit for building... | 0 | 2024-06-05T03:20:04 | https://dev.to/joeskills/how-to-add-a-rich-text-editor-to-your-nextjs-project-using-tiptap-1fil | [Tiptap](https://tiptap.dev/) is an open source headless wrapper around [ProseMirror](https://prosemirror.net/). ProseMirror is a toolkit for building rich text WYSIWYG editors. The best part about Tiptap is that it's headless, which means you can customize and create your rich text editor however you want. I'll be usi... | joeskills | |

1,877,462 | Spider Worldwide: A Fashion Revolution | In the ever-evolving world of fashion, some brands manage to Spider Worldwide a unique niche for... | 0 | 2024-06-05T03:19:07 | https://dev.to/larrypage/spider-worldwide-a-fashion-revolution-3hnp | In the ever-evolving world of fashion, some brands manage to Spider Worldwide a unique niche for themselves, standing out with their innovative designs, quality craftsmanship, and distinct identity. Spider Worldwide is one such brand that has captured the imagination of fashion enthusiasts globally. Known for its eclec... | larrypage | |

1,877,451 | The Innovations of Xianheng International Science and Technology Co., Ltd | The Incredible Developments of Xianheng Worldwide Scientific research as well as Innovation Carbon... | 0 | 2024-06-05T03:17:39 | https://dev.to/patricia_carrh_36f7f0b63b/the-innovations-of-xianheng-international-science-and-technology-co-ltd-972 | design, product |

The Incredible Developments of Xianheng Worldwide Scientific research as well as Innovation Carbon monoxide Ltd

Xianheng Worldwide Scientific research as well as Innovation Carbon monoxide Ltd is actually a business that focuses on establishing innovations that are ingenious well as items. This business has act... | patricia_carrh_36f7f0b63b |

1,877,450 | test | test | 0 | 2024-06-05T03:17:36 | https://dev.to/pko_65cb1efe0e6560eb4ce77/test-4io8 | test | pko_65cb1efe0e6560eb4ce77 | |

1,877,449 | Dive into Game Creation: Exploring the Basics of GDevelop | The world of game development can be intimidating, filled with complex engines and hefty price tags.... | 0 | 2024-06-05T03:14:03 | https://dev.to/epakconsultant/dive-into-game-creation-exploring-the-basics-of-gdevelop-1a71 | The world of game development can be intimidating, filled with complex engines and hefty price tags. But fear not! GDevelop, a free and open-source game engine, offers a beginner-friendly solution to turn your game ideas into reality. This article will equip you with the fundamental knowledge to get started with GDevel... | epakconsultant | |

1,877,448 | What are SLI, SLO and SLA, and Why are they important in SRE? | Aspect Service Level Indicator (SLI) Service Level Objective (SLO) Service Level Agreement... | 0 | 2024-06-05T03:10:29 | https://dev.to/u2633/what-are-sli-slo-and-sla-and-why-are-they-important-in-sre-1h1o | sre | | **Aspect** | **Service Level Indicator (SLI)** | **Service Level Objective (SLO)** ... | u2633 |

1,877,445 | Effortless Application Deployment and Management with AWS Elastic Beanstalk | Effortless Application Deployment and Management with AWS Elastic Beanstalk ... | 0 | 2024-06-05T03:08:48 | https://dev.to/virajlakshitha/effortless-application-deployment-and-management-with-aws-elastic-beanstalk-42id | # Effortless Application Deployment and Management with AWS Elastic Beanstalk

### Introduction to AWS Elastic Beanstalk

In today's fast-paced technological landscape, businesses and developers constantly seek efficient and scalable solutions for deploying and managing applications. AWS Elastic Beanstalk emerges as a... | virajlakshitha | |

1,877,444 | Unleash Your Creativity: Building Games with Godot | The world of game development can seem daunting, filled with complex engines and hefty price tags.... | 0 | 2024-06-05T03:08:41 | https://dev.to/epakconsultant/unleash-your-creativity-building-games-with-godot-3hnl | gamedevelope | The world of game development can seem daunting, filled with complex engines and hefty price tags. But fear not, aspiring game creators! Godot, a powerful and open-source game engine, offers a user-friendly platform to bring your game ideas to life. This guide will equip you with the foundational steps to get started w... | epakconsultant |

1,877,443 | How Shanghai Rumi Electromechanical Technology is Driving Industry Change | I Shanghai Rumi Electromechanical Innovation is actually a business that is continuing's steering... | 0 | 2024-06-05T03:08:13 | https://dev.to/patricia_carrh_36f7f0b63b/how-shanghai-rumi-electromechanical-technology-is-driving-industry-change-4db9 | design, product |

I

Shanghai Rumi Electromechanical Innovation is actually a business that is continuing's steering alter in the market. They offer lots of benefits as well as developments that are actually creating the function simpler as well as much more secure. They have actually transformed the manner ins which our team uti... | patricia_carrh_36f7f0b63b |

1,877,442 | SELENIUM | WHAT IS SELENIUM ? Selenium is an open-source tool for automating web browsers. It... | 0 | 2024-06-05T03:08:08 | https://dev.to/jayshankark/selenium-2c2d | #### <u>WHAT IS SELENIUM ?</u>

Selenium is an open-source tool for automating web browsers. It provides a way to interact with web pages as a user would, allowing you to automate tasks, test web applications, and scrape data from websites.We use Selenium for automation because it provides a powerful way to interact wi... | jayshankark | |

1,866,272 | Transforming the Oil and Gas Industry Everyday | Benefit from the Power of Brainboard to Make the Oil and Gas Industry More Efficient and... | 0 | 2024-06-05T03:06:00 | https://dev.to/brainboard/transforming-the-oil-and-gas-industry-everyday-4lb7 | industry, infrastructureascode, terraform, cloudcomputing | ## Benefit from the Power of Brainboard to Make the Oil and Gas Industry More Efficient and Eco-Friendly on Every Level

### Safer Drilling, Better Analytic Insights, More Efficient Production, and Cleaner Skies

### **Democratize Access to IaC Through Training**

Don’t hire expensive consultants. With Brainboard, you ... | miketysonofthecloud |

1,877,441 | Review bình xịt bọt tuyết cầm tay rửa xe ô tô có tốt không? | Bình xịt bọt tuyết cầm tay là phụ kiện ô tô không thể thiếu khi rửa xe ô tô. Cùng Accecar khám phá... | 0 | 2024-06-05T03:05:46 | https://dev.to/accecar/review-binh-xit-bot-tuyet-cam-tay-rua-xe-o-to-co-tot-khong-1a00 | Bình xịt bọt tuyết cầm tay là phụ kiện ô tô không thể thiếu khi rửa xe ô tô. Cùng Accecar khám phá tác dụng đặc biệt của bình xịt bọt tuyết cầm tay dung tích 2 lít với những đặc điểm độc đáo:

Chất liệu nhựa HDPE: Với chất liệu nhựa HDPE chất lượng cao, bình xịt bọt tuyết trở nên siêu bền và chắc chắn. Điều này đảm bảo... | accecar | |

1,877,440 | Firebase Fundamentals: Building Blocks for Your Mobile App | Firebase, Google's mobile app development platform, offers a comprehensive suite of tools to... | 0 | 2024-06-05T03:03:21 | https://dev.to/epakconsultant/firebase-fundamentals-building-blocks-for-your-mobile-app-2fb3 | firebase | Firebase, Google's mobile app development platform, offers a comprehensive suite of tools to streamline your app development process. Whether you're building for iOS, Android, or the web, Firebase provides essential features to enhance your app's functionality and user experience. Let's delve into the core functionalit... | epakconsultant |

1,877,439 | How to Deploy Flutter Apps to the Google Play Store and App Store | Today, we embark on an adventure – a journey to launch your captivating Flutter app onto the vast... | 0 | 2024-06-05T03:02:46 | https://dev.to/accecar/how-to-deploy-flutter-apps-to-the-google-play-store-and-app-store-2p1f | Today, we embark on an adventure – a journey to launch your captivating Flutter app onto the vast landscapes of the Google Play Store and App Store.

This comprehensive guide will be your trusty compass, guiding you through the essential steps of deploying your app on both prominent platforms. Whether you’re a seasoned... | accecar | |

1,877,438 | Ace Your Exams: Automated Question Generation for the Diligent Student | This one goes out to all my students who are always anxious about the upcoming exam. I see you... | 0 | 2024-06-05T02:59:59 | https://dev.to/roomals/ace-your-exams-automated-question-generation-for-the-diligent-student-11c | python, ai, productivity, machinelearning | This one goes out to all my students who are always anxious about the upcoming exam.

I see you guys, asking questions such as "What will professor put on the exam?!" It's simple, who cares?

With this script, Ollama will generate line per line multiple choice questions for you to prepare on!

Now, a few words of cau... | roomals |

1,877,437 | Corteiz Cargos Pants: A Modern Wardrobe Staple | Corteiz Cargos Pants: A Modern Wardrobe Staple Corteiz cargos pants have become an essential item in... | 0 | 2024-06-05T02:57:15 | https://dev.to/larrypage/corteiz-cargos-pants-a-modern-wardrobe-staple-4bi4 | Corteiz Cargos Pants: A Modern Wardrobe Staple

Corteiz cargos pants have become an essential item in the fashion industry, blending functionality with a trendy aesthetic. This article delves into the history, design, and cultural impact of Corteiz cargo pants, exploring why they have become a go-to choice for many fash... | larrypage | |

1,844,137 | aaaa | aaaaaaaaaaaaaaaa | 0 | 2024-05-06T14:31:15 | https://dev.to/afzl210/aaaa-42e6 | aaaaaaaaaaaaaaaa | afzl210 | |

1,877,436 | Wrangling Your Keychain: A Guide to Apple Certificates for App Development | For any aspiring iOS, iPadOS, macOS, or watchOS developer, Apple's certificates and Keychain Access... | 0 | 2024-06-05T02:57:03 | https://dev.to/epakconsultant/wrangling-your-keychain-a-guide-to-apple-certificates-for-app-development-53b6 | For any aspiring iOS, iPadOS, macOS, or watchOS developer, Apple's certificates and Keychain Access can feel like a mystical realm. But fear not! Understanding these tools is crucial for signing and deploying your apps. This guide will equip you with the knowledge to confidently manage your Apple certificates and Keych... | epakconsultant | |

1,872,298 | Constraints & Validations | All This Data In my previous blog post, I wrote about objects and classes and how they can... | 0 | 2024-06-05T02:52:11 | https://dev.to/gasparericmartin/constraints-validations-2n1i | ## All This Data

In my previous blog post, I wrote about objects and classes and how they can be used to represent entities, objects, and relationships in code. As part of that post I touched on object relational mapping, the concept of using classes to map relational databases. Using these structures to store, visuali... | gasparericmartin | |

1,877,435 | 🚀 First Week of Computer Programming Courses: What I’ve Learned! 🚀 | Diving into the world of programming has been interesting so far, and my first week was packed with... | 0 | 2024-06-05T02:52:08 | https://dev.to/itschristinamba/first-week-of-computer-programming-courses-what-ive-learned-3p45 | html, networking, beginners, webdev |

Diving into the world of programming has been interesting so far, and my first week was packed with tons of things I did not know and hands-on experiences. Here’s a glimpse of what I’ve learned so far:

🌐 Building... | itschristinamba |

1,877,434 | Best Age for Children and Teenagers to Start Using Social Media | Introduction The question of when children and teenagers should start using social media... | 0 | 2024-06-05T02:46:33 | https://dev.to/synthscript/best-age-for-children-and-teenagers-to-start-using-social-media-3m07 | children, socialmedia | **Introduction**

The question of when children and teenagers should start using social media platforms is an equivocal topic. Some argue in favour of early exposure aiding digital literacy and social skills; I firmly advocate placing a later age limit for accessing social media platforms. In my opinion, the right age ... | synthscript |

1,877,433 | Shanghai Rumi Electromechanical Technology's Manufacturing Capabilities | 2d7cce047a34a7d66425e4880c976d2438d4b051ac669e9e8e35cad6f7f82f10.jpg Shanghai Rumi Electromechanical... | 0 | 2024-06-05T02:44:43 | https://dev.to/patricia_carrh_36f7f0b63b/shanghai-rumi-electromechanical-technologys-manufacturing-capabilities-7d7 | design, product | 2d7cce047a34a7d66425e4880c976d2438d4b051ac669e9e8e35cad6f7f82f10.jpg

Shanghai Rumi Electromechanical Technology is a company that makes truly cool and things that are useful equipments and equipment. They have a total great deal of special abilities and methods that make them great at making things.

Advantages

Among t... | patricia_carrh_36f7f0b63b |

1,877,432 | CSS Naming Convention yang Perlu Diketahui dan Kenapa Perlu Digunakan | Kalau teman-teman pengen bikin website yang keren dan gampang diatur, teman-teman kudu ngerti yang... | 0 | 2024-06-05T02:42:15 | https://dev.to/yogameleniawan/css-naming-convention-yang-perlu-diketahui-dan-kenapa-perlu-digunakan-1l7j | css |

Kalau teman-teman pengen bikin website yang keren dan gampang diatur, teman-teman kudu ngerti yang namanya konvensi penamaan CSS. Ini kayak aturan main buat ngasih nama class dan ID di CSS biar kode teman-teman gam... | yogameleniawan |

1,846,216 | How to Deploy MERN Stack over Kubernetes | Hello Everyone, Welcome to another blog! In this blog post, we will talk about how easily you can... | 0 | 2024-06-05T02:33:02 | https://devtron.ai/blog/how-to-deploy-mern-stack-over-kubernetes/ | mern, kubernetes, mongodb, devtron | Hello Everyone, Welcome to another blog! In this blog post, we will talk about how easily you can deploy your MERN stack over Kubernetes with just a few clicks using Devtron.

Yes, you read it right! You don't have to write a number of yamls or pipelines to deploy your entire stack over Kubernetes.

Let's go ahead and se... | devtron_inc |

1,877,431 | Key Partnerships and Collaborations of Hebei Long Zhou Trade Co., Ltd | Essential Collaborations as well as Collaborations of Hebei Lengthy Zhou Profession Carbon monoxide... | 0 | 2024-06-05T02:29:43 | https://dev.to/patricia_carrh_36f7f0b63b/key-partnerships-and-collaborations-of-hebei-long-zhou-trade-co-ltd-ncd | design, product |

Essential Collaborations as well as Collaborations of Hebei Lengthy Zhou Profession Carbon monoxide Ltd

Hebei Lengthy Zhou Profession Carbon monoxide Ltd is actually a business that handles the production as well as circulation of various items like drinks, meals items, as well as house home devices. It... | patricia_carrh_36f7f0b63b |

1,876,465 | Using IP2Convert to create MMDB geolocation database for use with WireShark | WireShark is a free and open-source packet analyzer. It can be used to check for network attacks or... | 0 | 2024-06-05T02:28:50 | https://dev.to/ip2location/using-ip2convert-to-create-mmdb-geolocation-database-for-use-with-wireshark-3961 | webdev, tutorial, database, programming | WireShark is a free and open-source packet analyzer. It can be used to check for network attacks or to troubleshoot networking issues. Meanwhile, MMDB is a database format created by MaxMind for IP lookup. Inside WireShark, there is an option to retrieve IP geolocation data using the MMDB IP database. In this article, ... | ip2location |

1,877,397 | Streamlining Image Annotation with Annotate-Lab | Streamlining Image Annotation with Annotate-Lab Image annotation is the process of adding... | 0 | 2024-06-05T02:02:17 | https://dev.to/sumn2u/streamlining-image-annotation-with-annotate-lab-6hc | opensource, computervision, machinelearning, imageannotation | ## Streamlining Image Annotation with Annotate-Lab

Image annotation is the process of adding labels or descriptions to images to provide context for computer vision models. This task involves tagging an image with information that helps a machine understand its content. Annotation is crucial in applications such as se... | sumn2u |

1,877,430 | How to implement strategic trading in JavaScript language | Summary In the previous article, we introduced the fundamental knowledge that when using... | 0 | 2024-06-05T02:28:13 | https://dev.to/fmzquant/how-to-implement-strategic-trading-in-javascript-language-32gn | javascript, trading, cryptocurrency, fmzquant | ## Summary

In the previous article, we introduced the fundamental knowledge that when using JavaScript to write a program, including the basic grammar and materials. In this article, we will use it with some common strategy modules and technical indicators to achieve a viable intraday quantitative trading strategy.

##... | fmzquant |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.