id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,876,198 | EasyLog Library | Say hello to consistent and structured logging with EasyLog! 🚀 Enjoy ✨ Context-Aware Logging: Enjoy... | 0 | 2024-06-04T05:49:52 | https://dev.to/codaarx/easylog-library-1fmc | android, kotlin, mobile, androiddev | Say hello to consistent and structured logging with EasyLog! 🚀

Enjoy

✨ Context-Aware Logging: Enjoy logs automatically referencing exact call lines and classes.

⚡ Intuitive APIs: Speed up your development process with easy-to-use and concise logging methods.

🛠️ Enhanced Debugging and Data Tracking: Keep your logs... | codaarx |

1,876,197 | The Future of Blockchain Gaming on Solana | The blockchain gaming industry is rapidly growing but lacks games that truly excite players... | 27,548 | 2024-06-04T05:49:37 | https://dev.to/aishikl/the-future-of-blockchain-gaming-on-solana-2glo | # The blockchain gaming industry is rapidly growing but lacks games that truly excite players about blockchain technology. Many current games are overly focused on the 'token economy' model, limiting their appeal and sophistication. Solana aims to revolutionize this space by offering a more immersive and competitive ga... | aishikl | |

1,876,196 | Blockchain-Based Streaming for Fairer Content Monetization | The world of streaming reigns supreme in entertainment. From music and movies to live broadcasts and... | 0 | 2024-06-04T05:47:06 | https://dev.to/donnajohnson88/blockchain-based-streaming-for-fairer-content-monetization-471o | blockchain, streaming, learning, development | The world of streaming reigns supreme in entertainment. From music and movies to live broadcasts and educational lectures, [blockchain streaming development](https://blockchain.oodles.io/blockchain-video-streaming-solutions/?utm_source=devto) offers unparalleled convenience and accessibility for content consumption. Ho... | donnajohnson88 |

1,876,195 | Hallmark Treasor Gandipet Hyderabad | Hallmark Treasor Gandipet | Hallmark Treasor is nestled in the prestigious Gandipet area of Hyderabad. This community offers a... | 0 | 2024-06-04T05:44:48 | https://dev.to/narendra_kumar_5138507a03/hallmark-treasor-gandipet-hyderabad-hallmark-treasor-gandipet-14kb | realestate, realestateagent, realestateinvestment, hallmarktreasor | Hallmark Treasor is nestled in the prestigious Gandipet area of Hyderabad. This community offers a serene escape from the city's hustle and bustle while ensuring easy access to urban amenities.

Hallmark Treasor fe... | narendra_kumar_5138507a03 |

1,876,194 | The Ultimate Guide to the Best Cold Email Tools for 2024 | In the fast-paced world of sales and marketing, reaching out to potential clients through cold emails... | 0 | 2024-06-04T05:44:06 | https://dev.to/pardeep_sharma_ecf017f389/the-ultimate-guide-to-the-best-cold-email-tools-for-2024-ope | In the fast-paced world of sales and marketing, reaching out to potential clients through cold emails remains one of the most effective strategies. However, crafting and sending cold emails can be time-consuming and challenging without the right tools. That’s where SalesBlink comes in. As a leader in the field, SalesBl... | pardeep_sharma_ecf017f389 | |

1,876,193 | How to create an Authentication & Authorization feature in Next JS 14 with another backend? | To create an authentication and authorization feature in Next.js 14 with another backend, you... | 0 | 2024-06-04T05:42:51 | https://dev.to/nadim_ch0wdhury/how-to-create-an-authentication-authorization-feature-in-next-js-14-with-another-backend-3elh | To create an authentication and authorization feature in Next.js 14 with another backend, you typically need to use a third-party authentication provider or a custom backend API. Here, I'll demonstrate how to do this using Next.js with NextAuth.js as the authentication library and a custom backend (e.g., an Express.js ... | nadim_ch0wdhury | |

1,876,190 | How to create an Authentication & Authorization feature in Next JS 14? | Creating an authentication and authorization feature in Next.js 14 involves several steps. Here’s a... | 0 | 2024-06-04T05:41:14 | https://dev.to/nadim_ch0wdhury/how-to-create-an-authentication-authorization-feature-in-next-js-14-232g | Creating an authentication and authorization feature in Next.js 14 involves several steps. Here’s a comprehensive guide to help you implement it:

### Step 1: Set Up a New Next.js Project

1. **Initialize a New Project**:

```bash

npx create-next-app@latest my-nextjs-app

cd my-nextjs-app

```

2. **Install Req... | nadim_ch0wdhury | |

1,876,189 | How to create an Authentication & Authorization feature in Express JS RESTful API? | Creating an authentication and authorization feature in an Express.js RESTful API involves several... | 0 | 2024-06-04T05:38:23 | https://dev.to/nadim_ch0wdhury/how-to-create-an-authentication-authorization-feature-in-express-js-restful-api-ge8 | Creating an authentication and authorization feature in an Express.js RESTful API involves several steps. Here's a step-by-step guide:

### Step 1: Set Up a New Express.js Project

1. **Initialize a New Project**:

```bash

mkdir project-name

cd project-name

npm init -y

```

2. **Install Required Packages**:... | nadim_ch0wdhury | |

1,876,188 | Zeeve Launchpad for Node Sale Infrastructure: Boost Security & Token Utility in Rollups | Zeeve is expanding its rollups-as-a-service (RaaS) offering with an advanced node sale launchpad for... | 0 | 2024-06-04T05:38:19 | https://www.zeeve.io/blog/zeeve-launchpad-for-node-sale-infrastructure-boost-security-token-utility-in-rollups/ | rollups | <p>Zeeve is expanding its <a href="https://www.zeeve.io/rollups/">rollups-as-a-service (RaaS)</a> offering with an advanced node sale launchpad for the node sale infrastructure. All kinds of optimistic rollup projects seeking to add an additional security and monitoring layer can leverage the Launchpad service to imple... | zeeve |

1,876,187 | How to create an Authentication & Authorization feature in Nest JS GraphQL API? | Creating an authentication and authorization feature in a NestJS GraphQL API involves several steps.... | 0 | 2024-06-04T05:36:33 | https://dev.to/nadim_ch0wdhury/how-to-create-an-authentication-authorization-feature-in-nest-js-graphql-api-35em | Creating an authentication and authorization feature in a NestJS GraphQL API involves several steps. Here’s a step-by-step guide:

### Step 1: Set Up a New NestJS Project

1. **Install Nest CLI**:

```bash

npm install -g @nestjs/cli

```

2. **Create a New Project**:

```bash

nest new project-name

```

3. *... | nadim_ch0wdhury | |

1,876,186 | How to Connect RESTful API & Express JS backend with MongoDB database? | Connecting a RESTful API in an Express.js backend with a MongoDB database involves several steps.... | 0 | 2024-06-04T05:33:32 | https://dev.to/nadim_ch0wdhury/how-to-connect-restful-api-express-js-backend-with-mongodb-database-2aaa | Connecting a RESTful API in an Express.js backend with a MongoDB database involves several steps. Here’s a step-by-step guide:

### Step 1: Set Up a New Express.js Project

1. **Initialize a New Project**:

```bash

mkdir project-name

cd project-name

npm init -y

```

2. **Install Required Packages**:

```... | nadim_ch0wdhury | |

1,876,185 | Off-Road Towing Gear by Shanghai Special Rope Co Ltd | Conquer Any Terrain: Off-Road Towing Gear by Shanghai Jinli Special Rope Co, Ltd It is also... | 0 | 2024-06-04T05:33:14 | https://dev.to/hdweyd_djjehhe_94b0dba4fc/off-road-towing-gear-by-shanghai-special-rope-co-ltd-1nam |

Conquer Any Terrain: Off-Road Towing Gear by Shanghai Jinli Special Rope Co, Ltd

It is also dangerous if we all find out, taking place an adventure may be fun, although. That is why it's important to being constructed with the gear that are most gear that is readily useful hold us safer and to overcome any area. ... | hdweyd_djjehhe_94b0dba4fc | |

1,864,162 | Implementing AWS Config for your Organization with CloudFormation | Objective This lab is centered on deploying AWS Config within your AWS Organization for... | 0 | 2024-06-04T05:33:07 | https://dev.to/diegop0s/aws-config-for-organizations-3i6p | aws, config, audit, security | ## Objective

This lab is centered on deploying **AWS Config** within your AWS Organization for the first time. It's advisable to familiarize yourself with essential concepts of AWS Config, AWS CloudFormation, and how they integrate with AWS Organizations if you are new to them. In case you're interested I have publish... | diegop0s |

1,876,184 | How to Connect GraphQL API & Express JS backend with MySQL/PgSQL database? | Connecting a GraphQL API in an Express.js backend with a MySQL or PostgreSQL database involves... | 0 | 2024-06-04T05:31:54 | https://dev.to/nadim_ch0wdhury/how-to-connect-graphql-api-express-js-backend-with-mysqlpgsql-databse-1dlc | Connecting a GraphQL API in an Express.js backend with a MySQL or PostgreSQL database involves several steps. Here’s a step-by-step guide for both databases:

### Step 1: Set Up a New Express.js Project

1. **Initialize a New Project**:

```bash

mkdir project-name

cd project-name

npm init -y

```

2. **Insta... | nadim_ch0wdhury | |

1,876,182 | Using DAX for Financial Analysis in Power BI | Introduction Power BI, Microsoft's powerful data visualization tool, has revolutionized how... | 0 | 2024-06-04T05:28:16 | https://dev.to/stevejacob45678/using-dax-for-financial-analysis-in-power-bi-240h | powerbi, powerbiconsulting, powerbidax | Introduction

Power BI, Microsoft's powerful data visualization tool, has revolutionized how businesses analyze and interpret their data. One of its standout features is the use of Data Analysis Expressions (DAX), a robust formula language that enhances Power BI’s data manipulation capabilities. This blog explores how ... | stevejacob45678 |

1,876,181 | How to connect RESTful API & Nest JS backend with MongoDB database? | Connecting a RESTful API in a NestJS backend with a MongoDB database involves several steps. Here’s a... | 0 | 2024-06-04T05:28:01 | https://dev.to/nadim_ch0wdhury/how-to-connect-restful-api-nest-js-backend-with-mongodb-database-1g6i | Connecting a RESTful API in a NestJS backend with a MongoDB database involves several steps. Here’s a step-by-step guide:

### Step 1: Setup a New NestJS Project

1. **Install Nest CLI**:

```bash

npm install -g @nestjs/cli

```

2. **Create a New Project**:

```bash

nest new project-name

```

3. **Navigate... | nadim_ch0wdhury | |

1,876,180 | Hello, world! | Programming is fun! Isn't it? Anyone interested in logic with some creativity will fall in love with... | 27,589 | 2024-06-04T05:27:57 | https://dev.to/afraazahmed/hello-world-7bc | python, programming, beginners, tutorial | _Programming is fun!_

Isn't it?

Anyone interested in logic with some creativity will fall in love with coding.

Alright then, if you are interested to start the journey with me in learning a programming language: let's start our first step in learning to code.

OK, but there so many coding languages in the world, right... | afraazahmed |

1,876,179 | How to connect GraphQL API & Nest JS backend with MySQL/PgSQL database? | Connecting a GraphQL NestJS backend with MySQL and PostgreSQL databases involves several steps.... | 0 | 2024-06-04T05:25:31 | https://dev.to/nadim_ch0wdhury/how-to-connect-graphql-api-nest-js-backend-with-mysqlpgsql-database-1361 | Connecting a GraphQL NestJS backend with MySQL and PostgreSQL databases involves several steps. Here’s a step-by-step guide for both databases:

### Step 1: Setup a New NestJS Project

1. **Install Nest CLI**:

```bash

npm install -g @nestjs/cli

```

2. **Create a New Project**:

```bash

nest new project-nam... | nadim_ch0wdhury | |

1,876,178 | The Role of Self-Assessment in Distance Learning | Introduction: Distance learning has revolutionized the educational landscape, offering flexibility... | 0 | 2024-06-04T05:23:34 | https://dev.to/nelson_b_school/the-role-of-self-assessment-in-distance-learning-54lp |

**Introduction:**

[Distance learning](https://nbs.org.in/) has revolutionized the educational landscape, offering flexibility and accessibility to students worldwide. At Nelson Business School, we understand the importance of equipping students with the skills and strategies necessary for success in a virtual learnin... | nelson_b_school | |

1,868,883 | PSR-4 autoloading in Laravel | What is Autoloading? Autoloading in PHP is a mechanism that automatically loads the... | 0 | 2024-05-29T11:01:19 | https://dev.to/vimuth7/psr-4-autoloading-in-laravel-57nn | ##What is Autoloading?

Autoloading in PHP is a mechanism that automatically loads the classes, interfaces, or traits you need when you use them, without the need to manually include or require their files. This helps keep your code clean and organized, especially in large projects.

##Example Without Autoloading

**1.D... | vimuth7 | |

1,876,177 | Intermediate Linux Shell Scripting: Taking Your Skills to the Next Level | As you progress from basic to intermediate shell scripting, you begin to unlock more powerful... | 0 | 2024-06-04T05:23:12 | https://dev.to/iaadidev/intermediate-linux-shell-scripting-taking-your-skills-to-the-next-level-3mg5 | linux, script, bash, devops | As you progress from basic to intermediate shell scripting, you begin to unlock more powerful capabilities, allowing for more complex automation and system management tasks. This blog will cover essential intermediate concepts such as conditional statements, loops, functions, and script parameters, providing relevant e... | iaadidev |

1,876,176 | How to create RESTful API in Nest JS? Step by step guidelines! | Creating a RESTful API in NestJS involves several steps. Here’s a step-by-step theoretical guide: ... | 0 | 2024-06-04T05:20:45 | https://dev.to/nadim_ch0wdhury/how-to-create-restful-api-in-nest-js-step-by-step-guidelines-5eg9 | Creating a RESTful API in NestJS involves several steps. Here’s a step-by-step theoretical guide:

### Step 1: Setup a New NestJS Project

1. **Install Nest CLI**:

```bash

npm install -g @nestjs/cli

```

2. **Create a New Project**:

```bash

nest new project-name

```

3. **Navigate to the Project Director... | nadim_ch0wdhury | |

1,876,138 | Getting started with Mylanguage | Summary What is the M language? The so-called M language is a set of programmatic... | 0 | 2024-06-04T05:18:55 | https://dev.to/fmzquant/getting-started-with-mylanguage-270h | mylanguage, trading, cryptocurrency, fmzquant | ## Summary

What is the M language? The so-called M language is a set of programmatic functions that extend from the early stock trading technical indicators. Encapsulating the algorithm into a single function, the user only needs to call a certain function like "building blocks" to implement the strategy logic.

It ado... | fmzquant |

1,876,137 | My name is ameen ahamed | I was studded at 3rd STD My dad was died | 0 | 2024-06-04T05:18:48 | https://dev.to/ameen_ahamed_5ce6bb3edbf5/my-name-is-ameen-ahamed-4ad1 | I was studded at 3rd STD

My dad was died | ameen_ahamed_5ce6bb3edbf5 | |

1,876,136 | How to create GraphQL API in Nest JS? Step by Step guidelines! | Creating a GraphQL API in NestJS involves several steps. Here's a step-by-step theoretical guide: ... | 0 | 2024-06-04T05:18:47 | https://dev.to/nadim_ch0wdhury/how-to-create-graphql-api-in-nest-js-step-by-step-guidelines-2cej | Creating a GraphQL API in NestJS involves several steps. Here's a step-by-step theoretical guide:

### Step 1: Setup a New NestJS Project

1. **Install Nest CLI**:

```bash

npm install -g @nestjs/cli

```

2. **Create a New Project**:

```bash

nest new project-name

```

3. **Navigate to the Project Director... | nadim_ch0wdhury | |

1,876,135 | Experience India's Majesty: Aboard the Palace on Wheels Train | Imagine yourself transported to a land of vibrant colors, majestic forts, and captivating history.... | 0 | 2024-06-04T05:18:37 | https://dev.to/palaceonwheelsindia/experience-indias-majesty-aboard-the-palace-on-wheels-train-3b15 | Imagine yourself transported to a land of vibrant colors, majestic forts, and captivating history. Welcome to Rajasthan, also known as the Land of Kings . This extraordinary region in northwestern India boasts a rich heritage dating back centuries, evident in its opulent palaces, bustling bazaars, and age-old tradition... | palaceonwheelsindia | |

1,876,134 | Compressors: Powering Air Operated Valves and Actuators in Industries | Compressors: Powering Sky Run Shutoffs as well as Actuators in Markets Compressors are actually... | 0 | 2024-06-04T05:18:14 | https://dev.to/brenda_hernandezg_26bd74a/compressors-powering-air-operated-valves-and-actuators-in-industries-1occ | compress |

Compressors: Powering Sky Run Shutoffs as well as Actuators in Markets

Compressors are actually extremely essential devices that are actually utilized for a selection of various functions in markets. Among one of the absolute most essential requests of compressors remains in powering sky run shutoffs as well as actua... | brenda_hernandezg_26bd74a |

1,876,133 | Python Programming: A Beginner’s Guide | Python is an interpreted, high-level, powerful general-purpose programming language. You may ask,... | 27,589 | 2024-06-04T05:17:08 | https://dev.to/afraazahmed/python-programming-a-beginners-guide-1hig | beginners, programming, python, career | Python is an interpreted, high-level, powerful general-purpose programming language.

You may ask, Python’s a snake right? and Why is this programming language named after it?

Well, you are in the right place to discover it! and I’ll also answer the Why? What? How? Questions on Python programming.

is often the very first touchpoint a potential customer has with your brand. It serves as a visual shorthand, instantly conveying your essence and values. But a logo is more than just aesthe... | green_pixelcreationpriv |

1,876,130 | Crafting Better Software | Let me preface this by saying I am extremely pedantic and opinionated on what quality code looks... | 0 | 2024-06-04T05:13:39 | https://dev.to/silent6stringer/crafting-better-software-3hf0 | coding, softwaredevelopment, beginners |

_Let me preface this by saying I am extremely pedantic and opinionated on what quality code looks like... I have been burned enough times to know._

## What do I know?

I have written and read a lot of complex and untested code over the years. I have had to maintain services and libraries that are so complex and have ... | silent6stringer |

1,876,129 | Set Sail with Confidence: Marine Rope Supplies from Shanghai Jinli Special Rope Co., Ltd | f67df0f5ad778137f7ecd27964fb8965eda6b17a6ffb46fff75e39e2c015e24d.jpg Set Sail with Confidence:... | 0 | 2024-06-04T05:10:00 | https://dev.to/hdweyd_djjehhe_94b0dba4fc/set-sail-with-confidence-marine-rope-supplies-from-shanghai-jinli-special-rope-co-ltd-3cg6 | f67df0f5ad778137f7ecd27964fb8965eda6b17a6ffb46fff75e39e2c015e24d.jpg

Set Sail with Confidence: Marine Rope Supplies from Shanghai Jinli Special Rope Co., Ltd

You know how important it is to have the right equipment for a safe and memorable voyage if you a boater or a sailor. One of the most critical pieces of equipm... | hdweyd_djjehhe_94b0dba4fc | |

1,876,128 | What's with the Weird Elixir Function Names | Have you ever noticed that in all of the documentation related to Elixir, functions are referenced... | 0 | 2024-06-04T05:08:28 | https://davidturissini.com/blog/whats-with-the-weird-elixir-function-names/ | elixir, webdev, beginners | Have you ever noticed that in all of the documentation related to Elixir, functions are referenced like `function_name/2`? This is by design- Elixir itself identifies functions by [its name and the number of arguments it takes](https://hexdocs.pm/elixir/basic-types.html#identifying-functions-and-documentation). The doc... | daveturissini |

1,876,127 | Quick tip: Type of tokio spawn return | When I was implementing the metrics task using tokio, I wanted to save the result JoinHandle in a... | 0 | 2024-06-04T05:07:27 | https://dev.to/thiagomg/quick-tip-type-of-tokio-spawn-return-42i3 | rust, tokio, programming | When I was implementing the metrics task using tokio, I wanted to save the result `JoinHandle` in a struct and I saw the type being displayed by the IDE: `JoinHandle<?>`

What does it mean?

When I looked the definition of the function spawn, that's the code:

```rust

pub fn spawn<F>(future: F) -> JoinHandle<F::Output>... | thiagomg |

1,876,126 | Order Food Online: Mastering A Guide to Apps and Tech | Order Food Online: Mastering A Guide to Apps and Tech Online meal ordering has ingrained... | 0 | 2024-06-04T05:02:50 | https://dev.to/adambaba/order-food-online-mastering-a-guide-to-apps-and-tech-2oa5 | coding, programming | ## Order Food Online: Mastering A Guide to Apps and Tech

Online meal ordering has ingrained itself into our daily lives. Whether you're craving sushi at midnight or want a healthy salad for lunch, the convenience of having meals delivered right to your doorstep is unbeatable. If you're new to this, don't worry! This ul... | adambaba |

1,876,125 | Buy Verified Paxful Account | https://dmhelpshop.com/product/buy-verified-paxful-account/ Buy Verified Paxful Account There are... | 0 | 2024-06-04T05:01:24 | https://dev.to/yetolo9323/buy-verified-paxful-account-10jh | tutorial, python, ai, devops | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-paxful-account/\n\n\n\n\nBuy Verified Paxful Account\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, Buy verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to Buy Verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with. Buy Verified Paxful Account.\n\nBuy US verified paxful account from the best place dmhelpshop\nWhy we declared this website as the best place to buy US verified paxful account? Because, our company is established for providing the all account services in the USA (our main target) and even in the whole world. With this in mind we create paxful account and customize our accounts as professional with the real documents. Buy Verified Paxful Account.\n\nIf you want to buy US verified paxful account you should have to contact fast with us. Because our accounts are-\n\nEmail verified\nPhone number verified\nSelfie and KYC verified\nSSN (social security no.) verified\nTax ID and passport verified\nSometimes driving license verified\nMasterCard attached and verified\nUsed only genuine and real documents\n100% access of the account\nAll documents provided for customer security\nWhat is Verified Paxful Account?\nIn today’s expanding landscape of online transactions, ensuring security and reliability has become paramount. Given this context, Paxful has quickly risen as a prominent peer-to-peer Bitcoin marketplace, catering to individuals and businesses seeking trusted platforms for cryptocurrency trading.\n\nIn light of the prevalent digital scams and frauds, it is only natural for people to exercise caution when partaking in online transactions. As a result, the concept of a verified account has gained immense significance, serving as a critical feature for numerous online platforms. Paxful recognizes this need and provides a safe haven for users, streamlining their cryptocurrency buying and selling experience.\n\nFor individuals and businesses alike, Buy verified Paxful account emerges as an appealing choice, offering a secure and reliable environment in the ever-expanding world of digital transactions. Buy Verified Paxful Account.\n\nVerified Paxful Accounts are essential for establishing credibility and trust among users who want to transact securely on the platform. They serve as evidence that a user is a reliable seller or buyer, verifying their legitimacy.\n\nBut what constitutes a verified account, and how can one obtain this status on Paxful? In this exploration of verified Paxful accounts, we will unravel the significance they hold, why they are crucial, and shed light on the process behind their activation, providing a comprehensive understanding of how they function. Buy verified Paxful account.\n\n \n\nWhy should to Buy Verified Paxful Account?\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, a verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence. Buy Verified Paxful Account.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to buy a verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with.\n\n \n\nWhat is a Paxful Account\nPaxful and various other platforms consistently release updates that not only address security vulnerabilities but also enhance usability by introducing new features. Buy Verified Paxful Account.\n\nIn line with this, our old accounts have recently undergone upgrades, ensuring that if you purchase an old buy Verified Paxful account from dmhelpshop.com, you will gain access to an account with an impressive history and advanced features. This ensures a seamless and enhanced experience for all users, making it a worthwhile option for everyone.\n\n \n\nIs it safe to buy Paxful Verified Accounts?\nBuying on Paxful is a secure choice for everyone. However, the level of trust amplifies when purchasing from Paxful verified accounts. These accounts belong to sellers who have undergone rigorous scrutiny by Paxful. Buy verified Paxful account, you are automatically designated as a verified account. Hence, purchasing from a Paxful verified account ensures a high level of credibility and utmost reliability. Buy Verified Paxful Account.\n\nPAXFUL, a widely known peer-to-peer cryptocurrency trading platform, has gained significant popularity as a go-to website for purchasing Bitcoin and other cryptocurrencies. It is important to note, however, that while Paxful may not be the most secure option available, its reputation is considerably less problematic compared to many other marketplaces. Buy Verified Paxful Account.\n\nThis brings us to the question: is it safe to purchase Paxful Verified Accounts? Top Paxful reviews offer mixed opinions, suggesting that caution should be exercised. Therefore, users are advised to conduct thorough research and consider all aspects before proceeding with any transactions on Paxful.\n\n \n\nHow Do I Get 100% Real Verified Paxful Accoun?\nPaxful, a renowned peer-to-peer cryptocurrency marketplace, offers users the opportunity to conveniently buy and sell a wide range of cryptocurrencies. Given its growing popularity, both individuals and businesses are seeking to establish verified accounts on this platform.\n\nHowever, the process of creating a verified Paxful account can be intimidating, particularly considering the escalating prevalence of online scams and fraudulent practices. This verification procedure necessitates users to furnish personal information and vital documents, posing potential risks if not conducted meticulously.\n\nIn this comprehensive guide, we will delve into the necessary steps to create a legitimate and verified Paxful account. Our discussion will revolve around the verification process and provide valuable tips to safely navigate through it.\n\nMoreover, we will emphasize the utmost importance of maintaining the security of personal information when creating a verified account. Furthermore, we will shed light on common pitfalls to steer clear of, such as using counterfeit documents or attempting to bypass the verification process.\n\nWhether you are new to Paxful or an experienced user, this engaging paragraph aims to equip everyone with the knowledge they need to establish a secure and authentic presence on the platform.\n\nBenefits Of Verified Paxful Accounts\nVerified Paxful accounts offer numerous advantages compared to regular Paxful accounts. One notable advantage is that verified accounts contribute to building trust within the community.\n\nVerification, although a rigorous process, is essential for peer-to-peer transactions. This is why all Paxful accounts undergo verification after registration. When customers within the community possess confidence and trust, they can conveniently and securely exchange cash for Bitcoin or Ethereum instantly. Buy Verified Paxful Account.\n\nPaxful accounts, trusted and verified by sellers globally, serve as a testament to their unwavering commitment towards their business or passion, ensuring exceptional customer service at all times. Headquartered in Africa, Paxful holds the distinction of being the world’s pioneering peer-to-peer bitcoin marketplace. Spearheaded by its founder, Ray Youssef, Paxful continues to lead the way in revolutionizing the digital exchange landscape.\n\nPaxful has emerged as a favored platform for digital currency trading, catering to a diverse audience. One of Paxful’s key features is its direct peer-to-peer trading system, eliminating the need for intermediaries or cryptocurrency exchanges. By leveraging Paxful’s escrow system, users can trade securely and confidently.\n\nWhat sets Paxful apart is its commitment to identity verification, ensuring a trustworthy environment for buyers and sellers alike. With these user-centric qualities, Paxful has successfully established itself as a leading platform for hassle-free digital currency transactions, appealing to a wide range of individuals seeking a reliable and convenient trading experience. Buy Verified Paxful Account.\n\n \n\nHow paxful ensure risk-free transaction and trading?\nEngage in safe online financial activities by prioritizing verified accounts to reduce the risk of fraud. Platforms like Paxfu implement stringent identity and address verification measures to protect users from scammers and ensure credibility.\n\nWith verified accounts, users can trade with confidence, knowing they are interacting with legitimate individuals or entities. By fostering trust through verified accounts, Paxful strengthens the integrity of its ecosystem, making it a secure space for financial transactions for all users. Buy Verified Paxful Account.\n\nExperience seamless transactions by obtaining a verified Paxful account. Verification signals a user’s dedication to the platform’s guidelines, leading to the prestigious badge of trust. This trust not only expedites trades but also reduces transaction scrutiny. Additionally, verified users unlock exclusive features enhancing efficiency on Paxful. Elevate your trading experience with Verified Paxful Accounts today.\n\nIn the ever-changing realm of online trading and transactions, selecting a platform with minimal fees is paramount for optimizing returns. This choice not only enhances your financial capabilities but also facilitates more frequent trading while safeguarding gains. Buy Verified Paxful Account.\n\nExamining the details of fee configurations reveals Paxful as a frontrunner in cost-effectiveness. Acquire a verified level-3 USA Paxful account from usasmmonline.com for a secure transaction experience. Invest in verified Paxful accounts to take advantage of a leading platform in the online trading landscape.\n\n \n\nHow Old Paxful ensures a lot of Advantages?\n\nExplore the boundless opportunities that Verified Paxful accounts present for businesses looking to venture into the digital currency realm, as companies globally witness heightened profits and expansion. These success stories underline the myriad advantages of Paxful’s user-friendly interface, minimal fees, and robust trading tools, demonstrating its relevance across various sectors.\n\nBusinesses benefit from efficient transaction processing and cost-effective solutions, making Paxful a significant player in facilitating financial operations. Acquire a USA Paxful account effortlessly at a competitive rate from usasmmonline.com and unlock access to a world of possibilities. Buy Verified Paxful Account.\n\nExperience elevated convenience and accessibility through Paxful, where stories of transformation abound. Whether you are an individual seeking seamless transactions or a business eager to tap into a global market, buying old Paxful accounts unveils opportunities for growth.\n\nPaxful’s verified accounts not only offer reliability within the trading community but also serve as a testament to the platform’s ability to empower economic activities worldwide. Join the journey towards expansive possibilities and enhanced financial empowerment with Paxful today. Buy Verified Paxful Account.\n\n \n\nWhy paxful keep the security measures at the top priority?\nIn today’s digital landscape, security stands as a paramount concern for all individuals engaging in online activities, particularly within marketplaces such as Paxful. It is essential for account holders to remain informed about the comprehensive security protocols that are in place to safeguard their information.\n\nSafeguarding your Paxful account is imperative to guaranteeing the safety and security of your transactions. Two essential security components, Two-Factor Authentication and Routine Security Audits, serve as the pillars fortifying this shield of protection, ensuring a secure and trustworthy user experience for all. Buy Verified Paxful Account.\n\nConclusion\nInvesting in Bitcoin offers various avenues, and among those, utilizing a Paxful account has emerged as a favored option. Paxful, an esteemed online marketplace, enables users to engage in buying and selling Bitcoin. Buy Verified Paxful Account.\n\nThe initial step involves creating an account on Paxful and completing the verification process to ensure identity authentication. Subsequently, users gain access to a diverse range of offers from fellow users on the platform. Once a suitable proposal captures your interest, you can proceed to initiate a trade with the respective user, opening the doors to a seamless Bitcoin investing experience.\n\nIn conclusion, when considering the option of purchasing verified Paxful accounts, exercising caution and conducting thorough due diligence is of utmost importance. It is highly recommended to seek reputable sources and diligently research the seller’s history and reviews before making any transactions.\n\nMoreover, it is crucial to familiarize oneself with the terms and conditions outlined by Paxful regarding account verification, bearing in mind the potential consequences of violating those terms. By adhering to these guidelines, individuals can ensure a secure and reliable experience when engaging in such transactions. Buy Verified Paxful Account.\n\n \n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com\n\n" | yetolo9323 |

1,876,124 | Navigate the Waves: Marine Rope Excellence by Shanghai Jinli Special Rope Co., Ltd | f67df0f5ad778137f7ecd27964fb8965eda6b17a6ffb46fff75e39e2c015e24d.jpg Introduction: Shanghai Jinli... | 0 | 2024-06-04T04:59:26 | https://dev.to/darlene_ballardg_27f941ff/navigate-the-waves-marine-rope-excellence-by-shanghai-jinli-special-rope-co-ltd-34ik | design, product | f67df0f5ad778137f7ecd27964fb8965eda6b17a6ffb46fff75e39e2c015e24d.jpg

Introduction:

Shanghai Jinli Special Rope Co., Ltd is a continuous company that creates quality marine top. The company has been creating ropes for years, and it known for its level high of, safety, and quality. The Navigate the Waves Marine fishing ... | darlene_ballardg_27f941ff |

1,876,123 | Rust vs Typescript Variables | Jumping into Rust from Typescript requires that you change the way you think about code. A... | 0 | 2024-06-04T04:58:35 | https://davidturissini.com/blog/rust-vs-typescript-variables/ | rust, typescript, webdev, beginners |

Jumping into Rust from Typescript requires that you change the way you think about code.

## A Simple Log Statement

It is trivial to log a variable Typescript. Simply declare the variable and then pass it `console.log`. This is totally fine:

```ts

let value = 'string';

console.log(value);

```

It doesn't really matt... | daveturissini |

1,876,122 | Answer: How to print __int128 in g++? | answer re: How to print __int128 in g++? ... | 0 | 2024-06-04T04:58:07 | https://dev.to/mdsiaofficial/answer-how-to-print-int128-in-g-3mki | {% stackoverflow 78573447 %} | mdsiaofficial | |

1,876,121 | Shanghai Jinli Special Rope Co., Ltd: Your Off-Road Towing Expertise | f67df0f5ad778137f7ecd27964fb8965eda6b17a6ffb46fff75e39e2c015e24d.jpg Shanghai Jinli Special Rope Co.,... | 0 | 2024-06-04T04:57:39 | https://dev.to/darlene_ballardg_27f941ff/shanghai-jinli-special-rope-co-ltd-your-off-road-towing-expertise-1cb3 | design, product | f67df0f5ad778137f7ecd27964fb8965eda6b17a6ffb46fff75e39e2c015e24d.jpg

Shanghai Jinli Special Rope Co., Ltd: Your Off-Road Towing Expertise

Areyou looking for a safe and way reliable tow your off-road vehicle? Look no further than Shanghai Jinli Special Rope Co., Ltd. We are your go-to experts for all things towing off... | darlene_ballardg_27f941ff |

1,876,120 | LWC Batch Class Progress Indicator, Data Cloud Tips, Account Teams Best Practices | This is a weekly newsletter of interesting Salesforce content See the most interesting... | 25,293 | 2024-06-04T04:57:22 | https://dev.to/sfdcnews/lwc-batch-class-progress-indicator-data-cloud-tips-account-teams-best-practices-1g74 | salesforce, salesforcedevelopment, salesforceadministration, salesforceadmin | # This is a weekly newsletter of interesting Salesforce content

See the most interesting #Salesforce content of the last days 👇

✅ **[5 Tips for Getting Started with Data Cloud](https://admin.salesforce.com/blog/2024/5-tips-for-getting-started-with-data-cloud)**

As an admin, you've probably heard of Data Cloud, but ... | sfdcnews |

1,876,119 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash... | 0 | 2024-06-04T04:55:32 | https://dev.to/yetolo9323/buy-verified-cash-app-account-20ld | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-cash-app-account/\n\n\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoin enablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number. Buy verified cash app account.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, ACash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n \n\nHow to verify Cash App accounts\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account.\n\nAs part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly. Buy verified cash app account.\n\n \n\nHow cash used for international transaction?\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom.\n\nNo matter if you’re a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain. Buy verified cash app account.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today’s digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial.\n\nAs we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available. Buy verified cash app account.\n\nOffers and advantage to buy cash app accounts cheap?\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform.\n\nWe deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Buy verified cash app account.\n\nTrustbizs.com stands by the Cash App’s superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management.\n\nExplore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs. Buy verified cash app account.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\nIn today’s digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions.\n\nBy acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com" | yetolo9323 |

1,876,118 | A Developer's Guide to Boosting Ecommerce Sales: From Code to Checkout Success | Ecommerce isn't just about having a flashy website or the latest product; it's about crafting a... | 0 | 2024-06-04T04:54:44 | https://dev.to/elena143/a-developers-guide-to-boosting-ecommerce-sales-from-code-to-checkout-success-4p3h | Ecommerce isn't just about having a flashy website or the latest product; it's about crafting a digital experience that seamlessly guides shoppers from browsing to buying.

And as a developer, you're not just building a site, you're engineering that entire journey.

This guide is your roadmap to optimizing that journ... | elena143 | |

1,876,115 | Simplifying Authentication with JWT, TypeScript and Fastify | Authentication is the process of confirming the identity of a user, typically through credentials... | 0 | 2024-06-04T04:54:23 | https://dev.to/sraveend/simplifying-authentication-with-jwt-typescript-and-fastify-1dc9 | typescript, beginners, programming, tutorial | Authentication is the process of confirming the identity of a user, typically through credentials like a username and a password.

Authorization, determines if a user is permitted to perform an action, once their identity is authenticated.

JWT or JSON Web Token plays a crucial role in authentication and authorization p... | sraveend |

1,876,117 | Air Tanks: Supporting Air Horns and Emergency Brake Systems in Vehicles | Sky Storage containers: The Trick towards Risk-free as well as Dependable Car Bodies Sky storage... | 0 | 2024-06-04T04:51:02 | https://dev.to/brenda_hernandezg_26bd74a/air-tanks-supporting-air-horns-and-emergency-brake-systems-in-vehicles-ap4 | air, tanks |

Sky Storage containers: The Trick towards Risk-free as well as Dependable Car Bodies

Sky storage containers are essential elements in contemporary cars, as well as they participate in a crucial function in guaranteeing security as well as dependability. Along with the enhancing variety of cars when driving, it has ac... | brenda_hernandezg_26bd74a |

1,876,114 | 9 Essential Tips for Writing Efficient Shaders | Shaders are integral to modern computer graphics, enabling real-time rendering of complex scenes and... | 0 | 2024-06-04T04:45:58 | https://glsl.site/post/9-essential-tips-for-writing-efficient-shaders/ | gamedev, beginners, programming, tutorial |

Shaders are integral to modern computer graphics, enabling real-time rendering of complex scenes and effects. Writing efficient shaders can significantly impact the performance and visual quality of your graphics applications. Here are ten essential tips to help you write efficient and effective shaders.

## 1. Minimi... | hayyanstudio |

1,876,112 | Embrace Financial Freedom by migrating from QuickBooks to Sage Intacct | Greytrix | In today’s competitive business world, when every investment matters and every choice effects your... | 0 | 2024-06-04T04:43:19 | https://dev.to/dinesh_m/embrace-financial-freedom-by-migrating-from-quickbooks-to-sage-intacct-greytrix-16cc |

In today’s competitive business world, when every investment matters and every choice effects your company’s growth, having the appropriate tools can make all the difference. Agree? For years, QuickBooks has been on... | dinesh_m | |

1,876,111 | Twin Screw Extruders: Meeting the Demands of Modern Polymer Industries | What are Twin Screw Extruders and Why are They Important for the Modern Polymer... | 0 | 2024-06-04T04:36:51 | https://dev.to/brenda_hernandezg_26bd74a/twin-screw-extruders-meeting-the-demands-of-modern-polymer-industries-4ia3 | extruders | What are Twin Screw Extruders and Why are They Important for the Modern Polymer Industry

Introduction

Twin screw extruders are devices that are trusted inside the polymer industry to generate a range wide of products and services. These devices have actually two synchronous screws that turn to the way exact same mi... | brenda_hernandezg_26bd74a |

1,872,286 | Why should you automate your architecture on AWS? | Why should you automate your architecture on AWS? The answer to this question in not... | 0 | 2024-06-04T04:28:26 | https://dev.to/welcloud-io/why-should-you-automate-your-architecture-on-aws-333c | ## Why should you automate your architecture on AWS?

The answer to this question in not obvious. I remember explaining how to automate an application architecture on AWS to a colleague a few years ago, and his reaction was : I don't understand anything!

Maybe I didn't explain this clearly, but when someone tells me h... | welcloud-io | |

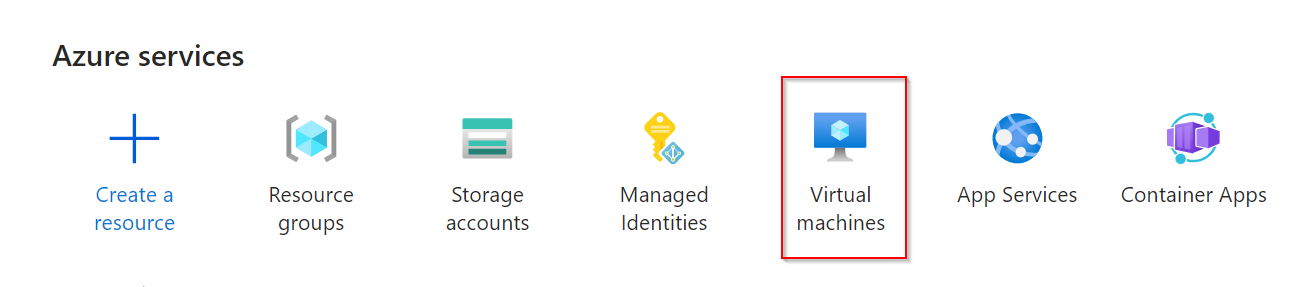

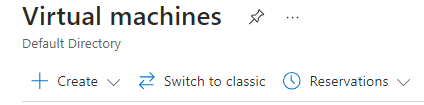

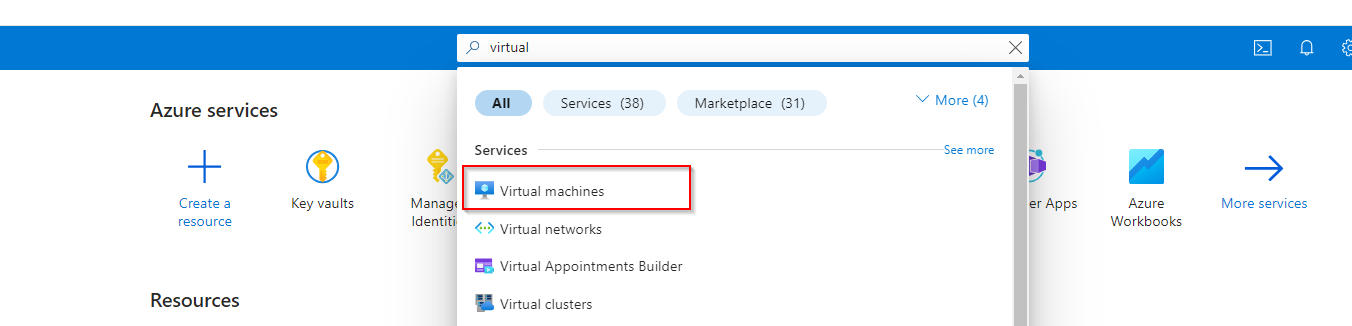

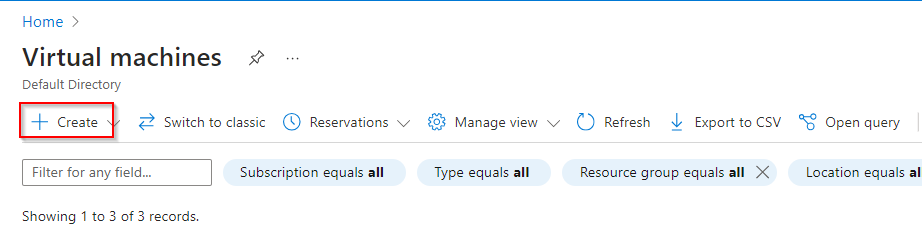

1,876,103 | Creating a Windows Server Virtual Machine and deploying a Windows Server. | In Azure Portal, select Virtual Machine Select Create Azure Virtual machine Select Resource... | 0 | 2024-06-04T04:20:23 | https://dev.to/opsyog/create-a-windows-server-and-install-windows-server-on-it-52ec | windows, azure, virtualmachine | **In Azure Portal, select Virtual Machine**

**Select Create Azure Virtual machine**

**Select Resource Group*... | opsyog |

1,875,926 | Embedding AI into the DNA of Your Company | Transform your organization into an AI-first company by embedding AI into the fabric of your business. Start by integrating AI into daily practices and strategic initiatives, encouraging experimentation, and fostering a culture of continuous learning. Ensure your team is equipped with the necessary AI tools and knowled... | 0 | 2024-06-04T04:20:11 | https://dev.to/dev3l/embedding-ai-into-the-dna-of-your-company-35nb | ai, innovation, aifirst, futureofwork | ---

title: Embedding AI into the DNA of Your Company

published: true

description: Transform your organization into an AI-first company by embedding AI into the fabric of your business. Start by integrating AI into daily practices and strategic initiatives, encouraging experimentation, and fostering a culture of continu... | dev3l |

1,876,092 | Future Prospects of Deepwater Drilling | The Future of Deepwater Drilling: Exploring the Advantages of Innovation and... | 0 | 2024-06-04T04:17:28 | https://dev.to/brenda_hernandezg_26bd74a/future-prospects-of-deepwater-drilling-6h0 | drilling | The Future of Deepwater Drilling: Exploring the Advantages of Innovation and Safety

Introduction

Deepwater drilling is the process of drilling for oil and gas at depths of 500 feet or more below the ocean's surface. This process requires significant technological advances, innovations, and safety measures. Deepwater ... | brenda_hernandezg_26bd74a |

1,876,090 | How to add flexibility to your RAG applications by choosing the right configuration(s) | Knowledge Bases for Amazon Bedrock is a fully managed capability that helps you implement the entire... | 0 | 2024-06-04T04:14:22 | https://community.aws/content/2gSzqTkFq25coY1upSDvpcVowV6/add-flexibility-to-your-rag-applications-in-amazon-bedrock | machinelearning, generativeai | [Knowledge Bases for Amazon Bedrock](https://aws.amazon.com/bedrock/knowledge-bases/) is a fully managed capability that helps you implement the entire RAG workflow from ingestion to retrieval and prompt augmentation without having to build custom integrations to data sources and manage data flows.

There are several c... | abhirockzz |

1,876,091 | Day 962 : All I Need | liner notes: Saturday : Had to do a couple of chores (like cleaning out the fridge in my van) so I... | 0 | 2024-06-04T04:13:47 | https://dev.to/dwane/day-962-all-i-need-597i | hiphop, code, coding, lifelongdev | _liner notes_:

- Saturday : Had to do a couple of chores (like cleaning out the fridge in my van) so I got to the station a little later than normal. Got my equipment set up. Of course, my laptop would decide that a firmware update would be a good thing to do! Luckily, it didn't take too long and I was able to finish g... | dwane |

1,876,089 | Anitaku: Your Gateway to High-Quality Anime with English Subtitles | In the vast realm of anime streaming platforms, finding a reliable source that offers both... | 0 | 2024-06-04T04:10:50 | https://dev.to/anitakucity/anitaku-your-gateway-to-high-quality-anime-with-english-subtitles-1fno | In the vast realm of anime streaming platforms, finding a reliable source that offers both high-quality content and English subtitles can sometimes feel like searching for a needle in a haystack. However, imagine stumbling upon a virtual oasis where your anime cravings are not only satisfied but exceeded – a place wher... | anitakucity | |

1,876,085 | Memahami CQRS (Command Query Responsibility Segregation) Kenapa dan Bagaimana Menggunakannya | Apa Itu CQRS? CQRS atau Command Query Responsibility Segregation adalah pola desain... | 0 | 2024-06-04T03:55:05 | https://dev.to/yogameleniawan/memahami-cqrs-command-query-responsibility-segregation-kenapa-dan-bagaimana-menggunakannya-4hmf | programming, go |

### Apa Itu CQRS?

CQRS atau _Command Query Responsibility Segregation_ adalah pola desain arsitektur yang memisahkan operasi data menjadi dua kategori: **command** dan **query**. Ini berarti operasi untuk mengubah... | yogameleniawan |

1,876,084 | Vacuum Pumps: Enabling Vacuum Packaging and Sealing Processes | Vacuum Pumps: a Tool powerful in Packaging We often see food products which are tightly packed and... | 0 | 2024-06-04T03:54:41 | https://dev.to/brenda_hernandezg_26bd74a/vacuum-pumps-enabling-vacuum-packaging-and-sealing-processes-ojc | vacuum | Vacuum Pumps: a Tool powerful in Packaging

We often see food products which are tightly packed and sealed when we go to the grocery store. Have you ever wondered how the fresh air is removed from the package? where vacuum pumps come in. Vacuum pumps are machines used to remove air and gas molecules from a container sea... | brenda_hernandezg_26bd74a |

1,876,083 | Deploying NextJS app to mobile App Stores using CapacitorJS | Have you ever thought of having a single codebase for your web and mobile apps? I was... | 0 | 2024-06-04T03:51:32 | https://dev.to/jacobporci/deploying-nextjs-app-to-mobile-app-stores-using-capacitorjs-215c | nextjs, ios, android, javascript | ## Have you ever thought of having a single codebase for your web and mobile apps?

I was recently tasked with a SPIKE ticket to research the gap analysis of getting our app to Google Play and App Store. What immediately popped up in my head was `react-native`. So I searched for popular frameworks that supported this. ... | jacobporci |

1,876,082 | **🎉 iPhone 15 Pro Max Giveaway! 🎉** | [We're excited to announce our latest giveaway! One lucky winner will receive the brand-new iPhone... | 0 | 2024-06-04T03:46:51 | https://dev.to/alquran23738336/-iphone-15-pro-max-giveaway--19h0 |

[We're excited to announce our latest giveaway! One lucky winner will receive the brand-new [iPhone 15 Pro Max](https://sites.google.com/view/3214547/home). Don’t miss out on your chance to own this amazing device! ... | alquran23738336 | |

1,876,081 | [Conceito] - Não Use HTTP 404 ou 204 para Buscas Sem Resultados | Conteúdo original em https://x.com/zanfranceschi/status/1797835707962245352 Ei dev, Bora falar... | 0 | 2024-06-04T03:45:15 | https://dev.to/zanfranceschi/conceito-nao-use-http-404-ou-204-para-buscas-sem-resultados-6ki | > Conteúdo original em https://x.com/zanfranceschi/status/1797835707962245352

---

Ei dev,

Bora falar um pouco de semântica HTTP e porque acho FEIO retornar um 404 numa busca sem resultados?

No HTTP, uma imagem, uma folha de estilos, um arquivo javascript e detalhes de um usuário em formato JSON são todos igualmente... | zanfranceschi | |

1,876,080 | REDEEM YOURSELF AFTER CRYPTO SCAM WITH CYBERPUNK PROGRAMMERS | The expanse of online investments, where the allure of financial prosperity intertwines with the... | 0 | 2024-06-04T03:43:28 | https://dev.to/brooke_eli_92d96bda0eba77/redeem-yourself-after-crypto-scam-with-cyberpunk-programmers-4pad | cryptocurrency, recovery, experts | The expanse of online investments, where the allure of financial prosperity intertwines with the shadows of deception, my journey commenced with a glimmer of optimism and concluded amidst the depths of disillusionment. In March of this year, I embarked upon what I believed to be a judicious venture—a $90,000 investment... | brooke_eli_92d96bda0eba77 |

1,876,073 | MindMap for full stack developer interview 🎉 | Hello devs! 🫶🏻 Here is roadmap of Interview preparation in 30 days, everything you need to hit your... | 0 | 2024-06-04T03:37:24 | https://dev.to/khushindpatel/mindmap-for-full-stack-developer-interview-1d7b | Hello devs! 🫶🏻

Here is roadmap of Interview preparation in 30 days, everything you need to hit your full stack developer interview!🙌🏻

First thing First

Follow on Instagram : [@codeandCrunch](https://www.instagram.com/codeandcrunch/)

Here is MindMap link https://miro.com/app/board/uXjVKAYFZuc=

### Halo, temen-temen! Mau Belajar Ngoding? Baca Ini Dulu!

Kalian baru mau mulai belajar ngoding tapi bingung mau pilih bahasa pemrograman yang mana? Tenang, di artikel ini kita bakal bahas detail banget tentang b... | yogameleniawan |

1,876,075 | Cordless Screwdriver Safety Tips Every User Should Know | Keep Their Cordless Screwdriver Safe: Have A Look At Tips which is why The Cordless Screwdriver... | 0 | 2024-06-04T03:22:01 | https://dev.to/brenda_hernandezg_26bd74a/cordless-screwdriver-safety-tips-every-user-should-know-5b50 | screwdriver | Keep Their Cordless Screwdriver Safe: Have A Look At Tips which is why

The Cordless Screwdriver which was cordless a really must-have unit if you're handy plus device. This can be a unit that was versatile can enable you to quickly fix choices plus effortlessly. Having the drill that has been cordless you can drill ... | brenda_hernandezg_26bd74a |

1,876,074 | Quantitative trading programming language evaluation | Summary In Chapters 1 and 2, we learned the basics of quantitative trading and the uses of... | 0 | 2024-06-04T03:21:12 | https://dev.to/fmzquant/quantitative-trading-programming-language-evaluation-5ghe | trading, programming, cryptocurrency, fmzquant | ## Summary

In Chapters 1 and 2, we learned the basics of quantitative trading and the uses of FMZ Quant tools. In this chapter, we will implement the actual trading strategies. If a worker wants to do something good, he must first sharpen his tools. To implement a trading strategy, you must first master a programming l... | fmzquant |

1,876,072 | Innovations in Compressed Natural Gas Storage | Innovations in Compressed Natural Gas Storage: A New Way to Power Your Vehicle Perhaps you have... | 0 | 2024-06-04T03:20:57 | https://dev.to/hdweyd_djjehhe_94b0dba4fc/innovations-in-compressed-natural-gas-storage-4i46 |

Innovations in Compressed Natural Gas Storage: A New Way to Power Your Vehicle

Perhaps you have considered travel an automobile fueled by compressed petrol that are Natural Gas Storage you're not alone. Many individuals are taking a look at cars that are Natural Gas Storage-powered about the importance it offe... | hdweyd_djjehhe_94b0dba4fc | |

1,876,071 | Learnings from GenAI on AWS at Deloitte workshop | I attended an in-personal workshop provided by Deloitte and AWS for NZ TechWeek24 on 22nd of May,... | 0 | 2024-06-04T03:18:45 | https://dev.to/notingin4k/learnings-from-genai-on-aws-at-deloitte-workshop-140n | workshop, genai, aws | I attended an in-personal workshop provided by Deloitte and AWS for NZ TechWeek24 on 22nd of May, noted down some key points that I probably can learn further with hands-on projects later.

### Key concepts:

- LLMOps

- Considerations for shortlisting LLMs

- Hallucination & Retrieval-Augmented Generation (RAG) pattern... | notingin4k |

1,876,070 | Keeping an Eye on Your AWS Infrastructure: A Deep Dive into CloudWatch | Keeping an Eye on Your AWS Infrastructure: A Deep Dive into CloudWatch Monitoring is... | 0 | 2024-06-04T03:16:54 | https://dev.to/virajlakshitha/keeping-an-eye-on-your-aws-infrastructure-a-deep-dive-into-cloudwatch-f6 | # Keeping an Eye on Your AWS Infrastructure: A Deep Dive into CloudWatch

Monitoring is crucial for any cloud infrastructure. Without effective monitoring, you could be blindsided by performance issues, security breaches, or unexpected costs. AWS CloudWatch is a powerful, fully managed service that provides comprehensi... | virajlakshitha | |

1,876,038 | Next.js: Best Way to Organize Your Project Structure | Whenever I start a new Next.js project, I search for the best way to organize my file structure,... | 0 | 2024-06-04T03:16:04 | https://dev.to/jonathan-dev/nextjs-best-way-to-organize-your-project-structure-25o6 | nextjs, react, javascript, webdev | Whenever I start a new Next.js project, I search for the best way to organize my file structure, especially when I moved to App Router from Pages Router. There are [app routing folder and file conventions](https://nextjs.org/docs/getting-started/project-structure#app-routing-conventions) every Next.js project would nee... | jonathan-dev |

1,876,069 | Photographie de portrait professionnelle : créer des images intemporelles | Comprendre le sujet Pour capturer l’essence d’une personne, un photographe doit comprendre qui il... | 0 | 2024-06-04T03:13:49 | https://dev.to/gabrielgorgi54/photographie-de-portrait-professionnelle-creer-des-images-intemporelles-b33 | Comprendre le sujet

Pour capturer l’essence d’une personne, un photographe doit comprendre qui il photographie. Cela peut impliquer de rechercher les antécédents du sujet, de discuter de ses préférences et de comprendre le but du portrait. Qu’il s’agisse d’un portrait professionnel, d’un portrait de famille ou d’un pro... | gabrielgorgi54 | |

1,876,068 | Unveiling the Essentials: A Beginner's Guide to Adobe XD | In the realm of user experience (UX) and user interface (UI) design, Adobe XD stands out as a... | 0 | 2024-06-04T03:13:26 | https://dev.to/epakconsultant/unveiling-the-essentials-a-beginners-guide-to-adobe-xd-394i | uiux | In the realm of user experience (UX) and user interface (UI) design, Adobe XD stands out as a powerful tool. Whether you're a seasoned designer or just starting your journey, understanding the core concepts of Adobe XD is essential. This article equips you with the building blocks to navigate this intuitive design plat... | epakconsultant |

1,875,921 | [Game of Purpose] Day 16 | Today I completed watching tutorial about integrating Perforce in Unreal Engine. I learned about... | 27,434 | 2024-06-03T22:57:10 | https://dev.to/humberd/game-of-purpose-day-16-1pm3 | gamedev | Today I completed watching [tutorial](https://www.youtube.com/watch?v=7PRo8gK6SNM) about integrating Perforce in Unreal Engine.

I learned about World Partitioning where by default in a level all the items are stored in 1 file. It means that only 1 person can edit it at once. This makes it counter-productive in bigger ... | humberd |

1,852,294 | Ambassador Challenge: Earn your first C# Foundational Certification | This challenge will give you an introduction to C# language, providing you with the essential... | 0 | 2024-06-04T03:11:30 | https://dev.to/nahyer/ambassador-challenge-earn-your-first-c-foundational-certification-18a1 | csharp, beginners, programming, certification | This challenge will give you an introduction to C# language, providing you with the essential training you need to build robust applications in .NET and take the Foundational C# Certification exam.

Whether new or experienced, gain essential skills like variables, control structures, methods, and classes. Through hands... | nahyer |

1,875,444 | Generics in Rust: murky waters of implementing foreign traits on foreign types | This post is about what bothered me for a while in generic Rust before I could clarify what's going... | 0 | 2024-06-04T03:11:12 | https://dev.to/iprosk/generics-in-rust-murky-waters-of-implementing-foreign-traits-on-foreign-types-584n | rust, generics, numeric, beginners | This post is about what bothered me for a while in generic Rust before I could clarify what's going on (sort of), namely, implementing _foreign trait on foreign types_, especially, in the context of Rust's way of "operator overloading".

## We can't do it, or can we?

First, there is no mystery, right? [The Rust Book](... | iprosk |

1,876,067 | Shanghai Jinli Special Rope Co., Ltd: Your Off-Road Towing Expert | Innovation Shanghai Jinli Special Rope Co., Ltd strives to boost their products or services. They use... | 0 | 2024-06-04T03:05:41 | https://dev.to/brenda_hernandezg_26bd74a/shanghai-jinli-special-rope-co-ltd-your-off-road-towing-expert-3boe | off, road, rope | Innovation

Shanghai Jinli Special Rope Co., Ltd strives to boost their products or services. They use the latest technology to create far ropes more durable, more powerful and safer. Their team of specialists is always finding latest ways to innovate and generate items satisfy their customer's specifications.

Shangha... | brenda_hernandezg_26bd74a |

1,876,066 | PRAAMS - Your ultimate investment tool | PRAAMS is a web and mobile platform for easy and complete investment analysis of 110,000 stocks and... | 0 | 2024-06-04T03:05:14 | https://dev.to/praams/praams-your-ultimate-investment-tool-505k | finance, software, investment, stocks | PRAAMS is a web and mobile platform for easy and complete investment analysis of 110,000 stocks and bonds, instant idea discovery, and intuitive portfolio construction & management. Created by professionals, for professionals, our comprehensive solution empowers you to analyse, compare, and pick stocks and bonds like a... | praams |