id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,873,705 | Using Keycloak in .NET Aspire projects | TL;DR You can use Keycloak.AuthServices.Templates to add Keycloak support for .NET Aspire... | 0 | 2024-06-05T10:52:01 | https://nikiforovall.github.io/dotnet/keycloak/2024/06/02/aspire-support-for-keycloak.html | dotnet, keycloak, aspire, aspnetcore | ---

title: Using Keycloak in .NET Aspire projects

published: true

date: 2024-06-02 00:00:00 UTC

tags: dotnet, keycloak, aspire, aspnetcore

canonical_url: https://nikiforovall.github.io/dotnet/keycloak/2024/06/02/aspire-support-for-keycloak.html

---

## TL;DR

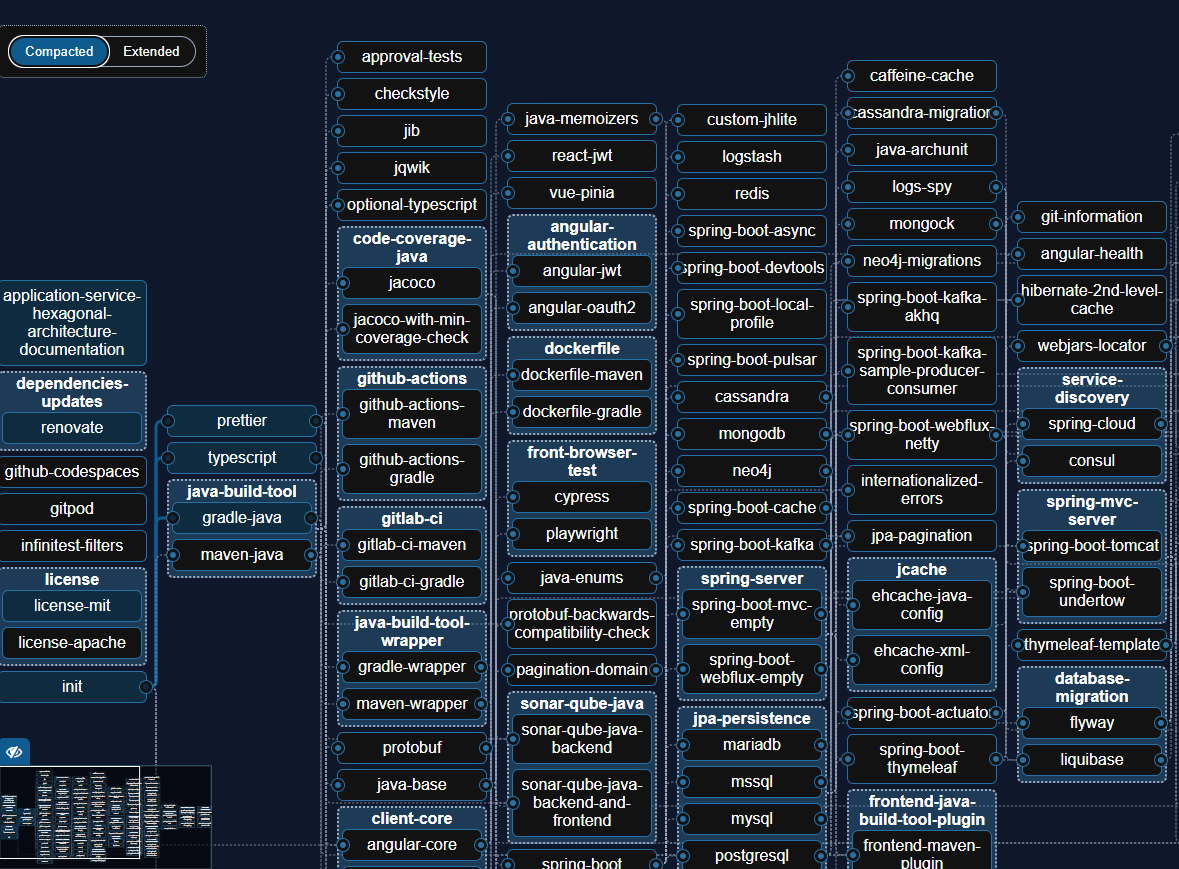

You can use [Keycloak.AuthServices.Templates](https://www.nuget.org/packages/Keycloak.AuthServices.Templates/) to add Keycloak support for .NET Aspire projects. See the docs for more details - [Keycloak.AuthServices/Aspire Support](https://nikiforovall.github.io/keycloak-authorization-services-dotnet/devex/aspire.html).

Source code: [https://github.com/NikiforovAll/keycloak-aspire-starter-template](https://github.com/NikiforovAll/keycloak-aspire-starter-template)

- [TL;DR](#tldr)

- [Introduction](#introduction)

- [Scaffold a solution](#scaffold-a-solution)

- [Run it](#run-it)

- [Code Explained](#code-explained)

- [Conclusion](#conclusion)

- [References](#references)

## Introduction

From the official [docs](https://learn.microsoft.com/en-us/dotnet/aspire/get-started/aspire-overview):

> .NET Aspire is designed to improve the experience of building .NET cloud-native apps. It provides a consistent, opinionated set of tools and patterns that help you build and run distributed apps.

Personally, I’m a big fan of Aspire because it enables great developer experience and productivity. I recommend trying it on your own 🚀

This article will show you how to get started with Keycloak and Aspire. It is based on [Keycloak.AuthServices.Templates](https://www.nuget.org/packages/Keycloak.AuthServices.Templates) template. Templates make it really easy to get started.

💡Here is a basic example of how the integration looks like:

```csharp

var builder = DistributedApplication.CreateBuilder(args);

var keycloak = builder

.AddKeycloakContainer("keycloak")

.WithDataVolume();

var realm = keycloak.AddRealm("Test");

builder.AddProject<Projects.Api>("api")

.WithReference(realm);

builder.Build().Run();

```

### Scaffold a solution

Install a templates pack:

```bash

❯ dotnet new install Keycloak.AuthServices.Templates

# The following template packages will be installed:

# Keycloak.AuthServices.Templates::2.5.0

# Success: Keycloak.AuthServices.Templates::2.5.0 installed the following templates:

# Template Name Short Name Language Tags

# ----------------------- ----------------------- -------- -------------------------------------

# Keycloak Aspire Starter keycloak-aspire-starter [C#] Common/.NET Aspire/Cloud/API/Keycloak

# Keycloak WebApi keycloak-webapi [C#] Common/API/Keycloak

❯ dotnet new keycloak-aspire-starter -o $dev/keycloak-aspire-starter-template

# The template "Keycloak Aspire Starter" was created successfully.

```

Here is what was generated:

```bash

❯ tre

.

├── .gitignore

├── Api

│ ├── Api.csproj

│ ├── Extensions.OpenApi.cs

│ ├── Program.cs

│ ├── Properties

│ │ └── launchSettings.json

│ ├── appsettings.Development.json

│ └── appsettings.json

├── AppHost

│ ├── AppHost.csproj

│ ├── KeycloakConfiguration

│ │ ├── Test-realm.json

│ │ └── Test-users-0.json

│ ├── Program.cs

│ ├── Properties

│ │ └── launchSettings.json

│ ├── appsettings.Development.json

│ └── appsettings.json

├── Directory.Build.props

├── Directory.Packages.props

├── README.md

├── ServiceDefaults

│ ├── Extensions.cs

│ └── ServiceDefaults.csproj

├── global.json

└── keycloak-aspire-starter-template.sln

```

### Run it

```bash

❯ dotnet run --project ./AppHost/

# Building...

# info: Aspire.Hosting.DistributedApplication[0]

# Aspire version: 8.0.1+a6e341ebbf956bbcec0dda304109815fcbae70c9

# info: Aspire.Hosting.DistributedApplication[0]

# Distributed application starting.

# info: Aspire.Hosting.DistributedApplication[0]

# Application host directory is: C:\Users\Oleksii_Nikiforov\dev\keycloak-aspire-starter-template\AppHost

# info: Aspire.Hosting.DistributedApplication[0]

# Now listening on: http://localhost:15056

# info: Aspire.Hosting.DistributedApplication[0]

# Distributed application started. Press Ctrl+C to shut down.

```

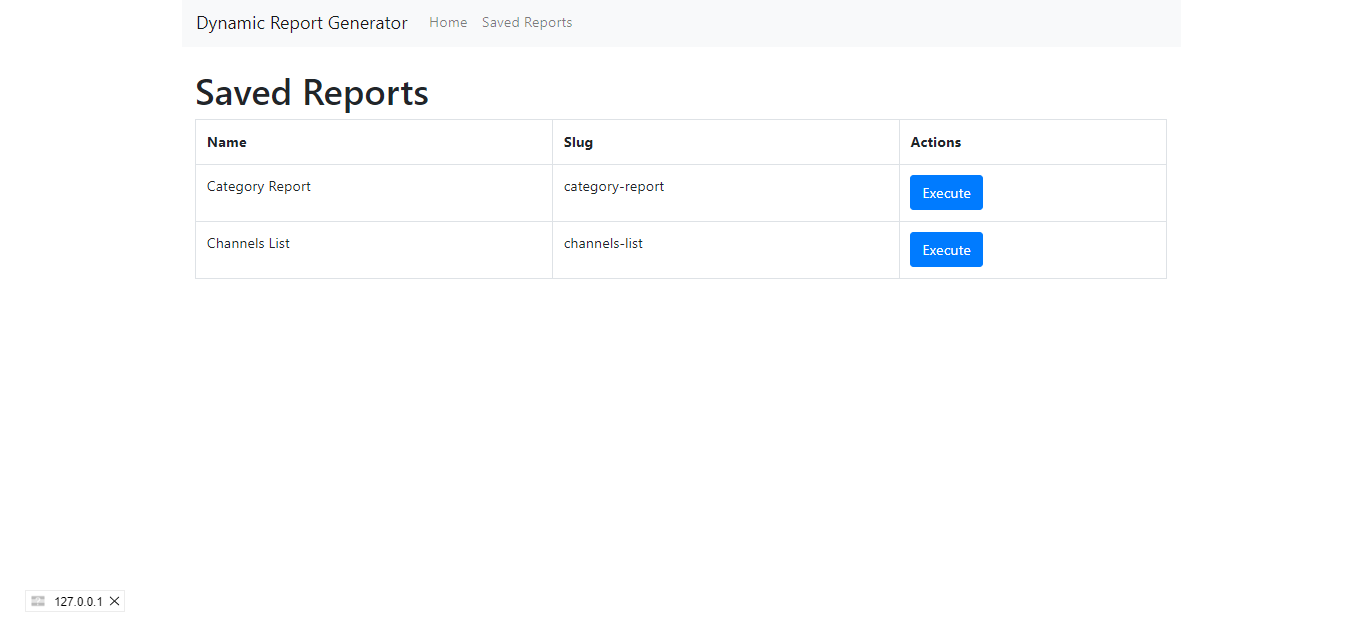

Here are resources from Aspire Dashboard:

<center>

<img src="https://nikiforovall.github.io/assets/keycloak-aspire/dashboard-resources.png" style="margin: 15px;">

</center>

As you can see, there is a `quay.io/keycloak/keycloak:24.0.3` container running. It is available on your local machine: [http://localhost:8080/](http://localhost:8080/). Use `admin:admin` credentials.

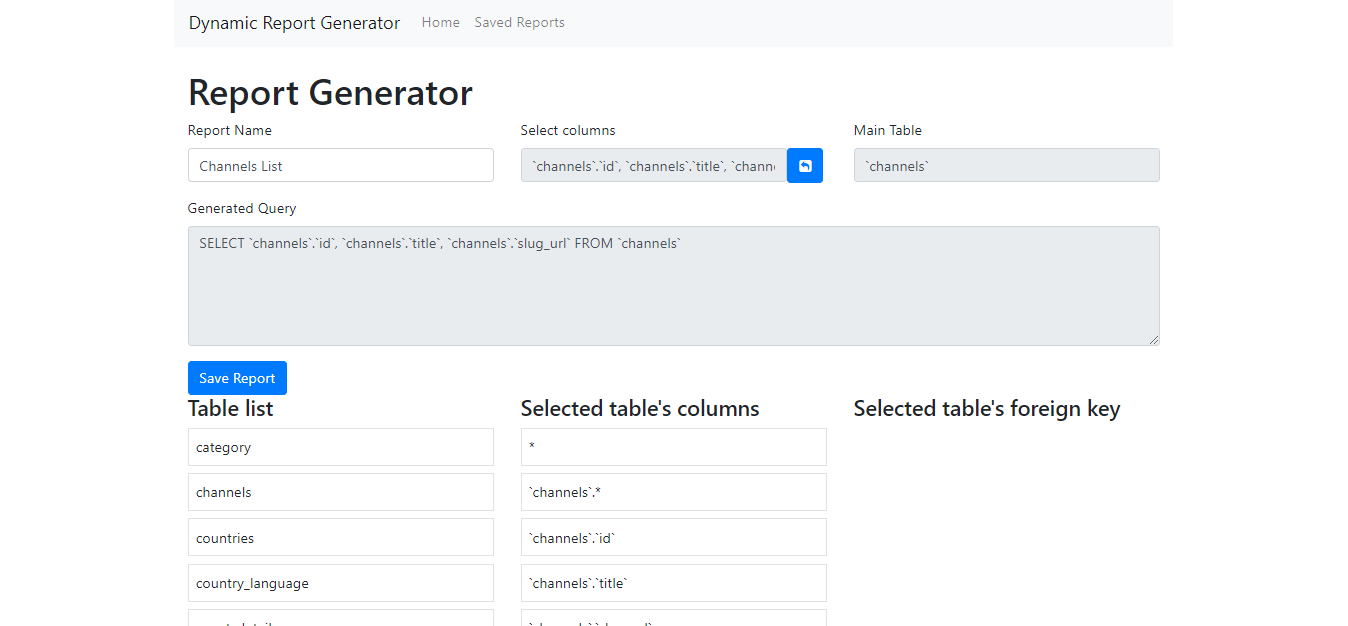

The template project was generated with exemplary import files. It imports _Test_ realm, adds _workspaces-client_, and seeds test users:

<center>

<img src="https://nikiforovall.github.io/assets/keycloak-aspire/test-realm.png" style="margin: 15px;">

</center>

Now, we can open Swagger UI and retrieve an access token. Note, imported realm is configured to support [Implicit Flow](https://oauth.net/2/grant-types/implicit/). We can use it during the development process as demonstrated below.

<center>

<img src="https://nikiforovall.github.io/assets/keycloak-aspire/auth-swagger-ui.png" width="100%" style="margin: 15px;">

</center>

To invoke the API you can use Swagger UI or other HTTP tool of your choice. Here is an example of how to use `cURL`:

```bash

curl -X 'GET' \

'https://localhost:51492/hello' \

-H 'accept: text/plain' \

-H 'Authorization: Bearer <AUTH_TOKEN>'

# Hello World!

```

## Code Explained

Basically, to setup Keycloak installation with Aspire we need to setup two things:

1. Add Keycloak Resource to Aspire `AppHost`.

2. Configure Web API to target Keycloak installation

Here is how to add Keycloak as resource to Aspire:

```csharp

// AppHost/Program.cs

var builder = DistributedApplication.CreateBuilder(args);

var keycloak = builder

.AddKeycloakContainer("keycloak")

.WithDataVolume()

.WithImport("./KeycloakConfiguration/Test-realm.json")

.WithImport("./KeycloakConfiguration/Test-users-0.json");

var realm = keycloak.AddRealm("Test");

builder.AddProject<Projects.Api>("api").WithReference(keycloak).WithReference(realm);

builder.Build().Run();

```

The code above does the following:

1. Starts a Keycloak Instance

2. Imports realm and test users

3. Reference to Keycloak adds Keycloak to service discovery

4. Reference to Realm adds _Keycloak\_\_Realm_ and _Keycloak\_\_AuthServerUrl_ environment variables.

And here is how to configure `Api` to integrated with Keycloak and use _workspaces-client_:

```csharp

// Api/Program.cs

using Api;

using Keycloak.AuthServices.Authentication;

var builder = WebApplication.CreateBuilder(args);

var services = builder.Services;

var configuration = builder.Configuration;

builder.AddServiceDefaults();

services.AddApplicationOpenApi(configuration);

services.AddKeycloakWebApiAuthentication(

configuration,

options =>

{

options.Audience = "workspaces-client";

options.RequireHttpsMetadata = false;

}

);

services.AddAuthorization();

var app = builder.Build();

app.UseHttpsRedirection();

app.UseApplicationOpenApi();

app.UseAuthentication();

app.UseAuthorization();

app.MapGet("/hello", () => "Hello World!").RequireAuthorization();

app.Run();

```

## Conclusion

The integration of Keycloak with .NET Aspire projects provides a first class support for building distributed, cloud native systems. By leveraging the `Keycloak.AuthServices.Templates` template, developers can easily scaffold a solution and configure their APIs to work with Keycloak.

* * *

🙌 `Keycloak.AuthServices.Templates` is under development. Please, feel free to submit PRs. [](https://github.com/nikiforovall/keycloak-authorization-services-dotnet) 🙌

## References

- [https://github.com/NikiforovAll/keycloak-authorization-services-dotnet](https://github.com/NikiforovAll/keycloak-authorization-services-dotnet)

- [https://nikiforovall.github.io/keycloak-authorization-services-dotnet/devex/aspire.html](https://nikiforovall.github.io/keycloak-authorization-services-dotnet/devex/aspire.html)

- [https://learn.microsoft.com/en-us/dotnet/aspire/](https://learn.microsoft.com/en-us/dotnet/aspire/) | nikiforovall |

1,863,232 | Speeding up installation of AIO (Azure IoT Operations) deployment using a script | If you're eager to learn more about AIO (Azure IoT Operations), which is currently in private... | 0 | 2024-06-01T23:57:05 | https://dev.to/danuw/re-installing-aio-azure-iot-operations-5f73 | aio, tutorial | If you're eager to learn more about AIO (Azure IoT Operations), which is currently in private preview, it's crucial to start with a fresh install to experiment quickly and efficiently. In this post, I will guide you through the steps to reinstall your cluster using a script, ensuring a smooth learning experience. Let's get started!

For now, the assumption is that you are using [fresh codespace using those steps](https://learn.microsoft.com/en-us/azure/iot-operations/get-started/quickstart-deploy#connect-a-kubernetes-cluster-to-azure-arc). Updated script for physical machines with Ubuntu server coming soon...

## Setup the cluster again and deploy the AIO extension

> Note: if your are looking at a [new version of AIO](https://github.com/Azure/azure-iot-operations/releases), the script may need adapting - this has been tested with AIO v0.5-preview at the time of writing

### 1. Set up your variables

#### 1.a RESOURCE_GROUP

When you created your Codespace, you had to provide some variable values such as the resource group, so double check that the resource group variable is what you expect with `echo $RESOURCE_GROUP`, and otherwise set it using (update value as needed):

```bash

export RESOURCE_GROUP=aio-codespace-rg

```

#### 1.b IOT OPS USER_EMAIL

To ensure your user can access the AIO UI, specify your IoT Ops user email with the following command, replacing <your-email> with your actual email address:

```bash

export USER_EMAIL=<your-email>

```

> Note: Remember that user needs to be a member of the tenant such as iotops@YOUTENANT.onmicrosoft.com

> If you do not have one, use `export USER_EMAIL=$(az ad user create --display-name 'IoT Ops User' --password <PASSOWRD> --user-principal-name iotop@<TENANTNAME>.onmicrosoft.com --force-change-password-next-sign-in false -o tsv --query userPrincipalName)`

### 1.c Other Variables (optional)

Consider double checking other environment variables such as the $CLUSTER_NAME to suit your preferences.

### 2. Login

Ensure you are signed into your Azure Account by running the following command in your terminal, then follow the instructions in your browser:

```bash

az login --use-device-code

```

### 3. Run your setup script

Create a file named setup.sh in your Codespace and copy the content from [this gist](https://gist.github.com/danuw/37c931341d5cde145564a5bfe05cc4c7/2fb3c5452d273a884da1dd8a28a7100bdfd1a656) into it.

Once you have updated your variables and logged into Azure, you are ready to run the script with the following command:

```bash

. ./setup.sh

```

> Note: Currently tested on Codespace, but will soon update with script for Ubuntu server including Az CLI install and other pre-requisites for re-install

### 4. Manual Validation

Based on experience, if your Codespace setup remains unchanged (same number of cores, etc.), you can expect this whole process to take approximately 25 minutes.

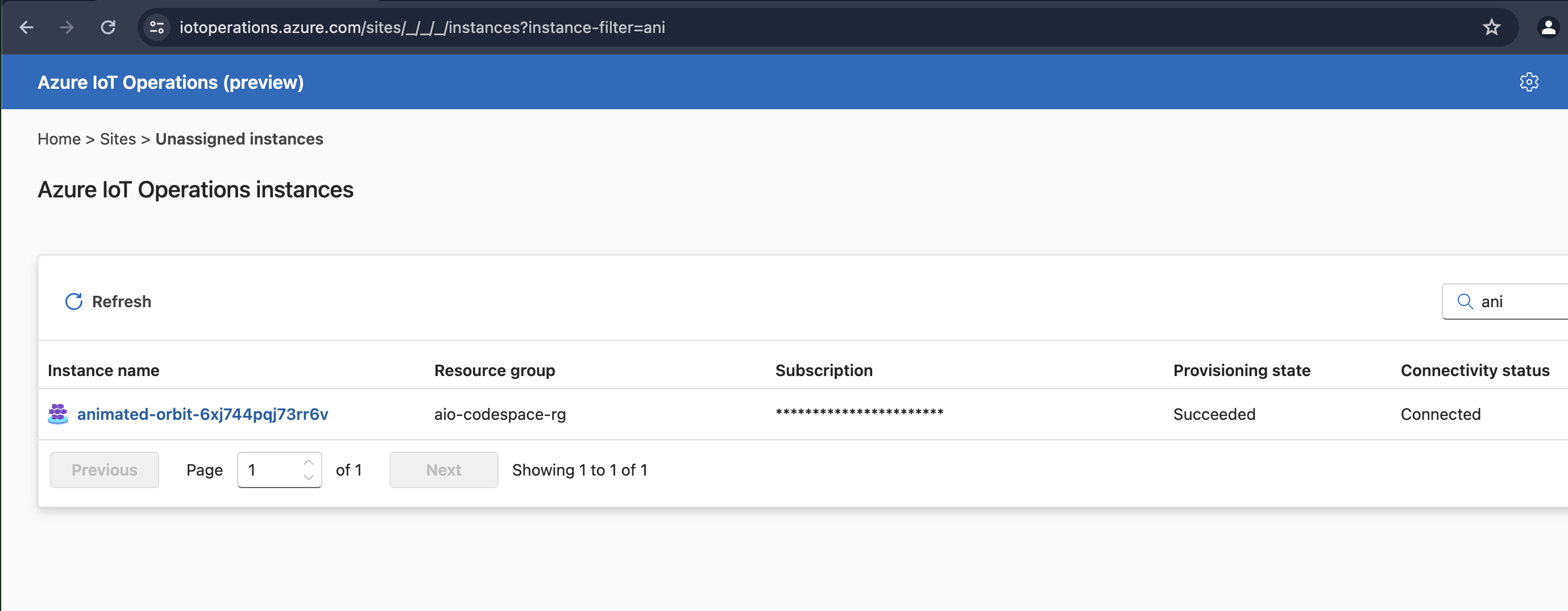

After the process completes, navigate to [IoT Operations Portal](https://iotoperations.azure.com) and sign in with your member user account. You should see your cluster listed as connected.

## Next?

Thank you for reading this far. I hope this script helps you experiment faster with AIO. In the future, I plan to:

- Expand the script with new steps: As AIO evolves and more APIs become available, additional processes can be automated. You may also consider improving the script as you explore or revisit the latest features, in which case don't forget to let me know in the gist's comments.

- Cover more scenarios, such as creating assets using CLI.

References:

- https://github.com/Azure/azure-iot-ops-cli-extension

- https://learn.microsoft.com/en-us/cli/azure/iot/ops?view=azure-cli-latest

- https://learn.microsoft.com/en-us/azure/azure-arc/kubernetes/custom-locations

- https://learn.microsoft.com/en-us/azure/iot-operations/get-started/quickstart-deploy

- https://learn.microsoft.com/en-us/azure/iot-operations/manage-devices-assets/howto-manage-assets-remotely?tabs=cli | danuw |

1,873,353 | Beachcomber: A Legacy of Family Values and Global Excellence in Hot Tub Manufacturing | Beachcomber is committed to strong family values across its entire organization, advocating for... | 0 | 2024-06-01T23:52:21 | https://dev.to/beachcomber1/beachcomber-a-legacy-of-family-values-and-global-excellence-in-hot-tub-manufacturing-2hjj | Beachcomber is committed to strong family values across its entire organization, advocating for health and happiness for everyone. Much like Porsche©, Beachcomber aims to grow alongside its staff and customers, treating each one like family. The company's pride lies not only in its high-quality handcrafted hot tubs but also in the enduring family bonds and fellowship nurtured over the decades.

As a family-owned and operated multi-generational business, Beachcomber Hot Tubs is dedicated to supporting families for generations to come. This legacy is illustrated by the presence of three generations at the factory, with founder Keith, his son Blake, and grandson Frankie working together to continue the tradition of excellence.

Beachcomber Hot Tubs has built a global reputation for its zero-defects approach and rigorous quality control in hot tub manufacturing. From Norway to Argentina and Ireland to Japan, the company's dream has evolved into a trusted global brand, represented in over 40 countries worldwide.

The company maintains a full-time specialized workforce focused on producing high-quality hot tubs and providing dedicated customer care. Beachcomber's brand is available through an omni-channel marketplace, which includes an international network of factory-trained distributors and dealerships, factory outlets that test retail experience concepts, omni-channel merchants whose values align with the company's, and a user-friendly online presence that ensures seamless pricing across all platforms.

[](https://www.beachcomberhottubs.com/) | beachcomber1 | |

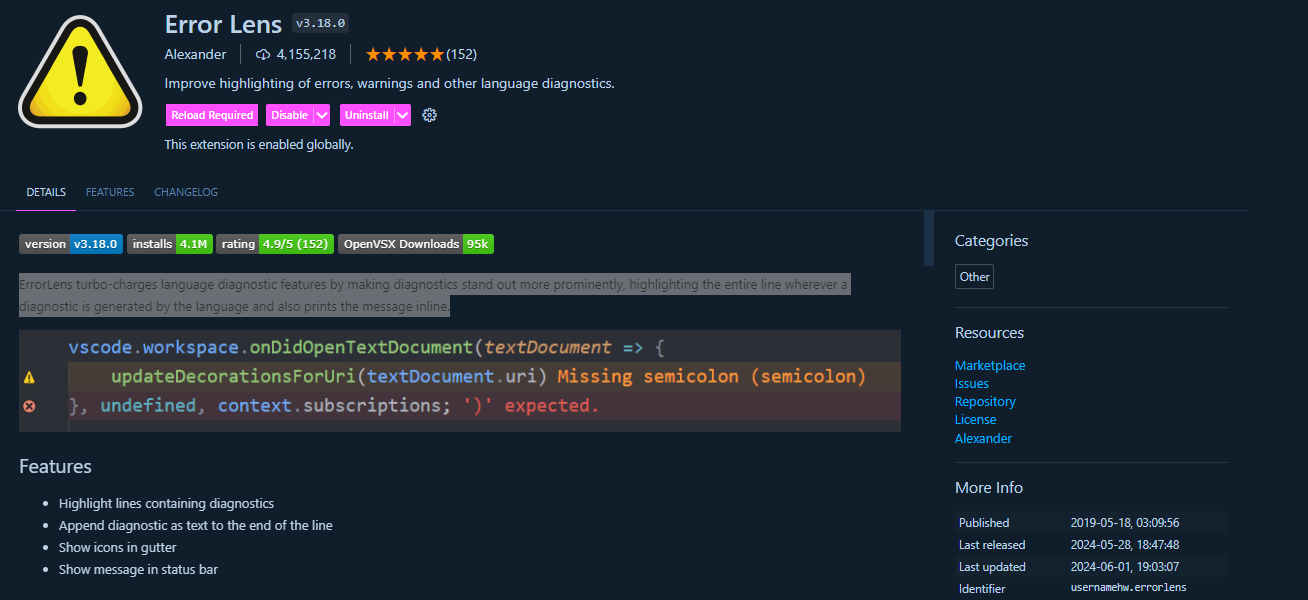

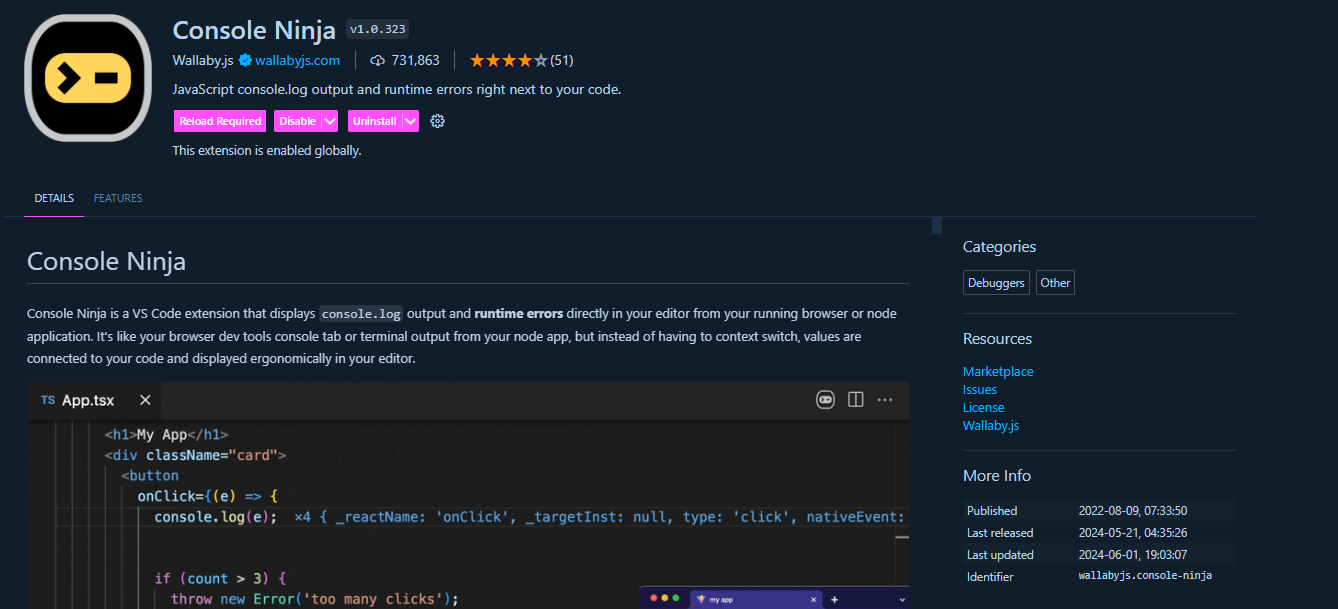

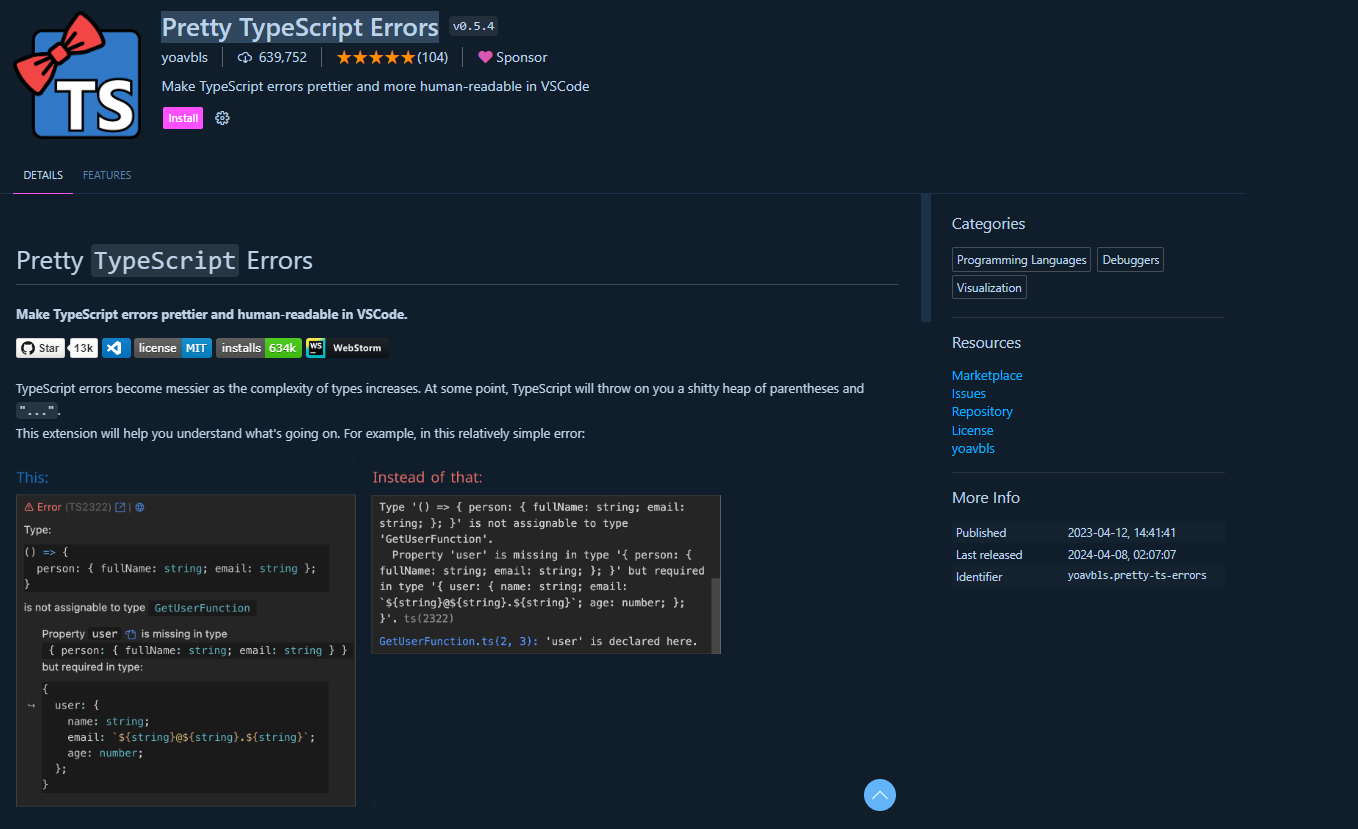

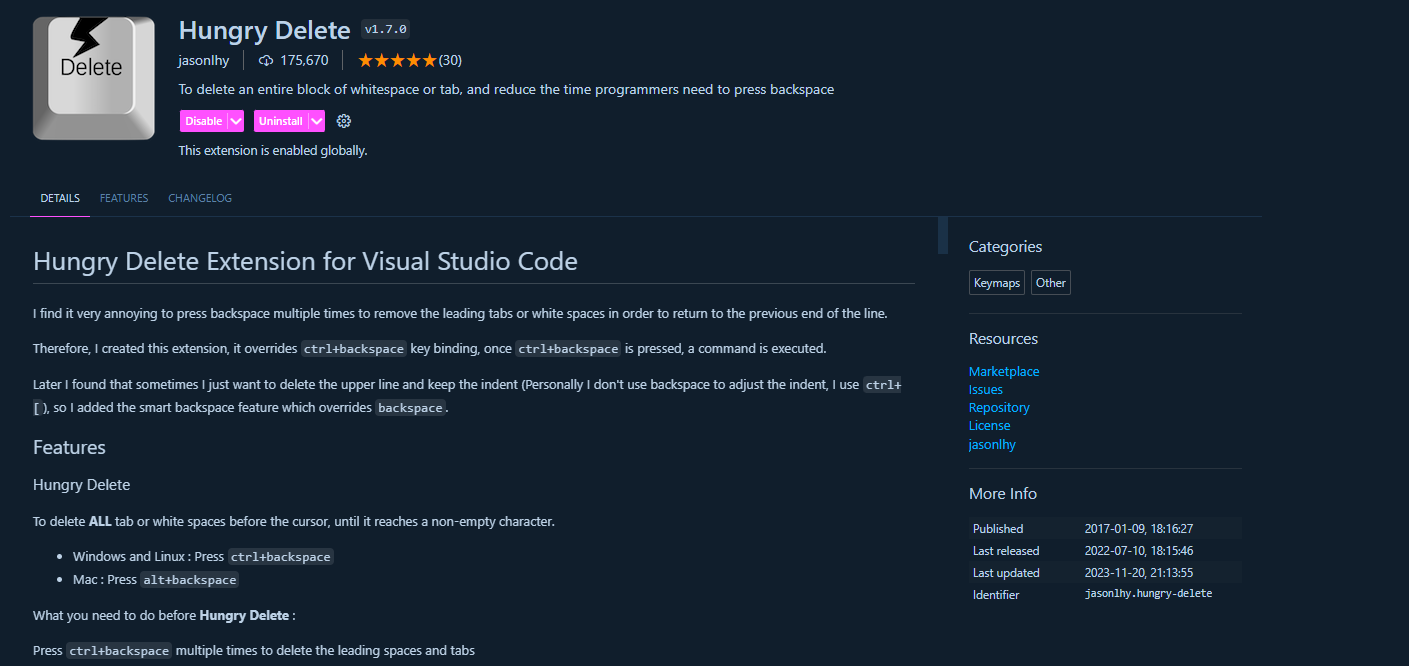

1,850,811 | 🪟Ferramentas para produtividade para dev no Sistema operacional Windows 11 | Introdução Sistema operacional 🪟 Windows 11 O Windows 11, com sua interface moderna e... | 0 | 2024-06-01T23:51:22 | https://dev.to/neiesc/dicas-e-truques-ferramentas-para-produtividade-para-dev-no-sistema-operacional-windows-11-1627 | braziliandevs, windows11, tools, productivity | ## Introdução

Sistema operacional 🪟 [Windows 11](https://www.microsoft.com/en-us/windows/windows-11)

O Windows 11, com sua interface moderna e recursos aprimorados, tem se tornado cada vez mais popular entre desenvolvedores. Neste artigo, exploraremos como personalizar e otimizar o Windows 11 para criar um ambiente de desenvolvimento altamente produtivo e eficiente. Apresentaremos uma seleção cuidadosa de ferramentas e configurações, fruto de mais de uma década de experiência na criação e refinamento de dotfiles personalizados.

Com o objetivo de aprimorar o fluxo de trabalho e impulsionar a produtividade, vamos mergulhar em um conjunto abrangente de aplicativos, extensões e personalizações. Desde gerenciadores de pacotes versáteis e soluções robustas de segurança até terminais poderosos e utilitários indispensáveis, exploraremos cada componente essencial para transformar o Windows 11 em uma verdadeira potência para desenvolvimento.

Prepare-se para descobrir como aproveitar ao máximo o Windows 11, otimizando seu sistema operacional para atender às suas necessidades específicas de desenvolvimento e desbloquear todo o seu potencial criativo.

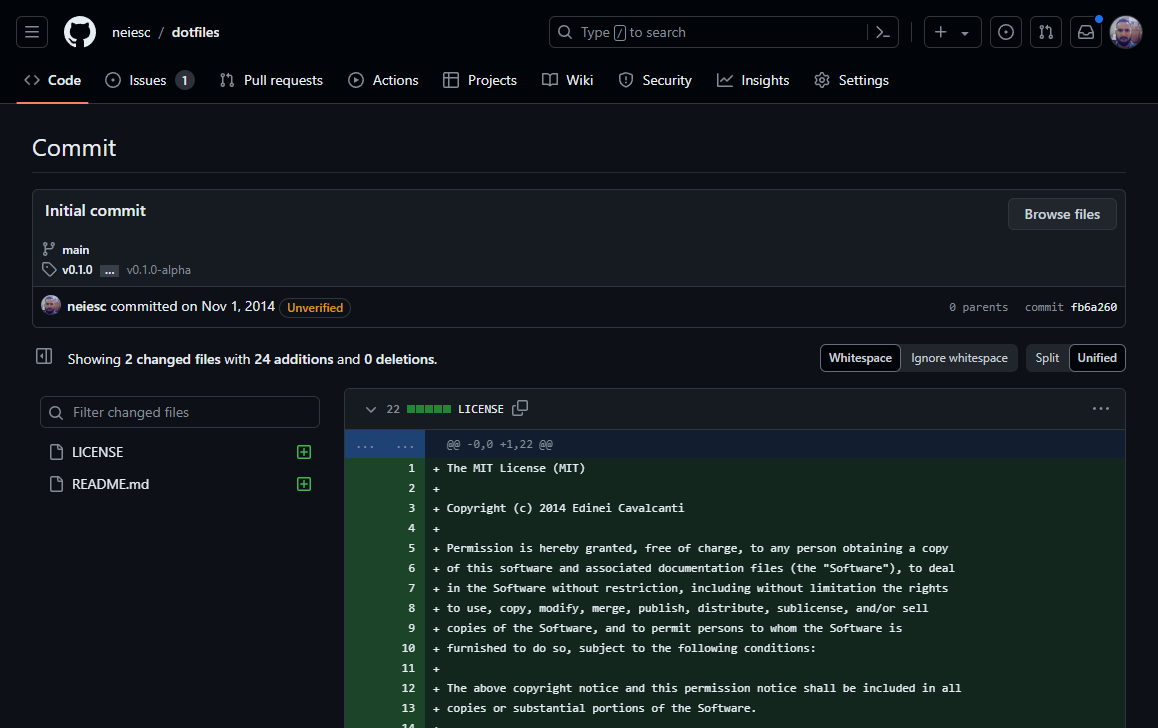

[meu dotfiles](https://github.com/neiesc/dotfiles) com mais de uma decada de criação

.

## Ferramentas

1. Gerenciador de pacote 📦 ([WingetUI](https://www.marticliment.com/wingetui)) Winget, Scoop, Chocolatey, Pip, Npm, .NET Tool e PowerShell Gallery.

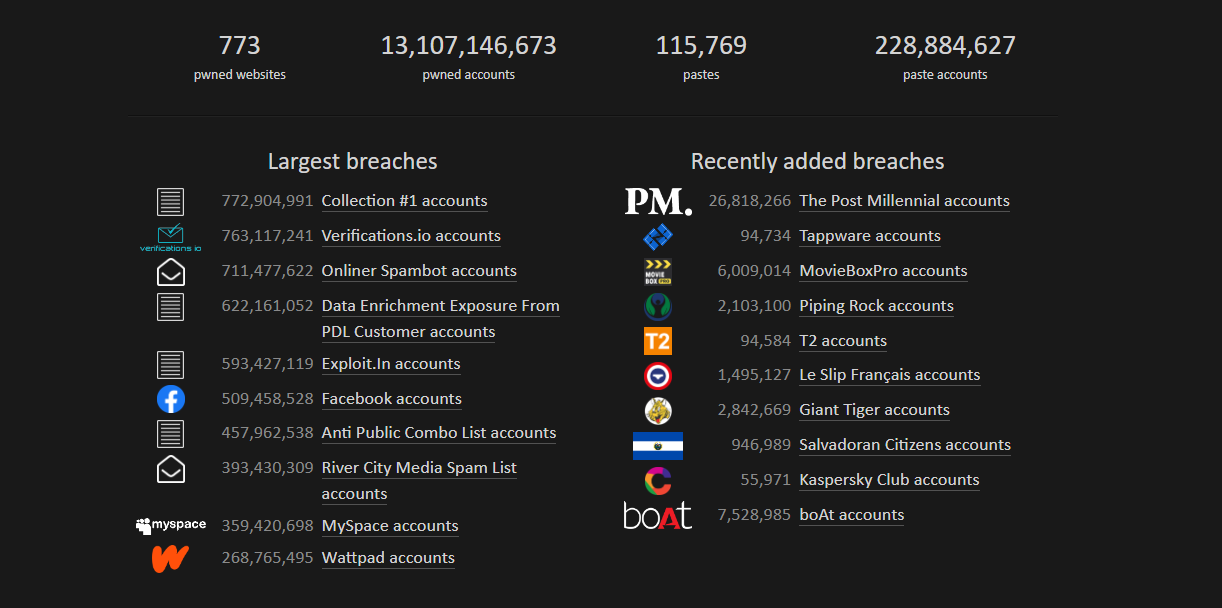

1. Gerenciador de senhas 🔑 ([Bitwarden](https://bitwarden.com)) Uso gerenciadores de senha desde 2011 ~ 2012 sendo essencial hoje em dia já que cada vez mais temos bases expostas:

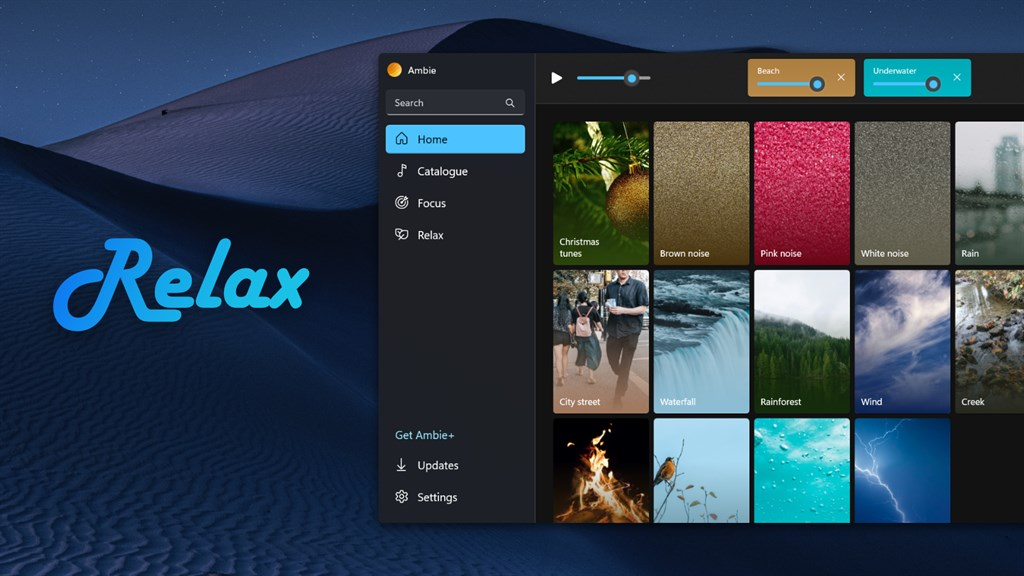

1. [Ambie White Noise](https://ambieapp.com) utilizo como com a técnica pomodoro e quando preciso colocar alguns sons de fundo ou fazer meditações.

1. [Windows Terminal](https://github.com/microsoft/terminal), já testei muitos terminais tanto no Windows o melhor sem dúvidas é o Windows Terminal.

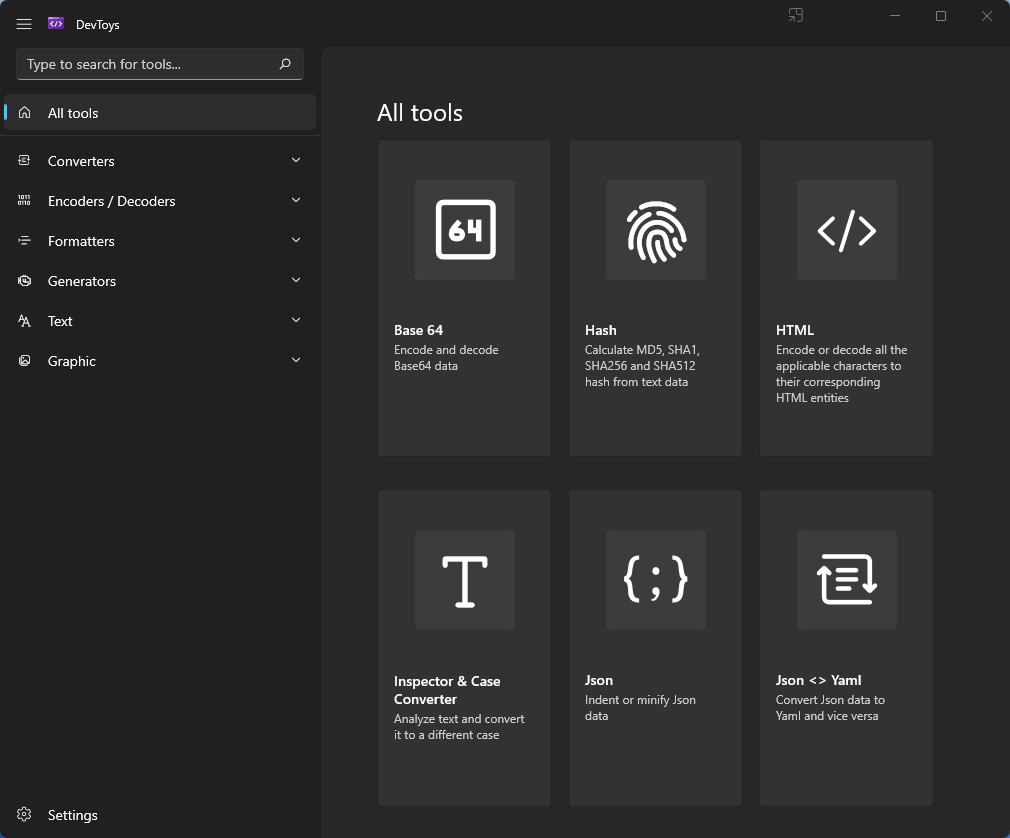

1. [DevToys](https://devtoys.app) o canivete suíço para desenvolvedores. mais de 28 ferramentas, incluindo:

- Json to Yaml and Yaml to Json converter

- Base64 Text & Image converter

- JWT encoder and decoder

- Text comparer

- Hash generator

- and more are coming! See the whole list here.

1. [Microsoft PowerToys](https://github.com/microsoft/PowerToys)

1. [Win-Debloat-Tools](https://github.com/LeDragoX/Win-Debloat-Tools)

1. [Visual Studio Code](https://code.visualstudio.com)

1. [Nushell](https://www.nushell.sh)

1. [proto](https://moonrepo.dev/proto)

1. [1.1.1.1](https://one.one.one.one/)

1. [GitKraken](https://www.gitkraken.com/)

## Conclusão

Em suma, o Windows 11, equipado com as ferramentas certas e personalizadas de acordo com as suas preferências, se transforma em um ambiente de desenvolvimento poderoso e eficiente. A combinação de gerenciadores de pacotes versáteis, soluções de segurança robustas, terminais personalizáveis e utilitários indispensáveis, como os mencionados neste artigo, capacita os desenvolvedores a maximizar sua produtividade e criatividade.

Ao adotar essas ferramentas e personalizações, você estará aproveitando o melhor do Windows 11 para criar um espaço de trabalho otimizado e adaptado às suas necessidades específicas. Com um ambiente de desenvolvimento sob medida, você estará pronto para enfrentar qualquer desafio de codificação com confiança e eficiência. Experimente essas sugestões e descubra como o Windows 11 pode impulsionar seu fluxo de trabalho e elevar seus projetos a um novo patamar.

## Referencias

- https://unsplash.com/photos/person-using-windows-11-computer-on-lap-AigsWJmvoEo

- https://dev.to/nickytonline/tools-that-keep-me-productive-1no5

- https://www.akitaonrails.com/2022/02/15/akitando-114-o-melhor-setup-dev-com-arch-e-wsl2 | neiesc |

1,873,352 | Adapt Kotlin 2.0 in Android applications | Read this post in Spanish here. Recently, version 2.0 of Kotlin has been released, and with it,... | 0 | 2024-06-01T23:43:07 | https://dev.to/marlonlom/adapt-kotlin-20-in-android-applications-3cmk | kotlin, android, jetpackcompose, k2 | > [Read this post in Spanish here.](https://dev.to/marlonlom/adaptar-kotlin-20-en-aplicaciones-android-1kfa)

Recently, version 2.0 of Kotlin has been released, and with it, several adjustments to Android projects to adapt or implement code with this new version of the programming language officially supported by Google for Android mobile development (Multiplatform, according to the latest versions of the Google I/O event).

In this short article, I explain my experience migrating from version `1.9.23` to version `2.0.0`; This, due to the novelties that the use of this new version covers in projects that use Kotlin as a programming language, and also the possible difficulty for many of us developers to apply migrations from versions of Kotlin much lower than `1.9.0 `.

## About the K2 compiler

The K2 compiler is a complete reimplementation of the original Kotlin compiler, designed to offer significant improvements in Android application development. Introduced in Kotlin 2.0, K2 provides several advantages, among which are: faster compilation speed, performance improvements, and improved cross-platform support; These advantages applied to Android projects allow a reduction in the size of the applications by generating more compact code, as well as the generation of native code which implies greater performance in mobile applications.

### How to test K2 compiler on Android?

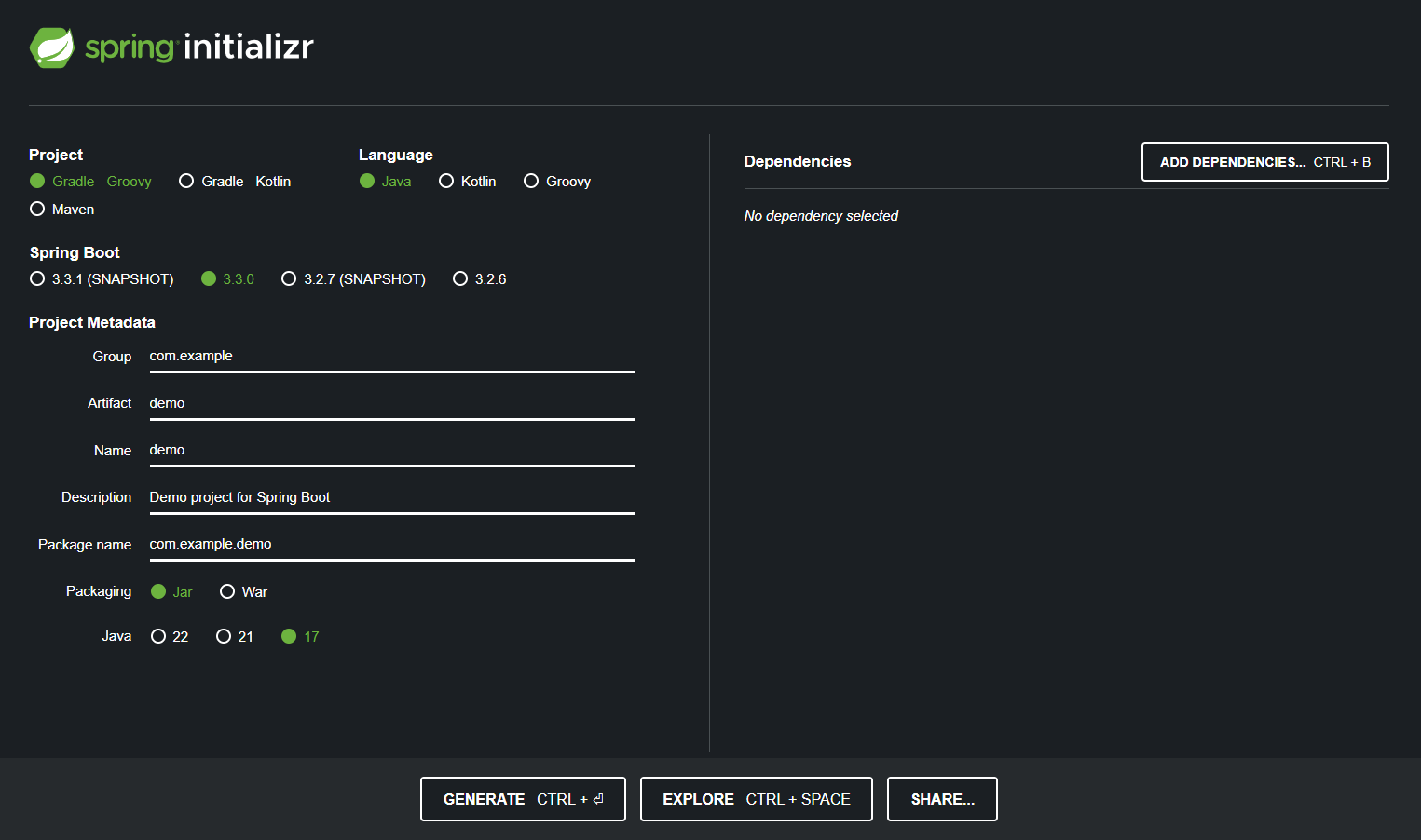

It is worth mentioning that this section covers the configuration aspect from the point of view of Android projects. I will not mention details of KMP, although several of the details indicated are easy to assimilate if one already has experience in cross-platform projects using Kotlin.

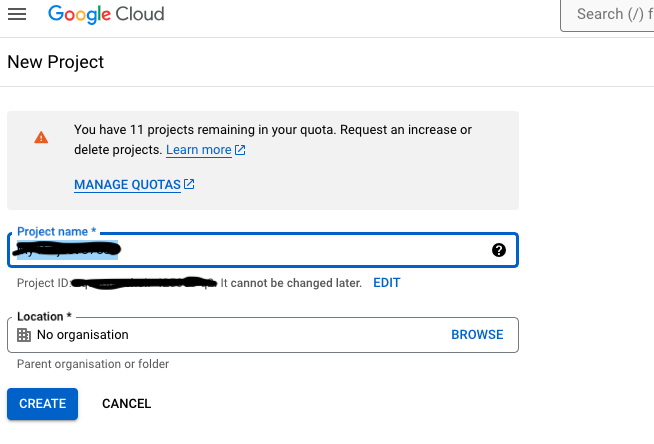

#### 1. A new build directory

Kotlin 2.0 introduces a new build output directory: `.kotlin`. you must add it to the `.gitignore` file so that its contents do not appear in commits:

```

#.gitignore

# Kotlin 2.0

.kotlin/

```

#### 2. Apply new version of kotlin

Now we go to the gradle/libs.versions.toml file, in this section, it is assumed that Version Catalogs are being used to control the dependencies of the mobile application (versions, plugins, etc.), The reference to the Kotlin version is located, and it is changed to version 2.0.0. So:

```

# gradle/libs.versions.toml

[versions]

agp="8.4.1"

kotlin="2.0.0"

ksp="2.0.0-1.0.21"

```

Regarding compatibility, the versions of the Kotlin symbol processor (KSP) libraries and the gradle plugin for Android (AGP, acronym used for reference) must also be updated. The previous snippet indicates the versions for review.

At the library level, the following Kotlin libraries are also updated:

```

# gradle/libs.versions.toml

[libraries]

kotlin-gradle-plugin = "org.jetbrains.kotlin:kotlin-gradle-plugin:2.0.0"

kotlin-serialization-plugin = "org.jetbrains.kotlin:kotlin-serialization:2.0.0"

```

> Note: there are libraries that, at the time of writing this article, did not present problems in terms of changing versions, such as `kotlin-coroutines`, `kotlin-serialization`, `kotlin-serialization-json`.

At the plugin level, the Kotlin libraries are also updated below, taking into account the version reference already indicated previously:

```

# gradle/libs.versions.toml

[plugins]

kotlin-android = { id = "org.jetbrains.kotlin.android", version.ref = "kotlin" }

kotlin-jvm = { id = "org.jetbrains.kotlin.jvm", version.ref = "kotlin" }

kotlin-serialization = { id = "org.jetbrains.kotlin.plugin.serialization", version.ref = "kotlin" }

```

With this done, run the command `gradle clean build` or from Android Studio, build the project.

#### 3. Update the Compose compiler

Another important aspect regarding the configuration of Android projects, especially using Jetpack Compose, is related to the Compose compiler for Kotlin, which is a configuration that allows the transformation of functions annotated as `@Composable` in order to apply optimizations In the compilation of these functions, now, the way of defining the version of the compose compiler has presented a big change, which is described below.

Previously, the version of the compose compiler had to be placed inside the build.gradle file of the gradle project module that has the Android implementations and that also contains the Jetpack Compose implementations.

```

/* build.gradle.kts */

…

composeOptions {

kotlinCompilerExtensionVersion="1.5.3"

}

…

```

And if you use Version Catalogs, the version would be indicated as follows:

```

# gradle/libs.versions.toml

[versions]

compose-compiler = “1.5.3”

…

```

```

/* build.gradle.kts */

…

composeOptions {

kotlinCompilerExtensionVersion = libs.versions.composeCompiler.get()

}

…

```

This initiative was good at the time, but it has some maintenance problems, since it is required to be compatible with the current version of Kotlin. It is different from the Compose version and the IDE did not propose to improve it. Every time you update your Kotlin version, you have to Google the Jetpack Compose documentation for the compatible version of the Compose compiler. Kotlin 2.0 solves this problem.

Now, the inclusion of a new gradle plugin is applied to the `gradle/libs.versions.toml` file, which is responsible for managing the more organized version of the compose compiler and linked to the Kotlin version.

```

# gradle/libs.versions.toml

[plugins]

…

kotlin-compose-compiler = { id = "org.jetbrains.kotlin.plugin.compose", version.ref = "kotlin" }

```

After this action, the `build.gradle.kts` file of the Android module that has the Jetpack Compose capabilities is modified, removing the `composeOptions configuration. kotlinCompilerExtensionVersion ` and adding the reference to the `kotlin-compose-compiler` plugin in the `plugins { .. }` section.

```

/* build.gradle.kts */

…

plugins {

…

alias(libs.plugins.compose.compiler)

}

…

```

With this done, run the `gradle clean build` command or from Android Studio, build the project or synchronize.

And that's it! This plugin will configure the Compose compiler version based on the current Kotlin version.

## Conclusions

Overall, the K2 compiler represents a significant step forward for Android app development with Kotlin. Improvements in speed, performance, and extensibility make it a valuable tool for Android developers.

Now that the use of Kotlin 2.0.0 is becoming standard, now is a good time to update our Android applications so that they support more of the technology that goes and evolves in terms of Kotlin, KMP, Jetpack Compose, and the other technologies that Google and app developers and mobile libraries are adapting for more modern mobile apps with better features.

I hope you find this informative and useful and that at some point you can follow these steps in your Android applications, in order to apply a good migration to Kotlin 2.0.0.

Thanks for reading, happy coding! 😊

| marlonlom |

1,873,351 | Procuro Devs Pleno e Senior | Hey, galera! Procuro Devs Pleno e Senior que estão acabando projeto ou em busca de um novo desafio... | 0 | 2024-06-01T23:41:28 | https://dev.to/fernanda_picazio/procuro-devs-pleno-e-senior-3o97 | Hey, galera! Procuro Devs Pleno e Senior que estão acabando projeto ou em busca de um novo desafio para assessorar como Headhunter nível Brasil (sou de SP o maior pólo no país em vagas de Tecnologia).

Para saber mais sobre o meu trabalho, me chama no meu wapp 11 99369-0258.

Bjs, Fe ❤️ | fernanda_picazio | |

1,873,350 | Kelebihan dan Harga Pagar Panel Beton | Kelebihan Pagar Panel Beton Pagar panel beton semakin populer dalam berbagai aplikasi konstruksi... | 0 | 2024-06-01T23:41:22 | https://dev.to/sodiumxyz/kelebihan-dan-harga-pagar-panel-beton-5gi2 | Kelebihan Pagar Panel Beton

Pagar panel beton semakin populer dalam berbagai aplikasi konstruksi karena sejumlah kelebihan yang ditawarkannya. Berikut beberapa kelebihan utama dari pagar panel beton:

1. Kekuatan dan Ketahanan

Pagar panel beton memiliki kekuatan struktural yang tinggi dan tahan terhadap tekanan serta cuaca ekstrem. Material beton ini juga tahan terhadap korosi, rayap, dan kebakaran, menjadikannya pilihan yang tahan lama untuk berbagai kondisi lingkungan.

2. Instalasi Cepat dan Mudah

Proses instalasi pagar panel beton lebih cepat dibandingkan dengan pagar konvensional. Panel pracetak ini dirancang untuk pemasangan yang mudah dan cepat di lokasi proyek, sehingga menghemat waktu dan biaya.

3. Perawatan Rendah

Beton tidak memerlukan perawatan rutin seperti pengecatan ulang atau perlindungan dari hama, yang menghemat biaya perawatan dalam jangka panjang. Inspeksi berkala cukup untuk memastikan tidak ada kerusakan struktural.

4. Estetika

Pagar panel beton tersedia dalam berbagai desain dan tekstur yang dapat disesuaikan dengan kebutuhan estetika proyek. Ini memberikan tampilan yang rapi dan profesional untuk berbagai aplikasi, baik komersial, industri, maupun perumahan.

5. Keamanan dan Privasi

Pagar beton menawarkan keamanan yang baik karena sulit untuk ditembus. Selain itu, memberikan privasi yang optimal karena struktur betonnya yang solid dan tinggi.

[Harga Pagar Panel Beton](https://amertaprima.co.id/produk-precast/pagar-panel-beton/)

Harga pagar panel beton dapat bervariasi tergantung pada beberapa faktor, seperti ukuran, ketebalan, desain, dan lokasi pemasangan. Berikut beberapa faktor yang mempengaruhi harga pagar panel beton:

1. Ukuran dan Ketebalan

Pagar panel beton tersedia dalam berbagai ukuran dan ketebalan. Pagar yang lebih besar dan tebal biasanya lebih mahal karena membutuhkan lebih banyak bahan dan tenaga kerja untuk produksi dan pemasangannya.

2. Desain dan Finishing

Desain dan finishing khusus dapat meningkatkan biaya pagar panel beton. Misalnya, pagar dengan tekstur atau pola dekoratif biasanya lebih mahal dibandingkan dengan yang polos.

3. Jumlah yang Dibutuhkan

Pembelian dalam jumlah besar sering kali mendapatkan harga yang lebih baik per unit dibandingkan dengan pembelian dalam jumlah kecil.

4. Lokasi Pemasangan

Biaya transportasi dan pemasangan dapat bervariasi tergantung pada lokasi proyek. Pemasangan di area yang sulit dijangkau atau membutuhkan peralatan khusus dapat meningkatkan biaya total.

5. Produsen atau Distributor

Harga juga dapat bervariasi antara produsen atau distributor. Penting untuk memilih produsen atau distributor yang terpercaya untuk mendapatkan produk berkualitas dengan harga yang kompetitif.

Kesimpulan

Pagar panel beton menawarkan banyak kelebihan seperti kekuatan, ketahanan, instalasi cepat, perawatan rendah, estetika, serta keamanan dan privasi. Harga pagar panel beton dipengaruhi oleh ukuran, ketebalan, desain, jumlah, lokasi pemasangan, dan produsen atau distributor. Untuk mendapatkan pagar panel beton berkualitas dengan harga yang kompetitif, pilihlah produsen atau distributor yang terpercaya dan berpengalaman seperti CV Amerta Prima.

Dengan reputasi yang solid dan komitmen terhadap kualitas, CV Amerta Prima menawarkan berbagai pilihan pagar panel beton yang sesuai dengan kebutuhan proyek Anda. Untuk informasi lebih lanjut dan penawaran harga, kunjungi situs web resmi CV Amerta Prima. | sodiumxyz | |

1,873,346 | It is so great when you try to find the bug in your code and it's just... a typo. Frustrating, yet great! | A post by BrendonMedeiros | 0 | 2024-06-01T23:25:33 | https://dev.to/punkdev/it-is-so-great-when-you-try-to-find-the-bug-in-your-code-and-its-just-a-typo-frustrating-yet-great-4kbd | punkdev | ||

1,873,342 | Is Temporal still temporal? | Temporal is the new JavaScript standard for date manipulations. It has a long, difficult journey that... | 0 | 2024-06-01T23:13:23 | https://dev.to/artalar/is-temporal-still-temporal-19f0 | webdev, javascript, temporal | [Temporal](https://tc39.es/proposal-temporal/docs/) is the new JavaScript standard for date manipulations. It has a long, difficult journey that I want to share with you. Is it ready for production or not? Let's find out!

## Introduction

On [May 13, 2017, the first commit](https://github.com/tc39/proposal-temporal/commit/8a171d1661babda716251250fbdb4dd39f2dd1c2) of the Temporal Proposal was made, heralding a new API for date manipulation, inspired by popular libraries like moment.js and luxon.

Temporal aims to correct many of the pain points associated with the existing `Date` object in JavaScript, providing a better, more reliable framework for handling dates and times. Over the years, Temporal has evolved significantly, incorporating numerous features and improvements.

## Key Differences from Date

Temporal introduces several significant improvements over the traditional `Date` object:

1. **Correct Time Zone Handling**: Temporal is designed with thoughtful time zone management, allowing developers to handle cross-time zone scenarios seamlessly.

2. **Immutable API**: The API is immutable, ensuring that date objects aren't accidentally modified and every operation returns a new object.

3. **Duration Handling**: Temporal includes robust support for intervals through the `Duration` object, making it easier to work with time durations.

Here's an example to demonstrate the ease of use:

```js

const start = Temporal.PlainDateTime.from('2024-01-01T10:00');

const duration = Temporal.Duration.from({ hours: 3, minutes: 15 });

const end = start.add(duration);

console.log(start.toString()); // 2024-01-01T10:00

console.log(end.toString()); // 2024-01-01T13:15

console.log(duration.toString()); // PT3H15M

```

And another example:

```js

const date1 = Temporal.PlainDate.from('2024-01-01');

const date2 = Temporal.PlainDate.from('2024-12-31');

const difference = date1.until(date2);

console.log(difference.toString()); // P11M30D (11 months and 30 days)

```

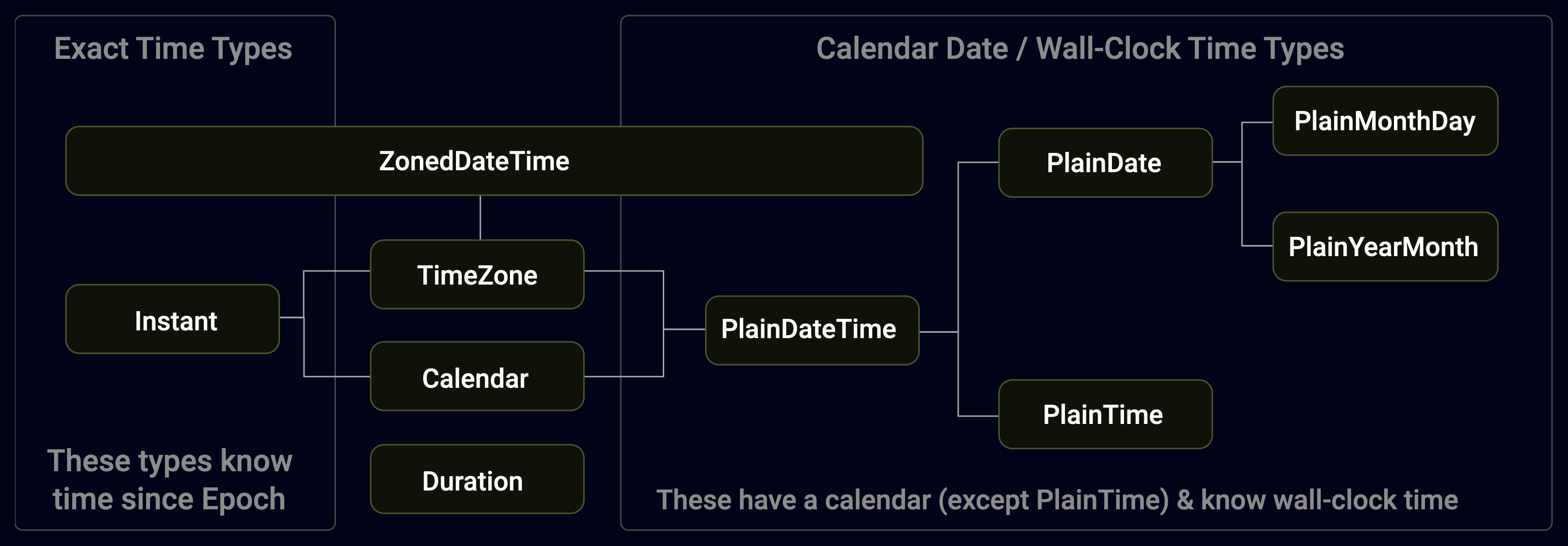

## Comprehensive Entity Model

Temporal's design incorporates a comprehensive model for date and time entities:

- **PlainDate**: Represents a date without a time zone.

- **PlainTime**: Represents a time of day without a date or time zone.

- **PlainDateTime**: Represents a combination of date and time without a time zone.

- **ZonedDateTime**: Represents a date and time in a specific time zone.

- **Duration**: Represents a time interval.

- **Instant**: Represents an exact moment in time.

You can read more about the [schematic of key entities here](https://tc39.es/proposal-temporal/docs/#object-relationship).

## Active Development and Polyfill

In 2020, there was [intensive work on the polyfill](https://github.com/js-temporal/temporal-polyfill/graphs/code-frequency). Igalia played a crucial role, investing significant effort into the development process. They wrote extensive tests, ensured type safety, and added numerous runtime assertions—adhering to best practices meticulously.

I personally used this polyfill for a year while developing a Node.js project and was extremely satisfied. I didn't encounter any bugs and even [submitted a PR to improve typings](https://github.com/tc39/proposal-temporal/pull/1445). It's gratifying to make even a small contribution to such a major project.

## Why Temporal?

By 2021, I considered the Temporal proposal and its polyfill the most well-thought-out tool for date manipulation, and I still hold this view. However, the polyfill [weighs 200 kB (50 kB gzip)](https://bundlejs.com/?q=@js-temporal/polyfill), making it somewhat heavy for front-end use. Nonetheless, it's worth noting that once Temporal is natively supported in browsers, you can simply remove the polyfill without altering your code—a luxury not available with other date libraries from NPM.

However, I couldn't afford to drag such a heavy library into every web application, and I forgot about Temporal for a while. Three years have passed since I used a well-crafted polyfill, so why hasn't it been shipped to all browsers yet?

## Roadblocks to Becoming a Standard

A key feature of the new API is sophisticated time zone management. However, this couldn't be implemented fully due to inadequacies in the existing time zone standard. [Ujjwal](https://github.com/ryzokuken), an Igalia employee and the primary developer of Temporal, proposed [extending the internet's date-time standard](https://ryzokuken.dev/draft-ryzokuken-datetime-extended/documents/rfc-3339.html) to allow for additional information. For instance, while Hawaiian law mandates a single standard time, locals often follow a different time zone. This issue isn't theoretical; it was an actual problem for my friend, a developer. The proposed extension permits specifying not just the time zone but also the [specific "calendar"](https://tc39.es/proposal-temporal/docs/calendar-review.html), accommodating such nuances.

The proposal's acceptance was a significant milestone. As of [October 23, 2023](https://mailarchive.ietf.org/arch/msg/ietf-announce/g_JdmxVWPKhV_GYYcSKVUk5czjE/), the proposal was approved!

### Platform Support

It's hard to predict when Temporal will become a standard and be available in all browsers, but there's progress. For instance, the [V8 implementation of Temporal now occupies over 2.6% of the binary](https://github.com/tc39/proposal-temporal/issues/2786), and you can [already use it in Deno](https://deno.com/blog/v1.40).

## Current Status and Future Outlook

Development on Temporal continues, with seemingly no remaining major blockers. You can track the current status here: [GitHub Issues - Proposal Temporal](https://github.com/tc39/proposal-temporal/issues/2628).

For example, recently (May 2024), [a method was removed](https://github.com/tc39/proposal-temporal/commit/d83084aab3cdf64f3cb761ad08334c9907c72e7d) - an intriguing change for a proposal on its way to becoming a standard. Does this mean Temporal is not ready yet? No, I think for a long time we can imagine Temporal as a mature date library that evolves and develops smoothly, just like any other NPM library.

So, what's better — a library with specific APIs without feature, or Temporal - a well-done, comprehensive date framework with ongoing improvements?

## Polyfill

The only complexity with Temporal is the size of the polyfill. However, an alternative polyfill has been in development for some time now — [temporal-polyfill](https://github.com/fullcalendar/temporal-polyfill) by [FullCalendar](https://fullcalendar.io/). Its size is much more appealing: 60.7 kB to 21.7 kB (gzip). And I plan to use it in my next project! How about you? | artalar |

1,873,343 | Enhance Your Deployments with Pod Topology Spread Constraints: K8s 1.30 | Pod Topology Spread Constraints in Kubernetes help us spread Pods evenly across different parts of a... | 0 | 2024-06-01T23:09:15 | https://dev.to/cloudy05/enhance-your-deployments-with-pod-topology-spread-constraints-k8s-130-14bp | kubernetes, cloudcomputing, cloudnative, aws | Pod Topology Spread Constraints in Kubernetes help us spread Pods evenly across different parts of a cluster, such as nodes or zones. This is great for keeping our applications resilient and available. This feature makes sure to avoid clustering too many Pods in one spot, which could lead to a single point of failure.

**Key Parameters:-**

1. Topology Key:- This is a label key that defines where your Pods can be placed in the cluster. Available topology keys

⇒ kubernetes.io/hostname → This key spreads Pods across different nodes within the cluster.

⇒ topology.kubernetes.io/zone → This key spreads Pods across different availability zones.

⇒ topology.kubernetes.io/region → This key spreads Pods across different regions.

2. MaxSkew:- Maximum allowed difference in the number of Pods between the most and least populated groups defined by your Topology Key.

3. WhenUnsatisfiable:- This is what Kubernetes does when it can't meet your specified Pod spread criteria.

⇒ DoNotSchedule → Prevents scheduling if the constraint is violated.

⇒ ScheduleAnyway → Allows scheduling but logs a warning.

4. LabelSelector:- Standard Kubernetes label selector to filter which Pods are considered for the constraint.

5. minDomain:- Ensures that pods are spread across at least ‘n’ different zones. This is an optional field which can only be used when using the

#### Basic YAML configuration

```

apiVersion: apps/v1

kind: Deployment

metadata:

name: new-app

spec:

replicas: 5

selector:

matchLabels:

app: new-app

template:

metadata:

labels:

app: new-app

spec:

topologySpreadConstraints:

- maxSkew: 1

topologyKey: "topology.kubernetes.io/zone"

whenUnsatisfiable: "DoNotSchedule"

labelSelector:

matchLabels:

app: new-app

minDomains: 3

containers:

- name: new-app-container

image: new-app-image

```

#### Note -

Suppose we have three zones, and we are deploying Pods with a maxSkew of 1. The distribution might look like this:

• Zone A: 2 Pods

• Zone B: 2 Pods

• Zone C: 1 Pod

Here, we can see that the maximum difference in Pod counts between the zones is not more than 1. Therefore, any new Pods will be scheduled as long as this difference does not exceed 1. If the difference were greater than 1, the system would not schedule any additional Pods in the zones where this limit is exceeded.

#### Pod Topology Spread Constraints can be used with various k8s objects:-

1. Deployments

2. StatefulSets

3. DaemonSets

4. ReplicaSets

5. Jobs and CronJobs

#### Some common use cases:-

1. Deploying a web application with its replicas spread evenly across multiple availability zones to ensure high availability and fault tolerance.

2. Deploying a stateful application, such as a database, with Pods spread across different nodes to prevent data loss in case of node failure.

3. Deploying a batch processing application with workloads distributed across multiple zones to optimize resource utilization and ensure processing continuity.

#### Other mechanisms to achieve balanced Pod distribution and resilience:-

1. Pod AntiAffinity

2. Node Affinity

3. Cluster Autoscaler with Balance-Similar-Node Groups

4. Manual Distribution

5. Custom Schedulers

#### Significant advantages of Pod Topology Spread Constraints over other mechanisms

1. Enhanced Granularity and Control: Pod Topology Spread Constraints allow precise control over Pod distribution across various domains (e.g., zones, nodes), ensuring a balanced deployment with minimal skew between domains.

2. Automation and Simplicity: Unlike Pod AntiAffinity and Node Affinity, which can be complex and require manual management, Pod Topology Spread Constraints automatically balance Pods based on predefined rules, reducing manual effort and errors.

3. Proactive Balancing: This feature ensures Pods are evenly distributed at the time of scheduling, unlike the Cluster Autoscaler which reacts to imbalances after they occur, providing more immediate and consistent balance.

4. Versatility Across Domains: While Node Affinity focuses on nodes, Pod Topology Spread Constraints work across multiple topology domains, making them more versatile for different deployment scenarios.

5. Standardized and Built-In: As a native Kubernetes feature, Pod Topology Spread Constraints offer a standardized approach, eliminating the need for custom schedulers and ensuring compatibility with Kubernetes updates and community support.

| cloudy05 |

1,873,341 | STOLEN CRYPTOCURRENCY ASSET RECOVERY EXPERTS-(FOLKWIN EXPERT RECOVERY | ** FOLKWIN EXPERT RECOVERY emerges as a guiding light, illuminating the path to redemption for... | 0 | 2024-06-01T23:02:30 | https://dev.to/logan_noah_2920cb0686a6f5/stolen-cryptocurrency-asset-recovery-experts-folkwin-expert-recovery-3g15 |

** FOLKWIN EXPERT RECOVERY emerges as a guiding light, illuminating the path to redemption for victims ensnared in the web of online fraud. My journey with them began tentatively, shrouded in skepticism and apprehension, a result of past encounters with false promises and shattered trust. Yet, amidst the darkness, their unwavering dedication and unparalleled expertise soon shattered my doubts, paving the way for a remarkable transformation. From the outset, ** FOLKWIN EXPERT RECOVERY'S commitment to its mission was palpable. Their team of skilled professionals, akin to artisans sculpting from the chaos, meticulously pieced together the fragments of my shattered trust. With each interaction, their transparency and integrity shone brightly, dispelling the shadows of uncertainty that had clouded my judgment. It was evident that they were not merely a service provider but guardians of integrity in the realm of cybersecurity. What truly sets ** FOLKWIN EXPERT RECOVERY apart is its unwavering commitment to client satisfaction. Unlike others who may offer empty promises, they deliver tangible results that surpass mere restitution. With each update and guidance provided, they instilled a sense of hope and reassurance, forging an enduring bond of trust. Their dedication to going above and beyond for their clients is not merely a business ethos but a testament to the triumph of resilience over adversity. In cybersecurity, where threats evolve and multiply with alarming speed, ** FOLKWIN EXPERT RECOVERY stands as a bastion of reliability. Their expertise spans a myriad of fraudulent schemes, from phishing scams to investment fraud, enabling them to navigate the intricate web of deception with precision and finesse. Moreover, their proactive approach to cybersecurity empowers clients with the knowledge and tools necessary to safeguard themselves against future threats. Beyond their technical prowess, ** FOLKWIN EXPERT RECOVERY'S human-centric approach sets them apart. Each client is treated with empathy and respect, and their unique circumstances are carefully considered and addressed with compassion. It is this personalized touch that fosters a sense of trust and camaraderie, transforming what could be a daunting ordeal into a journey of resilience and empowerment. As I reflect on my journey with ** FOLKWIN EXPERT RECOVERY, I am filled with gratitude for their unwavering support and guidance. They have not only restored what was lost but have also imbued me with a newfound sense of confidence and security in the digital realm. In an age where trust is a scarce commodity, their steadfast commitment to integrity serves as a beacon of hope, guiding others out of the shadows and into the light. In the realm of cybersecurity, ** FOLKWIN EXPERT RECOVERY is more than just a service provider; they are champions of justice, advocates for truth, and guardians of trust. For help, Contact them with this info below:

Website: ( WWW.FOLKWINEXPERTRECOVERY.COM

Whatsapp: ( +1 (740)705-0711

Email: ( FOLKWINEXPERTRECOVERY @ TECH-CENTER . COM

Regards,

Mr Logan Noah. | logan_noah_2920cb0686a6f5 | |

1,873,339 | Discover the Best Products of 2024 Curated by Redditors - BuyReddit.com | BuyReddit brings you the top recommended products from Reddit. Popular Products (as of May 2024):... | 0 | 2024-06-01T22:46:37 | https://dev.to/ben_levay_5576197218890ea/discover-the-best-products-of-2024-curated-by-redditors-buyredditcom-591a | react, productivity, showdev | BuyReddit brings you the top recommended products from Reddit.

Popular Products (as of May 2024): https://www.buyreddit.com/popular | ben_levay_5576197218890ea |

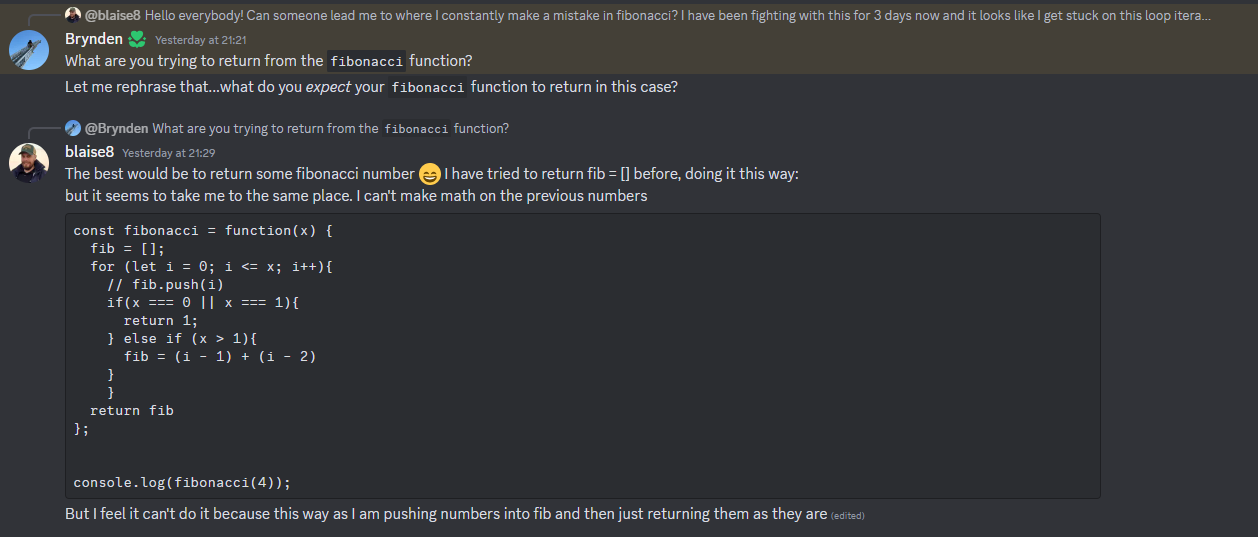

1,873,338 | The 8-Hour Debug: How a Silly Mistake Cost Me a Day | The Function function getEnrollmentByStudentIdAndCourseId( studentId: string, ... | 0 | 2024-06-01T22:34:05 | https://dev.to/mmvergara/the-8-hour-debug-how-a-silly-mistake-cost-me-a-day-543e | javascript, webdev, programming | ### The Function

```ts

function getEnrollmentByStudentIdAndCourseId(

studentId: string,

courseId: string

) {

// fetch logic

return result;

}

```

## The Mistake

Believe it or not this function caused me a day of debugging. Because of the way I was calling it like this.

```ts

const studentId = "ID";

const courseId = "ID";

const enrollment = getEnrollmentByStudentIdAndCourseId(courseId, studentId);

```

I was passing the arguments in the wrong order. The function signature was `getEnrollmentByStudentIdAndCourseId(studentId, courseId)` but I was calling it with `getEnrollmentByStudentIdAndCourseId(courseId, studentId)`.

The reason why i took so long debugging it was because function was **still returning a result even though the arguments were in the wrong order**, but it was the wrong result. I think this is ultimately a mistake i made by writing a bad prisma query that caused it.

### The Adjustment

make the argument passing more explicit by using object

```ts

type Args = {

courseId: string;

studentId: string;

};

function getEnrollmentByStudentIdAndCourseId({ courseId, studentId }: Args) {

// fetch logic

return result;

}

```

Ever since then, when I have a function that takes multiple arguments of the same type like IDs, I started to use object destructuring to make the argument passing more. And also like... made better prisma queries.

I hope this helps you avoid the same mistake I made. Happy coding!

| mmvergara |

1,873,337 | [Game of Purpose] Day 14 | Today no progress. I've been taking care of my home. | 27,434 | 2024-06-01T22:33:38 | https://dev.to/humberd/game-of-purpose-day-14-520 | gamedev | Today no progress. I've been taking care of my home. | humberd |

1,873,290 | The Start of E.V.O T | Hey everyone, hope all is well! I've officially started the journey now that I have all the necessary... | 0 | 2024-06-01T22:25:08 | https://dev.to/kevinpalma21/the-start-of-evo-t-ibf | beginners, learning, programming, coding | Hey everyone, hope all is well! I've officially started the journey now that I have all the necessary equipment to build the turret (might be missing a few things, but we'll go with the flow). I've begun creating the AutoCAD design for the base of the turret. This is just the prototype since it's my first time really freestyling this, so bear with me if it looks a bit weird.

I want to make a nice, sturdy base with some vents on the sides to let the components inside breathe. I want the turret to look like a railgun cannon, taking inspiration from the image below (Image 1) but with some tweaks. I have an idea for the base—here's a quick look at what I've got(image2). I want the Arduino to stay in this section of the turret, with the other bulkier equipment here. Once I have this part down, I'll start working on the actual movement of the turret.

(Image1)

(Image2)

I'm still deciding what kind of motor to use. If you have any suggestions, that would be awesome, and I'm always happy to hear your ideas and talk more about it.

This is the main update I have for you. I'll try to keep you updated every 3-4 days with good progress each time. Just wanted to let you know I haven't abandoned this project and will see it through to the end. Thank you all for the support and have a great one!

Quick pic of my Guinea Pig Yoshi

| kevinpalma21 |

1,873,284 | REST API Design Rules | Why is it important to write clean REST-API Designs In today's interconnected world,... | 0 | 2024-06-01T21:44:05 | https://dev.to/ezekiel_77/rest-api-design-rules-2c4j | webdev, backend, api, design | ## Why is it important to write clean REST-API Designs

In today's interconnected world, well-designed REST APIs are the backbone of efficient and scalable applications.

Writing clean REST API designs is crucial for several reasons:

* **Enhanced Usability:** A well-designed API is intuitive and easy to use, making it accessible to developers of all skill levels. This simplifies integration and reduces the learning curve.

* **Improved Maintainability:** Clean code promotes maintainability by making it easier to identify and fix bugs, add features, and scale the API. This ensures long-term stability and reduces development costs.

* **Increased Security:** A well-structured API with proper authentication and authorization mechanisms helps prevent unauthorized access, data breaches, and other security vulnerabilities.

* **Enhanced Performance:** A clean design optimizes performance by using efficient data structures, avoiding unnecessary calls, and minimizing latency. This provides a seamless user experience and improves overall application performance.

* **Reduced Development Time:** Well-defined API specifications and clear documentation enable faster development by eliminating guesswork and reducing the need for extensive testing. This saves valuable development time and resources.

* **Improved Scalability:** A clean design allows for easy scalability by providing a modular architecture that can be easily expanded to handle increased traffic or new features. This ensures the API can grow with the application's needs.

* **Increased Reusability:** A well-designed API can be reused across multiple applications, reducing duplication and promoting consistency. This simplifies development and saves time and effort.

* **Improved Documentation:** Clean designs are easier to document, making it clear to developers how the API works and how to use it effectively. This reduces confusion and improves adoption.

## URI Rules

**The structure of a url is as follows**

**`scheme :// authority / path [?query][#fragment]`**

for instance

`https://soccer.api.org/teams/dortmund/players?name=Rona#2`

There are two types of resources

1. Collection resources: contains a collection of resources. It can be likened to a database relation

2. Singleton resources: contains a single resource. It can be likened to a database record.

---

When designing Rest-Api's

1 Collection resources should be plural

```diff

+ soccer.api.org/teams/dortmund

- soccer.api.org/team/dortmund

```

2 Singleton resources should be singular and can be replaced by the unique id representing the resource in the database system behind the api

```diff

+soccer.api.org/teams/dortmund/players/58c1aaae-205a-11ef-aeea-a64c74618950

```

3 No **trailing forward slashes** in your URI's

```diff

+soccer.api.org/teams/dortmund/players

-soccer.api.org/teams/dortmund/players/

```

4 Use **hyphens** instead of **underscores** to improve readability of API's

```diff

+ api.blog.com/blogs/this-is-my-blog

- api.blog.com/blogs/this_is_my_blog

```

5 **Lowercase letters** are prefered to **Uppercase letters** in URI paths

```diff

+ api.example.com/my-api/my-resource

- api.example.com/My-Api/My-Resource

```

6 No **file extensions** in URI's

```diff

+ api.example.com/api/resource

- api.example.com/api/resource.json

```

7 CRUD function names should **not** be used in URI's

```diff

+ DELETE api.example.com/api/resource

- GET api.example.com/api.resource/delete

```

8 The query component of the URI's can only be used in collection resources

```diff

+ GET /users?role=admin

```

9 The query component of a URI should be used to paginate collection results

```diff

+ GET /users?pageSize=25&pageStartIndex=50

```

## HTTP Method Rules

| HTTP METHOD | Uses |

| ----------- | ----------------------------------------------------------------------------------------------------------------- |

| POST | To create a new resource. similar to create |

| GET | To get the representation of a resource. similar to read |

| PUT | To update a resource by replacing the **whole** resource |

| DELETE | To delete a resource |

| PATCH | To update a resource by changing the part of the resource that is required without replacing the entire resource. |

| HEAD | To get only the response head not the body |

| OPTIONS | To get all available options for a particular resource |

> PUT can be used for both creating and updating a resource. However, following best practices, it's generally recommended to use POST for creating new resources and PUT for fully replacing existing ones.

---

## Versioning

Versioning an api can be important for:

**Maintaining backward compatibility:** Versioning allows you to introduce new features without breaking existing integrations that rely on older API versions. Users can continue using the familiar endpoints while those seeking new features can adopt the versioned API.

**Ensuring a consistent and well-designed API:** Consistent naming conventions across versions contribute to a user-friendly experience. Changing endpoints disrupts this experience, and versioning helps avoid it.

---

# Conclusion

Now that you're armed with these REST API design rules, it's time to put them into action! Share your API creations in the comments below, and let's build a world of well-designed and developer-friendly APIs together.

| ezekiel_77 |

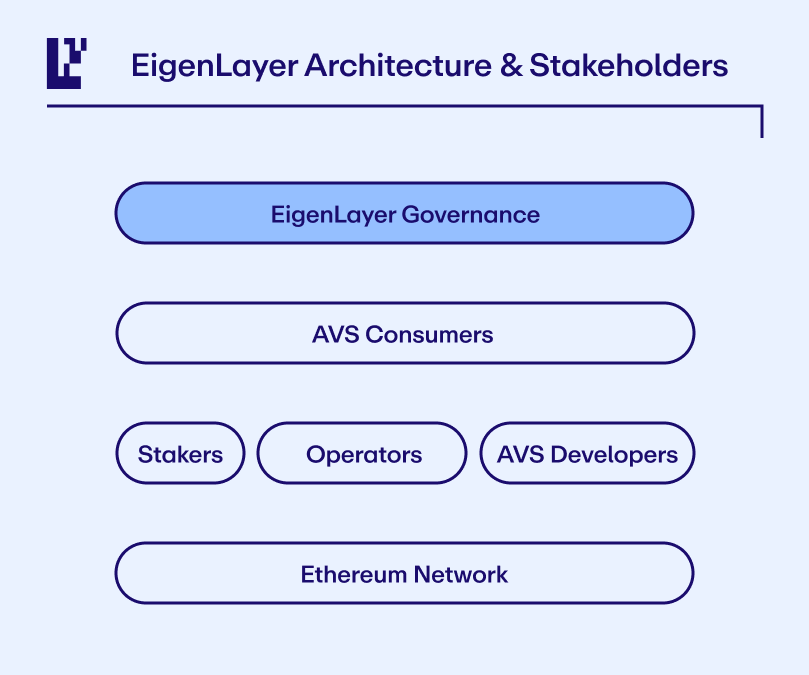

1,873,282 | Introduction to EigenLayer | Welcome to the first post in our series on EigenLayer! In this series, we'll explore how EigenLayer... | 0 | 2024-06-01T21:32:13 | https://dev.to/gaj/introduction-to-eigenlayer-45ob | crypto, ethereum, blockchain | Welcome to the first post in our series on EigenLayer! In this series, we'll explore how EigenLayer is transforming the Ethereum ecosystem by enabling the creation of Actively Validated Services (AVSs). Whether you're a seasoned developer or new to the world of blockchain, this guide will help you understand and leverage the potential of EigenLayer.

#### What is EigenLayer?

EigenLayer is a decentralized protocol built on the Ethereum blockchain. It introduces a novel concept: the ability to "restake" ETH that is already staked for securing the Ethereum network to provide additional security and validation for new decentralized applications (dApps) and services. This process enhances the utility of staked ETH, allowing it to be used for multiple purposes simultaneously.

#### Key Concepts

1. **Restaking**: This is the cornerstone of EigenLayer. It allows Ethereum stakers to opt-in their staked ETH to secure various AVSs. By doing so, the staked ETH can serve multiple roles without the need for additional capital.

2. **Actively Validated Services (AVSs)**: These are the services and applications that utilize the security provided by restaked ETH. Examples include oracles, bridges, Layer 2 solutions, and more. AVSs benefit from the robust security model of the Ethereum network while adding their own unique functionalities.

3. **Cryptoeconomic Security**: EigenLayer ensures that the restaked ETH is used responsibly. If an operator (like Joe in our previous example) misbehaves or acts maliciously, they get slashed. This means they lose a portion of their staked ETH, which provides a strong incentive to act honestly.

#### How Does EigenLayer Work?

Let’s break down the process using a simple example:

1. **Building an AVS**: Imagine James is a developer who wants to build an oracle service. An oracle provides trusted data feeds, which are crucial for many blockchain applications, like Julien’s lending protocol.

2. **Running the AVS**: Joe, who has significant compute power, decides to run James's oracle service. However, to ensure that Joe behaves correctly, he needs to be economically incentivized.

3. **Providing Security**: Jake, an Ethereum staker, delegates his staked ETH to Joe’s service via EigenLayer. This delegation provides cryptoeconomic security to the oracle service.

4. **Ensuring Integrity**: If Joe reports incorrect data (goes rogue), the protocol slashes his staked ETH. This slashing means Jake loses his staked ETH, covering any potential losses for users like Julien.

#### Why is EigenLayer Important?

EigenLayer brings several key benefits to the Ethereum ecosystem:

- **Enhanced Utility of Staked ETH**: It maximizes the utility of staked ETH by allowing it to secure multiple services.

- **Robust Security**: The cryptoeconomic security model ensures that services are reliable and operators are incentivized to act honestly.

- **Flexible and Composable**: Developers can create a wide range of services, from oracles to Layer 2 solutions, all benefiting from the security provided by EigenLayer.

#### Getting Started with EigenLayer

In the upcoming posts, we’ll dive deeper into how you can start building on EigenLayer. We’ll cover topics like setting up your development environment, creating and deploying AVSs, and best practices for ensuring security and reliability.

Stay tuned as we embark on this journey to explore the limitless possibilities enabled by EigenLayer. Whether you’re looking to enhance existing applications or build new innovative services, EigenLayer provides the foundation to do so securely and efficiently.

Welcome to the future of decentralized services with EigenLayer! | gaj |

1,851,926 | My Top 5 Favorite Algorithm Problems at Codewars | What is codewars? Codewars is a social network of programmers who get together to... | 0 | 2024-06-01T17:36:58 | https://coffeebytes.dev/en/top-5-favorite-algorithm-problems-at-codewars/ | opinion, algorithms, codewars, leetcode | ---

title: My Top 5 Favorite Algorithm Problems at Codewars

published: true

date: 2024-06-01 21:15:00 UTC

tags: opinion,algorithms,codewars,leetcode

canonical_url: https://coffeebytes.dev/en/top-5-favorite-algorithm-problems-at-codewars/

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/pdpfqinp1sdqt7rwgtuk.jpg

---

## What is codewars?

Codewars is a social network of programmers who get together to challenge each other to solve code challenges. Codewars is one of **the best websites for practicing algorithms and solving Katas**. Katas? Yes, as in Karate.

### What are katas in codewars?

In the spirit of martial arts, more specifically Karate, these code problems are called _katas_. The _katas_ are divided, ascendingly, according to their difficulty. There are katas from 8th kyu to 1st kyu, with 1st kyu being the most difficult type of _kata_ of all.

There are _katas_ on many, many topics: algorithm development, efficiency, regex, mathematics, cryptography, etc.

Collectively, the _katas_ encompass a variety of languages: C, C++, Go, Python, Javascript, Elixir, Haskell, Rust, even languages as esoteric as Brainfuck. While, individually, each Kata has one or more languages.

Without further ado, here are my top 5 _katas_. These _katas_ **are not necessarily the most difficult ones** , but the ones that I consider to have the ideal balance between creativity and difficulty. I choose those that give that feeling of a good puzzle, one of those that you can’t stop until you solve it.

By the way, **no, I’m not going to post the answers** , those are up to you.

## Multi Line Task++: Hello World

You need to write a function that returns the string “Hello, world!” in Javascript.

Requirement: Each line must have at most 2 characters, and the total number of lines must be less than 40.

Hint: It is possible to complete it in only 28 lines of code.

Original Kata: [Multi Line Task++: Hello World](https://www.codewars.com/kata/59a421985eb5d4bb41000031)

### Note on Kata

The difficult part is the two characters per line maximum. Give it a try.

```

12

34

56

78

//

```

There is a more complicated version where the limit is one character per line, in case you find this one too easy.

## Make a spiral

Your task is to create a spiral of NxN with the given size.

For example, a spiral with 5 sides should look like this:

```

00000

....0

000.0

0...0

00000

```

And size 10

```

0000000000

.........0

00000000.0

0......0.0

0.0000.0.0

0.0..0.0.0

0.0....0.0

0.000000.0

0........0

0000000000

```

The return value should contain an array of arrays, 0’s and 1’s, with the first row consisting of 1’s. For example, for the given size of 5, it should be:

``` python

[[1,1,1,1,1],[0,0,0,0,1],[1,1,1,0,1],[1,0,0,0,1],[1,1,1,1,1]]

```

For extreme cases of small spirals, the size will be at least 5.

As a general rule, the snake made of 1s cannot touch itself.

Original Kata: [Make a spiral](https://www.codewars.com/kata/534e01fbbb17187c7e0000c6)

### Note on Kata

It looks easy, but I assure you it won’t be so easy on your first try.

## The soul of wit: reverse an array

No time for stories, invert an array (in Javascript), return the result. Do whatever you want with the original array. Don’t use Array.prototype.reverse.

You have 30 bytes to spend.

Example: [1, 2, 3] → [3, 2, 1].

This time you won’t be able to do the other Kata thing.

Nor can you use require.

Kata original: [The soul of wit: reverse an array](https://www.codewars.com/kata/59b81886460387d8fc000043)

### Note on Kata

By 30 bytes it means that you have the equivalent in characters to use in your code. For example: the solution below has 33 characters, it exceeds the limit and also cannot be used reverse.

``` javascript

const reverse = a => a.reverse();

```

## Last digit of a huge number

Given a list [x1, x2, x3, …, xn] compute the last digit (decimal) of x1 ^ (x2 ^ (x3 ^ (… ^ xn))).

Example:

lastDigit([3, 4, 2]) === 1

because 3 ^ (4 ^ 2) = 3 ^ 16 = 43046721.

Beware: powers grow incredibly fast. For example, 9 ^ (9 ^ 9) has more than 369 million digits. Your lastDigit function has to deal with those numbers efficiently.

Unusual cases: we assume that 0 ^ 0 = 1 and that the last digit of an empty list is equal to 1.

Kata original: [Last digit of a huge number](https://www.codewars.com/kata/5518a860a73e708c0a000027)

### Note on Kata

If you are thinking of writing something like:

``` python

def lastDigit(arr):

# Esta función NO es la correcta

total = 1

for element in arr[::-1]:

total = element ** total

return str(total)[-1]

last_digit([528374,27415,789392,462589,166837,699678,866982])

```

Solutions like this will get you nowhere, the Kata has to run incredibly fast.

See how long it takes to run in Python with the correct lastDigit function.

``` bash

time python script.py

real 0m0.122s

user 0m0.073s

sys 0m0.044s

```

If you try to run the above code you can probably go make a cup of coffee before it finishes executing.

## Escape the maze

You are provided with a complete labyrinth, like a 2-dimensional grid, more specifically in your language: an array of strings.

maze[0][0] is the upper left corner maze[maze[maze.length - 1][maze[0].length - 1] is the lower right corner

Within this 2D grid:

``` bash

' ' Free space you can walk through

'#' bush with thorns (You can't walk through it)

'^', '<', 'v' or '>' Your body facing to north, west, south or east, respectively.

```

Original Kata: [Escape the maze](https://www.codewars.com/kata/5877027d885d4f6144000404)

### Note on Kata

You are given a series of mazes, your position and you must provide an array of moves to get out. It’s incredibly entertaining!

``` javascript

[ '##########',

'# #',

'# ##### #',

'# # # #',

'# #^# # #',

'# ### # #',

'# # #',

'######## #' ]

```

## Katas with honorable mention

There are other Katas that I like very much but they were left out of this top. Check them out.

- [The Millionth Fibonacci Kata](https://www.codewars.com/kata/53d40c1e2f13e331fc000c26)

- [Prime Streaming [NC-17]](https://www.codewars.com/kata/59122604e5bc240817000016)

- [Breaking the Vigenère Cipher](https://www.codewars.com/kata/544e5d75908f2d5eb700052b)

- [Escape the Maze](https://www.codewars.com/kata/5877027d885d4f6144000404)

- [Simple Maze](https://www.codewars.com/kata/56bb9b7838dd34d7d8001b3c)

- [Sum strings as numbers](https://www.codewars.com/kata/5324945e2ece5e1f32000370)

- [Elemental words](https://www.codewars.com/kata/56fa9cd6da8ca623f9001233) | zeedu_dev |

1,873,038 | Por que o Dia 31 Causa Problemas e Como Solucioná-los | Manipular datas é uma tarefa comum, mas pode se transformar em um verdadeiro desafio, especialmente... | 0 | 2024-06-01T21:06:18 | https://dev.to/yagocosta/por-que-o-dia-31-causa-problemas-e-como-soluciona-los-50ba | Manipular datas é uma tarefa comum, mas pode se transformar em um verdadeiro desafio, especialmente no fim dos meses. Vamos explorar por que os bugs são mais frequentes no dia 31 e como evitá-los em PHP/Laravel e JavaScript.

## Como Datas Funcionam

Antes de mais nada, precisamos entender brevemente como as datas funcionam na programação. Datas são geralmente tratadas como o número de milissegundos desde 1 de janeiro de 1970, a famosa **Época Unix**. Essa representação numérica permite manipular datas com operações matemáticas simples. No entanto, o problema surge quando tentamos ajustar datas em meses com diferentes números de dias.

## O Problema com a Manipulação de Datas

Cada mês tem um número diferente de dias, o que pode causar problemas quando tentamos passar de um mês para outro. Por exemplo, se você começa com uma data no dia 31 e tenta ir para o mês seguinte, o resultado pode ser inesperado porque nem todos os meses têm 31 dias.

## Exemplo em JavaScript

Imagine que você está construindo um calendário e precisa navegar pelos meses.

```javascript

const toggleMonth = (type) => {

let date = new Date(selectedYear, selectedMonth, selectedDay);

if (type === 'next') {

date.setMonth(date.getMonth() + 1);

}

if (type === 'previous') {

date.setMonth(date.getMonth() - 1);

}

setSelectedYear(date.getFullYear());

setSelectedMonth(date.getMonth());

setSelectedDay(date.getDate());

};

```

### Resultado do Código Problemático

Suponha que `selectedYear` seja 2023, `selectedMonth` seja 4 (maio) e `selectedDay` seja 31.

- Quando incrementamos o mês, `date.setMonth(date.getMonth() + 1)` muda para junho, mas como junho tem apenas 30 dias, o navegador ajusta automaticamente para 1 de julho.

**Solução:**

```javascript

const toggleMonth = (type) => {

let date = new Date(selectedYear, selectedMonth, 1); // Comece do primeiro dia do mês

if (type === 'next') {

date.setMonth(date.getMonth() + 1);

} else if (type === 'previous') {

date.setMonth(date.getMonth() - 1);

}

const lastDayOfMonth = new Date(date.getFullYear(), date.getMonth() + 1, 0).getDate();

setSelectedDay(Math.min(selectedDay, lastDayOfMonth)); // Ajuste o dia se necessário

setSelectedYear(date.getFullYear());

setSelectedMonth(date.getMonth());

};

```

Aqui, começamos no dia 1 do mês e depois ajustamos para garantir que não vamos para um dia que não existe no mês. Isso evita que 31 de maio se transforme em 1 de julho ao invés de 30 de junho.

## Exemplo em PHP/Laravel

No Laravel, imagine que você quer pegar o último dia do mês anterior:

**Código Problemático:**

```php

$lastDayOfPreviousMonth = Carbon::now()->subMonth()->endOfMonth();

```

### Resultado do Código Problemático

Imagine que hoje seja 31 de março:

- Usando `Carbon::now()->subMonth()`, a data muda para 3 de março (em anos não bissextos).

- Quando aplicamos `->endOfMonth()`, a data é ajustada para o último dia de março, resultando em 31 de março, o que não é o esperado.

**Solução:**

```php

$lastDayOfPreviousMonth = Carbon::now()->startOfMonth()->subMonth()->endOfMonth();

```

Nesta solução, garantimos que começamos no primeiro dia do mês atual antes de subtrair um mês. Isso evita problemas ao lidar com meses de diferentes durações. Dessa forma, se hoje for 31 de março, o código corretamente retorna 28 ou 29 de fevereiro, conforme o caso.

## Conclusão

Manipular datas pode ser complicado, especialmente no final dos meses. Começando de uma data segura e ajustando conforme necessário, você pode evitar muitos bugs comuns. Aplique essas melhorias no seu código e evite dores de cabeça em momentos cruciais!

## Referências

- [Documentação do Carbon](https://carbon.nesbot.com/docs/)

- [MDN Web Docs - Date](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/Date) | yagocosta | |

1,873,263 | HOW TO RECOVER YOUR CRYPTOCURRENCY FROM SUSPICIOUS INVESTMENTS AND ONLINE TRADING | my experience with Trust Geeks Hack Expert was transformative. They recovered my lost funds and... | 0 | 2024-06-01T20:45:56 | https://dev.to/cely-fernandez/how-to-recover-your-cryptocurrency-from-suspicious-investments-and-online-trading-2e79 | general, beginners, bitcoin, tutorial | my experience with Trust Geeks Hack Expert was transformative. They recovered my lost funds and provided invaluable support during a tumultuous period. Their professionalism, expertise, and dedication to helping victims of fraud are commendable. While I hope no one else endures what I did, knowing that services like Trust Geeks Hack Expert offer a beacon of light for those in similar predicaments like crypto theft or investment scams. Send a DM to Trust Geeks Hack Expert through the email: info@trustgeekshackexpert.com $ TelegramID: Trustgeekshackexper

| cely-fernandez |

1,873,262 | Need Suggestion | visit my portfolio website at https://abdullahs-portfolio.vercel.app/ Hi so its not one of the... | 0 | 2024-06-01T20:38:50 | https://dev.to/abdullah_ali_eb8b6b0c2208/need-suggestion-2i8g | javascript, webdev, beginners, career | **visit my portfolio website at

https://abdullahs-portfolio.vercel.app/**

Hi so its not one of the reading article i needed suggestion.

so i was creating an project for my profolio that is like an simple blogging website using mern

it already have

- login signup logout (jwt)

- create blog

- view blog

- search blog

- display blog ( pagination)

- profile view and edit

apart from theese suggest me what should i add more into website of **ML OR AI **

so that my project can be good for portfolio

_visit my portfolio website at

https://abdullahs-portfolio.vercel.app/_ | abdullah_ali_eb8b6b0c2208 |

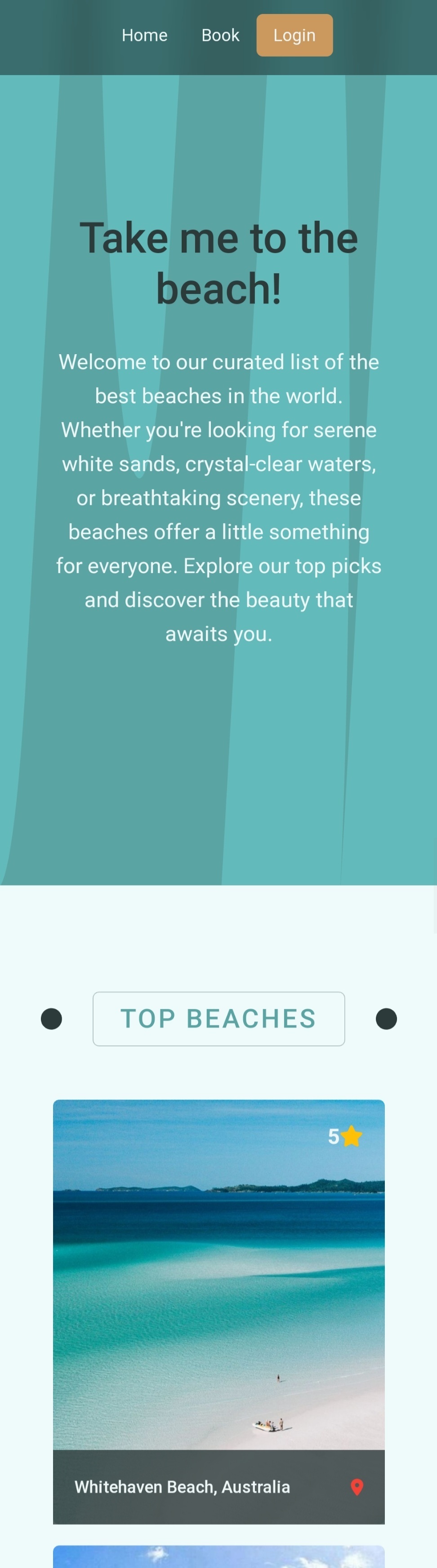

1,873,260 | Take me to a Beach! T-T | This is a submission for [Frontend Challenge... | 0 | 2024-06-01T20:29:27 | https://dev.to/dhrutisubham03/take-me-to-a-beach-t-t-5c5a | devchallenge, frontendchallenge, css, javascript | _This is a submission for [Frontend Challenge v24.04.17]((https://dev.to/challenges/frontend-2024-05-29), Glam Up My Markup: Beaches_

## What I Built

I was trying to build a table on a beach that would show different beaches in the form of cards. I wanted to give it a relaxed and "beachy" look with the help of textures and warm colors.

## Demo

I have made this for PC screens only(Pardon me for being lazy). Best viewed in 1024x532.

Keyboard Inputs are also supported:

```

SpaceBar: To begin exploring.

Left and Right Arrows: To navigate the gallery.

Escape: To exit the gallery and move to landing page.

```

{% codepen https://codepen.io/dhrutisubham/pen/vYwxyNR %}

_Edit: I am facing some issues with applying a hosted font on codepen. Make sure you check out netlify site as well :)_

_[View the full code here](https://github.com/dhrutisubham/glam-up-dhrutisundar)_

_[Hosted on Netlify as well](https://beaches-damn.netlify.app/)_

## Journey

I was excited to participate in the frontend challenges(Yes! This is the first time :P). When I saw the topic, I started diving deep into all kinds of illustrations(Freepik) and fonts(Google Fonts and Google Search :P) I could collect to get something into shape.

I thought showing different beaches on paper cards would be simple and cool. So, I dived into Figma and built a desktop screen for the landing page.

Given that beaches are filled with shuttle movements of nature(waves, wind and creatures), I wanted to add some transitions and animations with the help of [GSAP](https://gsap.com/). I was also dreaming of putting a crab that would randomly crawl in and out, but I decided to be happy with being able to imagine it.(Laziness-2 Me-0)

##Credits

Illustrations: [Freepik](freepik.com)

Beach Images: [Google Images](images.google.com)

Fonts: [Beach](https://www.1001fonts.com/beach-font.html), [Josefin Sans](https://fonts.google.com/specimen/Josefin+Sans)

Transitions: [GSAP](https://gsap.com/)

| dhrutisubham03 |