id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

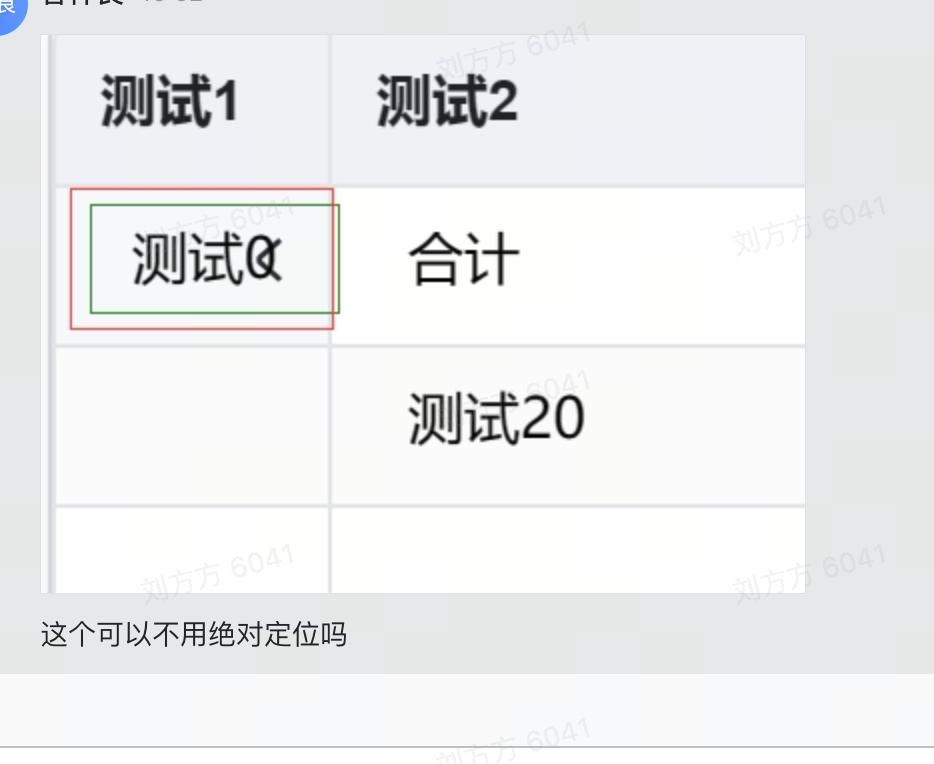

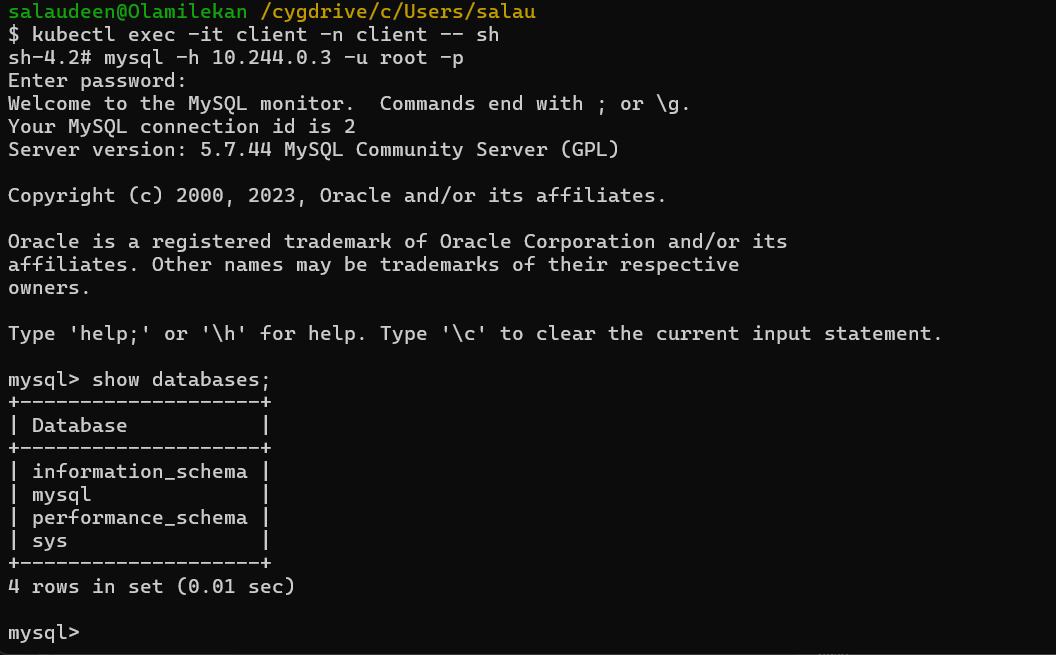

1,869,450 | Let’s highlight | Our team has been diligently adding screenshots to highlight the UI improvements made by PRs. While... | 0 | 2024-06-02T09:08:29 | https://dev.to/rationalkunal/lets-highlight-5lc | ios, swift, opensource | Our team has been diligently adding screenshots to highlight the UI improvements made by PRs. While this approach has been beneficial in pinpointing changes, it has become a cumbersome task when multiple UI elements are updated. The need to adhere to this guideline often results in the addition of numerous screenshots, which is a time-consuming process.

That's when I had a breakthrough: What if we could draw a border directly in the app to highlight changes? This would eliminate the bottleneck of manually adding borders by quick edit.

And here we are.

The plan is to create border drawing functionality:

- A simple keypress should trigger it.

- It should be separate from the app, i.e., the drawing should not affect it.

- We should be able to draw rectangles by just swiping.

## Trigger drawing functionality by keyboard keypress

I had already seen similar functionality in the FLEX tool, so that was my starting point. Interestingly, an app on a simulator captures keyboard events with `UIPhysicalKeyboardEvent` and exposes `_modifiedInput`, `_isKeyDown`, etc. As these are internal classes and Swift is a type-safe language, we need to use key-value pairs to access those. To intercept all events to the application, swizzled `UIApplication.sendEvent(_:)`.

```swift

// Hack: To look into keyboard events

extension UIEvent {

var modifiedInput: String? { self.value(forKey: "_modifiedInput") as? String }

var isKeyDown: Bool? { self.value(forKey: "_isKeyDown") as? Bool }

}

class ShortcutManager {

var actionsForKeyInputs: [String: () -> Void] = [:]

// Registers a action to be performed when "key" is presseed by user

// Note: This will override existing action for the "key"

func registerShortcut(withKey key: String, action: @escaping () -> Void) {

actionsForKeyInputs[key] = action

}

func handleKeyboardEvent(pressedKey: String) {

if let action = actionsForKeyInputs[pressedKey] {

action()

}

}

func interceptedSendEvent(_ event: UIEvent) {

guard event.isKeyDown ?? false else { return }

if let input = event.modifiedInput {

handleKeyboardEvent(pressedKey: input)

}

}

}

// Handle siwizzling: Just call `performSwizzling()`

// Expect call to `ShortcutManager.interceptedSendEvent(_: UIEvent)` when any event is performed on `UIApplication`.

extension ShortcutManager {

static func _swizzle() {

let originalSelector = #selector(UIApplication.sendEvent(_:))

let swizzledSelector = #selector(UIApplication.swizzled_sendEvent(_:))

guard let originalMethod = class_getInstanceMethod(UIApplication.self, originalSelector),

let swizzledMethod = class_getInstanceMethod(UIApplication.self, swizzledSelector) else {

return

}

let didAddMethod = class_addMethod(UIApplication.self, originalSelector, method_getImplementation(swizzledMethod), method_getTypeEncoding(swizzledMethod))

if didAddMethod {

class_replaceMethod(UIApplication.self, swizzledSelector, method_getImplementation(originalMethod), method_getTypeEncoding(originalMethod))

} else {

method_exchangeImplementations(originalMethod, swizzledMethod)

}

}

}

extension UIApplication {

@objc func swizzled_sendEvent(_ event: UIEvent) {

ShortcutManager.sharedInstance.interceptedSendEvent(event)

// Call original

swizzled_sendEvent(event)

}

}

```

_<sup>Check out the complete code and a few improvements at [github/rational-kunal/Picaso/ShortcutManager.swift](https://github.com/rational-kunal/Picaso/blob/main/Sources/Draw/ShortcutManager.swift)</sup>_

## Launch drawing functionality separate from the app

We can create a separate `UIWindow` and show it on top of the current window to keep our drawing functionality separate from the app's views.

I created a `CanvasManager` that will toggle our toggle functionality on the `x` key press.

```swift

class CanvasManager {

var isCanvasActive: Bool { self.canvasWindow.windowScene != nil }

/// Default initialization

/// - Shortcut "x" to toggle canvas

static func defaultInitialization() {

ShortcutManager.sharedInstance.registerShortcut(withKey: "x",

action: {

CanvasManager.sharedInstance.toggleCanvas()

})

}

public func toggleCanvas() {

isCanvasActive ? hideCanvas() : showCanvas()

}

public func showCanvas() {

guard let windowScene = UIApplication.shared.activeWindowScene else { return }

canvasWindow.rootViewController = makeRootViewController()

canvasWindow.windowScene = windowScene

canvasWindow.isHidden = false

}

public func hideCanvas() {

canvasWindow.rootViewController = nil

canvasWindow.windowScene = nil

canvasWindow.isHidden = true

}

}

```

_<sup>Check out the full code at [github/rational-kunal/Picaso/CanvasManager.swift](https://github.com/rational-kunal/Picaso/blob/main/Sources/Draw/CanvasManager.swift)</sup>_

## Draw!

To draw a border, I created a subclass of `UIView` and maintained `startPoint` and `endPoint`. We will update those in `touchesBegan()` and `touchesMoved()`. Finally, draw the rectangle from `startPoint` and `endPoint` in `draw()`.

```swift

class CanvasView: UIView {

private var startPoint: CGPoint = .zero

private var endPoint: CGPoint = .zero

override func touchesBegan(_ touches: Set<UITouch>, with event: UIEvent?) {

guard let touch = touches.first else { return }

startPoint = touch.location(in: self)

}

override func touchesMoved(_ touches: Set<UITouch>, with event: UIEvent?) {

guard let touch = touches.first else { return }

endPoint = touch.location(in: self)

}

override func draw(_ rect: CGRect) {

let rectangle = CGRect(x: min(startPoint.x, endPoint.x), y: min(startPoint.y, endPoint.y),

width: abs(startPoint.x - endPoint.x), height: abs(startPoint.y - endPoint.y))

let roundedRectangle = UIBezierPath(roundedRect: rectangle, cornerRadius: 3.5)

roundedRectangle.lineWidth = 2.5

UIColor.clear.setFill()

UIColor.red.setStroke()

roundedRectangle.stroke()

}

}

```

_<sup>Check out the complete code for this at [github/rational-kunal/Picaso/CanvasView.swift](https://github.com/rational-kunal/Picaso/blob/main/Sources/Draw/CanvasManager.swift)</sup>_

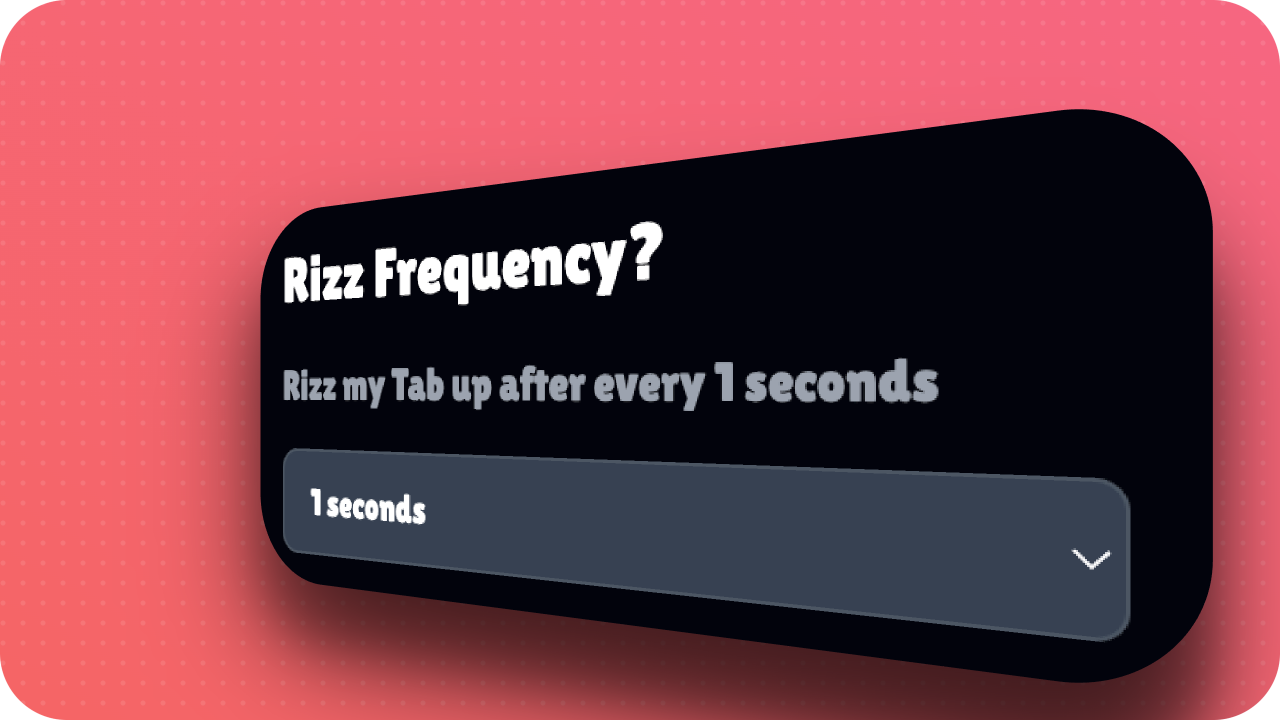

With this, we have the following output

<img src="https://dev-to-uploads.s3.amazonaws.com/uploads/articles/nzcl571jbttv8issrmmy.gif" />

## Lets over-engineer

As further improvements, I implemented a way to resize the rectangle by dragging corners. To achieve this, we need to manage each corner and the selection state of the rectangle. I finally packaged this in a swift package for anyone who wants to check out the functionality with a quick setup.

<img src="https://dev-to-uploads.s3.amazonaws.com/uploads/articles/7odqigxkas4reo34bggb.gif" />

Final comparison of before and after workflow of taking screenshots

| Before | After |

|------------|----------|-----------|

| |  |

With this, 1) improved productivity by removing the need to edit screenshots and 2) Learn a few new things like `UIPhysicalKeyboardEvent.` Please check out the complete plugin at [github/rational-kunal/Draw](https://github.com/rational-kunal/Draw), and leave a star if you liked it.

Thank you for reading. | rationalkunal |

1,873,583 | My GitHub Profile | Hello, I'm Sudhanshu Ambastha 👋 I'm a dedicated developer who enjoys tackling coding... | 0 | 2024-06-02T09:07:32 | https://dev.to/sudhanshuambastha/my-github-profile-47bc | markdown, profilereadme, commits | ## Hello, I'm Sudhanshu Ambastha 👋

I'm a dedicated developer who enjoys tackling coding challenges and finding elegant solutions. In my free time, I explore new technologies and contribute to open-source projects. If you're interested in collaborating on exciting projects or discussing tech-related topics, feel free to reach out to me!

**Gratitude**

I recently came across a **_[post](https://dev.to/syeo66/how-i-got-to-2000-followers-on-devto-118e)_** discussing how some followers on this platforms might be bots or spammers. This made me reflect on my own follower count as currently it is **_3536_**, especially considering the significant increase in followers I experienced in a single day. While it's possible that some followers might not be genuine, I am grateful for a total of **_1K views_** on all my posts combined from those who are genuinely engaging with my content. Thank you to everyone who supports me with likes and authentic engagement. Your support motivates me to continue creating valuable content. I will do my best to keep providing the quality content you enjoy.

**GitHub Profile Stats**

_Stars: 3

Clones: 16

Views: 64

Followers: 44_

I truly value the support from individuals like those **44 GitHub followers**. While many have cloned my projects, only a few have shown interest by granting them a star. **_Plagiarism is bad_**, and even if you are copying it, just consider giving it a star. If you're new and want to check traffic or how many people have cloned your project, simply visit your _desired repo > Insights > Traffic_.

I understand that not everyone may choose to star the project, but I kindly ask that if you find it useful, please consider giving it a star. It's a small gesture that means a lot to me and motivates me to generate more innovative ideas.

**Connect with Me**

You can connect with me on **_[GitHub](https://github.com/Sudhanshu-Ambastha)_**, _**[LinkedIn](https://www.linkedin.com/in/sudhanshu-ambastha-8a0b332a4/)**_ and support my work there, just as you do on **dev.to** and on **_[X](https://x.com/Sudhanshu79093)_**. Your continued support is crucial, and together we can achieve even greater milestones.

Thank you once again, and let's keep pushing the boundaries of what's possible in tech! | sudhanshuambastha |

1,839,271 | Django and PostgreSQL | Install Django on Mac: python3 -m pip install Django Enter fullscreen mode ... | 0 | 2024-06-02T09:06:36 | https://dev.to/ajeetraina/django-and-postgresql-4bfi | ## Install Django on Mac:

```

python3 -m pip install Django

```

## Installing Django Project:

```

django-admin startproject mysite

```

## View the project

```

tree mysite

mysite

├── manage.py

└── mysite

├── __init__.py

├── asgi.py

├── settings.py

├── urls.py

└── wsgi.py

2 directories, 6 files

```

These files are:

- The outer mysite/ root directory is a container for your project. Its name doesn’t matter to Django; you can rename it to anything you like.

- manage.py: A command-line utility that lets you interact with this Django project in various ways. You can read all the details about manage.py in django-admin and manage.py.

- The inner mysite/ directory is the actual Python package for your project. Its name is the Python package name you’ll need to use to import anything inside it (e.g. mysite.urls).

m- ysite/__init__.py: An empty file that tells Python that this directory should be considered a Python package. If you’re a Python beginner, read more about packages in the official Python docs.

- mysite/settings.py: Settings/configuration for this Django project. Django settings will tell you all about how settings work.

- mysite/urls.py: The URL declarations for this Django project; a “table of contents” of your Django-powered site.

You can read more about URLs in URL dispatcher.

- mysite/asgi.py: An entry-point for ASGI-compatible web servers to serve your project. See How to deploy with ASGI for more details.

- mysite/wsgi.py: An entry-point for WSGI-compatible web servers to serve your project. See How to deploy with WSGI for more details.

```

cd mysite

python3 manage.py runserver

Watching for file changes with StatReloader

Performing system checks...

System check identified no issues (0 silenced).

You have 18 unapplied migration(s). Your project may not work properly until you apply the migrations for app(s): admin, auth, contenttypes, sessions.

Run 'python manage.py migrate' to apply them.

May 01, 2024 - 05:29:06

Django version 5.0.4, using settings 'mysite.settings'

Starting development server at http://127.0.0.1:8000/

Quit the server with CONTROL-C.

[01/May/2024 05:29:21] "GET / HTTP/1.1" 200 10629

Not Found: /favicon.ico

[01/May/2024 05:29:22] "GET /favicon.ico HTTP/1.1" 404 2110

```

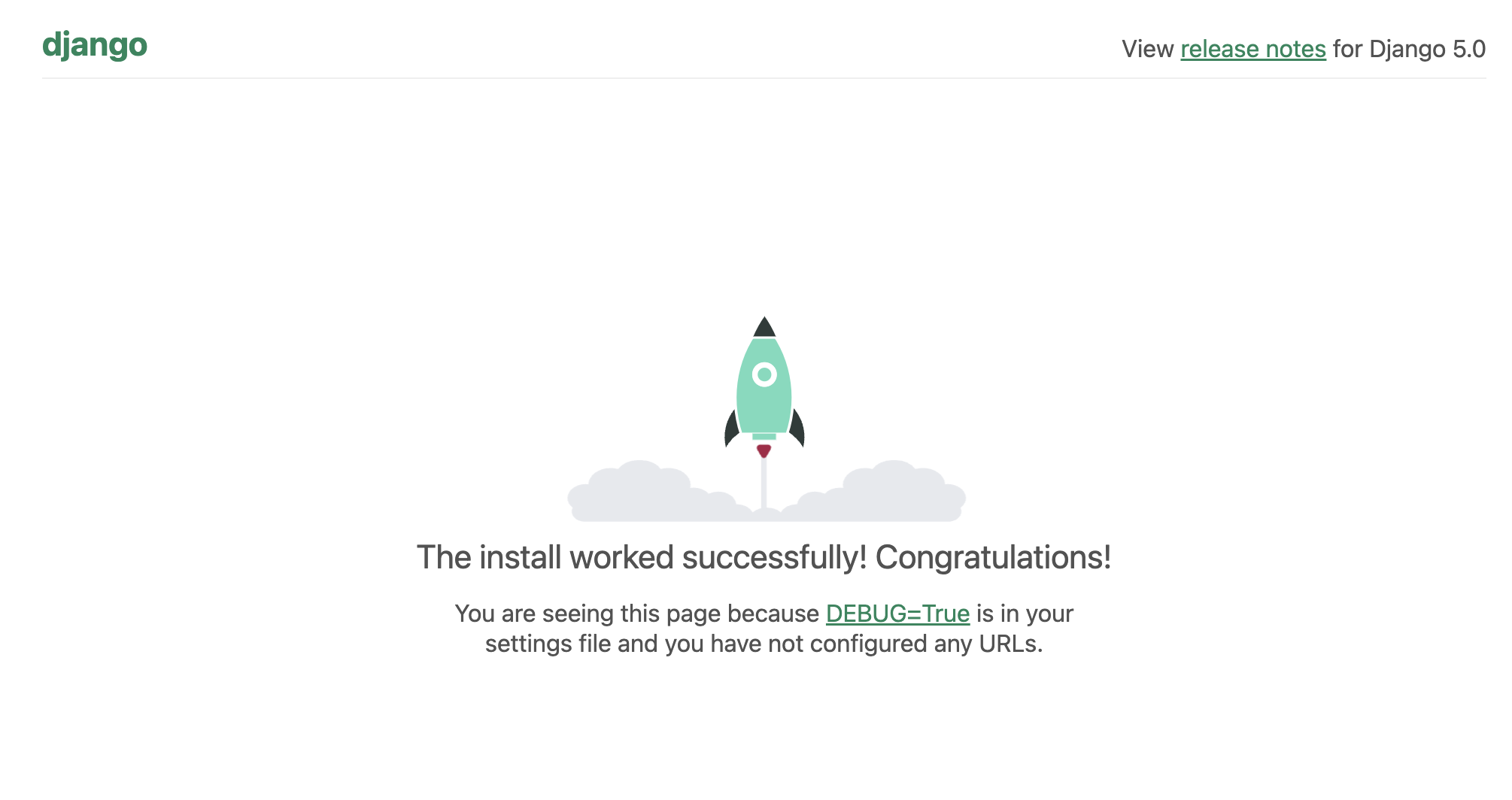

You’ve started the Django development server, a lightweight web server written purely in Python. We’ve included this with Django so you can develop things rapidly, without having to deal with configuring a production server – such as Apache – until you’re ready for production.

| ajeetraina | |

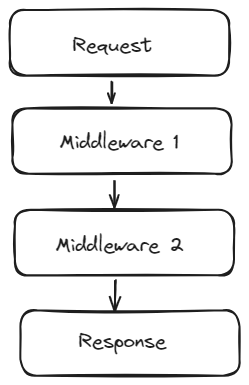

1,873,582 | FastAPI Microservices Deployment Using Kubernetes | In this article we are going to create 2 microservices in FastAPI and deploy them using kubernetes on... | 0 | 2024-06-02T09:05:14 | https://dev.to/sumangaire52/fastapi-microservices-deployment-using-kubernetes-4n4j | fastapi, docker, kubernetes, minikube | In this article we are going to create 2 microservices in FastAPI and deploy them using kubernetes on minkube. We are going to use kubernetes ingress to route requests to respective microservices.

Fist let’s go through kubernetes features we are going to use in this article.

Kubernetes Deployment: A Kubernetes Deployment automates the management of application updates and scaling. It defines the desired state for an application, such as the number of replicas, the container image to use, and update strategies. The Deployment controller ensures that the actual state of the application matches the desired state by creating and updating pods as needed. Deployments support rolling updates, rollback capabilities, and self-healing, making it easier to maintain application availability and reliability during changes. This abstraction simplifies deploying, scaling, and managing stateless applications in a Kubernetes cluster. [https://kubernetes.io/docs/concepts/workloads/controllers/deployment/](https://kubernetes.io/docs/concepts/workloads/controllers/deployment/)

Kuberenetes Service: A Kubernetes Service is an abstraction that defines a logical set of pods and a policy for accessing them, usually via a stable IP address and DNS name. Services enable communication between different parts of an application without needing to know the pod’s IP addresses, which can change.

[https://kubernetes.io/docs/tutorials/kubernetes-basics/expose/expose-intro/](https://kubernetes.io/docs/tutorials/kubernetes-basics/expose/expose-intro/)

Kubernetes Ingress: Kubernetes Ingress manages external access to services within a Kubernetes cluster. In our application we are going to use it to route the traffics to respective services (i.e microservice 1 or microservice 2). It defines rules to route traffic based on hostnames and paths to specific services. An Ingress controller, such as NGINX or Traefik, implements these rules, providing centralized management, load balancing, SSL termination, and path-based routing. Deploying an Ingress controller is required to use Ingress resources effectively.

[https://kubernetes.io/docs/concepts/services-networking/ingress/#what-is-ingress](https://kubernetes.io/docs/concepts/services-networking/ingress/#what-is-ingress)

**Microservice 1**:

Microservice 1 is going to be pretty straightforward. It is going to have only one endpoint which is going to return “You requested microservice 1”.

- Create a folder named microservice_1 and add a file named main.py.

```

# main.py (microservice 1)

from fastapi import FastAPI

app = FastAPI()

@app.get("/")

async def root():

return {"message": "You requested microservice 1"}

```

- Add requirements.txt file

`fastapi==0.111.0`

- Create Dockerfile

```

FROM python:3.10-slim

WORKDIR /app

COPY requirements.txt requirements.txt

RUN pip install -r requirements.txt

COPY . .

CMD ["uvicorn", "main:app", "--host", "0.0.0.0", "--port", "80"]

```

- Build the docker image and push it to dockerhub.

Make sure to tag the image in format username/image_name:version format. Otherwise kubernetes won’t be able to recognize the image. Run this command from the directory where Dockerfile for microservice 1 is located.

```

$ docker build . -t sumangaire96/microservice1:v1

$ docker push sumangaire96/microservice1:v1

```

**Microservice 2**:

Microservice 2 is also going to have only one endpoint which is going to return “You requested microservice 2”.

- Create a folder named microservice_1 and add a file named main.py.

```

`# main.py (microservice 2)

from fastapi import FastAPI

app = FastAPI()

@app.get("/")

async def root():

return {"message": "You requested microservice 2"}`

```

- Add requirements.txt

`fastapi==0.111.0`

- Add Dockerfile

```

FROM python:3.10-slim

WORKDIR /app

COPY requirements.txt requirements.txt

RUN pip install -r requirements.txt

COPY . .

CMD ["uvicorn", "main:app", "--host", "0.0.0.0", "--port", "80"]

```

- Build the docker image and push it to dockerhub.

`$ docker build . -t sumangaire96/microservice2:v1

$ docker push sumangaire96/microservice2:v1`

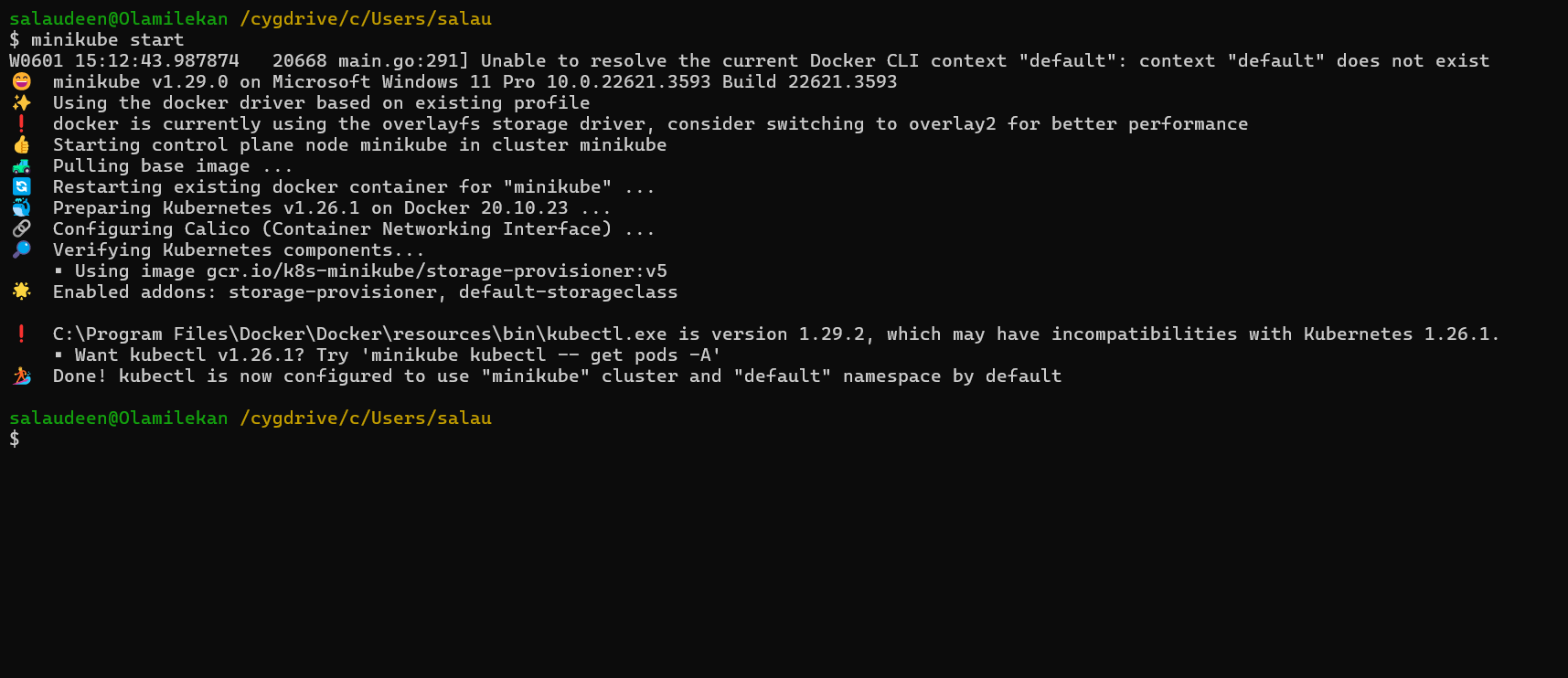

**Minikube**:

Make sure you have minikube installed. If it is not installed follow this link https://minikube.sigs.k8s.io/docs/start/. Make sure to have kubectl installed to interact with minikube cluster from the terminal. If it is not installed follow this link [https://kubernetes.io/docs/tasks/tools/](https://kubernetes.io/docs/tasks/tools/).

- Create a kubernetes cluster.

`$ minikube start`

- Enable ingress in minikube.

`$ minikube addons enable ingress`

- Create kubernetes manifest file for microservice 1.

```

# kubernetes/microservice_1.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: microservice1-deployment

spec:

replicas: 2

selector:

matchLabels:

app: microservice1

template:

metadata:

labels:

app: microservice1

spec:

containers:

- name: microservice1

image: sumangaire96/microservice1:v1

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: microservice1-service

spec:

selector:

app: microservice1

ports:

- protocol: TCP

port: 80

targetPort: 80

type: ClusterIP

```

- Create kubernetes manifest file for microservice 2.

```

# kubernetes/microservice_2.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: microservice2-deployment

spec:

replicas: 2

selector:

matchLabels:

app: microservice2

template:

metadata:

labels:

app: microservice2

spec:

containers:

- name: microservice2

image: sumangaire96/microservice2:v1

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: microservice2-service

spec:

selector:

app: microservice2

ports:

- protocol: TCP

port: 80

targetPort: 80

type: ClusterIP

```

- Create kubernetes ingress manifest file.

```

# kubernetes/ingress.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: app-ingress

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

spec:

rules:

- host: microservice1.local

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: microservice1-service

port:

number: 80

- host: microservice2.local

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: microservice2-service

port:

number: 80

```

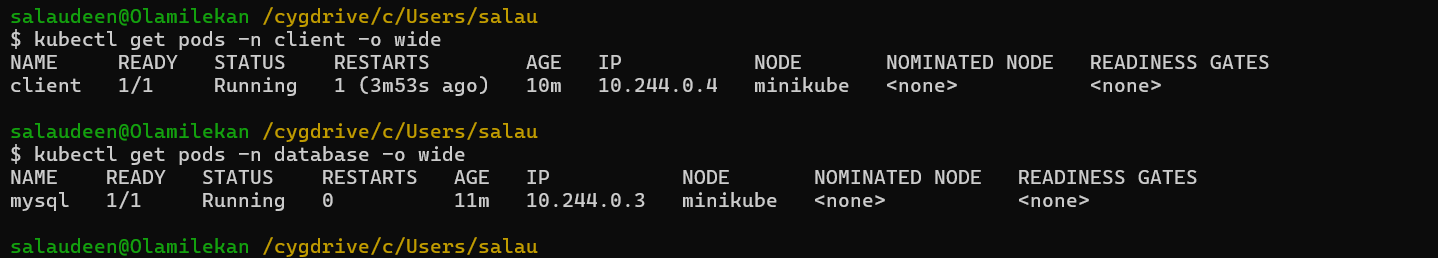

- Apply manifests

`$ kubectl apply -f microservice_1.yaml`

`$ kubectl apply -f microservice_2.yaml`

`$ kubectl apply -f ingress.yaml`

- Update hosts file to map the hostnames to the Minikube IP.

`$ echo "$(minikube ip) microservice1.local" | sudo tee -a /etc/hosts`

`$ echo "$(minikube ip) microservice2.local" | sudo tee -a /etc/hosts`

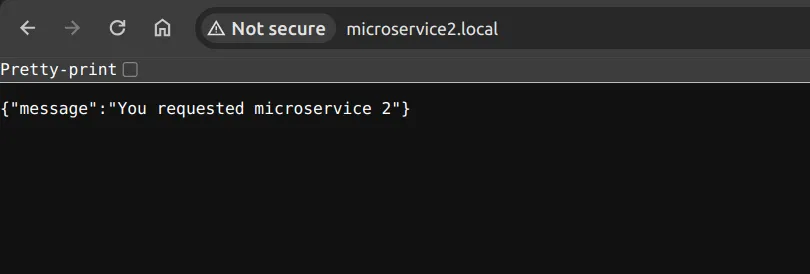

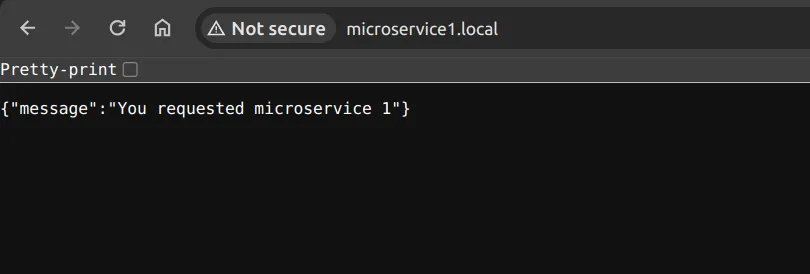

- Now go to url microservice1.local and microservice2.local. You should see output like this:

Congratulations! We have created 2 microservices in FastAPI and successfully deployed them using kubernetes. Make sure to follow me for upcoming articles. | sumangaire52 |

1,831,723 | What is Docker Debug and what problem does it solve? | The docker debug is a new command introduced in Docker Desktop 4.27.0. It allows you to open a... | 0 | 2024-06-02T09:05:08 | https://dev.to/ajeetraina/what-is-docker-debug-and-what-problem-does-it-solve-3f7g |

The `docker debug` is a new command introduced in Docker Desktop 4.27.0. It allows you to open a debug shell into any container or image. It's currently in beta and available to Pro subscribers. The command aims to provide a more comprehensive ecosystem of debugging tools and is seen as a crucial part of the Docker experience, not just a single CLI command

Docker Debug is a new tool to speed up the debugging process. Docker Debug is a tool designed to improve the troubleshooting process in Docker.

## What problem does it solve?

One of the main problems it tackles is the difficulty in debugging running and stopping containers. Docker Debug allows developers to join the execution context of a target container or create a temporary execution context for stopped containers, enabling them to investigate the containers.

Docker Debug also improves the shell environment for users, making it user-friendly and efficient. It includes features such as a descriptive prompt, auto-detection of the shell the user is using, persistent history across sessions, and a Nix-based package manager for adding tools as needed.

Additionally, Docker Debug helps with the issue of containers exiting unexpectedly, which previously made debugging impossible at that point.

By addressing these issues, Docker Debug enhances the usability of Docker and makes it easier for users to troubleshoot their applications.

It addresses several key issues:

1. The inability to effectively debug running and stopped containers. Docker Debug allows users to join the execution context of a target container or create a temporary execution context for stopped containers, enabling them to investigate the containers.

2. Difficulties in using docker exec command, which only works for containers that ship with a shell and doesn't work for stopped containers.

3. The need for a user-friendly and efficient shell environment, which Docker Debug aims to provide. This includes features such as a descriptive prompt, auto-detection of the shell the user is using, persistent history across sessions, and a nix-based package manager for adding tools as needed.

4. The problem of containers exiting unexpectedly, which makes debugging impossible at that point.

By addressing these issues, Docker Debug enhances the usability of Docker and makes it easier for users to troubleshoot their applications.

## Whos’ this for?

Docker Debug is primarily targeted at software developers and IT professionals who work with containerized applications, especially those using Docker for development and deployment. Here's a breakdown of who would benefit most:

### Developers:

- Building and deploying containerized applications

- Debugging issues within running or stopped containers

- Investigating runtime behavior and troubleshooting problems

- Simplifying the debugging workflow and streamlining development cycles

### IT professionals:

- Maintaining and managing containerized environments

- Diagnosing and fixing problems in production deployments

- Analyzing container performance and resource utilization

- Understanding the internals of containerized applications

While Docker Debug is currently in beta and limited to Pro subscribers, its potential benefits extend beyond these primary groups. Anyone who interacts with containerized applications, including system administrators and DevOps engineers, can benefit from its enhanced debugging capabilities and efficient shell environment.

## Getting Started

1. Ensure that you have Docker Desktop 4.27.0+ installed on your computer.

2. Verifying if the `docker debug` command is working correctly

```

docker debug --help

Usage: docker debug [OPTIONS] {CONTAINER|IMAGE}

Get an enhanced shell with additional tools into any container or image

Options:

-c, --command string Evaluate the specified commands instead, passing additional positional arguments through

$argv.

--host string Daemon docker socket to connect to. E.g.: 'ssh://root@example.org',

'unix:///some/path/docker.sock'

--shell shell Select a shell. Supported: "bash", "fish", "zsh", "auto". (default auto)

--version Display version of the docker-debug plugin

```

3. Running Docker Debug for Docker Images

The `docker debug` command requires container or image name as command-line argument. If you don’t have any container up and running or even if you don’t have any shell available in the running container, Docker Debug helps you to get access to the container shell.

I assume you just installed Docker Desktop 4.27.0 and right now you don’t have any image or container running on your system. Let’s jump into the Nginx image directly.

```

docker debug nginx

Pulling image, this might take a moment...

0.0.22: Pulling from docker/desktop-docker-debug-service

10c7e62bcff5: Pull complete

Digest: sha256:60b0227c4304f2d703255448aba2863c9a97e21ad0233514de3c7f200904869f

Status: Downloaded newer image for docker/desktop-docker-debug-service:0.0.22

latest: Pulling from library/nginx

a5573528b1f0: Pull complete

8897d65c8417: Pull complete

fbc138d1d206: Pull complete

06f386eb9182: Pull complete

aeb2f3db77c3: Pull complete

64fb762834ec: Pull complete

e5a7e61f6ff4: Pull complete

Digest: sha256:4c0fdaa8b6341bfdeca5f18f7837462c80cff90527ee35ef185571e1c327beac

Status: Downloaded newer image for nginx:latest

▄

▄ ▄ ▄ ▀▄▀

▄ ▄ ▄ ▄ ▄▇▀ █▀▄ █▀█ █▀▀ █▄▀ █▀▀ █▀█

▀████████▀ █▄▀ █▄█ █▄▄ █ █ ██▄ █▀▄

▀█████▀ DEBUG

Builtin commands:

- install [tool1] [tool2] ... Add Nix packages from: https://search.nixos.org/packages

- uninstall [tool1] [tool2] ... Uninstall NixOS package(s).

- entrypoint Print/lint/run the entrypoint.

- builtins Show builtin commands.

Checks:

✓ distro: Debian GNU/Linux 12 (bookworm)

✓ entrypoint linter: no errors (run 'entrypoint' for details)

Note: This is a sandbox shell. All changes will not affect the actual image.

Version: 0.0.22 (BETA)

root@6c7be49d2a11 / [nginx:latest]

docker >

```

## Inspect the entrypoint

```

docker > entrypoint

Understand how ENTRYPOINT/CMD work and if they are set correctly.

From CMD in Dockerfile:

['nginx', '-g', 'daemon off;']

From ENTRYPOINT in Dockerfile:

['/docker-entrypoint.sh']

```

By default, any container from this image will be started with following command:

```

/docker-entrypoint.sh nginx -g daemon off;

path: /docker-entrypoint.sh

args: nginx -g daemon off;

cwd:

PATH: /usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin

Lint results:

PASS: '/docker-entrypoint.sh' found

PASS: no mixing of shell and exec form

PASS: no double use of shell form

Docs:

- https://docs.docker.com/engine/reference/builder/#cmd

- https://docs.docker.com/engine/reference/builder/#entrypoint

- https://docs.docker.com/engine/reference/builder/#understand-how-cmd-and-entrypoint-interact

root@6c7be49d2a11 / [nginx:latest]

```

## Viewing all builtin commands

```

builtins

A docker debugging toolbox. 0.0.22

Builtin commands:

- install [tool1] [tool2] ... Add Nix packages from: https://search.nixos.org/packages

- uninstall [tool1] [tool2] ... Uninstall NixOS package(s).

- entrypoint Print/lint/run the entrypoint.

- builtins Show builtin commands.

Note: This is a sandbox shell. All changes will not affect the actual image.

Version: 0.0.22 (BETA)

root@6c7be49d2a11 / [nginx:latest]

```

## Installing NIXOS Packages

```

install crossplane

Tip: You can install any package available at: https://search.nixos.org/packages.

[2024-01-22T07:13:47.863328585Z][W] tcp keep alive: request failed (Not Found): 404

[2024-01-22T07:13:52.866284045Z][W] tcp keep alive: request failed (Not Found): 404

installing 'crossplane-0.5.8'

these 2 paths will be fetched (0.07 MiB download, 0.26 MiB unpacked):

/nix/store/dnyyy0nbr9ybyh6b49b450arp3d4zgnq-python3.10-crossplane-0.5.8

/nix/store/ah81s4zhrdiz24avnfj92jw440kxy7f0-python3.10-crossplane-0.5.8-dist

copying path '/nix/store/dnyyy0nbr9ybyh6b49b450arp3d4zgnq-python3.10-crossplane-0.5.8' from 'https://cache.nixos.org'...

copying path '/nix/store/ah81s4zhrdiz24avnfj92jw440kxy7f0-python3.10-crossplane-0.5.8-dist' from 'https://cache.nixos.org'...

building '/nix/store/ryyfmaqkmy80333cprzipb8v82ghl5nf-user-environment.drv'...

root@6c7be49d2a11 / [nginx:latest]

```

## Displaying the content of a file inside Nginx

```

docker > bash -c "cat /usr/share/nginx/html/index.html"

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

html { color-scheme: light dark; }

body { width: 35em; margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif; }

</style>

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>

root@6c7be49d2a11 / [nginx:latest]

docker >

```

## Tools available in Docker Debug

There are a number of tools that are made available. They don’t modify your container but just shipped with the CLI.

## 1. The vim tool

```

docker > vim

root@6c7be49d2a11 / [nginx:latest]

docker > vi hello

root@6c7be49d2a11 / [nginx:latest]

docker > ls

bin dev docker-entrypoint.sh hello lib mnt opt root sbin sys usr

boot docker-entrypoint.d etc home media nix proc run srv tmp var

root@6c7be49d2a11 / [nginx:latest]

docker >

```

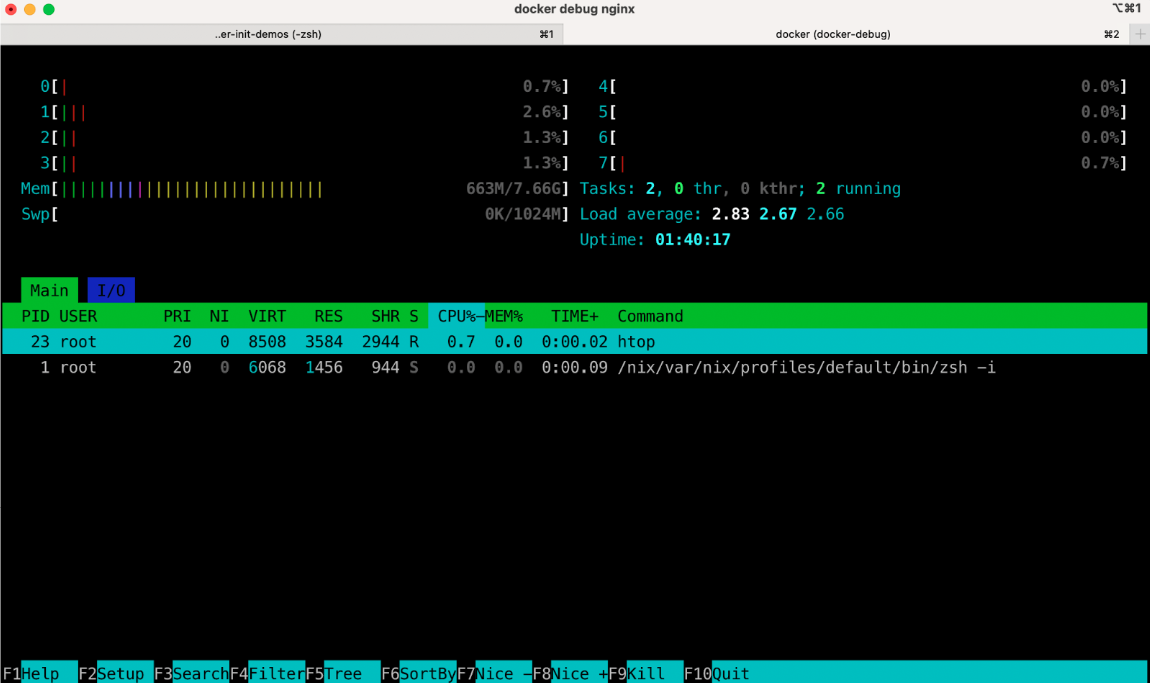

## 2. The Htop Tool

## 2. Which tool

```

which vim

/nix/var/nix/profiles/default/bin/vim

root@6c7be49d2a11 / [nginx:latest]

```

## 4. Forwarding the port temporarily

```

root@6c7be49d2a11 / [nginx:latest]

docker > forward --port 81:80 nginx

2024/01/22 09:54:02 [notice] 59#59: using the "epoll" event method

2024/01/22 09:54:02 [notice] 59#59: nginx/1.25.3

2024/01/22 09:54:02 [notice] 59#59: built by gcc 12.2.0 (Debian 12.2.0-14)

2024/01/22 09:54:02 [notice] 59#59: OS: Linux 6.6.12-linuxkit

2024/01/22 09:54:02 [notice] 59#59: getrlimit(RLIMIT_NOFILE): 1048576:1048576

2024/01/22 09:54:02 [notice] 64#64: start worker processes

2024/01/22 09:54:02 [notice] 64#64: start worker process 65

2024/01/22 09:54:02 [notice] 64#64: start worker process 66

2024/01/22 09:54:02 [notice] 64#64: start worker process 67

2024/01/22 09:54:02 [notice] 64#64: start worker process 68

2024/01/22 09:54:02 [notice] 64#64: start worker process 69

2024/01/22 09:54:02 [notice] 64#64: start worker process 70

2024/01/22 09:54:02 [notice] 64#64: start worker process 71

2024/01/22 09:54:02 [notice] 64#64: start worker process 72

```

## 5. Running Docker Debug for the running containers

```

docker run -d -p 6379:6379 redis

```

```

docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

a1ce95fc8430 redis "docker-entrypoint.s…" 26 seconds ago Up 26 seconds 0.0.0.0:6379->6379/tcp eloquent_hamilton

```

```

docker debug eloquent_hamilton

▄

▄ ▄ ▄ ▀▄▀

▄ ▄ ▄ ▄ ▄▇▀ █▀▄ █▀█ █▀▀ █▄▀ █▀▀ █▀█

▀████████▀ █▄▀ █▄█ █▄▄ █ █ ██▄ █▀▄

▀█████▀ DEBUG

Builtin commands:

- install [tool1] [tool2] ... Add Nix packages from: https://search.nixos.org/packages

- uninstall [tool1] [tool2] ... Uninstall NixOS package(s).

- entrypoint Print/lint/run the entrypoint.

- builtins Show builtin commands.

Checks:

✓ distro: Debian GNU/Linux 12 (bookworm)

✓ entrypoint linter: no errors (run 'entrypoint' for details)

This is an attach shell, i.e.:

- Any changes to the container filesystem are visible to the container directly.

- The /nix directory is invisible to the actual container.

Version: 0.0.22 (BETA)

redis@a1ce95fc8430 /data [eloquent_hamilton]

docker > ps -aef

UID PID PPID C STIME TTY TIME CMD

redis 1 0 0 10:08 ? 00:00:04 redis-server *:6379

root 47 0 0 10:24 pts/0 00:00:00 /bin/sh

root 54 47 0 10:24 pts/0 00:00:00 bash

redis 57 0 0 10:29 pts/0 00:00:00 /nix/var/nix/profiles/default/bin/zsh -i

redis 69 57 0 10:29 pts/0 00:00:00 ps -aef

redis@a1ce95fc8430 /data [eloquent_hamilton]

docker >

```

## 5. Installing nmap packages

```

docker > install nmap

Tip: You can install any package available at: https://search.nixos.org/packages.

[2024-01-22T10:36:46.561468511Z][W] tcp keep alive: request failed (Not Found): 404

[2024-01-22T10:36:51.567299097Z][W] tcp keep alive: request failed (Not Found): 404

replacing old 'nmap-7.93'

installing 'nmap-7.93'

redis@a1ce95fc8430 /data [eloquent_hamilton]

```

To check what ports are opened for scanme.nmap.org, run the following command

```

docker > nmap -v -A scanme.nmap.org

Starting Nmap 7.93 ( https://nmap.org ) at 2024-01-22 10:38 UTC

NSE: Loaded 155 scripts for scanning.

NSE: Script Pre-scanning.

Initiating NSE at 10:38

Completed NSE at 10:38, 0.00s elapsed

Initiating NSE at 10:38

Completed NSE at 10:38, 0.00s elapsed

Initiating NSE at 10:38

Completed NSE at 10:38, 0.00s elapsed

Initiating Ping Scan at 10:38

Scanning scanme.nmap.org (45.33.32.156) [2 ports]

Completed Ping Scan at 10:38, 0.22s elapsed (1 total hosts)

Initiating Parallel DNS resolution of 1 host. at 10:38

Completed Parallel DNS resolution of 1 host. at 10:38, 0.84s elapsed

Initiating Connect Scan at 10:38

Scanning scanme.nmap.org (45.33.32.156) [1000 ports]

Discovered open port 80/tcp on 45.33.32.156

Discovered open port 22/tcp on 45.33.32.156

```

| ajeetraina | |

1,843,082 | Getting Started with Nodejs | Node.js, built on Chrome's V8 JavaScript Engine, is a powerful JavaScript runtime that extends its... | 0 | 2024-06-02T09:04:28 | https://dev.to/ajeetraina/getting-started-with-nodejs-54pe | Node.js, built on Chrome's V8 JavaScript Engine, is a powerful JavaScript runtime that extends its capabilities to the server-side. Its asynchronous, event-driven nature, coupled with a non-blocking IO model, makes it exceptionally fast and efficient.

## Understanding Node.js

Node.js operates on an event-driven architecture, initializing all variables and functions and waiting for events to occur. Its asynchronous nature ensures that it doesn't block itself for one request but moves swiftly to the next, enhancing performance.

## Getting Started

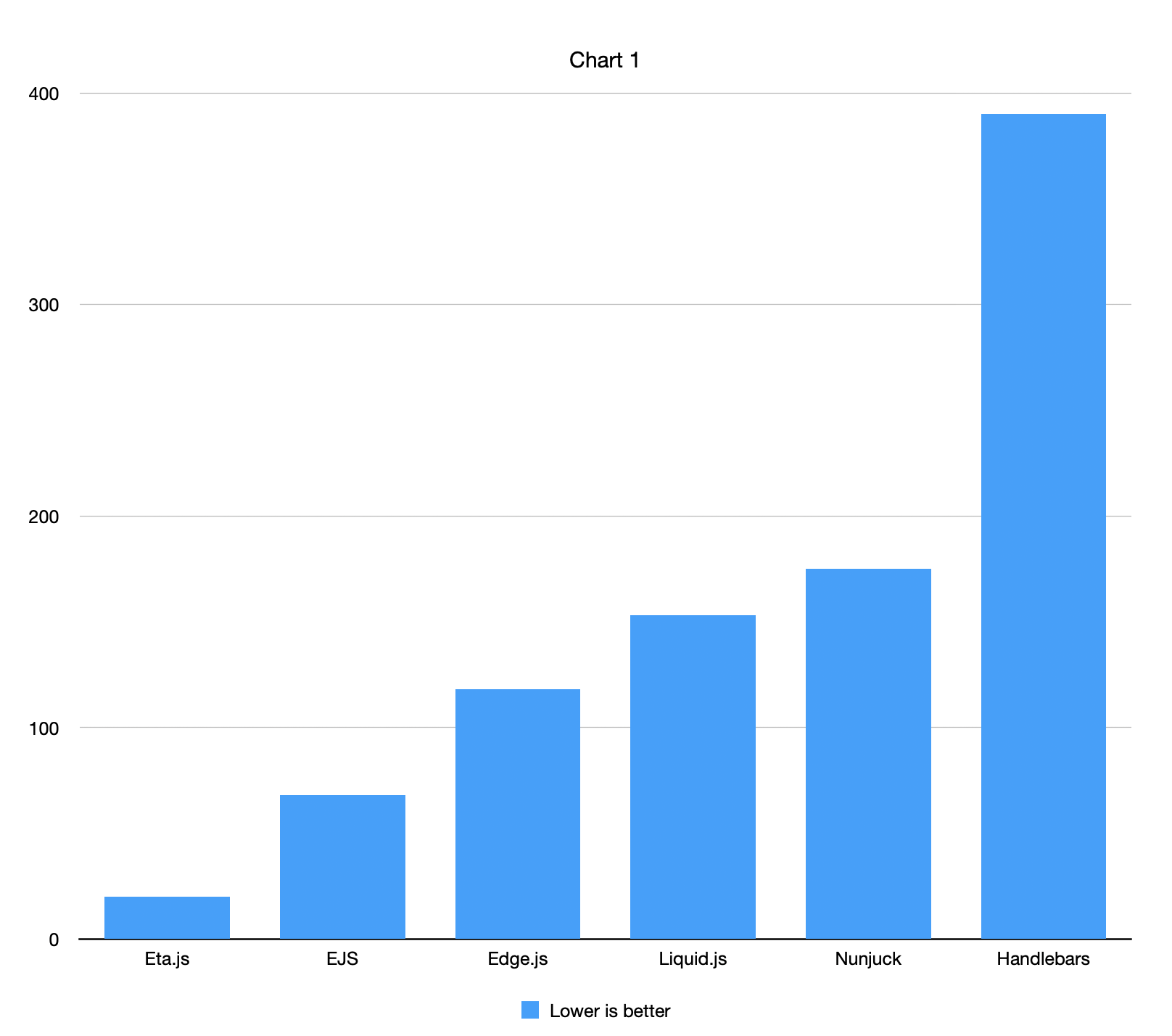

Let's walk through creating a simple Node.js application step by step. We'll create a basic web server using Express.js and render a dynamic webpage using Handlebars as the templating engine.

## Step 1: Set Up Your Project

Create a new directory for your project and navigate into it:

```

mkdir my-node-app

cd my-node-app

```

## Initialize a new Node.js project:

```

npm init -y

```

This will create a package.json file with default settings.

## Step 2: Install Dependencies

Install Express.js and Handlebars as dependencies:

```

npm install express hbs

```

## Step 3: Create Your Server

Create a file named `app.js` in your project directory and open it in your code editor.

```

// Import required modules

const express = require('express');

const hbs = require('hbs');

const path = require('path');

// Create an Express app

const app = express();

// Set the view engine to Handlebars

app.set('view engine', 'hbs');

// Set the path for views and partials

app.set('views', path.join(__dirname, 'views'));

hbs.registerPartials(path.join(__dirname, 'views', 'partials'));

// Define routes

app.get('/', (req, res) => {

// Render the index page with dynamic data

res.render('index', {

title: 'Home',

author: 'Your Name'

});

});

// Start the server

app.listen(3000, () => {

console.log('Server is running on port 3000');

});

```

## Step 4: Create Your Views

Create a directory named views in your project directory. Inside this directory, create two files: index.hbs and partials/header.hbs.

File: `index.hbs`

```

<!DOCTYPE html>

<html>

<head>

<title>{{ title }}</title>

</head>

<body>

{{>partials/header}}

<h1>Welcome to {{ title }}</h1>

<p>Written by {{ author }}</p>

</body>

</html>

partials/header.hbs:

```

File: `partials/header.hbs`:

```

<header>

<h1>My Node.js App</h1>

<nav>

<ul>

<li><a href="/">Home</a></li>

</ul>

</nav>

</header>

```

## Step 5: Run Your Application

Navigate to your project directory in the terminal and run your Node.js application:

```

node app.js

```

Visit [http://localhost:3000](http://localhost:3000) in your web browser to see your Node.js application in action.

That's it! You've created a simple Node.js application with Express.js and Handlebars. Feel free to expand upon this example by adding more routes, views, or functionality as needed. | ajeetraina | |

1,843,302 | Integrating Backstage with Kubernetes | Kubernetes is an undeniable powerhouse in container orchestration, but its complexity can leave... | 0 | 2024-06-02T09:04:16 | https://dev.to/ajeetraina/integrating-backstage-with-kubernetes-2i1g | Kubernetes is an undeniable powerhouse in container orchestration, but its complexity can leave developers feeling lost at sea. This blog post explores how Backstage can act as a lighthouse, guiding developers through the murky waters of Kubernetes.

## The Kubernetes Challenge

There's no denying that Kubernetes packs a punch. However, its intricate nature can create a significant learning curve for developers. As a platform engineer, the goal is to provide tools that make Kubernetes more user-friendly and reduce this barrier to entry.

## Backstage: A Developer Portal Platform

Enter Backstage: a platform specifically designed for building developer portals. These portals function as a central hub for various development activities, including:

- Continuous Integration (CI) pipeline information

- Access to documentation

- Monitoring of Kubernetes deployments

- The Software Catalog: A Centralized Source

One of Backstage's core strengths is the software catalog. This catalog acts as a single source of truth for service information, including:

- Ownership details

- Git repository location

- Relationships between different services

The beauty of the software catalog lies in its adaptability and expandability. Developers can create custom plugins or leverage existing open-source options to tailor the catalog to their specific organizational needs.

## Backstage in Action: Everyday Kubernetes Tasks

The blog post dives into two practical use cases for the Backstage Kubernetes plugin. The first one addresses common developer questions, like "on which cluster is a particular service running?". Backstage eliminates the need to navigate to the Kubernetes dashboard for these basic inquiries.

The second use case tackles troubleshooting errors. Backstage aggregates crash logs from all the pods within a service and offers a convenient link to a log aggregation platform for further analysis.

## Boosting Developer Productivity

In essence, Backstage empowers developers by providing a centralized platform for viewing and managing their Kubernetes services. This streamlined approach can significantly enhance developer productivity.

Backstage comes to the rescue as a platform specifically designed for building developer portals. These portals act as a central hub, consolidating information about various development activities, including:

- Continuous Integration/Continuous Delivery (CI/CD) Pipelines: Monitor and track your CI/CD pipelines for efficient deployments.

- Documentation: Keep your team on the same page with readily accessible documentation.

- Monitoring Kubernetes Deployments: Gain insights into the health and performance of your Kubernetes deployments directly through Backstage.

## The Power of the Software Catalog

One of Backstage's core strengths is the software catalog. This catalog serves as a central repository for service information, including:

- Ownership: Clearly identify who owns and maintains each service.

- Git Repository Location: Simplify access to the codebase for each service.

- Relationships Between Services: Understand how different services interact and depend on each other.

The beauty of the software catalog lies in its adaptability. You can leverage open-source plugins or create custom plugins to tailor the catalog to your specific needs.

## Backstage in Action:

To begin with, let's set up a namespace in Kubernetes to segregate services in a multi-tenant environment. We can either use the kubectl create namespace command directly or create a Namespace definition file and apply it using kubectl apply.

```

apiVersion: v1

kind: Namespace

metadata:

name: backstage

```

This YAML file defines a namespace named "backstage".

Once the namespace is set up, we can move on to configuring PostgreSQL for our Backstage application. Firstly, we'll create a Kubernetes Secret to store the PostgreSQL username and password. This is done to ensure security and is used by both the PostgreSQL database and Backstage deployments.

```

apiVersion: v1

kind: Secret

metadata:

name: postgres-secrets

namespace: backstage

type: Opaque

data:

POSTGRES_USER: YmFja3N0YWdl

POSTGRES_PASSWORD: aHVudGVyMg==

```

These values are base64-encoded to maintain secrecy. After creating the Secret, we apply it to the Kubernetes cluster.

Next, PostgreSQL requires a persistent volume to store data. We define a PersistentVolume along with a PersistentVolumeClaim to ensure data persistence.

```

apiVersion: v1

kind: PersistentVolume

metadata:

name: postgres-storage

namespace: backstage

labels:

type: local

spec:

storageClassName: manual

capacity:

storage: 2G

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Retain

hostPath:

path: '/mnt/data'

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: postgres-storage-claim

namespace: backstage

spec:

storageClassName: manual

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 2G

```

This creates a local volume with a capacity of 2 gigabytes. After defining the storage, we apply it to the Kubernetes cluster.

Now, we move on to deploying PostgreSQL itself. We define a Deployment descriptor for PostgreSQL, specifying its image, environment variables, and volume mounts.

```

apiVersion: apps/v1

kind: Deployment

metadata:

name: postgres

namespace: backstage

spec:

replicas: 1

selector:

matchLabels:

app: postgres

template:

metadata:

labels:

app: postgres

spec:

containers:

- name: postgres

image: postgres:13.2-alpine

imagePullPolicy: 'IfNotPresent'

ports:

- containerPort: 5432

envFrom:

- secretRef:

name: postgres-secrets

volumeMounts:

- mountPath: /var/lib/postgresql/data

name: postgresdb

subPath: data

volumes:

- name: postgresdb

persistentVolumeClaim:

claimName: postgres-storage-claim

```

This Deployment ensures that PostgreSQL is up and running. We apply it to the Kubernetes cluster, and once deployed, we can verify its status.

After setting up PostgreSQL, we proceed to create a Kubernetes Service for it. This Service allows other pods to connect to the PostgreSQL database.

```

apiVersion: v1

kind: Service

metadata:

name: postgres

namespace: backstage

spec:

selector:

app: postgres

ports:

- port: 5432

```

We apply this Service to the Kubernetes cluster, and now PostgreSQL is ready to handle connections from other pods.

With PostgreSQL set up, we can now proceed to deploy the Backstage instance. This involves creating secrets, a deployment, and a service for Backstage similar to what we did for PostgreSQL.

Similar to PostgreSQL, we first create a Kubernetes Secret to store any configuration secrets needed for Backstage, such as authorization tokens.

```

apiVersion: v1

kind: Secret

metadata:

name: backstage-secrets

namespace: backstage

type: Opaque

data:

GITHUB_TOKEN: VG9rZW5Ub2tlblRva2VuVG9rZW5NYWxrb3ZpY2hUb2tlbg==

```

After creating the secret, we apply it to the Kubernetes cluster.

Now, we define a Deployment descriptor for Backstage, specifying its image, environment variables, and ports.

```

apiVersion: apps/v1

kind: Deployment

metadata:

name: backstage

namespace: backstage

spec:

replicas: 1

selector:

matchLabels:

app: backstage

template:

metadata:

labels:

app: backstage

spec:

containers:

- name: backstage

image: backstage:1.0.0

imagePullPolicy: IfNotPresent

ports:

- name: http

containerPort: 7007

envFrom:

- secretRef:

name: postgres-secrets

- secretRef:

name: backstage-secrets

```

This Deployment ensures that the Backstage instance is up and running. We apply it to the Kubernetes cluster, and once deployed, we can verify its status.

After deploying Backstage, we create a Kubernetes Service to handle connecting requests to the correct pods.

```

apiVersion: v1

kind: Service

metadata:

name: backstage

namespace: backstage

spec:

selector:

app: backstage

ports:

- name: http

port: 80

targetPort: http

```

This Service ensures that other pods can connect to the Backstage instance. We apply it to the Kubernetes cluster.

Now, our Backstage deployment is fully operational! We can forward a local port to the service to access it locally.

```

$ sudo kubectl port-forward --namespace=backstage svc/backstage 80:80

```

With this setup, we can access our Backstage instance in a browser at localhost.

Let's delve into the additional steps and considerations for a production deployment of Backstage on Kubernetes.

- Set up a more reliable volume: The PersistentVolume configured earlier uses local Kubernetes node storage, which may not be suitable for production environments. It's recommended to replace this with a more reliable storage solution such as a cloud volume or network-attached storage.

- Expose the Backstage service: The Kubernetes Service created for Backstage is not exposed for external connections from outside the cluster. To enable external access, you can use - Kubernetes ingress or an external load balancer.

- Update the Deployment image: To update the Kubernetes deployment with a newly published version of your Backstage Docker image, you need to update the image tag reference in the deployment YAML file and then apply the changes using `kubectl apply -f`.

```

apiVersion: apps/v1

kind: Deployment

metadata:

name: backstage

namespace: backstage

spec:

replicas: 1

selector:

matchLabels:

app: backstage

template:

metadata:

labels:

app: backstage

spec:

containers:

- name: backstage

image: your-updated-image:tag

imagePullPolicy: IfNotPresent

ports:

- name: http

containerPort: 7007

envFrom:

- secretRef:

name: postgres-secrets

- secretRef:

name: backstage-secrets

```

Configure app and backend URLs: Ensure that the URLs configured in your app-config.yaml file match the URLs you're forwarding locally for testing or the URLs you've set up for production environments.

```

app:

baseUrl: http://localhost

backend:

baseUrl: http://localhost

```

Update these URLs according to your deployment environment.

Authentication provider configuration: If you're using an authentication provider, ensure that its address is correctly configured for the authentication pop-up to work properly. Update the relevant configurations in your Backstage application.

By addressing these additional steps and considerations, you can have a robust and production-ready deployment of Backstage on Kubernetes.

## Conclusion

By integrating Backstage with Kubernetes, you can empower your developers with a centralized platform for viewing and managing their deployments. Backstage simplifies access to crucial information and streamlines workflows, ultimately boosting developer productivity and reducing friction in your Kubernetes environment.

| ajeetraina | |

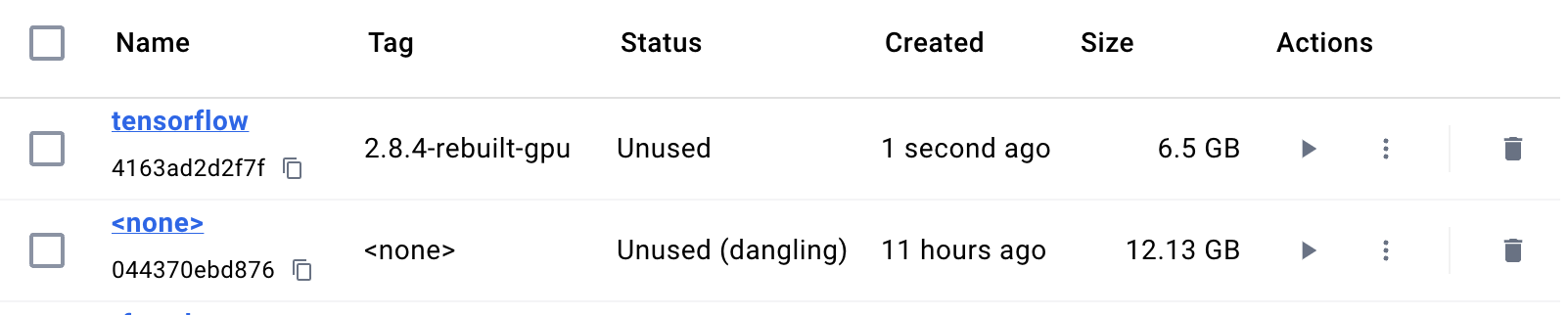

1,847,055 | Running Ollama with Docker Compose and GPUs | Large Language Models (LLMs) are revolutionizing various fields, pushing the boundaries of what... | 0 | 2024-06-02T09:04:04 | https://dev.to/ajeetraina/running-ollama-with-docker-compose-and-gpus-lkn | Large Language Models (LLMs) are revolutionizing various fields, pushing the boundaries of what machines can achieve. However, their complexity demands ever-increasing processing power. This is where accelerators like Nvidia GPUs come into play, offering a significant boost for training and inference tasks.

In this blog post, we'll guide you through running Ollama, a popular self-hosted LLM server, with Docker Compose and leverage the raw power of your Nvidia GPU. We'll delve into the configuration details, ensuring you get the most out of your LLM experience.

## Prerequisites:

- Docker and Docker Compose: Ensure you have Docker and Docker Compose installed and running on your system. You can find installation instructions on the official Docker website: https://docs.docker.com/engine/install/

- Nvidia GPU: Your system must have an Nvidia GPU installed and configured. Verify this by running nvidia-smi in your terminal. If the command doesn't work or returns an error, refer to Nvidia's documentation for configuration guidance: https://docs.nvidia.com/

Understanding the Configuration:

Now, let's explore the key components of the docker-compose.yml file that facilitates running Ollama with GPU acceleration:

## Docker Compose Version:

The version property specifies the Docker Compose version being used. While some might mention 3.9, it's recommended to stick with the officially documented version, currently 3.8. This ensures compatibility and stability.

## Ollama Service Definition:

The services section defines the ollama service, which encapsulates the Ollama container. Here's a breakdown of its important properties:

- **image:** This specifies the Docker image for Ollama. The default is ollama/ollama, but you can use a specific version if needed (refer to Ollama's documentation for available versions).

- **deploy:** This section configures resource reservations for the Ollama container. This is where the magic happens for harnessing the GPU power.

resources: Defines the resource requirements for the container.

- **reservations:** This nested property allows you to reserve specific devices for the container.

devices: Defines a device reservation. Within this nested configuration, we specify:

- **driver:** Sets the device driver to nvidia to indicate we're requesting an Nvidia GPU.

capabilities: Lists the capabilities requested by Ollama. In this case, we specify "gpu" to signify our desire to leverage the GPU for processing.

- **count:** This value determines how many Nvidia GPUs you want to reserve for Ollama. Use all to utilize all available GPUs or specify a specific number if you have multiple GPUs and want to dedicate a subset for Ollama.

## Persistent Volume Definition:

The volumes section defines a persistent volume named ollama. This volume ensures that any data generated by Ollama, such as trained models or configurations, persists even after container restarts. It's mounted at the /root/.ollama directory within the Ollama container.

## Putting it All Together

Here's the complete docker-compose.yml configuration for running Ollama with Nvidia GPU acceleration using Docker Compose:

```

services:

ollama:

container_name: ollama

image: ollama/ollama # Replace with specific Ollama version if needed

deploy:

resources:

reservations:

devices:

- driver: nvidia

capabilities: ["gpu"]

count: all # Adjust count for the number of GPUs you want to use

volumes:

- ollama:/root/.ollama

restart: always

volumes:

ollama:

```

## Running Ollama with GPU Acceleration:

With the configuration file ready, save it as docker-compose.yml in your desired directory. Now, you can run the following command to start Ollama with GPU support:

```

docker-compose up -d

```

The `-d` flag ensures the container runs in the background.

## Verification:

After running the command, you can check Ollama's logs to see if the Nvidia GPU is being utilized. Look for messages indicating "Nvidia GPU detected via cudart" or similar wording within the logs. This confirmation signifies successful GPU integration with Ollama.

## Additional Considerations:

- Refer to Ollama's official documentation for any additional configuration or resource requirements based on your specific use case.

- Adjust the count value in the devices section to match the number of Nvidia GPUs you want to dedicate to Ollama.

- This example uses Nvidia and wouldn't work for ROCm directly. It's meant to illustrate the concept of resource reservations within a Compose file (which currently doesn't support ROCm).

| ajeetraina | |

1,850,183 | What's New in Docker Desktop 4.30.0? | Docker Desktop 4.30.0 is now available to download and install on your laptop. It brings a variety... | 0 | 2024-06-02T09:03:47 | https://dev.to/ajeetraina/whats-new-in-docker-desktop-4300-52o1 |

[Docker Desktop 4.30.0](https://docs.docker.com/desktop/release-notes/#4300) is now available to download and install on your laptop. It brings a variety of improvements and bug fixes for developers building and deploying containerized applications. This update focuses on three key areas:

- Enhanced security,

- Streamlined workflows, and

- Platform-specific fixes.

- Docker Compose v2.27.0

- Docker Engine v26.1.1

- Wasm runtimes:

- Updated runwasi shims to v0.4.0

- Updated deislabs shims to v0.11.1

- Updated spin shim to v0.13.1

- Docker Scout CLI v1.8.0

- Docker Debug v0.0.29

- Linux kernel v6.6.26

- Go 1.22.2

## Improved Security:

### Enhanced Container Isolation (ECI)

Docker Desktop 4.30.0 strengthens security for ECI users by improving how it handles "docker build" commands within rootless containers.

ECI provides an additional layer of security by limiting the capabilities of containers. With Docker Desktop 4.30.0, there are improvements in how ECI handles "docker build" commands within rootless containers. This likely strengthens the isolation between the build process and the host system.

Imagine you're building a Docker image that installs system packages. In a non-ECI environment, the build process might have access to install system packages on the host machine as well. With ECI, such access would be restricted, enhancing security.

### Linux Kernel Update

The update includes the latest Linux kernel version (v6.6.26).

This includes various security patches and improvements to the core of the Linux kernel used within Docker Desktop. These patches address vulnerabilities and potential exploits in the kernel code.

A recent kernel vulnerability might have allowed attackers to gain unauthorized access to a system. The update to version 6.6.26 likely includes a fix for this vulnerability, making it more difficult for attackers to exploit.

### Mac-Specific Security Boosts

For Mac users, Docker Desktop 4.30.0 enables the `CONFIG_SECURITY=y` kernel configuration, potentially enhancing security for tools like Tetragon.

Tetragon is a runtime security scanner for containers. With CONFIG_SECURITY=y enabled, Tetragon might have access to additional kernel features that improve its ability to detect and prevent security threats within containers.

## Streamlined Workflows:

### SOCKS5 Proxy Support (Business Only)

This update allows users with a Business subscription to leverage SOCKS5 proxies for container network connections.

A company might use a SOCKS5 proxy to restrict outbound traffic from containers to specific allowed destinations. This could be useful for development environments where you only want containers to access internal resources.

```

docker run --rm -h my-container --network my-network \

--proxy socks5://your-proxy-server:1080 \

my-image:latest

```

In this example, the `--proxy` option specifies the SOCKS5 proxy server address and port.

### Improved Build UI

The build user interface offers better bulk deletion of build records and the ability to launch relevant web pages for container images and Git sources used in builds. Additionally, users can now download Provenance and OpenTelemetry traces in Jaeger or OTLP formats for easier analysis.

- Bulk deletion of build records: This allows you to easily remove multiple build history entries at once.

- Launching web pages for build dependencies: You can quickly access the web pages for container images and Git sources used in your builds directly from the build UI.

- Downloading Provenance and OpenTelemetry traces: These trace files provide valuable insights into the performance and behavior of your builds. You can now download them in Jaeger or OTLP formats for easier analysis with debugging tools.

Imagine you're having issues with a build and want to analyze the build process in more detail. Downloading the OpenTelemetry traces would allow you to visualize the different stages of the build and identify where the problem might be occurring.

### Kerberos and NTLM Proxy Authentication (Windows - Business Only)

Business users on Windows can now leverage Kerberos and NTLM for proxy authentication, simplifying their development workflows.

This feature simplifies development workflows for Windows users with a business subscription by enabling them to leverage existing Kerberos or NTLM proxy authentication mechanisms for container network connections.

Example:

A company might use Kerberos for internal authentication. With NTLM proxy authentication support, containerized applications can seamlessly access resources that require Kerberos or NTLM credentials without needing additional configuration within the containers themselves.

## Platform-Specific Fixes:

### Mac Bug Fixes

Docker Desktop 4.30.0 addresses several Mac-specific issues, including a segmentation fault with the Virtualization Framework, enabling SQUASHFS compression support again, and resolving a bug that prevented startup if Rosetta was not installed.

### Windows Bug Fixes

This update fixes several Windows-related bugs, including a regression in host file binding, issues with Docker CLI bash completions in WSL environments, and a problem that caused a new version of Docker Desktop to be marked as damaged. Additionally, Docker Desktop 4.30.0 introduces a simplified provisioning mode for WSL2, potentially streamlining the setup process.

By incorporating these improvements and bug fixes, Docker Desktop 4.30.0 aims to make the container development experience smoother, more secure, and more efficient for developers across all platforms.

| ajeetraina | |

1,873,581 | How to Create an Effective To-Do List | Project:- 1/500 To-Do List Project Description The To-Do List project is... | 27,575 | 2024-06-02T09:03:25 | https://dev.to/raajaryan/to-do-list-project-57dp | javascript, html, beginners, opensource | ## Project:- 1/500 To-Do List Project

## Description

The To-Do List project is designed to help users efficiently manage their daily tasks. This project demonstrates how to build a simple yet functional application using fundamental web development technologies. It allows users to add, update, and delete tasks, making task management straightforward and intuitive.

## Features

- **Add New Tasks**: Users can easily add new tasks by entering a task name and optional details in an input form. Each task is then added to the to-do list for tracking.

- **Mark Tasks as Complete**: Users can mark tasks as complete by clicking on them, which will visually differentiate completed tasks from those that are still pending.

- **Delete Tasks**: Users can remove tasks that are no longer needed by clicking a delete button, ensuring their to-do list remains clean and organized.

## Technologies Used

- **JavaScript**: Handles the dynamic aspects of the application, such as adding, updating, and deleting tasks.

- **HTML**: Provides the structure of the web page, including the form for adding tasks and the list for displaying them.

- **CSS**: Styles the application, ensuring it is visually appealing and responsive across different devices.

## Setup

Follow these steps to set up and run the To-Do List project on your local machine:

1. **Clone the Repository**:

First, clone the project repository from GitHub:

```bash

git clone https://github.com/deepakkumar55/ULTIMATE-JAVASCRIPT-PROJECT.git

cd Basic Projects/1-to_do_list

```

2. **Navigate to the Project Directory**:

Use the terminal or file explorer to go to the project folder:

```bash

cd Basic Projects/1-to_do_list

```

3. **Open `index.html` in Your Browser**:

Open the `index.html` file in your preferred web browser to view and interact with the to-do list application. You can do this by double-clicking the file or using a command like:

```bash

open index.html

```

4. **Customize the Application (Optional)**:

- **HTML**: Modify the structure to add more features or change the layout.

- **CSS**: Update the styles to match your preferred design.

- **JavaScript**: Enhance the functionality by adding features like task deadlines, priority levels, or categories.

## Contributing

We welcome contributions to improve and extend the To-Do List project. Whether you're fixing bugs, adding new features, or enhancing the documentation, your input is valuable. Here’s how you can get involved:

1. **Fork the Repository**:

Click the "Fork" button at the top right of the repository page on GitHub to create your own copy of the project.

2. **Clone Your Fork**:

Clone your forked repository to your local machine:

```bash

git clone https://github.com/deepakkumar55/ULTIMATE-JAVASCRIPT-PROJECT.git

cd Basic Projects/1-to_do_list

```

3. **Create a New Branch**:

Create a new branch for your feature or bug fix:

```bash

git checkout -b feature-name

```

4. **Make Your Changes**:

Implement your changes in the project.

5. **Commit Your Changes**:

Commit your changes with a descriptive message:

```bash

git commit -m "Add new feature: feature description"

```

6. **Push to Your Fork**:

Push your changes to your forked repository:

```bash

git push origin feature-name

```

7. **Create a Pull Request**:

Go to the original repository on GitHub and create a pull request to merge your changes into the main branch.

## Get in Touch

If you have any questions or need further assistance, feel free to open an issue on GitHub or contact us directly. Your contributions and feedback are highly appreciated!

---

Thank you for your interest in the To-Do List project. Together, we can build a more robust and feature-rich application. Happy coding! | raajaryan |

1,873,580 | How can you solve any captcha type with automatic captcha-solving technology? | In the digital age, ensuring the security of online platforms is paramount. CAPTCHAs (Completely... | 0 | 2024-06-02T09:00:37 | https://dev.to/media_tech/how-can-you-solve-any-captcha-type-with-automatic-captcha-solving-technology-12pa | In the digital age, ensuring the security of online platforms is paramount. CAPTCHAs (Completely Automated Public Turing tests to tell Computers and Humans Apart) have been widely used to differentiate between human users and automated bots. However, CAPTCHAs can often become a barrier for legitimate users. This article delves into automatic captcha-solving technology and how it can help overcome this challenge efficiently.

**Understanding CAPTCHA and Its Types**

CAPTCHAs are designed to protect websites from spam and abuse by presenting tests that are easy for humans but difficult for automated systems to solve. There are several types of CAPTCHAs:

**Text-based CAPTCHAs:** Users are required to type characters displayed in a distorted image.

**Image-based CAPTCHAs:** Users are asked to select certain images from a set, such as all pictures containing traffic lights.

**ReCAPTCHA:** Google’s version that often involves clicking checkboxes or selecting images based on a prompt.

Each type of CAPTCHA has its own complexities and solving them manually can be time-consuming. This is where automatic captcha-solving technology comes into play.

**How Automatic Captcha-Solving Technology Works**

Automatic captcha-solving technologies leverage advanced algorithms and artificial intelligence to interpret and solve CAPTCHAs. Here’s a closer look at the core technologies involved:

**Optical Character Recognition (OCR)**

OCR technology is pivotal in solving text-based CAPTCHAs. It scans the image for text, recognizes the characters, and converts them into machine-encoded text. Modern OCR systems are highly accurate and can handle various distortions and font variations used in CAPTCHAs.

**Machine Learning and AI**

Machine learning models, particularly those using deep learning, are trained on large datasets of CAPTCHAs. These models learn to recognize patterns and features that distinguish different types of CAPTCHAs. As a result, they can solve both text and image-based CAPTCHAs with high precision.

**Automated Scripts and Bots**

Automated scripts and bots can mimic human interaction with CAPTCHAs. They simulate mouse movements, clicks, and keystrokes, making it possible to solve CAPTCHAs that require user interaction, such as ReCAPTCHA.

**Benefits of Using Automatic Captcha-Solving Technology**

Implementing automatic captcha-solving technology offers numerous advantages:

**Enhanced User Experience**

Automatic captcha-solving technologies streamline the user experience by eliminating the need for manual CAPTCHA entry. This leads to faster access to online services and reduces frustration.

**Increased Efficiency**

Automated systems can solve CAPTCHAs at a much faster rate than humans, which is particularly beneficial for businesses that rely on high-volume transactions.

**Cost-Effective Solutions**

By reducing the need for manual labor, businesses can save on operational costs. Automated systems can handle large-scale CAPTCHA solving tasks without the need for additional human resources.

**Scalability**

Automatic captcha-solving technologies can easily scale to meet the demands of growing businesses. Whether it's for a small website or a large enterprise, these solutions can handle increasing volumes of CAPTCHA challenges.

**Best Practices for Implementing Automatic Captcha-Solving Technology**

To effectively implement automatic captcha-solving technology, businesses should follow these best practices:

**Choose Reliable Solutions**

Select technologies and vendors with a proven track record. Look for solutions that offer high accuracy rates and have been tested extensively.

**Maintain Transparency**

Inform users about the use of automatic captcha-solving technologies. Transparency builds trust and helps users understand the measures in place to protect their data.

**Regular Updates and Maintenance**

Technology evolves rapidly, and so do CAPTCHA challenges. Ensure that your automatic captcha-solving solutions are regularly updated to handle new types of CAPTCHAs and security measures.

**Monitor and Adapt**

Continuously monitor the performance of your captcha-solving technologies. Be prepared to adapt your strategies to address emerging threats and changes in CAPTCHA mechanisms.

**In conclusion,**

automatic captcha-solving technology is revolutionizing the way we interact with online security measures. By leveraging advanced algorithms and AI, these technologies offer a seamless and efficient solution to CAPTCHA challenges, enhancing user experience and operational efficiency.

**As a premier captcha solving service, CaptchaAI excels in solving reCaptcha, hCaptcha, image captchas, and many other types with unmatched efficiency.**

**What sets CaptchaAI apart is its unique pricing model - instead of charging per captcha, they offer unlimited captcha solving for a fixed price. This makes it the most cost-effective reCaptcha solving service on the market. Plus, you never have to worry about speed or accuracy. With a 99.9% accuracy rate and lightning-fast performance, CaptchaAI ensures that your captcha solving needs are met promptly and reliably.**

| media_tech | |

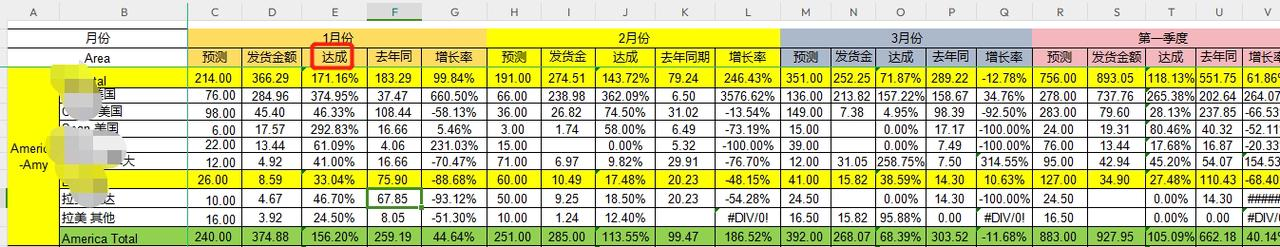

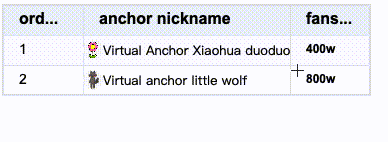

1,873,579 | When using the pivot table of the VTable component, how to display the calculated indicator results in a separate column? | Question Description Is there any configuration that can generate derived indicators?... | 0 | 2024-06-02T09:00:17 | https://dev.to/fangsmile/when-using-the-pivot-table-of-the-vtable-component-how-to-display-the-calculated-indicator-results-in-a-separate-column-3993 | webdev, visactor, vtable, visiualization | ## Question Description

Is there any configuration that can generate derived indicators? Calculate the indicator results after aggregation, and then display them in the indicator.

Description: For example, my row dimension is region - area, column dimension is month, and indicator is target, actual, and achievement (this achievement is calculated as actual / target). Achievement is the indicator I want to derive, because there is no achievement field in my data.

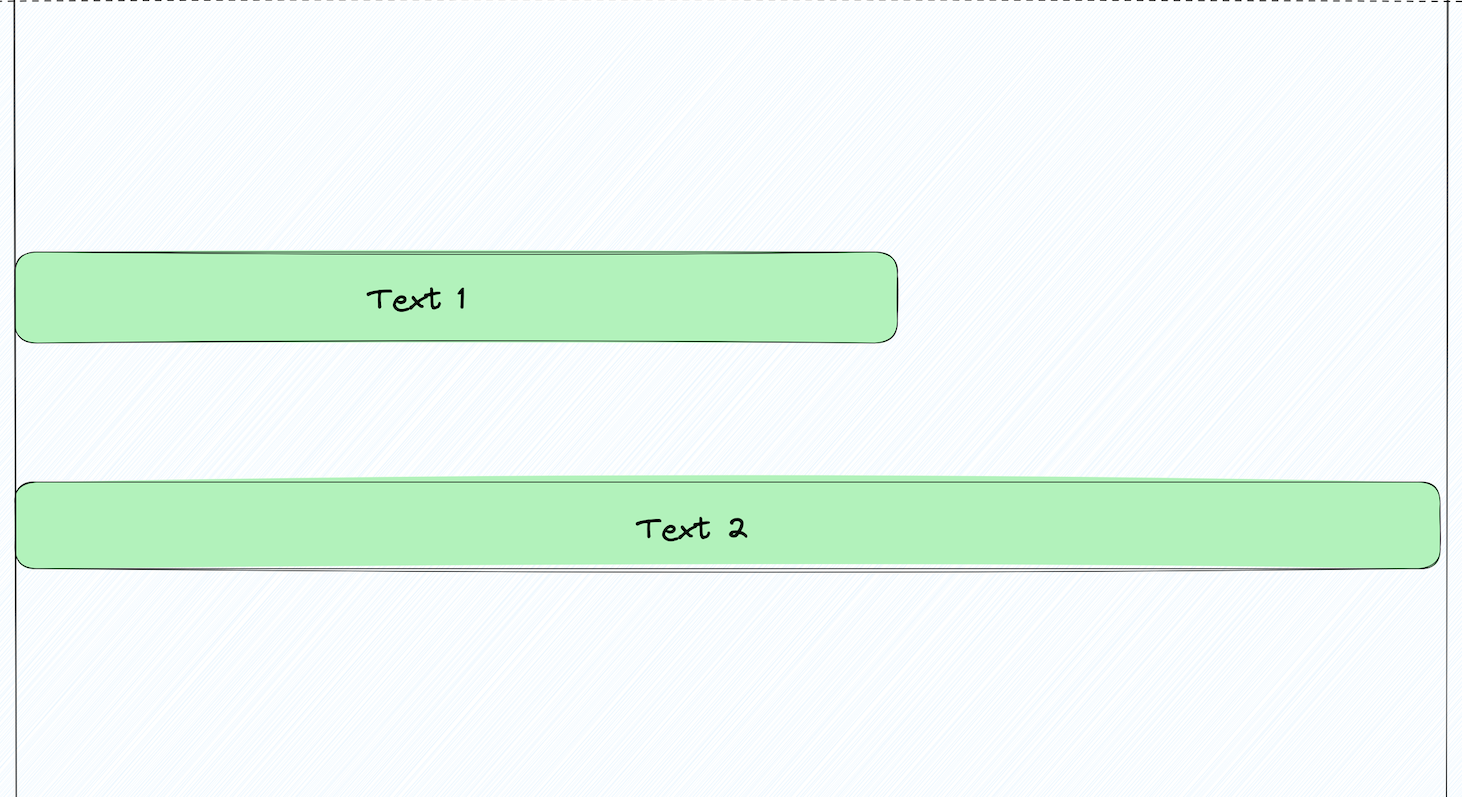

Screenshot of the problem:

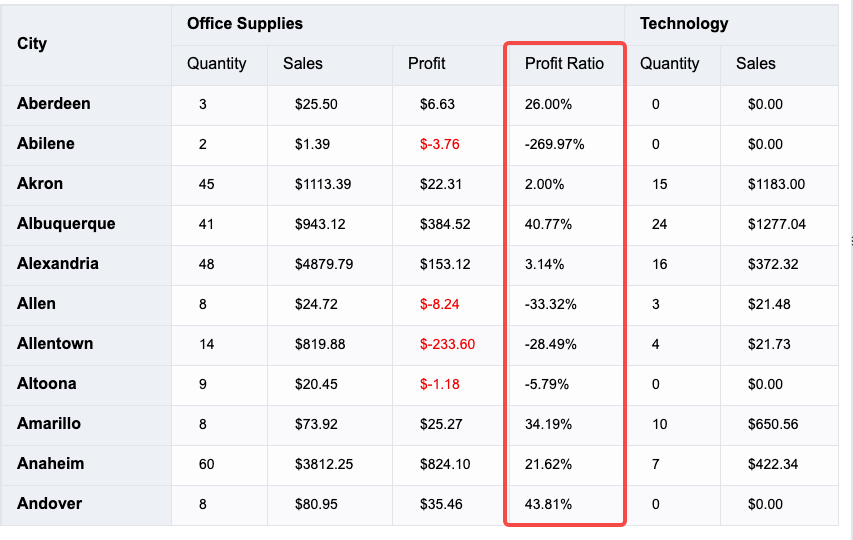

## Solution

Taking the pivot table on the official website of VTable as an example for similar target modifications, we add an indicator called Profit Ratio to the original demo, and use the format function to calculate the displayed value. The calculation logic depends on the values of the Sales and Profit indicators. That is, we calculate a profit ratio where profit ratio = profit / sales.

```

{

indicatorKey: 'Profit Ratio',

title: 'Profit Ratio',

width: 'auto',

showSort: false,

headerStyle: {

fontWeight: 'normal'

},

format: (value,col,row,table) => {

const sales=table.getCellOriginValue(col-2,row);

const profit=table.getCellOriginValue(col-1,row);

const ratio= profit/sales;

var percentage = ratio * 100;

return percentage.toFixed(2) + "%";

}

}

```

## Code Examples

```

let tableInstance;

fetch('https://lf9-dp-fe-cms-tos.byteorg.com/obj/bit-cloud/VTable/North_American_Superstore_Pivot_data.json')

.then(res => res.json())

.then(data => {

const option = {

records: data,

rows: [

{

dimensionKey: 'City',

title: 'City',

headerStyle: {

textStick: true

},

width: 'auto'

}

],

columns: [

{

dimensionKey: 'Category',

title: 'Category',

headerStyle: {

textStick: true

},

width: 'auto'

}

],

indicators: [

{

indicatorKey: 'Quantity',

title: 'Quantity',

width: 'auto',

showSort: false,

headerStyle: {

fontWeight: 'normal'

},

style: {

padding: [16, 28, 16, 28],

color(args) {

if (args.dataValue >= 0) return 'black';

return 'red';

}

}

},

{

indicatorKey: 'Sales',

title: 'Sales',

width: 'auto',

showSort: false,

headerStyle: {

fontWeight: 'normal'

},

format: rec => {

return '$' + Number(rec).toFixed(2);

},

style: {

padding: [16, 28, 16, 28],

color(args) {

if (args.dataValue >= 0) return 'black';

return 'red';

}

}

},

{

indicatorKey: 'Profit',

title: 'Profit',

width: 'auto',

showSort: false,

headerStyle: {

fontWeight: 'normal'

},

format: rec => {

return '$' + Number(rec).toFixed(2);

},

style: {

padding: [16, 28, 16, 28],

color(args) {

if (args.dataValue >= 0) return 'black';

return 'red';

}

}

},

{

indicatorKey: 'Profit Ratio',

title: 'Profit Ratio',

width: 'auto',

showSort: false,

headerStyle: {

fontWeight: 'normal'

},

format: (value,col,row,table) => {

const sales=table.getCellOriginValue(col-2,row);

const profit=table.getCellOriginValue(col-1,row);

const ratio= profit/sales;

var percentage = ratio * 100;

return percentage.toFixed(2) + "%";

}

}

],

corner: {

titleOnDimension: 'row',

headerStyle: {

textStick: true

}

},

dataConfig: {

sortRules: [

{

sortField: 'Category',

sortBy: ['Office Supplies', 'Technology', 'Furniture']

}

]

},

widthMode: 'standard'

};

tableInstance = new VTable.PivotTable(document.getElementById(CONTAINER_ID), option);

window['tableInstance'] = tableInstance;

});

```

## Result Display

Just paste the code in the example code directly into the official editor to display it.

## Related documents

Tutorial on pivot table usage: https://visactor.io/vtable/guide/table_type/Pivot_table/pivot_table_useage

Demo of pivot table usage: https://visactor.io/vtable/demo/table-type/pivot-analysis-table

Related API: https://visactor.io/vtable/option/PivotTable#indicators

github:https://github.com/VisActor/VTable | fangsmile |

1,873,577 | What Really Matters in Your 20s? | I turned 23 recently and it changed my perspective of life. It usually happens often when you reach a... | 0 | 2024-06-02T08:55:39 | https://dev.to/stealc/what-really-matters-in-your-20s-4a39 | productivity, careerdevelopment, speaking, challenge | I turned 23 recently and it changed my perspective of life. It usually happens often when you reach a certain age.

People often start their sentences with _“Get good grades and your life will be sorted”._ So I did as they said and excelled in my classes.