id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

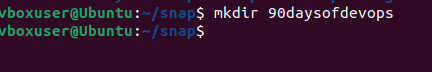

1,873,782 | Linux File System Hierarchy - DevOps Prerequisite 2 | In this article, we will explore the Linux filesystem hierarchy, detailing its directory structure... | 0 | 2024-06-02T15:30:20 | https://dev.to/iaadidev/linux-file-system-hierarchy-devops-prerequisite-2-46ai | linux, beginners, devops, opensource | In this article, we will explore the Linux filesystem hierarchy, detailing its directory structure and how it differs from other operating systems.

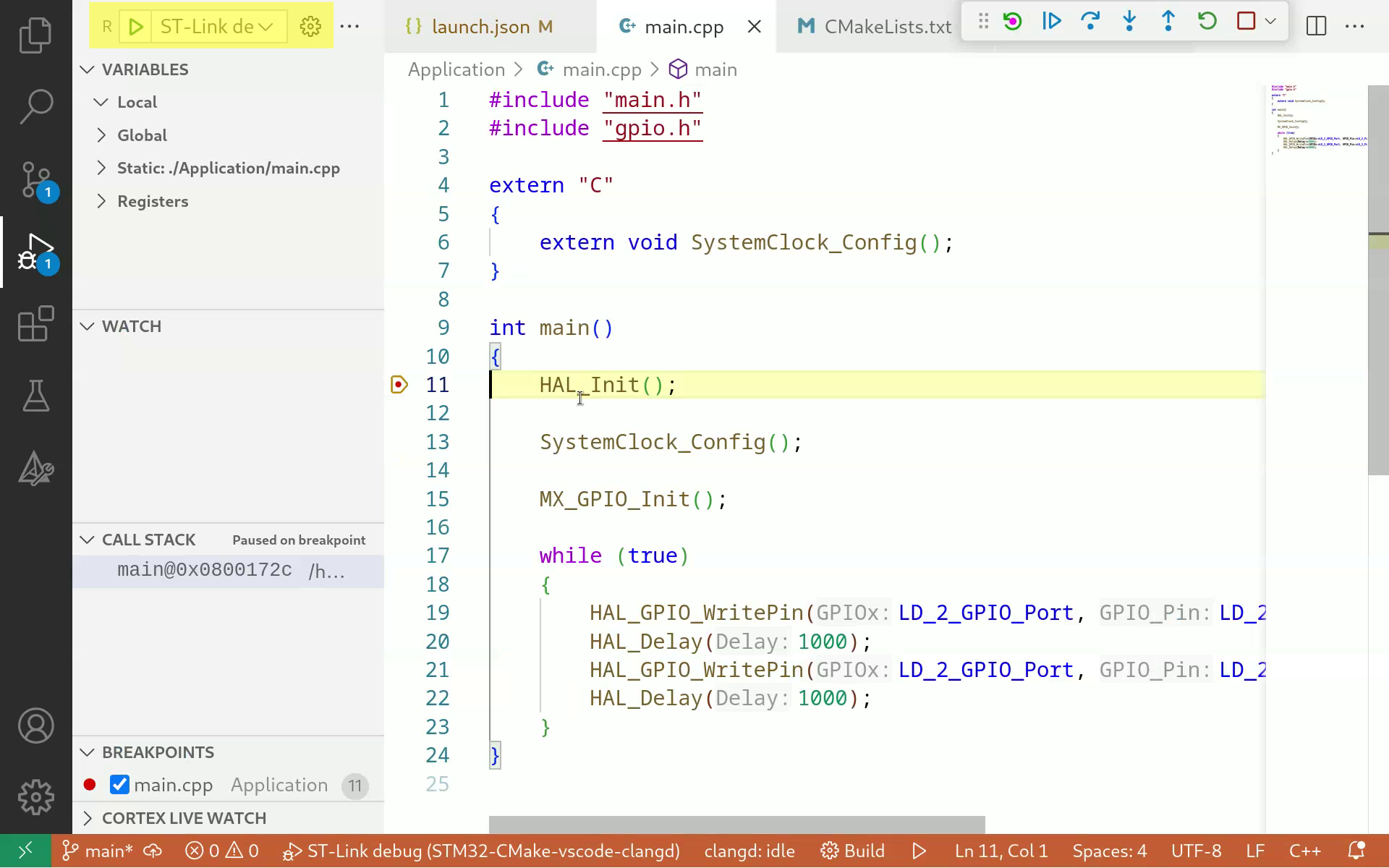

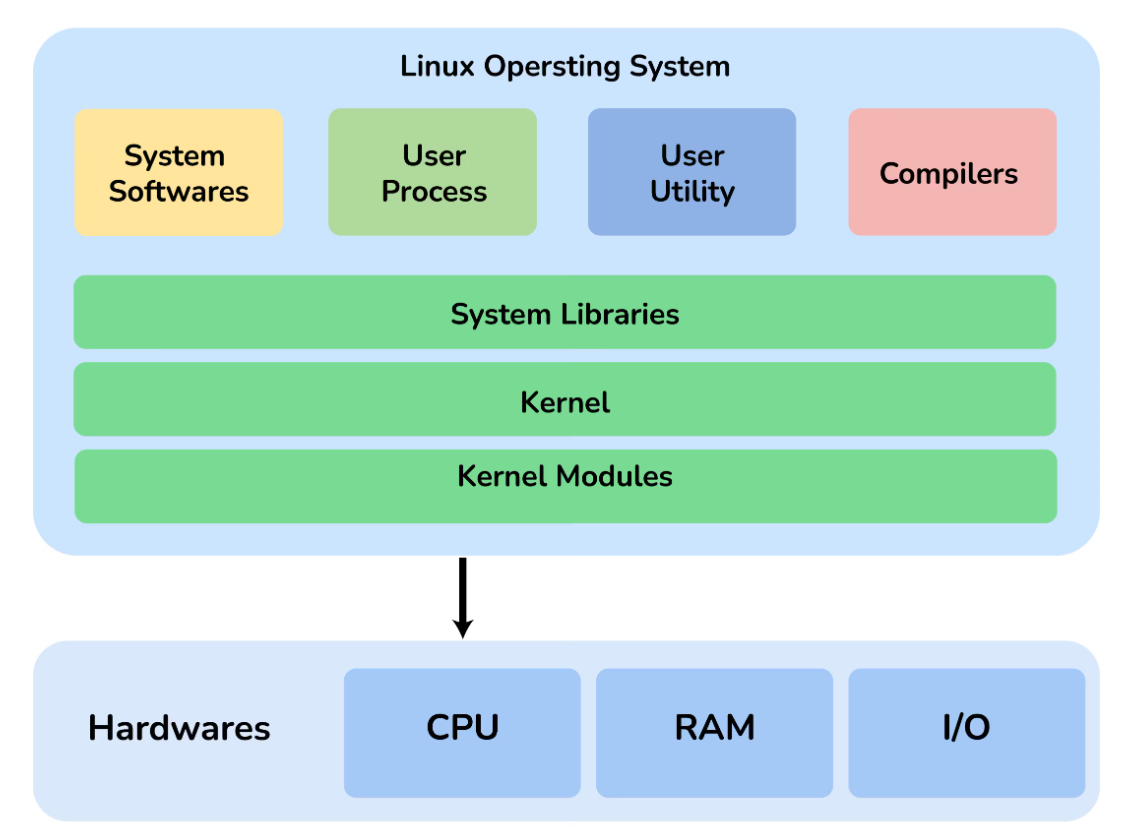

**Linux Filesystem**

The Linux OS features a secure, multi-user filesystem with a directory structure designed to balance security and functionality. User-accessible directories are kept separate from those needed by the administrator.

Linux generally adheres to the Filesystem Hierarchy Standard (FHS), established in 1994. This standard outlines the common layout conventions used by most UNIX and UNIX-like systems. It includes a primary (or root) directory with multiple branching sub-directories.

**Root Directory ( / )**

The root directory (/) is the starting point for the entire Linux filesystem hierarchy. It is the top-level directory from which all other filesystems are mounted during system boot. All files and folders branch from the root directory, regardless of where the data is physically stored.

The root directory is owned by the root user (administrator), with permissions tightly controlled to allow only administrators to add, remove, or modify its files and folders.

**Sub-Directories**

Linux conventionally includes several important sub-directories, each with its specific purpose and permissions. Some sub-directories, like /tmp, are accessible to everyone, while others, such as /etc, are restricted to administrators.

Here is a table detailing the purpose of common Linux sub-directories:

| SUB-DIRECTORY | PURPOSE |

| — — — — — — — -| — — — — -|

| /bin | Common binary executables used by all users |

| /boot | Files associated with the boot loader |

| /dev | Attached devices (USB, CD-ROM, mouse, keyboard) |

| /etc | Configuration files |

| /home | Personal directories for each user account |

| /lib | Shared system libraries |

| /media | Directory for mounting removable devices (floppy drive, CD-ROM) |

| /mnt | Directory for mounting filesystems (NFS, SMB) |

| /opt | Optional vendor add-on software |

| /proc | Virtual filesystem for system processes/resources information |

| /root | Home directory for the administrator account |

| /run | Storage for runtime information |

| /sbin | Binary executables used by the administrator |

| /srv | Data for server services |

| /sys | Virtual filesystem for hardware/driver information |

| /tmp | Temporary files purged on reboot |

| /usr | Utilities and read-only user data/programs |

| /var | Variable and log files |

**Linux versus Other Filesystems (macOS and Windows)**

**For Windows User’s Perspective**

Windows and Linux have distinct designs. Unlike Windows’ single-user system, Linux is multi-user. While Windows uses separate data drives (e.g., C:\WINDOWS and D:\DATA), Linux employs a tree-like hierarchy with everything branching from the root. On Windows, program and system files share the same path (C:\Program Files), whereas in Linux, they are separated (e.g., /bin, /boot, /usr/bin).

**For macOS User’s Perspective**

Apple’s macOS, derived from Unix and BSD, has a core file structure similar to Linux. Both have a single primary directory with sub-directories branching from the root (/). Many Linux sub-directory names are found in macOS, though some names differ (e.g., macOS uses /Users instead of /home for user accounts and personal files).

Sub-directory similarities to Linux include:

/bin /etc /dev /usr /sbin /tmp /var

Sub-directories unique to macOS include:

/Applications /Developer /Library /Network /System /Users /Volumes

**Conclusion**

The Linux filesystem, though distinct, shares similarities with other filesystems. This article has highlighted the unique aspects of the Linux filesystem. As you navigate your Linux environment, you will become more familiar with each directory’s purpose.

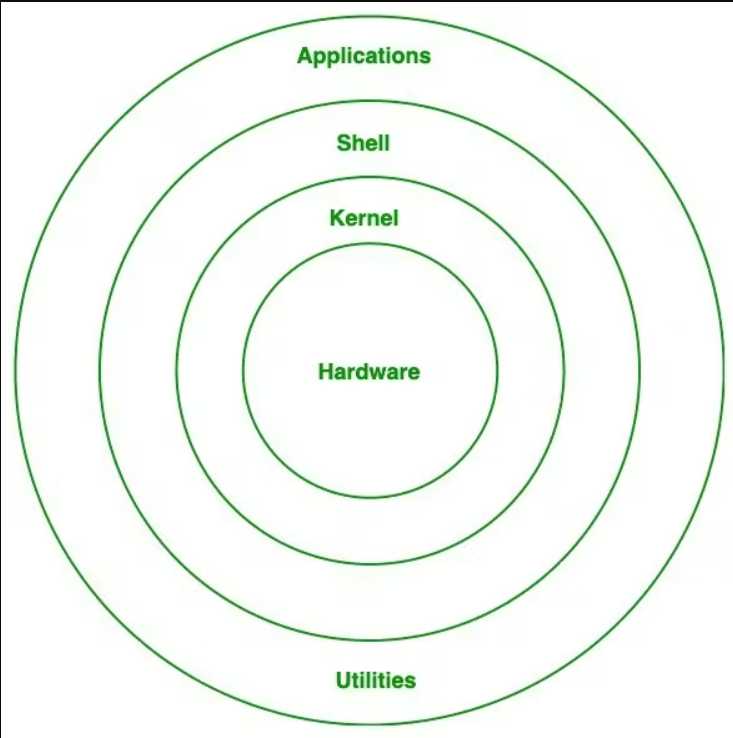

**Linux Bash Utilities**

Learn about useful Linux Bash utilities!

The Linux shell language, Bash, offers many possibilities for interacting with the OS, including file compression, archiving, and extraction from the command line. It also provides functions for looking up documentation for all commands.

For a foundation in command line basics, consider taking our “Learn the Command Line” course to practice basic navigation and filesystem modifications in Bash. | iaadidev |

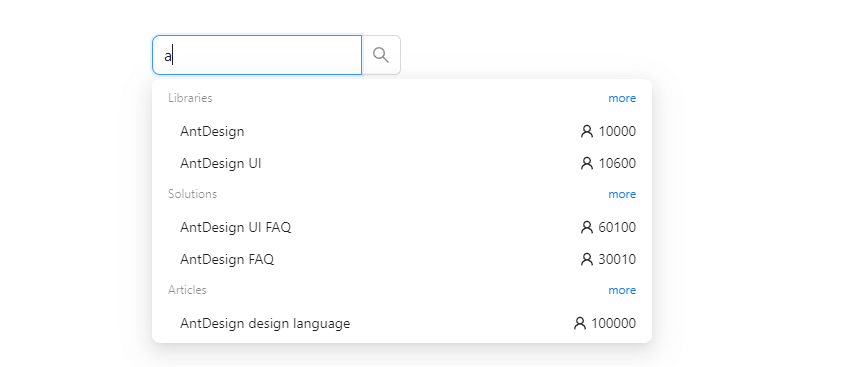

1,872,210 | how to use autocomplete in react ant design 5 | In this tutorial, we will create autocomplete in react with ant design 5. First you need to setup... | 0 | 2024-06-02T15:30:00 | https://frontendshape.com/post/how-to-use-autocomplete-in-react-ant-design-5 | react, antdesign, webdev | In this tutorial, we will create autocomplete in react with ant design 5. First you need to setup react with ant design 5 project.

<br>

[install & setup vite + react + typescript + ant design 5](https://frontendshape.com/post/install-setup-vite-react-typescript-ant-design-5)

1.Create a simple autocomplete in React using Ant Design 5's AutoComplete component and the useState hook.

```jsx

import React, { useState } from 'react';

import { AutoComplete } from 'antd';

const mockVal = (str: string, repeat = 1) => ({

value: str.repeat(repeat),

});

const App: React.FC = () => {

const [value, setValue] = useState('');

const [options, setOptions] = useState<{ value: string }[]>([]);

const [anotherOptions, setAnotherOptions] = useState<{ value: string }[]>([]);

const getPanelValue = (searchText: string) =>

!searchText ? [] : [mockVal(searchText), mockVal(searchText, 2), mockVal(searchText, 3)];

const onSelect = (data: string) => {

console.log('onSelect', data);

};

const onChange = (data: string) => {

setValue(data);

};

return (

<>

<AutoComplete

options={options}

style={{ width: 200 }}

onSelect={onSelect}

onSearch={(text) => setOptions(getPanelValue(text))}

placeholder="input here"

/>

<br />

<br />

<AutoComplete

value={value}

options={anotherOptions}

style={{ width: 200 }}

onSelect={onSelect}

onSearch={(text) => setAnotherOptions(getPanelValue(text))}

onChange={onChange}

placeholder="control mode"

/>

</>

);

};

export default App;

```

2.react ant design 5 search autocomplete with options property.

```jsx

import React from 'react';

import { UserOutlined } from '@ant-design/icons';

import { AutoComplete, Input } from 'antd';

const renderTitle = (title: string) => (

<span>

{title}

<a

style={{ float: 'right' }}

href="https://www.google.com/search?q=antd"

target="_blank"

rel="noopener noreferrer"

>

more

</a>

</span>

);

const renderItem = (title: string, count: number) => ({

value: title,

label: (

<div

style={{

display: 'flex',

justifyContent: 'space-between',

}}

>

{title}

<span>

<UserOutlined /> {count}

</span>

</div>

),

});

const options = [

{

label: renderTitle('Libraries'),

options: [renderItem('AntDesign', 10000), renderItem('AntDesign UI', 10600)],

},

{

label: renderTitle('Solutions'),

options: [renderItem('AntDesign UI FAQ', 60100), renderItem('AntDesign FAQ', 30010)],

},

{

label: renderTitle('Articles'),

options: [renderItem('AntDesign design language', 100000)],

},

];

const App: React.FC = () => (

<AutoComplete

popupClassName="certain-category-search-dropdown"

dropdownMatchSelectWidth={500}

style={{ width: 250 }}

options={options}

>

<Input.Search size="large" placeholder="input here" />

</AutoComplete>

);

export default App;

```

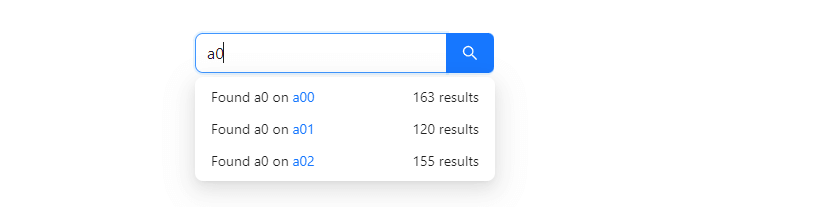

3.react typescript with ant design 5 search autocomplete.

```jsx

import React, { useState } from 'react';

import { AutoComplete, Input } from 'antd';

import type { SelectProps } from 'antd/es/select';

const getRandomInt = (max: number, min = 0) => Math.floor(Math.random() * (max - min + 1)) + min;

const searchResult = (query: string) =>

new Array(getRandomInt(5))

.join('.')

.split('.')

.map((_, idx) => {

const category = `${query}${idx}`;

return {

value: category,

label: (

<div

style={{

display: 'flex',

justifyContent: 'space-between',

}}

>

<span>

Found {query} on{' '}

<a

href={`https://s.taobao.com/search?q=${query}`}

target="_blank"

rel="noopener noreferrer"

>

{category}

</a>

</span>

<span>{getRandomInt(200, 100)} results</span>

</div>

),

};

});

const App: React.FC = () => {

const [options, setOptions] = useState<SelectProps<object>['options']>([]);

const handleSearch = (value: string) => {

setOptions(value ? searchResult(value) : []);

};

const onSelect = (value: string) => {

console.log('onSelect', value);

};

return (

<AutoComplete

dropdownMatchSelectWidth={252}

style={{ width: 300 }}

options={options}

onSelect={onSelect}

onSearch={handleSearch}

>

<Input.Search size="large" placeholder="input here" enterButton />

</AutoComplete>

);

};

export default App;

```

| aaronnfs |

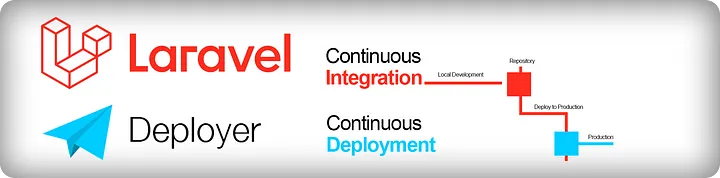

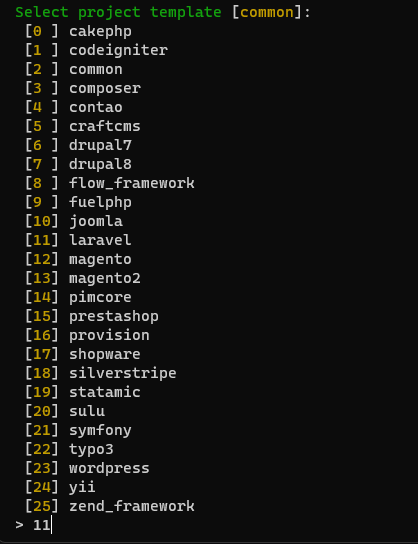

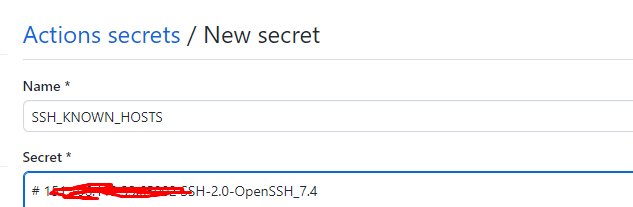

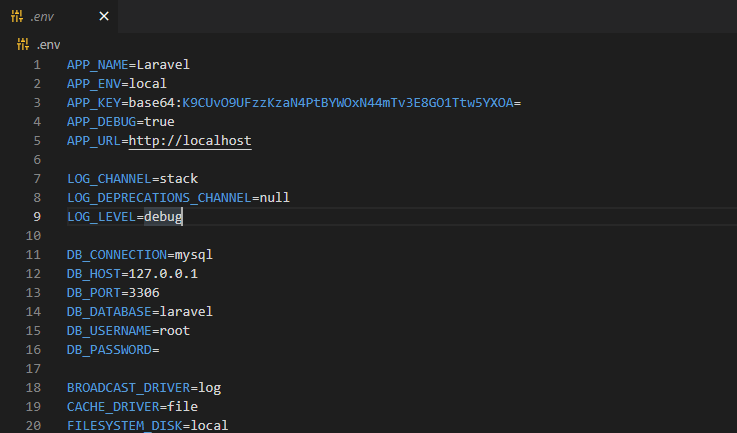

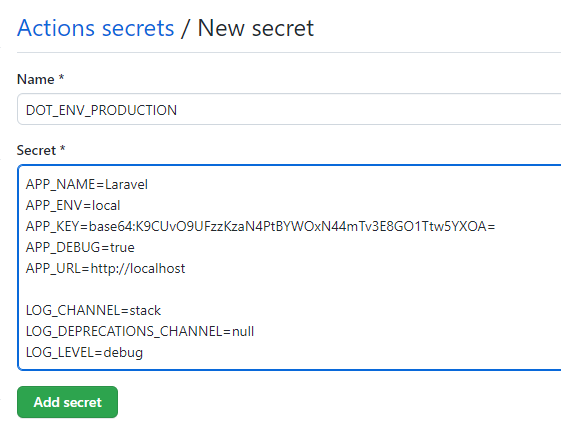

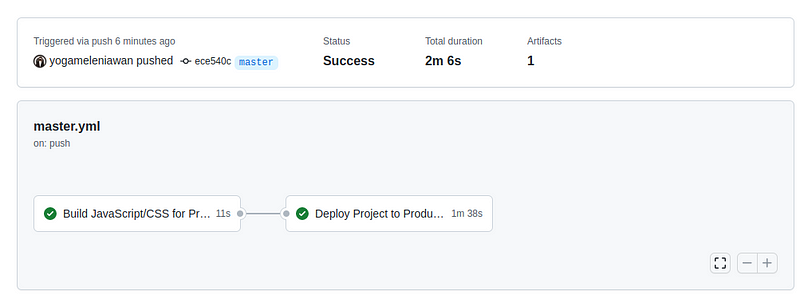

1,873,781 | 10 Metode Terbaik untuk Menjaga Kode Laravel Tetap Bersih dan Terstruktur | Menulis kode program yang bersih dan terstruktur dalam Laravel, seperti dalam framework PHP... | 0 | 2024-06-02T15:29:20 | https://dev.to/yogameleniawan/10-metode-terbaik-untuk-menjaga-kode-laravel-tetap-bersih-dan-terstruktur-14pa | laravel, programming |

Menulis kode program yang bersih dan terstruktur dalam Laravel, seperti dalam framework PHP lainnya, adalah hal yang sangat penting untuk memastikan bahwa kode temen-temen mudah dipahami, dikelola, dan diubah. Berikut adalah beberapa metode dan praktik terbaik yang dapat diikuti untuk mencapai tujuan ini:

### 1. Gunakan PSR (PHP Standard Recommendations)

Laravel secara otomatis mengikuti standar PSR-1 dan PSR-2 dalam penulisan kode. Pastikan temen-temen juga mengikutinya:

- PSR-1: Prinsip dasar penulisan kode PHP seperti penamaan file, penggunaan namespaces, dan deklarasi class.

- PSR-2: Panduan lebih rinci tentang penulisan kode seperti indentasi, panjang baris, dan penempatan kurung.

Contoh format PSR-2:

```php

<?php

namespace Vendor\Package;

use FooInterface;

use BarClass as Bar;

use OtherVendor\OtherPackage\BazClass;

class Foo extends Bar implements FooInterface

{

public function sampleFunction($arg1, $arg2 = null)

{

if ($arg1 === $arg2) {

bar();

} elseif ($arg1 > $arg2) {

$this->bar();

} else {

BazClass::bar($arg1, $arg2);

}

}

}

```

### 2. Gunakan Dependency Injection

Menggunakan Dependency Injection (DI) membuat kode lebih modular dan lebih mudah diuji maupun di-maintenance. Daripada temen-temen membuat instance dari class di dalam method, injeksikan dependensi melalui constructor atau method.

```php

<?php

class UserController extends Controller

{

protected $userService;

public function __construct(UserService $userService)

{

$this->userService = $userService;

}

public function index()

{

$users = $this->userService->getAllUsers();

return view('users.index', compact('users'));

}

}

```

### 3. Gunakan Service Container dan Service Providers

Laravel memiliki Service Container yang kuat dan flexible untuk mengelola dependency injection. Manfaatkan Service Providers untuk mengatur dependency.

Contoh Service Provider:

```php

<?php

namespace App\Providers;

use Illuminate\Support\ServiceProvider;

use App\Services\UserService;

class UserServiceProvider extends ServiceProvider

{

public function register()

{

$this->app->singleton(UserService::class, function ($app) {

return new UserService();

});

}

public function boot()

{

//

}

}

```

### 4. Gunakan Repositories dan Service Layer

Pisahkan logika bisnis dari controller dengan menggunakan pattern Repository dan Service. Repositories berfungsi sebagai layer abstraksi antara model dan controller.

Contoh Repository:

```php

<?php

namespace App\Repositories;

use App\Models\User;

class UserRepository

{

public function getAll()

{

return User::all();

}

public function findById($id)

{

return User::find($id);

}

}

```

Contoh Service:

```php

<?php

namespace App\Services;

use App\Repositories\UserRepository;

class UserService

{

protected $userRepository;

public function __construct(UserRepository $userRepository)

{

$this->userRepository = $userRepository;

}

public function getAllUsers()

{

return $this->userRepository->getAll();

}

public function getUserById($id)

{

return $this->userRepository->findById($id);

}

}

```

### 5. Gunakan Eloquent Mutators dan Accessors

Eloquent menyediakan mutators dan accessors untuk memodifikasi nilai atribut sebelum disimpan (setter) atau setelah diambil (getter) dari database.

**Accessor**

Apa itu Accessor? Accessors digunakan untuk memformat atau memanipulasi nilai atribut setelah diambil dari database.

Laravel 9 kebawah :

```php

<?php

class User extends Model

{

public function getFullNameAttribute()

{

return "{$this->first_name} {$this->last_name}";

}

}

```

Dalam Laravel 9, cara mendefinisikan accessors sedikit berbeda dengan versi sebelumnya. Anda sekarang menggunakan metode berbasis closure yang lebih deklaratif.

Laravel 9 keatas :

```php

namespace App\Models;

use Illuminate\Database\Eloquent\Model;

class User extends Model

{

protected $appends = ['full_name']; // menambahkan atribut 'full_name' ke array model

// Accessor untuk mendapatkan nama lengkap

protected function fullName(): Attribute

{

return Attribute::make(

get: fn ($value, $attributes) => "{$attributes['first_name']} {$attributes['last_name']}",

);

}

}

```

Penggunaan Accessor:

```php

<?php

$user = User::find(1);

echo $user->full_name; // Output: Nama Depan Nama Belakang

```

**Mutators**

Mutators digunakan untuk memformat atau memanipulasi nilai atribut sebelum disimpan ke database.

Misalkan temen-temen memiliki model User dengan atribut password, dan Anda ingin mengenkripsi password setiap kali disimpan ke database.

Laravel 9 kebawah:

```php

<?php

class User extends Model

{

public function setPasswordAttribute($password)

{

$this->attributes['password'] = bcrypt($password);

}

}

```

Laravel 9 keatas:

```php

<?php

namespace App\Models;

use Illuminate\Database\Eloquent\Model;

use Illuminate\Support\Facades\Hash;

class User extends Model

{

// Mutator untuk mengenkripsi password

protected function password(): Attribute

{

return Attribute::make(

set: fn ($value) => Hash::make($value),

);

}

}

```

Penggunaan Mutators:

```php

<?php

$user = new User;

$user->password = 'plain-text-password';

$user->save();

```

### 6. Gunakan Request Validation

Daripada melakukan validasi di dalam controller, temen-temen bisa gunakan Request Validation untuk membuat kode lebih bersih dan terorganisir.

Contoh Request Validation:

```php

<?php

namespace App\Http\Requests;

use Illuminate\Foundation\Http\FormRequest;

class StoreUserRequest extends FormRequest

{

public function authorize()

{

return true;

}

public function rules()

{

return [

'name' => 'required|string|max:255',

'email' => 'required|string|email|max:255|unique:users',

'password' => 'required|string|min:8|confirmed',

];

}

}

```

Penggunaan di Controller:

```php

<?php

public function store(StoreUserRequest $request)

{

$validated = $request->validated();

User::create($validated);

return redirect()->route('users.index');

}

```

### 7. Gunakan Resource Controllers

Laravel menyediakan resource controllers untuk membuat controller yang clean dan mengikuti prinsip RESTful sehingga controller resource di Laravel bisa menentukan operasi CRUD (Create, Read, Update, Delete) standar. Temen-temen bisa menggunakan **php artisan make:controller** untuk membuat resource controller.

Contoh pembuatan resource controller:

```bash

php artisan make:controller UserController --resource

```

Nanti akan menghasilkan controller seperti berikut:

```php

<?php

namespace App\Http\Controllers;

use App\Models\User;

use Illuminate\Http\Request;

class UserController extends Controller

{

// Menampilkan daftar resource

public function index()

{

$users = User::all();

return view('users.index', compact('users'));

}

// Menampilkan form untuk membuat resource baru

public function create()

{

return view('users.create');

}

// Menyimpan resource baru ke database

public function store(Request $request)

{

$validated = $request->validate([

'name' => 'required|string|max:255',

'email' => 'required|string|email|max:255|unique:users',

'password' => 'required|string|min:8',

]);

$user = new User($validated);

$user->password = bcrypt($request->password);

$user->save();

return redirect()->route('users.index');

}

// Menampilkan resource yang spesifik

public function show($id)

{

$user = User::findOrFail($id);

return view('users.show', compact('user'));

}

// Menampilkan form untuk mengedit resource yang spesifik

public function edit($id)

{

$user = User::findOrFail($id);

return view('users.edit', compact('user'));

}

// Memperbarui resource yang spesifik di database

public function update(Request $request, $id)

{

$validated = $request->validate([

'name' => 'required|string|max:255',

'email' => 'required|string|email|max:255|unique:users,email,' . $id,

'password' => 'nullable|string|min:8',

]);

$user = User::findOrFail($id);

$user->update($validated);

if ($request->filled('password')) {

$user->password = bcrypt($request->password);

$user->save();

}

return redirect()->route('users.index');

}

// Menghapus resource yang spesifik dari database

public function destroy($id)

{

$user = User::findOrFail($id);

$user->delete();

return redirect()->route('users.index');

}

}

```

Kemudian jangan lupa menambahkan routing pada web.php

```php

<?php

use App\Http\Controllers\UserController;

Route::resource('users', UserController::class);

```

Dengan menggunakan **Route::resource**, Laravel akan secara otomatis menghasilkan semua route yang diperlukan untuk operasi CRUD:

- GET **/users** - index

- GET **/users/create** - create

- POST **/user**s - store

- GET **/users/{user}** - show

- GET **/users/{user}/edit** - edit

- PUT/PATCH **/users/{user}** - update

- DELETE **/users/{user}** - destroy

### 8. Gunakan Resource untuk JSON Responses

Saat mengembangkan API, gunakan Resources untuk memastikan struktur respons yang konsisten.

Untuk membuatnya temen-temen bisa menjalankan perintah

```bash

php artisan make:resource UserResource

```

Contoh Resource:

```php

<?php

namespace App\Http\Resources;

use Illuminate\Http\Resources\Json\JsonResource;

class UserResource extends JsonResource

{

public function toArray($request)

{

return [

'id' => $this->id,

'name' => $this->name,

'email' => $this->email,

'created_at' => $this->created_at->toDateString(),

'updated_at' => $this->updated_at->toDateString(),

];

}

}

```

Penggunaan di Controller:

```php

<?php

use App\Http\Resources\UserResource;

public function show($id)

{

$user = User::findOrFail($id);

return new UserResource($user);

}

```

### 9. Gunakan Policies dan Gates untuk Authorization

Laravel menyediakan mekanisme untuk mengelola authorization melalui Policies dan Gates.

Contoh Policy:

```php

<?php

namespace App\Policies;

use App\Models\User;

use App\Models\Post;

class PostPolicy

{

public function update(User $user, Post $post)

{

return $user->id === $post->user_id;

}

}

```

Penggunaan Policy:

```php

<?php

public function update(Request $request, Post $post)

{

$this->authorize('update', $post);

// Update logic here

}

```

### 10. Gunakan Route Model Binding

Laravel mendukung route model binding untuk secara otomatis menginjeksikan model instance ke dalam route handler.

Contoh Route Model Binding:

```php

<?php

Route::get('users/{user}', function (User $user) {

return $user;

});

```

Dengan semua _best practice_ ini, temen-temen bisa memastikan bahwa kode Laravel temen-temen tetap _clean_, dapat dikelola, dan mengikuti standar industri. Kode yang clean tidak hanya membuat proses pengembangan lebih efisien tetapi juga meningkatkan kualitas dan pemeliharaan aplikasi jangka panjang. Sampai jumpa di artikel yang lain!!

| yogameleniawan |

1,873,753 | Laravel Reverb: Fitur Websocket Server pada Laravel 11 | Laravel 11 rilis pada tanggal 12 maret 2024 dengan berbagai macam fitur dan improvement baik dari... | 0 | 2024-06-02T15:18:23 | https://dev.to/yogameleniawan/laravel-reverb-fitur-websocket-server-pada-laravel-11-306a | laravel |

Laravel 11 rilis pada tanggal 12 maret 2024 dengan berbagai macam fitur dan improvement baik dari sisi performance, package, command, dan lain-lain. Salah satu fitur yang menarik untuk kita bahas adalah [Laravel Reverb](https://reverb.laravel.com/). Yuk kita bahas sedikit tentang laravel reverb ini.

Apa itu Laravel Reverb? kalau dari penjelasan official dari laravel reverb seperti ini “_Reverb is a first-party WebSocket server for Laravel applications, bringing real-time communication between client and server directly to your fingertips. Open source and an Artisan command away — with love from the Laravel team._”, jadi kurang lebih reverb ini adalah sebuah first party websocket server yang bisa digunakan dan bisa berjalan secara bersama-sama dengan projek laravel kita. Sebelum adanya reverb, ketika kita ingin membuat sebuah websocket server kita bisa menggunakan salah satu servicenya adalah [Pusher](https://pusher.com/), yang mana Pusher ini adalah salah satu service websocket server yang gratis maupun berbayar tetapi berjalan di luar Laravel.

Sekarang kita cobain aja ya bagaimana Laravel Reverb ini bekerja di Laravel. Seperti biasa temen-temen bisa langsung buka _official documentation_ dari [Laravel](https://laravel.com/).

---

Instalasi Laravel 11

```bash

composer create-project laravel/laravel:^11.0 laravel-reverb

```

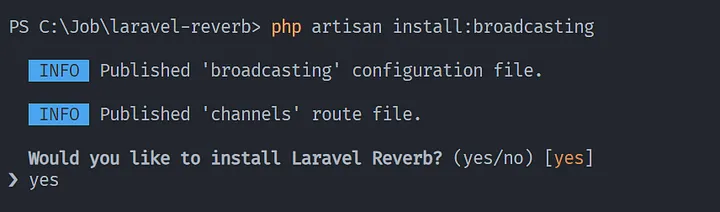

Instalasi Broadcasting, kenapa kita perlu melakukan instalasi broadcasting? karena by default di laravel 11 broadcasting tidak ditampilkan di folder routes

```bash

php artisan install:broadcasting

```

Pilih yes ketika ada pilihan untuk menginstall Laravel Reverb dikarenakan kita membutuhkan credential key dari reverb.

Pilih yes ketika ada pilihan untuk menginstall dependencies untuk broadcasting.

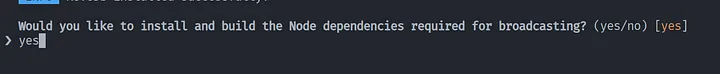

Apabila instalasi berhasil maka ketika temen-temen membuka file .env maka akan ada credential key untuk reverb.

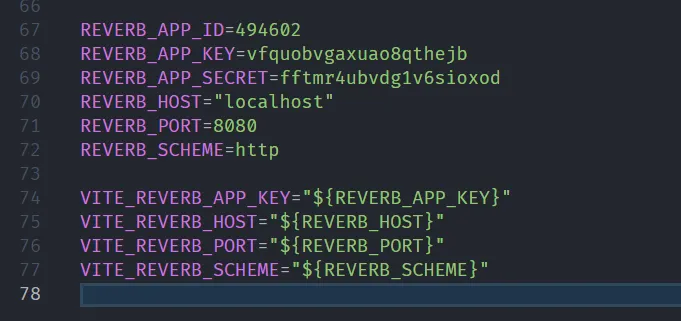

Buat event broadcast class dengan perintah di bawah ini

```bash

php artisan make:event SendMessageEvent

```

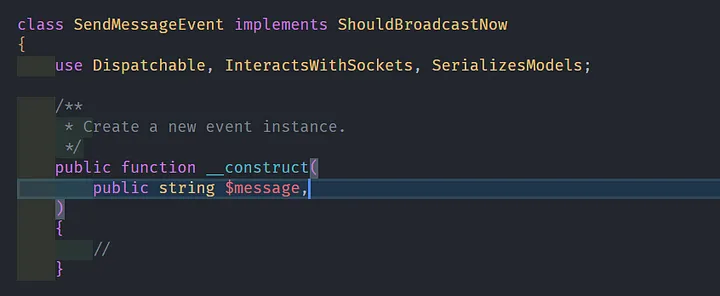

Jangan lupa tambahkan implements terhadap interface ShouldBroadcastNow

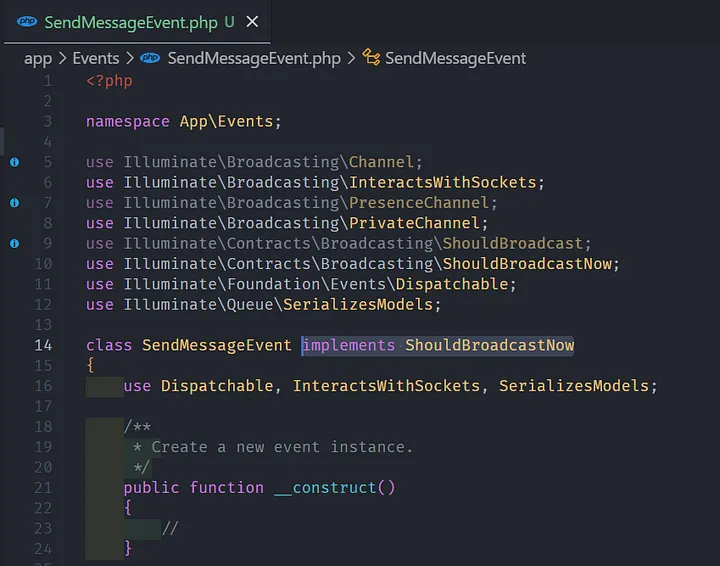

Tambahkan variabel pada constructor function yang akan digunakan sebagai variabel untuk mengirim data dari event broadcast ke channel reverb.

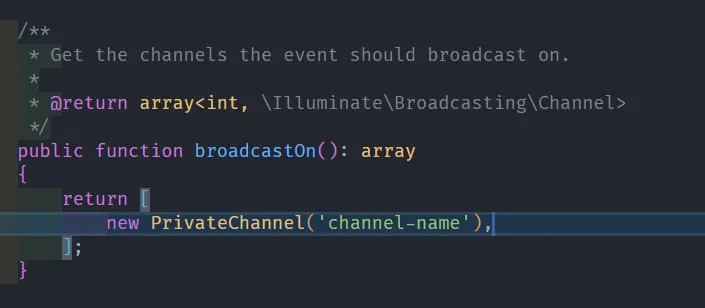

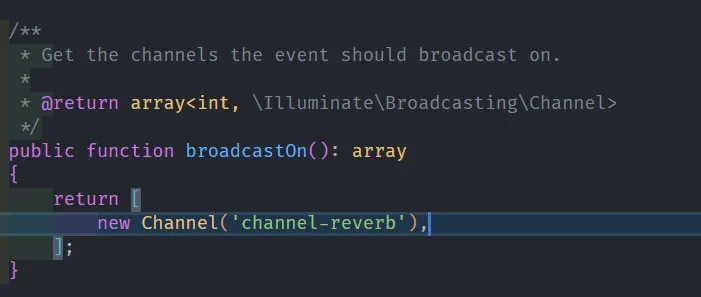

Kemudian silahkan ganti PrivateChannel menjadi Channel, kemudian nama channel default channel-name bisa kita ganti sesuai dengan kebutuhan, disini saya coba ganti menjadi channel-reverb.

Private Channel Default:

Channel Reverb:

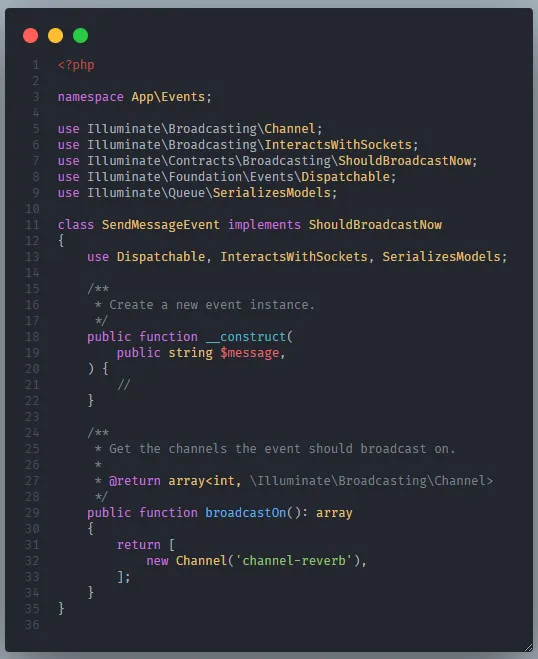

Sehingga hasil akhirnya kurang lebih seperti berikut:

```php

<?php

namespace App\Events;

use Illuminate\Broadcasting\Channel;

use Illuminate\Broadcasting\InteractsWithSockets;

use Illuminate\Contracts\Broadcasting\ShouldBroadcastNow;

use Illuminate\Foundation\Events\Dispatchable;

use Illuminate\Queue\SerializesModels;

class SendMessageEvent implements ShouldBroadcastNow

{

use Dispatchable, InteractsWithSockets, SerializesModels;

/**

* Create a new event instance.

*/

public function __construct(

public string $message,

) {

//

}

/**

* Get the channels the event should broadcast on.

*

* @return array<int, \Illuminate\Broadcasting\Channel>

*/

public function broadcastOn(): array

{

return [

new Channel('channel-reverb'),

];

}

}

```

Buat controller untuk mendefinisikan view dan function yang akan digunakan untuk mengirimkan sebuah event.

```bash

php artisan make:controller MessageController

```

Buat 2 function yang digunakan untuk membuat view dan melakukan aksi untuk mengirim event broadcast ke reverb.

```php

<?php

namespace App\Http\Controllers;

use App\Events\SendMessageEvent;

use Illuminate\Http\Request;

class MessageController extends Controller

{

public function index()

{

return view('message');

}

public function sendMessage(Request $request)

{

$message = $request->message;

event(new SendMessageEvent(

message: $message

));

return response()->json(['message' => 'Message has been send'], 200);

}

}

```

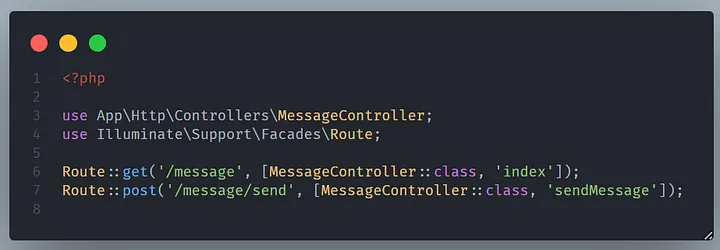

Kemudian definisikan 2 route yang digunakan untuk meng-handle route view dan route action.

```php

<?php

use App\Http\Controllers\MessageController;

use Illuminate\Support\Facades\Route;

Route::get('/message', [MessageController::class, 'index']);

Route::post('/message/send', [MessageController::class, 'sendMessage']);

```

Setelah itu silahkan distart untuk reverb websocket server menggunakan perintah dibawah ini

```bash

php artisan reverb:start

```

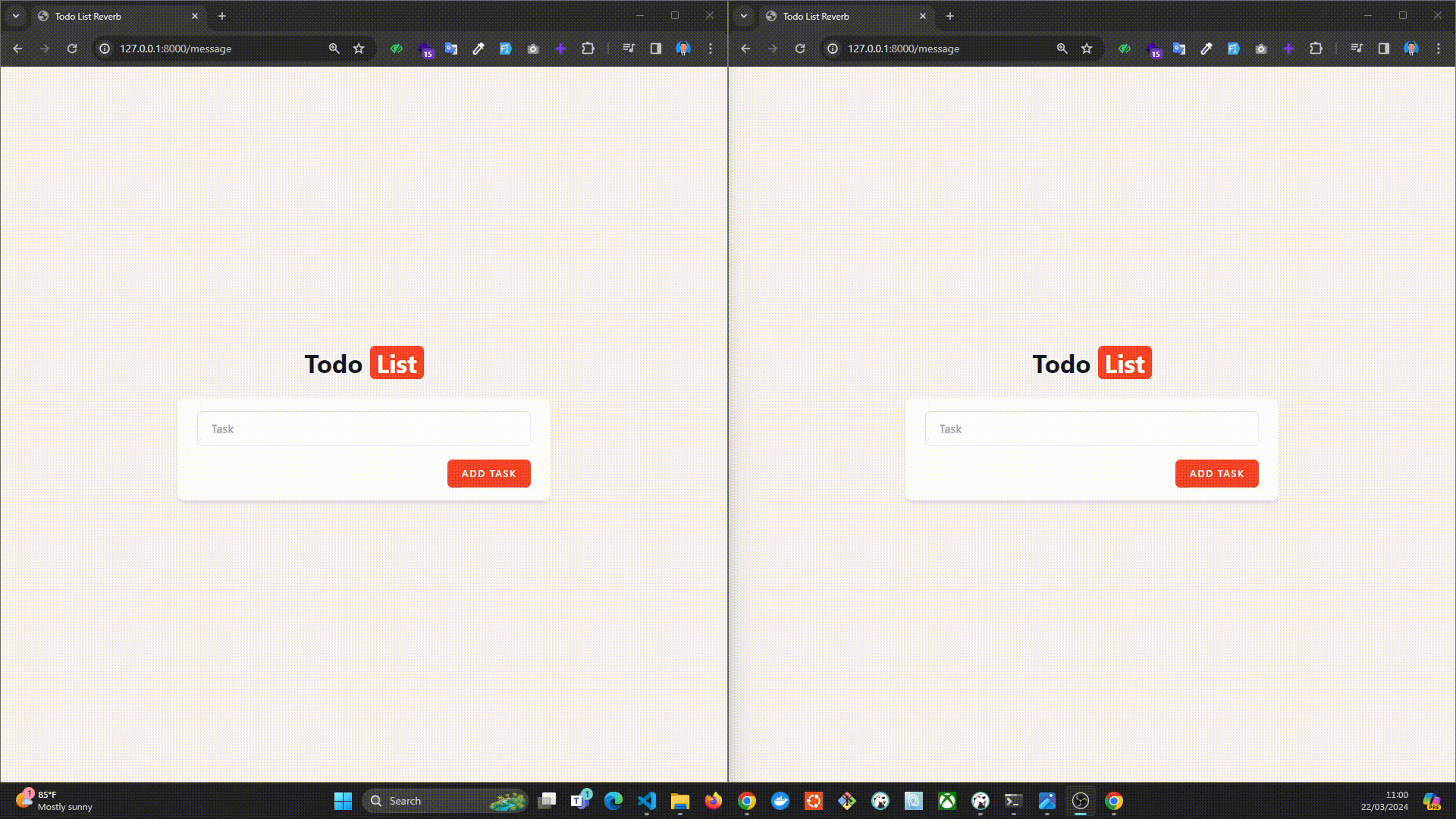

Setelah itu bisa kita langsung cobain Reverb ini ya, hasilnya kurang lebih seperti ini, kebetulan disini saya membuat aplikasi todo list sederhana.

Gimana? Cukup sederhana ya untuk konfigurasi websocket kali ini menggunakan Laravel Reverb. Tentunya dengan menggunakan reverb kita sebagai developer dipermudah untuk menggunakan websocket server pada projek Laravel. Kalau misalkan temen-temen pingin nyobain projek ini bisa diakses di repository github saya di sini : [https://github.com/yogameleniawan/laravel-reverb](https://github.com/yogameleniawan/laravel-reverb)

Oke, pembahasan tentang reverb cukup sampai disini ya temen-temen. See u di kesempatan lain dengan artikel yang berbeda.

| yogameleniawan |

1,873,749 | Bagaimana Cara Memahami useState Hooks pada React/Next JS? | Buat temen-temen yang mungkin baru saja belajar tentang react js/next js atau belum pernah belajar... | 0 | 2024-06-02T15:10:02 | https://dev.to/yogameleniawan/bagaimana-cara-memahami-usestate-hooks-pada-reactnext-js-9p8 | javascript, nextjs, react |

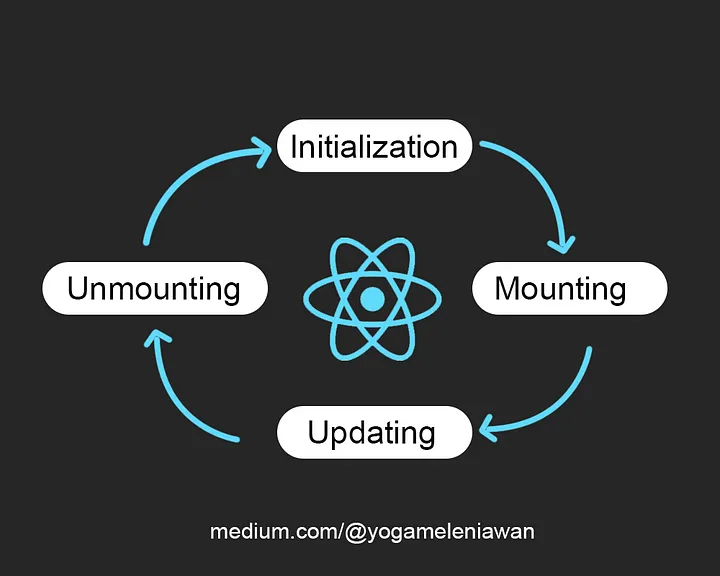

Buat temen-temen yang mungkin baru saja belajar tentang react js/next js atau belum pernah belajar sama sekali. Sepertinya artikel ini wajib untuk dibaca sampai akhir, tenang nggak banyak kok. Kalau temen-temen pernah buka dokumentasi tentang [useEffect](https://legacy.reactjs.org/docs/hooks-effect.html) dan berakhir pusing, saya akan coba bantu jelaskan sedikit.

Gambar diatas merupakan alur kerja react ketika merender sebuah komponen. Nah, tentu saja kita sebagai developer gak mau tau dong bagaimana proses yang berjalan dibelakang yang terpenting kita maunya komponen harus bisa dirender sekali ataupun berkali kali. Tapi, pertanyaanya sekarang, bagaimana cara kita untuk mengubah hasil render komponen yang sudah dirender react? Tenang, jangan dipikir terlalu jauh, useEffect hooks ini adalah solusinya.

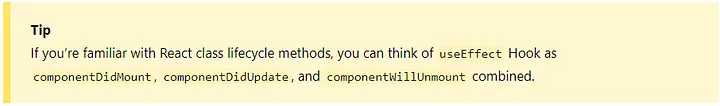

Kalau kita lihat dokumentasi yang disediakan oleh React JS, disana dijelaskan bahwa useEffect ini adalah hasil kombinasi dari componentDidMount, componentDidUpdate, dan componentWillUnmount ketika temen-temen menggunakan [Class Component](https://react.dev/reference/react/Component), tetapi jika temen-temen menggunakan [Functional Component](https://react.dev/reference/react/Component#migrating-a-simple-component-from-a-class-to-a-function) maka, temen-temen bisa menggunakan useEffect hooks ini. Kita langsung praktik pakai coding biar gak kebayang-bayang terus.

---

```javascript

import React, { useEffect, useState } from 'react'

const Home = () => {

const [counter, setCounter] = useState<number>(0);

useEffect(() => {

console.log('Running usEffect tanpa Array')

})

useEffect(() => {

console.log('Running usEffect dengan array kosong')

}, [])

useEffect(() => {

console.log('Ada yang berubah nih dari state counter')

}, [counter])

return (

<div className="flex flex-row justify-center items-center gap-2 mt-10">

<span>{counter}</span>

<button className="bg-gray-400 p-2 rounded-md text-white" onClick={() => setCounter((s) => s + 1)}>Increment</button>

</div>

)

}

export default Home

```

Bisa temen-temen lihat kode program diatas, ada 3 cara untuk mendefinisikan useEffect itu sendiri. Nah, perbedaanya ada pada [Array of Dependencies](https://react.dev/reference/react/useEffect#examples-dependencies)

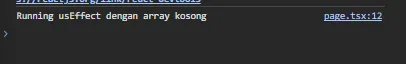

useEffect dengan array of dependencies kosong

```javascript

useEffect(() => {

console.log('Running usEffect dengan array kosong')

}, [])

```

Ketika temen-temen mendefinisikan useEffect dengan cara seperti ini maka semua kode program yang ada di dalam useEffect akan dijalankan hanya 1 kali ketika component itu dirender.

Hasil console useEffect dengan array of dependencies kosong:

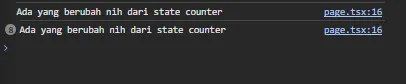

useEffect dengan array of dependencies ada isinya

```javascript

const [counter, setCounter] = useState<number>(0);

useEffect(() => {

console.log('Running usEffect dengan array kosong')

}, [counter])

```

Ketika temen-temen mendefinisikan useEffect dengan cara seperti ini maka semua kode program yang ada di dalam useEffect akan dijalankan ketika component dirender dan dijalankan kembali apabila ada perubahan terhadap state yang dimasukkan ke dalam arraynya.

```javascript

import React, { useEffect, useState } from 'react'

const Home = () => {

const [counter, setCounter] = useState<number>(0);

useEffect(() => {

console.log('Ada yang berubah nih dari state counter')

}, [counter])

return (

<div className="flex flex-row justify-center items-center gap-2 mt-10">

<span>{counter}</span>

<button

className="bg-gray-400 p-2 rounded-md text-white"

onClick={() => setCounter((s) => s + 1)}

>Increment</button>

</div>

)

}

export default Home

```

Contohnya misal user itu menekan tombol Increment maka semua kode program yang ada di dalam useEffect itu akan dijalankan kembali, tetapi jika tidak ada perubahan state maka semua kode program yang ada di dalam useEffect tersebut tidak akan dijalankan.

Hasil console useEffect dengan array of dependencies ada isinya:

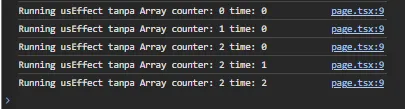

useEffect tanpa array of dependencies

```javascript

const [counter, setCounter] = useState<number>(0);

const [time, setTime] = useState<number>(0);

useEffect(() => {

console.log('Running usEffect tanpa Array')

})

```

Ketika temen-temen mendefinisikan useEffect dengan cara seperti ini maka semua kode program yang ada di dalam useEffect ini akan dijalankan apabila ada perubahan dari semua state.

```javascript

import React, { useEffect, useState } from 'react'

const Home = () => {

const [counter, setCounter] = useState<number>(0);

const [time, setTime] = useState<number>(0);

useEffect(() => {

console.log(

`Running usEffect tanpa Array

counter: ${counter} time: ${time}

`)

})

return (

<div className="flex flex-row justify-center items-center gap-2 mt-10">

<span>{counter}</span>

<span>{time}</span>

<button

className="bg-gray-400 p-2 rounded-md text-white"

onClick={() => setCounter((s) => s + 1)}

>Increment</button>

<button

className="bg-gray-400 p-2 rounded-md text-white"

onClick={() => setTime((s) => s + 1)}

>Time</button>

</div>

)

}

export default Home

```

Hasil console useEffect dengan array of dependencies kosong:

---

Bagaimana apakah penjelasan diatas mudah dipahami? kalau malah bikin temen-temen pusing maap ye bro wkwk. Kurang lebih seperti itu penjelasan yang bisa saya sampaikan tentang useEffect pada React/Next JS yang mana penjelasannya saya usahakan sesimple mungkin dengan analogi yang cukup sederhana juga. Sampai bertemu di lain artikel yang lebih menarik! Tengkyu brokk!

| yogameleniawan |

1,873,748 | Nhẫn Bạc Nam có gì mà hot??? | Kính thưa quý vị và các bạn, Hôm nay, tôi rất vinh dự được đứng đây để nói về một chủ đề mang tính... | 0 | 2024-06-02T15:05:53 | https://dev.to/suc_mktrang_53933a88068d/nhan-bac-nam-co-gi-ma-hot-7ho | Kính thưa quý vị và các bạn,

Hôm nay, tôi rất vinh dự được đứng đây để nói về một chủ đề mang tính thẩm mỹ và thời trang - nhẫn bạc nam. Đối với nhiều người, nhẫn bạc không chỉ là một phụ kiện thời trang mà còn là biểu tượng của phong cách, cá tính và thậm chí là những giá trị tinh thần sâu sắc.

1. Lịch sử và ý nghĩa của nhẫn bạc nam:

Nhẫn bạc đã xuất hiện từ hàng ngàn năm trước, được sử dụng bởi các nền văn minh cổ đại như Ai Cập, La Mã và Hy Lạp. Ở mỗi thời kỳ, nhẫn bạc mang những ý nghĩa khác nhau. Đối với người Ai Cập, nhẫn bạc tượng trưng cho sự trường tồn và vĩnh cửu. Người La Mã lại coi nhẫn bạc là biểu tượng của quyền lực và sự giàu có.

Ngày nay, nhẫn bạc nam không chỉ đơn thuần là một món trang sức mà còn là biểu tượng của sự mạnh mẽ và nam tính. Nó thể hiện sự quyết đoán, sự tự tin và phong cách riêng của người đàn ông.

2. Lợi ích của việc đeo nhẫn bạc:

Ngoài vẻ đẹp và phong cách, nhẫn bạc còn mang lại nhiều lợi ích cho sức khỏe. Bạc là một kim loại có tính kháng khuẩn và kháng nấm. Đeo nhẫn bạc có thể giúp điều hòa nhiệt độ cơ thể, cải thiện tuần hoàn máu và thậm chí giúp giảm căng thẳng.

3. Chọn nhẫn bạc nam phù hợp:

Khi chọn nhẫn bạc, người mua cần xem xét kỹ về kiểu dáng, kích thước và chất lượng bạc. Một chiếc nhẫn bạc đẹp phải có thiết kế tinh xảo, phù hợp với phong cách cá nhân và được làm từ bạc chất lượng cao. Ngoài ra, cần chú ý đến việc bảo quản và vệ sinh nhẫn bạc để giữ cho nó luôn sáng bóng và bền đẹp.

4. Nhẫn bạc trong phong cách thời trang hiện đại:

Trong thời trang hiện đại, nhẫn bạc nam được thiết kế đa dạng và phong phú. Từ những mẫu đơn giản, thanh lịch đến những mẫu phức tạp, lộng lẫy, nhẫn bạc có thể phù hợp với mọi phong cách từ cổ điển đến hiện đại. Các thương hiệu trang sức hàng đầu cũng luôn cập nhật những xu hướng mới, mang lại sự lựa chọn đa dạng cho phái mạnh.

5. Kết luận:

Nhẫn bạc nam không chỉ là một món trang sức mà còn là một phần của phong cách sống. Nó thể hiện sự tôn trọng đối với bản thân, sự chú trọng đến ngoại hình và mong muốn khẳng định cá tính. Với những ý nghĩa và lợi ích mà nhẫn bạc mang lại, chắc chắn đây sẽ là một lựa chọn hoàn hảo cho bất kỳ người đàn ông nào.

Kính thưa quý vị và các bạn,

Hy vọng qua bài diễn đàn này, chúng ta sẽ có cái nhìn sâu sắc hơn về nhẫn bạc nam và biết cách chọn lựa, sử dụng nó một cách hợp lý. Cảm ơn sự lắng nghe của quý vị và chúc quý vị một ngày vui vẻ!

Để biết bản thân phù hợp với món trang sức nào quý vị và các bạn có thể truy cập trang web [trangsucmk.com](https://trangsucmk.com/) để có thể lựa chọn cho mình một món trang sức sang trọng và phù hợp với bản thân nhất nhé hoặc truy cập [Bài Viết](https://trangsucmk.com/2024/05/31/nhan-nam-sinh/) để biết thêm chi tiết về nhẫn bạn nam.

Xin trân trọng cảm ơn! | suc_mktrang_53933a88068d | |

1,873,747 | NVM Install On Your PC | NVM (Node Version Manager) is a popular tool for managing multiple versions of Node.js on a single... | 0 | 2024-06-02T15:05:26 | https://dev.to/ars_3010/nvm-install-on-your-pc-1l07 | node, nvm, javascript, react | NVM (Node Version Manager) is a popular tool for managing multiple versions of Node.js on a single machine. Here are some important commands for NVM:

### Installation Commands

1. **Install NVM**:

```

curl -o- https://raw.githubusercontent.com/nvm-sh/nvm/v0.39.3/install.sh | bash

```

This script installs the latest version of NVM. Replace `v0.

### NVM (Node Version Manager) Important Commands

#### Installation

1. **Install NVM**:

```

curl -o- https://raw.githubusercontent.com/nvm-sh/nvm/v0.39.3/install.sh | bash

```

This command downloads and runs the NVM install script from the NVM GitHub repository.

2. **Load NVM**:

``` export NVM_DIR="$([ -z "${XDG_CONFIG_HOME-}" ] && printf %s "${HOME}/.nvm" || printf %s "${XDG_CONFIG_HOME}/nvm")"

[ -s "$NVM_DIR/nvm.sh" ] && \. "$NVM_DIR/nvm.sh"

```

This is usually added to your shell profile file (`~/.bashrc`, `~/.zshrc`, etc.) to load NVM when you open a new terminal.

#### Basic Usage

3. **Check NVM Version**:

```

nvm --version

```

4. **List Installed Node Versions**:

```

nvm ls

```

5. **List Available Node Versions**:

```

nvm ls-remote

```

6. **Install a Specific Node Version**:

```

nvm install <version>

```

Replace `<version>` with the desired version number, e.g., `nvm install 16.13.0`.

7. **Use a Specific Node Version**:

```

nvm use <version>

```

Replace `<version>` with the installed version number you want to use.

8. **Set Default Node Version**:

```

nvm alias default <version>

```

This sets the default Node.js version to be used in new shell sessions.

9. **Uninstall a Specific Node Version**:

```

nvm uninstall <version>

```

10. **Show Current Node Version**:

```

nvm current

```

11. **Run Node with a Specific Version**:

```

nvm run <version> <script.js>

```

Replace `<version>` with the Node.js version and `<script.js>` with the script you want to run.

12. **Execute NPM Commands with Specific Node Version**:

```

nvm exec <version> npm <command>

```

For example, `nvm exec 14.17.0 npm install`.

13. **Reinstall Packages from Current Version**:

```

nvm reinstall-packages <version>

```

This reinstalls global packages from the specified version into the current version.

14. **Get Help**:

```

nvm help

```

These commands cover the essential functionality of NVM, helping you to manage different Node.js versions efficiently. | ars_3010 |

1,873,744 | From Chaos to Clarity: Simplify Your Angular Code with Declarative Programming | Not that long ago I bumped into an interesting problem. I wanted to implement a “search user”... | 0 | 2024-06-02T15:01:54 | https://dev.to/krivanek06/from-chaos-to-clarity-simplify-your-angular-code-with-declarative-programming-58gm | webdev, angular, beginners, rxjs | Not that long ago I bumped into an interesting problem. I wanted to implement a “search user” dropdown. When you select a user's name, you make an API call to load more data, meanwhile the loading happens, you display a “loading…” message and once the user details are back from the server, you display those.

Kinda like the following GIF on which I will be describing the two approaches (declarative and imperative) that I used.

## The Problem Description

This is a small representation of the problem which you’ve probably bumped into many times. You have a dropdown and every time you select a value, you want to load more details about the selected item from the backend.

You display a loading message until the data is not there, maybe some fancy animation, and once the data arrives you display it.

We don’t need a server for this example, it’s enough to have a mock data service as follows:

```tsx

import { Injectable } from '@angular/core';

import { Observable, map, of } from 'rxjs';

import { delay } from 'rxjs/operators';

export type DataItem = {

id: string;

name: string;

};

export const dataItems: DataItem[] = [

{ id: 'id_1', name: 'item_1' },

{ id: 'id_2', name: 'item_2' },

{ id: 'id_3', name: 'item_3' },

{ id: 'id_4', name: 'item_4' },

{ id: 'id_5', name: 'item_5' },

];

@Injectable({

providedIn: 'root',

})

export class DataService {

/**

* simulate fake API call to the server

*/

getDataFakeAPI(itemId: string): Observable<DataItem> {

return of(itemId).pipe(

map(() => dataItems.find((d) => d.id === itemId)!),

delay(1000)

);

}

}

```

The `dataItems` are items which will be displayed inside the select dropdown and every time you change the value, you will call `getDataFakeAPI` that returns the same value with some delay - mocking API call.

## Imperative Solution

The following solution is the solution that I used initially. I will post the whole code and then go over some parts which are important in this example.

```tsx

import { Component, inject, signal } from '@angular/core';

import {

DataItem,

DataService,

dataItems

} from './data-service.service';

@Component({

selector: 'app-select-imperative',

standalone: true,

template: `

<!-- dropdown of users -->

<select (change)="onChange($event)">

@for(item of displayData; track item.id){

<option [value]="item.id">{{ item.name }}</option>

}

</select>

<h3>Selected Items </h3>

<!-- displayed selected options -->

@for(item of selectedItems(); track item.id){

<div class="item-selected" (click)="onRemove(item)">

{{ item.name }}

</div>

}

<!-- display loading state -->

@if(isLoadingData()){

<div class="item-loading"> Loading ... </div>

}

<!-- reset button -->

@if(selectedItems().length > 0){

<button type="button" class="remove" (click)="onReset()">

Reset

</button>

}

`,

})

export class SelectImperativeComponent {

private dataService = inject(DataService);

displayData = dataItems;

/**

* displayed data on the UI - loaded from the BE

*/

selectedItems = signal<DataItem[]>([]);

isLoadingData = signal(false);

/**

* on select change - load data from API

*/

onChange(event: any) {

const itemId = event.target.value;

// check if already saved

const savedIds = this.selectedItems().map((d) => d.id);

if (savedIds.includes(itemId)) {

return;

}

// set loading to true

this.isLoadingData.set(true);

// fake load data from BE

this.dataService.getDataFakeAPI(itemId).subscribe((res) => {

// save data

this.selectedItems.update((prev) => [...prev, res]);

// set loading to false

this.isLoadingData.set(false);

});

}

/**

* removes item from selected array

*/

onRemove(item: DataItem) {

this.selectedItems.update(

(prev) => prev.filter((d) => d.id !== item.id)

);

}

onReset() {

this.selectedItems.set([]);

}

}

```

Overall it’s not that complicated and it may be close to a solution that you yourself would write. First of all, there is nothing significantly wrong with this solution, but why exactly do I call this an imperative approach ?

In short, this is imperative, because your signals - `selectedItems` and `isLoadingData` - can be changed all over the places which leads to two major problems - debugging and multiple properties.

Right now the `selectedItems` is changed in 3 places and `isLoadingData` is changed in 2 places, however once the complexity of this feature grows, debugging may become an issue to figure out how the data flow happens in this feature. What if `selectedItems` and `isLoadingData` will be used in 10 places each, suddenly it is not that easy to understand what’s happening.

Also with the growing complexity, you may want to introduce another properties like `isError = signal(false)` .

Now let’s think a bit and ask the question, could we combine the `selectedItems` , `isLoadingData` and potentially a new property `isError` into only one property which would look something like:

```tsx

{

data: DataItem[];

isError: boolean;

isLoading: boolean;

}

```

## Declarative Solution

The result what we want to achieve with the declarative solution is that we want to have only one property (object), which will have the data and loading keys and we want to change the values for this property only in one place.

Here is the solution that I came up with:

```tsx

import { Component, inject, signal } from '@angular/core';

import {

DataItem,

DataService,

dataItems

} from './data-service.service';

import { Subject, map, merge, scan, startWith, switchMap } from 'rxjs';

import { toSignal } from '@angular/core/rxjs-interop';

@Component({

selector: 'app-select-declarative',

standalone: true,

template: `

<!-- dropdown of users -->

<select (change)="onChange($event)">

@for(item of displayData; track item.id){

<option [value]="item.id">{{ item.name }}</option>

}

</select>

<h3>Selected Items </h3>

<!-- displayed selected options -->

@for(item of selectedItems().data; track item.id){

<div class="item-selected" (click)="onRemove(item)">

{{ item.name }}

</div>

}

<!-- display loading state -->

@if(selectedItems().isLoading){

<div class="item-loading"> Loading ... </div>

}

<!-- reset button -->

@if(selectedItems().data.length > 0){

<button type="button" class="remove" (click)="onReset()">

Reset

</button>

}

`,

})

export class SelectDeclarativeComponent {

private dataService = inject(DataService);

displayData = dataItems;

private removeItem$ = new Subject<DataItem>();

private addItem$ = new Subject<string>();

private reset$ = new Subject<void>();

/**

* displayed data on the UI - loaded from the BE

*/

selectedItems = toSignal(

merge(

// create action to add a new item

this.addItem$.pipe(

switchMap((itemId) =>

this.dataService.getDataFakeAPI(itemId).pipe(

map((item) => ({

item,

action: 'add' as const,

})),

startWith({

item: null,

action: 'initLoading' as const,

})

)

)

),

// create action to remove an item

this.removeItem$.pipe(

map((item) => ({

item,

action: 'remove' as const,

}))

),

// create action to reset everything

this.reset$.pipe(

map(() => ({

item: null,

action: 'reset' as const,

}))

)

).pipe(

scan(

(acc, curr) => {

// add reset state

if (curr.action === 'reset') {

return {

isLoading: false,

data: [],

};

}

// display loading

if (curr.action === 'initLoading') {

return {

data: acc.data,

isLoading: true,

};

}

// check to remove item

if (curr.action === 'remove') {

return {

isLoading: false,

data: acc.data.filter((d) => d.id !== curr.item.id),

};

}

// check if already saved

const savedIds = acc.data.map((d) => d.id);

if (savedIds.includes(curr.item.id)) {

return {

isLoading: false,

data: acc.data,

};

}

// add item into the rest

return {

isLoading: false,

data: [...acc.data, curr.item],

};

},

{ data: [] as DataItem[], isLoading: false }

)

),

{

initialValue: {

data: [],

isLoading: false,

},

}

);

/**

* on select change - load data from API

*/

onChange(event: any) {

const itemId = event.target.value;

this.addItem$.next(itemId);

}

/**

* removes item from selected array

*/

onRemove(item: DataItem) {

this.removeItem$.next(item);

}

onReset() {

this.reset$.next();

}

}

```

Yes, this is longer than the previous solution, however is it more complex or simpler than the previous one?

What needs to be first highlighted that instead of changing the `selectedItems` on multiple places, you now have 3 subjects, each of them representing an action that can happen.

```tsx

private removeItem$ = new Subject<DataItem>();

private addItem$ = new Subject<string>();

private reset$ = new Subject<void>();

```

Next inside the `selectedItems` you use these subjects and map them into format you want to work with. For me the following format suited the most

```tsx

item: DataItem;

action: 'add' | 'remove' | 'initLoading' | 'reset'

```

For the `addItem$` you want to use the `startWith` operator at the end of the pipe chain. This will allow that the first action which will be emitted when selecting a new value is `initLoading` and only when the API call (`dataService.getDataFakeAPI`) finishes, it will emit again with the action `add`.

You wrap each pipe mapping with the `merge` operator, because you want to perform some common logic despite of which one of these subjects emit.

Lastly you have the giant `scan` section. The `scan` operator is similar to `reduce` , however `scan` remembers the last computation that happened and if the `scan` happens again, it will use the data from the last computation - [read more about scan](https://www.learnrxjs.io/learn-rxjs/operators/transformation/scan) .

Inside the `scan` section, you create conditions what should happen based on the action of current value that is being processed.

It may reassemble how NgRx works. You have some actions (add, remove and reset subjects) and you create reducers to updated the state of only one property.

## Final Thoughts

Overall it’s up to you, the developer, which approach you choose to solve this problem. Both have some advantages and shortcomings.

If you want to play around with this example, you can [find it on stackblitz](https://stackblitz.com/edit/stackblitz-starters-ingyij) or connect with me on [dev.to](https://dev.to/krivanek06) | [LinkedIn](https://www.linkedin.com/in/eduard-krivanek-714760148/)| [Personal Website](https://eduardkrivanek.com/) | [Github](https://github.com/krivanek06). | krivanek06 |

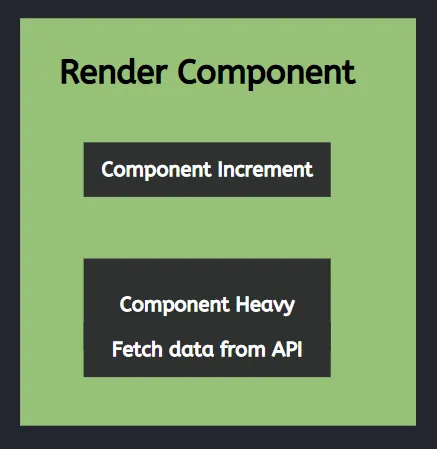

1,873,746 | Implementasi Lazy Loading pada Component Next JS yang Bisa Bikin Aplikasi Ngebut dengan Dynamic Import | Pernah mengalami aplikasi yang kita kembangkan terasa lemot karena ada banyak component yang sedang... | 0 | 2024-06-02T15:01:42 | https://dev.to/yogameleniawan/implementasi-lazy-loading-pada-component-next-js-yang-bisa-bikin-aplikasi-ngebut-dengan-menggunakan-dynamic-import-22ic | javascript, react, nextjs |

Pernah mengalami aplikasi yang kita kembangkan terasa lemot karena ada banyak _component_ yang sedang di-_render_? Pastinya semua orang yang baru belajar next js akan mengalami hal yang sama. Karena pada dasarnya ketika kita baru pertama kali belajar next js pasti tidak memperhatikan performa dari aplikasi.

Kalau kita bicara masalah performa dari aplikasi memang pengaruh ya? ketika kita menggunakan _lazy loading_ dan tidak menggunakan? Kalau data yang temen-temen gunakan itu masih sedikit dan itu proses running aplikasi dilakukan di lokal ya bisa dipastikan tidak ada pengaruh sama sekali. Tapi, kalau data temen-temen itu sudah jutaan maka itu menjadi issue yang perlu kita perbaiki.

Lalu kira-kira apa perbedaannya ketika kita menggunakan lazy loading atau tidak? Saya akan coba memberikan contoh yang paling sederhana ya temen-temen dengan menggunakan cara _dynamic import_ atau _code splitting_. Oiya, temen-temen juga bisa cobain sendiri ya dengan kode program yang akan saya tuliskan dibawah.

---

#### Tidak Menggunakan Lazy Loading

Disini kita memiliki 1 page dengan 2 component. Dibawah ini merupakan kode program untuk page dari next js.

```javascript

"use client"

import React from 'react'

import ComponentIncrement from './components/ComponentIncrement'

import HeavyComponent from './components/HeavyComponent'

const Home = () => {

return (

<main className="flex flex-col min-h-screen gap-3 m-10">

<ComponentIncrement />

<HeavyComponent/>

</main>

)

}

export default Home

```

Dibawah ini merupakan kode program dari component **ComponentIncrement**

```javascript

"use client"

import React, { useEffect, useState } from 'react'

import { setInterval } from 'timers'

const ComponentIncrement = () => {

const [count, setCount] = useState(0)

useEffect(() => {

setInterval(() => {

setCount((s) => s + 1)

}, 1000)

}, [])

return (

<div className="bg-slate-500 w-10 h-10">

{count}

</div>

)

}

export default ComponentIncrement

```

Dibawah ini merupakan kode program dari component HeavyComponent

```javascript

"use client"

import Image from "next/image";

import { useEffect, useState } from "react";

import Gambar from "../../../public/images/image.jpg"

const fetchData = async () => {

const response = await fetch(`https://jsonplaceholder.typicode.com/posts/1`);

const data = await response.json();

return data;

};

const HeavyComponent = () => {

const [data, setData] = useState<any>();

useEffect(() => {

const fetchDataAsync = async () => {

const result = await fetchData();

if (result) {

setData(result);

}

};

fetchDataAsync();

}, []);

return (

<div className="flex flex-col">

{

data != null ? <>

<Image src={Gambar} alt="Image" width={200} height={200} />

<h2>Heavy Component</h2>

<p>Title: {data.title}</p>

<p>Body: {data.body}</p>

</> : 'Fetching data from API'

}

</div>

);

};

export default HeavyComponent;

```

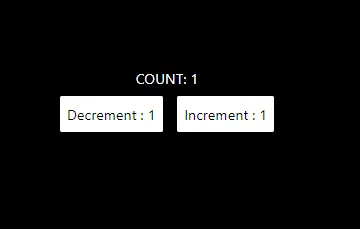

Untuk hasilnya kurang lebih seperti ini :

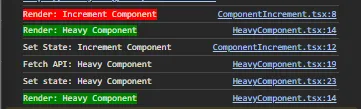

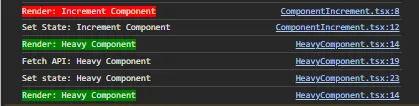

Hasil diatas belum bisa kita lihat ya temen-temen, apakah menggunakan lazy loading atau tidak. Tapi, kita akan coba berikan logging berupa teks yang akan ditampilkan pada console browser. Kurang lebih hasilnya seperti ini :

Kita akan simpan hasil itu dan nanti akan kita komparasikan diakhir artikel ini ya temen-temen untuk mengetahui yang sebenarnya fungsi dari lazy loading.

#### Menggunakan Lazy Loading

Disini kita memiliki 1 page dengan 2 component. Dibawah ini merupakan kode program untuk page dari next js.

```javascript

"use client"

import React from 'react'

import ComponentIncrement from './components/ComponentIncrement'

import dynamic from 'next/dynamic'

const DynamicHeavyComponent = dynamic(() => import('./components/HeavyComponent'), {

loading: () => <p className="text-white">Loading Heavy Component...</p>,

ssr: false,

})

const Home = () => {

return (

<main className="flex flex-col min-h-screen gap-3 m-10">

<ComponentIncrement />

<DynamicHeavyComponent />

</main>

)

}

export default Home

```

Dibawah ini merupakan kode program dari component **ComponentIncrement**

```javascript

"use client"

import React, { useEffect, useState } from 'react'

import { setInterval } from 'timers'

const ComponentIncrement = () => {

const [count, setCount] = useState(0)

useEffect(() => {

setInterval(() => {

setCount((s) => s + 1)

}, 1000)

}, [])

return (

<div className="bg-slate-500 w-10 h-10">

{count}

</div>

)

}

export default ComponentIncrement

```

Dibawah ini merupakan kode program dari component **HeavyComponent**

```javascript

"use client"

import Image from "next/image";

import { useEffect, useState } from "react";

import Gambar from "../../../public/images/image.jpg"

const fetchData = async () => {

const response = await fetch(`https://jsonplaceholder.typicode.com/posts/1`);

const data = await response.json();

return data;

};

const HeavyComponent = () => {

const [data, setData] = useState<any>();

useEffect(() => {

const fetchDataAsync = async () => {

const result = await fetchData();

if (result) {

setData(result);

}

};

fetchDataAsync();

}, []);

return (

<div className="flex flex-col">

{

data != null ? <>

<Image src={Gambar} alt="Image" width={200} height={200} />

<h2>Heavy Component</h2>

<p>Title: {data.title}</p>

<p>Body: {data.body}</p>

</> : 'Fetching data from API'

}

</div>

);

};

export default HeavyComponent;

```

Untuk hasilnya kurang lebih seperti ini, sama seperti tanpa menggunakan lazy loading :

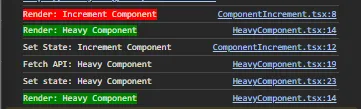

Dan untuk hasil console menggunakan lazy loading seperti ini :

Kira-kira apakah hasil console tidak menggunakan dan menggunakan lazy loading ada perbedaannya? Ada dong. Mari kita bahas ya temen-temen.

Hasil console tanpa menggunakan lazy loading 2:

Hasil console menggunakan lazy loading 2

Ketika temen-temen tidak mengimplementasikan lazy loading maka 2 component tersebut akan dirender secara paralel atau secara bersamaan dan perubahan state maupun pemanggilan end point API pun juga dilakukan secara bersamaan. Akibatnya apa? bayangkan jika salah satu component punya behavior yang cukup berat atau proses render maupun proses pengambilan datanya membutuhkan waktu. Maka, component lainnya yang seharusnya sudah selesai render maka harus nunggu sampai component sebelumnya selesai di-render.

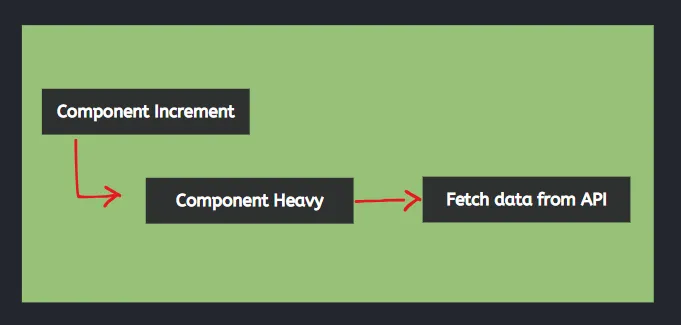

Render component secara paralel tanpa lazy loading:

Sedangkan, ketika kita mengimplementasikan lazy loading maka, masing-masing komponen itu tidak akan di-render secara bersama-sama tetapi akan di-render sesuai dengan urutannya. Selain itu, kita juga bisa memberikan UX (User Experience) dengan sebuah teks bahwa komponen tersebut sedang dimuat.

Render component secara bergantian menggunakan lazy loading:

---

Bagaimana? Apakah sudah ada gambaran pentingnya implementasi lazy loading pada aplikasi yang kita kembangkan menggunakan next js? Saya berharap artikel kali ini bisa memberikan ilmu tambahan untuk mengembangkan aplikasi menggunakan Next JS. Sampai bertemu di lain kesempatan dengan artikel yang lebih menarik lagi.

| yogameleniawan |

1,873,745 | SPBU88: Bukan Sekedar Stasiun Pengisian, Tapi Gerbang Menuju Keberuntungan dan Kehidupan Luar Biasa! | Pernahkah Anda membayangkan mengisi bensin dan sekaligus membuka gerbang menuju kekayaan dan... | 0 | 2024-06-02T14:55:06 | https://dev.to/spbu88_com_8f4314b6ea263e/spbu88-bukan-sekedar-stasiun-pengisian-tapi-gerbang-menuju-keberuntungan-dan-kehidupan-luar-biasa-58am | spbu88, spbu88login, spbu88daftar, linkalternatifspbu88 | Pernahkah Anda membayangkan mengisi bensin dan sekaligus membuka gerbang menuju kekayaan dan kehidupan yang luar biasa? Di [SPBU88](https://spbu88.com), bukan hanya tangki yang terisi, tapi juga pundi-pundi dan optimisme Anda!

SPBU88 bukan sekadar singkatan dari Stasiun Pengisian Bahan Bakar Umum. Di era digital ini, SPBU88 menjelma menjadi peluang emas untuk mengubah hidup Anda. Rasakan sensasi bermain slot yang tak terlupakan dan peluang untuk meraih harta karun yang melimpah di dunia penuh kegembiraan dan peluang emas ini.

Lebih dari Sekedar Permainan Slot

SPBU88 menghadirkan beragam jenis permainan slot dari provider ternama seperti Pragmatic Play, Microgaming, dan Slot88. Tema dan fitur unik di setiap permainan siap menantang adrenalin Anda dan membawa Anda berpetualang menjelajahi dunia penuh kegembiraan dan peluang emas.

Bonus dan Promosi yang Menggoda Selera

SPBU88 bagaikan kolam harta karun yang selalu memanjakan para pemainnya dengan bonus dan promosi yang menggoda. Dapatkan bonus deposit, bonus cashback, bonus free spins, dan masih banyak lagi. Semakin sering Anda bermain, semakin besar peluang Anda untuk meraih bonus fantastis yang bisa mengubah hidup Anda.

Kemenangan Besar Menanti di Setiap Putaran

Setiap putaran di SPBU88 penuh dengan kegembiraan dan peluang emas. Dengan sedikit keberuntungan, Anda bisa menjadi jutawan dalam sekejap mata. SPBU88 terkenal dengan jackpotnya yang fantastis, siap mengantarkan Anda pada kehidupan yang Anda impikan, penuh kemakmuran, kebahagiaan, dan pencapaian.

Layanan Terbaik dan Keamanan Terjamin

SPBU88 bukan hanya tentang hadiah dan kemenangan. Di sini, Anda juga akan mendapatkan layanan terbaik dari tim customer service yang siap membantu Anda 24/7. Keamanan data dan transaksi Anda pun terjamin dengan teknologi keamanan terdepan yang digunakan SPBU88.

Lebih dari Sekedar Permainan, SPBU88 Adalah Komunitas

SPBU88 bukan hanya tempat untuk bermain slot, tetapi juga komunitas yang dinamis dan ramah. Berinteraksilah dengan pemain lain dari seluruh dunia, berbagi tips dan strategi, dan ciptakan persahabatan yang tak ternilai.

SPBU88: Pilihan Tepat untuk Pecinta Slot yang Ingin Lebih

Bagi Anda yang haus akan sensasi, tantangan, dan peluang, SPBU88 adalah destinasi yang tepat. Kunjungi SPBU88 sekarang dan rasakan sendiri sensasi bermain slot yang tak terlupakan di surga harta karun digital ini! | spbu88_com_8f4314b6ea263e |

1,873,743 | Meningkatkan User Experiece (UX) Aplikasi Menggunakan useOptimistic Hooks pada React JS/Next JS | Seberapa penting emang user experience (UX) dari user terhadap aplikasi yang kita kembangkan?... | 0 | 2024-06-02T14:53:10 | https://dev.to/yogameleniawan/meningkatkan-user-experiece-ux-aplikasi-menggunakan-useoptimistic-hooks-pada-react-jsnext-js-4cpl | react, nextjs, javascript |

Seberapa penting emang _user experience_ (UX) dari user terhadap aplikasi yang kita kembangkan? Sangat penting bro. Kalau _first impression_ dari user terhadap aplikasi kita sudah jelek gara-gara _user experience_ yang tidak ramah lingkungan, maka user pun juga males buat buka aplikasi yang kita kembangkan. Emang ada parameternya buat nentuin bahwa UX aplikasi kita udah ramah lingkungan? Ada, parameter yang paling minimal itu penggunaan _loading _ ketika melakukan pemrosesan data/perubahan state.

Pada React JS kita bisa meningkatkan UX dari aplikasi yang kita kembangkan menggunakan useOptimistic Hooks yang bisa temen-temen baca dokumentasinya [disini ](https://react.dev/reference/react/useOptimistic) sebelum masuk lebih dalam dan implementasinya. Perlu diketahui ya temen-temen useOptimistic ini hanya tersedia pada React Canary dan Experimental Channel. Tapi, bukan berarti temen-temen kalau tidak menggunakan React Canary tidak mau berkenalan dengan useOptimistic Hooks. Tidak ada salahnya mencari ilmu baru.

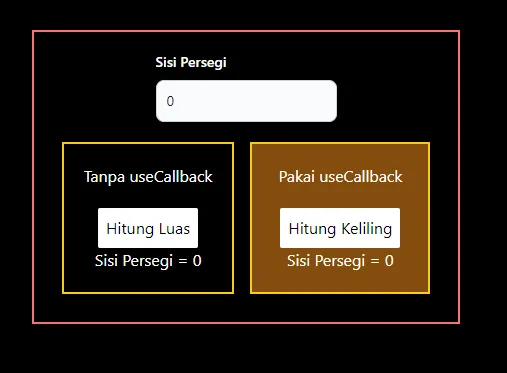

Kira-kira dari 2 perbandingan di bawah ini, temen-temen lebih prefer memilih yang mana?

GIF 1. Tanpa menggunakan useOptimistic Hooks:

GIF 2. Menggunakan useOptimistic Hooks:

Terdapat perbedaan yang cukup signifikan dari implementasi _useOptimistic_ Hooks pada komponen React JS/Next JS. Gif 1 tidak memberi tahu bahwa ada proses yang sedang terjadi ke _backend_ ketika menambahkan data baru, sedangkan Gif 2 memberi tahu kepada user dengan menambahkan opacity ketika ada proses pengiriman data ke _backend_. Saya pribadi lebih memilih opsi yang kedua karena ketika kita membuat sebuah aplikasi pastinya kita ingin user dapat berinteraksi dengan aplikasi kita sehingga _user experience_ dapat terbentuk hanya dengan hal yang cukup sederhana seperti ini.

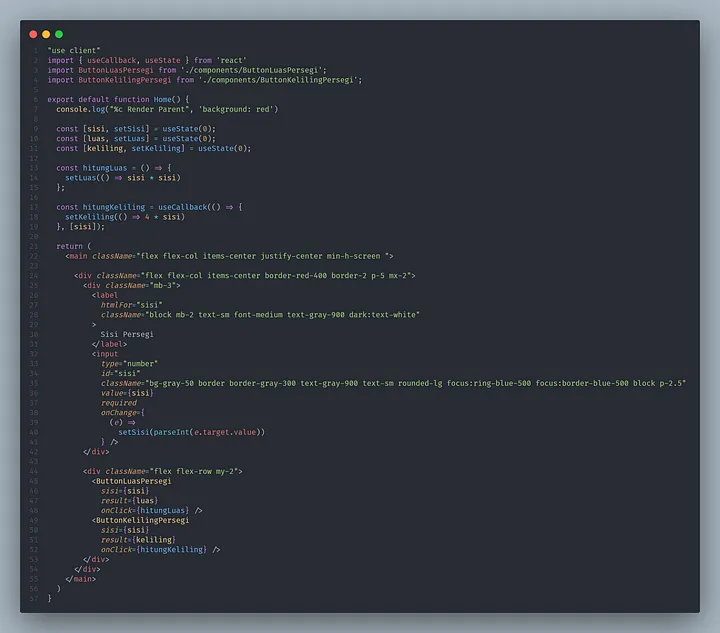

Oke, kita lanjut ke tahap implementasi kode. Kali ini saya menggunakan Next JS untuk implementasi _useOptimistic Hooks_ ini. Disini temen-temen juga bisa praktik dengan menjalankan kode program yang sudah saya buat ya.

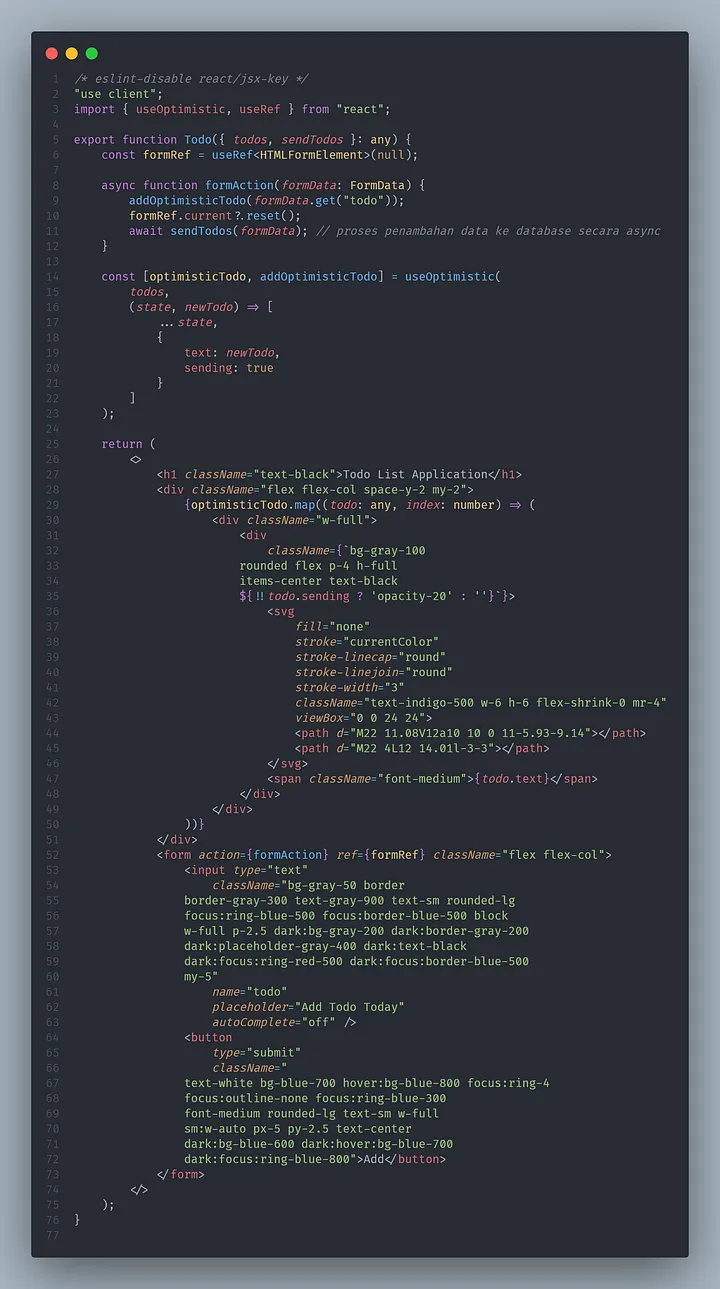

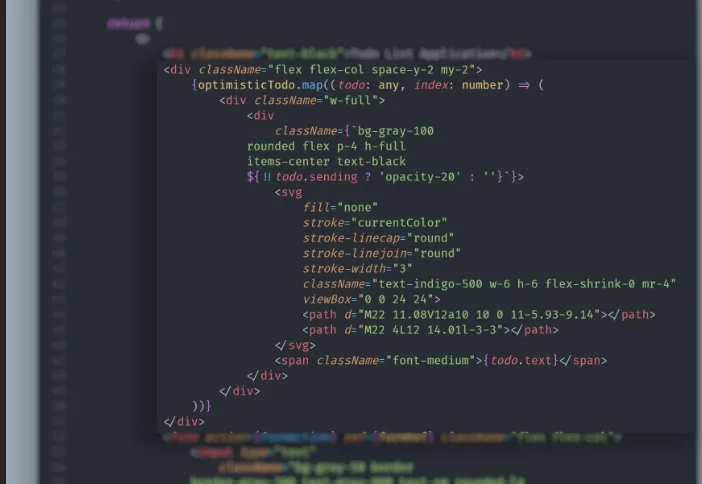

```javascript

"use client"

import { useState } from "react";

import { Todo } from "./Todo";

async function deliverTodos(todo: string) {

await new Promise((res) => setTimeout(res, 1000));

return todo;

}

export default function Home() {

const [todos, setTodos] = useState([

{ text: "Mengerjakan Tugas", sending: false }

]);

async function sendTodos(formData: any) {

const sentTodo = await deliverTodos(formData.get("todo"));

setTodos((todos: any) => [...todos, { text: sentTodo }]);

}

return (

<main className="h-screen w-screen flex items-center justify-center">

<div className="flex flex-col">

<Todo todos={todos} sendTodos={sendTodos} />

</div>

</main>

);

}

```

Lanjut, kita akan coba breakdown terlebih dahulu kode program yang sudah saya buat.

_Function deliverTodos_ ini kita analogikan sebagai function yang digunakan untuk melakukan request ke backend dengan durasi request dan response 1 detik. Jadi, kalau temen-temen udah punya endpoint sendiri bisa diimplementasikan pada function ini.

Kita membutuhkan 1 state yaitu todos sebagai initiator value dan digunakan untuk menyimpan perubahan value yang akan kita gunakan pada useOptimistic Hooks.

```javascript

/* eslint-disable react/jsx-key */

"use client";

import { useOptimistic, useRef } from "react";

export function Todo({ todos, sendTodos }: any) {

const formRef = useRef<HTMLFormElement>(null);

async function formAction(formData: FormData) {

addOptimisticTodo(formData.get("todo"));

formRef.current?.reset();

await sendTodos(formData); // proses penambahan data ke database secara async

}

const [optimisticTodo, addOptimisticTodo] = useOptimistic(

todos,

(state, newTodo) => [

...state,

{

text: newTodo,

sending: true

}

]

);

return (

<>

<h1 className="text-black">Todo List Application</h1>

<div className="flex flex-col space-y-2 my-2">

{optimisticTodo.map((todo: any, index: number) => (

<div className="w-full">

<div

className={`bg-gray-100

rounded flex p-4 h-full

items-center text-black

${!!todo.sending ? 'opacity-20' : ''}`}>

<svg

fill="none"

stroke="currentColor"

stroke-linecap="round"

stroke-linejoin="round"

stroke-width="3"

className="text-indigo-500 w-6 h-6 flex-shrink-0 mr-4"

viewBox="0 0 24 24">

<path d="M22 11.08V12a10 10 0 11-5.93-9.14"></path>

<path d="M22 4L12 14.01l-3-3"></path>

</svg>

<span className="font-medium">{todo.text}</span>

</div>

</div>

))}

</div>

<form action={formAction} ref={formRef} className="flex flex-col">

<input type="text"

className="bg-gray-50 border

border-gray-300 text-gray-900 text-sm rounded-lg

focus:ring-blue-500 focus:border-blue-500 block

w-full p-2.5 dark:bg-gray-200 dark:border-gray-200

dark:placeholder-gray-400 dark:text-black

dark:focus:ring-red-500 dark:focus:border-blue-500

my-5"

name="todo"

placeholder="Add Todo Today"

autoComplete="off" />

<button

type="submit"

className="

text-white bg-blue-700 hover:bg-blue-800 focus:ring-4

focus:outline-none focus:ring-blue-300

font-medium rounded-lg text-sm w-full

sm:w-auto px-5 py-2.5 text-center

dark:bg-blue-600 dark:hover:bg-blue-700

dark:focus:ring-blue-800">Add</button>

</form>

</>

);

}

```

Kita akan breakdown lagi kode program yang ada pada <Todo /> component.

_**function formAction**_ merupakan function yang digunakan untuk menambahkan hasil input todo ke dalam _**addOptimisticTodo**_ yang mana function ini merupakan function yang akan kita definisikan pada _useOptimisitic destruct_. Lalu, kita bisa mengirim data ke API secara asynchronous.

Lalu kita bisa mendefinisikan _passthrough_ dan _reducer_ yang ada pada _useOptimistic Hooks_. Disini saya definisikan passthrough dengan nama _optimisticTodo_ dan _reducer_ sebagai _addOptimisticTodo_. Kemudian, didalam parameter _useOptimistic_ kita bisa kirimkan todos sebagai _passthrough_ dan menuliskan _reducer_ sesuai dengan dokumentasi yang sudah disediakan oleh React JS. Di dalam reducer kita kirim 2 value yaitu text dan sending. Dengan demikian, implementasi _useOptimistic_ sudah bisa digunakan.

Sekarang, kita bisa langsung gunakan _state useOptimistic_ pada html tag komponennya seperti kode program diatas. Bagaimana? cukup mudah bukan implementasi _useOptimistic Hooks_ ini pada React JS/Next JS?

Dengan menggunakan _useOptimistic Hooks_, sekarang aplikasi yang temen-temen kembangkan sudah memenuhi syarat minimal untuk meningkatkan _user experience_. Cukup sekian ya artikel sederhana yang bisa saya tuliskan, semoga kita bisa bertemu di artikel lain dengan materi yang lebih menarik lagi.

| yogameleniawan |

1,873,741 | Mẫu đèn cầu thang 2024 | Đèn chân tường cầu thang Mẫu đèn chân tường cầu thang là một trong số các loại đèn cầu thang đẹp... | 0 | 2024-06-02T14:49:45 | https://dev.to/noithatnew_noithatnew_e45/mau-den-cau-thang-2024-3aph | Đèn chân tường cầu thang

Mẫu đèn chân tường cầu thang là một trong số các loại đèn cầu thang đẹp hiện đại nhất hiện nay. Bởi đây là một mẫu đèn cầu thang rất được nhiều người ưa thích vì có thể chiếu sáng lối đi, xác định được điểm đến rõ ràng hơn

Đặc biệt, ánh đèn chiếu từ dưới lên, luồng sáng dưới chân cũng khiến bạn cảm giác an toàn hơn khi di chuyển. Tạo lên một khung cảnh hiện đại ngay trong chính ngôi nhà của bạn.

5.Mẫu đèn cầu thang với hình dạng tam giác, vuông, tròn

Theo mình thấy thì ngày nay, các loại đèn được thiết kế với rất nhiều mẫu mã và hình dạng khác nhau. Tùy vào khu vực bạn sử dụng thì bạn có thể trang trí các mẫu đèn cầu thang sao cho phù hợp. Một vài hình dạng mà mình thấy phổ biến như:

- Mẫu đèn cầu thang hình tròn: Hình tròn là một kiểu dáng cơ bản đã quá quen thuộc nhưng tính thẩm mỹ của nó vẫn luôn được đánh giá cao. Bạn có thể trang trí đèn cầu thang bằng đèn tròn trên tường cầu thang. Nó vừa đẹp mắt lại vừa có công dụng chiếu sáng khá tốt.

- Mẫu đèn cầu thang hình tam giác: Có lẽ bạn sẽ ít gặp mẫu sản phẩm này vì nó còn khá mới mẻ với người tiêu dùng. Đèn lắp cầu thang dạng tam giác sở hữu thiết kế đơn giản nhưng vẫn cực kỳ thu hút và lạ mắt.

Không chỉ có vậy, mẫu đèn cầu thang hình tam giác còn được đánh giá cao bởi độ bền của chúng khi đừng dùng để trang trí đèn cầu thang.

- Mẫu đèn cầu thang hình vuông: Với dạng hình hộp vuông, chiếc đèn này tạo được ấn tượng bởi sự tinh tế. Vì vậy mà nó rất phù hợp với thiết kế của những công trình hiện đại.

Thông thường đèn cầu thang hình vuông được cấu tạo từ hợp kim nhôm cao cấp nên có thể điều chỉnh độ mở góc chiếu sáng linh hoạt. Rất phù hợp để trang trí đèn cầu thang.

Các hình dạng và mẫu mã đèn này còn tùy thuộc vào phong cách mà bạn muốn trang trí đèn cầu thang như thế nào. Bạn sẽ không gặp phải quá nhiều khó khăn khi tìm kiếm các loại đèn này để mang về cho ngôi nhà mình đâu nên đừng lo lắng nhé!

Trên đây là một vài mẫu đèn nổi bật dành cho cầu thang trong ngôi nhà của bạn.

Hy vọng là qua bài viết này, bạn có thể đưa ra quyết định xem mẫu trang trí đèn cầu thang nào là phù hợp với mình nhé!

Nếu bạn muốn tìm hiểu thêm nhiều thêm về đèn nội thất thì hãy tới [noithatnew.com](url) ngay nhé! | noithatnew_noithatnew_e45 | |

1,873,740 | Step Up Your Express.js Game: Advanced Middleware and Security Tips for Beginners | Express.js is a popular framework for developing web apps in Node.js. Middleware is a fundamental... | 0 | 2024-06-02T14:49:34 | https://dev.to/a_shokn/step-up-your-expressjs-game-advanced-middleware-and-security-tips-for-beginners-3bk7 | webdev, beginners, node, backenddevelopment | Express.js is a popular framework for developing web apps in Node.js. Middleware is a fundamental aspect that contributes to Express's power and flexibility. If you're familiar with the fundamentals of Express middleware, you'll recognize that it's similar to a set of steps your request takes. But what happens after the basics? Let's get started and examine sophisticated middleware topics in a basic manner.

> Middleware be like: "I'm just a simple middleware, but when things get tough, I call my next()"

### What is Middleware?

Middleware functions have access to the request object (req), the response object (res), and the following middleware function in the application's request-response cycle. These functions can execute a variety of activities, including altering the request or response objects, terminating the request-response cycle, and calling the next middleware in the stack.

Real-World Example: A Bakery

Imagine you own a bakery, and your shop is the server. Customers (requests) come in, and they have to go through several stages (middleware) to get their bread (response).

1. Request logging: A staff member logs the customer’s details.

2. Authorization: Another staff member checks if the customer has a valid membership card.

3. Processing order: The baker prepares the bread.

4. Packaging: Another staff member packs the bread.

5. Sending response: Finally, the cashier hands over the packed bread to the customer.

### 1. Error Handling Middleware:

Sometimes things go wrong, and you need a way to catch and handle errors. Error-handling middleware functions have four arguments: err, req, res, and next.

```

app.use((err, req, res, next) => {

console.error(err.stack);

res.status(500).send('Something broke!');

});

```

### 2.Chaining Middleware:

You can create modular middleware functions and chain them together for reusability and cleaner code.

```

const checkAuth = (req, res, next) => {

if (req.user) {

next();

} else {

res.status(401).send('Unauthorized');

}

};

const logRequest = (req, res, next) => {

console.log(`${req.method} ${req.url}`);

next();

};

app.use(logRequest);

app.use(checkAuth);

```

### 3.Custom Middleware for Specific Tasks

Sometimes you need middleware to perform specific tasks like data validation, rate limiting, or even modifying the request object to include additional information.

```

const addTimestamp = (req, res, next) => {

req.requestTime = Date.now();

next();

};

app.use(addTimestamp);

```

## Security Best Practices

### 1.Helmet Middleware:

When designing applications with Express.js, security is critical. Below are some lesser-known security guidelines and recommended practices that can help protect your application:

Helmet Middleware secures Express apps by setting multiple HTTP headers. It consists of a group of smaller middleware methods that set security-related HTTP headers.

```

const helmet = require('helmet');

app.use(helmet());

```

### 2.Rate Limiting:

Rate restriction prevents brute-force assaults by restricting the amount of requests a user can make in a given time period.

```

const rateLimit = require('express-rate-limit');

const limiter = rateLimit({

windowMs: 15 * 60 * 1000, // 15 minutes

max: 100, // limit each IP to 100 requests per windowMs

});

app.use(limiter);

```

### 3.Content Security Policy (CSP):