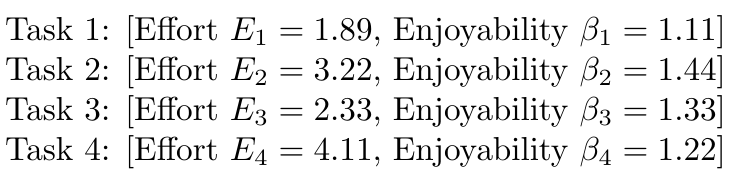

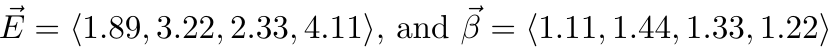

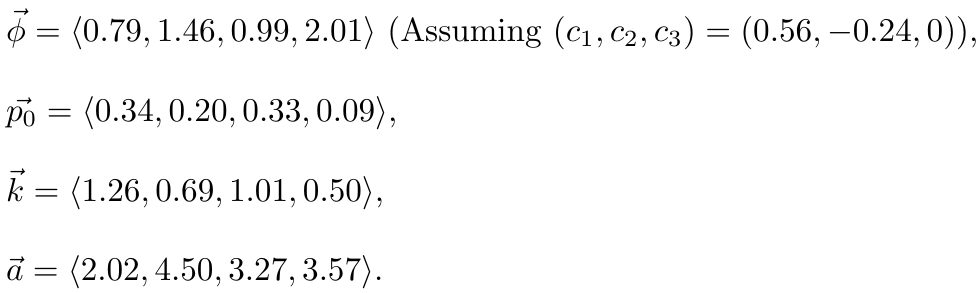

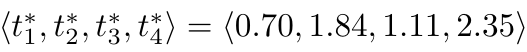

id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,872,583 | One JS Class to speak them all | Hi to everyone! 👋🏼 First of all: sorry for my English. I'm a guy from Argentina coding in... | 0 | 2024-06-01T02:26:35 | https://dev.to/mobilepadawan/one-js-class-to-speak-them-all-dl1 | javascript, speech, synthesis, class | **Hi to everyone! 👋🏼**

First of all: _sorry for my English_.

I'm a guy from Argentina coding in different programming languages since December 1994.

In my last holiday time I coded a JS Library improving and grouping in a simple JS Class the Speech Synthesis capacities included in one place the main features of this amazing way to transform a sentence in an audible thing.

I did feel myself as a fortunate coder who widely profited some of the modern capabilities included on #JavaScript to create JS Classes with private and static members (properties and methods).

I never found an opportunity, until now, to implement most of these modern features of this so-versatile programming language.

## What was the result

A JS library with just `3.17 KB` of weight (unminified) or `1.84 KB` of weight (minified) with the power of transforming a text or sentence in an audible thing. The best of all this thing is to resume in just one line of code a native feature of JS that needs between 5 to 10 lines of code for implementing using full Vanilla JS.

This library just `extends` the features of the Speech Synthesis Object and simplify in some properties and methods the Speech Synthesis and SpeechSynthesisUtterance objects.

**Test if your web browser supports Speech Synthesis**

```javascript

Speakit.TTStest();

```

**Get the available voices list in a web browser**

```javascript

Speakit.getVoices().then(voices => console.table(voices))

```

This method is based in JS Promises and returning an object array with all the voices available.

Some web browsers like `Chrome` and `Microsoft Edge` has a combination of synthesized voices and Natural voices available. The other ones like `Safari` or `Firefox` just have synthesized voices.

The `.utteranceRate` and `.utterancePitch` properties let you configure the tone and pitch of the voice. They drives values very sensitive and need several tests in different web browsers (_mobile and desktop_) to found the best tuning.

```javascript

Speakit.utteranceRate = 1.02

Speakit.utterancePitch = 1.00

```

## How to reproduce a text

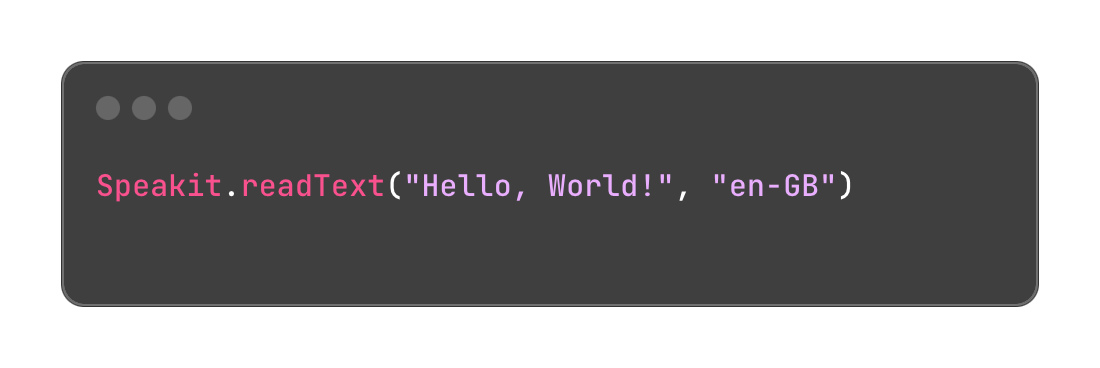

You need to call the `.readText()` method and send two arguments:

1) the string of the text or sentence to reproduce

2) the ISO language code selected (en-GB, es-AR, pt-BR)

This method has a third argument (optional) and represents the voice object corresponding to a tone or accent available in the voice list.

For example, if you are using Microsoft Edge to test your webapp and select `en-HK` as the language to reproduce audible text, you may choose `Microsoft Sam Online (Natural) - English (Hongkong)` by sending it as the third parameter.

```javascript

Speakit.readText('Hello world!',

'en-NZ',

'Microsoft Sam Online (Natural) - English (Hongkong))'

.then(()=> console.log('Text successfully readed.') )

.catch((error)=> console.error('Error reading the text:', error) )

```

The `.readText()` method works as a Promise. While it is reproducing the sentence, will wait to the end of reproduction to resolve the JS Promise. That let you establish a `.then()` control method to do something after the sentence finish played.

## Controlling the .readText() action

Of course Speakit JS let you controlling the audible reproducing action by using `.pauseSpeaking()`, `.resumeSpeaking()` or `.stopSpeaking()` methods.

## Speech Synthesis availability

This amazing JavaScript feature is available in most of the modern browsers since many years ago. In some web browsers you will find the Natural voices options, giving you a Headline in the Speech Synthesis experience of your webapps.

I invite you all to test my JS library and send me comments or suggestions to bring it to the next level. I am working already in some improvements of it.

I didn't mention all the properties and capabilities of Speakit-JS in this article. Go for it and dig all the available features.

If you wanna try this JS Library, go for it to my official repo at: https://github.com/mobilepadawan/Speakit-JS

You can also find it in NPMJS web by using `npn install speakit-js` command. This JS library just works in frontend webapps and PWAs. | mobilepadawan |

1,872,590 | Formulating Git Commit Message Best Practices | Git, the foundation of today's version control, provides tremendous power to development teams.... | 0 | 2024-06-01T02:19:59 | https://dev.to/cheikh_sadbouh_abd98924d6/formulating-git-commit-message-best-practices-1he |

Git, the foundation of today's version control, provides tremendous power to development teams. However, its actual potential is best realized when combined with well-structured and detailed commit messages. These messages provide crucial breadcrumbs for understanding the progress of your software. Let's look at the best practices for writing effective Git commit messages that improve collaboration and speed development.

## Anatomy of a Stellar Commit Message:

### 1. Change Type (Essential):

- `fix:` Signals the resolution of a bug.

- `feat:` Denotes the introduction of a new feature.

- `BREAKING CHANGE:` Highlights a modification that might necessitate updates to prevent compatibility issues, such as replacing outdated components. You can also append the '!' symbol after the type/area.

- `docs:` Reserved for updates and modifications to documentation.

- Other frequently used types include `test:`, `chore:`, `refactor:`, `build:`, and `style:`. Keep in mind that teams frequently use custom types, so make sure your procedures follow the rules set out by your team.

### 2. Affected Area (Optional):

The affected area pinpoints the specific section of the codebase impacted by the changes. Including this element brings clarity and context, especially within large projects involving multiple developers.

### 3. Concise Summary (Essential):

Keep this part brief and to the point. Employ the imperative mood—for instance, "Implement user authentication" instead of "Implemented user authentication." This practice enhances readability in automatically generated change logs and release notes.

### 4. Detailed Explanation (Optional):

Utilize this section to provide further insights into your modifications. Separate the detailed explanation from the concise summary with a blank line.

### 5. Additional Information (Optional):

Include any pertinent metadata here, such as a link to a previously reported issue (`fix #003`) or the name of the code reviewer.

Keep in mind that when you incorporate an affected area, always follow it with a colon and a space before writing the concise summary. Additionally, `BREAKING CHANGE` is case-sensitive and should be written in all uppercase.

## Illustrative Examples:

```

chore(Style_Guide): Rename variable “InvalidInput” to “invalidInput”

Rename variable to adhere to the established naming convention for improved consistency.

```

```

fix(Data_Processing)!: Update data validation rules

Revised data validation rules to exclusively accept structured data. All other data formats will be rejected.

```

```

feat: Integrate night mode functionality

```

For more extensive messages, omit the `-m` flag during the commit process. This action will open an editor, allowing for a comprehensive message. For shorter messages, use `git commit -m "summary" -m "explanation"` to distinctly separate the summary, explanation, and additional information.

## In Conclusion:

Effective commit messages are critical to enabling automation in your development workflow and promoting smooth cooperation. Always state the type of change you made along with a brief description of it. A codebase that is easier to maintain and comprehend is further enhanced by adhering to the principles of Conventional Commits.

| cheikh_sadbouh_abd98924d6 | |

1,872,588 | Cómo Exponer un Servicio Local a Internet Usando ngrok en una Mac | ¿Qué es ngrok? ngrok es una herramienta que permite exponer un servidor local a Internet a... | 0 | 2024-06-01T02:08:05 | https://dev.to/olverarobertp/como-exponer-un-servicio-local-a-internet-usando-ngrok-en-una-mac-1o3c | ### ¿Qué es ngrok?

**ngrok** es una herramienta que permite exponer un servidor local a Internet a través de un túnel seguro. Esto es especialmente útil para desarrolladores que necesitan compartir su entorno local con colaboradores, probar webhooks o mostrar prototipos a clientes sin necesidad de desplegar su aplicación a un servidor externo.

### Creación de Cuenta en ngrok

Para comenzar, necesitarás una cuenta en ngrok. Sigue estos pasos:

1. Visita [ngrok.com](https://ngrok.com).

2. Haz clic en **Sign Up** y completa el proceso de registro.

3. Una vez registrado, accede a tu **Dashboard**.

### Creación de Authtoken

1. En tu **Dashboard**, verás una sección llamada **Your Authtoken**.

2. Copia tu **Authtoken**; lo necesitarás para autenticar tu cliente ngrok.

### Instalación de ngrok en Mac

Para instalar ngrok en tu Mac, sigue estos pasos:

1. **Instala vía Homebrew**: Puedes instalar con el siguiente comando.

```sh

brew install ngrok/ngrok/ngrok

```

2. **Extrae el archivo descargado**:

```sh

ngrok config add-authtoken tu_authtoken

```

### Exponer un Servicio Ejecutándose en el Puerto 8080

Supongamos que tienes un servidor ejecutándose en tu máquina local en el puerto `8080`. Para exponerlo a Internet, utiliza el siguiente comando:

```sh

ngrok http 8080

```

Al ejecutar este comando, ngrok creará una URL pública que redirige el tráfico a tu servidor local. Verás una salida similar a esta:

```

ngrok by @inconshreveable (Ctrl+C to quit)

Session Status online

Session Expires 1 hour, 59 minutes

Version 2.3.35

Region United States (us)

Web Interface http://127.0.0.1:4040

Forwarding http://<subdomain>.ngrok.io -> http://localhost:8080

Forwarding https://<subdomain>.ngrok.io -> http://localhost:8080

```

### Validación

Para verificar que tu servicio está accesible desde Internet, abre la URL generada por ngrok (`http://<subdomain>.ngrok.io` o `https://<subdomain>.ngrok.io`) en tu navegador. Deberías ver tu aplicación funcionando como si estuvieras accediendo a ella localmente.

### Conclusiones

Usar ngrok es una forma eficiente y segura de exponer servicios locales a Internet, lo que facilita la colaboración y prueba de aplicaciones. La simplicidad de instalación y uso lo convierte en una herramienta imprescindible para desarrolladores.

¡Espero que encuentres útil esta guía! Si tienes alguna pregunta o comentario, no dudes en dejar un mensaje. 🚀

---

*¿Te gustó este post? Dale like y comparte con tus colegas. Para más contenido relacionado con desarrollo y tecnología, sígueme en LinkedIn.* | olverarobertp | |

1,872,587 | Day 1 | Today I will start trying the challenge of completing all the courses on Freecodecamp starting from... | 0 | 2024-06-01T02:03:27 | https://dev.to/han_han/day-1-2f58 | 100daysofcode, html, css, webdev | Today I will start trying the challenge of completing all the courses on Freecodecamp starting from the beginning of HTML and CSS

on today a #Day1 for me challenge in #100DaysOfCode and Today I learned a lot about HTML at #freecodecamp. I found several tags before I didn't understand their use

```

<figure>

<img src="https://cdn.freecodecamp.org/curriculum/cat-photo-app/cats.jpg" alt="Five cats looking around a field.">

<figcaption>Cats <strong>hate</strong> other cats.

</figcaption>

```

which sometimes when I add an image just use <img> directly without going into it | han_han |

1,872,586 | Unlocking the Decentralized Future: Exploring Web3 Technology 🔓 | In the constantly evolving digital landscape, a new era of the internet is on the horizon, one that... | 0 | 2024-06-01T02:02:24 | https://dev.to/vyan/unlocking-the-decentralized-future-exploring-web3-technology-3l01 | webdev, web3, javascript, beginners | In the constantly evolving digital landscape, a new era of the internet is on the horizon, one that promises to revolutionize the way we interact, transact, and exchange information. This paradigm shift is driven by the emergence of Web3, a decentralized and distributed network that aims to redefine the traditional client-server model of the internet as we know it.

### What is Web3?

Web3, also known as the decentralized web or the semantic web, is a vision for the next iteration of the internet. It builds upon the foundational principles of decentralization, transparency, and user control, leveraging cutting-edge technologies like blockchain, cryptocurrencies, and decentralized applications (dApps).

At its core, Web3 seeks to address the centralization and data monopolies that characterize the current state of the internet, where a handful of tech giants wield immense power over user data and online experiences. By embracing decentralization, Web3 aims to redistribute control and ownership back to users, fostering a more open, equitable, and resilient internet ecosystem.

### Key Pillars of Web3

1. **Decentralization**: Web3 is built on decentralized networks, such as blockchain, which eliminate the need for central authorities or intermediaries. This decentralized architecture ensures resilience, censorship resistance, and true user sovereignty over data and digital assets.

2. **Ownership and Self-Sovereignty**: In Web3, users regain control over their digital identities, data, and assets. Through the use of blockchain-based technologies like non-fungible tokens (NFTs) and decentralized identifiers (DIDs), individuals can own and manage their digital footprint without relying on centralized platforms.

3. **Trustless Interactions**: Web3 enables trustless interactions through the use of smart contracts, which are self-executing programs that automatically enforce predefined rules and agreements without the need for intermediaries or third-party oversight.

4. **Transparency and Immutability**: The underlying blockchain technology that powers Web3 ensures transparency and immutability of data. Transactions and interactions on the decentralized web are recorded on a publicly accessible, tamper-proof ledger, fostering trust and accountability.

5. **Democratization of the Internet**: By removing central points of control and enabling peer-to-peer interactions, Web3 aims to democratize the internet, giving users greater autonomy and fostering a more equitable distribution of power and opportunities.

### Potential Applications and Use Cases

The decentralized nature of Web3 opens up a wide range of promising applications and use cases across various sectors:

1. **Decentralized Finance (DeFi)**: Web3 enables the creation of decentralized financial systems, providing access to secure, transparent, and global financial services without the need for traditional intermediaries like banks or payment processors.

2. **Digital Identity and Data Ownership**: With Web3, individuals can establish self-sovereign digital identities and regain control over their personal data, reducing the risk of data breaches and privacy violations.

3. **Decentralized Marketplaces and Economies**: Web3 facilitates the creation of decentralized marketplaces and economies, enabling peer-to-peer transactions without intermediaries and empowering creators and entrepreneurs to monetize their work directly.

4. **Supply Chain and Provenance Tracking**: The immutable and transparent nature of blockchain technology makes Web3 an ideal solution for supply chain management and provenance tracking, ensuring the authenticity and traceability of goods and products.

5. **Decentralized Social Networks and Content Platforms**: Web3 paves the way for decentralized social networks and content platforms, where users own their data and content, fostering a more equitable and democratic online ecosystem.

### Challenges of Web3

While Web3 offers many promising benefits, it also faces several challenges:

1. **Scalability**: Current blockchain networks often face scalability issues, with limitations on transaction speed and throughput. This can hinder the widespread adoption of Web3 applications.

2. **User Experience**: Web3 applications can be complex and difficult for non-technical users to navigate. Improving the user experience is crucial for broader adoption.

3. **Regulatory Uncertainty**: The regulatory landscape for Web3 is still evolving. Uncertainty and inconsistent regulations across different jurisdictions can pose challenges for developers and users.

4. **Security**: While blockchain technology offers enhanced security, it is not immune to vulnerabilities. Smart contract bugs, hacking, and phishing attacks remain concerns.

5. **Energy Consumption**: Some blockchain networks, particularly those using proof-of-work consensus mechanisms, have been criticized for their high energy consumption. Sustainable and eco-friendly alternatives are being explored.

### Conclusion

Web3 technology represents a transformative shift towards a more decentralized, transparent, and user-centric internet. By leveraging blockchain technology, smart contracts, and decentralized applications, Web3 aims to empower users, foster innovation, and build a more resilient digital ecosystem. However, challenges such as scalability, user experience, and regulatory uncertainty need to be addressed to realize the full potential of Web3.

As this paradigm shift unfolds, it presents both opportunities and challenges, requiring a collective effort from developers, entrepreneurs, and users to shape the future of the web. Embrace the decentralized future and explore the vast potential of Web3 technology, where transparency, ownership, and user empowerment take center stage in the digital realm. | vyan |

1,872,585 | Exploring the Potential of AR Glasses in Healthcare | AR Glasses- Health Care Presentation Looking for an AR Glasses? Either for home or outsourcing it to... | 0 | 2024-06-01T01:56:57 | https://dev.to/pamela_ballardow_8f47cadc/exploring-the-potential-of-ar-glasses-in-healthcare-10dm | healthcare | AR Glasses- Health Care Presentation

Looking for an AR Glasses? Either for home or outsourcing it to public. Have you ever heard of AR Glasses in healthcare? Well, these are perfect cups that are perfect for exploring the possible of healthcare. AR Glasses in healthcare are an innovative technology that allows us to see digitally developed visualizations worldwide genuine. So let's dive right into it as we discussed it more further.

Features of AR Glasses in HealthCare

AR Glasses in healthcare have many benefits in healthcare, including improving the safety of patients during medical procedures. With AR Glasses in healthcare, physicians and nurses can access client real-time and complete procedures more accurately. They are allowed by this technology to spotlight the duty at hand instead of constantly looking away for information.

Innovation for AR Glasses in Healthcare

Innovation could be the key to enhancing healthcare, and AR Glasses in healthcare. This technology has revolutionized the way in which procedures which are medical performed by giving health practitioners and nurses by having a tool both reliable and accurate. With AR Glasses in healthcare, health practitioners can offer safer and more health care efficient.

Security in for AR Glasses in Healthcare

Protection is of utmost importance in healthcare, and AR cups give you the necessary tools to give safe and health care effective. AR Glasses in healthcare and nurses to possess use of data that are real-time information, allowing them to make smarter decisions and provide better look after patients.

Quality of AR Glasses in healthcare

The grade of AR spectacles is vital and must be judged according to several facets such as battery life, quality, durability, and weight. Top-quality AR glasses increase the user experience by providing clear and images which can be razor-sharp as well as extended battery life. The AR Glasses in healthcare must provide convenience whenever wore for the period extended of to avoid disquiet.

Applications of AR Glasses in Healthcare

There are many applications of Products AR Glasses in healthcare. It can be used in remote patient monitoring, emergency medical solutions, surgery, and training medical. Remote monitoring patient a system that transmits real-time data from a client to medical staff, and AR spectacles can be used being an interface for the system. AR Glasses in healthcare can be utilized in crisis solutions which are medical improve decision-making during critical situations.

Source: https://www.wupro.com/Ar-glasses | pamela_ballardow_8f47cadc |

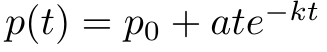

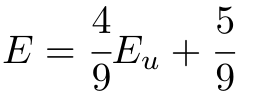

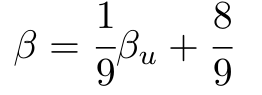

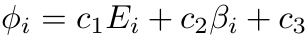

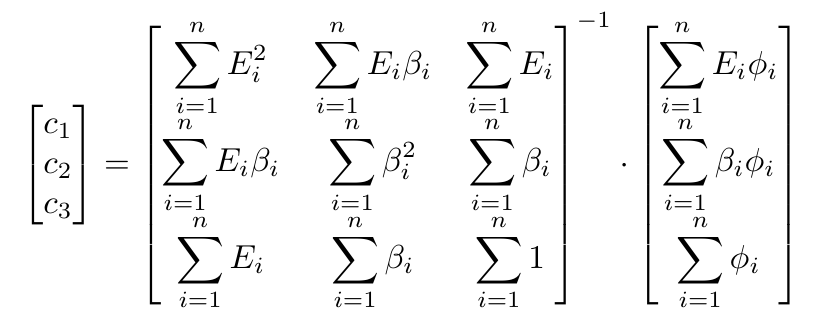

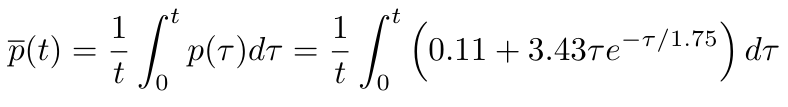

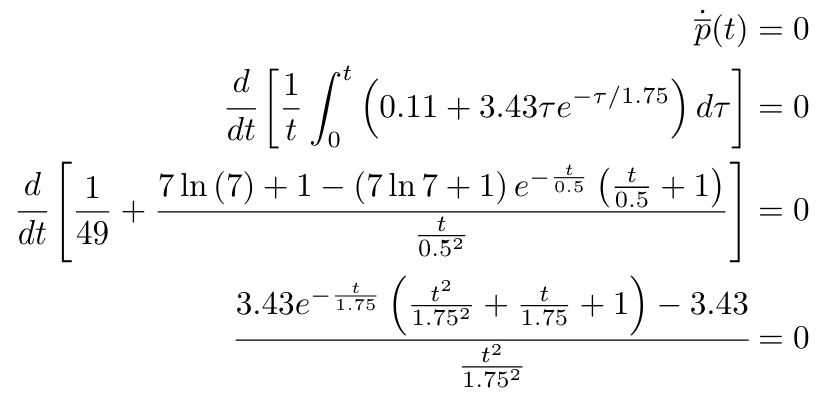

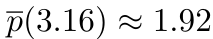

1,872,584 | Introduction To Mathematical Thinking | Part 1: Importance of Mathematics in Computer Science Overview Mathematics is... | 0 | 2024-06-01T01:56:54 | https://dev.to/niladridas/introduction-to-mathematical-thinking-57mc | machinelearning, programming, computerscience, ai | ## Part 1: Importance of Mathematics in Computer Science

## Overview

Mathematics is not just a tool but the foundation upon which computer science is built. It provides the theoretical underpinnings for many areas of computer science from algorithms and data structures to machine learning and cryptography. Understanding these mathematical principles is essential for the logic and efficiency of computer-based systems.

## Detailed Key Points

- **Algorithm Efficiency**

**Concept**: Algorithm efficiency measures how fast or how much storage an algorithm requires to solve a problem. It's typically expressed using Big O notation, which abstracts the performance of an algorithm as the size of its input data grows.

**Mathematical Foundation**: Big O notation involves concepts from calculus (for understanding growth rates) and algebra.

**Example**:

**Linear Search**: Has a complexity of O(n) because it scans each item in a list sequentially. So, if the list doubles in size, so does the search time.

**Binary Search**: Exhibits a complexity of O(log n) due to the list being divided in half each time, making the search much faster as the size increases.

- **Data Structures**

**Concept**: Effective use of data structures requires an understanding of their space (memory) and time complexity (efficiency of operations like insert, delete, search).

**Mathematical Foundation**: Analyzing these complexities again involves understanding functions and limits.

**Example**:

**Arrays**: Constant time O(1) access but linear time O(n) search.

**Hash Tables**: Aim for constant time O(1) access and search, thanks to an effective hash function which distributes entries evenly across an array.

- **Cryptography**

**Concept**: Cryptography secures information by transforming it into unreadable formats, unless one possesses a specific key. This field relies heavily on number theory and the properties of numbers.

**Mathematical Foundation**: Uses prime numbers, modular arithmetic, and algorithms like RSA for public-key encryption.

**Example**:

**RSA Algorithm**: Utilizes two large prime numbers to generate public and private keys. Secure because factoring the product of these two primes is computationally difficult.

- **Machine Learning**

**Concept**: Machine learning algorithms adjust their parameters on data input to improve their accuracy. This adjustment process is guided by statistical and probability theory to make predictions or decisions without being explicitly programmed.

**Mathematical Foundation**: Involves statistics for understanding data distributions and calculus for optimizing algorithms (like gradient descent).

**Example**:

**Linear Regression**: Finds a line (or hyperplane in higher dimensions) that best fits a set of data points. Uses simple algebraic and statistical principles to minimize the error in prediction.

**Neural Networks**: Use calculus to adjust the weights on the network's neurons during training, a process called backpropagation.

### Conclusion

These examples illustrate how deeply intertwined mathematics is with the field of computer science. Whether analyzing the efficiency of an algorithm, ensuring the rapid execution and management of data, securing information, or enabling machines to learn from data, mathematics provides the necessary theoretical framework to support and guide these activities. This foundation not only enhances the understanding but also the innovation within computer science.

## Part 2: Basic Symbols and Terminology in Mathematics

## Overview

Mathematics communicates its concepts through a unique language composed of symbols that denote operations, relationships, and values. Understanding these symbols is essential for both mathematical reasoning and programming, where such expressions are often directly translated into code.

## Detailed Explanations of Mathematical Symbols

- **Equals Sign (=)**

**Meaning**: Indicates that two expressions represent the same value.

**Example**: "3 + 4 = 7". This expression states that the sum of 3 and 4 is equal to 7.

- **Plus (+) and Minus (-) Signs**

**Meaning**: The plus sign represents addition, while the minus sign denotes subtraction.

**Examples**:

**Addition**: "5 + 2 = 7". Adds 2 to 5.

**Subtraction**: "10 - 3 = 7". Subtracts 3 from 10.

- **Multiplication (×), Division (÷)**

**Meaning**: These symbols are used for multiplying and dividing numbers, respectively.

**Examples**:

**Multiplication**: "4 × 3 = 12". Multiplies 4 by 3.

**Division**: "12 ÷ 4 = 3". Divides 12 by 4.

- **Exponentiation (^)**

**Meaning**: Indicates that a number is raised to the power of another number.

**Example**: "x^2" denotes "x raised to the power of 2". For instance, if "x = 5", then "x^2 = 25".

- **Square Root (√)**

**Meaning**: Represents the square root operation, which finds a number that, when multiplied by itself, gives the original number.

**Example**: "√16 = 4" because "4 × 4 = 16".

- **Inequalities (<, >, ≤, ≥)**

**Meaning**: These symbols compare the size or order of two values.

**Examples**:

**Less than (<)**: "3 < 5" indicates that 3 is less than 5.

**Greater than (>)**: "10 > 8" indicates that 10 is greater than 8.

**Less than or equal to (≤)**: "4 ≤ 5" indicates that 4 is less than or equal to 5.

**Greater than or equal to (≥)**: "10 ≥ 7" indicates that 10 is greater than or equal to 7.

## Practice Task and Solution

**Task**: Translate the following statement into a mathematical expression: "The sum of three times five and four is less than twenty."

**Solution**: To translate this, you recognize the operations and relationships:

"Three times five" translates to "3 × 5"

"The sum of 3 × 5 and 4" is "3 × 5 + 4"

"Is less than twenty" translates to "< 20"

**Mathematical Expression**: "3 × 5 + 4 < 20"

This example showcases how mathematical expressions encapsulate logical and numerical relationships in a concise format, pivotal for both theoretical mathematics and its application in computer science, especially in algorithms and problem-solving scenarios.

## Part 3: Introduction to Logical Thinking

## Overview

Logical thinking is the process of reasoning consistently to come to a conclusion. In computer science, logical reasoning underpins how programs make decisions and control the flow of execution. This part explores the basics of forming logical statements and how they integrate into programming.

## Key Concepts

- **Statements**

**Definition**: A statement is a declaration that is either true or false, but not both. In programming, these are often conditions that determine which code segment runs.

**Example**: "The number 4 is even." This is a true statement. In contrast, "The number 5 is even." is a false statement.

- **Logical Connectives**

**Definition**: Logical connectives are symbols or words used to connect statements to form more complex logical expressions.

**Examples**:

**AND (∧)**: True only if both connected statements are true.

**OR (∨)**: True if at least one of the connected statements is true.

**NOT (¬)**: True if the connected statement is false.

**Usage in Programming**: In conditions like `if (userIsLoggedIn && hasAccess)`, both conditions must be true to execute the block of code.

- **Conditional Statements**

**Definition**: These involve reasoning in the form "if this, then that," linking conditions to actions or outcomes.

**Example in Programming**: This code checks if a user is logged in; if true, it allows access to the dashboard.

```

if (user_is_logged_in):

access_dashboard()

```

## Detailed Example

Let's consider the logical statement: "If a user is logged in, then they can access their dashboard."

**Logical Formulation**:

_P_: User is logged in.

_Q_: User can access the dashboard.

**Implication**: _P_→_Q_ which reads as "If P, then Q."

## Activity: Creating Truth Tables

Truth tables are a way to visualize how logical connectives work. Let’s create truth tables for the basic connectives and the example given:

**Truth Table for Basic Connectives**

| **P** | **Q** | **P** ∧ **Q** | **P** ∨ **Q** | **¬P** | **P** → **Q** |

|:-----:|:-----:|:-------------:|:-------------:|:-----:|:-------------:|

| T | T | T | T | F | T |

| T | F | F | T | F | F |

| F | T | F | T | T | T |

| F | F | F | F | T | T |

**P** ∧ **Q** (AND): True only when both P and Q are true.

**P** ∨ **Q** (OR): True if at least one of P or Q is true.

**¬P** (NOT): True when P is false.

**P** → **Q** (IMPLICATION): True in all cases except where P is true and Q is false.

**Implication for the Example**:

| **User Logged In (P)** | **Access Dashboard (Q)** | **P** → **Q** |

|:----------------------:|:------------------------:|:-------------:|

| True | True | True |

| True | False | False |

| False | True | True |

| False | False | True |

This truth table shows that the user can access the dashboard only when they are logged in, mirroring the actual access control logic used in many computer systems.

### Conclusion

Understanding and applying logical thinking is essential for developing robust software that behaves as expected. By mastering logical connectives and conditional statements, programmers can write clearer, more efficient code. Logical reasoning also assists in debugging by helping to trace the flow of execution and understand where things might go wrong.

> Author's social media: [𝕏](https://x.com/niladrridas) 🔬🔭

| niladridas |

1,872,581 | UST Projectors: Compact Design with Big Benefits | UST Projectors: Compact Design with Big Advantages As technology progresses, many products being... | 0 | 2024-06-01T01:38:29 | https://dev.to/pamela_ballardow_8f47cadc/ust-projectors-compact-design-with-big-benefits-6ge | compact | UST Projectors: Compact Design with Big Advantages

As technology progresses, many products being electronic becoming smaller and smaller, yet stronger. One device such the UST projector. UST represents Ultra Short Throw, which really is a sort of projector that will project a image big a distance as small as a foot away. The compact nature of UST projectors has a plethora of benefits that produce them an choice ideal companies, schools, and houses alike.

Benefits of UST Projectors

Some great benefits of UST Projector are countless. Firstly, being little in dimensions means them very portable they are lightweight, making. You're permitted by this portability to maneuver the projector from a single space to some other, or from a single center to some other with general simplicity. Also, UST projectors do not require space much run, making them well suited for smaller venues or any area with limited area.

Innovation in tech

UST projectors are a definite total outcome of revolutionary technology. They use lasers to produce a clear and bright image without the need for any illumination additional. The maintenance-free nature of UST projectors means they are an option that wil attract users that don't want to take some time or incur the expense of replacing traditional projector bulbs usually.

Security Features

UST Projector have integrated security features that ensure the user's wellbeing during use. They normally use a Class 1 laser, which is harmless towards the eye peoples. The laser can project the image on any surface, including a wall or even a projection screen. The projection screen will further reduce the light spread providing a safer watching experience.

How exactly to Use UST Projectors

Using UST projectors is really a breeze, and everyone can learn to get it done by having a practice little. The step very first to place the projector as close as you possibly can towards the area you want to project the image on. The top could be a wall or a projection display screen. Once the projector is established, you will need to connect it to a source, like a laptop or even a DVD player, using an HDMI cable.

Quality and Service

The grade of the image created by UST projectors is superb. Making use of lasers helps to ensure that the image is bright, clear, and sharp, rendering it more fulfilling to view. UST projectors also come with an guarantee excellent meaning you are protected in the not likely event of any issues or faults.

Application of UST Projectors

UST projectors have actually different applications of Projection Screen Businesses use them to give presentations, while schools utilize them in classrooms to help make learning more engaging. Homes use UST projectors to create home theaters and gaming areas. Additionally, UST projectors are commonly used at occasions like weddings and concerts to give a more immersive and experience visually stunning.

Source: https://www.wupro.com/Ust-projector | pamela_ballardow_8f47cadc |

1,872,580 | Why Short Throw Projectors Are Ideal for Classrooms | Why Throw short Projectors ideal for Classrooms Short throw projectors are a innovation excellent is... | 0 | 2024-06-01T01:23:18 | https://dev.to/pamela_ballardow_8f47cadc/why-short-throw-projectors-are-ideal-for-classrooms-56go | short | Why Throw short Projectors ideal for Classrooms

Short throw projectors are a innovation excellent is assisting pupils and teachers alike. This technology happens to be fashioned with use and security convenience in mind. Brief throw projectors create a projection large in a short distance away from the screen, rendering it perfect for classrooms. We are going to explore exactly how short throw projectors may be used, their applications, and exactly why they've been the clear answer ideal classrooms.

Advantages of Quick Throw Projectors

Some great benefits of Short Throw Projector in classrooms are wide ranging. They feature an obvious, razor-sharp, and display bright can be seen effortlessly by all pupils in the class room, regardless of where these are typically sitting. It saves both right time and effort when getting around the class room. Instructors don't need to concern yourself with getting into the true way of the projector, as well as the shadow that has been formerly associated with conventional projectors was eradicated. Short throw projectors are more cost-effective, utilizing less lamp and electricity life.

Innovation

Short throw projectors are innovation at its most readily useful! These projectors are designed to project a large image from a distance quick. Within the past, classrooms utilized conventional projectors that had a distance range bound. Short throw projectors have revolutionized the true means instructors may use projection technology into the classroom. These are typically made with use and security convenience in mind.

Security

Security is a concern paramount it comes towards the class environment. The throw short happens to be fashioned with security at heart. Firstly, the Projector is put close to the wall, meaning that pupils are far less likely to be exposed to light direct the projector. It also eliminates the need for cords and wires that may pose a tripping hazard.

Usage

Short throw projectors are incredibly user friendly. They've been designed to be put up in minutes, without the necessity for complex construction instructions. The system can quickly be fixed for a wall or roof, making it less susceptible to damage accidental. It generates a projection large even yet in a tiny area, rendering it well suited for a class room with restricted space. Its straightforward user interface allows you for instructors to switch from a source to a different, supplying a means notably convenient combine different media and presentations in one single image or presentation.

Utilizing

Using a Short throw projector is quite simple. First, you will need a accepted destination to arrange it, like a wall surface or a whiteboard. The projector may be installed to then your wall surface using a bracket. The projector can be simply linked to any computer or other device compatible the mandatory connection cables. Some throw quick can also be controlled remotely, making them very easy to setup and run.

Service

When it comes to buying and utilizing a throw short, quality and service are necessary considerations. You need to ensure that you’re buying a item top-quality is designed to endure. It is critical to locate a provider that provides exemplary after-sales service, including warranty and support technical. By selecting a provider reliable you may be sure you may receive help if any problems arise.

Quality

Perhaps one of the most key elements in terms of investing in a throw short is quality. You need to make certain that you are buying a high-quality item that will provide exemplary image quality, durability, and life span very long. Considerations when choosing a projector include resolution, brightness, comparison, and image quality. The caliber of the image projected will determine the standard of the scholarly training that pupils get. Therefore, it's important to buy top-notch projector that may offer an learning environment engaging.

Application

Standard Throw Projector are ideal not only in schools but additionally in other surroundings such as for example boardrooms, meeting centers, and also in the home. Whether you are presenting your projects to your peers or preparing a slideshow in the home, short throw projectors provide a viewing experience exclusive. Its capability to project a image big an inferior area causes it to be the best candidate for small areas.

Source: https://www.wupro.com/Short-throw-projector | pamela_ballardow_8f47cadc |

1,872,559 | Flower | Check out this cool Pen! | 0 | 2024-06-01T01:17:13 | https://dev.to/araguaci/flower-3oc3 | codepen | ---

title: Flower

published: true

tags: codepen

---

Check out this cool Pen!

{% codepen https://codepen.io/stoumann/pen/dyQGQOK %} | araguaci |

1,872,558 | Abstract Color Theme Tool | Check out this cool Pen! | 0 | 2024-06-01T01:15:46 | https://dev.to/araguaci/abstract-color-theme-tool-amj | codepen | ---

title: Abstract Color Theme Tool

published: true

tags: codepen

---

Check out this cool Pen!

{% codepen https://codepen.io/stoumann/pen/WNGqZed %} | araguaci |

1,872,557 | Key Differences Between UST and Short Throw Projectors | Differences Between UST and Short Throw Projectors If you are not used to the world of projectors,... | 0 | 2024-06-01T01:14:32 | https://dev.to/pamela_ballardow_8f47cadc/key-differences-between-ust-and-short-throw-projectors-287n | projectors | Differences Between UST and Short Throw Projectors

If you are not used to the world of projectors, selecting between UST (ultra-short-throw) and projectors that are short-throw be quite confusing. Even although you're acquainted with projectors, the distinctions between UST and projectors that are short-throw not be completely clear. We’ll explore the key differences between UST and short-throw projectors to be able to make an purchase decision informed.

Benefits of Short Throw Projectors

While UST Projector have numerous benefits, short-throw projectors also have their merits. Short-throw projectors are perfect for bigger spaces, where you'll want to project a graphic over an extended distance. Also, they are cheaper than UST projectors, which makes them a selection popular individuals on a budget.

Innovation in a Short Throw projectors

Short-throw projectors are not as innovative as UST projectors, nevertheless they nevertheless come with enhanced functions. Numerous projectors that are short-throw have actually built-in WiFi, therefore you can hook up to your unit wirelessly. Some models even come with a running integrated, so you can stream content directly to your projector without the need to connect a computer device.

Security of Short Throw Projectors

Like UST Projector, Short Throw projectors also provide safety features. As an example, some short-throw projectors have an shut-off automated when they detect an obstruction as you're watching lens. This can help avoid damage to the projector and also decreases the risk of eye harm.

Application of UST and Short Throw Projectors

UST Short Throw projectors, such as for example classrooms or spaces which are living. Also, they are ideal for those who want a image top-notch being forced to be worried about attention security. Short-throw projectors, having said that, are perfect for bigger areas, such as for example conference rooms or lecture halls. They're also an inexpensive option for people on a budget whom nevertheless require a image top-quality.

Service for UST and Short Throw Projectors

In terms of solution, Standard Throw Projector are more costly to repair or change than short-throw projectors. This is because of the technology advanced and undeniable fact that these are typically still reasonably new. Short-throw projectors, having said that, are more affordable to repair or change, making them a better option for people who are not looking to invest an entire fortune on maintenance.

Source: https://www.wupro.com/Projector | pamela_ballardow_8f47cadc |

1,872,555 | An Easy Guide To Next14 Authentication With Kinde | This is a simple tutorial on adding authentication to your Next14 app using Kinde. To follow this... | 0 | 2024-06-01T01:08:25 | https://dev.to/joeskills/an-easy-guide-to-next14-authentication-with-kinde-auth-5f50 | kinde, nextjs, authentication, react | This is a simple tutorial on adding authentication to your Next14 app using [Kinde](https://kinde.com/). To follow this tutorial, you need a Kinde account. Kinde is a powerful user authentication service that integrates in minutes. They offer an amazing free tier for their service and the signup process is straightforward.

To continue the signup process, Kinde will ask you to add your business name and a domain name for Kinde to host your app. It also sets a default region for where your data will be stored, but you can modify it if necessary.

After clicking next, it will ask you if you want to start a project from scratch or add Kinde to your existing codebase. Since we're adding Kinde to our Next.js app, I'll choose the latter.

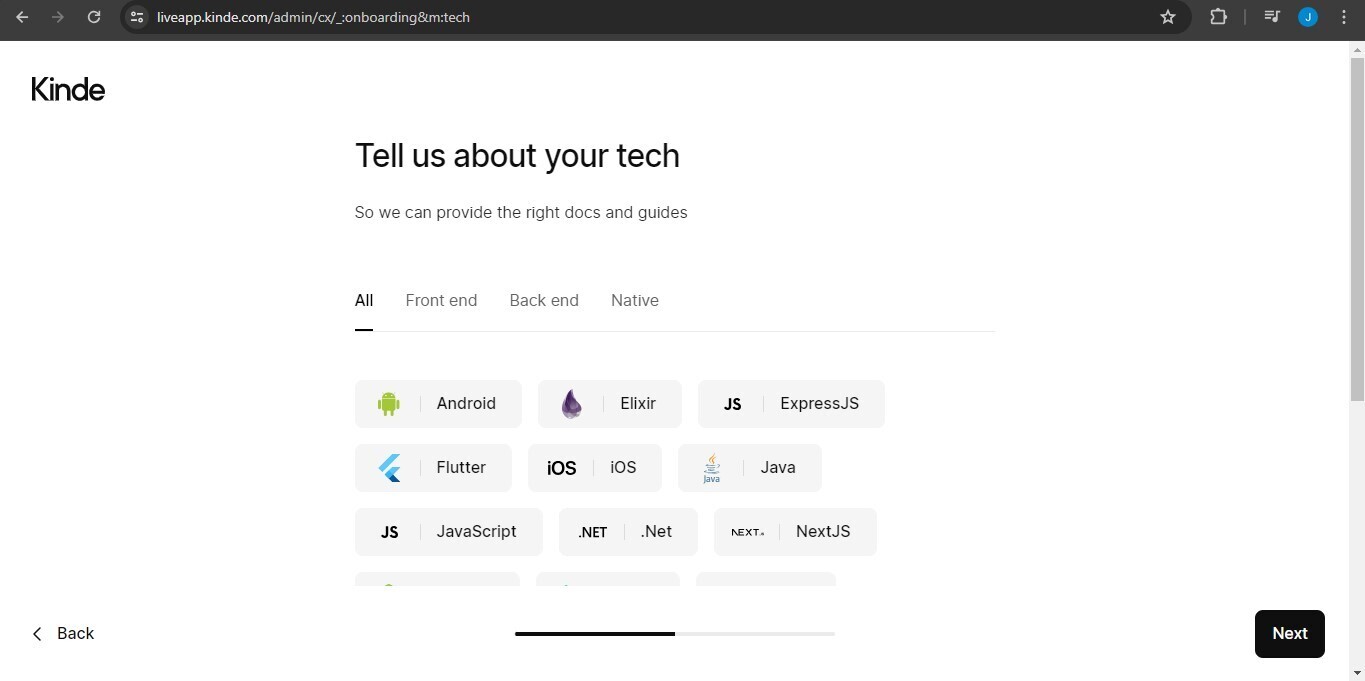

To provide the right docs, Kinde will also ask about the tech you're using on your app. We're using Next.js, so just click that and continue.

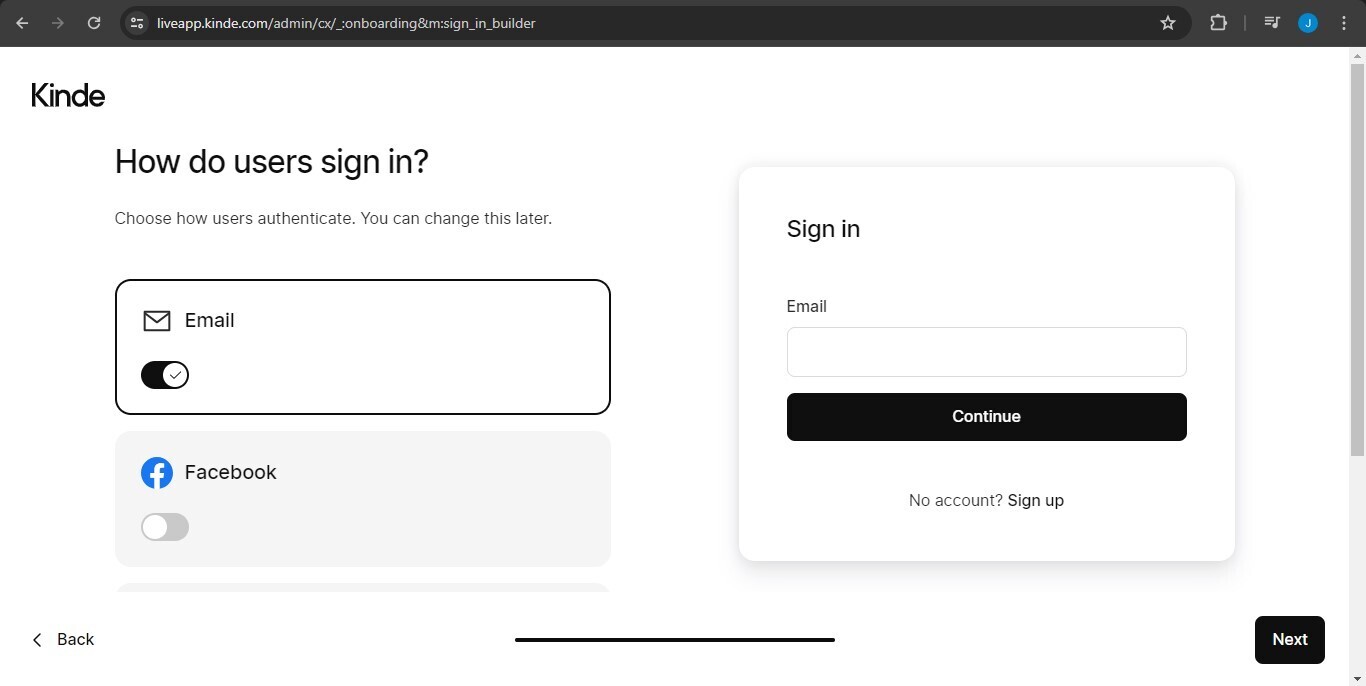

To complete the signup process, you need to choose the ways your users can be authenticated with Kinde. By default, Kinde provides email authentication, but you can add other authentication providers like Google or GitHub.

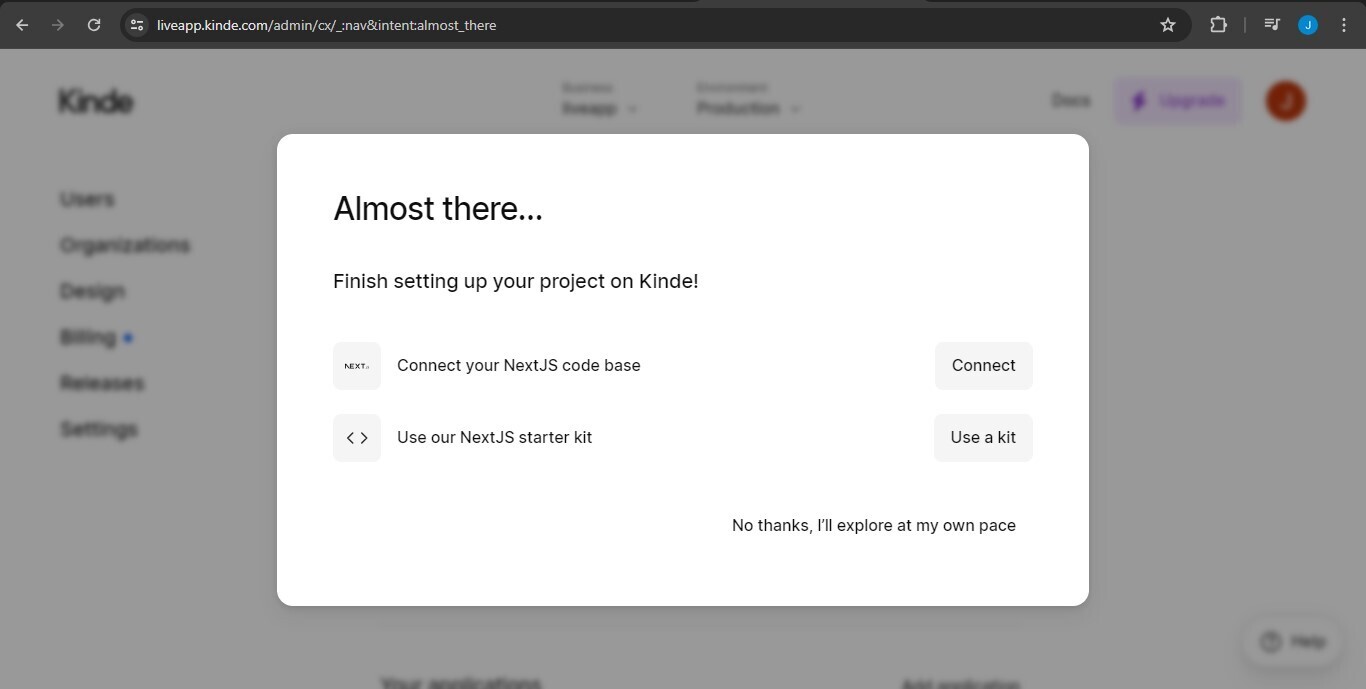

After the signup process is done, Kinde will lay out ways for you to integrate it into your app with their guides. You can just click on the connect to Next.js codebase option.

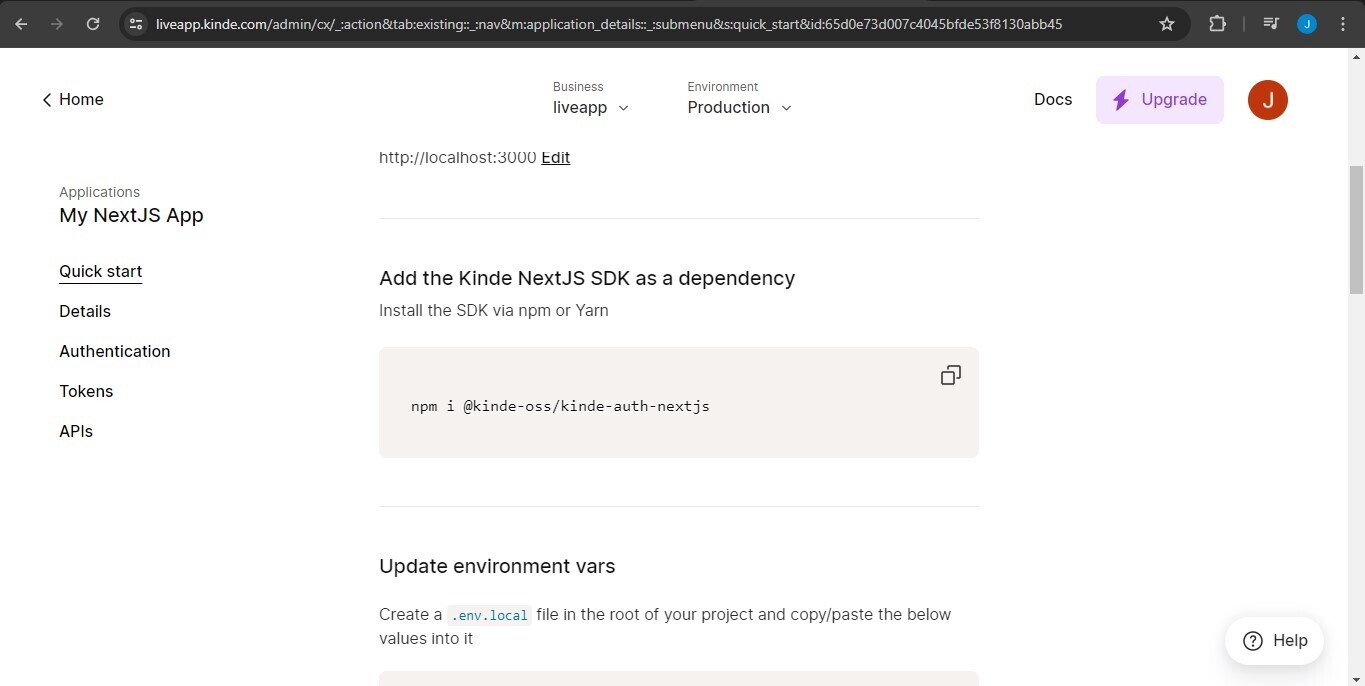

You will be redirected to a quick start guide for adding Kinde to your existing Next.js project. Scroll down and copy the npm or yarn installation command to install the Kinde Next.js SDK as a dependency. Also, copy the environment variables provided by Kinde into a .env.local file.

```

npm i @kinde-oss/kinde-auth-nextjs

```

We're almost done, the last thing you need to do is to create an api folder in your app directory, add an auth folder within it, within the auth folder a dynamic segment that looks like this [kindeauth], add a route.js file to it, and finally copy this inside of it.

```

import {handleAuth} from "@kinde-oss/kinde-auth-nextjs/server";

export const GET = handleAuth();

```

🎉You're done! You've successfully added authentication using Kinde to your Next14 app.

To start your auth flow in your app, you use the LoginLink and RegisterLink components.

```

import {RegisterLink, LoginLink} from "@kinde-oss/kinde-auth-nextjs/components";

<LoginLink>Sign in</LoginLink>

<RegisterLink>Sign up</RegisterLink>

```

🔥Happy coding!

You hear more from me on:

[X](https://x.com/code_withjoseph)

| joeskills |

1,872,553 | Silent Generators: Ensuring Uninterrupted Power with Minimal Noise | Silent Generators: Ensuring Uninterrupted Power plus Minimal Noise Silent Generators will be the... | 0 | 2024-06-01T01:05:55 | https://dev.to/pamela_ballardow_8f47cadc/silent-generators-ensuring-uninterrupted-power-with-minimal-noise-2pe1 | power |

Silent Generators: Ensuring Uninterrupted Power plus Minimal Noise

Silent Generators will be the innovation that are latest in the world of generators, ensuring power which are uninterrupted lower noise. These generators are now the game-changer, and people which can be a few switching with the {traditional generators to|generators that are traditional} these generators which are often peaceful., we intend to explore the value, safeguards, use, solution, plus quality of peaceful generators.

Great things about Silent Generators

One of the {biggest top features|top features that are biggest} of peaceful Diesel engine generators was they develop noise which are minimal contrast to generators that are main-stream. For the reason that they've enclosures which are soundproof {reduce noise pollution|noise pollution that is reduce}. Plus peaceful generators, you can enjoy power that was {uninterrupted sound which|sound that is uninterrupted} are distracting.

Next, Silent Generators are more efficient in comparison to generators being traditional. They could save on fuel expenses given that they consume less gasoline a tool of power produced. This may cause them to an alternative that are perfect those attempting to save very well {energy spending|spending that is energy}.

Innovation

Silent Generators are the product of innovation. They are typically made out of contemporary apparatus to provide power that are efficient with minimal noise. This has made them a range that has been {popular people who|people that are popular} are most domiciles, plus organizations.

Safety

Diesel generator sets are produced plus security in your thoughts. They shall have advanced safety service that decrease the risk of accidents. For instance, they have circuit breakers that avoid {accidental electrocution|electrocution that is accidental}. Furthermore, they shall has {automatic shutoff switches|shutoff that is automatic} that turn fully off the generator’s engine whenever it detects natural oils that was low or overheating.

Using Silent Generators

Silent Generators which is peaceful easy plus easy. First, be sure that the buyer is see our you handbook that is roofed aided by the generator. This could easily permit you to learn to operate it correctly.

{Before you begin the Silent Generators, check out the natural oils levels out plus fuel to make sure they are sufficient|They are sufficient before you begin the generator, check out the natural oils levels out plus fuel to make sure}. Then, connect the cords being electric the generator plus turn it in. Adjust the settings to the {required manufacturing regularity|manufacturing that is required} plus voltage, as well as the generator starts power that are creating.

Service

Silent generators or require regular servicing to {effortlessly confirm they operate|confirm they operate effortlessly} plus effectively. You ought to keep these specific things serviced our the {professional expert more|expert that is professional} than one days each year. The expert could bring repair which was routine changing the natural oils plus environment filters, checking the spark plugs, plus tightening {free part with|part that is free} the solutions.

Quality

The standard of the Silent Generators is vital since it see their {effectiveness plus durability|durability plus effectiveness}. When purchasing the generator that are peaceful select a brand name which try reputable plus make sure this has been {tested plus certified|certified plus tested} for protection. Also, glance at the guaranteed duration plus solution that has been after-sale by the manufacturer.

Application

Silent Generators require various applications in several settings. Domiciles can use them being truly a {|charged} power that are backup in case of the blackout since power outage. Hospitals plus company which was {medical utilize them|utilize that is medical} to ensure power that are uninterrupted for medical gear. Activities plus concerts can use them to make power for sound plus practices which are lighting. Finally, construction sites could use them for power products plus apparatus.

Silent Generators Products providing the dependable plus power that are efficient with minimal noise. They are an alternative which will be excellent those wanting to save on fuel costs plus minmise air pollution which was noise. Additionally, they're designed with high level protection characteristics, producing them the option that has been safer homes, companies, plus tasks. Plus solution which is {suitable quality purchase|quality that is suitable}, plus appropriate use, peaceful generators is an excellent investment that guarantees energy that are uninterrupted.

Source: https://www.kangwogroup.com/Diesel-generator-sets | pamela_ballardow_8f47cadc |

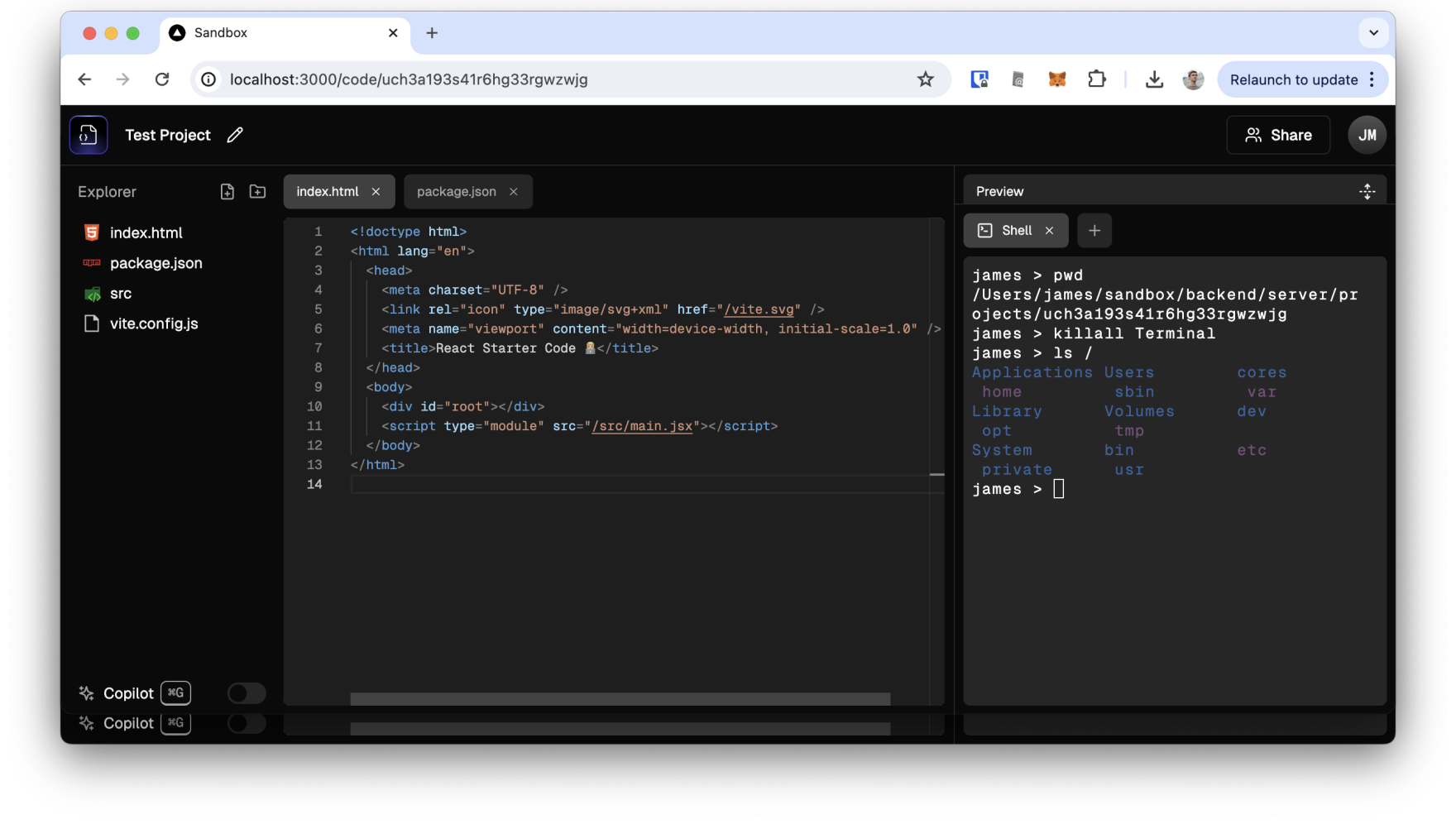

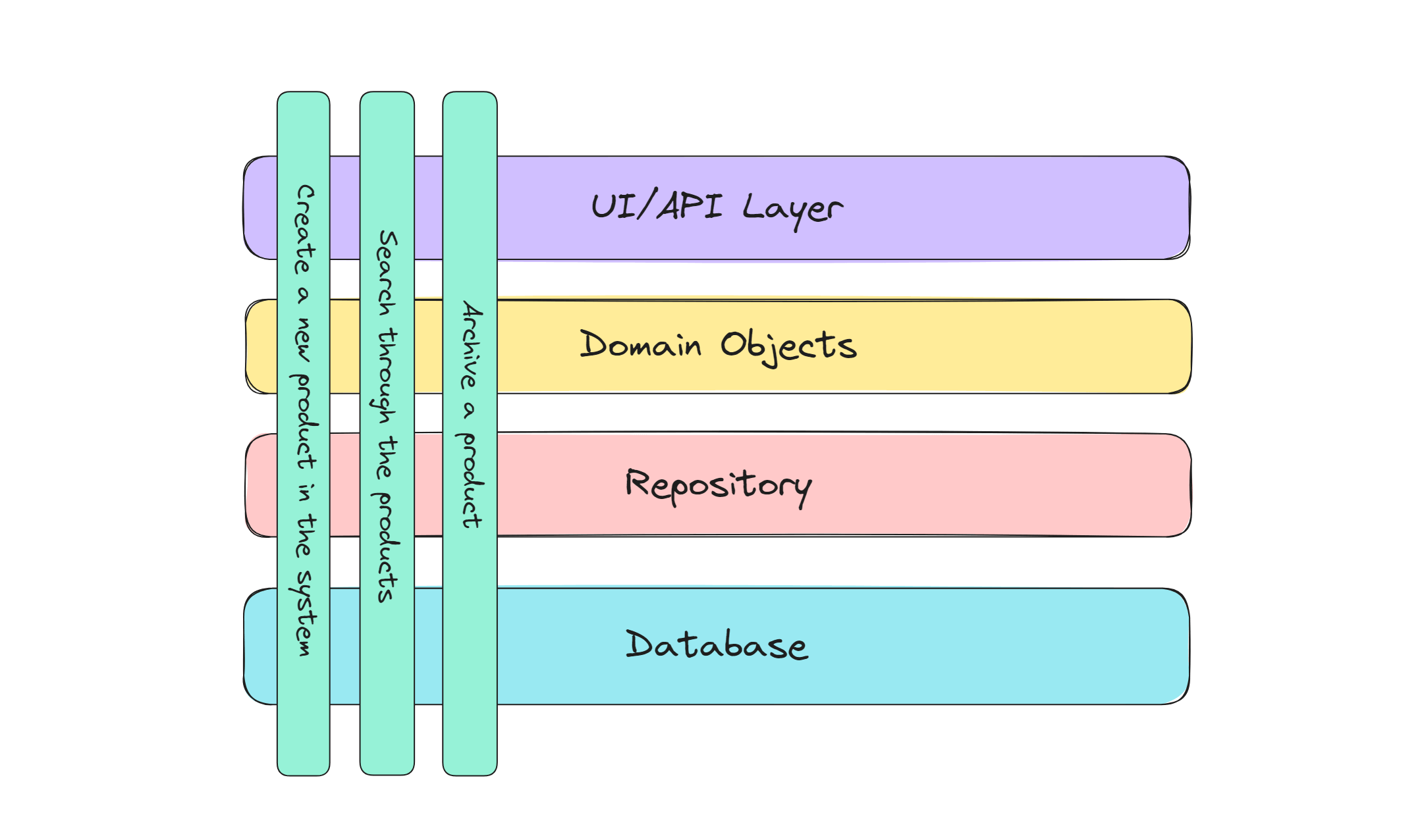

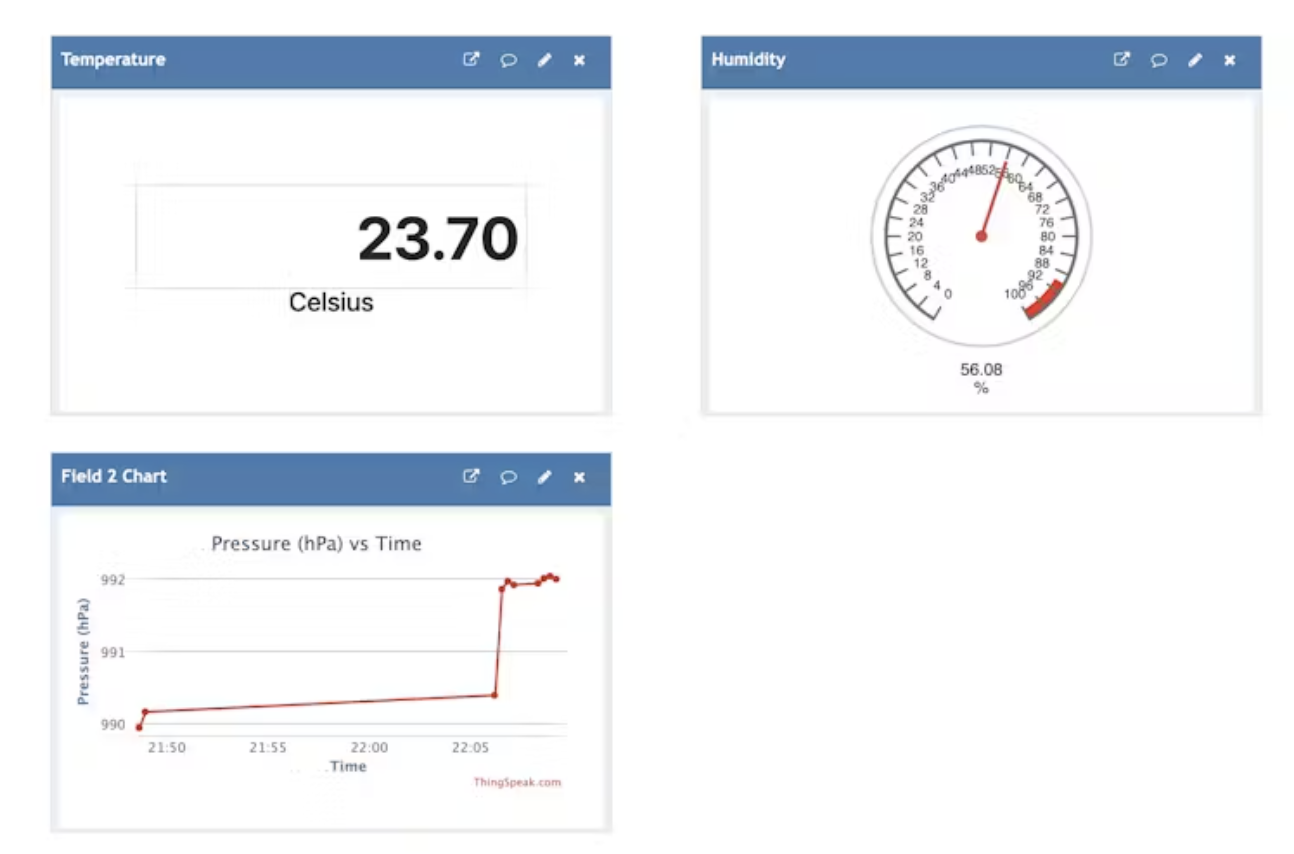

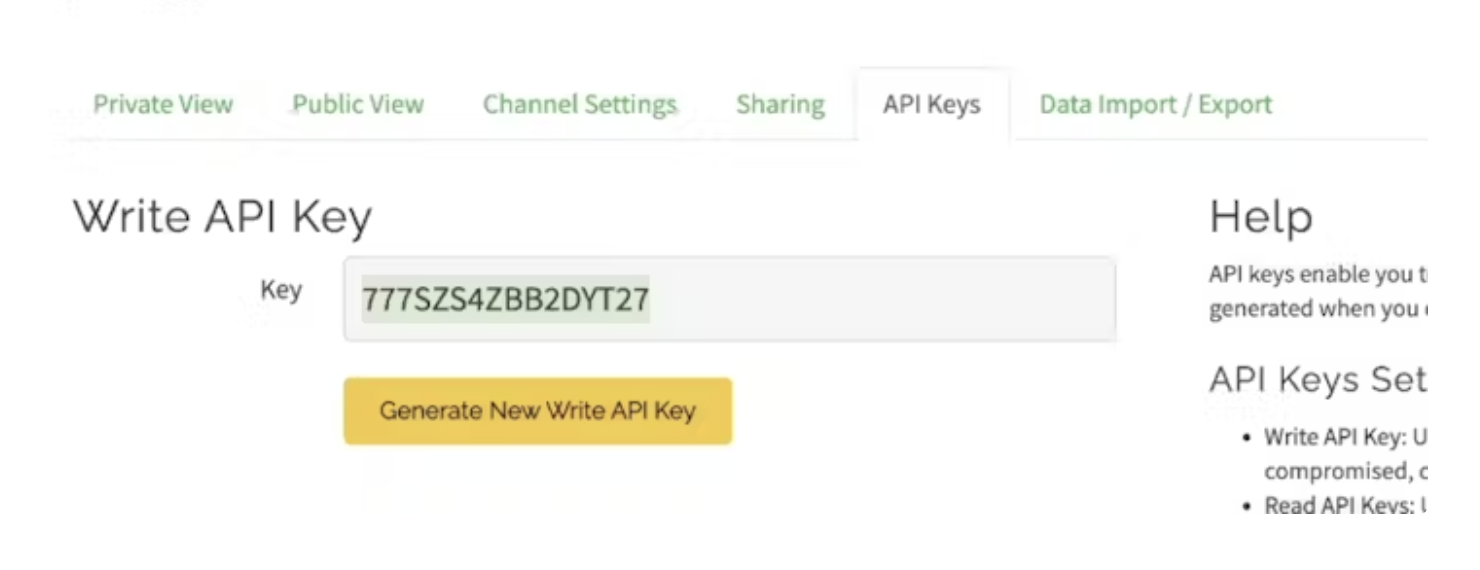

1,872,551 | Host your own web-based collaborative IDE | Intro I recently got to try Ishaan Dey's Sandbox, (ishaan1013/sandbox) which is an open... | 0 | 2024-06-01T00:49:59 | https://dev.to/jamesmurdza/how-to-setup-ishaan1013sandbox-locally-503p | ## Intro

I recently got to try Ishaan Dey's Sandbox, ([ishaan1013/sandbox](https://github.com/ishaan1013/sandbox)) which is an open source web-based editor similar to Replit that lets you write and run code in your browser.

In this post I write down the steps I followed to get the project running locally.

Quick note—in some of the text below you may see the use of emojis like 🍎 in example code. It should be very obvious what you need to put in place of the emojis—if not, leave a comment!

## Requirements

The application uses NodeJS and can be run with Docker.

Needed accounts to set up:

- [Clerk](https://clerk.com/): Used for user authentication.

- [Liveblocks](https://liveblocks.io/): Used for collaborative editing.

- [Cloudflare](https://www.cloudflare.com/): Used for relational data storage (D2) and file storage (R2).

A quick overview of the tech before we start: The deployment uses a **NextJS** app for the frontend and an **ExpressJS** server on the backend. Presumably that's because NextJS integrates well with Clerk middleware but not with Socket.io.

## Initial setup

No surprise in the first step:

```bash

git clone https://github.com/ishaan1013/sandbox

cd sandbox

```

Run `npm install` in:

```

/frontend

/backend/database

/backend/storage

/backend/server

/backend/ai

```

## Adding Clerk

Setup the Clerk account.

Get the API keys from Clerk.

Update `/frontend/.env`:

```

NEXT_PUBLIC_CLERK_PUBLISHABLE_KEY='🔑'

CLERK_SECRET_KEY='🔑'

```

## Deploying the storage bucket

Go to Cloudflare.

Create and name an R2 storage bucket in the control panel.

Copy the account ID of one domain.

Update `/backend/storage/src/wrangler.toml`:

```

account_id = '🔑'

bucket_name = '🔑'

key = 'SUPERDUPERSECRET'

```

In the `/backend/storage/src` directory:

```

npx wrangler deploy

```

## Deploying the database

Create a database:

```

npx wrangler d1 create sandbox-database

```

Use the output for the next setp.

Update `/backend/database/src/wrangler.toml`:

```

database_name = '🔑'

database_id = '🔑'

KEY = 'SUPERDUPERSECRET'

STORAGE_WORKER_URL = 'https://storage.🍎.workers.dev'

```

In the `/backend/database/src` directory:

```

npx wrangler deploy

```

## Applying the database schema

Delete the `/backend/database/drizzle/meta` directory.

In the `/backend/database/` directory:

```

npm run generate

npx wrangler d1 execute sandbox-database --remote --file=./drizzle/0000_🍏_🍐.sql

```

## Configuring the server

Update `/backend/server/.env`:

```

DATABASE_WORKER_URL='https://database.🍎.workers.dev'

STORAGE_WORKER_URL='https://storage.🍎.workers.dev'

WORKERS_KEY='SUPERDUPERSECRET'

```

## Adding Liveblocks

Setup the Liveblocks account.

Update `/frontend/.env`:

```

NEXT_PUBLIC_LIVEBLOCKS_PUBLIC_KEY='🔑'

LIVEBLOCKS_SECRET_KEY='🔑'

```

## Adding AI code generation

In the `/backend/ai` directory:

```

npx wrangler deploy

```

Update `/backend/server/.env`:

```

AI_WORKER_URL='https://ai.🍎.workers.dev'

```

## Configuring the frontend

Update `/frontend/.env`:

```

NEXT_PUBLIC_DATABASE_WORKER_URL='https://database.🍎.workers.dev'

NEXT_PUBLIC_STORAGE_WORKER_URL='https://storage.🍎.workers.dev'

NEXT_PUBLIC_WORKERS_KEY='SUPERDUPERSECRET'

```

## Running the IDE

Run `npm run dev` simultaneously in:

```

/frontend

/backend/server

```

Additionally, here's a [Dockerfile](https://github.com/jamesmurdza/sandbox/tree/feat/dockerfile) that can be used to run both components together. | jamesmurdza | |

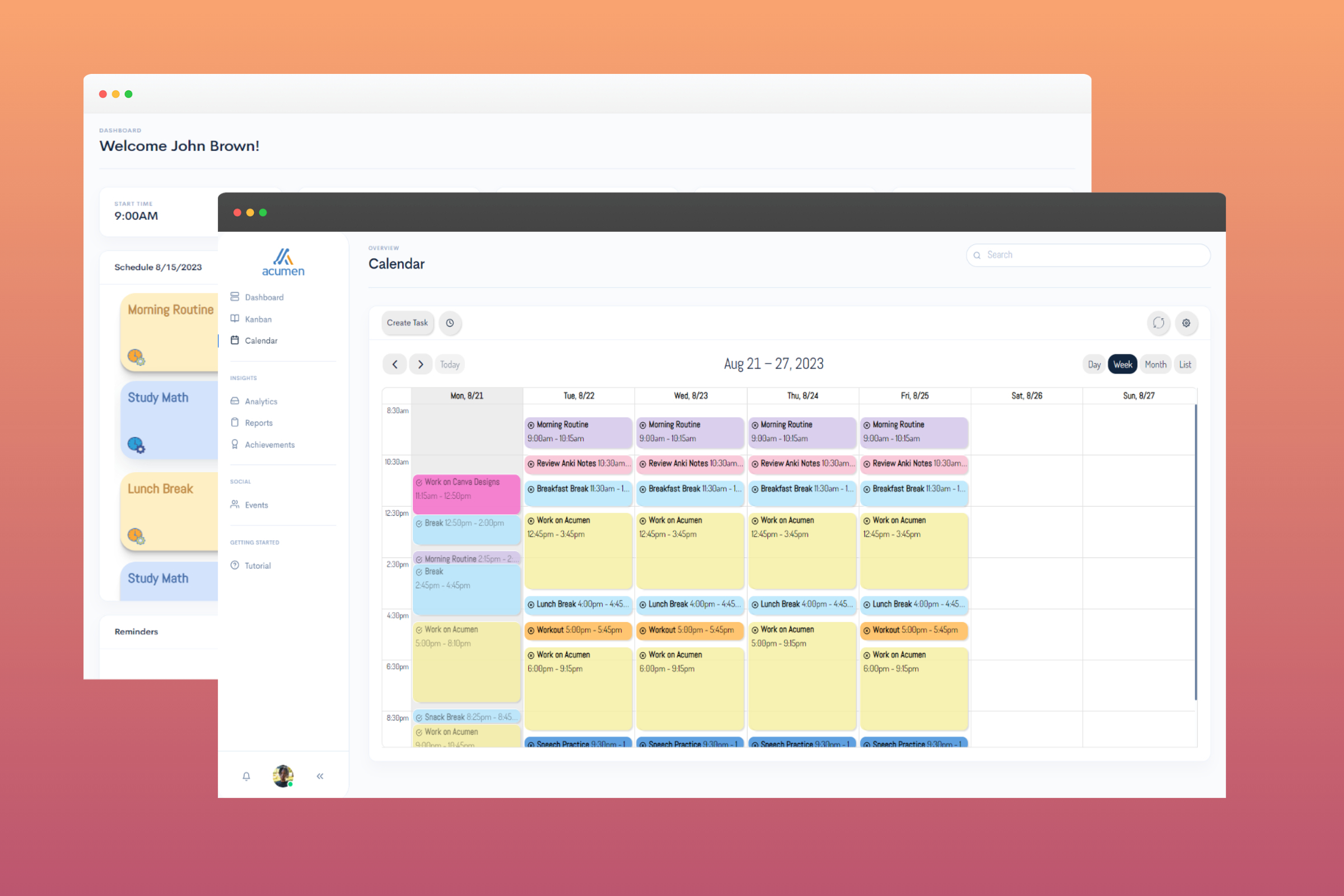

1,872,550 | Mi primer Articulo, escribiendo acerca de mi Agenda para el semestre 2 2024. "Sin saber de agendas!." | Ya son muchas las formas, conceptos, información acumulada durante unos 10 años aproximadamente. es... | 0 | 2024-06-01T00:47:24 | https://dev.to/rickdev9/mi-primer-articulo-escribiendo-acerca-de-mi-agenda-para-el-semestre-2-2024-sin-saber-de-agendas-kc4 | agenda, articulo, markdown | Ya son muchas las formas, conceptos, información acumulada durante unos 10 años aproximadamente. es por esto que me animo a escribir acerca de como voy a pretender organizar mi agenda, es decir mi tiempo para crear poryectos, actividades, enseñanzas. Las cuales pretenderé que se me anime a saber escribir de estos temas.

Si, asi es, me animaré de una vez por toda a realizar varios de mis cosas pendientes, y todo esto tendrá que ver por que me encanta aprender y compartir conocimiento.

Existe la probabilidad y posibilidad que a medida de mis publicaciones tenga algunos errores que usted encontrará al leer mis árticulos, es por ello que si te estás pasando por este sitio y cualquier de mis articulos publicados no dudes en hacerme la observación por medio de la caja de comentarios.

Agradezco la retroalimentación que me puedan hacer. Estaré presto a atender las sugerencias.

`

<table>

<thead>Agenda personal</thead>

<tr>

<thgroup>

<th>Área</th>

<th>Sección</th>

<th>Observaciones</th>

<th>Fecha de Inicio</th>

<th>Fecha de estipulada para Fin</th>

</thgroup

</tr>

<tbody>

<tr>

<td>Proyecto short-lived/td>

<td>Github</td>

<td>Microsoft Build: Aceleración de la productividad del desarrollador con GitHub y Azure para desarroll</td>

<td>Introducción a Github Copilot, Introducción a la ingeniería de solicitudes con Github Copilot, uso de GitHub Copilot con JavasCript</td>

<td>21 Junio</td>

</tr>

<tr>

<td>Proyecto short-lived/td>

<td>GitHub Pages, rickdev9's projects</td>

<td>Confirmacion .section > Hoja de vida && proyects</td>

<td>Vincular articulos y novedades a sitio dev.to && x.com && form contact & comunidad UNIMINUTO.</td>

<td>21 de Junio</td>

</tr>

<tr>

<td>Proyecto long-Running/td>

<td>Community for Gender equality and learning with teacher and students</td>

<td>investigar indicadores de género en LATAM y Colombia, Crear comunidad en Teams && vincular embedded sites web en pestañas</td>

<td>Vincular logros a sitio rickdev9's projects de GitHub Page</td>

<td> 21 de Junio</td>

</tr>

</tbody>

</table>

`

Nos veremos pronto, seguire actualizando mi agenda, pero sobre todo me la juego por cumplir estas agendas propuestas | rickdev9 |

1,872,549 | Flexible Solar Panels: A Solution for Curved Surfaces | Flexible Solar Panel Systems: The Newest Solution To Curved Surfaces Introduction: Have you ever... | 0 | 2024-06-01T00:46:00 | https://dev.to/pamela_ballardow_8f47cadc/flexible-solar-panels-a-solution-for-curved-surfaces-3gi1 | flexible | Flexible Solar Panel Systems: The Newest Solution To Curved Surfaces

Introduction:

Have you ever wondered how we can harness the charged power associated with the sunlight to generate electricity? The solution is based on solar power panels, which convert sunlight into usable energy. But imagine if you want to install solar panel systems for a area curved? This is where versatile panels that are solar in – these are typically a game-changer in the area of solar technology. Let us dive to the globe wonderful of solar power panels!

Features of Flexible Solar Panels:

Versatile solar power panels have some significant advantages over old-fashioned rigid panels that are solar. Above all, they've been flexible meaning can conform to any shape or size of the surface. They've been made by this quality ideal for curved areas like ships, RVs, and automobiles. Also, flexible panels that are solar lightweight and simple to install. Also more resistant and durable to harm from impacts, making them an improved choice for outside use. Flexible panels which are solar also better at creating energy in low light conditions. Many of these factors make versatile solar panel systems a choice top anyone trying to go green.

Innovation in Versatile Solar Panel Technology:

The growth of versatile Solar Panel that are solar due to innovative research and advances in technology. Unlike old-fashioned panels being solar use rigid materials like silicon, versatile solar panels use thin-film materials that are applied to flexible substrates like synthetic. This technology has permitted for the creation of slim and lightweight panels being solar may be stated in big quantities, making them more affordable than ever before. The innovation in versatile panel solar has opened up brand new opportunities for renewable energy.

Safety of Versatile Solar Panel Systems:

Versatile panels that are solar fashioned with security in your mind. They do not create any emissions which can be harmful and they're not just a fire risk. Additionally, they are waterproof and may come in contact with harsh conditions being outside posing any risk of electrocution or shock. The voltage low of flexible solar panel systems means they are safe to carry out and install. They are a fantastic choice for anyone concerned with security and impact environmental.

How to make use of Solar versatile Panels

Using versatile panels which can be solar effortless and straightforward. Begin by choosing the right output and size to your requirements. Then, determine where you intend to install the panels. If you should be installing the panels for a area curved make sure to select versatile panels that may flex and flex to the bend of the area. Next, you shall need certainly to install the panels utilizing adhesive or brackets. Be sure to proceed with the maker's instructions when installing the panels. After the panels are set up, simply connect them to a battery pack or inverter to start energy generating. It is that simple!

Quality and Provider of Versatile Solar Panel Systems:

When choosing Flexible Solar Panel, it is necessary to pick a quality item from a maker reputable. Try to find panels that are made out of top-quality materials and are usually built to final. Additionally, make sure to select a manufacturer that provides customer excellent and support. This can make certain you have a experience good that any dilemmas may be fixed quickly and easily.

Application of Flexible Solar Panels:

Versatile solar panel systems have a range wide of. They're ideal for usage on boats, RVs, and automobiles, where surfaces being curved common. They are able to additionally be used to power portable devices and illumination outside. Additionally, flexible solar power panels can be used in domestic and commercial settings to build power from rooftops as well as other areas that are curved. The possibilities are endless with regards to the application of Foldable Solar Panel that are solar.

Source: https://www.dhceversaving.com/flexible-solar-panel | pamela_ballardow_8f47cadc |

1,872,547 | Advanced Object-Oriented Programming in Java | Introduction Java is a popular programming language that is widely used in software... | 0 | 2024-06-01T00:34:22 | https://dev.to/kartikmehta8/advanced-object-oriented-programming-in-java-5a77 | webdev, javascript, programming, beginners | ## Introduction

Java is a popular programming language that is widely used in software development, particularly for web and mobile applications. One of the key features of Java is its support for object-oriented programming (OOP). In recent years, the demand for advanced OOP in Java has increased as developers strive to create more robust and scalable applications. In this article, we will discuss the advantages, disadvantages, and features of advanced OOP in Java.

## Advantages of Advanced OOP in Java

- **Better Code Organization and Modularization:** The use of advanced OOP in Java allows for better code organization and modularization, making it easier to maintain and update large software applications.

- **Reusable Code:** Advanced OOP enables developers to create reusable code through the use of classes and objects.

- **Improved Security and Reliability:** OOP allows for better data abstraction and encapsulation, which helps improve the security and reliability of the code.

## Disadvantages of Advanced OOP in Java

- **Steep Learning Curve:** Advanced OOP in Java requires a solid understanding of the principles of OOP, such as inheritance, polymorphism, and encapsulation, which can be challenging for beginners.

- **Performance Issues:** OOP can lead to performance issues, as it requires a significant amount of memory and processing power.

## Features of Advanced OOP in Java

- **Inheritance:** Java supports advanced OOP concepts such as inheritance, allowing classes to inherit properties and methods from other classes.

- **Polymorphism:** Java enables polymorphism, which allows methods to perform different functions based on the object that is calling them.

- **Encapsulation:** This feature helps protect the data in an object from accidental modification by restricting access to some of its components.

- **Multiple Inheritance:** Through interfaces, Java supports multiple inheritance, allowing a subclass to extend more than one parent class and thereby create complex and specialized classes.

### Example of Advanced OOP in Java

```java

public class Vehicle {

// Base class

}

public class Car extends Vehicle {

// Subclass extending the Vehicle class

private int wheels;

private int doors;

private int gears;

private boolean isManual;

public Car(String name, int wheels, int doors, int gears, boolean isManual) {

super(name);

this.wheels = wheels;

this.doors = doors;

this.gears = gears;

this.isManual = isManual;

}

// Additional methods and properties

}

public interface Control {

// Interface for multiple inheritance

void steer(int direction);

}

public class Ford extends Car implements Control {

// Subclass extending Car and implementing Control interface

public Ford(String name, int wheels, int doors, int gears, boolean isManual) {

super(name, wheels, doors, gears, isManual);

}

public void steer(int direction) {

// Implementation of the steer method

}

}

```

## Conclusion

In conclusion, advanced OOP in Java has numerous advantages, including better code organization, reusability, and improved security. However, it also has its downsides, such as a steep learning curve and potential performance issues. Nonetheless, with its powerful features and widespread use in software development, mastering advanced OOP in Java can greatly enhance a developer's skill set and improve the efficiency and quality of their code. | kartikmehta8 |

1,872,545 | Why Spring Matters for Java Developers | Writing Java Applications in the Past When writing Java applications in the past, writing... | 0 | 2024-06-01T00:15:02 | https://springmasteryhub.com/2024/05/31/why-spring-matters-for-java-developers/ | java, spring, springboot, programming | ## Writing Java Applications in the Past

When writing Java applications in the past, writing all the functionalities from scratch was common. It was time-consuming, requiring significant effort to create various factories, infrastructure code, setup code, etc.

## Growth of the Java Ecosystem and the Problem with Large Libraries

As the years passed, the Java ecosystem grew, and many libraries were created and shared to solve developers' common development problems. While these libraries were useful, they started to come with a cost.

These libraries grew too large, and using a simple functionality from a library required loading the entire package. Imagine importing a library with a thousand classes just to use a single one. Packaging your application would include all those classes even if you only needed one.

## The Solution: Small, Specific Libraries and Spring

This became a problem. A better idea was to have small libraries specific to certain problems, decoupled by topic. Instead of having large jars that did everything, we started to use small jars to solve specific problems.

For example, Jackson is a library specialized in serializing and deserializing JSON objects.

Now, Spring is a collection of these useful jars that can help you create your applications. It allows you to create a combination of dependencies (libraries) that suit your needs.

## Benefits of Spring

Spring helps by using the convention over configuration pattern. It creates a series of infrastructure beans based on the dependencies you add to your project, making things easier by providing a default configuration and allowing you to configure only what matters to you.

For example, are you using Kafka? Use the Spring Kafka dependency, customize your Kafka broker URL and some other configurations, and you're ready to go. Spring will provide a bean to send or consume messages from Kafka.

## Simplifying Development Decisions

It reduces the decisions developers need to make about handling connections, whether to use a singleton, where to put topic configurations, etc. It's already done; just customize it, and you're ready to go.

Spring uses these abstractions to make you more productive so you can focus on what matters most!

Do you use Spring in your projects? Many developers started working with Spring without knowing the Java past and how Spring makes our lives easier.

If you like Spring make sure to follow me and stay tuned to learn more! Don't miss out!

[Willian Moya (@WillianFMoya) / X (twitter.com)](https://twitter.com/WillianFMoya)

[Willian Ferreira Moya | LinkedIn](https://www.linkedin.com/in/willianmoya/)

Follow me here on dev.to! | tiuwill |

1,863,038 | Practical advice for writing React Components | I've been spending a lot of time with React lately - and I've developed some opinions. Disagree with... | 0 | 2024-06-01T00:05:42 | https://dev.to/lime517/practical-advice-for-writing-react-components-1o85 | react, tailwindcss, webdev, beginners | I've been spending a _lot_ of time with React lately - and I've developed some opinions. Disagree with any of them? Ping me at [@greveJoe](https://twitter.com/greveJoe/) on the social media platform formerly known as Twitter.

Let's get into it.

## 1. Combine Classes with clsx and twMerge

Blind concatenation of classNames is a trap, especially with Tailwind. Half the time it won't work how you expect because of CSS specificity. Instead, using `clsx` (or similar) and `twMerge` together provides flexibility when combining classes - letting you safely override default component styles with your own.

### Why This Matters

- **Cleaner Code:** `clsx` and `twMerge` help you write concise and readable class combinations.

- **Conditional Classes:** `clsx` makes it easy to apply classes based on props or state.

- **Conflict Resolution:** `twMerge` allows you to override conflicting classes, ensuring the desired styles are applied.

### Example: Custom Button Component

❌ **Don't do this**:

```jsx

const MyButton = ({ isActive, children }) => (

<button className={`bg-blue-500 text-white px-4 py-2 ${isActive ? 'border-2 border-red-500' : ''}`}>

{children}

</button>

);

```

*Why this is bad*: Concatenating classes directly can lead to hard-to-read and error-prone code, especially as the number of conditional classes increases.

✅ **Do this instead**:

```jsx

import clsx from 'clsx';

import { twMerge } from 'tailwind-merge';

const cn = (...classes) => twMerge(clsx(...classes));

const MyButton = ({ isActive, children }) => (

<button className={cn(

'bg-blue-500 text-white px-4 py-2',

isActive && 'border-2 border-red-500'

)}>

{children}

</button>

);

```

### Creating a Utility Function

To make combining classes even more convenient, create a utility function that combines `clsx` and `twMerge`:

```jsx

import clsx from 'clsx';

import { twMerge } from 'tailwind-merge';

const cn = (...classes) => twMerge(clsx(...classes));

export default cn;

```

You can then import and use this `cn` function in your components:

```jsx

import cn from './utils/cn';

const MyComponent = ({ isActive }) => (

<div className={cn('bg-white p-4', isActive && 'border-2 border-blue-500')}>

{/* ... */}

</div>

);

```

By using `clsx` and `twMerge` together, you can create cleaner, more maintainable code when working with utility-first CSS frameworks like Tailwind CSS. This approach makes it easier to apply conditional classes, resolve conflicts, and keep your components readable as your application grows in complexity.

## 2. Embrace Default Props

When building reusable components, it's crucial to allow for default props like `className` or `onClick`. This practice makes your components more composable, flexible, and easier to integrate into different contexts. It ensures that other developers can use your custom components just like native HTML elements, without needing to learn their inner workings.

In React, `forwardRef` is a powerful tool that allows your custom components to behave like native HTML elements. It enables your component to receive a `ref` prop, which can be used to access the underlying DOM element directly.

### Why This Matters

Allowing default props:

- **Enhances Flexibility:** Developers can style your component or attach event handlers without modifying its internals.

- **Improves Composability:** Your component can be seamlessly used within different layouts and contexts.

- **Reduces Learning Curve:** Other developers can use familiar props, making your component easier to adopt.

### Example: Custom Button Component

❌ **Don't do this**:

```jsx

const MyButton = ({ children }) => (

<button className="bg-blue-500 text-white px-4 py-2">

{children}

</button>

);

```

*Why this is bad*: This approach hardcodes styles and doesn't allow for additional props like `className` or `onClick`, making the component inflexible and hard to reuse.

✅ **Do this instead**:

```jsx

import React, { forwardRef } from 'react';

import clsx from 'clsx';

import { twMerge } from 'tailwind-merge';

const cn = (...classes) => twMerge(clsx(...classes));

const MyButton = forwardRef(({ children, className, ...props }, ref) => (

<button

ref={ref}

className={cn('bg-blue-500 text-white px-4 py-2', className)}

{...props}

>

{children}

</button>

));

export default MyButton;

```

### Using the Custom Button

Here's how you might use this `MyButton` component in a parent component:

```jsx

import React, { useRef } from 'react';

import MyButton from './MyButton';

const ParentComponent = () => {

const buttonRef = useRef(null);

const handleClick = () => {

if (buttonRef.current) {

console.log('Button clicked!', buttonRef.current);

}

};

return (

<div>

<MyButton ref={buttonRef} className="extra-class" onClick={handleClick}>

Click Me

</MyButton>

</div>

);

};

export default ParentComponent;

```

By embracing default props and using `forwardRef`, you create components that are flexible, reusable, and behave like native HTML elements. This approach reduces the learning curve for other developers and makes your components easier to work with in various contexts.

Here are points 3-5 and the conclusion of the blog post:

## 3. Let Parents Handle Layout and Spacing

When building reusable components, it's important to let the parent component handle layout and spacing concerns. This separates the responsibilities of the parent and child components, promoting a more modular and reusable design. A more controversial way to phrase this is: Never use `margin` - `padding` and `gap` are all you need.

### Why This Matters

- **Modularity:** Keeping layout and spacing concerns in the parent component makes the child components more modular and reusable.

- **Flexibility:** Parent components can adapt the layout and spacing based on their specific needs.

- **Consistency:** Centralizing layout and spacing decisions in the parent component ensures a consistent user interface.

### Example: List Component

❌ **Don't do this**:

```jsx

const ListItem = ({ children }) => (

<div className="mb-4">

{children}

</div>

);

const List = () => (

<div>

<ListItem>Item 1</ListItem>

<ListItem>Item 2</ListItem>

<ListItem>Item 3</ListItem>

</div>

);

```

_Why this is bad_: Each `ListItem` has a hard-coded `margin-bottom`, which can lead to inconsistent spacing and make the component less adaptable.

✅ **Do this instead**:

```jsx

const ListItem = ({ children }) => (

<div>{children}</div>

);

const List = () => (

<div className="flex flex-col gap-4">

<ListItem>Item 1</ListItem>

<ListItem>Item 2</ListItem>

<ListItem>Item 3</ListItem>

</div>

);

```

By letting the parent `List` component handle the spacing between `ListItem`s, you create a more modular and flexible design that can easily adapt to different layout requirements.

## 4. Use Controlled Components for Complex Interactions

For complex interactions like forms or modals, use controlled components to manage state and provide a clear way for the parent component to control the child component's behavior.

### Why This Matters

- **Predictability:** Controlled components make the state and behavior of the child component more predictable.

- **Easier Testing:** With controlled components, it's easier to write unit tests for the parent component.

- **Better Separation of Concerns:** The parent component manages the state, while the child component focuses on rendering and user interactions.

### Example: Modal Component

```jsx

const Modal = ({ isOpen, onClose, children }) => (

<div className={cn('fixed z-10 inset-0 overflow-y-auto', isOpen ? 'block' : 'hidden')}>

<div className="flex items-end justify-center min-h-screen pt-4 px-4 pb-20 text-center sm:block sm:p-0">

{/* Modal content */}

<div className="inline-block align-bottom bg-white rounded-lg text-left overflow-hidden shadow-xl transform transition-all sm:my-8 sm:align-middle sm:max-w-lg sm:w-full">

{children}