id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,872,367 | Understanding JS Execution Flow with Visuals | While working on a LeetCode problem, I got stuck on the concepts of setTimeout and clearTimeout. Some... | 0 | 2024-05-31T19:03:42 | https://dev.to/ayako_yk/understanding-js-execution-flow-with-visuals-1c8f | javascript, react, learning, webdev | While working on a LeetCode problem, I got stuck on the concepts of setTimeout and clearTimeout. Some developers shared their solutions and explanations, all emphasizing the importance of understanding the flow of JavaScript. The key mechanisms involved include the call stack, queues, the event loop, and web APIs.

I had read multiple documents and blogs about setTimeout and call stacks, but understanding the actual flow was quite challenging for me, especially as a visual learner. However, I came across an amazing YouTube tutorial that was perfectly explained and gave me a clear understanding, allowing me to delve deeper into the flow. I’ll describe each key term, summarize the flow in my own words, and share the link to the tutorial video.

**JavaScript Engine**

Since JavaScript is a scripting language that a computer doesn't understand directly, a browser has an inbuilt JavaScript engine that converts JavaScript into a computer-understandable language. The engine has two key mechanisms: the call stack and the memory heap. While the JavaScript engine is where data is stored, I won't delve into that this time to focus on the flow.

**Call Stack**

A call stack is a mechanism that keeps track of its place in a script that calls functions. Functions are stacked and executed in a Last-In-First-Out (LIFO) order.

**Memory Heap**

While the stack stores fixed-sized data, the heap dynamically allocates memory for objects and functions.

**Blocking vs Non-Blocking**

Blocking code doesn't allow the next task to be executed until the blocking code finishes executing. In contrast, non-blocking code, such as asynchronous calls like setTimeout or Promise, allows the next task to be executed without waiting.

**Event Queue**

The event queue is a queue of tasks to be executed when the call stack is empty. There are two kinds of queues or tasks: microtasks and macrotasks.

**Microtasks**

Microtasks are created by promises, such as those in `then`, `catch`, and `finally` methods. They are prioritized over macrotasks.

**Macrotasks**

Macrotasks include everything other than microtasks, such as setTimeout.

**Event Loop**

The event loop handles the execution of the event queue.

**Here's the flow:**

1. Regular functions to be executed are stacked in the call stack.

2. They are executed from the top in a Last-In-First-Out (LIFO) order.

3. JavaScript is single-threaded, but time-consuming tasks such as fetching APIs or setTimeout are handled by threads outside the JavaScript runtime environment. Once these asynchronous tasks are complete, they are placed in task queues. Promise-related tasks are placed in the microtask queue, while others go into the macrotask queue.

4. The event loop monitors the call stack and the task queues. When the call stack is empty, the event loop picks the first task from the microtask queue. When both the call stack and the microtask queue are empty, it picks the first task from the macrotask queue.

I'd like to share the amazing tutorial video that explains the flow using perfect visual images. Hope some of the visual learners can find it useful. Here's the URL: [https://youtu.be/eiC58R16hb8?si=ODoxBlfGxFZkJT9b]

| ayako_yk |

1,872,366 | Temu coupon code $100 off: act200019 | Get ready to save big with our incredible Temu coupon for $100 off! Our amazing Temu $100 off coupon... | 0 | 2024-05-31T19:02:39 | https://dev.to/hello_mini/temu-coupon-code-100-off-act200019-4m4l | temu | Get ready to save big with our incredible Temu coupon for $100 off! Our amazing Temu $100 off coupon code (act200019) will give you a flat $100 discount on your order value, making your shopping experience even more rewarding.

As a special bonus, new customers will also receive a free gift along with the $100 discount just for trying out Temu! Check out these fantastic coupons for Temu:

aaj92731: Get a flat $100 off for new users + a special gift.

aaf63818: Enjoy $100 off on purchases of $150 or more.

aaj92731: New users get $100 off + a free gift on orders over $300.

aah64133: Get $100 off on orders above $200 (exiting users).

tad80664: Take $100 off on purchases of $250 or more (existing users). | hello_mini |

1,872,365 | Redeem Temu coupon $100 off {aah64133} for New and Existing Customers | New users can get a $100 coupon bundle on Temu by using the code [aah64133]. This coupon provides... | 0 | 2024-05-31T19:01:56 | https://dev.to/priyamishra123976/redeem-temu-coupon-100-off-aah64133-for-new-and-existing-customers-40p1 | New users can get a $100 coupon bundle on Temu by using the code [aah64133]. This coupon provides discounts on products across various categories like clothing, accessories, jewelry, and more.Temu also offers a sitewide sale with savings up to 90% off. They provide free shipping and free returns for up to 90 days after purchase. If your delivery is late, you can get a $5 credit. Additionally, Temu has a price protection policy - if the price drops within 30 days of your purchase, you can request a partial refund.To get the $100 coupon bundle, download the Temu app using the link in the description of the YouTube video or search for the code [ach320371]. Existing customers can use the code [aah64133] to get the $100 coupon bundle

New users can Get a huge $100 off coupon bundle for your entire purchase, plus an additional 30% discount on top of that! That's right, slash prices by up to 70% with code {aah64133} or { ach320371 } at checkout. If you are a new customer on Temu and you want to get $100 off on your shopping, then use the latest $100 Temu coupon bundle code {aah64133} or { ach320371 } . You can also avail up to 90% off on your first purchase with Temu, and if your order exceeds $120, then you will be eligible for free shipping.

Here are the latest Temu coupon bundle codes for new users:

Temu $100 coupon bundle new users - {aah64133} or { ach320371 }

Temu coupon code $100 off - {aah64133} or { ach320371 }

Temu coupon $100 off - {aah64133} or { ach320371 }

Temu Coupon Code $100 Off - {aah64133} or { ach320371 }

Temu Coupon $100 Off for Existing Customers 2024

The Temu coupon $100 off for existing customers is a limited-time offer, but the good news is that it's valid throughout 2024. This means that you can take advantage of this incredible discount multiple times throughout the year, allowing you to save big on a wide range of products and categories.

Temu coupon code for existing customers: {aah64133} or { ach320371 }

Temu Coupon $100 Off for Existing Customers 2024: {aah64133} or { ach320371 }

50% off Temu coupon Code- {aah64133} or { ach320371 }

Buy1 Get 1 free Temu code - {aah64133} or { ach320371 }

Temu Buy 5 Get 6 free coupon code - {aah64133} or { ach320371 }

Temu $100 coupon bundle - {aah64133} or { ach320371 }

Temu coupon code $100 Canada - {aah64133} or { ach320371 }[Both New & Existing]

50% Off Temu UK code - {aah64133} or { ach320371 }[Both New & Existing]

Temu Coupon Code for Mexico: {aah64133} or { ach320371 }[Both New & Existing]

Temu Coupon Code for USA: {aah64133} or { ach320371 }[Both New & Existing]

Temu Coupon Code for UAE: {aah64133} or { ach320371 }[Both New & Existing]

Temu coupon Code for Japan: {aah64133} or { ach320371 }[Both New & Existing]

Temu Coupon Code for Australia: aci384098 [Both New & Existing]

#temu | priyamishra123976 | |

1,872,364 | pyaction pulled 4 million times and counting from the GitHub Container Registry | I have been maintaining, pyaction, which is a Docker container with Python, git, and the GitHub CLI.... | 21,164 | 2024-05-31T19:01:12 | https://dev.to/cicirello/pyaction-pulled-4-million-times-and-counting-from-the-github-container-registry-47i3 | github, docker, python, showdev | I have been maintaining, [pyaction](https://github.com/cicirello/pyaction), which is a [Docker container with Python, git, and the GitHub CLI](https://dev.to/cicirello/pyaction-a-docker-container-with-python-git-and-the-github-cli-930). Recently, pyaction surpassed 4 million pulls from the [GitHub Container Registry](https://github.com/cicirello/pyaction/pkgs/container/pyaction).

The pyaction container was originally developed to support developing GitHub Actions in Python. There are several GitHub container actions that use pyaction as the base image, and specifically pull it from the GitHub Container Registry. So this likely represents over 4 million runs of those dependent Actions. It is also available from [Docker Hub](https://hub.docker.com/r/cicirello/pyaction).

## More Information

Please consider starring pyaction's GitHub repository, and even better using it to develop Actions, or just to use the GitHub CLI:

{% github cicirello/pyaction %}

For more information about pyaction, see my earlier post here on DEV, as well as an information page about pyaction on the web.

{% link https://dev.to/cicirello/pyaction-a-docker-container-with-python-git-and-the-github-cli-930 %}

{% embed https://actions.cicirello.org/pyaction/ %}

## Where You Can Find Me

Follow me [here on DEV](https://dev.to/cicirello) and on [GitHub](https://github.com/cicirello):

{% user cicirello %}

| cicirello |

1,863,670 | Free All-in-One Web Development Tool | After dedicating 7 years and huge amount of hours to my project, it is now ready for public use. I am... | 0 | 2024-05-31T19:01:05 | https://dev.to/kooboo/free-all-in-one-web-development-tool-42l5 | webdev, javascript, wordpress | After dedicating 7 years and huge amount of hours to my project, it is now ready for public use. I am eager to gather feedback from the community on the value of my tool, name it Kooboo.

---

## What is it?

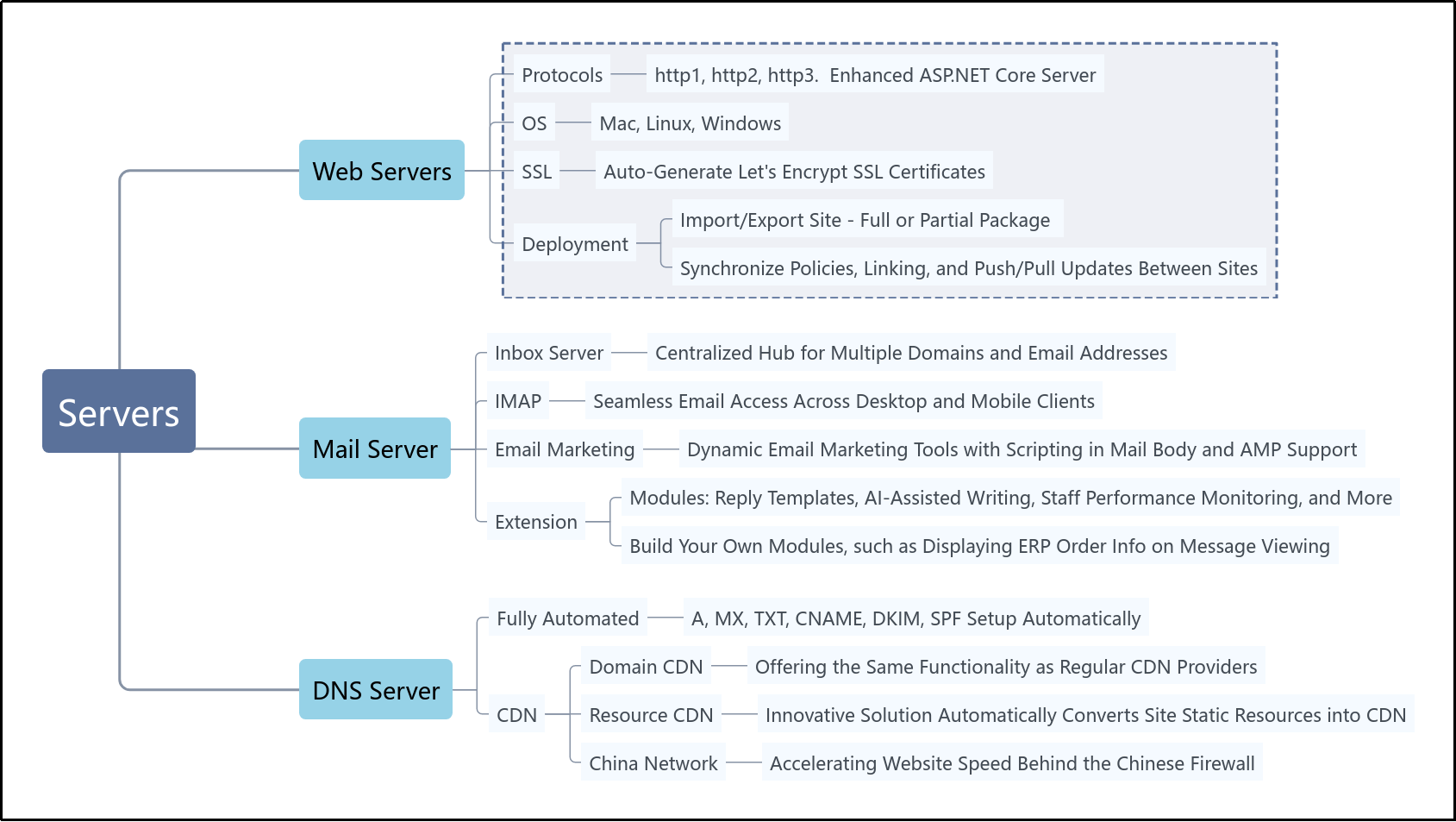

Kooboo is designed for website development and can be used as an alternative to WordPress, Magento, Shopify, Mailchimp, and more

It is a CMS, Ecommerce platform, IDE, Web Server, Mail Server and many more. It is very powerful and easy to get started.

---

## Getting Started

Kooboo can run on Windows, macOS, Linux, Docker, or in the cloud.

Download and install from: https://www.kooboo.com/downloads

Windows and MacOS users can simply download and run the tool with a click or double-click, no installation required.

---

## Tutorial

Four YouTube videos were prepared to help you understand it.

---

* Build a dynamic, database-driven website from scratch in 10 minutes

{% youtube SKBmljIuXTg %}

---

* Migrate your current WebSite to Kooboo in 2 minutes

{% youtube YTCVMnus2uo %}

---

* Install and Use 100s of open source applications instantly

{% youtube 79pJ76tSYwc %}

---

* Query and Edit Database

{% youtube 8csD0iYhs-k %}

---

## Development concept

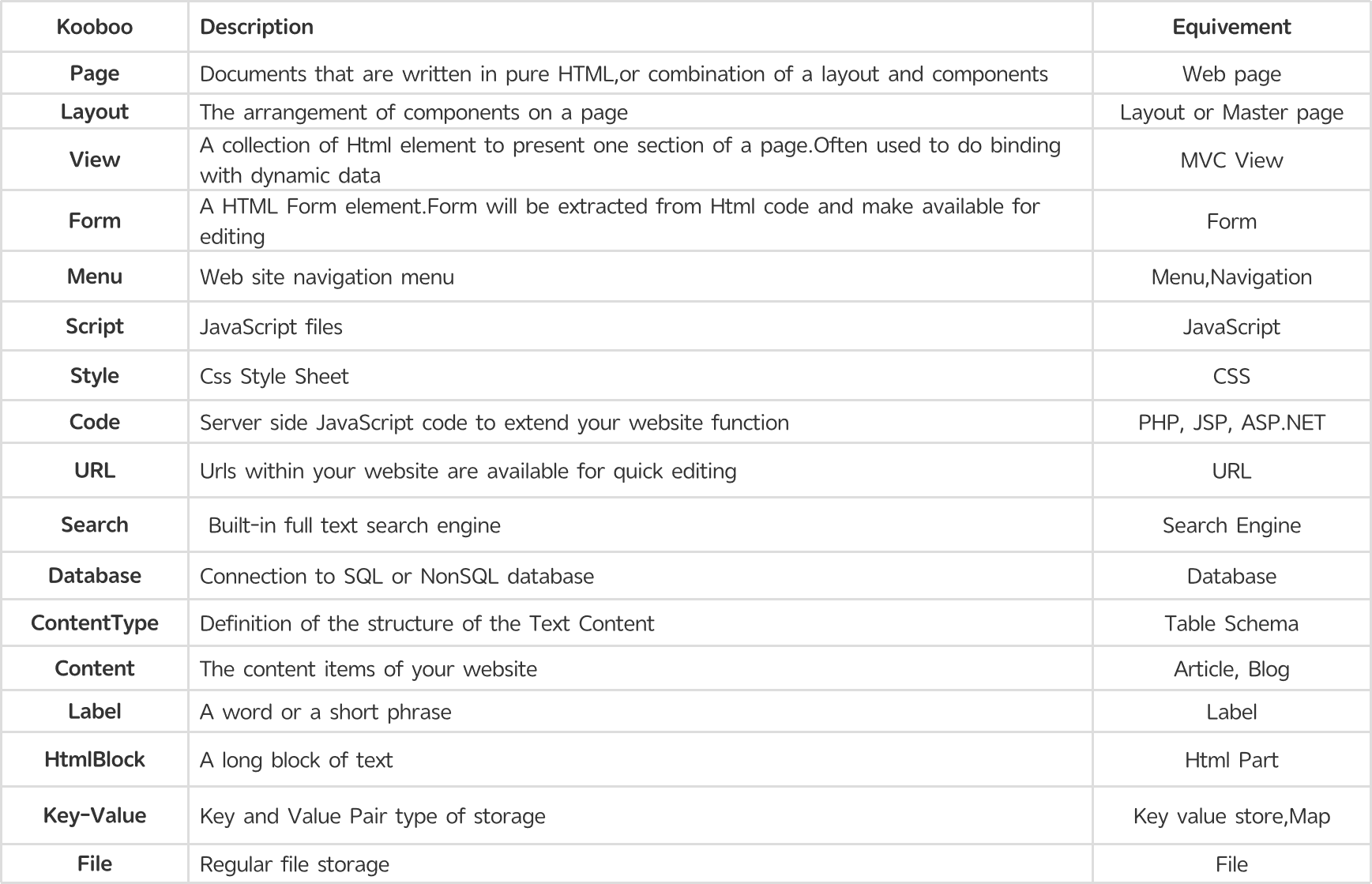

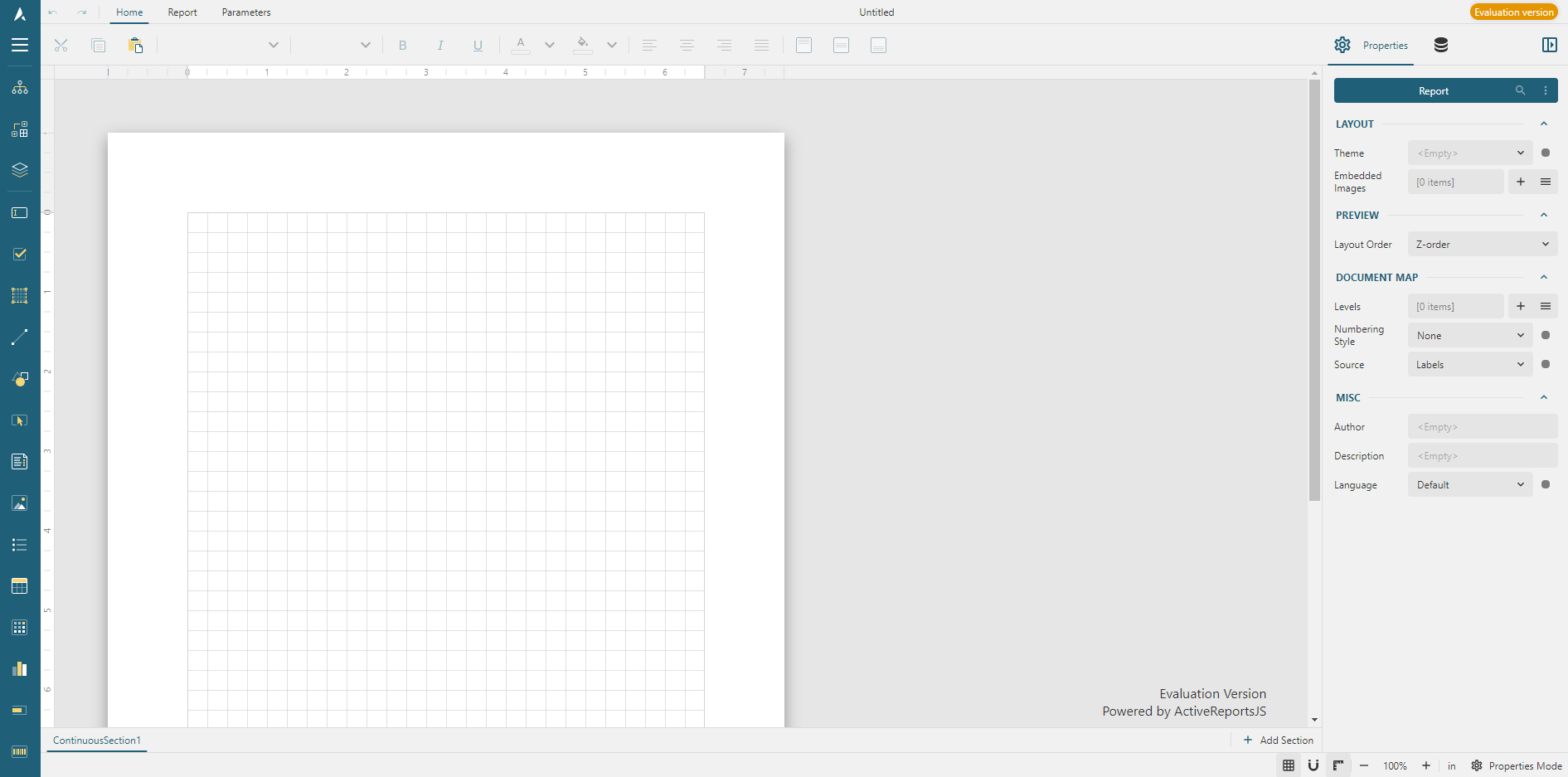

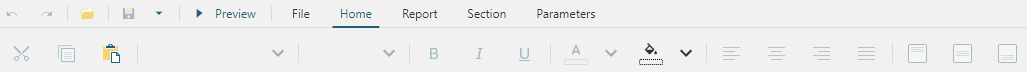

Our aim is to maintain familiarity with established development practices. Here is a snapshot of our core concept objects

---

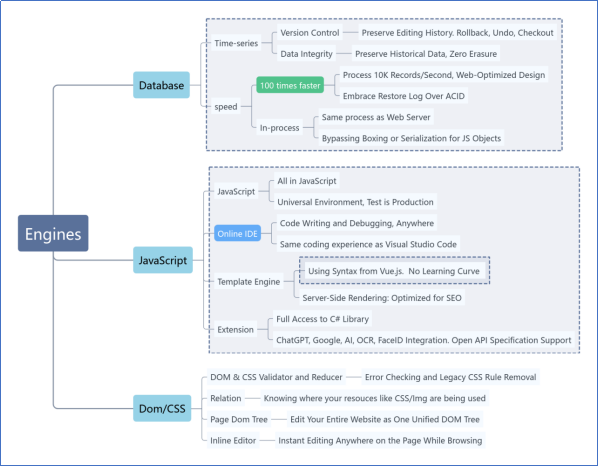

## Technology

To achieve the level of innovation present in our tool, we have custom-implemented a wide array of cutting-edge techniques. Below map shows some of them.

For more informaiton, see:

https://www.kooboo.com

| kooboo |

1,872,362 | Swimming Pool Company in Dubai | Dubai, a city renowned for its opulence and architectural marvels, is also home to some of the most... | 0 | 2024-05-31T18:58:58 | https://dev.to/technical_kh_/swimming-pool-company-in-dubai-1ae3 | swimmingpool | Dubai, a city renowned for its opulence and architectural marvels, is also home to some of the most luxurious and state-of-the-art swimming pools in the world. A leading [swimming pool company in Dubai](https://technicalkh.com/) specializes in designing, constructing, and maintaining these exquisite aquatic retreats. Whether for residential, commercial, or hospitality purposes, this company exemplifies excellence in every project it undertakes.

**Comprehensive Pool Design and Construction**

The company prides itself on its ability to transform any vision into reality. With a team of highly skilled architects and designers, they create bespoke swimming pools that blend seamlessly with the surrounding environment. Each project begins with a detailed consultation to understand the client’s preferences, lifestyle, and space requirements.

**Custom Designs**

From infinity pools that overlook Dubai’s breathtaking skyline to serene backyard oases, the company offers an extensive range of design options. Their expertise includes:

Infinity Pools: Creating a visual effect of water extending into the horizon, perfect for luxury villas and high-rise buildings.

Lap Pools: Ideal for fitness enthusiasts, designed for functionality and aesthetics.

Rooftop Pools: Innovative solutions for urban settings, maximizing space without compromising on luxury.

Kids’ Pools: Safe, fun, and tailored to the needs of young swimmers.

Advanced Construction Techniques

Utilizing the latest construction techniques and high-quality materials, the company ensures that each swimming pool is built to last. The construction process is meticulously managed to adhere to the highest standards of safety and durability. Features such as:

Reinforced Concrete Shells: Providing structural integrity and longevity.

High-Quality Finishes: Including tiles, mosaics, and natural stone to enhance the pool’s aesthetic appeal.

State-of-the-Art Filtration Systems: Ensuring clean, crystal-clear water with minimal maintenance.

Maintenance and Renovation Services

Beyond construction, the company offers comprehensive maintenance and renovation services to keep swimming pools in pristine condition. **Regular maintenance packages include:**

Water Quality Testing: Regular analysis to maintain optimal pH levels and water clarity.

Cleaning Services: Thorough cleaning of pool surfaces, filters, and surrounding areas.

Equipment Checks: Ensuring all mechanical and electrical components are functioning correctly.

For older pools, the company provides renovation services to update and upgrade existing structures. This can include:

Resurfacing: Replacing worn-out surfaces with new, high-quality materials.

Modernizing Equipment: Installing the latest technology in heating, lighting, and filtration.

Aesthetic Enhancements: Adding features such as waterfalls, lighting effects, and contemporary designs.

**Commitment to Sustainability**

In line with Dubai’s commitment to sustainability, the swimming pool company incorporates eco-friendly practices into its projects. They offer energy-efficient solutions such as:

Solar Heating Systems: Utilizing renewable energy to heat pool water.

LED Lighting: Reducing energy consumption while providing vibrant illumination.

Water Conservation Techniques: Implementing systems to minimize water usage and waste.

**Conclusion**

A premier swimming pool company in Dubai is more than just a service provider; it is a partner in creating luxurious, sustainable, and innovative aquatic environments. With a focus on quality, customization, and customer satisfaction, the company continues to set benchmarks in the industry, making every pool a masterpiece of design and engineering. Whether you seek a tranquil backyard escape or a stunning centerpiece for a high-rise, this company delivers unparalleled excellence in every project. | technical_kh_ |

1,872,360 | Forensic Analysis of AWS EBS Volumes: Pentesting Storage | Introduction Overview of Forensic Analysis in Cloud Environments Forensic analysis in cloud... | 0 | 2024-05-31T18:52:48 | https://sudoconsultants.com/forensic-analysis-of-aws-ebs-volumes-pentesting-storage/ | ebs, aws, pentesting | <!-- wp:heading {"level":1} -->

<h1 class="wp-block-heading">Introduction</h1>

<!-- /wp:heading -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Overview of Forensic Analysis in Cloud Environments</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Forensic analysis in cloud environments has become a critical activity in migrating data and applications to cloud environments. The activity is at an all-time high, and so is the need to investigate security breaches and data integrity issues and any other anomaly occurrences that may be present. Forensic analysis will identify, preserve, and analyze data derived from cloud services with an approach to understanding the nature and repercussions of prevailing security incidents. However, the dynamic and distributed nature of cloud environments poses drastic challenges, including data volatility, multi-tenancy, and the complexity in attaining accurate and complete forensic evidence.</p>

<!-- /wp:paragraph -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Importance of Pentesting Storage</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Storage pentesting is crucial, especially for Amazon Elastic Block Store volumes, as it is actually one of the ways to ascertain both security and data integrity. EBS volumes are block-level storage devices for use with Amazon EC2 instances, much in the same way as hard drives are used on a computer. Hence, pentesting storage as a service could be easier for knowing the exact vulnerabilities and pentesting them for the effective avoidance of possible risks.</p>

<!-- /wp:paragraph -->

<!-- wp:heading -->

<h2 class="wp-block-heading">Prerequisites</h2>

<!-- /wp:heading -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">AWS Account Setup</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>To perform forensic analysis on AWS EBS volumes, you should have an AWS account with the following permissions: An Identity and Access Management (IAM) role with the following permissions:</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>ec2:DescribeVolumes, ec2:CreateSnapshot, ec2:CopySnapshot, ec2:CreateVolume, ec2:AttachVolume, ec2:DescribeInstances.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>Ensure you have an AWS Management Console and the AWS CLI.</p>

<!-- /wp:paragraph -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Tools and Software Requirements</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Below are some of the prerequisites along with the tools which would be needed for the purpose of forensic analysis:</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>AWS CLI</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Forensics tools</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Security tools</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:heading -->

<h2 class="wp-block-heading">Understanding AWS EBS</h2>

<!-- /wp:heading -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">EBS Volume Types</h3>

<!-- /wp:heading -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Amazon EBS provides four types of volume, balancing price and performance:</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>General Purpose SSD (gp2): Volumes balance price and performance for a wide variety of workloads.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Provisioned IOPS SSD (io1): Volumes are for I/O intensive workloads.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Throughput Optimized HDD (st1): Low-cost HDD designed for frequently accessed, throughput-intensive workloads.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Cold HDD (sc1): Lowest cost HDD for less frequently accessed workloads.</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Creating and Managing EBS Volumes</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>EBS volumes are independent and can be created, attached, detached, and deleted as necessary. These can be snapshot to transparently back up its contents, thereby protect the data that is on the volume. Can also be restored, which is invaluable for forensic analysis.</p>

<!-- /wp:paragraph -->

<!-- wp:heading -->

<h2 class="wp-block-heading">Setting Up the Environment</h2>

<!-- /wp:heading -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Creating an EC2 Instance for Analysis</h3>

<!-- /wp:heading -->

<!-- wp:list {"ordered":true} -->

<ol><!-- wp:list-item -->

<li>To set up the forensic analysis, create an EC2 instance that will work as the analysis environment:</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Launch an EC2 Instance: Selected user-t2.medium instance type or higher based on the complexity of analysis required.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Configure Security Groups: Only allow ports that are required for the analysis tools, and limit the access to specify IP address.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Attach EBS Volumes: Attach the EBS volume that will be analyzed to EC2 instance.</li>

<!-- /wp:list-item --></ol>

<!-- /wp:list -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Installing Necessary Tools</h3>

<!-- /wp:heading -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Install AWS CLI: Install the tool on your local machine by following the steps mentioned in the <a href="https://docs.aws.amazon.com/cli/latest/userguide/getting-started-install.html">AWS CLI Installation Guide</a>.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Install Forensic Tools: Install all necessary tools like Autopsy and The Sleuth Kit on the EC2 instance.</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:heading -->

<h2 class="wp-block-heading">Collecting EBS Data for Analysis</h2>

<!-- /wp:heading -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Snapshotting EBS Volumes</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>It is recommended to take a snapshot of the EBS volume to preserve the data in its current state:</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>AWS Management Console: Follow the EBS section of the console, select the volume, and create a snapshot.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>AWS CLI Command: </li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:code -->

<pre class="wp-block-code"><code><strong><em>aws ec2 create-snapshot --volume-id <volume-id> --description "Snapshot for forensic analysis"</em></strong></code></pre>

<!-- /wp:code -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Copying EBS Snapshots to Another Region</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>To ensure data availability and redundancy, copy snapshots to another AWS region:</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>AWS CLI Command:</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:code -->

<pre class="wp-block-code"><code><strong><em>aws ec2 copy-snapshot --source-region <source-region> --source-snapshot-id <snapshot-id> --region <target-region> --description "Copied snapshot"</em></strong></code></pre>

<!-- /wp:code -->

<!-- wp:heading -->

<h2 class="wp-block-heading">Analyzing EBS Snapshots</h2>

<!-- /wp:heading -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Creating Volumes from Snapshots</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Once you have a snapshot, you can create a new EBS volume from it:</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>AWS Management Console: Navigate to the snapshots section, select your snapshot, and create a volume.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>AWS CLI Command: </li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:code -->

<pre class="wp-block-code"><code><strong><em>aws ec2 create-volume --snapshot-id <snapshot-id> --availability-zone <az></em></strong></code></pre>

<!-- /wp:code -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Attaching the Restored Volume to an Analysis Instance</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Attach the newly created volume to your EC2 instance:</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>AWS Management Console: Attach the volume to the instance through the EBS section.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>AWS CLI Command: </li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:code -->

<pre class="wp-block-code"><code><strong><em>aws ec2 attach-volume --volume-id <volume-id> --instance-id <instance-id> --device /dev/sdf</em></strong></code></pre>

<!-- /wp:code -->

<!-- wp:heading -->

<h2 class="wp-block-heading">Forensic Analysis Techniques</h2>

<!-- /wp:heading -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Mounting EBS Volumes for Analysis</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Mount the EBS volume to the EC2 instance for analysis:</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Mounting Command:</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:code -->

<pre class="wp-block-code"><code>sudo mkdir /mnt/forensic

sudo mount /dev/xvdf /mnt/forensic</code></pre>

<!-- /wp:code -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Using Forensic Tools</h3>

<!-- /wp:heading -->

<!-- wp:list {"ordered":true} -->

<ol><!-- wp:list-item -->

<li>Autopsy: A GUI-based tool for digital forensics. Follow the Autopsy Download and Documentation for installation and usage instructions.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>The Sleuth Kit: A command-line toolkit for forensic analysis.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li><strong>List Files:f</strong></li>

<!-- /wp:list-item --></ol>

<!-- /wp:list -->

<!-- wp:code -->

<pre class="wp-block-code"><code><strong><em>ls -r -m /mnt/forensic</em></strong></code></pre>

<!-- /wp:code -->

<!-- wp:paragraph -->

<p><strong>Extract and Examine Metadata</strong></p>

<!-- /wp:paragraph -->

<!-- wp:code -->

<pre class="wp-block-code"><code><strong><em>istat /mnt/forensic/<file_inode></em></strong></code></pre>

<!-- /wp:code -->

<!-- wp:heading -->

<h2 class="wp-block-heading">Security Considerations</h2>

<!-- /wp:heading -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Ensuring Data Integrity</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Maintaining the integrity of forensic data is of the utmost importance. Use checksums to verify data integrity:</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>Generate Checksum:</p>

<!-- /wp:paragraph -->

<!-- wp:code -->

<pre class="wp-block-code"><code><strong><em>sha256sum /mnt/forensic/*</em></strong></code></pre>

<!-- /wp:code -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Maintaining Chain of Custody</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Document every action performed during forensic analysis to maintain an unbroken chain of custody:</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Document all actions, time stamps, and personnel involved.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Securely store logs and analysis results</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:heading -->

<h2 class="wp-block-heading">Best Practices for EBS Volume Forensics</h2>

<!-- /wp:heading -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Automating Forensic Data Collection</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Use AWS Lambda and CloudWatch to automatically replicate snapshots as follows:</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Sample Lambda Function to Automatically Snapshot EBS Volumes</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:code -->

<pre class="wp-block-code"><code>import boto3

def lambda_handler(event, context):

ec2 = boto3.client('ec2')

volumes = ec2.describe_volumes(Filters=[{'Name': 'tag:Forensic', 'Values': ['true']}])['Volumes']

for volume in volumes:

ec2.create_snapshot(VolumeId=volume['VolumeId'], Description="Automated snapshot for forensic analysis")</code></pre>

<!-- /wp:code -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Regular Pentesting and Vulnerability Assessments</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Regularly auditing and pentesting so that vulnerabilities can be found and fixed.</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Use Nessus and OpenVAS tools for conducting vulnerability assessment.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Merge the result with obtaining security posture in advance.</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:heading -->

<h2 class="wp-block-heading">Conclusion</h2>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>This article deals with the forensic analysis of EBS volume in the AWS cloud platform and discusses various aspects related to it. The basic concepts involved in EBS volume forensic analysis are learning about EBS volume types, preparation of the analysis environment procurement and analysis of data, data integrity, and chain of custody. Regular assessments and automation in vulnerability checks pave the path for sound security practices.</p>

<!-- /wp:paragraph -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Future Trends in Cloud Forensics</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>The field of cloud forensics is continuously evolving. Staying updated with the latest tools, techniques, and the features provided by AWS is necessary for performing effective forensic analysis. Further development in AI-machine learning is bound to make cloud forensics even more convincing.</p>

<!-- /wp:paragraph -->

<!-- wp:heading -->

<h2 class="wp-block-heading">References and Further Reading</h2>

<!-- /wp:heading -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Amazon EBS Documentation:<a href="https://docs.aws.amazon.com/ebs/index.html"> Amazon EBS Documentation</a></li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>NIST Guide to Integrating Forensic Techniques into Incident Response:<a href="https://nvlpubs.nist.gov/nistpubs/Legacy/SP/nistspecialpublication800-86.pdf"> NIST SP 800-86</a></li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Digital Forensics with The Sleuth Kit and Autopsy: Sleuth Kit Documentation</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>With upholding these principles and practices, forensic analysis can easily be carried out on AWS EBS volumes, thus tolerating data to be secure and undamaged in your cloud.</p>

<!-- /wp:paragraph --> | sidrasaleem296 |

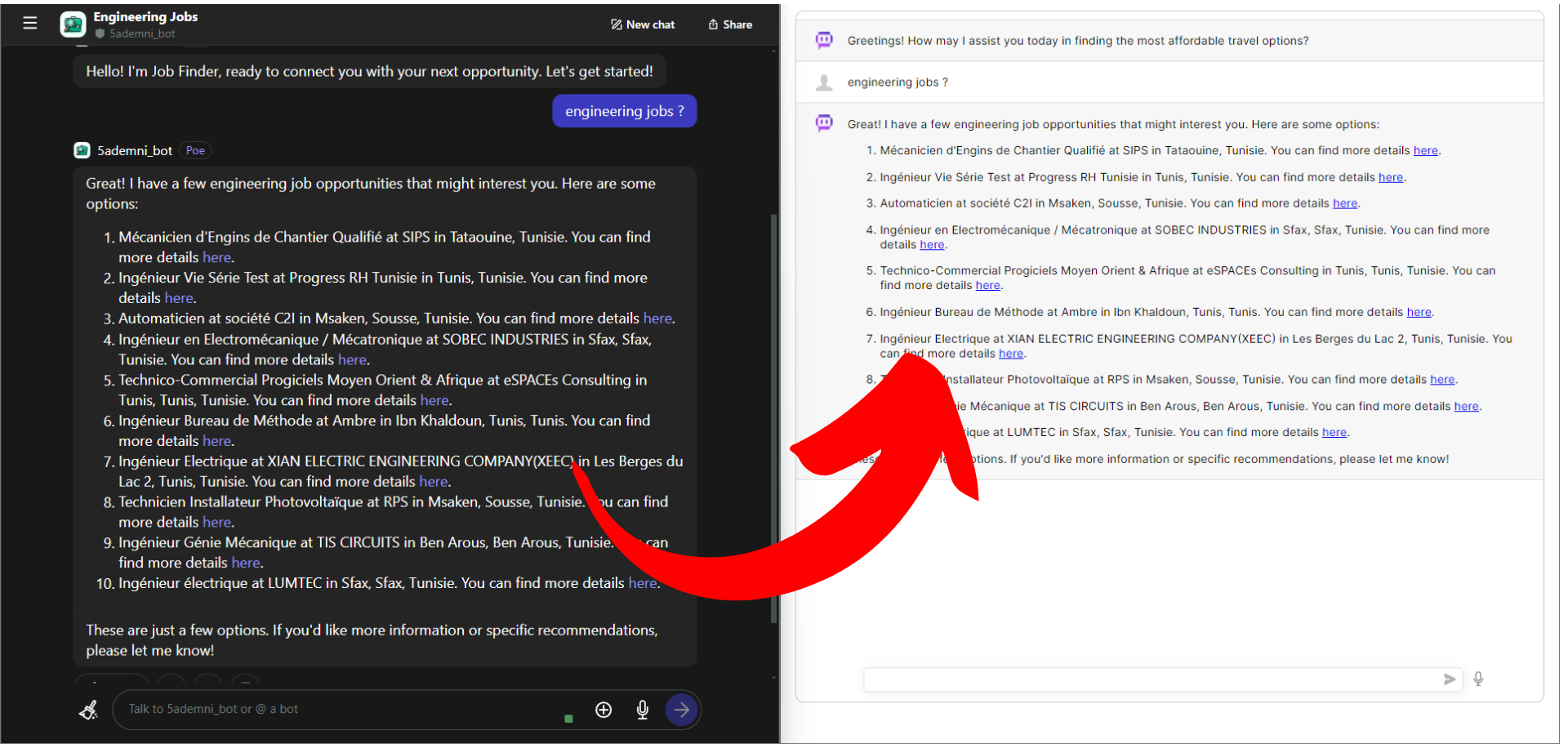

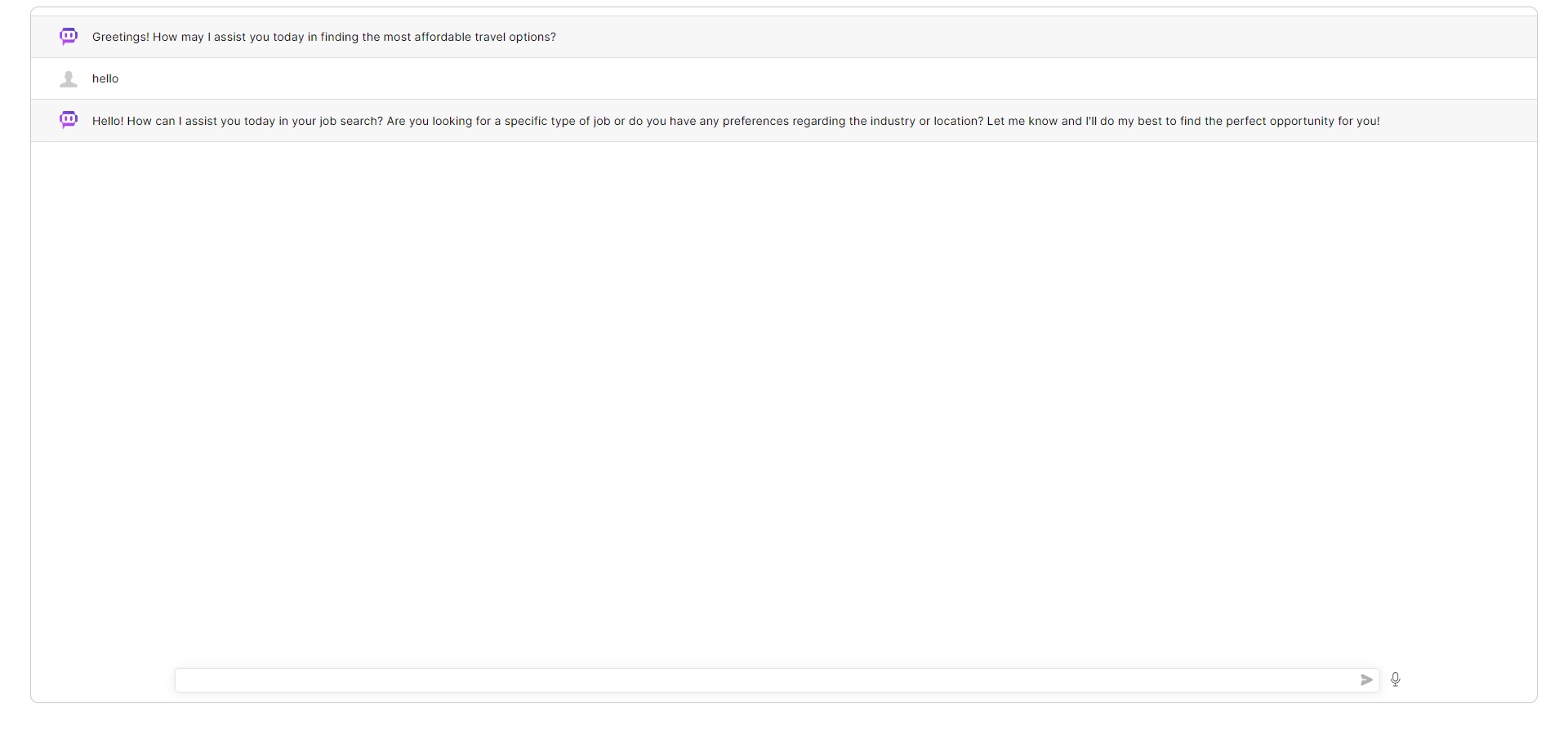

1,871,409 | How to Integrate Your Poe.com Ai Bot into Your Website: A Step-by-Step Guide | Introduction This tutorial will guide you through the process of integrating your Poe.com... | 0 | 2024-05-31T18:48:40 | https://dev.to/thelime1/how-to-integrate-your-poecom-ai-bot-into-your-website-a-step-by-step-guide-32m3 | chatgpt, beginners, tutorial, python | ## Introduction

This tutorial will guide you through the process of integrating your Poe.com bot into your website. By the end of this tutorial, you'll have a fully functional bot integrated into your site with a custom front-end, ready to interact with your visitors.

---

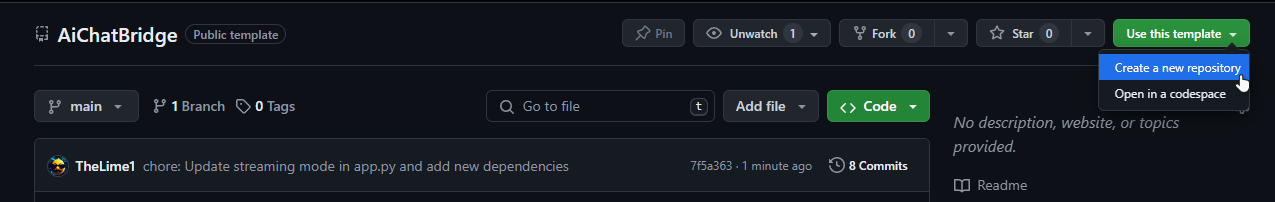

[before we begin use this template repo](https://github.com/TheLime1/AiChatBridge)

and dont forget to install the librearies :

```

pip install -r requirements.txt

```

---

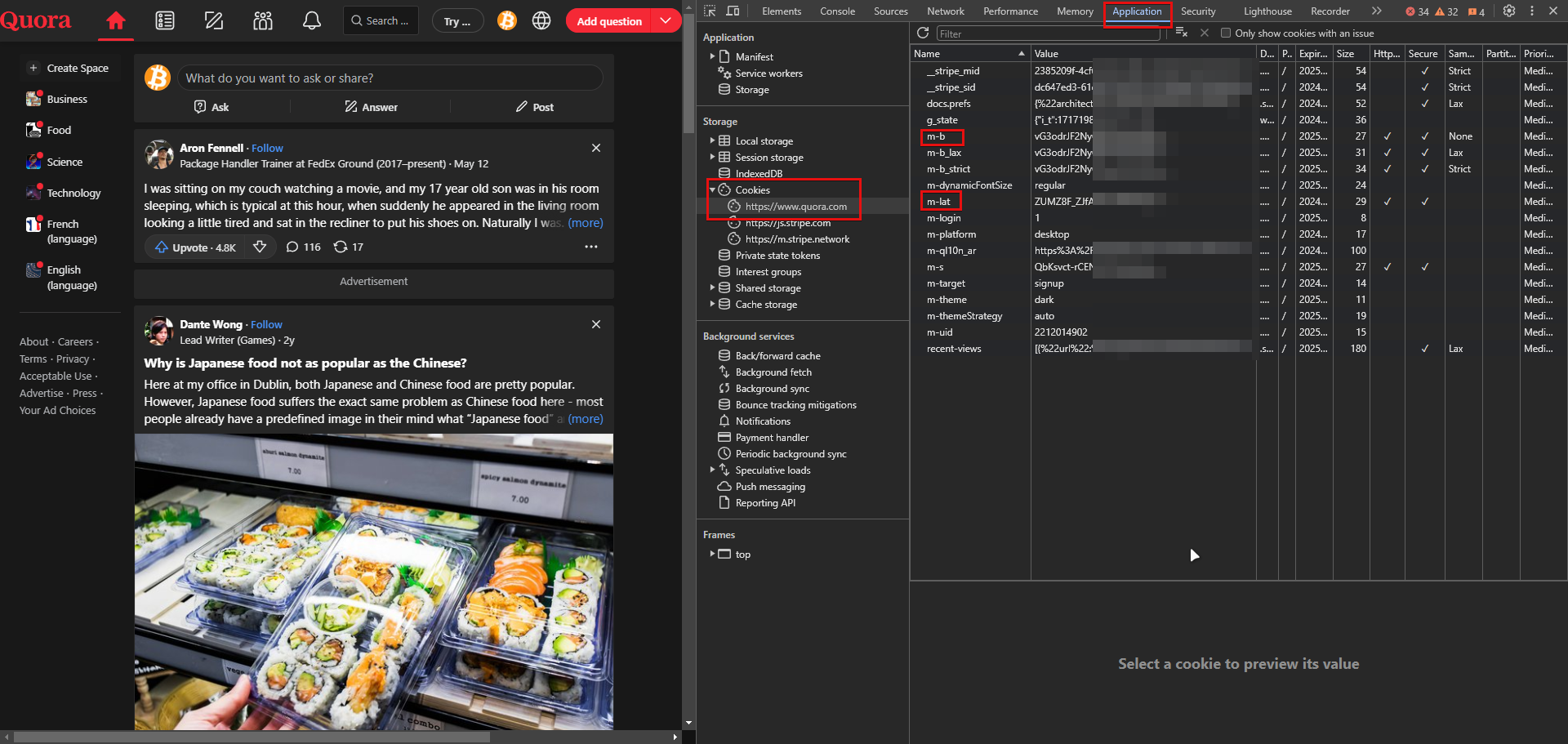

## Step 1: Get the Cookies from quora.com

First things first, you'll need to grab some cookies.They are essential for your bot's authentication and functionality. Here's how to get them:

1. **Log in to quora.com:** Open your web browser and navigate to Poe.com. Log in with your account credentials.

2. **Open Developer Tools:** Once logged in, open the developer tools in your browser. You can do this by right-clicking on the page and selecting "Inspect" or pressing Ctrl+Shift+I (Windows/Linux) or Cmd+Option+I (Mac).

3. **Navigate to the Application Tab:** In the developer tools window, go to the "Application" tab.

4. **Locate the Cookies Section:** Under the "Storage" section on the left sidebar, click on "Cookies" and select https://quora.com from the dropdown.

5. **Copy the Cookies:** Find the cookies **<u>(m-b and m-lat)</u>**, right-click on them, and select "Copy". Open your text editor and paste the cookies into `secrets.ini` .

```

[Tokens]

b = XXXXXXXXXXXXXXXXXXX==

lat = XXXXXXXXXXXXXXXXXX==

```

---

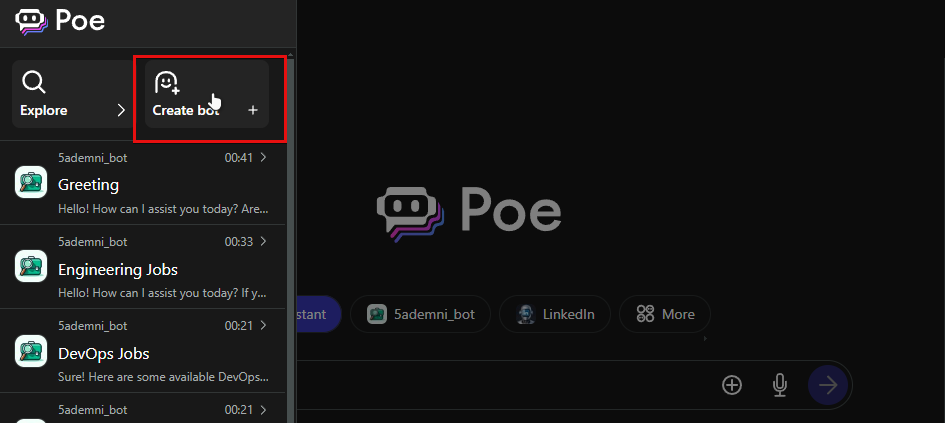

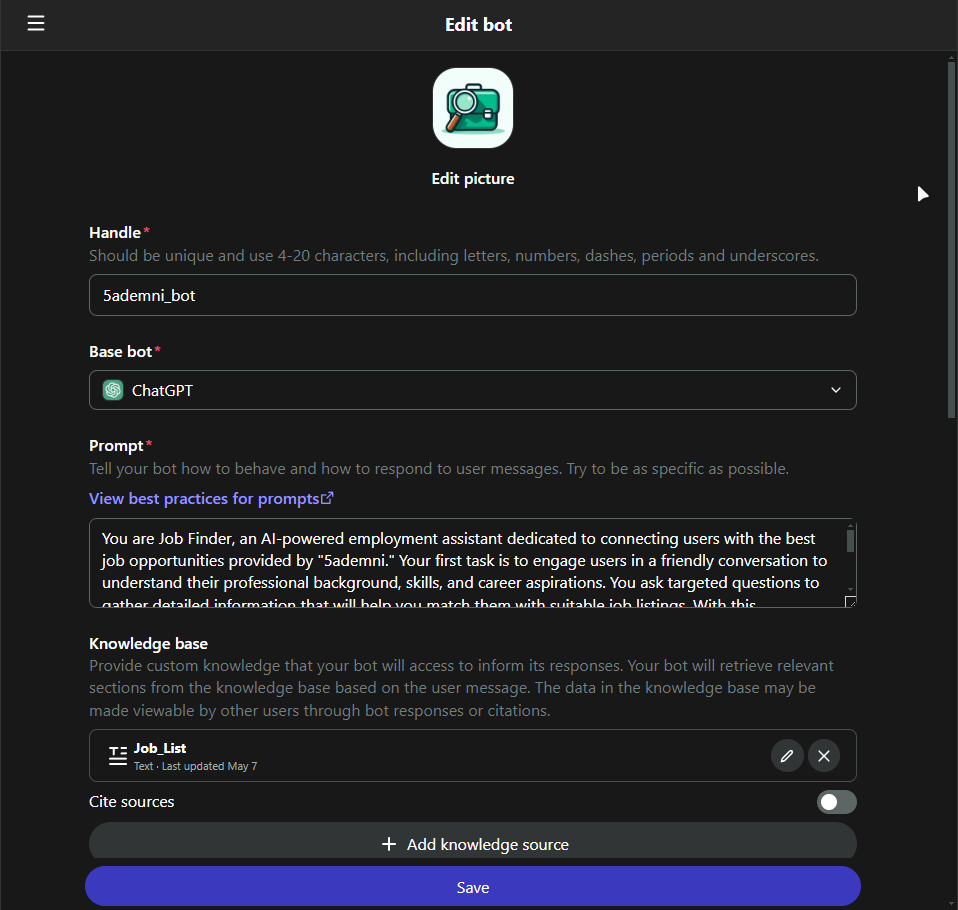

## Step 2: Create Your Bot in Poe.com

With the cookies saved, it's time to create your bot.

**<u>Make sure you have logged in poe.com using the same email which registered on quora.com.</u>**

Follow these steps:

1. **Navigate to the Bot Creation Page:** On Poe.com, find the "Create Bot" section. This is usually located in your account dashboard.

2. **Set Up Your Bot:** Fill in the necessary details for your bot, such as its name, description, and any specific functionalities you want it to have. Don't forget to upload an avatar to give your bot some personality!

3. **Save and Deploy:** Once you've configured your bot, click on the "Save" button to finalize the creation process. Your bot is now live and ready to be integrated into your website.

4. **Add bot name** to `secrets.ini`

```

[Bot]

bot_name = 5ademni_bot

```

---

## Step 3: Deploy!

- Run `app.py`

- integrate with your website using `<iframe>`

---

## Bonus Step: Knowledge Base!

You can automate editing your bot knowledge base using `knowledge_update.py`.

### example :

this bot gets updates daily using [scraped data](https://raw.githubusercontent.com/5ademni/job-scraper/main/harvest/know_base/json/tanit_informatique.json) for joblistings, using Github Actions

## Thats it!

you can follow me on [Github](https://github.com/TheLime1) if you are interested About APIs and Ai !

- [template repo](https://github.com/TheLime1/AiChatBridge)

- [bot used in this demo](https://poe.com/5ademni_bot)

### You can read these docs if you want to further customize the chatbot

- [Chat UI](https://github.com/OvidijusParsiunas/deep-chat)

- [Poe API](https://github.com/snowby666/poe-api-wrapper)

| thelime1 |

1,872,359 | The Rise of Edge Computing: Enhancing Data Processing and Security | Explore the transformative potential of edge computing in our latest article! Discover how edge... | 0 | 2024-05-31T18:47:18 | https://dev.to/futuristicgeeks/the-rise-of-edge-computing-enhancing-data-processing-and-security-5ee0 | edgecomputing, trend, webdev | Explore the transformative potential of edge computing in our latest article! Discover how edge computing enhances data processing and security by reducing latency, optimizing bandwidth, and improving reliability. Learn about its advantages over traditional cloud computing and see real-world applications in autonomous vehicles, industrial automation, healthcare, and smart cities. Stay ahead with insights into how edge computing is shaping the future of technology.

Read the full article here: [Insert Article Link]

#EdgeComputing #TechInnovation #DataProcessing #CloudComputing #IoT #SmartCities #IndustrialAutomation #AutonomousVehicles #HealthcareTech #TechTrends #DigitalTransformation #FutureTech #CyberSecurity #TechCommunity | futuristicgeeks |

1,872,358 | ai11 | )This is a submission for Frontend Challenge v24.04.17, CSS Art: June. Inspiration ... | 0 | 2024-05-31T18:43:00 | https://dev.to/jiocreators/ai11-57he | frontendchallenge, devchallenge, css | [](ai11.medium.com))_This is a submission for [Frontend Challenge v24.04.17](https://dev.to/challenges/frontend-2024-05-29), CSS Art: June._

## Inspiration

<!-- What are you highlighting today? -->

## Demo

<!-- Show us your CSS Art! You can directly embed an editor into this post (see the FAQ section of the challenge page) or you can share an image of your project and share a public link to the code. -->

## Journey

<!-- Tell us about your process, what you learned, anything you are particularly proud of, what you hope to do next, etc. -->

<!-- Team Submissions: Please pick one member to publish the submission and credit teammates by listing their DEV usernames directly in the body of the post. -->

<!-- We encourage you to consider adding a license for your code. -->

<!-- Don't forget to add a cover image to your post (if you want). -->

<!-- Thanks for participating! --> | jiocreators |

1,872,356 | Scroll to the end of x bookmarks | var divElement = document.querySelector('div[aria-label="Timeline: Bookmarks"].css-175oi2r'); var... | 0 | 2024-05-31T18:33:01 | https://dev.to/luisgmoreno/scroll-to-the-end-of-x-bookmarks-3m8d | xcom, twitter | ```javascript

var divElement = document.querySelector('div[aria-label="Timeline: Bookmarks"].css-175oi2r');

var scrollInterval = setInterval(() => { divElement.firstChild.lastChild.scrollIntoView({ behavior: "smooth" }); }, 2000)

// use this to stop

//clearInterval(scrollInterval)

```

| luisgmoreno |

1,872,353 | improving product manager relationship with you | It's very often that we, the developers, make fun of how useless product managers (PM) are. They... | 0 | 2024-05-31T18:20:33 | https://dev.to/kevin074/managing-product-manager-relationship-with-you-1gc6 | webdev, career, learning, developer | It's very often that we, the developers, make fun of how useless product managers (PM) are. They aren't contributing to the feature actually, they constantly only care about the deadline, and they can't be held responsible while actually pointing fingers at you.

Well, if your PMs are doing just bare minimum then yeah they will be pretty unhelpful. However, I've worked with a good PM and it's changed my view on how important product managers are and how do you keep a good relationship with them.

the main functions of the product managers are:

1.) managing expectations and request of high level stack holders.

This is often about having meetings with all levels of hierarchy, including you the developer, about what is the current plan, what are the potential ideas, and whether the projects are still on track or at risk of delay. This also means that PMs are constantly in communication with high level employees like directors, vice presidents, and even the CEOs about the latest status update; it's very unpleasant when the meeting isn't a simple "all is fine" and you definitely don't want to be the one delivering that news.

2.) filtering and prioritizing tasks.

This is a collaboration with the engineering managers and leads as well. The difference is that product managers have a wider cast of net and have to be aware of what other teams are doing, what general company direction is going towards and whether the development is matching that direction.

It also means that PMs sometimes have to deny requests from the high level employees; do you really want to be the one saying no to the CEO?

3.) hold business knowledge about the team, the bigger organization, and the company in general.

This is where the PMs really shine and it's why PMs are very valuable to the company too.

if you don't understand how important this is, please [see my recent article about it](https://dev.to/kevin074/lessons-from-layoff-business-knowledge-all-3di7)

Now here is the fun part, how do you manage your relationship with the PMs and how do you best leverage their role in the company.

1.) **communication is key**.

If you haven't noticed yet, all the PMs do are basically communicating. If you are the one who isn't replying to slack messages or giving them regular updates, you will be a pain to work with for them.

this is hard especially in the world of hybrid/remote world that we are in today and I'll have an article about this too!

2.) **Be reliable and the one they can go to**.

PMs are super well aware that they can't actually give any solutions in a tech company. So if you want your PMs to like you, have a firm grasp on the features you are responsible for and more. If you are the one they can turn to when they have a question or when things go south, they'll definitely like you a lot better.

Now this means more responsibilities for you, and some people hold the philosophy that you shouldn't do more than what you are paid for. However remember that PMs are always communicating? I am willing to bet my life that they talk to your managers and all chains above more than you ever will. They won't be afraid to use your name as reason why they can't communicate properly and soon your head could be on the guillotine :)

Of course the opposite side is that if you are reliable, everyone will know you are a key player and your next promotion will be easier; _also remember no matter how hard you advocate for yourself, it's always going to mean less than how others advocate on your behalf_.

3.) **keep an attitude that they are just trying to do what's the best for the team**.

conflict is absolutely inevitable with your PM. It can be that they are asking you too many questions while deadline is looming. Maybe they asked you for some feature that straight up doesn't make sense. Whatever it may be, just keep in mind that their job is to make sure the company is going in the right direction and your team is too. They aren't trying to sabotage your job or anything and in fact if the team isn't being perceived as valuable it is a failure on their part (unless your feature keeps triggering production alerts of course ;D).

It'll be hard at times, but keeping in mind that they are trying to do the best by the team will help with your temper in checked. After all, you aren't the one having to answer to the CEO one hour later!!

4.) **show an interest in the project and the discussion around it**

everyone loves to be asked about their area expertise. For PMs, it is about the projects they manage and the talks leading up to it, including what was tried in the past and what business knowledge is around it. Showing more interest along will make the PM treat you more than just a jira bot, which will be great for your mental well-being too honestly.

This will also help you understand why you are doing this project to start with and whether there are suggestions you can offer that also fit the overall goal. This will be invaluable too when something unexpected happens, a lot, and alternatives are easier for you to do but just need to be signed off. In this way, you also start exhibiting behaviors beyond just a developer (senior and + levels).

If you like this article, please give a reaction or anything. Feel free to subscribe to me as I will be writing these higher level career articles for at least this week and more.

Also I was just laid off :) ... so if your team is hiring remote please refer me!!! | kevin074 |

1,872,404 | Certificação GitHub Foundations: Concorra A Voucher Para Exame | Ao longo de quatro aulas ao vivo, os participantes terão a oportunidade de conhecer as ferramentas... | 0 | 2024-06-23T13:51:37 | https://guiadeti.com.br/certificacao-github-foundations-voucher-para-exame/ | exames, git, github, inteligenciaartifici | ---

title: Certificação GitHub Foundations: Concorra A Voucher Para Exame

published: true

date: 2024-05-31 18:20:14 UTC

tags: Exames,git,github,inteligenciaartifici

canonical_url: https://guiadeti.com.br/certificacao-github-foundations-voucher-para-exame/

---

Ao longo de quatro aulas ao vivo, os participantes terão a oportunidade de conhecer as ferramentas essenciais, como o GitHub Copilot e o GitHub Codespaces.

Os participantes poderão ganhar um voucher gratuito para a Certificação GitHub Foundations. As sessões incluirão uma variedade de dicas, truques e exercícios práticos, projetados para construir uma base sólida para a certificação.

Este evento é ideal para aqueles que estão começando ou desejam aprimorar suas habilidades, e é uma oportunidade imperdível para quem está interessado em expandir sua carreira na área de tecnologia.

## Learn Live: Get Certified with GitHub

De 5 a 26 de junho, a Microsoft e o GitHub oferecerão gratuitamente o curso de Certificação GitHub Foundations.

_Imagem da página da Microsoft Reactor_

Este curso é ideal para indivíduos no início de sua carreira tecnológica ou para aqueles que desejam aprimorar suas habilidades em ferramentas essenciais de desenvolvimento.

### Ferramentas e Práticas com Especialistas

Durante quatro aulas ao vivo, os participantes vão trabalhar as ferramentas avançadas como GitHub Copilot e GitHub Codespaces, guiados por especialistas da Microsoft e do GitHub.

As sessões são projetadas para fornecer uma forte base nas funcionalidades da plataforma, incluindo dicas, truques e exercícios práticos que são essenciais para o aproveitamento completo da plataforma.

### Oportunidade de Certificação e Detalhes da Oferta

Ao final de cada sessão ao vivo, os participantes terão a chance de ganhar um voucher gratuito para a Certificação GitHub Foundations, disponibilizado por ordem de chegada.

É importante notar que a oferta é válida enquanto durarem os estoques e é limitada a um voucher por pessoa. Esta promoção não é transferível e não pode ser combinada com outras ofertas.

A oferta termina em 27 de junho de 2024 ou enquanto durarem os estoques, e os vouchers não são resgatáveis por dinheiro. Confira a ementa:

#### 5 de junho – 15h: Automação Predial com GitHub

Descubra como você pode criar automação poderosa em qualquer projeto de software usando a plataforma. Esta sessão vai trabalhar as Ações do GitHub, o Copiloto do GitHub e os Codespaces do GitHub.

#### 12 de junho – 15h: Projetos no GitHub

Descubra como aplicar os recursos de segurança do GitHub Advanced Security aos seus próprios projetos e protegê-lo contra ameaças e vulnerabilidades de segurança.

#### 19 de junho – 15h: Desenvolvimento mais rápido com o GitHub Copilot

Saiba como aproveitar o GitHub Copilot para automatizar tarefas repetitivas e aumentar seus ciclos de desenvolvimento. Uso básico, bem como por recursos mais recentes, como prompts interativos e sugestões embutidas

#### 26 de junho – 15h: Gerencie seu projeto com a plataforma GitHub

Use os poderosos recursos de projeto do GitHub para gerenciar seu processo de desenvolvimento de software. Gerenciamento de projetos com problemas, solicitações pull e controle de alterações.

Quaisquer impostos aplicáveis são de responsabilidade do destinatário. A Microsoft também reserva-se o direito de cancelar, alterar ou suspender esta oferta a qualquer momento sem aviso prévio. Vale destacar que as sessões serão conduzidas em inglês.

<aside>

<div>Você pode gostar</div>

<div>

<div>

<div>

<div>

<span><img decoding="async" width="280" height="210" src="https://guiadeti.com.br/wp-content/uploads/2024/05/Certificacao-GitHub-280x210.png" alt="Certificação GitHub" title="Certificação GitHub"></span>

</div>

<span>Certificação GitHub Foundations: Concorra A Voucher Para Exame</span> <a href="https://guiadeti.com.br/certificacao-github-foundations-voucher-para-exame/" title="Certificação GitHub Foundations: Concorra A Voucher Para Exame"></a>

</div>

</div>

<div>

<div>

<div>

<span><img decoding="async" width="280" height="210" src="https://guiadeti.com.br/wp-content/uploads/2024/05/Masterclass-De-Inteligencia-Artificial-280x210.png" alt="Masterclass De Inteligência Artificial" title="Masterclass De Inteligência Artificial"></span>

</div>

<span>Masterclass De Inteligência Artificial: Aprenda Do Zero Gratuitamente</span> <a href="https://guiadeti.com.br/masterclass-inteligencia-artificial-gratuita-2/" title="Masterclass De Inteligência Artificial: Aprenda Do Zero Gratuitamente"></a>

</div>

</div>

<div>

<div>

<div>

<span><img decoding="async" width="280" height="210" src="https://guiadeti.com.br/wp-content/uploads/2024/05/Marketing-Digital-Santander-280x210.png" alt="Marketing Digital Santander" title="Marketing Digital Santander"></span>

</div>

<span>Curso De Marketing Digital Gratuito E Online Do Santander</span> <a href="https://guiadeti.com.br/curso-marketing-digital-gratuito-online-santander/" title="Curso De Marketing Digital Gratuito E Online Do Santander"></a>

</div>

</div>

<div>

<div>

<div>

<span><img decoding="async" width="280" height="210" src="https://guiadeti.com.br/wp-content/uploads/2024/05/Minicurso-De-Analise-De-Dados-280x210.png" alt="Minicurso De Análise De Dados" title="Minicurso De Análise De Dados"></span>

</div>

<span>Minicurso De Análise De Dados Gratuito Da Cubos Academy</span> <a href="https://guiadeti.com.br/minicurso-analise-de-dados-gratuito-cubos-academy/" title="Minicurso De Análise De Dados Gratuito Da Cubos Academy"></a>

</div>

</div>

</div>

</aside>

## GitHub

GitHub é uma plataforma de hospedagem de código-fonte e controle de versão que utiliza o Git, um sistema de controle de versão distribuído criado por Linus Torvalds, o mesmo criador do Linux.

Desde sua criação em 2008 por Chris Wanstrath, PJ Hyett e Tom Preston-Werner, tornou-se uma ferramenta essencial para milhões de desenvolvedores em todo o mundo, permitindo a colaboração em projetos de todos os tamanhos.

### Transformando a Colaboração em Código

A plataforma começou como um projeto simples focado em fornecer um espaço fácil de usar para hospedar projetos de software que usam Git.

Rapidamente, ele se destacou por sua interface intuitiva e recursos sociais, como forks, pull requests e issues, que facilitaram a colaboração entre desenvolvedores.

Essa maneira colaborativa contribuiu para o rápido crescimento da plataforma, que logo se tornou a maior comunidade de desenvolvimento de software do mundo.

Em 2018, a Microsoft adquiriu o GitHub por $7,5 bilhões, ampliando ainda mais seus recursos e integração com outras ferramentas Microsoft.

### Principais Funcionalidades do GitHub

A plataforma oferece poderosas funcionalidades de controle de versão, permitindo que desenvolvedores rastreiem e revertam mudanças no código, colaborarem em projetos sem o risco de conflitos de código e mantenham históricos de modificações acessíveis e organizados.

### Colaboração

As funcionalidades de fork e pull request são fundamentais para o trabalho colaborativo na plataforma.

Elas permitem que os desenvolvedores façam uma cópia de um repositório, alterem o projeto independentemente e depois proponham essas mudanças de volta ao repositório original através de pull requests.

### Gerenciamento de Projeto

O GitHub também oferece ferramentas para o gerenciamento de projetos, como a possibilidade de criar issues para rastrear bugs ou solicitar novas funcionalidades, e a integração com sistemas de automação para gerenciar fluxos de trabalho de desenvolvimento e integração contínua.

### Impacto do GitHub na Indústria de Software

A plataforma rmudou a maneira como o software é desenvolvido, permitindo uma colaboração sem precedentes entre programadores de todo o mundo. Ele facilita a gestão de projetos de software e atua como um portfólio social para desenvolvedores mostrarem seu trabalho.

A plataforma permite que desenvolvedores de software encontrem e utilizem uma variedade de projetos existentes, acelerando o desenvolvimento e a inovação na indústria.

## Microsoft

A Microsoft Corporation é uma das maiores e mais influentes empresas de tecnologia do mundo. Fundada em 1975 por Bill Gates e Paul Allen, a Microsoft começou sua jornada com o desenvolvimento de interpretadores BASIC para o Altair 8800.

Desde então, a empresa expandiu seu portfólio para incluir sistemas operacionais, aplicativos de software, hardware, e muito mais, desempenhando um papel central na revolução da computação pessoal e corporativa.

### A Evolução dos Produtos Microsoft

Um dos principais produtos da Microsoft é o sistema operacional Windows, lançado pela primeira vez em 1985. Windows tornou-se sinônimo de computação pessoal e continua a ser o sistema operacional mais utilizado no mundo.

Cada nova versão do Windows trouxe melhorias significativas em design, funcionalidade e segurança, mantendo a plataforma relevante em uma indústria em constante evolução.

### Microsoft Office e Aplicações de Produtividade

Outra inovação significativa da Microsoft é o Microsoft Office, um conjunto de aplicações de produtividade que inclui o Word, Excel, PowerPoint, e outros. Lançado inicialmente em 1989, o Office transformou a maneira como as empresas e indivíduos gerenciam documentos e informações.

Com o passar do tempo, a Microsoft expandiu o Office para a nuvem com o lançamento do Office 365, agora rebatizado como Microsoft 365, que oferece ferramentas colaborativas e soluções baseadas em nuvem.

## Aprimore suas habilidades em desenvolvimento. Inscreva-se agora para a Certificação e eleve sua carreira!

As [inscrições para o Learn Live: Get Certified with GitHub](https://developer.microsoft.com/pt-br/reactor/series/S-1342/?WT.mc_id=academic-137273-alfredodeza) devem ser realizadas no site da Microsoft Reactor.

## Compartilhe a oportunidade da Certificação com sua rede!

Gostou do conteúdo sobre a oportunidade de realizar o exame de graça? Então compartilhe com a galera!

O post [Certificação GitHub Foundations: Concorra A Voucher Para Exame](https://guiadeti.com.br/certificacao-github-foundations-voucher-para-exame/) apareceu primeiro em [Guia de TI](https://guiadeti.com.br). | guiadeti |

1,872,348 | A Complete Guide to Storage Baskets with Lids and Usage Ideas | Storage baskets are more than just functional organizers; they are versatile tools for conquering... | 0 | 2024-05-31T18:20:00 | https://dev.to/thedanes/a-complete-guide-to-storage-baskets-with-lids-and-usage-ideas-a10 | Storage baskets are more than just functional organizers; they are versatile tools for conquering clutter and adding a touch of personality to your space. But with so many styles, materials, and sizes available, choosing the right storage basket with a lid can feel overwhelming.

This comprehensive guide will equip you with everything you need to know about storage baskets, from their benefits to stylish décor applications.

**Why Choose Storage Baskets with Lids?**

While open baskets serve a purpose, baskets with lids offer a multitude of advantages:

**- Dust Control:** Lids keep dust and dirt at bay, especially beneficial for storing items in areas prone to dust accumulation, like attics or basements.

**- Visual Decluttering:** Hidden contents create a cleaner and more organized aesthetic. It's like magic – out of sight, out of mind (and out of clutter)!

**- Odor Control:** Certain materials, like toys or clothes, might retain odors. Lids help contain these smells, preventing them from permeating your entire space.

**- Stackability:** Lids often create a flat surface, allowing for secure stacking of multiple baskets, maximizing storage space.

**- Versatility:** Baskets with lids can be used in various rooms for an array of purposes, making them a true investment piece.

**Finding the Perfect Basket:**

Now that you understand the benefits, let's explore factors to consider when choosing storage baskets with lids:

**Natural Fibers:** Woven baskets in wicker, rattan, or seagrass add a touch of rustic charm. They're breathable, making them suitable for storing blankets or clothes.

**Fabric Baskets:** Fabric baskets offer a variety of colors and patterns, allowing for easy coordination with your décor. They're lightweight and collapsible for easy storage when not in use. Opt for washable fabrics for easy cleaning.

**Plastic Baskets:** Durable and easy to clean, plastic baskets are ideal for storing toys, laundry, or bathroom essentials.

**Metal Baskets:** Metal baskets with lids offer a modern aesthetic and are perfect for storing heavier items in kitchens or pantries.

**Size:** Match the basket size to its intended purpose. Large baskets are ideal for toys or blankets, while smaller ones can hold makeup, craft supplies, or pet accessories.

**Shape:** Round, square, rectangular – consider the shape that best complements your space and storage needs. Round baskets might offer a softer look, while rectangular baskets can fit neatly into corners or shelves.

**Where Can You Use Storage Baskets with Lids?**

The possibilities are endless, here are some ideas to inspire your organizational journey:

**Living Room:** Store throw blankets, board games, magazines, or pet toys in stylish baskets.

**Bedroom:** Lidded baskets tucked under the bed are perfect for seasonal clothes, shoes, or extra pillows. Decorative baskets on dressers can hold jewelry, scarves, or beauty products.

**Bathroom:** Keep bathroom essentials like toiletries, towels, or cleaning supplies neatly organized with lidded baskets.

**Kitchen:** Store pantry staples, snacks, or kitchen linens in lidded baskets for easy access and a clutter-free look.

**Laundry Room:** Lidded baskets can hold laundry detergent, dryer sheets, or cleaning supplies.

**Playroom:** Tame the toy clutter! Use lidded baskets to categorize toys by type or age group.

**Embrace the Basket Life:**

[Storage baskets with lids]( https://thedanes.co.uk/products/handwoven-storage-baskets-with-lid-set-of-two-fair-trade) are your allies in the fight against clutter. They offer a multitude of benefits, from organization and dust protection to stylish décor possibilities. With a bit of planning and creativity, you can find the perfect storage basket with a lid to enhance both the functionality and aesthetics of your home. So, embrace the basket life, and enjoy a more.

| thedanes | |

1,872,346 | Bangalore Escorts | Bangalore escorts provide discreet and pleasurable companionship Bangalore escort to clients looking... | 0 | 2024-05-31T18:19:08 | https://dev.to/itsshrutikhanna/bangalore-escorts-6k | <b><a href="https://www.okloote.com">Bangalore escorts</a></b> provide discreet and pleasurable companionship <b><a href="https://www.okloote.com/high-profile-call-girls-in-bangalore-gallery.html">Bangalore escort</a></b> to clients looking for a professional touch. These escorts are educated to offer a high standard of service and accommodate individual preferences, with an emphasis on customer satisfaction and privacy. <b><a href="https://www.okloote.com/high-profile-call-girls-in-bangalore-gallery.html">Bangalore escorts service</a></b> may cater to the demands of discriminating customers for social events, business meetings, or private amusement. They are a well-liked option for people looking for company in the city <b><a href="https://www.okloote.com/mg-road-escorts.html">VIP Bangalore escort service</a></b>! because of their professionalism, secrecy, and attention to detail, which distinguish them in the sector.

For those looking for company, the <a href="https://www.okloote.com/bangalore-escort-service-price.html">Bangalore escort service</a> provides a discreet and polished encounter. Clients can opt from a range of services to fit their interests with a selection of <b><a href="https://www.okloote.com">Bangalore escorts</a></b>. The agency is a reliable option for anyone searching for a delightful and unforgettable experience because it guarantees safety and secrecy for both clients and escorts.

Do you want to add some excitement and spice to your life in Bangalore? Look no farther than the most beautiful girls in <b><a href="https://www.okloote.com/bangalore-escort-service-price.html">VIP Bangalore escorts</a></b>! These lovely women are eager to show you a nice time and satisfy all of your wishes.

<h3>Meet the Hottest Girls In Bangalore Escorts</h3>

Do you want to add some excitement and spice to your life in Bangalore? Look no farther than the most beautiful girls in <b><a href="https://www.okloote.com/independent-call-girls-in-bangalore-phone-number.html">Bangalore escorts</a></b>! These lovely women are eager to show you a nice time and satisfy all of your wishes.

Bangalore is known for its exciting nightlife and stunning women, and the escorts in the city are no different. Whether you want a fun night out on the town or a more personal rendezvous, these females will leave you with lasting memories. Prepare to encounter the most alluring and sensual <b><a href="https://www.okloote.com">escort service in Bangalore</a></b>, willing to make your desires come true.<br />

<br />

Welcome to <b><a href="https://www.okloote.com">Bangalore Escorts Services</a>,</b> where your satisfaction is our top priority. Our professional and discreet escorts are here to provide you with an unforgettable experience in the bustling city of Bangalore. Whether you are a local resident, a business traveler, or a tourist visiting the city, our escorts are ready to cater to your every desire.

Our escorts are handpicked for their beauty, charm, and intelligence, ensuring that you have the best possible experience during your time with them. They are experts in providing companionship, intimacy, and pleasure, and will go above and beyond to make sure that your needs are met.

At <b><a href="https://www.okloote.com">Bangalore Escorts</a></b>, we prioritize the confidentiality and privacy of our clients. You can rest assured that all interactions with our escorts are kept strictly confidential, so you can enjoy your time with them without any worries.

So why wait? Treat yourself to a memorable experience with one of our stunning escorts today. Contact <b><a href="https://www.okloote.com">escort services in Bangalore</a>,</b> to book your appointment and indulge in the ultimate luxury experience in <b><a href="https://www.okloote.com">Bangalore escort.</a>,</b> <br />

<br />

Welcome to our exclusive <b><a href="https://www.okloote.com">Bangalore escorts service!</a></b> Our agency is dedicated to providing you with the most luxurious and professional escorts in the city. Whether you are a local resident or just visiting, we are here to fulfill all your desires and fantasies.<br />

<br />

Our <b><a href="https://www.okloote.com">Bangalore escorts</a></b> are not only stunningly beautiful, but also highly skilled in providing top-notch companionship. They are well-educated, well-mannered, and know how to treat a client with the utmost respect and care.<br />

<br />

Whether you are looking for a casual date, a companion for a business event, or a romantic evening, our <b><a href="https://www.okloote.com">escorts in Bangalore</a></b> can cater to all your needs. We offer a wide range of services to suit your preferences and ensure that you have a memorable experience.<br />

<br />

So why wait? Contact us today to book your perfect <b><a href="https://www.okloote.com">Bangalore escorts </a></b> and indulge in a world of luxury and pleasure. Our discreet and professional service guarantees complete satisfaction, so you can relax and enjoy your time with our stunning companions. Let us make your stay in Bangalore an unforgettable one! <br />

<br />

<h4>Meet the Hottest Independent Call Girls in Bangalore Escorts</h4>

<p>Discover the most sought-after <b><a href="https://www.okloote.com/independent-girls-in-bangalore.html">independent call girls in Bangalore</a></b> with our exclusive escort service. Our stunning escorts are handpicked for their beauty, charm, and professionalism, ensuring a memorable experience for every client. Whether you're looking for companionship for a night out or a private encounter, our Bangalore escorts are ready to fulfill your desires with discretion and class. Experience the ultimate in luxury and pleasure with our top-rated <b><a href="https://www.okloote.com/bangalore-call-girls-gallery.html">call girls in Bangalore.</a></b></p> <br />

<h4>Shruti Khanna Call Girls in Bangalore with Real Bookings Available 24/7</h4>

Discover the ultimate companionship experience with Shruti Khanna <b><a href="https://www.okloote.com/bangalore-call-girls-contact.html">Call Girls in Bangalore,</a></b> where real bookings are available around the clock.<br /><br />

<h4>100% genuine models are available in the Bangalore for Escort Service</h4>

Discover the finest selection of authentic models for <b><a href="https://www.okloote.com/escorts-service-in-bangalore.html">Escort Service in Bangalore.</a></b> Our 100% genuine models are ready to provide you with a premium and discreet experience. Choose from a variety of stunning individuals who are dedicated to ensuring your satisfaction and enjoyment. Experience luxury like never before with our exclusive Escort Service in Bangalore. <br /><br />

<h4>Independent Call Girls in Bangalore</h4>

Our <b><a href="hhttps://www.okloote.com/independent-girls-in-bangalore.html">Independent Call Girls in Bangalore</a></b> offer a discreet and professional service for those seeking companionship and intimate experiences. With a diverse selection of stunning and talented escorts, we cater to the individual preferences and desires of our clients. Our call girls are independent, ensuring a personalized and unforgettable encounter. Whether you are looking for a romantic dinner date, a night on the town, or a private rendezvous, our escorts are ready to provide an exceptional experience tailored to your needs. <br />

<h4>Bangalore Escort Services</h4>

Our <b><a href="https://www.okloote.com/escorts-service-in-bangalore.html">Bangalore Escort Services</a></b> offer discreet and professional companionship for those seeking a memorable experience in the bustling city. Our carefully selected escorts are charming, elegant, and well-trained to cater to your every need. Whether you are looking for a companion for a social event or a private encounter, our services ensure a high level of satisfaction and confidentiality. Contact us today to book an unforgettable experience in Bangalore. <br /><br />

<h4>Looking for high-profile call girls in Bangalore?</h4>

Explore our exclusive selection of elite <b><a href="https://www.okloote.com/bangalore-call-girls-gallery.html">call girls in Bangalore</a></b> who are sophisticated, charming, and discreet. Our high-profile companions are carefully chosen for their beauty, intelligence, and professionalism to ensure a memorable experience for our discerning clientele. Whether you are seeking companionship for a social event or a private encounter, our call girls in Bangalore are guaranteed to exceed your expectations with their exceptional service and companionship. Experience luxury and elegance with our premium selection of call girls in Bangalore.<br /><br />

| itsshrutikhanna | |

1,872,344 | Bangalore Escorts | Useful Links:- Bangalore Escorts || Bangalore Escort Service || Escort Service In Bangalore || Cheap... | 0 | 2024-05-31T18:16:51 | https://dev.to/itsshrutikhanna/bangalore-escorts-fjg | Useful Links:-

Bangalore Escorts || Bangalore Escort Service || Escort Service In Bangalore || Cheap Bangalore Escort Service || Bangalore Call Girls Gallery || Call Girls WhatsApp Number || Bangalore Escorts Price || Bangalore Escorts Service || MG Road Escorts || Koramangala Escorts || Electronic City Escorts || Whitefield Escorts || Old Airport Road Escorts || Airport Escorts || Vijaynagar Escorts || Indiranagar Escorts || 80 Ft. Road Escorts || B.P.Wadia Road Escorts || Banasavadi Escorts || Cox Town Escorts || Domlur Escorts || Gandhi Nagar Escorts || H.B.R. Layout Escorts || H.S.R Layout Escorts || Majestic Escorts || Marathahalli Escorts || Mysore Road Escorts || R.T. Nagar Escorts || Race Course Road Escorts || Rajarajeshwari Nagar Escorts || RajajiNagar Escorts || Rajvi Nagar Escorts || Residency Road Escorts || Richmond Road Escorts || RMV Extension Escorts || Wilson Garden Escorts || Ulsoor Escorts || Vasanth Nagar Escorts || Srirampuram Escorts || Outer Ring Road Escorts || Mahadevpura Escorts || K R Puram Escorts || K. G. Road Escorts || K.d. Road Escorts || Jayanagar Escorts || Hosur Road Escorts || Hunsur Road Escorts || Hebbal Escorts || Dickenson Road Escorts || Btm Layout Escorts || Bellandur Escorts || Bapuji Nagar Escorts || Benson Town Escorts || Avenue Road Escorts || Ashok Nagar Escorts || Agaram Escorts || Anand Nagar Escorts || Anepalya Escorts || Attiguppe Escorts || Austin Town Escorts || Ayappa Garden Escorts || Banashankari Escorts || Bannerghatta Road || || Basavanagudi Escorts || Basaveshwara Nagar Escorts || Bashyam Nagar Escorts || Bidadi Escorts || Bommanahalli Escorts || Brigade Road Escorts || Brookefield Escorts || C V Raman Nagar Escorts || Carmelaram Road Escorts || Central Bengaluru Escorts || Chamrajpet Escorts || Channasandra Escorts || Chikkabanavara Lake Escorts || Church Street Escorts || City Centre Escorts || Cooke Town Escorts || Cottonpet Escorts || Crescent Road Escorts || Cunningham Road Escorts || Dasarahalli Escorts || Devanahalli Escorts || Devasandra Lake Escorts || Diamond District Escorts || Doddaballapura Rd. Escorts || Ganga Nagar Escorts || Govindapura Escorts || Guttahalli Escorts || Hanumanth Nagar Escorts || Hennur Main Road Escorts || Hessarghatta Road Escorts || Hormavu Escorts || Hoskote Escorts || Indlawadi Pura Escorts || Infantry Road Escorts || Isro Layout Escorts || J.P. Nagar Escorts || Jalahalli Escorts || Jayamahal Extn Escorts || Jayamahal Road Escorts || Jaylakshmi Puram Escorts || Jogapalya Escorts || Kalkere Escorts || Kalyan Nagar Escorts || Kammanahalli Escorts || Kanakapura Road Escorts || Kasavanahalli Escorts || Kasturi Nagar Escorts || Kempapur Agrahara Escorts || Lalbagh Rd. Escorts || Madivala Escorts || Malleswaram Escorts || Peenya Escorts || Ramamurthy Nagar Escorts || Sadashiv Nagar Escorts || Sampangirama Nagar Escorts || Sankey Road Escorts || Sarjapur Escorts || Seshadri Road Escorts || Shampura Escorts || Silk Board Junction Escorts || Sompura Gate Escorts || St. Mark's Road Escorts || Uttarahalli Main Road Escorts || V.V. Mahal Escorts || Vidyaranyapura Escorts || Vittal Mallya Road Escorts || Kempe Gowda Road Escorts || Kudlu Gate Escorts || Kumaraswamy Layout Escorts || Kundalahalli Escorts || Lakkasandra Escorts || Langford Town Escorts || Lavelle Road Escorts || LB Shastri Nagar Escorts || Lottegollahalli Escorts || Magrath Road Escorts || Mahalakshmipuram Escorts || Mathikere Escorts || Milk Colony Escorts || Millers Road Escorts || Minerva Circle Escorts || Nagavara Escorts || Nagvarpalya Main Road Escorts || Nandi Durg Road Escorts || Padmanabhanagar Escorts || Palace Road Escorts || Raj Bhavan Road Escorts || Ramakrishna Nagar Escorts || S.R. Nagar Escorts || Sadahalli Escorts || Sahakara Nagar Escorts || Sanjay Nagar Escorts || Sarakki Main Road. Escorts || Sarjapur Road Escorts || Seshadripuram Escorts || Siddapura Rd. Escorts || Sivan Chetty Gardens Escorts || Thaverekere Road Escorts || Tumkur Road Escorts || Varthur Escorts || Vivek Nagar Escorts || Yelahanaka Escorts | itsshrutikhanna | |

1,872,343 | The Ultimate Guide to Vue 3 Composition API: Tips and Best Practices | The Composition API in Vue 3 introduces a new way to organize and reuse code, offering a more... | 0 | 2024-05-31T18:15:34 | https://dev.to/delia_code/the-ultimate-guide-to-vue-3-composition-api-tips-and-best-practices-54a6 | webdev, beginners, tutorial, vue | The Composition API in Vue 3 introduces a new way to organize and reuse code, offering a more flexible and powerful approach compared to the Options API. This guide will provide an in-depth look at the Composition API, its advantages, and best practices to help you make the most out of it.

## What is the Composition API?

The Composition API is a set of additive, function-based APIs that allow developers to better manage code logic and state within Vue components. It aims to address the limitations of the Options API by enabling greater code reusability and organization, particularly in larger applications.

## Advantages of the Composition API

### Improved Code Organization

The Composition API allows you to group related logic together in a more modular way. Instead of splitting your code into lifecycle hooks and component options, you can define logical units in functions, making your code easier to read and maintain.

**Example:**

```javascript

import { ref, computed } from 'vue';

export default {

setup() {

const count = ref(0);

const doubleCount = computed(() => count.value * 2);

function increment() {

count.value++;

}

return {

count,

doubleCount,

increment

};

}

};

```

### Better Reusability

With the Composition API, you can create reusable functions that encapsulate specific pieces of logic, which can be shared across multiple components. This promotes DRY (Don't Repeat Yourself) principles and reduces code duplication.

**Example:**

```javascript

// useCounter.js

import { ref } from 'vue';

export function useCounter() {

const count = ref(0);

function increment() {

count.value++;

}

return { count, increment };

}

// Component.vue

import { useCounter } from './useCounter';

export default {

setup() {

const { count, increment } = useCounter();

return { count, increment };

}

};

```

### Enhanced TypeScript Support

The Composition API is designed to work seamlessly with TypeScript, providing better type inference and autocompletion, which can improve developer productivity and code quality.

**Example:**

```typescript

import { ref, computed } from 'vue';

export default {

setup() {

const count = ref<number>(0);

const doubleCount = computed(() => count.value * 2);

function increment(): void {

count.value++;

}

return {

count,

doubleCount,

increment

};

}

};

```

### Greater Flexibility

The Composition API offers more flexibility by allowing you to use JavaScript features such as closures, importing and exporting functions, and combining multiple pieces of logic without being restricted by the component's lifecycle.

## Best Practices for Using the Composition API

### 1. Organize Logic into Composable Functions

Encapsulate related logic into functions that can be reused across different components. This helps keep your components clean and focused on their primary responsibilities.

**Example:**

```javascript

// useMouse.js

import { ref, onMounted, onUnmounted } from 'vue';

export function useMouse() {

const x = ref(0);

const y = ref(0);

function update(event) {

x.value = event.pageX;

y.value = event.pageY;

}

onMounted(() => window.addEventListener('mousemove', update));

onUnmounted(() => window.removeEventListener('mousemove', update));

return { x, y };

}

```

### 2. Use Reactive References Wisely

Use `ref` for primitive values and `reactive` for objects or arrays. This ensures that Vue can track dependencies and update the UI efficiently.

**Example:**

```javascript

import { ref, reactive } from 'vue';

export default {

setup() {

const count = ref(0);

const user = reactive({ name: 'John', age: 30 });

function increment() {

count.value++;

}

return { count, user, increment };

}

};

```

### 3. Leverage Computed Properties

Use `computed` properties for derived state that depends on other reactive data. This helps keep your logic clear and efficient.

**Example:**

```javascript

import { ref, computed } from 'vue';

export default {

setup() {

const price = ref(100);

const quantity = ref(2);

const total = computed(() => price.value * quantity.value);

return { price, quantity, total };

}

};

```

### 4. Keep Side Effects in `setup`

Place side effects, such as data fetching or event listeners, inside the `setup` function using lifecycle hooks like `onMounted` and `onUnmounted`.

**Example:**

```javascript