id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,872,146 | Day 3: Mastering Operators and Expressions in JavaScript | Introduction Welcome to Day 3 of your JavaScript journey! Yesterday, we explored variables... | 0 | 2024-05-31T14:35:04 | https://dev.to/dipakahirav/day-3-mastering-operators-and-expressions-in-javascript-3oa0 | javascript, beginners, html, css | #### Introduction

Welcome to Day 3 of your JavaScript journey! Yesterday, we explored variables and data types. Today, we will dive into operators and expressions, which are fundamental for performing calculations and making decisions in your code.

please subscribe to my [YouTube channel](https://www.youtube.com/@DevDivewithDipak?sub_confirmation=1) to support my channel and get more web development tutorials.

#### Arithmetic Operators

Arithmetic operators allow you to perform mathematical operations on numbers.

**1. Addition (+)**

Adds two numbers.

```javascript

let sum = 5 + 3;

console.log(sum); // Output: 8

```

**2. Subtraction (-)**

Subtracts the second number from the first.

```javascript

let difference = 9 - 4;

console.log(difference); // Output: 5

```

**3. Multiplication (*)**

Multiplies two numbers.

```javascript

let product = 7 * 2;

console.log(product); // Output: 14

```

**4. Division (/)**

Divides the first number by the second.

```javascript

let quotient = 10 / 2;

console.log(quotient); // Output: 5

```

**5. Modulus (%)**

Returns the remainder of the division.

```javascript

let remainder = 10 % 3;

console.log(remainder); // Output: 1

```

**6. Increment (++)**

Increases a number by one.

```javascript

let count = 5;

count++;

console.log(count); // Output: 6

```

**7. Decrement (--)**

Decreases a number by one.

```javascript

let count = 5;

count--;

console.log(count); // Output: 4

```

#### Comparison Operators

Comparison operators compare two values and return a boolean (true or false).

**1. Equal (==)**

Checks if two values are equal (type conversion may occur).

```javascript

let isEqual = (5 == '5');

console.log(isEqual); // Output: true

```

**2. Strict Equal (===)**

Checks if two values are equal and of the same type.

```javascript

let isStrictEqual = (5 === '5');

console.log(isStrictEqual); // Output: false

```

**3. Not Equal (!=)**

Checks if two values are not equal (type conversion may occur).

```javascript

let isNotEqual = (5 != '5');

console.log(isNotEqual); // Output: false

```

**4. Strict Not Equal (!==)**

Checks if two values are not equal or not of the same type.

```javascript

let isStrictNotEqual = (5 !== '5');

console.log(isStrictNotEqual); // Output: true

```

**5. Greater Than (>)**

Checks if the left value is greater than the right value.

```javascript

let isGreaterThan = (6 > 3);

console.log(isGreaterThan); // Output: true

```

**6. Less Than (<)**

Checks if the left value is less than the right value.

```javascript

let isLessThan = (6 < 3);

console.log(isLessThan); // Output: false

```

**7. Greater Than or Equal (>=)**

Checks if the left value is greater than or equal to the right value.

```javascript

let isGreaterThanOrEqual = (6 >= 6);

console.log(isGreaterThanOrEqual); // Output: true

```

**8. Less Than or Equal (<=)**

Checks if the left value is less than or equal to the right value.

```javascript

let isLessThanOrEqual = (6 <= 6);

console.log(isLessThanOrEqual); // Output: true

```

#### Logical Operators

Logical operators are used to combine multiple conditions.

**1. Logical AND (&&)**

Returns true if both operands are true.

```javascript

let andResult = (5 > 3 && 8 > 6);

console.log(andResult); // Output: true

```

**2. Logical OR (||)**

Returns true if at least one operand is true.

```javascript

let orResult = (5 > 3 || 8 < 6);

console.log(orResult); // Output: true

```

**3. Logical NOT (!)**

Inverts the truthiness of the operand.

```javascript

let notResult = !(5 > 3);

console.log(notResult); // Output: false

```

#### Expressions

Expressions are combinations of variables, operators, and values that yield a result.

**Example:**

```javascript

let a = 10;

let b = 5;

let c = a + b * 2;

console.log(c); // Output: 20

```

#### Practice Activities

**1. Practice Code:**

- Write expressions using each arithmetic, comparison, and logical operator.

- Combine multiple operators in complex expressions.

**2. Mini Project:**

- Create a simple calculator script that performs basic arithmetic operations based on user input.

**Example:**

```javascript

let num1 = parseFloat(prompt("Enter the first number:"));

let num2 = parseFloat(prompt("Enter the second number:"));

let operation = prompt("Enter the operation (+, -, *, /, %):");

let result;

if (operation === "+") {

result = num1 + num2;

} else if (operation === "-") {

result = num1 - num2;

} else if (operation === "*") {

result = num1 * num2;

} else if (operation === "/") {

result = num1 / num2;

} else if (operation === "%") {

result = num1 % num2;

} else {

result = "Invalid operation";

}

console.log("Result:", result);

```

#### Summary

Today, we explored operators and expressions in JavaScript. We learned how to use arithmetic, comparison, and logical operators to build expressions and perform calculations. Understanding these operators is crucial for making decisions and manipulating data in your code.

Stay tuned for Day 4, where we'll dive into control structures like conditionals and loops!

#### Resources

- [Expressions and Operators](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Guide/Expressions_and_Operators)

- [JavaScript Basics](https://developer.mozilla.org/en-US/docs/Learn/Getting_started_with_the_web/JavaScript_basics)

Happy coding! If you have any questions or need further clarification, feel free to leave a comment below. Let's continue learning and growing together!

*Follow me for more tutorials and tips on web development. Feel free to leave comments or questions below!*

#### Follow and Subscribe:

- **Website**: [Dipak Ahirav] (https://www.dipakahirav.com)

- **Email**: dipaksahirav@gmail.com

- **Instagram**: [devdivewithdipak](https://www.instagram.com/devdivewithdipak)

- **YouTube**: [devDive with Dipak](https://www.youtube.com/@DevDivewithDipak?sub_confirmation=1)

- **LinkedIn**: [Dipak Ahirav](https://www.linkedin.com/in/dipak-ahirav-606bba128) | dipakahirav |

1,864,919 | OS Fundamentals 101: Process and Syscalls | Hola! Let's dive into the world of operating systems. Today, we will discuss processes and... | 0 | 2024-05-31T14:35:02 | https://dev.to/xpertr2/os-fundamentals-101-process-and-syscalls-2h0n | linux, process, os, syscall | Hola! Let's dive into the world of operating systems. Today, we will discuss processes and syscalls.

## Process

First, let's quote the bookish answer.

> A process is a program in execution.

When we want to execute any program, the OS creates an entity called a process. A process is a kind of container to hold everything needed by the computer to execute it. Each process is given its own **address space**, a list of memory locations from 0 to some maximum depending on available physical & virtual memory, which the process can read and write to. The address space contains the **executable code** of the program (mostly assembly), the **program's data**, and its **stack**. A process is also associated with allocated resources like **registers** (for things like **program counter, stack counter**, etc.), file descriptors, list of related processes, etc. All this information of the process is stored by the OS in the **process table**.

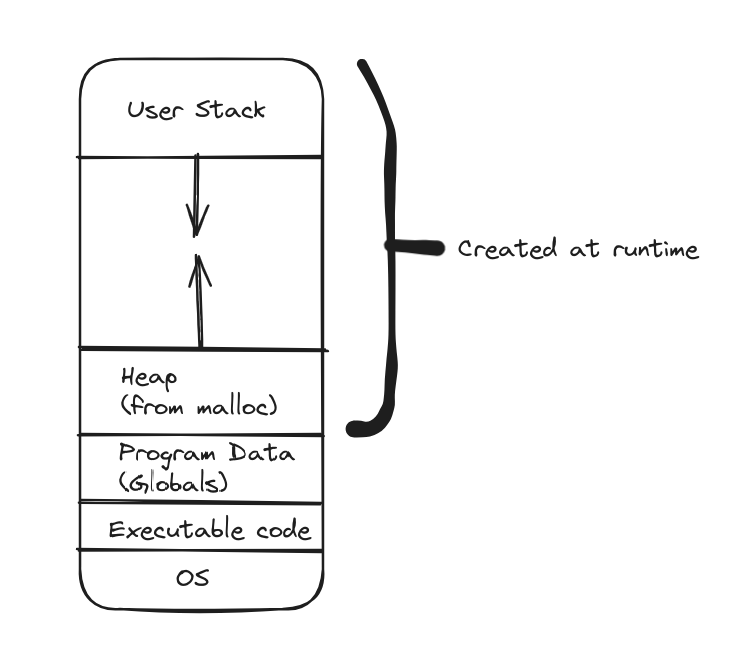

### Address Space

The address space is a map of memory locations from 0 to some max value to actual physical locations on the RAM. When the OS creates a process it allocates an address space where it first stores the location of OS **procedures** in the beginning, as the program will need them to talk to the **Kernel** (*read-only*). Next, it reads the executable code and stores it (*read-only*), followed by the data segment which contains things like the **globals** and is both **read** and **writable** by the process. Then, the remaining space is divided by **heap** and **stack**. The **heap** is allocated after the data segment whereas the **stack** is allocated at the end. So the heap grows **upwards** whereas the stack grows **downwards** with free space in between.

> This address space is also referred to as the **core-image**.

## System Calls

System calls or **syscalls** is an interface provided by the OS to allow user-space code to talk to the **Kernel** and instruct it to do specific tasks.

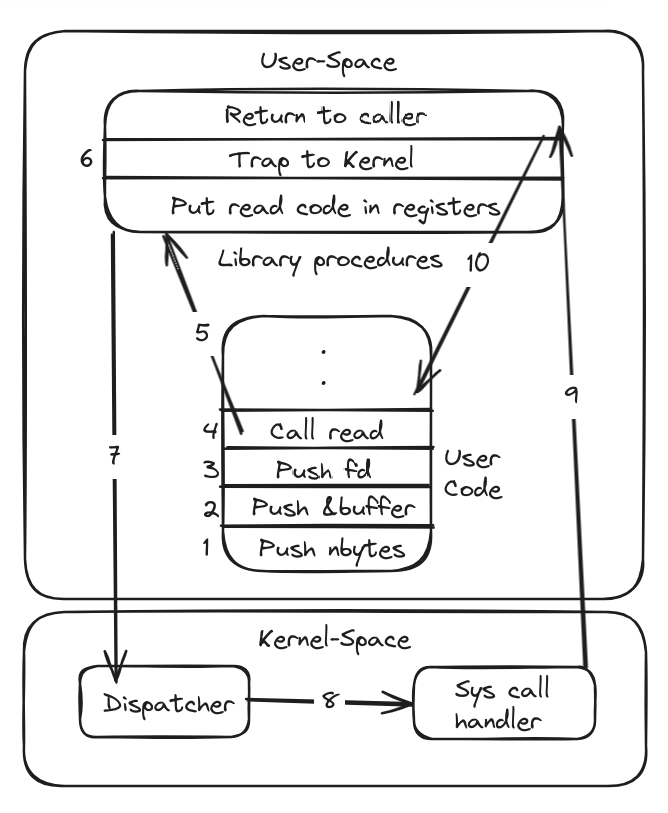

Let's take an example of reading a file. If a process is running in user-mode and it wants to read a file from **hard disk** which is a system service (takes place in kernel-mode), it issues a **read** system call. How? To make a **system call**, the process first sets a few parameters for the system call, then calls the **read** procedure call which sets the **code** for **read** in the **register** and then issues a **trap** command, which causes the OS to trap the **control** to the **Kernel**. The Kernel then **reads** the **code** and figures out the **syscall** to be executed, and dispatches it to **Sys call handler** which executes it, and then the control flows back to **user-space process**.

Learning to draw these kinds of diagrams, pls bear with this one 😜.

## Parent/Child Process

Let's take an example where we open a shell and type "htop" and press enter.

Firstly, we only have the **sh** (shell) process in the process table.

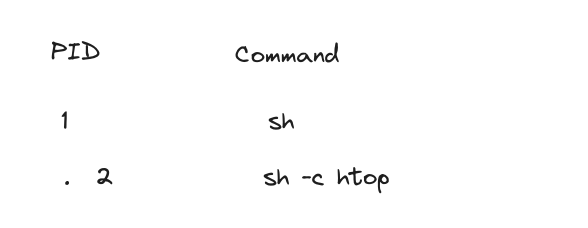

After we type "htop" and press enter, the shell process uses a syscall **fork()** to create a copy of itself. Fork also copies the registers, fds (file descriptors), address space, etc. This creates a new shell process in the process table which is a **child** of the shell that used the fork command (the **parent**).

> ***Fork*** creates a new process while copying the resources of the parent process. It is to be noted that the address space content is copied and not the locations, i.e., changing any value in the child process won't affect the parent process. Some systems may not copy all the content and rather implement **COW** (Copy-On-Write) but that's a different topic altogether.

Now, this forked shell process searches the path of this command and if found, it calls the **exec()** syscall with the user-entered command as the argument. Exec now takes the executable code of "htop" and puts it into the memory replacing the shell code. In a nutshell, it replaces the shell with htop while maintaining the context of the existing process, that is the data remains, address space remains, and it even maintains the **PID** (**Process Identifier**).

Now, **htop** does its thing and after it exists, the entry is removed from the process table, all the resources are released and the parent shell wakes up.

## Zombie/Orphan Process

A **zombie** process is a process that has completed its execution but is still present in the process table. This happens when the parent doesn't **"reap"** the process. This generally happens when the parent process doesn't call **waitpid()** syscall to wait for the child to complete its execution, and read the exit status of the child. During the waitpid() call the OS also removes the entry of this child from the process table.

> When a child process dies, the parent receives a ***SIGCHLD*** signal.

> **Kill** command doesn't work on the zombie process.

An **orphan** process is a process that is running even when the parent process has terminated. This may happen if the parent process doesn't wait() for the child to finish after fork()ing or system crashes.

> A **zombie** process has completed execution but its parent is still running, whereas in the case of an **orphan** process, the parent has already exited but the child is still running.

> A **zombie** process doesn't hold any resources except for the data needed to store its entry process table, whereas an **orphan** process is still running and holds the resources.

---

Let's end here for today. In the next article let's discuss storage and filesystems.

Please comment below suggesting any changes, asking for any topic, or just hanging out in general. Also, pls reach out to me on my social channels.

[[GitHub]](https://github.com/sith-lord-vader) [[LinkedIn]](https://www.linkedin.com) [[Instagram]](https://www.instagram.com/xpertr2) [[YouTube]](https://www.youtube.com/@xpertdev)

| xpertr2 |

1,872,145 | HAMSTER KOMBAT or NOTCOIN 2.0 NEW NOTCOIN ON TONCOIN | Notcoin is an application in telegram, or a regular clicker game. Many did not believe in the success... | 0 | 2024-05-31T14:32:31 | https://dev.to/denis_usa_98c4de9fe521955/hamster-kombat-or-notcoin-20-new-notcoin-on-toncoin-402m | ton, notcoin, hamster, bitcoin |

**Notcoin** is an application in telegram, or a regular clicker game. Many did not believe in the success of notcoin, but recently there was a listing of this coin on the stock exchanges, and those who collected their coins daily received a pleasant amount of money!

Now you will not be able to play notcoin, as it is no longer available, but a new clicker has appeared in Telegram

Notcoin is an application in telegram, or a regular clicker game. Many did not believe in the success of notcoin, but recently there was a listing of this coin on the stock exchanges, and those who collected their coins daily received a pleasant amount of money!

Now you will not be able to play notcoin, as it is no longer available, but a new clicker has appeared in Telegram

https://t.me/hamsTer_kombat_bot/start?startapp=kentId894108942

[Hamster Kombat](https://t.me/hamsTer_kombat_bot/start?startapp=kentId894108942) is a new telegram clicker that is not inferior to notcoin in the audience and has more than 20 million subscribers in its public.

The daily amount of gaming in Hamster Kombat is more than 60 million.

The listing of this coin (that is, the conversion of this coin into real money) is expected in 3 months. Now everyone has a chance to make money on it, while there is time not to miss this game as a coin.

[Hamster Kombat](https://t.me/hamsTer_kombat_bot/start?startapp=kentId894108942) is suitable for people who don't have time to play often, because there are daily rewards, everything you need

[Hamster Kombat](https://t.me/hamsTer_kombat_bot/start?startapp=kentId894108942) is a new telegram clicker that is not inferior to notcoin in the audience and has more than 20 million subscribers in its public.

The daily amount of gaming in Hamster Kombat is more than 60 million.

The listing of this coin (that is, the conversion of this coin into real money) is expected in 3 months. Now everyone has a chance to make money on it, while there is time not to miss this game as a coin.

[Hamster Kombat](https://t.me/hamsTer_kombat_bot/start?startapp=kentId894108942) is suitable for people who don't have time to play often, because there are daily rewards, everything you need

But this is not the only bot/telegram clicker that you can make money on.

In the telegram channel, I have collected the top of the best telegram clickers now, each of which does not take much time to collect coins.

Have time to try out these games, because the listing of all coins is very soon, I do not miss such a freebie!

Everything is here @vseocrpt - https://t.me/vseocrupt | denis_usa_98c4de9fe521955 |

1,872,144 | Vanilla JavaScript - Modal | In this article, we'll build a modal using vanilla JavaScript, HTML, and SCSS that will include... | 0 | 2024-05-31T14:31:19 | https://dev.to/serhatbek/vanilla-javascript-modal-37af | webdev, javascript, scss, beginners |

In this article, we'll build a modal using vanilla JavaScript, HTML, and SCSS that will include features like opening and closing with a button, and closing when clicking outside of the modal content.

Before we start I'd like mention that I used:

- [Boxicons](https://boxicons.com/) for icons.

- [Google Fonts](https://fonts.google.com) Roboto.

- [SCSS (SASS)](https://sass-lang.com/) for styling.

- [BEM methodology](https://getbem.com/introduction) for reusability of css for tooltip.

### Modal HTML Structure

The HTML structure of the modal includes a button to trigger the modal and the modal itself with an overlay and content area.

```html

<!-- Add Boxicons to html head tag -->

<link

href="https://unpkg.com/boxicons@2.1.4/css/boxicons.min.css"

rel="stylesheet"

/>

<body class="container">

<button class="btn js-show-modal">

Show Modal

<i class="bx bx-right-arrow-alt"></i>

</button>

<div class="modal js-modal">

<div class="modal__overlay"></div>

<div class="modal__content">

<button class="modal__close js-close-modal">

<i class="bx bx-x-circle"></i>

</button>

<h4>Lorem ipsum dolor sit amet consectetur adipisicing!</h4>

<p>

Lorem ipsum dolor sit amet consectetur adipisicing elit. Explicabo hic

earum possimus itaque, aperiam tenetur quo ducimus doloremque maxime

voluptas natus laudantium nemo maiores ex ipsam quis. Nobis atque

incidunt esse architecto cupiditate quis neque ipsa animi deserunt

commodi perspiciatis aperiam nemo dignissimos libero, fugit dolorum

similique quas, ducimus ad?

</p>

<div class="modal__action">

<button class="btn js-close-modal">Close</button>

</div>

</div>

</div>

</body>

```

### SCSS Styling

The SCSS for the modal ensures it is visually appealing and functions correctly when opened and closed.

```scss

@import url('https://fonts.googleapis.com/css2?family=Roboto:wght@300;400;500;700&display=swap');

// COLORS

// $black: #202b2f;

$black2: #1e1e1e;

$white: aliceblue;

$grayish-blue: #003249;

$blue: #4983cf;

$pink: #be5064;

// RESET

*,

*::before,

*::after {

box-sizing: border-box;

margin: 0;

padding: 0;

}

body {

font-family: 'Roboto', sans-serif;

background-color: $grayish-blue;

color: $white;

&.overflowHidden {

overflow: hidden;

}

}

// STYLES

.container {

width: 100vw;

height: 100vh;

display: flex;

align-items: center;

justify-content: center;

}

.btn {

font-size: 14px;

color: $white;

padding: 8px 16px;

background-color: $blue;

border: 0;

cursor: pointer;

border-radius: 4px;

display: inline-flex;

align-items: center;

justify-content: center;

> i {

font-size: 18px;

}

}

.modal {

display: flex;

align-items: center;

justify-content: center;

position: fixed;

left: 0;

top: 0;

right: 0;

bottom: 0;

opacity: 0;

user-select: none;

pointer-events: none;

z-index: -22;

transition: all 200ms ease-in-out;

&--opened {

opacity: 1;

user-select: auto;

pointer-events: all;

z-index: 1;

}

&__overlay {

position: absolute;

left: 0;

top: 0;

right: 0;

bottom: 0;

background-color: rgba($color: $black2, $alpha: 0.8);

z-index: 11;

}

&__close {

border: 0;

outline: 0;

background-color: transparent;

font-size: 24px;

position: absolute;

right: 20px;

top: 20px;

cursor: pointer;

> i {

font-size: 34px;

color: $pink;

}

}

&__content {

background-color: $white;

color: $black2;

max-width: 600px;

width: 100%;

padding: 30px;

border-radius: 8px;

z-index: 22;

position: relative;

> h4 {

font-size: 18px;

margin: 30px 0;

}

> p {

margin-bottom: 20px;

}

}

&__action {

text-align: right;

}

}

```

### Adding JavaScript Functionality

The JavaScript code handles the opening and closing of the modal, including the feature to close the modal when clicking outside of it. We add an event listener to the button with the class **js-show-modal**. When this button is clicked, the **modal--opened** class is added to the modal, making it visible.Then we add event listeners to all elements with the class **js-close-modal**. When any of these elements are clicked, the **modal--opened** class is removed, hiding the modal. Lastly, We add an event listener to the document. If the modal is open and the user clicks outside the modal content (but not on the trigger button), the modal will close.

```javascript

document.addEventListener('DOMContentLoaded', () => {

const modal = document.querySelector('.js-modal'),

openModalBtn = document.querySelector('.js-show-modal'),

closeModalBtns = document.querySelectorAll('.js-close-modal'),

body = document.querySelector('body');

const closeModal = () => {

modal.classList.remove('modal--opened');

body.classList.remove('overflowHidden');

document.removeEventListener('keydown', handleEscClose);

};

const openModal = () => {

modal.classList.add('modal--opened');

body.classList.add('overflowHidden');

document.addEventListener('keydown', handleEscClose);

};

const handleEscClose = (e) => {

if (e.key === 'Escape') {

closeModal();

}

};

if (modal) {

openModalBtn.addEventListener('click', openModal);

closeModalBtns.forEach((btn) => btn.addEventListener('click', closeModal));

}

document.addEventListener('click', (event) => {

if (

modal.classList.contains('modal--opened') &&

!event.target.closest('.modal__content') &&

!event.target.closest('.js-show-modal')

) {

closeModal();

}

});

});

```

We've just created a simple modal component using vanilla JavaScript. You can customize it for your project's needs. To see the detailed code check project's [Github](https://github.com/serhatbek/javascript-projects/tree/main/Modal) repo and [Codepen](https://codepen.io/serhatbek/pen/vYwXqGG) for live demo.

Thank you for reading. If you find the article useful, please do not forget to give a star so that others can access it. Happy Coding! 🙃

<a href="https://www.buymeacoffee.com/serhatbek" target="_blank"><img src="https://cdn.buymeacoffee.com/buttons/default-orange.png" alt="Buy Me A Coffee" height="41" width="174"></a>

| serhatbek |

1,872,143 | Śledzenie geolokalizacji JavaScript za pomocą Google Maps API | Zapoznaj się z ostatnią serią dotyczącą tworzenia map w czasie rzeczywistym przy użyciu JavaScript Google Maps API i śledzenia geolokalizacji. | 0 | 2024-05-31T14:30:46 | https://dev.to/pubnub-pl/sledzenie-geolokalizacji-javascript-za-pomoca-google-maps-api-2hl1 | Oto zaktualizowany wpis na blogu, z płynnie osadzonymi wszystkimi słowami kluczowymi.

Jest to podsumowanie czteroodcinkowej serii o tworzeniu aplikacji internetowych na żywo, w czasie rzeczywistym, z funkcjami geolokalizacji przy użyciu Google Maps JavaScript API i PubNub. Nasz samouczek przeprowadzi Cię przez proces generowania ścieżek lotu przy użyciu JavaScript i PubNub.

Aby zobaczyć, jak to wszystko jest zaimplementowane, sprawdź nasze [demo Showcase](https://showcase.pubnub.com/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl) na stronie PubNub. Przejdź do wersji demonstracyjnej Geolocation, aby zobaczyć, jak wykorzystujemy PubNub ze śledzeniem w czasie rzeczywistym. Aby zapoznać się z kodem wersji demonstracyjnej, przejdź do naszego serwisu [Github](https://github.com/PubNubDevelopers/PubNub-Showcase/tree/main/web/geolocation), aby zobaczyć, jak to wszystko działa.

Czym są ścieżki lotu?

---------------------

Ścieżki lotu, zaimplementowane w tym **samouczku**, odnoszą się do **polilinii**, które umożliwiają **dynamiczne rysowanie ścieżek przez punkty określone przez użytkownika** na mapie znajdującej się na **urządzeniach mobilnych** lub w przeglądarce internetowej. Są one integralną częścią **HTML5 Geolocation API** i **Google Maps API** do śledzenia wzorców ruchu.

Przegląd samouczka

------------------

Upewnij się, że spełniłeś wymagania wstępne z [części pierwszej,](https://www.pubnub.com/blog/javascript-mapping-javascript-tracking/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl) [drugiej](https://www.pubnub.com/blog/javascript-google-maps-api-map-markers/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl) i [trzeciej](https://www.pubnub.com/blog/javascript-google-maps-api-location-publishing/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl), , gdzie skonfigurowaliśmy [nasze środowisko JavaScript](https://www.pubnub.com/blog/javascript-mapping-javascript-tracking/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl) i omówiliśmy [znaczniki map](https://www.pubnub.com/blog/javascript-google-maps-api-map-markers/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl) oraz [śledzenie lokalizacji](https://www.pubnub.com/blog/javascript-google-maps-api-location-publishing/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl).

Gdy to zrobisz, przejdź do następnej części.

Przewodnik po kodzie

--------------------

Zacznijmy od zdefiniowania zmiennych \`let\` \`map\`, \`mark\` i \`lineCoords\` do przechowywania naszych obiektów mapy, markera i **współrzędnych** polilinii. W ten sposób możemy je dostosowywać w miarę nadejścia zdarzeń PubNub. Następnie definiujemy wywołanie zwrotne \`initialize\`, które jest używane przez [Google Maps JavaScript API](https://developers.google.com/maps/documentation/javascript/overview) po gotowości do załadowania. Upewnij się, że zastąpiłeś \`YOUR\_GOOGLE\_MAPS\_API\_KEY\` swoim rzeczywistym **kluczem API**.

```js

let map;

let mark;

let lineCoords = [];

let initialize = function() {

map = new google.maps.Map(document.getElementById('map-canvas'), {center:{lat:lat,lng:lng},zoom:12});

mark = new google.maps.Marker({position:{lat:lat, lng:lng}, map:map});

};

window.initialize = initialize;

```

Teraz, dzięki obsłudze zdarzenia "redraw", zaktualizujemy nowe informacje o lokalizacji w locie, wywołując metodę geolokalizacji \`getCurrentPosition() \`.

### Lat/Long

Następnie definiujemy funkcję obsługi zdarzenia redraw, którą będziemy wywoływać za każdym razem, gdy otrzymamy nowe zdarzenie zmiany pozycji w locie. W pierwszej części funkcji ustawiamy szerokość i długość geograficzną na nowe wartości z komunikatu. Następnie wywołujemy odpowiednie metody na obiektach mapy, markera i polilinii, aby zaktualizować pozycję, dodać ją do końca linii i ponownie wyśrodkować mapę.

```js

var redraw = function(payload) {

lat = payload.message.lat;

lng = payload.message.lng;

map.setCenter({lat:lat, lng:lng, alt:0});

mark.setPosition({lat:lat, lng:lng, alt:0});

lineCoords.push(new google.maps.LatLng(lat, lng));

var lineCoordinatesPath = new google.maps.Polyline({

path: lineCoords,

geodesic: true,

strokeColor: '#2E10FF'

});

lineCoordinatesPath.setMap(map);

};

```

Inicjalizacja PubNub

--------------------

Po zdefiniowaniu naszych wywołań zwrotnych, zainicjujemy funkcję strumieniowego przesyłania danych w czasie rzeczywistym PubNub, która działa na **telefonach komórkowych, tabletach, przeglądarkach** i **laptopach** w różnych technologiach, takich jak **iOS, Android, JavaScript, .NET, Java, Ruby, Python, PHP** i innych.

```js

const pnChannel = "map3-channel";

const pubnub = new PubNub({

publishKey: 'YOUR_PUB_KEY',

subscribeKey: 'YOUR_SUB_KEY'

});

pubnub.subscribe({channels: [pnChannel]});

pubnub.addListener({message:redraw});

```

Funkcjonalność PubNub do **publikowania** i **subskrybowania** tematów w kanałach czasu rzeczywistego zapewnia wydajne możliwości strumieniowego przesyłania danych.

Publikowanie długości i szerokości geograficznej

------------------------------------------------

W tym prostym samouczku skonfigurowaliśmy podstawowy licznik interwałów JavaScript, aby publikować nowe pozycje w oparciu o bieżący czas. Co 500 milisekund wywołujemy anonimową funkcję zwrotną, która publikuje nowy obiekt szerokości/długości geograficznej (ze współrzędnymi poruszającymi się w kierunku północno-wschodnim) na określonym kanale PubNub. W swojej aplikacji prawdopodobnie będziesz pobierać pozycję z pozycji urządzenia na żywo lub lokalizacji zgłoszonej przez użytkownika.

```js

setInterval(function() {

pubnub.publish({channel:pnChannel, message:{lat:window.lat + 0.001, lng:window.lng + 0.01}});

}, 500);

```

Wreszcie, na samym końcu inicjalizujemy interfejs API Map Google, aby upewnić się, że elementy DOM i warunki wstępne JavaScript są spełnione.

```js

<script src="https://maps.googleapis.com/maps/api/js?v=3.exp&key=YOUR_GOOGLE_MAPS_API_KEY&callback=initialize"></script>

```

Podsumowanie

------------

Ta seria samouczków pokazała nam, jak Google Maps [API](https://developers.google.com/maps/documentation/javascript/overview) i [PubNub](https://www.pubnub.com/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl) wyjątkowo dobrze współpracują ze sobą w celu śledzenia lokalizacji w czasie rzeczywistym w aplikacjach internetowych i mobilnych. Jest to podobne do tego, jak usługi przewozowe, takie jak **Uber** i **Lyft**, pokazują ruch swoich pojazdów w czasie rzeczywistym.

Poznaj PubNub

-------------

Zapoznaj się z [Live](https://www.pubnub.com/tour/introduction/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl) Tour, aby zrozumieć podstawowe koncepcje każdej aplikacji opartej na PubNub w mniej niż 5 minut. Dowiedz się więcej o doświadczeniach naszych użytkowników bezpośrednio z naszej [strony GitHub](https://github.com/PubNubDevelopers) i opinii dostępnych na naszej stronie internetowej.

Rozpocznij konfigurację

-----------------------

Zarejestruj [konto Pub](https://admin.pubnub.com/#/login?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl) Nub, aby uzyskać natychmiastowy dostęp do kluczy PubNub za darmo.

Rozpocznij

----------

[Dokumenty](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl) PubNub pozwolą Ci rozpocząć pracę, niezależnie od przypadku użycia. Mamy sekcje poświęcone JavaScript Google Maps API i temu, jak używać ich ze śledzeniem w czasie rzeczywistym w naszym SDK. | pubnubdevrel | |

1,872,142 | Image Labeling in React | Introducing ImageAnnotator: a powerful yet lightweight React component for image annotation.... | 0 | 2024-05-31T14:28:46 | https://dev.to/mohamad_mehdi_rajaei/image-labeling-in-react-lkd | react, labeling, annotation, image |

Introducing **ImageAnnotator**: a powerful yet lightweight React component for image annotation. Developed by @azadeh_koohjani and me, ImageAnnotator allows you to easily draw bounding boxes and polygons on images, making it ideal for applications in computer vision, medical imaging, and more.

We welcome your contributions and feedback on Github:

https://github.com/TaqBostan/react-image-label

Try it out and let us know what you think! | mohamad_mehdi_rajaei |

1,872,141 | Recipe Search Tool | Welcome to our recipe search tool, a place where cooking meets convenience and nutrition. Whether... | 0 | 2024-05-31T14:28:32 | https://dev.to/alanna_taylor_043a02c1744/recipe-search-tool-5b5b | Welcome to our [recipe search tool](https://discoverybody.com/recipes/), a place where cooking meets convenience and nutrition. Whether you're a seasoned home cook or just starting out, our platform offers a wide array of recipes tailored to suit your taste buds and dietary needs.

From simple weekday dinners to impressive dishes for special occasions, our collection has something for every mealtime. Explore our curated selection and discover how easy it can be to create delicious and wholesome meals in your own kitchen. Join us today and elevate your cooking game with our recipe search tool!

https://discoverybody.com/recipes/

| alanna_taylor_043a02c1744 | |

1,872,137 | Build error occurred Error: Could not load the "sharp" module using the linux-x64 runtime | showing this error in deploy: Could not load the "sharp" module using the linux-x64 runtime Solve:... | 0 | 2024-05-31T14:24:00 | https://dev.to/mdtanvirahamedshanto/build-error-occurred-error-could-not-load-the-sharp-module-using-the-linux-x64-runtime-kpn | webdev, javascript, sharp, errors | showing this error in deploy: Could not load the "sharp" module using the linux-x64 runtime

Solve: package downgrade in "0.32.6" version

package link: https://www.npmjs.com/package/sharp/v/0.32.6

Bangeli:

vercel deployment er somoy kew Build error occurred Error: Could not load the "sharp" module using the linux-x64 runtime ei error ti face korle apnar sharp package ti "0.32.6" ei version a downgrade kore er pore deploy korben. Taholei solve hoye jabe.

package link: https://www.npmjs.com/package/sharp/v/0.32.6 | mdtanvirahamedshanto |

1,872,136 | Vanilla JavaScript - Tooltip | Tooltips are a great way to provide additional information to users without cluttering the interface.... | 0 | 2024-05-31T14:23:58 | https://dev.to/serhatbek/vanilla-javascript-tooltip-27a4 | webdev, javascript, beginners, css | Tooltips are a great way to provide additional information to users without cluttering the interface. In this article, we'll create a simple and effective tooltip using vanilla JavaScript, HTML, and CSS.

Before we start I'd like mention that I used:

- [Google Fonts](https://fonts.google.com) Roboto.

- [SCSS (SASS)](https://sass-lang.com/) for styling.

- [BEM methodology](https://getbem.com/introduction) for reusability of css for tooltip.

### Tooltip HTML Structure

Let's start by defining the HTML structure for our tooltip component. We'll start with a basic HTML structure containing three buttons. Each button has a data-tooltip attribute containing the tooltip text.

```html

<body class="container">

<button class="trigger" data-tooltip="Alice In Wonderland">Show More</button>

<button class="trigger trigger--primary" data-tooltip="Wizard Of Ozz">

Show More

</button>

<button

class="trigger trigger--secondary"

data-tooltip="Lorem ipsum, dolor sit amet consectetur adipisicing elit."

>

Show More

</button>

</body>

```

### SCSS Styling

Next, we'll style our tooltips and buttons using SCSS. Tooltips are initially hidden and positioned above the buttons. The .active class makes them visible. We'll also include some general styling for the body and container.

```scss

@import url('https://fonts.googleapis.com/css2?family=Roboto:wght@300;400;500;700&display=swap');

// COLORS

$black2: #1e1e1e;

$white: aliceblue;

$grayish-blue: #003249;

$blue: #4983cf;

$pink: #be5064;

// RESET

*,

*::before,

*::after {

box-sizing: border-box;

margin: 0;

padding: 0;

}

body {

font-family: 'Roboto', sans-serif;

background-color: $black2;

color: $white;

}

// STYLES

.container {

width: 100vw;

height: 100vh;

display: flex;

align-items: center;

justify-content: center;

h1 {

text-align: center;

margin-bottom: 30px;

}

.trigger {

font-size: 14px;

color: $white;

padding: 4px 8px;

background: transparent;

border: 0;

cursor: pointer;

border-radius: 4px;

margin: 0 10px;

position: relative;

&--primary {

background-color: $blue;

}

&--secondary {

background-color: $pink;

}

}

[role='tooltip'] {

width: calc(100% + 40px);

height: auto;

padding: 8px;

line-height: 1.5;

border-radius: 4px;

background-color: $grayish-blue;

position: absolute;

left: 50%;

bottom: calc(100% + 15px);

transform: translateX(-50%);

opacity: 0;

visibility: hidden;

transition: all 300ms ease-in-out;

}

[role='tooltip'].active {

opacity: 1;

visibility: visible;

}

[role='tooltip']::before {

content: '';

position: absolute;

transform: translateX(-50%);

left: 50%;

bottom: -8px;

width: 0;

height: 0;

border-left: 10px solid transparent;

border-right: 10px solid transparent;

border-top: 10px solid $grayish-blue;

}

}

```

### Adding JavaScript Functionality

Finally, we'll add the JavaScript code to make our tooltips interactive. This involves creating tooltip elements dynamically and attaching event listeners to show and hide the tooltips. We select all elements with the **.trigger** class and for each trigger, we create a tooltip element, set its role to tooltip, and append it to the trigger button. We'll also add **mouseenter** and **mouseleave** event listeners that will call **openTooltip** and **closeTooltip** functions to show and hide the tooltip by adding and removing the **.active** class.

```javascript

const triggers = document.querySelectorAll('.trigger');

const openTooltip = (e) => {

const tooltip = e.target.querySelector('[role=tooltip]');

tooltip.classList.add('active');

};

const closeTooltip = (e) => {

const tooltip = e.target.querySelector('[role=tooltip]');

tooltip.classList.remove('active');

};

if (triggers) {

triggers.forEach((trigger) => {

let tooltip = document.createElement('span');

tooltip.setAttribute('role', 'tooltip');

tooltip.setAttribute('inert', true);

tooltip.textContent = trigger.dataset.tooltip;

trigger.appendChild(tooltip);

trigger.addEventListener('mouseenter', openTooltip);

trigger.addEventListener('mouseleave', closeTooltip);

});

}

```

We've just created a simple tooltip component using vanilla JavaScript. You can customize it for your project's needs. To see the detailed code check project's [Github](https://github.com/serhatbek/javascript-projects/tree/main) repo and [Codepen](https://codepen.io/serhatbek/pen/vYwKdaG) for live demo.

Thank you for reading. If you find the article useful, please do not forget to give a star so that others can access it. Happy Coding! 🙃

<a href="https://www.buymeacoffee.com/serhatbek" target="_blank"><img src="https://cdn.buymeacoffee.com/buttons/default-orange.png" alt="Buy Me A Coffee" height="41" width="174"></a> | serhatbek |

1,872,134 | DiscoveryBody | At DiscoveryBody, we believe that knowledge is power, especially when it comes to your well-being.... | 0 | 2024-05-31T14:23:19 | https://dev.to/alanna_taylor_043a02c1744/discoverybody-49b | At [DiscoveryBody](https://discoverybody.com/), we believe that knowledge is power, especially when it comes to your well-being. Our mission is simple: to provide you with the most up-to-date and reliable information about all things health.

We strive to be your go-to source for health and fitness news, health recipes, and everything in between. [DiscoveryBody](https://discoverybody.com/) is more than just a website. It's a community of like-minded individuals who are passionate about living their best lives.

https://discoverybody.com/

| alanna_taylor_043a02c1744 | |

1,872,133 | Google Maps APIを使ったJavaScriptの位置情報トラッキング | JavaScriptのGoogle Maps APIとジオロケーション・トラッキングを使ったリアルタイム地図作成についての最終シリーズをご覧ください。 | 0 | 2024-05-31T14:22:55 | https://dev.to/pubnub-jp/google-maps-apiwoshi-tutajavascriptnowei-zhi-qing-bao-toratukingu-1047 | キーワードをシームレスに埋め込んだ最新のブログ記事はこちら。

Google Maps JavaScript APIとPubNubを使ってジオロケーション機能を備えたライブのリアルタイム・ウェブ・アプリケーションを作成する4つのセグメント・シリーズの完結編です。チュートリアルでは、JavaScriptとPubNubを使って飛行経路を生成するユーザーエクスペリエンスを説明します。

これがどのように実装されているかの例については、PubNubのウェブサイトにある私たちの[ショーケースのデモを](https://showcase.pubnub.com/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ja)チェックしてください。リアルタイムトラッキングでPubNubをどのように組み込むかについては、Geolocationデモに移動します。デモのコードは[Githubを](https://github.com/PubNubDevelopers/PubNub-Showcase/tree/main/web/geolocation)ご覧ください。

フライトパスとは何ですか?

-------------

この**チュートリアルで**実装されているフライトパスは**ポリラインの**ことで、**モバイルデバイスや**ウェブブラウザ上の地図上に**ユーザーが指定したポイントを通るパスを動的に描画**することができます。このポリラインは、**HTML5 Geolocation APIと** **Google Maps APIに**不可欠であり、移動パターンを追跡します。

チュートリアルの概要

----------

[パート1、](https://www.pubnub.com/blog/javascript-mapping-javascript-tracking/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ja) [2](https://www.pubnub.com/blog/javascript-google-maps-api-map-markers/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ja)、[3](https://www.pubnub.com/blog/javascript-google-maps-api-location-publishing/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ja)、、[JavaScript環境のセットアップ](https://www.pubnub.com/blog/javascript-mapping-javascript-tracking/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ja)、[マップマーカーと](https://www.pubnub.com/blog/javascript-google-maps-api-map-markers/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ja) [ロケーショントラッキングについて](https://www.pubnub.com/blog/javascript-google-maps-api-location-publishing/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ja)説明しました。

それができたら、次のパートに進んでください。

コードのチュートリアル

-----------

まずは \`let\` 変数 \`map\`、\`mark\`、\`lineCoords\` を定義して、マップ、マーカー、ポリラインの**座標**オブジェクトを保持することから始めましょう。こうすることで、PubNubのイベントに合わせて調整することができる。続いて、[Google Maps JavaScript APIが](https://developers.google.com/maps/documentation/javascript/overview)読み込みを開始したときに使用できる \`initialize\` コールバックを定義します。YOUR\_GOOGLE\_MAPS\_API\_KEY\`は実際の**APIキーに置き換えて**ください。

```js

let map;

let mark;

let lineCoords = [];

let initialize = function() {

map = new google.maps.Map(document.getElementById('map-canvas'), {center:{lat:lat,lng:lng},zoom:12});

mark = new google.maps.Marker({position:{lat:lat, lng:lng}, map:map});

};

window.initialize = initialize;

```

さて、'redraw' イベントハンドラで、geolocation の \`getCurrentPosition()\` メソッドを呼び出して、新しい位置情報をその場で更新します。

### 緯度経度

次にredrawイベントハンドラを定義し、新しい位置が変更されたイベントを受信したときに呼び出します。関数の最初の部分では、緯度と経度をメッセージからの新しい値に設定します。次に、マップ、マーカー、ポリラインオブジェクトの適切なメソッドを呼び出して、位置を更新し、線の端に追加し、マップを再配置します。

```js

var redraw = function(payload) {

lat = payload.message.lat;

lng = payload.message.lng;

map.setCenter({lat:lat, lng:lng, alt:0});

mark.setPosition({lat:lat, lng:lng, alt:0});

lineCoords.push(new google.maps.LatLng(lat, lng));

var lineCoordinatesPath = new google.maps.Polyline({

path: lineCoords,

geodesic: true,

strokeColor: '#2E10FF'

});

lineCoordinatesPath.setMap(map);

};

```

PubNubの初期化

----------

コールバックを定義した後、**iOS、Android、JavaScript、.NET、Java、Ruby、Python、PHPなどの**技術スタックにわたって**携帯電話、タブレット、ブラウザ**、**ラップトップで**動作するPubNubリアルタイム・データ・ストリーミング機能を初期化する。

```js

const pnChannel = "map3-channel";

const pubnub = new PubNub({

publishKey: 'YOUR_PUB_KEY',

subscribeKey: 'YOUR_SUB_KEY'

});

pubnub.subscribe({channels: [pnChannel]});

pubnub.addListener({message:redraw});

```

PubNubのリアルタイムチャネルでのトピックの**公開と** **購読**機能は、効率的なデータストリーミング機能を提供します。

緯度/経度のパブリッシュ

------------

この簡単なチュートリアルでは、基本的なJavaScriptのインターバル・タイマーをセットアップして、現在時刻に基づいて新しい位置をパブリッシュします。500ミリ秒ごとに、指定したPubNubチャンネルに新しい緯度経度オブジェクト(北東移動座標)をパブリッシュする匿名コールバック関数を呼び出します。あなたのアプリでは、おそらくライブのデバイスの位置やユーザーが報告した位置から位置を取得することになるでしょう。

```js

setInterval(function() {

pubnub.publish({channel:pnChannel, message:{lat:window.lat + 0.001, lng:window.lng + 0.01}});

}, 500);

```

最後に、Google Maps APIを初期化して、DOM要素とJavaScriptの前提条件が満たされていることを確認します。

```js

<script src="https://maps.googleapis.com/maps/api/js?v=3.exp&key=YOUR_GOOGLE_MAPS_API_KEY&callback=initialize"></script>

```

まとめ

---

このチュートリアルシリーズでは、Google[Maps API](https://developers.google.com/maps/documentation/javascript/overview)と[PubNubが](https://www.pubnub.com/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ja)ウェブアプリやモバイルアプリでリアルタイムの位置情報をトラッキングするために非常にうまく連携する方法を紹介しました。これは**Uberや** **Lyftの**ようなライドヘイリングサービスがリアルタイムで車両の動きを表示するのと似ている。

PubNubを体験する

-----------

[ライブツアーを](https://www.pubnub.com/tour/introduction/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ja)チェックして、5分以内にすべてのPubNub搭載アプリの背後にある本質的な概念を理解してください。[GitHubページや](https://github.com/PubNubDevelopers)Webサイトで公開されている体験談から、ユーザーの体験を直接聞くことができます。

セットアップ

------

[PubNubアカウントに](https://admin.pubnub.com/#/login?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ja)サインアップすると、PubNubキーに無料ですぐにアクセスできます。

始める

---

[PubNubのドキュメントは](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ja)、ユースケースに関係なく、あなたを立ち上げ、実行することができます。私たちは、JavaScriptのGoogle Maps APIと私たちのSDKでリアルタイムトラッキングとそれらを使用する方法に特化したセクションがあります。 | pubnubdevrel | |

1,868,140 | static değişken ve metodlar | Elbette, static metodlar ve değişkenler, sınıf seviyesinde çalıştıkları ve sınıfın tüm örnekleri... | 0 | 2024-05-28T21:14:22 | https://dev.to/mustafacam/static-degisken-ve-metodlar-1j35 | Elbette, static metodlar ve değişkenler, sınıf seviyesinde çalıştıkları ve sınıfın tüm örnekleri arasında paylaşıldıkları için OOP'de önemli bir rol oynarlar. İşte bu kavramların detaylı bir açıklaması:

### Static Değişkenler

Static değişkenler, sınıfa ait olup tüm sınıf örnekleri arasında paylaşılan değişkenlerdir. Bir sınıfın tüm nesneleri, aynı static değişkeni paylaşır ve bu değişken üzerinde yapılan bir değişiklik tüm nesneler tarafından görülür.

#### Özellikleri:

- Sınıfa aittir, nesnelere değil.

- Sınıf yüklendiğinde bellekte tek bir kopya oluşturulur.

- Nesne oluşturulmadan da erişilebilir.

#### Kullanım Durumları:

- Bir sınıftan oluşturulan tüm nesneler için ortak bir veri tutmak istendiğinde kullanılır.

- Genellikle sayacılar, sabitler veya genel konfigürasyon bilgileri için kullanılır.

#### Örnek:

```java

public class Araba {

public static int arabaSayisi = 0;

public Araba() {

arabaSayisi++;

}

}

public class Main {

public static void main(String[] args) {

Araba araba1 = new Araba();

Araba araba2 = new Araba();

System.out.println(Araba.arabaSayisi); // Çıktı: 2

}

}

```

Bu örnekte, `arabaSayisi` tüm `Araba` nesneleri arasında paylaşılan bir değişkendir. Her yeni `Araba` nesnesi oluşturulduğunda bu değişken artırılır ve tüm nesneler aynı sayacı görür.

### Static Metodlar

Static metodlar, bir sınıfın örneğine bağlı olmadan çağrılabilen metodlardır. Bu metodlar, genellikle sınıf seviyesinde işlem yapar ve nesne seviyesindeki verilere erişemezler (static olmayan üyelere erişemezler).

#### Özellikleri:

- Sınıfa aittir, nesnelere değil.

- Nesne oluşturulmadan sınıf adıyla çağrılabilir.

- Sadece static değişkenlere ve diğer static metodlara erişebilirler.

#### Kullanım Durumları:

- Yardımcı işlevler veya sınıf seviyesinde operasyonlar gerçekleştirmek için kullanılır.

- Nesne durumu gerektirmeyen işlemler için uygundur.

#### Örnek:

```java

public class Matematik {

public static int toplama(int a, int b) {

return a + b;

}

}

public class Main {

public static void main(String[] args) {

int sonuc = Matematik.toplama(5, 3);

System.out.println(sonuc); // Çıktı: 8

}

}

```

Bu örnekte, `toplama` metodu `Matematik` sınıfının bir örneği oluşturulmadan doğrudan sınıf adıyla çağrılabilir. Bu metod, iki sayıyı toplar ve sonucu döndürür.

### Özet

- **Static Değişkenler:** Sınıf seviyesinde tanımlanır, tüm nesneler arasında paylaşılır, nesne oluşturulmadan erişilebilir. Genellikle sınıf genelinde ortak olan veriler için kullanılır.

- **Static Metodlar:** Sınıf seviyesinde tanımlanır, nesne oluşturulmadan sınıf adıyla çağrılabilir. Genellikle yardımcı işlevler veya nesne durumu gerektirmeyen işlemler için kullanılır.

Bu özellikler, static üyelerin sınıf düzeyinde işlemleri ve verileri yönetmek için nasıl kullanıldığını gösterir. Static üyeler, OOP'de sınıflar arasındaki işlevselliği ve veri paylaşımını kolaylaştırır. | mustafacam | |

1,872,132 | JavaScript Geolocation Tracking with Google Maps API | Explore the final series on real-time maps creation using JavaScript Google Maps API and geolocation tracking. | 0 | 2024-05-31T14:22:54 | https://dev.to/pubnub/javascript-geolocation-tracking-with-google-maps-api-1mmb | Here's the updated blog post, with all the keywords seamlessly embedded.

This is a conclusion to the four-segment series about creating live, real-time web applications with geolocation features using the Google Maps JavaScript API and PubNub. Our tutorial will walk you through the user experience of generating flight paths using JavaScript and PubNub.

For a example of how this is all implemented check out our [Showcase demo](https://showcase.pubnub.com/?) found on the PubNub website. Navigate to the Geolocation demo for how we incorporate PubNub with real-time tracking. For the code behind the demo navigate to our [Github](https://github.com/PubNubDevelopers/PubNub-Showcase/tree/main/web/geolocation) to see how it all works.

What are Flight Paths?

----------------------

Flight paths, as implemented in this **tutorial**, refer to **Polylines** which allow the **dynamic drawing of paths through user-specified points** on a map that resides either on your **mobile devices** or web browser. They are integral to the **HTML5 Geolocation API** and **Google Maps API** for tracking movement patterns.

Tutorial Overview

-----------------

Ensure you have the completed prerequisites from [Parts One,](https://www.pubnub.com/blog/javascript-mapping-javascript-tracking/?) [Two](https://www.pubnub.com/blog/javascript-google-maps-api-map-markers/?) and [Three](https://www.pubnub.com/blog/javascript-google-maps-api-location-publishing/?), where we [set up our JavaScript environment](https://www.pubnub.com/blog/javascript-mapping-javascript-tracking/?) and covered [map markers](https://www.pubnub.com/blog/javascript-google-maps-api-map-markers/?) and [location tracking](https://www.pubnub.com/blog/javascript-google-maps-api-location-publishing/?).

Once you’ve done so, move onto the next part.

Code Walkthrough

----------------

Let's start by defining \`let\` variables \`map\`, \`mark\`, and \`lineCoords\` to hold our map, marker, and polyline **coords** objects. By doing so, we can adjust them as PubNub events come in. Subsequently, we define the \`initialize\` callback which is usable by the [Google Maps JavaScript API](https://developers.google.com/maps/documentation/javascript/overview) upon readiness to load. Ensure to replace \`YOUR\_GOOGLE\_MAPS\_API\_KEY\` with your actual **API key**.

```js

let map;

let mark;

let lineCoords = [];

let initialize = function() {

map = new google.maps.Map(document.getElementById('map-canvas'), {center:{lat:lat,lng:lng},zoom:12});

mark = new google.maps.Marker({position:{lat:lat, lng:lng}, map:map});

};

window.initialize = initialize;

```

Now, with 'redraw' event handler, we will update the new location info on the fly invoking geolocation's \`getCurrentPosition()\` method.

### Lat/Long

Next up, we define a redraw event handler which we’ll call whenever we get a new position changed event on the fly. In the first part of the function, we set the latitude and longitude to the new values from the message. Then, we invoke the appropriate methods on the map, marker, and polyline objects to update the position, add it to the end of the line and recenter the map.

```js

var redraw = function(payload) {

lat = payload.message.lat;

lng = payload.message.lng;

map.setCenter({lat:lat, lng:lng, alt:0});

mark.setPosition({lat:lat, lng:lng, alt:0});

lineCoords.push(new google.maps.LatLng(lat, lng));

var lineCoordinatesPath = new google.maps.Polyline({

path: lineCoords,

geodesic: true,

strokeColor: '#2E10FF'

});

lineCoordinatesPath.setMap(map);

};

```

Initialize PubNub

-----------------

After defining our callbacks, we will initialize the PubNub real-time data streaming functionality which operates on **mobile phones, tablets, browsers,** and **laptops** across tech stacks like **iOS, Android, JavaScript, .NET, Java, Ruby, Python, PHP,** and more.

```js

const pnChannel = "map3-channel";

const pubnub = new PubNub({

publishKey: 'YOUR_PUB_KEY',

subscribeKey: 'YOUR_SUB_KEY'

});

pubnub.subscribe({channels: [pnChannel]});

pubnub.addListener({message:redraw});

```

PubNub's functionality to **publish** and **subscribe** to topics in real-time channels gives efficient data-streaming capabilities.

Publishing Lat/Long

-------------------

For this simple tutorial, we set up a basic JavaScript interval timer to publish new positions based on the current time. Every 500 milliseconds, we invoke the anonymous callback function which publishes a new latitude/longitude object (with Northeast-moving coordinates) to the specified PubNub channel. In your app, you’ll likely be getting the position from a live device position or user-reported location.

```js

setInterval(function() {

pubnub.publish({channel:pnChannel, message:{lat:window.lat + 0.001, lng:window.lng + 0.01}});

}, 500);

```

Last but not least, we initialize the Google Maps API at the very end to ensure the DOM elements and JavaScript prerequisites are satisfied.

```js

<script src="https://maps.googleapis.com/maps/api/js?v=3.exp&key=YOUR_GOOGLE_MAPS_API_KEY&callback=initialize"></script>

```

Wrapping Up

-----------

This tutorial series has shown us how [Google Maps API](https://developers.google.com/maps/documentation/javascript/overview) and [PubNub](https://www.pubnub.com/?) work exceptionally well together for real-time location tracking on web and mobile apps. It's similar to how ride-hailing services like **Uber** and **Lyft** show the movement of their vehicles in real time.

Experience PubNub

-----------------

Check out the [Live Tour](https://www.pubnub.com/tour/introduction/?) to understand the essential concepts behind every PubNub-powered app in under 5 minutes. Hear about our users' experience directly from our [GitHub page](https://github.com/PubNubDevelopers) and testimonials available on our website.

Get Setup

---------

Sign up for a [PubNub account](https://admin.pubnub.com/#/login?) for immediate access to PubNub keys for free.

Get Started

-----------

The [PubNub docs](https://www.pubnub.com/docs?) will get you up and running, regardless of your use case. We have sections dedicated to JavaScript Google Maps API and how to use them with real-time tracking in our SDK. | pubnubdevrel | |

1,872,131 | TVTap Pro APK 3.0 on Firestick & Android TV Devices(kemo iptv) | Installing TVTap Pro APK 3.0 on your Firestick (kemo iptv)or Android TV device is akin to unlocking a... | 0 | 2024-05-31T14:22:31 | https://dev.to/iptv_subscriptions_68e56b/tvtap-pro-apk-30-on-firestick-android-tv-deviceskemo-iptv-2l12 | iptv | Installing TVTap Pro APK 3.0 on your Firestick (kemo iptv)or Android TV device is akin to unlocking a treasure trove of free live cable TV entertainment. But why should you listen to me? As a tech enthusiast who has navigated the choppy waters of countless APK installations, my experience could save you from many pitfalls. Let’s dive right into the process, shunning the fluff for hardcore, actionable advice.

Read More:

https://kemo-iptv.pro/tvtap-pro-apk-3-0-on-firestick-android-tv-deviceskemo-iptv/ | iptv_subscriptions_68e56b |

1,872,130 | One-Click Project Planning for smart IT Teams | Hi developers, We're Markus and Patric, two passionate developers who have navigated the... | 0 | 2024-05-31T14:20:57 | https://dev.to/markus_at/one-click-project-planning-for-smart-it-teams-42dh | Hi developers,

We're Markus and Patric, two passionate developers who have navigated the complexities of IT ecosystems and high-pressure software teams for years. Like many of you, we’ve grappled with challenging work cultures, vague customer requirements, scope creep, and a lack of transparency—all under the weight of tight deadlines and unhealthy pressure. 😟 After exploring numerous tools, from industry giants to lesser-known platforms, we found that none could fully meet our needs with a 100% focus on IT specialization. That’s why we created **BestCase** — designed by developers for developers, designers, product owners, project managers, scrum masters, dev ops specialists, testers, and other IT professionals. BestCase cuts IT project planning time to under 5 minutes. It integrates everything from initial idea sketches to final solution findings, with AI-powered automation for cross-departmental steps. This framework supports genuine collaborative work, granting IT teams at least an additional 6 weeks for project execution and dramatically reducing time-to-market. BestCase is more than just another tool; it's a complete project management solution and a simulated IT ecosystem in a single browser tab. It eliminates the manual and cross-departmental work steps that cost you and your team thousands of minutes and consume immense amounts of time, so you can focus on what matters most. And the time to market for everything you successfully implement is drastically reduced at the same time.

Get started for FREE: [https://www.bestcase.work](https://www.bestcase.work)

🐣Features

✔ AI-supported generation of user stories

✔ AI-supported generation of features, test-cases and acceptance criterias

✔ Table, Gantt, Kanban, Dashboard views

✔ Workload-management of team-members

✔ Manage your team including their skills

✔ Task management

✔ Time-Tracking

✔ Project-Portfolio Dashboard

✔ Agile methodology

✔ Waterfall methodology

Read more about Bestcase: [https://www.bestcase.work](https://www.bestcase.work)

🥇Who is BestCase for?

✔ Freelancers in IT-industry

✔ Product Owners

✔ Project Managers

✔ IT-Teams

✔ Software Agencies

✔ Software Departments

✔ Remote teams

✔ AI-enthusiasts

✔ YOU

Feel free to register and PLEASE let us know what you think. We've just launched the beta-version and still looking for feedback. Made by developers for developers.

| markus_at | |

1,872,128 | The 80th Anniversary of the Deportation of the Crimean Tatars | May 18 marks the remembrance day of one of Stalin’s and the Soviet regime's most brutal crimes — the... | 0 | 2024-05-31T14:16:54 | https://www.heyvaldemar.com/the-80th-anniversary-of-the-deportation-of-the-crimean-tatars/ | crimeantatars, learning, repressions, history | May 18 marks the remembrance day of one of Stalin’s and the Soviet regime's most brutal crimes — the deportation of the Crimean Tatars.

The deportation operation began early on the morning of May 18, 1944, and concluded on the evening of May 20. It was the beginning of a cruel and inhumane operation carried out by the NKVD, which left an indelible scar in the history of the Crimean Tatar people. Residents were given just a few minutes to gather their belongings before being loaded into overcrowded cattle cars.

Those who refused to leave, resisted, or simply could not move were shot on the spot. Witnesses tell of bodies lying in the streets and courtyards, of screams and pleas for mercy that went unanswered. The conditions in the wagons were unbearable with overcrowding and lack of sanitation. People suffocated, died of dehydration, and lacked medical assistance, suffering from heat and suffocation. Mothers gave birth and lost their babies right before the eyes of other prisoners, and the dead were thrown directly onto the railway tracks.

About 200,000 people were sent to forced labor in Asian republics and Siberia. Upon arrival at their destinations, they faced not life, but a slow demise. Half of those who survived the hellish journey died in the first year of resettlement from hunger, cold, and unbearable working conditions. Many died from infections spread in overcrowded barracks. People were forced to work to exhaustion, often without clothing or footwear in the bitter cold.

This tragedy serves as a reminder that behind the facade of the "happy" life of the Soviet Union, which many now perceive as a time of stability and prosperity, lies the suffering and death of thousands of innocent people. Stalin's totalitarian regime turned the lives of millions into an endless nightmare filled with horror and bloody crimes.

## Contemporary Impact and Ongoing History

As highlighted in [the statement by the Canadian Minister of Foreign Affairs](https://www.canada.ca/en/global-affairs/news/2024/05/statement-by-minister-of-foreign-affairs-on-80th-anniversary-of-deportation-of-crimean-tatars.html), Mélanie Joly, the tragedy of the Crimean Tatars finds parallels in Russia's actions in Crimea following its illegal annexation in 2014. Russian authorities continue policies of infringing upon the rights of the Crimean Tatars, destroying their cultural heritage, replacing historical names, and persecuting those who oppose the annexation. Canada and the international community recognize these actions as a continuation of the policy of repression and support Ukraine's sovereignty and territorial integrity in response to ongoing aggression.

## Additional Resources for Study

📕 [Article on the deportation of the Crimean Tatars on English Wikipedia](https://en.wikipedia.org/wiki/Deportation_of_the_Crimean_Tatars)

📕 [Statement by the Minister of Foreign Affairs of Canada on the 80th anniversary of the deportation of the Crimean Tatars](https://www.canada.ca/en/global-affairs/news/2024/05/statement-by-minister-of-foreign-affairs-on-80th-anniversary-of-deportation-of-crimean-tatars.html)

📕 [Official statement by the Government of Norway on the 80th anniversary of the deportation of the Crimean Tatars](https://www.regjeringen.no/en/aktuelt/80-years-since-the-deportation-of-the-krym-tatars/id3040013/)

| heyvaldemar |

1,872,073 | Xperience by Kentico: 5 useful developer resources for getting started with XbyK | Have you tried Xperience by Kentico yet? 🤔 If not, it’s this easy to get started. 🙌 Here’s 5 useful... | 0 | 2024-05-31T14:13:54 | https://dev.to/michael419/xperience-by-kentico-5-useful-developer-resources-for-getting-started-with-xbyk-35m0 | kentico, xperience, cms | Have you tried Xperience by Kentico yet? 🤔

If not, it’s this easy to get started. 🙌 Here’s 5 useful resources to get you going:

## 📖 1. Read this blog post on the Kentico Community forum

It that will inform you where the documentation lives, how to access tutorials and quick-start guides https://community.kentico.com/blog/learning-xperience-by-kentico-as-a-software-developer

## ✉ 2. Sign-up to the Kentico Community Portal newsletter

As a developer, it’s one of the best methods for staying informed on the latest releases, useful articles and guides https://community.kentico.com/newsletter-signup

## 📽 3. Watch these brilliant technical spotlight videos by Kentico’s Lead Product Evangelist, @seangwright

We used these recently at our in-house agency hackathon to get developers, who were new to Xperience by Kentico, up-to-speed in minutes with setting up local environments (on Windows/Mac/Linux), creating content types, page templates, widgets, and using continuous integration https://www.youtube.com/playlist?list=PL9RdJplq_ukaIt4_V4GAbeJ_qk1AKuFrP

## 📄 4. Get to know the developer documentation

Kentico’s documentation is second-to-none and it’s one of the many reasons why I’ve been able to successfully build and deliver solutions for our clients, quickly and efficiently, as every aspect of the product is immaculately documented in a format that is easy to read, understand, and action. https://docs.kentico.com/developers-and-admins

## 📢 5. If you get stuck, you’re not alone...

Ask a question on the Kentico Community Portal Q&A forum. Other developers and Kentico MVPs, even Kentico employees, will be on-hand to help https://community.kentico.com/q-and-a

Become Kentico Developer Certified?

Once you're up-and-running, and have accrued some experience with...Xperience, why not consider becoming a Kentico Certified Developer? The Xperience by Kentico Certified Developer exam is not currently available, but will be released sometime mid-2024, but in the meantime, you can draw some learnings for the equivalent Kentico Xperience 13 developer exam in my post: [5 tips on how to ace the Kentico Certified Developer exam](https://dev.to/michael419/kentico-xperience-13-5-tips-on-how-to-ace-the-kentico-certified-developer-exam-3dkh) 😉

Good luck 👍

| michael419 |

1,872,127 | The Significance of Commercial Gate Repair and Its Effect on Your Company. | The operations of commercial properties in our highly competitive market require proper maintenance... | 0 | 2024-05-31T14:13:42 | https://dev.to/information-stock/the-significance-of-commercial-gate-repair-and-its-effect-on-your-company-2e8m | repair, comercialgaterepair, security | The operations of commercial properties in our highly competitive market require proper maintenance and security. [Commercial gate repair](https://www.gaterepairexperts.com/) is essential in the maintenance and protection of your business facilities. The commercial gate is a primary security barrier against intruders, so its maintenance is crucial. In addition to security, a gate that is well-functioning helps in conducting the functions of your business in a timely manner. It also makes the property attractive to clients and customers from the outside. A defective gate, on the other hand, not only causes delivery delays and lost sales but also impacts the public perception of the business. These issues can be prevented by regular maintenance and timely commercial gate repair to ensure that the gate lasts long. In addition, proper gate maintenance ensures that the industry and safety standards are met, thus reducing liability risks and guaranteeing a safe working environment. This guide aims to provide an overview of the importance of timely and professional commercial gate repair for your business.

## Increasing Protection and Lowering Risk

The issue of security is critical for an organisation. If the gate malfunctions, you will create risks for your employees, customers, and assets. Maintenance and repair of commercial gates should, therefore be done on a regular basis and on time to avoid a third party from accessing and exposing the facility or compound to security threats. A secure gate works not only to guard certain inventories but also helps to reduce the chances of theft and damage of the property and so on which in turn reduces the liability on the business.

## Prevent Unauthorized Access

A commercial gate is the most effective and loyal security that protects the house from intruders. It helps in ensuring that only approved personnel can have access to the premises; this contributes to overall security. High-security gate systems that have keypads, card readers or other security components added to them help to ensure greater safety of your property.

## Alleviate Liability Risks

Individuals are also responsible for accidents that may happen in their businesses. A faulty gate can cause injuries, property destruction, or even litigation. By maintaining and repairing their gates on a regular basis, these businesses can eliminate or drastically minimise these risks.

## Improving Operational Efficiency

A working gate is a time-saver if you have a commercial enterprise. It helps in the effective delivery of materials and personnel, particularly within the stipulated time, to avoid delays that inconvenience customers.

## Minimizing Downtime

The [most common problem with commercial gates](https://www.gaterepairexperts.com/commercial-gate-repair/) is breakdowns, which may pose a big challenge to your undertakings. This may lead to longer delivery delays, missed sales, and lower customer satisfaction. Preventive maintenance and repair of electrical faults will prevent or reduce the amount of time that your business works without electrical power.

## Streamlining Logistics

Any warehouse or distribution center must have an operating gate system for businesses that are dependent on a steady supply of commodities coming in and out of the facility. The gate also assists in loading and unloading procedures, which helps minimize waiting time and enhance production. A properly kept gate also enhances the easy operation and management of logistics and supply chains.

## First Impressions: Developing a Positive Impression

First impressions matter. Smooth and workable gates offer a positive impression on customers, partners, and prospects. This will show that you are professional and regard details, which will also impact their perception of your business.

## Reinforcing Brand Identity

A customized commercial gate can also act as a billboard and an expression of your brand. The integration of a firm’s logo, colours, and design can be aptly used in the gate to promote the business throughout the business premises. This not only improves the product’s appearance but also enables the customer to identify the product easily.

## Life Extension and Cost-Saving

Periodic maintenance and timely repairs of commercial gates can help increase their service life and save the company money down the road. Regular maintenance and repair also help in the overall costs of [repairing commercial gates](https://www.gaterepairexperts.com/commercial-gate-repair/). It is also important to resolve minor problems to prevent severe damage that would result in expensive repairs or replacements. Additionally, regular maintenance will help in detecting any potential issues that may keep on re-occurring with the gate. Identifying and addressing these causes will help businesses to avoid similar problems in the future and thus save time and money.

## Complying with Safety Regulations

Safety regulations are important for any business. Commercial gates must comply with industry standards and regulations to avoid legal concerns and ensure that their workplace is safe. It is, therefore, vital to ensure that commercial gates are well maintained and repaired to achieve maximum security. A properly maintained gate is less prone to breakage or other breakdowns that may put the security of the premises in danger. In contrast, a poorly maintained gate is likely to break down at the most inappropriate moment. Companies that can keep the gate working at optimum levels can regulate access to their property and secure their resources and employees.

## Adhering to Industry Standards

Commercial gates should be manufactured according to various industry standards and local regulations. This means that regular maintenance or repair is crucial for your gate to meet these standards and avert fines or legal actions. Compliance also shows that you are taking care of the safety and regulatory issues.

## Ensuring Safe Operation

A defective gate may cause safety risks. Routine maintenance and inspection make your gate run smoothly and effectively. This not only ensures the safety of your employees and customers but also enhances your credibility as a safe and risk-free company.

## Selecting the Best Commercial Gate Repair Company

It is important to choose the best commercial gate repair company service that is professional and effective.

## Experience and Expertise

Choose a company that has substantial experience and knowledge of commercial gate repair. An ideal service provider will have a history of working with different kinds of gates and dealing with a range of problems.

## 24/7 Emergency Services

It is also important to note that gate malfunctions can arise at any time and, most likely, at the most inconvenient time. Choose a [24/7 gate repair service](https://www.gaterepairexperts.com/) providers so they are available at all hours of the day to avoid downtime and security threats.

## Comprehensive Maintenance Plans

Regular maintenance can also ensure that your gate is well-maintained. Select a service provider that regularly inspects and cleans your gate, provides preventive maintenance, and responds quickly to requests for repair.

## Summery

Regular maintenance and timely repair of the commercial gate are important steps in protecting security, efficiency, and the image of the enterprise. Early identification of potential problems, improvement of safety, and compliance with requirements allow for achieving the positive impact of the gate system on the business. For Commercial gate repair, hire a competent [Commercial gate repair company](Commercial gate repair) to ensure long-term use and save money on repairs. Maintenance and repair activities are important because equipment failure can result in losses in business. It is important to have high-quality materials and equipment when repairing gates in order to achieve the best results in terms of performance and durability. Security arrangements should also be made when performing gate repairs and maintenance. Implementing and maintaining professional gates is an essential way to support businesses’ activities and protect them from threats.

| information-stock |

1,872,125 | Explorer les cas d'utilisation des services cognitifs | Exemples d'applications et d'entreprises transformées par les services cognitifs, ainsi que quelques cas d'utilisation futurs | 0 | 2024-05-31T14:11:21 | https://dev.to/pubnub-fr/explorer-les-cas-dutilisation-des-services-cognitifs-e66 | Passons en revue quelques exemples d'applications et d'entreprises transformées par les services cognitifs et quelques cas d'utilisation futurs pour voir à quel point ils changent le paysage technologique.

Grâce aux services cognitifs de géants du cloud comme AWS, IBM et Microsoft Azure, les équipes de développeurs de toutes tailles ont désormais accès à des [services cognitifs](https://pubnub.com/resources/ebook/building-apps-with-cognitive-services/) d'une puissance stupéfiante. Fournis par le biais d'API, ces services permettent d'injecter facilement une intelligence de nouvelle génération dans les applications.

[**Chat**](https://pubnub.com/learn/glossary/what-is-a-chat-api/) **et interaction sociale**

--------------------------------------------------------------------------------------------

En 2015, les utilisateurs actifs mensuels des applications de chat ont dépassé ceux des réseaux sociaux, et le fossé continue de se creuser. En effet, la messagerie est devenue un élément essentiel des réseaux sociaux eux-mêmes. Avec cette croissance rapide, les applications de messagerie ont évolué, passant de simples outils pour envoyer et recevoir de courts messages textuels à des expériences innovantes et complètes, dotées de fonctions surprenantes et agréables. Les API cognitives sont le moteur de cette innovation.

### **Chatbots et informatique cognitive**

Les chatbots sont l'une des premières formes d'algorithmes d'IA. Même s'il est peu probable qu'ils réussissent bientôt le test de Turing, ils représentent l'évolution naturelle des applications à commande vocale. Alors qu'auparavant vous deviez appeler une ligne d'assistance et appuyer sur la touche 1 pour obtenir les comptes fournisseurs, vous pouvez désormais parler avec des phrases complètes à un système capable de discerner votre intention.

Que vous le sachiez ou non, l'adoption des chatbots a explosé car les entreprises cherchent à réduire les temps d'attente, à améliorer l'expérience client et à minimiser le coût des opérateurs téléphoniques humains. Pour l'instant, ils sont principalement utilisés pour gérer des tâches simples : comprendre les demandes de base et y répondre en fonction de règles prédéfinies, en répondant à des questions telles que "Où en est ma commande ?" ou "Chatbot, allume les lumières d'ambiance".