id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,870,745 | DAFTAR | [](https://bungjpjaya2.online/register?ref=MCANAB01468 | 0 | 2024-05-30T20:03:28 | https://dev.to/pastijaya/daftar-bgd | [](https://bungjpjaya2.online/register?ref=MCANAB01468 | pastijaya | |

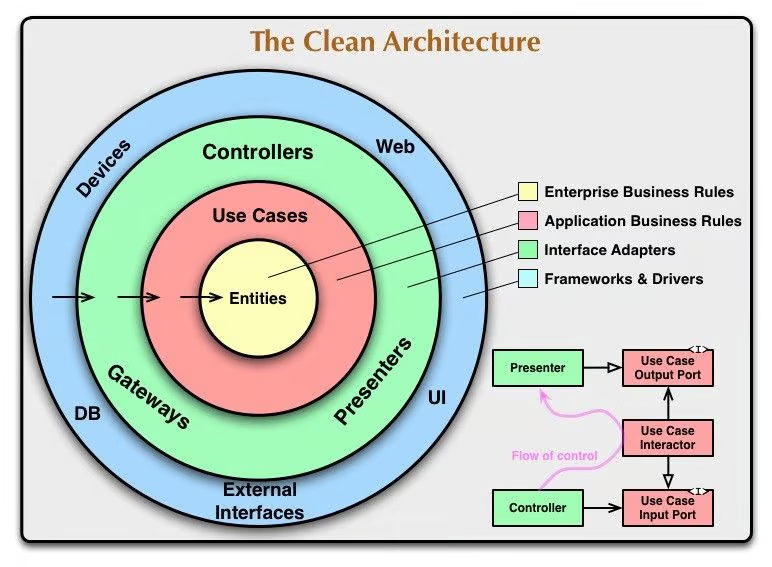

1,870,743 | Software Design and Architecture: Understanding Their Roles and Challenges in Development | Well, I'm starting to read the excellent book "Clean Architecture: A Craftsman's Guide to Software... | 0 | 2024-05-30T19:58:01 | https://dev.to/mathsena/software-design-and-architecture-understanding-their-roles-and-challenges-in-development-4jkf | Well, I'm starting to read the excellent book "Clean Architecture: A Craftsman's Guide to Software Structure and Design" by author Robert C. Martin, which is why I decided to write a bit about some important themes described in the work.

We start with an important subject, which is the difference between Software Design and Architecture.

Effective software development requires a clear understanding of two fundamental concepts: software design and architecture. Although often used interchangeably, these terms describe distinct yet complementary aspects of the software creation process. To illustrate these differences, we can compare them to the different systems comprising a human body, where each part plays an essential role, but all work in harmony to ensure the proper functioning of the whole.

### Differences Between Software Design and Architecture

**Software architecture** is like the skeleton of the human body: a structure defining the arrangement and interconnection of the main components, just as bones support and connect all parts of the body. In the context of software, architecture involves high-level decisions about the systems and platforms to be used, the organization of code into modules or services, and the communication patterns between these components.

On the other hand, **software design** is comparable to the nervous system, responsible for ensuring that signals move efficiently throughout the body, controlling specific functions. In software, this translates to detailed specifications on how each part of the system should operate and interact, detailing algorithms, user interface patterns, and internal data management.

### Objectives and Importance

The goal of **software architecture** is to create a robust and scalable foundation that supports the system as a whole, ensuring that the software can grow and adapt without compromising its functionality. Effective architecture facilitates the maintenance and expansion of software, while poor architecture can lead to a system that is difficult to understand and costly to modify.

**Software design**, in contrast, aims to maximize the efficiency and quality of the system's internal operations. Good design improves code readability, simplifies debugging and maintenance, and reduces the risk of errors. It ensures that each software component functions correctly within the context established by the architecture.

### Costs and Examples

Investing in good software architecture and design can be significant, but the costs of neglecting them are often higher. For example, the software incident with Obamacare in the United States showed how poorly planned architecture can result in system failures and exorbitant repair costs. It is estimated that the costs to fix the issues exceeded several times the original development budget.

### Failures in Software Development

Failures in delivering high-quality software often occur due to several factors, such as **overconfidence**, **haste**, and **market pressure**. Developers might overestimate their ability to create complex systems within tight deadlines, resulting in poorly conceived architectures and rushed designs. The pressure to release products quickly can lead to poorly considered design and architecture decisions, negatively impacting the quality and sustainability of the software.

### The Importance of Clean Code

Clean, well-organized code is crucial for the maintenance and scalability of software. It allows other developers to quickly understand the system, reducing the time needed to implement new features or fix bugs. Moreover, clean code facilitates testing, which is essential for ensuring the stability and reliability of software over time.

### Conclusion

Software architecture and design are critical components that determine the success of a development project. Like a human body, each aspect must function in harmony to ensure the health and effectiveness of the system. Understanding these concepts and applying them carefully is key to avoiding the costs and failures associated with software development, culminating in the creation of durable and effective solutions. | mathsena | |

1,870,740 | Overview: Express.js Framework Middleware's | One of the most important things about Express.js is how minimal this framework is. Known for its... | 0 | 2024-05-30T19:54:58 | https://dev.to/buildwebcrumbs/overview-expressjs-framework-middlewares-4o65 | webdev, javascript, backend, express | One of the most important things about **Express.js** is how minimal this framework is. Known for its simplicity and performance, Express.js is one of the most popular frameworks in the **Node.js** ecosystem.

Let's dive into interesting parts:

Using **Middleware** functions, is possible to access the request and response objects, making it easy to add functionalities like logging, authentication, and data parsing. Check the example:

```js

const requestLogger = (req, res, next) => {

console.log(`${req.method} ${req.url}`);

next();

};

```

The **next()** function, is responsible to pass the control for the next middleware. Without calling this function, the request will not proceed to the next middleware or route handler, and the request-response cycle will be left hanging.

You can also apply this **middleware** for all the routes, using only a line of code.

```js

app.use(requestLogger);

```

This show to how **Express.js** is really simple to read! 😁

## Testing

_This is my favorite part, let's test it!!_

Adding some basic routes to test the **middleware**

```js

app.get('/', (req, res) => {

res.send('Hello, World!');

});

app.get('/about', (req, res) => {

res.send('About Page');

});

```

## Follow the full code for test the Middleware functions:

**Note:** Create a file 'index.js' and add the full code.

```js

const express = require('express');

const app = express();

const port = 3000;

const requestLogger = (req, res, next) => {

console.log(`${req.method} ${req.url}`);

next(); // Pass control to the next middleware function

};

app.use(requestLogger);

app.get('/', (req, res) => {

res.send('Hello, World!');

});

app.get('/about', (req, res) => {

res.send('About Page');

});

app.listen(port, () => {

console.log(`Server running at http://localhost:${port}/`);

});

```

## IMPORTANT

Before run check if the port in the `const port` is in use.

Run in the terminal:

```js

node app.js

```

**Thank you, Please Follow:** [Webcrumbs](https://webcrumbs.org)

_Bibliography: [Express.js Docs](https://expressjs.com/)_ | m4rcxs |

1,870,738 | The Critical Role of Mobile Optimization in Web Design and SEO | As the number of mobile users continues to surge, the importance of mobile optimization in web... | 0 | 2024-05-30T19:51:42 | https://dev.to/annamariapascual/the-critical-role-of-mobile-optimization-in-web-design-and-seo-2eeb | webdev | <p><img src="https://media.licdn.com/dms/image/C5612AQHxWDCLG5a8Dw/article-cover_image-shrink_720_1280/0/1570474066501?e=2147483647&v=beta&t=cXB17ofg5P3gFHuViWv_4tagHw5o-xv3Eu0EJsknZ3I" alt="How do I optimize my website for mobile?" width="672" height="378" /></p>

<p>As the number of mobile users continues to surge, the importance of mobile optimization in web design and SEO has never been more pronounced. A mobile-optimized website (<strong><a href="https://agenciafort.com.br/criacao-de-sites/">criação de sites</a></strong>) is not just a convenience; it’s a necessity. Here’s why mobile optimization should be at the forefront of your digital strategy:</p>

<p><strong>Mobile Usage Trends</strong> The mobile revolution has changed the way people access the internet. With smartphones becoming increasingly prevalent, more users are <strong>browsing</strong> the web on-the-go. This shift in user behavior means that websites must be designed with mobile users in mind to provide a seamless and accessible experience.</p>

<p><strong>Mobile-First Design Philosophy</strong> Adopting a mobile-first design philosophy involves creating a website with the mobile user’s needs as the primary focus. This approach ensures that the most critical information and functionality are presented in a clear, concise manner on smaller screens. It’s about prioritizing content and features that matter most to mobile users.</p>

<p><strong>Impact on User Experience (UX)</strong> Mobile optimization directly impacts UX. A mobile-friendly website loads quickly, has touch-friendly navigation, and scales content appropriately for smaller screens.<strong> <a href="https://agenciafort.com.br/">https://agenciafort.com.br</a></strong> This leads to higher user satisfaction, longer engagement times, and lower bounce rates, which are all positive signals to search engines.</p>

<p><strong>SEO Advantages</strong> Google and other search engines have recognized the shift towards mobile usage and have adjusted their algorithms accordingly. Mobile optimization is now a significant ranking factor. Websites that provide a superior mobile experience are more likely to rank higher in search results, making mobile optimization a critical component of SEO.</p>

<p><strong>Responsive Web Design</strong> Responsive web design is a technique that allows a website to adapt its layout to the screen size of the device it’s being viewed on. This flexibility ensures that whether a user is on a smartphone, tablet, or desktop, the website provides an optimal viewing experience.</p>

<p><strong>Speed Optimization</strong> Mobile users expect fast loading times. Speed optimization techniques such as image compression, caching, and minimizing code can significantly improve a website’s loading speed on mobile devices. Faster websites not only provide a better user experience but also contribute to better SEO performance.</p>

<p><strong>Local SEO and Mobile</strong> For businesses with a local presence, mobile optimization is even more critical. Mobile users often search for local information, and a mobile-optimized site with local SEO can drive foot traffic to physical locations.</p>

<p>In conclusion, mobile optimization is a cornerstone of modern web design and SEO. It enhances user experience, improves search engine rankings, and caters to the growing number of users who rely on mobile devices for their internet usage. Ignoring mobile optimization is no longer an option for businesses that want to succeed online.</p> | annamariapascual |

1,870,736 | Suivi en temps réel des commits sur le tableau de bord GitHub | Apprenez à construire un tableau de bord montrant les commits GitHub en temps réel. Nous utilisons JavaScript, C3.js, et PubNub pour ce projet. | 0 | 2024-05-30T19:46:34 | https://dev.to/pubnub-fr/suivi-en-temps-reel-des-commits-sur-le-tableau-de-bord-github-p51 | Dans le domaine du développement logiciel, les graphiques C3.js en temps réel offrent un moyen efficace de surveiller l'activité au sein de votre organisation. Pour les équipes d'ingénierie, l'une des métriques à suivre est celle des commits GitHub. En explorant ce sujet, ce billet de blogue fournit un tutoriel pour vous guider à travers le processus d'utilisation de l'API de GitHub pour récupérer et afficher les données des commits GitHub dans un graphique interactif en temps réel. Nous utiliserons la puissance de HTML, Javascript, CSS, et utiliserons PubNub pour créer le tableau de bord GitHub et streamer les données de commit, tandis que C3.js aidera à la visualisation.

Pour en savoir plus sur les [graphiques C3.js en temps réel, nous avons un excellent tutoriel](https://pubnub.com/blog/building-realtime-live-updating-animated-graphs-c3-js/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=fr). Maintenant, plongeons dans le vif du sujet !

Comment créer un tableau de bord GitHub en temps réel

-----------------------------------------------------

Créer un tableau de bord GitHub en temps réel implique de se connecter à diverses sources de données telles que le dépôt GitHub et de s'occuper de certaines dépendances nécessaires. Soyez conscient des mesures de cybersécurité nécessaires, comme le codage sécurisé et le cryptage des données. Il est impératif de suivre les protocoles de sécurité standard de l'industrie.

Voici un guide étape par étape :

### Ajouter un Webhook GitHub

Pour configurer le webhook, suivez les étapes suivantes :

1. Créez un dépôt GitHub ou utilisez un dépôt git existant.

2. Cliquez sur "Paramètres" sur le côté droit de la page.

3. Cliquez sur "[Webhooks](https://www.pubnub.com/learn/glossary/what-is-a-webhook/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=fr)" sur le côté gauche de la page.

4. Cliquez sur "Add Webhook" en haut à droite.

5. GitHub vous demandera votre mot de passe, entrez-le.

6. Sous 'Payload URL', entrez **: http://pubnub-git-hook.herokuapp.com/github/ORG-NAME/TEAM-NAME.** Remplacez ORG-NAME par le nom de votre organisation et TEAM-NAME par l'équipe qui contrôle le repo.

### Charger le tableau de bord visuel

[Visitez cette page](https://pubnub.github.io/git-commits-ui/). Vous verrez une liste de tous les commits envoyés à travers le tableau de bord PubNub - super ! Lorsque vous envoyez un de vos commits sur GitHub, vous devriez voir un message apparaître sur votre tableau de bord des commits GitHub en quelques dizaines de millisecondes, et les graphiques se mettront à jour en temps réel.

Comment nous avons construit le tableau de bord des livraisons Github

---------------------------------------------------------------------

Le tableau de bord est un mélange de GitHub, du réseau de flux de données PubNub et des visualisations graphiques D3 alimentées par [C3.js](https://c3js.org/). Lorsqu'un commit est poussé sur GitHub, les métadonnées du commit sont postées sur une petite instance Heroku qui les publie sur le réseau PubNub. [Nous hébergeons une page de tableau de bord sur les pages GitHub.](https://pubnub.github.io/git-commits-ui/)

Une fois que notre instance Heroku reçoit les données de validation de GitHub, elle publie un résumé de ces données sur PubNub en utilisant les clés publiques de publication/abonnement sur le canal **pubnub-git**. [Vous pouvez surveiller le canal pubnub-git via notre console de développement ici](https://www.pubnub.com/docs/console/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=fr).

Voici un exemple de message :

```js

{

"name":"drnugent",

"avatar_url":"https://avatars.githubusercontent.com/u/857270?v=3",

"num_commits":4,

"team":"team-pubnub",

"org":"pubnub",

"time":1430436692806,

"repo_name":"drnugent/test"

}

```

La deuxième partie de la magie se produit lorsque le tableau de bord reçoit ces informations par le biais de son **callback subscribe**. Si vous regardez la source du tableau de bord, vous verrez ce code :

```js

pubnub.subscribe({

channel: 'pubnub-git',

message: displayLiveMessage

});

```

Cet appel subscribe garantit que la fonction JavaScript **displayLiveMessage()** est appelée chaque fois qu'un message est reçu sur le canal **pubnub-git**. displayLiveMessage() ajoute la notification push de commit en haut du journal et met à jour les graphiques de visualisation C3.

Mais attendez, comment le tableau de bord est-il alimenté lorsqu'il est chargé pour la première fois ?

Tirer profit de l'API de stockage et de lecture de PubNub pour votre tableau de bord

------------------------------------------------------------------------------------

PubNub conserve un enregistrement de chaque message envoyé, et fournit aux développeurs un moyen d'accéder à ces messages sauvegardés avec l'[API Storage & Playback (History](https://www.pubnub.com/products/pubnub-platform/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=fr)). Plus profondément dans le tableau de bord web, vous verrez le code suivant :

```js

var displayMessages = function(ms) { ms[0].forEach(displayMessage); };

pubnub.history({

channel: 'pubnub-git',

callback: displayMessages,

count: 100

});

```

Il s'agit d'une requête visant à récupérer les 1 000 derniers messages envoyés sur le canal pubnub-git. Ainsi, même si le tableau de bord web était hors ligne lorsque ces messages ont été envoyés, il est capable de les récupérer et d'utiliser ces données pour alimenter le tableau de bord comme s'il était en ligne en permanence.

Cette fonctionnalité est particulièrement utile lorsqu'il s'agit d'appareils dont la connectivité est intermittente ou peu fiable, comme les applications mobiles sur les réseaux cellulaires ou les voitures connectées. Grâce au réseau PubNub, notre tableau de bord de visualisation ne nécessite pas de backend pour stocker l'état de l'application.

Création de votre propre tableau de bord GitHub

-----------------------------------------------

Pour commencer à construire votre tableau de bord Github, prenez le dépôt Git Commit UI sur github.com et suivez le README pour les instructions d'installation. Les demandes d'extraction sont les bienvenues dans le cadre de la collaboration avec la communauté open source.

Tendances et développements futurs en matière de tableaux de bord en temps réel

-------------------------------------------------------------------------------

Il est essentiel de garder un œil sur les dernières tendances et évolutions en matière de tableaux de bord en temps réel et de technologies connexes. Cela inclut les websockets pour la transmission de données en temps réel, l'utilisation de notifications pour un aperçu immédiat, et l'utilisation de tableaux de bord en temps réel dans divers flux de travail.

L'expérience PubNub

-------------------

PubNub a aidé de nombreux clients à réussir leurs applications en temps réel. Par exemple, le système de notifications en temps réel de LinkedIn...

S'installer

-----------

Créez un compte PubNub pour obtenir un accès immédiat et gratuit aux clés PubNub. Les dernières fonctionnalités disponibles dans votre compte PubNub incluent ...

Démarrer

--------

Notre [documentation](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=fr) complète [sur PubNub](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=fr) vous permettra d'être opérationnel en un rien de temps, quel que soit votre cas d'utilisation ou votre [SDK](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=fr).

PubNub offre une plateforme conviviale pour améliorer votre expérience utilisateur. Nos services sont conçus en gardant à l'esprit les développeurs pour un processus d'intégration transparent.

N'oubliez pas que nous sommes là pour rendre votre parcours de développement en temps réel plus fluide et plus efficace. Configurez l'URL de votre charge utile et commençons !

La documentation officielle et les sources faisant autorité peuvent être référencées tout au long de l'article de blog pour confirmer la validité de l'information.

Comment PubNub peut-il vous aider ?

===================================

Cet article a été publié à l'origine sur [PubNub.com](https://www.pubnub.com/blog/tracking-realtime-github-dashboard-commits/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=fr)

Notre plateforme aide les développeurs à construire, livrer et gérer l'interactivité en temps réel pour les applications web, les applications mobiles et les appareils IoT.

La base de notre plateforme est le réseau de messagerie en temps réel le plus grand et le plus évolutif de l'industrie. Avec plus de 15 points de présence dans le monde, 800 millions d'utilisateurs actifs mensuels et une fiabilité de 99,999 %, vous n'aurez jamais à vous soucier des pannes, des limites de concurrence ou des problèmes de latence causés par les pics de trafic.

Découvrez PubNub

----------------

Découvrez le [Live Tour](https://www.pubnub.com/tour/introduction/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=fr) pour comprendre les concepts essentiels de chaque application alimentée par PubNub en moins de 5 minutes.

S'installer

-----------

Créez un [compte PubNub](https://admin.pubnub.com/signup/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=fr) pour un accès immédiat et gratuit aux clés PubNub.

Commencer

---------

La [documentation PubNub](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=fr) vous permettra de démarrer, quel que soit votre cas d'utilisation ou votre [SDK](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=fr). | pubnubdevrel | |

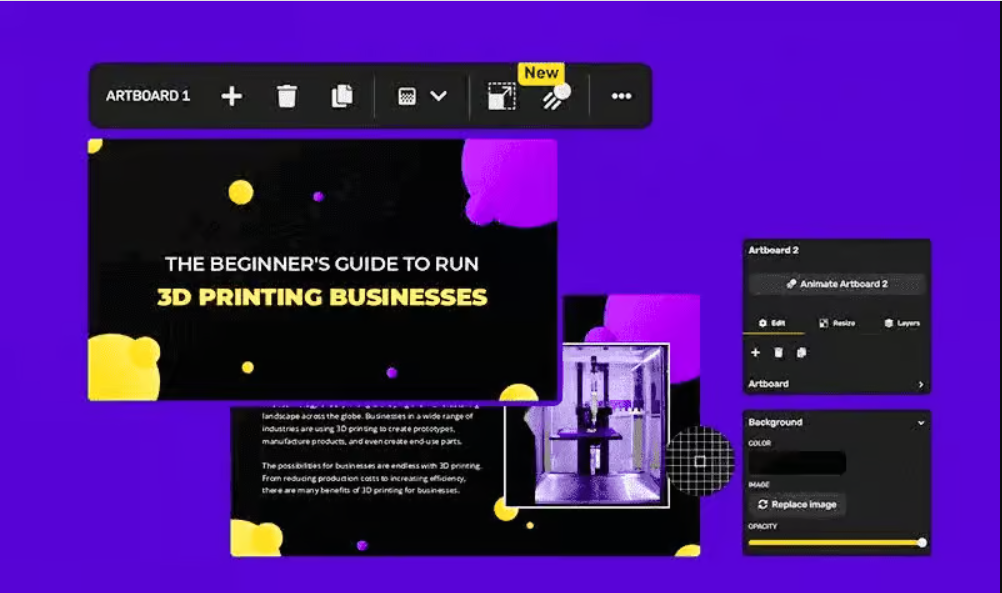

1,870,735 | Business Showcase Innovations for Success | Crafting a compelling business presentation is an art form that requires both strategic planning and... | 0 | 2024-05-30T19:43:14 | https://dev.to/businesspresentation/business-showcase-innovations-for-success-55ki | Crafting a compelling business presentation is an art form that requires both strategic planning and captivating visuals. It's about not only delivering information but also igniting the interest of your audience and inspiring action.

However, let's face it: starting from scratch with a blank slide can be daunting. That's where business PowerPoint templates come in – they provide a strong foundation to build upon, saving you valuable time and effort.

Finding the Perfect Template: Match Your Message to the Design

Business PowerPoint templates come in a wide array, offering a variety of styles and functionalities. However, with so many options to choose from, how do you select the perfect one? The key lies in aligning the template's design with your presentation's core message and objective.

Here are some key considerations:

Presentation Type: Are you delivering a formal pitch deck to investors, a project update to colleagues, or a sales presentation to potential clients? Different presentation types benefit from distinct design aesthetics. For instance, a pitch deck might favor a sleek, modern template, while a project update might utilize a more process-oriented layout.

Target Audience: Who are you presenting to? Understanding your audience's expectations is crucial. A presentation for a tech-savvy audience might leverage a data-heavy template with interactive elements. Conversely, a presentation for a more traditional audience might prioritize a classic, clean design.

Brand Identity: Does your company have established branding guidelines? If so, consider templates that complement your brand's color palette, fonts, and overall visual identity. Maintaining brand consistency fosters recognition and trust with your audience.

Beyond Aesthetics: Leveraging Templates for Maximum Impact

While a well-designed template provides a strong visual foundation, it's just the first step. To create a truly impactful presentation, here are some additional tips:

Content is King: Remember, even the most stunning template can't compensate for weak content. Focus on crafting a clear, concise, and engaging message that resonates with your audience.

Data Visualization: Infographics, charts, and graphs can effectively communicate complex information in a visually appealing way. Utilize the template's built-in charts or incorporate high-quality visuals to enhance your presentation's clarity.

Storytelling Power: Facts and figures are important, but weaving them into a compelling narrative is key. Use the template's layout to guide your storytelling, strategically placing key points and visuals to keep your audience engaged.

Practice Makes Perfect: Rehearse your delivery beforehand. A polished presentation, delivered with confidence, will leave a lasting impression on your audience.

[Business PowerPoint templates](https://simplified.com/ai-presentation-maker/business

) are a valuable tool for anyone creating business presentations. By selecting the right template and focusing on strong content delivery, you can craft presentations that not only inform but also inspire and influence your audience. | businesspresentation | |

1,870,734 | Verfolgung von GitHub Dashboard Commits in Echtzeit | Lernen Sie, ein Dashboard zu erstellen, das GitHub-Commits in Echtzeit anzeigt. Wir verwenden JavaScript, C3.js und PubNub für dieses Projekt. | 0 | 2024-05-30T19:41:33 | https://dev.to/pubnub-de/verfolgung-von-github-dashboard-commits-in-echtzeit-3a47 | Im Bereich der Softwareentwicklung bieten C3.js-Echtzeitdiagramme eine effektive Möglichkeit zur Überwachung der Aktivitäten in Ihrem Unternehmen. Für Entwicklungsteams ist eine der verfolgbaren Metriken GitHub Commits. Dieser Blog-Beitrag bietet ein Tutorial, das Sie durch den Prozess der Nutzung der GitHub-API führt, um GitHub-Commit-Daten in einem interaktiven Echtzeitdiagramm abzurufen und anzuzeigen. Wir nutzen die Möglichkeiten von HTML, Javascript und CSS und verwenden PubNub, um das GitHub-Dashboard zu erstellen und die Commit-Daten zu streamen, während C3.js bei der Visualisierung hilft.

Um mehr über [Echtzeit-C3.js-Diagramme](https://pubnub.com/blog/building-realtime-live-updating-animated-graphs-c3-js/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=de) zu erfahren [, haben wir ein großartiges Tutorial](https://pubnub.com/blog/building-realtime-live-updating-animated-graphs-c3-js/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=de). Jetzt können wir eintauchen!

Wie man ein Echtzeit-GitHub-Dashboard erstellt

----------------------------------------------

Um ein GitHub-Dashboard in Echtzeit zu erstellen, müssen Sie eine Verbindung zu verschiedenen Datenquellen wie dem GitHub-Repository herstellen und sich um einige notwendige Abhängigkeiten kümmern. Achten Sie auf die notwendigen Cybersicherheitsmaßnahmen wie sichere Kodierung und Datenverschlüsselung. Die Einhaltung der branchenüblichen Sicherheitsprotokolle ist unabdingbar.

Hier ist eine Schritt-für-Schritt-Anleitung:

### Hinzufügen eines GitHub-Webhooks

Führen Sie folgende Schritte aus, um den Webhook einzurichten:

1. Erstellen Sie ein GitHub-Repository oder verwenden Sie ein vorhandenes Git-Repository.

2. Klicken Sie auf "Einstellungen" auf der rechten Seite der Seite

3. Klicken Sie auf "[Webhooks](https://www.pubnub.com/learn/glossary/what-is-a-webhook/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=de)" auf der linken Seite der Seite

4. Klicken Sie oben rechts auf "Webhook hinzufügen".

5. GitHub fragt Sie nach Ihrem Passwort, geben Sie es ein

6. Geben Sie unter 'Payload URL' ein: **http://pubnub-git-hook.herokuapp.com/github/ORG-NAME/TEAM-NAME.** Ersetzen Sie ORG-NAME durch den Namen Ihrer Organisation und TEAM-NAME durch das Team, das das Repo kontrolliert.

### Laden Sie das Visual Dashboard

[Besuchen Sie diese Seite](https://pubnub.github.io/git-commits-ui/). Sie werden eine Liste aller Commits sehen, die über das PubNub-Dashboard gesendet wurden - toll! Wenn Sie einen Ihrer Commits an GitHub senden, sollten Sie innerhalb weniger Millisekunden eine Nachricht auf Ihrem GitHub-Commit-Dashboard sehen, und die Diagramme werden in Echtzeit aktualisiert.

Wie wir das Github Commit Dashboard aufgebaut haben

---------------------------------------------------

Das Dashboard ist ein Mashup aus GitHub, dem PubNub Data Stream Network und D3-Diagrammvisualisierungen, die von [C3.js](https://c3js.org/) unterstützt werden. Wenn ein Commit auf GitHub gepusht wird, werden die Commit-Metadaten an eine kleine Heroku-Instanz gesendet, die sie im PubNub-Netzwerk veröffentlicht. [Wir hosten die Dashboard-Seite auf den GitHub-Seiten.](https://pubnub.github.io/git-commits-ui/)

Sobald unsere Heroku-Instanz die Commit-Daten von GitHub erhält, veröffentlicht sie eine Zusammenfassung dieser Daten auf PubNub unter Verwendung der öffentlichen Publish/Subscribe-Schlüssel im Kanal **pubnub-git**. [Sie können den pubnub-git-Kanal über unsere Entwicklerkonsole hier überwachen](https://www.pubnub.com/docs/console/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=de).

Hier ist ein Beispiel für die Nutzlast einer Nachricht:

```js

{

"name":"drnugent",

"avatar_url":"https://avatars.githubusercontent.com/u/857270?v=3",

"num_commits":4,

"team":"team-pubnub",

"org":"pubnub",

"time":1430436692806,

"repo_name":"drnugent/test"

}

```

Die zweite Hälfte der Magie geschieht, wenn das Dashboard diese Informationen über seinen **Subscribe-Callback** erhält. Wenn Sie sich den Quelltext des Dashboards ansehen, werden Sie diesen Code sehen:

```js

pubnub.subscribe({

channel: 'pubnub-git',

message: displayLiveMessage

});

```

Dieser subscribe-Aufruf stellt sicher, dass die JavaScript-Funktion **displayLiveMessage()** jedes Mal aufgerufen wird, wenn eine Nachricht auf dem **pubnub-git-Kanal** empfangen wird. displayLiveMessage() fügt die Commit-Push-Benachrichtigung an den Anfang des Protokolls und aktualisiert die C3-Visualisierungsdiagramme.

Aber Moment, wie wird das Dashboard beim ersten Laden gefüllt?

Die Nutzung der PubNub Storage & Playback API für Ihr Dashboard

---------------------------------------------------------------

PubNub speichert jede gesendete Nachricht und bietet Entwicklern mit der [Storage & Playback (History) API](https://www.pubnub.com/products/pubnub-platform/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=de) eine Möglichkeit, auf diese gespeicherten Nachrichten zuzugreifen. Tiefer im Web-Dashboard werden Sie den folgenden Code sehen:

```js

var displayMessages = function(ms) { ms[0].forEach(displayMessage); };

pubnub.history({

channel: 'pubnub-git',

callback: displayMessages,

count: 100

});

```

Dies ist eine Anfrage zum Abrufen der letzten 1.000 Nachrichten, die über den pubnub-git-Kanal gesendet wurden. Auch wenn das Web-Dashboard zum Zeitpunkt des Versands dieser Nachrichten offline war, kann es sie abrufen und diese Daten verwenden, um das Dashboard so aufzufüllen, als ob es ständig online wäre.

Diese Funktion ist besonders nützlich, wenn es um Geräte mit unterbrochener oder unzuverlässiger Konnektivität geht, wie z. B. mobile Anwendungen in Mobilfunknetzen oder vernetzte Autos. Dank des PubNub-Netzwerks benötigt unser Visualisierungs-Dashboard kein Backend, um den Zustand der Anwendung zu speichern.

Bauen Sie Ihr eigenes GitHub-Dashboard

--------------------------------------

Um mit der Erstellung Ihres Github-Dashboards zu beginnen, forken Sie das Git Commit UI-Repository auf github.com und folgen Sie den README-Anweisungen zur Einrichtung. Pull Requests sind als Teil der Open-Source-Community-Zusammenarbeit willkommen.

Zukünftige Trends und Entwicklungen bei Echtzeit-Dashboards

-----------------------------------------------------------

Es ist wichtig, die letzten Trends und Entwicklungen bei Echtzeit-Dashboards und verwandten Technologien im Auge zu behalten. Dazu gehören Websockets für die Datenübertragung in Echtzeit, die Verwendung von Benachrichtigungen für sofortige Einblicke und die Verwendung von Echtzeit-Dashboards in verschiedenen Arbeitsabläufen.

Erfahrung mit PubNub

--------------------

PubNub hat zahlreichen Kunden geholfen, mit ihren Echtzeitanwendungen erfolgreich zu sein. Zum Beispiel das Echtzeit-Benachrichtigungssystem von LinkedIn...

Einrichten

----------

Melden Sie sich für ein PubNub-Konto an, um sofort und kostenlos Zugang zu den PubNub-Schlüsseln zu erhalten. Die neuesten Funktionen in Ihrem PubNub-Konto umfassen ...

Anfangen

--------

Mit unseren umfassenden [PubNub-Dokumenten](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=de) sind Sie im Handumdrehen startklar, unabhängig von Ihrem Anwendungsfall oder [SDK](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=de).

PubNub bietet eine benutzerfreundliche Plattform zur Verbesserung der Benutzerfreundlichkeit. Unsere Dienste sind so konzipiert, dass sie Entwicklern einen nahtlosen Integrationsprozess ermöglichen.

Vergessen Sie nicht, dass wir hier sind, um Ihre Echtzeit-Entwicklungsreise reibungsloser und effizienter zu gestalten. Richten Sie Ihre Nutzdaten-URL ein und lassen Sie uns beginnen!

Offizielle Dokumentationen und maßgebliche Quellen können im gesamten Blogpost referenziert werden, um die Gültigkeit der Informationen zu bestätigen.

Wie kann PubNub Ihnen helfen?

=============================

Dieser Artikel wurde ursprünglich auf [PubNub.com](https://www.pubnub.com/blog/tracking-realtime-github-dashboard-commits/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=de) veröffentlicht.

Unsere Plattform unterstützt Entwickler bei der Erstellung, Bereitstellung und Verwaltung von Echtzeit-Interaktivität für Webanwendungen, mobile Anwendungen und IoT-Geräte.

Die Grundlage unserer Plattform ist das größte und am besten skalierbare Echtzeit-Edge-Messaging-Netzwerk der Branche. Mit über 15 Points-of-Presence weltweit, die 800 Millionen monatlich aktive Nutzer unterstützen, und einer Zuverlässigkeit von 99,999 % müssen Sie sich keine Sorgen über Ausfälle, Gleichzeitigkeitsgrenzen oder Latenzprobleme aufgrund von Verkehrsspitzen machen.

PubNub erleben

--------------

Sehen Sie sich die [Live Tour](https://www.pubnub.com/tour/introduction/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=de) an, um in weniger als 5 Minuten die grundlegenden Konzepte hinter jeder PubNub-gestützten App zu verstehen

Einrichten

----------

Melden Sie sich für einen [PubNub-Account](https://admin.pubnub.com/signup/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=de) an und erhalten Sie sofort kostenlosen Zugang zu den PubNub-Schlüsseln

Beginnen Sie

------------

Mit den [PubNub-Dokumenten](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=de) können Sie sofort loslegen, unabhängig von Ihrem Anwendungsfall oder [SDK](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=de) | pubnubdevrel | |

1,870,806 | Publicando imagen docker a repositorio con Azure Devops | En este ejemplo vamos a subir una imagen docker al repositorio de dockerhub con Azure Devops. Este... | 0 | 2024-05-30T20:56:48 | https://www.ahioros.info/2024/05/subir-imagen-docker-repositorio-con.html | azure, devops, linux, spanish | ---

title: Publicando imagen docker a repositorio con Azure Devops

published: true

date: 2024-05-30 19:39:00 UTC

tags: Azure,DevOps,Linux,spanish

canonical_url: https://www.ahioros.info/2024/05/subir-imagen-docker-repositorio-con.html

---

En este ejemplo vamos a subir una imagen docker al repositorio de dockerhub con Azure Devops.

Este manual/tutorial es la continuación de [Cómo conectar un pipeline de Azure DevOps Pipelines con DockerHub](https://www.ahioros.info/2024/05/como-conectar-un-pipeline-de-azure.html) y [Creando una imagen docker de una aplicación en react](https://www.ahioros.info/2024/05/creando-una-imagen-docker-de-una.html) si no los has leído por favor hazlo para que te des una idea de dónde nos quedamos.

Ya que hemos creado nuestro archivo Dockerfile y .dockerignore podemos continuar. Básicamente lo que haremos aquí es:

1. Vamos a poner en nuestro pipeline la creación de una imagen docker de una aplicación en react.

2. Luego vamos a subir nuestra imagen en nuestro repositorio de dockerhub.

<!-- agrega el botón leer más -->

Estas dos tareas las haremos en un solo paso (task en este caso).

Tomamos nuestro archivo yaml del pipeline creado anteriormente y le agregamos la tarea de **buildAndPush**.

```yaml

pr:

branches:

include:

- "*"

pool:

vmImage: ubuntu-latest

stages:

- stage: LoginAndLogout

jobs:

- job: buildandpush

steps:

- task: Docker@2

displayName: Login

inputs:

command: login

containerRegistry: docker-hub-test

- task: Docker@2

displayName: BuildAndPush

inputs:

command: buildAndPush

containerRegistry: docker-hub-test

repository: ahioros/rdicidr

tags: latest

- task: Docker@2

displayName: Logout

inputs:

command: logout

containerRegistry: docker-hub-test

```

**Nota** : En este ejemplo todas las imágenes que subamos tendrán el tag **latest** , esto lo podemos cambiar si deseamos usando el $(Build.BuildId) como tag:

```bash

tags: |

$(Build.BuildId)

latest

```

Ejecutamos nuestro pipeline con los cambios al finalizar el pipeline vamos a nuestro repositorio dockerhub donde veremos la nueva imagen.

Acá te dejo el video de esta configuración por si tienes dudas:

{% youtube nmhLqZ3Kk_I %}

<iframe allowfullscreen="" youtube-src-id="nmhLqZ3Kk_I" width="480" height="270" src="https://www.youtube.com/embed/nmhLqZ3Kk_I"></iframe> | ahioros |

1,870,732 | การป้องกันการทำให้สัตว์เลี้ยงหายออกจากบ้าน: วิธีการง่ายๆ ที่คุณสามารถทำได้ | เรียนรู้วิธีการป้องกันสัตว์เลี้ยงของคุณจากการหลบหนีออกจากบ้าน ด้วยวิธีการง่ายๆ ที่สามารถทำได้ทันที | 0 | 2024-05-30T19:37:09 | https://pey-journey.co | pet, technology |

---

published: true

description: "เรียนรู้วิธีการป้องกันสัตว์เลี้ยงของคุณจากการหลบหนีออกจากบ้าน ด้วยวิธีการง่ายๆ ที่สามารถทำได้ทันที"

canonical_url: "https://pey-journey.co"

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/gywl0dwy8xi5c5a3vf4g.jpeg

---

## การป้องกันการทำให้สัตว์เลี้ยงหายออกจากบ้าน: วิธีการง่ายๆ ที่คุณสามารถทำได้

สัตว์เลี้ยงเป็นส่วนหนึ่งของครอบครัวเรา และการสูญเสียสัตว์เลี้ยงสามารถสร้างความเศร้าโศกและความเครียดให้กับเจ้าของได้ ดังนั้นการป้องกันไม่ให้สัตว์เลี้ยงหายออกจากบ้านเป็นสิ่งที่สำคัญมาก ต่อไปนี้คือวิธีการที่สามารถช่วยป้องกันการสูญเสียสัตว์เลี้ยงได้

## 1. การติดตั้งรั้วและประตูที่มั่นคง

การติดตั้งรั้วและประตูที่มั่นคงเป็นวิธีที่ดีในการป้องกันไม่ให้สัตว์เลี้ยงหลบหนี รั้วควรมีความสูงเพียงพอและไม่มีช่องว่างที่สัตว์เลี้ยงสามารถหลบหนีได้ สำหรับประตูควรตรวจสอบให้แน่ใจว่าเป็นแบบที่สัตว์เลี้ยงไม่สามารถเปิดได้ด้วยตัวเอง

## 2. การติดแท็กหรือไมโครชิป

การติดแท็กหรือไมโครชิปที่มีข้อมูลของเจ้าของจะช่วยให้สัตว์เลี้ยงสามารถถูกระบุตัวได้ง่ายในกรณีที่หายไป การติดไมโครชิปเป็นวิธีที่มีประสิทธิภาพสูงเนื่องจากไมโครชิปไม่สามารถหลุดออกได้เหมือนกับแท็ก

## 3. การฝึกให้สัตว์เลี้ยงรู้จักคำสั่งพื้นฐาน

การฝึกให้สัตว์เลี้ยงรู้จักคำสั่งพื้นฐาน เช่น "หยุด" หรือ "มา" จะช่วยให้คุณสามารถควบคุมสัตว์เลี้ยงได้ดีขึ้นเมื่ออยู่ในสถานการณ์ที่อาจทำให้สัตว์เลี้ยงหลบหนี

## 4. การดูแลและให้ความสนใจ

สัตว์เลี้ยงที่ได้รับการดูแลและให้ความสนใจอย่างเพียงพอจะมีโอกาสน้อยที่จะพยายามหลบหนีเพื่อค้นหาความสนุกหรือความสนใจจากภายนอก ควรให้เวลาเล่นและออกกำลังกายกับสัตว์เลี้ยงทุกวัน

## 5. การติดตั้งประตูสัตว์เลี้ยงที่มีล็อค

หากคุณมีประตูสัตว์เลี้ยงในบ้าน ควรเลือกใช้ประตูที่มีล็อคเพื่อป้องกันไม่ให้สัตว์เลี้ยงสามารถออกไปข้างนอกได้โดยไม่ได้รับอนุญาต

## 6. การเฝ้าระวังอย่างใกล้ชิด

การเฝ้าระวังสัตว์เลี้ยงอย่างใกล้ชิดเมื่ออยู่ข้างนอกเป็นสิ่งสำคัญ ควรตรวจสอบให้แน่ใจว่าสัตว์เลี้ยงอยู่ในสายตาของคุณตลอดเวลา และหากจำเป็นควรใช้สายจูงเมื่อออกไปข้างนอก

การป้องกันสัตว์เลี้ยงหายออกจากบ้านต้องการการวางแผนและความใส่ใจในรายละเอียดต่าง ๆ แต่การป้องกันเหล่านี้จะช่วยให้คุณมั่นใจได้ว่าสัตว์เลี้ยงของคุณจะปลอดภัยและอยู่กับคุณไปตลอดเวลา

---

| poom-sci |

1,870,731 | 실시간 GitHub 대시보드 커밋 추적하기 | GitHub 커밋을 실시간으로 보여주는 대시보드를 만드는 방법을 알아보세요. 이 프로젝트에서는 JavaScript, C3.js, PubNub를 활용합니다. | 0 | 2024-05-30T19:36:32 | https://dev.to/pubnub-ko/silsigan-github-daesibodeu-keomis-cujeoghagi-39fc | 소프트웨어 개발 영역에서 실시간 C3.js 차트는 조직의 활동을 효과적으로 모니터링할 수 있는 방법을 제공합니다. 엔지니어링 팀의 경우 추적 가능한 메트릭 중 하나는 GitHub 커밋입니다. 이 블로그 게시물에서는 이 주제를 살펴보면서 GitHub의 API를 활용하여 실시간 대화형 그래프로 GitHub 커밋 데이터를 검색하고 표시하는 프로세스를 안내하는 튜토리얼을 제공합니다. HTML, Javascript, CSS의 기능을 활용하고 PubNub를 사용하여 GitHub 대시보드를 만들고 커밋 데이터를 스트리밍하는 한편, C3.js가 시각화에 도움을 줄 것입니다.

[실시간 C3.js 차트에](https://pubnub.com/blog/building-realtime-live-updating-animated-graphs-c3-js/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ko) 대해 자세히 알아보려면 [훌륭한 튜토리얼을](https://pubnub.com/blog/building-realtime-live-updating-animated-graphs-c3-js/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ko) 참조하세요. 이제 시작해 보겠습니다!

실시간 GitHub 대시보드 만드는 방법

----------------------

실시간 GitHub 대시보드를 만들려면 GitHub 리포지토리와 같은 다양한 데이터 소스에 연결하고 몇 가지 필요한 종속성을 처리해야 합니다. 보안 코딩 및 데이터 암호화와 같은 필요한 사이버 보안 조치에 유의하세요. 업계 표준 보안 프로토콜을 준수하는 것은 필수입니다.

다음은 단계별 가이드입니다:

### GitHub 웹후크 추가하기

웹훅을 설정하려면 다음 단계를 따르세요:

1. GitHub 리포지토리를 만들거나 기존 Git 리포지토리를 사용합니다.

2. 페이지 오른쪽의 '설정'을 클릭합니다.

3. 페이지 왼쪽의 '[웹훅](https://www.pubnub.com/learn/glossary/what-is-a-webhook/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ko)'을 클릭합니다.

4. 오른쪽 상단의 '웹후크 추가'를 클릭합니다.

5. GitHub에서 비밀번호를 묻는 메시지가 표시되면 입력합니다.

6. '페이로드 URL'에 **http://pubnub-git-hook.herokuapp.com/github/ORG-NAME/TEAM-NAME** 을 입력합니다. ORG-NAME을 조직의 이름으로, TEAM-NAME을 리포지토리를 제어하는 팀으로 바꿉니다.

### 시각적 대시보드 로드

[이 페이지를 방문합니다](https://pubnub.github.io/git-commits-ui/). PubNub 대시보드를 통해 전송된 모든 커밋의 목록이 표시됩니다. 커밋 중 하나를 GitHub로 푸시하면 수십 밀리초 내에 GitHub 커밋 대시보드에 메시지가 표시되고 차트가 실시간으로 업데이트됩니다.

Github 커밋 대시보드 구축 방법

--------------------

이 대시보드는 GitHub, PubNub 데이터 스트림 네트워크, [C3.js로](https://c3js.org/) 구동되는 D3 차트 시각화의 매시업입니다. 커밋이 GitHub로 푸시되면, 커밋 메타데이터가 작은 Heroku 인스턴스에 게시되어 PubNub 네트워크에 게시됩니다. [우리는 GitHub 페이지의 대시보드 페이지에서 호스팅하고 있습니다.](https://pubnub.github.io/git-commits-ui/)

Heroku 인스턴스가 GitHub에서 커밋 데이터를 받으면 **pubnub-git** 채널의 공개 게시/구독 키를 사용하여 해당 데이터의 요약을 PubNub에 게시합니다. [여기에서 개발자 콘솔을 통해 pubnub-git 채널을 모니터링할 수 있습니다](https://www.pubnub.com/docs/console/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ko).

다음은 메시지 페이로드 예시입니다:

```js

{

"name":"drnugent",

"avatar_url":"https://avatars.githubusercontent.com/u/857270?v=3",

"num_commits":4,

"team":"team-pubnub",

"org":"pubnub",

"time":1430436692806,

"repo_name":"drnugent/test"

}

```

대시보드가 **구독 콜백을** 통해 이 정보를 수신할 때 마법의 후반부가 시작됩니다. 대시보드의 소스를 보면 이 코드를 볼 수 있습니다:

```js

pubnub.subscribe({

channel: 'pubnub-git',

message: displayLiveMessage

});

```

이 구독 호출은 **pubnub-git** 채널에서 메시지가 수신될 때마다 JavaScript 함수 **displayLiveMessage()** 가 호출되도록 합니다. displayLiveMessage()는 로그의 맨 위에 커밋 푸시 알림을 추가하고 C3 시각화 차트를 업데이트합니다.

하지만 대시보드가 처음 로드될 때 어떻게 채워질까요?

대시보드에 PubNub 저장 및 재생 API 활용하기

-----------------------------

PubNub는 전송된 각 메시지의 기록을 보관하며, 개발자가 저장 [및 재생(기록) API를](https://www.pubnub.com/products/pubnub-platform/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ko) 통해 저장된 메시지에 액세스할 수 있는 방법을 제공합니다. 웹 대시보드의 더 깊은 곳에 다음 코드가 표시됩니다:

```js

var displayMessages = function(ms) { ms[0].forEach(displayMessage); };

pubnub.history({

channel: 'pubnub-git',

callback: displayMessages,

count: 100

});

```

이것은 pubnub-git 채널을 통해 전송된 마지막 1,000개의 메시지를 검색하는 요청입니다. 따라서 해당 메시지가 전송되었을 때 웹 대시보드가 오프라인 상태였더라도 해당 메시지를 검색하고 해당 데이터를 사용하여 마치 영구적으로 온라인 상태인 것처럼 대시보드를 채울 수 있습니다.

이 기능은 셀룰러 네트워크의 모바일 앱이나 커넥티드 카처럼 연결이 간헐적이거나 불안정한 디바이스를 다룰 때 특히 유용합니다. PubNub 네트워크 덕분에 시각화 대시보드에는 애플리케이션의 상태를 저장하기 위한 백엔드가 필요하지 않습니다.

나만의 GitHub 대시보드 구축

------------------

Github 대시보드 구축을 시작하려면 github.com에서 Git Commit UI 리포지토리를 포크하고 설정 지침을 위한 README를 따르세요. 오픈 소스 커뮤니티 협업의 일환으로 풀 리퀘스트를 환영합니다.

실시간 대시보드의 향후 트렌드 및 발전 방향

------------------------

실시간 대시보드 및 관련 기술의 최신 동향과 발전을 주시하는 것은 매우 중요합니다. 여기에는 실시간 데이터 전송을 위한 웹 소켓, 즉각적인 인사이트를 얻기 위한 알림 사용, 다양한 워크플로우에서 실시간 대시보드 사용 등이 포함됩니다.

PubNub 체험하기

-----------

PubNub은 수많은 고객이 실시간 애플리케이션으로 성공을 거두는 데 도움을 주었습니다. 예를 들어, LinkedIn의 실시간 알림 시스템...

설정하기

----

PubNub 계정에 가입하면 PubNub 키에 무료로 즉시 액세스할 수 있습니다. PubNub 계정에서 사용할 수 있는 최신 기능은 다음과 같습니다...

시작하기

----

사용 사례나 [SDK에](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ko) 관계없이 포괄적인 [PubNub 문서를](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ko) 통해 즉시 시작하고 실행할 수 있습니다.

PubNub은 사용자 경험을 향상시킬 수 있는 사용자 친화적인 플랫폼을 제공합니다. 저희 서비스는 원활한 통합 프로세스를 위해 개발자를 염두에 두고 설계되었습니다.

여러분의 실시간 개발 여정을 더욱 원활하고 효율적으로 만들어드리겠습니다. 페이로드 URL을 설정하고 시작하세요!

블로그 게시물 전체에서 공식 문서와 공신력 있는 출처를 참조하여 정보의 유효성을 확인할 수 있습니다.

펍넙이 어떤 도움을 줄 수 있나요?

===================

이 문서는 원래 [PubNub.com에](https://www.pubnub.com/blog/tracking-realtime-github-dashboard-commits/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ko) 게시되었습니다.

저희 플랫폼은 개발자가 웹 앱, 모바일 앱 및 IoT 디바이스를 위한 실시간 인터랙티브를 구축, 제공 및 관리할 수 있도록 지원합니다.

저희 플랫폼의 기반은 업계에서 가장 크고 확장성이 뛰어난 실시간 에지 메시징 네트워크입니다. 전 세계 15개 이상의 PoP가 월간 8억 명의 활성 사용자를 지원하고 99.999%의 안정성을 제공하므로 중단, 동시 접속자 수 제한 또는 트래픽 폭증으로 인한 지연 문제를 걱정할 필요가 없습니다.

PubNub 체험하기

-----------

[라이브 투어를](https://www.pubnub.com/tour/introduction/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ko) 통해 5분 이내에 모든 PubNub 기반 앱의 필수 개념을 이해하세요.

설정하기

----

PubNub [계정에](https://admin.pubnub.com/signup/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ko) 가입하여 PubNub 키에 무료로 즉시 액세스하세요.

시작하기

----

사용 사례나 [SDK에](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ko) 관계없이 [PubNub 문서를](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ko) 통해 바로 시작하고 실행할 수 있습니다. | pubnubdevrel | |

1,870,730 | Conquer Your Cloud Bill: Mastering Azure Cost Optimization | The cloud empowers businesses with unparalleled scalability, agility, and on-demand resources. But... | 0 | 2024-05-30T19:35:29 | https://dev.to/unicloud/conquer-your-cloud-bill-mastering-azure-cost-optimization-4o7k | azure, webdev, aws | The cloud empowers businesses with unparalleled scalability, agility, and on-demand resources. But with this flexibility comes the responsibility of managing Azure cloud costs. Unchecked spending can quickly erode your budget and hinder growth. Here's where Azure cost optimization steps in – a strategic approach to minimizing your Azure expenses while maximizing the value you get from your cloud investment.

**Why Optimize Your Azure Costs?**

Unlike traditional IT infrastructure with upfront costs, Azure offers a pay-as-you-go model. This flexibility can lead to uncontrolled spending if left unchecked. Here's why Azure cost optimization is crucial:

**- Reduced Costs:** Studies show organizations can achieve significant cost savings (up to 30%) by implementing optimization strategies. These savings can be re-invested in core business initiatives or fueling further cloud adoption.

**- Improved Budgeting & Forecasting: **Gaining deeper visibility into your Azure spending patterns allows for accurate budgeting and forecasting. This financial transparency empowers you to make informed decisions about resource allocation and avoid surprise cost spikes.

**- Enhanced Performance:** Optimizing your Azure resources ensures peak performance while keeping costs under control. This translates to faster processing times, improved application responsiveness, and a better overall user experience.

**Mastering the Art of Azure Cost Optimization**

Microsoft offers a robust set of tools and strategies to help you conquer your Azure cloud bill:

**- Leverage Azure Cost Management:** This built-in service provides a centralized view of your Azure spending across subscriptions, resource groups, and individual resources. Utilize cost analysis reports to identify trends, anomalies, and potential savings opportunities.

**- Embrace Reserved Instances (RIs):** Ideal for predictable workloads, RIs offer significant discounts compared to pay-as-you-go pricing. You commit to using a specific instance size for a one or three-year term in exchange for upfront savings.

**- Explore Azure Savings Plans:** Similar to RIs, Savings Plans offer significant discounts for consistent compute resource usage. They provide flexibility across different instance sizes within a chosen region.

**- Unleash the Power of VM Autoscaling:** Automate scaling your virtual machines (VMs) up or down based on real-time demand. This ensures you only pay for the resources you actually use, eliminating idle resource costs.

**- Identify and Shut Down Idle Resources:** Use Azure Advisor, a free service that recommends optimization opportunities. It can pinpoint unused VMs, databases, or other resources that can be stopped or scaled down to reduce costs.

**- Embrace Cost-Effective Storage Options:** Choose the right storage tier based on your data access needs. Consider cost-effective options like Azure Archive Blob storage for infrequently accessed data.

**- Promote a Culture of Cost Awareness:** Foster a culture within your organization where everyone understands the importance of responsible cloud usage. Educate users on cost optimization best practices.

**Taking Action: Your Azure Cost Optimization Journey**

**1. Assess Your Current Spending:** Utilize Azure Cost Management to understand your baseline cloud spending patterns. Identify areas with high costs or potential for optimization.

**2. Set SMART Goals:** Establish clear, measurable, achievable, relevant, and time-bound goals for your Azure cost optimization efforts. Aim for realistic cost reduction targets within a defined timeframe.

**3. Develop an Action Plan:** Create a roadmap outlining specific actions you'll take to achieve your cost optimization goals. Assign ownership for each action item and establish a timeline for implementation.

**4. Monitor and Refine:** Continuously monitor your progress and adjust your strategy as needed. Utilize Azure Cost Management to track your cost reduction efforts and identify new optimization opportunities.

By following these steps and leveraging the power of Azure's built-in tools, you can significantly reduce your Azure cloud costs and maximize the value you get from your cloud investment. Remember, Azure cost optimization is an ongoing process – constantly refine your approach and embrace a culture of cost awareness to ensure your cloud journey remains cost-effective and successful.

| unicloud |

1,870,682 | Buy Verified Paxful Account | https://dmhelpshop.com/product/buy-verified-paxful-account/ Buy Verified Paxful Account There are... | 0 | 2024-05-30T18:34:31 | https://dev.to/katheogren04/buy-verified-paxful-account-2olm | webdev, javascript, beginners, programming | ERROR: type should be string, got "\nhttps://dmhelpshop.com/product/buy-verified-paxful-account/\n\nBuy Verified Paxful Account\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, Buy verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to Buy Verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with. Buy Verified Paxful Account.\n\nBuy US verified paxful account from the best place dmhelpshop\nWhy we declared this website as the best place to buy US verified paxful account? Because, our company is established for providing the all account services in the USA (our main target) and even in the whole world. With this in mind we create paxful account and customize our accounts as professional with the real documents. Buy Verified Paxful Account.\n\nIf you want to buy US verified paxful account you should have to contact fast with us. Because our accounts are-\n\nEmail verified\nPhone number verified\nSelfie and KYC verified\nSSN (social security no.) verified\nTax ID and passport verified\nSometimes driving license verified\nMasterCard attached and verified\nUsed only genuine and real documents\n100% access of the account\nAll documents provided for customer security\nWhat is Verified Paxful Account?\nIn today’s expanding landscape of online transactions, ensuring security and reliability has become paramount. Given this context, Paxful has quickly risen as a prominent peer-to-peer Bitcoin marketplace, catering to individuals and businesses seeking trusted platforms for cryptocurrency trading.\n\nIn light of the prevalent digital scams and frauds, it is only natural for people to exercise caution when partaking in online transactions. As a result, the concept of a verified account has gained immense significance, serving as a critical feature for numerous online platforms. Paxful recognizes this need and provides a safe haven for users, streamlining their cryptocurrency buying and selling experience.\n\nFor individuals and businesses alike, Buy verified Paxful account emerges as an appealing choice, offering a secure and reliable environment in the ever-expanding world of digital transactions. Buy Verified Paxful Account.\n\nVerified Paxful Accounts are essential for establishing credibility and trust among users who want to transact securely on the platform. They serve as evidence that a user is a reliable seller or buyer, verifying their legitimacy.\n\nBut what constitutes a verified account, and how can one obtain this status on Paxful? In this exploration of verified Paxful accounts, we will unravel the significance they hold, why they are crucial, and shed light on the process behind their activation, providing a comprehensive understanding of how they function. Buy verified Paxful account.\n\n \n\nWhy should to Buy Verified Paxful Account?\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, a verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence. Buy Verified Paxful Account.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to buy a verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with.\n\n \n\nWhat is a Paxful Account\nPaxful and various other platforms consistently release updates that not only address security vulnerabilities but also enhance usability by introducing new features. Buy Verified Paxful Account.\n\nIn line with this, our old accounts have recently undergone upgrades, ensuring that if you purchase an old buy Verified Paxful account from dmhelpshop.com, you will gain access to an account with an impressive history and advanced features. This ensures a seamless and enhanced experience for all users, making it a worthwhile option for everyone.\n\n \n\nIs it safe to buy Paxful Verified Accounts?\nBuying on Paxful is a secure choice for everyone. However, the level of trust amplifies when purchasing from Paxful verified accounts. These accounts belong to sellers who have undergone rigorous scrutiny by Paxful. Buy verified Paxful account, you are automatically designated as a verified account. Hence, purchasing from a Paxful verified account ensures a high level of credibility and utmost reliability. Buy Verified Paxful Account.\n\nPAXFUL, a widely known peer-to-peer cryptocurrency trading platform, has gained significant popularity as a go-to website for purchasing Bitcoin and other cryptocurrencies. It is important to note, however, that while Paxful may not be the most secure option available, its reputation is considerably less problematic compared to many other marketplaces. Buy Verified Paxful Account.\n\nThis brings us to the question: is it safe to purchase Paxful Verified Accounts? Top Paxful reviews offer mixed opinions, suggesting that caution should be exercised. Therefore, users are advised to conduct thorough research and consider all aspects before proceeding with any transactions on Paxful.\n\n \n\nHow Do I Get 100% Real Verified Paxful Accoun?\nPaxful, a renowned peer-to-peer cryptocurrency marketplace, offers users the opportunity to conveniently buy and sell a wide range of cryptocurrencies. Given its growing popularity, both individuals and businesses are seeking to establish verified accounts on this platform.\n\nHowever, the process of creating a verified Paxful account can be intimidating, particularly considering the escalating prevalence of online scams and fraudulent practices. This verification procedure necessitates users to furnish personal information and vital documents, posing potential risks if not conducted meticulously.\n\nIn this comprehensive guide, we will delve into the necessary steps to create a legitimate and verified Paxful account. Our discussion will revolve around the verification process and provide valuable tips to safely navigate through it.\n\nMoreover, we will emphasize the utmost importance of maintaining the security of personal information when creating a verified account. Furthermore, we will shed light on common pitfalls to steer clear of, such as using counterfeit documents or attempting to bypass the verification process.\n\nWhether you are new to Paxful or an experienced user, this engaging paragraph aims to equip everyone with the knowledge they need to establish a secure and authentic presence on the platform.\n\nBenefits Of Verified Paxful Accounts\nVerified Paxful accounts offer numerous advantages compared to regular Paxful accounts. One notable advantage is that verified accounts contribute to building trust within the community.\n\nVerification, although a rigorous process, is essential for peer-to-peer transactions. This is why all Paxful accounts undergo verification after registration. When customers within the community possess confidence and trust, they can conveniently and securely exchange cash for Bitcoin or Ethereum instantly. Buy Verified Paxful Account.\n\nPaxful accounts, trusted and verified by sellers globally, serve as a testament to their unwavering commitment towards their business or passion, ensuring exceptional customer service at all times. Headquartered in Africa, Paxful holds the distinction of being the world’s pioneering peer-to-peer bitcoin marketplace. Spearheaded by its founder, Ray Youssef, Paxful continues to lead the way in revolutionizing the digital exchange landscape.\n\nPaxful has emerged as a favored platform for digital currency trading, catering to a diverse audience. One of Paxful’s key features is its direct peer-to-peer trading system, eliminating the need for intermediaries or cryptocurrency exchanges. By leveraging Paxful’s escrow system, users can trade securely and confidently.\n\nWhat sets Paxful apart is its commitment to identity verification, ensuring a trustworthy environment for buyers and sellers alike. With these user-centric qualities, Paxful has successfully established itself as a leading platform for hassle-free digital currency transactions, appealing to a wide range of individuals seeking a reliable and convenient trading experience. Buy Verified Paxful Account.\n\n \n\nHow paxful ensure risk-free transaction and trading?\nEngage in safe online financial activities by prioritizing verified accounts to reduce the risk of fraud. Platforms like Paxfu implement stringent identity and address verification measures to protect users from scammers and ensure credibility.\n\nWith verified accounts, users can trade with confidence, knowing they are interacting with legitimate individuals or entities. By fostering trust through verified accounts, Paxful strengthens the integrity of its ecosystem, making it a secure space for financial transactions for all users. Buy Verified Paxful Account.\n\nExperience seamless transactions by obtaining a verified Paxful account. Verification signals a user’s dedication to the platform’s guidelines, leading to the prestigious badge of trust. This trust not only expedites trades but also reduces transaction scrutiny. Additionally, verified users unlock exclusive features enhancing efficiency on Paxful. Elevate your trading experience with Verified Paxful Accounts today.\n\nIn the ever-changing realm of online trading and transactions, selecting a platform with minimal fees is paramount for optimizing returns. This choice not only enhances your financial capabilities but also facilitates more frequent trading while safeguarding gains. Buy Verified Paxful Account.\n\nExamining the details of fee configurations reveals Paxful as a frontrunner in cost-effectiveness. Acquire a verified level-3 USA Paxful account from usasmmonline.com for a secure transaction experience. Invest in verified Paxful accounts to take advantage of a leading platform in the online trading landscape.\n\n \n\nHow Old Paxful ensures a lot of Advantages?\n\nExplore the boundless opportunities that Verified Paxful accounts present for businesses looking to venture into the digital currency realm, as companies globally witness heightened profits and expansion. These success stories underline the myriad advantages of Paxful’s user-friendly interface, minimal fees, and robust trading tools, demonstrating its relevance across various sectors.\n\nBusinesses benefit from efficient transaction processing and cost-effective solutions, making Paxful a significant player in facilitating financial operations. Acquire a USA Paxful account effortlessly at a competitive rate from usasmmonline.com and unlock access to a world of possibilities. Buy Verified Paxful Account.\n\nExperience elevated convenience and accessibility through Paxful, where stories of transformation abound. Whether you are an individual seeking seamless transactions or a business eager to tap into a global market, buying old Paxful accounts unveils opportunities for growth.\n\nPaxful’s verified accounts not only offer reliability within the trading community but also serve as a testament to the platform’s ability to empower economic activities worldwide. Join the journey towards expansive possibilities and enhanced financial empowerment with Paxful today. Buy Verified Paxful Account.\n\n \n\nWhy paxful keep the security measures at the top priority?\nIn today’s digital landscape, security stands as a paramount concern for all individuals engaging in online activities, particularly within marketplaces such as Paxful. It is essential for account holders to remain informed about the comprehensive security protocols that are in place to safeguard their information.\n\nSafeguarding your Paxful account is imperative to guaranteeing the safety and security of your transactions. Two essential security components, Two-Factor Authentication and Routine Security Audits, serve as the pillars fortifying this shield of protection, ensuring a secure and trustworthy user experience for all. Buy Verified Paxful Account.\n\nConclusion\nInvesting in Bitcoin offers various avenues, and among those, utilizing a Paxful account has emerged as a favored option. Paxful, an esteemed online marketplace, enables users to engage in buying and selling Bitcoin. Buy Verified Paxful Account.\n\nThe initial step involves creating an account on Paxful and completing the verification process to ensure identity authentication. Subsequently, users gain access to a diverse range of offers from fellow users on the platform. Once a suitable proposal captures your interest, you can proceed to initiate a trade with the respective user, opening the doors to a seamless Bitcoin investing experience.\n\nIn conclusion, when considering the option of purchasing verified Paxful accounts, exercising caution and conducting thorough due diligence is of utmost importance. It is highly recommended to seek reputable sources and diligently research the seller’s history and reviews before making any transactions.\n\nMoreover, it is crucial to familiarize oneself with the terms and conditions outlined by Paxful regarding account verification, bearing in mind the potential consequences of violating those terms. By adhering to these guidelines, individuals can ensure a secure and reliable experience when engaging in such transactions. Buy Verified Paxful Account.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com\n\n " | katheogren04 |

1,870,729 | Śledzenie zatwierdzeń na pulpicie nawigacyjnym GitHub w czasie rzeczywistym | Naucz się konstruować pulpit nawigacyjny pokazujący zatwierdzenia GitHub w czasie rzeczywistym. W tym projekcie wykorzystujemy JavaScript, C3.js i PubNub. | 0 | 2024-05-30T19:31:31 | https://dev.to/pubnub-pl/sledzenie-zatwierdzen-na-pulpicie-nawigacyjnym-github-w-czasie-rzeczywistym-5a7b | W dziedzinie tworzenia oprogramowania wykresy C3.js w czasie rzeczywistym oferują skuteczny sposób monitorowania aktywności w organizacji. W przypadku zespołów inżynieryjnych jednym z możliwych do śledzenia wskaźników są zatwierdzenia w serwisie GitHub. Aby zgłębić ten temat, ten wpis na blogu zawiera samouczek, który poprowadzi Cię przez proces wykorzystania interfejsu API GitHub do pobierania i wyświetlania danych zatwierdzeń GitHub na interaktywnym wykresie w czasie rzeczywistym. Wykorzystamy moc HTML, Javascript, CSS i użyjemy PubNub do stworzenia pulpitu nawigacyjnego GitHub i przesyłania strumieniowego danych zatwierdzeń, podczas gdy C3.js pomoże w wizualizacji.

Aby dowiedzieć się więcej o [wykresach C3.js w czasie rzeczywistym, przygotowaliśmy świetny samouczek](https://pubnub.com/blog/building-realtime-live-updating-animated-graphs-c3-js/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl). A teraz do dzieła!

Jak utworzyć pulpit nawigacyjny GitHub w czasie rzeczywistym

------------------------------------------------------------

Tworzenie pulpitu nawigacyjnego GitHub w czasie rzeczywistym obejmuje łączenie się z różnymi źródłami danych, takimi jak repozytorium GitHub i dbanie o niektóre niezbędne zależności. Należy pamiętać o niezbędnych środkach bezpieczeństwa cybernetycznego, takich jak bezpieczne kodowanie i szyfrowanie danych. Przestrzeganie branżowych protokołów bezpieczeństwa jest koniecznością.

Oto przewodnik krok po kroku:

### Dodaj webhook GitHub

Aby skonfigurować webhook, wykonaj następujące kroki:

1. Utwórz repozytorium GitHub lub użyj istniejącego repozytorium git.

2. Kliknij "Ustawienia" po prawej stronie strony.

3. Kliknij "[Webhooks](https://www.pubnub.com/learn/glossary/what-is-a-webhook/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl)" po lewej stronie strony.

4. Kliknij "Dodaj Webhook" w prawym górnym rogu.

5. GitHub poprosi o podanie hasła, wprowadź je.

6. W polu "Payload URL" wpisz: **http://pubnub-git-hook.herokuapp.com/github/ORG-NAME/TEAM-NAME.** Zastąp ORG-NAME nazwą swojej organizacji, a TEAM-NAME nazwą zespołu kontrolującego repozytorium.

### Załaduj wizualny pulpit nawigacyjny

Odwiedź[tę stronę](https://pubnub.github.io/git-commits-ui/). Zobaczysz listę wszystkich commitów wysłanych przez dashboard PubNub - słodkie! Po przesłaniu jednego z commitów do GitHub, w ciągu kilkudziesięciu milisekund na pulpicie commitów GitHub powinna pojawić się wiadomość, a wykresy będą aktualizowane w czasie rzeczywistym.

Jak zbudowaliśmy pulpit nawigacyjny zatwierdzeń Github

------------------------------------------------------

Pulpit nawigacyjny jest połączeniem GitHub, PubNub Data Stream Network i wizualizacji wykresów D3 obsługiwanych przez [C3.js](https://c3js.org/). Gdy commit jest przesyłany do GitHub, metadane commitu są publikowane w małej instancji Heroku, która publikuje je w sieci PubNub. [Hostujemy stronę pulpitu nawigacyjnego na stronach GitHub.](https://pubnub.github.io/git-commits-ui/)

Gdy nasza instancja Heroku otrzyma dane zatwierdzenia z GitHub, publikuje podsumowanie tych danych w PubNub przy użyciu publicznych kluczy publikowania/subskrybowania na kanale **pubnub-git**. [Kanał pubnub-git można monitorować za pośrednictwem naszej konsoli programisty tutaj](https://www.pubnub.com/docs/console/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl).

Oto przykładowy ładunek wiadomości:

```js

{

"name":"drnugent",

"avatar_url":"https://avatars.githubusercontent.com/u/857270?v=3",

"num_commits":4,

"team":"team-pubnub",

"org":"pubnub",

"time":1430436692806,

"repo_name":"drnugent/test"

}

```

Druga połowa magii dzieje się, gdy dashboard otrzymuje te informacje za pośrednictwem **wywołania zwrotnego subskrypcji**. Jeśli spojrzysz na źródło dashboardu, zobaczysz ten kod:

```js

pubnub.subscribe({

channel: 'pubnub-git',

message: displayLiveMessage

});

```

To wywołanie subskrypcji zapewnia, że funkcja JavaScript **displayLiveMessage()** jest wywoływana za każdym razem, gdy wiadomość zostanie odebrana na kanale **pubnub-git**. displayLiveMessage() dodaje powiadomienie commit push na górze dziennika i aktualizuje wykresy wizualizacji C3.

Ale zaraz, w jaki sposób pulpit nawigacyjny jest wypełniany po pierwszym załadowaniu?

Wykorzystanie PubNub Storage & Playback API dla pulpitu nawigacyjnego

---------------------------------------------------------------------

PubNub przechowuje zapis każdej wysłanej wiadomości i zapewnia programistom sposób na dostęp do tych zapisanych wiadomości za pomocą interfejsu [API Storage & Playback (History)](https://www.pubnub.com/products/pubnub-platform/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl). Głębiej w pulpicie nawigacyjnym zobaczysz następujący kod:

```js

var displayMessages = function(ms) { ms[0].forEach(displayMessage); };

pubnub.history({

channel: 'pubnub-git',

callback: displayMessages,

count: 100

});

```

Jest to żądanie pobrania ostatnich 1000 wiadomości wysłanych za pośrednictwem kanału pubnub-git. Tak więc, nawet jeśli pulpit nawigacyjny mógł być offline, gdy te wiadomości zostały wysłane, jest w stanie je pobrać i wykorzystać te dane do wypełnienia pulpitu nawigacyjnego tak, jakby był stale online.

Funkcja ta jest szczególnie przydatna w przypadku urządzeń z przerywaną lub zawodną łącznością, takich jak aplikacje mobilne w sieciach komórkowych lub podłączone samochody. Dzięki sieci PubNub nasz pulpit wizualizacji nie wymaga zaplecza do przechowywania stanu aplikacji.

Tworzenie własnego pulpitu nawigacyjnego GitHub

-----------------------------------------------

Aby rozpocząć tworzenie własnego pulpitu nawigacyjnego Github, rozwidl repozytorium Git Commit UI na github.com i postępuj zgodnie z README, aby uzyskać instrukcje konfiguracji. Żądania ściągnięcia są mile widziane w ramach współpracy społeczności open source.

Przyszłe trendy i rozwój pulpitów nawigacyjnych w czasie rzeczywistym

---------------------------------------------------------------------

Śledzenie ostatnich trendów i rozwoju w zakresie pulpitów nawigacyjnych w czasie rzeczywistym i powiązanych technologii ma kluczowe znaczenie. Obejmuje to websockety do transmisji danych w czasie rzeczywistym, wykorzystanie powiadomień do natychmiastowego wglądu oraz wykorzystanie pulpitu nawigacyjnego w czasie rzeczywistym w różnych przepływach pracy.

Doświadczenie PubNub

--------------------

PubNub pomógł wielu klientom osiągnąć sukces dzięki ich aplikacjom działającym w czasie rzeczywistym. Na przykład system powiadomień w czasie rzeczywistym LinkedIn...

Konfiguracja

------------

Zarejestruj konto PubNub, aby uzyskać natychmiastowy dostęp do kluczy PubNub za darmo. Najnowsze funkcje dostępne na koncie PubNub obejmują ...

Rozpocznij

----------

Nasze obszerne [dokumenty](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl) PubNub pozwolą ci rozpocząć pracę w mgnieniu oka, niezależnie od przypadku użycia lub [zestawu SDK](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl).

PubNub oferuje przyjazną dla użytkownika platformę, która zwiększa komfort użytkowania. Nasze usługi zostały zaprojektowane z myślą o programistach, aby zapewnić płynny proces integracji.

Jesteśmy tu po to, by ułatwić i usprawnić proces tworzenia aplikacji w czasie rzeczywistym. Skonfiguruj adres URL ładunku i zaczynajmy!

Oficjalna dokumentacja i wiarygodne źródła mogą być przywoływane w całym wpisie na blogu w celu potwierdzenia ważności informacji.

Jak PubNub może ci pomóc?

=========================

Ten artykuł został pierwotnie opublikowany na [PubNub.com](https://www.pubnub.com/blog/tracking-realtime-github-dashboard-commits/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl)

Nasza platforma pomaga programistom tworzyć, dostarczać i zarządzać interaktywnością w czasie rzeczywistym dla aplikacji internetowych, aplikacji mobilnych i urządzeń IoT.

Podstawą naszej platformy jest największa w branży i najbardziej skalowalna sieć komunikacyjna w czasie rzeczywistym. Dzięki ponad 15 punktom obecności na całym świecie obsługującym 800 milionów aktywnych użytkowników miesięcznie i niezawodności na poziomie 99,999%, nigdy nie będziesz musiał martwić się o przestoje, limity współbieżności lub jakiekolwiek opóźnienia spowodowane skokami ruchu.

Poznaj PubNub

-------------

Sprawdź [Live Tour](https://www.pubnub.com/tour/introduction/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl), aby zrozumieć podstawowe koncepcje każdej aplikacji opartej na PubNub w mniej niż 5 minut.

Rozpocznij konfigurację

-----------------------

Załóż [konto](https://admin.pubnub.com/signup/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl) PubNub, aby uzyskać natychmiastowy i bezpłatny dostęp do kluczy PubNub.

Rozpocznij

----------

[Dokumenty](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl) PubNub pozwolą Ci rozpocząć pracę, niezależnie od przypadku użycia lub [zestawu SDK](https://www.pubnub.com/docs?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=pl). | pubnubdevrel | |

1,870,726 | GitHub ダッシュボードのコミットをリアルタイムで追跡する | GitHubのコミットをリアルタイムで表示するダッシュボードの作り方を学ぶ。このプロジェクトではJavaScript、C3.js、PubNubを利用します。 | 0 | 2024-05-30T19:24:39 | https://dev.to/pubnub-jp/github-datusiyubodonokomitutoworiarutaimudezhui-ji-suru-364f | ソフトウェア開発の領域では、リアルタイムのC3.jsチャートが組織内の活動を監視する効果的な方法を提供します。エンジニアリング・チームにとって、追跡可能なメトリクスの一つは GitHub のコミットです。このブログでは、GitHub の API を利用して GitHub のコミット・データを取得し、リアルタイムでインタラクティブなグラフに表示する方法を説明します。HTML、Javascript、CSSのパワーを活用し、PubNubを使ってGitHubダッシュボードを作成し、コミットデータをストリーミングします。

[リアルタイムの C3.js チャートについては、素晴らしいチュートリアルが](https://pubnub.com/blog/building-realtime-live-updating-animated-graphs-c3-js/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ja)あります。では、さっそく見ていこう!

リアルタイム GitHub ダッシュボードの作り方

-------------------------

リアルタイム GitHub ダッシュボードを作るには、GitHub リポジトリのような様々なデータソースに接続し、必要な依存関係を処理する必要があります。セキュアなコーディングやデータの暗号化など、必要なサイバーセキュリティ対策を意識しましょう。業界標準のセキュリティ・プロトコルに従うことは必須です。

ステップバイステップのガイドはこちら:

### GitHub ウェブフックを追加する

ウェブフックをセットアップするには、以下の手順に従ってください:

1. GitHub リポジトリを作成するか、既存の git リポジトリを使用する。

2. ページの右側にある 'Settings' をクリックします。

3. ページの左側にある '[Webhooks](https://www.pubnub.com/learn/glossary/what-is-a-webhook/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ja)' をクリックします。

4. 右上の 'Add Webhook' をクリックします。

5. GitHub がパスワードを要求しますので、入力してください。

6. Payload URL'の下に:**http://pubnub-git-hook.herokuapp.com/github/ORG-NAME/TEAM-NAME** と入力します。ORG-NAME は組織名に、TEAM-NAME はレポを管理しているチームに置き換えてください。

### ビジュアル・ダッシュボードを読み込む