id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,871,732 | How to Improve Company Value in Your organization | Best Consultancy in Gurgaon | top 10 job consultancy in Gurgaon In these days’s competitive... | 0 | 2024-05-31T06:49:35 | https://dev.to/valuewisers_bc9b99a8f0c40/how-to-improve-company-value-in-your-organization-an4 | hrconsultancy, top10jobconsultancyingurgaon, bestconsultancyingurgaon | [](https://staffix.in/employers/best-consultancy-services-gurgaon/)

Best Consultancy in Gurgaon | top 10 job consultancy in Gurgaon

In these days’s competitive enterprise surroundings, company subculture performs a pivotal function in attracting and retaining pinnacle talent, riding employee engagement, and ensuring organizational success.

Understanding Company Culture

A sturdy, advantageous company tradition can result in better employee pleasure, higher performance, and decrease turnover quotes.

Top Placement Agencies

Top placement corporations like TeamLease Services, Kelly Services India, and ManpowerGroup India recognition on placing applicants in roles that fit their competencies and profession aspirations at the same time as also considering cultural compatibility. Their expertise in specific domains ensures that they are able to provide candidates who now not handiest perform properly however additionally integrate seamlessly into the employer way of life.

Strategies to Improve Company Culture

1. Define and Communicate Your Company Values

The first step in enhancing organization tradition is to truly define your enterprise’s values and ensure they are communicated effectively to all employees.

mission on Statement: Create a undertaking declaration that encapsulates your employer’s reason and core values. This need to be prominently displayed within the workplace and included in onboarding materials.

Leadership Communication: Leaders should consistently speak and encompass these values. Regularly discussing the values at some stage in conferences and integrating them into business practices reinforces their significance.

2. Foster Open Communication

Open and obvious communication is critical for constructing consider and a superb work surroundings. Employees ought to feel cushty sharing their ideas, remarks, and concerns with out fear of retribution.

Regular Meetings: Hold everyday team meetings and one-on-one periods to facilitate open speak. Encourage employees to voice their critiques and concentrate actively to their remarks.

Feedback Mechanisms: Implement anonymous comments mechanisms consisting of surveys or thought boxes to collect sincere evaluations and deal with problems directly.

3 Recognize and Reward Employees

Regularly acknowledging and appreciating personnel’ difficult paintings and achievements fosters a subculture of gratitude and motivates others to excel.

Recognition Programs: Establish formal popularity packages that remember personnel’ accomplishments. This should consist of Employee of the Month awards, peer recognition programs, and public acknowledgment in the course of meetings.

Incentives: Offer incentives inclusive of bonuses, extra excursion days, or expert development opportunities to reward splendid overall performance.

4. Invest in Employee Development

Providing possibilities for professional growth and development indicates employees that the enterprise values their career progression and is inclined to invest in their future.

Training Programs: Offer training programs and workshops to assist personnel broaden new capabilities and strengthen their careers.

Career Pathing: Work with employees to create clear career paths and provide the assets and support had to attain their goals.

5. Encourage Team Building and Collaboration

A tradition of collaboration and teamwork complements creativity, hassle-solving, and ordinary productiveness.

6. Embrace Diversity and Inclusion

A diverse and inclusive place of job fosters innovation, creativity, and a broader range of views. Embracing range manner valuing personnel’ precise backgrounds and experiences.

Diversity Programs: Develop applications and policies that sell range and inclusion, including bias education, range hiring projects, and employee useful resource companies.

Inclusive Culture: Create an inclusive way of life in which all personnel experience valued and respected. Encourage open talk about variety and offer platforms for underrepresented voices.

7. Lead by means of Example

Leadership plays a crucial position in shaping and retaining business enterprise lifestyle. Leaders ought to encompass the values and behaviors they want to look in their personnel.

Role Models: Leaders ought to act as function fashions by means of demonstrating integrity, empathy, and respect in their interactions.

Transparent Leadership: Practice obvious management by way of openly speaking organisation desires, demanding situations, and successes. This builds consider and fosters a experience of shared motive.

8. Regularly Assess and Improve Culture

Improving corporation culture is an ongoing technique that calls for regular evaluation and adjustment. Continuously gathering comments and making enhancements ensures that the lifestyle evolves with the employer.

Culture Surveys: Conduct regular subculture surveys to evaluate worker delight and discover areas for development.

Action Plans: Develop movement plans based on survey consequences and remarks. Involve personnel within the process to ensure their voices are heard and valued.

Top recruitment corporations in India and pinnacle placement organizations play a pivotal function in enhancing business enterprise culture by way of ensuring that new hires are not only skilled but additionally culturally aligned with the company.

Finding the Right Fit

Recruitment organizations behavior thorough assessments to suit candidates with companies where they will thrive. This includes evaluating cultural suit, which guarantees that new hires percentage the organisation’s values and could combine smoothly into the group.

Reducing Turnover

By setting applicants who align properly with the company subculture, recruitment and placement businesses assist reduce turnover charges. Employees who sense related to their place of business are more likely to live, contributing to a stable and engaged personnel.

| valuewisers_bc9b99a8f0c40 |

1,871,731 | Best Machine For Weight Loss | At Best Machine For Weight Loss, we are proud to collaborate with top manufacturers and distributors... | 0 | 2024-05-31T06:48:59 | https://dev.to/bestmachine/best-machine-for-weight-loss-27n3 | At Best Machine For Weight Loss, we are proud to collaborate with top manufacturers and distributors of gym equipment in the industry. This partnership has endowed us with invaluable insights that our team leverages to assist our clients in choosing the most effective exercise machines for weight loss.

Website: https://bestmachineforweightloss.com/

Phone: 330-577-0430

Address: 2216 Little Street - Akron, OH 4431

https://muckrack.com/best-machine-for-weight-loss

https://rentry.co/wru2pcfu

https://pastelink.net/1gmc5glg

https://makersplace.com/bestmachineforweightloss/about

https://www.gaiaonline.com/profiles/bestmachine/46701144/

https://www.wpgmaps.com/forums/users/bestmachine/

https://visual.ly/users/bestmachineforweightloss

https://expathealthseoul.com/profile/best-machine-for-weight-loss/

https://guides.co/a/best-machine-for-weight-loss

https://www.funddreamer.com/users/best-machine-for-weight-loss

https://www.dnnsoftware.com/activity-feed/userid/3199386

https://forum.dmec.vn/index.php?members/bestmachine.61390/

https://webflow.com/@bestmachine

https://potofu.me/bestmachine

https://www.pearltrees.com/bestmachine

https://www.rctech.net/forum/members/bestmachine-375100.html

https://www.elephantjournal.com/profile/bestmachi-n-e-forw-eightloss/

https://www.artscow.com/user/3196903

https://linkmix.co/23519558

https://peatix.com/user/22446154/view

https://wperp.com/users/bestmachine/

https://controlc.com/3b45cea6

https://nhattao.com/members/bestmachine.6536211/

https://www.creativelive.com/student/best-machine-for-weight-loss?via=accounts-freeform_2

https://www.intensedebate.com/people/jdbestmachine

https://naijamp3s.com/index.php?a=profile&u=bestmachine

https://telegra.ph/bestmachine-05-31-2

https://myanimelist.net/profile/twbestmachine

https://devpost.com/bestmachi-n-e-forw-eightloss

https://dreevoo.com/profile.php?pid=643365

https://sinhhocvietnam.com/forum/members/74805/#about

https://dribbble.com/bestmachine/about

https://vimeo.com/user220450008

https://files.fm/bestmachine/info

https://www.kniterate.com/community/users/bestmachine/

https://collegeprojectboard.com/author/bestmachine/

https://bestmachine.notepin.co/

https://wibki.com/bestmachine?tab=Best%20Machine%20For%20Weight%20Loss

https://www.are.na/best-machine-for-weight-loss/channels

https://www.facer.io/u/bestmachine

https://able2know.org/user/bestmachine/

https://os.mbed.com/users/bestmachine/

https://www.instapaper.com/p/bestmachine

https://www.5giay.vn/members/bestmachine.101974775/#info

https://wmart.kz/forum/user/163759/

https://research.openhumans.org/member/bestmachine

https://starity.hu/profil/452802-bestmachine/

https://pxhere.com/en/photographer-me/4271498

https://www.scoop.it/u/best-machinefor-weight-loss

https://gettr.com/user/bestmachine

https://slides.com/bestmachine

https://hub.docker.com/u/bestmachine

https://link.space/@bestmachine

https://www.nexusmods.com/20minutestildawn/images/98

https://www.dohtheme.com/community/members/bestmachine.76688/#about

https://blender.community/bestmachineforweightloss/

https://app.talkshoe.com/user/bestmachine

https://bentleysystems.service-now.com/community?id=community_user_profile&user=e41b1e3047e2825088c56642846d43d7

https://www.equinenow.com/farm/bestmachine.htm

https://kumu.io/bestmachine/sandbox#untitled-map

https://www.angrybirdsnest.com/members/bestmachine/profile/

https://camp-fire.jp/profile/bestmachine

https://ficwad.com/a/bestmachine

https://allmylinks.com/bestmachine

https://www.beatstars.com/bestmachineforweightloss/about

https://www.anobii.com/fr/0131ae2b0d3ea0c921/profile/activity

https://topsitenet.com/profile/szbestmachine/1198308/

https://padlet.com/bestmachineforweightloss

https://community.tableau.com/s/profile/0058b00000IZZVH

https://www.speedrun.com/users/bestmachine

https://linktr.ee/bestmachine

https://www.robot-forum.com/user/160698-bestmachine/?editOnInit=1

https://wakelet.com/@BestMachineForWeightLoss27687

https://roomstyler.com/users/bestmachine

http://hawkee.com/profile/6988348/

https://www.pling.com/u/bestmachine/

http://idea.informer.com/users/bestmachine/?what=personal

https://vnxf.vn/members/bestmachine.81767/#about

https://qiita.com/bestmachine

https://tinhte.vn/members/bestmachine.3023699/

https://zzb.bz/r0uwb

https://jsfiddle.net/user/bestmachine/

https://newspicks.com/user/10325866

https://500px.com/p/bestmachine?view=photos

https://www.storeboard.com/bestmachineforweightloss

https://lab.quickbox.io/dcbestmachine

https://profile.ameba.jp/ameba/izbestmachine/

https://app.roll20.net/users/13394055/best-machine-f

https://www.reverbnation.com/bestmachine

https://unsplash.com/@bestmachine

https://leetcode.com/u/bestmachine/

https://taplink.cc/bestmachine

https://kktix.com/user/6123810

https://willysforsale.com/profile/bestmachine

https://www.patreon.com/bestmachine

https://www.babelcube.com/user/best-machine-for-weight-loss

https://forum.codeigniter.com/member.php?action=profile&uid=109079

https://www.noteflight.com/profile/4d76568b1046987440c2e3caa74de85e6b7ed395

https://disqus.com/by/bestmachine/about/

https://www.mountainproject.com/user/201832311/best-machine-for-weight-loss

https://confengine.com/user/best-machine-for-weight-loss

https://www.hahalolo.com/@66596d9e05740e60d09478e2

https://stocktwits.com/bestmachine

https://hackerone.com/bestmachine?type=user

https://www.diggerslist.com/bestmachine/about

https://www.metal-archives.com/users/bestmachine

https://www.nintendo-master.com/profil/bestmachine

https://diendannhansu.com/members/bestmachine.50555/#about

https://www.fimfiction.net/user/748504/bestmachine

https://vnseosem.com/members/bestmachine.31304/#info

https://turkish.ava360.com/user/bestmachine/#

https://www.plurk.com/bestmachine/public

https://www.mixcloud.com/bestmachine/

https://hypothes.is/users/bestmachine

https://gifyu.com/bestmachine

https://data.world/bestmachine

https://piczel.tv/watch/bestmachine

https://git.industra.space/bestmachine

https://thefeedfeed.com/prickly-pear5667

https://teletype.in/@bestmachine

https://solo.to/bestmachine

https://timeswriter.com/members/bestmachine/

https://8tracks.com/bestmachine

https://my.desktopnexus.com/bestmachine/

https://worldcosplay.net/member/1772327

https://notabug.org/bestmachine

https://magic.ly/bestmachine

https://www.cakeresume.com/me/bestmachine

https://www.metooo.io/u/66596f040c59a922425910e0

https://chart-studio.plotly.com/~bestmachine

https://www.credly.com/users/best-machine-for-weight-loss/badges

https://bandori.party/user/201782/bestmachine/

https://play.eslgaming.com/player/20137119/

https://participez.nouvelle-aquitaine.fr/profiles/bestmachine/activity?locale=en

https://tupalo.com/en/users/6797791

https://justpaste.it/u/bestmachine

https://www.ohay.tv/profile/bestmachine

https://doodleordie.com/profile/bestmachine

https://www.bark.com/en/gb/company/bestmachine/MO3L0/

https://sketchfab.com/bestmachine

https://www.exchangle.com/bestmachine

http://forum.yealink.com/forum/member.php?action=profile&uid=343539

https://filesharingtalk.com/members/596930-bestmachine?tab=aboutme#aboutme

https://glose.com/u/bestmachine

https://www.kickstarter.com/profile/bestmachine/about

https://rotorbuilds.com/profile/42809/

https://experiment.com/users/bmachineforweightloss

https://www.fitday.com/fitness/forums/members/bestmachine.html

https://www.webwiki.com/bestmachineforweightloss.com

https://pinshape.com/users/4478576-bestmachine#designs-tab-open

https://inkbunny.net/bestmachine

www.artistecard.com/bestmachine#!/contact

https://crowdin.com/project/bestmachine

https://www.dermandar.com/user/bestmachine/

https://chodilinh.com/members/bestmachine.79641/#about

http://gendou.com/user/ytbestmachine

https://www.divephotoguide.com/user/bestmachine/

https://myspace.com/webestmachine

| bestmachine | |

1,871,730 | New post test | Just to test the post update Hello koko sfsf skadfj asklfj asfl | 0 | 2024-05-31T06:48:20 | https://dev.to/ijatayam/new-post-test-54mh | test | Just to test the post update

**Hello**

_koko_

[](https://freeimage.host/)

1. sfsf

2. skadfj

- asklfj

- asfl

| ijatayam |

1,871,728 | King88 - 77King88 Nha Cai An Toan So #1 | DK Nhan 88k | 77King88.co la trang web chinh thuc cua King 88. Khi tham gia choi tai KING88. King88 tu hao la noi... | 0 | 2024-05-31T06:47:45 | https://dev.to/77king88co/king88-77king88-nha-cai-an-toan-so-1-dk-nhan-88k-24h0 | 77king88, king88 | 77King88.co la trang web chinh thuc cua King 88. Khi tham gia choi tai KING88. King88 tu hao la noi cung cap cac tro choi song bac truc tuyen hang dau tai Viet Nam, hay lua chon va tin tuong tham gia ngay hom nay.

Dia chi: 42 Hem 68/53/18, Quan Hoa, Cau Giay, Ha Noi, Viet Nam

Email: sksm51097@gmail.com

Website: https://77king88.co/

#King88 #77king88 #88king88

Social:

https://www.facebook.com/77king88/

https://twitter.com/77king88co

https://www.youtube.com/channel/UCGYohXZUdgN1HVNp0Tv39cw

https://www.pinterest.com/77king88co/

https://learn.microsoft.com/en-us/users/77king88co/

https://github.com/77king88co

https://www.blogger.com/profile/04622065633570877437

https://www.reddit.com/user/77king88co/

https://vi.gravatar.com/77king88co

https://en.gravatar.com/77king88co

https://medium.com/@sksm51097/about

https://www.tumblr.com/77king88co

https://sksm51097.wixsite.com/77king88co

https://77king88co.weebly.com/

https://77king88co.livejournal.com/profile/

https://soundcloud.com/77king88co

https://www.openstreetmap.org/user/77king88co

https://77king88co.wordpress.com/

https://sites.google.com/view/77king88co/home

https://linktr.ee/77king88co

https://www.twitch.tv/77king88co/about

https://tinyurl.com/77king88co

https://ok.ru/77king88co/statuses/592475306212

https://profile.hatena.ne.jp/king7788co/profile

https://issuu.com/77king88co

https://www.liveinternet.ru/users/king7788co

https://dribbble.com/77king88co/about

https://form.jotform.com/241510205041032

https://www.patreon.com/77king88co

https://archive.org/details/@77king88co

https://gitlab.com/77king88co

https://www.kickstarter.com/profile/1186605209/about

https://disqus.com/by/77king88co/about/

https://77king88co.webflow.io/

https://www.goodreads.com/user/show/178712703-77king88co

https://500px.com/p/77king88co?view=photos

https://about.me/king7788co

https://tawk.to/77king88co

https://www.deviantart.com/77king88co

https://ko-fi.com/77king88co

https://www.provenexpert.com/77king88co/

https://hub.docker.com/u/77king88co | 77king88co |

1,871,727 | How We are using office furniture? | In today's modern workplaces, office furniture plays a crucial role in enhancing productivity,... | 0 | 2024-05-31T06:46:56 | https://dev.to/p1_office_furniture/how-we-are-using-office-furniture-2010 | officefurniture, office | In today's modern workplaces, office furniture plays a crucial role in enhancing productivity, comfort, and overall well-being. From ergonomic chairs to adjustable standing desks, the right office furniture can make a significant difference in how we work. Let's explore how businesses are leveraging office furniture to create more efficient and ergonomic workspaces.

Introduction to Office Furniture

Office furniture encompasses a wide range of items designed to facilitate work activities in an office environment. It includes desks, chairs, storage solutions, and various accessories aimed at providing comfort and functionality to employees.

Importance of Ergonomic Office Furniture

[Ergonomic office furniture](https://p1officefurniture.com/) is designed to support the natural posture of the body, reducing strain and discomfort during long hours of work. Investing in ergonomic chairs and desks can significantly improve employee health and productivity while reducing the risk of musculoskeletal disorders.

Types of Office Furniture

Desks

Desks come in various shapes and sizes, including traditional rectangular desks, L-shaped desks, and height-adjustable standing desks. Each type serves different purposes and can be customized to meet the needs of individual employees.

Chairs

Office chairs are perhaps the most important piece of furniture in any workspace. Ergonomic chairs with adjustable features such as lumbar support, armrests, and seat height can help maintain proper posture and reduce the risk of back pain and fatigue.

Storage Solutions

Effective storage solutions such as filing cabinets, shelves, and modular storage units help keep the workspace organized and clutter-free. By providing designated storage space for documents and supplies, employees can work more efficiently without distractions.

Factors to Consider When Choosing Office Furniture

When selecting office furniture, several factors should be taken into account:

Comfort

Comfort should be a top priority when choosing office furniture. Employees who are comfortable at their desks are more likely to remain focused and productive throughout the day.

Functionality

Office furniture should serve its intended purpose efficiently. Desks should have ample workspace, chairs should provide adequate support, and storage solutions should be easily accessible.

Style

The aesthetic appeal of office furniture can contribute to the overall ambiance of the workspace. Choosing furniture that complements the company's brand and culture can create a positive impression on clients and visitors.

Budget

While quality [office furniture](https://p1logisticservices.com/office-furniture) is an investment, it's essential to consider budget constraints. Finding a balance between cost and quality ensures that businesses get the most value out of their furniture purchases.

Benefits of Using Proper Office Furniture

Investing in proper office furniture offers several benefits:

Increased Productivity

Comfortable and ergonomic furniture can boost employee productivity by reducing discomfort and fatigue, allowing them to focus on their tasks more effectively.

Improved Health and Well-being

Ergonomic chairs and desks promote better posture and reduce the risk of musculoskeletal injuries, leading to improved employee health and well-being.

Enhanced Workplace Aesthetics

Well-designed office furniture can enhance the visual appeal of the workspace, creating a more inviting and professional atmosphere for employees and clients alike.

Sustainability in Office Furniture

With growing awareness of environmental issues, many businesses are prioritizing sustainability in their furniture choices. Sustainable materials, energy-efficient designs, and recycling programs are becoming increasingly common in the office furniture industry.

Trends in Office Furniture Design

Modern office furniture designs focus on flexibility, adaptability, and multi-functionality to accommodate diverse work styles and preferences. Collaborative workspaces, open-plan layouts, and modular furniture systems are some of the key trends shaping the future of office design.

Tips for Arranging Office Furniture

Properly arranging office furniture can optimize space usage and improve workflow. Consider factors such as natural light, traffic flow, and the placement of equipment when arranging desks and chairs in the workspace.

Case Studies: Successful Office Furniture Implementation

Case studies highlighting successful office furniture implementations can provide valuable insights into best practices and innovative solutions for creating productive work environments.

Future of Office Furniture

The future of office furniture is likely to be influenced by advancements in technology, changing work habits, and evolving workplace cultures. As businesses continue to prioritize employee well-being and flexibility, office furniture designs will adapt to meet these changing needs.

Conclusion

In conclusion, office furniture plays a crucial role in shaping the modern workplace. By investing in ergonomic, functional, and aesthetically pleasing furniture, businesses can create environments that promote productivity, health, and well-being for their employees. With careful consideration of factors such as comfort, functionality, and sustainability, businesses can maximize the benefits of their office furniture investments. | p1_office_furniture |

1,871,725 | Startup Hiring: 5 Recruiting Tips Every Founder Should Know | Best Consultancy in Gurgaon | top 10 job consultancy in Gurgaon Recruiting the proper skills is one... | 0 | 2024-05-31T06:45:42 | https://dev.to/valuewisers_bc9b99a8f0c40/startup-hiring-5-recruiting-tips-every-founder-should-know-1727 | hrconsultancy, bestconsultancyingurgaon, top10jobconsultancyingurgaon | [](https://staffix.in/employers/best-consultancy-services-gurgaon/)

Best Consultancy in Gurgaon | top 10 job consultancy in Gurgaon

Recruiting the proper skills is one of the most crucial aspects of constructing a a hit startup.

However, the hiring procedure can be daunting, specially for startups that want to compete with

installed businesses for top expertise. This weblog explores five crucial hacks each founder must

know when recruiting, with a selected attention on leveraging the understanding of top

recruitment corporations and location companies in India.

1. Define Your Startup's Unique Value Proposition

Understanding Your Value Proposition

One of the primary steps in attracting pinnacle expertise is surely defining and speaking your

startup's specific fee proposition (UVP). This isn't just about the services or products you offer to

customers however also approximately what you could offer capacity personnel.

Make certain capacity hires recognize the scope for private and expert development inside your

employer.

Work Environment: Emphasize your administrative center tradition, whether or not it is

collaborative, revolutionary, flexible, or driven by way of a strong feel of network.

Crafting the Message

Ensure that your job postings, organization internet site, and social media profiles mirror this

UVP. Consistent messaging throughout all systems enables in attracting candidates who resonate

along with your startup’s ethos.

2. Leverage Top Recruitment Agencies in India

Why Use Recruitment Agencies?

They carry understanding, huge networks, and a deep information of the task market. Here's how

you could leverage them efficiently:

Time and Resource Efficiency: Outsourcing the recruitment procedure allows you to awareness

on different important factors of your enterprise at the same time as professionals take care of the

hiring technique.

Choosing the Right Agency

When selecting a recruitment employer, consider factors which includes their specialization,

popularity, and achievement rate. Top recruitment organizations in India, like ABC Consultants,

Michael Page India, and Randstad India, have a proven song record and cater to diverse

industries, which include tech startups.

Building a Partnership

Establish a robust partnership with your chosen organization. Clearly speak your hiring wishes,

company tradition, and the specific abilities and attributes you're looking for in applicants.

Regular remarks and open communique can help the organization higher understand your

requirements and improve the pleasant of candidates they present.

Three. Utilize Top Placement Agencies for Specialized Roles

Understanding Placement Agencies

Placement organizations typically focus on matching candidates with particular activity roles

based totally on their skills, qualifications, and career aspirations. Leveraging those agencies can

be mainly useful for hiring specialized or high-level positions.

Benefits of Placement Agencies

Expertise in Specific Domains: Placement corporations regularly have recruiters with

understanding especially industries or activity functions, making them well-prepared to pick out

and appeal to pinnacle talent for specialised roles.

Targeted Searches: They can conduct focused searches to discover candidates with the precise

talent sets and enjoy you want, saving you time and assets.

Streamlined Process: Placement companies take care of the preliminary screening, interviews,

and history tests, ensuring you handiest meet candidates who are a very good healthy in your

startup.

Examples of Top Placement Agencies

Some of the top placement organizations in India encompass TeamLease Services, Kelly

Services India, and ManpowerGroup India.

Four. Leverage Social Media and Professional Networks

they allow you to reach a massive target audience, exhibit your employer culture, and at once

engage with potential applicants.

LinkedIn: Create a strong LinkedIn organization web page and actively publish approximately

process openings, agency updates, and enterprise insights. Join applicable corporations and take

part in discussions to boom your visibility.

Facebook and Twitter: Use these platforms to share activity postings and corporation news.

Facebook groups and Twitter chats can be effective for achieving unique groups.

Instagram: Showcase your corporation lifestyle thru images and stories, highlighting crew

occasions, workspaces, and worker testimonials.

Networking and Referrals

Professional networks and worker referrals are useful assets for locating top skills. Encourage

your modern personnel to refer potential applicants and reward successful referrals. Attend

enterprise occasions, conferences, and meetups to increase your network and hook up with

capability hires.

Five. Optimize Your Hiring Process

Streamlining the Hiring Process

An green hiring method can appreciably enhance your potential to draw and preserve pinnacle

talent. Here are a few recommendations to optimize your recruitment method:

Clear Job Descriptions: Write clear, exact job descriptions that define the function,

responsibilities, required skills, and qualifications. This facilitates entice the proper applicants

and units clear expectations.

Efficient Screening: Use applicant monitoring systems (ATS) to manipulate programs and

streamline the screening method. Automated equipment permit you to filter applicants based on

unique standards, saving time and making sure consistency.

Structured Interviews: Develop a based interview procedure with standardized questions and

assessment criteria.

Timely Communication: Maintain everyday conversation with applicants at some stage in the

hiring system. Prompt responses and updates display professionalism and appreciate, improving

the candidate enjoy.

Assessing Cultural Fit

While technical talents and experience are essential, cultural in shape is equally vital. Assess

candidates' alignment along with your startup's values, paintings ethic, and team dynamics. This

can be achieved through behavioral interviews, cultural healthy checks, and crew-based totally

critiques.

Providing a Positive Candidate Experience

A nice candidate revel in can enhance your recognition and attract top skills. Ensure that the

hiring technique is obvious, respectful, and engaging. Provide optimistic remarks to candidates,

no matter the outcome, and make the onboarding technique clean and inviting.

conclusion

Hiring the right expertise is a important determinant of a startup's fulfillment. By defining your

specific fee proposition, leveraging top recruitment companies and placement businesses in

India, using social media and expert networks, and optimizing your hiring process, you could

appeal to and maintain the high-quality applicants on your startup.

In the aggressive panorama of startups, having the proper recruitment techniques in area could

make all the distinction. Remember, the adventure to constructing a a success startup starts

offevolved with hiring the right people who proportion your vision and ardour. | valuewisers_bc9b99a8f0c40 |

1,871,724 | Introducing ERC-7401: The Next Evolution in Nestable NFT Standards | With the rapid development of digital assets and blockchain technology today, non-fungible tokens... | 0 | 2024-05-31T06:45:31 | https://dev.to/nft_research/introducing-erc-7401-the-next-evolution-in-nestable-nft-standards-565g | nft, web3 | With the rapid development of digital assets and blockchain technology today, non-fungible tokens (NFTs) have become an important form of asset that is widely used in various fields such as art, gaming, collectibles, and more. As the market demands diversify, traditional NFT standards like ERC-721 and ERC-1155 are not able to fully meet the users’ needs, especially in terms of flexibility and interactivity.

To address these challenges, multiple innovative protocol standards have entered the market, and this article primarily explores ERC-7401 for introducing the concept of nestable NFTs, opening up new possibilities for the functionality and flexibility of NFTs.

## What is ERC-7401?

ERC-7401, also known as Parent-Managed Nestable Non-Fungible Tokens, was initially named ERC-6059 but was revised and renumbered after receiving feedback from the community. The standard was proposed on 22 and finalized on September 23, with its future applications yet to be observed, but it is expected to bring improved functionality. Its core innovation lies in allowing one NFT (parent NFT) to contain one or more other NFTs (child NFTs), thereby opening the doors to managing and interacting with multi-layered assets.

The standard extends the basic NFT standard to allow nesting and parent-child relationships between NFTs. In simpler terms, NFTs can own and manage other NFTs, creating a hierarchical structure of tokens. This structure enables users to more flexibly manage and trade their digital assets while also providing more possibilities for the creation and use of NFTs. Compared to other standards, ERC-7401 is more focused on scalability and interactivity in its design, aiming to meet the needs of more complex applications.

## Concepts and Innovations of ERC-7401

We are accustomed to the fact that only user wallets or smart contracts can own NFTs, but they can also “nest” non-fungible items within each other. The technical implementation of the ERC-7401 standard is based on several core points:

Multi-level nesting: Supports infinite levels of NFT nesting, where each parent NFT can contain multiple child NFTs, which can themselves become parent NFTs of other NFTs. This multi-layered structure provides great flexibility for asset composition and separation, as well as enabling more complex asset relationships and management strategies.

Asset management flexibility: Users who own parent NFTs can freely manage their internal child NFTs, including but not limited to adding, removing, or replacing them. This is particularly important when managing complex asset collections such as art series or multiple items in a game.

Cross-collection interoperability: Parent NFTs and child NFTs can belong to different NFT collections, providing significant flexibility for cross-brand or cross-platform collaborations. For example, an NFT collection of a movie series can contain limited edition art pieces NFTs from different artists.

Diverse Application Scenarios

The actual application scenarios of ERC-7401 are diverse and varied, including but not limited to the following areas:

Gaming industry: Game developers can utilize ERC-7401 to design more complex in-game economies. For instance, a character NFT (parent NFT) can include multiple equipment NFTs (child NFTs), which can be updated or traded individually, adding strategy and player engagement to the game. This not only centralizes asset management but also allows adjusting the character’s abilities and appearance through trading child NFTs.

Art and collectibles: Artists can provide whole or fragmented ways of collecting by creating collection NFTs (parent NFTs) containing multiple artworks. This not only facilitates artists in managing and selling their works but also offers collectors more choices and flexibility.

Community management: The ERC-7401 standard also has significant applications in community management. Through ERC-7401, communities can create parent community NFTs containing multiple sub-communities or activities. For example, a large community can act as a parent NFT, with its various events, conferences, and sub-communities serving as child NFTs. This way, community managers can more conveniently organize and manage community activities while enhancing community participation and cohesion.

Identity and certifications: In terms of digital identity authentication, individuals or institutions can issue a parent NFT containing multiple certificates or qualifications. Each certificate is stored as a child NFT, making it easier to manage and verify a person’s multiple identities or qualifications.

Impact of ERC-7401 on the NFT Ecosystem

1/ Enhancing the value and liquidity of NFTs

By introducing the nesting structure, the ERC-7401 standard can enhance the overall value and liquidity of NFTs. A parent NFT containing multiple child NFTs often has a higher value than the sum of individual NFTs. Additionally, the unified management and trading of nested NFTs can significantly increase the liquidity of NFTs, promoting market activity.

2/ Fostering innovation

The ERC-7401 standard provides developers and creators with more innovative space. Through nesting structures, developers can design more complex and rich digital assets, sparking more creativity and applications. For example, in games, developers can design game assets with multiple levels and complex relationships, enhancing the depth and fun of the game.

3/ Optimizing user experience

The introduction of the ERC-7401 standard can greatly optimize the user experience. By managing and trading nested NFTs in a unified manner, users can more efficiently manage and trade their digital assets. Additionally, the nesting structure can visually display the hierarchy and relationships of digital assets, improving user understanding and operational experience.

4/ Driving standardization process

The introduction of ERC-7401 signifies the further advancement of NFT standardization. By introducing the nesting structure, ERC-7401 provides new directions and ideas for the standardization of NFTs, promoting the normalization and standardization of NFT technology and applications. This not only helps improve the technical level of NFTs but also enhances market trust and recognition.

Technological Implementation and Challenges The primary technological challenge in implementing the ERC-7401 standard lies in processing and storing a large amount of nested information efficiently. Additionally, the security of smart contracts is also an important consideration, as complex interactions and nesting may increase the risk of smart contract attacks. Developers need to ensure contract security and functionality while optimizing contract performance and costs.

Future Prospects and Outlook

With the continuous development of the NFT market, the introduction of the ERC-7401 standard undeniably brings new vitality and possibilities to the market. It not only provides users with more flexibility and choice but also opens the doors for developers to innovative applications. In the future, we can anticipate ERC-7401 playing a unique role in more areas, driving further integration and innovation of digital assets and blockchain technology.

With the promotion and application of this standard, the future NFT market will be more diversified and dynamic, providing users and developers with a richer and more in-depth digital asset experience.

NFTScan is the world’s largest NFT data infrastructure, including a professional NFT explorer and NFT developer platform, supporting the complete amount of NFT data for 20+ blockchains including Ethereum, Solana, BNBChain, Arbitrum, Optimism, and other major networks, providing NFT API for developers on various blockchains.

Official Links:

NFTScan: https://nftscan.com

Developer: https://developer.nftscan.com

Twitter: https://twitter.com/nftscan_com

Discord: https://discord.gg/nftscan

Join the NFTScan Connect Program | nft_research |

1,871,723 | ERC-7401:嵌套 NFT 标准的全新篇章 | 在数字资产和区块链技术迅速发展的今天,非同质化代币(NFT)已经成为了一种重要的资产形式,广泛应用于艺术、游戏、收藏品等多个领域。随着市场需求的多样化,传统的 NFT 标准如 ERC-721 和... | 0 | 2024-05-31T06:43:51 | https://dev.to/nft_research/erc-7401qian-tao-nft-biao-zhun-de-quan-xin-pian-zhang-246n | nft, web3 | 在数字资产和区块链技术迅速发展的今天,非同质化代币(NFT)已经成为了一种重要的资产形式,广泛应用于艺术、游戏、收藏品等多个领域。随着市场需求的多样化,传统的 NFT 标准如 ERC-721 和 ERC-1155 已经不能完全满足用户的需求,尤其是在灵活性和互动性方面。为了应对这些挑战,市场上涌入了多种创新的协议标准,本文主要探究 ERC-7401 因其引入的嵌套 NFT 概念,为 NFT 的功能性和灵活性开辟了新的可能性。

什么是 ERC-7401?

ERC-7401 即家长管理的可嵌套非同质代币,最初的名称为 ERC-6059,但后来在许多社区评论后进行了修订并给出了新的编号。该标准于 22 年提出,23 年 9 月才最终确定,其未来应用尚待观察,但将带来改进的功能。其核心创新在于允许一个 NFT(父 NFT)包含一个或多个其他 NFT(子 NFT),从而打开了管理和交互多层次资产的大门。

该标准扩展了基本的 NFT 标准,以允许 NFT 之间的嵌套和亲子关系。用更简单的话来说,NFT 可以拥有和管理其他 NFT,从而创建 Token 的层次结构。这种结构使得用户可以更灵活地管理和交易他们的数字资产,同时也为 NFT 的创建和使用提供了更多的可能性。与其他标准相比,ERC-7401 在设计上更注重于可扩展性和交互性,旨在满足更复杂的应用需求。

ERC-7401 的概念与创新

我们已经习惯了只有用户钱包或智能合约才能拥有 NFT 的事实,但也可以将不可替代的东西“嵌套”在彼此之间。ERC-7401 标准的技术实现基于以下几个核心点:

多级嵌套:支持无限层次的 NFT 嵌套,每个父 NFT 可以包含多个子 NFT,而这些子 NFT 本身也可以成为其他 NFT 的父 NFT。这种多层次的结构不仅为资产的组合和拆分提供了极大的灵活性,也允许了更复杂的资产关系和管理策略的实现。

资产管理灵活性:拥有父 NFT 的用户可以自由管理其内部的子 NFT,包括但不限于添加、移除或替换。这一点在管理复杂资产集合,如艺术品系列或游戏内多个装备时显得尤为重要。

跨集合互通:父 NFT 和子 NFT 可以属于不同的 NFT 集合,这一点对于跨品牌或跨平台合作提供了极大的灵活性。例如,一个电影系列的 NFT 可以包含来自多个不同艺术家的限量版艺术作品 NFT。

应用场景的多样化

ERC-7401 的实际应用场景广泛且多样,包括但不限于以下几个方面:

游戏行业:游戏开发者可以利用 ERC-7401 设计更复杂的游戏内经济系统。例如,一个角色 NFT(父 NFT)可以包含多个装备 NFT(子 NFT),这些装备可以单独更新或交易,从而增加游戏的策略性和玩家的参与感。不仅使得资产管理更为集中,还可以通过交易子 NFT 来调整角色的能力和外观。

艺术和收藏:艺术家可以通过创建包含多件作品的集合 NFT(父 NFT),提供整体或分散的收藏方式。不仅便于艺术家管理和销售其作品,也为收藏家提供了更多的选择和灵活性。

社区管理:ERC-7401标准在社区管理中也具有重要应用。通过ERC-7401,社区可以创建包含多个子社区或活动的父社区NFT。例如,一个大型社区可以作为父NFT,而其中的不同活动、会议和子社区作为子NFT。这样,社区管理者可以更方便地管理和组织社区活动,并提升社区的参与度和凝聚力。

身份和证书:在数字身份认证方面,个人或机构可以发行一个包含多个认证或资格证书的父 NFT。每个证书都作为一个子 NFT 存储,从而方便管理和验证个人的多重身份或资格。

ERC-7401 对 NFT 生态系统的影响

1. 提升 NFT 的价值和流动性

通过引入嵌套结构,ERC-7401 标准可以提升 NFT 的整体价值和流动性。一个包含多个子 NFT 的 父 NFT,其价值往往高于单个 NFT 的总和。此外,统一管理和交易嵌套 NFT 的方式,可以大大提高 NFT 的流动性,促进市场的活跃度。

2. 激发创新

ERC-7401 标准为开发者和创作者提供了更多的创新空间。通过嵌套结构,开发者可以设计出更加复杂和丰富的数字资产,激发出更多的创意和应用。例如,在游戏中,开发者可以设计出包含多个层级和复杂关系的游戏资产,从而提升游戏的深度和趣味性。

3. 优化用户体验

ERC-7401 标准的引入,可以大大优化用户的使用体验。通过统一管理和交易嵌套 NFT,用户可以更加方便地管理和交易其数字资产。此外,嵌套结构可以更直观地展示数字资产的层级和关系,提升用户的理解和操作体验。

4. 推动标准化进程

ERC-7401 的推出,标志着 NFT 标准化进程的进一步推进。通过引入嵌套结构,ERC-7401 为 NFT 的标准化提供了新的方向和思路,促进了 NFT 技术和应用的规范化和标准化。不仅有助于提高 NFT 的技术水平,还可以增强市场的信任和认可度。

技术实现和挑战

实现 ERC-7401 标准的技术挑战主要在于如何高效地处理和存储大量的嵌套信息。此外,智能合约的安全性也是一个重要考虑因素,因为复杂的交互和嵌套可能增加智能合约被攻击的风险。开发者需要在保证合约安全和功能性的同时,优化合约的性能和成本。

前景与展望

随着 NFT 市场的持续发展,ERC-7401 标准的引入无疑为市场带来了新的活力和可能性。它不仅为用户提供了更多的灵活性和选择,也为开发者打开了创新应用的大门。未来,我们可以预见 ERC-7401 在更多领域发挥其独特的影响力,推动数字资产和区块链技术的进一步融合和创新。随着该标准的推广和应用,未来的 NFT 市场将更加多元化和动态,为用户和开发者提供更加丰富和深入的数字资产体验。 | nft_research |

1,869,929 | What We Learned From Building Share-Brewfiles (Astro + React + Clack CLI) | INTRO What did we build? The Share Brewfiles project was built to make it easy... | 0 | 2024-05-31T06:42:54 | https://dev.to/therubberduckiee/what-we-learned-from-building-share-brewfiles-astro-react-clack-cli-2p4c | astro, webdev, javascript, programming | # INTRO

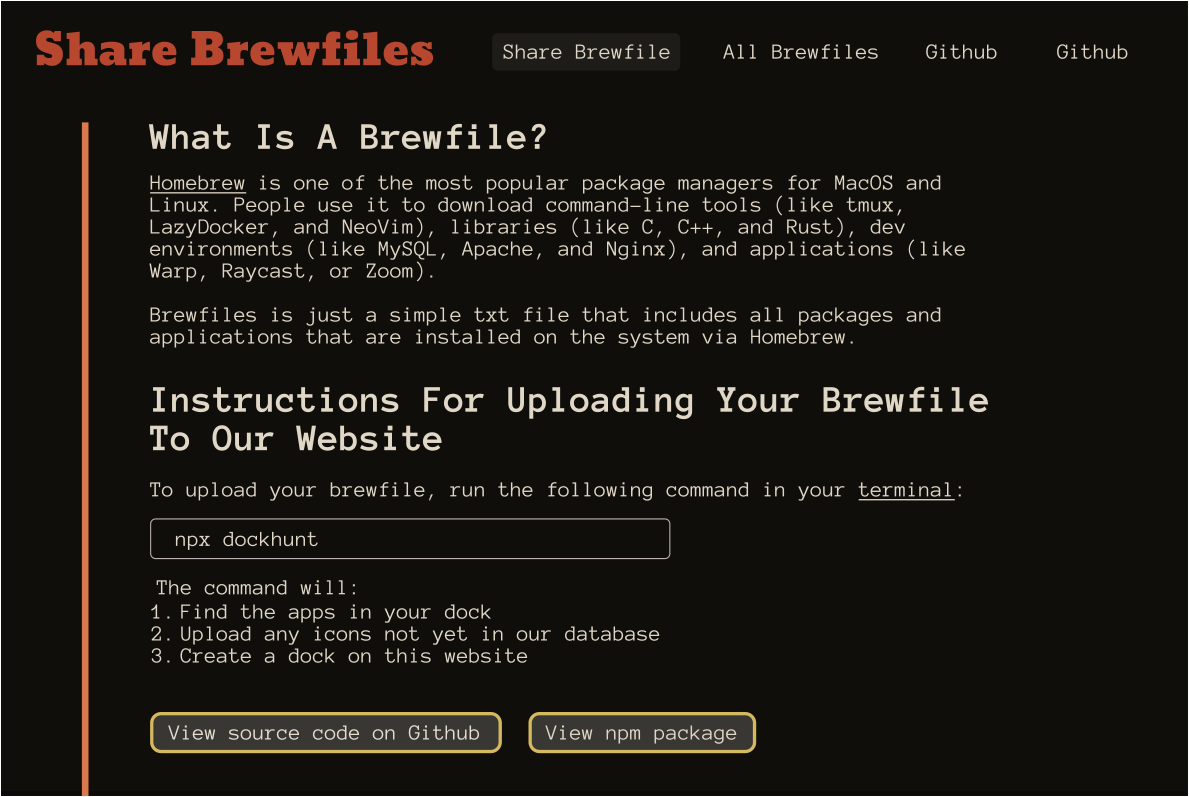

## What did we build?

The [Share Brewfiles project](www.brewfiles.com) was built to make it easy for people to share out their full tech stack. We also added a social aspect to the site so that developers could have fun with it.

Here’s what we built:

{% embed https://youtu.be/_AaW7VPBY80 %}

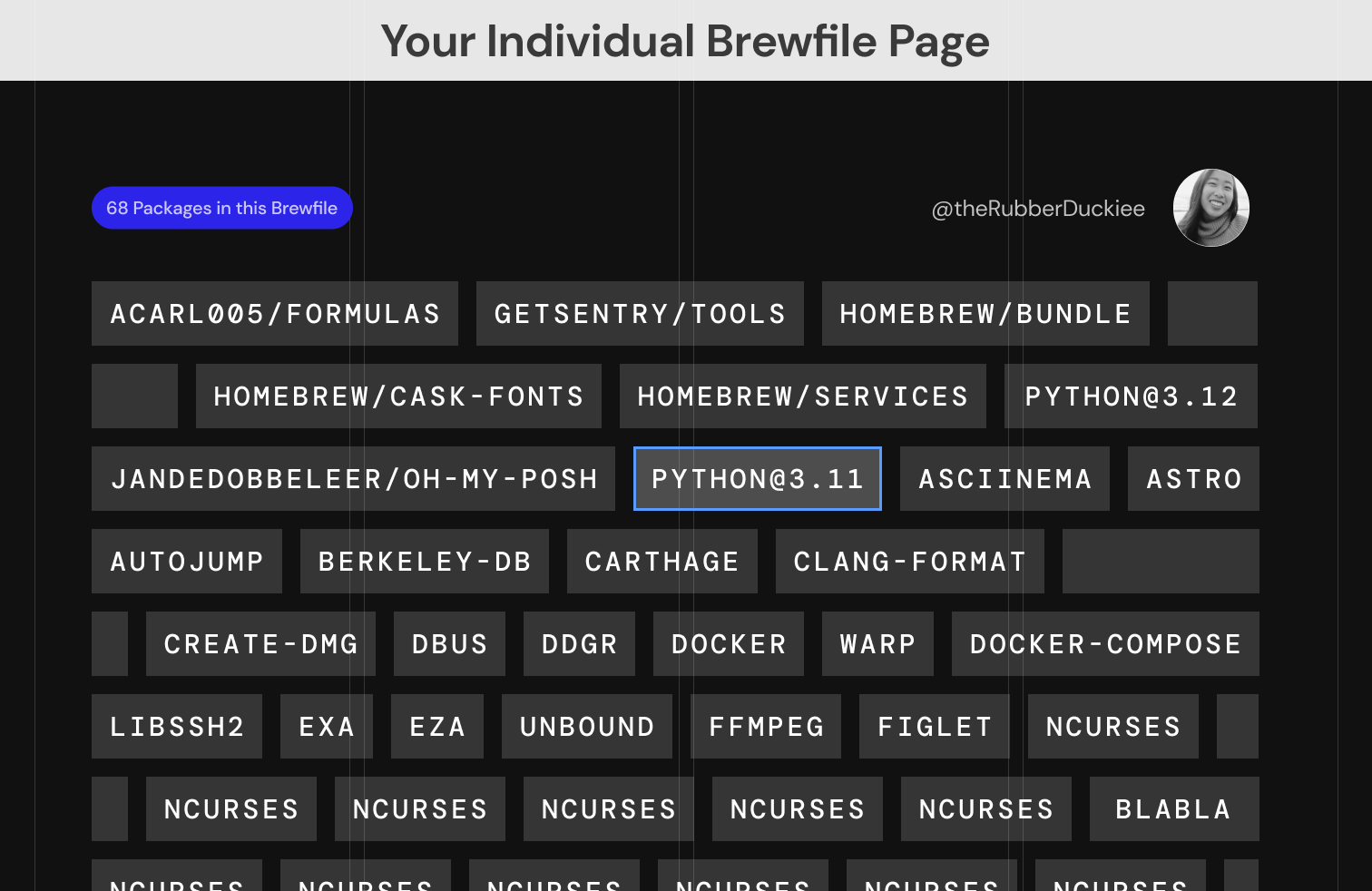

- A personalized Brewfile page that is easily shared and just requires one CLI command to be updated whenever you want.

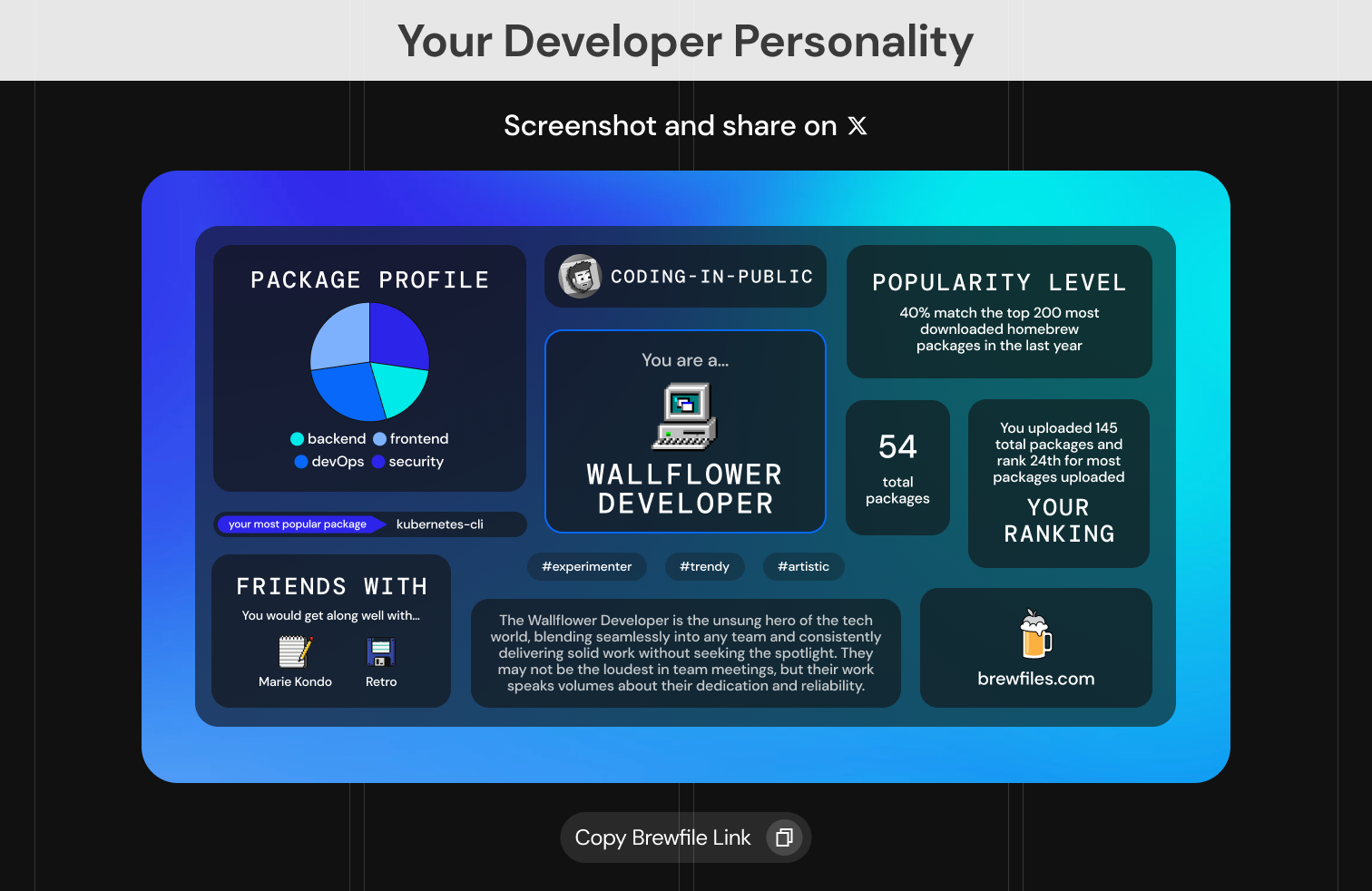

- Generates a developer personality profile based off the packages in your Brewfile, really fun to share with friends & social media.

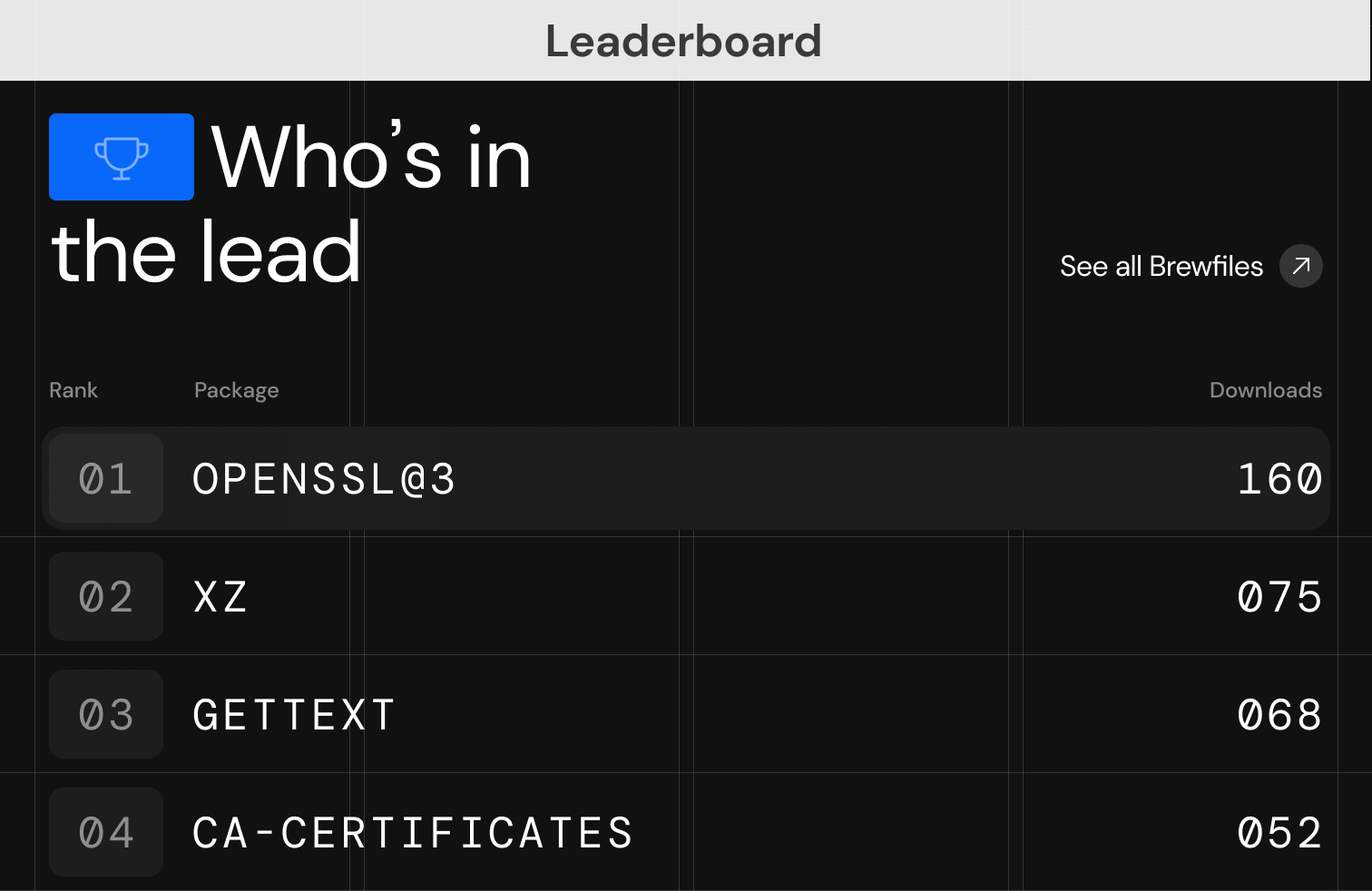

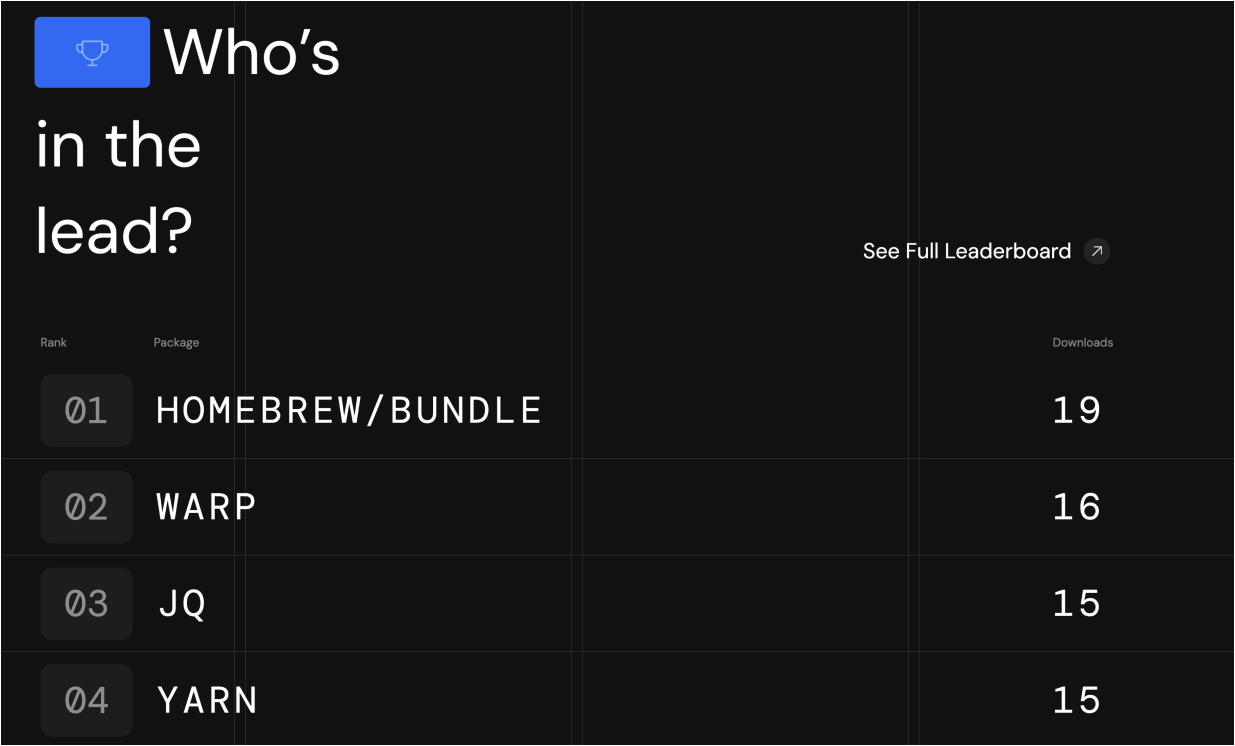

- A leaderboard that lets you see what’s popular overall and discover new tools.

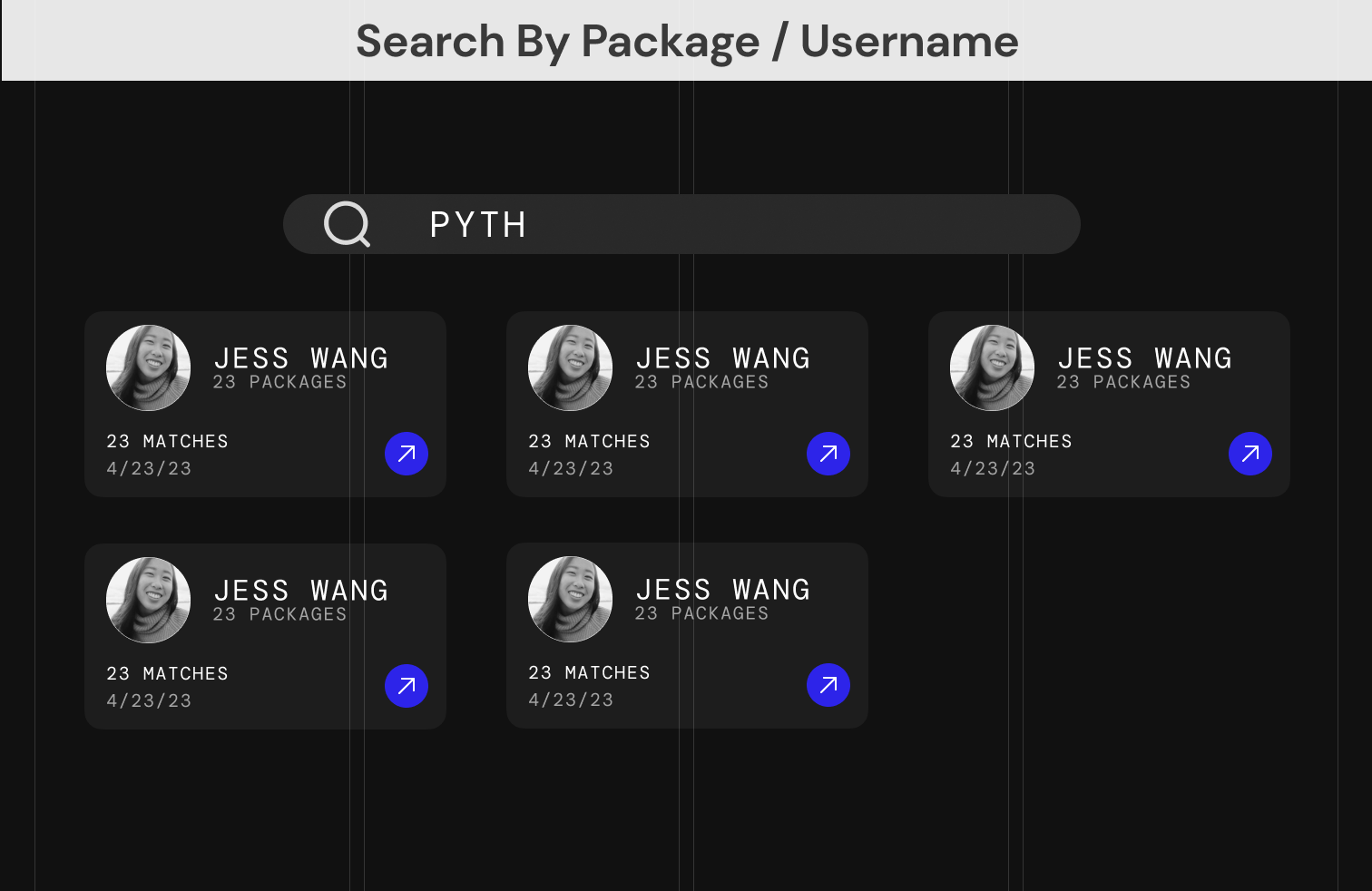

- Search that allows you to search by package or username so you can stalk other developers’ Brewfiles.

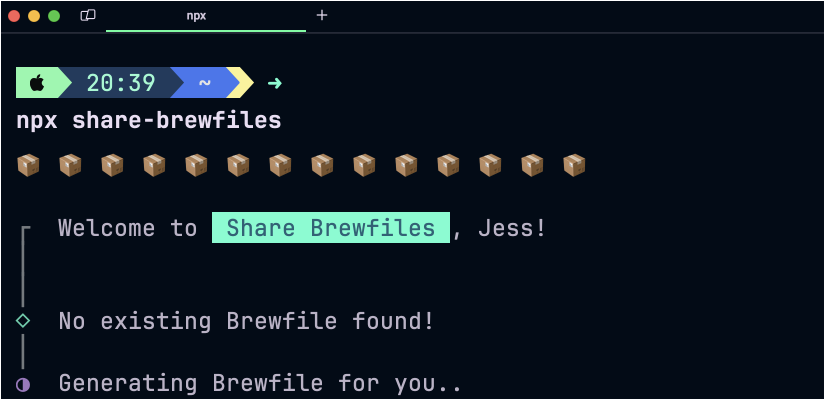

Run `npx share-brewfiles` in your CLI to try out the tool.

Also, this project is [100% open source](https://github.com/theRubberDuckiee/share-brewfiles-website). I want to give a huge thank you to [Warp](warp.dev) (the company I work at) for encouraging me to build this tool. We hope this project contributes to the developer community in a fun and educational way!

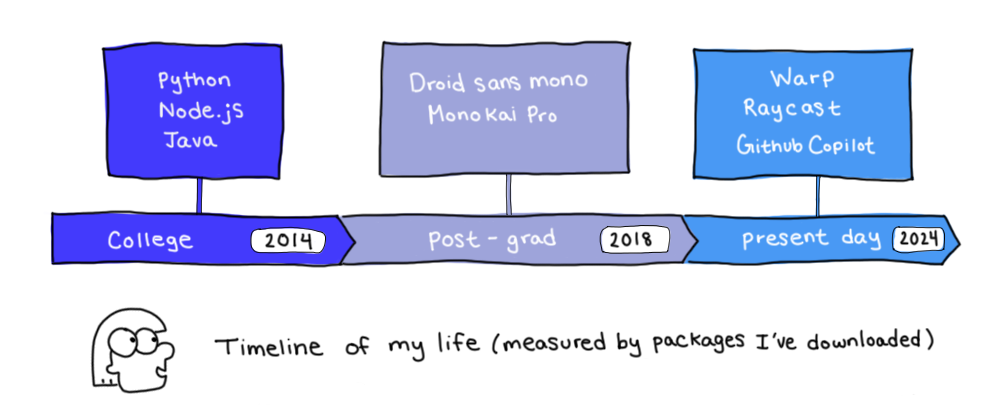

## Our inspiration

Most developers’ careers can be tracked by the packages they’ve downloaded over time. At school, I downloaded frameworks like Python, Node.js, and Java. Upon graduating, Monokai Pro became my VSCode theme, Droid Sans Mono became my default NerdFont, and I used Starship to customize my terminal prompt. In recent years, I’ve experimented with newer tools like Warp, Raycast, and Github Copilot.

What tools we download tell the story of who we are as developers. We enjoy sharing our hand-selected tools in blogs or Youtube videos titled “Must-Have Tools And Apps” or “How To Customize Your Dev Machine In 2024”. But this content can’t be easily updated as you evolve, and usually only contain 5-10 tools when realistically most developers are probably using >50 packages.

# Technical Overview + Tech Stack

Our tool can be split into 2 parts:

1: **The command line experience**

Stack: Clack CLI, Github auth, Node.js

Run `npx share-brewfiles` to generate your Brewfile package list for you if you don’t already have an existing one in that directory. It collects your Github auth and sanitizes your data before uploading it to the brewfiles.com API endpoint. The UI of the command line interface (white vertical lines, spinning and text animations) is dictated by Clack CLI.

2: **The brewfiles.com website**

Stack: Astro, React, Tailwind CSS, Firebase

Just a reminder that this project is completely [open source](https://github.com/theRubberDuckiee/share-brewfiles-website). This code supports the entire web experience (which we described in the “What did we build?” section) which is too much to cover, but we’ll point out a few interesting chunks of our codebase in case you decide to poke around.

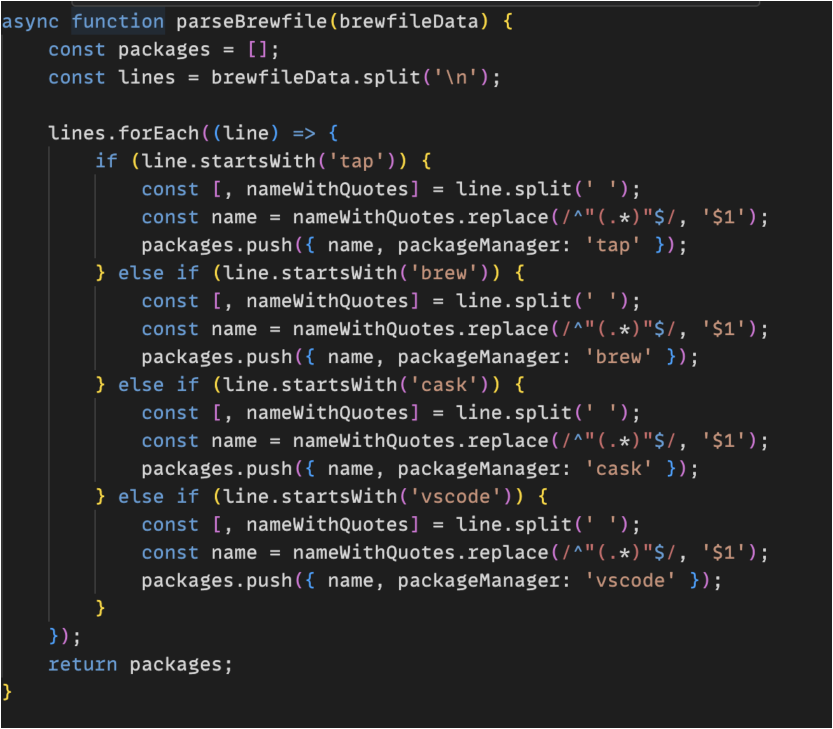

- `api/uploadBrewfile`

This is the endpoint that the CLI logic will hit when uploading a Brewfile to the site. This logic will sanitize the data and check if this user already has an existing Brewfile (and update it if needed) before uploading it to our Firebase database. It will also asynchronously kick off the process of parsing through the packages to determine the developer’s personality type.

- `generatePersonality.ts`

This file contains the logic through which we determine a developer’s personality type. You can see all the different possible personality types, as well as the criteria we set to be categorized for each personality. We realize these categories and criteria may be a bit arbitrary, but cut us some slack - we decided to build this feature 2 weeks before launching! If you want to see improvements, send in a PR.

- `labelledBrewfiles.ts`

This file is a dictionary of packages categorized by certain characteristics, like whether it’s frontend/backend/devops/ai, whether it’s related to the CLI, and more. This dictionary is what we use to filter our leaderboard by “top dev apps” and “top CLI tools”, as well as what we use to determine a developer’s personality type. We used ChatGPT to help us label these packages.

# What we learned

## Astro vs React

When choosing a web framework, we settled on Astro. Admittedly, part of this decision was inherent bias. Astro was a technology that tech influencers were claiming to be “S-tier”, and we thought this newer tool would be fun to write about and attract attention from those who were curious. From a more logical standpoint, we chose Astro because SSR rendering meant better performance and better implications for SEO. Plus, it allowed for a high degree of flexibility by simplifying the management of dependencies like React/Vue/Svelte.

One downfall of this approach is that Astro is meant for content-heavy, mostly static websites, whereas the Brewfiles website has many dynamic elements. While content-rich sites have their place, our thoughts about Astro mostly came down to planning. Due to the nature of building the site as we were designing the UI/UX, we continued to make adjustments—each one moving more and more dynamic.

Thankfully, Astro can include React and other UI frameworks with a simple integration, but we lost most of the benefit of Astro as we continued to make the site more dynamic. So while Astro could handle it, a React meta framework would have likely been a cleaner experience. In the end, we were left with a few Astro route shells filled mostly with React components and manual vanilla JavaScript components.

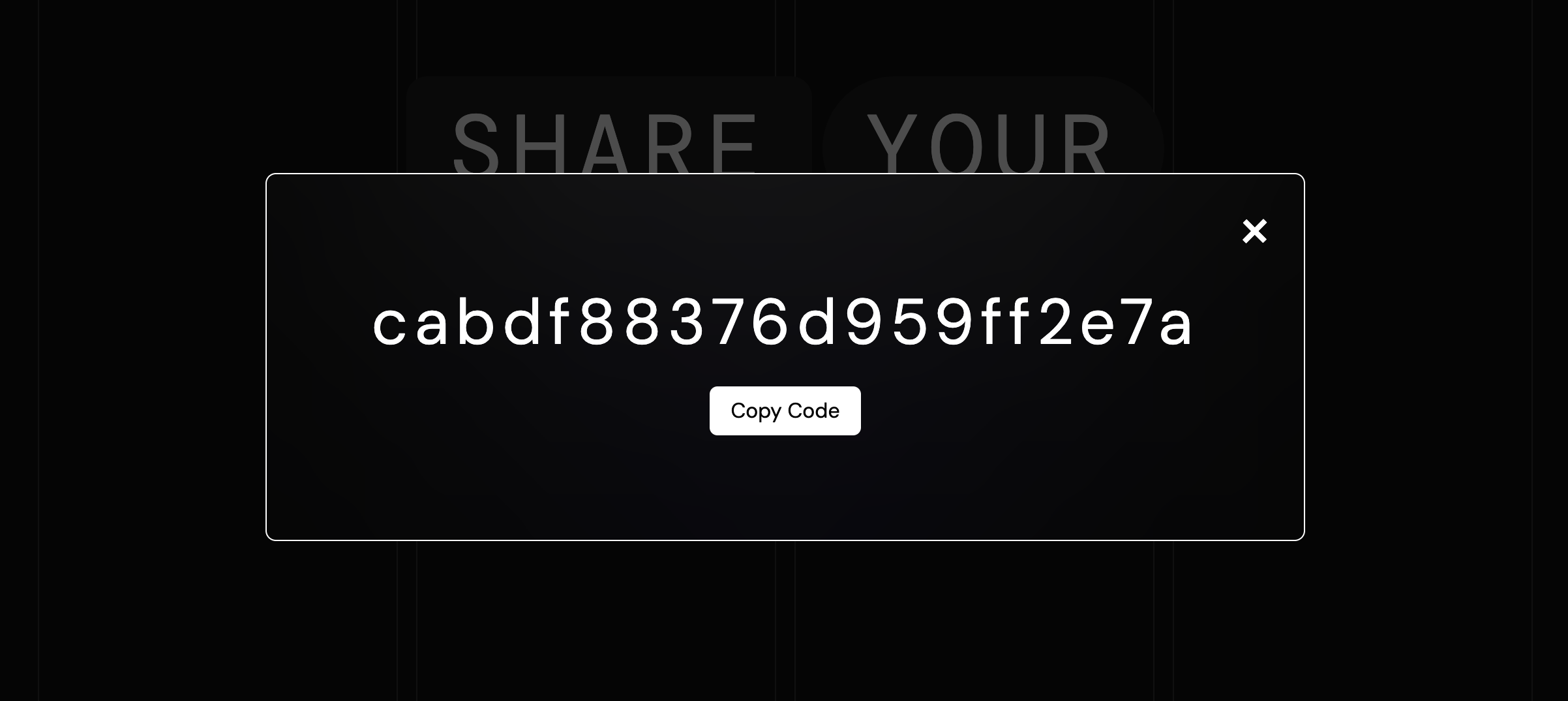

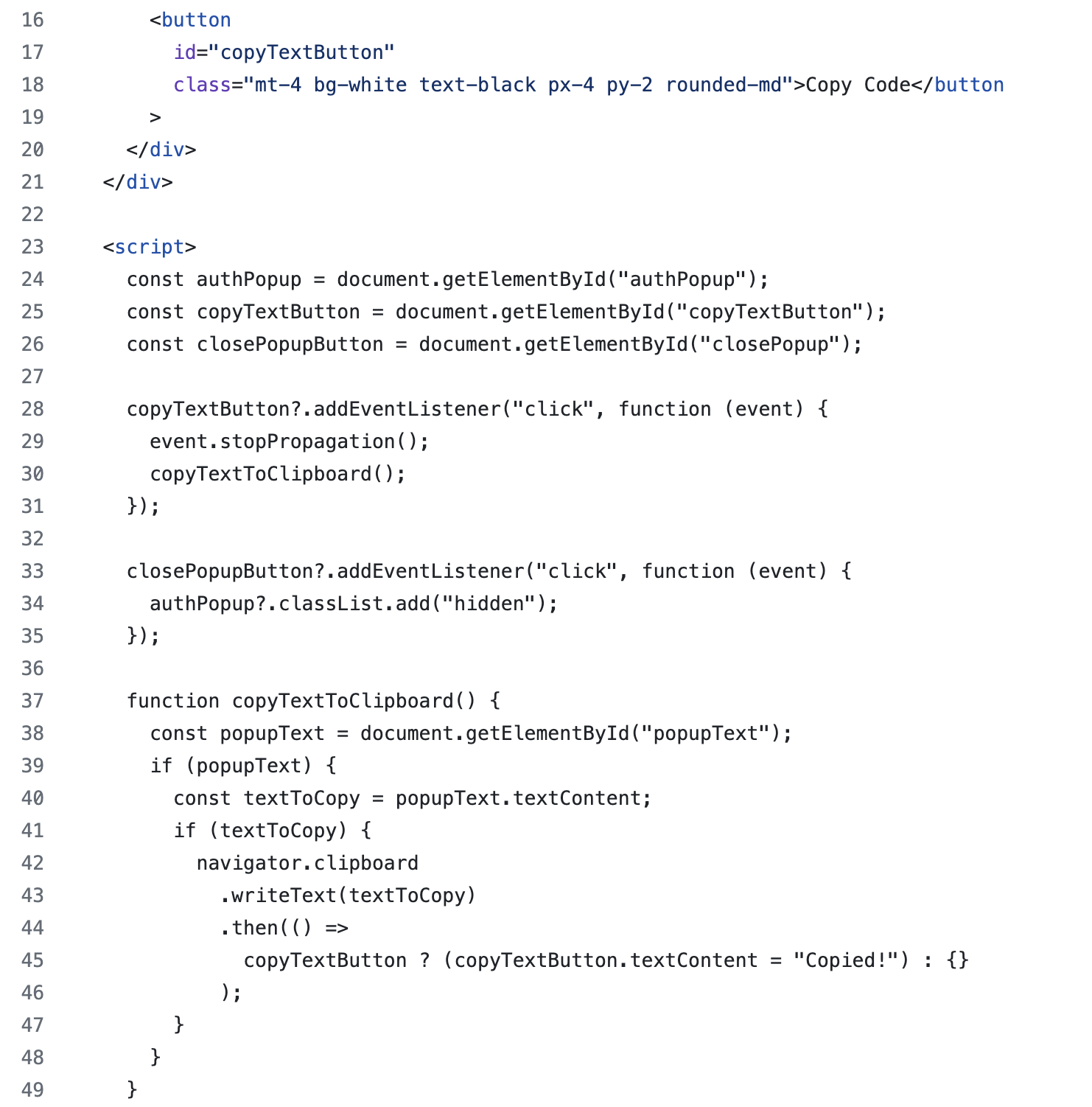

For example, on our website, we have a screen that pops up an auth code that the user needs to copy when uploading their Brewfile to the site. Here’s what it looks like.

When the user presses “Copy Code”, the text on the button will then change to “Copied!”. Here is what the Astro code looks like (or see code [here](https://github.com/theRubberDuckiee/share-brewfiles-website/blob/main/src/components/AuthPopup.astro)):

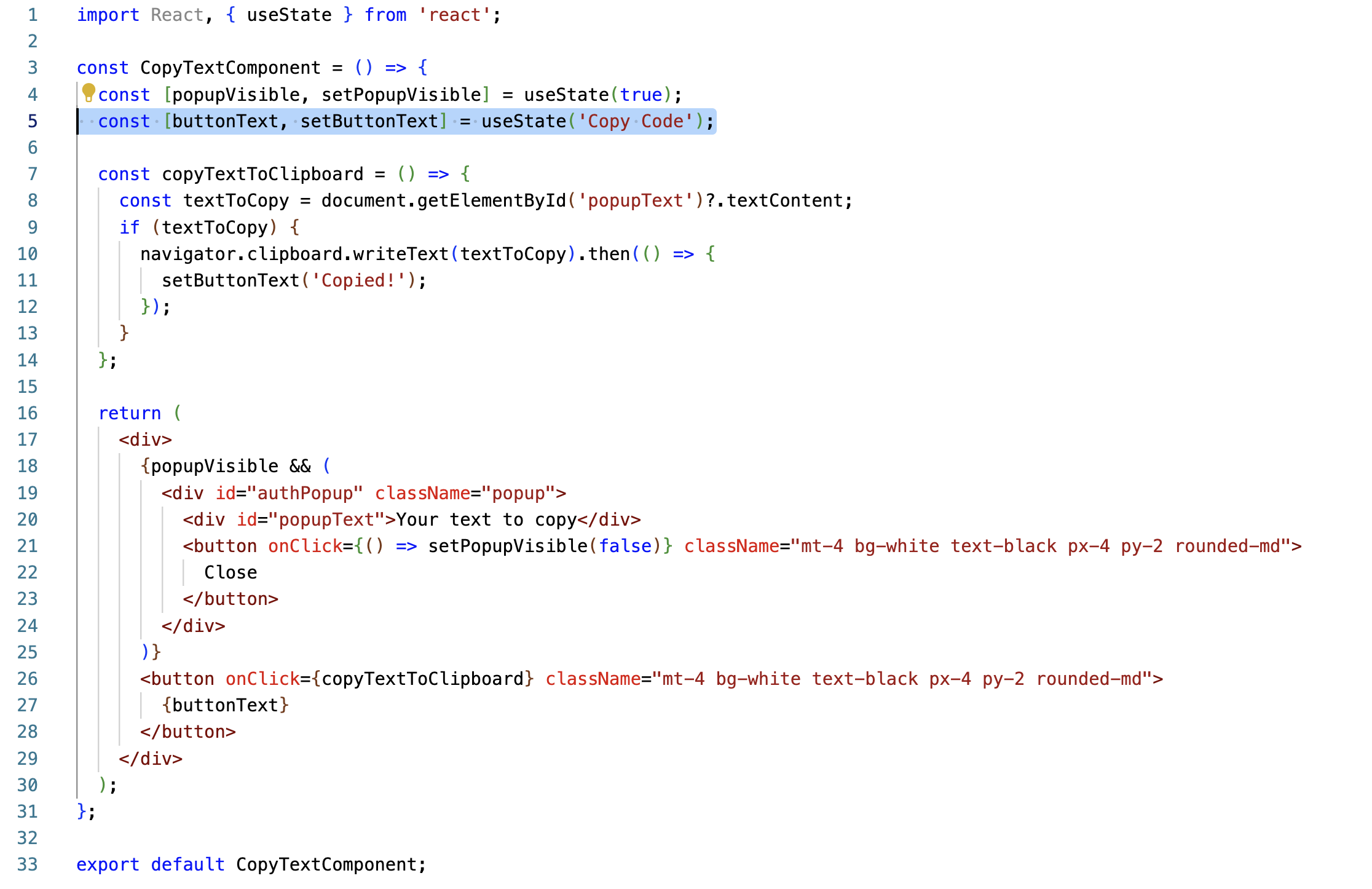

As you can see in the code, we need to interact with the DOM directory using JavaScript to add event listeners and perform actions based on those events. This approach is quite manual. It’s clear that Astro encourages developers to pre-render as much content as possible at build time. If we had written this code in React, we could simplify the logic using React’s state management and event handling capabilities. The code may look something like this:

As you can tell here, the use of useState and onClick() is much cleaner than using the `<script>` tag to embed Javascript code directly within the HTML markup. Because of the complexity caused by Astro, we ended up rewriting a few of our files using React instead.

Though we had to work around some of Astro’s capabilities as a framework, there were still some upsides, which we’ll talk about in the next section when it comes to rendering and performance.

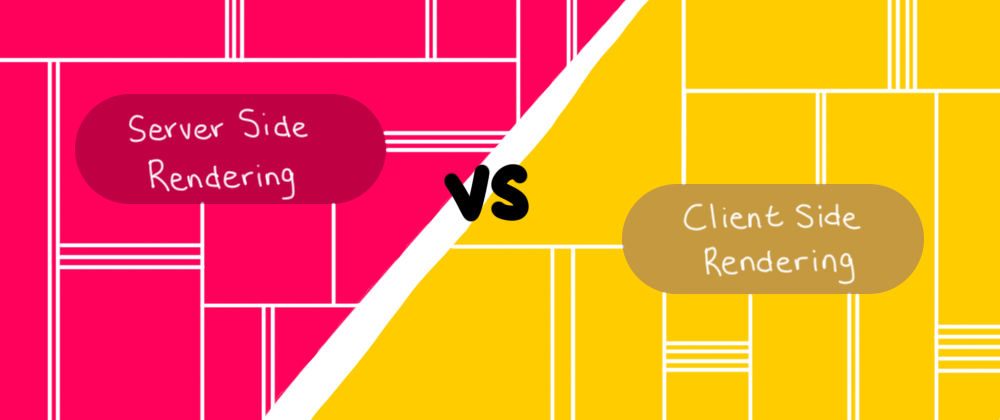

## Sever-Side(SSR) vs client side(CSR) rendering

Astro SSR adapters enable server-side rendering, meaning as routes are requested, we could tell Astro to generate a finalized HTML page on the server. Some pages, however, require processing a lot of data before rendering the page. With each new brewfile submission, more processing will be required, and yet we want to maintain quick page loading speed as our data entries grow.

We decided to server-side render each route and embed slower sections as client-side rendered components. As an example, the homepage includes a leaderboard list at the bottom. Generating the list requires fetching all users’ Brewfiles from the database and calculating a top 10 list.

We created a server API route to handle the calculations and then embedded a client-side React component to hit the endpoint and display the final list. This combination of SSR and CSR allowed for the best possible performance and user experience.

## Data decisions

- Basic data sanitation

We had to take into account the possibility that somebody could add random sentences into their Brewfile or generally corrupt the file (since it is just a plain .txt) before uploading it to our site. As a result, we checked for a format of <packageManager> followed by <packageName> and sanitized the strings of extra quotes and spaces.

- Client-side validation

The reason that the CLI logic hits the API endpoint on the website and not Firebase directly is because we wanted to do some client-side validation before uploading the data to the database.

For example, we check if this user already has a Brewfile that’s been uploaded. If yes, then we will update the existing Brewfile with the incoming data so we can ensure that 1 user will only ever have 1 uploaded Brewfile on our site.

- Database/Server-side validation

Firebase implements security rules to lock down read/write access to your documents, but validating the content of your data is less robust. We decided to approach validation in two ways to provide some basic safety for our data.

First, we provided some basic rules for reading and writing to our documents in Firestore rules. While Firebase recently started offering an early-access PostgreSQL database solution, we used its traditional document collections. The security rules ensure any document in the collection follows a basic structure with only a particular set of keys, but you can’t easily ensure the shape of your data.

Secondly, due to some of the limitations of verification in Firestore, we decided to provide more structured validation when fetching documents in our server-side API endpoints. The isValidBrewfile function offers four validating options, one for each piece of data associated with a brewfile. By default, all four options are enabled yet for any particular validation call we can turn off a setting to skip that validation when needed.

Since so much of the UX depends on valid data, this two-tiered validation system means we can trust the data when processing Brewfiles. It’s not iron-clad validation, but good enough for our purposes.

## User experience decisions

### Overall UI/UX

When thinking of the design, we considered a few things.

First, we are trying to bring some “pizzazz” into Brewfiles, which are normally just normal, boring, black-and-white .txt files. For that reason, the UI should feel cool, sleek, and modern.

Second, package lists are very text-heavy, meaning that there won’t be many opportunities to show off graphics/images on this website. That’s why we chose an in-your-face font and a bold-blue color scheme that would catch people’s eyes.

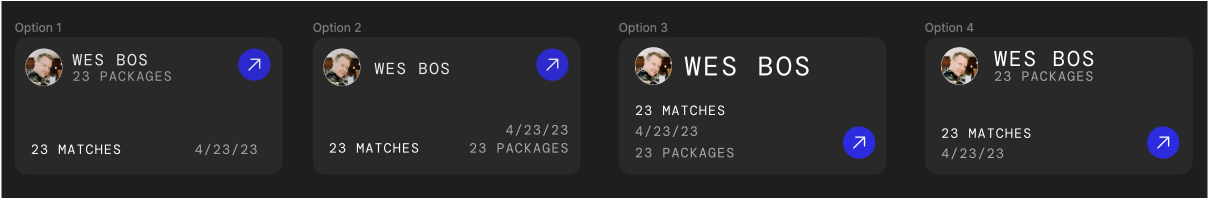

We didn’t have design resources when first starting this project, so one of our engineers decided to try mocking up some initial designs. Luckily, the UI you see today was created by Kyle, one of our very talented designers - but we thought it would be funny to show you some of the initial mockups:

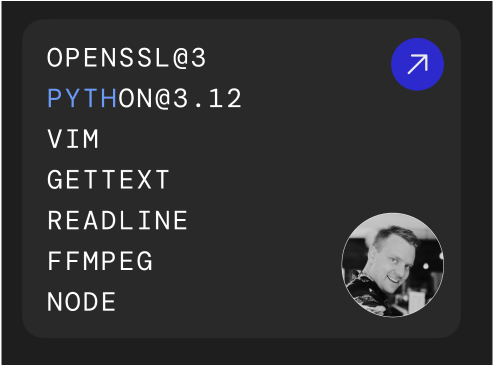

### Brewfile Cards

We had some interesting conversations around the design of our Brewfile card. Originally, we had coded our cards to look like this:

Where the card would show the first 7 packages in the developer’s Brewfile, and highlight packages when a certain keyword was being searched. However, we realized this wouldn’t work if the keyword matched with multiple packages within the Brewfile. For example, just the letter “a” would likely match with many packages. This is why product designers are important! Sadly, we had to rethink the UI for the card, which led to some backtracking in terms of our code and implementation. Here were the options we had come up with:

And you can check out the [“All Brewfiles”](https://www.brewfiles.com/brewfiles) page to see which option we decided on.

### Product decisions + product philosophy

A large part of this project was deciding what features to build. For example, we could have doubled-down on features that analyzed the packages within Brewfiles and gave developers more extensive statistics about the tools they download. We could have also changed the UI to only show the top 10 uploaded packages and only updated it once per week, to encourage people to come back to see the new leaderboard.

The leading philosophy behind the features we decided to build was education and social fun. We wanted this website to be a place where developers could discover new tools, which is why we have a complete leaderboard of every single package that has been uploaded, as well as different filters to sort by different categories. We also wanted to create a sense of community, which is why we required developers to connect a Github account with their Brewfile and added a search functionality. And our “developer personality” summary added a little bit of fun to the whole experience, and encouraged developers to share their Brewfiles out with the world.

### Discovering new tech/frameworks

Container queries have been stable in modern browsers since Firefox added them in February 2023 and they [currently are supported in 90% of browsers used globally](https://caniuse.com/?search=container-type). In short, container queries provide control over styling decisions based on the size of a parent container (instead of @media queries which are based on the size of the viewport).

The cards on the /brewfiles route change sizes as the viewport changes, but consistently with the viewport. At first, the cards take up the full viewport width, continuing to increase until 768px, where the list moves to a two-column layout.

Once again, the cards expand until displaying in three columns at 1280px. A simple @media query would only adjust the typography and other UI elements in a straight line, getting larger with the viewport. However, with container queries, we could adjust all the elements based on the intrinsic size of the cards creating a truly responsive interface!

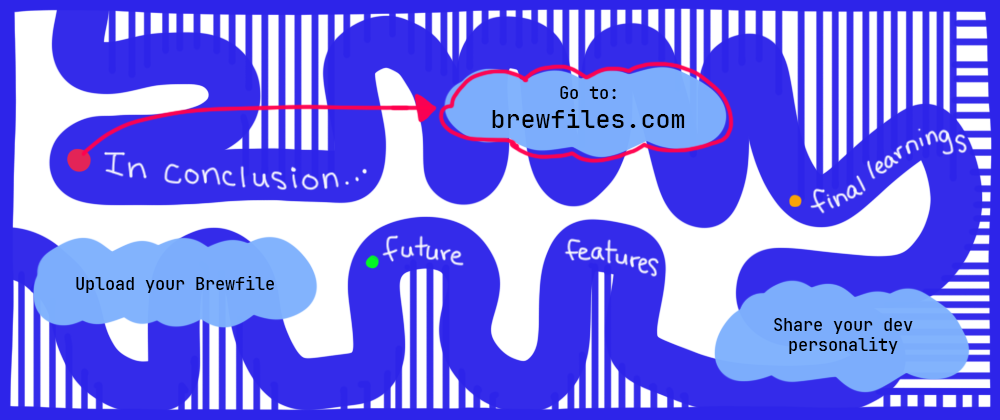

# Conclusion

### Future features

Before we end, here are some of the features/improvements we wanted to build, but didn’t get to before we launched.

**“Annotating” packages**

What if developers could “star” certain packages as ones they used daily or deserved special attention? Or flag other tools as deprecated?

**Template Brewfiles**

What if we had Brewfile templates for a specific project/project type? For example, if I am a frontend dev setting up a brand new MacOS and can run 1 command to download all packages from a specific template Brewfile.

### Closing remarks

We had so much fun creating this tool. It has been such a rewarding experience taking such a boring, black-and-white Brewfile .txt and turning it into a cool, fun, and social experience for the developer community. If you’d like to try it out, here are 2 things you can do.

- Run `npx share-brewfiles` in your CLI and follow the instructions to upload your Brewfile.

- Go to your [Brewfile page](www.brewfiles.com), generate your developer personality, and share it on social media!

And HUGE thank you again to [Warp](warp.dev) for encouraging me to build this project.

Thank you!

| therubberduckiee |

1,871,721 | Instagram AI policy | Instagram is updating it's privacy policy to give you the right to not have your data used to train... | 0 | 2024-05-31T06:40:36 | https://dev.to/lukeecart/instagram-ai-policy-h20 | ai, news | Instagram is updating it's privacy policy to give you the right to not have your data used to train AI going forward.

Here is the email I received:

We're getting ready to expand our AI at Meta experiences to your region. AI at Meta is our collection of generative AI features and experiences, such as Meta AI and AI creative tools, along with the models that power them.

What this means for you

To help bring these experiences to you, we'll now rely on the legal basis called legitimate interests for using your information to develop and improve AI at Meta. This means that you have the right to object to how your information is used for these purposes. If your objection is honoured, it will be applied from then on.

## How do I object the use of my data to included in AI training data?

You can fill out [this form to object from having your data used in the future.](https://help.instagram.com/contact/233964459562201)

Once you have given a reason you need to verify your email (because they send you a code). Then, at least for me, it took minutes for them to reply and say my data will not be used going forward.

## Do you notice the wording?

`If your objection is honoured, it will be applied from then on.`

So it looks like our data has been used in the past but for any future AI training and developments it will not be used.

## Thanks 🙏

Thank you for reading and I hope this was helpful.

If you think someone else should know this then share this article with them so they can also object Instagram from training their AI with their data.

| lukeecart |

1,871,720 | A Beginner’s Guide to Using Vuex | State management is crucial for developing scalable and maintainable applications. In Vue.js, Vuex is... | 0 | 2024-05-31T06:39:13 | https://dev.to/delia_code/a-beginners-guide-to-using-vuex-4egh | vue, javascript, beginners, tutorial | State management is crucial for developing scalable and maintainable applications. In Vue.js, Vuex is the official state management library, providing a centralized store for all the components in an application. This ensures consistent and predictable state management. This guide will walk you through using Vuex with the Composition API, detailing how the code works, how to use it effectively, and when not to use it.

## What is Vuex?

Vuex is a state management pattern + library for Vue.js applications. It serves as a centralized store for all the components in an application, with rules ensuring that the state can only be mutated in a predictable fashion.

## Setting Up Vuex in a Vue.js Project

### Step 1: Install Vuex

First, install Vuex. If you haven't created your Vue.js project yet, set it up using Vue CLI:

```bash

npm install -g @vue/cli

vue create vue-vuex-example

cd vue-vuex-example

npm install vuex@next

npm run serve

```

### Step 2: Create a Vuex Store

Create a Vuex store to manage the state of your application.

**store/index.js:**

```javascript

import { createStore } from 'vuex';

export default createStore({

state: {

count: 0

},

mutations: {

increment(state) {

state.count++;

},

decrement(state) {

state.count--;

}

},

actions: {

increment({ commit }) {

commit('increment');

},

decrement({ commit }) {

commit('decrement');

}

},

getters: {

doubleCount(state) {

return state.count * 2;

}

}

});

```

### Step 3: Integrate Vuex with Vue.js

In your `main.js` file, integrate Vuex with your Vue.js application.

**main.js:**

```javascript

import { createApp } from 'vue';

import App from './App.vue';

import store from './store';

const app = createApp(App);

app.use(store);

app.mount('#app');

```

### Step 4: Using Vuex in a Component with Composition API

Now, let's use Vuex in a Vue component using the Composition API.

**App.vue:**

```html

<template>

<div>

<h1>Count: {{ count }}</h1>

<h2>Double Count: {{ doubleCount }}</h2>

<button @click="increment">Increment</button>

<button @click="decrement">Decrement</button>

</div>

</template>

<script>

import { computed } from 'vue';

import { useStore } from 'vuex';

export default {

setup() {

const store = useStore();

const count = computed(() => store.state.count);

const doubleCount = computed(() => store.getters.doubleCount);

const increment = () => {

store.dispatch('increment');

};

const decrement = () => {

store.dispatch('decrement');

};

return {

count,

doubleCount,

increment,

decrement

};

}

};

</script>

```

### Explanation of the Code

- **State**: The state is an object that contains the application state. Here, we have a single state property `count`.

- **Mutations**: Mutations are synchronous functions that directly mutate the state. We have `increment` and `decrement` mutations to modify the `count`.

- **Actions**: Actions are functions that can contain asynchronous operations. They commit mutations. We have `increment` and `decrement` actions that commit the corresponding mutations.

- **Getters**: Getters are functions that return a derived state. We have a `doubleCount` getter that returns double the `count`.

- **useStore**: This function from Vuex is used to access the store instance in the setup function.

- **Computed Properties**: We use the Composition API's `computed` function to create reactive computed properties for `count` and `doubleCount`.

- **Methods**: Methods `increment` and `decrement` dispatch actions to modify the state.

## Good Practices

- **Keep State Simple**: Avoid complex nested state structures. Use modules to split your store if necessary.

- **Use Namespaced Modules**: For larger applications, use namespaced modules to keep the store structure modular and maintainable.

- **Leverage Getters**: Use getters to encapsulate logic for derived state, ensuring that components remain simple and focused on rendering.

- **Mutations for Synchronous Changes**: Only use mutations for synchronous state changes. For asynchronous operations, use actions to commit mutations.

## Bad Practices

- **Direct State Mutation**: Avoid mutating the state directly outside of mutations. Always use mutations to ensure predictable state changes.

- **Complex Mutations and Actions**: Keep mutations and actions simple. Complex logic should be handled outside of the store or split into multiple smaller mutations/actions.

- **Overusing Getters**: Avoid using getters for every state access. Getters should be used for computed or derived state, not as a substitute for state properties.

## When Not to Use Vuex

### 1. Simple Applications

If your application is simple and doesn't require complex state management, using Vuex might be overkill. Vue's built-in reactivity system is often sufficient for smaller projects.

**Example:**

If you are building a small to-do list app, managing state directly within your components or using Vue's built-in reactive properties (`ref` and `reactive`) might be simpler and more efficient.

### 2. Component-Local State

When state is only relevant to a single component and doesn't need to be shared across the application, using Vuex can add unnecessary complexity.

**Example:**

A form component with local validation state doesn't need Vuex. The state can be managed directly within the component using `ref` or `reactive`.

### 3. Performance-Sensitive Applications

For highly performance-sensitive applications, the overhead of Vuex might not be desirable. In such cases, you might consider using alternative state management libraries like MobX or Zustand, which offer different performance characteristics.

**Example:**

A real-time gaming application requiring millisecond-level performance might benefit from a more lightweight state management solution.

Using Vuex for state management in Vue.js applications provides a structured and maintainable way to manage shared state. By integrating Vuex with the Composition API, you can take advantage of Vue's reactivity system to build powerful and efficient applications. Remember to follow best practices to ensure your state management remains predictable and maintainable. Additionally, consider the complexity of your application and the scope of state sharing when deciding whether to use Vuex. Happy coding!

Twitter: [@delia_code](https://x.com/delia_code)

Instagram:[@delia.codes](https://www.instagram.com/delia.codes/)

Blog: [https://delia.hashnode.dev/](https://delia.hashnode.dev/) | delia_code |

1,871,719 | Tips to Making a Good First Impression at a New Job | Best Consultancy in Gurgaon | top 10 job consultancy in Gurgaon Starting a new activity is like... | 0 | 2024-05-31T06:38:46 | https://dev.to/valuewisers_bc9b99a8f0c40/tips-to-making-a-good-first-impression-at-a-new-job-3f28 | hrconsultancy, bestconsultancyingurgaon, top10jobconsultancyingurgaon | [](https://staffix.in/employers/best-consultancy-services-gurgaon/)

Best Consultancy in Gurgaon | top 10 job consultancy in Gurgaon

Starting a new activity is like stepping onto a brand new degree. You want to make a fine first impression that sets the degree for success and increase on your new role. The manner you navigate the ones early days and weeks can shape the way your colleagues and superiors perceive you. Here are some pointers to help you make an awesome first impact at your new task:

1. Dress Professionally

Wearing expert apparel shows your commitment on your paintings. Check the get dressed code of the agency and attempt to wear some thing a touch more formal than what’s common. It means which you respect the place of work and are a professional.

2. Be on Time

Arriving on time every day is critical, specifically in the course of your first weeks. Punctuality not only suggests your dedication but also demonstrates admire on your colleagues and their time. Plan your go back and forth and allocate extra time in case of sudden delays.

3. Show Enthusiasm

Expressing enthusiasm to your new job and the tasks at hand can go a long manner in creating a wonderful first influence. Displaying a fantastic mind-set and being eager to learn will show which you are stimulated and equipped to make a contribution to the group.

4. Be a Good Listener

During your initial interactions with colleagues and superiors, actively concentrate to what they have got to mention. By demonstrating precise listening skills, you show that you fee their enter and recognize their information. Listening attentively may also assist you apprehend the dynamics of the crew and your position inside it.

5. Ask Questions

Don’t be afraid to ask questions. Asking thoughtful and relevant questions demonstrates your interest in expertise the company’s operations and responsibilities assigned to you. By in search of rationalization, you show that you are proactive and dedicated to doing your activity effectively.

6. Take Initiative

While it’s important to listen and learn in the early stages, also look for opportunities to take initiative. Identify tasks that you can contribute to, even if they may not be explicitly assigned to you. Showing initiative helps establish yourself as a proactive and valuable team member.

7. Be Open to Feedback

Be open to receiving feedback and constructive grievance. Showing which you are receptive to comments demonstrates your dedication to non-public boom and improvement. Actively put into effect guidelines and paintings on rectifying regions for improvement.

8. Build Relationships

Take the time to construct effective relationships together with your colleagues. Offer help whilst possible and participate in team activities. Building sturdy relationships not only facilitates create a effective work environment however also complements collaboration and team achievement.

9. Maintain Professionalism

Be expert at all times while interacting with clients, bosses, and coworkers. Pay interest for your body language, tone, and words. Everyone must be handled with decency and respect, regardless of their work or function in the business enterprise.

10. Be Yourself

Making a good impression is important, but so is being sincere. Let your individuality come through and just be your self. With your coworkers, you may expand deep ties and gain their accept as true with by being actual and actual.

Remember, making an excellent first affect isn’t pretty much impressing others, however additionally about placing the stage for a successful and gratifying profession in your new task. | valuewisers_bc9b99a8f0c40 |

1,871,718 | Discovering the Mystical Kamakhya Temple: A Journey into the Heart of Assam's Spirituality | Nestled atop the Nilachal Hill in Guwahati, Assam, the Kamakhya Temple is one of the most revered... | 0 | 2024-05-31T06:38:42 | https://dev.to/travelsguide/discovering-the-mystical-kamakhya-temple-a-journey-into-the-heart-of-assams-spirituality-5c63 | temple, travelsguide, webdev | Nestled atop the Nilachal Hill in Guwahati, Assam, the Kamakhya Temple is one of the most revered Shakti Peethas in India. It is a place where spirituality, history, and culture converge, drawing thousands of devotees and tourists each year. This ancient temple, dedicated to Goddess Kamakhya, is not only a significant religious site but also a symbol of Assam's rich heritage.

Historical Significance

The **[Kamakhya Temple](https://jetsettersush.com/kamakhya-temple-5-important-things-you-must-know/)**'s origins are shrouded in mystery and myth. According to legend, it marks the site where the yoni (genitalia) of Sati, Lord Shiva's consort, fell when he carried her corpse after she immolated herself. This event is believed to have led to the creation of the fifty-one Shakti Peethas, sacred spots where parts of Sati's body are said to have fallen.

The temple's historical roots date back to the 8th century, with significant renovations carried out in the 17th century by King Nara Narayana of the Koch dynasty. Its unique architectural style, which includes a blend of Hindu temple architecture and indigenous elements, reflects the region's cultural diversity.

Architectural Marvel

The temple complex is a marvel of architecture, featuring a series of temples dedicated to ten Mahavidyas: Kali, Tara, Sodashi, Bhuvaneshwari, Bhairavi, Chinnamasta, Dhumavati, Bagalamukhi, Matangi, and Kamalatmika. The main temple houses the sacred sanctum sanctorum, a cave-like structure with a natural underground spring that symbolizes the goddess.

The outer walls of the temple are adorned with intricate carvings depicting various deities, mythological scenes, and floral motifs. The beehive-shaped dome, built in the Nilachal type of architecture, is a striking feature that stands out against the lush green backdrop of Nilachal Hill.

Religious Practices and Festivals

The Kamakhya Temple is a vibrant center of Tantric practices, and it plays a pivotal role in the religious life of its devotees. One of the unique aspects of the temple is the annual Ambubachi Mela, held in June, which celebrates the menstruation of the goddess. During this festival, the temple remains closed for three days, symbolizing the goddess's period, and reopens with great fanfare, drawing sadhus, devotees, and tourists from across the country.

Other significant festivals celebrated here include Durga Puja, Manasa Puja, and Kali Puja, each marked by elaborate rituals and a festive atmosphere.

Cultural Impact

The Kamakhya Temple is not just a religious site but a cultural landmark that influences various aspects of Assamese life. It has inspired numerous literary works, folk songs, and dances, reflecting its deep-rooted significance in the local culture. The temple also plays a crucial role in promoting Assam's tourism, contributing to the state's economy.

Visiting Kamakhya Temple

For those planning a visit, the [Kamakhya Temple](https://jetsettersush.com/kamakhya-temple-5-important-things-you-must-know/) is easily accessible from Guwahati, the largest city in Assam. The best time to visit is during the cooler months from October to March. However, visiting during the Ambubachi Mela offers a unique experience of witnessing the temple's vibrant traditions and the convergence of diverse spiritual practices.

When visiting, it's essential to respect the temple's customs and dress modestly. The serene environment of Nilachal Hill, coupled with the spiritual aura of the temple, provides a perfect setting for introspection and rejuvenation.

**Conclusion**

The Kamakhya Temple is more than just a place of worship; it is a beacon of spirituality, history, and culture. Its mystical aura, coupled with its architectural splendor and rich traditions, makes it a must-visit destination for anyone seeking to explore the spiritual heart of Assam. Whether you are a devotee, a history enthusiast, or a curious traveler, the Kamakhya Temple promises an unforgettable journey into the depths of India's sacred heritage. | travelsguide |

1,871,717 | Querying DNS Records with PowerShell | In the world of networking and system administration, efficiently managing DNS (Domain Name System)... | 0 | 2024-05-31T06:37:23 | https://www.techielass.com/querying-dns-records-with-powershell/ | powershell |

In the world of networking and system administration, efficiently managing DNS (Domain Name System) records is paramount. Whether you're troubleshooting connectivity issues, configuring mail servers, or ensuring proper domain mapping, having the ability to query DNS records quickly and accurately is indispensable. Fortunately, [PowerShell](https://www.techielass.com/tag/powershell/) provides a robust set of tools that make this task straightforward and efficient.

PowerShell, with its versatile scripting capabilities, allows administrators to automate repetitive tasks and perform complex operations with ease. When it comes to querying DNS records, PowerShell offers several cmdlets within its NetTCPIP module that streamline the process. Let's delve into how you can harness the power of PowerShell to query DNS records effectively.

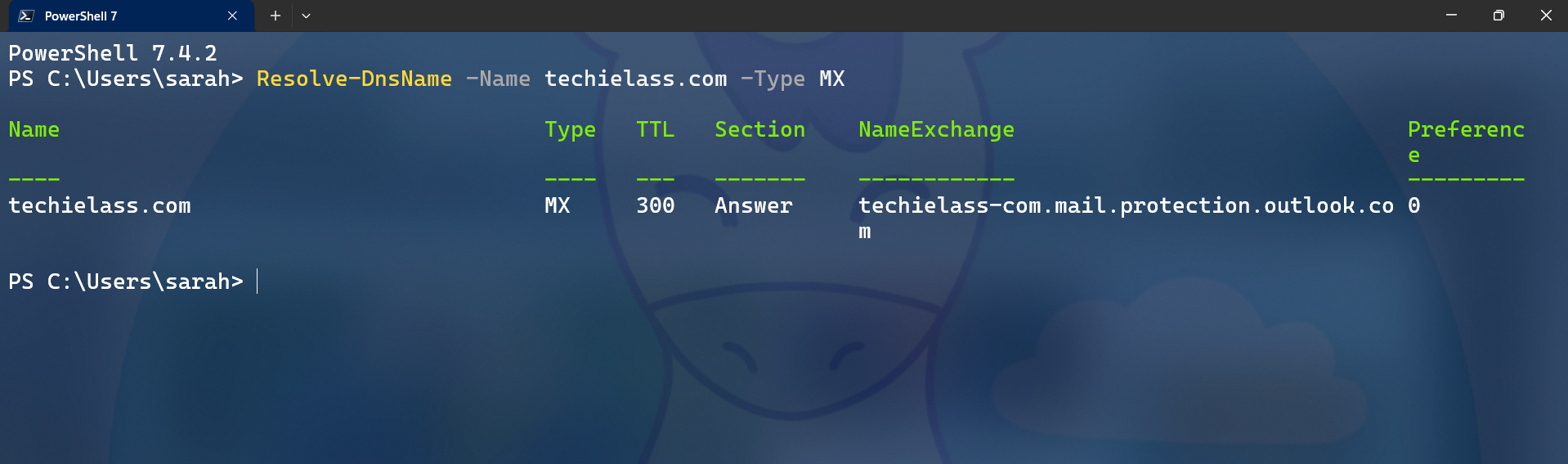

## MX Record Query

MX (Mail Exchange) records play a crucial role in email delivery, specifying the mail server responsible for receiving email on behalf of a domain. Here's how you can use PowerShell to query the MX record for 'techielass.com':

```

Resolve-DnsName -Name techielass.com -Type MX

```

_PowerShell MX Record query_

This command utilises the _Resolve-DnsName_ cmdlet, specifying the domain name and record type (MX). Upon execution, it returns information about the MX record(s) associated with the domain 'techielass.com', including the preference value and the mail server's hostname.

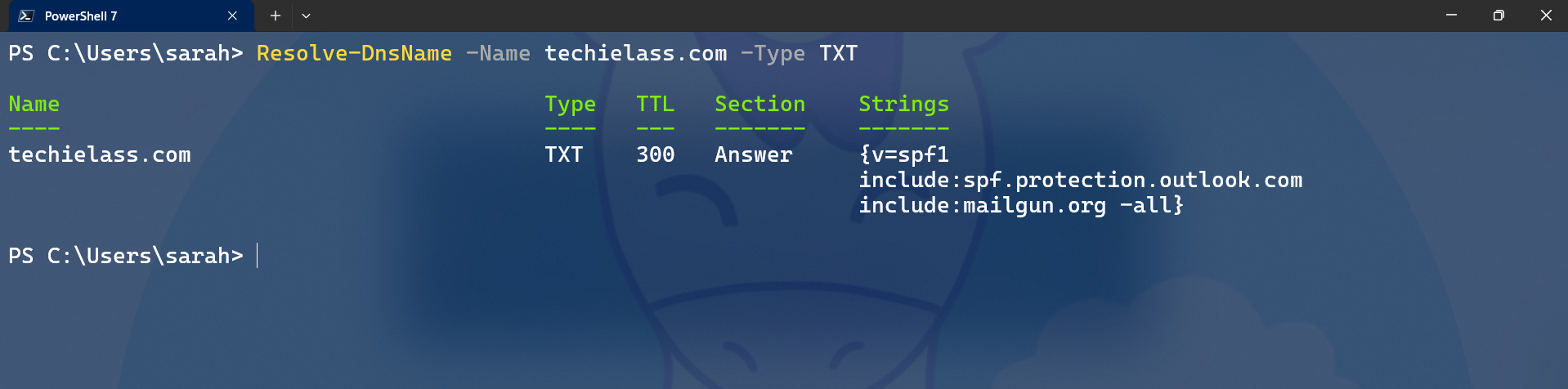

## TXT Record Query

TXT records serve various purposes, such as domain verification for services like SPF (Sender Policy Framework) and DKIM (DomainKeys Identified Mail). Let's use PowerShell to query the TXT record for 'techielass.com':

_PowerShell TXT DNS record query_

Executing this command retrieves the TXT records associated with the domain, providing valuable information encoded within these records, such as SPF policies or verification keys.

```

Resolve-DnsName -Name techielass.com -Type TXT

```

## CNAME Record Query