id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,871,542 | Demystifying Version Control: Your Guide to Git | Imagine working on a complex project, constantly editing and revising files. Wouldn't it be helpful... | 0 | 2024-05-31T03:09:53 | https://dev.to/epakconsultant/demystifying-version-control-your-guide-to-git-3jn | git | Imagine working on a complex project, constantly editing and revising files. Wouldn't it be helpful to rewind time and see previous versions? Or collaborate with others without version conflicts? This is where version control systems (VCS) come in, and Git, the most popular VCS, is your key to mastering this essential skill.

## What is Version Control?

Think of version control as a time machine for your files. It tracks changes made over time, allowing you to revert to previous versions, see who made what edits, and collaborate seamlessly. This is particularly crucial for software development, where a single wrong line can break everything. But VCS benefits extend far beyond code. Writers, designers, and even students can leverage its power.

[Trading Cryptocurrencies: Understanding the Basics and Mastering the Fundamentals](https://www.amazon.com/dp/B0CR9DV5HT)

## Why Use Git?

Git, a free and open-source VCS, stands out for its distributed nature. Unlike some systems that store everything on a central server, Git creates a local copy of the entire project history on each user's machine. This makes collaboration efficient, as everyone has a complete picture and can work offline. Additionally, Git excels at:

• Tracking Changes: It meticulously records every edit, allowing you to see the evolution of your project.

• Reverting to Previous States: Accidentally deleted a crucial section? No worries! Revert to a previous version with ease.

• Collaboration: Multiple users can work on the same project simultaneously without conflicts. Git helps merge changes smoothly.

• Branching: Create isolated branches to experiment with new features without affecting the main project.

• Security: Git ensures data integrity and allows access control for secure collaboration.

## Getting Started with Git

While Git might seem intimidating at first, understanding its core concepts is crucial. Here's a simplified breakdown:

• Repository (Repo): This is where all your project files and their version history are stored. Imagine it as a central archive.

• Working Directory: This is your local copy of the repo where you make changes to files.

• Staging Area: This is a temporary holding zone for changes you plan to commit to the main repository.

• Commit: This is the act of capturing a snapshot of your project at a specific point in time, along with a descriptive message.

## The basic Git workflow involves:

1.Making changes: Edit files in your working directory.

2.Staging changes: Use the git add command to mark specific changes for inclusion in the next commit.

3.Committing changes: Use the git commit command to create a permanent snapshot with a message describing the changes.

4.Pushing changes: (For collaboration) Upload your local commits to a remote repository, often hosted on platforms like GitHub.

## Beyond the Basics

As you become comfortable with Git, explore its more advanced features:

• Branching and Merging: Create isolated branches to experiment with features and seamlessly merge them back into the main project.

• Version Control for Different File Types: While Git excels with code, it can also manage documents, images, and other file types.

• Remote Collaboration with Platforms like GitHub: GitHub provides a user-friendly interface to manage your Git repositories, collaborate with others, and share code.

## Embrace the Power of Version Control

Git empowers you to work smarter, not harder. It streamlines collaboration, safeguards your work, and provides valuable insights into your project's history. Whether you're a developer, designer, or simply someone who values keeping track of revisions, Git is an invaluable tool. Take the first step today and unlock the power of version control!

## Additional Resources:

There are plenty of resources available to delve deeper into Git. Consider exploring the official Git documentation https://www.git-scm.com/ or online tutorials for a more hands-on learning experience. With dedication and practice, Git will become an indispensable part of your workflow.

| epakconsultant |

1,871,540 | Fortuna Tiger: slot online, mergulhe no mundo da sorte e da emoção | No mundo dos jogos de azar online, existem muitos jogos emocionantes que fazem seu coração bater mais... | 0 | 2024-05-31T03:07:24 | https://dev.to/tommydorton/fortuna-tiger-slot-online-mergulhe-no-mundo-da-sorte-e-da-emocao-433o | No mundo dos jogos de azar online, existem muitos jogos emocionantes que fazem seu coração bater mais rápido e sua respiração ficar mais lenta de excitação. Porém, entre todos esses jogos, há um que se destaca pelo seu charme único e atmosfera emocionante - Fortuna Tiger. Você deve [clicar na ligação](https://fortunatiger.org/). Neste artigo veremos o que torna este slot online tão único e emocionante.

**O fascinante mundo da Fortuna**

Fortuna Tiger não é apenas uma máquina caça-níqueis, é todo um mundo de aventuras e oportunidades emocionantes. Assim que você inicia este jogo, você fica imerso na emocionante atmosfera da China antiga, onde cada símbolo e som o transporta para o mundo da sorte e da riqueza.

**Ótimo design e gráficos**

Um dos aspectos mais impressionantes do Fortuna Tiger é seu design impressionante e gráficos de qualidade. Cada elemento do jogo é trabalhado nos mínimos detalhes, desde símbolos coloridos até animações emocionantes. Isso cria uma atmosfera única e torna a jogabilidade ainda mais emocionante.

**Recursos de bônus exclusivos**

Fortuna Tiger não oferece apenas um jogo de azar, mas também oferece muitos bônus interessantes que tornam a experiência de jogo ainda mais emocionante. Desde rodadas grátis até multiplicadores de ganhos, há algo para todos.

**Jogue em dispositivos móveis**

O mundo moderno exige acessibilidade e comodidade, por isso o Fortuna Tiger está disponível não só em computadores, mas também em dispositivos móveis. Isso significa que você pode aproveitar o jogo a qualquer hora e em qualquer lugar, sem estar limitado por tempo e lugar.

**Conclusão**

Fortuna Tiger não é apenas um jogo, é uma imersão em um mundo de sorte e emoção, onde cada rolo giratório pode trazer ganhos incríveis. Do design cativante aos emocionantes recursos de bônus, este jogo promete ser seu parceiro em suas aventuras no caminho para a riqueza. Faça uma viagem com Fortuna Tiger hoje e sinta a magia da sorte! | tommydorton | |

1,812,820 | 1. Concorrência Java: Threads! Processando em Paralelo e Ganhando Throughput | Seja bem vindo, esse daqui é o primeiro de 6 posts sobre concorrência em ... | 26,999 | 2024-05-31T03:00:00 | https://kaue.cat/posts/concorrencia-java/threads-java/ | programming, java, braziliandevs | Seja bem vindo, esse daqui é o primeiro de 6 posts sobre concorrência em

# Contexto

<mark style="background: #D2B3FFA6;">Threads são unidades de execução dentro de um processo</mark>. <mark style="background: #D2B3FFA6;">Um processo é um programa em execução que contém pelo menos uma thread.</mark> As threads permitem que um programa execute várias tarefas ao mesmo tempo (ou pelo menos aparentemente).

# **Vantagens de programar com múltiplas threads:**

Uma das principais razões para usar múltiplas threads é melhorar o desempenho de um programa. Tarefas pesadas e demoradas podem ser divididas em threads separadas, permitindo que diferentes partes do programa sejam executadas em paralelo. Isso pode levar a uma utilização mais eficiente dos recursos da CPU e, consequentemente, a um tempo de resposta mais rápido.

![[Untitled 109.png|Untitled 109.png]]

No entanto, programar com threads também traz desafios, como a necessidade de lidar com concorrência (quando várias threads tentam acessar ou modificar os mesmos recursos ao mesmo tempo) e a possibilidade de erros difíceis de depurar (como as condições de corrida), pois os resultados de um mesmo código não serão necessariamente os mesmos (não determinísticos).

# O multithreading ajuda ou não?

**1. Operações de I/O:**

Quando um programa precisa realizar operações de entrada/saída -- I/O (e elas são o gargalo), como leitura/gravação de arquivos, comunicação com bancos de dados ou solicitações de rede, <mark style="background: #D2B3FFA6;">há frequentemente momentos em que a CPU fica ociosa</mark>, esperando que os dados sejam lidos ou escritos.

Nessa situação, se uma nova thread tomasse conta da situação, ela não seria mais executada pelo processador enquanto estivesse ociosa, pois aconteceria o que chamamos de troca de contexto, que é basicamente fazer com que outra thread seja processada. Isso permite que outras threads que necessitem de processamento real tenham suas operações executadas pelos núcleos da CPU, ou até mesmo lançar (ou usar) mais threads para já lançar outras chamadas que também exigem esse tempo de espera, conhecidas como <mark style="background: #D2B3FFA6;">bloqueantes</mark>. Isso ajuda a aproveitar melhor o tempo da CPU, **melhorando a eficiência geral do programa.**

Imagine um contexto onde você precisa ler dois arquivos .txt, essa operação poderia ser realizada paralelamente se lançássemos duas threads, uma para ler cada arquivo, sendo cada uma processada em um núcleo, diminuindo o tempo de execução essencialmente pela metade

**2. Código CPU-bound:**

Quando o programa está executando tarefas intensivas em CPU, como cálculos matemáticos complexos, simulações ou processamento de imagem, uma única thread pode não ser capaz de aproveitar totalmente a capacidade de processamento da CPU. Dividir essas tarefas em threads separadas permite que múltiplos núcleos da CPU trabalhem em paralelo, acelerando o processamento.

Nesse caso, devemos tomar cuidado, pois a quantidade de tarefas que pode ser paralelizada realmente é igual a quantidade de núcleos do seu processador (lógicos + físicos).

![[Pasted image 20240404211738.png]]

A imagem acima representa a troca de contexto, note que esse processo não é necessariamente instantâneo e resulta em possível perda de cache, o que pode ser agressor à performance - [Fonte](https://wiki.inf.ufpr.br/maziero/lib/exe/fetch.php?media=socm:socm-05.pdf).

> A frequência de trocas de contexto tem impacto na eficiência do sistema operacional: quanto menor o número de trocas de contexto e menor a duração de cada troca, mais tempo sobrará para a execução das tarefas em si. Assim, é possível definir uma medida de eficiência E do uso do processador, em função das durações médias do quantum de tempo *t* e da troca de contexto *c*.

# Java: Threads!

## O Objeto Thread

O objeto `java.lang.Thread` é um *wrapper* em cima das threads do sistema operacional

> [!important]

> Note que as Threads são objetos wrappers em torno das threads do SO, portanto, se essas threads do S.O são pesadas (e são), as Threads em Java também são.

Em Java, podemos trabalhar com threads de algumas maneiras, a primeira que veremos é com a classe Thread, essas classes precisam dar o override do método `run`:

```Java

class ThreadExample extends Thread{

char c;

public ThreadExample(char c) {

this.c = c;

}

@Override

public void run() {

System.out.printf("\nComeçouuu!: %s\n", c);

for (int i = 0; i < 100 ; i++) {

System.out.print(c);

}

}

}

```

```Java

public static void main(String[] args) {

/* Todo programa em execução é "feito" de threads, esse não é uma exceção*/

Thread.currentThread().getName();

ThreadExample t1 = new ThreadExample('A');

ThreadExample t2 = new ThreadExample('B');

ThreadExample t3 = new ThreadExample('C');

t1.run();

t2.run();

t3.run();

}

```

![[Untitled 110.png|Untitled 110.png]]

Pronto! (Só que não) → Note que os objetos thread ainda estão rodando na mesma thread, nesse caso, usar `Thread.run()` executa o método run, nao inicia a thread, nesse caso, devemos rodar `start()`!

```Java

public class Thread01 {

public static void main(String[] args) {

/* Todo programa em execução é "feito" de threads, esse não é uma exceção*/

Thread.currentThread().getName();

ThreadExample t1 = new ThreadExample('A');

ThreadExample t2 = new ThreadExample('B');

ThreadExample t3 = new ThreadExample('C');

t1.start();

t2.start();

t3.start();

}

}

```

![[Untitled 1 70.png|Untitled 1 70.png]]

> [!question] Reflexão

> Criar um objeto do tipo thread faz sentido? Você está especializando uma thread realmente? A herança faz sentido nesse caso? [[2. SOLID]]

## 1. Interface Runnable

Nesse caso, acho válido começar diferente, vamos ler uma parte da javadoc da classe runnable

## 0. Javadoc

> The Runnable interface should be implemented by any class whose instances are intended to be executed by a thread. The class must define a method of no arguments called run.

>

> In addition, Runnable provides the means for a class to be active while not subclassing Thread. A class that implements `Runnable` can run without subclassing Thread by instantiating a Thread instance and passing itself in as the target.

> **<mark style="background: #D2B3FFA6;">In most cases, the Runnable interface should be used if you are only planning to override the run() method and no other Thread methods. This is important because classes should not be subclassed unless the programmer intends on modifying or enhancing the fundamental behavior of the class.</mark>**

A documentação do JAVA responde perfeitamente a reflexão anterior, se você discorda, pode seguir em frente, mas *particularmente* acho que é um argumento difícil de rebater.

Exemplo:

```Java

class ThreadRunnable implements Runnable {

char c;

public ThreadRunnable(char c) {

this.c = c;

}

@Override

public void run() {

System.out.printf("\nComeçouuu!: %s\n", c);

for (int i = 0; i < 100; i++) {

System.out.print(c);

}

}

}

public class Thread01 {

public static void main(String[] args) {

/* Todo programa em execução é "feito" de threads, esse não é uma exceção*/

Thread.currentThread().getName();

var t1Runnable = new ThreadRunnable('a');

var t2Runnable = new ThreadRunnable('b');

var t3Runnable = new ThreadRunnable('c');

Thread t1 = new Thread(t1Runnable);

Thread t2 = new Thread(t2Runnable);

Thread t3 = new Thread(t3Runnable);

t1.start();

t2.start();

t3.start();

}

}

```

![[Untitled 2 56.png|Untitled 2 56.png]]

# Estados de uma thread

![[Untitled 3 42.png|Untitled 3 42.png]]

É interessante sabermos disso, pois podemos dar dicas para o S.O como dizer para que uma thread running pare, ou notificando que uma thread se tornou *Runnable*.

# Melhorando o Código

Se não precisarmos de construtor! podemos usar uma lambda, pois Runnable é uma `@FunctionalInterface`:

```Java

Thread t1 = new Thread( () -> {/*codigo*/});

```

Ou, um pouco mais verboso:

```java

Runnable simplerRunnable = () -> {

System.out.printf("\nComeçouuu!: %s\n", c);

for (int i = 0; i < 100; i++) {

System.out.print(c);

}

};

```

## Prioridade

Prioridades podem ser atribuídas à threads, conforme mostra o código:

```Java

Thread t3 = new Thread(t3Runnable,"nomeC");

t3.setPriority(Thread.MAX_PRIORITY);

```

![[Untitled 111.png|Untitled 111.png]]

> [!important]

> Note que prioridades são indicações do que você deseja para o scheduler, uma thread de prioridade 1 pode rodar andar da prioridade 10, você não deve desenvolver um código baseado em prioridade

## Sleep

Imagine que você deseja que uma thread ocorra sem fim, mas rode a cada 2 minutos, como pode fazer isso? 🤔

Uma das maneiras é usar um `Thread.sleep(milis)` e pedir para que a thread pare por algum tempo, note que é importante esse código estar dentro de um try-catch, por sua possibilidade de gerar uma exceção (caso a thread seja interrompida, por exemplo)

```Java

@Override

public void run() {

System.out.printf("\nComeçouuu!: %s\n", c);

for (int i = 0; i < 100; i++) {

System.out.print(c);

}

try {

Thread.sleep(1000);

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

}

```

![[Untitled 1 71.png|Untitled 1 71.png]]

## Yield

Yield serve para indicarmos / darmos uma **<mark style="background: #D2B3FFA6;">dica</mark>** para o scheduler do JVM faça a thread voltar para Runnable (pare) por um tempo. [[2. Começando com o Código]]

![[Untitled 2 57.png|Untitled 2 57.png]]

O *yield* é um dos principais elementos que permitem a existência de *Virtual Threads*.

## Join

![[Untitled 3 43.png|Untitled 3 43.png]]

Join serve para avisarmos a thread main que ela deve **esperar** para continuar seu fluxo q uando as operações terminarem

Quando você chama o método `join` em uma determinada (thread), você está essencialmente dizendo: "Pera ai, só continua quando essa tarefa terminar". Isso é útil quando você tem partes do programa que precisam estar totalmente prontas antes que outras partes possam prosseguir.

Um exemplo seria um cenário onde você precisa comparar 3 pesquisas de viagem de avião para conseguir ver o preço mais barato, você pode dar o `join` nas 3 threads que rodaram essa operação de I/O (a ordem não irá importar, pois estaremos limitados pela última de qualquer jeito) e então depois comparamos os resultados

Resumidamnete, o `join` é especialmente útil quando você precisa garantir a ordem correta das operações ou quando precisa coletar resultados de várias threads antes de prosseguir.

```Java

// t1 roda antes de t1 e t2

Thread t1 = new Thread(new ThreadRunnableYieldJoin('A'));

Thread t2 = new Thread(new ThreadRunnableYieldJoin('B'));

Thread t3 = new Thread(new ThreadRunnableYieldJoin('C'));

t1.start();

try {

t1.join();

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

var threads = List.of(t2,t3);

threads.forEach(Thread::start);

```

```Java

public static void main(String[] args) {

// t1 e t2 em paralelo

Thread t1 = new Thread(new ThreadRunnableYieldJoin('A'));

Thread t2 = new Thread(new ThreadRunnableYieldJoin('B'));

Thread t3 = new Thread(new ThreadRunnableYieldJoin('C'));

t1.start();

t2.start();

try {

t1.join();

t2.join();

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

t3.start();

}

}

```

# Referências

> [!info] Qual a finalidade do Transient e Volatile no Java?

> As vezes quando vou declarar meus atributos noto o transient e o volatile.

> [https://pt.stackoverflow.com/a/116080](https://pt.stackoverflow.com/a/116080)

> [!info] Maratona Java Virado no Jiraya

> Melhor, maior, e o mais completo curso de Java em português grátis de toda Internet está de volta.

> [https://www.youtube.com/playlist?list=PL62G310vn6nFIsOCC0H-C2infYgwm8SWW](https://www.youtube.com/playlist?list=PL62G310vn6nFIsOCC0H-C2infYgwm8SWW)>)

| kauegatto |

1,871,537 | Glam Up My Markup: Beaches | This is a submission for [Frontend Challenge... | 0 | 2024-05-31T02:54:12 | https://dev.to/altafsyah/glam-up-my-markup-beaches-4hfg | devchallenge, frontendchallenge, css, javascript | _This is a submission for [Frontend Challenge v24.04.17]((https://dev.to/challenges/frontend-2024-05-29), Glam Up My Markup: Beaches_

## What I Built

This is my first time doing challenge, so in this challenge i built a list of card for the beaches in the template that provided. I try not editing the HTML and make it pure CSS and JavaScript. I would challenge myself to do it with basic, without frameworks and libraries. I want to learn the basic and re-memorized the fundamental of CSS and also JavaScript DOM.

## Demo

You guys can see the code in here : [Repo](https://github.com/altafsyah/dev.to-beach)

Visit the live preview in [here](https://dev-to-beach.vercel.app/)

## Journey

In this challenge, I'm editing the HTML using JavaScript DOM to insert element and adding styles into it. Since the template doesn't included with phots. I challenge myself to make the visual design using SVG path. It's really challenging, but I learn a lot from this.

I re-learn about the fundamental of Javascript, CSS Flexbox, and using path in SVG to draw. It's worth the time, maybe I would make this website as my experiment for SVG path and animation, since I found out it's really fun to do.

I'm looking forward for the next challenges. | altafsyah |

1,871,535 | Next.js: Simple Example using revalidateTag | In Next.js, revalidating data is the process of clearing the Data Cache and retrieving the latest... | 0 | 2024-05-31T02:52:02 | https://dev.to/jonathan-dev/nextjs-simple-example-using-revalidatetag-41f5 | nextjs, beginners, typescript, tutorial | In Next.js, [revalidating data](https://nextjs.org/docs/app/building-your-application/data-fetching/fetching-caching-and-revalidating#revalidating-data) is the process of clearing the Data Cache and retrieving the latest data. This allows you to display the latest information to your users as your data changes.

There are two types of revalidation:

- Time-Base

- On-demand - `revalidatePath` and `revalidateTag`

In this post, we'll focus on On-demand revalidation `revalidateTag`, which manually revalidates data based on an event such as a form submission. This can only called in a Server Action or Router Handler.

Next.js has a cache tagging system for invalidating `fetch` requests across routes. In order to use `revalidateTag`, you'll need to add a tag in the `fetch` request:

```TS

const res = await fetch('https://baseurl.com', { next: { tags: ['mytag'] } });

```

This adds the cache tag `mytag` to the `fetch` request.

Then you can revalidate the `fetch` call by calling `revalidateTag` in a Server Action:

```TS

'use server'

import { revalidateTag } from 'next/cache'

export default async function action() {

revalidateTag('mytag')

}

```

Basic Next.js 14 example with Typescript can be found here: https://github.com/juhlmann75/Next.js-Examples/tree/main/src/app/examples/revalidateTag

More on how [On-demand revalidation works](https://nextjs.org/docs/app/building-your-application/caching#on-demand-revalidation).

| jonathan-dev |

1,871,534 | Estudo do KTO Casino: Novo Horizonte de Jogos Virtuais | Desde o advento dos casinos online no mundo do entretenimento online, os jogadores têm procurado... | 0 | 2024-05-31T02:47:15 | https://dev.to/tommydorton/estudo-do-kto-casino-novo-horizonte-de-jogos-virtuais-5ace | Desde o advento dos casinos online no mundo do entretenimento online, os jogadores têm procurado sites confiáveis que ofereçam jogos emocionantes, bónus generosos e um ambiente de jogo seguro. Neste contexto, o KTO Casino representa um fenômeno interessante. Vejamos [aqui](https://mixjogos.com.br/cassino/kto/) o que torna esta plataforma única e se vale a pena investir seu dinheiro e tempo nos jogos oferecidos por esta operadora.

**Plataforma de jogos**

O KTO Casino oferece uma ampla variedade de jogos, incluindo slots, jogos de mesa, jogos com crupiê ao vivo e muito mais. A colaboração com os principais desenvolvedores de software, como NetEnt, Microgaming e Evolution Gaming, garante conteúdo de jogos de alta qualidade. Os usuários podem desfrutar de slots emocionantes com uma variedade de temas, bem como jogos de mesa clássicos como blackjack, roleta e pôquer.

**Bônus e Promoções**

Um dos principais aspectos para atrair jogadores para cassinos online são os bônus e promoções. O KTO Casino oferece uma variedade de ofertas de bônus, incluindo bônus de boas-vindas para novos jogadores, rodadas grátis em slots, cashback e muito mais. Os bônus podem aumentar significativamente o saldo do jogo e melhorar suas chances de ganhar.

**Segurança e Confiabilidade**

Um dos aspectos mais importantes na escolha de um casino online é a segurança. O KTO Casino oferece um alto nível de proteção para os dados pessoais e transações financeiras dos jogadores usando modernas tecnologias de criptografia. Uma licença emitida pelas autoridades competentes garante a conformidade com os padrões da indústria de jogos e a justiça do processo de jogo.

**Suporte ao usuário**

Um bom suporte ao cliente desempenha um papel importante na experiência do jogador no cassino online. O KTO Casino oferece suporte 24 horas por dia, 7 dias por semana, via chat ao vivo, e-mail e telefone. A equipe profissional está pronta para ajudar os jogadores com quaisquer dúvidas ou problemas que surjam durante o jogo.

**Cassino Móvel**

Com a crescente popularidade dos dispositivos móveis, muitos jogadores preferem jogar jogos de casino nos seus smartphones ou tablets. O KTO Casino oferece uma plataforma móvel compatível com vários sistemas operacionais, permitindo aos jogadores desfrutar de jogos a qualquer hora e em qualquer lugar.

**Conclusão**

KTO Casino é uma plataforma que combina uma ampla seleção de jogos, bônus generosos, alto nível de segurança e atendimento de qualidade. Para quem procura uma experiência emocionante de jogo de cassino em um ambiente seguro, o KTO Casino pode ser uma ótima escolha. No entanto, os jogadores devem sempre lembrar-se de jogar com responsabilidade e gerir as suas finanças. | tommydorton | |

1,869,413 | Azure - Building Multimodal Generative Experiences. Part 2 | Previous Post Link Create a composed Document Intelligence Model Composed models in... | 0 | 2024-05-31T02:14:17 | https://dev.to/manjunani/azure-building-multimodal-generative-experiences-part-2-i3 | azure, ai, openai, information | Previous Post [Link](https://dev.to/manjunani/azure-building-multimodal-generative-experiences-part-1-j5o)

## Create a composed Document Intelligence Model

- Composed models in Azure AI document intelligence enable users to submit a form when they don't know which is the best model to use.

- Composed Models

- when you have forms with unusual or unique formats, you can create and train your own custom models in Azure AI Document Intelligence.

- You can create custom model of 2 types (custom template model and custom neural models) refer to previous post to know more about them.

- Once you have created a set of custom models, you must assemble them into a composed model. you can do this on the Azure AI Studio.

- Custom model Compatibility

- Custom template models are responsible with other custom template models across 3.0 and 2.1 API versions

- Custom neural models are composable with other custom neural models.

- Custom neural models can't be composed with custom template models.

- [Custom models](https://learn.microsoft.com/en-us/azure/ai-services/document-intelligence/concept-composed-models)

## Build a document intelligence custom skill for azure search.

- If you integrate AI Search with an Azure AI Document intelligence solution, you can enrich your index with fields that your Azure AI Document Intelligence models are trained to extract.

- Azure AI Search is a search service hosted in Azure that can index content on your permises or in a cloud location.

- There are 5 stages in Indexing process

- Document Cracking. In document cracking, the indexer opens the content files and extracts their content.

- Field Mappings. Fields such as titles, names, dates, and more are extracted from the content. You can use field mappings to control how they're stored in the index.

- Skillset Execution. In the optional skillset execution stage, custom AI processing is done on the content to enrich the final index.

- Output field mappings. If you're using a custom skillset, its output is mapped to index fields in this stage.

- Push to index. The results of the indexing process are stored in the index in Azure AI Search.

- AI Search Skillset

- Key Phrase extraction

- Language Detection

- Merge

- Sentiment

- Translation

- Image Analysis

- Optical character recognition

- we can use custom skills too and they can be used for 2 reasons

- The list of built-in skills doesn't include the type of AI Enrichment you need.

- you want to train your own model to analyze the data

- 2 types of custom skills that you can create

- Azure Machine Learning Custom Skills

- Custom Web API Skills

Refer to this [link](https://learn.microsoft.com/en-in/training/modules/build-form-recognizer-custom-skill-for-azure-cognitive-search/3-build-custom-skill) for building an Azure AI Document Intelligence Custom Skill

| manjunani |

1,871,533 | Water-Cooled Generators: Maintenance Tips and Tricks | Keep Your Water-Cooled Generator Running Smoothly with These Maintenance Tips and Tricks Are you... | 0 | 2024-05-31T02:45:45 | https://dev.to/hanna_prestonle_101c638d5/water-cooled-generators-maintenance-tips-and-tricks-578c | generators | Keep Your Water-Cooled Generator Running Smoothly with These Maintenance Tips and Tricks

Are you tired of dealing with power outages during storms or other emergencies? A water-cooled generator is a great solution that can provide reliable backup power when you need it most. But to keep your generator running smoothly, you need to perform regular maintenance. We'll explore the advantages, innovation, safety, use, service, quality, and application of water-cooled generators and provide tips and tricks for keeping them in tip-top shape.

Advantages of Water-Cooled Generators

A generator like water-cooled a type of that makes use of water to cool the motor and avoid overheating

These generators tend to be more efficient and sturdy than air-cooled generators, making them a option like popular commercial and commercial applications

They are able to also run for longer periods without the need for maintenance, which can be well suited for crisis situations

Innovation in Water-Cooled Generators

In recent times, water-cooled generator are becoming more energy-efficient and eco-friendly

They now use less fuel and produce fewer emissions, making them a option like fantastic those who are environmentally aware

Many generators which can be water-cooled have features such as for example automated shutdown and monitoring systems, which can make them more user-friendly and dependable

Safety and Use of Water-Cooled Generators

When using a generator like water-cooled it's important to follow all security directions to stop harm or injury

First of all, it's required to understand owner's manual completely before running the generator

Always make certain that the generator has air flow like proper counter carbon monoxide poisoning

Additionally it is important to shut the generator down before refueling in addition to only utilize authorized fuels

Maintaining a distance like secure the generator during procedure can be recommended also

Simple suggestions to make use of Water-Cooled Generator

Working with a generator like water-cooled fairly simple

After reading the master's manual, make certain you have the desired fuel and lubricants to use the generator

Check out the generator's oil coolant and degree, and ensure that every filters are clean

Connect the generator to an transfer like electric or extension cable, and begin the engine in line with the maker's directions

Monitor the generator's performance, and shut it well if it is not any longer needed

Provider and Maintenance of Water-Cooled Generators

Proper upkeep is crucial to keep your generator like water-cooled running

Regularly check out the oil level, coolant level, and air filters

Replace the oil after each and every 100 hours of operation or according to the maker's guidelines

The coolant ought to be changed and flushed every 500 hours of operation or every couple of years, whichever comes first

Clean the new air filters every 50 hours of procedure or more often in dusty surroundings

Regular upkeep and service will prolong the lifespan of your generator and ensure you'll need it that it's ready to operate whenever

Quality and Application of Water-Cooled Diesel engine Generators

When purchasing a generator like water-cooled it is necessary to decide on a professional brand recognized for quality and reliability

Choose for your energy needs as the application related to generator

As an example, a little generator could possibly be ideal for residential usage or a small company, while a more substantial generator could be required for a commercial or facility like commercial

Always pick a generator out that meets your needs that are specific

Conclusion

A water-cooled Diesel generator sets is a reliable backup power option for emergencies and other situations where power is unavailable. By following the maintenance tips and tricks discussed, you can ensure that your generator is always ready to operate when you need it. Remember to always follow safety guidelines and choose a generator that meets your specific needs for quality and application. With these tips, you'll be well on your way to being prepared for any power outage that may occur!

Source: https://www.kangwogroup.com/Diesel-generator-sets | hanna_prestonle_101c638d5 |

1,871,532 | Why You Should Choose TypeScript Over JavaScript | As the web development landscape continues to evolve, developers are constantly seeking tools and... | 0 | 2024-05-31T02:44:28 | https://dev.to/vyan/why-you-should-choose-typescript-over-javascript-d5m | webdev, javascript, typescript, beginners | As the web development landscape continues to evolve, developers are constantly seeking tools and technologies that enhance productivity, maintainability, and scalability. One of the most significant debates in this realm is whether to use TypeScript (TS) or JavaScript (JS). While JavaScript has been the backbone of web development for decades, TypeScript has rapidly gained popularity. In this blog post, we will explore why you should consider choosing TypeScript over JavaScript for your next project.

**1. Type Safety**

**JavaScript:**

JavaScript is a dynamically typed language, meaning that variable types are determined at runtime. This can lead to unexpected errors that are only caught during execution, making debugging a challenge.

**TypeScript:**

TypeScript introduces static typing, allowing developers to define types for variables, function parameters, and return values. This type safety helps catch errors at compile time, reducing the likelihood of runtime errors and making the code more predictable and easier to debug.

```

// JavaScript

function add(a, b) {

return a + b;

}

console.log(add(5, "10")); // Output: "510"

// TypeScript

function add(a: number, b: number): number {

return a + b;

}

console.log(add(5, 10)); // Output: 15

```

**2. Enhanced IDE Support**

**JavaScript:**

While modern IDEs offer some level of code completion and error checking for JavaScript, the dynamic nature of the language can limit the accuracy and usefulness of these features.

**TypeScript:**

TypeScript provides superior IDE support, with features like intelligent code completion, real-time type checking, and refactoring tools. This improved tooling leads to a more efficient and enjoyable development experience.

**3. Improved Code Readability and Maintainability

JavaScript:**

JavaScript's flexibility can sometimes lead to inconsistent coding practices, making it harder to maintain large codebases. Lack of type information can make the code less readable, especially for new team members.

**TypeScript:**

TypeScript enforces a more structured and consistent codebase. The explicit types and interfaces make the code self-documenting, improving readability and making it easier to maintain and refactor, especially in larger projects.

**4. Scalability

JavaScript:**

As JavaScript projects grow in size and complexity, managing the codebase can become increasingly difficult due to the lack of a robust type system.

**TypeScript:**

TypeScript's strong type system and modular approach make it easier to scale applications. The ability to define interfaces, generics, and custom types allows for better code organization and modularity, making large-scale applications more manageable.

**5. Backward Compatibility

JavaScript:**

JavaScript is a highly compatible language, but using newer features often requires polyfills or transpilers like Babel to ensure compatibility with older browsers.

**TypeScript:**

TypeScript is a superset of JavaScript, meaning any valid JavaScript code is also valid TypeScript code. Additionally, TypeScript can be transpiled to different versions of JavaScript (ES3, ES5, ES6, etc.), ensuring compatibility across different environments without the need for additional tools.

**6. Community and Ecosystem**

**JavaScript:**

JavaScript boasts a vast ecosystem and community with countless libraries and frameworks. However, managing dependencies and ensuring compatibility can sometimes be challenging.

**TypeScript:**

TypeScript has seen widespread adoption and support from major frameworks like Angular, React, and Vue. Many popular libraries are now written in or have type definitions for TypeScript, making it easier to integrate and use them in TypeScript projects.

**7. Error Reduction**

**JavaScript:**

Due to its dynamic nature, JavaScript code can be prone to type-related errors, which can be difficult to trace and debug.

**TypeScript:**

TypeScript's compile-time type checking helps catch errors early in the development process, reducing the number of bugs and issues that make it to production. This leads to more robust and reliable code.

**Conclusion**

While JavaScript remains a powerful and versatile language, TypeScript offers several compelling advantages that make it a superior choice for many projects. From type safety and enhanced IDE support to improved maintainability and scalability, TypeScript provides a robust framework for modern web development. By choosing TypeScript over JavaScript, you can create more reliable, readable, and maintainable code, ultimately leading to more successful and scalable applications.

| vyan |

1,871,531 | Industrial Generators: Cost-Effective Power Solutions | Are you looking for a reliable source of power for your daily needs? Industrial generators are here... | 0 | 2024-05-31T02:35:08 | https://dev.to/hanna_prestonle_101c638d5/industrial-generators-cost-effective-power-solutions-1of9 | industrial, generators | Are you looking for a reliable source of power for your daily needs? Industrial generators are here to provide you with cost-effective solutions. Generators are an innovative and advanced system designed to cater to people’s needs. Here are some advantages and innovative features of industrial generators, how to use them in a safe way, and maintain the quality and integrity of service.

Top features of Industrial Generators:

Industrial generator really are a reliable supply like alternate of that may be used anywhere once you want

A generator can offer ability to factories which can be tiny offices, also whole cities

The bonus like biggest of industrial generators will be the power to deliver stable and uninterrupted power for an excessive period

They've been considerably economical them a investment like worthy both residential and commercial purposes while they reduce electricity invoices, making

Innovations in Industrial Generators:

Below are a few innovative attributes of commercial which help them to stand call during the market:

Digital Controller: Digital controllers provide remote accessibility, monitoring, and diagnostics associated with generator system

This feature enables users to manage the generator from anywhere, rendering it simpler and much more convenient to use

Soundproofing: Industrial generators are really noisy, but with advanced soundproofing technology, they can now operate inside a environment like noise-free

Eco-Friendly: Most generators operate using fuel like non-renewable however with new technological advancements, generators are now able to run utilizing biogas like eco-friendly biodiesel, decreasing their carbon footprint

Safety:

Commercial Diesel generator sets is actually a bit intimidating for some individuals, however with all the safety that's right, you can properly run the generator

Check out fundamental ideas to just take really whenever running a generator:

Never ever run the generator inside

Generators produce fuel, that can be toxic in closed areas

Make certain the generator is correctly earthed before activating it to be able to prevent accidents

Keep carefully the generator not even close to any fluids that are flammable materials that could ignite and result in a fire

Usage:

Below are a actions that are few stick to whenever using an generator like commercial

Before you start the generator, examine the equipment visually to confirm its in good condition

Stick to an manual like individual know tips which are easy operate the generator properly

Link the generator to the load like required is electric ensure all connected products are deterred

Service:

To help keep the product quality up and integrity associated with solution, generators need regular maintenance

Consider recommendations that are easy maximize the lifespan concerning the generator:

Modify the oil and oil filter usually

Frequently inspect the atmosphere filters and clean or replace them if needed

Routinely check the battery away

Obtain the generator serviced by the technician that has experience as this helps identify dilemmas early

Application:

Industrial Diesel engine generators can supply ability to industries which are different factories, mining sites, construction websites, hospitals, and even schools

These structures often require uninterrupted power, and generators can provide just that

They could will also be obtainable in handy in the event of an outage like electric an all-natural tragedy and acquire used to restore energy

In conclusion, industrial generators provide cost-effective and reliable sources of energy. With innovative features, safety precautions, proper usage, and maintenance, they are an excellent investment for both commercial and residential use. Their applications are endless, and they are a must-have for those who need power solutions.

Source: https://www.kangwogroup.com/Diesel-generator-sets | hanna_prestonle_101c638d5 |

1,871,530 | Silent Generators: Solutions for Noise-Sensitive Environments | Silent Generators: Solutions for Noise-Sensitive Environments Are you tired of the loud noise that... | 0 | 2024-05-31T02:27:01 | https://dev.to/hanna_prestonle_101c638d5/silent-generators-solutions-for-noise-sensitive-environments-115b | silent, generators | Silent Generators: Solutions for Noise-Sensitive Environments

Are you tired of the loud noise that generators make? Do you live or work in a noise-sensitive environment where conventional generators are not an option? Then, you might want to consider getting a silent generator. We will discuss the advantages of silent generators, their innovation, safety, use, how to use them, their service, quality, and application.

Options that come with Silent Generators

Silent generators provide lots of benefits that traditional generatorare not able to

First, they create extremely noise that's low, making them well suitable for domestic areas, hospitals, schools, along side other places where levels which can be sound to be held towards the very least

Next, silent are safer when it comes to environment since they create less emissions than main-stream generators

Finally, they've been more fuel-efficient and possess a lengthier lifespan when compared with generators that can be conventional

What this means is you might save money on upkeep and gas costs as time passes

Innovation of Silent Generators

Quiet generators have really advanced notably since they certainly were first introduced in the marketplace

By means of technical development, manufacturers were created to make generators that are calm are lighter, scaled-down, more fuel-efficient, and safer to utilize

Some generators being peaceful operating on solar energy systems, consequently you don't have to stress about fuel prices or emissions

Other people have movement sensors that turn them on and off automatically, with regards to the conventional of power use

These are revolutionary features that make quiet generators more dependable and user-friendly

Security of Silent Generators

Quiet Diesel generator sets are produced with safety in your head

They truly are high in a safety like few that ensure they operate in the safe and manner like dependable

For example, they have automatic shutdown systems that activate when the generator overheats or you have a autumn like oil force like quick

This prevents the generator from causing harm or damage

Provider and Quality of Silent Generators

Peaceful generators are top-quality and reliable

They are developed to final so are made to withstand conditions being harsh are environmental

Moreover, they are supported by manufacturers' warranties, and so you'll be guaranteed associated with durability and quality

You should have to choose a more supplier like developed maker who offers client like exemplary and help like technical

Due to this, you'll be particular you encounter any problems or dilemmas with your generator you'll get aid that positively is prompt the reality

Application of Silent Generators

Peaceful generators can be used in several applications

Included in these are ideal for domestic areas, hospitals, schools, and locations where are various noise levels needs become held to your lowest

They are furthermore well suited for camping, outside activities, as well as other occasions where you may possibly require usage of reliable and energy like noise-free

Additionally, they could be correctly utilized as backup power sources for domiciles, businesses, as well as other facilities that be determined by electricity

In conclusion, silent Diesel engine generators are an excellent solution for noise-sensitive environments. They offer many advantages, are innovative, safe, and easy to use. Silent generators provide high-quality service and are suitable for various applications. So, if you are looking for a reliable, quiet, and efficient source of power, then a silent generator is definitely worth considering.

Source: https://www.kangwogroup.com/Diesel-generator-sets | hanna_prestonle_101c638d5 |

1,871,529 | Add an alarm clock to the trading strategy | Traders who design trading strategies often ask me how to design timing functions for strategies so... | 0 | 2024-05-31T02:17:40 | https://dev.to/fmzquant/add-an-alarm-clock-to-the-trading-strategy-4bo4 | trading, strategy, fmzquant, cryptocurrency | Traders who design trading strategies often ask me how to design timing functions for strategies so that strategies can handle certain tasks at specified times. For example, some intraday strategies need to close positions before the first section end in a trading day. How to design such requirements in the trading strategy? A strategy may use a lot of time control. In this way, we can encapsulate the time control function to minimize the coupling between the time control code and the strategy, so that the time control module can be reused and is concise in use.

## Design an "alarm clock"

```

// triggerTime: 14:58:00

function CreateAlarmClock(triggerHour, triggerMinute) {

var self = {} // constructed object

// Set members and functions to the constructed object below

self.isTrigger = false // Has it been triggered that day

self.triggerHour = triggerHour // The planned trigger hour

self.triggerMinute = triggerMinute // The planned trigger minute

self.nowDay = new Date().getDay() // what day is the current time

self.Check = function() { // Check function, check trigger, return true when triggered, return false if not triggered

var t = new Date() // Get the current time object

var hour = t.getHours() // Get the current decimal: 0~23

var minute = t.getMinutes() // Get the current minute: 0~59

var day = t.getDay() // Get the current number of days

if (day != self.nowDay) { // Judge, if the current day is not equal to the day of the record, reset the trigger flag as not triggered and update the number of days for the record

self.isTrigger = false

self.nowDay = day

}

if (self.isTrigger == false && hour == self.triggerHour && minute >= self.triggerMinute) {

// Determine whether the time is triggered, if it meets the conditions, set the flag isTrigger to true to indicate that it has been triggered

self.isTrigger = true

return true

}

return false // does not meet the trigger condition, that is, it is not triggered

}

return self // return the constructed object

}

```

We have designed and implemented a function to create an alarm clock object (can be understood as a constructor), and other languages can directly design an alarm clock class (for example, using Python, we will implement one in Python later).

Design the function to construct the "alarm clock" object, and only need one line of code to create an "alarm clock" object in use.

```

var t = CreateAlarmClock(14, 58)

```

For example, create an object t and trigger it at 14:58 every day.

You can create another object t1, which is triggered every day at 9:00.

```

var t1 = CreateAlarmClock(9, 0)

```

## Test strategy

We write a test strategy. The strategy uses the simplest moving average system. The strategy is just for testing and does not care about the profit.

The strategy plan is to open a position (long, short, no trade) based on the daily moving average golden cross and dead cross when the market opens at 9:00 every day, and close the position at 14:58 in the afternoon (close at 15:00).

```

function CreateAlarmClock(triggerHour, triggerMinute) {

var self = {} // constructed object

// Set members and functions to the constructed object below

self.isTrigger = false // Has it been triggered that day

self.triggerHour = triggerHour // The planned trigger hour

self.triggerMinute = triggerMinute // The planned trigger minute

self.nowDay = new Date().getDay() // what day is the current time

self.Check = function() {// Check function, check trigger, return true when triggered, return false if not triggered

var t = new Date() // Get the current time object

var hour = t.getHours() // Get the current decimal: 0~23

var minute = t.getMinutes() // Get the current minute: 0~59

var day = t.getDay() // Get the current number of days

if (day != self.nowDay) {// Judge, if the current day is not equal to the day of the record, reset the trigger flag as not triggered and update the number of days for the record

self.isTrigger = false

self.nowDay = day

}

if (self.isTrigger == false && hour == self.triggerHour && minute >= self.triggerMinute) {

// Determine whether the time is triggered, if it meets the conditions, set the flag isTrigger to true to indicate that it has been triggered

self.isTrigger = true

return true

}

return false // does not meet the trigger condition, that is, it is not triggered

}

return self // return the constructed object

}

function main() {

var q = $.NewTaskQueue()

var p = $.NewPositionManager()

// You can write: var t = CreateAlarmClock(14, 58)

// You can write: var t1 = CreateAlarmClock(9, 0)

var symbol = "i2009"

while (true) {

if (exchange.IO("status")) {

exchange.SetContractType(symbol)

var r = exchange.GetRecords()

if(!r || r.length <20) {

Sleep(500)

continue

}

if (/*Judging the conditions for opening a position at 9:00*/) {// You can write: t1.Check()

var fast = TA.MA(r, 2)

var slow = TA.MA(r, 5)

var direction = ""

if (_Cross(fast, slow) == 1) {

direction = "buy"

} else if(_Cross(fast, slow) == -1) {

direction = "sell"

}

if(direction != "") {

q.pushTask(exchange, symbol, direction, 1, function(task, ret) {

Log(task.desc, ret)

})

}

}

if (/*Judging 14:58 conditions for closing the position near the market close*/) {// You can write: t.Check()

p.CoverAll()

}

q.poll()

LogStatus(_D())

} else {

LogStatus(_D())

}

Sleep(500)

}

}

```

Put the CreateAlarmClock function we have implemented in the strategy, and construct two "alarm clock" objects at the beginning of the main function. In the strategy to determine the position of opening and closing, add the code that the "alarm clock" object calls the Check function, such as the commented out part of the code.

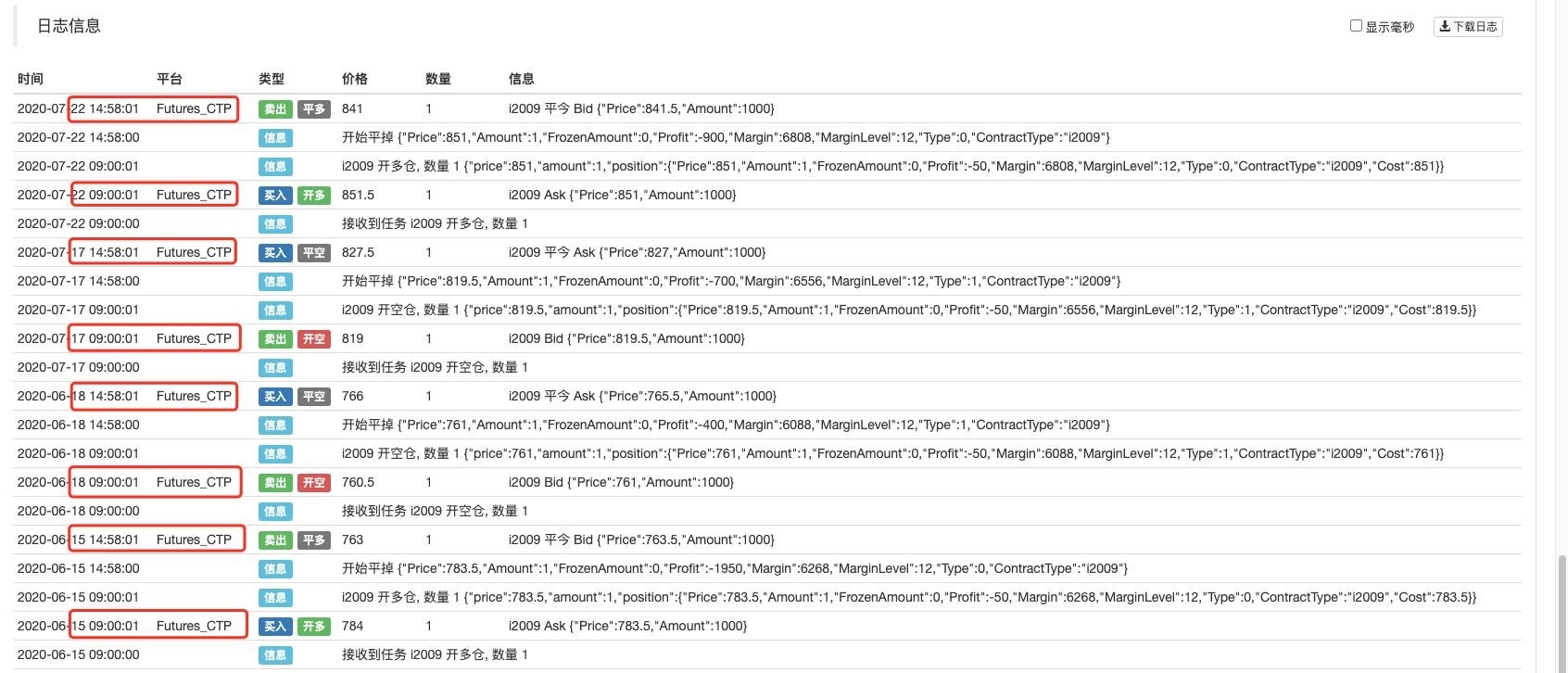

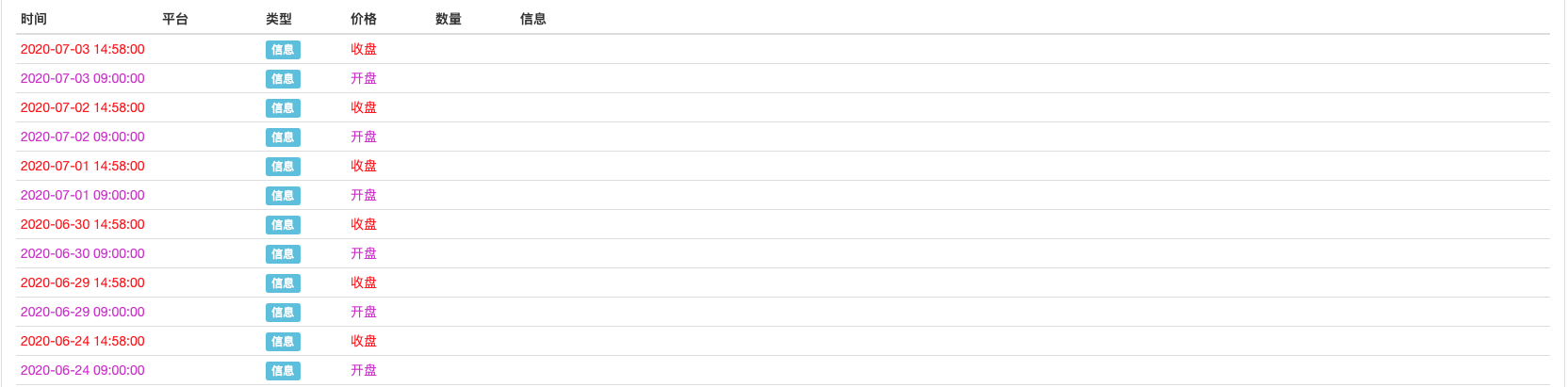

## Backtest

You can see the backtest, opening positions after 9 am and closing positions at 14:58 pm.

It can also be used for multi-variety strategies. Multiple such "alarm clock" objects can be created in multi-variety strategies for time control of multiple varieties without affecting each other.

## Python language implements alarm clock class

Implementation and test code:

```

import time

class AlarmClock:

def __init__(self, triggerHour, triggerMinute):

self.isTrigger = False

self.triggerHour = triggerHour

self.triggerMinute = triggerMinute

self.nowDay = time.localtime(time.time()).tm_wday

def Check(self):

t = time.localtime(time.time())

hour = t.tm_hour

minute = t.tm_min

day = t.tm_wday

if day != self.nowDay:

self.isTrigger = False

self.nowDay = day

if self.isTrigger == False and hour == self.triggerHour and minute >= self.triggerMinute:

self.isTrigger = True

return True

return False

def main():

t1 = AlarmClock(14,58)

t2 = AlarmClock(9, 0)

while True:

if exchange.IO("status"):

LogStatus(_D(), "Already connected!")

exchange.SetContractType("rb2010")

ticker = exchange.GetTicker()

if t1.Check():

Log("Market Close", "#FF0000")

if t2.Check():

Log("Market Open", "#CD32CD")

else :

LogStatus(_D(), "not connected!")

Sleep(500)

```

Backtest test run:

It should be noted that for backtest, the K-line cycle of the bottom layer cannot be set too large, otherwise the time detection point may be skipped directly and there will be no trigger.

From: https://blog.mathquant.com/2020/08/07/add-an-alarm-clock-to-the-trading-strategy-2.html | fmzquant |

1,867,140 | How To Tell An Amateur Programmer From a Professional | You are always learning new programming languages or frameworks. I think all of us have fallen... | 0 | 2024-05-31T02:10:00 | https://dev.to/thekarlesi/how-to-tell-an-amateur-programmer-from-a-professional-1dnn | webdev, beginners, html, programming | You are always learning new programming languages or frameworks.

I think all of us have fallen victims to this. Whether it is the hype of a certain technology, or you just decide one day that you want to master something completely new just for the challenge of it.

It is really a vain pursuit. Why? Well I'm all for you being a constant learner. That is a good thing you actually have to do in this industry. You have to always be learning.

But the question is, "What are you learning?"

It is much more beneficial to be learning and mastering the concepts of programming and how things are really working than learning many different languages.

Which to be honest, each one is just a new syntax. It is just the new language. Why do that.

Before we continue, If you are interested in more web related content, [subscribe to my newsletter](karlgusta.substack.com), especially if you are in a job search so that you don't miss future posts.

If you really understand the concepts that underline programming, a loop can be looked up in any language. A switch statement can be looked up in any language.

It is all just syntax!

So, if you are asked to build a feature in a new language or frameworks, something that you haven't used before, you should be able to:

1. Read a quick overview of that technology and how it works.

2. Be able to build that feature based on programming concepts or pseudo code even and then transforming it into whatever syntax that language speaks.

Also, and I did an article on this one recently. I will share a link. But if you are going to learn a new language for the sake of doing so, pick a lower level language like C or Rust or even Go or C#, if you are coming from Python or something high level like that.

And once you have put in the work of learning the deeper concepts that those languages force you to understand, you can really jump in anywhere.

## Too Many Things At Once

You are working on too many different things at once.

As a new developer, you want to look competent. And you want people to think that you are very efficient.

That you just happen to be this coding prodigy that came out of nowhere.

But take a look at senior to mid level devs.

They reject the extra work because they are in the middle of something. They are in the middle of, one thing.

They have a really good grasp of that one thing, and the requirements for that one thing.

And they get it done well.

You, on the other hand, have 3 things going on. Of which you don't fully understand. And your brain has to jump back and forth between them.

As a new dev, don't be embarrassed to say, "I'm currently in the middle of something. Let me get this done first, then I will jump on that."

Stop volunteering for everything.

Get your one assignment, understand the requirements well, and then knock out the park.

Be a dev that always delivers over one that is always in the middle of 10 different things. And always has to give updates and excuses for all the things that you are doing.

Take one task assignment at a time, and complete it.

And commit to a new task only when the previous task is delivered as requested.

In fact, building software is a slower process than you think. Especially if you want to do it right.

Happy Coding!

Karl

P.S. My new course [The 2 Hour Web Developer](https://karlgusta.gumroad.com/l/eofdr) will help you build ANY website you want. | thekarlesi |

1,742,432 | Dev Archetypes: How to Recognize Yours and Leverage It | Sorry to say this, but you can't be good at everything. Everyone, by design, has strengths and... | 0 | 2024-05-31T02:07:02 | https://dev.to/jeriel/dev-archetypes-how-to-recognize-yours-and-leverage-it-3o4o | programming, productivity, devjournal | Sorry to say this, but you can't be good at everything. Everyone, by design, has strengths and weaknesses, even in software development. We're all built differently and have our own contributions to bring to the table.

In many ways, recognizing that everyone is different will help you to collaborate better as a team. It also humbles you to know that you will have limitations but also that that's okay. While you may lack refinement in certain areas, you will still shine elsewhere.

The aim, then, is to figure out what those skills are and focus on expanding them.

## Archetypes

While the following archetypes are fun to think about, remember that they're not discrete buckets. There is lots of room for overlap, and you may find yourself excelling in one or more areas. The important thing to remember is that you'd rarely find anyone who's maxed out in all areas, if that's even possible. Ultimately, the idea isn’t to pigeonhole yourself but to recognize and embrace your strengths.

The following is a breakdown of what I believe are common developer archetypes on a given team. It may not be an exhaustive list, so if you have ideas of your own, feel free to share some in the comments!

#### The Artisan

When it comes to quality, syntax, and semantics, the Artisan crafts clean code that is maintainable and readable. This goes a long way in keeping tech debt out of the codebase. Additionally, you also excel at doing careful refactors, sometimes on a large scale, with minimal errors. This naturally comes along with thoughtful unit tests that protect the integrity of the code. In short, you pay attention to detail and have the sharpest eye for software quality, what it looks like and what it doesn’t look like.

You can almost liken the Artisan to a gardener, caring for and tending to the needs of the code, making sure to prune any weeds before they become too unwieldy to manage.

#### The Encyclopedia

The Encyclopedia is a fountain of knowledge, having a natural ability to recall nitty-gritty APIs and tools. You're able to maintain this knowledge through an appetite for blogs and podcasts since the software landscape changes almost weekly. Thus, people with an eagerness to learn and an excitement for new tech will usually excel at this. This comes in especially useful when the approach to implementing a new feature or bug fix isn't readily apparent.

One great way to continue sharpening this trait is to experiment with various side projects as your sandbox environment. By knowing broadly what tools are available, you're usually the first to ask, "What if we tried using XYZ?"

#### The Debugger

The Debugger is really good at pinpointing issues and finding a fix for them. For some reason, you have a sort of "sixth sense" in feeling out the root cause of a bug, which will oftentimes point to its solution.

Debuggers are typically methodical in their approach. Instead of banging your head against the wall when something isn't working, you tend to step back and look at the bigger picture. In this way, you're more efficient at diagnosing the issue because you're quick to synthesize the common denominator between what's working and what isn't. As a Debugger, you're not afraid to roll up your sleeves and use all the tools at your disposal to go deep into a particular problem.

#### The Architect

The Architect has a broad vision for software design and can identify the pros and cons of a given solution. This skill doesn't typically show itself until you've had years of experience, particularly after working on longer-term projects where you've been able to witness firsthand the consequences of bad design.

Still, the Architect has a natural ability of seeing how all the pieces of a larger system connect together and laying out the plan to put those pieces together, one step at a time. People trust your judgment because you're able to articulate and address the flaws in a software solution before spending time and resources to build it.

#### The Communicator

Bridging the gap between technical jargon and product value is a difficult balance. Especially when you're deep in the weeds of the code and all its edge cases, trying to explain any of that to someone outside your team will simply fall on deaf ears if you don't speak their language.

The Communicator excels at understanding what's relevant to non-developers and articulating that information in a way that will get buy-in from stakeholders and drive the product to completion. This is an important role that runs in tandem with your product owner because, as someone who also has your hands deep in the code, you deeply understand the “why” in what you’re building.

#### The Mentor

Similar to the Communicator, the Mentor understands the perspective of a new developer and how overwhelming onboarding can be. Not everyone can easily explain a codebase or advanced topics in a way that will stick with a developer who doesn’t have the same amount of context. Here, that context is the key piece, and empathy is the strongest trait of the Mentor. While everyone else on the team has had time to build up a shared understanding of historical decisions and past mistakes, a junior developer or new joiner has not. The Mentor acts as their representative and exercises patience and generosity with their time.

As the Mentor, you understand the multiplicative effects of raising up everyone else on the team, even if it takes time away from your own tasks.

## Caveats

Knowing your archetype isn't meant to be a way for you to flex. At least, it shouldn't be. Rather, it's a way to discover where your strengths lie so that you can bring the most value to your team. It also helps you remember that no one is perfect, allowing you to give yourself and others more grace.

Taking a step back, finding your niche skill(s) should not be an excuse to ignore all the other areas. Knowing your limits also has an upside by allowing you to understand where else you can improve. While it's important to work as a team, there's a fine line between collaboration and over-dependence. Harness your strengths but also find ways to understand and work around your weaknesses. | jeriel |

1,871,528 | First Glance at Ansible | What is ansible Ansible is an open-source IT automation tool that automates provisioning,... | 0 | 2024-05-31T02:03:37 | https://dev.to/feng_wei/first-glance-at-ansible-3522 | ansible | What is ansible

Ansible is an open-source IT automation tool that automates provisioning, configuration management, application deployment, orchestration, and many other IT processes. Ansible is written in Python and uses OpenSSH for transport.

Hands-on Ansible

1. Install ansible on a Linux machine, which is called control node. Use "ssh-keygen" and "ssh-copy-id"commands to generate ssh key and copy it to managed nodes for authentication

2. Playbooks are the simplest way in Ansible to automate repeating tasks in the form of reusable and consistent configuration files. Playbooks are scripts defined in YAML files and contain any ordered set of steps to be executed on managed nodes.

[My first playbook]

- hosts: webserver

remote_user: whocare

tasks:

- name: make ~/whocare directory

ansible.builtin.file:

path: ~/whocare

state: directory

- name: Copy file

copy:

src: /home/whocare.deb

dest: /home/whocare.deb

owner: whocare

group: whocare

mode: '0700'

- name: install whocare

become: true

become_method: sudo

ansible.builtin.apt:

deb: /home/whocare.deb

- name: copy dat file to /opt/Tanium/TaniumClient

become: true

become_method: sudo # need "-K" parameter, which is short form "--ask-become-pass". $ ansible-playbook <1.yaml> -K.

copy:

src: /home/whocare.dat

dest: /opt/whocare.dat

- name: restart service

become: true

become_method: sudo

service:

name: whocare

state: restarted

| feng_wei |

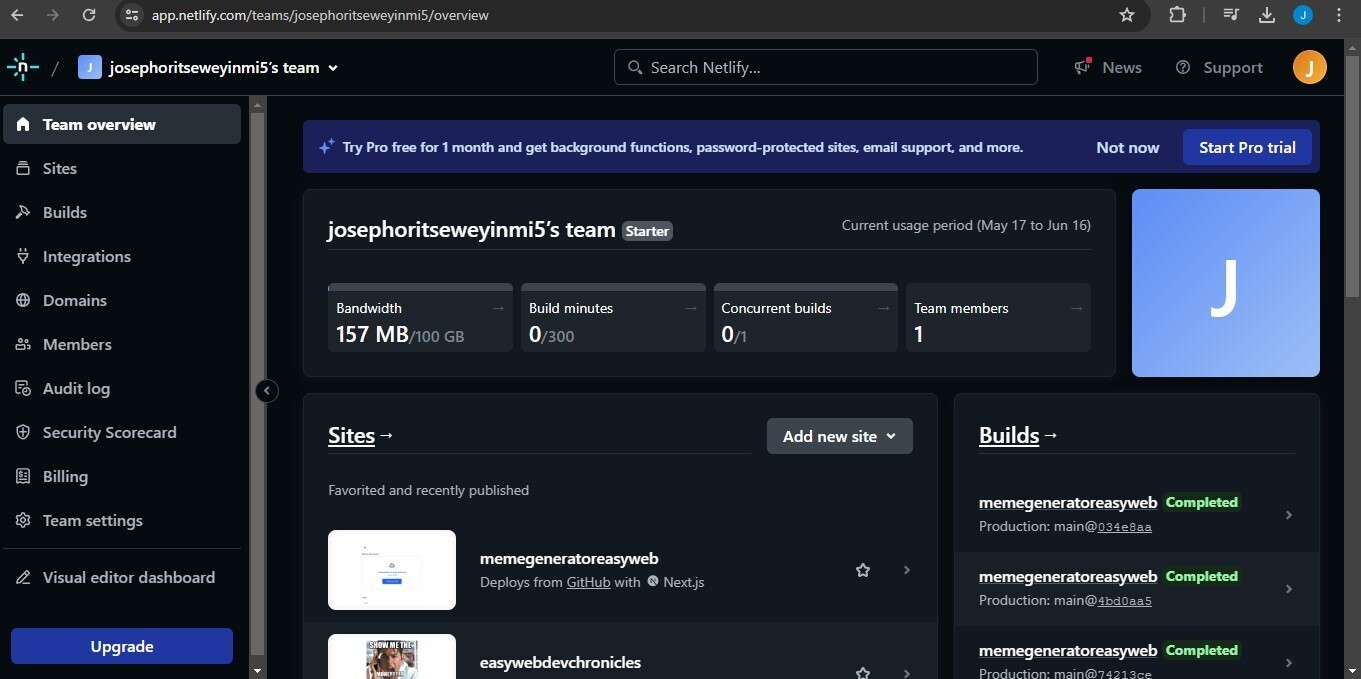

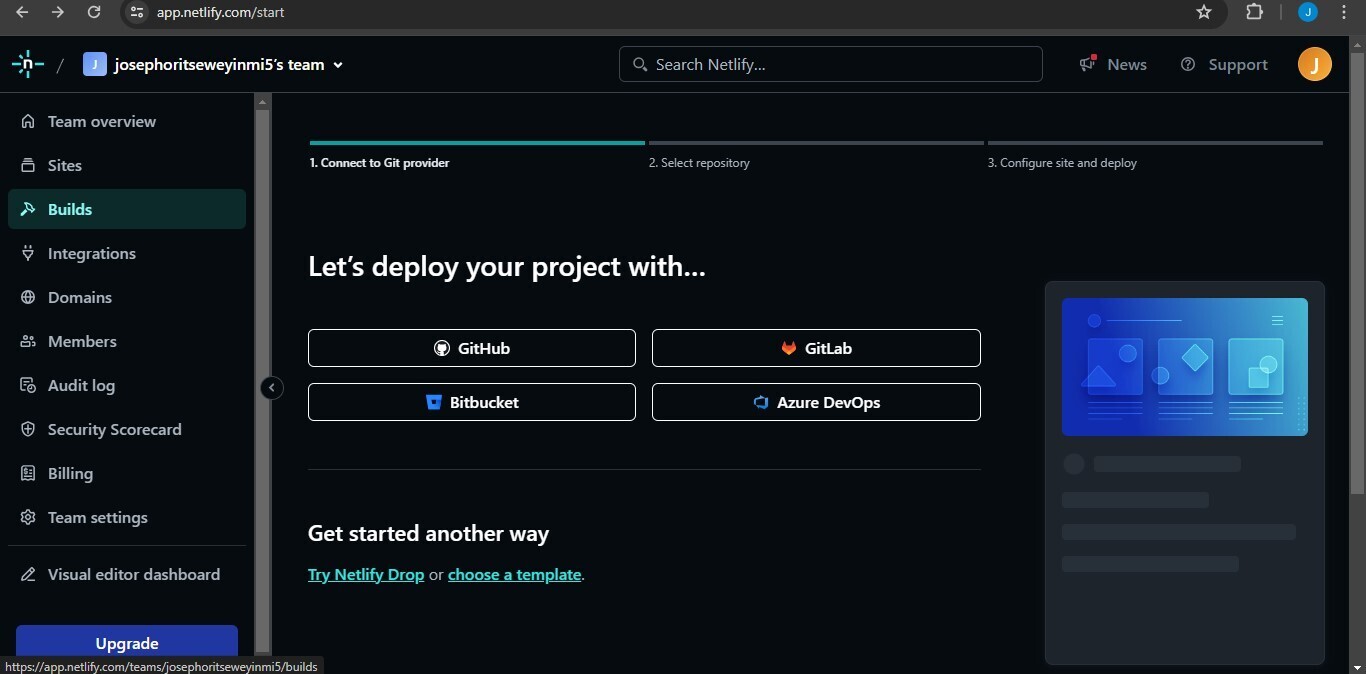

1,871,527 | How to host your Next14 app on Netlify in 2 minutes | To follow this tutorial, you need to create a Netlify account and sign in to it, also the code for... | 0 | 2024-05-31T02:03:09 | https://dev.to/joeskills/how-to-host-your-next14-app-on-netlify-in-2-minutes-566f | netlify, nextjs, webdev, howto | To follow this tutorial, you need to create a Netlify account and sign in to it, also the code for your Next app should be hosted on an online repository. I’m using GitHub to host the code.

---

## **Add a new site🔗:**

You will be taken to your Netlify dashboard after you log in. After you click on the add new site button. It will bring up a popup menu, choose the 'import an existing project' option.

---

## **Choose your code repository platform👨💻:**

Click on the platform of your choice and grant Netlify access to it. I'll choose GitHub because I'm using it to host my code.

---

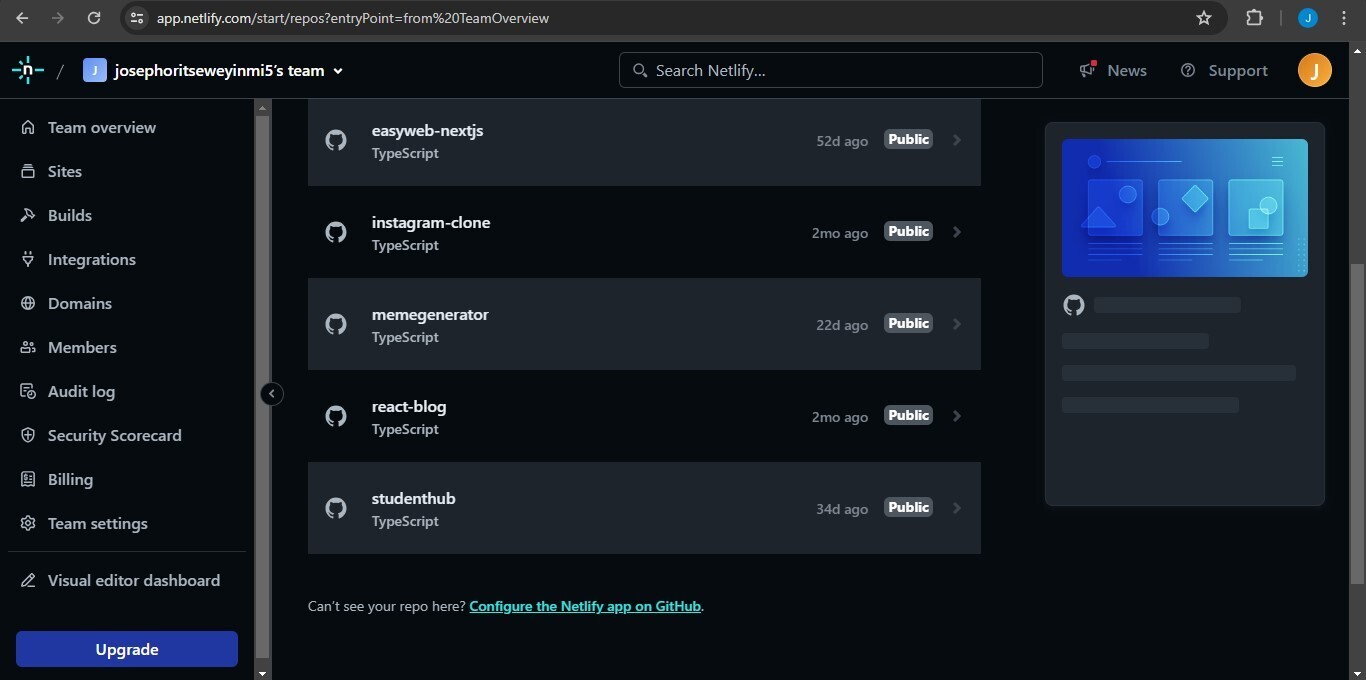

## **Pick the repository of your Next.js app👩🏻💻:**

After choosing a platform, Netlify will show you a list of repositories hosted on your platform. Pick the repository that contains the Next14 app.

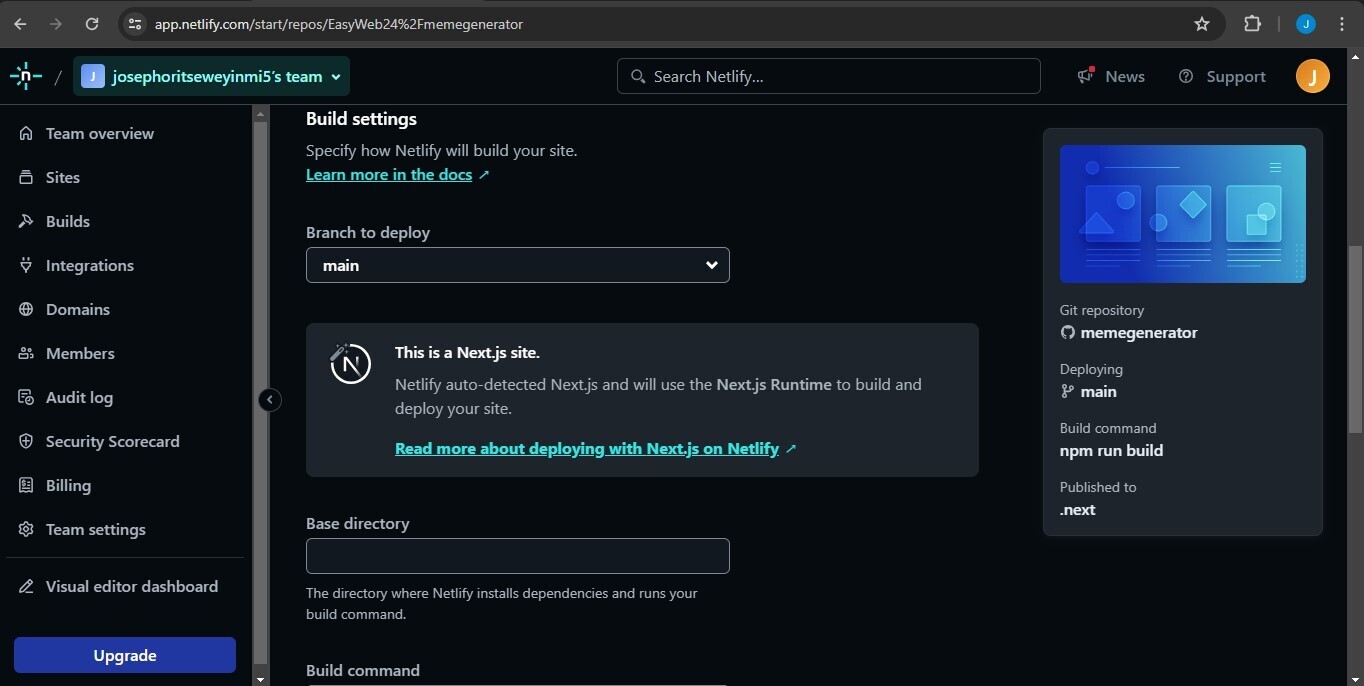

---

## **Choose the correct branch & build settings⚙️:**

Netlify automatically infers if you're using Next.js, the build settings will allow your app to run normally, but you'll also be able to customize your site name, build command, the base directory, and your publish directory here. This is where you can also add your environment variables. If you're using a different branch other than the default main branch, you can switch to it with the select menu. Once you're done, click on deploy 'site name' at the bottom.

---

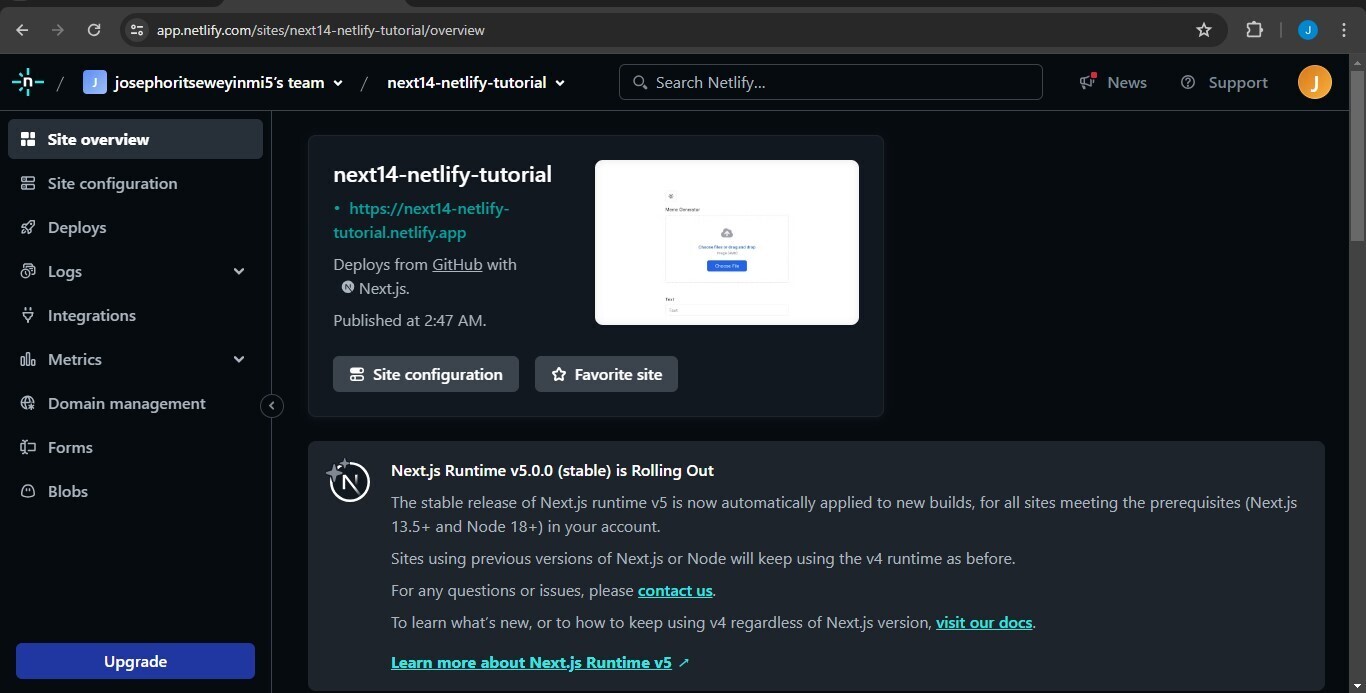

## **You Next14 app is now live⚡**

Your site is live! If there were no build errors, all you have to do is wait for Netlify to deploy your site. Once it's done deploying, it'll provide a domain name for you that you can use to visit your site. You might need to reload the page if you're being shown 'site in progress' for a long time.

---

Happy Coding!

You can hear more from me on:

𝕏 - https://x.com/code_withjoseph

| joeskills |

1,871,526 | Ensino superior na área de tecnologia | Ensino superior na área de tecnologia: benefícios e impacto na carreira | 0 | 2024-05-31T02:02:56 | https://dev.to/lexipedia/ensino-superior-na-area-de-tecnologia-39a2 | tecnologia, ensinosuperior, graduacao, educacao | ---

title: Ensino superior na área de tecnologia

published: true

description: Ensino superior na área de tecnologia: benefícios e impacto na carreira

tags: #tecnologia #ensinosuperior #graduacao #educacao

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-05-31 01:55 +0000

---

## A polêmica

Com certeza, o tema "faculdade na área de tecnologia" é um assunto que dá o que falar. Há quem seja fortemente contra a ideia de entrar na universidade se o objetivo é mergulhar no mercado de trabalho tecnológico. E há aqueles que são fervorosamente a favor da educação superior, independentemente do resto. Hoje, estou aqui para compartilhar minha visão sobre isso, então sem mais enrolações, vamos lá!

## Impacto da Educação Superior na Carreira em Tecnologia: Uma Perspectiva de Curto e Longo Prazo

### Início de carreira

Sem dúvidas cursar ou terminar uma graduação não é estritamente necessário para profissionais do mercado de tecnologia. Mas muitos conhecimentos que se ganha durante uma graduação podem ser relevantes no mercado, direta ou indiretamente. Análise de requisitos, estruturas de dados, algoritmos, programação orientada à objetos e engenharia de software são exemplos de matérias de faculdade que podem ser aplicadas facilmente no mercado de trabalho. Entendo que algumas pessoas que são contra a ideia do ensino superior talvez não vejam uma aplicação direta desses conteúdos, que às vezes são aplicados de forma mais abstrata ou indireta. Mas, se olharmos mais de perto, eles ainda estão lá.

### Carreira à longo prazo

Para profissionais que já têm uma carreira consolidada, acredito que ainda faz sentido investir em uma educação superior. Isso se deve às mesmas razões que mencionei ao falar sobre o início de carreira. Além disso, principalmente porque cargos de maior senioridade têm mais chances de exigir uma educação superior e até mesmo pós-graduação, dependendo da empresa. Sinto que isso é ainda mais evidente quando olhamos para duas coisas: vagas no exterior e o tamanho da empresa. As empresas maiores tendem a ter mais poder para definir os pré-requisitos de seus funcionários. Junto com isso, vejo que as vagas para o exterior geralmente exigem uma educação superior com mais frequência. Se você está sonhando em trabalhar para grandes empresas, como por exemplo as FAANG ou outras empresas de grande influência no mercado, acredito que o ensino superior se torna ainda mais essencial.

## Ensino superior público vs. privado

Escolher entre uma faculdade pública ou particular é uma decisão significativa que pode influenciar significativamente sua jornada profissional. As faculdades públicas, comumente, investem mais tempo em aprofundar a teoria, o que pode fortalecer sua base de conhecimento. Esta abordagem intensiva pode ajudá-lo a ter uma melhor compreensão das disciplinas estudadas, preparando-o para enfrentar desafios complexos que podem surgir em sua carreira.

Por outro lado, as faculdades particulares tendem a enfatizar mais as habilidades práticas e atualizadas. Elas se concentram em fornecer uma educação que está alinhada com as demandas do mercado de trabalho atual, o que pode ser extremamente benéfico para se destacar em um ambiente de trabalho competitivo.

Ambos os tipos de instituições têm seus pontos fortes. Portanto, a melhor escolha depende dos seus objetivos individuais de carreira e da sua situação pessoal. É importante ponderar cuidadosamente suas metas, seu estilo de aprendizado preferido e suas aspirações de carreira ao tomar essa decisão.

## Competências desenvolvidas durante o processo de graduação

### Habilidades Sociais e de Comunicação

O ensino superior não só fornece conhecimento técnico, mas também contribui para o desenvolvimento de habilidades sociais e de comunicação. Trabalhar em equipe, resolver problemas complexos e comunicar ideias de forma eficaz são habilidades valiosas no mercado de tecnologia. Durante a graduação, os alunos têm a oportunidade de aprimorar essas habilidades por meio de projetos de grupo, apresentações e interações com colegas e professores.

### Estágios e Experiências Práticas

Muitas instituições de ensino superior têm parcerias com empresas e indústrias locais, permitindo aos estudantes participarem de programas de cooperação e estágios. Essas experiências práticas dão aos alunos a oportunidade de aplicar o conhecimento teórico no mundo real, obtendo uma visão valiosa do ambiente de trabalho e das demandas do mercado.

### Certificações e Educação Continuada

Além do diploma de graduação, certificações em TI de empresas reconhecidas internacionalmente, como AWS(Amazon Web Services), GCP(Google Cloud Plataform), Microsoft e Cisco, são altamente valorizadas no mercado. Muitas instituições oferecem cursos preparatórios para estar certificações e até oportunidades de tirar elas por preços mais acessíveis

### Recursos gratuitos

Estar vinculado à uma instituição de ensino traz a oportunidade de ter acesso à uma série de ferramentas e recursos que originalmente seriam pagos, de forma gratuita ou com um significativo desconto. A maioria destes estão são oferecidos no [GitHub Student Developer Pack](https://education.github.com/pack). Esses benefícios não só economizam dinheiro, mas também proporcionam aos estudantes uma vantagem significativa no aprendizado e na prática do desenvolvimento de software com ferramentas usadas por profissionais ao redor do mundo.

### Networking e Eventos

A participação em eventos, conferências e hackathons oferece a chance de fazer networking e aprender com outros profissionais da área. Essas atividades podem abrir portas no mercado de trabalho e fornecer insights valiosos sobre as mais recentes inovações e tendências na indústria de tecnologia.

### Inclusão e Diversidade

A diversidade e a inclusão são aspectos cada vez mais importantes no ensino superior e no mercado de tecnologia. A promoção da inclusão e da diversidade não apenas enriquece o ambiente de aprendizado e de trabalho, mas também contribui para a inovação e a criatividade na indústria de tecnologia. Iniciativas e programas que incentivam a participação de grupos sub-representados na área de TI são passos importantes para criar um setor mais inclusivo e diversificado.

## Conclusão

Em conclusão, o ensino superior na área de tecnologia pode desempenhar um papel significativo na preparação de indivíduos para uma carreira no setor. Apesar de não ser estritamente necessário para entrar no mercado de trabalho tecnológico, a educação superior proporciona uma série de benefícios que podem ser úteis em todo o percurso profissional. Desde o desenvolvimento de habilidades sociais e de comunicação, à oportunidade de realizar estágios práticos e obter certificações reconhecidas. Além disso, a escolha entre uma instituição de ensino superior público ou privado deve ser pensada com base em suas metas de carreira e situação pessoal. Portanto, enquanto se reflete sobre essa decisão, é importante considerar cuidadosamente todos os aspectos e benefícios de uma educação superior na área de tecnologia. | lexipedia |

1,837,568 | Task18 | Describe the Python Selenium architecture in detail? What is the significance of the Python Virtual... | 0 | 2024-04-29T16:44:11 | https://dev.to/vignesh_89/task18-141c | **Describe the Python Selenium architecture in detail?**

**What is the significance of the Python Virtual Environment? Give Some Example to support your answer?**

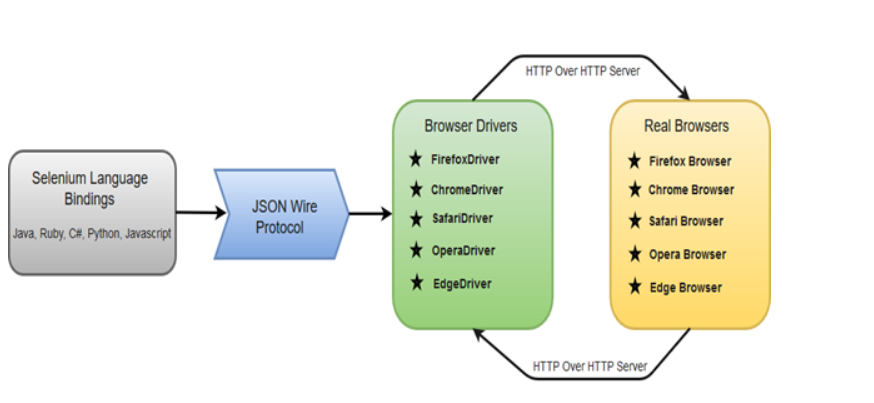

**Python Selenium Architecture:**