id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,867,751 | Staying Up-to-Date with the Latest AI Developments and Trends | Introduction In the rapidly evolving field of Artificial Intelligence (AI), staying... | 0 | 2024-05-28T15:24:46 | https://dev.to/wafa_bergaoui/staying-up-to-date-with-the-latest-ai-developments-and-trends-2kei | ai, development, machinelearning, programming | ## **Introduction**

In the rapidly evolving field of Artificial Intelligence (AI), staying current with the latest developments and trends is essential for professionals to remain competitive and relevant. This article outlines key strategies to help you stay informed and ahead of the curve in the AI landscape.

## **The Importance of Staying Informed**

AI is transforming industries and creating new opportunities, but it also demands continuous learning and adaptation. According to a [report by Gartner](https://www.gartner.com/en/newsroom/press-releases/2021-05-19-gartner-says-70-percent-of-organizations-will-shift-their-focus-from-big-to-small-and-wide-data-by-2025), AI is expected to create 97 million new jobs by 2025, while eliminating 85 million. This dynamic shift underscores the need for professionals to stay updated with the latest AI advancements to harness opportunities and mitigate risks.

## **Key Strategies for Staying Up-to-Date**

**1. Follow Industry News and Publications**

Regularly reading industry news, research papers, and publications is a foundational strategy for staying informed. Some valuable resources include:

. [TechCrunch](https://techcrunch.com/): Covers the latest technology news, including AI developments.

. [MIT Technology Review](https://www.technologyreview.com/): Provides in-depth articles on emerging technologies.

. [Towards Data Science:](https://towardsdatascience.com/) Offers insights and tutorials on data science and AI.

. [ArXiv.org](https://arxiv.org/): Hosts preprints of research papers across various fields, including AI.

**2. Attend Conferences and Workshops**

Participating in conferences, workshops, and webinars allows you to learn from experts, discover new tools, and network with peers. Key events for AI professionals include:

. NeurIPS (Conference on Neural Information Processing Systems)

. ICLR (International Conference on Learning Representations)

. AI Expo

. CVPR (Conference on Computer Vision and Pattern Recognition)

. AAAI Conference on Artificial Intelligence

**3. Join Professional Organizations and Online Communities**

Being part of professional organizations and online communities provides access to exclusive resources, journals, and networking opportunities. Some notable organizations and communities are:

.Association for Computing Machinery (ACM)

. [IEEE Computer Society](https://www.computer.org/)

. [Reddit’s r/MachineLearning](https://www.reddit.com/r/MachineLearning/?rdt=36759)

. [Stack Overflow](https://stackoverflow.com/)

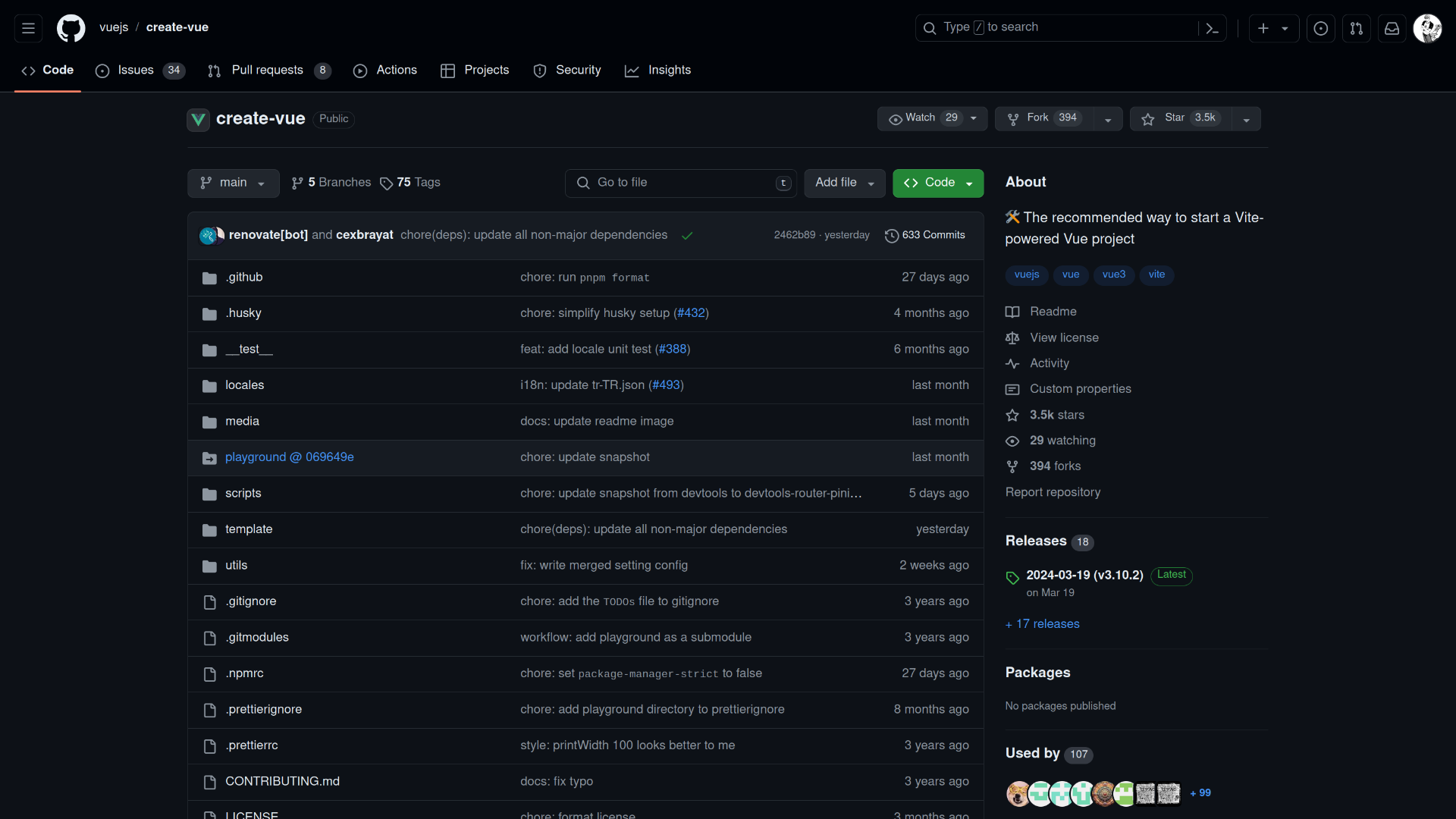

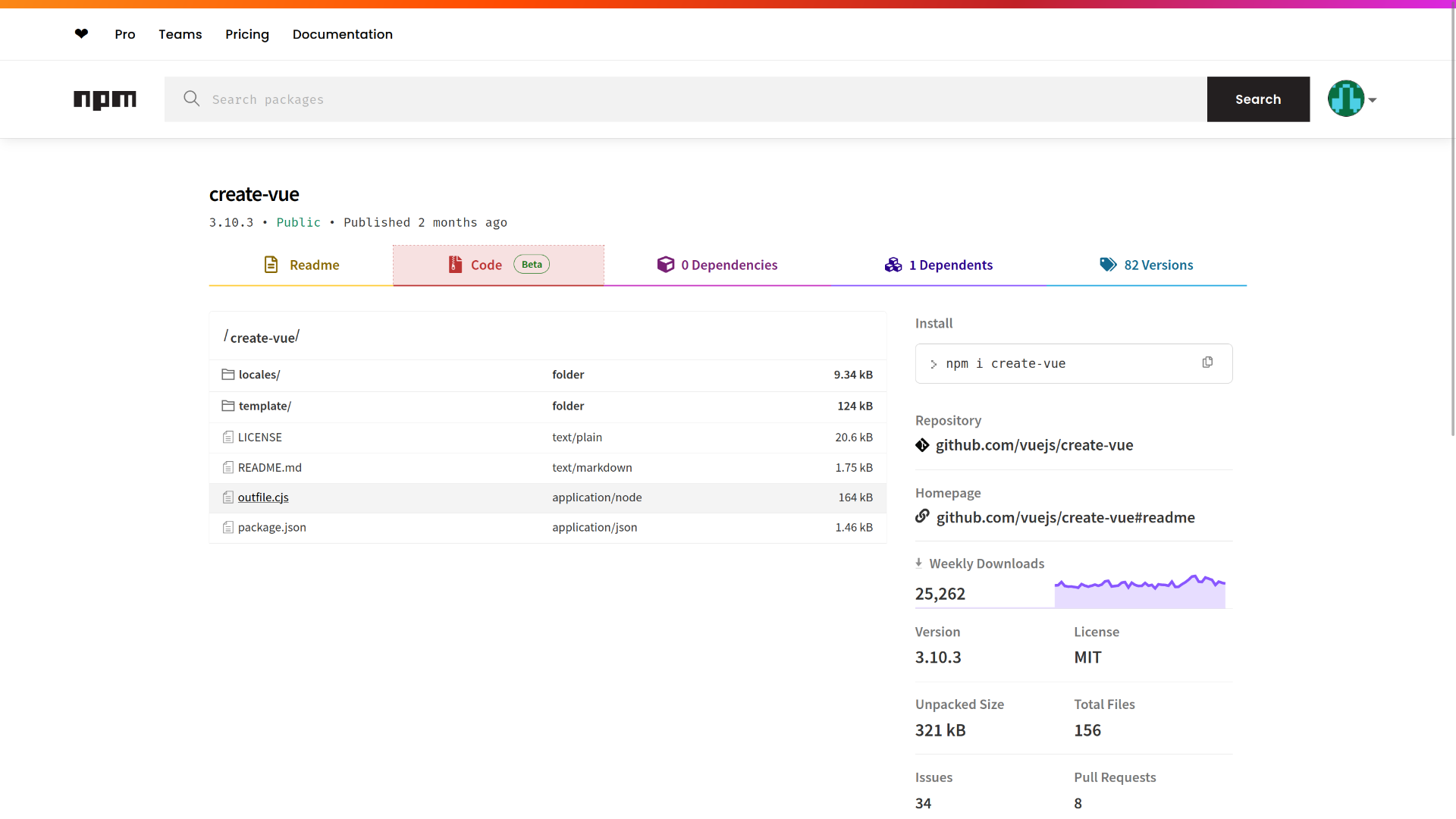

. [GitHub](https://github.com/)

. [Kaggle](https://www.kaggle.com/)

**4. Engage in Continuous Learning**

Leveraging online learning platforms helps you stay current with new technologies and methodologies in AI. These platforms offer courses and specializations that cover the latest advancements and practical applications of AI:

. [Coursera](https://www.coursera.org/courseraplus/?utm_medium=sem&utm_source=gg&utm_campaign=B2C_EMEA__coursera_FTCOF_courseraplus&campaignid=20858197888&adgroupid=156245795749&device=c&keyword=coursera&matchtype=e&network=g&devicemodel=&adposition=&creativeid=684297719990&hide_mobile_promo&term={term}&gad_source=1&gclid=CjwKCAjwgdayBhBQEiwAXhMxtll_TKdZu6hSyNEoLP5pHJl0P6pYgBxuhIK0Meowmij0mkBVvkUgzhoCLNYQAvD_BwE): Offers courses from top universities and institutions, including AI and machine learning specializations.

. [Udacity](https://www.udacity.com/): Known for its AI and machine learning Nanodegree programs.

. [edX](https://www.edx.org/): Provides courses from institutions like MIT and Harvard.

. [Fast.ai](https://www.fast.ai/): Offers practical deep learning courses.

**5. Experiment with AI Projects**

Hands-on experience is invaluable. Regularly working on personal or open source AI projects allows you to apply your knowledge and stay familiar with new tools and frameworks. Some platforms to consider are:

. [Kaggle](https://www.kaggle.com/): For competitions and datasets.

. [GitHub](https://github.com/): For open source projects and collaboration.

. [Colab](https://colab.research.google.com/): Google’s platform for building and sharing machine learning models.

**6. Follow Influential Figures and Organizations**

Following influential figures and organizations in the AI field on social media can provide insights into the latest trends and discussions. Some notable figures and organizations include:

. [Andrew Ng](https://www.linkedin.com/in/andrewyng/): Co-founder of Coursera and Adjunct Professor at Stanford University.

. [Yann LeCun](https://www.linkedin.com/in/yann-lecun/): Chief AI Scientist at Facebook.

. [OpenAI](https://www.linkedin.com/company/openai/)

. [Google DeepMind](https://www.linkedin.com/company/googledeepmind/)

**7. Read Books and Research Papers**

Books and research papers provide in-depth knowledge and understanding of AI concepts and advancements. Some recommended books include:

"Deep Learning" by Ian Goodfellow, Yoshua Bengio, and Aaron Courville

"Artificial Intelligence: A Modern Approach" by Stuart Russell and Peter Norvig

"Pattern Recognition and Machine Learning" by Christopher M. Bishop

**8. Participate in AI Competitions**

Engaging in AI competitions allows you to apply your skills to real-world problems and learn from others. Competitions hosted by platforms like Kaggle, DrivenData, and Codalab often push the boundaries of what is possible and offer practical experience in solving complex AI challenges.

**9. Network with Peers**

Networking with peers through meetups, hackathons, and local AI groups can provide new insights and foster collaborative learning. Websites like [Meetup](https://www.meetup.com/fr-FR/) and platforms like [LinkedIn](https://www.linkedin.com/) are great for finding local events and professional groups focused on AI and machine learning.

## **Conclusion**

Staying up-to-date with the latest AI developments and trends is not just beneficial but essential for professionals in the field. By following these strategies—engaging with industry news, attending events, joining professional communities, continuously learning, experimenting with projects, following influential figures, reading extensively, participating in competitions, and networking with peers—you can ensure that you remain at the forefront of AI advancements.

By consistently engaging with these resources and activities, software developers can stay up-to-date with the latest AI developments and trends, ensuring they remain competitive and well-equipped to handle the challenges and opportunities presented by the AI revolution.

| wafa_bergaoui |

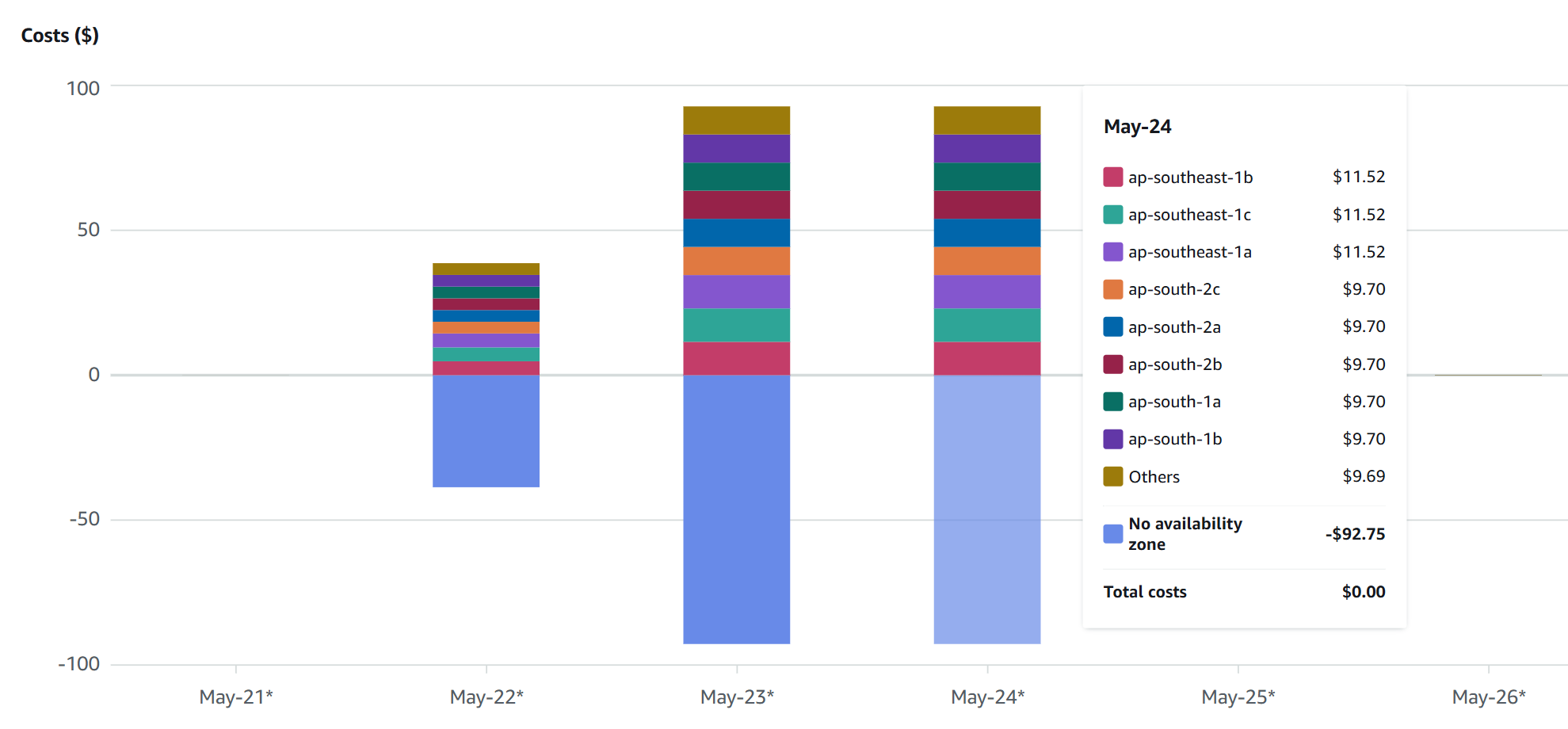

1,867,881 | Optimize AWS Costs by Managing Elastic IPs with Python and Boto3 | The Need for Cost Optimization As businesses increasingly rely on cloud infrastructure,... | 0 | 2024-05-28T15:24:28 | https://dev.to/karandaid/optimize-aws-costs-by-managing-elastic-ips-with-python-and-boto3-53m2 | python, eip, aws, cost | ### The Need for Cost Optimization

As businesses increasingly rely on cloud infrastructure, managing costs becomes critical. AWS Elastic IPs (EIPs) are essential for maintaining static IP addresses, but they can also become a source of unwanted costs if not managed properly. AWS charges for EIPs that are not associated with running instances, which can quickly add up if left unchecked.

To address this, we can write a Python script using Boto3 to automate the management of EIPs, ensuring that we only pay for what we use. Let's walk through the process of creating this script step-by-step.

### Step 1: Setting Up the Environment

First, we need to set up our environment. Install Boto3 if you haven't already:

```bash

pip install boto3

```

Make sure your AWS credentials are configured. You can set them up using the AWS CLI or by setting environment variables.

### Step 2: Initializing Boto3 Client

We'll start by initializing the Boto3 EC2 client, which will allow us to interact with AWS EC2 services.

```python

import boto3

# Initialize boto3 EC2 client

ec2_client = boto3.client('ec2')

```

With this code, we now have an `ec2_client` object that we can use to call various EC2-related functions.

### Step 3: Retrieving All Elastic IPs

Next, we need a function to retrieve all Elastic IPs along with their associated instance ID and allocation ID.

```python

def get_all_eips():

"""

Retrieve all Elastic IPs along with their associated instance ID and allocation ID.

"""

response = ec2_client.describe_addresses()

eips = [(address['PublicIp'], address.get('InstanceId', 'None'), address['AllocationId']) for address in response['Addresses']]

return eips

```

You can run this function to get a list of all EIPs in your AWS account:

```python

print(get_all_eips())

```

The output will be a list of tuples, each containing the EIP, associated instance ID (or 'None' if unassociated), and allocation ID.

### Step 4: Checking Instance States

We need another function to check the state of an instance. This helps us determine if an EIP is associated with a stopped instance.

```python

def get_instance_state(instance_id):

"""

Retrieve the state of an EC2 instance.

"""

response = ec2_client.describe_instances(InstanceIds=[instance_id])

state = response['Reservations'][0]['Instances'][0]['State']['Name']

return state

```

You can test this function with an instance ID to see its state:

```python

print(get_instance_state('i-1234567890abcdef0'))

```

This will output the state of the instance, such as 'running', 'stopped', etc.

### Step 5: Categorizing Elastic IPs

Now, let's categorize the EIPs based on their association and instance states.

```python

def categorize_eips():

"""

Categorize Elastic IPs into various categories and provide cost-related insights.

"""

eips = get_all_eips()

eip_map = {}

unassociated_eips = {}

stopped_instance_eips = {}

for eip, instance_id, allocation_id in eips:

eip_map[eip] = allocation_id

if instance_id == 'None':

unassociated_eips[eip] = allocation_id

else:

instance_state = get_instance_state(instance_id)

if instance_state == 'stopped':

stopped_instance_eips[eip] = instance_id

return {

"all_eips": eip_map,

"unassociated_eips": unassociated_eips,

"stopped_instance_eips": stopped_instance_eips

}

```

Run this function to get categorized EIPs:

```python

categorized_eips = categorize_eips()

print(categorized_eips)

```

The output will be a dictionary with categorized EIPs.

### Step 6: Printing the Results

To make our script user-friendly, we'll add functions to print the categorized EIPs and provide cost insights.

```python

def print_eip_categories(eips):

"""

Print categorized Elastic IPs and provide cost-related information.

"""

print("All Elastic IPs:")

if eips["all_eips"]:

for eip in eips["all_eips"]:

print(eip)

else:

print("None")

print("\nUnassociated Elastic IPs:")

if eips["unassociated_eips"]:

for eip in eips["unassociated_eips"]:

print(eip)

else:

print("None")

print("\nElastic IPs associated with stopped instances:")

if eips["stopped_instance_eips"]:

for eip in eips["stopped_instance_eips"]:

print(eip)

else:

print("None")

```

Test this function by passing the `categorized_eips` dictionary:

```python

print_eip_categories(categorized_eips)

```

### Step 7: Identifying Secondary EIPs

We should also check for instances that have multiple EIPs associated with them.

```python

def find_secondary_eips():

"""

Find secondary Elastic IPs (EIPs which are connected to instances already assigned to another EIP).

"""

eips = get_all_eips()

instance_eip_map = {}

for eip, instance_id, allocation_id in eips:

if instance_id != 'None':

if instance_id in instance_eip_map:

instance_eip_map[instance_id].append(eip)

else:

instance_eip_map[instance_id] = [eip]

secondary_eips = {instance_id: eips for instance_id, eips in instance_eip_map.items() if len(eips) > 1}

return secondary_eips

```

Run this function to find secondary EIPs:

```python

secondary_eips = find_secondary_eips()

print(secondary_eips)

```

### Step 8: Printing Secondary EIPs

Let's add a function to print the secondary EIPs.

```python

def print_secondary_eips(secondary_eips):

"""

Print secondary Elastic IPs.

"""

print("\nInstances with multiple EIPs:")

if secondary_eips:

for instance_id, eips in secondary_eips.items():

print(f"Instance ID: {instance_id}")

for eip in eips:

print(f" - {eip}")

else:

print("None")

```

Test this function by passing the `secondary_eips` dictionary:

```python

print_secondary_eips(secondary_eips)

```

### Step 9: Providing Cost Insights

Finally, we add a function to provide cost insights based on our findings.

```python

def print_cost_insights(eips):

"""

Print cost insights for Elastic IPs.

"""

unassociated_count = len(eips["unassociated_eips"])

stopped_instance_count = len(eips["stopped_instance_eips"])

total_eip_count = len(eips["all_eips"])

print("\nCost Insights:")

print(f"Total EIPs: {total_eip_count}")

print(f"Unassociated EIPs (incurring cost): {unassociated_count}")

print(f"EIPs associated with stopped instances (incurring cost): {stopped_instance_count}")

print("Note: AWS charges for each hour that an EIP is not associated with a running instance.")

```

Run this function to get cost insights:

```python

print_cost_insights(categorized_eips)

```

### Full Code

Here's the complete script with all the functions we've discussed:

```python

import boto3

# Initialize boto3 EC2 client

ec2_client = boto3.client('ec2')

def get_all_eips():

"""

Retrieve all Elastic IPs along with their associated instance ID and allocation ID.

"""

response = ec2_client.describe_addresses()

eips = [(address['PublicIp'], address.get('InstanceId', 'None'), address['AllocationId']) for address in response['Addresses']]

return eips

def get_instance_state(instance_id):

"""

Retrieve the state of an EC2 instance.

"""

response = ec2_client.describe_instances(InstanceIds=[instance_id])

state = response['Reservations'][0]['Instances'][0]['State']['Name']

return state

def categorize_eips():

"""

Categorize Elastic IPs into various categories and provide cost-related insights.

"""

eips = get_all_eips()

eip_map = {}

unassociated_eips = {}

stopped_instance_eips = {}

for eip, instance_id, allocation_id in eips:

eip_map[eip] = allocation_id

if instance_id == 'None':

unassociated_eips[eip] = allocation_id

else:

instance_state = get_instance_state(instance_id)

if instance_state == 'stopped':

stopped_instance_eips[eip] = instance_id

return {

"all_eips": eip_map,

"unassociated_eips": unassociated_eips,

"stopped_instance_eips": stopped

_instance_eips

}

def print_eip_categories(eips):

"""

Print categorized Elastic IPs and provide cost-related information.

"""

print("All Elastic IPs:")

if eips["all_eips"]:

for eip in eips["all_eips"]:

print(eip)

else:

print("None")

print("\nUnassociated Elastic IPs:")

if eips["unassociated_eips"]:

for eip in eips["unassociated_eips"]:

print(eip)

else:

print("None")

print("\nElastic IPs associated with stopped instances:")

if eips["stopped_instance_eips"]:

for eip in eips["stopped_instance_eips"]:

print(eip)

else:

print("None")

def find_secondary_eips():

"""

Find secondary Elastic IPs (EIPs which are connected to instances already assigned to another EIP).

"""

eips = get_all_eips()

instance_eip_map = {}

for eip, instance_id, allocation_id in eips:

if instance_id != 'None':

if instance_id in instance_eip_map:

instance_eip_map[instance_id].append(eip)

else:

instance_eip_map[instance_id] = [eip]

secondary_eips = {instance_id: eips for instance_id, eips in instance_eip_map.items() if len(eips) > 1}

return secondary_eips

def print_secondary_eips(secondary_eips):

"""

Print secondary Elastic IPs.

"""

print("\nInstances with multiple EIPs:")

if secondary_eips:

for instance_id, eips in secondary_eips.items():

print(f"Instance ID: {instance_id}")

for eip in eips:

print(f" - {eip}")

else:

print("None")

def print_cost_insights(eips):

"""

Print cost insights for Elastic IPs.

"""

unassociated_count = len(eips["unassociated_eips"])

stopped_instance_count = len(eips["stopped_instance_eips"])

total_eip_count = len(eips["all_eips"])

print("\nCost Insights:")

print(f"Total EIPs: {total_eip_count}")

print(f"Unassociated EIPs (incurring cost): {unassociated_count}")

print(f"EIPs associated with stopped instances (incurring cost): {stopped_instance_count}")

print("Note: AWS charges for each hour that an EIP is not associated with a running instance.")

# Main execution

if __name__ == "__main__":

categorized_eips = categorize_eips()

print_eip_categories(categorized_eips)

print_secondary_eips(find_secondary_eips())

print_cost_insights(categorized_eips)

```

### Pros and Cons

**Pros**:

- **Cost Savings**: Identifies and helps eliminate unwanted costs associated with unused or mismanaged EIPs.

- **Automation**: Automates the process of monitoring and categorizing EIPs.

- **Insights**: Provides clear insights into EIP usage and cost-related information.

- **Easy to Use**: Simple and straightforward script that can be run with minimal setup.

**Cons**:

- **AWS Limits**: The script relies on AWS API calls, which may be subject to rate limits.

- **Manual Intervention**: While it identifies cost-incurring EIPs, the script does not automatically release or reallocate them.

- **Resource Intensive**: For large-scale environments with many EIPs and instances, the script may take longer to run and consume more resources.

### How We Can Improve It

- **Automatic Remediation**: Extend the script to automatically release unassociated EIPs or notify administrators for manual intervention.

- **Scheduled Execution**: Use AWS Lambda or a cron job to run the script at regular intervals, ensuring continuous monitoring and cost management.

- **Enhanced Reporting**: Integrate with a logging or monitoring service to provide detailed reports and historical data on EIP usage and costs.

- **Notification System**: Implement a notification system using AWS SNS or similar services to alert administrators of cost-incurring EIPs in real time.

By incorporating these improvements, we can make the script even more robust and useful for managing AWS EIPs and reducing associated costs effectively. | karandaid |

1,867,875 | Understanding Certificates in the Digital World | TL;DR Digital certificates are the digital counterpart to physical ID cards or... | 0 | 2024-05-28T15:19:28 | https://dev.to/yannick555/understanding-certificates-in-the-digital-world-1bn6 | ### TL;DR

Digital certificates are the digital counterpart to physical ID cards or passports.

Their trustworthiness and validity stems from the reliability and credibility of the issuing entity (the CA, respectively the government).

### Intro

In our increasingly digital world, ensuring security and authenticity online is more crucial than ever. Whether you're shopping online, accessing your bank account, or simply browsing the web, the need for secure communication and trust in the entities you interact with cannot be overstated. This is where digital certificates come into play. Much like an ID card verifies your identity in the physical world, a digital certificate verifies identities and secures communications in the digital realm.

Let’s dive into what digital certificates are and how they function similarly to ID cards.

### What is a Digital Certificate?

A digital certificate is a digital document used to authenticate the identity of an entity and establish secure communications. Entities can include websites, individuals, devices, or software. Think of it as a virtual passport that confirms the legitimacy of the entity and contributes to ensure that any data transmitted between you and the entity is secure (the certificate alone is not enough, the communication protocol is important as well).

### Contents of a Digital Certificate

1. **Entity Information**:

- **Subject**: The entity the certificate is issued to. For example, in an SSL/TLS certificate for a website, the subject is typically the domain name of the website.

- **Issuer**: The entity that issued the certificate. This is usually a Certificate Authority (CA).

- **Serial Number**: A unique identifier assigned by the issuer to the certificate.

- **Validity Period**: The period during which the certificate is considered valid, including a start date and an expiration date.

2. **Public Key**:

- The public key of the entity, used for encryption, digital signatures, or both. This key is mathematically linked to a private key held by the entity.

3. **Digital Signature**:

- A digital signature created by the issuer using its private key. It ensures the integrity and authenticity of the certificate. If the signature can be verified using the issuer's public key, the certificate is considered valid.

4. **Certificate Extensions**:

- **Key Usage**: Specifies the cryptographic operations that the public key can be used for, such as encryption, digital signatures, or both.

- **Subject Alternative Name (SAN)**: Additional identities for which the certificate is valid, such as alternative domain names for a website.

- **Basic Constraints**: Specifies whether the certificate can be used as a Certificate Authority to issue other certificates.

- **Extended Key Usage**: Further specifies the purposes for which the public key can be used, such as client authentication, server authentication, or code signing.

> **Notes**

> 1. A certificate is meant to be shared with other entities, which is why...

> 2. The private key is *not* part of the certificate.

> 3. The CA itself may be authenticated by another CA, which might as well be authenticated by another one and so on, until the root CA is attained: this is the CA trust chain.

### How Does an ID Card Work?

1. **Issuance of ID Card**:

- An individual provides personal information and undergoes identity verification by a relevant authority, such as a government agency or employer, to obtain an ID card.

- The authority verifies the identity of the requester and issues the ID card containing their personal details and photograph.

2. **Authentication and Identity Verification**:

- When presenting the ID card, the information on the card is compared with the individual presenting it to verify their identity.

- This authentication process ensures that the person holding the ID card is indeed the legitimate cardholder and is authorised to access the services or privileges associated with the card.

3. **Access to Privileges and Services**:

- ID cards grant access to various privileges and services based on the authority associated with the card. For example, a driver's license grants the holder the privilege to operate a motor vehicle.

- By presenting the ID card, individuals can access these privileges and services, ensuring that only authorised individuals benefit from them.

### How Does a Digital Certificate Work?

1. **Certificate Issuance**: An entity generates a public-private key pair and submits a certificate signing request (CSR) to a Certificate Authority (CA). The CA verifies the identity of the requester and issues a digital certificate containing the public key.

2. **Authentication and Secure Communication**:

- **Websites**: Your browser requests the site’s digital certificate and verifies it, ensuring you are communicating with the legitimate site.

- **Emails**: Email clients can use certificates to encrypt and sign emails, ensuring confidentiality and authenticity.

- **Software**: Developers sign their software with certificates to prove that it has not been tampered with.

- **Individuals**: Digital certificates can authenticate individuals for access to secure systems or documents.

### Types of Digital Certificates

There are different times of digital certificates which serve different purposes:

1. **SSL/TLS Certificates**: Secure communications between browsers and websites.

2. **Client Certificates**: Authenticate users or devices to a server.

3. **Code Signing Certificates**: Verify the identity of the software publisher and ensure the integrity of the software.

4. **Email Certificates (S/MIME Certificates)**: Secure email communications by encrypting and signing emails.

5. **Document Signing Certificates**: Digitally sign documents to ensure their authenticity and integrity.

6. **Root and Intermediate Certificates**: Form the foundation of trust in the PKI hierarchy, used by Certificate Authorities to issue end-entity certificates.

> **Types of government-issued IDs**

> Governments typically issue various types of identification documents to their citizens, residents, and other individuals. These IDs serve different purposes and may vary depending on the country and its regulations. Here are some common types of IDs issued by governments:

> 1. **National Identity Cards (NIC)**:

> - National identity cards are government-issued documents that serve as official proof of identity for citizens or residents. They typically include the individual's name, photograph, date of birth, and sometimes other identifying information such as address or identification number.

> 2. **Passports**:

> - Passports are travel documents issued by governments to their citizens for international travel. They contain personal information about the passport holder, including their photograph, nationality, date of birth, and passport number.

> 3. **Driver's Licenses**:

> - Driver's licenses are issued by government agencies to individuals who have passed the required tests to operate motor vehicles legally. In addition to serving as proof of identity, driver's licenses also indicate the holder's authorisation to drive specific types of vehicles.

> 4. **Social Security Cards**:

> - Social Security cards are issued in some countries to individuals who have registered for government social security programs. They typically contain a unique identification number assigned to the individual for tracking purposes.

> 5. **Residence Permits**:

> - Residence permits are issued to non-citizen residents by governments to legally reside in a country for a specified period. These documents typically include the individual's name, photograph, and information about their immigration status.

> 6. **Voter ID Cards**:

> - Voter ID cards are issued to eligible voters by election authorities to facilitate voting in elections. They serve as proof of identity and eligibility to vote.

> 7. **Military IDs**:

> - Military identification cards are issued to members of the armed forces and their dependent. They serve as proof of military affiliation and may grant access to military facilities and services.

> 8. **Government Employee IDs**:

> - Government employee IDs are issued to individuals employed by government agencies. They serve as proof of employment and may grant access to government facilities and services.

>

> These are just a few examples of the types of IDs that governments commonly issue. The > specific types and requirements for obtaining them vary by country and jurisdiction.

### Digital Certificates vs. ID Cards: The Similarities

Usually, the government trusts local authorities (municipality) to authenticate a person and to make the ID request. The government then creates the ID, which is then handed to the person by the requesting local authority. The role of the government is similar to the role of a CA.

People and organisations usually trust the government-delivered ID: sometimes, this is even mandated by law. This trust in the government is what makes this system robust - whether one likes the government or not.

1. **Identity Verification**:

- **ID Card**: Confirms your identity with personal details and a photograph, typically issued by a government or other trusted entity.

- **Digital Certificate**: Confirms the identity of an entity, typically issued by a trusted CA after verifying the entity's credentials.

2. **Trust**:

- **ID Card**: We trust ID cards because they are issued by recognised authorities like governments.

- **Digital Certificate**: We trust digital certificates because they are issued by recognised CAs, which follow rigorous verification processes.

3. **Authentication**:

- **ID Card**: Used to prove your identity when accessing restricted areas, conducting transactions, or during identification checks.

- **Digital Certificate**: Used to prove the identity of an entity, ensuring secure and authenticated communications.

4. **Security Features**:

- **ID Card**: Contains physical security features like holograms, watermarks, and micro-printing to prevent forgery.

- **Digital Certificate**: Contains cryptographic elements like public keys and digital signatures to prevent tampering and ensure authenticity.

5. **Validity period:**

- **ID Card**: Has an expiration date.

- **Digital Certificate**: Has a limited validity period which specifies the time frame during which it is considered valid and trustworthy. After the expiration date, the certificate is no longer considered valid, and relying parties should not trust it for secure communications.

6. **Renewal Process:**

- **ID Card**: Must be renewed before it expires. Renewal typically involves obtaining a new ID from the issuing government and only using the new one (in some countries, authorities may even require that old IDs are given back to the government).

- **Digital Certificate**: Must be renew by their holders before they expire to ensure uninterrupted secure communication. Renewal typically involves obtaining a new certificate from the issuing Certificate Authority (CA) and replacing the old certificate with the new one.

7. **Types:**

- **ID Card:** Various types of IDs are issued by governments and are used for different purposes.

- **Digital Certificate:** Various types of digital certificates are used for different purposes.

### Practical Examples of Digital Certificates in Use

1. **Secure Web Browsing**: When you visit a website starting with `https://`, the communication protocol and the site’s digital certificate ensure that your communication with the site is encrypted and secure, protecting sensitive information like passwords and credit card numbers.

2. **Email Security**: Digital certificates can secure email communications by encrypting emails and enabling digital signatures, ensuring that your emails are confidential and authenticated.

3. **Software Integrity**: Developers use digital certificates to sign software, ensuring users that the software has not been tampered with and is from a legitimate source.

4. **Individual Authentication**: Organisations issue digital certificates to employees, enabling secure access to corporate systems and data. These certificates ensure that only authorised individuals can access sensitive information.

5. **Document Signing**: Digital certificates are used to sign electronic documents, providing a digital equivalent of a handwritten signature and ensuring the document’s integrity and authenticity.

### Conclusion

Digital certificates play a vital role in maintaining trust and security in the digital world, much like ID cards do in the physical world. They verify the identities of entities, establish secure communications, and ensure data integrity. By understanding how digital certificates function and their similarities to ID cards, we can better appreciate the underlying mechanisms that keep our online interactions safe and trustworthy. So next time you encounter a digital certificate, you’ll know that it is hard at work, verifying identities and securing your digital environment.

| yannick555 | |

1,867,863 | Architecting Microservices as Tenants on a Monolith with Zango | You must be this tall to use Microservices - Martin Fowler Microservices architectural pattern has... | 0 | 2024-05-28T15:15:01 | https://www.zango.dev/blog/architecting-microservices-as-a-tenant-on-a-monolith | python, microservices, architecture, opensource | ---

published: true

canonical_url: https://www.zango.dev/blog/architecting-microservices-as-a-tenant-on-a-monolith

---

> You must be this tall to use Microservices - Martin Fowler

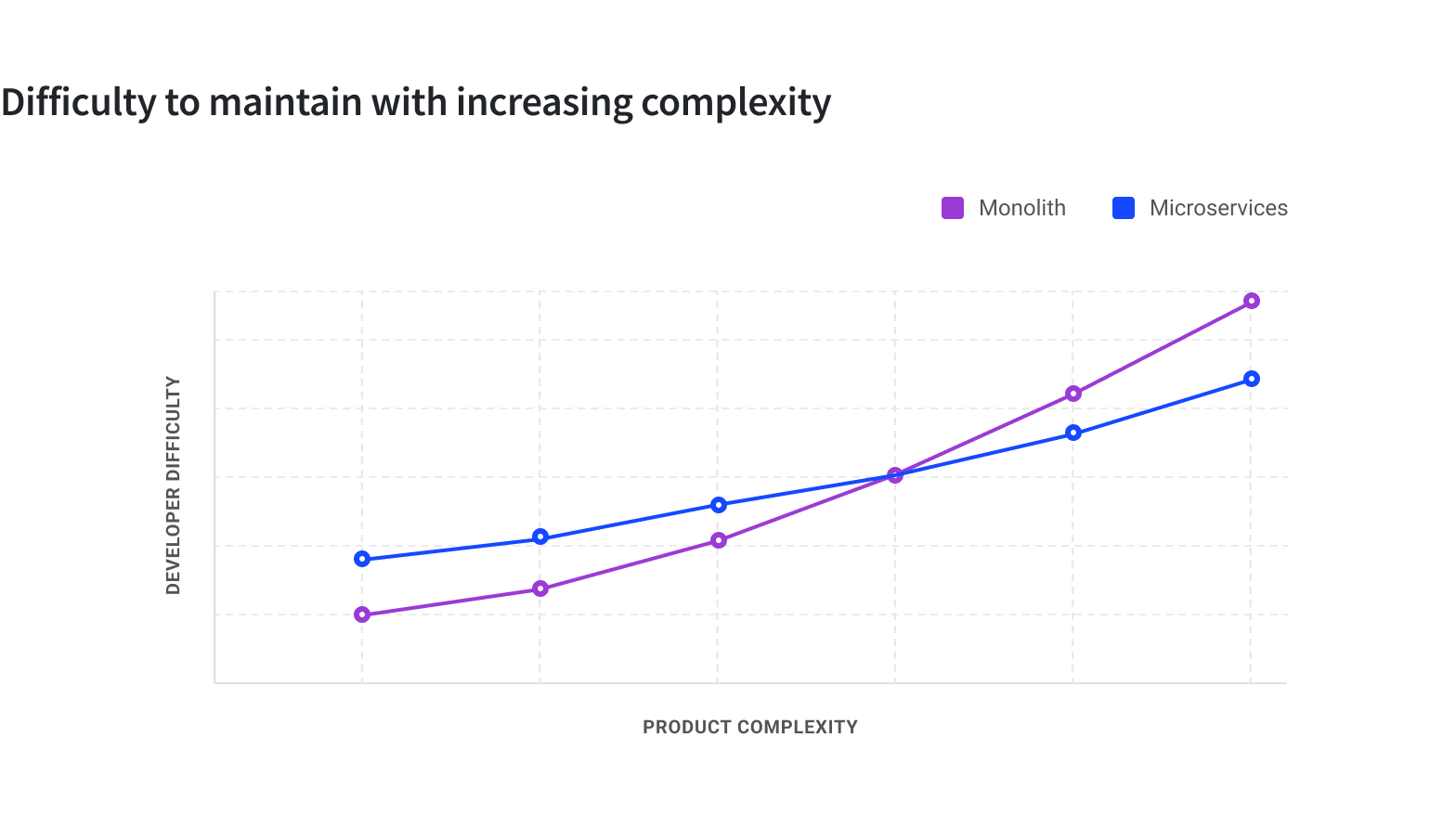

Microservices architectural pattern has gained massive prominence and widespread adoption over the last decade or so due to the many advantages it offers. It enables decentralized structures at the people level as well as at the technical level and improves the ability of systems to effectively respond to the demands of growth, both of features and scale. The microservices pattern definitely offers higher elasticity to the system it is powering to respond to changes in a dynamic environment.

On the other hand, a monolithic system is akin to a centralized structure where the complexity keeps increasing by the day. The addition of more lines of codes, new modules, new packages, new dependencies, etc. leads to a system where the elasticity to change is decreasing. Enhancements tends to become more cumbersome and expensive and it is easier for more crufts to surface in the codebase. The burden of bureaucracy also tends to be higher on the monolith for obvious reasons.

## Microservices are expensive

More recently, there has been a lot of discussion around the cost of microservices. The in-memory method calls in a monolith between modules becomes the more expensive network calls in microservices. Separate deployment makes it easy to run into a fleet of hundreds of servers in organizations running several microservices in production. Then there are overheads introduced from more complex monitoring, devops, etc.

While the ability to run and scale smaller independent systems introduces the leverage of agility, the higher infrastructural and operational overheads renders the choice of microservices impractical in a vast majority of use cases.

During the proliferation phase, the microservices pattern seemed like the silver bullet and organizations of all sizes were adopting it overlooking the overheads. In the current times, it seems as if the reverse is happening. It is becoming fashionable to suggest that microservices is not a suitable solution for the problem at hand and that monolith is ‘majestic’ or start with a monolith.

## The advantages of microservices, albeit the cost

The microservices pattern can revolutionize the way a product is developed, deployed and enhanced even for ecosystems that might not have hit the threshold of scale. Keeping aside the cost and concerns around the operational overhead, microservices as a pattern stands to quickly outshine a monolithic approach by remaining more adaptable to new requirements. The enablement of parallel development by establishing well enforced boundaries greatly adds to the agility of the development team.

The adage is to start with a monolith and branch out to microservices, using the approach known as the [Strangler Fig pattern](https://martinfowler.com/bliki/StranglerFigApplication.html).

## Don’t start with a monolith if the goal is microservices

To paraphrase [Simon Tilkov](https://z-framework-website-git-development-zelthy.vercel.app/blog/architecting-microservices-as-a-tenant-on-a-monolith#:~:text=To%20paraphrase-,Simon%20Tilkov,-I%E2%80%99m%20firmly%20convinced)

> I’m firmly convinced that starting with a monolith is usually exactly the wrong thing to do. Starting to build a new system is exactly the time when you should be thinking about carving it up into pieces. I strongly disagree with the idea that you can postpone this.

Building a greenfield monolith that is expected to grow out into a Strangler Fig and expecting to cut it off when the time is right will add stress to the design of the monolith in the first place.

Simon Tilkov continues

> You might be tempted to assume there are a number of nicely separated microservices hiding in your monolith, just waiting to be extracted. In reality, though, it’s extremely hard to avoid creating lots of connections, planned and unplanned. In fact the whole point of the microservices approach is to make it hard to create something like this.

## Zango - A new approach for microservices, sans the overheads

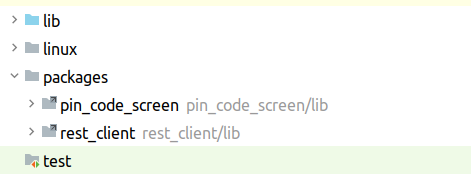

[Zango](https://github.com/Healthlane-Technologies/Zango) is a new Python web application development framework, implemented on top of Django, that allows leveraging the key advantages of the micorservices pattern without incurring any additional operational overheads.

Under the hood, Zango is a monolith that allows hosting multiple independently deployable applications or microservices on top of it. Each application is logically isolated from the other sibling apps at the data layer, the logic layer, the interface layer as well as in deployment, ensuring complete microservices type autonomy.

One of the key goals of Zango's design is to make sure that deployment of one app does not require downtime for any other sibling app or the underlying monolith. We do so by creating logically separated areas, enforcing rules and policies at the framework level and leveraging the plugin architectural pattern for executing the app's logics.

In addition, to meet the requirement of differential scaling, Zango also allows easy branching off of one or more services to deployment on a separate cluster without needing any code level refactoring.

Zango has been open sourced recently, but it has been in production to support hundreds of applications in global companies.

## Let’s understand the architecture in detail.

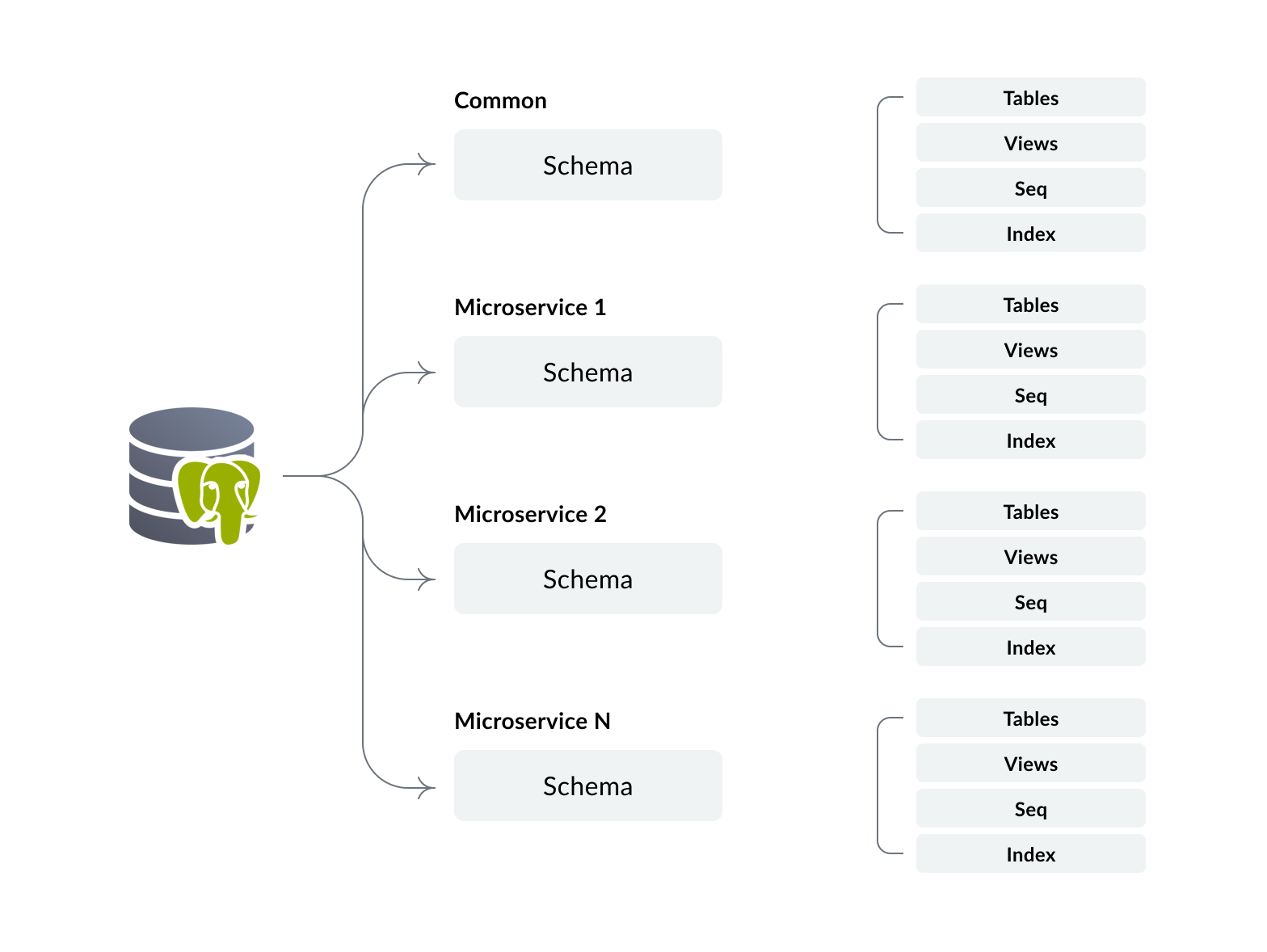

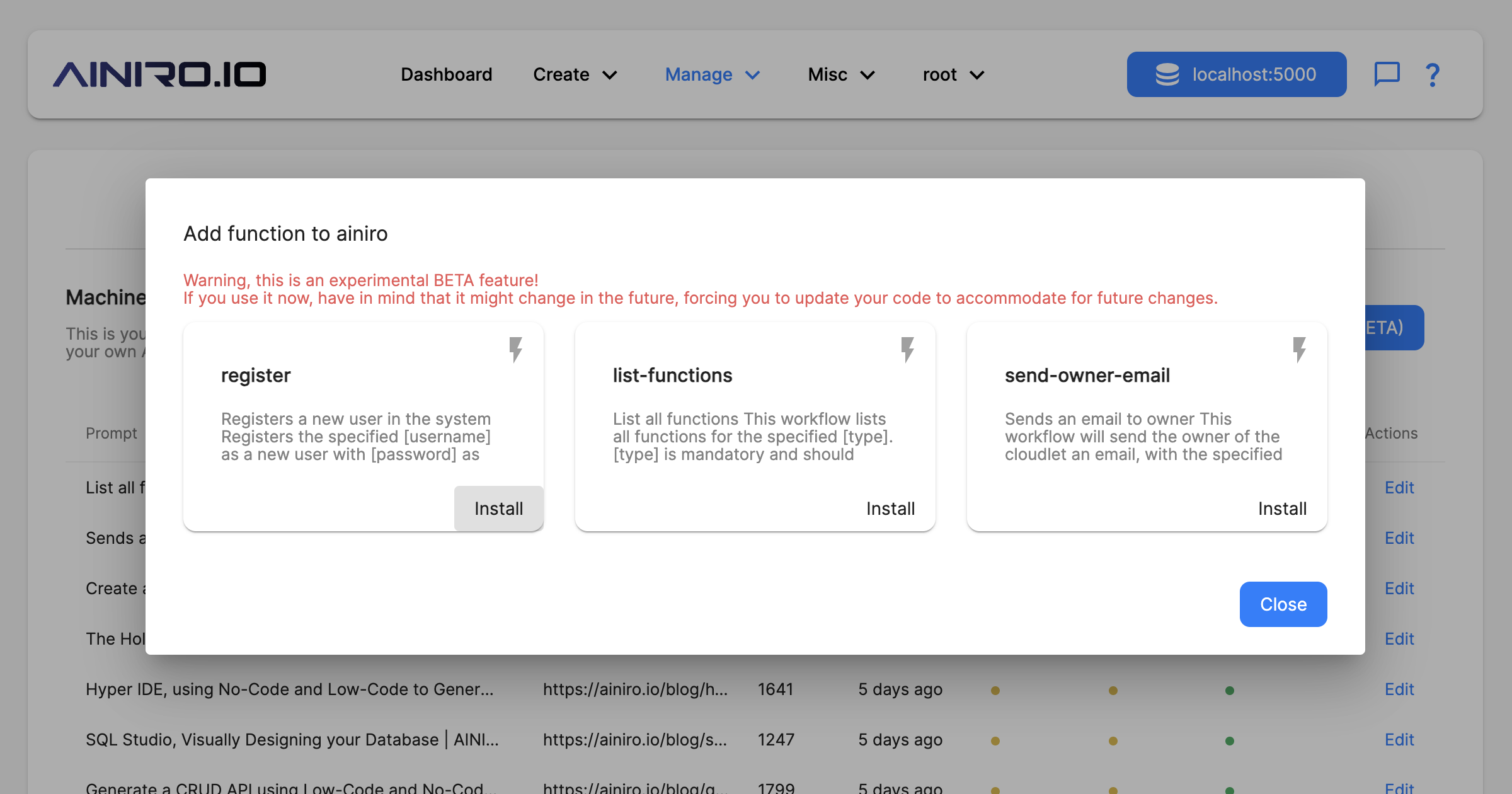

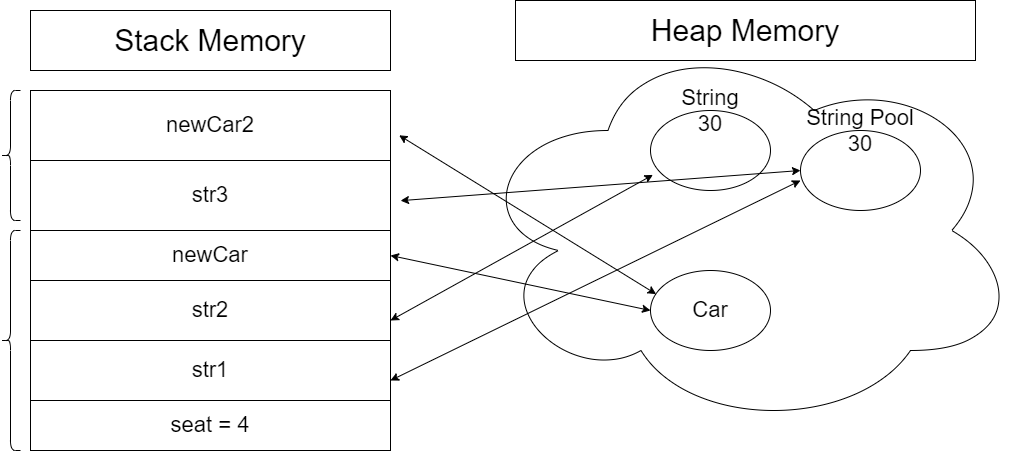

Zango comes with Postgres SQL database and the organization of the datastore is central to the framework. We utilize the [multi-tenancy](https://www.postgresql.org/docs/current/ddl-schemas.html) feature of Postgres to logically segregate the storage for each microservice. Each microservice will have a unique schema in the database where all the tables and associated objects for that microservice will be housed. The image below shows the organization at the database level.

Policies are enforced by the framework to prevent unauthorized access to data. For e.g. application logic implemented in microservice 1 that attempts to access the data for microservice 2 will be restricted by the default policies.

Additionally the common schema in the database will have a table to function as the registry of microservices. In this registry we store the generic details of the microservices, including:

- Primary ID

- Name

- Domain URL

- DB Schema Name

With the organization of data storage for the microservices in place, we can now look into how Zango handles the codebase, ensuring we meet the key requirements:

- A lot of microservices can be created on a single monolith deployment

- Deployment of one microservice must not require any downtime for other microservices

- Errors in one microservice must not propagate to outside the boundaries of the microservice

To meet these requirements, we leverage the Plugin Architectural pattern. Each microservice is implemented as a plugin. Caching techniques ensure the efficient loading of the plugin codebase and re-loading only when new releases are available for a particular microservice.

## Inside the request-response cycle

On receiving an incoming request, Zango first attempts to identify which microservice it is intended for, using the domain url from the request and the matching with the ones stored in the registry. If a matching microservice is found, an attempt to find a matching route for the URI in the microservice’s codebase. The framework enforces certain rules for defining the routes. If a matching route is found, the controller associated with the route is called to generate the response. If the match is not found, 404 is returned.

The framework also sets the path to the particular schema in the database that belongs to the microservice. By doing so, there will be no requirement to explicitly set the schema context in the codebase of the microservice and the developer gets the sense that he is working with a single tenant database only.

The overall architecture involves a lot of other nuances to handle finer details around access control and authorization framework, differential throttling, packages layer to enable a packages ecosystem, etc. which is beyond the scope of this introduction.

We believe that the possibility of implementing a microservices architecture without additional cost burden can bring a lot of advantages to a greenfield web application project. The power of differential scaling on the same deployment as well as the ease with which the microservices can be extracted fully into an independent deployment will significantly strengthen the teams

Zango is available under an open source AGPL license [here](https://github.com/Healthlane-Technologies/Zango). It is implemented on top of Django and requires basic Django understanding to start developing applications on it.

**Checkout Zango on Github:** [https://github.com/Healthlane-Technologies/Zango](https://github.com/Healthlane-Technologies/Zango)

| kcdiabeat |

1,867,877 | Light Bulb Challenge | Hi, This is one of the javascript challenges that was quite popular when I was studying Javascript.... | 0 | 2024-05-28T15:13:56 | https://dev.to/rusandu_dewm_galhena/light-bulb-challenge-595n | javascript, webdev, beginners, programming | Hi, This is one of the javascript challenges that was quite popular when I was studying Javascript. This challenge is a completly begginer friendly challenge for who ever began to code Javascript.

Bulb challenge is when you create button and when the user presses the button the content changes or in this case, the image of the unlit bulb changes to a lit bulb image when the use clicks on light off button.This challenge is performed by the onclick function in Javascript.

Hope this would be helpfull!! | rusandu_dewm_galhena |

1,867,730 | Testing React Applications | Table of contents Introduction 1. The importance of testing 2. Testing fundamentals 3.... | 0 | 2024-05-28T15:13:00 | https://www.marioyonan.com/blog/react-testing | webdev, react, testing, beginners | ## Table of contents

- [Introduction](#introduction)

- [1. The importance of testing](#1-the-importance-of-testing)

- [2. Testing fundamentals](#2-testing-fundamentals)

- [3. Writing unit tests with Jest and React Testing Library](#3-writing-unit-tests-with-jest-and-react-testing-library)

- [4. Integration Testing](#4-integration-testing)

- [5. End-to-End testing with Cypress](#5-end-to-end-testing-with-cypress)

- [6. Test-Driven Development (TDD)](#6-test-driven-development-tdd)

- [7. Mocking in tests](#7-mocking-in-tests)

- [8. Best practices and Tips](#8-best-practices-and-tips)

- [9. Continuous Integration and Deployment (CI/CD)](#9-continuous-integration-and-deployment-cicd)

- [Conclusion](#conclusion)

---

## Introduction

👋 Hello everyone!

Let’s dive into an essential aspect of developing robust React applications – testing. While it’s a well-known practice in the developer community, effectively testing React apps can still be challenging.

In this article, we’ll explore various aspects of testing React applications. We’ll cover the basics, like why testing is important and the different types of tests you should write. Then we’ll get our hands dirty with some practical examples using popular tools like Jest, React Testing Library, and Cypress.

By the end of this article, you’ll have a solid understanding of how to set up a robust testing strategy for your React apps, making your development process smoother and your applications more reliable.

Let’s get started!

---

## 1. The importance of testing

Testing is a critical component of software development, and for React applications, it’s no different. Here’s why testing your React apps is essential:

- **Improves Code Quality**: Regular testing helps identify and fix bugs early in the development process, leading to higher quality code. It ensures that your code meets the specified requirements and behaves as expected under different conditions.

- **Reduces Bugs**: Automated tests can catch bugs before they make it to production. By writing comprehensive tests, you can prevent many common issues that might otherwise slip through the cracks during manual testing.

- **Enhances Maintainability**: As your React application grows, maintaining and updating it becomes more challenging. Tests act as a safety net, ensuring that new changes do not break existing functionality. This makes refactoring and adding new features much safer and more efficient.

- **Increases Developer Confidence**: With a robust test suite, developers can make changes and add new features with greater confidence, knowing that the tests will catch any regressions or issues.

- **Supports Continuous Integration**: Automated tests are essential for continuous integration and continuous deployment (CI/CD) pipelines. They ensure that every change is tested automatically, maintaining the stability and reliability of your application.

Understanding the importance of testing helps in appreciating the effort put into writing and maintaining tests. It’s not just about finding bugs but also about building a reliable and maintainable codebase.

---

## 2. Testing fundamentals

Understanding the basics of testing is crucial before diving into the specifics of testing React applications. Here are some key concepts and strategies:

### Types of Testing

- **Unit Testing**: Focuses on individual components or functions. The goal is to test each part of the application in isolation to ensure it works as expected.

- **Integration Testing**: Tests the interaction between different parts of the application. This ensures that different components or services work together correctly.

- **End-to-End (E2E) Testing**: Simulates real user interactions with the application. These tests cover the entire application from the user interface to the back-end, ensuring everything works together as a whole.

### Common Testing Tools for React

- **Jest**: A powerful JavaScript testing framework developed by Facebook, commonly used for unit and integration tests in React applications.

- **React Testing Library**: A library for testing React components, focusing on testing user interactions rather than implementation details.

- **Enzyme**: A testing utility for React that allows you to manipulate, traverse, and simulate runtime behavior in a React component’s output. Though it’s less commonly used today, it’s still relevant in many projects.

- **Cypress**: A robust framework for writing end-to-end tests. It provides a developer-friendly experience and is known for its powerful features and ease of use.

Understanding these fundamentals will provide a strong foundation as we move into writing specific types of tests for React applications.

---

## 3. Writing unit tests with Jest and React Testing Library

Unit testing focuses on verifying the functionality of individual components in isolation. Jest and React Testing Library are commonly used together to write unit tests for React applications.

### Setting Up Your Testing Environment

First, you need to install Jest and React Testing Library. If you haven’t already, you can add them to your project using npm or yarn:

```bash

npm install --save-dev jest @testing-library/react @testing-library/jest-dom

# or

yarn add --dev jest @testing-library/react @testing-library/jest-dom

```

Next, create a setupTests.js file in your src directory to configure Jest and React Testing Library:

```javascript

// src/setupTests.js

import '@testing-library/jest-dom';

```

Ensure your package.json includes the following configuration for Jest:

```json

{

"scripts": {

"test": "jest"

},

"jest": {

"setupFilesAfterEnv": ["<rootDir>/src/setupTests.js"],

"testEnvironment": "jsdom"

}

}

```

### Writing your first unit test

Let’s start with a simple example. Suppose you have a Button component:

```jsx

// src/components/Button.js

import React from 'react';

const Button = ({ label, onClick }) => (

<button onClick={onClick}>{label}</button>

);

export default Button;

```

Now, let’s write a unit test for this component:

```jsx

// src/components/Button.test.js

import React from 'react';

import { render, fireEvent } from '@testing-library/react';

import Button from './Button';

test('renders the button with the correct label', () => {

const { getByText } = render(<Button label="Click me" />);

expect(getByText('Click me')).toBeInTheDocument();

});

test('calls the onClick handler when clicked', () => {

const handleClick = jest.fn();

const { getByText } = render(<Button label="Click me" onClick={handleClick} />);

fireEvent.click(getByText('Click me'));

expect(handleClick).toHaveBeenCalledTimes(1);

});

```

### Testing Component Rendering and User Interactions

React Testing Library encourages testing components in a way that resembles how users interact with them. Here are a few more examples:

**Testing State Management**:

Suppose you have a Counter component that increments a counter when a button is clicked:

```jsx

// src/components/Counter.js

import React, { useState } from 'react';

const Counter = () => {

const [count, setCount] = useState(0);

return (

<div>

<p>Count: {count}</p>

<button onClick={() => setCount(count + 1)}>Increment</button>

</div>

);

};

export default Counter;

```

Now, let’s write a unit test for this component:

```jsx

// src/components/Counter.test.js

import React from 'react';

import { render, fireEvent } from '@testing-library/react';

import Counter from './Counter';

test('increments the counter when the button is clicked', () => {

const { getByText } = render(<Counter />);

const button = getByText('Increment');

fireEvent.click(button);

expect(getByText('Count: 1')).toBeInTheDocument();

});

```

**Testing Props**:

Ensure that components render correctly based on different props:

```jsx

// src/components/Greeting.js

import React from 'react';

const Greeting = ({ name }) => <h1>Hello, {name}!</h1>;

export default Greeting;

```

```jsx

// src/components/Greeting.test.js

import React from 'react';

import { render } from '@testing-library/react';

import Greeting from './Greeting';

test('renders the correct greeting message', () => {

const { getByText } = render(<Greeting name="Alice" />);

expect(getByText('Hello, Alice!')).toBeInTheDocument();

});

```

---

## 4. Integration Testing

Integration testing focuses on verifying the interactions between different parts of your application to ensure they work together correctly. This type of testing is crucial for React applications, where components often interact with each other and external services.

### Setting up integration tests

To start writing integration tests, you’ll use the same tools as for unit testing, such as Jest and React Testing Library. However, you’ll focus on testing how multiple components interact with each other.

Here’s how to set up a basic integration test:

1. **Install necessary libraries:**

Make sure you have Jest and React Testing Library installed.

```bash

npm install --save-dev jest @testing-library/react @testing-library/jest-dom

# or

yarn add --dev jest @testing-library/react @testing-library/jest-dom

```

2. **Configure Jest for integration testing:**

Ensure your Jest setup can handle integration tests, especially if you’re mocking APIs or using other external services.

Let’s consider an example where you have a UserList component that fetches and displays a list of users. This component interacts with an API and another User component.

```jsx

// src/components/User.js

import React from 'react';

const User = ({ name }) => <li>{name}</li>;

export default User;

```

```jsx

// src/components/UserList.js

import React from 'react';

import User from './User';

const UserList = ({users}) => {

return (

<ul>

{users.map(user => (

<User key={user.id} name={user.name} />

))}

</ul>

);

};

export default UserList;

```

**Integration test**

```jsx

// src/components/UserList.test.js

import React from 'react';

import { render, screen, waitFor } from '@testing-library/react';

import UserList from './UserList';

beforeAll(() => server.listen());

afterEach(() => server.resetHandlers());

afterAll(() => server.close());

test('fetches and displays users', async () => {

render(<UserList users=["Alice", "Bob"] />);

expect(screen.getByText('Alice')).toBeInTheDocument();

expect(screen.getByText('Bob')).toBeInTheDocument();

});

```

---

## 5. End-to-End testing with Cypress

End-to-end (E2E) testing is a critical part of ensuring your React application works as expected from the user’s perspective. Cypress is a popular tool for E2E testing due to its developer-friendly features and powerful capabilities.

### Introduction to End-to-End Testing

End-to-end testing simulates real user interactions with your application, testing the entire workflow from start to finish. This type of testing helps ensure that all components of your application work together as intended, providing a seamless experience for users.

### Benefits of Cypress

- **Developer-Friendly**: Cypress provides an intuitive interface and easy-to-write tests, making it accessible for developers of all skill levels.

- **Fast and Reliable**: Cypress runs tests in the browser, allowing you to see exactly what the user sees. This results in fast and reliable test execution.

- **Built-In Features**: Cypress includes features like time travel, automatic waiting, and real-time reloads, which simplify the testing process and enhance debugging capabilities.

### Setting Up Cypress

To get started with Cypress, follow these steps:

1. **Install Cypress**:

Use npm or yarn to install Cypress in your project.

```bash

npm install cypress --save-dev

# or

yarn add cypress --dev

```

2. **Open Cypress:**

Open Cypress for the first time to complete the setup and generate the necessary folder structure.

```bash

npx cypress open

```

3. **Configure Cypress**:

Add a cypress.json file to configure Cypress settings as needed.

```json

{

"baseUrl": "http://localhost:3000"

}

```

### Writing E2E Tests

Let’s write a simple E2E test to verify the login functionality of a React application:

1. **Create a Test File**:

Create a new test file in the cypress/integration folder.

```jsx

// cypress/integration/login.spec.js

describe('Login', () => {

it('should log in the user successfully', () => {

cy.visit('/login');

cy.get('input[name="username"]').type('testuser');

cy.get('input[name="password"]').type('password123');

cy.get('button[type="submit"]').click();

cy.url().should('include', '/dashboard');

cy.get('.welcome-message').should('contain', 'Welcome, testuser');

});

});

```

2. 2.**Run the Test**:

Run the test using the Cypress Test Runner.

```bash

npx cypress open

```

### Running and Debugging Tests

Cypress makes it easy to run and debug tests with its robust set of features:

- **Time Travel**: Inspect snapshots of your application at each step of the test, allowing you to see exactly what happened at any point.

- **Automatic Waiting**: Cypress automatically waits for elements to appear and actions to complete, reducing the need for manual wait commands.

- **Real-Time Reloads**: The Test Runner reloads tests in real-time as you make changes, providing immediate feedback.

By using Cypress for end-to-end testing, you can ensure that your React application delivers a reliable and user-friendly experience.

---

## 6. Test-Driven Development (TDD)

Test-Driven Development (TDD) is a software development methodology where tests are written before the actual code. This approach ensures that the code meets the specified requirements and helps maintain high code quality.

### Principles of TDD

TDD is based on a simple cycle of writing tests, writing code, and refactoring. Here are the core principles:

- **Write a Test**: Start by writing a test for the next piece of functionality you want to add.

- **Run the Test**: Run the test to ensure it fails. This step confirms that the test is detecting the absence of the desired functionality.

- **Write the Code**: Write the minimal amount of code necessary to make the test pass.

- **Run the Test Again**: Run the test again to ensure it passes with the new code.

- **Refactor**: Refactor the code to improve its structure and readability while ensuring the tests still pass.

### TDD Workflow

1. **Red Phase**: Write a failing test that defines a function or feature.

2. **Green Phase**: Write the code to pass the test.

3. **Refactor Phase**: Refactor the code for optimization and clarity, ensuring the test still passes.

### Advantages of TDD

- **Improved Code Quality**: Writing tests first ensures that each piece of functionality is clearly defined and tested.

- **Early Bug Detection**: Bugs are caught early in the development process, reducing the cost and effort of fixing them later.

- **Better Design**: TDD encourages modular and maintainable code design.

- **Confidence in Code Changes**: Tests provide a safety net that gives developers confidence when making changes or adding new features.

### Practical Example

Let’s walk through a simple TDD example for a React component:

**Step 1: Write a Test**

Suppose we want to create a Counter component. We’ll start by writing a test:

```jsx

// src/components/Counter.test.js

import React from 'react';

import { render, fireEvent } from '@testing-library/react';

import Counter from './Counter';

test('increments counter when button is clicked', () => {

const { getByText } = render(<Counter />);

const button = getByText('Increment');

const counter = getByText('Count: 0');

fireEvent.click(button);

expect(counter).toHaveTextContent('Count: 1');

});

```

**Step 2: Run the Test**

Run the test to ensure it fails, indicating that the functionality is not yet implemented:

```bash

npm test

# or

yarn test

```

**Step 3: Write the Code**

Write the minimal code to pass the test:

```jsx

// src/components/Counter.js

import React, { useState } from 'react';

const Counter = () => {

const [count, setCount] = useState(0);

return (

<div>

<p>Count: {count}</p>

<button onClick={() => setCount(count + 1)}>Increment</button>

</div>

);

};

export default Counter;

```

**Step 4: Run the Test Again**

Run the test again to ensure it passes with the new code:

```bash

npm test

# or

yarn test

```

**Step 5: Refactor**

Refactor the code to improve its structure and readability while ensuring the test still passes. In this simple example, the initial implementation is already quite clean, so minimal refactoring is needed.

By following the TDD workflow, you can ensure that your code is thoroughly tested and meets the desired requirements from the outset.

---

## 7. Mocking in tests

Mocking is an essential technique in testing that allows you to isolate the component or function under test by simulating the behavior of dependencies. This helps ensure that tests are focused and reliable.

### Importance of Mocking

- **Isolation**: Mocking helps isolate the component or function being tested by simulating its dependencies. This ensures that tests are not affected by external factors or actual implementations of dependencies.

- **Control**: By using mocks, you can control the behavior of dependencies, making it easier to test different scenarios and edge cases.

- **Performance**: Mocks can improve test performance by avoiding calls to slow or resource-intensive external systems, such as databases or APIs.

### Mocking Functions and Modules with Jest

Jest provides powerful mocking capabilities that allow you to create mocks for functions, modules, and even entire libraries.

### Mocking Functions:

Suppose you have a utility function that performs a complex calculation, and you want to mock it in your tests:

```jsx

// src/utils/calculate.js

export const calculate = (a, b) => a + b;

// src/components/Calculator.js

import React from 'react';

import { calculate } from '../utils/calculate';

const Calculator = ({ a, b }) => {

const result = calculate(a, b);

return <div>Result: {result}</div>;

};

export default Calculator;

```

### Test with Mocked Function:

```jsx

// src/components/Calculator.test.js

import React from 'react';

import { render } from '@testing-library/react';

import Calculator from './Calculator';

import * as calculateModule from '../utils/calculate';

jest.mock('../utils/calculate');

test('renders the result of the calculation', () => {

calculateModule.calculate.mockImplementation(() => 42);

const { getByText } = render(<Calculator a={1} b={2} />);

expect(getByText('Result: 42')).toBeInTheDocument();

});

```

By mocking the calculate function, you can control its behavior and test different scenarios without relying on the actual implementation.

---

## 8. Best practices and Tips

Writing effective tests for your React applications involves following best practices that ensure your tests are maintainable, reliable, and efficient. Here are some tips to help you achieve that:

### Effective Test Writing

- **Write Tests Early**: Incorporate testing into your development process from the beginning. Writing tests early helps catch issues sooner and ensures new features are covered by tests.

- **Test Behavior, Not Implementation**: Focus on testing the behavior and output of your components rather than their implementation details. This makes your tests more robust and less likely to break with refactoring.

- **Keep Tests Small and Focused**: Write small, focused tests that cover specific pieces of functionality. This makes it easier to understand and maintain your test suite.

### Optimizing Test Performance

- **Avoid Unnecessary Re-renders**: Use utility functions like rerender from React Testing Library to avoid unnecessary re-renders in your tests.

- **Mock Expensive Operations**: Mock operations that are resource-intensive or slow, such as network requests, to speed up your tests.

- **Run Tests in Parallel**: Configure your testing framework to run tests in parallel where possible, reducing overall test execution time.

### Managing Test Data

- **Use Factories for Test Data**: Create factories or fixtures for generating test data. This ensures consistency and makes it easier to set up tests.

- **Clean Up After Tests**: Ensure that any side effects created during tests are cleaned up. Use Jest’s afterEach or afterAll hooks to reset state or clear mocks.

### Common Challenges and Solutions

- **Flaky Tests**: Identify and fix flaky tests that sometimes pass and sometimes fail. This can be due to timing issues, reliance on external services, or random data.

- **Testing Asynchronous Code**: Use utilities like waitFor or findBy from React Testing Library to handle asynchronous operations in your tests. Ensure that your tests account for delays or async behavior.

- **Handling Dependencies**: Use mocking to handle dependencies that are difficult to control in tests, such as API calls or global objects.

---

## 9. Continuous Integration and Deployment (CI/CD)

Continuous Integration and Continuous Deployment (CI/CD) are essential practices for modern software development. They automate the process of integrating code changes, running tests, and deploying applications, ensuring that your software is always in a deployable state.

### Role of CI/CD in Testing

CI/CD pipelines automate the testing process, allowing you to:

- **Run Tests Automatically**: Ensure that tests are run automatically on every code change, catching bugs early in the development process.

- **Maintain Code Quality**: Enforce code quality standards by running linting and formatting checks as part of the pipeline.

- **Deploy Continuously**: Deploy code changes to production or staging environments automatically, ensuring that new features and fixes are available to users as soon as they are ready.

### Popular CI/CD Platforms

Several CI/CD platforms are popular in the development community for their ease of use and powerful features. Here are a few:

- **GitHub Actions**: Integrated with GitHub repositories, it allows you to automate workflows directly within your GitHub environment.

- **CircleCI**: Known for its speed and efficiency, it supports various configurations and is easy to integrate with multiple environments.

- **Travis CI**: Popular for its simplicity and ease of setup, especially for open-source projects.

### Running and Monitoring CI/CD Pipelines

Once your CI/CD pipeline is set up, every code change will trigger the workflow, running your tests and providing feedback. You can monitor the status of your workflows directly in the GitHub Actions tab of your repository.

### Benefits:

- **Consistency**: Ensure that tests are run consistently and automatically on every code change.

- **Early Bug Detection**: Catch bugs early in the development process before they make it to production.

- **Continuous Delivery**: Automate the deployment process, ensuring that your application is always in a deployable state.

By integrating CI/CD into your development workflow, you can maintain high code quality, reduce the risk of introducing bugs, and ensure that new features are delivered to users quickly and reliably.

---

## Conclusion

Implementing a robust testing strategy is crucial for delivering high-quality, reliable software. By integrating tools like Jest, React Testing Library, and Cypress into your workflow, you can catch bugs early, improve code quality, and ensure a smooth user experience. Continuous Integration and Deployment (CI/CD) further enhance this process by automating tests and deployments, maintaining the stability of your application.

Remember, testing is not just about finding bugs—it’s about ensuring that your code behaves as expected and continues to do so as it evolves. By prioritizing testing in your development workflow, you can build more reliable and maintainable React applications.

| mario130 |

1,867,873 | AWS open source newsletter, #198 | Edition #198 Welcome to issue #198 of the AWS open source newsletter, the newsletter where... | 0 | 2024-05-28T15:05:53 | https://community.aws/content/2h6H82ceGfVpqbr5NOAWnvS1oO5/aws-open-source-newsletter-198 | opensource, aws | ## Edition #198

Welcome to issue #198 of the AWS open source newsletter, the newsletter where we try and provide you the best open source on AWS content. In this issue we feature new projects that provide integration of .NET Aspire with AWS resources, an automated data discovery tool to find data in your AWS environments, a tool to help incorporate good practices when building SaaS solutions, a cost allocation dashboard for your Kubernetes workloads, a project that might help you mitigate costs around Internet Gateway, and a few generative AI demos around food, news, and social media which you should definitely check out. Also in this edition is plenty of content on your favourite open source technologies, which this week includes Kubernetes, Leapp, OpenTelemetry, AWS CDK, llrt, Valkey, PostgreSQL, InfluxDB, High Performance Software Foundation, Karpenter, Multus, Kata, Grafana, Prometheus, Apache Flink, Zingg, Apache Hudi, Apache Iceberg, MySQL, Apache Tomcat, WordPress, AWS Amplify, Apache Airflow, OpenSearch, Apache Kafka, Bottlerocket, and Amazon EMR. As always, make sure you check out the events section at the end, and if you have your own event or online thing you want me to include here, just drop me a message.

### Latest open source projects

*The great thing about open source projects is that you can review the source code. If you like the look of these projects, make sure you that take a look at the code, and if it is useful to you, get in touch with the maintainer to provide feedback, suggestions or even submit a contribution. The projects mentioned here do not represent any formal recommendation or endorsement, I am just sharing for greater awareness as I think they look useful and interesting!*

### Tools

**.NET Aspire**

[aspire](https://aws-oss.beachgeek.co.uk/3x1) Provides extension methods and resources definition for a .NET Aspire AppHost to configure the AWS SDK for .NET and AWS application resources. If you are not familiar with Aspire, it is an opinionated, cloud ready stack for building observable, production ready, distributed applications in .NET. You can now use this with AWS resources, so check out the repo and the documentation that provides code examples and more.

**aws-sdk-python-signers**

[aws-sdk-python-signers](https://aws-oss.beachgeek.co.uk/3x3) AWS SDK Python Signers provides stand-alone signing functionality. This enables users to create standardised request signatures (currently only SigV4) and apply them to common HTTP utilities like AIOHTTP, Curl, Postman, Requests and urllib3. This project is currently in an Alpha phase of development. There likely will be breakages and redesigns between minor patch versions as we collect user feedback. We strongly recommend pinning to a minor version and reviewing the changelog carefully before upgrading. Check out the README for details on how to use the signing module.

**automated-datastore-discovery-with-aws-glue**

[automated-datastore-discovery-with-aws-glue](https://aws-oss.beachgeek.co.uk/3x7) This sample shows you how to automate the discovery of various types of data sources in your AWS estate. Examples include - S3 Buckets, RDS databases, or DynamoDB tables. All the information is curated using AWS Glue - specifically in its Data Catalog. It also attempts to detect potential PII fields in the data sources via the Sensitive Data Detection transform in AWS Glue. This framework is useful to get a sense of all data sources in an organisation's AWS estate - from a compliance standpoint. An example of that could be GDPR Article 30. Check out the README for detailed architecture diagrams and a break down of each component as to how it works.

**sbt-aws**

[sbt-aws](https://aws-oss.beachgeek.co.uk/3x8) SaaS Builder Toolkit for AWS (SBT) is an open-source developer toolkit to implement SaaS best practices and increase developer velocity. It offers a high-level object-oriented abstraction to define SaaS resources on AWS imperatively using the power of modern programming languages. Using SBT’s library of infrastructure constructs, you can easily encapsulate SaaS best practices in your SaaS application, and share it without worrying about boilerplate logic. The README contains all the resources you need to get started with this project, so if you are doing anything in the SaaS space, check it out.

**containers-cost-allocation-dashboard**

[containers-cost-allocation-dashboard](https://aws-oss.beachgeek.co.uk/3x9) provides everything you need to create a QuickSight dashboard for containers cost allocation based on data from Kubecost. The dashboard provides visibility into EKS in-cluster cost and usage in a multi-cluster environment, using data from a self-hosted Kubecost pod. The README contains additional links to resources to help you understand how this works, dependencies, and how to deploy and configure this project.

### Demos, Samples, Solutions and Workshops

**create-and-delete-ngw**

[create-and-delete-ngw](https://aws-oss.beachgeek.co.uk/3x2) This project contains source code and supporting files for a serverless application that allocates an Elastic IP address, creates a NAT Gateway, and adds a route to the NAT Gateway in a VPC route table. The application also deletes the NAT Gateway and releases the Elastic IP address. The process to create and delete a NAT Gateway is orchestrated by an AWS Step Functions State Machine, triggered by an EventBridge Scheduler. The schedule can be defined by parameters during the SAM deployment process.

**whats-new-summary-notifier**

[whats-new-summary-notifier](https://aws-oss.beachgeek.co.uk/3x4) is a demo repo that lets you build a generative AI application that summarises the content of AWS What's New and other web articles in multiple languages, and delivers the summary to Slack or Microsoft Teams.

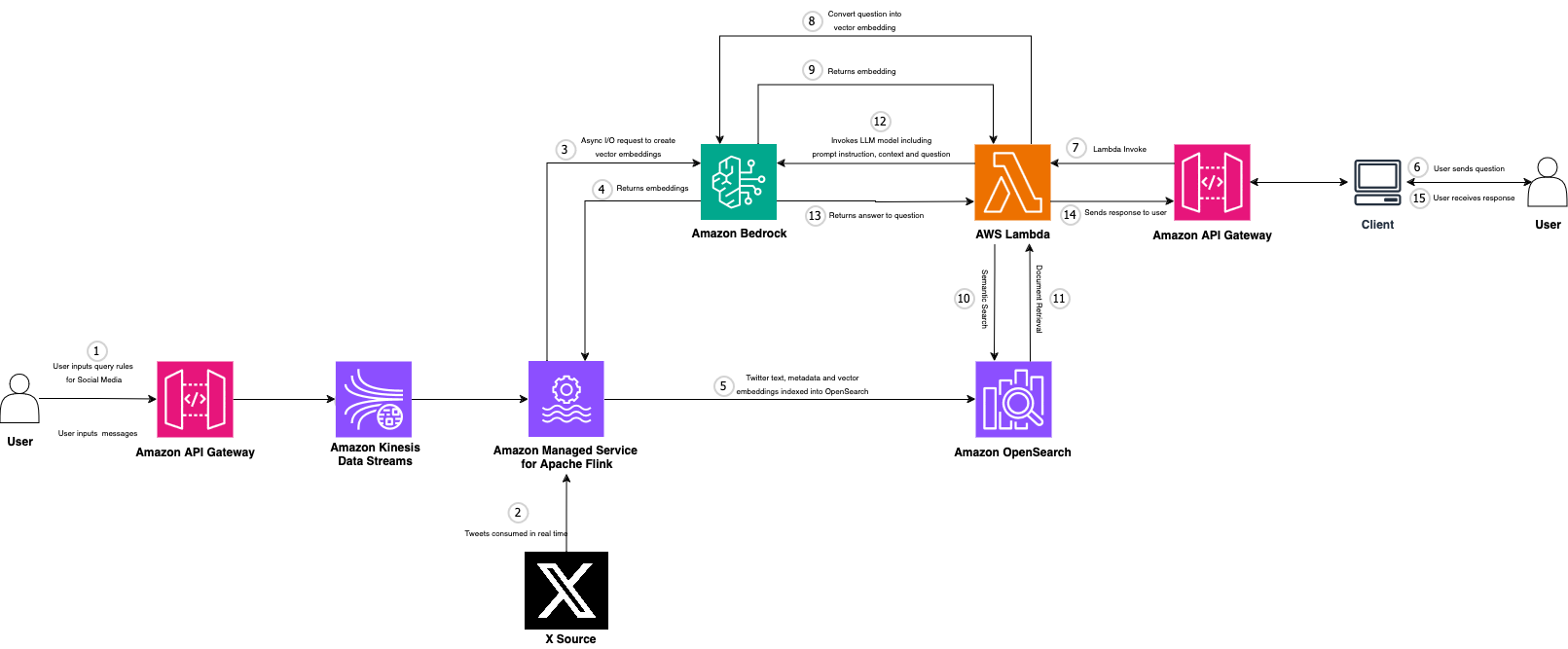

**real-time-social-media-analytics-with-generative-ai**

[real-time-social-media-analytics-with-generative-ai](https://aws-oss.beachgeek.co.uk/3x5) this repo helps you to build and deploy an AWS Architecture that is able to combine streaming data with GenAI using Amazon Managed Service for Apache Flink and Amazon Bedrock.

**serverless-genai-food-analyzer-app**

[serverless-genai-food-analyzer-app](https://aws-oss.beachgeek.co.uk/3x6) provides code for a personalised GenAI nutritional web application for your shopping and cooking recipes built with serverless architecture and generative AI capabilities. It was first created as the winner of the AWS Hackathon France 2024 and then introduced as a booth exhibit at the AWS Summit Paris 2024. You use your cell phone to scan a bar code of a product to get the explanations of the ingredients and nutritional information of a grocery product personalised with your allergies and diet. You can also take a picture of food products and discover three personalised recipes based on their food preferences. The app is designed to have minimal code, be extensible, scalable, and cost-efficient. It uses Lazy Loading to reduce cost and ensure the best user experience. Tres bon!

### AWS and Community blog posts

Each week I spent a lot of time reading posts from across the AWS community on open source topics. In this section I share what personally caught my eye and interest, and I hope that many of you will also find them interesting.

**The best from around the Community**

Starting this off this week we have AWS Community Builder Julian Michel, who shares his personal AWS setup with you, and more importantly some of the cool open source tools you can use to keep everything in order. I use some of these myself, so make sure you check "[My personal AWS account setup - IAM Identity Center, temporary credentials and sandbox account](https://aws-oss.beachgeek.co.uk/3wu)" out to see how you might be able to improve your setup.