id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,867,533 | What Are the Benefits of Digital Transformation? | In today's rapidly evolving business landscape, digital transformation has emerged as a key driver of... | 0 | 2024-05-28T11:12:04 | https://dev.to/justinsaran/what-are-the-benefits-of-digital-transformation-12k8 | webdev, productivity, learning | In today's rapidly evolving business landscape, digital transformation has emerged as a key driver of growth and innovation. But what exactly is digital transformation, and what benefits does it bring to businesses? This article explores the multifaceted advantages of digital transformation, shedding light on how it can revolutionize various aspects of an organization.

Understanding digital transformation

------------------------------------

Digital transformation involves integrating digital technologies into all areas of a business, fundamentally changing how it operates and delivers value to customers. This process goes beyond merely adopting new technologies; it requires a cultural shift that encourages innovation, embraces change, and continually challenges the status quo.

Key Benefits of Digital Transformation

--------------------------------------

### 1\. Enhanced Operational Efficiency

Digital transformation streamlines business processes, reducing the time and effort required to complete tasks. Automation plays a significant role here, as it eliminates manual, repetitive tasks and frees up employees to focus on more strategic activities. For instance, robotic process automation (RPA) can handle data entry, invoicing, and other routine tasks, significantly improving efficiency.

According to [Virtru](https://www.virtru.com/blog/8-benefits-digital-transformation), companies that embrace digital transformation see marked improvements in operational efficiency, leading to cost savings and enhanced productivity.

### 2\. An improved customer experience

At the heart of digital transformation is the goal of enhancing the customer experience. By leveraging digital tools and data analytics, businesses can gain deeper insights into customer preferences and behaviors, allowing them to deliver personalized and engaging experiences. For example, customer relationship management (CRM) systems help businesses understand customer needs and tailor their interactions accordingly.

[Quixy](https://quixy.com/blog/top-benefits-of-digital-transformation/) highlights that a superior customer experience leads to increased customer loyalty and retention, ultimately driving business growth.

### 3\. Data-Driven Decision Making

Digital transformation enables businesses to harness the power of data. With advanced analytics and big data technologies, organizations can collect, process, and analyze vast amounts of data in real time. This data-driven approach allows for more informed decision-making, helping businesses identify trends, optimize operations, and predict future outcomes.

As [Trustpair](https://trustpair.com/blog/what-are-the-benefits-of-digital-transformation/) points out, data-driven decision-making enhances agility and responsiveness, enabling businesses to adapt quickly to changing market conditions.

### 4\. Increased Agility and Innovation

In a competitive market, the ability to innovate quickly is crucial. Digital transformation fosters a culture of agility and continuous improvement, encouraging businesses to experiment with new ideas and technologies. Agile methodologies and digital tools facilitate rapid prototyping, iterative development, and swift feedback loops, accelerating the innovation process.

[PTC](https://www.ptc.com/en/blogs/corporate/digital-transformation-benefits) emphasizes that digital transformation helps businesses stay ahead of the curve by continuously evolving and embracing new opportunities.

### 5\. Enhanced Collaboration and Communication

Digital transformation breaks down silos within organizations, promoting better collaboration and communication among teams. Cloud-based tools, collaboration platforms, and digital workspaces enable employees to work together seamlessly, regardless of their physical location. This interconnected environment fosters teamwork, knowledge sharing, and collective problem-solving.

[Thales Group](https://cpl.thalesgroup.com/software-monetization/benefits-of-digital-transformation) notes that improved collaboration and communication lead to higher employee engagement and productivity, driving overall business performance.

### 6\. Improved resource management

Digital transformation provides businesses with tools to manage resources more effectively. Enterprise Resource Planning (ERP) systems, for example, integrate various business processes into a single platform, offering real-time visibility into operations. This integration helps optimize resource allocation, reduce waste, and improve overall efficiency.

Businesses can ensure optimal resource use, leading to cost savings and improved operational performance, by leveraging digital technologies.

### 7\. Competitive advantage

Incorporating digital transformation into business strategy provides a significant competitive edge. Companies that effectively utilize digital technologies can respond faster to market changes, offer superior customer experiences, and innovate more rapidly than their competitors. This agility and responsiveness position them as leaders in their industries.

According to [Virtru](https://www.virtru.com/blog/8-benefits-digital-transformation), businesses that embrace digital transformation are better equipped to navigate disruptions and capitalize on new opportunities, ensuring long-term success.

### 8\. Increased Revenue and Growth

Ultimately, the benefits of digital transformation translate into increased revenue and business growth. By enhancing efficiency, improving the customer experience, and fostering innovation, businesses can drive higher sales and expand their market reach. Digital transformation opens up new revenue streams, such as online sales channels, subscription models, and digital services.

[Quixy](https://quixy.com/blog/top-benefits-of-digital-transformation/) highlights that businesses that successfully undergo digital transformation often see substantial improvements in their financial performance.

Real-World Examples of Digital Transformation Success

-----------------------------------------------------

### 1\. General Electric (GE)

General Electric embarked on a digital transformation journey to become a leader in the Industrial Internet of Things (IIoT). By integrating digital technologies into its operations, GE created Predix, an industrial IoT platform that collects and analyzes data from industrial machines. This transformation enabled GE to offer predictive maintenance services, reducing downtime and operational costs for its customers.

### 2\. Starbucks

Starbucks leveraged digital transformation to enhance its customer experience through its mobile app and loyalty program. The app allows customers to order and pay ahead, earn rewards, and receive personalized offers. This digital initiative not only improved customer convenience but also provided Starbucks with valuable data to tailor its marketing strategies and drive customer engagement.

### 3\. Netflix

Netflix’s shift from a DVD rental service to a leading streaming platform is a prime example of digital transformation. By embracing digital technology, Netflix developed a robust streaming infrastructure and used data analytics to understand viewer preferences. This transformation allowed Netflix to deliver personalized content recommendations and create original programming that resonates with its audience.

Best Practices for Implementing Digital Transformation

------------------------------------------------------

### 1\. Develop a clear strategy.

A successful digital transformation begins with a well-defined strategy. Outline your objectives, identify key areas for improvement, and develop a roadmap for implementation. This strategic approach ensures that digital initiatives align with business goals and deliver measurable results.

### 2\. Foster a Culture of Innovation

Encourage a culture that embraces change and innovation. Provide employees with the tools and training they need to leverage digital technologies effectively. Encourage experimentation and view failure as a learning opportunity.

### 3\. Invest in the right technologies.

Choosing the right technologies is crucial for a successful digital transformation. Assess your business needs and invest in solutions that offer scalability, flexibility, and security. Cloud computing, AI, machine learning, and IoT are some of the technologies that can drive significant transformation.

### 4\. Focus on the customer experience

Put the customer at the forefront of your digital transformation efforts. Use data analytics to gain insights into customer behavior and preferences. Develop personalized experiences that meet customer needs and exceed their expectations.

### 5\. Ensure data security and compliance.

As you integrate digital technologies, prioritize data security and compliance. Implement robust security measures to protect sensitive information and ensure compliance with industry regulations. Regularly review and update your security protocols to address emerging threats.

### 6\. Monitor and measure progress.

Continuously monitor the progress of your digital transformation initiatives. Use key performance indicators (KPIs) to measure success and identify areas for improvement. Regularly review your strategy and make adjustments as needed to ensure ongoing success.

Conclusion

----------

Digital transformation offers a multitude of benefits that can drive business growth, enhance efficiency, and improve customer experiences. By embracing digital technologies and fostering a culture of innovation, businesses can stay competitive and thrive in today’s dynamic market. From operational efficiency to increased revenue, the advantages of [digital transformation are clear and compelling](https://www.softura.com/digital-transformation-consulting/). Are you ready to embark on your digital transformation journey? Start by developing a clear strategy, investing in the right technologies, and focusing on delivering exceptional customer experiences. The future of your business awaits, transformed by the power of digital innovation will transform your business's future. | justinsaran |

1,867,532 | Getting into full stack development at 50. | Hi guys, I am looking for some honest views here. Am deciding whether to start a level 5 course... | 0 | 2024-05-28T11:11:20 | https://dev.to/dumont-namin/getting-into-full-stack-development-at-50-58md | newtotech, entryintotechat50, agebarriersintech | Hi guys,

I am looking for some honest views here.

Am deciding whether to start a level 5 course offered by Code Institute to get into tech in general, specifically full stack development at the age of 50!

Is it possible to get into tech, ie, full stack development or other areas of tech following on from this, such as aws, at 50 years of age?

Code Institute, perhaps unsurprisingly say age is no barrier, that it is irrelevant, however, is this really the case?

Would really appreciate any views on this. Is it possible to start a career in tech, in the areas mentioned at this age? Is there still scope for development? Without sounding crass, can you go on to earn the really good wages we hear about?

Should mention also, Bristol, UK, is the area, there are a lot of tech jobs here. Still, would very much appreciate any views people have on this.

Many thanks,

Kind regs,

Potential beginner, Bristol | dumont-namin |

1,867,531 | 🌈 2 Colors Extensions to make Visual Studio Code even better! | Colors 🌈 help us identify things in our surroundings, including Visual Studio Code instances and... | 20,133 | 2024-05-28T11:09:48 | https://leonardomontini.dev/vscode-folder-path-color-peacock/ | vscode, programming, productivity | Colors 🌈 help us identify things in our surroundings, including Visual Studio Code instances **and** files.

Today I show how I solved two problems I had, thanks to colors and 2 vscode extensions. Here's a video where I showcase them and how you can push the extension even further to get even better customization 👇

{% youtube bvaSo3tip2g %}

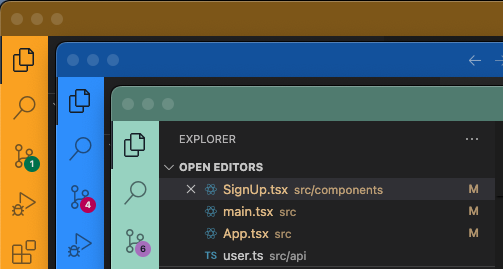

## 1. Identify different vscode instances

This is quite common, you may have a backend and a frontend repo, or simply you’re working on multiple projects and it happens that you have two or more vscode instances open.

Short answer: Peacock ([get the extension](https://marketplace.visualstudio.com/items?itemName=johnpapa.vscode-peacock))

It's quite a famous and widely used extension, but if you don’t know it yet, it's maybe time to give it a try!

(you can also customize projects from the settings, manually, but this extension makes it so much easier)

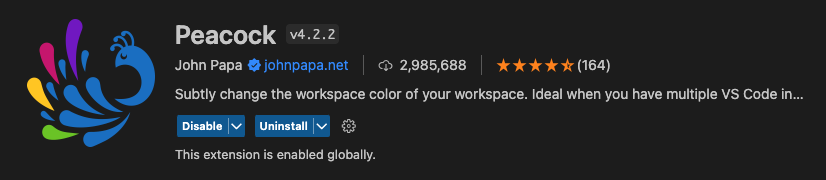

### Peacock Features

Some features you may find useful:

With the command `Peacock: Surprise me with a random color`, you can assign a random color to the current project. Try it if you dare!

If you have already a color in mind, you can set it with `Peacock: Enter a color`.

You're also covered if you want some suggestions, with `Peacock: Change to a Favorite Color` you'll get a list of predefined colors (you can also expand this list with your own colors).

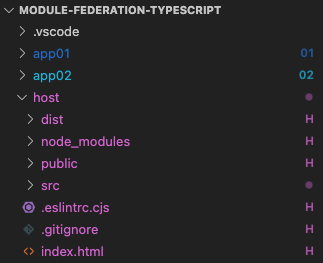

## 2. Identify files and folders in the same repo

I was recently playing around with Module Federation, but this applies pretty much in all monorepo scenarios.

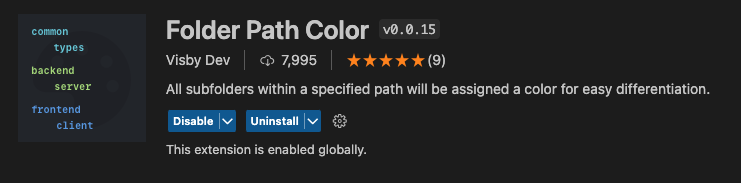

I wanted an easy way to know what project a file refers to and with another extension, Folder Path Color ([get the extension](https://marketplace.visualstudio.com/items?itemName=VisbyDev.folder-path-color)), I was able to do that!

It has some limitations since colors on filenames are already used to track git status, so it's up to you if you want to enable it or not.

### Folder Path Color Features

The core feature here is that you can customize the colors for your file based on their path. You can also assign a symbol.

This is the config I use in the video:

```json

{

"folder-path-color.folders": [

{

"path": "host",

"symbol": "H",

"tooltip": "Host"

},

{

"path": "app01",

"symbol": "01",

"tooltip": "App 01",

"color": "blue"

},

{

"path": "app02",

"symbol": "02",

"tooltip": "App 02",

"color": "cyan"

}

]

}

```

The symbol (that is a string) is particularly useful to overcome the limitation of the colors on filenames, so that git can color your newly added file in green but you still have the symbol next to the filename to identify the project.

You have some default colors, but you can also expand them with a set of custom colors.

### Conclusion

This was a quick one, but I wanted to mention these two extensions I found to solve a couple of problems I had. Peacock is quite famous, but Folder Path Color is less known and I think it has some usecases.

If you want to see me testing the two extensions and their customization, here's the video: https://www.youtube.com/watch?v=bvaSo3tip2g

---

Thanks for reading this article, I hope you found it interesting!

I recently launched a GitHub Community! We create Open Source projects with the goal of learning Web Development together!

Join us: https://github.com/DevLeonardoCommunity

Do you like my content? You might consider subscribing to my YouTube channel! It means a lot to me ❤️

You can find it here:

[](https://www.youtube.com/c/@DevLeonardo?sub_confirmation=1)

Feel free to follow me to get notified when new articles are out ;)

{% embed https://dev.to/balastrong %} | balastrong |

1,885,688 | The Magic and Reality of AI: What can Generative AI actually do? | For more content like this subscribe to the ShiftMag newsletter. Many things pass for AI, and... | 0 | 2024-06-13T13:49:13 | https://shiftmag.dev/what-can-generative-ai-do-3267/ | artificialintelligen, event, ai, christinespang | ---

title: The Magic and Reality of AI: What can Generative AI actually do?

published: true

date: 2024-05-28 11:08:53 UTC

tags: ArtificialIntelligen,Event,AI,ChristineSpang

canonical_url: https://shiftmag.dev/what-can-generative-ai-do-3267/

---

_For more content like this **[subscribe to the ShiftMag newsletter](https://shiftmag.dev/newsletter/)**._

Many things pass for AI, and sometimes it’s hard to put them under the common denominator, but Christine Spang still gives it a shot with a simple equation in her [Shift Miami talk](https://shift.infobip.com/us/): _Chatbots are cool, but what else can generative AI do_?

**Leverage = having a higher impact with a smaller input**

**AI = using computers to generate leverage**

**Computing** itself, argues Christine, **is about giving more leverage to individuals or groups,** and the rise of LLMs has driven AI magic into new sets of use cases.

> We used to carry water to the village; now we have tap water. We invented language, and then systems for storing information. We keep making better ways to use the data and information we have today – AI is our latest attempt at that.

## **Chatbots, knowledge bases, coding assistants**

Today, the most notable use cases are user-facing chatbots, knowledge bases, and coding assistants (or, as often happens, some combination of the three).

Chatbots have come a long way from their initial instances and can now boast **great UI and conversational intelligence. **

Christine argues that coding assistance **(or copilots) supercharges our coding powers**, ensuring enhanced productivity and efficiency in the dev cycle.

AI-powered knowledge bases give us **access to the right information at the time when we need it** , not when a customer service agent is available or can schedule a call.

As a good example of that (and the benefits that AI brings), Christine mentioned her company’s own chatbot, [Nylas Assist](https://nylas.com/blog/pr/nylas-launches-its-new-generative-ai-assist-chatbot/), a chat user interface for their docs. Launched in August 2023, **it has reduced the number of raised tickets by 25%** , even though the user base grew by 30% – that’s precisely the leverage she’s talking about

## Language is messy; data should not be

All three use cases are pretty useful – but is this the peak AI we’re experiencing? We’ve probably all guessed that it’s not. At the moment, Christine notes, **we have generalized datasets, which only allow us to get generalized actions. **

Human language, on the other hand, is messy, full of nuances, and context-dependent. Communication is the bottleneck where most relevant information and context pass through, she states, and communication happens over many different channels, like messaging apps, email, voice, and social media, as well asynchronously.

Current LLMs are trained on the entire scrapable content available online. That’s a lot of text but not necessarily a lot of (right) context. LLMS trained on social media, Christine exemplifies, don’t necessarily have the right context for business.

## Customization is the next step forward

Communication data is a haystack, she stresses, it’s not valuable as an unstructured pile.

Take, for example, email, which is Nylas’s bread–and–butter data. It is particularly messy, with plain text, images, links, and formatting. Passing that data to a model the right way is a challenge. Everything needs to be pre-processed in a specific way, **extracting not just information but also information order.** It needs to be structured to have value and the right context to move from generalized outputs.

Context is exactly how Christine sees generative AI evolving and generating even more leverage: When we access the context of a dataset rather than just the dataset itself, we can customize models and get customized actions instead of generalized ones.

The post [The Magic and Reality of AI: What can Generative AI actually do?](https://shiftmag.dev/what-can-generative-ai-do-3267/) appeared first on [ShiftMag](https://shiftmag.dev). | shiftmag |

1,867,530 | 🚀 The Importance of Understanding Social Networking Basics 🚀 | In today's digital era, almost every website incorporates social media components. Whether you're... | 0 | 2024-05-28T11:05:52 | https://dev.to/code0monkey1/the-importance-of-understanding-social-networking-basics-58l7 | social, node, tdd, mern |

In today's digital era, almost every website incorporates social media components. Whether you're browsing through reviews on Amazon, checking out product ratings on Flipkart, or engaging in discussions on Reddit, social networking features are ubiquitous. They drive user interaction and engagement, making it crucial for tech enthusiasts and professionals to grasp the fundamentals of social networking websites.

🔍 **Why Should You Care?**

Understanding these basics is not just about building traditional social media platforms like Facebook or Twitter

- **E-commerce Sites**: User reviews and ratings (like on Amazon) play a vital role in purchasing decisions.

- **Content Platforms**: Comments, likes, and shares (as seen on YouTube and blogs) increase user interaction.

- **Professional Networks**: LinkedIn recommendations and endorsements help build professional credibility.

🛠️ **Exciting Backend Project Opportunity!**

This backend project that encapsulates these social networking principles, designed with industry best practices like Test-Driven Development (TDD).

Here's a glimpse into the project features:

### 🌐 Application Routes

#### 🔑 Auth Routes

1. **/auth/login**: Log in a user using their credentials.

2. **/auth/register**: Register a new user to the system.

3. **/auth/logout**: Log out a user from the system.

4. **/auth/refresh**: Refresh the user's authentication token.

#### 👤 User Routes

1. **/users**: Fetch a list of all users.

2. **/users/:userId**: Fetch details of a specific user.

3. **/users/:userId/avatar**: Fetch the avatar of a specific user.

4. **/users/:userId/follow**: Follow a specific user.

5. **/users/:userId/unfollow**: Unfollow a specific user.

6. **/users/:userId/recommendations**: Fetch recommendations for a specific user

#### 📝 Post Routes

1. **/posts**: Fetch a list of all posts.

2. **/posts/:postId**: Fetch details of a specific post.

3. **/posts/:postId/photo**: Fetch the photo of a specific post.

4. **/posts/:postId/comment**: Comment on a specific post.

5. **/posts/:postId/uncomment**: Delete a comment on a specific post.

6. **/posts/:postId/like**: Like a specific post.

7. **/posts/:postId/unlike**: Unlike a specific post.

8. **/posts/by/user/:userId**: Fetch all posts by a specific user.

9. **/posts/feed/user/:userId**: Get the feed for a specific user.

#### 📋 Self Routes

1. **/self**: Fetch information about the logged-in user.

🔧 **Built with Precision**

This project adheres to best practices, including TDD, to ensure robust and maintainable code. In a job market that increasingly demands senior-level expertise, writing clean and efficient code is non-negotiable.

📎 **Check it Out on GitHub!**

Explore the project in detail on my GitHub profile: https://lnkd.in/gCiFTSfF

Stay tuned! The frontend for this backend application will be released soon, providing a complete full-stack solution.

Happy coding! 🌟 | code0monkey1 |

1,867,529 | Sell Your House Fast in Northern California | We Buy Houses in Northern California | Sell Your House Fast in Northern California and Close whenever you like with a direct cash buyer. No... | 0 | 2024-05-28T11:05:41 | https://dev.to/norcalhomeoffer/sell-your-house-fast-in-northern-california-we-buy-houses-in-northern-california-4682 | webuyhousesinnorthern, sellyourhousesinnorthern, sellmyhousesinnorthern | **[Sell Your House Fast in Northern](https://www.norcalhomeoffer.com)** California and Close whenever you like with a direct cash buyer. No REALTOR Fees and We Pay for all closing costs. **[We Buy Houses](https://www.norcalhomeoffer.com/redding-ca/)** in Northern California. Living In Northern California for over 35 years, Derek has provided value to the North State in Real Estate to many clients. He provides a no-lowball offer to anyone who opts into being a client. He wants to help anyone that he meets and provide a white glove service to sell your house. There will be NO Hassle way of selling your house. You won’t have to deal with an Agent but will still close with a reputable title company. You don’t have to close fast and can close whenever you like. Derek is a Cash Buyer | norcalhomeoffer |

1,866,462 | TypeScript: Interfaces vs Types - Understanding the Difference | This blog post makes a deep dive into TypeScript's object type system, exploring interfaces and... | 0 | 2024-05-28T11:05:36 | https://antondevtips.com/blog/typescript-interfaces-vs-types-understanding-the-difference | webdev, typescript, frontend, programming | ---

canonical_url: https://antondevtips.com/blog/typescript-interfaces-vs-types-understanding-the-difference

---

This blog post makes a deep dive into TypeScript's object type system, exploring **interfaces** and **types**, difference between them with various examples to help you master these types.

_Originally published at_ [_https://antondevtips.com_](https://antondevtips.com/blog/typescript-interfaces-vs-types-understanding-the-difference)_._

## What Are Interfaces and Types In TypeScript

**Interfaces** and **types** belong to the [**object types**](https://antondevtips.com/blog/the-complete-guide-to-typescript-types) in TypeScript.

**Object types** in TypeScript groups a set of properties under a single type.

Object types can be defined either using `type` alias:

```typescript

type User = {

name: string;

age: number;

email: string;

};

const user: User = {

name: "Anton",

age: 30,

email: "info@antondevtips.com"

};

```

Or `interface` keyword:

```typescript

interface User {

name: string;

age: number;

email: string;

}

const user: User = {

name: "Anton",

age: 30,

email: "info@antondevtips.com"

};

```

## Interfaces in TypeScript

**Interfaces** are extendable and can inherit from other interfaces using the `extends` keyword:

```typescript

interface Employee extends User {

employeeId: number;

salary: number;

}

const employee: Employee = {

name: "Jack Sparrow",

age: 40,

email: "captain.jack@gmail.com",

employeeId: 1,

salary: 2000

};

```

> **Classes** in TypeScript can inherit from interfaces and implement them using the `extends` keyword.

You can also extend **interfaces** by declaring the same interface multiple times:

```typescript

interface User {

name: string;

age: number;

email: string;

}

interface User {

phone: string;

}

const user: User = {

name: "Anton",

age: 30,

email: "info@antondevtips.com",

phone: "1234567890"

};

```

In TypeScript interfaces, you can use property modifiers to define **optional** and **readonly** properties.

### Optional Properties

These properties are not mandatory when creating an object:

```typescript

interface User {

name: string;

age: number;

email?: string; // Optional property

}

const user: User = {

name: "Anton",

age: 30

};

```

### Readonly Properties

These properties cannot be modified after the object is created:

```typescript

interface User {

name: string;

readonly age: number;

email: string;

}

const user: User = {

name: "Anton",

age: 30,

email: "john.doe@example.com"

};

// Error: Cannot assign to 'age' because it is a read-only property.

// user.age = 31;

```

### Index Signatures

Index signatures allow you to define the type of keys and values that an object can have.

Sometimes you may not know all the names of the properties during compile time, but you do know the shape of the values.

In such use cases you can use an index signature to define the properties:

```typescript

interface Names {

[index: number]: string;

}

const names: Names = ["Anton", "Jack"];

```

You can also define dictionaries by using index signatures:

```typescript

interface NameDictionary {

[key: string]: string;

}

const dictionary: NameDictionary = {

name: "Anton",

email: "info@antondevtips.com"

};

```

There is a limitation when working with index signatures: you can't have named properties of another type:

```typescript

interface NumberDictionary {

[index: string]: number;

length: number;

// Error: Property 'name' of type 'string' is not assignable to 'string' index type 'number'.

name: string;

}

```

You can bypass this limitation my using Unions:

```typescript

interface Dictionary {

[index: string]: number | string;

length: number;

name: string;

}

const dictionary: Dictionary = {

name: "Anton",

email: "info@antondevtips.com",

length: 5

};

```

It might be useful to make index signatures readonly to prevent assignment to their indexes:

```typescript

interface ReadonlyDictionary {

readonly [index: number]: string;

}

const dictionary: ReadonlyDictionary = {

0: "Anton"

};

dictionary[0] = "John";

// Error: Index signature in type 'ReadonlyDictionary' is readonly.

```

## Types in TypeScript

The **type** in TypeScript is similar to the interfaces but you can't extend existing types or add new properties to them.

```typescript

type User = {

name: string;

age: number;

email: string;

};

type User = {

phone: string;

};

// Error: Duplicate identifier 'User'.

```

## Combining Interfaces and Types with Intersection Types

**Intersection types** in TypeScript allow you to combine multiple types into one.

An **intersection type** can be created by combining multiple interfaces or types using `&` operator:

```typescript

interface Person {

name: string;

}

interface ContactDetails {

email: string;

phone: string;

}

type Customer = Person & ContactDetails;

const customer: Customer = {

name: "Anton",

email: "info@antondevtips.com",

phone: "1234567890"

};

```

In these examples a `Customer` type is an **intersection type** that combines all properties from `Person` and `ContactDetails` interfaces.

It's important to note that you can only declare an **Intersection Type** with a **type** keyword.

You can't declare an interface type that holds an Intersection.

Intersections are not limited just to interfaces and types, they can be used with other types, including primitives, unions, and other intersections.

## Combining Interfaces and Types with Union Types

**Union type** in TypeScript is a type that is formed from two or more other types.

Union types are also called [**Discriminated Unions**](https://antondevtips.com/blog/mastering-discriminated-unions-in-typescript).

A variable of **union type** can have one of the types from the union.

Let's explore an example of geometrics shapes, that have common and different properties:

```typescript

interface Circle {

type: "circle";

radius: number;

}

interface Square {

type: "square";

square: string;

}

type Shape = Circle | Square;

```

Let's create a function that calculates a square for each shape:

```typescript

function getSquare(shape: Shape) {

if (shape.type === "circle") {

return Math.PI * shape.radius * shape.radius;

}

if (shape.type === "square") {

return shape.square;

}

}

const circle: Circle = {

type: "circle",

radius: 10

};

const square: Square = {

type: "square",

size: 5

};

console.log("Circle square: ", getSquare(circle));

console.log("Circle square: ", getSquare(square));

```

Here a `getSquare` function accepts a shape parameter that can be one of 2 types: `Circle` or `Square`.

We need to define a property that will allow to distinguish types from each other.

In our example it's a `type` property.

It's important to note that you can only create a **Union Type** with a **type** keyword.

You can't declare an interface type that holds a Union Type.

## Difference Between Interfaces and Types

**Interfaces:**

* can inherit from other interfaces

* can merge declarations, which is useful for extending existing objects

* can't represent more complex structures, like unions and intersections

**Types:**

* doesn't support inheritance

* doesn't allow extending existing objects

* can represent more complex structures, like unions and intersections

## Summary

Both **Interfaces** and **Types** in TypeScript are quite the same but have some differences.

Interfaces allow extension of existing objects, while types - not.

On the other hand Types can represent unions and intersections.

Use **Interfaces** when you need to define the structure of an object and take advantage of declaration merging and extension.

Use **Types** when you need you don't need them to be extended or to define complex types, such as unions or intersections.

There is no right choice whether to prefer Interfaces to Types or vice versa.

You can choose based on the needs and personal preference, the most important part is to select a single approach that will be consistent in the project.

My personal choice is **Types** as they can't be accidentally re-declared and extended, unless I need to do some fancy object-oriented stuff with interfaces and classes.

**P.S.:** you can find an amazing Cheat Sheets on [**Types**](https://www.typescriptlang.org/static/TypeScript%20Types-ae199d69aeecf7d4a2704a528d0fd3f9.png) and [**Interfaces**](https://www.typescriptlang.org/static/TypeScript%20Interfaces-34f1ad12132fb463bd1dfe5b85c5b2e6.png) from the Official TypeScript website.

Hope you find this blog post useful. Happy coding!

_Originally published at_ [_https://antondevtips.com_](https://antondevtips.com/blog/typescript-interfaces-vs-types-understanding-the-difference)_._

### After reading the post consider the following:

- [Subscribe](https://antondevtips.com/blog/typescript-interfaces-vs-types-understanding-the-difference#subscribe) **to receive newsletters with the latest blog posts**

- [Download](https://github.com/AntonMartyniuk-DevTips/dev-tips-code/tree/main/frontend/typescript/interfaces-vs-types) **the source code for this post from my** [github](https://github.com/AntonMartyniuk-DevTips/dev-tips-code/tree/main/frontend/typescript/interfaces-vs-types) (available for my sponsors on BuyMeACoffee and Patreon)

If you like my content — **consider supporting me**

Unlock exclusive access to the source code from the blog posts by joining my **Patreon** and **Buy Me A Coffee** communities!

[](https://www.buymeacoffee.com/antonmartyniuk)

[](https://www.patreon.com/bePatron?u=73769486) | antonmartyniuk |

1,867,528 | I want redesign recommendations. | I'm looking to redesign the website MEPCO Bill Checker and would love some advice. Here are a few... | 0 | 2024-05-28T11:04:21 | https://dev.to/shane_king_3ce799210d8264/i-want-redesign-recommendations-em5 | wordpress, javascript, php | I'm looking to redesign the[ website MEPCO Bill Checker](https://mepcobillchecker.com.pk/) and would love some advice. Here are a few specifics:

**Design Improvements:** What modern design elements should I incorporate to enhance user experience?

**User Interface:** How can I make the interface more intuitive and user-friendly?

Content Management System: Would it be beneficial to shift the site to a different CMS? If so, which one would you recommend and why?

**SEO Considerations:** What key SEO strategies should I keep in mind during the redesign to maintain or improve our search rankings?

Performance Optimization: How can I ensure the redesigned site is fast and responsive?

Any tips, resources, or examples of well-designed websites would be greatly appreciated!

Thanks in advance! | shane_king_3ce799210d8264 |

1,867,527 | Hot789 - Trang chu hot789.mobi - Tai app android | ios Mien Phi | Hot789 cung cap cho cac ban mot kho tang game online doi the sieu khung. Cac ban hay thu gian thoai... | 0 | 2024-05-28T11:03:35 | https://dev.to/hot789mobi/hot789-trang-chu-hot789mobi-tai-app-android-ios-mien-phi-1adb | hot789mobi | Hot789 cung cap cho cac ban mot kho tang game online doi the sieu khung. Cac ban hay thu gian thoai mai va nam bat thoi co thang to voi nhung giai thuong cuc ky hap dan. Tai app hot789 de dang ve dien thoai ios va android de trai nghiem game ca cuoc moi luc moi noi.

Email: hoanganhlai1995@gmail.com

Website: https://hot789.mobi/

Dia chi: 139 D. Chau Van Liem, Phuong 14, Quan 5, Thanh pho Ho Chi Minh, Viet Nam 72700

#hot789 #hot789mobi

Social:

https://www.facebook.com/hot789mobi/

https://twitter.com/hot789mobi

https://www.youtube.com/channel/UCVH4PN-0Ef-gs0x00PHKfzw

https://www.pinterest.com/hot789mobi/

https://vimeo.com/hot789mobi

https://github.com/hot789mobi

https://www.blogger.com/profile/03260261300158852990

https://www.reddit.com/user/hot789mobi/

https://vi.gravatar.com/hot789mobi

https://en.gravatar.com/hot789mobi

https://medium.com/@hot789mobi/about

https://www.tumblr.com/hot789mobi

https://hoanganhlai1995.wixsite.com/hot789mobi

https://hot789mobi.weebly.com/

https://hot789mobi.livejournal.com/profile/

https://soundcloud.com/hot789mobi

https://www.openstreetmap.org/user/hot789mobi

https://hot789mobi.wordpress.com/

https://sites.google.com/view/hot789mobi/trang-ch%E1%BB%A7

https://linktr.ee/hot789mobi

https://www.twitch.tv/hot789mobi/about

https://tinyurl.com/hot789mobi

https://ok.ru/profile/591581406342

https://profile.hatena.ne.jp/hot789mobi/profile

https://issuu.com/hot789mobi

https://www.liveinternet.ru/users/hot789mobi

https://dribbble.com/hot789mobi/about

https://www.patreon.com/hot789mobi/about

https://archive.org/details/@hot789mobi

https://gitlab.com/hot789mobi

https://www.kickstarter.com/profile/1013291841/about

https://disqus.com/by/hot789mobi/about/

https://hot789mobi.webflow.io/

https://www.goodreads.com/user/show/178628622-hot789mobi

https://500px.com/p/hot789mobi?view=photos

https://about.me/hot789mobi

https://tawk.to/hot789mobi

https://www.deviantart.com/hot789mobi

https://ko-fi.com/hot789mobi

https://www.provenexpert.com/hot789mobi/

https://hub.docker.com/u/hot789mobi | hot789mobi |

1,867,526 | C++: freeing resources in destructors using helper functions | In this article, we'll look at how to correctly destroy objects in the OOP-based C++ program without... | 0 | 2024-05-28T11:03:18 | https://dev.to/anogneva/c-freeing-resources-in-destructors-using-helper-functions-1f3d | cpp, programming, gamedev | In this article, we'll look at how to correctly destroy objects in the OOP\-based C\+\+ program without redundant operations\. This is the final article in the series about the bugs in qdEngine\.

## Failed resource release in qdEngine code

Here's a list of previous articles about checking the qdEngine game engine:

1. [Let's check the qdEngine game engine, part one: top 10 warnings issued by PVS\-Studio](https://pvs-studio.com/en/blog/posts/cpp/1119/)

1. [Let's check the qdEngine game engine, part two: simplifying C\+\+ code](https://pvs-studio.com/en/blog/posts/cpp/1121/)

1. [Let's check the qdEngine game engine, part three: 10 more bugs](https://pvs-studio.com/en/blog/posts/cpp/1123/)

Once I wrote them, I still had one more interesting PVS\-Studio warning\. So, I decided to make a separate article for this\. Here's the warning:

[V1053](https://pvs-studio.com/en/docs/warnings/v1053/) \[[CERT\-OOP50\-CPP](https://wiki.sei.cmu.edu/confluence/display/cplusplus/OOP50-CPP.+Do+not+invoke+virtual+functions+from+constructors+or+destructors)\] Calling the 'Finit' virtual function in the destructor may lead to unexpected result at runtime\. gr\_dispatcher\.cpp 54

We can call virtual functions in destructors, and the C\+\+ standard clearly describes how this works\. Unfortunately, such code is a magnet for errors, that's why many coding standards and analyzers recommend against using these calls\. I once wrote an article on this topic: "[Virtual function calls in constructors and destructors \(C\+\+\)](https://pvs-studio.com/en/blog/posts/cpp/0891/)"\. If you're a beginner in C\+\+ or want to refresh your memory, I suggest taking a peek at it before you continue reading\. I also encourage you to read it if you're not sure what we're talking about\.

The code fragment related to the warning is quite large, but you can safely skip it\. We'll break it down below using some synthetic examples\.

<spoiler title="The qdEngine code.">

The *Finit* function is called in the base class destructor\. Since the *DDraw\_grDispatcher* subclass has already been destroyed, its *Finit* function isn't called\.

```cpp

class grDispatcher

{

....

virtual ~grDispatcher();

virtual bool Finit();

....

};

grDispatcher::~grDispatcher()

{

Finit();

if (dispatcher_ptr_ == this) dispatcher_ptr_ = 0;

}

bool grDispatcher::Finit()

{

#ifdef _GR_ENABLE_ZBUFFER

free_zbuffer();

#endif

flags &= ~GR_INITED;

SizeX = SizeY = 0;

wndPosX = wndPosY = 0;

screenBuf = NULL;

delete yTable;

yTable = NULL;

return true;

}

class DDraw_grDispatcher : public grDispatcher

{

....

~DDraw_grDispatcher();

bool Finit();

....

};

DDraw_grDispatcher::~DDraw_grDispatcher()

{

if (ddobj_)

{

ddobj_ -> Release();

ddobj_ = NULL;

}

video_modes_.clear();

}

bool DDraw_grDispatcher::Finit()

{

grDispatcher::Finit();

if (back_surface_)

{

while(

back_surface_ -> GetBltStatus(DDGBS_ISBLTDONE) == DDERR_WASSTILLDRAWING);

back_surface_ -> Unlock(&back_surface_obj_);

ddobj_ -> SetCooperativeLevel((HWND)Get_hWnd(),DDSCL_NORMAL);

if (fullscreen_ && ddobj_) ddobj_ -> RestoreDisplayMode();

}

if (prim_surface_)

{

prim_surface_ -> Release();

prim_surface_ = NULL;

}

if (back_surface_)

{

back_surface_ -> Release();

back_surface_ = NULL;

}

return true;

}

```

</spoiler>

## Synthetic code example for error analysis

Now, let's figure out what the issue is\. We'll use the synthetic code and the [Compiler Explorer](https://godbolt.org/) website to quickly explore how the code works\.

We need to create a class hierarchy to manage some resources\. We'll also use the polymorphism principle to work with objects via a pointer to the base class\.

Let's start with the simplest base class:

```cpp

#include <memory>

#include <iostream>

class Resource

{

public:

void Create() {}

void Destroy() {}

};

class A

{

std::unique_ptr<Resource> m_a;

public:

void InitA()

{

m_a = std::make_unique<Resource>();

m_a->Create();

}

virtual ~A()

{

std::cout << "~A()" << std::endl;

if (m_a != nullptr)

m_a->Destroy();

}

};

int main()

{

std::unique_ptr<A> p = std::make_unique<A>();

return 0;

}

```

So far, everything seems OK\. We get the following output for the [online example](https://godbolt.org/z/z8EdqWv3n):

```cpp

~A()

```

Then we find out that the class state should be reset from time to time\. We need to release resources and rather than waiting for the destructor call when the class is destroyed\. Here's the design error: a virtual function is created to clean up the class\. A developer creates an additional virtual class interface like this:

```cpp

#include <memory>

#include <iostream>

class Resource

{

public:

void Create() {}

void Destroy() {}

};

class A

{

std::unique_ptr<Resource> m_a;

public:

void InitA()

{

m_a = std::make_unique<Resource>();

m_a->Create();

}

virtual void Reset()

{

std::cout << "A::Reset()" << std::endl;

if (m_a != nullptr)

{

m_a->Destroy();

m_a.reset();

}

}

virtual ~A()

{

std::cout << "~A()" << std::endl;

Reset();

}

};

int main()

{

std::unique_ptr<A> p = std::make_unique<A>();

return 0;

}

```

[The online example displays the following output](https://godbolt.org/z/hTs4YGGqc):

```cpp

~A()

A::Reset()

```

The virtual *Reset* function is added to free resources\. The destructor doesn't release resources to avoid the code duplication\. Now it just calls the function\.

So far, it seems like everything is still OK, but let's add the subclass:

```cpp

#include <memory>

#include <iostream>

class Resource

{

public:

void Create() {}

void Destroy() {}

};

class A

{

std::unique_ptr<Resource> m_a;

public:

void InitA()

{

m_a = std::make_unique<Resource>();

m_a->Create();

}

virtual void Reset()

{

std::cout << "A::Reset()" << std::endl;

if (m_a != nullptr)

{

m_a->Destroy();

m_a.reset();

}

}

virtual ~A()

{

std::cout << "~A()" << std::endl;

Reset();

}

};

class B : public A

{

std::unique_ptr<Resource> m_b;

public:

void InitB()

{

m_b = std::make_unique<Resource>();

m_b->Create();

}

void Reset()

{

std::cout << "B::Reset()" << std::endl;

if (m_b != nullptr)

{

m_b->Destroy();

m_b.reset();

}

A::Reset();

}

~B()

{

std::cout << "~B()" << std::endl;

Reset();

}

};

int main()

{

std::unique_ptr<A> p = std::make_unique<B>();

p->Reset();

std::cout << "------------" << std::endl;

p->InitA();

return 0;

}

```

[The online example displays the output](https://godbolt.org/z/rPzbdrfo1):

```cpp

B::Reset()

A::Reset()

------------

~B()

B::Reset()

A::Reset()

~A()

A::Reset()

```

If we explicitly call the *Reset* function from the external code, everything works fine\. The *B::Reset\(\)* function is called, and then it calls a function with the same name from the base class\.

There is an issue with the destructor\. Each destructor calls the *Reset* function\. It results in redundant operations because the *Reset* function calls its own version from the base class\.

If we continue this strange inheritance, we will make the issue worse and worse\. And it will cause more and more redundant function calls\.

Here's the [code output](https://godbolt.org/z/dnGKEr95r) where another class has been added:

```cpp

C::Reset()

B::Reset()

A::Reset()

------------

~C()

C::Reset()

B::Reset()

A::Reset()

~B()

B::Reset()

A::Reset()

~A()

A::Reset()

```

There's obviously a class design error\. But it's obvious to us now, though\. In a real project, such errors can thrive and remain unnoticed by the developer's eye: the code works and the *Reset* functions do their task\. But redundant and inefficient operations are still here\.

When a dev notices the described error and tries to fix it, they risk making two other typical errors\.

**The first option\.** They declare the *Reset* functions as non\-virtual and do not call the base \(*x::Reset*\) options in them\. Then, each destructor calls only the *Reset* function from its class and releases only its own resources\. This really takes away the redundancy in the operation of destructors\. However, when *Reset* is called externally, the cleanup of the object state breaks\. [The broken code displays](https://godbolt.org/z/TM9eqo4Pz):

```cpp

A::Reset() // Cleanup resources is broken externally

------------

~C()

C::Reset()

~B()

B::Reset()

~A()

A::Reset()

```

**The second option\.** They call the *Reset* virtual function once from the base class destructor\. This doesn't work because, according to C\+\+ rules, implementation of the *Reset* function from base class will be called, not from subclasses\. This makes sense because by the time the *~A\(\)* destructor is called, all subclasses have been destroyed, and functions can't be called from them\. [The broken code displays](https://godbolt.org/z/6cs9aj4aG):

```cpp

C::Reset()

B::Reset()

A::Reset()

------------

~C()

~B()

~A()

A::Reset() // Release resources only in the base class

```

It's this type of the error that we've found in the qdEngine project thanks to PVS\-Studio\. If you wish, now you can scroll up to the beginning of the article and see the corresponding code from the game engine\.

## Fixed synthetic code

So, how can we correctly use classes to avoid numerous redundant calls?

To do this, we need to separate the release of internal class resourses from the public interface\. It'd be better to create non\-virtual functions that are only responsible for releasing the data in classes where they're declared\. Let's name them *ResetImpl* and make private because they're not for the external use\.

Destructors will simply delegate their work to the *ResetImpl* functions\.

The *Reset* function will remain public and virtual\. It'll release data of all classes using the same *ResetImpl* helper functions\.

Let's put everything together and write correct code:

```cpp

#include <memory>

#include <iostream>

class Resource

{

public:

void Create() {}

void Destroy() {}

};

class A

{

std::unique_ptr<Resource> m_a;

void ResetImpl()

{

std::cout << "A::ResetImpl()" << std::endl;

if (m_a != nullptr)

{

m_a->Destroy();

m_a.reset();

}

}

public:

void InitA()

{

m_a = std::make_unique<Resource>();

m_a->Create();

}

virtual void Reset()

{

std::cout << "A::Reset()" << std::endl;

ResetImpl();

}

virtual ~A()

{

std::cout << "~A()" << std::endl;

ResetImpl();

}

};

class B : public A

{

std::unique_ptr<Resource> m_b;

void ResetImpl()

{

std::cout << "B::ResetImpl()" << std::endl;

if (m_b != nullptr)

{

m_b->Destroy();

m_b.reset();

}

}

public:

void InitB()

{

m_b = std::make_unique<Resource>();

m_b->Create();

}

virtual void Reset()

{

std::cout << "B::Reset()" << std::endl;

ResetImpl();

A::Reset();

}

virtual ~B()

{

std::cout << "~B()" << std::endl;

ResetImpl();

}

};

class C : public B

{

std::unique_ptr<Resource> m_c;

void ResetImpl()

{

std::cout << "C::ResetImpl()" << std::endl;

if (m_c != nullptr)

{

m_c->Destroy();

m_c.reset();

}

}

public:

void InitC()

{

m_c = std::make_unique<Resource>();

m_c->Create();

}

virtual void Reset()

{

std::cout << "C::Reset()" << std::endl;

ResetImpl();

B::Reset();

}

virtual ~C()

{

std::cout << "~C()" << std::endl;

ResetImpl();

}

};

int main()

{

std::unique_ptr<A> p = std::make_unique<C>();

p->Reset();

std::cout << "------------" << std::endl;

return 0;

}

```

[The online example displays](https://godbolt.org/z/G3ohvE6EY):

```cpp

C::Reset()

C::ResetImpl()

B::Reset()

B::ResetImpl()

A::Reset()

A::ResetImpl()

------------

~C()

C::ResetImpl()

~B()

B::ResetImpl()

~A()

A::ResetImpl()

```

I can't say the synthetic code looks nice\. However, it's full of As, Bs, and Cs, so it's very easy to make a typo\. Let's forgive the synthetic examples for this\. The code works, and we've deleted redundant operations\. That's a good result\.

## Conclusion

A virtual function call in a destructor isn't always an error\. However, this may be a sign of the poor class design\. The *qdEngine* project is a great example of such a case\.

The PVS\-Studio analyzer issues the [V1053](https://pvs-studio.com/en/docs/warnings/v1053/) warning if a virtual function is called in a constructor or destructor\. This is a good reason to take another look at the code and see if there's anything we can fix or refactor\. | anogneva |

1,867,525 | F# For Dummys - Day 16 Collections Sequence | Today we learn Sequence, represents an ordered, read-only series of elements Why... | 0 | 2024-05-28T11:01:54 | https://dev.to/pythonzhu/f-for-dummys-day-16-collections-sequence-can | fsharp | Today we learn Sequence, represents an ordered, read-only series of elements

#### Why Sequence

- Sequences are particularly useful when you have a large, ordered collection of data but do not necessarily expect to use all of the elements.

- Individual sequence elements are computed on-demand, so a sequence can provide better performance than a list in situations in which not all the elements are used</br>

**What is computed on-demand**</br>

like buying a burger in KFC, the staff prepared 1000 burgers in the early morning, so you got your burger immediately, all the burgers are made before customer order, this is called *eager evaluation*</br>

then you ordered a fried chicken, the staff replies: sorry, you have to wait for a minute, as we need to fry the chicken first, they only do the work when someone ordered, this is called *lazy evaluation*, also known as *On-demand computation*

#### Create Sequence

- Explicitly specifying elements

```f#

let seq1 = seq [1; 2; 3; 4]

printfn "seq: %A" seq1

```

- Using range expression

```f#

let seq1 = seq { 1 .. 10 } // from 1 to 10

printfn "seq: %A" seq1 // seq: seq [1; 2; 3; 4; ...]

```

use step in range expression

```f#

let seq1 = seq { 1 .. 2 .. 10 } // start from 1, add 2 each time

printfn "seq: %A" seq1 // seq: seq [1; 3; 5; 7; ...]

```

- Using for loop

```f#

let seq1 = seq { for i in 1 .. 10 -> i }

printfn "seq: %A" seq1

```

- ofList</br>

Views the given list as a sequence

```f#

let inputs = [ 1; 2; 5 ]

let seq1 = inputs |> Seq.ofList

printfn "seq: %A" seq1

```

- ofArray</br>

```f#

let inputs = [| 1; 2; 5 |]

let seq1 = inputs |> Seq.ofArray

printfn "seq: %A" seq1

```

- Seq.initInfinite

generate an infinite sequence

```f#

let infiniteSeq = Seq.initInfinite (fun i -> i * 2)

printfn "infiniteSeq %A" infiniteSeq

```

define a start for infinite sequence

```f#

let start = 5

let infiniteSeq = Seq.initInfinite (fun i -> i + start)

printfn "infiniteSeq %A" infiniteSeq // infiniteSeq seq [5; 6; 7; 8; ...]

```

or define a start like this

```f#

let infiniteSeq = Seq.initInfinite ((+) 5)

printfn "infiniteSeq %A" infiniteSeq // infiniteSeq seq [5; 6; 7; 8; ...]

```

#### Loop Sequence

- for loop

```f#

let numbers = seq { 1 .. 10 }

for number in numbers do

printfn "Number: %d" number

```

- Seq.iter

```f#

let numbers = seq { 1 .. 10 }

Seq.iter (fun x -> printfn "Number: %d" x) numbers

```

#### Access element

- Seq.item

syntax: Seq.item index source, thrown ArgumentException when the index is negative or the input sequence does not contain enough elements

```f#

let numbers = seq { 1 .. 10 }

let thirdElement = Seq.item 2 numbers // Indexing is zero-based

printfn "The third element is %d" thirdElement

```

- Seq.head && Seq.tail

Seq.head: Returns the first element of the sequence</br>

Seq.tail: Returns a sequence that skips 1 element of the underlying sequence and then yields the remaining elements of the sequence

```f#

let numbers = seq { 1 .. 10 }

let firstElement = Seq.head numbers

let restElement = Seq.tail numbers

printfn "The first element is %d" firstElement // The first element is 1

printfn "The rest element is %A" restElement // The rest element is seq [2; 3; 4; 5; ...]

```

#### Operate element

- Seq.updateAt

Return a new sequence with the item at a given index set to the new value</br>

syntax: Seq.updateAt index value source

```f#

let newSeq = Seq.updateAt 1 9 seq { 0; 1; 2 }

// let newSeq = Seq.updateAt 1 9 (seq { 0; 1; 2 })

printfn "newSeq: %A" newSeq // newSeq: seq [0; 9; 2]

```

- Seq.filter

Returns a new collection containing only the elements of the collection for which the given predicate returns "true". This is a synonym for Seq.where</br>

syntax: Seq.filter predicateFunc sourceSeq

```f#

let numbers = seq { 1 .. 10 }

let evenNumbers = Seq.filter (fun x -> x % 2 = 0) numbers

Seq.iter (fun x -> printfn "Even Number: %d" x) evenNumbers

```

- Seq.map

Builds a new collection whose elements are the results of applying the given function to each of the elements of the collection</br>

syntax: Seq.filter mappingFunc sourceSeq

```f#

let numbers = seq { 1 .. 10 }

let doubleNumbers = Seq.map (fun x -> x * 2) numbers

Seq.iter (fun x -> printfn "doubleNumbers: %d" x) doubleNumbers

```

- Seq.fold

Applies a function to each element of the collection

syntax: Seq.fold folderFunc state sourceSeq

```f#

type Charge =

| In of int

| Out of int

let inputs = [In 1; Out 2; In 3]

let balance = Seq.fold (fun acc charge ->

match charge with

| In i -> acc + i

| Out o -> acc - o) 0 inputs

printfn "balance %A" balance // balance 2

```

or use pipeline operator to pass state sourceSeq, here is ||> for passing more than one param

```f#

type Charge =

| In of int

| Out of int

let inputs = [In 1; Out 2; In 3]

let balance = (0, inputs) ||> Seq.fold (fun acc charge ->

match charge with

| In i -> acc + i

| Out o -> acc - o)

printfn "balance %A" balance // balance 2

``` | pythonzhu |

1,867,524 | Top 5 Agile Testing Interviews Questions and Answers | Whether you’re a seasoned professional or a fresh graduate, preparing for an Agile testing interview... | 0 | 2024-05-28T11:01:05 | https://dev.to/lalyadav/top-5-agile-testing-interviews-questions-and-answers-3m5m | agile, testing, agiletesting, agilesoftwaretesting | Whether you’re a seasoned professional or a fresh graduate, preparing for an [Agile testing interview](https://www.onlineinterviewquestions.com/agile-testing-interview-questions) requires a solid understanding of Agile principles, practices, and techniques. In this blog post, we’ll delve into the top 5 Agile testing interview questions and answers to help you ace your interview.

**Q1. What is Agile Testing?**

Ans: Agile testing is a software testing approach that follows Agile principles, focusing on iterative development, continuous feedback, and collaboration among team members to ensure high-quality software delivery.

**Q2. What are the key differences between Agile and traditional testing methodologies?**

Ans: Agile testing is iterative, adaptive, and emphasizes continuous feedback, while traditional testing methodologies like Waterfall are sequential, rigid, and involve extensive documentation.

**Q3. What is the Agile Manifesto, and how does it relate to Agile testing?**

Ans: The Agile Manifesto is a set of values and principles that prioritize individuals and interactions, working software, customer collaboration, and responding to change. Agile testing aligns with these principles by emphasizing collaboration, customer feedback, and adaptability.

**Q4. What are the roles and responsibilities of a tester in Agile?**

Ans: Testers in Agile teams collaborate with developers and stakeholders, write and execute test cases, provide feedback on product quality, and contribute to continuous improvement efforts by identifying and addressing defects early in the development cycle.

**Q5. How does Agile testing differ from traditional testing in terms of planning and documentation?**

Ans: Agile testing focuses on adaptive planning and minimal documentation, prioritizing working software over comprehensive documentation. In contrast, traditional testing methodologies often involve extensive upfront planning and documentation. | lalyadav |

1,867,523 | Cash App is Giving Away $750 Gift Cards – Here's How to Enter | Get_Your_$750_Wish_Gift_Card_Now! Giveaway Gift Card : Free Cash App Money Get $750 Cash App Gift... | 0 | 2024-05-28T11:00:38 | https://dev.to/hasib_jabitjabit_c70b1d2/cash-app-is-giving-away-750-gift-cards-heres-how-to-enter-p1j | cashmoney, cashapp, giveaway, funny | Get_Your_$750_Wish_Gift_Card_Now!

Giveaway Gift Card : Free Cash App Money Get $750 Cash App Gift Card . Your Chance to get $750 to your Cash Account!

Cash App Gift Card $750 Free-Unveiling the Offer.

In the fast-paced world of digital transactions, the allure of free money is hard to resist. Enter the Cash App Gift Card, a popular choice for those seeking financial perks in the form of a $750 windfall.

Let's dive into the details, exploring the offer, its legitimacy, and the steps to claim this tempting reward.

👉Get your reward after you fill in your information.

👉Instantly receive $750 in your Cash App account.

👉 Submit your Email/Zip code win the gift card

👉 This offer is only allowed on Apple iOS in United States (US).

[CLICK HERE MORE INFO](https://sites.google.com/view/cashapp750544654/home) | hasib_jabitjabit_c70b1d2 |

1,867,522 | Best Earthmoving Spare Parts in India | JCB spare parts in India have become essential for maintaining the efficiency and longevity of JCB... | 0 | 2024-05-28T10:59:36 | https://dev.to/onlinepartsshop/best-earthmoving-spare-parts-in-india-19il | parts, jcbspareparts, onlinepartsshop | JCB spare parts in India have become essential for maintaining the efficiency and longevity of JCB equipment. As a leading **JCB spare parts manufacturer** and supplier, we at Online Parts Shop are committed to providing top-quality products and exceptional customer service. Our comprehensive range of **JCB spare parts online** ensures that you can find exactly what you need, whenever you need it.

**Why Choose JCB Spare Parts from Online Parts Shop?**

**Unmatched Quality and Reliability**

At Online Parts Shop, we understand that the performance of your machinery is critical to the success of your projects. That’s why we offer only the highest quality [Earthmoving Spare Parts in India](https://onlineparts.shop/). Our products are manufactured using the latest technology and the finest materials to ensure durability and reliability in the most demanding conditions.

**Extensive Range of Products**

Our extensive inventory includes a wide variety of JCB spare parts for different models and types of machinery. From engines and hydraulic parts to electrical components and undercarriage parts, we have everything you need to keep your equipment running smoothly. Whether you are looking for specific JCB spare parts in India or general maintenance items, we have you covered.

**Expert Support and Guidance**

Our team of experienced professionals is always ready to assist you with any queries or concerns you may have. We provide expert advice on selecting the right parts for your machinery, ensuring compatibility and optimal performance. Our commitment to customer satisfaction sets us apart from other JCB spare parts suppliers.

**The Importance of Using Genuine JCB Spare Parts**

Using genuine JCB spare parts is crucial for maintaining the performance and longevity of your equipment. Genuine parts are designed and tested to meet the exact specifications of JCB machinery, ensuring seamless integration and reliable operation. Here are some key benefits of using genuine JCB spare parts:

Enhanced Performance: Genuine parts are engineered to deliver optimal performance, maximizing the efficiency and productivity of your machinery.

Improved Durability: High-quality materials and precise manufacturing processes ensure that genuine parts are more durable and resistant to wear and tear.

Safety and Reliability: Genuine parts undergo rigorous testing to ensure they meet safety and reliability standards, reducing the risk of equipment failure and accidents.

Warranty Protection: Using genuine parts helps maintain the warranty on your equipment, protecting your investment and providing peace of mind.

**How to Purchase JCB Spare Parts Online**

Buying JCB spare parts online from Online Parts Shop is quick and easy. Our user-friendly website allows you to browse our extensive catalog and place orders from the comfort of your home or office. Here’s a step-by-step guide to purchasing **JCB spare parts online**:

Browse Our Catalog: Visit our website and browse our comprehensive catalog of JCB spare parts. Use the search function to find specific parts or explore different categories to discover the products you need.

Select the Parts You Need: Once you have found the parts you need, add them to your cart. Make sure to check the compatibility of the parts with your equipment model.

Place Your Order: Proceed to checkout and enter your shipping and payment details. Review your order to ensure all information is correct, and then submit your order.

Receive Your Parts: We offer fast and reliable shipping across India, ensuring that your parts arrive promptly and in perfect condition. Track your order online and receive updates on its status.

**The Benefits of Choosing Online Parts Shop**

**Competitive Pricing**

We offer competitive pricing on all our JCB spare parts, ensuring you get the best value for your money. By sourcing our products directly from manufacturers and maintaining efficient supply chain operations, we are able to pass on the savings to our customers.

**Convenient Shopping Experience**

Our online platform is designed to provide a seamless shopping experience. With detailed product descriptions, high-quality images, and easy navigation, finding and purchasing the right JCB spare parts has never been easier.

**Secure Payment Options**

We prioritize the security of our customers’ information. Our website uses advanced encryption technology to ensure that your payment details are safe and secure. Choose from a variety of payment options, including credit/debit cards, net banking, and mobile wallets.

**Fast and Reliable Shipping**

We understand the importance of timely delivery. That’s why we offer fast and reliable shipping across India. Our logistics partners ensure that your orders are delivered promptly, minimizing downtime and keeping your projects on track.

**Customer Satisfaction Guarantee**

At Online Parts Shop, customer satisfaction is our top priority. We are committed to providing high-quality products and exceptional service. If you are not completely satisfied with your purchase, we offer hassle-free returns and exchanges to ensure you get exactly what you need.

**Our Commitment to Sustainability**

We are committed to sustainability and environmental responsibility. By offering high-quality, durable JCB spare parts, we help reduce the need for frequent replacements and minimize waste. Our packaging materials are eco-friendly, and we continuously strive to improve our operations to reduce our environmental impact.

JCB spare parts in India have become essential for maintaining the efficiency and longevity of JCB equipment. As a leading JCB spare parts manufacturer and supplier, we at Online Parts Shop are committed to providing top-quality products and exceptional customer service. Our comprehensive range of JCB spare parts online ensures that you can find exactly what you need, whenever you need it.

**Why Choose JCB Spare Parts from Online Parts Shop?**

**Unmatched Quality and Reliability**

At Online Parts Shop, we understand that the performance of your machinery is critical to the success of your projects. That’s why we offer only the highest quality JCB spare parts that meet or exceed OEM standards. Our products are manufactured using the latest technology and the finest materials to ensure durability and reliability in the most demanding conditions.

**Extensive Range of Products**

Our extensive inventory includes a wide variety of JCB spare parts for different models and types of machinery. From engines and hydraulic parts to electrical components and undercarriage parts, we have everything you need to keep your equipment running smoothly. Whether you are looking for specific JCB spare parts in India or general maintenance items, we have you covered.

**Expert Support and Guidance**

Our team of experienced professionals is always ready to assist you with any queries or concerns you may have. We provide expert advice on selecting the right parts for your machinery, ensuring compatibility and optimal performance. Our commitment to customer satisfaction sets us apart from other JCB spare parts suppliers.

**The Importance of Using Genuine JCB Spare Parts**

Using genuine JCB spare parts is crucial for maintaining the performance and longevity of your equipment. Genuine parts are designed and tested to meet the exact specifications of JCB machinery, ensuring seamless integration and reliable operation. Here are some key benefits of using genuine JCB spare parts:

Enhanced Performance: Genuine parts are engineered to deliver optimal performance, maximizing the efficiency and productivity of your machinery.

Improved Durability: High-quality materials and precise manufacturing processes ensure that genuine parts are more durable and resistant to wear and tear.

Safety and Reliability: Genuine parts undergo rigorous testing to ensure they meet safety and reliability standards, reducing the risk of equipment failure and accidents.

Warranty Protection: Using genuine parts helps maintain the warranty on your equipment, protecting your investment and providing peace of mind.

**How to Purchase JCB Spare Parts Online**

Buying JCB spare parts online from Online Parts Shop is quick and easy. Our user-friendly website allows you to browse our extensive catalog and place orders from the comfort of your home or office. Here’s a step-by-step guide to purchasing JCB spare parts online:

Browse Our Catalog: Visit our website and browse our comprehensive catalog of JCB spare parts. Use the search function to find specific parts or explore different categories to discover the products you need.

Select the Parts You Need: Once you have found the parts you need, add them to your cart. Make sure to check the compatibility of the parts with your equipment model.

Place Your Order: Proceed to checkout and enter your shipping and payment details. Review your order to ensure all information is correct, and then submit your order.

Receive Your Parts: We offer fast and reliable shipping across India, ensuring that your parts arrive promptly and in perfect condition. Track your order online and receive updates on its status.

**The Benefits of Choosing Online Parts Shop**

**Competitive Pricing**

We offer competitive pricing on all our JCB spare parts, ensuring you get the best value for your money. By sourcing our products directly from manufacturers and maintaining efficient supply chain operations, we are able to pass on the savings to our customers.

**Convenient Shopping Experience**

Our online platform is designed to provide a seamless shopping experience. With detailed product descriptions, high-quality images, and easy navigation, finding and purchasing the right JCB spare parts has never been easier.

**Secure Payment Options**

We prioritize the security of our customers’ information. Our website uses advanced encryption technology to ensure that your payment details are safe and secure. Choose from a variety of payment options, including credit/debit cards, net banking, and mobile wallets.

**Fast and Reliable Shipping**

We understand the importance of timely delivery. That’s why we offer fast and reliable shipping across India. Our logistics partners ensure that your orders are delivered promptly, minimizing downtime and keeping your projects on track.

**Customer Satisfaction Guarantee**

At Online Parts Shop, customer satisfaction is our top priority. We are committed to providing high-quality products and exceptional service. If you are not completely satisfied with your purchase, we offer hassle-free returns and exchanges to ensure you get exactly what you need.

**Our Commitment to Sustainability**

We are committed to sustainability and environmental responsibility. By offering high-quality, durable JCB spare parts, we help reduce the need for frequent replacements and minimize waste. Our packaging materials are eco-friendly, and we continuously strive to improve our operations to reduce our environmental impact.

**Join Our Community of Satisfied Customers**

Join the growing community of satisfied customers who trust Online Parts Shop for their JCB spare parts needs. Our reputation for quality, reliability, and excellent customer service speaks for itself. Whether you are a contractor, equipment owner, or maintenance professional, we are here to support you with the best products and services.

**Contact Us Today**

Ready to experience the Online Parts Shop difference? Contact us today to learn more about our products and services. Our friendly and knowledgeable team is here to assist you with all your JCB spare parts needs. Join the growing community of satisfied customers who trust Online Parts Shop for their [JCB spare parts in India](https://onlineparts.shop/). Our reputation for quality, reliability, and excellent customer service speaks for itself. Whether you are a contractor, equipment owner, or maintenance professional, we are here to support you with the best products and services.

| onlinepartsshop |

1,867,521 | What is Java Application Development for Businesses? | Java has been a cornerstone in the world of programming languages, particularly for enterprise... | 0 | 2024-05-28T10:56:54 | https://dev.to/justinsaran/what-is-java-application-development-for-businesses-1ej2 | java, softwaredevelopment, development, discuss | Java has been a cornerstone in the world of programming languages, particularly for enterprise applications. Its robustness, versatility, and scalability make it a preferred choice for businesses looking to build reliable and efficient software solutions. But what exactly is Java application development for businesses, and why is it so important? This article delves into the fundamentals, benefits, and best practices of Java application development for businesses, providing a comprehensive understanding of its significance.

Understanding Java application development

------------------------------------------

Java application development is the process of using the Java programming language to create applications that meet specific business needs. Java's platform-independent nature, extensive libraries, and strong community support make it an ideal choice for developing a wide range of applications, from web and mobile applications to complex enterprise systems.

### Key components of Java application development

1. **Platform Independence**: Java applications can run on any device that has the Java Virtual Machine (JVM) installed. This write-once, run-anywhere capability ensures that Java applications are highly portable.

2. **Robustness and Security**: Java is known for its robustness and security features. Its strong memory management, exception handling, and multi-threading capabilities ensure that applications are reliable and secure.