id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,866,649 | BEM: The Best Way To Write CSS | BEM, as its name implies, means Block Element Modifier. If you don't write much CSS because you use... | 0 | 2024-05-27T14:16:49 | https://sotergreco.com/bem-the-best-way-to-write-css | css | BEM, as its name implies, means Block Element Modifier. If you don't write much CSS because you use Tailwind, then maybe this article is not for you, but it can still teach you a lot about how to write good CSS.

Also, a lot of people move to Tailwind because they find CSS cluttered, but the truth is that Tailwind is a lot more cluttered than CSS. If you write good CSS, it can be much cleaner than the alternatives.

So let's take a deep dive into how you can transform your entire CSS workflow with this simple way of writing CSS.

## Introduction

Before we start talking I am going to share with you an example code snippet.

```xml

<style>

// Classic CSS

#opinions_box h1 {

margin: 0 0 8px 0;

text-align: center;

}

#opinions_box {

p.more_pp {

a {

text-decoration: underline;

}

}

input[type="text"] {

border: 1px solid #ccc!important;

}

}

// BEM CSS

.opinions-box {

margin: 0 0 8px 0;

text-align: center;

&__view-more {

text-decoration: underline;

}

&__text-input {

border: 1px solid #ccc;

}

&--is-inactive {

color: gray;

}

}

</style>

```

As you can see BEM is a lot cleaner way of writing CSS. Also you need to keep in mind that it is a methodology. You don't need to install anything. You just change the way you think.

## Blocks

Blocks are encapsulated and standalone blocks of code that are meaningful on their own.

Blocks can be nested and interact with each other, semantically they remain equal; there is no precedence or hierarchy.

```xml

<header class="layout__header header">

<div class="header__logo">My Website</div>

<nav class="header__nav">

<ul class="header__menu">

<li class="header__menu-item"><a href="#" class="header__menu-link">Home</a></li>

<li class="header__menu-item"><a href="#" class="header__menu-link">About</a></li>

<li class="header__menu-item"><a href="#" class="header__menu-link">Contact</a></li>

</ul>

</nav>

</header>

```

As you can see here we have one block inside the other. *Layout* is the one block and *Header* is the other block.

## Elements

Elements are the constituent parts of a block that have no standalone meaning and are semantically tied to their block. They are typically represented by two underscores connecting the block and the element.

```html

<div class="header">

<div class="header__logo"></div>

<div class="header__nav">

<div class="header__nav-item"></div>

</div>

</div>

```

In this example, `header__logo`, `header__nav`, and `header__nav-item` are elements of the `header` block.

## Modifiers

Modifiers are used to change the appearance, behavior, or state of a block or element. They are typically represented by two hyphens connecting the block or element and the modifier.

```html

<div class="header header--dark">

<div class="header__logo"></div>

<div class="header__nav header__nav--expanded">

<div class="header__nav-item header__nav-item--active"></div>

</div>

</div>

```

In this example, `header--dark`, `header__nav--expanded`, and `header__nav-item--active` are modifiers that alter the appearance or behavior of the respective blocks and elements.

## Benefits

The BEM methodology offers several benefits, including improved code readability and maintainability. By using a consistent naming convention, BEM makes it easier to understand the structure and relationships within your HTML and CSS.

This approach also promotes reusability and scalability, allowing developers to manage and update styles more efficiently. Additionally, BEM helps in avoiding CSS conflicts and specificity issues, leading to more predictable and stable styling across your project.

## Conclusion

In conclusion, adopting the BEM methodology can significantly enhance your CSS workflow by promoting cleaner, more organized, and maintainable code. By adhering to its structured naming conventions, you can avoid common pitfalls such as CSS conflicts and specificity issues, ultimately leading to a more efficient and scalable development process.

Whether you're new to CSS or looking to refine your skills, BEM offers a practical and effective approach to writing better CSS.

Thanks for reading, and I hope you found this article helpful. If you have any questions, feel free to email me at [**kourouklis@pm.me**](mailto:kourouklis@pm.me), and I will respond.

You can also keep up with my latest updates by checking out my X here: [**x.com/sotergreco**](http://x.com/sotergreco) | sotergreco |

1,866,647 | MOST COMMON AUTOMATION TESTING TOOLS IN MARKET | There are several automation testing tools available in the market, each with its own features,... | 0 | 2024-05-27T14:15:14 | https://dev.to/akshara_chandran_0f2b21d7/most-common-automation-testing-tools-in-market-4i9p | There are several automation testing tools available in the market, each with its own features, capabilities, and popularity among software testing professionals. Here are some of the most common automation testing tools widely used in the industry:

1. **Selenium WebDriver**:

- Selenium WebDriver is one of the most popular and widely used open-source automation testing frameworks for web applications.

- It supports various programming languages such as Java, Python, C#, etc.

- Selenium WebDriver allows testers to automate web browser interactions across different browsers and platforms.

2. **Appium**:

- Appium is an open-source automation tool for testing mobile applications across different platforms such as iOS, Android, and Windows.

- It supports multiple programming languages and offers a unified API for testing both native and hybrid mobile apps.

3. **Katalon Studio**:

- Katalon Studio is a comprehensive test automation solution for web, API, mobile, and desktop applications.

- It provides a range of features including recording and playback, scriptless automation, and built-in test reporting.

4. **TestComplete**:

- TestComplete is a commercial automation testing tool by SmartBear that supports testing of web, desktop, and mobile applications.

- It offers record and playback capabilities, keyword-driven testing, and script-based testing using various scripting languages.

5. **Robot Framework**:

- Robot Framework is an open-source automation framework that uses a keyword-driven approach for test automation.

- It supports testing of web, desktop, mobile, and API applications and can be extended through libraries for additional functionality.

6. **Jenkins**:

- Jenkins is an open-source automation server that is commonly used for continuous integration and continuous delivery (CI/CD) pipelines.

- It allows automation of various tasks including building, testing, and deployment of software applications.

7. **Cucumber**:

- Cucumber is a popular open-source tool for behavior-driven development (BDD) and acceptance testing.

- It allows writing test scenarios in a human-readable format using Gherkin syntax and automating them with various programming languages.

8. **Postman**:

- Postman is a widely used API testing tool that allows testers to create, organize, and automate API tests.

- It provides features for API endpoint testing, request/response validation, and automation of API workflows.

9. **SoapUI**:

- SoapUI is an open-source API testing tool that supports testing of SOAP, REST, and GraphQL web services.

- It offers features for functional testing, load testing, security testing, and mocking of web services.

10. **QTP/UFT (Micro Focus Unified Functional Testing)**:

- QTP/UFT is a commercial automation testing tool by Micro Focus (formerly HP) that supports functional and regression testing of web, desktop, and mobile applications.

- It provides a range of features including record and playback, keyword-driven testing, and integration with ALM tools.

These are just a few examples of the many automation testing tools available in the market. | akshara_chandran_0f2b21d7 | |

1,866,645 | Software testing | Software is the process of checking the quality and functionality of a performance of software... | 0 | 2024-05-27T14:12:22 | https://dev.to/malaiyarasi/software-testing-1ldo | Software is the process of checking the quality and functionality of a performance of software product. Main purpose to validate the functionality, enhance its performance and improve the overall user experience. Testing can help to compliance the any legal and industry specific standards main goal to find the errors,gaps or missing requirements in comparison of actual requirements.and ultimately deliver the high quality product and satisfy the user needs.

1. Service based company activity that is based on the services the is doing things for customer rather than on manufacturing.

2. Product based company that makes products that might or might not related to software. However they are mainly concentrated on their products to develop their criteria in it companies.

A planning and clear definition of problem and clear statement of requirements for a software solution must be complete,unambiguous and interpretable.

Planning and settings executing activities that integrate quality into all software development solution must be clear and maintenance stage.

_

| malaiyarasi | |

1,866,644 | Low-Code Backend Solution for Refine.dev Using Prisma and ZenStack | Refine.dev is a very powerful and popular React-based framework for building web apps with less code.... | 0 | 2024-05-27T14:12:18 | https://zenstack.dev/blog/refine-dev-backend | webdev, react, lowcode, authorization | [Refine.dev](https://refine.dev/) is a very powerful and popular React-based framework for building web apps with less code. It focuses on providing high-level components and hooks to cover common use cases like authentication, authorization, and CRUD. One of the main reasons for its popularity is that it allows easy integration with many different kinds of backend systems via a flexible adapter design.

This post will focus on the most important type of integration: database CRUD. I'll show how easy it is, with the help of Prisma and ZenStack, to turn your database schema into a fully secured API that powers your refine app. You'll see how we start by defining the data schema and access policies, derive an automatic CRUD API from it, and finally integrate with the Refine app via a "Data Provider."

## A quick overview of the tools

### Prisma

[Prisma](https://www.prisma.io) is a modern TypeScript-first ORM that allows you to manage database schemas easily, make queries and mutations with great flexibility, and ensure excellent type safety.

### ZenStack

[ZenStack](https://zenstack.dev) is a toolkit built above Prisma that adds access control, automatic CRUD web API, etc. It unleashes the ORM's full power for full-stack development.

### Auth.js

[Auth.js](https://authjs.dev/) (successor of NextAuth) is a flexible authentication library that supports many authentication providers and strategies. Although you can use many external services for auth, simply storing everything inside your database is often the easiest way to get started.

## A blogging app

I'll use a simple blogging app as an example to facilitate the discussion. We'll first focus on implementing the authentication and CRUD with essential access control and then expand to more advanced topics.

You can find the link to the completed project's GitHub repo at the end of the post.

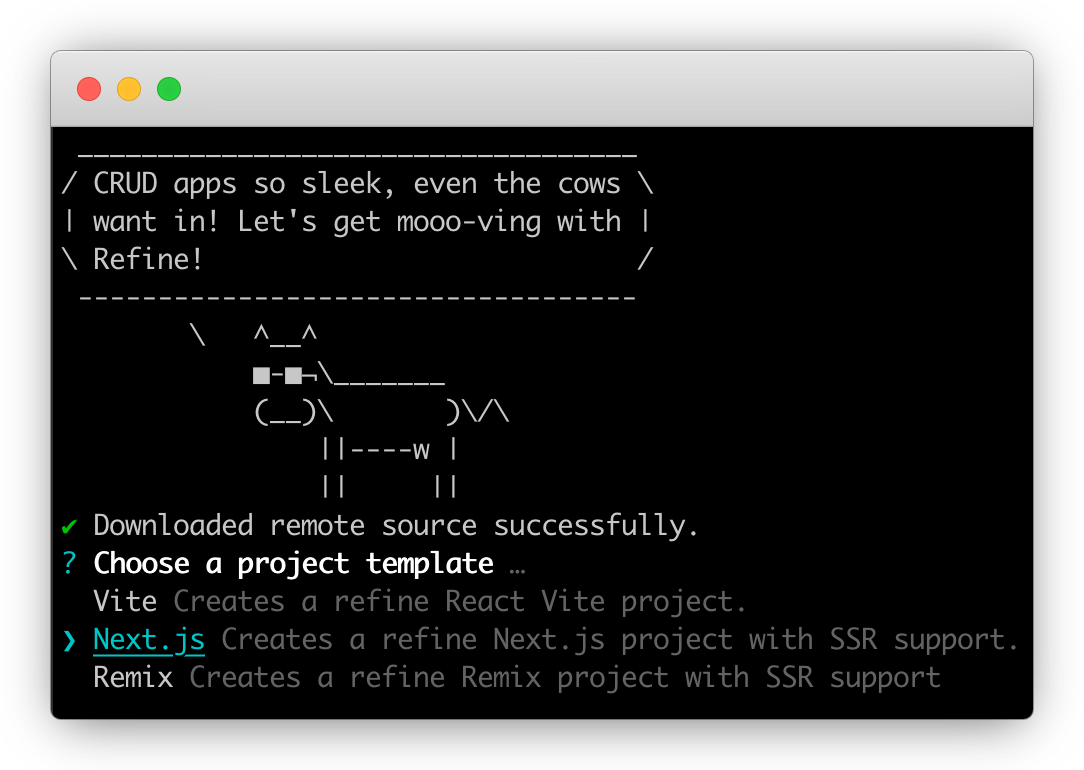

### Scaffolding the app

The `create-refine-app` CLI provides several handy templates to scaffold a new app. We'll use the "Next.js" one so that we can easily contain both the frontend and backend in the same project. Most of the ideas in this post can be applied to a standalone backend project as well.

We also need to install Prisma and NextAuth:

```bash

npm install --save-dev prisma

npm install @prisma/client next-auth@beta

```

Finally, we'll create the database schema for our app (schema.prisma):

```ts

datasource db {

provider = "sqlite"

url = "file:./dev.db"

}

generator client {

provider = "prisma-client-js"

}

model User {

id String @id() @default(cuid())

name String?

email String? @unique()

emailVerified DateTime?

image String?

createdAt DateTime @default(now())

updatedAt DateTime @updatedAt()

accounts Account[]

sessions Session[]

password String

posts Post[]

}

model Post {

id String @id() @default(cuid())

createdAt DateTime @default(now())

updatedAt DateTime @updatedAt()

title String

content String

status String @default("draft")

author User @relation(fields: [authorId], references: [id])

authorId String

}

model Account {

...

}

model Session {

...

}

model VerificationToken {

...

}

```

> The `Account`, `Session`, and `VerificationToken` models are [required by Auth.js](https://authjs.dev/getting-started/adapters/prisma#schema).

### Building authentication

The focus of this post will be data access and access control. However, they are only possible with an authentication system in place. We'll use simple credential-based authentication in this app. The implementation involves creating an Auth.js configuration, installing an API route to handle auth requests, and implementing a Refine "Authentication Provider".

I won't elaborate on the details of this part, but you can find the completed code [here](https://github.com/ymc9/refine-nextjs-zenstack/tree/main/src/providers/auth-provider). It should get the registration, login, and session management parts working.

### Set up access control

There are many ways to implement access control. People typically put the check in the API layer with imperative code. ZenStack offers a unique and powerful way to do it declaratively inside the database schema. Let's see how it works.

First, let's initialize the project for ZenStack:

```bash

npx zenstack@latest init

```

It'll install a few dependencies and copies over the `prisma/schema.prisma` file to `/schema.zmodel`. ZModel is a superset of Prisma Schema Language that adds more features like access control.

Next, we'll add policy rules to the schema:

```ts

model User {

...

// everybody can signup

@@allow('create', true)

// full access by self

@@allow('all', auth() == this)

}

model Post {

...

// allow read for all signin users

@@allow('read', auth() != null && status == 'published')

// full access by author

@@allow('all', author == auth())

}

```

As you can see, the overall schema still looks very similar to the original Prisma schema. The `@@allow` directive defines access control rules. The `auth()` function returns the current authenticated user. We'll see how it's connected with the authentication system next.

The most straightforward way to use ZenStack is to create an "enhancement" wrapper around the Prisma client. First, run the CLI to generate JS modules that support the enforcement of policies:

```bash

npx zenstack generate

```

Then, you can call the `enhance` API to create an enhanced PrismaClient.

```ts

const session = await auth();

const user = session?.user?.id ? { id: session.user.id } : undefined;

const db = enhance(prisma, { user });

```

Besides the `prisma` instance, the `enhance` function also takes a second argument that contains the current user. The user object provides value to the `auth()` function call in the schema at runtime.

The enhanced PrismaClient has the same API as the original one, but it will enforce the policy rules automatically for you.

### Automatic CRUD API

Having the ORM instance enhanced with access control capabilities is great. We can now implement CRUD APIs without writing imperative authorization code as long as we use the enhanced client. However, wouldn't it be even cooler if the CRUD APIs were automatically derived from the schema?

ZenStack makes it possible by providing a set of server adapters for popular Node.js frameworks. Using it with Next.js is easy. You'll only need to create an API route handler:

```ts

// src/app/model/[...path]/route.ts

import { auth } from '@/auth';

import { prisma } from '@/db';

import { enhance } from '@zenstackhq/runtime';

import { NextRequestHandler } from '@zenstackhq/server/next';

// create an enhanced Prisma client with user context

async function getPrisma() {

const session = await auth();

const user = session?.user?.id ? { id: session.user.id } : undefined;

return enhance(prisma, { user });

}

const handler = NextRequestHandler({ getPrisma, useAppDir: true });

export {

handler as DELETE,

handler as GET,

handler as PATCH,

handler as POST,

handler as PUT,

};

```

You then have a set of CRUD APIs served at "/api/model/[Model Name]/...". The APIs closely resemble PrismaClient's API:

- `/api/model/post/findMany`

- `/api/model/post/create`

- ...

You can find the detailed API specification [here](https://zenstack.dev/docs/reference/server-adapters/api-handlers/rpc).

### Implementing a data provider

We've got the backend APIs ready. Now, the only missing piece is a Refine "Data Provider", which talks to the API to fetch and update data. The following code snippet shows how the `getList` method is implemented. Refine's data provider's data structure is conceptually very close to Prisma, and we only need to do some lightweighted translation:

```ts

// src/providers/data-provider/index.ts

export const dataProvider: DataProvider = {

getList: async function <TData extends BaseRecord = BaseRecord>(

params: GetListParams

): Promise<GetListResponse<TData>> {

const queryArgs: any = {};

// filtering

if (params.filters && params.filters.length > 0) {

const filters = params.filters.map((filter) =>

transformFilter(filter)

);

if (filters.length > 1) {

queryArgs.where = { AND: filters };

} else {

queryArgs.where = filters[0];

}

}

// sorting

if (params.sorters && params.sorters.length > 0) {

queryArgs.orderBy = params.sorters.map((sorter) => ({

[sorter.field]: sorter.order,

}));

}

// pagination

if (

params.pagination?.mode === 'server' &&

params.pagination.current !== undefined &&

params.pagination.pageSize !== undefined

) {

queryArgs.take = params.pagination.pageSize;

queryArgs.skip =

(params.pagination.current - 1) * params.pagination.pageSize;

}

// call the API to fetch data and count

const [data, count] = await Promise.all([

fetchData(params.resource, '/findMany', queryArgs),

fetchData(params.resource, '/count', queryArgs),

]);

return { data, total: count };

},

...

};

```

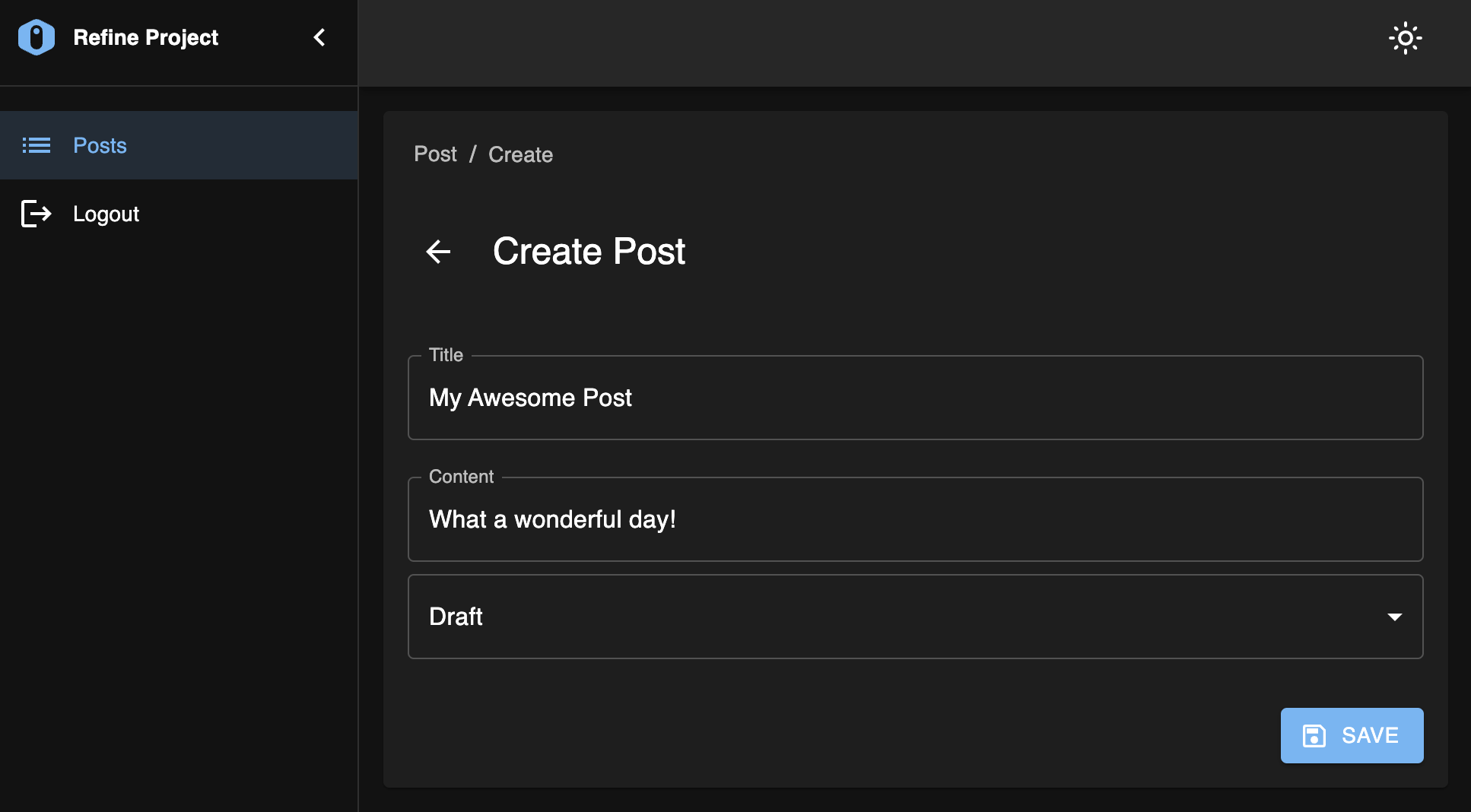

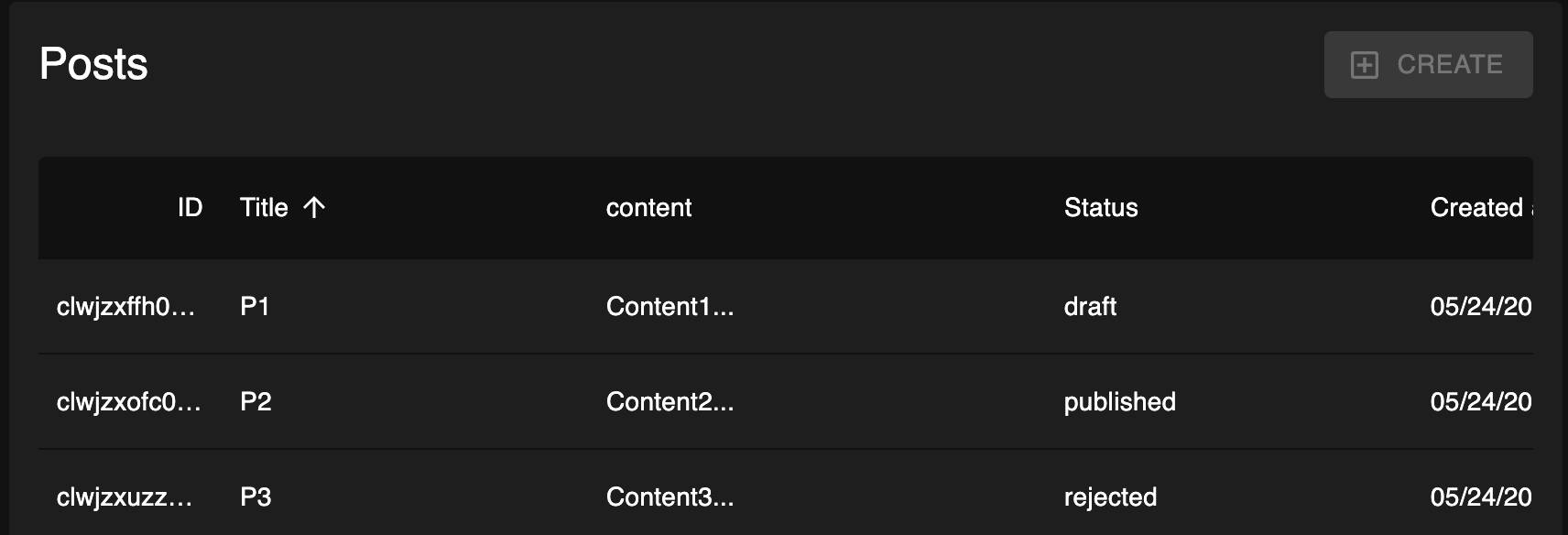

With the data provider in place, we now have a fully working CRUD UI.

You can sign up for two accounts and verify that the access control rules are working as expected - draft posts are only visible to the author.

### Bonus: guarding UI with permission checker

Let's add one more challenge to the problem: the users of our app will have two roles:

- Reader: can only read published posts

- Writer: can create new posts

Our schema needs to be updated accordingly:

```ts

model User {

...

role String @default('Reader')

}

model Post {

...

// allow read for all signin users

@@allow('read', auth() != null && status == 'published')

// allow "Writer" users to create

@@allow('create', auth().role == 'Writer')

// full access by author

@@allow('read,update,delete', author == auth())

}

```

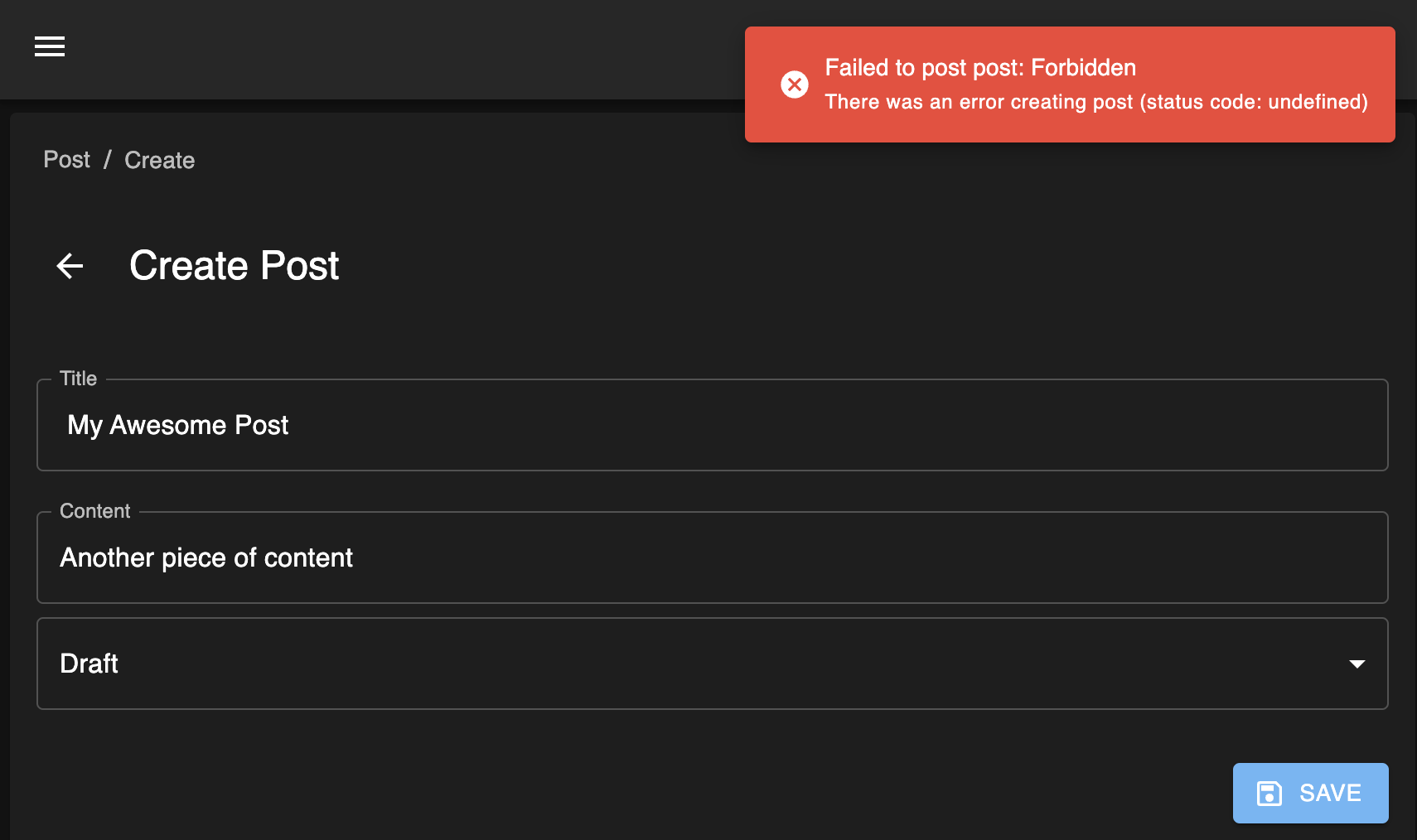

Now, if you try to create a new post with a "Reader" account, you'll see the following error:

The operation is denied correctly according to the rules. However, it's not an entirely user-friendly experience. It'd be nice to prevent the "Create" button from appearing in the first place. This can be achieved by combining two additional features from Refine and ZenStack:

- Refine allows you to implement an "Access Control Provider" to verdict whether the current user has permission to perform an action.

- ZenStack's enhanced PrismaClient has an extra `check` API for inferring permission based on the policy rules. The `check` API is also available in the automatic CRUD API.

> ZenStack's `check` API doesn't query the database. It's based on logical inference from the policy rules. See more details [here](https://zenstack.dev/docs/guides/check-permission).

Let's see how these two pieces are put together. First, implement an `AccessControlProvider`:

```ts

// src/providers/access-control-provider/index.ts

export const accessControlProvider: AccessControlProvider = {

can: async ({ resource, action }: CanParams): Promise<CanReturnType> => {

if (action === 'create') {

// make a request to "/api/model/:resource/check?q={operation:'create'}"

let url = `/api/model/${resource}/check`;

url +=

'?q=' +

encodeURIComponent(

JSON.stringify({

operation: 'create',

})

);

const resp = await fetch(url);

if (!resp.ok) {

return { can: false };

} else {

const { data } = await resp.json();

return { can: data };

}

}

return { can: true };

},

options: {

buttons: {

enableAccessControl: true,

hideIfUnauthorized: false,

},

queryOptions: {},

},

};

```

Then, register the provider to the top-level `Refine` component:

```tsx

// src/app/layout.tsx

<Refine

accessControlProvider={ accessControlProvider }

...

/>

```

You'll immediately notice the difference that, with a "Reader" user, the "Create" button is grayed out and disabled.

However, you can still directly navigate to the "/blog-post/create" URL to access the create form. We can prevent that by using Refine's `CanAccess` component to guard it:

```tsx

// src/app/blog-post/create/page.tsx

<CanAccess

resource="post"

action="create"

fallback={<div>Not Allowed</div>}

>

<Create ... />

</CanAccess>

```

Mission accomplished! We've also done it elegantly without hard coding any permission logic in the UI. Everything about access control is still centralized in the ZModel schema.

## Conclusion

Refine.dev is a great tool for building complex UI without writing complex code. Combined with the superpowers of Prisma and ZenStack, we've now got a full-stack, low-code solution with excellent flexibility.

The completed sample project is here: [https://github.com/ymc9/refine-nextjs-zenstack](https://github.com/ymc9/refine-nextjs-zenstack).

---

We're building [ZenStack](https://github.com/zenstackhq/zenstack), a toolkit that supercharges Prisma ORM with a powerful access control layer and unleashes its full potential for full-stack development. If you enjoy the reading and feel the project interesting, please help star it so that more people can find it! | ymc9 |

1,866,643 | The Ultimate Checklist for Applying for an LLC in Texas Online | Starting your own business is an exciting venture, and forming a Limited Liability Company (LLC) can... | 0 | 2024-05-27T14:11:44 | https://dev.to/tom_ford_a41cfbc89a2ddf43/the-ultimate-checklist-for-applying-for-an-llc-in-texas-online-48dl | llc, finance, file | Starting your own business is an exciting venture, and forming a Limited Liability Company (LLC) can be a great way to structure your enterprise. If you're considering setting up an LLC in the Lone Star State, you’ll be pleased to know that the process is straightforward and can be done entirely online. Here’s your ultimate checklist for [how to apply for LLC in Texas online](https://truspanfinancial.com/set-up-an-llc/).

**Step 1: Choose Your LLC Name**

Your LLC’s name must be unique and distinguishable from other business entities registered with the Texas Secretary of State. To ensure your desired name is available, you can perform a name search on the Texas Secretary of State's website. The name must contain "Limited Liability Company," "LLC."

**Step 2: Appoint a Registered Agent**

A registered agent is required for your Texas LLC. This person or business entity is responsible for accepting legal documents on behalf of the LLC. The registered agent must have a physical street address in Texas and be available during normal business hours. You can choose yourself, another individual, or a professional service as your registered agent.

**Step 3: File the Certificate of Formation**

To legally establish your LLC, you need to file Form 205 (Certificate of Formation) with the Texas Secretary of State. This form can be filed online through the SOSDirect website. Here’s what you’ll need to provide:

- The LLC’s name

- The duration of the LLC (perpetual or a specific end date)

- The registered agent’s name and address

- The management structure (member-managed or manager-managed)

- The names and addresses of the founding members or managers

- The filing fee is $300, and you can pay by credit card, debit card, or through a pre-funded SOSDirect account.

**Step 4: Create an Operating Agreement**

Although not required by Texas law, having an operating agreement is highly recommended. This document details the ownership structure and operational procedures of the LLC. It helps prevent misunderstandings and disputes among members. The operating agreement should include:

- Member roles and responsibilities

- Voting rights and decision-making processes

- Profit and loss distribution

- Procedures for adding or removing members

- Dissolution procedures

**Step 5: Obtain an EIN**

An Employer Identification Number (EIN) is required for tax purposes and is needed to open a business bank account, hire employees, and file federal taxes. You can obtain an EIN for free from the IRS by applying online on the IRS website.

**Step 6: Register for Texas State Taxes**

Depending on the nature of your business, you may need to register for one or more Texas state taxes. Common registrations include:

- Sales tax permit

- Franchise tax

- Employer taxes if you have employees

- You can register for these taxes online through the Texas Comptroller of Public Accounts website.

**Step 7: Open a Business Bank Account**

Separating your personal and business finances is crucial. Open a business bank account using your LLC’s EIN and Certificate of Formation. This step ensures that your business transactions are kept separate, which is important for liability protection and tax purposes.

**Step 8: Comply with Ongoing Requirements**

Once your LLC is formed, you must comply with ongoing requirements to maintain good standing. These include:

- Submitting annual reports and paying any related fees

- Keeping detailed records of your LLC’s activities and finances

- Adhering to any additional state or local licensing requirements

**Step 9: Obtain Required Permits and Licenses**

Depending on your business type and location, you may need various permits and licenses to operate legally in Texas. Check with local city and county offices, as well as relevant state agencies, to determine what is required.

**Conclusion**

Applying for an LLC in Texas online is a streamlined process that can set the foundation for your business success. By following this ultimate checklist, you ensure that all legal and administrative bases are covered, allowing you to focus on growing your business. Remember to [create LLC online Texas](https://truspanfinancial.com/set-up-an-llc/

) for the best experience in forming your business entity, ensuring a smooth and efficient start to your entrepreneurial journey.

| tom_ford_a41cfbc89a2ddf43 |

1,866,642 | Automation vs Manual Testing in Software | Automated testing and manual testing are two approaches used in software testing, each with its own... | 0 | 2024-05-27T14:11:26 | https://dev.to/akshara_chandran_0f2b21d7/automation-vs-manual-testing-in-software-566i | Automated testing and manual testing are two approaches used in software testing, each with its own advantages and disadvantages. Here's a breakdown of the key differences between them:

1. **Automated Testing:**

- **Definition**: Automated testing involves the use of tools and scripts to execute pre-defined test cases automatically without human intervention.

- **Advantages**:

- **Efficiency**: Automated tests can be run quickly and repeatedly, saving time compared to manual testing.

- **Consistency**: Automated tests execute the same steps and checks consistently, reducing the risk of human error.

- **Repeatability**: Automated tests can be easily repeated across different builds and environments, ensuring consistent results.

- **Regression Testing**: Automated tests are particularly useful for regression testing, where previous functionality is verified after code changes.

- **Disadvantages**:

- **Initial Setup**: Setting up automated tests requires time and effort, especially for complex systems.

- **Maintenance**: Automated tests require regular maintenance to keep them up-to-date with changes in the application.

- **Limited Scope**: Some aspects of testing, such as usability testing and exploratory testing, are difficult to automate.

- **Cost**: There may be initial costs associated with purchasing testing tools and resources.

2. **Manual Testing:**

- **Definition**: Manual testing involves testers executing test cases manually, following pre-defined steps and instructions.

- **Advantages**:

- **Flexibility**: Manual testing allows testers to adapt to changes and explore the application in ways that automated tests cannot.

- **Exploratory Testing**: Manual testing is well-suited for exploratory testing, where testers explore the application to uncover defects and usability issues.

- **Human Judgment**: Manual testers can apply human judgment and intuition to identify issues that may not be caught by automated tests.

- **Usability Testing**: Manual testing is effective for evaluating the user interface and overall user experience.

- **Disadvantages**:

- **Time-consuming**: Manual testing can be time-consuming, especially for repetitive or large-scale testing efforts.

- **Inconsistency**: Manual tests may produce inconsistent results due to human error or variability in tester skills.

- **Regression Testing**: Repeating manual tests for each build or release can be tedious and error-prone.

- **Resource Intensive**: Manual testing requires human testers, which can be costly and may not scale well for large projects.

In summary, automated testing offers efficiency, repeatability, and consistency but requires initial setup and ongoing maintenance. Manual testing offers flexibility, human judgment, and effectiveness for certain types of testing but can be time-consuming and resource-intensive. Often, a combination of both automated and manual testing is used to achieve comprehensive test coverage in software development projects. | akshara_chandran_0f2b21d7 | |

1,866,040 | Generics in Rust: little library for Bezier curves -- Part 2 | Some time ago, I decided to write a couple of posts about my experience with generic programming in... | 0 | 2024-05-27T14:10:20 | https://dev.to/iprosk/generics-in-rust-little-library-for-bezier-curves-part-2-2cpi | rust, generic, beginners, numeric | Some time ago, I decided to write a couple of posts about my experience with [generic programming](https://en.wikipedia.org/wiki/Generic_programming) in Rust. For me, when someone says generics, [C++ template metaprogramming](https://en.cppreference.com/w/cpp/language/template_metaprogramming) and [Alexander Stepanov](https://en.wikipedia.org/wiki/Alexander_Stepanov) immediately pop in my mind. Rust is different. So it was interesting to see what's going on out there, which motivated my original interest.

Learning by doing is the best for quick hands-on, so I decided to write a little generic numeric library for manipulating [Bezier curves](https://en.wikipedia.org/wiki/B%C3%A9zier_curve) (polynomials in the [Bernstein basis](https://en.wikipedia.org/wiki/Bernstein_polynomial)) that is (i) uses static dispatch (and no heap allocation calls), and (ii) can be used with different types for specifying Bezier control polygon: reals, rationals, complex, and, in general, something that implements standard vector-space operations.

What I [learned](https://dev.to/iprosk/experimenting-with-generics-in-rust-little-library-for-bezier-curves-part-1-4093) is that writing generic libraries in Rust is not a piece of cake. There are two major facts that contribute into this: (i) Rust's explicit safety-oriented type system, and (ii) the absence of decent support of generic constant expressions in stable Rust. So what I am writing about here is based on Rust Nightly.

So before we take of, there is a couple of previous posts

- [Generic constant expressions: a future bright side of nightly Rust](https://dev.to/iprosk/generic-constant-expressions-a-future-bright-side-of-nightly-rust-3bp7).

- [Experimenting with generics in Rust: little library for Bezier curves - part 1](https://dev.to/iprosk/experimenting-with-generics-in-rust-little-library-for-bezier-curves-part-1-4093).

And the [Github repo](https://github.com/sciprosk/bernstein) that contains examples from these posts, some tests, and maybe even some demos, if I will manage to add them in nearby future.

## First steps

First, make sure we use the unstable rust build. I will just set it up for all repositories with ` rustup default nightly`, but it is possible to apply it to a folder with `rustup override set nightly`. After that:

```

PS > rustc --version

rustc 1.80.0-nightly (1ba35e9bb 2024-05-25)

```

and we also make sure that we include the following lines in our crate

```

#![allow(incomplete_features)]

#![feature(generic_const_exprs)]

```

As I briefly outlined [here](https://dev.to/iprosk/generic-constant-expressions-a-future-bright-side-of-nightly-rust-3bp7), Rust currently does not support generic constant expression in its type system. It is not that something is wrong with it in general, it is just not implemented due to [technical difficulties](https://hackmd.io/OZG_XiLFRs2Xmw5s39jRzA). This is considered to be an unstable feature.

To describe a generic Bezier curve `c(u) = (x(u), y(u), z(u), ...)`, I basically wrap a primitive array type `[T; N]` into a struct

```

pub struct Bernstein<T, U, const N: usize> {

coef: [T; N],

segm: (U, U),

}

```

that contains a generic type parameter `T` for Bezier control polygon, type parameter `U` for curve parametrization, and a generic constexpression parameter `N` for the number of basis polynomials (or just the size of the Bezier control polygon). For example, a cubic Bezier curve should have four points in its control polygon, i. e. `N = 4`. The curve is always parameterized on `0 <= u <= 1`, so right now `segm` is defaulted to `(0, 1)`.

Some more details can be found in my [previous post](https://dev.to/iprosk/experimenting-with-generics-in-rust-little-library-for-bezier-curves-part-1-4093). In this post, I would like to discuss how implement generic methods on this type that would leverage generic constant expressions on the size of the control polygon `N`.

## Implementing eval-method

The first method to do is, of course, to implement `eval()` method to find a point on the curve at some value of the parameter `u`, so that we can write something like this

```

let p0 = Complex::new(0.0, 0.0);

let p1 = Complex::new(2.5, 1.0);

let p2 = Complex::new(-0.5, 1.0);

let p3 = Complex::new(2.0, 0.0);

// Define cubic Bezier curve in the complex plane.

let c: Bernstein<Complex<f32>, f32, 4> = Bernstein::new([p0, p1, p2, p3]);

let p = c.eval(0.5); // point on a curve of type Complex<f32>

```

This part is easy, and can be done even in stable Rust. I just use the De [Casteljau's algorithm](https://en.wikipedia.org/wiki/De_Casteljau%27s_algorithm)

```

impl<T, U, const N: usize> Bernstein<T, U, N> where

T: Copy + Add<T, Output = T> + Sub<T, Output = T> + Mul<U, Output = T>,

U: Copy + Num,

{

pub fn eval(&self, u: U) -> T {

// -- snippet --

// De Casteljau's algorithm

}

}

```

Trait bounds on types are quite transparent. I require `Copy` trait to make my life easier when manipulating mathematical expressions, type `U` should be a number, which is required by [`num::Num`](https://docs.rs/num/latest/num/trait.Num.html) trait. This is especially useful because `Num` trait requires generic `One` and `Zero` traits, which provide methods such as `U::zero()` and `U::one()`. Type `T` is required to implement vector space operations of addition, subtraction, and right-hand-side multiplication by a variable of type `U` with the result of being of type `T` (`Mul<U, Output = T>`).

## Implementing diff and integ methods

The next step is to implement generic `diff()` and `integ()` methods to find the parametric derivative of the Bezier curve `dc(u)/du`, and the integral with respect to parameter `u`. That's where generic constant expression come into play.

The problem is that our methods should take an array of control points `[T; N]` of size `N` as an input, and return an array of size `N - 1` for `diff()` or `N + 1` for `integ()` as output nicely wrapped into our custom `Bernstein` type so that the signatures of the functions should be like these:

```

fn diff(&self) -> Bernstein<T, U, {N - 1}> {}

// c: T -- is the initial point to fix the constant of integration.

fn integ(&self, c: T) -> Bernstein<T, U, {N + 1}> {}

```

And stable Rust does not allow us to do that. Using `generic_const_exprs` feature, it becomes possible, as we shall see shortly.

Another difficulty is related to Rust's explicit type system. In these methods, the size of the array `N` becomes a part of mathematical expressions in the `diff()` and `integ()` algorithms. Rust requires the size of the array to be of a machine-dependent pointer size `usize` (which totally make sense but it is not generic). Converting from `usize` to other type is not considered to be a safe operation so I have to rely on third party traits for that purpose, such as `num::FromPrimitive` trait that is implemented for `usize` in the `num` crate. Otherwise, multiplying and expression of type, let's say, `f64`, by `N` is not defined.

Having this in mind, let's discuss `diff()` method (implementing `integ()` is similar and may be found in the [repo](https://github.com/sciprosk/bernstein)):

```

impl<T, U, const N: usize> Bernstein<T, U, N> where

T: Copy + Add<T, Output = T> + Sub<T, Output = T> + Mul<U, Output = T>,

U: Copy + Num + FromPrimitive,

{

pub fn diff(&self) -> Bernstein<T, U, {N - 1}> where

[(); N - 1]:

{

let coef: [T; N - 1] = array::from_fn(

|i| -> T {

(self.coef[i + 1] - self.coef[i]) *

(U::from_usize(N - 1).unwrap() / (self.segm.1 - self.segm.0))

}

);

Bernstein {

segm: self.segm,

coef: coef,

}

}

}

```

Here, there is a couple of new details. First, I require type `U` to be bounded by `FromPrimitive` trait that allows to convert from `usize` to `U` in a generic environment by calling `U::from_usize(N - 1).unwrap()`. Second is that there is new bound `[(); N - 1]:` which is [required by `generic_const_exprs` feature](https://hackmd.io/OZG_XiLFRs2Xmw5s39jRzA)

> We currently use where [(); expr]: as a way to add additional const wf bounds. Once we have started experimenting with this it is probably worth it to add a more intuitive way to add const wf bounds.

The bounds on `T` type is basically the same as in the `eval()` method.

Now, we can find a derivative type from a basic type, by using for example the following

```

// Define cubic Bezier curve in the complex plane.

let c: Bernstein<Complex<f32>, f32, 4> = Bernstein::new([p0, p1, p2, p3]);

// Get the derivative, or hodograph curve at u = 0.2.

let d = c.diff().eval(0.2); // `d` is of type Complex<f32>

```

## Generic product of two polynomials

The next example is a product of two polynomials of order `N - 1` and `M - 1` (the size of arrays of coefficients is `N` and `M` respectively). This is a little bit more involved since it has to take the array `[T; N]` as an input, multiply it by `[T; M]` and the type of output should be `[T; M + N - 1]`. For example, multiplying a polynomial of the third order (`N = 4`) by a second-order polynomial (`N = 3`) should give a quintic polynomial (`N = 6`).

The implementation may looks like this:

```

impl<T, U, const N: usize, const M: usize>

Mul<Bernstein<T, U, {M}>> for Bernstein<T, U, {N}> where

T: Copy + Add<Output = T> + Sub<Output = T> + Mul<Output = T> + Mul<U, Output = T>,

U: Num + FromPrimitive,

[(); N]:,

[(); M]:,

[(); N + M - 1]:

{

type Output = Bernstein<T, U, {N + M - 1}>;

fn mul(self, rhs: Bernstein<T, U, {M}>) -> Self::Output {

let mut coef = [self.coef[0] - self.coef[0]; N + M - 1];

// -- snippet --

// actual algorithm

Bernstein {

coef: coef,

segm: self.segm,

}

}

}

```

The required operation is specified by `Mul<Bernstein<T, U, {M}>>` in the `impl`, and the resulting type should be `type Output = Bernstein<T, U, {N + M - 1}>`.

Another subtle moment is that I have to initialize the array in the body of the function `mul()` because Rust does not allow to use uninitialized variables (remember old plain C89? -- who cared about initializing all the variables). One way to do it is to put a trait bound on `T` to implement `T::zero()` that can be used as an initial value. In this case, I chose a workaround instead (may change it later) which is to require subtraction `Sub<Output = T>` and use `self.coef[0] - self.coef[0]` as a kind of generic zero.

Note that `generic-const-exprs` require additional trait bounds to be imposed for each of the array types we use `[(); N]:`, `[(); M]:`, ` [(); N + M - 1]:`.

Now, it possible to write

```

let p: Bernstein<f64, f64, 3> = Bernstein::new([0.0, 2.0, 1.0]);

let q: Bernstein<f64, f64, 4> = Bernstein::new([1.0, 2.0, 0.0, 0.0]);

// Quintic polynomial with real coefficient

let c = p * q;

```

## Summary

Generic constant expressions in Rust give the flexibility of implementing generic types which size is known at compile time. So far, using an unstable Rust nightly 1.80, I didn't notice any issues with using `generic-const-exprs` feature. | iprosk |

1,866,639 | Objetos vs. Estruturas de Dados | ✨ Objetos vs. Estruturas de Dados ✨ Você sabia que entender a diferença entre objetos e estruturas... | 0 | 2024-05-27T14:08:48 | https://dev.to/jackienascimento/objetos-vs-estruturas-de-dados-codigo-limpo-capitulo-6-goj | codigolimpo, cleancode, desenvolvimentodesoftware | ✨ **Objetos vs. Estruturas de Dados** ✨

Você sabia que entender a diferença entre objetos e estruturas de dados pode transformar seu código? Vamos ver o que Robert C. Martin nos ensina no capítulo 6 de "Código Limpo"! 👇

---

## Objetos 🛠️

- **Encapsulamento**: Objetos escondem dados e expõem comportamentos através de métodos.

- **Ocultação de Informação**: A principal função dos objetos é esconder detalhes de implementação, expondo apenas o necessário.

- **Interação**: Objetos interagem entre si via métodos, promovendo modularidade e manutenção.

---

## Estruturas de Dados 🗄️

- **Transparência de Dados**: Estruturas de dados são transparentes e focam em expor dados diretamente.

- **Foco na Representação**: Elas se concentram na representação e armazenamento de dados de forma acessível.

---

## Quando Usar Cada Um? 🤔

- **Objetos**:

- Ocultar implementações complexas.

- Garantir a integridade dos dados.

- **Estruturas de Dados**:

- Facilitar o acesso direto e simples aos dados.

- Manipular dados em algoritmos.

---

## Dicas Práticas 📝

- **Princípio do Abstrato**: Objetos devem expor operações de alto nível e esconder detalhes.

- **Trade-offs**: Escolha entre objetos e estruturas de dados conforme a necessidade de encapsulamento ou acesso direto aos dados.

- **Design e Manutenção**: Pense no futuro, facilite a manutenção e a evolução do código.

---

🔗 **Leia mais em "Código Limpo" e melhore suas habilidades de programação!**

---

Espero que gostem da dica! Até a próxima! 🚀

``` | jackienascimento |

1,866,641 | FD | Hey there! I just wanted to share a quick message about the importance of monitoring our health. In... | 0 | 2024-05-27T14:05:56 | https://dev.to/dsfgsg34g/fd-51pg | Hey there! I just wanted to share a quick message about the importance of monitoring our health. In our fast-paced world, it's easy to ignore our physical well-being, but doing so can lead to bigger problems down the road. Simple habits like eating a balanced diet, staying active, and getting regular health screenings can go a long way. Make your health a priority and take those steps towards a healthier you. Remember, good health is priceless! [https://jacanawellness.com/shop/wellness/body-oil/](https://jacanawellness.com/shop/wellness/body-oil/)

| dsfgsg34g | |

1,866,640 | Meme Monday | Meme Monday! Today's cover image comes from last week's thread. DEV is an inclusive space! Humor in... | 0 | 2024-05-27T14:03:42 | https://dev.to/ben/meme-monday-4l95 | jokes, discuss, watercooler | **Meme Monday!**

Today's cover image comes from [last week's thread](https://dev.to/ben/meme-monday-ha7).

DEV is an inclusive space! Humor in poor taste will be downvoted by mods. | ben |

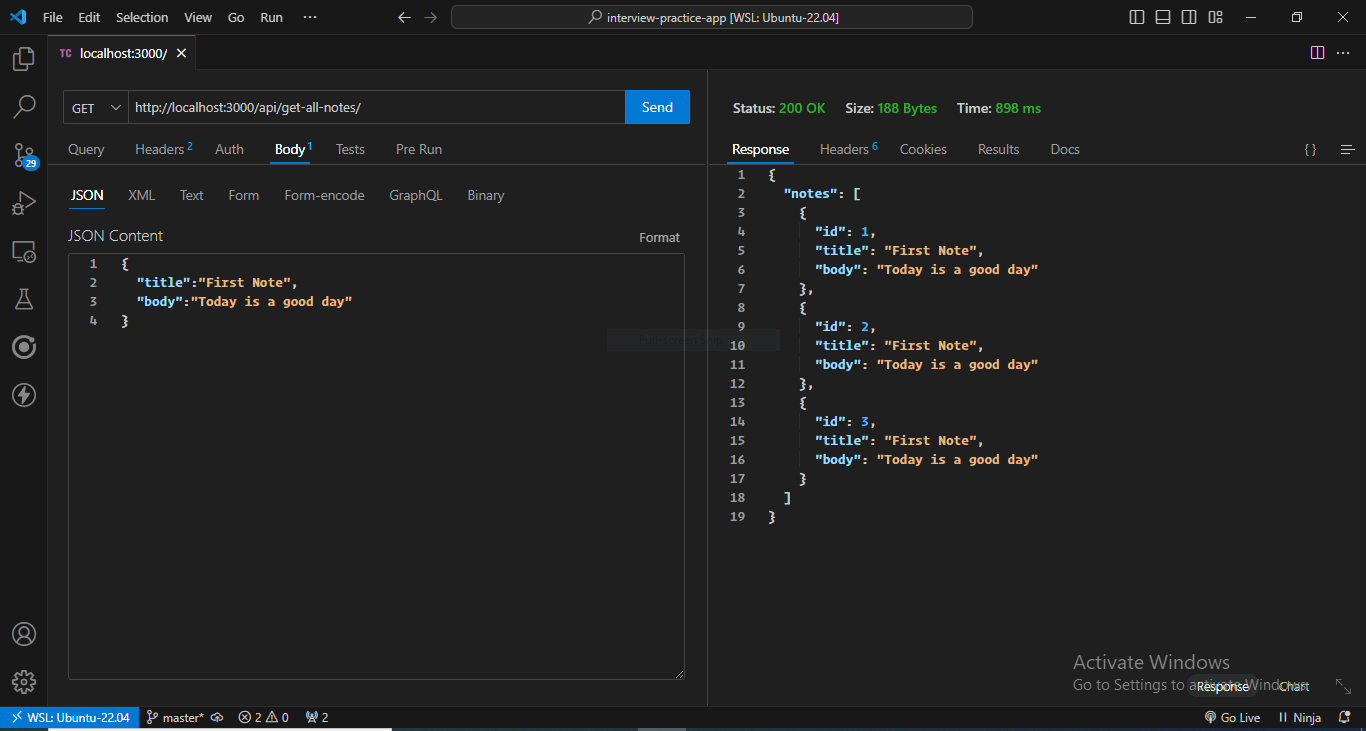

1,866,468 | Building a Scalable REST API with TypeScript, Express, Drizzle ORM, and Turso Database: A Step-by-Step Guide | Building REST APIs with Express.js is straightforward and a must-have skill for every web developer.... | 0 | 2024-05-27T13:57:44 | https://dev.to/ibrocodes/build-a-scalable-rest-api-with-typescript-express-drizzle-orm-and-turso-database-a-step-by-step-guide-2hnd | typescript, express, drizzle, turso | Building REST APIs with Express.js is straightforward and a must-have skill for every web developer. In this guide, we'll learn a step-by-step approach to building a REST API. We'll use the following technologies to build an API for a note-taking application:

- **TypeScript**: Literally JavaScript with static typing. It compiles down to JavaScript. Suitable for building large-scale JavaScript applications with confidence.

- **Express**: A minimalist, unopinionated Node.js framework for building server-side applications.

- **Drizzle**: An SQL Object-Relational Mapping (ORM) tool that makes it easy to interact with the database.

- **Turso**: A fast and scalable SQLite database technology for building production-ready applications. Easy to set up and has a generous free tier.

## Why Learn Express?

Using opinionated Node.js frameworks such as Nest.js and Sails.js is great, especially for large-scale applications. However, they're not ideal for beginners because they abstract away a lot of how the server actually works. In fact, Nest.js is an abstraction on top of Express.js.

Express.js, on the other hand, is unopinionated and allows for more flexibility and a better learning experience. It's lightweight and easy to get started with, especially if you're coming from frontend JavaScript. Moreover, understanding Express will give you the knowledge and confidence to pick up other Node.js backend frameworks.

## What the Guide Covers

We will cover the following topics in this article:

- How to set up a TypeScript Project with Express

- How Express middlewares work

- Setting up Turso database

- Connecting and interacting with Turso database using Drizzle ORM

- Creating a CRUD (Create Read Update Update Delete) API

- How to validate and sanitize data using the third-party `express-validator` middlewares

- Testing an API with Thunder Client VS Code extension

## Prerequisites

To effortlessly follow this tutorial, you should have a basic understanding of the following:

- JavaScript (We will be using TypeScript, but JavaScript knowledge is enough to follow this guide)

- Node.js

- Structure Query Language (SQL)

- How a server works

Let's begin!

## Setting Up A TypeScript Project with Express

The first steps to building a REST API with Express.js and TypeScript are:

1. Generating and configuring `package.json`

2. Installing basic dependencies necessary to initially run our app

3. Generating and configuring `tsconfig.json`

### 1. Generating and configuring `package.json` file

```bash

npm init -y

```

This command generates a `package.json` file in the root directory.

Next, update the file to look like this:

```json

{

"name": "article",

"version": "1.0.0",

"main": "dist/index.js",

"type": "module",

"scripts": {

"dev": "node --loader=ts-node/esm --env-file=.env --watch src/index.ts",

"build": "npx tsc",

"start": "node --env-file=.env dist/index.js"

},

...

}

```

The `main` option in the above `package.json` file points to the destination of the JavaScript file after compilation. The `dist/index.js` file will be generated (usually for production) when you execute the `build` script command by running `npm run build` on the terminal. The `start` command is used to start the server in production.

The `dev` script command is used to run the application in development mode. It contains the following commands:

- `node`: Uses the Node runtime to execute the code.

- `--loader=ts-node/esm`: Uses the `ts-node` package (we'll later install) to compile our code to JavaScript during development.

- `--env-file=.env`: Tells Node where the `.env` file is located.

- `-watch`: Reruns our code whenever we make an update, enabling automatic reloading during development.

### 2. Installing starter dependencies

We'll install some packages needed to kickstart our application. Aside from `typescript` and `express`, we will install the following packages:

- `ts-node`: A package that compiles our TypeScript code to JavaScript during development, allowing us to run our application without the need for explicit compilation.

- `@types/express`: A package that provides type definitions for Express.js, helping TypeScript to recognize and understand the types and interfaces of Express.js, enabling better code completion, error reporting, and overall development experience.

```bash

npm i express; npm i -D typescript ts-node @types/express

```

The `-D` flag, short for `--save-dev`, indicates that the packages being installed are development dependencies, meaning they are only required for development purposes and will not be needed in production. By using the `-D` flag, we are telling npm to include these packages in the `devDependencies` section of our `package.json` file, rather than the `dependencies` section, which is used for production dependencies.

### 3. Generating and configuring `tsconfig.json`

The following command generates the `tsconfig.json` file in the root directory:

```bash

npx tsc --init

```

The generated `tsconfig.json` file will contain many commented out TypeScript compiler options. Uncomment out the following options and update them accordingly:

```json

{

"compilerOptions": {

....

"module": "NodeNext",

"rootDir": "./src",

"moduleResolution": "NodeNext",

"outDir": "./dist",

....

}

}

```

### Recommended starter file structure for the project

**Create the necessary folders and files**

Your file structure should look like this:

```

├── .env

├── .gitignore

├── package-lock.json

├── package.json

├── src

│ ├── db

│ ├── handlers

│ ├── routes

│ ├── lib

│ ├── middleware

│ ├── index.ts

│ └── server.ts

└── tsconfig.js

```

## Express Middleware

We'll use middleware functions throughout the project. So, let's take some time to understand them. If you're already familiar with Express middleware, you can skip this section.

### Without middleware

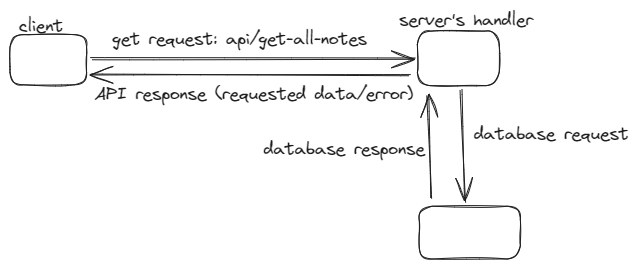

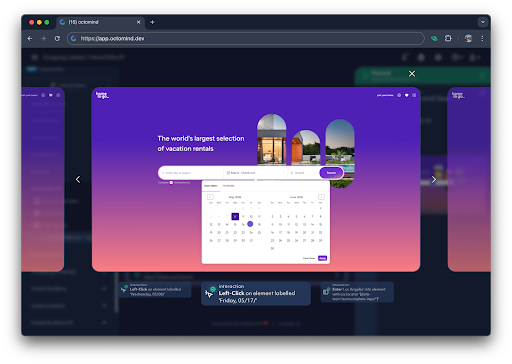

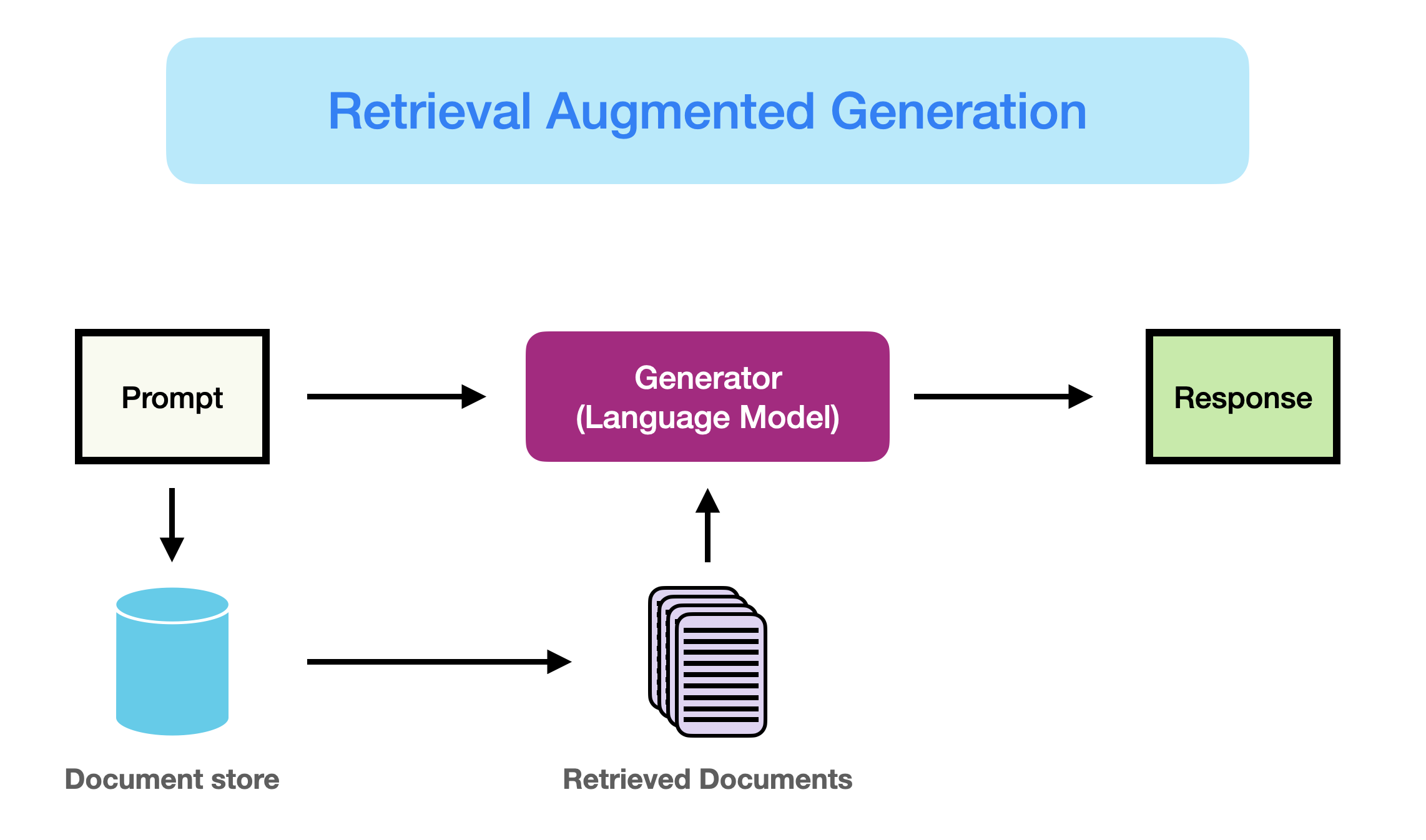

To best illustrate this concept, I made a little sketch using [excalidraw](https://excalidraw.com/):

Normally, this is how a client will communicate with a server. In this case, the client requests all the notes in the database. The server makes a request to the database and, depending on the database's response, returns the requested resources or an error.

This approach keeps the server open for all kinds of requests. As API developers, we want to ensure that the client is requesting the right way and is not a malicious actor. So, we introduce middleware into the equation:

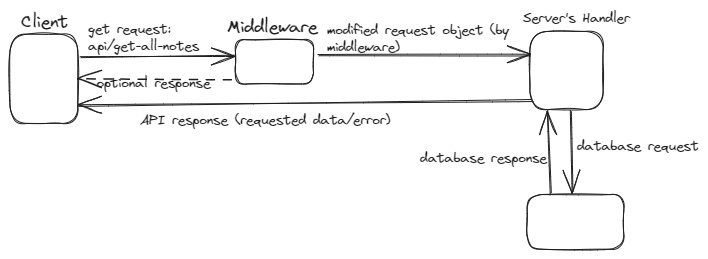

### With middleware

The middleware sits between the client's request and the server's handler function. Whenever the server receives a request from the client, the request has to pass through the middleware and gets securitized in the process. In the above sketch, the middleware modified the request object. Express middleware can do more, including the following:

- Execute any code

- Terminate the request

- Make changes to the request or response object

- Detect errors

- Authentication and authorization

### How to define an express middleware

```typescript

import express, {Request, Response, NextFunction} from "express"

const app = express()

const logRequestMethod = (req: Request, res: Response, next: NextFunction) => {

console.log(req.method)

next()

}

const logHostname = (req: Request, res: Response, next: NextFunction) => {

console.log(req.hostname)

next()

}

```

`logRequestMethod` and `logHostname` are middlewares responsible for logging the request's HTTP method and hostname respectively. Typically, an Express middleware has three arguments:

- request

- response

- next

If we don't call the `next()` function, respond back to the client, or terminate the request, the server will hang in the middleware. The `next()` function transfers control to the next middleware or handler.

### How to use an express middleware

Basically, there are two ways of using an Express middleware:

- On an application level: An application-level middleware will execute whenever a request reaches the server and not terminated by other middleware before it. We can invoke application-level middleware using `app.use(middlewareFunction)`. For example, let's use the above `logRequestMethod` on the app level:

```typescript

app.use(logRequestMethod)

```

- Route-specific middleware: We can restrict a middleware to a particular route. This type of middleware will only execute when a request is made to that particular route.

Note that whether we're using a middleware on the app or route level, we can have one or more of them separated by commas. For instance, let's use logRequestMethod and logHostname only when the client makes a request to the `/about` path:

```typescript

app.get("/about", logRequestMethod, logHostname, (req, res) => {})

```

The `app.use()` can also take a path as the first argument and used for a route-specific middleware: `app.use("/about", logRequestMethod)`.

### Error middleware

Whenever a client request results in an unhandled error, Express.js has a default error middleware that sends the error message back to the client in HTML format. There are two common ways an error can go unhandled:

- Not wrapping a code block that may result in an error in a try-catch

- The client sending a request to a route that doesn't exist

We would create the following middlewares and use them on the application level to handle errors:

- A custom error middleware that handles all errors and sends back an error response in JSON format to the client.

- A not-found middleware that gets triggered whenever the client requests resources from a route that doesn't exist. This not-found middleware will forward an error message to the custom error middleware, which will send back an appropriate JSON response to the client.

### Setting up the Error Middleware

As mentioned earlier, the `next()` function triggers a jump from the current middleware in execution to the next middleware or handler. However, if we pass an argument to the `next()` function, it jumps to the next error middleware on the stack. If we haven't defined a custom error middleware, it transfers control to Express's default error middleware.

Typically, you want to pass an Error object to the `next()` function. The issue with the inbuilt error class is that it only accepts one argument, which is the error message string. However, we want our error object to contain an error message and status code that we would use to send back the appropriate error message back to the client.

Let's create a child class of the error class and make it receive an extra `statusCode` argument. So, within the `lib` folder, create a `custom-error.ts` file and insert the following lines of code:

**`src/lib/custom-error.ts`**

```typescript

export class CustomError extends Error {

message: string;

statusCode: number;

constructor(message: string, statusCode: number) {

super(message);

this.statusCode = statusCode;

}

}

```

Our `CustomError` class can form error objects with two arguments — the message and status code. We'll use `CustomError` throughout our code to forward errors that happen in our server to the error middleware we'll create in a moment.

Next, we'll create an error middleware that will receive the error object and form a proper JSON response for the client. Unlike the traditional middleware functions, error middleware takes four arguments — `error`, `request`, `response`, `next`.

Within the `middleware` folder, create `error.ts` file. Within, paste the following lines of code:

**`src/middleware/error.ts`**

```typescript

import { Request, Response, NextFunction } from "express";

import { CustomError } from "../lib/custom-error.ts";

export function error(

err: CustomError,

req: Request,

res: Response,

next: NextFunction

) {

try {

const msg = JSON.parse(err.message);

res.status(err.status).json({ msg });

} catch (error) {

res.status(err.status).json({ msg: err.message });

}

}

```

The `err` argument is the same error that is passed into the `next()` function from some middleware or handler. The error middleware can handle both JSON strings and text strings.

By handling both JSON and text strings, our error middleware can flexibly accommodate different types of error messages, making it more robust and adaptable to various scenarios.

### Setting up not-found middleware

We need to create a middleware that handles requests made to routes that do not exist. This is straightforward. Express executes all middleware in the stack except the request is terminated by some middleware. So, we'll simply create a middleware that forwards an error message to our error middleware and place it at the tail end of the middleware stack. At that position, it only gets hit when our Express app has scanned through all the routes we have defined and doesn't find any that matches the one the client is requesting.

Inside the middleware folder, create a `not-found.ts` file and paste the following lines of code:

**`src/middleware/not-found.ts`**

```typescript

import { Response, Request, NextFunction } from "express";

import { CustomError } from "../lib/custom-error.ts";

export function notFound(req: Request, res: Response, next: NextFunction) {

return next(new CustomError("Route not found", 404));

}

```

This not-found middleware creates a `CustomError` object with a 404 status code and passes it to the next error middleware using the `next()` function.

### Setting up `server.ts` file

Let's set up the application `server.ts` file and implement the two middlewares we just created. Within the `src` folder, create the `server.ts` file:

**`src/server.ts`**

```typescript

import express, { urlencoded, json } from "express";

import { notFound } from "./middleware/not-found.ts";

import { error } from "./middleware/error.ts";

const app = express();

app.use(urlencoded({ extended: true }));

app.use(json());

// ... other middlewares and routes ...

app.use(notFound);

app.use(error);

export default app;

```

The order of middleware matters in Express, and it's important to place the error middleware last, as it will catch any errors that occur in the previous middlewares or handlers. The not-found middleware should be placed just above the error middleware, as it will handle any requests that don't match any of the previous routes.

The `urlencoded({ extended: true })` and `json()` middlewares are built-in Express middleware functions that parse requests with urlencoded and JSON payloads, respectively. They should be placed early in the middleware stack, as they need to parse the request body before any other middlewares or routes can handle the request.

## Setting up the Turso Database and Drizzle ORM

On this section, we’ll learn how to set up and use Turso database and Drizzle ORM in the API.

### How to set up a Turso database

To use Turso database, you need the connection URL and authentication token. Follow these steps to obtain the credentials:

1. Go to the [Turso website](https://turso.tech/)

2. Click on the `Sign Up` button

3. Sign up with either Gmail or Github

4. Choose a username

5. Skip the "About you" form

6. Click on `Create Database`

7. Name your database (e.g., *express-api-project*)

8. Click on `Create Database`

9. Scroll down and click on `Continue to Dashboard`

10. In the dashboard's side menu, click on `Databases`

11. Click on the name of the database you created (e.g., *express-api-project*) at the base of the page

12. Scroll down, copy the URL, and click on the `Generate Token` button

13. Leave everything as it is and click on `Generate Token`

14. Copy the token

15. Paste the URL and token in your `.env` file as follows:

```

TURSO_AUTH_TOKEN=paste-token-here

TURSO_CONNECTION_URL=paste-url-here

PORT=3000

```

### Installing necessary packages for our database

```bash

npm i @libsql/client drizzle-orm; npm i -D drizzle-kit

```

### Create the Drizzle configuration file

After installing the necessary packages, create a `drizzle.config.ts` file in the root directory and paste the following configuration options:

**`drizzle.config.ts`**

```typescript

import { defineConfig } from "drizzle-kit";

export default defineConfig({

schema: "./src/db/schema.ts",

out: "./src/db/migrations",

driver: "turso",

dbCredentials: {

url: process.env.TURSO_CONNECTION_URL,

authToken: process.env.TURSO_AUTH_TOKEN,

},

dialect: "sqlite",

verbose: true,

strict: true,

});

```

This file contains the configurations for drizzle ORM, including location of our schema and migrations. The `verbose: true` property prints out every action executed when making changes to the database. `strict:true` property ensures caution, forcing confirmation of any changes you want to make to the database.

### Connect Turso database with Drizzle

Next, create the `db.ts` file in the `db` folder and paste the following lines of code:

**`src/db/db.ts`**

```typescript

import { drizzle } from "drizzle-orm/libsql";

import { createClient } from "@libsql/client";

const client = createClient({

url: process.env.TURSO_CONNECTION_URL,

authToken: process.env.TURSO_AUTH_TOKEN,

});

export const db = drizzle(client);

```

The `drizzle(client)` function establishes a connection between the database client and Drizzle ORM, enabling the use of the ORM’s capabilities to interact with the database.

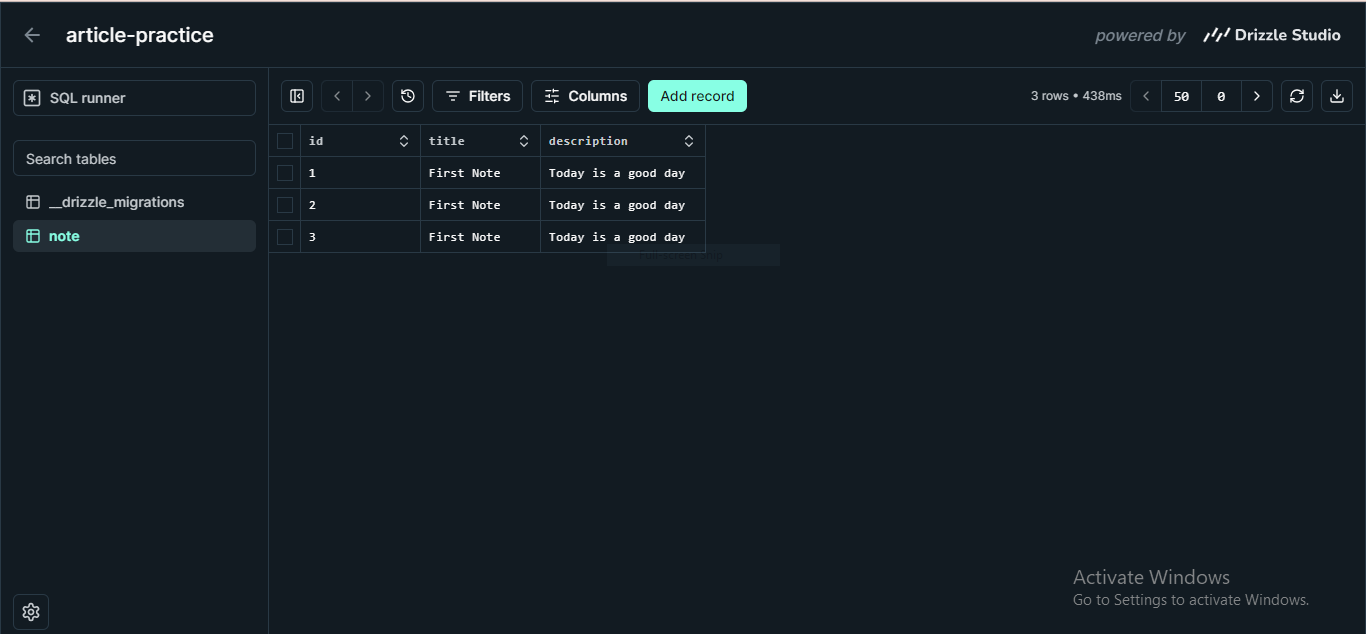

## Create Notes Schema and Migrations

Now that we have set up our database, let's create the notes schema, generate migrations, and apply the migrations to the Turso database.

### Creating the notes schema

Let's define our schema file for the notes app project. In the `db` folder, create a new file named `schema.ts` and add the following code:

**`src/db/schema.ts`**

```typescript

import { sqliteTable, integer, text } from "drizzle-orm/sqlite-core";

export const NotesTable = sqliteTable("note", {

id: integer("id").primaryKey(),

title: text("title").notNull(),

body: text("body"),

});

```

This is similar to the `CREATE TABLE` statement in MySQL. It creates a table with the following columns:

- `id`: A primary key that auto-increments, uniquely identifying each note

- `title`: A text column that must not be null, representing the title of the note

- `body`: A text column that can be null, representing the body or content of the note

### Generating migrations

Next, we'll generate migrations using the following command in your terminal:

```bash

npx drizzle-kit generate

```

This command will create our database migrations in the `"/src/db/migrations"` directory, as specified in the `drizzle.config.ts` file.

### Apply migrations

Next, apply the generated migrations to the Turso database by running the following command in your terminal:

```bash

npx drizzle-kit migrate

```

## Creating Notes Router in a Modular Way

When building an API using Express, we can place all our routes directly on the Express app:

```typescript

import express from "express";

const app = express();

//get request

app.get("/", handlerFunction1)

//post request

app.post("/add-something", handlerFunction2)

```

Using this approaching for small and demo applications is fine. But in large-scale projects, it can lead to a cluttered and hard-to-maintain codebase. Let’s see a better way.

### Express Router function explained

Express.js provides the `Router()` function, which creates a router object. This object acts as a middleware, but with the added capability to attach HTTP methods (such as GET, POST, PUT, and DELETE) to it. This allows for modular and organized routing.

Here's an example of creating a simple router:

```typescript

import { Router } from "express";

//defining the router

const routerObject = Router()

routerObject.get("/get-something", (req, res) => {})

routerObject.post("/post-something", (req, res) => {})

routerObject.delete("/delete-something", (req, res) => {})

//using the router in our app

app.use("/our-api", routerObject)

```

When a request URL starts with `/our-api`, the `routerObject` will be invoked, and the appropriate handler will be executed based on the HTTP method and the remaining part of the URL. For instance, if the request URL is `/our-api/get-something`, the GET handler attached to the `/get-something` route will be executed.

### Creating the `notesRouter` object

The notes API will have the following endpoints:

| API Endpoint | Method | Description |

| --- | --- | --- |

| /add-note | POST | Add new note |

| /get-note/:id | GET | Get note with id |

| /get-all-notes | GET | Get all notes |

| /update-note/:id | PUT | Update note with id |

| /delete-note/:id | DELETE | Delete note with id |

Within the `routes` folder, create `notes.ts` file and insert the following lines of code:

**`src/routes/notes.ts`**

```typescript

import { Router } from "express";

import { addNote, deleteNote, getAllNotes, getNote, updateNote} from "../handlers/notes.ts";

const notesRouter = Router();

notesRouter.get("/get-note/:id", getNote);

notesRouter.get("/get-all-notes", getAllNotes);

notesRouter.post("/add-note", addNote);

notesRouter.put("/update-note/:id", updateNote);

notesRouter.delete("/delete-note/:id", deleteNote);

export default notesRouter;

```

Now that our `notesRouter` object is ready, let’s hook it to our app in our `server.ts` file:

**`src/server.ts`**

```typescript

import express, { urlencoded, json } from "express";

import { notFound } from "./middleware/not-found.ts";

import { error } from "./middleware/error.ts";

import notesRouter from "./routes/notes.ts";

const app = express();

app.use(urlencoded({ extended: true }));

app.use(json());

app.use("/api", notesRouter);

app.use(notFound);

app.use(error);

export default app;

```

The URL to request any notes resource must start with `/api`. As we've seen, Express Router makes our application easier to manage and scale.

Next, we'll define our handlers, including `addNote` and `getNote`. Although we've imported them, we haven't created them yet.

## Creating the Notes Handlers

Handlers are technically middlewares. Just like middleware functions, they have three arguments — `request`, `response`, and `next`. Typically, they respond to the client or send an error message to the error middleware using the `next()` function.

More importantly, we define the logic to interact with the database in the handlers. This is where Drizzle shines. Within the `handlers` folder, create a new file named `notes.ts` and add the following code:

**`src/handlers/notes.ts`**

```typescript

import { eq } from "drizzle-orm";

import { db } from "../db/db.ts";

import { NotesTable } from "../db/schema.ts";

import { Response, Request, NextFunction } from "express";

import { CustomError } from "../lib/custom-error.ts";

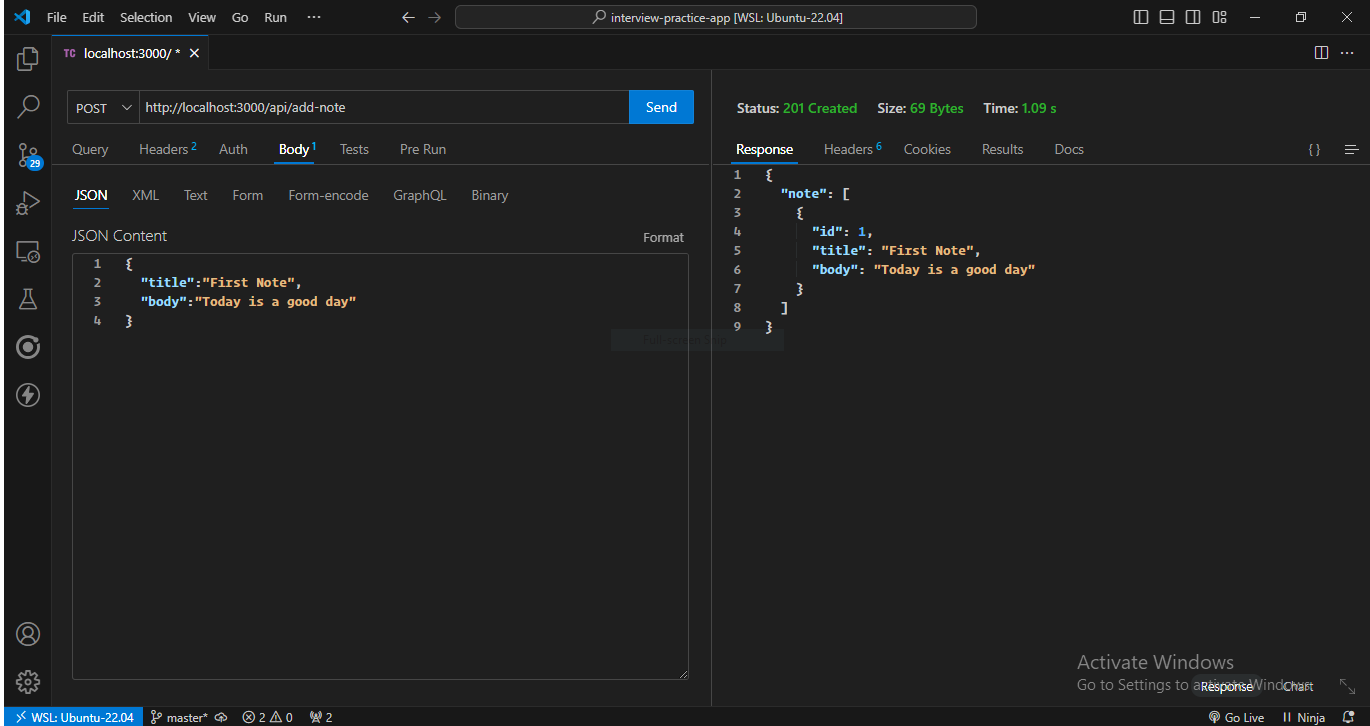

export async function addNote(req: Request, res: Response, next: NextFunction) {

try {

const note = await db.insert(NotesTable).values(req.body).returning();

res.status(201).json({ note });

} catch (error) {

next(new CustomError("Failed to add note", 500));

}

}

export async function getAllNotes(req: Request,res: Response, next: NextFunction) {

try {

const notes = await db.select().from(NotesTable);

res.status(200).json({ notes });

} catch (error) {

next(new CustomError("Failed to fetch notes", 500));

}

}

export async function getNote(req: Request, res: Response, next: NextFunction) {

try {

const note = await db

.select()

.from(NotesTable)

.where(eq(NotesTable.id, +req.params.id));

res.status(200).json({ note });

} catch (error) {

next(new CustomError("Failed to fetch note", 500));

}

}

export async function deleteNote(req: Request, res: Response, next: NextFunction) {

try {

const note = await db

.delete(NotesTable)

.where(eq(NotesTable.id, +req.params.id))

.returning({

deletedNoteId: NotesTable.id,

});

res.status(200).json({ note });

} catch (error) {

next(new CustomError("Failed to delete note", 500));

}

}

export async function updateNote(req: Request, res: Response, next: NextFunction) {

try {

const note = await db

.update(NotesTable)

.set(req.body)

.where(eq(NotesTable.id, +req.params.id))

.returning();

res.status(201).json({ note });

} catch (error) {

next(new CustomError("Failed to update note", 500));

}

}

```

Similar to SQL, Drizzle provides methods like `insert`, `select`, `update`, `delete`, `set`, `where`, and more to interact with the database. The `eq` function is used to compare two entities. The `returning` function at the end of the `insert`, `update`, and `delete` functions specifies what Drizzle should return after successful execution. If left empty, it will return all fields. Drizzle offers a wide range of functions to help you achieve your API goals, and they are well-documented in the [official documentation](https://orm.drizzle.team/docs/overview).

## Validating and Sanitizing Data from Client’s Request

Our app is still missing a crucial component. Consider a scenario where we expect a numerical value in the request body but receive a string instead, or anticipate an email address but receive plain text. Even worse, a malicious actor might attempt to inject code into our database to steal or compromise stored information. We must prevent these scenarios from occurring, and one way to do so is to thoroughly examine client data before it reaches the handler.

We could write custom middlewares to validate and sanitize client data, but a more efficient approach is to utilize the battle-tested [express-validator](https://express-validator.github.io/docs) npm package. This package provides a set of middlewares that enable us to inspect client data and ensure it meets our requirements, making our app more secure and robust.

### Installing `express-validator`

```bash

npm i express-validator

```

### Defining `express-validator` middlewares

Within the `src/lib` folder, create the `validator-functions.ts` file and include the following lines of code:

**`src/lib/validator-functions.ts`**

```typescript

import { body, param } from "express-validator";

//Validating and sanitizing title from request body

export function validateNoteTitle() {

return body("title").notEmpty().isString().trim().escape();

}

//Validating and sanitizing body from request body

export function validateNoteBody() {

return body("body").notEmpty().isString().trim().escape();

}

//Validating id from route parameter

export function validateIdParam() {

return param("id").toInt().isInt();

}

```

To validate individual values from the client request, we utilize method chaining, where the output of the previous method becomes the input for the current method. For the `title` and `body` fields, we employ the following methods:

- `notEmpty()`: Validates that the value is not empty

- `isString()`: Validates that the value is a string

- `trim()`: A sanitizer that removes whitespace from both ends of the value, if present

- `escape()`: A sanitizer that replaces special characters, such as `<` and `>`, with HTML entities, protecting the server from Cross-Site Scripting (XSS) attacks

For a comprehensive list of validators and sanitizers, along with their uses, refer to the [validator.js](https://github.com/validatorjs/validator.js) GitHub repository.

### Implementing `express-validator` middleware functions

Now that we have written the validator functions, we can now implement them in our routes. Let’s update our `src/routes/notes.ts` file:

**`src/routes/notes.ts`**

```typescript

import { Router } from "express";

import { addNote, deleteNote, getAllNotes, getNote, updateNote} from "../handlers/notes.ts";

import { validateIdParam, validateNoteBody, validateNoteTitle} from "../lib/validator-functions.ts";

const notesRouter = Router();

notesRouter.get("/get-note/:id", validateIdParam(), getNote);

notesRouter.get("/get-all-notes", getAllNotes);

notesRouter.post("/add-note", validateNoteBody(), validateNoteTitle(), addNote);

notesRouter.put("/update-note/:id", validateIdParam(), validateNoteBody(), validateNoteTitle(), updateNote);

notesRouter.delete("/delete-note/:id", validateIdParam(), deleteNote);

export default notesRouter;

```

When an `express-validator` middleware encounters a validation error, it doesn't terminate the request immediately. Instead, it collects the errors and passes control to the next middleware or handler in the chain. This allows for more flexibility in handling validation errors.