id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,866,330 | External CSS to Inline CSS Converter | Tired of manually converting external CSS styles to Inline CSS? Introducing the Inline CSS Converter... | 0 | 2024-05-27T09:17:23 | https://dev.to/foxinfotech/external-css-to-inline-css-converter-32k5 | css, tooling, showdev, webdev | **Tired of manually converting external CSS styles to Inline CSS?** Introducing the [Inline CSS Converter Tool](https://webutility.io/external-css-to-inline-css-converter) - your web dev sidekick!

This tool makes converting external CSS to inline styles a BREEZE. Simply input your CSS and HTML, click "Convert CSS", and BOOM! Your HTML code now rocks inline styles. No more tedious manual work!

##Try now: [External CSS to Inline CSS](https://webutility.io/external-css-to-inline-css-converter)

###Key Features:

👉 Side-by-Side Input Columns: Easily input CSS and HTML for a clear workflow.

👉 Real-Time Conversion: Watch the magic happen as your CSS instantly converts to inline styles!

👉 Formatted Output: The converted HTML is neatly displayed, ready to copy and use.

👉 Copy to Clipboard: With one click, copy the converted code to paste anywhere.

👉 Intuitive Design: User-friendly interface for a smooth experience on any device.

Whether you're a web dev pro or just starting, this tool is a game-changer. Say goodbye to manual CSS conversion and hello to boosted productivity!

Try the [External CSS to Inline CSS Converter Tool](https://webutility.io/external-css-to-inline-css-converter) today and streamline your workflow like a boss!

(No data stored or transmitted - your code stays confidential in your browser.) | foxinfotech |

1,866,329 | KINGDOM77 - Website Game Online Terbaik | KINGDOM77: Portal gaming favorit para gamer yang mencari pengalaman bermain game online dengan... | 0 | 2024-05-27T09:15:19 | https://dev.to/kingdom77/kingdom77-website-game-online-terbaik-5gin | webdev, javascript, beginners, programming | [KINGDOM77](https://hartakingdom.com): Portal gaming favorit para gamer yang mencari pengalaman bermain game online dengan kualitas premium dan berbagai fitur menarik, memastikan setiap sesi bermain selalu penuh dengan kesenangan.

| kingdom77 |

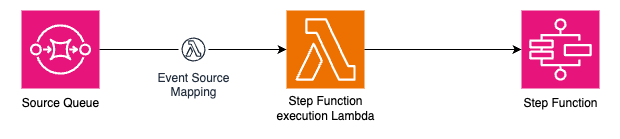

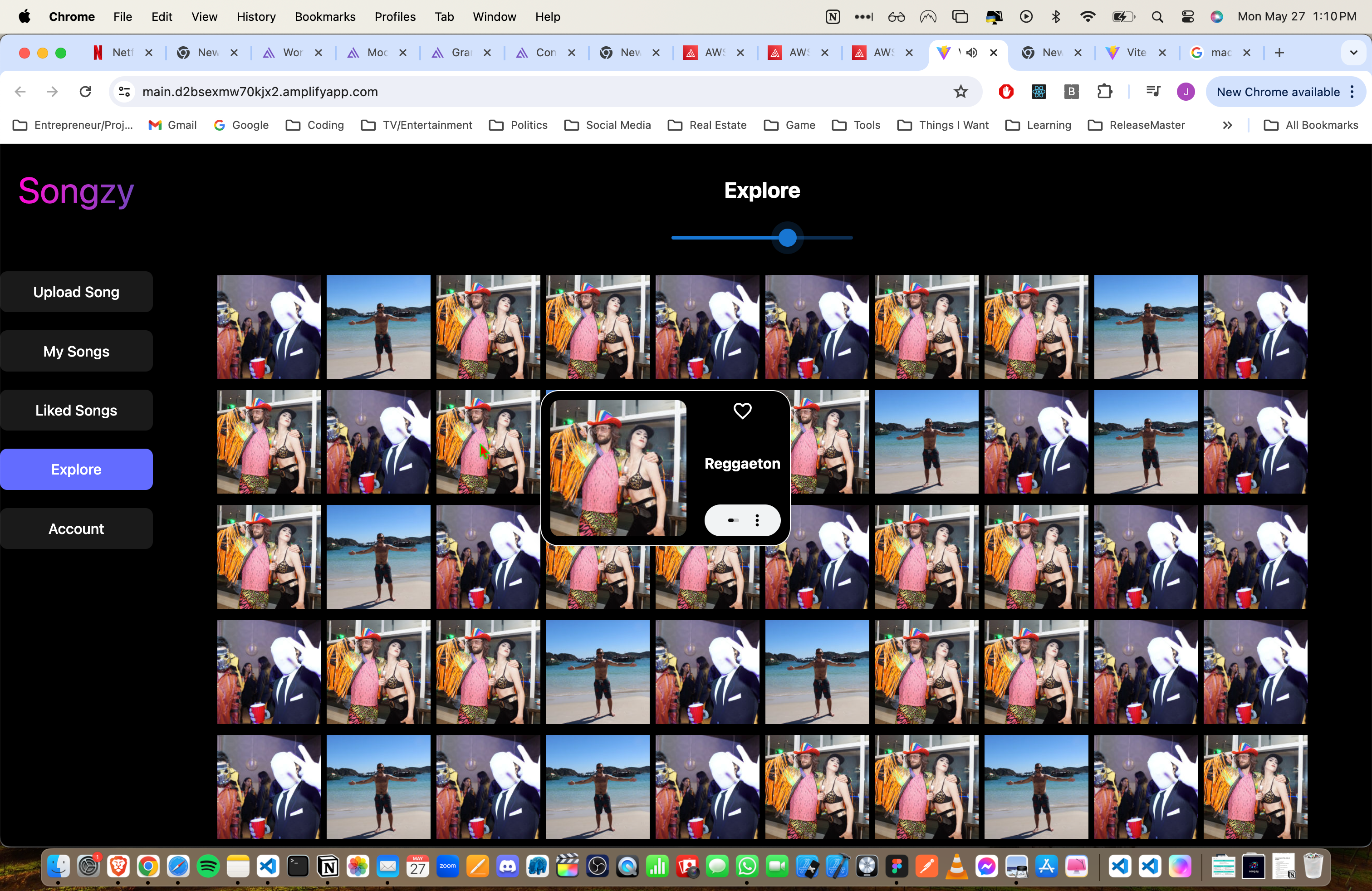

1,866,328 | A Deep Dive into a Video Rendering Pipeline | Hi everyone! My name is Igor Samokhovets and I'm a music producer who goes by the artist name Tequila... | 0 | 2024-05-27T09:14:49 | https://www.inngest.com/blog/banger | webdev, startup, javascript, tutorial | Hi everyone! My name is [Igor Samokhovets](https://twitter.com/IgorSamokhovets) and I'm a music producer who goes by the artist name Tequila Funk. In this blog post I will walk you through our video rendering pipeline built with Inngest, which powers [banger.show](https://banger.show/).

[banger.show](https://banger.show/) is a video maker app for musicians, DJs, and labels, which I built with Mark Beziaev. It allows music industry people to create stunning visual assets for their music.

{% embed https://www.youtube.com/watch?v=eSLKm6IfZM4 %}

Creating a video for your new song takes only a few minutes and you don't need to install or learn any complex software because banger.show works in your browser!

## Making background processing snappy

At banger.show, we do a lot of background processing. Managing render states, generating visual assets in the background, and even handling the rendering process on remote distributed workers.

We chose Inngest because there's no better way to handle background jobs if you're using Next.js **without a custom server**. **It's a primary "flow controller" for us**, even though we have a simple queue solution based on Redis to handle tasks on our infra. It allows us to orchestrate, observe, and abstract away lower-level processes, for example:

- We don't have to delegate every post-render task to the video rendering machine, but have some flow where the main server can receive render results from the workers and do something with it.

- We can observe each [step](/docs/reference/functions/step-run) and its return data in the dashboard.

- We can handle emergency cases when all of our infra goes down and we need to spin up some backup workers on AWS.

Before we dive in, let's take a high-level look at our video rendering pipeline:

- First, the app receives an uploaded project from the user.

- Next, it converts the audio for the more efficient rendering.

- The project is then sent to the render machine, which controls the progress, updates statuses, and handles error retries and "stalled render" cases.

- Finally, when the render is finished, a number of tasks need to run, such as invalidating CDN cache, creating video thumbnails, or sending email to the user when the video is ready.

Let's now dive into some parts of the video rendering pipeline.

### 1. Updating the render status

The first step in the render pipeline is to update the render status and set user credits on hold. Here we are using the default Inngest [concurrency](/docs/guides/concurrency).

```js

export const renderVideo = inngest.createFunction(

{

name: 'Render video',

id: 'render-video',

cancelOn: [

{

event: 'banger/video.create',

match: 'data.videoId'

}

],

},

{ event: 'banger/video.create' },

async ({ event, step, attempt, logger }) => {

const updatedVideo = await step.run('update-user-balance', async () => {

await dbConnect()

const render = await VideoModel.findOneAndUpdate(

{ _id: videoId },

{ $set: { renderProgress: 0, renderTime: 0, status: 'pending' } },

{ new: true }

)

.populate('user')

.lean()

invariant(video, 'no render found')

// Simplified

await UserModel.updateOne(

{ _id: video.user._id },

{ $inc: { unitsRemaining: -video.videoDuration } }

)

return video

})

})

```

### 2. Cropping the audio file

In banger.show, the user selects a fragment of the song to create a "videoization" for it. In the background job, we:

- Crop audio file based on the user selection.

- Convert the file to mp3 format for disk space efficiency and optimal compatibility.

Let's see it in code.

```js

const croppedMp3Url = await step.run(

'trim-audio-and-convert-to-mp3',

async () => {

// create temporary file

const tempFilePath = `${os.tmpdir()}/${videoId}.mp3`

await execa(`ffmpeg`, [

'-i',

updatedVideo.audioFileURL, // ffmpeg will grab input from URL

'-map',

'0:a',

'-map_metadata',

'-1',

'-ab',

'320k',

'-f',

'aac',

'-ss',

String(updatedVideo.regionStartTime), // start time

'-to',

String(updatedVideo.regionEndTime), // end time

tempFilePath

])

const croppedAudioS3Key = await getAudioFileKey(videoId)

// upload mp3 to file storage

const mp3URL = await uploadFile({

Key: croppedAudioS3Key,

Body: fs.createReadStream(tempFilePath)

})

// remove temp file

await unlink(tempFilePath)

await dbConnect()

await VideoModel.updateOne(

{ _id: videoId },

{ $set: { croppedAudioFileURL: mp3URL } }

)

return mp3URL

}

)

```

### 3. Rendering the video

The next step is to render the video using remote workers with beefy CPUs and GPUs.

We have a sub-queue that communicates with our own infrastructure. We send a job to the queue while Inngest allows us to wait until the job is done and handles new progress events.

```js

const { videoFileURL, renderTime } = await step.run(

'render-video-to-s3',

async () => {

const outKey = await getVideoOutKey(videoId)

const userBundle = bundles.find((p) => p.key === updatedVideo.user.bundle)

if (!userBundle) {

throw new NonRetriableError('no bundle assigned to user')

}

await dbConnect()

const video = await VideoModel.findOne({

_id: videoId

}).populate('user')

if (!video) {

throw new NonRetriableError('no video found')

}

// attempt is provided by Inngest.

// if video fails to render from the first attempt, we will pick different worker

const renderer = await determineRenderer(video, attempt)

// CRF of the video based on user bundle

const constantRateFactor = determineRemotionConstantRateFactor(

video.user.bundle

)

const renderPriority = await determineQueuePriority(video.user.bundle)

logger.info(

`Rendering Remotion video with renderer ${renderer} and crf ${constantRateFactor}`

)

const renderedVideo = await renderVideo({

videoId: videoId,

priority: renderPriority,

renderOptions: {

crf: constantRateFactor,

concurrency: determineRemotionConcurrency(video),

...(video.hdr && {

colorSpace: 'bt2020-ncl'

})

},

inputPropsOverride: {

...video.videoSettings,

videoFormat: video.videoFormat

},

renderer,

audioURL: croppedMp3Url,

startTime: 0,

endTime: video.videoDuration,

outKey,

onProgress: async (progress) => {

await VideoModel.updateOne(

{

_id: videoId

},

{ $set: { renderProgress: progress, status: 'processing' } }

)

}

})

return renderedVideo

}

)

```

### 4. Invalidate CDN cache

There are some cases when video needs to be re-rendered. We host our videos on a CDN, but some times, the video needs to be re-rendered. To make sure CDN cache is always fresh, we purge it each time the video renders.

```js

await step.run('create-invalidation-on-CloudFront', async () => {

try {

const { pathname: videoPathnameToInvalidate } = new URL(videoFileURL)

return await invalidateCloudFrontPaths([

videoPathnameToInvalidate,

`/thumbnails/${videoId}.jpg`,

`/thumbnails/${videoId}-square.jpg`

])

} catch (error) {

sendTelegramLog(`Invalidation failed for ${videoId}: ${error.message}`)

return `Invalidation failed, skipping: ${error.message}`

}

})

```

### 5. Updating video status to "ready"

After video is successfully rendered and we have obtained a URL, we set video status to "ready" and update `renderTime`.

```js

await step.run('update-video-status-to-ready', () =>

Promise.all([

VideoModel.updateOne(

{ _id: videoId },

{

$set: {

status: 'ready',

videoFileURL

},

$inc: {

renderTime

}

}

)

])

)

```

### 6. Creating a video thumbnail

Finally, we also want to create a thumbnail for each video to show it in listings or use as a video posters.

```js

await step.run('generate-thumbnail-and-upload-to-s3', async () => {

const thumbnailFilePath = `${os.tmpdir()}/${videoId}-thumbnail.jpg`

await execa(`ffmpeg`, [

'-i',

videoFileURL, // ffmpeg will grab input from URL

'-vf',

'thumbnail=300',

'-frames:v', // only one frame

'1',

thumbnailFilePath

])

const thumbnailFileURL = await uploadFile({

Key: `thumbnails/${videoId}.jpg`,

Body: fs.createReadStream(thumbnailFilePath)

})

await dbConnect()

await VideoModel.updateOne(

{ _id: videoId },

{ $set: { thumbnailURL: thumbnailFileURL } }

)

await unlink(thumbnailFilePath)

})

```

### 7. Handling failures

We set a graceful flow termination strategy in the [`onFailure`](/docs/reference/functions/handling-failures) function (please keep in mind that it's simplified for this blog post).

```js

export const renderVideo = inngest.createFunction(

{

name: 'Render video',

id: 'render-video',

cancelOn: [

{

event: 'banger/video.create',

match: 'data.videoId'

}

],

onFailure: async ({ error, event, step }) => {

await dbConnect()

const isStalled = RenderStalledError.isRenderStalledError(error)

const updatedVideo = await step.run(

'Update video status to failed',

() =>

VideoModel.findOneAndUpdate(

{ _id: event.data.event.data.videoId },

{

$set: {

status: isStalled ? 'stalled' : 'error',

...(isStalled && { stalledAt: new Date() }),

renderProgress: null

}

},

{ new: true }

)

.lean()

)

invariant(updatedVideo, 'no video found')

// refund user units if error is not recoverable

// if it's stalled, we're going to recover it later

if (!isStalled) {

await step.run('Refund user units', async () => {

await UserModel.updateOne(

{

_id: event.data.event.data.userId

},

{ $inc: { unitsRemaining: updatedVideo.videoDuration } }

)

})

}

if (process.env.NODE_ENV === 'production') {

const errorJson = _.truncate(JSON.stringify(event), {

length: 3000

})

await sendTelegramLog(

_.truncate(

`🚨 Error while rendering video: ${error.message}\n

Event: ${errorJson}\n`,

{ length: 3000 }

)

)

}

Sentry.captureException(error)

}

},

{ event: 'banger/video.create' },

async ({ event, step, attempt, logger }) => {

// ...

})

```

## Why I chose Inngest

Inngest makes the difficult parts easy.

For example, there's no simpler way to put it: I find the [`steps`](/docs/reference/functions/step-run) concept mindblowing. I wished something like this was available back in 2019, when I was just starting out with BullMQ, Agenda.js, and other solutions. It's a really sweet abstraction. I also enjoy observability, so I can track each step and function run in one dashboard. | samohovets |

1,866,327 | Errores Comunes de Pylint. Guía Práctica | Pylint es una herramienta de análisis estático de código que se utiliza para encontrar errores y... | 0 | 2024-05-27T09:10:20 | https://dev.to/gfouz/errores-comunes-de-pylint-guia-practica-2ko | Pylint es una herramienta de análisis estático de código que se utiliza para encontrar errores y mejorar la calidad del código Python. Sin embargo, enfrentarse a los errores que reporta puede ser desalentador, especialmente para los desarrolladores novatos. Aquí exploramos algunos de los errores más comunes que lanza Pylint, cómo resolverlos y cómo podemos beneficiarnos de estas correcciones para escribir un código más limpio y eficiente.

1- C0103: Variable name "x" doesn't conform to snake_case naming style

Error:

Este error se produce cuando el nombre de una variable no sigue la convención de nombres en Python, que es usar snake_case.

Ejemplo de código que lanza el error:

```py

userName = "John Doe"

```

Solución:

Renombrar la variable para que siga la convención snake_case.

```py

user_name = "John Doe"

```

2- E1101: Instance of 'class' has no 'member' member

Error:

Pylint no puede encontrar el atributo especificado en la instancia de la clase. Esto suele ocurrir cuando se intenta acceder a un atributo que no existe.

Ejemplo de código que lanza el error:

```py

class Person:

def __init__(self, name):

self.name = name

p = Person("Alice")

print(p.age)

```

Solución:

Asegurarse de que el atributo exista en la clase.

```py

class Person:

def __init__(self, name, age=None):

self.name = name

self.age = age

p = Person("Alice", 30)

print(p.age)

```

3- R0913: Too many arguments (6/5)

Error:

Esta advertencia surge cuando una función o método tiene demasiados parámetros, lo cual puede hacer que el código sea difícil de mantener y leer.

Ejemplo de código que lanza el error:

```py

def create_user(username, password, email, first_name, last_name, age):

pass

```

Solución:

Reducir el número de parámetros, por ejemplo, utilizando un diccionario o una clase.

```py

class User:

def __init__(self, username, password, email, first_name, last_name, age):

self.username = username

self.password = password

self.email = email

self.first_name = first_name

self.last_name = last_name

self.age = age

def create_user(user):

pass

user_info = User("johndoe", "12345", "john@example.com", "John", "Doe", 30)

create_user(user_info)

```

4- W0611: Unused 'import'

Error:

Este error aparece cuando hay una importación en el código que no se utiliza, lo que puede añadir desorden innecesario.

Ejemplo de código que lanza el error:

```py

import os

def greet(name):

return f"Hello, {name}"

```

Solución:

Eliminar las importaciones no utilizadas.

```py

def greet(name):

return f"Hello, {name}"

```

5- C0301: Line too long (82/80)

Error:

Este error se produce cuando una línea de código supera la longitud máxima recomendada de 80 caracteres.

Ejemplo de código que lanza el error:

```py

def greet(name): return f"Hello, {name}. Welcome to the platform. We hope you have a great experience here!"

```

Solución:

Dividir la línea larga en varias líneas más cortas.

```py

def greet(name):

return (f"Hello, {name}. Welcome to the platform. "

"We hope you have a great experience here!")

```

Conclusión:

Abordar y resolver los errores que Pylint destaca puede parecer un desafío, pero es un paso crucial hacia la mejora continua y la profesionalización de nuestro código. Cada corrección que hacemos nos acerca a un código más limpio, más eficiente y más fácil de mantener. Acepta los mensajes de Pylint como oportunidades de aprendizaje y crecimiento profesional.

Para más detalles, puedes consultar la documentación oficial de Pylint que proporciona una guía exhaustiva sobre cómo interpretar y resolver los mensajes de error.

Mantén la motivación y recuerda: escribir un buen código no es solo una habilidad técnica, sino también un arte que se perfecciona con práctica y perseverancia. ¡Sigue adelante y convierte cada error en un aprendizaje!

| gfouz | |

1,866,326 | The evolution of social media platform | The evolution of social media platform Table of contents Introduction Early Days (1990s - early... | 0 | 2024-05-27T09:07:10 | https://dev.to/swahilipotdevs/the-evolution-of-social-media-platform-455i |

The evolution of social media platform

Table of contents

- Introduction

- Early Days (1990s - early 2000s)

- Rise of Blogging and Early Social Networks (2000-2005)

- Dominance of Facebook and Expansion (2004-2010)

- The Smartphone Era (2007 - 2015):

- Diversification and Mobile Era (2010-2015)

- The Era Of Visual Content (2010-2020)

- Current Trends and Future Directions

- Positive impacts of social media platforms

- Negative impacts of social media platforms

- Challenges of social media platforms

- References

Introduction

Social media platforms are online services or websites which allow users to create and share content, interact with others, and be part of virtual communities. Examples include Facebook, Twitter, Instagram, Tiktok, LinkedIn, Snapchat, Pinterest, Reddit, Tumblr and YouTube.

The evolution of social media platforms has changed the way we connect, communicate and collaborate.

This evolution has been brought about by technology advancement, previous limitations and the ever growing user needs.

In this article we will cover the evolution of the social media platforms from early years to the present.

Early Days (1990s - early 2000s)

The foundations of social media were laid in the late 1990s and early 2000s with the emergence of platforms like Six Degrees, Friendster, and LiveJournal. These early platforms focused on building online communities and allowing users to create personal profiles, connect with friends, and share content:

BBS and Usenet: Bulletin Board Systems (BBS) and Usenet groups were early forms of online communities, allowing users to post messages and share files.

Six Degrees (1997): Often considered the first recognizable social media site, it allowed users to create profiles and friend others.

Rise of Blogging and Early Social Networks (2000-2005)

- LiveJournal (1999): A platform for journaling and blogging, emphasizing community building.

https://www.livejournal.com

- Friendster (2002): Focused on connecting friends and meeting new people, though it struggled with technical issues.

https://friendster.com

- MySpace (2003): Became hugely popular for its customizable profiles and music sharing, attracting a large youth demographic.

https://myspace.com

- LinkedIn (2003): Targeted professional networking, helping users to establish business connections.

https://www.linkedin.com

Dominance and Expansion of social media platforms (2004-2010)

- Facebook (2004): Initially launched by Harvard student Mark Zuckerberg gaining popularity to college students. It is later expanded to the general public in 2006 and quickly became the dominant social network due to its user-friendly interface and wide range of features.

- Monthly active users: 2.96 billion, Daily active users: 2 billion.

https://www.facebook.com

- YouTube (2005): Revolutionized video sharing, becoming a platform for user-generated content and a new form of social media.

- Monthly active users: 2.6 billion, Daily active users: 122 million.

https://www.youtube.com

- X, formerly twitter: Introduced the concept of microblogging with its 140-character limit, providing a platform for short real-time updates, conversations and trending topics.

- Monthly active users: 450 million, Daily active users: 206 million.

https://x.com

The Smartphone Era (2007 - 2015):

The introduction of the iPhone in 2007 and the subsequent proliferation of smartphones significantly impacted the social media landscape. Platforms like Instagram (2010) and Snapchat (2011) were designed with mobile-first experiences, capitalizing on the growing use of smartphones and the desire to share visual content.

Diversification and Mobile Era (2010-2015)

- Instagram (2010): Focused on photo and video sharing with a simple, visually appealing interface, becoming a key player in the social media landscape.

https://www.instagram.com

Features:

1. Photo and Video Sharing:

- Users can upload photos and videos to their profile.

- Editing tools, filters, and tags can be added to enhance the posts.

- Users can caption their posts, tag other users, and add location information.

2. Stories:

- Photos and videos that disappear after 24 hours.

- Stories can include text, stickers, music, and interactive elements like polls and questions.

- Highlights allow users to save and showcase their Stories on their profile.

3. Reels:

- Short, engaging videos similar to TikTok.

- Users can add music, effects, and new creative tools.

- Reels can be shared on the Explore page to reach a wider audience.

4. IGTV:

- A platform for longer videos.

- Users can create and upload videos up to an hour long (or longer for verified accounts).

- IGTV videos can be previewed in the feed.

5. Direct Messaging:

- Private messaging for direct communication.

- Includes text, photos, videos, and disappearing messages.

- Group chats and video calls are also supported.

- Pinterest (2010): Allowed users to "pin" images to virtual boards, catering to interests and hobbies.

https://www.pinterest.com

Features:

1. Pins:

- Users can save (pin) images, videos, and links to their boards.

- Pins can be customized with descriptions, tags, and links back to the original source.

2. Boards:

- Collections of pins organized by themes or topics.

- Boards can be public or private, and users can invite others to collaborate on them.

3. Home Feed:

- A personalized feed of pins based on user interests and activity.

- Includes content from followed accounts and recommended pins.

4. Explore:

- A section for discovering trending and popular content across various categories.

- Provides curated content and inspiration based on themes like fashion, home decor, food, and travel.

- Snapchat (2011): Introduced ephemeral content with disappearing messages and stories, appealing to a younger audience.

https://www.snapchat.com

Features:

1. Snaps:

- Send photos and videos that disappear after being viewed.

- Add text, drawings, stickers, and filters to personalize Snaps.

- Snaps can be sent to individual friends or added to your Story.

2. Stories:

- Share Snaps that stay live for 24 hours.

- Friends can view Stories as many times as they like within that period.

- My Story is visible to all your friends, while Custom Stories can be shared with selected friends or groups.

3. Chat:

- Send text messages, photos, videos, and voice notes.

- Messages disappear after they are viewed, unless saved in the chat.

- Includes stickers, Bitmojis, and GIFs for more expressive chats.

- WhatsApp (2009) and WeChat (2011): Emerged as major messaging platforms with social features.

https://whatsapp.com

Features:

1. Text Messaging:

- Send and receive text messages.

- Supports rich text formatting like bold, italics, and strikethrough.

2. Multimedia Messaging:

- Share photos, videos, and audio recordings.

- Supports GIFs and stickers for more expressive conversations.

3. Voice and Video Calls:

- Make free voice and video calls over the internet.

- Supports one-on-one and group calls.

Other Features:

- Group communication

- End-To-End Encryption

- Whatsapp Status

Wechat:

https://www.wechat.com

Features:

1. Text Messaging:

- Send and receive text messages.

- Supports rich text features like emojis and stickers.

2. Voice and Video Calls:

- Make free voice and video calls over the internet.

- Supports one-on-one and group calls.

3. Multimedia Messaging:

- Share photos, videos, audio messages, and documents.

- Supports GIFs, stickers, and short videos.

4. Group Chats:

- Create group chats with up to 500 members.

- Share messages, media, and documents within the group.

- Admin controls for managing group settings and participants.

The Era Of Visual Content (2010-2020)

Social media platforms evolved to cater to the demand of more visual forms of content since there was an advancement in the user preferences.

Instagram, Snapchat, and later TikTok (2016) gained widespread popularity, enabling users to capture, edit, and share photos, videos, and short-form content.

Live Streaming and Short-Form Content (2015-Present)

- Periscope (2015): Pioneered live streaming, integrated with Twitter for real-time broadcasts.

https://www.periscope.tv

- TikTok (2016): Originally launched as Douyin in China, it gained global popularity for its short, engaging video content, using powerful algorithms to surface trending content.

https://tiktok.com

- Clubhouse (2020): Popularized audio-based social networking, focusing on live, drop-in conversations.

https://www.clubhouse.com

Current Trends and Future Directions

- Integration with E-commerce: Platforms like Instagram and Facebook have integrated shopping features, enabling social commerce.

- Augmented Reality (AR) and Virtual Reality (VR): Platforms like Snapchat and emerging metaverse platforms are exploring immersive experiences.

- Privacy and Data Security: Growing concerns over data privacy and handling of user information led to social media platforms facing increased scrutiny and had to adapt their policies and practices. This led to the implementation of stronger privacy controls, data transparency measures, and user empowerment features.

- AI and Personalization: Enhanced algorithms and AI are driving more personalized content and advertisements.

- The Integration Of Emerging Technologies

- Social media platforms have integrated emerging technologies, such as artificial intelligence, augmented reality, and virtual reality, to enhance user experiences, content creation, and engagement. This has led to the development of features like AR filters, interactive experiences, and AI-powered content recommendations.

Positive impacts of social media platforms

Social media platforms have brought about several positive impacts on individuals, societies, and various aspects of our lives:

1. Connection and Communication: Social media enables people to connect and communicate with friends, family, and acquaintances regardless of geographical barriers. It fosters relationships, facilitates staying in touch, and provides a platform for sharing updates, photos, and memories.

2. Information Sharing and Awareness: Social media serves as a powerful tool for sharing information, news, and updates on a wide range of topics. It helps raise awareness about social issues, current events, and humanitarian causes, mobilizing support and driving positive change.

3. Community Building: Social media platforms facilitate the formation of online communities based on shared interests, hobbies, identities, or causes. These communities provide support and a sense of belonging to individuals who may feel isolated or marginalized in offline spaces.

4. Education and Learning: Social media platforms offer access to a wealth of educational resources, including tutorials, webinars, and online courses. They provide opportunities for lifelong learning, skill development, and knowledge sharing in various fields, from academics to hobbies to professional development.

5. Business and Entrepreneurship: Social media has transformed the way businesses engage with customers, market their products or services, and build brand awareness. It offers cost-effective marketing tools, targeted advertising options, and opportunities for businesses to connect directly with their target audiences.

6. Crisis Response and Support: Social media plays a crucial role in crisis response, providing real-time updates, emergency alerts, and information dissemination during natural disasters, humanitarian crises, or public health emergencies. It also facilitates fundraising efforts and mobilizes support for affected communities.

7. Political Engagement and Activism: Social media platforms empower individuals to participate in political discourse, express their opinions, and advocate for social and political change. It facilitates grassroots activism, mobilizes communities, and holds governments and institutions accountable.

8. Cultural Exchange and Diversity: Social media exposes users to diverse perspectives, cultures, and experiences from around the world, fostering cross-cultural understanding, empathy, and appreciation for diversity. It breaks down barriers and promotes intercultural dialogue and exchange.

Negative impacts of social media platforms

Social media platforms have brought about several negative impacts on individuals, societies, and various aspects of our lives:

1. Addiction and Dependence: Social media can be addictive.Individuals may find it difficult to disconnect from social media, leading to distractions and decreased productivity.

2. Cyberbullying and Harassment: Social media provides a platform for cyberbullying, harassment, and online abuse.

3. Privacy Concerns: Social media platforms often collect vast amounts of user data, raising concerns about privacy breaches, data misuse, and surveillance.

4. Distorted Self-Image: Social media often portrays unrealistic standards of beauty, success, and lifestyle, leading to feelings of inadequacy, body image issues, and self-esteem problems, especially among young users.

5. Sleep Disturbance: The use of social media before bedtime can disrupt sleep patterns and quality. The blue light emitted by screens interferes with the production of melatonin, making it harder to fall asleep and negatively affecting overall sleep health.

6. Decreased Attention Span: Constant exposure to short-form content and notifications on social media platforms can lead to decreased attention spans and difficulties in focusing on tasks requiring sustained concentration.

Challenges of social media platforms

Social media platforms face various challenges, including:

1. Privacy Concerns: Social media platforms collect vast amounts of user data, raising concerns about privacy breaches, data misuse, and surveillance. Users may be unaware of how their data is being used and may have limited control over their privacy settings.

2. Misinformation and Fake News: Social media facilitates the rapid spread of misinformation, rumors, and fake news. False or misleading information can easily go viral, leading to public confusion, polarization, and undermining trust in traditional media and institutions.

3. Cyberbullying and Harassment: Social media provides a platform for cyberbullying, harassment, and online abuse. Individuals, particularly young people, may experience bullying, hate speech, or threats, leading to psychological distress and negative mental health outcomes.

4. Addiction and Mental Health Impacts: Social media can be addictive, leading to excessive use and dependency. Excessive use of social media has been linked to various mental health issues, including anxiety, depression, loneliness, and low self-esteem.

5. Online Safety and Security: Social media platforms face challenges related to online safety and security, including hacking, account hijacking, phishing scams, and identity theft. Users may be vulnerable to online predators, fraudsters, and malicious actors seeking to exploit personal information.

6. Regulatory and Legal Issues: Social media platforms operate in a complex regulatory environment, facing scrutiny from lawmakers and regulators regarding issues such as data privacy, content moderation, antitrust concerns, and the spread of harmful content online. Compliance with diverse regulatory frameworks across different jurisdictions poses challenges for global platforms.

7. Content Moderation and Trust: Social media platforms grapple with the challenge of moderating user-generated content to prevent the spread of harmful or illegal content, including hate speech, violence, misinformation, and extremist propaganda, while upholding principles of free speech and user expression. Inconsistent or opaque moderation policies can erode trust and credibility.

8. Monetization and Advertiser Trust: Social media platforms rely heavily on advertising revenue, which can incentivize attention-grabbing content and clickbait, potentially compromising user experience and trust. Advertiser trust may be undermined by concerns about brand safety, ad fraud, and the effectiveness of advertising metrics.

9. Ethical and Societal Impacts: Social media platforms raise broader ethical and societal questions about their role in shaping public discourse, influencing behavior, and exacerbating societal challenges such as misinformation, polarization, and mental health issues. Platforms must grapple with these complex ethical dilemmas while striving to uphold user trust and social responsibility.

Conclusion

The evolution of social media has been a rapid journey, fundamentally altering how we interact with the world around us. As we move forward, it's important to remain aware of both the possibilities and pitfalls that lie ahead. By fostering open dialogue, holding platforms accountable, and advocating for ethical practices, we can shape the future of social media to be a positive force for connection, creativity, and positive social change.

Moreover, the social media landscape continues to evolve, with change and shift in user preferences, emergence of new platforms and the ongoing integration of emerging technologies. As the roles of social media in daily life continue to grow, platforms will need to adapt to meet new expectations of the users, as that will be addressing the challenges posed by privacy, content moderation and the impact of social media in the society at large.

References

https://www.youtube.com/watch?v=x1caQa_7CpE&

https://phrasee.co/news/the-history-of-social-media-a-timeline/#:~:text=August%202003%3A%20Myspace%20is%20founded,of%20America's%20Funniest%20Home%20Videos.

https://online.maryville.edu/blog/evolution-social-media/

| ngemuantony | |

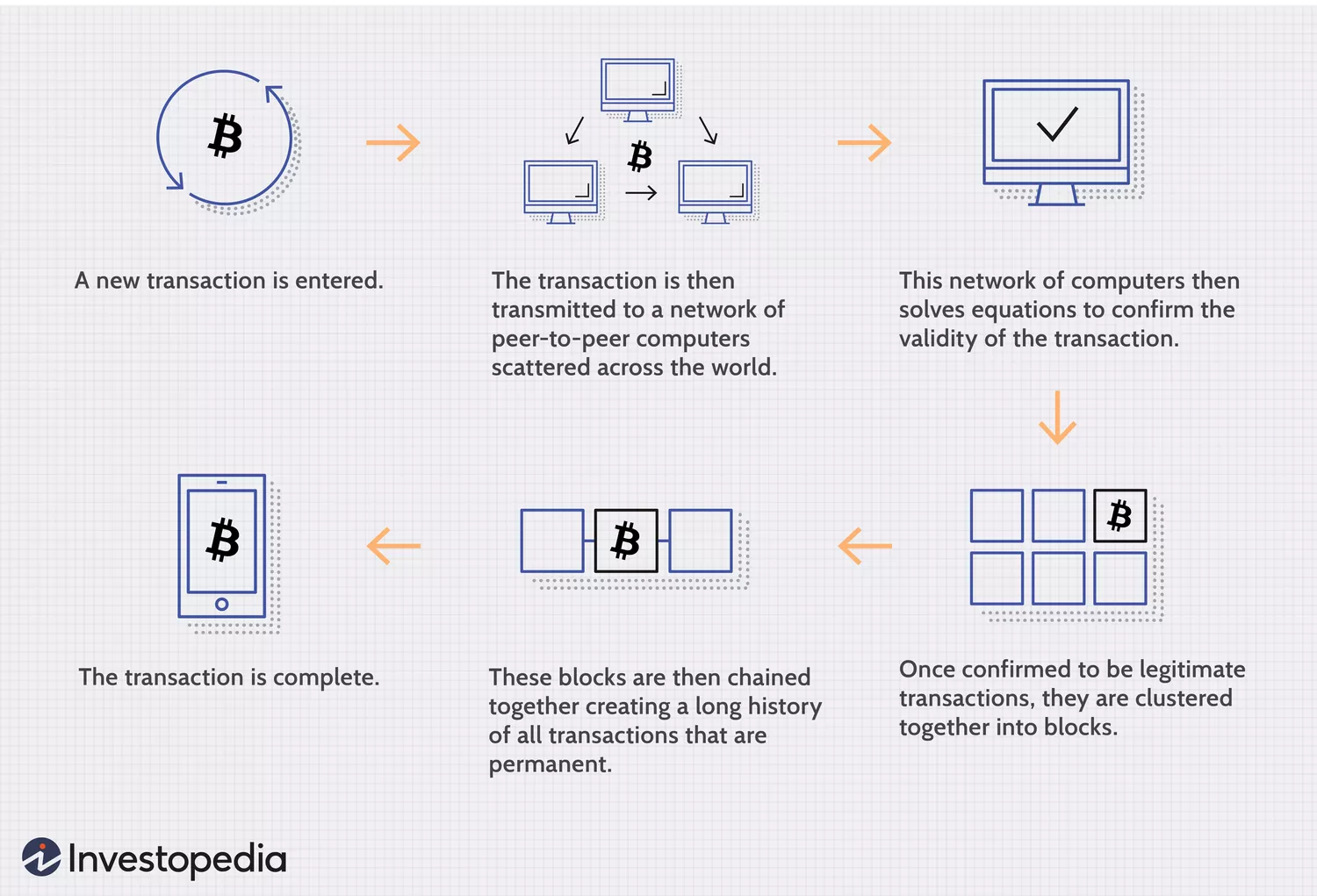

1,866,325 | A Brief Discussion on the Balance of Order Books in Centralized Exchanges | Recently, I have summarized some key insights from papers studying limit order books. You will learn... | 0 | 2024-05-27T09:03:45 | https://dev.to/fmzquant/a-brief-discussion-on-the-balance-of-order-books-in-centralized-exchanges-27f0 | exchange, balance, order, fmzquant | Recently, I have summarized some key insights from papers studying limit order books. You will learn how to measure the imbalance of transaction volume in the order book and its predictive ability for price trends. This article explores methods of using order book data to model price changes.

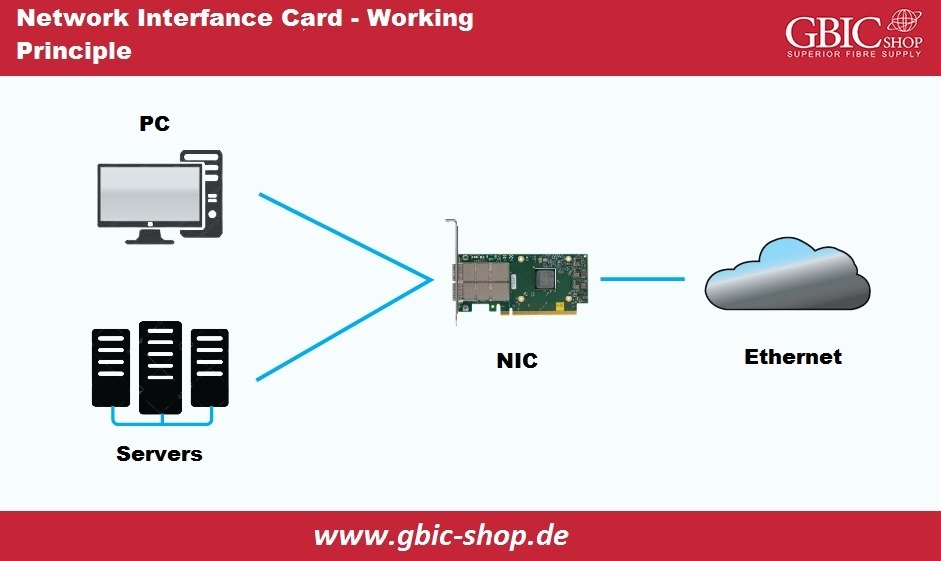

### First, let's talk about the order book.

Exchange order book balance refers to the relative balance state between buy and sell orders in an exchange. The order book is a real-time record of all pending buy and sell orders on the market. This includes orders from buyers and sellers who are willing to trade at different prices.

Below are some key concepts related to exchange order book balance:

- Buyer and Seller Orders: Buyer orders in the order book represent investors willing to purchase assets at a specific price, while seller orders represent investors willing to sell assets at a specific price.

- Order Book Depth: Order book depth refers to the number of orders on both the buyer and seller sides. A greater depth indicates there are more buy and sell orders in the market, which may be more liquid.

- Transaction Price and Transaction Volume: The transaction price is the price of the most recent trade, while the transaction volume is the quantity of assets traded at that price. The transaction price and volume are determined by the competition between buyers and sellers in the order book.

- Order Book Imbalance: Order book imbalance refers to the discrepancy between the number of buy and sell orders or total transaction volume. This can be determined by examining the depth of the order book, if one side has significantly more orders than the other, there may be an order book imbalance.

- Market Depth Chart: The market depth chart graphically presents the depth and balance of the order book. Typically, the number of orders from buyers and sellers is displayed on the price level in a bar chart or other visual ways.

- Factors Affecting the Price: The balance of the order book directly affects market prices. If there are more buy orders, it may push up the price; on the contrary, if there are more sell orders, it may cause a drop in price.

- High-frequency Trading and Algorithmic Trading: Order book balance is crucial for high-frequency trading and algorithmic trading, as they rely on real-time order book data to make decisions, aiming to seize market opportunities quickly.

Understanding the balance of order books is important for investors, traders, and market analysts, because it provides useful information about market liquidity, potential price direction, and market trends.

### Imbalance in Trading Volume

A key idea when analyzing limit order books is to determine whether the overall market tends to buy or sell. This concept is known as imbalance in trading volume.

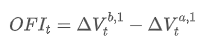

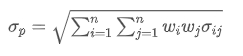

The imbalance in trading volume at time t is defined as:

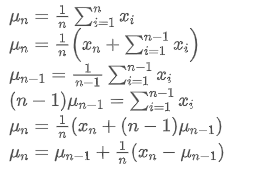

Where,

represents the transaction volume of the best buy order at time t,

represents the transaction volume of the best sell order at time t. We can interpret ρt close to 1 as strong buying pressure, and ρt close to -1 as strong selling pressure. This only considers the transaction volumes of orders placed at the best buy price and best sell price, that is, L1 order book.

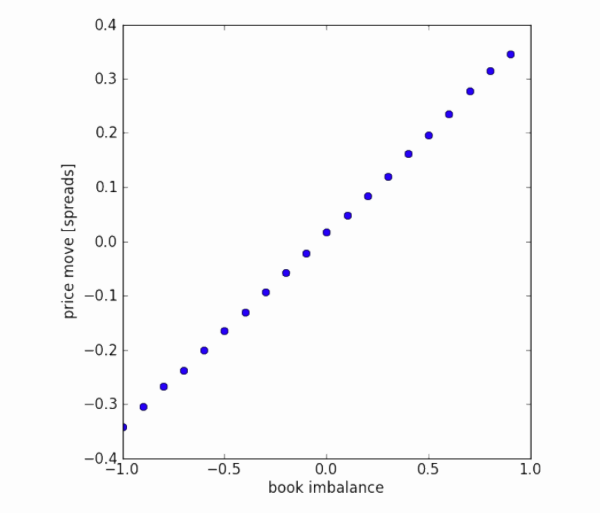

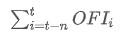

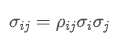

Imbalance in trading volume and price changes. The graph shows the imbalance of tiered trading volumes (x-axis) and the average value of future price movements, standardized by price difference (y-axis). The dataset is a quarter's order flow from a certain market. There seems to be a linear relationship between first-level order imbalance and future price changes. However, on average, future price changes are within the bid-ask spread.

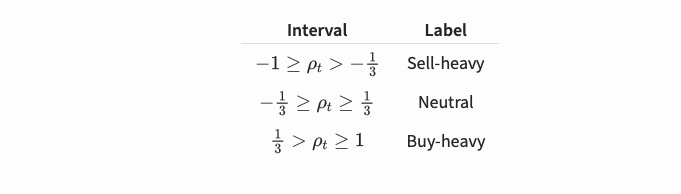

The imbalance in trading volume ρt will be divided into the following three paragraphs:

It was discovered that these segments can predict future price changes:

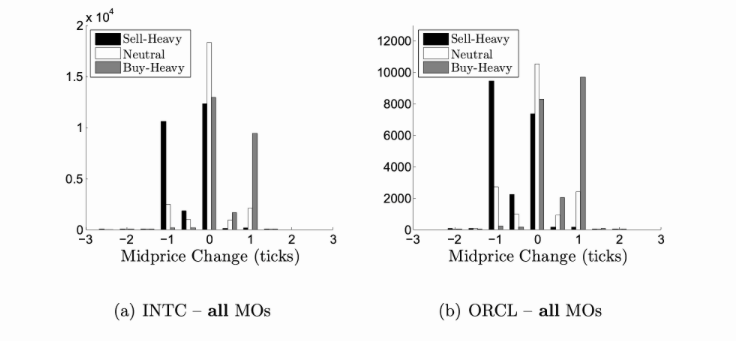

Regarding the predictive ability of volume imbalance, an analysis was conducted on the tick-by-tick order book of a certain commodity from January 2014 to December 2014. For each arriving market order (MO), the volume imbalance was recorded and segmented according to the number of ticks in which the mid-price changed within the next 10 milliseconds. The chart shows the distribution and mid-price changes for each segment. We can see that positive price changes are more likely to occur before order books with greater buying pressure. Similarly, negative changes are more likely to occur before order books with greater selling pressure.

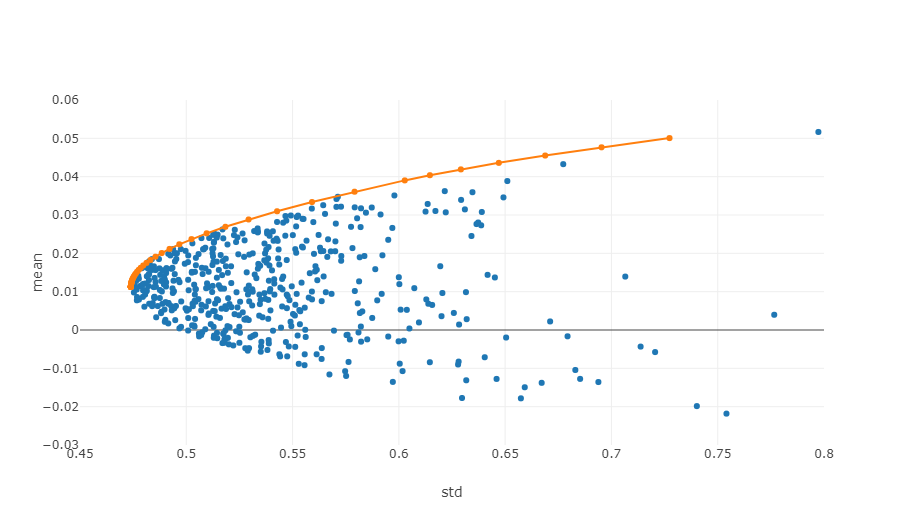

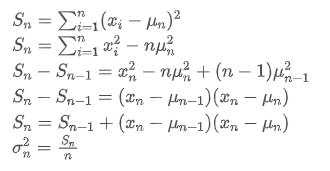

### Order Flow Imbalance

The imbalance of trading volume focuses on the total trading volume in the limit order book. One drawback is that some of this volume may come from old orders, which contain less relevant information. We can instead focus on the trading volume of recent orders. This concept is known as order flow imbalance. You can achieve this by tracking individual markets and limit orders (requires Level 3 data) or observing changes in the limit order book.

Since Level 3 data is expensive and usually only available to institutional traders, we will focus on changes in the limit order book.

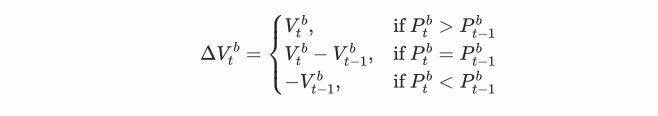

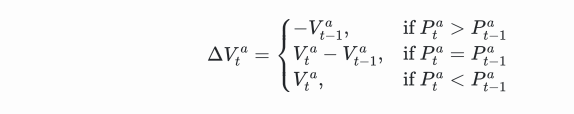

We can calculate the order flow imbalance by looking at how much the trading volumes have moved at best bid price and best ask price. The change in trading volume at best bid price is:

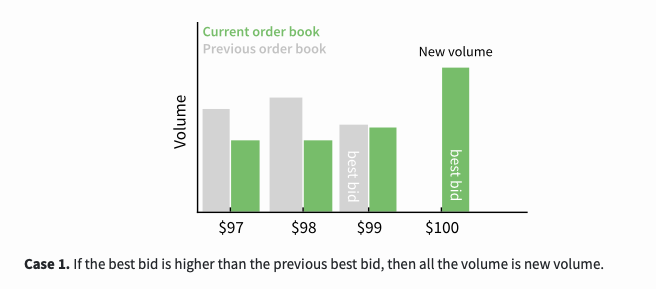

This is a function involving three scenarios. The first scenario is, if the best buying price is higher than the previous best buying price, then all transaction volumes are new transaction volumes.

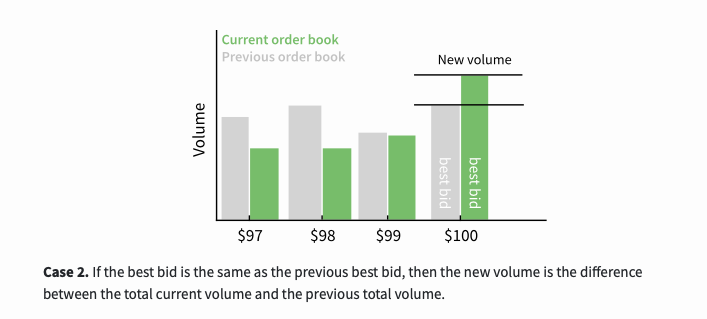

The second scenario is, if the best buying price is the same as the previous best buying price, then the new transaction volume is the difference between the current total transaction volume and the previous total transaction volume.

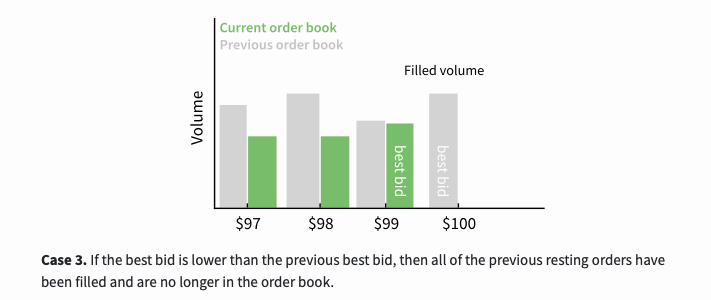

The third scenario is, if the best buying price is lower than the previous best buying price, then all previous orders have been traded and are no longer in the order book.

The calculation method for the change in transaction volume at the best selling price is similar:

The net order flow imbalance (OFI) at time t is given by the following formula:

This will be a positive value when there are more buy orders, and a negative value when there are more sell orders. It measures both the quantity and direction of the transaction volume. In the previous part, order imbalance only measured direction without measuring the quantity of transactions.

You can add these values to get the net order flow imbalance (OFI) over a period of time:

Use regression models to test whether order flow imbalance contains information about future price changes:

The calculated OFI value above focuses on the best buying price and selling price. In part 4, the values of the top 5 best prices were also calculated, providing 5 inputs instead of just one. They found that a deep study of the order book can provide new information for future price changes.

### Summary

Here, I have summarized some key insights from papers studying the order volume in limit order books. These papers indicate that the order book contains information highly predictive of future price changes. However, these changes cannot overcome the bid-ask spread.

I have added links to the papers in the references section. Please refer to them for more detailed information.

References & Notes

- Álvaro Cartea, Ryan Francis Donnelly, and Sebastian Jaimungal: "Enhancing Trading Strategies with Order Book Signals" Applied Mathematical Finance 25(1) pp. 1–35 (2018)

- Alexander Lipton, Umberto Pesavento, and Michael G Sotiropoulos: "Trade arrival dynamics and quote imbalance in a limit order book" arXiv (2013)

- Álvaro Cartea, Sebastian Jaimungal, and J. Penalva: "Algorithmic and high-frequency trading." Cambridge University Press

- Ke Xu, Martin D. Gould, and Sam D. Howison: "Multi-Level Order-Flow Imbalance in a Limit Order Book" arXiv (2019)

Reprinted from: Author ~ {Leigh Ford, Adrian}.

From: https://blog.mathquant.com/2023/11/13/a-brief-discussion-on-the-balance-of-order-books-in-centralized-exchanges.html | fmzquant |

1,866,324 | TASK_2_JS_College | Check out this Pen I made! | 0 | 2024-05-27T09:02:56 | https://dev.to/__c07737ed6f/task2jscollege-1np6 | codepen | Check out this Pen I made!

{% codepen https://codepen.io/olena-bolshunova/pen/BaeaxGq %} | __c07737ed6f |

1,866,323 | Use Golang Migrate on Docker Compose | Previously I setup docker compose for golang application and PostgreSQL. It can run the application... | 0 | 2024-05-27T09:01:13 | https://ynrfin.com/blogs/docker/add-docker-migrate-to-docker-compose/ | webdev, docker, database, go | Previously I setup docker compose for golang application and PostgreSQL. It can run the application and can connect to PostgreSQL. While doing basic CRUD, I found that I need a tool to migrate my database structure and seed the database for easy onboarding and development. Thus I want to use `golang-migrate` to do that.

## What Is Golang Migrate

Contrary to the name, it is not a migration tool specifically created for golang development, although we can use it as a golang package in your application. This tool is a CLI tool that can be installed on Windows, Mac, and Linux. As a CLI tool, it means that no matter what language you use to code, your migration can be managed using this `golang-migrate`.

[Here](https://github.com/golang-migrate/migrate/blob/master/cmd/migrate/README.md) is the documentation how to install it.

## Golang migrate commands

What I use is this for now.

Create migration script:

```bash

migrate create -ext sql -dir migrations -seq create_users_table

```

- `migrate` golang-migrate command

- `create` create new migration script, both up and down

- `-ext sql` use `sql` extension

- `-dir migrations` the target directory where the migration files is generated

- `-seq` make the file name sequential, increment from 1. if not it would be current datetime

- `create_users_table` migration files name

which will create 2 files in `migrations` dir

```diff

├── Dockerfile

├── Dockerfile.multistage

├── README.md

├── cmd

│ └── main.go

├── controllers

├── docker-compose.yml

├── go-market-warehouse-api

├── go.mod

├── go.sum

├── main

├── middlewares

├── migrations

+++ │ ├── 000001_create_users_table.down.sql <- this

+++ │ └── 000001_create_users_table.up.sql <- and this

├── models

└── repositories

```

`000001_create_users_table.up.sql` contains the DDL for the changes that I want to make, in this case creating `users` table

```sql

CREATE EXTENSION IF NOT EXISTS "uuid-ossp";

CREATE TABLE users (

id UUID PRIMARY KEY DEFAULT uuid_generate_v4(),

name VARCHAR NOT NULL,

email VARCHAR(255) NOT NULL UNIQUE

);

```

and `000001_create_users_table.down.sql` contains the DDL to undo the above code.

```sql

DROP TABLE IF EXISTS "users";

```

And Apply the migration once the DDL is supplied in the files:

```

migrate -path /migrations/ -database "postgres://username:password@host:port/db_name?sslmode=disable" up

```

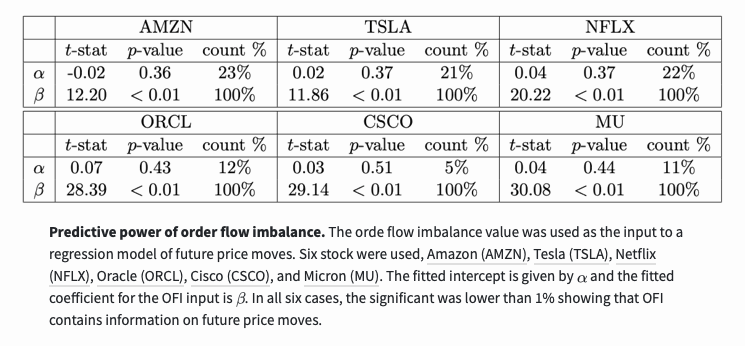

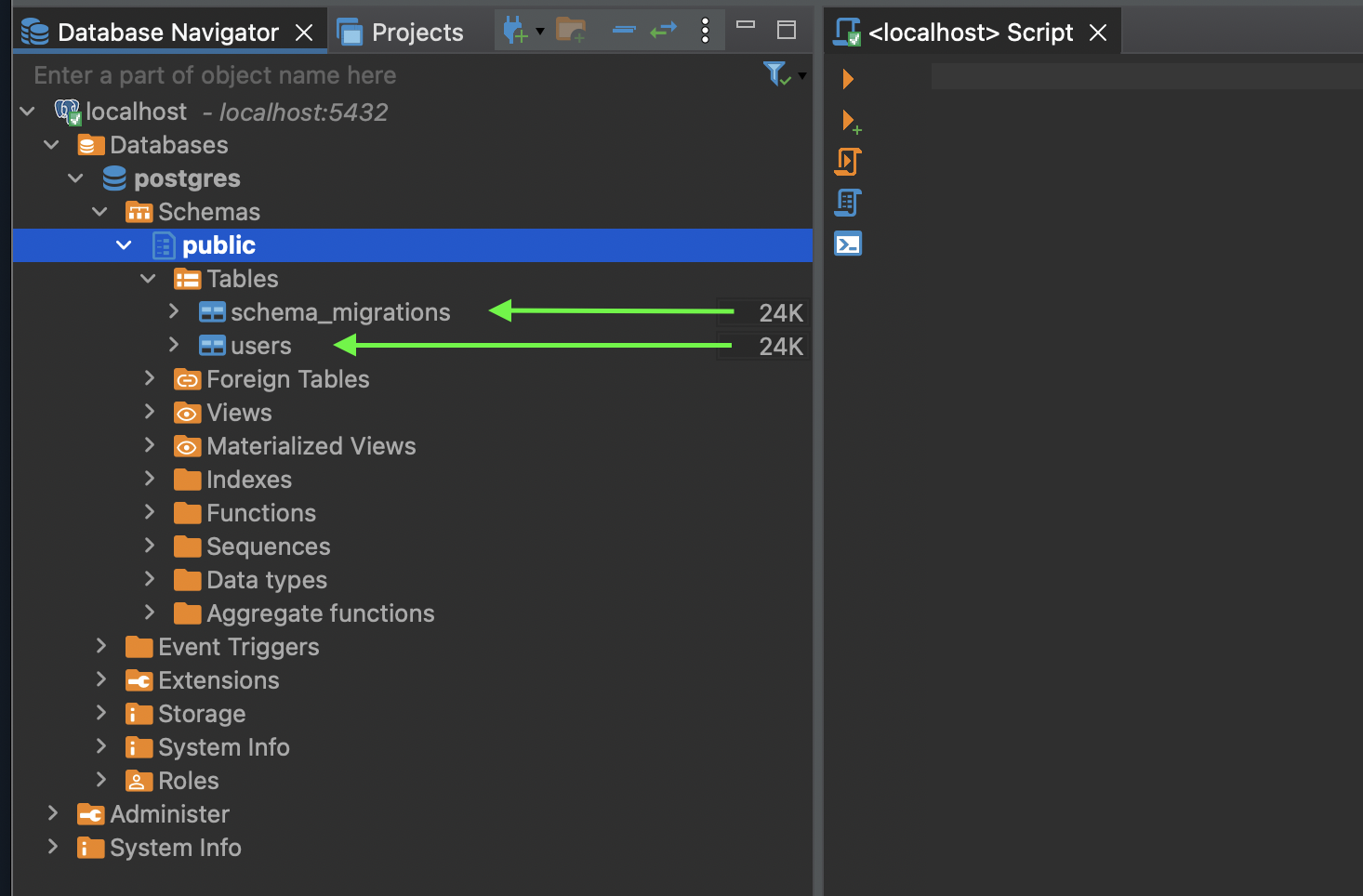

Here's the database tables before we execute the migration:

And here it is after running migration

- `schema_migrations` is used by golang migrate to track migrations

- `users` table is the table that being generated from my script above

## How To Use Golang Migrate in Docker Compose

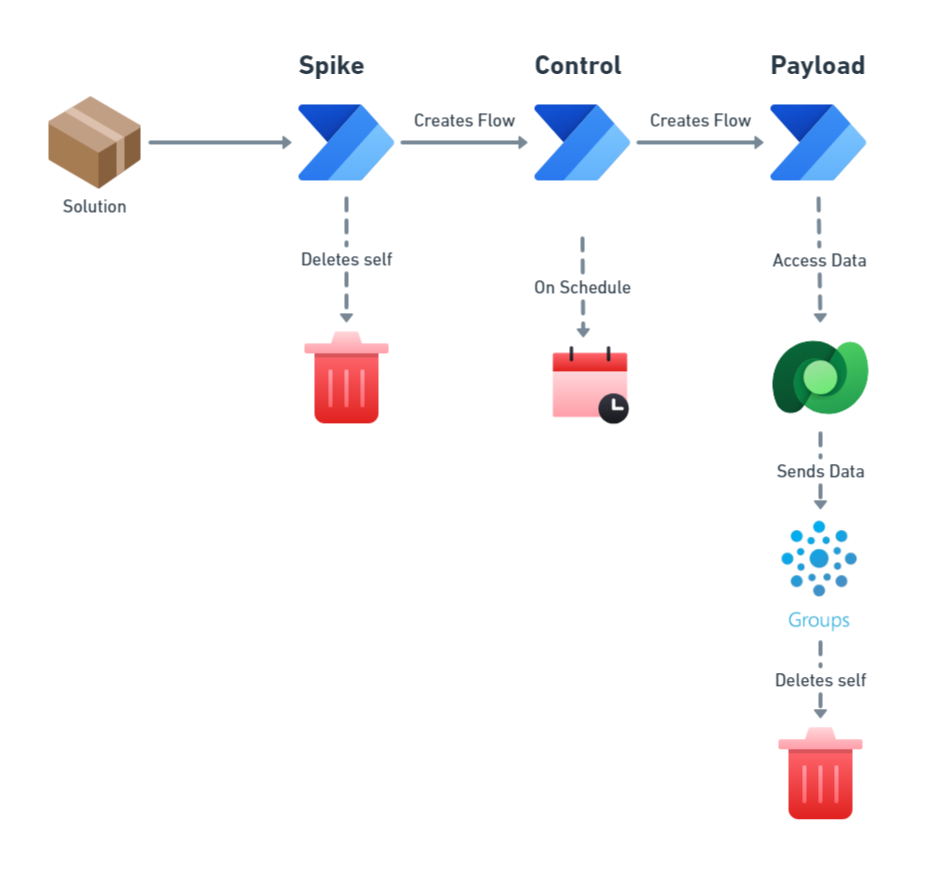

Golang migrate has its own image in docker hub. The way to use this, is to create a new service for this golang migrate, then run the migration that targetted to PostgreSQL service. By creating it's own service and execute it, the migration is another service that is not added to the main application service, make the application smaller.

Here's the new `golang-migrate` service:

```yml

service:

# ... go-market-warehouse-api & local-pg-16 services

migrate:

image: migrate/migrate

depends_on:

local-pg-16:

condition: service_healthy

networks:

- my-local-net

volumes:

- ./migrations/:/migrations

command: ["-path", "/migrations/", "-database", "postgres://${PGUSER}:${PGPASSWORD}@local-pg-16:5432/postgres?sslmode=disable", "up"]

```

Full code [in this commit](https://github.com/ynrfin/go-market-warehouse-api/tree/83ed175ea03b75cad75f36f63c7295ef8f33352f)

`image: migrate/migrate` base this service to the image of `golang-migrate`

`depends_on: local-pg-16: condition: service_healthy` I rearrange the application startup order to `PostgreSQL` then `golang-migrate` then `go-market-warehouse-api` because after we startup the database, I want the database to be updated using latest DDL then the application can connect to database.

`networks` use already declared network, which is `my-local-net` to connect to PostgreSQL service

`volumes` here I specify which directory contains the migration scripts, in my case, it is the `migrations` directory, the LEFT one `./migrations/`.

`commands` is the arguments the `migrate` service use to run the migration

The `commands` is the bash command to apply the migration(s) like the one above without using docker.

```bash

migrate -path /migrations/ -database "postgres://${PGUSER}:${PGPASSWORD}@local-pg-16:5432/postgres?sslmode=disable" up

```

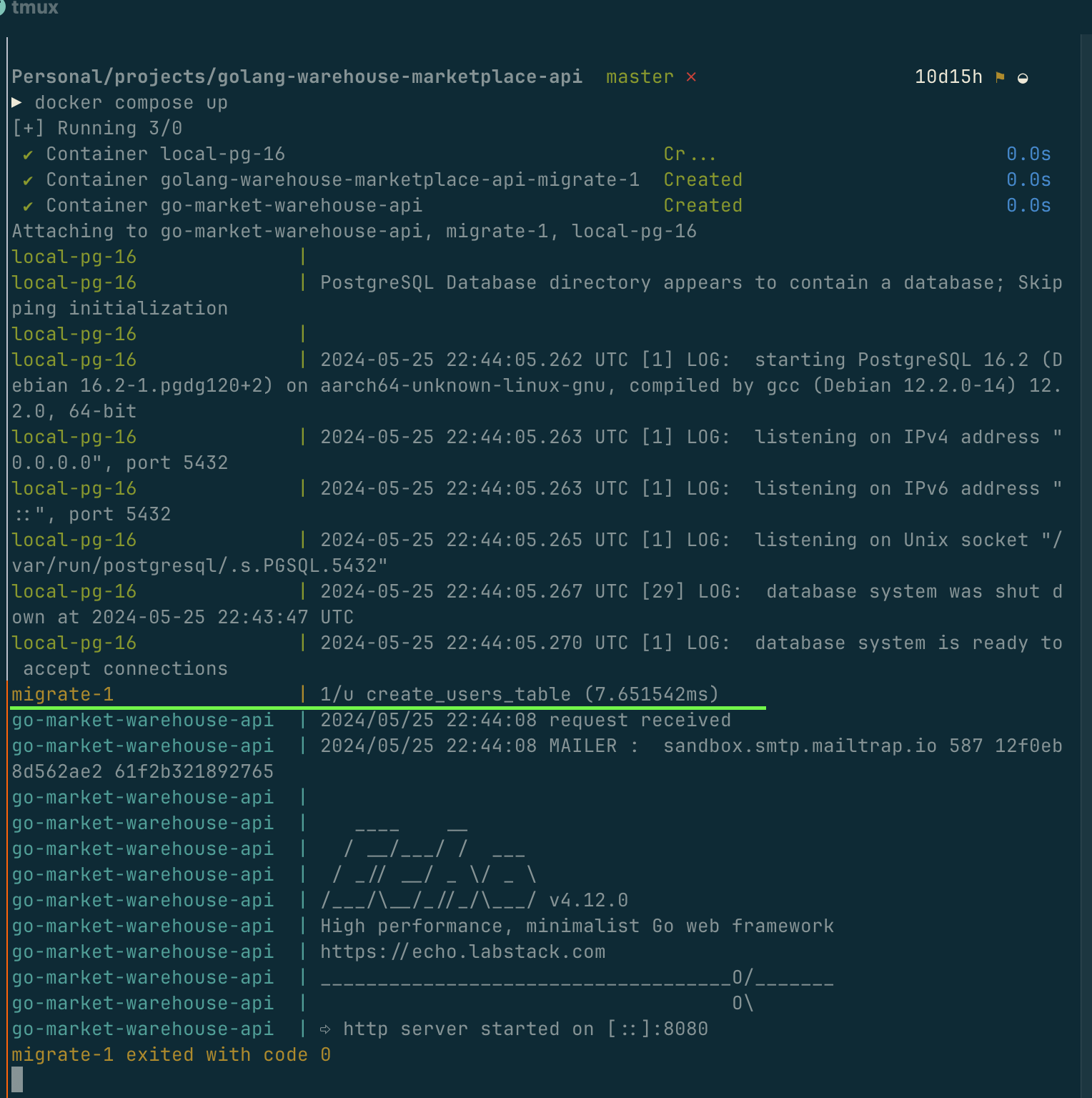

This is the docker log with migration executed

`migrate-1` is the service name

`1/u create_users_table` 1 migration upped, with name of create_users_table

If no new migration found, there won't be message on the log.

That is it for this article, hope you enjoy it.

| ynrfin |

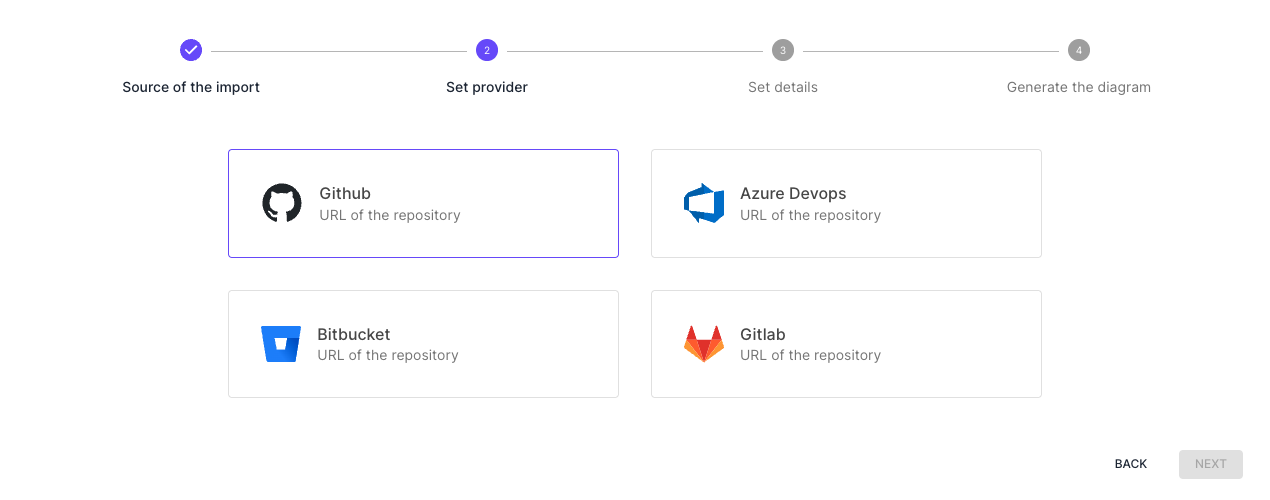

1,866,322 | GitHub Basics: Creating Repository and Maintaining It | Table of content: Definition Requirements Creating a new Repository Maintaining a Repository ... | 0 | 2024-05-27T08:59:31 | https://dev.to/swahilipotdevs/github-basics-creating-repository-and-maintaining-it-4i04 |

Table of content:

1. Definition

2. Requirements

3. Creating a new Repository

4. Maintaining a Repository

- Fork the repository

- Clone the repository

- Create a branch

- Initializing a repository

- Adding files to the repository

- Make an Initial Commit

- Push changes to your repository

- Pull requests

- Renaming a repository

- Transferring a repository

- Deleting a repository

- Restoring a deleted repository

5. Conclusion

6. Useful resources

Definition

GitHub is a web-based tool for version control and collaboration. It provides developers with an easy-to-use interface for creating repositories (remote storage sites), tracking code changes over time (version control), and collaborating on projects with others.

Requirements

- GitHub Account

- Git Installed

- Text Editor or IDE

- Basic Command Line Skills

1. Creating a new repository

As a prerequisite you need a GitHub account to be able to create a repository.

Creating a GitHub account

1. Navigate to https://github.com/.

2. Click Sign up.

3. Follow the prompts to create your personal account.

During sign up, you'll be asked to verify your email address. Without a verified email address, you won't be able to complete some basic GitHub tasks, such as creating a repository.

If you're having problems verifying your email address, there are some troubleshooting steps you can take. For more information, see "Verifying your email address."

Creating a new repository from the web UI

step 1-;

Once you have GitHub account, In the upper-right corner of your page, click the + sign .

Step 2-:

On the drop down list, click on the New repository option.

Step 3-;

Use the Owner drop-down menu to choose the account that will own the repository.

step 4-:

In the repository name field, enter a name for your repository and optionally provide a description. Select the desired repository visibility, then click the "Create repository" button at the bottom of the page.

For example-;

Step 5-;

At this point, you have successfully created a new repository using the web UI.

2. Maintaining a Repository

1. Fork the repository

Fork the repository by clicking the fork button on the top of the page. This will create an instance of that entire repository in your account.

2. Clone the repository

To work with the repository locally, clone it to your machine. Click on the "Code" button and copy the provided link.

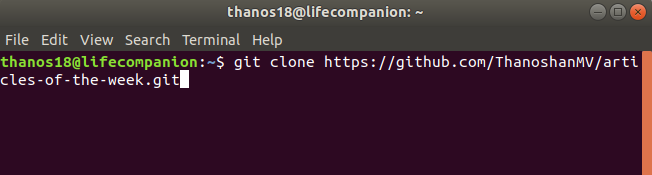

Open the terminal and run the following command. It will clone the repository locally.

$ git clone [HTTPS ADDRESS]

For example

$ git clone hhtps://github.com/ThanoshanMV/articles-of-the-week.git

Now we have set up a copy of the master branch from the main online project repository.

$ cd [NAME OF REPOSITORY]

3. Create a branch

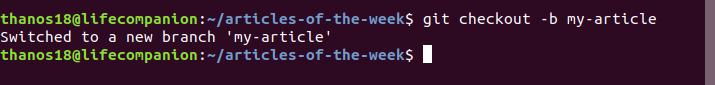

It’s good practice to create a new branch when working with repositories, whether it’s a small project or contributing to a group's work.

Branch name should be short and it should reflect the work we’re doing.

Now create a branch using the `git checkout` command:

$ git checkout -b [Branch Name]

4. Initializing a repository:

To initialize a new Git repository, open the terminal, navigate to the root directory of your project, and execute git init. This command initializes an empty Git

repository in the specified directory and generates a .git sub-directory that contains versioning information.

5. Adding files to the repository

Make essential changes to the project and save it.

Then execute `git status` , and you’ll see the changes.

Use the `git add` `.` command to add your project files to the repository after it has been initialized. For instance, you may use git add . to add all files in the repository. The files are ready to be included in the subsequent commit at this point.

6. Make an Initial Commit

Use the `git commit -m "Initial commit message"` command to generate an initial commit once you have added the required files. Commits are a snapshot of the project at a particular moment in time and ought to have a detailed message detailing the modifications that were made.

7. Push changes to your repository

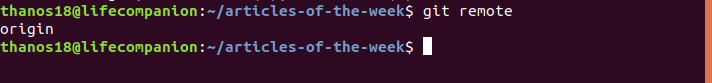

In order to push the changes to GitHub, we need to identify the remote’s name.

$ git remote

For this repository the remote’s name is “origin”.

After identifying the remote’s name we can safely push those changes to GitHub.

1. On GitHub.com, navigate to the main page of the repository.

2. Above the list of files, click Code.

3. To clone your repository using the command line using HTTPS, under "Quick setup", copy link . To clone the repository using an SSH key, including a certificate issued by your organization's SSH certificate authority, click SSH, then copy link .

4. Open Terminal .

5. Change the current working directory to the location where you want the cloned directory.

git push origin [Branch Name]

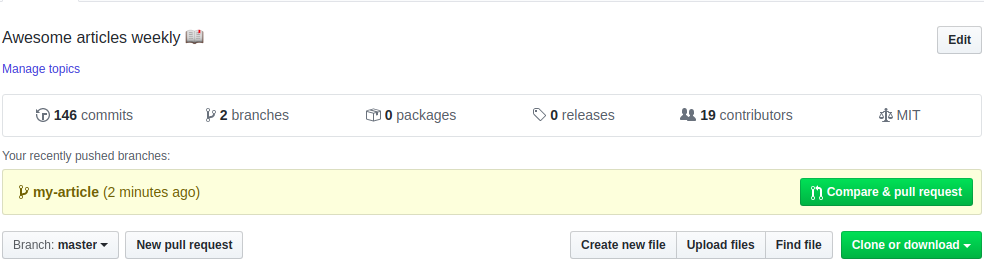

8. Pull requests

To create a pull request that is ready for review, click Create Pull Request. To create a draft pull request, use the drop-down and select Create Draft Pull Request, then click Draft Pull Request.

9. Renaming a repository

- On GitHub.com, navigate to the main page of the repository.

- Under your repository name, click Settings. If you cannot see the "Settings" tab, select the drop-down menu, then click Settings.

- In the Repository Name field, type the new name of your repository.

Click Rename.

- Warning: If you create a new repository under your account in the future, do not reuse the original name of the renamed repository. If you do, redirects to the renamed repository will no longer work.

10. Transferring a repository

- When you transfer a repository that you own to another personal account, the new owner will receive a confirmation email. The confirmation email includes instructions for accepting the transfer. If the new owner doesn't accept the transfer within one day, the invitation will expire.

1. On GitHub.com, navigate to the main page of the repository.

2. Under your repository name, click Settings. If you cannot see the "Settings" tab, select the drop down menu, then click Settings.

3.

1. At the bottom of the page, in the "Danger Zone" section, click Transfer.

1. Read the information about transferring a repository, then under "New owner", choose how to specify the new owner.

- To choose one of your organizations, select “Select one of my organizations.”

- Select the drop-down menu and click an organization.

- Optionally, in the "Repository name" field, type a new name for the repository.

- Note: You must be an owner of the target organization to rename the repository.

- To specify an organization or username, select Specify an organization or username, then type the organization name or the new owner's username.

2. Read the warnings about potential loss of features depending on the new owner's GitHub subscription.

3. Following “Type Repository Name to confirm”, type the name of the repository you'd like to transfer, then click I understand, transfer this repository.

11. Deleting a repository

- On GitHub.com, navigate to the main page of the repository.

- Under your repository name, click Settings. If you cannot see the "Settings" tab, select the drop-down menu, then click Settings.

- On the "General" settings page (which is selected by default), scroll down to the "Danger Zone" section and click Delete this repository.

- Click I want to delete this repository.

- Read the warnings and click I have read and understand these effects.

- To verify that you're deleting the correct repository, in the text box, type the name of the repository you want to delete.

- Click Delete this repository.

12. Restoring a deleted repository

Some deleted repositories can be restored within 90 days of deletion.

1. In the upper-right corner of any page, click your profile photo, then click Settings.

1. In the "Code planning, and automation" section of the sidebar, click Repositories.

2. Under "Repositories", click Deleted repositories.

Next to the repository you want to restore, click Restore.

Read the warning, then click I understand, restore this repository.

13. Conclusion

Repositories on GitHub are an effective tool for organizing and working together on software projects. Developers may assure project transparency and version control, promote teamwork, and expedite workflows by adhering to best practices when creating, organizing, and managing repositories. Leveraging GitHub repository capabilities can greatly improve code quality and efficiency, whether working on a team project or a personal one.

14. Useful Resources

Official GitHub Documentation

- GitHub Docs: https://docs.github.com/en

- Creating a repository: https://docs.github.com/en/repositories/creating-and-managing-repositories/creating-a-new-repository

- Managing repositories: https://docs.github.com/en/repositories

Git and GitHub Learning Resources

- Git Handbook: https://guides.github.com/introduction/git-handbook/

- GitHub Learning Lab: https://lab.github.com/

- Git & GitHub Crash Course for Beginners (Video): https://www.youtube.com/watch?v=RGOj5yH7evk

Tutorials and Guides

- GitHub Guides: https://guides.github.com/

- GitHub Skills: https://skills.github.com/

- FreeCodeCamp GitHub Tutorial: https://www.freecodecamp.org/news/git-and-github-for-beginners/

Books

- "Pro Git" by Scott Chacon and Ben Straub (free online book): https://git-scm.com/book/en/v2

- "GitHub For Dummies" by Sarah Guthals and Phil Haack

Community and Forums

- GitHub Community: https://github.community/

- Stack Overflow (GitHub tag): https://stackoverflow.com/questions/tagged/github | sanaipei | |

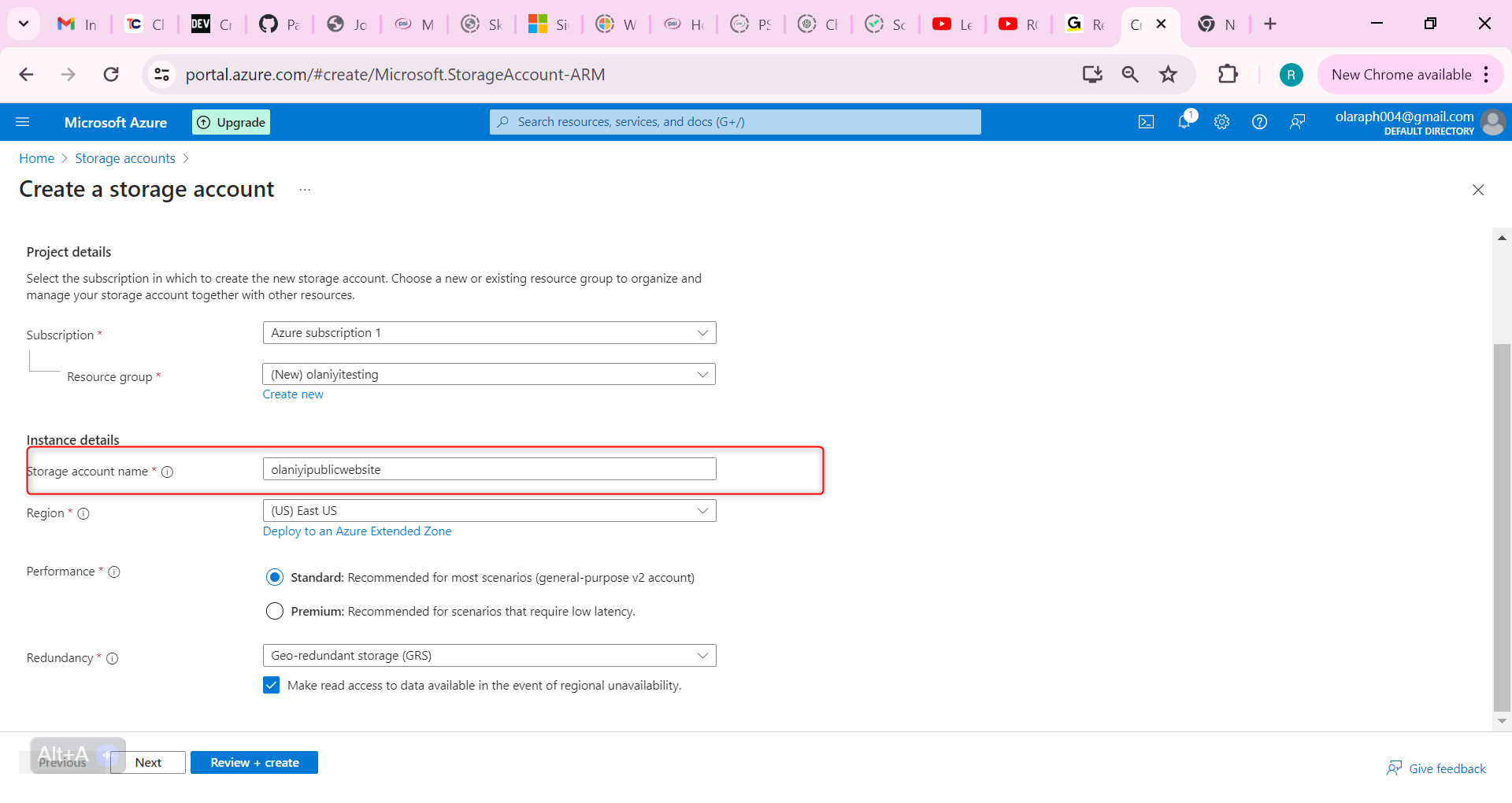

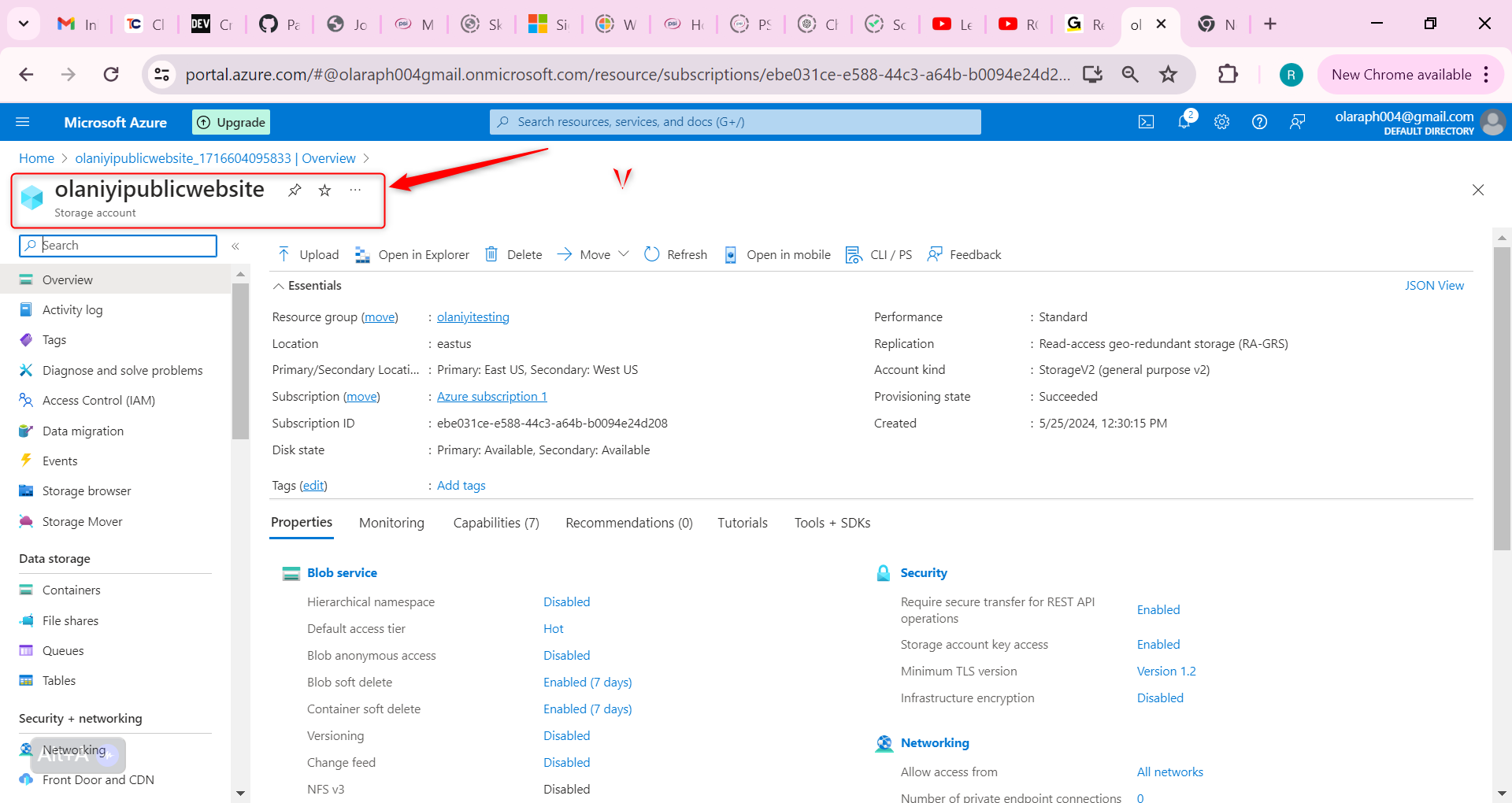

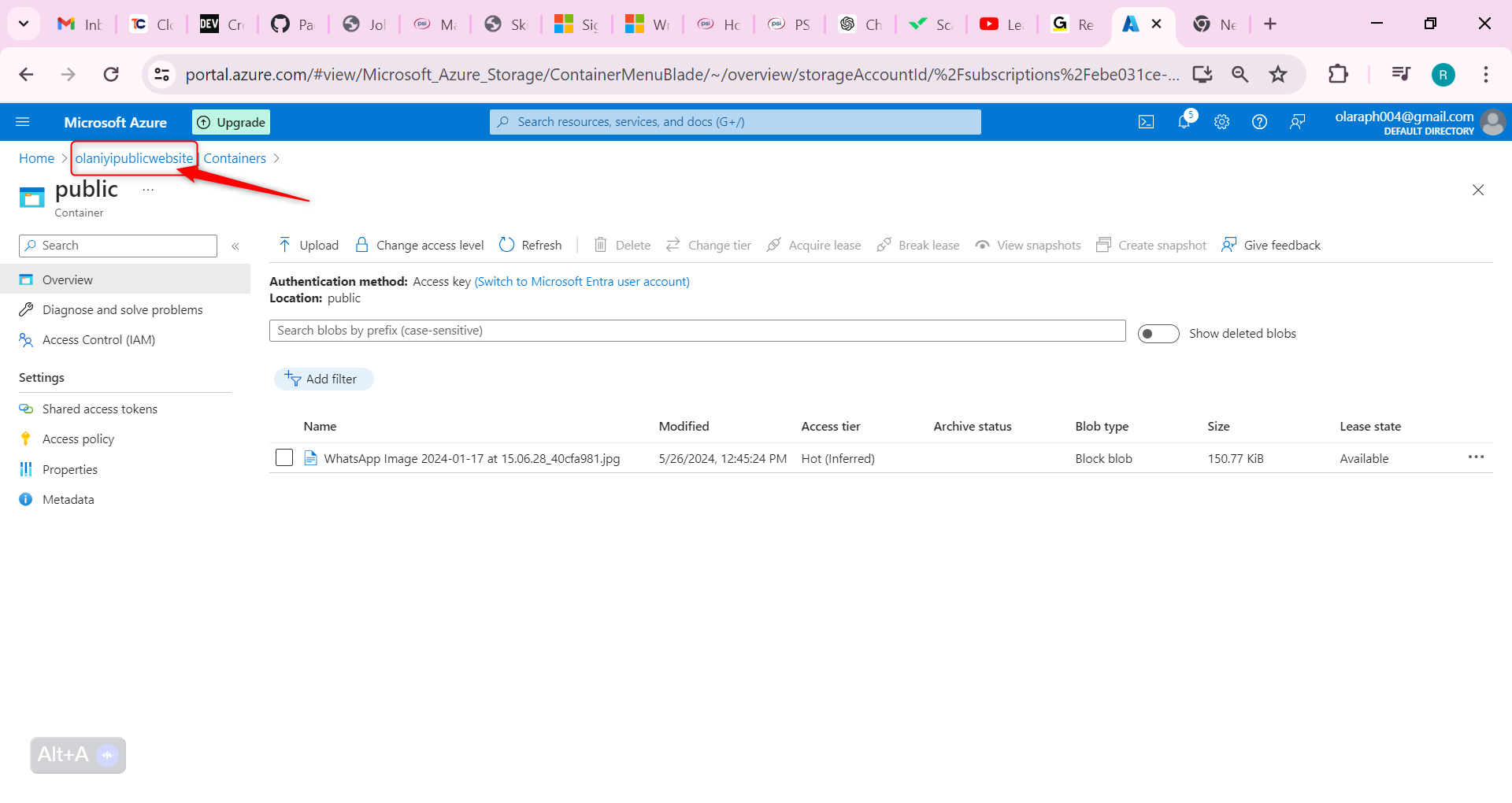

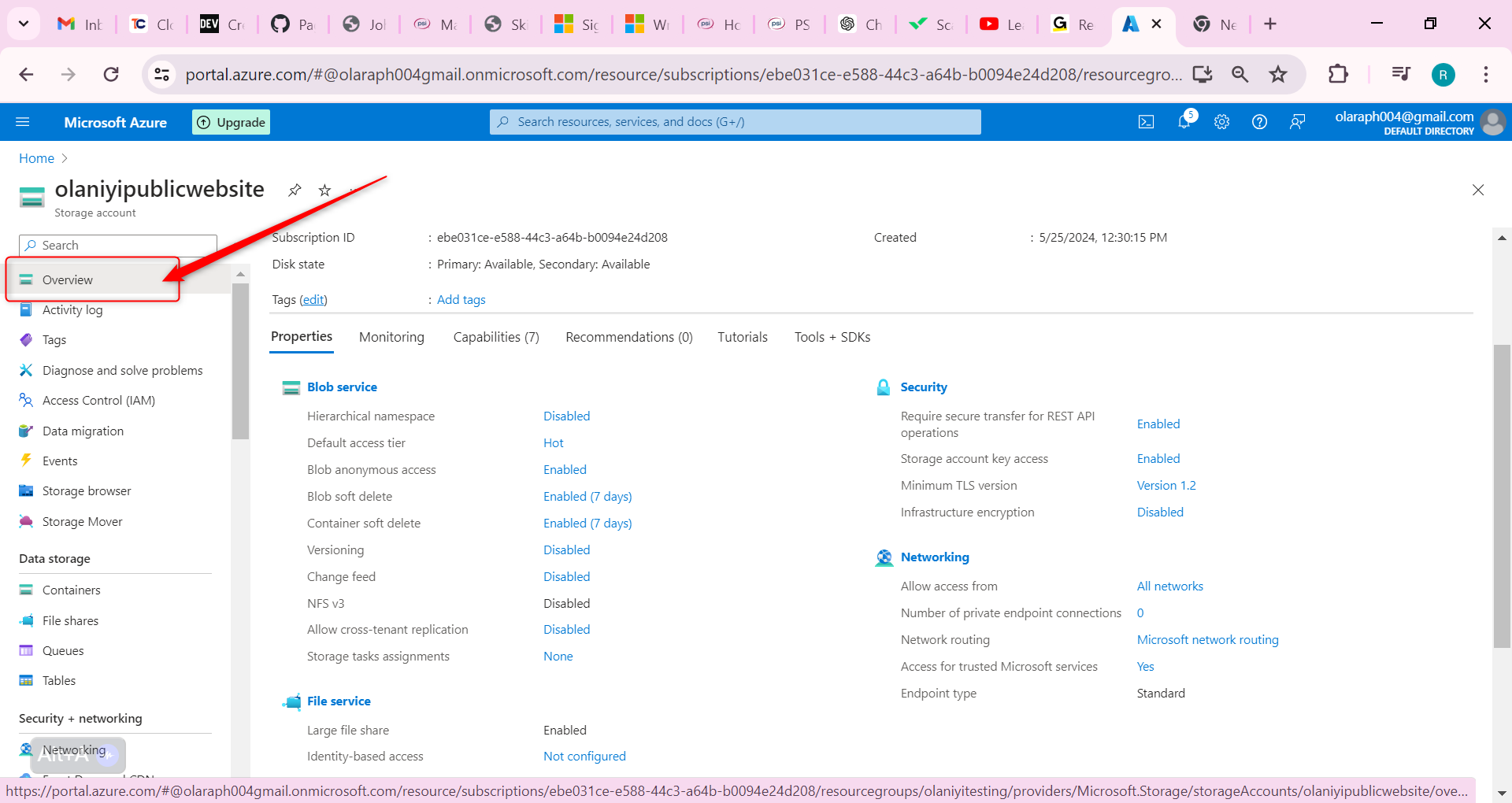

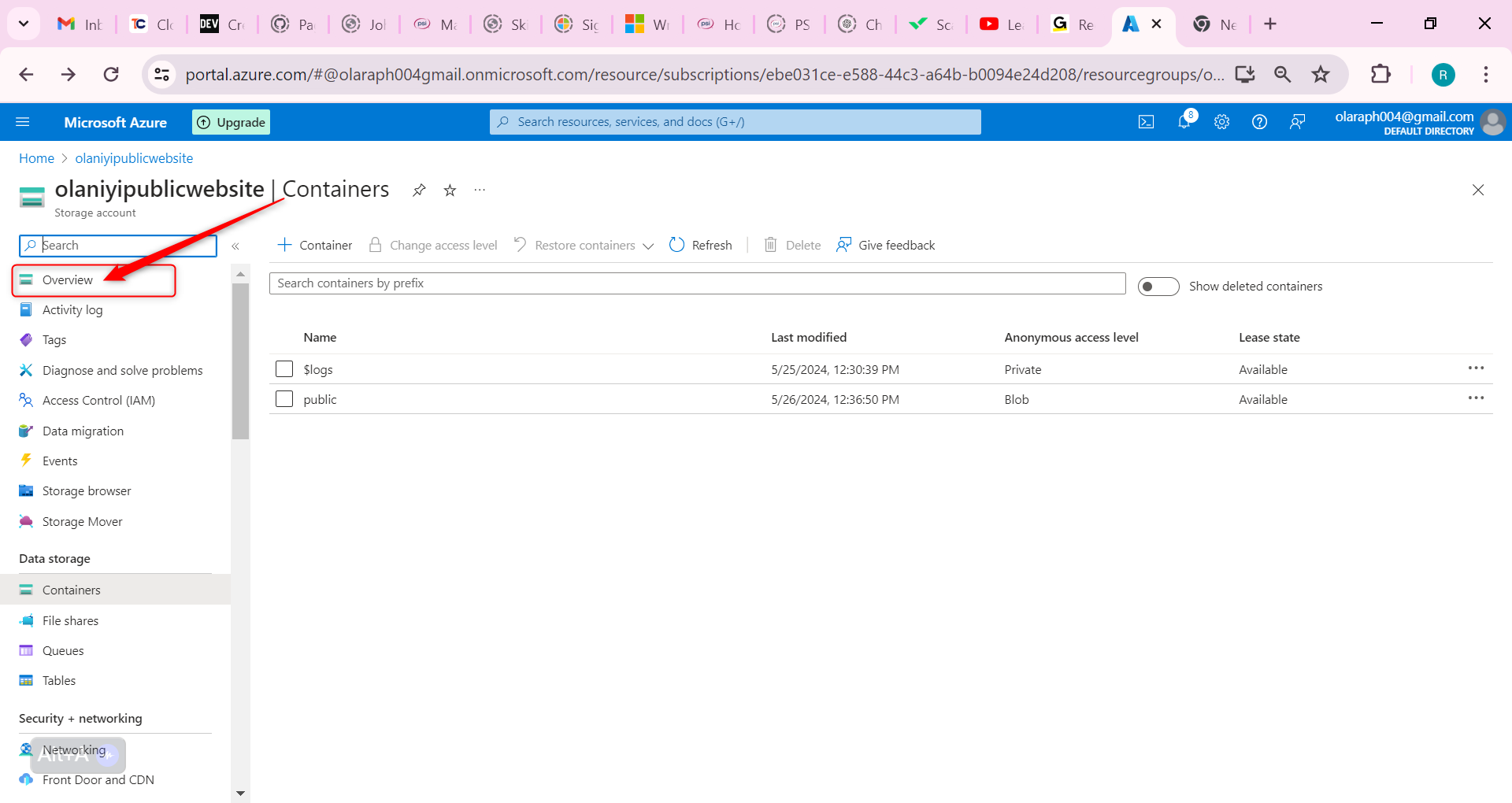

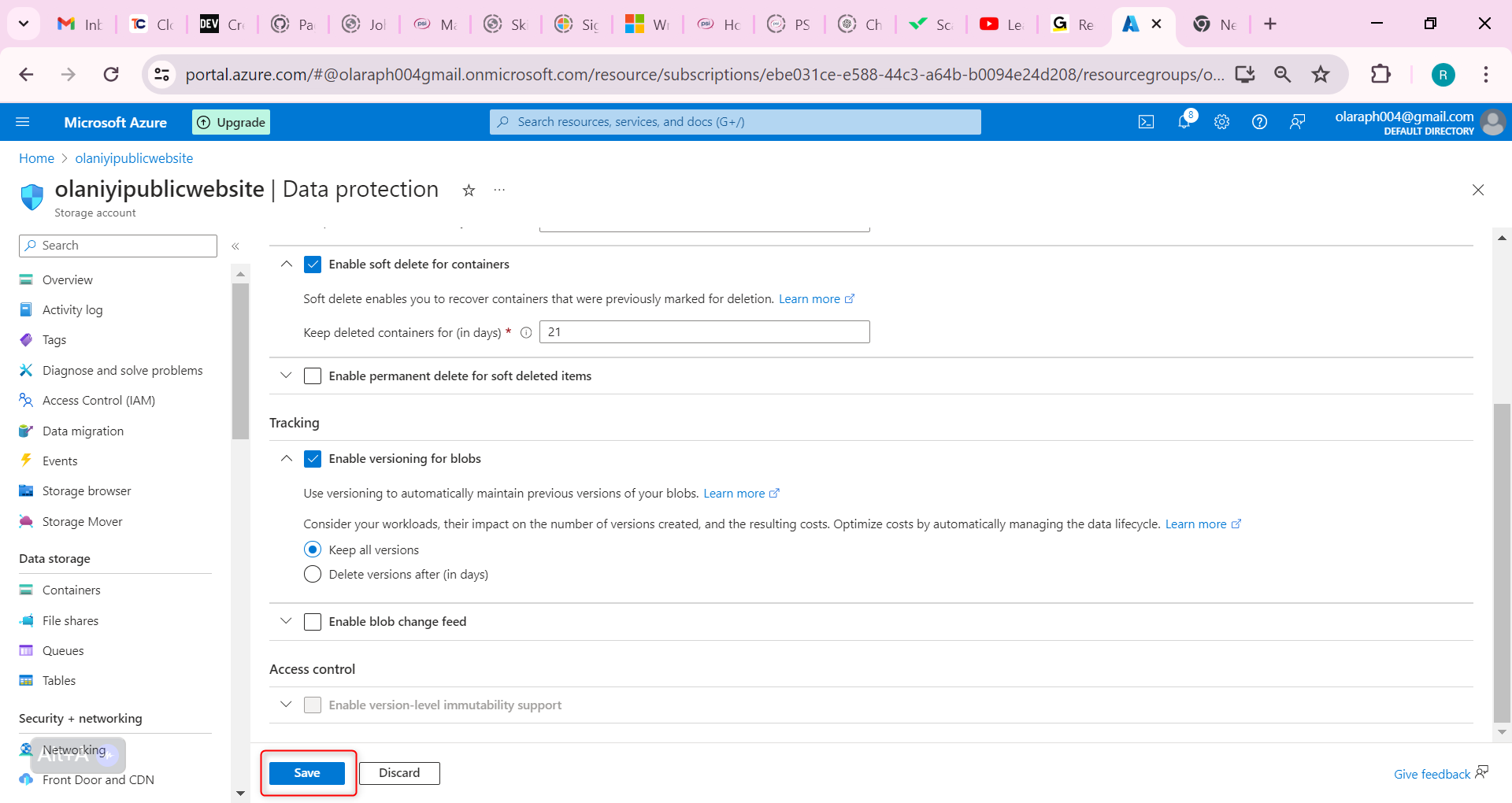

1,866,321 | How to Create Storage Account With High Availability On Microsoft Azure. | An example of the need for a high-availability storage account is the storage supporting the company... | 0 | 2024-05-27T08:58:46 | https://dev.to/olaraph/how-to-create-storage-account-with-high-availability-on-microsoft-azure-42a4 | _An example of the need for a high-availability storage account is the storage supporting the company website. This site offers product images, videos, marketing literature, and customer success stories. Given the global customer base and rapidly increasing demand, it's vital to ensure low latency load times for this mission-critical content. Additionally, maintaining version control of documents and enabling quick restoration of deleted files are crucial._

**Our goals are as follows**:

- Create a storage account with high availability.

- Ensure the storage account has anonymous public access.

- Create a blob storage container for the website documents.

- Enable soft delete so files can be easily restored.

- Enable blob versioning.

_Lets start_

Create a storage account with high availability.

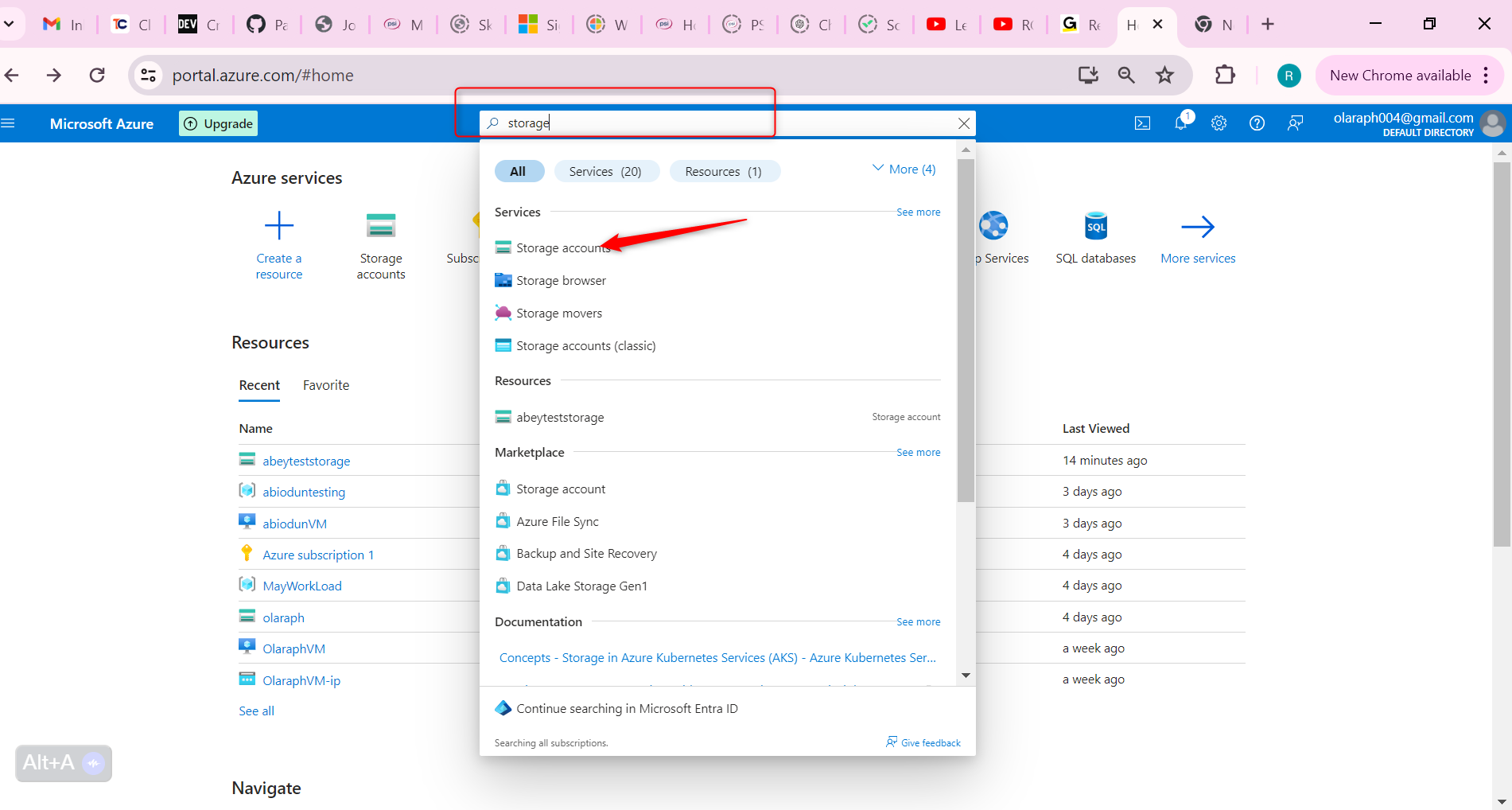

In the portal, **search** for and **select** Storage accounts.

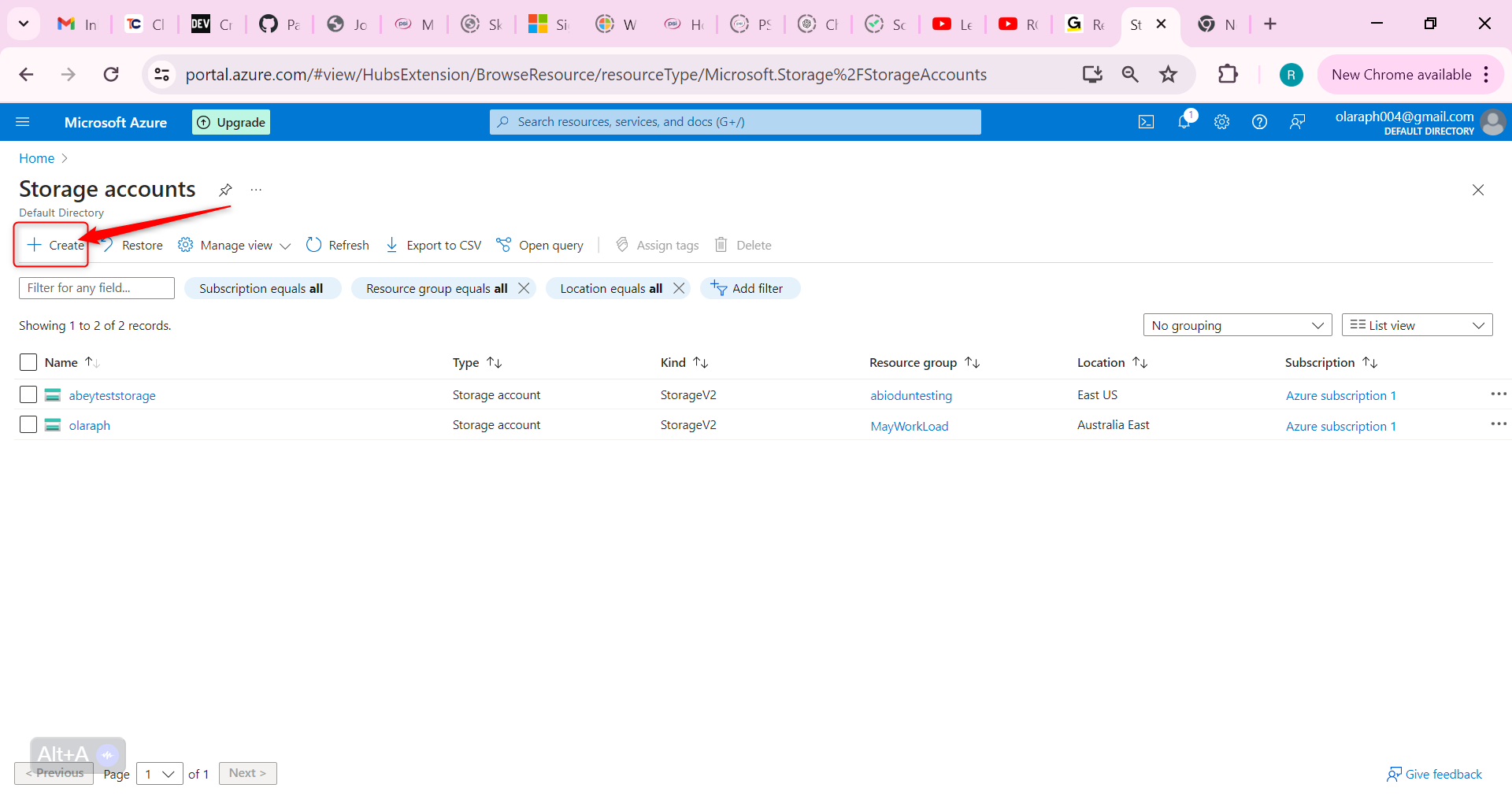

**Select + Create**.

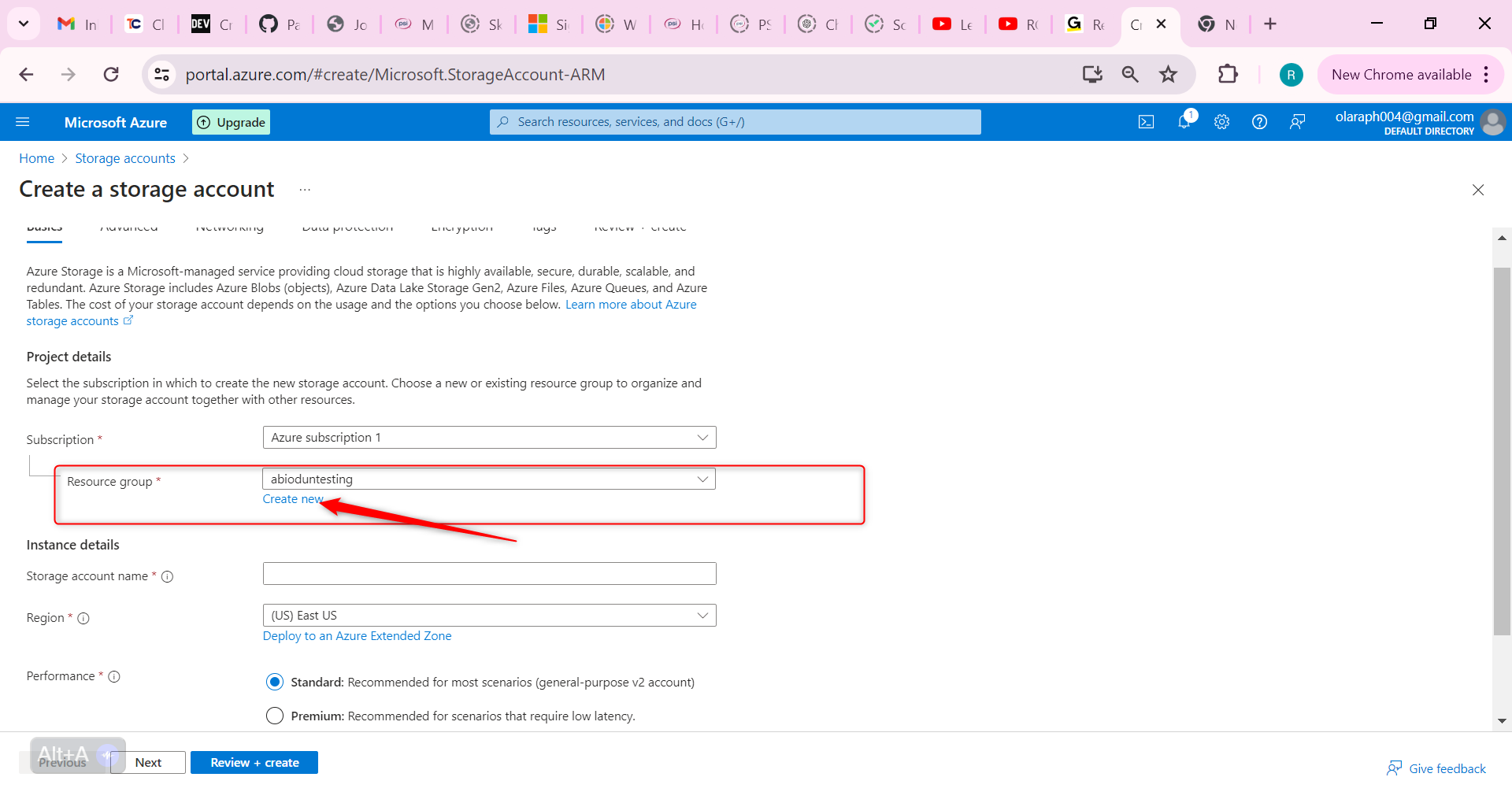

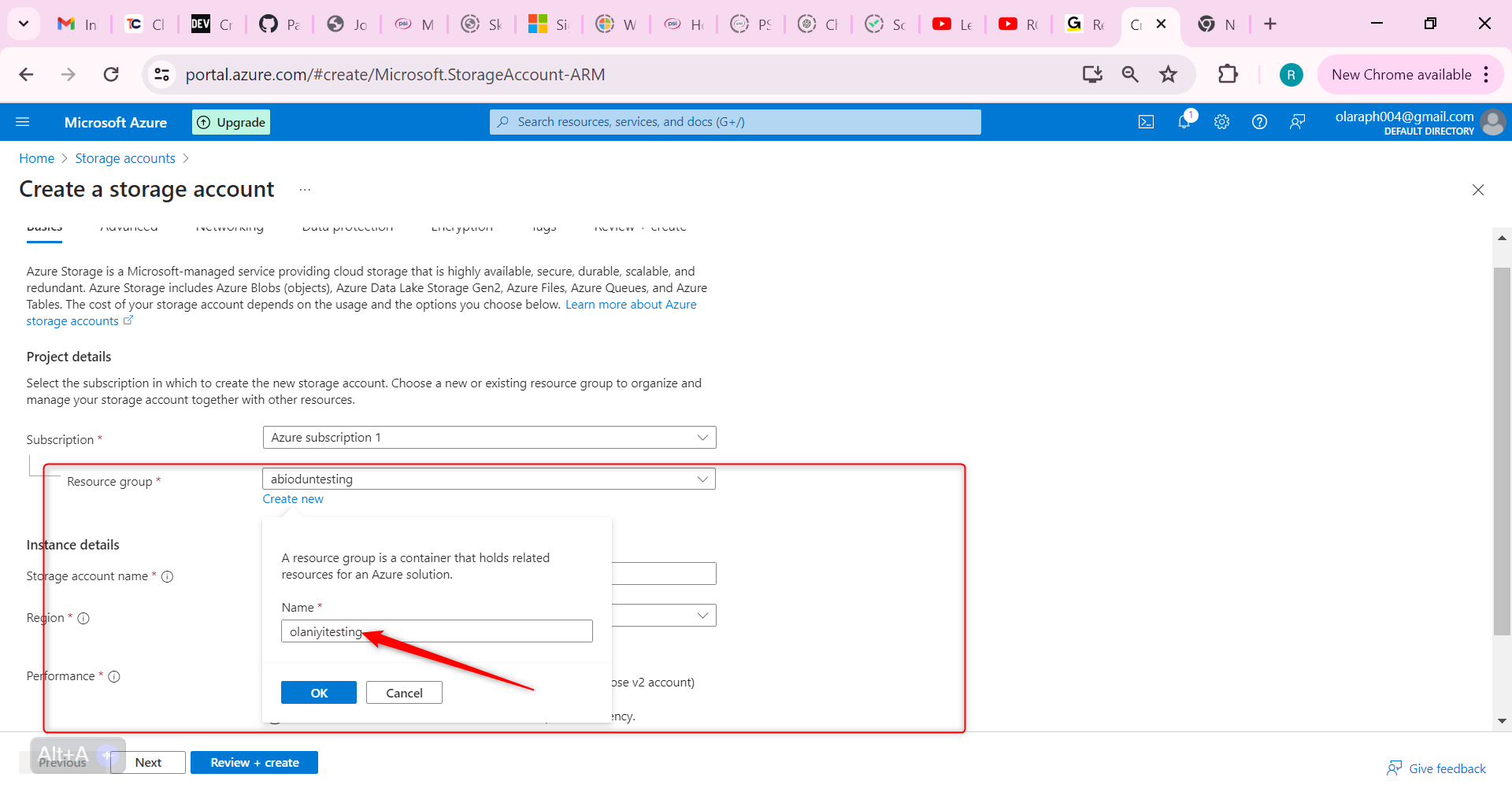

For resource group select new. Give your resource group a name and select OK.

Set the Storage account name to publicwebsite. Make sure the storage account name is unique by adding an identifier.

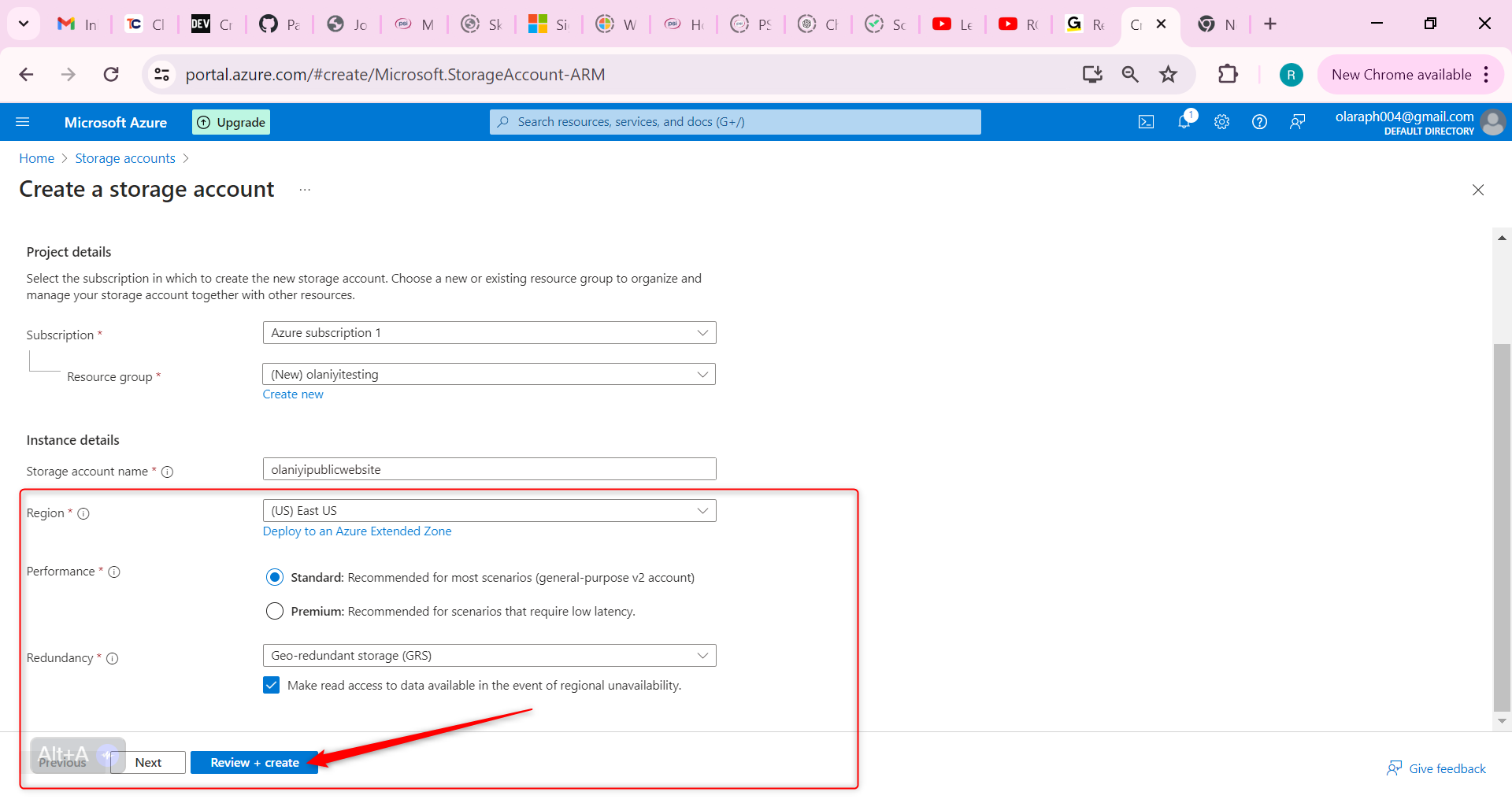

Take the defaults for other settings.

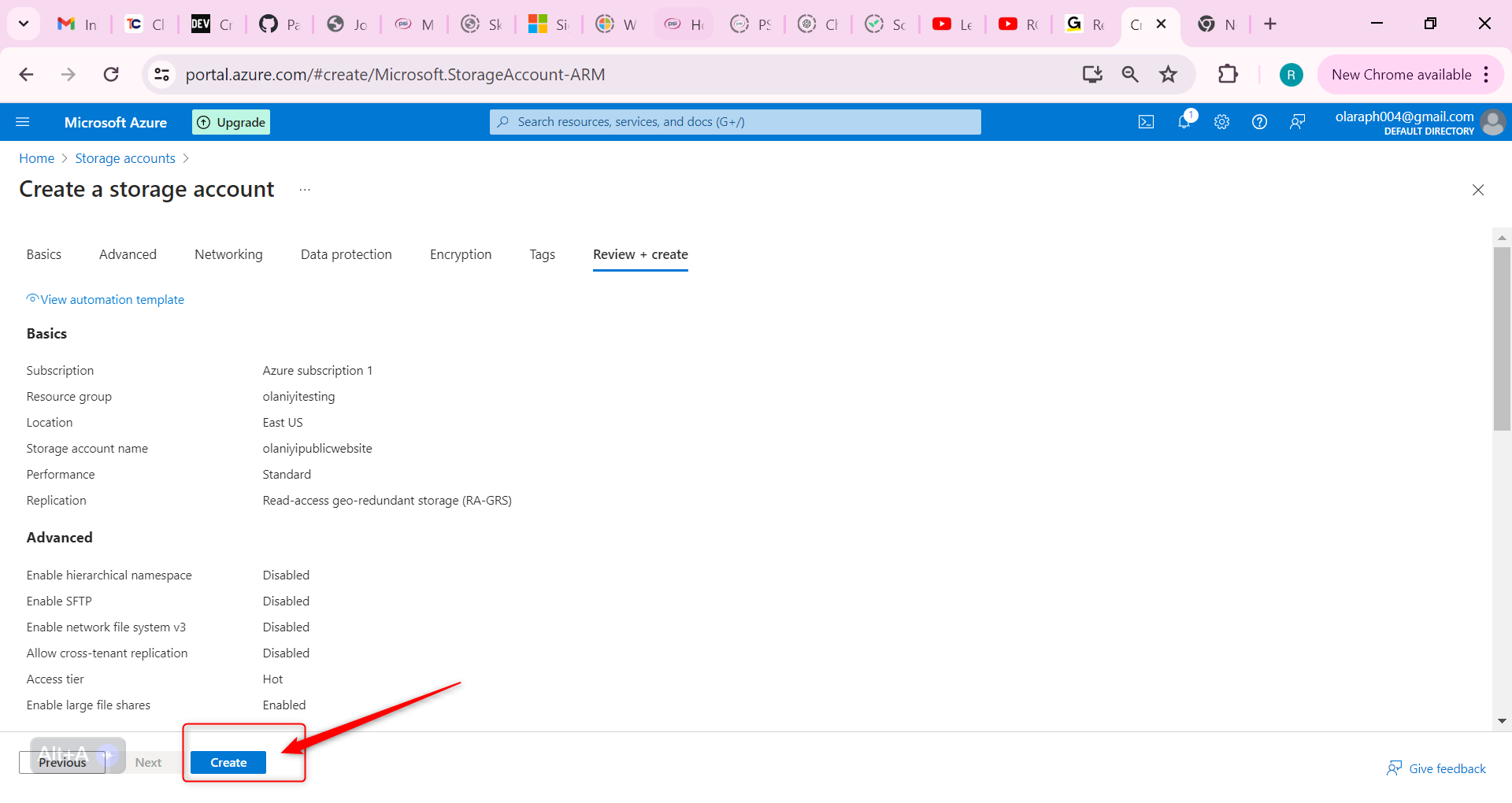

Then Select Review and then Create.

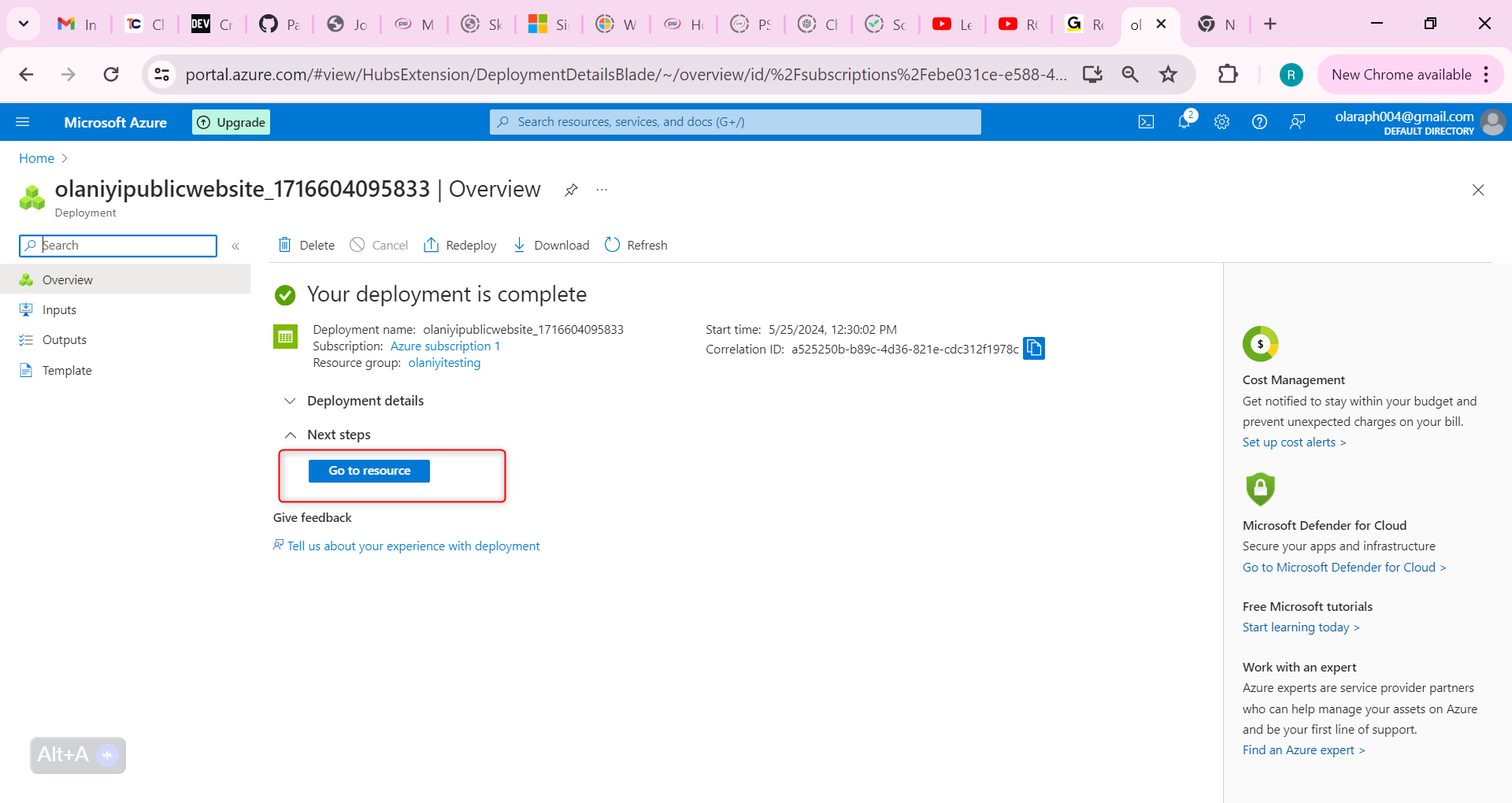

Wait for the storage account to deploy, and then select Go to resource

This storage requires high availability if there’s a regional outage. Additionally, enable read access to the secondary region.

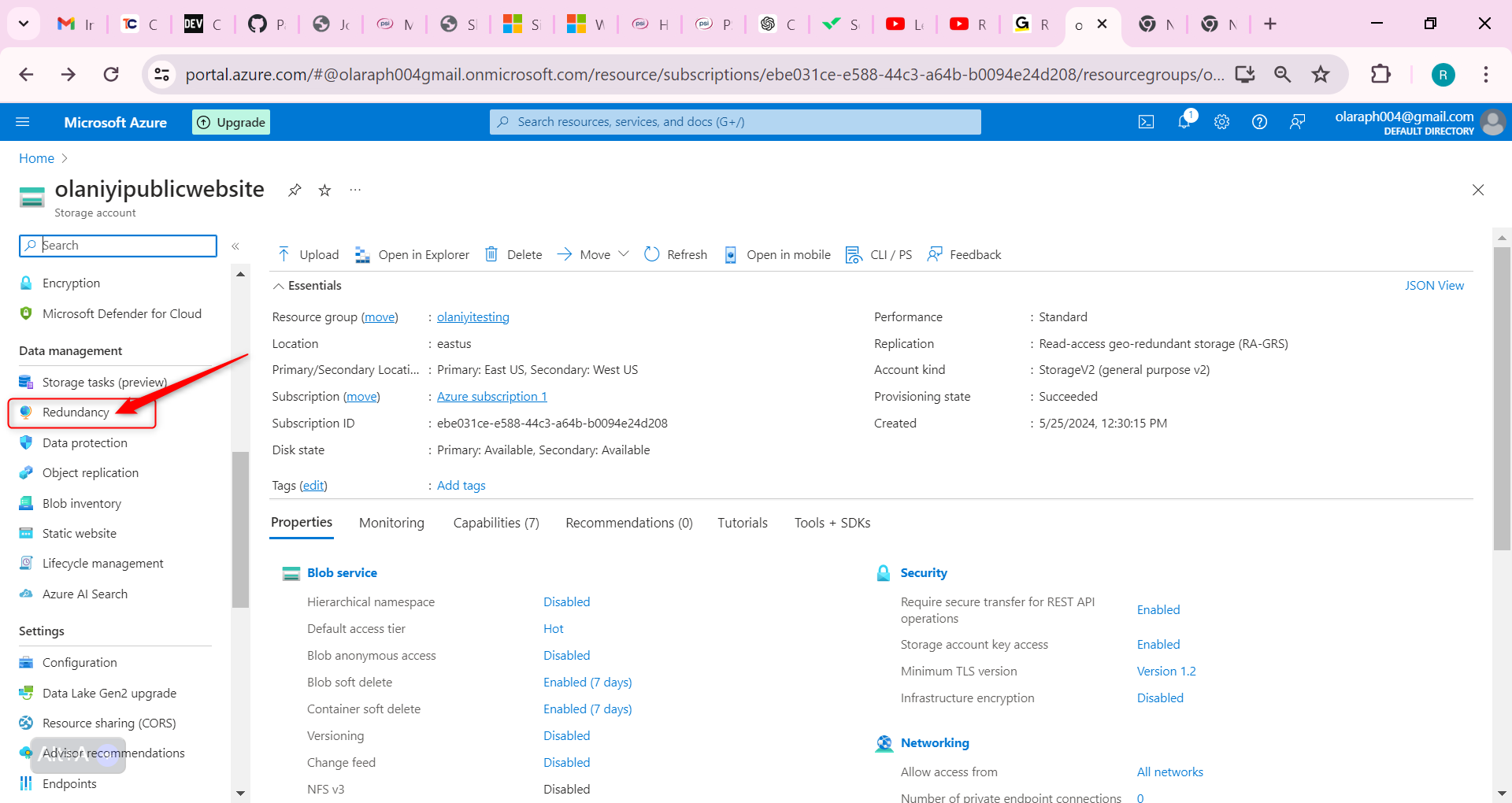

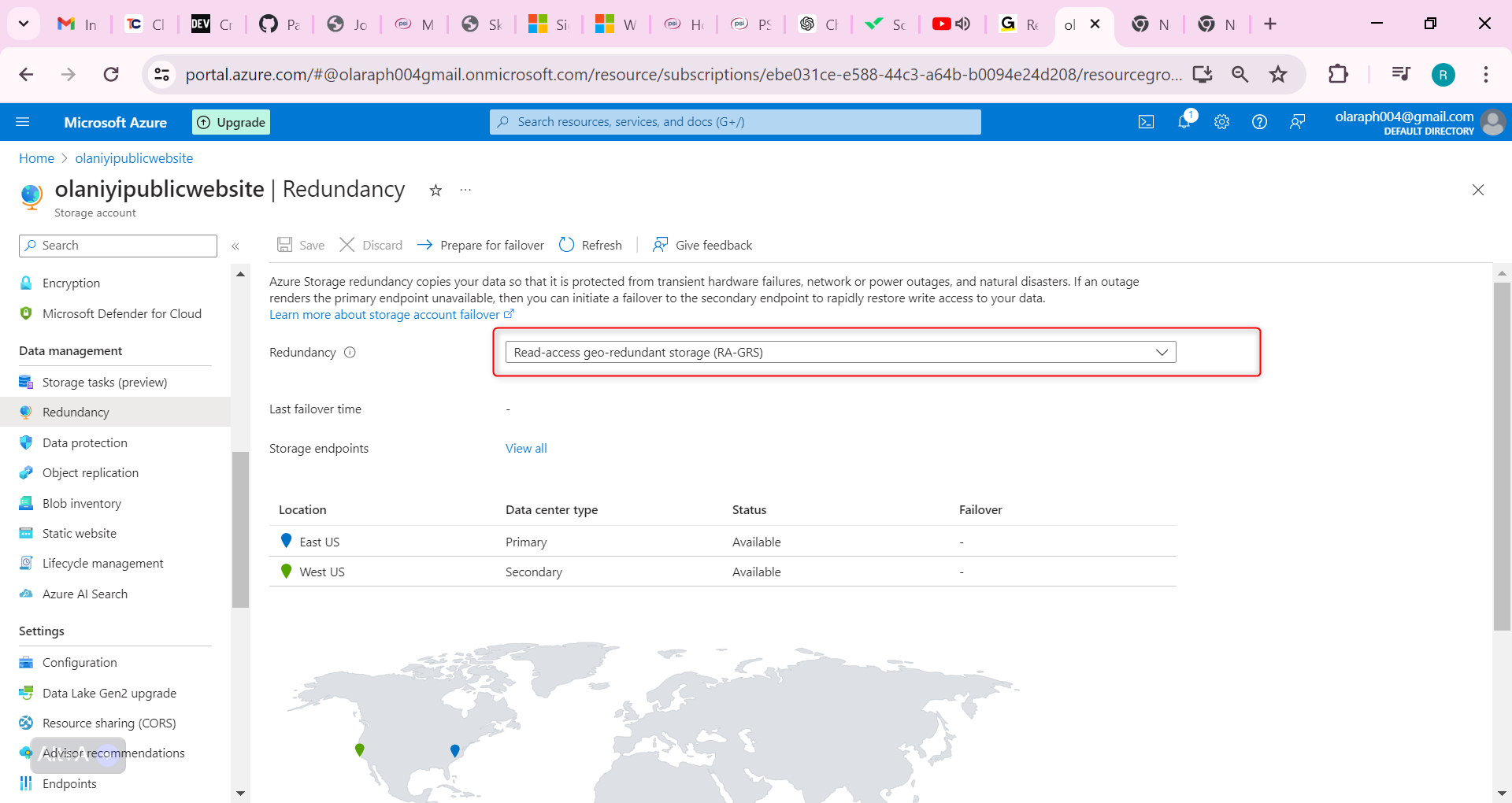

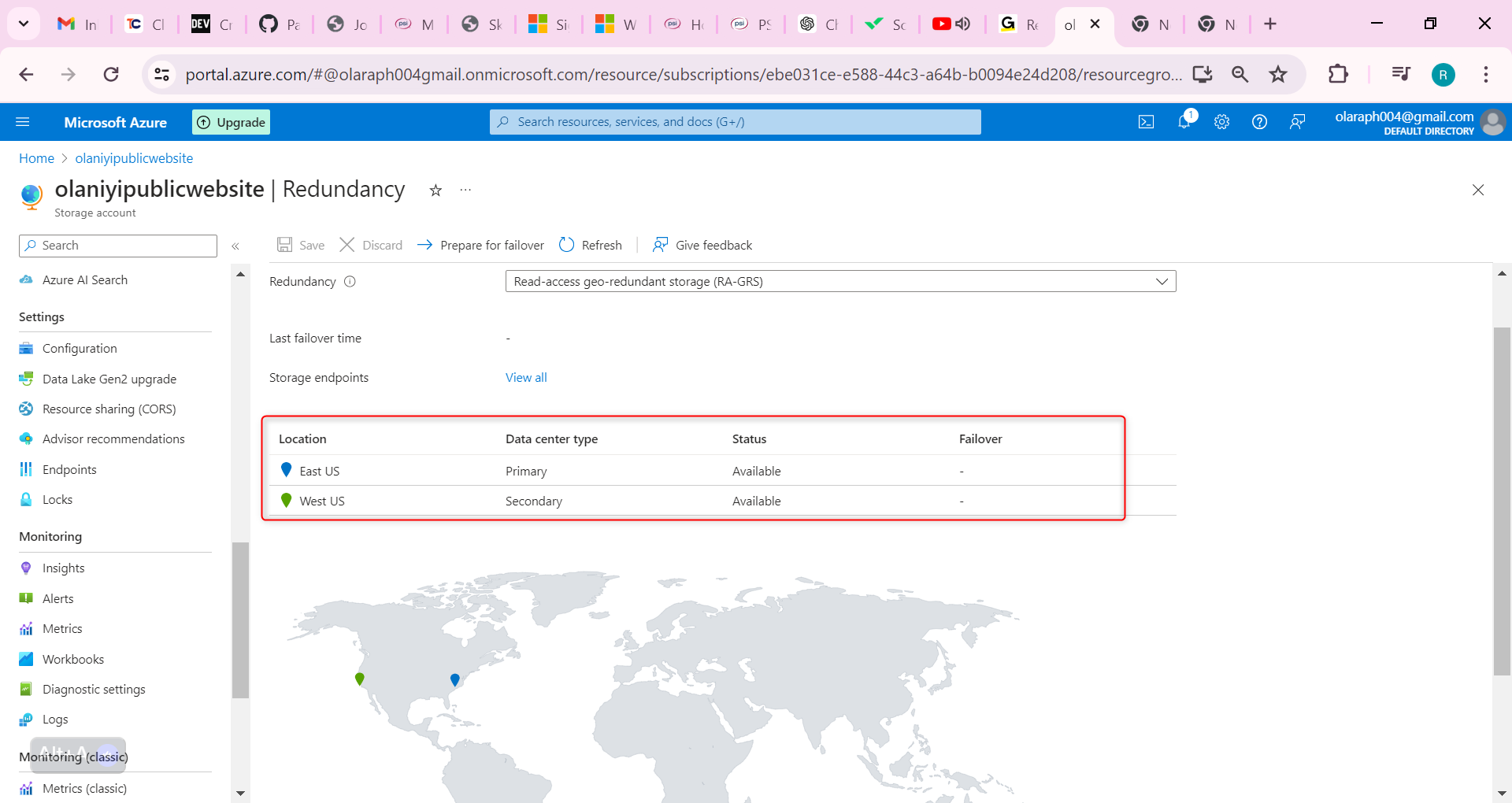

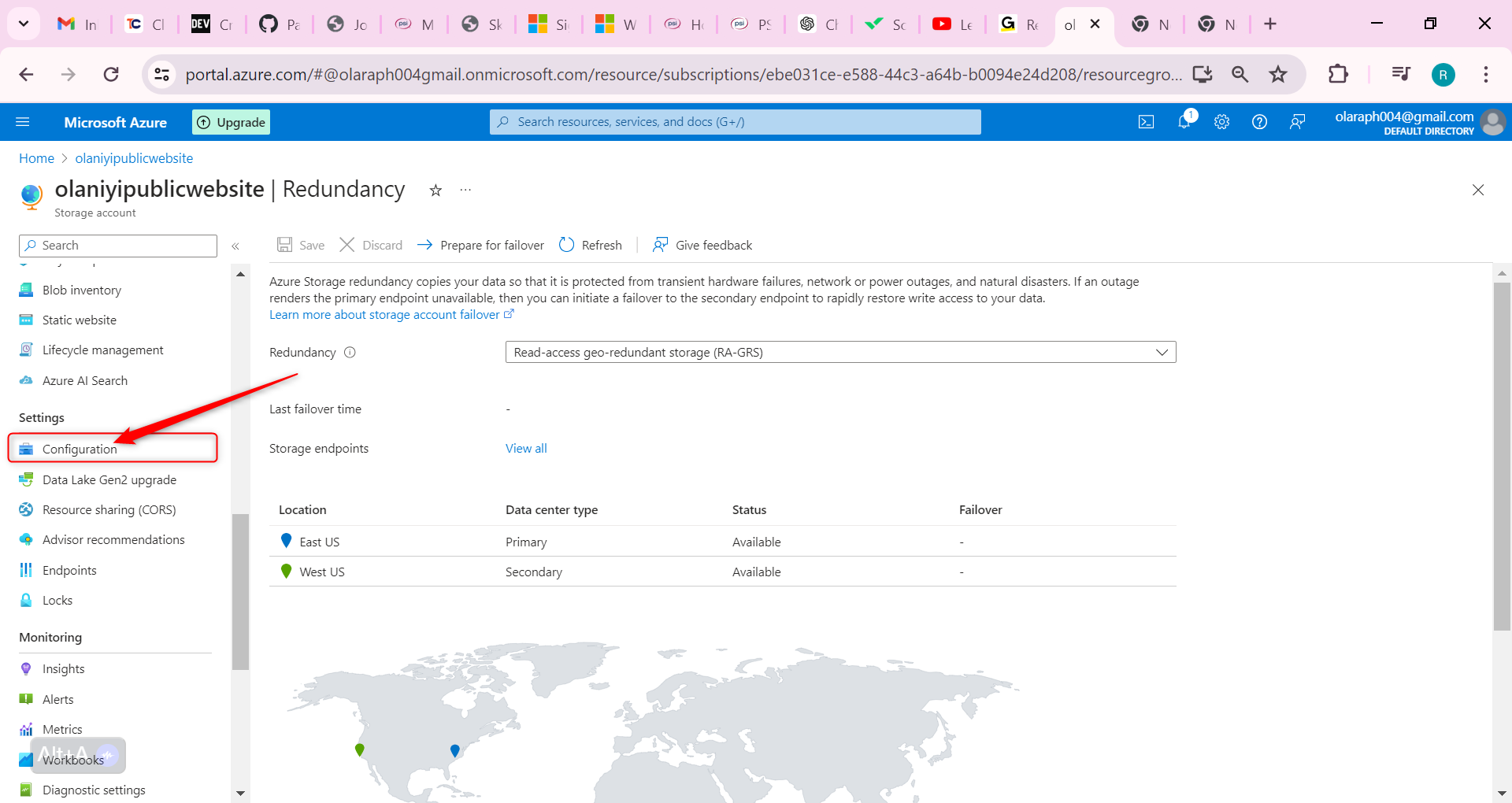

In the storage account, in the Data management section, select the Redundancy blade.

Ensure Read-access Geo-redundant storage is selected.

Review the primary and secondary location information.

It is important to note that Information on the public website should be accessible without requiring customers to login.

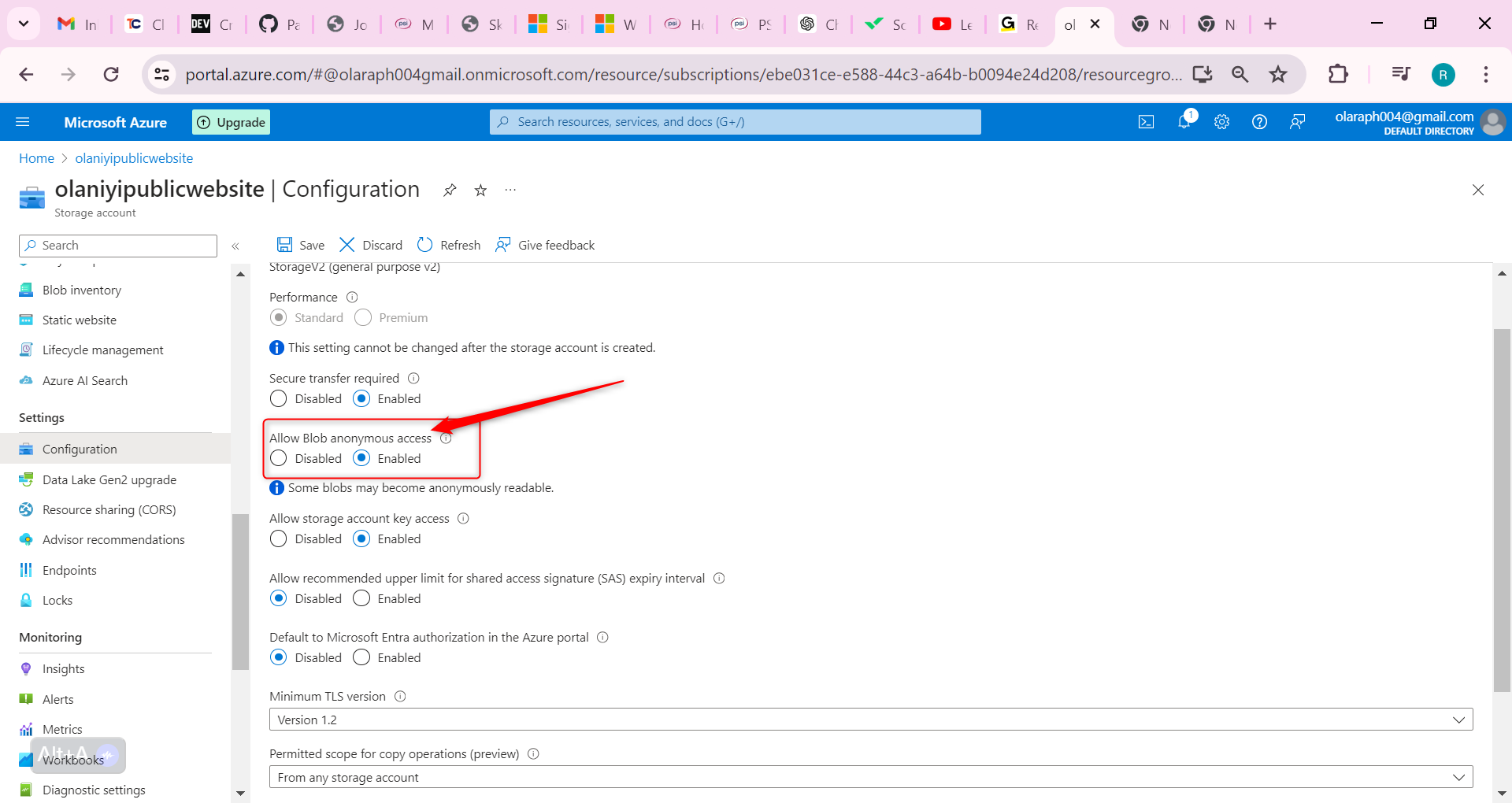

Hence In the storage account, in the Settings section, select the Configuration blade.

Ensure the Allow blob anonymous access setting is Enabled.

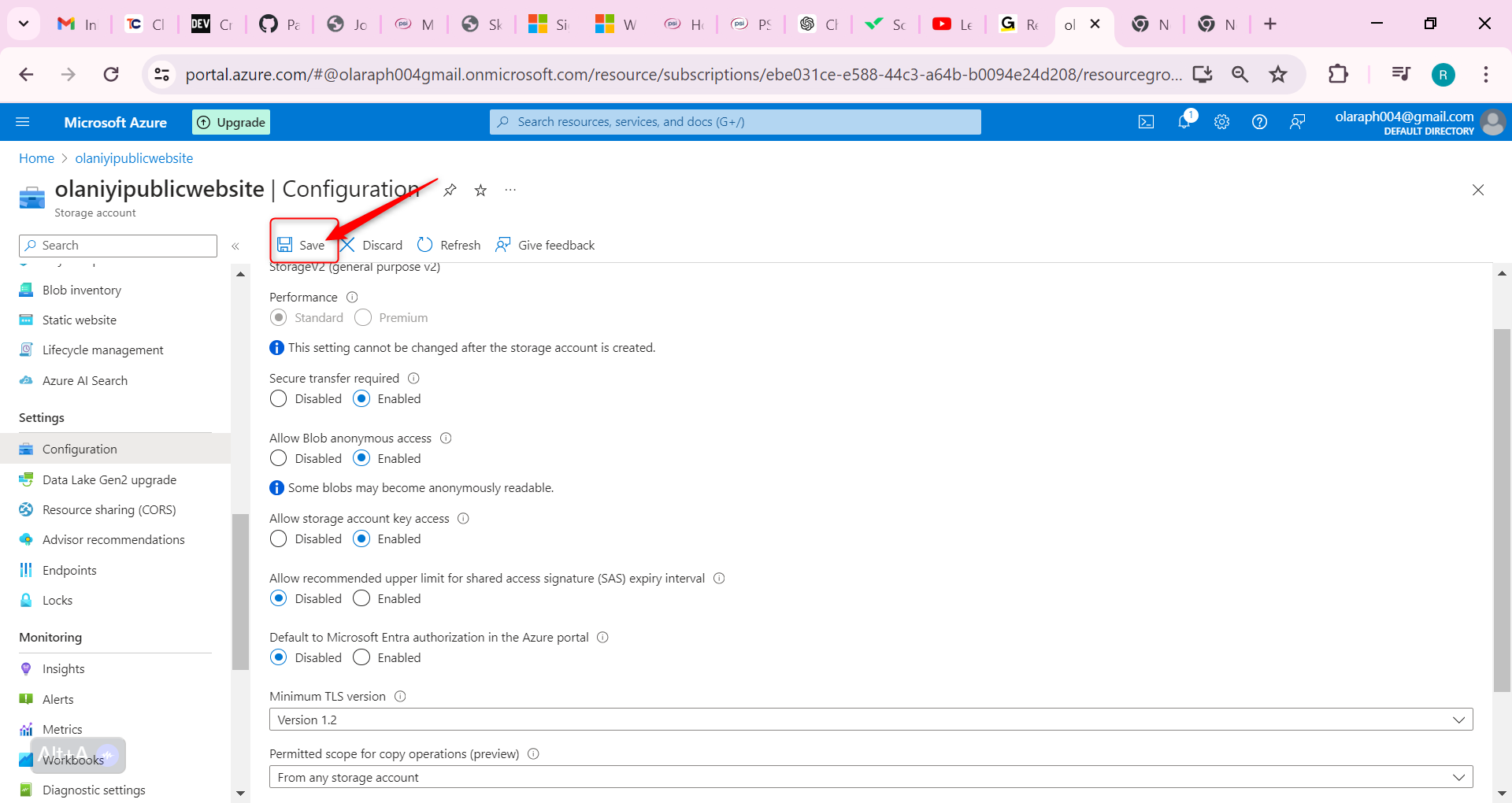

Be sure to Save your changes.

Ensure the storage account has anonymous public access.

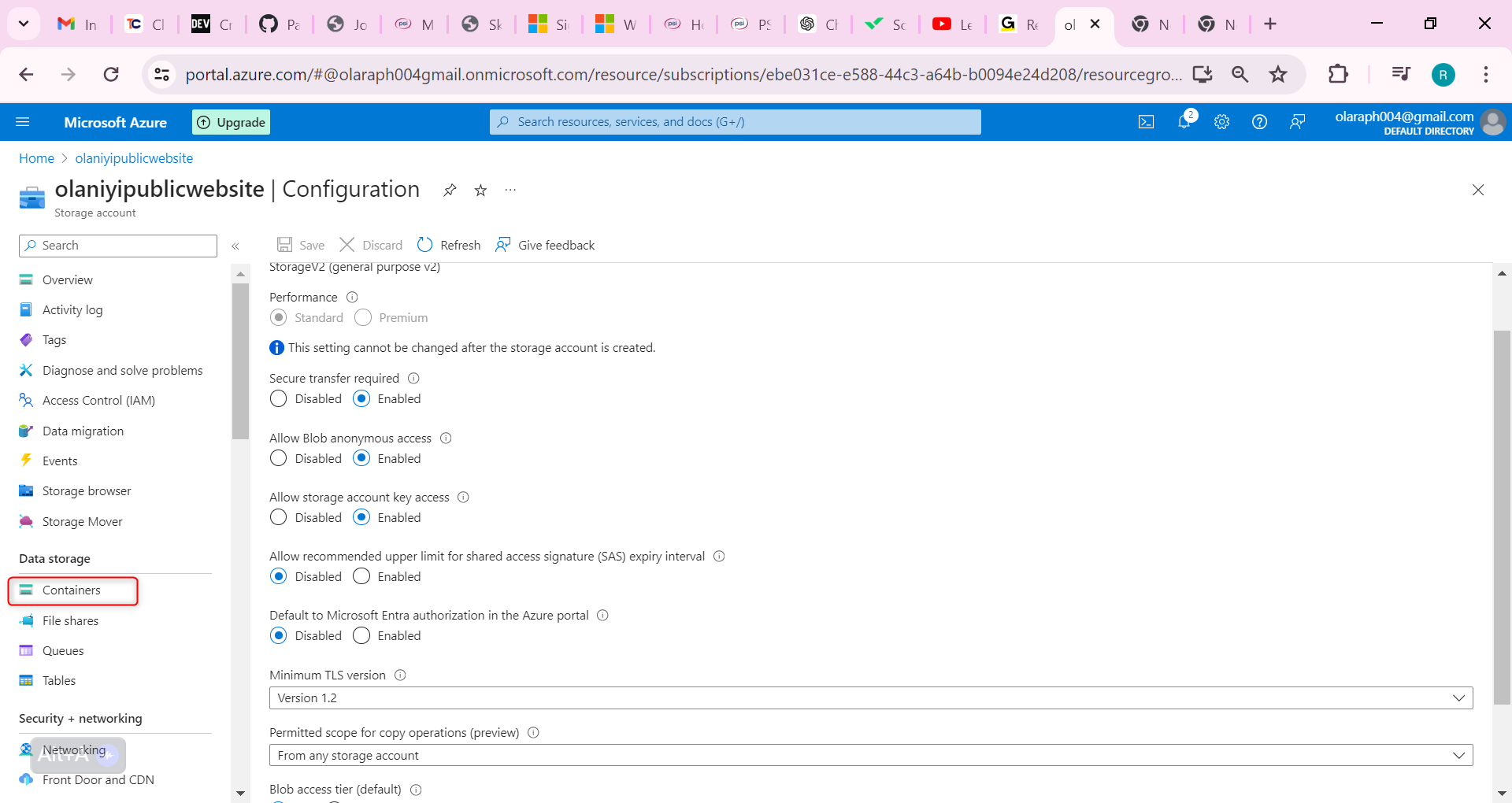

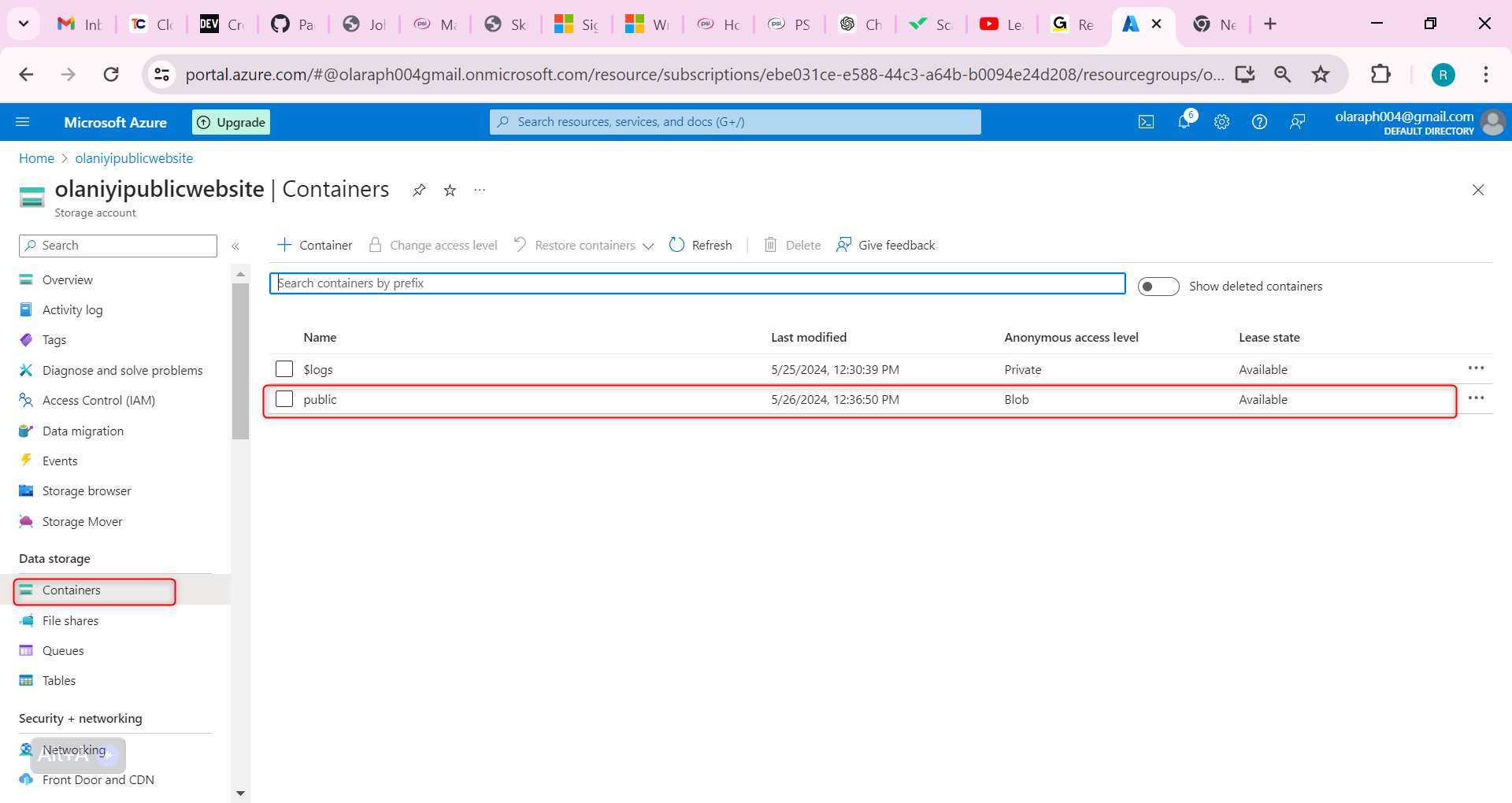

The public website has various images and documents. Create a blob storage container for the content.

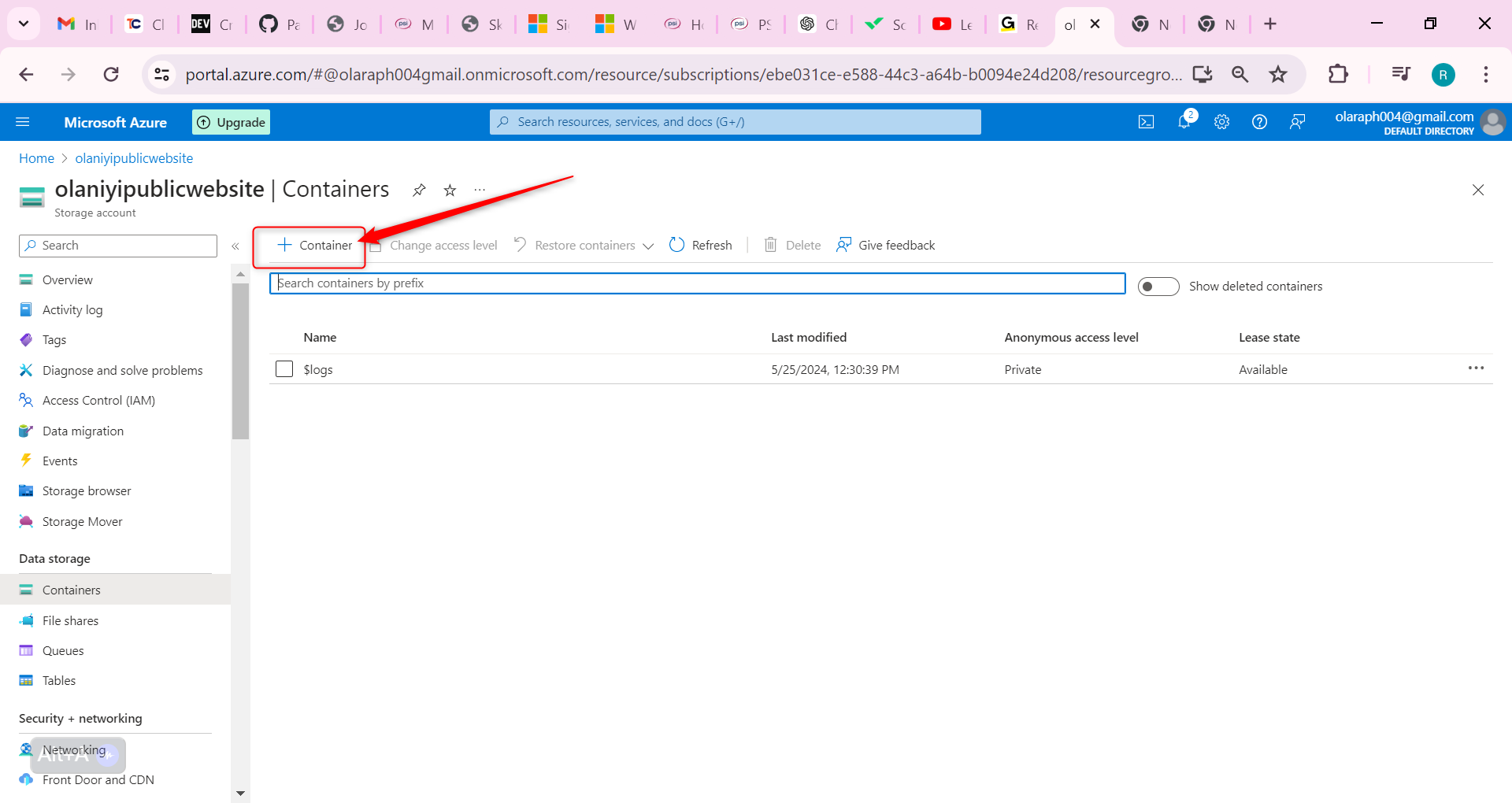

In your storage account, in the Data storage section, select the Containers blade.

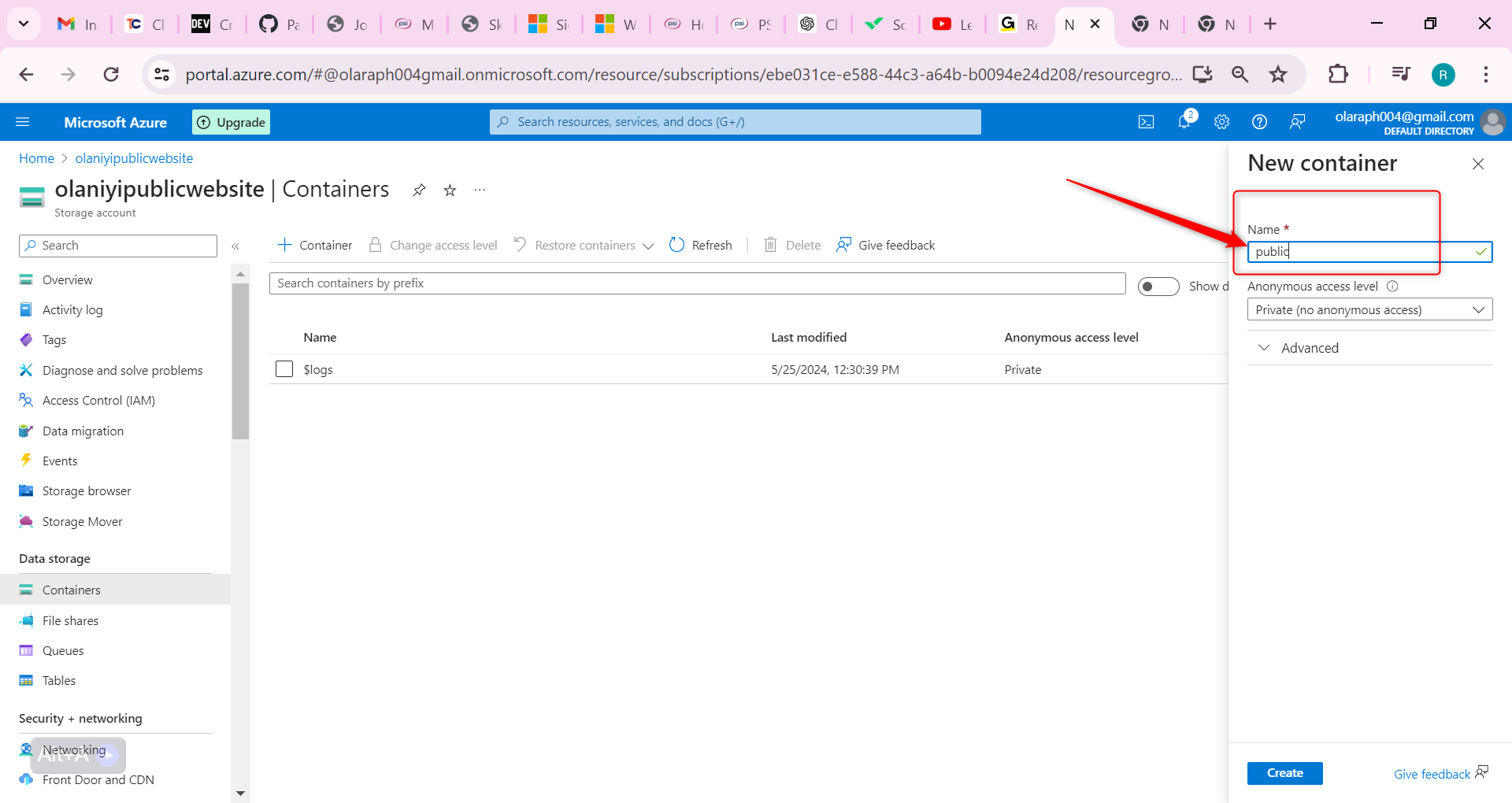

Select + Container.

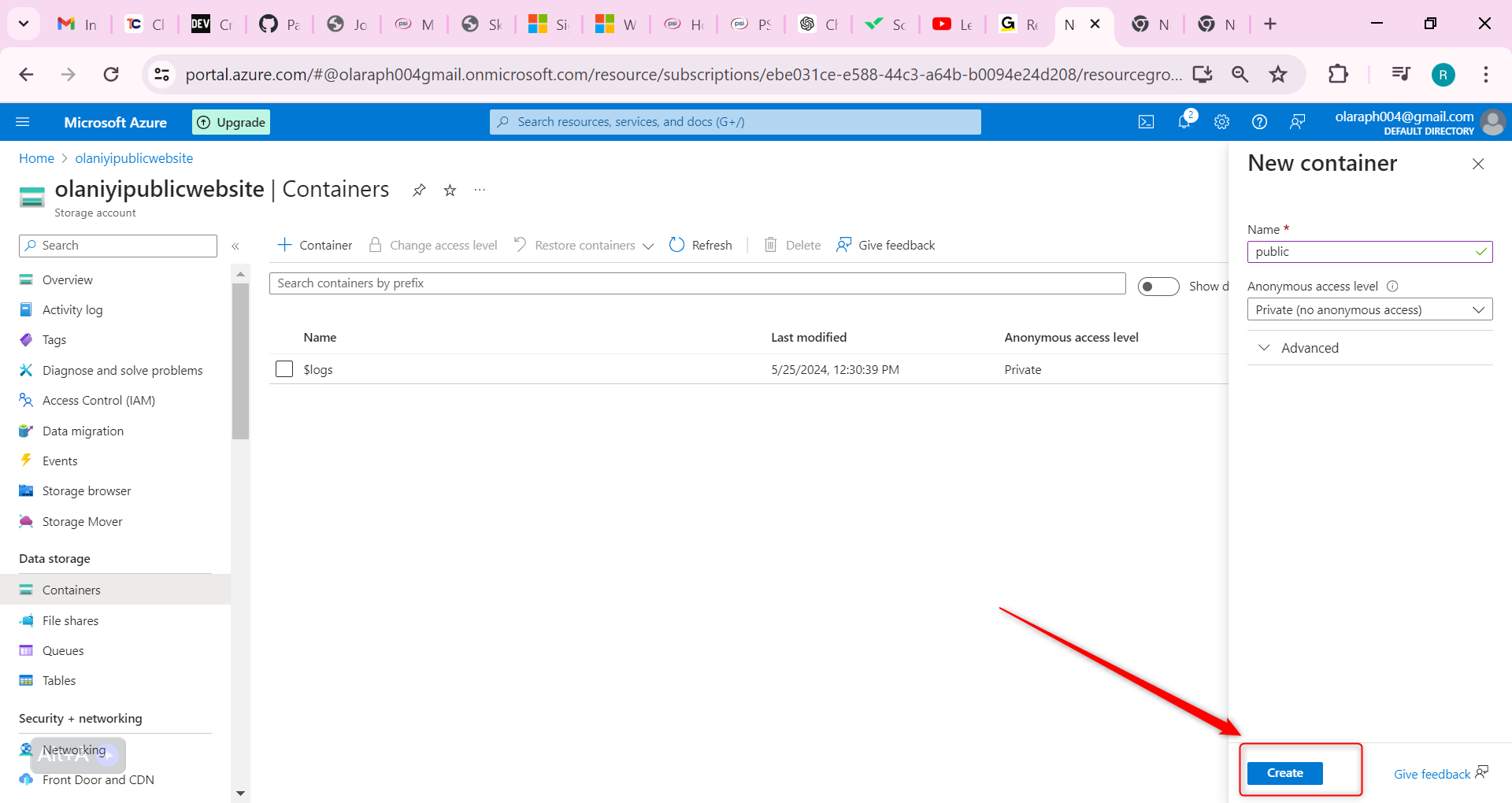

Ensure the Name of the container is public.

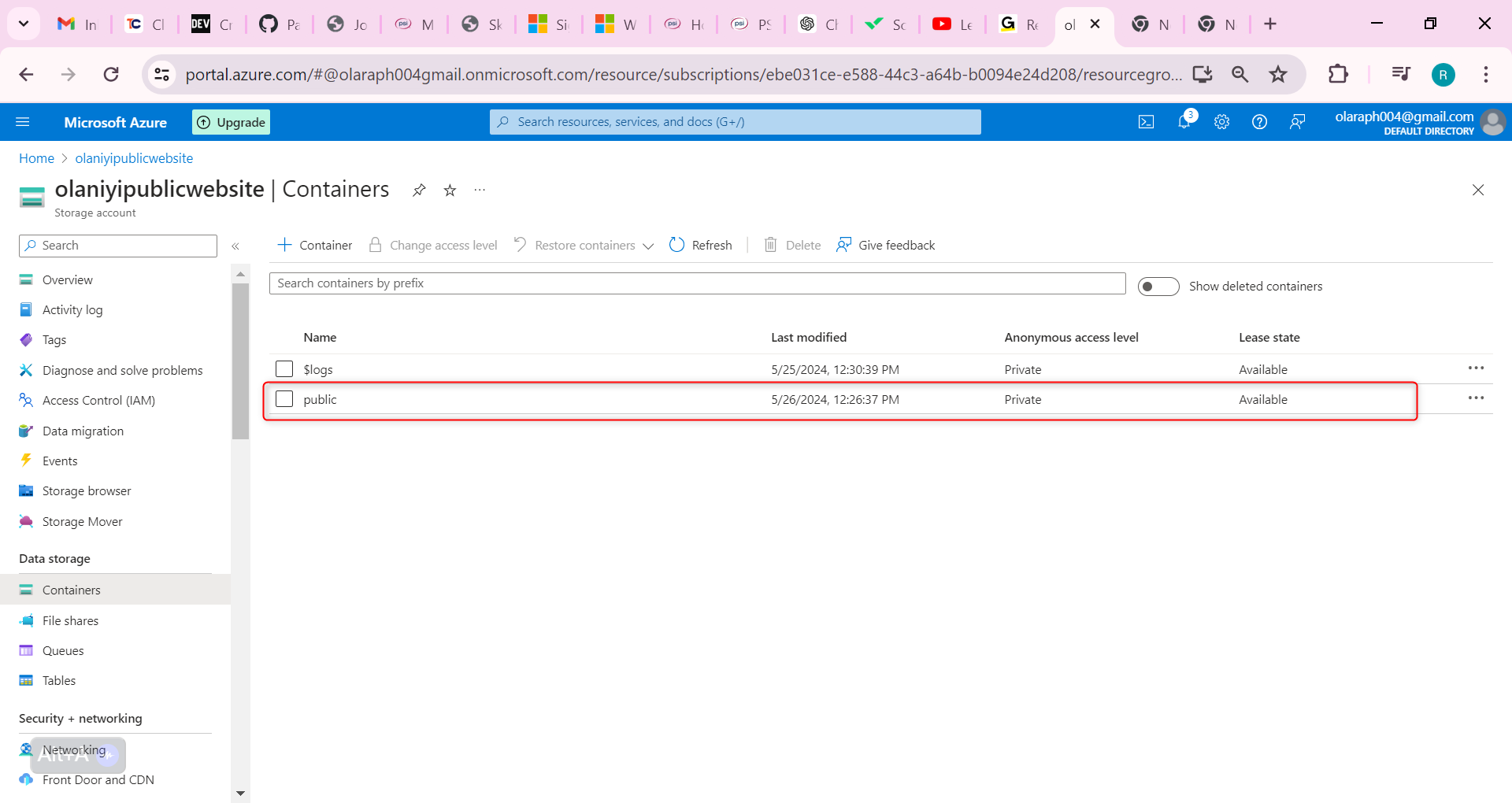

Select Create.

Customers should be able to view the images without being authenticated. Configure anonymous read access for the public container blobs. Learn more about configuring anonymous public access. to do this follow the step below;

Select your public container.

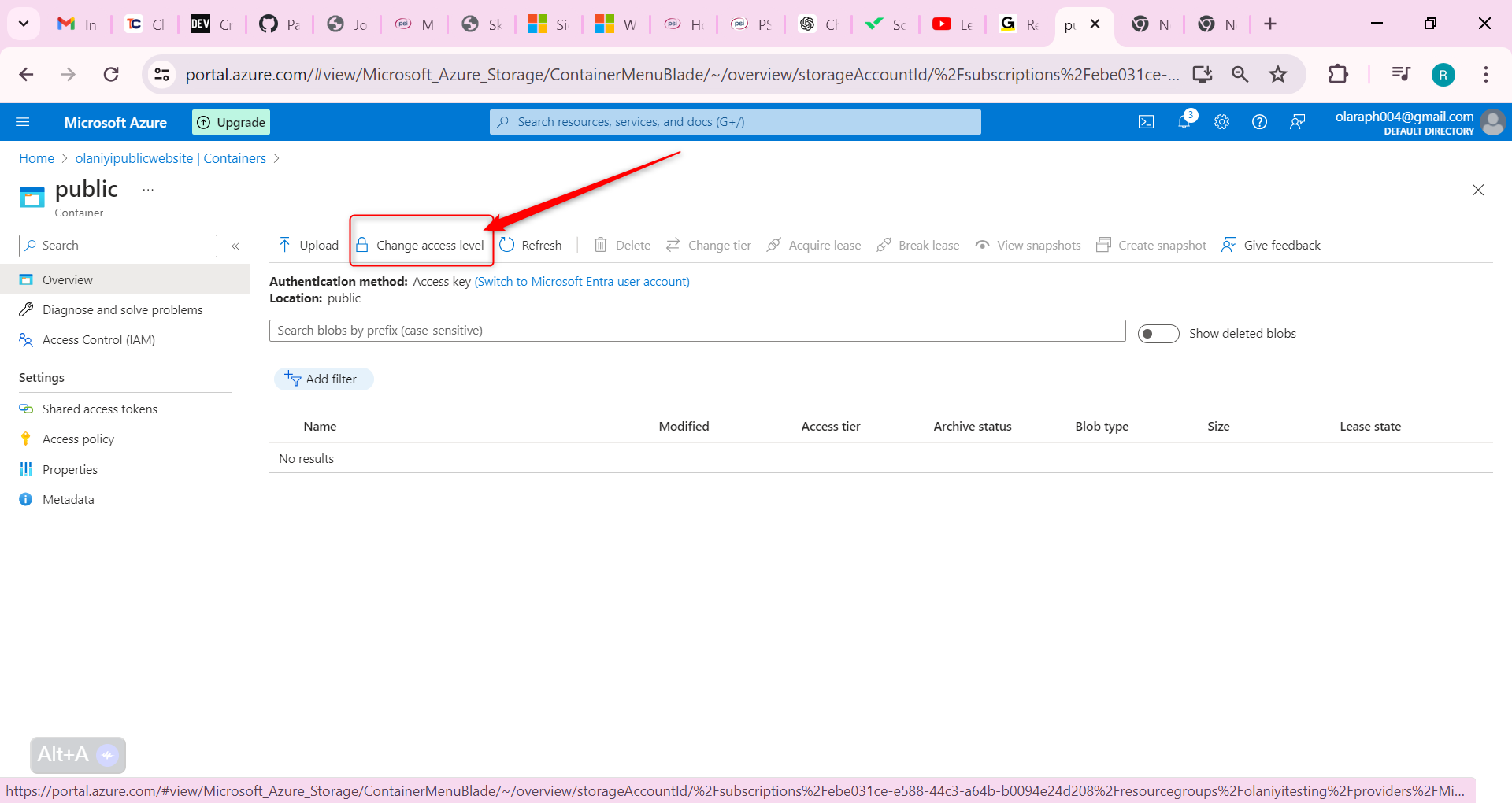

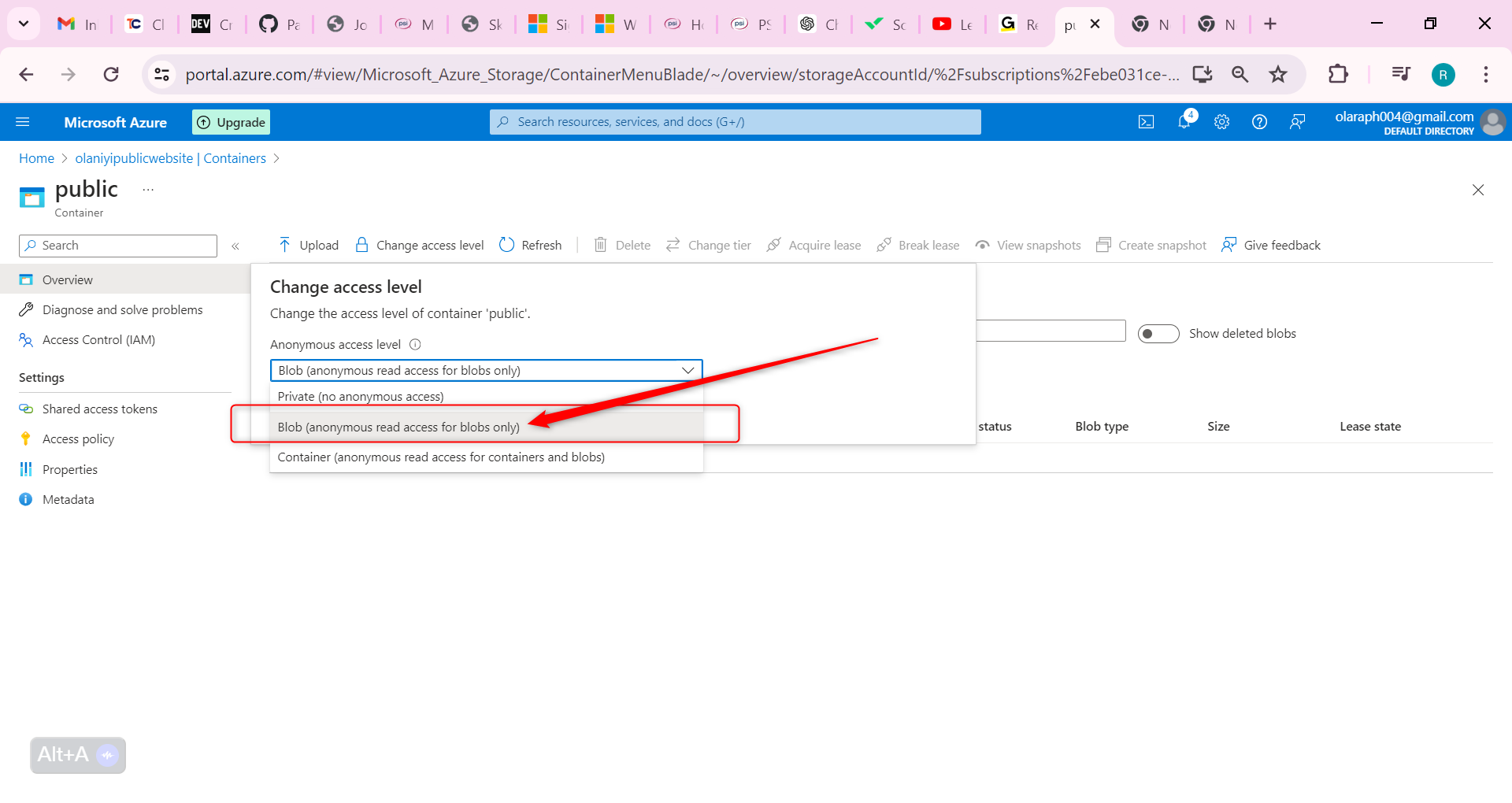

On the Overview blade, select Change access level.

Ensure the Public access level is Blob (anonymous read access for blobs only). Select OK.

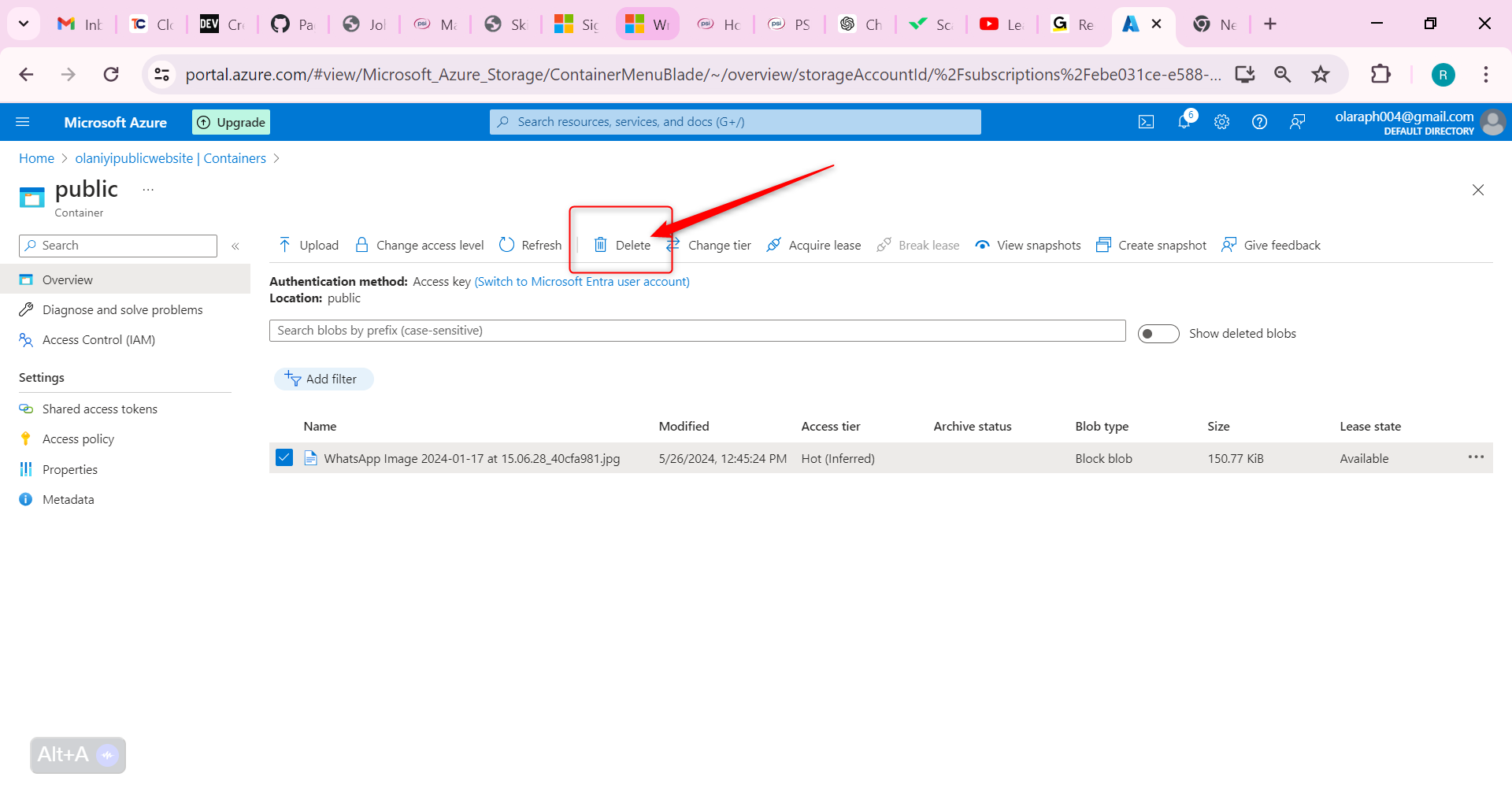

Lets Practice uploading files and testing access to be sure of what we have done

For testing, upload a file to the public container. The type of file doesn’t matter. A small image or text file is a good choice

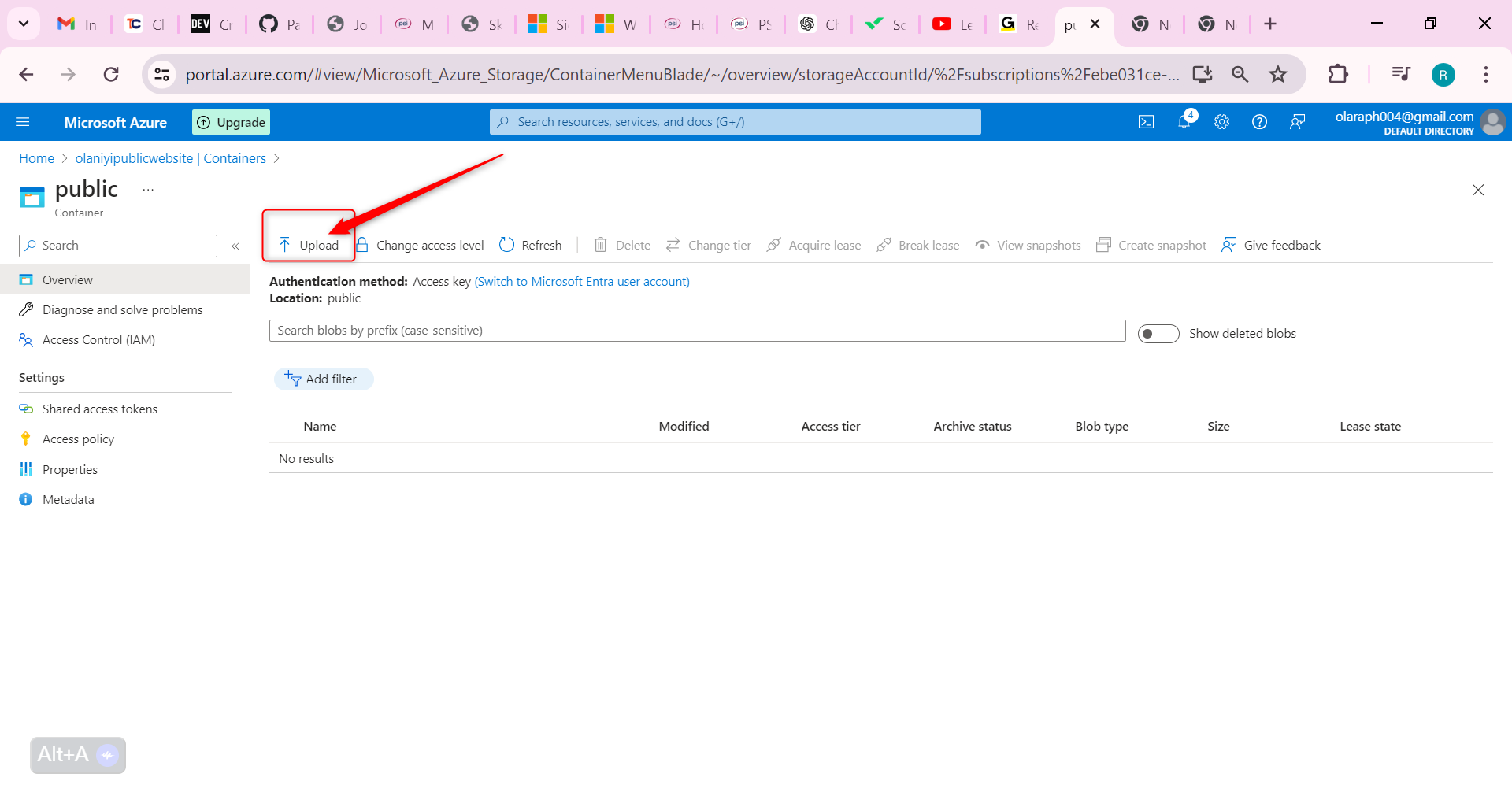

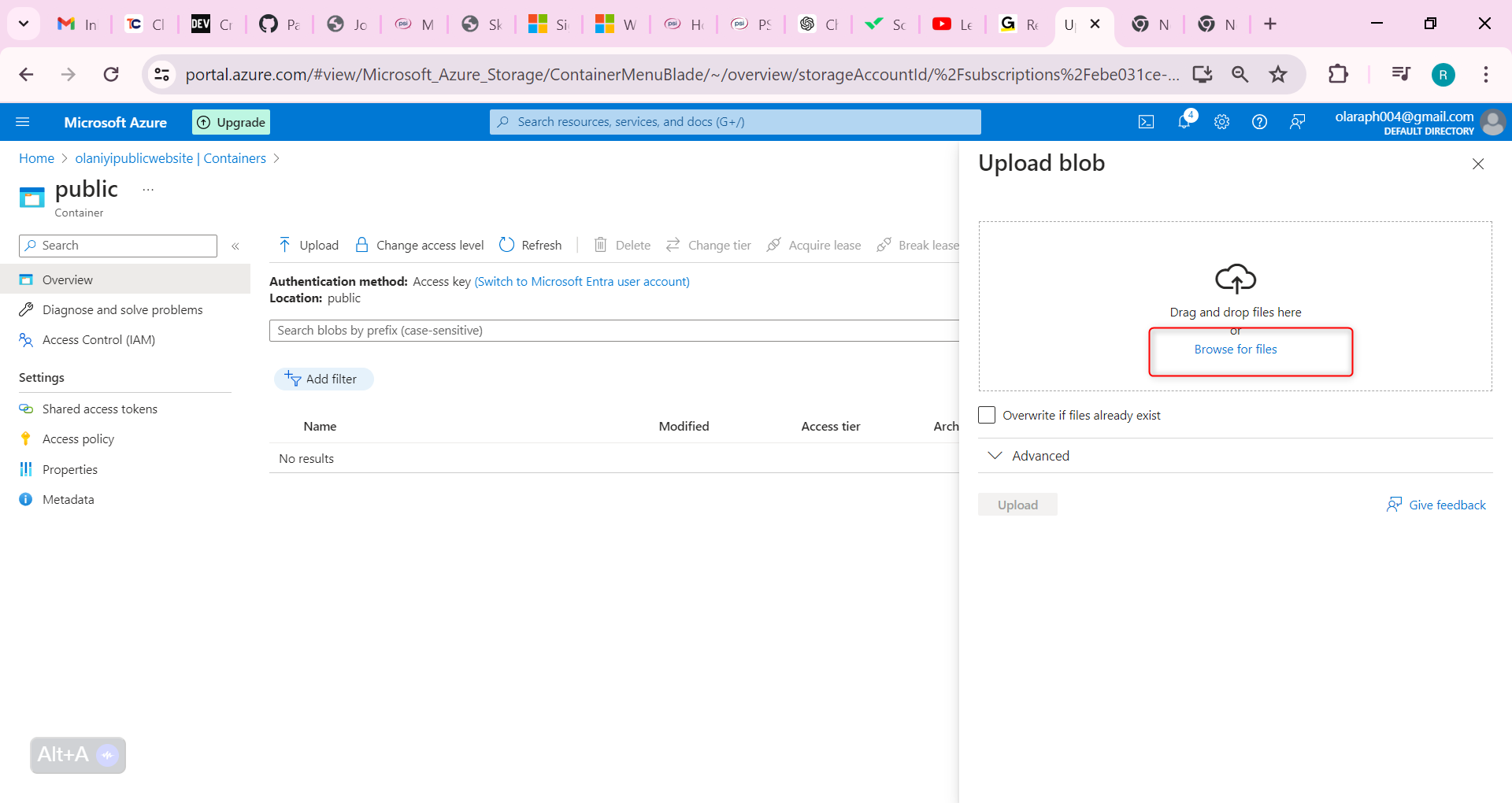

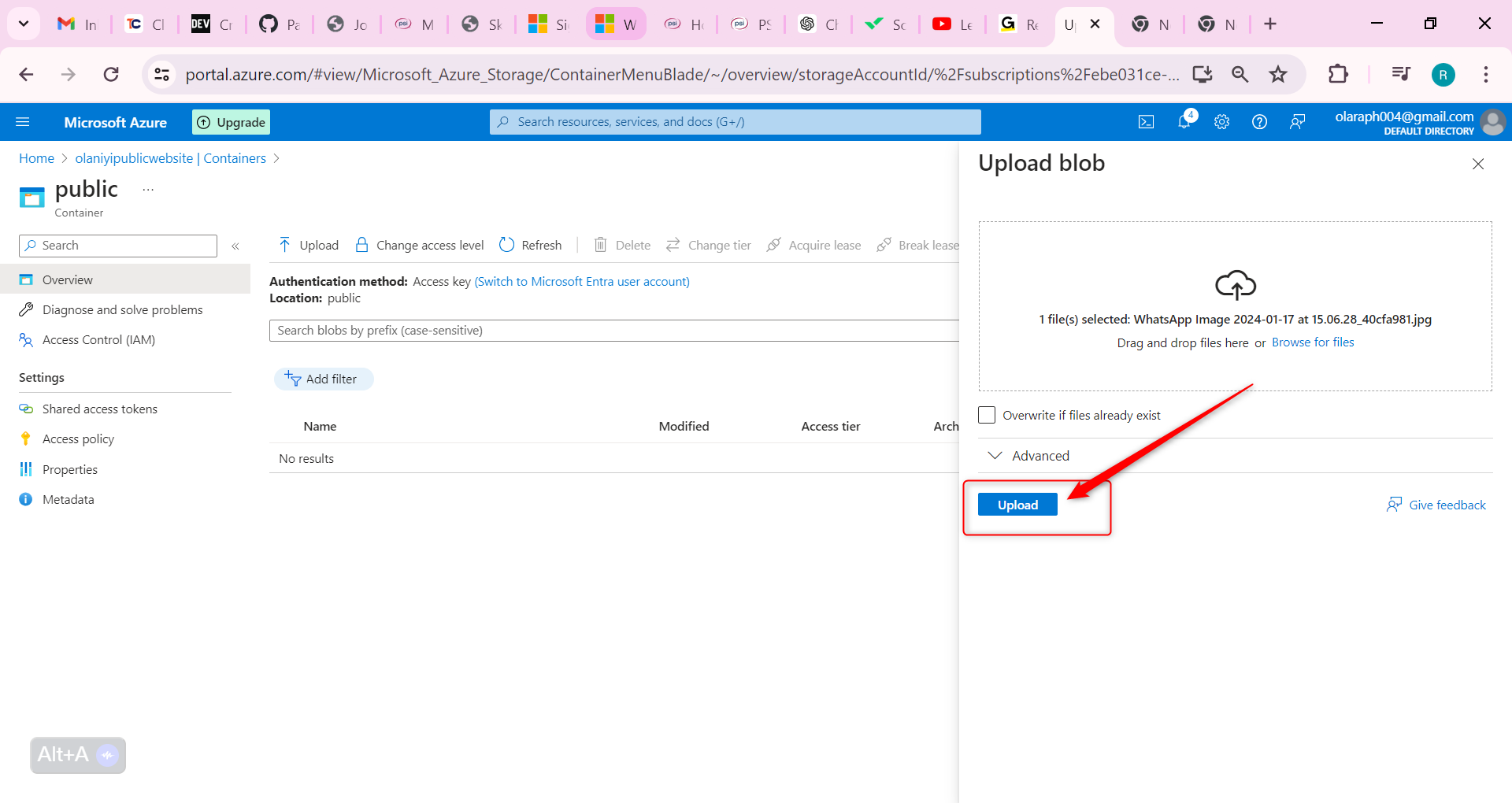

Ensure you are viewing your container then Select Upload.

Browse to files and select a file. Browse to a file of your choice.

Select Upload.

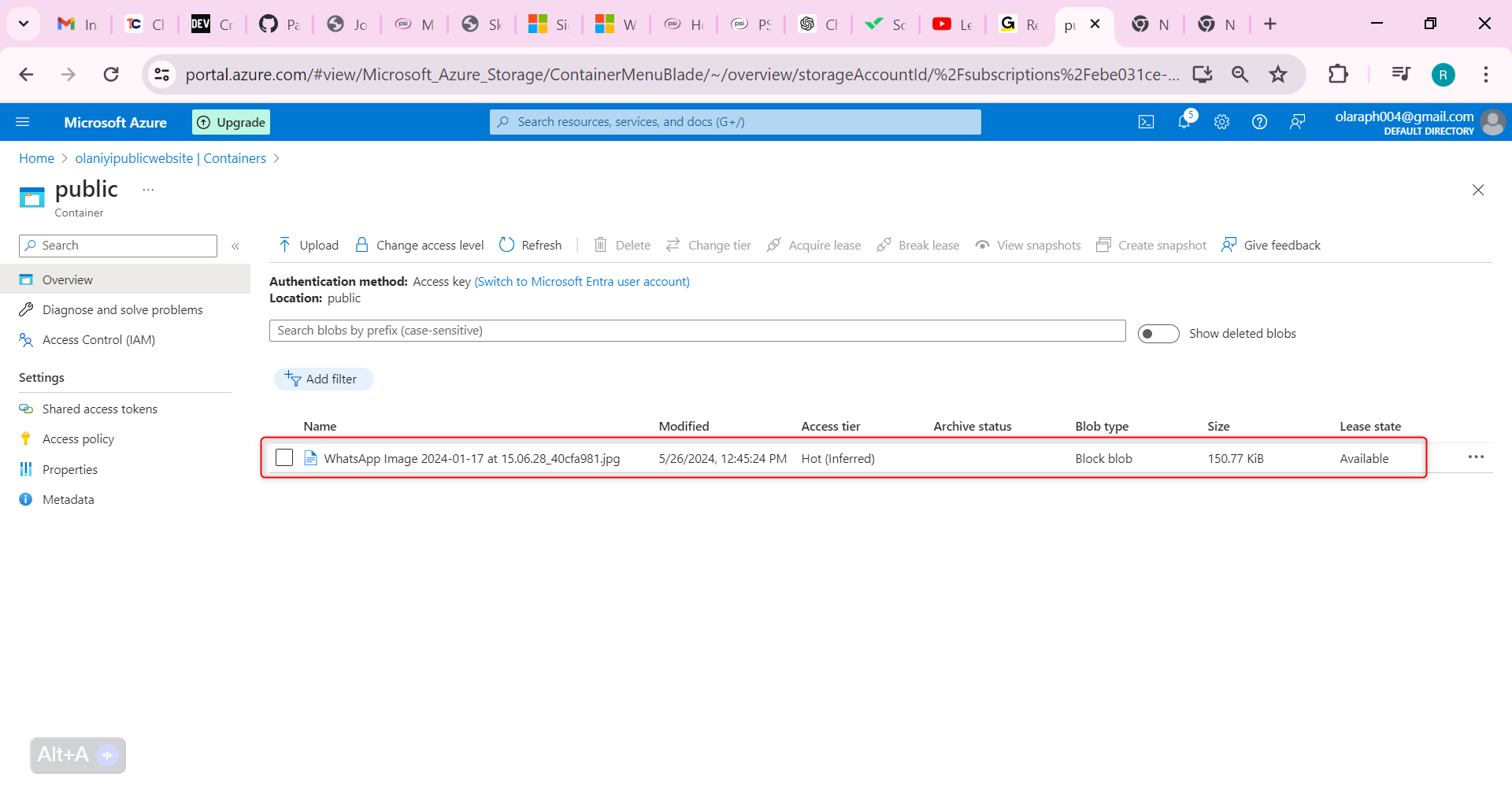

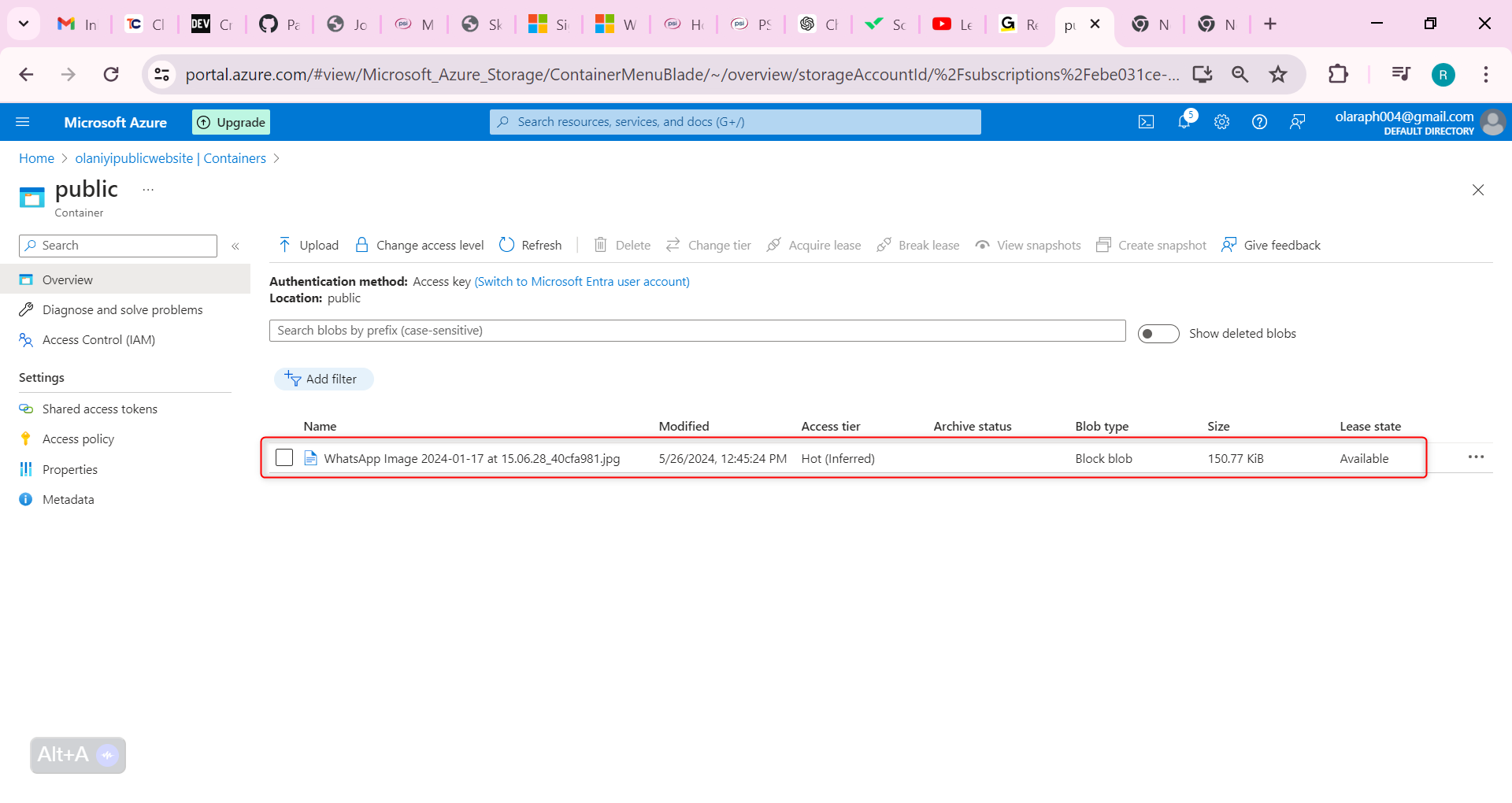

Close the upload window, Refresh the page and ensure your file was uploaded.

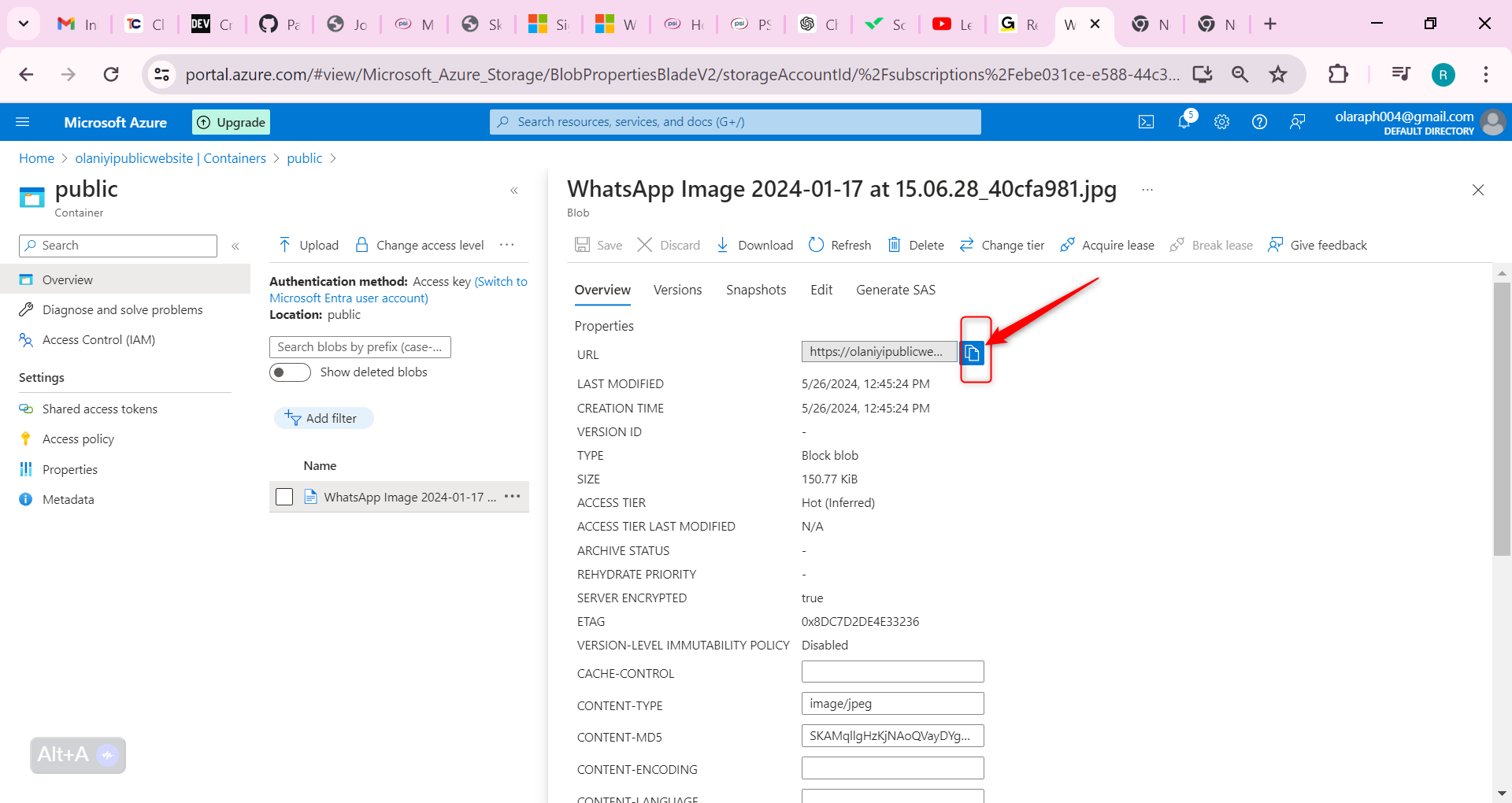

Determine the URL for your uploaded file. Open a browser and test the URL.

Select your uploaded file.

On the Overview tab, copy the URL.

Paste the URL into a new browser tab, If you have uploaded an image file it will display in the browser. Other file types should be downloaded.

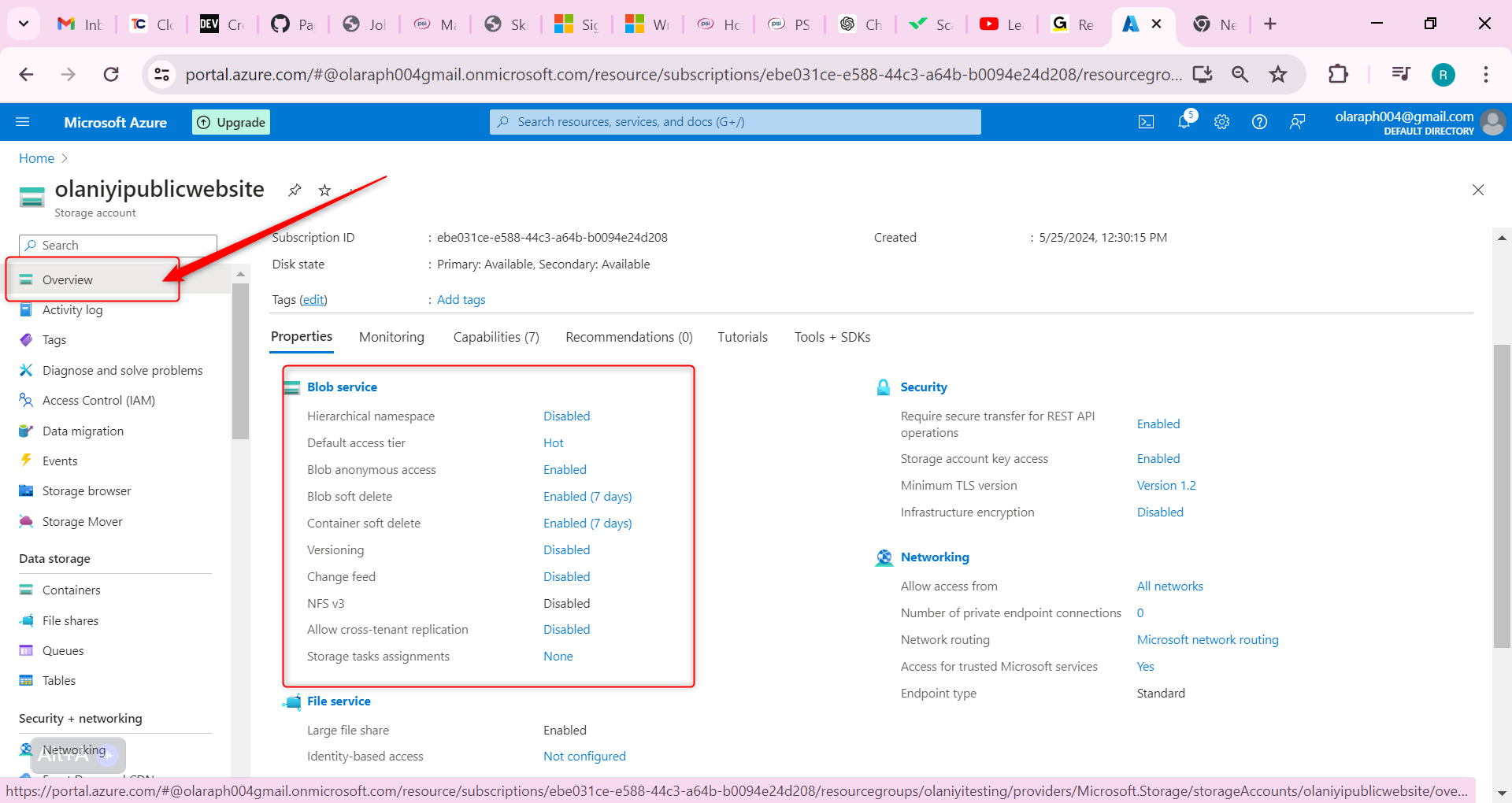

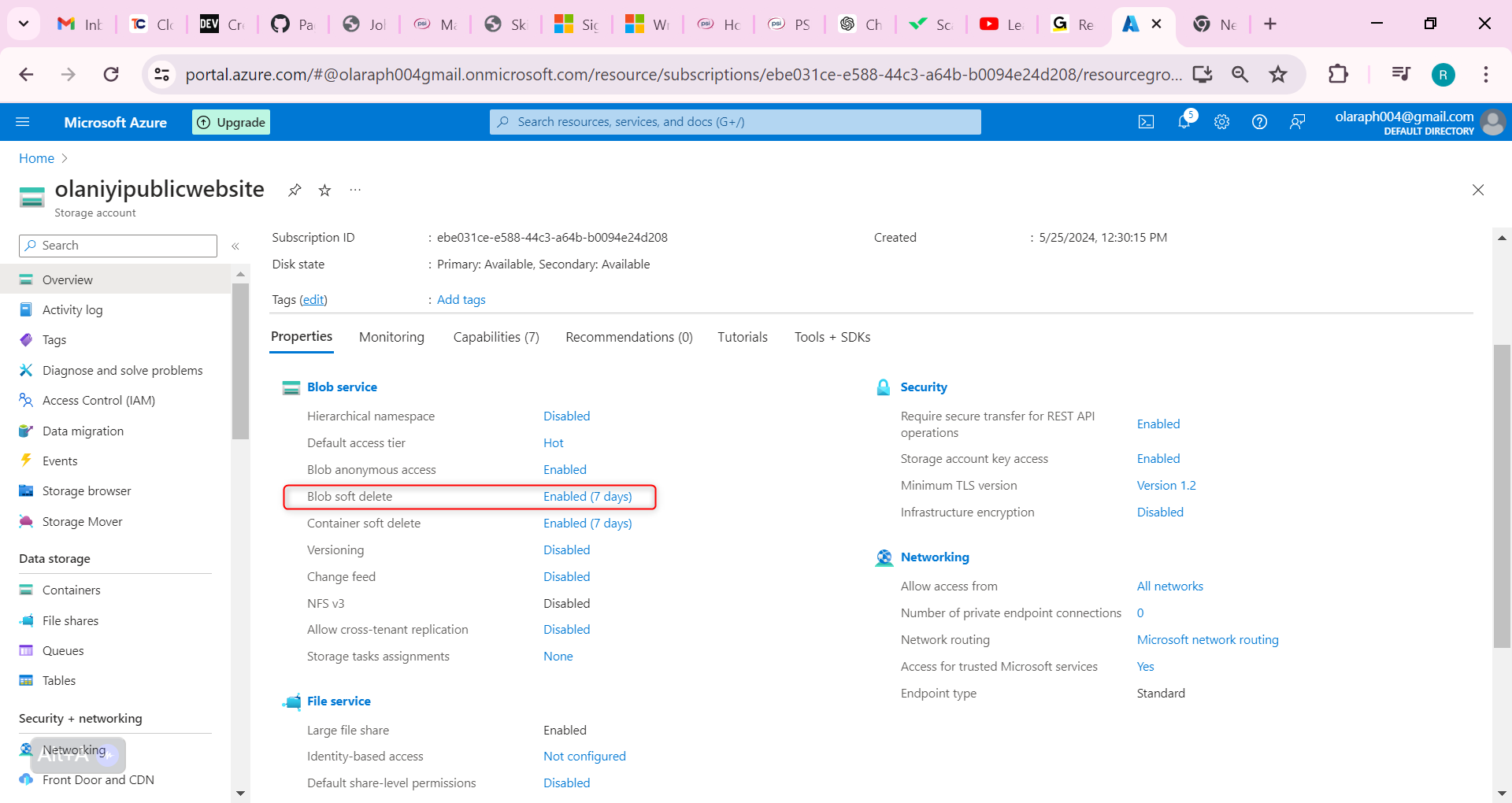

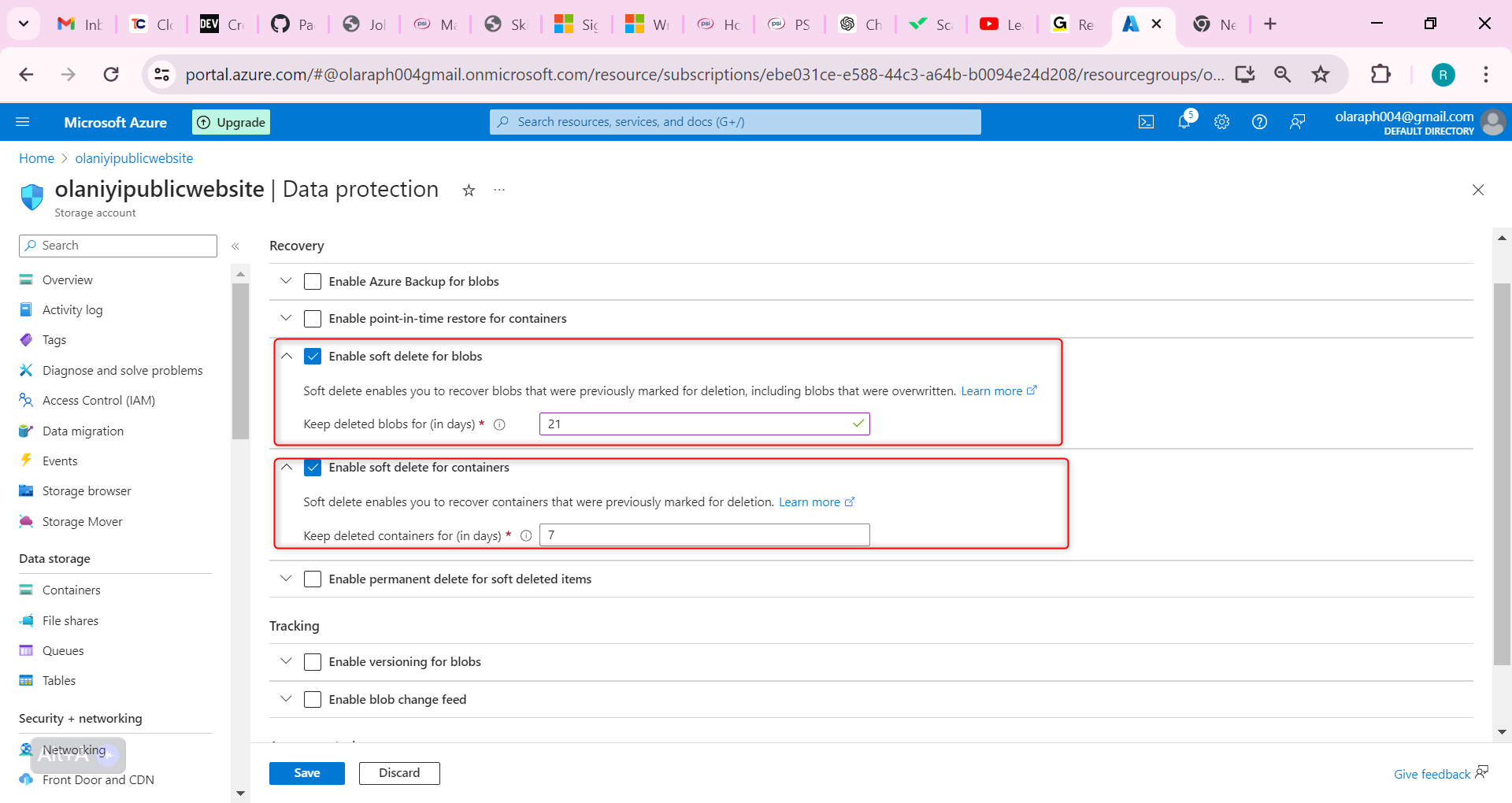

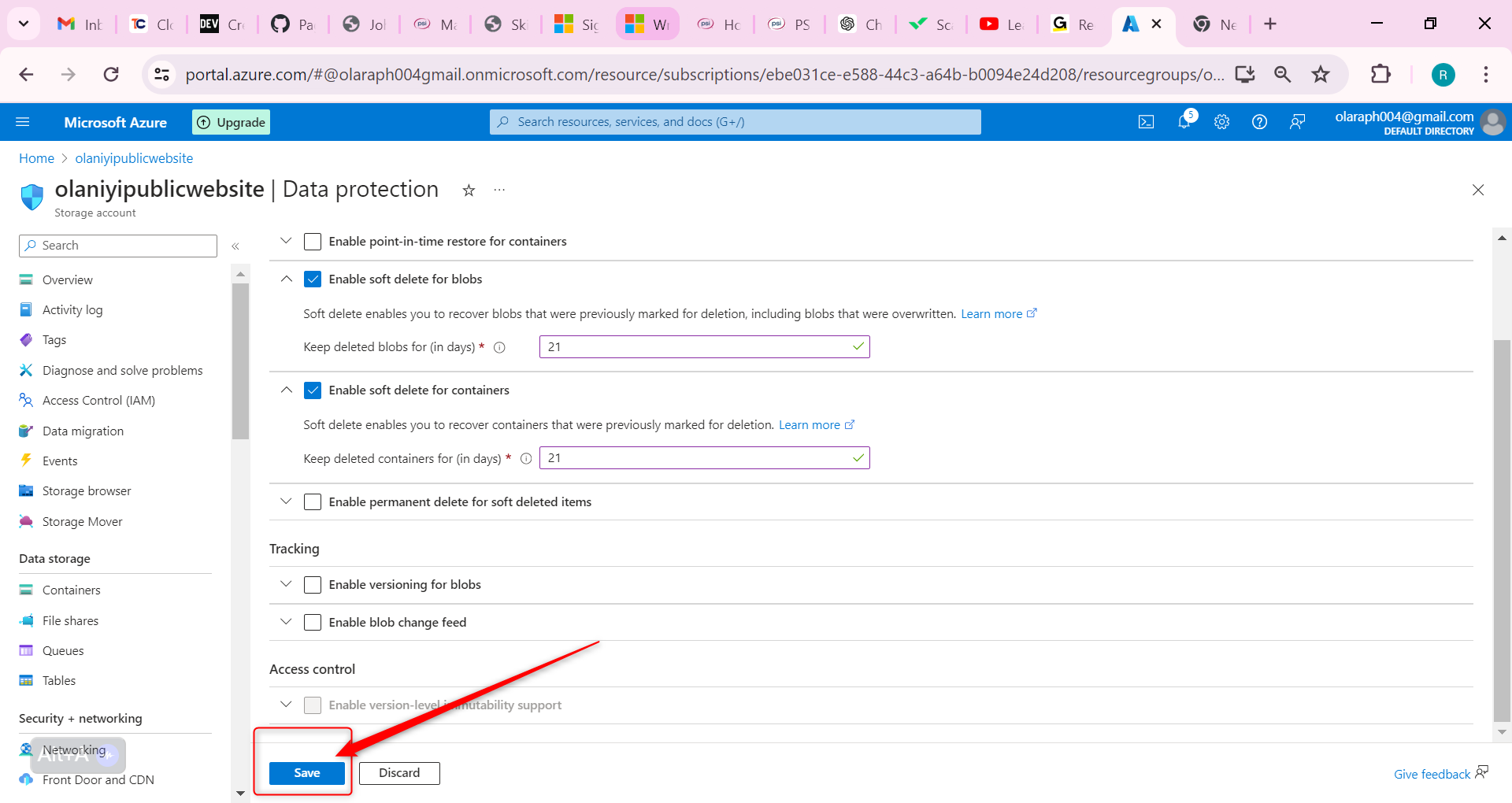

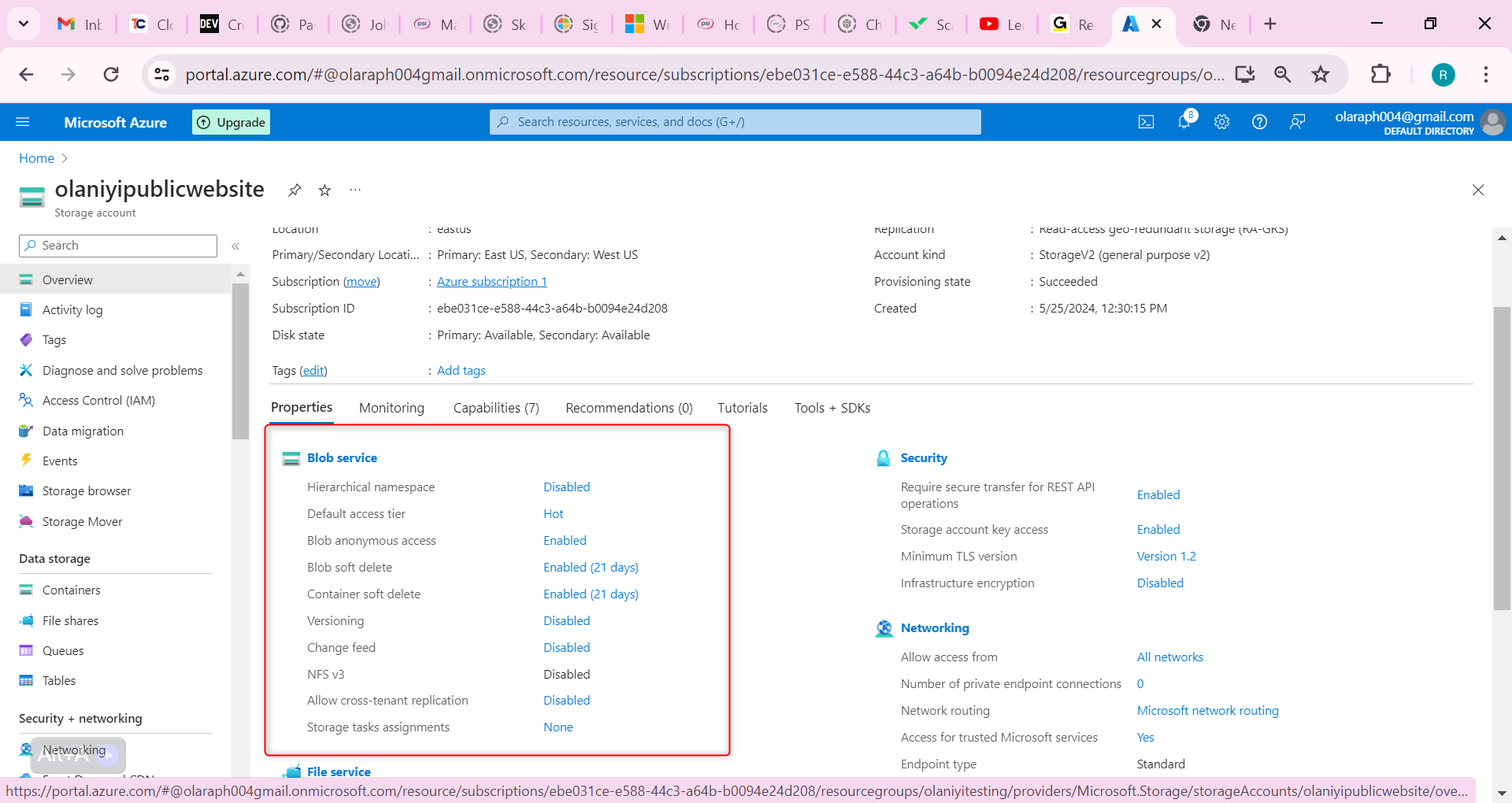

Now let us configure Soft Delete; It’s important that the website documents can be restored if they’re deleted. Configure blob soft delete for 21 days.

Go to the Overview blade of the storage account.

On the Properties page, locate the Blob service section.

Select the Blob soft delete setting.

Ensure the Enable soft delete for blobs is checked.

Change the Keep deleted blobs for (in days setting is 21.

Notice you can also Enable soft delete for containers.

Don’t forget to Save your changes.

If something gets deleted, you need to practice using soft delete to restore the files

Navigate to your container where you uploaded a file.

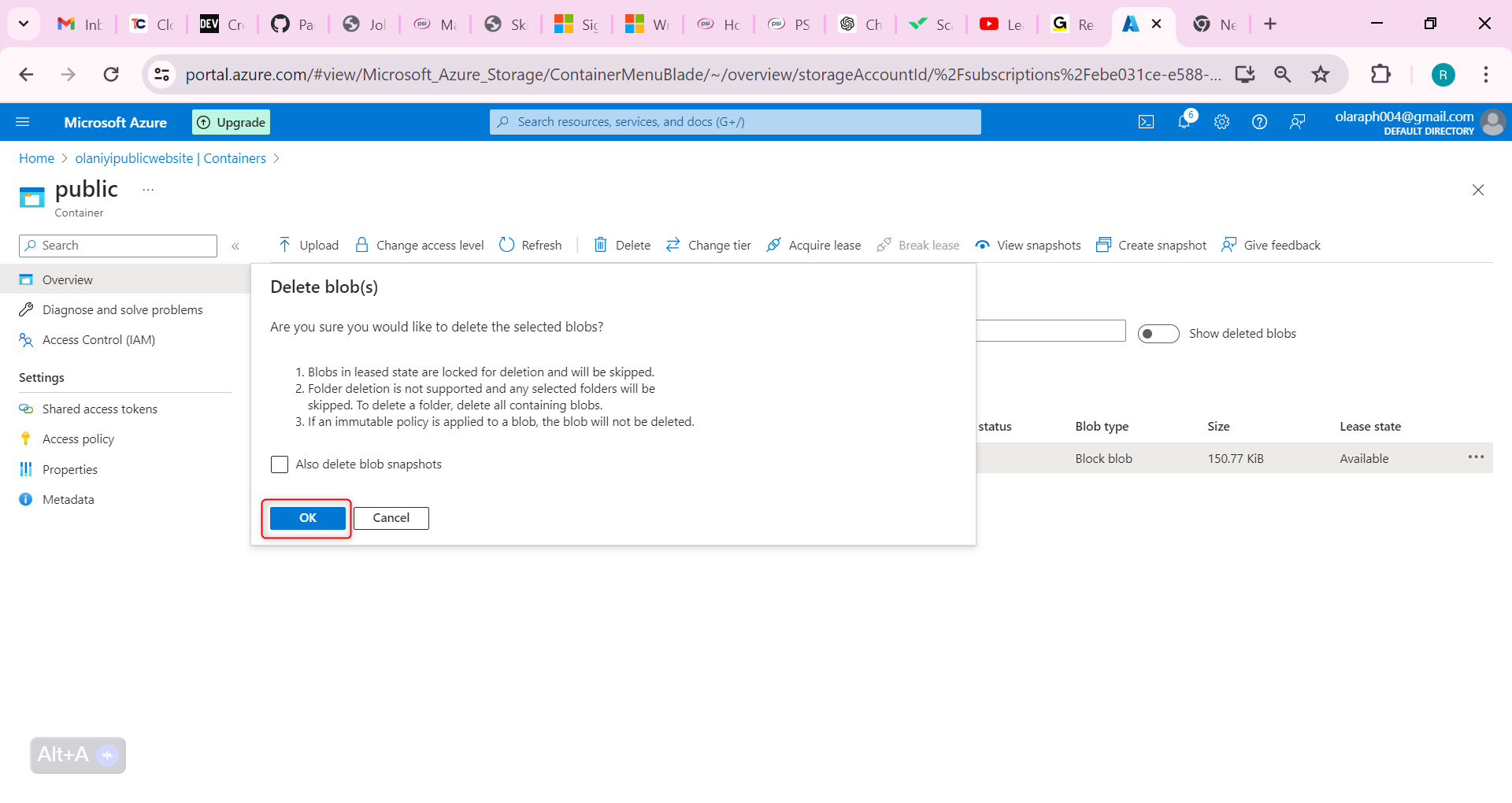

Select the file you uploaded and then select Delete.

Select OK to confirm deleting the file.

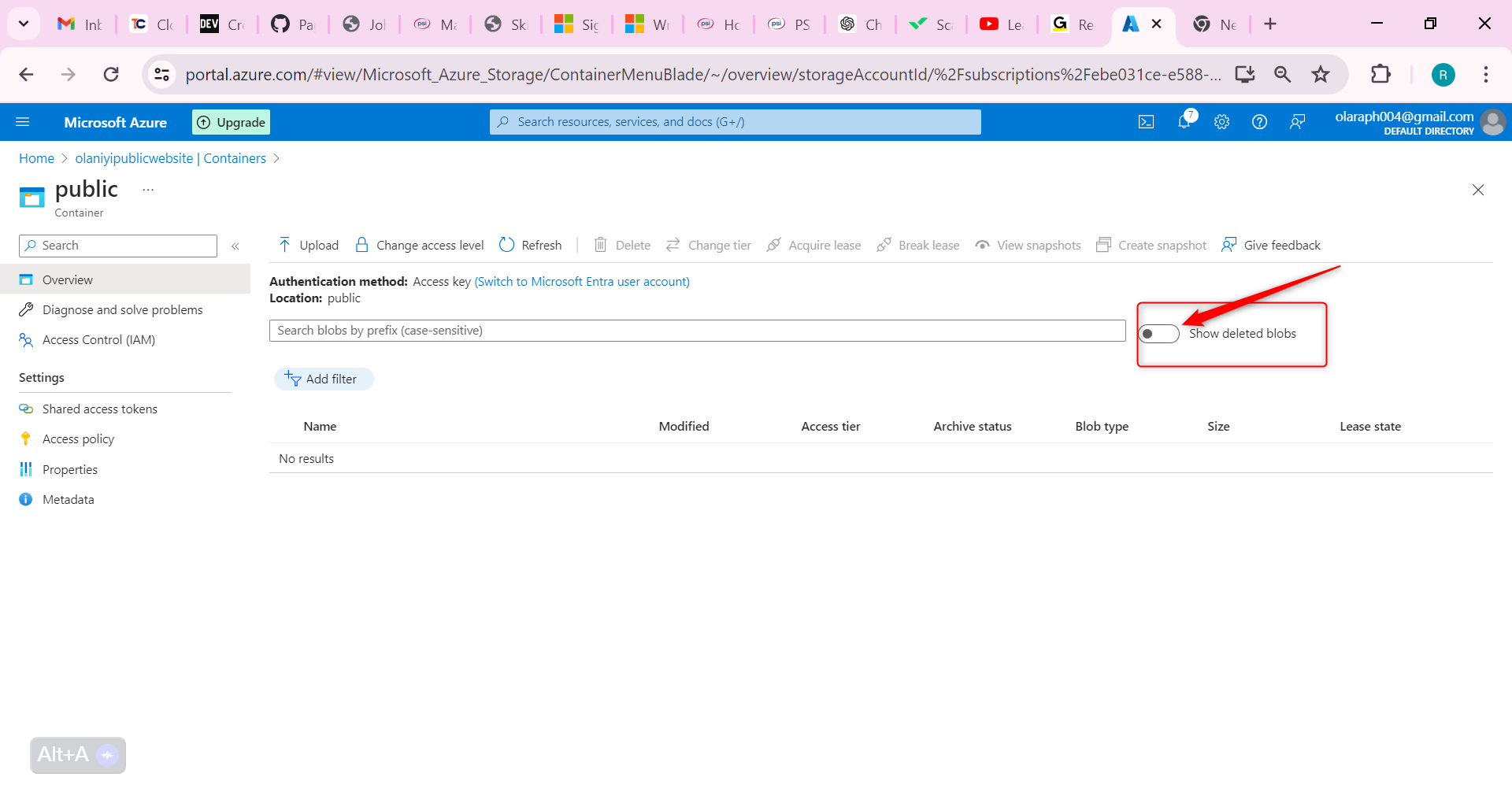

On the container Overview page, toggle the slider Show deleted blobs. This toggle is to the right of the search box.

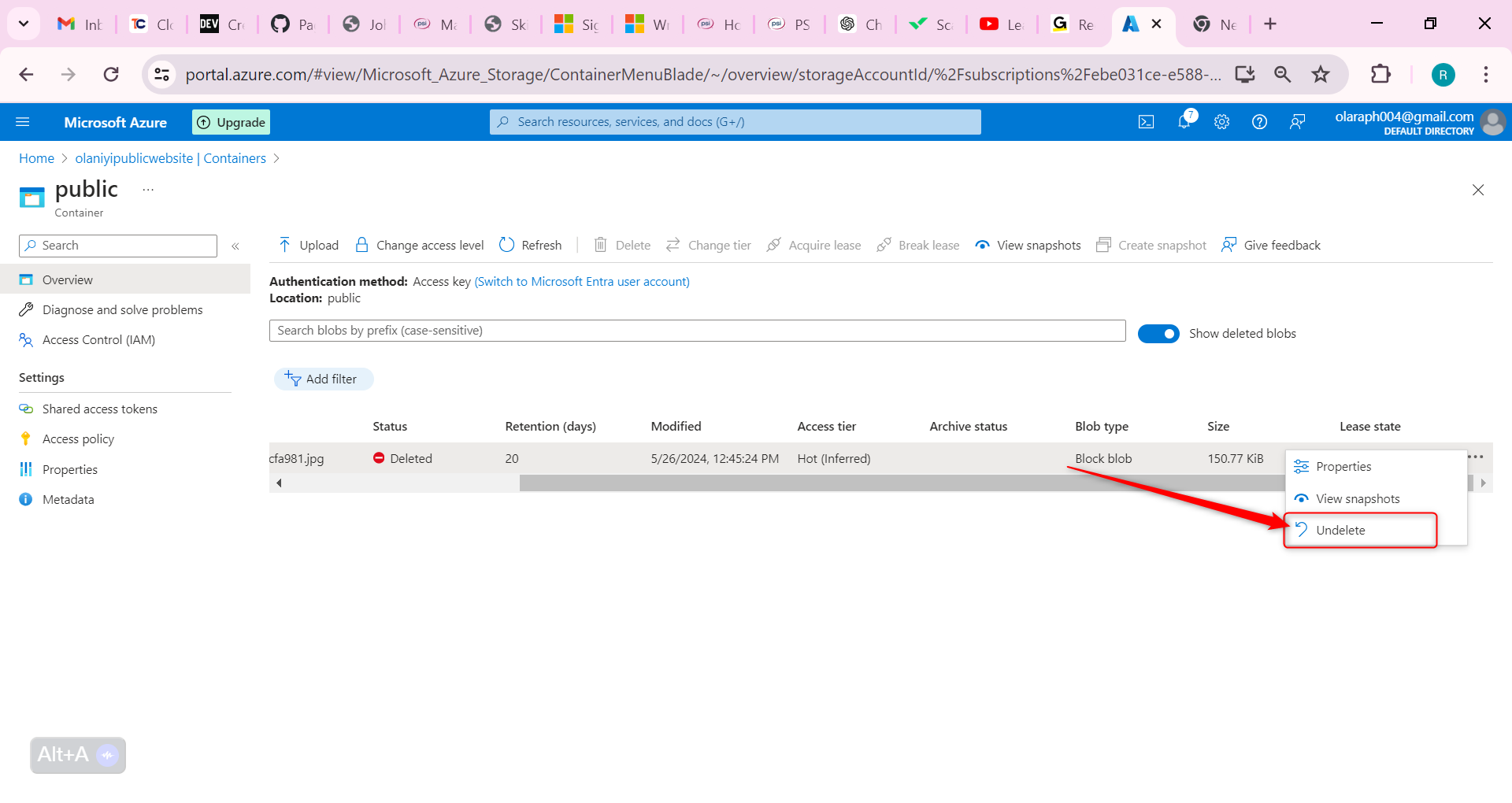

Select your deleted file, and use the ellipses on the far right, to Undelete the file.

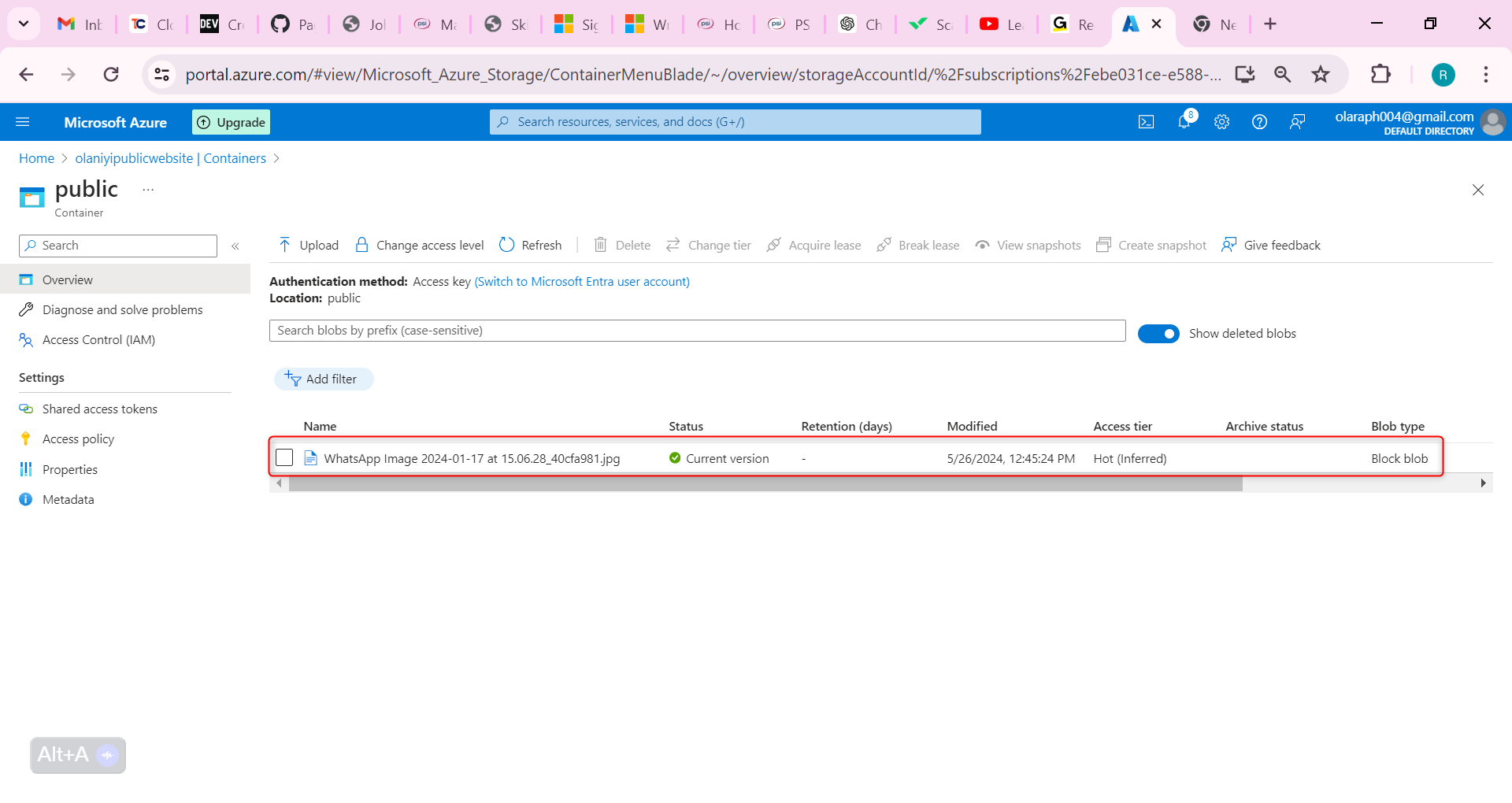

Refresh the container and confirm the file has been restored.

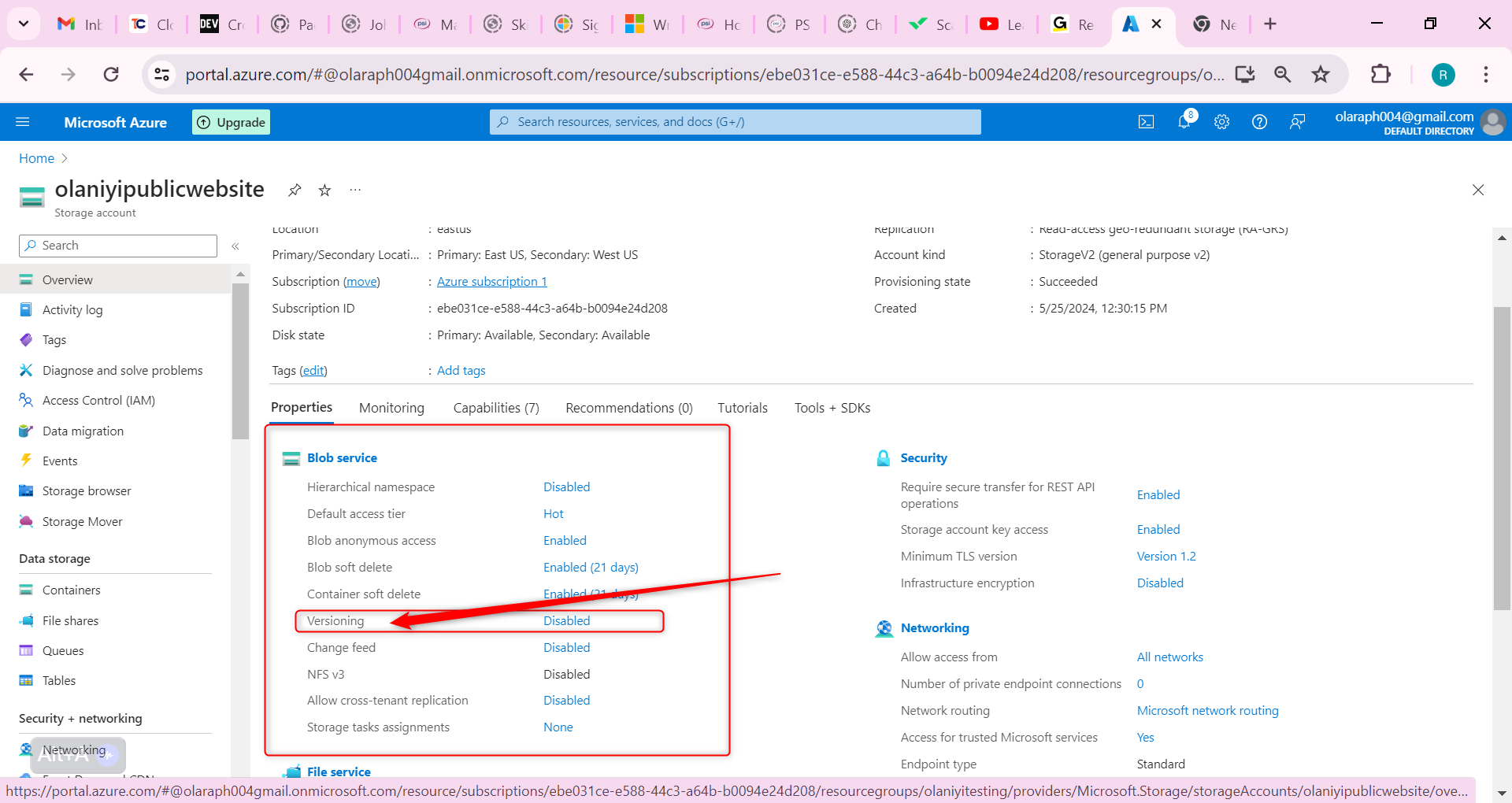

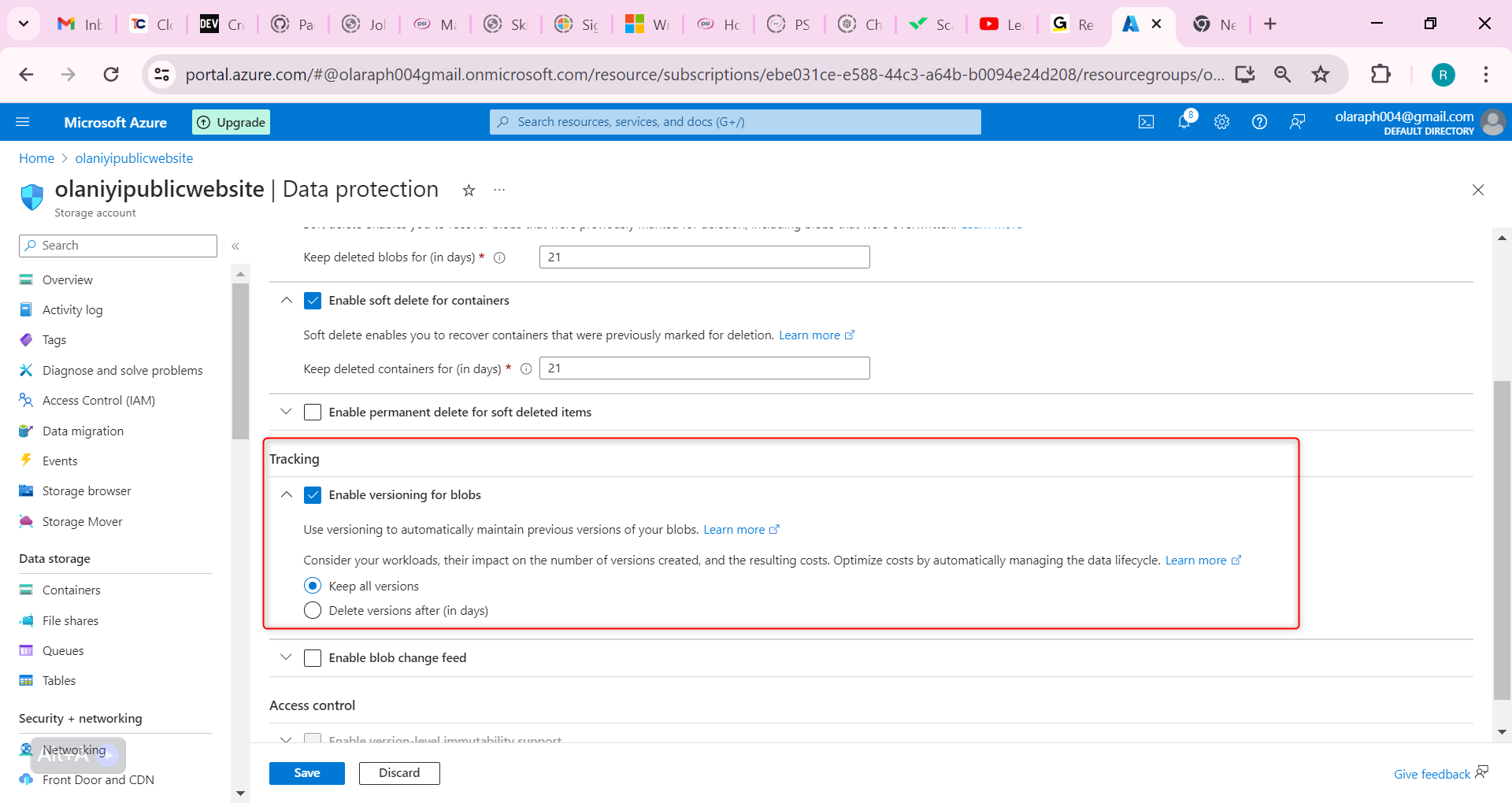

Now let us configure blob versioning, this will help us keep track of the different website product document versions.

Go to the Overview blade of the storage account.

In the Properties section, locate the Blob service section.

Select the Versioning setting.

Ensure the Enable versioning for blobs checkbox is checked.

Notice your options to keep all versions or delete versions after.

Don’t forget to Save your changes.

As you have time experiment with restoring previous blob versions.

Upload another version of your container file. This overwrites your existing file.

Your previous file version is listed on Show deleted blobs page.

Now you have been able to create a storage account that has high availability on Microsoft Azure

| olaraph | |

1,866,320 | Unable to verify digital signature with public key and detached payload | Hi Team, I have a response from the external API in the below format: const inputData = { ... | 0 | 2024-05-27T08:56:57 | https://dev.to/shreya_dalvi_40fd31b69610/unable-to-verify-digital-signature-with-public-key-and-detached-payload-3dh5 | Hi Team,

I have a response from the external API in the below format:

const inputData = {

signature: 'eyJhbGciOiJSUzI1NiIsImtpZCI6InNhbXBsZS1rZXktaWQifQ..SflKxwRJSMeKKF2QT4fwpMeJf36POk6yJV_adQssw5c',

response: 'jdskhfgdjskfgkjsdhf'

};

The signature is without the payload check ("..") in inputData signature, and I am trying to verify the signature.

My public key format is:

PublicKey: {

"kty": "RSA",

"e": "AQAB",

"use": "sig",

"kid": "erityuiuerot",

"n": "kjfghdsjkbfdasbf"

}

The inputData is:

const inputData = {

signature: 'eyJhbGciOiJSUzI1NiIsImtpZCI6InNhbXBsZS1rZXktaWQifQ..SflKxwRJSMeKKF2QT4fwpMeJf36POk6yJV_adQssw5c',

response: 'jdskhfgdjskfgkjsdhf'

};

I am using the below code to verify it in nodejs:

const jose = require("node-jose");

async function createKeystore() {

const keystore = jose.JWK.createKeyStore();

// Add the public key to the keystore

const key = await keystore.add({

kty: 'RSA',

kid: "erityuiuerot",

use: 'sig',

alg: 'RS256',

n: "kjfghdsjkbfdasbf",

e: 'AQAB'

}, 'json');

return keystore;

}

async function verifyDetachedJWS(jws, payload) {

try {

const keystore = await createKeystore();

console.log("keystore", keystore);

// Use JWS.createVerify to verify the token

const verifier = jose.JWS.createVerify(keystore);

const result = await verifier.verify(jws);

console.log('Verification successful:', result);

} catch (error) {

console.error('Verification failed:', error);

}

}

// Example JWS token (without payload) and payload (replace with your actual values)

const jws = 'eyJhbGciOiJSUzI1NiIsImtpZCI6InNhbXBsZS1rZXktaWQifQ..SflKxwRJSMeKKF2QT4fwpMeJf36POk6yJV_adQssw5c';

verifyDetachedJWS(jws, payload);

But I am getting the following error:

Verification failed: Error: no key found

at processSig (/node_modules/node-jose/lib/jws/verify.js:132:22)

I am unable to figure out where the issue is. Can you please help me resolve this as soon as possible?

Do I need to use private for verification, if yes then please suggest code how to do it.

my private key is in below format :

PrivateKey : {

keys : [{

"p": "",

"kty": "RSA",

"q": "",

"d": "",

"e": "",

"use": "sig",

"kid": "",

"qi": "",

"dp": "",

"dq": "",

"n": ""

}]

};

Kindest Regards | shreya_dalvi_40fd31b69610 | |

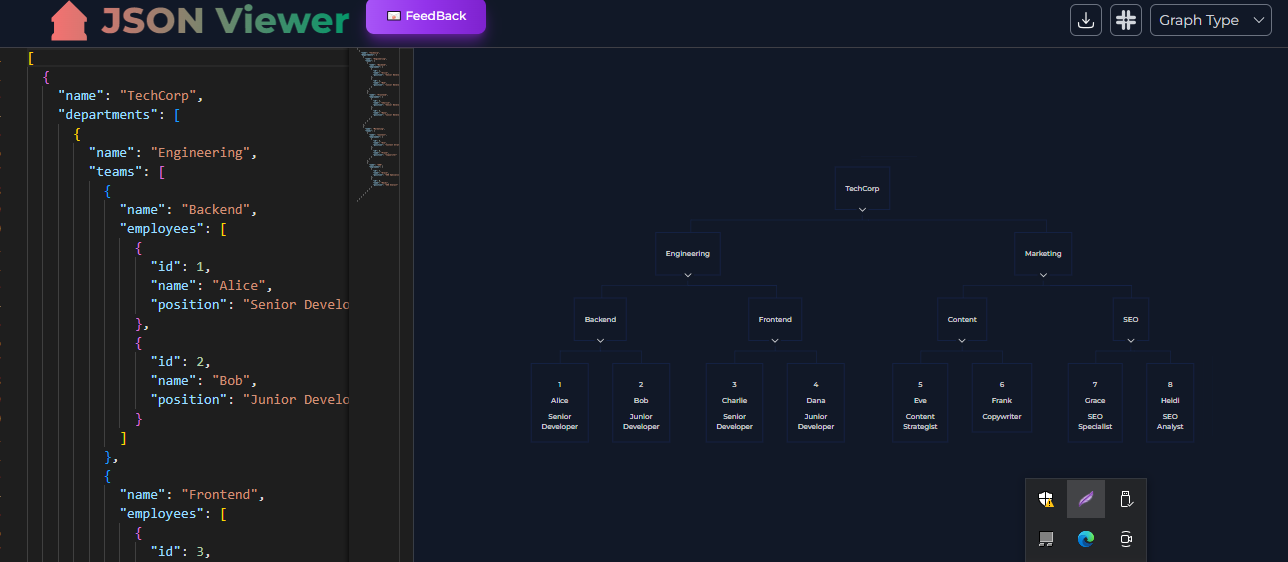

1,866,319 | Online Genarate Graph,Org Chart and roadmap by JSON | Hello Dev, Experience the power of seamless organization and visualization with our online tool for... | 0 | 2024-05-27T08:56:18 | https://dev.to/bugblitz98/online-genarate-graphorg-chart-and-roadmap-by-json-5aag | webdev, javascript, programming, showdev | Hello Dev,

Experience the power of seamless organization and visualization with our online tool for generating graphs, org charts, and roadmaps from JSON data. With our intuitive interface, transform your data into clear, insightful diagrams that enhance collaboration and decision-making. Whether you're managing teams, planning projects, or mapping out strategies, our platform simplifies the process, allowing you to focus on what matters most. Join us and unlock the potential of your data with ease.

Visit :

[jsonviewer.tools](url)

| bugblitz98 |

1,866,318 | Highly Effective 7 Habits for Developers | As a software developer, success doesn't just come from luck or chance. It is the result of years of... | 0 | 2024-05-27T08:52:13 | https://dev.to/cyberjon/highly-effective-7-habits-for-developers-5aig | As a software developer, success doesn't just come from luck or chance. It is the result of years of hard work, continuous learning and development, and forming good habits. In the fast-paced world of technology, software developers must always be learning and adapting to keep up with the latest trends and advancements in their field. In this article, we will discuss 7 habits that can help you become a highly effective software developer.

01 Map out a timetable: Just like in school, having a timetable is essential for software developers. It helps you keep track of your daily activities and make sure you're using your time efficiently. When you're learning a new programming language, it's important to have a schedule in place that outlines when you'll be working on it and for how long. This way, you can stay focused and avoid distractions, and make the most of your learning time.

02 Embrace mistakes and learn from experiences: No one is perfect, and as a software developer, you will make mistakes. It's important to embrace these mistakes and use them as opportunities to learn and grow. When you make a mistake, take time to reflect on what went wrong and what you can do better next time. This way, you'll be able to avoid making the same mistake in the future and become a better developer.

03 Be consistent: Consistency is key when it comes to software development. By setting aside time every day to work on your craft, you'll be able to make steady progress and become more skilled over time. Consistency also helps you identify areas that need improvement and gives you the time and motivation to work on them.

04 Find a mentor: Having a mentor can be incredibly beneficial for software developers. A mentor can offer guidance, and advice, and help you overcome challenges. They can provide you with a fresh perspective and share their experiences and insights, which can be valuable when working on complex projects.

05 Work on projects: Learning by doing is one of the most effective ways to become a better software developer. By working on projects, you'll have the opportunity to put your skills to the test and gain real-world experience. It's important to choose projects that are aligned with your skill level and gradually increase the difficulty as you grow more comfortable.

06 Don't be a jack of all trades: As a software developer, it's tempting to try and learn as many programming languages and technologies as possible. However, it's important to remember that being a jack of all trades won't necessarily make you a master of any. Instead, focus on mastering one area, and then move on to the next once you feel comfortable. This way, you'll be able to become a more specialized and in-demand developer.

07 Stay up to date with the latest advancements: The world of technology is constantly changing, and software developers must keep up with the latest advancements in their field. Read articles, attend webinars and conferences, and follow industry leaders on social media to stay informed and up to date with the latest trends and advancements.

In conclusion, forming good habits as a software developer can greatly enhance your career and lead to long-term success. By following these 7 habits, you'll be able to become a more effective, knowledgeable, and in-demand developer in no time. | cyberjon | |

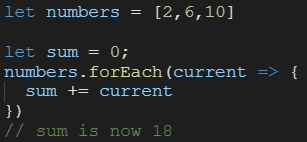

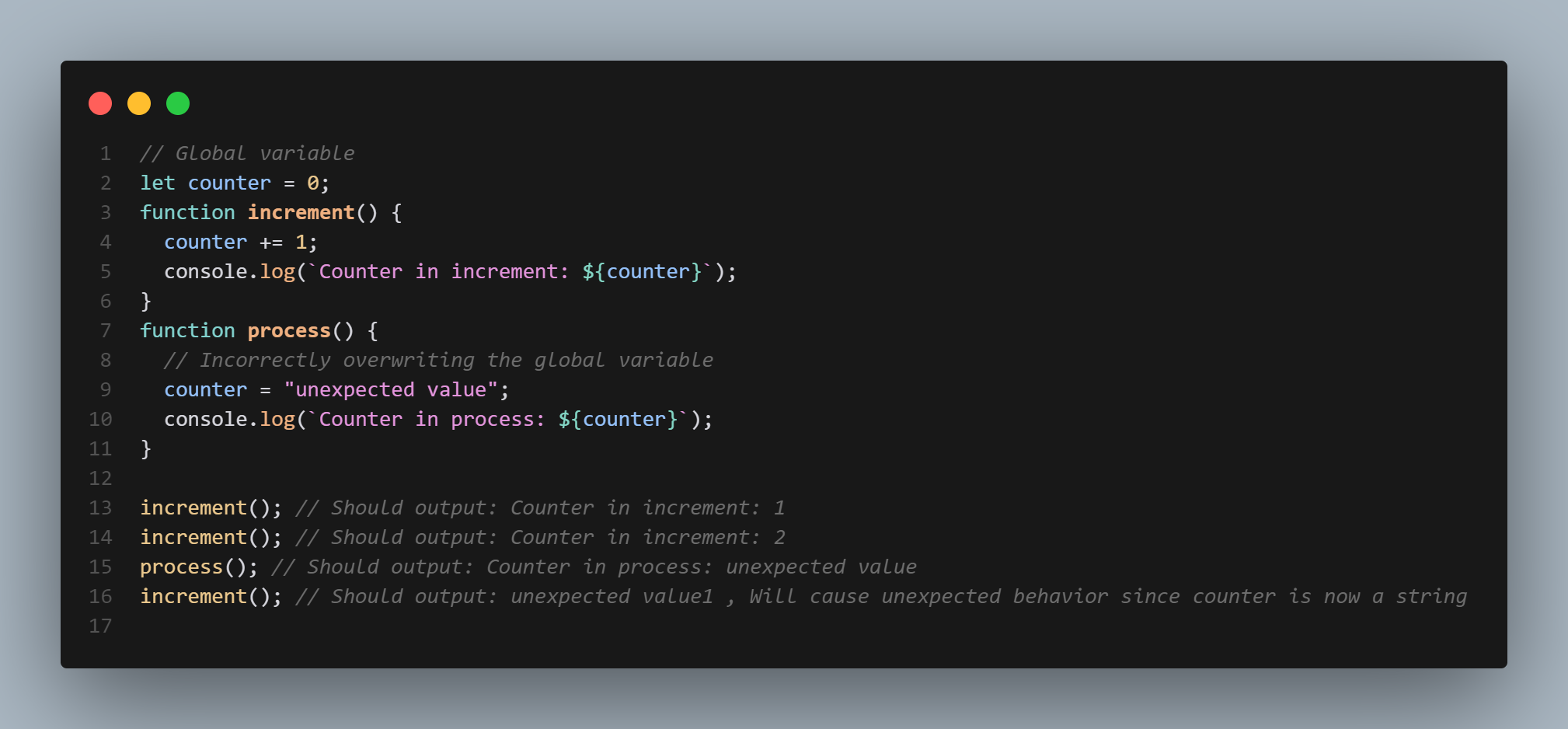

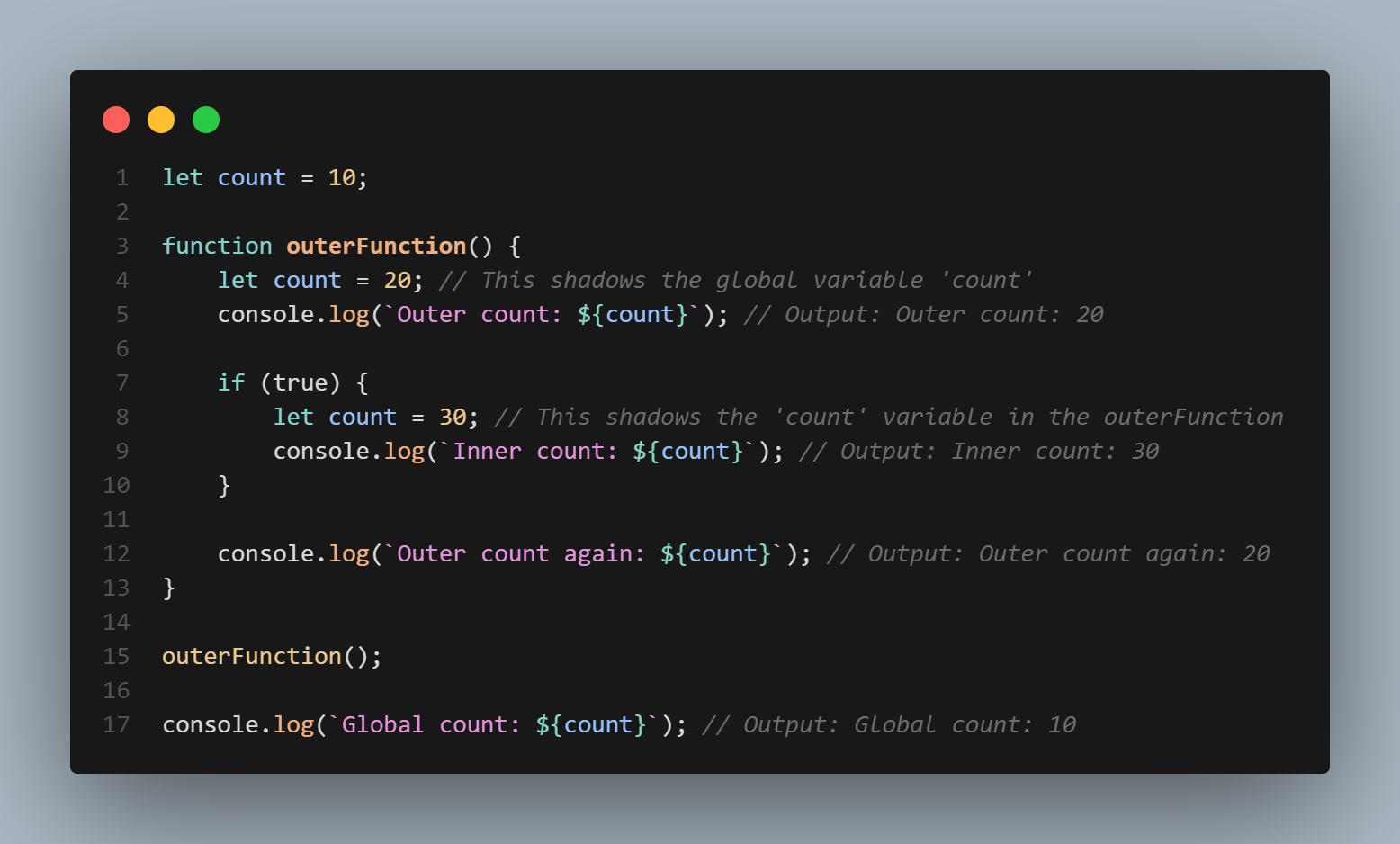

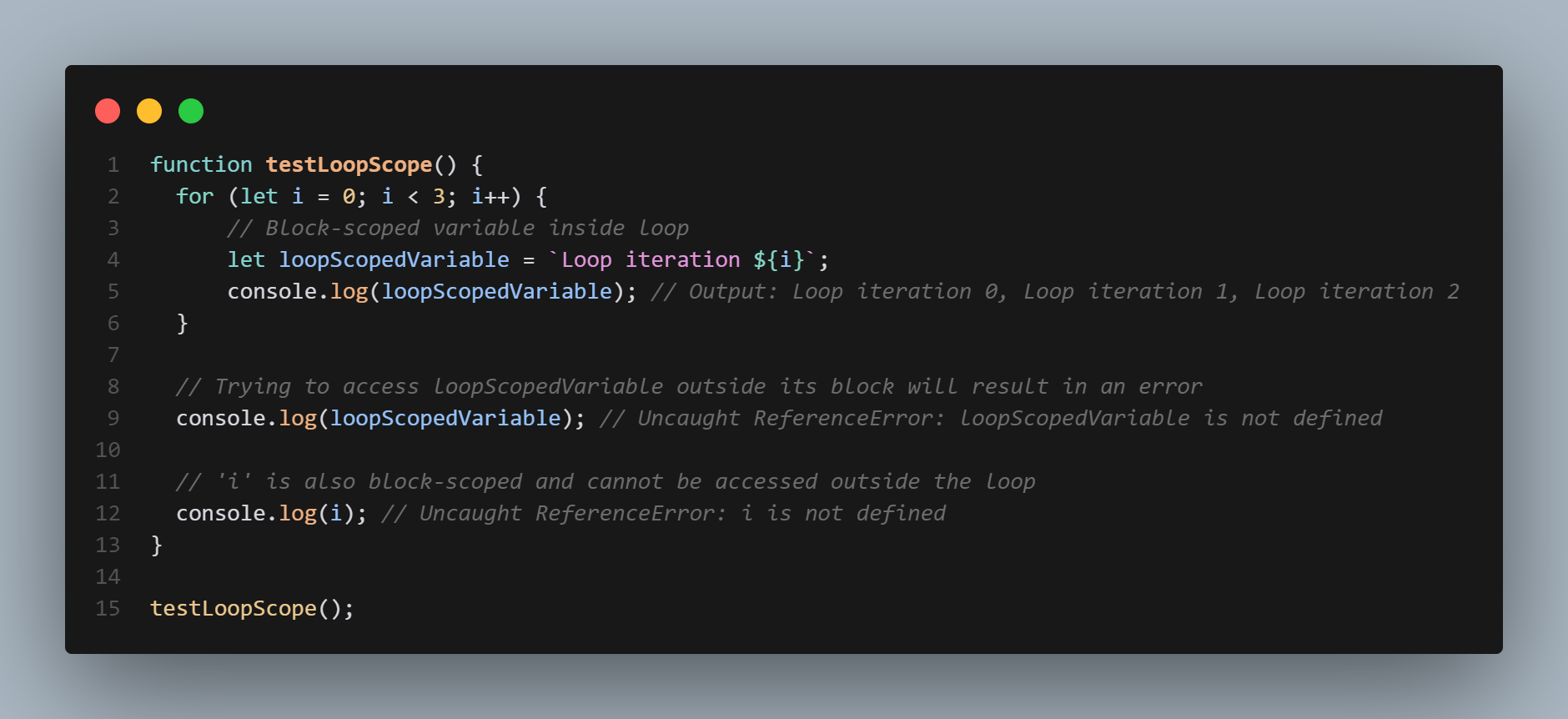

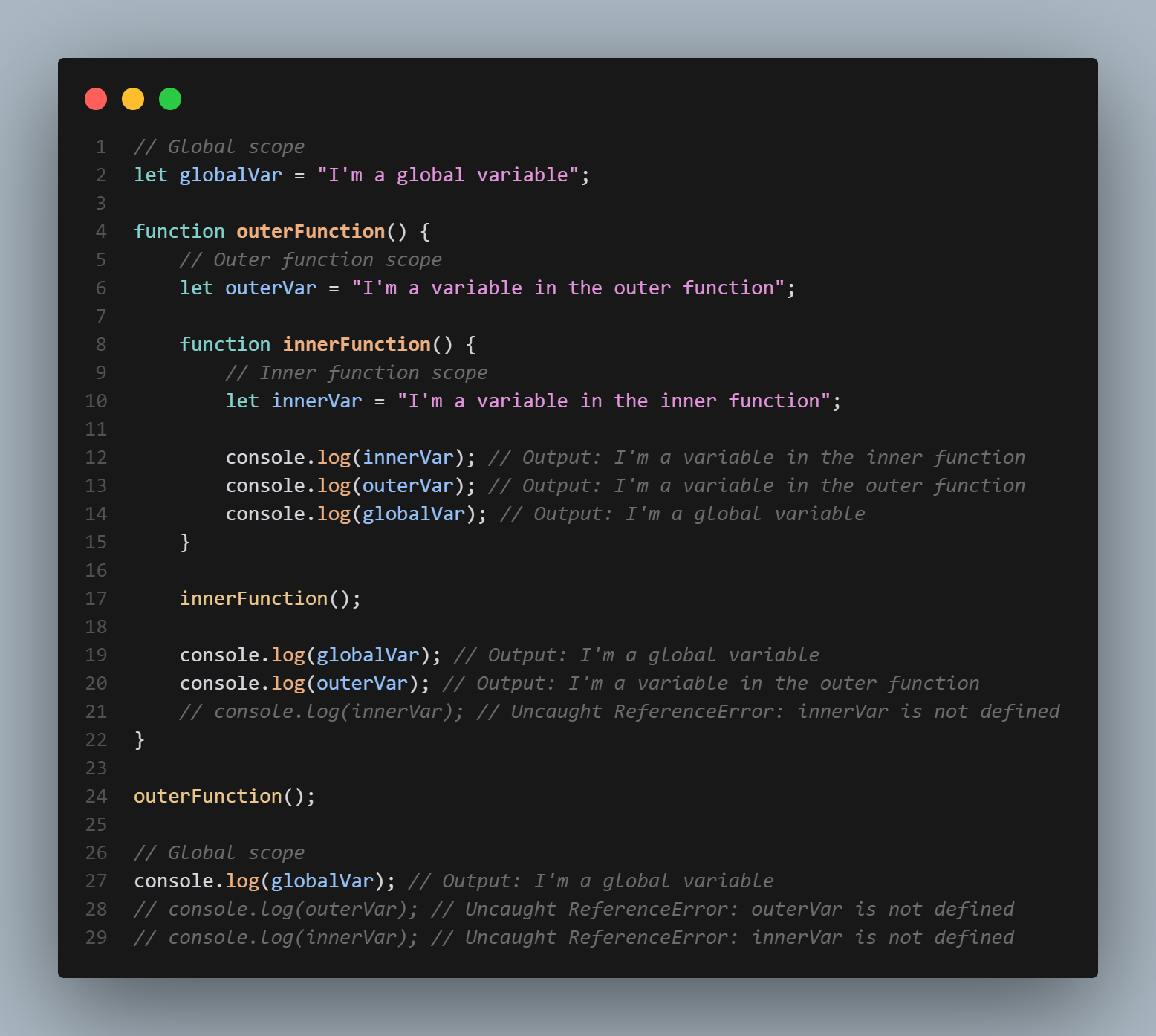

1,866,315 | Scop in Javascript with example | JavaScript is a powerful and versatile programming language that is widely used for web development.... | 0 | 2024-05-27T08:47:51 | https://dev.to/cyberjon/scop-in-javascript-with-example-n02 | JavaScript is a powerful and versatile programming language that is widely used for web development. One of the key concepts in JavaScript is scope, which refers to the accessibility of variables, functions, and objects within a program. In this blog post, we will explain the different types of scope in JavaScript, including global scope, local scope, and function scope, and provide examples to help you understand how they work.

Global scope

Global scope in JavaScript refers to variables, functions, and objects that can be accessed from anywhere within a program. These variables, functions, and objects are defined outside of any function or block of code.

For example, consider the following code:

```

let globalVariable = "Hello, World!";

function myFunction() {

console.log(globalVariable); // prints "Hello, World!"

}

```

console.log(globalVariable); // prints "Hello, World!"

In this example, the variable globalVariable is declared outside of any function or block of code, making it accessible from anywhere within the program. Both the myFunction function and the console.log statement outside of the function are able to access and print the value of globalVariable.

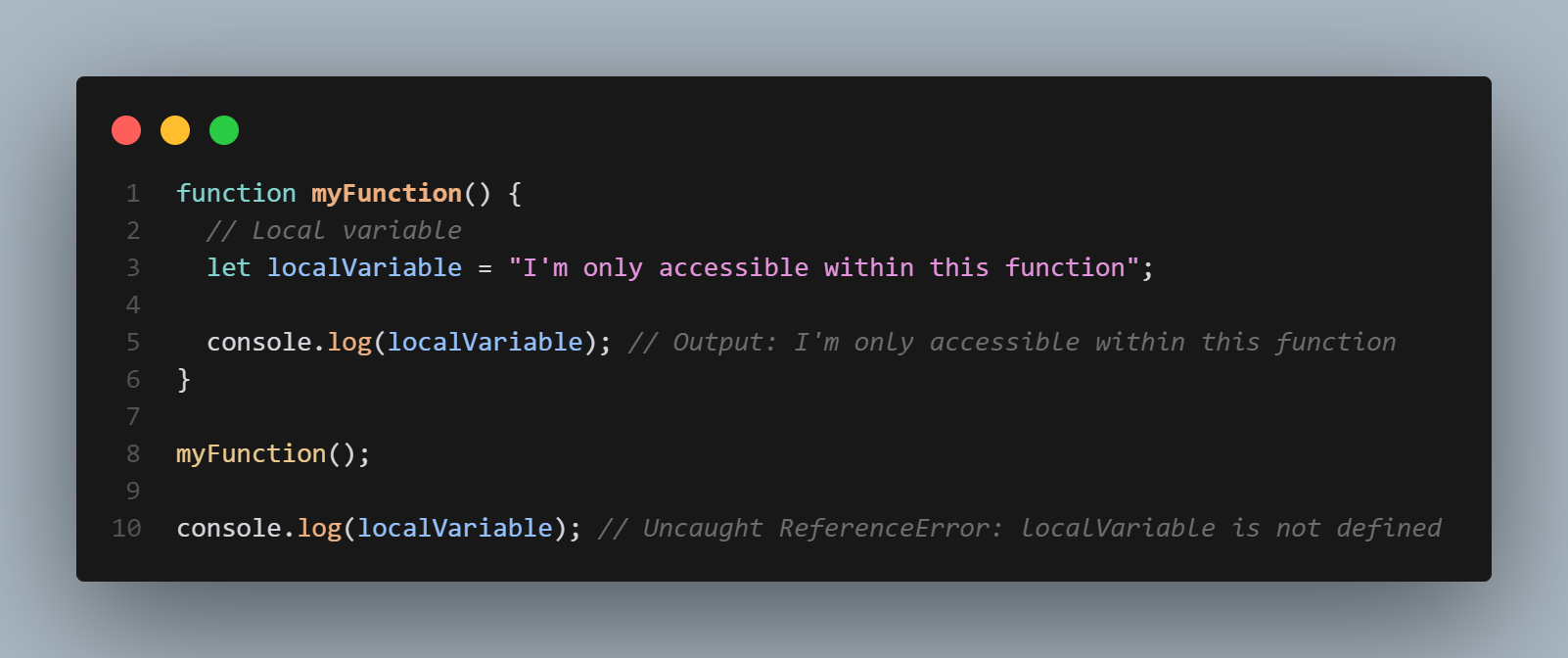

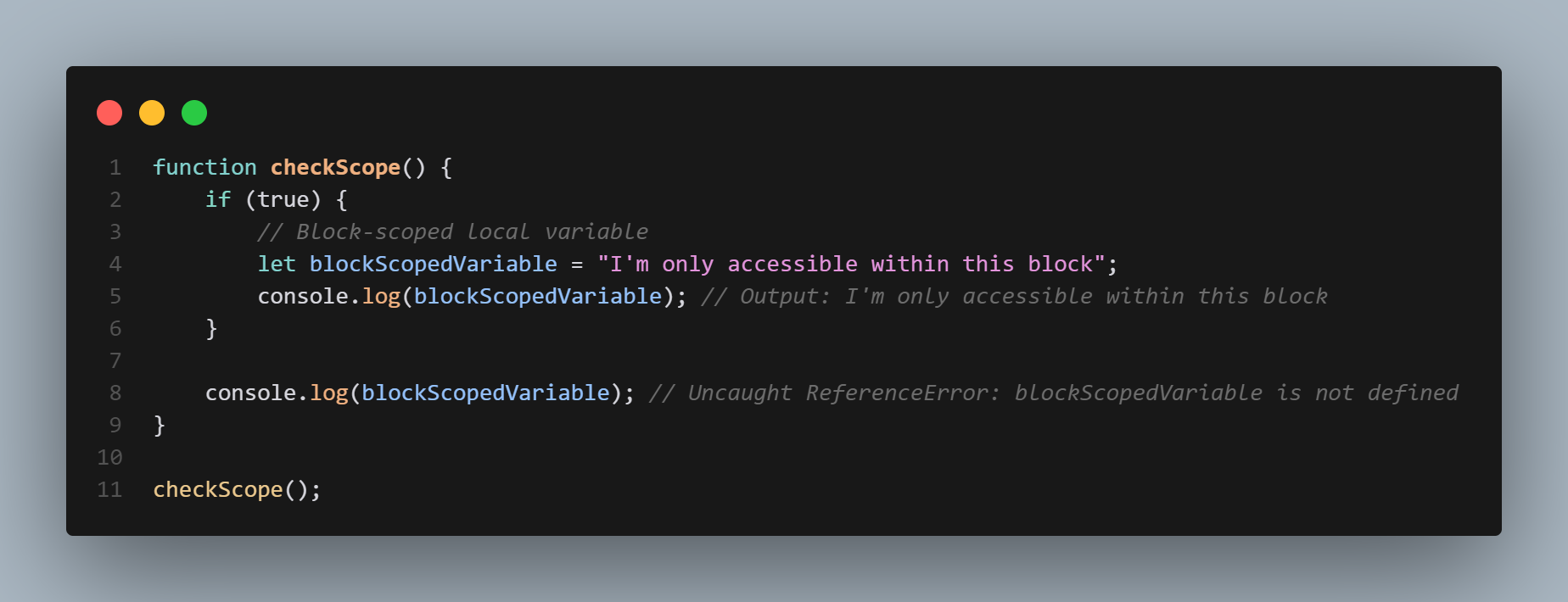

Local scope

Local scope in JavaScript refers to variables, functions, and objects that can only be accessed within a specific block of code. These variables, functions, and objects are defined within a block of code, such as a if statement or a for loop.

For example, consider the following code:

```

if (true) {

let localVariable = "Hello, World!";

console.log(localVariable); // prints "Hello, World!"

}

console.log(localVariable); // throws an error, localVariable is not defined

```

In this example, the variable localVariable is defined within the if statement, making it only accessible within that block of code. The console.log statement within the if statement is able to access and print the value of localVariable, but the console.log statement outside of the ifstatement throws an error because localVariable is not defined in the global scope.

Function scope

Function scope in JavaScript refers to variables, functions, and objects that can only be accessed within a specific function. These variables, functions, and objects are defined within a function, and are not accessible outside of that function.

For example, consider the following code:

```

function myFunction() {

let functionVariable = "Hello, World!";

console.log(functionVariable); // prints "Hello, World!"

}

console.log(functionVariable); // throws an error, functionVariable is not defined

```

In this example, the variable functionVariable is defined within the myFunction function, making it only accessible within that function. The console.log statement within the function is able to access and print the value of functionVariable, but the console.log statement outside of the function throws an error because functionVariable is not defined in the global or local scope.