id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,863,647 | Top-Rated CCTV Camera Dealers in Jaipur 2024 | <p>In the vibrant city of Jaipur, security is a top priority for both residents... | 0 | 2024-05-24T07:20:01 | https://dev.to/vermaharshita/top-rated-cctv-camera-dealers-in-jaipur-2024-5f7n | webdev, javascript, beginners, programming | <div class="flex flex-grow flex-col max-w-full">

<div class="min-h-[20px] text-message flex flex-col items-start whitespace-pre-wrap break-words [.text-message+&]:mt-5 juice:w-full juice:items-end overflow-x-auto gap-2" data-message-author-role="assistant" data-message-id="b077b2f7-fcbc-429d-8df7-f25f855d5028" dir="auto">

<div class="flex w-full flex-col gap-1 juice:empty:hidden juice:first:pt-[3px]">

<div class="markdown prose w-full break-words dark:prose-invert dark">

<p>In the vibrant city of Jaipur, security is a top priority for both residents and businesses. With the increasing need for surveillance, the demand for reliable CCTV cameras has surged. Whether you're looking to enhance the security of your home or business, finding the <a target="_blank" rel="noopener noreferrer" href="https://orbitinfotech.com/cctv.html"><strong>best CCTV camera dealers in Jaipur</strong></a> is crucial. This article will guide you through the top-rated CCTV camera dealers in Jaipur for 2024, highlighting key players like Orbit Infotech (Registered as Orbit Infosoft Pvt. Ltd.), and provide answers to frequently asked questions about CCTV cameras and their installation services.</p>

<h2>Why CCTV Cameras Are Essential in Jaipur</h2>

<p>With the rise in urbanization, Jaipur, known for its historical significance and modern growth, has seen an increase in the need for enhanced security measures. <strong>CCTV cameras</strong> play a pivotal role in:</p>

<ul>

<li><strong>Deterring Crime</strong>: Visible cameras can deter potential intruders.</li>

<li><strong>Monitoring Activities</strong>: Keeping an eye on daily activities within a property.</li>

<li><strong>Gathering Evidence</strong>: Useful for legal proceedings in the event of a crime.</li>

<li><strong>Remote Surveillance</strong>: Monitoring your property from anywhere via mobile devices.</li>

</ul>

<p>Given these benefits, it's important to partner with reputable dealers and <strong>CCTV camera service providers</strong>.</p>

<h2>Top-Rated CCTV Camera Dealers in Jaipur</h2>

<h3>Orbit Infotech (Registered as Orbit Infosoft Pvt. Ltd.)</h3>

<p>Orbit Infotech stands out as one of the best CCTV camera dealers in Jaipur. Known for their commitment to quality and customer satisfaction, they offer a comprehensive range of services, including sales, installation, and maintenance of <a target="_blank" rel="noopener noreferrer" href="https://www.reddit.com/user/orbitinfosoft/comments/1cddfec/cctv_camera_trends_surveillance_solutions_evolve/"><strong>CCTV cameras</strong></a>.</p>

<h4>Services Offered by Orbit Infotech</h4>

<ol>

<li><strong>CCTV Camera Sales</strong>: A wide range of cameras suited for different needs, from basic models to advanced systems with high-definition capabilities.</li>

<li><strong>CCTV Camera Installation Services</strong>: Professional installation ensuring optimal placement and performance.</li>

<li><strong>Maintenance and Support</strong>: Regular maintenance services to ensure your system remains in top working condition.</li>

<li><strong>Remote Monitoring Solutions</strong>: Advanced systems that allow for remote monitoring via smartphones and computers.</li>

</ol>

<h3>Why Choose Orbit Infotech?</h3>

<ul>

<li><strong>Expertise</strong>: With years of experience in the field, they provide expert advice tailored to your specific needs.</li>

<li><strong>Customer Service</strong>: Known for excellent customer support, ensuring all your queries are promptly addressed.</li>

<li><strong>Quality Products</strong>: They offer high-quality, reliable <strong>CCTV cameras</strong> that guarantee security and peace of mind.</li>

<li><strong>Competitive Pricing</strong>: Offering competitive prices without compromising on quality.</li>

</ul>

<h2>Other Notable CCTV Camera Dealers in Jaipur</h2>

<h3>Securitex India</h3>

<p><strong>Securitex India</strong> is another prominent name in Jaipur's security solutions market. They offer a variety of <strong>CCTV cameras</strong> and installation services tailored to both residential and commercial needs.</p>

<h4>Key Offerings</h4>

<ul>

<li><strong>Wide Range of Cameras</strong>: From bullet and dome cameras to PTZ and wireless options.</li>

<li><strong>Installation Services</strong>: Expert technicians ensure that your system is installed correctly and efficiently.</li>

<li><strong>After-Sales Support</strong>: Comprehensive support and maintenance packages to keep your system running smoothly.</li>

</ul>

<h3>Rajdeep Security Systems</h3>

<p>Rajdeep Security Systems is renowned for their comprehensive security solutions, including CCTV camera installation services. They cater to a diverse clientele, ensuring customized security setups for each client.</p>

<h4>Key Offerings</h4>

<ul>

<li><strong>Customized Solutions</strong>: Tailored solutions to meet specific security needs.</li>

<li><strong>High-Quality Products</strong>: Offering some of the best brands in the industry.</li>

<li><strong>Professional Installation</strong>: Ensuring optimal camera placement for maximum coverage.</li>

</ul>

<figure class="image image_resized" style="width:52.6%;" data-ckbox-resource-id="C8bu6h_nXYic">

<picture>

<source srcset="https://ckbox.cloud/f172671a18cb67c44269/assets/C8bu6h_nXYic/images/108.webp 108w,https://ckbox.cloud/f172671a18cb67c44269/assets/C8bu6h_nXYic/images/216.webp 216w,https://ckbox.cloud/f172671a18cb67c44269/assets/C8bu6h_nXYic/images/324.webp 324w,https://ckbox.cloud/f172671a18cb67c44269/assets/C8bu6h_nXYic/images/432.webp 432w,https://ckbox.cloud/f172671a18cb67c44269/assets/C8bu6h_nXYic/images/540.webp 540w,https://ckbox.cloud/f172671a18cb67c44269/assets/C8bu6h_nXYic/images/648.webp 648w,https://ckbox.cloud/f172671a18cb67c44269/assets/C8bu6h_nXYic/images/756.webp 756w,https://ckbox.cloud/f172671a18cb67c44269/assets/C8bu6h_nXYic/images/864.webp 864w,https://ckbox.cloud/f172671a18cb67c44269/assets/C8bu6h_nXYic/images/972.webp 972w,https://ckbox.cloud/f172671a18cb67c44269/assets/C8bu6h_nXYic/images/1080.webp 1080w" sizes="(max-width: 1080px) 100vw, 1080px" type="image/webp"><img alt="Best CCTV Camera Dealers in Jaipur" src="https://ckbox.cloud/f172671a18cb67c44269/assets/C8bu6h_nXYic/images/1080.jpeg" width="1080" height="1080">

</picture>

</figure>

<h2>How to Choose the Best CCTV Camera Dealer in Jaipur</h2>

<p>When selecting a <strong>CCTV camera service provider</strong>, consider the following factors:</p>

<h3>Product Range</h3>

<p>Ensure the dealer offers a variety of <strong>CCTV cameras</strong> to choose from, catering to different needs and budgets. From basic models to advanced systems with features like night vision and motion detection, a good dealer should have it all.</p>

<h3>Installation Services</h3>

<p>Professional installation is crucial for the effectiveness of your surveillance system. Opt for dealers that provide comprehensive <a target="_blank" rel="noopener noreferrer" href="https://worldwide-entertainment-club.mn.co/posts/streamline-security-cctv-camera-installation-services"><strong>CCTV camera installation services</strong></a>, ensuring proper setup and configuration.</p>

<h3>After-Sales Support</h3>

<p>Ongoing support and maintenance are essential to keep your security system functioning optimally. Choose dealers known for their reliable after-sales service.</p>

<h3>Customer Reviews and Testimonials</h3>

<p>Check customer reviews and testimonials to gauge the reputation of the dealer. Positive feedback from previous clients is a good indicator of reliable service.</p>

<h2>FAQs About Best CCTV Camera Dealers in Jaipur</h2>

<h3>1. What should I look for in the <strong>best CCTV camera dealers in Jaipur</strong>?</h3>

<p>Look for dealers with a strong reputation, wide product range, professional installation services, and excellent after-sales support. Checking customer reviews and testimonials can also help in making an informed decision.</p>

<h3>2. Why is <strong>CCTV camera installation near me</strong> important?</h3>

<p>Choosing a local service provider ensures prompt installation and maintenance services. It also facilitates quick response times in case of technical issues.</p>

<h3>3. Can I <strong>buy CCTV cameras online</strong> in Jaipur?</h3>

<p>Yes, many dealers offer the option to buy <strong>CCTV cameras online</strong>. This can be convenient and often comes with detailed product descriptions, customer reviews, and sometimes even installation services.</p>

<h3>4. How much does <strong>CCTV camera installation services</strong> cost?</h3>

<p>The cost varies based on the type and number of cameras, complexity of the installation, and specific requirements of the site. It’s best to get a quote from the dealer after a site assessment.</p>

<h3>5. What are the benefits of choosing a professional <strong>CCTV camera service provider</strong>?</h3>

<p>Professional service providers ensure proper installation, offer reliable products, and provide ongoing maintenance and support. They also offer expert advice on the best security solutions for your specific needs.</p>

<h3>6. How do I find a reliable <strong>cctv camera shop near me</strong>?</h3>

<p>You can search online, check local business directories, or ask for recommendations from friends or family. Reading online reviews and ratings can also help in identifying a trustworthy shop.</p>

<p> </p>

<p><strong>Also Read: </strong><a target="_blank" rel="noopener noreferrer" href="https://dailynewsupdate247.in/jaipurs-hot-trend-cctv-camera-installation-soars"><strong>Jaipur's Hot Trend: CCTV Camera Installation Soars</strong></a></p>

<h2>Conclusion</h2>

<p>Ensuring the security of your home or business in Jaipur requires investing in reliable CCTV cameras and partnering with the best CCTV camera dealers in Jaipur. Orbit Infotech (Registered as Orbit Infosoft Pvt. Ltd.) and other reputable dealers like Securitex India and Rajdeep Security Systems offer top-notch products and services to meet your security needs. By choosing the right dealer, you can rest assured that your property is well-protected, giving you peace of mind.</p>

<p>For those looking to enhance their security setup, now is the perfect time to reach out to these top-rated dealers, explore their offerings, and secure your property with the latest surveillance technology.</p>

</div>

</div>

</div>

</div>

<div class="mt-1 flex gap-3 empty:hidden juice:-ml-3">

<div class="items-center justify-start rounded-xl p-1 flex">

<div class="flex items-center"><span class="" data-state="closed"></span></div>

</div>

</div>

<p><span class="" data-state="closed"></span></p>

<p><span class="" data-state="closed"></span></p>

<div class="flex"><span class="" data-state="closed"></span></div> | vermaharshita |

1,863,646 | Types of crypto coin | What is a crypto coin? A crypto coin is a digital or virtual currency designed to work as a medium... | 0 | 2024-05-24T07:19:41 | https://dev.to/ritesh_saini_45667ac4b3a5/types-of-crypto-coin-4pf1 | coin, cryptocurrency, cryptocoin | **What is a crypto coin?**

A [crypto coin](https://lbmsolutions.in/crypto-coin-development-services/

) is a digital or virtual currency designed to work as a medium of exchange. It utilizes cryptographic techniques to secure transactions, control the creation of new units, and verify the transfer of assets. Unlike traditional currencies, cryptocurrencies operate on decentralized networks, typically based on blockchain technology, which ensures transparency, immutability, and security.

**Types of crypto coins:**

**1. Bitcoin (BTC):**

Bitcoin (BTC) entered the scene in 2009, brought forth by the enigmatic Satoshi Nakamoto. Renowned as the "digital gold," its supply is capped at 21 million coins. BTC holds sway as both a secure store of value and a ubiquitous medium of exchange, profoundly influencing the digital economic landscape.

**2. Ethereum (ETH):**

Ethereum, envisioned by Vitalik Buterin in late 2013 and launched on July 30, 2015, is a decentralized platform. It empowers the creation and execution of [smart contracts](https://lbmsolutions.in/smart-contract-development-company/

) and decentralized applications (DApps) without the need for intermediaries. ETH, the platform's native cryptocurrency, fuels its operations and transactions.

**3. Ripple (XRP):**

Ripple operates as both a platform and a currency. The Ripple platform functions as an open-source protocol designed for fast and cost-effective transactions. XRP, the currency within the Ripple network, is utilized for facilitating transactions, particularly emphasizing real-time cross-border payments. It's preferred by financial institutions due to its efficient transaction process.

**4.Litecoin (LTC):**

Litecoin, often dubbed as the silver to Bitcoin's gold, was introduced by Charlie Lee back in 2011. Its aim is to provide quicker and more affordable transactions than Bitcoin. Using a distinct hashing algorithm called Scrypt, Litecoin offers faster transaction times, making it a compelling option for digital transactions.

**5. Stablecoins:**

Stablecoins represent a category of digital currencies that are tethered to conventional assets like fiat currencies (such as USD or EUR) or commodities like gold. Their primary objective is to uphold a stable value within the often turbulent cryptocurrency market. Notable examples of stablecoins include Tether (USDT), USD Coin (USDC), and Dai (DAI).

**6.Privacy Coins:**

**Monero (XMR)**, launched in April 2014, is dedicated to enhancing privacy, decentralization, and scalability within the cryptocurrency space. It achieves this by employing sophisticated cryptographic methods to conceal transaction details, ensuring anonymity for users.

**Zcash (ZEC)**, introduced in October 2016, introduces the concept of "shielded" transactions, leveraging zero-knowledge proofs to offer heightened privacy. This innovative approach enables transactions to be conducted without revealing the sender, recipient, or transaction amount.

The primary purpose of both Monero and Zcash is to prioritize user privacy by obscuring transaction details, rendering them untraceable. This emphasis on anonymity enhances security and confidentiality, appealing to users seeking greater privacy protection in their financial transactions.

**7.Utility Tokens:**

Utility tokens serve as digital assets utilized within particular [blockchain platforms](https://lbmsolutions.in/blockchain-development-services/) to enable access to specific services or products.

**Binance Coin (BNB):**

Initially devised as a discount token for reducing trading fees on the Binance exchange, BNB has evolved to become the backbone of the Binance ecosystem. This includes powering the Binance Smart Chain, a blockchain network facilitating decentralized applications and smart contracts.

**Chainlink (LINK):**

Chain Link serves as the native token on the Chainlink network. It is utilized for paying for services provided by Chainlink, which acts as a bridge between smart contracts and real-world data sources. This enables smart contracts to access and utilize data from outside the blockchain.

In essence, utility tokens like BNB and LINK grant users access to specific functionalities and services within their respective blockchain ecosystems. They facilitate transactions, provide discounts, and enable interaction with decentralized applications, contributing to the overall functionality and utility of the platforms.

**8. Governance Tokens:**

Governance tokens are a type of cryptocurrency that grants holders the ability to engage in the decision-making process of a blockchain project.

**Maker (MKR)** is a governance token within the MakerDAO ecosystem. Holders of MKR tokens possess the authority to participate in the decision-making process regarding proposed changes to the Maker Protocol. This protocol governs the operation of the stablecoin DAI, ensuring its stability and functionality within the [decentralized finance (DeFi)](https://medium.com/@riteshsaini.lbmsolutions/decentralized-finance-defi-and-its-impact-on-token-development-b237cb0e3a4d) landscape.

**Compound (COMP)** is another governance token used within the Compound protocol. Holders of COMP tokens have the privilege to propose and vote on modifications to the Compound lending and borrowing platform. These alterations can include adjustments to interest rates, collateral requirements, or other parameters that influence the platform's operation and user experience within the DeFi ecosystem.

Governance tokens empower users to shape a project's future by enabling voting on key decisions. Through democratic mechanisms, holders propose and decide on matters, ensuring community involvement, transparency, and decentralization.

**Conclusion:**

In Conclusion , the [crypto coin](https://lbmsolutions.in/crypto-coin-development-services/) space offers a wide variety of coins, each serving distinct purposes. From Bitcoin's pioneering role in digital currency to Ethereum's smart contract capabilities, Ripple's efficient cross-border transactions, and Litecoin's focus on faster transactions, the ecosystem is diverse.

Stablecoins provide stability, privacy coins prioritize anonymity, utility tokens grant access to specific services, and governance tokens empower community participation. Together, they shape the evolving landscape of digital assets, driving innovation and decentralization in the financial world.

| ritesh_saini_45667ac4b3a5 |

1,862,827 | Cross-Origin Resource Sharing (CORS) in ASP.NET Core: A Comprehensive Guide | Originally published at https://antondevtips.com. Cross-Origin Resource Sharing (CORS) is a standard... | 0 | 2024-05-24T07:17:50 | https://antondevtips.com/blog/cors-in-asp-net-core-a-comprehensive-guide | programming, dotnet, backend, development | ---

canonical_url: https://antondevtips.com/blog/cors-in-asp-net-core-a-comprehensive-guide

---

_Originally published at_ [_https://antondevtips.com_](https://antondevtips.com/blog/cors-in-asp-net-core-a-comprehensive-guide)_._

Cross-Origin Resource Sharing (CORS) is a standard in web applications that allows or restricts web pages from making requests to a domain different from the one that served the initial web page.

Today we will explore how to implement and manage CORS in ASP.NET Core applications effectively.

## How CORS works

When a web page makes a cross-origin HTTP request, the browser automatically adds an `Origin` header to the request.

The server checks this header against its CORS policy.

If the origin is allowed, the server responds with a `Access-Control-Allow-Origin` CORS header.

This indicates that the request is allowed to be served on the server.

The server doesn't return an error if the CORS policy doesn't allow the request to be executed.

The client is responsible for returning error to the client and blocking the response.

CORS is a way for a server to allow web browsers to execute a cross-origin requests.

Browsers without CORS can't do cross-origin requests.

## When To Use CORS

**CORS should be enabled in ASP.NET Core:**

* To allow your API to be accessed from different domains

* When your frontend and backend are hosted separately

* To control specific resources in your application to be accessible from other domains

* In development mode

To enable CORS in ASP.NET Core, call the `AddCors` method on the `IServiceCollection` to add it to the DI container:

```csharp

builder.Services.AddCors(options =>

{

options.AddPolicy("AllowSpecificOrigin",

policyBuilder => policyBuilder.WithOrigins("http://example.com"));

});

```

And add CORS to the middleware pipeline by calling `UseCors` method:

```csharp

app.UseCors("AllowSpecificOrigin");

```

> Often CORS are enabled in ASP.NET Core apps only in the **development mode** to simplify development when frontend and backend are run on different hosts or ports.

> Consider using CORS in **production** only when it is absolutely required

## Correct Order of Middlewares When Using CORS

Here are few important tips when placing `UseCors` middleware:

* `UseCors` middleware should be placed before `UseResponseCaching` due to this [bug](https://github.com/dotnet/aspnetcore/issues/23218).

* `UseCors` middleware should be placed before `UseStaticFiles` to ensure CORS headers are properly added to the static file responses

```csharp

app.UseCors();

app.UseResponseCaching();

app.UseStaticFiles();

```

## Enable CORS in WebApi Controllers

To apply CORS policies to specific endpoints, use the `RequireCors` method in endpoint routing configuration:

```csharp

app.UseEndpoints(endpoints =>

{

endpoints.MapControllers().RequireCors("AllowSpecificOrigin");

});

```

You can enable or disable CORS for a specific controller:

```csharp

[EnableCors("AllowSpecificOrigin")]

public class UsersController : ControllerBase

{

// Controller methods

}

[DisableCors]

public class ProductsController : ControllerBase

{

// Controller methods

}

```

You can also enable or disable CORS for different controller methods:

```csharp

public class UsersController : ControllerBase

{

[EnableCors("CorsPolicy1")]

[HttpGet]

public ActionResult<IEnumerable<string>> Get(Guid id)

{

var user = new User

{

Id = id,

Name = "Anton"

};

return Ok(user);

}

[EnableCors("CorsPolicy2")]

[HttpPost]

public ActionResult<IEnumerable<string>> Create(CreateUserRequest request)

{

return Ok();

}

[DisableCors]

[HttpDelete]

public ActionResult<IEnumerable<string>> Create(Guid id)

{

return NoContent();

}

}

```

## Enable CORS in Minimal APIs

To enable CORS for minimal API endpoints simply call `RequireCors` method for each endpoint:

```csharp

app.MapGet("/api/books", () =>

{

var books = SeedService.GetBooks(10);

return Results.Ok(books);

}).RequireCors("AllowAllOrigins");

```

## CORS Policy Options

We already learned what the CORS is and how to enable it in the ASP.NET Core.

It's time to explore what policy options does the CORS provide us:

* Set the allowed origins

* Set the allowed HTTP methods

* Set the allowed request headers

* Set the exposed response headers

* Credentials in cross-origin requests

### Allowed Origin

Specify what origins are allowed to access the resource.

```csharp

builder.WithOrigins("http://example.com");

```

### Allowed HTTP Methods

Define what HTTP methods can be used when accessing the resource.

```csharp

builder.WithMethods("GET", "POST", "PUT", "DELETE", "PATCH");

```

### Allowed Request Headers

Specify headers that can be used when making the request.

```csharp

builder.WithHeaders("Content-Type", "Authorization");

```

### Exposed Response Headers

Control which headers are exposed to the browser.

```csharp

builder.WithExposedHeaders("X-Custom-Header");

```

### Credentials in Cross-Origin Requests

Determine if cookies should be included with requests.

```csharp

builder.AllowCredentials();

```

## Allow Any Policy Options

There is a way to enable all policy options, for example for development:

```csharp

if (builder.Environment.IsDevelopment())

{

builder.Services.AddCors(options =>

{

options.AddPolicy("AllowAllOrigins",

policyBuilder => policyBuilder.AllowAnyHeader()

.AllowAnyMethod()

.AllowAnyOrigin()

//.AllowCredentials()

.SetIsOriginAllowed(_ => true)

);

});

}

// ...

if (app.Environment.IsDevelopment())

{

app.UseCors("AllowAllOrigins");

}

```

> **NOTE:** you can't use AllowAnyOrigin (wild card, allowing all origins) with AllowCredentials at the same time

## Summary

Implementing CORS in ASP.NET Core is essential for modern web applications to securely manage cross-origin requests in the web browsers.

You can configure CORS for controllers and minimal APIs.

You can use CORS in **development mode** to simplify your frontend and backend development.

Use CORS in **production mode** only when it is absolutely required.

Hope you find this blog post useful. Happy coding!

_Originally published at_ [_https://antondevtips.com_](https://antondevtips.com/blog/cors-in-asp-net-core-a-comprehensive-guide)_._

### After reading the post consider the following:

- [Subscribe](https://antondevtips.com/blog/cors-in-asp-net-core-a-comprehensive-guide#subscribe) **to receive newsletters with the latest blog posts**

- [Download](https://github.com/AntonMartyniuk-DevTips/dev-tips-code/tree/main/backend/AspNetCore/Cors) **the source code for this post from my** [github](https://github.com/AntonMartyniuk-DevTips/dev-tips-code/tree/main/backend/AspNetCore/Cors) (available for my sponsors on BuyMeACoffee and Patreon)

If you like my content — **consider supporting me**

Unlock exclusive access to the source code from the blog posts by joining my **Patreon** and **Buy Me A Coffee** communities!

[](https://www.buymeacoffee.com/antonmartyniuk)

[](https://www.patreon.com/bePatron?u=73769486) | antonmartyniuk |

1,863,645 | Introducing Google Play Points: Elevating Your Rewards Experience | Get ready to level up your rewards game with Google Play Points! Since 2018, over 220 million members... | 0 | 2024-05-24T07:17:28 | https://dev.to/n968941/introducing-google-play-points-elevating-your-rewards-experience-1jc | news, flutter, playstore, googlecloud | Get ready to level up your rewards game with Google Play Points! Since 2018, over 220 million members have enjoyed exclusive perks, and now, we're taking it even further. From exciting new games like Diamond Valley to VIP event experiences, join the adventure today and elevate your gaming journey like never before.

Unlocking Exciting New Perks and RewardsDiscover New Adventures with Diamond ValleyExclusive Pre-registration BonusEarly Access to Exciting New GamesBuild Your Squad TodayElevate Your Experience with VIP EventsJoin the Play Points Adventure TodayInformation in Table formatFAQs about Google Play PointsWhat is Google Play Points?How many members currently benefit from Google Play Points rewards?What are the benefits of being a Google Play Points member?How do I join Google Play Points?What are the perks of being a Diamond or Platinum member?What is Diamond Valley?How can I pre-register for Diamond Valley?How do I access early game releases like Squad Busters?How can I stay updated on upcoming VIP events?What rewards can I expect from Google Play Points?

[read full article](https://flutters.in/introducing-google-play-points-elevating-your-rewards-experience/)

Since its launch in 2018, Google Play Points has been our way of expressing gratitude to the millions of users who choose Google Play for their app, game, and digital content needs. With over 220 million members benefiting from Play Points rewards, we're thrilled to announce that we're taking the rewards game to the next level.

Unlocking Exciting New Perks and Rewards

This year, we're enhancing Google Play Points with a host of thrilling new perks and rewards designed to immerse you in experiences you'll adore. Moreover, the higher your status within the program, the greater the rewards you'll unlock. Here's a sneak peek of what's in store:

Introducing Google Play Points: Elevating Your Rewards Experience

Discover New Adventures with Diamond Valley

Launching on June 17th in the U.S., Diamond Valley is an engaging treasure hunt mini-game that has already captured the hearts of players in Korea and Japan. Hunt for precious diamonds and utilize them for a chance to win fantastic prizes such as Pixel devices, exclusive merchandise from your favorite games, bonus points, and much more.

Exclusive Pre-registration Bonus

Secure your spot in the treasure hunt by pre-registering for Diamond Valley today and kickstart your journey with bonus diamonds. As an added perk, the first 50,000 Diamond and Platinum members to pre-register will receive an exclusive Diamond Valley t-shirt. Head over to the Perks tab of the Play Points home to pre-register and prepare for the adventure ahead.

Early Access to Exciting New Games

As a token of appreciation to our top members, we're offering exclusive early access to the latest mobile gaming sensations. Starting immediately, Diamond, Platinum, and Gold members can dive into the action-packed world of Squad Busters from Supercell before anyone else.

Build Your Squad Today

To claim your early access and assemble your squad, simply navigate to the Perks tab of the Play Points home. Keep an eye out for additional exclusive Squad Busters rewards dropping soon.

Introducing Google Play Points: Elevating Your Rewards Experience

Elevate Your Experience with VIP Events

Get ready to elevate your summer with VIP experiences at premier gaming and entertainment events. Stay tuned to the Google Play Store for upcoming announcements and seize the opportunity to indulge in unforgettable experiences this season.

[read full article](https://flutters.in/introducing-google-play-points-elevating-your-rewards-experience/)

Join the Play Points Adventure Today

Ready to embark on your Play Points journey? Simply head to the Google Play Store, tap your profile icon, select "Play Points," and hit "Join for free." Then, let the fun begin!

Also read:

Google Play Points Introduces Exciting New Features to Enhance User Rewards

Is Flutter Right For Your Mobile App?

Faq about Google Play Points

What is Google Play Points?

Google Play Points is a rewards program launched in 2018 to thank users for choosing Google Play for their app, game, and digital content needs. It offers points and rewards to members for their activities on Google Play.

How many members currently benefit from Google Play Points rewards?

Over 220 million members are currently benefiting from Google Play Points rewards.

What are the benefits of being a Google Play Points member?

As a Google Play Points member, you can earn points and unlock various rewards, including exclusive perks, early access to new games, VIP experiences at gaming and entertainment events, and more.

How do I join Google Play Points?

Joining Google Play Points is simple and free. Just head to the Google Play Store, tap on your profile icon, select "Play Points," and hit "Join for free."

What are the perks of being a Diamond or Platinum member?

Diamond and Platinum members enjoy exclusive perks such as early access to new games like Squad Busters, exclusive merchandise, bonus points, and more.

What is Diamond Valley?

Diamond Valley is an engaging treasure hunt mini-game available to Google Play Points members. Players hunt for diamonds and have the chance to win exciting prizes such as Pixel devices, exclusive merchandise, bonus points, and more.

How can I pre-register for Diamond Valley?

To pre-register for Diamond Valley, visit the Perks tab of the Play Points home. As an added bonus, the first 50,000 Diamond and Platinum members to pre-register will receive an exclusive Diamond Valley t-shirt.

[read full article](https://flutters.in/introducing-google-play-points-elevating-your-rewards-experience/)

How do I access early game releases like Squad Busters?

Diamond, Platinum, and Gold members can access early game releases like Squad Busters by visiting the Perks tab of the Play Points home.

How can I stay updated on upcoming VIP events?

Stay tuned to the Google Play Store for announcements about upcoming VIP events. These events offer members exclusive VIP experiences at premier gaming and entertainment events.

What rewards can I expect from Google Play Points?

Google Play Points offers a variety of rewards, including exclusive merchandise, bonus points, early access to new games, VIP experiences, and more. | n968941 |

1,863,642 | Saving data without triggering events in Laravel | How to | Tutorial | Quick Win Wednesday #QWW | A post by Bert De Swaef | 0 | 2024-05-24T07:15:06 | https://dev.to/burtds/saving-data-without-triggering-events-in-laravel-how-to-tutorial-quick-win-wednesday-qww-628 | laravel, php, tutorial, beginners | {% embed https://youtu.be/S7oBTTLORHg %} | burtds |

1,863,640 | Development and Futures Trading: A Dynamic Duo in the Crypto World | In the rapidly evolving landscape of cryptocurrency, the intersection of development and futures... | 0 | 2024-05-24T07:13:23 | https://dev.to/andylarkin677/development-and-futures-trading-a-dynamic-duo-in-the-crypto-world-58n4 | webdev, news, development, learning | In the rapidly evolving landscape of cryptocurrency, the intersection of development and futures trading plays a pivotal role in shaping the future of the market. As digital assets become increasingly mainstream, developers and traders find themselves in a symbiotic relationship, driving innovation and expanding trading possibilities. This article explores the vital connection between development and futures trading, with a focus on leading cryptocurrency exchanges like WhiteBIT, and introduces the new futures trading pair $NOT-PERP.

The Role of Development in Crypto Futures Trading

Developers are the backbone of the cryptocurrency ecosystem, crafting the technologies that make blockchain networks, decentralized applications (dApps), and trading platforms possible. Their work ensures the reliability, security, and efficiency of these platforms, which are crucial for enabling complex financial instruments like futures contracts.

Futures trading, a staple in traditional financial markets, has found a robust foothold in the crypto world. Futures contracts allow traders to speculate on the future price of a cryptocurrency, providing opportunities for hedging and leveraging positions. This type of trading requires a sophisticated technological infrastructure to handle high transaction volumes, rapid execution, and stringent security measures—tasks that fall squarely on the shoulders of developers.

Trading Futures on Major Crypto Exchanges

Several major cryptocurrency exchanges have made significant strides in futures trading, each bringing unique features and offerings to the table.

Binance Futures: One of the largest and most popular platforms, Binance Futures offers a wide range of perpetual and quarterly contracts. The platform is known for its liquidity, diverse trading pairs, and advanced trading tools, all supported by a robust technical framework that ensures seamless operation.

Bybit: Specializing in perpetual contracts, Bybit is renowned for its user-friendly interface and high-performance trading engine. Bybit emphasizes security and customer support, making it a preferred choice for both novice and experienced traders.

WhiteBIT: As a rapidly growing exchange, WhiteBIT has made notable advancements in futures trading. The platform recently introduced the $NOT-PERP trading pair, allowing traders to speculate on the future price movements of the NOT token. This addition enhances WhiteBIT’s offerings, providing more options for traders looking to diversify their portfolios.

The $NOT-PERP Pair on WhiteBIT

The introduction of the $NOT-PERP futures trading pair on WhiteBIT is a testament to the exchange's commitment to innovation and user-centric development. This new pair allows traders to engage with the NOT token in a futures market context, opening up new avenues for strategic trading and risk management. The launch of $NOT-PERP showcases the collaborative efforts of developers and market strategists in delivering cutting-edge trading options.

The Synergy Between Developers and Traders

The relationship between developers and traders is symbiotic. Developers create the tools and platforms that facilitate efficient trading, while traders provide feedback and demand that drive continuous improvement and innovation. This dynamic interaction ensures that trading platforms remain at the forefront of technological advancements, offering enhanced features and security protocols.

Conclusion

The fusion of development and futures trading is a driving force behind the growth and evolution of the cryptocurrency market. Exchanges like Binance, Bybit, and WhiteBIT exemplify how cutting-edge technology and innovative trading solutions come together to create vibrant and efficient trading environments. The introduction of the $NOT-PERP pair on WhiteBIT highlights the ongoing collaboration between developers and traders, paving the way for new opportunities and advancements in the crypto space.

As the market continues to evolve, the interplay between development and futures trading will remain crucial in shaping the future of cryptocurrency, driving both technological progress and market expansion. | andylarkin677 |

1,863,639 | Where to Learn Prompt Engineering | Whether you're a seasoned AI practitioner looking to enhance your skills or a newcomer eager to delve... | 0 | 2024-05-24T07:13:17 | https://dev.to/ailearning/where-to-learn-prompt-engineering-3dl0 | learnpromptengineering, promptengineering, certifiedpromptengineer, promptengineeringcertification | Whether you're a seasoned AI practitioner looking to enhance your skills or a newcomer eager to delve into the world of NLP, mastering prompt engineering opens up a wealth of opportunities for shaping the future of human-computer interaction. But where can you learn [prompt engineering](https://futureskillsacademy.com/blog/become-certified-prompt-engineer-expert/), and how can you obtain certification to validate your expertise?

#### 1. Online Courses:

One of the most accessible and flexible ways to learn prompt engineering is through online courses. Platforms like Coursera, Udemy, and edX offer a variety of courses specifically tailored to NLP and prompt engineering. Look for courses that cover topics such as prompt design strategies, model fine-tuning techniques, and practical applications in real-world scenarios. These courses often provide a combination of video lectures, hands-on exercises, and quizzes to reinforce learning and ensure mastery of key concepts.

#### 2. Specialized Training Programs:

For a more immersive learning experience, consider enrolling in specialized training programs or workshops focused specifically on prompt engineering that can help anyone become a [certified prompt engineer](https://futureskillsacademy.com/certification/certified-prompt-engineering-expert/). Some organizations and institutes offer intensive boot camps or certificate programs that provide in-depth instruction and practical experience with industry-standard tools and methodologies. These programs typically cater to professionals seeking to advance their careers or transition into roles focused on AI and NLP.

#### 3. Self-Study Resources:

In addition to formal courses and programs, aspiring prompt engineers can access a wealth of self-study resources online. Blogs, research papers, and online forums like Reddit's prompt engineering community offer valuable insights, case studies, and discussions on prompt design techniques and best practices. Dive deep into the latest research papers and experiment with open-source tools and libraries to gain hands-on experience and stay up-to-date with the latest advancements in the field.

## Prompt Engineering Certification:

Once you've acquired the necessary knowledge and skills in prompt engineering, obtaining certification can help validate your expertise and distinguish you in the job market. While there isn't yet a standardized certification specifically for prompt engineering, you can demonstrate your proficiency through various means:

#### 1. Industry-Recognized Certifications:

Some organizations offer certifications in related fields such as NLP, machine learning, or AI engineering, which may include components or modules on prompt engineering. Look for certifications from reputable institutions or industry leaders that align with your career goals and interests.

#### 2. Project Portfolio:

Building a strong portfolio of projects showcasing your prompt engineering skills can be just as valuable as formal certification. Create and showcase projects demonstrating your ability to design effective prompts, fine-tune models, and achieve desired outcomes in real-world applications. Highlighting your practical experience and contributions to the field can impress potential employers and collaborators.

#### 3. Networking and Collaboration:

Engage with the prompt engineering community through online forums, social media groups, and networking events. Participate in hackathons, competitions, or collaborative projects where you can showcase your skills, learn from others, and establish connections with industry professionals. Networking can lead to valuable opportunities for mentorship, collaboration, and career advancement in the field of prompt engineering.

### Conclusion:

As the demand for AI-powered applications continues to grow across industries, prompt engineering has become an essential skill for shaping the future of NLP and human-computer interaction. Whether you choose to learn through online courses, specialized training programs, or self-study resources, mastering prompt engineering opens doors to exciting career opportunities and meaningful contributions to the advancement of AI technology.

Future Skill Academy is at the forefront of preparing individuals for the future of work through its [prompt engineering certification](https://futureskillsacademy.com/certification/certified-prompt-engineering-expert/) program. Recognizing the growing importance of natural language processing (NLP) and its applications in various industries, the academy offers comprehensive courses and workshops focused on mastering prompt engineering techniques.

| ailearning |

1,863,638 | What study Abroad consultants do for you? | If you are also planning to move abroad for higher studies, you must hire abroad study visa... | 0 | 2024-05-24T07:11:54 | https://dev.to/abroad_gateway_20e7b5165b/what-study-abroad-consultants-do-for-you-13eb |

If you are also planning to move abroad for higher studies, you must hire abroad [study visa consultant](https://abroadgateway.com/) as per the current scenario of the market these students are finding it difficult to select the appropriate study abroad consultant. There are many institutes are available in the market which is claiming for the best, but few of them are unauthorized and fake. So if you are also planning to hire a study abroad consultant you need keep in mind few parameters such as check their credentials, reviews, reputation and quality of service. Once you hire a an authorized study abroad consultant, you are sorted he is going to make visa filing process very easy and enjoyable on the flip side if you do not hire a good study abroad you might find this process hectic and challenging. Basically these consultants are act as education advisor in your abroad journey as they will give you right guidance as per your profile by helping you to selecting the best university and best course.

Let’s have look what an abroad study visa consultant can do to help you:

Academic advising: In this, your consultant will give academic advice as by accessing your profile he will give you advice according to your previous studies so that you can select the right course in the right university.

University selection: Your consultant will help you in choosing the right university in which he will aware you about the location and reputation of the university and course availability also. As if you choose the private/ not registered university and the demanding course you will not get the education points at the end.

Application assistance: Your consultant will help you by explaining you the application process of the university and also help you by arranging documents such as SOP which stands for statement of purpose, LOR which stands for the letter of recommendation as they understand the demand of the university and the embassy.

Test preparation: As you are applying in the foreign universities for the studies so you need to fulfill the eligibility criteria of the language proficiency test such as IELTS and PTE as they are compulsory to get admission in the universities.

Visa guidance: Your consultant will provide you right visa guidance as per your profile and wish and also help you to arrange the documents which are required to apply for the visa and also make sure to apply for the same before the deadlines.

Financial planning: Your consultant will help you by providing the information regarding tuition fees, living expenses and the scholarships which will help the student a lot during his academic journey in the abroad.

Cultural orientation: Your consultant will aware you in advance about the cultural diversity of the country where you are moving, moreover, he will give you advice on how to adapt the new culture by joining cultural groups there so that you will suffer from cultural shock.

Travel arrangements: Your consultant will help you by booking your air tickets and arrange the accommodation in the host country for you so that you can start your academic journey without any hustle-bustle.

Pre-departure orientation: Your consultant will arrange a pre- departure session for you before flying in which they will provide you information regarding the host country rules and regulation as you will be newcomer over there.

Post arrival support: Most of the consultants gives post arrival support by keeping in touch with you after you lands your dream country as they are ready to assist you if you face any kind of difficulty there.

Career counseling: Your consultant will provide you career counseling for the short term and long term career goals by giving you right guidance on how to achieve them.

Networking: Your consultant will have networks with the universities and students so they can connect you with the alumni and the experts who can help you if you experience any kind of difficulty in the university and the campus.

Monitoring progress: In this, your [immigration consultant](https://abroadgateway.com/) will monitor your progress and provide you support in visa conversion and obtaining the work permit and after that they assist you in applying for PR of that country.

Read More at : [https://medium.com/@abroadgateway700/which-is-the-best-uk-study-visa-consultant-in-chandigarh-15ae60426070](https://medium.com/@abroadgateway700/which-is-the-best-uk-study-visa-consultant-in-chandigarh-15ae60426070

)

| abroad_gateway_20e7b5165b | |

1,863,636 | DashVector x 通义千问大模型:打造基于专属知识的问答服务 | =================================================== 本教程演示如何使用向量检索服务(DashVector),结合LLM大模型等能力,来打造基于垂直领... | 0 | 2024-05-24T07:10:53 | https://dev.to/dashvector/dashvector-x-tong-yi-qian-wen-da-mo-xing-da-zao-ji-yu-zhuan-shu-zhi-shi-de-wen-da-fu-wu-2f0 |

===================================================

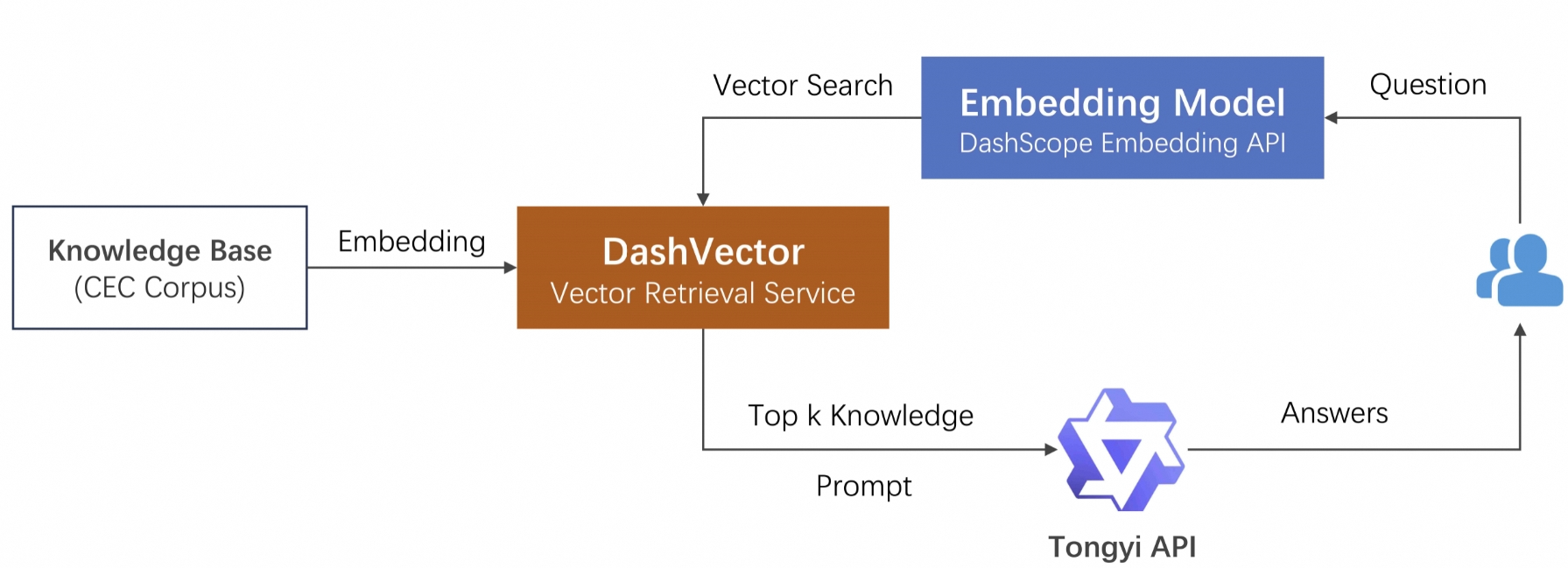

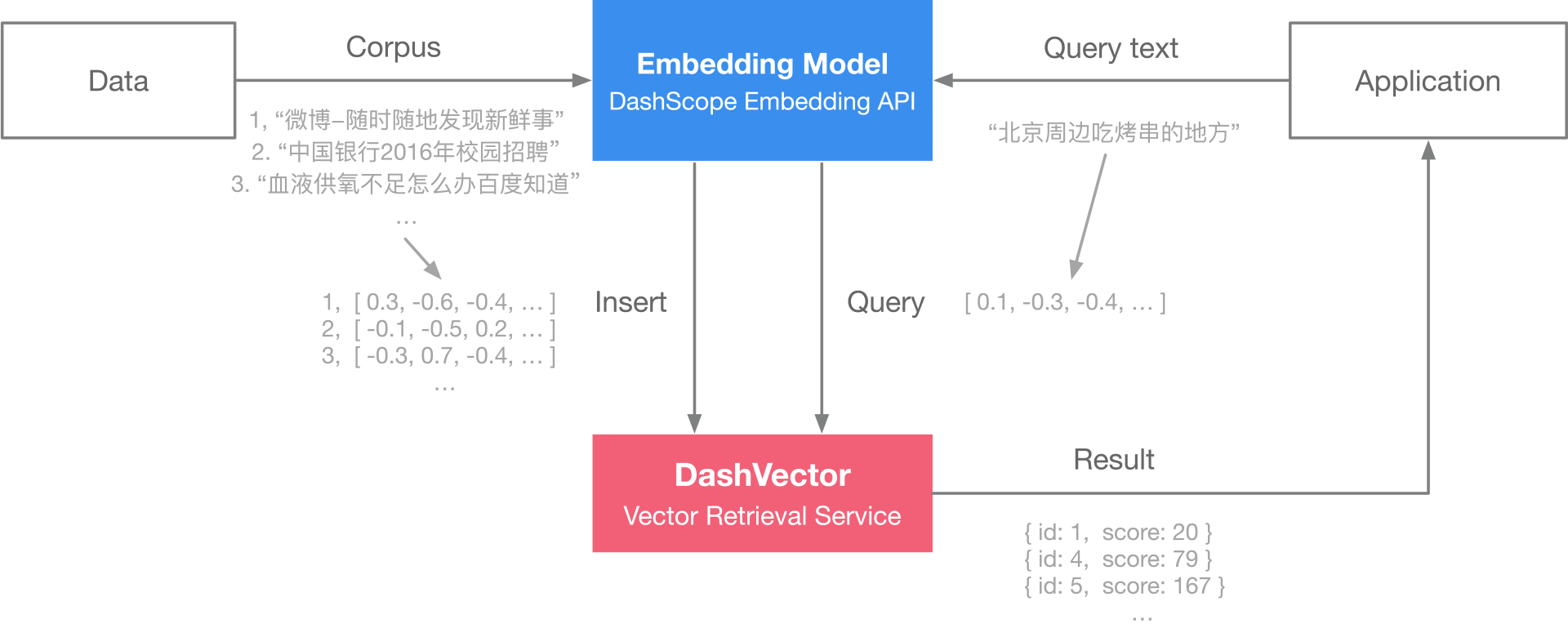

本教程演示如何使用向量检索服务(DashVector),结合LLM大模型等能力,来打造基于垂直领域专属知识等问答服务。其中LLM大模型能力,以及文本向量生成等能力,这里基于[灵积模型服务](https://dashscope.aliyun.com/)上的通义千问 API以及Embedding API来接入。

背景及实现思路

----------------

大语言模型(LLM)作为自然语言处理领域的核心技术,具有丰富的自然语言处理能力。但其训练语料库具有一定的局限性,一般由普适知识、常识性知识,如维基百科、新闻、小说,和各种领域的专业知识组成。导致 LLM 在处理特定领域的知识表示和应用时存在一定的局限性,特别对于垂直领域内,或者企业内部等私域专属知识。

实现专属领域的知识问答的关键,在于如何让LLM能够理解并获取存在于其训练知识范围外的特定领域知识。同时可以通过特定Prompt构造,提示LLM在回答特定领域问题的时候,理解意图并根据注入的领域知识来做出回答。在通常情况下,用户的提问是完整的句子,而不像搜索引擎只输入几个关键字。这种情况下,直接使用关键字与企业知识库进行匹配的效果往往不太理想,同时长句本身还涉及分词、权重等处理。相比之下,倘若我们把提问的文本,和知识库的内容,都先转化为高质量向量,再通过向量检索将匹配过程转化为语义搜索,那么提取相关知识点就会变得简单而高效。

接下来我们将基于[中文突发事件语料库](https://github.com/shijiebei2009/CEC-Corpus/)(CEC Corpus)演示关于突发事件新闻报道的知识问答。

整体流程

-------------

主要分为三个阶段:

1. **本地知识库的向量化** 。通过文本向量模型将其转化为高质量低维度的向量数据,再写入DashVector向量检索服务。这里数据的向量化我们采用了[灵积模型服务](https://dashscope.aliyun.com/)上的Embedding API实现。

2. **相关知识点的提取** 。将提问文本向量化后,通过 DashVector 提取相关知识点的原文。

3. **构造 Prompt 进行提问** 。将相关知识点作为"限定上下文+提问" 一起作为prompt询问通义千问。

前提准备

-------------

### 1. API-KEY 和 Cluster准备

* 开通灵积模型服务,并获得 API-KEY。请参考:[开通DashScope并创建API-KEY](https://help.aliyun.com/zh/dashscope/developer-reference/activate-dashscope-and-create-an-api-key?spm=a2c4g.2510235.0.i10)。

* 开通DashVector向量检索服务,并获得 API-KEY。请参考:DashVector [API-KEY管理](https://help.aliyun.com/document_detail/2510230.html?spm=a2c4g.2510235.0.i11)。

* 开通DashVector向量检索服务,并[创建Cluster](https://help.aliyun.com/document_detail/2631966.html?spm=a2c4g.2510235.0.i12)。

* 获取Cluster的Endpoint,Endpoint获取请查看 [Cluster详情](https://help.aliyun.com/document_detail/2568084.html?spm=a2c4g.2510235.0.i13#3c0384e4e6asc)。

**说明**

灵积模型服务DashScope的API-KEY与DashVector的API-KEY是独立的,需要分开获取。

### 2. 环境准备

**说明**

需要提前安装 Python3.7 及以上版本,请确保相应的 python 版本。

```

pip3 install dashvector dashscope

```

### 3. 数据准备

```

git clone https://github.com/shijiebei2009/CEC-Corpus.git

```

搭建步骤

-------------

**说明**

本教程所涉及的 *your-xxx-api-key* 以及 *your-xxx-cluster-endpoint* ,均需要替换为您自己的API-KAY及CLUSTER_ENDPOINT后,代码才能正常运行。

### 1. 本地知识库的向量化

[CEC-Corpus](https://github.com/shijiebei2009/CEC-Corpus)数据集包含 332 篇突发事件的新闻报道的语料和标注数据,这里我们只需要提取原始的新闻稿文本,并将其向量化后入库。文本向量化的教程可以参考[《基于向量检索服务与灵积实现语义搜索》](https://help.aliyun.com/document_detail/2510234.html?spm=a2c4g.2510235.0.i14)。创建`embedding.py`文件,并将如下示例代码复制到`embedding.py`中:

```python

import os

import dashscope

from dashscope import TextEmbedding

from dashvector import Client, Doc

def prepare_data(path, batch_size=25):

batch_docs = []

for file in os.listdir(path):

with open(path + '/' + file, 'r', encoding='utf-8') as f:

batch_docs.append(f.read())

if len(batch_docs) == batch_size:

yield batch_docs

batch_docs = []

if batch_docs:

yield batch_docs

def generate_embeddings(news):

rsp = TextEmbedding.call(

model=TextEmbedding.Models.text_embedding_v1,

input=news

)

embeddings = [record['embedding'] for record in rsp.output['embeddings']]

return embeddings if isinstance(news, list) else embeddings[0]

if __name__ == '__main__':

dashscope.api_key = '{your-dashscope-api-key}'

# 初始化 dashvector client

client = Client(

api_key='{your-dashvector-api-key}',

endpoint='{your-dashvector-cluster-endpoint}'

)

# 创建集合:指定集合名称和向量维度, text_embedding_v1 模型产生的向量统一为 1536 维

rsp = client.create('news_embedings', 1536)

assert rsp

# 加载语料

id = 0

collection = client.get('news_embedings')

for news in list(prepare_data('CEC-Corpus/raw corpus/allSourceText')):

ids = [id + i for i, _ in enumerate(news)]

id += len(news)

vectors = generate_embeddings(news)

# 写入 dashvector 构建索引

rsp = collection.upsert(

[

Doc(id=str(id), vector=vector, fields={"raw": doc})

for id, vector, doc in zip(ids, vectors, news)

]

)

assert rsp

```

在示例中,我们将 Embedding 向量和新闻报道的文稿(作为raw字段)一起存入DashVector向量检索服务中,以便向量检索时召回原始文稿。

### 2. 知识点的提取

将 CEC-Corpus 数据集所有新闻报道写入DashVector服务后,就可以进行快速的向量检索。实现这个检索,我们同样将提问的问题进行文本向量化后,再在DashVector服务中检索最相关的知识点,也就是相关新闻报道。创建`search.py`文件,并将如下示例代码复制到`search.py`文件中。

```

from dashvector import Client

from embedding import generate_embeddings

def search_relevant_news(question):

# 初始化 dashvector client

client = Client(

api_key='{your-dashvector-api-key}',

endpoint='{your-dashvector-cluster-endpoint}'

)

# 获取刚刚存入的集合

collection = client.get('news_embedings')

assert collection

# 向量检索:指定 topk = 1

rsp = collection.query(generate_embeddings(question), output_fields=['raw'],

topk=1)

assert rsp

return rsp.output[0].fields['raw']

```

### 3. 构造 Prompt 向LLM(通义千问)提问

在通过提问搜索到相关的知识点后,我们就可以将 "提问 + 知识点" 按照特定的模板作为 prompt 向LLM发起提问了。在这里我们选用的LLM是通义千问,这是阿里巴巴自主研发的超大规模语言模型,能够在用户自然语言输入的基础上,通过自然语言理解和语义分析,理解用户意图。可以通过提供尽可能清晰详细的指令(prompt),来获取更符合预期的结果。这些能力都可以通过[通义千问API](https://help.aliyun.com/document_detail/613695.html)来获得。

具体我们这里设计的提问模板格式为: *请基于我提供的内容回答问题。内容是{___},我的问题是{___}* ,当然您也可以自行设计合适的模板。创建`answer.py`,并将如下示例代码复制到`answer.py`中。

````

from dashscope import Generation

def answer_question(question, context):

prompt = f'''请基于```内的内容回答问题。"

```

{context}

```

我的问题是:{question}。

'''

rsp = Generation.call(model='qwen-turbo', prompt=prompt)

return rsp.output.text

````

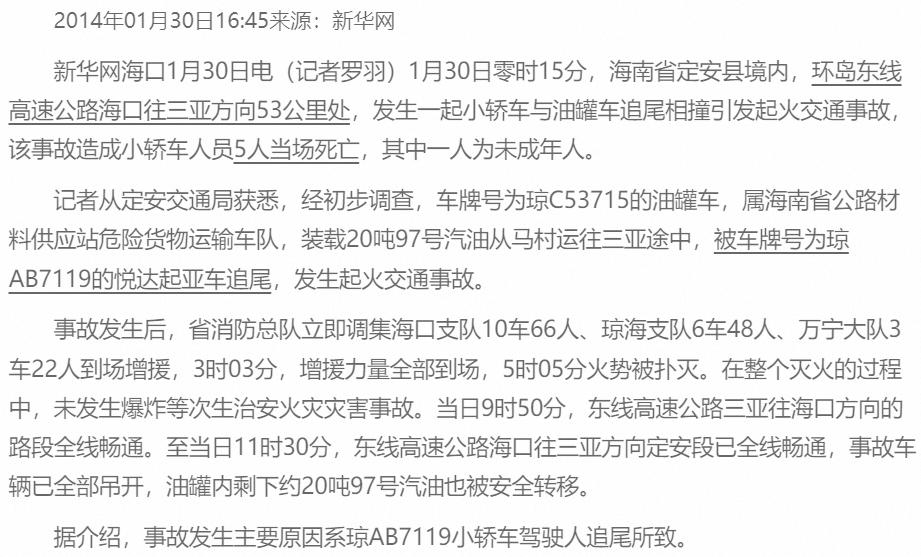

知识问答

-------------

做好这些准备工作以后,就可以对LLM做与具体知识点相关的提问了。比如在 CEC-Corpus 新闻数据集里,有如下一篇报道。因为整个新闻数据集已经在之前的步骤里,转换成向量入库了,我们现在就可以把这个新闻报道作为一个知识点,做出针对性提问:海南安定追尾事故,发生在哪里?原因是什么?人员伤亡情况如何?,并查看相应答案。

创建`run.py`文件,并将如下示例代码复制到`run.py`文件中。

```

import dashscope

from search import search_relevant_news

from answer import answer_question

if __name__ == '__main__':

dashscope.api_key = '{your-dashscope-api-key}'

question = '海南安定追尾事故,发生在哪里?原因是什么?人员伤亡情况如何?'

context = search_relevant_news(question)

answer = answer_question(question, context)

print(f'question: {question}\n' f'answer: {answer}')

```

可以看到,基于DashVector作为向量检索的底座,LLM大模型的知识范畴得到了针对性的扩展,并且能够对于专属的特定知识领域做出正确的回答。

写在最后

-------------

从本文的范例中,可以看到DashVector作为一个独立的向量检索服务,提供了开箱即用的强大向量检索服务能力,这些能力和各个AI模型结合,能够衍生多样的AI应用的可能。这里的范例中,LLM大模型问答,以及文本向量生成等能力,都是基于[灵积模型服务](https://dashscope.aliyun.com/)上的通义千问API和Embedding API来接入的,在实际操作中,相关能力同样可以通过其他三方服务,或者开源模型社区,比如ModelScope上的[各种开源LLM模型](https://modelscope.cn/topic/dfefe5be778b49fba8c44646023b57ba/pub/summary)来实现。

| dashvector | |

1,863,634 | Google Play Points Introduces Exciting New Features to Enhance User Rewards | Choosing the right framework is vital for your mobile app's success. Flutter, a popular UI toolkit by... | 0 | 2024-05-24T07:02:35 | https://dev.to/n968941/google-play-points-introduces-exciting-new-features-to-enhance-user-rewards-1453 | flutter, firebase, beginners, programming | Choosing the right framework is vital for your mobile app's success. Flutter, a popular UI toolkit by Google, allows developers to create natively compiled applications for mobile, web, and desktop from a single codebase. This article explores Flutter's features, benefits, and drawbacks to help you decide if it's the right choice for your app.

IntroductionWhat is Flutter?Key Features of FlutterSingle CodebaseHot ReloadRich Widget LibraryHigh PerformanceStrong Community and SupportBenefits of Using FlutterCross-Platform DevelopmentFast Development CycleCustomizable WidgetsStrong PerformanceReduced Testing EffortsConsistent UI Across PlatformsPotential Drawbacks of Using FlutterLarge App SizeLimited Native FeaturesLearning CurveEcosystem MaturityPerformance OverheadWhen to Choose FlutterCross-Platform NeedsRapid DevelopmentBudget ConstraintsCustom UI RequirementsPrototypingWhen Not to Choose FlutterPlatform-Specific FeaturesHigh-Performance RequirementsApp Size ConcernsLong-Term MaintenanceComparison with Other FrameworksFlutter vs. React NativeFlutter vs. Native DevelopmentFlutter vs. XamarinInformation in Table formatBenefits of Using FlutterPotential Drawbacks of Using FlutterWhen to Choose FlutterWhen Not to Choose FlutterComparison with Other FrameworksConclusionFAQs About Flutter for Mobile App DevelopmentWhat is Flutter and why is it popular?What are the key features of Flutter?What are the benefits of using Flutter for mobile app development?What are the potential drawbacks of using Flutter?When should I choose Flutter for my mobile app development project?When should I not choose Flutter for my mobile app development project?How does Flutter compare to other frameworks like React Native, Native Development, and Xamarin?In conclusion, is Flutter a suitable choice for my mobile app development project?

Introduction

Mobile app development is crucial in today's digital world. With many frameworks available, choosing the right one can make or break your app's success. Flutter, a UI toolkit by Google, has become popular for its ability to create natively compiled applications for mobile, web, and desktop from a single codebase. This article helps you decide if Flutter is the right choice for your mobile app by exploring its features, benefits, and potential drawbacks.

[read full article](https://flutters.in/google-play-points-introduces-exciting-new-features-to-enhance-user-rewards/)

What is Flutter?

Flutter is an open-source UI software development kit (SDK) created by Google. It allows developers to build apps for Android, iOS, web, and desktop from one codebase. Flutter uses the Dart programming language and provides a rich set of pre-designed widgets, making it easy to create visually appealing and highly responsive applications.

Key Features of Flutter

Single Codebase

Flutter lets developers write code once and deploy it across multiple platforms. This reduces the time and effort needed to maintain separate codebases.

Hot Reload

This feature lets developers see changes in real-time without restarting the app, speeding up the development process.

Rich Widget Library

Flutter offers a comprehensive library of customizable widgets that follow Material Design and Cupertino design guidelines, ensuring a consistent look and feel across different platforms.

High Performance

Flutter apps are compiled to native ARM code, ensuring high performance on both Android and iOS devices.

Strong Community and Support

As an open-source project backed by Google, Flutter has a robust community and extensive documentation, making it easier for developers to find resources and support.

Benefits of Using Flutter

Cross-Platform Development

Flutter’s single codebase approach saves time and resources and ensures a consistent user experience across different devices.

Fast Development Cycle

The hot reload feature allows developers to experiment, build UIs, add features, and fix bugs faster, speeding up the development process and improving productivity.

Customizable Widgets

Flutter’s rich set of widgets can be customized to create unique and branded app experiences, allowing developers to implement complex user interfaces easily.

Strong Performance

Flutter’s use of Dart and its compilation to native code ensure apps run smoothly and efficiently, which is crucial for performance-critical applications.

Reduced Testing Efforts

With a single codebase, testing requirements are significantly reduced. QA teams can focus on a single app version, streamlining the testing process and reducing the likelihood of platform-specific bugs.

[read full article](https://flutters.in/google-play-points-introduces-exciting-new-features-to-enhance-user-rewards/)

Consistent UI Across Platforms

Flutter’s widget-based architecture ensures that the UI looks and behaves consistently across different platforms, enhancing the overall user experience.

Potential Drawbacks of Using Flutter

Large App Size

Flutter apps tend to have larger file sizes compared to native apps, which can be a concern for users with limited storage space or slow internet connections.

Limited Native Features

While Flutter provides access to many native features, it may not support all functionalities available in native development environments. This can limit apps requiring deep integration with platform-specific features.

Learning Curve

Although Dart is relatively easy to learn, developers unfamiliar with it may face a learning curve. Additionally, getting used to Flutter’s widget-based architecture may take some time.

Ecosystem Maturity

Flutter is newer compared to other frameworks like React Native or native development platforms, meaning some libraries or tools may not be as mature or comprehensive.

Performance Overhead

While Flutter performs well for most applications, extremely performance-sensitive apps (like high-end games) may experience some overhead compared to apps developed with native code.

When to Choose Flutter

Cross-Platform Needs

If you need an app that works on both Android and iOS with a consistent user experience, Flutter’s single codebase approach is a strong contender.

Rapid Development

For projects with tight deadlines or those requiring frequent updates and iterations, Flutter’s hot reload and fast development cycle are highly beneficial.

Budget Constraints

Startups and small businesses with limited budgets can benefit from Flutter’s cost-effective development process by reducing the need for separate development teams for different platforms.

Custom UI Requirements

If your app requires a highly customized user interface with complex animations and visual elements, Flutter’s rich widget library and flexible customization options make it a suitable choice.

Prototyping

Flutter is ideal for creating prototypes and MVPs (Minimum Viable Products) due to its quick development capabilities and ability to produce visually appealing interfaces rapidly.

When Not to Choose Flutter

Platform-Specific Features

If your app requires extensive use of platform-specific features or APIs that Flutter does not support natively, you may encounter limitations that hinder your app’s functionality.

High-Performance Requirements

For applications that demand the highest level of performance, such as complex 3D games or apps requiring intensive computational tasks, native development might be more suitable.

App Size Concerns

If minimizing the app size is crucial for your target audience, consider native development, as Flutter apps generally have larger file sizes.

Long-Term Maintenance

If you anticipate needing to maintain and update the app over a long period, consider the availability of resources and the maturity of the ecosystem. While Flutter is growing rapidly, native development platforms have a longer track record and may offer more stability in the long run.

[read full article](https://flutters.in/google-play-points-introduces-exciting-new-features-to-enhance-user-rewards/)

Comparison with Other Frameworks

Flutter vs. React Native

Performance: Flutter often provides better performance due to its direct compilation to native code, while React Native relies on a JavaScript bridge.

Development Speed: Both frameworks offer fast development cycles, but Flutter’s hot reload is considered slightly more efficient.

UI Consistency: Flutter provides more consistent UI across platforms as it uses its own rendering engine, whereas React Native uses native components, which can lead to slight variations.

Community and Ecosystem: React Native has a more mature ecosystem and more third-party libraries compared to Flutter.

Flutter vs. Native Development

Development Time: Native development requires separate codebases for Android and iOS, leading to longer development times compared to Flutter’s single codebase approach.

Performance: Native apps generally offer the best performance and access to platform-specific features, which is crucial for certain types of applications.

Cost: Native development is usually more expensive due to the need for separate development teams and longer development cycles.

Flutter vs. Xamarin

Language: Flutter uses Dart, while Xamarin uses C#. Your team's familiarity with these languages can influence the decision.

Performance: Both offer good performance, but Flutter’s direct compilation to native code gives it a slight edge.

Ecosystem: Xamarin, backed by Microsoft, has a mature ecosystem and better integration with Microsoft’s development tools, which can be beneficial for enterprise applications.

Also read:

Artificial Intelligence Act: All You Need to Know About the European Council’s First Worldwide Rules on AI

New Diploma Course in Artificial Intelligence and Machine Learning at GTTC: Belagavi

Information in Table format

AspectDescriptionWhat is Flutter?Flutter is an open-source UI SDK by Google, using the Dart language to build apps for Android, iOS, web, and desktop from one codebase.Single CodebaseWrite code once and deploy across multiple platforms, reducing time and effort for separate codebases.Hot ReloadSee changes in real-time without restarting the app, speeding up development.Rich Widget LibraryOffers a library of customizable widgets following Material Design and Cupertino guidelines for a consistent look and feel.High PerformanceCompiled to native ARM code, ensuring high performance on both Android and iOS.Strong Community and SupportBacked by Google, with a robust community and extensive documentation for easy resource access.

Join Our Whatsapp Group

Join Telegram group

Benefits of Using Flutter

BenefitDescriptionCross-Platform DevelopmentSingle codebase saves time and resources, ensuring a consistent user experience across devices.Fast Development CycleHot reload feature allows for rapid experimentation, UI building, feature addition, and bug fixing.Customizable WidgetsRich set of widgets customizable for unique and branded app experiences, simplifying complex UI implementation.Strong PerformanceDart and native code compilation ensure smooth and efficient app performance.Reduced Testing EffortsSingle codebase reduces testing requirements, streamlining the QA process and lowering the likelihood of platform-specific bugs.Consistent UI Across PlatformsWidget-based architecture ensures consistent UI behavior across different platforms, enhancing user experience.

Potential Drawbacks of Using Flutter

DrawbackDescriptionLarge App SizeFlutter apps tend to be larger in file size compared to native apps, which can be an issue for users with limited storage or slow internet.Limited Native FeaturesWhile Flutter supports many native features, it may not cover all functionalities available in native development environments.Learning CurveDevelopers unfamiliar with Dart and Flutter’s widget-based architecture may need time to adapt.Ecosystem MaturityFlutter is newer compared to other frameworks, meaning some libraries or tools may not be as mature or comprehensive.Performance OverheadExtremely performance-sensitive apps, like high-end games, might experience some overhead compared to native development.

When to Choose Flutter

ScenarioDescriptionCross-Platform NeedsIdeal for apps needing consistent user experience on both Android and iOS with a single codebase.Rapid DevelopmentSuitable for projects with tight deadlines or frequent updates due to fast development cycles enabled by hot reload.Budget ConstraintsBeneficial for startups and small businesses by reducing costs of maintaining separate development teams for different platforms.Custom UI RequirementsGreat for apps needing highly customized user interfaces with complex animations and visual elements, thanks to Flutter’s rich widget library.PrototypingPerfect for creating prototypes and MVPs quickly due to rapid development capabilities and visually appealing interfaces.

When Not to Choose Flutter

ScenarioDescriptionPlatform-Specific FeaturesIf your app requires extensive use of platform-specific features or APIs not supported natively by Flutter, this may limit functionality.High-Performance RequirementsNative development might be more suitable for apps demanding the highest performance levels, like complex 3D games or apps requiring intensive computational tasks.App Size ConcernsIf minimizing the app size is crucial, consider native development as Flutter apps generally have larger file sizes.Long-Term MaintenanceNative development platforms might offer more stability and resources over the long term compared to Flutter, which is newer and evolving.

Comparison with Other Frameworks

ComparisonDescriptionFlutter vs. React NativePerformance: Flutter’s direct native code compilation vs. React Native’s JavaScript bridge. Development Speed: Flutter’s hot reload slightly more efficient. UI Consistency: Flutter’s own rendering engine for consistent UI. Community and Ecosystem: React Native’s mature ecosystem and more third-party libraries.Flutter vs. Native DevelopmentDevelopment Time: Flutter’s single codebase vs. native’s separate codebases. Performance: Native apps offer best performance and platform-specific features. Cost: Native development generally more expensive due to separate teams and longer cycles.Flutter vs. XamarinLanguage: Flutter’s Dart vs. Xamarin’s C#. Performance: Both perform well, but Flutter’s native code compilation has an edge. Ecosystem: Xamarin’s mature ecosystem and Microsoft integration for enterprise apps.

Conclusion

Deciding whether Flutter is right for your mobile app depends on your project requirements, target audience, budget, and timeline. Flutter’s cross-platform development, fast cycles, customizable widgets, and strong performance make it a compelling choice. However, drawbacks like larger app sizes, limited native features, and a learning curve should be considered. Flutter is well-suited for projects needing quick multi-platform targeting and consistent, appealing UI. Carefully evaluate your needs to determine if Flutter fits your mobile app development project.

FAQs About Flutter for Mobile App Development

What is Flutter and why is it popular?

Answer: Flutter is an open-source UI software development kit (SDK) created by Google, allowing developers to build apps for Android, iOS, web, and desktop from one codebase. It has gained popularity for its ability to create natively compiled applications, its rich widget library, and its strong community support.

What are the key features of Flutter?

Answer: Some key features of Flutter include its single codebase approach, enabling developers to write code once and deploy it across multiple platforms. It also offers hot reload for real-time changes, a rich widget library following Material Design and Cupertino guidelines, high performance through native ARM code compilation, and strong community support.

What are the benefits of using Flutter for mobile app development?

Answer: Using Flutter offers benefits such as cross-platform development, fast development cycles with hot reload, customizable widgets for unique UI experiences, strong performance due to Dart and native code compilation, reduced testing efforts with a single codebase, and consistent UI across platforms.

What are the potential drawbacks of using Flutter?

Answer: Some potential drawbacks of Flutter include larger app sizes compared to native apps, limitations in accessing certain native features, a learning curve for developers new to Dart and Flutter's widget-based architecture, and the ecosystem's relative immaturity compared to other frameworks.

When should I choose Flutter for my mobile app development project?

Answer: Flutter is a good choice for projects needing cross-platform development, rapid development cycles, cost-effective development, highly customized UI requirements, and quick prototyping.

When should I not choose Flutter for my mobile app development project?

Answer: You may want to avoid Flutter if your app requires extensive use of platform-specific features not supported natively by Flutter, demands the highest level of performance, needs to minimize app size, or requires long-term maintenance where ecosystem maturity is a concern.

[read full article](https://flutters.in/google-play-points-introduces-exciting-new-features-to-enhance-user-rewards/)

Join Our Whatsapp Group

Join Telegram group

How does Flutter compare to other frameworks like React Native, Native Development, and Xamarin?

Answer: Flutter often provides better performance compared to React Native, offers faster development cycles with hot reload, and ensures more consistent UI across platforms. Compared to native development, Flutter reduces development time with its single codebase approach but may not offer the same level of platform-specific features. Flutter vs. Xamarin: Flutter uses Dart while Xamarin uses C#, both offer good performance but Flutter has a slight edge with direct compilation to native code.

In conclusion, is Flutter a suitable choice for my mobile app development project?

Answer: Deciding whether Flutter is the right choice for your mobile app depends on various factors such as project requirements, target audience, budget, and development timeline. Flutter offers many advantages but also has potential drawbacks. By carefully evaluating your specific needs and considering the trade-offs, you can determine whether Flutter is the best fit for your mobile app development project. | n968941 |

1,863,633 | 基于向量检索服务与TextEmbedding实现语义搜索 | 本教程演示如何使用向量检索服务(DashVector),结合灵积模型服务上的Embedding API,来从0到1构建基于文本索引的构建+向量检索基础上的语义搜索能力。具体来说,我们将基于QQ... | 0 | 2024-05-24T07:01:07 | https://dev.to/dashvector/ji-yu-xiang-liang-jian-suo-fu-wu-yu-textembeddingshi-xian-yu-yi-sou-suo-25lm | 本教程演示如何使用向量检索服务(DashVector),结合[灵积模型服务](https://dashscope.aliyun.com/)上的[Embedding API](https://help.aliyun.com/zh/dashscope/developer-reference/api-details-15),来从0到1构建基于文本索引的构建+向量检索基础上的语义搜索能力。具体来说,我们将基于QQ 浏览器搜索标题语料库([QBQTC](https://github.com/CLUEbenchmark/QBQTC):QQ Browser Query Title Corpus)进行实时的文本语义搜索,查询最相似的相关标题。

什么是 Embedding

----------------------

简单来说,Embedding是一个多维向量的表示数组,通常由一系列数字组成。Embedding可以用来表示任何数据,例如文本、音频、图片、视频等等,通过Embedding我们可以编码各种类型的非结构化数据,转化为具有语义信息的多维向量,并在这些向量上进行各种操作,例如相似度计算、聚类、分类和推荐等。

整体流程概述

---------------

* **Embedding** :通过DashScope提供的通用文本向量模型,对语料库中所有标题生成对应的embedding向量。

* **构建索引服务和查询** :

* 通过DashVector向量检索服务对生成embedding向量构建索引。

* 将查询文本embedding向量作为输入,通过DashVector搜索相似的标题。

具体操作流程

---------------

### 前提条件

* 开通灵积模型服务,并获得 API-KEY:[开通DashScope并创建API-KEY](https://help.aliyun.com/zh/dashscope/developer-reference/activate-dashscope-and-create-an-api-key?spm=a2c4g.2510234.0.i8)。

* 开通DashVector向量检索服务,并获得 API-KEY[API-KEY管理](https://help.aliyun.com/document_detail/2510230.html?spm=a2c4g.2510234.0.i9)。

### 1、环境安装

**说明**

需要提前安装 Python3.7 及以上版本,请确保相应的 python 版本。

```

pip3 install dashvector dashscope

```

### 2、数据准备

[QQ浏览器搜索相关性数据集](https://github.com/CLUEbenchmark/QBQTC)(QBQTC, QQ Browser Query Title Corpus),是QQ浏览器搜索引擎目前针对大搜场景构建的一个融合了相关性、权威性、内容质量、 时效性等维度标注的学习排序(LTR)数据集,广泛应用在搜索引擎业务场景中。作为CLUE-beanchmark的一部分,QBQTC 数据集可以直接从github上下载(训练集路径为dataset/train.json)。

```

git clone https://github.com/CLUEbenchmark/QBQTC.git

wc -l QBQTC/dataset/train.json

```

数据集中的训练集(train.json)其格式为 json:

```

{

"id": 0,

"query": "小孩咳嗽感冒",

"title": "小孩感冒过后久咳嗽该吃什么药育儿问答宝宝树",

"label": "1"

}

```

我们将从这个数据集中提取title,方便后续进行embedding并构建检索服务。

```

import json

def prepare_data(path, size):

with open(path, 'r', encoding='utf-8') as f:

batch_docs = []

for line in f:

batch_docs.append(json.loads(line.strip()))

if len(batch_docs) == size:

yield batch_docs[:]

batch_docs.clear()

if batch_docs:

yield batch_docs

```

### 3、通过 DashScope 生成 Embedding 向量

DashScope灵积模型服务通过标准的API提供了多种模型服务。其中支持文本Embedding的模型中文名为通用文本向量,英文名为text-embedding-v1。我们可以方便的通过DashScope API调用来获得一段输入文本的embedding向量。

**说明**

需要使用您的api-key替换示例中的 *your-dashscope-api-key* ,代码才能正常运行。

```

import dashscope

from dashscope import TextEmbedding

dashscope.api_key='{your-dashscope-api-key}'

def generate_embeddings(text):

rsp = TextEmbedding.call(model=TextEmbedding.Models.text_embedding_v1,

input=text)

embeddings = [record['embedding'] for record in rsp.output['embeddings']]

return embeddings if isinstance(text, list) else embeddings[0]

# 查看下embedding向量的维数,后面使用 DashVector 检索服务时会用到,目前是1536

print(len(generate_embeddings('hello')))

```

### 4、通过 DashVector 构建检索:向量入库

DashVector 向量检索服务上的数据以集合(Collection)为单位存储,写入向量之前,我们首先需要先创建一个集合来管理数据集。创建集合的时候,需要指定向量维度,这里的每一个输入文本经过DashScope上的text_embedding_v1模型产生的向量,维度统一均为1536。

DashVector 除了提供向量检索服务外,还提供倒排过滤功能 和 scheme free 功能。所以我们为了演示方便,可以写入数据时,可以将title内容写入 DashVector 以便召回。写入数据还需要指定 id,我们可以直接使用 QBQTC 中id。

**说明**

需要使用您的api-key替换示例中的 *your-dashvector-api-key* ,以及您的Cluster Endpoint替换示例中的 *your-dashvector-cluster-endpoint* ,代码才能正常运行。

```

from dashvector import Client, Doc

# 初始化 DashVector client

client = Client(

api_key='{your-dashvector-api-key}',

endpoint='{your-dashvector-cluster-endpoint}'

)

# 指定集合名称和向量维度

rsp = client.create('sample', 1536)

assert rsp

collection = client.get('sample')

assert collection

batch_size = 10

for docs in list(prepare_data('QBQTC/dataset/train.json', batch_size)):

# 批量 embedding

embeddings = generate_embeddings([doc['title'] for doc in docs])

# 批量写入数据

rsp = collection.insert(

[

Doc(id=str(doc['id']), vector=embedding, fields={"title": doc['title']})

for doc, embedding in zip(docs, embeddings)