id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,863,811 | Scram Authentication: Overcoming Mock Testing Challenges | In the vast landscape of cybersecurity, authentication stands as the guardian of digital... | 0 | 2024-05-24T10:16:43 | https://keploy.io/blog/technology/scram-authentication-overcoming-mock-testing-challenges | webdev, javascript, programming, python |

In the vast landscape of cybersecurity, authentication stands as the guardian of digital fortresses, ensuring that only the right individuals gain access to sensitive information and services. Imagine you're at the entrance of a top-secret facility, and you need to prove your identity to the security personnel. In the digital realm, this is precisely what authentication mechanisms do – they verify your identity before granting access.

When it comes to databases, making sure that the right programs have access is super important for keeping things safe and organised. At first, I thought databases might use a method called **JWT tokens** for this because it's popular and strong for security. However, I found out that there are different ways of authentication based on the connection state.

**- Token Based Authentications:** Token-based authentication is a method where users or applications receive a special "token" after initial authentication. This token is then used for further requests without repeatedly verifying credentials for each new connection.

Due to this property, it is used in the **Stateless Services** (like REST APIs, SSO, etc)

**- Connection oriented Authentications:** It is a method where users authenticates with the credentials for each connection or session. It is typically used in scenarios where a continuous connection or session is established between the client and server (like Databases, SSH).

In this blog post, we will explore how to mock SCRAM authentication in a testing environment. Before we dive into the details, let's first understand what SCRAM (Salted Challenge Response Authentication Mechanism) is and how it is used by databases like MongoDB.

**What is SCRAM authentication**

SCRAM, which stands for "Salted Challenge Response Authentication Mechanism," is a powerful challenge-based authentication method that follows the SASL (Simple Authentication and Security Layer) framework. SASL is designed to provide a framework for authentication and security without exposing sensitive credentials like passwords during the authentication process. This helps with several important aspects of authentication and security in the digital world:

**- Password Protection:** In SCRAM, the password is not shared between the client and server which ensures the security of user password. This also prevents from the MITM attacks.

**- Protection Against Credential Attacks:** SCRAM is resistant to common credential-based attacks such as brute force and dictionary attacks. The salted and hashed passwords, along with challenge-response mechanisms, add a layer of complexity that makes it challenging for attackers to guess passwords.

**- Confidentiality:** SCRAM ensures the confidentiality of sensitive data during authentication. By using cryptographic techniques and secure channels, it prevents unauthorised entities from eavesdropping on authentication attempts.

**- Secure Access Control:** SCRAM plays a crucial role in access control, ensuring that only authorised users or applications can interact with a system or database.

`There is a good example on wikipedia describing scram. here`

**How SCRAM authentication works**

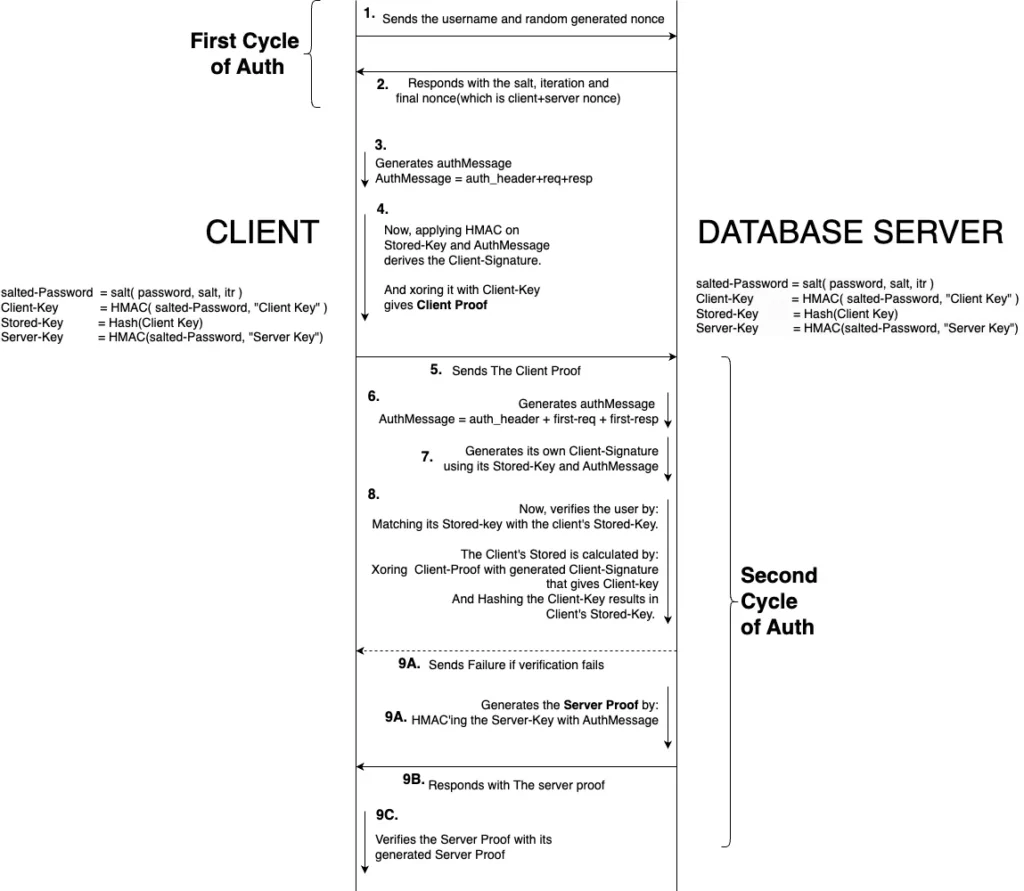

After exploring what SCRAM is and understanding its purpose, it's natural to wonder how it functions and ensures all the necessary properties for ideal authentication in database environments.

In SCRAM authentication, the client and server securely establish their identities without the need to exchange the user password. This is achieved by generating and validating cryptographic proofs. These proofs are created using robust cryptographic algorithms, such as HMAC and SHA-hashing. This method ensures that even if network packets are intercepted by an intruder, the **password remains undecipherable.**

As the generation and validation of proofs in SCRAM authentication rely on randomly generated nonces by the client and server, it ensures robust security against potential intruders. The key factor here is that for each new connection, a unique nonce is generated and associated with it. This dynamic and one-time use of nonces effectively mitigates the risk of packet capture leading to unauthorised access, enhancing the overall security of the communication process.

Here is a detailed flow of communication for SCRAM authenticaton.

**Challenges for mocking SCRAM Auth**

In a test environment, mocking libraries need to bypass authentication simply by returning a message indicating successful authentication. However, SCRAM introduces a more complex scenario. In SCRAM, the client not only authenticates itself but also verifies the server's identity, adding an additional layer of security. This dual-verification process presents a significant hurdle for mocking tools like Keploy, an API testing tool designed to capture and replay network data. Due to SCRAM's complex nature, these libraries often experience Auth failure error like:

```

Exception authenticating MongoCredential{mechanism=SCRAM-SHA-1, userName='USER', source='ADMIN', password=<hidden>, mechanismProperties=<hidden>}; nested exception is com.mongodb.MongoSecurityException

```

Upon debugging the network packets, we've identified key insights. Attached below are the pertinent packet details for reference:

```

# SaslStart request. Here, r is the client nonce.

n,,n=root,r=hjO1c6p6PaNbeDUGhn/Jak3FFuUZQBxN1xmOeWr5L1c=

# The mocked SaslStart response. Here, r is the client/server nonce.

r=cjr1a0k0KanfoMMNkr/LaedRRtTQUBxM4fe3tuc6K1d=/igGE0M3BBiDmR/et9DN4cOR+CoNtHxs,s=ZPGEqaD8ImD95Vt3c1uuVQkImxrntgG4Wjh37Q==,i=15000

```

In the mechanism under discussion, the r key plays a crucial role in transmitting nonces within the payload. During the process, the client initiates the interaction by sending a new client nonce within the request. Subsequently, it receives a combined client/server nonce, along with the salt and iteration count, in the SaslStart response. However, a notable issue arises here: the client nonce often does not match in the response payload of SaslStart. This mismatch is a critical point of concern, as it can disrupt the intended flow of the SaslContinue authentication cycle because client and server proof depends on the nonces.

**Nonce Syncing: A Quick Fix for Successful auth**

To bypass the nonce mismatch issue during SCRAM authentication in Keploy tests, a straightforward solution is to update the client nonce directly in the SaslStart response payload. This adjustment ensures that the client's nonce matches the server's, leading to successful authentication. Attaching the pseudo code:

```

func (saslStartRequest, saslStartResponse string) {

expectedNonce, err := extractClientNonce( saslStartRequest )

actualNonce, err := extractClientNonce( saslStartRequest )

// Since, the nonce are randomlly generated string. so, each session have unique nonce.

// Thus, the mocked server response should be updated according to the current nonce

return strings.Replace( saslStartResponse, expectedNonce, actualNonce, -1), nil

}

```

`Here is the code in production to update client nonce.`

After implementing the quick fix of replacing the client nonce, we anticipated a smooth resolution. However, the error persisted, leading us to delve deeper into the problem. Further investigation required debugging the client driver, where we uncovered a critical issue: the server verification was failing on the client side.

**Final step: Correct Server Proof Generation**

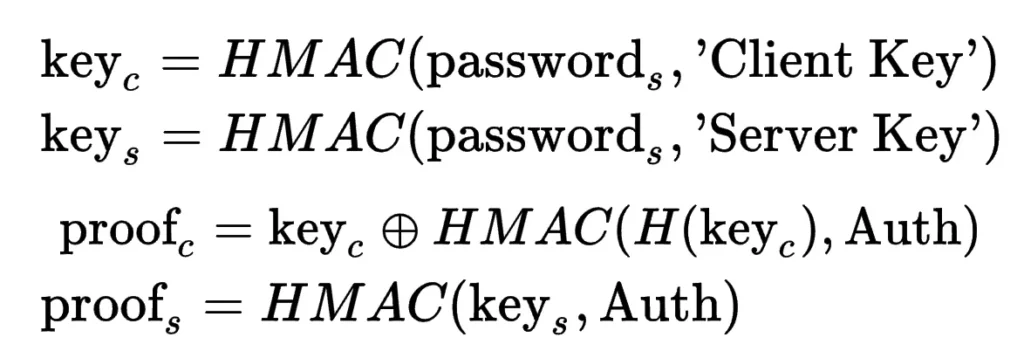

In tackling SCRAM authentication issues with Keploy, the critical final step is ensuring the correct generation of server proof. This is essential for successful authentication, especially with how the client side works.

1.**Client-Side Verification:** The client uses the combined client/server nonce to verify the server. The method to generate the server proof, which is key to this verification, is straightforward yet precise.

2.**Why Authentication Fails:** Our tests showed that authentication failures were mainly due to the mismatch between the recorded server proof and the new nonces. Each new nonce combination requires a unique server proof, and if they don't match, authentication fails.

3.**Fixing the Issue:** The solution is simple: generate a new server proof for each new set of nonces. This means moving away from using a fixed, recorded proof to creating a new proof every time, based on the current nonces. This change solves the authentication problems and ensures that the client can successfully verify the server each time, making the process more secure and reliable.

The image below provides a detailed description of the formula used in generating server proofs:

Here, is the code in production for generating the server proof in SaslContinue

`Hurray! Our fixes worked – authentication is now a smooth success in our test environment! 🎉🎊`

**Conclusion**

To sum up our exploration of SCRAM authentication:

1.**SCRAM Auth Insights:** We've learned how SCRAM auth secures user verification without revealing passwords, a smart way to safeguard user credentials.

2.**Mocking Library Challenges:** Mocking tools (like Keploy), reliant on network packets, face hurdles with SCRAM due to its dynamic and secure nature.

3.**Solution for Testing:** To successfully test with mocking libraries, it's essential to configure them with the user's password. This allows for generating accurate server proofs, aligning with the ever-changing nonces in SCRAM.

This journey underscores the delicate balance between robust security and effective software testing, highlighting the evolving landscape of digital security.

**FAQ's**

**How can authentication issues with SCRAM be resolved in testing environments?**

Authentication issues with SCRAM in testing environments can be resolved by ensuring that mocking tools are configured to handle dynamic nonce generation and server proof validation accurately. Keploy does this by aligning it's mocking capabilities with the authentication requirements of SCRAM, and ensuring authentication failures can be mitigated, and testing can proceed smoothly.

**What are the key takeaways from exploring SCRAM authentication?**

Key takeaways from exploring SCRAM authentication include:

- SCRAM auth secures user verification without revealing passwords, enhancing security.

- Mocking libraries may face challenges with SCRAM due to its dynamic nature.

- Solutions for testing with mocking libraries involve configuring them with accurate server proofs and handling dynamic nonce generation effectively.

- Balancing robust security with effective software testing is crucial in the evolving landscape of digital security.

**How does SCRAM authentication compare to other authentication methods like JWT tokens?**

SCRAM authentication is primarily used in connection-oriented environments like databases, whereas JWT tokens are commonly used in stateless services like REST APIs. Each method has its advantages and is suited to different use cases based on factors like security requirements and connection state.

**What databases support SCRAM authentication?**

SCRAM authentication is supported by various databases, including MongoDB, PostgreSQL, CouchDB, and others. It's becoming a standard method for securing user authentication in database environments. | keploy |

1,863,810 | Best Video Conference API for Websites in 2024 | We all recognise how integral video conferencing has become in our daily routines, having spent... | 0 | 2024-05-24T10:14:24 | https://dev.to/digitalsamba/best-video-conference-api-for-websites-in-2024-3dfd | api, sdk, videoconfererencing, webdev | We all recognise how integral video conferencing has become in our daily routines, having spent countless hours using these tools. A less familiar but equally significant development is the video conferencing API. So, what exactly is it? An API, or "Application Programming Interface", is essentially a mechanism that enables one system to communicate with another.

A video conferencing API allows you to embed video conferencing capabilities into your application and manage the platform's features through an API. The platform itself is often referred to as an API, and while this isn't entirely accurate, the term "video conferencing API" has gained widespread usage. Essentially, a video conferencing API is just an API.

This article reviews [the leading video conferencing APIs](https://www.digitalsamba.com/blog/best-free-video-conferencing-api-for-website-integration) available today. We analyse established industry leaders and emerging contenders, evaluating their features, costs, and ideal use cases. Whether you are a developer, business owner, or decision-maker, this guide will assist you in selecting the best video conferencing API for your needs.

## What is a video conferencing API?

Video conferencing APIs efficiently integrate real-time video and audio communication into your application or website. Rather than building complex video streaming protocols from scratch, these APIs provide all the necessary tools. They handle the intricate processes of capturing, encoding, transmitting, and decoding video and audio data, ensuring a smooth, low-latency experience for your users.

Utilising a video conferencing API saves you from the substantial investment required to develop this technology in-house, conserving both time and resources. You benefit from a reliable, secure, and continually evolving infrastructure the API provider maintains, allowing you to concentrate on your application's core strengths.

Many video conferencing APIs come with advanced features and basic video functionality. Screen sharing, virtual whiteboards, recording options, and integrated chat form a comprehensive collaboration suite. This enables you to customise the solution for specific use cases, such as remote healthcare consultations, interactive online classes, international team meetings, or personalised customer support.

The possibilities are extensive with a [powerful video conferencing API](https://www.digitalsamba.com/video-api). You can deliver the engaging, face-to-face interactions that modern users expect. By leveraging an API, you streamline development, speed up your launch, and provide rich, real-time communication experiences that enhance user satisfaction and loyalty.

## Things to consider when choosing a video conferencing API

Adding video calls to your website or app opens up exciting possibilities. However, with so many video conferencing APIs available, how do you choose the right one? Here’s a breakdown of key factors to keep in mind:

- Quality matters

Will your video calls be clear and crisp? Opt for an API that prioritises high-definition video and audio. This is essential for professional use cases, such as online job interviews or remote doctor consultations. Ensure the API offers low latency (minimal delay), as this significantly impacts the natural flow of conversation.

- Features to fit your needs

Basic video calls are just the beginning! Consider additional features that could enhance your use case. Screen sharing, virtual whiteboards, file transfers, and text chat can transform simple video calls into powerful collaboration sessions.

- Ease of use

A complex API can be challenging for your developers. Select an API that is easy to embed and use. This will save development time and ensure users can join calls effortlessly.

- Scalability

Will the API grow with your needs? If you plan to host large-scale meetings or anticipate a user surge, ensure the API can handle increased traffic without compromising quality.

- Security and privacy

How robust are the API’s security measures? Strong encryption and data protection protocols are crucial when dealing with sensitive data, such as in healthcare or finance.

- Cost-effectiveness

Video APIs typically offer flexible pricing models. Consider factors like minutes used per month, the number of participants, and required features before selecting a plan that suits both your budget and needs.

Choosing wisely means building a seamless video conferencing experience that enhances your app or website. With some research and planning, you'll find the perfect video chat API for your project.

## Key features to look for in a video conferencing API

Are you looking to facilitate clear and secure virtual meetings? Choosing the right video conferencing API is essential. Here’s what to consider:

### High-quality audio and video

Avoid grainy visuals and choppy audio. Select an API that supports HD video resolution for sharp images and includes noise cancellation to minimise distracting background sounds. This ensures a professional and focused meeting environment.

### Screen sharing

Effective collaboration often requires everyone to view the same content simultaneously. Ensure the API offers simple and reliable screen sharing for presentations, live demonstrations, or real-time document collaboration.

### Recording

The ability to record meetings is crucial. Recorded sessions can be used to train new staff, revisit important decisions, or share with those unable to attend live.

### Security

When evaluating an API, prioritise data security and privacy. Look for features like encrypted data transmission, robust authentication, and compliance with data privacy regulations such as GDPR. These measures protect your meetings and safeguard your organisation and users.

### Scalability

If you anticipate large meetings, the API must handle increased demand without sacrificing performance. Choose proven solutions that maintain call quality even with numerous participants.

### Customisation

A customisable API allows you to control the look, feel, and features of your video conferencing experience. This enables seamless integration into your existing application or website, creating a cohesive user experience.

### Virtual backgrounds

Let users replace or blur their backgrounds for added privacy, professionalism, or a touch of fun. This feature is particularly useful for those working in less-than-ideal environments.

### Live chat

Enable text-based communication alongside the video stream for quick questions, side discussions, or sharing links without interrupting the main conversation.

### Whiteboarding

Offer a virtual whiteboard for participants to collaborate visually in real time. This encourages brainstorming, problem-solving, and the free flow of ideas.

Remember, prioritise the features most important to your video conferencing needs. Selecting a dependable API with strong security will ensure smooth and secure virtual meetings for all participants.

## 10 Best Video Conferencing APIs in 2024

The video conferencing market is thriving in 2024, offering businesses a wide range of options. Selecting the right provider can be challenging. To help you make an informed decision, let's delve into some top solutions, examining their features, potential drawbacks, and pricing models.

### 1. Digital Samba

[Digital Samba](https://www.digitalsamba.com/) revolutionises online business communication and collaboration beyond mere video conferencing. Their APIs facilitate seamless, high-definition video and audio calls, effortless screen sharing for presentations or demonstrations, and reliable recording options for training or compliance purposes.

Imagine video calls so sharp and lag-free that it feels like everyone's in the same room, even if they're continents apart—that's the power of Digital Samba's API! You can easily embed fluid video conferencing into your websites and apps. Additionally, Digital Samba offers a video call SDK for further customisation and flexibility.

Digital Samba goes above and beyond the basics by prioritising security and scalability. User data is fiercely protected, and their solutions are designed to handle traffic surges, ensuring no downtime during crucial meetings. With built-in data privacy compliance (like GDPR), you can confidently integrate their privacy-focused API into various services, including healthcare, finance, and other sensitive industries.

#### [Digital Samba features](https://www.digitalsamba.com/features):

- Embeddable conferencing

- Screen sharing

- Virtual whiteboard

- Recording capabilities

- Customisation options

- End-to-end encryption

- Robust SDKs

#### [Digital Samba pricing](https://www.digitalsamba.com/pricing):

- The free tier (10,000 minutes/month limit) is ideal for testing and smaller projects. Explore Digital Samba's API and see how it meets your needs. Once you exceed the minute limit, upgrade to a paid plan.

- Paid plans (from €99/month to custom pricing): Unlock higher minute limits and advanced features as your video conferencing needs grow. Paid plans offer flexibility and scalability. For high-volume enterprise needs, contact the sales team for custom pricing.

### 2. Agora.io

Agora.io goes beyond just enabling video calls; it helps you create online experiences that feel truly connected. Imagine a video so clear it feels like you're in the same room, voice calls without glitches, and easy recording to capture important moments. With features like messaging and live streaming, Agora.io's smart technology ensures strong connections, no matter where your users are globally.

#### Agora.io key features:

- Real-time video and audio

- Screen sharing

- Whiteboarding

- Recording

- Robust SDKs for high customisation

- Global low-latency network

#### Agora.io limitations:

- Can be complex for simple use cases.

- Pricing can become expensive at very high volumes.

- Occasional reports of audio/video quality inconsistencies.

#### Agora.io pricing:

- Free Plan: Up to 10,000 minutes per month.

- Paid Plans: Tiered pricing based on usage:

- Video HD: $3.99 per 1,000 minutes.

- Video Full HD: $8.99 per 1,000 minutes.

**Note:** Pricing complexity increases with additional features and higher usage volumes.

### 3. CometChat

CometChat prioritises a smooth development experience with extensive documentation, easy-to-follow demos, and intuitive code samples. Their APIs provide everything needed for text, voice, and video chat, ensuring beautiful and functional video conferencing experiences across devices. It's the ultimate tool for creating a chat experience that looks as good as it performs.

#### Key CometChat features:

- Video and audio calling

- Text chat

- File sharing

- Whiteboarding

- User presence

- Pre-built UI components

#### CometChat limitations:

- Some users report issues with call quality in low-bandwidth scenarios.

#### CometChat pricing:

- Free plan: basic features.

- Usage-based pricing:

- Text chat: $0.001/user minute.

- Voice calling/conferencing: $0.003/user minute.

- Video calling/conferencing: $0.006/minute.

### 4. MirrorFly

With MirrorFly, you're not just purchasing technology; you're shaping your communication experience. Their SaaS and SaaP solutions completely control every aspect of your video calls. Expect bank-grade security to protect sensitive conversations and unparalleled customisation, with full ownership of your SDKs and source code—a game-changer for enterprises and developers seeking long-term control.

#### MirrorFly features:

- Highly customisable video, voice, and chat solutions

- In-app messaging

- Push notifications

- Moderation tools

- Analytics

- Source code ownership

#### MirrorFly limitations:

- Requires more development effort than some prebuilt solutions.

- Can be more expensive for smaller projects.

- Support response times can sometimes be slow.

#### MirrorFly pricing:

- Usage-based pricing:

- Essentials (5k Monthly Active Users): $0.08/user/month.

- Premium (5k Monthly Active Users): $0.2/user/month.

- For enterprise clients, contact sales for tailored pricing.

### 5. Daily.co

Daily.co provides flexibility to build the video conferencing experience you envision. Use their pre-built UI components for a quick launch or dive into granular customisation for a bespoke interface. Expect stunning 1080p video, flexible recording options (including automatic transcriptions), and scalability to handle any audience size your business can manage.

#### Daily.co features:

- Easy integration into websites and apps

- Recording

- Screen sharing

- Developer-friendly API

- Strong support for live-streaming

#### Daily.co limitations:

- Limited features for complex moderation.

- Can be less cost-effective for high-volume use.

- Some UI components feel basic compared to others.

#### Daily.co pricing:

- Free Plan: Limited use.

- Paid Plans: Scales are based on the minutes streamed and features, starting at $0.0015 per participant per minute.

For complex implementations, contact them for a detailed breakdown.

### 6. VideoSDK.live

VideoSDK.live offers developers a comprehensive suite of tools to embed real-time audio and video communication experiences within websites and applications. It provides high customisation, excellent performance with low latency, and features designed to enhance interactivity within video sessions. VideoSDK.live prioritises developer experience with straightforward APIs and SDKs.

#### Features highlight:

- Flexible customisation

- Low-latency video conferencing

- Interactive live-streaming

- Robust analytics

- Developer-focused APIs and SDKs

#### Limitations:

- Effective utilisation might require a certain level of technical expertise.

- Occasional reports of inconsistencies with certain browsers.

#### Pricing:

- Free Tier: Generous free usage limits for development and experimentation.

- Conferencing (audio/video calls): Starts at $0.003 per participant per minute for video calls and $0.0006 per participant per minute for audio-only calls.

### 7. Sinch

Sinch is a trusted choice for industries like healthcare and retail, where reliable, customisable video communication is critical. Their Live Video Call API allows developers to effortlessly add instant messaging capabilities to iOS, Android, and web applications. This facilitates crystal-clear doctor-patient consultations, virtual shopping experiences with personalised support, and more. Sinch's focus on customisation empowers you to tailor the experience perfectly to your brand's and industry's needs.

#### Sinch features overview:

- Voice and video calling

- SMS and verification solutions

- User-to-user chat

- Push notifications

- Strong emphasis on reliability

#### Sinch Limitations:

- Less emphasis on pure video customisation compared to dedicated video providers.

- It can be overkill for projects needing only basic video chat.

- UI toolkits may feel dated compared to some.

#### Pricing:

- Free Trial: Available.

- Contact sales for a price quote.

### 8. Enablex

Enablex offers a budget-friendly yet powerful solution for developers seeking a HIPAA-compliant video conferencing API. They provide top-notch SDKs for seamless one-on-one and group video calls. Enablex also stands out with live streaming features that let you push content directly to social media, which is ideal for webinars, training sessions, or product demos.

#### Enablex features:

- Low-latency video conferencing

- Whiteboarding and collaboration tools

- Recording

- Moderation features

- Breakout rooms

#### Enablex limitations:

- Fewer pre-built UI components compared to alternatives.

- Can be less intuitive for non-technical users.

- Documentation isn't as extensive as some competitors.

#### Pricing:

- Usage-Based Model: $0.004 per participant per minute (for a 50 participant session).

- For detailed plans and specific pricing, contact sales.

### 9. Apphitect

Apphitect provides robust video conferencing for businesses of all sizes, built on WebRTC to ensure smooth communication across mobile, desktop, and web browsers. Apphitect's adaptability allows their solutions to match your company's workflow and branding, creating a seamless and intuitive experience.

#### Apphitect features:

- Video and voice calling

- Messaging solutions

- Screen sharing and collaboration features

- Secure and reliable infrastructure

#### Limitations:

- Call quality may not be stable due to potential lags.

- Limited advanced analytics and reporting tools.

#### Pricing:

- Contact the sales team for custom pricing.

### 10. Vonage Video Chat API

Building upon the well-respected TokBox OpenTok, Vonage's Video Chat API offers reliable video experiences for your applications. Expect high-quality, WebRTC-powered video streaming that's visually impressive and secure with AES encryption. It’s the evolution of proven technology, ready to meet modern communication needs.

#### Features of Vonage Video Chat API:

- Robust and customisable video solutions

- Archiving (recording)

- Session monitoring tools

- Cross-platform support

- Strong developer community

#### Vonage Video Chat API limitations:

- Can be complex for simpler implementations.

- Support quality has been inconsistent in reviews.

- Call quality may not be consistent in some cases.

#### Pricing:

- $0.00395 per participant per minute.

The video conferencing landscape is vast and ever-evolving. The providers we've explored offer a diverse range of features, from basic functionality to advanced customisation and enterprise-level security. When choosing your provider, consider the features you need and your budget to make the best decision.

## Conclusion

When selecting a video conferencing API, consider the features that are most important to you. Are high-quality video and sound crucial? Do you need screen-sharing or call-recording capabilities? How essential are security and privacy? Should the API support large groups, and can it be customised to fit your specific project?

Additionally, assess any usage limits and the cost of the API. Does it fit within your budget? Many APIs offer free trials or plans, allowing you to test them before committing. This is an excellent way to determine which one best suits your needs.

Digital Samba is a standout option for those seeking the freedom to create exceptional video experiences. Our robust API supports everything from simple team chats to large-scale online conferences. We prioritise security and are equipped to handle projects of any size.

Ready to explore Digital Samba's capabilities? [Sign up now](https://dashboard.digitalsamba.com/signup) and receive 10,000 free credits to enhance your video experiences. | digitalsamba |

1,863,809 | Destructuring Assignments in JavaScript | Destructuring in JavaScript lets you easily take apart arrays or objects and assign their parts to... | 0 | 2024-05-24T10:13:37 | https://dev.to/linusmwiti21/destructuring-assignments-in-javascript-5hik | javascript, webdev, beginners, programming | Destructuring in JavaScript lets you easily take apart arrays or objects and assign their parts to new variables. It's like unpacking a box: instead of grabbing each item one by one, you can quickly grab what you need all at once. This makes your code easier to read and write because you can do more with less.

There are two main types of destructuring in JavaScript:

1. **Array Destructuring:** With array destructuring in JavaScript, you can grab values from an array and assign them to variables in just one go. Plus, if you don't need certain values, you can easily skip over them.

2. **Object Destructuring:** With object destructuring, you can extract properties from objects and assign them to variables with the same name. You can also provide default values in case the property doesn't exist in the object.

Destructuring help increase the readability, decrease verbosity, and improve maintainability of your Javascript code.

In this article, we'll take you through destructuring assignments, exploring how to work with both arrays and objects while providing practical examples and highlighting valuable use cases. Additionally, we'll look into advanced techniques such as rest operators and combining array and object destructuring to demonstrate the full power and flexibility of this JavaScript feature.

### Destructuring Objects

Object is a basic data structure in JavaScript that is used to arrange data in a key-value pair manner. It functions similarly to a container that stores attributes (keys) and the values that go with them. Think of it like a box with sections that are labelled.

**Example**

```javascript

const person = {

name: "Alice", // Key: "name", Value: "Alice"

age: 30, // Key: "age", Value: 30

hobbies: ["coding", "reading"] // Key: "hobbies", Value: Array of strings

};

```

**Data Types for Values:** Object values can be of any data type in JavaScript, including strings, numbers, booleans, arrays, or even other objects (nested objects).

**Accessing properties:** You can use bracket notation (``object["key"]``) or dot notation (``object.key``) to access particular properties of an object. The value connected to the key is retrieved by both notations.

Objects are a great method to organise related data in JavaScript. They function similarly to labelled boxes, with keys serving as labels that identify certain values (the contents therein). The key-value pair method facilitates efficient data access and manipulation. Moreover, objects serve as the foundation for complex data structures and can even be used to represent actual objects in JavaScript programmes.

**Accessing Object Properties using Destructuring**

Destructuring in JavaScript isn't just for retrieving values from regular objects. It also lets you reach and access properties from objects hidden inside other objects. Now let's see how we may effectively access object properties by using destructuring syntax.

Imagine a scenario where an object is designed to represent a user:

```javascript

const user = {

name: 'John Doe',

age: 30,

email: 'john@example.com'

};

```

To access the individual properties of this object, we can use destructuring assignment:

```js

const { name, age, email } = user;

console.log(name); // Output: John Doe

console.log(age); // Output: 30

console.log(email); // Output: john@example.com

```

**Nested Object Destructuring**

Objects in JavaScript can hold other objects within them, creating a hierarchy of data structures.

Often, objects may contain nested structures, such as:

```js

const user = {

name: "Patel",

age: 40,

address: {

city: "Mumbai",

country: "India",

},

};

```

To access nested properties, we can destructure them directly:

```js

const { address: { city } } = user;

console.log(city); // Output: Mumbai

```

We destructure the user object, extracting the address property and assigning it to a new variable named address.

Within the nested address object, we further destructure to extract the city property and assign it to the standalone variable city.

This approach simplifies object access, especially when dealing with deeply nested structures.

**Renaming Properties**

JavaScript destructuring assignments have a useful feature that allows you to rename properties as you extract them from objects. This can be really helpful:

* The property name in the object doesn't clearly reflect its meaning.

* You want to use a different variable name for clarity or consistency within your code.

**Example**

```js

const person = {

fullName: "John Doe",

age: 30,

};

```

Destructuring allows you to rename the property during extraction. Here, we'll rename ``fullName`` to ``firstNameLastName``:

```js

const { fullName: firstNameLastName } = person;

console.log(firstNameLastName); // Output: John Doe

```

In this example, we separate the individual object.

The property ``fullName`` is specified inside the curly braces {}, and is followed by a colon :.

To capture the value from the ``fullName`` field, we declare a new variable named ``firstNameLastName`` after the colon.

JavaScript's object destructuring completely changes the way we work with complex data. Curly braces are used to extract values from objects directly, eliminating the need for tedious dot notation. Clarity requires renaming attributes on. Destructuring efficiently captures particular data in one go, even with nested objects.

### Destructuring Arrays

An array is a type of variable that has the capacity to store several values. JavaScript's array destructuring makes working with arrays easier by enabling you to extract values directly from the array.

To assign values to variables, use square brackets rather than indexing elements. Selecting specific elements from an array becomes clear-cut and easy. You can also skip elements and define default values during destructuring, which increases flexibility in managing different cases. Ultimately, array destructuring simplifies your code, improving its readability and productivity.

**Accessing Array Elements using Destructuring**

In JavaScript, array destructuring offers a simple method for accessing array elements. Square brackets allow you to assign array values directly to variables, without the need for the conventional index notation.

**Example**

```js

const colors = ["red", "green", "blue"];

// Destructuring the first two elements:

const [firstColor, secondColor] = colors;

console.log(firstColor); // Output: red

console.log(secondColor); // Output: green

```

We destructure the ``shoppingList`` array.

We define two variables: ``firstItem`` for the first element.

For ``secondItem``, we set a default value of "eggs".

If shoppingList only has one element, "eggs" is assigned to ``secondItem`` to avoid undefined.

**Skipping values example**

Sometimes you only need specific elements from an array, and the rest are irrelevant. Destructuring allows you to skip elements elegantly. Simply add commas (,) where you want to skip elements.

**Example**

```js

const colors = ["red", "green", "blue", "yellow"];

const [firstColor, , thirdColor] = colors;

console.log(firstColor); // Output: red

console.log(thirdColor); // Output: blue (skipping the second element)

```

In this case, We destructure the colors array using square brackets [].

We define two variable names, ``firstColor`` and ``secondColor``, separated by a comma.

These variable names automatically capture the values at the corresponding positions (index 0 and 1) in the array.

### Destructuring using the Rest Operator (...)

A function can consider an infinite number of arguments as an array by using the rest operator (...). This operator allows you to capture all the remaining elements of an array into a single variable, after extracting the specific elements you need upfront.

Imagine a shopping list that might have an indefinite number of items beyond the essentials (milk, bread, eggs). Destructuring with the rest operator lets you handle this flexibility with ease. Here's the concept in action:

```js

const shoppingList = ["milk", "bread", "eggs", "chips", "cookies"];

const [firstItem, secondItem, ...remainingItems] = shoppingList;

console.log(firstItem); // Output: milk

console.log(secondItem); // Output: bread

console.log(remainingItems); // Output: ["chips", "cookies"] (all remaining items)

```

We destructure the ``shoppingList`` array.

We extract the first two elements (``milk`` and ``bread``) into separate variables.

The magic happens with the rest operator (``...``). It captures all the remaining elements (``chips`` and ``cookies``) into an array named ``remainingItems``.

The rest operator (... ) in destructuring works when you're dealing with arrays of unknown length. It lets you grab the specific elements you need upfront and effortlessly capture any remaining items in a single variable. This keeps your code concise, adaptable to different array sizes, and ultimately more reusable for various scenarios.

### Conclusion

In JavaScript, destructuring assignments provide a strong and efficient method of working with both arrays and objects. You can greatly increase the readability, maintainability, and flexibility of your code by utilising destructuring techniques.

This article provided a foundational understanding of destructuring, covering:

* Extracting specific properties from objects

* Renaming properties during extraction

* Accessing elements from arrays

* Using defaults and skipping elements in arrays

* Capturing the remaining elements of an array with the rest operator (...)

Even though this is only a brief introduction to destructuring, we've already seen how it simplifies complex data manipulation tasks.

Start incorporating destructuring assignments into your JavaScript coding practices. You'll find it becomes a natural and efficient way to interact with data structures.

These resources offer deep understanding into destructuring and related concepts:

* [Modern JavaScript- Destructuring Assignment]( https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/OperatorsDestructuring_assignment)

* [freeCodeCamp](https://forum.freecodecamp.org/t/use-destructuring-assignment-to-assign-variables-from-arrays/170377)

| linusmwiti21 |

1,863,807 | Abstract Art Awards as Symbols of Recognition: Honoring Artistic Excellence | It is in our inherent human nature to provide motivation and encouragement to the people around us... | 0 | 2024-05-24T10:12:50 | https://dev.to/corporateolympiasydney/abstract-art-awards-as-symbols-of-recognition-honoring-artistic-excellence-3o7d | award | It is in our inherent human nature to provide motivation and encouragement to the people around us and push them towards becoming a better version of themselves.

When it comes to art and artists, it is important to provide them with the much-needed support and encouragement. Why? It is to bring complex ideas and emotions to life.

Providing the artists with [abstract art awards](https://www.olympia.com.au/corporate/abstract-awards/) is important because it will help provide them with an opportunity to connect with people from the art community. The abstract awards Award will encourage them to showcase their creations. This article will teach us more about abstract art and the importance of providing abstract awards.

## What is Abstract Art?

The work of artists can leave a lasting impact on our minds, helping bring imagination and creativity to the forefront. Abstract art mostly is a reflection of the thought process of the artist itself. It is their innate ability to think beyond the traditional ideas and bring something extraordinary on paper.

Abstract art can be anything from geometric shapes, to just a wide amalgamation of colors. In the truest sense it reflects the inner workings of the artist's minds and brings their thoughts and feelings on the canvas.

## Why do we need Abstract Art Awards?

It is important to hold abstract words because it will allow for better art appreciation. Generally, abstract art is very difficult for most people to understand. Most people find it difficult to understand the context of these paintings, and thus reduce their interest.

Organizing abstract awards, will help educate the masses about this art form along with honoring talented artists in the world. It will take a positive step towards appreciating art for its varied forms and ideas.

It is easier these days to hold abstract awards online to unite the abstract art community and appreciate their talent. Through abstract awards online, it also becomes easier to learn about different new abstract artists and encourage others.

The advantage associated with abstract awards online is that it helps in networking. As artists convene to talk about different techniques and topics leading to the sharing of knowledge. This shows that holding abstract art awards, [sports trophies and medals](https://www.olympia.com.au/corporate/school-trophies/) even if they are abstract awards online, enriches the art community as a whole.

## 1. The case of Emotions

Through the abstract award of art it allows artists to showcase how they use their emotions and intuition to create art. It helps them to make people understand that there is no set way to view abstract art, but rather their own feelings and imagination.

## 2. Challenging Traditional Ideas and Interpretations

Organizing abstract words will allow people to understand the different perspectives and emotions associated with abstract art. It will help provide an opportunity to break down any conventional ideas or views that may be present and help provide a new Outlook. It will allow people to conserve more about abstract artwork, which will lead to better knowledge and understanding.

## 3. Bringing together artists from different walks of Life

Another important reason why organizing abstract art awards is important is because it will provide a platform for people performing abstract art to come together. It will help create a place where abstract art can be appreciated without any judgment or preconceived notions. It will help in creating thought-provoking conversations that will have a positive impact on the artists.

## Why is Art Appreciation important?

There can be many reasons outlined necessary for holding abstract awards and art appreciation. One should not forget that it provides a historical perspective of different cultures, societies, and the modern world. Hence, having the ability to analyze art will allow an individual to reflect on the problems that were prevalent in society and compare if history has been repeating itself.

By appreciating art and organizing abstract awards, it will be possible to bring the community together to analyze different perspectives of artists, their designs, skilled techniques, and mastery of different hues of colors. Mostly, abstract art awards will provide an opportunity to appreciate art more from emotions, thoughts, and minds rather than what is just being viewed by the eye.

The abstract award will help in the following:

1. Helping create awareness about viewing art more from their heart and emotions than just the mind.

2. Improving their conscience and consciousness to understand the different emotions and perspectives.

3. Through the abstract art awards, it will be possible to create a Foundation for artists to come together and appreciate each other's work.

4. The abstract art awards will also contribute to learning about different stories and reflections on the canvas.

5. Lastly, through the Abstract Award, it will be possible to connect with art on a deeper level and learn the ability to reflect our thoughts and ideas.

## Relevance of Honoring Artistic Excellence

There always lies the question of whether abstract art awards or any awards relating to art are even relevant or not to society. And the answer is that it is indeed relevant.

The reason is that an announcement regarding abstract awards online or about abstract art awards in general creates a positive buzz around the event. This allows people to start learning about abstract awards and understand abstract art, which is usually beneficial to the growth of artists in general.

It is important to understand that art and artists are vital to sustaining a developing and liberal economy. Art allows an artist to express emotions and ideas, this sense of the world, and its many works through abstract patterns. It provides the people with a window not only to the artist's soul but also to the world itself. Hence, it becomes possible to recognize all these facets through the abstract award.

Most importantly, abstract art awards allow abstract artists to hear and see their work. They are allowed a platform to showcase their life's work and be appreciated for the mastery of their ideas and techniques in front of many critics and viewers alike. Abstract awards help them network and connect with like-minded individuals, leading to the community's growth as a whole.

At a time when society is becoming more modernized, with the foray of digital art, it has become important now more than ever to take the initiative of honoring artistic excellence. To help people learn about different abstract artists and their ability to weave emotions into stories and put them in their canvases. Providing an abstract award to such artists will allow them to enthusiastically showcase their creations.

Thus, it is important to hold abstract art awards and honor artists' excellence because:

1. It will help provide more exposure to new and old artists, bringing together art lovers, critics, curators, and buyers.

2. It will improve networking opportunities, allowing people to learn about them and their creations and helping them with finding new contacts.

3. With the abstract award and associated prizes, artists will be leveraged to manage their expenses better and be encouraged to develop more.

4. Apart from the prize money from the abstract award, they will be allowed to become published in magazines and newspapers and improve their standing in the art community.

## Parting Words

Creating a platform for holding abstract art awards is vital for the sustenance of artists. Even if there are abstract awards online, many opportunities present themselves to improve the appreciation and knowledge of abstract art. Hence, organizing an abstract award will provide artists with the platform to appreciate their artistic excellence. The abstract award will also push them toward further growth in the art community. | corporateolympiasydney |

1,863,804 | Exploring the Synergy Between Development and Futures Trading in the Crypto Space | In the dynamic world of cryptocurrency, the convergence of development and futures trading has... | 0 | 2024-05-24T10:07:24 | https://dev.to/klimd1389/exploring-the-synergy-between-development-and-futures-trading-in-the-crypto-space-3458 | webdev, beginners, news, cryptocurrency | In the dynamic world of cryptocurrency, the convergence of development and futures trading has emerged as a critical intersection. Developers are leveraging their expertise and innovative solutions to reshape the landscape of financial markets, particularly in the realm of futures trading.

The Role of Developers:

Developers play a pivotal role in driving innovation and technological advancement within the crypto space. Armed with cutting-edge technology and forward-thinking strategies, developers are constantly pushing the boundaries of what is possible in the world of finance. Their expertise in blockchain technology, smart contracts, and decentralized applications (dApps) enables them to create innovative solutions that address the evolving needs of traders and investors.

The Rise of Futures Trading:

Futures trading has gained significant traction in the crypto market, offering traders the opportunity to speculate on the future price movements of various digital assets. By entering into futures contracts, traders can hedge their risk, amplify their returns, and diversify their investment portfolios. The availability of futures trading on leading cryptocurrency exchanges has democratized access to these sophisticated financial instruments, allowing traders of all skill levels to participate in the market.

The Importance of WhiteBIT:

WhiteBIT, a premier cryptocurrency exchange, has established itself as a leader in the field of futures trading. With its robust trading platform and wide range of trading pairs, WhiteBIT provides traders with the tools they need to navigate the complex world of futures trading with ease. The recent listing of the $NOT-PERP trading pair on WhiteBIT further expands the exchange's offerings, providing traders with additional opportunities to capitalize on market opportunities and diversify their trading strategies.

Unlocking New Opportunities:

The integration of futures trading into development strategies opens up a world of possibilities for developers. By leveraging futures contracts, developers can hedge their exposure to volatile markets, lock in profits, and mitigate risk. Additionally, futures trading allows developers to gain insights into market sentiment and price trends, enabling them to make more informed decisions when developing new projects or allocating capital.

Looking Ahead:

As the crypto ecosystem continues to evolve, the synergy between development and futures trading is expected to grow stronger. Developers will play a key role in shaping the future of finance, driving innovation and pushing the boundaries of what is possible in the crypto market. By embracing futures trading and leveraging platforms like WhiteBIT, developers can unlock new opportunities for growth and success in the ever-changing landscape of cryptocurrency. | klimd1389 |

1,863,803 | Integrating with WordPress | A hands-on guide and example to integrate WordPress with Logto. This guide will walk you through... | 27,498 | 2024-05-24T10:07:09 | https://blog.logto.io/integrate-with-wordpress/ | webdev, wordpress, developer, opensource | A hands-on guide and example to integrate WordPress with Logto.

---

This guide will walk you through the process of integrating Logto with WordPress using the OIDC plugin. But first, let's take a look at the user experience once the integration is complete.

# The sign-in process with Logto integration

1. The user accesses the WordPress site's login page, `which is [SITE URL]/wp-login.php` by default, and there is a sign in by OIDC button.

2. The user clicks the button, and is redirected to the Logto's sign in page with your customized sign in experience.

3. The user choose a sign in method, and Logto will authenticate the user.

4. Once the user is authenticated, Logto will redirect the user back to the WordPress site.

5. WordPress will create a new user account or sign in the existing user account, and redirect the user to the previous page.

Now that we've seen the end result of integrating Logto with WordPress, let's get our hands dirty and dive into the integration process.

# Prerequisites

Before we begin, make sure you have the following:

1. A WordPress site: A fully operational WordPress site is required. Ensure administrative access to manage plugins and configure settings.

2. A Logto instance: Create a new Logto instance by visiting the [Logto Console](https://auth.logto.io/sign-in), or host your own instance by following the [installation guide](https://docs.logto.io/docs/tutorials/get-started/#logto-oss-self-hosted).

# Integration Steps

### Step 1: Create a Logto application

1. Visit the [Logto Console](https://auth.logto.io/sign-in).

2. Navigate to "Applications" and click "Create application".

3. Click "Create app without framework".

4. Select "Traditional Web" as the application type.

5. Name your application and click "Create application".

### Step 2: Install the plugin

1. Log in to your WordPress site.

2. Navigate to "Plugins" and click "Add New".

3. Search for "OpenID Connect Generic" and install the plugin by [daggerhart](https://www.daggerhartlab.com/).

4. Activate the plugin.

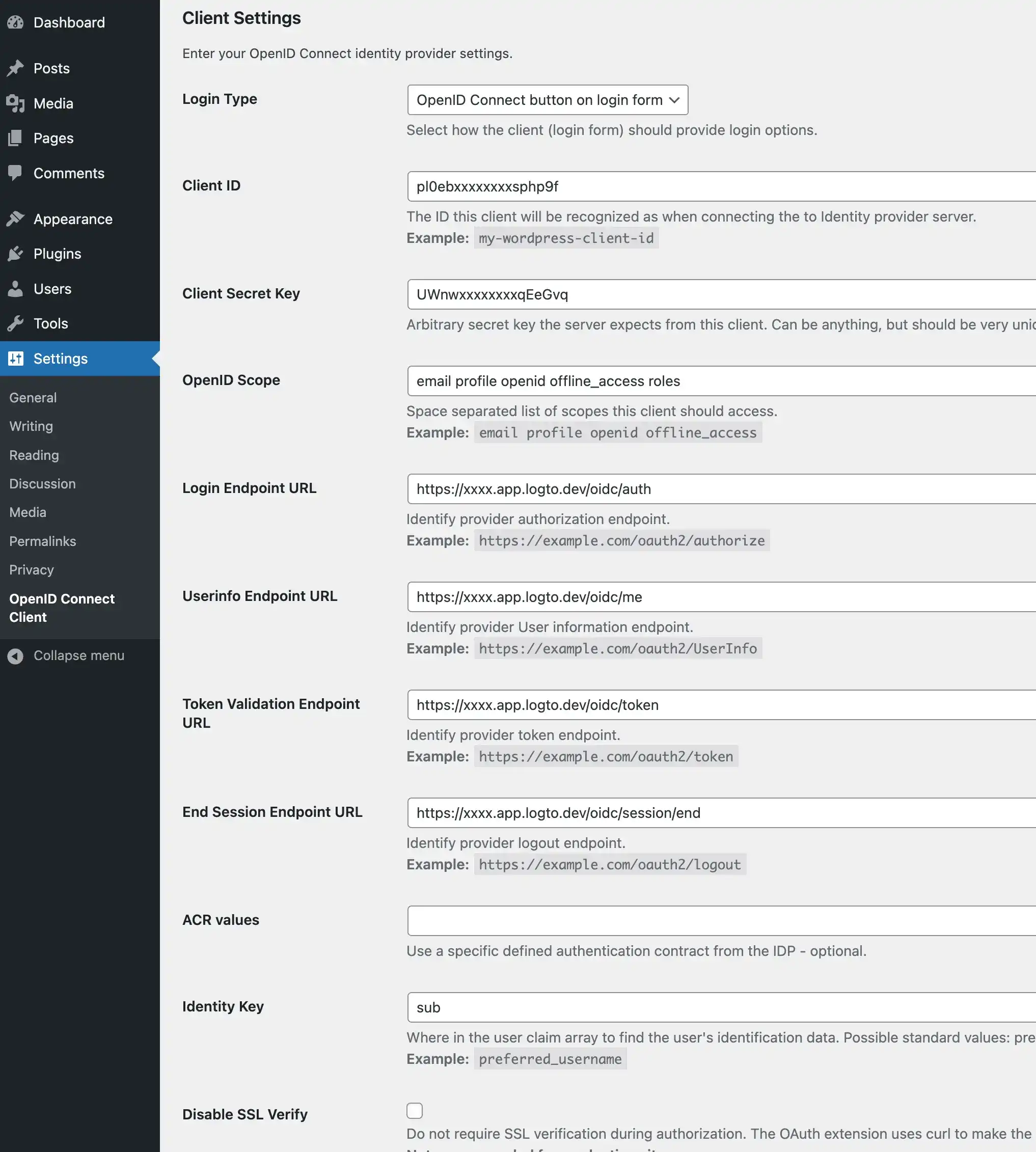

### Step 3: Configure the plugin

1. Open the plugin settings by navigating to "Settings" > "OpenID Connect Generic".

2. Fill in the following fields:

- **Client ID**: The app ID of your Logto application.

- **Client Secret**: The app secret of your Logto application.

- **OpenID Scope**: Enter `email profile openid offline_access`.

- **Login Endpoint URL**: The authorization endpoint URL of your Logto application, which is `https://[tenant-id].logto.app/oidc/auth`, you can click "show endpoint details" in the Logto application page to get the URL.

- **Userinfo Endpoint URL**: The userinfo endpoint URL of your Logto application, which is `https://[tenant-id].logto.app/oidc/me`.

- **Token Validation Endpoint URL**: The token validation endpoint URL of your Logto application, which is `https://[tenant-id].logto.app/oidc/token`.

- **End Session Endpoint URL**: The end session endpoint URL of your Logto application, which is `https://[tenant-id].logto.app/oidc/session/end`.

- **Identity Key**: The unique key in the ID token that contains the user's identity, it can be `email` or sub, depending on your configuration.

- **Nickname Key**: The key in the ID token that contains the user's nickname, you can set it to `sub` and change it later.

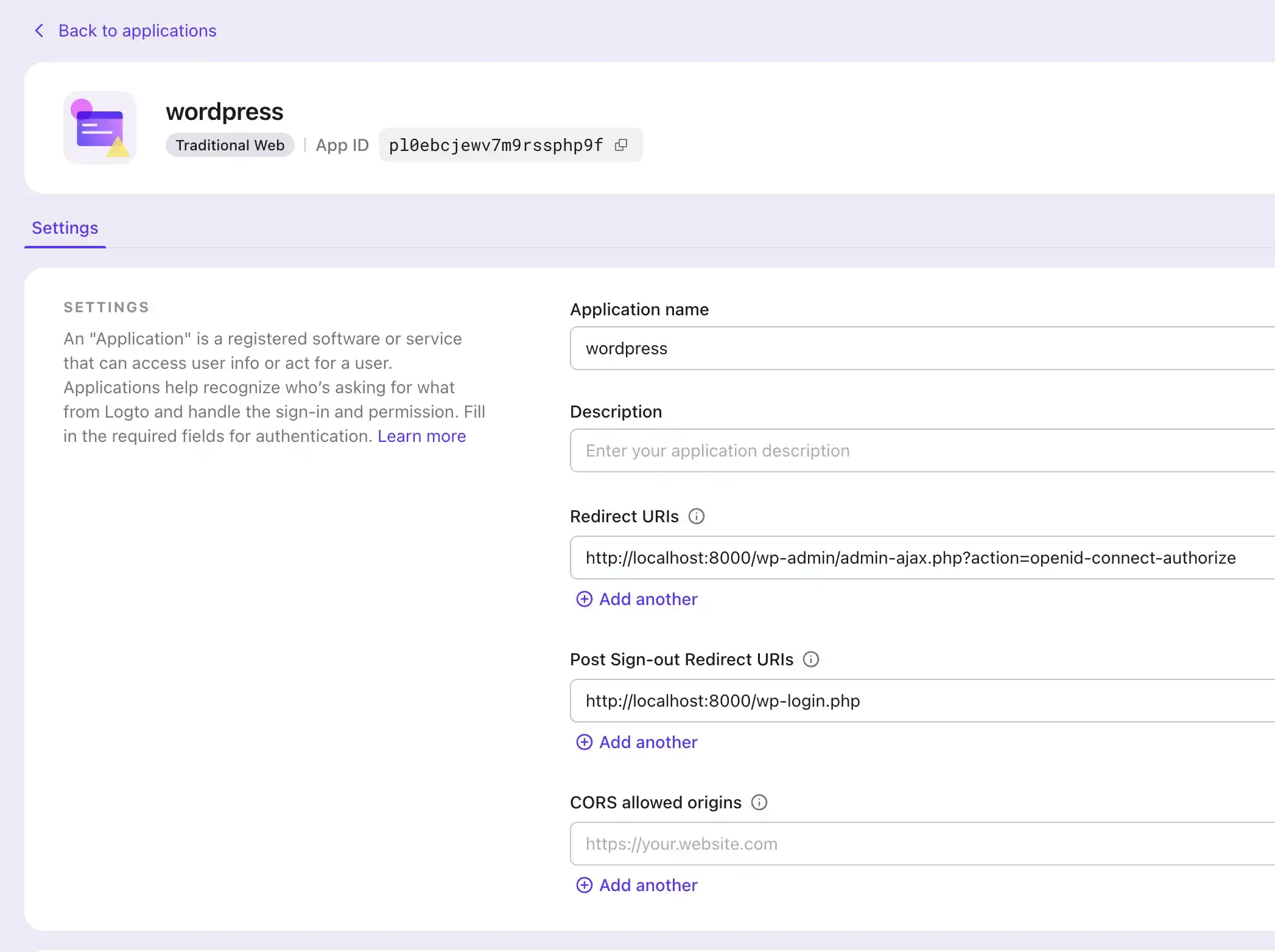

### Step 4: Configure the application

1. In the plugin settings, scroll down to the "Notes" section, and copy the "Redirect URI" value.

2. In the Logto Console, navigate to your application's settings.

3. Add the copied "Redirect URI" value to the "Redirect URIs" field.

### Step 5: Test the integration

1. Log out of your WordPress site.

2. Visit the WordPress login page and click the "Sign in with Logto" button.

3. You will be redirected to the Logto sign-in page.

4. Sign in with your Logto account.

5. You will be redirected back to the WordPress site and logged in automatically.

# Final steps

Congratulations! You have successfully integrated Logto with WordPress.

To further customize the integration, you can explore additional features in the plugin's setting page, such as linking existing users, customizing the login button, using SSO mode, and more.

{% cta https://logto.io/?ref=dev %} Try Logto Cloud for free {% endcta %}

| palomino |

1,863,802 | Issue Report: Dialogs Dismissed Prematurely with ensureSemantics | In this issue report (#149001), reported by user "rkunboxed", a problem in Flutter Web is outlined... | 0 | 2024-05-24T10:07:03 | https://dev.to/n968941/issue-report-dialogs-dismissed-prematurely-with-ensuresemantics-3162 | flutter, firebase, webdev, beginners | In this issue report (#149001), reported by user "rkunboxed", a problem in Flutter Web is outlined where dialogs dismiss prematurely when ensureSemantics is included. Clicking inside the dialog causes dismissal, contrary to expected behavior. A workaround is suggested involving the use of a hidden Semantics widget. This issue affects releases 3.22 and 3.23.

[read full article](https://flutters.in/issue-report-dialogs-dismissed-prematurely-with-ensuresemantics/)

Issue Details:

Issue Number: #149001

Reported by: rkunboxed

Date: 11 hours ago

Comments: 2

Description

Dialogs in Flutter Web are dismissed prematurely when ensureSemantics is included. Clicking inside the dialog on whitespace causes dismissal, contrary to expected behavior.

Issue Report: Dialogs Dismissed Prematurely with `ensureSemantics`

Steps to Reproduce

Run the code sample as a Web project in Chrome.

Click on the "Show Dialog" button.

Click anywhere inside the dialog that is not a form field.

Observe the dialog being dismissed.

Expected Results

The dialog should not dismiss when any content inside it is clicked. It should only dismiss if the area outside the content (the barrier) is clicked.

Actual Results

Clicking on whitespace inside the dialog causes it to be dismissed in Web builds. This only occurs in Web builds and only when WidgetsFlutterBinding.ensureInitialized().ensureSemantics(); is included.

Code Sample

import 'package:flutter/material.dart';

void main() {

runApp(const MyApp());

WidgetsFlutterBinding.ensureInitialized().ensureSemantics();

}

class MyApp extends StatelessWidget {

const MyApp({Key? key}) : super(key: key);

@override

Widget build(BuildContext context) {

return const MaterialApp(

title: 'Semantics Issue',

home: MyHomePage(),

);

}

}

class MyHomePage extends StatefulWidget {

const MyHomePage({Key? key}) : super(key: key);

@override

State<MyHomePage> createState() => _MyHomePageState();

}

class _MyHomePageState extends State<MyHomePage> {

void _onPressed() {

showDialog(

context: context,

barrierColor: Colors.grey.shade400,

builder: (context) {

return _dialogContent;

},

);

}

@override

Widget build(BuildContext context) {

return Scaffold(

body: Center(

child: Column(

mainAxisAlignment: MainAxisAlignment.center,

children: <Widget>[

ElevatedButton(

onPressed: _onPressed,

child: const Text('Show Dialog'),

)

],

),

),

);

}

Widget get _dialogContent => Center(

child: Container(

margin: const EdgeInsets.all(50),

child: const Material(

child: SizedBox(

height: 250,

width: 250,

child: Padding(

padding: EdgeInsets.all(20),

child: Column(

children: [

TextField(

decoration: InputDecoration(

border: OutlineInputBorder(),

labelText: 'Form field one',

),

),

SizedBox(height: 40),

TextField(

decoration: InputDecoration(

border: OutlineInputBorder(),

labelText: 'Form field two',

),

),

],

),

),

),

),

),

);

}

Workaround

A workaround is available by wrapping the dialog contents in a Stack and including a hidden Semantics widget inside Positioned.fill().

Widget get _dialogContentHack => Center(

child: Container(

margin: const EdgeInsets.all(50),

child: Material(

child: SizedBox(

height: 250,

width: 250,

child: Padding(

padding: const EdgeInsets.all(20),

child: Stack(

children: [

Positioned.fill(

child: Semantics(hidden: true),

),

const Column(

children: [

TextField(

decoration: InputDecoration(

border: OutlineInputBorder(),

labelText: 'Form field one',

),

),

SizedBox(height: 40),

TextField(

decoration: InputDecoration(

border: OutlineInputBorder(),

labelText: 'Form field two',

),

),

],

),

],

),

),

),

),

),

);

Screenshots or Video

Issue Report: Dialogs Dismissed Prematurely with ensureSemantics

[read full article](https://flutters.in/issue-report-dialogs-dismissed-prematurely-with-ensuresemantics/)

Logs

[Paste your logs here]

Flutter Doctor Output

[✓] Flutter (Channel stable, 3.19.2, on macOS 14.2.1 23C71 darwin-arm64, locale en-US)

• Flutter version 3.19.2 on channel stable at /Users/rona/Sites/Tools/flutter

• Upstream repository https://github.com/flutter/flutter.git

• Framework revision 7482962148 (3 months ago), 2024-02-27 16:51:22 -0500

• Engine revision 04817c99c9

• Dart version 3.3.0

• DevTools version 2.31.1

[✗] Android toolchain - develop for Android devices

✗ Unable to locate Android SDK.

Install Android Studio from: https://developer.android.com/studio/index.html

On first launch it will assist you in installing the Android SDK components.

(or visit https://flutter.dev/docs/get-started/install/macos#android-setup for detailed instructions).

If the Android SDK has been installed to a custom location, please use

`flutter config --android-sdk` to update to that location.

[!] Xcode - develop for iOS and macOS (Xcode 15.3)

• Xcode at /Applications/Xcode.app/Contents/Developer

• Build 15E204a

✗ Unable to get list of installed Simulator runtimes.

• CocoaPods version 1.15.2

[✓] Chrome - develop for the web

• Chrome at /Applications/Google Chrome.app/Contents/MacOS/Google Chrome

[!] Android Studio (not installed)

• Android Studio not found; download from https://developer.android.com/studio/index.html

(or visit https://flutter.dev/docs/get-started/install/macos#android-setup for detailed instructions).

[✓] VS Code (version 1.89.1)

• VS Code at /Applications/Visual Studio Code.app/Contents

• Flutter extension version 3.88.0

[✓] Connected device (2 available)

• macOS (desktop) • macos • darwin-arm64 • macOS 14.2.1 23C71 darwin-arm64

• Chrome (web) • chrome • web-javascript • Google Chrome 125.0.6422.76

[✓] Network resources

• All expected network resources are available.

! Doctor found issues in 3 categories.

Additional Information

Flutter Doctor Output: Provided above.

Workaround: A hacky workaround has been discovered by the author, wrapping the dialog contents in a Stack and including a hidden Semantics widget inside Positioned.fill().

Platform: Web

Found in Releases: 3.22, 3.23

Also read:

[What Lies Ahead for Flutter: Advancements, Innovations, & Beyond](https://flutters.in/what-lies-ahead-for-flutter/)

[NVIDIA Accelerates GPU, CPU, & AI Platform Roadmap: Launching New Chips Every Year](https://flutters.in/nvidia-accelerates-gpu/)

FAQs

What is the issue described in the report?

The issue revolves around dialogs being dismissed prematurely on Flutter Web when ensureSemantics is included. Clicking inside the dialog's whitespace causes dismissal contrary to expected behavior.

How can I reproduce the issue?

To reproduce the issue:

Run the provided code sample as a Web project in Chrome.

Click on the "Show Dialog" button.

Click anywhere inside the dialog that is not a form field.

Observe the dialog being dismissed.

What are the expected results?

The dialog should not dismiss when clicking inside it. It should only dismiss if the area outside the dialog (the barrier) is clicked.

[read full article](https://flutters.in/issue-report-dialogs-dismissed-prematurely-with-ensuresemantics/)

What are the actual results?

Clicking on whitespace inside the dialog causes it to be dismissed in Web builds. This behavior is observed only in Web builds and when WidgetsFlutterBinding.ensureInitialized().ensureSemantics(); is included.

Is there a workaround available?

Yes, a workaround has been discovered by the author. It involves wrapping the dialog contents in a Stack and including a hidden Semantics widget inside Positioned.fill().

How can I implement the workaround?

You can implement the workaround by using the provided _dialogContentHack method in the code sample. This method wraps the dialog contents in a Stack and includes a hidden Semantics widget inside Positioned.fill().

Additional Information

Platform: Web

Found in Releases: 3.22, 3.23

[read full article](https://flutters.in/issue-report-dialogs-dismissed-prematurely-with-ensuresemantics/) | n968941 |

1,863,801 | Bamboo products | Uravu Bamboo India, based in the picturesque Wayanad district of Kerala, India, is your premier... | 0 | 2024-05-24T10:05:49 | https://dev.to/uravu/bamboo-products-k4d | Uravu Bamboo India, based in the picturesque Wayanad district of Kerala, India, is your premier destination for high-quality bamboo handicrafts and sustainable **[bamboo products](https://www.uravustore.com/)**. As a leading advocate for bamboo as a versatile and eco-friendly resource, Uravu is dedicated to offering a wide range of bamboo items crafted by skilled artisans.

Our online store provides a convenient platform for customers in Kerala and across India to explore and purchase premium bamboo products. From exquisite handicrafts to bamboo saplings for plantation, Uravu Bamboo India is committed to promoting the use of bamboo as a sustainable alternative to traditional resources like wood.

Bamboo, known for its remarkable qualities, is gaining popularity as an environmentally friendly substitute. At Uravu, we recognize the importance of this incredible resource in reducing the ecological impact of single-use plastic pollution. Our extensive range of bamboo products is designed to offer not only functionality and beauty but also a conscious choice towards a greener and more sustainable future.

As a company rooted in the Wayanad district, we take pride in our efforts to contribute to the long-term sustainability of our planet. By providing premium bamboo products at competitive prices, Uravu aims to make a positive impact on both the local community and the global environment. Choose Uravu Bamboo India for an authentic and sustainable shopping experience that aligns with your commitment to environmental responsibility.

| uravu | |

1,863,800 | All India Typing Test, Typing Skill Test, Typing Certificate, CPCT English Typing | The adequacy of a business relies upon how things are done speedier . To finish your work speedier... | 0 | 2024-05-24T10:05:26 | https://dev.to/typingspeed2021/all-india-typing-test-typing-skill-test-typing-certificate-cpct-english-typing-462m | The adequacy of a business relies upon how things are done <a href="https://www.blog.typingspeedtestonline.com/hindi-typing-test mangal/"> speedier </a>. To finish your work speedier it is essential to make Typing <a href="https://www.blog.typingspeedtestonline.com/online typing-test-in-english-advanced/"> aptitudes </a> . All India Typing Test urges you to work cautiously on the PC, it helps in chatting with aides and clients, making reports, and finding new data.

How to moreover develop Typing speed?

Forming is about muscle memory, so the best system for <a href="https://www.blog.typingspeedtestonline.com/krutidev-typing-test/"> development </a> is to work on Typing continually. We made The India Typing Test Hindi give you the contraption to learn and work on Typing by memory in the best way. The way toward making <a href="https://www.blog.typingspeedtestonline.com/typing-test-in numeric/"> genuine </a> affinities gathers that you should set up your fingers at times and show limits. You ought to at <a href="https://www.blog.typingspeedtestonline.com/english-typing-test free/"> beginning </a> zero in director on <a href="https://www.blog.typingspeedtestonline.com/typing-master-online typing-test/"> accuracy </a>, ergonomics, and high India Typing Test Online Hindi Speed will go with time. Not to cripple yourself. Keep in mind, it is an <a href="https://www.blog.typingspeedtestonline.com/5-minute-typing-test-2/"> enormous </a> distance race, not a run, it is savvier to require ten minutes of training gradually than a solitary one hour run... <a href="https://www.blog.typingspeedtestonline.com/all-india-typing-test/"> read more </a>

Suggested Link:

<a href="https://www.blog.typingspeedtestonline.com/how-fast-can-i-type-the-alphabet/">How Fast Can I Type the Alphabet</a>

<a href="https://www.blog.typingspeedtestonline.com/alphabet-typer/">Alphabet Typer</a>

<a href="https://www.blog.typingspeedtestonline.com/speed-typing-online-games/">Speed Typing Online Games</a>

<a href="https://www.blog.typingspeedtestonline.com/speed-typer/">Speed Typer</a>

<a href="https://www.blog.typingspeedtestonline.com/alphabet-typing-test-calculate-typing-speed-and-accuracy-online-what-is-the-average-typing-speed-free-typing-test/">Alphabet Typing Test Calculate</a>

<a href="https://www.blog.typingspeedtestonline.com/mangal-typing-test/">Mangal Typing Test</a>

<a href="https://www.blog.typingspeedtestonline.com/online-typing-test-for-ssc-chsl/">Online Typing Test for SSC CHSL</a>

<a href="https://www.blog.typingspeedtestonline.com/typing-speed-test-software/">Typing Speed Test Software</a>

<a href="https://www.blog.typingspeedtestonline.com/punjabi-typing-test-in-raavi-font-2022/">Punjabi Typing Test</a>

<a href="https://www.blog.typingspeedtestonline.com/online-typing-tests/">Online Typing Tests</a>

<a href="https://www.blog.typingspeedtestonline.com/typing-speed-test-software/">Typing Speed Test Software</a>

RIT

| typingspeed2021 | |

1,863,799 | Software Development Service Ahmedabad | Ahmedabad, the bustling metropolis known for its rich heritage and vibrant culture, is also emerging... | 0 | 2024-05-24T10:04:14 | https://dev.to/benchkart/software-development-service-ahmedabad-5efj | benchkart, softwaredevelopment, b2b, services | Ahmedabad, the bustling metropolis known for its rich heritage and vibrant culture, is also emerging as a tech hub, with a myriad of [software development companies in Ahmedabad](https://benchkart.com/services/software-development-agency-in-ahmedabad

) propelling businesses to unprecedented heights. Among these innovative players, one name stands out – Benchkart.

At Benchkart, we don't just offer software solutions; we craft digital experiences that resonate with your audience and drive tangible results. With a finger on the pulse of industry trends and cutting-edge technologies, we are committed to delivering excellence in every project we undertake.

What sets Benchkart apart from the rest? Our unwavering dedication to client success. We understand that every business is unique, which is why we take a personalized approach to software development. Whether you're a startup looking to disrupt the market or an established enterprise seeking to optimize operations, we tailor our solutions to meet your specific needs and objectives.

From web applications to mobile apps, e-commerce platforms to enterprise software, our team of seasoned professionals possesses the expertise and creativity to bring your vision to life. We combine technical prowess with a keen eye for design, ensuring that your software not only functions flawlessly but also captivates users from the first click.

But our commitment to your success doesn't end with project delivery. At Benchkart, we believe in fostering long-term partnerships built on trust, transparency, and mutual growth. We stand by our clients every step of the way, providing ongoing support and guidance to help them navigate the ever-evolving digital landscape.

So why choose Benchkart for your software development needs in Ahmedabad? Because we don't just create software – we empower businesses to thrive in the digital age. Experience the Benchkart difference today and unlock the full potential of your enterprise.

Reach out to us and let's embark on a journey of innovation and success together. Your vision, our expertise – the perfect recipe for digital transformation. | benchkart |

1,863,795 | Scheduling Events in Firebase Firestore with Server Timestamps | Explore how to utilize Firebase Firestore to schedule events with server timestamps effectively, showcasing a practical implementation. | 0 | 2024-05-24T10:00:40 | https://dev.to/itselftools/scheduling-events-in-firebase-firestore-with-server-timestamps-3ccd | firebase, firestore, javascript, webdev |

At [itselftools.com](https://itselftools.com), we have developed over 30 applications using technologies like Next.js and Firebase. Throughout these projects, we have leveraged Firebase's powerful features, particularly Firestore, to handle real-time data management efficiently. In this tutorial, we'll explore how to use Firebase Firestore to add documents with server timestamps, specifically focusing on scheduling events.

## Understanding the Code

The provided code snippet demonstrates how to create a new document in the Firestore database under the collection 'events'. Here’s a breakdown of each part of the code:

```javascript

// Add a document with server timestamp

const db = firebase.firestore();

db.collection('events').add({

name: 'Webinar',

startTime: firebase.firestore.FieldValue.serverTimestamp()

}).then(docRef => {

console.log('Event scheduled with ID: ', docRef.id);

}).catch(error => {

console.error('Error scheduling event: ', error);

});

```

- **firebase.firestore()**: This function initializes the Firestore service.

- **db.collection('events')**: Specifies the 'events' collection where the document will be added.

- **add({...})**: Adds a new document to the collection. The document contains two fields: 'name' and 'startTime'. The 'name' field is a simple string that describes the event, while 'startTime' is a special field that uses `firebase.firestore.FieldValue.serverTimestamp()`, telling Firestore to fill this field with the server's current timestamp at the moment of document creation.

- **.then(docRef => {...})**: This callback function is executed when the document is successfully added. It logs the unique identifier of the document (`docRef.id`).

- **.catch(error => {...})**: If there is any error during the document creation, this callback function captures and logs the error.

## Benefits of Using Server Timestamps