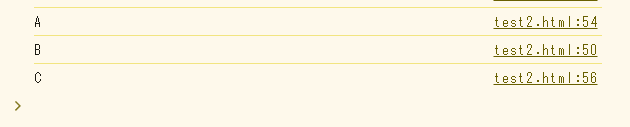

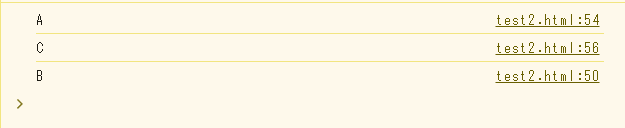

id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,863,476 | How to Create a Virtual Machine (Windows 11 Pro) | Login to Azure Portal Locate the search field In the search field, type virtual machine Select... | 0 | 2024-05-24T02:19:49 | https://dev.to/opsyog/create-a-virtual-machine-4boj |

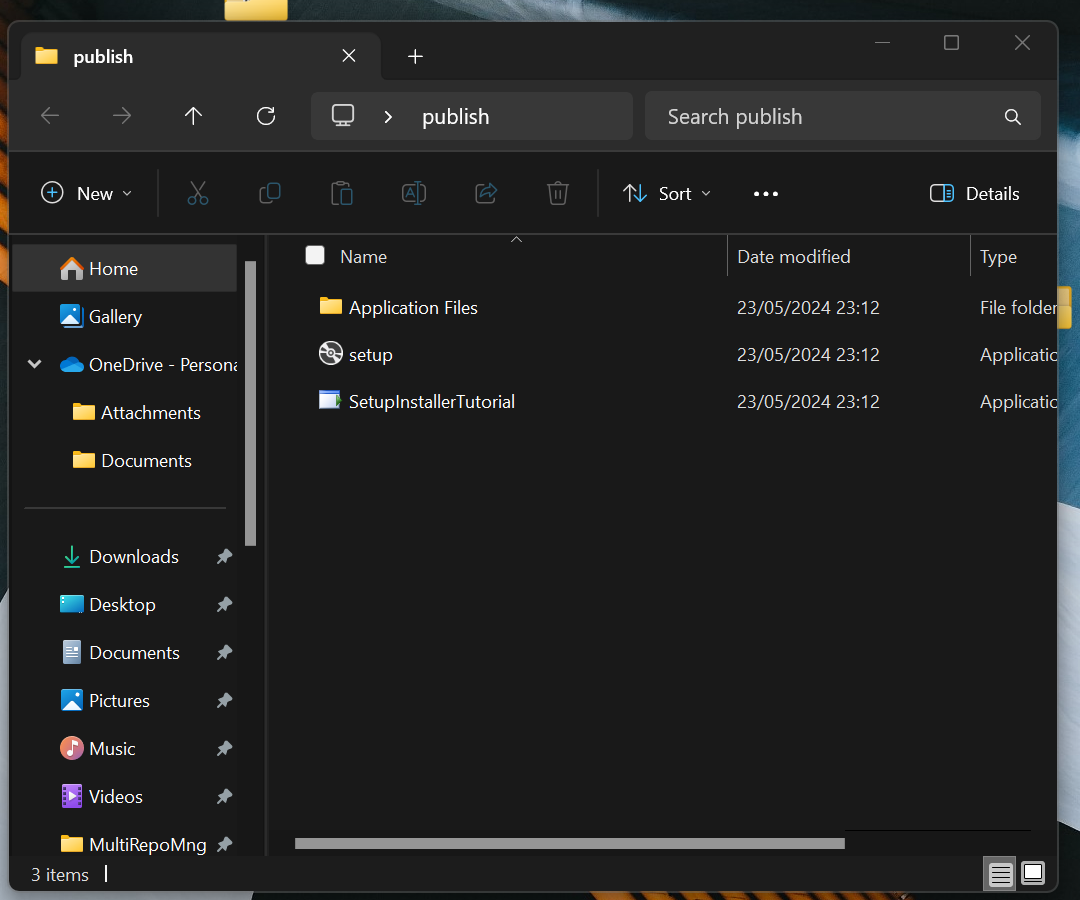

**Login to Azure Portal**

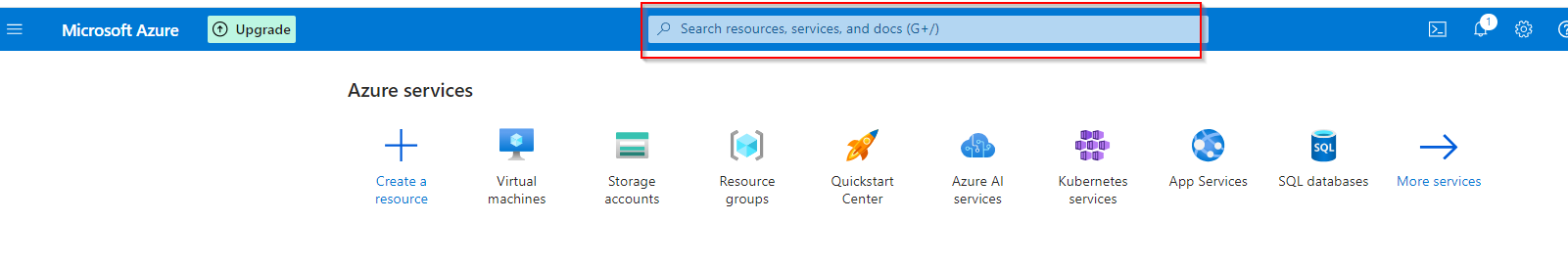

**Locate the search field**

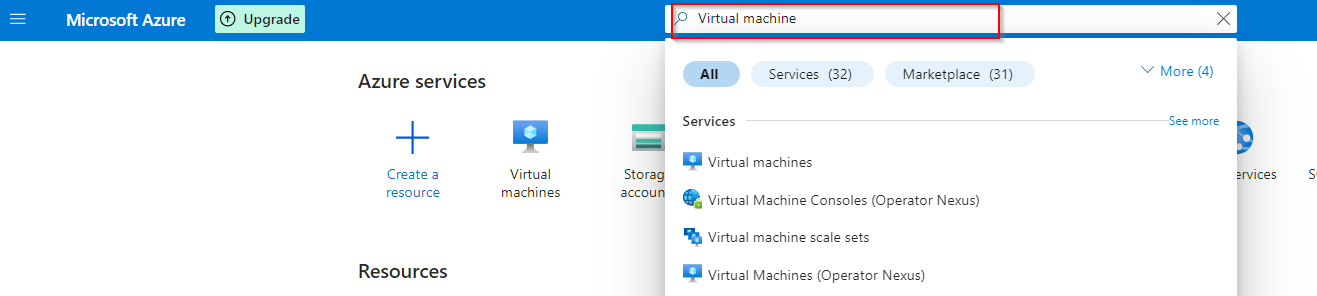

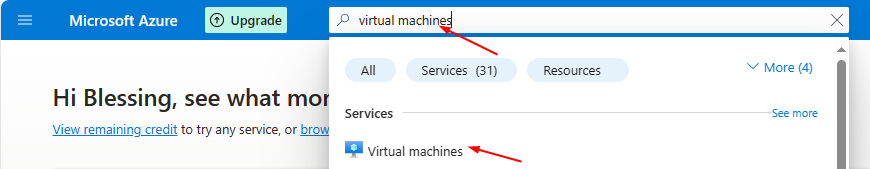

**In the search field, type virtual machine**

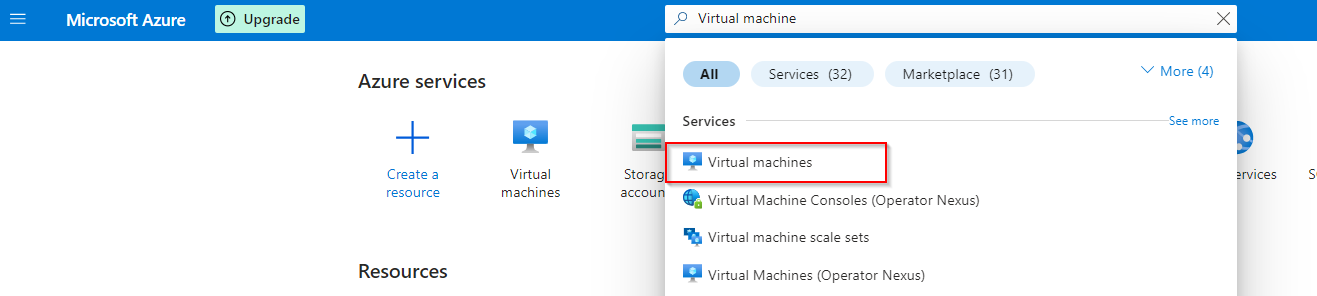

**Select Virtual machine from the list of options**

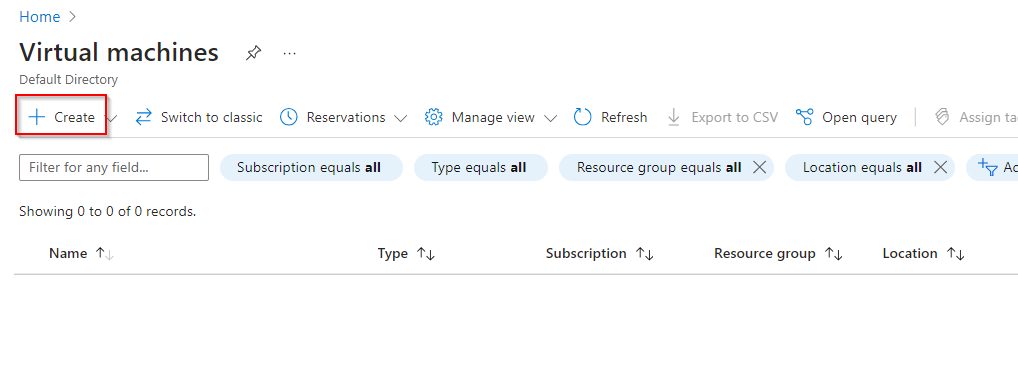

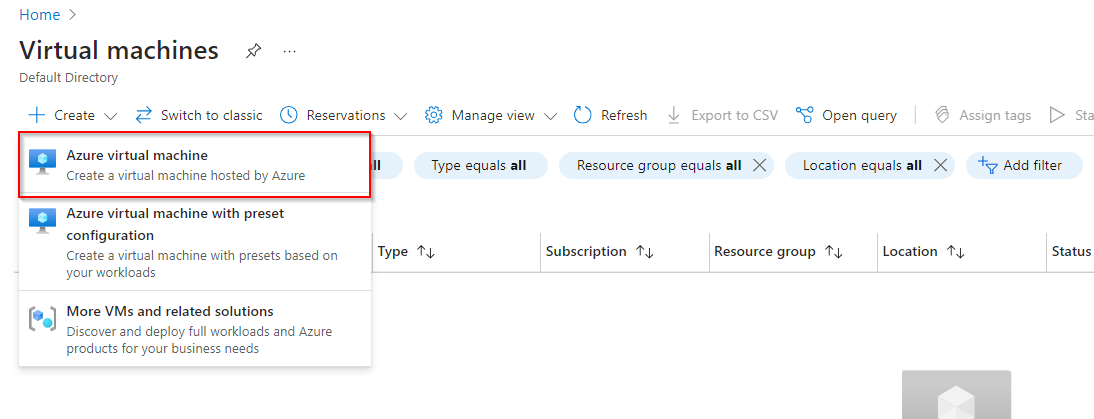

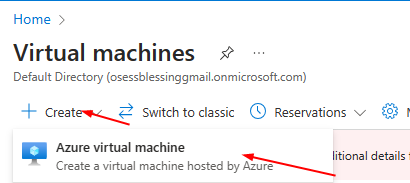

**Click "Create"**

**Select Azure Virtual Machine**

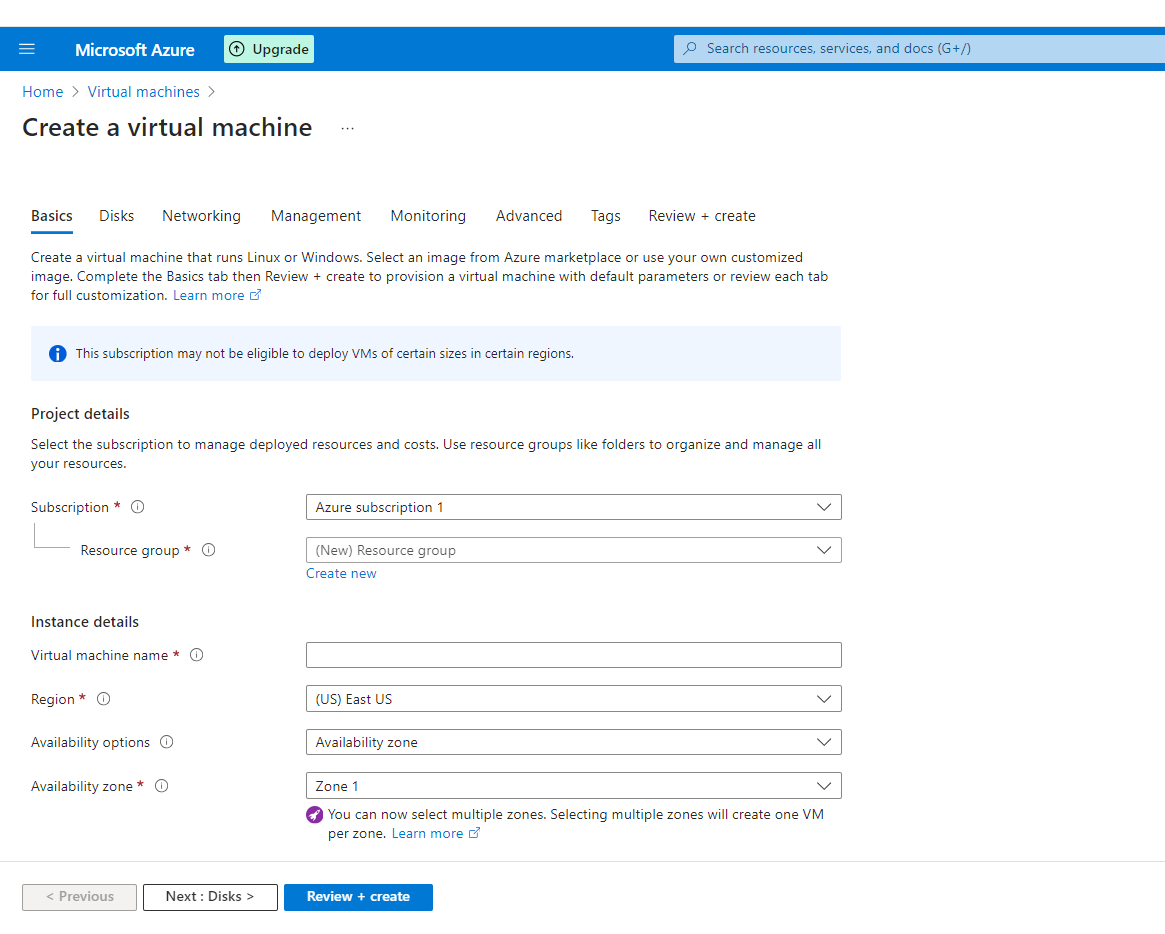

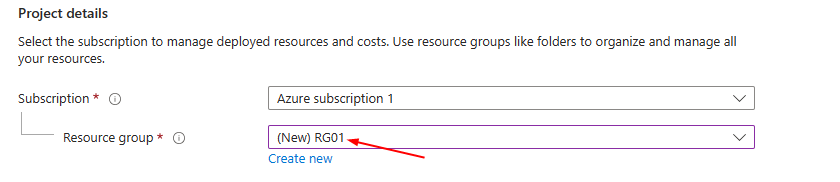

**Create a Virtual Machine**

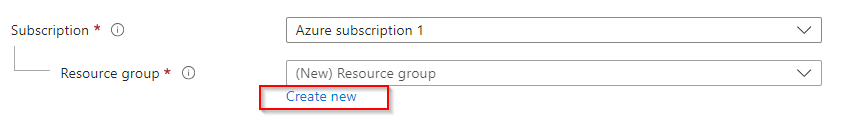

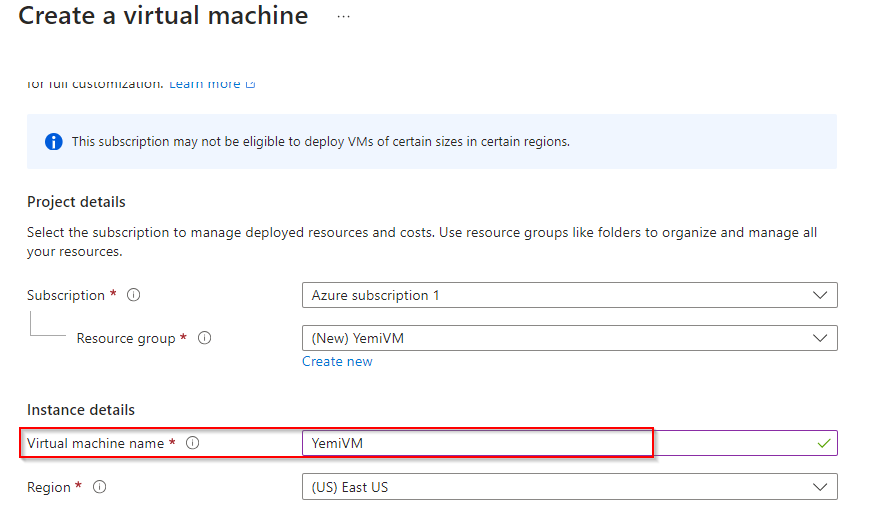

**Create new Resource Group**

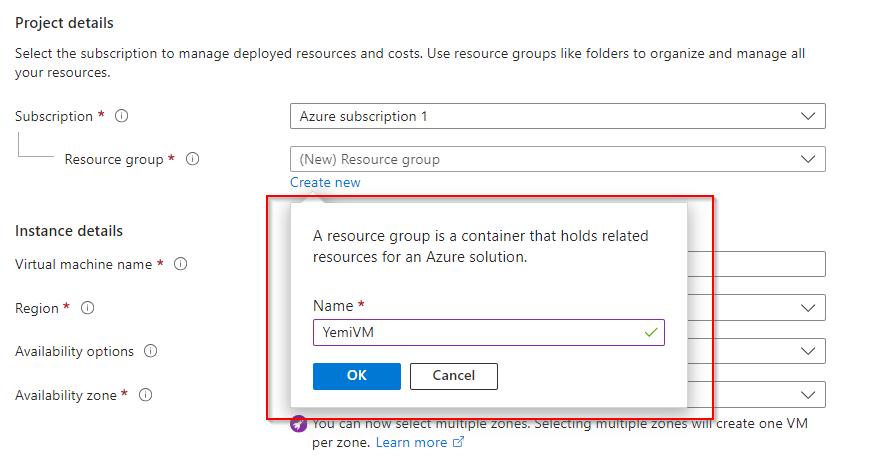

**Name Resource Group**

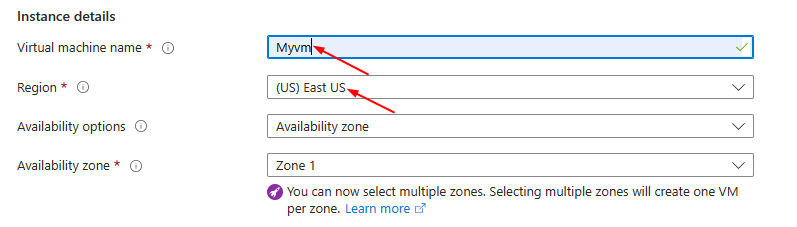

**Enter Virtual Machine Name**

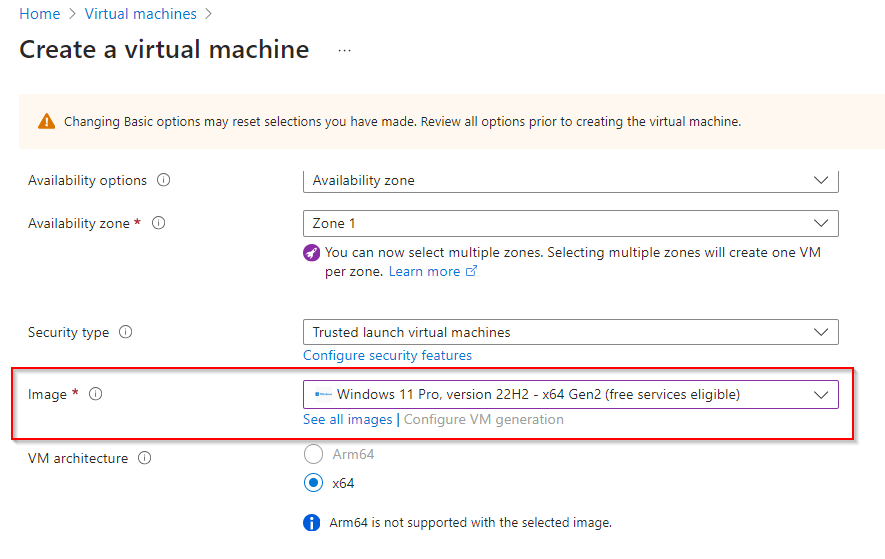

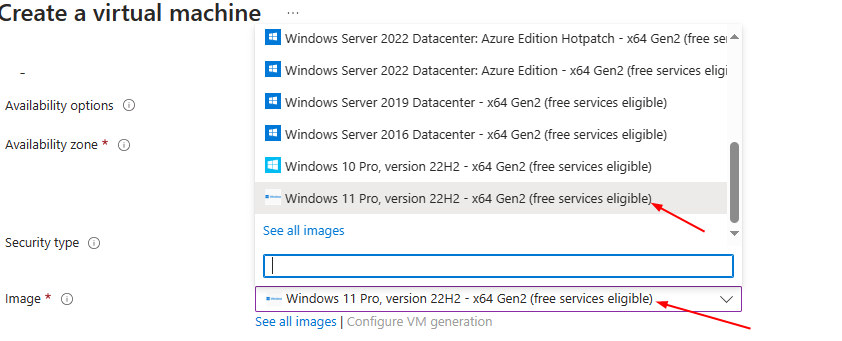

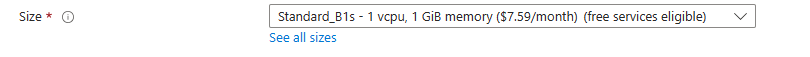

**Select Image "Windows 11 Pro, Version 22H2..."**

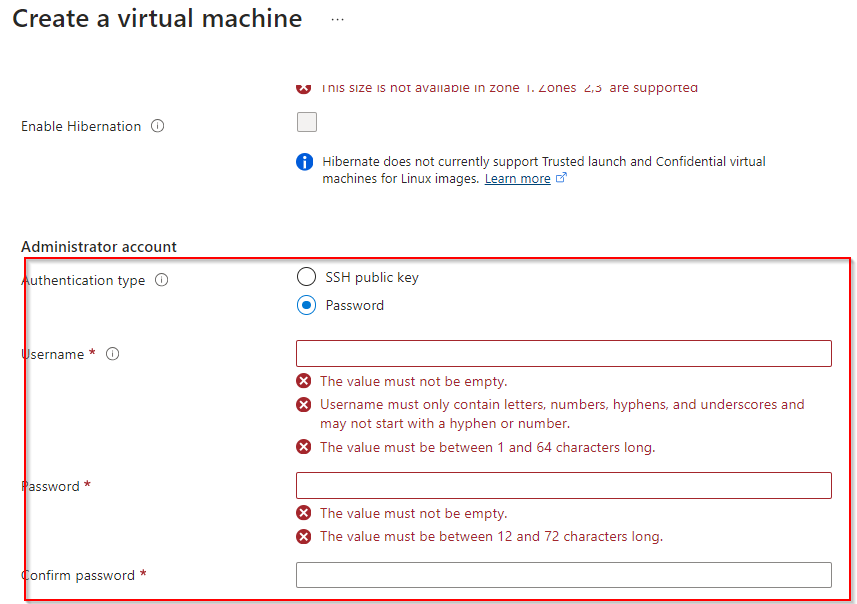

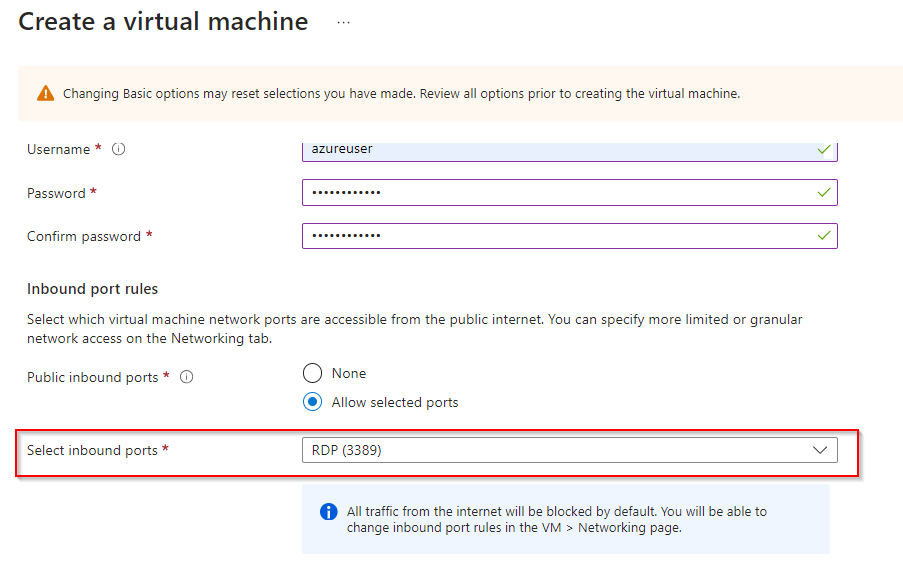

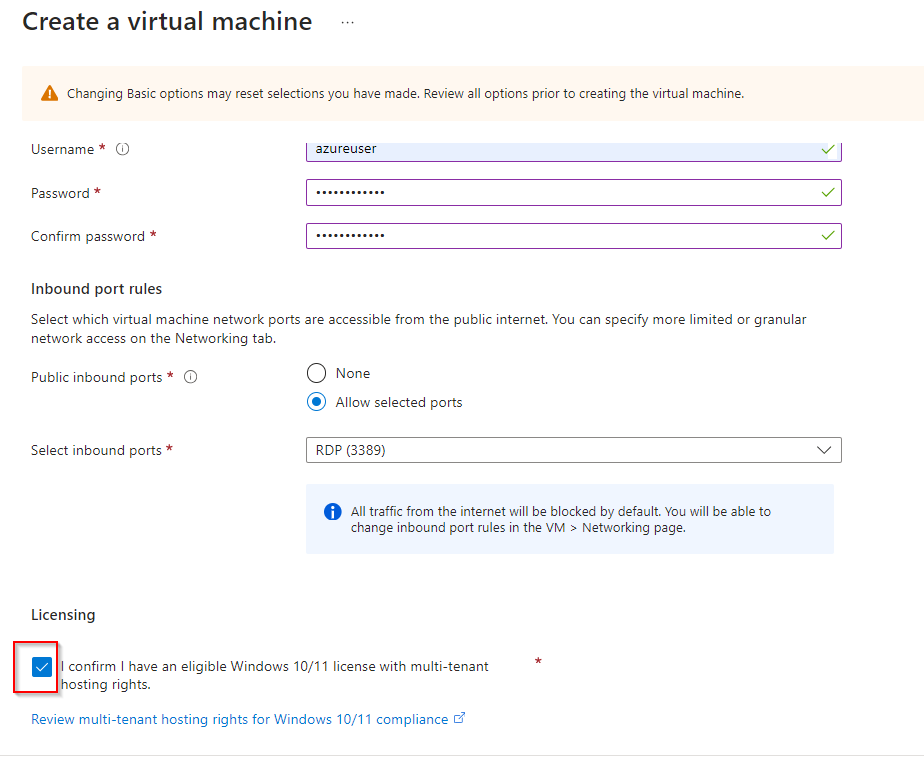

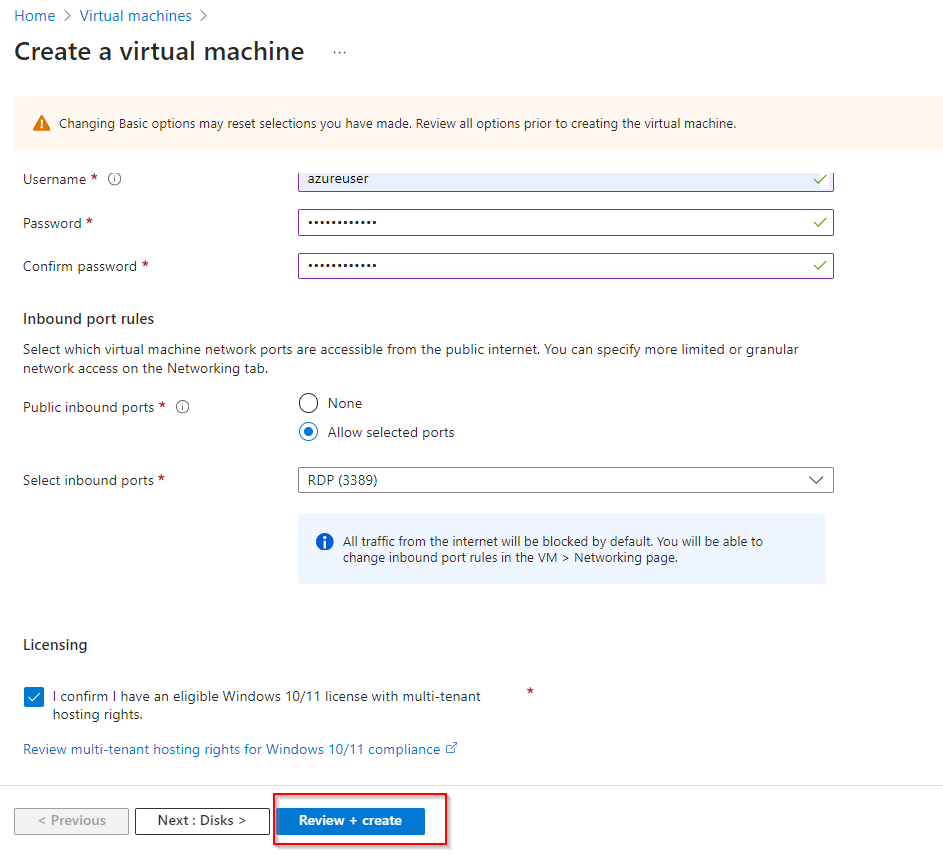

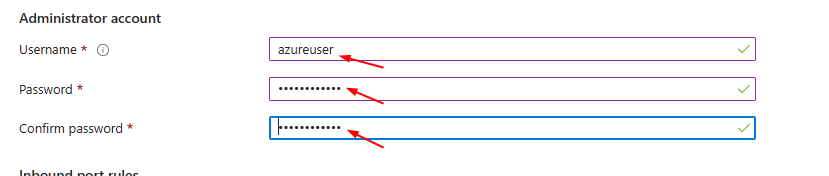

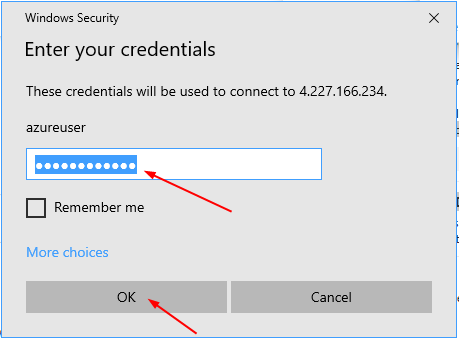

**Authentication Type as password and enter username and password**

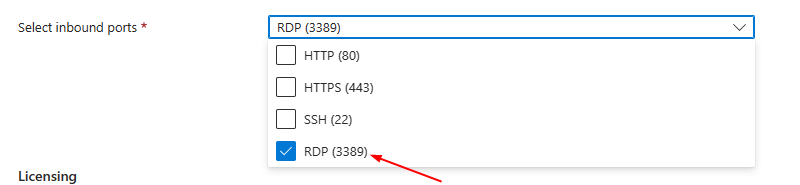

**Select inbound port rule as "RDP"**

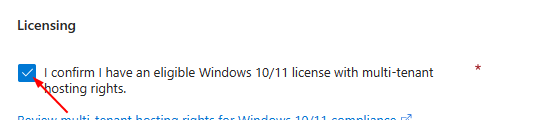

**Check Licensing**

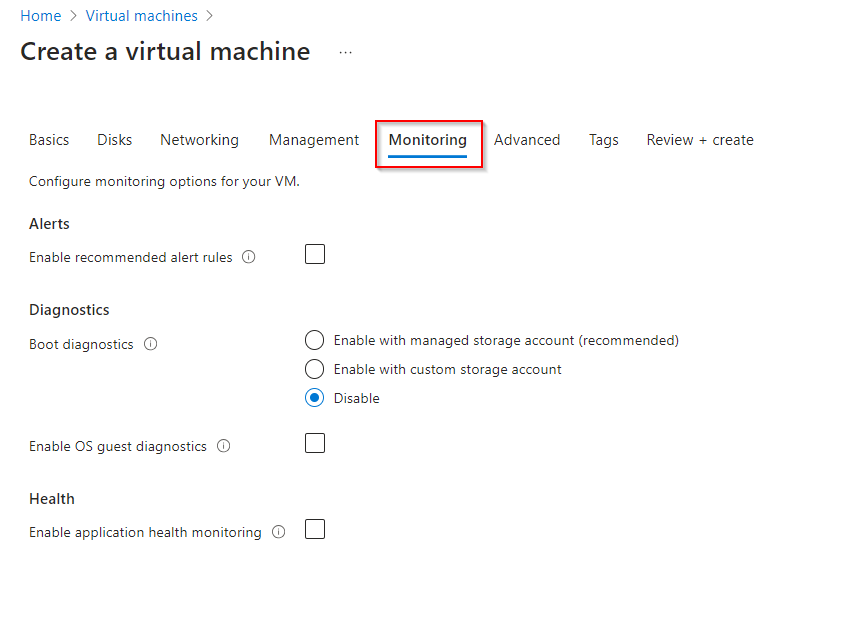

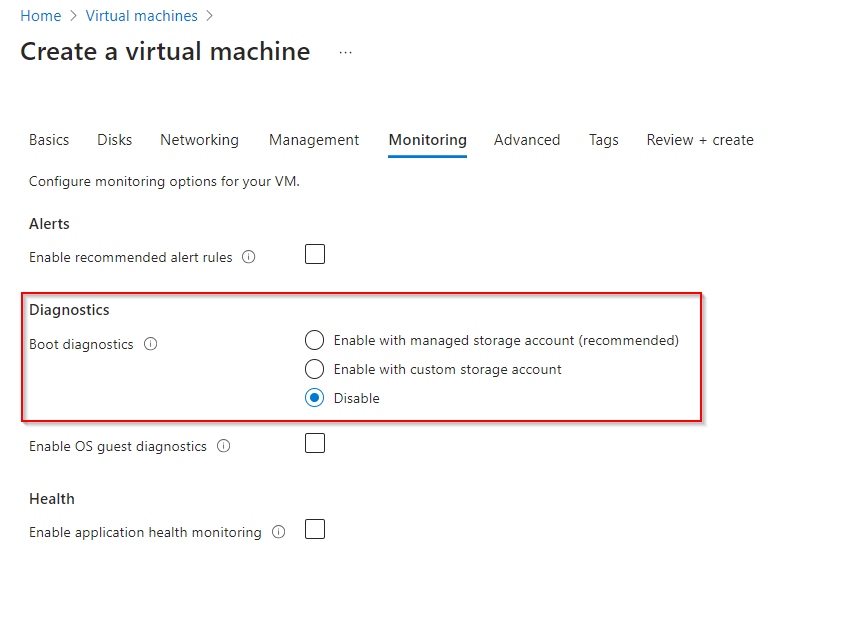

**Click on "Monitoring" Tab**

**Disable Diagnostics in Monitoring Tab**

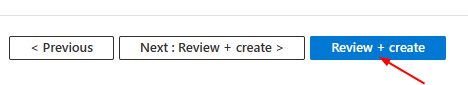

**Review and Create**

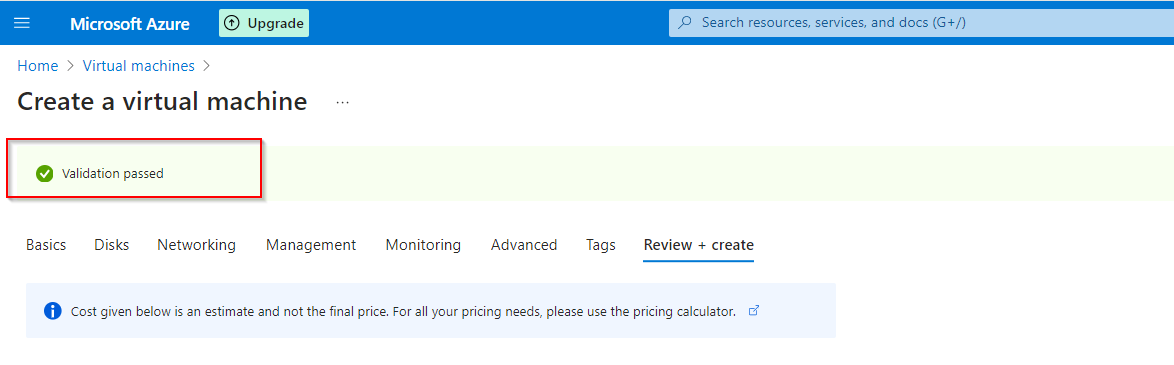

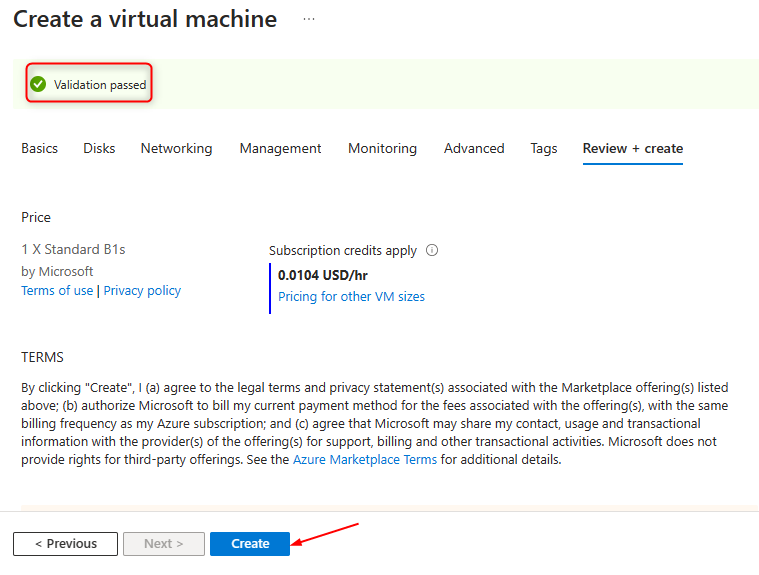

**Validation**

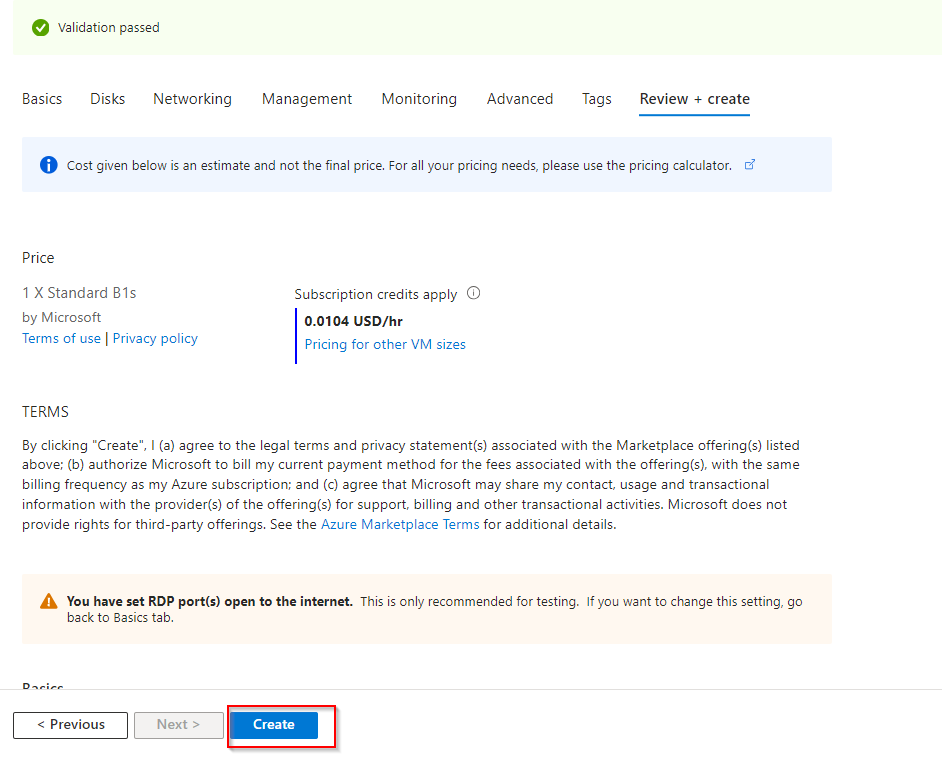

**Click "Create"**

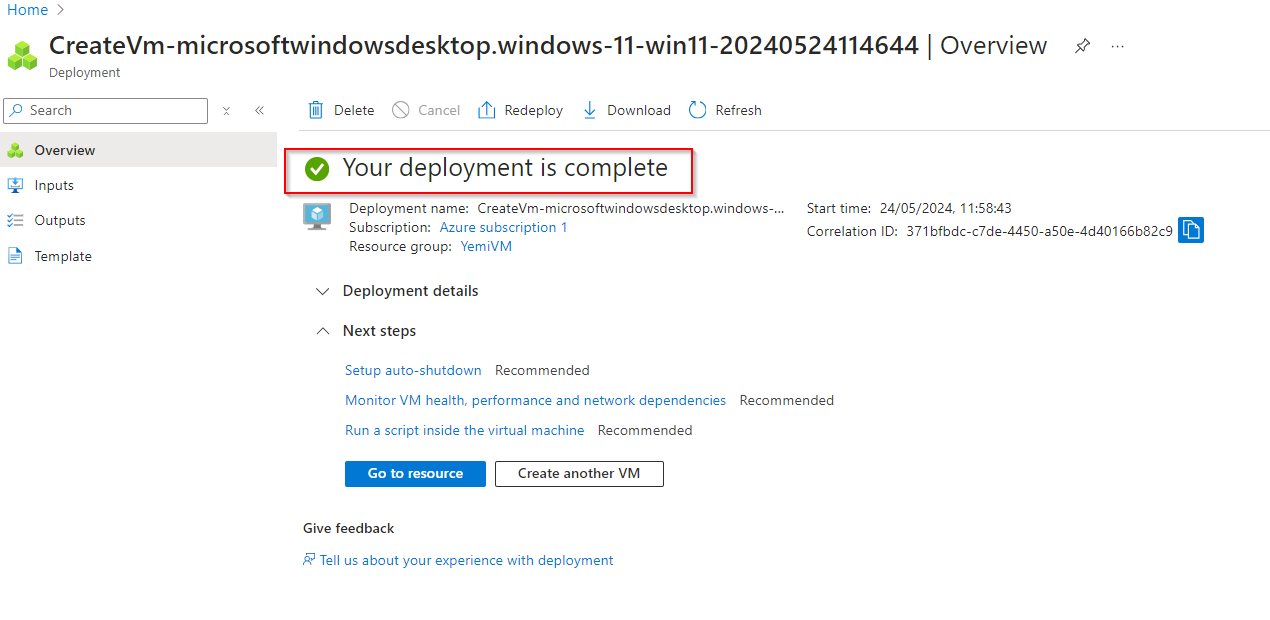

**Confirm Deployment**

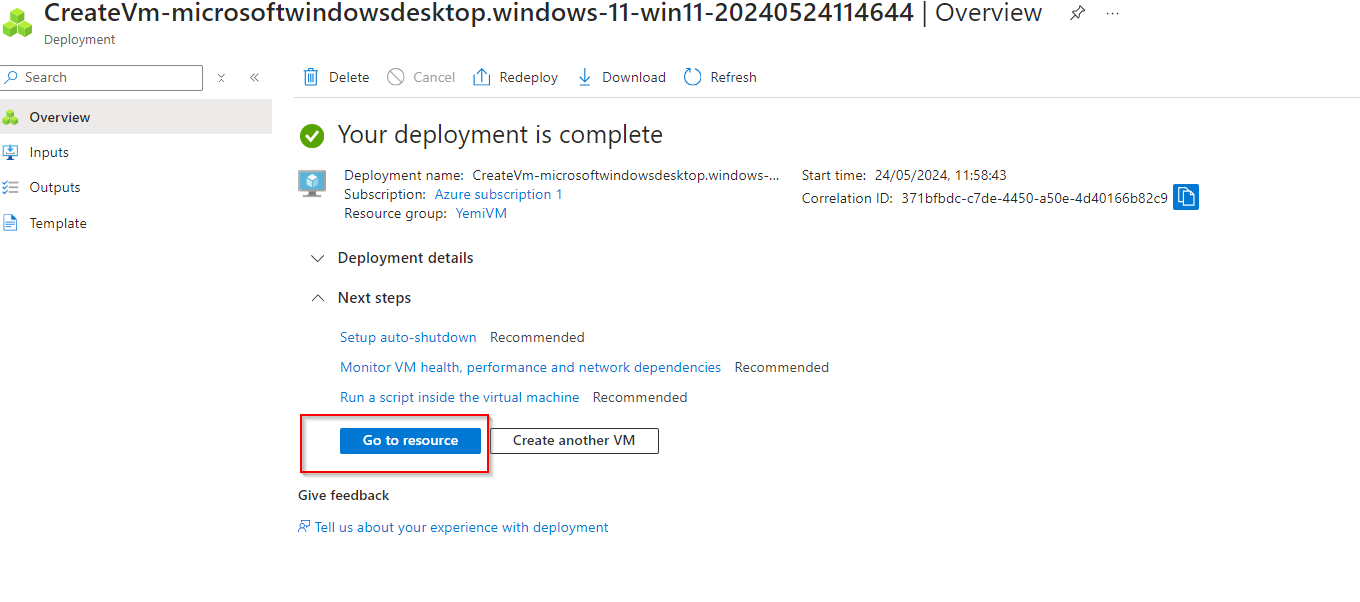

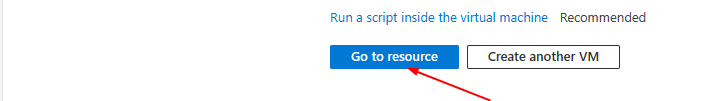

**Click on "Go to resource" **

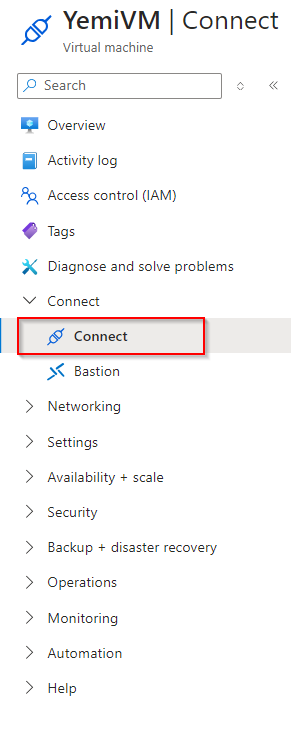

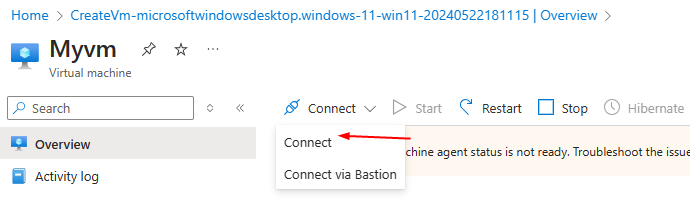

**Click on "Connect"**

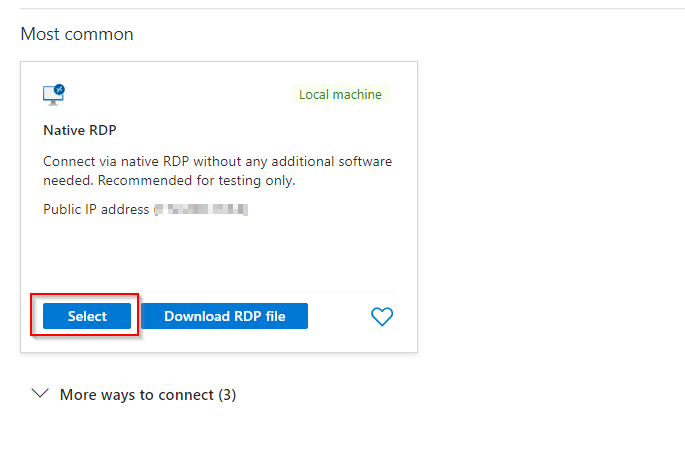

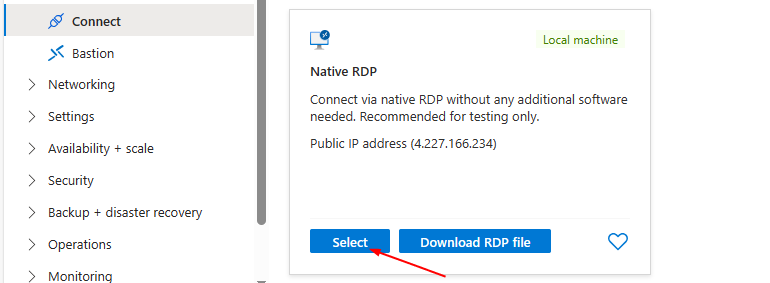

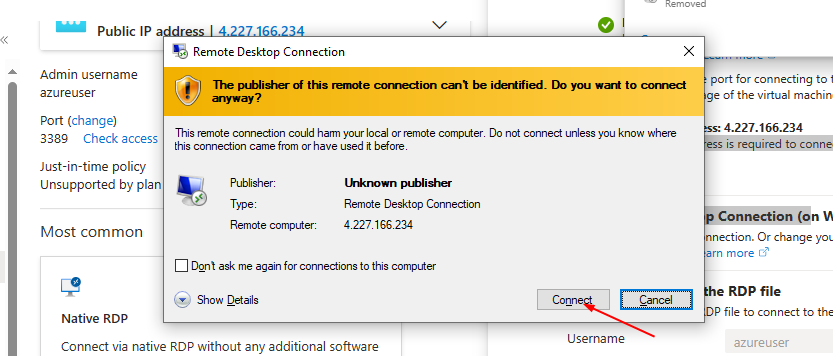

**Select Native RDP & Click "Select"**

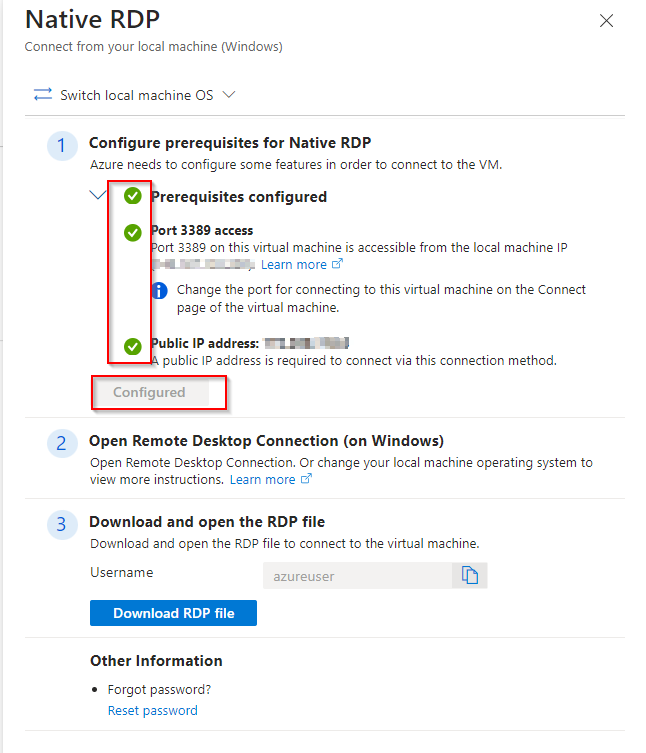

**Ensure all configurations are ticked green and configured**

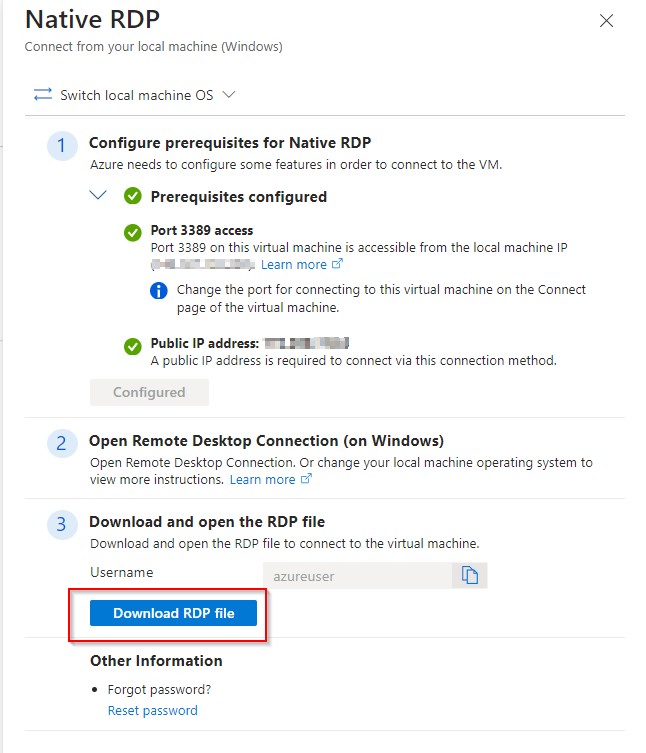

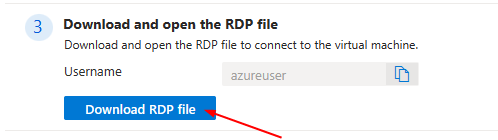

**Download RDP File**

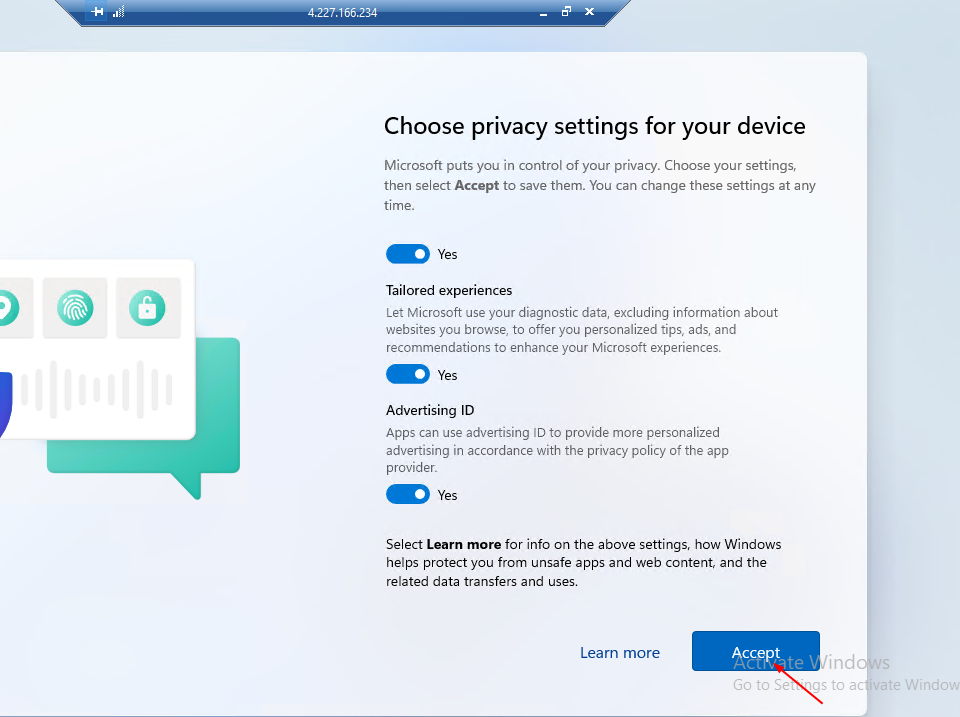

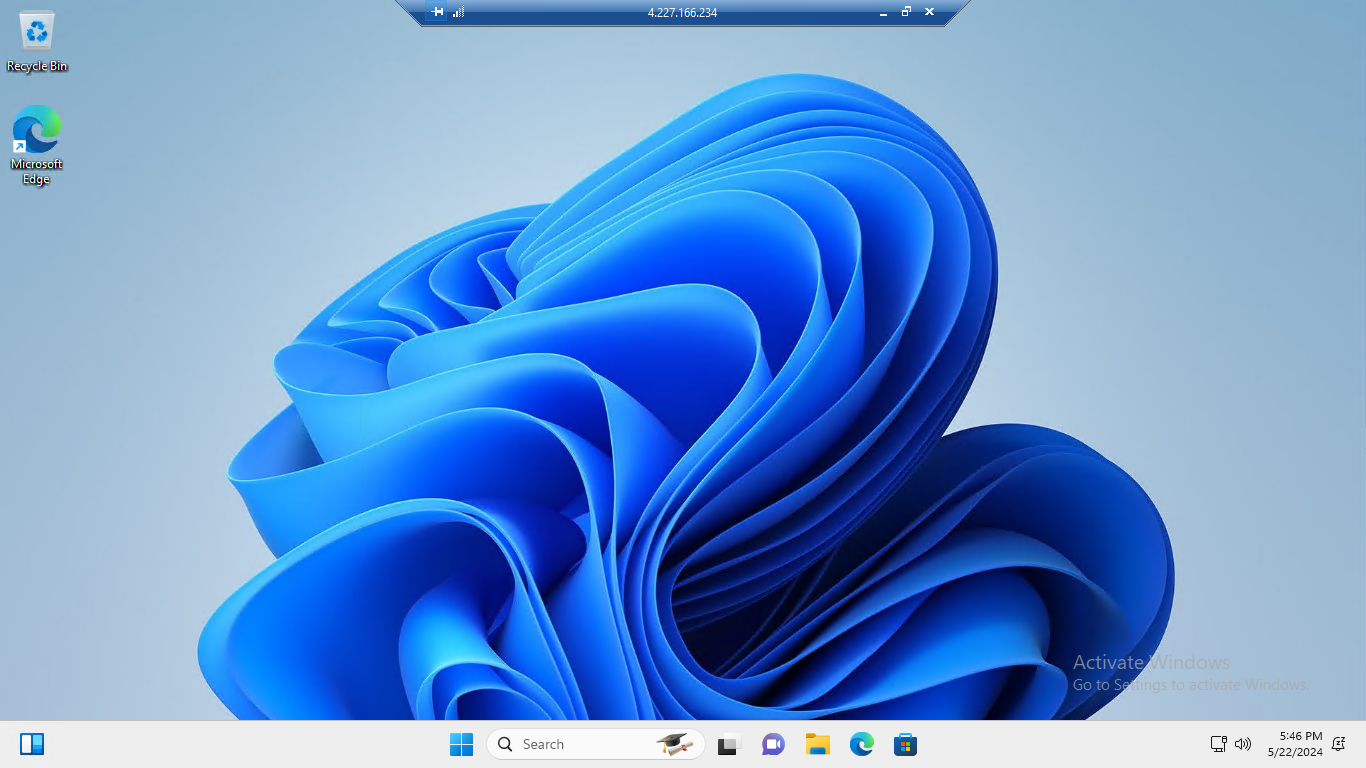

Further installation settings will be required and you will be able to access your virtual machine.

| opsyog | |

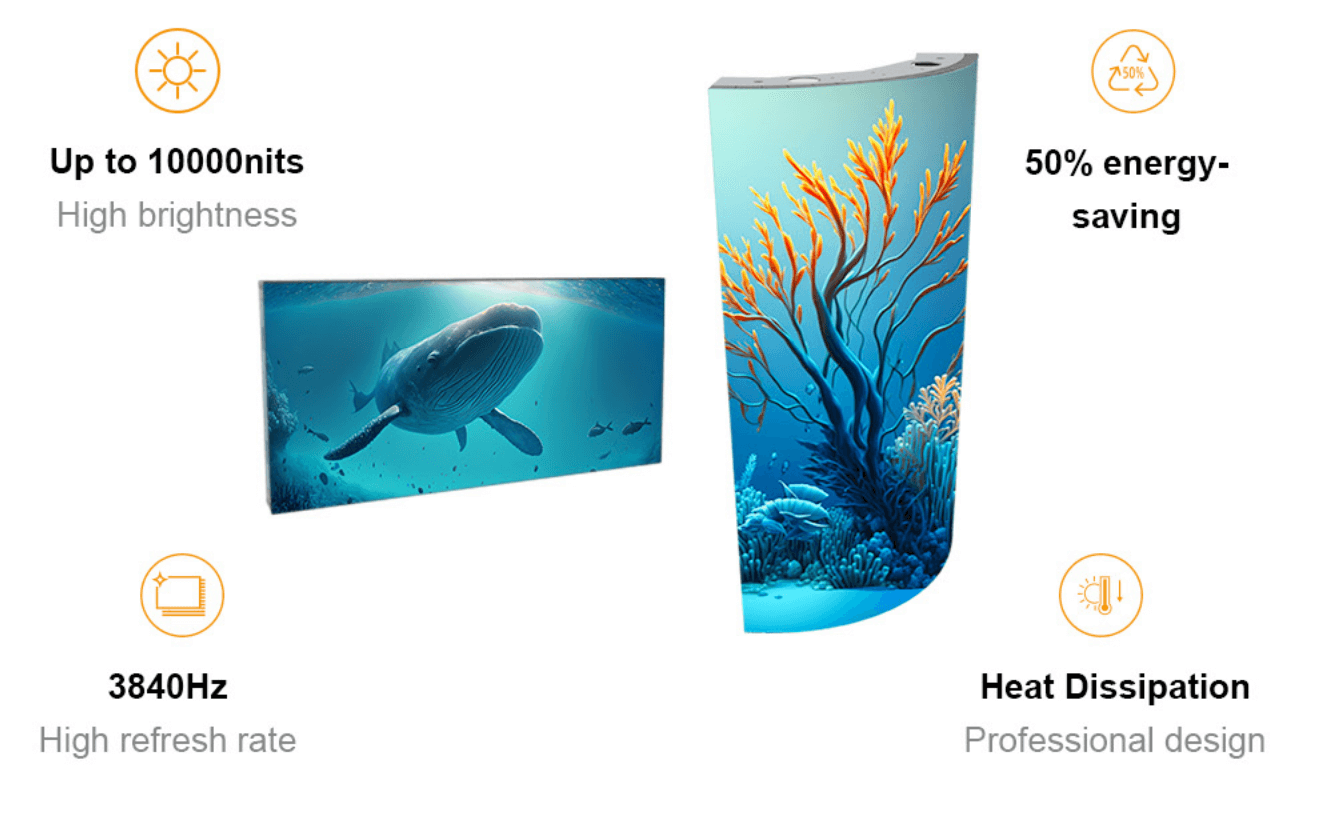

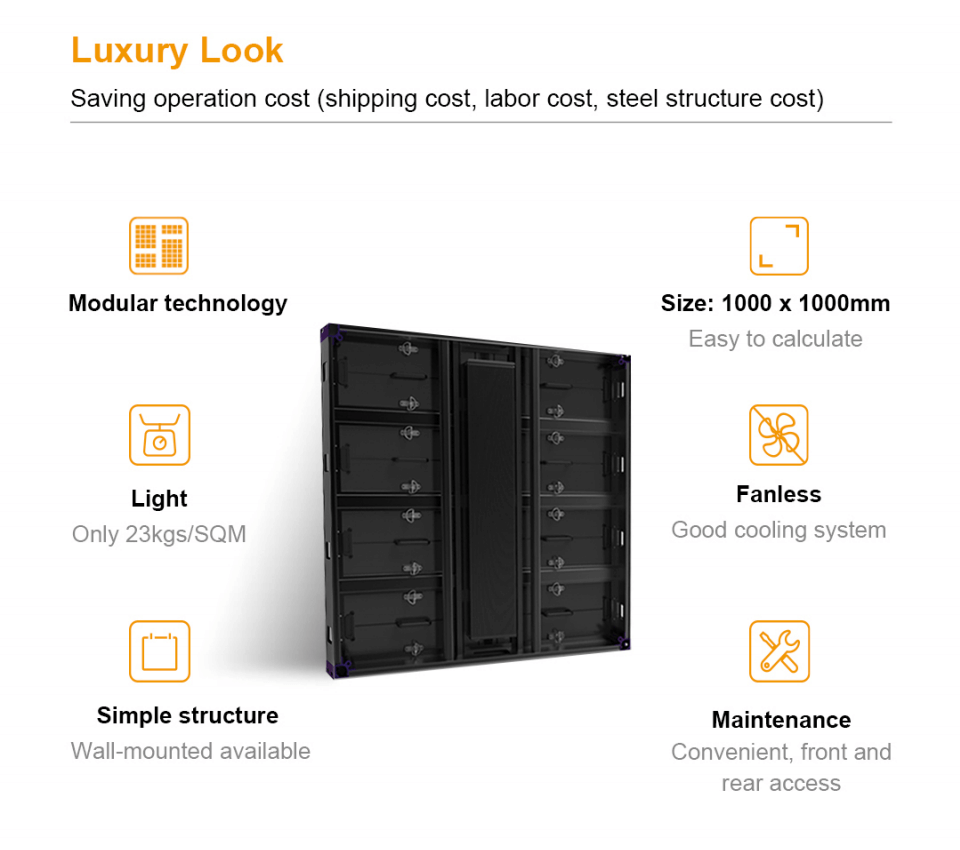

1,863,475 | Full-color LED display: the integration of rich colors and technological innovation | In today's digital era, the importance of visual communication has become increasingly prominent, and... | 0 | 2024-05-24T02:19:37 | https://dev.to/sostrondylan/full-color-led-display-the-integration-of-rich-colors-and-technological-innovation-50ik | led, display, technological | In today's digital era, the importance of visual communication has become increasingly prominent, and full-color LED display screens, as an important medium for information display, have become the first choice in advertising, publicity, display and other fields with their unique color expression and high definition. This article will delve into the working principle of [full-color LED displays](https://www.sostron.com/product?category=2) and their application value in modern society.

Introduction to full-color LED display

LED display screens can be divided into three types: single color, double color and full color according to color classification. Full-color LED display, as the name suggests, refers to a display that can display three basic colors of red, green and blue. Through different combinations of these three colors, up to 16,777,216 colors can be generated, thereby achieving high saturation and high resolution. High-definition and richly colored dynamic picture images. [What should I do if the LED display screen cannot load images? ](https://www.sostron.com/service/faq/4332)

Full color LED display performance characteristics

The performance advantages of full-color LED displays are mainly reflected in the following aspects:

Nonlinear correction technology: Through nonlinear correction technology, the screen image is clearer and has a distinct sense of layering. [Here is the technological evolution of LED screens. ](https://sostron.com/service/faq/3274)

Stable performance: The use of layout scanning technology and modular design ensures the stable performance of the display.

Diversified display: Supports multiple playback formats and screen display modes to meet the display needs of different scenarios.

Easy to operate: Equipped with video playback software and an operating system unique to the LED display, simplifying the operation process. [Take you 5 minutes to understand the LED display control system. ](https://www.sostron.com/service/faq/4384)

Working principle of full-color LED display

The working principle of the full-color LED display is relatively complex and mainly consists of the following parts:

Main controller: Responsible for obtaining the brightness data of each color of each pixel of a screen from the computer display card and assigning it to the scanning board.

Scanning board: controls several rows (columns) on the LED display and is responsible for transmitting signals to the display control unit.

Display control unit: Directly facing the LED display body, it is responsible for controlling the brightness and color of the LED lamp beads.

LED display body: It is composed of thousands of LED lamp beads and is the physical carrier that ultimately forms the image. [Provide you with the working principle of LED lamp beads. ](https://www.sostron.com/service/faq/7842)

The main controller converts the signal from the computer display card into the data and control signal format required by the LED display. The display control unit usually consists of a shift register and a latch with grayscale control function to achieve precise control of the brightness of the LED lamp beads. The scanning board plays a role as a link between the previous and the following. It not only receives the video signal from the main controller, but also transmits the data to the display control unit, and coordinates the differences in space, time, sequence, etc. between the video signal and the LED display data.

Application of full-color LED display

With its unique advantages, full-color LED displays have been widely used in various industries, especially in the following fields:

Advertising industry: used for outdoor billboards, shopping mall displays, etc. to attract customers' attention.

Promotional activities: Provide real-time information and visual enjoyment at various event sites, such as sports events, concerts, etc.

Exhibition display: Display high-definition images and videos in museums, exhibitions and other places to enhance the audience experience.

Conclusion

The working principle of the full-color LED display reflects the innovation of modern display technology. Its advantages in color performance, clarity and stability make it an indispensable visual communication tool in modern society. With the continuous advancement of technology, the application range of full-color LED displays will be further expanded, bringing more convenience and fun to people's lives and work.

Thank you for watching. I hope we can solve your problems. Sostron is a professional [LED display manufacturer](https://sostron.com/about). We provide all kinds of displays, display leasing and display solutions around the world. If you want to know: [LED display: stage art revolution.](https://dev.to/sostrondylan/led-display-stage-art-revolution-4aa2) Please click read.

Follow me! Take you to know more about led display knowledge.

Contact us on WhatsApp:https://api.whatsapp.com/send/?phone=8613570218702&text&type=phone_number&app_absent=0 | sostrondylan |

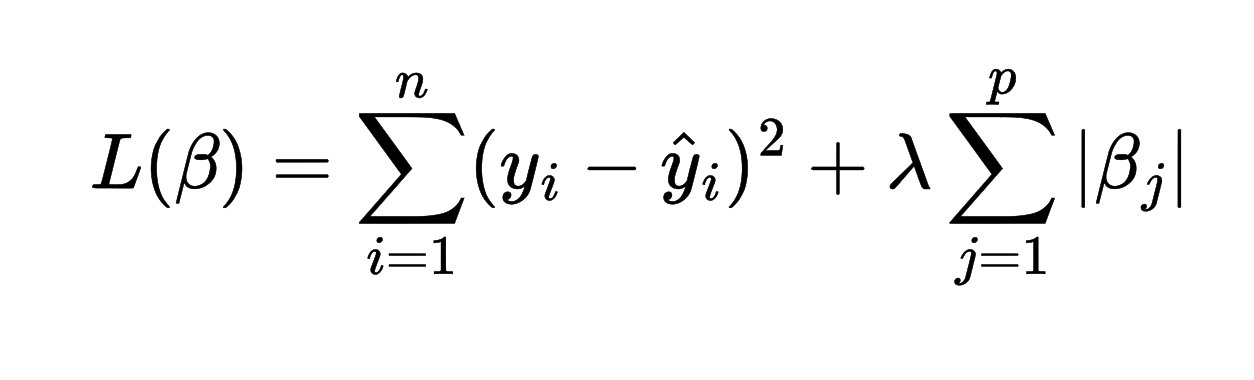

1,863,473 | Understanding Lasso Regularization: Enhancing Model Performance and Feature Selection | Lasso regularization is a powerful technique in machine learning, which is used to prevent... | 0 | 2024-05-24T02:16:33 | https://dev.to/harsimranjit_singh_0133dc/understanding-lasso-regularization-enhancing-model-performance-and-feature-selection-330p | Lasso regularization is a powerful technique in machine learning, which is used to prevent overfitting. But lasso goes a step further- it can also help us identify the most important features of the model. In this article today we will discuss the theoretical aspects of lasso along with its mathematical formulation.

## Lasso Regularization

Lasso regularization is designed to enhance model sparsity, meaning it can zero out coefficients of less important features, effectively performing feature selection. This is particularly useful in high-dimensional data scenarios where we want to identify the most relevant predictors.

## Mathematical formulation

Lasso regularization modifies the objective function (linear regression)by adding a penalty term to the function. This penalty is the L1 norm of the coefficient vector defined as the sum of the absolute values of the coefficients

where:

- lambda is the regularization parameter that controls the strength of the penalty.

- another term is the L1 norm

The L1 penalty encourages sparsity in the model by shrinking some coefficients to zero, effectively performing the feature selection.

## Benefits of Lasso Regularization

- **Feature Selection:** Lasso can automatically perform feature selection by setting the coefficients of less important features to zero.

- **Prevents Overfitting:** By reducing the variance of model, the lasso helps to prevent overfitting.

## Practical Implementation

```

import numpy as np

import matplotlib.pyplot as plt

from sklearn.linear_model import Lasso

from sklearn.datasets import make_regression

X, y = make_regression(n_samples=100, n_features=10, noise=0.1, random_state=42)

lasso = Lasso(alpha=0.1)

lasso.fit(X, y)

plt.figure(figsize=(12, 6))

plt.plot(range(X.shape[1]), lasso.coef_, marker='o', linestyle='none')

plt.xlabel('Feature Index')

plt.ylabel('Coefficient Value')

plt.title('Lasso Coefficients')

plt.xticks(range(X.shape[1]))

plt.grid(True)

plt.show()

```

In the above code, the alpha is the hyperparameter that we need to tune. It is the value of the lambda in the equation.

## Choosing the Optimal Parameter

The value of lambda significantly impacts the sparsity and performance of the model. A higher value leads to a stronger penalty, potentially driving more coefficients to zero and risking underfitting. Conversely, a lower value provides less regularization, potentially resulting in overfitting.

## Feature Selection

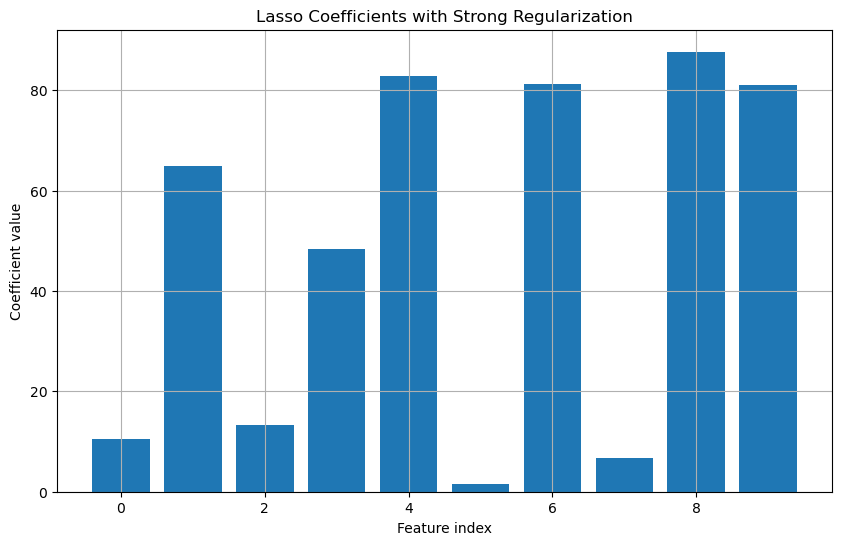

Consider a more complex dataset with multiple features. By fitting a Lasso model and examining the coefficients, we can determine which features are most important.

```

X, y = make_regression(n_samples=100, n_features=10, noise=0.1)

lasso = Lasso(alpha=0.5)

lasso.fit(X, y)

plt.figure(figsize=(10, 6))

plt.bar(range(X.shape[1]), lasso.coef_)

plt.title('Lasso Coefficients with Strong Regularization')

plt.xlabel('Feature index')

plt.ylabel('Coefficient value')

plt.grid(True)

plt.show()

```

In this plot, many of the coefficients would be zero, indicating that Lasso has selected only the most relevant features.

## Conclusion

Lasso regularization is a robust technique for enhancing model interpretability and performance. By adding an L1 penalty to the linear regression objective function, Lasso encourages sparsity in the model, effectively performing feature selection. This helps in identifying the most relevant predictors and prevents overfitting, making it particularly useful in high-dimensional datasets.

| harsimranjit_singh_0133dc | |

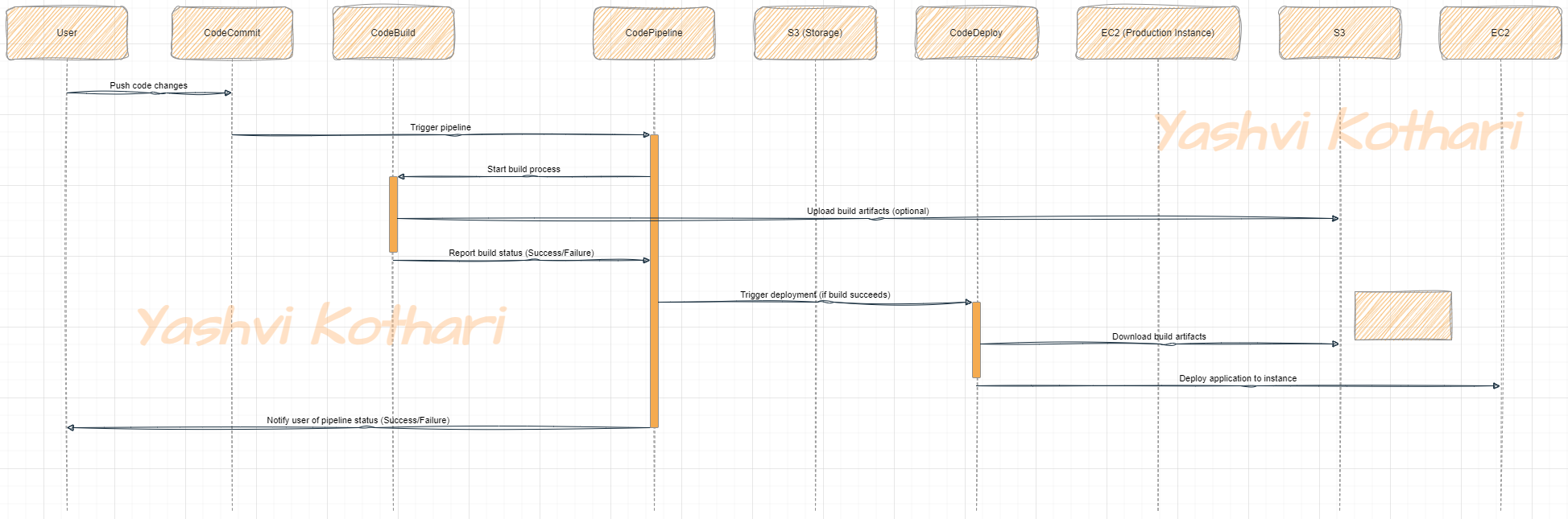

1,863,471 | Migration of a Workload running in a Corporate Data Center to AWS using the Amazon EC2 and RDS service | In another project based on a real-world scenario, I acted as the Cloud Specialist responsible for... | 0 | 2024-05-24T02:10:44 | https://dev.to/cansu_tekin_b017634d64dfd/migration-of-a-workload-running-in-a-corporate-data-center-to-aws-using-the-amazon-ec2-and-rds-service-5e6h | aws, rds, ec2, mysql |

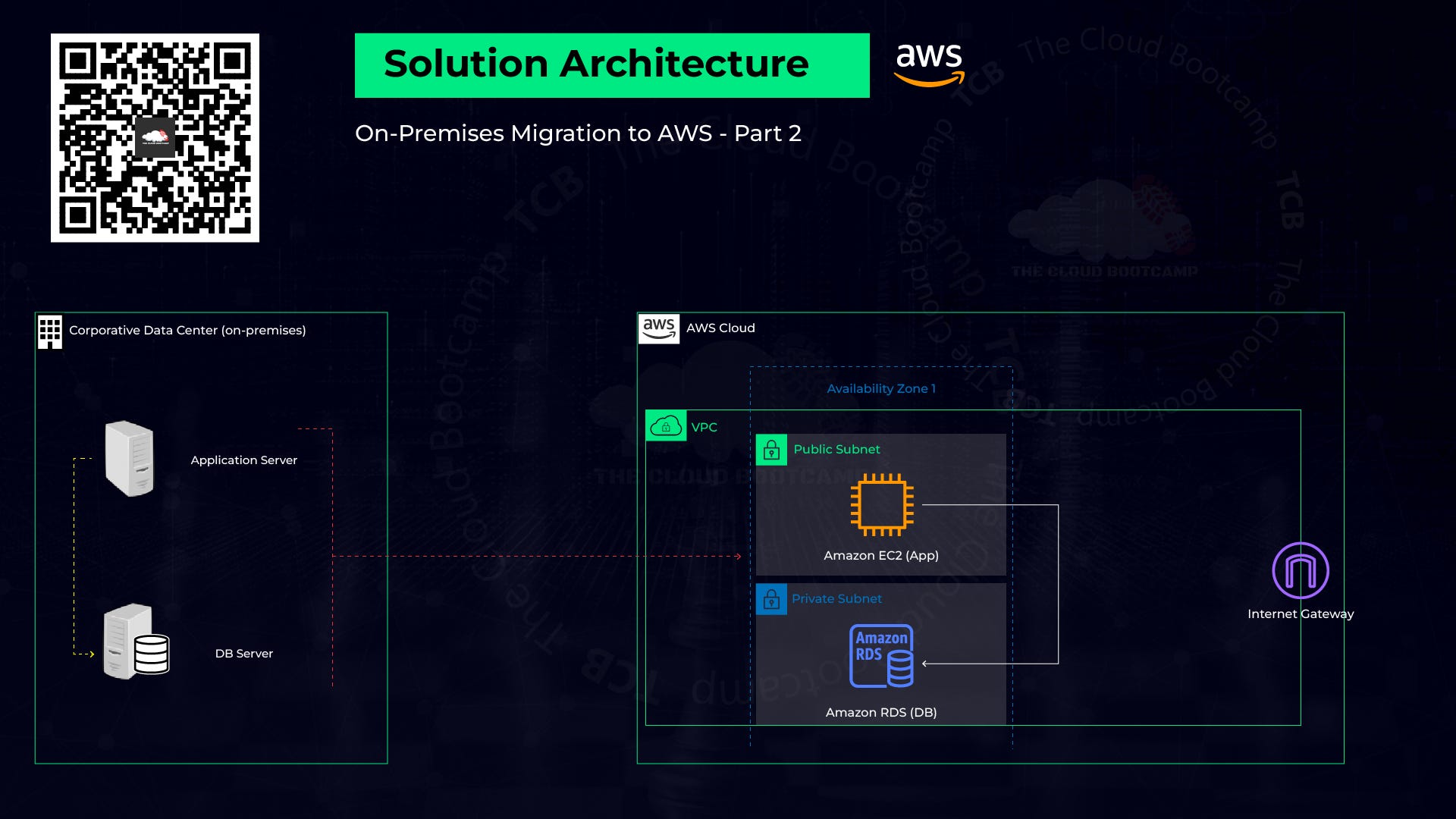

In another project based on a real-world scenario, I acted as the Cloud Specialist responsible for migrating a workload running in a Corporate Data Center to AWS.

The application and database were migrated to AWS using the Lift & Shift (rehost) model, moving both application and database data.

Migration steps:

1. Planning: sizing, prerequisites, resource naming

2. Implementation: resource provisioning, best practices

3. Go-live: validation test — Dry-run, final migration — Cutover

4. Post Go-live: ensuring the operation of the application and user access

1. Planning

The client provided information and files for the application and the database to migrate from the on-premise environment to the AWS cloud.

Python Web — Wiki Server Application: Prerequisite python packages and libraries for the application to be run successfully on the AWS EC2 application server are determined.

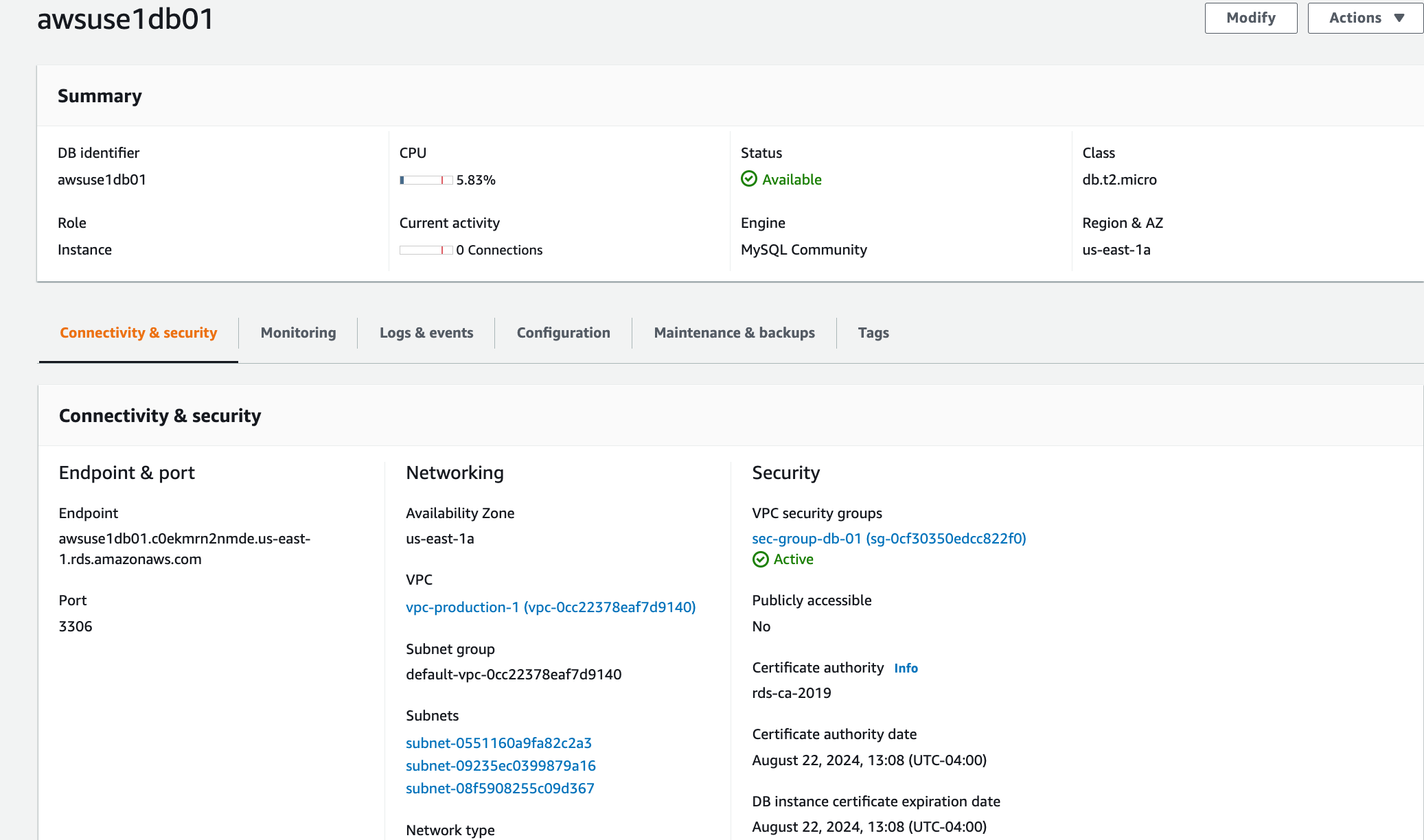

MySQL 5.7 Python Web — Wiki DB Server: Size, host/server name, IP address, CPU, and necessary description are provided to migrate it to the Amazon RDB server.

2. Implementation

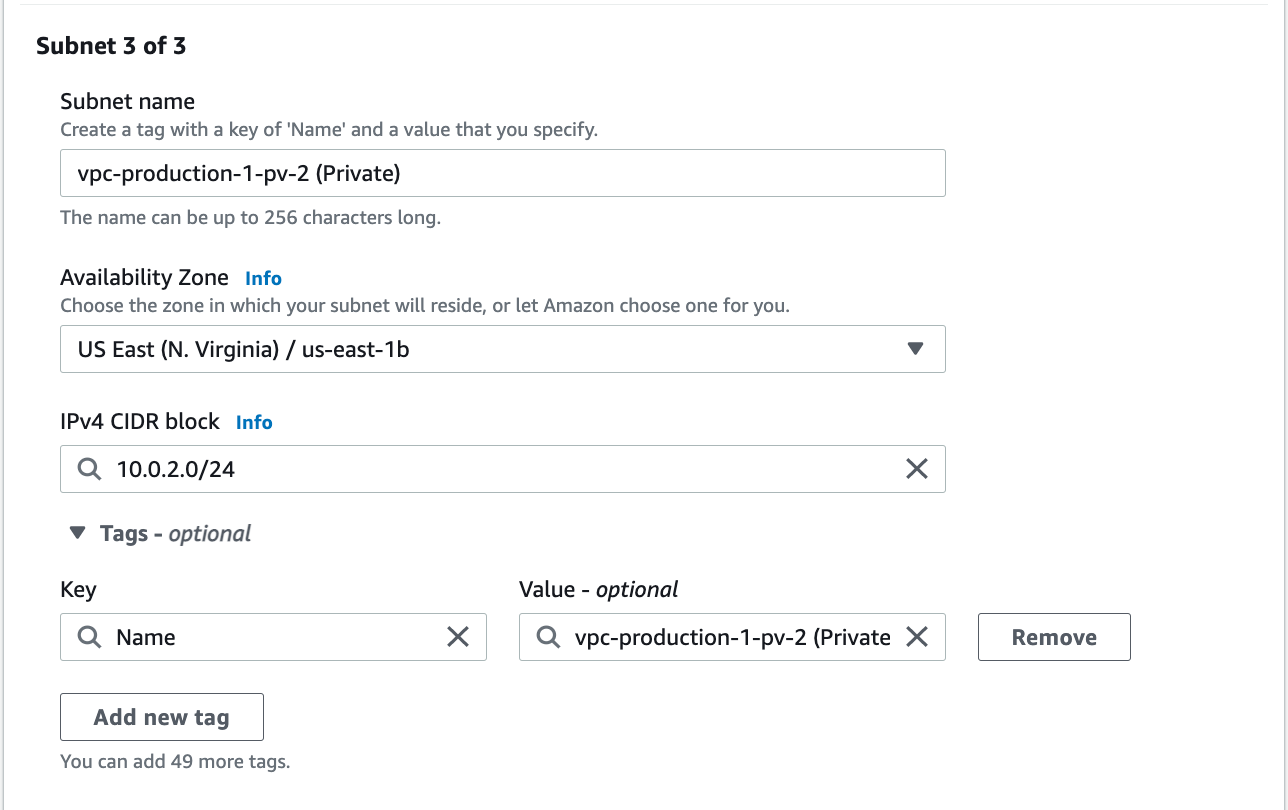

* Create a VPC (Amazon Virtual Private Cloud): The purpose is to build a virtual and isolated network. The accessibility of the Amazon RDB and EC2 instance resources on the Internet will be controlled by the assignment of IP addresses. One public subset and two private subsets were added to VCP. The IPv4 CIDR block must not overlap with any existing CIDR block that’s associated with the VPC.

* Create an Internet Gateway and attach it to a VPC: Necessary for the connection between EC2 and the Internet.

* Create a Route Table: There is already an attached route table to VPC when it is created but it is local and only routing internal traffics. We want to add new traffics to allow users coming from the Internet to access the EC2 application instance. The EC2 instance also can initiate internet connections from itself like connection to download packages etc.

* Create an EC2 instance with a new Security Group and Key Pair (*.pem)*: EC2 instance(AMI: Ubuntu 18.04) within the previously launched VPC network was created.

* Key pair was created while creating the EC2 instance and downloaded to the desktop. It is necessary to connect remotely to the EC2 instance from the desktop via ssh.

* Security Group was created and configured to open a port so that we can access the services running on the virtual machine. A new security rule was added for the application to be accessed over the internet only port 8080.

* Create MySQL RDS instance: It should be the same version from the on-promises environment or a newer version but we need to make sure this change will not affect anything else. Public access: No, never set it to “yes” if it is not really necessary.

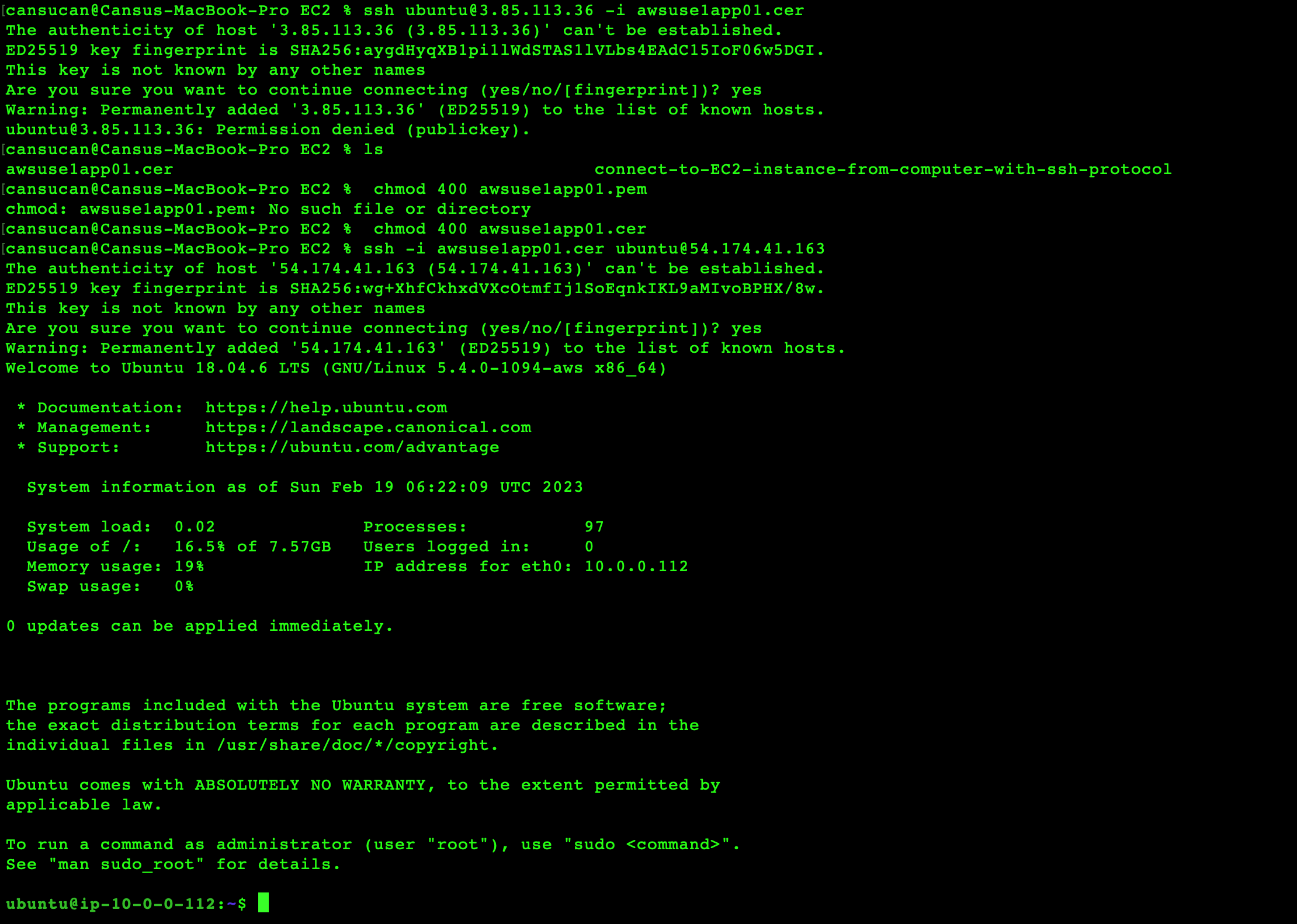

* Pre-requisites steps: It should be connected to the EC2 instance using ssh and pair-key which was downloaded before in “.pem” format. It is important to prepare the EC2 instance to make the application work properly on it. Required python packages and libraries were installed as determined in the planning step.

* Set the permissions of your private key so that only you can read it. chmod 400 key-pair-name.pem

3. On-Live

This is broken into two steps, the validation (dry run) and the cutover.

Validation(dry-run):

The on-premises resources should be up and running at this stage. Once the validation is completed successfully, a downtime window can be scheduled when the business is not running and run the final migration switching from the on-premise environment to the cloud (cutover).

* 1) Database and 2) application deployment resources from the on-premises environment were exported and 3) put in an AWS S3 bucket. Then we can transfer files from the S3 bucket to related subnets in VCP. It is connected to the EC2 instance and opened remote connectivity from the local computer.

* 4) The application deployment files were imported to the EC2 instance.

$ ssh ubuntu@<PUBLIC_IP> -i <ssh_private_key>

* 5) Remotely Connected to MySQL running on AWS RDS so that we can import the data coming from the on-premise DB. DB files were imported to the AWS RDB. 6) The connectivity between the EC2 instance and RDB was established in the application configuration file by pointing to the AWS RDB hostname.

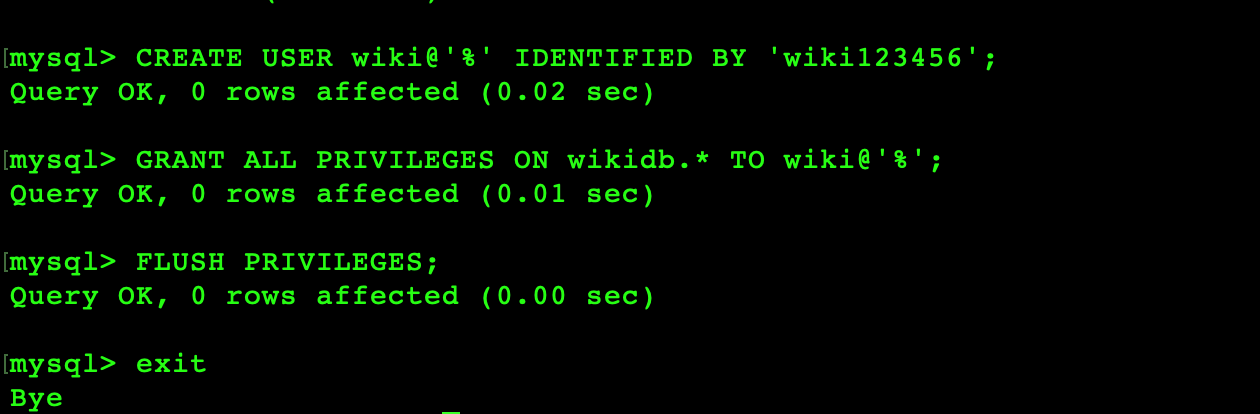

* A new user wiki in the wikidb was created so that application can go ahead and connect to the database, it will do connectivity from the application to the database.

* Unzip the app files, it is configuration files from the application that we want to point to MySQL DB running on AWS.

* Launch the application to validate the migration: Bring up the application to see if the application will be connected to the RDS.

<EC2_PUBLIC_IP>:8080

* A new article was created to see if the application is able to not only read from MySQL database but also write on it.

Cutover: It is the next step of the On-Live process. So, in a production environment, we can schedule the downtime to bring the actual data from the on-premises environment, import the data, and make sure that the on-premises database and application are down. Once it is done we can switch from the on-premises environment to the AWS completely.

4. Post Go-Live:

Last step of migration. We should make sure there is no problem after go-live. Stability, ongoing support; access, performance, integration. Ongoing support can continue for 2 weeks, more or less depending on the complexity of the application.

| cansu_tekin_b017634d64dfd |

1,863,470 | Transitioning away from Nursing | Transitioning out of nursing after spending that last decade in the field. I really hope to be able... | 0 | 2024-05-24T02:09:04 | https://dev.to/malukanoa/transitioning-away-from-nursing-1i | nursetotech, new, careerdevelopment, webdev | Transitioning out of nursing after spending that last decade in the field. I really hope to be able to land work that is remote, challanging skill wise and leaves me time to travel. Nursing while it has been rewarding has become a challange. I want to meet new people and discover other sectors of the workforce. | malukanoa |

1,863,466 | Automated user migration and management of AWS Identity and Access Management (IAM) resources | Automated user migration and management of AWS Identity and Access Management (IAM)... | 0 | 2024-05-24T02:07:03 | https://dev.to/cansu_tekin_b017634d64dfd/automated-user-migration-and-management-of-aws-identity-and-access-management-iam-resources-2j7c | cloudcomputing, aws, multiplatform, iam |

## *Automated user migration and management of AWS Identity and Access Management (IAM) resources*

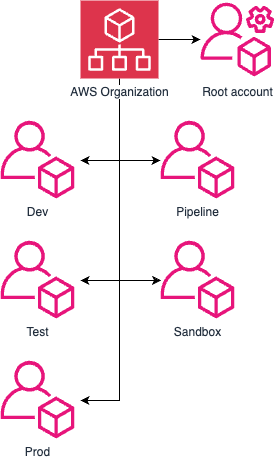

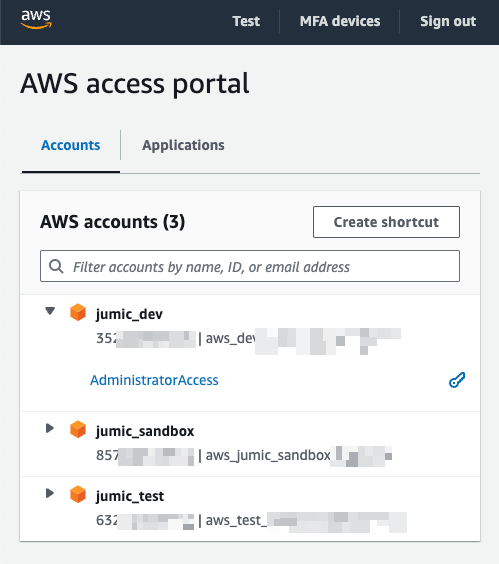

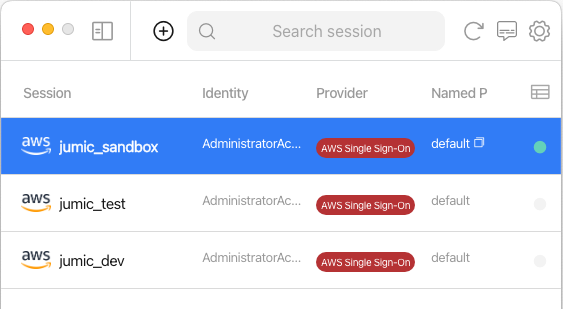

In this project based on a real-world scenario, I acted as Cloud Specialist with the mission to migrate users in an automated way and manage AWS IAM (Identity and Access Management) resources.

There were 100 users that needed to be migrated and have MFA (Multi-factor authentication) enabled on their accounts, as this is a security best practice.

Using GitBash with AWS CLI and Shell Script, I avoided repetitive and manual tasks in the AWS console and automated the processes.

PART-1: On-Premises Migration to AWS

Create 5 user groups, attach policies, and migrate users from the on-premise environment

**CloudAdmin: A**dministratorAccess, IAMUserChangePassword

**NetworkAdmin: **VPCFullAccess, IAMUserChangePassword

**DatabaseAdmin:** AmazonRDSFullAccess, IAMUserChangePassword

**LinuxAdmin: **AmazonEC2FullAccess, IAMUserChangePassword

**Trainees: **ReadOnlyAccess, IAMUserChangePassword

PART-2: Enable MFA (MultiFactor Authentication) on your root and IAM users applying the IAM Password Policy

Create a custom policy, named **EnforceMFAPolicy.json, **for the MFA and add it to each user group, then enable it for root and each IAM user.

| cansu_tekin_b017634d64dfd |

1,863,469 | vaytien banglai | Vay vốn theo bằng lái xe máy, xe ô tô A1, B2, C...Lãi suất thấp, không giữ giấy tờ gốc, duyệt vay... | 0 | 2024-05-24T02:03:56 | https://dev.to/cavpbankvntop/vaytien-banglai-4nce | Vay vốn theo bằng lái xe máy, xe ô tô A1, B2, C...Lãi suất thấp, không giữ giấy tờ gốc, duyệt vay nhanh an toàn. Đăng ký vay ngay tại Webstie VPBankvn.top

Website: https://vpbankvn.top/vay-bang-cccd-va-bang-lai-xe/

Phone: 0708866285

Address: 144 Cộng Hòa, Q.Tân Bình, TP. Hồ Chí Minh

https://tupalo.com/en/users/6759050

https://glose.com/u/stvpbankvntop

https://www.silverstripe.org/ForumMemberProfile/show/151835

https://dreevoo.com/profile.php?pid=641155

https://potofu.me/fcvpbankvntop

https://edenprairie.bubblelife.com/users/gbvpbankvntop

https://muckrack.com/vaytien-banglai

https://leetcode.com/u/spvpbankvntop/

https://www.diggerslist.com/rdvpbankvntop/about

http://buildolution.com/UserProfile/tabid/131/userId/405251/Default.aspx

https://hypothes.is/users/xpvpbankvntop

https://8tracks.com/vuvpbankvntop

https://www.ohay.tv/profile/cmvpbankvntop

https://www.reverbnation.com/vpbankvntop33

https://www.funddreamer.com/users/vaytien-banglai

https://www.bark.com/en/gb/company/vpbankvntop/MOdD0/

https://piczel.tv/watch/fkvpbankvntop

http://idea.informer.com/users/mkvpbankvntop/?what=personal

https://os.mbed.com/users/gdvpbankvntop/

https://rotorbuilds.com/profile/41910/

https://research.openhumans.org/member/szvpbankvntop

https://wmart.kz/forum/user/162895/

https://participez.nouvelle-aquitaine.fr/profiles/vpbankvntop_5/activity?locale=en

https://vocal.media/authors/vaytien-banglai

https://community.tableau.com/s/profile/0058b00000IZXoq

https://www.mountainproject.com/user/201826004/vaytien-banglai

https://www.dermandar.com/user/xnvpbankvntop/

https://www.creativelive.com/student/vaytien-banglai?via=accounts-freeform_2

https://zzb.bz/ApMv0

https://www.copytechnet.com/member/355334-cjvpbankvntop/about

https://www.storeboard.com/vaytienbanglai

https://slides.com/rxvpbankvntop

https://stocktwits.com/wivpbankvntop

https://www.designspiration.com/thuhoai10049826/

https://devpost.com/thuh-o-a-i-100-498

https://data.world/vvvpbankvntop

https://hackerone.com/vpbankvntop?type=user

https://hackmd.io/@zqvpbankvntop

https://www.chordie.com/forum/profile.php?id=1962106

https://www.cineplayers.com/dsvpbankvntop

https://makersplace.com/thuhoai10049821/about

https://fileforum.com/profile/zovpbankvntop

https://qiita.com/tvvpbankvntop

https://socialtrain.stage.lithium.com/t5/user/viewprofilepage/user-id/64386

https://gitee.com/thuhoai

https://active.popsugar.com/@qbvpbankvntop/profile

https://able2know.org/account/profile/

https://teletype.in/@vpbankvntop

https://www.speedrun.com/users/wmvpbankvntop

https://www.kickstarter.com/profile/cpvpbankvntop/about

https://www.credly.com/users/vaytien-banglai/badges

https://gifyu.com/yvvpbankvntop

https://www.metooo.io/u/664fed928eb6540b99161bc1

https://inkbunny.net/rvvpbankvntop

https://chart-studio.plotly.com/~tnvpbankvntop

https://www.5giay.vn/members/wjvpbankvntop.101974073/#info

https://www.facer.io/u/spvpbankvntop

https://www.noteflight.com/profile/0d26fd39dde2f66ef4421b209f435e3d1f7bd175

https://vnseosem.com/members/yivpbankvntop.30866/#info

https://wakelet.com/@vaytienbanglai40639

https://vimeo.com/user220125603

https://pinshape.com/users/4401533-dqvpbankvntop#designs-tab-open

https://diendannhansu.com/members/xkvpbankvntop.49071/#about

https://doodleordie.com/profile/rpvpbankvntop

https://www.divephotoguide.com/user/edvpbankvntop/

https://chodilinh.com/members/qovpbankvntop.78091/#about

https://hashnode.com/@ksvpbankvntop

https://www.proarti.fr/account/wovpbankvntop

https://www.cakeresume.com/me/vpbankvntop-74f1e0

https://englishbaby.com/

https://lab.quickbox.io/jyvpbankvntop

https://visual.ly/users/thuhoai10049821

https://circleten.org/a/291402

https://www.wpgmaps.com/forums/users/owvpbankvntop/

https://www.fimfiction.net/user/744817/okvpbankvntop

https://forum.dmec.vn/index.php?members/jdvpbankvntop.60636/

https://pastelink.net/sz0og2c5

https://www.dnnsoftware.com/activity-feed/my-profile/userid/3198382

https://play.eslgaming.com/player/20122851/

https://www.artscow.com/user/3196021

https://disqus.com/by/disqus_vTnftEhflC/about/

https://topsitenet.com/profile/vrvpbankvntop/1193080/

https://www.intensedebate.com/people/hdvpbankvntop

https://pxhere.com/en/photographer-me/4265538

https://www.instapaper.com/p/ievpbankvntop

https://collegeprojectboard.com/author/imvpbankvntop/

https://www.pearltrees.com/divpbankvntop

https://portfolium.com/mlvpbankvntop

https://penzu.com/p/0e80f197fab85cc4

https://peatix.com/user/22340032/view

https://www.scoop.it/u/vaytienbanglai

https://bentleysystems.service-now.com/community?id=community_user_profile&user=935815aa1b168e90dc6db99f034bcb41

https://app.talkshoe.com/user/syvpbankvntop

https://www.ekademia.pl/@vaytienbanglai

https://telegra.ph/vpbankvntop-05-24

https://www.anibookmark.com/user/arvpbankvntop.html

www.artistecard.com/ilvpbankvntop#!/contact

https://www.ethiovisit.com/myplace/wgvpbankvntop

https://pbase.com/vpbankvntop/profile

https://controlc.com/7b491582

https://www.quia.com/profiles/vaytienba

https://rentry.co/szh8b5ww

https://linkmix.co/23370846

https://jsfiddle.net/user/krvpbankvntop/

https://expathealthseoul.com/profile/vaytien-banglai/

https://www.equinenow.com/farm/vpbankvntop-1127640.htm

https://app.roll20.net/users/13366800/vaytien-b

https://allmylinks.com/lhvpbankvntop

https://www.titantalk.com/members/vpbankvntop.375307/#about

http://forum.yealink.com/forum/member.php?action=profile&uid=341364

https://www.discogs.com/user/apvpbankvntop

https://www.exchangle.com/vtvpbankvntop

https://naijamp3s.com/index.php?a=profile&u=cuvpbankvntop

https://willysforsale.com/profile/jlvpbankvntop

| cavpbankvntop | |

1,863,462 | Introducing react-tools: A Toolbox for Streamlining React Development | Introducing @galiprandi/react-tools: A Toolbox for Streamlining React Development Are... | 0 | 2024-05-24T01:56:18 | https://dev.to/galiprandi/introducing-react-tools-a-toolbox-for-streamlining-react-development-2f23 |

### Introducing `@galiprandi/react-tools`: A Toolbox for Streamlining React Development

Are you looking to simplify your React development process? Look no further than `@galiprandi/react-tools`. This comprehensive package offers a range of intuitive utilities designed to enhance your React applications. Let's explore some of its key components and hooks:

### Playground

Before diving into the details, why not take a test drive? Visit the [@galiprandi/react-tools Playground](https://stackblitz.com/edit/vitejs-vite-7c9m54?file=src%2FApp.tsx) to experiment with the components firsthand.

### Installation

Getting started is a breeze. Simply install the package using your preferred package manager:

```bash

npm i @galiprandi/react-tools

```

```bash

pnpm i @galiprandi/react-tools

```

```bash

yarn add @galiprandi/react-tools

```

### Components

#### `<Form />`

Simplify form creation with the `<Form />` component. This component wraps the `form` HTML tag, offering a straightforward approach to building forms in your React application. Additional props like `onSubmitValues` and `filterEmptyValues` provide enhanced functionality.

#### `<Input />`

Enhance user input experiences with the `<Input />` component. This reusable input wrapper adds consistency to your forms and accepts various props for customization, including `label`, `onChangeValue`, and `debounceDelay`.

#### `<DateTime />`

Need date and time input functionality? Look no further than the `<DateTime />` component. This wrapper around the native `input` element with `type="datetime-local"` offers convenient date selection with additional props for customization.

#### `<Dialog />`

Create accessible dialogs and modals effortlessly with the `<Dialog />` component. This wrapper around the `dialog` HTML tag simplifies dialog creation, with options for defining behavior, callbacks, and content.

#### `<Observer />`

Track element visibility with the `<Observer />` component. Whether you're implementing lazy loading images or infinite scrolling, this component offers a straightforward solution for monitoring viewport interactions.

### Hooks

#### `useDebounce()`

Streamline asynchronous operations with the `useDebounce()` hook. This simple yet powerful hook accepts a value and delay, returning a debounced value to optimize performance.

### Contribution

Your contributions are valuable! Whether it's bug fixes, feature enhancements, or documentation improvements, contributions are welcome. Simply fork the repository, make your changes, and open a pull request.

### License

`@galiprandi/react-tools` is licensed under the MIT License, providing flexibility for both personal and commercial use.

Ready to simplify your React development workflow? Install [@galiprandi/react-tools](https://www.npmjs.com/package/@galiprandi/react-tools) today and experience the difference firsthand. Happy coding! | galiprandi | |

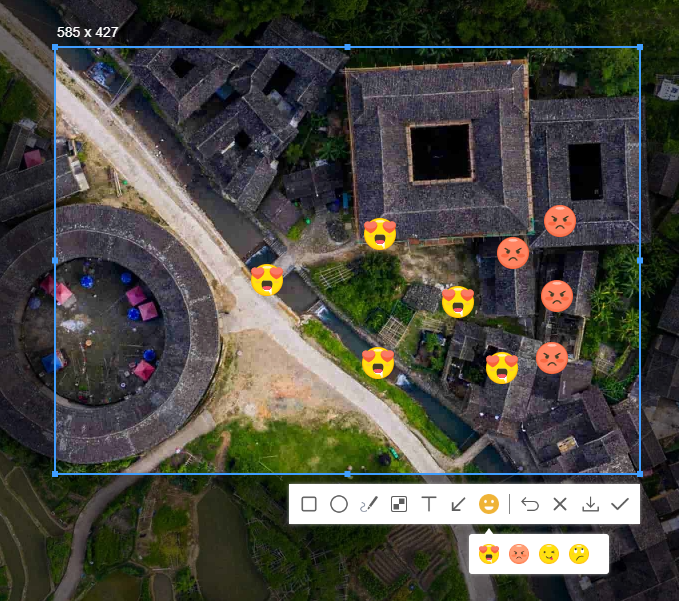

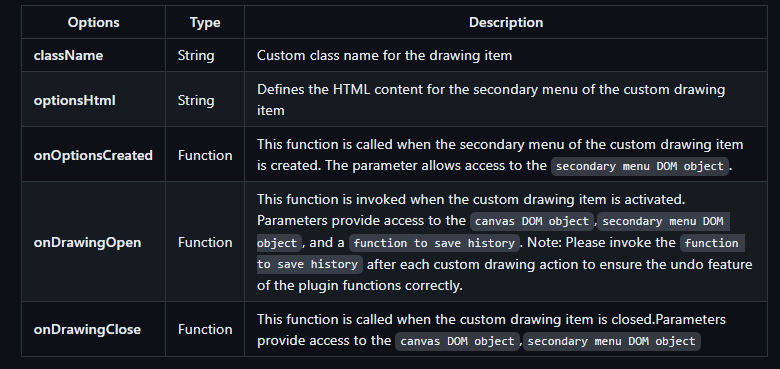

1,863,461 | region-screenshot-js helps you quickly build the selection screenshot function | live... | 0 | 2024-05-24T01:52:16 | https://dev.to/brilliant/region-screenshot-js-helps-you-quickly-build-the-selection-screenshot-function-5mg | live demo:https://github.com/weijun-lab/region-screenshot-js

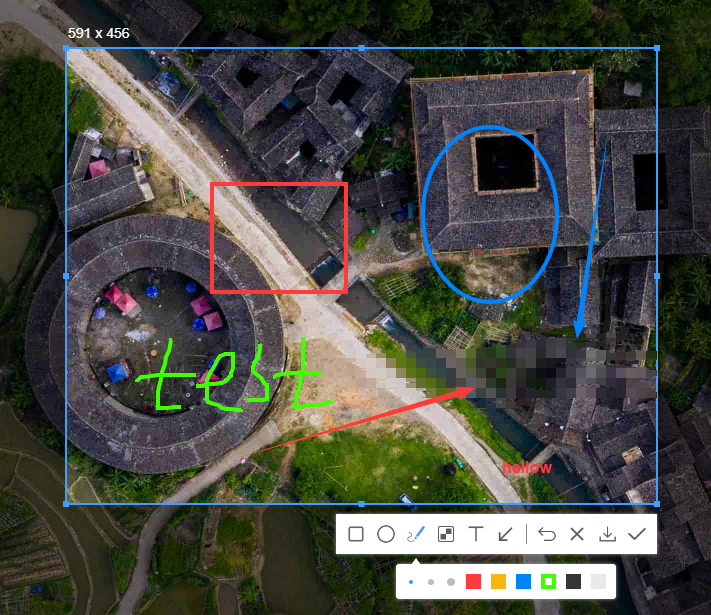

github:https://weijun-lab.github.io/region-screenshot-js

This article describes how to use the plug-in region-screenshot-js to succinctly and efficiently achieve the selection screenshot function on the web side, and draw specific patterns and mosaics on the screenshot. If the plug-in comes with pattern drawing can not meet your needs, you can customize drawing through the customDrawing configuration item of the plug-in. The following is only a basic indication, more functions and usage refer to the documentation. This is a plug-in I developed in my spare time, nearly two thousand lines of code, writing a little two months. If you have any suggestions or comments about this plugin, you can put them in the Issues. If it helps you, please click on star.

Code example:

```html

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Document</title>

<style>

body,

html {

height: 100%;

width: 100%;

}

* {

margin: 0;

padding: 0;

}

body {

background-image: url(./assets/bg.png);

background-size: cover;

background-position: center;

}

</style>

</head>

<body>

</body>

<script src="./dist/region-screenshot.umd.js"></script>

<script>

let screenshot = new RegionScreenshot({

regionColor:"#00ff28"

});

let screenshot = new RegionScreenshot();

screenshot.on("successCreated",(dataUrl)=>{

console.log("Plugin initialized successfully.");

});

screenshot.on("screenshotGenerated",(dataUrl)=>{

console.log(dataUrl);

});

</script>

</html>

```

If the current drawing tool does not meet your needs, you can customize a drawing tool through the customDrawing configuration item

Code example:

```html

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Document</title>

<style>

body,

html {

height: 100%;

width: 100%;

}

* {

margin: 0;

padding: 0;

}

body {

background-image: url(./assets/bg.png);

background-size: cover;

background-position: center;

}

.region-screenshot_custom_tools.emoji .region-screenshot_tools_btn {

background-image: url(./assets/emoji.png);

}

.region-screenshot_custom_tools.emoji.region-screenshot_active .region-screenshot_tools_btn {

background-image: url(./assets/emoji_active.png);

}

.region-screenshot_custom_tools.emoji .region-screenshot_tools_options img {

width: 20px;

margin-right: 10px;

cursor: pointer;

}

.region-screenshot_custom_tools.emoji .region-screenshot_tools_options img.active {

filter: brightness(1.2)

}

</style>

</head>

<body>

</body>

<script src="./dist/region-screenshot.umd.js"></script>

<script src="https://unpkg.com/jquery@3.7.1/dist/jquery.js"></script>

<script>

let screenshot = new RegionScreenshot({

customDrawing: [

{

className: "emoji",

optionsHtml: `

<img class="active" src="assets/emoji-1.png"/>

<img src="assets/emoji-2.png"/>

<img src="assets/emoji-3.png"/>

<img src="assets/emoji-4.png"/>

`,

onOptionsCreated(optionsEl) {

$(optionsEl)

.find("img")

.click(function () {

$(this).addClass("active");

$(this).siblings().removeClass("active");

});

},

onDrawingOpen(canvasEl, optionsEl, saveCallback) {

let ctx = canvasEl.getContext("2d");

canvasEl.style.cursor = "crosshair";

canvasEl.onclick = function (e) {

let img = $(optionsEl).find(".active")[0];

ctx.drawImage(

img,

e.offsetX - img.naturalWidth / 2,

e.offsetY - img.naturalWidth / 2

);

saveCallback();

};

},

onDrawingClose(canvasEl,optionsEl) {

canvasEl.onclick = null;

canvasEl.style.cursor = "default";

},

},

],

});

</script>

</html>

```

emoji.png

emoji_active.png

emoji-1.png

emoji-2.png

emoji-3.png

emoji-4.png

| brilliant | |

1,863,460 | Axial Fans: Understanding Airflow Dynamics and Applications | Axial Fans: Understanding Airflow Dynamics and Applications Have actually you ever before really... | 0 | 2024-05-24T01:39:22 | https://dev.to/mobika/axial-fans-understanding-airflow-dynamics-and-applications-2akm | axial, fans | Axial Fans: Understanding Airflow Dynamics and Applications

Have actually you ever before really experienced that the space is actually as well stale and damp? Perform you wish to produce a comfy atmosphere in your house, workplace, or even work environment? If your response is actually indeed, after that you have to learn about axial fans.

An axial fan is actually a gadget that utilizes turning cutters towards produce airflow and distribute sky in a specific instructions. It is actually an important device for air flow, cooling down, and home heating bodies. We'll check out the benefits, development, security, utilize, ways to utilize, solution, high top premium, and request of axial fans.

Benefits of Axial Fans

Axial fans have actually a great deal of benefits over various other kinds of fans. Very initial, they are actually extremely effective and can easily relocate big quantities of sky along with reduced energy usage. This implies that they can easily conserve power and decrease sets you back. 2nd, axial fans are actually peaceful and create much less sound compared with various other fans. This is actually perfect for locations where sound is actually a problem, like bed rooms, seminar spaces, and collections. 3rd, axial fans are actually light-weight and small, creating all of them the simple towards set up and utilize in a selection of setups.

Development in Axial Fans

Recently, axial fans have actually gone through considerable development. For instance, brand-brand new products, like compounds, have actually been actually utilized to create fans lighter and more powerful. Likewise, brand-brand new styles, like combined stream fans, have actually been actually designed towards integrate the benefits of axial and centrifugal fans. Additionally, brand-brand new innovations, like adjustable rate steers, have actually been actually utilized towards enhance fan effectiveness and command.

Security of Axial Fans

Security is actually an essential factor to consider when utilizing Short tube axial fans. One security include is actually the fan protector, which avoids fingers or even various other items coming from obtaining captured in the cutters. Another security include is actually the thermal overload security, which turns off the fan in the event of getting too hot. It is essential towards comply with the manufacturer's directions and security standards when utilizing axial fans.

Use Axial Fans

Axial fans have actually a wide variety of utilizes, consisting of air flow, cooling down, home heating, drying out, and dirt compilation. They are actually typically utilized in houses, workplaces, manufacturing facilities, warehouses, and agricultural setups. Axial fans could be utilized as standalone systems or even incorporated right in to bigger bodies. They can easily likewise be actually utilized in mix along with various other air flow and cooling down bodies, like a/c and evaporative cooling down.

Ways to Utilize Axial Fans

Utilizing Stand axial fans is actually simple, however certainly there certainly are actually a couple of points towards bear in mind. Very initial, ensure towards choose the appropriate dimension fan for the area to become aerated. A fan that's as well little will certainly not work, while a fan that's as well big will certainly squander power. 2nd, setting the fan towards attract sky coming from one location and tire it towards another. This produces a stream of sky and guarantees appropriate air flow. 3rd, cleanse and preserve the fan routinely to guarantee appropriate procedure and prolong its life expectancy.

Solution and High premium of Axial Fans

When purchasing an axial fan, it is essential to select a reliable producer and provider. This guarantees that the fan is actually of top quality and satisfies security and efficiency requirements. It is likewise essential to select a provider that provides after-sales solutions and sustains. This consists of setup, upkeep, and repair work. A great provider will help you enhance your airflow and cool down bodies and accomplish optimum effectiveness and efficiency.

Request of Axial Fans

Axial fans have a wide variety of applications in various markets and setups. For instance, in farming, axial fans are utilized for all-organic air flow and cooling down of animal structures. In production, axial fans are utilized for tire and airflow of fumes and dirt. In buildings, axial fans are utilized for drying out and cooling down structures. In transport, axial fans are utilized for cooling down motors in vehicles, vehicles, and planes. The request of axial fans is restricted just through creativity and imagination.

Source: https://www.hangdafans.com/axial-fans | mobika |

1,851,871 | How to scale a Django application to serve one million users? | Wish your Django app could handle a million hits? This post is a compilation of articles, books, and... | 0 | 2024-05-24T00:34:13 | https://coffeebytes.dev/en/is-your-django-application-slow-maximize-its-performance-with-these-tips/ | python, django, opinion, performance | ---

title: How to scale a Django application to serve one million users?

published: true

date: 2024-05-24 01:59:00 UTC

tags: python,django,opinion,performance

canonical_url: https://coffeebytes.dev/en/is-your-django-application-slow-maximize-its-performance-with-these-tips/

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/5lfie46djdyz2k3q3zki.jpg

---

Wish your Django app could handle a million hits? This post is a compilation of articles, books, and videos I’ve read on how to take a Django application to its maximum capabilities, I’ve even implemented some of these recommendations myself.

It's also a good time to remember that if your application is just starting out, you probably [shouldn't obsess about its performance... yet](https://coffeebytes.dev/en/dont-obsess-about-your-web-application-performance/).

## Reduce slow queries in Django

As you know, database access is usually the bottleneck of most applications. \*\*The most important action to take is to reduce the number of queries and the impact of each one of them. You can reduce the impact of your queries by 90%, and I am not exaggerating.

It is quite common to write code that occasions multiple queries to the database, as well as quite expensive searches.

Identify what queries are being made in your application using [django-debug-toolbar](https://github.com/jazzband/django-debug-toolbar) and reduce them, or make them more efficient:

- **select\_related()** to [avoid multiple searches in foreign key or one-to-one relationships](https://coffeebytes.dev/en/differences-between-select_related-and-prefetch_related-in-django/)

- **prefetch\_related()** to prevent excessive searches on many-to-many or many-to-one relationships

- **django\_annotate()** to add information to each object in a query. I have an entry where I explain [the difference between annotate and aggregate](https://coffeebytes.dev/en/django-annotate-and-aggregate-explained/).

- **django\_aggregate()** to process all information from a single query into a single data (summation, averages).

- **Object Q** to join queries by OR or AND directly from the database.

- F-Expressions\*\* to perform operations at the database level instead of in Python code.

_Django debug tool bar showing the SQL queries of a Django request_

Example of use with _select\_related_.

``` python

# review/views.py

from .models import Review

def list_reviews(request):

queryset = Review.objects.filter(product__id=product_id).select_related('user')

# We're preventing a new query everytime we access review.user

# ...

```

## Configure gunicorn correctly

Gunicorn is the most widely used Python WSGI HTTP server for Django applications. But it is not asynchronous, consider combining it with one of its asynchronous counterparts: hypercorn or uvicorn. The latter implements gunicorn workers.

### Configure gunicorn correctly

Make sure you are using the correct gunicorn workers, according to the number of cores in your processor. They recommend setting the workers to (2 x number of cores) + 1. According to the documentation, **with 4-12 workers you can serve from hundreds to thousands of requests per second** , so that should be enough for a medium to large scale website.

## Improve the performance of your serializers

If you use DRF and use its generic classes to create serializers, you may not exactly be getting the best performance. The generic classes for serializers perform data validation, which can be quite time consuming if you are only going to read data.

Even if you remembered to mark your fields as read\_only, DRF serializers are not the fastest, you might want to check out [Serpy](https://serpy.readthedocs.io/en/latest/), [Marshmallow](https://marshmallow.readthedocs.io/en/stable/). The topic is quite broad, but stay with the idea that there is a major area of improvement in Django serializers.

I leave you this article that explains [how some developers managed to reduce the time cost of serialization by 99%.](https://hakibenita.com/django-rest-framework-slow)

## Use pagination in your views

It probably sounds pretty obvious, yet I feel I should mention it: you don’t need to return an entire database table if your user only finds the first few records useful.

Use the _paginator_ object provided by Django, or limit the results of a search to a few.

DRF also has an option to [paginate your results](https://www.django-rest-framework.org/api-guide/pagination/), check it out.

``` python

# review/views.py

from django.views.generic import ListView

from .models import Review

class ReviewList(ListView):

model = Review

paginate_by = 25

context_object_name = 'review_list'

```

## Use indexes in your models

Understand your more complex queries and try to create indexes for them. The index will make your searches in Django faster, but it will also slow down, slightly, the creations and updates of new information, besides taking up a little more space in your database. Try to strike a healthy balance between speed and storage space used.

``` python

from django.db import models

class Review(models.Model):

created = models.DateTimeField(

auto_now_add=True,

db_index=True,

)

```

## Use indexes for your searches

If your application makes heavy use of information searches, consider using an efficient [search engine, such as Solr](https://coffeebytes.dev/en/searches-with-solr-with-django-haystack/), rather than implementing the code yourself.

There are many options available:

- ElasticSearch

- Solr

- Whoosh

- Xapian

## Remove unused middleware

Each middleware implies an extra step in each web request, so removing all those middlewares that you do not use will mean a slight improvement in the response speed of your application.

Here are some common middleware that are not always used: messages, flat pages and localization, no, I don’t mean geographic location, but translating the content according to the local context.

``` python

MIDDLEWARE = [

# ...

'django.contrib.messages.middleware.MessageMiddleware',

'django.contrib.flatpages.middleware.FlatpageFallbackMiddleware',

'django.middleware.locale.LocaleMiddleware'

]

```

## Caching in Django

When the response time of your application becomes a problem, you should start caching all time-consuming and resource-intensive results.

Would you like to dig deeper into the caching system, I have a post about [caching in django using memcached](https://coffeebytes.dev/en/caching-in-django-rest-framework-using-memcached/) that you can check out to dig deeper.

If your page has too many models, and they rarely change, it does not make sense to access the database each time to request them with each new HTTP request. Just put the response of that request in cache and your response time will improve, this way every time the same content is requested, it will not be necessary to make a new request or calculations to the database, but the value will be returned directly from memory.

Among the options available are:

- Memcached

- Redis

- Database cache

- File system cache

``` python

# settings.py

CACHES = {

'default': {

'BACKEND': 'django.core.cache.backends.memcached.MemcachedCache',

'LOCATION': '127.0.0.1:11211',

}

}

```

The django cache is configurable at many, many levels, from the entire site to views or even small pieces of information.

``` python

# myapp/views.py

from django.shortcuts import render

from django.views.decorators.cache import cache_page

@cache_page(60*15)

def my_view(request):

return render(request, 'myapp/template.html', {

'time_consuming_data': get_time_consuming_data()

})

```

Note that **memcached cache (memcached, redis) is an ephemeral storage method**, the entire cache will disappear if the system is rebooted or shutdown.

## Uses Celery for asynchronous tasks

Sometimes the bottleneck is the responsibility of third parties. When you send an email or request information from a third party, you have no way of knowing how long your request will take, a slow connection or an oversaturated server can keep you waiting for a response. There is no point in keeping the user waiting tens of seconds for an email to be sent, send them a reply back and transfer the email to a queue to be processed later. [Celery](https://docs.celeryproject.org/en/stable/) is the most popular way to do this.

No idea where to start, I have a couple of posts where I explain [how to run asynchronous tasks with celery and django](https://coffeebytes.dev/en/celery-and-django-to-run-asynchronous-tasks/).

``` python

# myapp/views.py

from celery import shared_task

@shared_task

def send_order_confirmation(order_pk):

email_data = generate_data_for_email(order_pk)

send_customized_mail(**email_data)

```

## Partition the tables in your database

When your tables exceed millions of records, each search will go through the entire database, taking a very long time in the process. How could we solve this? By splitting the tables in parts so that each search is done on one of the parts, for example, one table for data from one year ago (or the period you prefer), another for data from two years ago and so on up to the first data.

The instructions for implementing partitioning depend on the database you are using. If you are using postgres this feature is only available for Postgres versions higher than 10. You can use [django-postgres-extra](https://django-postgres-extra.readthedocs.io/en/master/table_partitioning.html) to implement those extra features not found in the django ORM.

The implementation is too extensive and would require a full entry. There is an excellent article that explains how to implement [Postgresql partitioning in Django.](https://pganalyze.com/blog/postgresql-partitioning-django/)

Consider also looking into database replicas for reading files, depending on the architecture of your application, you can implement multiple replicas for reading and a master for writing. This approach is a whole topic and is beyond the scope of a short post, but now you know what to look for.

## Use a CDN (Content Delivery Network)

Serving static images and files can hinder the important part of your application; generating dynamic content. You can delegate the task of serving static content to a content delivery network (CDN).

In addition to benefiting from the geographic locations of CDNs; a server in the same country (or continent) as your user will result in a faster response.

There are many CDN options available, among the most popular options are AWS, [Azure](https://coffeebytes.dev/en/azure-az-900-certification-exam-my-experience/), Digital Ocean, Cloud Flare, among others.

## Denormalization

Sometimes there are quite costly runtime queries that could be solved by adding redundancy, repeated information. For example, imagine you want to return the number of products that have the phrase “for children” on your home page, running a query that searches for the word and then executes a count is fairly straightforward. But what if you have 10,000 or 100,000 or 1,000,000 products, every time you want to access the count value, your database will go through the entire table and count the data.

Instead of performing a count, you could store that number in the database or in memory and return it directly, to keep it updated you could use a periodic count or increment it with each addition.

Of course this brings the problem that you now have more data to maintain, not coupled together, so \*\*you should only use this option to solve your Django performance problems if you have already exhausted the other options.

``` python

count = my_model.objects.filter(description__icontains="para niños").count()

# ... denormalizing

count = my_count.objects.get(description="para niños") # Each row of the my_count model contains a description and the total results.

total_count = count.total

```

## Review the impact of third-party plugins

Sometimes our website works almost perfectly, but third party plugins, such as facebook analytics tools, google, social media chat integrations plugins affect the performance of our application. Learn how to delay their loading or modify them to reduce their impact, using async, defer or other HTML attributes, in combination with Javascript.

If the above is impossible, evaluate alternatives or consider eliminating them.

## Consider using another interpreter to improve django performance

It’s not all about the database, sometimes the problem is in the Python code itself.

In addition to the normal Python interpreter, the one offered by default on the official Python website, there are other interpreters that are sure to give you better performance.

[Pypy](https://www.pypy.org/) is one of them, it is responsible for optimizing Python code by analyzing the type of objects that are created with each execution. This option is ideal for applications where Django is in charge of returning a result that was mainly processed using Python code.

But not everything is wonderful; third-party interpreters, including pypy, are usually not 100% compatible with all Python code, but they are compatible with most of it, so, just like the previous option. \*\*Using a third-party interpreter should also be one of the last options you consider to solve your Django performance problem.

## Write bottlenecks in a low-level language with Swig

If you’ve tried all of the above and still have a bottlenecked application, you’re probably squeezing too much out of Python and need the speed of another language. But don’t worry, you don’t have to redo your entire application in C or C++. [Swig](http://www.swig.org/) allows you to create modules in C, C++, Java, Go or other lower level languages and import them directly from Python.

Do you want to know how much difference there is between Python and a compiled language like go? in my post [Python vs Go I compare the speed of both languages](https://coffeebytes.dev/en/python-vs-go-go-which-is-the-best-programming-language/)

If you have a bottleneck caused by some costly mathematical computation, which highlights the lack of speed of Python being an interpreted language, you may want to rewrite the bottleneck in some low-level language and then call it using Python. This way you will have the ease of use of Python with the speed of a low-level language.

Keep an eye on language Mojo, it promises to be a super set of Python but much faster

## ORMs and alternative frameworks

Depending on the progress of your application, you may want to migrate to another framework faster than Django. Django’s ORM is not exactly the fastest out there, and, at the time of writing, it is not asynchronous. You might want to consider giving [sqlalchemy](https://www.sqlalchemy.org/), [ponyorm](https://ponyorm.org/) a try.

Or, if your application is not very complex at the database level, you may want to write your own sql queries and combine them with some other framework.

The current trend is to separate frontend and backend, so Django is being used in conjunction with Django Rest Framework to create APIs, so if your plans include the creation of an API, you may want to consider FastAPI, if you don’t know it, take a look at my post where I explain [the basics of FastAPI](https://coffeebytes.dev/en/fastapi-tutorial-the-best-python-framework/).

## Bonus: applications with more than 63 000 models

There is a talk they gave at djangocon2019 where the speaker explains how they managed to deal with an application with 63000 endpoints, each with different permissions.

{% youtube O6-PbTPAFXw %}

## Bonus: Technical blogs

Pinterest and Instagram are two gigantic sites that started out by choosing Django as their backend. You can find information about optimization and very specific problems in their technical blogs.

The instagram blog has a post called [Web Service efficiency at Instagram with Python](https://instagram-engineering.com/web-service-efficiency-at-instagram-with-python-4976d078e366), where they explain some problems encountered when handling 500 million users and how to fix them.

Here are the links to the blogs below:

- [Pinterest engineering](https://medium.com/pinterest-engineering)

- [Ingeniería de Instagram](https://instagram-engineering.com/)

References:

- Definitive Guide to Django: Web Development Done Right by Adrian Holovaty and Jacob Kaplan Moss

- Two scoops of Django 1.8 by Daniel Roy Greenfeld and Audrey Roy Greenfeld

- High performance Django by Peter Baumgartner and Yann Malet | zeedu_dev |

1,863,458 | Testando das trincheiras: Como criar mocks e stubs dinâmico com mockito em java | Criando mocks e stubs dinâmicos com Mockito em Java para simplificar testes quando a construção de objetos reais é impraticável. | 0 | 2024-05-24T01:30:58 | https://dev.to/hugaomarques/testando-das-trincheiras-como-criar-mocks-e-stubs-dinamico-com-mockito-em-java-3bmi | java, mockito, junit | ---

title: Testando das trincheiras: Como criar mocks e stubs dinâmico com mockito em java

published: true

description: Criando mocks e stubs dinâmicos com Mockito em Java para simplificar testes quando a construção de objetos reais é impraticável.

tags: #java #mockito #junit

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-05-24 01:00 +0000

---

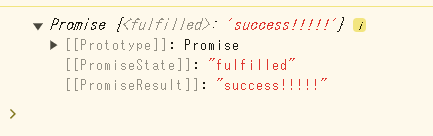

Esse vai ser curtinho. Hoje eu estava tentando testar uma classe que segue o seguinte comportamento:

```java

Book book = bookManager.getBook(id);

book.getId();

```

Por vários motivos que não vêm ao caso agora, imagine que você não consegue construir o objeto `BookManager` e também não consegue criar um `FakeBook` para injetar o ID conforme você deseja.

Pois bem, eu lembrei que era possível criar um mock dinâmico usando `Answer` do Mockito.

## Solução: Um mock dinâmico

A solução fica assim:

```java

@ExtendWith(MockitoExtension.class)

public class MyBookManagerTest {

@Mock

private BookManager bookManager;

@Mock

private Book book;

@Test

public void testMyMethod() {

// Define the behavior of the bookManager mock

when(bookManager.getBook(anyInt())).thenAnswer(new Answer<Book>() {

@Override

public Book answer(InvocationOnMock invocation) throws Throwable {

Object[] args = invocation.getArguments();

int id = (Integer) args[0];

when(book.getId()).thenReturn(id);

return book;

}

});

// Use the mock in the test

Book book = bookManager.getBook(12345);

// Verify the behavior of the mock

assertEquals(12345, book.getId());

}

}

```

Note que, ao definirmos o comportamento do `BookManager`, retornamos uma `Answer`. Nessa `Answer`, capturamos o parâmetro passado (veja como usamos a `invocation`) e o configuramos no mock `Book` para ser retornado quando fizermos a chamada `book.getId()`.

Dessa forma, em vez de definirmos o mock diversas vezes, podemos definir apenas uma vez e fazer várias chamadas:

```java

// Use the mock in the test

Book book = bookManager.getBook(12345);

// Verify the behavior of the mock

assertEquals(12345, book.getId());

// Esse aqui também funciona porque o nosso mock é configurável

Book book = bookManager.getBook(6789);

// Verify the behavior of the mock

assertEquals(6789, book.getId());

```

## Simplificando: Java 8 + Lambdas 🥰

Se usarmos lambdas em vez da `anonymous` classe, o nosso exemplo fica ainda mais simples:

```java

when(bookManager.getBook(anyInt()))

.thenAnswer(invocation -> {

int id = invocation.getArgument(0);

when(book.getId()).thenReturn(id);

return book;

});

```

É isso, essa foi direto das trincheiras. Normalmente, eu gosto de evitar mocks se possível e tento usar os objetos reais. No meu caso específico criar o objeto ia ser um trampo do cão e aí eu decidi usar a ferramenta pra simplicar a minha vida.

Keep coding! 💻 | hugaomarques |

1,863,456 | 12章33 | 以下に、2つの回避方法をそれぞれ別々のコードとして示し、コメントアウトで解説を追加します。 方法1: keys.clear();を削除して例外を回避 import... | 0 | 2024-05-24T01:24:11 | https://dev.to/aaattt/12zhang-33-3l9c | 以下に、2つの回避方法をそれぞれ別々のコードとして示し、コメントアウトで解説を追加します。

### 方法1: `keys.clear();`を削除して例外を回避

```java

import java.util.HashMap;

import java.util.List;

import java.util.Map;

public class Main {

// HashMapを作成してキーと値を格納するための静的マップ

static Map<String, String> map = new HashMap<>();

// 不変リストを使用 (例外が発生する可能性のあるリスト)

static List<String> keys = List.of("A", "B", "C");

// 値の配列を定義

static String[] values = {"1", "2", "3"};

// 静的初期化ブロックでキーと値をマップに追加

static {

for (int i = 0; i < keys.size(); i++) {

map.put(keys.get(i), values[i]);

}

}

public static void main(String[] args) {

// 例外が発生するkeys.clear()の呼び出しを削除

// keys.clear(); // この行を削除することで例外を回避

// values配列を空にする

values = new String[0];

// マップのサイズ、キーリストのサイズ、values配列の長さを出力。3,3,0

System.out.println(map.size() + "," + keys.size() + "," + values.length);

}

}

```

### 解説:

1. **方法1: `keys.clear();`を削除して例外を回避**

- `keys`リストは`List.of("A", "B", "C")`を使用して不変リストとして作成されています。

- 不変リストのため、変更操作(例:`clear()`)はサポートされておらず、`keys.clear();`を呼び出すと`UnsupportedOperationException`が発生します。

- この例外を回避するために、`keys.clear();`の行を削除しました。

map.size() は 3(マップには3つのキーと値のペアが格納されています)

keys.size() は 3(keysリストは不変であり、変更されないので3つの要素を持っています)

values.length は 0(values配列は空に変更されました)

### 方法2: 可変リストを使用して例外を回避

```java

import java.util.ArrayList;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

public class MainWithMutableKeys {

// HashMapを作成してキーと値を格納するための静的マップ

static Map<String, String> map = new HashMap<>();

// 可変リストを使用 (例外を回避するためのリスト)

static List<String> keys = new ArrayList<>(List.of("A", "B", "C"));

// 値の配列を定義

static String[] values = {"1", "2", "3"};

// 静的初期化ブロックでキーと値をマップに追加

static {

for (int i = 0; i < keys.size(); i++) {

map.put(keys.get(i), values[i]);

}

}

public static void main(String[] args) {

// 可変リストの場合、keys.clear()は正常に動作する

keys.clear(); // この行は正常に動作

// values配列を空にする

values = new String[0];

// マップのサイズ、キーリストのサイズ、values配列の長さを出力。3,0,0

System.out.println(map.size() + "," + keys.size() + "," + values.length);

}

}

```

### 解説:

2. **方法2: 可変リストを使用して例外を回避**

- `keys`リストを`new ArrayList<>(List.of("A", "B", "C"))`を使用して可変リストとして作成します。

- これにより、`keys.clear();`が正常に動作し、例外は発生しません。

- `keys.clear();`を呼び出しても問題なくリストをクリアすることができます。

map.size() は 3(マップには3つのキーと値のペアが格納されています)

keys.size() は 0(keys.clear();によってkeysリストが空になります)

values.length は 0(values配列は空に変更されました) | aaattt | |

1,863,454 | Thoughts on High-Frequency Trading Strategies (1) | I have written two articles on high-frequency trading of digital currencies, namely "Digital Currency... | 0 | 2024-05-24T01:19:59 | https://dev.to/fmzquant/thoughts-on-high-frequency-trading-strategies-1-3l7f | trading, strategy, fmzquant, cryptocurrency | I have written two articles on high-frequency trading of digital currencies, namely "[Digital Currency High-Frequency Strategy Detailed Introduction](https://www.fmz.com/bbs-topic/10009)" and "[Earn 80 Times in 5 Days, the Power of High-frequency Strategy](https://www.fmz.com/bbs-topic/9750)". However, these articles can only be considered as sharing experiences and provide a general overview. This time, I plan to write a series of articles to introduce the thought process behind high-frequency trading from scratch. I hope to keep it concise and clear, but due to my limited expertise, my understanding of high-frequency trading may not be very in-depth. This article should be seen as a starting point for discussion, and I welcome corrections and guidance from experts.

## Source of High-Frequency Profits

In my previous articles, I mentioned that high-frequency strategies are particularly suitable for markets with extremely volatile fluctuations. The price changes of a trading instrument within a short period of time consist of overall trends and oscillations. While it is indeed profitable if we can accurately predict trend changes, this is also the most challenging aspect. In this article, I will primarily focus on high-frequency maker strategies and will not delve into trend prediction. In oscillating markets, by placing bid and ask orders strategically, if the frequency of executions is high enough and the profit margin is significant, it can cover potential losses caused by trends. In this way, profitability can be achieved without predicting market movements. Currently, exchanges provide rebates for maker trades, which are also a component of profits. The more competitive the market, the higher the proportion of rebates should be.

## Problems to be Addressed

1. The first problem in implementing a strategy that places both buy and sell orders is determining where to place these orders. The closer the orders are placed to the market depth, the higher the probability of execution. However, in highly volatile market conditions, the price at which an order is instantly executed may be far from the market depth, resulting in insufficient profit. On the other hand, placing orders too far away reduces the probability of execution. This is an optimization problem that needs to be addressed.

2. Position control is crucial to manage risk. A strategy cannot accumulate excessive positions for extended periods. This can be addressed by controlling the distance and quantity of orders placed, as well as setting limits on overall positions.

To achieve the above objectives, modeling and estimation are required for various aspects such as execution probabilities, profit from executions, and market estimation. There are numerous articles and papers available on this topic, using keywords such as "High-Frequency Trading" and "Orderbook." Many recommendations can also be found online, although further elaboration is beyond the scope of this article. Additionally, it is advisable to establish a reliable and fast backtesting system. Although high-frequency strategies can easily be validated through live trading, backtesting provides additional insights and helps reduce the cost of trial and error.

## Required Data

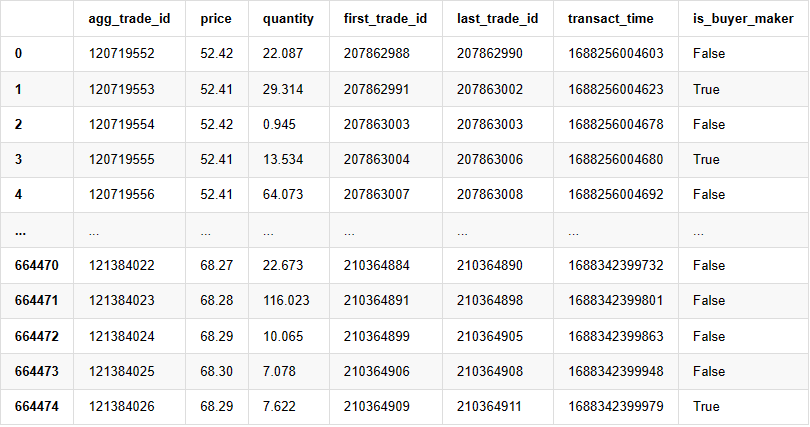

Binance provides [downloadable data](https://www.binance.com/en/landing/data) for individual trades and best bid/ask orders. Depth data can be downloaded through their API by being whitelisted, or it can be collected manually. For backtesting purposes, aggregated trade data is sufficient. In this article, we will use the example of HOOKUSDT-aggTrades-2023-01-27 data.

In [1]:

```

from datetime import date,datetime

import time

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

%matplotlib inline

```

The individual trade data includes the followings:

1. agg_trade_id: The ID of the aggregated trade.

2. price: The price at which the trade was executed.

3. quantity: The quantity of the trade.

4. first_trade_id: In cases where multiple trades are aggregated, this represents the ID of the first trade.

5. last_trade_id: The ID of the last trade in the aggregation.

6. transact_time: The timestamp of the trade execution.

7. is_buyer_maker: Indicates the direction of the trade. "True" represents a buy order executed as a maker, while a sell order is executed as a taker.

It can be seen that there were 660,000 trades executed on that day, indicating a highly active market. The CSV file will be attached in the comments section.

In [4]:

```

trades = pd.read_csv('COMPUSDT-aggTrades-2023-07-02.csv')

trades

```

Out[4]:

, , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , , ,

664475 rows × 7 columns

## Modeling Individual Trade Amount

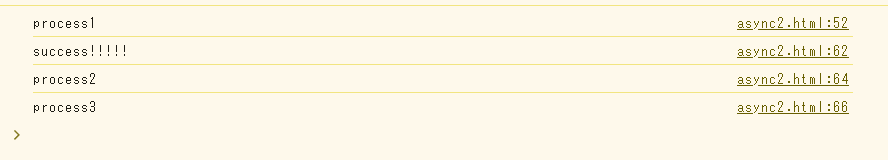

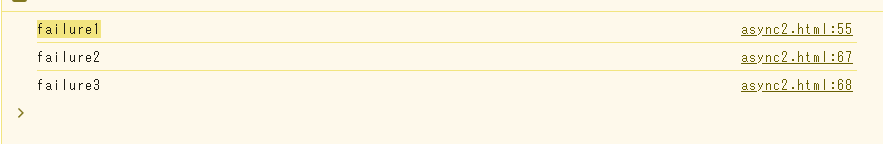

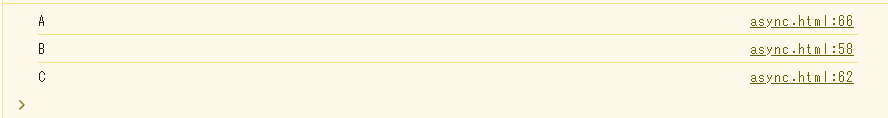

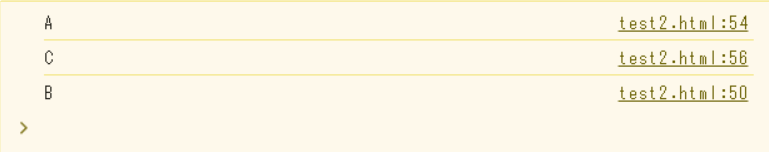

First, the data is processed by dividing the original trades into two groups: buy orders executed as makers and sell orders executed as takers. Additionally, the original aggregated trade data combines trades executed at the same time, at the same price, and in the same direction into a single data point. For example, if there is a single buy order with a volume of 100, it may be split into two trades with volumes of 60 and 40, respectively, if the prices are different. This can affect the estimation of buy order volumes. Therefore, it is necessary to aggregate the data again based on the transact_time. After this second aggregation, the data volume is reduced by 140,000 records.

In [6]:

```

trades['date'] = pd.to_datetime(trades['transact_time'], unit='ms')

trades.index = trades['date']

buy_trades = trades[trades['is_buyer_maker']==False].copy()

sell_trades = trades[trades['is_buyer_maker']==True].copy()

buy_trades = buy_trades.groupby('transact_time').agg({

'agg_trade_id': 'last',

'price': 'last',

'quantity': 'sum',

'first_trade_id': 'first',

'last_trade_id': 'last',

'is_buyer_maker': 'last',

'date': 'last',

'transact_time':'last'

})

sell_trades = sell_trades.groupby('transact_time').agg({

'agg_trade_id': 'last',

'price': 'last',

'quantity': 'sum',

'first_trade_id': 'first',

'last_trade_id': 'last',

'is_buyer_maker': 'last',

'date': 'last',

'transact_time':'last'

})

buy_trades['interval']=buy_trades['transact_time'] - buy_trades['transact_time'].shift()

sell_trades['interval']=sell_trades['transact_time'] - sell_trades['transact_time'].shift()

```

In [10]:

```

print(trades.shape[0] - (buy_trades.shape[0]+sell_trades.shape[0]))

```

Out [10]:

146181

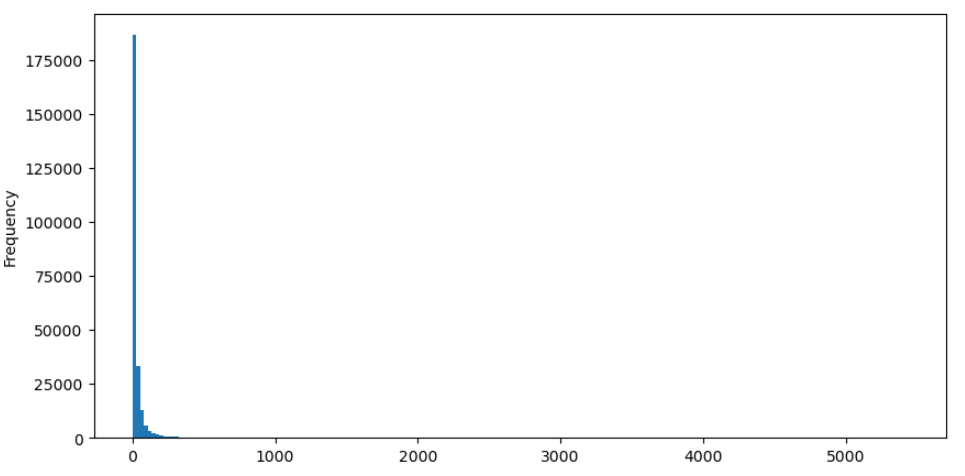

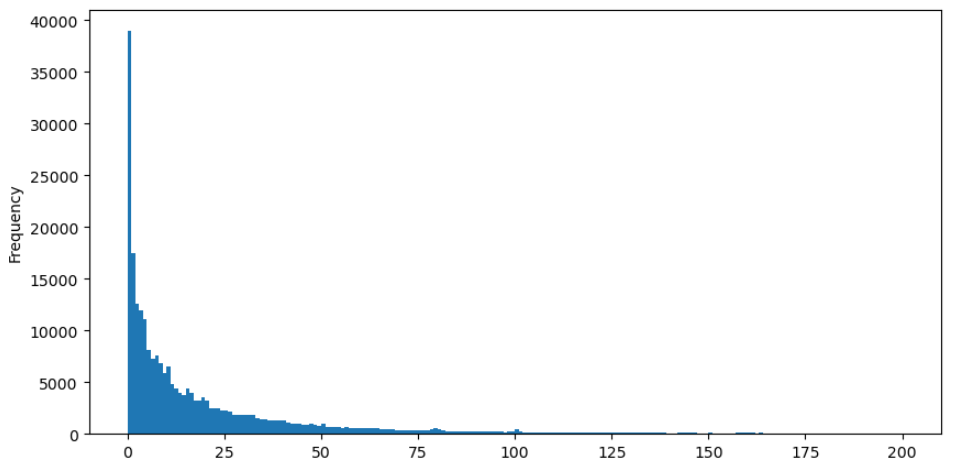

Take buy orders as an example, let's first plot a histogram. It can be observed that there is a significant long-tail effect, with the majority of data concentrated towards the leftmost part of the histogram. However, there are also a few large trades distributed towards the tail end.

In [36]:

```

buy_trades['quantity'].plot.hist(bins=200,figsize=(10, 5));

```

Out [36]:

For easier observation, let's trim the tail and analyze the data. It can be observed that as the trade amount increases, the frequency of occurrence decreases, and the rate of decrease becomes faster.

In [37]:

```

buy_trades['quantity'][buy_trades['quantity']<200].plot.hist(bins=200,figsize=(10, 5));

```

Out [37]:

There have been numerous studies on the distribution of trade amounts. It has been found that trade amounts follow a power-law distribution, also known as a Pareto distribution, which is a common probability distribution in statistical physics and social sciences. In a power-law distribution, the probability of an event's size (or frequency) is proportional to a negative exponent of that event's size. The main characteristic of this distribution is that the frequency of large events (i.e., those far from the average) is higher than expected in many other distributions. This is precisely the characteristic of trade amount distribution. The form of the Pareto distribution is given by P(x) = Cx^(-α). Let's empirically verify this.

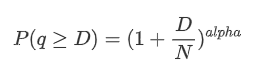

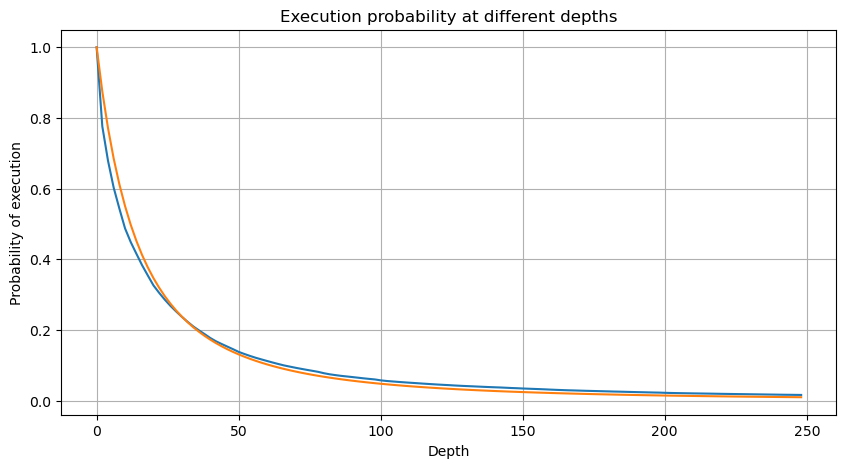

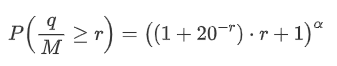

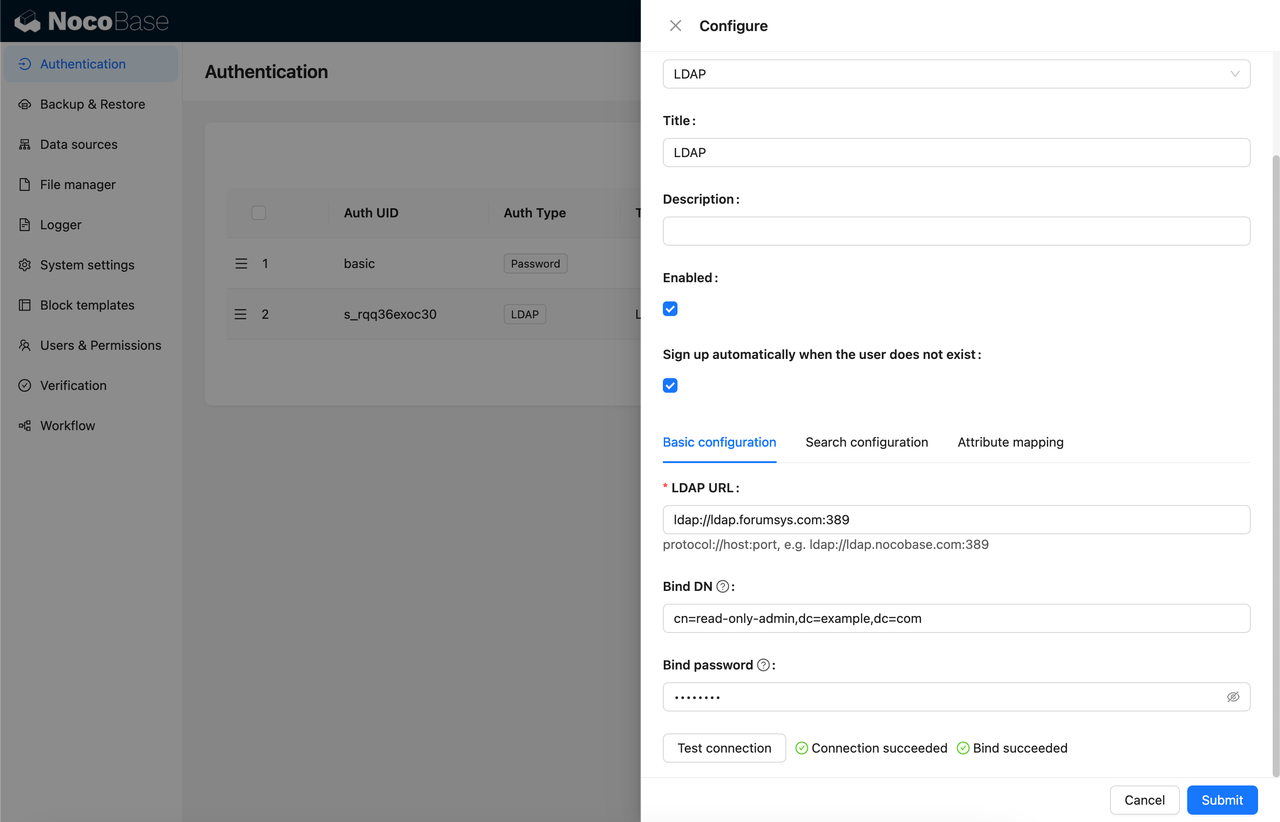

The following graph represents the probability of trade amounts exceeding a certain value. The blue line represents the actual probability, while the orange line represents the simulated probability. Please note that we won't go into the specific parameters at this point. It can be observed that the distribution indeed follows a Pareto distribution. Since the probability of trade amounts being greater than zero is 1, and in order to satisfy normalization, the distribution equation should be as follows:

Here, N is the parameter for normalization. We will choose the average trade amount, M, and set alpha to -2.06. The specific estimation of alpha can be obtained by calculating the P-value when D=N. Specifically, alpha = log(P(d>M))/log(2). The choice of different points may result in slight differences in the value of alpha.

In [55]:

```

depths = range(0, 250, 2)

probabilities = np.array([np.mean(buy_trades['quantity'] > depth) for depth in depths])

alpha = np.log(np.mean(buy_trades['quantity'] > mean_quantity))/np.log(2)

mean_quantity = buy_trades['quantity'].mean()

probabilities_s = np.array([(1+depth/mean_quantity)**alpha for depth in depths])

plt.figure(figsize=(10, 5))

plt.plot(depths, probabilities)

plt.plot(depths, probabilities_s)

plt.xlabel('Depth')

plt.ylabel('Probability of execution')

plt.title('Execution probability at different depths')

plt.grid(True)

```

Out[55]:

In [56]:

```

plt.figure(figsize=(10, 5))

plt.grid(True)

plt.title('Diff')

plt.plot(depths, probabilities_s-probabilities);

```

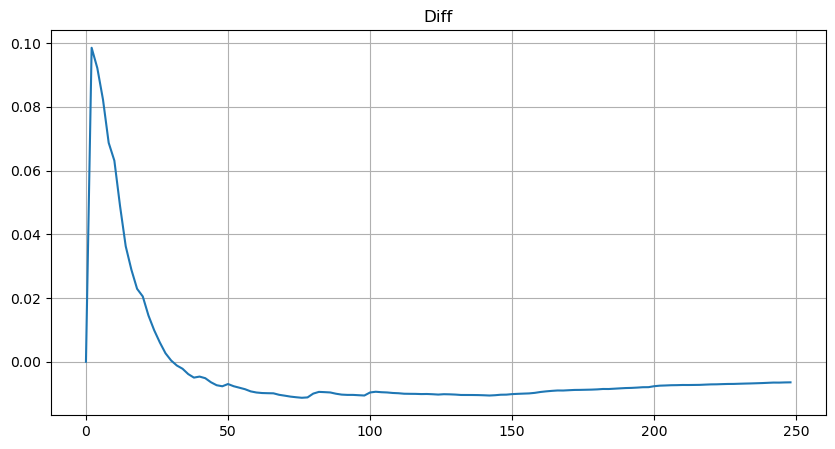

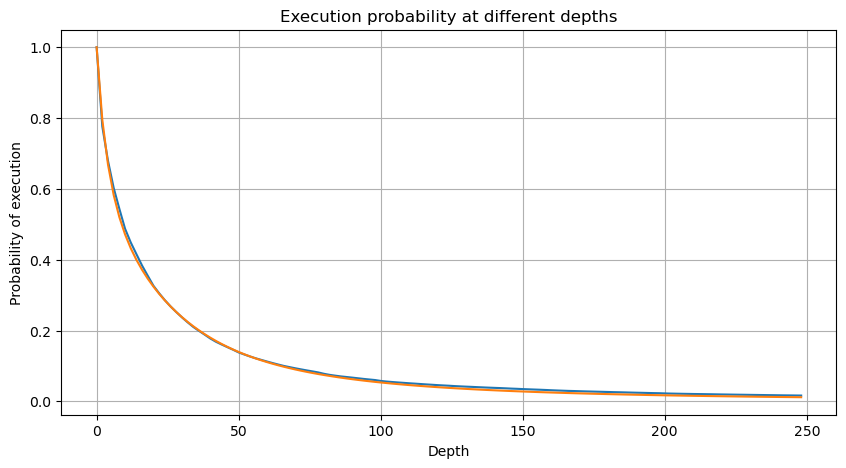

Out[56]:

However, this estimation is only approximate, as shown in the graph where we plot the difference between the simulated and actual values. When the trade amount is small, the deviation is significant, even approaching 10%. Although selecting different points during parameter estimation may improve the accuracy of that specific point's probability, it does not solve the deviation issue as a whole. This discrepancy arises from the difference between the power-law distribution and the actual distribution. To obtain more accurate results, the equation of the power-law distribution needs to be modified. The specific process is not elaborated here, but in summary, after a moment of insight, it is found that the actual equation should be as follows:

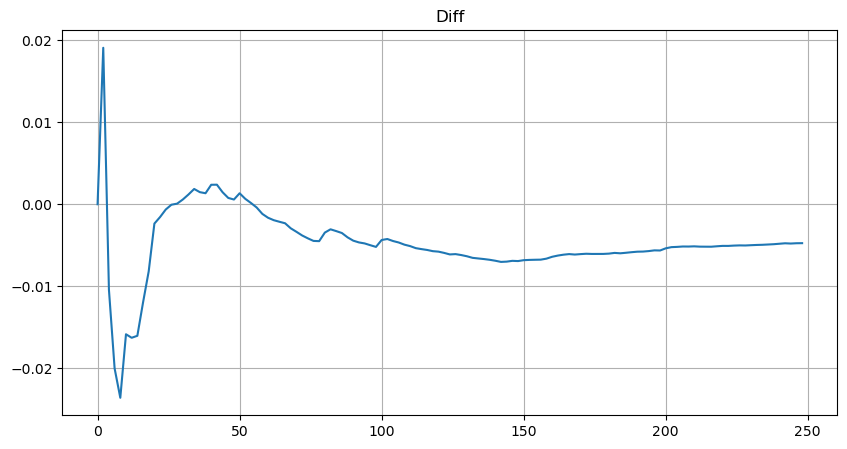

To simplify, let's use r = q/M to represent the normalized trade amount. We can estimate the parameters using the same method as before. The following graph shows that after the modification, the maximum deviation is no more than 2%. In theory, further adjustments can be made, but this level of accuracy is already sufficient.

In [52]:

```

depths = range(0, 250, 2)

probabilities = np.array([np.mean(buy_trades['quantity'] > depth) for depth in depths])

mean = buy_trades['quantity'].mean()

alpha = np.log(np.mean(buy_trades['quantity'] > mean))/np.log(2.05)

probabilities_s = np.array([(((1+20**(-depth/mean))*depth+mean)/mean)**alpha for depth in depths])

plt.figure(figsize=(10, 5))

plt.plot(depths, probabilities)

plt.plot(depths, probabilities_s)

plt.xlabel('Depth')

plt.ylabel('Probability of execution')

plt.title('Execution probability at different depths')

plt.grid(True)

```

Out[52]:

In [53]:

```

plt.figure(figsize=(10, 5))

plt.grid(True)

plt.title('Diff')

plt.plot(depths, probabilities_s-probabilities);

```

Out[53]:

With the estimated equation for the trade amount distribution, it is important to note that the probabilities in the equation are not the actual probabilities, but conditional probabilities. At this point, we can answer the question: What is the probability that the next order will be greater than a certain value? We can also determine the probability of orders at different depths being executed (in an ideal scenario, without considering order additions, cancellations, and queueing at the same depth).

At this point, the length of the text is already quite long, and there are still many questions that need to be answered. The following series of articles will attempt to provide answers.

From: https://blog.mathquant.com/2023/08/04/thoughts-on-high-frequency-trading-strategies-1.html | fmzquant |

1,863,453 | Huaxia Laser: A Pioneer in Fiber Laser Cutting Technology | Huaxia Laser: A Pioneer in Fiber Laser Cutting Technology Are you searching for a quicker, much a... | 0 | 2024-05-24T01:15:34 | https://dev.to/mobika/huaxia-laser-a-pioneer-in-fiber-laser-cutting-technology-213k | fiber, laser | Huaxia Laser: A Pioneer in Fiber Laser Cutting Technology

Are you searching for a quicker, much a lot extra effective method towards reducing products such as steel as well as timber? Huaxia Laser may simply be the service you require. Huaxia Laser is a business that specializes in fiber laser cutting technology. We will talk about the benefits of this particular technology, the development responsible for it, ways to utilize it securely, as well as its lots of requests.

Benefits of Fiber Laser Cutting Technology

Fiber laser cutting technology has several benefits over various other cutting techniques. Firstly, it is extremely quick. Along with fiber laser cutting, you can easily puncture products at a rate that is as much as 5 opportunities quicker compared to conventional cutting techniques. Another benefit of fiber laser cutting technology is its accuracy. Along with a fiber laser cutting machine, you can easily reduce products with amazing precision, by reducing as little as 0.02mm. Another profit of this particular technology is actually that it is extremely flexible. You can easily utilize it to reduce a broad variety of products, consisting of steel, timber, and plastic, as well as a lot extra.

Development responsible for Fiber Laser Cutting Technology

Exactly just what creates fiber laser cutting technology therefore revolutionary? The response deceptions in the method the technology jobs. Unlike conventional cutting techniques, which depend on a bodily device to puncture products, fiber laser cutting devices utilize a high-powered laser to puncture products. This laser produces an extremely extreme beam, along with power that is concentrated on a solitary area. When this beam strikes the product, it quickly heats up as well as vaporizes the product, leaving behind a cleanse, and accurate reduction. This ingenious method of cutting products has transformed production as well as construction markets worldwide.

Security of Fiber Laser Cutting Technology