id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,862,682 | Create a Weather App using ToolJet and OpenWeatherMap API | Introduction In this tutorial, we will create a weather app using ToolJet and... | 0 | 2024-05-23T13:07:01 | https://blog.tooljet.com/create-a-weather-app-using-openweathermap-api-and-tooljet/ | restapi, lowcode, tooljet, javascript | ## Introduction

In this tutorial, we will create a weather app using [ToolJet](https://github.com/ToolJet/ToolJet) and OpenWeatherMap. By the end of this guide, you'll have a functional app that fetches and displays weather data for any city using the OpenWeatherMap API.

### Prerequisites:

Before we start, make sure you have:

- **ToolJet (https://github.com/ToolJet/ToolJet) :** An open-source, low-code platform allowing you to build internal tools and applications rapidly. If you don’t have an account, sign up for a free ToolJet cloud account [here](https://app.tooljet.com/).

- **API Key from OpenWeatherMap :** Create an account on [OpenWeatherMap](https://openweathermap.org/api) to generate an API Key that will be used while we create queries to fetch the weather data.

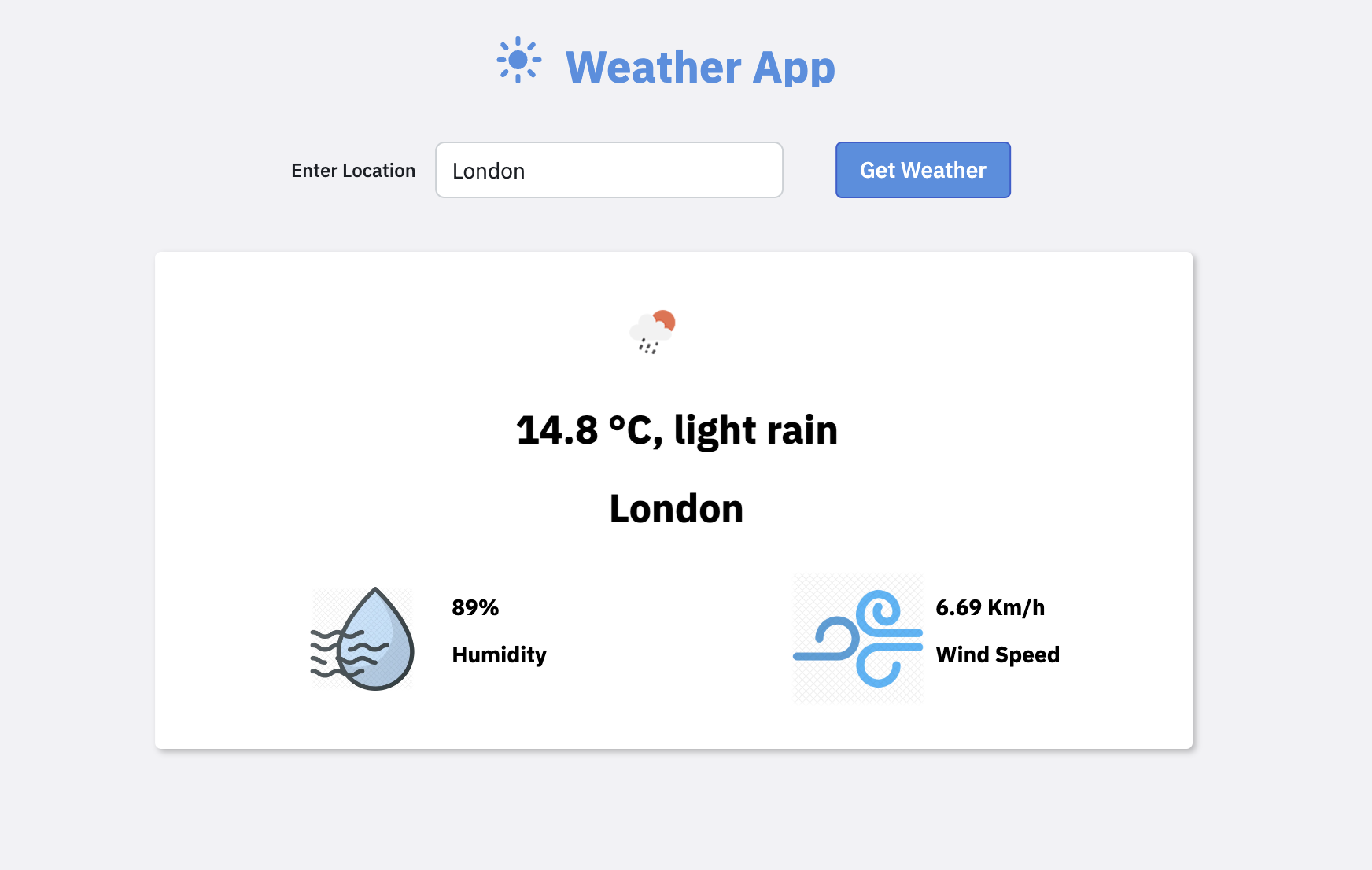

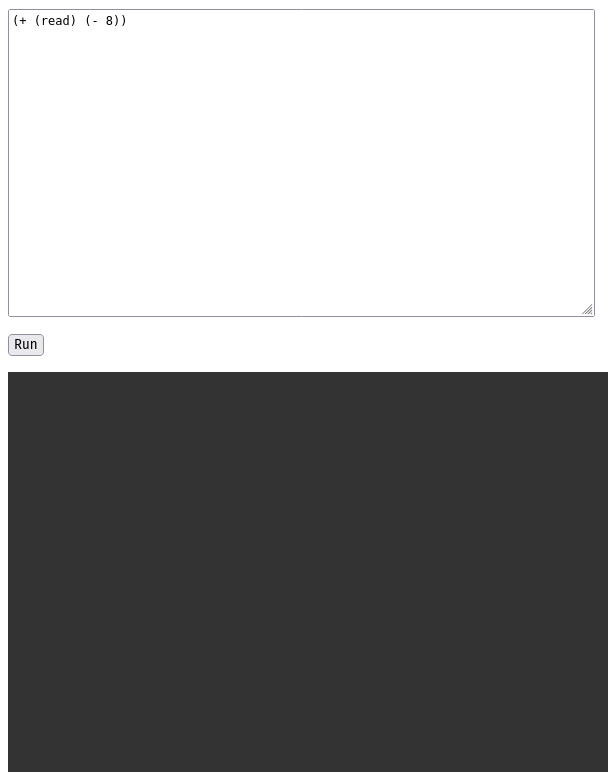

Here’s a quick preview of the app that we will be building by the end of this tutorial:

Before creating the UI, let’s create a new ToolJet App. On your ToolJet dashboard, click on the **Create an app** button and name the app **Weather App**.

Once the app is created, we can start with the UI.

## Creating the UI

Creating a custom UI is straightforward with the help of ToolJet’s [built-in components](https://docs.tooljet.com/docs/tooljet-concepts/what-are-components).

Let’s start by creating the app’s header.

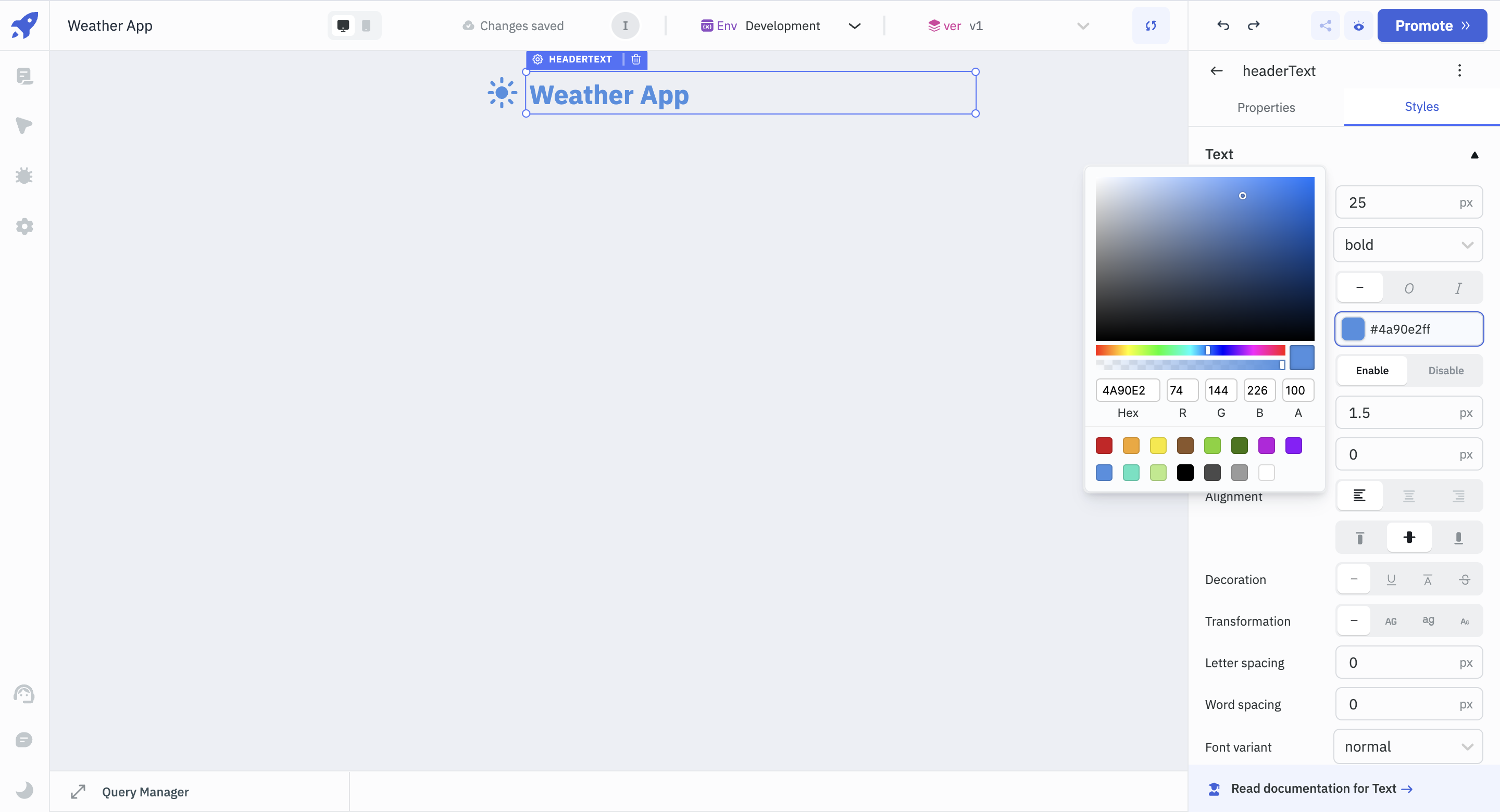

- Drag and drop the **Icon** and a **Text** Component from the [components library](https://docs.tooljet.com/docs/tooljet-concepts/what-are-components) on the right and rename them to _headerIcon_ and _headerText_, respectively.

- To configure the Text component, click on it and see the Properties Panel on the right.

- Set its **Data** property to 'Weather App', and under the **Styles** section, change its colour to light blue (Hex code: #4a90e2ff), font-weight to bold and font size to 25.

_Renaming components is an effective way to refer to components as the app grows._

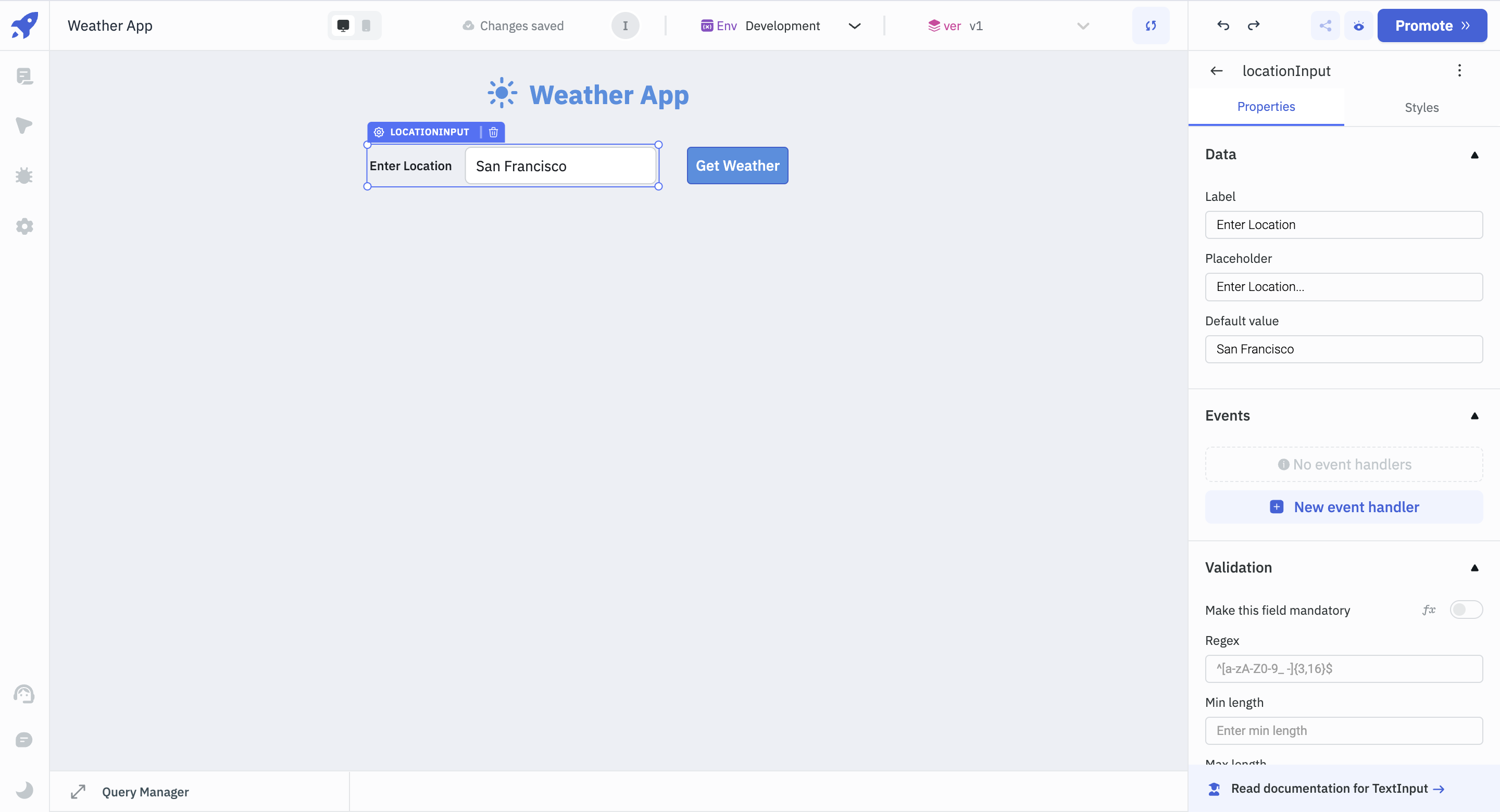

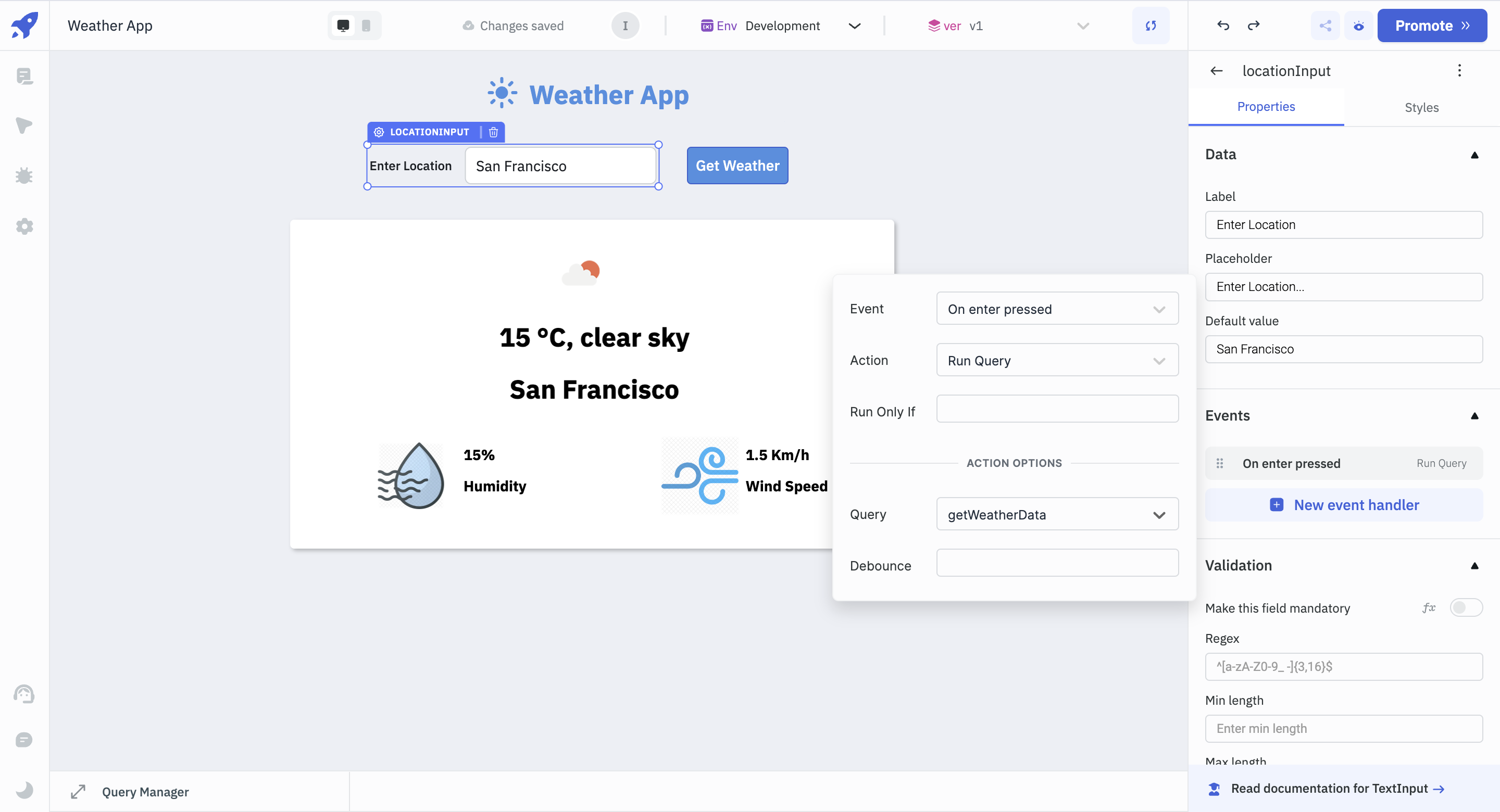

- Once the header is ready, drag and drop a **Text Input** component onto the canvas. This is where we will be entering our desired locations. Rename it to _locationInput_.

- For _locationInput_, change **Label** to **Enter Location** and **Placeholder** to **Enter Location**. Let’s set the default value to 'San Francisco'.

- Next to _locationInput_ component, add a **Button** component, rename it to _getWeatherButton_, and change the **Button text** property to **Get Weather** from the properties panel. From the **Styles** tab, change the **Background color** of the button to blue (Hex code: #4a90e2ff).

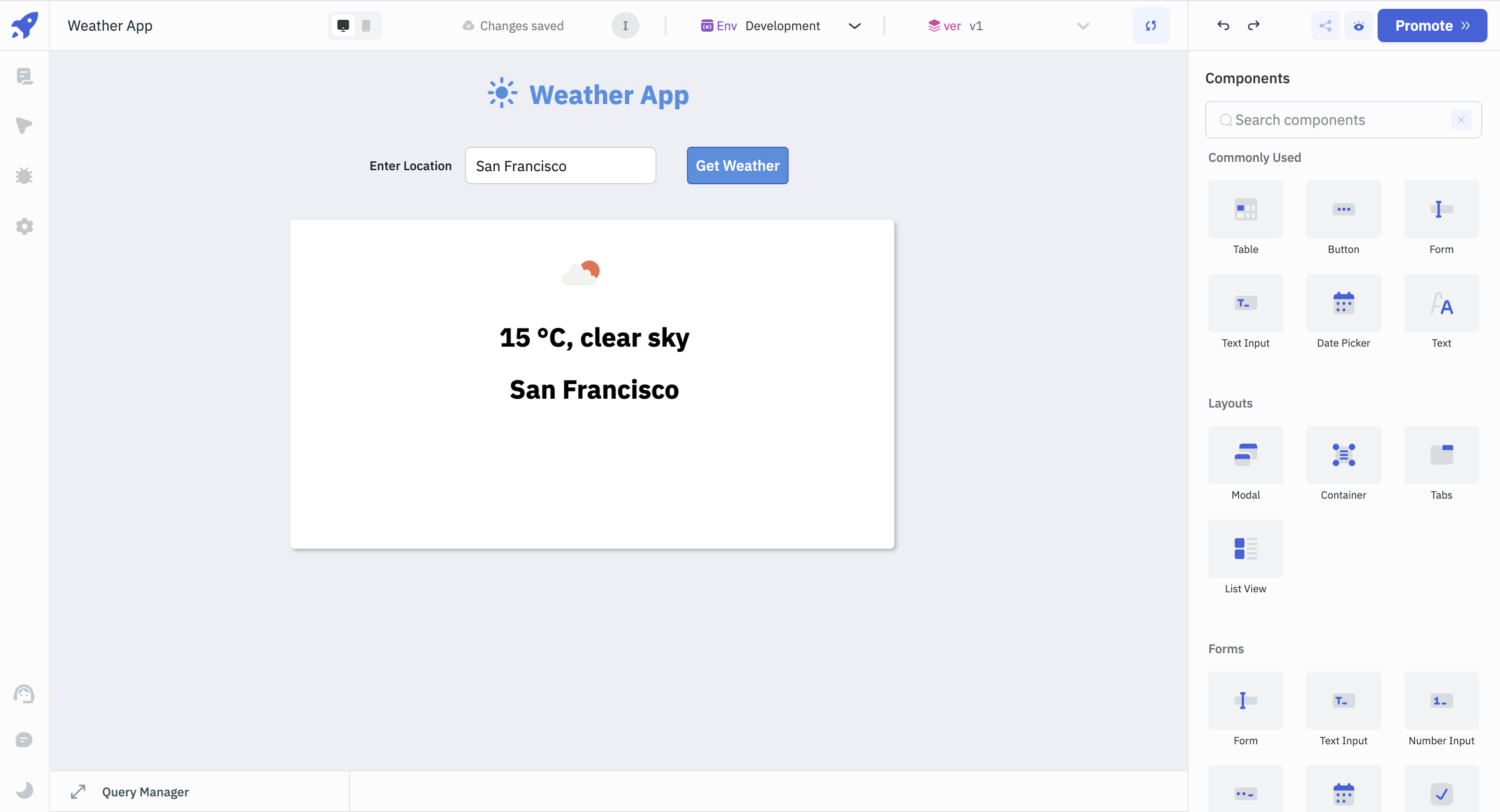

Next we will create the layout where we will be displaying the weather data.

- Drag and drop a **Container** component. Containers are used to group related components.

- Add an **Image** component inside the Container and rename it to _weatherImage_. This component will be used to display the weather through an image later in the tutorial. For now, let’s add a dummy image. In the **URL** section, add the URL, [http://openweathermap.org/img/wn/02d@2x.png](http://openweathermap.org/img/wn/02d@2x.png).

- Next, add two **Text** components and rename them to _weatherDescription_ and _locationText_, respectively. Add some dummy text under their **Data** property. The dummy data will be made dynamic as the tutorial progresses.

- For both the text components, in the **Styles** section, change the font size to 25, font-weight to bold and alignment to center.

We will also display a particular location's humidity and wind speed.

- Drag and drop the **Image** component and rename it to _humidityIcon_. In the **URL** property, let’s use the following URL for now: [https://cdn.iconscout.com/icon/premium/png-512-thumb/humidity-14-532297.png?f=webp&w=512](https://cdn.iconscout.com/icon/premium/png-512-thumb/humidity-14-532297.png?f=webp&w=512).

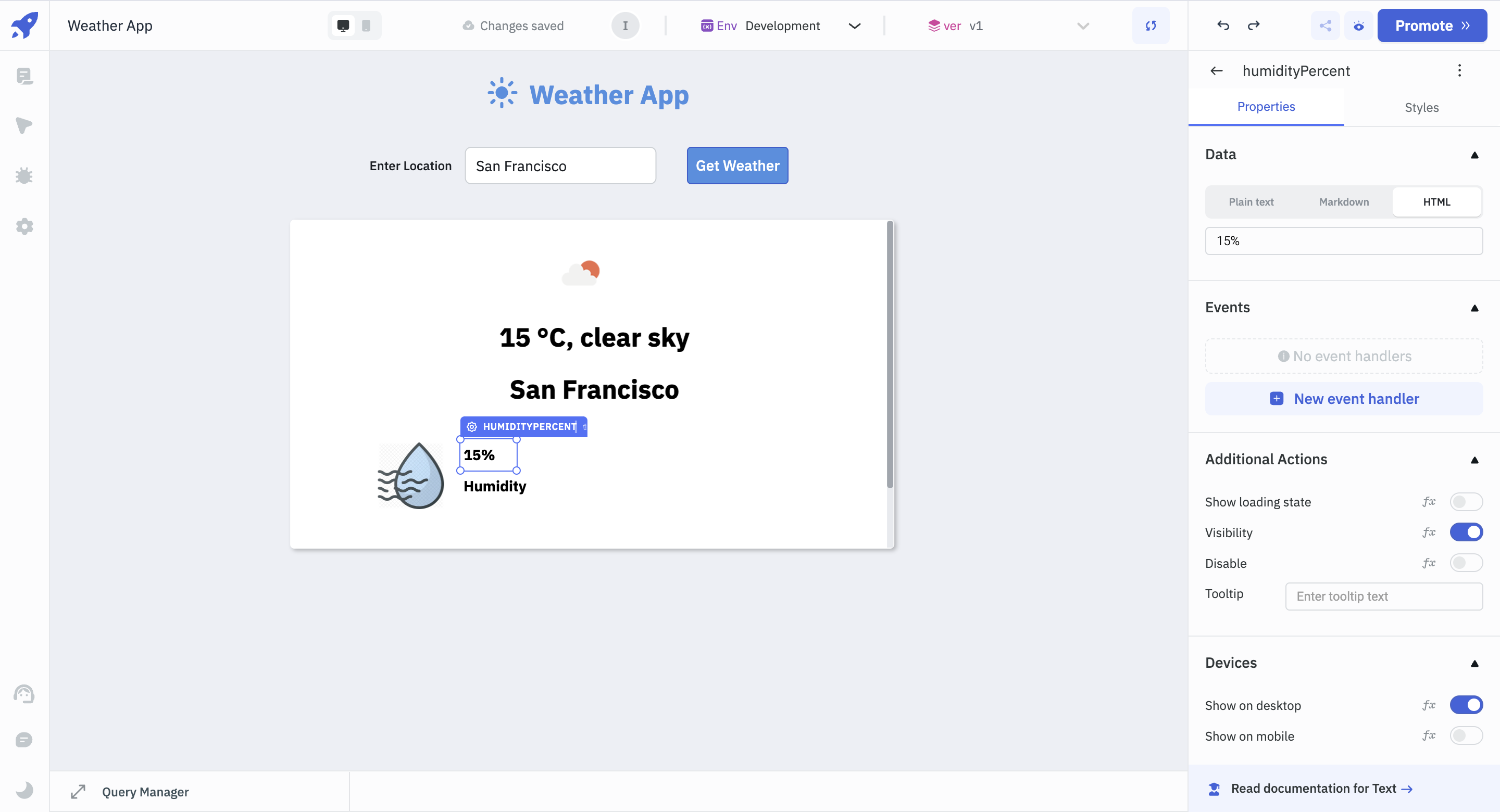

- Place two Text components next to the Image component and rename them to _humidityPrecent_ and _humidityText_ respectively. In the Styles section, set the font weight to bold for both.

- For the _humidityPercent_ and _humidityText_ components, add some dummy data in the **Data** section of the Properties panel. As the tutorial progresses, we will be fetching the humidity data dynamically.

- To add wind-speed data, copy the Image and Text components and paste them beside the humidity section.

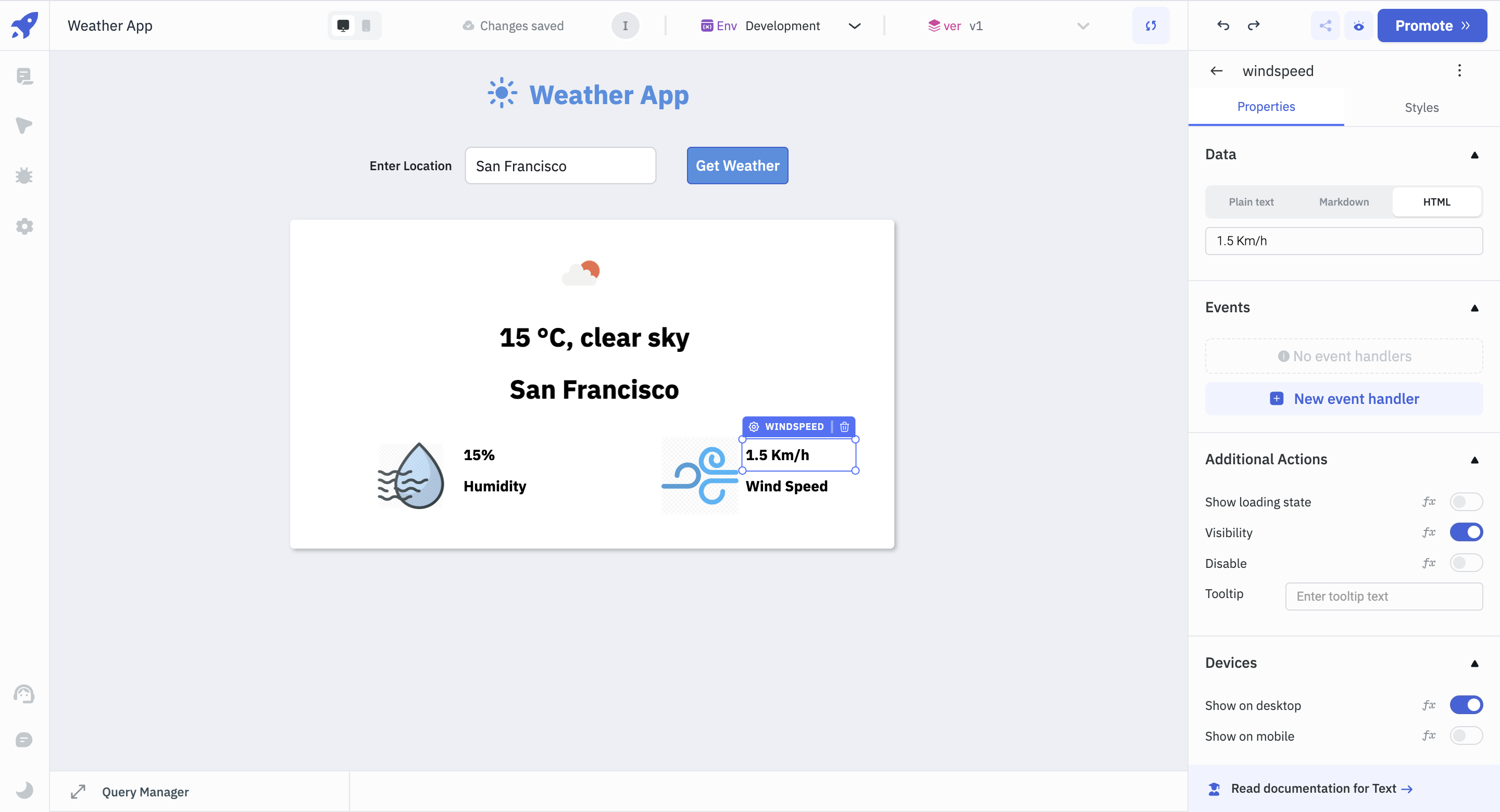

- Rename the Image component to _windspeedImage_ and the Text components to _windpseed_ and _windspeedText_, respectively.

- Select the _windspeedImage_ component, and in the **URL** section, add the following URL for the image: [https://cdn.iconscout.com/icon/premium/png-512-thumb/wind-336-974466.png?f=webp&w=512](https://cdn.iconscout.com/icon/premium/png-512-thumb/wind-336-974466.png?f=webp&w=512)

- Select the _windspeed_ component, add some dummy data in the **Data** property. In the **Styles** tab, set the font weight to bold.

- For the _windspeedText_ component, add some dummy data in the **Data** property. In the **Styles** tab, set the weight to bold.

With the above steps, our UI is now ready. It’s time to use OpenWeatherMap API to fetch the weather data for our desired location.

## Use OpenWeatherMap API to Fetch Weather Data

### 1. Creating the Query

To fetch the weather data from OpenWeatherMap API, we will use ToolJet’s [queries](https://docs.tooljet.com/docs/tooljet-concepts/what-are-queries).

Follow the steps below to create a query and fetch the data.

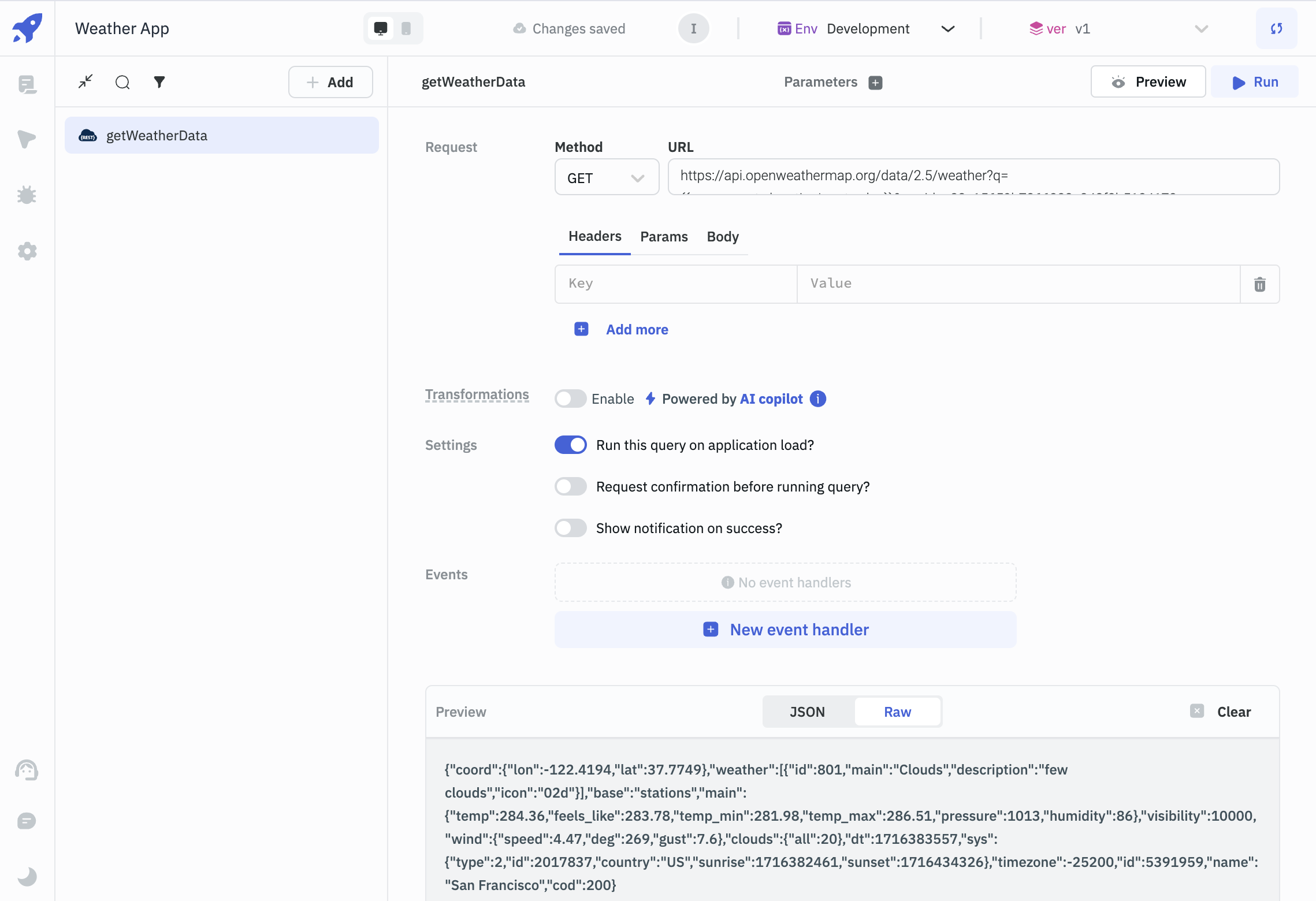

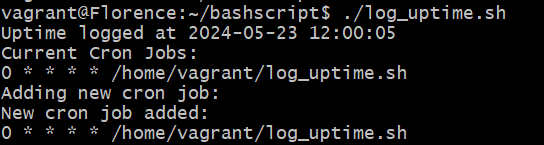

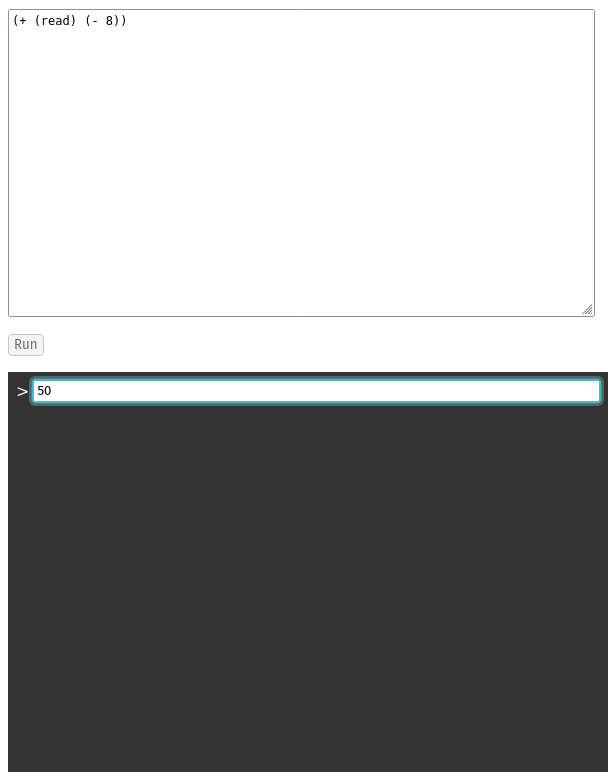

- Expand the **Query Panel** at the bottom and click the **Add** button to create a query.

- Choose the **REST API** query and rename it to _getWeatherData_.

- Since we will just be fetching the data, in the **Request** section, choose the **Method** as **GET** from the dropdown, and add the following as the **URL**: `https://api.openweathermap.org/data/2.5/weather?q=<LOCATION>&appid=<APP_ID>`.

- Replace **<LOCATION>** with the following code: `{{components.locationInput.value}}`; since we have set the default value to 'San Francisco', the location will be replaced with 'San Francisco' for now.

- Replace the **<APP_ID>** with the API Key that you generated while creating an account on OpenWeatherMap API.

- To ensure that the query runs every time the application loads, enable **Run this query on application load?**

- Click on the **Run** button to see that the weather data is being fetched from the OpenWeatherMap API for the city of San Francisco.

_In ToolJet, [double curly braces](https://docs.tooljet.com/docs/tooljet-concepts/how-to-access-values) are used to access or refer dynamic values._

### 2. Adding Event to _locationInput_ and _getWeatherButton_ Components

Now that we’re successfully configured the query, we will bind this query to our _locationInput_ and _getWeatherButton_ components.

- Select the _locationInput_ component. In the properties panel, click on the **[New event handler](https://docs.tooljet.com/docs/tooljet-concepts/what-are-events)** button, set **Event** as **On enter pressed**, choose **Action** as **Run Query**, and select _getWeatherData_ as the query.

- To test it, let’s change the location to 'London' and press enter. If you followed the above steps correctly, the query should fetch the data related to London.

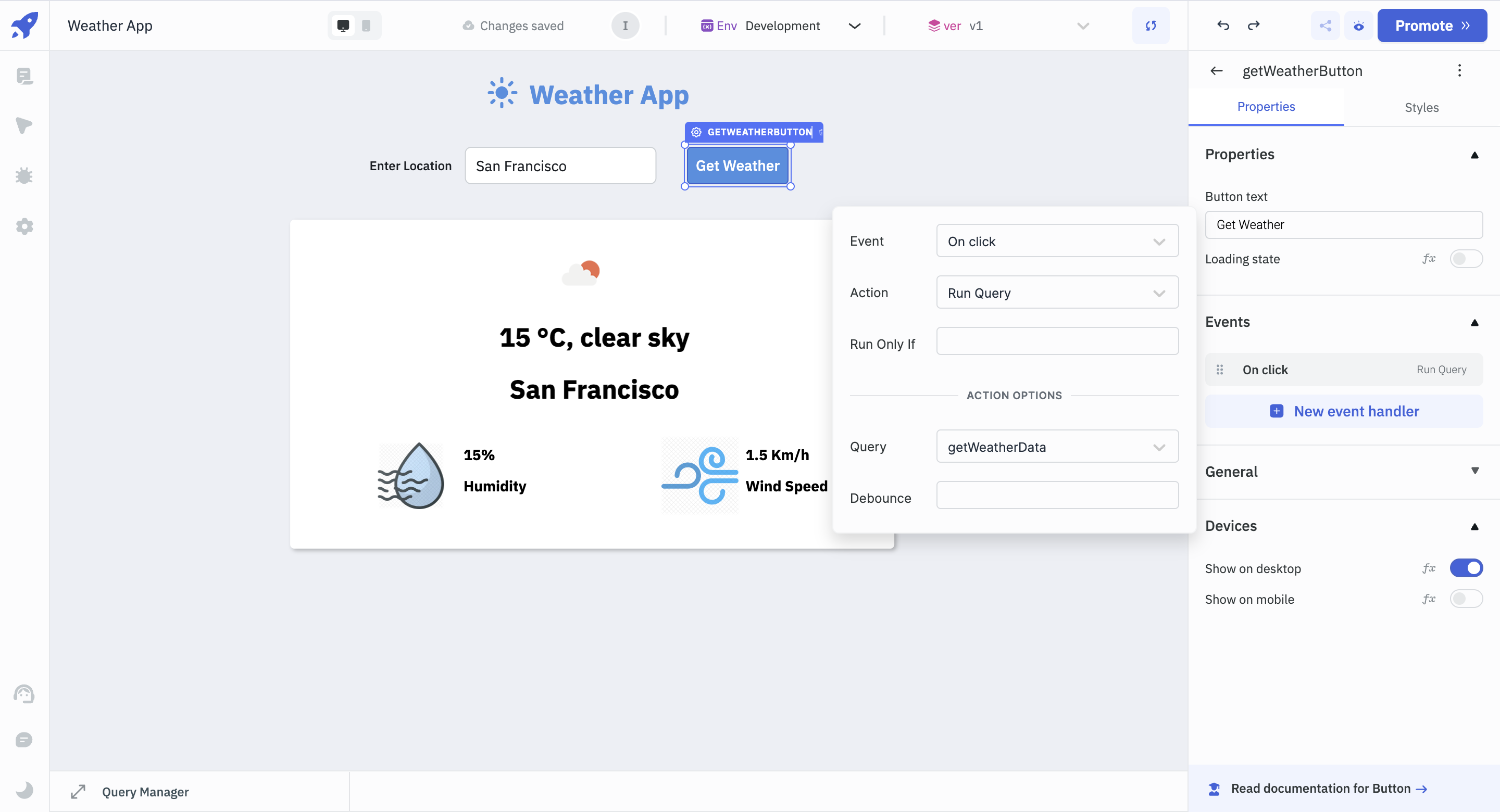

We would also want the query to run once we enter a location and press the **Get Weather** button. To do so, follow the steps below:

- Select the _getWeatherButton_ button, and click on **New event handler**.

- Choose the **Event** as **On click**, **Action** as **Run Query**, and select _getWeatherData_ as the **Query**.

- If you followed the above steps correctly, you can now run the query after clicking on the **Get Weather** button.

### 3. Binding the Data to the Components

Since we can now fetch the queries by pressing enter and clicking on the **Get Weather** button, we will bind the fetched data to our UI to make our app dynamic.

Let’s start with the weatherImage component first.

- Select the _weatherImage_ component, and replace the URL with `http://openweathermap.org/img/wn/{{queries.getWeatherData.data.weather[0].icon}}@2x.png`. This will ensure, that the image changes accordingly with the weather of a particular city.

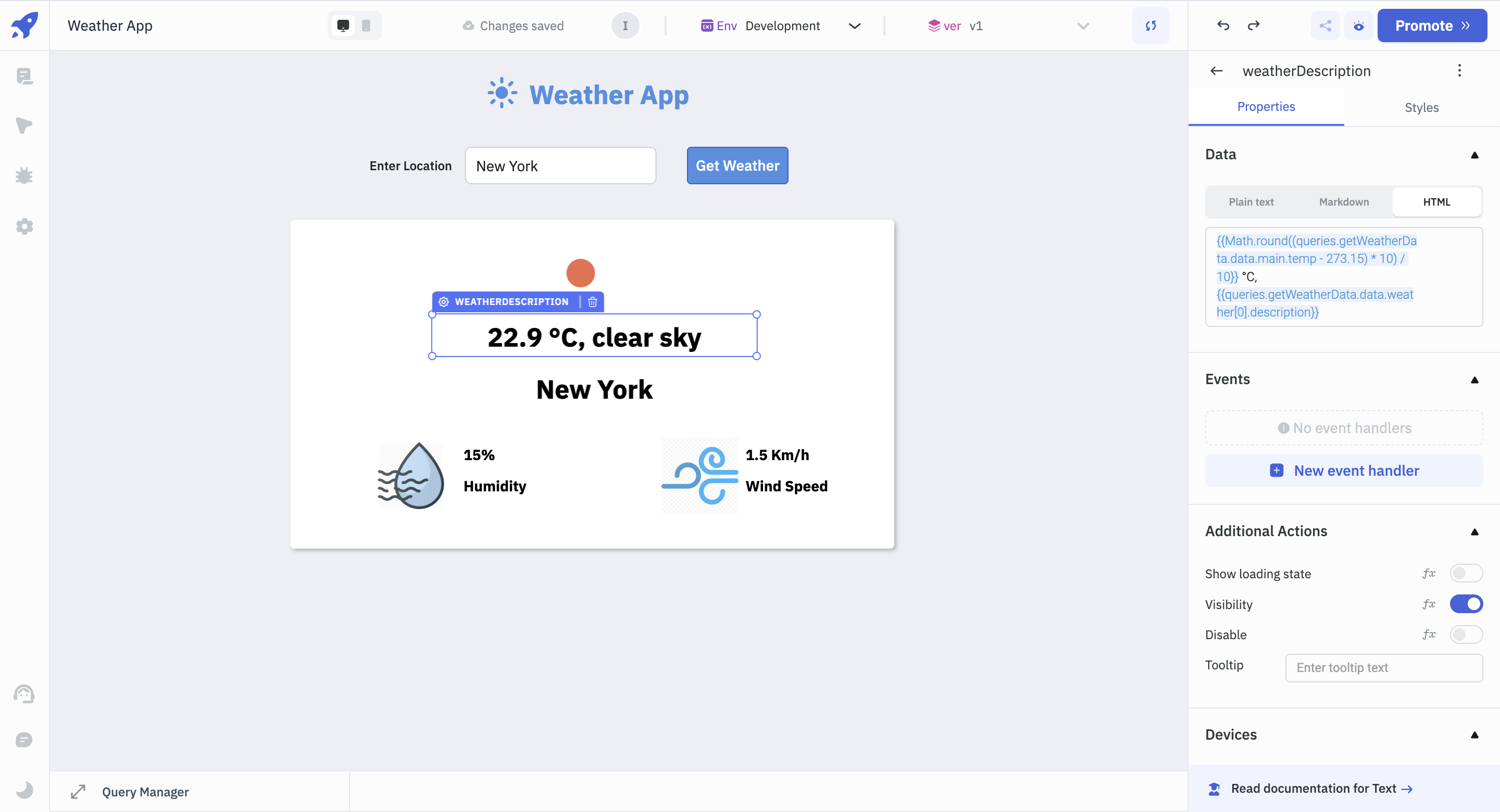

- Next, select the weatherDescription component and replace the text under the **Data** property with the following code:

`{{Math.round((queries.getWeatherData.data.main.temp - 273.15) * 10) / 10}} °C, {{queries.getWeatherData.data.weather[0].description}}`. Here, we’re converting the temperature to degrees Celsius and adding the weather description.

- Select the _locationText_ component, and add the following code to the **Data** property: `{{queries.getWeatherData.data.name}}`.

If you followed the above steps correctly, you should see the image, temperature, weather description and city name changing according to the location we’re adding to the _locationInput_ component.

The last step would be to display the actual humidity percentage and the windspeed of the entered location.

- Select the _humidityPercent_ component, add `{{queries.getWeatherData.data.main.humidity}}%` under the **Data** property. This will give the humidity percentage of the entered location.

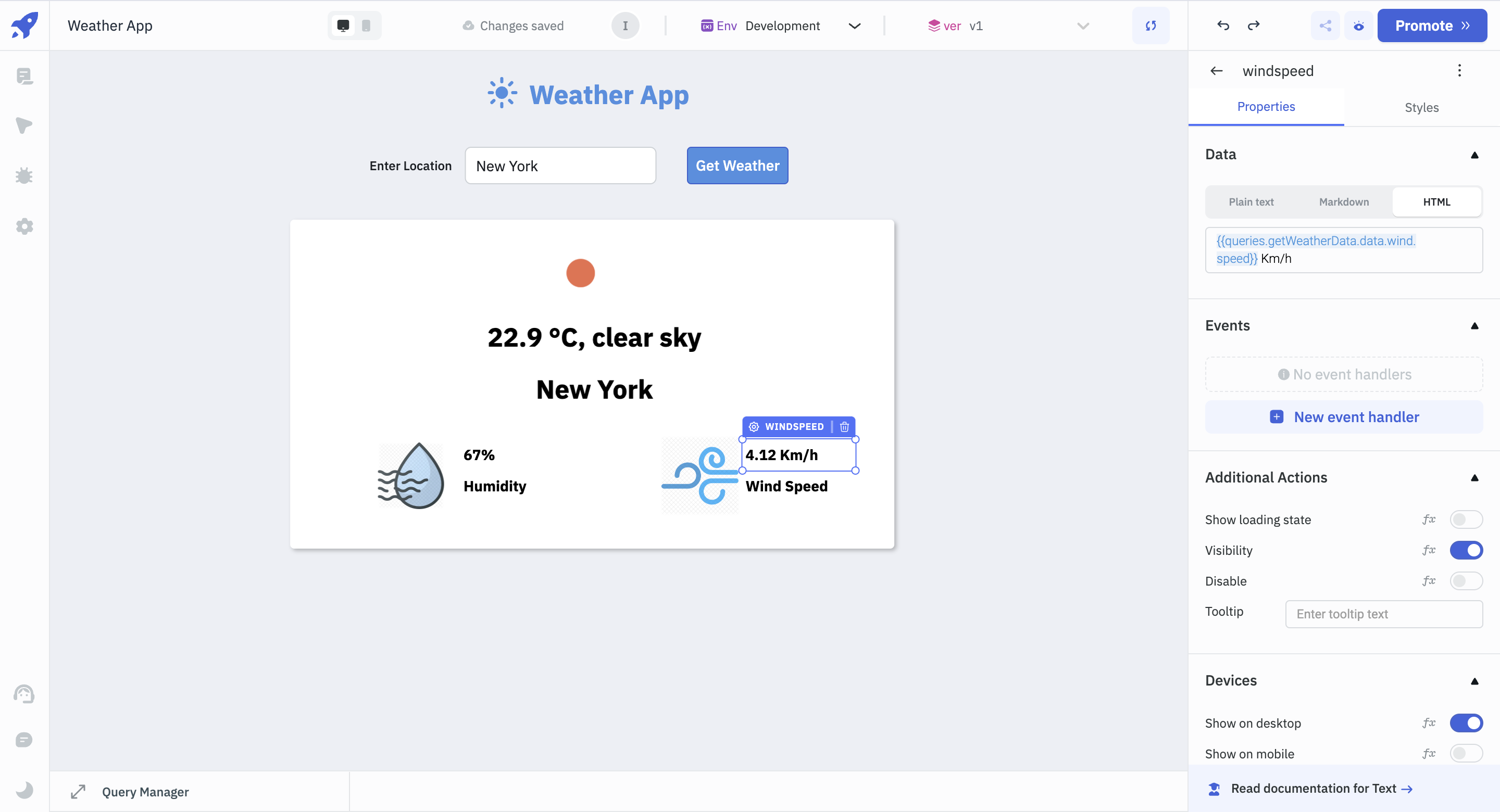

- Next, click on the _windspeed_ component, and add `{{queries.getWeatherData.data.wind.speed}} Km/h` under the **Data** property. This will display the wind speed of the entered location.

If you have followed all the steps mentioned above, you can see that all of the component data gets updated after running the query according to the location we add.

To make sure that our app displays a loader whenever the data is being fetched for the new city, select the Container component and in the **Loading state** property, click _fx_ and add `{{queries.getWeatherData.isLoading}}`.

## Conclusion

You've successfully built a weather app using ToolJet by consuming the OpenWeatherMap REST API. This simple app demonstrates how straightforward it is to create functional apps in ToolJet. To know more about what [ToolJet](https://docs.tooljet.com/docs/) can do, visit the ToolJet docs or join us and post your queries on [Slack](https://join.slack.com/t/tooljet/shared_invite/zt-2ij7t3rzo-qV7WTUTyDVQkwVxTlpxQqw).

| asjadkhan |

1,862,859 | Dive into Creativity: Toca Life World APK for iOS | "Dive into Creativity: Toca Life World APK for iOS invites users into a boundless world of... | 0 | 2024-05-23T13:05:04 | https://dev.to/wendlland_tabler_6d6176b0/dive-into-creativity-toca-life-world-apk-for-ios-59eg | "Dive into Creativity: [Toca Life World APK for iOS](https://tocaapkboca.com/toca-life-world-apk-for-ios/) invites users into a boundless world of imagination and exploration. This APK version tailored for iOS devices unlocks endless possibilities within the Toca Life universe. From bustling city streets to serene countryside retreats, players can immerse themselves in various vibrant locations, each brimming with interactive elements and quirky characters. With intuitive touch controls optimized for iOS, navigating through this virtual world becomes seamless and enjoyable. Whether creating unique stories, designing intricate scenes, or simply unleashing creativity, Toca Life World APK for iOS provides an unparalleled sandbox experience, empowering users to craft their own adventures and memories. | wendlland_tabler_6d6176b0 | |

1,862,858 | 20 Top C# Frameworks and Libraries on GitHub for Building Powerful Applications | GitHub offers a wealth of frameworks and libraries that can greatly enhance your projects. Below,... | 0 | 2024-05-23T13:03:07 | https://dev.to/crafting-code/20-top-c-frameworks-and-libraries-on-github-for-building-powerful-applications-29dg | csharp, aspdotnet, github, coding | GitHub offers a wealth of frameworks and libraries that can greatly enhance your projects. Below, I’ve mentioned 21 essential tools with what they do, how they can benefit you, and where to find them on GitHub.

If you find our content enriching and helpful, consider Supporting Us.

So, lets get started!!🚀

## 1. [ASP.NET Core](https://github.com/dotnet/aspnetcore)

A framework for building web applications and APIs. It’s fast, modular, and cross-platform, allowing you to create high-performance web solutions with ease.

## 2. [Entity Framework Core](https://github.com/dotnet/efcore)

Simplifies database interaction by providing a powerful object-relational mapping (ORM) framework. With EF Core, you can manipulate database data using C# objects, saving time and reducing complexity.

## 3. [Dapper](https://github.com/DapperLib/Dapper)

A lightweight ORM that offers high performance and simplicity for data access. It lets you execute SQL queries and map results directly to C# objects, making database operations straightforward.

## 4. [FluentValidation](https://github.com/FluentValidation/FluentValidation)

Enables easy validation of complex objects in C#. It provides a fluent interface for defining validation rules, ensuring data integrity in your applications.

## 5. [Serilog](https://github.com/serilog/serilog)

A logging library that simplifies structured logging in various formats. It helps you troubleshoot and monitor your applications effectively by providing flexible logging capabilities.

## 6. [Hangfire](https://github.com/HangfireIO/Hangfire)

Facilitates background processing in your applications. You can use it to perform tasks asynchronously, schedule jobs, and manage workflows, ensuring smooth application operation.

## 7. [AutoMapper](https://github.com/AutoMapper/AutoMapper)

Simplifies object-to-object mapping in C#. It automates the process of copying data between different object types, saving you time and effort.

## 8. [Moq](https://github.com/Moq/moq4)

A mocking library for .NET that simplifies unit testing. Moq allows you to create mock objects and set expectations easily, making it ideal for testing complex interactions within your code.

## 9. [Newtonsoft.Json](https://github.com/JamesNK/Newtonsoft.Json)

Provides high-performance JSON serialization and deserialization for .NET applications. It’s easy to use and offers robust support for manipulating JSON data.

## 10. [SignalR](https://github.com/SignalR/SignalR)

Adds real-time web functionality to your applications. With SignalR, you can build interactive, real-time features such as chat, notifications, and live updates.

## 11. [NLog](https://github.com/NLog/NLog)

A flexible logging platform for .NET. NLog allows you to configure logging behavior dynamically, making it easy to log messages in various environments.

## 12. [Refit](https://github.com/reactiveui/refit)

Simplifies API integration by generating API interfaces from C# interfaces. With Refit, you can consume HTTP APIs in a type-safe and efficient manner.

## 13. [CsvHelper](https://github.com/JoshClose/CsvHelper)

Makes it easy to read and write CSV files in C#. CsvHelper simplifies CSV parsing and writing, saving you time when working with tabular data.

## 14. [Humanizer](https://github.com/Humanizr/Humanizer)

Adds human-friendly extensions to various data types in .NET. Humanizer helps you format strings, handle dates, and manipulate numbers in a natural and readable way.

## 15. [FluentAssertions](https://github.com/fluentassertions/fluentassertions)

Provides a fluent syntax for writing clear and concise unit tests in .NET. With FluentAssertions, you can create expressive assertions that enhance the readability of your test code.

## 16. [Xamarin.Forms](https://github.com/xamarin/Xamarin.Forms)

Enables cross-platform mobile development using C#. Xamarin.Forms allows you to create native user interfaces and share code across iOS, Android, and Windows platforms.

## 17. [Unity](https://github.com/Unity-Technologies/UnityCsReference)

A powerful game engine that supports C# scripting. Unity simplifies game development by providing tools for creating immersive and engaging experiences across various platforms.

## 18. [FluentValidation.AspNetCore](https://github.com/FluentValidation/FluentValidation)

Extends FluentValidation to integrate seamlessly with ASP.NET Core applications. It provides additional functionality for validating request payloads and model objects in MVC projects.

## 19. [Hangfire.Dashboard.Authorization](https://github.com/HangfireIO/Hangfire.Dashboard.Authorization)

Adds role-based access control to Hangfire dashboards in ASP.NET Core applications. With this extension, you can secure your Hangfire dashboard and restrict access based on user roles.

## 20. [XUnit](https://github.com/xunit/xunit)

A testing framework for .NET that supports test-driven and behavior-driven development. xUnit makes it easy to write clear and concise unit tests, helping you ensure the quality and reliability of your code.

---

These 21 essential frameworks and libraries on GitHub are invaluable tools for C# developers. These resources can streamline your development process, enhance functionality, and improve the overall quality of your projects. Explore them, experiment with them, and leverage them.

Feel free to reach out to me at **toshiah213@gmail.com** if you’re interested in collaborating, sponsoring, or discussing business opportunities.

---

_If you found this content helpful, Please Support us:_

_PayPal:_ **[toshiah213@gmail.com](https://www.paypal.com/paypalme/tauseef69?country.x=IN&locale.x=en_GB)** 🌟✨ | crafting-code |

1,862,857 | vsplit() in PyTorch | *Memos: My post explains split(). My post explains hsplit(). My post explains dsplit(). My post... | 0 | 2024-05-23T13:02:51 | https://dev.to/hyperkai/vsplit-in-pytorch-4915 | pytorch, vsplit, split, tensor | *Memos:

- [My post](https://dev.to/hyperkai/split-in-pytorch-nga) explains [split()](https://pytorch.org/docs/stable/generated/torch.split.html).

- [My post](https://dev.to/hyperkai/hsplit-in-pytorch-4b1d) explains [hsplit()](https://pytorch.org/docs/stable/generated/torch.hsplit.html).

- [My post](https://dev.to/hyperkai/dsplit-in-pytorch-594c) explains [dsplit()](https://pytorch.org/docs/stable/generated/torch.dsplit.html).

- [My post](https://dev.to/hyperkai/tensorsplit-in-pytorch-30m5) explains [tensor_split()](https://pytorch.org/docs/stable/generated/torch.tensor_split.html).

- [My post](https://dev.to/hyperkai/chunk-in-pytorch-30f5) explains [chunk()](https://pytorch.org/docs/stable/generated/torch.chunk.html).

- [My post](https://dev.to/hyperkai/unbind-in-pytorch-3lk9) explains [unbind()](https://pytorch.org/docs/stable/generated/torch.unbind.html).

[vsplit()](https://pytorch.org/docs/stable/generated/torch.vsplit.html) can get the one or more 2D or more D tensors of zero or more vertically splitted elements from the 2D or more D tensor of zero or more elements as shown below:

*Memos:

- `vsplit()` can be used with [torch](https://pytorch.org/docs/stable/torch.html) and a tensor.

- The 1st argument with `torch` or using a tensor is `input`(Required-Type:`tensor` of `int`, `float`, `complex` or `bool`).

- The 2nd argument with `torch` or the 1st argument with a tensor is `sections`(Required-Type:`int`).

- The 2nd argument with `torch` or the 1st argument with a tensor is `indices`(Required-Type:`tuple` of `int` or `list` of `int`).

- The total number of the zero or more elements of one or more returned tensors changes.

- One or more returned tensors keep the dimension of `input` tensor.

```python

import torch

my_tensor = torch.tensor([[0, 1, 2, 3], [4, 5, 6, 7], [8, 9, 10, 11]])

torch.vsplit(input=my_tensor, sections=1)

my_tensor.vsplit(sections=1)

# (tensor([[0, 1, 2, 3], [4, 5, 6, 7], [8, 9, 10, 11]]),)

torch.vsplit(input=my_tensor, sections=3)

# (tensor([[0, 1, 2, 3]]),

# tensor([[4, 5, 6, 7]]),

# tensor([[8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(0,))

torch.vsplit(input=my_tensor, indices=(-3,))

# (tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[0, 1, 2, 3], [4, 5, 6, 7], [8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(1,))

torch.vsplit(input=my_tensor, indices=(-2,))

# (tensor([[0, 1, 2, 3]]),

# tensor([[4, 5, 6, 7], [8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(2,))

torch.vsplit(input=my_tensor, indices=(-1,))

# (tensor([[0, 1, 2, 3], [4, 5, 6, 7]]),

# tensor([[8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(3,))

# (tensor([[0, 1, 2, 3], [4, 5, 6, 7], [8, 9, 10, 11]]),

# tensor([], size=(0, 4), dtype=torch.int64))

torch.vsplit(input=my_tensor, indices=(0, 0))

torch.vsplit(input=my_tensor, indices=(0, -3))

# (tensor([], size=(0, 4), dtype=torch.int64),

# tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[0, 1, 2, 3], [4, 5, 6, 7], [8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(0, 1))

torch.vsplit(input=my_tensor, indices=(0, -2))

# (tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[0, 1, 2, 3]]),

# tensor([[4, 5, 6, 7], [8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(0, 2))

torch.vsplit(input=my_tensor, indices=(0, -1))

# (tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[0, 1, 2, 3], [4, 5, 6, 7]]),

# tensor([[8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(0, 3))

# (tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[0, 1, 2, 3], [4, 5, 6, 7], [8, 9, 10, 11]]),

# tensor([], size=(0, 4), dtype=torch.int64))

torch.vsplit(input=my_tensor, indices=(1, 0))

torch.vsplit(input=my_tensor, indices=(1, -3))

# (tensor([[0, 1, 2, 3]]),

# tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[0, 1, 2, 3], [4, 5, 6, 7], [8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(1, 1))

torch.vsplit(input=my_tensor, indices=(1, -2))

# (tensor([[0, 1, 2, 3]]),

# tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[4, 5, 6, 7], [8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(1, 2))

torch.vsplit(input=my_tensor, indices=(1, -1))

# (tensor([[0, 1, 2, 3]]),

# tensor([[4, 5, 6, 7]]),

# tensor([[8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(1, 3))

# (tensor([[0, 1, 2, 3]]),

# tensor([[4, 5, 6, 7], [8, 9, 10, 11]]),

# tensor([], size=(0, 4), dtype=torch.int64))

torch.vsplit(input=my_tensor, indices=(2, 0))

torch.vsplit(input=my_tensor, indices=(2, -3))

# (tensor([[0, 1, 2, 3], [4, 5, 6, 7]]),

# tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[0, 1, 2, 3], [4, 5, 6, 7], [8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(2, 1))

torch.vsplit(input=my_tensor, indices=(2, -2))

# (tensor([[0, 1, 2, 3], [4, 5, 6, 7]]),

# tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[4, 5, 6, 7], [8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(2, 2))

torch.vsplit(input=my_tensor, indices=(2, -1))

# (tensor([[0, 1, 2, 3], [4, 5, 6, 7]]),

# tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(2, 3))

# (tensor([[0, 1, 2, 3], [4, 5, 6, 7]]),

# tensor([[8, 9, 10, 11]]),

# tensor([], size=(0, 4), dtype=torch.int64))

torch.vsplit(input=my_tensor, indices=(3, 0))

torch.vsplit(input=my_tensor, indices=(3, -3))

# (tensor([[0, 1, 2, 3], [4, 5, 6, 7], [8, 9, 10, 11]]),

# tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[0, 1, 2, 3], [4, 5, 6, 7], [8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(3, 1))

torch.vsplit(input=my_tensor, indices=(3, -2))

# (tensor([[0, 1, 2, 3], [4, 5, 6, 7], [8, 9, 10, 11]]),

# tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[4, 5, 6, 7], [8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(3, 2))

torch.vsplit(input=my_tensor, indices=(3, -1))

# (tensor([[0, 1, 2, 3], [4, 5, 6, 7], [8, 9, 10, 11]]),

# tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(3, 3))

# (tensor([[0, 1, 2, 3], [4, 5, 6, 7], [8, 9, 10, 11]]),

# tensor([], size=(0, 4), dtype=torch.int64),

# tensor([], size=(0, 4), dtype=torch.int64))

torch.vsplit(input=my_tensor, indices=(-1, 0))

torch.vsplit(input=my_tensor, indices=(-1, -3))

# (tensor([[0, 1, 2, 3], [4, 5, 6, 7]]),

# tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[0, 1, 2, 3], [4, 5, 6, 7], [8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(-1, 1))

torch.vsplit(input=my_tensor, indices=(-1, -2))

# (tensor([[0, 1, 2, 3], [4, 5, 6, 7]]),

# tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[4, 5, 6, 7], [8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(-1, 2))

torch.vsplit(input=my_tensor, indices=(-1, -1))

# (tensor([[0, 1, 2, 3], [4, 5, 6, 7]]),

# tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(-1, 3))

# (tensor([[0, 1, 2, 3], [4, 5, 6, 7]]),

# tensor([[8, 9, 10, 11]]),

# tensor([], size=(0, 4), dtype=torch.int64))

torch.vsplit(input=my_tensor, indices=(-2, 0))

# (tensor([[0, 1, 2, 3]]),

# tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[0, 1, 2, 3], [4, 5, 6, 7], [8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(-2, 1))

# (tensor([[0, 1, 2, 3]]),

# tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[4, 5, 6, 7], [8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(-2, 2))

# (tensor([[0, 1, 2, 3]]),

# tensor([[4, 5, 6, 7]]),

# tensor([[8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(-2, 3))

# (tensor([[0, 1, 2, 3]]),

# tensor([[4, 5, 6, 7], [8, 9, 10, 11]]),

# tensor([], size=(0, 4), dtype=torch.int64))

torch.vsplit(input=my_tensor, indices=(-3, 0))

torch.vsplit(input=my_tensor, indices=(-3, -3))

# (tensor([], size=(0, 4), dtype=torch.int64),

# tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[0, 1, 2, 3], [4, 5, 6, 7], [8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(-3, 1))

torch.vsplit(input=my_tensor, indices=(-3, -2))

# (tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[0, 1, 2, 3]]),

# tensor([[4, 5, 6, 7], [8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(-3, 2))

torch.vsplit(input=my_tensor, indices=(-3, -1))

# (tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[0, 1, 2, 3], [4, 5, 6, 7]]),

# tensor([[8, 9, 10, 11]]))

torch.vsplit(input=my_tensor, indices=(-3, 3))

# (tensor([], size=(0, 4), dtype=torch.int64),

# tensor([[0, 1, 2, 3], [4, 5, 6, 7], [8, 9, 10, 11]]),

# tensor([], size=(0, 4), dtype=torch.int64))

my_tensor = torch.tensor([[0., 1., 2., 3.],

[4., 5., 6., 7.],

[8., 9., 10., 11.]])

torch.vsplit(input=my_tensor, sections=1)

# (tensor([[0., 1., 2., 3.],

# [4., 5., 6., 7.],

# [8., 9., 10., 11.]]),)

my_tensor = torch.tensor([[0.+0.j, 1.+0.j, 2.+0.j, 3.+0.j],

[4.+0.j, 5.+0.j, 6.+0.j, 7.+0.j],

[8.+0.j, 9.+0.j, 10.+0.j, 11.+0.j]])

torch.vsplit(input=my_tensor, sections=1)

# (tensor([[0.+0.j, 1.+0.j, 2.+0.j, 3.+0.j],

# [4.+0.j, 5.+0.j, 6.+0.j, 7.+0.j],

# [8.+0.j, 9.+0.j, 10.+0.j, 11.+0.j]]),)

my_tensor = torch.tensor([[True, False, True, False],

[False, True, False, True],

[True, False, True, False]])

torch.vsplit(input=my_tensor, sections=1)

# (tensor([[True, False, True, False],

# [False, True, False, True],

# [True, False, True, False]]),)

``` | hyperkai |

1,862,856 | annapolis taxi cabs | Annapolis Taxi Cabs Service drivers are the most professional independent business drivers in the... | 0 | 2024-05-23T13:02:47 | https://dev.to/alliedmaterial/annapolis-taxi-cabs-4pe8 | Annapolis Taxi Cabs Service drivers are the most professional independent business drivers in the industry. which provides businesses with a convenient method for managing their corporate transportation needs.

[](https://annapolistaxicabs.com/) | alliedmaterial | |

1,862,855 | makeovergames dotnet | Welcome to Makeover Games Net, your ultimate destination for free online makeover games! Dive into a... | 0 | 2024-05-23T13:01:37 | https://dev.to/makeovergames/makeovergames-dotnet-508h | Welcome to Makeover Games Net, your ultimate destination for free online makeover games! Dive into a world where creativity meets fashion, and every game is an opportunity to explore new styles, trends, and transformations. From casual chic to runway glamour, our extensive collection of makeover games will satisfy every fashion enthusiast's dream. Experience the joy of makeovers, where you're the stylist, and every character is your canvas

Website: https://makeovergames.net/

Phone: 986956312

Address: 64 To Huu, Nam Tu Liem, Ha Noi, Vietnam

[object Object][ scoopit ]

| makeovergames | |

1,845,240 | 5 steps to grow from junior dev to senior | I've been writing code for eleven years now, and slowly moved from intern, to junior, to senior, to... | 0 | 2024-05-23T13:01:16 | https://dev.to/sashkan/5-steps-to-grow-from-junior-dev-to-senior-46n8 | progression, webdev, management | I've been writing code for eleven years now, and slowly moved from intern, to junior, to senior, to lead developer, with the occasional job as a CTO.

Over the past two years, I've spent roughly 250 hours reviewing applications and technical tests, both on site and online, and helped wonderful teams build great products.

Here are the 5 key differences between a junior and a senior developer. And to illustrate each concept, I'll give examples based on real-life events, that lead either to great success, or tremendous failures.

## 1 - Ask, Challenge, Believe

Whatever you're working on, it is way easier to build a great product if you actually believe in it. And the only way to build conviction is to ask around. Why am I adding this feature ? Why are we making these changes ? Does my team know about this pattern ?

The key idea here is, you have to believe in the product you are building. You have to build conviction before laying down your first line of code. You cannot nurture any doubt as of why you are shipping this feature, who's going to use it, or how.

In 2020, I was working at a major company in video games. We were hosting an e-sport tournament, and our marketing team thought about developing a scoreboards client app, and plugging it into an eternal API to fetch scores in real time. I was in charge of the technical part, managing a team of 5 developers.

Unfortunately, the same marketing team did its own research for available APIs, and decided to sign a contract with a company before including any tech member in the process.

Which lead to a very goofy meeting, when I finally met my tech counterpart in said company. For 90 minutes, we listened to my manager, knowing that their API was not the one we needed. In fact, I knew half a dozen of APIs that could have done the job, for a fraction of the price.

I went back to my team, and we took a moment to decide how to tackle this issue. To which they answered the ONE question you have to fear when working on any product: "Why do we need this ?". They did not believe in this new scoreboard app. Neither did I: there was no conviction whatsoever.

So, I calmly asked for a quick talk with my manager, and asked him what we needed exactly. He gave my a short list of features, and I told him that it would take roughly 3 weeks to ship them all, and that I knew of an API that could get the job done for 12.99 a month. To which he responded that he already paid the API company in full, for the use of their API and the services of a consultant, for the next 4 months. We're talking 115k euros.

So, I did the only sensible thing to do. I went to my manager's manager, took the blame for the miscommunication, and asked him what to do next. We thanked the API company, we thanked the consultant, and based simply on the small list of feature my manager had given me, we managed to ship the whole project in three weeks. Suddenly, our tech team believed in the project, because we know WHY we were doing it, and HOW we could do it properly.

The key idea here is: a senior developer spends a lot of time building conviction, questioning patterns, and proactively bringing solutions through his knowledge and past experiences. Once your whole team believes in an app, or a feature, you'll know exactly how to code it.

## 2 - Know your tools

There are thousands of way to add authentication to your app. Or real-time. Or to build an endpoint that fetches data. Which means that there are thousands of way to do it poorly.

A senior dev knows the main patterns to add a feature, and therefore, he can code it quickly, shipping a code that is both clean, reliable, and well documented.

A great way to assess your "seniority" is to take a look at a few numbers:

- How many threads do I have on my merge requests ?

- How long does it take for my MR to be merged ?

- How often am I asked for advices/review ?

No matter how complex your app might be, there are not that many tools that we use on a daily basis. My current stack includes Nest.js, Next.js, tailwind and GraphQL, which can be overwhelming for a junior dev.

So, instead of simply reading the documentation, every time I start working on a new topic, or using a new tool, I document it for myself, using the [micro-thesis system](https://www.youtube.com/watch?v=XE_CGBlQ17o). I'm using Obsidian as my Documentation system, but there are a lot of viable tools out there.

Here is my process:

- I use my daily note to list my tasks for the day.

- If this task requires learning a new skill, I turn it into a link using the [[]] syntax.

- As I learn how to use this new tool, I write the best documentation I can. It has to be simple, include pieces of code, be reusable, and include links toward useful documentations.

This way, the next time I'll have to use said tool, and I have to do is search for this page in my Obsidian vault. not only will I have a head start on how to use it, it will also include links toward all of my notes that mention this page, making it easier to decide whether or not this is the right tool for the task at hand.

This also means that, whenever a tool is updated, I must update my documentation accordingly. This is pretty much how I keep myself updated on the latest versions of my stack.

Another important thing to keep in mind is the ratio between the time needed to ship a feature, and the price invested in using a third part application.

Let's say you are building an app that required both authentication and authorization. Maybe you have an repository you can fork, maybe you don't. If you don't, it will take you some time to add these features. In the mean time, libraries and freemium SAAS might help you setup your authentication in no time.

If I'm building a small app, I'll always go for free solutions for everything I don't feel like coding myself. Let's keep it simple: a freelance software engineer charges 1000 dollars a day. Adding a proper authentication might take 2/3 working days. in the mean time, adding and configuring Clerk/Kinde/Lucia takes roughly an hour, and these solutions are free.

The key idea here is: your tools are not limited to your coding skills. Just like in the old RTS games I used to play, consider both your time, money and skills as resources, and pick wisely which one to use, and how much you want to spend.

## 3 - Code Small.

Being a software engineer is awesome. It feels like having a bottomless box of Lego bricks at your disposal. You can use them to build whatever you want, from the smallest car to the biggest city.

But when it comes to professional projects, you have to understand that every line of code is a liability. Which means, the less you code, the more reliable your codebase is.

So, here are the 3 golden rules I try to live by:

- A merge request solves ONE issue.

- If I need to use a bullet list to explain the changes I made, and said bullet list includes more than 3 or 4 elements, I probably messed up.

- The ability to properly split up your code requires experience, and is as important as the actual performance of your code. Both on front end (think composition in React) and back-end (think modules/services/whatever architecture you are using to split your code into small manageable chinks)

if you do so, you'll manage to ship faster, build confidence within your team, and quickly setup a flow that'll allow you to deliver value on a daily basis.

On the other hand, you know that a pull request that affects countless files, is not properly documented, and includes loads of code smells will either be a nightmare to review, and will either stay open for a very long time, or will simply be closed. I usually go for the second option, by asking to split its content into smaller parts.

## 4 - Reviews are gold

Consider this: as a software engineer, 90% of your knowledge comes from experience. Actual work experience. And said experience comes from mistakes and failures, both yours and your teammates'

The lesson here is, your main goal as a senior developer is to provide and receive feedback. As we said, there are thousands of ways to add a feature, some are better than others, and you don't have enough of a lifetime to try them all. So, ask around and collaborate.

Here is the usual process I follow when reviewing a MR:

- Understand the underlying goal. "What are we trying to achieve here ?"

- "How would I code it ?"

From there, I'm more of a multi-commits kind of guy, so I simply review one commit at a time. If, at any point, I don't understand something (why do you use this lib ? Why did you name it that way ?), I add a comment.

Nowadays, I'm working as a lead developer, and my team has a wide range of seniority. Which means some MRs will receive a couple of comments (usually questions or interesting debates), and some others will receive 54 comments, from naming to logic to performances. In which case, I switch from review to peer programming.

If you are managing a team, note that the amount of comments on any given review is an actual metric for the quality of code.

5 - Own it

It usually takes me about two months to decide whether or not I'm confident on the level of expertise of a coworker. If my standards are met, I don't have a problem with telling them that they are responsible for 99% of the development process, from the tools they use to the patterns they pick. The last percent is the review, and the consequences. I want them to be owners of the code, but I'll be the custodian of their wellbeing.

At the end of they day, you have to embrace ownership on your decisions, both personal and professionals, which brings me to the following personal statements:

- I'm way more efficient at work when I do the things that matter to me first thing in the morning. And not a single one of them is work-related

- This includes drinking water, moisturising, flossing, workout, meditate.

- Keep track of everything that matters. The good thing is, there are not a lot of things that matter.

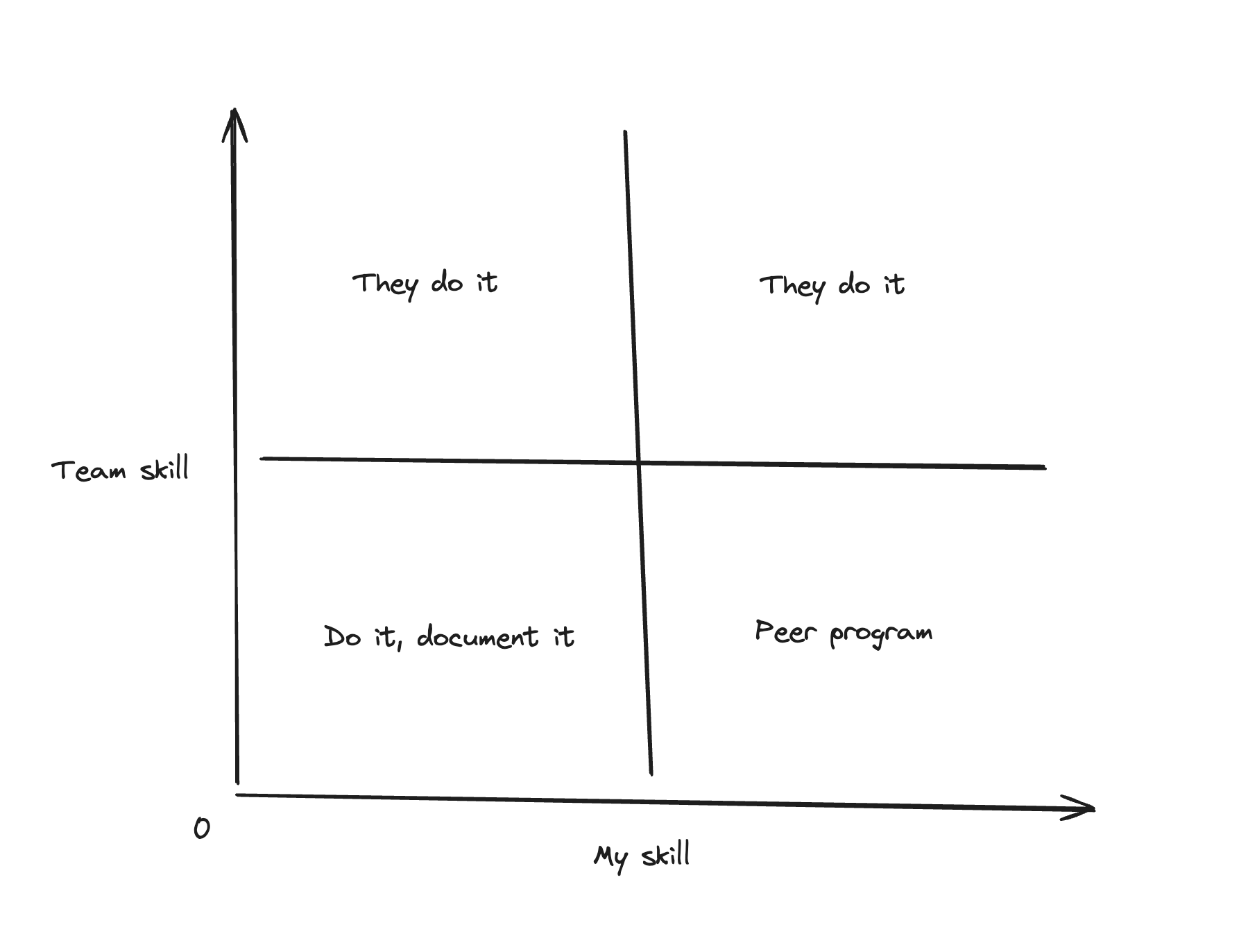

- Communicate to find your love/delegate balance, using the following chart:

As a senior developer, you are responsible of both the quality of the code you ship, AND the quality of the code you review. As a manager, you are responsible of any given failure that comes from either your code, or the code your team ships. Which means that the BEST way to ensure code quality is not only to make thoughtful review, but to create the best possible environnement so that everyone can perform well

## TL;DR

A senior dev would read the article. | sashkan |

1,862,849 | Bigg Boss 18 All Latest Episodes | "Bigg Boss" is an Indian reality television show that is modeled after the global "Big Brother"... | 0 | 2024-05-23T12:51:08 | https://dev.to/biggboss18watch/bigg-boss-18-all-latest-episodes-206o | "Bigg Boss" is an Indian reality television show that is modeled after the global "Big Brother" formula. It has grown to be one of the most well-liked and often viewed TV programs in the nation throughout time. Even with "Bigg Boss 18," the format's distinctive blend of drama, challenges, evictions, and celebrity participants manages to keep viewers interested.

[Bigg Boss 18 Full Episode](

https://biggboss18watch.live/)

"Bigg Boss 18" sticks to the tried-and-true formula of locking up a group of celebrities in a specially designed home, cutting them off from the outside world and preventing them from using any kind of communication gadget. Cameras are positioned throughout the house to monitor them around-the-clock. The competitors, also known as housemates, are required to live together for a number of weeks while taking part in a variety of activities and challenges that test | biggboss18watch | |

1,857,397 | Leveraging Docker in Your Web Development Workflow: A Comprehensive Guide | Introduction: In recent years, Docker has revolutionized the way developers build, ship, and run... | 0 | 2024-05-23T13:00:00 | https://dev.to/nitin-rachabathuni/leveraging-docker-in-your-web-development-workflow-a-comprehensive-guide-2e8d | Introduction:

In recent years, Docker has revolutionized the way developers build, ship, and run applications. Its lightweight containerization technology has become an indispensable tool in the toolkit of web developers worldwide. In this article, we'll explore how you can integrate Docker into your web development workflow to streamline processes, enhance collaboration, and ensure consistency across environments.

Why Docker?

Before diving into the practical aspects, let's briefly touch on why Docker has gained such widespread adoption in the web development community:

Isolation: Docker containers encapsulate your application and its dependencies, ensuring consistency across different environments, from development to production.

Portability: Containers are lightweight and portable, enabling seamless deployment across various platforms and cloud providers.

Scalability: Docker's container-based approach makes it easy to scale your applications horizontally by spinning up multiple instances of containers.

Reproducibility: With Docker, you can define your application's environment using code (Dockerfiles), making it easy to reproduce and share with your team.

Now, let's dive into how you can incorporate Docker into your web development workflow.

Setting Up Your Development Environment:

The first step is to set up Docker on your machine. Docker provides comprehensive documentation for installing Docker Desktop on different operating systems, including Windows, macOS, and Linux.

Once Docker is installed, you'll need to create a Dockerfile to define your application's environment. Here's a simple example for a Node.js application:

```

# Use the official Node.js image as a base

FROM node:14

# Set the working directory in the container

WORKDIR /app

# Copy package.json and package-lock.json to the working directory

COPY package*.json ./

# Install dependencies

RUN npm install

# Copy the rest of the application code

COPY . .

# Expose port 3000

EXPOSE 3000

# Command to run the application

CMD ["npm", "start"]

```

Setting Up Your Development Environment:

The first step is to set up Docker on your machine. Docker provides comprehensive documentation for installing Docker Desktop on different operating systems, including Windows, macOS, and Linux.

Once Docker is installed, you'll need to create a Dockerfile to define your application's environment. Here's a simple example for a Node.js application:

```

Dockerfile

# Use the official Node.js image as a base

FROM node:14

# Set the working directory in the container

WORKDIR /app

# Copy package.json and package-lock.json to the working directory

COPY package*.json ./

# Install dependencies

RUN npm install

# Copy the rest of the application code

COPY . .

# Expose port 3000

EXPOSE 3000

# Command to run the application

CMD ["npm", "start"]

In this Dockerfile:

```

We start with the official Node.js image from Docker Hub.

Set the working directory inside the container.

Copy package.json and package-lock.json to install dependencies.

Copy the rest of the application code.

Expose port 3000 (the default for most Node.js applications).

Define the command to start the application.

Building and Running Your Docker Container:

Once you have your Dockerfile ready, you can build your Docker image using the docker build command. Navigate to your project directory containing the Dockerfile and run:

```

docker build -t my-node-app .

```

This command builds a Docker image with the tag my-node-app.

To run your application as a Docker container, use the docker run command:

```

docker run -p 3000:3000 my-node-app

```

This command starts a container based on the my-node-app image and forwards port 3000 from the container to port 3000 on your local machine.

Integrating Docker Compose:

While running individual containers with docker run works well for simple applications, managing multiple containers can become cumbersome. Docker Compose is a tool for defining and running multi-container Docker applications.

Here's an example docker-compose.yml file for our Node.js application:

```

version: '3'

services:

web:

build: .

ports:

- "3000:3000"

```

With this docker-compose.yml file in your project directory, you can start your application and its dependencies (if any) with a single command:

```

docker-compose up

```

Conclusion:

Docker has become an indispensable tool for modern web development workflows. By containerizing your applications, you can ensure consistency, scalability, and reproducibility across different environments. In this article, we've covered the basics of using Docker in your web development workflow, from setting up your development environment to running multi-container applications with Docker Compose. Incorporating Docker into your workflow will not only streamline your development process but also enhance collaboration and deployment agility. So why wait? Start containerizing your applications with Docker today!

---

Thank you for reading my article! For more updates and useful information, feel free to connect with me on LinkedIn and follow me on Twitter. I look forward to engaging with more like-minded professionals and sharing valuable insights.

| nitin-rachabathuni | |

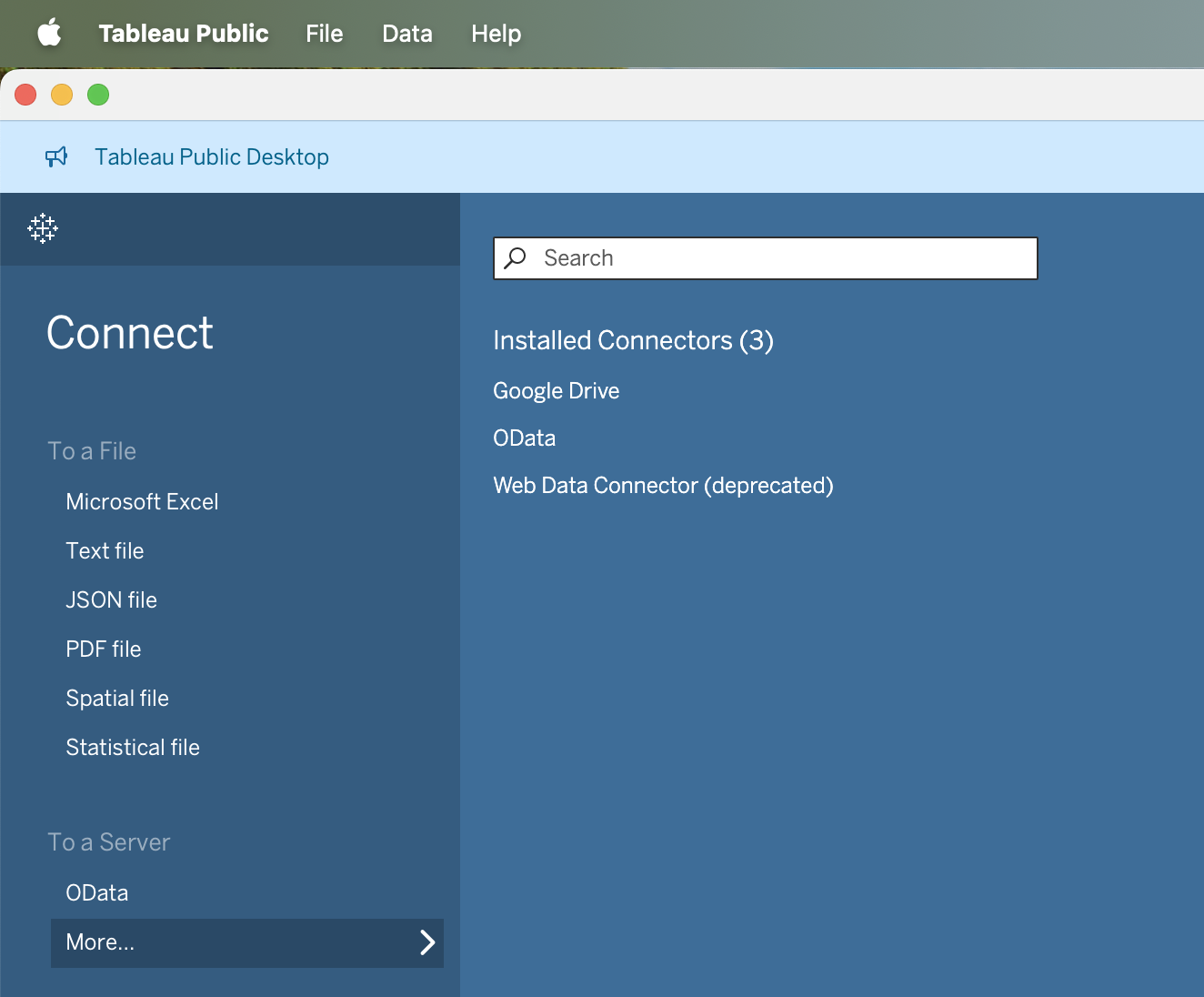

1,861,527 | Tableau Desktop Public: Try the Free Version for Data Visualization | Introduction Ahead of Tableau Conference 2024, Tableau has made a groundbreaking... | 0 | 2024-05-23T13:00:00 | https://dev.to/luca1iu/tableau-desktop-public-try-the-free-version-for-data-visualization-46b8 | tutorial, tableau, visualization, software | # Introduction

Ahead of Tableau Conference 2024, Tableau has made a groundbreaking announcement: the transition of Tableau Public Desktop into the **Tableau Desktop Public Edition**, now available for free. This new version is equipped with most of the powerful features previously found only in Tableau Desktop Professional, minus the ability to connect to enterprise databases.

# **Key Features**

- Connectivity: Users can link to multiple data sources, including Excel spreadsheets, CSV files, and cloud-based platforms.

- Ease of Use: With its drag-and-drop functionality, Tableau Public makes it incredibly easy to create sophisticated charts, graphs, and maps.

- Customization: A wide array of customization options are available, allowing users to tailor their visualizations to their specific needs.

- Collaboration: Tableau Public facilitates sharing and collaboration, enabling users to publish their work online and engage with a community of data enthusiasts.

# **Accessibility**

The Tableau Desktop Public Edition supports local file usage, automatic saving, and cloud sharing options for non-commercial use, such as Google Drive. This makes it a versatile tool for data enthusiasts, educators, and professionals looking to enhance their data visualization skills without financial barriers.

# **How to Get Started**

To experience the full potential of Tableau Desktop Public Edition, download it from the [**official Tableau website**](https://www.tableau.com/products/public/download). The installation is simple, and you'll be prompted to fill out some personal information for the initial download.

# **Conclusion**

Tableau's decision to offer a free, robust data visualization tool is a significant step towards democratizing data analysis. Tableau Desktop Public Edition empowers users to create, share, and learn from a vast array of data visualizations, making it an invaluable resource for anyone interested in data analytics. Don't miss this opportunity—download it today and start exploring the endless possibilities of data visualization!

---

## Explore more

{% embed https://dev.to/luca1iu %}

Thank you for taking the time to explore data-related insights with me. I appreciate your engagement.

{% cta https://www.linkedin.com/in/lucaliu-data %} 🚀 Connect with me on LinkedIn {% endcta %}

{% cta https://twitter.com/Luca_DataTeam %} 🎃 Connect with me on X {% endcta %} | luca1iu |

1,862,854 | Crafting the Peacock Rentals Website: Overcoming Challenges and Embracing the Future | In the ever-evolving landscape of online rental services, Peacock Rentals emerges as a beacon of... | 0 | 2024-05-23T12:57:31 | https://dev.to/blazetzkrieg66/crafting-the-peacock-rentals-website-overcoming-challenges-and-embracing-the-future-319o | javascript, python |

In the ever-evolving landscape of online rental services, Peacock Rentals emerges as a beacon of luxury and convenience. With a commitment to offering top-tier vehicles and accommodations across multiple locations, the journey of creating the Peacock Rentals website has been as exhilarating as a drive in one of our exotic cars. However, like any ambitious project, it has come with its fair share of challenges and triumphs.

**Choosing the Right Technology Stack:** One of the initial hurdles we faced was selecting the appropriate technology stack to bring our vision to life. After careful consideration, we decided to harness the power of C++, JavaScript, and Python. Each language offers unique strengths that contribute to the functionality and performance of our website. C++ provides robustness and efficiency, JavaScript enhances interactivity and user experience, while Python offers versatility and ease of development.

**Designing a Seamless User Experience:** Creating an intuitive and seamless user experience was paramount to us. From browsing luxury vehicles to booking vacation homes, we wanted every step of the rental process to be effortless and enjoyable. This required meticulous planning and iteration of our website's design and navigation. Through user feedback and rigorous testing, we refined our interface to ensure clarity, simplicity, and accessibility for all users.

**Integrating Complex Features:** [Peacock Rentals](https://peacock-rentals.com/) offers a range of services beyond traditional car rentals, including vacation homes and boats. Integrating these diverse offerings into a cohesive platform posed a significant technical challenge. We leveraged the flexibility of Python to develop robust backend systems capable of handling complex data and transactions seamlessly. Additionally, JavaScript played a vital role in creating dynamic and interactive elements, enhancing the overall user experience.

**Navigating Regulatory Compliance:** Operating in multiple locations means navigating a maze of regulatory requirements and legal considerations. From insurance regulations to local ordinances, ensuring compliance across jurisdictions presented a formidable challenge. However, by working closely with legal experts and industry professionals, we developed comprehensive strategies to address regulatory hurdles while maintaining the highest standards of integrity and legality.

**Future Goals and Aspirations:** As we look to the future, our aspirations for Peacock Rentals are as grand as the vehicles in our fleet. We envision expanding our services to new cities and destinations, offering customers unparalleled luxury and convenience wherever they travel. Furthermore, we are committed to harnessing emerging technologies such as artificial intelligence and augmented reality to enhance the rental experience further. Whether it's through personalized recommendations or immersive virtual tours, we strive to push the boundaries of innovation and redefine the standards of excellence in the rental industry.

In conclusion, the creation of the Peacock Rentals website has been a journey filled with challenges, triumphs, and endless possibilities. By leveraging the power of C++, JavaScript, and Python, we have built a platform that epitomizes luxury, convenience, and sophistication. As we embark on this exciting adventure, we remain steadfast in our commitment to delivering exceptional experiences and shaping the future of luxury rentals. | blazetzkrieg66 |

1,862,873 | Secure File Handling in Blazor: Implement JWT Authentication | TL;DR: Are you worried about unauthorized access to your Blazor apps’ file uploads? For enhanced... | 0 | 2024-05-30T04:34:08 | https://www.syncfusion.com/blogs/post/blazor-file-upload-jwt-authentication | blazor, development, syncfusion, web | ---

title: Secure File Handling in Blazor: Implement JWT Authentication

published: true

date: 2024-05-23 12:56:53 UTC

tags: blazor, development, syncfusion, web

canonical_url: https://www.syncfusion.com/blogs/post/blazor-file-upload-jwt-authentication

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/3jkq933xahpdwfe3dioa.png

---

**TL;DR:** Are you worried about unauthorized access to your Blazor apps’ file uploads? For enhanced security, learn to implement JWT authentication in the Syncfusion Blazor File Upload component. This guide covers adding the File Upload component, incorporating JWT headers for authentication, and handling file upload and removal on the server to restrict access to authenticated users only.

File uploads are a common requirement in modern web applications.

Syncfusion [Blazor File Upload](https://www.syncfusion.com/blazor-components/blazor-file-upload "Blazor File Upload") is a component for uploading files, images, documents, and audio and video files to a server. It works in both WebAssembly and server-side Blazor apps. It also supports a rich set of features, including multiple file selection, progress bars, auto-uploading, drag and drop, folder (directory) uploading, file validation, and more.

In this blog, we’ll see how to integrate the Syncfusion Blazor File Upload component with [JWT (JSON Web Token)](https://en.wikipedia.org/wiki/JSON_Web_Token "Wikipedia Link: JWT (JSON Web Token)") authentication in a Blazor app. This combination allows us to securely upload and remove files while ensuring that only authenticated users can perform the actions.

Let’s get started!

## Prerequisites

- [Visual Studio 2022](https://visualstudio.microsoft.com/vs/ "Visual Studio 2022")

- [.NET Core 6.0 and above](https://dotnet.microsoft.com/en-us/download/dotnet/6.0 "Download .NET Core")

## Step 1: Create a Blazor WebAssembly app

First, create a new Blazor WebAssembly app using Visual Studio. Then, install Syncfusion Blazor packages and configure the styles and script references using the [getting started documentation](https://blazor.syncfusion.com/documentation/getting-started/blazor-webassembly-visual-studio "Getting started with Blazor WebAssembly App in Visual Studio").

## Step 2: Add Blazor File Upload component

Now, integrate the Syncfusion Blazor File Upload component into your Blazor page. Refer to the following code example.

```xml

@using Syncfusion.Blazor.Inputs

<SfUploader ID="UploadFiles">

<UploaderAsyncSettings SaveUrl="api/FileAction/Save" RemoveUrl="api/FileAction/Remove">

</UploaderAsyncSettings>

</SfUploader>

```

## Step 3: Add JWT authentication for file upload action

The Blazor File Upload component allows you to add an additional header to bind the authentication token during **file** **upload**, which can then be received on the server side. To configure the header as a key-value pair, you can achieve this behavior by using the [FileSelected](https://help.syncfusion.com/cr/blazor/Syncfusion.Blazor.Inputs.UploaderEvents.html#Syncfusion_Blazor_Inputs_UploaderEvents_FileSelected "FileSelected property of Blazor File Upload component") and [BeforeRemove](https://help.syncfusion.com/cr/blazor/Syncfusion.Blazor.Inputs.UploaderEvents.html#Syncfusion_Blazor_Inputs_UploaderEvents_BeforeRemove "BeforeRemove property of Blazor File Upload component") events and their [CurrentRequest](https://help.syncfusion.com/cr/blazor/Syncfusion.Blazor.Inputs.SelectedEventArgs.html#Syncfusion_Blazor_Inputs_SelectedEventArgs_CurrentRequest "CurrentRequest property of Blazor File Upload component") arguments.

Refer to the following code example.

```xml

<SfUploader ID="UploadFiles">

<UploaderEvents FileSelected="onFileSelect" BeforeRemove="onRemove"></UploaderEvents>

<UploaderAsyncSettings SaveUrl="api/FileAction/Save" RemoveUrl="api/FileAction/Remove">

</UploaderAsyncSettings>

</SfUploader>

@code {

private void onFileSelect(SelectedEventArgs args)

{

args.CurrentRequest = new List<object> { new { Authorization = "test@123" } };

}

}

```

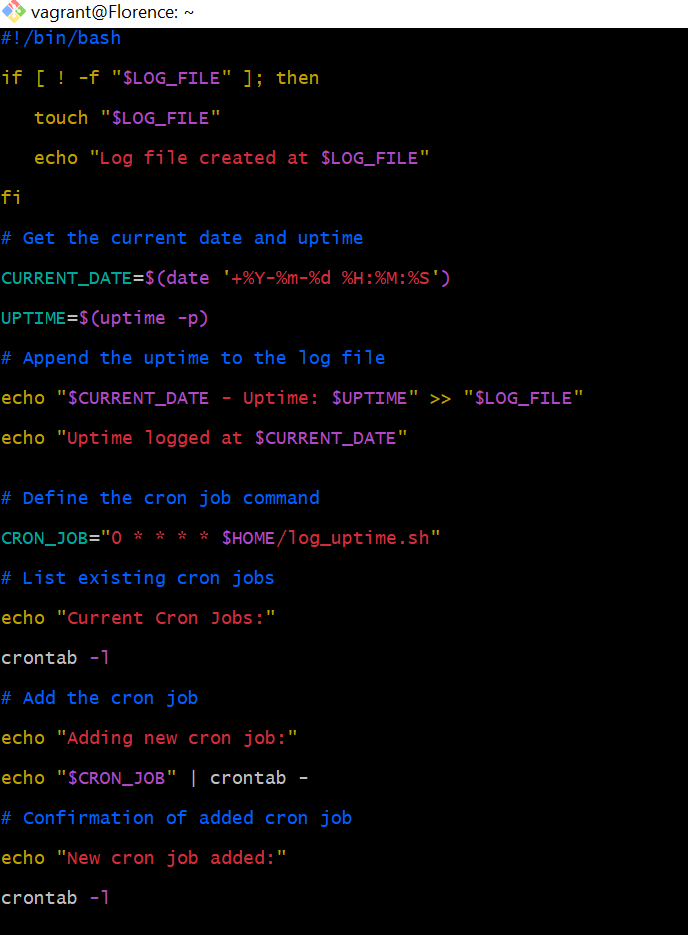

## Step 4: Implement server-side handling for upload action

We should implement the API endpoints to handle file uploads and removals on the server side. These endpoints will validate JWT tokens to ensure authentication.

In the server-side control code, you can retrieve the authentication token key from the server project’s response header for file upload action, as demonstrated in the following code example.

```csharp

[HttpPost("[action]")]

public async void Save(IList<IFormFile> UploadFiles)

{

//To get the authorization header to handle save file action on the server side.

var authorizationHeader = Request.Headers["Authorization"];

if (authorizationHeader.Count == 0 || authorizationHeader[0] != "test123")

{

Response.Clear();

Response.StatusCode = 401;

Response.HttpContext.Features.Get<IHttpResponseFeature>().ReasonPhrase = "Unauthorized";

return;

}

try

{

foreach (var file in UploadFiles)

{

if (UploadFiles != null)

{

var filename = ContentDispositionHeaderValue.Parse(file.ContentDisposition).FileName.Trim('"');

filename = hostingEnv.WebRootPath + $@"\{filename}";

if (!System.IO.File.Exists(filename))

{

using (FileStream fs = System.IO.File.Create(filename))

{

file.CopyTo(fs);

fs.Flush();

}

}

}

}

}

catch (Exception e)

{

Response.Clear();

Response.ContentType = "application/json; charset=utf-8";

Response.StatusCode = 204;

Response.HttpContext.Features.Get<IHttpResponseFeature>().ReasonPhrase = "File failed to upload";

Response.HttpContext.Features.Get<IHttpResponseFeature>().ReasonPhrase = e.Message;

}

}

```

## Step 5: Add JWT authentication for file removal action

In the same way, you can handle the **file** **removal** action by sending the JWT authentication header to the [BeforeRemove](https://help.syncfusion.com/cr/blazor/Syncfusion.Blazor.Inputs.UploaderEvents.html#Syncfusion_Blazor_Inputs_UploaderEvents_BeforeRemove "BeforeRemove property of Blazor File Upload component") event and its [CurrentRequest](https://help.syncfusion.com/cr/blazor/Syncfusion.Blazor.Inputs.SelectedEventArgs.html#Syncfusion_Blazor_Inputs_SelectedEventArgs_CurrentRequest "CurrentRequest property of Blazor File Upload component") argument to configure the header as a key-value pair.

Refer to the following code example.

```csharp

private void onRemove(BeforeRemoveEventArgs args)

{

args.CurrentRequest = new List<object> { new { Authorization = "test123" } };

}

```

## Step 6: Implementing server-side file removal action

Within the server-side control code, you can retrieve the authentication token key to perform file removal action from the response header, mirroring the procedure in the file-saving action controller. Verify the authentication before executing the file removal process.

Refer to the following code example.

```csharp

[HttpPost("[action]")]

public void Remove(IList<IFormFile> UploadFiles)

{

// To get the authorization header to handle the file removal action on the server side.

var authorizationHeader = Request.Headers["Authorization"];

if (authorizationHeader.Count == 0 || authorizationHeader[0] != "test123")

{

Response.Clear();

Response.StatusCode = 401;

Response.HttpContext.Features.Get<IHttpResponseFeature>().ReasonPhrase = "Unauthorized";

return;

}

try

{

var filename = hostingEnv.ContentRootPath + $@"\{UploadFiles[0].FileName}";

if (System.IO.File.Exists(filename))

{

System.IO.File.Delete(filename);

}

}

catch (Exception e)

{

Response.Clear();

Response.StatusCode = 200;

Response.HttpContext.Features.Get<IHttpResponseFeature>().ReasonPhrase = "File removed successfully";

Response.HttpContext.Features.Get<IHttpResponseFeature>().ReasonPhrase = e.Message;

}

}

```

Refer to the following output image.

<figure>

<img src="https://www.syncfusion.com/blogs/wp-content/uploads/2024/05/Implementing-JWT-authentication-in-the-Blazor-File-Upload-component-2.png" alt="Implementing JWT authentication in the Blazor File Upload component" style="width:100%">

<figcaption>Implementing JWT authentication in the Blazor File Upload component</figcaption>

</figure>

## GitHub reference

Also, check out the complete source code for [integrating JWT authentication in Blazor File Upload component on GitHub](https://github.com/SyncfusionExamples/blazor-file-upload-jwt-authentication "Integrating JWT authentication in Blazor File Upload component GitHub demo").

## Conclusion

Thanks for reading! This blog shows how to integrate JWT authentication in the Syncfusion [Blazor File Upload](https://www.syncfusion.com/blazor-components/blazor-file-upload "Blazor File Upload") component. This can enhance the security of our file upload functionality. Authenticated users can securely upload and manage files while unauthorized access is effectively prevented.

Experience our Blazor component firsthand by downloading a [free 30-day trial](https://www.syncfusion.com/account/manage-trials/downloads "Get free evaluation of Essential Studio products") or utilizing our [NuGet package](https://www.nuget.org/packages/Syncfusion.Blazor "Syncfusion.Blazor NuGet package"). Explore additional features through our Blazor [online examples](https://blazor.syncfusion.com/ "Blazor online demo") and [documentation](https://blazor.syncfusion.com/documentation/introduction/ "Welcome to Syncfusion Blazor Components").

If you have any questions, please don’t hesitate to let us know in the comments section given below. You can also contact us through our [support forum](https://www.syncfusion.com/forums/blazor-components "Syncfusion Support Forum"), [support portal](https://support.syncfusion.com/ "Syncfusion Support Portal"), and [feedback portal](https://www.syncfusion.com/feedback "Syncfusion Feedback Portal"). We are always eager to assist you!

## Related blogs

- [Easily Perform CRUD Actions in Blazor Pivot Table with SQL Database & Entity Framework](https://www.syncfusion.com/blogs/post/crud-blazor-pivot-table-sql-entity-framework "Blog: Easily Perform CRUD Actions in Blazor Pivot Table with SQL Database & Entity Framework")

- [Seamlessly Load Data from Different Data Sources into Blazor Charts](https://www.syncfusion.com/blogs/post/load-data-sources-in-blazor-charts "Blog: Seamlessly Load Data from Different Data Sources into Blazor Charts")

- [Advanced Query Building Techniques: Connecting Tables with Joins using Blazor Query Builder](https://www.syncfusion.com/blogs/post/advanced-query-building-blazor-connecting-tables-joins "Blog: Advanced Query Building Techniques: Connecting Tables with Joins using Blazor Query Builder")

- [Creating Custom Forms and Validation in a Blazor Hybrid App](https://www.syncfusion.com/blogs/post/blazor-hybrid-app-custom-forms-validation "Blog: Creating Custom Forms and Validation in a Blazor Hybrid App") | jollenmoyani |

1,862,847 | Let build a website | Describe a web page and let me design it for free | 0 | 2024-05-23T12:48:12 | https://dev.to/marvellousabio/let-build-a-website-37j3 | Describe a web page and let me design it for free | marvellousabio | |

1,862,853 | A beginner's guide to the Meta-Llama-3-8b-Instruct model by Meta on Replicate | meta-llama-3-8b-instruct | 0 | 2024-05-23T12:55:58 | https://aimodels.fyi/models/replicate/meta-llama-3-8b-instruct-meta | coding, ai, beginners, programming | *This is a simplified guide to an AI model called [Meta-Llama-3-8b-Instruct](https://aimodels.fyi/models/replicate/meta-llama-3-8b-instruct-meta) maintained by [Meta](https://aimodels.fyi/creators/replicate/meta). If you like these kinds of guides, you should subscribe to the [AImodels.fyi newsletter](https://aimodels.substack.com) or follow me on [Twitter](https://twitter.com/mikeyoung44).*

## Model overview

`meta-llama-3-8b-instruct` is an 8 billion parameter language model from [Meta](https://aimodels.fyi/creators/replicate/meta) that has been fine-tuned for chat completions. This model is part of the Llama 3 series, which also includes the base `meta-llama-3-8b` and the larger `meta-llama-3-70b` models. Compared to the base Llama 3 models, the `meta-llama-3-8b-instruct` version has been further trained on dialogue and instruction-following tasks, giving it enhanced capabilities for open-ended conversations and task completion.

## Model inputs and outputs

The `meta-llama-3-8b-instruct` model takes a prompt as input and generates text as output. The prompt can be a statement, question, or instruction that the model uses to continue the conversation or complete the task. The output is a completion of the prompt, generated based on the model's understanding of the context and its training on dialogue and instruction-following.

### Inputs

- **Prompt**: The starting text that the model should use to generate a completion.

### Outputs

- **Text completion**: The model's generated continuation or completion of the input prompt.

## Capabilities

The `meta-llama-3-8b-instruct` model is capable of engaging in open-ended dialogue, answering questions, and following instructions. It can be used for a variety of tasks such as language modeling, text generation, question answering, and task completion. The model's fine-tuning on dialogue and instruction-following allows it to generate more coherent and relevant responses compared to the base Llama 3 models.

## What can I use it for?

The `meta-llama-3-8b-instruct` model can be used for a wide range of applications, such as building chatbots, virtual assistants, and content generation tools. Its ability to understand and respond to instructions makes it well-suited for automating various tasks, from customer service to content creation. Developers and businesses can leverage this model to enhance their products and services, while researchers can use it to further explore the capabilities of large language models.

## Things to try

One interesting aspect of the `meta-llama-3-8b-instruct` model is its ability to follow complex instructions and generate coherent responses. You can try prompting the model with multi-step tasks or open-ended questions and observe how it handles the complexity. Additionally, you can experiment with different temperature and top-k/top-p settings to see how they affect the model's output in terms of creativity, coherence, and safety.

**If you enjoyed this guide, consider subscribing to the [AImodels.fyi newsletter](https://aimodels.substack.com) or following me on [Twitter](https://twitter.com/mikeyoung44) for more AI and machine learning content.** | mikeyoung44 |

1,862,173 | JavaScript Mini Password Generator | Intro: This is a small password generator made with JavaScript. It's a very simple program. All it... | 0 | 2024-05-23T12:55:41 | https://dev.to/petrinaropra/javascript-mini-password-generator-30b | beginners, javascript, programming, tutorial | **Intro:** This is a small password generator made with JavaScript. It's a very simple program. All it does is display a new password every time the user presses the generate password button. I added pictures to it for fun. You can add your pictures if you want to. By the way, this is for complete beginners as I have commented on every line of my JavaScript file. If I have made mistakes please let me know and feel free to copy and tweak this game to your liking.

**Watch Demo Here:** [https://youtube.com/shorts/A5BLMB5H1mI?feature=share](https://youtube.com/shorts/A5BLMB5H1mI?feature=share)

**Note:** I got my pictures from [https://www.canva.com/ai-image-generator/](https://www.canva.com/ai-image-generator/)

**Tip:** You can use random images at first until the program works fine and then add images that fit the description. Make sure the first word and second word of the images' name are similar to the first two words of the password for example: when the password fluffyapple95h shows up then the fluffyapple.jpg image will show. Make sure to put the full file path to your images.

**Tweak:** You can change the adjectives and nouns and put as many or as few as you like.

The first code block is HTML, the second is CSS and the third is JavaScript.

```html

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Password Picker</title>

<link rel="stylesheet" href="password_picker.css"> <!-- Link to the external CSS file -->

</head>

<body>

<div id="password-container">

<h2>Welcome to Password Picker!</h2>

<div id="password-output"></div>

<br>

<img id="password-image" src="" alt="Password Image"> <!-- Image element to display the image -->

<br><br>

<button id="generate-btn">Generate Password</button>

</div>

<script src="password_picker.js"></script> <!-- Link to the external JavaScript file -->

</body>

</html>

```

```css

body {

font-family: Arial, sans-serif;

background-color: #f0f0f0;

margin: 0;

padding: 0;

display: flex;

justify-content: center;

align-items: center;

height: 100vh;

}

#password-container {

background-color: #fff;

border-radius: 5px;

padding: 80px;

width: 20%;

box-shadow: 0 2px 5px rgba(0, 0, 0, 0.1);

text-align: center;

}

button {

padding: 10px 20px;

font-size: 16px;

background-color: #007bff;

color: #fff;

border: none;

border-radius: 3px;

cursor: pointer;

transition: background-color 0.3s;

}

button:hover {

background-color: #0056b3;

}

#password-output{

font-size: 20px;

background-attachment: orange;

text-align: center;

}

```

```javascript

//create an array of adjectives

const adjectives = ['sleepy', 'slow', 'smelly', 'wet', 'fat', 'red', 'orange', 'yellow', 'green', 'blue', 'purple', 'fluffy', 'white', 'proud', 'brave'];

//create an array of nouns

const nouns = ['apple', ]; //'dinosaur', 'ball', 'toaster', 'goat', 'dragon', 'hammer', 'duck', 'panda'];

//create a function called generatePassword

const generatePassword = () => {

//pick a random item in the adjectives array everytime the function is called

//Math.random gives a random floating point number, then multiplied with the length of the adjectives array

//to stay within the array then Math.floor rounds it up to the nearest integer.

const adjective = adjectives[Math.floor(Math.random() * adjectives.length)];

//same thing above here but for nouns array

const noun = nouns[Math.floor(Math.random() * nouns.length)];

//get a random number from 0 to 100

const number = Math.floor(Math.random() * 100);

//get the ASCII codes for printable characters

const specialChar = String.fromCharCode(33 + Math.floor(Math.random() * 94));

//join all the string values prevous variables together and put them in a variable called password

const password = adjective + noun + number + specialChar;

//display the message below in the html file

//For example: Your new password is: fluffyapple95h

document.getElementById('password-output').textContent = 'Your new password is: ' + password;

//get the image that matches the password and display it in the html file

document.getElementById('password-image').src = `C:/Users/petix/OneDrive/Desktop/my_javascript_games/Password_picker/${adjective}${noun}.png`; // Set the source of the image

};

//add an event to the button so that when it is pressed it will call the generatePassword function

document.getElementById('generate-btn').addEventListener('click', generatePassword);

//show an image when the program loads for the first time

document.getElementById('password-image').src = 'C:/Users/petix/OneDrive/Desktop/my_javascript_games/Password_picker/letters.png'

```

| petrinaropra |

1,862,739 | Integrating PWA in a Next.js App | Introduction Progressive Web Apps (PWAs) combine the best features of web and mobile... | 0 | 2024-05-23T12:52:59 | https://dev.to/wafa_bergaoui/integrating-pwa-in-a-nextjs-app-3a1 | pwa, nextjs, react, frontend | ## Introduction

Progressive Web Apps (PWAs) combine the best features of web and mobile applications, offering offline capabilities, push notifications, and the ability to install the app on a user’s device. For a detailed overview of PWAs, refer to my previous article [The Evolution and Necessity of Progressive Web Apps.](https://dev.to/wafa_bergaoui/the-evolution-and-necessity-of-progressive-web-apps-pwas-42pl)

This guide will take you through the steps to integrate a PWA into your Next.js application.

## Steps to Integrate PWA in Next.js

**1. Initial Setup**

Ensure you have a Next.js app set up. If not, create one using:

```

npx create-next-app@latest pwa-app

cd pwa-app

```

**2. Install Dependencies**

Install the **next-pwa** package to add PWA capabilities to your app:

```

npm install next-pwa

OR

yarn add next-pwa

```

**3. Configure next.config.js**

Modify `next.config.js` to use the **next-pwa** plugin:

```javascript

const withPWA = require('next-pwa')({

dest: 'public',

disable: process.env.NODE_ENV === 'development',

});

module.exports = withPWA({

// Your existing Next.js configuration

});

```

**4. Create a Manifest File**

- Add a `manifest.json` file in the public directory. This file contains metadata about your web application, including details such as the app's name, short name, theme color, and icons.

- You can create this file manually or use online tools like:

- [SimiCart Manifest Generator](https://www.simicart.com/manifest-generator.html/)

- [Manifest Generator](https://manifest-gen.netlify.app/)

- Example `manifest.json`:

```json

{

"name": "Progressive Web App",

"short_name": "PWA",

"start_url": "/",

"display": "standalone",

"background_color": "#ffffff",

"theme_color": "#000000",

"icons": [

{

"src": "/icons/icon-192x192.png",

"sizes": "192x192",

"type": "image/png"

},

{

"src": "/icons/icon-512x512.png",

"sizes": "512x512",

"type": "image/png"

}

]

}

```

- Save the `manifest.json` file in the `/public` directory of your project.

**5. Service Worker Setup**

The **next-pwa** plugin handles the creation and configuration of the service worker for you. Ensure the public directory is configured correctly for caching and offline capabilities.

**6. Link Manifest in `_document.js`**

Modify the `_document.js` file to include the manifest file and specify theme colors:

```javascript

import Document, { Html, Head, Main, NextScript } from 'next/document';

class MyDocument extends Document {

render() {

return (

<Html>

<Head>

<link rel="manifest" href="/manifest.json" />

<meta name="theme-color" content="#000000" />

</Head>

<body>