id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,862,720 | Hello #freshspartechnologies | Helllo Everyone #freshspartechnologies | 0 | 2024-05-23T10:47:59 | https://dev.to/freshspartechnologies/hello-freshspartechnologies-48la | Helllo Everyone #freshspartechnologies | freshspartechnologies | |

1,862,719 | Oracle EPM Cloud Release Notes - February 2024 Update | Oracle Enterprise Performance Management (EPM) Cloud is a cloud-based business suite that integrates... | 0 | 2024-05-23T10:47:16 | https://www.iuemag.com/m24/sf/oracle-epm-cloud-release-notes-february-2024-update | oracle, epm, cloud, release |

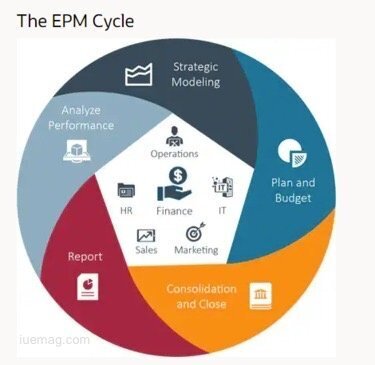

Oracle Enterprise Performance Management (EPM) Cloud is a cloud-based business suite that integrates financial planning, analytics, and reporting. It is a crucial tool for informed decision-making and monitoring processes like financial close.

Oracle EPM Cloud Release Notes are rolled out monthly along with updates to help businesses in growth and success.

These updates provide businesses with the latest developments, enhancements, and trends in EPM. It empowers them to elevate their performance to new heights. The February 2024 update brings several key enhancements across various modules.

Keeping up with these Oracle EPM Cloud Releases can be challenging, which is why we bring you this blog. We will highlight the enhancement across various modules and how to strategically test to ensure their systems aren't disrupted.

**Account Reconciliation Enhancements**

The Account Reconciliation feature now automatically makes reverse adjustments. When a user unmatches a match that contains an adjustment the Extract Status of the adjustment is set to close. This enhancement streamlines the reconciliation process and reduces manual effort.

Users can now export data to Microsoft Excel in .xlsx format, in addition to the existing .xls format. This enhancement provides users with more flexibility in how they export and analyze their reconciliation data.

The new "importAttributeValues" command allows users to import values into group attributes, transaction matching lists, and account reconciliation. This feature simplifies the data import process and improves efficiency.

**Enterprise Data Management Improvements**

A new Help Center tab "How Do I..." has been added to answer common questions about Enterprise Data Management. This feature provides users with quick access to information and resources, improving user experience and productivity.

**Financial Consolidation and Close Updates**

A new troubleshooting section has been added to the Oracle EPM Cloud Release. The new release can solve enterprise journal functional issues. This enhancement helps users troubleshoot and resolve issues more effectively, reducing downtime and improving system reliability.

Enterprise Profitability and Cost Management Enhancements

**Oracle EPM**

The module "Migrating from Profitability and Cost Management to Enterprise Profitability and Cost Management" has been improved. This will handle duplicate member names during migration. This enhancement ensures data integrity and accuracy during the migration process.

The initial page load time for Calculation Control and Calculation Analysis pages has been reduced. This improvement enhances user experience by reducing wait times and improving overall system performance.

**Planning Module Improvements**

A new module has been added to demonstrate how to allocate Planning and Budgeting costs using the Allocation System Templates in Calculation Manager. This tutorial helps users effectively manage their planning and budgeting processes.

Users can now import and export model data and metadata to and from a .csv file. This feature provides users with greater flexibility in managing their models. It allows them to make changes offline and then import the updated data back into the model.

**Role of Test Automation in Maximizing Efficiency and Reliability of EPM Release**

**Efficient Deployment**

Opkey accelerates EPM updates by analyzing testing procedures, suggesting improvements, and enabling users to write and execute tests three times faster. This speed ensures that businesses can quickly adapt to new features and functionalities, gaining a competitive edge in the market.

**Continuous Testing**

Continuous testing is a key component of effective EPM testing. It helps identify bugs early in the development lifecycle, resulting in faster bug fixes and reducing overall downtime risk by over 90%. This proactive approach ensures that the EPM system remains stable and reliable, even with frequent updates.

**Reduced Testing Expenditure**

Opkey lowers testing costs by detecting defects early in the development lifecycle. This aligns with the Systems Sciences Institute's estimate that post-release bug discovery is 4-5 times more expensive. By identifying and fixing issues early, businesses can significantly reduce the cost of maintaining and updating their EPM system.

Mitigating Operational Risks

**Oracle EPM**

Opkey's AI-enabled features mitigate financial, operational, and reputational risks by concentrating testing efforts on the most critical processes within Oracle Cloud EPM projects. It prevents potential worst-case scenarios, ensuring that businesses can operate smoothly without disruptions.

**Automated Test Report Generation**

Automation reduces manual effort and saves time without compromising testing quality. Automated documentation tools enhance traceability, efficiency, accuracy, and consistency in testing operations, ensuring that all tests are well-documented and easily accessible.

**Increased Test Coverage**

Manual testing can be challenging, especially with twelve EPM releases annually. Opkey's test automation platform enhances test coverage by addressing the majority of testing scenarios. This comprehensive approach ensures that all aspects of the EPM system are thoroughly tested, reducing the risk of undiscovered issues.

**Ensuring Regulatory Adherence**

Ensuring regulatory compliance is vital for organizations. Insufficient testing can jeopardize application data and result in compliance violations. Opkey's quality assurance system meticulously documents data setups, parameters, and testing steps, ensuring accuracy and traceability, and helping businesses maintain compliance.

**Opkey: Your Invaluable Partner for Oracle Cloud EPM Updates Testing**

Opkey is a codeless testing platform that specializes in Oracle Cloud EPM testing. It offers EPM-based scripts that facilitate organizations to automate regression testing for monthly Oracle EPM Cloud Release Notes. This helps organizations to shorten the testing completion time. Additionally, it also offers impact analysis for monthly releases and has an extensive library of 7,000-plus pre-built test libraries. | rohitbhandari102 |

1,862,718 | The Future is Now: Exploring the Impact of Generative AI on Telecom Services | The telecom industry has always been at the forefront of technological innovation, continuously... | 0 | 2024-05-23T10:43:51 | https://dev.to/maysanders/the-future-is-now-exploring-the-impact-of-generative-ai-on-telecom-services-223m | The telecom industry has always been at the forefront of technological innovation, continuously evolving to meet the increasing demands for connectivity and communication. In recent years, the advent of generative AI has begun to revolutionize telecom services, promising to enhance efficiency, customer experience, and overall service delivery. This blog delves into the transformative impact of generative AI on the telecom sector and what the future holds for this dynamic industry.

**Transforming Customer Service**

One of the most visible impacts of generative AI in telecom is the transformation of customer service. Traditional call centers, often plagued by long wait times and inconsistent service quality, are now being augmented with AI-powered chatbots and virtual assistants. These advanced systems can handle a wide range of customer queries in real-time, providing accurate and prompt responses.

Generative AI models can understand and process natural language with high precision, enabling them to engage in meaningful and contextually relevant conversations with customers. This not only improves customer satisfaction but also allows human agents to focus on more complex issues, thereby enhancing overall efficiency.

**Optimizing Network Management**

Network management is another critical area where generative AI is making significant strides. The complexity of managing telecom networks, with their vast and intricate infrastructure, demands constant monitoring and optimization. [AI software development](https://binmile.com/services/ai-development-company/) has led to the creation of advanced algorithms capable of predictive maintenance, identifying potential issues before they escalate into major problems.

Generative AI can analyze large volumes of network data, detect anomalies, and predict future trends, enabling telecom operators to proactively address potential disruptions. This results in improved network reliability and performance, which is crucial in an era where connectivity is essential for both personal and business activities.

**Enhancing Service Personalization**

In an increasingly competitive market, telecom companies are leveraging [Applied AI services](https://binmile.com/services/artificial-intelligence-as-a-service/) to offer personalized experiences to their customers. Generative AI can analyze user data to understand individual preferences and behaviors, allowing telecom providers to tailor their services and offers accordingly.

For instance, AI can suggest the most suitable data plans based on a user's usage patterns or recommend value-added services that align with their interests. This level of personalization not only enhances customer loyalty but also drives revenue growth by offering targeted and relevant services.

**Revolutionizing Content Generation**

Generative AI is also set to revolutionize content generation within the telecom sector. AI-driven tools can create high-quality content for marketing, customer engagement, and educational purposes. This includes generating compelling blog posts, social media content, and even video scripts that resonate with target audiences.

Moreover, generative AI can assist in developing interactive content, such as personalized video messages or dynamic newsletters, which can significantly improve customer engagement. By automating content creation, telecom companies can maintain a consistent and impactful presence across various digital platforms.

**The Road Ahead**

The integration of [generative AI in telecom](https://binmile.com/blog/generative-ai-in-telecom-industry/) is still in its early stages, but the potential is vast. As AI technology continues to advance, we can expect even more innovative applications that will further transform the industry. Telecom operators must stay ahead of the curve by investing in AI research and development, collaborating with AI experts, and continuously exploring new ways to enhance their services.

In conclusion, generative AI is poised to redefine the telecom landscape, offering unprecedented opportunities for improvement in customer service, network management, service personalization, and content generation. The future of telecom is undoubtedly intertwined with the advancements in AI, and those who embrace this technology will be well-positioned to lead the industry into the next era of digital transformation. | maysanders | |

1,862,717 | My Pen on CodePen | Check out this Pen I made! | 0 | 2024-05-23T10:43:49 | https://dev.to/etholite/my-pen-on-codepen-35c3 | codepen | Check out this Pen I made!

{% codepen https://codepen.io/xyaiujlg-the-animator/pen/LYoZQyK %} | etholite |

1,862,716 | Kitchen Colors as per Vastu: A Guide to Harmonious Living | Introduction Choosing the right colors for your kitchen is more than just an aesthetic... | 0 | 2024-05-23T10:43:14 | https://dev.to/mjvedicmeet/kitchen-colors-as-per-vastu-a-guide-to-harmonious-living-37h2 | ## **Introduction**

Choosing the right colors for your kitchen is more than just an aesthetic decision; it’s a step towards creating a harmonious and positive environment in your home. Vastu Shastra, the ancient Indian science of architecture, emphasizes the importance of colors in maintaining balance and flow of energy. In this guide, we’ll explore the best **[kitchen colors as per Vastu](https://vedicmeet.com/vastu/colours-for-kitchen/)** and how they can enhance your living space.

## **Understanding Vastu Shastra**

Vastu Shastra, rooted in ancient Indian traditions, is the science of architecture and spatial arrangement. It aims to harmonize the environment by balancing the five elements: earth, water, fire, air, and space. The principles of Vastu focus on creating a positive energy flow, ensuring peace, prosperity, and health.

## **Significance of the Kitchen in Vastu**

The kitchen is often considered the heart of the home, playing a crucial role in the family’s health and well-being. According to Vastu, the placement and color of the kitchen significantly impact the household’s overall harmony and energy.

## **General Guidelines for Kitchen Colors**

Vastu Shastra provides specific guidelines for kitchen colors based on its direction and placement within the home. The key is to choose colors that align with the natural elements associated with each direction.

## **Best Colors for East-facing Kitchens**

For east-facing kitchens, **[Vastu](https://vedicmeet.com/topics/vastu/)** recommends using colors that represent the sun and air elements. Light blue, white, and green are ideal choices. These colors promote clarity, creativity, and freshness, enhancing the kitchen's energy flow.

## **Best Colors for West-facing Kitchens**

West-facing kitchens benefit from colors that reflect the earth and metal elements. Shades of grey, beige, and yellow are perfect. These colors bring stability, warmth, and a grounding effect, creating a balanced environment.

## **Best Colors for North-facing Kitchens**

North-facing kitchens should incorporate colors that resonate with the water element. Blue, green, and black are recommended. These colors encourage calmness, prosperity, and a soothing atmosphere, ideal for cooking and dining spaces.

## **Best Colors for South-facing Kitchens**

For south-facing kitchens, colors representing the fire element are most beneficial. Red, orange, and pink can be used, but it’s essential to use them in moderation. These colors stimulate energy, passion, and appetite, making the kitchen a vibrant space.

## **Avoiding Inauspicious Colors**

Vastu advises against using certain colors in the kitchen, such as dark brown, black, and dark grey. These colors can create negative energy, leading to stress, health issues, and conflicts among family members.

## **Using Natural Elements and Materials**

Incorporating natural elements like wood, stone, and earthy tones can enhance the kitchen’s Vastu compliance. These materials bring a touch of nature indoors, promoting balance and tranquillity.

## **Balancing Modern Aesthetics with Vastu**

While adhering to Vastu principles, it’s possible to maintain a modern and stylish kitchen. Choose contemporary shades that align with Vastu guidelines and complement them with modern appliances and fixtures.

## **Common Mistakes in Kitchen Color Selection**

One common mistake is choosing colors solely based on personal preference without considering Vastu principles. Another is using excessive bright colors, which can disrupt the energy balance. It's crucial to strike a balance between Vastu and aesthetics.

## **Enhancing Positive Energy with Accessories**

Complement your kitchen’s color scheme with Vastu-approved accessories. Use green plants, colorful utensils, and decorative items that align with Vastu principles to enhance positive energy.

## **Conclusion**

Applying Vastu principles to your kitchen’s color scheme can transform it into a space filled with positive energy, harmony, and prosperity. By carefully selecting colors based on direction and elements, you can create a kitchen that’s not only aesthetically pleasing but also beneficial for your family’s well-being.

## **FAQs**

**What are the best colors for a small kitchen as per Vastu?**

Light colors such as white, light blue, and green are ideal for small kitchens. They make the space look larger and more open, enhancing positive energy.

**Can we use black in the kitchen according to Vastu?**

Black is generally not recommended in Vastu for kitchens as it can attract negative energy and create a heavy atmosphere.

**How does lighting affect kitchen Vastu?**

Proper lighting is crucial in Vastu. Natural light is best, but if that's not possible, use bright, warm lights to create a welcoming and energetic environment.

**Are there any Vastu remedies for an inauspicious kitchen color?**

Yes, you can use Vastu remedies like placing certain plants, crystals, or decorative items to counteract the effects of inauspicious colors.

**Can Vastu principles be applied to open kitchens?**

Absolutely. Vastu principles can be adapted for open kitchens by focusing on the placement and color coordination within the larger living space.

| mjvedicmeet | |

1,862,715 | How to Create a User using Tinker in Laravel | Tinker allows you to interact with your entire Laravel application on the command line, including the... | 0 | 2024-05-23T10:42:53 | https://larainfo.com/blogs/how-to-create-a-user-using-tinker-in-laravel/ | laravel, php, webdev | Tinker allows you to interact with your entire Laravel application on the command line, including the Eloquent ORM, jobs, events, and more. To enter the Tinker environment, run the tinker Artisan command.

```php

php artisan tinker or php artisan ti

```

By using the tinker command line, we can create a new user or insert new data into the database.

Now run `php artisan ti`

```php

Psy Shell v0.10.6 (PHP 7.4.16 — cli) by Justin Hileman

>>> User::create(["name"=> "larainfo","email"=>"larainfo@gmail.com","password"=>bcrypt("123456")]);

=> App\Models\User {#4290

name: "larainfo",

email: "larainfo@gmail.com",

updated_at: "2021-04-22 08:23:28",

created_at: "2021-04-22 08:23:28",

id: 1,

}

>>>

```

Or you can explore different approaches to storing new data. Let's see.

`php artisan ti`

```php

>>> $user = new App\Models\User;

=> App\Models\User {#4301}

>>> $user->name = "larainfo";

=> "larainfo"

>>> $user->email= "larainfo@gmail.com";

=> "larainfo@gmail.com"

>>> $user->password=bcrypt('123456');

=> "$2y$10$uSdO/eBCQPNK3eVjXlSh.ulBVamZOhc.Hu5bp8Xzzb.uWyS3MSwRC"

>>> $user->save();

=> true

``` | saim_ansari |

1,862,713 | unsupported! | Check out this Pen I made! | 0 | 2024-05-23T10:41:50 | https://dev.to/itzad_20/unsupported-508l | codepen | Check out this Pen I made!

{% codepen https://codepen.io/ITzAD20/pen/rNgLJpq %} | itzad_20 |

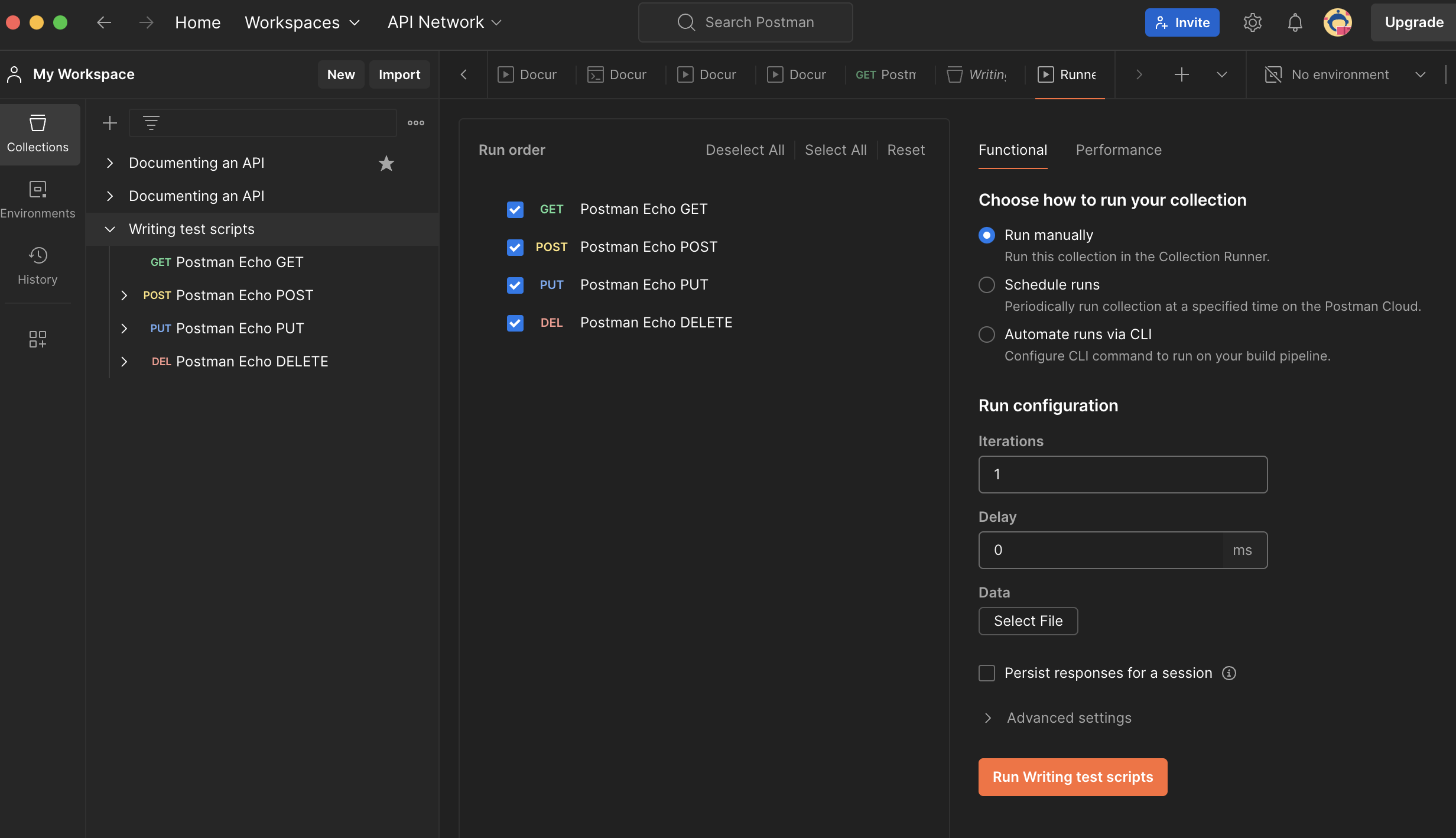

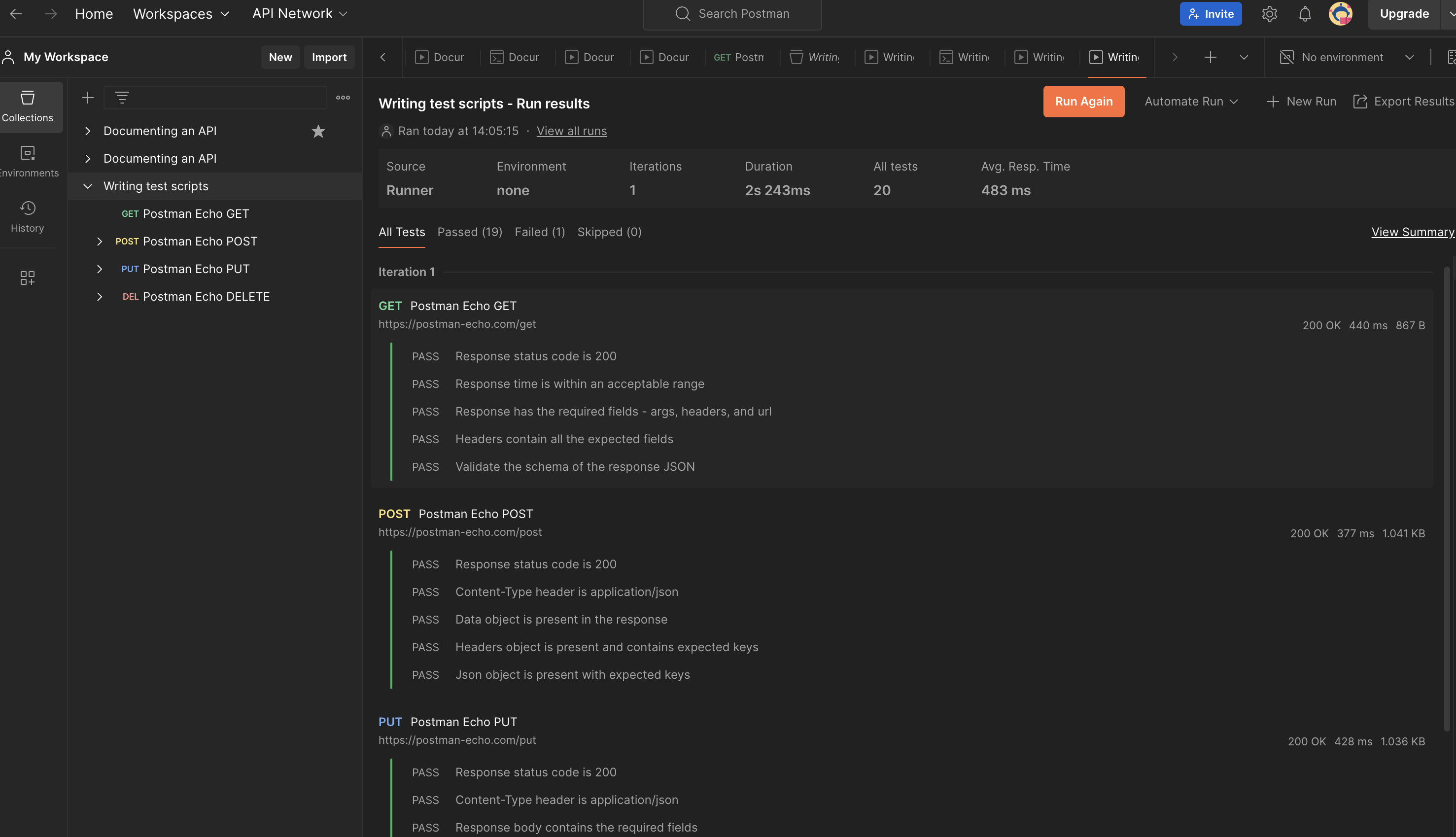

1,862,712 | RateMyReads API | Introduction RateMyReads, a platform dedicated to book enthusiasts, relies on a robust API... | 0 | 2024-05-23T10:41:46 | https://dev.to/nimo08/ratemyreads-api-b8p | python, api, django, postman |

## Introduction

RateMyReads, a platform dedicated to book enthusiasts, relies on a robust API to facilitate seamless user interaction and efficient data management. This article delves into the development journey of RateMyReads API, outlining the technologies utilized, challenges encountered, and future directions.

## Project Description

The RateMyReads API project was a solo endeavor, with the goal of crafting an intuitive platform for users to manage their book preferences and engage with a vibrant community of readers. Spearheaded entirely by me, the API development journey spanned from inception to completion, ensuring every aspect of the platform's functionality was meticulously crafted to deliver an exceptional user experience.

## Technologies Used

- Python: Chosen for its readability and extensive libraries, Python served as the primary programming language.

- Django: Leveraged as a high-level Python web framework, Django accelerated development with its built-in features and adherence to the DRY principle.

- Django Rest Framework (DRF): Complemented Django by simplifying API development, providing tools for serialization, authentication, and authorization.

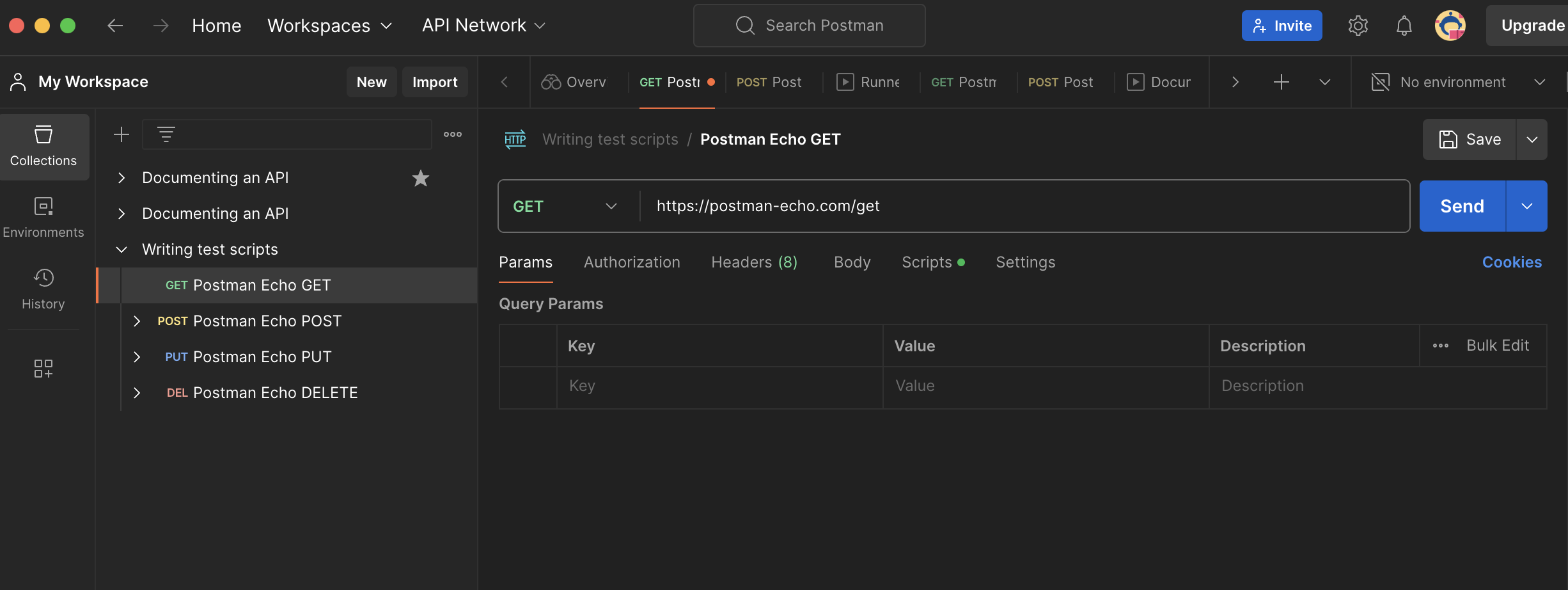

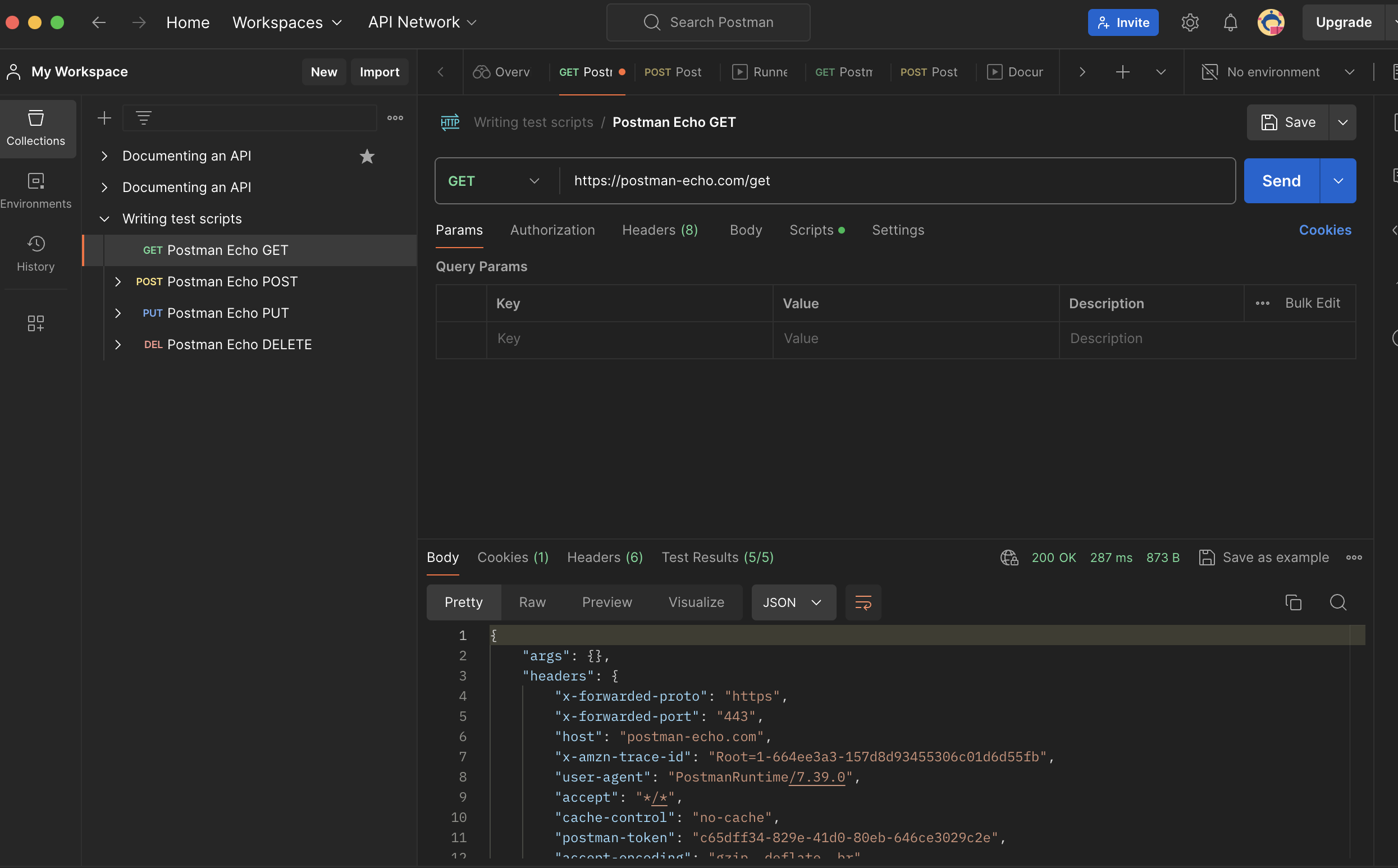

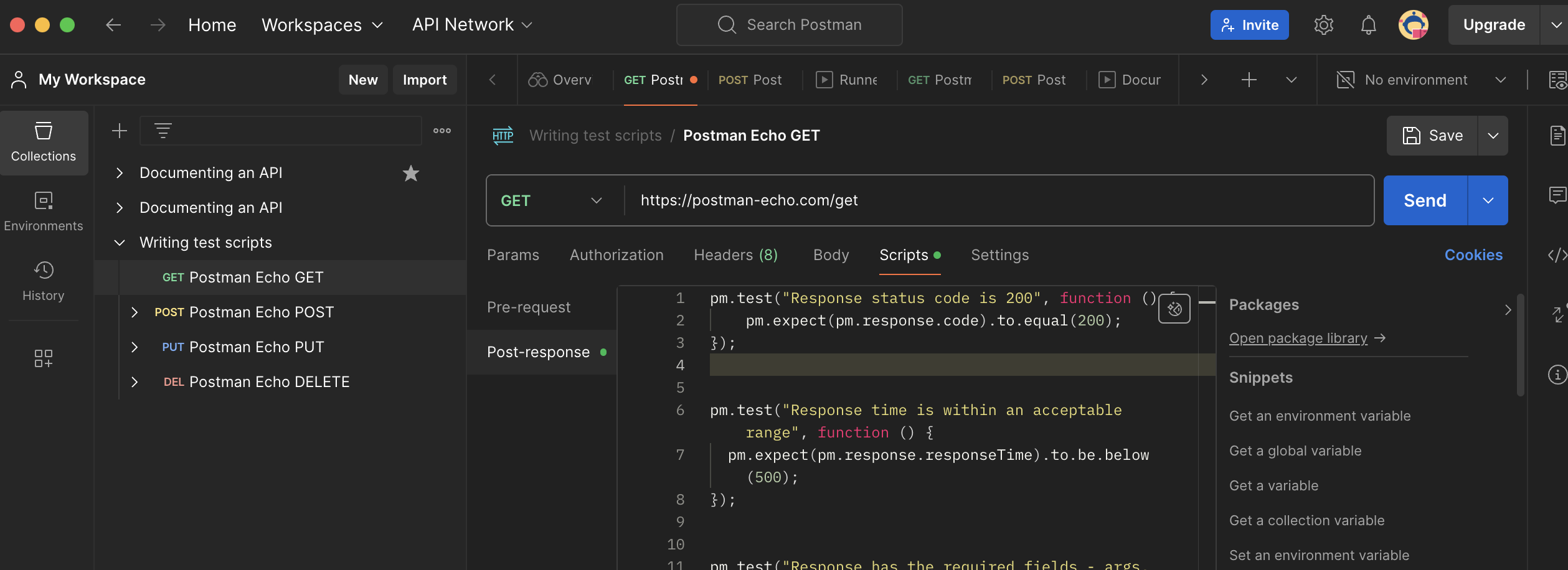

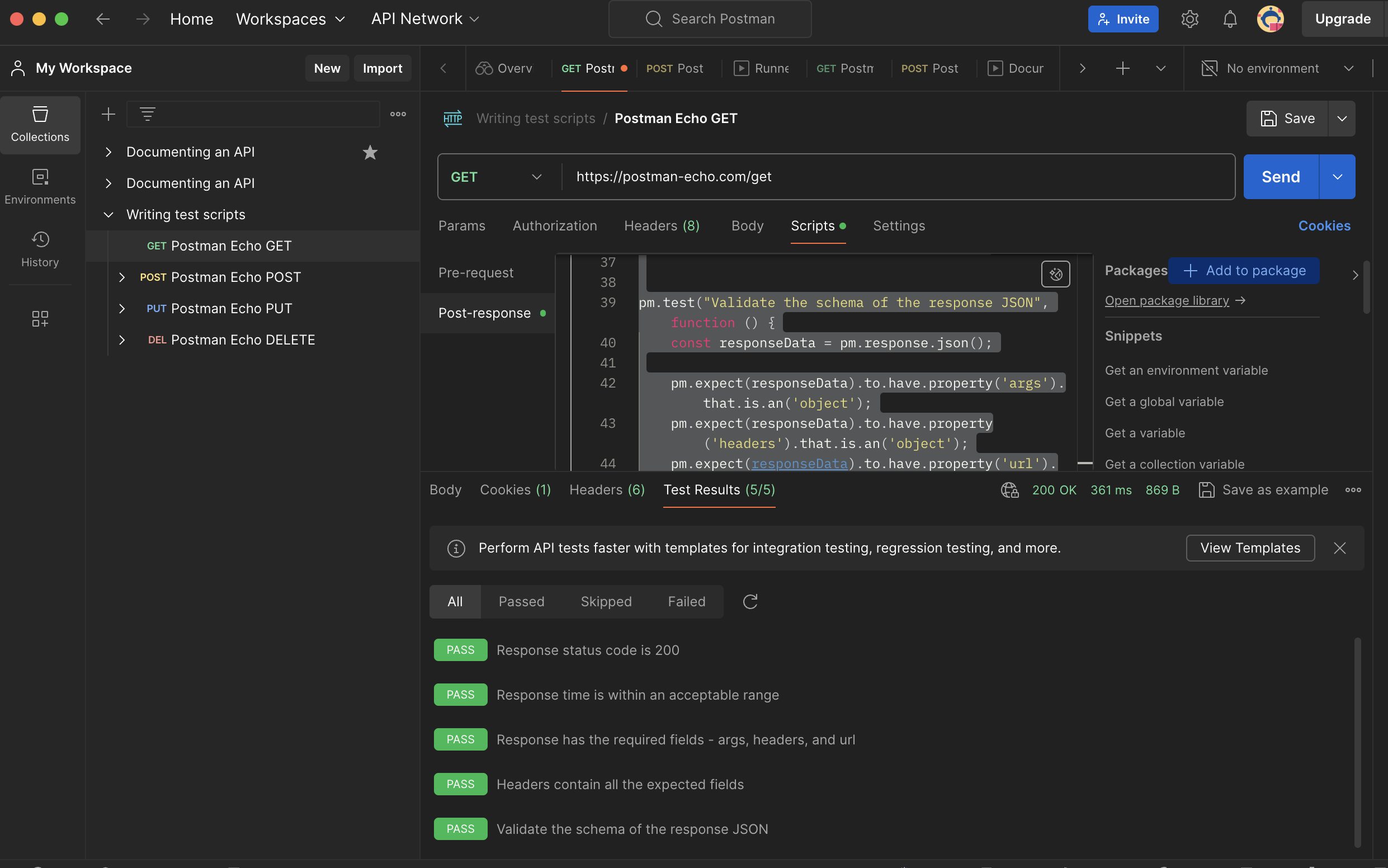

- Postman: Utilized as a comprehensive testing and debugging tool to ensure the reliability and functionality of RateMyReads API.

- Docker: Employed for containerization, simplifying dependency management and deployment processes.

## Developments

Throughout the development process, several milestones were achieved:

- RESTful API Implementation: A robust API architecture was developed, seamlessly connecting user, book, and comment models for efficient data management.

- User Authentication and Authorization: Robust authentication mechanisms were implemented to ensure secure access to the platform, prioritizing user data privacy.

- Email Notification Integration: An email backend was integrated to enhance user communication, providing confirmation emails upon sign-up for a smooth registration process.

## Challenges & Areas of Improvement

While significant progress was made, challenges were encountered:

- Deployment Issues: The learning curve with deployment frameworks posed challenges during deployment, highlighting the need for optimization.

- Areas for Improvement: Future iterations will focus on optimizing the deployment process, enhancing API functionality and security, and improving user experience with additional features.

## Key Findings

- Thorough Deployment Research: Emphasized the importance of mastering deployment procedures for smoother project deployment, prompting further exploration into deployment strategies.

- Continuous Technology Learning: Highlighted the value of staying updated with new technologies for enhanced development processes, inspiring ongoing learning and adaptation.

## Next Steps

- Optimize Deployment Process: Research and implement deployment strategies to streamline the deployment process and mitigate deployment-related challenges.

- Enhance API Functionality and Security: Continuously improve API functionality and security measures to ensure a seamless and secure user experience.

- Incorporate Additional Features: Gather user feedback to identify and implement additional features that enhance the overall user experience and engagement on the platform.

## Conclusion

The development of RateMyReads API has been an enriching experience, marked by significant achievements and valuable learnings. Despite challenges, I remain committed to refining and expanding the platform to meet user needs effectively and enhance overall functionality and usability.

## Project Repository

https://github.com/Nimo08/RateMyReads-API

| nimo08 |

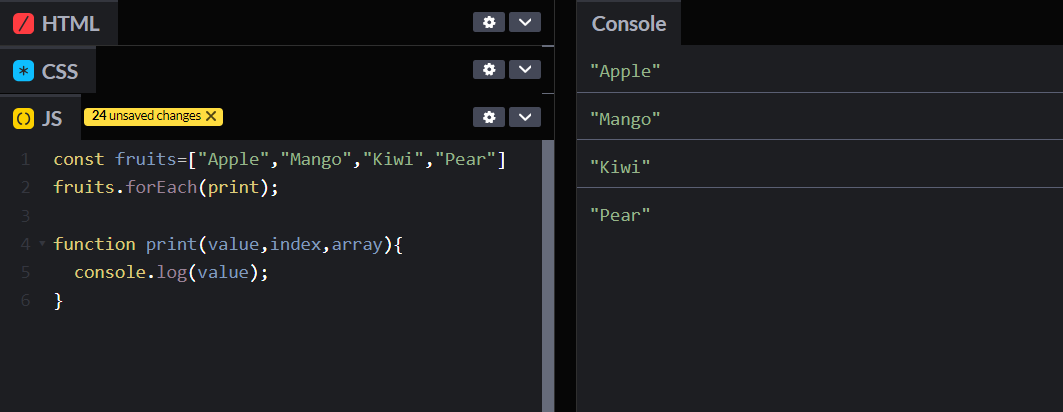

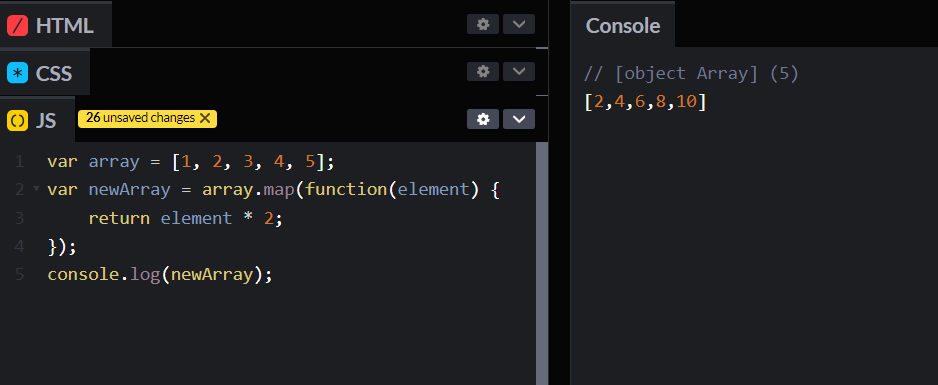

1,862,711 | Recursion in JavaScript | Today, we'll talk about recursion in JavaScript, a powerful tool in your coding arsenal. You'll learn... | 0 | 2024-05-23T10:38:27 | https://dev.to/shehzadhussain/recursion-in-javascript-2kh3 | webdev, javascript, programming, beginners | Today, we'll talk about recursion in JavaScript, a powerful tool in your coding arsenal. You'll learn how to implement it through clear, practical examples.

Understanding recursion is crucial for JavaScript developers. It simplifies complex problems, improves readability, and is often a preferred solution in interviews and real-world coding challenges.

Many developers struggle with recursion due to its abstract concept and potential for errors like infinite loops. But, with the right approach, it can be learned effectively

## **Recursion is not just a technique but a new way of thinking.

Recursion involves a function calling itself until it reaches a base condition. This approach is beneficial for tasks like traversing trees or solving algorithms that require backtracking, such as searching or sorting.

Here are 4 takeaways:

- Recursion simplifies complex problems by breaking them into smaller, manageable parts.

- It's essential to define a clear base case to prevent infinite loops.

- Recursion can lead to more readable and elegant code than iterative solutions.

- Understanding stack overflow and how JavaScript manages memory in recursive calls is crucial.

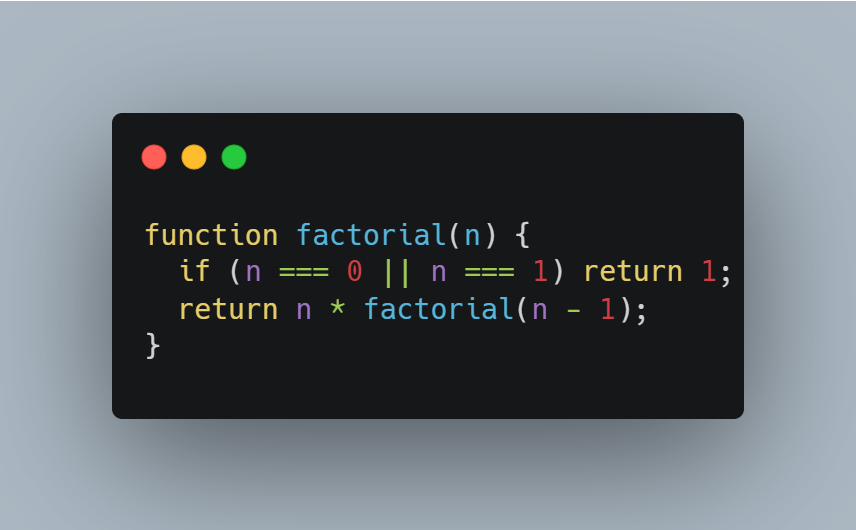

## **Code Example: Factorial Calculation

**

**

## **Common Pitfalls

**

1. Stack Overflow: In JavaScript, each recursive call adds a frame to the call stack. If your recursion is too deep (i.e., too many calls without reaching the base case), you can exhaust the stack memory, leading to a "stack overflow" error. This often happens if the base case is not correctly defined or the recursion is not converging towards it.

2. Lack of Base Case: The base case is what stops the recursion. Without a proper base case, your function will keep calling itself indefinitely, leading to infinite recursion and, eventually, a stack overflow error.

3. Large Memory Consumption: Each recursive call uses memory to maintain its execution context. Inefficient recursion, especially with many levels, can consume significant memory, leading to performance issues.

## **Advanced Techniques: Tail Call Optimization (TCO)

**

In ES6 (ECMAScript 2015), JavaScript introduced a feature called "tail call optimization." This optimization allows certain recursive calls (tail calls) to be executed without adding a new stack frame. For a recursive call to be a tail call, it must be the last operation in the function. This optimization significantly reduces the risk of stack overflow and improves performance for deep recursive calls.

Here are 4 takeaways:

- Tail Call Optimization is a feature that makes recursive functions more efficient.

- Normally, whenever a function calls itself, it adds a new layer to the "call stack" (a pile of ongoing function calls). If this stack gets too big, it can cause problems like a "stack overflow."

- In TCO, if a function's last action is calling itself (a "tail call"), JavaScript can optimize it. Instead of adding a new layer to the stack, it reuses the current one. This means you can have more recursive calls without the risk of stack overflow.

- However, for TCO to work, the recursive call must be the last thing the function does.

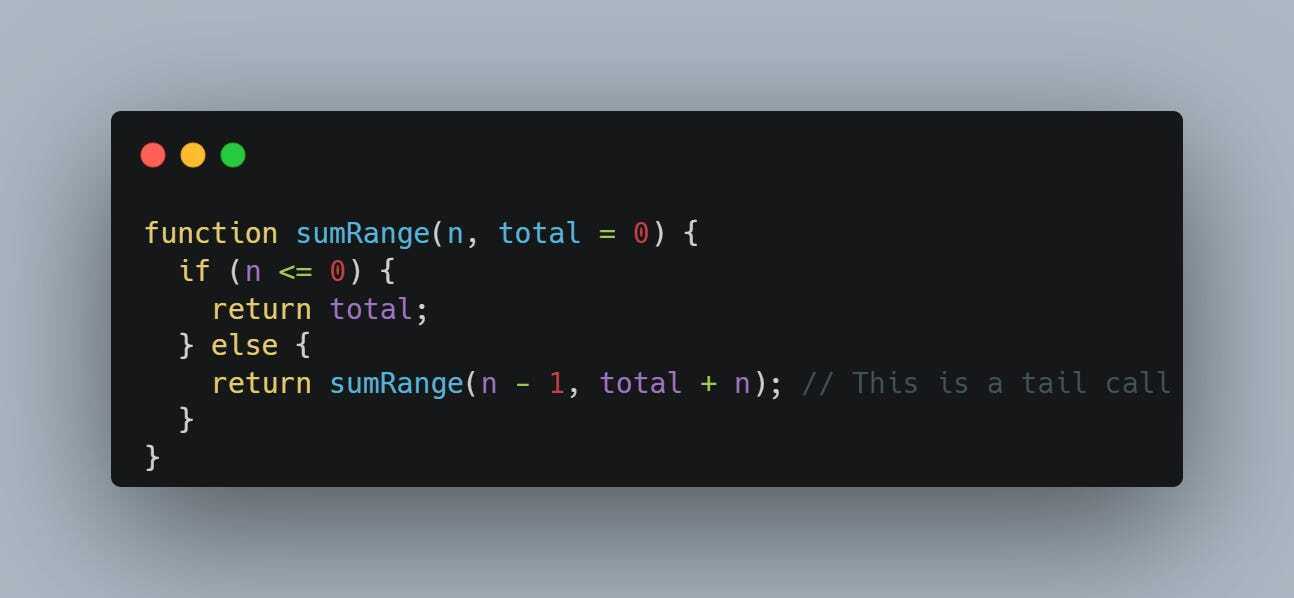

Here's a more straightforward example of a recursive function using TCO:

In this sumRange function:

- We're calculating the sum of numbers from 1 to n.

- The function keeps calling itself, but its last operation is the recursive call (return sumRange(n - 1, total + n)).

- Because this call is the last action, it's a tail call and can be optimized by JavaScript, allowing it to run more efficiently, especially for large values of n.

In summary, TCO in JavaScript allows you to write recursive functions that are more efficient and less likely to run into problems with large numbers of recursive calls.

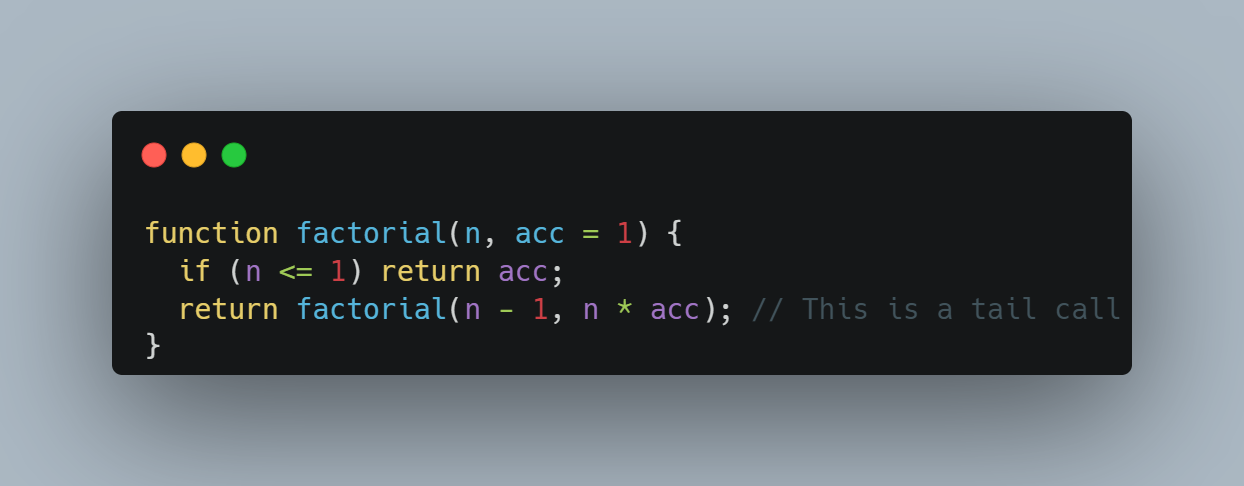

Here's an example to illustrate TCO with the factorial function:

Understanding these pitfalls and techniques can significantly enhance your proficiency in writing efficient and safe recursive functions in JavaScript.

Conclusion

Recursion in JavaScript is a valuable skill that, once mastered, opens up new possibilities in coding. It encourages cleaner, more intuitive solutions and is a favorite in algorithmic challenges. Practising and understanding the theory behind recursion are key to mastering it.

I hope you enjoyed the article.

If you have any questions, feel free to reply to the email or leave a comment in the post.

See you in the next post.

Have a great day!

| shehzadhussain |

1,862,710 | Technology Events and Their Role in Promoting Digital Transformation | In today's fast-paced world, going digital isn't just a trend – it's essential for staying ahead.... | 0 | 2024-05-23T10:38:21 | https://dev.to/ajaytie/technology-events-and-their-role-in-promoting-digital-transformation-13hb | technology, digitaltransformation, techtalks, eventdriven | In today's fast-paced world, going digital isn't just a trend – it's essential for staying ahead. Technology events are like powerhouses of innovation, bringing together minds from all over to share ideas and drive change. This piece dives into how these events are shaping the digital landscape, sparking inspiration, and helping organizations navigate the journey towards a tech-driven future.

## Understanding Digital Transformation

Digital transformation is like a big makeover for businesses, changing the way they work using digital tools. It covers a range of technologies, like cloud computing, AI, IoT, and data analytics, all aimed at making things faster, more flexible, and more innovative.

## The Transformative Power of Technology Events

**1. Knowledge Sharing and Education**

Technology events serve as vibrant forums for thought leadership, offering attendees access to cutting-edge insights, trends, and best practices in digital transformation. Through keynote speeches, panel discussions, and interactive workshops, industry luminaries and experts share their experiences, successes, and lessons learned. These sessions empower attendees with the knowledge and inspiration needed to navigate the complexities of digital transformation.

**2. Showcasing Innovation**

One of the hallmarks of [technology events](https://www.expresscomputer.in/events/) is the opportunity for companies to showcase their latest innovations and solutions. From groundbreaking startups to industry titans, exhibitors unveil cutting-edge technologies and applications that are reshaping industries. Attendees gain firsthand exposure to innovative products and services, sparking ideas and igniting the imagination for what's possible in their own digital transformation journey.

**3. Networking and Collaboration**

Networking is the beating heart of tech events, where collaboration and idea-sharing thrive. Attendees mingle with peers, industry experts, and potential partners, creating a rich environment for digital innovation. These connections often blossom into partnerships and alliances, fueling the drive towards digital transformation.

**4. Inspiration and Vision**

Tech events go beyond displaying gadgets; they're about molding tomorrow and igniting change. Leaders and innovators share their visions, sparking creativity and pushing boundaries. Their ideas inspire attendees to think big, take risks, and embrace innovation for a brighter future.

## Real-World Examples of Digital Transformation Enabled by Technology Events

**1. Company A: Embracing Cloud Computing**

After attending a cloud computing conference, Company A, a traditional manufacturing firm, embarked on a digital transformation journey by migrating its legacy systems to the cloud. By leveraging cloud-based infrastructure and software-as-a-service (SaaS) solutions, Company A achieved greater agility, scalability, and cost-efficiency, transforming its operations and driving business growth.

**2. Company B: Harnessing AI and Analytics**

Inspired by insights gained at an AI and analytics summit, Company B, a leading retailer, implemented advanced analytics and AI-powered solutions to personalize customer experiences and optimize supply chain operations. By leveraging predictive analytics and machine learning algorithms, Company B improved customer engagement, reduced costs, and gained a competitive edge in the market.

## Overcoming Challenges and Driving Success

While technology events offer tremendous opportunities for digital transformation, organizations must navigate various challenges along the way:

- **Resistance to Change:** Overcoming resistance to change and fostering a culture of innovation is essential for successful digital transformation.

- **Skills Gap:** Addressing the skills gap and ensuring that employees are equipped with the necessary digital skills and competencies is critical.

- **Integration Complexity:** Managing the complexity of integrating new technologies and systems into existing infrastructure requires careful planning and execution.

To drive success in their digital transformation endeavors, organizations must:

- **Set Clear Goals:** Define clear and measurable goals for digital transformation initiatives, aligning them with overall business objectives.

- **Invest in Talent:** Invest in upskilling and reskilling employees to ensure they have the necessary digital capabilities to drive transformation.

- **Collaborate and Iterate:** Foster a culture of collaboration, experimentation, and continuous improvement, embracing agility and adaptability as core principles.

**Also Read: [What Programming Language Should a Startup Choose?](https://dev.to/ajaytie/what-programming-language-should-a-startup-choose-gp6)**

**Conclusion**

Technology events are like fuel for the fire of digital transformation, spurring organizations to innovate, collaborate and adapt to change. They offer a space for sharing knowledge, showcasing new ideas, and forming partnerships, all of which are essential for navigating the journey towards a more agile and competitive future. As businesses embrace the challenges and opportunities of digital transformation, technology events will remain vital hubs of inspiration and progress. | ajaytie |

1,813,455 | Rendering the TRUE Argo CD diff on your PRs | TL;DR — The safest way to make changes to your Helm Charts and Kustomize Overlays is to let Argo CD... | 0 | 2024-05-23T10:33:41 | https://dev.to/dag-andersen/rendering-the-true-argo-cd-diff-on-your-prs-10bk | kubernetes, devops, git, argocd | > TL;DR — The safest way to make changes to your Helm Charts and Kustomize Overlays is to let Argo CD render them for you. This can be done by spinning up an ephemeral cluster in your automated pipelines. This article presents a tool ([`argocd-diff-preview`](https://github.com/dag-andersen/argocd-diff-preview)) for rendering manifest changes on pull requests. The rendered output is similar to what Atlantis creates for Terraform.

## Problem

In the Kubernetes world, we often use templating tools like Kustomize and Helm to generate our Kubernetes manifests. These tools make maintaining and streamlining configuration easier across applications and environments. However, they also make it harder to visualize the application's actual configuration in the cluster.

Mentally parsing Helm templates and Kustomize patches is hard without rendering the actual output. Thus, making mistakes while modifying an application's configuration is relatively easy.

In the field of GitOps and infrastructure as code, all configurations are checked into Git and modified through PRs. The code changes in the PR are reviewed by a human, who needs to understand the changes made to the configuration. This is hard when the configuration is generated through templating tools like Kustomize and Helm.

If you are interested in a more detailed walkthrough for this problem, I recommend watching [_Nicholas Morey_](https://www.linkedin.com/in/nicholas-morey/)'s talk at KubeCon 2024: ["The Rendered Manifests Pattern: Reveal Your True Desired State"](https://www.youtube.com/watch?v=TonN-369Qfo&ab_channel=CNCF%5BCloudNativeComputingFoundation%5D)

This article introduces the tool [`argocd-diff-preview`](https://github.com/dag-andersen/argocd-diff-preview) that solves this problem by rendering manifest changes directly on pull requests.

... but first, let's go through two simple examples where not rendering manifests can result in misconfiguration:

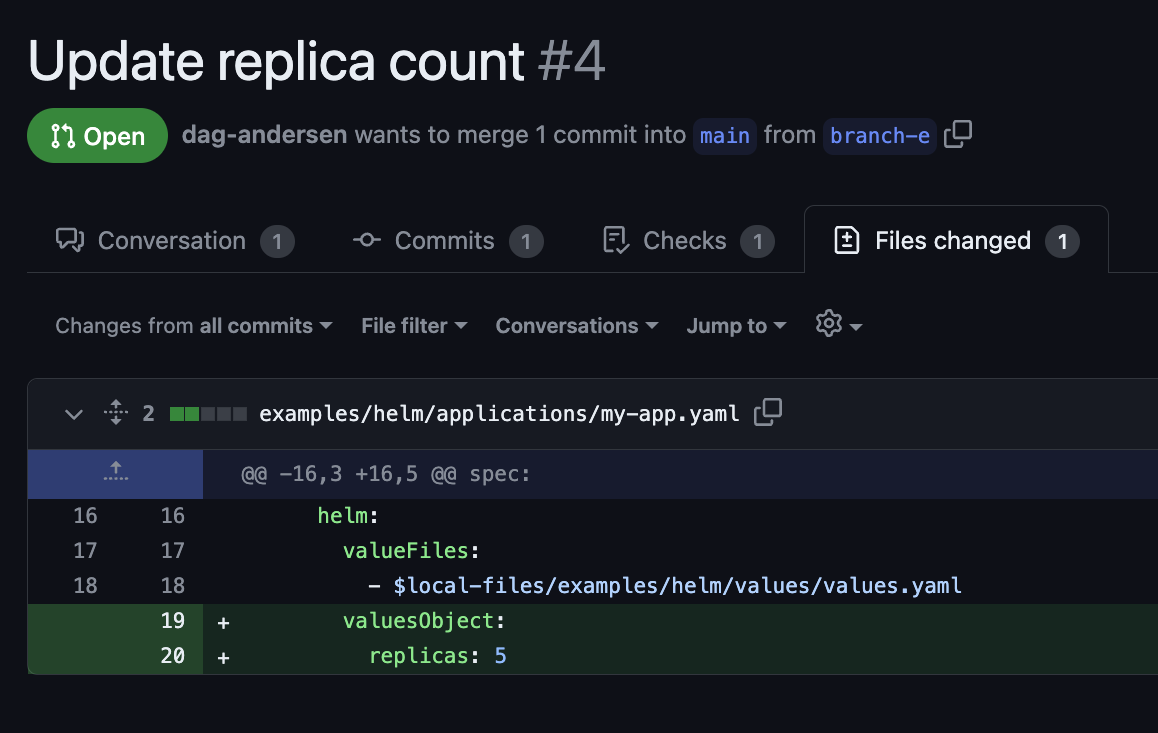

#### Helm misconfiguration example

Here we see an example of a developer trying to override the replica count on an Argo CD application:

This PR may look correct, but as a reviewer, you do not know if the value specified in the Helm Chart is named `replicas:` or `replicaCount:`. The code change has no effect if the value name is incorrect. Without rendering the Helm templates, the likelihood of these errors going to production is high.

#### Kustomize misconfiguration example

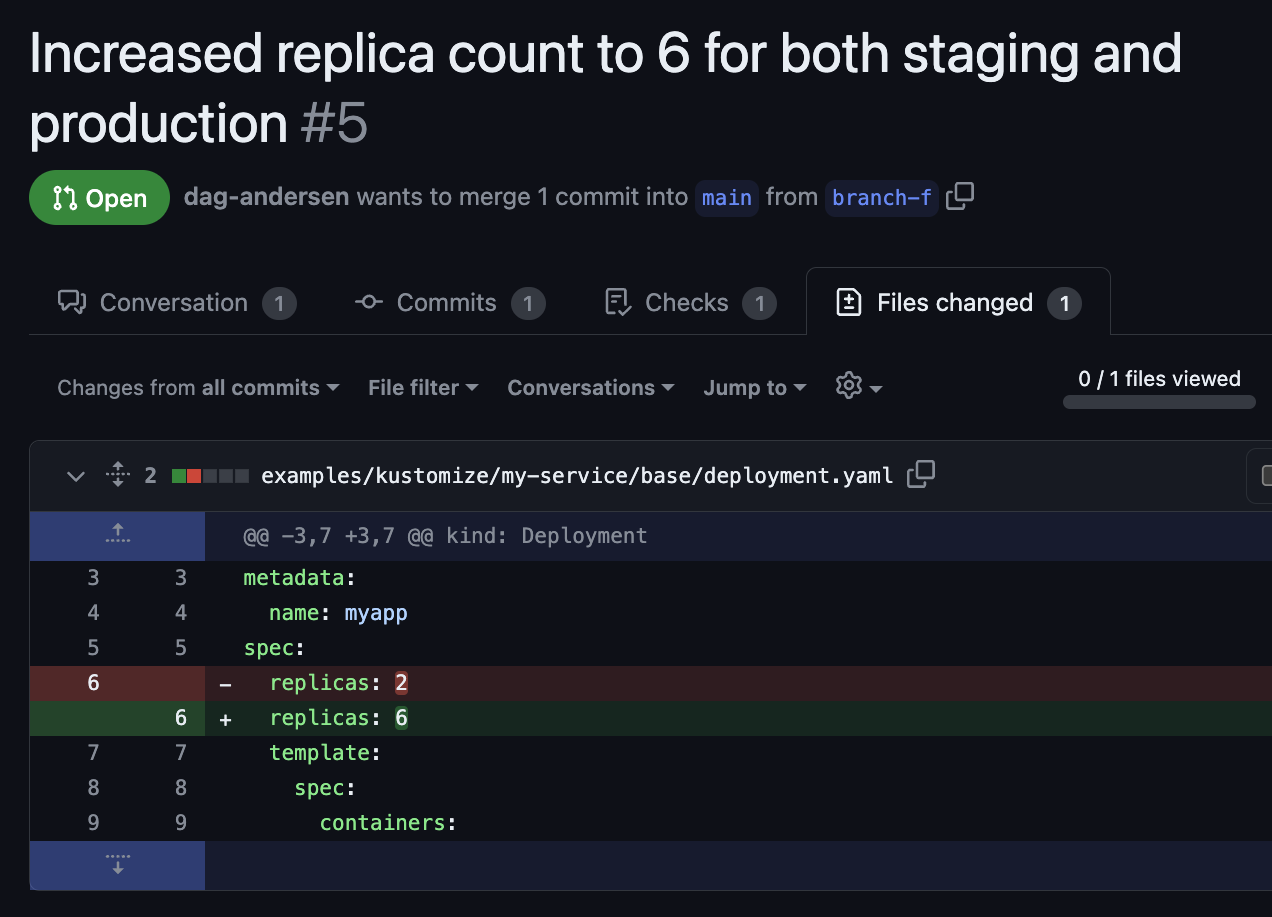

Here we see an example of a developer trying to set the replica count for both staging and production:

Again, this PR may look correct because the change happens in a base folder, so the change applies to all overlays (production and staging). But as a reviewer, you do not know if this value is overridden later down the chain of overlays.

```yaml

~/someApp

├── base

│ ├── deployment.yaml ⬅️ File changed in Pull Request

│ ├── kustomization.yaml

│ └── service.yaml

└── overlays

├── staging

│ ├── cpu_count.yaml

│ ├── kustomization.yaml

└── production

├── cpu_count.yaml

├── kustomization.yaml

└── replica_count.yaml ⬅️ replicaCount overwritten here

```

This unintended result might not have been caught without rendering the final output for staging and production.

### Other solutions to the problem

This problem has been pointed out many times in articles and tech talks about GitOps and infrastructure as code.

If you are interested in different approaches to solving the problem and their limitations, check out [_Kostis Kapelonis_](https://www.linkedin.com/in/kostiskapelonis/)'s [article](https://codefresh.io/blog/argo-cd-preview-diff/) on the topic.

`argocd-diff-preview` is not the first tool that tries to tackle this problem. Other open-source repos include [quizlet/argocd-diff-action](https://github.com/quizlet/argocd-diff-action) and [zapier/kubechecks](https://github.com/zapier/kubechecks).

- [quizlet/argocd-diff-action](https://github.com/quizlet/argocd-diff-action) generates an Argo CD diff between the current PR and the current state of the cluster using the `argocd app diff` command. Thus, this tool needs the Argo CD applications to already be in sync with Git to be helpful. Applications that are out-of-sync on the Argo CD instance will be rendered as a diff on every PR. Additionally, you need to provide your CI pipeline with credentials to your Argo CD server, which may not be possible or desirable.

- [zapier/kubechecks](https://github.com/zapier/kubechecks) is a system that you install on your cluster, which may not be desirable for organizations with strict security restrictions. The tool is complex but has many interesting features. Again, this tool requires access to your running Argo CD instance, which may not be possible or desirable.

`argocd-diff-preview` was created to avoid installing a tool directly on a cluster or providing it with credentials to your live Argo CD instance.

---

## New solution: `argocd-diff-preview`

### Goal

Create a tool that works like Atlantis for Terraform but for Argo CD. The tool should render a reliable diff of the configuration changes directly on the PR. Additionally, it should work without needing access to your existing infrastructure.

Instead of creating some scripts that try to mimic how Argo CD would render the manifests, why not let Argo CD render the manifests itself? This would ensure that the rendered manifests are exactly how Argo CD would render the manifests.

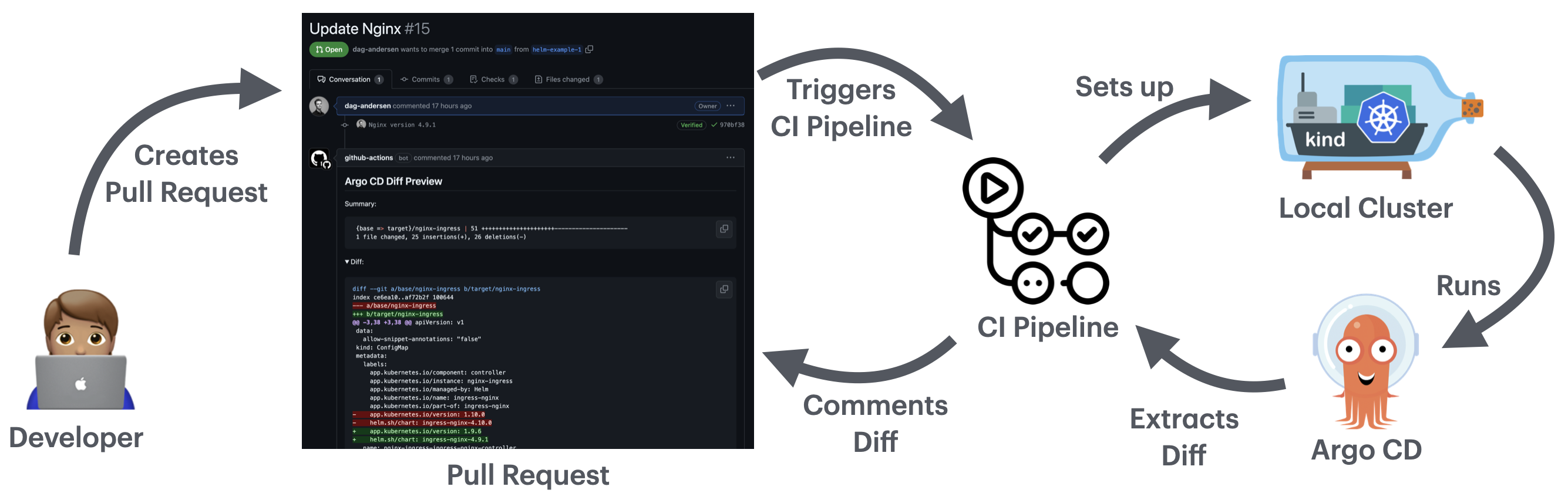

### How it works

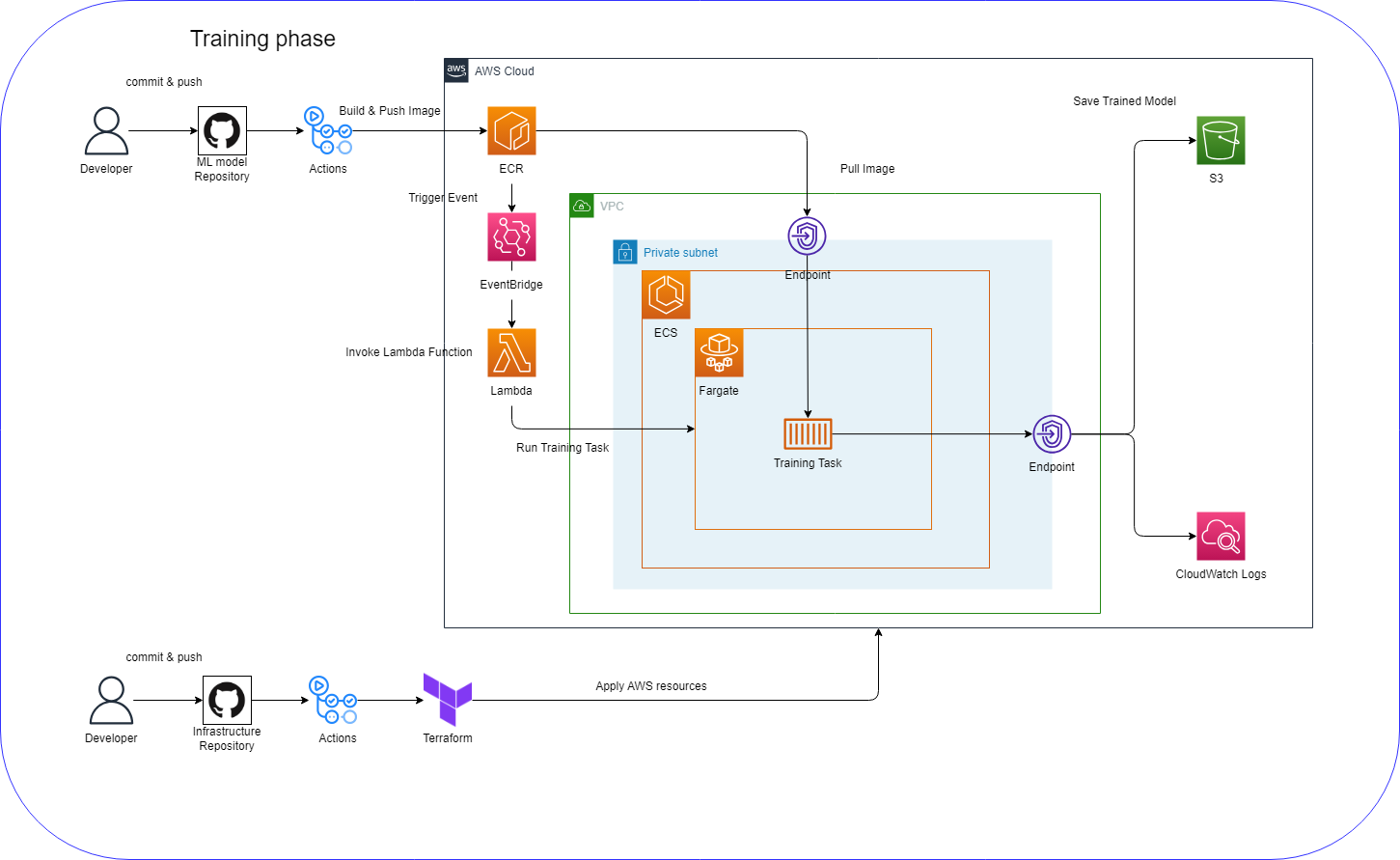

`argocd-diff-preview` spins up a local cluster, installs Argo CD, applies the manifests to the cluster, extracts the rendered manifests from Argo CD, and compares it to the main branch.

This tool runs an ephemeral local cluster inside Docker, so it does not need access to your infrastructure. It only needs read access to the Git repository and your Helm Charts (either stored in Git or a registry)

#### In other words, it follows these 10 steps:

1. Start a local cluster

2. Install Argo CD

3. Add the required credentials (Git credentials, image pull secrets, etc.)

4. Fetch all Argo CD application files on your PR branch

- Point their `targetRevision` to the Pull Request branch

- Remove the `syncPolicy` from the application (to avoid the application to sync locally)

1. Apply the modified applications to the cluster

1. Let Argo CD do its magic

1. Extract the rendered manifests from the Argo CD server

1. Repeat steps 4–7 for the base branch (main branch)

1. Create a diff between the manifests rendered from each branch

1. Display the diff in the PR

**The flow visualized:**

### Example

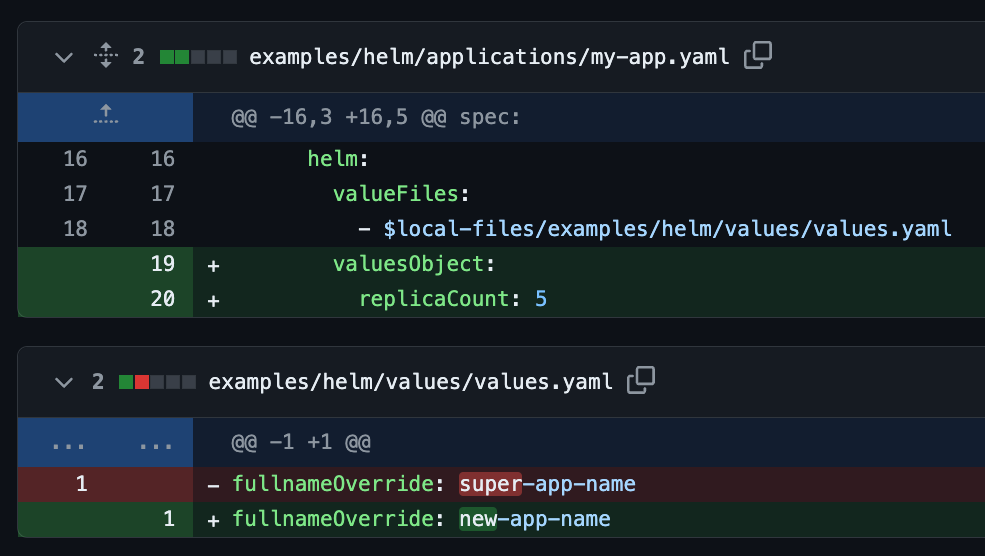

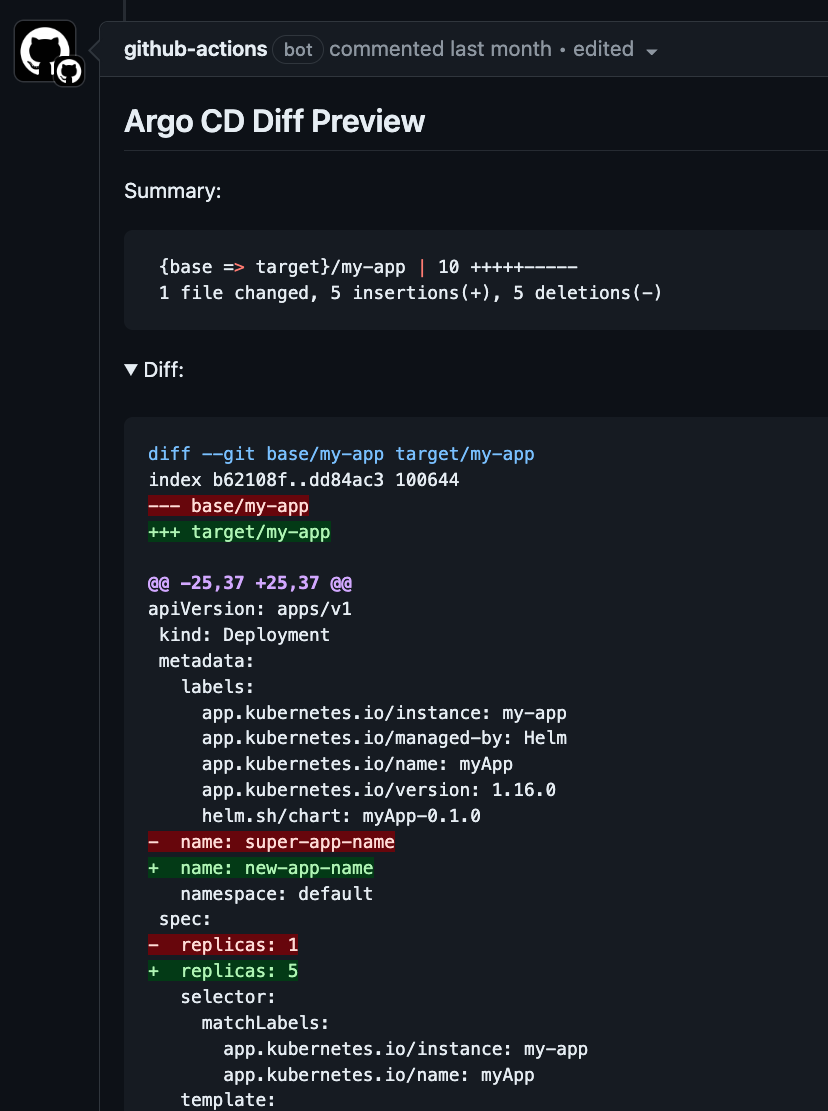

If you are asked for a review on a PR that looks like this:

Then you can verify that it is configured correctly by checking the output generated by `argocd-diff-preview`. The output would look similar to this:

### Pros

- Always renders the correct difference between branches because it is rendered by Argo CD itself.

- Fully ephemeral cluster.

- Does not access any of your existing infrastructure. It only requires read access to the Git repository and your Helm Charts.

- Can be run locally before you open the pull request.

- Render changes in resources from external sources (e.g., Helm Charts). For example, when you update the Helm Chart version of `nginx`, you can see what exactly changed - [PR example](https://github.com/dag-andersen/argocd-diff-preview/pull/15).

### Cons

- It is slow. Spinning up a cluster and installing Argo CD takes a few minutes each run (see table below)

- Does not support [Argo CD CMP plugins](https://argo-cd.readthedocs.io/en/stable/operator-manual/config-management-plugins/)

- Does not work with [Cluster Generators](https://argocd-applicationset.readthedocs.io/en/stable/Generators-Cluster/) in your ApplicationSets.

### Comparing desired states - Not actual state

An important point to understand is that, unlike Atlantis or the `argocd diff` CLI command, this approach doesn't compare the desired state in Git with the actual state in Kubernetes. Instead, it compares the desired state of the two branches stored in Git. I would argue that this is better than comparing Git with the actual state in Kubernetes because the state can change, resulting in non-deterministic output. The actual state in Kubernetes can temporarily go out-of-sync with Git, and we don't want this to be highlighted in our diff preview. Developers who work with Altanis experience this a lot - each time you run `atlantis plan`, it may produce a different result if the infrastructure changes often.

### How to use it in GitHub Actions

Here is an example of how you would trigger `argocd-diff-preview` on your pull requests in GitHub Actions

```yaml

name: Argo CD Diff Preview

on:

pull_request:

branches:

- main

jobs:

build:

runs-on: ubuntu-latest

permissions:

contents: read

pull-requests: write

steps:

- uses: actions/checkout@v4

with:

path: pull-request

- uses: actions/checkout@v4

with:

ref: main

path: main

- name: Generate Diff

run: |

docker run \

--network=host \

-v /var/run/docker.sock:/var/run/docker.sock \

-v $(pwd)/main:/base-branch \

-v $(pwd)/pull-request:/target-branch \

-v $(pwd)/output:/output \

-e TARGET_BRANCH=${{ github.head_ref }} \

-e REPO=${{ github.repository }} \

dagandersen/argocd-diff-preview:v0.0.8

- name: Post diff as comment

run: |

gh pr comment ${{ github.event.number }} --repo ${{ github.repository }} --body-file output/diff.md --edit-last || \

gh pr comment ${{ github.event.number }} --repo ${{ github.repository }} --body-file output/diff.md

env:

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

```

### Handling credentials

In the simple code example above, I do not provide `argocd-diff-preview` with any credentials, which only works if the Helm Chart registry and the Git repository are public. If you want to use this tool in a private repository, you need to provide the tool with the required credentials. More details on this can be seen in the [GitHub Repository](https://github.com/dag-andersen/argocd-diff-preview#handling-credentials)

### Output

On a successful run, the tool prints the following output:

```

✨ Running with:

✨ - base-branch: main

✨ - target-branch: helm-example-3

✨ - repo: dag-andersen/argocd-diff-preview

✨ - timeout: 180

🚀 Creating cluster...

🚀 Cluster created successfully

🦑 Installing Argo CD...

...

🤖 Patching applications for branch: main

🤖 Patching applications for branch: helm-example-3

🌚 Getting resources for base-branch

🌚 Getting resources for target-branch

...

🔮 Generating diff between main and helm-example-3

🙏 Please check the ./output/diff.md file for differences

```

If something is wrong with your configuration, it prints the Argo CD Application error message:

```

...

🤖 Patching 4 Argo CD Application[Sets] for branch: helm-example-3

🌚 Getting resources for target-branch

⏳ Waiting for 4 out of 4 applications to become 'OutOfSync'. Retrying in 5 seconds. Timeout in 180 seconds...

❌ Failed to process application, my-app, with error:

Failed to load target state: failed to generate manifest for source 2 of 2: rpc error: code = Unknown desc = authentication required

```

### Speed

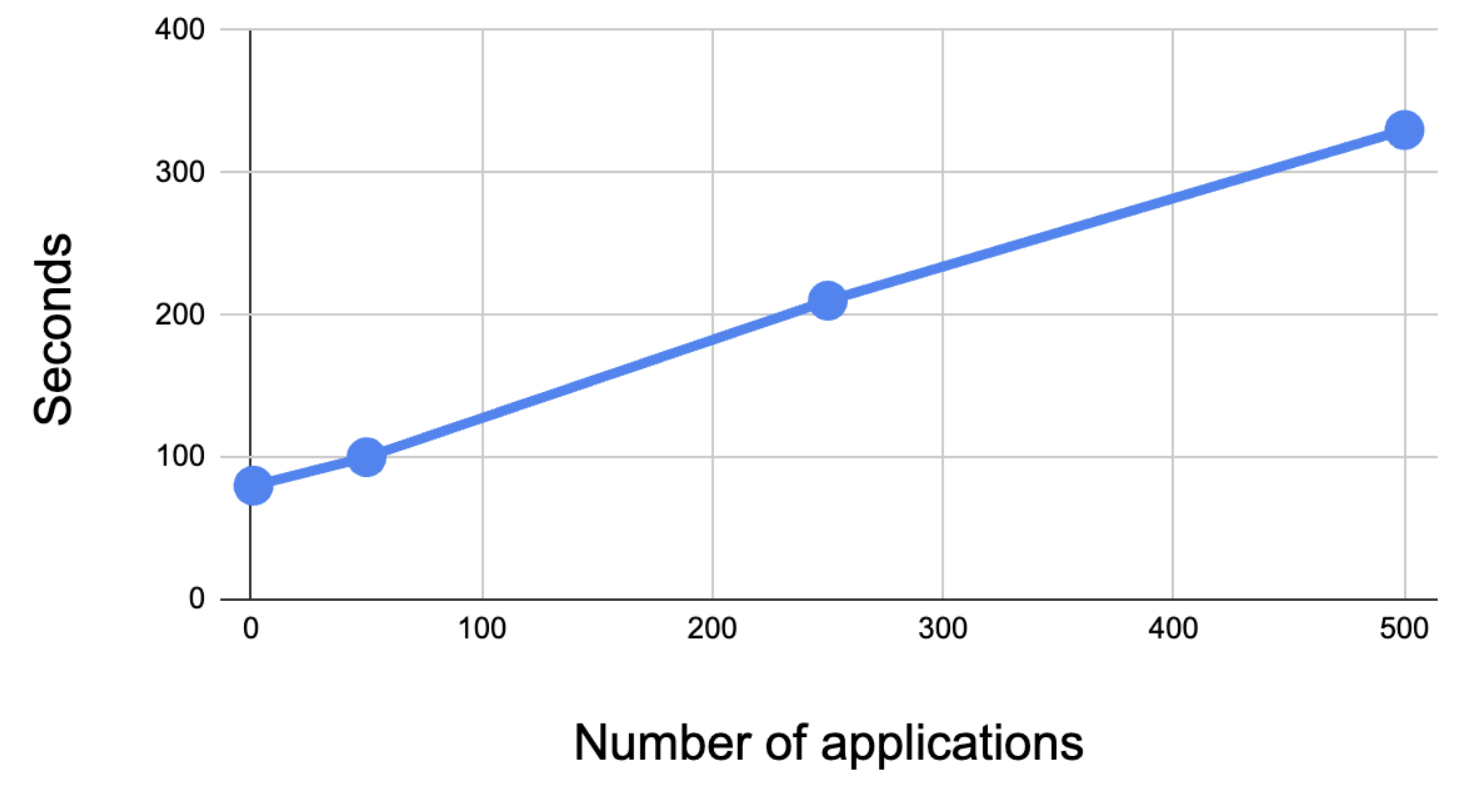

The table below shows how the number of applications correlates with the time it takes to render them all:

| Number of applications | 1 | 50 | 250 | 500 |

|:----------------------:|:--:|:---:|:---:|:---:|

| Seconds** | 80 | 100 | 210 | 330 |

Creating a cluster and installing Argo CD on it takes around 1 minute, which is why rendering a single application takes over a minute.

> **The speed can vary depending on the distribution between applications used with Kustomize, Helm, and raw manifests. This test's result is based on a codebase mainly filled with Helm Charts.

#### Speeding up the rendering process

Rendering the manifests generated by all applications in the repository on each pull request is slow. The tool supports grepping applications with regex. Setting the environment variable `FILE_REGEX` only allows the tool to run on manifests that match a particular regex.

For example, if someone in your organization from *Team A* makes changes to one of their applications, the tool can be run with `FILE_REGEX=/Team-A/` so it only renders changes in folders matching `*/Team-A/*`. This speeds up the process significantly.

---

## Conclusion

In conclusion, tackling the challenge of accurately visualizing Kubernetes configuration changes within GitOps workflows is essential for ensuring smooth operations and minimizing errors.

`argocd-diff-preview` works like Atlantis for Terraform. The tool lets you render the diff on PRs, making it easier to review the changes made to the configuration. Since the diff is rendered by Argo CD itself, it is as accurate as possible.

In contrast to other existing solutions, `argocd-diff-preview` works without direct access to your infrastructure, which can be desirable for organizations with strict security requirements.

If you experience any issues with the tool, please open an issue on the [repository](https://github.com/dag-andersen/argocd-diff-preview) | dag-andersen |

1,862,708 | Reshape your Online Business Smartly with AI in E-commerce | Numerous e-commerce businesses employ artificial intelligence to understand their customers better.... | 0 | 2024-05-23T10:30:40 | https://dev.to/techdynesty/reshape-your-online-business-smartly-with-ai-in-e-commerce-48na | ai, ecommerce, aiinecommerce | Numerous e-commerce businesses employ artificial intelligence to understand their customers better. With AI tools such as machine learning, natural language processing, deep learning, and more, online retailers can make sense of the vast amount of data they gather, gain insights that enhance consumer satisfaction, expedite internal company operations, and combat fraud.

AI in e-commerce offers many opportunities for your company to expand. This blog will teach you more about using artificial intelligence (AI) in e-commerce and how it can assist companies in increasing process efficiency cost-effectively.

## **Application of AI in E-Commerce**

AI has the capabilities to transform your online business in every corner. Here are some of the significant uses of AI in e-commerce:

**- Personalization**

E-commerce companies can use artificial intelligence (AI) to tailor their clients' shopping experiences by making product recommendations based on their past purchases, tastes, and behavior.

This raises the likelihood of generating more sales and enhances consumer loyalty. It can also consider factors like search engine queries to attract more prospective clients.

**- Better Support for Customers**

The most likely way to achieve this is through improved chatbots. AI-powered chatbots are a great way for e-commerce companies to offer 24/7 customer care. Chatbots and virtual assistants can automate customer support requests, respond to frequently asked questions, and even help with the purchase process.

Customers benefit from a more efficient experience, and customer service personnel's workload is lessened.

**- Optimizing Prices**

Businesses want to focus on price optimization to achieve several benefits. However, AI in ecommerce can optimize pricing to guarantee companies the highest possible profit margins. Seasonal variations or supply chain problems may affect some products' costs. AI may consider all of this, allowing for real-time dynamic pricing.

**- Improved Customer Segmentation**

Software driven by artificial intelligence may examine millions of data points and offer insights into the most consistent purchasing behaviors, customer trends, and segment preferences.

This allows online retailers to tailor promotions and marketing messaging to each subdivided group, increasing income and sales and improving the personalized shopping experience.

**- Mitigation of Fraud**

Fraudsters attack e-commerce platforms and enterprises. On the other hand, fraud detection systems driven by AI in ecommerce domain can help to lessen the possibility of fraudulent transactions. These systems can trace fake IP addresses, identify consumer activity trends, and analyze them. This enhances the e-commerce platform's security and reliability in addition to helping to lower losses.

## **Wrapping Up**

There is no denying that artificial intelligence has advanced the e-commerce industry. AI applications in the e-commerce sector can improve customers' purchasing experience by making it more convenient and customized. [AI in e-commerce ](https://www.bacancytechnology.com/blog/ai-in-ecommerce)can help your businesses succeed in the market and differentiate themselves from competitors.

| techdynesty |

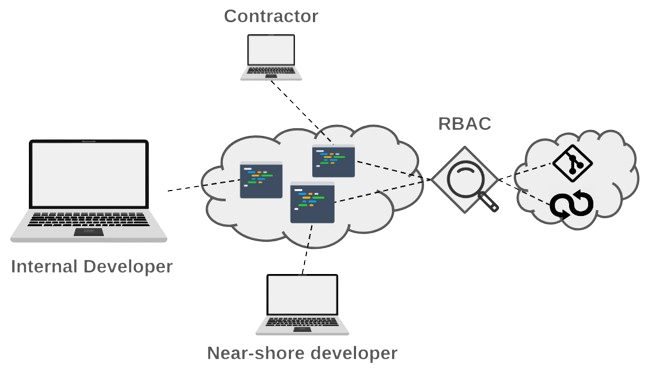

1,862,703 | Securing Development: Key Differences in Virtual Desktops, Enterprise Browsers and Cloud-Based Development | Which technology to secure development? Check the top differences between virtual desktops,... | 0 | 2024-05-23T10:29:12 | https://strong.network/article/key-differences | vdi, browser, cloud, development | > Which technology to secure development? Check the top differences between virtual desktops, enterprise browsers, and secure cloud development environments.

Many organizations struggle to keep an application development workflow that is secure against data leaks, while jointly preserving developers’ experience and productivity. This challenge has driven market demand for secure development environments especially due to developers being increasingly targeted by hackers. Breaches are notably [around source code ](https://www.securityweek.com/leaked-github-token-exposed-mercedes-source-code/) and [credentials ](https://www.securityweek.com/leaked-github-token-exposed-mercedes-source-code/)([see also this report](https://www.f5.com/labs/articles/threat-intelligence/2023-identity-threat-report-the-unpatchables)), some leading to [personal information leaks](https://techcrunch.com/2021/11/22/godaddy-breach-million-accounts/).

In this article, I’ll start with a quick rundown of the technologies available to organizations to address this challenge. Then, I’ll compare them across three dimensions: their applicability to support an application development process, their focus on security, and their impact on developer experience. At the end of this article, I’ll give a nuanced conclusion, in the sense that choosing any of these technologies shouldn’t necessarily be clear-cut. In other words, mixing them could even be beneficial in some cases.

## What Are Virtual Desktops?

The need to protect the development workflow has led to the emergence of technologies such as virtual desktops notably from organizations such as [Citrix ](https://www.citrix.com/solutions/vdi-and-daas/virtualization-vdi.html)([Virtual Desktop Infrastructure, or VDI](https://www.citrix.com/solutions/vdi-and-daas/virtualization-vdi.html)) and [VMWare (Horizon)](https://www.vmware.com/products/horizon.html), etc. These are general-purpose desktops (Windows or Linux), where developers can install applications like Integrated Development Environments (IDE), and DevOps tools (e.g. [container management](https://dzone.com/guides/containers-development-and-management)) and access web applications supporting their workflow. In effect, remote access to a virtual desktop removes the need to maintain sensitive data on the local device. In addition, it provides access to an alternative source of computing power to build applications. A typical access method for the remote desktop is the [Remote Desktop Protocol](https://en.wikipedia.org/wiki/Remote_Desktop_Protocol) (RDP) which streams the desktop image to the local device.

## What Are Enterprise Browsers?

More recently, enterprise browsers, with companies such as [Island](https://www.island.io/), [Talon](https://talon-sec.com/), and [Citrix](https://docs.citrix.com/en-us/citrix-enterprise-browser.html), have appeared as web-based alternatives to Virtual Desktops, although the focus is securing access to web applications, typically SaaS services, as opposed to providing access to a desktop. However, these browsers also support protocols such as RDP to provide access to remote desktops (also as virtual machines). Vendors in this field often position themselves as a VDI replacement. A marked difference is that their offerings typically do not include computing resources (unlike Citrix and VMWare’s offerings). Hence, it is likely that organizations adopt them in the scope of a broader infrastructure set-up including [Desktop-as-a-Service](https://www.techtarget.com/searchvirtualdesktop/definition/desktop-as-a-service-DaaS) (DaaS) when computing resources are needed.

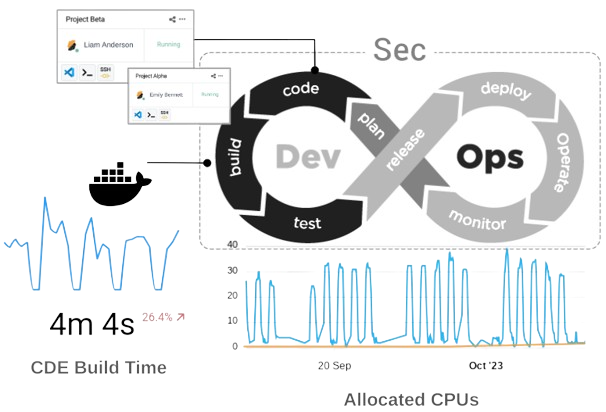

What Are Secure Cloud Development Environments?

As a means to secure an application development process, recent technology is [Secure Cloud Development Environments](https://strong.network/article/the-need-for-secure-cloud-development-environments) (CDEs) and the associated platform used to manage them. The basic role of such a platform is to provide online access to development environments with security mechanisms via an IDE, in addition to providing secured access to the web applications used by developers (e.g. for code management). For the latter, a technology similar to the one used for enterprise browsers is used.

The combination of remote access via IDE and secured web browsing aims at protecting the entire developer workflow against data leaks. Like in the case of a virtual desktop, local development data is in effect “removed” from local devices and computing is delivered via the Cloud. Hence, secure CDEs can be seen as a technology blending aspects of the previous two presented here.

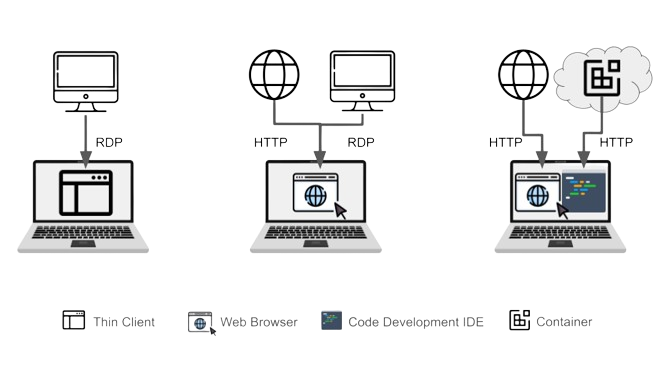

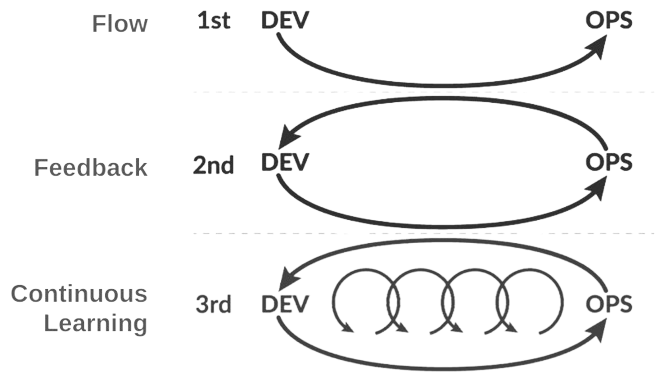

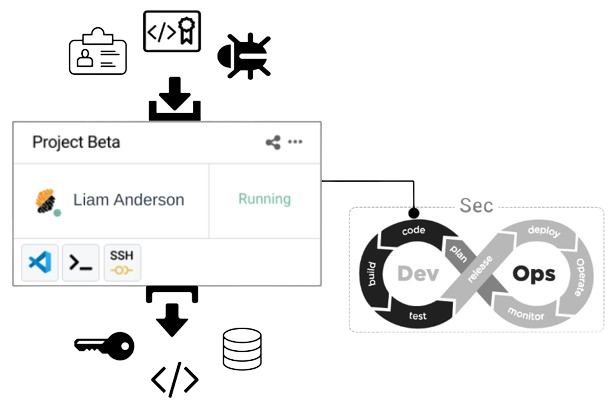

The figure below depicts the three technologies compared in this article.

**Figure:** _From left to right, a thin client accesses a remote desktop, while an enterprise browser provides access to both web applications and remote desktops via RDP. On the right, a secure CDE platform provides a combination of remote access to a development environment via an IDE and secure web browsing. _

## Comparison of the Technologies

Let's dive now into key differences between the three technologies and highlight roles and benefits. Note that, albeit virtual desktops and enterprise browsers are business process-agnostic, I only discuss their application in securing application development here.

### Code Development Applicability

#### Virtual Desktops

In the context of code development virtual desktops are used across business units, but more commonly in scenarios involving the onboarding of remote developers, implementation of BYOD policies, and others requiring centralized control and security over remote desktop environments, mostly Windows-based (although Linux hosts are also used).

Any code development activities are performed on a generic desktop, which typically requires the installation of tools such as an IDE to start coding. As illustrated in the picture below (left), the remote desktop is used to code using the IDE and access a code management application. The remote desktop is also the primary source of compute to build applications.

#### Enterprise Browsers

When used in the scope of a development process, an enterprise browser acts as a web front-end to access a remote desktop set-up for development (via RDP). Because secure browsers impose fewer infrastructure constraints than virtual desktops, they can be more easily deployed across both internal and remote developers. Still, enterprise browsers do not provide any development environments by themselves, hence in this setting, they are merely the front-end to an existing park of development machines (physical or virtual), accessed via the browser client.

Hence, in the scope of a development process where a source of compute is needed, the setup is similar to a virtual desktop. A small difference is that the code management application in this case can be accessed securely using the browser on the developer device as opposed to a browser running on the virtual desktop. Here too, the remote desktop is the primary source of compute to build applications. This is shown in the middle part of the figure below.

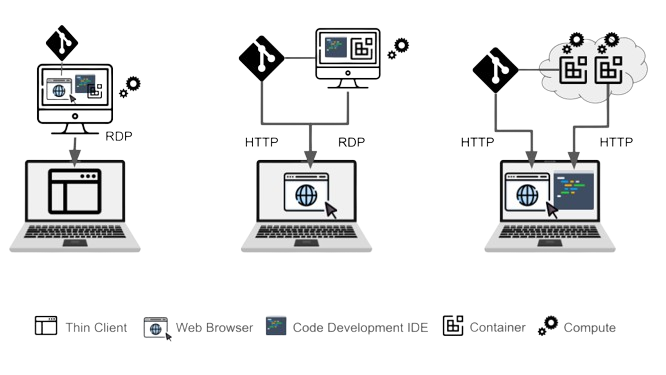

#### Secure Cloud Development

A secure CDE Platform is designed to onboard both internal and remote developers (incl. BYOD) on centrally managed and standardized environments. To run environments, the platform relies on lightweight virtualization using containers (i.e. a virtual process) as opposed to a virtual machine. Hence set-up and operations are much more efficient and more scalable because containers require fewer resources. Hence it is easy and economical to assign multiple environments to a single developer. Each container has its own source of compute and is easy to set or reconfigure without any loss of data.

**Figure:** _From left to right, in both the cases of virtual desktops and enterprise browsers, a virtual machine hosts all the tools, computing power and provides connection to DevOps services. Secure Cloud Development provides access via IDE and secure browser to containerized environments and services, respectively._

The striking difference with a remote desktop is that developers do not need a desktop to develop applications. The platform is primarily suited for cloud-native, i.e. web-based (back-end/front-end) and mobile development (left part in the next figure). The Cloud environment is accessed directly via the IDE and developers typically run web applications on an environment’s port. The running application is then accessed via a local web browser. Note that it is possible to run a desktop on the containers if needed. In this case, it is steamed over a port and accessed via the browser as well (right part of the figure below).

**Figure:** _Secure Cloud-based development does not require a desktop to build backend, web, and mobile applications. The same mechanism used to run applications on containers lets users access a desktop when necessary. _

### Security Focus of the Technologies

What about their security focus?

#### Virtual Desktops and Enterprise Browsers

Virtual desktops secure the end-user environment by centralizing data and desktop applications, reducing endpoint vulnerabilities. Both Citrix and VMWare clients offer data leak prevention (DLP) measures that protect from data exfiltration via [system hardening measures](https://community.citrix.com/tech-zone/build/tech-papers/cvad-security-best-practices/) encompassing user access, data egress restrictions, etc.

Enterprise browsers aim to protect the organization from phishing, malware, and other threats when accessing web applications, and from user operations that could result in data leaks, including insider threats using DLP as well.

In the case of enterprise browsers, security measures are primarily “client-focused”, because users do not have access to the backend of the application they use. Security measures are more complex and include network policies in the case of virtual desktops. Such policies might be necessary to avoid data exfiltration using internet connectivity once on the remote application. This shows a potential limitation of Enterprise browsers as a VDI replacement when accessing desktops: it is likely that no secure measures are provided to protect from operations on the desktop. However, this aspect could depend on the vendor-dependent.

#### Secure Cloud Development

A secure CDE platform focuses on securing data in development environments and web applications against exfiltration. This is akin to client-side DLP.

The platform also provides “back-end DLP” by protecting access to the data used for development. This is achieved by controlling the network and providing authentication services to the organization’s resources.

Although a CDE-based infrastructure is simpler than a virtual desktop counterpart, it is indeed this simplicity that allows it to build a more holistic approach to data loss prevention with minimal impact on the local device used to access the platform.

An additional aspect is that, because a secure CDE platform is designed to support application development, security mechanisms can beneficially use the context to make security a productivity enabler as opposed to a hassle.

## Impact and Benefits to Developer Experience

Accessibility to the platform and more generally the developer experience are important factors when assessing the fitness of these technologies to support development.

Virtual Desktops lets developers interact with a remote desktop via a locally installed client by streaming the image of the remote desktop to the client. Such access protocol often suffers from latency due to network requirements, which unfortunately impacts the developer experience (check this real-life story).

Enterprise browsers let developers access web applications without usability issues However, because developers need access to a remote desktop for coding, this requires again the use of a streaming protocol such as RDP and results in display latency impacting the developer experience and productivity.

**Figure:** _In the case of a secure CDE platform, the IDE used for coding (right part) is not streamed to the desktop and rendered locally, which preserves the developer’s experience. In comparison, secure browsing might be implemented using streaming (left part)._

A secure CDE platform provides developers access to the online development environment via a web-based IDE, and to web applications via a secure browser. The web-based IDE is a web application on its own and renders natively in the browser on the developer’s device. Hence no streaming is required which provides optimal developer experience (see the above figure).

In contrast, the chosen implementation for the secure browser can impact the experience. However, in practice, developers spend the majority of their time in the IDE and use web applications for less frequent operations such as pull requests.

## Opportunities When Combining Technologies

In general, virtual desktops and enterprise browsers play an important role across enterprise business processes by providing general-purpose security for desktops and web applications, each with distinct infrastructure requirements and performance outcomes. Historically, virtual desktops have been a staple in the enterprise environment, representing the oldest technology among the ones that I discussed in this article.

In comparison, enterprise browsers are designed as a lightweight alternative to virtual desktop infrastructure. They are however optimized mainly for SaaS applications delivered through the web. Their utilization for accessing developer desktops via RDP is akin to a modern reinterpretation of virtual desktops via a web browser.

To protect the application development process, a secure Cloud Development Environment (CDE) platform centralizes all essential resources, including access to clients (IDE and web applications) and development environments, in one place. The targeted usage allows the platform to offer context-specific security and preserves the developer experience when working in a secure environment.

In a larger organizational context, integrating a secure CDE platform with virtual desktops or an existing enterprise browser setup might be necessary. This provides an opportunity to balance development productivity, security, and asset utilization optimally.

One key feature of a secure CDE platform is its use of a dedicated browser for safe access to web applications. This feature is particularly enhanced when integrated with an enterprise browser or application virtualization technologies. Essentially, this integration allows for replacing the CDE platform's secure browser with a more seamless solution and incorporates secure CDE technologies into the existing infrastructure.

This way, organizations can standardize security mechanisms across the infrastructure, ensuring access to legacy applications while modernizing application development. It also offers them an opportunity to improve asset utilization by leveraging lightweight virtualization for on-demand access to cheap computing power dedicated to development workloads (see the next figure),

In the implementation of a Virtual Desktop Infrastructure, incorporating a secure CDE platform elevates the developer experience by providing on-demand development environments (with associated computational resources) and bolstering data access security.

**Figure:** _The combination of the secure CDE platform and the other technologies to fit different needs and scenarios in an enterprise setting._

In conclusion, integrations between secure CDE platforms, enterprise browsers, and virtual desktops provide opportunities for enhancing both the security and productivity of the development process, while jointly improving developer experience and resource utilization.

Although a secure CDE platform alone provides a contemporary approach to prevent data leaks during application development, it also delivers an opportunity to enrich the existing infrastructure ecosystem of modern organizations.

**Published at Dev.to with permission of Laurent Balmelli, PhD. See the original article [here](https://strong.network/article/key-differences).** | loransha256 |

1,862,689 | Automating Next.js Builds and Firebase Deployment with Node.js | Learn how to automate your Next.js application build process and deploy it to Firebase using Node.js scripts. | 0 | 2024-05-23T10:00:50 | https://dev.to/itselftools/automating-nextjs-builds-and-firebase-deployment-with-nodejs-48g0 | javascript, node, nextjs, devops |

At [itselftools.com](https://itselftools.com), we've gained extensive experience from developing over 30 web applications using Next.js and Firebase. In this article, I'll share a snippet of code that automates the building and deployment of a Next.js app to Firebase, making the deployment process as efficient as possible.

## Understanding the Code Snippet

Here is a concise breakdown of each segment of the code provided:

```javascript

const { readFileSync, writeFileSync } = require('fs');

const yaml = require('js-yaml');

const prepareDeployment = () => {

const doc = yaml.load(readFileSync('./deployment.yaml', 'utf8'));

const config = {

public: 'out',

ignore: doc.ignoreFiles

};

writeFileSync('firebase.json', JSON.stringify(config, null, 2));

console.log('Firebase configuration updated for deployment.');

};

const buildAndDeploy = () => {

prepareDeployment();

execSync('next build && next export && firebase deploy');

console.log('App successfully deployed to Firebase.');

};

```

### Step-by-Step Explanation

1. **Reading and Parsing Configuration:** The `prepareDeployment` function reads a YAML configuration file, 'deployment.yaml', which specifies files to ignore during deployment. It uses the 'js-yaml' package to parse this file.

2. **Creating the Firebase Configuration File:** It then creates or updates the 'firebase.json' file with deployment configuration such as the public directory and ignored files, which is essential for the Firebase deployment settings.

3. **Building and Deploying the App:** The `buildAndDeploy` function first calls `prepareDeployment` to ensure the Firebase config is set up correctly. It then executes a series of commands to build the Next.js application, export it, and finally deploy it to Firebase using `execSync`, which runs these commands in the system's shell.

## Recommended Tools and Libraries

- **Node.js**: Provides the runtime environment to run JavaScript on the server.

- **Next.js**: A React framework that enables functionalities such as server-side rendering and generating static websites.

- **Firebase**: An application development platform that provides hosting and backend services.

- **js-yaml**: A library to safely parse YAML files in Node.js.

## Conclusion

This scripted approach reduces the likelihood of deployment errors and streamlines the entire build and deployment process. To see how effective this setup can be, feel free to explore some of our applications like [Free High-Quality Screen Recording](https://online-screen-recorder.com), [Free Online English Word Search Tool](https://find-words.com), and [Extract Text from Images and PDFs Online](https://ocr-free.com).

Switching to automated deployments can significantly improve the reliability and efficiency of software releases, especially in agile development environments where frequent updates are common. | antoineit |

1,862,707 | Why Divsly is the Best Choice for Your Email Marketing Needs | In today's digital age, email marketing has become a cornerstone of business communication and... | 0 | 2024-05-23T10:25:52 | https://dev.to/divsly/why-divsly-is-the-best-choice-for-your-email-marketing-needs-5cko | emailmarketing, emailcampai, emails, emailbusin | In today's digital age, email marketing has become a cornerstone of business communication and customer engagement strategies. With countless options available, it can be overwhelming to choose the right email marketing platform for your needs. However, amidst the sea of choices, one platform stands out as a reliable and effective solution: Divsly. In this blog post, we'll explore why Divsly is the best choice for your email marketing needs.

## 1. User-Friendly Interface

[Divsly](https://divsly.com/) prides itself on its intuitive and user-friendly interface. Whether you're a seasoned marketer or just starting out, Divsly makes it easy to create, send, and track email campaigns. With drag-and-drop functionality and pre-designed templates, you can quickly customize emails to suit your brand and message without any coding knowledge required. This simplicity and ease of use mean that you can focus on crafting engaging content rather than getting bogged down in technical details.

## 2. Powerful Automation Tools

Automation is key to streamlining your email marketing efforts and maximizing efficiency. Divsly offers a robust suite of automation tools that allow you to set up personalized email workflows based on customer behavior, demographics, and preferences. From welcome emails to abandoned cart reminders, you can automate various touchpoints along the customer journey, ensuring timely and relevant communication that drives results. With Divsly's automation tools, you can nurture leads, re-engage dormant subscribers, and ultimately, boost conversions with minimal effort.

## 3. Advanced Segmentation Capabilities

One size does not fit all when it comes to email marketing. Divsly understands this and offers advanced segmentation capabilities that enable you to divide your audience into targeted groups based on specific criteria such as location, purchase history, or engagement level. By segmenting your email list, you can deliver highly tailored content that resonates with each segment, increasing open rates, click-through rates, and ultimately, conversions. Whether you're running a promotional campaign or sending out a newsletter, Divsly's segmentation tools help you deliver the right message to the right people at the right time.

## 4. Comprehensive Analytics

Effective [email marketing](https://divsly.com/features/email-marketing) requires data-driven insights to measure performance, identify areas for improvement, and optimize campaigns for better results. Divsly provides comprehensive analytics that give you a deep understanding of how your emails are performing. From open rates and click-through rates to conversion tracking and revenue attribution, you can track key metrics and monitor the success of your campaigns in real-time. Armed with this valuable data, you can make informed decisions to refine your email strategy and achieve your marketing goals.

## 5. Seamless Integration

Divsly seamlessly integrates with a wide range of third-party tools and platforms, including CRM systems, e-commerce platforms, and analytics software. This integration allows you to sync customer data, track interactions across multiple touchpoints, and leverage existing tools to enhance your email marketing efforts. Whether you're managing contacts, analyzing sales data, or optimizing website performance, Divsly plays nicely with your existing tech stack, making it easy to integrate email marketing into your overall marketing strategy.

## 6. Exceptional Support

Last but not least, Divsly provides exceptional customer support to help you every step of the way. Whether you have a question about a feature, need assistance with a campaign, or encounter a technical issue, Divsly's support team is readily available to provide guidance and resolve any issues promptly. With responsive support via email, chat, and phone, you can rest assured that help is always just a click or call away, ensuring a smooth and hassle-free experience with the platform.

In conclusion, Divsly offers a winning combination of user-friendly interface, powerful automation tools, advanced segmentation capabilities, comprehensive analytics, seamless integration, and exceptional support that makes it the best choice for your email marketing needs. Whether you're a small business owner, a marketing professional, or an e-commerce entrepreneur, Divsly empowers you to create engaging email campaigns, nurture customer relationships, and drive business growth with ease. Try Divsly today and experience the difference for yourself! | divsly |

1,862,704 | Interactive Color-Changing Button | This project demonstrates a simple interactive button using HTML, CSS, and JavaScript. When the... | 0 | 2024-05-23T10:25:18 | https://dev.to/megha_sangapur_98205768ca/interactive-color-changing-button-5fil | codepen | This project demonstrates a simple interactive button using HTML, CSS, and JavaScript. When the button is clicked, its color changes randomly. This example is perfect for beginners who want to learn how to manipulate DOM elements and handle events in JavaScript.

{% codepen https://codepen.io/Megha-Sangapur/pen/PovzEVo %} | megha_sangapur_98205768ca |

1,862,705 | Puppeteer Docker | Hi, i am running into an error while performing html to pdf generation using puppeteer ,i am using... | 0 | 2024-05-23T10:24:07 | https://dev.to/let_alliance_bcc6d5511681/puppeteer-docker-4c2i | programming, devops, aws | Hi,

i am running into an error while performing html to pdf generation using puppeteer ,i am using docker to deploy on aws but when i excute my code i am getting error

"Read-only file system : '/var/task/chrome-linux64.zip'"

Thanks

| let_alliance_bcc6d5511681 |

1,862,702 | Creating Open Source Connections | Explore how StarSearch revolutionizes open source collaboration by connecting projects with top talent using AI-driven insights into GitHub events and contributor activities. | 0 | 2024-05-23T10:17:39 | https://opensauced.pizza/blog/open-source-insights-with-starsearch | opensource, ai, community, showdev | ---

title: Creating Open Source Connections

published: true

description: Explore how StarSearch revolutionizes open source collaboration by connecting projects with top talent using AI-driven insights into GitHub events and contributor activities.

tags: opensource, ai, community

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/ifvsdgm6y0y0r0pkmmu8.png

# Use a ratio of 100:42 for best results.

# published_at: 2024-05-23 10:04 +0000