id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,863,052 | 2597. The Number of Beautiful Subsets | 2597. The Number of Beautiful Subsets Medium You are given an array nums of positive integers and a... | 27,523 | 2024-05-23T17:06:04 | https://dev.to/mdarifulhaque/2597-the-number-of-beautiful-subsets-ih5 | php, leetcode, algorithms, programming | 2597\. The Number of Beautiful Subsets

Medium

You are given an array nums of positive integers and a positive integer k.

A subset of nums is beautiful if it does not contain two integers with an absolute difference equal to k.

Return the number of non-empty beautiful subsets of the array nums.

A subset of nums is an array that can be obtained by deleting some (possibly none) elements from nums. Two subsets are different if and only if the chosen indices to delete are different.

**Example 1:**

- **Input:** nums = [2,4,6], k = 2

- **Output:** 4

- **Explanation:** The beautiful subsets of the array nums are: [2], [4], [6], [2, 6].

It can be proved that there are only 4 beautiful subsets in the array [2,4,6].

**Example 2:**

- **Input:** nums = [1], k = 1

- **Output:** 1

- **Explanation:** The beautiful subset of the array nums is [1].

It can be proved that there is only 1 beautiful subset in the array [1].

**Example 3:**

- **Input:** nums = [4,2,5,9,10,3], k = 1

- **Output:** 23

**Constraints:**

- <code>1 <= nums.length <= 20</code>

- <code>1 <= nums[i], k <= 1000</code>

**Solution:**

```

class Solution {

/**

* @param Integer[] $nums

* @param Integer $k

* @return Integer

*/

function beautifulSubsets($nums, $k) {

$countBeautifulSubsets = -1;

$countNums = array_fill(0, 1010, 0);

$size = count($nums);

$dfs = function($index) use (&$dfs, &$countBeautifulSubsets, &$nums, &$countNums, $size, $k) {

if ($index >= $size) {

++$countBeautifulSubsets;

return;

}

$dfs($index + 1);

$isBeautifulIncrement = $nums[$index] + $k >= 1010 || $countNums[$nums[$index] + $k] == 0;

$isBeautifulDecrement = $nums[$index] - $k < 0 || $countNums[$nums[$index] - $k] == 0;

if ($isBeautifulIncrement && $isBeautifulDecrement) {

++$countNums[$nums[$index]];

$dfs($index + 1);

--$countNums[$nums[$index]];

}

};

$dfs(0);

return $countBeautifulSubsets;

}

}

```

**Contact Links**

- **[LinkedIn](https://www.linkedin.com/in/arifulhaque/)**

- **[GitHub](https://github.com/mah-shamim)** | mdarifulhaque |

1,863,051 | Buy Verified Paxful Account | https://dmhelpshop.com/product/buy-verified-paxful-account/ Buy Verified Paxful Account There are... | 0 | 2024-05-23T17:04:21 | https://dev.to/mcynthiahortonotyu/buy-verified-paxful-account-4e9b | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-paxful-account/\n\n\nBuy Verified Paxful Account\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, Buy verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to Buy Verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with. Buy Verified Paxful Account.\n\nBuy US verified paxful account from the best place dmhelpshop\nWhy we declared this website as the best place to buy US verified paxful account? Because, our company is established for providing the all account services in the USA (our main target) and even in the whole world. With this in mind we create paxful account and customize our accounts as professional with the real documents. Buy Verified Paxful Account.\n\nIf you want to buy US verified paxful account you should have to contact fast with us. Because our accounts are-\n\nEmail verified\nPhone number verified\nSelfie and KYC verified\nSSN (social security no.) verified\nTax ID and passport verified\nSometimes driving license verified\nMasterCard attached and verified\nUsed only genuine and real documents\n100% access of the account\nAll documents provided for customer security\nWhat is Verified Paxful Account?\nIn today’s expanding landscape of online transactions, ensuring security and reliability has become paramount. Given this context, Paxful has quickly risen as a prominent peer-to-peer Bitcoin marketplace, catering to individuals and businesses seeking trusted platforms for cryptocurrency trading.\n\nIn light of the prevalent digital scams and frauds, it is only natural for people to exercise caution when partaking in online transactions. As a result, the concept of a verified account has gained immense significance, serving as a critical feature for numerous online platforms. Paxful recognizes this need and provides a safe haven for users, streamlining their cryptocurrency buying and selling experience.\n\nFor individuals and businesses alike, Buy verified Paxful account emerges as an appealing choice, offering a secure and reliable environment in the ever-expanding world of digital transactions. Buy Verified Paxful Account.\n\nVerified Paxful Accounts are essential for establishing credibility and trust among users who want to transact securely on the platform. They serve as evidence that a user is a reliable seller or buyer, verifying their legitimacy.\n\nBut what constitutes a verified account, and how can one obtain this status on Paxful? In this exploration of verified Paxful accounts, we will unravel the significance they hold, why they are crucial, and shed light on the process behind their activation, providing a comprehensive understanding of how they function. Buy verified Paxful account.\n\n \n\nWhy should to Buy Verified Paxful Account?\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, a verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence. Buy Verified Paxful Account.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to buy a verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with.\n\n \n\nWhat is a Paxful Account\nPaxful and various other platforms consistently release updates that not only address security vulnerabilities but also enhance usability by introducing new features. Buy Verified Paxful Account.\n\nIn line with this, our old accounts have recently undergone upgrades, ensuring that if you purchase an old buy Verified Paxful account from dmhelpshop.com, you will gain access to an account with an impressive history and advanced features. This ensures a seamless and enhanced experience for all users, making it a worthwhile option for everyone.\n\n \n\nIs it safe to buy Paxful Verified Accounts?\nBuying on Paxful is a secure choice for everyone. However, the level of trust amplifies when purchasing from Paxful verified accounts. These accounts belong to sellers who have undergone rigorous scrutiny by Paxful. Buy verified Paxful account, you are automatically designated as a verified account. Hence, purchasing from a Paxful verified account ensures a high level of credibility and utmost reliability. Buy Verified Paxful Account.\n\nPAXFUL, a widely known peer-to-peer cryptocurrency trading platform, has gained significant popularity as a go-to website for purchasing Bitcoin and other cryptocurrencies. It is important to note, however, that while Paxful may not be the most secure option available, its reputation is considerably less problematic compared to many other marketplaces. Buy Verified Paxful Account.\n\nThis brings us to the question: is it safe to purchase Paxful Verified Accounts? Top Paxful reviews offer mixed opinions, suggesting that caution should be exercised. Therefore, users are advised to conduct thorough research and consider all aspects before proceeding with any transactions on Paxful.\n\n \n\nHow Do I Get 100% Real Verified Paxful Accoun?\nPaxful, a renowned peer-to-peer cryptocurrency marketplace, offers users the opportunity to conveniently buy and sell a wide range of cryptocurrencies. Given its growing popularity, both individuals and businesses are seeking to establish verified accounts on this platform.\n\nHowever, the process of creating a verified Paxful account can be intimidating, particularly considering the escalating prevalence of online scams and fraudulent practices. This verification procedure necessitates users to furnish personal information and vital documents, posing potential risks if not conducted meticulously.\n\nIn this comprehensive guide, we will delve into the necessary steps to create a legitimate and verified Paxful account. Our discussion will revolve around the verification process and provide valuable tips to safely navigate through it.\n\nMoreover, we will emphasize the utmost importance of maintaining the security of personal information when creating a verified account. Furthermore, we will shed light on common pitfalls to steer clear of, such as using counterfeit documents or attempting to bypass the verification process.\n\nWhether you are new to Paxful or an experienced user, this engaging paragraph aims to equip everyone with the knowledge they need to establish a secure and authentic presence on the platform.\n\nBenefits Of Verified Paxful Accounts\nVerified Paxful accounts offer numerous advantages compared to regular Paxful accounts. One notable advantage is that verified accounts contribute to building trust within the community.\n\nVerification, although a rigorous process, is essential for peer-to-peer transactions. This is why all Paxful accounts undergo verification after registration. When customers within the community possess confidence and trust, they can conveniently and securely exchange cash for Bitcoin or Ethereum instantly. Buy Verified Paxful Account.\n\nPaxful accounts, trusted and verified by sellers globally, serve as a testament to their unwavering commitment towards their business or passion, ensuring exceptional customer service at all times. Headquartered in Africa, Paxful holds the distinction of being the world’s pioneering peer-to-peer bitcoin marketplace. Spearheaded by its founder, Ray Youssef, Paxful continues to lead the way in revolutionizing the digital exchange landscape.\n\nPaxful has emerged as a favored platform for digital currency trading, catering to a diverse audience. One of Paxful’s key features is its direct peer-to-peer trading system, eliminating the need for intermediaries or cryptocurrency exchanges. By leveraging Paxful’s escrow system, users can trade securely and confidently.\n\nWhat sets Paxful apart is its commitment to identity verification, ensuring a trustworthy environment for buyers and sellers alike. With these user-centric qualities, Paxful has successfully established itself as a leading platform for hassle-free digital currency transactions, appealing to a wide range of individuals seeking a reliable and convenient trading experience. Buy Verified Paxful Account.\n\n \n\nHow paxful ensure risk-free transaction and trading?\nEngage in safe online financial activities by prioritizing verified accounts to reduce the risk of fraud. Platforms like Paxfu implement stringent identity and address verification measures to protect users from scammers and ensure credibility.\n\nWith verified accounts, users can trade with confidence, knowing they are interacting with legitimate individuals or entities. By fostering trust through verified accounts, Paxful strengthens the integrity of its ecosystem, making it a secure space for financial transactions for all users. Buy Verified Paxful Account.\n\nExperience seamless transactions by obtaining a verified Paxful account. Verification signals a user’s dedication to the platform’s guidelines, leading to the prestigious badge of trust. This trust not only expedites trades but also reduces transaction scrutiny. Additionally, verified users unlock exclusive features enhancing efficiency on Paxful. Elevate your trading experience with Verified Paxful Accounts today.\n\nIn the ever-changing realm of online trading and transactions, selecting a platform with minimal fees is paramount for optimizing returns. This choice not only enhances your financial capabilities but also facilitates more frequent trading while safeguarding gains. Buy Verified Paxful Account.\n\nExamining the details of fee configurations reveals Paxful as a frontrunner in cost-effectiveness. Acquire a verified level-3 USA Paxful account from usasmmonline.com for a secure transaction experience. Invest in verified Paxful accounts to take advantage of a leading platform in the online trading landscape.\n\n \n\nHow Old Paxful ensures a lot of Advantages?\n\nExplore the boundless opportunities that Verified Paxful accounts present for businesses looking to venture into the digital currency realm, as companies globally witness heightened profits and expansion. These success stories underline the myriad advantages of Paxful’s user-friendly interface, minimal fees, and robust trading tools, demonstrating its relevance across various sectors.\n\nBusinesses benefit from efficient transaction processing and cost-effective solutions, making Paxful a significant player in facilitating financial operations. Acquire a USA Paxful account effortlessly at a competitive rate from usasmmonline.com and unlock access to a world of possibilities. Buy Verified Paxful Account.\n\nExperience elevated convenience and accessibility through Paxful, where stories of transformation abound. Whether you are an individual seeking seamless transactions or a business eager to tap into a global market, buying old Paxful accounts unveils opportunities for growth.\n\nPaxful’s verified accounts not only offer reliability within the trading community but also serve as a testament to the platform’s ability to empower economic activities worldwide. Join the journey towards expansive possibilities and enhanced financial empowerment with Paxful today. Buy Verified Paxful Account.\n\n \n\nWhy paxful keep the security measures at the top priority?\nIn today’s digital landscape, security stands as a paramount concern for all individuals engaging in online activities, particularly within marketplaces such as Paxful. It is essential for account holders to remain informed about the comprehensive security protocols that are in place to safeguard their information.\n\nSafeguarding your Paxful account is imperative to guaranteeing the safety and security of your transactions. Two essential security components, Two-Factor Authentication and Routine Security Audits, serve as the pillars fortifying this shield of protection, ensuring a secure and trustworthy user experience for all. Buy Verified Paxful Account.\n\nConclusion\nInvesting in Bitcoin offers various avenues, and among those, utilizing a Paxful account has emerged as a favored option. Paxful, an esteemed online marketplace, enables users to engage in buying and selling Bitcoin. Buy Verified Paxful Account.\n\nThe initial step involves creating an account on Paxful and completing the verification process to ensure identity authentication. Subsequently, users gain access to a diverse range of offers from fellow users on the platform. Once a suitable proposal captures your interest, you can proceed to initiate a trade with the respective user, opening the doors to a seamless Bitcoin investing experience.\n\nIn conclusion, when considering the option of purchasing verified Paxful accounts, exercising caution and conducting thorough due diligence is of utmost importance. It is highly recommended to seek reputable sources and diligently research the seller’s history and reviews before making any transactions.\n\nMoreover, it is crucial to familiarize oneself with the terms and conditions outlined by Paxful regarding account verification, bearing in mind the potential consequences of violating those terms. By adhering to these guidelines, individuals can ensure a secure and reliable experience when engaging in such transactions. Buy Verified Paxful Account.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com" | mcynthiahortonotyu |

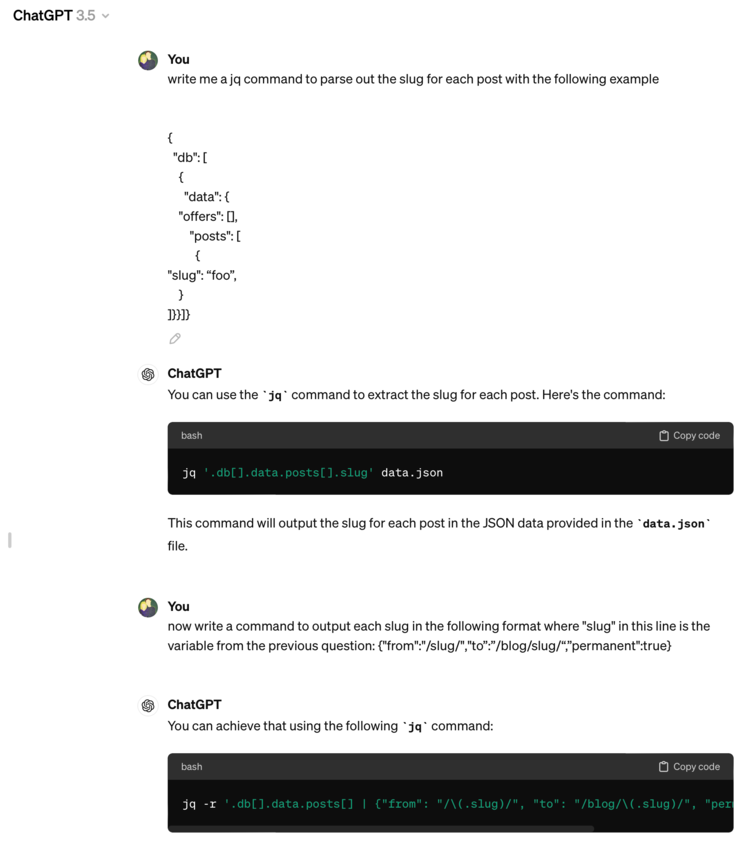

1,863,050 | TypeScript, Understanding the code you write | TypeScript has given the frontend a powerful chance to deliver reliable and maintainable code. The... | 0 | 2024-05-23T17:02:24 | https://dev.to/zeyadetman/typescript-understanding-the-code-you-write-12eg | typescript, javascript, webdev, frontend |

TypeScript has given the frontend a powerful chance to deliver reliable and maintainable code. The more you correctly type your code, the more you fall into its strengths and importance. In this post, I'll mention some TypeScript tips that you may or may not know about.

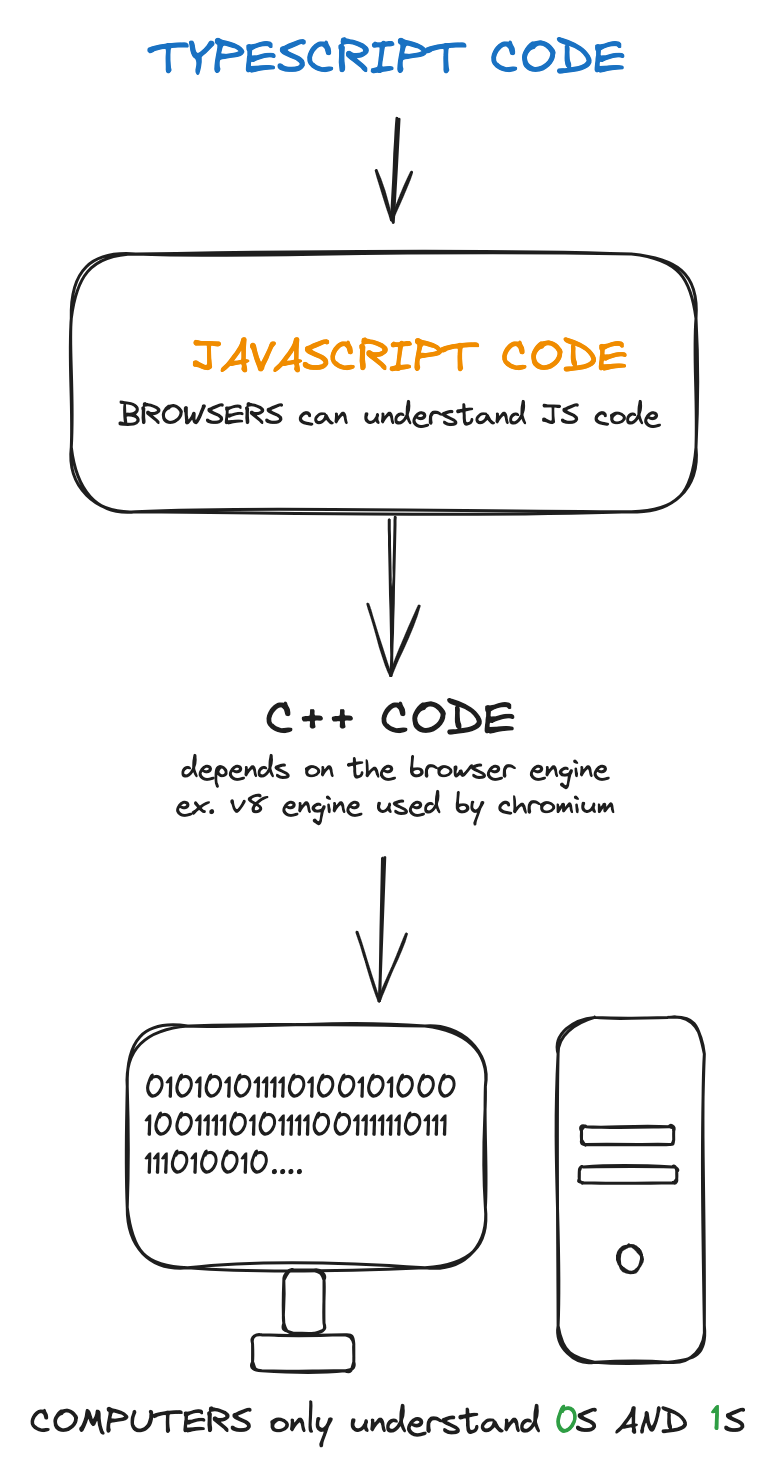

## How TypeScript works?

The first thing you should know is **how TypeScript works**?

<figure style={{ textAlign: "center" }}>

<figcaption>

[Source](https://gist.github.com/zeyadetman/e2435ca1e2e0fe78d3dc981250e42499)

</figcaption>

</figure>

Browsers are designed to only understand JavaScript, just as computers are designed to only understand zeros and ones. In the case of computers, we compile high-level code to machine code and then to zeros and ones so the computer can understand it. Similarly, we transpile or translate TypeScript code to JavaScript code to be understood by browsers and other engines that only know JavaScript.

To do this, we may use the popular `tsc` tool, You can also check out the [awesome-typescript-compilers](https://github.com/JohnDeved/awesome-typescript-compilers?tab=readme-ov-file) repository to learn more about them.

For example, code like this:

```ts

type animals = "cat" | "dog";

const getAnimal = (animal: animals) => animal;

getAnimal("cat");

```

Is translated to this JavaScript code, so browsers can understand it:

```js

const getAnimal = (animal) => animal;

getAnimal("cat");

```

**Note: TypeScript code is eliminated in the transpiling process.**

This leads us to an **important conclusion**: once you realize it, you'll notice a lot about TypeScript concepts and why they've been written in their particular way.

**Conclusion: As TypeScript does not run on browsers, it only runs at compile time, not at runtime.**

> **Compile time** is the time when your code is being built, before it runs and becomes interactive. **Run time** is when your code is running and interacting with the client.

### Differences in Action

Let's compare a typed language that checks types at runtime, like C#, with TypeScript, which checks types at compile time:

#### Function overloading

> Some JavaScript functions can be called in a variety of argument counts and types. For example, you might write a function to produce a `Date` that takes either a timestamp (one argument) or a month/day/year specification (three arguments).

> [Resource](https://www.typescriptlang.org/docs/handbook/2/functions.html#function-overloads)

In C#, we can achieve function overloading by declaring multiple function arguments and bodies. This is possible because C# checks the type of the function arguments at runtime and defines the function body accordingly:

```c#

void display() { ... }

void display(int a) { ... }

float display(double a) { ... }

float display(int a, float b) { ... }

```

However, in TypeScript, we go to the runtime without types, without typescript itself, so there's no runtime type checks and then we can't assign multiple values to the same variable in javascript, or in other words we can't create multiple bodies for the same function.

```ts

function makeDate(timestamp: number): Date;

function makeDate(m: number, d: number, y: number): Date;

function makeDate(m: number, d: string, y: number): string;

function makeDate(

mOrTimestamp: number,

d?: number | string,

y?: number

): Date | string {

if (typeof d === "string") {

return "hello";

}

if (d !== undefined && y !== undefined) {

return new Date(y, mOrTimestamp, d);

} else {

return new Date(mOrTimestamp);

}

}

const d1 = makeDate(1234);

const d2 = makeDate(5, 5, 5);

```

This will be compiled to this Javascript code:

```js

function makeDate(mOrTimestamp, d, y) {

if (typeof d === "string") {

return "hello";

}

if (d !== undefined && y !== undefined) {

return new Date(y, mOrTimestamp, d);

} else {

return new Date(mOrTimestamp);

}

}

const d1 = makeDate(1234);

const d2 = makeDate(5, 5, 5);

```

There's libraries can do runtime checks for javascript, one of the most popular libraries do this is [zod](https://zod.dev/) you can give it a try.

## Narrowing (make it more specific)

## `keyof` and `typeof`

- `keyof` from its name, used to "extract" a key of a TYPE, and since objects are the only datatype that contains keys, then `keyof` is used to extract key of an object in a **union** format

```ts

type obj = { [n: number]: string };

type A = keyof obj; // number

```

- `typeof` from its name, used to return the `type` of a variable. simple!

```ts

let str = "Alia";

type a = typeof str; // string

const str2 = "Alia";

type b = typeof str2; // "Alia"

```

**Important note: I used `let` here instead of `const`. If I used `const`, then the type would be the specific string value itself.**

Let's merge both

```ts

const obj = {

id: 1,

name: "Alia",

}; // javascript variable no types

type typeOfObj = typeof obj; // type of the javascript object

type keysOfObj = keyof typeOfObj; // id | name

type keysOfObjOneLine = keyof typeof obj;

```

Notice here

- `keyof` extracts keys of type (object).

- `typeof` extracts the type of variable.

_keep this in your mind for the mapped types section._

## `in` operator and `extends`

Similar to the previous section, `in` operator is kinda similar way you can use it to limit the key to be within a group of types

```ts

type keysOfObj = "id" | "name";

type obj = { [key in keysOfObj]: string };

// type obj = {

// id: string;

// name: string;

// }

```

But we need to modify the code to fix the type for the `id` key to be `number` not `string`

- `extends` consider it as `if else` of typescript.

```ts

type keysOfObj = "id" | "name";

type obj = { [key in keysOfObj]: key extends "id" ? number : string };

// That's it

```

_This called mapped types_

## Generics

Think about it as a function in javascript, if you have a type and you're repeating it with slight changes, then you probably need to use `generics`.

```ts

interface BasicType<T> {

input: T;

}

const x: BasicType<string> = { input: "test" };

const y: BasicType<number> = { input: "test" }; // wrong

```

```ts

function basic(a, b) {

return { a, b };

}

function basic<A, B>(a, b) {

return { a, b };

}

function basic<A, B>(a: A, b: B): { a: A; b: B } {

return { a, b };

}

basic<string, number>("hello", 2);

```

we can still use `extends` with generics to give a powerful type.

## Typescript Coverage

In addition to adding TypeScript rules to your project, it's important to track TypeScript code coverage. Code coverage helps identify missing types for variables.

You might think it's obvious if a variable has a type, but when using third-party libraries, classes, or functions, it's easy to miss some. Not everything, such as query parameters, will be tracked by the TypeScript coverage library, but it definitely helps.

I suggest using [typescript-coverage-report](https://www.npmjs.com/package/typescript-coverage-report) for this purpose. If you know of a better option, feel free to share it in the comments below.

Resources:

- [TypeScript Documentation](https://www.typescriptlang.org/docs/)

- [type-challenges](https://github.com/type-challenges/type-challenges)

- [typehero.dev](https://typehero.dev/)

[Find more... ](https://notes.zeyadetman.com/programming-languages/typescript)

---

_While writing this post, civilians and children in Gaza are being killed in the ongoing genocide. It's important to be informed about what is happening in Palestine and to consider the humanitarian aspects of the situation. Please take the time to research, and understand the events and their impact on palestinian's lives. [know more](https://blog.paulbiggar.com/i-cant-sleep/)_

_Originally Published on [https://zeyadetman.com/posts/typescript-understand-the-code-you-write](https://zeyadetman.com/posts/typescript-understand-the-code-you-write)_ | zeyadetman |

1,863,049 | The Power of Cryptocurrency: Insights from Cardano’s Founder Charles Hoskinson | In a world where the use of digital currencies is becoming increasingly popular, China is trying to... | 0 | 2024-05-23T17:01:45 | https://36crypto.com/the-power-of-cryptocurrency-insights-from-cardanos-founder-charles-hoskinson/ | cryptocurrency, news | In a world where the use of digital currencies is becoming increasingly popular, China is trying to become a leader by actively introducing the digital yuan. However, citizens are in no hurry to use the currency. Why is this happening? Let’s take a closer look at it.

**Chinese Workers Prefer Fiat Money**

The Chinese government is actively trying to introduce a digital yuan. According to the South China Morning Post, some Chinese cities have started paying CBDC to the country’s civil servants. However, a large number of employees are still hesitant to use them for daily transactions and convert them directly into cash.

Sammy Lin, a customer service manager at a Chinese state-owned bank in Suzhou, explains that she does not keep funds in the e-CNY app because it does not charge interest for storage. She also notes that there are currently not many places online or offline where you can use the digital yuan.

Although China has been a “functionally cashless” society for more than a decade, many citizens are still hesitant to use a purely digital currency due to concerns about traceability and limited use cases.

But despite such concerns, according to Yi Gang, former governor of the People’s Bank of China, more than $250 million worth of transactions were made using the digital yuan in July 2023. He also emphasized that the digital yuan is able to “fully protect privacy” due to the so-called “controlled anonymity,” which means that there is no tracking of small payments, but some tracking of large payments is possible.

Charles Hoskinson, the founder of Cardano, emphasizes the importance of cryptocurrency in the modern world. On May 11, he reminded on his social media page that the fundamental concept of cryptocurrency is the creation of new social contracts. Hoskinson emphasized that it is these contracts that will make the government accountable to the people and urged the industry to focus on promoting this goal.

He also warned that anyone who opposes the spread of cryptocurrencies unknowingly supports the concentration of power in the hands of a few.

_“Crypto gives us our voices, financial freedom, and shared humanity back.”_ Hoskinson [remarked](https://x.com/iohk_charles/status/1789403123804688520?s=46&t=aw6ZR-6aD050XLPVXY8AQA).

**European Crypto Industry Development**

The crypto industry is gaining significant growth in Europe. European countries are actively working to create a legal environment to ensure investor protection and financial system stability. For example, Switzerland is known for its “crypto valley” in Zurich, where numerous blockchain companies and startups are based. Malta has adopted a number of laws aimed at regulating cryptocurrencies and ensuring their legality and transparency.

European countries are constantly working on the development of new blockchain solutions and cryptocurrency payment systems. European countries are also actively exploring the possibilities of integrating cryptocurrencies into their financial systems. For example, Switzerland was one of the first countries to [introduce](https://www.bloomberg.com/news/articles/2023-12-06/taxpayers-in-swiss-city-can-settle-bills-with-bitcoin-tether) the possibility of paying taxes in Bitcoin.

Many countries have long accepted cryptocurrency as a means of payment, and Ukraine is no exception. Ukraine is intensively expanding the crypto sphere, attracting investors, startups, and regulators to create a favorable landscape for this spectrum.

Ukrainians have long had the opportunity to pay for purchases in grocery stores using payment solutions from [Corefy](https://corefy.com/en-ua), [Switchere](https://switchere.com/accept-crypto), [Whitepay](https://whitepay.com/), and many others. Moreover, the first cryptocurrency [transaction](https://gncrypto.news/news/ukraines-first-electric-vehicle-purchase-with-crypto/) for the purchase of an electric car has recently taken place in Ukraine. This step demonstrates the significant development and potential of the industry.

**Summary**

The introduction of the digital yuan in China faces a number of challenges, but despite the difficulties, the government continues to promote this initiative. At the same time, the development of the crypto industry in Europe is showing remarkable progress, creating new opportunities for businesses and citizens. The involvement of cryptocurrencies in everyday financial transactions, including charity and shopping, demonstrates the importance of digital assets for economic development. As Charles Hoskinson has aptly noted, the rise of cryptocurrencies is not only shifting financial paradigms, but also has the potential to redefine social contracts and empower people in unprecedented ways. | hryniv_vlad |

1,863,048 | autollama | From the ollama help discord channel: Docker compose: How do you pull a model automatically with... | 0 | 2024-05-23T17:01:36 | https://dev.to/spara_50/autollama-4mi8 | From the [ollama help discord](https://discord.com/invite/ollama) channel:

> Docker compose: How do you pull a model automatically with container creation?

The conundrum of containerizing Ollama is that it must be running to pull a model. If you run ollama using `docker compose`, it doesn't provide a way to pull a model from the ollama registry. What's a budding generative AI nerd to do?

The answer is more `docker compose`, as in `docker compose exec`, which runs a script to download a model. Add both commands to a startup script, and you have [autollama](https://github.com/spara/autollama).

```bash

docker compose up -d

sleep 5

docker compose exec autollama sh /root/.ollama/pull_model.sh

```

Come and get your ollama love [here](https://github.com/spara/autollama). | spara_50 | |

1,863,002 | sCrypt Hackathon 2024 Winners Announced! | sCrypt Hackathon 2024 Winners Announced! The sCrypt community is thrilled to announce the winners of... | 0 | 2024-05-23T17:00:41 | https://dev.to/bitruslukag/scrypt-hackathon-2024-winners-announced-127i | **sCrypt Hackathon 2024 Winners Announced!**

The sCrypt community is thrilled to announce the winners of the 2024 sCrypt Hackathon, held from March 25 to April 25, 2024!

This year's theme revolved around merging sCrypt (Bitcoin smart contracts) with Ordinals (Bitcoin tokens) to pioneer innovative solutions in the blockchain space. We received a fantastic response from developers worldwide, with a wide range of creative projects submitted.

The winners were chosen through a combination of judge evaluation and community votes. You can explore all the amazing projects on Devfolio: [scrypt.devfolio.co/projects](https://scrypt.devfolio.co/projects)

And the ten best selected projects are...

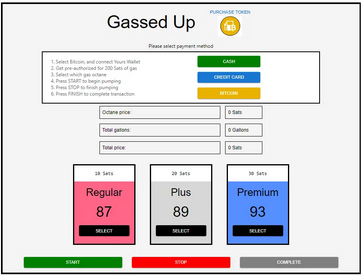

1. Grand Prize Winner ($5,000): Gassed-Up

2. The Bitcoin CPU

3. Satoshi Dragons

4. Block Trust

5. OneSatRollup

6. mediumSv

7. Smart Ordinals

8. Autochaintrade App

9. Facial-Identity-Verification

10. Auoz

We're especially excited to congratulate [Gassed-Up](https://devfolio.co/projects/gassedup-08ec),

the project taking home the Grand Prize of $5,000! Their innovative approach exemplifies the potential of sCrypt to revolutionize everyday processes.

Congratulations to all the participants for their innovative projects and dedication to advancing the sCrypt ecosystem. We're confident these projects will continue to develop and contribute significantly to the growth of the sCrypt ecosystem.

**Summary of 10 Ten Best Projects Developed During the sCrypt Hackathon 2024**

1. 1. **Gassed-Up**

**Description:** A gas pump simulator optimizing cost, trust, and customer experience at the pump with a Bitcoin smart contract.

**Project Link:** [Gassed-Up](https://devfolio.co/projects/gassedup-08ec)

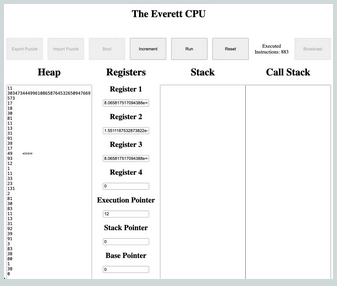

12. 2. **The Bitcoin CPU**

**Description:** Executes code with the Everett CPU architecture on Bitcoin.

**Project Link:** [The Bitcoin CPU](https://devfolio.co/projects/the-bitcoin-cpu-cfe7)

24. 3. **Satoshi Dragons**

**Description:** A smart contract-enforced NFT game on Bitcoin.

**Project Link:** [Satoshi Dragons](https://devfolio.co/projects/satoshi-dragons-08f5)

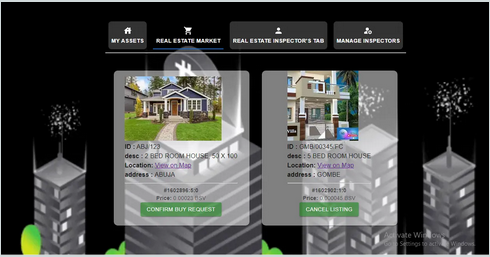

29. 4. **BlockTrust: Real Estate Management System**

**Description:** A Blockchain Based Real Estate Management System on Bitcoin SV Blockchain.

**Project Link:** [BlockTrust](https://devfolio.co/projects/blocktrust-32fc)

40. 5. **OneSatRollup**

**Description:** Zero knowledge rollups for Onesat Ordinals and other transaction chains.

**Project Link:** [OneSatRollup](https://devfolio.co/projects/onesatrollup-cbf0)

45. 6. **mediumSv: Blockchain Content Rights Platform**

**Description:** Ensures content authenticity and ownership, combating plagiarism and unauthorized use while providing transparent, immutable records of creative works.

**Project Link:** [MediumSv](https://devfolio.co/projects/mediumsv-blockchain-content-rights-platform-5946)

60. 7. **Smart Ordinals**

**Description:** Demonstrates the potential of turning a stateless UTXO script into a stateful one to leverage the benefits of stateful UTXO scripts.

**Project Link:** [Smart Ordinals](https://devfolio.co/projects/smart-ordinals-8034)

65. 8. **Autochaintrade App**

**Description:** A decentralized automotive trading platform utilizing Ordinal NFTs for secure and transparent vehicle transactions.

**Project Link:** [Autochaintrade App](https://devfolio.co/projects/autochaintrade-app-2308)

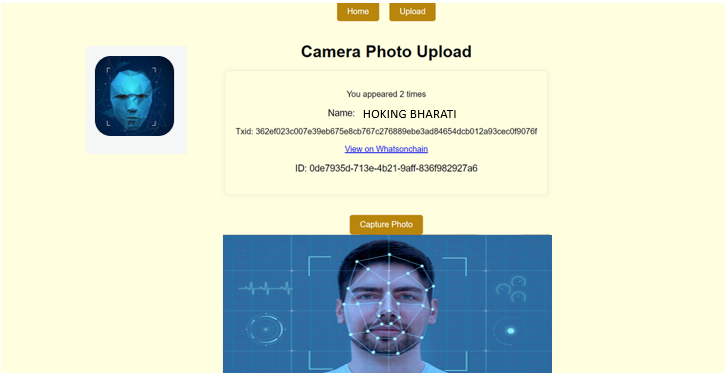

70. 9. **Facial-Identity-Verification**

**Description:** A groundbreaking platform where cutting-edge technology meets the simplicity of facial recognition.

**Project Link:** [Facial-Identity-Verification](https://devfolio.co/projects/facialidentityverificationonblockchainwithma-2edd)

75. 10. **Auoz**

**Description:** Auoz (Au-oz), a fully Bitcoin-backed stablecoin linked to the price of one ounce of gold (Au).

**Project Link:** [Auoz](https://devfolio.co/projects/auoz-13b3)

In conclusion, these projects demonstrated the creativity and technical prowess of the participants, showcasing a diverse array of applications and solutions within the sCrypt ecosystem.

Stay tuned for more updates and future events from sCrypt at [scrypt.devfolio.co/projects](https://scrypt.devfolio.co/projects).

| bitruslukag | |

1,863,047 | Understanding Lawyers in Corpus Christi, TX | Nestled along the Gulf Coast of Texas, Corpus Christi boasts a vibrant community, bustling economy,... | 0 | 2024-05-23T16:56:52 | https://dev.to/backlink_30/understanding-lawyers-in-corpus-christi-tx-5d30 | Nestled along the Gulf Coast of Texas, Corpus Christi boasts a vibrant community, bustling economy, and a rich tapestry of legal needs. Whether it's a maritime dispute, personal injury claim, or family matter, the residents of Corpus Christi often turn to the expertise of seasoned attorneys to navigate the complex terrain of the legal system. In this comprehensive guide, we delve into the role of lawyers in Corpus Christi, TX, exploring their specialties, qualifications, and the crucial services they provide to individuals and businesses alike.

**Legal Landscape of Corpus Christi**

Corpus Christi, with its strategic location and thriving industries, presents a diverse array of legal challenges. From maritime law stemming from its bustling port to civil litigation arising from its vibrant economy, the legal landscape here is multifaceted. Additionally, family law matters, such as divorce and child custody, are common, reflecting the dynamics of the community.

**Specialties of Lawyers in Corpus Christi**

Maritime Law: Given its position as a major port city, Corpus Christi is a hub for maritime activities. Lawyers specializing in maritime law handle a range of issues, including vessel collisions, cargo disputes, and offshore injuries. They possess a deep understanding of admiralty law and strive to protect the rights of seamen, vessel owners, and maritime businesses.

Personal Injury Law: Accidents happen, and when they do, residents of Corpus Christi rely on personal injury attorneys to seek compensation for their injuries. Whether it's a car accident, slip and fall, or workplace injury, these lawyers are adept at negotiating with insurance companies and litigating in court to ensure their clients receive fair compensation for medical expenses, lost wages, and pain and suffering.

Real Estate Law: With a growing real estate market, the need for skilled real estate attorneys in Corpus Christi is paramount. These lawyers assist clients with property transactions, lease agreements, zoning issues, and property disputes. Whether you're buying your dream home or investing in commercial real estate, having a knowledgeable real estate attorney by your side can make all the difference.

Family Law: Navigating the complexities of family law can be emotionally challenging, but experienced family law attorneys in Corpus Christi offer compassionate guidance and steadfast advocacy. From divorce proceedings to child custody disputes and adoption cases, these lawyers work tirelessly to protect the best interests of their clients and their families.

Business Law: Entrepreneurs and businesses in Corpus Christi rely on business law attorneys to navigate the legal intricacies of commerce. Whether it's forming a business entity, drafting contracts, or resolving disputes, these attorneys provide invaluable counsel to ensure their clients' ventures thrive in a competitive market.

**Qualifications and Credentials**

Lawyers in Corpus Christi, like their counterparts across the country, must meet rigorous standards to practice law. They must graduate from an accredited law school, pass the Texas Bar Exam, and meet the ethical requirements set forth by the State Bar of Texas. Additionally, many lawyers pursue specialized certifications or memberships in professional organizations to enhance their expertise and credibility in their respective fields.

**The Role of Lawyers in the Community**

Beyond their legal expertise, **[Lawyers in Corpus Christi Tx](https://perkinsperkinslaw.com/)** play an integral role in their community. They volunteer their time and resources to various charitable organizations, provide pro bono legal services to those in need, and serve as advocates for social justice and equality. Through their dedication to serving others, these lawyers embody the principles of justice and compassion that underpin the legal profession.

**Finding the Right Lawyer for Your Needs**

When facing a legal issue, finding the right lawyer is essential. Residents of Corpus Christi can utilize various resources to identify attorneys who specialize in their particular area of need. Referrals from friends, family, or trusted professionals are often a valuable starting point. Additionally, online directories, such as the State Bar of Texas website, provide comprehensive listings of licensed attorneys in the area, along with their practice areas and contact information.

**Conclusion**

Lawyers in Corpus Christi, TX, play a pivotal role in upholding justice, protecting rights, and ensuring the rule of law in their community. Whether advocating for the injured, guiding businesses through legal challenges, or helping families navigate turbulent times, these attorneys are dedicated professionals committed to serving the needs of their clients with integrity and compassion. In a city as dynamic as Corpus Christi, the expertise and counsel of skilled lawyers are invaluable assets in navigating the complexities of the legal system. | backlink_30 | |

1,863,046 | How to Craft an Effective Tech Resume Without Experience | How to Craft an Effective Tech Resume Without Experience Highlight Relevant... | 0 | 2024-05-23T16:56:01 | https://dev.to/bingecoder89/how-to-craft-an-effective-tech-resume-without-experience-1l1m | beginners, tutorial, career, codenewbie | ### How to Craft an Effective Tech Resume Without Experience

1. **Highlight Relevant Education:**

- Emphasize your degree, coursework, and any academic projects related to tech. Mention specific subjects that align with the job you're applying for.

2. **Showcase Personal Projects:**

- Include any self-initiated tech projects, such as building a website, creating a mobile app, or contributing to open-source projects. Detail your role and the technologies used.

3. **Certifications and Online Courses:**

- List any tech certifications or online courses you've completed, such as those from Coursera, Udemy, or Codecademy. Highlight skills and knowledge gained from these courses.

4. **Technical Skills Section:**

- Create a dedicated section for technical skills. List programming languages, tools, and software you are familiar with. Include any relevant frameworks or libraries.

5. **Soft Skills and Transferable Skills:**

- Emphasize soft skills like problem-solving, analytical thinking, and teamwork. Mention any transferable skills from other jobs or experiences that are relevant to tech roles.

6. **Internships and Volunteer Work:**

- If you've interned or volunteered in any capacity that involved tech, detail these experiences. Focus on the skills learned and any accomplishments during these roles.

7. **Relevant Extracurricular Activities:**

- Mention tech-related clubs, hackathons, or coding bootcamps you've participated in. Highlight leadership roles or significant contributions in these activities.

8. **Customize for Each Job:**

- Tailor your resume to the specific job you're applying for. Use keywords from the job description and align your skills and experiences with the job requirements.

9. **Include a Personal Statement:**

- Write a brief summary or objective at the top of your resume. Focus on your passion for tech, eagerness to learn, and career aspirations.

10. **Professional Format and Presentation:**

- Use a clean, professional resume template. Ensure it's well-organized, free of errors, and easy to read. A polished presentation can make a strong first impression.

Happy Learning 🎉 | bingecoder89 |

1,863,037 | Building Robust Applications in React with TypeScript and Zod for REST API Validation | When building applications with React and TypeScript, leveraging TypeScript's static typing... | 0 | 2024-05-23T16:54:55 | https://dev.to/schead/building-robust-applications-in-react-with-typescript-and-zod-for-rest-api-validation-cl8 | When building applications with React and TypeScript, leveraging TypeScript's static typing capabilities can significantly enhance your code's reliability. However, even with TypeScript, you can't guarantee the shape and type of data coming from external APIs. This potential discrepancy can lead to runtime errors that disrupt your application's functionality. In this blog post, we'll explore how to handle such situations using Zod, a TypeScript-first schema declaration and validation library.

#### Scenario: Fetching User Data with TypeScript

Imagine you have a React application that fetches user data from an API. The `User` object has a `phoneNumber` property, which is expected to be a string. You'll format this phone number to display it in a user-friendly manner. Here's how you might start:

```tsx

// types.ts

export interface User {

id: number;

name: string;

phoneNumber: string;

}

// api.ts

export const fetchUser = async (userId: number): Promise<User> => {

const response = await fetch(`https://api.example.com/users/${userId}`);

const data: User = await response.json();

return data;

};

// UserComponent.tsx

import React, { useEffect, useState } from 'react';

import { User } from './types';

import { fetchUser } from './api';

const UserComponent: React.FC<{ userId: number }> = ({ userId }) => {

const [user, setUser] = useState<User | null>(null);

const [error, setError] = useState<string | null>(null);

useEffect(() => {

fetchUser(userId)

.then(setUser)

.catch(err => setError(err.message));

}, [userId]);

if (error) return <div>Error: {error}</div>;

if (!user) return <div>Loading...</div>;

const formattedPhoneNumber = formatPhoneNumber(user.phoneNumber);

return (

<div>

<h1>{user.name}</h1>

<p>Phone: {formattedPhoneNumber}</p>

</div>

);

};

const formatPhoneNumber = (phoneNumber: string): string => {

// Logic to format the phone number

return phoneNumber.replace(/(\d{3})(\d{3})(\d{4})/, '($1) $2-$3');

};

export default UserComponent;

```

In this example, we expect `phoneNumber` to be a string. However, if the backend changes `phoneNumber` to a number, this will lead to a runtime error in the `formatPhoneNumber` function.

#### Runtime Error Example

Suppose the backend now sends:

```json

{

"id": 1,

"name": "John Doe",

"phoneNumber": 1234567890

}

```

This change will cause a runtime error in the `formatPhoneNumber` function because `replace` is not a method on numbers. The error will look like this:

```vbnet

TypeError: phoneNumber.replace is not a function

```

This error indicates that `phoneNumber` is not a string as expected, but a number, leading to the failure of the `replace` method.

#### Introducing Zod for Schema Validation

To safeguard against such issues, we can use Zod to validate the data we receive from the API. Here's how we can do it step-by-step:

1. **Install Zod** :

```bash

npm install zod

```

2. **Define a Zod Schema** :

```tsx

// schema.ts

import { z } from 'zod';

export const userSchema = z.object({

id: z.number(),

name: z.string(),

phoneNumber: z.string(),

});

export type User = z.infer<typeof userSchema>;

```

3. **Validate API Response and Provide Feedback** :

```tsx

// api.ts

import { userSchema, User } from './schema';

export const fetchUser = async (userId: number): Promise<User> => {

const response = await fetch(`https://api.example.com/users/${userId}`);

const data = await response.json();

const result = userSchema.safeParse(data);

if (!result.success) {

console.error("Validation Error:", result.error.format());

throw new Error('Invalid data format');

}

return result.data;

};

```

4. **Handle Validation in the Component** :

```tsx

// UserComponent.tsx

import React, { useEffect, useState } from 'react';

import { User } from './schema';

import { fetchUser } from './api';

const UserComponent: React.FC<{ userId: number }> = ({ userId }) => {

const [user, setUser] = useState<User | null>(null);

const [error, setError] = useState<string | null>(null);

useEffect(() => {

fetchUser(userId)

.then(setUser)

.catch(err => setError(err.message));

}, [userId]);

if (error) return <div>Error: {error}</div>;

if (!user) return <div>Loading...</div>;

const formattedPhoneNumber = formatPhoneNumber(user.phoneNumber);

return (

<div>

<h1>{user.name}</h1>

<p>Phone: {formattedPhoneNumber}</p>

</div>

);

};

const formatPhoneNumber = (phoneNumber: string): string => {

// Logic to format the phone number

return phoneNumber.replace(/(\d{3})(\d{3})(\d{4})/, '($1) $2-$3');

};

export default UserComponent;

```

In this updated example, the `fetchUser` function uses the Zod schema to validate the API response. If the data doesn't match the expected schema, an error is thrown, and a detailed validation error is logged to the console. This feedback helps developers quickly identify and resolve issues with the API data.

#### Error Message Example

With the Zod validation in place, if the backend sends the `phoneNumber` as a number, the application will catch this discrepancy and throw an error. The console will log a detailed error message like this:

```css

Validation Error: {

"phoneNumber": {

"_errors": ["Expected string, received number"]

}

}

```

In addition to logging the error, the application will throw an error to the user with the message:

```javascript

Error: Invalid data format

```

This message indicates to the user that there was an issue with the data received from the API.

#### Conclusion

Using Zod for schema validation in your React and TypeScript applications ensures that your application can gracefully handle unexpected changes in the API response structure. This approach helps you catch potential issues early, leading to more robust and reliable applications. By incorporating Zod into your development workflow, you can enhance your application's resilience against API changes and reduce runtime errors.

Remember, while TypeScript provides excellent compile-time type checking, runtime validation with tools like Zod is essential for dealing with external data sources. This combination ensures that your application remains stable and predictable, even when the data it relies on changes unexpectedly.

| schead | |

1,863,043 | Tricky Golang interview questions - Part 2: BigO of len(...) | An example I want to discuss is quite simple and requires knowledge about how some data types are... | 0 | 2024-05-23T16:50:48 | https://dev.to/crusty0gphr/tricky-golang-interview-questions-part-2-bigo-of-len-2om9 | go, interview, tutorial, programming | An example I want to discuss is quite simple and requires knowledge about how some data types are constructed under the hood.

**Question: What is the time complexity of `len(...)` for each data type**

```go

package main

import (

"fmt"

)

func main() {

_ = len("string")

_ = len([]int{1, 2, 3})

_ = len(map[string]int{"one": 1, "two": 2, "three": 3})

}

```

The answer to this question is very easy:

**The time complexity of the `len(...)` for all cases is O(1).**

If you answer like this, the interviewer will come up with the following question: **why?** To answer this question, we need to look under the hood of each of the types.

### String

According to the Go documentation string type can be represented as a struct, a **StringHeader**. StringHeader is the runtime representation of a string.

```go

type StringHeader struct {

Data uintptr

Len int

}

```

- `Data` is a pointer, which points to an underlying (backing) byte array that stores the string data

- `Len` is the length of an underlying (backing) byte array

### Slice

Slice can also be represented as a struct, a **SliceHeader**. SliceHeader is the runtime representation of a slice.

```go

type SliceHeader struct {

Data uintptr

Len int

Cap int

}

```

- `Data` is a pointer, which points to an underlying (backing) byte array that stores the string data

- `Len` is the length of an underlying (backing) byte array

- `Cap` is the capacity of the slice.

### Map

Map is a bit more complex data structure and is represented as a [package](https://go.dev/src/runtime/map.go) in Go. In this package, we can find the map header struct with its corresponding fields.

```go

type hmap struct {

count int

flags uint8

B uint8

noverflow uint16

hash0 uint32

buckets unsafe.Pointer

oldbuckets unsafe.Pointer

nevacuate uintptr

extra *mapextra

}

```

We need the `count` field for this struct. According to the comments

- `count` is live cells == size of map or simply length of map

Now we know how these data structures are constructed under the hood we can answer the follow-up question by the interviewer:

**Why len(...) operations are O(1)?**

___

The `Len` function simply returns the value of the field in the header responsible for storing the length.

___

It's that easy! | crusty0gphr |

1,863,042 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash... | 0 | 2024-05-23T16:49:56 | https://dev.to/mcynthiahortonotyu/buy-verified-cash-app-account-3h8c | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-cash-app-account/\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoin enablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number. Buy verified cash app account.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, ACash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n \n\nHow to verify Cash App accounts\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account.\n\nAs part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly. Buy verified cash app account.\n\n \n\nHow cash used for international transaction?\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom.\n\nNo matter if you’re a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain. Buy verified cash app account.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today’s digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial.\n\nAs we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available. Buy verified cash app account.\n\nOffers and advantage to buy cash app accounts cheap?\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform.\n\nWe deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Buy verified cash app account.\n\nTrustbizs.com stands by the Cash App’s superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management.\n\nExplore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs. Buy verified cash app account.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\nIn today’s digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions.\n\nBy acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com[](url)" | mcynthiahortonotyu |

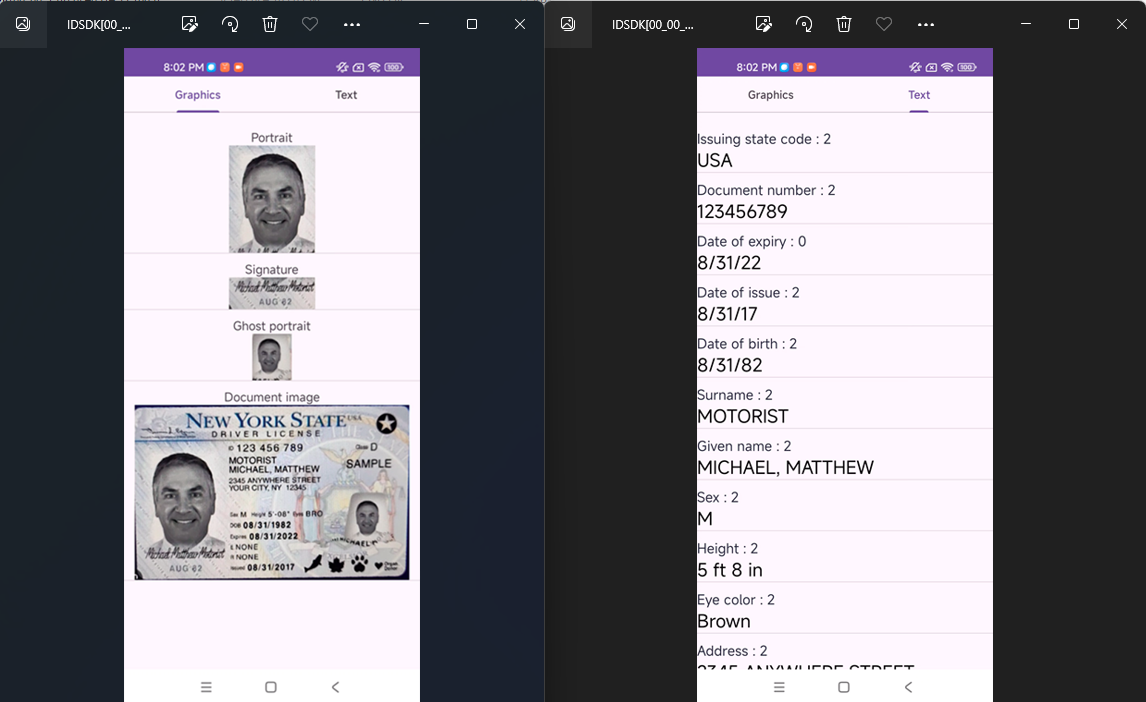

1,863,040 | ID document verification using MiniAiLive ID Recognition SDK | Miniailive ID Recognition SDK offers efficient ID document verification. It ensures accurate, fast,... | 0 | 2024-05-23T16:48:15 | https://dev.to/miniailive/id-document-verification-using-miniailive-id-recognition-sdk-54hi | webdev, androiddev, biometrics, identity | Miniailive ID Recognition SDK offers efficient ID document verification. It ensures accurate, fast, and secure identity checks.

Miniailive ID Recognition SDK is a reliable solution for verifying identity documents swiftly. Businesses can enhance security and reduce fraud with this advanced technology. It supports various ID types, including passports and driver’s licenses, making it versatile for global use.

The SDK integrates seamlessly into existing systems, providing a user-friendly experience. With its high accuracy and speed, businesses can streamline operations and improve customer satisfaction. Miniailive’s technology uses advanced algorithms to ensure the legitimacy of documents. It is an essential tool for industries requiring stringent identity verification. The SDK helps maintain compliance with regulatory standards, offering peace of mind to both businesses and users.

For more reading:

https://miniai.live/id-document-verification-using-miniailive-id-recognition-sdk/

| miniailive |

1,862,985 | Agile has fallen. Or has it ? | In today's fast moving world it is not news to hear of companies adapting new ways of working and... | 0 | 2024-05-23T16:45:55 | https://dev.to/polinaeliana/agile-has-fallen-or-has-it--470m | agile, productivity, writing, scrum | In today's fast moving world it is not news to hear of companies adapting new ways of working and shedding old ones. Many are running after the trend only to find themselves stuck in a vicious cycle where money and resources have been spent and now it's too late to get out. So it is to no surprise that so many leaders are raising their voices on the side effects of agile. Here I wanted to share some of my observations from both experience and reading online content on this topic. To start I truly empathise with the trauma endured by those in the tech world who have been forced into the maze of scrum or whatever other framework under the umbrella of agile. As an agile practitioner I cannot negate these horror stories, so before I continue with my 2 cents I wanted to make it clear that I totally understand why people are standing up to express their feelings of frustration and disillusionment.

**The question is this, has agile fallen ?** I would say yes and no.

Now let's look at the real life example of an average corporate that has adapted this fancy new way of working and is rapidly trying to convert their entire company into an agile superhero. They have big dreams which is commendable,

so what's the big deal ? Well everything. Diving deeper, many companies are using this term "agile" as a tool to cover up waterfallish way of doing business, for them agile is nothing more but just business. They are looking at it as means to cut down budget to layoff onshore and re hire offshore, then demand projects to be completed on time and with quality and when these high expectations are not met someone has to be blamed and in our case it's the mister agile. This type of stressful environment with constant changes and unstable teams not only creates burnout but most of all becomes the bottleneck for teams to deliver better product in shorter time. So the very thing which supposed to aid in continuous improvement has become the monster that's stopping them from growing.

**Let's ask ourselves is this really what agility is?**

On the other hand the issue is the focus on certification over mindset, project managers are undergoing scrum conversion but instead of carefully leading their teams through the transformation by upholding the 12 principles they are forcibly imposing this new way on them without allowing for the room to experiment, make mistakes, learn and grow together as a unit. Agile will not work but certainly fail when we use it to conceal our micromanaging ways. It cannot thrive in a rigid climate where the focal point is how many story points can you deliver in 2 weeks and how fast can you make this and that happen. Instead true agility facilitates a pipeline for both business and tech to have transparent conversations in a safe fearless environment with the customer at the center and the teams having the power to share their technical expertise. So any agile framework will expose the weaknesses that the system in place has and it is up to the leadership if they desire true shift or not.

Nevertheless we rarely see holistic agility happen in real life not because agile is bad (it's like saying all chocolate is bad when in fact good quality cacao is a superfood if consumed in wisdom), but because there is misalignment between the leadership and its employees. Real changes start at the top and trickle down, if you are seeing great dissatisfaction with agile at your work place ask yourself if your teams are encouraged to fail fast or are they being reprimanded for not meeting the highly favoured velocity. We have to stop using agile as a mantra but instead be open to trying new things, if one way doesn't work for your team, try something else. It is about building healthy teams in the end and amplifying creativity and growth. It is not all size fits all type of method but an invitation to a journey of discovery of what works for you and not against you. So my encouragement to the struggling scrum masters and teams would be to do what's best for everyone, have empathy and don't be afraid of uncomfortable conversations.

I would love to hear your opinions about this topic, don't be shy to leave your comments. Talk soon !

| polinaeliana |

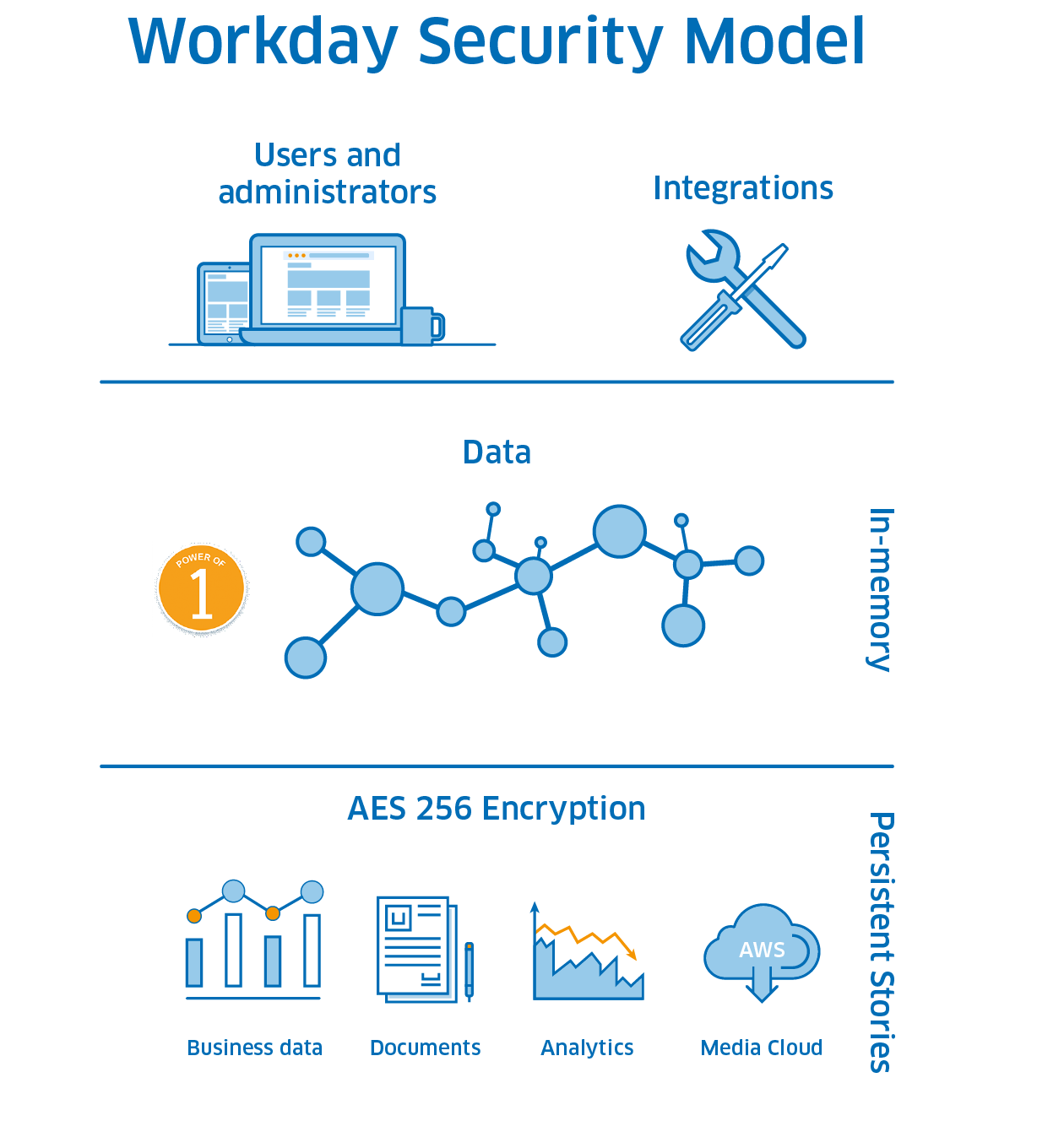

1,863,036 | Workday Security: Everything You Need to Know | If you're a malicious actor in cyberspace, you could do much worse than targeting a Workday instance... | 0 | 2024-05-23T16:41:38 | https://www.suridata.ai/blog/workday-security/ | cybersecurity, devops | If you're a malicious actor in cyberspace, you could do much worse than targeting a Workday instance at a large corporation. As one of the world's leading Human Capital Management (HCM) applications, Workday holds valuable data from employees and businesses worldwide.