id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,860,912 | Domain Events | Introduction to domain events Definition of domain events: Domain events are events that... | 0 | 2024-07-02T08:00:00 | https://dev.to/ben-witt/domain-events-2772 | development, microsoft, domainevents, dotnet |

## Introduction to domain events

**Definition of domain events:**

Domain events are events that occur in a specific area or domain and are important for the business logic of an application. They represent significant state changes or activities within the system. In contrast to system-wide events, which can affect... | ben-witt |

1,908,712 | ⚡ MyFirstApp - React Native with Expo (P19) - Code Layout List Orders | ⚡ MyFirstApp - React Native with Expo (P19) - Code Layout List Orders | 27,894 | 2024-07-02T10:02:46 | https://dev.to/skipperhoa/myfirstapp-react-native-with-expo-p19-code-layout-list-orders-3lj8 | react, reactnative, webdev, tutorial | ⚡ MyFirstApp - React Native with Expo (P19) - Code Layout List Orders

{% youtube iPBI6GhvfG8 %} | skipperhoa |

1,908,711 | Styling Buttons with styled-jsx in Next.js | Learn how to style buttons using styled-jsx in the Next.js framework to enhance the UI of your web projects. | 0 | 2024-07-02T10:00:39 | https://dev.to/itselftools/styling-buttons-with-styled-jsx-in-nextjs-29fb | javascript, css, nextjs, webdev |

In our ongoing journey at [itselftools.com](https://itselftools.com), where we've developed over 30 projects using Next.js and Firebase, we've encountered and implemented a variety of ways to style applications effectively. One of the tools we frequently utilize in our Next.js projects for component-level styling is `... | antoineit |

1,908,710 | Marriage Halls In Medavakkam | For those seeking marriage halls in Medavakkam, their collection showcases versatile options to suit... | 0 | 2024-07-02T10:00:31 | https://dev.to/soundarya_b4c0664448181e2/marriage-halls-in-medavakkam-2j87 | For those seeking [marriage halls in Medavakkam](https://sgrmahal.in/marriage-halls-medavakkam.php), their collection showcases versatile options to suit every taste and budget. Picture-perfect settings and modern amenities make these venues a top choice for couples embarking on their marital journey. Whether nestled ... | soundarya_b4c0664448181e2 | |

1,908,709 | Introduction to Lotus365 | Welcome to Lotus365, the ultimate online gaming platform where excitement and entertainment meet!... | 0 | 2024-07-02T10:00:11 | https://dev.to/lotus365india/introduction-to-lotus365-edo | lotus365, login, register | Welcome to **[Lotus365](https://lotus365india.in/)**, the ultimate online gaming platform where excitement and entertainment meet! Whether you're a seasoned gamer or new to the world of online gaming, Lotus365 offers a unique and immersive experience that will keep you coming back for more.

Why Choose Lotus365?

At Lot... | lotus365india |

1,908,708 | The Ultimate Redis Command Cheatsheet: A Comprehensive Guide | Introduction Redis, an open-source, in-memory data structure store, is widely used for its... | 0 | 2024-07-02T09:59:47 | https://blog.spithacode.com/posts/a7540bc2-7b5a-4f3b-8768-3979f3f9e523 | webdev, beginners, javascript, redis | ## Introduction

Redis, an open-source, in-memory data structure store, is widely used for its high performance, versatility, and simplicity. It supports various data structures, including strings, hashes, lists, sets, and more. This cheatsheet serves as a comprehensive guide to Redis commands, complete with detailed ... | stormsidali2001 |

1,908,705 | Top 5 Best Hotel Management Courses 2024 | Introduction No Doubt! Hotel management is a great career option for those students who are... | 0 | 2024-07-02T09:57:22 | https://dev.to/thegihm2_45/top-5-best-hotel-management-courses-2024-5a4i | hotel, management | Introduction

No Doubt! Hotel management is a great career option for those students who are passionate about the hospitality field. This industry has a wide range of applications, including hotels, resorts, restaurants, events, tourism, and even healthcare. There is a huge demand for employees with hospitality managem... | thegihm2_45 |

1,908,706 | Top 5 Best Hotel Management Courses 2024 | Introduction No Doubt! Hotel management is a great career option for those students who are... | 0 | 2024-07-02T09:57:22 | https://dev.to/thegihm2_45/top-5-best-hotel-management-courses-2024-2929 | hotel, management | Introduction

No Doubt! Hotel management is a great career option for those students who are passionate about the hospitality field. This industry has a wide range of applications, including hotels, resorts, restaurants, events, tourism, and even healthcare. There is a huge demand for employees with hospitality managem... | thegihm2_45 |

1,908,636 | Backtracking, Design and Analysis of Algorithms | Fundamentals of Backtracking Definition and Importance of... | 0 | 2024-07-02T09:57:15 | https://dev.to/harshm03/backtracking-design-and-analysis-of-algorithms-4ooj | algorithms, coding, programming, design | ### Fundamentals of Backtracking

#### Definition and Importance of Backtracking

**Definition:**

Backtracking is a general algorithmic technique that incrementally builds candidates for the solution to a problem and abandons a candidate (backtracks) as soon as it determines that the candidate cannot possibly be comple... | harshm03 |

1,908,704 | Buy Verified Paxful Account | https://dmhelpshop.com/product/buy-verified-paxful-account/ Buy Verified Paxful Account There are... | 0 | 2024-07-02T09:57:14 | https://dev.to/mojashfinding/buy-verified-paxful-account-5a0a | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-paxful-account/\n\n\nBuy Verified Paxful Account\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, Buy verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to Buy Verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with. Buy Verified Paxful Account.\n\nBuy US verified paxful account from the best place dmhelpshop\nWhy we declared this website as the best place to buy US verified paxful account? Because, our company is established for providing the all account services in the USA (our main target) and even in the whole world. With this in mind we create paxful account and customize our accounts as professional with the real documents. Buy Verified Paxful Account.\n\nIf you want to buy US verified paxful account you should have to contact fast with us. Because our accounts are-\n\nEmail verified\nPhone number verified\nSelfie and KYC verified\nSSN (social security no.) verified\nTax ID and passport verified\nSometimes driving license verified\nMasterCard attached and verified\nUsed only genuine and real documents\n100% access of the account\nAll documents provided for customer security\nWhat is Verified Paxful Account?\nIn today’s expanding landscape of online transactions, ensuring security and reliability has become paramount. Given this context, Paxful has quickly risen as a prominent peer-to-peer Bitcoin marketplace, catering to individuals and businesses seeking trusted platforms for cryptocurrency trading.\n\nIn light of the prevalent digital scams and frauds, it is only natural for people to exercise caution when partaking in online transactions. As a result, the concept of a verified account has gained immense significance, serving as a critical feature for numerous online platforms. Paxful recognizes this need and provides a safe haven for users, streamlining their cryptocurrency buying and selling experience.\n\nFor individuals and businesses alike, Buy verified Paxful account emerges as an appealing choice, offering a secure and reliable environment in the ever-expanding world of digital transactions. Buy Verified Paxful Account.\n\nVerified Paxful Accounts are essential for establishing credibility and trust among users who want to transact securely on the platform. They serve as evidence that a user is a reliable seller or buyer, verifying their legitimacy.\n\nBut what constitutes a verified account, and how can one obtain this status on Paxful? In this exploration of verified Paxful accounts, we will unravel the significance they hold, why they are crucial, and shed light on the process behind their activation, providing a comprehensive understanding of how they function. Buy verified Paxful account.\n\n \n\nWhy should to Buy Verified Paxful Account?\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, a verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence. Buy Verified Paxful Account.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to buy a verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with.\n\n \n\nWhat is a Paxful Account\nPaxful and various other platforms consistently release updates that not only address security vulnerabilities but also enhance usability by introducing new features. Buy Verified Paxful Account.\n\nIn line with this, our old accounts have recently undergone upgrades, ensuring that if you purchase an old buy Verified Paxful account from dmhelpshop.com, you will gain access to an account with an impressive history and advanced features. This ensures a seamless and enhanced experience for all users, making it a worthwhile option for everyone.\n\n \n\nIs it safe to buy Paxful Verified Accounts?\nBuying on Paxful is a secure choice for everyone. However, the level of trust amplifies when purchasing from Paxful verified accounts. These accounts belong to sellers who have undergone rigorous scrutiny by Paxful. Buy verified Paxful account, you are automatically designated as a verified account. Hence, purchasing from a Paxful verified account ensures a high level of credibility and utmost reliability. Buy Verified Paxful Account.\n\nPAXFUL, a widely known peer-to-peer cryptocurrency trading platform, has gained significant popularity as a go-to website for purchasing Bitcoin and other cryptocurrencies. It is important to note, however, that while Paxful may not be the most secure option available, its reputation is considerably less problematic compared to many other marketplaces. Buy Verified Paxful Account.\n\nThis brings us to the question: is it safe to purchase Paxful Verified Accounts? Top Paxful reviews offer mixed opinions, suggesting that caution should be exercised. Therefore, users are advised to conduct thorough research and consider all aspects before proceeding with any transactions on Paxful.\n\n \n\nHow Do I Get 100% Real Verified Paxful Accoun?\nPaxful, a renowned peer-to-peer cryptocurrency marketplace, offers users the opportunity to conveniently buy and sell a wide range of cryptocurrencies. Given its growing popularity, both individuals and businesses are seeking to establish verified accounts on this platform.\n\nHowever, the process of creating a verified Paxful account can be intimidating, particularly considering the escalating prevalence of online scams and fraudulent practices. This verification procedure necessitates users to furnish personal information and vital documents, posing potential risks if not conducted meticulously.\n\nIn this comprehensive guide, we will delve into the necessary steps to create a legitimate and verified Paxful account. Our discussion will revolve around the verification process and provide valuable tips to safely navigate through it.\n\nMoreover, we will emphasize the utmost importance of maintaining the security of personal information when creating a verified account. Furthermore, we will shed light on common pitfalls to steer clear of, such as using counterfeit documents or attempting to bypass the verification process.\n\nWhether you are new to Paxful or an experienced user, this engaging paragraph aims to equip everyone with the knowledge they need to establish a secure and authentic presence on the platform.\n\nBenefits Of Verified Paxful Accounts\nVerified Paxful accounts offer numerous advantages compared to regular Paxful accounts. One notable advantage is that verified accounts contribute to building trust within the community.\n\nVerification, although a rigorous process, is essential for peer-to-peer transactions. This is why all Paxful accounts undergo verification after registration. When customers within the community possess confidence and trust, they can conveniently and securely exchange cash for Bitcoin or Ethereum instantly. Buy Verified Paxful Account.\n\nPaxful accounts, trusted and verified by sellers globally, serve as a testament to their unwavering commitment towards their business or passion, ensuring exceptional customer service at all times. Headquartered in Africa, Paxful holds the distinction of being the world’s pioneering peer-to-peer bitcoin marketplace. Spearheaded by its founder, Ray Youssef, Paxful continues to lead the way in revolutionizing the digital exchange landscape.\n\nPaxful has emerged as a favored platform for digital currency trading, catering to a diverse audience. One of Paxful’s key features is its direct peer-to-peer trading system, eliminating the need for intermediaries or cryptocurrency exchanges. By leveraging Paxful’s escrow system, users can trade securely and confidently.\n\nWhat sets Paxful apart is its commitment to identity verification, ensuring a trustworthy environment for buyers and sellers alike. With these user-centric qualities, Paxful has successfully established itself as a leading platform for hassle-free digital currency transactions, appealing to a wide range of individuals seeking a reliable and convenient trading experience. Buy Verified Paxful Account.\n\n \n\nHow paxful ensure risk-free transaction and trading?\nEngage in safe online financial activities by prioritizing verified accounts to reduce the risk of fraud. Platforms like Paxfu implement stringent identity and address verification measures to protect users from scammers and ensure credibility.\n\nWith verified accounts, users can trade with confidence, knowing they are interacting with legitimate individuals or entities. By fostering trust through verified accounts, Paxful strengthens the integrity of its ecosystem, making it a secure space for financial transactions for all users. Buy Verified Paxful Account.\n\nExperience seamless transactions by obtaining a verified Paxful account. Verification signals a user’s dedication to the platform’s guidelines, leading to the prestigious badge of trust. This trust not only expedites trades but also reduces transaction scrutiny. Additionally, verified users unlock exclusive features enhancing efficiency on Paxful. Elevate your trading experience with Verified Paxful Accounts today.\n\nIn the ever-changing realm of online trading and transactions, selecting a platform with minimal fees is paramount for optimizing returns. This choice not only enhances your financial capabilities but also facilitates more frequent trading while safeguarding gains. Buy Verified Paxful Account.\n\nExamining the details of fee configurations reveals Paxful as a frontrunner in cost-effectiveness. Acquire a verified level-3 USA Paxful account from usasmmonline.com for a secure transaction experience. Invest in verified Paxful accounts to take advantage of a leading platform in the online trading landscape.\n\n \n\nHow Old Paxful ensures a lot of Advantages?\n\nExplore the boundless opportunities that Verified Paxful accounts present for businesses looking to venture into the digital currency realm, as companies globally witness heightened profits and expansion. These success stories underline the myriad advantages of Paxful’s user-friendly interface, minimal fees, and robust trading tools, demonstrating its relevance across various sectors.\n\nBusinesses benefit from efficient transaction processing and cost-effective solutions, making Paxful a significant player in facilitating financial operations. Acquire a USA Paxful account effortlessly at a competitive rate from usasmmonline.com and unlock access to a world of possibilities. Buy Verified Paxful Account.\n\nExperience elevated convenience and accessibility through Paxful, where stories of transformation abound. Whether you are an individual seeking seamless transactions or a business eager to tap into a global market, buying old Paxful accounts unveils opportunities for growth.\n\nPaxful’s verified accounts not only offer reliability within the trading community but also serve as a testament to the platform’s ability to empower economic activities worldwide. Join the journey towards expansive possibilities and enhanced financial empowerment with Paxful today. Buy Verified Paxful Account.\n\n \n\nWhy paxful keep the security measures at the top priority?\nIn today’s digital landscape, security stands as a paramount concern for all individuals engaging in online activities, particularly within marketplaces such as Paxful. It is essential for account holders to remain informed about the comprehensive security protocols that are in place to safeguard their information.\n\nSafeguarding your Paxful account is imperative to guaranteeing the safety and security of your transactions. Two essential security components, Two-Factor Authentication and Routine Security Audits, serve as the pillars fortifying this shield of protection, ensuring a secure and trustworthy user experience for all. Buy Verified Paxful Account.\n\nConclusion\nInvesting in Bitcoin offers various avenues, and among those, utilizing a Paxful account has emerged as a favored option. Paxful, an esteemed online marketplace, enables users to engage in buying and selling Bitcoin. Buy Verified Paxful Account.\n\nThe initial step involves creating an account on Paxful and completing the verification process to ensure identity authentication. Subsequently, users gain access to a diverse range of offers from fellow users on the platform. Once a suitable proposal captures your interest, you can proceed to initiate a trade with the respective user, opening the doors to a seamless Bitcoin investing experience.\n\nIn conclusion, when considering the option of purchasing verified Paxful accounts, exercising caution and conducting thorough due diligence is of utmost importance. It is highly recommended to seek reputable sources and diligently research the seller’s history and reviews before making any transactions.\n\nMoreover, it is crucial to familiarize oneself with the terms and conditions outlined by Paxful regarding account verification, bearing in mind the potential consequences of violating those terms. By adhering to these guidelines, individuals can ensure a secure and reliable experience when engaging in such transactions. Buy Verified Paxful Account.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com\n\n " | mojashfinding |

1,908,703 | Popular Test Automation Frameworks: How to Choose | A test automation framework provides a platform through which the entire test automation process is... | 0 | 2024-07-02T09:56:49 | https://dev.to/alishahndrsn/popular-test-automation-frameworks-how-to-choose-1298 | testautomation | A test automation framework provides a platform through which the entire test automation process is optimized for maximum productivity. Every project has a different set of requirements, scope, budget, tool requirements etc., and hence it becomes significant to leverage a test automation framework.

A framework will i... | alishahndrsn |

1,908,696 | CUDA 12: Optimizing Performance for GPU Computing | Introduction CUDA 12 is a significant advancement in GPU computing, offering new... | 0 | 2024-07-02T09:55:00 | https://dev.to/novita_ai/cuda-12-optimizing-performance-for-gpu-computing-3j13 | ## Introduction

CUDA 12 is a significant advancement in GPU computing, offering new improvements for software developers. With enhanced memory management and faster kernel start times, NVIDIA demonstrates its commitment to innovation. The updates in CUDA 12 are poised to have a substantial impact on machine learning an... | novita_ai | |

1,908,699 | Guide on Outsourcing Laravel Development Services in Canada 20 | Outsourcing Laravel development services in Canada in 2024 offers numerous benefits, including access... | 0 | 2024-07-02T09:54:00 | https://dev.to/nectarbits1/guide-on-outsourcing-laravel-development-services-in-canada-20-1pjc | laravel, development | Outsourcing Laravel development services in Canada in 2024 offers numerous benefits, including access to skilled developers, cost-efficiency, and high-quality standards. When choosing a partner, prioritize firms with a strong portfolio, proven expertise in Laravel, and positive client feedback. Evaluate their communica... | nectarbits1 |

1,908,698 | Everything You Need to Know About Medical Gel Pads | Medical gel pads find critical applications in health care set-ups. They offer protection and comfort... | 0 | 2024-07-02T09:51:43 | https://dev.to/lenvitz/everything-you-need-to-know-about-medical-gel-pads-3acb | Medical gel pads find critical applications in health care set-ups. They offer protection and comfort to patients undergoing various medical procedures. These pads come in different forms, shapes, sizes, and materials for specific applications. In this elaborate review, we look at the other forms of medical gel pads, t... | lenvitz | |

1,908,697 | Revolutionizing Beauty Retail with Cutting-Edge Makeup App | Welcome to the Future of Makeup Shopping Hello, beauty lovers and tech enthusiasts!... | 27,673 | 2024-07-02T09:51:25 | https://dev.to/rapidinnovation/revolutionizing-beauty-retail-with-cutting-edge-makeup-app-34e0 | ## Welcome to the Future of Makeup Shopping

Hello, beauty lovers and tech enthusiasts! Imagine a scenario where trying on

makeup merges seamlessly with the ease and fun of looking into a mirror,

enhanced by digital technology. This is the reality we're stepping into with

the launch of a groundbreaking makeup applicati... | rapidinnovation | |

1,908,695 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash... | 0 | 2024-07-02T09:45:01 | https://dev.to/mojashfinding/buy-verified-cash-app-account-1djd | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-cash-app-account/\n\n\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoin enablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number. Buy verified cash app account.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, ACash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n \n\nHow to verify Cash App accounts\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account.\n\nAs part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly. Buy verified cash app account.\n\n \n\nHow cash used for international transaction?\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom.\n\nNo matter if you’re a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain. Buy verified cash app account.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today’s digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial.\n\nAs we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available. Buy verified cash app account.\n\nOffers and advantage to buy cash app accounts cheap?\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform.\n\nWe deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Buy verified cash app account.\n\nTrustbizs.com stands by the Cash App’s superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management.\n\nExplore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs. Buy verified cash app account.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\nIn today’s digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions.\n\nBy acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com\n\n" | mojashfinding |

1,908,694 | Qatar Airways: Hamad Int. Airport Lounge Experience | Office Like Qatar Airways Tbilisi Office in Georgia gives some perks like lounge access at Hamad... | 0 | 2024-07-02T09:43:52 | https://dev.to/allairlinesoffice/qatar-airways-hamad-int-airport-lounge-experience-2ok0 | Office Like [Qatar Airways Tbilisi Office in Georgia](https://allairlinesoffice.com/qatar-airways/qatar-airways-tbilisi-office-in-georgia/) gives some perks like lounge access at Hamad International Airport, guests may enjoy a luxurious and quiet experience at the Qatar Airways lounge. It provides an ideal environment ... | allairlinesoffice | |

1,908,693 | Finding the Right Residential Roofing Experts in Your Area | When it comes to protecting your home, the roof plays a crucial role. Whether you're in need of... | 0 | 2024-07-02T09:43:06 | https://dev.to/byadmin/finding-the-right-residential-roofing-experts-in-your-area-fp6 | roofing |

When it comes to protecting your home, the roof plays a crucial role. Whether you're in need of repairs, replacement, or installation, finding the right residential roofing professionals is essential. This article w... | byadmin |

1,908,692 | Optimize Manufacturing Automation with OEM USB Camera Solutions: Boosting Efficiency and Accuracy | In today’s fast-paced manufacturing environment, the integration of advanced technologies like OEM... | 0 | 2024-07-02T09:41:58 | https://dev.to/finnianmarlowe_ea801b04b5/optimize-manufacturing-automation-with-oem-usb-camera-solutions-boosting-efficiency-and-accuracy-3cb5 | oemusbcamera, usbcamera, camera, photography | In today’s fast-paced manufacturing environment, the integration of advanced technologies like OEM USB cameras has become crucial for enhancing operational efficiency and ensuring precision across various processes. This blog explores how **[OEM USB camera ](https://www.vadzoimaging.com/product/ar0233-1080p-hdr-usb-3-0... | finnianmarlowe_ea801b04b5 |

1,908,691 | Exploring the Blockchain Trends and Innovations in 2024 | In recent years, blockchain technology has grown rapidly from a niche interest to a disruptive force... | 0 | 2024-07-02T09:41:22 | https://dev.to/shifali8990/exploring-the-blockchain-trends-and-innovations-in-2024-4ikl | blockchain, cryptocurrency | In recent years, blockchain technology has grown rapidly from a niche interest to a disruptive force in sectors around the world. As we approach 2024, the blockchain environment continues to grow, pushed by technology improvements and innovative applications. This article goes into the growing trends and innovations in... | shifali8990 |

1,908,690 | DumpsBoss: Effective Preparation with AWS Practitioner Exam Dumps | Benefits of Using AWS Practitioner Exam Dumps Comprehensive Coverage: AWS Practitioner Exam Dumps ... | 0 | 2024-07-02T09:41:15 | https://dev.to/romero796/dumpsboss-effective-preparation-with-aws-practitioner-exam-dumps-d2d | Benefits of Using AWS Practitioner Exam Dumps

1. Comprehensive Coverage:

AWS Practitioner Exam Dumps cover a wide range of topics outlined in the exam blueprint, including <a href="https://dumpsboss.com/certification-provider/amazon/">AWS Practitioner Exam Dumps</a> concepts, AWS services, security, compliance, and b... | romero796 | |

1,908,689 | AI and Data Ethics: A Balancing Act for Responsible Innovation (A Thought Leader's Perspective) | The marriage of Artificial Intelligence (AI) and data analytics holds immense promise for progress.... | 0 | 2024-07-02T09:40:33 | https://dev.to/blogsx/ai-and-data-ethics-a-balancing-act-for-responsible-innovation-a-thought-leaders-perspective-28h4 | ai, dataethics, thoughtleadership, responsibleai | The marriage of Artificial Intelligence (AI) and data analytics holds immense promise for progress. However, as a thought leader in this field, I believe it's crucial to address the ethical considerations that come with this powerful union. Let's delve into the potential pitfalls and explore strategies for responsible ... | blogsx |

1,908,688 | Elevate Your Vlogging with an HDR USB Camera: High Dynamic Range for Professional Content Creation | In the dynamic world of content creation, quality is paramount. Whether you're a seasoned vlogger or... | 0 | 2024-07-02T09:39:03 | https://dev.to/finnianmarlowe_ea801b04b5/elevate-your-vlogging-with-an-hdr-usb-camera-high-dynamic-range-for-professional-content-creation-3p0n | hdrusbcamera, usbcamera, camera, photography | In the dynamic world of content creation, quality is paramount. Whether you're a seasoned vlogger or just starting out, the right equipment can make all the difference. Enter HDR USB cameras – a game-changer in the realm of video production. In this blog post, we'll explore how [**HDR USB camera**](https://www.vadzoima... | finnianmarlowe_ea801b04b5 |

1,908,683 | Optimize Robotics Vision with High-Performance GMSL Camera Modules | Vision systems are essential to robotics because they allow machines to see and interact with their... | 0 | 2024-07-02T09:35:54 | https://dev.to/finnianmarlowe_ea801b04b5/optimize-robotics-vision-with-high-performance-gmsl-camera-modules-2p3l | gsmlcamera, usbcamera, camera, photography | Vision systems are essential to robotics because they allow machines to see and interact with their surroundings on their own. A major technology propelling robotic vision developments is the GMSL (Gigabit Multimedia Serial Link) camera module. Without getting into specific product names, we will explore the definition... | finnianmarlowe_ea801b04b5 |

1,908,682 | Optimize Robotics Vision with High-Performance GMSL Camera Modules | Vision systems are essential to robotics because they allow machines to see and interact with their... | 0 | 2024-07-02T09:35:51 | https://dev.to/finnianmarlowe_ea801b04b5/optimize-robotics-vision-with-high-performance-gmsl-camera-modules-3bcd | gsmlcamera, usbcamera, camera, photography | Vision systems are essential to robotics because they allow machines to see and interact with their surroundings on their own. A major technology propelling robotic vision developments is the GMSL (Gigabit Multimedia Serial Link) camera module. Without getting into specific product names, we will explore the definition... | finnianmarlowe_ea801b04b5 |

1,908,253 | Mobile Dev.. | Mobile dev has become an essential skill in today's society. As a mobile developer, my progress and... | 0 | 2024-07-01T23:02:03 | https://dev.to/joe_asam/mobile-dev-5c82 | Mobile dev has become an essential skill in today's society. As a mobile developer, my progress and experience in the world of mobile development will be shared. In this, I will explain the merits and demerits of cadres and discuss my motivation for joining the HNG internship.

My name is Joseph Asam Sunday, a studen... | joe_asam | |

1,908,680 | Quarterly Rewards for security researchers! | 🕹️Do you still remember your annual goals for 2024? 💫Now, we have an important announcement for... | 0 | 2024-07-02T09:34:31 | https://dev.to/tecno-security/quarterly-rewards-for-security-researchers-2d34 | security, cybersecurity, bug, career | 🕹️Do you still remember your annual goals for 2024?

💫Now, we have an important announcement for you: The quarterly rewards for Q3 and Q4 will be calculated as normal. Are you confident in getting a reward? Looking forward to your answer!

Details:

1) Rising Star Award: A newly registered researcher who submits th... | tecno-security |

1,908,679 | The Vital Role of Investment Banks in Global Finance and Economic Growth | Investment banking is a cornerstone of global finance, serving as a vital intermediary between... | 0 | 2024-07-02T09:33:52 | https://dev.to/linda0609/the-vital-role-of-investment-banks-in-global-finance-and-economic-growth-5h7n | Investment banking is a cornerstone of global finance, serving as a vital intermediary between corporations seeking capital and investors looking to deploy funds. These institutions play a multifaceted role that extends beyond traditional financial transactions to encompass advisory services, market-making activities, ... | linda0609 | |

1,908,678 | Hãng thảm sàn thể thao Enlio | Enlio là thương hiệu hàng đầu thế giới về sản xuất thảm sàn thể thao, đặc biệt là thảm cầu lông. Với... | 0 | 2024-07-02T09:30:53 | https://dev.to/enliovietnamgo/hang-tham-san-the-thao-enlio-29p6 |

Enlio là thương hiệu hàng đầu thế giới về sản xuất thảm sàn thể thao, đặc biệt là thảm cầu lông. Với uy tín và chất lượng đã được kiểm chứng qua việc tài trợ và cung cấp thảm cho nhiều giải đấu cầu lông quốc tế lớn, Enlio khẳng định vị thế là đối tác tin cậy của các vận động viên và tổ chức thể thao chuyên nghiệp.

Thả... | enliovietnamgo | |

1,908,522 | Mastering Cloud-Based Quantum Machine Learning Applications | Key Highlights Quantum machine learning and cloud computing are shaking things up across... | 0 | 2024-07-02T09:30:00 | https://dev.to/novita_ai/mastering-cloud-based-quantum-machine-learning-applications-3pdg | ## Key Highlights

Quantum machine learning and cloud computing are shaking things up across different sectors. With quantum machine learning applications on the cloud, businesses can scale up easily, get to stuff from anywhere, and cut down on extra costs. At the heart of these systems lie quantum algorithms and the ph... | novita_ai | |

1,908,673 | Challenge with RBAC Authentication | Building great and memorable experiences for any software requires a lot of things and one of those... | 0 | 2024-07-02T09:29:06 | https://dev.to/emmanuelomoiya/challenge-with-rbac-authentication-329h | security, webdev, backend, api | Building great and memorable experiences for any software requires a lot of things and one of those things is for your user to feel safe and "actually" be safe and secure...

The last thing any engineer would hope for is to wake up in the early hours of the morning and notice that the software has been down for close to... | emmanuelomoiya |

1,908,672 | Doing Hard Things | "What doesn't kill you makes you stronger" - A strong man(probably). On the 9th of March,... | 0 | 2024-07-02T09:28:46 | https://dev.to/afeh/doing-hard-things-227f |

## "What doesn't kill you makes you stronger" - A strong man(probably).

On the 9th of March, 2024, I woke up around 6 am. It was a Saturday so it was unusual for me to be up that early. I said my prayers, picked up my phone and opened WhatsApp. Scrolling through unread messages, a post in my school's Google Develo... | afeh | |

1,908,677 | UK Private Healthcare Market Analysis: Size, Share, Trends, Growth, Forecast 2024-2033 and Key Player Strategies | The UK private healthcare market is expected to grow from $14.5 billion in 2024 to $19.3 billion by... | 0 | 2024-07-02T09:28:43 | https://dev.to/swara_353df25d291824ff9ee/uk-private-healthcare-market-analysis-size-share-trends-growth-forecast-2024-2033-and-key-player-strategies-gii |

The [UK private healthcare market](https://www.persistencemarketresearch.com/market-research/uk-private-healthcare-market.asp) is expected to grow from $14.5 billion in 2024 to $19.3 billion by 2033, with a CAGR of ... | swara_353df25d291824ff9ee | |

1,908,676 | Transform Digital Signage with 4K USB Cameras: Superior Image Quality for Engagement | Improving visual experiences is essential to drawing in and holding the interest of viewers in the... | 0 | 2024-07-02T09:28:19 | https://dev.to/finnianmarlowe_ea801b04b5/transform-digital-signage-with-4k-usb-cameras-superior-image-quality-for-engagement-34km | 4kusbcamera, usbcamera, 4kcamera, camera | Improving visual experiences is essential to drawing in and holding the interest of viewers in the current digital era. Digital signage is being revolutionized by technologies such as [**4K USB camera**](https://www.vadzoimaging.com/product-page/ar0821-4k-hdr-usb-3-0-camera)s. Because of their unmatched image quality, ... | finnianmarlowe_ea801b04b5 |

1,908,674 | Devops | Devops for beginner | 0 | 2024-07-02T09:26:41 | https://dev.to/samad_rufai_732247b1547df/devops-51o2 | Devops for beginner | samad_rufai_732247b1547df | |

1,908,649 | Comparing Svelte and Vue.js: A Battle of Frontend Technologies | The field of frontend development is dynamic and offers a wide selection of frameworks and... | 0 | 2024-07-02T09:26:21 | https://dev.to/mundianderi/comparing-svelte-and-vuejs-a-battle-of-frontend-technologies-1co2 | webdev, frontend, javascript, programming |

The field of frontend development is dynamic and offers a wide selection of frameworks and libraries. Notable participants are Vue.js and Svelte. Both provide strong tools for creating contemporary web apps, but t... | mundianderi |

1,908,671 | i have created sample Website for a Coffee Shop | This is a submission for the Wix Studio Challenge . What I Built Here i built Sample... | 0 | 2024-07-02T09:23:25 | https://dev.to/sibi1103/i-have-created-sample-website-for-a-coffee-shop-5caa | devchallenge, wixstudiochallenge, webdev, javascript | *This is a submission for the [Wix Studio Challenge ](https://dev.to/challenges/wix).*

## What I Built

Here i built Sample website for the Coffee Shop.

## Demo

https://sibisid90.wixsite.com/coffee-point

, and today I’m going to explain a new and exciting hook in React called **useActionState**.

_[Follow me in Github⭐](https://github.com/taqui-786)_

## What is useActionState?

useActionState is a new React hook that helps us... | random_ti |

1,908,661 | Optimize Telemedicine with AutoFocus Camera Technology: Precision in Remote Consultations | The importance of cutting-edge technology in the rapidly changing field of telemedicine cannot be... | 0 | 2024-07-02T09:18:01 | https://dev.to/finnianmarlowe_ea801b04b5/optimize-telemedicine-with-autofocus-camera-technology-precision-in-remote-consultations-220j | autofocuscamera, usbcamera, camera, cameraautofocus | The importance of cutting-edge technology in the rapidly changing field of telemedicine cannot be overemphasized. The focusing camera technology is one such important invention that is revolutionizing remote healthcare. Healthcare professionals are transforming the way they communicate electronically with patients with... | finnianmarlowe_ea801b04b5 |

1,907,866 | My recent, difficult backend problem | I’m Elijah Odefemi, Backend Developer. Am five years of experience developer. I was tasked with... | 0 | 2024-07-01T15:48:25 | https://dev.to/heliphem/my-recent-difficult-backend-problem-4i13 | I’m Elijah Odefemi, Backend Developer. Am five years of experience developer.

I was tasked with resolving difficult backend problems on a project which required technical ability skills, Timeframe, Documentation, creativity skill. I recently encountered a difficult backend problem with one educational Institution webs... | heliphem | |

1,908,660 | Java for Machine Learning: Libraries and Frameworks | The machine learning (ML) market is expected to reach a valuation of over $31 billion in five years.... | 0 | 2024-07-02T09:17:49 | https://dev.to/pritesh80/java-for-machine-learning-libraries-and-frameworks-30j7 | javascript, java, machinelearning, news | The machine learning (ML) market is expected to reach a valuation of over [$31 billion in five years](https://www.globenewswire.com/en/news-release/2022/11/10/2552929/0/en/Machine-Learning-ML-Market-Projected-to-Surpass-US-31360-million-and-Grow-at-a-CAGR-of-33-6-During-the-2022-2028-Forecast-Timeframe-102-Pages-Report... | pritesh80 |

1,908,659 | core java -Basics | Day-4: Today We learn about Some important topics are you excited Java... | 0 | 2024-07-02T09:16:53 | https://dev.to/sahithi_puppala/core-java-basics-n4j | beginners, java, learning, programming | ## Day-4:

Today We learn about Some important topics are you excited

## Java Class:

java Class is Divided into 2 types:

1)Predefined Class

2)User defined class

## 1)Predefined Class:

- Every java Predefined Class always start with **CAPITAL LETTERS**

[**EX:** System, String...etc]

## 2)User d... | sahithi_puppala |

1,908,658 | Shillong Travel Guides | Here are some of the Places to visit in Shillong. Getting There Shillong is well-connected by road... | 0 | 2024-07-02T09:14:35 | https://dev.to/travenjo/shillong-travel-guides-laf | travel, meghalaya, natural, shillong | Here are some of the [Places to visit in Shillong](https://travenjo.com/places-to-visit-in-shillong/).

**Getting There**

Shillong is well-connected by road and air. The nearest airport is in Guwahati, Assam, about 100 kilometers away. From Guwahati, you can take a taxi or a bus to reach Shillong. The journey through t... | travenjo |

1,908,657 | Courier Software Market Analysis: Latest Developments, Growth Forecast, and Key Players Insights | The global courier software market, valued at US$ 0.54 billion in 2022, is projected to reach US$... | 0 | 2024-07-02T09:14:30 | https://dev.to/swara_353df25d291824ff9ee/courier-software-market-analysis-latest-developments-growth-forecast-and-key-players-insights-419a |

The global [courier software market](https://), valued at US$ 0.54 billion in 2022, is projected to reach US$ 1.4 billion by 2032, growing at a CAGR of 10%. This growth is driven by the rising popularity of e-commer... | swara_353df25d291824ff9ee | |

1,908,656 | Vancouver Airport Hotels with Shuttle | Onsite Dining & Fitness Centre Amenities | Hotels Near Vancouver Airport YVR, Grand Park Hotel Vancouver Airport, Vancouver Airport Hotels. At... | 0 | 2024-07-02T09:14:04 | https://dev.to/grandparkvancouverairporthotel/vancouver-airport-hotels-with-shuttle-onsite-dining-fitness-centre-amenities-48i0 | Hotels Near [Vancouver Airport YVR](url), Grand Park Hotel Vancouver Airport, Vancouver Airport Hotels.

At the Grand Park Hotel Vancouver Airport, your comfort is our top priority. That’s why our hotel provides a variety of convenient amenities to make your stay stress-free. Our hotel offers flexible meeting and confe... | grandparkvancouverairporthotel | |

1,908,655 | Another developer in your Town. | Yes, I am available, I've done many projects which are more advanced than this. So I can easily do... | 0 | 2024-07-02T09:13:37 | https://dev.to/nishadchowdhury/another-developer-in-your-town-39bo | webdev, react, nextjs | Yes, I am available, I've done many projects which are more advanced than this. So I can easily do your website with all the functionalities.

If you want a landing page then you can go through this GIG:- https://www.fiverr.com/s/R7rj6jN

For an advanced website, you can follow this:- https://www.fiverr.com/s/7Y1dB6W | nishadchowdhury |

1,908,575 | Decorator-Pattern | Javascript Design Pattern Simplified | Part 5 | As a developer, understanding various JavaScript design patterns is crucial for writing maintainable,... | 27,934 | 2024-07-02T09:10:00 | https://dev.to/aakash_kumar/decorator-pattern-javascript-design-pattern-simplified-part-5-26kf | webdev, javascript, programming, tutorial | As a developer, understanding various JavaScript design patterns is crucial for writing maintainable, efficient, and scalable code. Here are some essential JavaScript design patterns that you should know:

## Factory Pattern

The Decorator pattern allows behavior to be added to an individual object, dynamically, withou... | aakash_kumar |

1,908,654 | Building a Secure & Trustworthy Reputation System on Your Blockchain Ecosystem | Introduction In blockchain ecosystems, reputation systems play a crucial role. They are pivotal for... | 0 | 2024-07-02T09:08:50 | https://dev.to/capsey/building-a-secure-trustworthy-reputation-system-on-your-blockchain-ecosystem-4lcj | blockchain, cryptocurrency, bitcoin, trad | **Introduction**

In blockchain ecosystems, reputation systems play a crucial role. They are pivotal for entrepreneurs and businessmen as they nurture trust and reliability. These systems ensure that transactions and interactions within the blockchain are transparent and secure, fostering a dependable environment for bu... | capsey |

1,908,653 | Rendering Videos in the Browser Using WebCodecs API | Every time we mention rendering videos in the browser, we get raised eyebrows and concerns about... | 0 | 2024-07-02T09:08:43 | https://dev.to/rendley/rendering-videos-in-the-browser-using-webcodecs-api-328n | webdev, javascript, programming, frontend | Every time we mention rendering videos in the browser, we get raised eyebrows and concerns about performance. This is for a good reason, mainly because, for a long time, browsers were not capable of doing it and had to use the CPU for decoding and encoding frames.

This bottleneck made companies willing to implement a ... | rendley |

1,908,643 | Real-Time Testing: Best Practices Guide | OVERVIEW Real time testing is a crucial part of the Software Development Life Cycle that... | 0 | 2024-07-02T08:54:00 | https://dev.to/nazneenahmd/real-time-testing-best-practices-guide-16om | ## OVERVIEW

Real time testing is a crucial part of the Software Development Life Cycle that involves testing software applications for their reliability and functionality in real time. This involves simulating the real-time environment or scenarios to verify the performance of the software application under various lo... | nazneenahmd | |

1,908,650 | Why Your Business Needs Laravel Development in 2024 | The global market is growing rapidly. Laravel development helps businesses reach a wider audience and... | 0 | 2024-07-02T09:07:16 | https://dev.to/dhruvil_joshi14/why-your-business-needs-laravel-development-in-2024-8ib | laravel, php, webdev, technology | The global market is growing rapidly. Laravel development helps businesses reach a wider audience and increase revenue through their products and services. Laravel, an effective open-source PHP framework, is well-known for creating dynamic and interactive websites, so it has become a leading option for web development.... | dhruvil_joshi14 |

1,908,648 | .Net Versiyalari | .Netning har hil turlari bor, yani .Net Framework, .Net Core va .Net Standard. .Net Framework - Bu... | 0 | 2024-07-02T09:05:55 | https://dev.to/xojimurodov/net-versiyalari-4b37 |

.Netning har hil turlari bor, yani .Net Framework, .Net Core va

.Net Standard.

.Net Framework - Bu k'odni boshqaruchisi Common Language Runtime (CLR) va ilovalarni yaratish uchun Base Class Library (BCL) larni o’z... | xojimurodov | |

1,908,573 | Module-Pattern | Javascript Design Pattern Simplified | Part 4 | As a developer, understanding various JavaScript design patterns is crucial for writing maintainable,... | 27,934 | 2024-07-02T09:05:00 | https://dev.to/aakash_kumar/module-pattern-javascript-design-pattern-simplified-part-4-ln5 | webdev, javascript, programming, tutorial | As a developer, understanding various JavaScript design patterns is crucial for writing maintainable, efficient, and scalable code. Here are some essential JavaScript design patterns that you should know:

##Module Pattern

The Module pattern allows you to create public and private methods and variables, helping to kee... | aakash_kumar |

1,908,641 | Unforgettable Escapes: Top 5 International Honeymoon Destinations for 2024 | Your honeymoon is a once-in-a-lifetime experience, a celebration of your new life together. It should... | 0 | 2024-07-02T09:02:50 | https://dev.to/nitsa_holidays_7/unforgettable-escapes-top-5-international-honeymoon-destinations-for-2024-4715 | Your honeymoon is a once-in-a-lifetime experience, a celebration of your new life together. It should be filled with romance, adventure, and unforgettable memories. But finding the perfect destination can be overwhelming, especially when trying to balance dreams and budget. Fear not! Here are five of the [best internat... | nitsa_holidays_7 | |

1,908,571 | Observer-Pattern | Javascript Design Pattern Simplified | Part 3 | As a developer, understanding various JavaScript design patterns is crucial for writing maintainable,... | 27,934 | 2024-07-02T09:00:00 | https://dev.to/aakash_kumar/observer-pattern-javascript-design-pattern-simplified-part-3-4cn6 | webdev, javascript, programming, tutorial | As a developer, understanding various JavaScript design patterns is crucial for writing maintainable, efficient, and scalable code. Here are some essential JavaScript design patterns that you should know:

##Observer Pattern

The Observer pattern allows objects to notify other objects about changes in their state.

**E... | aakash_kumar |

1,908,647 | Shop 0.8mm Laminates Sheets by Saket Mica | Looking for quality & decorative 0.8mm laminate sheets? Explore Saket Mica's range of stylish,... | 0 | 2024-07-02T08:58:04 | https://dev.to/saketmica/shop-08mm-laminates-sheets-by-saket-mica-1pff | Looking for quality & decorative 0.8mm laminate sheets? Explore [Saket Mica's](https://www.saketmica.com/) range of stylish, durable options, perfect for any interior design. Order now!

Sakte Mica's [0.8mm laminate sheet ](https://www.saketmica.com/collections/0-8mm-laminates)is a top-quality, versatile option for var... | saketmica | |

1,908,645 | Big Brother or Big Benefits? The Impact of Face Recognition on Our Lives | Facial recognition technology has proved to be an innovation with the power to reshape our society.... | 0 | 2024-07-02T08:55:19 | https://dev.to/luxandcloud/big-brother-or-big-benefits-the-impact-of-face-recognition-on-our-lives-45cf | ai, security, machinelearning, discuss | Facial recognition technology has proved to be an innovation with the power to reshape our society. Still, it is a controversial tool capable of influencing our lives both positively and negatively.

On the one hand, facial recognition offers heightened security and convenience. For example, in January 2020, the New De... | luxandcloud |

1,908,523 | Factory-Pattern | Javascript Design Pattern Simplified | Part 2 | As a developer, understanding various JavaScript design patterns is crucial for writing maintainable,... | 27,934 | 2024-07-02T08:55:00 | https://dev.to/aakash_kumar/factory-pattern-javascript-design-pattern-simplified-part-2-3fhd | webdev, javascript, programming, tutorial | As a developer, understanding various JavaScript design patterns is crucial for writing maintainable, efficient, and scalable code. Here are some essential JavaScript design patterns that you should know:

## Factory Pattern

The Factory pattern provides a way to create objects without specifying the exact class of th... | aakash_kumar |

1,908,644 | Durable & Stylish 1mm Laminate Sheets l Saket Mica | Introducing Saket Mica's exclusive range of 1mm laminate sheets. We are laminates suppliers and... | 0 | 2024-07-02T08:54:32 | https://dev.to/saketmica/durable-stylish-1mm-laminate-sheets-l-saket-mica-4efb | Introducing [Saket Mica's](https://www.saketmica.com/) exclusive range of 1mm laminate sheets. We are laminates suppliers and dealers. Check out our collection for amazing designs.

Sakte Mica's [1 mm laminate sheets](https://www.saketmica.com/collections/1mm-laminates) provide both durability and aesthetic appeal, ma... | saketmica | |

1,908,642 | Introduction to Microservices with .NET 8 | Introduction to the Series Welcome to our series on microservices with .NET 8! In this... | 27,935 | 2024-07-02T08:52:10 | https://dev.to/moh_moh701/introduction-to-microservices-with-net-8-36p9 | dotnetcore, aspdotnet, api | #### Introduction to the Series

Welcome to our series on microservices with .NET 8! In this series, we will explore the fundamental concepts of microservices, delve into the principles of Clean Architecture, and provide step-by-step guides to help you design, develop, and deploy microservices using .NET 8. Whether you'... | moh_moh701 |

1,908,640 | Best Laminate Company in India - Saket Mica | Since 1999, Saket Mica Has Been a Leading Laminates Manufacturer and Supplier in India. Our... | 0 | 2024-07-02T08:50:15 | https://dev.to/saketmica/best-laminate-company-in-india-saket-mica-d87 | Since 1999, [Saket Mica](https://www.saketmica.com/) Has Been a Leading Laminates Manufacturer and Supplier in India. Our dedication to quality and customer satisfaction has made us a trusted partner.

Buy top-quality [laminate sheets in India](https://www.saketmica.com/). Saket Mica offers a wide range of laminates, l... | saketmica | |

1,908,639 | ¿Qué deberían contener los productos para estanques para sus necesidades específicas? | Cada estanque es único, con su propio ecosistema y desafíos. Antes de sumergirnos en Productos para... | 0 | 2024-07-02T08:46:14 | https://dev.to/toddepsmith/que-deberian-contener-los-productos-para-estanques-para-sus-necesidades-especificas-1l36 | Cada estanque es único, con su propio ecosistema y desafíos. Antes de sumergirnos en Productos para estanques selección, es importante evaluar las necesidades específicas de su estanque. Considere factores como el tamaño, la profundidad, el volumen de agua, las poblaciones de plantas y peces y las condiciones ambiental... | toddepsmith | |

1,908,638 | Unleashing the Power of Hybrid Cloud with Azure Stack HCI | Hey there, tech aficionados! Recently, I dove deep into the world of Azure Stack HCI, and let me... | 0 | 2024-07-02T08:44:07 | https://dev.to/karleeov/unleashing-the-power-of-hybrid-cloud-with-azure-stack-hci-2dhb | azurestack, hci, azure | Hey there, tech aficionados!

Recently, I dove deep into the world of Azure Stack HCI, and let me tell you, I was pretty amazed by what I found. This platform is a game-changer for anyone looking to leverage the true potential of hybrid cloud environments. Whether you're a fan of container-based applications with AKS (... | karleeov |

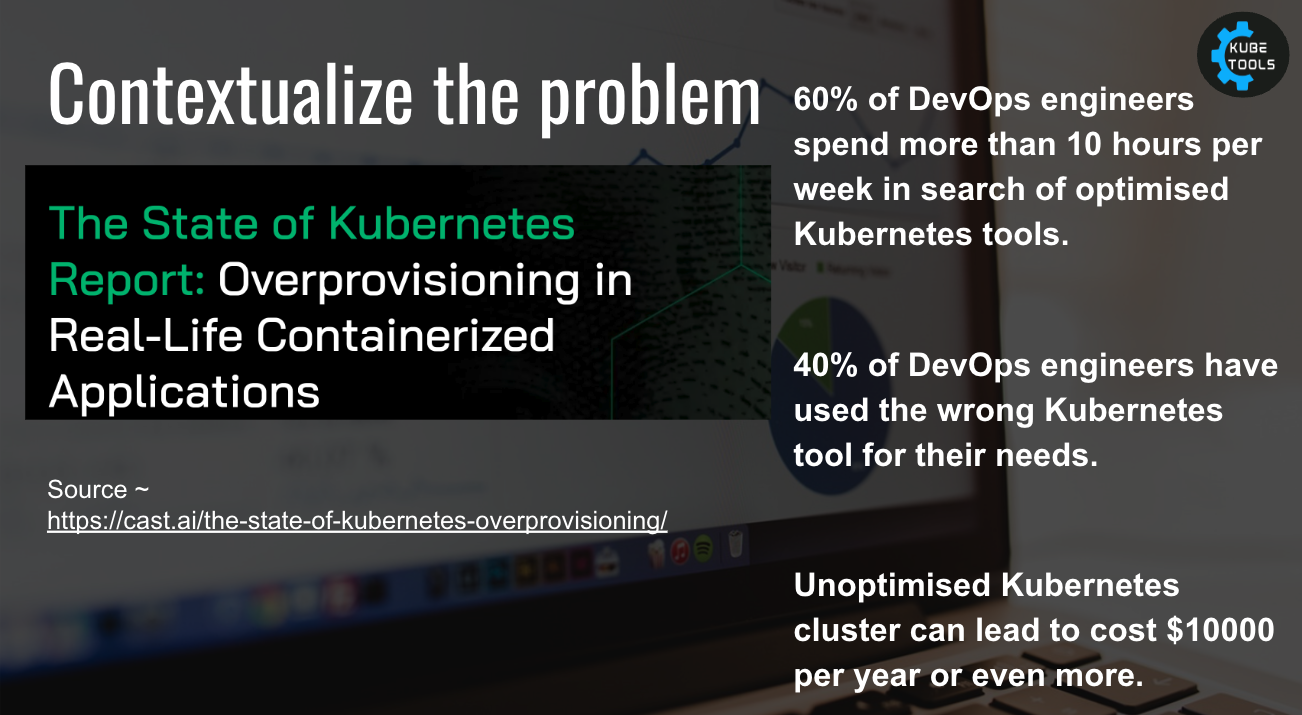

1,908,637 | Leveraging AI for Kubernetes Troubleshooting via K8sGPT | Nowadays, there is a lot of excitement around AI and its new applications. For instance, in April/May... | 0 | 2024-07-02T08:42:14 | https://gtrekter.medium.com/leveraging-ai-for-kubernetes-troubleshooting-via-k8sgpt-12ceb42bb51f | kubernetes, ai, k8s, chatgpt | Nowadays, there is a lot of excitement around AI and its new applications. For instance, in April/May 2024, there were at least four AI conventions in Seoul with thousands of attendees. So, what about Kubernetes? Can AI help us manage Kubernetes? The answer is yes. In this article, I will introduce K8sGPT.

# What does... | gtrekter |

1,908,634 | Comparing HTML and CSS in Frontend Development | Comparison between HTML and CSS, discussing their roles, differences, and strengths in frontend... | 0 | 2024-07-02T08:40:16 | https://dev.to/ayomide_aina/comparing-html-and-css-in-frontend-development-cd0 | Comparison between HTML and CSS, discussing their roles, differences, and strengths in frontend development:

#Comparing HTML and CSS in Frontend Development.

In the realm of web development, HTML (HyperText Markup Language) and CSS (Cascading Style Sheets) are foundational technologies. They play distinct but complem... | ayomide_aina | |

1,908,633 | The Ultimate MongoDB Configuration Cheatsheet: Tips and Commands | Introduction to MongoDB Overview MongoDB is a leading NoSQL database that... | 0 | 2024-07-02T08:38:54 | https://blog.spithacode.com/posts/76f06ed0-6838-4763-b224-75de1297c682 | webdev, javascript, beginners, mongodb | ## Introduction to MongoDB

### Overview

MongoDB is a leading NoSQL database that provides high performance, high availability, and easy scalability. Unlike traditional SQL databases, MongoDB uses a flexible, schema-less data model, making it ideal for handling unstructured data.

### Key Features

* Scalability: Easily... | stormsidali2001 |

1,908,632 | Email Marketing Services in London: Prabisha Consulting! | In the bustling hub of London's business landscape, effective marketing can make all the difference.... | 0 | 2024-07-02T08:38:49 | https://dev.to/prabisha_dev_1bfefdc54339/email-marketing-services-in-london-prabisha-consulting-2n24 | In the bustling hub of London's business landscape, effective marketing can make all the difference. Prabisha Consulting stands at the forefront, offering specialized Email Marketing Services designed to maximize your outreach and engagement.

Tailored Email Marketing Solutions

At Prabisha Consulting, we understand tha... | prabisha_dev_1bfefdc54339 | |

1,908,630 | Navigating the Future: Exploring Appium Testing for Smart TVs | In the realm of software testing, smart TVs represent a distinct challenge. Their unique interfaces,... | 0 | 2024-07-02T08:35:55 | https://dev.to/jennife05918349/navigating-the-future-exploring-appium-testing-for-smart-tvs-3d9a | automation, testing, webdev | In the realm of software testing, smart TVs represent a distinct challenge. Their unique interfaces, embedded features, and distinct operating systems demand specialized tools and expertise. Enter Appium, a powerful testing tool, that has been extended to support platforms like LG Webos TV and Samsung Tizen TV. This ar... | jennife05918349 |

1,908,629 | Navigating the Future: Exploring Appium Testing for Smart TVs | In the realm of software testing, smart TVs represent a distinct challenge. Their unique interfaces,... | 0 | 2024-07-02T08:35:55 | https://dev.to/jennife05918349/navigating-the-future-exploring-appium-testing-for-smart-tvs-fjg | automation, testing, webdev | In the realm of software testing, smart TVs represent a distinct challenge. Their unique interfaces, embedded features, and distinct operating systems demand specialized tools and expertise. Enter Appium, a powerful testing tool, that has been extended to support platforms like LG Webos TV and Samsung Tizen TV. This ar... | jennife05918349 |

1,908,628 | 10 Factors to Choose the Best Magento Development Company | Are you also one of the Magento store owners who attempt to give your store new heights? If you are... | 0 | 2024-07-02T08:32:09 | https://dev.to/elightwalk/10-factors-to-choose-the-best-magento-development-company-1ack | magentodevelopment, magento, magentodevelopers |

Are you also one of the Magento store owners who attempt to give your store new heights? If you are facing any issues in doing so, you can hire a Magento Developer for the same. However, choosing the best Magento De... | elightwalk |

1,908,627 | How ZK-Rollups are Streamlining Crypto Banking in 2024 | The scalability limitations of traditional blockchains have long hindered the mass adoption of crypto... | 0 | 2024-07-02T08:30:46 | https://dev.to/donnajohnson88/how-zk-rollups-are-streamlining-crypto-banking-in-2024-3oi2 | cryptocurrency, blockchain, zkrollups, learning | The scalability limitations of traditional blockchains have long hindered the mass adoption of crypto banking. Enter [ZK-Rollups](https://blockchain.oodles.io/blog/zero-knowledge-zk-rollups/?utm_source=devto), one of the revolutionary [Blockchain application development services](https://blockchain.oodles.io/blockchain... | donnajohnson88 |

1,908,626 | {SDK vs Runtime} | SDK (Dasturiy ta'minotni ishlab chiqish to'plami): SDK - bu .NET platformasida ilovalarni ishlab... | 0 | 2024-07-02T08:30:26 | https://dev.to/firdavs090/sdk-vs-runtime-28b | SDK (Dasturiy ta'minotni ishlab chiqish to'plami):

SDK - bu .NET platformasida ilovalarni ishlab chiqish uchun mo'ljallangan asboblar va kutubxonalar to'plami. Bunga quyidagilar kiradi:

Kompilyatorlar: C#, F# yoki VB.NET dasturlash tillarida manba kodini bajariladigan kodga aylantirish uchun.

Kutubxonalar va ... | firdavs090 | |

1,908,624 | How is Angus Grill Brazilian Steakhouse Different from other Steakhouses? | If you are a food lover choosing places to eat, let alone a steak house, it is not just a meal that... | 0 | 2024-07-02T08:28:10 | https://dev.to/jandewrede/how-is-angus-grill-brazilian-steakhouse-different-from-other-steakhouses-1m3j |

If you are a food lover choosing places to eat, let alone a steak house, it is not just a meal that defines an exceptional experience.

According to a report published on DatamarNews, “Brazilian beef sales to the i... | jandewrede | |

1,908,623 | Elevate Your Career with the Best CV Writing Service by Bidisha Ray | In today's competitive job market, a professionally crafted CV is your ticket to landing interviews... | 0 | 2024-07-02T08:27:41 | https://dev.to/bidisha_ray_adbcb4641e0dd/elevate-your-career-with-the-best-cv-writing-service-by-bidisha-ray-5be2 | In today's competitive job market, a professionally crafted CV is your ticket to landing interviews and advancing your career. At Bidisha Ray, we specialize in delivering the Best CV Writing Service tailored to your unique career aspirations and strengths.

Why Choose Bidisha Ray's CV Writing Service?

Bidisha Ray stand... | bidisha_ray_adbcb4641e0dd | |

1,908,622 | A Comprehensive Guide to NFT Drops | The world of Non-Fungible Tokens (NFTs) has rapidly evolved, creating a digital revolution that has... | 0 | 2024-07-02T08:24:40 | https://dev.to/ram_kumar_c4ad6d3828441f2/a-comprehensive-guide-to-nft-drops-1jm9 | nft, webdev, beginners, programming |

The world of Non-Fungible Tokens (NFTs) has rapidly evolved, creating a digital revolution that has captivated artists, collectors, and investors alike. Among the many facets of this burgeoning industry, NFT drops s... | ram_kumar_c4ad6d3828441f2 |

1,908,620 | GBase 8s Database Performance Testing and Optimization Guide | Performance testing is a crucial part of database management and optimization. It not only helps us... | 0 | 2024-07-02T08:20:50 | https://dev.to/congcong/gbase-8s-database-performance-testing-and-optimization-guide-3n40 | database | Performance testing is a crucial part of database management and optimization. It not only helps us understand the current performance status of the system but also guides us in effective tuning. This article will provide a detailed introduction on how to conduct performance testing and optimization for the GBase8s dat... | congcong |

1,908,612 | Automating User Creation and Management with a Bash Script | Introduction Managing users on a Linux system can be a daunting task, especially in environments... | 0 | 2024-07-02T08:19:05 | https://dev.to/gbenga700/automating-user-creation-and-management-with-a-bash-script-5bad | **Introduction**