id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,908,589 | Why Are My Gmail Emails Blank? | Gmail is a popular email service platform that is used by millions of people for personal as well as... | 0 | 2024-07-02T07:49:29 | https://dev.to/shivang_sharma_621e321184/why-are-my-gmail-emails-blank-15ai |

Gmail is a popular email service platform that is used by millions of people for personal as well as professional correspondence. Moreover, users may occasionally face an annoying issue when gmail emails are blank, lacking any visible information even though they have legitimate subject lines and attachments. This iss... | shivang_sharma_621e321184 | |

1,908,587 | In the land of Potato PCs | The term "Potato Computer" is used for essentially underperforming PCs. For instance, if you get 30... | 0 | 2024-07-02T07:48:21 | https://dev.to/ithun/in-the-land-of-potato-pcs-1fe8 | lowcode, nandtotetris | The term "Potato Computer" is used for essentially underperforming PCs. For instance, if you get 30 FPS on a relatively low resource heavy game, you have yourself a Potato PC.

[Here's an actual potato PC for reference](https://www.youtube.com/watch?app=desktop&v=yWBzsBaU-Os)

But how much of a potato is it, really?

... | ithun |

1,908,500 | Writing a User Creation Script in Linux Bash | If you've never written a bash script before, today you'll be seeing how to write one. Bash scripts... | 0 | 2024-07-02T07:46:12 | https://dev.to/brightest/writing-a-user-creation-script-in-linux-bash-5clb | webdev, beginners, tutorial | If you've never written a bash script before, today you'll be seeing how to write one.

Bash scripts are used to automate linux processes. Knowing how to write and implement them will serve you well in your devops journey.

In this article, I'll be showing you how I wrote a bash script that automates the process of cre... | brightest |

1,908,585 | Guide: Free Ai Image Enhancer | In today's visually-driven digital environment, enhancing image quality is a top priority for many... | 0 | 2024-07-02T07:46:03 | https://dev.to/emma_rodriguez_9ff90506b6/guide-free-ai-image-enhancer-10mg | aimageenhancer, editing, imageediting, tutorial | In today's visually-driven digital environment,[ enhancing image quality](https://www.spyne.ai/image-enhancer) is a top priority for many professionals. Spyne.ai's AI image enhancer offers a sophisticated solution to this need, using artificial intelligence to significantly improve the quality and resolution of photos.... | emma_rodriguez_9ff90506b6 |

1,908,584 | GBase 8c Join Query Performance Optimization: A Practical Analysis | Join queries are one of the primary methods in relational databases, including methods like hash... | 0 | 2024-07-02T07:43:49 | https://dev.to/congcong/gbase-8c-join-query-performance-optimization-a-practical-analysis-46ll | database | Join queries are one of the primary methods in relational databases, including methods like hash join, merge join, or nested loop join. This article explores how to optimize join query performance in GBase 8c database through practical examples.

## 1. Creating Tables and Importing Data

Create tables `departments` and... | congcong |

1,908,583 | Dotnet's versions. | .NET Framework: 1.0: ASP.NET, ADO.NET va Windows Forms bilan birinchi versiya. 1.1: Mobil... | 0 | 2024-07-02T07:42:17 | https://dev.to/firdavs090/dotnets-versions-e89 | dotnet, dotnetcore, dotnetframework, documentation | .NET Framework:

1.0: ASP.NET, ADO.NET va Windows Forms bilan birinchi versiya.

1.1: Mobil rivojlanishni qo'llab-quvvatlash, xavfsizlikni yaxshilash.

2.0: Jeneriklarni joriy etish, yangi boshqaruv elementlari, 64-bitli tizimlarni qo'llab-quvvatlash.

3.0: WPF (Windows Presentation Foundation), WCF (Windo... | firdavs090 |

1,908,582 | Effortlessly Accept Payments on Your Website with Web Payments | Any online business hoping to flourish needs to be able to collect payments on their website in... | 0 | 2024-07-02T07:42:12 | https://dev.to/david_mark_61fd09e0f67a52/effortlessly-accept-payments-on-your-website-with-web-payments-4b3f | paymentgateway, paymentprocess, paymentsolutions, onlinepayments | Any online business hoping to flourish needs to be able to collect payments on their website in today's digital marketplace. With the correct payment gateway, you can turn your website into a powerful e-commerce platform that accepts secure online payments and boosts client happiness. To optimize your online sales and ... | david_mark_61fd09e0f67a52 |

1,908,581 | BatchGPT - Run multiple prompts, download conversations - No API key required | Hi everyone, I just released my first chrome extension called BatchGPT 👋 You can run multiple... | 0 | 2024-07-02T07:39:17 | https://dev.to/penguin_dev/my-first-product-batchgpt-4dl8 | watercooler, chatgpt, ai | Hi everyone, I just released my first chrome extension called BatchGPT 👋

You can run multiple ChatGPT prompts one after another automatically without an API key! The extension also allows you to download any ChatGPT conversation to a CSV (useful for importing into Excel) or JSON for easy processing.

You can find t... | penguin_dev |

1,908,580 | Demystifying Concurrency and Parallelism in Software Development | Concurrency and parallelism are fundamental concepts in software development, often misunderstood or... | 0 | 2024-07-02T07:38:57 | https://dev.to/ruzny_ma/demystifying-concurrency-and-parallelism-in-software-development-25cm | webdev, beginners, javascript, node | Concurrency and parallelism are fundamental concepts in software development, often misunderstood or used interchangeably. Let's clarify these terms and understand their implications for building efficient applications.

## Introduction

In the realm of software development, understanding the nuances between concurrenc... | ruzny_ma |

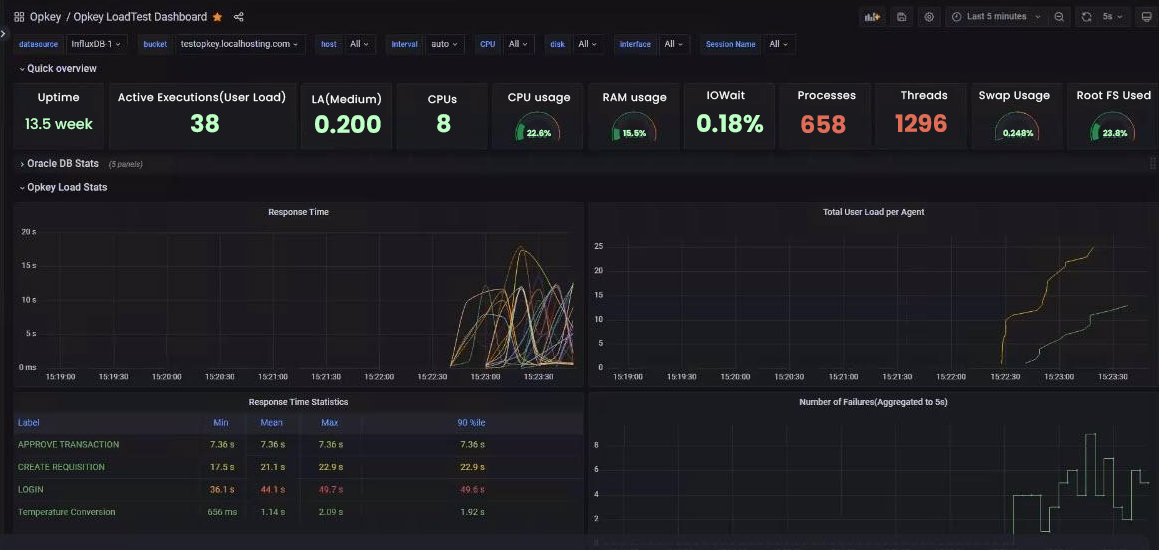

1,908,579 | Tips To Choose The Best Performance Testing Tools | Choosing the right performance testing tool is essential in verifying that your software... | 0 | 2024-07-02T07:36:17 | https://thedatascientist.com/tips-to-choose-the-best-performance-testing-tools/ | performance, testing, tools |

Choosing the right performance testing tool is essential in verifying that your software applications adhere to agreed performance standards and offer users a great experience. The selection process can be intimidati... | rohitbhandari102 |

1,908,578 | Designing Mobile-Friendly Financial Dashboards | In today's fast-paced, data-driven world, having instant access to crucial financial insights is a... | 0 | 2024-07-02T07:32:43 | https://dev.to/stevejacob45678/designing-mobile-friendly-financial-dashboards-4n8n | powerbi, powerbifinancialdashboard, powerbiconsultingservice | In today's fast-paced, data-driven world, having instant access to crucial financial insights is a game-changer. Mobile-friendly financial dashboards are becoming indispensable for businesses and financial professionals who need to make quick, informed decisions on the go. This blog will delve into the key aspects of d... | stevejacob45678 |

1,908,577 | Frontend Technology: An Overview of Modern Tools and Trends | Frontend technology is the cornerstone of web development, responsible for everything users interact... | 0 | 2024-07-02T07:32:36 | https://dev.to/matthew1/frontend-technology-an-overview-of-modern-tools-and-trends-2boc | webdev, programming, productivity, frontend | **Frontend technology** is the cornerstone of web development, responsible for everything users interact with on a website.

## ** Main Cores Of Frontend Development**

The main 3 cores of front-end technology consists of:

1. **HTML**

HTML which is called Hypertext Markup Language... | matthew1 |

1,908,574 | Difference Between TailwindCSS and Bootstrap | Philosophy and Approach Bootstrap: *Component-Based: * Bootstrap provides... | 0 | 2024-07-02T07:30:32 | https://dev.to/darshan_kumar_c9883cffc18/difference-between-tailwindcss-and-bootstrap-2dei | 1.

## **Philosophy and Approach**

Bootstrap:

## **Component-Based: **

Bootstrap provides pre-designed components like buttons, navbars, modals, and more. It aims to help developers quickly build responsive websites by using these ready-made components.

## **Opinionated Design:**

Bootstrap comes with a specific des... | darshan_kumar_c9883cffc18 | |

1,908,572 | Uttam Prayas Foundation: Growing Education and Health | The Uttam Prayas Foundation is a remarkable organization making a big difference in Greater Noida... | 0 | 2024-07-02T07:29:08 | https://dev.to/ravikumarr15/uttam-prayas-foundation-growing-education-and-health-38ek | productivity, uttamprayasfoundation, ngo | The [Uttam Prayas Foundation](https://www.facebook.com/people/Uttam-Prayas-Foundation/61560324803267/) is a remarkable organization making a big difference in Greater Noida West. They help people in need and care deeply about the environment. Their belief, "True education lies in doing charity, serving others, and doin... | ravikumarr15 |

1,908,570 | Rentals at Kuwait International Airport | RideRove | Traveling can be an exhilarating experience, but transportation logistics can sometimes dampen the... | 0 | 2024-07-02T07:27:59 | https://dev.to/riderovetaxi/rentals-at-kuwait-international-airport-riderove-3ibi | carrenta, carrenalkuwait, carhirekuwait, kuwaittaxi | Traveling can be an exhilarating experience, but transportation logistics can sometimes dampen the excitement. If you're flying into Kuwait and want to hit the ground running, having a rental car waiting for you at Kuwait International Airport can be a game-changer. This guide provides valuable insights into car rental... | riderovetaxi |

1,908,569 | Xe Tải Hà Nội xetai | "XE TẢI HÀ NỘI với kinh nghiệm 10 năm trong nghề xe tải, chúng tôi chuyên cung cấp các dòng xe tải... | 0 | 2024-07-02T07:23:58 | https://dev.to/xetaihanoi/xe-tai-ha-noi-xetai-8p9 | "XE TẢI HÀ NỘI với kinh nghiệm 10 năm trong nghề xe tải, chúng tôi chuyên cung cấp các dòng xe tải Thùng, xe tải nhẹ, xe tải VAN và các loại xe tải 1 tấn, 2 tấn, 3.5 tấn và xe Tải 8 tấn.

Số ĐT: 0968236395, Web: https://xetaihanoi.edu.vn/ , #xetai #hanoi #xetaihanoi #car #truck #terav6 #dongvangD8 #1tan #2tan #8tan

Địa ... | xetaihanoi | |

1,908,568 | On fuzzing, fuzz testing, stateless and stateful fuzzing, and then invariant testing and all those scary stuff | For blockchain developers only You might've heard the scary terms fuzzing, fuzz tests, invariant... | 0 | 2024-07-02T07:23:42 | https://dev.to/muratcanyuksel/on-fuzzing-fuzz-testing-stateless-and-stateful-fuzzing-and-then-invariant-testing-and-all-those-scary-stuff-21fj | For blockchain developers only

You might've heard the scary terms fuzzing, fuzz tests, invariant testing, stateless fuzzing etc. They're not as scary as they sound. But, what are they?

If you're a developer, you know about unit tests. What do they do? They isolate and test. What will happen if I call this function wi... | muratcanyuksel | |

1,908,567 | My Journey in Android Development: Learning Java and Building Apps | Introduction Hello, Dev Community! Today, I want to share my journey in Android... | 0 | 2024-07-02T07:21:51 | https://dev.to/ankittmeena/my-journey-in-android-development-learning-java-and-building-apps-35a3 | android, java, androiddev, beginners | ## Introduction

Hello, Dev Community! Today, I want to share my journey in Android development, how I learned Java, and my progress in building Android applications. This experience has been both challenging and rewarding, and I hope it inspires others to embark on a similar path.

## Getting Started with Java

When I... | ankittmeena |

1,908,525 | What are the potential ethical concerns associated with AI advancements in 2024? | As AI technology continues to advance in 2024, there are several potential ethical concerns that need... | 0 | 2024-07-02T07:17:38 | https://dev.to/topainewsindia/what-are-the-potential-ethical-concerns-associated-with-ai-advancements-in-2024-592n | As AI technology continues to advance in 2024, there are several potential ethical concerns that need to be carefully considered:

**Bias and Fairness:**

AI systems can perpetuate or amplify existing biases present in the training data or algorithmic design, leading to unfair and discriminatory outcomes, especially in ... | topainewsindia | |

1,908,524 | 5 Mistakes to Avoid While Using System Integration Testing Tools | A significant stage in the software development life cycle is system integration testing (SIT) as it... | 0 | 2024-07-02T07:17:17 | https://25pr.com/5-mistakes-to-avoid-while-using-system-integration-testing-tools/ | system, integration, testing, tools |

A significant stage in the software development life cycle is system integration testing (SIT) as it helps to verify that different parts and subsystems work together and perform as intended. Organizations frequently... | rohitbhandari102 |

1,908,521 | 5 Ways to Unleash the Power of Your VoIP System | Communication, in any small or large business in the digital world today, is crucial.... | 0 | 2024-07-02T07:16:23 | https://dev.to/william_taranto_d999d7ffc/5-ways-to-unleash-the-power-of-your-voip-system-77m | voip |

Communication, in any small or large business in the digital world today, is crucial. State-of-the-art VoIP systems have brought flexibility, cost-effectiveness, and features to corporate communication that traditio... | william_taranto_d999d7ffc |

1,908,520 | Dotnet's hisotry. | .NET (toʻliq nomi Microsoft .NET Framework) — Microsoft tomonidan turli turdagi ilovalarni yaratish... | 0 | 2024-07-02T07:13:42 | https://dev.to/firdavs090/dotnets-hisotry-37b1 | dotnet, learning | .NET (toʻliq nomi Microsoft .NET Framework) — Microsoft tomonidan turli turdagi ilovalarni yaratish va joylashtirishni soddalashtirish uchun ishlab chiqilgan platforma. U 2000-yillarning boshida turli texnologiyalar va vositalarni yagona ishlab chiqish platformasida birlashtirgan Windows xizmatlarining keyingi avlodi s... | firdavs090 |

1,908,519 | Wink APK MOD VIP Unlocked Free For Android | How Can Wink APK Help You? Easy To Use Could it be said that you are somebody who appreciates... | 0 | 2024-07-02T07:13:41 | https://dev.to/fueqanwaleed/wink-apk-mod-vip-unlocked-free-for-android-5aj3 |

How Can Wink APK Help You?

1. Easy To Use

Could it be said that you are somebody who appreciates streaming motion pictures, Television programs, or in any event, paying attention to music in a hurry? Wink APK gives admittance to an immense library of sight and sound substance. Whether you're into the most r... | fueqanwaleed | |

1,908,518 | Aizarrain Gutters | Best Pvc Pipe Manufacture in Kerala Aizar gutters are “Customer First – Fabricator Friendly”... | 0 | 2024-07-02T07:13:32 | https://dev.to/aizarrain_gutters_6ad726c/aizarrain-gutters-214n | pvcraingutter, pvcpipe, pipefiting | **Best Pvc Pipe Manufacture in Kerala**

Aizar gutters are “Customer First – Fabricator Friendly” products that offer beauty, Quality and Durability to the end users and easy, secure and problem-free installation to the fabricators.

[website](https://aizarraingutters.com/#)

Mek Aizar Pvt.Ltd. VIII/357 B, Industrial De... | aizarrain_gutters_6ad726c |

1,908,514 | Umami, simple self-hosted analytics | I was looking for a simple analytics solution because I wasn't satisfied with Google Analytics'... | 0 | 2024-07-02T07:11:33 | https://dev.to/indyman/umami-simple-self-hosted-analytics-4pk8 | webdev, analytics, news, javascript | I was looking for a simple analytics solution because I wasn't satisfied with Google Analytics' footprint on page performance and overall heaviness. After trying various options, I have been using Umami for a few weeks, and I'm very happy with it! Let's see why.

## What is Umami?

Umami is an open-source, lightweight,... | indyman |

1,908,517 | Blockchain Application Development: Benefits, Challenges and Future | Basic concepts Blockchain has emerged as a disruptive technology that is transforming... | 0 | 2024-07-02T07:10:23 | https://dev.to/blockchainx358/blockchain-application-development-benefits-challenges-and-future-27p0 | blockchain, blockchainapplication, blockchainapplicatio |

## **Basic concepts**

Blockchain has emerged as a disruptive technology that is transforming various industries. From its origin with Bitcoin to its evolution with Ethereum , blockchain has demonstrated its ability ... | blockchainx358 |

1,908,516 | how many teeths of lion? | A post by furqan | 0 | 2024-07-02T07:07:53 | https://dev.to/fueqanwaleed/how-many-teeths-of-lion-3k61 | webdev, beginners | fueqanwaleed | |

1,908,515 | Web Development & AI: Does AI will Affects its cost, effort and time: A Glimpse into Future | Artificial intelligence in Web Development Philadelphia has become increasingly popular in recent... | 0 | 2024-07-02T07:06:23 | https://dev.to/blog98/web-development-ai-does-ai-will-affects-its-cost-effort-and-time-a-glimpse-into-future-7j4 | webdevelopmentphiladelphia, philadelphiawebdesign, aidevelopers, webdevelopmentandai | Artificial intelligence in Web Development Philadelphia has become increasingly popular in recent years.

## Web Development & AI

Artificial intelligence (AI) is a disruptive force in the dynamic world of web development.

According to researchers, the AI market is expected to be worth $126 billion by 2025, growing at a... | blog98 |

1,907,764 | Build a Pokédex with React and PokéAPI 🔍 | I recently leveled up my React skills by building a Pokédex! This was such a fun project that I... | 0 | 2024-07-02T07:05:43 | https://dev.to/axelfrache/build-a-pokedex-with-react-and-pokeapi-4f2d | webdev, javascript, react, beginners | I recently leveled up my React skills by building a Pokédex! This was such a fun project that I wanted to share the process with you all. The app allows users to search for Pokémon by name or ID, fetching detailed information from the PokéAPI, including their type, abilities, and game appearances.

You can check out t... | axelfrache |

1,908,513 | Top Online Science Assignment Help in Australia | In the academic journey of a student, assignments play a crucial role in evaluating their... | 0 | 2024-07-02T07:05:05 | https://dev.to/aakash_panchal_51294e86b9/top-online-science-assignment-help-in-australia-gjb | scienceassignmenthelp, scienceassignmenthelpaustralia, onlinescienceassignmenthelp, assignmentwriter | In the academic journey of a student, assignments play a crucial role in evaluating their understanding and grasp of various subjects. Among these, science assignments often stand out due to their complexity and the analytical skills they require. As students in Australia juggle their academic responsibilities with par... | aakash_panchal_51294e86b9 |

1,908,510 | Month in WordPress: June 2024 | A supply chain attack hits plugins, WordPress 6.5.5 and 6.6 RC 1 are released, plugin install limit... | 0 | 2024-07-02T07:04:46 | https://wplake.org/blog/month-in-wordpress-june-2024/ | wordpress, cms, news, webdev | A supply chain attack hits plugins, WordPress 6.5.5 and 6.6 RC 1 are released, plugin install limit tops 10M, and ACF launches its 2024 survey.

## 1. Supply chain attack on WordPress.org plugins

> WP Team: We identified that some plugin authors were reusing passwords exposed in data breaches elsewhere. The compromis... | wplake |

1,907,350 | Launching a dev tool on Product Hunt? Keep it simple | The question isn't if you should launch on Product Hunt. It's how. To start, some... | 27,917 | 2024-07-02T07:01:00 | https://dev.to/fmerian/launching-a-dev-tool-on-product-hunt-keep-it-simple-54o9 | startup, developer, marketing, devjournal | **The question isn't _if_ you should launch on Product Hunt. It's *how*.**

To start, some definitions:

- **Maker**: a user who works on the product;

- **Hunter**: a user who submits a product and doesn't work on it;

- **Upvoter**: a user who upvotes a product;

- **Commenter**: a user who comments on a product;

- **La... | fmerian |

1,908,511 | Monolithic vs Microservices Architecture: Which is Best? | Introduction When architecting software systems, one of the fundamental decisions... | 0 | 2024-07-02T07:00:12 | https://dev.to/ruzny_ma/--3b48 | webdev, beginners, microservices, systemdesign | ## Introduction

When architecting software systems, one of the fundamental decisions developers face is choosing between monolithic and microservices architectures. Each approach comes with its own set of advantages and challenges, impacting scalability, flexibility, and overall system complexity. In this article, we’... | ruzny_ma |

1,908,509 | The Gemini AI and Google AI Features that We Have Been Waiting For | Google’s relentless pursuit of AI innovation has led to many exciting Gemini AI features slated for... | 0 | 2024-07-02T06:57:41 | https://dev.to/hyscaler/the-gemini-ai-and-google-ai-features-that-we-have-been-waiting-for-l1b | geminiai, aifeatures, webdev | Google’s relentless pursuit of AI innovation has led to many exciting Gemini AI features slated for integration across its vast ecosystem. From enhancing photography on Pixel devices to revolutionizing search and productivity with Gmail and Google Workspace, Gemini is poised to redefine how users interact with technolo... | amulyakumar |

1,908,508 | The Gemini AI and Google AI Features that We Have Been Waiting For | Google’s relentless pursuit of AI innovation has led to many exciting Gemini AI features slated for... | 0 | 2024-07-02T06:57:41 | https://dev.to/hyscaler/the-gemini-ai-and-google-ai-features-that-we-have-been-waiting-for-2c09 | geminiai, aifeatures, webdev | Google’s relentless pursuit of AI innovation has led to many exciting Gemini AI features slated for integration across its vast ecosystem. From enhancing photography on Pixel devices to revolutionizing search and productivity with Gmail and Google Workspace, Gemini is poised to redefine how users interact with technolo... | amulyakumar |

1,908,507 | How CTFA Certification Enhances Your Trust and Financial Expertise | • Independent Study Resources: Supplement your official ABA resources with additional study ctfa... | 0 | 2024-07-02T06:55:20 | https://dev.to/wrion1958/how-ctfa-certification-enhances-your-trust-and-financial-expertise-225 | webdev, javascript, beginners, programming | • Independent Study Resources: Supplement your official ABA resources with additional study <a href="https://dumpsarena.com/aba-dumps/ctfa/">ctfa certification</a> materials. Consider CTFA exam prep books, online courses offered by reputable institutions, or study groups with fellow CTFA aspirants.

• Practice Makes Pe... | wrion1958 |

1,908,506 | In 2024, should we still use React and check out other frameworks too? | As a frontend developer, we've reached an era where new technologies are continuously emerging, and... | 0 | 2024-07-02T06:54:43 | https://dev.to/jawnchuks/in-2024-should-we-still-use-react-and-check-out-other-frameworks-too-2hle | react, frontend, svelte, solidjs | As a frontend developer, we've reached an era where new technologies are continuously emerging, and established ones are rapidly evolving. Each passing month brings a wave of innovation, and it’s easy to question whether you're a well-rounded frontend engineer or just someone proficient in a single framework. This atta... | jawnchuks |

1,908,504 | A quick survey on AI Assistants in project management | Hi everyone, Imagine an AI assistant that helps you: Answer any project-related questions you... | 0 | 2024-07-02T06:51:05 | https://dev.to/june_luo/a-quick-survey-on-ai-assistants-in-project-management-18c4 | productivity, management, ai | Hi everyone,

Imagine an AI assistant that helps you:

- Answer any project-related questions you have

- Generate detailed reports on projects, sprints, and team performance

- Monitor progress and provide real-time risk alerts

This AI assistant can seamlessly integrate into your workflow, making project management mor... | june_luo |

1,907,991 | A Comprehensive Guide to SharePoint Embedded Graph APIs | Are you a developer looking to leverage the power of SharePoint Embedded in your applications?... | 26,993 | 2024-07-02T06:30:00 | https://intranetfromthetrenches.substack.com/p/a-guide-to-sharepoint-embedded-api-methods | sharepoint | Are you a developer looking to leverage the power of SharePoint Embedded in your applications? Managing files and documents within SharePoint Embedded containers is crucial for building robust solutions. This blog post is your one-stop guide to understanding SharePoint Embedded container and file Graph API methods!

**

By using Screen Flow... | sfdcnews |

1,908,501 | Custom Software Development – An Ultimate Guide For 2024 | Forget the one-size-fits-all narrative, it’s time for custom software development specialized... | 0 | 2024-07-02T06:44:43 | https://dev.to/rubengrey/custom-software-development-an-ultimate-guide-for-2024-c2b | react | Forget the one-size-fits-all narrative, it’s time for custom software development specialized requirements in businesses. Every industry these days is wading through a competitive market as well as targeting unique approaches to rope in as well as retain their consumer base.

The trick to any successful business these ... | rubengrey |

1,908,499 | Why Dream99 Game Stands Out | Dream99 Game is a fun and engaging online gaming platform that has captured the attention of many... | 0 | 2024-07-02T06:42:41 | https://dev.to/dhfyjgkh/why-dream99-game-stands-out-2mc9 | Dream99 Game is a fun and engaging online gaming platform that has captured the attention of many gamers worldwide. With a wide variety of games to choose from, Dream99 Game provides endless entertainment for players of all ages. Whether you're looking to relax, challenge your mind, or compete with friends, Dream99 Gam... | dhfyjgkh | |

1,908,494 | How to get Single Console | My Sir Asked Me To creaeatee single console of this : let tableOf2=[] for(let i=1; i<=10; i++){ ... | 0 | 2024-07-02T06:37:54 | https://dev.to/raja_musawir/how-to-get-single-console-1of0 | help | My Sir Asked Me To creaeatee single console of this :

let tableOf2=[]

for(let i=1; i<=10; i++){

tableOf2.push({value : `2 x ${i} = ${2*i}`})

}

// console.log(tableOf2)

let tableOf5=[]

for(let i=1; i<=10; i++){

tableOf5.push( {value :`5 x ${i} = ${5*i}`})

}

// console.log(tableOf5)

let combined=[

{

name... | raja_musawir |

1,908,498 | You can't use up creativity. The more you use, the more you have. | A post by Abhishek Kumar | 0 | 2024-07-02T06:41:52 | https://dev.to/abhishek_kumar_468ba87afa/you-cant-use-up-creativity-the-more-you-use-the-more-you-have-2kme | webdev, beginners, productivity, ai |

| abhishek_kumar_468ba87afa |

1,908,486 | Driving Business Success with Gen AI: Using LLMs in Production for Enterprises | Are you ready to take your business to the next level with the power of Generative AI? We are... | 0 | 2024-07-02T06:41:00 | https://dev.to/calsoftinc/driving-business-success-with-gen-ai-using-llms-in-production-for-enterprises-1mfl | ai, machinelearning, tutorial, news | Are you ready to take your business to the next level with the power of Generative AI? We are thrilled to invite you to our upcoming webinar titled "Driving Business Success with Gen AI: Using LLMs in Production for Enterprises."

This insightful event is scheduled for **12th July 2024 at 10:00 AM PST.**

### Why Atten... | calsoftinc |

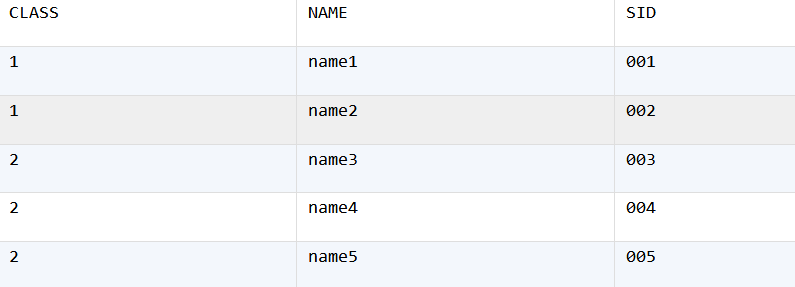

1,908,497 | How to Transpose Columns in Each Group to a Single Row | We have a database table STAKEHOLDER as follows: We are trying to group the table by CLASS and... | 0 | 2024-07-02T06:40:27 | https://dev.to/esproc_spl/how-to-transpose-columns-in-each-group-to-a-single-row-5451 | sql, development, spl | We have a database table STAKEHOLDER as follows:

We are trying to group the table by CLASS and convert all columns to a same row. Below is the desired result set:

| 1. Memory Parameters 1.1 Memory Size Parameter for INSERT... | 0 | 2024-07-02T06:36:49 | https://dev.to/congcong/gbase-8a-implementation-guide-parameter-optimization-3-2be3 | database | ## 1. Memory Parameters

### 1.1 Memory Size Parameter for `INSERT SELECT`

**`_gbase_insert_malloc_size_limit`**

This parameter controls the memory allocation size in the `INSERT SELECT` scenario. The default value is 10240, which is optimal. For scenarios involving long `VARCHAR` fields, such as multiple `VARCHAR(20... | congcong |

1,908,492 | A linux session after a while | I had my next linux session after a while. Got to learn new commands like find, locate, who. Commands... | 0 | 2024-07-02T06:36:29 | https://dev.to/anakin/a-linux-session-after-a-while-2m50 | I had my next linux session after a while. Got to learn new commands like find, locate, who. Commands like wc along with grep and pipe can do wonders and give you results in a real time. I also got learn about various text editors present in Linux like vim,nano,vi. | anakin | |

1,908,491 | Feedback on Amazon ECS Developer Experience | Hi everyone, I'm working on a project to improving the early developer experience of Amazon ECS and... | 0 | 2024-07-02T06:35:55 | https://dev.to/nikitand/feedback-on-amazon-ecs-developer-experience-3k0i | Hi everyone,

I'm working on a project to improving the early developer experience of Amazon ECS and would love to hear your thoughts on:

- Challenges: What are the main pain points you've encountered while using ECS?

- Improvements: How do you think the developer experience with ECS can be improved?

- Comparison: Are... | nikitand | |

1,908,490 | Introducing App Review | We at Appriview have provided this opportunity for you who are interested in mobile applications and... | 0 | 2024-07-02T06:35:36 | https://dev.to/appreview/introducing-app-review-4agd | We at Appriview have provided this opportunity for you who are interested in mobile applications and games to choose the best and most practical ones while saving time and money.

We try to help you with the experience we have in producing better products and increasing downloads.

[](https://appreview.ir/) | appreview | |

1,908,487 | Hacking Alibaba Cloud's Kubernetes Cluster | Hacking Alibaba Cloud's Kubernetes Cluster with Hillai Ben-Sasson &Ronen Shustin, Security... | 0 | 2024-07-02T06:34:16 | https://dev.to/gulcantopcu/hacking-alibaba-clouds-kubernetes-cluster-ofp | kubernetes, cloudcomputing, cybersecurity, hacking | Hacking Alibaba Cloud's Kubernetes Cluster with Hillai Ben-Sasson &Ronen Shustin, Security Researchers at Wiz and Bart Farrell, KubeFM Host

Securing Kubernetes clusters is one of the toughest challenges in cloud security, but for Ronen Shustin and Hillai Ben-Sasson at Wiz, it's just another day at work. These top-tier... | gulcantopcu |

1,908,434 | dfgdfg fgdfg fdgfd gdfg | g df gdfgfdg fdgfd gfgfdgfd gfdgfgfdgffdgfddf g dfgdf gdfgfd gfd | 0 | 2024-07-02T05:52:49 | https://dev.to/tel5_australia_117a27af06/dfgdfg-fgdfg-fdgfd-gdfg-106p | g df gdfgfdg fdgfd gfgfdgfd gf[dgfgfdg](dgfgfdg)ffdgfddf g dfgdf gdfgfd gfd | tel5_australia_117a27af06 | |

1,908,424 | Top 5 Essential React Libraries🚀 | In the ever-evolving world of web development, efficiency and functionality are key. React.js, one of... | 0 | 2024-07-02T06:28:56 | https://dev.to/vedansh0412/top-5-essential-react-libraries-for-boosting-your-web-development-efficiency-5a0n | webdev, javascript, react, frontend | In the ever-evolving world of web development, efficiency and functionality are key. React.js, one of the most popular JavaScript libraries, provides a solid foundation for building user interfaces. However, to fully leverage its potential, integrating the right set of libraries can make a significant difference, for B... | vedansh0412 |

1,908,488 | VS code Keyboard Short-cut for Developers⌨ | ! = html boilerplate show ctrl + z = undo ctrl + backspace = delete a word at a time shift +alt+down... | 0 | 2024-07-02T06:26:17 | https://dev.to/shemanto_sharkar/vs-code-keyboard-short-cut-for-developers-4bbe | webdev, javascript, beginners, programming | ! = html boilerplate show

ctrl + z = undo

ctrl + backspace = delete a word at a time

shift +alt+down = duplicate line

ctrl +shift+L = select all same tag

crtl + L = select a whole line

ctrl + B = toggle sidebar

ctrl + shift + F = search word among the whole folder

ctrl + F = search word among the file

ctrl + H = replac... | shemanto_sharkar |

1,908,485 | Boosting Web Application Performance: Strategies for Full-Stack Developers | Introduction As a full-stack developer, I always strive to improve the performance of web... | 0 | 2024-07-02T06:25:25 | https://dev.to/ruzny_ma/boosting-web-application-performance-strategies-for-full-stack-developers-mo0 | webdev, javascript, beginners, performance | # **Introduction**

As a full-stack developer, I always strive to improve the performance of web applications. The following problems arise now and then within this span, each one requiring different ways for the approach of the solution or best practices. This post attempts to review the insights and modern ways of ha... | ruzny_ma |

1,908,484 | MIMI's Security Measures: Comprehensive User Asset Protection Strategies | In the era of digital finance, blockchain technology is revolutionizing global financial markets... | 0 | 2024-07-02T06:24:15 | https://dev.to/mimi_official/mimis-security-measures-comprehensive-user-asset-protection-strategies-2n8m |

In the era of digital finance, blockchain technology is revolutionizing global financial markets with its innovative potential. However, as blockchain and decentralized finance (DeFi) become more widespread, the security of user assets has become a pressing concern. Threats like hacker attacks ... | mimi_official | |

1,908,446 | #7 Modern SQL Databases You Must Know in 2024 | clickhouse #MongoDB #Redis #MindsDB Dolt Dolt is an open-source, version-controlled... | 0 | 2024-07-02T06:03:36 | https://dev.to/dipalee_gaware_b4630cc678/7-modern-sql-databases-you-must-know-in-2024-4g16 | sql, dolt, snowflake, elasticsearch | #clickhouse #MongoDB #Redis #MindsDB

1. Dolt

Dolt is an open-source, version-controlled database that combines the power of Git with the functionality of a relational database. With Dolt, you can fork, clone, branch, merge, push, and pull databases just like you would with a Git repository.

Dolt is MySQL-c... | dipalee_gaware_b4630cc678 |

1,908,483 | Web Development & AI | Artificial intelligence in Web Development Philadelphia has become increasingly popular in recent... | 0 | 2024-07-02T06:23:43 | https://dev.to/blog98/how-these-10-tech-trends-will-transform-web-design-3913 | softcircles, aidevelopers, webdevelopmentphiladelphia, philadelphiawebdesign | Artificial intelligence in Web Development Philadelphia has become increasingly popular in recent years.

## Web Development & AI

Artificial intelligence (AI) is a disruptive force in the dynamic world of web development.

According to researchers, the AI market is expected to be worth $126 billion by 2025, growing at a... | blog98 |

1,908,480 | From leveraging it's Javascript development capabilities | Certainly! When you code with Wix Studio, you have several options for leveraging its JavaScript... | 0 | 2024-07-02T06:21:12 | https://dev.to/olatunjiayodel9/from-leveraging-its-javascript-development-capabilities-4e5m | devchallenge, wixstudiochallenge, webdev, javascript |

Certainly! When you code with **Wix Studio**, you have several options for leveraging its JavaScript development capabilities¹. Here are some key features:

1. **Coding Environments**:

- You can code directly in Wix Studio's built-in Code panel, the Wix IDE (based on VS Code), or your own IDE integrated with GitHub... | olatunjiayodel9 |

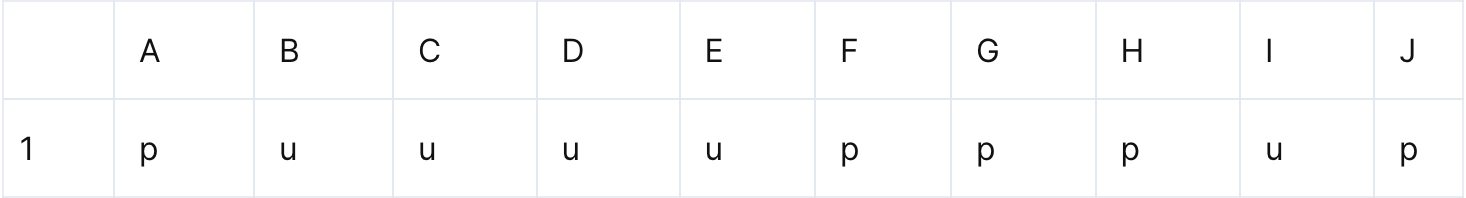

1,908,447 | List the Positions of Each Character | Problem description & analysis: Below is a row of letters. The letters in certain positions are... | 0 | 2024-07-02T06:18:09 | https://dev.to/judith677/list-the-positions-of-each-character-1367 | beginners, programming, tutorial, productivity | **Problem description & analysis**:

Below is a row of letters. The letters in certain positions are continuous.

We need to arrange them according to the format of “letter+positions”, as shown below:

![desired table]... | judith677 |

1,907,452 | Move aws resources from one stack to another cloudformation stack | Why do we need this? The AWS CloudFormation resource limit is currently set at 500,... | 0 | 2024-07-02T06:18:00 | https://dev.to/distinction-dev/move-aws-resources-from-one-stack-to-another-cloudformation-stack-5d1m | aws, guide, serverless, cloudformation | ## Why do we need this?

- The AWS CloudFormation resource limit is currently set at 500, although this size may increase with the introduction of new features in Application.

- To accommodate this limitation, we must distribute all resources across various stacks.

- Our approach involves isolating Lambda functions int... | bhavin03 |

1,907,608 | Automating User Management and Permissions on Linux using Bash Scripting | Linux is a multi-user operating system and as such, an administrator can create users and groups for... | 0 | 2024-07-02T06:04:17 | https://dev.to/vicradon/working-with-users-groups-and-permissions-on-linux-ale | linux, authorization | Linux is a multi-user operating system and as such, an administrator can create users and groups for different purposes. Both users and groups have their permissions. When a user is added to a group, it inherits the permissions of that groups. In this article, you will learn how to work with permissions for users and g... | vicradon |

1,908,445 | Help Us Pick the Best Slogan for Our New SaaS Startup | Hey everyone, We're pumped to introduce our latest SaaS innovation and we need your help to nail... | 0 | 2024-07-02T06:02:37 | https://dev.to/june_luo/help-us-pick-the-best-slogan-for-our-new-saas-startup-34i4 | saas, developer, insights, softwareengineering | Hey everyone,

We're pumped to introduce our latest SaaS innovation and we need your help to nail down the perfect slogan. If you're a development manager or leader, your input is gold to us. Check out these potential slogans:

1. Drive Engineering Excellence with AI Insights into Action

2. Optimize Engineering with AI... | june_luo |

1,908,443 | Navigating the Job Market in Data Analytics: Key Trends and Opportunities in 2024 | Introduction The data analytics job market is more dynamic than ever in 2024, driven by... | 0 | 2024-07-02T06:01:58 | https://dev.to/sejal_4218d5cae5da24da188/navigating-the-job-market-in-data-analytics-key-trends-and-opportunities-in-2024-fa5 | dataanalytics, dataanalyticsjobs, dataanalyticsfreelancer | ## Introduction

The [data analytics job](https://www.pangaeax.com/browse-talent/freelancer-data-analyst/) market is more dynamic than ever in 2024, driven by technological advancements and the growing importance of data-driven decision-making. As businesses continue to harness the power of big data, the demand for skil... | sejal_4218d5cae5da24da188 |

1,908,442 | The Ecosystem of India Interior Design Market | The India interior design industry has witnessed remarkable growth in recent years, driven by... | 0 | 2024-07-02T06:00:00 | https://dev.to/harshita_09/the-ecosystem-of-india-interior-design-market-5072 | interior, design, market | <p><span style="font-weight: 400;">The </span><strong>India interior design</strong><span style="font-weight: 400;"> industry has witnessed remarkable growth in recent years, driven by urbanization, rising disposable incomes, and an increasing appetite for aesthetic living spaces. the </span><a href="https://www.kenres... | harshita_09 |

1,901,700 | Develop APIs Quicker With API Testing | API development is a complex process due to two main reasons: (1) the number of variables and people... | 0 | 2024-07-02T06:00:00 | https://www.getambassador.io/blog/api-testing-quick-development | testing, api, development | [API development](https://www.getambassador.io/blog/api-development-comprehensive-guide) is a complex process due to two main reasons: (1) the number of variables and people involved in creating an API and (2) the process of building and improving your APIs never ends. At a quick glance, the steps within building an AP... | getambassador2024 |

1,908,441 | MOBILE DEVELOPMENT PLATFORMS. Software Architecture Patterns | Introduction: A Look into the world of Mobile app development: As the mobile world is growing,... | 0 | 2024-07-02T05:59:58 | https://dev.to/oreoluwa_eniola_eaa58bdf3/mobile-development-platforms-software-architecture-patterns-24k4 | Introduction:

A Look into the world of Mobile app development:

As the mobile world is growing, changes are following suit. Platforms are expanding, architecture patterns becoming the conventional norm. Extensive knowledge of both with great technical skills is much needed by a mobile developer before starting a projec... | oreoluwa_eniola_eaa58bdf3 | |

1,908,440 | How digital signage software can help improve the healthcare sector | The healthcare sector is an intricate and essential part of our society, demanding constant... | 0 | 2024-07-02T05:58:52 | https://dev.to/nextbraincanada/how-digital-signage-software-can-help-improve-the-healthcare-sector-2l4c | digitalsignagesoftware | The healthcare sector is an intricate and essential part of our society, demanding constant communication, efficient operations, and high levels of patient engagement and satisfaction. Digital signage software has emerged as a transformative tool in this sector, offering myriad benefits that streamline operations, enha... | nextbraincanada |

1,908,439 | How To Hire A Software Developer? | How To Hire A Software Developer? Hiring a software developer is a critical decision that... | 0 | 2024-07-02T05:58:10 | https://dev.to/bytesfarms/how-to-hire-a-software-developer-1m06 | softwaredevelopment, webdev, javascript, beginners | ## How To Hire A Software Developer?

Hiring a software developer is a critical decision that can significantly impact your project's success. Here's a comprehensive guide to help you navigate the process effectively.

### 1. Define Your Needs

Before starting the hiring process, clearly define what you need:

**Projec... | bytesfarms |

1,908,438 | The Rise of Vending Machines in India: A Growing Business Opportunity | The vending machine market in India is experiencing a promising uptrend. As of 2023, the market is... | 0 | 2024-07-02T05:56:42 | https://dev.to/harshita_09/the-rise-of-vending-machines-in-india-a-growing-business-opportunity-598j | vendingmachine, market, size, industry | The vending machine market in India is experiencing a promising uptrend. As of 2023, the market is valued at approximately USD 1.5 billion, with projections suggesting a robust compound annual growth rate (CAGR) of around 14% over the next five years. This growth is fueled by various factors, including increasing dispo... | harshita_09 |

1,908,437 | History of .NET | Платформа .NET (произносится как «dot net») — это бесплатная управляемая компьютерная программная... | 0 | 2024-07-02T05:54:51 | https://dev.to/fazliddin7777/history-of-net-5fcl | Платформа .NET (произносится как «dot net») — это бесплатная управляемая компьютерная программная платформа с открытым исходным кодом для операционных систем Windows, Linux и macOS. Проект в основном разрабатывается сотрудниками Microsoft посредством .NET Foundation и распространяется под лицензией MIT.

В конце 1990-х... | fazliddin7777 | |

1,908,436 | What is Bitmain Antminer S21 XP? | Bitmain unveiled the Antminer S21 XP at one of the biggest events in the cryptocurrency industry. The... | 0 | 2024-07-02T05:53:46 | https://dev.to/lillywilson/what-is-bitmain-antminer-s21-xp-19a5 | cryptocurrency, bitcoin, bitmain, asic | Bitmain unveiled the **[Antminer S21 XP](https://asicmarketplace.com/blog/bitmain-antminer-s21-xp/)** at one of the biggest events in the cryptocurrency industry. The following are some examples of the use of The World Digital Mining Summit. They highlighted at this event the innovative steps that boast not only the mi... | lillywilson |

1,908,435 | La función atoi y strcat en C | ¡Hola! Me encuentro aprendiendo el lenguaje de programación C y como herramienta estoy utilizando el... | 0 | 2024-07-02T05:53:13 | https://dev.to/omem/la-funcion-atoi-y-strcat-en-c-1go4 | c, atoi, csaga, strcat | ¡Hola! Me encuentro aprendiendo el lenguaje de programación C y como herramienta estoy utilizando el libro de "The C Programming Language" de Kernighan y Ritchie. A lo largo de mi aprendizaje estaré compartiendo todo lo que me parezca interesante o retador. Todos estos posts estarán unidos con la etiqueta `#csaga`.

Ac... | omem |

1,908,433 | Cracking the Code Of Brand Strategy vs Creative Strategy | Understanding the distinction between Brand Strategy and Creative Strategy is a common query in... | 0 | 2024-07-02T05:52:08 | https://dev.to/tgtg/cracking-the-code-of-brand-strategy-vs-creative-strategy-2ka5 | creative, strategy, brand, branding | Understanding the distinction between Brand Strategy and Creative Strategy is a common query in business, often accompanied by complex responses don’t you think? Different people might give you different explanations, throwing in various marketing terms that might leave you scratching your head. Well, we’re here to mak... | tgtg |

1,908,432 | Building a Travel Checklist Generator using Lyzr SDK | In this blog post, we’ll explore how to build a Travel Checklist Generator using Streamlit, the Lyzr... | 0 | 2024-07-02T05:51:02 | https://dev.to/akshay007/building-a-travel-checklist-generator-using-lyzr-sdk-18e8 | ai, python, productivity, coding | In this blog post, we’ll explore how to build a **Travel Checklist Generator** using Streamlit, the Lyzr Automata SDK, and OpenAI’s GPT-4 Turbo. This application will provide users with a customized packing list based on their travel details.

**Why use Lyzr SDK’s?**

With **Lyzr SDKs**, crafting your own **GenAI** app... | akshay007 |

1,908,431 | Shared Responsibility Model in Azure: A Comprehensive Guide | The shared responsibility model is a fundamental concept in cloud computing, outlining the division... | 0 | 2024-07-02T05:47:18 | https://dev.to/azizularif/shared-responsibility-model-in-azure-a-comprehensive-guide-3kba | The shared responsibility model is a fundamental concept in cloud computing, outlining the division of security and compliance responsibilities between a cloud service provider (CSP) and its customers. Understanding this model is crucial for effectively managing and securing your cloud resources. In this article, we wi... | azizularif | |

1,908,430 | Get Information Related to All Airports Terminal in 1 Second | Visit All airport terminal for comprehensive information on airports and terminals worldwide. Whether... | 0 | 2024-07-02T05:43:14 | https://dev.to/airportterminal/get-information-related-to-all-airports-terminal-in-1-second-cm5 | Visit All airport terminal for comprehensive information on airports and terminals worldwide. Whether you need details on amenities, transportation options, or terminal maps, we've got you covered. Our platform ensures you stay informed about check-in procedures, security guidelines, and more, making your travel experi... | airportterminal | |

1,908,429 | How to Change a Southwest Airlines Flight? | Southwest Airlines offers multiple ways for passengers to use their change flight date option if they... | 0 | 2024-07-02T05:41:12 | https://dev.to/flightsyo/how-to-change-a-southwest-airlines-flight-jma | airtravels, cheapflighttickets, southwestnamechange, policy | Southwest Airlines offers multiple ways for passengers to use their change flight date option if they are unable to take the originally planned flight. You can change your schedule both online and offline with the airline. You only need to decide which procedure is best for you and implement necessary adjustments, if r... | flightsyo |

1,908,428 | Farewell MongoDB: 5 reasons why you only need PostgreSQL | Discuss the reasons why you should consider PostgreSQL over MongoDB for your next project. ... | 0 | 2024-07-02T05:39:35 | https://blog.logto.io/postgresql-vs-mongodb/ | webdev, programming, opensource, identity | Discuss the reasons why you should consider PostgreSQL over MongoDB for your next project.

# Introduction

In the database world, MongoDB and PostgreSQL are both highly regarded choices. MongoDB, a popular NoSQL dat... | palomino |

1,908,427 | Leetcode Day 1: Two Sum Explained | The problem is as follow: Given an array of integers nums and an integer target, return indices of... | 0 | 2024-07-02T05:34:12 | https://dev.to/simona-cancian/leetcode-day-1-two-sum-45fp | leetcode, python, coding, codenewbie | The problem is as follow:

Given an array of integers `nums` and an integer `target`, _return indices of the two numbers such that they add up to `target`_.

You may assume that each input would have **_exactly_ one solution**, and you may not use the _same_ element twice.

You can return the answer in any order.

Exa... | simona-cancian |

1,908,423 | 🚀 Mastering Loop Control in C Programming: Leveraging break and continue 🌟 | Hey Dev Community! Are you ready to enhance your C programming skills and optimize your code? Today,... | 0 | 2024-07-02T05:34:02 | https://dev.to/moksh57/mastering-loop-control-in-c-programming-leveraging-break-and-continue-1116 | Hey Dev Community! Are you ready to enhance your C programming skills and optimize your code? Today, let's delve into the powerful world of loop control with break and continue statements.

**Understanding break and continue**

In C programming, break and continue are essential tools for managing loops effectively:

-

... | moksh57 | |

1,908,426 | Does Every Airline Have A Name Change Policy? | Looking to change your name in your scheduled ticket? Before inciting the name change process, you... | 0 | 2024-07-02T05:33:09 | https://dev.to/flightsyo/does-every-airline-have-a-name-change-policy-3d2e | airtravels, cheapflighttickets | Looking to change your name in your scheduled ticket? Before inciting the name change process, you must acquire information on the name change policy of your respective airline. You can make changes to your name effortlessly if you have a clear overview of the name change policy. Let’s discuss some of the name change p... | flightsyo |

1,908,425 | Rails have introduced new features like Hotwire and Async Query Loading. | Hotwire (HTML Over The Wire) is a new approach for building modern, dynamic web applications without... | 0 | 2024-07-02T05:31:59 | https://dev.to/m_hussain/rails-have-introduced-new-features-like-hotwire-and-async-query-loading-g58 |

**Hotwire** (HTML Over The Wire) is a new approach for building modern, dynamic web applications without writing much custom JavaScript. Hotwire consists of several components, primarily Turbo and Stimulus, which help developers create fast and interactive applications.

**Async Query Loading** allows you to load Acti... | m_hussain | |

1,908,422 | High Level System Design | High-Level System Design: Creating a system capable of supporting millions of users involves a... | 0 | 2024-07-02T05:28:03 | https://dev.to/zeeshanali0704/high-level-system-design-4b70 | systemdesignwithzeeshanali, systemdesign, javascript | High-Level System Design:

Creating a system capable of supporting millions of users involves a complex, iterative process that requires ongoing refinement and improvement. In this article we will discuss about all key components of a system. By the end, you will have a foundational understanding of system design and t... | zeeshanali0704 |

1,908,421 | Get Udemy Courses for Free with Certificate | udemy courses for free,how to get paid udemy courses for free,download udemy courses for... | 0 | 2024-07-02T05:22:26 | https://dev.to/banmyaccount/get-udemy-courses-for-free-with-certificate-4nlg | udemy | {% youtube https://www.youtube.com/watch?v=C-neomEDbcM %}

udemy courses for free,how to get paid udemy courses for free,download udemy courses for free,udemy free courses,udemy free courses certificate,get udemy paid courses for free,get udemy courses for free,how to get udemy courses for free,free udemy courses,how... | banmyaccount |

1,908,419 | hello | A post by Fabrice NZ | 0 | 2024-07-02T05:17:52 | https://dev.to/fabrice_nz_d7bd159119d98a/hello-38l5 | fabrice_nz_d7bd159119d98a | ||

1,908,416 | The Productivity apps I use in 2024 | Cassidy's current "stack" of task-tracking, calendar, and note-taking apps | 0 | 2024-07-02T05:11:02 | https://cassidoo.co/post/producivity-apps-2024/ | productivity, applications, todo, opensource | ---

title: The Productivity apps I use in 2024

published: true

description: Cassidy's current "stack" of task-tracking, calendar, and note-taking apps

tags: productivity, applications, todo, oss

canonical_url: https://cassidoo.co/post/producivity-apps-2024/

---

I often get asked what my favorite tools are and how I us... | cassidoo |

1,908,415 | How to Find the Best Angular Web Development Services for Your Needs | When embarking on a web application development project, choosing the right development services can... | 0 | 2024-07-02T05:06:05 | https://dev.to/chicmicllp/how-to-find-the-best-angular-web-development-services-for-your-needs-3k18 | When embarking on a web application development project, choosing the right development services can be the key to success. Angular, a powerful and versatile framework, is ideal for building dynamic, single-page applications (SPAs). To help you navigate the options, here are some of the best [Angular web app developmen... | chicmicllp | |

1,908,414 | Install HomeAssistant on TVBox Coolme BB2 S912 | Requirements: Hướng dẫn bằng Video cài ARMbian lên TVBox:... | 0 | 2024-07-02T05:05:26 | https://dev.to/bachhuynh/install-homeassistant-on-tvbox-coolme-bb2-s912-2hd9 | Requirements:

- Hướng dẫn bằng Video cài ARMbian lên TVBox: https://www.youtube.com/watch?v=k4qzfOOPbYA&ab_channel=i12bretro

- Image: https://github.com/ophub/amlogic-s9xxx-armbian (Tôi chọn: https://github.com/ophub/amlogic-s9xxx-armbian/releases/download/Armbian_bullseye_save_2024.07/Armbian_24.8.0_amlogic_s912_bulls... | bachhuynh | |

1,908,412 | Demystifying Entitlement Management: A Deep Dive into OpenMeter | The world of APIs and cloud services thrives on controlled access and usage. OpenMeter emerges as a... | 0 | 2024-07-02T05:01:02 | https://dev.to/epakconsultant/demystifying-entitlement-management-a-deep-dive-into-openmeter-5coa | openmeter | The world of APIs and cloud services thrives on controlled access and usage. OpenMeter emerges as a powerful tool for entitlement management, empowering businesses to manage access to their resources effectively. This article delves into the core functionalities of OpenMeter, equipping you to understand how it can bene... | epakconsultant |

1,908,408 | My FreeCodeCamp Contributions | Contributed to Testing using Typescript and Playwright for the... | 0 | 2024-07-02T04:59:18 | https://dev.to/harshanand/my-freecodecamp-contributions-2674 | **Contributed to Testing using Typescript and Playwright for the FreeCodeCamp.org**

[https://github.com/freeCodeCamp/freeCodeCamp/pull/51977](https://github.com/freeCodeCamp/freeCodeCamp/pull/51977)

[https://github.com/freeCodeCamp/freeCodeCamp/pull/51947](https://github.com/freeCodeCamp/freeCodeCamp/pull/51947)

[http... | harshanand | |

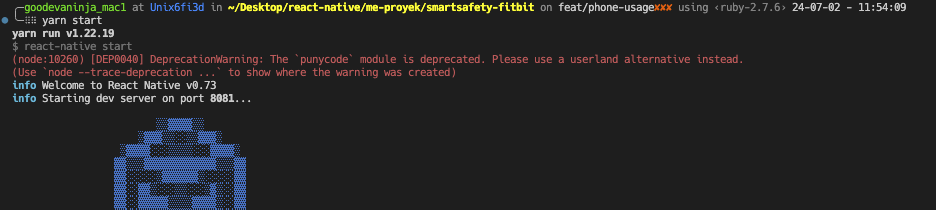

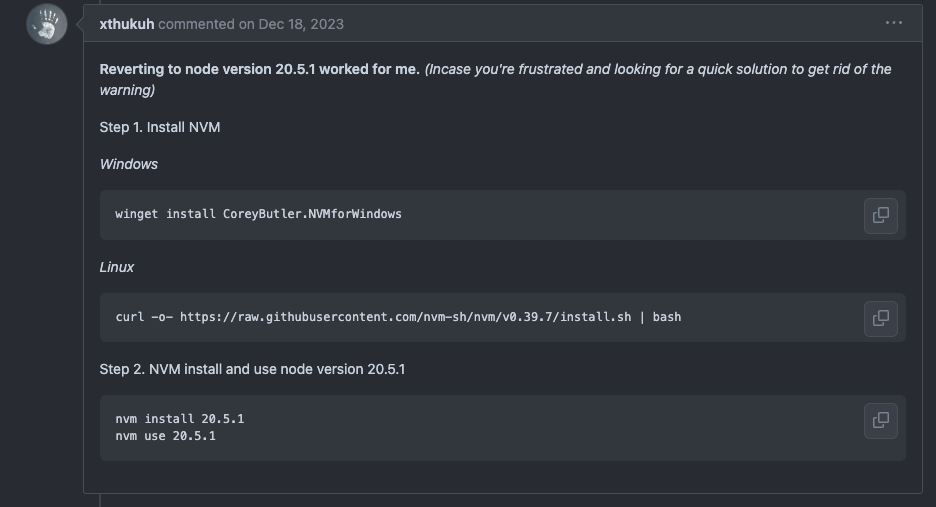

1,908,409 | (node:10260) [DEP0040] DeprecationWarning: The `punycode` module is deprecated | Solve with this state Source:... | 0 | 2024-07-02T04:58:33 | https://dev.to/aspsptyd/node10260-dep0040-deprecationwarning-the-punycode-module-is-deprecated-d11 |

Solve with this state

Source: https://github.com/yarnpkg/yarn/issues/9005#issuecomment-1861008960

Result

... | aspsptyd | |

1,908,407 | How to Create and Save data with NextJS Server Actions, Prisma ORM, and React Hook Forms | Tutorial | Hey everyone! I'm excited to share the latest video in my Code Snippet Sharing App series! In this... | 0 | 2024-07-02T04:57:44 | https://dev.to/gkhan205/how-to-create-and-save-data-with-nextjs-server-actions-prisma-orm-and-react-hook-forms-tutorial-9cg | webdev, javascript, beginners, nextjs | Hey everyone!

I'm excited to share the latest video in my Code Snippet Sharing App series! In this tutorial, we dive into creating and saving code snippets to the database using NextJS Server Actions, Prisma ORM, and React Hook Forms. Whether you're a seasoned developer or just starting out, this video has something f... | gkhan205 |

1,908,406 | SOLID Design Principles in Ruby | SOLID - dasturni yanada tushunarli, o'zgartirishga va kattalashtirishga imkon beruvchi principlar... | 0 | 2024-07-02T04:57:04 | https://dev.to/faxriddinmaxmadiyorov/solid-principles-in-ruby-49p7 | solid, ruby, oop | **SOLID - dasturni yanada tushunarli, o'zgartirishga va kattalashtirishga imkon beruvchi principlar yig'indisi.**

- Single Responsibility Principle (SRP)

- Open/Closed Principle (OCP)

- Liskov Substitution Principle (LSP)

- Interface Segregation Principle (ISP)

- Dependency Inversion Principle (DIP)

**1. Single Respo... | faxriddinmaxmadiyorov |

1,908,405 | Unveiling the Dev Arsenal: Exploring TypeScript, React, Next.js, and Redux DevTools | The modern web development landscape thrives on powerful tools and frameworks. This article delves... | 0 | 2024-07-02T04:56:25 | https://dev.to/epakconsultant/unveiling-the-dev-arsenal-exploring-typescript-react-nextjs-and-redux-devtools-446g | typescript | The modern web development landscape thrives on powerful tools and frameworks. This article delves into four key players: TypeScript, React, Next.js, and Redux DevTools, equipping you to build robust and efficient web applications.

1. TypeScript: Supercharging JavaScript

TypeScript adds a layer of type safety on top ... | epakconsultant |

1,908,404 | 健牌:打造健康生活的完整指南 | 在現代社會中,健康已成為每個人追求的目標之一。然而,由於工作壓力、飲食習慣和生活方式等各種因素,維持健康的挑戰越來越大。幸運的是,透過了解並實踐一些關鍵原則,我們可以顯著提升自己的健康水平。本指南將以「... | 0 | 2024-07-02T04:53:07 | https://dev.to/johnvicky/jian-pai-da-zao-jian-kang-sheng-huo-de-wan-zheng-zhi-nan-21bo | 健牌, 健康指南, 健康生活, 健康習慣 | 在現代社會中,健康已成為每個人追求的目標之一。然而,由於工作壓力、飲食習慣和生活方式等各種因素,維持健康的挑戰越來越大。幸運的是,透過了解並實踐一些關鍵原則,我們可以顯著提升自己的健康水平。本指南將以「 **[健牌](https://www.hksmoke.com/product/m3-健牌-(免稅煙)) **」為核心,為您提供一套完整且實用的健康生活策略,從飲食、運動、心態到生活習慣,全面解析如何打造屬於您的「健牌」健康生活。

一、均衡飲食

健牌的首要原則是保持均衡的飲食習慣。現代人往往因忙碌的生活節奏而忽略飲食的重要性,導致營養失衡,進而影響健康。均衡飲食包括攝取足夠的蛋白質、碳水化合物、脂肪、維生素和礦物質。您可以通過以... | johnvicky |

1,908,403 | Building Robust Backends: Mastering NestJS for Design and Development of Services and APIs | NestJS emerges as a powerful framework for crafting robust and scalable backend services and APIs.... | 0 | 2024-07-02T04:47:48 | https://dev.to/epakconsultant/building-robust-backends-mastering-nestjs-for-design-and-development-of-services-and-apis-38km | NestJS emerges as a powerful framework for crafting robust and scalable backend services and APIs. This article delves into the core concepts of NestJS, guiding you through the design and development process. By the end, you'll be equipped to build efficient and well-structured backend solutions.

What is NestJS?

Nes... | epakconsultant | |

1,908,402 | Unlock the Power of App Development with the Best Free Flutter App Builder | As an experienced app developer, I've witnessed the remarkable evolution of the app development... | 0 | 2024-07-02T04:43:45 | https://dev.to/apptagsolution/unlock-the-power-of-app-development-with-the-best-free-flutter-app-builder-3okc | free, flutter, app, builder | As an experienced app developer, I've witnessed the remarkable evolution of the app development landscape. One technology that has particularly captivated my attention is Flutter, a cross-platform framework developed by Google. Flutter's ability to create high-performance, visually stunning, and natively compiled appli... | apptagsolution |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.