id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,908,143 | How I Build Projects Faster - My Stack and Strategies | Hello everyone, I'm Juan, and today I want to share with you the strategies and technologies that I... | 0 | 2024-07-01T20:28:45 | https://dev.to/juanemilio31323/how-i-build-projects-faster-my-stack-and-strategies-3hpg | softwaredevelopment, saas, entrepreneurship, webdev | Hello everyone, I'm Juan, and today I want to share with you the strategies and technologies that I use to develop projects as fast as I can in my free time. In my last post, I talked about how I try to apply the **[48-hour rule](https://medium.com/@theprof301/48hs-is-all-you-need-15083345c5d5)** and how that helped me... | juanemilio31323 |

1,908,142 | Playwright vs. Cypress: Comparing E2E Tools | This article was originally published on the Shipyard Blog Playwright and Cypress are the... | 0 | 2024-07-01T20:28:39 | https://shipyard.build/blog/playwright-vs-cypress/ | testing, playwright, devops, tdd | *<a href="https://shipyard.build/blog/playwright-vs-cypress/" target="_blank">This article was originally published on the Shipyard Blog</a>*

---

<a href="https://playwright.dev/" target="_blank">Playwright</a> and <a href="https://www.cypress.io/" target="_blank">Cypress</a> are the industry standard when it comes t... | shipyard |

1,908,141 | HNG: What I aim to Solve with Backend Development | I'm really excited to share that I'm beginning a new phase in my career as a backend developer. I'm... | 0 | 2024-07-01T20:27:47 | https://dev.to/thedanielokoye/hng-what-i-aim-to-solve-with-backend-development-3bl7 | I'm really excited to share that I'm beginning a new phase in my career as a backend developer. I'm Daniel, and while I am currently a DevOps engineer, I am diving into backend development not to change careers, but to increase my understanding of software and grow my skill set while at it. I’m about to start an incred... | thedanielokoye | |

1,908,140 | Web Design Trends for 2024: What’s Hot and What’s Not | As we move further into 2024, web design continues to evolve rapidly, driven by technological... | 0 | 2024-07-01T20:27:43 | https://dev.to/zinotrust/web-design-trends-for-2024-whats-hot-and-whats-not-2fi9 | As we move further into 2024, web design continues to evolve rapidly, driven by technological advancements and changing user preferences. Staying current with the latest trends is essential for creating engaging and effective websites. This article explores the hottest web design trends for 2024 and highlights those th... | zinotrust | |

1,908,108 | SQL Course: Many-to-many relationship and Nested Joins. | You have learned about one-to-one and one-to-many relationships and they are quite straight forward.... | 27,924 | 2024-07-01T20:24:21 | https://dev.to/emanuelgustafzon/sql-course-many-to-many-relationship-and-nested-joins-4c28 | sql | You have learned about one-to-one and one-to-many relationships and they are quite straight forward. Many-to-Many works a bit different because you need an extra table, so called join table.

In this example we will let users like posts in the database. To achieve this functionality we need to create a many-to-many re... | emanuelgustafzon |

1,907,877 | AWS All Builders Welcome Grant & re:Inforce Newbie | Earlier this year, I was excited to learn that I was one of ~fifty applicants selected to receive the... | 0 | 2024-07-01T20:23:22 | https://dev.to/ahoughro/aws-all-builders-welcome-grant-reinforce-newbie-32pp | security, awscommunity, cloudcomputing, aws | Earlier this year, I was excited to learn that I was one of ~fifty applicants selected to receive the inaugural AWS [All Builders Welcome Grant](https://reinvent.awsevents.com/all-builders-welcome/) to attend the 2024 [AWS re:Inforce Conference](https://reinforce.awsevents.com/) held in Philadelphia, PA in June of 2024... | ahoughro |

1,908,119 | Core CI/CD Concepts: A Comprehensive Overview | Core CI/CD Concepts: A Comprehensive Overview In the fast-paced world of software... | 0 | 2024-07-01T19:58:42 | https://dev.to/kshyam/core-cicd-concepts-a-comprehensive-overview-ma6 | [

](https://razorops.com/)

## Core CI/CD Concepts: A Comprehensive Overview

In the fast-paced world of software development, the ability to quickly and reliably deliver software is paramount. This need has led to the e... | kshyam | |

1,908,117 | The Most Comprehensive Guide to Read On Mobile Testing | Mobile Testing has become vital today as millions of mobile applications are available on the play... | 0 | 2024-07-01T19:57:45 | https://dev.to/morrismoses149/the-most-comprehensive-guide-to-read-on-mobile-testing-4lcf | mobiletesting, testgrid | Mobile Testing has become vital today as millions of mobile applications are available on the play store.

Over the years, the number will increase as more and more people come on the internet every year, and time spent on mobile applications increases every year.

People nowadays are very selective with which mobile app... | morrismoses149 |

1,908,115 | Spoofing | I am attempting to verify numbers that were spoofed to my home landline. I have 20 numbers. ANyone... | 0 | 2024-07-01T19:49:57 | https://dev.to/legaleagle/spoofing-elh | I am attempting to verify numbers that were spoofed to my home landline. I have 20 numbers. ANyone here have the talent to identify? | legaleagle | |

1,907,860 | Creating Bash Scripts for User Management | Introduction A Bash script is a file containing a sequence of commands that are executed... | 0 | 2024-07-01T19:47:55 | https://dev.to/oluchi_oraekwe_b0bf2c5abc/creating-bash-scripts-for-user-management-47ce | linux, devops |

## Introduction

A Bash script is a file containing a sequence of commands that are executed on a Bash shell. It allows performing a series of actions or automating tasks. This article will examine how to use a Bash script to create users dynamically by reading a CSV file. A CSV (Comma Separated Values) file contains ... | oluchi_oraekwe_b0bf2c5abc |

1,908,074 | Segment Anything in a CT Scan with NVIDIA VISTA-3D | Author: Dan Gural (Machine Learning Engineer at Voxel51) The Future Of Analyzing Medical... | 0 | 2024-07-01T19:41:43 | https://voxel51.com/blog/segment-anything-in-a-ct-scan-with-nvidia-vista-3d/ | computervision, ai, datascience, machinelearning | _Author: [Dan Gural](https://www.linkedin.com/in/daniel-gural/) (Machine Learning Engineer at [Voxel51](https://voxel51.com/))_

## The Future Of Analyzing Medical Imagery Is Here

## No time to read the blog? No worries! Here’s a video of me covering what’s in this blog!

{% embed https://youtu.be/zi4AzEaQ5BA %}

Visu... | jguerrero-voxel51 |

1,908,081 | Learning VisionPro: Let's Build a Stock Market App | I joined Polygon.io a few months back and have been looking for a project that I can dive in to help... | 0 | 2024-07-01T19:31:16 | https://dev.to/quintonwall/learning-visionpro-lets-build-a-stock-market-app-2opk | swift, visionpro, api, devdiary | I joined [Polygon.io](https://polygon.io?utm_campaign=quintondevto) a few months back and have been looking for a project that I can dive in to help me learn the [platform’s APIs](https://polygon.io/docs/stocks/getting-started?utm_campaign=quintondevto) better. Then, when watching [this year’s WWDC](https://developer.a... | quintonwall |

1,908,113 | Regular dev pod day | This image was created by dollyyyy :) 😭🥺❤️🍦😂🥹🦙😍**** | 0 | 2024-07-01T19:26:23 | https://dev.to/yunhaozz/regular-dev-pod-day-dpi | This image was created by dollyyyy :) 😭🥺❤️🍦😂🥹🦙😍**** | yunhaozz | |

1,908,110 | Architect level: React State and Props | As an architect-level developer, your focus is on building scalable, maintainable, and... | 0 | 2024-07-01T19:19:53 | https://dev.to/david_zamoraballesteros_/architect-level-react-state-and-props-426f | react, webdev, javascript, programming | As an architect-level developer, your focus is on building scalable, maintainable, and high-performance applications. Understanding the core concepts of state and props in React, and leveraging them effectively, is crucial for achieving these goals. This article delves into advanced uses and best practices for props an... | david_zamoraballesteros_ |

1,908,109 | Add Filter choices to resources | I worked on the project Add Filter Choices to Resources as my first task, focusing on implementing... | 0 | 2024-07-01T19:17:38 | https://dev.to/ccokeke/add-filter-choices-to-resources-4g3a | I worked on the project Add Filter Choices to Resources as my first task, focusing on implementing filterable actions for both users and administrators. This enhancement ensures a user-friendly interface for managing Share Networks by utilizing filters and workflows.

The ShareNetworksView class is central to this func... | ccokeke | |

1,907,807 | Subsets and Backtracking, Coding Interview Pattern | Subsets The Subsets technique is a fundamental approach used to solve problems involving... | 0 | 2024-07-01T19:14:35 | https://dev.to/harshm03/subsets-and-backtracking-coding-interview-pattern-11of | coding, algorithms, dsa, interview | ## Subsets

The Subsets technique is a fundamental approach used to solve problems involving the generation and manipulation of subsets from a given set of elements. This technique is particularly useful in scenarios where we need to explore all possible combinations of a set, such as in problems related to combinatori... | harshm03 |

1,907,989 | How to deploy React App to GitHub Pages - Caveman Style 🌋 🔥🦴 | Like a good caveman you have to do everything by hand and with as little automation as possible. ... | 0 | 2024-07-01T19:10:10 | https://dev.to/uxxxjp/how-to-deploy-react-app-to-github-pages-caveman-style-2alh | webdev, github, react, staticwebapps | Like a good caveman you have to do everything by hand and with as little automation as possible.

## Create the New Repo

Create the repo on your GitHub Account

Create a public repo named `<user-name.github.io>`

Naming things is probably the most common thing a developer does.

Naming your API properly is essential to provide clarity and facilitate its usage. Let’s see some best practices for naming REST APIs.

## Tips

### ... | thiagobfim | |

1,908,097 | SQL Course: One-to-many Relationships and Left Joins | In the last chapter we learned about one-to-one fields and inner joins. In this chapter we learn... | 27,924 | 2024-07-01T19:05:57 | https://dev.to/emanuelgustafzon/sql-course-one-to-many-relationships-and-left-joins-pgm | sql | In the last chapter we learned about one-to-one fields and inner joins. In this chapter we learn about one-to-many relationships and left joins. If you followed the last chapter this should be easy.

We will create a `posts table` and a `post is related to one user` and a `user can have many posts`. That is why it is ... | emanuelgustafzon |

1,908,106 | Character Encoding and Rendering | Introduction to Character Encoding Character encoding is a method used to convert text... | 0 | 2024-07-01T19:05:40 | https://dev.to/hichem-mg/character-encoding-and-rendering-4j7f | webdev, learning, codenewbie, unicode | ## Introduction to Character Encoding

Character encoding is a method used to convert text data into a format that computers can efficiently process and display. It maps characters to specific numeric values that are stored in computer memory, enabling the representation of diverse languages, symbols, and characters in ... | hichem-mg |

1,908,061 | Figma Config 2024 | This past week I attended the 2024 Figma Config conference. The conference was held in person in San... | 0 | 2024-07-01T19:05:37 | https://jamesiv.es/blog/frontend/design/2024/06/29/figma-config-2024 | figma, webdev, conference | This past week I attended the [2024 Figma Config conference](https://config.figma.com). The conference was held in person in San Francisco, California, at the Moscone Convention Center and was a great opportunity to learn more about [Figma](https://figma.com) and how other teams in the industry manage their [design sys... | jamesives |

1,908,105 | Lead level: React State and Props | As a lead developer, your responsibility extends beyond just writing code; you need to ensure that... | 0 | 2024-07-01T19:04:11 | https://dev.to/david_zamoraballesteros_/lead-level-react-state-and-props-2ad1 | react, webdev, javascript, programming | As a lead developer, your responsibility extends beyond just writing code; you need to ensure that your team understands and effectively uses React's core concepts, such as state and props. This article provides an in-depth exploration of these concepts, focusing on best practices, advanced techniques, and architectura... | david_zamoraballesteros_ |

1,908,104 | Senior level: React State and Props | As a senior developer, understanding and leveraging the core concepts of React, such as state and... | 0 | 2024-07-01T19:03:26 | https://dev.to/david_zamoraballesteros_/senior-level-react-state-and-props-3710 | react, webdev, javascript, programming | As a senior developer, understanding and leveraging the core concepts of React, such as state and props, is crucial for designing scalable, maintainable, and efficient applications. This article delves into the advanced use of state and props, including type-checking with PropTypes, managing state in both functional an... | david_zamoraballesteros_ |

1,908,103 | Mid level: React State and Props | Understanding state and props in React is fundamental for building dynamic and maintainable... | 0 | 2024-07-01T19:02:31 | https://dev.to/david_zamoraballesteros_/mid-level-react-state-and-props-1k86 | react, webdev, javascript, programming | Understanding state and props in React is fundamental for building dynamic and maintainable applications. As a mid-level developer, you should not only grasp these concepts but also be adept at implementing best practices and advanced techniques. This article delves into the core concepts of state and props, including ... | david_zamoraballesteros_ |

1,908,100 | Junior level: React State and Props | Understanding state and props in React is essential for building dynamic and interactive... | 0 | 2024-07-01T19:00:51 | https://dev.to/david_zamoraballesteros_/junior-level-react-state-and-props-4b3a | react, webdev, javascript, programming | Understanding state and props in React is essential for building dynamic and interactive applications. This guide will introduce you to these concepts, explain how to use them, and highlight the differences between them.

## Props

### What Are Props?

Props, short for properties, are read-only attributes used to pass ... | david_zamoraballesteros_ |

1,908,080 | First Post | I just wrote a script for a terminal based blackjack script. It is not perfect believe me, but I have... | 0 | 2024-07-01T18:41:21 | https://dev.to/joosedev/first-post-4e99 | python, blackjack | I just wrote a script for a terminal based blackjack script. It is not perfect believe me, but I have the functionality for a full game of blackjack.

import random

class Player:

def __init__(self, name, balance):

self.name = name

self.balance = balance

self.hand = []

def place_bet(sel... | joosedev |

1,908,098 | Intern level: React State and Props | Introduction to State and Props In React, state and props are essential concepts that... | 0 | 2024-07-01T18:59:48 | https://dev.to/david_zamoraballesteros_/intern-level-react-state-and-props-4hch | react, webdev, javascript, programming | ## Introduction to State and Props

In React, **state** and **props** are essential concepts that allow you to manage data and make your applications dynamic and interactive. Understanding how to use state and props effectively is crucial for building robust React applications.

## Props

### What Are Props?

Props, sh... | david_zamoraballesteros_ |

1,908,096 | Architect level: Core Concepts of React | As an architect-level developer, you are responsible for designing scalable, maintainable, and... | 0 | 2024-07-01T18:58:36 | https://dev.to/david_zamoraballesteros_/architect-level-core-concepts-of-react-5973 | react, webdev, javascript, programming |

As an architect-level developer, you are responsible for designing scalable, maintainable, and high-performance applications. Understanding the core concepts of React is crucial for creating robust architectures. This article delves into the fundamental concepts of React, focusing on components, JSX, and their advanc... | david_zamoraballesteros_ |

1,908,094 | API Gateways | The What API Gateways to extract bunch of code that would otherwise be inside your servers... | 0 | 2024-07-01T18:52:28 | https://dev.to/abhishek_konthalapalli_38/api-gateways-2dcl | networking, webdev |

## The What

API Gateways to extract bunch of code that would otherwise be inside your servers and extract it out to a different server.

##The Why / Advantages

Authorization: User authentication can be done before sending the request to your microservices.

Transformation & Validation : Client would have the libe... | abhishek_konthalapalli_38 |

1,908,092 | A Quick Guide to Creating Laravel Factories and Seeders | A Quick Guide to Creating Laravel Factories and Seeders | 0 | 2024-07-01T18:50:02 | https://dev.to/bn_geek/a-quick-guide-to-creating-laravel-factories-and-seeders-3o09 | php, laravel | ---

title: A Quick Guide to Creating Laravel Factories and Seeders

published: true

description: A Quick Guide to Creating Laravel Factories and Seeders

tags: PHP, Laravel

# cover_image: https://images.pexels.com/photos/21711160/pexels-photo-21711160/free-photo-of-drone-shot-of-a-tractor-with-a-seeder-on-a-cropland.jpeg... | bn_geek |

1,908,065 | User Management Automation : Bash Script Guide | Trying to manage user accounts in a Linux environment can be a stressful, time-consuming and... | 0 | 2024-07-01T18:46:28 | https://dev.to/augusthottie/user-management-automation-bash-script-guide-14pl | devops, linux, sysops, tutorial | Trying to manage user accounts in a Linux environment can be a stressful, time-consuming and error-prone process, especially in a large organization with many users. Automating this process not only makes things easy, efficient and time-saving, but also ensures consistency and accuracy. In this article, we'll look at a... | augusthottie |

1,908,091 | Lead level: Core Concepts of React | As a lead developer, your role extends beyond writing code. You need to ensure that your team... | 0 | 2024-07-01T18:46:08 | https://dev.to/david_zamoraballesteros_/lead-level-core-concepts-of-react-f9p | react, webdev, javascript, programming | As a lead developer, your role extends beyond writing code. You need to ensure that your team understands and utilizes the core concepts of React efficiently to build scalable, maintainable, and high-performance applications. This article delves into these fundamental concepts with an emphasis on best practices, advanc... | david_zamoraballesteros_ |

1,906,120 | Security in Requirements phase | Building requirements is one of the first steps in the SDLC, where we define the goals and objectives... | 27,930 | 2024-07-01T18:46:07 | https://dev.to/owasp/security-in-requirements-phase-33ad | cybersecurity, security, requirements, sdlc | Building requirements is one of the first steps in the SDLC, where we define the goals and objectives of our future application. Usually, at this phase, we collect relevant stakeholders and start discussing their needs and expectations. We talk to people who will use the application, those who will manage it, and anyon... | exploitorg |

1,908,042 | Intro to Application Security | In the face of increasing cyberattacks, application security is becoming critical, requiring... | 27,930 | 2024-07-01T18:45:43 | https://dev.to/owasp/intro-to-application-security-3cj3 | cybersecurity, learning, security, softwaredevelopment | In the face of increasing cyberattacks, application security is becoming critical, requiring developers to integrate robust measures and best practices to build secure applications.

But what exactly does the term "secure application" mean?

Let's take a brief look at some notable security incidents in history:

#### T-M... | exploitorg |

1,908,089 | Senior level: Core Concepts of React | Components What Are Components? Components are the fundamental units of a React... | 0 | 2024-07-01T18:45:15 | https://dev.to/david_zamoraballesteros_/senior-level-core-concepts-of-react-3e75 | react, webdev, javascript, programming | ## Components

### What Are Components?

Components are the fundamental units of a React application. They enable the building of complex UIs from small, isolated pieces of code. Each component encapsulates its own structure, logic, and styling, making it reusable and maintainable. Components can be compared to the mic... | david_zamoraballesteros_ |

1,908,088 | A beginner's guide to the Codellama-70b-Python model by Meta on Replicate | codellama-70b-python | 0 | 2024-07-01T18:44:40 | https://aimodels.fyi/models/replicate/codellama-70b-python-meta | coding, ai, beginners, programming | *This is a simplified guide to an AI model called [Codellama-70b-Python](https://aimodels.fyi/models/replicate/codellama-70b-python-meta) maintained by [Meta](https://aimodels.fyi/creators/replicate/meta). If you like these kinds of guides, you should subscribe to the [AImodels.fyi newsletter](https://aimodels.substack... | mikeyoung44 |

1,908,087 | Mobile Development Platforms and software architecture pattern in mobile development | Mobile app development has become an integral part of the tech industry, with millions of apps... | 0 | 2024-07-01T18:44:37 | https://dev.to/webking/mobile-development-platforms-and-software-architecture-pattern-in-mobile-development-fo4 | platforms, mobile, patterns, internship | Mobile app development has become an integral part of the tech industry, with millions of apps available for various purposes. For anyone looking to dive into mobile development, understanding the different platforms and architecture patterns is crucial. In this article, I’ll discuss popular mobile development platform... | webking |

1,908,086 | Mid level: Core Concepts of React | Components What Are Components? Components are the fundamental building blocks... | 0 | 2024-07-01T18:44:29 | https://dev.to/david_zamoraballesteros_/mid-level-core-concepts-of-react-3ki | react, webdev, javascript, programming | ## Components

### What Are Components?

Components are the fundamental building blocks of React applications. They allow you to split the UI into independent, reusable pieces that can be managed separately. A component in React is essentially a JavaScript function or class that optionally accepts inputs (known as "pro... | david_zamoraballesteros_ |

1,908,084 | A beginner's guide to the Clip-Vit-Large-Patch14 model by Cjwbw on Replicate | clip-vit-large-patch14 | 0 | 2024-07-01T18:44:06 | https://aimodels.fyi/models/replicate/clip-vit-large-patch14-cjwbw | coding, ai, beginners, programming | *This is a simplified guide to an AI model called [Clip-Vit-Large-Patch14](https://aimodels.fyi/models/replicate/clip-vit-large-patch14-cjwbw) maintained by [Cjwbw](https://aimodels.fyi/creators/replicate/cjwbw). If you like these kinds of guides, you should subscribe to the [AImodels.fyi newsletter](https://aimodels.s... | mikeyoung44 |

1,908,083 | Junior level: Core Concepts of React | Components What Are Components? Components are the building blocks of any React... | 0 | 2024-07-01T18:43:51 | https://dev.to/david_zamoraballesteros_/junior-level-core-concepts-of-react-2l5e | react, webdev, javascript, programming | ## Components

### What Are Components?

Components are the building blocks of any React application. Think of components as small, reusable pieces of code that define how a section of the user interface (UI) should look and behave. Each component can manage its own state and props, making it easier to build and mainta... | david_zamoraballesteros_ |

1,908,082 | Intern level: Core Concepts of React | Components What Are Components? Components are the building blocks of any React... | 0 | 2024-07-01T18:43:06 | https://dev.to/david_zamoraballesteros_/intern-level-core-concepts-of-react-enk | react, webdev, javascript, programming | ## Components

### What Are Components?

Components are the building blocks of any React application. They let you split the UI into independent, reusable pieces, and think about each piece in isolation. A component in React can be thought of as a JavaScript function or class that optionally accepts inputs (known as "p... | david_zamoraballesteros_ |

1,908,079 | Detail Differences Between AngularJs And VueJs. | AngularJS and Vue.js are both popular JavaScript frameworks/libraries used for building web... | 0 | 2024-07-01T18:41:03 | https://dev.to/solenn_ebangha_9970e15990/detail-differences-between-angularjs-and-vuejs-525e |

AngularJS and Vue.js are both popular JavaScript frameworks/libraries used for building web applications, but they have some significant differences in terms of architecture, learning curve, performance, and community support. Here's a detailed comparison:

AngularJS (Angular 1.x):

Architecture:

MVVM Architecture: An... | solenn_ebangha_9970e15990 | |

1,908,078 | Architect level: Setting Up the React Environment | As an architect-level developer, setting up the React environment involves strategic decisions that... | 0 | 2024-07-01T18:34:32 | https://dev.to/david_zamoraballesteros_/architect-level-setting-up-the-react-environment-304 | react, webdev, javascript, programming | As an architect-level developer, setting up the React environment involves strategic decisions that impact the scalability, maintainability, and performance of applications. This guide will cover advanced practices for configuring the development environment, providing flexibility and efficiency.

## Development Enviro... | david_zamoraballesteros_ |

1,908,077 | Building a Training Schedule Generator using Lyzr SDK | In the fast-paced world of athletic training, having a personalized training plan can make a... | 0 | 2024-07-01T18:34:12 | https://dev.to/akshay007/building-a-training-schedule-generator-using-lyzr-sdk-1432 | ai, streamlit, python, github | In the fast-paced world of **athletic training**, having a personalized training plan can make a significant difference in an athlete’s performance. Leveraging advanced AI technologies, we can now create **tailored training schedules** that cater to individual needs. In this blog post, we explore how to develop a Train... | akshay007 |

1,908,076 | Breaking down pointers (in C++) | This post was originally posted on my Hashnode blog. I recently began learning C++ (or CPP), a... | 0 | 2024-07-01T18:33:44 | https://shafspecs.hashnode.dev/breaking-down-pointers | cpp, beginners, programming, computerscience | > This post was originally posted on [my Hashnode blog](https://shafspecs.hashnode.dev/breaking-down-pointers).

I recently began learning C++ (or CPP), a powerful language built on C. C++ is a language that exposes a lot more than conventional languages like JS, Python and Java. Having worked with weird languages (lik... | shafspecs |

1,908,075 | Lead level: Setting Up the React Environment | As a lead developer, ensuring that your team’s development environment is efficient, scalable, and... | 0 | 2024-07-01T18:33:21 | https://dev.to/david_zamoraballesteros_/lead-level-setting-up-the-react-environment-3ona | react, webdev, javascript, programming | As a lead developer, ensuring that your team’s development environment is efficient, scalable, and maintainable is critical. This guide covers advanced practices for setting up a React development environment, emphasizing flexibility and optimization.

## Development Environment Setup

### Installing Node.js and npm

N... | david_zamoraballesteros_ |

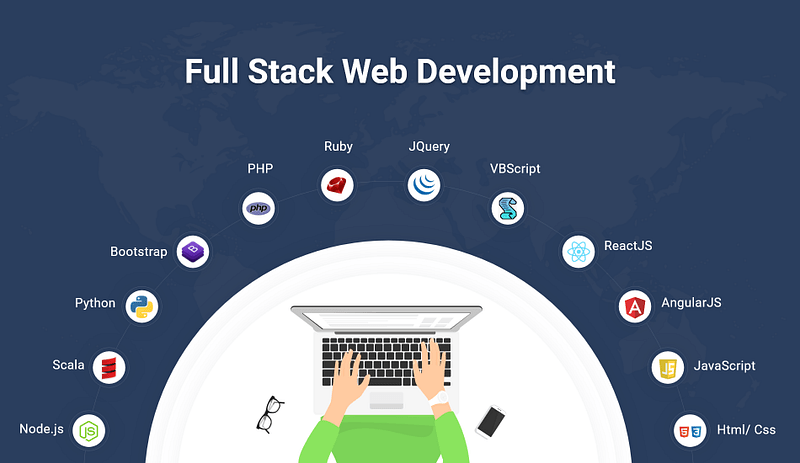

1,908,059 | The Future of Full Stack Development: Is It Still Worth Learning in 2024? | In today's rapidly evolving tech landscape, many aspiring developers question the relevance of... | 0 | 2024-07-01T18:32:46 | https://dev.to/helloworldttj/the-future-of-full-stack-development-is-it-still-worth-learning-in-2024-1bf4 | javascript, webdev, frontend, backend |

In today's rapidly evolving tech landscape, many aspiring developers question the relevance of full-stack development. This blog post delves into the current state of full-stack development and explores its prospects... | helloworldttj |

1,908,073 | Automating Git Commands: Streamline Your Workflow with Shell Scripts and Aliases | As developers, we frequently use Git for version control, and a common task is pushing our latest... | 0 | 2024-07-01T18:30:27 | https://dev.to/gulshank721/automating-git-commands-streamline-your-workflow-with-shell-scripts-and-aliases-al2 | git, automation, webdev, github | As developers, we frequently use Git for version control, and a common task is pushing our latest code changes to the repository. This typically involves running three commands in sequence: `**git add .**`, `**git commit -m "message"**`, and `**git push origin main**`. Repeating these commands can be tedious, especiall... | gulshank721 |

1,908,069 | Senior level: Setting Up the React Environment | Development Environment Setup As a senior developer, it's crucial to have a... | 0 | 2024-07-01T18:29:09 | https://dev.to/david_zamoraballesteros_/senior-level-setting-up-the-react-environment-2e4h | react, webdev, javascript, programming | ## Development Environment Setup

As a senior developer, it's crucial to have a well-configured development environment to streamline your workflow and maximize productivity. This section covers the essentials of setting up Node.js, npm, and a code editor like VS Code.

### Installing Node.js and npm

Node.js and npm a... | david_zamoraballesteros_ |

1,908,068 | Distinction With(out) a difference | I'm relearning Java. It's one of the first programming languages I studied in school and it is... | 0 | 2024-07-01T18:28:21 | https://dev.to/caseyeee/distinction-without-a-difference-3n62 | java, beginners | I'm relearning Java. It's one of the first programming languages I studied in school and it is hitting differently this time around.

For instance, I never mastered when to use public vs. private, the purpose of (String[] args) or the logic behind choosing a data type. I memorized patterns and tinkered with things lik... | caseyeee |

1,908,067 | Why Galvalume Gutters Are the Best Choice for Homes in Litchfield, CT | When selecting gutters for your home in Litchfield, CT, Galvalume gutters stand out as an exceptional... | 0 | 2024-07-01T18:27:57 | https://dev.to/allk12kg/why-galvalume-gutters-are-the-best-choice-for-homes-in-litchfield-ct-1fgh | When selecting gutters for your home in Litchfield, CT, Galvalume gutters stand out as an exceptional choice. Known for their durability, cost-effectiveness, and aesthetic appeal, these gutters are designed to handle the diverse weather conditions typical of the area. This article will explore the benefits of Galvalume... | allk12kg | |

1,908,066 | Mid level: Setting Up the React Environment | Development Environment Setup Installing Node.js and npm As a mid-level... | 0 | 2024-07-01T18:27:52 | https://dev.to/david_zamoraballesteros_/mid-level-setting-up-the-react-environment-ibl | react, webdev, javascript, programming |

## Development Environment Setup

### Installing Node.js and npm

As a mid-level developer, you might already be familiar with the basics of Node.js and npm. However, ensuring that you have the latest stable versions and understanding their roles in React development is crucial.

#### Steps to Install Node.js and npm... | david_zamoraballesteros_ |

1,908,063 | Junior level: Setting Up the React Environment | Development Environment Setup Installing Node.js and npm To start developing... | 0 | 2024-07-01T18:26:23 | https://dev.to/david_zamoraballesteros_/junior-level-setting-up-the-react-environment-1gan | react, webdev, javascript, programming | ## Development Environment Setup

### Installing Node.js and npm

To start developing with React, you need to have Node.js and npm (Node Package Manager) installed. Node.js allows you to run JavaScript on your local machine, and npm helps you manage and install JavaScript packages.

#### Steps to Install Node.js and np... | david_zamoraballesteros_ |

1,908,064 | Enclave Games Monthly Report: June 2024 | During June the countdown to js13kGames 2024 started, new Gamedev.js Weekly newsletter website was... | 0 | 2024-07-01T18:29:50 | https://enclavegames.com/blog/monthly-report-june-2024/ | gamedev, js13k, enclavegames, monthlyreport | ---

title: Enclave Games Monthly Report: June 2024

published: true

date: 2024-07-01 18:26:17 UTC

tags: gamedev,js13k,enclavegames,monthlyreport

canonical_url: https://enclavegames.com/blog/monthly-report-june-2024/

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/uzgoxuw0ut7mvnpz2dzm.png

---

Durin... | end3r |

1,908,062 | Intern level: Setting Up the React Environment | Development Environment Setup Installing Node.js and npm Before you can start... | 0 | 2024-07-01T18:24:40 | https://dev.to/david_zamoraballesteros_/intern-level-setting-up-the-react-environment-120o | react, webdev, javascript, programming | ## Development Environment Setup

### Installing Node.js and npm

Before you can start working with React, you need to have Node.js and npm (Node Package Manager) installed on your computer. Node.js allows you to run JavaScript on the server side, while npm helps you manage and install JavaScript packages.

#### Steps ... | david_zamoraballesteros_ |

1,908,060 | Building a Serverless Web Scraper with a ~little~ lot of help from Amazon Q | I moved to Seattle at the end of 2021 on an L1b visa, and need to keep an eye on the priority dates for my Green Card application, so I decided to build an app that will pull the historic data for me and graph it. | 27,940 | 2024-07-01T18:23:00 | https://community.aws/content/2hsUp8ZV7UoQpVCApnaTOBljCai | amazonqdeveloper, terraform, lambda, dynamodb |

---

title: "Building a Serverless Web Scraper with a ~little~ lot of help from Amazon Q"

description: "I moved to Seattle at the end of 2021 on an L1b visa, and need to keep an eye on the priority dates for my Green Card application, so I decided to build an app that will pull the historic data for me and graph it."

t... | cobusbernard |

1,908,050 | App building | colorful pictures 4 colors picture with the following properties A black wide thick ring Golden... | 0 | 2024-07-01T18:19:47 | https://dev.to/aboladale01/app-building-30od | webdev, python, javascript, programming | **colorful pictures**

_4 colors picture with the following properties_

1. A black wide thick ring

2. Golden shiny backgrounds

3. Flying green feathers eagle

4. Sliver shiny crown

| aboladale01 |

1,908,049 | The Future of Entertainment: Unveiling the New Streaming IPTV | In the ever-evolving landscape of digital entertainment, IPTV (Internet Protocol Television) has... | 0 | 2024-07-01T18:19:01 | https://dev.to/furycodz/the-future-of-entertainment-unveiling-the-new-streaming-iptv-1n0d | In the ever-evolving landscape of digital entertainment, IPTV (Internet Protocol Television) has emerged as a revolutionary force, transforming how we consume content. The latest advancements in streaming IPTV are set to redefine our viewing experiences, offering unparalleled convenience, personalization, and variety. ... | furycodz | |

1,908,048 | Why Organizations Should Opt for Independent Software Testing | Software applications have become indispensable to business success in today’s digital world.... | 0 | 2024-07-01T18:18:24 | https://dev.to/testree/why-organizations-should-opt-for-independent-software-testing-39i6 | testing, software, performance, programming | Software applications have become indispensable to business success in today’s digital world. However, delivering high-quality software that performs reliably can be complex and challenging. Software testing has emerged as a crucial practice to ensure the quality, reliability, and effectiveness of software applications... | testree |

1,908,047 | Tailwind CSS and Bootstrap: A Comparison of Modern CSS Frameworks. | Introduction Hello, Fellow Developers Good day, this is Goodness David Ireogbu. I am currently... | 0 | 2024-07-01T18:17:44 | https://dev.to/sheisgoodness/tailwind-css-and-bootstrap-a-comparison-of-modern-css-frameworks-26ca | webdev, frontend, tailwindcss, bootstrap | **Introduction**

_Hello, Fellow Developers Good day, this is Goodness David Ireogbu. I am currently enrolled in the HNG program and am particularly interested in front-end development. I'm going to compare two well-known CSS frameworks in this context: Tailwind CSS and Bootstrap. They have multiple advantages as well a... | sheisgoodness |

1,908,045 | Comparing Alpine.js and Svelte: A Niche Frontend Technology Analysis | Based on the rapid changing landscape of frontend development, choosing the right technology can... | 0 | 2024-07-01T18:16:54 | https://dev.to/nwoko_gabriella_46c73a70f/comparing-alpinejs-and-svelte-a-niche-frontend-technology-analysis-28m3 | Based on the rapid changing landscape of frontend development, choosing the right technology can significantly impact the success of a project. While popular frameworks like React and Angular often dominate the conversation, there are several niche technologies that offer unique advantages. In this article, we’ll be lo... | nwoko_gabriella_46c73a70f | |

1,899,531 | Top 15 Linux Commands Every Beginner Should Know | Why is it important to learn Linux commands? Learning Linux commands is essential for... | 0 | 2024-07-01T18:16:48 | https://dev.to/mennahaggag/top-15-linux-commands-every-beginner-should-know-1mco | linux, terminal, ubuntu, shell | ## Why is it important to learn Linux commands?

Learning Linux commands is essential for anyone who wants to use the terminal effectively. If you are a beginner interested in fields where Linux is important, this article is perfect for you. Mastering these commands will make you more efficient and give you a deeper un... | mennahaggag |

1,908,044 | How to send email with Go and MailTrap | Sending emails is a common requirement in many applications. Whether it's for account verification,... | 0 | 2024-07-01T18:15:09 | https://dev.to/gbubemi22/how-to-send-email-with-go-and-mailtrap-3o6i | go | Sending emails is a common requirement in many applications. Whether it's for account verification, password reset, or notifications, integrating email functionality can enhance the user experience. In this post, we'll walk through the steps to send emails using Go, leveraging the `gomail` package.

Prerequisites

Ensur... | gbubemi22 |

1,908,043 | Overcoming a Challenging Backend Problem: A Journey of Learning | As a growing software developer, I constantly find myself navigating through complex problems and... | 0 | 2024-07-01T18:14:26 | https://dev.to/easygtheprogrammer/overcoming-a-challenging-backend-problem-a-journey-of-learning-ddl | beginners, database, learning, backenddevelopment | As a growing software developer, I constantly find myself navigating through complex problems and devising innovative solutions. Recently, I encountered a particularly challenging backend issue that tested my skills and patience. This experience not only honed my technical abilities but also reinforced my passion for c... | easygtheprogrammer |

1,908,041 | Migrating from .NET Framework to .NET 8: | Why You Should Migrate Migrating from the .NET Framework to .NET 8 is essential for... | 0 | 2024-07-01T18:09:06 | https://dev.to/jwtiller_c47bdfa134adf302/migrating-from-net-framework-to-net-8-34d5 | dotnet, dotnetframework, dotnetcore | ## Why You Should Migrate

Migrating from the .NET Framework to .NET 8 is essential for modernizing your applications. Here’s why:

### Performance

.NET 8 offers significant performance improvements, including faster runtime execution and optimized libraries, leading to more responsive applications.

### Security

.NET ... | jwtiller_c47bdfa134adf302 |

1,907,628 | Quick guide to setting up an shortcut/alias in windows | This is a quick 30 second guide to setting up an alias in windows. All this is applicable for... | 0 | 2024-07-01T18:06:54 | https://dev.to/minhaz1217/quick-guide-to-setting-up-an-shortcutalias-in-windows-g49 | productivity, automation, windows, powershell | This is a quick 30 second guide to setting up an alias in windows. All this is applicable for windows.

## Motivation

The main motivation behind this is that I wanted to simplify the ping command. So that I'll just type a single command and it'll execute the `ping www.google.com -t`, which I use to check if my internet... | minhaz1217 |

1,908,036 | Automate REST API Management with Terraform: AWS API Gateway Proxy Integration and Lambda | In my previous post, I talked about how to simplify REST API management with AWS API Gateway Proxy... | 0 | 2024-07-01T18:06:24 | https://dev.to/sepiyush/automate-rest-api-management-with-terraform-aws-api-gateway-proxy-integration-and-lambda-5dh | terraform, aws, lambda, apigateway | In my previous post, I talked about how to simplify REST API management with [AWS API Gateway Proxy Integration and Lambda](https://dev.to/sepiyush/simplify-rest-api-management-with-aws-api-gateway-proxy-integration-and-lambda-1j5j) using the AWS Console. In this blog, I will discuss how to achieve the same setup using... | sepiyush |

1,906,059 | Is your fine-tuned LLM any good? | Over the past few weeks, I've been diving deep into fine-tuning Large Language Models (LLMs) for... | 0 | 2024-07-01T18:06:18 | https://dev.to/shannonlal/is-your-fine-tuned-llm-any-good-3k7h | llm, ai, finetuning, evaluate | Over the past few weeks, I've been diving deep into fine-tuning Large Language Models (LLMs) for various applications, with a particular focus on creating detailed summaries from multiple sources. Throughout this process, we've leveraged tools like weights and biases to track our learning rates and optimize our results... | shannonlal |

1,908,035 | Building a Yoga Assistant using Lyzr SDK | In today’s fast-paced world, finding time for self-care and wellness can be challenging. Whether... | 0 | 2024-07-01T18:05:53 | https://dev.to/akshay007/building-a-yoga-assistant-using-lyzr-sdk-3kjf | ai, lyzr, yoga, python | In today’s fast-paced world, finding time for **self-care and wellness** can be challenging. Whether you’re a beginner looking to start your yoga journey or an experienced yogi aiming to deepen your practice, having personalized guidance tailored to your goals and schedule can make all the difference. That’s where tech... | akshay007 |

1,908,034 | Final Blog | After 3 months of learning as much about the basics as you can, completing the FlatIron Software... | 0 | 2024-07-01T18:03:44 | https://dev.to/dillybunn/final-blog-3pnd | After 3 months of learning as much about the basics as you can, completing the FlatIron Software Engineering Bootcamp is a great feeling. I was not sure what to expect when I started, but at the end, it is a great feeling. Having a strong foundation for what comes next feels like it sets me up for success in the future... | dillybunn | |

1,908,030 | Why WordPress is the Best Platform for Your Website: Insights from Mike Savage | Mike Savage, a tech-savvy enthusiast from New Canaan, knows the importance of choosing the right... | 0 | 2024-07-01T17:56:18 | https://dev.to/savagenewcanaan/why-wordpress-is-the-best-platform-for-your-website-insights-from-mike-savage-ifp | wordpress, website, development, seo | <p style="text-align: justify;"><a href="https://www.instagram.com/michaelsavagenc/">Mike Savage</a>, a tech-savvy enthusiast from New Canaan, knows the importance of choosing the right platform for building and managing websites. Among the numerous options available, WordPress stands out as the best choice for blogger... | savagenewcanaan |

1,908,029 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash... | 0 | 2024-07-01T17:55:54 | https://dev.to/ladriohariox/buy-verified-cash-app-account-42n7 | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-cash-app-account/\n\n\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoin enablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number. Buy verified cash app account.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, ACash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n \n\nHow to verify Cash App accounts\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account.\n\nAs part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly. Buy verified cash app account.\n\n \n\nHow cash used for international transaction?\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom.\n\nNo matter if you’re a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain. Buy verified cash app account.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today’s digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial.\n\nAs we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available. Buy verified cash app account.\n\nOffers and advantage to buy cash app accounts cheap?\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform.\n\nWe deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Buy verified cash app account.\n\nTrustbizs.com stands by the Cash App’s superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management.\n\nExplore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs. Buy verified cash app account.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\nIn today’s digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions.\n\nBy acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com" | ladriohariox |

1,908,028 | Do LLMs Have Distinct and Consistent Personality? TRAIT: Personality Testset designed for LLMs with Psychometrics | Do LLMs Have Distinct and Consistent Personality? TRAIT: Personality Testset designed for LLMs with Psychometrics | 0 | 2024-07-01T17:54:47 | https://aimodels.fyi/papers/arxiv/do-llms-have-distinct-consistent-personality-trait | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Do LLMs Have Distinct and Consistent Personality? TRAIT: Personality Testset designed for LLMs with Psychometrics](https://aimodels.fyi/papers/arxiv/do-llms-have-distinct-consistent-personality-trait). If you like these kinds of analysis, you should su... | mikeyoung44 |

1,908,027 | From Artificial Needles to Real Haystacks: Improving Retrieval Capabilities in LLMs by Finetuning on Synthetic Data | From Artificial Needles to Real Haystacks: Improving Retrieval Capabilities in LLMs by Finetuning on Synthetic Data | 0 | 2024-07-01T17:54:12 | https://aimodels.fyi/papers/arxiv/from-artificial-needles-to-real-haystacks-improving | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [From Artificial Needles to Real Haystacks: Improving Retrieval Capabilities in LLMs by Finetuning on Synthetic Data](https://aimodels.fyi/papers/arxiv/from-artificial-needles-to-real-haystacks-improving). If you like these kinds of analysis, you should... | mikeyoung44 |

1,908,026 | Computational Life: How Well-formed, Self-replicating Programs Emerge from Simple Interaction | Computational Life: How Well-formed, Self-replicating Programs Emerge from Simple Interaction | 0 | 2024-07-01T17:53:38 | https://aimodels.fyi/papers/arxiv/computational-life-how-well-formed-self-replicating | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Computational Life: How Well-formed, Self-replicating Programs Emerge from Simple Interaction](https://aimodels.fyi/papers/arxiv/computational-life-how-well-formed-self-replicating). If you like these kinds of analysis, you should subscribe to the [AIm... | mikeyoung44 |

1,908,025 | Evaluating the Social Impact of Generative AI Systems in Systems and Society | Evaluating the Social Impact of Generative AI Systems in Systems and Society | 0 | 2024-07-01T17:53:04 | https://aimodels.fyi/papers/arxiv/evaluating-social-impact-generative-ai-systems-systems | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Evaluating the Social Impact of Generative AI Systems in Systems and Society](https://aimodels.fyi/papers/arxiv/evaluating-social-impact-generative-ai-systems-systems). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi news... | mikeyoung44 |

1,908,024 | From Decoding to Meta-Generation: Inference-time Algorithms for Large Language Models | From Decoding to Meta-Generation: Inference-time Algorithms for Large Language Models | 0 | 2024-07-01T17:52:29 | https://aimodels.fyi/papers/arxiv/from-decoding-to-meta-generation-inference-time | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [From Decoding to Meta-Generation: Inference-time Algorithms for Large Language Models](https://aimodels.fyi/papers/arxiv/from-decoding-to-meta-generation-inference-time). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi ne... | mikeyoung44 |

1,908,023 | Thermometer: Towards Universal Calibration for Large Language Models | Thermometer: Towards Universal Calibration for Large Language Models | 0 | 2024-07-01T17:51:21 | https://aimodels.fyi/papers/arxiv/thermometer-towards-universal-calibration-large-language-models | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Thermometer: Towards Universal Calibration for Large Language Models](https://aimodels.fyi/papers/arxiv/thermometer-towards-universal-calibration-large-language-models). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi new... | mikeyoung44 |

1,908,022 | MobileLLM: Optimizing Sub-billion Parameter Language Models for On-Device Use Cases | MobileLLM: Optimizing Sub-billion Parameter Language Models for On-Device Use Cases | 0 | 2024-07-01T17:50:46 | https://aimodels.fyi/papers/arxiv/mobilellm-optimizing-sub-billion-parameter-language-models | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [MobileLLM: Optimizing Sub-billion Parameter Language Models for On-Device Use Cases](https://aimodels.fyi/papers/arxiv/mobilellm-optimizing-sub-billion-parameter-language-models). If you like these kinds of analysis, you should subscribe to the [AImode... | mikeyoung44 |

1,908,021 | Assessing the nature of large language models: A caution against anthropocentrism | Assessing the nature of large language models: A caution against anthropocentrism | 0 | 2024-07-01T17:50:12 | https://aimodels.fyi/papers/arxiv/assessing-nature-large-language-models-caution-against | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Assessing the nature of large language models: A caution against anthropocentrism](https://aimodels.fyi/papers/arxiv/assessing-nature-large-language-models-caution-against). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi... | mikeyoung44 |

1,908,020 | MAGIS: LLM-Based Multi-Agent Framework for GitHub Issue Resolution | MAGIS: LLM-Based Multi-Agent Framework for GitHub Issue Resolution | 0 | 2024-07-01T17:49:37 | https://aimodels.fyi/papers/arxiv/magis-llm-based-multi-agent-framework-github | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [MAGIS: LLM-Based Multi-Agent Framework for GitHub Issue Resolution](https://aimodels.fyi/papers/arxiv/magis-llm-based-multi-agent-framework-github). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https://aimo... | mikeyoung44 |

1,908,019 | The Remarkable Robustness of LLMs: Stages of Inference? | The Remarkable Robustness of LLMs: Stages of Inference? | 0 | 2024-07-01T17:49:03 | https://aimodels.fyi/papers/arxiv/remarkable-robustness-llms-stages-inference | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [The Remarkable Robustness of LLMs: Stages of Inference?](https://aimodels.fyi/papers/arxiv/remarkable-robustness-llms-stages-inference). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https://aimodels.substac... | mikeyoung44 |

1,908,018 | ReFT: Reasoning with Reinforced Fine-Tuning | ReFT: Reasoning with Reinforced Fine-Tuning | 0 | 2024-07-01T17:48:28 | https://aimodels.fyi/papers/arxiv/reft-reasoning-reinforced-fine-tuning | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [ReFT: Reasoning with Reinforced Fine-Tuning](https://aimodels.fyi/papers/arxiv/reft-reasoning-reinforced-fine-tuning). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https://aimodels.substack.com) or follow m... | mikeyoung44 |

1,908,017 | Automating User Management in Linux with Bash | Hey DevOps enthusiasts! If you're anything like me, you've probably found yourself stuck in the... | 0 | 2024-07-01T17:48:01 | https://dev.to/pat6339/automating-user-management-in-linux-with-bash-5gmm | linux, bash, automation, devops | Hey DevOps enthusiasts!

If you're anything like me, you've probably found yourself stuck in the repetitive cycle of managing user accounts and groups, especially when onboarding new employees. It's one of those essential tasks that, while critical, can eat up a lot of your valuable time. But what if I told you there's... | pat6339 |

1,908,016 | SciBench: Evaluating College-Level Scientific Problem-Solving Abilities of Large Language Models | SciBench: Evaluating College-Level Scientific Problem-Solving Abilities of Large Language Models | 0 | 2024-07-01T17:47:54 | https://aimodels.fyi/papers/arxiv/scibench-evaluating-college-level-scientific-problem-solving | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [SciBench: Evaluating College-Level Scientific Problem-Solving Abilities of Large Language Models](https://aimodels.fyi/papers/arxiv/scibench-evaluating-college-level-scientific-problem-solving). If you like these kinds of analysis, you should subscribe... | mikeyoung44 |

1,908,015 | Middleware function Execution Problem and Solution | What is Middleware? A middleware can be defined as a function that will have all the access for... | 0 | 2024-07-01T17:43:40 | https://dev.to/officiabreezy/middleware-function-execution-problem-and-solution-4325 | webdev, javascript, programming, middleware | What is Middleware?

A middleware can be defined as a function that will have all the access for requesting an object, responding to an object, and moving to the next middleware function in the application request-response cycle. Middleware stand as a bridge between client requests and server responses. It's also respon... | officiabreezy |

1,908,014 | Day 9 of Machine Learning|| Linear Regression implementation | Hey reader👋Hope you are doing well😊 In the last post we have read about how we can minimize our cost... | 0 | 2024-07-01T17:43:02 | https://dev.to/ngneha09/day-9-of-machine-learning-linear-regression-implementation-5487 | machinelearning, datascience, tutorial, beginners | Hey reader👋Hope you are doing well😊

In the last post we have read about how we can minimize our cost function using gradient descent algorithm.

In this post we are going to discuss about the implementation of Linear Regression in python using scikit-learn.

So let's get started🔥

## Assumptions for Linear Regression

... | ngneha09 |

1,908,013 | Akira - Respond to all feedback, fix every listing, and get insights | Unleash the Power of AI-Driven Reputation Management! We understand the challenges you face daily.... | 0 | 2024-07-01T17:42:43 | https://dev.to/akira7/akira-respond-to-all-feedback-fix-every-listing-and-get-insights-1452 | reputation, restaurant, feedback, reviews | Unleash the Power of AI-Driven Reputation Management! We understand the challenges you face daily. Keeping up with reviews across multiple platforms, promptly responding to feedback, and identifying potential risks can be overwhelming. It's a constant juggling act that drains your time and resources. Let Akira's all-in... | akira7 |

1,908,012 | mobile development platforms and the common software architecture patterns | Mobile programming has evolved significantly over the years, with various platforms and architectural... | 0 | 2024-07-01T17:35:24 | https://dev.to/uti/mobile-development-platforms-and-the-common-software-architecture-patterns-2ifo | Mobile programming has evolved significantly over the years, with various platforms and architectural patterns available to developers. These platforms include iOS (Swift/Objective-C), Android (Kotlin/Java), React Native, Flutter, Ionic, and Cordova. Each architecture has its advantages and disadvantages, and developer... | uti | |

1,908,011 | Top 3 PHP Frameworks: Speed, Response Time, and Efficiency Compared | In the bustling world of web development, PHP frameworks play a crucial role in shaping robust,... | 0 | 2024-07-01T17:33:40 | https://dev.to/arafatweb/top-3-php-frameworks-speed-response-time-and-efficiency-compared-25bi | php, laravel, symfony, codeigniter | In the bustling world of web development, PHP frameworks play a crucial role in shaping robust, efficient, and scalable web applications. Whether you're a seasoned developer or a newcomer, picking the right framework can significantly impact your project's success. Today, we're diving into the top 3 PHP frameworks: Lar... | arafatweb |

1,907,372 | Comparing ReactJS vs Alpine.js: A Deep Dive into Frontend Technologies. | In the world of frontend development, numerous frameworks and libraries compete for developers'... | 0 | 2024-07-01T17:32:08 | https://dev.to/adurangba/comparing-reactjs-vs-alpinejs-a-deep-dive-into-frontend-technologies-2f47 | In the world of frontend development, numerous frameworks and libraries compete for developers' attention. Among them, ReactJS has established itself as a powerhouse, while Alpine.js is a more niche, lightweight alternative. This article will compare ReactJS and Alpine.js, highlighting their differences, strengths, and... | adurangba | |

1,908,009 | #5 Dependency Inversion Principle ['D' in SOLID] | DIP - Dependency Inversion principle The Dependency Inversion Principle is the fifth principle in the... | 0 | 2024-07-01T17:30:40 | https://dev.to/vinaykumar0339/5-dependency-inversion-principle-d-in-solid-1ip2 | solidprinciples, dependencyinversion, designprinciples | **DIP - Dependency Inversion principle**

The Dependency Inversion Principle is the fifth principle in the Solid Design Principles.

1. High-Level Modules should not depend on Low-Level Modules. Both Should depend on abstraction.

2. Abstraction should not depend on details. Details should depend on Abstraction.

### High... | vinaykumar0339 |

1,880,580 | Ibuprofeno.py💊| #129: Explica este código Python | Explica este código Python Dificultad: Fácil x = {"a", "b",... | 25,824 | 2024-07-01T17:30:07 | https://dev.to/duxtech/ibuprofenopy-129-explica-este-codigo-python-649 | python, learning, spanish, beginners | ## **<center>Explica este código Python</center>**

#### <center>**Dificultad:** <mark>Fácil</mark></center>

```py

x = {"a", "b", "c"}

x.add("a")

print(len(x))

```

* **A.** `4`

* **B.** `3`

* **C.** `2`

* **D.** `5`

---

{% details **Respuesta:** %}

👉 **B.** `3`

`len()` nos es útil para saber la longitud del conj... | duxtech |

1,908,008 | How to Make a Barcode Generator in Python | Generating barcodes programmatically can be a valuable tool for many applications, from inventory... | 0 | 2024-07-01T17:28:41 | https://dev.to/hichem-mg/how-to-make-a-barcode-generator-in-python-4hmg | python, programming, tutorial, beginners | Generating barcodes programmatically can be a valuable tool for many applications, from inventory management to event ticketing. Python offers a convenient library called [python-barcode library](https://github.com/WhyNotHugo/python-barcode) that simplifies the process of creating barcodes.

In this comprehensive guide... | hichem-mg |

1,908,007 | Building a NL2Typescript using Lyzr SDK | In the rapidly evolving world of software development, bridging the gap between human language and... | 0 | 2024-07-01T17:27:41 | https://dev.to/akshay007/building-a-nl2typescript-using-lyzr-sdk-5f40 | ai, typescript, streamlit, python | In the rapidly evolving world of software development, bridging the gap between human language and programming syntax is a sought-after goal. Imagine if you could simply describe what you want in plain English, and have it transformed into functional **TypeScript** code.

are two intricate facets of early... | 0 | 2024-07-01T17:08:37 | https://dev.to/marth_ji_1dea6a00a8d8b4/examining-adhd-in-talented-children-difficulties-and-advantages-3cei | healthcare, healthydebate, fitness, leetcode | Giftedness and Attention Deficit Hyperactivity Disorder (ADHD) are two intricate facets of early development that together offer special advantages and difficulties. While giftedness usually shows up as extraordinary academic prowess and creativity, ADHD is characterized by difficulties maintaining concentration as wel... | marth_ji_1dea6a00a8d8b4 |

1,907,987 | Procrastination: A Young Developer's Secret Weapon | The Beginning A 22-year-old freshly graduated and excited to start his journey as a software... | 0 | 2024-07-01T17:05:04 | https://dev.to/zain725342/procrastination-a-young-developers-secret-weapon-37no | programming, productivity, software, development | **The Beginning**

A 22-year-old freshly graduated and excited to start his journey as a software developer, our story's hero loves coding and is eager to make a mark in the tech world. But like many new developers, our hero quickly encounters a familiar foe: procrastination. Instead of seeing it as a hurdle, our hero l... | zain725342 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.