id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,907,361 | The Ultimate Guide to Virtual Assistant Hiring | Outsourcing through hiring a VA can be one of the most productive decisions a business owner has to... | 0 | 2024-07-01T08:26:44 | https://dev.to/ayesha1379/the-ultimate-guide-to-virtual-assistant-hiring-1nbf | job, remotejob |

Outsourcing through hiring a VA can be one of the most productive decisions a business owner has to make because the main aspects of a business’s functioning can be and should be freed from nonessential everyday obligations. With this, the following guide will help you in [hiring a virtual assistant](https://prosmarke... | ayesha1379 |

1,904,421 | Git | I. Add Commits to A Repo Configuration $ git --version $ git config --global... | 0 | 2024-07-01T08:25:46 | https://dev.to/congnguyen/git-2of0 | ## I. Add Commits to A Repo

- Configuration

```$ git --version```

```

$ git config --global user.name "<NAME>"

$ git config --global user.email "<EMAIL>"

$ git config --global color.ui auto

$ git config --global merge.conflictstyle diff3

$ git config --global core.editor "code --wait"

```

- Check configuration

`... | congnguyen | |

1,907,358 | GBase 8c Slow SQL Queries and Optimization | GBase 8c database supports slow SQL diagnostics and provides several parameter interfaces for... | 0 | 2024-07-01T08:25:06 | https://dev.to/congcong/gbase-8c-slow-sql-queries-and-optimization-4dli | database | GBase 8c database supports slow SQL diagnostics and provides several parameter interfaces for developers.

## 1. Slow SQL Related Parameters

### `track_stmt_stat_level`

Controls the level of statement execution tracking. This parameter has two parts, formatted as `'full sql stat level, slow sql stat level'`.

**full s... | congcong |

1,906,109 | Neural Network From Scratch Project | In Progress... | 0 | 2024-06-29T22:25:17 | https://dev.to/nelson_bermeo/neural-network-from-scratch-project-12ec | In Progress... | nelson_bermeo | |

1,446,411 | A review of this week's APIs: List Payments, IP info and Geocode Address | This week, we will offer an introduction to three new APIs for you. This week's Round up features... | 0 | 2024-07-01T08:23:00 | https://dev.to/worldindata/a-review-of-this-weeks-apis-list-payments-ip-info-and-geocode-address-2ln0 | api, ip, paymentapi, geocode | This week, we will offer an introduction to three new APIs for you. This week's Round up features APIs that we really enjoy and we think you will too. These APIs' purpose, industry, and client types will be discussed. The full details of the APIs can be accessed on [Worldindata's API marketplace](https://www.worldindat... | worldindata |

1,906,170 | Moz Domain Authority Score for SEO Success | At Form und Zeichen, we continually navigate the evolving SEO landscape to provide top-notch digital... | 0 | 2024-07-01T08:18:13 | https://dev.to/christofkarisch/moz-domain-authority-score-for-seo-success-nfn | At [Form und Zeichen](https://www.formundzeichen.at/), we continually navigate the evolving SEO landscape to provide top-notch digital marketing solutions. Recently, for our client [California Metals](https://www.californiametals.com/), we needed a reliable metric to compare their website's performance against competit... | christofkarisch | |

1,907,348 | Catch, Optimize Client-Side Data Fetching in Next.js Using SWR || Tech Shade | A post by Shaswat Raj | 0 | 2024-07-01T08:14:30 | https://dev.to/sh20raj4/catch-optimize-client-side-data-fetching-in-nextjs-using-swr-tech-shade-1mnn | nextjs, javascript, webdev, beginners | {% youtube https://www.youtube.com/watch?v=OjAwwGV38Ms&t=12s&ab_channel=ShadeTech %} | sh20raj4 |

1,907,347 | Free Database Hosting Providers | SQL/NoSQL/Reddis/MongoDB/Neon/Supabase | A post by Shaswat Raj | 0 | 2024-07-01T08:14:27 | https://dev.to/sh20raj4/free-database-hosting-providers-sqlnosqlreddismongodbneonsupabase-2ll9 | webdev, javascript, beginners, programming | {% youtube https://www.youtube.com/watch?v=wUVQ0yHZ1SU&ab_channel=ShadeTech %} | sh20raj4 |

1,907,346 | ye left moscow, why? | taylor swift next? | 0 | 2024-07-01T08:13:56 | https://dev.to/taylorye/ye-left-moscow-why-ghi | hype |

taylor swift next? | taylorye |

1,907,345 | Which Industries Benefit the Most from Offshore Laravel Developers? | Introduction to Offshore Laravel Developers In today's digital economy, leveraging offshore Laravel... | 0 | 2024-07-01T08:13:06 | https://dev.to/hirelaraveldevelopers/which-industries-benefit-the-most-from-offshore-laravel-developers-p67 | programming | <h2>Introduction to Offshore Laravel Developers</h2>

<p>In today's digital economy, leveraging offshore Laravel developers has become a strategic advantage for many businesses aiming to enhance their web development capabilities. Laravel, known for its robust features and scalability, paired with offshore outsourcing, ... | hirelaraveldevelopers |

1,907,344 | Learning Activities For Kids At Home | The preschool years are a period of rapid development. This is a time of infinite curiosity for your... | 0 | 2024-07-01T08:11:50 | https://dev.to/prinsy_3d5b88480d84c4e9b8/learning-activities-for-kids-at-home-4lnn | bestpreschool, daycare, babysitter, webdev | The preschool years are a period of rapid development. This is a time of infinite curiosity for your child, and their mind absorbs information and experiences like a sponge. While formal schooling may not be here just yet, you can nurture their love of learning with fun [activities at home](https://hikalpaa.org/)!

Enga... | prinsy_3d5b88480d84c4e9b8 |

1,907,342 | Meme Monday | Marketers Absolutely Love Creating Campaigns, Really! Source | 0 | 2024-07-01T08:10:31 | https://dev.to/td_inc/meme-monday-l87 | ai, memes, mondaymotivation, marketing | **Marketers Absolutely Love Creating Campaigns, Really!**

[Source](https://imgflip.com/i/85u47a) | td_inc |

1,907,341 | Magic Cloud Security | Since we're attracting larger clients today than a year ago, with more complex needs for security, we... | 0 | 2024-07-01T08:07:56 | https://ainiro.io/blog/magic-cloud-security | security | Since we're attracting larger clients today than a year ago, with more complex needs for security, we wanted to write some words about our platform's security to ease our clients' minds.

[Magic Cloud](https://ainiro.io/magic-cloud) is the name of our platform. Magic is what allows us to deliver our [AI solutions](http... | polterguy |

1,907,339 | Recommended 5 SQL tools most suitable for beginners to get started | SQLynx Reason for recommendation: SQLynx is a powerful and user-friendly web-based database... | 0 | 2024-07-01T08:06:32 | https://dev.to/tom8daafe63765434221/recommended-5-sql-tools-most-suitable-for-beginners-to-get-started-1na1 | SQLynx

Reason for recommendation: SQLynx is a powerful and user-friendly web-based database management tool. It features an intuitive web interface and supports multiple database types, making it suitable for both beginners and professionals. SQLynx offers real-time collaboration, an intelligent SQL editor, and securi... | tom8daafe63765434221 | |

1,907,338 | How to Automatically Approve All Posts in Your Reddit Subreddit | A post by Shaswat Raj | 0 | 2024-07-01T08:05:57 | https://dev.to/sh20raj4/how-to-automatically-approve-all-posts-in-your-reddit-subreddit-49h3 | webdev, javascript, beginners, programming | {% youtube https://www.youtube.com/watch?v=TuL8k15cCm0&t=22s&ab_channel=ShadeTech %} | sh20raj4 |

1,907,337 | How to Integrate Plyr.io's Video Player with Custom Controls | A post by Shaswat Raj | 0 | 2024-07-01T08:05:46 | https://dev.to/sh20raj4/how-to-integrate-plyrios-video-player-with-custom-controls-1g41 | {% youtube https://www.youtube.com/watch?v=SR8pFHpsC8c&ab_channel=ShadeTech %} | sh20raj4 | |

1,907,336 | Implementing Multiple Density Image Assets in Flutter | As the mobile landscape continues to evolve, the range of screen sizes and resolutions across devices... | 0 | 2024-07-01T08:04:57 | https://dev.to/vincwestley/implementing-multiple-density-image-assets-in-flutter-3h05 | As the mobile landscape continues to evolve, the range of screen sizes and resolutions across devices has expanded significantly. From compact smartphones to expansive tablets, ensuring that your application’s visuals remain sharp and consistent across all these devices is crucial. This is where the concept of **multip... | vincwestley | |

1,907,485 | StirlingPDF: Free Open-source PDF Tools & API | PDF files are a staple of digital document management, and having the right tools to handle them... | 0 | 2024-07-05T16:48:49 | https://blog.elest.io/stirlingpdf-free-open-source-pdf-tools-api/ | stirlingpdf, opensource, elestio | ---

title: StirlingPDF: Free Open-source PDF Tools & API

published: true

date: 2024-07-01 08:01:32 UTC

tags: StirlingPDF, OpenSource,Elestio

canonical_url: https://blog.elest.io/stirlingpdf-free-open-source-pdf-tools-api/

cover_image: https://blog.elest.io/content/images/2024/07/stirling-pdf.png

---

PDF files are a sta... | kaiwalyakoparkar |

1,907,335 | The Significance of Test Automation: Some unsaid Benefits | In the ever-evolving landscape of software development, the need for robust and efficient testing... | 0 | 2024-07-01T08:01:07 | https://mobilemarketingwatch.com/the-significance-of-test-automation-some-unsaid-benefits/ | test, automation |

In the ever-evolving landscape of software development, the need for robust and efficient testing processes has become paramount. With the increasing complexity of applications and the demand for faster release cycle... | rohitbhandari102 |

1,907,332 | Como usamos tunelamento de rede para criar um servidor de minecraft. | Anualmente todos nós sentimos a necessidade de jogar Minecraft, seja quando há alguma grande... | 0 | 2024-07-01T08:00:44 | https://dev.to/nevidomyyb/como-usamos-tunelamento-de-rede-para-criar-um-servidor-de-minecraft-3ih7 | tunelamento, aws, minecraft, servidor | Anualmente todos nós sentimos a necessidade de jogar Minecraft, seja quando há alguma grande atualização ou queremos relembrar os velhos tempos, e com a atualização da 1.21 não foi diferente, porém, como fugir de serviços grátis e de baixa qualidade como Aternos ou não depender do Hamachi/Radmin para criar um servidor ... | nevidomyyb |

1,907,334 | Free Play Store Alternatives to Publish Android Apps for Free with high traffic | A post by Shaswat Raj | 0 | 2024-07-01T08:00:28 | https://dev.to/sh20raj4/free-play-store-alternatives-to-publish-android-apps-for-free-with-high-traffic-4m8m | {% youtube https://www.youtube.com/watch?v=GAWFgu3smwM&t=2s&ab_channel=ShadeTech %} | sh20raj4 | |

1,907,333 | Get .js.org domain name for Free and Lifetime | GitHub Pages | A post by Shaswat Raj | 0 | 2024-07-01T08:00:26 | https://dev.to/sh20raj4/get-jsorg-domain-name-for-free-and-lifetime-github-pages-41a2 | {% youtube https://www.youtube.com/watch?v=_2XkHU-8I3I&ab_channel=ShadeTech %} | sh20raj4 | |

1,906,396 | Understanding JavaScript's `==` and `===`: Equality and Identity | In JavaScript, understanding the differences between == and === is crucial for writing effective and... | 0 | 2024-07-01T08:00:00 | https://dev.to/manthanank/understanding-javascripts-and-equality-and-identity-34lj | webdev, javascript, beginners, programming | In JavaScript, understanding the differences between `==` and `===` is crucial for writing effective and bug-free code. Both operators are used to compare values, but they do so in distinct ways, leading to different outcomes. Let's delve into what sets these two operators apart and when to use each.

## `==` (Equality... | manthanank |

1,906,670 | Como um Desenvolvedor Solo de Android Pode Reunir 20 Testadores | Para Meus Leitores Funcionalidades Oferecidas pelo App Como Testador Listando Apps que Estão... | 0 | 2024-07-01T08:00:00 | https://zmsoft.org/pt/devspayforward-libere-o-potencial-do-seu-aplicativo-com-20-testadores-gratuitos/ | androiddev, 20testadores, teste, playconsole | - [Para Meus Leitores](#para-meus-leitores)

- [Funcionalidades Oferecidas pelo App](#funcionalidades-oferecidas-pelo-app)

- [Como Testador](#como-testador)

- [Listando Apps que Estão Recrutando Testadores](#listando-apps-que-estão-recrutando-testadores)

- [Suporte para Entrar Como Testador](#suporte-para-entrar-com... | zmsoft |

1,907,331 | Mastering the Potential of B.Sc. Degrees: A Comprehensive Guide | Introduction The Bachelor of Science (B.Sc.) degree stands as a cornerstone of higher education,... | 0 | 2024-07-01T07:59:39 | https://dev.to/nisha_rawat_b538a76f5cc46/mastering-the-potential-of-bsc-degrees-a-comprehensive-guide-4fdn | **Introduction**

The Bachelor of Science (B.Sc.) degree stands as a cornerstone of higher education, offering a robust foundation in various scientific disciplines. This comprehensive guide delves into the academic rigor, diverse career pathways, and the pivotal role of platforms like Universitychalo in assisting stud... | nisha_rawat_b538a76f5cc46 | |

1,907,330 | Patriotic T-Shirts and Essentials Hoodies: A Trendsetter in American Fashion | Fashion has always been a reflection of cultural values, societal changes, and individual expression.... | 0 | 2024-07-01T07:59:29 | https://dev.to/akki_sarsaniya_e90f816375/patriotic-t-shirts-and-essentials-hoodies-a-trendsetter-in-american-fashion-451i | tshirts, patriot, crew, patriotcrew | Fashion has always been a reflection of cultural values, societal changes, and individual expression. In the United States, one trend that stands out is the rise of patriotic clothing, particularly patriotic t-shirts and essentials hoodies. These pieces not only symbolize national pride but also offer a versatile and s... | akki_sarsaniya_e90f816375 |

1,907,329 | Controlling the API Landscape: Implementing an API Governance Framework for Enterprises | In the age of digital transformation, APIs (Application Programming Interfaces) play a critical role... | 0 | 2024-07-01T07:58:27 | https://dev.to/syncloop_dev/controlling-the-api-landscape-implementing-an-api-governance-framework-for-enterprises-2b04 | javascript, programming, ai, api | In the age of digital transformation, APIs (Application Programming Interfaces) play a critical role in enabling seamless data exchange and driving innovation. However, with a growing API portfolio, managing their lifecycle effectively becomes paramount. This blog delves into the importance of API governance framew... | syncloop_dev |

1,890,498 | Maintain chat history in generative AI apps with Valkey | Integrate Valkey with LangChain A while back I wrote up a blog post on how to use Redis as a chat... | 0 | 2024-07-01T07:57:54 | https://community.aws/content/2hxacO2sAyLAWy1eCFERO8d6Xrb | redis, database, machinelearning, go | > Integrate Valkey with LangChain

A while back I wrote up a blog post on how to use [Redis as a chat history component with LangChain](https://community.aws/content/2aq9ju6xvYtywGVbuPoWFTk5oK4/build-a-streamlit-app-using-langchain-amazon-bedrock-and-redis). Since LangChain already had Redis chat history available as a... | abhirockzz |

1,906,547 | Security: BitPower's impeccable security | Security: BitPower's impeccable security BitPower, a decentralized platform built on the blockchain,... | 0 | 2024-06-30T11:53:50 | https://dev.to/ping_iman_72b37390ccd083e/security-bitpowers-impeccable-security-4ioi |

Security: BitPower's impeccable security

BitPower, a decentralized platform built on the blockchain, knows the importance of security. In this rapidly changing digital world, security is the top priority for every u... | ping_iman_72b37390ccd083e | |

1,907,328 | 4DEV: The Ultimate Toolkit Collection for Developers 🚀 | Are you a developer looking to boost your productivity and streamline your workflow? Look no further!... | 0 | 2024-07-01T07:55:43 | https://dev.to/raja_rakoto/4dev-the-ultimate-toolkit-collection-for-developers-3l9n | Are you a developer looking to boost your productivity and streamline your workflow? Look no further! [4dev](https://github.com/RajaRakoto/4dev) is the solution you need ...

### What is 4dev? 🤔

[4dev](https://github.com/RajaRakoto/4dev) is an all-in-one solution specifically designed to meet the diverse needs of dev... | raja_rakoto | |

1,907,327 | ISO 27001 Certification in saudi arabia | ISO 27001 certification is crucial for Saudi Arabian organizations looking to strengthen their... | 0 | 2024-07-01T07:55:23 | https://dev.to/popularcert12/iso-27001-certification-in-saudi-arabia-36oa | isoconsultants, isocertification, isocertificationcost, iso |

ISO 27001 certification is crucial for Saudi Arabian organizations looking to strengthen their information security management systems (ISMS). This internationally recognized standard outlines requirements for estab... | popularcert12 |

1,907,326 | How zkRollups and FHE Rollups Tackle Privacy Challenges Differently | One of the most serious challenges Ethereum faces today is privacy. Privacy problems have grown in... | 0 | 2024-07-01T07:53:13 | https://www.zeeve.io/blog/how-zkrollups-and-fhe-rollups-tackle-privacy-challenges-differently/ | rollups, zkrollups | <p>One of the most serious challenges Ethereum faces today is privacy. Privacy problems have grown in priority as the number of users and apps on the network has increased. To solve these challenges, <a href="https://www.zeeve.io/appchains/polygon-zkrollups/">zk-rollups</a> and FHE rollups have emerged as some of the m... | zeeve |

1,907,325 | ISO 45001 Certification in Saudi Arabia | ISO 45001 certification holds significant importance for Saudi Arabian businesses aiming to... | 0 | 2024-07-01T07:53:09 | https://dev.to/popularcert12/iso-45001-certification-in-saudi-arabia-31c4 | isocertificationcost, isocertification, isoconsultants, iso |

ISO 45001 certification holds significant importance for Saudi Arabian businesses aiming to prioritize occupational health and safety within their workplaces. This international standard provides a framework to syst... | popularcert12 |

1,907,324 | The Three Kinds of Role-Playing Magic | Everyone who's played an RPG knows "in-game" magic. The stuff of clerics, wizards, warlocks,... | 0 | 2024-07-01T07:50:55 | https://dev.to/djradon/the-three-kinds-of-role-playing-magic-3mkb | roleplay, magic, storytelling, art |

Everyone who's played an RPG knows "in-game" magic. The stuff of clerics, wizards, warlocks, enchanted weapons, magical traps, and cursed knickers.

You could easily argue that the GM's ability to invoke arbitrary fantasy-reality and the players' ability to give life to characters are forms of performative (real-worl... | djradon |

1,907,323 | Webdesign-Strategien, um die Aufmerksamkeit von oben und unten zu gewinnen | In der heutigen digitalen Landschaft, in der die durchschnittliche Aufmerksamkeitsspanne kürzer ist... | 0 | 2024-07-01T07:50:47 | https://dev.to/junkerdigital/webdesign-strategien-um-die-aufmerksamkeit-von-oben-und-unten-zu-gewinnen-14ef | webdesignagentureninberlin, webdesign | <p>In der heutigen digitalen Landschaft, in der die durchschnittliche Aufmerksamkeitsspanne kürzer ist als je zuvor, ist es für den Erfolg Ihrer Website entscheidend, die Aufmerksamkeit der Nutzer zu gewinnen und zu halten. Als eine der führenden <strong><a href="https://junker-digital.de/webdesign-agent... | junkerdigital |

1,907,322 | Ensuring Compliance: ISO 45001 Certification in Saudi Arabian Industries | In an era where workplace safety is paramount, ISO 45001 certification has emerged as the gold... | 0 | 2024-07-01T07:50:20 | https://dev.to/popularcert12/ensuring-compliance-iso-45001-certification-in-saudi-arabian-industries-28l | isocertification, isoconsultants, isocertificationcost, iso | In an era where workplace safety is paramount, ISO 45001 certification has emerged as the gold standard for Occupational Health and Safety Management Systems (OHSMS). This international standard provides a framework that helps organizations enhance employee safety, reduce workplace risks, and create better, safer worki... | popularcert12 |

1,907,321 | NextJS 15 Update | Upgrade to latest version of Next JS @rc || experimental | A post by Shaswat Raj | 0 | 2024-07-01T07:50:07 | https://dev.to/sh20raj4/nextjs-15-update-upgrade-to-latest-version-of-next-js-rc-experimental-1icm | {% youtube https://www.youtube.com/watch?v=099DcQu1-2A&ab_channel=ShadeTech %} | sh20raj4 | |

1,907,320 | Add TopLoader to NextJS App Router | Add top Loading Line | A post by Shaswat Raj | 0 | 2024-07-01T07:50:04 | https://dev.to/sh20raj4/add-toploader-to-nextjs-app-router-add-top-loading-line-35dj | {% youtube https://www.youtube.com/watch?v=952ERYbbcxg&t=45s&ab_channel=ShadeTech %} | sh20raj4 | |

1,907,319 | How to hack your Google Lighthouse scores in 2024 | Google Lighthouse has been one of the most effective ways to gamify and promote web page performance... | 0 | 2024-07-01T07:49:28 | https://www.smashingmagazine.com/2024/06/how-hack-google-lighthouse-scores-2024/ | performance, webdev, javascript | Google Lighthouse has been one of the most effective ways to gamify and promote web page performance among developers. Using Lighthouse, we can assess web pages based on overall performance, accessibility, SEO, and what Google considers “best practices”, all with the click of a button.

We might use these tests to eval... | whitep4nth3r |

1,906,593 | 📋 📝 Manage your clipboard in the CLI with Python | As someone who frequently copies and pastes content, I've often wondered about the inner workings of... | 0 | 2024-07-01T07:48:37 | https://dev.to/audreyk/manage-your-clipboard-in-the-cli-with-python-5f0d | python, automation, productivity, clipboard | As someone who frequently copies and pastes content, I've often wondered about the inner workings of the clipboard and whether it was possible to manage it programmatically. I ended up writing a simple Python script that allows you to get the current value in the clipboard, clear it, and get its history via the command... | audreyk |

1,907,318 | Technical Report: Titanic Passenger List | Introduction The Titanic dataset, available on Kaggle, contains detailed information about the... | 0 | 2024-07-01T07:48:10 | https://dev.to/dee_grayce/technical-report-titanic-passenger-list-556h | datascience, dataanalysis, titanic, python | **Introduction**

The Titanic dataset, available on Kaggle, contains detailed information about the passengers aboard the ill-fated RMS Titanic. This dataset is a popular choice for data analysis and machine learning practice due to its rich variety of numerical and categorical variables. The purpose of this review is t... | dee_grayce |

1,907,317 | Unlock Your Future with TIME Education - The Best Coaching for CAT in Jaipur! | Looking to crack the toughest exams and secure your dream career? TIME Education is your trusted... | 0 | 2024-07-01T07:47:53 | https://dev.to/rahul_saini_98e0a8405ff9a/unlock-your-future-with-time-education-the-best-coaching-for-cat-in-jaipur-4cb8 | Looking to crack the toughest exams and secure your dream career? TIME Education is your trusted partner for comprehensive exam preparation in Jaipur. Recognized as the "[Best Coaching for CAT in Jaipur](https://www.time4education.com/jaipur)," we offer expert guidance and tailored courses to help you excel in a variet... | rahul_saini_98e0a8405ff9a | |

1,907,316 | How to Download "Happy Birthday" MP3 | Legal Sources: There are legal sources where you can purchase or download royalty-free versions of... | 0 | 2024-07-01T07:46:59 | https://dev.to/beatdreamer55/how-to-download-happy-birthday-mp3-43em | Legal Sources: There are legal sources where you can purchase or download royalty-free versions of "Happy Birthday" that are intended for personal or commercial use without infringing copyright. These versions are often available for a small fee.

Public Domain: In some cases, a version of "Happy Birthday" might be in ... | beatdreamer55 | |

1,907,315 | CE Mark Certification in Saudi Arabia | CE Mark certification in saudi arabia is vital for products intended to be marketed within the... | 0 | 2024-07-01T07:44:59 | https://dev.to/popularcert12/ce-mark-certification-in-saudi-arabia-2hjg | power, qyality, battery, qualitycontrol |

[CE Mark certification in saudi arabia](https://popularcert.com/saudi-arabia/ce-mark-certification-in-saudi-arabia/) is vital for products intended to be marketed within the European Economic Area (EEA), encompassin... | popularcert12 |

1,907,313 | ipify - Free API to GET User IP Address | Get IP Address with JavaScript | A post by Shaswat Raj | 0 | 2024-07-01T07:43:47 | https://dev.to/sh20raj4/ipify-free-api-to-get-user-ip-address-get-ip-address-with-javascript-5320 | {% youtube https://www.youtube.com/watch?v=cE4PzMonikc&ab_channel=ShadeTech %} | sh20raj4 | |

1,907,312 | Deploy Static Website for Free with Unlimited Bandwidth ✨ - GitHub Pages - (2024) - Latest Way | A post by Shaswat Raj | 0 | 2024-07-01T07:43:43 | https://dev.to/sh20raj4/deploy-static-website-for-free-with-unlimited-bandwidth-github-pages-2024-latest-way-hf0 | {% youtube https://www.youtube.com/watch?v=lphelsAvYoA&t=55s&ab_channel=ShadeTech %} | sh20raj4 | |

1,908,512 | ChatGPT in Recruitment: Revolutionizing the Hiring Process | In today’s fast-paced job market, the integration of artificial intelligence (AI) in recruitment is... | 0 | 2024-07-02T07:02:21 | https://www.tech-careers.de/chatgpt-in-recruitment-revolutionizing-the-hiring-process/ | techjobsingermany | ---

title: ChatGPT in Recruitment: Revolutionizing the Hiring Process

published: true

date: 2024-07-01 07:41:57 UTC

tags: TechJobsinGermany

canonical_url: https://www.tech-careers.de/chatgpt-in-recruitment-revolutionizing-the-hiring-process/

---

In today’s fast-paced job market, the integration of artificial intellige... | nadin |

1,907,310 | Steps to Success: ISO 9001 Certification for Saudi Arabian Companies | In today's competitive business environment, maintaining high standards of quality is crucial for... | 0 | 2024-07-01T07:41:22 | https://dev.to/popularcert12/steps-to-success-iso-9001-certification-for-saudi-arabian-companies-215e | isocertification, isoconsultants, isocertificationcost, iso9001certification |

In today's competitive business environment, maintaining high standards of quality is crucial for success. ISO 9001 certification is a recognized benchmark for quality management systems (QMS) worldwide, and its imp... | popularcert12 |

1,907,309 | Exploring Dua e Qunoot and Ayatul Kursi: Essential Islamic Prayers for Daily Life | Introduction In Islam, prayers and Quranic verses are central to spiritual life. Two significant... | 0 | 2024-07-01T07:37:00 | https://dev.to/qari_ahmadshoaib_c5b9393/exploring-dua-e-qunoot-and-ayatul-kursi-essential-islamic-prayers-for-daily-life-heg | Introduction

In Islam, prayers and Quranic verses are central to spiritual life. Two significant recitations, Dua e Qunoot, and Ayatul Kursi, are of special importance. Let's explore their meanings, benefits, and how to integrate them into daily life.

What is Dua e Qunoot?

[Dua e Qunoot](uhttps://qari.live/blog/dua-e-q... | qari_ahmadshoaib_c5b9393 | |

1,907,308 | Deploy Next.js App for Free on Render 🚀 | A post by Shaswat Raj | 0 | 2024-07-01T07:36:15 | https://dev.to/sh20raj4/deploy-nextjs-app-for-free-on-render-3133 | {% youtube https://www.youtube.com/watch?v=dBqEr278XH0&ab_channel=ShadeTech %} | sh20raj4 | |

1,907,307 | Embed Codes with Code Runner on Dev.to Using Liquid Syntax | A post by Shaswat Raj | 0 | 2024-07-01T07:36:00 | https://dev.to/sh20raj4/embed-codes-with-code-runner-on-devto-using-liquid-syntax-4oog | webdev, javascript, beginners, programming | {% youtube https://www.youtube.com/watch?v=yEWLR9kcFPY&ab_channel=ShadeTech %} | sh20raj4 |

1,907,306 | Top Medical Coding Trends to Watch Out for in 2024 | Medical coding is essential in healthcare for billing, data analysis, and patient care. As... | 0 | 2024-07-01T07:35:40 | https://dev.to/sanchit_chaudhri_8a7e91cf/top-medical-coding-trends-to-watch-out-for-in-2024-dp0 | coding, clinical, medicalcoding |

Medical coding is essential in healthcare for billing, data analysis, and patient care. As technology evolves, professionals must adapt. In 2024, trends like AI and blockchain integration, real-time coding, and pers... | sanchit_chaudhri_8a7e91cf |

1,907,305 | Streamline Your Email Marketing Efforts with Divsly | Email marketing remains a cornerstone of digital marketing strategies, allowing businesses to connect... | 0 | 2024-07-01T07:35:17 | https://dev.to/divsly/streamline-your-email-marketing-efforts-with-divsly-4jl1 | emailmarketing, emailcampaigns, emailmarketingcampaigns | Email marketing remains a cornerstone of digital marketing strategies, allowing businesses to connect directly with their audience in a personalized and effective manner. However, managing email campaigns can be complex and time-consuming without the right tools. This is where Divsly steps in, offering a comprehensive ... | divsly |

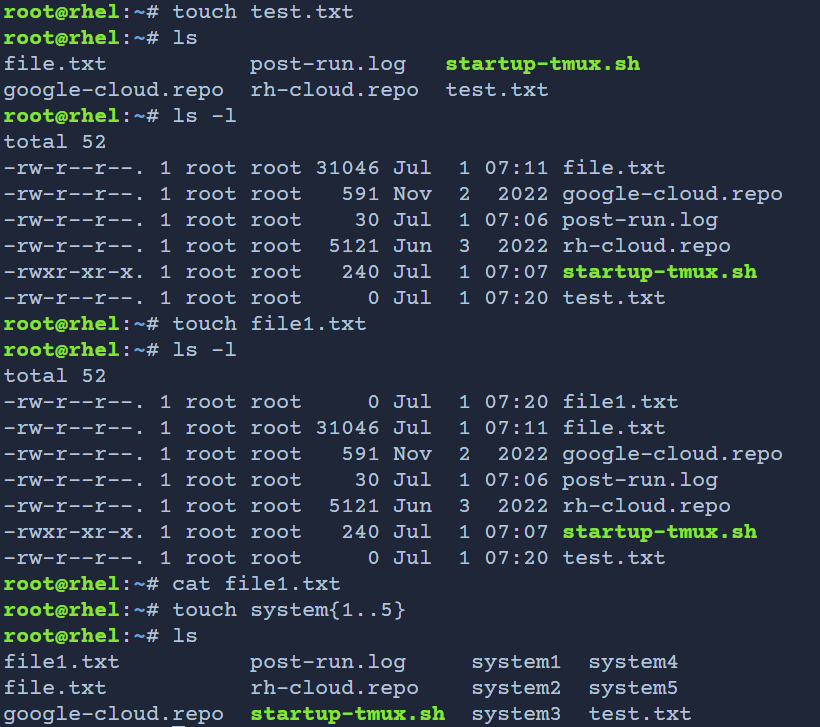

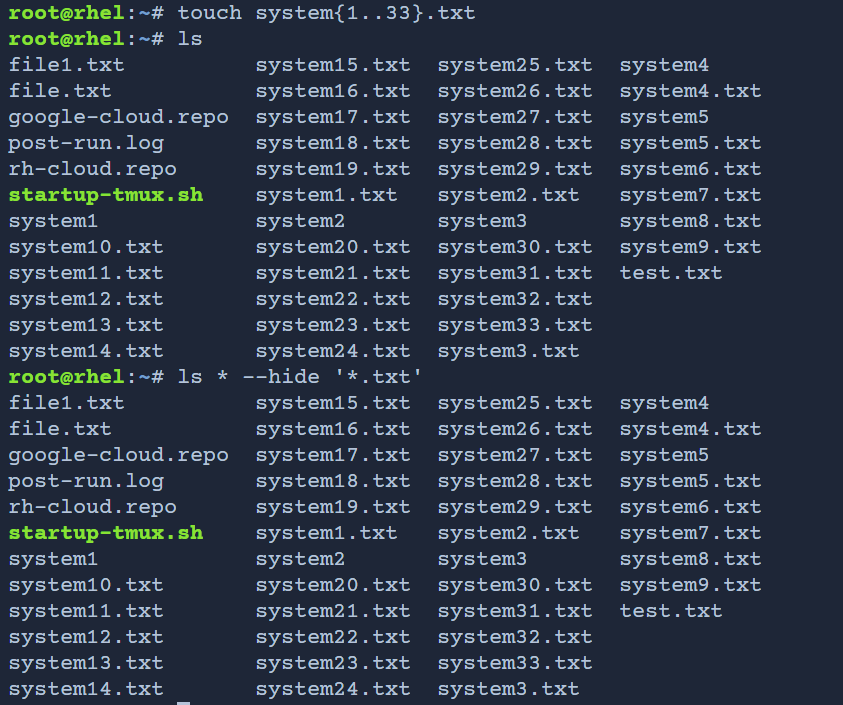

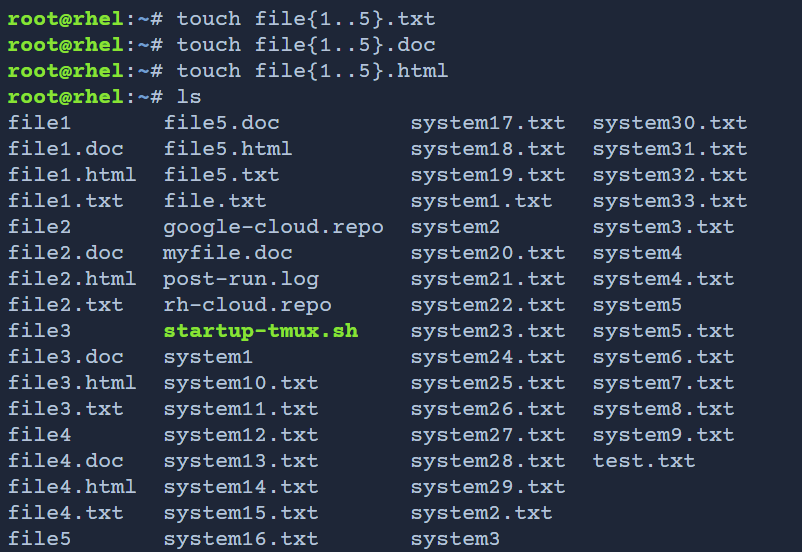

1,907,304 | Quick Guide: Making Files with Touch Command | A post by mahir dasare | 0 | 2024-07-01T07:33:46 | https://dev.to/mahir_dasare_333/quick-guide-making-files-with-touch-command-g07 |

| mahir_dasare_333 | |

1,907,303 | Exploring the World of Testing: Automated vs Manual | Testing is like the superhero of the software world – it ensures everything works smoothly and saves... | 0 | 2024-07-01T07:31:41 | https://www.thetealmango.com/featured/automated-vs-manual-testing/ | automated, testing, manual |

Testing is like the superhero of the software world – it ensures everything works smoothly and saves the day. But did you know there are different ways to do it? Let’s dive into the two main types: automated testing ... | rohitbhandari102 |

1,907,302 | Bet smart with Be8fair: 7up & 7down, your winning streak! | 7Up & 7Down is a betting game where players wager on the outcome of two dice rolls, predicting if... | 0 | 2024-07-01T07:31:23 | https://dev.to/be8fair_asia_9ab36c02b674/bet-smart-with-be8fair-7up-7down-your-winning-streak-16d2 | **[7Up & 7Down](https://be8fair.asia/)** is a betting game where players wager on the outcome of two dice rolls, predicting if the sum will be above, below, or exactly seven. Be8Fair ensures transparent, secure, and fair betting, enhancing **[your gaming](https://be8fair.asia/)** experience with reliable odds and real-... | be8fair_asia_9ab36c02b674 | |

1,907,301 | Difference Between Signed and Unsigned Drivers | Signed drivers are the main actors who guarantee the system’s safety and stability by permitting... | 0 | 2024-07-01T07:27:41 | https://dev.to/sign_my_code/difference-between-signed-and-unsigned-drivers-1o2n | signeddrivers, unsigneddrivers |

Signed drivers are the main actors who guarantee the system’s safety and stability by permitting only trusted and verified software components to communicate with the kernel.

Signing a driver is a process of valida... | sign_my_code |

1,907,290 | How to Annul Promises in JavaScript | In JavaScript, you might already know how to cancel a request: you can use xhr.abort() for XHR and... | 0 | 2024-07-01T07:26:16 | https://webdeveloper.beehiiv.com/p/cancel-promises-javascript | webdev, react, javascript, programming | In JavaScript, you might already know [how to cancel a request](https://levelup.gitconnected.com/how-to-cancel-a-request-in-javascript-67f98bd1f0f5?utm_source=webdeveloper.beehiiv.com&utm_medium=newsletter&utm_campaign=how-to-annul-promises-in-javascript): you can use `xhr.abort()` for XHR and `signal` for fetch. But h... | zacharylee |

1,907,300 | ZIP/UNZIP using Command Line | A post by Shaswat Raj | 0 | 2024-07-01T07:25:51 | https://dev.to/sh20raj4/zipunzip-using-command-line-2jgc | webdev, javascript, beginners, programming | {% youtube https://www.youtube.com/watch?v=wYmPXVmVB3U&ab_channel=ShadeTech %} | sh20raj4 |

1,907,299 | TweetX: HTML5 Twitter like Video Player for Your Website ✨ | A post by Shaswat Raj | 0 | 2024-07-01T07:25:36 | https://dev.to/sh20raj4/tweetx-html5-twitter-like-video-player-for-your-website-2j8e | webdev, javascript, beginners, programming | {% youtube https://www.youtube.com/watch?v=HQ1uZl4-Sq8&t=99s&ab_channel=ShadeTech %} | sh20raj4 |

1,907,298 | Comprendiendo el Sesgo Cultural en la Visión por Computadora con IA: Una Visión Técnica | La Inteligencia Artificial (IA) ha revolucionado muchos campos, y la visión por computadora es una de... | 0 | 2024-07-01T07:25:18 | https://dev.to/juanmorales10/comprendiendo-el-sesgo-cultural-en-la-vision-por-computadora-con-ia-una-vision-tecnica-2n6e | ai, computervision |

La Inteligencia Artificial (IA) ha revolucionado muchos campos, y la visión por computadora es una de las áreas donde su impacto es más pronunciado. Sin embargo, como con cualquier tecnología, el desarrollo y la implementación de sistemas de IA no están libres de sesgos y limitaciones. Este artículo profundiza en los ... | juanmorales10 |

1,907,297 | Why is Testing Important for Your Mobile App? | What is Mobile App Testing? Mobile App testing is the process of validating mobile Apps... | 0 | 2024-07-01T07:25:13 | https://dev.to/robort_smith/why-is-testing-important-for-your-mobile-app-2l9i | mobileapptesting, testing, mobile, software |

## **What is Mobile App Testing?**

**[Mobile App testing](https://www.alphabin.co/services/mobile-app-testing)** is the process of validating mobile Apps (Android, IOS) for better functionality, usability, perfor... | robort_smith |

1,907,296 | Score big with soccer betting – be8fair, your winning partner! | Bet on soccer with confidence at Be8Fair, where fair play meets exciting opportunities. Enjoy... | 0 | 2024-07-01T07:24:49 | https://dev.to/be8fair_asia_9ab36c02b674/score-big-with-soccer-betting-be8fair-your-winning-partner-2m11 | Bet on **[soccer with confidence](https://be8fair.asia/

)** at Be8Fair, where fair play meets exciting opportunities. Enjoy real-time odds, secure transactions, and expert insights. Elevate your soccer experience with... | be8fair_asia_9ab36c02b674 | |

1,907,291 | The Evolution of Web Development: A Historical Perspective | Since the Internet's inception, web development has undergone significant transformations.... | 0 | 2024-07-01T07:21:25 | https://dev.to/ray_parker01/the-evolution-of-web-development-a-historical-perspective-4f2j | ---

title: The Evolution of Web Development: A Historical Perspective

published: true

---

Since the Internet's inception, web development has undergone significant transformations. Understanding its evolution provide... | ray_parker01 | |

1,904,353 | Binary Decision Tree in Ruby ? Say hello to Composite Pattern 🌳 | Decision trees are very common algorithms in the world of development. On paper, they are quite... | 0 | 2024-07-01T07:23:16 | https://dev.to/wecasa/binary-decision-tree-in-ruby-say-hello-to-composite-pattern-h8n | ruby, rails, tutorial, programming | Decision trees are very common algorithms in the world of development. On paper, they are quite simple. You chain if-else statements nested within each other. The problem arises when the tree starts to grow. If you're not careful, it becomes complex to read and, therefore, difficult to maintain.

In this article, we'll... | pimp_my_ruby |

1,907,295 | Introduction to Cloud Computing & AWS Cloud Services | Cloud computing refers to the on-demand delivery of IT resources via the Internet, operating on a... | 27,845 | 2024-07-01T07:22:35 | https://dev.to/ansumannn/introduction-to-cloud-computing-aws-cloud-services-50n | aws, cloud, devops, cloudcomputing | Cloud computing refers to the on-demand delivery of IT resources via the Internet, operating on a pay-as-you-go model. Instead of investing in and maintaining physical data centers and servers, users can leverage technology services like computing power, storage, and databases as needed from cloud providers such as Ama... | ansumannn |

1,907,293 | Your trusted partner in discovering premium real estate in Dubai. | At Dubai New Developments, we understand the importance of investment value, which is why we offer... | 0 | 2024-07-01T07:22:22 | https://dev.to/dubainewdevelopments_69/your-trusted-partner-in-discovering-premium-real-estate-in-dubai-4me4 | At [Dubai New Developments](https://dubai-new-developments.com/

), we understand the importance of investment value, which is why we offer tailored advice to help you maximize your returns in one of the world's most dynamic real estate markets. Whether you are buying your first home, seeking a lucrative investment, or ... | dubainewdevelopments_69 | |

1,907,292 | Top Tennis Betting Strategies Revealed: Bet Smart with Be8fair | [Bet on tennis] matches with confidence at be8fair, your trusted platform for accurate odds,... | 0 | 2024-07-01T07:22:17 | https://dev.to/be8fair_asia_9ab36c02b674/top-tennis-betting-strategies-revealed-bet-smart-with-be8fair-58f7 | webdev | **[[Bet on tennis](https://be8fair.asia/)**] matches with confidence at be8fair, your trusted platform for accurate odds, real-time updates, and secure transactions. Whether you're a novice or an expert, **[be8fair](https://be8fair.asia/)** ensures a fair and thrilling betting experience every game, every set, every ma... | be8fair_asia_9ab36c02b674 |

1,907,287 | Data Analytics Companies vs. Freelancers: Making the Right Choice for Your Business | Introduction In the rapidly evolving field of data analytics, businesses often face the... | 0 | 2024-07-01T07:20:51 | https://dev.to/sejal_4218d5cae5da24da188/data-analytics-companies-vs-freelancers-making-the-right-choice-for-your-business-572d | dataanalyticscompanies, dataanalyticsfreelancers, freelancer | ## Introduction

In the rapidly evolving field of data analytics, businesses often face the dilemma of choosing between hiring a data analytics company or a [freelancer](https://www.pangaeax.com/). Each option has its advantages and drawbacks, making it crucial to understand which is the best fit for your specific needs... | sejal_4218d5cae5da24da188 |

1,907,289 | Day 1 of 100 Days of Code | Mon, Jul 1, 2024 Let's get this done. | 0 | 2024-07-01T07:17:36 | https://dev.to/jacobsternx/day-1-of-100-days-of-code-3e23 | 100daysofcode, webdev, javascript, beginners | Mon, Jul 1, 2024

Let's get this done. | jacobsternx |

1,907,288 | Comparing Lexical Scope for Function Declarations and Arrow Functions | In JavaScript, understanding lexical scope is crucial for understanding how variables are accessed... | 0 | 2024-07-01T07:14:39 | https://dev.to/rahulvijayvergiya/comparing-lexical-scope-for-function-declarations-and-arrow-functions-go3 | In JavaScript, understanding lexical scope is crucial for understanding how variables are accessed and managed within functions. Lexical scope refers to the context in which variables are declared and how they are accessible within nested functions. Let's explore how lexical scope behaves differently between traditiona... | rahulvijayvergiya | |

1,907,286 | Effortless Travel: Private Jet Charter Services | In today's fast-paced world, time is of the essence. For those who value efficiency, comfort, and... | 0 | 2024-07-01T07:11:38 | https://dev.to/theairchartergroupau/effortless-travel-private-jet-charter-services-327m |

In today's fast-paced world, time is of the essence. For those who value efficiency, comfort, and exclusivity, private jet charter services provide the perfect solution. Whether traveling for business or leisure, ch... | theairchartergroupau | |

1,907,284 | echo3D’s 3D Asset Manager Is Now Available | Hi there, We are excited to share the release of our much anticipated 3D asset manager. echo3D... | 0 | 2024-07-01T07:09:58 | https://dev.to/echo3d/echo3ds-3d-asset-manager-is-now-available-1aie | assetmanagement, softwaredevelopment, news, startup | **Hi there,**

We are excited to share the release of our much anticipated **3D asset manager**.

echo3D offers a whole set of tools to help you take control over your 3D content, streamline workflows and collaboration, and discover, process, and stream 3D assets across your organization and beyond, with built-in integ... | _echo3d_ |

1,907,282 | A Guide to AI Video Analytics: Applications and Opportunities | AI video analytics has emerged as a transformative technology, revolutionizing various industries by... | 0 | 2024-07-01T07:07:55 | https://dev.to/nextbraincanada/a-guide-to-ai-video-analytics-applications-and-opportunities-1ko6 | aivideoanalyticsoftware | AI video analytics has emerged as a transformative technology, revolutionizing various industries by providing enhanced capabilities in video surveillance, security, marketing, and beyond. By leveraging machine learning, computer vision, and data analysis, AI video analytics offers a powerful tool for extracting action... | nextbraincanada |

1,907,281 | Casino Betting Soars to New Heights: Embrace the Thrill and Be8fair in Every Bet! | Step into a world where excitement meets fairness with Casino Betting Soars to New Heights. At... | 0 | 2024-07-01T07:05:37 | https://dev.to/be8fair_asia_9ab36c02b674/casino-betting-soars-to-new-heights-embrace-the-thrill-and-be8fair-in-every-bet-2n75 | [](https://be8fair.asia/)

Step into a world where excitement meets fairness with Casino Betting Soars to New Heights. [At Be8fair](https://be8fair.asia/), we redef... | be8fair_asia_9ab36c02b674 | |

1,907,280 | Breaking the Blockchain Silos: Exploring Link Network’s Cross-Chain Interoperability | While blockchain technology has revolutionized the management and exchange of digital assets, it... | 0 | 2024-07-01T07:05:07 | https://dev.to/linknetwork/breaking-the-blockchain-silos-exploring-link-networks-cross-chain-interoperability-eho |

While blockchain technology has revolutionized the management and exchange of digital assets, it has brought forth a significant challenge known as “blockchain silos.” This issue refers to the lack of effective int... | linknetwork | |

1,907,277 | BIG ISSUE BUT RESOLVED | Hey everyone, Hope you all have been doing great! I was supposed to post an update about 3 days... | 0 | 2024-07-01T07:03:04 | https://dev.to/kevinpalma21/big-issue-but-resolved-5655 | beginners, productivity, design, tutorial | Hey everyone,

Hope you all have been doing great! I was supposed to post an update about 3 days after my last post, but things went south. My AutoCAD crashed, and when I booted everything back up, all my progress was lost.

My Mario Tube was gone, so I had to redo everything. Technically, I have made some progress sin... | kevinpalma21 |

1,907,276 | #EP 43 - Enhancing DevEx, Code Review & Leading Gen Z | Jacob Singh from Alpha Wave Global 🎙️ | In this episode, I, groCTO host Kovid Batra is joined by Jacob Singh, CTO in Residence at Alpha Wave... | 0 | 2024-07-01T07:01:22 | https://dev.to/grocto/ep-43-enhancing-devex-code-review-leading-gen-z-jacob-singh-from-alpha-wave-global-1jpb | podcast, developer, career, techtalks | [](url)In this episode, I, groCTO host Kovid Batra is joined by Jacob Singh, CTO in Residence at Alpha Wave Global. Jacob's impressive career spans over two decades, during which he has held pivotal CTO and Director roles at renowned startups and organizations such as Blinkit, Sequoia Capital, and Acquia.

Podcast link... | grocto |

1,900,087 | 42 launches on Product Hunt | I've launched 42 dev tools on Product Hunt in the last 2 years — or maybe 55? To be honest, I've lost... | 27,917 | 2024-07-01T07:01:00 | https://dev.to/fmerian/42-launches-on-product-hunt-4ioa | startup, developer, marketing, devjournal | I've launched 42 dev tools on Product Hunt in the last 2 years — or maybe 55? To be honest, I've lost count.

I could bore you with the details of how Specify launched or that Product Hunt awarded me Community Member of the Year in 2022 (runner-up).

No. What you probably care about is what my key takeaways are.

So, h... | fmerian |

1,905,421 | Unraveling the URL Enigma with Power Automate’s C# Plugin | Intro: Emails often contain links that are valuable for various reasons. Power Automate by... | 26,301 | 2024-07-01T07:00:43 | https://dev.to/balagmadhu/unraveling-the-url-enigma-with-power-automates-c-plugin-jni | powerautomate, hacks, powerfuldevs | ## Intro:

Emails often contain links that are valuable for various reasons. Power Automate by Microsoft is a tool that can automate many tasks, but it doesn’t have a built-in feature to Find all (as in excel) instance of a specific string. Felling in love with bring in your own c# code as a plugin helped to solve a sma... | balagmadhu |

1,872,078 | PostgreSQL Backups Simplified with pg_dump | pg_dump is an essential tool for creating PostgreSQL backups. This guide highlights key features and... | 21,681 | 2024-07-01T07:00:00 | https://dev.to/dbvismarketing/postgresql-backups-simplified-with-pgdump-504b | pgdump, postgres | `pg_dump` is an essential tool for creating PostgreSQL backups. This guide highlights key features and examples to streamline your backup process.

**SQL Script Backup**

```

pg_dump -U admin -d company -f company_backup.sql

```

Restore using:

```

psql -d new_company -f company_backup.sql

```

**Directory-Format Arch... | dbvismarketing |

1,907,149 | Mondev's summer break | Good morning everyone and happy MonDEV! How was this week? Have you tried anything new? Both for... | 25,147 | 2024-07-01T07:00:00 | https://dev.to/giuliano1993/mondevs-summer-break-3h9p | Good morning everyone and happy MonDEV!

How was this week? Have you tried anything new?

Both for work and personal projects, I am studying a lot and this certainly makes me happy; moreover, this allows me to gather some material for newsletters and future articles: what more could I ask for?

Meanwhile, we have also ... | giuliano1993 | |

1,906,033 | How to Containerize Your Backend Applications Using Docker | Imagine you have spent months meticulously building a backend application. It handles user requests... | 0 | 2024-07-01T07:00:00 | https://dev.to/mlasunilag/how-to-containerize-your-backend-applications-using-docker-3ap7 | devops, docker, aws | Imagine you have spent months meticulously building a backend application. It handles user requests flawlessly, scales beautifully, and is ready to take your product to the next level. But then comes deployment dread. Different environments, dependency conflicts, and a looming fear of something breaking - all potential... | alexindevs |

1,907,275 | How to Recover Roadrunner Email Password 2024 | For many users, Roadrunner (now Spectrum) email is an essential tool for personal and professional... | 0 | 2024-07-01T06:58:34 | https://dev.to/shivam_kushwaha_a070ed655/how-to-recover-roadrunner-email-password-2024-20en | For many users, Roadrunner (now Spectrum) email is an essential tool for personal and professional communication. Losing access to your account due to a forgotten password can be frustrating, but recovering it is a straightforward process. This guide will walk you through the steps to recover your [Roadrunner email pas... | shivam_kushwaha_a070ed655 | |

1,907,251 | How to Track USDT TRC20 Transactions | Tether USDT (TRC20) is a USDT stablecoin issued on the TRON network using the trc20 token standard.... | 0 | 2024-07-01T06:57:56 | https://dev.to/bitquery/how-to-track-usdt-trc20-transactions-565e | tracing, cryptocurrency, cybersecurity, data |

Tether USDT (TRC20) is a USDT stablecoin issued on the TRON network using the trc20 token standard. It is pegged to the US Dollar, which allows it to provide the stability a traditional currency gives while leveraging the benefits of blockchain technology.

By combining the stability of Tether and the efficiency of th... | divyasshree |

1,907,274 | Checklist for a Successful Website Launch | Launching a website is an exciting milestone, but it’s crucial to ensure everything is in place for a... | 0 | 2024-07-01T06:57:48 | https://dev.to/digvijayjadhav98/checklist-for-a-successful-website-launch-1dop | webdev, javascript, websitelaunch, checklist | Launching a website is an exciting milestone, but it’s crucial to ensure everything is in place for a smooth and successful launch. Here’s a comprehensive checklist to help you cover all essential aspects of your website launch.

1. **Content Checklist:** Content is the backbone of your website. It engages users, conve... | digvijayjadhav98 |

1,907,273 | Building a RESTful API with Spring Boot: A Comprehensive Guide to @RequestMapping | @RequestMapping is a versatile annotation in Spring that can be used to map HTTP requests to handler... | 27,843 | 2024-07-01T06:57:21 | https://dev.to/jottyjohn/building-a-restful-api-with-spring-boot-a-comprehensive-guide-to-requestmapping-49lf | springboot, api | @RequestMapping is a versatile annotation in Spring that can be used to map HTTP requests to handler methods of MVC and REST controllers. Here’s an example demonstrating how to use @RequestMapping with different HTTP methods in a Spring Boot application.

**Step-by-Step Example**

Set up the Spring Boot project as menti... | jottyjohn |

1,907,272 | Hoisting, Lexical Scope, and Temporal Dead Zone in JavaScript | JavaScript is often a quirky language. It's crucial to understand concepts like hoisting, lexical... | 0 | 2024-07-01T06:56:11 | https://dev.to/rahulvijayvergiya/hoisting-lexical-scope-and-temporal-dead-zone-in-javascript-55pg | webdev, javascript, react, beginners | JavaScript is often a quirky language. It's crucial to understand concepts like **hoisting, lexical scope, and the temporal dead zone (TDZ)**. Rather than just going through theory, let's dive into practical examples that illustrate these concepts, some of which might initially seem confusing.

## Hoisting

Hoisting is ... | rahulvijayvergiya |

1,907,271 | Fostering Productive Dev Culture; Intro to SLA, SLO, and SLI | 🙏 We’re at Issue Number TEN already. Huge thank you to all of our supporters, your feedback and trust... | 0 | 2024-07-01T06:55:49 | https://dev.to/grocto/fostering-productive-dev-culture-intro-to-sla-slo-and-sli-59kc | devops, developer, softwareengineering, beginners | 🙏 We’re at Issue Number TEN already. Huge thank you to all of our supporters, your feedback and trust help us bring better weekly content to you.

Read the full newsletter here - https://grocto.substack.com/p/fostering-productive-dev-culture

in React. We will... | 27,921 | 2024-07-01T06:45:02 | https://coluzziandrea.hashnode.dev/react/react-daw | webdev, javascript, react, music | ---

In this blog post, we will explore how to build a Digital Audio Workstation (DAW) in React. We will use the Tone.js library to create music and audio effects, and we will discuss the key components and features of a DAW application.

** solutions at Qualistery GmbH. Ensure compliance and reliability with our precise temperature mapping services tailored to your pharmaceutical, healthcare, or industrial environment. Our expert team... | qualistery | |

1,907,263 | Driving Developer Productivity: Insights and Strategies from Gaurav Batra, CTO @Semaai | In the fast-paced world of tech startups, developer productivity is not just a metric—it's the... | 0 | 2024-07-01T06:49:17 | https://dev.to/grocto/driving-developer-productivity-insights-and-strategies-from-gaurav-batra-cto-semaai-dbk | beginners, productivity, learning, career | In the fast-paced world of tech startups, developer productivity is not just a metric—it's the lifeblood of innovation and progress. During my discussion with our guest Gaurav Batra, CTO at Semaai (a hyper-growth agritech startup from Indonesia), he mentioned about the criticality of optimizing their engineering team's... | grocto |

1,907,262 | Rahasia Game Slot Online Zhao Yun | Latar Belakang dan Cerita di Balik Game Slot Online Zhao Yun Game slot online Zhao Yun... | 0 | 2024-07-01T06:48:28 | https://dev.to/panlipovenzov/rahasia-game-slot-online-zhao-yun-48i |

# **Latar Belakang dan Cerita di Balik Game Slot Online Zhao Yun**

Game slot online Zhao Yun didasarkan pada sejarah Tiongkok kuno dan mengambil inspirasi dari tokoh legendaris Zhao Yun. Zhao Yun adalah seorang jenderal yang terkenal dalam periode Tiga Kerajaan di Tiongkok. Ia dikenal karena keberaniannya di... | panlipovenzov | |

1,907,259 | Top 5 Email Autoresponders to Supercharge Your Marketing Strategy | Top 5 Email Autoresponders to Supercharge Your Marketing Strategy **Email marketing remains one of... | 0 | 2024-07-01T06:45:34 | https://dev.to/steven_hunter_88bde44af70/top-5-email-autoresponders-to-supercharge-your-marketing-strategy-4oon | **[Top 5 Email Autoresponders to Supercharge Your Marketing Strategy](https://downloadfreepic.com/5-best-email-autoresponders-for-marketing-automation/)**

**Email marketing remains one of the most effective tools for businesses to engage with their audience, nurture leads, and drive conversions. With the right email au... | steven_hunter_88bde44af70 | |

1,907,258 | Boost Your Online Presence: Why Qorvatech is the Best Social Networking Website Company in India | Do you want to make an identity in the social media market? Are you probably searching for Social... | 0 | 2024-07-01T06:45:23 | https://dev.to/qorva_tech_e2ab7722e88080/boost-your-online-presence-why-qorvatech-is-the-best-social-networking-website-company-in-india-4la4 | digitalmarketing |

Do you want to make an identity in the social media market? Are you probably searching for Social Networking Website Company in India for your business? At Qorvatech, we are here for you when it comes to designing ... | qorva_tech_e2ab7722e88080 |

1,907,256 | Top React UI Libraries 🌟 - ShadCN, Redix UI - NextJS UI Library - Tailwind CSS 2024 | A post by Shaswat Raj | 0 | 2024-07-01T06:42:17 | https://dev.to/sh20raj4/top-react-ui-libraries-shadcn-redix-ui-nextjs-ui-library-tailwind-css-2024-dh7 | {% youtube https://www.youtube.com/watch?v=Gyp0AfK-19U&t=34s&ab_channel=ShadeTech %} | sh20raj4 | |

1,907,254 | Creating a GitHub Repository Collection Using GitHub Lists ✨ | A post by Shaswat Raj | 0 | 2024-07-01T06:41:41 | https://dev.to/sh20raj4/creating-a-github-repository-collection-using-github-lists-2p2c | webdev, javascript, beginners, programming | {% youtube https://www.youtube.com/watch?v=oxom2JV--p4&ab_channel=ShadeTech %} | sh20raj4 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.