id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

788,458 | Crystal for the curious Ruby on Rails Developer | Have you been hearing about the language Crystal lately and you're curious what it's all about? Well,... | 0 | 2021-08-11T14:46:16 | https://dev.to/seesethcode/crystal-for-the-curious-ruby-on-rails-developer-1dc | crystal, webdev, alwayslearning, 12monthsofmakes | Have you been hearing about the language [Crystal](https://www.crystal-lang.org) lately and you're curious what it's all about? Well, I'm right there with you! Specifically I'm curious how Crystal can be used to create web applications that are highly performant but use less resources.

##A little about me

My name is Seth and I'm a self-taught full-stack Ruby on Rails developer. My strong suites are mostly back-end and infrastructure.

##The goal of this article

Since I'm self-taught, and because of the way I learn, I rely heavily on video tutorials to help me build my understanding of how Ruby, Javascript, HTML/CSS and infrastructure work together to create functional web applications.

Crystal caught my attention because of it's similarity to Ruby, but with benefits of a compiled language that I think Ruby is naturally trying to move towards. The challenge I find myself having is... **a lack of videos helping introduce the different frameworks and the language**.

So, I decided I'll take multiple Crystal web frameworks for a test drive and we'll build the same app in each one to explore how each one is different. I plan to personally highlight the differences I notice between the Crystal framework and Rails because most of my app development experience is with Rails.

##Crystal Web Frameworks I'll Be Exploring

Here's the list of frameworks I will be making videos for. I'll be creating the same simple blog app on each framework so that anyone following along can compare how each one. I'll update each list item with a link to the article specifically about the framework with links to the videos and the source code for anyone to clone/fork and play around with. So if you don't see a link yet, please check back in the future 😄

- [Amber](https://amberframework.org/)

- [Lucky](https://www.luckyframework.org/)

- [Spider Gazelle](https://spider-gazelle.net/)

- [Kemal](https://github.com/kemalcr/kemal)

- [Athena](https://athenaframework.org/)

The order here is roughly based on the full featured-ness of the framework. I say that because from my current experience Lucky is more fully featured as an out of the box system, but I'm already working with Amber currently and I'm more familiar with it so I plan to start there. Kemal and Athena are much lighter weight and are better compared to a Sinatra type framework.

##Blog Project Outline

I'm rarely this structured, but a simple blog site is a good way to explore a frameworks ORM, controller and view layer, routing, simple user authentication and all of the basic CRUD actions.

Since the purpose of this project is to explore the frameworks themselves I'll be using bootstrap for the front-end and making all of the projects look essentially the same with standard bootstrap styling. If I use any Javascript, it'll be through Webpack and using Stimulus or just plain Javascript (clearly I haven't decided yet).

###Pages

- Home: This will act as the index page for the posts, displaying up to 10 posts and paginating any additional posts.

- Sign Up

- Sign In

- Post: the individual post page displaying the post contents. Post pages will accept comments from non-signed in users.

- User Account: screen showing all of the posts belonging to the signed in user, with all of the CRUD actions for all the posts belonging to that user.

All pages will have a sticky top nav with links to sign in/out and create/manage posts.

##And there you have it!

I'll update this post as I finish the videos and posts with each framework. If this has helped you, or caused you to have more questions that I didn't answer, please let me know. I'd love to get your feedback! | seesethcode |

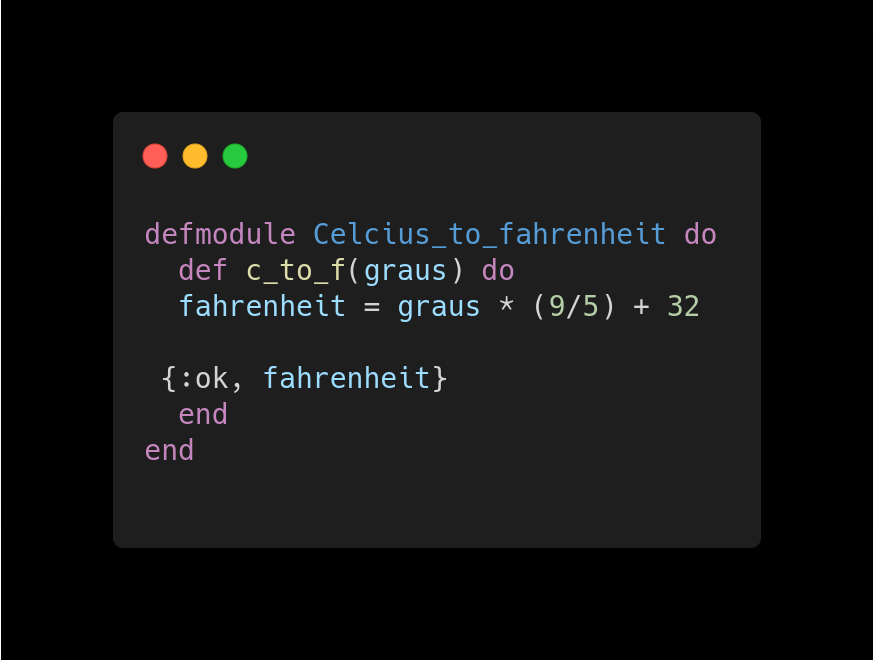

788,576 | Um errinho em Elixir | Elixir não é uma linguagem perfeita, claro. O "erro" que vou comentar aqui está na função... | 0 | 2021-08-11T15:10:21 | https://dev.to/elixir_utfpr/um-errinho-em-elixir-1dof | elixir, erlang | Elixir não é uma linguagem perfeita, claro.

O "erro" que vou comentar aqui está na função [Enum.at/3](https://hexdocs.pm/elixir/1.12/Enum.html#at/3).

Deixa explicar melhor. Se eu tenho uma lista com 5 elementos:

```elixir

[:a, :b, :c, :d, :e]

```

e eu perguntar a você qual é o terceiro elemento, obviamente você vai me responder que é o `:c`.

Mas se eu usar [Enum.at/3](https://hexdocs.pm/elixir/1.12/Enum.html#at/3) da seguinte forma:

```elixir

Enum.at([:a, :b, :c, :d, :e],3)

```

o resultado vai ser:

```elixir

iex> Enum.at([:a, :b, :c, :d, :e],3)

:d

```

Interessante é que é um "erro" (coloco entre aspas por não ser um erro de verdade mas sim uma escolha que eu acredito ser errada) que [não veio do Erlang](https://erlang.org/doc/man/lists.html#nth-2):

```erlang

> lists:nth(3, [a, b, c, d, e]).

c

```

O Maxsuel Maccari explicou [neste tweet](https://twitter.com/maxmaccari/status/1425476422425092106?s=20) a razão provável deste erro estar em Elixir. Ruby faz isso. Veja [aqui](https://ruby-doc.org/core-2.7.0/Array.html) e abaixo:

```ruby

arr = [1, 2, 3, 4, 5, 6]

arr[2] #=> 3

arr[100] #=> nil

arr[-3] #=> 4

```

Ruby adiciona requintes de crueldade permitindo índices negativos.

| elixir_utfpr |

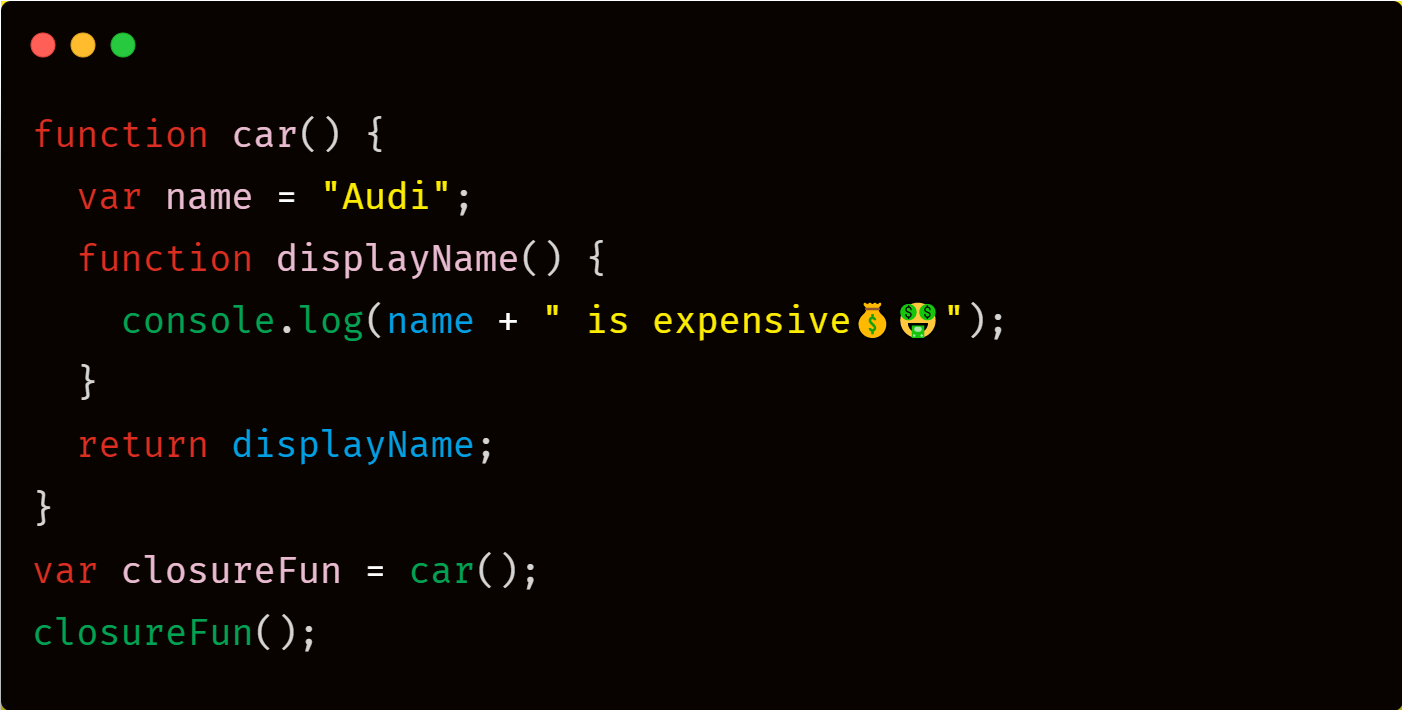

788,645 | #14) Explain Closures in JS❓ | ✅A Closure is the combination of function enclosed with refrences to it's surrounding state. OR ✅A... | 13,804 | 2021-08-11T16:24:51 | https://dev.to/myk/14-explain-closures-in-js-h8g | javascript, webdev, beginners, career | ✅A Closure is the combination of function enclosed with refrences to it's surrounding state.

`OR`

✅A Closure gives you the access to an outer function's scope from an inner function.

✅Closure are created every time a function is created.

✅It is an ability of a function to remember the variables and function declared in it's outer scope.

Let's talk about the above code👇

💠The function `car` gets executed and return a function when we assign it to a variable.

`var closureFun = car();`

💠The returned function is then executed when we invoke closureFun:

`closureFun();`

💠Because of closure the output is `Audi is expensive💰🤑`

When the function _car()_ runs, it sees that the returning function is using the variable _name_ inside it:

`console.log(name + " is expensive💰🤑");`

💠Therefore car(), instead of destroying the value of name after execution, saves the value in the memory for further reference.

💠This is the reason why the returning function is able to use the variable declared in the outer scope even after the function is already executed.

✔This ability of a function to store a variable for further reference even after it is executed, is called Closure.

__________________________________________________________________ | myk |

788,658 | How to Install Elementor Pro in WordPress 2021 | How to Install Elementor Pro in WordPress 2021 | How to Buy Elementor Pro To Buy and Install... | 0 | 2021-08-11T16:48:56 | https://dev.to/hmawebdesign/how-to-install-elementor-pro-in-wordpress-2021-48o9 | wordpress, programming, php, webdev | How to Install Elementor Pro in WordPress 2021 | How to Buy Elementor Pro

To Buy and Install Elementor Pro version in WordPress follow these steps:

1. Go to the elementor.com website link

2. Go to pricing and choose 'essential plan' for the Elementor Pro WordPress Plugin.

3. Create a new account to download the Elementor Pro version.

4. After filling in the billing details, download the elementor Pro Plugin

**How to Install the Elementor Pro - Vodeo Tutorial!**

{% youtube ERQn0Vh84ik %}

5. After complete the downloading, open WordPress, go to plugins, and click add new.

6. Upload the downloaded zip file of Elementor Pro Plugin and install.

7. After installation activates the Plugin.

8. Must install elementor now, to use the pro version of Elementor Plugin.

9. Now, connect and activate the plugin with provided license key.

10. Congratulations! Elementor Pro is activated and ready to use.

| hmawebdesign |

788,666 | Frontend Environment Variables – What, Why and How | Photo by Pascal Debrunner on Unsplash What If you ever touched backend code you probably... | 0 | 2021-09-05T14:58:24 | https://dev.to/henriqueinonhe/frontend-environment-variables-what-why-and-how-1c1 | frontend, javascript, webpack, environment | *Photo by [Pascal Debrunner](https://unsplash.com/@debrupas?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText) on [Unsplash](https://unsplash.com/?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText)*

## What

If you ever touched backend code you probably already know that environment variables really come in handy when dealing with multiple environments, like local, dev, qa, prod by decoupling configuration from code.

In case you didn't, you may think of environment variables as **inputs** that you application take as parameters, after all, a program is pretty much like a function, with inputs, outputs and sometimes side effects.

So, just as with functions, where parametrizing values that were previously hardcoded in the function's body yields a more flexible implementation, we may extract **hardcoded values** from our frontend code as **environment variables**, so that we are able to change our application behavior without touching the code itself.

## Why

When working with a real project you'll probably deal with multiple environments (local, dev, qa, prod) and each of these environments will most likely have its own dedicated API service, and thus each one will be accessed using a different URL.

So instead of hardcoding the API URL, we read this value from an environment variable so that we can deploy the same code for all these different environments.

Another interesting use case for environment variables is to implement **feature flags** which are used to enable or disable certain features depending on the context (e.g. A/B testing or the application might serve multiple countries/regions and some features might not be available in some of them).

Currently at the place I work we also rely on environment variables to set the "check for updates" polling interval and to tweak some testing scenarios.

In summary, environment variables are a widely supported way of decoupling **configuration** from **code**. (See [12factorapp](https://12factor.net/config) for an in depth explanation)

## How

If we were talking about environment variables at the backend we could just `npm install dotenv` and `dotenv.config()` and then call it a day.

However, as the frontend runs on the client's machine it can't access environment variables (and even if it could, it would make no sense), so we need a different approach.

### Enter the compiler

As reading environment variables at **run time** is not an option for the frontend, we must fallback to **compile time** substitution.

Nowadays you'll most likely be using a compiler for the frontend, either because you're using JSX, or relying on Babel polyfills, or maybe you recognize the value of static type checking and need to transpile from Typescript.

Even if you don't really care about any of those things, you'll probably be minifying your JS code to reduce the bundle size and get that perfect Page Speed (*is this still relevant?*) score.

What we're going to do then is use the compiler to substitute environment variables in the code by their actual values at **build/compile time**.

In this example I'll be using **Webpack** as it is the standard bundler.

So, supposing you already have your build configuration in place with Webpack, setting up environment variables is a 3-step process:

```js

//webpack.config.js

//1. Read environment variables from our .env file

import dotenv from "dotenv";

dotenv.config();

//2. List environment variables you'll use

// The keys listed here are the ones that will

// be replaced by their actual value in the code.

// Also, their presence will be validated, so that

// if they're undefined webpack will complain and

// refuse to proceed with compilation

const environmentVariables = [

"API_BASE_URL",

"CHECK_FOR_UPDATES_TIME_INTERVAL"

];

//...

//3. Use Webpack's EnvironmentPlugin

plugins: [

//...

new webpack.EnvironmentPlugin(environmentVariables)

//...

],

//...

```

And then you can use environment variables the same way you'd do with backend code:

```js

const response = await fetch(`${process.env.API_BASE_URL}/login`);

```

Once again it is very important to keep in mind that what actually happens is essentially **textual substitution** of environment variables in build time, and a fortunate consequence of this is that for some cases like with feature flags, the minification process is even able to completely wipe out unreachable code branches, eliminating code related to unused features.

By the way, if you ever programmed with C or C++, this substitution process works pretty much the same way the C/C++ preprocessor would when you're using `#define`. | henriqueinonhe |

798,308 | Best Programming Stuff. | I have seen some of the programming stuff article on internet as usual sharing it with you... | 0 | 2021-08-20T15:21:28 | https://dev.to/stuffsuggested/best-programming-stuff-5a88 | I have seen some of the programming stuff article on internet as usual sharing it with you guys...Some of the websites are having affiliate links.

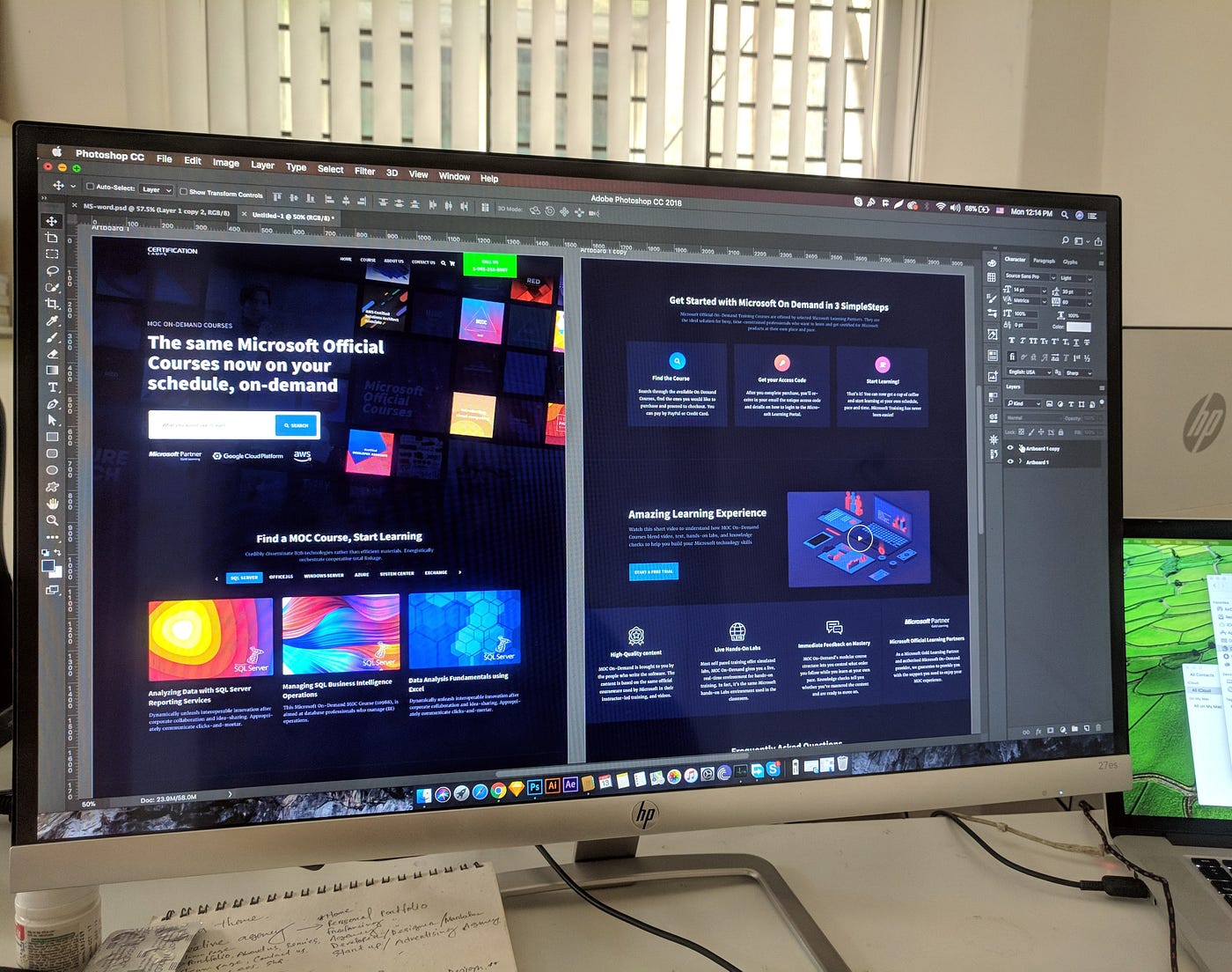

## 1. Best Monitors For Programming ##

When programming or coding for long periods, it's essential to have a comfortable monitor to keep eye strain to a minimum.

1. [Best Monitor For Programming](https://suggestedstuff.com/best-monitors-for-programming-in-india-2021/)

2. [Best Monitor For Programming](https://www.rtings.com/monitor/reviews/best/by-usage/programming-and-coding)

3. [Best Monitor for Programming & Coding (2021 Update)](https://www.guru99.com/best-monitor-programming.html)

## 2. Best Keyboard For Programing ##

As developers we don’t need many tools to work, many would say that we just need a laptop or computer, and even though that’s true, there’s a difference between having the minimum required to work to have the tools that you need to work comfortably, productive and healthy.

1. [List of Best keyboard for programming India 2021](https://cmsinstallation.blogspot.com/2021/04/best-keyboards-for-programming-in-2021.html)

2. [8 Best Keyboards for Programming in India 2021](https://www.thecrazyprogrammer.com/2020/09/best-keyboards-for-programming-in-india.html)

3. [Best Keyboard for Programming & Coding in 2021](https://www.guru99.com/best-keyboards.html)

## 3. Best Mouse For Programing ##

Programmer, Gammers, and coders spend their whole day with computers. So they have comfortable things for use. A standard mouse is irritating to use for a long time. A mouse that puts your hand in an unprejudiced position is maybe the best way to reduce these problems you just have to find a vertical or trackball mouse. Here, I've listed the Best mouse for programming.

1. [7 Best Mouse For Developer / Gaming / Coding](https://cmsinstallation.blogspot.com/2021/05/7-best-mouse-for-developer-gaming-coding.html)

2. [Best MOUSE for Programming & Coding (2021 Updated List)](https://www.guru99.com/best-mouse-programming.html)

3. [The best mouse of 2021: 10 top computer mice compared](https://www.techradar.com/in/news/computing-components/peripherals/what-mouse-10-best-mice-compared-1027809)

| stuffsuggested | |

788,668 | New JavaScript Features improving the Web Design World | The new decade has been a year of discovery and introduction of novice features for web developers... | 0 | 2021-08-11T17:10:07 | https://dev.to/dailydevnews/new-javascript-features-improving-the-web-design-world-2b5g | The new decade has been a year of discovery and introduction of novice features for web developers and programmers. JavaScript has been on the forefront of these innovations and system developments. In the current world, JavaScript has been defined as a well-thought-out programming language based on its user-friendly code and versatility. JavaScript platforms and tools are well recognized and globally appreciated for creating top-notch and cutting edge online casino games available in sites such as [onlinecasinosincanada.ca](http://onlinecasinosincanada.ca/).

Programmers and online casino games developers agree that JavaScript platforms creates fully-fledged games that offer the most fascinating user experience and the start of the art of security. The best part of JavaScript is the capacity to develop new features in constant frequentness with the aim of aiding their clients have the best experience and create more powerful, reliable, and efficient sites and applications. Let’s take you through some of the new JavaScript features that will enable the development of super cool online casino games.

###Dynamic import

This new JS feature saves us the challenge of importing files as modules. The most intriguing factor about this feature is how it enables the use to ship-on-demand request codes or conduct a code splitting function without the unnecessary module bundlers or even the overhead of web pack. It helps in making an import without adulterating the global namespace.

Dynamic import is a syntax that has solved the ever-evolving problems of failing to dynamically produce the parameters of import. Users have widely expressed their contention in regards to the dynamic import capacities that include the factor that it can be called from any place in the code and that it returns a promise that resolves into a module object. The previous features that are made in static means that developers will use more compile-time checking while the dynamism aspect provides for analyzable information at a lesser build time. Dynamic import enhances the use of one bundle for a single app and this enables sites built through JavaScript to be faster and efficient for demanding tasks such as the online casino games.

###BigInt

BigInt value or popularly known as BigInt is a new addition to the JavaScript programming that adds integer value or string value to the integers. It simply means that the feature gives developers the power to have greater integer representation as they code with JavaScript for improved data processing during data handling procedure.

The feature operates by adding an appended n at the end of the numeral to give the JavaScript engine the notion of treating it differently allowing the engine to support numbers of larger sizes. Strong sites such as online casino websites require a code that allows larger and more integers to reduce downtime and create more value for the user.

###Nullish coalescing

For a longer period developers have had the challenge of assigning values to be either false or null due to predictability hardships. But not anymore, the nullish coalescing feature provides through and efficient specification of assigning values. The feature handles default values through short-circuiting operators by assigning them as “truthy” or “falsy”. For instance, if a code is assigned to the left-hand side, it is considered as false while in the right-hand side it is considered as truth without leading to bugs.

Developers enjoy an added advantage of in that nullish coalescing evaluates its operands at most once which means it reduces the time used for evaluation increasing the value and reducing time used. For online casino web developers, this is a simple and invaluable addition to their repositories that will enable efficiency, predictability, and clean coding.

###Prototype based programming

Prototype-based programming refers to the object-oriented programming design where the behavior re-use involves cloning and extending objects and re-using them to serve as prototypes. This distinctive procedure gives objects the capacity to just inherit from other objects directly as opposed to creating something from nothing.

This feature gives [programmers using JavaScript language](http://www.forbes.com/sites/forbestechcouncil/2017/03/14/what-language-should-budding-programmers-learn-first/) the opportunity to create more objects by just cloning the initial ones. Cloning for this case is viewed as a style of developing new objects by simply copying the behaviors of an existing object where the clone or the prototype carries the qualities of the original object. This is a simpler, faster, and more efficient process that gives developers an upper hand in their activities.

###Import.meta

Import.meta is a uniquely designed feature that allows the object to provide precise metadata elements to the defined JavaScript module. This feature is known for bringing out or exposing the information about the module and in this case the information may include the module’s URL and resolve. For the URL obtained can be from the script; that is for external scripts, or it can be document base URL for the inline scripts.

An important thing to note is that the obtained URL will provide other details such as the query parameters or hash. [Programmers also need to understand](https://dev.to/hrishikesh1990/using-python-to-print-variable-in-strings-1d11) that the import meta object was created using the ECMAScript implementation.

###Optional chaining

This remains among the most fascinating features that have been recently introduced into the JS programming language. Previously, identification of a deep-rooted value has been a humongous challenge since you have to check if the intermediate node exist. Another challenge has been that the object maybe assigned as undefined or null but you want to get the result when the object is defined.

As a result, the optional chaining feature gives the user the ability to read the value of a property that is deeply located in a cycle of inter-joined objects without necessary having to [check](https://www.deviantart.com/us-news/posts) the validity of each reference within the chain. Developers note that optional chaining is a syntax with the capacity to access deeply nested object properties without being concerned of their existence.

Basically, it provides shorter expressions due to its short-circuiting capacities which eliminates the error reference. The other advantage of this feature is that it aids in the exploration of object context in cases when there is no guarantee of the properties.

| dailydevnews | |

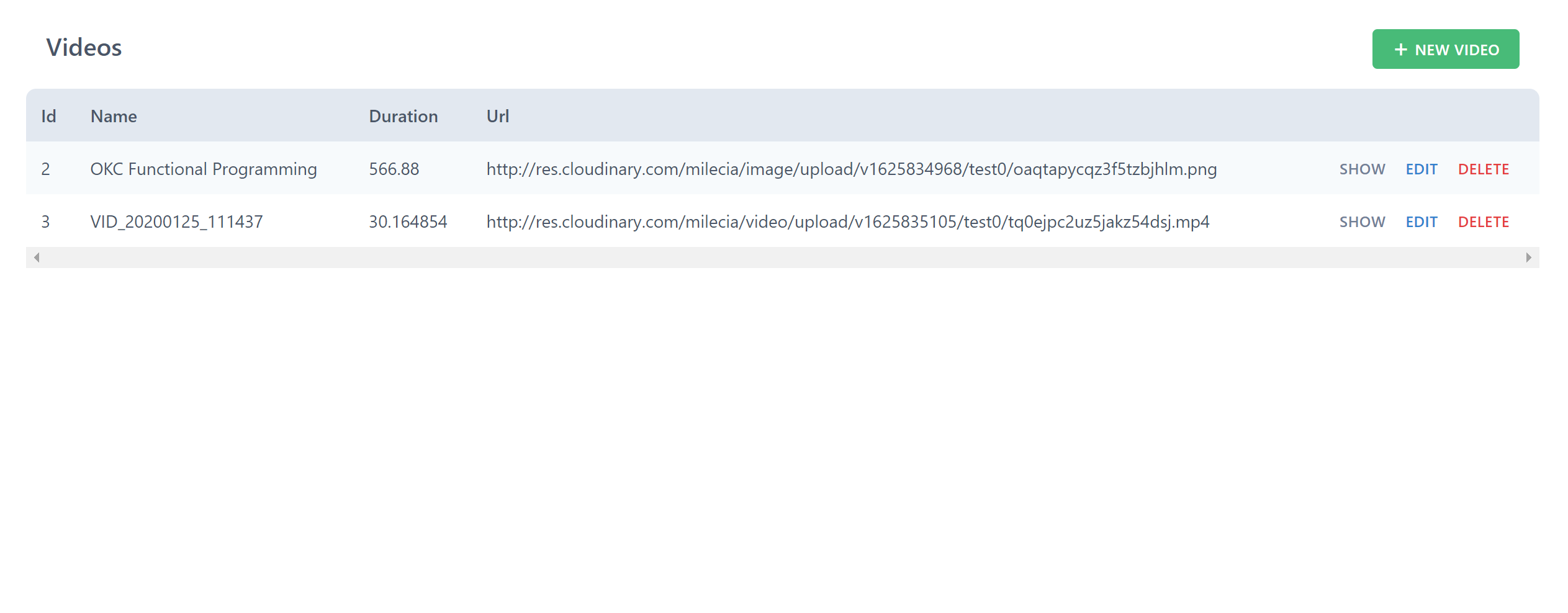

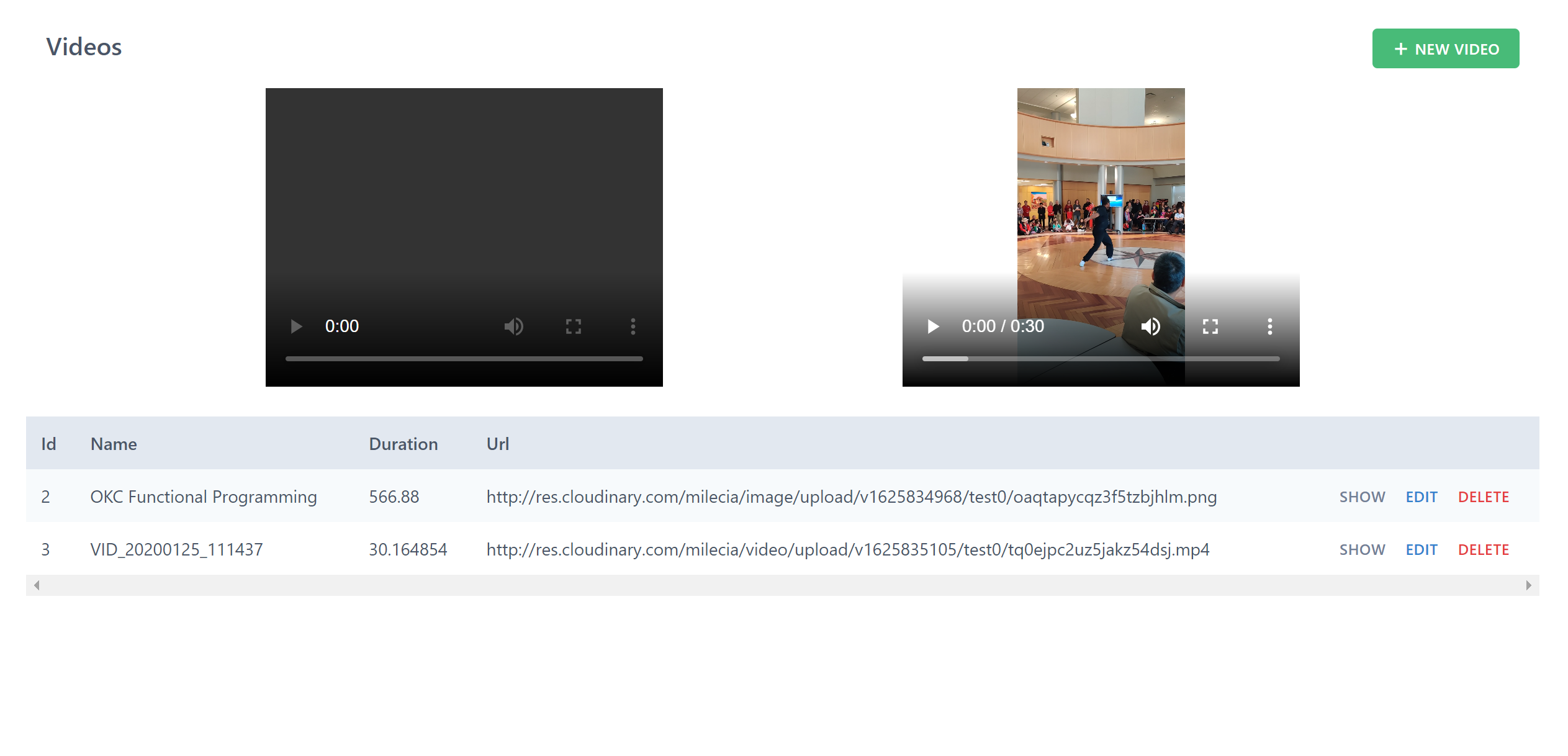

788,718 | AluraChallenges #2 ( Semana 2) | No post anterior, nós fizemos todas as implementações que tínhamos da semana 1, deixando nossa API de... | 14,064 | 2021-09-01T21:50:33 | https://dev.to/delucagabriel/alurachallenges-2-semana-2-4d33 | nestjs, typescript, jest, typeorm | No post anterior, nós fizemos todas as implementações que tínhamos da semana 1, deixando nossa API de vídeos pronta para consumo.

Só que ainda temos desafios pela frente e nessa semana faremos a inserção de categorias, para classificarmos os nossos vídeos.

A história dessa semana é a seguinte:

> Depois de alguns testes com usuários, foi definido que a próxima feature a ser desenvolvida nesse projeto é a divisão dos vídeos por categoria, para melhorar a experiência de organização da lista de vídeos pelo usuário.

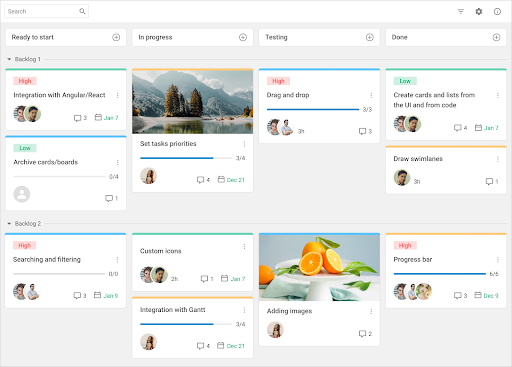

Então, faremos nesse post as seguintes [implementações](https://trello.com/b/iX8Xeg4k/alura-challenge-backend-semana-2) :

* **Adicionar `categorias` e seus campos na base de dados**;

* **Rotas CRUD para `/categorias`**;

* **Incluir campo `categoriaId` no modelo `video`**;

* **Escrever os testes unitários**.

Vamos começar fazendo os dois primeiros pontos, e novamente usaremos o generate do Nest, para criamos o recurso Categorias.

```bash

nest generate resource categorias

```

Após a criação, faremos a definição da nossa classe CreateCategoriaDto:

```typescript

// src/categorias/dto/create-categoria.dto.ts

import { Video } from '../../videos/entities/video.entity';

export class CreateCategoriaDto {

id: number;

titulo: string;

cor: string;

videos: Video[];

}

```

e alteraremos o nosso CreateVideoDto, adicionando a categoria:

```typescript

// src/videos/dto/create-video.dto.ts

import { Categoria } from '../../categorias/entities/categoria.entity';

export class CreateVideoDto {

...

categoria: Categoria;

}

```

Agora vamos na nossa "entity" e precisaremos fazer um pouco diferente.

Como será necessário ligar as tabelas de videos e categorias, utilizaremos o decorator @OneToMany(), que significa que teremos 1 categoria para vários vídeos:

```typescript

// src/categorias/entities/categoria.entity.ts

import { IsNotEmpty, IsString } from 'class-validator';

import { Video } from '../../videos/entities/video.entity';

import { Column, Entity, OneToMany, PrimaryGeneratedColumn } from 'typeorm';

@Entity()

export class Categoria {

@PrimaryGeneratedColumn()

id: number;

@IsNotEmpty()

@IsString()

@Column()

titulo: string;

@IsNotEmpty()

@IsString()

@Column()

cor: string;

@OneToMany(() => Video, (video) => video.categoria)

videos: Video[];

}

```

Será necessário alterar nossa "entity" Video também, informando que teremos varios vídeos para cada categoria. Utilizaremos o decorator @ManyToOne() após todos os atributos que já temos:

```typescript

// src/videos/entities/video.entity.ts

import { PrimaryGeneratedColumn, Column, Entity, ManyToOne } from 'typeorm';

import { IsNotEmpty, IsString, IsUrl } from 'class-validator';

import { Categoria } from '../../categorias/entities/categoria.entity';

@Entity()

export class Video {

...

@ManyToOne(() => Categoria, (categoria) => categoria.id, { nullable: false })

categoria: Categoria;

}

```

Entidades ajustadas, vamos para o nosso "categoria.service" para implementar as funcionalidades dela.

A diferença nela está no findAll, que passamos o parâmetro indicando a relação (relations: ['videos']) e já pedindo para trazer os dados dessa relação (loadEagerRelations: true):

```typescript

// src/categorias/categorias.service.ts

import { Injectable } from '@nestjs/common';

import { InjectRepository } from '@nestjs/typeorm';

import { Repository } from 'typeorm';

import { CreateCategoriaDto } from './dto/create-categoria.dto';

import { UpdateCategoriaDto } from './dto/update-categoria.dto';

import { Categoria } from './entities/categoria.entity';

@Injectable()

export class CategoriasService {

@InjectRepository(Categoria)

private categoriaRepository: Repository<Categoria>;

create(createCategoriaDto: CreateCategoriaDto) {

return this.categoriaRepository.save(createCategoriaDto);

}

findAll() {

return this.categoriaRepository.find({

relations: ['videos'],

loadEagerRelations: true,

});

}

findOne(id: number) {

return this.categoriaRepository.findOne(id);

}

update(id: number, updateCategoriaDto: UpdateCategoriaDto) {

return this.categoriaRepository.update(id, updateCategoriaDto);

}

async remove(id: number) {

const categoria = await this.findOne(id);

return this.categoriaRepository.remove(categoria);

}

}

```

Novamente, temos que ajustar nosso "video.service" também, que no caso, só terá alteração no método "findAll()":

```typescript

// src/videos/videos.service.ts

...

findAll() {

return this.videoRepository.find({

relations: ['categoria'],

loadEagerRelations: true,

});

}

...

```

Agora, para que a tabela de categorias seja criada no banco e identificada pelo Nest, precisamos ir no categorias.module e importar o TypeOrmModule passando a Categoria como parâmetro:

```typescript

// src/categorias/categorias.module.ts

import { Module } from '@nestjs/common';

import { CategoriasService } from './categorias.service';

import { CategoriasController } from './categorias.controller';

import { Categoria } from './entities/categoria.entity';

import { TypeOrmModule } from '@nestjs/typeorm';

@Module({

imports: [TypeOrmModule.forFeature([Categoria])],

controllers: [CategoriasController],

providers: [CategoriasService],

})

export class CategoriasModule {}

```

Maravilha, implementamos a categoria para os nossos videos, porém, temos um card que pede para que ao acessar a rota "/categorias/:id/videos" retorne os vídeos de uma determinada categoria:

Para que isso seja possível, vamos precisar de uma nova rota no nosso controller de categorias.

A nova rota vai ficar assim:

```typescript

// src/categorias/categorias.controller.ts

...

@Get(':id/videos')

findVideosByCategoryId(@Param('id') id: string) {

return this.categoriasService.findVideoByCategory(+id);

}

...

```

Mas espera aí, nós não temos esse método "categoriasService.findVideoByCategory()".

Precisamos criar esse método lá no nosso serviço "categorias.service":

```typescript

// src/categorias/categorias.service.ts

...

async findVideoByCategory(id: number): Promise<Video[]> {

const categoria = await this.findOne(id);

return categoria.videos;

}

...

```

Mas se tentarmos acessar a rota, veremos uma tela branca, sem retorno de nenhum dado. --'

Para que seja retornado, precisamos alterar também o nosso método findOne, passando um objeto de configuração, informando que queremos que ele faça o carregamento dos dados relacionados à essa tabela.

O método ficará assim:

```typescript

// src/categorias/categorias.service.ts

...

findOne(id: number) {

return this.categoriaRepository.findOne(id, {

relations: ['videos'],

loadEagerRelations: true,

});

}

...

```

Agora sim, ao acessar nossa rota ".../categorias/1/videos", teremos nossos vídeos referentes à essa categoria.

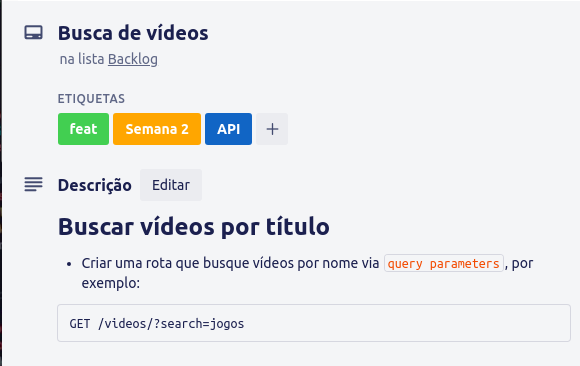

O próximo card, tem a seguinte descrição:

Para atender a esse requisito, precisaremos alterar nosso controller e nosso service de videos:

```typescript

// src/videos/videos.controller.ts

...

@Get()

findAll(@Query() query) {

return this.videosService.findAll(query.search);

}

...

```

```typescript

// src/videos/videos.service.ts

...

findAll(search = '') {

return this.videoRepository.find({

where: { titulo: ILike(`%${search}%`) },

relations: ['categoria'],

});

}

...

```

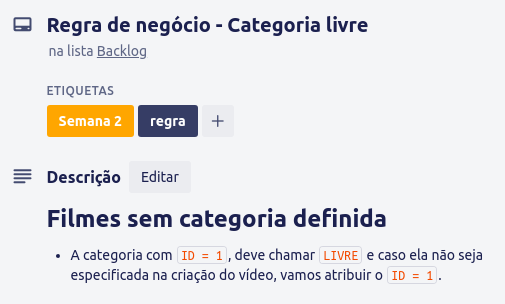

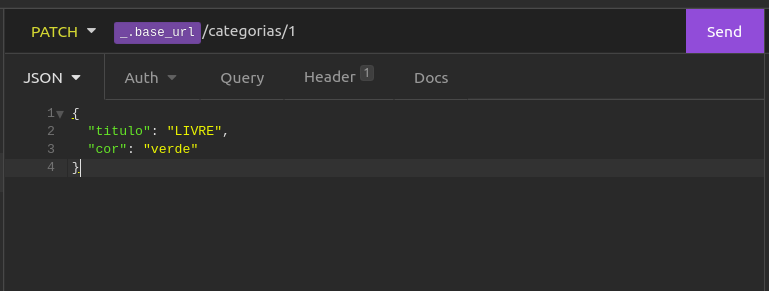

Próximo card:

Como já criamos uma categoria anteriormente, vamos enviar uma requisição de update para a categoria de ID 1, alterando o título para LIVRE

E após isso, vamos no videos.service e alteraremos a lógica do método create:

```typescript

// src/videos/videos.service.ts

...

create(createVideoDto: CreateVideoDto) {

if (!createVideoDto.categoria)

return this.videoRepository.save({

...createVideoDto,

categoria: { id: 1 },

});

return this.videoRepository.save(createVideoDto);

}

...

```

Pronto, requisito atendido!

>(Faça uma requisição criando um vídeo sem informar a categoria e veja se está tudo funcionando como o esperado, o retorno deve ser o vídeo criado e com a "categoria":{"id": 1})

O próximo requisito é para que se crie os testes automatizados e aí que o negócio começa a ficar legal.

## Implementando testes automatizados

Para facilitar nossos mocks, vamos inserir um construtor para as nossas entidades, deixando elas assim:

```typescript

// src/categorias/entities/categoria.entity.ts

...

constructor(private categoria?: Partial<Categoria>) {}

```

```typescript

// src/categorias/entities/categoria.entity.ts

...

constructor(private video?: Partial<Categoria>) {}

```

Vamos criar também uma pasta common dentro de src e nela criar uma pasta test, que ficarão os arquivos necessário e comum à todos os testes. Por agora, teremos dois stubs:

videos.stub.ts (crie esse arquivo)

```typescript

// src/common/test/videos.stub.ts

import { Categoria } from '../../categorias/entities/categoria.entity';

import { Video } from '../../videos/entities/video.entity';

import { categoriasStub } from './categorias.stub';

export const videosStub: Video[] = [

new Video({

id: 1,

titulo: 'título qualquer',

descricao: 'descrição qualquer',

url: 'http://url_qualquer.com',

categoria: new Categoria({ id: 1, titulo: 'LIVRE', cor: 'verde' }),

}),

new Video({

id: 2,

titulo: 'outro título qualquer',

descricao: 'outra descrição qualquer',

url: 'http://outra_url_qualquer.com',

categoria: categoriasStub[1],

}),

new Video({

id: 3,

titulo: 'titulo qualquer',

descricao: 'descrição qualquer',

url: 'http://url_qualquer.com',

categoria: categoriasStub[1],

}),

];

```

e categorias.stub.ts (crie também)

```typescript

// src/common/test/categorias.stub.ts

import { Categoria } from '../../categorias/entities/categoria.entity';

export const categoriasStub: Categoria[] = [

new Categoria({ id: 1, titulo: 'LIVRE', cor: 'verde' }),

new Categoria({ id: 2, titulo: 'Programação', cor: 'azul' }),

];

```

### Testando os controllers

Vamos rodas os testes e ver no que vai dar.

```bash

npm run test

```

Que delícia, nenhum teste passou!

Isso acontece pois alteramos e implementamos tudo sem criar nenhum teste o que não é muito legal, mas enfim, vamos começar a ajustar as coisa...

#### Testando nosso categorias.controller

O Nestjs já deixa uma estrutura pré-montada, então, vamos até o nosso arquivo categorias.controller.spec.ts para trabalhar nele.

Nosso controller tem como dependência o service e para que ele seja disponibilizado no teste precisamos prover ele.

Faremos dessa forma:

```typescript

// src/categorias/categorias.controller.spec.ts

import { Test, TestingModule } from '@nestjs/testing';

import { categoriasStub } from '../common/test/categorias.stub';

import { CategoriasController } from './categorias.controller';

import { CategoriasService } from './categorias.service';

import { videosStub } from '../common/test/videos.stub';

describe('CategoriasController', () => {

let controller: CategoriasController;

let service: CategoriasService;

beforeEach(async () => {

const module: TestingModule = await Test.createTestingModule({

controllers: [CategoriasController],

providers: [

CategoriasService,

{

provide: CategoriasService,

useValue: {

create: jest.fn().mockResolvedValue(categoriasStub[0]),

findAll: jest.fn().mockResolvedValue(categoriasStub),

findOne: jest.fn().mockResolvedValue(categoriasStub[0]),

findVideoByCategory: jest.fn().mockResolvedValue(videosStub),

update: jest.fn().mockResolvedValue(categoriasStub[0]),

remove: jest.fn().mockResolvedValue(categoriasStub[0]),

},

},

],

}).compile();

controller = module.get<CategoriasController>(CategoriasController);

service = module.get<CategoriasService>(CategoriasService);

});

it('should be defined', () => {

expect(controller).toBeDefined();

expect(service).toBeDefined();

});

});

```

repare que nós criamos um mock para cada função que temos no serviço e definimos o retorno delas com nosso stub da categoria.

com isso, podemos rodar novamente o comando de testes do npm, só que agora, deixaremos ele em modo watch para que ele fique observando as alterações, enquanto implementamos os testes, mas faremos somente para o arquivo que estamos trabalhando no momento:

```bash

npm run test:watch -t /home/gabriel/Documentos/alura-challenges-2/src/categorias/categorias.controller.spec.ts

# substitua essa parte '/home/gabriel/Documentos' pelo caminho do seu computador, é claro.

```

Feito isso, o teste vai rodar e faremos a implementação do restante.

No final, esse arquivo ficará assim:

```typescript

// src/categorias/categorias.controller.spec.ts

import { Test, TestingModule } from '@nestjs/testing';

import { categoriasStub } from '../common/test/categorias.stub';

import { CategoriasController } from './categorias.controller';

import { CategoriasService } from './categorias.service';

import { videosStub } from '../common/test/videos.stub';

describe('CategoriasController', () => {

let controller: CategoriasController;

let service: CategoriasService;

beforeEach(async () => {

const module: TestingModule = await Test.createTestingModule({

controllers: [CategoriasController],

providers: [

CategoriasService,

{

provide: CategoriasService,

useValue: {

create: jest.fn().mockResolvedValue(categoriasStub[0]),

findAll: jest.fn().mockResolvedValue(categoriasStub),

findOne: jest.fn().mockResolvedValue(categoriasStub[0]),

findVideoByCategory: jest.fn().mockResolvedValue(videosStub),

update: jest.fn().mockResolvedValue(categoriasStub[0]),

remove: jest.fn().mockResolvedValue(categoriasStub[0]),

},

},

],

}).compile();

controller = module.get<CategoriasController>(CategoriasController);

service = module.get<CategoriasService>(CategoriasService);

});

it('should be defined', () => {

expect(controller).toBeDefined();

expect(service).toBeDefined();

});

describe('create', () => {

it('should create a category', async () => {

const result = await service.create(categoriasStub[0]);

expect(result).toEqual(categoriasStub[0]);

expect(service.create).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(service, 'create').mockRejectedValueOnce(new Error());

expect(service.create(categoriasStub[0])).rejects.toThrowError();

expect(service.create).toHaveBeenCalledTimes(1);

});

});

describe('findAll', () => {

it('should return a category list', async () => {

const result = await service.findAll();

expect(result).toEqual(categoriasStub);

expect(service.findAll).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(service, 'findAll').mockRejectedValueOnce(new Error());

expect(service.findAll()).rejects.toThrowError();

expect(service.findAll).toHaveBeenCalledTimes(1);

});

});

describe('findOne', () => {

it('should return a category', async () => {

const result = await service.findOne(categoriasStub[0].id);

expect(result).toEqual(categoriasStub[0]);

expect(service.findOne).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(service, 'findOne').mockRejectedValueOnce(new Error());

expect(service.findOne(1)).rejects.toThrowError();

expect(service.findOne).toHaveBeenCalledTimes(1);

});

});

describe('findVideosByCategoryId', () => {

it('should return videos from a category', async () => {

const result = await service.findVideoByCategory(categoriasStub[0].id);

expect(result).toEqual(videosStub);

expect(service.findVideoByCategory).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest

.spyOn(service, 'findVideoByCategory')

.mockRejectedValueOnce(new Error());

expect(service.findVideoByCategory(1)).rejects.toThrowError();

expect(service.findVideoByCategory).toHaveBeenCalledTimes(1);

});

});

describe('update', () => {

it('should return a updated category', async () => {

const result = await service.update(

categoriasStub[0].id,

categoriasStub[0],

);

expect(result).toEqual(categoriasStub[0]);

expect(service.update).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(service, 'update').mockRejectedValueOnce(new Error());

expect(service.update(1, categoriasStub[0])).rejects.toThrowError();

expect(service.update).toHaveBeenCalledTimes(1);

});

});

describe('remove', () => {

it('should return a removed category', async () => {

const result = await service.remove(categoriasStub[0].id);

expect(result).toEqual(categoriasStub[0]);

expect(service.remove).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(service, 'remove').mockRejectedValueOnce(new Error());

expect(service.remove(1)).rejects.toThrowError();

expect(service.remove).toHaveBeenCalledTimes(1);

});

});

});

```

Passou todos os testes? vamos para o próximo!

#### Testando nosso videos.controller

```bash

npm run test:watch -t /home/gabriel/Documentos/alura-challenges-2/src/videos/videos.controller.spec.ts

# substitua essa parte '/home/gabriel/Documentos' pelo caminho do seu computador, é claro.

```

A ideia é a mesma para cá, então, esse arquivo fica assim:

```typescript

// src/videos/videos.controller.spec.ts

import { Test, TestingModule } from '@nestjs/testing';

import { VideosController } from './videos.controller';

import { VideosService } from './videos.service';

import { videosStub } from '../common/test/videos.stub';

describe('VideosController', () => {

let controller: VideosController;

let service: VideosService;

beforeEach(async () => {

const module: TestingModule = await Test.createTestingModule({

controllers: [VideosController],

providers: [

VideosService,

{

provide: VideosService,

useValue: {

create: jest.fn().mockResolvedValue(videosStub[0]),

findAll: jest.fn().mockResolvedValue(videosStub),

findOne: jest.fn().mockResolvedValue(videosStub[0]),

update: jest.fn().mockResolvedValue(videosStub[0]),

remove: jest.fn().mockResolvedValue(videosStub[0]),

},

},

],

}).compile();

controller = module.get<VideosController>(VideosController);

service = module.get<VideosService>(VideosService);

});

it('should be defined', () => {

expect(controller).toBeDefined();

expect(service).toBeDefined();

});

describe('create', () => {

it('should create a video', async () => {

const result = await service.create(videosStub[0]);

expect(result).toEqual(videosStub[0]);

expect(service.create).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(service, 'create').mockRejectedValueOnce(new Error());

expect(service.create(videosStub[0])).rejects.toThrowError();

expect(service.create).toHaveBeenCalledTimes(1);

});

});

describe('findAll', () => {

it('should return a video list', async () => {

const result = await service.findAll();

expect(result).toEqual(videosStub);

expect(service.findAll).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(service, 'findAll').mockRejectedValueOnce(new Error());

expect(service.findAll()).rejects.toThrowError();

expect(service.findAll).toHaveBeenCalledTimes(1);

});

});

describe('findOne', () => {

it('should return a video', async () => {

const result = await service.findOne(videosStub[0].id);

expect(result).toEqual(videosStub[0]);

expect(service.findOne).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(service, 'findOne').mockRejectedValueOnce(new Error());

expect(service.findOne(1)).rejects.toThrowError();

expect(service.findOne).toHaveBeenCalledTimes(1);

});

});

describe('update', () => {

it('should return a updated video', async () => {

const result = await service.update(videosStub[0].id, videosStub[0]);

expect(result).toEqual(videosStub[0]);

expect(service.update).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(service, 'update').mockRejectedValueOnce(new Error());

expect(service.update(1, videosStub[0])).rejects.toThrowError();

expect(service.update).toHaveBeenCalledTimes(1);

});

});

describe('remove', () => {

it('should return a removed video', async () => {

const result = await service.remove(videosStub[0].id);

expect(result).toEqual(videosStub[0]);

expect(service.remove).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(service, 'remove').mockRejectedValueOnce(new Error());

expect(service.remove(1)).rejects.toThrowError();

expect(service.remove).toHaveBeenCalledTimes(1);

});

});

});

```

Testes dos controllers ok, vamos para os services.

### Testando os services

Sem muitas mudanças para cá também, faremos o mesmo que nos controllers, exceto pelo fato de que a dependência deixa de ser o serviço (óbvio, pois estamos no serviço rs) e passa a ser o nosso repository.

Trecho para prestar atenção:

```typescript

// src/categorias/categorias.service.spec.ts

...

beforeEach(async () => {

const module: TestingModule = await Test.createTestingModule({

providers: [

CategoriasService,

{

provide: getRepositoryToken(Categoria),

useValue: {

save: jest.fn().mockResolvedValue(categoriasStub[0]),

find: jest.fn().mockResolvedValue(categoriasStub),

findOne: jest.fn().mockResolvedValue(categoriasStub[0]),

update: jest.fn().mockResolvedValue(categoriasStub[0]),

remove: jest.fn().mockResolvedValue(categoriasStub[0]),

},

},

],

}).compile();

service = module.get<CategoriasService>(CategoriasService);

repository = module.get(getRepositoryToken(Categoria));

});

...

```

Dada a devida atenção para o que muda dos controllers, vamos para as implementações dos services.

#### Testando nosso categorias.service

```bash

npm run test:watch -t /home/gabriel/Documentos/alura-challenges-2/src/categorias/categorias.service.spec.ts

# substitua essa parte '/home/gabriel/Documentos' pelo caminho do seu computador, é claro.

```

O código para testar nosso service de categorias ficará assim:

```typescript

// src/categorias/categorias.service.spec.ts

import { Test, TestingModule } from '@nestjs/testing';

import { CategoriasService } from './categorias.service';

import { getRepositoryToken } from '@nestjs/typeorm';

import { Categoria } from './entities/categoria.entity';

import { categoriasStub } from '../common/test/categorias.stub';

import { Repository } from 'typeorm';

describe('CategoriasService', () => {

let service: CategoriasService;

let repository: Repository<Categoria>;

beforeEach(async () => {

const module: TestingModule = await Test.createTestingModule({

providers: [

CategoriasService,

{

provide: getRepositoryToken(Categoria),

useValue: {

save: jest.fn().mockResolvedValue(categoriasStub[0]),

find: jest.fn().mockResolvedValue(categoriasStub),

findOne: jest.fn().mockResolvedValue(categoriasStub[0]),

update: jest.fn().mockResolvedValue(categoriasStub[0]),

remove: jest.fn().mockResolvedValue(categoriasStub[0]),

},

},

],

}).compile();

service = module.get<CategoriasService>(CategoriasService);

repository = module.get(getRepositoryToken(Categoria));

});

it('should be defined', () => {

expect(service).toBeDefined();

expect(repository).toBeDefined();

});

describe('save', () => {

it('should create a category', async () => {

const newCategory: Omit<Categoria, 'id'> = categoriasStub[0];

const result = await service.create(newCategory);

expect(result).toEqual(categoriasStub[0]);

expect(repository.save).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(repository, 'save').mockRejectedValueOnce(new Error());

expect(service.create(categoriasStub[0])).rejects.toThrowError();

expect(repository.save).toHaveBeenCalledTimes(1);

});

});

describe('findAll', () => {

it('should return a categories list', async () => {

const result = await service.findAll();

expect(result).toEqual(categoriasStub);

expect(repository.find).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(repository, 'find').mockRejectedValueOnce(new Error());

expect(service.findAll()).rejects.toThrowError();

expect(repository.find).toHaveBeenCalledTimes(1);

});

});

describe('findOne', () => {

it('should return a category', async () => {

const result = await service.findOne(categoriasStub[0].id);

expect(result).toEqual(categoriasStub[0]);

expect(repository.findOne).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(repository, 'findOne').mockRejectedValueOnce(new Error());

expect(service.findOne(1)).rejects.toThrowError();

expect(repository.findOne).toHaveBeenCalledTimes(1);

});

});

describe('update', () => {

it('should return a updated category', async () => {

const result = await service.update(

categoriasStub[0].id,

categoriasStub[0],

);

expect(result).toEqual(categoriasStub[0]);

expect(repository.update).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(repository, 'update').mockRejectedValueOnce(new Error());

expect(service.update(1, categoriasStub[0])).rejects.toThrowError();

expect(repository.update).toHaveBeenCalledTimes(1);

});

});

describe('remove', () => {

it('should return a removed category', async () => {

const result = await service.remove(categoriasStub[0].id);

expect(result).toEqual(categoriasStub[0]);

expect(repository.remove).toHaveBeenCalledTimes(1);

expect(repository.findOne).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(repository, 'remove').mockRejectedValueOnce(new Error());

expect(service.remove(1)).rejects.toThrowError();

expect(repository.findOne).toHaveBeenCalledTimes(1);

});

});

});

```

#### Testando nosso videos.service

E para o nossos testes do service de videos:

```bash

npm run test:watch -t /home/gabriel/Documentos/alura-challenges-2/src/videos/videos.service.spec.ts

# substitua essa parte '/home/gabriel/Documentos' pelo caminho do seu computador, é claro.

```

```typescript

// src/videos/videos.service.spec.ts

import { Test, TestingModule } from '@nestjs/testing';

import { getRepositoryToken } from '@nestjs/typeorm';

import { Repository } from 'typeorm';

import { videosStub } from '../common/test/videos.stub';

import { Video } from './entities/video.entity';

import { VideosService } from './videos.service';

describe('VideosService', () => {

let service: VideosService;

let repository: Repository<Video>;

beforeEach(async () => {

const module: TestingModule = await Test.createTestingModule({

providers: [

VideosService,

{

provide: getRepositoryToken(Video),

useValue: {

save: jest.fn().mockResolvedValue(videosStub[0]),

find: jest.fn().mockResolvedValue(videosStub),

findOne: jest.fn().mockResolvedValue(videosStub[0]),

update: jest.fn().mockResolvedValue(videosStub[0]),

remove: jest.fn().mockResolvedValue(videosStub[0]),

},

},

],

}).compile();

service = module.get<VideosService>(VideosService);

repository = module.get(getRepositoryToken(Video));

});

it('should be defined', () => {

expect(service).toBeDefined();

expect(repository).toBeDefined();

});

describe('save', () => {

const newVideo: Omit<Video, 'id'> = videosStub[0];

it('should create a video', async () => {

const result = await service.create(newVideo);

expect(result).toEqual(videosStub[0]);

expect(repository.save).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(repository, 'save').mockRejectedValueOnce(new Error());

expect(service.create(videosStub[0])).rejects.toThrowError();

expect(repository.save).toHaveBeenCalledTimes(1);

});

});

describe('findAll', () => {

it('should return a videos list if search is not informed', async () => {

const result = await service.findAll();

expect(result).toEqual(videosStub);

expect(repository.find).toHaveBeenCalledTimes(1);

});

it('should return a videos list if search is informed', async () => {

//Arrange

const expectedResult = videosStub.filter((video) =>

video.titulo?.includes('teste'),

);

jest.spyOn(repository, 'find').mockResolvedValue(expectedResult);

//Act

const result = await service.findAll('teste');

//Assert

expect(result).toEqual([]);

expect(repository.find).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(repository, 'find').mockRejectedValueOnce(new Error());

expect(service.findAll()).rejects.toThrowError();

expect(repository.find).toHaveBeenCalledTimes(1);

});

});

describe('findOne', () => {

it('should return a video', async () => {

const result = await service.findOne(videosStub[0].id);

expect(result).toEqual(videosStub[0]);

expect(repository.findOne).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(repository, 'findOne').mockRejectedValueOnce(new Error());

expect(service.findOne(1)).rejects.toThrowError();

expect(repository.findOne).toHaveBeenCalledTimes(1);

});

});

describe('update', () => {

it('should return a updated video', async () => {

const result = await service.update(videosStub[0].id, videosStub[0]);

expect(result).toEqual(videosStub[0]);

expect(repository.update).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(repository, 'update').mockRejectedValueOnce(new Error());

expect(service.update(1, videosStub[0])).rejects.toThrowError();

expect(repository.update).toHaveBeenCalledTimes(1);

});

});

describe('remove', () => {

it('should return a removed video', async () => {

const result = await service.remove(videosStub[0].id);

expect(result).toEqual(videosStub[0]);

expect(repository.remove).toHaveBeenCalledTimes(1);

expect(repository.findOne).toHaveBeenCalledTimes(1);

});

it('should throw an exception', () => {

jest.spyOn(repository, 'remove').mockRejectedValueOnce(new Error());

expect(service.remove(1)).rejects.toThrowError();

expect(repository.findOne).toHaveBeenCalledTimes(1);

});

});

});

```

E com isso, fechamos as implementações da segunda semana.

Aaaah, estou fazendo meus commits conforme vou implementando as coisas... (Padronizadinho, conforme configuramos no início)

Está lá no meu [Github](https://github.com/delucagabriel).

Abraços e até a próxima semana! | delucagabriel |

788,731 | Pandas Core Components - The Data Frame Object

| • The DataFrame Object • 1. Constructing a DataFrame From a Series Object • 2. Constructing a... | 0 | 2021-08-11T19:11:05 | https://dev.to/222010301035/pandas-core-components-the-data-frame-object-4dl1 | dataframe, pandas, series, columns | • The DataFrame Object

• 1. Constructing a DataFrame From a Series Object

• 2. Constructing a DataFrame From a Dictionary

• 3. Constructing a Dataframe by Importing Data From a File

The DataFrame Object #

In the previous lesson, we learned about Series. The next fundamental

structure in Pandas that we will learn about is the DataFrame. While a Series

is essentially a column, a DataFrame is a multi-dimensional table made

up of a collection of Series. Dataframes allow us to store and manipulate

tabular data where rows consist of observations and columns represent

variables.

There are several ways to create a DataFrame using pd.DataFrame() . For

example, we can create a DataFrame by passing multiple Series into the

DataFrame object, we can convert a dictionary to a DataFrame or we can

import data from a csv file. Let’s look at each of these in detail.

1. Constructing a DataFrame From a Series Object #

We can create a DataFrame from a single Series by passing the Series object as

input to the DataFrame creation method, along with an optional input

parameter, column, which allows us to name the columns:

import pandas as pd

data_s1 = pd.Series([12, 24, 33, 15],

index=['apples', 'bananas', 'strawberries', 'oranges'])

# 'quantity' is the name for our column

dataframe1 = pd.DataFrame(data_s1, columns=['quantity'])

print(dataframe1)

2. Constructing a DataFrame From a Dictionary #

We can construct a DataFrame form any list of dictionaries. Say we have a

dictionary with countries, their capitals and some other variable (population,

size of that country, number of schools, etc.):

dict = {"country": ["Norway", "Sweden", "Spain", "France"],

"capital": ["Oslo", "Stockholm", "Madrid", "Paris"],

"SomeColumn": ["100", "200", "300", "400"]}

data = pd.DataFrame(dict)

print(data)

We can also construct a DataFrame from a dictionary of Series objects. Say we

have two different Series; one for the price of fruits and one for their quantity.

We want to put all the fruits related data together into a single table. We can

do this like so:

import pandas as pd

quantity = pd.Series([12, 24, 33, 15],

index=['apples', 'bananas', 'strawberries', 'oranges'])

price = pd.Series([4, 4.5, 8, 7.5],

index=['apples', 'bananas', 'strawberries', 'oranges'])

df = pd.DataFrame({'quantity': quantity,

'price': price})

print(df)

3. Constructing a Dataframe by Importing Data From a File #

It’s quite simple to load data from various file formats, e.g., CSV, Excel, json

into a DataFrame. We will be importing actual data for analyzing the IMDB-

movies dataset in the next lesson. Here is what loading data from different file

formats looks like in code:

import pandas as pd

# Given we have a file called data1.csv in our working directory:

df = pd.read_csv('data1.csv')

#given json data

df = pd.read_json('data2.json')

We have only just scratched the surface and learned how to construct

DataFrames. In the next lessons we will go deeper and learn-by-doing the

many methods that we can call on these powerful objects. | 222010301035 |

788,755 | Testing Authenticated Routes in AdonisJS | If you haven't created an AdonisJS 5.0 app yet, you can check out my previous post or follow the... | 0 | 2021-08-12T00:22:59 | https://warrenwong.org/posts/testing-authenticated-routes-in-adonisjs | adonisjs, node, testing, typescript | ---

title: Testing Authenticated Routes in AdonisJS

published: true

date: 2021-08-11 00:00:00 UTC

tags: [AdonisJS, NodeJS, Testing, TypeScript]

canonical_url: https://warrenwong.org/posts/testing-authenticated-routes-in-adonisjs

---

> If you haven't created an AdonisJS 5.0 app yet, you can check out my [previous post](https://warrenwong.org/posts/posts/setting-up-vscode-for-adonisjs) or follow the docs [here](https://preview.adonisjs.com/guides/quick-start).

> We'll be testing authenticated routes, so if you haven't added authentication to your AdonisJS project, take a look at **[Add Authentication to Your AdonisJS Project](https://warrenwong.org/posts/posts/add-authentication-to-adonisjs)**. For some background on the libraries used, check out [this post](https://docs.adonisjs.com/cookbooks/testing-adonisjs-apps#sidenav-open) [Aman Virk](https://twitter.com/AmanVirk1) wrote.

## Set Up the Test Runner

So it's time to add tests to your brand new AdonisJS project, but what to do? AdonisJS doesn't come with a test-runner out-of-the-box at the moment. Well, for the most part, it's fairly simple if you just follow these simple steps.

First, install the dependencies:

```bash

# npm

npm i -D japa execa get-port supertest @types/supertest jsdom @types/jsdom

# yarn

yarn add -D japa execa get-port supertest @types/supertest jsdom @types/jsdom

```

Now, just copy `japaFile.ts` from the [article here](https://docs.adonisjs.com/cookbooks/testing-adonisjs-apps#interacting-with-the-database). We'll need to interact with the database so just copy it verbatim and place it at the base directory of the project:

```ts

import { HttpServer } from "@adonisjs/core/build/src/Ignitor/HttpServer";

import execa from "execa";

import getPort from "get-port";

import { configure } from "japa";

import { join } from "path";

import "reflect-metadata";

import sourceMapSupport from "source-map-support";

process.env.NODE_ENV = "testing";

process.env.ADONIS_ACE_CWD = join(__dirname);

sourceMapSupport.install({ handleUncaughtExceptions: false });

export let app: HttpServer;

async function runMigrations() {

await execa.node("ace", ["migration:run"], {

stdio: "inherit",

});

}

async function rollbackMigrations() {

await execa.node("ace", ["migration:rollback"], {

stdio: "inherit",

});

}

async function startHttpServer() {

const { Ignitor } = await import("@adonisjs/core/build/src/Ignitor");

process.env.PORT = String(await getPort());

app = new Ignitor(__dirname).httpServer();

await app.start();

}

async function stopHttpServer() {

await app.close();

}

configure({

files: ["test/**/*.spec.ts"],

before: [runMigrations, startHttpServer],

after: [stopHttpServer, rollbackMigrations],

});

```

To run the test, we'll create a test script in our `package.json` file:

```json

{

"scripts": {

"test": "node -r @adonisjs/assembler/build/register japaFile.ts"

}

}

```

When working locally, I like to have a different database for `dev` and `testing`. AdonisJS can read the `.env.testing` file when `NODE_ENV=testing`, which was set in the `japaFile.ts` file. The easiest thing to do is to copy the `.env` file and rename it to `.env.testing`. Then go and add `_test` to the end of the current database name you have for your dev environment.

```bash

...

PG_DB_NAME=todos_test

```

Since we configured our test runner to look in the `test` directory for any file with the `.spec.ts` extension, we can just place any file matching that pattern in the test directory, and we will run it with the `npm test` command.

## Set Up the Authentication Secured Routes (To-dos)

As with any tutorial, we want to have a simple, but practical, example. Let's just use a Tt-do list app as an example. Let's go over what we want to do with our To-dos.

I want a user to be signed-in in order to create and/or update a todo. What good are todos if no one can see them? So let's allow anyone to look at the list of todos, as well as look at each individual todo. I don't think I want anyone to delete a todo, maybe just to change the status (Open, Completed, or Closed).

Let's leverage the generators to create the model, controller, and migration.

### Let's `make:migration`

```bash

node ace make:migration todos

```

Let's add a `name`, a `description`, and a foreign key of `user_id` to our new table:

```ts

import BaseSchema from "@ioc:Adonis/Lucid/Schema";

export default class Todos extends BaseSchema {

protected tableName = "todos";

public async up() {

this.schema.createTable(this.tableName, table => {

table.increments("id");

table.string("name").notNullable();

table.text("description");

table.integer("user_id").notNullable();

/**

* Uses timestamptz for PostgreSQL and DATETIME2 for MSSQL

*/

table.timestamp("created_at", { useTz: true });

table.timestamp("updated_at", { useTz: true });

table.foreign("user_id").references("users_id");

});

}

public async down() {

this.schema.dropTable(this.tableName);

}

}

```

Run the migration:

```bash

node ace migration:run

```

### Let's `make:model`

```bash

node ace make:model Todo

```

We'll want to add the same 3 fields we added to our migration, but we'll also want to add a `belongsTo` relationship to our model linking the `User` through the `creator` property:

```ts

import { BaseModel, BelongsTo, belongsTo, column } from "@ioc:Adonis/Lucid/Orm";

import { DateTime } from "luxon";

import User from "App/Models/User";

export default class Todo extends BaseModel {

@column({ isPrimary: true })

public id: number;

@column()

public userId: number;

@column()

public name: string;

@column()

public description: string;

@belongsTo(() => User)

public creator: BelongsTo<typeof User>;

@column.dateTime({ autoCreate: true })

public createdAt: DateTime;

@column.dateTime({ autoCreate: true, autoUpdate: true })

public updatedAt: DateTime;

}

```

Add the corresponding `hasMany` relationship to the `User` model now:

```ts

...

import Todo from "App/Models/Todo";

export default class User extends BaseModel {

...

@hasMany(() => Todo)

public todos: HasMany<typeof Todo>;

...

}

```

### Let's `make:controller`

```bash

node ace make:controller Todo

```

Now let's add our new `/todos` path to the `routes.ts` file:

```ts

...

Route.resource("todos", "TodosController").except(["destroy"]).middleware({

create: "auth",

edit: "auth",

store: "auth",

update: "auth",

});

```

Here, we want a RESTful resource, except `destroy`. I also want the request to run through the "auth" middleware for the `create`, `edit`, `store`, and `update` resources. Basically, anyone can view `index` and `show`, but anything else will require authentication.

We can see a list of our new routes with the `node ace list:routes` command. It's handy that it show which routes require authentication. It also lists the route names (handy for redirecting the linking).

```

┌────────────┬────────────────────────────────────┬────────────────────────────┬────────────┬────────────────────────┐

│ Method │ Route │ Handler │ Middleware │ Name │

├────────────┼────────────────────────────────────┼────────────────────────────┼────────────┼────────────────────────┤

│ HEAD, GET │ / │ Closure │ │ home │

├────────────┼────────────────────────────────────┼────────────────────────────┼────────────┼────────────────────────┤

│ HEAD, GET │ /login │ SessionsController.create │ │ login │

├────────────┼────────────────────────────────────┼────────────────────────────┼────────────┼────────────────────────┤

│ POST │ /login │ SessionsController.store │ │ │

├────────────┼────────────────────────────────────┼────────────────────────────┼────────────┼────────────────────────┤

│ POST │ /logout │ SessionsController.destroy │ │ │

├────────────┼────────────────────────────────────┼────────────────────────────┼────────────┼────────────────────────┤

│ HEAD, GET │ /register │ UsersController.create │ │ │

├────────────┼────────────────────────────────────┼────────────────────────────┼────────────┼────────────────────────┤

│ POST │ /register │ UsersController.store │ │ │

├────────────┼────────────────────────────────────┼────────────────────────────┼────────────┼────────────────────────┤

│ HEAD, GET │ /users/:id │ UsersController.show │ │ users.show │

├────────────┼────────────────────────────────────┼────────────────────────────┼────────────┼────────────────────────┤

│ HEAD, GET │ /todos │ TodosController.index │ │ todos.index │

├────────────┼────────────────────────────────────┼────────────────────────────┼────────────┼────────────────────────┤

│ HEAD, GET │ /todos/create │ TodosController.create │ auth │ todos.create │

├────────────┼────────────────────────────────────┼────────────────────────────┼────────────┼────────────────────────┤

│ POST │ /todos │ TodosController.store │ auth │ todos.store │

├────────────┼────────────────────────────────────┼────────────────────────────┼────────────┼────────────────────────┤

│ HEAD, GET │ /todos/:id │ TodosController.show │ │ todos.show │

├────────────┼────────────────────────────────────┼────────────────────────────┼────────────┼────────────────────────┤

│ HEAD, GET │ /todos/:id/edit │ TodosController.edit │ auth │ todos.edit │

├────────────┼────────────────────────────────────┼────────────────────────────┼────────────┼────────────────────────┤

│ PUT, PATCH │ /todos/:id │ TodosController.update │ auth │ todos.update │

└────────────┴────────────────────────────────────┴────────────────────────────┴────────────┴────────────────────────┘

```

## Back to Our Tests

Let's create a new test file called `test/functional/todos.spec.ts`. While I normally just start writing tests as I they come to my head, that's probably not idea. For just a high level overview, I know I'd like to test the To-do features. So far, it's just creating, saving, editing, and updating. Also, I'd want to make sure I test that anyone can access the `index` and `show` routes, but only an authenticated user can see the others.

### Testing "To-dos"

- Todo list shows up at the `index` route.

- Individual todo shows up a the `show` route.

- Create a todo and check the `show` route to see if it exists.

- Edit a todo and check the `show` route to see if the data is updated.

- Navigate to the `create` route without logging in to test if we get redirected to the sign-in page.

- Navigate to the `edit` route without loggin in to test if we get redirected to the sign-in page.

This should cover it for now. As always, feel free to add more if you feel like it.

## Write the tests

### Testing the `index` Route

Anyone should be able to view the list of todos. A good question to ask is what should someone see if there are no todos to see (the null state). Well, there should at least be a link to the `create` route to create a new todo. If there are todos, we should show them.

First, let's start off testing for a page to load when we go to the `index` route, `/todos`. I have an inkling that I will massively refactor this later, but let's just start out simple. No point in premature optimization, expecially if it turns out we need less tests than we think.

```ts

import supertest from "supertest";

import test from "japa";

const baseUrl = `http://${process.env.HOST}:${process.env.PORT}`;

test.group("Todos", () => {

test("'index' should show a link to create a new todo", async assert => {

await supertest(baseUrl).get("/todos").expect(200);

});

});

```

Here we use the [supertest](https://github.com/visionmedia/supertest) library to see if we get a status of 200 back when we navigate to `/todos`. After running the test with `npm test`, it looks like we forgot to even open up our controller file.

```

Missing method "index" on "TodosController"

...

✖ 'index' should show a link to create a new todo

Error: expected 200 "OK", got 500 "Internal Server Error"

```

Let's go a create that `index` method and the Edge template that goes along with it:

```ts

import { HttpContextContract } from "@ioc:Adonis/Core/HttpContext";

export default class TodosController {

public async index({ view }: HttpContextContract) {

return await view.render("todos/index");

}

}

```

```bash

node ace make:view todos/index

```

```handlebars

@layout('layouts/default')

@section('body')

<a href="{{ route('todos.create') }}">Create Todo</a>

@endsection

```

Looks like we're passing the tests after adding this little bit of code. Red-green-refactor FTW!

Let's add some more to our test. I want to test for that link.

```ts

test("'index' should show a link to create a new todo", async assert => {

const { text } = await supertest(baseUrl).get("/todos").expect(200);

const { document } = new JSDOM(text).window;

const createTodosLink = document.querySelector("#create-todo");

assert.exists(createTodosLink);

});

```

Here I want to query the document for an element with the `create-todos` `id`. Once I put the `id` on my "Create Todo" link, I should be green again.

```handlebars

<a href="{{ route('todos.create') }}" id="create-todo">Create Todo</a>

```

Now comes time to actually persist some `Todo`s in the database and test to see if we can see them on `/todos`. Let's just simply create 2 new todos and test for their existence on the page.

```ts

test("'index' should show all todos created", async assert => {

const items = ["Have lunch", "Grocery shopping"];

items.forEach(async name => await Todo.create({ name }));

const { text } = await supertest(baseUrl).get("/todos");

assert.include(text, items[0]);

assert.include(text, items[1]);

});

```

This looks simple enough. Let's create 2 `Todo`s, "Have lunch" and "Grocery shopping". Once these are saved, I should be able to navigate to `/todos` and see both. Since we're doing red-green-refactor, let's run our tests first to get our "red" before we try to turn it "green" by implementing our solution.

```

"uncaughtException" detected. Process will shutdown

error: insert into "todos" ("created_at", "name", "updated_at") values ($1, $2, $3) returning "id" - null value in column "user_id" of relation "todos" violates not-null constraint

```

Oops, looks like we forgot to add a `user_id` to our `Todo`. Let's create a user first, then add these `Todo`s as "related" to the `User`.

```ts

test("'index' should show all todos created", async assert => {

const items = ["Have lunch", "Grocery shopping"];

const user = await User.create({ email: "alice@email.com", password: "password" });

await user.related("todos").createMany([{ name: items[0] }, { name: items[1] }]);

const { text } = await supertest(baseUrl).get("/todos");

assert.include(text, items[0]);

assert.include(text, items[1]);

});

```

Okay, now we're still not passing, but we don't have that knarly "uncaughtException" anymore. Now let's render out our list of todos. To do that, we'll need to query for the list of all todos in the controller, and then pass it to our view.

```ts

import Todo from "App/Models/Todo";

export default class TodosController {

public async index({ view }: HttpContextContract) {

const todos = await Todo.all();

return await view.render("todos/index", { todos });

}

}

```

```handlebars

@section('body')

<ul>

@each(todo in todos)

<li>{{ todo.name }}</li>

@endeach

</ul>

<a href="{{ route('todos.create') }}" id="create-todo">Create Todo</a>

@endsection

```

Awesome. Back to "green".

Now let's work on the `show` route. We should be able to navigate there once the todo has been created.

```ts

test.group("Todos", () => {

...

test("'show' should show the todo details", async assert => {

const user = await User.create({ email: "alice@email.com", password: "password" });

const todo = await user

.related("todos")

.create({ name: "Buy shoes", description: "Air Jordan 1" });

const { text } = await supertest(baseUrl).get(`/todos/${todo.id}`);

assert.include(text, todo.name);

assert.include(text, todo.description);

});

});

```

We're flying now. Our tests seem to have a lot of similar setup code. Possible refactor candidate. I'll note that for later.

```ts