id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

802,255 | Participate in a study: Gender in Online Software Communities | Participate in Gender Research | 0 | 2021-08-24T16:16:06 | https://dev.to/mccurcio/participate-in-a-study-gender-in-online-software-communities-5441 | discuss, learning, career | ---

title: Participate in a study: Gender in Online Software Communities

published: true

description: Participate in Gender Research

tags: #discuss #learning #career

//cover_image: https://home.cc.umanitoba.ca/~maftounm/static/UofM-Logo.png

---

Greetings all, I had an interesting time participating in this study.

Check it out: https://home.cc.umanitoba.ca/~maftounm/

Mahya Maftouni is conducting a user study to explore the role of a design element that emphasizes prosocial behavior in addition to content accuracy in Q&A communities and see if such interventions affect people of different genders.

Who can participate: You are invited to participate if

- You are 18 years old or over,

- You are a Stack Overflow member and visit Stack Overflow at least monthly,

- You have a computer with a browser installed (preferably chrome).

What will you do:

If you decide to participate, you will be asked to interact with a mock Q&A site for 15 minutes. You are not expected to post any questions, answers, or comments. After exploring the prototype, you will be given a short survey that gathers your feedback. In the end, there will be an interview.

Time commitment and compensation:

This study will take approximately one hour. As a thank you for your participation, you will receive $20 CAD (converted to requested currency) at the start of the study. Depending on your preference, it can be provided as an Amazon gift card or cash (via Paypal or e-Transfer).

I had an interesting time participating in this study. | mccurcio |

802,293 | TinyML: Deploying TensorFlow models to Android | What is TinyML? Tiny machine learning (TinyML) is a field that focuses on running machine... | 0 | 2021-08-24T17:21:30 | https://dev.to/badasstechie/tinyml-deploying-tensorflow-models-to-android-2i73 | machinelearning, deeplearning, tinyml, tensorflow | ## What is TinyML?

Tiny machine learning (TinyML) is a field that focuses on running machine learning (mostly deep learning) algorithms directly on edge devices such as microcontrollers and mobile devices. The algorithms have to be highly optimized to be able to run on such systems since most of them are low powered.

## Wait, what do you mean by 'edge devices'?

An edge device is the device which makes use of the **final** output of machine learning algorithms, for instance, a camera that displays the result of image recognition, or a smartphone that plays speech synthesized from text. Most practitioners run machine learning models on more powerful devices, then send the output to edge devices, but this is starting to change with the advent of TinyML.

## Why TinyML?

The need to run machine learning directly on edge devices and the convenience that comes with this has made TinyML become one of the fastest growing fields in deep learning.

## How does one go about deploying ML to edge devices?

1. Train a machine learning model on a more powerful environment such as a cloud virtual machine or a faster computer.

2. Optimize the model, say, by reducing the number of parameters, or by using low precision data types such as 16 bit floats. This will make the model smaller and the inference faster and more power efficient at the cost of accuracy, which is a compromise you'll have to take.

3. Run the model 'on the edge'!

## TensorFlow Lite Quick Start

TensorFlow Lite is TensorFlow's take on TinyML.

### Converting a saved model from TensorFlow to TensorFlow Lite

```python

import tensorflow as tf

model=tf.keras.models.load_model("/path_to_model.h5")

converter = tf.lite.TFLiteConverter.from_keras_model(model)

tflite_model = converter.convert()

open("/tflite_model.tflite", "wb").write(tflite_model)

```

As you can see, it only takes a few lines of code 😊.

### Running a TensorFlow Lite model in TensorFlow Lite's Python Interpreter

```python

import tensorflow as tf

interpreter = tf.lite.Interpreter(model_path="/tflite_model.tflite") #initialize interpreter with model

interpreter.allocate_tensors()

input_details = interpreter.get_input_details()

output_details = interpreter.get_output_details()

inputs = [] #list of input tensors

for index,item in enumerate(inputs):

interpreter.set_tensor(input_details[index]['index'], item)

interpreter.invoke() #run model

outputs = [] #output tensors

num_outputs = len(output_details) #number of output tensors

for index in range(num_outputs):

outputs.append(interpreter.get_tensor(output_details[index]['index']))

```

### Running a TensorFlow Lite model in an Android application

#### 1. Create a new Android Studio Project

#### 2. Import the model into Android Studio

Copy the .tflite model to app/src/main/assets/ - create the assets folder if it does not exist.

#### 3. Import TensorFlow Lite into your project

Add the following dependency to your app-level build.gradle

`implementation 'org.tensorflow:tensorflow-lite:+'`

#### 4. Load Model

Load the .tflite model you placed in your assets folder as a MappedByteBuffer.

```java

private MappedByteBuffer loadModelFile(Context c, String MODEL_FILE) throws IOException {

AssetFileDescriptor fileDescriptor = c.getAssets().openFd(MODEL_FILE);

FileInputStream inputStream = new FileInputStream(fileDescriptor.getFileDescriptor());

FileChannel fileChannel = inputStream.getChannel();

long startOffset = fileDescriptor.getStartOffset();

long declaredLength = fileDescriptor.getDeclaredLength();

return fileChannel.map(FileChannel.MapMode.READ_ONLY, startOffset, declaredLength);

}

model = loadModelFile(this, 'name_of_model.tflite')

```

#### 5. Initialize Interpreter

```java

try {

interpreter = new Interpreter(model);

}

catch (IOException e) {

e.printStackTrace();

}

```

#### 6. Run Model

```Java

Object[] inputs = {input1, input2, ...} //the objects in inputs{} are jagged arrays - what in TensorFlow would be considered tensors

Map<Integer, Object> outputs = new HashMap<>(); //same for outputs

outputs.put(0, output1); //add outputs to the map

interpreter.runForMultipleInputsOutputs(inputs, outputs); //get inference from interpreter

```

And there you go.

## Sample project

[Here is source code](https://github.com/badass-techie/IAmNotReal) for a [GAN](https://en.wikipedia.org/wiki/Generative_adversarial_network) deployed to an Android app with TensorFlow Lite. [Here is the android app](https://play.google.com/store/apps/details?id=com.apptasticmobile.iamnotreal) for you to play with.

| badasstechie |

802,297 | Making programs interact using qtalk | qtalk-go is a versatile IPC/RPC library for Go | 0 | 2021-08-24T18:53:49 | https://dev.to/progrium/making-programs-interact-using-qtalk-4gdc | programming, showdev, go | ---

title: Making programs interact using qtalk

published: true

description: qtalk-go is a versatile IPC/RPC library for Go

tags: programming, showdev, go

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/t3bazpkc56ju321zlsvk.gif

---

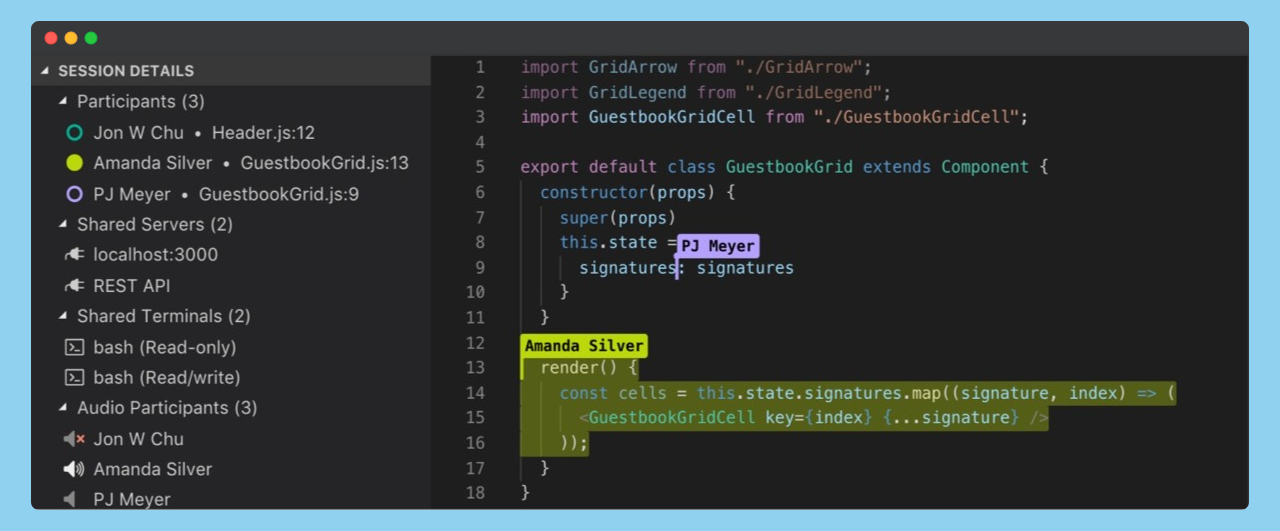

Today I'm releasing a beta of [qtalk-go](https://github.com/progrium/qtalk-go), a versatile IPC/RPC library for Go. I've been using and iterating on it for 5 years to get it as simple and clear as possible.

```golang

// client.go

package main

import (

"context"

"log"

"github.com/progrium/qtalk-go/codec"

"github.com/progrium/qtalk-go/fn"

"github.com/progrium/qtalk-go/talk"

)

func main() {

ctx := context.Background()

// use talk.Dial to get a client

client, err := talk.Dial("tcp", "localhost:9999", codec.JSONCodec{})

if err != nil {

log.Fatal(err)

}

defer client.Close()

// call Upper and print the string return value

var ret string

_, err = client.Call(ctx, "Upper", fn.Args{"hello world"}, &ret)

if err != nil {

log.Fatal(err)

}

log.Println(ret)

// call Error and expect err to be the returned error

_, err = client.Call(ctx, "Error", fn.Args{"user error"}, nil)

log.Println(err)

// Output:

// HELLO WORLD

// remote: user error [/Error]

}

```

qtalk is based on over a decade of building and cataloging approaches, patterns, anti-patterns, and best practices in network programming. My interest goes all the way back to high school when I first started playing with Winsock attempting to make massively multiplayer games. Then as a young web developer, pushing the limits of HTTP, discovering how to stream real-time to the browser years before Websocket was dreamed up. Then further abusing HTTP to model other protocols like DNS and IMAP. I pioneered distributed callbacks with webhooks, which got me working at early Twilio where I started going deep on scalable, highly-available messaging architectures. This led me into distributed systems: discovery, coordination, scheduling, etc. I've seen a lot.

I originally wanted to release qtalk with a paper describing all the significant choices to consider when building a stack like this: message framing, data formats, transports, security mechanisms, protocol flows, queuing, multiplexing, batching, layering, schemas, IDLs, symmetrical vs asymmetrical, stateful vs stateless, TCP vs UDP, etc. It would be a sort of guide for building your own stack. I'd still like to write that at some point, but this post will have to suffice for now.

**qtalk makes no significant claims other than being the most bang for buck in simplicity and versatility.** I've made a full walkthrough of various ways it can be used on the [wiki](https://github.com/progrium/qtalk-go/wiki/Examples), but I'll share a taste here.

Here is the server to the client code from above for you to try. Together they show qtalk being used in the simplest case, traditional RPC:

```golang

// server.go

package main

import (

"fmt"

"log"

"net"

"strings"

"github.com/progrium/qtalk-go/codec"

"github.com/progrium/qtalk-go/fn"

"github.com/progrium/qtalk-go/rpc"

)

type service struct{}

func (svc *service) Upper(s string) string {

return strings.ToUpper(s)

}

// methods can opt-in to receive the call as last argument.

// also, errors can be returned to be received as remote errors.

func (svc *service) Error(s string, c *rpc.Call) error {

return fmt.Errorf("%s [%s]", s, c.Selector)

}

func main() {

// create a tcp listener

l, err := net.Listen("tcp", "localhost:9999")

if err != nil {

log.Fatal(err)

}

// setup a server using fn.HandlerFrom to

// handle methods from the service type

srv := &rpc.Server{

Codec: codec.JSONCodec{},

Handler: fn.HandlerFrom(new(service)),

}

// serve until the listener closes

srv.Serve(l)

}

```

## Features

Some basic features of qtalk-go are:

* heavily `net/http` inspired API

* pluggable format codecs

* optional reflection handlers for funcs and methods

* works over any `io.ReadWriteCloser`, including STDIO

* easily portable to other languages

The more unique features of qtalk-go I want to talk about are:

* connection multiplexing

* bidirectional calling

## Multiplexing Layer

The connection multiplexing layer is based on [qmux](https://github.com/progrium/qmux), a subset of SSH that I've [written about previously](https://dev.to/progrium/the-history-and-future-of-socket-level-multiplexing-1d5n). It was designed to optionally be swapped out with QUIC as needed. Either way, everything in qtalk happens over flow-controlled channels, which can be used like embedded TCP streams. Whatever you do with qtalk, you can also tunnel other connections and protocols on the same connection.

RPC is just a layer on top, where each call gets its own channel. This makes request/reply correlation simple, streaming call input/output easy, and lets you hijack the call channel to do something else without interrupting other calls. You can start with an RPC call and then let it become a full-duplex bytestream pipe. Imagine a call that provisions a database and then becomes a client connection to it.

## Bidirectional Calling

Bidirectional calling allows both the client and server to make and respond to calls. Decoupling the caller and responder roles from the connection topology lets you implement patterns like the worker pattern, where a worker connects to a coordinator and responds to its calls.

This also allows for various forms of callbacks in either direction. Not only do callbacks let you build more extensible services, but generally open up more ways for processes to talk to each other. Especially when combined with the other aspects of qtalk.

Imagine a TCP proxy with an API letting services register a callback whenever a connection comes through, and the callback includes a tee of the client bytestream letting this external service monitor and maybe close the connection when it sees something it doesn't like.

## State Synchronization

State synchronization isn't a feature but a common pattern you can easily implement in a number of ways with qtalk. While many people think about pubsub with messaging, which you can also implement with qtalk, I've learned you usually actually want state synchronization instead. Below is a simple example.

Our server will have a list of usernames connected, which is our state. When a client connects, it calls Join to add its username to the list. This also registers the client to receive a callback passing the list of usernames whenever it changes. The client can then call Leave, or if it disconnects abruptly it will be unregistered with the next update.

```golang

// server.go

package main

import (

"context"

"log"

"net"

"sync"

"github.com/progrium/qtalk-go/codec"

"github.com/progrium/qtalk-go/fn"

"github.com/progrium/qtalk-go/rpc"

)

// State contains a map of usernames to callers,

// which are used as a callback client to that user

type State struct {

users sync.Map

}

// Users gets a list of usernames from the keys of the sync.Map

func (s *State) Users() (users []string) {

s.users.Range(func(k, v interface{}) bool {

users = append(users, k.(string))

return true

})

return

}

// Join adds a username and caller using the injected rpc.Call

// value, then broadcasts the change

func (s *State) Join(username string, c *rpc.Call) {

s.users.Store(username, c.Caller)

s.broadcast()

}

// Leave removes the user from the sync.Map and broadcasts

func (s *State) Leave(username string) {

s.users.Delete(username)

s.broadcast()

}

// broadcast uses the rpc.Caller values to perform a callback

// with the "state" selector, passing the current list of

// usernames. any callers that return an error are added to

// gone and then removed with Leave

func (s *State) broadcast() {

users := s.Users()

var gone []string

s.users.Range(func(k, v interface{}) bool {

_, err := v.(rpc.Caller).Call(context.Background(), "state", users, nil)

if err != nil {

log.Println(k.(string), err)

gone = append(gone, k.(string))

}

})

for _, u := range gone {

s.Leave(u)

}

}

func main() {

// create a tcp listener

l, err := net.Listen("tcp", "localhost:9999")

if err != nil {

log.Fatal(err)

}

// setup a server using fn.HandlerFrom to

// handle methods from the state value

srv := &rpc.Server{

Codec: codec.JSONCodec{},

Handler: fn.HandlerFrom(new(State)),

}

// serve until the listener closes

srv.Serve(l)

}

```

The Call pointer that handlers can receive has a reference to a Caller, which is a client to make calls back to the caller, allowing callbacks.

Our client is straightforward. After setting up a connection and a handler to receive and display an updated username listing, we call Join with a username, wait for SIGINT, and call Leave before exiting.

```golang

// client.go

package main

import (

"context"

"flag"

"fmt"

"log"

"os"

"os/signal"

"github.com/progrium/qtalk-go/codec"

"github.com/progrium/qtalk-go/fn"

"github.com/progrium/qtalk-go/rpc"

"github.com/progrium/qtalk-go/talk"

)

func fatal(err error) {

if err != nil {

log.Fatal(err)

}

}

func main() {

flag.Parse()

// establish connection to server

client, err := talk.Dial("tcp", "localhost:9999", codec.JSONCodec{})

fatal(err)

// state callback handler that redraws the user list

client.Handle("state", rpc.HandlerFunc(func(r rpc.Responder, c *rpc.Call) {

var users interface{}

if err := c.Receive(&users); err != nil {

log.Println(err)

return

}

// the nonsense are terminal escape codes

// to return to the last line and clear it

fmt.Println("\u001B[1A\u001B[K", users)

}))

// respond to incoming calls

go client.Respond()

// call Join passing a username from arguments

_, err = client.Call(context.Background(), "Join", fn.Args{flag.Arg(0)}, nil)

fatal(err)

// wait until we get SIGINT

ch := make(chan os.Signal)

signal.Notify(ch, os.Interrupt)

<-ch

// call Leave before finishing

_, err = client.Call(context.Background(), "Leave", fn.Args{flag.Arg(0)}, nil)

fatal(err)

}

```

See the [Examples wiki page](https://github.com/progrium/qtalk-go/wiki/Examples) for more code examples, including tunnels and proxies, selector routing, and streaming responses.

## Roadmap

I'm trying to get to a 1.0 for [qtalk-go](https://github.com/progrium/qtalk-go), so I'd like more people to use and review its code. I also haven't actually gotten around to [putting in QUIC](https://github.com/progrium/qtalk-go/issues/2) as a usable base layer, which I think should be in a 1.0 release. It's in the name, qtalk was started with QUIC in mind. Not only will QUIC improve performance, resolve head of line blocking, and eventually be native to browsers, but being UDP-based means that hole punching can be used to establish peer-to-peer qtalk connections. I'd like to one day be able to use qtalk directly between machines behind NAT.

Meanwhile, I'm wrapping up a JavaScript implementation (in TypeScript) to officially release soon. I have the start of a Python implementation I could use help with, and I'd love to have a C# implementation.

That's it for now. A big thanks to my [sponsors](https://github.com/sponsors/progrium) for making this happen and thanks to you for reading!

---

*For more great posts like this sent directly to your inbox and to find out what all I'm up to, get on the list at [progrium.com](http://progrium.com) ✌️* | progrium |

802,371 | Operadores Lógicos: Exemplos (Parte 2) | Vou criar quatro variáveis com valores definidos: const A = 5; const B = 3; const C = 7; const D =... | 0 | 2021-09-04T14:52:02 | https://ananopaisdojavascript.hashnode.dev/operadores-logicos-exemplos-parte-2 | javascript, algorithms | Vou criar quatro variáveis com valores definidos:

```javascript

const A = 5;

const B = 3;

const C = 7;

const D = 2;

```

Vamos ver na prática como funcionam os operadores lógicos.

```javascript

A > B && C > D; // true

```

`A` é maior do que `B` e `C` é maior do que `D`. As duas afirmações são verdadeiras, portanto o resultado geral é `true`.

```javascript

A < B && C > D; // false

```

`A` não é menor do que `B` e `C` é maior do que `D`. A primeira afirmação é falsa, portanto o resultado geral será `false`.

```javascript

A < B || C > D; // true

```

Usei as mesmas expressões do exemplo anterior, agora com `||`. A primeira afirmação é falsa, porém a segunda é verdadeira, portanto o resultado geral será `true`.

```javascript

const isThisNumberEven = false;

console.log(!isThisNumberEven); // true

```

A variável `isThisNumberEven` foi declarada com um valor `false`. Porém, com o uso do `!`, o valor tornou-se `true`.

E aí? Gostaram? Até a próxima anotação! 😊 | ananopaisdojavascript |

802,493 | Day 437 : So Ambitious | liner notes: Professional : Had a group meeting in the morning. After that, just worked on cleaning... | 0 | 2021-08-24T23:40:29 | https://dev.to/dwane/day-437-so-ambitious-2hdn | hiphop, code, coding, lifelongdev | _liner notes_:

- Professional : Had a group meeting in the morning. After that, just worked on cleaning up some code for one demo and figured out a workaround for another demo. Yeah, pretty good day. It went by super quick.

- Personal : Last night, I started back watching Boruto. I actually remembered the story line. Also took a look at where I left off with my side project when I first started it in the beginning of the year. I got a lot more done than I remember. I was so ambitious. haha I'm going to pull it back a little to make sure I can launch by the deadline I set for myself. I also tested out the new equipment for the radio show. Everything worked as expected. I think I'll be using it for this week's radio show.

Normally I try to get the blog posted before eating dinner, but I was pretty hungry. haha. I'm going to see if I can get an idea I had for the side project working in a Codepen before implementing it into my code. I've been researching yet another camera to do some live streams. It's similar to the one I backed on IndieGogo, but I can't get it to transmit video to my media server like other cameras I have. It may have to do with the encoding of the video, but I'm not sure. This other camera looks like it has more controls of the video that is transmitted. I don't need the camera until I start travelling again, so what I may do is put the money into some sort of account to gain more interest than my regular savings account. We'll see, more research to be done.

Have a great night!

peace piece

Dwane / conshus

https://dwane.io / https://HIPHOPandCODE.com

{% youtube Z0Hb0BS8FOg %} | dwane |

802,657 | COMPUTATIONAL THINKING | Future of education is impossible without the use of communication technologies. It emphasized that... | 0 | 2021-08-25T03:48:20 | https://dev.to/viktorilie/computational-thinking-37bi | algorithms, programming, computerscience, logic | Future of education is impossible without the use of communication technologies. It emphasized that it is necessary to start computer programming from the lowest levels of education.

It has been observed that programming in a child development is a logical way of thinking that contributes to creative problem solving. In a way it prepares the child for the future by developing a number of skills such as:

• Persistence;

• Successful teamwork;

• Practice thinking;

• Precision

This helps to successfully overcome the problems of everyday life activities.

It helps to master easily and successfully the contents in other branches of education (other subjects).

**Computational thinking** is a thinking process that formulates the problem and creates its solution in a way that is understandable to the machine (computer) and that can be performed mechanically.

In other words, it allows us to understand a complex problem:

• the nature of the problem

• to develop possible solutions that can be presented in a way that the computer, human, or both can understand.

Computer thinking is used in algorithmic problem solving. A person who has the ability to think computerically is able to logically organize and analyze data, and is able to break the problem into smaller pieces, to solve it using some programming techniques such as iterations, logic operations and the like.

In addition, knowing how to identify, analyze and apply possible solutions in order to achieve the most efficient problem solving.

There are four main techniques of computer thinking:

• **Decomposition**

• **Pattern recognition**

• **Abstraction**

• **Algorithms**

**Decomposition** is the breakdown of a complex problem into smaller problems.

A complex problem is a problem that at first glance we do not understand or do not know how to solve. Therefore, we break it down into smaller problems that are easy to understand and solve.

We solve all minor problems. We integrate their solutions, and through their integration we solve the complex problem.

**Pattern recognition** is a process that takes place after the decomposition process.

During this process, each smaller problem obtained by breaking down the big problem is analyzed and the similarities and connections between the smaller problems are sought.

Once they are found, questions are asked about how to make the best use of that knowledge.

It is a way of solving new problems based on solutions to previous problems and previous experiences.

Solutions to some specific problems can be adapted and used to solve a whole class of similar problems.

Namely, the solutions to similar problems are grouped in a general solution. So whenever we encounter problems of a similar kind, for their solution, we use this general solution.

In educational practice this method can be used very effectively.

Students are given a set of tasks to solve.They look for similarities between the set tasks, ie common elements between those tasks. After that they find a solution for one task that is adaptable for everyone else.

**Abstraction** is a process that facilitates thinking about a problem.

Abstraction means focusing only on important information and ignoring unimportant details.

It makes the problem understandable by removing unnecessary details.

The basic motto of abstraction is to remove details that can be neglected to make the problem easier, but at the same time nothing of what is important for solving it must be lost.

**Algorithms** are a precise description of the rules and procedures needed to achieve the desired result.

The algorithm is a procedure (procedure) that describes to the last detail the activities and their order, necessary to process the input data to reach the output data, respectively to reach the solution.

All operations performed in the algorithm must be simple enough to be performed, accurately and at the final time, using pencil and paper.

The algorithm must complete a finite number of steps. The procedure that has all the properties as the algorithm, but does not have to end in a final number of steps is a computer procedure.

The procedures for making algorithms are not simple and often require a great deal of creativity. There are no hard and fast rules for defining an algorithm.

Algorithms can be represented in different ways:

• Descriptive, with sentences;

• Graphically, with block diagram of the flow;

• In a language close to human (pseudocode)

• In a strictly formalized programming language.

However, the program on the computer is always in binary form only.

Each of these four techniques mentioned above is equally important.

To successfully solve the problem, it is recommended to use all techniques, ie to be used in combination.

If a technique is not used, there is a possibility that the problem will not be solved successfully.The order of using the techniques depends on the type of problem.Order is very important when solving various tasks. If it is not set correctly, then we can get the wrong result.

Also, when solving a logical task, if we use different sequences, different results can be obtained.

| viktorilie |

802,681 | How to setup the dlink wifi extender? | Overcome poor network signals in your home with the dlink extender. You don’t have to always stick to... | 0 | 2021-08-25T05:30:51 | https://dev.to/customertechn12/how-to-setup-the-dlink-wifi-extender-57fj | dlinkwifiextender, dlinklogin | Overcome poor network signals in your home with the dlink extender. You don’t have to always stick to a particular location in order to benefit from a fast internet speed. With the <a href="https://www.d-linkap.net/">D-Link WiFi Extender Setup</a> in your house, you can increase productivity and multi-task without any kind of disruption.

<a href="https://www.d-linkap.net/dlinkrouter-local/">Dlinkrouter.local</a>

<a href="https://www.d-linkap.net/">Dlinkap.local</a>

| customertechn12 |

802,848 | Search Meter für WordPress | Es gibt WordPress-Plugins, die scheinen erst einmal unwichtig zu sein.... WordPress ist eine... | 0 | 2021-08-25T09:32:41 | https://bloggerpilot.com/search-meter/ | wordpress, seo, wordpressplugins | ---

title: Search Meter für WordPress

published: true

date: 2021-08-25 08:44:32 UTC

tags: WordPress,seo,WordPressPlugins

canonical_url: https://bloggerpilot.com/search-meter/

---

Es gibt [WordPress-Plugins](https://bloggerpilot.com/search-meter/), die scheinen erst einmal unwichtig zu sein....

1. WordPress ist eine großartige Möglichkeit, einen Blog zu erstellen

2. Einfach zu installieren und zu benutzen

3. Du wirst ein Plugin installieren müssen, das dir hilft, etwas zu tun (was im Titel hinzugefügt werden sollte)

3. Dieses Plugin wird dir helfen, etwas zu tun (was im Titel hinzugefügt werden sollte)

4. Dieses Plugin wird dein Leben einfacher machen

5. Und es kann mit nur wenigen Klicks installiert werden | j0e |

802,859 | Creating The Sign Up Page Part I | Hello, glad to see you here again. In today's part of the series, we are going to create the sign up... | 14,274 | 2021-08-25T10:03:13 | https://dev.to/earthcomfy/creating-a-django-registration-login-app-part-ii-3k6 | python, django, webdev, codenewbie | Hello, glad to see you here again. In today's part of the series, we are going to create the sign up page.

------------------------------------------------------------------

Okay to create users we need a model right, we need to define what kind of attributes users should have. Django comes with a built in User model whose primary attributes are:

* username

* password

* email

* first_name

* last_name

If we want our users to have more attributes than this built in model gives us, there are a couple of ways to do that and we will see one way in the future when we create a user profile. But for now we are using the Django User model as it is so we should be fine.

Next, we need a form that will be displayed to the users, validate the data and save it to the database. Luckily we don't have to reinvent the wheel because Django has a built in easy to use UserCreationForm.

> UserCreationForm is a form that creates a user, with no privileges, from the given username and password. - [docs](https://docs.djangoproject.com/en/1.8/_modules/django/contrib/auth/forms/)

- Looks good, but what if we want to include extra fields in our form and get other information about the user upon sign up?

For that, we can just simply extend the built in model form and add more fields. I'm going to add email, firstname, and lastname of the user.

**Don't forget that the fields am adding are already in the User model**

Create forms.py module inside the users app and first let's import the necessary dependencies.

forms.py

```python

from django import forms

from django.contrib.auth.models import User

from django.contrib.auth.forms import UserCreationForm

```

- Forms in Django is a class whose fields map to HTML form elements.

- forms class has different fields for handling different types of data. For example, CharField, DateField... Check out the [docs](https://docs.djangoproject.com/en/3.2/ref/forms/fields/#built-in-field-classes) here to look at more of these fields.

- These form fields are represented to the user as an HTML widget (Widgets render HTML form elements) therefore unless we explicitly specify the widget for our form field, Django will use default widgets which may not look that good.

- By overriding the default widget for the form field, we can bootstrapify our form fields.

forms.py

```python

from django import forms

from django.contrib.auth.models import User

from django.contrib.auth.forms import UserCreationForm

class RegisterForm(UserCreationForm):

# fields we want to include and customize in our form

first_name = forms.CharField(max_length=100,

required=True,

widget=forms.TextInput(attrs={'placeholder': 'First Name',

'class': 'form-control',

}))

last_name = forms.CharField(max_length=100,

required=True,

widget=forms.TextInput(attrs={'placeholder': 'Last Name',

'class': 'form-control',

}))

username = forms.CharField(max_length=100,

required=True,

widget=forms.TextInput(attrs={'placeholder': 'Username',

'class': 'form-control',

}))

email = forms.EmailField(required=True,

widget=forms.TextInput(attrs={'placeholder': 'Email',

'class': 'form-control',

}))

password1 = forms.CharField(max_length=50,

required=True,

widget=forms.PasswordInput(attrs={'placeholder': 'Password',

'class': 'form-control',

'data-toggle': 'password',

'id': 'password',

}))

password2 = forms.CharField(max_length=50,

required=True,

widget=forms.PasswordInput(attrs={'placeholder': 'Confirm Password',

'class': 'form-control',

'data-toggle': 'password',

'id': 'password',

}))

class Meta:

model = User

fields = ['first_name', 'last_name', 'username', 'email', 'password1', 'password2']

```

- I added an id for the password fields because we are gonna use a plugin that shows password hide/show icon - you know that weird looking eye icon. But more on that later when we create the template.

- Under Meta class we can create the link between our model's fields and the different fields we want to have in our form(order matters).

Alright! Let's head over to *views.py* and use the form we just created.

Here I'm using class-based view to handle the register form. Check the [docs](https://docs.djangoproject.com/en/3.2/topics/class-based-views/intro/) for more info about what am using.

views.py

```python

from django.shortcuts import render, redirect

from django.contrib import messages

from django.views import View

from .forms import RegisterForm

class RegisterView(View):

form_class = RegisterForm

initial = {'key': 'value'}

template_name = 'users/register.html'

def get(self, request, *args, **kwargs):

form = self.form_class(initial=self.initial)

return render(request, self.template_name, {'form': form})

def post(self, request, *args, **kwargs):

form = self.form_class(request.POST)

if form.is_valid():

form.save()

username = form.cleaned_data.get('username')

messages.success(request, f'Account created for {username}')

return redirect(to='/')

return render(request, self.template_name, {'form': form})

```

- First by overriding the `form_class` attribute, we are able to tell django which form to use, `template_name` -> the template we want django to look for

- If the request is get, it creates a new instance of an empty form.

- If the request is post,

-- It creates a new instance of the form with the post data. Then checks if the form is valid or not by calling the [`form.is_valid()`](https://docs.djangoproject.com/en/3.2/ref/forms/api/) method.

-- Then if the form is valid, process the cleaned form data and save the user to our database.

- To let the user know that his/her account is successfully created, we can generate a flash message and display his/her username to the page they are getting redirected to(home page).

Next let's map our urlpatterns to our register view.

users/urls.py

```python

from django.urls import path

from .views import home, RegisterView # Import the view here

urlpatterns = [

path('', home, name='users-home'),

path('register/', RegisterView.as_view(), name='users-register'), # This is what we added

]

```

This is getting kinda long so, we will create the template in the next part.

Thanks for your time, you can find the finished app in [github](https://github.com/earthcomfy/Django-registration-and-login-system).

Feel free to ask, and any suggestions are welcome. See ya!

| earthcomfy |

802,886 | Mastering Web Scraping in Python: Scaling to Distributed Crawling | Wondering how to build a website crawler and parser at scale? Implement a project to crawl, scrape,... | 0 | 2021-08-25T13:35:12 | https://www.zenrows.com/blog/mastering-web-scraping-in-python-scaling-to-distributed-crawling | python, beginners, tutorial, webdev | Wondering how to build a website crawler and parser at scale? Implement a project to crawl, scrape, extract content, and store it at scale in a distributed and fault-tolerant manner. We will take all the knowledge from previous posts and combine it.

First, we learned about [pro techniques to scrape content](https://dev.to/anderrv/mastering-web-scraping-in-python-from-zero-to-hero-4fj4), although we'll only use CSS selectors today. Then [tricks to avoid blocks](https://dev.to/anderrv/stealth-web-scraping-in-python-avoid-blocking-like-a-ninja-16ok), from which we will add proxies, headers, and headless browsers. And lastly, we [built a parallel crawler](https://dev.to/anderrv/mastering-web-scraping-in-python-crawling-from-scratch-1dgd), and this blog post begins with that code.

If you do not understand some part or snippet, it might be in an earlier post. Brace yourselves; lengthy snippets are coming.

### Prerequisites

For the code to work, you will need [Redis](https://redis.io/) and [python3](https://www.python.org/downloads/) installed. Some systems have it pre-installed. After that, install all the necessary libraries by running pip install.

```bash

pip install install requests beautifulsoup4 playwright "celery[redis]"

npx playwright install

```

## Intro to Celery and Redis

[Celery](https://docs.celeryproject.org/en/stable/getting-started/introduction.html) "is an open source asynchronous task queue." We created a simple parallel version in the last blog post. Celery takes it a step further by providing an actual distributed queue implementation. We will use it to distribute our load among workers and servers.

[Redis](https://redis.io/) "is an open source, in-memory data structure store, used as a database, cache, and message broker." Instead of using arrays and sets to store all the content (in memory), we will use Redis as a database. Moreover, Celery can use Redis as a broker, so we won't need other software to run it.

## Simple Celery Task

Our first step will be to create a task in Celery that prints the value received by parameter. Save the snippet in a file called `tasks.py` and run it. If you run it as a regular python file, only one string will be printed. The console will print two different lines if you run it with `celery -A tasks worker`.

The difference is in the `demo` function call. Direct call implies "execute that task," while `delay` means "enqueue it for a worker to process." Check the docs for more info on [calling tasks](https://docs.celeryproject.org/en/stable/userguide/calling.html#basics).

{% gist https://gist.github.com/AnderRV/f741355b768c7f4faee9ed5c4cd15f55 %}

The `celery` command will not end; we need to kill it by exiting the console (i.e., `ctrl + C`). We'll need it several times because Celery does not reload after code changes.

## Crawling from Task

The next step is to connect a Celery task with the crawling process. This time we will be using a slightly altered version of the [helper functions](https://www.zenrows.com/blog/mastering-web-scraping-in-python-crawling-from-scratch#final-code) seen in the last post. `extract_links` will get all the links on the page except the `nofollow` ones. We will add filtering options later.

{% gist https://gist.github.com/AnderRV/4e06185b0e53da256622a4f85479db14 %}

We could loop over the retrieved links and enqueue them, but that would end up crawling the same pages repeatedly. We saw the basics to execute tasks, and now we will start splitting into files and keeping track of the pages on Redis.

## Redis for Tracking URLs

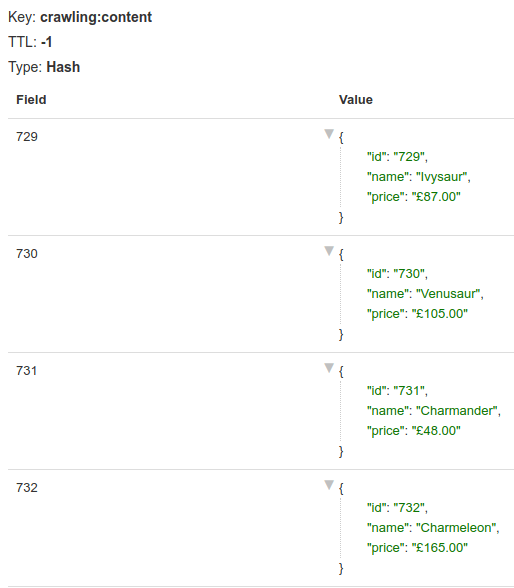

We already said that relying on memory variables is not an option anymore. We will need to persist all that data: visited pages, the ones being currently crawled, keep a "to visit" list, and store some content later on. For all that, instead of enqueuing directly to Celery, we will use Redis to avoid re-crawling and duplicates. And enqueue URLs only once.

We won't go into further details on Redis, but we will use [lists](https://redis.io/commands#list), [sets](https://redis.io/commands#set), and [hashes](https://redis.io/commands#hash).

Take the last snippet and remove the last two lines, the ones calling the task. Create a new file `main.py` with the following content. We will create a list named `crawling:to_visit` and push the starting URL. Then we will go into a loop that will query that list for items and block for a minute until an item is ready. When an item is retrieved, we call the `crawl` function, enqueuing its execution.

{% gist https://gist.github.com/AnderRV/22c4e888e547a1bcd029038a4122f730 %}

It does almost the same as before but allows us to add items to the list, and they will be automatically processed. We could do that easily by looping over `links` and pushing them all, but it is not a good idea without deduplication and a maximum number of pages. We will keep track of all the `queued` and `visited` using sets and exit once their sum exceeds the maximum allowed.

{% gist https://gist.github.com/AnderRV/05702a70a0368a51d826acce8faf08d5 %}

{% gist https://gist.github.com/AnderRV/ca76b6733c381c0949fdc2be45900881 %}

After executing, everything will be in Redis, so running again won't work as expected. We need to clean manually. We can do that by using `redis-cli` or a GUI like [redis-commander](https://github.com/joeferner/redis-commander#readme). There are commands for deleting keys (i.e., `DEL crawling:to_visit`) or [flushing the database](https://redis.io/commands/flushdb) (careful with this one).

## Separate Responsabilities

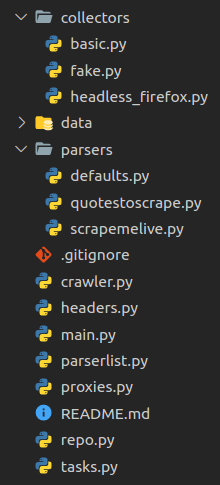

We will start to separate concepts before the project grows. We already have two files: `tasks.py` and `main.py`. We will create another two to host crawler-related functions (`crawler.py`) and database access (`repo.py`). Please look at the snippet below for the repo file, it is not complete, but you get the idea. There is a [GitHub repository](https://github.com/ZenRows/scaling-to-distributed-crawling) with the final content in case you want to check it.

{% gist https://gist.github.com/AnderRV/797bfb64cbdd5e4e2d996dc184c121c4 %}

And the `crawler` file will have the functions for crawling, extracting links, and so on.

## Allow Parser Customization

As mentioned above, we need some way to extract and store content and add only a particular subset of links to the queue. We need a new concept for that: default parser (`parsers/defaults.py`).

{% gist https://gist.github.com/AnderRV/4c0dfe8a584334a49eef09cd30287028 %}

And in the repo.py file:

{% gist https://gist.github.com/AnderRV/e00bdaa8652518dca9c4c3e91a63a474 %}

There is nothing new here, but it will allow us to abstract the link and content extraction. Instead of hardcoding it in the crawler, it will be a set of functions passed as parameters. Now we can substitute the calls to these functions by an import (for the moment).

For it to be completely abstracted, we need a generator or factory. We'll create a new file to host it - `parserlist.py`. To simplify a bit, we allow one custom parser per domain. The demo includes two domains for testing: [scrapeme.live](https://scrapeme.live/shop/page/1/) and [quotes.toscrape.com](http://quotes.toscrape.com/page/1/).

There is nothing done for each domain yet so that we will use the default parser for them.

{% gist https://gist.github.com/AnderRV/004d891f58cb88e52d6f4048209bb24e %}

We can now modify the task with the new per-domain-parsers.

{% gist https://gist.github.com/AnderRV/004d891f58cb88e52d6f4048209bb24e %}

## Custom Parser

We will use `scrapeme` first as an example. Check the [repo](https://github.com/ZenRows/scaling-to-distributed-crawling/blob/main/parsers/scrapemelive.py) for the final version and the other custom parser.

Knowledge of the page and its HTML is required for this part. Take a look at it if you want to get the feeling. To summarize, we will get the product id, name, and price for each item in the product list. Then store that in a set using the id as the key. As for the links allowed, only the ones for pagination will go through the filtering.

{% gist https://gist.github.com/AnderRV/a1a33d102e43893f278b379dd17e104c %}

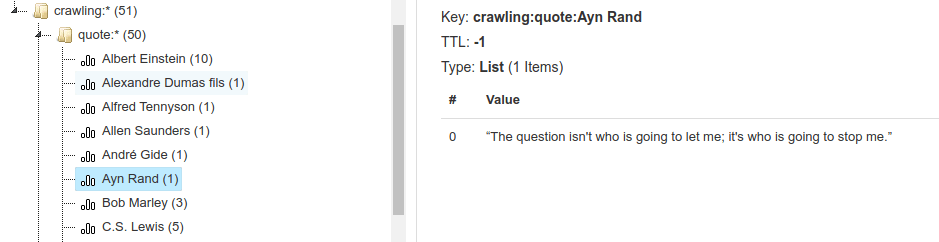

In the `quotes` site, we need to handle it differently since there is no ID per quote. We will extract the author and quote for each entry in the list. Then, in the `store_content` function, we'll create a list for each author and add that quote. Redis handles the creation of the lists when necessary.

{% gist https://gist.github.com/AnderRV/08feeafc01861b10a414b0e4675a17cf %}

With the last couple of changes, we have introduced custom parsers that will be easy to extend. When adding a new site, we must create one file per new domain and one line in `parserlist.py` referencing it. We could go a step further and "auto-discover" them, but no need to complicate it even more.

## Get HTML: Headless Browsers

Until now, every page visited was done using `requests.get`, which can be inadequate in some cases. Say we want to use a different library or headless browser, but just for some cases or domains. Loading a browser is memory-consuming and slow, so we should avoid it when it is not mandatory. The solution? Even more customization. New concept: collector.

We will create a file named `collectors/basic.py` and paste the already known `get_html` function. Then change the defaults to use it by importing it. Next, create a new file, `collectors/headless_firefox.py`, for the new and shiny method of getting the target HTML. As in the previous post, we will be using [playwright](https://playwright.dev/python/docs/intro/). And we will also parametrize headers and proxies in case we want to use them. _Spoiler: we will_.

{% gist https://gist.github.com/AnderRV/deb0b6231eed6cc8133159bf841ef4f0 %}

If we want to use a headless Firefox for some domain, merely modify the `get_html` for that parser (i.e., `parsers/scrapemelive.py`).

{% gist https://gist.github.com/AnderRV/56fa3a6d8e58cb3cb8d73015997a69d7 %}

As you can see in the [final repo](https://github.com/ZenRows/scaling-to-distributed-crawling/blob/main/collectors/fake.py), we also have a `fake.py` collector used in `scrapemelive.py`. Since we used that website for intense testing, we downloaded all the product pages the first time and stored them in a `data` folder. We can customize with a headless browser, but we can do the same with a file reader, hence the "fake" name.

{% gist https://gist.github.com/AnderRV/75bb1291af682e1d2678656b5dd1800d %}

## Avoid Detection with Headers and Proxies

You guessed it: we want to add custom headers and use proxies. We will start with the headers creating a file `headers.py`. We won't paste the entire content here, there are three different sets of headers for a Linux machine, and it gets pretty long. Check the [repo](https://github.com/ZenRows/scaling-to-distributed-crawling/blob/main/headers.py) for the details.

{% gist https://gist.github.com/AnderRV/ababe91d84e3d5486c2294853b6db33a %}

We can import a concrete set of headers or call the `random_headers` to get one of the available options. We will see a usage example in a moment.

The same applies to the proxies: create a new file, `proxies.py`. It will contain a list of them grouped by the provider. In our example, we will include only [free proxies](https://free-proxy-list.net/). Add your paid ones in the `proxies` dictionary and change the default type to the one you prefer. If we were to complicate things, we could add a retry with a different provider in case of failure.

_Note that these [free proxies](https://free-proxy-list.net/) might not work for you. They are short-time lived._

{% gist https://gist.github.com/AnderRV/663a0667e384c79bcedcda8e1d8eff27 %}

And the usage in a parser:

{% gist https://gist.github.com/AnderRV/3a4e6b582cbf3027025ee937c42ce637 %}

## Bringing it All Together

It's been a long and eventful trip. It is time to put an end to it by completing the puzzle. We hope you understood the whole process and all the challenges that scraping and crawling at scale have.

We cannot show here the final code, so take a look at the [repository](https://github.com/ZenRows/scaling-to-distributed-crawling) and do not hesitate to comment or contact us with any doubt.

The two entry points are `tasks.py` for Celery and `main.py` to start queueing URLs. From there, we begin storing URLs in Redis to keep track and start crawling the first URL. A custom or the default parser will get the HTML, extract and filter links, and generate and store the appropriate content. We add those links to a list and start the process again. Thanks to Celery, once there is more than one link in the queue, the parallel/distributed process starts.

## Points Still Missing

We already covered a lot of ground, but there is always a step more. Here are a few functionalities that we did not include. Also, note that most of the code does not contain error handling or retries for brevity's sake.

### Distributed

We didn't include it, but Celery offers it out-of-the-box. For local testing, we can start two different workers `celery -A tasks worker --concurrency=20 -n worker1` and `... -n worker2`. The way to go is to do the same in other machines as long as they can connect to the broker (Redis in our case). We could even add or remove workers and servers on the fly, no need to restart the rest. Celery handles the workers and distributes the load.

It is important to note that the worker's name is essential, especially when starting several in the same machine. If we execute the above command twice without changing the worker's name, Celery won't recognize them correctly. Thus launch the second one as `-n worker2`.

### Rate Limit

Celery does not allow a rate limit per task and parameter (in our case, domain). Meaning that we can throttle workers or queues, but not to a fine-grained detail as we would like to. There are several [issues](https://github.com/celery/celery/issues/5732) open and [workarounds](https://stackoverflow.com/questions/29854102/celery-rate-limit-on-tasks-with-the-same-parameters). From reading several of those, the take-away is that we cannot do it without keeping track of the requests ourselves.

We could easily rate-limit to 30 requests per minute for each task with the provided param `@app.task(rate_limit="30/m")`. But remember that it would affect the task, not the crawled domain.

### Robots.txt

Along with the `allow_url_filter` part, we should also add a robots.txt checker. For that, the [robotparser library](https://docs.python.org/3/library/urllib.robotparser.html) can take a URL and tell us if it is allowed to crawl it. We can add it to the default or as a standalone function, and then each scraper decides whether to use it. We thought it was complex enough and did not implement this functionality.

If you were to do it, consider the last time the file was accessed with `mtime()` and reread it from time to time. And also, cache it to avoid requesting it for every single URL.

## Conclusion

Building a custom crawler/parser at scale is not an easy nor straightforward task. We provided some guidance and tips, hopefully helping you all with your day-to-day tasks.

Before developing something as big as this project at scale, think about some important take-aways:

1. Separate responsabilities.

2. Use abstractions when necessary, but do not over-engineer.

3. Don't be afraid of using specialized software instead of building everything.

4. Think about scaling even if you don't need it now; just keep it in mind.

Thanks for joining us until the end. It's been a fun series to write, and we hope it's also been attractive from your side. If you liked it, you might be interested in [the Javascript Web Scraping guide](https://www.zenrows.com/blog/web-scraping-with-javascript-and-nodejs?utm_source=devto&utm_medium=blog&utm_campaign=distributed_crawling).

Do not forget to take a look at the rest of the posts in this series.

+ [Crawling from Scratch](https://www.zenrows.com/blog/mastering-web-scraping-in-python-crawling-from-scratch?utm_source=devto&utm_medium=blog&utm_campaign=distributed_crawling) (3/4)

+ [Avoid Blocking Like a Ninja](https://www.zenrows.com/blog/stealth-web-scraping-in-python-avoid-blocking-like-a-ninja?utm_source=devto&utm_medium=blog&utm_campaign=distributed_crawling) (2/4)

+ [Mastering Extraction](https://www.zenrows.com/blog/mastering-web-scraping-in-python-from-zero-to-hero?utm_source=devto&utm_medium=blog&utm_campaign=distributed_crawling) (1/4)

Did you find the content helpful? Please, spread the word and share it. 👈

---

Originally published at [https://www.zenrows.com](https://www.zenrows.com/blog/mastering-web-scraping-in-python-scaling-to-distributed-crawling?utm_source=devto&utm_medium=blog&utm_campaign=distributed_crawling) | anderrv |

803,089 | How does wrinkle treatment with injections work? | https://osloplastikkirurgi.no/behandlinger/behandlinger-hos-kvinner/rynkebehandling/ | 0 | 2021-08-25T12:46:15 | https://dev.to/osloplastikkir1/how-does-wrinkle-treatment-with-injections-work-38e9 | plastikkirurg | https://osloplastikkirurgi.no/behandlinger/behandlinger-hos-kvinner/rynkebehandling/ | osloplastikkir1 |

803,458 | Day 183 | Day 183/200 of #100DaysOfCode Solved three problems on Codewars. Practice webpack using... | 11,311 | 2021-08-25T17:57:07 | https://dev.to/rb_wahid/day-183-5di9 | 100daysofcode, programming | Day 183/200 of #100DaysOfCode

- Solved three problems on Codewars.

- Practice webpack using babel-loader, css-loader, html-loader | rb_wahid |

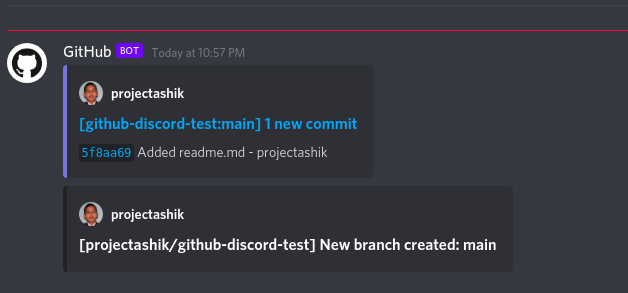

803,533 | Producing packages for Windows Package Manager | In my previous articles about winget I talked about installing packages but I did not talk about... | 0 | 2021-08-25T22:03:02 | https://techwatching.dev/posts/wingetcreate | winget, windows, tooling, githubactions | ---

title: Producing packages for Windows Package Manager

published: true

date: 2021-08-25 00:00:00 UTC

tags: winget, windows, tooling, githubactions

canonical_url: https://techwatching.dev/posts/wingetcreate

---

In my [previous articles about winget](https://www.techwatching.dev/tags/winget) I talked about installing packages but I did not talk about producing packages for Windows Package Manager. So let's set things right.

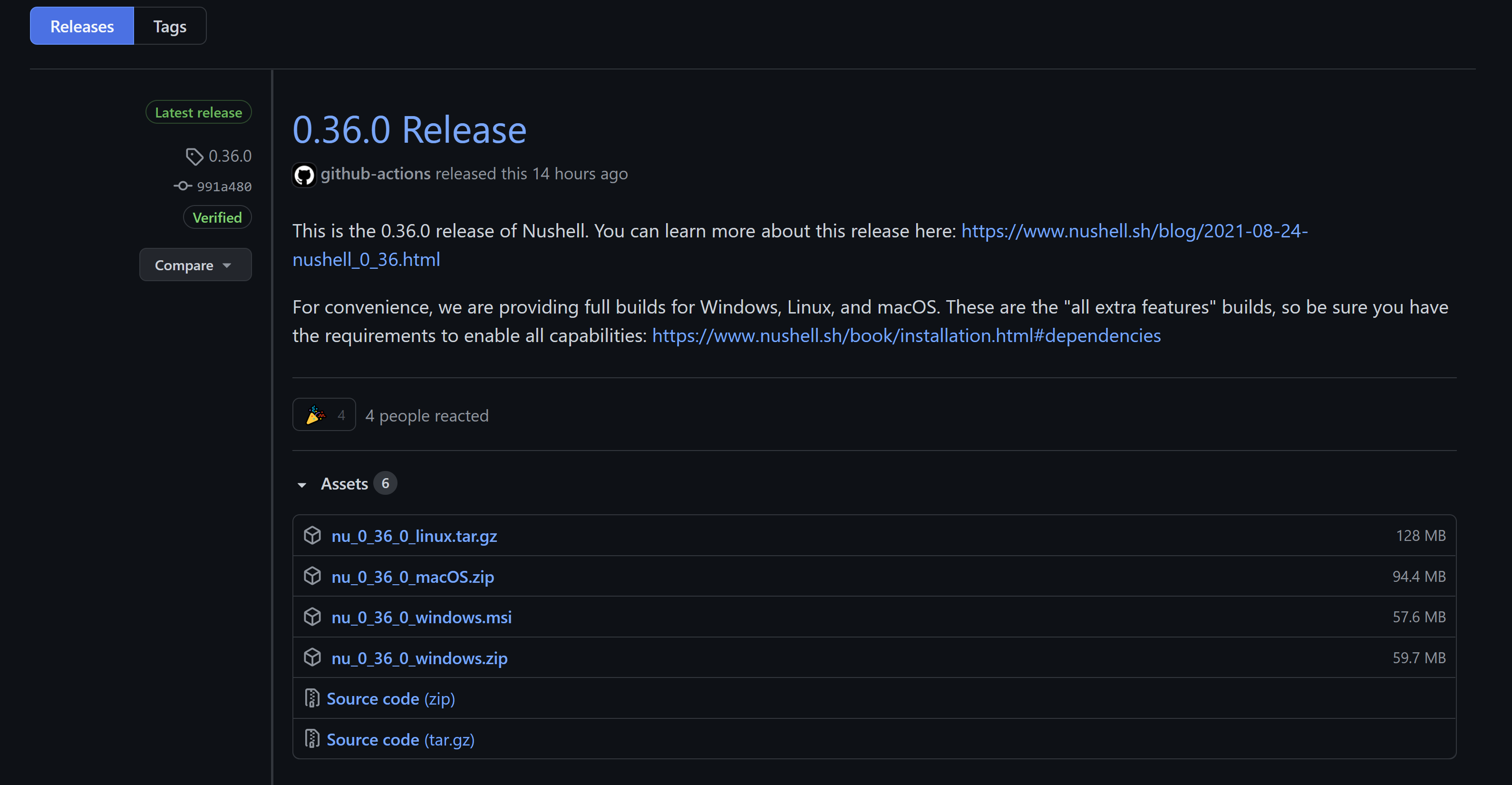

## About winget packages

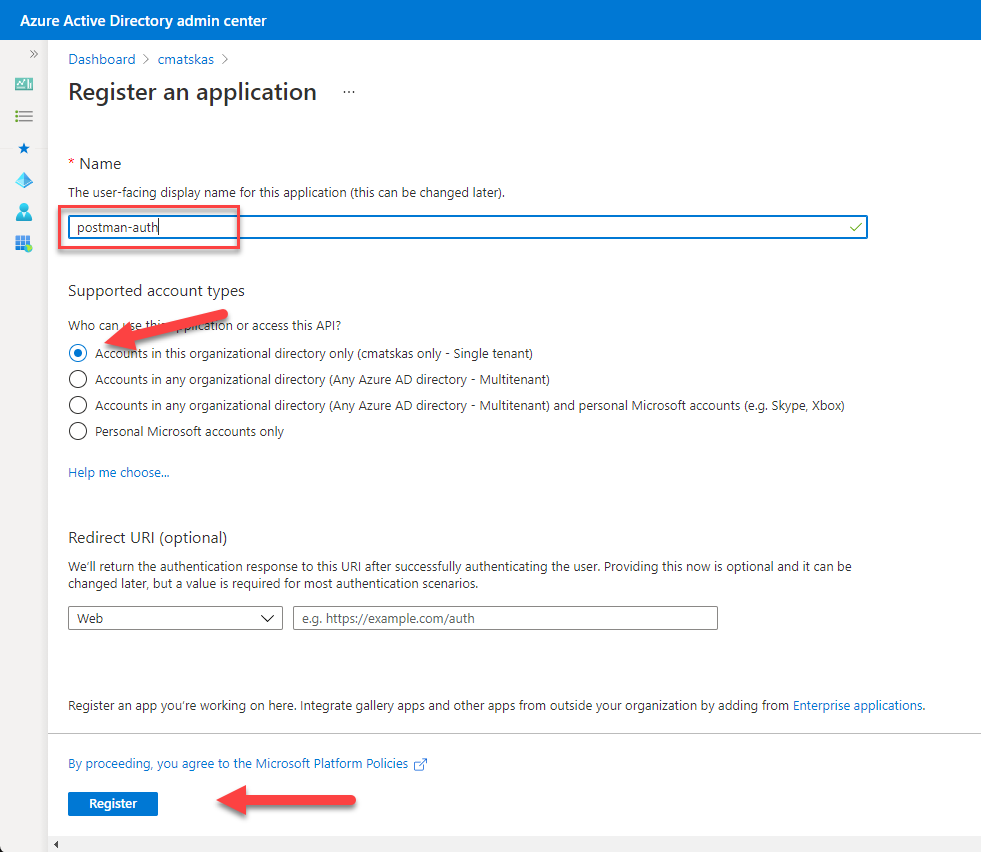

Windows Package Manager allows you to search and install applications that are referenced by the sources you have configured to be used by the winget tool. Sources are repositories that list applications that can be installed by winget and the data needed for them to be installed (in the form of a manifest file containing information such as the installer location of a package for instance). The default source is the [Windows Package Manager Community Repository](https://github.com/microsoft/winget-pkgs) which is a public GitHub repository where everyone can submit its application package manifest to make an applications available for installation to Windows Package Manager users.

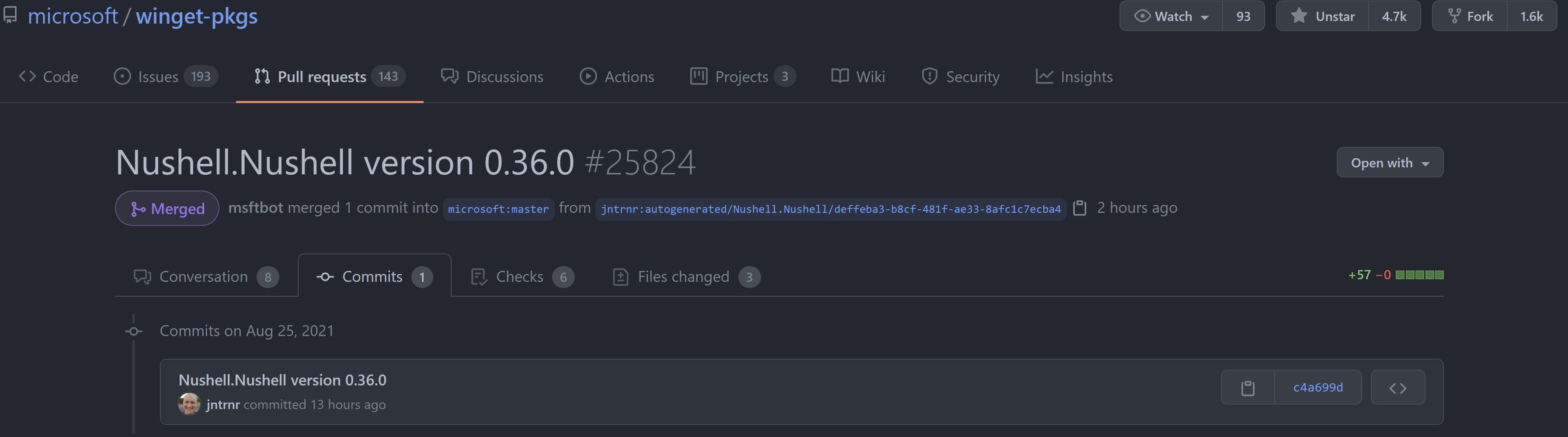

Once you know that, if you are the developer of an application you want to distribute on Windows through a the Windows Package Manager you have to create a manifest for your application and publish it through a Pull Request on the Windows Package Manager Community Repository. And each time you release a new version of you application, you have to update your app manifest with the information of your new package version (new version number, new installer location...) and create a PR to the Windows Package Manager Community Repository with this updated version of your manifest. For more details you can have a look at the official [documentation](https://docs.microsoft.com/en-us/windows/package-manager/package/)

As a package creator you probably do not want to create and update this app manifest manually. Luckily for you there is a tool to do that for you.

## WingetCreate to the rescue

### Introducing WingetCreate

[Windows Package Manager Manifest Creator](https://github.com/microsoft/winget-create) aka WingetCreate is a tool "designed to help generate or update manifest files for the Community repo" (quoting the readme of WingetCreate repository). At the time of writing it is still in preview but you can already use it to help you with your manifest files. You can download the installer from [this link](https://aka.ms/wingetcreate/latest) but of course it is available from winget: `winget install wingetcreate`.

The main commands are [New](https://github.com/microsoft/winget-create/blob/main/doc/new.md), [Update](https://github.com/microsoft/winget-create/blob/main/doc/update.md) and [Submit](https://github.com/microsoft/winget-create/blob/main/doc/submit.md).

### The `New` command

It allows you to create a new manifest from scratch. If you don't really know where to start to deal with manifest files it is a nice way of getting started. Yet having a look at existing manifests in the [winget community repository](https://github.com/microsoft/winget-pkgs) can be sometimes more efficient.

### The `Update` command

It allows you to update an existing manifest, that is to say to create an updated version of your manifest when you have released a new version of your application (so new version number and new installer url). You can use this command to `submit` your updated package to the Windows Package Manager Community Repository. In my opinion it is the most useful command from WingetCreate as it can be easily be integrated in a build pipeline to publish your installer.

### The `Submit` command

It allows you to submit an existing manifest (you created earlier on disk with the create or update command) to the Windows Package Manager Community Repository automatically. Basically what it does it that is uses the Github personal access token you give it to create a Pull Request with your manifest in this repository.

### What else ?

If you look at the [settings command](https://github.com/microsoft/winget-create/blob/main/doc/settings.md) you will see that you can specify the name of the GitHub repository to target for your package submission. This is really interesting if you want to host a private source for winget available to your organization only where you will publish applications for related to your business needs and that you don't want to make available publicly.

WingetCreate is a really helpful tool to create, update and validate a manifest for you winget package. Still you probably don't want to manually run WingetCreate each time you release a new package version. So let's see how to automate that with GitHub Actions.

## Automating your app manifest upgrade with GitHub Actions

### Why using GitHub Actions to demonstrate the automation of app manifests upgrades ?

In my daily work, Azure Pipelines are the pipelines I used to do CI/CD and they are great. Currently they offer more functionalities than GitHub Actions and as the code I develop is hosted in Azure Repos it makes more sense to use the Azure DevOps built-in CI/CD tool than something else (although Azure DevOps does not enforce at all you to choose their tools). However there is already in WingetCreate's readme a section with a link to an example about using WingetCreate with Azure Pipelines, but there is no example with GitHub Actions.

Moreover I think many applications that are available or will want to be available as a winget package are open source applications whose code are hosted in a GitHub repository and that are already using GitHub Actions for their CI/CD. So I though it could be useful to have an example of using WingetCreate with GitHub Actions, especially as GitHub has this concept of "releases".

### An interesting use case for with Nushell

[Nushell](https://www.nushell.sh/) is a cross platform shell written in Rust. Nushell's developers took the best of existing shells (like the structured data approach from PowerShell) and created a shell that feels modern, easy-to-use and very useful in my opinion.

There was a [GitHub issue](https://github.com/nushell/nushell/issues/1859) to support the new official Windows package manager so I though it was the opportunity to contribute to Nushell. Contributing to this project was something that I had not been able to do yet because I did not know Rust, writing CI/CD pipelines however is something I can do.

Nushell already uses GitHub Actions for its continuous integration and to create releases. If you are not familiar with GitHub releases you can read the [official documentation](https://docs.github.com/en/github/administering-a-repository/releasing-projects-on-github/about-releases) but basically a release is a version of your software (corresponding to a git tag in your repository) that you make available with release notes and binaries files.

Therefore, the idea was to update Nushell manifest with the latest version of Nushell using `WingetCreate` each time a new release of Nushell is published.

### Triggering a new workflow from a release event

Automating the app manifest upgrade of Nushell just meant creating a `job` in a GitHub Actions workflow that would call `WingetCreate` with the new version number and the new installer url.

I first wanted to modify the existing Nushell GitHub Actions workflow that was creating the releases by adding a new `job` at the end of the workflow just after the release was created. Well this is was a bad idea, I pushed this change and during the next release of Nushell the workflow failed because I did not pay attention the workflow was creating releases in draft, so the installer url of the new version did not exist when my job called `WingetCreate`.

Because of that I decided to create a separate workflow that would be triggered each time a Nushell release is published. In Nushell this is done manually (passing from draft to release) but even if it were done automatically by the release workflow I think it is a better idea to have a specific workflow triggered by the publication of a release.

```

name: Submit Nushell package to Windows Package Manager Community Repository

on:

release:

types: [published]

jobs:

winget:

name: Publish winget package

```

I really like how it is possible with GitHub Actions to trigger on many different GitHub events. It is something that seems more limited in Azure Pipelines.

### Calling `WingetCreate` from a GitHub Actions workflow.

Windows Package Manager Manifest Creator needs to be run in windows so we need to specify that in the job that will submit a new version of Nushell package to Windows Package Manager Community Repository:

```

jobs:

winget:

name: Publish winget package

runs-on: windows-latest

```

This job will only contain one step that is the execution of the commands to call `WingetCreate`. These commands will be in PowerShell as this is the default runner (`pwsh`) in a windows job.

```

winget:

name: Publish winget package

runs-on: windows-latest

steps:

- name: Submit package to Windows Package Manager Community Repository

run: |

```

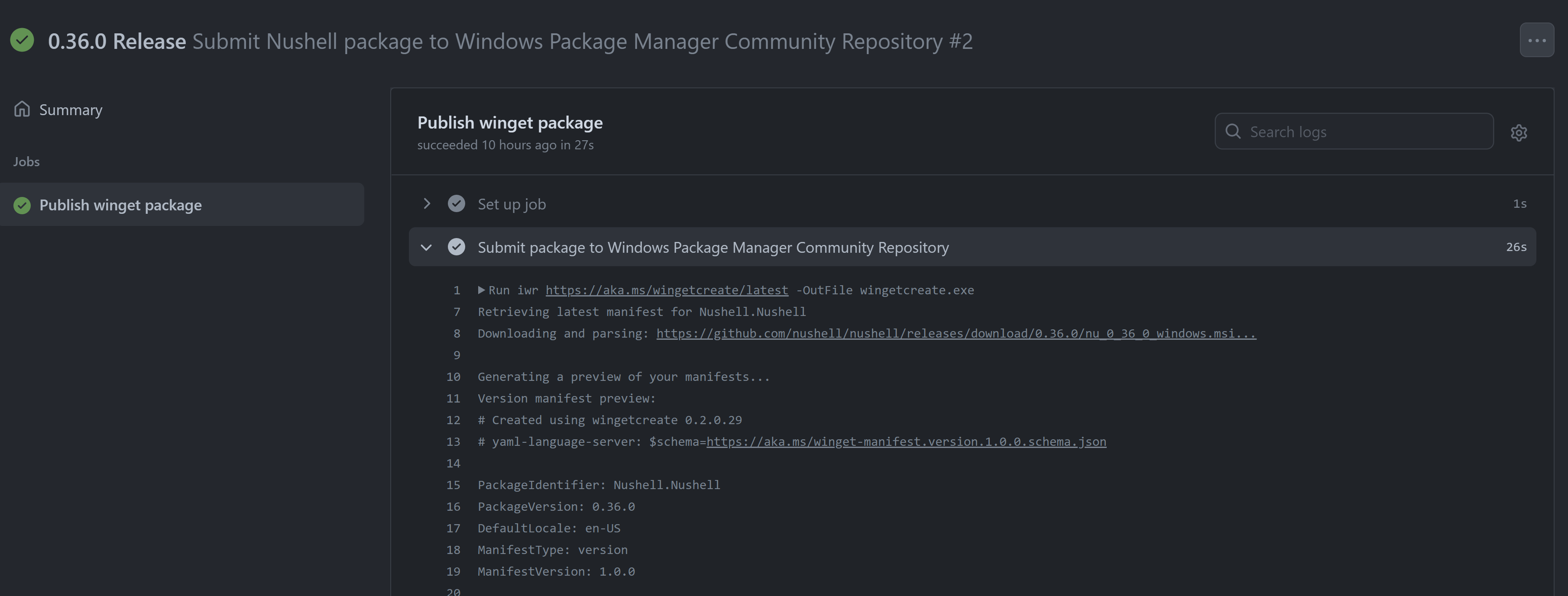

First we need to download latest version of `WingetCreate` by using the following command :

```

iwr https://aka.ms/wingetcreate/latest -OutFile wingetcreate.exe

```

Second we want to retrieve the version number and the installer url of the new package. These 2 pieces of information will be needed as parameter to the WingetCreate update command. We can find these in the github context which contains the release event that triggered the workflow. We are using these 2 lines of PowerShell to get assets associated to the release and filter on the msi file which is the Windows installer of Nushell.

```

$github = Get-Content '${{ github.event_path }}' | ConvertFrom-Json

$installerUrl = $github.release.assets | Where-Object -Property name -match 'windows.msi' | Select -ExpandProperty browser_download_url -First 1

```

> 💡 I just though that instead of doing this in PowerShell we could have done this in Nushell, which would have been fun 'using Nushell to provide a new version of Nushell' but as it is not installed by default on windows agents it would mean a loss of time each time the workflow runs.

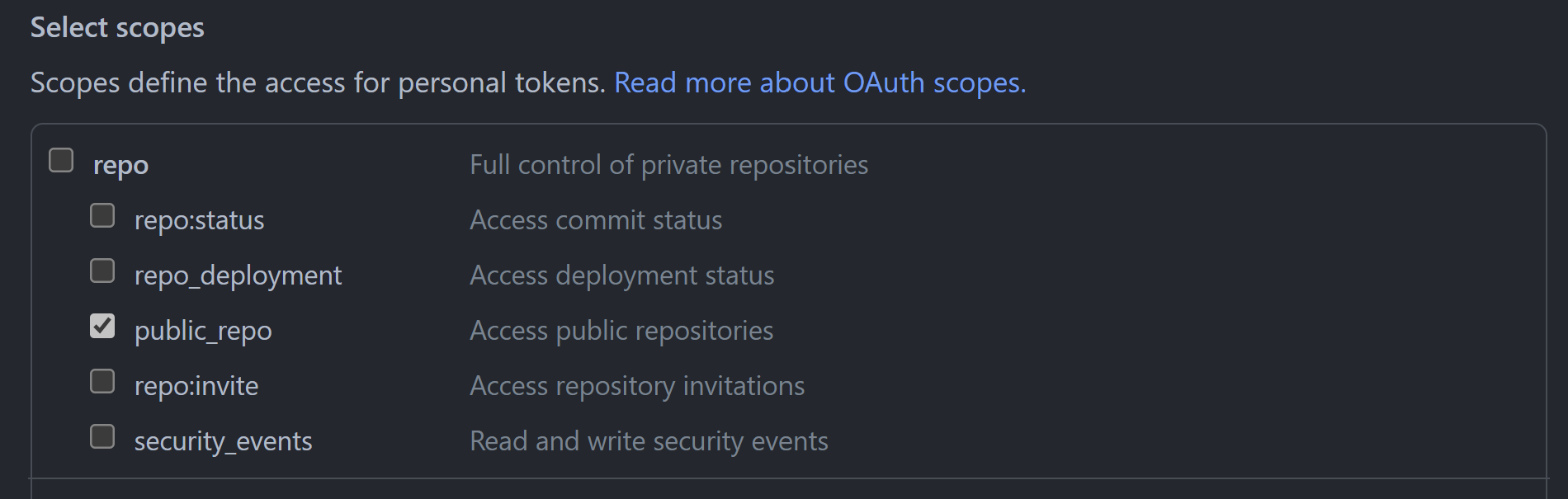

Third we can call the `WingetCreate` update command by specifying the version, the url of the installer, and a Personal Access Token that will be use by `WingetCreate`to make the Pull Request in the Windows Package Manager Community Repository. This PAT needs to be created by a maintainer of the repository with the permission and added to the secrets of the project.

Here you can see a run of the workflow in GitHub:

## Overview of the created workflow

You can find the complete workflow below and [here](https://github.com/nushell/nushell/blob/main/.github/workflows/winget-submission.yml) in the Nushell repository.

```

name: Submit Nushell package to Windows Package Manager Community Repository

on:

release:

types: [published]

jobs:

winget:

name: Publish winget package

runs-on: windows-latest

steps:

- name: Submit package to Windows Package Manager Community Repository

run: |

iwr https://aka.ms/wingetcreate/latest -OutFile wingetcreate.exe

$github = Get-Content '${{ github.event_path }}' | ConvertFrom-Json

$installerUrl = $github.release.assets | Where-Object -Property name -match 'windows.msi' | Select -ExpandProperty browser_download_url -First 1

.\wingetcreate.exe update Nushell.Nushell -s -v $github.release.tag_name -u $installerUrl -t ${{ secrets.NUSHELL_PAT }}

```

Here is what a Pull Request generated by the GitHub Actions workflow looks like:

## To summarize

We have introduced the notion of source for winget packages, and in particular the Windows Package Manager Community Repository where we can open PR to submit a new application or new versions of an existing application. We have seen how Windows Package Manager Manifest Creator could help us doing that and how it could be automated from a GitHub Actions workflow like it was done for the Nushell project.

Do not hesitate to copy some of the GitHub Actions workflow I showed you. I hope this will inspire you to do the same to distribute your applications through winget.

A big thank you to [Edward Thomson](https://twitter.com/ethomson) who explained to me how to retrieve GitHub Actions contexts in PowerShell. Thanks also to [Darren Schroeder](https://twitter.com/fdncred) and [Jonathan Turner](https://twitter.com/jntrnr) who supported me to set up a workflow that publish new releases of Nushell in winget. | techwatching |

803,622 | JS Coding Question #2: Reverse a string [Common Question - 3 Solutions] | Front end job interview question, Reverse a string | 14,457 | 2021-08-25T22:02:24 | https://dev.to/frontendengineer/technical-interview-1-reverse-a-string-33pb | challenge, javascript, tutorial, career | ---

title: JS Coding Question #2: Reverse a string [Common Question - 3 Solutions]

published: true

description: Front end job interview question, Reverse a string

tags: challenge, javascript, tutorial, career

cover_image: https://images.unsplash.com/photo-1565688527174-775059ac429c?ixlib=rb-1.2.1&ixid=MnwxMjA3fDB8MHxwaG90by1wYWdlfHx8fGVufDB8fHx8&auto=format&fit=crop&w=1650&q=80

series: Job Interview Preparation Materials

---

#### Interview Question #2:

Write a function that reverses a string❓🤔

> If you need practice, try to solve this on your own. I have included 3 potential solutions below.

> Note: There are many other potential solutions to this problem.

Feel free to bookmark 🔖 even if you don't need this for now. You may need to refresh/review down the road when it is time for you to look for a new role.

Code: https://codepen.io/angelo_jin/pen/LYBPrKo

#### Solution #1: Array methods

- very simple solution that will utilize array methods to reverse the string.

```js

function reverseString(str) {

return str.split("").reverse().join("");

}

```

#### Solution #2: Array forEach

- will cycle through each characters and push it on the temp variable created one by one in reversed order.

```js

function reverseString(str) {

let reversedString = ''

str.split('').forEach(char => {

reversedString = char + reversedString

})

return reversedString

}

```

#### Solution #3: Array reduce

- slightly better than second solution above. Will use reduce and add the result to the empty string in reverse.

```js

function reverseString(str) {

return str.split('')

.reduce((prev, curr) => curr + prev, '')

}

```

Happy coding and good luck if you are interviewing!

If you want to support me - [Buy Me A Coffee](https://www.buymeacoffee.com/letscode77)

In case you like a video instead of bunch of code 👍😊

{% youtube Ol-vKinc6s0 %}

| frontendengineer |

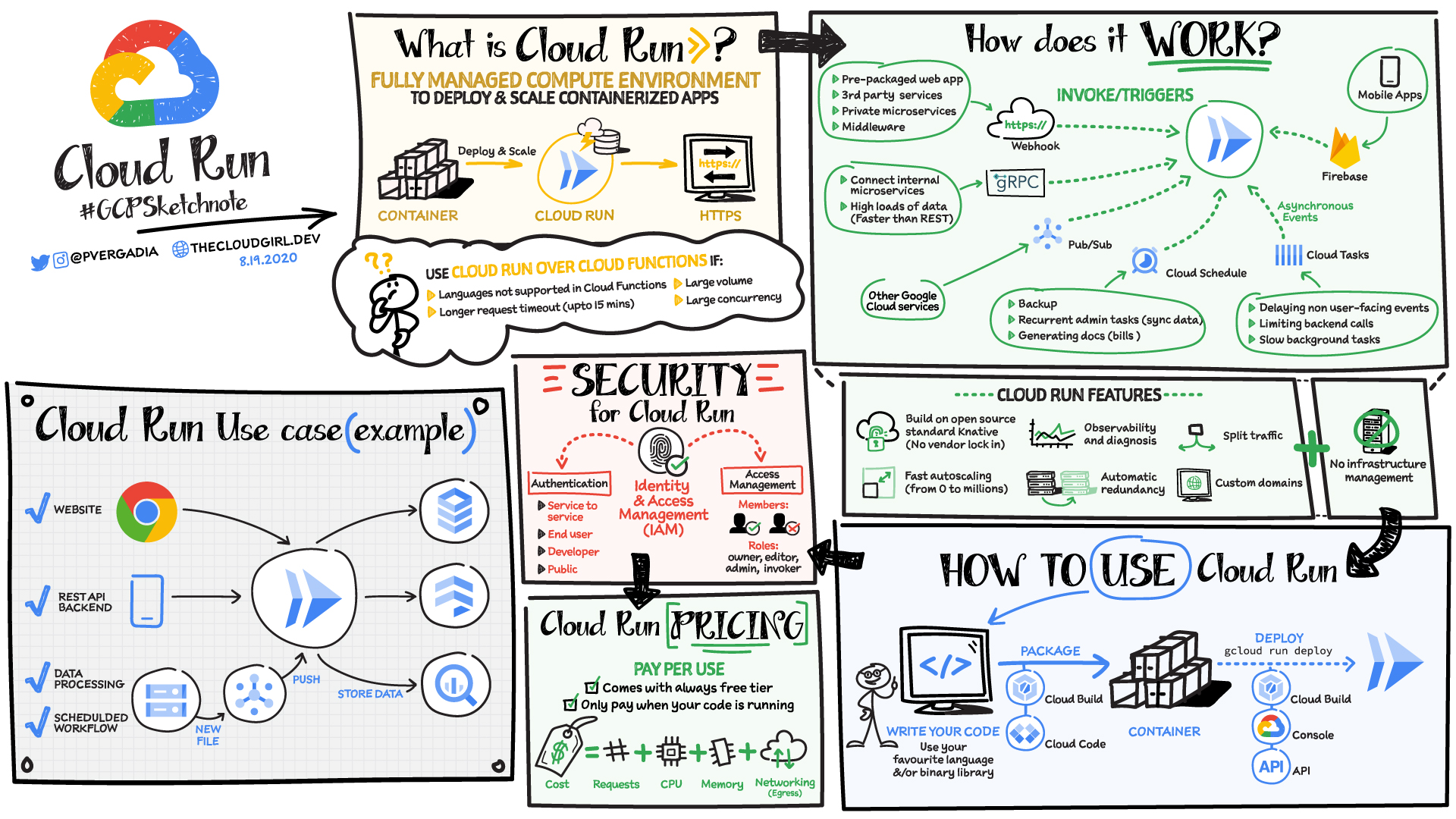

810,307 | Google Cloud Run Combines Serverless with Containers | When it comes to managed Kubernetes services, Google Kubernetes Engine (GKE) is a great choice if you... | 0 | 2021-09-01T13:34:26 | https://dev.to/spawar1991/google-cloud-run-combines-serverless-with-containers-5433 | containers, serverless, googlecloud, kubernetes | When it comes to managed Kubernetes services, Google Kubernetes Engine (GKE) is a great choice if you are looking for a container orchestration platform that offers advanced scalability and configuration flexibility. GKE gives you complete control over every aspect of container orchestration, from networking to storage, to how you set up observability—in addition to supporting stateful application use cases.

However, if your application does not need that level of cluster configuration and monitoring, then a fully managed Cloud Run might be the right solution for you.

> Cloud Run is a fully-managed compute environment for deploying and scaling serverless containerized microservices.

Fully managed Cloud Run is an ideal serverless platform for stateless containerized microservices that don’t require Kubernetes features like namespaces, co-location of containers in pods (sidecars), or node allocation and management.

### **You must be thinking, Why Cloud Run?**

Cloud Run is a fully managed compute environment for deploying and scaling serverless HTTP containers without worrying about provisioning machines, configuring clusters, or autoscaling.

The managed serverless compute platform Cloud Run provides a number of features and benefits:

* **Easy deployment of microservices.** A containerized microservice can be deployed with a single command without requiring any additional service-specific configuration. Si

* **Simple and unified developer experience.** Each microservice is implemented as a Docker image, Cloud Run’s unit of deployment.

* **Scalable serverless execution.** A microservice deployed into managed Cloud Run scales automatically based on the number of incoming requests, without having to configure or manage a full-fledged Kubernetes cluster. Managed Cloud Run scales to zero if there are no requests, i.e., uses no resources.

* **Support for code written in any language.** Cloud Run is based on containers, so you can write code in any language, using any binary and framework.

* **No vendor lock-in** - Because Cloud Run takes standard OCI containers and implements the standard Knative Serving API, you can easily port over your applications to on-premises or any other cloud environment.

* **Split traffic** - Cloud Run enables you to split traffic between multiple revisions, so you can perform gradual rollouts such as canary deployments or blue/green deployments.

* **Automatic redundancy** - Cloud Run offers automatic redundancy so you don’t have to worry about creating multiple instances for high availability

Cloud Run is available in two configurations:

* Fully managed Google Cloud Service.

* Cloud Run For Anthos (s (this option deploys Cloud Run into an Anthos GKE cluster).

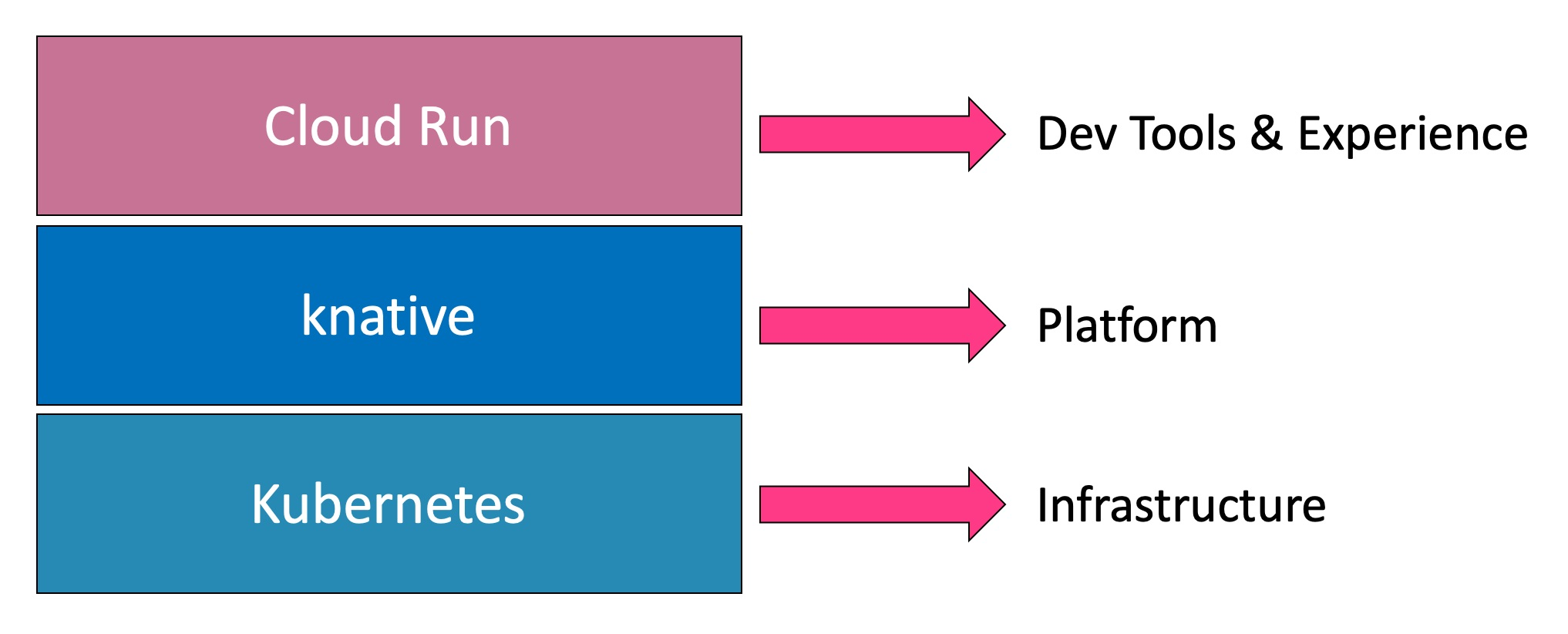

Cloud Run is a layer that Google built on top of Knative to simplify deploying serverless applications on the Google Cloud Platform.

> Google is one of the first public cloud providers to deliver a commercial service based on the open-source Knative project. Like the way it offered a managed Kubernetes service before any other provider, Google moved fast in exposing Knative through Cloud Run to developers.

Knative has a set of building blocks for building a serverless platform on Kubernetes. But dealing with it directly doesn’t make developers efficient or productive. While it acts as the meta-platform running on the core Kubernetes infrastructure, the developer tooling and workflow are left to the platform providers.

### **How does Cloud Run work?**

Cloud Run service can be invoked in the following ways:

**HTTPS:** You can send HTTPS requests to trigger a Cloud Run-hosted service. Note that all Cloud Run services have a stable HTTPS URL. Some use cases include:

1. Custom RESTful web API

2. Private microservice

3. HTTP middleware or reverse proxy for your web applications

4. Prepackaged web application

**gRPC:** You can use gRPC to connect Cloud Run services with other services—for example, to provide simple, high-performance communication between internal microservices. gRPC is a good option when you:

1. Want to communicate between internal microservices

2. Support high data loads (gRPC uses protocol buffers, which are up to seven times faster than REST calls)

3. Need only a simple service definition you don't want to write a full client library

4. Use streaming gRPCs in your gRPC server to build more responsive applications and APIs

**WebSockets:** WebSockets applications are supported on Cloud Run with no additional configuration required. Potential use cases include any application that requires a streaming service, such as a chat application.

**Trigger from Pub/Sub:** You can use Pub/Sub to push messages to the endpoint of your Cloud Run service, where the messages are subsequently delivered to containers as HTTP requests. Possible use cases include:

1. Transforming data after receiving an event upon a file upload to a Cloud Storage bucket

2. Processing your Google Cloud operations suite logs with Cloud Run by exporting them to Pub/Sub

3. Publishing and processing your own custom events from your Cloud Run services

**Running services on a schedule:** You can use Cloud Scheduler to securely trigger a Cloud Run service on a schedule. This is similar to using cron jobs.

Possible use cases include:

1. Performing backups on a regular basis

2. Performing recurrent administration tasks, such as regenerating a sitemap or deleting old data, content, configurations, synchronizations, or revisions

3. Generating bills or other documents

**Executing asynchronous tasks:** You can use Cloud Tasks to securely enqueue a task to be asynchronously processed by a Cloud Run service.

Typical use cases include:

1. Handling requests through unexpected production incidents

2. Smoothing traffic spikes by delaying work that is not user-facing

3. Reducing user response time by delegating slow background operations, such as database updates or batch processing, to be handled by another service,

4. Limiting the call rate to backend services like databases and third-party APIs

**Events from Eventrac:** You can trigger Cloud Run with events from more than 60 Google Cloud sources. For example:

1. Use a Cloud Storage event (via Cloud Audit Logs) to trigger a data processing pipeline

2. Use a BigQuery event (via Cloud Audit Logs) to initiate downstream processing in Cloud Run each time a job is completed

### **How is Cloud Run different from Cloud Functions?**

Cloud Run and Cloud Functions are both fully managed services that run on Google Cloud’s serverless infrastructure, auto-scale, and handle HTTP requests or events. They do, however, have some important differences: