id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

816,630 | Hello class! | My name is Michael and I am a senior at Penn State University. Currently I am majoring in... | 0 | 2021-09-07T18:11:32 | https://dev.to/michaelzwhang/hello-class-50dj | My name is Michael and I am a senior at Penn State University. Currently I am majoring in Cybersecurity Analytics & Operations and minoring in Information Science & Technology.

This past summer and overlapping into the first week of the semester, I had the opportunity to intern at CrowdStrike, where I was working as a threat intelligence analyst. Though I am not so sure how much web component work I will do in my future career, I am optimistic and excited to learn in this class and discover where I will be able to apply this knowledge in the future. | michaelzwhang | |

816,650 | A Look into a terminal emulator's text stack | I describe how I implemented proper text shaping in my terminal emulator with all its today's wishes: ZWJ Emoji, variation selectors, programming ligatures. | 0 | 2021-09-07T19:02:43 | https://dev.to/christianparpart/look-into-a-terminal-emulator-s-text-stack-3poe | programming, terminal, unicode, emoji | ---

title: A Look into a terminal emulator's text stack

published: true

description: I describe how I implemented proper text shaping in my terminal emulator with all its today's wishes: ZWJ Emoji, variation selectors, programming ligatures.

tags: [programming, terminal, unicode, emoji]

cover_image: https://contour-terminal.github.io/images/terminal-zwj-emoji.png?2

---

TL;DR

-----

I am going to describe how I implement rendering text in my terminal emulator so that we get programming ligatures, emoji, variation selectors, and yes, even ZWJ emoji.

Introduction

------------

Text rendering in a virtual terminal emulator can be as simple as just iterating over each grid cell's character, mapping it to a Font's bitmap glyph, and rendering it to the target surface at the appropriate position. But it can also be as complex as a web browser's text stack[1] if one may want to do it right.

In contrast to web browsers (or word processors),

terminal emulators are still rendering text the way they did render text 50 years ago - plus some non-standard extensions that did arise over the decades.

Also, terminal screens weren't made with Unicode in mind, Unicode did not even exist back then, so there were a few workarounds and non-standardized ideas implemented in order to display complex Unicode text and symbols in terminals without a common formal ground that terminal application developers can rely on.

Text rendering in a terminal emulator puts some additional constraints on how to render, mostly because character placement is decided before text shaping is performed and must align to a fixed-size grid, which makes it almost impossible to properly render traditional Japanese text into the terminal, or Hewbrew right-to-left text (though, there is a handful of virtual terminal emulators that specialize

on the latter and an informal agreement on how to deal with wide characters on the terminal screen).

Not every character, or to be displayed symbol (such as Emoji) is as wide as exactly one grid cell's width, so additional measurements have to be taken into account for dealing with these characters as well.

Terminals and terminal applications until now did depend on a standard library API call `wcwidth()` that does ideally return the number of grid cells that an input character will occupy. This however became useless as soon as multi codepoint grapheme clusters have been introduced that still form a single user perceived character. `wcswidh()` should return the total number of grid cells of a sequence of characters, but these do not handle grapheme clusters nor variation selectors either.

Unicode - a very quick rundown in the context of terminals

----------------------------------------------------------

Unicode is aiming to have one huge universal space where every imaginable "user perceived character" can be represented. A "user perceived character" is what the user that looks at that character thinks of as one unit. This is in direct contrast to what a character is in computer science. A "user perceived character" can be as simple as one single codepoint (32-bit value representing that

character) and as complex as an ordered sequence of 7 unbreakable codepoints to compose one single "user perceived character".

This places additional requirements to a virtual terminal emulator where each grid cell SHOULD contain exactly one *"user perceived character"* (also known as *grapheme cluster*), that is, an unbreakable codepoint sequence of one or more codepoints that must not be broken up into multiple grid cells before the actual text shaping or screen rendering has been performed.

Also, some grapheme clusters take up more than one grid cell in terms of display width, such as Emoji usually take two grid cells in width in order to merely match the Unicode (TR 51, section 2.2) specification's wording that the best practice to display Emoji is to render them in a square block to match the behavior of the old japanese phone companies that that first introduced Emoji.

Rendering text - a top level view

---------------------------------

A terminal emulator's screen is divided into fixed width and (not necessarily equal) fixed height grid cells.

When rendering this grid, it is sufficient to iterate over each line and column and render each grid cell individually, at least when doing basic rendering.

Now, when non-trivial user perceived characters need to be supported, the rendering cannot just render each character individually, but must be first grouped into smaller chunks of text with common shared properties, across the grid cell boundaries.

Here we enter the world text shaping.

Text Shaping

------------

Simply put, text shaping is the process of translating a sequence of codepoints into glyphs and their glyph positions. This differs from normal text processors and web browsers in a way because glyph placement in virtual terminal emulators are constrained.

When shaping text of a single grid line, the line is split into words, delimited by spaces, gaps and SGR attributes, that is, each word must represent the same SGR attributes for every "user perceived character" - for example the text's font style (such as bold or italic) or background color must be equal for each position in this sequence, from now on called "word").

The word can be used as a cacheable unit, in order to significantly speed up rendering for future renders.

The cache key is composed of the codepoint sequence of that word, as well as, the common shared SGR attributes.

This cacheable word is further segmented into sub runs by a series categories before text shaping can occur, that is, by Unicode script attribute (such as Latin or Hangul) and by symbol presentation (such as Emoji text presentation or Emoji presentation). This is important because one cannot just pass a string of text to the underlying text shaping engine with mixed properties, such as Hebrew text along with some Latin and Kanji or Emoji in between or a font style change for obvious reasons.

Each segment (usually called run) must be shaped individually with its own set of fallback fonts. Emoji are using a different font and font fallback list than regular text which uses a different font and font fallback list then bold, italic, or bold italic fonts.

Emoji also have two different presentation styles, the one that everybody expects and is named Emoji Emoji presentation (double-width colored Emoji) and the other one is named Emoji text presentation, which renders Emoji in non-colored text-alike pictograms.

The result of all sub runs is composing the sequence of glyph indices and glyph positions and is used as the cache value for the cacheable word to be passed to the next stage, the text renderer.

Text Rendering

--------------

The text renderer receives an already pre-shaped string of glyphs and glyph positions relative to screen coordinates of the first glyph to be rendered onto the screen.

In order to lower the pressure on the GPU and reduce synchronization times between CPU and GPU, all glyph bitmaps are stored into a texture atlas on the GPU, such that the text rendering (when everything has been already uploaded once) just needs to deal with indices to those glyph bitmaps into the appropriate texture atlas as well as screen coordinates where to render those glyphs on the target surface.

There is one texture atlas for gray scaled glyphs (this is usually standard text) as well as one texture atlas for colored glyphs (usually colored Emoji). Additionally there can be a third type of texture atlas for storing LCD anti-aliased bitmap glyphs.

Now, when rendering a string of glyphs and glyph positions, each glyph's texture atlas ID and atlas texture coordinate is appended into an atlas coordinate array along with each glyph's absolute screen coordinate and color attributes into a vertex buffer to be uploaded to the GPU.

When iterating over the whole screen buffer has finished, the atlas texture and vertex buffer are filled with all glyphs and related information that are required for rendering one frame. These buffers are then uploaded to the GPU to be drawn in a single GPU render command (such as `glDrawArrays`, or `glDrawElements`).

Other Terminal Emulator related Challenges

------------------------------------------

Most terminal applications use `wcwidth()` to detect the width of a potential "wide character". This is broken by design and a terminal emulator has to deal with such broken client applications. Some however use utf8proc's `utf8proc_charwidth`, another library to deal with Unicode,

and maybe some are using `wcswidth()`, which doesn't handle grapheme clusters nor variation selectors either.

The suggested way for future applications (emulator and client) would be to introduce feature detection and mode switching on how to process grapheme clusters and their width, if legacy apps are of concern.

Just looking at the algorithm, implementing grapheme cluster segmentation isn't too hard but in execution very expensive. Also grapheme cluster width computation is expensive. But luckily, in the context of terminal emulators, both can be optimized for the general case in terminal emulators, which is mostly US-ASCII, and yields almost no penalty with optimizations or a ~60% performance penalty when naively implemented.

Also, implementing proper text shaping into a fixed-grid terminal wasn't really the easiest when there is no other project or text to look at. I used "Blink's text stack" documentation and the Blink's source code (the Web renderer of Google Chrome) as bases and source of truth to understand this complex topic and then mapped my findings to the terminal world.

Since text shaping *IS* expensive, this cannot be done without caching without severely hurting user experience.

After investigating into the above optimization possibilities however, I do not see why a terminal emulator should *not* do provide support for complex Unicode, as the performance I have achieved so far is above average at least, and therefore should be sufficient for everyday use.

Bidirectional text was not addressed in this document nor in the implementation in the Contour terminal yet, as this imposes a new set of challenges that have to be dealt with separately. If that is of interest, there are a few terminals (such as mlterm) that have put great effort in getting such scripts into the terminal.

Hopefully this will be eventually added (or contributed) to my project at any time in the future, too and if so, I'll update this document accordingly.

Conclusion

----------

If one went through all the pain on how Unicode, text segmentation, and text shaping works, you will be rewarded with a terminal emulator that is capable of rendering complex Unicode. At least as much as most of us desire - being able to use (power user/) programming ligatures and composed Emoji.

Some terminal emulators do partly support ligatures or rendering trivial single codepoint Emoji or a few of the composed Emoji codepoint sequences, but sadly, most seem to get Emoji wrong. While this is a great start, I'd say we can deliver better and more.

Final notes

-----------

I'd like to see the whole virtual terminal emulator world to join forces and agree on how to properly deal with complex text in a somewhat future-proof way.

And while we would be in such an ideal world, we could even throw away all the other legacies that are inevitably inherited from the ancient VT standards that are partly even older than I am. What would we be without dreams. ;-)

Some other terminal emulator developers and I have

[started to address](https://github.com/contour-terminal/contour/issues/404) at least some of the many Unicode problems that are up until now undefined behavior by creating a [formal specification](https://github.com/contour-terminal/terminal-unicode-core) on how a terminal emulator should behave in a backward and forward compatible way so that app developers and users will benefit.

References

----------

- [Blink's text stack](https://chromium.googlesource.com/chromium/src/+/master/third\_party/blink/renderer/platform/fonts/README.md)

- [UTS 11](https://unicode.org/reports/tr11/) - character width

- [UTS 24](https://unicode.org/reports/tr24/) - script property

- [UTS 29](https://unicode.org/reports/tr29/) - text segmentation (grapheme cluster, word boundary)

- [UTS 51](https://unicode.org/reports/tr51/) - Emoji

| christianparpart |

816,700 | [PT-BR]Guia instalando Node.js em ambientes linux Manjaro/Fedora/Ubuntu | Ola, irei te guiar em como instalar Node.js nos ambientes linux mais famosos, provavelmente você já... | 0 | 2021-09-07T20:32:18 | https://dev.to/mfortunat0/pt-br-guia-instalando-node-js-em-ambientes-linux-manjaro-fedora-ubuntu-nom | node, javascript, linux, ubuntu | Ola, irei te guiar em como instalar Node.js nos ambientes linux mais famosos, provavelmente você já deva ter utilizado ou utilizava uma das distros da imagem acima.

Antes de começarmos as instalações, e preciso saber que o Node.js possui versões LTS e as para testes. neste caso iremos utilizar as LTS pois são seguras, a versão LTS enquanto escrevo esta na 14.17.6 como a imagem abaixo mostrara:

Ao entrar na aba “downloads” existem varias maneiras de instalar-mos como por exemplo por arquivos binários. As maneiras que iremos utilizar sera via “package manager” e Snap.

## Instalação via Package Manager

Das três distros que iremos utilizar teremos packages manager Apt para o Ubuntu, Dnf/Yum para Fedora, Pacman para Manjaro. Bom chega de enrolação e vamos abrir o terminal e começar.

### Ubuntu

Antes de tudo, veja se seu ubuntu possui o pacote Curl, caso não, apenas instale-o com comando abaixo:

sudo apt install curl

Tendo agora o Curl, basta executarmos os comandos abaixo:

curl -fsSL https://deb.nodesource.com/setup_lts.x | sudo -E bash -

sudo apt-get install -y nodejs

Caso queira consultar de onde tirei estas linhas de comandos, estarão no próprio site do Node.js, no repositório **distributions** da **nodesource** hospedado no github.

### Fedora

Bom acredito que das três distro, fedora facilita muito para instalar o Node.js pois ele já o possui em seu repositório do workstation, então basta apenas um comando:

sudo dnf install nodejs

### Manjaro

No manjaro caso tente instalar o pacote “nodejs” você obterá a versão mais atual do Node e não a LTS, não e isto que queremos. Entao para instalarmos a LTS acesse pagina oficial do Arch linux e acesse a aba **packages**ou [clique aqui](https://archlinux.org/packages/), procure pelas palavras chaves “node” e “lts” como na imagem abaixo:

Repare que obtive como resultado a versão 14.17.4 nomeada **nodejs-lts-fermium**, basta instalarmos o mesmo agora junto com Npm:

sudo pacman -S nodejs-lts-fermium npm

## Testando Node.js

Feito isso, basta executarmos o seguinte comando para checar qual a versão do Node e Npm foi instalada:

node -v && npm -v

Vamos executar um arquivo javascript:

echo 'console.log("Hello World")' > index.js

node index.js

Se tudo der certo, devera exibir no console o famoso “Hello World”.

Caso houver erro “command not found”, tente fechar o terminal aberto e abrir novamente e testar novamente. Em ultimo caso tente um reboot em sua maquina.

## Instalação via Snap

Node.js também possui seu pacote Snap podendo ser facilmente instalado via Snap, veja abaixo como instalá-lo:

sudo snap install node --classic

Apos instalar basta fechar o terminal e abri-lo novamente e fazer seu teste.

## Node Manager Version

Node.js também possui seu próprio gerenciador de versões caso você precise manusear mais de uma versão do Node.js. Veja como e simples instalar e utilizar o **nvm**.

### Instalação

Acessando o repository oficial do [nvm no github](https://github.com/nvm-sh/nvm), terá um guia para instalação bem simples optando por uso do Curl ou Wget.

{% gist https://gist.github.com/mfortunat0/c6434b31bda27c6f2e0d19616b4eff63 %}

Escolhido uma das duas opções, basta agora adicionar ao seu arquivo “~/.bash_profile” , “~/.zshrc” “~/.profile” ou “~/.bashrc” as seguintes linhas:

{% gist https://gist.github.com/mfortunat0/854364695812b24974655483135b5912 %}

Os arquivos que variam pelo shell você utiliza. Agora basta fechar e abrir um novo terminal e testar o nvm com comando:

nvm -v

### Comandos básicos utilizando NVM

Listando versões do node instaladas

nvm ls

Listando todas versões possível para instalação

nvm ls-remote

Instalando outras versões do node

nvm install 14.7.1

Indicar qual versão utilizar no ambiente atual

nvm use 14.7.1

Bom, agora já podemos estudar/testar muita coisa fornecida pelo Node.js.

Espero que este artigo tenha sido util e se puder deixe um aplauso 👋

| mfortunat0 |

816,820 | When Reviewing Others’ Work, Ask ‘How’ Instead of ‘Why’ Questions | In my API governance experience, I interviewed a lot of teams. The purpose of those chats was... | 0 | 2021-09-07T21:55:44 | https://matthewreinbold.com/2021/06/23/HowVersusWhyWhyQuestions/ | management, sociotechnical, governance, api |

In my API governance experience, I interviewed *a lot* of teams. The purpose of those chats was always to reach a better understanding of the problem. A better understanding leads to better design. **Most** of the time, these conversations were amicable, easy-going affairs. One contributing factor that kept these affairs from becoming confrontations was when I switched from asking "Why" to asking "How" questions.

When you ask someone "Why" they do or don't do something, you'll inevitably provoke a defensive response. For example, when trying to learn more about somebody's API practice, I could ask:

* "Why don't you have 100% contract test coverage?"

* "Why aren't your API descriptions captured in a single, discoverable place?"

* "Why did you produce so many microservices?"

* "Why is your domain terminology inconsistent?"

* And the classic catch-all, *"Why did you do it this way?"*

Each of those may be a legitimate question. However, the nature of the question comes across as a request for justification. Worse, answers to a why question immediately entrenches the person in a list, of their own making, of confirmation bias. What was supposed to be a positive discourse has turned exhausting, as each side attempts to prove that their approach is superior.

Asking "how" is much more productive. Reframing our points from earlier changes the questions to:

* "How do you detect breaking changes in production?"

* "How do developers discover and learn how to use APIs in your area?"

* "How do you manage the greater complexity with your number of microservices?"

* "How do you describe this concept within your domain?"

* *"How did you work through the difficult decisions in this design?"*

Both the 'how' and 'why' examples address, roughly, the same areas of concern. However, the 'how' questions are more likely to result in people talking in greater length, detail, and thoughtfulness. They assist us in going further in exploration.

Asking quality, open-ended questions creates a better rapport and a clearer idea of how to help. And the faster we get to help, the sooner our customers will find success.

The next time you feel the urge to ask "why", try reaching for "how" instead. | matthewreinbold |

816,896 | Design reinforcement learning agents using Unity ML-Agents | This article is part 3 of the series 'A hands-on introduction to deep reinforcement learning using... | 14,314 | 2021-09-08T01:22:22 | https://www.gocoder.one/blog/designing-reinforcement-learning-agents-using-unity-ml-agents | machinelearning, deeplearning, unity3d, tutorial |

This article is part 3 of the series '[A hands-on introduction to deep reinforcement learning using Unity ML-Agents](https://dev.to/joooyz/a-hands-on-introduction-to-deep-reinforcement-learning-using-unity-ml-agents-4f8i)'. It's also suitable for anyone new to Unity interested in using ML-Agents for their own reinforcement learning project.

## **Recap and overview**

In [part 2](https://dev.to/joooyz/build-a-reinforcement-learning-environment-using-unity-ml-agents-112e), we built a 3D physics-based volleyball environment in Unity. We also added rewards to encourage agents to 'volley'.

In this tutorial, we'll add agents to the environment. The goal is to let them observe and interact with the environment so that we can train them later using deep reinforcement learning.

## Letting our agents make decisions

We want our agent to learn which actions to take given a certain state of the environment — e.g. if the ball is on our side of the court, our agent should get it before it hits the floor.

The goal of reinforcement learning is to learn the ***best* policy** (a mapping of states to actions) **that will maximise possible rewards.** The theory behind how reinforcement learning algorithms achieve this is beyond the scope of this series, but the courses I shared in the [series introduction](https://dev.to/joooyz/a-hands-on-introduction-to-deep-reinforcement-learning-using-unity-ml-agents-4f8i) will cover it in great depth.

While training, the agent will either take actions:

1. At random (to explore which actions lead to rewards and which don't)

2. From its current policy (the optimal action given the current state)

ML-Agents provides a convenient **Decision Requester** component which will handle the alternation between these for us during training.

To add a Decision Requester:

1. Select the **PurpleAgent** game object (within the **PurplePlayArea** parent).

2. Add Component > Decision Requester.

3. Leave decision period default as 5.

## Defining the agent behavior

Both agents are already set up with the `VolleyballAgent.cs` script and **Behavior Parameters** component (which we'll come back to later).

In this part we'll walk through `VolleyballAgent.cs`. This script contains all the logic that defines the agents' actions and observations. It contains some helper methods already:

- `Start()` — called when the environment is first rendered. Grabs the parent Volleyball environment and saves it to a variable `envController` for easy reference to its methods later.

- `Initialize()` — called when the **agent** is first initialized. Grabs some useful constants and objects. Also sets `agentRot` to ensure symmetry so that the same policy can be shared between both agents.

- `MoveTowards()`, `CheckIfGrounded()` & `Jump()` — from ML-Agents sample projects. Used for jumping.

- `OnCollisionEnter()` — called when the Agent collides with something. Used to update `lastHitter` to decide which agent gets penalized if the ball is hit out of bounds or rewarded if hit over the net.

Adding an agent in Unity ML-Agents usually involves extending the base `Agent` class, and implementing the following methods:

- `OnActionReceived()`

- `Heuristic()`

- `CollectObservations()`

- `OnEpisodeBegin()` (**Note:** usually used for resetting starting conditions. We don't implement it here, because the reset logic is already defined at the environment-level in `VolleyballEnvController`. This makes more sense for us since we also need to reset the ball and not just the agents.)

## Agent actions

At a high level, the Decision Requester will select an action for our agent to take and trigger `OnActionReceived()`. This in turn calls `MoveAgent()`.

### `MoveAgent()`

This method resolves the selected action.

Within the `MoveAgent()` method, start by declaring vector variables for our agents direction and rotation movements:

```csharp

var dirToGo = Vector3.zero;

var rotateDir = Vector3.zero;

```

We'll also add a 'grounded' check to see whether its possible for the agent to jump:

```csharp

var grounded = CheckIfGrounded();

```

The actions passed into this method (`actionBuffers.DiscreteActions`) will be an array of integers which we'll map to some behavior. It's not important which order we assign them, as long as they remain consistent:

```csharp

var dirToGoForwardAction = act[0];

var rotateDirAction = act[1];

var dirToGoSideAction = act[2];

var jumpAction = act[3];

```

In Unity, every object has a `transform` class that stores its position, rotation and scale. We'll use it to create a vector pointing to the correct direction in which we want our agent to move.

Based on the previous assignment order, this is how we'll map our actions to behaviors:

1. `dirToGoForwardAction`: Do nothing [0] | Move forward [1] | Move backward [2]

2. `rotateDirAction`: Do nothing [0] | Rotate clockwise [1] | Rotate anti-clockwise [2]

3. `dirToGoSideAction`: Do nothing [0] | Move left [1] | Move right [2]

4. `jumpAction`: Don't jump [0] | Jump [1]

Add to the `MoveAgent()` method:

```csharp

if (dirToGoForwardAction == 1)

dirToGo = (grounded ? 1f : 0.5f) * transform.forward * 1f;

else if (dirToGoForwardAction == 2)

dirToGo = (grounded ? 1f : 0.5f) * transform.forward * volleyballSettings.speedReductionFactor * -1f;

if (rotateDirAction == 1)

rotateDir = transform.up * -1f;

else if (rotateDirAction == 2)

rotateDir = transform.up * 1f;

if (dirToGoSideAction == 1)

dirToGo = (grounded ? 1f : 0.5f) * transform.right * volleyballSettings.speedReductionFactor * -1f;

else if (dirToGoSideAction == 2)

dirToGo = (grounded ? 1f : 0.5f) * transform.right * volleyballSettings.speedReductionFactor;

if (jumpAction == 1)

{

if (((jumpingTime <= 0f) && grounded))

{

Jump();

}

}

```

**Note:**

`volleyballSettings.speedReductionFactor` is a constant that slows backwards and strafe movement to be more 'realistic'.

Next, apply the movement using Unity's provided `Rotate` and `AddForce` methods:

```csharp

transform.Rotate(rotateDir, Time.fixedDeltaTime * 200f);

agentRb.AddForce(agentRot * dirToGo * volleyballSettings.agentRunSpeed,

ForceMode.VelocityChange);

```

Finally, add in the logic for controlling jump behavior:

```csharp

// makes the agent physically "jump"

if (jumpingTime > 0f)

{

jumpTargetPos =

new Vector3(agentRb.position.x,

jumpStartingPos.y + volleyballSettings.agentJumpHeight,

agentRb.position.z) + agentRot*dirToGo;

MoveTowards(jumpTargetPos, agentRb, volleyballSettings.agentJumpVelocity,

volleyballSettings.agentJumpVelocityMaxChange);

}

// provides a downward force to end the jump

if (!(jumpingTime > 0f) && !grounded)

{

agentRb.AddForce(

Vector3.down * volleyballSettings.fallingForce, ForceMode.Acceleration);

}

// controls the jump sequence

if (jumpingTime > 0f)

{

jumpingTime -= Time.fixedDeltaTime;

}

```

### `Heuristic()`

To test that we've resolved the actions properly, lets implement the `Heuristic()` method. This will map actions to a keyboard input, so that we can playtest as a human controller.

Add to `Heuristic()`:

```csharp

var discreteActionsOut = actionsOut.DiscreteActions;

if (Input.GetKey(KeyCode.D))

{

// rotate right

discreteActionsOut[1] = 2;

}

if (Input.GetKey(KeyCode.W) || Input.GetKey(KeyCode.UpArrow))

{

// forward

discreteActionsOut[0] = 1;

}

if (Input.GetKey(KeyCode.A))

{

// rotate left

discreteActionsOut[1] = 1;

}

if (Input.GetKey(KeyCode.S) || Input.GetKey(KeyCode.DownArrow))

{

// backward

discreteActionsOut[0] = 2;

}

if (Input.GetKey(KeyCode.LeftArrow))

{

// move left

discreteActionsOut[2] = 1;

}

if (Input.GetKey(KeyCode.RightArrow))

{

// move right

discreteActionsOut[2] = 2;

}

discreteActionsOut[3] = Input.GetKey(KeyCode.Space) ? 1 : 0;

```

Save your script and return to the Unity editor.

In the Behavior Parameters component of the PurpleAgent:

1. Set Behavior Type to Heuristic Only. This will call the `Heuristic()` method.

2. Set up the Actions:

1. Discrete Branches = 4

1. Branch 0 Size = 3 [No movement, move forward, move backward]

2. Branch 1 Size = 3 [No movement, move left, move right]

3. Branch 2 Size = 3 [No rotation, rotate clockwise, rotate anti-clockwise]

4. Branch 4 Size = 2 [No Jump, jump]

Press ▶️ in the editor and you'll be able to use the arrow keys (or WASD) and space bar to control your agent!

**Note:** It might be easier to playtest if you comment out the `EndEpisode()` calls in `ResolveEvent()` of `VolleyballEnvController.cs` to stop the episode resetting.

## Observations

Observations are how our agent 'sees' its environment.

In ML-Agents, there are 3 types of observations we can use:

- **Vectors** — "direct" information about our environment (e.g. a list of floats containing the position, scale, velocity, etc of objects)

- **Raycasts** — "beams" that shoot out from the agent and detect nearby objects

- **Visual/camera input**

In this project, we'll implement **vector observations** to keep things simple. **The goal is to include only the observations that are relevant for making an informed decision.**

With some trial and error, here's what I decided to use for observations:

- Agent's y-rotation [1 float]

- Agent's x,y,z-velocity [3 floats]

- Agent's x,y,z-normalized vector to the ball (i.e. direction to the ball) [3 floats]

- Ball's x,y,z-velocity [3 floats]

This is a total of **11 vector observations**. Feel free to experiment with different observations. For example, you might've noticed that the agent knows nothing about its opponent. This ends up working fine for training a simple agent that can bounce the ball over the net, but won't be great at training a competitive agent that wants to win.

Also note that selecting observations depends on your goal. If you're trying to replicate a 'real world' scenario, these observations won't make sense. It would be very unlikely for a player to 'know' these direct values about the environment .

To add observations, you'll need to implement the Agent class `CollectObservations()` method:

```csharp

public override void CollectObservations(VectorSensor sensor)

{

// Agent rotation (1 float)

sensor.AddObservation(this.transform.rotation.y);

// Vector from agent to ball (direction to ball) (3 floats)

Vector3 toBall = new Vector3((ballRb.transform.position.x - this.transform.position.x)*agentRot,

(ballRb.transform.position.y - this.transform.position.y),

(ballRb.transform.position.z - this.transform.position.z)*agentRot);

sensor.AddObservation(toBall.normalized);

// Distance from the ball (1 float)

sensor.AddObservation(toBall.magnitude);

// Agent velocity (3 floats)

sensor.AddObservation(agentRb.velocity);

// Ball velocity (3 floats)

sensor.AddObservation(ballRb.velocity.y);

sensor.AddObservation(ballRb.velocity.z*agentRot);

sensor.AddObservation(ballRb.velocity.x*agentRot);

}

```

Now we'll finish setting up the Behavior Parameters:

1. Set **Behavior Name** to 'Volleyball'. Later, this is how our trainer will know which agent to train.

2. Set Vector Observation:

1. Space Size: 11

2. Stacked Vectors: 1

## Wrap-up

You're now all set up to train your reinforcement learning agents.

If you get stuck, check out the pre-configured `BlueAgent` , or see the full source code in the [Ultimate Volleyball project repo](https://github.com/CoderOneHQ/ultimate-volleyball).

In the next section, we'll train our agents using [PPO](https://openai.com/blog/openai-baselines-ppo/) — a state of the art RL algorithm provided out-of-the-box by Unity ML-Agents.

If you have any feedback or questions, please let me know! | joooyz |

817,111 | Speedy Grade - Physical Chemistry For The Life Science | Do not waste countless hours on your college assignments anymore. Get Solution Manual For Physical... | 0 | 2021-09-08T05:57:33 | https://dev.to/kimberly2e/speedy-grade-physical-chemistry-for-the-life-science-4d53 | Do not waste countless hours on your college assignments anymore. Get <a href="https://speedygrade.com/p/solution-manual-for-physical-chemistry-for-the-life-sciences/">Solution Manual For Physical Chemistry For The Life Sciences</a> and work smart with better results. | kimberly2e | |

817,339 | New Year Countdown | A post by Atulwinlooper | 0 | 2021-09-08T11:22:43 | https://dev.to/atulwinlooper/new-year-countdown-3d2h | codepen | {% codepen https://codepen.io/bhadoria_atul_/pen/abwpLXJ %} | atulwinlooper |

817,382 | Custom radio button CSS only | Custom and fancy checkbox style with automatic fallback for older browsers. Forked from Giandomenico... | 0 | 2021-09-08T12:24:56 | https://dev.to/adenlall/custom-radio-button-css-only-bm5 | codepen | <p>Custom and fancy checkbox style with automatic fallback for older browsers.</p>

<p>Forked from <a href="http://codepen.io/giando110188/">Giandomenico Pastore</a>'s Pen <a href="http://codepen.io/giando110188/pen/zxoboB/">Custom checkbox CSS only</a>.</p>

<p>Forked from <a href="http://codepen.io/giando110188/">Giandomenico Pastore</a>'s Pen <a href="http://codepen.io/giando110188/pen/zxoboB/">Custom checkbox CSS only</a>.</p>

{% codepen https://codepen.io/manabox/pen/raQmpL %} | adenlall |

817,412 | Reader Response to "Can you work from an airplane?" | My recent message Can you work from an airplane? earned a fair amount of attention, both in email,... | 0 | 2021-09-21T08:26:43 | https://jhall.io/archive/2021/09/08/reader-response-to-can-you-work-from-an-airplane/ | serverless, productivity | ---

title: Reader Response to "Can you work from an airplane?"

published: true

date: 2021-09-08 00:00:00 UTC

tags: serverless,productivity

canonical_url: https://jhall.io/archive/2021/09/08/reader-response-to-can-you-work-from-an-airplane/

---

My recent message [Can you work from an airplane?](https://jhall.io/archive/2021/09/06/can-you-work-from-an-airplane/) earned a fair amount of attention, both in email, and on LinkedIn. But I wanted to respond to one particular response I got from fellow reader Paul Swail, because he brings up a very interesting case where “from an airplane” work is almost completely impractical (quoted with permission):

> Hi Jonathan,

>

> This is a very interesting topic and I do note that you caveated that “full of technical nuances that depend on your tech stack.”

>

> My dev work is almost exclusively building backend APIs using cloud services on AWS. The workloads are often integration-heavy. Many of these cloud services can’t run at all on a local developer workstation or if there are emulators, they’re suboptimal and can introduce other issues. For that reason, I almost always advocate developing directly in the cloud against real resources, with each developer having their own dedicated cloud stack so they can work in isolation. In my experience for serverless/cloud services solutions, focusing on building a fully local developer environment is actually detrimental and just introduces too many “works on my machine” bugs due to the large environment differential.

>

> For the 1% of time where lack of internet connection or cloud downtime is an issue, there will still be something meaningful that devs can do (e.g. writing local unit tests for Lambda functions or writing docs).

>

> Anyway, on a more general note, I want to say that I love your daily emails — so much value in there and your consistency is amazing!

>

> —Paul

Paul is absoultely right. And serverless is an area I’ve done embarassingly little work, even despite [having done a podcast episode on the topic](https://podcast.jhall.io/episodes/zachary-randall-the-benefits-of-serverless-for-small-and-understaffed-teams).

My reply:

> Hi Paul,

>

> Thanks for writing!

>

> You’re absolutely correct that there are times when connectivity is an absolute must for development. If your job is solely focused on that level of integration, then it may well be possible that you cannot be productive “from an airplane.”

>

> Most products that rely on this level of integration _tend_ to do so only at the boundaries of the application, which leaves open the possibility of working on internal components without a network connection. In some cases emulators or other mocks/stubs can be used to allow productive work when touching those boundaries, but as you say, they’re all imperfect.

>

> If your application relies on Lambda functions, though, then it sounds like a majority of your application lives really close to the API layer, so I’d guess that you’re right that there’s limited opportunity for “from an airplane” work.

>

> Jonathan

Paul also referred me to some of his own writing on the topic, which I share here for your benefit as well: [_A fully local development workflow is impossible for most serverless applications_](https://notes.serverlessfirst.com/public/A+fully+local+development+workflow+is+impossible+for+most+serverless+applications).

Thanks again, Paul, for the response!

* * *

_If you enjoyed this message, [subscribe](https://jhall.io/daily) to <u>The Daily Commit</u> to get future messages to your inbox._ | jhall |

817,596 | Top 7 Benefits Of Serverless CMS in 2021 | Advantages of Using Serverless CMS Every business owner or marketer tends to be... | 9,823 | 2021-09-08T14:47:28 | https://dev.to/techmagic/top-7-benefits-of-serverless-cms-in-2021-26k2 | ##Advantages of Using Serverless CMS

Every business owner or marketer tends to be omnipresent as much as possible, bringing new and personalized content to engage with a broader range of audiences and turning users into clients. For startups, it’s crucial.

With the constant growth of the Internet of Things, marketing specialists face a new challenge - to keep up with time and trends and power their content anytime through all possible smart devices around us.

Thanks to the advantages of serverless CMS, your digital presence is a question of a few weeks now. With serverless architecture, you focus entirely on code and content. Maintaining a back-end database doesn't bother or distract you. You are free to use your favourite stack platforms to write code. Or you can choose ready-to-use solutions and don't work with code at all.

And as a result, you have your once created content simultaneously presented through the web, mobile, and other IoT devices.

Let's find out the top benefits of serverless CMS.

##Scalability

You can create, manage, and reuse content and campaign pages when you need and as you’d like. Serverless CMS are optimized for speed and scalability by default, giving marketers the ease of increasing engagement with no distractions and limitations.

For developers, it means no maintenance since a serverless CMS runs in the cloud. You won't ever need to update anything like you needed to do with traditional CMS. In addition to that, scalability in serverless hosting means zero performance or hosting issues for your developers to face.

For example, suppose your business is super-successful, and your website receives an enormous amount of traffic. Your host automatically deals with this avalanche and manages accordingly. You don't do this manually; you don't even need to keep it in mind.

The serverless CMS always has enough capacity to deal with your traffic. You, in turn, dedicate time and energy to marketing, design, and content itself that will boost it.

##User Security

Serverless is rightly considered one of the safest (if not the safest) content management approaches. A physical web server is a metaphorical door for hackers, but since all content is stored in the cloud, the door is not even closed; it just doesn’t exist. Moreover, most CDN headless CMS use their own app firewall, adding an extra protection cover.

Hackers' attacks are harmful by the theft of personal data and posting impudent or restricted content. Both the first and the second scenarios lead you to reputation loss. Who would like the idea of giving you personal data if they were stolen once?

In this regard, cloud services demonstrate their advantage by offering a range of security mechanisms like the latest web application firewalls, real-time threat management, and ongoing penetration testing.

##Data Security

Here we consider two aspects. First, even if the equipment is damaged or broken, the data remains secure. You can use any other device and get access to your content and all related data, download them if needed, and continue working with no loss.

The second aspect is that you can always roll back to a particular stage and go the other way. This ability is invaluable. In this way, your business has enough space for experiments, research, keeping up with the times, and regularly updating without spending extra time, money, and other resources.

On top of that, serverless CMS can be proclaimed unsinkable. It is highly unlikely for you to lose any data while using one of them. From a marketer's point of view, you can focus on the quality of your content, have no worries about the clients and data safety, and scale when the time is right.

##Simple Use

Migrating your project to the cloud service can make it easier for all types of users to work with. How come and what stays behind it?

**Mobility**. Your resource is accessible from any device. Cloud storage means that you never meet data overload that is able to slow down your device.

**Speed**. Cloud services that specialize in providing storage services produce a higher playback speed than the internal server. It's all about capacity, tech equipment quality, expertise, and focus on a specific task.

##Cost-Effectiveness

You don’t need to pay for back-end maintenance if you don’t deal with one. This is where API-based serverless CMS systems significantly save your budget and allow you to direct these costs on improving the product, for example.

On the other hand, hosting static files rather than dynamic means no need for you to pay for web servers or databases themselves. All you need is a content delivery network (CDN). You just use less paid services.

In short, you are relieved of worries about storage costs and costly back-end maintenance. In the long term, such a state of affairs is beneficial to both developers and marketers, combined with the simplicity of using a serverless CMS.

It deserves reminding that your provider manages all the updates. Their internal team closely monitors the performance, which means you always get the best version of hosting services they can provide and find the perfect balance between the service package and the price.

##Easy Teamwork

Never before has the work of remote teams been so synchronous, productive, and well-coordinated as in the era of serverless CMS.

Developers, marketers, and authors are all exposed to the same infrastructure and can operate simultaneously without stepping on each other's toes. It's easy to keep track of who made which changes and avoid misunderstandings and conflicts. Also, it's a great time saver since one team doesn't need to wait until another one is done.

One of the best benefits of serverless CMS is that they make it much easier to collaborate transparently.

##Total Freedom For Developers

Developers are free to work with their favourite technologies without losing time and effort for learning and onboarding new ones. Serverless CMS are flexible and adaptable, so developers can focus on their work without the fuss of handling bugs in an already existing tech stack.

Marketers, in turn, have the freedom to connect with any third-party software or automation tools (CRM, conversion optimization, localization technologies for an international reach, etc.). With a serverless CMS, developers and marketers never have to settle for a “second-best."

One of the main benefits of serverless CMS functions for marketers is that the website structure becomes easier for them to understand and, accordingly, to improve. A serverless CMS allows you to try all the content building and managing tools throughout the process and preview the result before publishing. Thanks to this, you have the priceless power to decide how to structure and architect your site and display your content accordingly.

Moreover, including marketers in the building process leads to better understanding, communication, and collaboration between marketers, content creators, and developers.

##Conclusion

The modern trend of being omnichannel is dictating the market, not just influencing it. Flexible, scalable, customizable content management systems offer their services for turning your content into the experience your customers expect. Either a serverless or a pure headless CMS enables organizations to increase delivery time and iterate faster.

The question of whether to migrate to a traditional, serverless, or headless CMS depends on your business needs and expectations. With all the benefits of using serverless CMS, it is simple for you to publish content once with a single authoring point and deploy it anywhere you want with no stress and mess.

We have a significant number of serverless-based projects in our portfolio. If you want to upscale your business with all the benefits of serverless CMS AWS, for example, we, as big fans of Amazon, will be glad to help you with the implementation of the serverless CMS. | techmagic | |

817,632 | How to Install SSL Certificate on WordPress on Ubuntu Server? | In the current days, most modern websites have SSL certificates installed. So, let's look at how we... | 0 | 2021-09-08T15:43:55 | https://dev.to/yolchyolchyan/how-to-install-ssl-certificate-on-wordpress-on-ubuntu-server-43fc | wordpress, security, ssl |

In the current days, most modern websites have SSL certificates installed. So, let's look at how we can install an SSL certificate with WordPress.

First things first, it depends on your WordPress hosting provider how you can set up an SSL certificate. Some hosting providers will [setup an SSL certificate automatically](https://alandcloud.com/how-to-setup-ssl-with-aland-cloud/) for you. So, sometimes you don't need to have any technical knowledge.

So, now let's look at the ways to install an SSL certificate on WordPress when you manage your own servers.

## Prerequisites to install SSL

First of all, you should have an access to your server where you want to install an SSL certificate. You will usually need [SSH access](https://study.com/academy/lesson/what-is-ssh-access.html) to perform the installation.

Another thing is to make sure, your server IP address provided by your hosting provider is added to your domain as an A record.

The best way to install an SSL certificate for WordPress is by certbot. Certbot can power your WordPress website with a [Let's Encrypt](https://letsencrypt.org/) SSL certificate for free!

So, if you get the SSH access and mapped your domain with the IP Address we are ready to go.

## Install Certificate

1. First thing, SSH to your server.

2. Execute this command: `apt-get install software-properties-common python-software-properties`

3. Execute this command: `add-apt-repository ppa:certbot/certbot`

4. Execute this command: `apt-get update`

5. Execute this command: `apt-get install python-certbot-apache`

6. Execute this command `certbot --apache -d change-this-to-your domain.com`

7. Should you popped up with Y/N, enter `Y` and press enter to continue.

8. When it will ask if it should redirect `http` traffic to `https`, enter `Y` and press enter to continue.

You're done with setting up an SSL certificate for your WordPress website. Please not, you might need to renew this certificate every three months.

| yolchyolchyan |

818,038 | React Native: Optimizing FlatList performance | Flatlist is react-native component should be used for rendering scrollable lists. If you don't... | 0 | 2021-09-09T02:29:23 | https://dev.to/erdenezayaa/react-native-optimizing-flatlist-performance-31k | reactnative, performance | Flatlist is react-native component should be used for rendering scrollable lists. If you don't optimize well enough, performance starts to drop once dataset gets large. This causes laggy scroll and blank spaces between the list items.

### 1. Avoid inline functions

Inline functions are recreated every time component renders. This may be okay for some components but can slow performance for flatlists.

**Avoid this!**

```JSX

return (

<Flatlist

data={data}

renderItem={({item}) => <Item item={item} />}

keyExtractor={(item) => item.id}

/>

);

```

**Instead use this**

```JSX

const renderItem = ({item}) => <Item item={item} />;

const keyExtractor = (item) => item.id;

return (

<Flatlist

data={data}

renderItem={renderItem}

keyExtractor={keyExtractor}

/>

);

```

### 2. Provide height value for every item

If you don't provide getItemLayout function as props, Flatlist have to calculate height for every item in the list. By the result of this, sometimes when you scroll fast enough, you will see some gaps in the list. By providing our own getItemLayout function, it won't have to calculate every item's height and the performance will improve.

```JSX

const ITEM_HEIGHT = 65; // fixed height of item component

const getItemLayout = (data, index) => {

return {

length: ITEM_HEIGHT,

offset: ITEM_HEIGHT * index,

index,

};

};

return (

<Flatlist

data={data}

renderItem={renderItem}

getItemLayout={getItemLayout}

keyExtractor={keyExtractor}

/>

);

```

### 3. Keep component that renders the list item as light as possible

Don't do any extra work in renderItem function like formatting the data and declaring another function. Also props you pass to renderItem should be only the data that will render in the UI.

In this example, Item component is formatting the date with moment which is heavy js library that should be avoided, formatting a number value right before rendering and getting really big navigation as props every time. All of these, should be avoided to keep the Item component light.

**Don't do!**

```JSX

const Item = ({item, navigation}) => {

const created_at = moment(item.created_at).format('YYY/MM/DD HH:mm');

const total_value = formatNumber(item.total);

// it is common to pass navigation instance of react-navigation library, avoid this because navigation props is too big

const onPressItem = () => {

navigation.navigate('DetailItem', {item});

};

return (

<View>

...

</View>

);

}

const renderItem = ({item}) => <Item item={item} navigation={navigation} />;

return (

<Flatlist

data={data}

renderItem={renderItem}

getItemLayout={getItemLayout}

keyExtractor={keyExtractor}

/>

);

```

**Do this**

```JSX

const Item = ({item, onItemPress}) => {

return (

<View onPress={onItemPress(item)}>

...

</View>

);

}

// Handle item press event in parent component that has already access to navigation component

const onItemPress = (item) => {

navigation.navigate('ItemDetail');

};

const renderItem = ({item}) => <Item item={item} navigation={navigation} />;

// do the data formatting and manipulation before the flatlist render

const preparedData = data.map((item) => {

const created_at = moment(item.created_at).format('YYY/MM/DD HH:mm');

const total_value = formatNumber(item.total);

// only return the properties that need to be rendered and leave everything else

return {

label: item.label,

total_value,

created_at,

};

});

return (

<Flatlist

data={preparedData}

renderItem={renderItem}

getItemLayout={getItemLayout}

keyExtractor={keyExtractor}

/>

);

```

### 4. Use Pure Component or Memo

PureComponent re-renders a component by shallow comparing the props and re-renders only if the props have changed. PureComponent is used for class components. React memo is the alternative to PureComponent for functional components.

### 5. Use cached optimized images

If your list have lots of images, you should use optimal size d, cached images. You can use libraries like [react-native-fast-image](https://github.com/DylanVann/react-native-fast-image) to implement this. These libraries can do following things.

- resize images according to device dimensions, therefore reduces the memory consumption.

- Caches images to memory and storage to better load times.

Keep in mind it works better when you have already optimized images for different sizes of devices.

### Conclusion

In my experience, those methods really improved the performance of the Flatlist. I hope you find this helpful. If these methods didn't help, you should checkout the sources I used for this article.

#### Sources:

[Official Documentation for Optimizing Flatlist Configuration](https://reactnative.dev/docs/0.61/optimizing-flatlist-configuration)

[8 ways to optimize React native FlatList performance](https://codingislove.com/optimize-react-native-flatlist-performance/)

| erdenezayaa |

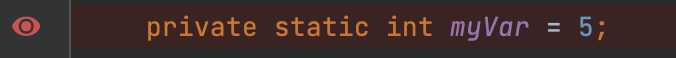

818,051 | Top 7 VS Code extensions | The truth is not that they are the best, I was just looking for an eye-catching title. VS... | 0 | 2021-09-09T02:09:17 | https://dev.to/sebasttiandaza/top-7-vs-code-extensions-2dkc | vscode, frontend, productivity, webdev | ###The truth is not that they are the best, I was just looking for an eye-catching title.

VS Code for me is one of the best cross-platform text editors, developed with TypeScript, JavaScript and CSS technologies, of course one of its best parts is the large section of extensions that has to improve code development and work productivity, I will tell you about these 7 extensions based on the ones I use most in my day to day coding.

The first of all, and that for me has been very helpful is:

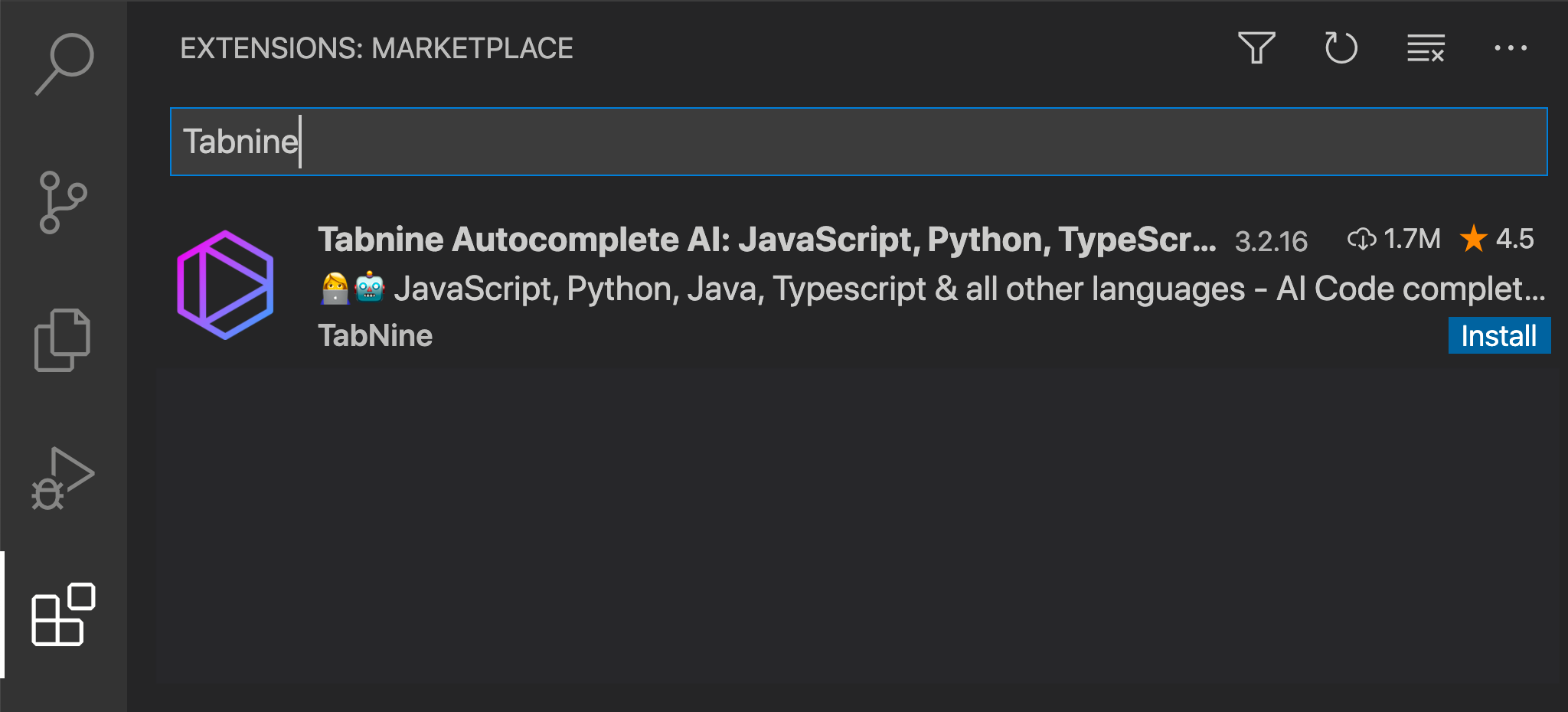

1. [Tabnine](https://tab9.in/eqypp) - Code: It is one of the best Artificial Intelligence assistants, you code much faster, with less errors and of course improves your productivity, it also supports many languages and is totally free, I highly recommend it!

In this case it is an extension that will help you to better identify your code, it is about

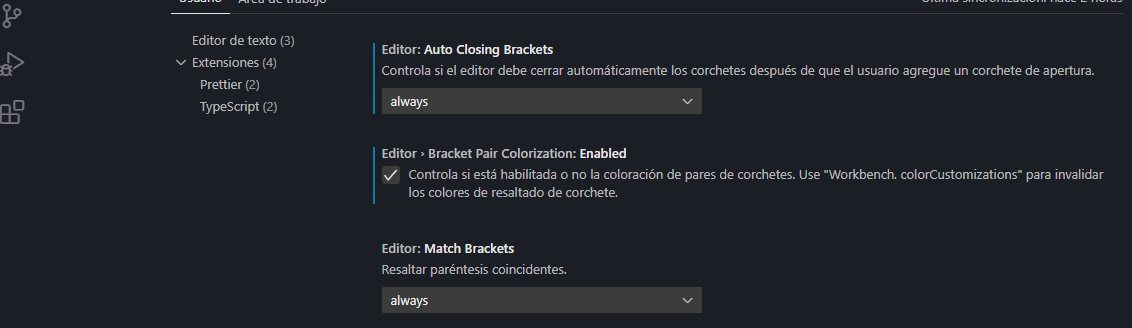

2. [Bracket Pair Colorizer](https://marketplace.visualstudio.com/items?itemName=CoenraadS.bracket-pair-colorizer): It allows you to identify the brackets of your code with matching colors, so that in this way they match and are better identified, with support for different languages and very customizable, although relatively this extension became obsolete in the last [update](https://code.visualstudio.com/updates/v1_60) of VS Code, because it comes included in the editor itself:

By activating this option, it will be ready for you. Let's go right away to the third one

3. [ESLint](https://marketplace.visualstudio.com/items?itemName=dbaeumer.vscode-eslint): it is a code analyzer that allows us to write quality code, that is to say, it will allow you to identify errors in your code, faster and identifiable at sight.

From the third to the fourth:

4. [Live Server](https://marketplace.visualstudio.com/items?itemName=ritwickdey.LiveServer), this extension offers you in a very fast way a live development server, so you can see what you are doing.

The fifth is a tool that for Spanish speakers, we are very grateful for.

5. [Spanish Languaje Pac for VS Code](https://marketplace.visualstudio.com/items?itemName=MS-CEINTL.vscode-language-pack-es), it is the pack in Spanish of the editor, although I do not recommend it much, it is better that you learn to handle this tools in English, personally I prefer to have it in English, and the main reason is that the language at world-wide level of the programming is English, so it is better that you relate with this one.

6. [Auto rename Tag](https://marketplace.visualstudio.com/items?itemName=formulahendry.auto-rename-tag) and [Auto Close Tag](https://marketplace.visualstudio.com/items?itemName=formulahendry.auto-close-tag): The first one serves you for an automatic tag change in your HTML code and the second one for an automatic tag close in your code.

7. [Git Lens](https://marketplace.visualstudio.com/items?itemName=eamodio.gitlens), if you work with git, this is your extension, it will enhance the capabilities of git integrated with VS Code, offering many more very effective features.

Obviously I clarify, that does not mean that these are the only good extensions, but I think that from my side are extensions that can not miss in your VS Code, but I hope you leave them in the comments.

| sebasttiandaza |

818,063 | My first post on OSD | Introduction Hello, my name is Leyang and I'm a student in the Computer Programming and... | 0 | 2021-09-09T19:29:59 | https://dev.to/lyu4321/my-first-post-on-osd-4dp2 | opensource | ###Introduction

Hello, my name is Leyang and I'm a student in the Computer Programming and Analysis Program at Seneca College. I'm so excited to be taking a course on open source development in my last semester! During my time at Seneca and at my co-op, I have used many open source projects in creating my own projects and they have made my life so much easier. I hope to learn how I can also make contributions to this community, discover some cool new projects, and improve my ability to understand code written by many different people by working on open source projects.

###Where I Am

I am from and am currently based in Toronto, Canada. However, I look forward to working with people from all over the world through various open source projects.

###What I Hope To Learn

Over the semester, I hope to work on all sorts of projects in many different languages. Currently, I am most comfortable with working on web apps using JavaScript and C#. However, I hope to be able to broaden my horizons and gain more experience using languages I have less experience in such as Python. I love games and would love to work on some gaming projects or projects that I would use in the future.

###Open Source Project

After researching some trending open source projects, the one I chose to fork to my own repo is [30 Days of Python](https://github.com/Asabeneh/30-Days-Of-Python). As a beginner to Python, I think this project would be a very useful tool to help people like me learn the basics.

Thanks for reading!

[GitHub](https://github.com/lyu4321)

[LinkedIn](https://www.linkedin.com/in/leyang-yu-48668ba8/) | lyu4321 |

818,234 | Set Git Proxy | You clone a Git repo and get this error message: Git failed with a fatal error. unable to access... | 0 | 2021-09-09T06:34:33 | https://dev.to/dileno/set-git-proxy-3i69 | github | You clone a Git repo and get this error message:

```

Git failed with a fatal error.

unable to access 'https://github.com/THE_REPO':

OpenSSL SSL_connect: SSL_ERROR_SYSCALL in connection github.com:443

```

This might be because you're behind a proxy. You then need to set proxy for Git in your command tool:

```

git config --global http.proxy http://your-proxy-url:8080

git config --global https.proxy https://your-proxy-url:8080

```

You also might need to disable SSL verification:

```

git config --global http.sslVerify false

```

| dileno |

818,244 | Learn how to create a secure mobile application. | 📜 About this program: "14 Days of Mobile Security" is a social learning and accountability... | 0 | 2021-09-09T08:50:26 | https://aviyel.com/post/333/14-days-of-mobile-security | 14daysofsec, mobile, security, event | ## 📜 About this program:

"14 Days of Mobile Security" is a social learning and accountability program for mobile developers to build a security-centric culture and to achieve the goal of creating secure mobile apps.

One of the most common mistakes made by new or young mobile developers is a lack of knowledge or foundations in security development practice. They begin working on their 'development style' before learning security practices and methods. To assist you, we have developed a practical roadmap called "14 Days of Security," which is based on extensive research and the experience of mobile security developers working in large tech companies.

## 📦 So, what exactly are you going to receive?

- You will learn the fundamentals of mobile security from the ground up.

- Even if you have never picked up a security book, you will learn about best security practices to follow.

- You will be able to master the fundamentals of mobile security.

- You'll learn about security best practices by utilizing open source tools and frameworks, along with videos and text tutorials.

## 🎀 Win Exciting Prizes

- After completing the challenge, you will receive a free subscription to a premium course.

- You will receive a certificate of completion.

- Submissions with more than 5 upvotes will receive the top peer badge of the day or week.

- Anyone who earned 14 challenge completion badges and 5 top peer badges can request a 14-day mobile security challenge completion certificate from the Aviyel Team.

## 🏁 How do you start?

- Sign-up for free and Text "Pledge" on the comment box of this article [14 days of mobile security](https://bit.ly/14DaysMobSec)

- Dedicate 1 hour every day for the next 14 days to learn one mobile security.

- Every day, set a goal of reading an article, developing a security tool to audit your app, or utilizing a security framework to encrypt your data-in-transit or data-in-storage. You can choose articles and tutorials from the content repository, or you can follow any article or tutorial on Mobile Security. We have also created a repository of mobile security concepts to search or research for the program to help you narrow your search.

- After finishing the day's activities, you can choose a challenge from our Challenge repo. To complete the challenge, simply share a screenshot of your tutorial's results, GitHub code, a short post, and so on. This earns you a completion badge.

- Any challenge submission that receives more than 5 upvotes will be awarded the top peer badge of the day or week.

- Any participant who received 14 challenge completion badges with 5 top peer badges can apply for 14 days of mobile security challenge completion certificate from the Aviyel Team.

- Top "Day X Submission" of the Day and Week will be selected and pinned 📍.

## 🗺️ Roadmap Content

To help you with this, we have created a practical roadmap, “14 days of Security,” based on a lot of research and the experience of mobile security developers working in big tech companies. This roadmap will give you a complete guideline to build a strong coding habit and to achieve your goal of creating a secure mobile app.

- Video

- Blogs

- Audio

[Here](https://aviyel.com/post/401/roadmap-content) are the list of the entire roadmap content of 14days of security.

## 🗺️ Roadmap Content Theme

- [End to End Encryption](https://aviyel.com/post/431/end-to-end-encryption)

- [Code Quality Checklist](https://aviyel.com/post/332/secure-code-review-checklist)

- [Obfuscating](https://aviyel.com/post/734/obfuscating)

- [Encrypt your data](https://aviyel.com/post/735/encrypt-your-data)

- [Certificate Transparent](https://aviyel.com/post/739/certificate-transparent)

✨ Tweet your progress [@AviyelHq](https://aviyel.com/discussions) by using hashtag #14DaysOfSec and the hashtag of the challenge in which you participate from this day forward for the next 14 days. | pramit_marattha |

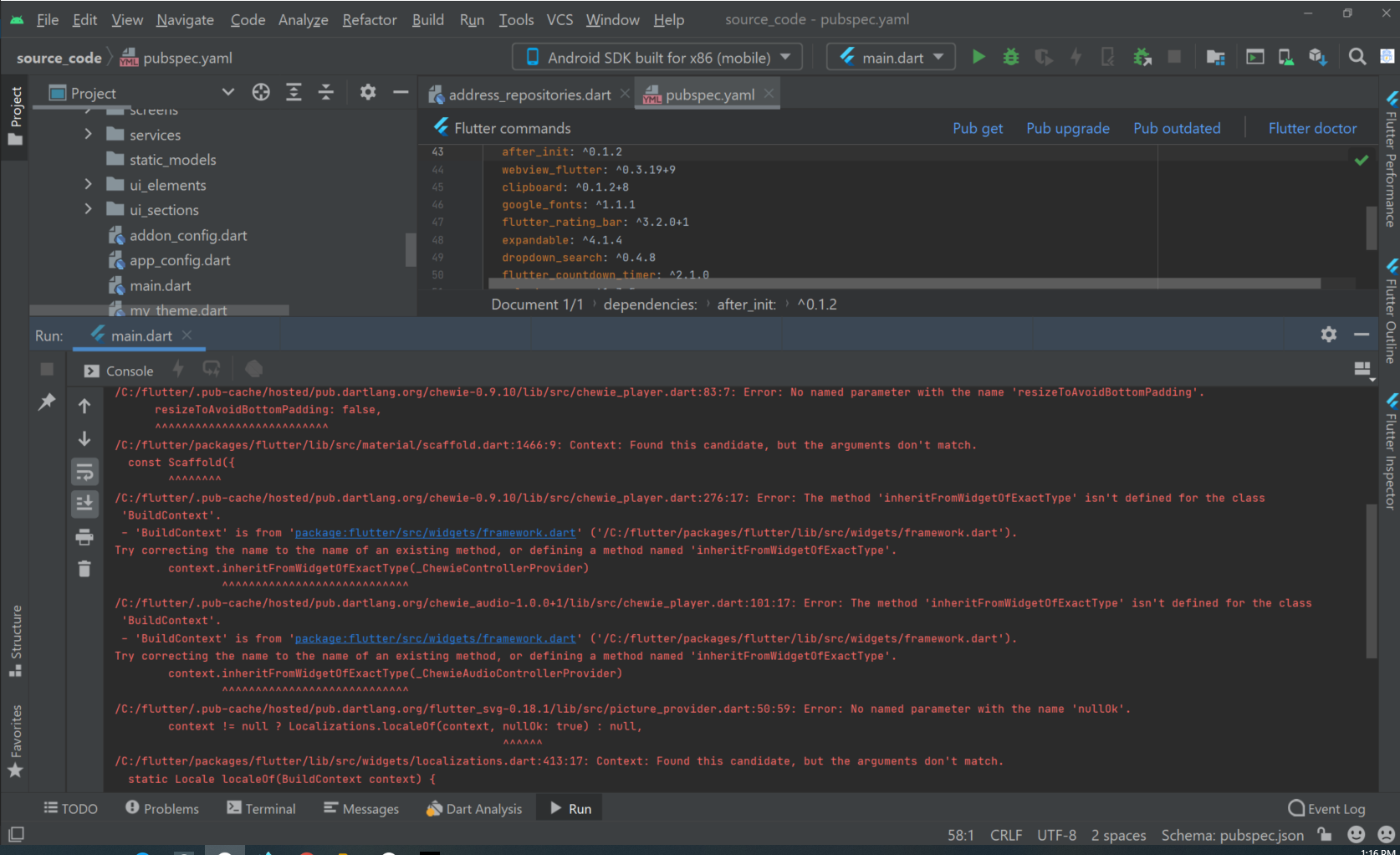

818,265 | Help Solved this error #Flutter | A post by chathu pathirana | 0 | 2021-09-09T07:49:55 | https://dev.to/chathuranga0005/help-me-solved-this-error-flutter-4mo8 |

| chathuranga0005 | |

830,047 | Day 454 : And So | liner notes: Professional : My day started really early for a feedback session with a coding... | 0 | 2021-09-17T21:41:04 | https://dev.to/dwane/day-454-and-so-2lb7 | hiphop, code, coding, lifelongdev | _liner notes_:

- Professional : My day started really early for a feedback session with a coding bootcamp in Japan that we teamed up with to see what they could build with our APIs. Got really good feedback and some ideas on how to improve even more. Then right after that was their demo day to present what they built. I was really impressed. The built a really good application. After that, I worked on completing the Web Components I've been adding the ability to style it how a user wants or stick with the default. There's 5 in total so it took a little time, especially when I had other projects due sooner. I had to rework the code to allow for custom styling, luckily I learned about CSS part. Then come up with a default style that would look good. Then create some graphics for each Web Component to hopefully detail the different parts that can be styled. Then update the readme documentation of each component to help explain the changes. Then push a PR so it could be reviewed. Then published the changes to NPM so that demos I created could be updated automatically. And so... I got everything done today. Feels good to finally get it out there. Good thing because I got some info for some things I need to work on Monday. haha.

- Personal : Last night, basically worked on finishing up my Web Component project for work. I did get to watch an episode of "Boruto" while eating dinner.

It's starting to rain. Going to go inside and get some dinner and watch "Boruto". I want to finish up going through tracks for the radio show tomorrow. Maybe even get that image uploading with Cloudinary working on my side project. We'll see.

Have a great night and weekend!

peace piece

Dwane / conshus

https://dwane.io / https://HIPHOPandCODE.com

{% youtube gv4DZ7AnptE %} | dwane |

830,122 | Review Lab 1 | I find my partner- Le Minh Pham - through Slack and we both prefer Python. I just started learning... | 0 | 2021-09-18T00:07:00 | https://dev.to/yodacanada/review-lab-1-4fog | > I find my partner- Le Minh Pham - through Slack and we both prefer Python. I just started learning Python, but he should be an expert. I learned some new libraries and methods after testing and reviewing Le’s code. I am surprised at the beautiful structure of his code. My partner helped me solve a big problem of finding folders, so I’m lucky to have such a partner.

> Reviewing and testing code is an exciting and painful thing for me. I'm excited because I can learn a lot of skills from my partner. For example, the original code only has one file, but after optimization, it has four files, and many auxiliary functions are added, and the structure is clearer.

> The pain is because as a new language, it takes me a long time to review and test it. Especially, my partner is very diligent. His code changes a lot every few days. Based on my level, it’s hard to find issues about his constantly improving code. Despite all this, I can still find problems and put forward what needs to be improved. For example, the [issue “--input folder command line isn't working”]

(https://github.com/lmpham1/cool_ssg_generator/issues/2). I also give some [suggestion]. (https://github.com/lmpham1/cool_ssg_generator/issues/3)

> There are 2 main issues about my code. [issue1] (https://github.com/Yoda-Canada/Magic-SSG/issues/1), [issue2](https://github.com/Yoda-Canada/Magic-SSG/issues/2) Le give me lots of suggestion to fix the bugs, and I am fixing them.

This lab is very important for me, because a lot of basic knowledge needs to be learned, and it also help me to recall the knowledge I learned before. I am confident to complete the future project

| yodacanada | |

830,262 | Create Filters in AngularJS | Filters are used to modify the data. They can be used in expression or directives using pipe (|)... | 14,655 | 2021-09-18T03:27:24 | https://www.w3courses.org/create-filters-in-angularjs/ | angular, javascript | **Filters are used to modify the data.** They can be used in expression or directives using pipe (|) character. We can use **angularjs built in filters or can create filters in angularjs.**

##There are some commonly used filters in angularjs

###uppercase

converts a text to upper case text.

###lowercase

converts a text to lower case text.

###currency

formats text in a currency format.

###filter

filter the array to a subset of it based on provided criteria.

###orderby

orders the array based on provided criteria.

##Installing AngularJS in Website

To Install angularjs paste the script in head tag of your website layout

```js

<script src = "https://ajax.googleapis.com/ajax/libs/angularjs/1.3.14/angular.min.js"></script>

```

##Using built in Filters in AngularJS

```html

<div ng-app = "mainApp" ng-controller = "studentController">

<table border = "0">

<tr>

<td>Enter first name:</td>

<td><input type = "text" ng-model = "student.firstName"></td>

</tr>

<tr>

<td>Enter last name: </td>

<td><input type = "text" ng-model = "student.lastName"></td>

</tr>

<tr>

<td>Enter fees: </td>

<td><input type = "text" ng-model = "student.fees"></td>

</tr>

<tr>

<td>Enter subject: </td>

<td><input type = "text" ng-model = "subjectName"></td>

</tr>

</table>

<br/>

<table border = "0">

<tr>

<td>Name in Upper Case: </td><td>{{student.fullName() | uppercase}}</td>

</tr>

<tr>

<td>Name in Lower Case: </td><td>{{student.fullName() | lowercase}}</td>

</tr>

<tr>

<td>fees: </td><td>{{student.fees | currency}}

</td>

</tr>

<tr>

<td>Subject:</td>

<td>

<ul>

<li ng-repeat = "subject in student.subjects | filter: subjectName |orderBy:'marks'">

{{ subject.name + ', marks:' + subject.marks }}

</li>

</ul>

</td>

</tr>

</table>

</div>

<script>

var mainApp = angular.module("mainApp", []);

mainApp.controller('studentController', function($scope) {

$scope.student = {

firstName: "Mahesh",

lastName: "Parashar",

fees:500,

subjects:[

{name:'Physics',marks:70},

{name:'Chemistry',marks:80},

{name:'Math',marks:65}

],

fullName: function() {

var studentObject;

studentObject = $scope.student;

return studentObject.firstName + " " + studentObject.lastName;

}

};

});

</script>

```

##Create Filters in AngularJS

```html

<div ng-app = "mainApp" ng-controller = "myController">

<div ng-bind-html="htmlData |safeAs"></div>

</div>

<script>

var mainApp = angular.module("mainApp", []);

/*controller*/

mainApp.controller('myController', function($scope) {

$scope.htmlData = "<p>Hello AngularJS";

});

/*filter*/

mainApp.filter('safeAs', ['$sce',

function($sce) {

return function (input, type) {

if (typeof input === "string") {

return $sce.trustAs(type || 'html', input);

}

console.log("trustAs filter. Error. input isn't a string");

return "";

};

}

]);

</script>

```

Using these methods you can make custom filters in angularjs.

___

###See Also

[How to Create Multiple Parameters Dynamic Routes in Laravel](https://www.w3courses.org/how-to-create-multiple-parameters-dynamic-routes-in-laravel/)

[Laravel 8 Multiple Database and Resource Routes with Controllers](https://www.w3courses.org/laravel-8-multiple-database-and-resource-routes-with-controllers/)

[Optimize Database Queries in Laravel](https://www.w3courses.org/how-to-optimize-database-queries-in-laravel/)

[Flash Messages in AngularJS](https://www.w3courses.org/flash-messages-in-laravel-with-angular-material/)

[Create REST API in Node.js](https://www.w3courses.org/how-to-create-rest-api-in-nodejs/)

___

**Thanks For Reading :)**

**Please give your comments :)**

**Please Must Visit My Website :)**

[W3Courses](https://www.w3courses.org)

| readymadecode |

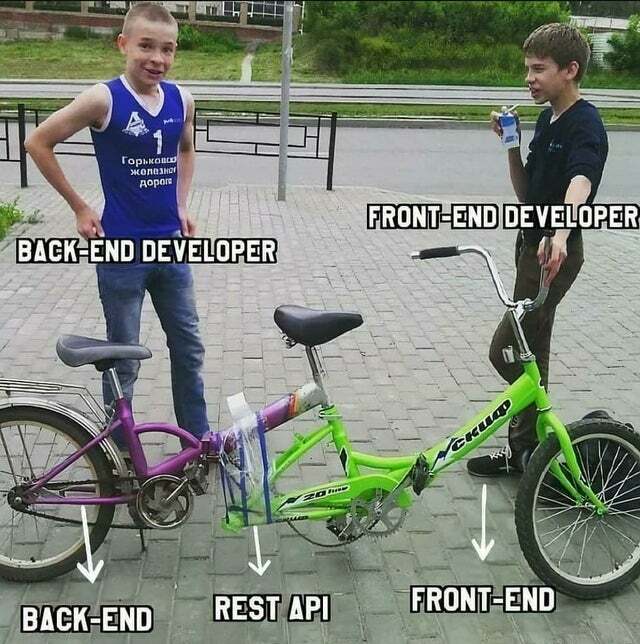

891,096 | The End of the backend | We're finished backend So its been 2 weeks again since I last posted, but I though I would... | 14,682 | 2021-11-07T16:07:18 | https://dev.to/newcastlegeek/the-end-of-the-backend-352g | javascript, beginners, bootcamp |

## We're finished backend

So its been 2 weeks again since I last posted, but I though I would wait and round up backend in one post,

Its been an interesting couple of weeks consolidating and putting our new knowledge into practice, I'm happy to say that a lot of it has sunk in.

Over the past few weeks we have finished off the knowledge we needed to cover us for last week, during last week my fellow peers have worked on a portfolio project. we have worked on either a backend news API with article and comments or a games API.

I got all excited when I heard games API but it turns out it was board games so I chose the news API.

We started from the beginning NorthCoders had given us a repo with some of the more boiler plate style code in to get us going but essentially that was just enough to get us going.

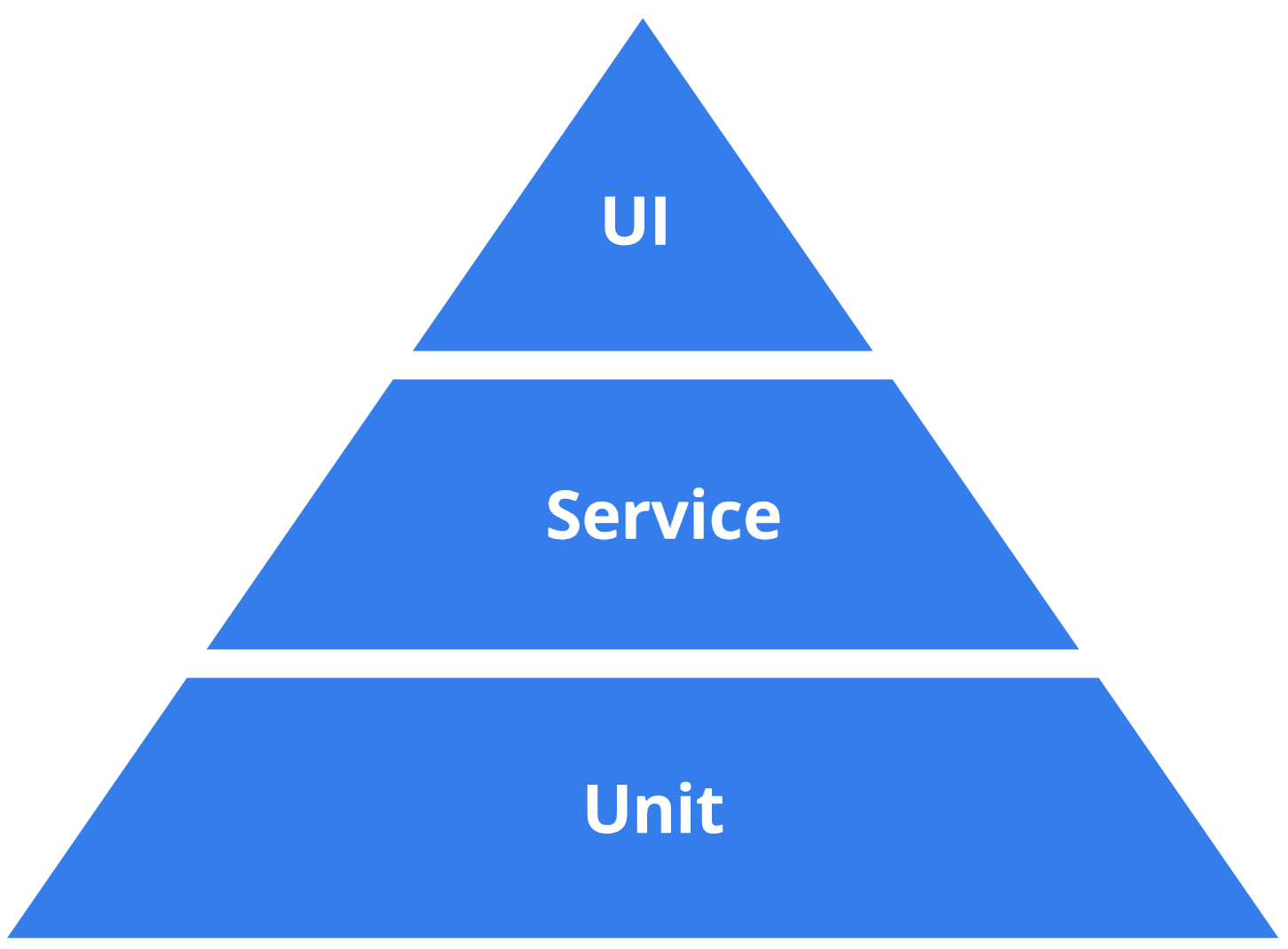

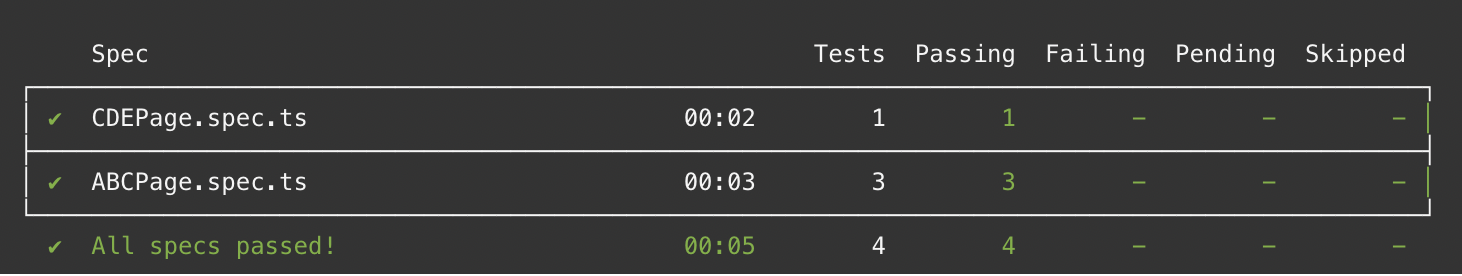

###testing testing 1... 2... 3...

as with everything in we have done at NorthCoders we started with testing, Its been really interesting over the past few week for me to go from hating/not really using testing to using it to drive my development, we've been using testing to build it relatively small functions but I can easily see how the basis we have now in testing could help us in the future to build larger function and projects.

### they planted the seed

the repo we were given contained a whole bunch of seed data for our project, it was our task to use this data and turn it into a working and functional API, I think I might have went a little far with my data, I wrote a function to make sure all of the data was presented to the Database in a way I wanted, I also then spent time writing tests the best I could to test this data and make sure it came back in the format I intended, I then tested the data from the database to make sure that was returned Properly.

### our ROUTE to salvation

the next thing we needed to do was build up a whole bunch of endpoints using the REST ideology we had to build ourselves some GET, POST, PATCH and DELETE endpoints to be able to manipulate the data we send and receive from the database.

### HerokWHO?

the whole idea of building this project was so that we have something after we have finished the bootcamp, something that employers can look at and play with, some code that they can read and understand, to help us do this we made use of Heroku the online app hosting site. while I've used Heroku once before to host something, this time in true NorthCoders fashion we used the command line to do 90% of the work. Its nice that it has the website but there's something awesome about using the command line to do things.

## Up Next

I've really enjoyed my time on backend but I'm excited to move onto front end, looking at the calendar where going to be working with the REACT framework which I haven worked with yet, Taking a sneak peak ahead though it looks like we might be revisiting our projects at the end of front end to round it out and give it more functionality using react.

Kind of like this...

| newcastlegeek |