id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

941,073 | API3:2019 - Excessive Data Exposure | Hey there! How's your day going so far? Hope you are doing great! For the third post of our OWASP... | 17,377 | 2021-12-31T08:38:25 | https://dev.to/therealbrenu/api32019-excessive-data-exposure-4c4p | cybersecurity, owasp, api, hacking | Hey there! How's your day going so far? Hope you are doing great!

For the third post of our OWASP API Security Top 10 series, it is time to talk about Excessive Data Exposure! Hope you guys like it :hugs:

## :national_park: What is Sensitive Data?

Excessive data exposure is an issue that includes different intrinsic topics, such as database performance for example. However, this whole series is about API security, and when it comes to the security aspect of excessive data exposure, sensitive data always comes up.

Okay, so what the flip is sensitive data? Generically speaking, we may consider as sensitive data everything that would cause damage to a company if publicly exposed. Some examples are listed below:

- SSH keys

- Database credentials

- Users' billing information

- Biometric and health records

Of course, not all of these things will appear as a result of calling API endpoints. The most common cases of excessive data exposure in APIs usually involve a more specific type of sensitive data, that we may call PII (Personally Identifiable Information).

Personally Identifiable Information is any data that, once publicly available, could be used directly or indirectly to identify a person. From the examples listed above, we can consider the last two items as being PII.

## :gear: Getting Sensitive Data From APIs

Now, I am going to be showing two different cases where we have API3:2019 happening, one for GraphQL APIs and one for REST APIs.

### :books: GraphQL Example

As a first practical example, let's take a GraphQL API of a fictional game, from where the introspection defines the following query:

> query {

> user(id: 123) {

> username

> level

> rankingPosition

> }

> }

Basically, it receives a number which corresponds to the ID of a user, and returns their username, level and position on the ranking. Theoretically, if the API really follows what the introspection is saying, we are only capable of retrieving these three attributes of a user.

Also, the introspection defines this query right down below, from which is possible to retrieve some of your own user's information:

> query {

> myUser {

> username

> level

> rankingPosition

> address {

> street

> number

> postCode

> }

> creditCard

> }

> }

Notice that the query `myUser` returns more attributes than the previous one, it returns your address and your credit card. Returning your credit card in plain text, per se, is something that might already be enough to say that we have an excessive data exposure happening, but it can get worse.

What both queries do is to retrieve data from a specific user, and because of that, although they are differently defined by the GraphQL introspection, possibly both `user` and `myUser` were implemented in a similar way, with very similar code.

By assuming that they "share code" to each other, an attacker might try to call `user` passing `creditCard` as a response attribute, like this:

> query {

> user(id: 1337) {

> creditCard

> }

> }

If it works, this means that the attacker is able to retrieve the credit card of any user they want. In other words, this means that our fictional game has a business logic error leading to excessive data exposure.

### :books: REST Example

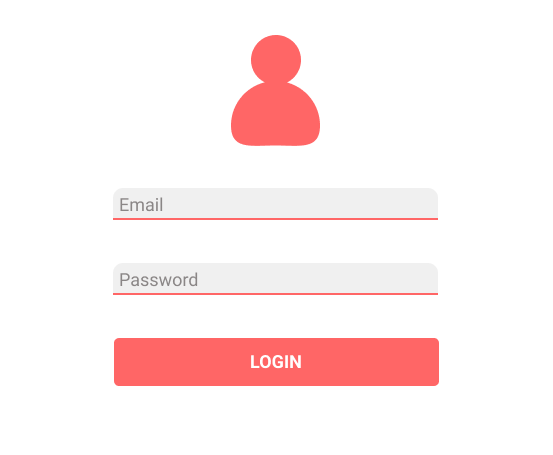

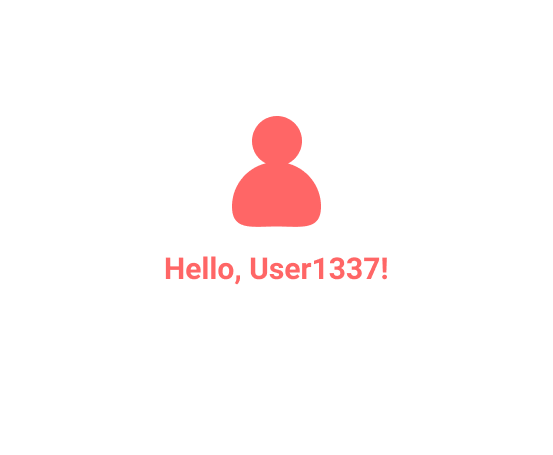

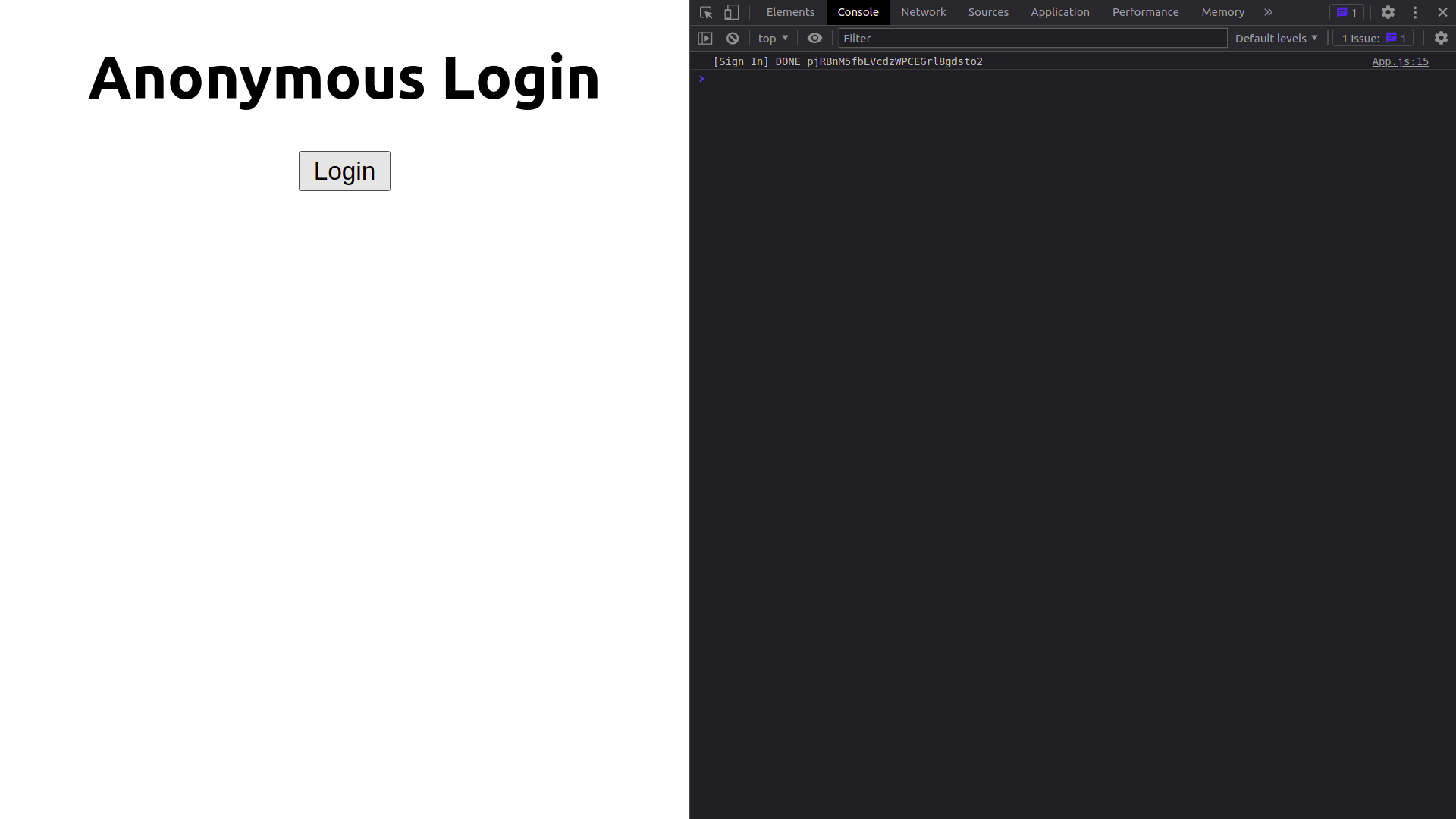

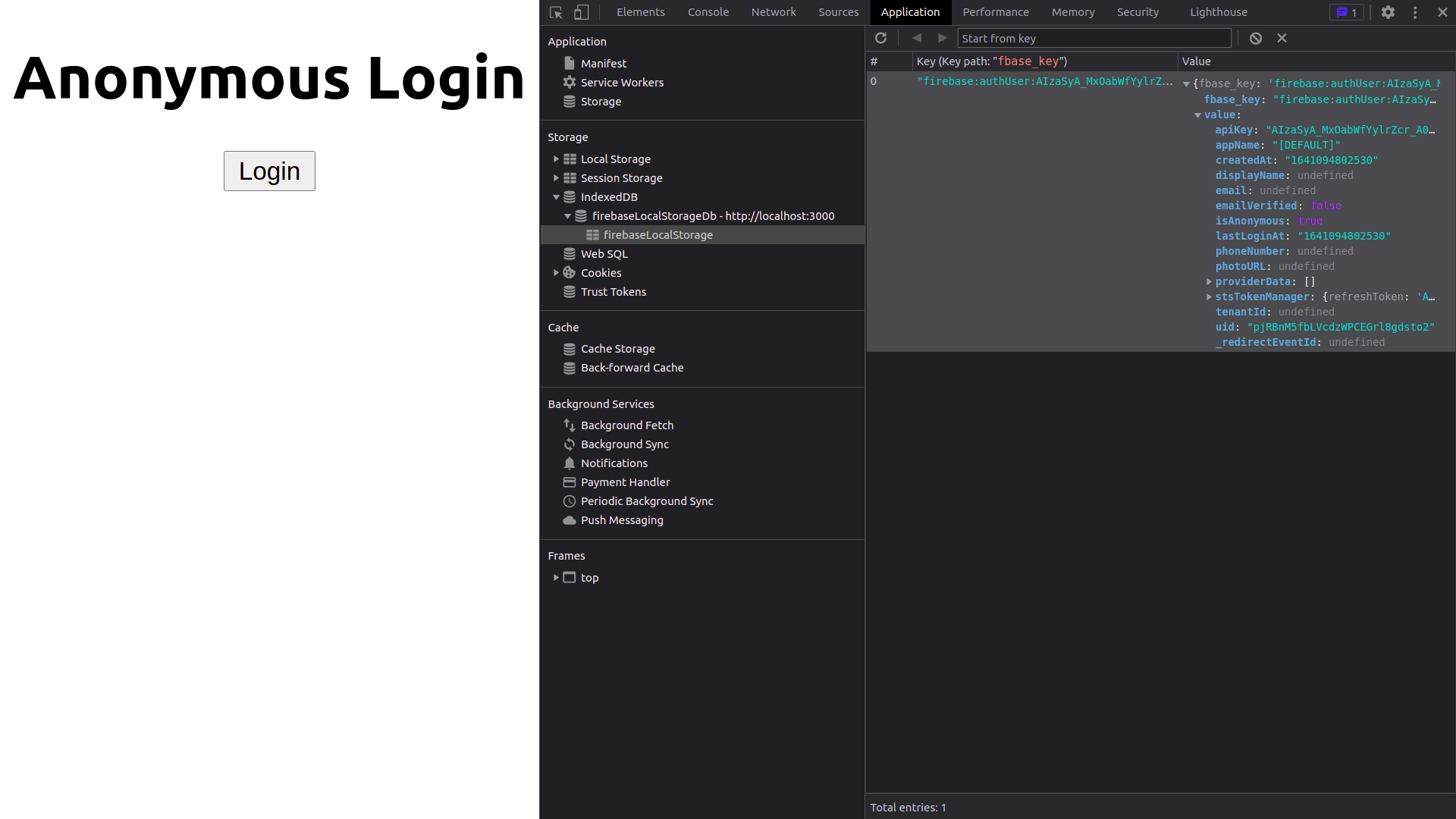

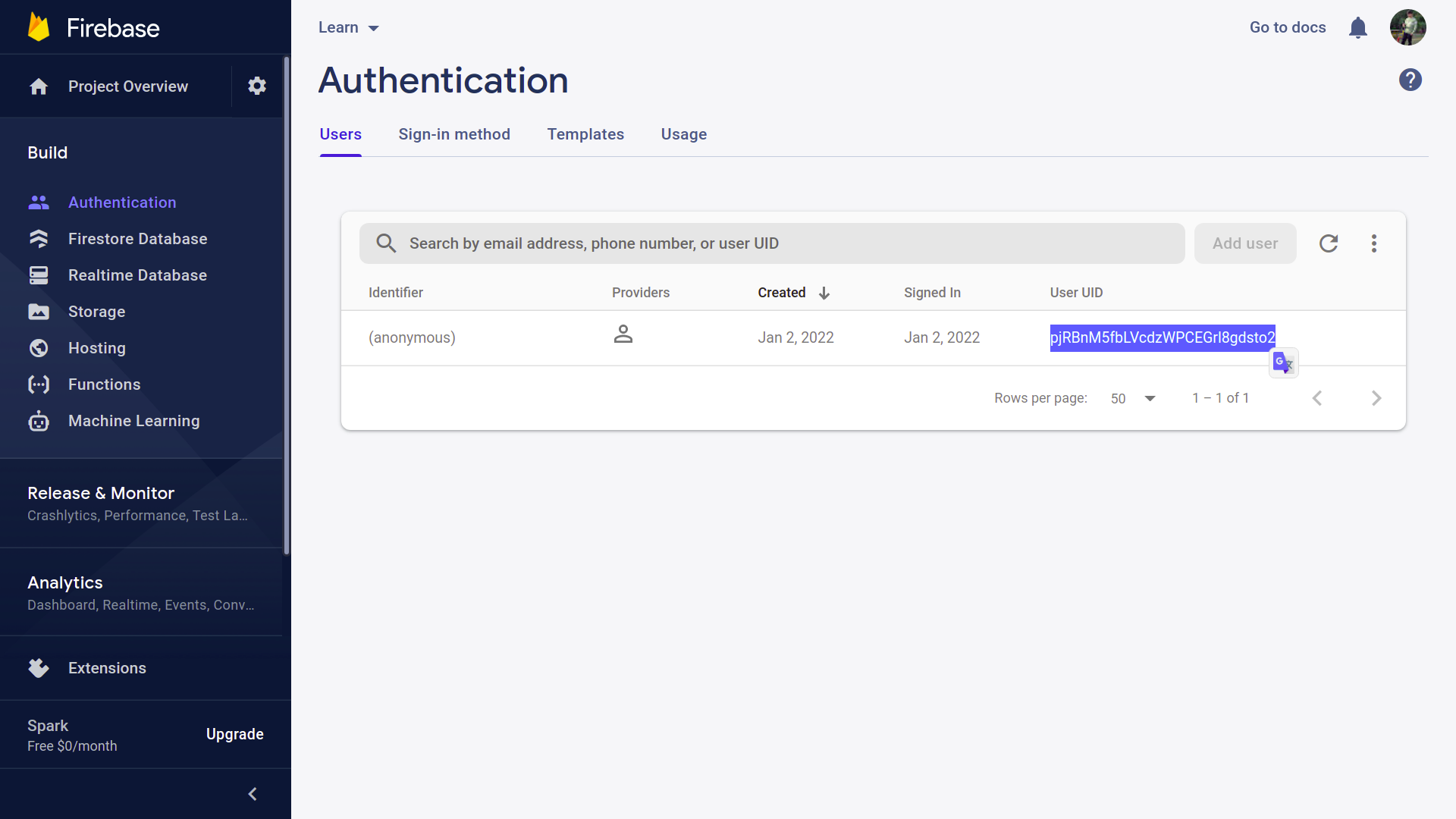

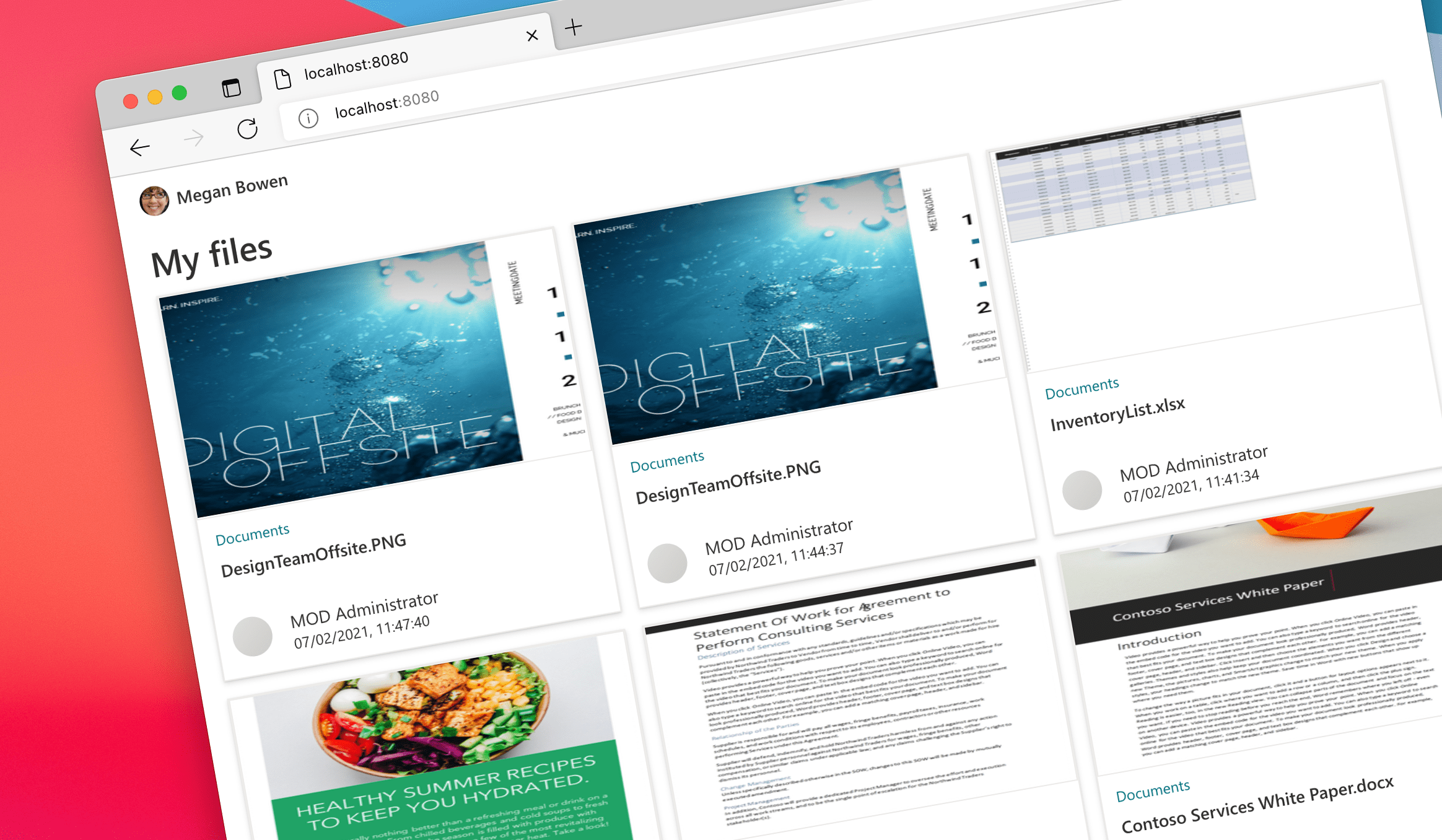

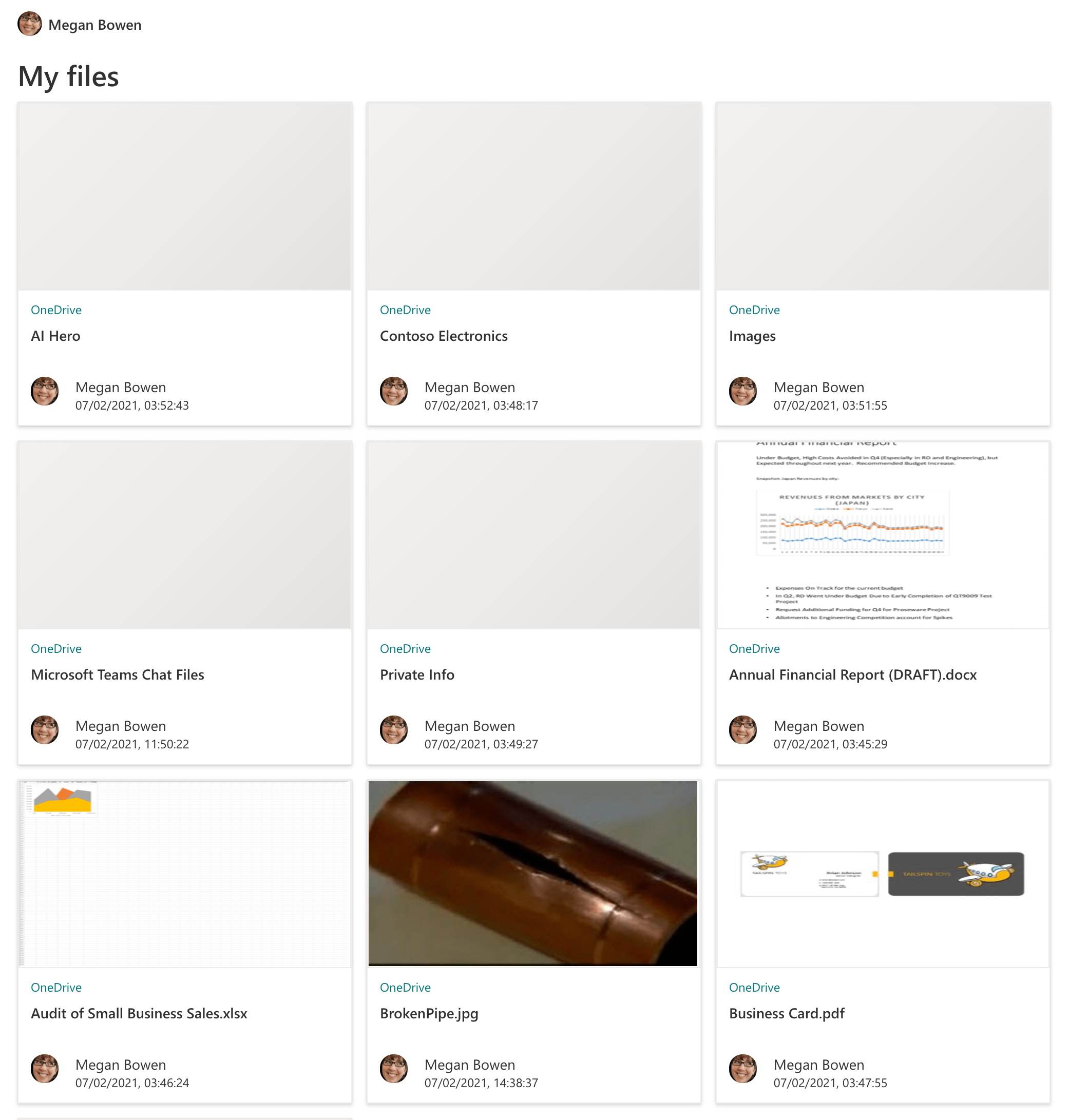

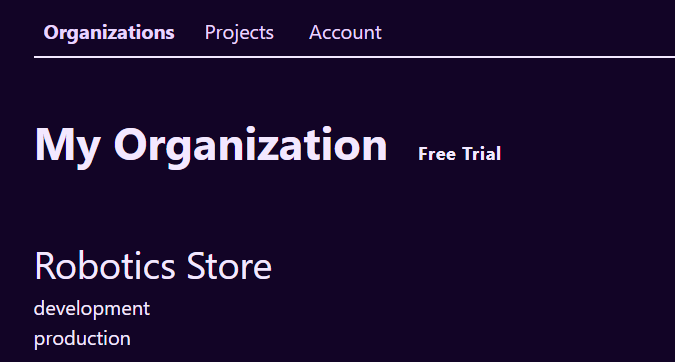

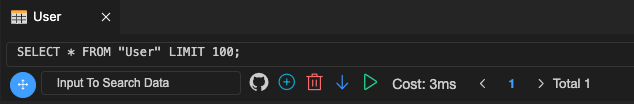

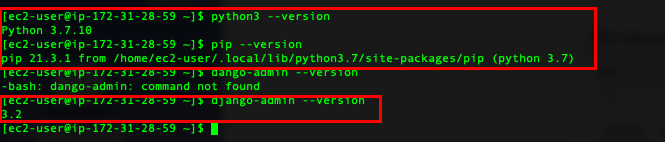

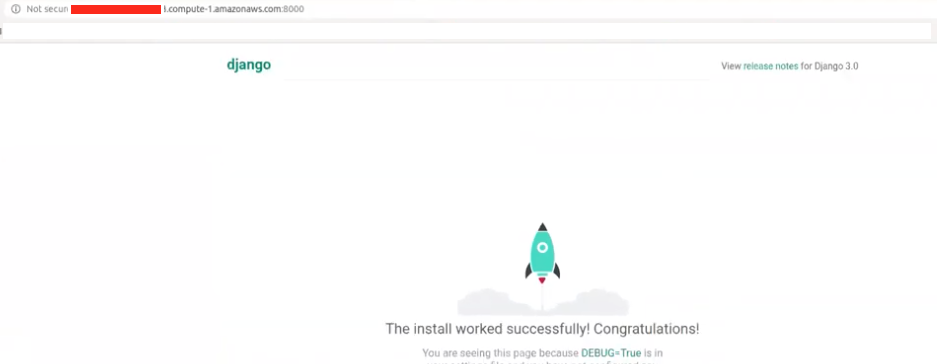

For the REST example, let's get back to those screens presented in the [last post](https://dev.to/therealbrenu/api22019-broken-user-authentication-nep). We have this login page:

And when you submit your credentials, a new page is generated:

Let's say that the new page makes the following request, in order to obtain your name and display your "hello" message:

> GET /users/welcome HTTP/1.1

> Host: api.example.com

> Authorization: Bearer sup3r_t0k3n_h3r3

And for the request above, this is the response:

> HTTP/1.1 200 OK

> Content-Type: application/json

>

> {

> "firstName": "Naruto",

> "lastName": "Uzumaki",

> "username": "User1337",

> "email": "dattebayo@mail.com",

> "password": "HashedVersionOfRamen123"

> }

Notice that the response not only has more data than necessary (it should ideally contain only the username), but it also contains the password of the user in it. One more case of API3:2019 :persevere:

Cases like this happen when the endpoint implementation is something like:

> user = db.run('SELECT * FROM users WHERE id = 1337')

>

> return user

Basically, it just picks up everything related to the user and throws in the response, without filtering what is not important, and without filtering sensitive data. A similar implementation using an ORM would be just:

> user = User::find(1337)

>

> return user

You can, of course, implement a filter in the database itself, or elsewhere in the API code, but if it is not done, this kind of endpoint implementation where you rely exclusively on the client side to filter stuff may lead to huge problems.

## :notebook_with_decorative_cover: External materials

As my goal with this series is to just explain what each flaw is while I'm learning about them all, I would like to suggest some materials about data exposure issues, so you understand better the details of it:

https://github.com/OWASP/API-Security/blob/master/2019/en/src/0xa3-excessive-data-exposure.md

https://salt.security/blog/api3-2019-excessive-data-exposure

https://portswigger.net/support/using-burp-to-test-for-sensitive-data-exposure-issues | therealbrenu |

941,163 | How to Use Observables with Vanilla JavaScript | No frameworks used, just pure vanilla JavaScript. While working on a side project just... | 0 | 2021-12-31T09:36:37 | https://www.developmentsimplyput.com/post/how-to-use-observables-with-vanilla-javascripta-javascript-aca40a7590ff | javascript, programming, webdev | ### No frameworks used, just pure vanilla JavaScript.

<br>

While working on a side project just for fun, I wanted to write a JavaScript script to call a REST API and eventually do some cool stuff on a webpage. It is purely vanilla JavaScript, with no fancy frameworks or even libraries being used.

First, I thought of using **Promises** for my calls and this was easy for me. I have done that a ton of times. However, it then hit me hard — why don’t I use **Observables?** I knew that vanilla JavaScript didn’t natively support Observables. But couldn’t I implement it myself? And that’s what I did.

## This is how I thought things through

1. The Observable itself would be of a new object type called **Subject.**

2. This **Subject** object should expose the subscribe and next functions.

3. subscribe should be called by observers to subscribe to the observable stream of data.

4. next should be called by the **Subject** owner to push/publish new data whenever available.

5. Additionally, I wanted the **Subject** owner to be able to know whenever no observers were interested in its data. This would enable the **Subject** owner to decide if he still wanted to get the data or not.

6. Also, the **Subject** owner should be able to know whenever at least one observer started being interested in its data. This would give the **Subject** owner more control on its data flow and any related operations.

7. Now back to the **observer**. He should be able to **unsubscribe** from the **Subject** at any time.

8. This leads us to a new object type called **Subscription.**

9. This **Subscription** object should expose an unsubscribe function.

10. unsubscribe should be called by the **observer** whenever he wants to stop listening to the data stream coming from the **Subject**.

Following these rules, I came up with the following implementation.

## Implementation

### Subscription

```javascript

let Subscription = function(handlerId, unsubscribeNotificationCallback) {

let self = this;

self.unsubscribe = () => {

if(unsubscribeNotificationCallback) {

unsubscribeNotificationCallback(handlerId);

}

};

return self;

};

```

Note that **Subscription** just notifies the **Subject** when the unsubscribe function is called.

---

### Subject

```javascript

let Subject = function(subscribersStateChangeNotificationCallback) {

let self = this;

let handlers = {};

Object.defineProperty(self, "subscribersFound", {

get() {

let found = false;

for(const prop in handlers) {

if(handlers.hasOwnProperty(prop)) {

found = true;

break;

}

}

return found;

}

});

Object.defineProperty(self, "subscribersCount", {

get() {

let count = 0;

for(const prop in handlers) {

if(handlers.hasOwnProperty(prop)) {

count++;

}

}

return count;

}

});

let unsubscribeNotificationCallback = (handlerId) => {

if(handlerId && handlerId !== '' && handlers.hasOwnProperty(handlerId)) {

delete handlers[handlerId];

if(subscribersStateChangeNotificationCallback && !self.subscribersFound) {

subscribersStateChangeNotificationCallback(false);

}

}

};

self.subscribe = (handler) => {

let handlerId = createGuid();

handlers[handlerId] = handler;

if(subscribersStateChangeNotificationCallback && self.subscribersCount === 1) {

subscribersStateChangeNotificationCallback(true);

}

return new Subscription(handlerId, unsubscribeNotificationCallback);

};

self.next = (data) => {

for(const handlerId in handlers) {

handlers[handlerId](data);

}

};

return self;

};

let createGuid = function() {

return 'xxxxxxxx-xxxx-4xxx-yxxx-xxxxxxxxxxxx'.replace(/[xy]/g, function(c) {

var r = Math.random()*16|0, v = c === 'x' ? r : (r&0x3|0x8);

return v.toString(16);

});

};

```

---

### Somewhere in the Subject Owner

```javascript

.

.

.

let subscribersStateChangeNotificationCallback = (subscriberFound) => {

if(!subscriberFound && isNowWatching) {

stopWatching();

isNowWatching = false;

} else if(subscriberFound && !isNowWatching) {

startWatching();

}

};

self.data = new Subject(subscribersStateChangeNotificationCallback);

.

.

.

self.data.next(self.snapshot.data);

.

.

.

```

---

### Somewhere in the Observer

```javascript

.

.

.

const dashboardServiceSubscription = myDashboardService.data.subscribe((data) => {

...

});

.

.

.

dashboardServiceSubscription.unsubscribe();

.

.

.

```

That’s it, everything worked like a charm and I was proud of what I achieved.

So, the punch line is that coding in vanilla JavaScript is not always equal to writing boring code, you can make it much more fun 😃

<br>

Hope you found reading this story as interesting as I found writing it.

<br><br>

This article was originally published [here](https://www.developmentsimplyput.com/post/how-to-use-observables-with-vanilla-javascript). | ahmedtarekhasan |

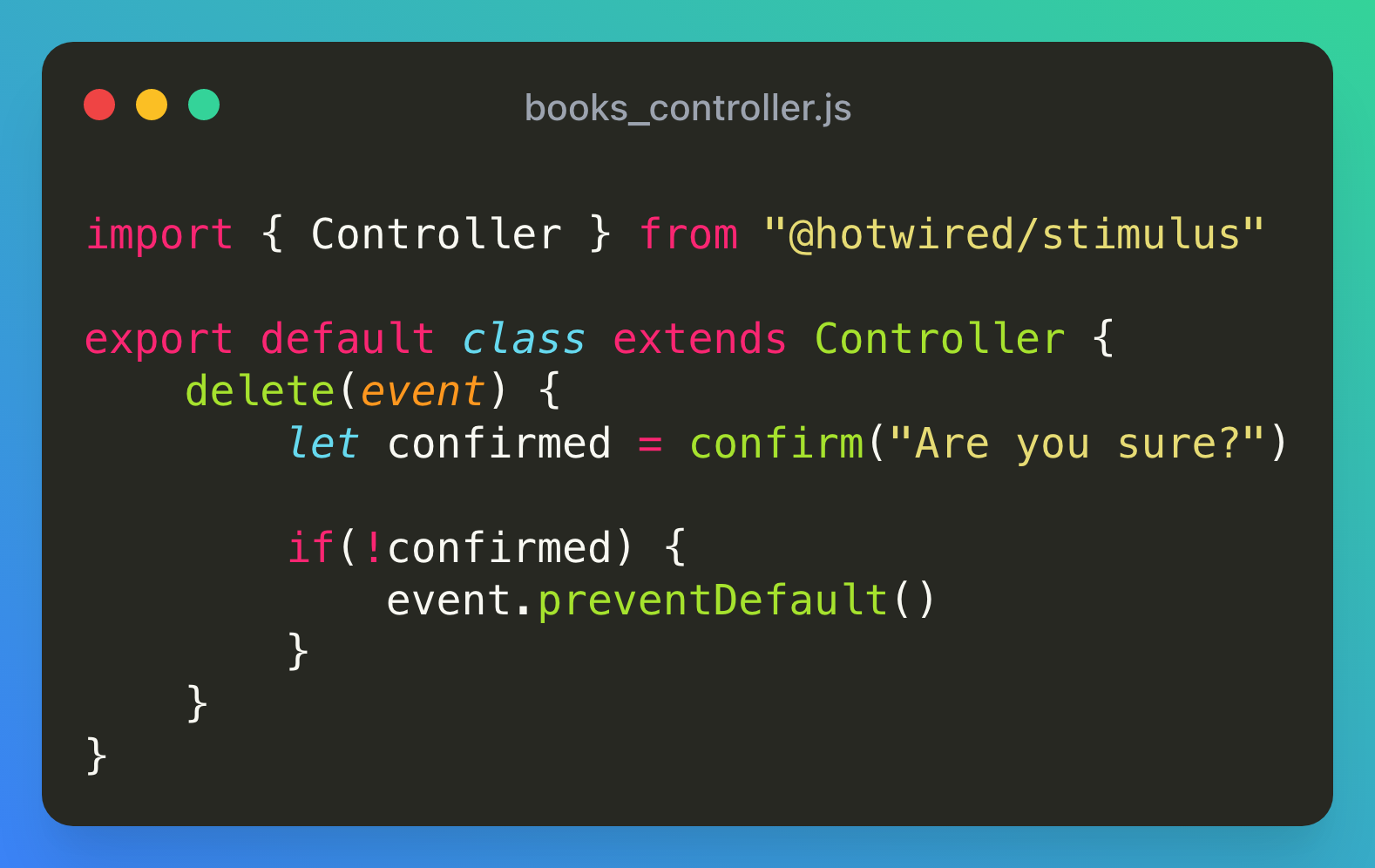

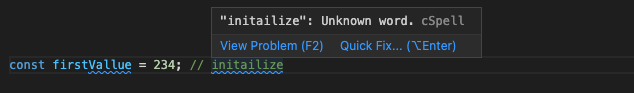

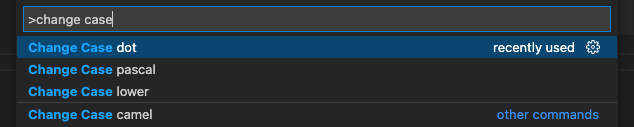

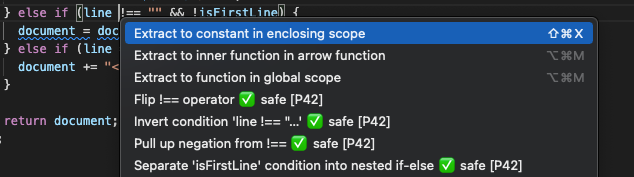

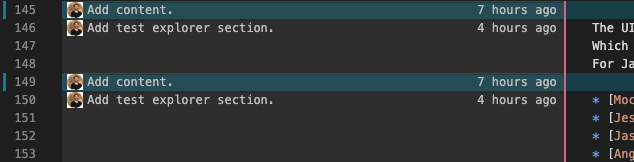

941,197 | Wrap up 2021 with these VS Code extensions | Visual Studio Code is arguably the most popular IDE at the moment for quite a while now. 2021 was no... | 0 | 2021-12-31T11:08:03 | https://dev.to/pythonbutsnake/wrap-up-2021-with-these-vs-code-extensions-19af | webdev, yearinreview, vscode, extensions | Visual Studio Code is arguably the most popular IDE at the moment for quite a while now. 2021 was no different for this successful code editor. The wide range of different features that VS Code allows us to use is what makes it special. One of the things that stands out about VS Code is the massive number of extensions it provides you. Today we will have a look at some of the distinct VS Code Extensions that every developer should install by the end of this year.

## [Visual Studio IntelliCode](https://marketplace.visualstudio.com/items?itemName=VisualStudioExptTeam.vscodeintellicode)

This extension is based on Artificial Intelligence and it recommends code completion automatically. It also gives various development features based on Artificial Intelligence.

[Marketplace Link

](https://marketplace.visualstudio.com/items?itemName=VisualStudioExptTeam.vscodeintellicode)

## [Peacock](https://marketplace.visualstudio.com/items?itemName=johnpapa.vscode-peacock)

This extension adds various colors to different windows of VS Code. While working on the front-end and back-end at the same time, you can add different colors to identify which repo you are working on. It also helps you add colors in live share and remote integration.

[Marketplace Link

](https://marketplace.visualstudio.com/items?itemName=johnpapa.vscode-peacock)

## [Regex Previewer](https://marketplace.visualstudio.com/items?itemName=chrmarti.regex)

Regular expressions are often confusing to understand. Regex Previewer gives you a separate document similar to your regex.

The extension provides many examples of comparisons, so it is much easier to quickly and accurately regex the various use cases.

[Marketplace Link

](https://marketplace.visualstudio.com/items?itemName=chrmarti.regex)

## [blox](https://www.vsblox.com/)

blox is another incredibly useful extension with a mammoth 1500+ drop-in-ready UI snippets. All you have to do is just drag and drop the pre-made code snippets in your project and save a great amount of time writing code.

[Marketplace Link

](https://www.vsblox.com/)

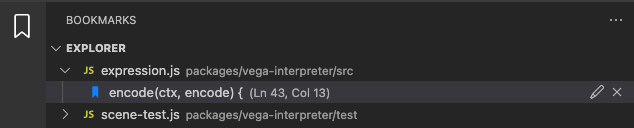

## [Bookmarks](https://marketplace.visualstudio.com/items?itemName=alefragnani.Bookmarks)

Bookmark your code at a certain line with this extension so that you can refer to it later. Add the bookmark with the command “ctrl+alt+k” and it is also the same command for toggling the bookmark. “ctrl+alt+l” is the command for jumping to the next bookmark and “ctrl+alt+j” is the command for jumping to the previous bookmark.

[Marketplace Link

](https://marketplace.visualstudio.com/items?itemName=alefragnani.Bookmarks)

## [Quokka.js](https://marketplace.visualstudio.com/items?itemName=WallabyJs.quokka-vscode)

This extension speeds up development by displaying runtime values in the editor as you code, so you can focus on writing code instead of building custom configs.

[Marketplace Link

](https://marketplace.visualstudio.com/items?itemName=WallabyJs.quokka-vscode)

## [Profile Switcher](https://marketplace.visualstudio.com/items?itemName=aaronpowell.vscode-profile-switcher)

Profile Switcher helps you create various profiles on VS code that you can use for different reasons. This is a must-have extension for those who use VS code for more than one reason. You can save specific extensions for each profile as well.

[Marketplace Link

](https://marketplace.visualstudio.com/items?itemName=aaronpowell.vscode-profile-switcher)

## [SonarLint](https://www.sonarlint.org/vscode)

Sonarlint is an outstanding extension for lone workers without a team. Basically, it detects code quality and code security issues, and generates reports for the user. Sonarlint can also be used by teams with sonarCube and sonarCloud. It has built-in rules in it but a developer can also mute rules or add new rules.

[Marketplace Link

](https://www.sonarlint.org/vscode)

If you are going in 2022 without these extensions then you should definitely check out these extensions for better productivity.

| pythonbutsnake |

941,206 | Create Image slider using Js and CSS | In this article, we are going to make an Image Slider with a clean UI and smooth transition. First,... | 0 | 2022-01-01T11:25:25 | https://dev.to/anomaly3108/create-image-slider-using-js-and-css-48l3 | webdev, javascript, css, beginners | In this article, we are going to make an Image Slider with a clean UI and smooth transition. First, Let's see what are we building.

#PREVIEW

#HTML

```

<div class="container">

<div class="img-comp-container">

<div class="img-comp-img">

<img src="a.png" height="400" width="300">

</div>

<div class="img-comp-img img-comp-overlay">

<img src="b.png" height="400" width="300">

</div>

</div>

</div>

```

We will have an outer div with class `.img-comp-container`. It will have two separate children.

- `.img-comp-img`: It will contain the first image.

- `.img-comp-overlay`: It will contain the second image for overlay. This image will be overlayed over the top of first image to create the effect of sliding.

I guess now you have an overview of what are we doing. Now let's get into the CSS.

#CSS

```

* {

box-sizing: border-box;

}

.img-comp-container {

position: relative;

height: 500px;

}

.img-comp-img {

position: absolute;

width: auto;

height: auto;

overflow: hidden;

}

.img-comp-img img {

padding: 20px;

display: table-row;

}

.container {

display: table;

}

```

This CSS is for the image that will be displayed on the screen.

Everything above is self-explanatory but If you have any queries then comment down.

```

.img-comp-slider {

position: absolute;

z-index: 9;

cursor: ew-resize;

/*set the appearance of the slider:*/

width: 40px;

height: 40px;

background: url(slider_icon.jpg);

background-color: #ffffff70;

background-repeat: round;

backdrop-filter: blur(5px);

border-radius: 50%;

}

```

this CSS is for the slider button

#Javascript

This is where the fun begins. Let's see from the scratch.

First, we need to find all elements with an "overlay" (`img-comp-overlay`) class

```

var x, i;

/*find all elements with an "overlay" class:*/

x = document.getElementsByClassName("img-comp-overlay");

for (i = 0; i < x.length; i++) {

/*once for each "overlay" element:

pass the "overlay" element as a parameter when executing the compareImages function:*/

compareImages(x[i]);

}

```

Next, we will create a function `compareImages` with an `img` parameter

```

function compareImages(img) {

var slider, img, clicked = 0, w, h;

/*get the width and height of the img element*/

w = img.offsetWidth;

h = img.offsetHeight;

/*set the width of the img element to 50%:*/

img.style.width = (w / 2) + "px";

}

```

Now, We will create the slider using Js in the same function

```

/*create slider:*/

slider = document.createElement("DIV");

slider.setAttribute("class", "img-comp-slider");

/*insert slider*/

img.parentElement.insertBefore(slider, img);

position the slider in the middle:*/

slider.style.top = (h / 2) - (slider.offsetHeight / 2) + "px";

slider.style.left = (w / 2) - (slider.offsetWidth / 2) + "px";

```

Now, Let us add the listeners that will be triggered when we press the mouse button.

```

/*execute a function when the mouse button is pressed:*/

slider.addEventListener("mousedown", slideReady);

/*and another function when the mouse button is released:*/

window.addEventListener("mouseup", slideFinish);

/*or touched (for touch screens:*/

slider.addEventListener("touchstart", slideReady);

/*and released (for touch screens:*/

window.addEventListener("touchstop", slideFinish);

```

Now, Basic structure of our slider is created. Next we need to create some functions that will perform the main functionality of the slider. i.e, Slide over the the image.

For this we will first create `slideReady` Function inside the `compareImages` Function that will be executed when mouse button is pressed.

```

function slideReady(e) {

/*prevent any other actions that may occur when moving over the image:*/

e.preventDefault();

/*the slider is now clicked and ready to move:*/

clicked = 1;

/*execute a function when the slider is moved:*/

window.addEventListener("mousemove", slideMove);

window.addEventListener("touchmove", slideMove);

}

```

Next, create another Function inside the `compareImages` Function when the slider is no longer clicked

```

function slideFinish() {

/*the slider is no longer clicked:*/

clicked = 0;

}

```

Now, we will create 3 more functions in `compareImages` with which we will get the cursor position and move the slider accordingly across the Image window

```

function slideMove(e) {

var pos;

/*if the slider is no longer clicked, exit this function:*/

if (clicked == 0) return false;

/*get the cursor's x position:*/

pos = getCursorPos(e)

/*prevent the slider from being positioned outside the image:*/

if (pos < 0) pos = 0;

if (pos > w) pos = w;

/*execute a function that will resize the overlay image according to the cursor:*/

slide(pos);

}

function getCursorPos(e) {

var a, x = 0;

e = e || window.event;

/*get the x positions of the image:*/

a = img.getBoundingClientRect();

/*calculate the cursor's x coordinate, relative to the image:*/

x = e.pageX - a.left;

/*consider any page scrolling:*/

x = x - window.pageXOffset;

return x;

}

function slide(x) {

/*resize the image:*/

img.style.width = x + "px";

/*position the slider:*/

slider.style.left = img.offsetWidth - (slider.offsetWidth / 2) + "px";

}

```

Wrap it all in a parent function with name `initComparisons`.

Now we have covered all the aspects of this now let's see the full `Scripts.js` file

```

function initComparisons() {

var x, i;

/*find all elements with an "overlay" class:*/

x = document.getElementsByClassName("img-comp-overlay");

for (i = 0; i < x.length; i++) {

/*once for each "overlay" element:

pass the "overlay" element as a parameter when executing the compareImages function:*/

compareImages(x[i]);

}

function compareImages(img) {

var slider, img, clicked = 0,

w, h;

/*get the width and height of the img element*/

w = img.offsetWidth;

h = img.offsetHeight;

/*set the width of the img element to 50%:*/

img.style.width = (w / 2) + "px";

/*create slider:*/

slider = document.createElement("DIV");

slider.setAttribute("class", "img-comp-slider");

/*insert slider*/

img.parentElement.insertBefore(slider, img);

/*position the slider in the middle:*/

slider.style.top = (h / 2) - (slider.offsetHeight / 2) + "px";

slider.style.left = (w / 2) - (slider.offsetWidth / 2) + "px";

/*execute a function when the mouse button is pressed:*/

slider.addEventListener("mousedown", slideReady);

/*and another function when the mouse button is released:*/

window.addEventListener("mouseup", slideFinish);

/*or touched (for touch screens:*/

slider.addEventListener("touchstart", slideReady);

/*and released (for touch screens:*/

window.addEventListener("touchstop", slideFinish);

function slideReady(e) {

/*prevent any other actions that may occur when moving over the image:*/

e.preventDefault();

/*the slider is now clicked and ready to move:*/

clicked = 1;

/*execute a function when the slider is moved:*/

window.addEventListener("mousemove", slideMove);

window.addEventListener("touchmove", slideMove);

}

function slideFinish() {

/*the slider is no longer clicked:*/

clicked = 0;

}

function slideMove(e) {

var pos;

/*if the slider is no longer clicked, exit this function:*/

if (clicked == 0) return false;

/*get the cursor's x position:*/

pos = getCursorPos(e)

/*prevent the slider from being positioned outside the image:*/

if (pos < 0) pos = 0;

if (pos > w) pos = w;

/*execute a function that will resize the overlay image according to the cursor:*/

slide(pos);

}

function getCursorPos(e) {

var a, x = 0;

e = e || window.event;

/*get the x positions of the image:*/

a = img.getBoundingClientRect();

/*calculate the cursor's x coordinate, relative to the image:*/

x = e.pageX - a.left;

/*consider any page scrolling:*/

x = x - window.pageXOffset;

return x;

}

function slide(x) {

/*resize the image:*/

img.style.width = x + "px";

/*position the slider:*/

slider.style.left = img.offsetWidth - (slider.offsetWidth / 2) + "px";

}

}

}

```

Now the final step, use this script in HTML and call the `initComparisons` function at the starting of the page where you want the slider.

```

<script>

initComparisons();

</script>

```

The final product will look like:-

#Wrapping Up

I hope you enjoyed the article, if yes then don't forget to press ❤️. You can also bookmark it for later use. It was fun to make this slider and If you have any queries or suggestions don't hesitate to drop them. See you.

| anomaly3108 |

941,215 | Look yourself. | When I think about my dreams, goals and start to see and feel my conquest, to come there, this is a... | 0 | 2021-12-31T12:25:50 | https://dev.to/jubarcelos/end-year-philosophy-5c5h | learning, development, language, personal | When I think about **my dreams, goals** and start to see and **feel my conquest**, to come there, this is a great indication I'm going in the **correct way**.

---

I hated English when I was a kid, teenager, and adult being honest, but I realize I need this language to get my dream, so I started to practice that **every day, I read, speak, write, and now I like it**.

I watch all my favorite series and film in English. I mark some meetings with old or new friends to practice English, and of course, I'm not fluent yet, I commit mistakes when I write or speak, but definitive I'm better than before.

**I was tired to feel stagnating in that and decided to improve myself**.

---

I've been using this channel to practice my English because is very difficult to maintain my knowledge if I don't practice frequently.

Some weeks I don't practice because I need to focus on another thing, but the most important I did, I set that as a routine, so... I can stop, but I'll return to that.

**I started to feel great doing that just when I stopped to compare myself with other people** and analyze my stories, by myself.

The same happened when I looked at my olds projects and thought about my learning journey, all my **baby steps**, and I noticed how math I **grew up** and improve my expertise. **Everything has a process to pass**, and everybody needs to know their **time and necessities**.

**What are you compromising with your personal development?**

Think about that, make one checklist and do some things to get that, step by step you will feel this same energy. " | jubarcelos |

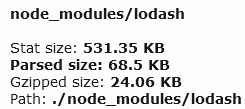

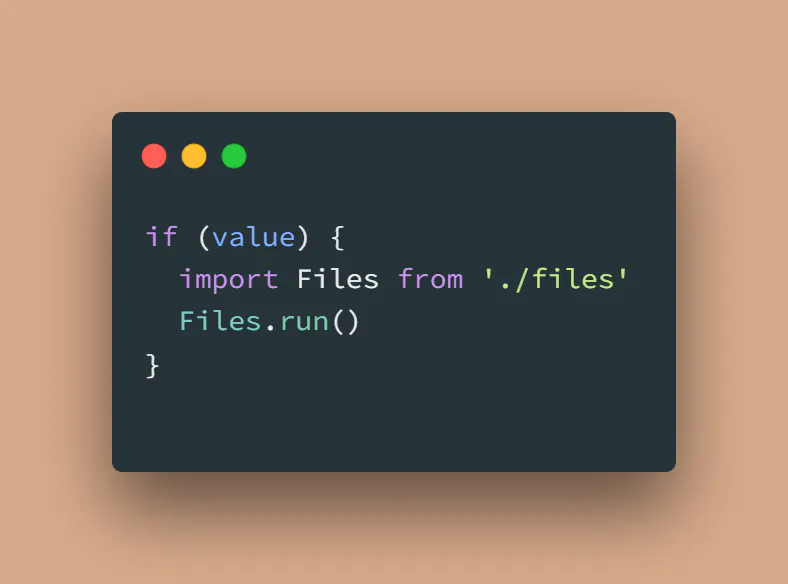

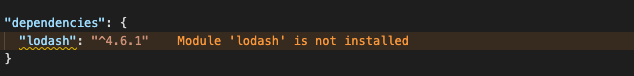

941,320 | Do not use destructuring on import | When importing it is common to use destructuring, but it seems to have its drawbacks. By not using... | 0 | 2021-12-31T13:50:02 | https://dev.to/theproductivecoder/do-not-use-destructuring-on-import-1440 | When importing it is common to use destructuring, but it seems to have its drawbacks. By not using destructuring I got a bundled JavaScript file down from 76kb to 7kb.

I made a simple web app that prints hello world in camelcase to the console.

`const lodash = require('lodash')

console.log(lodash.camelCase('hello world'))`

After bundling it with webpack the bundled js file was 76kb. Checking out the file with webpack-bundle-analyzer it seems to have loaded the whole of lodash. Maybe it would be smaller with destructoring.

`const {camelCase} = require('lodash')

console.log(camelCase('hello world'))`

Now the file is 70kb. Not a huge saving. I tried another syntax.

`const camelCase = require('lodash/camelCase')

console.log(camelCase('hello world'))`

Now the bundled file was only 7kb, a huge saving. I got the same results whether using nodejs require or ES6 import syntax. Try it out in your own projects and see if you have similar results.

| theproductivecoder | |

941,383 | Symfony Station Communique - 31 December 2021. A Look at Symfony and PHP News. | This post originally appeared on Symfony Station. Welcome to this week's Symfony Station Communique.... | 0 | 2021-12-31T17:07:39 | https://dev.to/reubenwalker64/symfony-station-communique-31-december-2021-a-look-at-symfony-and-php-news-4ckf | symfony, php, drupal, docker | This post originally appeared on [Symfony Station](

https://www.symfonystation.com/Symfony-Station-Communique-31-December-2021).

Welcome to this week's Symfony Station Communique. It's your weekly review of the most valuable and essential news in the Symfony and PHP development communities. There was not much Symfony news this week, so we are expanding coverage of general coding articles in our Other section. Take your time and enjoy the items most valuable for you.

###And more importantly, we want to wish you a Happy New Year!

Thanks to Javier Eguiluz and Symfony for sharing [our last communique](https://www.symfonystation.com/Symfony-Station-Communique-24-December-2021) in their [Week of Symfony](https://symfony.com/blog/a-week-of-symfony-782-20-26-december-2021).

*Please note that links will open in a new browser window. My opinions, if I present any, will be in bold.

##Symfony

As always, we will start with the official news from Symfony.

Highlight -> "This week, Symfony development activity was low because of the Christmas holidays and focused on fixing various small bugs. Meanwhile, the upcoming Symfony 6.1 version, to be released in May 2022, introduced a new feature to create draft emails."

A Week of Symfony #782 (20-26 December 2021)

https://symfony.com/blog/a-week-of-symfony-782-20-26-december-2021

I ran across this in the Symfony Docs. It's a good one to bookmark.

Quick Tour / The Big Picture

https://symfony.com/doc/current/quick_tour/the_big_picture.html

The open-sourcing of Symfony CLI is now official.

Announcing the open-sourcing of the Symfony CLI

https://symfony.com/blog/announcing-the-open-sourcing-of-the-symfony-cli

##Featured Item

We continue to highlight a post of the week. This one was an obvious and easy decision.

2021 was an incredibly hard year for many people and companies. The Symfony community was no exception, and our thoughts are with all of you who suffered because of the COVID-19 pandemic. Luckily, thanks to your help and support, there were some reasons for optimism throughout the year. This blog post highlights the main Symfony achievements during 2021.

Symfony 2021 Year in Review

https://symfony.com/blog/symfony-2021-year-in-review

###This week

Eelco Verbrugge reminds us that "Symfony is the fastest major PHP framework with the philosophy to start small and add features when you need them.

So today, I'll show you what is needed at a minimum to easily serve your new Symfony project. Bonuses are some packages which can be installed, and I think are useful to start with for developing."

Starting a new Symfony project on Linux

https://dev.to/eelcoverbrugge/starting-a-new-symfony-project-on-linux-2amh

He also has:

My top 5 favorite Symfony packages

https://dev.to/eelcoverbrugge/my-top-5-favorite-symfony-packages-22i8

Jolie Code, @jolicode, explores how to how to use systemd properly to run Symfony Messenger workers.

Symfony Messenger 💛 systemd

https://jolicode.com/blog/symfony-messenger-systemd

Smaine Milianni explores how to:

Use PHP Enums as Doctrine type in Symfony

https://smaine-milianni.medium.com/use-php-enums-as-doctrine-type-in-symfony-85909aa0a19a

Speaking of Doctrine, Mike Zukowski explores the best way to:

Iterating billions of objects in Doctrine

https://medium.com/@dotcom.software/iterating-billions-of-objects-in-doctrine-23256f7d539

Who knew you could? ;)

2021 was an eventful year for the Drupal community. Explore all the highlights in the following article.

The Year in Review: Drupal in 2021

https://evolvingweb.ca/blog/year-review-drupal-2021

##Timeless

_Sponsored Article_

All sponsored articles are for products we have vetted and stand behind. We either use them or would do so if they were applicable to the Symfony Station site.

We published our first sponsored article on Symfony Station exploring how Code Execution Monitoring helps you identify bugs and bottlenecks in your Symfony app before your customers do. Like all our articles it is now available via audio.

Why You Should Use Code Execution Monitoring with Symfony

https://www.symfonystation.com/why-code-execution-monitoring-symfony

##PHP

###ThisWeek

Dariusz Gafka, @DariuszGafka, shows us:

How To Build Maintainable PHP Applications

https://dariuszgafka.medium.com/how-to-build-maintainable-php-applications-29c48e2a258c

The following from Geni Jaho is specific to Laravel, but the general points apply to all PHP programming.

A significant pain point in programming has to deal with all the clutter that is shoved into controller methods. One of the best ways to remedy that is to extract some functionality into their own classes. They’re called Actions, or Services, or whatever.

Refactoring #2: From controllers to actions

https://genijaho.medium.com/refactoring-2-from-controllers-to-actions-e4d448194abb

The Drinks & Co. engineering team is a fan of concurrency and parallelism to get the best performance and resource optimization in their systems.

Here, they explain how to start adding Async PHP to your toolkit.

How we are adding Async PHP to our Stack

https://uvinum.engineering/how-we-are-adding-async-php-to-our-stack-3bb7c2192cb

This article on Dev.to shows us a way to gain readability and efficiency in our foreach loops that go over a collection of objects: iterators.

PHP iterators and generators: get started with the `ArrayIterator` and `FilterIterator` classes

https://dev.to/yactouat/php-iterators-and-generators-get-started-with-the-arrayiterator-and-the-filteriterator-classes-39co

Samuel Fontebasso continues his series looking at "how to use Docker containers to run applications in a production environment."

PHP + Nginx with Docker in production

https://blog.fontebasso.com.br/php-nginx-with-docker-in-production-8c1ad71182f2

DEVRiMS says "There are many professionals in the software development field, but very few of them are real inspirations in the community. We are glad to feature Mr. Chris Hartjes in our first interview today."

PHP Interview with The Grumpy Programmer - Chris Hartjes

https://devrims.com/blog/grumpy-programmer-php-interview/

In Portuguese, Guilherme Donizetti writes "When we use the PHP language in our projects, in more or less time we will need to use some database as well. Databases are always a tool apart from what we are developing, but we want to connect the two things, and there are different ways to realize this connection. In this article, I will present PDO, a simple and efficient technique of connecting applications that use PHP to a database!"

PDO — CONEXÃO COM BANCO DE DADOS

https://guilhermedonizettiads.medium.com/pdo-conex%C3%A3o-com-banco-de-dados-5df0ac93f22f

The following series of Advent posts look at functional programming in PHP.

Advent of Functional PHP: Review

https://peakd.com/hive-168588/@crell/aoc2021-review

##Timeless

We ran across a promising tool this week. And it's building a Symfony integration.

PSX: A modern set of PHP components to simplify API development

https://phpsx.org/

DeliciousBrains writes "You could just debug your PHP code using dump debugging functions such as error_log, print, and var_dump, and let’s be honest, we’ve all done it a lot! While helpful sometimes, they often just aren’t enough and can actually slow you down while developing. There must be a better way, surely?!

Enter Xdebug, the rather awesome step debugging and profiling tool for PHP."

How to Use Xdebug for Advanced PHP Debugging

https://deliciousbrains.com/xdebug-advanced-php-debugging/

You can also watch a video version

How to use Xdebug with PhpStorm

https://www.youtube.com/watch?v=1nryJL3kCx0

And by the way, don't host your videos on YouTube.

We found this bookmarkable article via Joshua Otwell's, @j21120, OpenLamp newsletter.

Buggy PHP Code: The 10 Most Common Mistakes PHP Developers Make

https://www.toptal.com/php/10-most-common-mistakes-php-programmers-make

##Other

While there are many resources to help programmers write better code—such as books and static analyzers—there are few for writing better comments. While it's easy to measure the number of comments in a program, it's hard to measure the quality, and the two are not necessarily correlated. A bad comment is worse than no comment at all. Here are some rules to help you achieve a happy medium.

Best practices for writing code comments

https://stackoverflow.blog/2021/12/23/best-practices-for-writing-code-comments/

With the dust settled in the wake of Elastic’s relicensing controversy, VentureBeat interviewed co-founder and CEO Shay Banon to get his take on why they made the license change; what impact — if any — it has had on business, and what being a “free and open” company (vs. “open source”) really means.

Elastic CEO reflects on Amazon spat, license switch, and the principles of open-source

https://venturebeat.com/2021/12/27/elastic-ceo-reflects-on-amazon-spat-license-switch-and-the-principles-of-open-source/

Venture Beat also explores:

How open-source is powering data sovereignty and digital autonomy

https://venturebeat.com/2021/12/29/how-open-source-is-powering-data-sovereignty-and-digital-autonomy/

Ingo Steinke writes: "Inspired by my article “Nothing New in 2022?”, I will share some thoughts about what to expect and not to expect of a new year from the perspective of a frontend web developer."

What not to expect from 2022 as a Web Developer

https://ingosteinke.medium.com/what-not-to-expect-from-2022-as-a-web-developer-55f095268d30

James Read states: "I propose that DockerHub as a single repository of container images is becoming less and less important than it once was, and in its place, GitHub is a strong contender to take over as the #1 repository for containers — as it has its sights on being everything a developer needs."

GitHub may replace DockerHub

https://levelup.gitconnected.com/github-may-replace-dockerhub-a5da5e547f01

Apoorv Tyagi published this informative article.

How to Scale a Distributed System

https://www.freecodecamp.org/news/how-to-scale-a-distributed-system/

Web3 is the latest Silicon Valley buzzword, which is being dubbed as the next phase of the Internet. It has got tech and cryptocurrency enthusiasts buzzing but others, Jack Dorsey in particular, argues it is already in the hands of deep-pocketed venture capitalists.

But what is Web3, and can this future vision of a decentralized and egalitarian Internet work?

What is Web3, and why is Jack Dorsey attacking the 'next phase of the internet'?

https://www.euronews.com/next/2021/12/24/what-is-web3-is-it-the-new-phase-of-the-internet-and-why-are-elon-musk-and-jack-dorsey-aga

We all know nation-states aren't necessarily the best forces for an open, free, and democratic internet. Dr. Samantha Hoffman writes:

"Recent prominent data breach incidents, ... have made clear how vulnerable both public and private systems remain to espionage and cybercrime. What is less obvious is the way that a foreign adversary or competitor might target data that is less clearly relevant from a national security or espionage perspective. Today, data about public sentiments, such as the kinds of data used by advertisers to analyze consumer preferences, has become as strategically valuable as data about traditional military targets. As the definition of what is strategically valuable becomes increasingly blurred, the ability to identify and protect strategic data will be an increasingly complex and vital national security task."

How to avoid falling into China’s ‘data trap’

https://techcrunch.com/2021/12/26/how-to-avoid-falling-into-chinas-data-trap/

Brian Kardell ponders upon this "We’ve built the web so far on a very particular model, with web engine implementers at the center. The whole world is leaning extremely heavily on the independent, voluntary funding (and management and prioritization) of a very few steward organizations.

Maybe that isn’t a great idea."

Webrise

https://bkardell.com/blog/Webrise.html

Have you published or seen something related to Symfony or PHP that we missed? If so, please contact us. https://www.symfonystation.com/contact

That's it for this week. Thanks for making it to the end of another extended edition. I look forward to sharing next week's Symfony and PHP news with you on Friday.

Please share this post. :) Be sure to join our newsletter list at the bottom of any of our site’s pages. https://www.symfonystation.com/contact Joining gets you each week's communique in your inbox (a day early). And [follow us on Twitter](https://twitter.com/symfonystation).

###Once again, Happy New Year Symfonistas!

Reuben Walker

Founder [Symfony Station](https://www.symfonystation.com/)

Reuben is also Ringmaster of [Mobile Atom Media](https://media.mobileatom.net/about-us/) and its division [Mobile Atom Code](https://www.mobileatom.net/). | reubenwalker64 |

941,403 | In-Demand Database Administrator Skills You Need To Get Hired In 2022 | The Database Administrator (DBA), as the name suggests, operates and administers the database. The... | 0 | 2021-12-31T18:18:44 | https://dev.to/bpb_online/in-demand-database-administrator-skills-you-need-to-get-hired-in-2022-1gb1 | database, datascience, datascientist, dba | The Database Administrator (DBA), as the name suggests, operates and administers the database. The technical skills required by a DBA are SQL, scripting, database performance tuning, and system and network design. A DBA also handles the backup and recovery of databases. This job is critical as a business function properly only when the database is stored and managed well.

Listing a few tasks a Database Administrator is involved in:

Database designing as per end-user requirements.

- Providing (or revoking) rights to or from database end-users.

- Enabling efficient data backup and data recovery mechanisms.

- Database-related training to end-users.

- Ensuring data privacy and security.

- Managing data integrity for end-users.

- Monitoring the performances of the database.

The proper functioning of databases is solely the responsibility of a DBA. If at any point in time the database functioning fails, the DBA should be able to quickly and efficiently manage data recovery mechanisms to recover the functioning of data.

Thorough knowledge of SQL and related scripting languages makes a DBA well-tuned manage any database queries that need to be handled by various end-users of a database.

Hope this was helpful. | bpb_online |

941,565 | ESP8266 Adventures | My first experience with ESP8266. Cover image shows the ESP12e module. | 0 | 2021-12-31T23:02:42 | https://dev.to/thspinto/esp8266-adventures-12g4 | arduino, esp8266, grafana, mqtt | ---

title: "ESP8266 Adventures"

published: true

description: My first experience with ESP8266. Cover image shows the ESP12e module.

cover_image: https://ae01.alicdn.com/kf/HTB19oGtXojrK1RkHFNRq6ySvpXac/Esp8266-ESP-12E-esp12e-substituir-esp12-ESP-12-esp8266-remoto-porta-serial-m-dulo-sem-fio.jpg

tags: [arduino, esp8266, grafana, mqtt]

---

How can my knowledge of technology help in global warming impacted future? My main area of study is in Computer Science and I've mostly had contact with intangible stuff. If, as a consequence of climate change, we enter a new era of scarcity, how can this intangible knowledge help?

With this in mind and with curiosity about IoT. Our most basic need is food. Vegetal food needs light, healthy soil and the right amount of water to grow. The most basic gadget I could build that can help food to grow and to learn with my experimentation is a weather station.

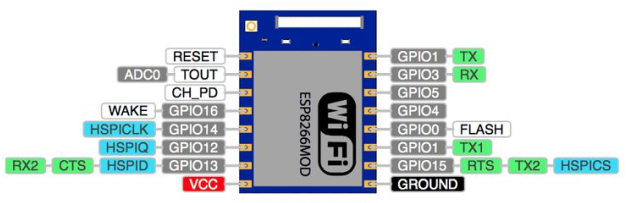

All that introduction is just to say that my goal is collecting the temperature, air humidity, soil humidity and sending it to my cluster for processing. Since we are in the future, no cables are allowed therefore of wireless connection is required. So I went online and bought the most simple-looking and cheap module I could find to couple it to an Arduino I've already owned. I got the ESP-12e and that is where a whole new world opened beneath my feet and thing got a little more complicated.

## Programming the ESP8266

What I didn't know is that the ESP8266 is not just a Wifi module, it can be programmed and even run a web server inside. Of course there was a community leveraging this for IOT, and they led me to [OpenMQTTGateway](https://docs.openmqttgateway.com/). This project collects various signals and sensor from IOT devices and sends it to a queue to be used by HomeAutomation services such as [Home Assistant](https://www.home-assistant.io/). Given my astonishment of the capacity of this module of course I didn't have any tools that would make my life easier in programming it.

Since I had an Arduino I thought it would be easy to use it to program the ESP. My first blocker is that I saw on a tutorial that I needed an external 3,3V power source because Arduino's 3,3V output didn't have enough current for the module. I found 3 used 1,5V batteries that connected in series gave me 3,6V. The datasheet says that 3,6V is within the operational voltage, so achievement unlocked for recycling used batteries. (I do have to notice that I found out later that used batteries are really bad at keeping the voltage I measured without any usage.)

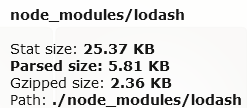

Now I just had to flash the OpenMQTTGateway project into the module. Well, to put it into flash mode you have to reboot the module with the GPIO0. I found this nice schematics in instructables:

[https://www.instructables.com/ESP-12E-ESP8266-With-Arduino-Uno-Getting-Connected/](https://www.instructables.com/ESP-12E-ESP8266-With-Arduino-Uno-Getting-Connected/)

My execution of it was a disaster because I bought the wrong pin bar (the ESP-12E has a smaller distance between the roles in comparison to the default protoboard) so I just attached the wires directly to the module. Of course that doing so made it very unstable and I couldn't breath near it without triggering a reset. But it I got it be be stable enough to flash it. The next issue I had was not flowing correctly the instructions: I was trying to use Arduino IDE to program the module and it wasn't able to connect. First of all it is very important to connect the ground to reset in the Arduino board. Apparently when you open the usb-to-serial converter Arduino automatically resets and keeping reset pin to ground avoids that.

## Pin info

⚠️ To flash software to the module no other sensors can be attached.

I ended buying a fiberglass protoboard to weld everything together and make the setup more stable (I admit I do have practice welding to get more motor coordination). Finally, I also added a USB cable and a tension regulator to 3,3V for a constant power supply.

You might ask me why didn't you just buy the [nodemcu](https://www.nodemcu.com/)? Well, I basically didn't know of its existence and I never thought that "wifi module" could do so much. It was fun to learn, but it is certainly better to buy the nodemcu because it is pretty cheap (even in Brazil), and flashing it and connecting stuff to it is a lot simpler.

## OpenMQTTGateway Configuration

To manually configure the network and MQTT I added the following to [User_config.h](https://github.com/1technophile/OpenMQTTGateway/blob/development/main/User_config.h):

```c

#define ESPWifiManualSetup true

#define MQTT_SERVER "your.server"

#define MQTT_USER "user"

#define MQTT_PASS "pass"

#define wifi_ssid "ssid"

#define wifi_password "pass"

#define SECURE_CONNECTION

#define MQTT_PORT "9443"

const char* certificate CERT_ATTRIBUTE = R"EOF("

-----BEGIN CERTIFICATE-----

... // your root certificate: see here for let`s encypt https://letsencrypt.org/certificates/

-----END CERTIFICATE-----

")EOF";

```

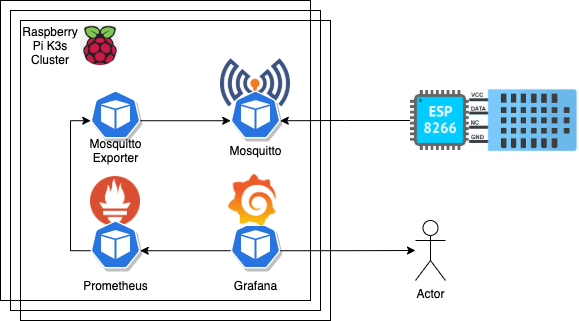

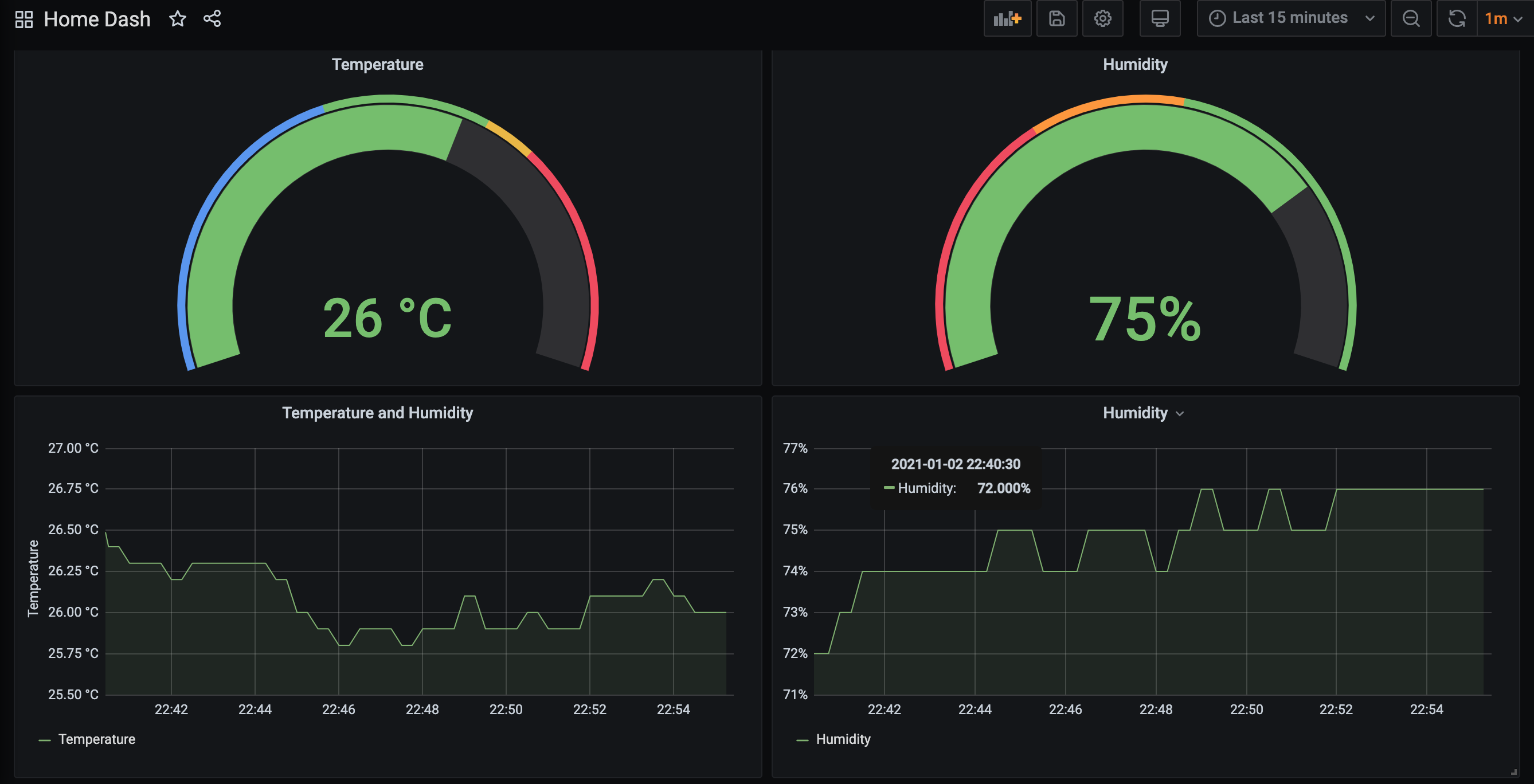

## Collecting the metrics

To collect and view the sensor data I'm using Mosquitto, Prometheus, and Grafana. For Prometheus to be able to collect the data I'm running [an exporter](https://github.com/kpetremann/mqtt-exporter) that reads the data from the queue and provides an HTTP endpoint in the format Prometheus expects.

## References

[🔗 OpenMQTTGateway](https://docs.openmqttgateway.com/)

[🔗 AT instruction set](https://www.espressif.com/sites/default/files/documentation/4a-esp8266_at_instruction_set_en.pdf)

| thspinto |

941,621 | Using Kyverno Policies for Kubernetes Governance | Kubernetes gives developers a lot of flexibility in terms of developing and deploying applications. With flexibility, comes the opportunity for configuration to get out of control. Kyverno is a great tool for helping create policies in Kubernetes to ensure that flexibility remains, but guardrails are in place. | 0 | 2021-12-31T23:58:15 | https://dev.to/mda590/using-kyverno-policies-for-kubernetes-governance-3e17 | kubernetes, kyverno, policy, governance | ---

title: Using Kyverno Policies for Kubernetes Governance

published: true

description: Kubernetes gives developers a lot of flexibility in terms of developing and deploying applications. With flexibility, comes the opportunity for configuration to get out of control. Kyverno is a great tool for helping create policies in Kubernetes to ensure that flexibility remains, but guardrails are in place.

tags: kubernetes, kyverno, policy, governance

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/xki3qslzpu9s3rzw9k9p.jpg

---

[Kyverno](https://kyverno.io/) is a great tool that can be installed into any Kubernetes cluster, allowing cluster administrators to enforce policies for resources in the cluster, and even modify resources before they are applied. Kyverno can be used to ensure deployments are secure, ensure deployments meet certain organizational criteria (e.g. define a cost center label), or even ensure all deployments mount a common volume.

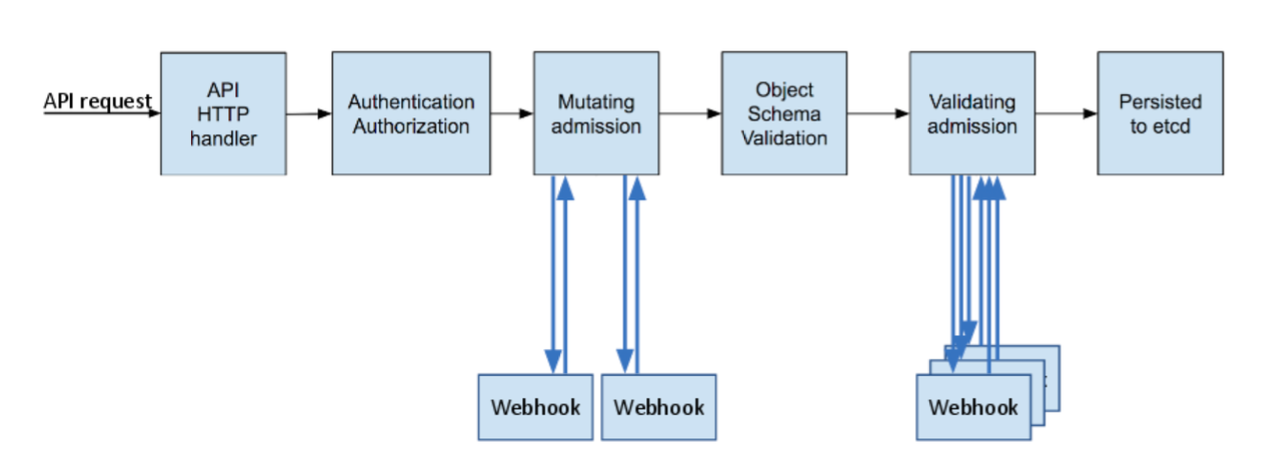

Kyverno works by deploying a pod and services into your existing cluster. It creates multiple Admission Webhooks in the cluster. These webhooks are responsible for handling API requests coming in to Kubernetes and either validating something from the request ([Validating Admission Webhook](https://kubernetes.io/docs/reference/access-authn-authz/admission-controllers/#validatingadmissionwebhook), or modifying the request before it is applied ([Mutating Admission Webhook](https://kubernetes.io/docs/reference/access-authn-authz/admission-controllers/#mutatingadmissionwebhook)).

In the diagram below, you can see where in the process of an API call to Kubernetes, each of the Mutating Admission and Validating Admission webhooks will run.

All of the Kubernetes Manifests and Kyverno Policies are available in [this GitHub repo](https://github.com/mda590/do-k8s-2021-challenge).

## Deploying Kyverno

Getting started with Kyverno is pretty simple. While there are a lot of knobs that can be turned to configure Kyverno, the initial default goes a long way. *I deployed this into my DigitalOcean Kubernetes cluster.*

To install, I simply ran:

```

$ helm repo add kyverno https://kyverno.github.io/kyverno/

$ helm repo update

$ helm install kyverno kyverno/kyverno --namespace kyverno --create-namespace

```

I now have a single Kyverno pod running in the cluster:

```

$ kubectl get pods -n kyverno

NAME READY STATUS RESTARTS AGE

kyverno-6d94754db4-tdl9s 1/1 Running 0 5s

```

## Policies

### Anatomy of a basic policy

Policies can be written with many different options in Kyverno. The most basic policies can be written to check values of specific field(s) within an API request to Kubernetes, and make a decision whether the request should be allowed or not.

### Require specific pod labels

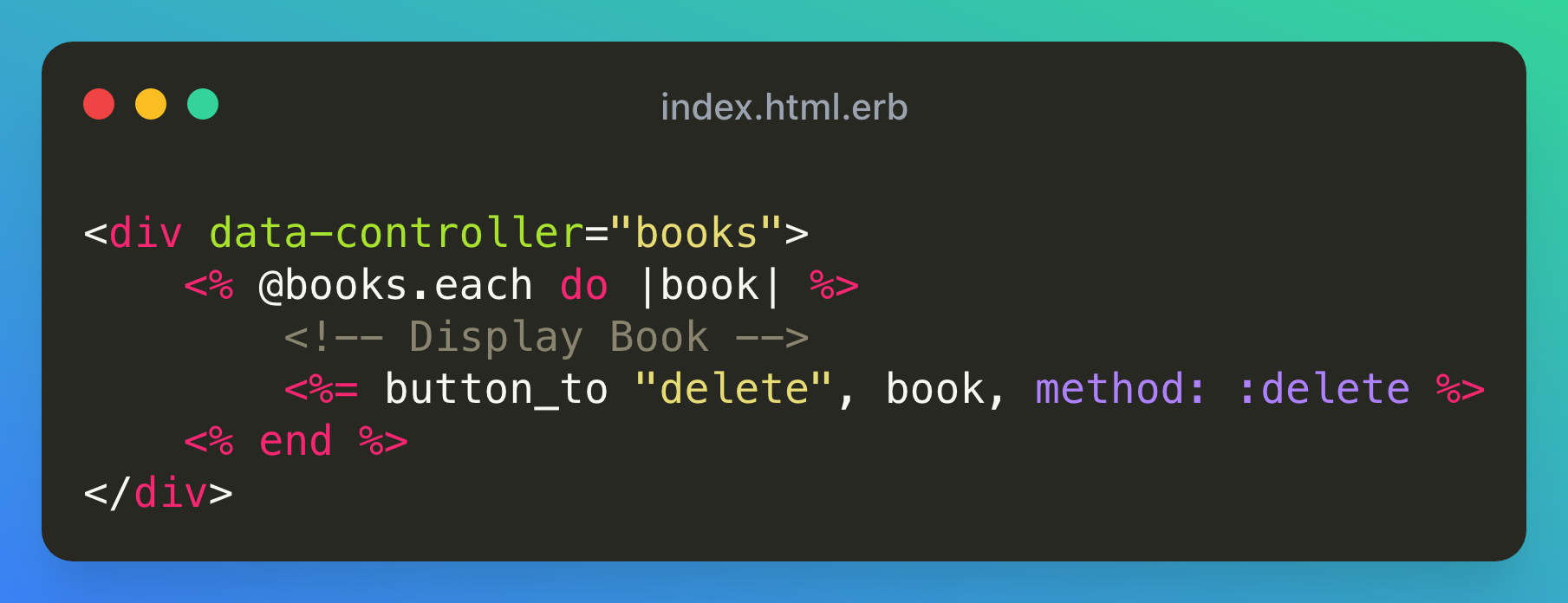

This example requires specific labels to be set on a pod prior to creating those resources. One of the great benefits Kyverno provides is that you can specify `Pod` as the resource kind, but it will also check against the policy whenever creating resources which will end up creating pods (Deployment, StatefulSet, etc.)

The below example policy requires that all pods have the `labels.acmecorp.com/costCenter` and `labels.acmecorp.com/department` labels. They can be set to any value.

```yaml

apiVersion: kyverno.io/v1

kind: ClusterPolicy

metadata:

name: require-business-labels

annotations:

policies.kyverno.io/title: Require Business Labels

policies.kyverno.io/category: Best Practices

policies.kyverno.io/severity: medium

policies.kyverno.io/subject: Pod, Label

policies.kyverno.io/description: >-

Define required labels used by our internal business processes to understand which applications

are running in each cluster, and used to handle chargeback activities for resources consumed

by this specific application.

spec:

validationFailureAction: enforce

background: false

rules:

- name: check-for-business-labels

match:

resources:

kinds:

- Pod

validate:

message: "The labels `labels.acmecorp.com/costCenter` and `labels.acmecorp.com/department` are required."

pattern:

metadata:

labels:

labels.acmecorp.com/costCenter: "?*"

labels.acmecorp.com/department: "?*"

```

#### Example Policy Tests:

* [Example Deployment which will be denied under this policy.](https://github.com/mda590/do-k8s-2021-challenge/blob/main/deployments/01_denied.yaml)

* [Example Deployment which will be allowed under this policy.](https://github.com/mda590/do-k8s-2021-challenge/blob/main/deployments/01_allowed.yaml)

#### Demo showing policy in cluster:

{% youtube yd_f8XIgLfw %}

### Require the use of a specific container registry

This policy checks for 2 important items: 1. images specified in a pod definition must be from a specific container registry (in this case, from the DigitalOcean registry); and 2. images cannot have the `latest` tag.

```yaml

apiVersion: kyverno.io/v1

kind: ClusterPolicy

metadata:

name: restrict-image-registries

annotations:

policies.kyverno.io/title: Restrict Image Registries and Latest

policies.kyverno.io/category: Best Practices

policies.kyverno.io/severity: medium

policies.kyverno.io/subject: Pod

policies.kyverno.io/description: >-

Requires all images for pods be sourced from the Digital Ocean Container Registry. Any other

image sources are denied.

spec:

validationFailureAction: enforce

background: false

rules:

- name: validate-registries

match:

resources:

kinds:

- Pod

validate:

message: "Unknown image registry."

pattern:

spec:

containers:

- image: "registry.digitalocean.com/*"

- name: validate-image-tag

match:

resources:

kinds:

- Pod

validate:

message: "Must not use the tag `latest` on any images."

pattern:

spec:

containers:

- image: "!*:latest"

```

#### Example Policy Tests:

* [Example Deployment which will be denied under this policy.](https://github.com/mda590/do-k8s-2021-challenge/blob/main/deployments/02_denied.yaml)

* [Example Deployment which will be allowed under this policy.](https://github.com/mda590/do-k8s-2021-challenge/blob/main/deployments/02_allowed.yaml)

#### Demo showing policy in cluster:

{% youtube Sb0zzsif7ZM %}

### Require a runAsUser be specified

This policy requires that every container specified within a pod has a `runAsUser` defined and that the value is greater than `0`, meaning the container cannot run as root. In reality, there are additional items you will probably want to check in a policy like this, but this provides a good place to get started.

```yaml

apiVersion: kyverno.io/v1

kind: ClusterPolicy

metadata:

name: require-pod-runasuser

annotations:

policies.kyverno.io/title: Require the RunAsUser to be Specified

policies.kyverno.io/category: Best Practices

policies.kyverno.io/severity: medium

policies.kyverno.io/subject: Pod

policies.kyverno.io/description: >-

Requires Pods to specify as runAsUser value within their containers which are not root.

spec:

validationFailureAction: enforce

background: false

rules:

- name: check-userid

match:

resources:

kinds:

- Pod

validate:

message: >-

The field spec.containers.*.securityContext.runAsUser must specified and greater than zero.

pattern:

spec:

containers:

- securityContext:

runAsUser: ">0"

```

#### Example Policy Tests:

* [Example Deployment which will be denied under this policy.](https://github.com/mda590/do-k8s-2021-challenge/blob/main/deployments/03_denied.yaml)

* [Example Deployment which will be allowed under this policy.](https://github.com/mda590/do-k8s-2021-challenge/blob/main/deployments/03_allowed.yaml)

#### Demo showing policy in cluster:

{% youtube qTVkgwBQcA0 %}

## Conclusion

Overall, this is meant to be a couple basic examples of using policies with Kyverno in Kubernetes. You can certainly get much more complex, or implement a policy which mutates a resource, to keep resources in your cluster compliant with your rules.

If you end up trying Kyverno and find out you need something more complex, take a look at Gatekeeper. The [Gatekeeper](https://open-policy-agent.github.io/gatekeeper/website/docs/) project works very similar to Kyverno, except it allows for defining policies in Rego language, which adds complexity but allow for additional customization.

| mda590 |

941,640 | Scilab: An Introductory Course | What is Scilab? Why should you learn Scilab? Advantages Disadvantages What's next? ... | 16,117 | 2022-01-02T02:00:18 | https://dev.to/fcomovaz/scilab-an-introductory-course-188o | scilab, course, learning | - [What is Scilab?](#what-is-scilab)

- [Why should you learn Scilab?](#why-should-you-learn-scilab)

- [Advantages](#advantages)

- [Disadvantages](#disadvantages)

- [What's next?](#whats-next)

## What is Scilab?

Scilab is a free and open source software for engineers & scientists, with a long history (first release in 1994) and a growing community.

![Scilab Logo][logo-scilab]

Also, Scilab is a high level programming language for scientific programming. It enables a rapid prototyping of algorithms, without having to deal with the complexity of other more low level programming language such as C and Fortran.

## Why should you learn Scilab?

Scilab is a powerful software and a pretty good alternative to the commercial Matlab, besides it's free and open source what allows us use it as we want.

### Advantages

I'll list yo some advantages about the us of Scilab as numerical computing software:

- *Open Source & Free*: This's very useful because is easy to get and there is no problem when you use it.

- *Easy to use*: Scilab doens't havea very complex programming language is very similar to Matlab syntaxis.

- *OS compatible*: It's available for any OS.

- *Lightweight software*: In comparition to Matlab instalation, Scilab is much lighter than Matlab.

### Disadvantages

Likewise, Scilab has certain point i don't really like about it. So, I'm going to be very honest with you and I'll give you the pros/cons i find when i've used Scilab.

- *Small community*: It's not a common software, for this reason its community is not as big as Matlab community.

- *Lack of functions*: This is because of the lightweight of the software, it just includes indispensable functions to work.

- *Documentation looks old*: Scilab has its official docummentation but the page and examples are not very friendly at first sight.

## What's next?

The first step to begin with Scilab develop is download it.

Scilab, being Open Source, is very easy to install in any kind of OS accessing from the [Scilab download page][download-scilab].

Once you got it installed, you can wath this short overview to Scilab:

{% youtube VfNV7wr4338 %}

Nevertheless, sometimes you don't want to install programs because a lot of reasons, in this case you can use the [Scilab Online Version][cloud-scilab] which is mainteined by **IITB** (*Indian Institute of Technology Bombay*).

As well as Matlab, Scilab has its own IDE integrated in the instalation, you can use it but we going to implement Scilab execution in the Visual Studio Code Editor, you can download it from the [VS Code download page][download-code], we'll configure it in following posts.

[download-scilab]: https://www.scilab.org/download/

[download-code]: https://code.visualstudio.com/download

[cloud-scilab]: https://cloud.scilab.in/

[logo-scilab]: https://ftp.sun.ac.za/ftp/pub/mirrors/scilab/www.scilab.org/images/scilab_logo.jpg "scilab logo"

| fcomovaz |

941,672 | I've created a graphql-codegen plugin that generates type-safe hooks for GraphQL queries for Flutter! | If you are a Flutter & GraphQL fan, please try it. (And if you like it, please give it a star... | 0 | 2022-01-01T04:24:57 | https://dev.to/seya/ive-created-a-graphql-codegen-plugin-that-generates-type-safe-hooks-for-graphql-queries-for-flutter-27h0 | flutter, graphql | ---

title: I've created a graphql-codegen plugin that generates type-safe hooks for GraphQL queries for Flutter!

published: true

description:

tags: flutter, graphql

//cover_image: https://direct_url_to_image.jpg

---

If you are a Flutter & GraphQL fan, please try it.

(And if you like it, please give it a star ⭐️)

https://github.com/kazuyaseki/graphql-codegen-flutter-artemis-hooks

## Motivation

I'm from React, and when building an application with React + Apollo configuration, I really liked the experience of using graphql-codegen to generate hooks like this

```tsx

export function useFetchUserQuery(baseOptions?: ApolloReactHooks.QueryHookOptions<FetchUserQuery, FetchUserQueryVariables>) {

return ApolloReactHooks.useQuery<FetchUserQuery, FetchUserQueryVariables>(FetchUserDocument, baseOptions);

}

```

(This is the code that will be generated when you use this plugin with `withHooks` set to true.)

https://www.graphql-code-generator.com/plugins/typescript-react-apollo

I want to develop with the same experience in Flutter! So I created this plugin.

https://github.com/kazuyaseki/graphql-codegen-flutter-artemis-hooks

In Flutter, there is a library called [artemis](https://pub.dev/packages/artemis) that generates the type definitions, so this plugin is based on that.

For example, suppose you define a query like this as a .graphql file

```graphql

query ExampleQuery {

objects {

id

name

}

}

mutation TestMutation($variable: String!) {

testMutation(variable: $variable) {

result

}

}

```

This plugin will generate the following hooks function

```dart

import 'package:flutter/material.dart';

import 'package:flutter_hooks/flutter_hooks.dart';

import 'package:graphql_flutter/graphql_flutter.dart';

import 'package:gql/ast.dart';

import 'package:your_project/your_artemis_generated/graphql_api.dart';

QueryResult useQuery<DataType>(BuildContext context, DocumentNode query,

[Map<String, dynamic>? variables]) {

final client = GraphQLProvider.of(context).value;

final state =

useState<QueryResult>(QueryResult(source: QueryResultSource.network));

useEffect(() {

late Future<QueryResult> promise;

if (variables ! = null) {

promise = client.query(

QueryOptions(document: query, variables: variables),

);

} else {

promise = client.query(

QueryOptions(document: query),

);

}

promise.then((result) {

state.value = result;

});

return () {};

}, []);

return state.value;

}

class ExampleQuery$QueryReturnType {

bool isLoading;

OperationException? exception;

ExampleQuery$Query? data;

ExampleQuery$QueryReturnType(this.isLoading, this.exception, this.data);

}

ExampleQuery$QueryReturnType useExampleQueryQuery<DataType>(BuildContext context) {

final result = useQuery<ExampleQuery$Query>(context, EXAMPLE_QUERY_QUERY_DOCUMENT);

return ExampleQuery$QueryReturnType(result.isLoading, result.exception, result.data == null ? null : ExampleQuery$Query.fromJson(result.data!));

}

class TestMutation$MutationReturnType {

bool isLoading;

OperationException? exception;

TestMutation$Mutation? data;

TestMutation$MutationReturnType(this.isLoading, this.exception, this.data);

}

TestMutation$MutationReturnType useTestMutationQuery<DataType>(BuildContext context, TestMutationArguments variables) {

final result = useQuery<TestMutation$Mutation>(context, TEST_MUTATION_MUTATION_DOCUMENT, variables.toJson());

return TestMutation$MutationReturnType(result.isLoading, result.exception, result.data == null ? null : TestMutation$Mutation.fromJson(result.data!));

}

```

Then all that's left to do is import the above file and use the hooks! 🔥

```dart

class PageWidget extends HookWidget {

const PageWidget({Key key}) : super(key: key);

@override

Widget build(BuildContext context) {

final queryResult = useExampleQueryQuery(context);

final mutationResult = useTestMutationQuery(context, TestMutationArguments(variable: ""));

return ...

}

}

```

## Usage

### Installation

```bash

npm i --save-dev graphql-codegen-flutter-artemis-hooks

```

### Create a configuration file for graphql-codegen.

Create a configuration file for graphql-codegen, including the schema, the path to the .graphql file, and the path to the artemis-generated file.

```yml:codegen.yml

schema: your_schema_file.graphql

documents: '. /your_project/**/*.graphql'.

generates:

your_project/generated_hooks.dart:

config:

artemisImportPath: package:your_project/your_artemis_generated/graphql_api.dart

plugins:

- graphql-codegen-flutter-artemis-hooks

```

### Run graphql-codegen

If you do not have graphql-codegen itself installed, install the following `@graphql-codegen/cli`.

```bash

npm i --save-dev @graphql-codegen/cli

```

Then add the following script to package.json and run `npm run codegen`!

```json

{

"scripts": {

"codegen": "graphql-codegen"

},

}

```

That's it, I hope you find it useful!

| seya |

941,825 | Day 67 of 100 Days of Code & Scrum: Back From Holiday Break! | Happy New Year, everyone! I took a long time off from coding and blogging during the holiday season.... | 14,990 | 2022-01-01T10:25:37 | https://blog.rammina.com/day-67-of-100-days-of-code-and-scrum-back-from-holiday-break | 100daysofcode, beginners, javascript, productivity | Happy New Year, everyone!

I took a long time off from coding and blogging during the holiday season. My [previous post](https://blog.rammina.com/day-66-of-100-days-of-code-and-scrum-services-and-portfolio-pages-for-my-business-website) was back from December 21, 2021. I just wanted to take a break from my usual daily routine and spend time with people that I cared for the most.

Today, I finally went back into it! I have a long list of tasks that I have to deal with as a result of taking a break for about two weeks. I managed to deal with my social media backlog, which I haven't checked in a while. Also, I checked my e-mails and other messages.

In my remaining free time, I studied Scrum for my upcoming Professional Scrum Master I (PSM I) certification exam. Also, I re-examined my [company website](https://www.rammina.com), found some of the things that didn't work as intended, as well as thought of ways to improve the [Portfolio](https://www.rammina.com/portfolio) and [Services](https://www.rammina.com/services) sections of the website. It was kind of difficult to start working after such a long break, but it shouldn't take me long to get back my lost momentum.

Anyway, let's move on to my first daily report of the year!

## Two Week Holiday Break

No coding, blogging, and social media. Time was spent with my wife playing games, reading novels, eating unhealthy Christmas food, watching movies, and unproductive things.

## Today

Here are the things I learned and worked on today:

### Company Website

- I found a few things that are either bugs or not working as intended in my [business website](https://www.rammina.com).

- plan out how to improve the [Portfolio](https://www.rammina.com/portfolio) and [Services](https://www.rammina.com/services) sections of the website.

### Scrum

- I reread the [2020 Scrum Guide](https://scrumguides.org/scrum-guide.html).

- Took some practice exams for the Professional Scrum Master I (PSM I) certification.

- I did some practice flashcards for Scrum.

- reviewed some of the things I've learned before.

### Other Stuff

- replied to emails and social media messages.

I hope everyone had a great time during the holidays. And I wish you all the best for this new year!

### Resources/Recommended Readings

- [The 2020 Scrum Guide](https://scrumguides.org/scrum-guide.html)

- [Open Assessments | Scrum.org](https://www.scrum.org/open-assessments)

- [Mikhail Lapshin's Scrum Quizzes](https://mlapshin.com/index.php/scrum-quizzes/)

### DISCLAIMER

**This is not a guide**, it is just me sharing my experiences and learnings. This post only expresses my thoughts and opinions (based on my limited knowledge) and is in no way a substitute for actual references. If I ever make a mistake or if you disagree, I would appreciate corrections in the comments!

<hr />

### Other Media

Feel free to reach out to me in other media!

<span><a target="_blank" href="https://www.rammina.com"><img src="https://res.cloudinary.com/rammina/image/upload/v1638444046/rammina-button-128_x9ginu.png" alt="Rammina Logo" width="128" height="50"/></a></span>

<span><a target="_blank" href="https://twitter.com/RamminaR"><img src="https://res.cloudinary.com/rammina/image/upload/v1636792959/twitter-logo_laoyfu_pdbagm.png" alt="Twitter logo" width="128" height="50"/></a></span>

<span><a target="_blank" href="https://github.com/Rammina"><img src="https://res.cloudinary.com/rammina/image/upload/v1636795051/GitHub-Emblem2_epcp8r.png" alt="Github logo" width="128" height="50"/></a></span>

| rammina |

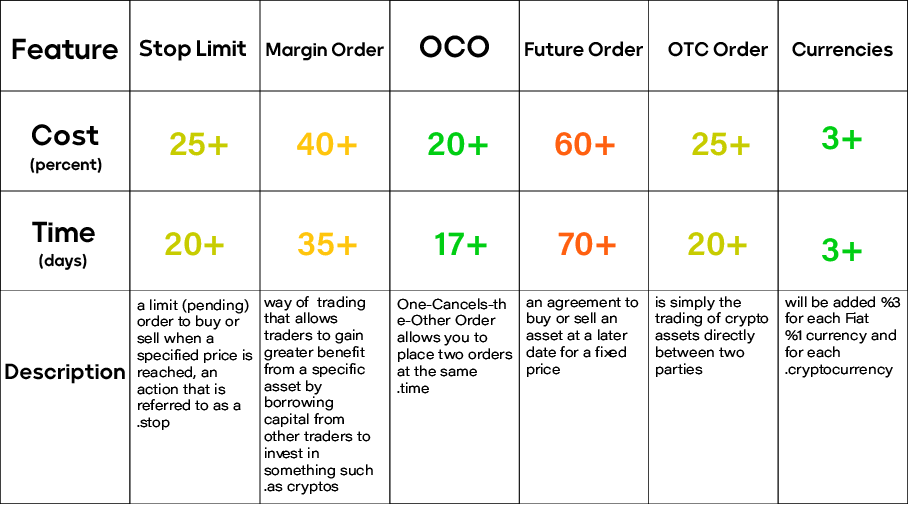

941,863 | P2P Crypto Exchange Software- story of peer to peer platforms and its usages in crypto exchanges | Peer-to-peer, abbreviated P2P, is a collection of interconnected devices that store and share... | 0 | 2022-01-01T13:17:30 | https://dev.to/jonathanberg/p2p-crypto-exchange-software-story-of-peer-to-peer-platforms-and-its-usages-in-crypto-exchanges-23n4 | blockchain, webdev, cryptocurrency | Peer-to-peer, abbreviated P2P, is a collection of interconnected devices that store and share specific files and documents. Each of these devices or systems is called a node. In this structure, each node has the same power and tasks.

But the term p2p or peer-to-peer has taken on a different color and flavor in financial technologies. It is usually used to refer to the exchange of cryptocurrencies on a person-to-person and decentralized basis. A [p2p crypto exchange software](https://radindev.com/p2p-cryptocurrency-exchange-software/) records the bid price of the buyer and the seller and, if they match, connects them to make the exchange. Some of the more advanced platforms have even provided a platform for automatic borrowing based on smart contracts.

Let's see where P2P was first used.

## How P2P networks work:

It was stated above that a p2p system is an aggregate of interconnected systems that have the same tasks and power. There is no administrator or admin or supervisor in this system because each node keeps a copy of the file and plays two roles simultaneously. One server and one client.

So each node can download files from other nodes. While in a centralized system, client devices download information from a centralized server.

In P2P networks, networked devices share files stored on their drives. Using software designed to mediate data sharing, users can search for other devices on the web, find and download files. Once the user downloads the file, it can act as the source of that file.

Simply put, a node acts as a client when downloading from other sources, but when it acts as a server, it is a resource from which other nodes can download files. (This can happen at the same time.)

## P2P history:

For the first time in the '80s, after introducing computers, one of the first examples of a user network was Internet Relay Chat, which was a way to send text and chat between two people.

In 1999, Napster introduced a P2P network for music sharing. This service made it possible for anyone to share their favorite music with others.

In 2000, a project called Gnutella started decentralized peer-to-peer sharing, which is still ongoing. Gnutella allows users to access folders on other systems.

Finally, it was in 2009 that Satoshi Nakamoto introduced the Bitcoin network as a peer-to-peer network. Perhaps the most famous sentence in the world about P2P is the first sentence of Bitcoin White Paper:

Bitcoin: A Peer-to-Peer Electronic Cash System

Most people have never heard of P2P before Bitcoin.

## Advantages of P2P networks:

1. Elimination of intermediaries: From the point of view of some economic actors, probably the most essential advantage of P2P is the elimination of intermediaries such as banks or financial and credit institutions.

In this way, digital currency is transferred directly from one person's wallet to another, and no bank in the middle plays the role of intermediary.

2. High security: Many others believe that much more security of this system than centralized client-server systems is the most significant advantage of p2p. It is P2P that protects digital currencies such as bitcoin against Dos attacks. Dos is a type of cyber attack that takes the server off the network and out of the reach of users. However, since peer-to-peer information systems are divided among many nodes, they are resistant to these attacks.

3. Avoid censorship: Avoiding censorship is another benefit of peer-to-peer networks. Because no one has the permission and power to block users' accounts, some people, such as merchants, see cryptocurrencies as a surefire way to prevent government payments from being blocked.

## Disadvantages of P2P networks:

1. Scalability: Because distributed offices must be updated at each node instead of the central server, adding any transaction in the blockchain requires a great deal of computing power. While this increases security, it dramatically reduces efficiency and is one of the main obstacles to scalability and widespread acceptance. However, blockchain encoders and developers are exploring options that may be used as scalability solutions. Prominent examples are the Lightning network, the atrium plasma, and the Wembley protocol.

2. Hard fork attacks: Another potential limitation is related to seizures during a hard fork. Because most blockchains are decentralized and open-source, nodes can freely copy and modify the code, detach from the main chain, and form a new parallel network. A hard fork is perfectly normal and is not a threat in itself. But if security measures are not taken properly, both chains will be vulnerable to replay attacks.

In addition, the distributed nature of P2P networks makes it difficult to control and regulate them. For example, several P2P programs and companies have engaged in illegal activities and copyright infringement.

## Unstructured P2P networks

In unstructured P2P networks, there is no specific organization of nodes. In this network, participants communicate with each other randomly. These systems are resistant to high churn activity (i.e., multiple nodes frequently joining and exiting the web).